id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,700,430 | open re: playtest! playtest! playtest! | Fellow list member Kamran Ayub (who maintains and works on Excalibur.js and runs Keep Track of My... | 0 | 2023-12-24T16:20:52 | https://onwriting.games/daily/20231124-1046-open-re-playtest-playtest-playtest/ | gamedev, writing | ---

title: open re: playtest! playtest! playtest!

published: true

date: 2023-11-24 00:00:00 UTC

tags: [ 'gamedev', 'writing' ]

canonical_url: https://onwriting.games/daily/20231124-1046-open-re-playtest-playtest-playtest/

---

Fellow list member Kamran Ayub (who maintains and works on [Excalibur.js](https://excaliburjs.com/) and runs [Keep Track of My Games](https://keeptrackofmygames.com/)) shared an interesting thought about yesterday's email:

> One thing it seems like narrative games would benefit from playtesting is for inclusivity. Your game could be played from all types of people from different cultures different to yours, so you may unintentionally introduce language or elements that don't land how you intended.

This.

**So. Much. This.**

Inclusion should be a **priority for us**.

It is practically impossible to have a complete view of where we are being non-inclusive.

At least not without **many years** of experience in the subject.

Clearly, playtesting is a good tool to **identify inclusivity issues**.

I'm not going to delve into the ramble of cultural Marxism, what matters to me is making the world accessible and inclusive, which is why I was excited about the response.

Although Kamran is focusing on content issues (e.g., whether a word or another might be offensive, etc.), I believe this is also true in **matters of form**.

For instance, a children's book has less text than a book for adults.

This is true in video games as well, **the length of the texts is greatly reduced** , we will talk about this in an upcoming email.

And not to mention those of us with ADHD.

There are games I cannot play because of the amount of information that needs to be managed at the same time without getting distracted.

This speaks to the importance of playtesting with a **variety of players**.

It's clear that this extends to all types of playtesting, not just narrative, but it's good to emphasize it.

Thank you, Kamran, for your comment!

Question:

Do you know any games that would have benefited from having done narrative playtesting on something in particular? Why do you think that? | onwritinggames |

1,700,437 | frustrating results | Something's been on my mind lately, summarizing some of the things we've been talking about: When... | 0 | 2023-12-24T16:21:19 | https://onwriting.games/daily/20231201-2349-frustrating-results/ | gamedev, writing | ---

title: frustrating results

published: true

date: 2023-12-01 00:00:00 UTC

tags: [ 'gamedev', 'writing' ]

canonical_url: https://onwriting.games/daily/20231201-2349-frustrating-results/

---

Something's been on my mind lately, summarizing some of the things we've been talking about:

When writing narrative games, we have a unique opportunity to **explore the intricacies of the real world**.

We can work complex moral and ethical dilemmas into our stories, we can create experiences that are thought-provoking and reflective of the nuanced world we live in. Or not.

For instance, think about the tough choices some people face in resource allocation in real life, like in healthcare or during a natural disaster.

That's something we don't experience daily, unless we work on those areas, and many people might find that interesting.

Many video games default to violence as the primary conflict.

It's the easiest thing to do, obviously.

Two opposing sides, war, and that's enough.

Many of us are tired of this and are looking for different ways of interpreting conflict.

The selection of options opens the possibility of generating a type of conflict that does not exist in linear media, but is very strong in human experience.

And I'm not referring so much to **moral dilemmas** , which are great to explore.

I'm referring more to the conflict that arises between making a decision and the outcome that follows.

This is slightly related to Brian Upton's concept of the horizon of intention and horizon of action.

The intention that the player has when choosing an option, and the action (or result) can be different, and a great source of conflict.

Obviously, we need to be careful and subtle with this, it's not about removing the player's agency, but about exploring the frustration that things turn out to be more complex than one imagines as a design goal.

Have you played any games that perform this? I think I have not, but I'd love to... | onwritinggames |

1,700,598 | Experimenting around FP in PHP | Warn: in this Post, it's tried to use as much typing as possible also if sometimes this means to... | 0 | 2023-12-18T13:23:01 | https://dev.to/stefanofago73/experimenting-around-fp-in-php-5ek6 | > **Warn**: in this Post, it's tried to use as much typing as possible also if sometimes this means to take some distance from official documentation

The evolution of the PHP language has had an effective contribution from some powerful specifications:

- the [Callable](https://www.php.net/manual/en/language.types.callable.php)

- the [Anonymous Function](https://www.php.net/manual/en/functions.anonymous.php)

- the [Closure](https://www.php.net/manual/en/class.closure.php)

These are now enriched by new RFC:

- the [Anonymous Classes](https://www.php.net/manual/en/language.oop5.anonymous.php)

- the [Arrow Functions](https://www.php.net/manual/en/functions.arrow.php)

- the [First Class Callable Syntax](https://www.php.net/manual/en/functions.first_class_callable_syntax.php)

> **Note**: These RFCs make possible a lot of different constructs that go further than what will be exposed in this Post: remember that the "White Rabbit hole" is deep!

## Callable, Anonymous Function, Closure, and FP!

***Callables*** are generally used as the main type hint to define callbacks: a summary of how they can be defined/used can be seen in the [official documentation](https://www.php.net/manual/en/language.types.callable.php#118032). Introducing the ***__invoke*** function, even at the interface or anonymous class level, makes it possible to manage an object as a function transforming it into a Callable.

```php

class Butterfly {

public function __invoke():void {echo "flying...";}

}

$butterfly = new Butterfly();

$butterfly(); // flying...

```

***Closure*** is the class used to represent anonymous functions: this kind of construct, also in the form of ***arrow*** function, is really useful not only as a callback, in place of a callable, but also more usage since the ability to capture a different scope or to simulate FP constructs such as [currying](https://en.wikipedia.org/wiki/Currying) like in the following code:

```php

class Person{

private function __construct(

public readonly string $name,

public readonly string $familyName,

public readonly int $age){}

public static function create():\Closure

{

return

static fn(string $name):\Closure

=> static fn(string $familyName):\Closure

=> static fn(int $age):Person

=> new Person($name,$familyName,$age);

}

}

$person = Person::create()('Frank')('Jamison')(50);

```

The introduction of ***First-Class Callable Syntax*** makes it possible to create Closure from any kind of function, defining a sort of ***function reference*** usable in places different from the definition (what follows reuse the example seen above)

```php

$personBuilder = Person::create(...);

$frank = $personBuilder()('Frank')('Jamison')(45);

$john= $personBuilder()('John')('Smith')(40);

```

## Arrays, arrays functions, and the anonymous functions

The use of anonymous functions is particularly useful when we refer to native functions on arrays: the potential is notable and makes the use of [loops superfluous](https://szymonkrajewski.pl/why-you-should-use-array-functions-instead-of-basic-loops/) as in the following code.

```php

$stock = [

['name' => 'Phone', 'qty' => 0],

['name' => 'Notebook', 'qty' => 1],

['name' => 'SDD Drive', 'qty' => 0],

['name' => 'HDD Drive', 'qty' => 3]

];

$inStock = array_filter($stock, static fn($item) => $item['qty'] > 0);

```

Native functions on arrays bring out the functional world when it comes down to it to the ***fold operation*** (the [array_reduce](https://www.php.net/manual/en/function.array-reduce.php) function) that allows us to be a point on which to build other fundamental functions such as ***map*** and ***filter***:

```php

/**

*

*@template T

*@template R

*@param array<T> $data

*@param callable(T):R $mapper

*@return array<R>

*

*/

function map(array $data, callable $mapper):array

{

return array_reduce($data,

/** @param T $element */

function(array $accumulator, $element) use ($mapper)

{

$accumulator[] = $mapper($element);

return $accumulator;

}, []);

}

/**

*

*@template T

*@param array<T> $data

*@param callable(T):bool $predicate

*@return array<T>

*

*/

function filter(array $data, callable $predicate):array

{

return array_reduce($data,

/** @param T $element */

function(array $accumulator, $element)use($predicate){

if($predicate($element)){

$accumulator[]=$element;

}

return $accumulator;},[]);

}

```

The improved performance on arrays, starting from PHP 7.X, and the handling of anonymous functions are convenient from both a performance and memory consumption point of view although the practice of only using arrays sometimes doesn't make you consider the multiple benefits of alternative data structures.

Anonymous functions and closures are easy to distinguish given the ***use clause*** that allows you to capture elements from outside the given anonymous function. The value of the captured element is defined when the closure is declared and cannot be changed.

```php

class Product{ ... }

final class PriceRange {

private function __construct(private float $minimumValue,

private float $maximumValue){}

public static function of(

float $minimumValue,

float $maximumValue): PriceRange{

return new PriceRange($minimumValue,$maximumValue);

}

public function priceFilter():\Closure{

return fn(Product $value)=>

$value->price() >= $this->minimumValue

&&

$value->price() < $this->maximumValue;

}

}

/** @var array<Product> $prodcuts */

$products = [ ... ];

$range = PriceRange::of(5.0,10.0);

array_filter($products, $range->priceFilter());

```

Closures are still handy in design where you want to isolate the visibility of data, always in the light of the FP paradigm, as in the following example.

```php

/** @return \Closure():int */

function rndNumber():\Closure{

return fn()=> rand(0,getrandmax()-1);

}

/** @return \Closure():int */

function rndConstant():\Closure{

$constant = rand(0,getrandmax()-1);

return fn()=> $constant;

}

$rnd1 = rndNumber();

$c1 = rndConstant();

$c2 = rndConstant();

echo $rnd1(); // 505035335

echo $rnd1(); // 1353685165

echo $c1(); // 2030702172

echo $c1(); // 2030702172

```

These possibilities are also useful in approaching internal domain-specific languages (***DSL***) or adopting the ***higher-order function*** (in FP slang): the [Loan](https://www.oreilly.com/library/view/design-patterns-and/9781786463593/56585929-d828-45ce-9a91-648ad0dd4823.xhtml) Pattern and the [Execute Around](https://www.dontpanicblog.co.uk/2020/11/28/execute-around-idiom-in-java/) Pattern are examples of this concept.

```php

//

//Example of Execute-Around...

// ...also used in DSL definition for nested-dsl

//

MailSender::Send(

fn(Mail $config):Mail=>

$config

->From("john.black@kmail.com")

->To("jack.white@jmail.com")

->Subject("Test message")

->Body("Hello World!")

);

//

// Example of Load Pattern

//

class ToyBox{

...

/**

*

* @param \Closure(ToyBox):ToyBox $boxFillerPolicy

* @param \Closure(ToyBox):ToyBox $playActions

* @return \Closure():void

*/

public static final function play(\Closure $boxFillerPolicy, \Closure $playActions):\Closure

{

return function()use($boxFillerPolicy,$playActions):void{

$box = new ToyBox();

$box->Open();

try{

$box = $boxFillerPolicy($box);

$box = $playActions($box);

}finally{

$box->Close()

->CleanUpToys();

}

};

}

...

public function nextToy():ToyBox{ ... }

public function addToy(string $toyName):ToyBox{ ... }

}

function Usage():void{

$refill = fn(ToyBox $box):ToyBox

=>$box

->addToy("lego")

->addToy("mechano")

->addToy("laser"); };

$play = fn(ToyBox $box):ToyBox{

=> $box

->nextToy()

->nextToy()

->nextToy(); };

$playSession = ToyBox::play($refill, $play);

$playSession();

```

## The Scope element in Closures

Closures can refer to different scopes than those in which they were created thanks to the ***bind*** functions. The possibilities of closures become considerable and they could replace the use of ***Reflection*** since they can access the private state of objects: this, however, has limitations in the presence of static analysis infrastructures such as PHPStan and PSALM which highlight *abuses of closures* as in the following example.

```php

class SimpleClass {

private int $privateData = 2;

}

$simpleClosure = function():int {return $this->privateData;};

$instance = new SimpleClass();

$resultClosure = \Closure::bind(

$simpleClosure,

$instance,

SimpleClass::class);

echo $resultClosure==false?-1:$resultClosure();

...

//

//PSALM Output

//

// ERROR: InvalidScope - Invalid reference to $this in a

// non-class context

// INFO: MixedInferredReturnType - Could not verify the return

// type 'int' for...

//

```

It's however possible to find ways to overcome these problems and exploit appropriately the potential of the closures from the outside of a class. A possible trick is to define a ***bridge operation*** (a protected function, also *static* if needed) to use when the Closure is created, as follows:

```php

class SimpleClass {

private int $privateData = 2;

protected final function data():int{

return $this->privateData;

}

}

$instance = new SimpleClass();

// @phpstan-ignore return.unusedType

$accessTo = fn (string $bridgeOperation): ?\Closure

=>

\Closure::bind(

function(SimpleClass $instance) use ($bridgeOperation): int{

/** @var callable():int $operation */

$operation = [$instance,$bridgeOperation];

return $operation();

},

NULL,

SimpleClass::class);

/** @var null|\Closure(SimpleClass):int $resultClosure **/

$resultClosure = $accessTo("privateData");

echo ($resultClosure===null?-1:$resultClosure($instance)); // 2

```

This approach seems more verbose but we can make it reusable and it passes static analysis given also more strong typing. It's also an example of how we can approach the FP concept of [Optics](https://medium.com/@gcanti/introduction-to-optics-lenses-and-prisms-3230e73bfcfe).

## Conclusion

The importance of the exposed specifications is not only in having introduced types for the different elements but it's also in the new design possibilities. They have also brought PHP even closer to natively approaching Functional Programming: so it's important to study and experiment also with static analysis enabled! It is worth noticing that with PHP you can approach FP both in a more function-oriented way or in a more object-oriented way using generics and advanced techniques thanks also to PHPStan or PSALM.

## To Go Furter

More elements need to be considered, here not exposed as for the design consequences of ***read-only*** constructs or the ***Generators***, but also if not adopted, it's useful to consider these concepts to enrich our ability to design Clean Software!

Other resources allow the adoption of functional idioms/constructs and to study the concepts and approaches presented in this post: so... Happy Learn!

- https://www.infoq.com/articles/php7-function-improvements/

- https://github.com/fp4php/functional

- https://github.com/haskellcamargo/php-partial-function-application

- https://github.com/marcosh/lamphpda

- https://github.com/phunkie/phunkie

- https://github.com/functional-php

- https://github.com/widmogrod/php-functional

- https://github.com/loophp/collection

- https://leanpub.com/thinking-functionally-in-php

- https://www.phparch.com/books/functional-programming-in-php/

- https://link.springer.com/book/10.1007/978-1-4842-2958-3

| stefanofago73 | |

1,700,785 | Eager loading vs lazy loading | Basically, do you know when you need to study for a test? You don't have much time: eager loading.... | 0 | 2023-12-18T03:39:56 | https://dev.to/juanpinheirx/eager-loading-vs-lazy-loading-90o | javascript, node, programming, webdev | Basically, do you know when you need to study for a test? You don't have much time: eager loading. Whatever you see in your sight is pretty much enough for you to have. But if you need to study a specific thing: lazy loading. That's when you have time to study specific concepts. Therefore, specific results.

Let's have another example with other things like our solar system.

Inquiring one about our solar system will get us a general answer. Let's name this concept as macroquery. We have informations about other planets (enough to know what they're made of, for example).

Let's say we want now to get more information on mars. Let's call this inquiry microquery. Mars has its specific details such as earth.

When we want to get from a query nothing in particular: eager loading (macroquery). When we want specifics: lazy loading (microquery).

Think about atoms and how they were discovered pretty much after stars. In terms of mass, both are completely different however, they share the same matter.

Doesn't it look like a planet with its moons?

Let's talk a bit more about space and get the difference between being on a planet, watch it through a telescope and look up to the sky.

We have more informations now in Mars than before, due to exploration technologies. We can call this action towards knowledge from mankind as microquery. We want to know now details of mars.

Image you wake up in a remote period of ancient time, where all you can do to know about space is to look up. To see little stars with different colors or constellations will give you coordinates or some kind of information such as: the north star, if you're on a western or eastern part of earth, etc. We'll call it macroquery. We have little to work on, and much to do if we want specifics.

Now imagine you woke up with Copernicus looking to the stars through a telescope. You could see with your own eyes his studies to get to our general answer today: the sun does not rotate around earth.

Is this micro or macroquery? The answer for us is: _it depends._

If you need specific data from your database: lazy loading. Remember microquery. Else, use eager loading and remember macroquery. | juanpinheirx |

1,700,923 | `On School: Top defensemen to view this period | A few of the most effective defensemen in the NHL are graduates of college hockey. Cale Makar of the... | 0 | 2023-12-18T07:14:28 | https://dev.to/brito11/on-school-top-defensemen-to-view-this-period-11og | A few of the most effective defensemen in the NHL are graduates of college hockey. Cale Makar of the Colorado Avalanche won the Norris Prize in 2021-22, one year after Adam Fox of the New York Rangers won it. Look at the NHL's top markers and together with Makar, you'll see Quinn Hughes of the Vancouver Canucks, that leads the Organization with 37 points and went to the University of Michigan. The list goes on, including Charlie McAvoy of the Boston Bruins, Brandon Montour of the Florida Panthers, Jaccob Slavin of the Carolina Hurricanes and Devon Toews of the Avalanche. Luke Hughes of the New Jersey Devils, and the more youthful brother of Quinn, is among the early favorites for the Calder Prize as the League's best rookie. And a lot more are on the method. It will not be long prior to the following wave of university standouts move on to the NHL. Here are 10 of the top NCAA defensemen to view this season. Sean Behrens, College of DenverSelected by the Colorado Avalanche in the second round of the 2021 NHL Draft, the knowledgeable puck-mover belonged of Denver's 2022 championship game team. He has 14 factors in 14 video games this season <a href="https://www.avalancheprostore.com/ben-meyers-jersey">Ben Meyers Jersey</a>. The 20-year-old junior played for the United States in the 2023 World Junior Champion, where he had 3 indicate help them win a bronze medal. Zeev Buium, College of DenverA 17-year-old freshman who is a remarkable skater, Buium is ranked by NHL Central Scouting as an A prospect for the 2024 NHL Draft. He plays with Behrens on Denver's leading pair. He gained National Collegiate Hockey Meeting Defenseman of the Week honors after scoring an objective in a 5-0 win on Nov. 24 and having four aids in a 9-0 win on Nov. 25 as Denver swept Yale University. Buium has 18 points and is plus-16 in 14 video games this period, and has 14 points in his previous eight video games. His bro, Shai, that also plays at Denver, was picked by the Detroit Red Wings in the second round of the 2021 draft. Seamus Casey, University of MichiganAfter an exceptional freshman year with 29 factors in 36 games, Casey is off to a fast begin as a sophomore. He is the top-scoring defenseman in the nation and is tied for 3rd among all players with 22 points in 16 games <a href="https://www.avalancheprostore.com/peter-forsberg-jersey">Peter Forsberg Jersey</a>. The 19-year-old was picked by the New Jersey Devils in the second round of the 2022 NHL Draft. Ryan Chesley, College of MinnesotaSelected by the Washington Capitals in the 2nd round of the 2022 draft, Chelsey competes hard, a trait that figures to make him an area on the USA group at the 2024 World Junior Championship for the second straight year. The 19-year-old student has five points in 14 video games this season. Lane Hutson, Boston UniversityThe 19-year-old was a Hobey Baker Award finalist and led Hockey East in scoring as a fresher last season with 48 factors in 39 video games. This season, he has 16 factors in 13 games <a href="https://www.avalancheprostore.com/henry-bowlby-jersey">Henry Bowlby Jersey</a>. Chosen by the Montreal Canadiens in the second round of the 2022 draft, he figures to be a principal for the United States at the World Junior Championship and possesses a high hockey IQ. Artyom Levshunov, Michigan State UniversityAfter a solid period with Environment-friendly Bay of the USHL last season, Levshunov has actually been a standout in his initial year in university and is expected to be a high choice in the 2024 draft. The 18-year-old has 15 factors in 16 games this season. Scott Morrow, University of MassachusettsSelected by the Carolina Hurricanes in the 2nd round of the 2021 draft, Morrow led his group in scoring last period and is doing it once more in his junior year with 15 factors in 13 video games. The 21-year-old had 31 factors in 35 games last period. ` | brito11 | |

1,700,947 | Skyflow's privacy vault for building LLMs | Skyflow's Privacy Vault empowers organizations to build and deploy LLMs responsibly, ensuring data privacy and compliance throughout the entire LLM lifecycle. | 21,488 | 2023-12-18T07:31:25 | https://codingcat.dev/podcast/3-24-skyflow-privacy-vault | webdev, javascript, beginners, podcast |

Original: https://codingcat.dev/podcast/3-24-skyflow-privacy-vault

{% youtube https://youtu.be/f_gNOK8cpwI %}

In the rapidly evolving realm of artificial intelligence, large language models (LLMs) have emerged as powerful tools for natural language processing and generation. These models, trained on massive datasets of text and code, have demonstrated remarkable capabilities in tasks such as machine translation, text summarization, and creative writing. However, the development and utilization of LLMs raise significant privacy concerns, particularly with regard to the handling of sensitive personal information.

Skyflow's Privacy Vault offers a groundbreaking solution to address these concerns, enabling organizations to build and deploy LLMs while upholding the highest standards of data privacy and security. This innovative privacy vault provides a secure environment for sensitive data, ensuring that it remains protected throughout the entire LLM lifecycle, from data collection and preparation to model training and deployment.

## Safeguarding Sensitive Data Throughout the LLM Lifecycle

The LLM lifecycle encompasses various stages, each presenting unique data privacy challenges. Skyflow's Privacy Vault effectively addresses these challenges, ensuring that sensitive data is safeguarded at every step.

1. **Data Collection and Preparation:** During data collection, Skyflow's Privacy Vault allows organizations to identify and redact sensitive data before it is used for training or inference. This process helps prevent the inadvertent exposure of personally identifiable information (PII) or other sensitive information.

2. **Model Training:** The Privacy Vault maintains its protection during model training, ensuring that sensitive data remains encrypted and inaccessible to unauthorized parties. This encryption safeguards sensitive data from potential breaches or unauthorized access during the training process.

3. **Model Deployment and Inference:** When deployed for inference, LLMs interact with user-provided data. Skyflow's Privacy Vault extends its protection to this stage, ensuring that sensitive data is redacted or anonymized before being exposed to the LLM. This protection prevents the LLM from learning or disclosing sensitive information during inference.

## Enhancing Data Privacy with Granular Controls and Compliance

Skyflow's Privacy Vault goes beyond basic data protection by providing organizations with granular controls over data access and usage. These controls enable organizations to define who can access sensitive data and for what purposes, ensuring that sensitive information is only used for authorized purposes.

Furthermore, the Privacy Vault facilitates compliance with various data privacy regulations, such as the General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA). The vault's data residency capabilities ensure that sensitive data remains within the specified geographic regions, complying with data localization requirements.

## Unlocking the Potential of LLMs with Confidence

Skyflow's Privacy Vault empowers organizations to harness the power of LLMs without compromising data privacy. By providing comprehensive data protection and compliance capabilities, the Privacy Vault enables organizations to build and deploy LLMs responsibly, fostering trust and transparency among stakeholders.

**Key Benefits of Skyflow's Privacy Vault**

* **Protect sensitive data throughout the LLM lifecycle**

* **Implement granular access controls for sensitive data**

* **Comply with data privacy regulations, such as GDPR and CCPA**

* **Maintain data residency in specified geographic regions**

* **Build and deploy LLMs responsibly and ethically**

## Conclusion

As LLMs continue to revolutionize various industries, Skyflow's Privacy Vault plays a crucial role in ensuring that these powerful models are developed and deployed in a privacy-conscious manner. By safeguarding sensitive data and enabling compliance with data privacy regulations, the Privacy Vault empowers organizations to leverage the full potential of LLMs while upholding the highest standards of data protection. | codercatdev |

1,701,077 | Simplify and Stabilize Your Playwright Locators | Who controls the locators, controls the web. Much of Playwright's power comes from its ability to... | 25,769 | 2023-12-19T12:00:00 | https://www.browsercat.com/post/strengthen-selectors-and-locators-in-playwright?canonical=2 | playwright, javascript, automation, testing | Who controls the locators, controls the web.

Much of Playwright's power comes from its ability to target and interact with elements on a webpage. But as you well know, the web is a finicky place. Elements come and go, and the slightest change in HTML can break your automation scripts.

In this article, we'll explore strategies for creating simple and stable Playwright locators that can withstand the test of time and changes in web applications. We'll also look at how to optimize your selectors for speed and efficiency.

For a more in-depth look at these concepts, check out my complete deep dive on [optimizing Playwright locators](https://www.browsercat.com/post/strengthen-selectors-and-locators-in-playwright).

Let's go!

## Optimize Selector Specificity

Refining selector specificity involves finding an ideal equilibrium: you need selectors that are precise enough to unambiguously identify target elements across different page states, yet not so intricate that they shatter with the slightest DOM adjustments.

Steer clear of overly rigid selectors; such fragility means that a trivial HTML modification could render them useless. On the flip side, too loose selectors might lead you down a path of position-dependent logic (like indices) or unwarranted reliance on the web page's current implementation.

We're looking for the Goldilocks zone of search queries.

Here are a few anti-patterns and how to fix them:

```ts

// 🔴 BAD: Selectors based on fragile structural assumptions

const $link = page.locator('li:nth-child(3) > a');

// 🟢 GOOD: Selectors grounded in distinguishing features

const $link = page.locator('a[href*="privacy"]');

// 🔴 BAD: Selectors anchored to mutable text

const $button = page.locator('button', {hasText: 'Sign up'});

// 🟢 GOOD: Robust, pattern-based selectors

const $button = page.locator('button', {

hasText: /(sign|start|subscribe|launch)/i,

});

// 🔴 BAD: Selectors tied to changeable style-specific classes

const $input = page.locator('input.email');

// 🟢 GOOD: Selectors focused on functional attributes

const $input = page.locator('input[type="email"]');

// 🔴 BAD: Excessively specific hierarchical selectors

const $list = page.locator('footer > ul.inline-links > li');

// 🟢 GOOD: Shallow, adaptive nesting

const $list = page.locator('footer:last-of-type li', {

has: page.locator('a'),

});

```

To sum up:

- Prioritize attributes that capture the essence of the target element, rather than arbitrary qualities.

- If your target elements have no stable defining characteristics, anchor your queries to parents or children who do have such qualities.

- Keep chain selectors short, and try to only use stable elements within them.

- Leverage regular expressions for text matching to accommodate variations.

## Prefer Semantic Locator Methods

When it comes to selecting elements, it's always better to prioritize semantic attributes over stylistic or functional attributes. While an element's classes or its location in the DOM are typically the easiest to target, they're also the most prone to change. On the other hand, semantic attributes (such as role, label, or title) change infrequently. And when they do, they tend to change in ways that can be accounted for ahead of time.

Given the benefit of targeting semantic attributes, Playwright provides utility methods for directly accessing them. Use them whenever possible. Here's why:

- Semantic methods encourage best practices. As you'll see in the passages that follow, the most durable selector patterns have helper methods.

- Semantic methods are typed, improving your IDE experience and alerting you to errors. Plain selector strings can only be debugged at runtime, resulting in more bugs.

- Semantic methods are chainable. This dramatically simplifies the queries themselves. It also results in better error messages when queries fail.

Let's run through each method that's currently available:

```ts

// Target Test IDs when you have control of the HTML

// E.G. `<section data-testid="delete-modal" />`

const $modal = page.getByTestId('delete-modal');

// Target explicit or implied element roles

// "button" matches `<button>`, `<input type="button">`, or `<div aria-role="button">`

const $button = page.getByRole('button', {hasText: 'Buy'});

// Target text HTML attributes that are unlikely to change

const $input = page.getByLabel('Email');

const $search = page.getByPlaceholder(/^search/i);

const $image = page.getByAltText('Profile Picture');

const $icon = page.getByTitle('Info', {exact: false});

// Or target elements by `innerText`, when you can be sure it's stable

const $dialog = page.getByText(/^confirm/i);

```

I cannot stress enough how valuable it is to target based on `role` and `data-testid` in particular.

When you have control of the DOM, `data-testid` is a fantastic [convention](/glossary/data-testid) to implement across dev teams. Unlike every other HTML attribute, there is never any reason for `data-testid` to change, making it the least brittle of all possible selectors.

That said, given that you won't always have control over the HTML of target pages, `role` is an excellent fallback. As shown in the example code above, `role` is an inherently forgiving selector. It can continue working even across substantial DOM changes.

## Chain Locators, Not Selectors

As described above, it's best to avoid long selector query strings. They're inherently difficult to debug, and they result in less descriptive error messages.

Of course, you're not always going to be able to avoid chaining. Sometimes, you need to target particularly evasive DOM nodes. More often, you simply want to break a page up into subtrees and drill down from an intermediate HTML element. (For example, a list of articles in a blog feed.)

Here are two strategies for designing locators that are both easy to read and easy to debug...

### Drill Down Within Subtrees

Chaining locators is like adding layers to a sketch; each additional stroke refines the image. Begin with a broad locator and use methods like `.filter()`, `.first()`, `.last()`, and conditional parameters to progressively narrow down to your target element.

```ts

// Find the first article about BrowserCat

const $articles = page.locator('article');

const $aboutBrowserCat = $articles.filter({

hasText: /BrowserCat/i

});

const $firstArticle = $aboutBrowserCat.first();

```

This approach separates concerns, ties the locators to logical entities, and keeps the code readable and adaptable. If your layout changes, you might only need to adjust the parent locator instead of unraveling multiple complex strings.

### Filter by Content with `{has}` and `{hasNot}`

Sometimes you need to select an element not only by its properties but also by its relation to others. With `{has}` and `{hasNot}` parameters, you can define these relationships clearly, creating a robust context for your selectors.

```ts

// Find the first article with an image

const $articles = page.locator('article');

const $withImages = $articles.filter({

has: page.locator('img'),

});

const $firstArticle = $withImages.first();

```

These parameters act as assertions about the presence of certain elements within a parent. This increases the number of stable, unique attributes you can leverage in creating good query patterns.

## Next steps

We've touched on several key practices for creating simple and stable Playwright locators that can withstand the test of time and changes in web applications. I go much deeper on performance, error handling, and refactoring your selectors in my [Playwright locators deep-dive](https://www.browsercat.com/post/strengthen-selectors-and-locators-in-playwright). Check it out.

Until next time, happy automating!

| mikestopcontinues |

1,701,183 | Unit test in Laravel by example | Testing is fun! Don't take my word for it. You should probably try it yourself. To get familiar with... | 0 | 2023-12-18T11:21:41 | https://dev.to/amirsabahi/learn-unit-test-in-laravel-by-example-1k5p | laravel, php, unittest, filesystem | Testing is fun! Don't take my word for it. You should probably try it yourself. To get familiar with testing or even get better at writing unit tests, you can learn from prominent open-source projects. Just open the project and go to the test folder. You might see things like feature tests, integration tests, or unit tests. Choose a unit test and go through the test files.

Here we go through the unit test of FileSystems in Laravel framework.

The address is [tests/Filesystem/FilesystemTest.php](https://github.com/laravel/framework/blob/10.x/tests/Filesystem/FilesystemTest.php)

## Setting the Stage:

Before delving into the details of the unit test, let's set the stage by briefly introducing the Illuminate Filesystem component. This component provides a unified interface for interacting with file systems, allowing developers to perform tasks such as reading, writing, and manipulating files and directories.

## Test Class Overview:

The unit test is written in PHP using the PHPUnit testing framework. It is part of the Illuminate\Tests\Filesystem namespace and extends the PHPUnit\Framework\TestCase class. The test class covers a wide range of scenarios, including file retrieval, storage, line operations, permissions, directory manipulation, and more.

### Mock

The test file uses use Mockery framework. Sometimes we do not really want to call a piece of code, or send real emails or SMS. For instance, in file system testing, we do not want to create a real file. Instead, we use mock object to call the delete method. a mock is a stand-in for a real object. It pretends to be the real thing in a controlled way, allowing developers to test specific parts of their code independently.

Take a look at the code below:

` $files = m::mock(Filesystem::class)->makePartial();

$files->shouldReceive('deleteDirectory')->once()->andReturn(false);`

We use mock to create partial test doubles* and fake call deleteDirectory (We do not call it really, and we do not delete anything rather we return what we think the deleteDirectory method returns).

Now open the code and walk through it along with the following explanations.

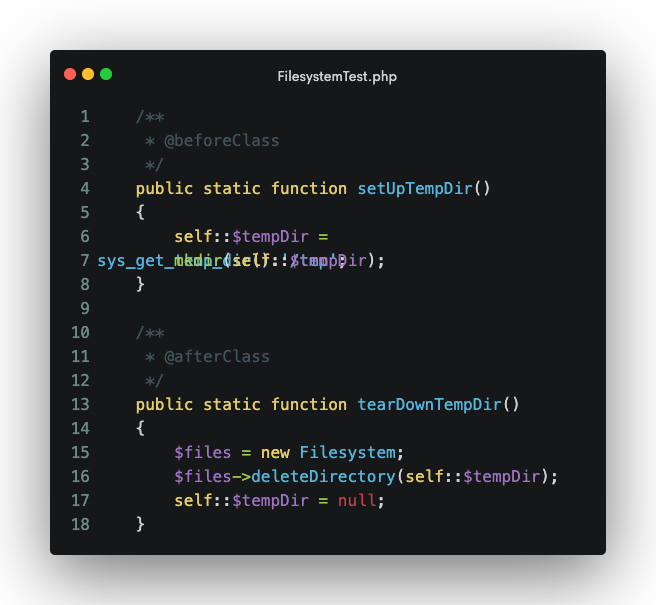

### Setting up Temporary Directory:

The test class employs a temporary directory for creating and testing files. The setupTempDir() method, annotated with @beforeClass, creates a temporary directory for testing, while tearDownTempDir() (annotated with @afterClass) cleans up the temporary directory after all tests have been executed.

### Filesystem Initialization:

In various test methods, an instance of the Filesystem class is created to simulate real-world usage. This includes scenarios where files need to be created, modified, or deleted.

### Testing File Retrieval:

The testGetRetrievesFiles method ensures that the get method retrieves the correct content from a file, asserting that the retrieved content matches the expected value.

### Testing File Storage:

The testPutStoresFiles method examines the put method's ability to store content in a file, with an assertion to validate that the file content matches the expected value.

### Lazy Collection for Lines:

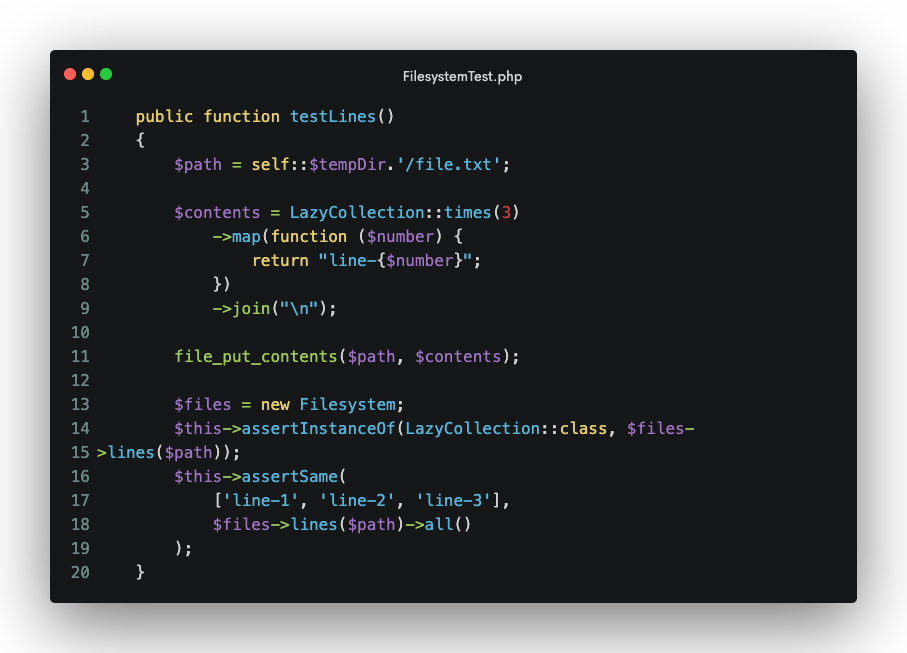

The testLines method demonstrates the use of LazyCollection for working with lines in a file. It ensures that lines are read correctly and returned as a LazyCollection.

### File Replacement Operations:

The testReplaceCreatesFile and testReplaceInFileCorrectlyReplaces methods test the replace method, both for creating a file and for replacing content within an existing file.

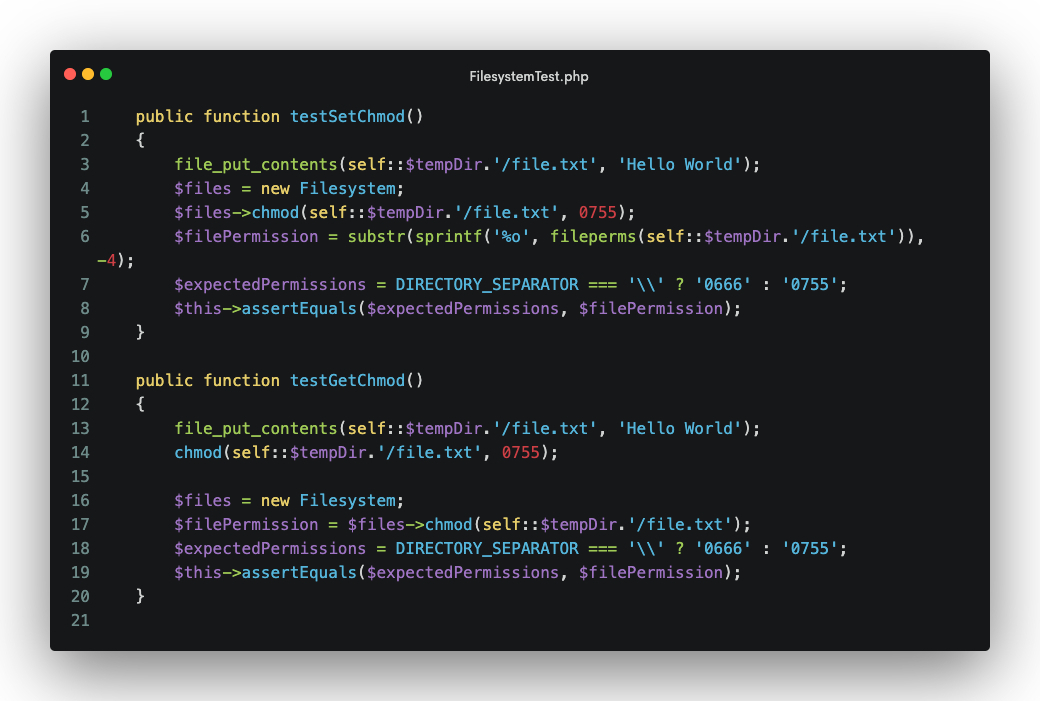

### Testing File Permissions:

The testSetChmod and testGetChmod methods focus on testing the _chmod_ method for setting and retrieving file permissions.

### Directory and File Deletion:

The testDeleteRemovesFiles method checks the delete method's ability to remove both single files and arrays of files.

### Prepending to Files:

The testPrependExistingFiles and testPrependNewFiles methods examine the prepend method's functionality for adding content to the beginning of a file.

### File Existence Checks:

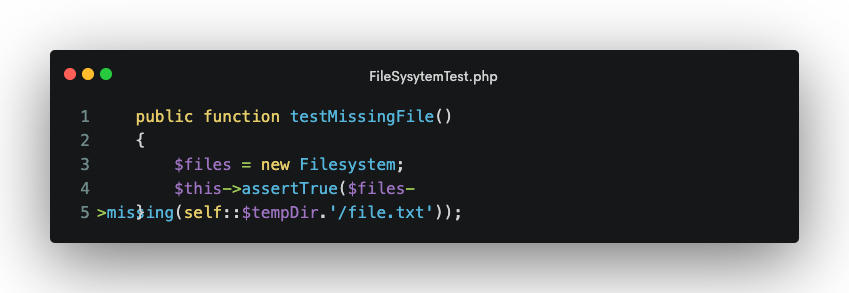

The testMissingFile method checks the missing method for detecting the absence of a file.

### Directory Manipulation:

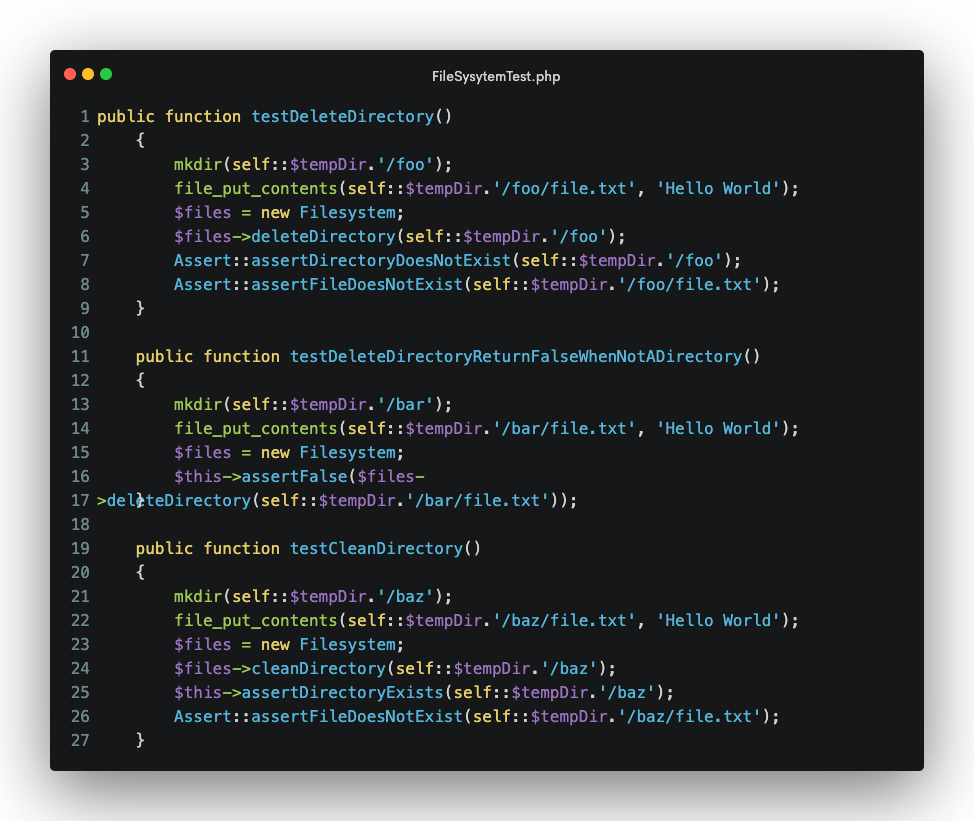

Methods like testDeleteDirectory, testDeleteDirectoryReturnFalseWhenNotADirectory, and testCleanDirectory focus on testing directory-related operations.

### Macro Functionality:

The testMacro method demonstrates the ability to add macros dynamically to the Filesystem class.

### Additional File and Directory Operations:

The testFilesMethod, testCopyDirectory, testMoveDirectory, and other methods cover a range of file and directory operations, ensuring correct behavior in various scenarios.

### Exception Handling:

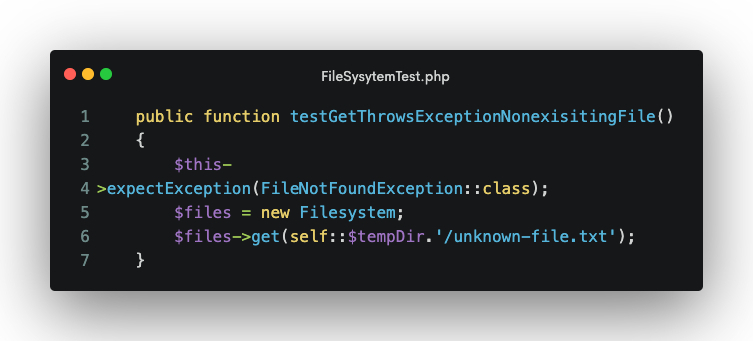

Methods like testGetThrowsExceptionNonexisitingFile and testGetRequireThrowsExceptionNonExistingFile test the handling of FileNotFoundExceptions.

I hope this post helps you to get your testing skills even further.

> Test Doubles: In computer programming and computer science, programmers employ a technique called automated unit testing to reduce the likelihood of bugs occurring in the software. Frequently, the final release software consists of a complex set of objects or procedures interacting together to create the final result. In automated unit testing, it may be necessary to use objects or procedures that look and behave like their release-intended counterparts but are simplified versions that reduce the complexity and facilitate testing. A test double is a generic term used for these objects or procedures.

> Wikipedia

| amirsabahi |

1,701,533 | Modern GL + Google Colab = triangle animation | Rendered not in my PC image: *i mean not this gif here in your browser but in colab cloud? Link to... | 0 | 2023-12-18T16:54:50 | https://dev.to/fakelaboratory/modern-gl-google-colab-triangle-animation-38bc | moderngl, colab, python | Rendered not in my PC image:

*i mean not this gif here in your browser but in colab cloud?

Link to colab:

https://colab.research.google.com/drive/10Ig1Nqbwqd1lF7HAF0rJuxpZpThgjHcB?usp=sharing

This notebook restored from old(2018) google notebook, with some changes in code. Links to references in comments of the notebook.

```

!pip install moderngl

!pip install moviepy

import moderngl as gl

import numpy as np

from PIL import Image

import moviepy.editor as mpy

ctx = gl.create_context(standalone=True, backend='egl')

print(ctx.info)

```

```

prog = ctx.program(

vertex_shader="""

#version 330

in vec2 in_vert;

in vec3 in_color;

out vec3 v_color;

void main() {

v_color = in_color;

gl_Position = vec4(in_vert, 0.0, 1.0);

}

""",

fragment_shader="""

#version 330

in vec3 v_color;

out vec3 f_color;

void main() {

f_color = v_color;

}

""",

)

vertices = np.asarray([

-0.75, -0.75, 1, 0, 0,

0.75, -0.75, 0, 1, 0,

0.0, 0.649, 0, 0, 1

], dtype='f4')

```

```

def render_frame(time):

vbo = ctx.buffer(vertices.tobytes())

vao = ctx.vertex_array(prog, vbo, "in_vert", "in_color")

fbo = ctx.framebuffer(

color_attachments=[ctx.texture((512, 512), 3)]

)

fbo.use()

fbo.clear(0.0+time, 0.0+time, 0.0+time, 1.0)

vao.render()

return np.array(Image.frombytes(

"RGB", fbo.size, fbo.color_attachments[0].read(),

"raw", "RGB", 0, -1

))

```

```

clip = mpy.VideoClip(render_frame, duration=2) # 2 seconds

clip.write_gif("anim.gif",fps=15)

#now in files anim.gif

```

---

_May the new year bless you with health, wealth, and happiness._

from "65 Happy New Year Wishes for Friends and Family 2024" countryliving article

| fakelaboratory |

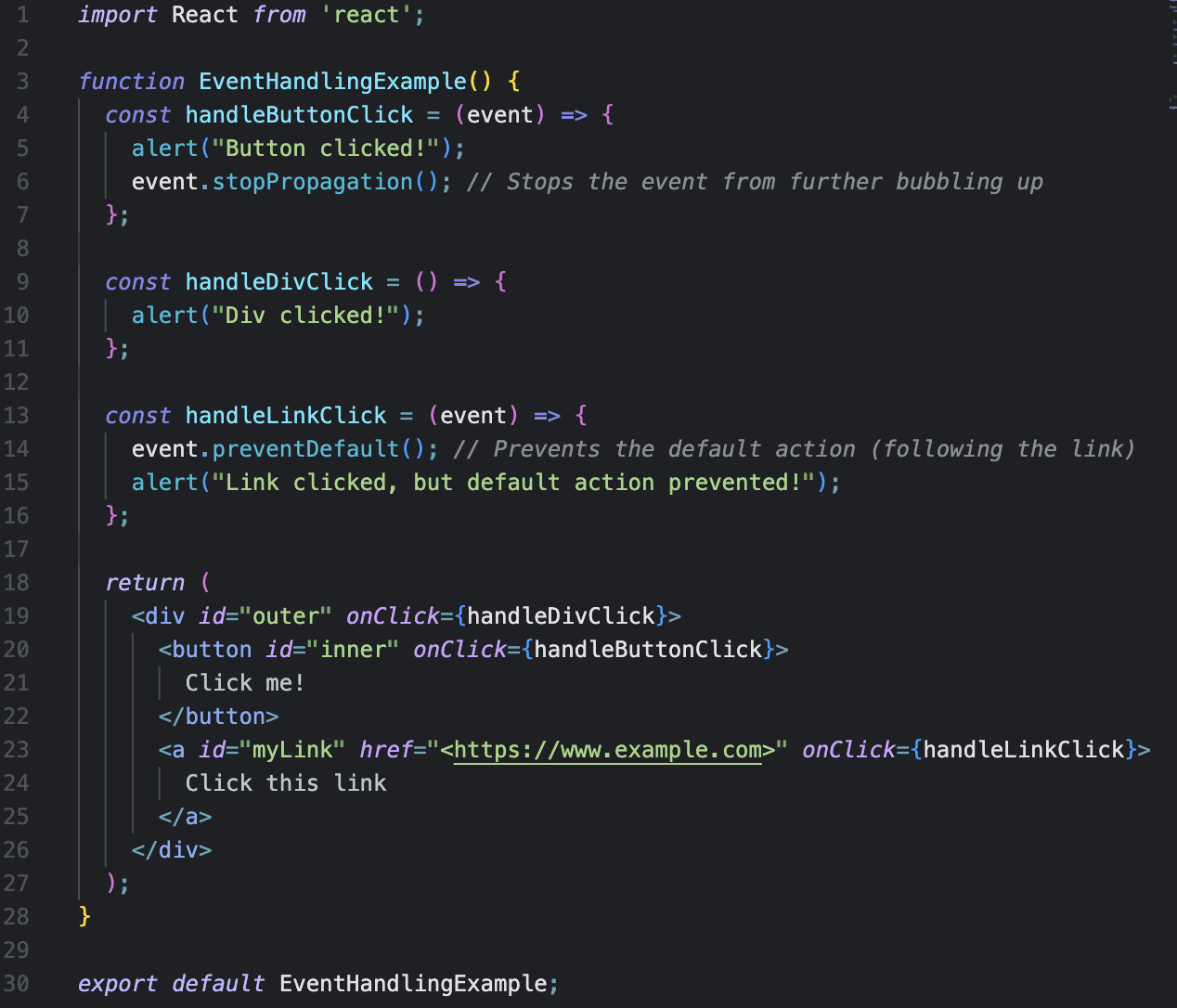

1,701,941 | What is Event Bubbling and how we can handle this? | Hi Devs, Have you all heard about Event Bubbling? Recently, someone asked me if I knew about this... | 0 | 2023-12-18T20:46:25 | https://dev.to/jaelynlee/what-is-event-bubbling-and-how-we-can-handle-this-501c | webdev, programming, beginners, discuss | Hi Devs,

Have you all heard about **Event Bubbling**? Recently, someone asked me if I knew about this concept, and I had no idea what it was! They were also asked about it in an interview and couldn't answer. So, I decided to research and summarize the definition, as well as how we can use it in real code.

Shall we start our deep dive into it?

## What is Event Bubbling?

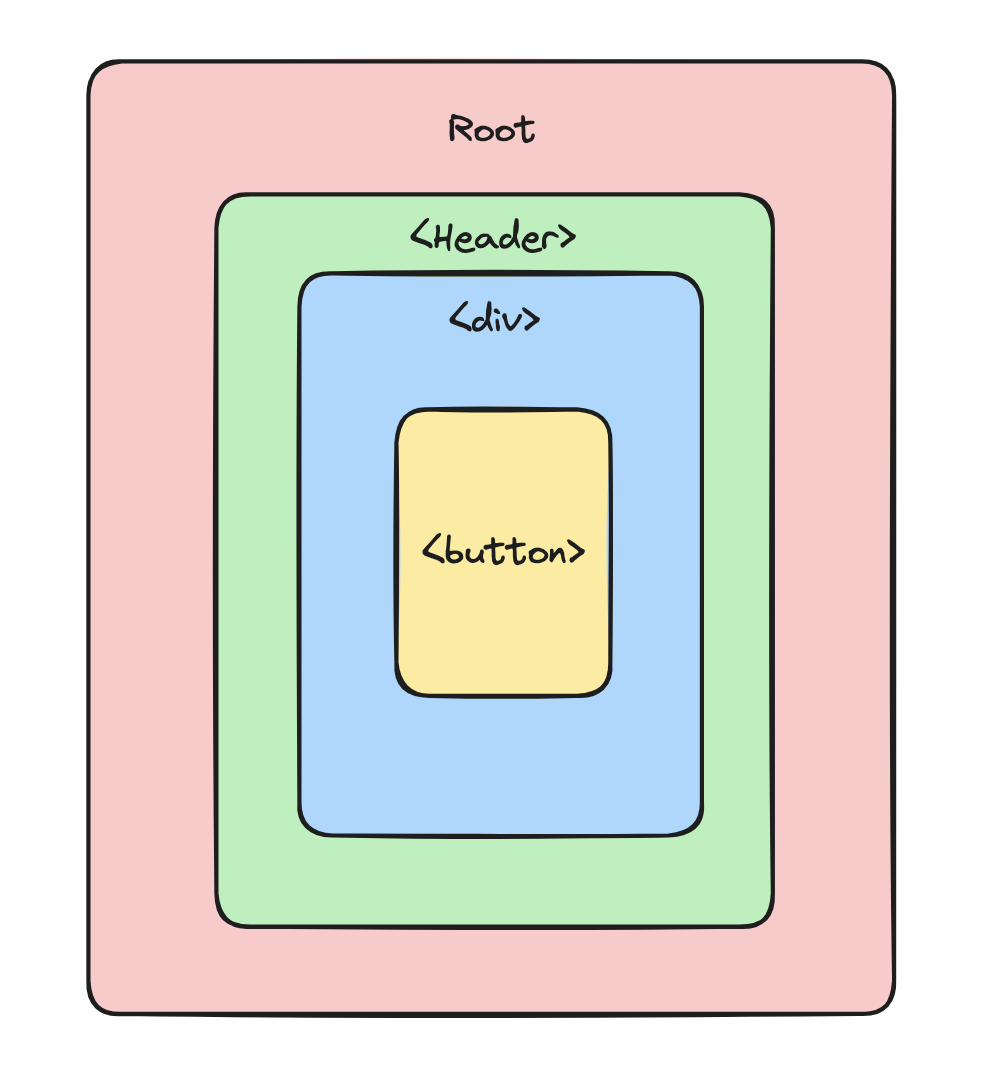

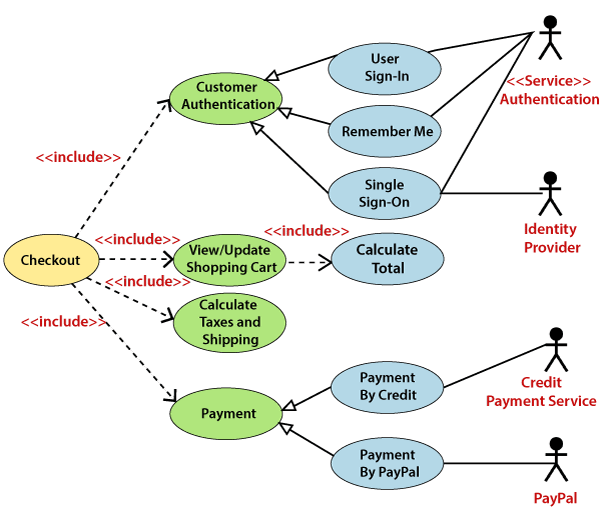

**Event Bubbling** is a concept in the DOM (Document Object Model). It occurs when an element receives an event, and that event bubbles up to its parent and ancestor elements in the DOM tree until it reaches the root element.

As you can see from this diagram, if `button` is clicked, all events will be propagated until it reaches the root element. Event bubbling is the default behavior for events but can be prevented in certain cases.

Let me give you some examples to help you understand better.

Of course, I am too lazy to write code, so I asked ChatGPT to generate it for me, and it did a great job, to be honest!

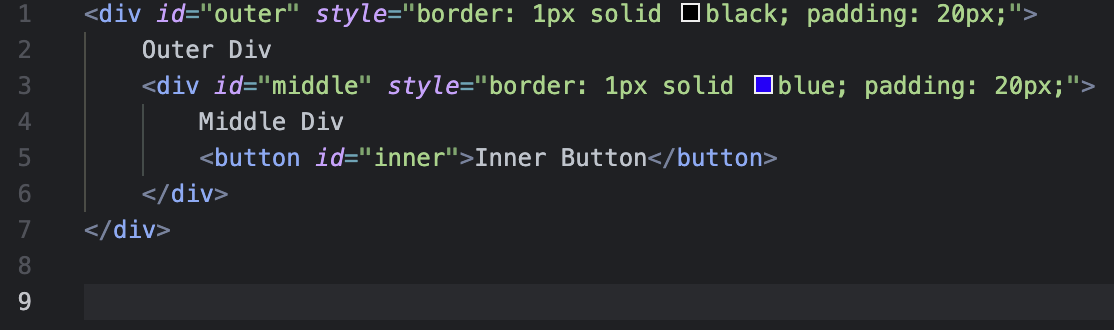

So, just like the diagram above, the elements are stacked on top of each other like a set of Russian dolls.

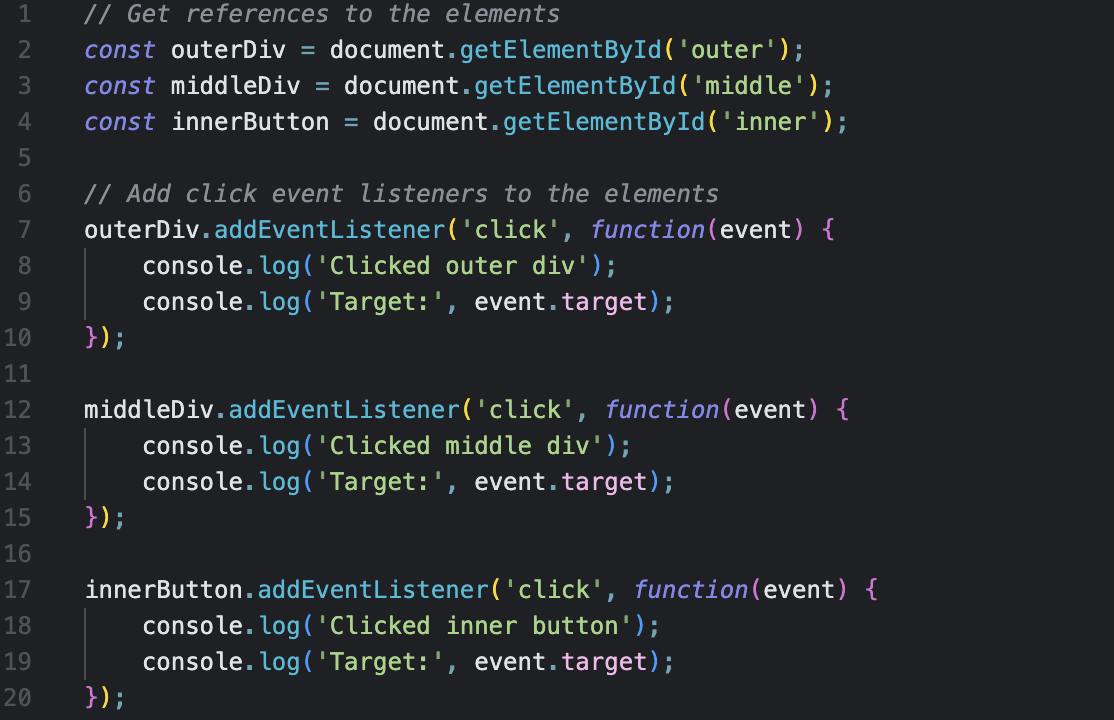

By clicking the `Inner Button`, the logs on `innerButton`, `middleDiv`, and `outerDiv` will be executed sequentially. And this is Event bubbling! A very straightforward concept, right?

## Importance of understanding Event bubbling in Web development

Think of event bubbling like throwing a pebble into a pond. When you toss the pebble, ripples spread outward from where it landed. Event bubbling works similarly on web pages. When something happens, like a click on a button, that "event" ripples through the different parts of a webpage, starting from where it happened and moving up to the main part of the page.

1. **Controlling how things flow**: Just like you can predict where ripples go in a pond, understanding event bubbling lets developers decide how things react when you click or interact with something on a webpage.

2. **Smart handling for many things at once**: Imagine if you could clap once and have everyone in a room who heard it react in a certain way. Event bubbling lets developers set up reactions for many things (like buttons) all at once without having to do it for each one individually.

3. **Building pieces that work everywhere**: It's like creating LEGO pieces that fit together perfectly. With event bubbling, developers can make these parts (components) that know how to handle their own events. Then, they can use these pieces in different parts of a website without having to rebuild them each time.

4. **Making websites fun to use**: Event bubbling helps in creating web pages that respond when you do something, like clicking a button or dragging an item. It's what makes websites feel interactive and enjoyable to use.

In short, understanding event bubbling helps developers control how events move around a webpage, handle lots of things efficiently, make reusable parts, and create fun and interactive websites.

## How can we handle this Event bubbling?

What if you want to stop this from happening?

There are several ways to handle Event bubbling, but I am going to explain 2 methods today.

1. stopPropagation()

The stopPropagation() method prevents further propagation of the current event. However, if an element has multiple event handlers on a single event, then even if one of them stops the bubbling, the other ones still execute. In other words, `event.stopPropagation()` stops the upward movement, but all other handlers on the current element will still run.

2. preventDefault()

If you want to stop those behaviors, you should use the `preventDefault()` method.

The `preventDefault()` method is used to stop a specific action from happening when an event occurs. Normally, when you interact with something on a webpage (like clicking a link), the browser does something automatically (like navigating to a new page). But with `preventDefault()`, you can tell the browser not to do that automatic action. It's like saying "Hey browser, don't do the default thing, I want to do something else instead."

Just keep in mind that using `preventDefault()` should be done carefully and only when necessary because it can change how a webpage or application behaves.

Let’s have a look at a simple scenario to better understand.

There are 3 functions to explain event handling methods.

So what do you think will happen if the button is clicked?

As the button is stopping the propagation from going up to the parent elements, the alert message on the outer container will not be executed.

What about preventDefault()?

It will prevent us from navigating to the link and will execute the remaining action.

The difference between `e.preventDefault()` and `e.stopPropagation()` is that `e.preventDefault()` is used to stop a specific action from happening when an event occurs, such as preventing a link from navigating to a new page. On the other hand, `e.stopPropagation()` stops the propagation of the event to parent and ancestor elements, but other event handlers on the current element will still execute.

I hope this post was helpful for your coding. Happy coding, everyone! | jaelynlee |

1,702,071 | Discuss the current job market? | Yes, I have also experienced job loss like you. We can indeed discuss the current job market and... | 0 | 2023-12-19T02:34:32 | https://dev.to/jialudev/discuss-the-current-job-market-58g | discuss | Yes, I have also experienced job loss like you. We can indeed discuss the current job market and explore the new roles that are in high demand that a programmer can transition into. | jialudev |

1,702,125 | The Evolving Role of Property Managers in the Modern Real Estate Market | In an ever-changing real estate landscape, the role of property managers has evolved significantly to... | 0 | 2023-12-19T05:00:50 | https://dev.to/acceleratemarketing03/the-evolving-role-of-property-managers-in-the-modern-real-estate-market-jii | In an ever-changing real estate landscape, the role of property managers has evolved significantly to meet the dynamic needs of both landlords and tenants. As technology, demographics, and market dynamics reshape the way we approach property management, professionals in this field are embracing new strategies and adopting innovative tools to stay ahead. Let's delve into the key aspects of the evolving role of property managers in the modern real estate market.

## Embracing Technological Advancements

Gone are the days of manual paperwork and traditional communication methods. Modern property managers leverage cutting-edge technology to streamline their operations. From property management software that automates tasks like rent collection and maintenance requests to online portals that enhance communication between landlords and tenants, embracing technology is a hallmark of the contemporary property manager.

## Data-Driven Decision Making

In the digital age, data is a powerful tool for informed decision-making. Property managers now analyze market trends, tenant preferences, and property performance metrics to make strategic decisions. This data-driven approach allows for better forecasting, proactive issue resolution, and the optimization of property portfolios.

## Enhanced Communication Strategies

Effective communication has always been a cornerstone of successful property management, but the methods have evolved. Property managers are now utilizing various communication channels, including email, text messaging, and social media, to stay connected with both landlords and tenants. Proactive and transparent communication helps build trust and fosters positive relationships.

## Focus on Tenant Experience

Modern property managers recognize the importance of tenant satisfaction. With an increased focus on providing exceptional tenant experiences, property managers are implementing amenities, services, and community-building initiatives that go beyond the basic requirements. This emphasis on tenant satisfaction contributes to tenant retention and positive word-of-mouth referrals.

## Adaptation to Regulatory Changes

The legal landscape of real estate is ever-changing, with new regulations and compliance requirements emerging regularly. Property managers stay abreast of these changes, ensuring that landlords remain compliant with local, state, and federal laws. Their understanding of legal nuances protects both landlords and tenants and contributes to the overall stability of the rental market.

## Green and Sustainable Practices

Sustainability is no longer a mere buzzword; it's a driving force in property management. Modern property managers are implementing eco-friendly practices, from energy-efficient property upgrades to waste reduction initiatives. These practices not only align with broader environmental goals but also appeal to environmentally conscious tenants.

## Strategic Marketing and Branding

Property managers today understand the significance of effective marketing. Whether it's showcasing properties through high-quality online listings, utilizing social media for targeted advertising, or implementing SEO strategies, modern property managers take a proactive approach to attract quality tenants and maximize property exposure.

## Financial Expertise and Budgeting

Beyond rent collection, property managers now play a more active role in financial management. They work closely with landlords to develop comprehensive budgets, ensuring that properties remain profitable and well-maintained. Financial expertise is particularly crucial in navigating economic uncertainties and unexpected market shifts.

## Crisis Management and Emergency Response

Recent global events have highlighted the importance of crisis management and emergency response in property management. Modern property managers are well-prepared to handle unexpected challenges, whether they be natural disasters, public health crises, or other emergencies. Their ability to adapt swiftly ensures the safety and well-being of tenants and the preservation of property value.

## Professional Development and Industry Certifications

Recognizing the complexity of their evolving role, property managers invest in continuous professional development. Many pursue industry certifications, attend workshops, and participate in networking events to stay informed about the latest trends and best practices. This commitment to ongoing education enhances their expertise and benefits the clients they serve.

## Conclusion

In the modern real estate market, [**best property management**](https://www.johnpye.com.au/)

are no longer simply rent collectors and maintenance coordinators. Their role has transformed into a multifaceted, dynamic profession that requires adaptability, technological savvy, and a holistic understanding of the real estate landscape. As property managers continue to evolve, their ability to navigate complexities, embrace innovation, and prioritize the needs of landlords and tenants positions them as indispensable players in the ever-changing world of real estate.

| acceleratemarketing03 | |

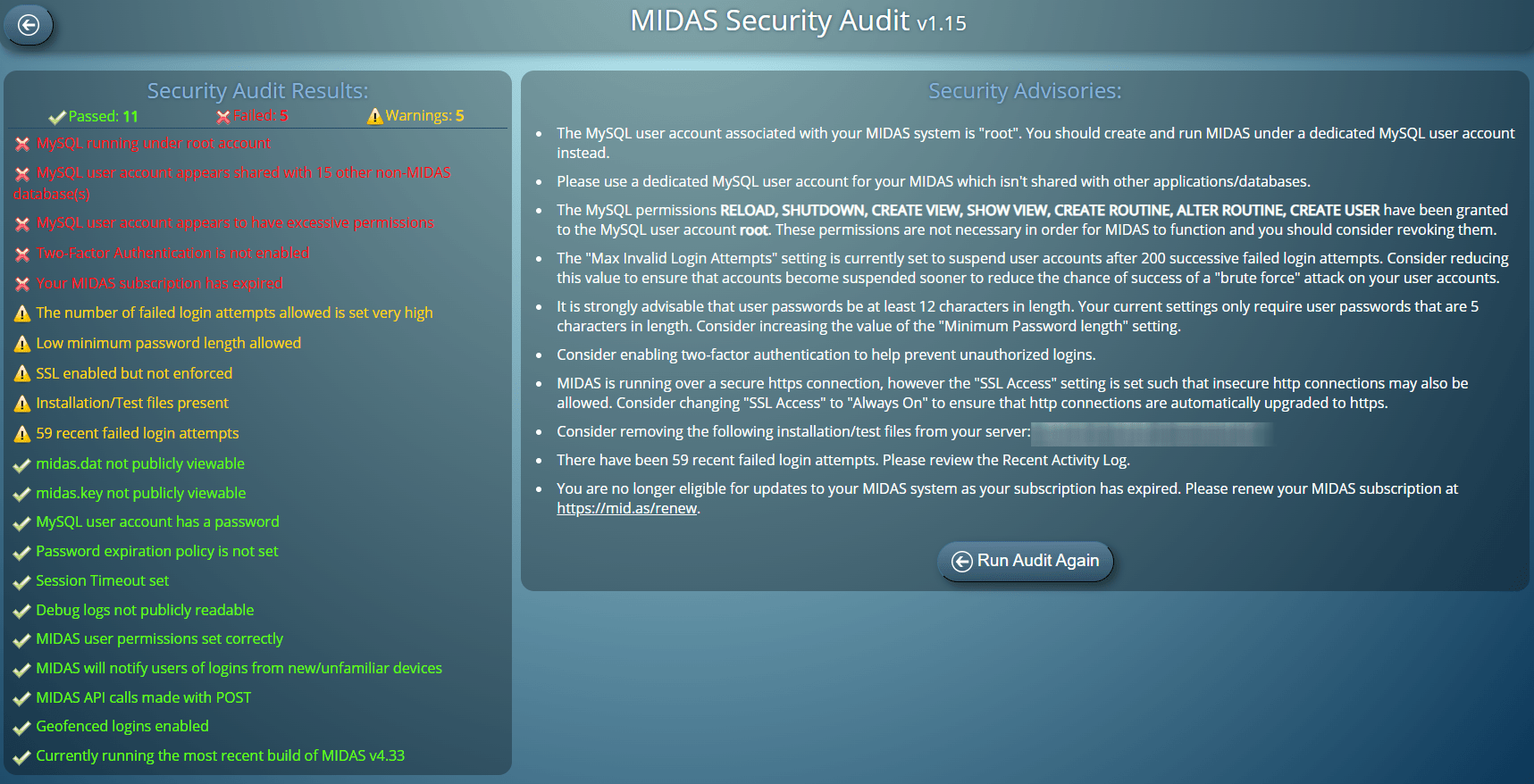

1,702,416 | Schedule regular Security Audits | You may not know, but MIDAS includes a built-in “Security Audit” tool. This allows you to perform a... | 0 | 2023-12-19T10:21:00 | https://mid.as/blog/schedule-regular-security-audits/ | development, scheduledtasks, security, v433 | ---

title: Schedule regular Security Audits

published: true

date: 2023-04-17 19:23:39 UTC

tags: Development,scheduledtasks,security,v433

canonical_url: https://mid.as/blog/schedule-regular-security-audits/

---

You may not know, but MIDAS includes a built-in “Security Audit” tool.

This allows you to perform a quick and on-demand security analysis of your MIDAS system.

_Perform a detailed Security Audit of your MIDAS room booking system_

[First introduced with the release of MIDAS v4.13 in 2016](https://mid.as/blog/security-enhancements-in-v4-13/), the “Security Audit” tool tests a number of key metrics of your MIDAS booking system.

The audit checks your MySQL / MariaDB setup, MIDAS files, and recommended MIDAS security settings.

It provides a detailed report with appropriate advisories for hardening the security of your MIDAS system.

When the Security Audit was first introduced, it analyzed 15 metrics. Today, that number has increased to over 20.

For MIDAS v4.33, the audit now additionally also…

- Indicates the number of recently failed login attempts to your MIDAS system.

- Checks whether [Geofenced logins](https://mid.as/blog/geolocation-and-geofencing/) have been enabled.

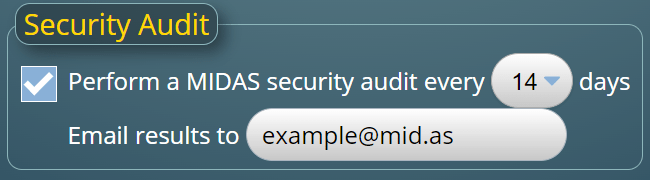

But the biggest improvement to Security Audits for MIDAS v4.33 is the ability to schedule regular automated security audits.

Until now, a Security Audit could only be manually initiated (via MIDAS Admin Options → Manage MIDAS → Security → Perform a Security Audit)

From MIDAS v4.33, you can now use [Scheduled Tasks](https://mid.as/help/manage-scheduled-tasks) to automatically run a Security Audit and email you the results. Audits can be configured to run every 7, 14, 30, 60, or 90 days.

_Schedule automated security audits of your MIDAS booking system_

The post [Schedule regular Security Audits](https://mid.as/blog/schedule-regular-security-audits/) appeared first on [MIDAS - Room Booking System | Blog](https://mid.as/blog). | midas |

1,702,897 | Unlocking Entertainment: Watching the Best Korean Dramas Online for Free | In the vast landscape of online streaming, Korean dramas have emerged as a global entertainment... | 0 | 2023-12-19T19:11:23 | https://dev.to/blogul/unlocking-entertainment-watching-the-best-korean-dramas-online-for-free-20ig | serialecoreene, blogulluiatanase, koreandramas | In the vast landscape of online streaming, Korean dramas have emerged as a global entertainment phenomenon, captivating audiences with their compelling stories and cultural richness. The good news is that you don't need to break the bank to enjoy the best of K-dramas. Here's a guide on how to be entertained by watching the finest Korean dramas online for free.

**1. Explore Free Streaming Platforms:

Numerous streaming platforms offer a selection of Korean dramas for free. Platforms like Viki, Rakuten Viki, and Tubi provide access to a variety of K-dramas without requiring a subscription fee. While there may be some ads, the content is accessible, making it an excellent starting point for budget-conscious viewers.

**2. Utilize Ad-Supported Streaming:

Some premium streaming platforms, including Viu and Kocowa, offer ad-supported viewing options. By enduring occasional ads, you can access a wide range of K-dramas without paying a subscription fee. Keep an eye out for free trials or limited-time promotional periods that platforms may offer.

**3. YouTube Channels and Playlists:

YouTube is a treasure trove for free K-drama content. Many official channels or content creators upload dramas legally, and you can find playlists with full episodes. Be cautious to choose content from legitimate sources to ensure quality and legality.

**4. Free Trials on Premium Platforms:

Take advantage of free trial periods offered by premium streaming services. Platforms like [https://blogulluiatanase.net/](https://blogulluiatanase.net/) often provide a limited trial period for new subscribers. During this time, you can binge-watch your favorite K-dramas without incurring any cost. Just remember to cancel the subscription before the trial period ends if you choose not to continue.

**5. Check Local Broadcasting Services:

In some regions, local broadcasting services or streaming platforms may offer Korean dramas for free as part of their content library. Explore the options available in your region to discover if any platforms provide free access to K-dramas.

**6. Engage with Apps:

Certain mobile apps offer free K-drama content. Apps like KOCOWA, OnDemandKorea, and Viu allow users to watch dramas without a subscription. While these apps may have limitations on the available content, they still provide an opportunity to enjoy K-dramas without a financial commitment.

**7. Community Recommendations:

Engage with online communities and forums where K-drama enthusiasts share recommendations and links to free streaming sites. Websites like MyDramaList, Reddit, or dedicated K-drama forums often have threads where users discuss and share legal sources for free streaming.

**8. Public Libraries and Educational Platforms:

Some public libraries offer streaming services with a selection of K-dramas. Additionally, educational platforms may host cultural content, including dramas, as part of their outreach programs. Check with your local library or educational institutions for potential free access to K-dramas.

**9. Stay Updated on Promotions:

Streaming platforms occasionally run promotional events or partnerships that allow users to access premium content for free during specific periods. Stay informed about these promotions by following the social media accounts of streaming services or subscribing to their newsletters.

**10. Support Legal Channels:

While enjoying K-dramas for free is fantastic, consider supporting legal channels when possible. Legal streaming services ensure the quality of content, provide a smoother viewing experience, and contribute to the continued production of captivating dramas.

In conclusion, the world of Korean dramas is at your fingertips, and you can embark on an entertainment journey without spending a dime. By exploring free streaming platforms, utilizing trial periods, engaging with online communities, and staying informed about promotions, you can unlock the magic of K-dramas without breaking the bank. Enjoy the stories, emotions, and cultural richness of the best Korean dramas from the comfort of your screen, all while staying within your budget. | blogul |

1,703,056 | Blackjack Terminal Game | This is my game for a portfolio project on a Computer Science course at Codecademy. I would... | 0 | 2023-12-19T22:00:46 | https://dev.to/matpluta99/blackjack-terminal-game-16nd | codecademy, beginners, codenewbie | #### This is my game for a portfolio project on a Computer Science course at Codecademy. I would be very grateful if you reviewed the it and left a feedback on what I could improve :D

#### https://github.com/mat-pluta99/Blackjack

### What were the goals of the project?

The first goal of the project was create a simple basic program for me and friends or family to play with. I decided to create a Blackjack game, since I'd spend too much time on the Strip in Fallout: New Vegas.

The last goal was to create a blog post about the project. So here I am, creating a first blog post on DEV :D

### Lady Luck

I've managed to create a Blackjack game for 1-5 players. You can play alone or gather friends and try your luck in this classic card game. If you earn enough chips and quit before your luck runs out, your score will be saved in the Top 10 leaderboard. | matpluta99 |

1,703,110 | The 'Serendipity Effect' of Brainstory | A first time Brainstory user shared this story in our community Discord. I originally was going in... | 0 | 2023-12-20T00:14:46 | https://blog.brainstory.ai/blog/brainstory-serendipity-effect/ | psychology, questioning, culture | ---

title: The 'Serendipity Effect' of Brainstory

published: true

date: 2023-12-19 00:00:00 UTC

tags: psychology, questioning, culture

canonical_url: https://blog.brainstory.ai/blog/brainstory-serendipity-effect/

---

A first time Brainstory user shared this story in our [community Discord](https://brainstory.ai/discord).

> I originally was going in the direction of trying to give myself criteria to make my movie ratings more objective, but it turned into me [talking] about what makes a movie important to me and helped me visualize that for my ratings instead. First time using Brainstory, I like how it pulled me from my first line of thinking (making an objective scale) to a different train of thought (Making me defend and elaborate on my categories of subjective ranking) very seamlessly, very cool!

Let’s talk about what this experience is and why!

## What does Brainstory _do_?

Brainstory asks you **questions**. It does not give advice or suggestions. While you’re talking, it may seem like Brainstory is “pulling” you into a different train of thought, but that’s actually _you_ pulling yourself into a different direction. All Brainstory does is ask you questions about your thinking.

## Why did we make Brainstory ask questions?

Asking questions is good, but there’s a lot of reasons humans don’t want to ask other humans questions. Maybe you feel like you’re wasting someone’s time, or you think the question is too sensitive. [Research](https://www.sciencedirect.com/science/article/abs/pii/S0749597820304003) has shown that the benefits of asking questions outweighs the perceived consequences, but tell that to your emotions!

That’s why we made Brainstory— to be a safe place where you can always have your best thoughts.

## Are you reading this in December of 2023?

Join our [giveaway](https://brainstory.ai/giveaway) and win awesome cash prizes! See you in the Discord! | lilchenzo |

1,703,249 | Beginner's TypeScript #6 | ⭐ Constraining Value Types ⭐ We have a User interface below: interface User { id: number; ... | 25,770 | 2023-12-23T15:00:00 | https://dev.to/nhannguyendevjs/beginners-typescript-6-jn5 | programming, beginners, javascript, typescript |

⭐ **Constraining Value Types** ⭐

We have a **User** interface below:

```ts

interface User {

id: number;

firstName: string;

lastName: string;

role: string;

}

```

We would not want to use a **freeform string**, because a User could have only a set number of roles: **admin**, **user**, or **super-admin**.

Consider this **defaultUser**:

```ts

export const defaultUser: User = {

id: 1,

firstName: "Uri",

lastName: "Pilot",

role: "I_SHOULD_NOT_BE_ALLOWED",

}

```

It is being defined with a role that is not one of our options.

We will update the **User interface** to restrict the role property to one of the set options.

The **I_SHOULD_NOT_BE_ALLOWED** role should cause an error.

👉 **Solution:**

The solution is to update **role** to be a [Union type](https://www.typescriptlang.org/docs/handbook/2/everyday-types.html#union-types).

The syntax uses **|** to delineate the options for which values a key can be:

```ts

interface User {

id: number;

firstName: string;

lastName: string;

role: "admin" | "user" | "super-admin";

}

```

✍️ **About Union Types:**

Anything can be added to a union type. For example, we can make a new **SuperAdmin** type and add it to the role:

```ts

type SuperAdmin = "super-admin"

interface User {

// ...other stuff

role: "admin" | "user" | SuperAdmin;

}

```

Union types are everywhere within TypeScript. They give our code safety and allow us to be really sure of the types of data flowing through our app.

---

I hope you found it useful. Thanks for reading. 🙏

Let's get connected! You can find me on:

- **Medium:** https://medium.com/@nhannguyendevjs/

- **Dev**: https://dev.to/nhannguyendevjs/

- **Hashnode**: [https://nhannguyen.hashnode.dev](https://nhannguyen.hashnode.dev/)/

- **Linkedin:** https://www.linkedin.com/in/nhannguyendevjs/

- **X (formerly Twitter)**: https://twitter.com/nhannguyendevjs/

| nhannguyendevjs |

1,703,393 | hehe_game | Check out this Pen I made! | 0 | 2023-12-20T08:05:12 | https://dev.to/rishitagarg/hehegame-e33 | codepen | Check out this Pen I made!

{% codepen https://codepen.io/Rishita-Garg/pen/MWxgvVm %} | rishitagarg |

1,784,948 | Blockchain Apps | Does anyone understand programming to make blockchain apps? | 0 | 2024-03-09T01:26:40 | https://dev.to/anonimus/blockchain-apps-51bd | Does anyone understand programming to make blockchain apps? | anonimus | |

1,703,434 | Building an AI powered WebGL experience with Supabase and React Three Fiber | TL;DR: Baldur's Gate 3 inspired WebGL experience called Mirror of Loss Demo video GitHub... | 0 | 2023-12-21T19:59:59 | https://dev.to/laznic/building-an-ai-powered-webgl-experience-with-supabase-and-react-three-fiber-3dfn | webgl, webdev, supabase, react | > TL;DR:

> - Baldur's Gate 3 inspired WebGL experience called [Mirror of Loss](https://mirrorofloss.com)

> - [Demo video](https://drive.google.com/file/d/1t9DJvSedcPtayNZb4--8g1-sm4P8X1wf/preview)

> - [GitHub repo](https://github.com/laznic/mirror-of-loss)

> React Three Fiber with [Drei](https://github.com/pmndrs/drei) makes things simple

> Tech stack used: React, React Three Fiber, Vite, Supabase, OpenAI, Stable Diffusion, Stable Audio

I recently participated in the [Supabase Launch Week X Hackathon](https://supabase.com/blog/supabase-hackathon-lwx) where I ended up dabbling in WebGL and created a project called [Mirror of Loss](https://mirrorofloss.com). I had a lot of fun working on it, and figured it would be nice to share a bit about it here, too.

I've participated in three different Launch Week Hackathon's before and I've always tried to do something a bit outside my regular web dev work. Usually they turn out to be more of an experience than an app. The hackathons run for a week, so it's a good time to focus on and learn something new and cool!

> **Note**: this article will not go into every detail how to build a similar kind of WebGL app with React Three Fiber and Supabase. For example, installation instructions can be found on the libraries own websites instead of being mentioned here.

>

> This article just provides the bigger picture of how you can build WebGL apps & experiences by sharing my experience with it during the Supabase hackathon.

>

> Not all the code will be displayed here, as the article would grow as long. It is, however, open sourced, so you can find all the little details in the [GitHub repo](https://github.com/laznic/mirror-of-loss).

## The idea

Recently I've been indulging on [Baldur's Gate 3](https://baldursgate3.game/), and what a better way to show your appreciation as a fan than to create something (close) from it! In the game they introduced a [Mirror of Loss (spoiler warning)](https://bg3.wiki/wiki/Mirror_of_Loss), and me being very intrigued by the aesthetics (and the whole story/concept) of [Shar](https://forgottenrealms.fandom.com/wiki/Shar) in the game, I thought it would be nice to do a representation of it myself. And in 3D/WebGL! However, I wasn't planning on doing this during the hackathon: I just wanted to make it a new art project for myself. In the end I decided to try and create this within a week, since it seemed like a good time to do it.

## Preparation

So before the hackathon started, around one or two weeks prior, I started wondering how to build this thing in WebGL. I was aware of [Three.js](https://threejs.org/), which I had dabbled a bit in the past, however it seemed a bit intimidating. No way I'd have time to learn the vanilla way of creating WebGL experiences. Luckily I had head about [React Three Fiber](https://docs.pmnd.rs/react-three-fiber/getting-started/introduction) before, although hadn't payed a lot of attention to it. And boy, I was really happy with what I read in their documentation! Everything seemed to abstract the tedious bits in Three.js to an easy to use React components, and I can do React for sure. They provide a lot of additional helper libraries, such as [Drei](https://github.com/pmndrs/drei), [Ecctrl](https://github.com/pmndrs/ecctrl), to make developing a lot easier.

Drei is a collection of various, ready-made abstractions of React Three Fiber, and includes things like [trails](https://drei.pmnd.rs/?path=/docs/misc-trail--docs), [making something face camera always, called Billboard](https://drei.pmnd.rs/?path=/docs/abstractions-billboard--docs), and [animated distort material](https://drei.pmnd.rs/?path=/docs/shaders-meshdistortmaterial--docs) for example. Ecctrl on the other hand allows you to set up a character controller very quickly. I recommend checking out both of these if you are planning to do any React Three Fiber work.

> **Tip**: Easiest way to get started is to setup up your project with [Vite](https://docs.pmnd.rs/react-three-fiber/getting-started/installation#vite.js).

Other than that, my plan was to do two different scenes: one with a mirror for which the user can give a memory to, and one "inside" the mirror where you can see every single memory the mirror holds. Thought this would be a pretty cool concept, and with this in mind, I started experimenting a bit.

I started playing around and see if I could create some elements I'd like to use in the scene. I was mostly obsessed about creating a brazier, because you gotta have braziers! Below is what I come up with. It's very simple, however has a nice vibe to it. I didn't have time to study how to create a real looking flame via shaders, so I had to be quite creative in this. Basically it is just two stacked Cone geometries with some transparency.

It came nicely together in React Three Fiber with `Lathe`, `Cone`, and `MeshDistortMaterial` components.

```javascript

const lathePoints = [];

for (let i = 0; i < 11; i++) {

lathePoints.push(new Vector2(Math.sin(i * 0.2) * 2, (i - 5) * 0.2));

}

return (

<>

<Lathe args={[lathePoints]} rotation={[0, 0, 0]} position={[0, 1.2, 0]}>

<meshLambertMaterial color="#777" />

</Lathe>

<pointLight color="#fff" intensity={10} position={[0, 5, 0]} />

<Cone args={[1.5, 2, undefined, 50]} position={[0, 2.75, 0]}>

<MeshDistortMaterial

distort={0.5}

speed={10}

color="#baa8ff"

transparent

roughness={0.01}

transmission={4.25}

ior={1} />

</Cone>

<Cone args={[1, 1.5, undefined, 50]} position={[0, 2.25, 0]}>

<MeshDistortMaterial

distort={0.75}

speed={10}

color="#fff"

roughness={0.8}

ior={7} >

</Cone>

</>

)

```

At this point I really had no idea what I was doing, however felt surprisingly confident that I could do some cool stuff for the hackathon.

## Starting the project, and the Mirror scene

First off when the hackathon kicked off, I thought that creating a 3D model of the mirror will play an important part in this as I could quickly generate textures for other, simple objects (e.g. walls, pillars, etc.) via an AI. So I fired up [Spline](https://spline.design) and got to work.

After spending several hours on the model, I now just needed to import it to the project and adding some materials to it. Before you can use GLTF files in your project, you'll need to tell Vite to include them in the assets like so:

```javascript

// https://vitejs.dev/config/

export default defineConfig({

assetsInclude: ["**/*.gltf"],

plugins: [react()],

});

```

It's a bit tedious process to create meshes for the GLTF model by hand, and luckily the Poimandres collective have also created [GLTFJSX](https://github.com/pmndrs/gltfjsx) library to help in that regard. They even have [a website to test it out](https://gltf.pmnd.rs/), which I just ended up using directly. [It prints out a nicely grouped meshes](https://github.com/laznic/mirror-of-loss/blob/57dfb1702e106808f5ebfeae2d65d0b05d20ac00/src/scenes/mirror/components/Mirror.tsx), which can be [altered individually](https://github.com/laznic/mirror-of-loss/commit/eb9501a8fe7f9788c74de9ae836cac4fe9ba6307).

At this point, after adding some initial materials to the model as you can see in the latter commit link, I realized that this is going to look very bad if I can't nail the materials perfectly. The alternative route would be creating something with a more oldschool vibe, and mixing 2D with 3D. Basically using sprites in a 3D environment like what they did in old games such as Wolfenstein, Duke Nukem, and Hexen. I especially have always liked the aesthetics of the last game mentioned, so I decided that I could try it out quickly to see if I could make it work.