id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

47,222 | CallerMemberName and Interfaces |

[CallerMemberName] is a attribute that is available in C# 5.0. It is a very use... | 0 | 2018-09-01T13:42:39 | http://www.dotnetindia.com/2018/08/callermembername-and-interfaces.html | net, softwarearchitecture, csharp | ---

title: CallerMemberName and Interfaces

published: true

tags: .NET,Software Architecture, CSharp

canonical_url: http://www.dotnetindia.com/2018/08/callermembername-and-interfaces.html

---

[CallerMemberName] is a attribute that is available in C# 5.0. It is a very useful functionality especially when doing common app framework components like logging, auditing etc.

One of the things that you usually try to do in your common logging infrastructure is to log the function that generated that log.

So before C# 5.0 it was most common to interrogate the stack to find the caller, which can be an expensive operation. Or the caller information is passed from the source function, which hard codes its name in the call.

Something like

```cs

_logger.Debug("Message", "myFunctionName");

```

This becomes hard to maintain and so in most cases the logger actually interrogates the stack to get this information and as mentioned before that comes with a performance penalty.

Using this new attribute, you can write something like the following for your log function:

```cs

public void Debug(string Message, [CallerMemberName] string callerFunction = "NoCaller")

```

The second parameter here is not passed by the caller, but is rather filled up by the infrastructure (i.e. .NET Framework). The parameter needs to be an optional parameter for you to be able to use this attribute. And this does not have a performance penalty as the compiler will fill in this parameter at compile time and so no run-time overheads.

This works fine, except when you use Interfaces. Again interfaces are generally a pattern that is often used in framework components like logging. So you will have a ILogger interface that will have a definition like about which is implemented by your actual Logger class and the caller use the Interface to talk to your implementation.

If you do something like this, the gotcha is that the interface also needs to have the attribute defined, or the value will not be auto filled. This is something that is easy to miss and is not well documented from whatever I could find. So your interface definition for this function will have the attribute defined like below:

```cs

void Debug(string Message, [CallerMemberName] string callerFunction = "")

```

for the caller value to flow through to your implementation.

When you are designing your interfaces this is so easy to miss and can take a long time to figure out why. | anandmukundan |

47,396 | Language Idea: Limit custom types to three arguments | The general rule should be to name your data, even when using custom types. | 0 | 2018-09-23T19:35:20 | https://dev.to/robinheghan/language-idea-limit-custom-types-to-three-arguments-27p1 | elm | ---

title: Language Idea: Limit custom types to three arguments

published: true

description: The general rule should be to name your data, even when using custom types.

tags: elm

---

_Language Idea is a new series where I note down some ideas I have on how Elm could improve as a language. Each post isn't necessarily a fully thought out idea, as part of my thinking process is writing stuff down. This is more a discussion starter than anything else._

In Elm 0.19 you can no longer create tuples which contains more than three elements. The reasoning behind this is that it's easy to lose track of what the elements represents after that. As an example:

```elm

(0, 64, 32, 32)

```

Is a bit more cryptic than:

```elm

{ xPosition = 0

, yPosition = 64

, width = 32

, height = 32

}

```

The rule here is quite simple, if you feel yourself in need of a tuple containing more than three elements you're better of using a record with named fields.

There is, however, another way in which you can work around this restriction in Elm 0.19.

## Custom Types

Yes. Custom types can still contain more than three parameters. Which is strange, as there is little to separate tuples from custom types with the exception of syntax and comparability.

Let me be clear though, you should prefer records with named fields. Names provide basic documentation, and records let you forget about what order parameters are in. I'd say that even when you're using custom types, you should always limit them to three (maybe even two) parameters and use records if you need more than that.

As to why Elm allows more than three parameters in custom types; I don't know. It might just be an oversight.

So here is a question: should Elm limit the number of parameters in custom types to three, as it does with tuples?

I think it should.

_Edit: The idea here is that one can do `type Person = Person { name : String, age : Int }` instead of `type Person = Person String Int` as it would make it more obious what each parameter is. It should then be encouraged to use records within custom types if there are many fields to store within the custom type._ | robinheghan |

47,566 | Want to write defect-free software? Learn the Personal Software Process | PSP is too hard to follow for most of software developers. But I think you can adapt the PSP principles and get 80% of the benefits with 20% of the work. | 0 | 2018-09-03T22:47:44 | https://smallbusinessprogramming.com/want-to-write-defect-free-software-learn-the-personal-software-process/ | codequality, learning, productivity | ---

title: Want to write defect-free software? Learn the Personal Software Process

published: true

description: PSP is too hard to follow for most of software developers. But I think you can adapt the PSP principles and get 80% of the benefits with 20% of the work.

tags: #quality, #learning, #productivity

cover_image: https://thepracticaldev.s3.amazonaws.com/i/mut20ft48dsfcjdmt1cz.jpg

canonical_url: https://smallbusinessprogramming.com/want-to-write-defect-free-software-learn-the-personal-software-process/

---

I'm on a journey to [become a better software developer](https://smallbusinessprogramming.com/how-i-intend-to-become-a-better-software-developer/) by reducing the number of defects in my code. The Personal Software Process (PSP) is one of the few proven ways to achieve ultra-low defect rates. I did a deep dive on it over the last few months. And in this post I'm going to tell you everything you need to know about PSP.

### The Promise of the Personal Software Process (PSP)

Watts Humphrey, the creator of [PSP](https://en.wikipedia.org/wiki/Personal_software_process)/[TSP](https://en.wikipedia.org/wiki/Team_software_process), makes extraordinary claims for defects rates and productivity achievable by individuals and teams following his processes. He wrote a couple of books on the topic but the one I'm writing about today is [PSP: A Self-Improvement Process for Software Engineers](https://www.amazon.com/PSP-Self-Improvement-Process-Software-Engineers/dp/0321305493/) (2005).

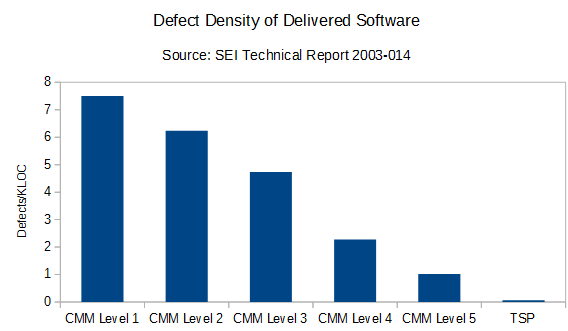

This chart shows a strong correlation between the formality with which software is developed and the number of defects in the delivered software. As you can see, PSP/TSP delivered a shocking 0.06 defects/KLOC, which is about **100 times fewer defects than your average organization** hovering around [CMM level 2](https://en.wikipedia.org/wiki/Capability_Maturity_Model) (6.24 defects/KLOC). Impressive, right?

Humphrey further claims:

>Forty percent of the TSP teams have reported delivering defect-free products. And these teams had an average productivity improvement of 78% (Davis and Mullaney 2003). Quality isn't free--it actually pays.

**Bottom line: defects make software development slower than it needs to be. If you adopt PSP and get your team to adopt TSP, you can dramatically reduce defects and deliver software faster.**

It seems counter-intuitive but Steve McConnell explains why reducing defect rates actually allows you to deliver software more quickly in this article: [Software Quality at Top Speed](https://stevemcconnell.com/articles/software-quality-at-top-speed/)

Of course, you can only get those gains if you follow a process like PSP. I'll explain more on that later but I want to take a small detour to tell you a story.

### Imagine your ideal job

Imagine you work as a software developer for a truly enlightened company--I'm talking about something far beyond Google or Facebook or Amazon or whomever you think is the best at software development right now.

#### You are assigned a productivity coach

Now, your employer cares so much about developer productivity that every software developer in your company is assigned a coach. Here's how it works. Your coach sits behind you and watches you work every day (without being disruptive). He keeps detailed records of:

* time spent on each task

* programming tasks are broken down into categories like requirements, requirements review, design, design review, coding, personal code review, testing, peer code review, etc.

* non-programming tasks are also broken down into meaningful categories like meetings, training, administrative, etc.

* defects you inject and correct are logged and categorized

* anything else you think might be useful

#### Your coach runs experiments on your behalf

After you go home, your coach runs your work products (requirements, designs, code, documentation, etc.) through several tools to extract even more data such as lines of code added and modified in the case of code. He combines and analyzes additional data from your bug tracker and other data sources into useful reports for your review when the results become statistically significant.

Your coach's job is to **help you discover how to be the very best programmer you can be**. So, you can propose experiments and your coach will set everything up, collect the data, and present you with the results. Maybe you want to know:

* if test-first development works better for you than test-last development?

* are design reviews worth the effort?

* does pair programming work for you?

* do you actually get more done by working more than 40 hours a week?

* are your personal code reviews effective?

Those would be the kinds of experiments your coach would be happy to run. Or your coach might suggest you try an experiment that has been run by several of your colleagues with favorable outcomes. The important point is that your coach is doing all the data collection and analysis for you while you focus on programming.

Doesn't that sound great? Imagine how productive you could become.

#### Unfortunately this is the real world so you have to be your own coach

This is the essence of the Personal Software Process (PSP). So, ***in addition to developing software, you also have to learn statistics, propose experiments, collect data, analyze it, draw meaningful conclusions, and adjust your behavior based on what your experiments reveal***.

### What the Personal Software Process gets right

Humphrey gets to the root of a number of the problems in software development:

* projects are often doomed by arbitrary and often impossible deadlines

* the longer a defect is in the project, the more it will cost to fix it

* defects accumulate in large projects and lead to expensive debugging and unplanned rework in system testing

* debugging and defect repair times increase exponentially as projects grow in size

* a heavy reliance on testing is inefficient, time-consuming, and unpredictable

Here's an overview of his solution:

>***The objective, therefore, must be to remove defects from the requirements, the designs, and the code as soon as possible. By reviewing and inspecting every work product as soon as you produce it, you can minimize the number of defects at every stage. This also minimizes the volume of rework and the rework costs. It also improves development productivity and predictability as well as accelerating development schedules***.

Humphrey convinced me that he's right about all aspects of the problem. He has data and it's persuasive. However, the prescription--what you need to do to fix the problem--is unappealing, as I'll explain in the next section.

### Why almost nobody practices the Personal Software Process

PSP is too hard to follow for 99.9% of software developers. The discipline required to be your own productivity coach is beyond what most people can muster, especially in the absence of organizational support and sponsorship. Software development is already demanding work and now you need to add this whole other layer of thinking, logging, data analysis, and behavior changes if you're going to practice the Personal Software Process.

Most software developers just aren't going to do that unless you hold a gun to their heads. And if you do hold a gun to someone's head PSP won't work at all. They'll just sabotage it or quit.

Here's a quote from Humphrey's book just to give you a taste for how detailed and process-driven PSP is:

>The PSP's recommended personal quality-management strategy is to use your plans and historical data to guide your work. That is, start by striving to meet the PQI guidelines. Focus on producing a thorough and complete design and then document that design with the four PSP design templates that are covered in Chapters 11 and 12. Then, as you review the design, spend enough time to find the likely defects. If the design work took four hours, plan to spend at least two, and preferably three, hours doing the review. To do this productively, plan the review steps based in the guidelines in the PSP Design Review Script described in Chapter 9....

It goes on from there...for about 150 pages. I can see how you could create 'virtually defect-free' software using this kind of process but it's hard for me to imagine a circumstance where you could get a room full of developers to voluntarily adopt such an involved process and apply it successfully.

But that's not the only barrier to adoption.

### Additional barriers to adoption

#### The Personal Software Process is meant to be taught as a classroom course

The course is prohibitively expensive. So, the book is the only realistic option available to most people.

You'll find that reading the book, applying the process, figuring out how to fill out all the forms properly, and then analyzing your data all without the help of classmates or an instructor is hard.

#### The book assumes your current process is some version of waterfall

TDD doesn't not play nice with the version of PSP you'll learn in the book. Neither does the process where you write a few lines, compile, run, repeat until you're done. It breaks the stats and the measures you need to compute to get the feedback you require to track your progress. It's not impossible to come up with your own measures to get around these problems but it's definitely another hurdle in your way.

Languages without a compile phase (python, PHP, etc.) also mess up the stats in a similar way.

#### The chapters on estimating are of little value to agile/scrum practitioners

There's a whole estimating process in PSP that involves collecting data, breaking down tasks, and using historical data to make very detailed estimates about the size of a task that just don't matter that much in the age of agile/scrum. In fact, I didn't see any evidence that the Personal Software Process estimating methods are any better than just getting an experienced group of developers together to play planning poker.

#### It's very difficult to collect good data on a real-world project

If you're going to count defects and lines of code, you need good definitions for those things. And at first glance, PSP seems to have a reasonably coherent answer. But when I actually tried to collect that data I was flooded by edge cases for which I had no good answers.

For example, a missing requirement caught in maintenance could take one hour to fix or it could take months to fix but you are supposed to record them both as requirement defects and record the fix time. Then you use the arithmetic mean for the fix time in your stats. So that one major missing requirement could completely distort your stats, even if it is unlikely to be repeated.

Garbage in, garbage out. Very concerning.

By the way, there's some free software you can use to help you with the data collection and analysis for PSP. It's hasn't been very useful in my experience but it's probably better than the alternative, which is paper forms.

#### Your organization needs to be completely on board

Learning the Personal Software Process is a huge investment. I think I read somewhere that it will take the average developer about 200 hours of devoted study to get proficient with the PSP processes and practices. Not many organizations are going to be up for that.

PSP/TSP works because the people using it follow a detailed process. But most software development occurs at [CMM Level 1](https://en.wikipedia.org/wiki/Capability_Maturity_Model) because the whole business is at CMM Level 1. I think it's pretty unlikely that a chaotic business is going to see the value in sponsoring a super-process-driven software development methodology on the basis that it will make the software developers more productive and increase the quality of their software.

You could argue that the first 'P' in PSP stands for 'personal' and that you don't need any organizational buy-in to do PSP on your own. And that may technically be true but that means you'll have to learn it on your own, practice it on your own, and resist all the organizational pressure to abandon it whenever management decides your project is taking too long.

### Is the Personal Software Process (PSP) a waste of time then?

No, I don't think so. Humphrey nails the problems in software development. And PSP is full of great ideas. The reason most people won't be able to adopt the Personal Software Process--or won't even try--is the same reason people people drop their new year's resolutions to lose weight or exercise more by February: our brains resist radical change. There's a bunch of research behind this human quirk and you can read [The Spirit of Kaizen](https://www.amazon.com/Spirit-Kaizen-Creating-Lasting-Excellence-ebook/dp/B009Q0CQMA/) or [The Power of Habit](https://www.amazon.com/Power-Habit-What-Life-Business-ebook/dp/B00564GPKY/) if you want to learn more.

Humphrey comes at this problem like an engineer trying to make a robot work more efficiently and that's PSP's fatal flaw. Software developers are people, not robots.

So, here are some ideas for how you can get the benefits from the Personal Software Process (PSP) that are more compatible with human psychology.

#### 1. Follow the recommendations without doing any of the tracking

Your goal in following PSP is to remove as many defects as possible as soon as possible. You definitely don't want any errors in your work when you give it to another person for peer review and/or system testing. Humphrey recommends written requirements, personal requirements review, written designs, personal design reviews, careful coding in small batches, personal code reviews, the development and use of [checklists](https://smallbusinessprogramming.com/code-review-checklist-prevents-stupid-mistakes/), etc.

You can do all that stuff without doing the tracking. He even recommends ratios of effort for different tasks that you could adopt. Will that get you to 0.06 defects/KLOC? No. But you might get 80% of the way there for 20% of the effort.

#### 2. Adopt PSP a little bit at a time

To get around the part of your brain that resists radical change, you could adopt PSP over many months. Maybe you just adopt the recommendations from one chapter every month or two. Instead of investing 200 hours up front, you could start with 5-10 hours and see how that goes.

Or you could just track enough data to prove to yourself that method A is better than method B. And once you're satisfied, you could drop the tracking altogether. For example, if you wanted to know if personal design reviews are helpful for you, you don't need to do all the PSP tracking all the time. You could just setup an experiment where you could choose five tasks as controls and five tasks for design reviews and run that for a week or two, stop tracking, and then decide which design review method to adopt based on what you learn.

#### 3. Read the PSP book and then follow a process with a better chance of succeeding in the long run

[Rapid Development](https://www.amazon.com/Rapid-Development-Devment-Developer-Practices-ebook/dp/B00JDMPOB6/) by Steve McConnell is all about getting your project under control and delivering working software faster. McConnell, unlike Humphrey, doesn't ignore human psychology. In fact, he embraces it. I believe most teams would follow McConnell's advice long before they'd consider adopting the Personal Software Process (PSP).

Most of the advice in Rapid Development is aimed at the team or organization instead of the individual, which I think it the correct focus. PSP is aimed at the individual but I can't see how you're going to get good results with it unless nearly everyone working on your project uses it. For example, if everyone on your team is producing crappy software as quickly as they can, your efforts to produce defect-free software won't have much effect on the quality or delivery date of the finished software.

### What I'm going to do

I develop e-commerce software on a team of two. My colleague and I have adopted a number of processes to ensure only high quality software makes it into production. And we've been successful at that; we've only had 4 critical (but easily fixed) defects and a handful of minor defects make it into production in the last year. Our problem is that we have quite a bit of rework because too many defects are making to the peer code review stage.

I showed my colleague PSP and he wasn't excited to adopt it, especially all the tracking. But he was willing to add design reviews to our process. So we'll start there and improve our processes in little steps at our retrospectives--just like we've been doing.

Rod Chapman recorded a nice talk on [PSP](https://youtu.be/nWScAkGn-zw) and I like his idea of "moving left". If you want go faster and save more money you should move your QA to the left--or closer to the beginning--of your development process. That sounds about right to me.

### Additional resources

Here are some resources to help you learn more about PSP:

* [Nice overview of PSP](https://youtu.be/nWScAkGn-zw) by Rod Chapman (video)

* [Watts Humphrey speaking about TSP/PSP](https://youtu.be/4GFqodvsugY) (video)

* [A PSP case study supporting PSP](https://pdfs.semanticscholar.org/86b9/16e062217867543889fc2c487ac368414698.pdf) (pdf)

* [Research paper that casts doubt on the benefits of PSP](https://www.researchgate.net/profile/Karlheinz_Kautz/publication/258223987_Improving_Software_Developer%27s_Competence_Is_the_Personal_Software_Process_Working/links/54bda13f0cf218da9391b500/Improving-Software-Developers-Competence-Is-the-Personal-Software-Process-Working.pdf?origin=publication_detail) (pdf)

* [PSP: A Self-Improvement Process for Software Engineers](https://www.amazon.com/PSP-Self-Improvement-Process-Software-Engineers/dp/0321305493/) (book)

* [Link to the programming exercises for the book](https://smallbusinessprogramming.com/where-to-find-the-psp-programming-exercises/) (website)

* [Process Dashboard](https://www.processdash.com/) - a PSP support tool (website)

### Takeaways

It's tough to recommend the Personal Software Process (PSP) unless you are building safety-critical software or you've got excellent organizational support and sponsorship for it. Most developers are just going to find the Personal Software Process overwhelming, frustrating, and not very compatible with the demands of their jobs.

On the other hand, PSP is full of good ideas. I know most of you won't adopt it. But that doesn't preclude you from using some of the ideas from PSP to improve the quality of your software. I outlined three alternate paths you could take to get some of the benefits of PSP without doing the full Personal Software Process (PSP). I hope one of those options will appeal to you.

*Have you ever tried PSP? Would you ever try PSP? I'd love to hear your thoughts in the comments.* | bosepchuk |

47,656 | Progressive Web Apps tutorial from scratch | What are progressive web apps? And how do you learn about them? | 0 | 2018-09-06T14:42:51 | https://dev.to/jadjoubran/progressive-web-apps-tutorial-from-scratch-3eim | pwa, progressivewebapp, javascript, serviceworker | ---

title: Progressive Web Apps tutorial from scratch

published: true

description: What are progressive web apps? And how do you learn about them?

tags: pwa, progressive web app, javascript, service worker

cover_image: https://i.imgur.com/80fkZFD.jpg

---

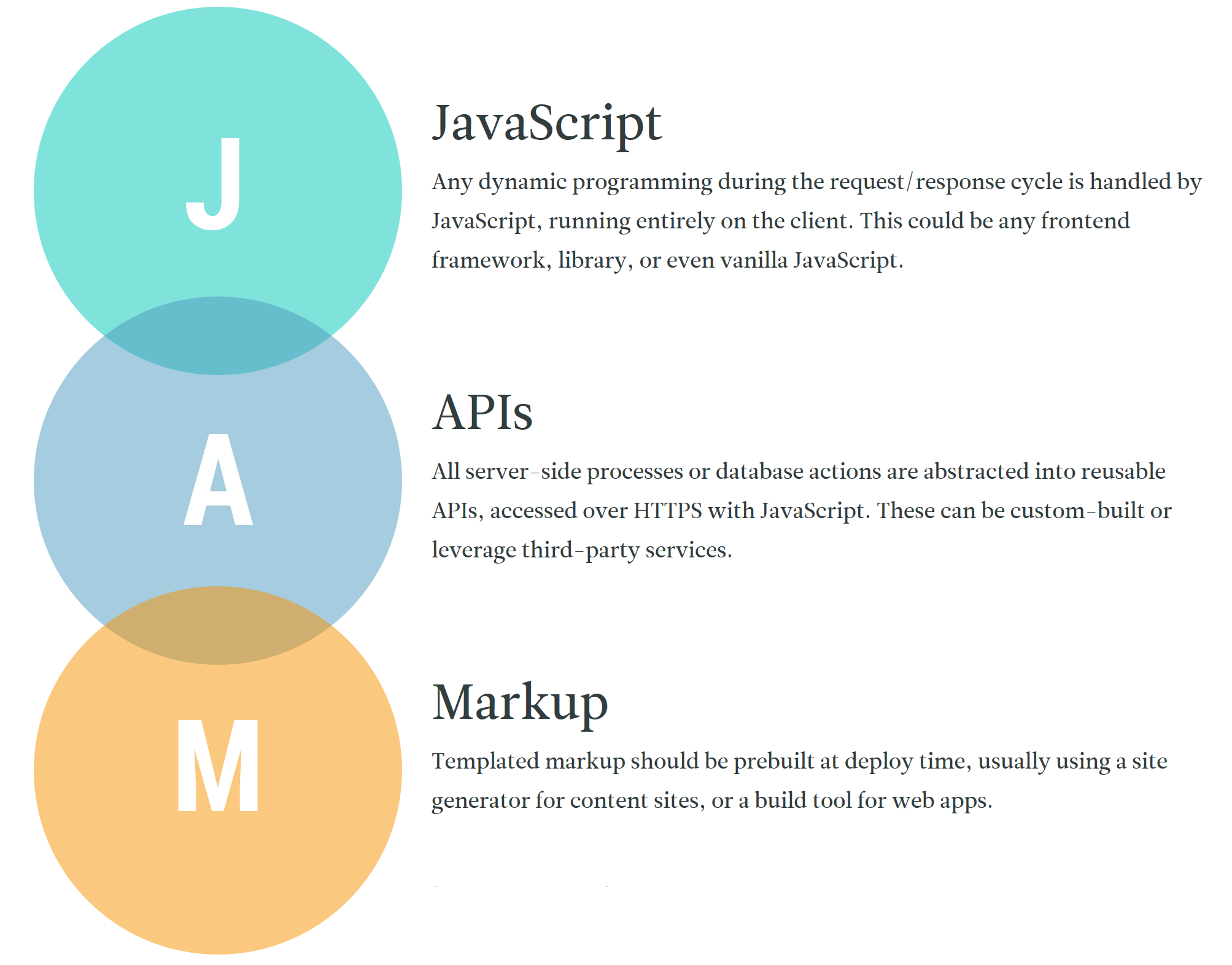

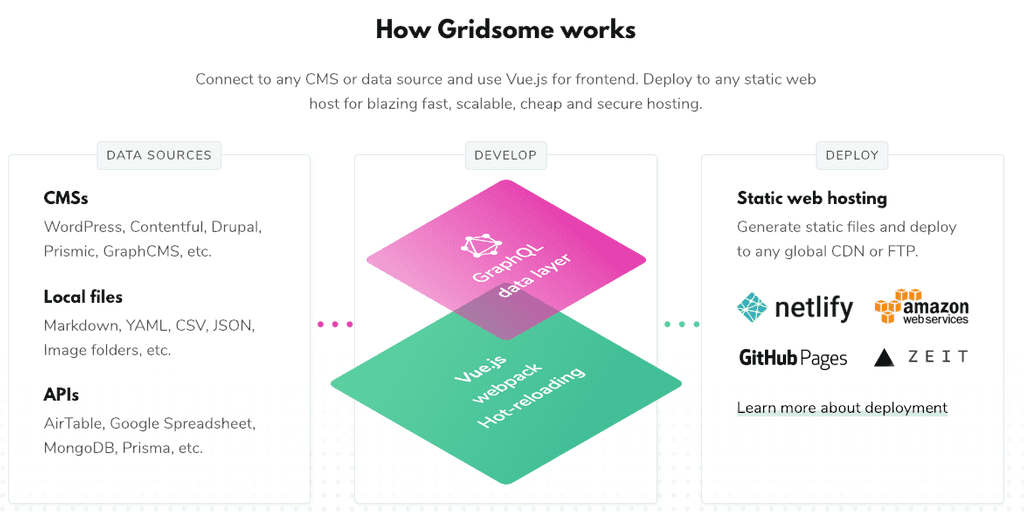

The modern web is super exciting. There's a whole new range of possibilities for us web developers thanks to a set of new Web APIs, collectively popularized under the term Progressive Web Apps.

When somebody asks me what is a PWA, it's always hard to come up with a concise definition that doesn't include a lot of technical terms. However, I finally came up with a definition that holds true in most scenarios:

> **A Progressive Web App is a modern Website that consistently delivers a superior User Experience.** ✨

The reason why I think this holds true in most scenarios is because it covers most of the technical features possible by PWAs. Here's an example:

Making your website work offline is about the User Experience. If your user gets interrupted with the Offline Dinosaur because they briefly lost connection, then this is a bad User Experience.

> **Everything revolves around User Experience.**

## How do you learn it?

Now the big question is, how do we learn Progressive Web Apps?

Here's why I have an extremely important 3-step recommendation:

1. Set your front-end framework of choice aside

2. Learn Progressive Web Apps from scratch

3. Apply what you learned in PWAs to your front-end framework

Front-end frameworks are great, but the web platform has been moving so fast that we as web developers need to keep up with it by understanding how these new Web APIs work.

Having a wrapper on top of these APIs is great for productivity, but terrible for understanding how something works.

This is exactly why I recorded a free video series on YouTube that teaches Progressive Web Apps from scratch. We start from a repository with a simple index.html, app.js & app.css all the way to building a simple PWA.

Watch the [📽 PWA Video series](https://www.youtube.com/watch?v=GSSP5BxBnu0&list=PLIiQ4B5FSuphk6P-zg_E3W9zL3J22U4dT&index=1) for free! | jadjoubran |

47,739 | What are some projects or exercises I can do to practice HTML and CSS? | (I just got notified that I've written this a while back. It's aimed at beginners wanting to practice... | 0 | 2018-09-05T03:23:31 | https://dev.to/aurelkurtula/what-are-some-projects-or-exercises-i-can-do-to-practice-html-and-css-5f6a | htlm, css, design, beginners | ---

title: What are some projects or exercises I can do to practice HTML and CSS?

published: true

description:

tags: htlm, css, design, beginners

---

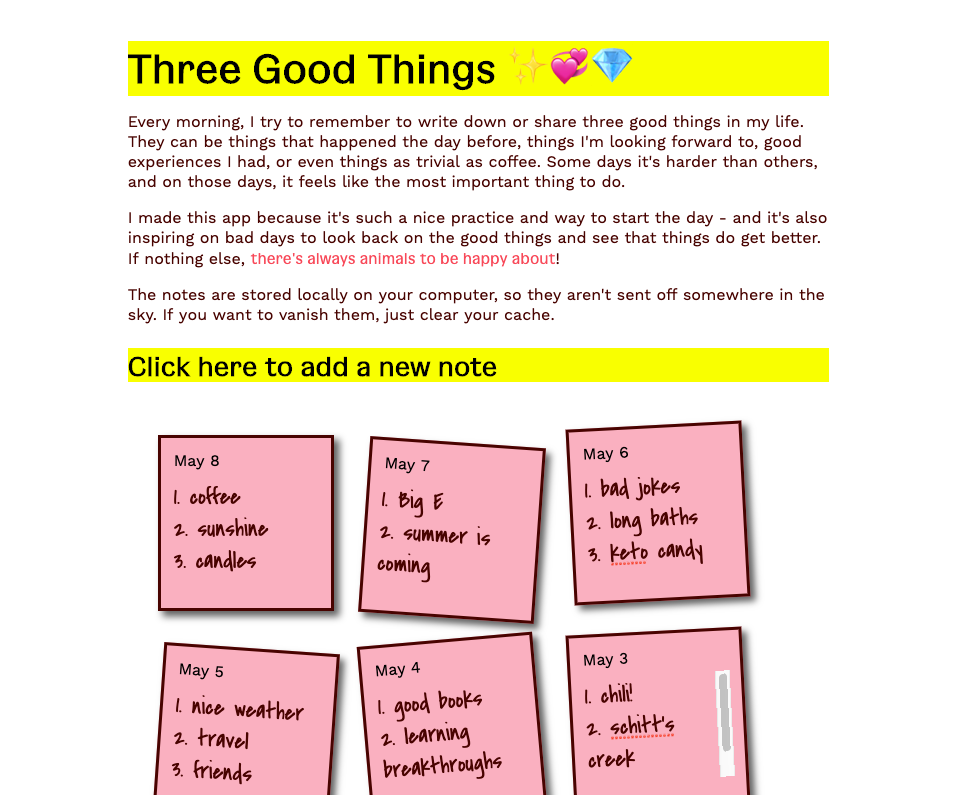

(I just got notified that I've written this a while back. It's aimed at beginners wanting to practice HTML and CSS, I still stand by it)

#### First: Pen and Paper!

Create a site with pen an paper. An idea would be a site that displays your favorite books - with pen and paper draw boxes representing book covers, thick lines where the title goes, thinner lines for the author.

Then start translating that drawing in HTML.

Stop!

You have created a drawing, now you, and you alone, need to choose where to use the HTML tags. What's the best tag for the title what its the best tag for the author, looking at your paper design would the two benefit by being grouped together. Do you group them with a div, a header, a hgroup, or do you use just one element and distinguish the two with a span.

Do you need an id, a class or an id and few classes.

Now into css. Your drawing might be simple and you might get the style perfectly in one go.

Stop!

Why is it that I am using the same styles on different elements, can I make this css less repetitive? I need to add few more classes,

#### Step two

Shrink the size of your browser, now your design is broke. Get back to a fresh pen and paper think about how to re-organize the same elements to fit the space.

Now get back to css. Learn about the best way. If media queries are hard for you. Copy the project, and start from the beginning and design the site for that screen size now. This is not good practice, a site should be responsive, but you are practicing, you tried designing for big screen, now you are trying to design for small screen.

(Of topic: learn the very basics of git, even you, learning the very basics would extremely benefit from the basics of git. In broad strokes, it's like a CCTV for your code. You can go back in time to the moment your code was working.)

#### Step three

By this point you have two different sites, one that looks good in small screen and one that looks good in big screen. Now, Create a third version with media queries!

With your first and second step you are fully aware of the problems you faced when designing for the small and big screens. Now you know how your HTML needs to be structured to accommodate the transition. You know that you might need more classes, maybe one or two more divs here and there.

#### Back to the top

You don't have to use photoshop, you don't have to create a great design. You just have to fill an A4 page with some elements (if a book site: book covers, and book info). For now the CSS would be enough if you only use it to lay those elements in the same way you did in the A4.

Similar with your smaller site: take the A4 cut out a perfect square. Through the square away and design the site (exactly the same one you did in the A4 design) on the remaining slice of the A4 you cut.

Designing these two sites, and then merging them together (the definition of responsive design) is going to be better than any artistic design you could possibly do or clone.

If you need to, write whilst you work. I do this when ever I learn something complicated. I create a markdown file (if you don't know what that is: a text file) and write down what I am doing, what I should think about, I record what I should remember for future projects.

After doing this. You might have noticed that you need more practice in the same process. You might have had an idea for another design, just go for it. Get fresh paper and scribble the hell out of it.

There are screenshots of beautiful websites all over the web. Pinterest has plenty of them. Pick one that you like. Or pick things you like from every design you see and collage your own (still pen and paper can take you a long way). And then start re-creating the new site in html and css.

There is a tool called [Frank DeLoupe](http://www.jumpzero.com/frank/) it is a color picker, you pick any color from any thing and the color get's copied into clipboard - look out for something like that in you operating system. Browsers might have something like that also, but with frank, you can pick colors from anywhere, not just the web

| aurelkurtula |

47,973 | 10 Great Hacks to Boost Your Sales this Holiday Season | 10 best tips o boost your WooCommerce Store sales this holiday season. | 0 | 2018-09-06T06:28:55 | https://dev.to/alexxpaull/10-great-hacks-to-boost-your-sales-this-holiday-season-418c | webdev, productivity, devops | ---

title: 10 Great Hacks to Boost Your Sales this Holiday Season

published: true

description: 10 best tips o boost your WooCommerce Store sales this holiday season.

tags: #webdev #productivity #devops

---

<img src="https://thepracticaldev.s3.amazonaws.com/i/rbwztj8hyyzcs9bqfsbw.png">

If you want to sell your products and build your customers for long-term then you must have to offer them some advantage that makes them buy your product. As a WooCommerce owner you should have to overlook on all of the marketing aspects including your niche, your targeted audience and of course your product that is your asset and after focusing on all of these things you will start building your store for the Holiday Season.

As we know that launching a WooCommerce store and then managing is not an easy task. Mostly owners miss small things while taking the store online that has a big impact on the revenue as well as the performance of the store during the Holiday Season.

Most customers have a certain expectation from an online store. If these expectations no going to meet then your customer will never return and might share a bad review regarding your product and services.

To help you out, here are </b>10 simple Hacks</b> to prepare your online WooCommerce store for the Holiday Season.

<b>1. Choose Your Platform</b>

<img src="http://www.systemseeders.com/wp-content/uploads/2016/10/choose-the-best-ecommerce-platform-shopify-vs-woocommerce-vs-magento.jpg">

There are a lot of options in the market but I suggest you launch an online store on WooCommerce. The community around WooCommerce is awesome and also you will find several extensions that help you to make your WooCommerce store perform well.

<b>2. Choose Managed WooCommerce Hosting</b>

<img src="https://thepracticaldev.s3.amazonaws.com/i/sle26v81uycktw1jtl07.jpg">

Managing your online store is a long-time job and during this phase, you will face a lot of challenges managing your store. Most of the store owners not experience in managing the servers and security-related issues. That is the reason experts recommend <a href="https://www.cloudways.com/en/hosting-woocommerce.php"><b><i>Managed WooCommerce hosting</i></b></a> for launching an online store. All of the issues whether it is related to the server, hosting etc will be managed by the hosting providers. They also ensure you for the regular backups.

<b>3. Fast Speed can Boost Sales</b>

<img src="https://thepracticaldev.s3.amazonaws.com/i/14ghdkh5gxjcbcj89hfn.png">

The speed of your online WooCommerce store is one of the important factors that directly affect your revenue. Slow online stores with a high page load time sour up the user experience even one delay seconds can cause your conversions to drop so optimizing your online stores is necessary to avoid bad user experience.

<b>4. Responsive Design</b>

<img src="https://thepracticaldev.s3.amazonaws.com/i/akrndlwjk1kikpt7rceh.jpg">

Most the sales come from the smartphone in Holiday Season. That is why your store must have a responsive design to ensure that your WooCommerce store is easy to browse.

<b>5. Attractive Design and Layout</b>

<img src="https://thepracticaldev.s3.amazonaws.com/i/t8idz9e72cqh7tozvvz6.jpeg">

Most of the customers bounce back just because of the messy store. Customers want a shop that is attractive and easy to find thing what they are looking for. Investing time and money for a well-designed store pay them back through high conversions.

Recommended Top 2018 Themes

<a href="https://www.cloudways.com/en/astra-theme-partnership.php"><b><i>Astra Theme</i></b></a>

<a href="https://www.cloudways.com/en/oceanwp-theme-partnership.php"><b><i>OceanWP Theme</i></b></a>

<b>6. Create a Page with Gift Ideas</b>

<img src="https://thepracticaldev.s3.amazonaws.com/i/7apn14qsanjx89mnd46x.jpg">

Creating a gift page is one of the best ways to boost your sales because a visitors always looking for some good collection of gifts in Holiday Season for their loved ones. Also, you can offer some deals, offer discounts this Holiday Season to attract the customer towards your store to boost your sales.

<b>7. Offer Free Shipping</b>

<img src="https://thepracticaldev.s3.amazonaws.com/i/7bia2m7ekr75cgtrjzt1.jpeg">

Most of the shoppers irritate to pay the shipping amount for their purchases. If possible give them a free shipping in Holiday Season or offer any discount on a specific amount of purchase.

<b>8. Use Secure Payment Gateways</b>

<img src="https://thepracticaldev.s3.amazonaws.com/i/zaeh6wn1707vbnhwm53b.jpeg">

Customers nowadays more concerned about their payments and the security of their credit cards. They looking for secure payment gateways that ensure the protection of their credit card. On WooCommerce store you can easily set up a secure payment option through Stripe Payment Gateway or any other extension available.

<b>9. Involve Your Customers</b>

<img src="https://thepracticaldev.s3.amazonaws.com/i/kiuxh9nm6mh70jsfdegu.jpg">

Boost your sales using social media launching a small campaign for this Holiday Season. CUstomers use social media as an alternative resource to buy products. This is the best way to engage your customers in the sales promotion process.

<b>10. Simplify Purchase Processes</b>

<img src="https://thepracticaldev.s3.amazonaws.com/i/ba2lxlewzyl5sf6wiyxj.png">

Most of the customers feel annoying while filling in several forms during the checkout process. This Holiday Season, plan to replace your checkout process with a simple one-page checkout.

| alexxpaull |

48,088 | New to Reactjs (I want to REACT to this) | Hello, I am Michael Owolabi from Nigeria and I am new to React js, hope to explore more as I progress... | 0 | 2018-09-06T19:08:52 | https://dev.to/imichaelowolabi/new-to-reactjs-i-want-to-react-to-this-eb3 | react, newbie | ---

title: New to Reactjs (I want to REACT to this)

published: true

description:

tags: reactjs, newbie

---

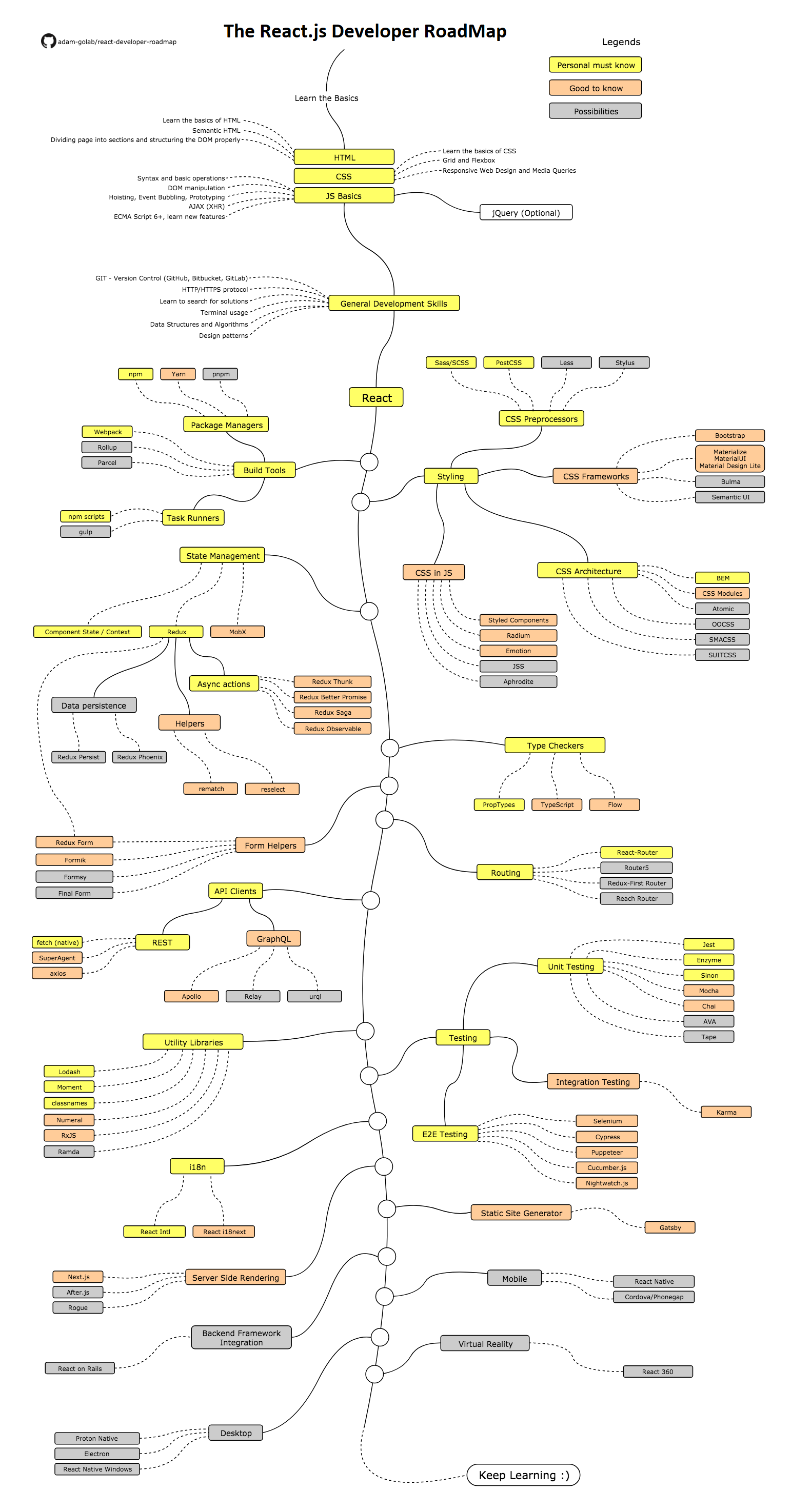

Hello, I am Michael Owolabi from Nigeria and I am new to React js, hope to explore more as I progress in this journey. plz, if you have anything that you think might help me grow as fast and solid as possible kindly get in touch.

Thanks.

| imichaelowolabi |

48,277 | Working Remotely | I really want to start looking for remote web developer positions but I'm not sure where to start. I... | 0 | 2018-09-07T19:46:28 | https://dev.to/nicostar26/working-remotely-1lkj | help, remote | ---

title: Working Remotely

published: true

description:

tags: help, remote

---

I really want to start looking for remote web developer positions but I'm not sure where to start. I know HTML and CSS fairly well and I'm getting there with JavaScript. I feel like at this point I can look up anything that I don't know (which I'm understanding is normal even for the most experienced developers).

My question is, do I need to know more before I start looking for a job? If not, can anyone suggest where I should start looking? I really want to get a good start in this field and I'm really tired of holding down jobs that have nothing to do with where my heart is. Any advice would be appreciated. | nicostar26 |

48,628 | Software that helps | I am wondering who of you write software that „truly“ helps other people. In a way, all software... | 0 | 2018-09-09T21:37:13 | https://dev.to/bertilmuth/software-that-helps-33ge | discuss | ---

title: Software that helps

published: true

tags: discuss

---

I am wondering who of you write software that „truly“ helps other people.

In a way, all software does. But I am thinking e.g. about software that runs a pacemaker, or maybe you’re developing software for a non-profit that deals with social issues.

| bertilmuth |

48,653 | Back to the basics, you're gonna NOT need hypes to drive you crazy | For all of us, want to master the real fundamentals of frontend development, just learn from this is... | 0 | 2018-09-10T04:37:49 | https://dev.to/revskill10/back-to-the-basic-youre-gonna-not-need-hypes-to-drive-you-crazy-145e | css, javascript, html | ---

title: Back to the basics, you're gonna NOT need hypes to drive you crazy

published: true

description:

tags: #css, #javascript, #html

---

For all of us, want to master the real fundamentals of frontend development, just learn from [this](https://www.w3schools.com/howto/default.asp) is more than enough.

Stop chasing for hypes, courses, frameworks, library, articles, blogs, twitters, or ebooks, focus on it and you'll get very far. Seriously.

| revskill10 |

48,941 | I have been banned from Lobste.rs, ask me anything. | Lobste.rs is a great community, but apparently it's a bit vulnerable to misuse Statistics. | 0 | 2018-09-11T19:37:00 | https://dev.to/shamar/i-have-been-banned-from-lobsters-ask-me-anything-5041 | ama, security, opensource, javascript | ---

title: I have been banned from Lobste.rs, ask me anything.

published: true

description: Lobste.rs is a great community, but apparently it's a bit vulnerable to misuse Statistics.

tags: ama, security, opensource, javascript

---

Let me start by saying that [Lobste.rs](https://lobste.rs) is a **great community** that I enjoined for more than an year. Several very smart guys hungs there, and I got great conversations with them about operating system design, programming languages, artificial intelligence and machine learning, security, privacy and so on.

I also tried to be a constructive member of such community, [posting there interesting documents](https://lobste.rs/newest/Shamar) I came across.

**NOTE** In the url above the two submission marked as "**[Story removed by original submitter]**" have been removed by the administrator after my ban.

I didn't remove them. **I have nothing to hide.**

One was [my recent article documenting an exploit](https://dev.to/shamar/the-meltdown-of-the-web-4p1m) that let any website you visit to tunnel into your private network (bypassing many corporate firewalls and proxies).

The other was [the related bug report that I wrote to Mozilla](https://bugzilla.mozilla.org/show_bug.cgi?id=1487081) (than reported [to Chromium too](https://shamar.github.io/documents/Mozilla-Bug1487081-Attachments/ChromiumBug879381.html)) before disclosing such Proof-of-concept exploit.

Something went wrong after these submissions, because despite the fact Lobste.rs was suggested by a [Mozilla Security developer](https://bugzilla.mozilla.org/show_bug.cgi?id=1487081#c3) as a place to continue the discussion about the HTTP/JavaScript vulnerability I reported, nobody answered to my question "[are Firefox users vulnerable to this wide class of attacks?](https://shamar.github.io/documents/Mozilla-Bug1487081-Attachments/Undetectable_Remote_Arbitrary_Code_Execution_Attacks_through_JavaScript_and_HTTP_headers_trickery__Lobsters.html#c_i5j37u)".

Yet I got downvoted so much that an administrator (after writing me on August 30 for the first time) decided that I do not suit to the community's culture.

The [official reason of the ban](https://lobste.rs/u/Shamar) was: "Constant antagonstic behavior and no hope for improvement".

Now let's be clear, I'm fine with [Peter](https://lobste.rs/u/pushcx)'s decision, even if I don't agree with it. **Your server, your rules**.

But I think that my ban is a very nice example of **Statistics misuse**.

Indeed, since the first private message I got from Peter, he asked me to explain why I was downvoted 18 (and later 22) standard deviations more than the average.

Note, I was also upvoted enough to get a positive ranking on most of my comments and posts, but he was **just** looking to the downvotes, in isolation.

As one who knows [how to lie with statistics](https://www.horace.org/blog/wp-content/uploads/2012/05/How-to-Lie-With-Statistics-1954-Huff.pdf) this was a bit of a smell, but since my private explanations were not enough [I carefully explained](https://lobste.rs/s/pnfmzr/google_certbot_letsencrypt#c_o2zvx2) how most of those downvotes did not complied with the Lobste.rs own guideline about downvotes (sorry, due to the downvotes, you have to expand [this comment](https://lobste.rs/s/pnfmzr/google_certbot_letsencrypt#c_s8oksi) to see the [explaination](https://lobste.rs/s/pnfmzr/google_certbot_letsencrypt#c_o2zvx2)).

To get a clue about my bad behavior [you can give a look to my recent comments on Lobste.rs](https://lobste.rs/threads/Shamar) (some of the comments have been censored, but Peter has kindly sent me a CSV containing a full export from the DB).

Here some examples of the missing contents (beware, 18+ only! :-D):

> > I feel very uneasy about the safe browsing thing.

>

> For most people (those using WHATWG browsers like [Firefox](https://bugzilla.mozilla.org/show_bug.cgi?id=1487081), [Chromium](https://shamar.github.io/documents/Mozilla-Bug1487081-Attachments/ChromiumBug879381.html), IE/Edge and derived such as [Tor Browser](https://www.torproject.org/docs/faq.html.en#TBBJavaScriptEnabled), Safari or Google Chrome) [there is not such a thing like "safe browsing"](https://dev.to/shamar/the-meltdown-of-the-web-4p1m).

>

> I mean: if any website you visit can enter your private network or check in your cache if you visited a certain page... or upload illegal contents into your hard disk... calling it safe is rather misleading!

>

> HTTPS protects users by certain threats, by reducing the number of potential attackers to CA and those who have access to certificates (which is a varying and large number of people anyway, if you consider CDN or custom CA you might have to install on your work pc).

>

> As for this being anticompetitive... maybe.

>

> But some of the issues here are rooted in Copyright protection, so... it might just be one of the many problems of a legal system designed before information technology.

[`see in context here`](https://lobste.rs/threads/Shamar#c_s8oksi)

> NOTE: **every browser** executing JavaScript and honouring HTTP cache controls headers is equally vulnerable.

[`see in context here`](https://lobste.rs/threads/Shamar#c_qtjegw)

> I'm seriously concerned by this **attitude** among IT people.

> My question is simple and have a boolean answer.

>

> Are the **attacks** described in the bug report possible, or not?

[`see in context here`](https://lobste.rs/threads/Shamar#c_22ksxd)

> > Okay, I’ll bite.

>

> +1! I'm Italian! I'm very tasty! ;-)

>

> > > > Bugzilla is not a discussion forum.

> > >

> > > Indeed this is a bug report.

> >

> > Ah, here’s where we disagree. I understand that a bug is an ambiguous concept. This is why we have our Bugzilla etiquette, which also contains a link to Mozilla’s bug writing guidelines.

>

> I'm pretty serious with netiquette, and I checked your before writing the report.

>

> I'm **very sorry** if I violated one of your etiquette rule, but honestly [I cannot see which one](https://bugzilla.mozilla.org/page.cgi?id=etiquette.html).

>

> Even about [Bug writing](https://developer.mozilla.org/en-US/docs/Mozilla/QA/Bug_writing_guidelines) I tried my best, what exactly I got wrong?

>

> Note that this is not a single RCE, but a whole category of them.

>

> And the problem are not just the JavaScript attacks themselves, but the fact that **they can remove all evidences**.

> > > > Furthermore, what you seek to discuss is not specific to Mozilla or Firefox.

> > >

> > > True. Several other browsers are affected too, but:

> > >

> > > - This doesn’t means that it’s not a bug in Firefox

> > > - As a browser “built for people, not for profit” I think you are more interested about the topic.

> >

> > Please elaborate, I am not sure what you mean to imply.

>

> As a Firefox user (and "evangelist") from version 0.8 I know Mozilla as a brand that cares about people.

>

> Even the word you used, "people" instead of "users", has always been inspirational to me.

>

> Now, the issue here is specifically dangerous because not all **people** live under the same law.

>

> Thus I think (and hope) that **Mozilla is more interested to the safety of such people** than other browser vendors that are led by profit.

>

> > I agree with what @callahad@wandering.shop says right away: If you browse to a website. It gives you JavaScript. The browser executes it. That’s by design! Nowadays, the web is specified by W3C and WHATWG as an application platform. You have to accept that the web is not about hyper*text* anymore.

>

> I worked (and still work) on such application platform for 20 years, I think I have understood that pretty well.

>

> The point is if such application platform is **broken at design level** or not.

>

> > > > This is not a bug in Firefox.

> > >

> > > Are you saying that these attacks are not possible?

> >

> > I am saying that this is not specific to Firefox, but inherent to the browser as a concept.

>

> Sorry if I ask it again, but I'm pretty dumb.

>

> Are the attacks described in the [bug report](https://bugzilla.mozilla.org/show_bug.cgi?id=1487081) possible in Firefox, or not?

[`see in context here`](https://lobste.rs/threads/Shamar#c_hd61dm)

This is a just a sampling but if you find other censored contents that you are curious about feel free to ask.

----

Now, I still think that Lobste.rs is a great technical community and you should really join them. And even Peter is a good administrator: he just did an error.

But I'm a Data Science hobbyist myself, so feel free to ask me how an actual troll could fool such metric by downvoting others. Or why if you do not care about Internet points (and do not try to maximize them), you will obviously loose a lot of them.

Or well... ask me anything else! :-D

I'm not from Mozilla Security.

I will answer. I'm a hacker.

<img src="https://files.mastodon.social/media_attachments/files/006/053/256/original/0e2a898b01052765.jpeg"/> | shamar |

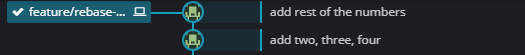

49,644 | One Command to Change the Last Git Commit Message | Ever wanted to amend the git commit message that you just committed and pushed it to remote as well. Well, I wrote one single bash function to deal with it. | 0 | 2018-09-14T10:35:53 | https://dev.to/ahmadawais/one-command-to-change-the-last-git-commit-message--42hb | git, bash, zsh | ---

title: One Command to Change the Last Git Commit Message

published: true

description: Ever wanted to amend the git commit message that you just committed and pushed it to remote as well. Well, I wrote one single bash function to deal with it.

tags: git, bash, zsh

---

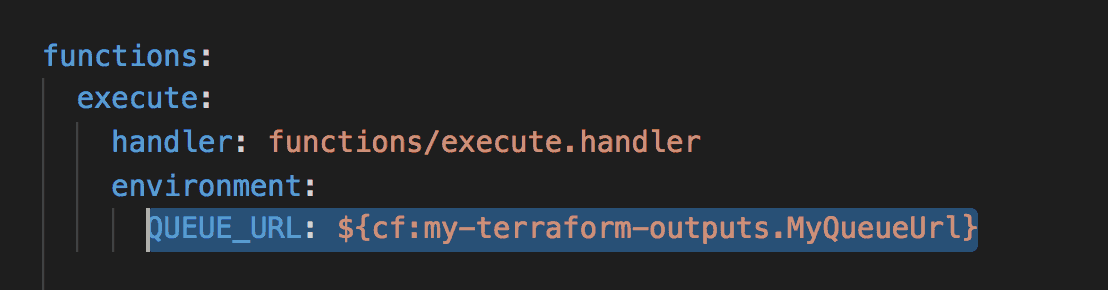

🔥 Hot-tip: Do you mess up git commit messages quite often? I DO!

Most of the time I have to amend the last git commit message. So, I made a small bash function out of it.

```sh

# Amend the last commit message.

# Push the changes to remote by force.

# USAGE: gamend "Your New Commit Msg"

function gamend() {

git commit --amend -m "$@"

git push --force-with-lease

}

```

> ⚠️ Avoid `--force` unless it is absolutely necessary and you can be sure that nobody else is syncing your project at the same time.

> ℹ️ Why git push --force-with-lease! If someone else pushed changes to the same branch, you probably want to avoid destroying those changes. The --force-with-lease option is the safest, because it will abort if there are any upstream changes.

✅ Amend git commit in one go

🤖 Put it in .bashrc/.zshrc etc files

👌 Sharing this quick-tip is a fun thing to do

{%tweet 1040539814536392709 %} | ahmadawais |

49,664 | Contributing to an open source project for the first time! | Things I realized when I started contributing | 0 | 2018-09-14T12:46:23 | https://dev.to/nina_rallies/contributing-to-an-open-source-project-for-the-first-time--34me | googletest, cpp, algos | ---

title: Contributing to an open source project for the first time!

published: true

description: Things I realized when I started contributing

tags: #GoogleTest #CPP #Algos

---

Alright, so here is the truth. I have been testing everyone's code, except my own! To be more precise, I've been involved in customer acceptance testing more than integration testing and NEVER in unit testing.

I started working early, so I practically never had much free time and never contributed to any open source projects. When I moved to Germany, I was pretty sure I could finally make time to contribute, thinking I was the one "giving".

The task I'm at is pretty easy, "write down the shunting yard algorithm and test it". Did the first part in a few minutes, and have been stuck for two to three days since! I mean... Testing was my job, it was what I did for 6 years, wasn't it!? I should've been way better at it!

I've been reading on and clicking on every single documentation page of GoogleTest to figure out where it goes wrong.

Apart from the frustration I feel, there's something else I feel as well, satisfaction. I am finally learning to "learn" and understanding that contribution isn't about me "giving" but also about me becoming aware of what I do not know.

P.S. probably very bad timing to get stuck on googleTest since I also have a couple of exams in October :-D

| nina_rallies |

49,918 | Simple snippet to make Node's built in modules globally accessible | This will create a global.fs, global.childProcess, global.http and so on. | 0 | 2018-09-16T13:18:42 | https://dev.to/jochemstoel/simple-snippet-to-make-nodes-built-in-modules-globally-accessible-23ep | javascript, node, snippet | ---

title: Simple snippet to make Node's built in modules globally accessible

published: true

description: This will create a global.fs, global.childProcess, global.http and so on.

tags: javascript, node, snippet

---

I am very lazy and don't want to type the same _fs = require('fs')_ in every little thing I'm doing and every temporary file that is just a means to an end and will never be used in production.

I decided to share this little snippet that iterates Node's internal (built in) modules and globalizes only the _valid_ ones. The invalid ones are those you can't or shouldn't require directly such as internals and 'sub modules' (containing a '/'). Simply include _globals.js_ or copy paste from below.

The [camelcase](https://github.com/sindresorhus/camelcase/blob/master/index.js) function is only there to convert *child_process* into *childProcess*. If you prefer to have no NPM dependencies then just copy paste the function from GitHub or _leave it out entirely_ because camelcasing is only cute and not necessary.

#### globals.js

```js

/* https://github.com/sindresorhus/camelcase/blob/master/index.js */

const camelCase = require('camelcase')

Object.keys(process.binding('natives')).filter(el => !/^_|^internal/.test(el) && [

'freelist',

'sys',

'worker_threads',

'config'

].indexOf(el) === -1 && el.indexOf('/') == -1).forEach(el => {

global[camelCase(el)] = require(el) // global.childProcess = require('child_process')

})

```

Just require that somewhere and all built in modules are global.

```js

require('./globals')

fs.writeFileSync('dir.txt', childProcess.execSync('dir'))

```

These are the modules exposed to the global scope (Node v10.10.0)

```text

asyncHooks

assert

buffer

childProcess

console

constants

crypto

cluster

dgram

dns

domain

events

fs

http

http2

https

inspector

module

net

os

path

perfHooks

process

punycode

querystring

readline

repl

stream

stringDecoder

timers

tls

traceEvents

tty

url

util

v8

vm

zlib

```

> Um. I suggest we start using the [#snippet](https://dev.to/t/snippet) tag to share snippets with each other. =) | jochemstoel |

50,037 | Testing your CosmosDB C# code and automating it with AppVeyor | In this blog I will talk about the challenges we face when we are writing unit tests for CosmosDB and how to automate them with AppVeyor. | 0 | 2018-09-17T13:08:29 | https://dev.to/elfocrash/testing-your-cosmosdb-c-code-and-automating-it-with-appveyor-1001 | cosmosdb, azure, appveyor, nosql | ---

title: Testing your CosmosDB C# code and automating it with AppVeyor

published: true

description: In this blog I will talk about the challenges we face when we are writing unit tests for CosmosDB and how to automate them with AppVeyor.

tags: cosmosdb, azure, appveyor, nosql

---

#### Introduction

Don't we all love well tested code? I know I do.

It's just an awesome feeling knowing that if I change something over here, the thing over there didn't break.

However, when your code depends on a third party library, then you have to hope that the code in that library is easy to work with and easy to test.

If this isn't the first blog of mine that you read then you probably know that I'm working on an open-source ORM for CosmosDB called [Cosmonaut](https://github.com/Elfocrash/Cosmonaut). When I'm using a third party library and its code happens to be opensource then the first thing I do is to go to wherever it's code is hosted and check if there are tests covering it. Nobody wants to use untested code, so I knew when I started that I should cover as many scenarios and cases as possible.

#### Unit testing

The CosmosDB SDK is not as friendly as it could be when it comes to unit testing (and the CosmosDB SDK team knows it, so it will get better).

Normally, the way you would unit test such code would be to mock the calls that would go over the wire and just return the dataset that you want this scenario to return.

However here is a list of things that make it really hard for you to test your code.

##### Problem 1

The `ResourceResponse` class has the constructor that contains the `DocumentServiceResponse` parameter marked as `internal`. This is bad because even though you can create a `ResourceReponse` object from your DTO class you cannot set things like RUs consumed, response code and pretty much anything else because they are all coming from the `ResourceResponseBase` which also has the `DocumentServiceResponse` marked as internal.

##### Solution

To solve this problem you have to somehow set the response headers for the `ResourceResponse`. As you might have guessed already, then only way you can do that is via reflection. Here is an extension method that can convert your generic type object to a `ResourceResponse` and allows you to set the `responseHeaders`.

{% gist https://gist.github.com/Elfocrash/ae6ef0b472ae170a70be4cb183706a34 %}

*This extension method is also part of Cosmonaut*

##### Problem 2

The SDK is using IQueryable in order to build a fluent LINQ query and use it to query your CosmosDB. This LINQ query will be then converted into SQL via an internal LINQ2CosmosDBSQL provider that the SDK comes with. The problem with that is that if you want to mock your `IDocumentClient`'s `CreateDocumentQuery` to return a specific data set based on a LINQ expression then you're in for a ride.

##### Solution

Lets take a look at the code.

{% gist https://gist.github.com/Elfocrash/163d3210204fd661ddf9bff14607a812 %}

As you can see, it needs quite the setup. That's because the `IQueryable` needs to be setup from the ground up or else it won't be properly translated.

##### Problem 3

It's basically the same as Problem 2 but for SQL, which makes it slightly easier because there is no IQueryable to setup.

##### Solution

Almost everything in the code from "Problem 2" is the same. The only differences are between the lines 24-47. You need to replace those with the code below.

{% gist https://gist.github.com/Elfocrash/c9b77c5f41cc0d080acc900939abb840 %}

These 3 are the most common unit testing problems you will face with the CosmosDB SDK. Thankfully the other operations are easier to test, especially if you make use of the `ToResourceResponse` extension method.

**UPDATE**: As I was writing this Microsoft released the 2.0.0 version of the CosmosDB SDK packages and in them they changed the constructors of come classes.

If you are using a post 2.0.0 version of the SDK, here are the extension methods you need.

{% gist https://gist.github.com/Elfocrash/276b6518633338091dc8ec2daaec69b2 %}

#### Integration testing

Unit testing is awesome, but you also want to test your system against a real database. These tests should have a wider scope than the unit-sized context of your unit tests. I won't go in depth on this one because everyone's idea of integration testing seems to be different but what you need to remember is that we are trying to test again the real CosmosDB database.

As you probably know, CosmosDB comes with it's own local emulator. That's great because we can use it to run our integration/system tests against. What's also great is that we can use the same emulator as part of our CI pipeline.

#### Automating unit and integration testing

I personally use [AppVeyor](https://www.appveyor.com/) because it is incredibly easy to setup and get started, it supports loads of stuff such as Azure services and Nuget building out of the box and it is also free for open source projects.

AppVeyor is as simple to setup as linking your Github account and selecting the repository you want it to run against. Once you set that up it will trigger a build every time you commit code. There are tons of stuff to configure if you want something more specific but in this blog I will just explain how you can setup the CosmosDB emulator in AppVeyor.

AppVeyor will look for an `appveyor.yml` file in your repository. If it's not there it will use the settings that can be found in the "Configuration" section. Our `appveyor.yml` will be pretty simple. We just want to `dotnet build` and `dotnet test` our code. Before that however we need to install the CosmosDB Emulator on the AppVeyor VM. In order to do that we will need the following powershell script:

{% gist https://gist.github.com/Elfocrash/9eef5ee99052c5fd0da9f4272b618cb0 %}

Now the CosmosDB Emulator is installed and you can enjoy testing against it using the default CosmosDB Emulator url `https://localhost:8081` and the well known key `C2y6yDjf5/R+ob0N8A7Cgv30VRDJIWEHLM+4QDU5DE2nQ9nDuVTqobD4b8mGGyPMbIZnqyMsEcaGQy67XIw/Jw==`.

Here is the final appveyor.yml

{% gist https://gist.github.com/Elfocrash/dd0d8ce654309ac7b51b81abbc794866 %}

Once you set that up, a build will be kicked off every time you commit code in the repository and both unit and integration tests will be run against the local CosmosDB emulator instance.

Happy testing! | elfocrash |

50,410 | Clean iOS Architecture pt.7: VIP (Clean Swift) – Design Pattern or Architecture? | Watch on YouTube Today we're going to analyze the VIP (Clean Swift) Architecture. And, as we did... | 0 | 2018-09-26T14:47:54 | https://www.essentialdeveloper.com/articles/clean-ios-architecture-part-7-vip-clean-swift-design-pattern-or-architecture | ---

title: Clean iOS Architecture pt.7: VIP (Clean Swift) – Design Pattern or Architecture?

published: true

tags:

canonical_url: https://www.essentialdeveloper.com/articles/clean-ios-architecture-part-7-vip-clean-swift-design-pattern-or-architecture

cover_image: http://static1.squarespace.com/static/5891c5b8d1758ec68ef5dbc2/58921b5a6b8f5bd75e20b0f4/5b97ab984fa51a9057c24d8f/1536667692697/thumbnail.png?format=1000w

---

{% youtube AnUcZUMGVBI %} [Watch on YouTube](https://www.youtube.com/watch?v=AnUcZUMGVBI?list=PLyjgjmI1UzlSWtjAMPOt03L7InkCRlGzb)

Today we're going to analyze the VIP (Clean Swift) Architecture. And, as we did in previous videos with [VIPER](https://dev.to/essentialdeveloper/clean-ios-architecture-pt6-viper--design-pattern-or-architecture-3f1f-temp-slug-2599990), [MVC, MVVM, and MVP](https://dev.to/essentialdeveloper/clean-ios-architecture-pt5-mvc-mvvm-and-mvp-ui-design-patterns-5ha5-temp-slug-7121127), we will decide if we can call VIP a Software Architecture or a Design Pattern.

The Clean Swift Architecture or, as also called, "VIP" was introduced to the world by [clean-swift.com](https://clean-swift.com) and, just like VIPER and other patterns, the main goals for the architecture were Testability and to fix the Massive View Controller problem.

The name VIP can be confused with VIPER, and interestingly enough the creators of VIPER [almost called it VIP](https://mutualmobile.com/resources/meet-viper-fast-agile-non-lethal-ios-architecture-framework) but decided to drop the name because it could stand for “Very Important Architecture,” which VIPER creators thought it was derogatory.

VIP is very similar to VIPER as both originated from [Uncle Bob's Clean Architecture](https://8thlight.com/blog/uncle-bob/2012/08/13/the-clean-architecture.html) ideas.

So can we consider VIP a Software Architecture or just a Design Pattern?

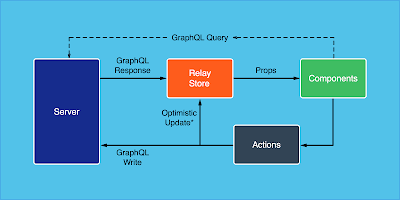

The VIP diagram describes its main structure, which explains its acronym definition: the ViewController/Interactor/Presenter relationship, or as they call it: The VIP cycle.

The unidirectional VIP cycle.

The VIP cycle differs from the VIPER relationship model described in our previous video. In VIPER, the communication between Interactor and Presenter, and View and Presenter is bidirectional.

The bidirectional VIPER cycle.

Instead, VIP follows a unidirectional approach, where the ViewController talks to the Interactor, the Interactor runs business logic with its collaborators and passes the output to the Presenter, and the Presenter formats the Interactor output and gives the response (or view model) to the ViewController, so it can render its Views.

Does this unidirectional communication model define an architecture? Also, are those 3 components (the ViewController, Interactor, and Presenter) enough to describe the Application Architecture?

It may seem like an architecture, but the VIP cycle is such a limited outlook of the application that we consider it a Design Pattern. And this design pattern has a name already: MVP. Regardless of what we call it, the MVP design pattern is not a software architecture.

However, VIP or "Clean Swift" has more components than just ViewControllers, Interactors, and Presenters, for example, Data Models, Routers and Workers.

Like VIPER, the Clean Swift author describes that VIP can have less or even more layers of separation, as needed. But the core must follow MVP or the VIP cycle! So the VIP cycle sounds more like an organizational design pattern that can solve the Massive View Controller problem and make your code more testable, but it doesn't describe the big picture or the “Software Architecture.”

VIP or Clean Swift, just like VIPER and other patterns, is trying to solve class dependency issues like the Massive View Controller and testability, rather than module dependency issues, like modularity. With the described Clean Swift components, we may end up with testable code but Spaghetti Architecture.

VIP also encourages the use of templates to "facilitate" its implementation. It can be very convenient to have templates, but, at the same time, it may create a limited framework to think. We don't believe there's a design pattern or "template" that can solve all the problems and can be infinitely extended. We believe that, instead of trying to fit every problem into a template, every software architecture must be carefully crafted to solve the system challenges. For example, some systems would benefit more from an Event-Driven (Producer/Consumer) streaming model where other systems would not.

Let's have a look at the [Clean Swift sample project (CleanStore)](https://github.com/Clean-Swift/CleanStore) dependencies diagram.

[CleanStore sample project](https://github.com/Clean-Swift/CleanStore) class and module dependencies diagram.

As you can see, there are arrows everywhere, crossing module boundaries, so changes to the software can break multiple modules (and of course, numerous tests...).

In the Clean Swift sample app, the application is separated in scenes (or modules). There’s a List Orders Scene, Create Order Scene and Show Order Scene. A higher level look at the modules dependencies shows that scenes are highly coupled with each other and with other system’s components.

High-level modules dependencies diagram shows a highly coupled architecture.

Another way to look at the application architecture is to examine its modules in a circular form so we can see the dependencies between the modules. The closer to the center, the more abstract and independent the module is.

High-level modules dependencies diagram shows a highly coupled, monolithic architecture.

Different than Uncle Bob’s Clean Architecture, we can see services, frameworks, and drivers in the core rings. Also, inside each scene, some Interactors might contain business logic, and they depend on frameworks and other services. Throughout the codebase, we can even notice that core models are used in all levels of abstraction (UI/Presentation/Business Logic/Routing/Services/Workers) which is a no-no in Uncle Bob’s Clean Architecture.

The VIP sample app architecture is a highly coupled and monolithic architecture. If we want to create a somewhat testable monolith, it might work well. However, we might quickly find out that the lack of modularity prevents us from scaling the team, moving fast and prevents us from achieving key business metrics. For example:

- Deployment Frequency

- Estimation Accuracy

- Lead Time for Changes

- Mean Time to Recover

As explained in the previous video, we believe that software architecture is less about types, classes, and even responsibilities and more about how the components communicate to each other, how they depend on each other, and what is the shared understanding of the senior developers regarding: What are the important parts? What is coupled? What is decoupled? What is hard to change, What is easy to change? How is the data flowing between layers and why – is the data going in one direction? Two directions? Multiple directions? Can we support the business short and long-term goals?

We would like to reinforce that our codebases are like living organisms and they're changing all the time. So does the architecture. It's continually evolving, and there are no templates for that.

At Essential Developer, we do believe software architecture has a strong correlation with Product Success, Product Longevity, and Product Sustainability. We advise professional developers to learn from Uncle Bob's Clean Architecture, VIP, VIPER, MVC, MVVM, MVP, and other patterns, but not try to copy and paste solutions. Remember: Every challenge is different, and there are no silver bullets.

VIP, VIPER, MVC, MVVM, MVP, as design patterns, can guide you towards more structured components. However, they don't define the big picture or the Software Architecture. So, use them with care!

For more, visit [the Clean iOS Architecture Playlist](https://www.youtube.com/watch?v=PnqJiJVc0P8&list=PLyjgjmI1UzlSWtjAMPOt03L7InkCRlGzb).

[Subscribe now to our Youtube channel](https://www.youtube.com/essentialdeveloper?sub_confirmation=1) and catch **free new episodes every week**.

* * *

Originally published at [www.essentialdeveloper.com](https://www.essentialdeveloper.com/articles/the-importance-of-discipline-for-ios-programmers).

We’ve been helping dedicated developers to get from low paying jobs to **high tier roles – sometimes in a matter of weeks!** To do so, we continuously run and share free market researches on how to improve your skills with **Empathy, Integrity, and Economics** in mind. If you want to step up in your career, [access now our latest research for free](https://www.essentialdeveloper.com/courses/career-and-market-strategy-for-professional-ios-developers).

## Let’s connect

If you enjoyed this article, visit us at [https://essentialdeveloper.com](https://essentialdeveloper.com) and get more in-depth tailored content like this.

Follow us on: [YouTube](https://youtube.com/essentialdeveloper) • [Twitter](https://twitter.com/essentialdevcom) • [Facebook](https://facebook.com/essentialdeveloper) • [GitHub](https://github.com/essentialdevelopercom) | caiozullo | |

50,744 | Shell Aliases For Easy Directory Navigation #OneDevMinute | Shell Aliases For Easy Directory Navigation #OneDevMinute | 0 | 2018-09-21T16:24:48 | https://dev.to/ahmadawais/shell-aliases-for-easy-directory-navigation-onedevminute-30nm | onedevminute, devtips, shell, zsh | ---

title: Shell Aliases For Easy Directory Navigation #OneDevMinute

published: true

description: Shell Aliases For Easy Directory Navigation #OneDevMinute

tags: OneDevMinute, DevTips, Shell, Zsh

---

> 🔥 #OneDevMinute is my new series on development tips in one minute. Wish me luck to keep it consistent on a daily basis. Your support means a lot to me. Feedback on the video is welcomed as well.

Ever had that typo where you wrote `cd..` instead of `cd ..` — well this tip not only addresses that typo but also adds a couple other aliases to help you easily navigate through your systems directories. More in the video…

[](https://www.youtube.com/watch?v=x-on-xD3OdE&feature=youtu.be)

```sh

################################################

# 🔥 #OneDevMinute

#

# Daily one minute developer tips.

# Ahmad Awais (https://twitter.com/MrAhmadAwais)

################################################

# Easier directory navigation.

alias ~="cd ~"

alias .="cd .."

alias ..="cd ../.."

alias ...="cd ../../.."

alias ....="cd ../../../.."

alias cd..="cd .." # Typo addressed.

```

---

> Copy paste these aliases at the end of your `.zshrc`/`.bashrc` files and then reload/restart your shell/terminal application.

{%tweet 1043164426860408832 %}

> If you'd like to [📺 watch in 1080p that's on Youtube →](https://www.youtube.com/watch?v=x-on-xD3OdE)

> This is a new project so bear with me. Peace! ✌️

| ahmadawais |

50,891 | How to conduct project post-mortem? | What are your tips or strategies in conducting a post-mortem? Can it be conducted offline? When is th... | 0 | 2018-09-22T14:56:14 | https://dev.to/sforce/how-to-conduct-project-post-mortem--2ep4 | discuss | ---

title: How to conduct project post-mortem?

published: true

description:

tags: #Discuss

---

What are your tips or strategies in conducting a post-mortem?

Can it be conducted offline?

When is the best time to do it?

Any memorable/funny/insightful post-mortem experience? | sforce |

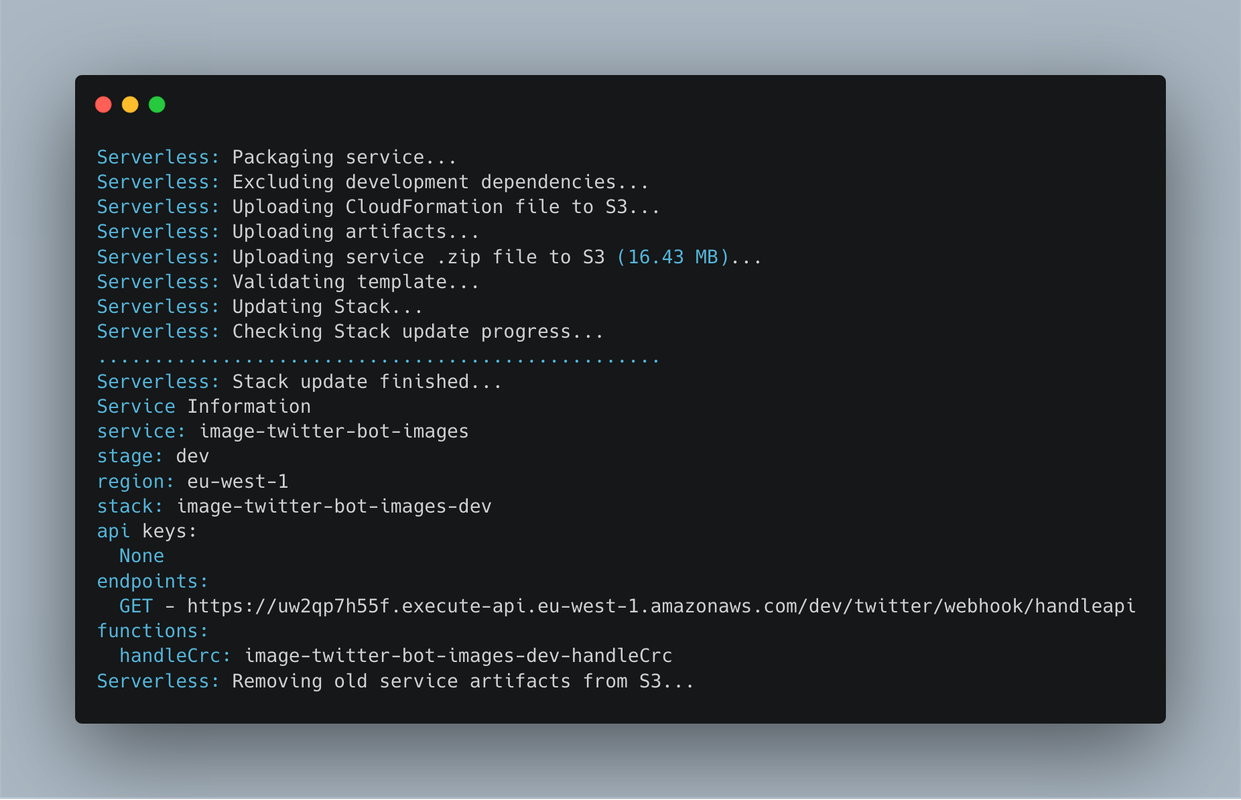

50,996 | Build Chatbot for Twitter Direct Message | Sample scripts to get started with Twitter's premium Account Activity API (Direct Messages Activities). Written in Node.js. Full documentation for this API can be found on developer.twitter.com | 0 | 2018-09-23T06:18:42 | https://dev.to/sandeshsuvarna/build-chatbot-for-twitter-direct-message-32fn | twitterchatbot, node, twitterapi, directmessage | ---

title: Build Chatbot for Twitter Direct Message

published: true

description: Sample scripts to get started with Twitter's premium Account Activity API (Direct Messages Activities). Written in Node.js. Full documentation for this API can be found on developer.twitter.com

tags: Twitter Chatbot, node.js, twitter api, direct message

cover_image: https://thepracticaldev.s3.amazonaws.com/i/jo95kfgwwoymilunmypw.jpg

---

#Step 1: Get Developer account

https://developer.twitter.com/en/apply-for-access

Note: Review & approval usually takes 10–15 days.

#Step 2: Create Twitter App & Dev Environment

https://developer.twitter.com/en/account/get-started

#Step 3: Generate app access token for the direct message using twitter developer portal

Note: Change the app permissions to "Read, write, and direct messages" & generate the access token.

#Step 4: Create the Node module & run it.

{% codepen https://codepen.io/sandeshsuvarna/pen/gdyXWg %}

Run command: node app.js

#Step 5: Tunnel to your localhost webhook using Ngrok

run the following command on the same directory using terminal/command prompt: ngrok http 1337

Copy the "https" url. (It will be something like https://XXXXXX.ngrok.io)

#Step 6: Download account activity dashboard

Git clone https://github.com/twitterdev/account-activity-dashboard.git

run the module using "npm start" using the terminal/command prompt

#Step 7: Attach Webhook

open "localhost:5000" on the browser.

Click on "Manage Webhook"

Paste the "ngrok url" into "Create or Update Webhook" field & click submit

#Step 8: Add a user/page subscription

Open terminal/Command prompt

Goto "account activity dashboard" folder

execute "node example_scripts/subscription_management/add-subscription-app-owner.js -e <TWITTER_DEV_ENV_NAME>"

note: Add user subscription for the user that owns the app.

##Goto Twitter DM & start talking to your bot

###Thanks for reading! :) If you enjoyed this article, hit that heart button below ❤ Would mean a lot to me and it helps other people see the story. | sandeshsuvarna |