id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

110,846 | Is Web-development the Best Career Choice for 2019? | Before becoming a web developer, it is extremely important to know which type of web developer you would want to become. Read here how and why is web development the best career choice for 2019. | 0 | 2019-05-21T06:16:56 | https://dev.to/sadiakhan3/is-web-development-the-best-career-choice-for-2019-3dm2 | webdev, career | ---

title: Is Web-development the Best Career Choice for 2019?

published: true

description: Before becoming a web developer, it is extremely important to know which type of web developer you would want to become. Read here how and why is web development the best career choice for 2019.

tags: #webdev #career

---

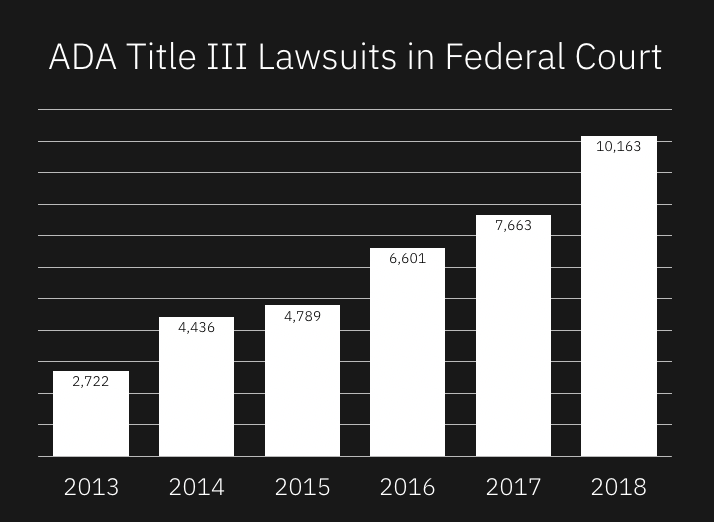

Whether you are an experienced web developer or wish to become one, it is important to understand that the IT industry is getting more and more competitive due to continuous advancements and developments. With a rapidly increasing number of IT professionals entering into the field, many young aspirants face the dreaded question: is becoming a web developer a sound career choice or not? The process of selection for a programmer job has itself become a mammoth task, specifically after the drastic increase in the tasks involved in coding interviews.

Before becoming a web developer, it is extremely important to make an informed decision about which type of web developer you would want to become. You can either become a full stack web developer, a front end or a back end developer.

<h1>Major Responsibilities Of Web-Developers</h1>

Usually, full stack developers are those experienced professionals who possess all the necessary skills and expertise that are required to independently operate a whole website. Full stack developers are those experts who have the capabilities to work with both front and back end development. Therefore, in order to become a skilled full stack developer, it is extremely important that you learn the basics of both, front end and back end operations, to get your skills validated.

Whereas, front-end web developers are those professionals who work on the overall appearance and visual aesthetics of a site, which is usually the end product. Basically, front-end web-developers deal with the operations concerned more directly with the final product which clients get to view on their screens and engage with.

Lastly, back-end developers are responsible for managing the data servers for a website.

<h1>Prerequisite Skills For Each Type Of Web-Developer</h1>

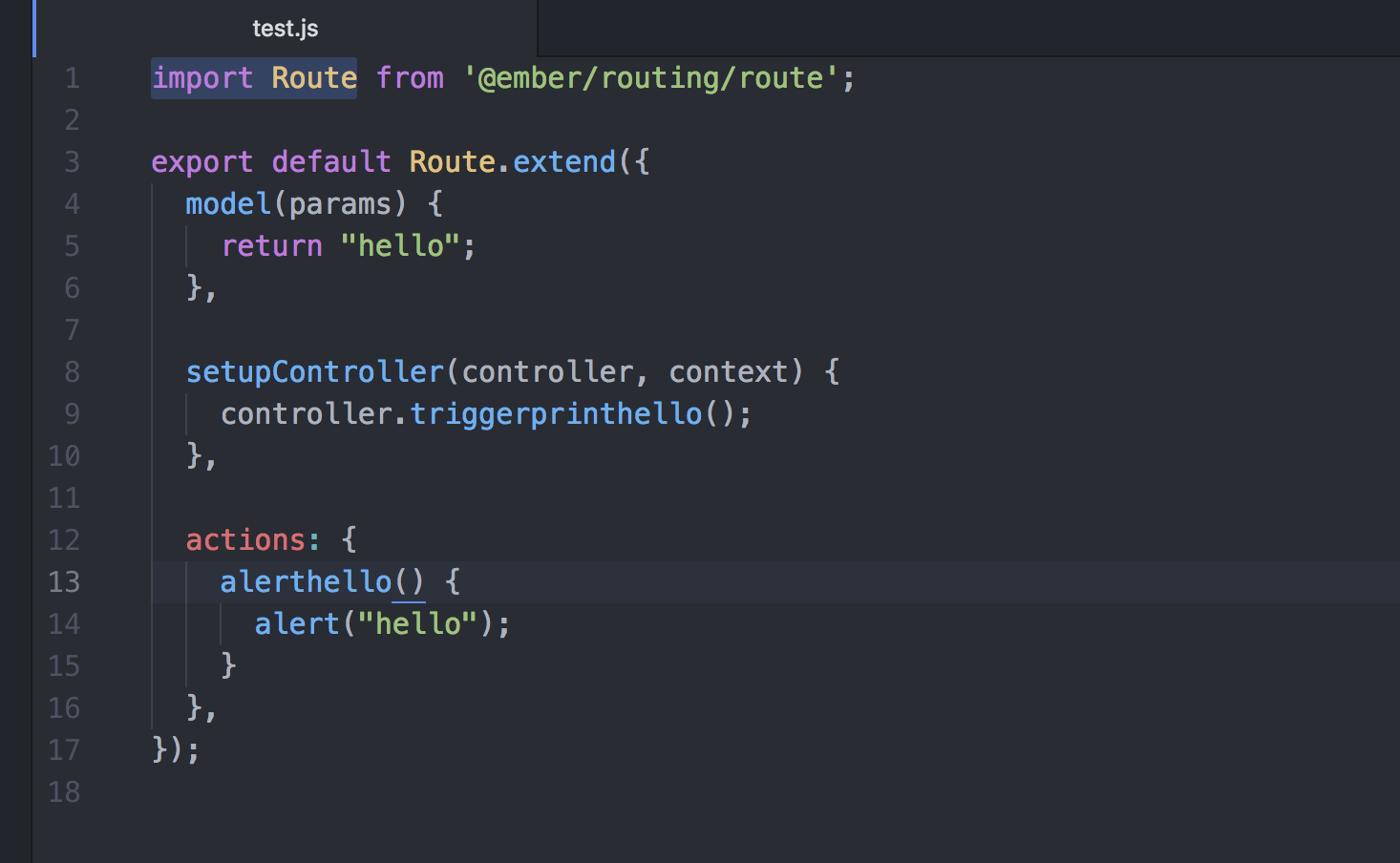

Usually, front-end web developers are expected to have unmatchable skills in programming languages. Therefore, if you are planning to become a front-end web developer, you need to learn various programming languages such as JavaScript, HTML as well as CSS. However, back-end web development has been observed to be one of the IT skills in great demand and most desired by IT firms. To become a back-end web developer, you are required to cover numerous skills and areas of programming. Presently, back-end programming is done in a number of languages such as JavaScript, Ruby, Java, PHP and Python.

Also, having command over different technologies such as Kubernetes, NginX, Varnish and Docker are considered as an added bonus for back end developers. Therefore, to stand out and make your mark in the industry as an experienced programmer, it is extremely important that you have command on multiple programming languages as well as technologies. To achieve this goal, experts recommend professionals to opt for an <a href="https://edu.wagner.quickstart.com/">IT bootcamp</a> if they wish to enhance and improve their existing skills.

<h1>A Smart Career Move</h1>

If experts are to be believed, presently, a career in web development is a smart choice as it is a very secure and safe option for IT professionals. There are many areas of web development that are still in the developing stage and requires trained professionals to help it grow.

Instead of working as a web developer for a particular organization, you also have the option of becoming a freelance web developer, provided that you have the necessary skills and knowledge to find new clients. If you are a quick learner and have a knack to remain up to date with <a href="https://www.trendmut.com/top-web-development-trends-2019/">current trends</a>, then freelance web development might be a good option for you. To develop and improve your skills in web development, there are a number of online tutorials that might give you a basic idea of web programming.

If you work as a web developer and want to improve and update your skills in lesser time for getting better job offers, then you can choose <a href="https://edu.wagner.quickstart.com/bootcamps/web-development/">the web developer bootcamp</a> that is specially designed for experienced web developers to update their knowledge in web development. You can select any course according to your needs and budget.

Pursuing a career in the field of web development is not as simple as it seems, as it requires constant effort, hard work and undivided attention in learning different types of programming languages and becoming up to date with recent changes and development in web development.

<h1>Myths And False Notions</h1>

As a result of recent developments in technologies such as ML, data science, cloud computing and IoT, there is a wrong conception that these technologies have made web development obsolete. However, in reality, all these new technologies cannot take place in web development. Rather, they are seen to be interlinked with the skills of web development, complementing them in various uses.

The concept of web development is broad and extremely versatile. It includes the development of various games, web applications and most importantly, mobile applications. Pursuing a career in the field of web development is an excellent option for 2019 as it is constantly growing, and the demand for skilled web developers does not seem to decline shortly. Currently, skilled web-developers enjoy great demand in the job market, and you can easily make a six-figure income by working as a web developer.

It is safe to say that web development is an ideal choice for individuals who have just stepped into the IT industry. There is no doubt about the fact that web developers play a key role in the current IT industry. This is proved by the fact that there is a great demand for skilled web developers in each and every field of IT.

<h1>Final Word</h1>

Therefore, if you want to step into the field of web development, then now is the right time to do so. If you are planning to become a web developer, it is advisable to become familiar with different web developer roles. Each position has a different pay scale and requires varied skillsets. Web development is a growing field with a vast array of job opportunities.

However, it is still advisable that you first evaluate your strengths and skills before opting for a career as a front, back or full stack developer. Usually, the majority of the individuals who wish to become a web developer prefer working as a back end developer first, and then as a front developer. After gaining several years of experience as a back and front end developer, it becomes quite easy to become a full stack developer, as it requires knowledge and expertise of both; front and back end web development. | sadiakhan3 |

111,017 | How to deploy a Symfony 4 project in a shared host. | Hi, anybody knows if i can deploy a Symfony 4 project in a shared free host (http... | 0 | 2019-05-21T14:13:38 | https://dev.to/kevinhch/how-to-deploy-a-symfony-4-project-in-a-shared-host-197k | help, apache, symfony4, sharedhost | ---

title: How to deploy a Symfony 4 project in a shared host.

published: true

tags: help, apache, symfony4, shared host

---

Hi, anybody knows if i can deploy a Symfony 4 project in a shared free host (https://www.000webhost.com/).

I saw and read a lot of post about projects in Symfony 2 or 3, but im trying to deploy my personal project in Symfony 4, but i don't know how to configure the .htaccess or ENV_VARS and im starting to think it's impossible because maybe this type of host can't support Symfony 4.

Thanks. | kevinhch |

111,035 | SSL Certificates in Development | Recently I've been working a lot with multiple multi-app servers and their SSL certificates. Once y... | 0 | 2019-05-21T17:49:10 | https://jimbuck.io/ssl-certs-in-dev | security, powershell | ---

title: SSL Certificates in Development

published: true

tags: Security,PowerShell

canonical_url: https://jimbuck.io/ssl-certs-in-dev

---

Recently I've been working a lot with multiple multi-app servers and their SSL certificates. Once you get more than two or three environments up and running it can get a little tricky finding and wrangling certificates from each instance. Thankfully I've found a few super tips that have been a big time saver when it comes to solving issues with certs when your working in less than ideal system setups.

### Tip 1: Find and Destroy

I find there are certain times when the dev certs on my PC are just out of hand. The first step to cleaning them up is by removing the old ones. The [certificates snap-in](https://docs.microsoft.com/en-us/dotnet/framework/wcf/feature-details/how-to-view-certificates-with-the-mmc-snap-in) for the Microsoft Management Console is a decent tool if you want to look at a specific cert. Simply select the folder on the left and you can easily find the certs you need. But when you're looking for one or more possible certs, then try the following Powershell snippet:

<!--kg-card-begin: code-->

```

ls Cert:\ -Recurse | where { $_.Issuer -like "*Jim Buck*" }

```

<!--kg-card-end: code-->

This snippet will recursively search all installed certificates but only display those that match the criteria (in this case the `Issuer` must contain `Jim Buck`. You can filter on any of the following fields:

1. `Subject` - text (might be generic or the same as issuer)

2. `Issuer` - text (typically quite reliable to filter on)

3. `Thumbprint` - hash (unique per certificate)

4. `FriendlyName` - string (sometimes empty)

5. `NotBefore` - Datetime

6. `NotAfter` - Datetime

7. `Extensions` - List of `Oid` objects (they have `FriendlyName` and `Value` properties).

Early in the development process you might be adding quite a few certs to your store. Run the same command but pipe it to the `rm` command and you can easily remove all of the pesky old certificates:

<!--kg-card-begin: code-->

```

ls Cert:\ -Recurse | where { $_.Issuer -like "*Jim Buck*" } | rm

```

<!--kg-card-end: code-->

I don't recommend clearing out certs too often though. Ideally you only have to do it before installing the "good" certs. Once you have a nice clean cert store adding new certs that are known to be good should be just fine to have installed.

### Tip 2: Trust the CA Root certificate

Our application relies on an in-house data service. During the install of this service, it generates a CA Root certificate and an end-user certificate for the server's [FQDN](https://en.wikipedia.org/wiki/Fully_qualified_domain_name) (signed by the generated CA Root). We can't modify the install logic of the service, so we have to make due with the certs it produces. The best approach is to simply download the CA Root cert from each instance and install it in the Trusted Root of our Local Machine.

<!--kg-card-begin: gallery-->

<figcaption>I typically select "Local Machine" (just in case) and manually select "Trusted Root Certification Authorities".</figcaption>

<!--kg-card-end: gallery-->

Don't be fooled, certs of the same name (but different thumbprint) can be installed side-by-side. Just remember to restart your browser/client apps so they pick up the new certificates.

### Tip 3: Use a shared CA Root certificate

By far the best approach is to simply make your certificate generation use a shared CA Root. This would allow a project or even a whole department to use one common root cert that signs all server-specific development/test certs. No more downloading of certs to trust, no more hacks to ignore cert errors. No more rummaging through each environment trying to update all references of which cert to use. Just one shared cert that keeps developers, testers, managers, and product owners safe and secure.

I am currently finalizing a script to help create (dev-only) CA Root and SSL certs. Once I can test it a bit more and get the usage as simple as possible I will write a special article all about it.

### | jimbuck |

1,429,589 | How to Create a Yearly Count Down Timer | Introduction Countdown timers have been around since the early twentieth The development... | 0 | 2023-04-07T18:33:04 | https://dev.to/fortune42/how-to-create-a-yearly-count-down-timer-1d60 | ## Introduction

Countdown timers have been around since the early twentieth

The development of digital electronics in the 1950s resulted in the development of electronic countdown timers. These have been used in a wide range of applications, including scientific experiments, space missions, and sporting events.

Digital timers became more widely available in the 1970s and were used in consumer products such as microwave ovens and alarm clocks.

Countdown timers have become increasingly popular in the digital realm in recent years, appearing in online games, apps, and websites. Countdown timers are now widely used in a wide range of applications, from cooking and fitness to business and education.Countdown timers have been around since the early 1900s. Countdown timers were first used in aviation, where pilots used them to time flights and monitor fuel consumption.In this article i we will be creating a simple yearly count down timer with html, css, and javascript

## What is a Count Down Timer

A countdown timer is a tool that displays the amount of time until a specific event or deadline. It is commonly used to help keep track of time to avoid missing a significant event or deadline. Countdown timers are found in various contexts, such as sporting events, concerts, and online meetings. They are viewable on smartphones, computers, and dedicated digital clocks. Countdown timers can help people stay on track and manage their time more effectively.

## Prerequsite

Before getting started with this tutorial, you need to have basic knowledge of the following:

- Html

- CSS

- Javascript

## Developing the Frontend

creating our frontend with just html and css. copy and paste the following.

```html

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8" />

<meta http-equiv="X-UA-Compatible" content="IE=edge" />

<meta name="viewport" content="width=device-width, initial-scale=1.0" />

<link rel="stylesheet" href="style.css" />

<title>Document</title>

</head>

<body>

<h1>New Year Eve</h1>

<div class="container">

<div class="count-hours">

<p class="big-text" id="days">0</p>

<span>days</span>

</div>

<div class="count-hours">

<p class="big-text" id="hours">0</p>

<span>hours</span>

</div>

<div class="count-hours">

<p class="big-text" id="mins">0</p>

<span>mins</span>

</div>

<div class="count-hours">

<p class="big-text" id="seconds">0</p>

<span>seconds</span>

</div>

</div>

<script src="index.js"></script>

</body>

</html>

```

Adding style to our html design

```css

*{

box-sizing: border-box;

}

body{

background-size: cover;

background-position: center, center;

min-height: 100vh;

font-family: "poppins" sans-serif;

margin: 0;

display: flex;

flex-direction: column;

background-image: url(time.jpg);

align-items: center;

justify-content: center;

}

.container{

display: flex;

}

h1{

color:white;

font-size: 5rem;

padding-top:20px ;

}

.count-hours{

}

span{

color: white;

padding-left:40px;

}

.big-text{

color: azure;

font-size: 70px;

padding: 10px;

margin: 0 2rem;

}

```

Now that we are done with our frontend design, let's head straight to adding functionality to our work.

```javascript

const day = document.querySelector("#days");

const hour = document.querySelector("#hours");

const min = document.querySelector("#mins");

const sec = document.querySelector("#seconds");

const newYear = "1 jan 2024";

function countDown() {

const newYearDate = new Date(newYear);

const currentDate = new Date();

const totalSeconds = (newYearDate - currentDate) / 1000;

const days = Math.floor(totalSeconds / 3600 / 24);

const hours = Math.floor(totalSeconds / 3600) % 24;

const mins = Math.floor(totalSeconds / 60) % 60;

const seconds = Math.floor(totalSeconds % 60);

day.innerHTML = days;

hour.innerHTML = hours;

min.innerHTML = mins;

sec.innerHTML = seconds;

// console.log(days, hours, mins, seconds);

}

countDown();

setInterval(countDown, 1000);

```

From the code above, Firstly, we get all html documents needed for the id attribute. Then, declare the variable for the new year in anticipation.

The countdown() performs all the activities, like getting the total number of seconds, days, hours, mins, and seconds remaining in the new year(1 jan 2024). And displaying the data to our frontend using the innerHTML property

## Conclusion

We have been able to create a simple countdown timer using html, css, and javascript. If u follow this tutorial, your project should be working fine without any errors.

Thanks for reading. Please like and add your comments in the comment session | fortune42 | |

111,196 | Why I Love useReducer | I didn't realize until recently how much I loved the React Hook useReducer. It's one of those advance... | 0 | 2019-05-22T02:17:18 | https://dev.to/hswolff/why-i-love-usereducer-4mnk | react, hooks, reducer, redux | ---

title: Why I Love useReducer

published: true

description:

tags:

- react

- hooks

- reducer

- redux

cover_image: https://thepracticaldev.s3.amazonaws.com/i/agv1wz8romklodvwmqul.jpg

---

I didn't realize until recently how much I loved the [React Hook useReducer](https://reactjs.org/docs/hooks-reference.html#usereducer). It's one of those advanced hooks, and while I read the documentation about it and already have a good amount of experience with [Redux](https://redux.js.org/), it took a little while for me to fully understand just how powerful `useReducer` can make your components.

# Why do I love useReducer?

The simple answer is that it lets you separate the _What_ from the _How_. To expand upon that, it may be that _What_ a user wants to do is `login`.

With `useState` when a user wants to `login` I create function that handles a lot of the _How_. _How_ my component has to behave when a user wants to `login`:

- Sets `loading` to true

- Clears out old `error` state

- Disables the button.

With `useReducer` all my component has to do is think about _What_ the user wants. Which is:

- `dispatch('login')`

After that all the _How_ is handled inside the `loginReducer` function.

Furthermore, any future _How_ questions become completely centralized inside of that one `loginReducer` function. My component can just keep on thinking about the _What_.

It's a subtle distinction but an extremely powerful one.

To further show the point [you can check out the full source code here](https://github.com/hswolff/youtube/tree/master/videos/why-i-love-usereducer) or see these inline examples.

I'm going to ignore showing the UI, if you want to see that you can check out the repo. For now I just want to focus on the data we're storing and updating.

# Using useState

Here I have 5 calls to useState to manage all the distinct state transitions.

In my `onSubmit` call I have to careful orchestrate all the state changes that I want.

They're tightly coupled to the onSubmit handler and awkward to extract.

```js

function LoginUseState() {

const [username, setUsername] = useState('');

const [password, setPassword] = useState('');

const [isLoading, showLoader] = useState(false);

const [error, setError] = useState('');

const [isLoggedIn, setIsLoggedIn] = useState(false);

const onSubmit = async e => {

e.preventDefault();

setError('');

showLoader(true);

try {

await login({ username, password });

setIsLoggedIn(true);

} catch (error) {

setError('Incorrect username or password!');

showLoader(false);

setUsername('');

setPassword('');

}

};

return; // remaining UI code here

}

```

# Using useReducer

While it may be overall longer, I would argue that it's much easier to read and track what's going on.

If you jump straight to the `onSubmit` function I can now clearly show the intent of the user. There's only 3 behaviors that can happen, 'login', 'success', and 'error'. What that means is not a concern of my component, it's all handled in the `loginReducer`.

Even better, it becomes easier for me to make wide-ranging changes to state changes because all the state changes are centrally located.

And even more exciting is that all state changes become easy to share by default.

If I want to show my error state from elsewhere in the component I can easily re-use the same `dispatch({ type: 'error' })` and I'm good to go.

```js

function LoginUseReducer() {

const [state, dispatch] = useReducer(loginReducer, initialState);

const { username, password, isLoading, error, isLoggedIn } = state;

const onSubmit = async e => {

e.preventDefault();

dispatch({ type: 'login' });

try {

await login({ username, password });

dispatch({ type: 'success' });

} catch (error) {

dispatch({ type: 'error' });

}

};

return; // UI here

}

function loginReducer(state, action) {

switch (action.type) {

case 'field': {

return {

...state,

[action.fieldName]: action.payload,

};

}

case 'login': {

return {

...state,

error: '',

isLoading: true,

};

}

case 'success': {

return {

...state,

isLoggedIn: true,

isLoading: false,

};

}

case 'error': {

return {

...state,

error: 'Incorrect username or password!',

isLoggedIn: false,

isLoading: false,

username: '',

password: '',

};

}

case 'logOut': {

return {

...state,

isLoggedIn: false,

};

}

default:

return state;

}

}

const initialState = {

username: '',

password: '',

isLoading: false,

error: '',

isLoggedIn: false,

};

```

# Think like the user

`useReducer` gets you to write code the way a user will interact with your component.

You are encouraged to think in the _What_ and centralize all _How_ questions inside the reducer.

I'm so excited `useReducer` is now built-in to React. It's one more reason why I love it.

---

If you enjoyed this article you can find more like this [on my blog](https://hswolff.com/)!

And if you like to see my talk about things you can [check out my YouTube channel](https://www.youtube.com/user/hswolff) for tutorial videos!

| hswolff |

111,300 | 5 Awesome Laravel Nova Packages to Kickstart Your Next Project | A collection of five packages to kickstart your next project with Laravel Nova. These are some of my personal favourites that I use on every project. | 0 | 2019-05-22T09:58:37 | https://dev.to/jackabox/5-awesome-laravel-nova-packages-to-kickstart-your-next-project-1932 | laravel, nova, php, laravelnova | ---

title: 5 Awesome Laravel Nova Packages to Kickstart Your Next Project

published: true

description: A collection of five packages to kickstart your next project with Laravel Nova. These are some of my personal favourites that I use on every project.

tags: laravel, nova, php, laravel nova

---

I've been using Laravel Nova a lot since it's release and find myself using the same packages across projects just because they're awesome and work well. In this post I've compiled a list of my five favourite packages to include in your Laravel Nova install.

If you haven't used Nova before, it's an admin package written for Laravel and allows for rapid prototyping of a CRUD admin system quickly. It's also extensible and all written within Vue/Tailwind. I'd suggest checking it out for your projects at [https://nova.laravel.com/](https://nova.laravel.com/).

## Nova Flexible Content

Nova Flexible Content is a package written by [Whitecube](https://github.com/whitecube) and allows for a way to build repeatable fields on your models. It generally allows for any subfield or other package to be passed through and will collate the data into JSON to be saved in your table.

The team have been awesome at fixing out a few bugs and providing updates. They've recently included the ability to have nested flexible content which takes this package to the next level.

GitHub Link: [https://github.com/whitecube/nova-flexible-content](https://github.com/whitecube/nova-flexible-content)

## Advanced Nova Media Library

Advanced Nova Media Library takes the [Spatie's Media Library](https://github.com/spatie/laravel-medialibrary) and extends it to be integrated into Nova. I've been using Spatie's Media Library for several years and have found it to be an invaluable resource - especially with the generation of responsive images.

This package by [Eduard Bess](https://github.com/ebess) integrates all the Spatie goodies into Nova and allows for the defining of gallery, files or single images. It also allows for the clipping of images upon upload which makes package even nicer.

GitHub Link: [https://github.com/ebess/advanced-nova-media-library](https://github.com/ebess/advanced-nova-media-library)

## Nova Duplicate Field

Nova Duplicate Field is the first package I wrote for Nova and allows for the quick duplication of models and their relations. This package takes a few parameters and utilises them at the click of the button. It will then redirect you to the edit view so that you can make any necessary changes that you need.

It's currently at around ~2000 installs.

GitHub Link: [https://github.com/jackabox/nova-duplicate-field](https://github.com/jackabox/nova-duplicate-field)

## Nova Snowball

This one is a theme but [Stephen Lake](https://github.com/stephenlake) has generated a really clean interface on top of Nova which cleans up a few things to my personal preference such as collapsable field groups and reducing the white space around elements. It also provides some responsive elements which Nova does not do by default.

GitHub Link: [https://github.com/stephenlake/nova-snowball](https://github.com/stephenlake/nova-snowball)

## Nova Backup Tool

This one is direct from [Spatie](https://github.com/spatie) and provides a slick interface for monitoring the back ups of your site. It's crucially important to keep a solid backup and this package will allow you to trigger a manual backup or see the status of your scheduled backups.

GitHub Link: [https://github.com/spatie/nova-backup-tool](https://github.com/spatie/nova-backup-tool)

That's all for this list, but if you have any packages your particularly fond of? Drop me a tweet [@jackabox](https://twitter.com/jackabox) and let me know!

Originally written at [https://jackwhiting.co.uk](https://jackwhiting.co.uk) | jackabox |

111,302 | Elixirメタプログラミング 02: マクロ | Elixir公式サイトの許諾を得て「Macros」の解説にもとづき、加筆補正を加えて、Elixirにおけるマクロの定め方と使い方についてご説明します。 | 0 | 2019-12-20T08:53:00 | https://dev.to/gumi/elixir-02-7l | elixir, webdev, tutorial, programming | ---

title: Elixirメタプログラミング 02: マクロ

published: true

description: Elixir公式サイトの許諾を得て「Macros」の解説にもとづき、加筆補正を加えて、Elixirにおけるマクロの定め方と使い方についてご説明します。

tags: #elixir, #webdev, #tutorial, #programming

---

本稿はElixir公式サイトの許諾を得て「[Macros](https://elixir-lang.org/getting-started/meta/macros.html)」の解説にもとづき、加筆補正を加えて、Elixirにおけるマクロの定義方法についてご説明します。

# はじめに

Elixirには、マクロをできるだけ安全に使える環境が整えられています。とはいえ、マクロでクリーンなコードを書くことは開発者の責任です。マクロをつくるのは、通常のElixirの関数を使うより難しいといえます。むやみにマクロを用いるのは、避けた方がよいでしょう。

Elixirには、データ構造や関数によりわかりやすく読みやすいコードを書ける仕組みがすでに備わっています。コードは黙示的より明示的に、短くよりわかりやすくすべきです。マクロはどうしても必要な場合にお使いください。

# はじめてのマクロ

Elixirのマクロは[`defmacro/2`](https://hexdocs.pm/elixir/Kernel.html#defmacro/2)により定めます。本稿では、コードを基本的に`.exs`ファイルに書いて、`elixir ファイル名`または`iex ファイル名`のコマンドで実行しましょう。

簡単なマクロを書いて、動きを確かめてみましょう。モジュールは`macros.exs`に定め、マクロと関数を加えます。コードの中身はどちらも同じです。反転した条件に応じて、引数の式を実行します。

```elixir

defmodule Unless do

def fun_unless(clause, do: expression) do

if(!clause, do: expression)

end

defmacro macro_unless(clause, do: expression) do

quote do

if(!unquote(clause), do: unquote(expression))

end

end

end

```

関数は、受け取った引数を[`if/2`](https://hexdocs.pm/elixir/Kernel.html#if/2)に渡します。けれど、マクロが受け取るのは内部表現です(「[Elixirメタプログラミング 01: 内部表現 ー quote/2とunquote/1](https://dev.to/gumi/elixir---quote2unquote1-f4i-temp-slug-2512891?preview=aa737ae159320789d47880244c4d8f7932cf1d320b50728763c9f568b45c560772df16045eab26a2814ecb951abf68dc9c5df380e95ec4d0273989ea)」参照)。そして、それを差し込んだ別の内部表現を返します。

前述で定義したマクロを試すために、このモジュールで`iex`を開きましょう。

```

$ iex macros.exs

```

マクロを使うには、その前に[`require/2`](https://hexdocs.pm/elixir/Kernel.SpecialForms.html#require/2)でモジュールを要求しなければなりません。そのあと、関数と同じように呼び出せます。

```elixir

iex> require Unless

Unless

iex> Unless.macro_unless(true, do: IO.puts "this should never be printed")

nil

iex> Unless.fun_unless(true, do: IO.puts "this should never be printed")

this should never be printed

nil

```

マクロも関数も戻り値(`nil`)は同じでした。けれど、マクロは[`IO.puts/2`](https://hexdocs.pm/elixir/IO.html#puts/2)に渡した文字列が出力されません。文字列が関数で出力されたのは、値を返す前に引数が評価されるからです。これに対して、マクロは渡された引数を評価しません。引数は内部表現として受け取られ、別の内部表現にされるのです。今回、定義した`macro_unless`マクロは、`if`の内部表現になります。

前掲`macro_unless`の呼び出しは、引数につぎのような内部表現を用いたのと同じです。

```elixir

iex> Unless.macro_unless(

...> true,

...> [

...> do: {{:., [], [{:__aliases__, [alias: false], [:IO]}, :puts]}, [],

...> ["this should never be printed"]}

...> ]

...> )

```

さらに、マクロの定義も内部表現に展開すると、つぎのようになります。

```elixir

{:if, [context: Unless, import: Kernel],

[

{:!, [context: Unless, import: Kernel], [true]},

[

do: {{:., [],

[

{:__aliases__, [alias: false, counter: -576460752303422719], [:IO]},

:puts

]}, [], ["this should never be printed"]}

]

]}

```

引数の内部表現は、[`quote/2`](https://hexdocs.pm/elixir/Kernel.SpecialForms.html#quote/2)で確かめられるでしょう。さらに、その内部表現を展開するのが[`Macro.expand_once/2`](https://hexdocs.pm/elixir/Macro.html#expand_once/2)です。

```elixir

iex> expr = quote do: Unless.macro_unless(true, do: IO.puts "this should never be printed")

{{:., [], [{:__aliases__, [alias: false], [:Unless]}, :macro_unless]}, [],

[

true,

[

do: {{:., [], [{:__aliases__, [alias: false], [:IO]}, :puts]}, [],

["this should never be printed"]}

]

]}

iex> res = Macro.expand_once(expr, __ENV__)

{:if, [context: Unless, import: Kernel],

[

{:!, [context: Unless, import: Kernel], [true]},

[

do: {{:., [],

[

{:__aliases__, [alias: false, counter: -576460752303422719], [:IO]},

:puts

]}, [], ["this should never be printed"]}

]

]}

iex> IO.puts Macro.to_string(res)

if(!true) do

IO.puts("this should never be printed")

end

:ok

iex> IO.puts Macro.to_string(expr)

Unless.macro_unless(true) do

IO.puts("this should never be printed")

end

:ok

```

`Macro.expand_once/2`は内部表現を受け取って、現在の環境に応じて展開します。前述の例では、マクロ`Unless.macro_unless/2`が展開されて呼び出され、結果が返りました(`__ENV__`については後述します)。さらに戻り値の内部表現を[`IO.puts/2`](https://hexdocs.pm/elixir/IO.html#puts/2)で文字列に出力して確かめたということです。

なお、内部表現をコードの文字列表現として確かめたいときは、つぎのように書くと簡単です。

```elixir

iex> expr |> Macro.expand_once(__ENV__) |> Macro.to_string |> IO.puts

if(!true) do

IO.puts("this should never be printed")

end

:ok

```

以上が、マクロの基本的な働きです。内部表現を受け取って、別のものに変換するという役割を果たします。実際、Elixirの[`unless/2`](https://hexdocs.pm/elixir/Kernel.html#unless/2)の実装はつぎのようなものです。

```elixir

defmacro unless(clause, do: expression) do

quote do

if(!unquote(clause), do: unquote(expression))

end

end

```

`unless/2`や[`defmacro/2`](https://hexdocs.pm/elixir/Kernel.html#defmacro/2)、[`def/2`](https://hexdocs.pm/elixir/Kernel.html#def/2)、[`defprotocol/2`](https://hexdocs.pm/elixir/Kernel.html#defprotocol/2)などの構文、その他公式サイトのガイドに掲げられているコードは、純粋なElixirに加え、マクロで実装されているものも少なくありません。言語を構築している構文は、開発者がそれぞれ開発しているドメインに言語を拡張するために用いることもできます。

関数やマクロを用途に応じて定め、さらにElixirに組み込み済みの定義を上書きすることもできます。ただし、Elixirの特殊フォームだけは例外です。Elixirで実装されていないため、上書きができません。特殊フォームに何があるか、詳しくは「[`Kernel.SpecialForms`](https://hexdocs.pm/elixir/Kernel.SpecialForms.html)」をご参照ください。

# マクロの健全さ

Elixirのマクロは、あとで解決されます。つまり、マクロで定められた変数は、マクロが展開されるコンテキストに定義された変数と競合することはないということです。

たとえば、つぎのようにマクロと関数を、それぞれ別のモジュールに定義したとします。

```elixir

defmodule Hygiene do

defmacro no_interference do

quote do: a = 1

end

end

defmodule HygieneTest do

def go do

require Hygiene

a = 13

Hygiene.no_interference

a

end

end

```

関数が変数に値を定義したあと、呼び出したマクロが同名の変数に異なる値を与えても、関数の変数値は変わりません。

```elixir

iex> HygieneTest.go

13

```

あえて、マクロが呼び出されたコンテキストに影響を与えたいときには、[`var!/2`](https://hexdocs.pm/elixir/Kernel.html#var!/2)を使ってください。

```elixir

defmodule Hygiene do

# defmacro no_interference do

defmacro interference do

# quote do: a = 1

quote do: var!(a) = 1

end

end

defmodule HygieneTest do

def go do

require Hygiene

a = 13

# Hygiene.no_interference

Hygiene.interference

a

end

end

```

マクロが`var/2`に与えた変数は、呼び出されたコンテキストに上書きして定義されます。

```elixir

iex> HygieneTest.go

1

```

上書きされたもとの変数値は使われません。そのため、コンパイル時に、それを告げる警告が示されます。

```

warning: variable "a" is unused

```

変数のコンテキストは、内部表現の第3要素のアトムで示されます。そして、モジュールから`quote/2`で引用された変数は、そのモジュールをコンテキストにもつのです。そのため、他のコンテキストを汚すことなく健全さが保たれます(「[健全なマクロ](https://ja.wikipedia.org/wiki/%E5%81%A5%E5%85%A8%E3%81%AA%E3%83%9E%E3%82%AF%E3%83%AD)」参照)。

```elixir

defmodule Sample do

def quoted do

quote do: x

end

end

```

```elixir

iex> quote do: x

{:x, [], Elixir}

iex> Sample.quoted

{:x, [], Sample}

```

Elixirはインポートとエイリアスにも、同じ仕組みを与えます。マクロはもとのモジュールのもとで動作し、展開された先と競合することはありません。あえて、影響を及したいときに用いるのが、`var!/2`や[`alias!/1`](https://hexdocs.pm/elixir/Kernel.html#alias!/1)です。ただし、健全さが失われ、使われる環境を直接変えることになりますので、ご注意ください。

[`Macro.var/2`](https://hexdocs.pm/elixir/Macro.html#var/2)を使うと、動的に変数をつくることができます。第1引数が変数名で、第2引数はコンテキストです。

```elixir

defmodule Sample do

defmacro initialize_to_char_count(variables) do

Enum.map variables, fn(name) ->

var = Macro.var(name, nil)

length = name |> Atom.to_string |> String.length

quote do

unquote(var) = unquote(length)

end

end

end

def run do

initialize_to_char_count [:red, :green, :yellow]

[red, green, yellow]

end

end

```

```elixir

iex> Sample.run

[3, 5, 6]

```

# 環境

前述「はじめてのマクロ」の項で`Macro.expand_once/2`の第2引数に[`__ENV__`](https://hexdocs.pm/elixir/Kernel.SpecialForms.html#__ENV__/0)を渡しました。戻り値は[`Macro.Env`](https://hexdocs.pm/elixir/Macro.Env.html)構造体のインスタンスです。構造体にはコンパイル環境の有用な情報が納められています。たとえば、現在のモジュールやファイル、行番号、現在のスコープのすべての変数などです。`import/2`や`require/2`で加わったものも含まれます。

```elixir

iex> __ENV__.module

nil

iex> __ENV__.file

"iex"

iex> __ENV__.requires

[IEx.Helpers, Kernel, Kernel.Typespec]

iex> require Integer

Integer

iex> __ENV__.requires

[IEx.Helpers, Integer, Kernel, Kernel.Typespec]

```

`Macro`モジュールの多くの関数は環境を与えて呼び出します。詳しくは、「[Macro](https://hexdocs.pm/elixir/Macro.html)」をご参照ください。また、コンパイル環境については「[Macro.Env](https://hexdocs.pm/elixir/Macro.Env.html)」で解説されています。

# プライベートマクロ

Elixirは[`defmacrop/2`](https://hexdocs.pm/elixir/Kernel.html#defmacrop/2)で、プライベートマクロが定義されます。プライベートな関数になりますので、マクロを定義したモジュールの中で、コンパイル時にしか使えません。

```elixir

defmodule Sample do

defmacrop two, do: 2

def four, do: two + two

end

```

```elixir

iex> Sample.four

4

```

そして、プライベートマクロは、使う前に定義されていることが必要です。マクロは展開されてから、関数として呼び出せます。そのため、定義の前に呼び出すと、エラーが生じるのです。

```elixir

defmodule Sample do

def four, do: two + two # ** (CompileError) macros.exs: undefined function two/0

defmacrop two, do: 2

end

```

# 責任のあるマクロを書く

マクロはできることが豊富な構文です。Elixirはさまざまな仕組みで、責任のあるマクロが書けるようにしています。

- **健全**: デフォルトでは、マクロ内で定義された変数は、使う側のコードに影響を与えません。さらに、マクロのコンテキストにおける関数呼び出しやエイリアスも、ユーザーコンテキストからは切り離されます。

- **レキシカル**: コードやマクロをグローバルに差し込むことはできません。マクロが定められたモジュールを、明示的に`require/2`または`import/2`で使う必要があります。

- **明示**: マクロは明示的に呼び出さなければ実行できません。言語によってはパースやリフレクションなどといった仕組みも用いて、開発者が外からわからないように関数をすっかり書き替えられたりします。Elixirのマクロは、呼び出す側がコンパイルのとき明示的に実行しなければならないのです。

- **明確**: 多くの言語には`quote`や`unquote`に省略記法が備えられています。Elixirではフルに入力することにしました。マクロ定義と内部表現をはっきりと識別できるようにするためです。

このような仕組みはあるものの、マクロを書く責任の多くは開発者が担います。マクロの助けがいると判断した場合、マクロがAPIではないことは頭においてください。

マクロの定義は、内部表現も含めて短くしましょう。つぎのように書くのは、よくない例です。

```elixir

defmodule MyModule do

defmacro my_macro(a, b, c) do

quote do

do_this(unquote(a))

...

do_that(unquote(b))

...

and_that(unquote(c))

end

end

end

```

つぎのように書けば、コードは明確になり、テストや管理もしやすくなります。関数`do_this_that_and_that/3`は直接呼び出してテストできるからです。また、マクロに依存したくない開発者向けのAPIを設計するのにも役立つでしょう。

```elixir

defmodule MyModule do

defmacro my_macro(a, b, c) do

quote do

# マクロに書くのは最小限に

# その他の処理はすべて関数に

MyModule.do_this_that_and_that(unquote(a), unquote(b), unquote(c))

end

end

def do_this_that_and_that(a, b, c) do

do_this(a)

...

do_that(b)

...

and_that(c)

end

end

```

| gumitech |

111,339 | The visual learner’s guide to async JS |

Have you ever watched or read hours’ worth of tutorials but were still left con... | 0 | 2019-07-08T20:06:56 | https://blog.logrocket.com/the-visual-learners-guide-to-async-js-62a0a03d1d57 | javascript, learntocode, frontend, programming | ---

title: The visual learner’s guide to async JS

published: true

tags: javascript,learn-to-code,frontend,programming

canonical_url: https://blog.logrocket.com/the-visual-learners-guide-to-async-js-62a0a03d1d57

---

Have you ever watched or read hours’ worth of tutorials but were still left confused? That’s how I felt when I first dove into learning asynchronous JavaScript. I struggled to clearly see the differences between promises and async/await, especially because under the hood, they’re the same.

Async JS has evolved a lot over the years. Tutorials are great, but they often give you a snapshot of what is the “right” way to do things at that particular point in time. Not realizing I should pay attention to the content’s date (😅), I found myself mixing different syntaxes together. Even when I tried to only consume the most recent content, something was still missing.

I realized much of the material out there wasn’t speaking to my learning style. I’m a visual learner, so in order to make sense of all the different async methods, I needed to organize it all together in a way that spoke to my visual style. Here I’ll walk you through the questions I had about async and how I differentiated promises and async/await through examples and analogies.

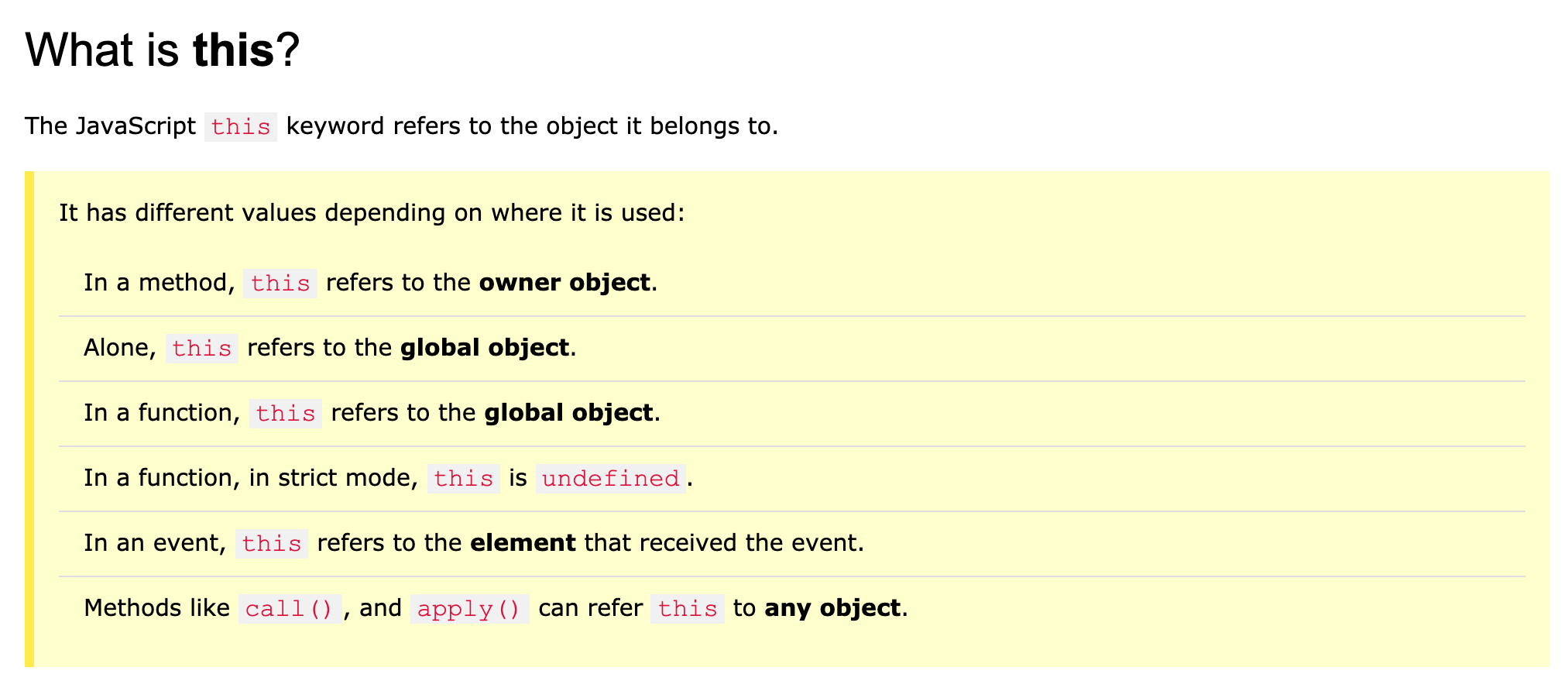

### Why do we need async?

At its core, JavaScript is a synchronous, blocking, single-threaded language. If those words don’t mean much to you, this visual helped me better understand how asynchronous JS can be more time-efficient:

<figcaption><a href="https://eloquentjavascript.net/11_async.html">Thick lines = time the program spends running normally. Thin lines = time spent waiting for the network</a>.</figcaption>

We want to use async methods for things that can happen in the background. You wouldn’t want your entire app to wait while you query something from the database or make an API request. In real life, that would be the equivalent of not being able to do anything — no phone calls, no eating, no going to the bathroom — until the laundry machine is done. This is less than ideal.

Out of the box, JS is synchronous, but we have ways of making it _behave_ asynchronously.

[](https://logrocket.com/signup/)

### Evolution of async

When searching online for “async JS,” I came across many different implementations: callbacks, promises, and async/await. It was important for me to be clear about each method and its unique value proposition so I could code with consistent syntax throughout. Here’s a breakdown of each one:

#### **Callbacks**

Before ES6, we’d implement this async behavior using callbacks. I won’t get too deep into it here, but, in short, a callback is a function that you send as a parameter to another function that will be executed once the current function is finished executing. Let’s just say there’s a reason why people refer to it as “callback hell.”

In order to control the sequence of events, using callbacks, you’d have to nest functions within callbacks of other functions to ensure they occur in the order you expect.

<figcaption>“Callback hell.”</figcaption>

Since implementing this gave us all headaches, the JS community came up with the promise object.

#### **Promises**

As humans, it’s easier for us to understand and read synchronous code, so promises were created to _look_ more synchronous but _act_ asynchronously. Ideally, it would look something like this:

This might look nice, but it’s missing a few key elements, one of which is error handling. Have you ever gotten an unhandledPromiseRejection error or warning? This is because some error occurred, which caused the promise to be rejected instead of resolved.

In the snippet above, we only handle the case of “success,” meaning that an unhandled promise is never settled, and the memory it is taking up is never freed. If you’re not careful, a promise will silently fail, unless manually handled with catch:

#### **Async/await**

This is the syntactic sugar on top of promises, which helps the code look more readable. When we add the async keyword in front of the function, it changes its nature.

An async function will return a value inside of a promise. In order to access that value, we need to either .then() the method or await it.

Style and conventions aside, it is technically OK to use different async methods together in your code since they all implement async behavior. But once you fully understand the differences between each one, you’ll be able to write with consistent syntax without hesitation.

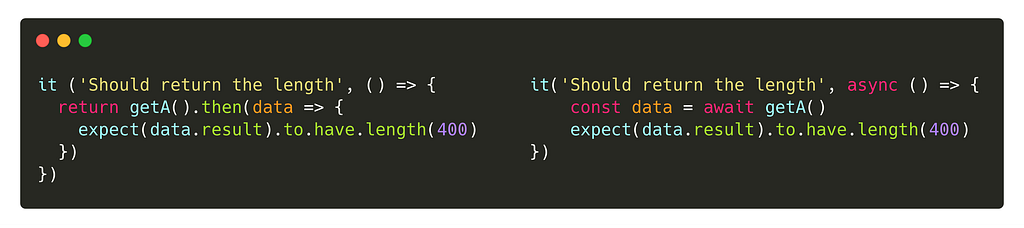

Since async/await utilizes promises, I initially struggled to separate the two methods in terms of syntax and conventions. To clear up the differences between them, I mapped out each method and its syntax for each use case.

### **Comparing promises and async/await**

These comparisons are a visually upgraded version of what I originally mapped out for myself. **Promises are on the left, async/await on the right**.

#### **Consuming**

getJSON() is a function that returns a promise. For promises, in order to resolve the promise, we need to .then() or .catch() it. Another way to resolve the promise is by awaiting it.

N.B., await can only be called inside of an async function. The async function here was omitted to show a more direct comparison of the two methods.

#### **Creating**

Both of these will return Promise {<resolved>: "hi"} . With async , even if you don’t explicitly return a promise, it will ensure your code is passed through a promise.

resolve() is one of the executor functions for promises. When called, it returns a promise object resolved with the value. In order to directly compare this behavior, the async method is wrapped in an immediately invoked function.

#### **Error handling**

There’s a few ways to catch errors. One is by using then/catch, and the other is by using try/catch. Both ways can be used interchangeably with promises and async/await, but these seem to be the most commonly used conventions for each, respectively.

A major advantage of using async/await is in the error stack trace. With promises, once B resolves, we no longer have the context for A in the stack trace. So, if B or C throw an exception, we no longer know A’s context.

With async/await, however, A is suspended while waiting for B to resolve. So, if B or C throw an exception, we know in the stack trace that the error came from A.

#### **Iterating**

I’m using single letters for names here to help you more clearly see the differences between the syntaxes. Before, I would read through code samples where I felt like I had to whack through the weeds of the function names to understand what was happening. It became very distracting to me, especially as such a visual learner.

N.B., even though each task is async, these both won’t run the tasks concurrently. I’ll touch on this in **Parallel execution** below.

#### **Testing**

There are subtle but important differences here. Remember that async functions return promises, so similarly, if you are using regular promises, you must return them.

Other things to note:

- Not putting await in front of something async results in an unresolved promise, which would make your test result return a false positive

- If you want to stub an async method that returns a promise, you can do something like this:

Now that we’ve covered most of the basic scenarios, let’s touch on some more advanced topics regarding async.

### Parallel vs. sequential async

Since async/await makes the syntax so readable, it can get confusing to tell when things are executed in parallel versus sequentially. Here are the differences:

#### **Parallel execution**

Let’s say you have a long to-do list for the day: pick up the mail, do laundry, and respond to emails. Since none of these things depend on one another, you can use Promise.all() to run each of these tasks. Promise.all() takes an array (for any iterable) of promises and resolves once all of the async methods resolve, or rejects when one of them rejects.

#### **Sequential execution**

Alternatively, if you have tasks that are dependent on one another, you can execute them in sequence. For example, let’s say you’re doing laundry. You have to do things in a sequence: wash, dry, fold. You cannot do all three at the same time. Since there’s an order to it, you would do it this way:

These functions are executed in sequence because the return values here are used as inputs for the next functions. So the function must wait until the value is returned in order to proceed executing.

### Tip for success

Everyone has a different learning style. No matter how many tutorials I watched or blog posts I read, there were still holes in my async knowledge. Only when I sat down and mapped everything out did I finally put the pieces together.

Don’t get frustrated or discouraged when you come across a concept you struggle with. It’s simply because the information isn’t being presented to you in a way that speaks to your learning style. If the material isn’t out there for you, create it yourself and share it! It might surprise you how many people out there are feeling the same way as you.

Thanks for reading 🙌! Would love to hear your thoughts, feel free to leave a comment.

Connect with me on [Instagram](https://www.instagram.com/edenadler) and check out my [website](https://edenadler.com) 👈.

### Plug: [LogRocket](https://logrocket.com/signup/), a DVR for web apps

[](https://logrocket.com/signup/)<figcaption><a href="https://logrocket.com/signup/">https://logrocket.com/signup/</a></figcaption>

[LogRocket](https://logrocket.com/signup/) is a frontend logging tool that lets you replay problems as if they happened in your own browser. Instead of guessing why errors happen, or asking users for screenshots and log dumps, LogRocket lets you replay the session to quickly understand what went wrong. It works perfectly with any app, regardless of framework, and has plugins to log additional context from Redux, Vuex, and @ngrx/store.

In addition to logging Redux actions and state, LogRocket records console logs, JavaScript errors, stacktraces, network requests/responses with headers + bodies, browser metadata, and custom logs. It also instruments the DOM to record the HTML and CSS on the page, recreating pixel-perfect videos of even the most complex single-page apps.

[Try it for free](https://logrocket.com/signup/).

* * *

The post [The visual learner's guide to async JS](https://blog.logrocket.com/the-visual-learners-guide-to-async-js-62a0a03d1d57) appeared first on [LogRocket Blog](https://blog.logrocket.com).

| bnevilleoneill |

111,380 | On Glitch This Week | Just a few projects that caught our eye this week on Glitch (May 22, 2019). | 741 | 2019-05-22T14:45:31 | https://glitch.com/@glitch/glitch-this-week-may-22-2019 | glitch, glitchthisweek, showdev | ---

title: On Glitch This Week

published: true

canonical_url: https://glitch.com/@glitch/glitch-this-week-may-22-2019

series: Glitch This Week

cover_image: https://cdn.glitch.com/2bdfb3f8-05ef-4035-a06e-2043962a3a13%2FdevBanner4.png?1558536124883

description: Just a few projects that caught our eye this week on Glitch (May 22, 2019).

tags: glitch,glitchthisweek,showdev

---

Glitch This Week is a regular series in which we round-up just some of the amazing projects that fantastic creators have made on [Glitch](https://glitch.com/). Here are a few projects that caught our eye this week.

## [Create Your Own Pac-Man Game](https://glitch.com/~figma-pacman)

As the name suggests "[figma-pacman](https://glitch.com/~figma-pacman)" by [Gleb Sabirzyanov](https://glitch.com/@zyumbik) is a Pac-Man game that you can customize in Figma. With a copy of the Figma file, you can customize the game's level design and then upload it to the app to play. Gleb created a [pink heart-shaped Pac-Man level](https://twitter.com/zyumbik/status/1129361314504216576) and another one in the shape of [the Figma logo](https://twitter.com/zyumbik/status/1129564817738620929), while Ali Salah made a [Twitter Vs. Social Media version](https://twitter.com/alollou/status/1130491266192617472). What level will you create?

{% twitter 1129031291041669121 %}

{% glitch figma-pacman app %}

## [Make Twitter More Accessible](https://glitch.com/~tweet-a11y-stats)

[Cole Gleason](https://glitch.com/@colegleason) is a Ph.D. student researching accessibility technology for people with vision impairments. He created "[tweet-a11y-stats](https://glitch.com/~tweet-a11y-stats)" - a tool to tell Twitter users how many of their photos are accessible for users who are blind. Currently, only around 0.1% of photo tweets on Twitter have an image description, but image descriptions are critical for people with vision impairments to access visual content on Twitter as the descriptions get read by screen reader or Braille display software. Cole's app provides a useful reminder to build or maintain the habit of making sure content is accessible by all when sharing on Twitter.

{% glitch tweet-a11y-stats app %}

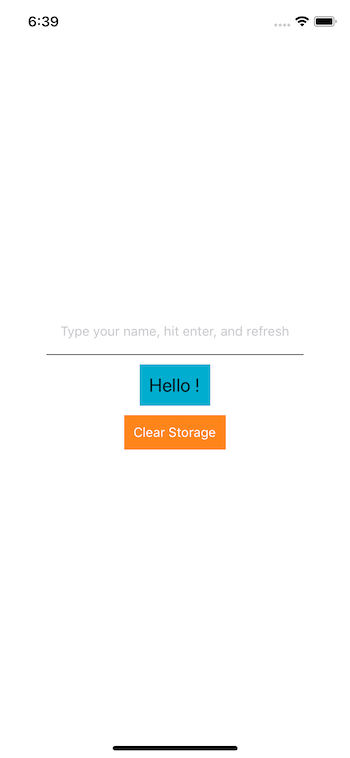

## [Get Started with Local Storage](https://glitch.com/~hello-local-storage)

[Kelly Lougheed](https://glitch.com/@kellylougheed), a Computer Science teacher created "[hello-local-storage](https://glitch.com/~hello-local-storage)" so her students could get started with storing info in the browser using local storage. You can use it too by remixing the app and checking out the [commented source code](https://glitch.com/edit/#!/hello-local-storage?path=script.js:1:0) to see how it works.

{% user kellylougheed1 %}

{% glitch hello-local-storage app %}

Other apps in [this week's round-up](https://glitch.com/@glitch/glitch-this-week-may-22-2019) include a Game of Thrones soundboard, a sparkly real-time web chat, and a 3D visualization of CSS named colors. [Check them out](https://glitch.com/@glitch/glitch-this-week-may-22-2019)! | _gw |

111,403 | How to Grow Your Business as a Developer | Breaking out on your own can be daunting, but also very rewarding. Here we pulled together 6 top tips to help you get on your way to success. | 0 | 2019-05-22T16:10:35 | https://dev.to/officialsilb/how-to-grow-your-business-as-a-developer-5dkg | freelance, businessasadeveloper, entrepreneurtips | ---

title: How to Grow Your Business as a Developer

published: true

description: Breaking out on your own can be daunting, but also very rewarding. Here we pulled together 6 top tips to help you get on your way to success.

tags: freelance, business as a developer, entrepreneur tips

---

As with most industries, many developers dream of turning their skills into a full-time business. However, going out on your own and finding clients can be a daunting task. There is often a lot to learn to go from skilled dev to skilled business owner. Here, we will look at some great ways to grow as a dev and build a business.

Build Your Technical Skills

Having a strong and [varied skillset](https://blog.teamtreehouse.com/how-to-build-your-professional-portfolio-as-a-developer) will help place you ahead of the competition when pitching for client work. Not only becoming a full stack developer but also a skilled marketer, a smart business man/woman and a savvy accountant will stand you in great stead for success in the future. If there is any area you feel you are lacking, try to fit time in to train or work alongside someone who can plug your knowledge gaps.

Network

[Networking](https://www.forbes.com/sites/theyec/2014/07/28/how-to-network-the-right-way-eight-tips/#14bd78fb6d47) is a great way to not only meet likeminded individuals but also to be introduced to potential clients or encourage others to recommend you to potential clients. Networking can also be a great place to meet potential mentors who can help guide you through the process of setting up a business as a developer.

Know Your Marketing

Having great software with no sales or amazing dev skills and no clients will quickly lead to failure. Know who you want to work with or sell to and write a list of ways you can reach them, perhaps via social media, LinkedIn or at networking events. Create a [marketing strategy](https://medium.com/career-change-coder/what-to-consider-when-marketing-yourself-as-a-developer-784c324c551c) in which you’ve identified your demographic and marketing platform along with your budget to help give your efforts some focus.

Attend Tech Events

Today there are many events and conferences held for web developers. Tech events are a great way to build awareness of your business as well as to learn loads about the industry from expert speakers. These events also attract lots of potential clients and investors, such as cyber security venture capital firm [C5 Capital](https://www.c5capital.com/Blog/tech-tour-2019-growth-summit/) who attended the Tech Tour 2019 Growth Summit in Geneva.

Have Contracts in Place

It’s important when working with any clients as a web developer that you have solid contracts in place. [A contract](https://www.crunch.co.uk/knowledge/contracts/protect-yourself-with-these-contract-samples/) will protect you should the client have an issue with your work or refuses to pay on time or even at all. Have important communications with clients in writing and make sure both parties have signed off and are in agreement. The contract doesn’t need to be overly complicated; if you’re unsure, there are many contract templates online you can amend to fit with your business.

Stay Focused

As your business grows it can be easy to lose focus. It can be tricky to keep clients happy, manage accounts, pitch for more work and have a life outside of your business. It’s for this reason that it’s so important to efficiently manage your time and keep your focus on the areas of business you deem to be the most important. It’s also useful to remember that if you’re struggling you can seek outside help in the form of freelancers or employees.

| officialsilb |

111,424 | Do you use isolated tests? | During my professional career, I've worked on projects where most of the tests are integration... | 0 | 2019-05-22T22:43:27 | https://dev.to/delbetu/do-you-use-isolated-tests-4ehc | discuss, testing, design | ---

title: Do you use isolated tests?

published: true

tags: discuss, testing, design

---

During my professional career, I've worked on projects where most of the tests are integration tests.

After watching this video --> [Integrated Tests Are A Scam](https://vimeo.com/80533536) it seems that we've been doing all wrong.

Are you using isolated tests in your projects?

How does it feel to program with these kinds of tests?

Thoughts??

| delbetu |

111,471 | The Uri composition mystery | A quick tale of an idiot wasting hours by not checking the docs earlier. | 0 | 2019-05-22T20:58:01 | https://blog.codingmilitia.com/2019/05/22/the-uri-composition-mystery | dotnet, csharp | ---

title: The Uri composition mystery

published: true

tags: dotnet,csharp

canonical_url: https://blog.codingmilitia.com/2019/05/22/the-uri-composition-mystery

cover_image: https://thepracticaldev.s3.amazonaws.com/i/u5fi889caoii2p4zjc7e.png

description: "A quick tale of an idiot wasting hours by not checking the docs earlier."

---

## Intro

This will be one of those posts of shame, that I'll use to make sure the next time I get into the same problem, I don't waste hours trying to figure it out 😛.

## So, what's the problem?

So I was using an `HttpClient`, passing it an `Uri` for the request to make. To compose the `Uri`, the constructor that gets two `Uri`s was being used, the first `Uri` represents the base address, so an absolute `Uri`, while the second should be a relative address.

With this in mind, looking at the following code, what would you expect to be written to the console?

```csharp

var baseUri = new Uri("https://api.dev/v3");

var routeUri = new Uri("stuff", UriKind.Relative);

var fullUri = new Uri(baseUri, routeUri);

Console.WriteLine(fullUri);

```

My expectation would be `https://api.dev/v3/stuff`, but alas, that's not what we get! The output is `https://api.dev/stuff`, because I didn't add a `/` to the end of the base address. If the base address ends with `/`, then the composition would work as expected.

But wait! There's more...

Even with the trailing slash in the base address, if the relative address starts with a slash, it will replace the relative part of the base address as well.

So, the following code:

```csharp

var baseUri = new Uri("https://api.dev/v3/");

var routeUri = new Uri("/stuff", UriKind.Relative);

var fullUri = new Uri(baseUri, routeUri);

Console.WriteLine(fullUri);

```

Will also output `https://api.dev/stuff`.

## "Adding insult to injury"

What's worse than the time I wasted on this, is that this behavior is described in the [docs](https://docs.microsoft.com/en-us/dotnet/api/system.uri.-ctor?view=netcore-2.2#System_Uri__ctor_System_Uri_System_Uri_).

> Remarks

>

> This constructor creates a new Uri instance by combining an absolute Uri instance, baseUri, with a relative Uri instance, relativeUri. If relativeUri is an absolute Uri instance (containing a scheme, host name, and optionally a port number), the Uri instance is created using only relativeUri.

>

> If the baseUri has relative parts (like /api), then the relative part must be terminated with a slash, (like /api/), if the relative part of baseUri is to be preserved in the constructed Uri.

>

> Additionally, if the relativeUri begins with a slash, then it will replace any relative part of the baseUri

>

> This constructor does not ensure that the Uri refers to an accessible resource.

The behavior just seemed so strange to me (although there's probably a good reason for it), I didn't think about looking at the docs, and kept scouring the code for some other reason to what was happening.

## Outro

Wrapping up, if we want to be sure the `Uri` composition works well, we should end the base address with a `/` and **not** start the relative part with one.

```csharp

var baseUri = new Uri("https://api.dev/v3/");

var routeUri = new Uri("stuff", UriKind.Relative);

var fullUri = new Uri(baseUri, routeUri);

Console.WriteLine(fullUri);

// outputs -> https://api.dev/v3/stuff

```

Hopefully I'll remember this the next time! 🙃 | joaofbantunes |

111,479 | Call for JavaScript library: "navigation history" | Based on the discussion in this post: Is there any way to det... | 0 | 2019-05-22T21:43:46 | https://dev.to/ben/call-for-a-navigation-history-library-2k43 | contributorswanted, opensource, webdev, javascript | Based on the discussion in this post:

{% link https://dev.to/ben/is-there-any-way-to-detect-if-a-user-can-go-back-in-pwas-desktop-pwa-most-specifically-203j %}

We got on to this thread:

{% devcomment b2jh %}

{% devcomment b2ke %}

{% devcomment b2mc %}

I believe all the information about determining `cangoback` would exist in the information about past actions taken on the site and persisted in whichever way was called for.

If someone wants to take a stab at this, it would be really useful! | ben |

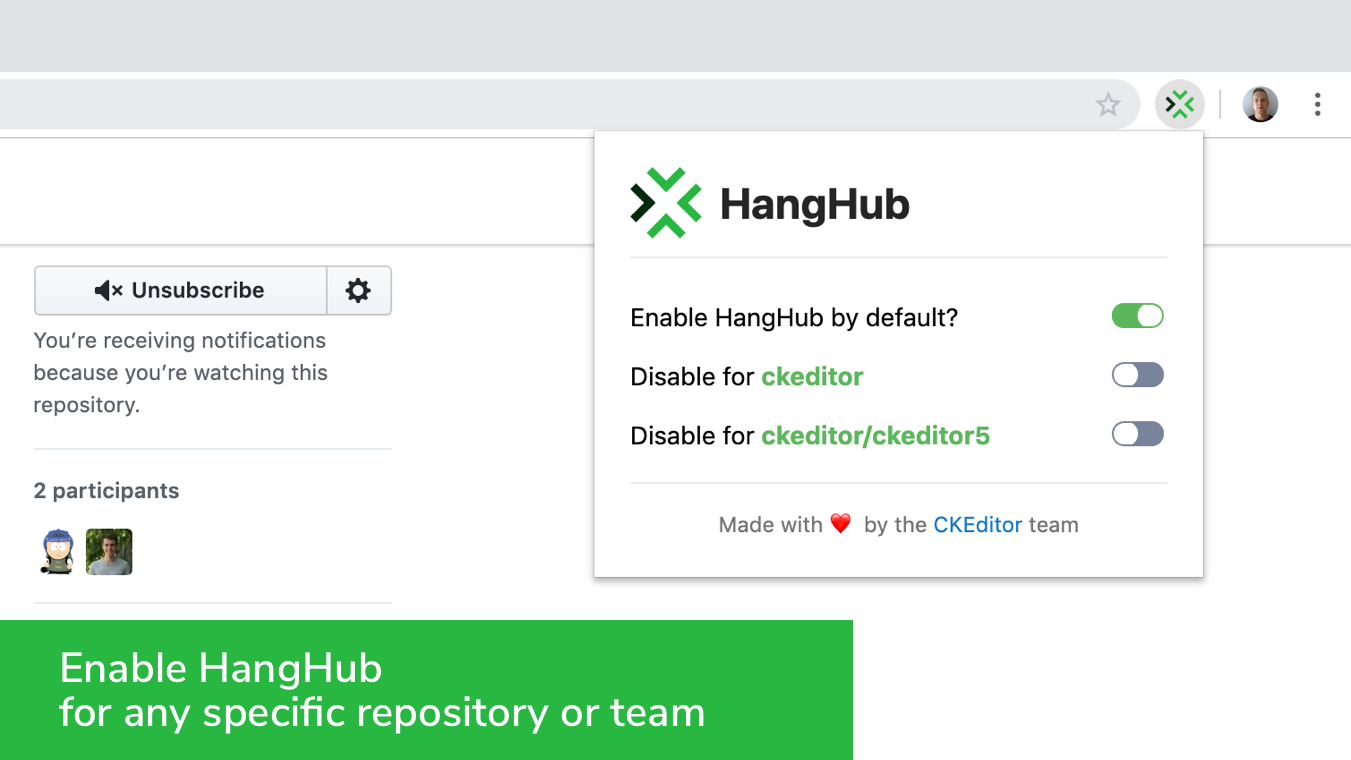

113,649 | Meet HangHub, a new productivity tool for GitHub | What is HangHub HangHub is a team productivity tool that lets you see other users who are... | 0 | 2019-05-23T16:29:02 | https://ckeditor.com/blog/Meet-HangHub-a-new-productivity-tool-for-GitHub/ | github, productivity, opensource, c | #What is HangHub

HangHub is a team productivity tool that lets you see other users who are working on the same GitHub issue or pull request as you. They can be commenting, editing, simply viewing or merging. After you and your teammates install HangHub, your team will never have to worry about wasting time working on the exact same thing at the same time.

<p><a href="https://ckeditor.com/blog/Meet-HangHub-a-new-productivity-tool-for-GitHub/hanghub-screencast.gif" class="article-body-image-wrapper"><img src="https://ckeditor.com/blog/Meet-HangHub-a-new-productivity-tool-for-GitHub/hanghub-screencast.gif" alt=""></a></p>

HangHub is currently available to download as a browser extension for [Chrome](https://chrome.google.com/webstore/detail/hanghub/egnoioofamlapfbecfkjgeobkfmfflfo) and [Firefox](https://addons.mozilla.org/en-US/firefox/addon/hanghub/). You can also [build](https://github.com/ckeditor/hanghub) it (and enhance) by yourself. It is available for free, under a permissive MIT Open Source license.

#Why HangHub

With the arrival of CKEditor 5 and its collaboration features, we have shown our commitment to collaboration. We are still working on ways to make CKEditor 5 your ultimate collaboration framework and during this ongoing effort, we also learned a lot about collaboration itself.

During the annual CKEditor hackathon, our developers wanted to tackle the collaboration and communication troubles we have when using GitHub. Have you ever written a lengthy answer to a GitHub discussion only to discover that your teammate spent the last 30 minutes writing about the same? We most definitely have, and this is why HangHub was born.

#How HangHub works

To communicate with your collaborators, HangHub uses the WebSocket protocol. HangHub is based on Preact and VirtualDOM to make updates of the user state really fast and efficient. Thanks to this you can see exactly when the collaborators — other HangHub users — join in, leave, comment on, and edit a GitHub issue or merge a pull request.

Note that you can disable HangHub for any specific organization or repository.

Install HangHub now from [Chrome Web Store](https://chrome.google.com/webstore/detail/hanghub/egnoioofamlapfbecfkjgeobkfmfflfo) or [Firefox Add-ons](https://addons.mozilla.org/en-US/firefox/addon/hanghub/) and share it with your teammates to make the GitHub management process in your organization more efficient!

We hope that HangHub will make your work with GitHub easier and are curious to hear your thoughts about our new tool. For any questions or feedback you might have, you can join the discussion on Product Hunt or contact us. You can also head straight to the [GitHub repository](https://github.com/ckeditor/hanghub) to report issues and submit feature requests.

And don’t forget to star it on GitHub!

This article was originally posted on [ckeditor.com](https://ckeditor.com/blog/Meet-HangHub-a-new-productivity-tool-for-GitHub/) | gok9ok |

113,923 | The Social Worker Who Codes | The beginnings story of a social worker who learned to love code. | 0 | 2019-05-24T22:21:09 | https://dev.to/taylorpage71/trying-to-find-balance-in-change-3bjn | beginners, codenewbie, webdev | ---

title: The Social Worker Who Codes

published: true

description: The beginnings story of a social worker who learned to love code.

tags: beginners, codenewbie, webdev

---

I didn't really mean for it to happen, but I really became enraptured with the idea that one day I could become a web developer full-time. This has been a tough dream to wake up to, but maybe not for reasons you'd think. You see, I spent a lot of time and money in my earlier days building up a career in social work. I'm still paying on a mountain of student loan debt for a Master's degree and yet I really want to "jump ship" so to speak for a completely unrelated career field? I really don't want to become another stereotypical US student statistic who ends up getting an advanced degree but ends up working in a totally different career, but here I am.

## How it Started

I got my first taste for web development 5 or 6 years ago. My wife and I were on staff at a church and ran the worship and media teams. I also worked full-time as a social worker and was wrapping up my Masters degree (this was before kids, so I could stay very busy). Without much notice, we found out in a staff meeting that the guy who ran our website just left. *"Does anyone know anything about websites?"* Of course no one did. Without any better options available, I volunteered to do it. Why not? "I'm good with computers and technology," I thought. I knew I was also a strong self-learner and enjoyed learning new things.

### It's Hacked!

I started off very quickly in over my head. Thankfully, the previous web admin had left some sign-in credentials for our WordPress site so I at least was able to learn how to log in and set up my own username and password. *phew* I was confused quickly though to find a lot of pages had random ads and words that didn't make sense at all. Why would the admin insert all this odd stuff about product offers and promotions on a church website? Not only were they unrelated, they were just weird. After some additional research, I learned the site had been hacked and that this wasn't an altogether uncommon occurrence for WordPress sites. I did some Googling and found out that our website didn't implement a lot of common best practices for WordPress to avoid such hacks. So, I started cleaning it all up. And oddly enough, despite the frustration of it all, I found it really enjoyable.

### I Actually Like This

Over time, I found myself spending more free time learning about web development and how we could improve our online presence and implement more features on the website. I was having a lot of fun. I had changed jobs in social work for a more flexible schedule and working with the developmentally disabled population. I really enjoyed my full-time social work job. The flexibility, helping people, and getting to flex some creative and technical muscles at my church gig seemed to be the perfect situation. I even got to do a complete site remodel. I ended up cheating and rather than building my own theme I went with Divi from Elegant Themes. My hope was to train some other folks on content creation for the site so I could focus on maintenance and technical improvements.

### All Good Things Must Come to an End

But unfortunately, my ideal situation didn't last forever. Some changes in the church occurred and my wife and I decided to step down from our leadership positions. I offered to run the website until they could find someone else to take over the maintenance. Several months later I was logging into the site to do some updates and found that the entire site had been replaced with a Wix site. *A Wix site*. I was crushed. Shortly after we left that church altogether but I found that I *_really_* missed working on the website. So I searched around and found one of the most frequently recommended web development courses was Colte Steele's Online Bootcamp on Udemy. I signed up and got started learning the building blocks of the web.

## Derailed and Juggling Priorities

Not long after we stepped down from our leadership positions at church, I was offered a supervisor position at my social work job. I had always turned down or avoided advancing previously because our work in the church had taken priority, so I thought the timing was perfect.

I had started the Colte Steele course on Udemy but quickly fell off track with the discipline of online learning when I became a supervisor. I found myself working tons of hours and we now had an infant at home. I was getting mentally drained and exhausted at work and also not getting enough sleep with a baby at home. I found less and less time available for my newfound love of web development.

It would take me another year with this schedule to complete the course. But it was awesome. Learning how to build something as *advanced* as a Yelp-type web app was so cool. It wasn't just building websites I was interested in anymore - now I wanted to build web apps!

## Fast Forward to Today

My journey to figure out how I can code and not give up social work entirely has been a confusing one to say the least. I love helping people, so maybe I can do it with code? I've become really interested in accessibility and have found a real desire as well to work with local businesses/professionals in my area. I'm obviously still learning and have a long ways to go before I can call myself a real web developer.

I've found myself really enjoying working with React and Gatsby is one of my favorite tools to work with. I'm trying to learn Next.js and eventually I think I'd like to learn Vue.js but I know I really need to step up my core JavaScript skills first. Sometimes I'm all over the place with what I want to learn and it's a struggle to stay disciplined on one task before moving on to the next tutorial. I randomly spent 4 hours on a full-stack serverless tutorial before I even realized, *"Why am I doing this? I'm still getting React down - focus on JavaScript first!"* Needless to say, I'm still learning on how to stay focused on my self-learning path. It's amazing that there's so much available from the web development community online, but for a true outsider like me it can be very overwhelming.

### Looking Forward

My wife and I have started a business doing websites, social media, and content creation in hopes to work with small businesses in our area. I think one day, I'd like to get a full-time gig as a developer, but I'm content with social work at the moment. I am in the process of switching my full-time job to a different social work agency and giving up a supervisory role so I can have more time with my family and also with pursuing this passion of web development. I'll be working with a developing agency who is just starting to invest in some online technologies and my hope is to learn where I am so I can advance my skills in web development.

I'm excited for what the future holds and to learn more from this amazing community. Who knows? Maybe some day I'll find the perfect marriage in my love for web development and helping those who can't help themselves. For now, social work is my full-time work thing and web development is my free-time passion.

| taylorpage71 |

113,971 | What's One Thing You Wish You Knew Before Starting A Career In Tech? | If you were asked this what would be your response? | 0 | 2019-05-24T13:43:09 | https://dev.to/lauragift21/what-s-one-thing-you-wish-you-knew-before-starting-a-career-in-tech-423k | discuss | If you were asked this what would be your response? | lauragift21 |

114,054 | Customize shell prompt | Change the appearance of the terminal shell prompt: customize colors, text formatting, and... | 0 | 2019-05-24T17:37:15 | https://dev.to/pldg/customize-shell-prompt-2bpa | linux, bash, tutorial | Change the appearance of the terminal [shell prompt](https://en.wikibooks.org/wiki/Guide_to_Unix/Explanations/Shell_Prompt): customize colors, text formatting, and dynamically display other type of information (including git status).

We're going to use bash on Ubuntu but most concepts can also be applied in other unix-based systems (e.g. MacOS and Windows Subsystem for Linux).

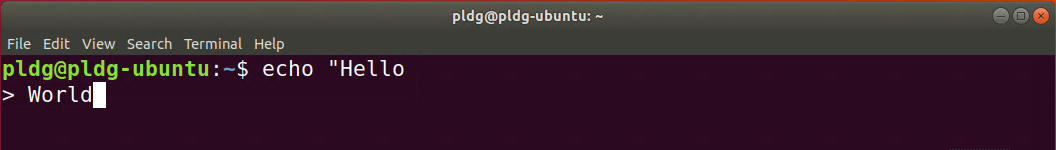

## Prompts variables

Bash has four type of prompts controlled by those variables:

- `PS1` primary prompt.

- `PS2` displayed when you've to type more commands (multi-line commands).

- `PS3` displayed when the select command is waiting for input.

- `PS4` displayed when debugging Bash scripts.

If you `echo $PS1` you'll see a bunch of characters sequences:

```txt

\[\e]0;\u@\h: \w\a\]${debian_chroot:+($debian_chroot)}\[\033[01;32m\]\u@\h\[\033[00m\]:\[\033[01;34m\]\w\[\033[00m\]\$

```

When you enter an interactive shell session, the shell read `PS1` and output something like this:

```txt

username@hostname:directory$

```

The dollar `$` symbol at the end signifies that you're a normal user, for root user it's replaced with the hash `#` symbol.

If you `echo $PS2` it'll display only the greater-than sign `>` symbol.

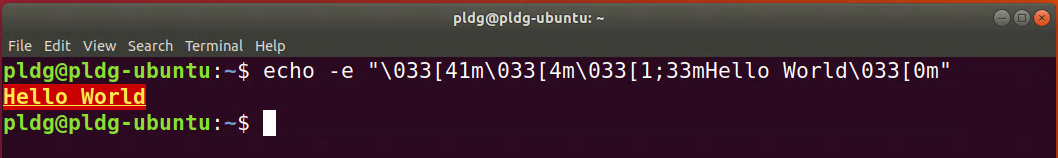

You can see both `PS1` and `PS2` in the screenshot below:

The `PS3` and `PS4` prompts are not very common. In this guide we'll focus on the primary prompt.

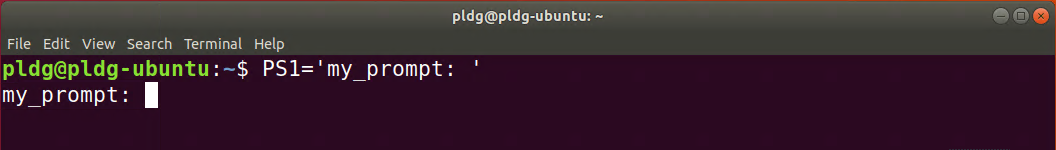

## Change the prompt

To control the output of your primary prompt, edit the `PS1` variable:

```sh

PS1='my_prompt: '

```

Because `PS1` was replaced directly in Bash, changes will disappear on shell `exit`. Later in this guide we'll learn how to make this changes permanent.

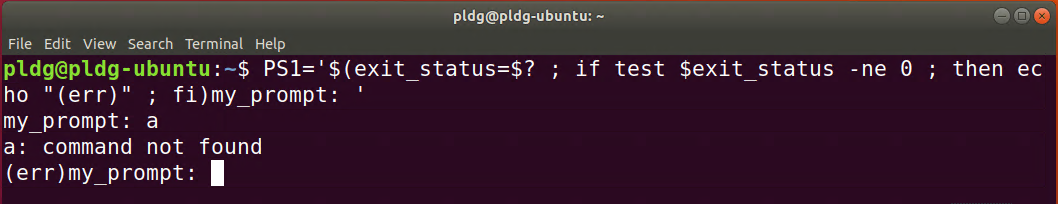

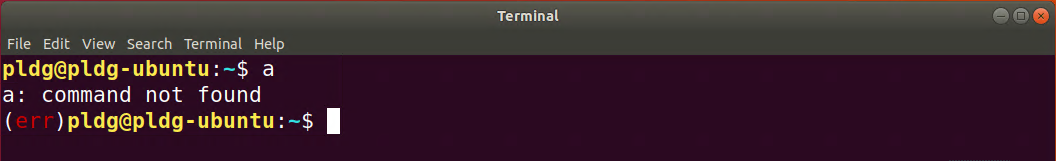

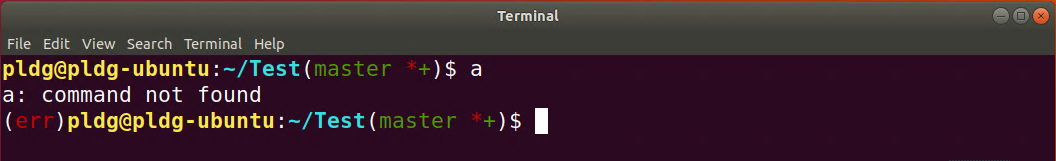

## Embedding commands

You can run commands inside `PS1` variable:

```sh

PS1='$(exit_status=$? ; if test $exit_status -ne 0 ; then echo "(err)" ; fi)my_prompt: '

```

If exit code is not equal to `0` it'll display `(err)`.

## Backslash-escaped characters

There are some backslash-escaped special characters you can use to dynamically display useful information in the prompt.

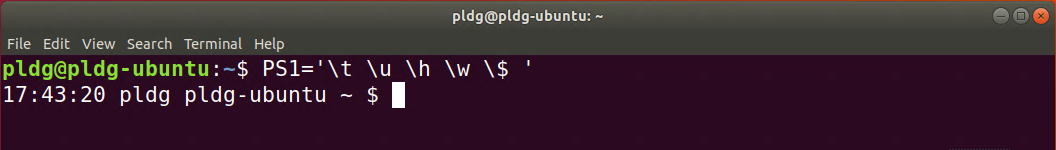

For example:

```sh

PS1='\t \u \h \w \$ '

```

- `\t` display time.

- `\u` display user name.

- `\h` display hostname.

- `\w` display current working directory.

- `\$` display dollar *$* symbol if you're normal user; display hash *#* symbol if you're root user.

Here is a complete list of [backslash-escaped characters](https://ss64.com/bash/syntax-prompt.html).

## ANSI escape sequences

Bash allows the user to call a series of [ANSI escape sequences](https://en.wikipedia.org/wiki/ANSI_escape_code) to change colors, text formatting, cursor location and other options of the terminal window. Those sequences are a set of non-printable [control characters](https://en.wikipedia.org/wiki/ASCII#Control_characters) which the shell interprets as commands.

An ANSI escape sequence always start with an escape character and a left-bracket character, followed by one or more control characters:

```txt

ESC[COMMAND

```

- The escape character `ESC` can be written as `\033` or `\e` or `\x1b`.

- `COMMAND` is the control character.

See the [list of ANSI sequences](https://bluesock.org/~willkg/dev/ansi.html#sequences) for all available commands (some terminals may have partial support for ANSI sequences).

## Colors and text formatting

To colorize the output of your text terminal use the following ANSI sequence:

```txt

ESC[CODEm

```

Where `CODE` is a series of one or more semicolon-separated color codes.

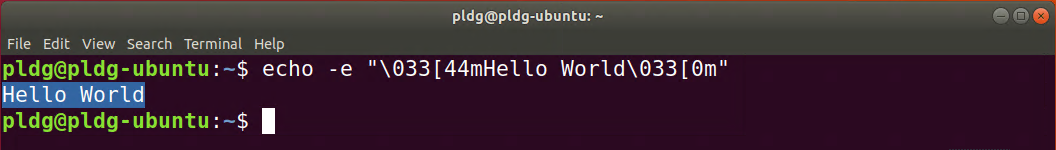

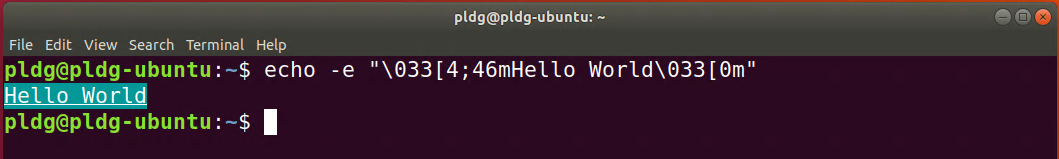

For example:

```sh

echo -e "\033[44mHello World\033[0m"

```

- `-e` enable `echo` to parse escape sequences.

- `\033[` mark the start of an ANSI sequence.

- `44` is the code for background color blue.

- `m` mark the end of color codes.

- `0` removes all text attributes (formatting and colors). It's important to reset attributes, otherwise the styles will be applied to all texts after *Hello World* (including the prompt and the text we type).