id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

163,368 | Migrating learn-dojo to Dojo 6 | The latest release of Dojo 6 brings with it some major updates to how you can build apps with Dojo. T... | 0 | 2019-08-29T15:17:01 | https://learn-dojo.com/migrating-to-dojo-6 | javascript, dojo, typescript, webdev | The latest release of [Dojo 6](https://dojo.io/) brings with it some major updates to how you can build apps with Dojo. There are some enhancements to builds, and custom elements, but I think one of the biggest new features available to developers is the use of function-based widgets. This doesn't mean you can't continue to use class-based widgets, but there are some nice advantages to using the new function-based widgets.

In case you didn't know, [learn-dojo](https://learn-dojo.com) is a static site, [built with Dojo](https://learn-dojo.com/building-static-site-with-dojo). So as Dojo 6 development was ongoing, I was already looking at what I would need to do to migrate the site to the latest Dojo.

## Updates to Widgets

Let's take a look at a basic [Header](https://github.com/odoe/learn-dojo/blob/df41818497429706e235c7b39437abb5ed4ee3b5/src/widgets/header/Header.tsx) widget, that accepts properties to display the title and and links to the main page.

```tsx

// src/widgets/header/Header.tsx

import WidgetBase from "@dojo/framework/widget-core/WidgetBase";

import { tsx } from "@dojo/framework/widget-core/tsx";

import Link from "@dojo/framework/routing/Link";

import * as css from "./Header.m.css";

export default class Header extends WidgetBase<{ title: string }> {

protected render() {

const { title } = this.properties || "My Site";

return (

<header classes={[css.root]}>

<div classes={[css.title]}>

<Link to="/" isOutlet={false} classes={[css.link]}>

{title}

</Link>

</div>

</header>

);

}

}

```

This widget takes an object with a `title` that is a `string` as its property. This isn't a complicated widget, Now, when we convert it to a function-based widget, not much is going to change in terms of what is being rendered, but there are some slight differences in the [Header](https://github.com/odoe/learn-dojo/blob/c480ef742b088dd3bea9a28d686c35a3e551271b/src/widgets/header/Header.tsx).

```tsx

// converted to Dojo 6

// src/widgets/header/Header.tsx

import { tsx, create } from '@dojo/framework/core/vdom';

import Link from '@dojo/framework/routing/Link';

import * as css from './Header.m.css';

const factory = create().properties<{ title: string }>();

export default factory(({ properties }) => {

const { title } = properties() || 'My Site';

return (

<header classes={[css.root]}>

<div classes={[css.title]}>

<Link to="/" isOutlet={false} classes={[css.link]}>

{title}

</Link>

</div>

</header>

);

});

```

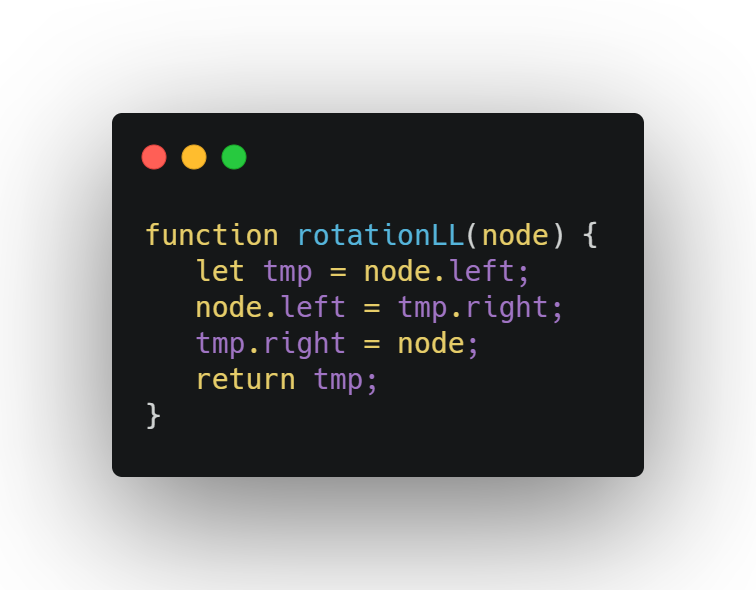

First of all, the folder `widget-core` has been renamed to `core` in `@dojo/framework`. This is just a organizational change to Dojo. But the other new one is the use of this `create` module. The `create` module that lets you create a factory method for your render function.

To create a basic factory render function, you could do something like this.

```tsx

// return a render factory

const factory = create();

export factory(function MyBasicWidget() {

return <h2>Everything is awesome!</h2>;

});

```

But the `Header` widget requires some properties, so we can tell the render factory that properties are expected, and we can type them.

```ts

const factory = create().properties<{ title: string }>();

```

Now in the factory method, it will be pass a `properties()` method that will return the provided properties to the widget.

```tsx

export default factory(({ properties }) => {

const { title } = properties() || 'My Site';

...

});

```

Why is `properties` a function and not just an object? This has to do with some other features of function-based widgets that allow middleware to be used. This ensures that you don't get stale values from the properties.

_We'll cover the new middleware capabilities in more detail in in a future blog post_.

The rest of this widget looks like the previous version returning JSX vdom.

It is normally recommended that you provide _named_ render methods to the render factory as it will help you track down errors in debugging, but it isn't required. _Sometimes you just need to live on the edge_.

## Basic Middleware

One of the standout features of Dojo is the use of [blocks](https://learn-dojo.com/dojo-from-the-blocks) that let you run code in node when you use build time rendering. It's critical in how learn-dojo is built because blocks are used to parse the posts from markdown, and run various tooling for code blocks, and formatting. In class-based widgets, this is done via the use metas.

Here is how a blog page is rendered with a class-based widget.

```tsx

// src/pages/Blog.tsx

import WidgetBase from '@dojo/framework/widget-core/WidgetBase';

import Block from '@dojo/framework/widget-core/meta/Block';

import { tsx } from '@dojo/framework/widget-core/tsx';

import compileBlogIndex from '../blocks/compile-blog-index.block';

import Post from '../templates/blog-post/BlogPost';

import * as css from './Blog.m.css';

export default class Blog extends WidgetBase<{

standalone?: boolean;

path?: string;

}> {

protected render() {

const { standalone = false, path } = this.properties;

// run the block as a meta

const blogs: any = this.meta(Block).run(compileBlogIndex)({});

// render blog excerpts or single blog post

return (

<div classes={[css.root]}>

{!standalone

? blogs &&

blogs.map((blog: any) => [

<Post key={blog.file} path={blog.file} excerpt />,

<hr key={blog.file} />

])

: undefined}

{path && path.length && <Post key={path} path={path} />}

</div>

);

}

}

```

Let's dive right into how the [Blog](https://github.com/odoe/learn-dojo/blob/c480ef742b088dd3bea9a28d686c35a3e551271b/src/pages/Blog.tsx) module looks as a function-based widget in Dojo 6.

```tsx

// converted to Dojo 6

// src/pages/Blog.tsx

import { tsx, create } from '@dojo/framework/core/vdom';

import block from '@dojo/framework/core/middleware/block';

import compileBlogIndex from '../blocks/compile-blog-index.block';

import Post from '../templates/blog-post/BlogPost';

import * as css from './Blog.m.css';

const factory = create({ block }).properties<{ standalone?: boolean; path?: string }>();

export default factory(({ middleware: { block }, properties }) => {

const { standalone = false, path } = properties();

const blogs: any = block(compileBlogIndex)({});

return (

<div classes={[ css.root ]}>

{!standalone ? (

blogs &&

blogs.map((blog: any) => [

<Post key={blog.file} path={blog.file} excerpt />,

<hr key={blog.file} />

])

) : (

undefined

)}

{path && path.length && <Post key={path} path={path} />}

</div>

);

});

```

To use this in a render factory method, pass the block middleware to the `create` method so that it's available to your render factory.

Anything you pass into the create() method will be available to your render factory methods as middleware.

```ts

const factory = create({ block }).properties<{ standalone?: boolean; path?: string }>();

// render factory

export default factory(({ middleware: { block }, properties }) => {...});

```

Now the `block` is available on the `middleware` property passed to the render factory method.

```ts

const blogs: any = block(compileBlogIndex)({});

```

Notice that now, you can run the middleware block independently of any `meta` helpers like in a class-based method. This is one of my favorite features of the new function-based widgets!

## Composable Widgets

The learn-dojo site takes advantage of the ability to create wrapper widgets that renders any children provided to it. This is used in something like the [`Layout`](https://github.com/odoe/learn-dojo/blob/df41818497429706e235c7b39437abb5ed4ee3b5/src/layouts/Layout.tsx) widget.

```tsx

// src/layouts/Layout.tsx

export default class Layout extends WidgetBase<SiteMeta> {

protected render() {

const { title, description, author, footerLinks } = this.properties;

return (

<div classes={[css.root]}>

<Header title={title} />

<Hero description={description} />

{/* render the children */}

<main classes={[css.section]}>{this.children}</main>

<SignUp />

<Footer {...{ author, footerLinks }} />

</div>

);

}

}

```

Like the update to make `properties` a function so you always have the latest values, the same is true for `children` now being a function in the [Layout](https://github.com/odoe/learn-dojo/blob/c480ef742b088dd3bea9a28d686c35a3e551271b/src/layouts/Layout.tsx).

```tsx

// converted to Dojo 6

// src/layouts/Layout.tsx

const factory = create().properties<SiteMeta>();

export default factory(({ children, properties }) => {

const { title, description, author, footerLinks } = properties();

return (

<div classes={[ css.root ]}>

<Header title={title} />

<Hero description={description} />

{/* render the children */}

<main classes={[ css.section ]}>{children()}</main>

<SignUp />

<Footer {...{ author, footerLinks }} />

</div>

);

});

```

That's the only change in regard to rendering children in your widgets.

## Summary

Dojo 6 is a significant release in the Dojo roadmap, offering some exciting new capabilities in build reactive widgets for your applications. There are plenty more new features not covered in this blog post that will be discussed in the future. For details, see the [official Dojo 6 blog post](https://dojo.io/blog/version-6-dojo).

I was able to migrate [learn-dojo](https://learn-dojo.com/) in a single morning based off the new Dojo documentation. I'm really impressed with the new function-based widget pattern in Dojo and the use of middleware that we barely scratched the surface of in this post.

Stay tuned for more! | odoenet |

163,820 | AWS Amplify GraphQL Operations with TypeScript and Hooks - Part 4 [Subscriptions] | Subscriptions provide a way to easily supply real-time information in an application using GraphQL. Let's see what they look like with AWS Amplify and how they can be made better with TypeScript and hooks! | 1,515 | 2019-09-14T00:19:32 | https://dev.to/mwarger/aws-amplify-graphql-operations-with-typescript-and-hooks-part-4-subscriptions-h0j | typescript, graphql, amplify, javascript | ---

title: AWS Amplify GraphQL Operations with TypeScript and Hooks - Part 4 [Subscriptions]

published: true

description: Subscriptions provide a way to easily supply real-time information in an application using GraphQL. Let's see what they look like with AWS Amplify and how they can be made better with TypeScript and hooks!

tags: TypeScript, GraphQL, Amplify, JavaScript

series: AWS Amplify GraphQL Operations with TypeScript and Hooks

---

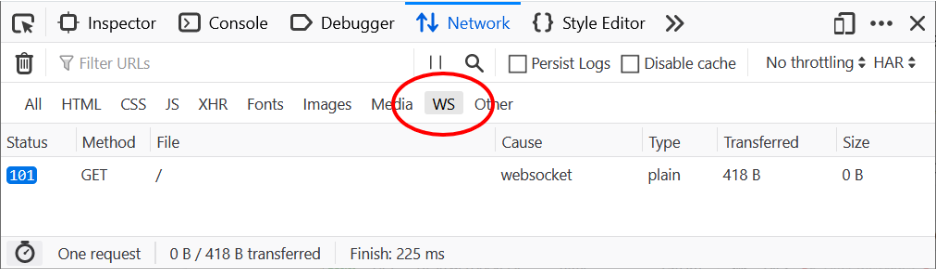

Next up is subscriptions. This is a fun feature of GraphQL and AppSync in general. The ability to leverage real-time data can really bring some nice UX to your apps. I tend to use it sparingly, but it's super helpful for small lists and instant feedback.

Our previous posts have built up a fairly nice set of abstractions that we can use here as well. Because of this, I'm going to paste the result at the start this time and break it down into pieces. Grab a cup of coffee and we'll get to it.

## The Code

Here's our finished custom hook:

```typescript

type ConfigType<VariableType extends {}> = {

query: string;

key: string;

variables?: VariableType;

};

export const useSubscriptionByItself = <

ItemType extends { id: string },

VariablesType extends {} = {}

>({

config,

itemData,

}: {

config?: ConfigType<VariablesType>;

itemData?: ItemType;

} = {}) => {

const [item, update] = React.useState<ItemType | undefined>(itemData);

React.useEffect(() => {

let unsubscribe;

if (config) {

const { query, key, variables } = config;

const subscription = API.graphql(graphqlOperation(query, variables));

if (subscription instanceof Observable) {

const sub = subscription.subscribe({

next: payload => {

try {

const {

value: {

data: { [key]: item },

},

}: {

value: { data: { [key: string]: ItemType } };

} = payload;

update(item);

} catch (error) {

console.error(

`${error.message} - Check the key property: the current value is ${key}`

);

}

},

});

unsubscribe = () => {

sub.unsubscribe();

};

}

}

return unsubscribe;

}, [JSON.stringify(config)]);

return [item];

};

```

There's a lot here, but our use-case is simple. Our subscription is going to handle an item. This could be something as simple as subscribing to new blog posts that are created, for example:

```typescript

const [item] = useSubscription<postFragment>({

config: {

key: 'onCreatePost',

query: onCreatePost,

},

});

```

We could also pass some variables to subscribe to a comment when it is updated:

```typescript

const [comment] = useSubscriptionByItself<

commentFragment,

onUpdateCommentSubscriptionVariables

>({

itemData: comment,

config: {

key: 'onUpdateComment',

query: onUpdateComment,

variables: {

id,

},

},

});

```

> The point is that we are able to take the boilerplate of `const subscription = API.graphql(graphqlOperation(query, variables));` and extract it away into something that can be re-used, as well as leaning into the convention of how AWS Amplify returns data to handle everything in a strongly-typed way.

Let's start at the top and see what's going on.

## Typing the Configuration

```typescript

type ConfigType<VariableType extends {}> = {

query: string;

key: string;

variables?: VariableType;

};

export const useSubscription = <

ItemType extends { id: string },

VariablesType extends {} = {}

>({

config,

itemData,

}: {

config?: ConfigType<VariablesType>;

itemData?: ItemType;

} = {}) => {

```

Let's take a look at the type parameters (the things in between the angle brackets). This takes some explaining because I start out assuming a convention. The `ItemType` represents the object that we're going to be returning and operating on in our hook. The `extends { id: string }` means that whatever object we pass in, it must have an id of type `string` as a property. This is useful, as we want a unique identifier for our object. The `itemData` used in case we want to initialize our state.

Note that I'm leveraging fragments to provide a single typed object that we can work with. Once created, the Amplify `codegen` tool will create types for your fragments that you can then use as we are in this example. You can learn more about fragments and how to use them with GraphQL [here](https://graphql.org/learn/queries/#fragments).

The second `VariableType` is going to be an object that represents any variables that we will be passing to our subscription `graphqlOperation`. This is used further down in the type declaration to the `ConfigType`. This represents the configuration that holds the subscription `query`, `variables` and `key` that we will use to establish our subscription. We'll come back to the `key` a bit later.

## The State

```typescript

const [item, update] = React.useState<ItemType | undefined>(itemData);

```

This is pretty straightforward. We use the `ItemType` parameter we passed in to type the `useState` function. This is possibly undefined, so we note that as well. If we passed in initial `itemData`, we use this as well to establish the state that will keep track of the subscription we're working with.

## The Effect

Here's the real meat of it.

```typescript

React.useEffect(() => {

let unsubscribe;

if (config) {

const { query, key, variables } = config;

const subscription = API.graphql(graphqlOperation(query, variables));

if (subscription instanceof Observable) {

const sub = subscription.subscribe({

next: payload => {

try {

const {

value: {

data: { [key]: item },

},

}: {

value: { data: { [key: string]: ItemType } };

} = payload;

update(item);

} catch (error) {

console.error(

`${error.message} - Check the key property: the current value is ${key}`

);

}

},

});

unsubscribe = () => {

sub.unsubscribe();

};

}

}

return unsubscribe;

}, [JSON.stringify(config)]);

```

First things first, we're going to be establishing a subscription, so for an effect, we need to [clean it up](https://reactjs.org/docs/hooks-effect.html#example-using-hooks-1) when we're done. We declare a variable that will hold the function we want to run when returning from the effect.

Next, we will check if the config exists, as it is optional. We destructure the components and will use them to construct our subscription. The next lines are important:

```typescript

const subscription = API.graphql(graphqlOperation(query, variables));

if (subscription instanceof Observable) {

...

```

The `API.graphql` call actually returns `Observable | Promise<>` - what this means is that the result will be one or the other. To get the autocomplete help that we expect (and stop TypeScript from yelling at us) we need to do what is called "type narrowing" using a [type guard](https://basarat.gitbooks.io/typescript/docs/types/typeGuard.html). We do this by using the `instanceof` keyword to check if the type is an `Observable`. I've added the `@types/zen-observable` package (`yarn add -D @types/zen-observable`) to provide the type.

## The Subscription

```typescript

const sub = subscription.subscribe({

next: payload => {

try {

const {

value: {

data: { [key]: item },

},

}: {

value: { data: { [key: string]: ItemType } };

} = payload;

update(item);

} catch (error) {

console.error(

`${error.message} - Check the key property: the current value is ${key}`

);

}

},

});

unsubscribe = () => {

sub.unsubscribe();

};

```

We have our subscription that's returned from our graphql call, so now we need to subscribe to it. This is using what is called an observable. Last time I checked, Amplify is using the [zen-observable](https://github.com/zenparsing/zen-observable) library for the subscription implementation. Observables operate with values by returning them as streams, so you can listen for updates to the stream by supplying callbacks - in this case, `next`. Our `next` callback takes a `payload` (this will be the value of the next event in the stream) and we then do some destructuring on this value to get the underlying data we want. Amplify follows a convention for returning data in the subscriptions, so we can use this to make sure our destructuring is correct.

```typescript

const {

value: {

data: { [key]: item },

},

}: {

value: { data: { [key: string]: ItemType } };

} = payload;

```

We use the `key` we talked about earlier, as well as the `ItemType` type we passed in, to create a type and properly destructure from the nested object (in the form of `value.data[key]`). Once this data is handled, we use the `update` method from our `useState` hook to persist our state, and if anything goes wrong we log out the error.

After the callback, we assign a small arrow function to our `unsubscribe` variable that will do the work of unsubscribing from our subscription if the component the hook is used in is unmounted.

```typescript

[JSON.stringify(config)];

```

Our `useEffect` hook takes in one dependency (an object) so we'll just `stringify` it to make sure that if it's changed in any way, our hook will run again and we can re-establish the appropriate subscription.

The last line merely returns the data kept in state, so we can use it from the caller.

```typescript

return [item];

```

## The Wrap

This is, at its core, just a wrapper over the existing Amplify tools. But for TypeScript projects, it gives you the help you can use to make sure your app is doing what you expect. The nice by-product, in my opinion, is that the API surface is more complete while abstracting away the common bits. It's generally a good practice to extract these things away and avoid having `useEffect` directly in your components. This is just one little step in that direction.

If anyone has any feedback on this approach, please leave it in the comments. I use this often, only in TypeScript projects, and I hope it can help someone. You can also ping me on twitter @mwarger.

...

But wait, what if you need to subscribe to many events? That's next up - follow me to be notified when it's published!

| mwarger |

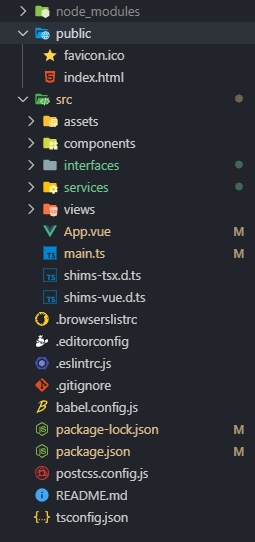

163,390 | VS Code August 2019 Release Highlights Video | Highlights of some of the latest features found in the August 2019 release of Visual Studio Code. | 1,833 | 2019-09-09T16:48:56 | https://www.clarkio.com/2019/09/03/vs-code-august-release-video/ | discuss, vscode, javascript, webdev | ---

title: VS Code August 2019 Release Highlights Video

published: true

description: Highlights of some of the latest features found in the August 2019 release of Visual Studio Code.

tags: discuss,vscode,javascript,webdev

cover_image: https://thepracticaldev.s3.amazonaws.com/i/usptjx26n1vuqlkt2xb4.jpg

canonical_url: https://www.clarkio.com/2019/09/03/vs-code-august-release-video/

series: vscode-release

---

## August 2019 VS Code Release

Visual Studio Code has a new release out with lots of improvements and new features. You can read through the [release notes](https://code.visualstudio.com/updates/v1_38?WT.mc_id=devto-blog-brcl) to find out more about them or watch this short video to see some of the highlights from it.

{% youtube YA8QJPGf2M4 %}

### Features Highlighted

- [Copy and Revert Actions for Diffs](https://code.visualstudio.com/updates/v1_38#_copy-and-revert-in-the-inline-diff-editor?WT.mc_id=devto-blog-brcl)

- [Multi-line search in Find](https://code.visualstudio.com/updates/v1_38#_multiline-search-in-find?WT.mc_id=devto-blog-brcl)

- [Maximize/Restore Bottom Panel](https://code.visualstudio.com/updates/v1_38#_maximize-editor-toggle-command?WT.mc_id=devto-blog-brcl)

- [Cursor Surround Lines (a.k.a Scroll Off)](https://code.visualstudio.com/updates/v1_38#_cursor-surrounding-lines-scrolloff?WT.mc_id=devto-blog-brcl)

- [Link Protection for Outgoing Links](https://code.visualstudio.com/updates/v1_38#_link-protection-for-outgoing-links?WT.mc_id=devto-blog-brcl)

What's your favorite feature or improvement in this month's release? Share it below 👇

| clarkio |

163,465 | How to create an Android App: Android Room | Hi again (x3) everyone! We’re almost done with all app functionality, missing the saving m... | 1,850 | 2019-08-29T19:16:01 | https://dev.to/edrome/how-to-create-an-android-app-android-room-il | android, java, tutorial | ### Hi again (x3) everyone! ###

We’re almost done with all app functionality, missing the saving mechanism. Once the user has filled his information, then it has to be saved on database to avoid the user fill it again and again.

Android support either SQLite or Room, I prefer the second one because adding queries and modifying columns it’s easier. Also, allow singleton instance, and asynchronous task.

As we establish on the last post, the readability is important and the way to accomplish it is by creating packages where classes are going to be stored.

Inside gradle add the following implementations. They will add android room annotations that are very important.

```

implementation 'androidx.room:room-runtime:2.1.0'

annotationProcessor 'androidx.room:room-compiler:2.1.0'

implementation 'org.jetbrains:annotations-java5:15.0'

```

Android Room needs four different classes:

1. ***Entity:*** it is table definition.

2. ***DAO:*** it has all queries available to be performed to the table.

3. ***POJO:*** here it is the DAO functionality

4. ***Room Database:*** here we create the database instance.

Start creating the package database, inside it we’ll create subpackages in case we want to add more tables. I suggest to structure all your database packages like this.

```

+-- com.main.package

| +-- database

| +-- dao

| +-- entity

| +-- pojo

| +-- room

```

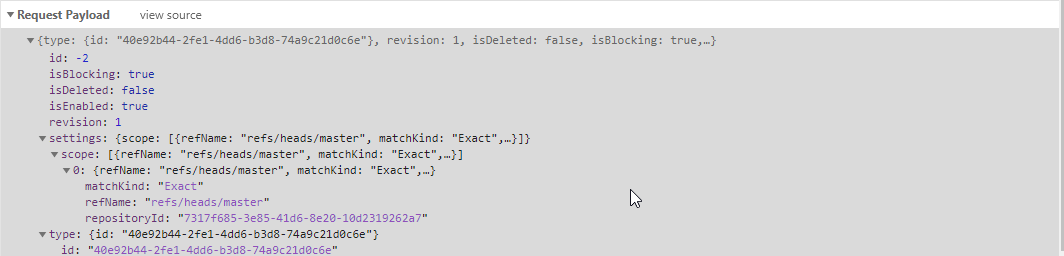

First we’ll create the entity, the name I chose is profileModel. The class needs a constructor either empty or not, prefered private attributes, getter and setters of every attribute, otherwise an error will be thrown.

The table design is as described below:

| Columns name | Data Type | Attributes |

| ------------- |:-------------:| -----------:|

| profileID | Integer | Primary Key, Auto generate, Non null |

| username | String | Non Null |

| gender | String | Non Null |

| birthday | Date | Non Null |

| photo | byte[] | |

As you can see we add a primary key auto generated, every column might be non null except for photo, allowing the user to either select or not a profile photo.

Add the annotation for every column, and generate the constructor including getters and setters. Note the “Entity” annotation at the beginning of the class, it tells android that this class is a table and its name is profile.

```{java}

@Entity(tableName = "profile")

public class profileModel {

public profileModel(@NonNull String username,

@NonNull String gender,

@NonNull Date birthday,

@NonNull byte[] photo) {

this.username = username;

this.gender = gender;

this.birthday = birthday;

this.photo = photo;

}

@NonNull

@PrimaryKey(autoGenerate = true)

private Integer profileId;

@NonNull

private String username;

@NonNull

private String gender;

@NonNull

private Date birthday;

@NonNull

private byte[] photo;

@NonNull

public Integer getProfileId() {

return profileId;

}

public void setProfileId(@NonNull Integer profileId) {

this.profileId = profileId;

}

@NonNull

public String getUsername() {

return username;

}

public void setUsername(@NonNull String username) {

this.username = username;

}

@NonNull

public String getGender() {

return gender;

}

public void setGender(@NonNull String gender) {

this.gender = gender;

}

@NonNull

public Date getBirthday() {

return birthday;

}

public void setBirthday(@NonNull Date birthday) {

this.birthday = birthday;

}

@NonNull

public byte[] getPhoto() {

return photo;

}

public void setPhoto(@NonNull byte[] photo) {

this.photo = photo;

}

}

```

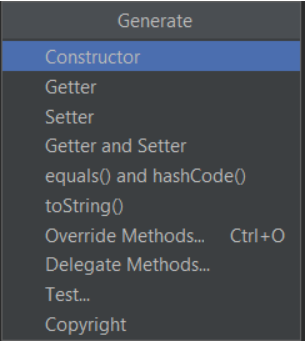

> Tip: You can press alt+insert or right-click generate to popup a generate menu.

>

> Select all columns excluding the primary key and press ok.

>

> These steps also work for getter and setter, in that case you might select all columns, including primary key.

The DAO will be interface instead of classe, containing all table operations like inserts, queries, deletes and updates. For this tutorial purpose, we'll define an insert, an update and a query.

```{java}

@Dao

public interface profileDAO {

@Insert

void insert(profileModel profile);

@Update

void update(profileModel profile);

@Query("SELECT * FROM profile WHERE profileId = :id")

profileModel getProfile(Integer id);

}

```

Once the entity and DAO are create, we add the database room class. It has to be abstract, with an annotation at the top of it. This annotation indicates the entities contained inside the database, along with a version number, that has to increase each time you do a change on the entity, and an exportSchema indicator, it is defined as false for this series.

```{java}

@Database(entities = {profileModel.class}, version = 1, exportSchema = false)

public abstract class databaseRoom extends RoomDatabase {

public abstract profileDAO foodsDao();

public static volatile databaseRoom INSTANCE;

public static databaseRoom getDatabase(final Context context){

if (INSTANCE == null){

synchronized (databaseRoom.class){

if (INSTANCE == null){

INSTANCE = Room.databaseBuilder(

context.getApplicationContext(),

databaseRoom.class,

"profile_database")

.fallbackToDestructiveMigration()

.allowMainThreadQueries()

.build();

}

}

}

return INSTANCE;

}

}

```

When all files are ready, then we create a POJO class that will be the one used to perform operations inside our database.

```java

public class profilePOJO {

private profileDAO mProfileDao;

private profileModel mProfile;

public profilePOJO(Application application) {

databaseRoom db = databaseRoom.getDatabase(application);

mProfileDao = db.foodsDao();

}

public profileModel getProfile(Integer id) {

return mProfileDao.getProfile(id);

}

public void insert(profileModel profile){

new insertAsyncTask(mProfileDao).execute(profile);

}

private static class insertAsyncTask extends AsyncTask<profileModel, Void, Void> {

private profileDAO mAsyncTaskDao;

insertAsyncTask(profileDAO dao){

mAsyncTaskDao = dao;

}

@Override

protected Void doInBackground(final profileModel... profiles) {

mAsyncTaskDao.insert(profiles[0]);

return null;

}

}

}

```

Aaand.... we're going to leave it here or the post will be to large for reading.

As always, I share the link to this [project](https://github.com/EdRome/AppProfile) on GitHub. Feel free to use it as you wish.

> Edit: I forgot to add Dao annotation. Sorry for that | edrome |

163,537 | Demystifying webpack - What's a Bundler doing? | Originally publised on jnielson.com In my introduction to this series on Demystifying Build Tools, I... | 1,893 | 2019-08-29T23:46:11 | https://jnielson.com/demystifying-webpack-whats-a-bundler-doing | webpack, javascript | _Originally publised on [jnielson.com](https://jnielson.com/demystifying-webpack-whats-a-bundler-doing)_

In my introduction to this series on [Demystifying Build Tools](https://jnielson.com/build-tools-demystified-my-thoughts), I introduced the core concepts of webpack and babel. I've created a couple other posts on various facets of babel, like [`@babel/preset-env`](https://jnielson.com/demystifying-babel-preset-env) and [`babel plugins more generally`](https://jnielson.com/demystifying-babel-plugins-a-debugging-story). If you haven't read those, I'd highly recommend them (obviously!). In this post I'll shift and cover a little more about webpack. In the talk I'm prepping for, I'm intending to spend more time on babel and less time on webpack, which you might have guessed from the blog coverage difference.

## Why less on webpack?

I haven't had nearly as much in our projects to manage with webpack since we're using the defaults provided by [next.js](https://nextjs.org) (thanks Next team!). But, the things that I have found valuable to be aware of include a knowledge of what webpack is at a little more depth than the concepts docs referenced in the introduction post and also how to use and read the `webpack-bundle-analyzer` plugin. In my opinion, having a knowledge of webpack makes it simpler to work with as the core concepts build together masterfully, and then the bundle-analyzer plugin is super useful to examine what webpack is outputting that I can't imagine doing a project where I don't use it at least once to sanity check that nothing I don't expect is included in the bundle.

So, to learn more about webpack where do you start? First, I'd start with breaking down the description they use for webpack in their docs:

> "At its core, webpack is a static module bundler for modern JavaScript applications."

>

> [webpack docs](https://webpack.js.org/concepts/)

That statement is relatively simple, but can be broken down to emphasize the key features and goals of webpack. I'll talk more to each of the following ideas:

- Bundler

- Module

- Static

- Modern JavaScript

- Applications (including libraries)

## Bundler

At its core, webpack is a bundler. Not a task runner or a compiler, a bundler. What is a bundler? In the context of webpack, it takes all files referenced from the entry point(s) and spits out at least 1 file called "the bundle". The goal of the bundle is to package code in a way that makes sense for the target environment, in most cases that's the browser. With HTTP 1.1, it tends to be best to serve as much of the application in a single file, to reduce the number of round-trips needed to get the code for the browser to execute. But, with HTTP 2 as well as in environments where you want heavier caching it makes sense to split your "bundle" into multiple files that can be cached and served independently and in parallel.

How does webpack's role as a bundler impact you? Well, for the most part it doesn't. Since it's a bundler it usually does its thing just fine, and once setup in an application it doesn't take much maintenance unless you add a new file type or want to process something differently. More on that later though!

## Module

In stating its place as a bundler, the webpack docs clarify that it is a `module` bundler. In that aspect, it treats everything as a module: JavaScript Code, Images, Raw files, you name it and it is a module in webpack. Modules are loaded into webpack through a variety of `loaders`, which you can read more about [on the loaders concepts page](https://webpack.js.org/concepts/#loaders). Essentially in order to support a large variety of file types you'll need to add loaders for them so that webpack can understand them. Out of the box it supports JavaScript and JSON "modules", much like Node itself. In webpack 4 at least, the module type you use greatly impacts the extra features webpack is able to enable, such as Tree Shaking. Modules are key in webpack, since that is how it determines what code to include in the bundle that it creates. It starts from your "entry point" (which is a module) and pulls in everything referenced by that module. In order to pull it in, it needs to be a module! So, anything that you `import` in that entry module will end up in your bundle that is created. Without module boundaries, webpack wouldn't be able to determine code that can be left out, and we'd be back to including entire directories in what we serve to the browser.

## Static

One of the best features of webpack, in my opinion, is the static analysis capabilities that are unlocked by it being a `static` (in other words, build time) module bundler. A runtime bundler could probably work, but it wouldn't be able to do Tree Shaking or Dead Code Elimination. This would be a pretty large drawback for me, since it is pretty common in my projects to only use part of the aspects that a library or component exposes. In my opinion, the word `static` in this context also implies that the build output won't change unless the build input does (assuming you have things configured correctly), which gives me some confidence in being able to run builds as many times as needed. Related to that, another benefit of `static` in this context is that it allows the build process to support plugins that act on those `static` assets to transform, adjust, or otherwise do something to the code.

There are some downsides to it being a `static` module bundler. One of the largest I've run into is the inability to dynamically use `require.context` in storybook to get just the stories that I want with some sort of option string. This led to us re-writing our storybook config file whenever we want a different set of components to work on, which thankfully was relatively easy to implement.

## Modern JavaScript

Since the docs statement says "modern JavaScript applications", I decided that there should be a comma in there and broke it down even further. Modern can be made to indicate that it is something up to date, but I think when you combine it with JavaScript you usually get the idea of `ESNext` or `ES2015`. In the case of new language features, that job is actually handled by `babel`, which webpack can run on your code as it bundles it. This interplay is something that I wanted to highlight since it illustrates the capability of the module bundler to take in anything that you can tell it how to handle. Since it runs in node, webpack can be default handle whatever syntax your version of node can. Since you can run it with `babel`, webpack can optionally handle whatever syntax you throw at it (within the limits of babel of course). These two libraries work together to output your code in a manner that's suitable for browser consumption. In the simplest configuration, babel will take your files and output them, one for one or all to one, transformed according to the plugins you use. Using webpack, it can be a little smarter than that and only run `babel` on the files that it is bundling, allowing you to have other files in your `src` directory (or however you organize yourself) that don't need to be processed by babel.

Splitting this up further, `Modern` is also a good descriptor of webpack itself. The team there does a great job adding new features/plugins, fixing things, and overall keeping the tool `modern` in the sense of up to date and useful! `JavaScript` by itself doesn't mean all that much though, it does indicate that webpack is focused on that language (though if I understand correctly it supports web assembly to some extent).

## Applications (including libraries)

The core use case for webpack is definitely applications that are served to the browser, but it can also be used for libraries if they have a desire to do so. There is support for libraries in a similar way to applications, and they have an [awesome guide on their docs site](https://webpack.js.org/guides/author-libraries/) about how to use webpack to bundle your library code. Since webpack focuses on the application level, there are tons of plugins that support that use providing things like aliasing, loading all the file types you use, and others.

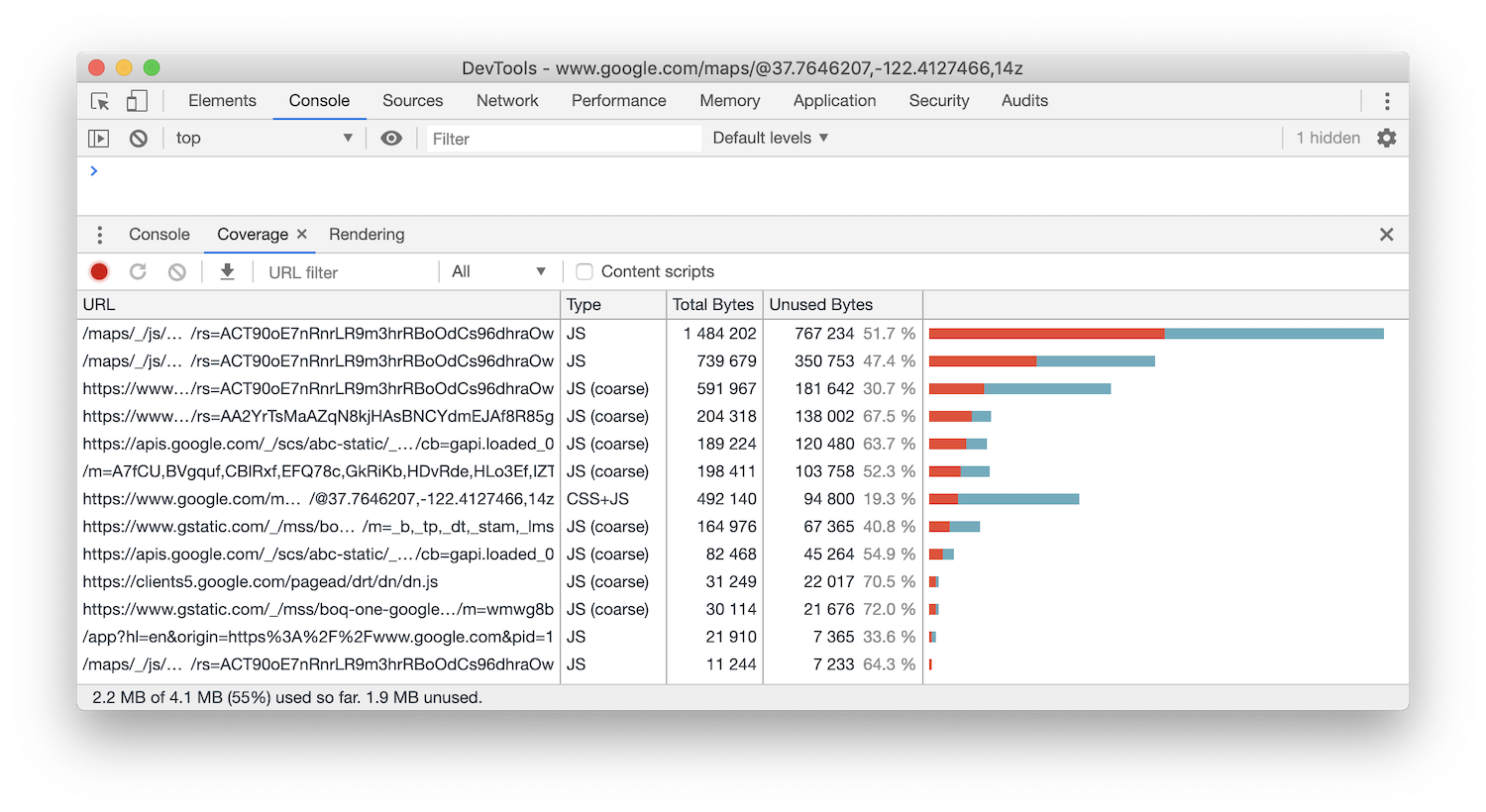

## The Bundle Analyzer

After you've got webpack setup and outputting some wonderful files to serve to the browser, you might run into a case where you're curious what is in there. In most cases, your bundle will be minified and uglified so it won't be much good to try and read what's there, though there are some things that don't uglify very well that you can use if you're trying to check to see if something is there quickly. But, outside of that the `webpack-bundle-analyzer` is a fantastic tool. For use in [next.js](https://nextjs.org), it's as simple as installing the [Next.js plugin](https://github.com/zeit/next.js/tree/canary/packages/next-bundle-analyzer) and following the instructions in the readme to add it to your project. Since Next produces two bundles, one for the server and another for the client, it can be pretty intimidating to set up any webpack things from scratch. So, I'm super grateful for the team that added this plugin since it's already setup to create a bundle analyzer for both bundles. Most of the time I just use the client bundle, but the server bundle is also quite helpful. The [bundle analyzer](https://www.npmjs.com/package/webpack-bundle-analyzer) looks pretty overwhelming when you first look at it, since it shows in some manner every file that is included in the bundle. There's a number of things to look at when using the bundle analyzer, but there are a few that I want to call out:

1. Different Size Settings

1. Hiding chunks

1. Outputting a JSON file (not currently supported by the next-bundle-analyzer plugin)

### Different Size Settings

One of the first things you might wonder is "where does this size information come from?", since in most cases you won't be seeing what your file explorer told you the size was. In the sidebar menu when analyzing your bundle, you can select between `stat`, `parsed`, and `gzip`. These are described in detail on the documentation page linked above, but I think it's useful to point out that `stat` should be close to your file system output, `parsed` should be the post-webpack size (minified/uglified) and then `gzip` is the compressed size of the post-webpack file. By default the `parsed` size is pulled up, which is why I pointed out that they might look different than you might expect. In most cases I've seen, `parsed` is the most useful number, since `stat` doesn't help much as it's pre-webpack and `gzip` is useful... but I don't want to spend my time optimizing my code for `gzip` compression since the time the browser spends parsing it is usually longer than the network time a few more bytes off would save. There's more information on this in [the documentation](https://www.npmjs.com/package/webpack-bundle-analyzer#user-content-size-definitions).

### Hiding Chunks

In most cases, the output from the bundle analyzer will be entirely too much to handle as most projects that care to analyze their bundle will have hundreds of modules. If you haven't used it before, clicking on a module/section will zoom in on it, but that doesn't actually hide the ones that now can't be seen. To do that, you can uncheck them in the sidebar menu, which will actually re-draw the entire page in most cases. There are a number of things that you might want to hide, like a node_module that you're stuck with and can't reduce the size of or a section of your application that you're not working on right now and is distracting from the actual part you are inspecting. There's more information on this in [the documentation](https://www.npmjs.com/package/webpack-bundle-analyzer).

### Outputting a JSON file

In a lot of cases, webpack has way more information available then even the bundle analyzer shows, and in that case I find the bundle analyzer's capability to output the `stats.json` file from webpack for you to be wonderful. Since the bundle analyzer already uses a lot of the stats options (and webpack does slow down a bit when you use a bunch of stats options), it's helpful to be able to re-use those and output them to a file. Sadly the next-bundle-analyzer plugin doesn't currently support passing any options to the bundle analyzer (they'd probably add it, but I haven't cared enough yet since it isn't terribly hard to use for a one-off case). So, if you want to do this in a next context you'd need to manually adjust your next.config.js to use the bundle analyzer (in a similar way to [what the plugin does](https://github.com/zeit/next.js/blob/canary/packages/next-bundle-analyzer/index.js) ideally) to pass the `generateStatsFile: true` option to the bundle analyzer, with the `statsFilename` changed based off which build is running. The stats file is a bit of a beast to handle, so we're not going to talk about it much here, but it is super useful if you think webpack is doing something weird!

Thanks for reading! Ideally this helps you understand a little bit more about webpack, in combination with going through their [core concepts docs](https://webpack.js.org/concepts/). I'd highly recommend spending some time on doing so, since even if you're using an awesome tool like [next.js](https://nextjs.org) there's still benefits that come from understanding what is happening to bundle your code.

_Cover image courtesy of undraw.co_

| jnielson94 |

163,683 | Nebula container orchestrator — container orchestration for IoT devices & distributed systems | Let’s say for example you started a new job as a DevOps/Dev/SRE/etc at a company that created a new s... | 0 | 2019-08-30T11:33:11 | https://dev.to/naorlivne/nebula-container-orchestrator-container-orchestration-for-iot-devices-distributed-systems-48f7 | devops, docker | Let’s say for example you started a new job as a DevOps/Dev/SRE/etc at a company that created a new smart speaker (think Amazon Echo or Google home), said device gained a lot of success and you quickly find yourself with a million clients, each with a single device at his\hers home, Sounds great right? Now the only problem you have is how do you handle deployments to a million of devices located all across the world?

* You could go the way most old school vendors do it by releasing a package for the end user to download and install himself on the company website but at this day and age this will quickly lose you customers to the competition who doesn’t have such high maintenance needs.

* You could create a self updating system built into your codebase but that will require a lot of maintenance and man hours from the development team & even then will likely lead to problems and failures down the road.

* You could containerize the codebase, create on each smart speaker a single server Kubernetes cluster and create a huge federated cluster out of all of them (as Kubernetes doesn’t support this scale nor latency tolerant workers this is required) but that will lead to huge costs on all the resources wasted only to run all said clusters.

* You could use Nebula Container Orchestrator — which was designed to solve exactly this kind of distributed orchestration needs.

As you may have guessed from the title I want to discuss about the last option from the list.

Nebula Container Orchestrator aims to help devs and ops treat IoT devices just like distributed Dockerized apps. It aim is to act as Docker orchestrator for IoT devices as well as for distributed services such as CDN or edge computing that can span thousands (or even millions) of devices worldwide and it does it all while being open-source and completely free.

# Different requirements leads to different orchestrators

When you think about it a distributed orchestrator has the following requirements:

* It needs to be latency tolerant — if the IoT devices are distributed then each will connect to the orchestrator through the Internet at a connection that might not always be stable or fast.

* It needs to scale out to handle thousands (and even hundreds of thousands) of IoT devices — massive scale deployments are quickly becoming more and more common.

* It needs to run on multiple architectures — a lot of IoT devices uses ARM boards.

* It needs to be self healing — you don’t want to have to run across town to reset a device every time there is a little glitch do you?

* Code needs to be coupled to the hardware — if your company manufacture the smart speaker in the example mentioned above & a smart fridge you will need to ensure coupling of the code to the device it’s intended to run on (no packing different apps into the same devices in the IoT use case).

This is quite different from the big Three orchestrators (Kubernetes, Mesos & Swarm) which are designed to pack as many different apps\microservices onto the same servers in a single (or relatively few) data centers and as a result non of them provide truly latency tolerant connection and the scalability of Swarm & Kubernetes is limited to a few thousands workers.

Nebula was designed with stateless RESTful Manger microservice to provide a single point to manage the clusters as well as providing a single point which all containers check for updates with a Kafka inspired Monotonic ID configuration updates in a pull based methodology, this ensure that changes to any of the applications managed by Nebula are pulled to all managed devices at the same time and also ensures that all devices will always have the latest version of the configuration(thanks to the monotonic ID), all data is stored in MongoDB which is the single point of truth for the system, on the workers side it’s based around a worker container on each devices that is in charge of starting\stopping\changing the other containers running on that device, due to the design each component can be scaled out & as such Nebula can grow as much as you require it.

you can read more about Nebula architecture at https://nebula.readthedocs.io/en/latest/architecture/

# Nebula features

As it was designed from the ground up to support distributed systems Nebula has a few neat features that allows it to control distributed IoT systems:

* Designed to scale out on all of it’s components (IoT devices, API layer, & Mongo all scale out)

* Able to manage millions of IoT devices

* Latency tolerant — even if a device goes offline it will be re-synced when he gets back online

* Dynamically add/remove managed devices

* Fast & easy code deployments, single API call with the new container image tag (or other configuration changes) and it will be pushed to all devices of that app.

* Simple install —MongoDB & a stateless API is all it takes for the management layer & a single container with some envvars on each IoT device you want to manage takes care of the worker layer

* Single API endpoint to manage all devices

* Allows control of multiple devices with the same Nebula orchestrator (multiple apps & device_groups)

* Not limited to IoT, also useful for other types of distributed systems

* API, Python SDK & CLI control available

# A little example

The following command will install an Nebula cluster for you to play on and will create an example app as well, requires Docker, curl & docker-compose installed:

```

curl -L "https://raw.githubusercontent.com/nebula-orchestrator/docs/master/examples/hello-world/start_example_nebula_cluster.sh" -o start_example_nebula_cluster.sh && sudo sh start_example_nebula_cluster.sh

```

But let’s go over what this command does to better understand the process:

* The scripts downloads and runs a docker-compose.yml file which creates:

1. A MongoDB container — the backend DB where Nebula apps current state is saved.

2. A manager container — A RESTful API endpoint, this is where the admin manages Nebula from & where devices pulls the latest configuration state from to match against their current state

3. A worker container — this normally runs on the IoT devices, only one is needed on each device but as this is just an example it runs on the same server as the management layer components runs on.

It’s worth mentioning the “DEVICE_GROUP=example” environment variable set on the worker container, this DEVICE_GROUP variable controls what nebula apps will be connected to the device (similar to a pod concept in other orchestrators).

* The script then waits for the API to become available.

* Once the API is available the scripts sends the following 2 commands:

```

curl -X POST \

http://127.0.0.1/api/v2/apps/example \

-H 'authorization: Basic bmVidWxhOm5lYnVsYQ==' \ -H 'cache-control: no-cache' \

-H 'content-type: application/json' \ -d '{

"starting_ports": [{"81":"80"}],

"containers_per": {"server": 1},

"env_vars": {},

"docker_image" : "nginx",

"running": true,

"volumes": [],

"networks": ["nebula"],

"privileged": false,

"devices": [],

"rolling_restart": false

}'

```

This command creates an app named “example” and configures it to run an nginx container to listen on port 81 , as you can see it can also control other parameters usually passed to the docker run command such as envvars or networks or volume mounts.

```

curl -X POST \

http://127.0.0.1/api/v2/device_groups/example \ -H 'authorization: Basic bmVidWxhOm5lYnVsYQ==' \ -H 'cache-control: no-cache' \

-H 'content-type: application/json' \ -d '{

"apps": ["example"]

}'

```

This command creates a device_group that is also named “example” & attaches the app named “example” to it.

* After the app & device_groups arecreated on the nebula API the worker container will pick it up the changes to the device_group which is been confiugred to be part of (“example” in this case) and will start an Nginx container on the server, you can run “docker logs worker” to see the Nginx container being downloaded before it starts (this might take a bit if your on a slow connection). and after it’s completed you can access http://<server_exterior_fqdn>:81/ on your browser to see it running

Now that we have a working Nebula system running we can start playing around with it to see it’s true strengths:

* We can add more remote workers by running a worker container on them:

```

sudo docker run -d --restart unless-stopped -v /var/run/docker.sock:/var/run/docker.sock --env DEVICE_GROUP=example --env REGISTRY_HOST=https://index.docker.io/v1/ --env MAX_RESTART_WAIT_IN_SECONDS=0 --env NEBULA_MANAGER_AUTH_USER=nebula --env NEBULA_MANAGER_AUTH_PASSWORD=nebula --env NEBULA_MANAGER_HOST=<your_manager_server_ip_or_fqdn> --env NEBULA_MANAGER_PORT=80 --env nebula_manager_protocol=http --env NEBULA_MANAGER_CHECK_IN_TIME=5 --name nebula-worker nebulaorchestrator/worker

```

It’s worth mentioning that a lot of the envvars passed through the command above are optional (with sane defaults) & that there is no limit on how many devices we can run this command on, at some point you might have to scale out the managers and\or backend DB but those are not limited as well.

* We can change the container image on all devices with a single API call, let’s for example replace the container image to Apache to simulate that

```

curl -X PUT \http://127.0.0.1/api/v2/apps/example/update \-H ‘authorization: Basic bmVidWxhOm5lYnVsYQ==’ \-H ‘cache-control: no-cache’ \-H ‘content-type: application/json’ \-d ‘{“docker_image”: “httpd:alpine”}’

```

* Similarly we can also update any parameter of the app such as env_vars, privileged permissions, volume mounts, etc, — the full list of API endpoints as well as the Python SDK & the CLI is available at documentation page at https://nebula.readthedocs.io/en/latest/

Hopefully this little guide allowed you to see the need of an IoT docker orchestrator and it’s use case & should you find yourself interested in reading more about it you can visit Nebula Container Orchestrator site at https://nebula-orchestrator.github.io/ or skip right ahead to the documentation at https://nebula.readthedocs.io | naorlivne |

163,714 | NextJS Vs Preact, Which do I go for? | I am working on a big Forum project and i'm trying to figure out which one to use to handle my... | 0 | 2019-08-30T11:02:08 | https://dev.to/misteryomi/nextjs-vs-preact-which-do-i-go-for-iom | help, preact, nextjs | ---

title: NextJS Vs Preact, Which do I go for?

published: true

tags: help, preact, Next, NextJS

---

I am working on a big Forum project and i'm trying to figure out which one to use to handle my frontend between NextJS and Preact.

Suggestions are kindly needed... and why.

| misteryomi |

163,903 | Queixa: a faculdade não ensina nada atualizado! | Um vídeo do canal O Universo da Programação sobre o conteúdo acadêmico | 0 | 2020-05-22T18:00:24 | https://dev.to/etc_william/queixa-a-faculdade-nao-ensina-nada-atualizado-166i | udp, universodaprogramacao, podcast, youtube | ---

title: "Queixa: a faculdade não ensina nada atualizado!"

published: true

description: "Um vídeo do canal O Universo da Programação sobre o conteúdo acadêmico"

tags: udp, universo-da-programacao, podcast, youtube

---

Muita gente reclama do conteúdo da faculdade.

Será que a faculdade ensina tudo o que precisamos para o mercado? Será que a faculdade deveria ensinar tecnologias de mercado?

Neste vídeo temos uma reflexão sobre o assunto e espero que te ajude a se encontrar nesse universo de opções que é o Universo da Programação!

{% youtube BwdKfV7wvWo %}

| 1ilhas |

164,005 | Configuring standard policies for all repositories in Azure Repos |

A couple of weeks ago I blogged about setting collection level permissions on Az... | 0 | 2019-08-30T21:00:43 | https://jessehouwing.net/azure-repos-git-configuring-standard-policies-on-repositories/ | azuredevops, git, azurerepos | ---

title: Configuring standard policies for all repositories in Azure Repos

published: true

tags: Azure DevOps,git,Azure Repos

canonical_url: https://jessehouwing.net/azure-repos-git-configuring-standard-policies-on-repositories/

---

> A couple of weeks ago I blogged about [setting collection level permissions on Azure Repos](https://dev.to/jessehouwing/setting-default-repository-permissions-on-your-azure-devops-organization-1i6g-temp-slug-9023663). That sparked questions whether the same was possible on Branch Policies in the comments, twitter and the Azure DevOps Club slack channel.

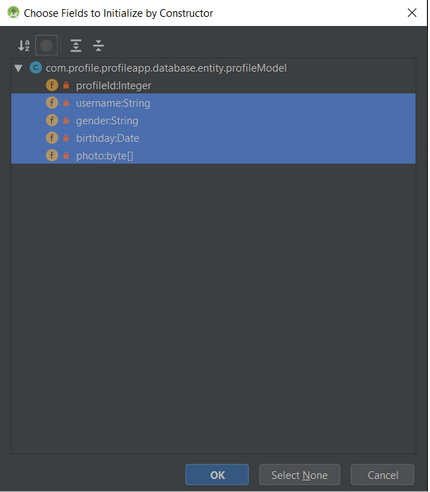

By default you can only configure policies on specific branches in Azure Repos. You access the policies through the Branch's [...] menu and set the policy from there. But if you're using a strict naming pattern for your branches (e.g. when using [Release Flow](https://docs.microsoft.com/en-us/azure/devops/learn/devops-at-microsoft/release-flow) or [GitHub Flow](https://guides.github.com/introduction/flow/)), you may want to set a policy for all future Release Branches, or all Feature branches.

**It would be nice if you could write these policies into law, that way you don't have to set them for every future branch.**

> **Let's start with the bad news** : the policy API is specific to a Project. Because of that you can't set the policies for all Git Repositories in an account, but you can specify the policy for all repositories in a Project.

<figcaption>Set a policy on a branch.</figcaption>

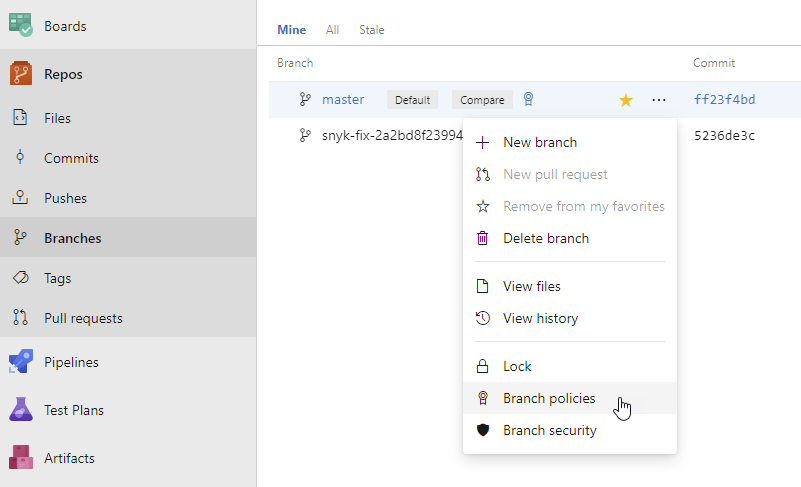

If you look at the request that's generated when saving a Branch Policy, you can see the UI sending a POST request to the `/{Project Guid}/api/policy/Configurations` REST API when creating a new policy. That request contains the scope for each policy:

<figcaption>Each policy has a scope in Azure Repos</figcaption>

As you can see, the policy has a Scope. You can have multiple active policies and each can have its own scope. The UI will always create a specific scope that contains the `repositoryId` and the exact branch name.

```

"scope": [

{

"refName": "refs/heads/master",

"matchKind": "Exact",

"repositoryId": "7317f685-3e85-41d6-8e20-10d2319262a7"

}

]

```

<figcaption>Scope: (default) Specific Git Repo and single branch.</figcaption>

[But if you look at the docs for this API, you'll find that this is not the only option available](https://docs.microsoft.com/en-us/rest/api/azure/devops/policy/configurations/create?view=azure-devops-server-rest-5.0). The widest scope you can create has no repository scope at all and applies to all repositories in that project:

```

"scope": [

{

"repositoryId": null

}

]

```

<figcaption>Scope: All Git Repos in the project.</figcaption>

But there are other cool options as well. You can configure a policy for all branches with a specific prefix by setting the `matchKind` from `exact` to `prefix`.

```

"settings": {

"scope": [

{

"repositoryId": null,

"refName": "refs/heads/features/",

"matchKind": "prefix"

}

]

}

```

<figcaption>Scope: All feature branches for all repositories in the project.</figcaption>

Unfortunately, it looks like this API exists at the Project level only. One can't set the policy for all future projects. _But, think about it, that makes sense. You can't predict all the future group names, Build Definition IDs and such for projects that don't exist yet._ But it's less restricted than the UI would let you believe.

To figure out how each of the policies is specified, configure one branch the way you want ant then open `/{Project Guid}/_apis/policy/Configurations/` on your account. you'll be treated with the JSON for your current configuration:

```

{

"count": 1,

"value": [

{

"isEnabled": true,

"isBlocking": true,

"settings": {

"useSquashMerge": false,

"scope": [

{

"refName": "refs/heads/master",

"matchKind": "Exact",

"repositoryId": "7317f685-3e85-41d6-8e20-10d2319262a7"

}

]

}

}

]

}

```

Find out all you need to know about policy types by querying them from your account as well, my account returns these:

```

[

{

"description": "GitRepositorySettingsPolicyName",

"id": "0517f88d-4ec5-4343-9d26-9930ebd53069",

"displayName": "GitRepositorySettingsPolicyName"

},

{

"description": "This policy will reject pushes to a repository for paths which exceed the specified length.",

"id": "001a79cf-fda1-4c4e-9e7c-bac40ee5ead8",

"displayName": "Path Length restriction"

},

{

"description": "This policy will reject pushes to a repository for names which aren't valid on all supported client OSes.",

"id": "db2b9b4c-180d-4529-9701-01541d19f36b",

"displayName": "Reserved names restriction"

},

{

"description": "This policy ensures that pull requests use a consistent merge strategy.",

"id": "fa4e907d-c16b-4a4c-9dfa-4916e5d171ab",

"displayName": "Require a merge strategy"

},

{

"description": "Check if the pull request has any active comments",

"id": "c6a1889d-b943-4856-b76f-9e46bb6b0df2",

"displayName": "Comment requirements"

},

{

"description": "This policy will require a successfull status to be posted before updating protected refs.",

"id": "cbdc66da-9728-4af8-aada-9a5a32e4a226",

"displayName": "Status"

},

{

"description": "Git repository settings",

"id": "7ed39669-655c-494e-b4a0-a08b4da0fcce",

"displayName": "Git repository settings"

},

{

"description": "This policy will require a successful build has been performed before updating protected refs.",

"id": "0609b952-1397-4640-95ec-e00a01b2c241",

"displayName": "Build"

},

{

"description": "This policy will reject pushes to a repository for files which exceed the specified size.",

"id": "2e26e725-8201-4edd-8bf5-978563c34a80",

"displayName": "File size restriction"

},

{

"description": "This policy will ensure that required reviewers are added for modified files matching specified patterns.",

"id": "fd2167ab-b0be-447a-8ec8-39368250530e",

"displayName": "Required reviewers"

},

{

"description": "This policy will ensure that a minimum number of reviewers have approved a pull request before completion.",

"id": "fa4e907d-c16b-4a4c-9dfa-4906e5d171dd",

"displayName": "Minimum number of reviewers"

},

{

"description": "This policy encourages developers to link commits to work items.",

"id": "40e92b44-2fe1-4dd6-b3d8-74a9c21d0c6e",

"displayName": "Work item linking"

}

]

```

<figcaption>All policy types available in my account.</figcaption>

The configuration for each policy is a bit of a mystery. I tend to configure a policy through the UI, then retrieve the configured policy to see what the JSON looks like.

Now that you understand the underlying concepts, guids and things, you can use the raw REST requests from PowerShell or... [You could use the new Azure CLI for Azure DevOps](https://docs.microsoft.com/en-us/cli/azure/ext/azure-devops/repos/policy?view=azure-cli-latest):

```

az extension add --name "azure-devops"

az login

az repos policy create --org {your org} --project {your project name or guid} --config "path/to/config/file"

```

For reference:

- [Policy API docs](https://docs.microsoft.com/en-us/rest/api/azure/devops/policy/?view=azure-devops-server-rest-5.0)

- [Policy Configurations REST API](https://docs.microsoft.com/en-us/rest/api/azure/devops/policy/configurations/list?view=azure-devops-server-rest-5.0)

- [Policy Type REST API](https://docs.microsoft.com/en-us/rest/api/azure/devops/policy/types/get?view=azure-devops-server-rest-5.0)

- [Policy Examples](https://docs.microsoft.com/en-us/rest/api/azure/devops/policy/configurations/create?view=azure-devops-server-rest-5.0#examples) | jessehouwing |

164,031 | Why I can't spell but I CAN code | How do you spell function? You had to think for a split second didn't you? You probably wr... | 0 | 2019-08-30T21:30:08 | https://dev.to/adam_cyclones/why-i-can-t-spell-but-i-can-code-2lp5 | ### How do you spell function?

You had to think for a split second didn't you? You probably write the word in some form atleast 30 times per day, 10,950 per year and 87,600 keystrokes just to fit that statistical guess work. But still.. it's a small wonder our keyboards haven't inverted, caught fire, or complained to a workers Union. It's all down to muscle memory (which I will now googlize into my own brains short term memory) and define it thusly:

_Muscle memory is a form of procedural memory that involves consolidating a specific motor task into memory through repetition, which has been used synonymously with motor learning._

Ace now we have that out the way, thanks googled generic text, I can't spell because I have been told I am dyslexic. But I can code because I need to get it write in order to survive at life, this is my job and my hobby. So yeah working with me is probably full of typos and disorganized rambles because dyslexia is more than just spelling. It's a state of mind. I want to know your point of view, is dyslexia a label, an excuse or a genuine thing, I won't be offended, so have at it. | adam_cyclones | |

164,042 | Population Growth and Housing Availability | New housing and new residents A certain amount of housing prices are due to supply and dem... | 2,079 | 2019-09-27T21:59:50 | https://dev.to/rpost/population-growth-and-housing-affordability-79e | data, analytics, techforgood | # New housing and new residents

A certain amount of housing prices are due to supply and demand - more people means more demand. It can be hard, if not impossible, to keep up with that demand, which means more expensive housing. Of course there are [tons of factors that play into housing affordability](https://www.curbed.com/2019/5/15/18617763/affordable-housing-policy-rent-real-estate-apartment) but supply (new units being built) and demand (new residents moving to an area) are easy to find data that can show the impact of these factors.

According to the Census estimates, the Austin-Round Rock Metropolitan area grew by 53,086 people from July 1, 2017 to July 1, 2018. A little less than a quarter, or 23.6%, of those individuals moved to live within the Austin city limits. That also means that about 40,000 people moved into the surrounding areas. During that same period, construction was completed on 12,453 housing units. Using the Census average household size of 2.48 people, that means we gained enough housing for a little over 30,000 people! This, in theory, should have helped slow down housing cost increases.

Austin has been experiencing a similar growth rate since 2010, but there have not been enough housing units completed to keep up with the change - 9,449 were completed between July 1, 2018 and June 30, 2019. Using the same average household size, that made room for just 23,400 people, which means that well over half of the new residents moved to the area, but outside of the city limits. The low number of new units likely increased the cost of housing by not meeting demand.

If units continue to be finished at the same rate, we will see 13,750 more units come available by June 30, 2020, which should be able to provide housing for about 34,100 new residents at the current average household size. While these are not huge numbers, and may not lower average rents it may help slow the relentless increase of housing costs.

# Dev - estimating upcoming housing availability

Here's why this is dev-related to me. Once you have the data, it's fairly easy to figure out how many houses have been built within a certain period. Look at the number of building permits and a status of "Final" along with their Status date. (The Status date looks to have been started in 2007, and retroactively applied through 2008, so even this method only works for about the last 10 years.)

More difficult is estimating the units that may be completed soon. To do this, I first created a field called "Time to Completion" that finds the difference between the Completed Date and the Issue Date. That allowed me to looked at the average completion time in days. I narrowed the time range down to the last two years because construction methods change, crew availability changes, and I thought that two years would be a large enough time frame to have a broad average while also reflecting a state that may be similar to what we're facing now. (As I'm a developer, and not a construction or real estate professional, I might be wrong about that.)

If you look at the average completion time for all Building Permits related to new housing units, from July 1, 2017 to now, it is 331.7 days. I did exclude 16 permits that were listed at taking over 2000 days to complete. That's 5 1/2 years! Something seems to have gone really wrong with those, and I'm comfortable calling them outliers for our current purposes. That may seem like a good number to use, but if you think for a second longer - does it take the same amount of time to build a 3,000 unit complex as a single house? I hope not! I looked at the relationship between number of units and time to completion and, yes! Common sense works here, it takes longer to build more units. You can see it in a [dashboard I made on Tableau Public](https://public.tableau.com/profile/rebekah3261#!/vizhome/AustinTXUnitCompletionEstimates/EstimatingUnitCompletion?publish=yes).

I added a line to my scatter plot with Housing Units and Time to Completion. We could use the nice formula that Tableau generated based on number of units alone, but the line goes way above the reality for larger complexes, which means we would seriously over-estimate completion time for larger projects, like those with more than 150 units. What else might be at play? Next I checked the time to completion by permit class and wow! Now we see a range from 297.5 days to 989.9. That's from about 10 months at the shortest to over two and a half years! That seems like a better estimate.

After exploring these options, I decided to use the average Time to Completion by Permit Class. I created a new field that calculates an Estimated Completion Date based on the Issue Date plus the average Time to Completion for a given permit's Permit Class. If you are a more visual person, go download [my Tableau workbook](https://public.tableau.com/profile/rebekah3261#!/vizhome/AustinTXUnitCompletionEstimates/EstimatingUnitCompletion?publish=yes) and play with it!

The final step was to account for *actual completed date* and *estimated completed date* at the same time. I created one more field that looks at the project status, decides what date to use (actual or estimate) and then allows us to view all of the building permits together, whether they have been completed yet or not.

# Should we ever expect housing costs in Austin to go down?

If more people are moving to Austin and the area every year, and are increasing the demand for housing, then we can never really expect the housing costs to go down as long as the economy is doing well. It's awkward, but what incentive do housing developers have to make houses that will sell for less? Apartments that command lower rents may be profitable to management companies because they could tap a separate market, but at some point the profit may not be worth it to those companies. That is where local government steps in - they can (and do!) create incentives for developers to build affordable units.

A related issue is land. You can build on empty land, or you can tear down existing units. The former is very limited within city limits, and the latter often results in simple replacement of older (read: cheaper) housing with newer (read: more expensive) housing. Changing the land development code is one way to encourage greater density, which will allow for more housing within city limits. Existing options, like two detached homes on a single lot, are becoming more popular as a way for those willing to live in smaller homes to stay within the city. This, then is another area where local government can help control housing costs. And we'll get into that next time. | rpost |

164,146 | Magento 2 Store Pickup | Use Magento 2 Store Pickup Extension to drive more revenues! By allowing your customers to pick up th... | 0 | 2019-08-31T07:07:04 | https://dev.to/jadeblanne/magento-2-store-pickup-hp6 | magento, webdev | Use [Magento 2 Store Pickup](https://www.mageants.com/store-pickup-extension-for-magento-2.html) Extension to drive more revenues! By allowing your customers to pick up their orders from the nearest store, offer improved shopping experience.

**Key Features**

Configuration of multiple stores

Store pick up with the Store locator is included

Map clustering is automatic

On every product information page link to store is provided

Store can be searched instantly by area and distance

**Overview**

Magento 2 Store Pickup provides an additional shipping technique that allows clients to collect their orders on their own right at the shop. This helps to decrease a third party's potential shipping hazards and enhances shopping experience with a fresh selection of pickup.

| jadeblanne |

164,254 | Building an Enigma machine with only TypeScript and then use Angular DI system to properly instantiate it | [Part 2] - Building Enigma with TypeScript and Angular | 1,667 | 2019-09-10T09:11:17 | https://dev.to/maxime1992/building-an-enigma-machine-with-only-typescript-and-then-use-angular-di-system-to-properly-instantiate-it-2e2h | cryptography, enigma, angular | ---

published: true

title: "Building an Enigma machine with only TypeScript and then use Angular DI system to properly instantiate it"

cover_image: "https://raw.githubusercontent.com/maxime1992/my-dev.to/master/blog-posts/enigma-part-2/assets/enigma-2-cover-image.png"

description: "[Part 2] - Building Enigma with TypeScript and Angular"

tags: cryptography, enigma, angular

series: "Enigma: Understand it, implement it, crack it"

canonical_url:

---

This blog post is the second of a series of 3, called **"Enigma: Understand it, implement it, crack it"**:

- 1 - [Enigma machine, how does the famous encryption device work?](https://dev.to/maxime1992/enigma-machine-how-does-the-famous-encryption-device-work-5aon)

- **2 - Building an Enigma machine with only TypeScript and then use Angular DI system to properly instantiate it _[this blog post]_**

- 3 - [Brute-forcing an encrypted message from Enigma using the web worker API](https://dev.to/maxime1992/brute-forcing-an-encrypted-message-from-enigma-using-the-web-worker-api-166b)

# Table of contents

<!-- toc -->

- [Intro](#intro)

- [1 - Enigma library](#1-enigma-library)

- [A - Reflector](#a-reflector)

- [B - Rotor](#b-rotor)

- [C - Machine](#c-machine)

- [2 - Enigma app](#2-enigma-app)

- [A - Display the initial config rotors and current ones](#a-display-the-initial-config-rotors-and-current-ones)

- [B - Encrypt a message from the app](#b-encrypt-a-message-from-the-app)

- [B1 - Logic and template](#b1-logic-and-template)

- [B2 - Create an Enigma machine using dependency injection](#b2-create-an-enigma-machine-using-dependency-injection)

- [Conclusion](#conclusion)

- [Found a typo?](#found-a-typo)

<!-- tocstop -->

If you find any typo please just make the edit yourself here: https://github.com/maxime1992/my-dev.to/blob/master/blog-posts/enigma-part-2/enigma-part-2.md and submit a pull request :ok_hand:

# Intro

In the [first blog post of this series](https://dev.to/maxime1992/enigma-machine-how-does-the-famous-encryption-device-work-5aon), we've seen the internal mechanism of Enigma. In this one, I'll explain how I decided to implement it.

The Enigma library I've built has nothing to do with Angular, it's just **pure TypeScript**. The reasons behind that are:

- It shouldn't in the first place because it could be used as a separate package with vanilla JS or any other framework

- [:warning: Spoiler alert :warning:] To crack Enigma in the next blog post of the series, we will use a [web worker](https://developer.mozilla.org/en-US/docs/Web/API/Web_Workers_API) and importing anything from Angular within the worker context would break it as it's not aware of the DOM at all

BUT. For Angular lovers, worry no more. We will use Angular and especially its dependency injection API to build the UI that'll consume Enigma library.