id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

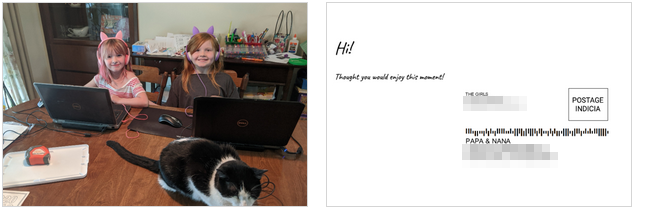

321,124 | SMS Postcards for Grandparents | What I built With the quarantine/lock-down in place my parents have been really missing se... | 0 | 2020-04-28T03:06:59 | https://dev.to/mattdini/placeholder-title-2b04 | twiliohackathon, serverless, aws | [Comment]: # (All of this is placeholder text. Use this format or any other format of your choosing to best describe your project.)

[Reminder]: # (Make sure you've submitted the Twilio CodeExchange agreement: https://ahoy.twilio.com/code-exchange-community)

[Important]: # (By making a submission, you agree to the competition's terms: https://www.twilio.com/legal/twilio-dev-hackathon-terms)

## What I built

With the quarantine/lock-down in place my parents have been really missing seeing their granddaughters. I built this as a way to send them pictures in the mail with the least amount of resistance. No apps to download, no software, use any cellphone... just send a text message to a Twilio number and a postcard will be mailed the next day!

#### Category Submission:

COVID-19 Communications and Interesting Integrations

## Link to Code

https://github.com/mattdini/sms-postcard

## How I built it (what's the stack? did I run into issues or discover something new along the way?)

Built with:

* API Gateway

* Lambdas

* S3

* Secrets Manager

* Twilio!

* https://lob.com/

Some fun things learned along the way:

* Hangouts + Google Fi will resize images to 100x100, not great for postcards!

* https://github.com/keithrozario/Klayers is a life saver! | mattdini |

321,151 | List all current environment variables - Linux Tips | Here we introduce 2 ways to list out all current environment variables: 1, use printenv com... | 0 | 2020-04-28T03:56:01 | https://dev.to/bitecode/list-all-current-environment-variables-linux-tips-4n01 | c, linux | Here we introduce 2 ways to list out all current environment variables:

## 1, use `printenv` command:

```bash

$ printenv

SHELL=/bin/bash

SESSION_MANAGER=local/pop-os

COLORTERM=truecolor

...

```

## 2, write a C program to list them

Environment variables will be put in a environment table in process, and it has a pre-defined `environ` pointer points to that table. To iterate all environment variables, we just need to walk through this table via the pointer:

```c

#include <stdlib.h>

#include <stdio.h>

extern char** environ;

int main() {

char** ep = NULL;

for (ep=environ; *ep!=NULL; ep++) {

puts(*ep);

}

return 0;

}

```

Compile and run it: `gcc main.c -o listenv`

```bash

$ ./listenv

SHELL=/bin/bash

SESSION_MANAGER=local/pop-os

COLORTERM=truecolor

...

```

| bitecode |

321,279 | 5 Reasons as to why you Need a Wifi Booster | A wifi booster is the same as the wifi range extender, which helps in boosting the wifi range of the... | 0 | 2020-04-28T07:03:30 | https://dev.to/mynewextenders1/5-reasons-as-to-why-you-need-a-wifi-booster-208b |

A wifi booster is the same as the wifi range extender, which helps in boosting the wifi range of the signals to those areas or dead zones where the wifi is feeble or altogether absent. The wifi range extender is also known as a ‘repeater’ because it catches the wifi signals of the router and re-transmits it to all the areas of the house or office. It can be easily configured on mywifiext site and placed at a place where it receives good wifi signals from the wifi router.

Here we will discuss in detail below the 5 reasons as to why you need the wifi range extender.

1. To Increase the Wifi Range

If you have a wifi router which is not giving you maximum wifi signals to all the areas of the house, then you need a wifi range extender, placed between the router and the wifi enabled device/s so that it transmits the wifi signals of the router further to the dead zones of the house or office. You can use the extender in either the extender mode or the access point mode to get great results.

2. To Increase Speed and Connectivity

The wifi range extender not only extends the wifi signals but also improves the wifi speed and gives you a boosted internet speed with no interference in between. You can stream you HD videos with best quality and no need to wait for buffering while watching videos as well.

3. To Act as an Access Point

When you want to boost the range of the internet in a large area like a large office, you can get a wifi range extender and configure it as an access point. Once you have successfully configured it as an access point, you can place it in the dead zones and the wifi extender, acting as an access point will give the extender wifi signals directly in the dead zones of a large office or home.

4. To have Wifi in the Surrounding Area

When you have a big home with a nice lawn, a separate pool area, a garage, a basement or cellar, and a wonderful large play area, you really need the wifi signals to reach all those areas surrounding your house. The wifi range extender or booster, does just that for your large homes. Get a booster which is best suitable for large homes, set it up on mywifiext.net and place it at the right spot to give extender wifi range to all of these areas surrounding your house.

5. To Have a Good Wifi Signal without Throwing Away the Old Router

If you have an old router, which is not performing at its best, and giving troubles regarding speed and connectivity, do not throw it away yet. You can get the wifi range extender and boost the wifi signals, speed and connectivity of the wifi even with the old router in place. The wifi extender will retransmit the wifi signals from your old router with its own network and give you much better results than what you would have got from a new wifi router. https://mynewextendersetup.net/ | mynewextenders1 | |

321,302 | From Ph.D. to Independent Software Developer | Life as a Ph.D., even with its ups and downs, is a relatively comfortable life. There is quite a reas... | 0 | 2020-04-28T07:56:20 | https://dev.to/aquicarattino/from-ph-d-to-independent-software-developer-5dci | science, phd, startup | Life as a Ph.D., even with its ups and downs, is a relatively comfortable life. There is quite a reasonable job security, a project, colleagues, a supervisor. But doctorates have hard deadlines which can't be extended. It forces us to think once more about what do we want to pursue for the future: academic life, a job in the industry, a sabbatical, an entrepreneurial path.

I knew academic life was not for me because of several reasons, which I hope to be able to put in writing one day. Getting a job in a company was a possibility, and I was actively applying to some roles. However, something that always characterized me was the pulsion of learning and starting new things. Starting something on my own was an aspiration, but without a concrete plan until the very last few months of my contract.

## Identifying Options

One of the hardest things to do when you want to start something on your own is to identify the options you have. And once you identify them, isolate your value proposition. Of course, back then, I had no idea what the meaning of the words *value proposition* was. During my Ph.D. I had developed several skills, some directly related to my work, some in my free time.

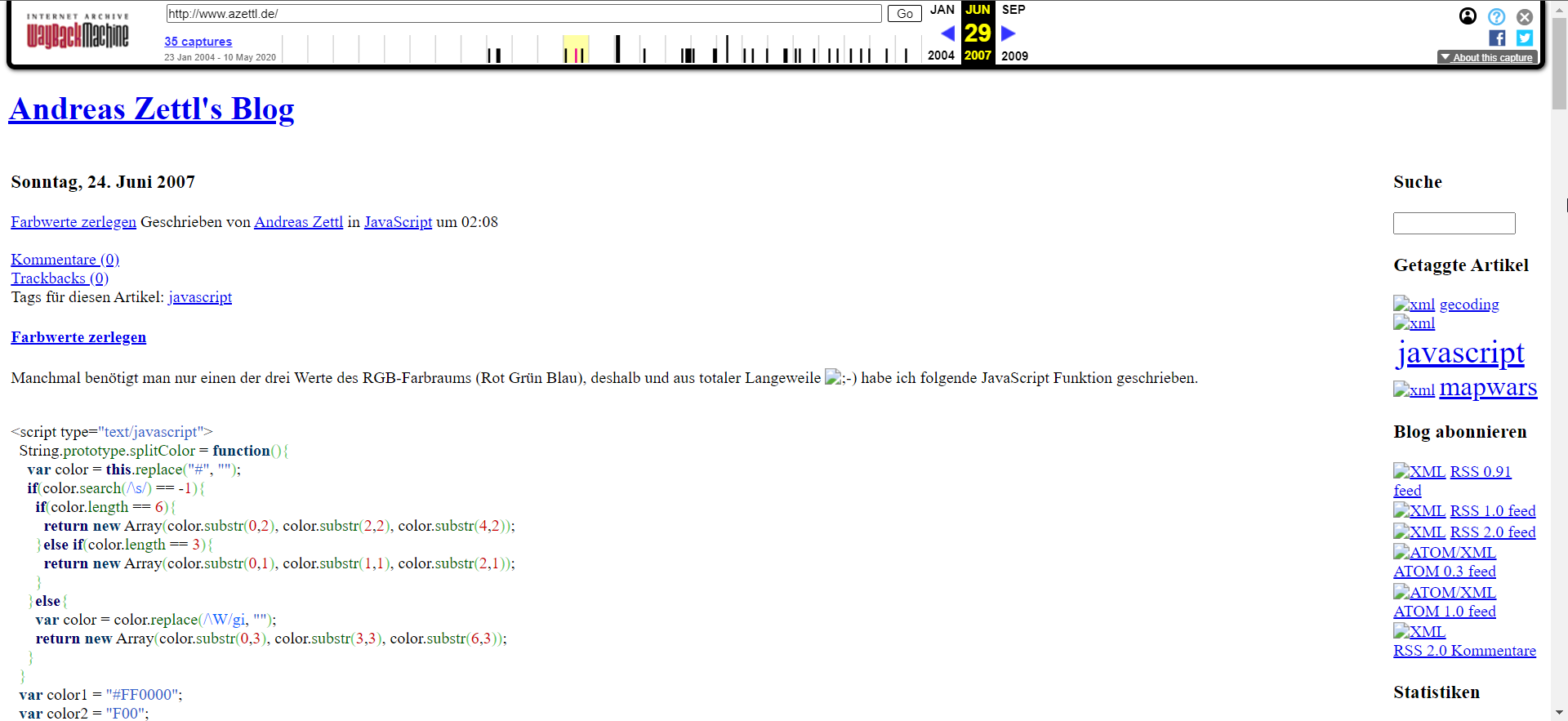

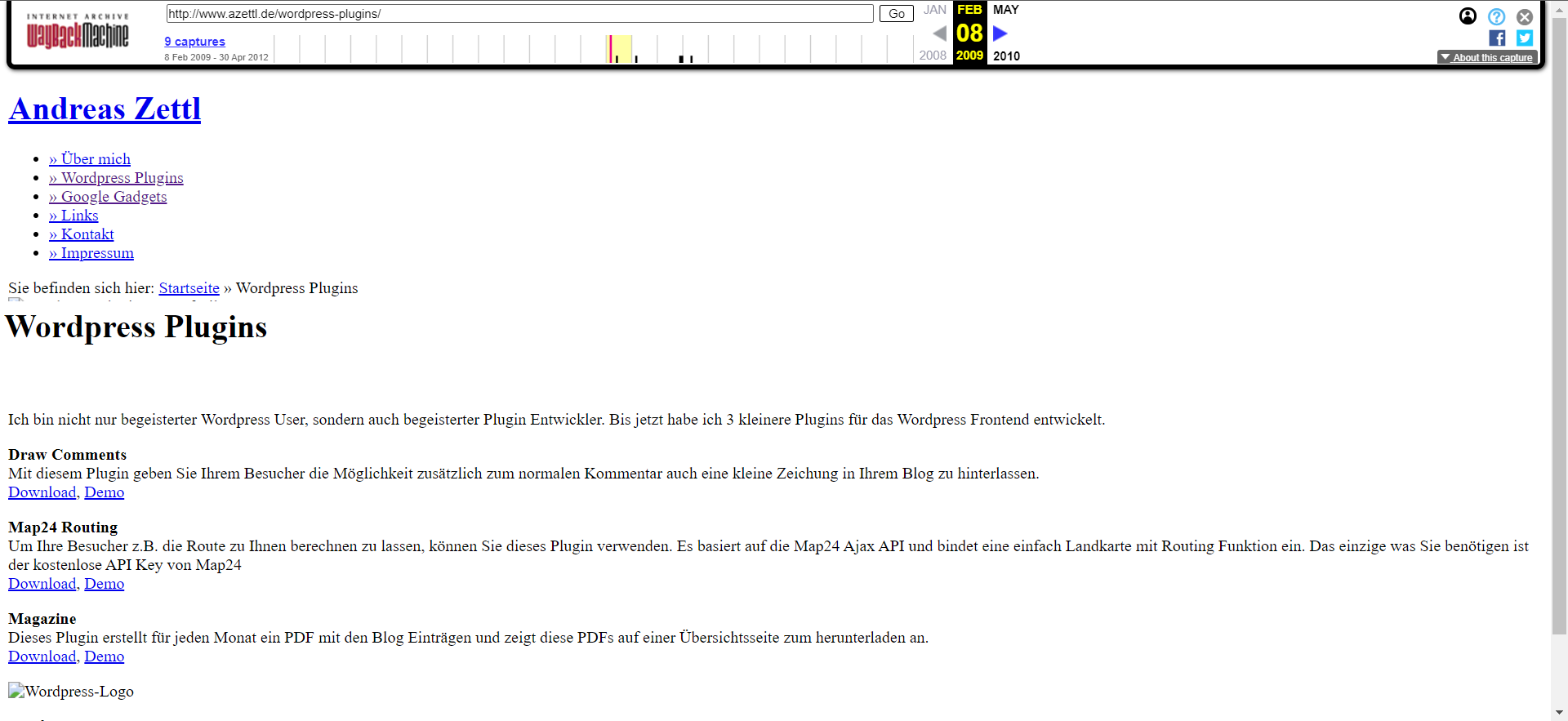

I knew how to build websites. It was something I always enjoyed, and over time I kept updating my skills. My first option was to create a service to develop websites for small shops in my area that didn't have an online presence yet. I developed a website, built a couple of sites to have on my portfolio, including my group's website. However, it was a very time-consuming task, with little joy. Customers are always complaining, I have no design skills, and the competition is fierce.

I also knew how to work with Raspberry Pi's and Arduinos. I started thinking about building a consumer product such as an intelligent thermostat, or a private VPN to use at home. I even contacted friends overseas to discuss options. I had the base code for the thermostat, and I could monitor the temperature of my house at different points and stream the data. I also set up a VPN to work at home, with a private cloud accessible from anywhere else in the world. It was fun, but it was just a hobby. Lots of people could do it much better than me.

I knew that I had to keep thinking about what I wanted to do, time was still passing, and the end of my contract was getting closer and closer. I started contacted startups and small companies to try to understand how someone with my profile could fit into their needs.

## The Serendipity

One of my former colleagues, Sanli Faez, was starting his group when we met for a beer. He told me he needed a hand developing software for controlling experiments. During my Ph.D., part of what I did was building instrumentation software to do experiments that were not possible to do by hand. I automatize a lot of repetitive tasks, and that allowed me to build statistics much faster, with measurements running overnight or even for several days uninterrupted.

It was just a random talk that made all my choices crystallize. In that talk, I understood that there was something I could do that was a skill hard to find. Programming software for a lab is not just about being a good software developer. It is also about understanding the experiment itself. Very few people have those overlapping skills. I was not only one of those people, but I was also available to start a new project.

## Becoming an Expert

When I started developing software for research labs, I started with what I knew. I was behaving like I saw the postdocs act. I was participating in group meetings, offering feedback. I even participated in a conference showing the software I had developed and the new things I had learned over time.

One day, out of the blue, someone wrote to me asking for advice on a program they were writing. I didn't know the person, but they found me on Github, and we had someone in common on LinkedIn. The first time, it was just a coincidence, the second time, it was a pattern that was starting to emerge. Perhaps I was more than just a person developing software.

It is a strange feeling when it hits you. People are seeking your advice on a topic that you were giving for granted. At that time is when you realize that you have become an expert. It is not a choice. It is something that happens when you dedicate day and night to learning about a topic on which very few people are focusing.

## Building a Brand

When people start asking for advice, when they seek your opinion or your approval on a topic, you might want to separate yourself from the product. Developing software was allowing me to live, but I didn't want people to know me just because of it. I started to build a brand, Python for the Lab, to separate me from my product. In my long term vision, it would allow me to hire people or to diversify.

I am not sure whether it was a good move or not, but I know people started perceiving what I do as a product. I have heard people talking about my website or my book, without actively realizing I was behind them. I still don't know how to feel about it. I know I should feel proud of having a successful product, but not being recognized for it still feels strange.

## Value Proposition and Unique Selling Point

Understanding what a value proposition is, took me a long time. Still, it is something that can be applied to many different situations. In any transaction between people, one person delivers value, and the other acquires it. The perception of the value being exchanged, however, can be very different. In my case, developing software for the lab was a natural task that I learned out of need. I hadn't realized that it could be of any importance for someone else.

It took me a while to find a way of formulating what I was doing. What I realized was that I could save hundreds of hours of a Ph.D. or a postdoc who had to learn what I already knew. Because of my experience, I was much faster at developing solutions than someone starting from scratch. Those hours that the group was saving was my value proposition. Hours are a quantifiable measure. The other aspect is the sheer interest of a researcher in learning or not how to do what I could do. Very few experimentalists want to spend so many hours behind a computer screen.

Once I could formulate the value of what I did, I had to wrap my mind around is why someone would pay me. That is also called *the unique selling point*. In the case of software for the lab, the answer is straightforward: no one else can do what I do. Better said, no one else is available to be hired for a specific project. In labs, most people are doing a Ph.D. or a postdoc. Very few groups have access to support departments that can develop custom software. There is almost no academic gain in developing just software and not producing results.

I was not looking for scientific achievements. That was my unique selling point setting me apart from people pursuing a career in Academia and who could learn to do what I was doing. My other unique selling point was the brand I was building. Python for the Lab is years ahead from anyone else who would start now. That generates a reputation that helps to justify why me and not someone else.

## Summary

Looking in retrospect, I could have saved much time if I would have known what I was looking for. Better said, if I would have had the tools to identify what I was able to do. I spent much time focusing on topics in which I was no expert just because it seemed like the easy path to follow. We all have heard the stories of successful websites and apps. But what I was trying to achieve was a sustainable way of life, one that could give me both joy and enough money to live.

Being able to identify what made me unique was a journey. It is hard to understand at first until you validate the feeling with others. Your life experiences accumulate, and that is what makes you unique. It is not only the technical skills that set you apart but every choice, every experience from your past.

If you want to know more about the things I do, follow me on [Twitter](https://twitter.com/aquicarattino) | aquicarattino |

321,344 | Json parse data after line 1 to 28 Column | Step1 : Json Marshal function receive data and return data after convert into json format -----------... | 0 | 2020-04-28T09:49:29 | https://dev.to/wisdomenigma/json-parse-data-after-line-1-to-28-column-37em | go, json | Step1 : Json Marshal function receive data and return data after convert into json format --------------------------------- return ok

step 2 : Apply NewDecoder on given data and it throw me error

</

var member Member

data ,err := json.Marshal(member)

if err != nil{println("error", err)} println(data)

err = json.NewDecoder(request.Body).Decode(member); if err != nil{

println("Error ", err ) // return eof

}

>

hOW TO RESOLVE THIS ERROR

| wisdomenigma |

330,924 | I published two books last week and you can publish a book too! | How to publish a book using Kindle Direct Publishing | 0 | 2020-05-09T11:01:55 | https://dev.to/napolux/i-published-two-books-last-week-and-you-can-publish-a-book-too-bkh | sideprojects, books, watercooler, discuss | ---

title: I published two books last week and you can publish a book too!

published: true

description: How to publish a book using Kindle Direct Publishing

tags: sideprojects,books,watercooler,discuss

cover_image: https://i.imgur.com/798JOrt.jpg

---

Ok, so this was the plan last monday morning:

* I have a one week long holiday (at home, thanks Covid-19) with my wife working in the other room (no kids or pets around)

* I'm a programmer (they pay me to program, so I must be good)

* I want to achieve "something" by the end of the week

* I want to do something "print related"

Spoiler: **I have two books published on Amazon!**

### What?

**Yes, you got it**. I've published two books on Amazon in less than a week. More specifically two sudoku puzzle books:

* [360 sudoku puzzles](https://www.amazon.com/dp/B088B6XW6G/)

* [1200 sudoku puzzles](https://www.amazon.com/1200-Sudoku-Puzzles-intermediate-solutions/dp/B088B8MFNN/)

### Why?

I didn't want to publish just another app. I choose something you can print and touch. A book was the best choice.

### How?

If you follow me you've probably read this post: [https://dev.to/napolux/how-to-generate-pdf-from-web-pages-5781](How to generate PDFs from web pages).

So, I've used a Sudoku generator in a little PHP app to generate HTML books. Then I created the "master" PDF file for my book with [Weasyprint](https://weasyprint.org/) and a cover with [Canva.com](https://www.canva.com).

I've spent a couple of days fine-tuning my PDF and then I was ready.

The final book and cover were uploaded to the [Amazon KDP platform](https://kdp.amazon.com), et voilà, my books were ready to be printed on demand! No ebook as I said, a real book!

### You can publish a book (or an ebook) too!

**So, what's blocking you?** You can easily publish (and sell) a book in a matter of minutes. Your blog posts and tutorials, your ideas, your puzzles, your personal diary or that novel about dinosaurs that work as programmers.

**We all have something to say**. This time were Sudokus, but I'll probably publish something more in the next weeks!

### Will I make money?

I don't know and I don't care. The achievement was really what I was looking for (at least for now).

**If you have any question, just ask in the comments section!**

### My two books

* [360 sudoku puzzles](https://www.amazon.com/dp/B088B6XW6G/)

* [1200 sudoku puzzles](https://www.amazon.com/1200-Sudoku-Puzzles-intermediate-solutions/dp/B088B8MFNN/)

| napolux |

330,958 | Migrate from Graphcool to Hasura | Migrate your existing graphcool project to Hasura GraphQL backed by PostgreSQL. | 0 | 2020-05-09T12:29:12 | https://dev.to/open-graphql/migrate-from-graph-cool-to-hasura-2mfi | graphcool, hasura, graphql, migration | ---

title: Migrate from Graphcool to Hasura

published: true

description: Migrate your existing graphcool project to Hasura GraphQL backed by PostgreSQL.

tags: graphcool, hasura, graphql, migration

---

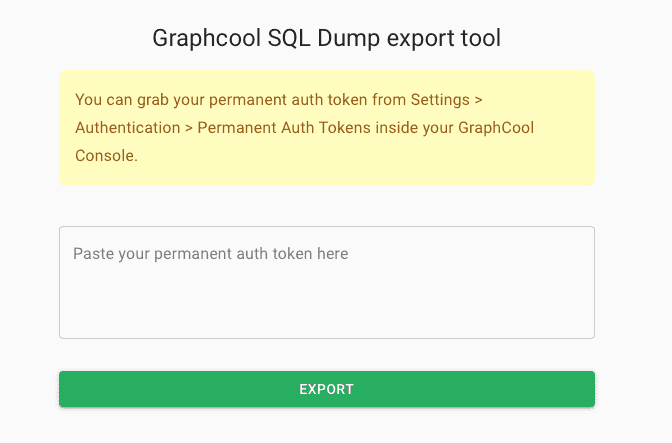

Graph.cool will be [sunsetted](https://www.graph.cool/sunset-notice) July 1, 2020. If you have an existing project, it is time to migrate off from their platform to have enough leeway for testing and going production.

I have been helping a few folks migrate from Graph.cool to Hasura and decided to put together a rough migration path.

[Hasura](https://hasura.io) is an open source engine that connects to your databases & microservices and auto-generates a production-ready GraphQL backend.

## Pre-requisites:

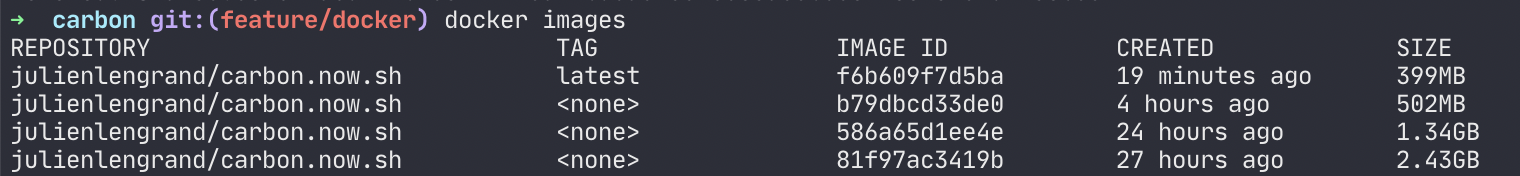

We will be using `docker` to run some containers (MySQL, Postgres and Hasura). If you don't have docker on your machine yet, it is time to set up. [Read their official docs](https://docs.docker.com/get-docker/)

**Note**: This guide is not comprehensive and some steps require manual intervention depending on your Graph.cool project. Feel free to ask any queries in the comments. You can also [DM me on Twitter](https://twitter.com/praveenweb). I will be happy to help you out :)

But here's roughly what you need to follow to get going.

## Step 1: Export data from Graph.cool

Export your Graph.cool data using their [export tool](https://export.graph.cool/). This will give a MySQL binary dump of your current Graphcool data.

## Step 2: Set up an intermediary MySQL server

We need to set up a MySQL server as an intermediary step in order to migrate data from Graph.cool to Postgres.

### Step 2.1: Start MySQL with Docker

```bash

docker run --name graphcool-mysql -e MYSQL_ROOT_PASSWORD=my-secret-pw -p 3306:3306 -d mysql:latest --default-authentication-plugin=mysql_native_password

```

Replace the password (my-secret-pw) as required.

### Step 2.2: Connect to MySQL via mysql CLI

```

mysql --user=root --password=my-secret-pw --host=<host>

```

Replace the host and password as required.

### Step 2.3: Create a database in MySQL

```bash

create database public;

```

### Step 2.4: Import data

Import Graph.cool's MySQL export into your local MySQL instance:

```bash

mysql --user=root --password=my-secret-pw --host=<host> public --binary-mode=1 < <pathtomysqlexport>

```

Replace host and pathtomysqlexport as required. Your data should now be present in the MySQL database.

## Step 3: Migrate data to Hasura

Since MySQL is now set up with all the data from Graph.cool, we need to create a Hasura and Postgres instance, and to import the data to the same.

### Step 3.1: Set up Hasura

Refer to the [Getting started guide](https://hasura.io/docs/1.0/graphql/manual/getting-started/docker-simple.html#docker-simple) to set up Hasura using `docker-compose`.

### Step 3.2: Import data into Postgres

We will use `pgloader` to migrate from MySQL to Postgres. Refer to their [installation guide](https://github.com/dimitri/pgloader) for setting this up.

Once you have installed, execute the following command:

```bash

pgloader mysql://root:my-secret-pw@<host>/public postgresql://postgres:postgrespassword@<host>:5432/postgres

```

Replace `<host>` and mysql password (my-secret-pw) as required.

Your data should now be present in the Postgres database.

### Step 3.3: Connect Hasura to Postgres

Once the dataset is migrated to Postgres, Hasura should be able to track tables and relationships.

**Note**: If you have enums in your Graph.cool project, check out [Enums in Hasura](https://hasura.io/docs/1.0/graphql/manual/schema/enums.html), since they're handled differently in Hasura and you would need to change the data structure a bit.

## Step 4: Migrate structure & functionality

After migrating the data to Hasura, there is some manual work involved in migrating the structure and functionality of your Graph.cool project.

### Step 4.1: Restructure connection tables

You can rename tables/columns to match your client-side queries as required. Do note that, for every one-to-one relationship, Graph.cool would have created a connection table to link them. This would require a bit of manual work to restructure. Currently, there is no automation available for this step. Carefully review the connection tables and make the necessary changes.

Read up more about [Relationships in Hasura](https://hasura.io/docs/1.0/graphql/manual/schema/relationships/index.html)

### Step 4.2: Migrate functions

In case you have functions in Graph.cool, Hasura has an equivalent feature called [Event Triggers](https://hasura.io/docs/1.0/graphql/manual/event-triggers/index.html). Migrating this involves taking your code and deploying it on a different platform. It could be a serverless function or all the functions can be combined into a monolith Node.js server. The choice is up to you.

Do note that for event triggers, the [payload](https://hasura.io/docs/1.0/graphql/manual/event-triggers/payload.html) that Hasura sends might be different, and you might have to change the way the request body parameters are handled in your function code.

### Step 4.3: Migrate auth

There are two ways of authenticating users in Graph.cool:

1. Using Auth0

2. Using email-password auth.

If you were using Auth0 with Graph.cool, the migration should be fairly straightforward. You can configure Hasura with Auth0 easily by following the [Auth0 guide](https://hasura.io/docs/1.0/graphql/manual/guides/integrations/auth0-jwt.html).

In case you are using email-password auth, Graph.cool generates mutations for

- creating a user

```graphql

createUser(authProvider: { email: { email, password } })

```

- login

```graphql

signinUser(email: { email, password })

```

You will need to implement these custom mutations using [Hasura Actions](https://hasura.io/docs/1.0/graphql/manual/actions/index.html).

Refer to this example for a [custom signup mutation](https://github.com/hasura/hasura-actions-examples/tree/master/auth). You can modify this to include login functionality.

### Step 4.4: Migrate permissions

The CRUD permissions in Graph.cool can be manually migrated to Hasura's permission system. You can define roles in Hasura and configure permissions declaratively for all the CRUD operations.

Refer to [authorization](https://hasura.io/docs/1.0/graphql/manual/auth/authorization/index.html) for configuring Hasura permissions.

## Community Tooling for File Storage

Nhost has a community maintained solution [hasura-backend-plus](https://github.com/nhost/hasura-backend-plus) for handling Files on Cloud providers like S3 in case you are looking for a way to migrate Files from Graph.cool. They also have an Auth solution that can be integrated with Hasura :)

## Subscriptions

Hasura gives you realtime APIs out of the box. All your Postgres tables can be subscribed from the client using GraphQL Subscriptions.

I hope this guide gives an indication of the steps involved. In case you are stuck with any of the steps, do ping me :)

Hasura has an active and a helpful discord community. Do [join the discord server](https://hasura.io/discord) as well and post your queries. | praveenweb |

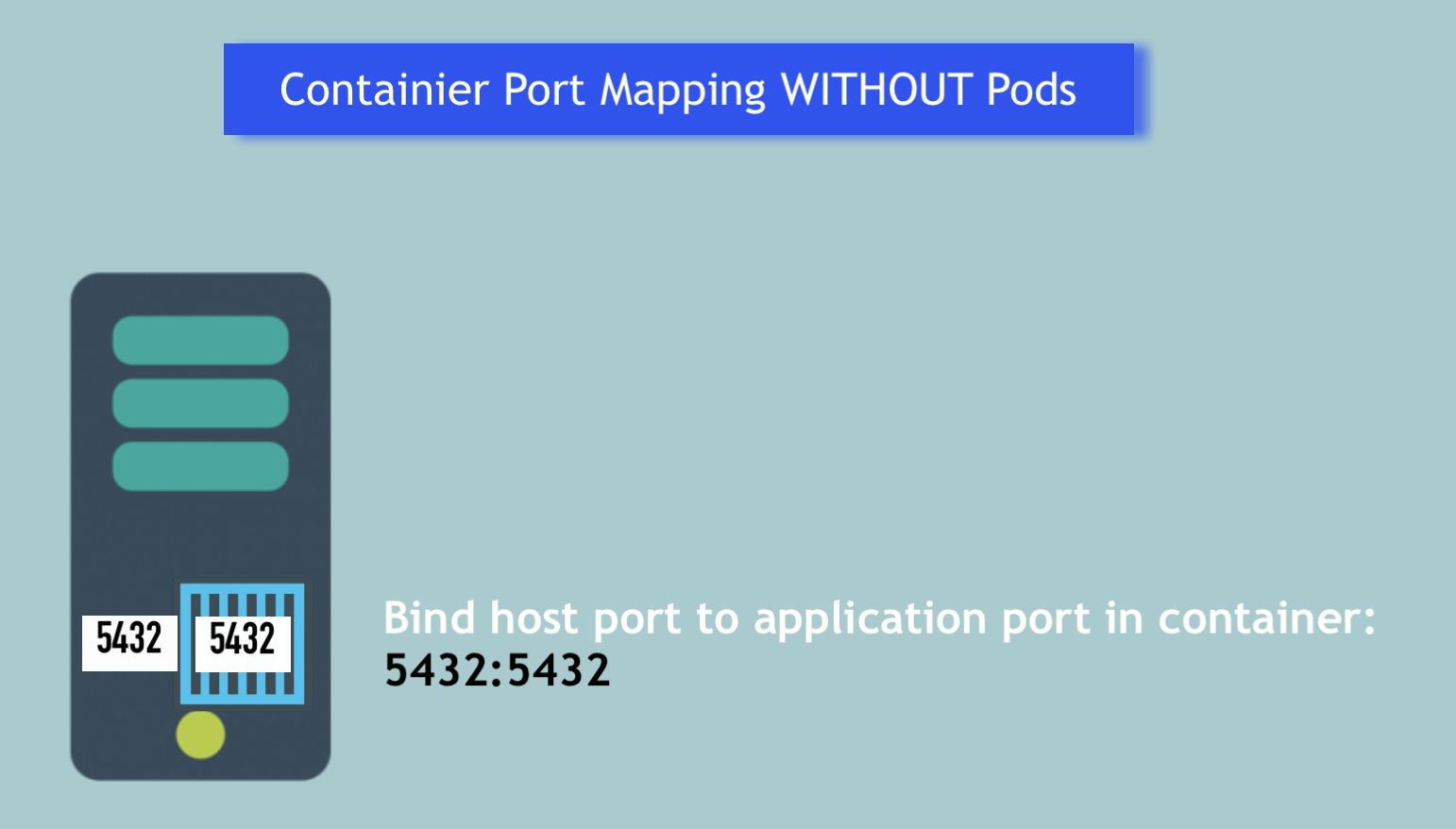

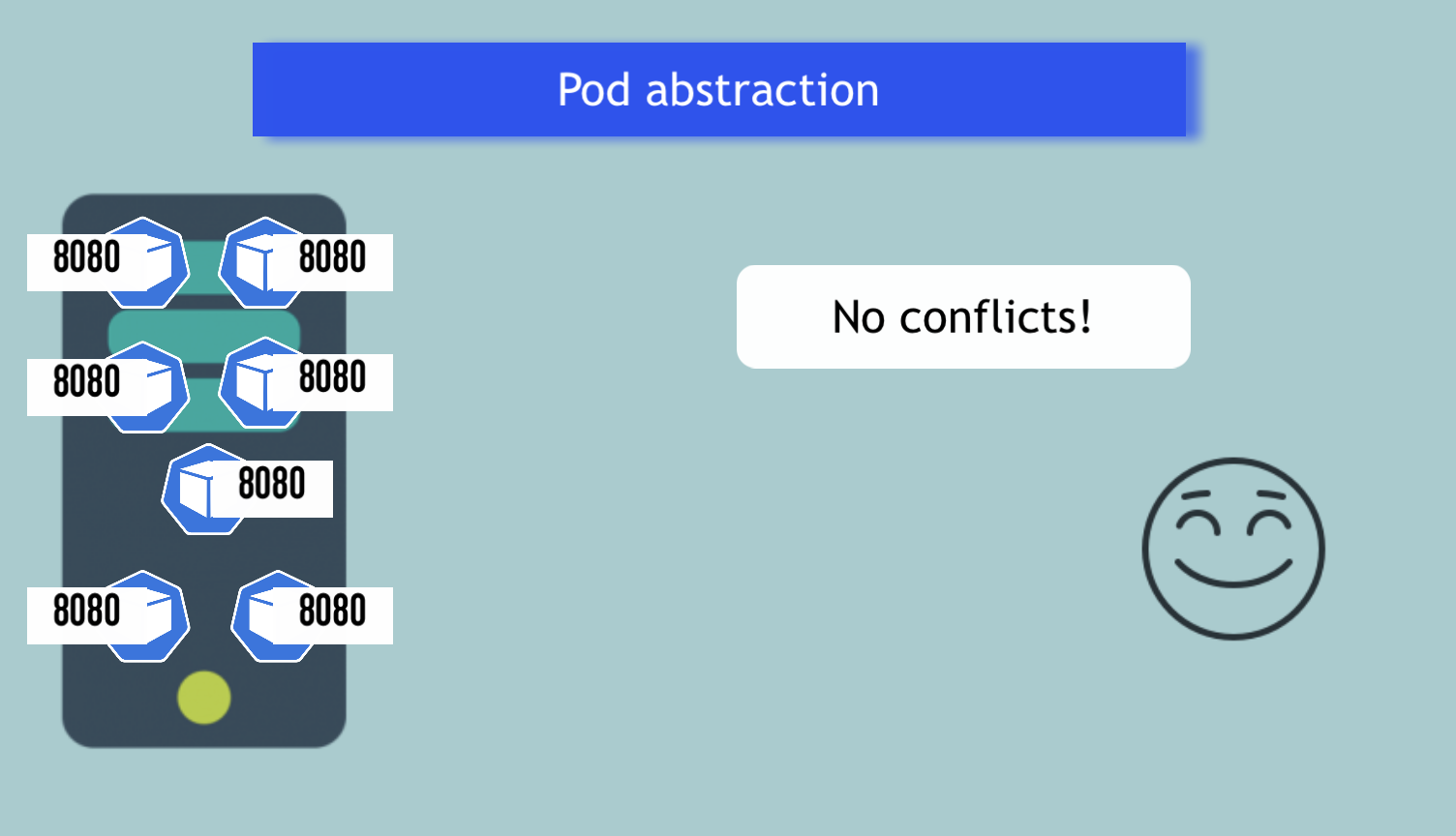

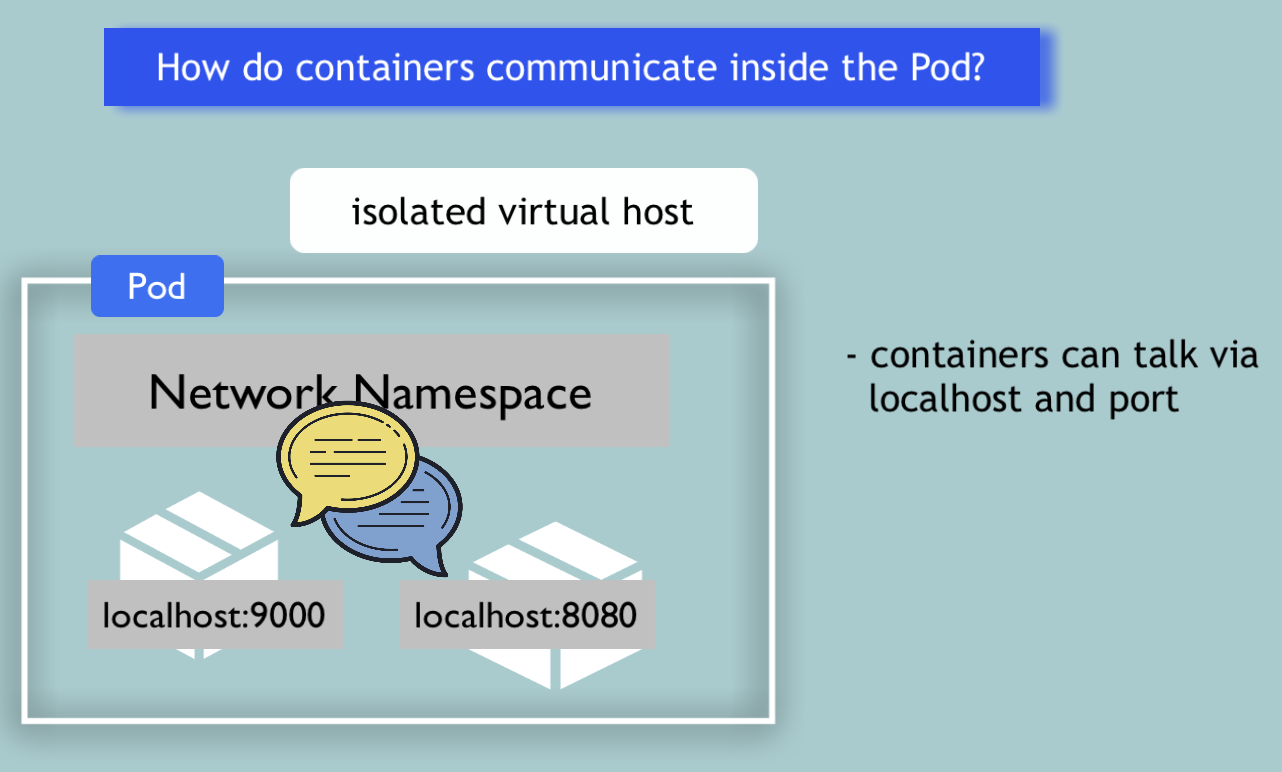

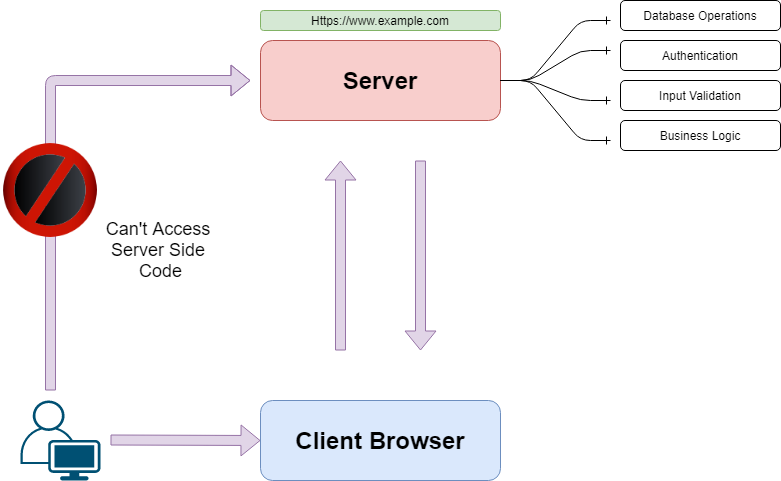

331,060 | Kubernetes Pods vs Containers | K8s Networking Basics 💡 | In this video I cover one part of the broader Kubernetes Networking topic, which is container commun... | 4,349 | 2020-05-09T14:11:55 | https://dev.to/techworld_with_nana/kubernetes-pods-vs-docker-containers-k8s-networking-basics-32mm | kubernetes, devops, tutorial, docker | In this video I cover one part of the broader Kubernetes Networking topic, which is container communication inside pods.

Considering Pods mostly contain only 1 main container, I start by answering the question of **why having a Pod as an abstraction over container is such an important concept in Kubernetes**.

I show **how container port mapping is a problem with hundreds of containers**, specifically how to allocate ports without getting conflicts, and **how Pods solve this port allocation problem**. 💪🏼

Using containers directly, you can only use a specific port ***once on a server***:

The same port can be used multiple times:

In addition, I show you in which cases you would need to run **multiple containers in one pod** and how these **containers communicate** with each other inside this pod:

You can find the full video here:

{% youtube 5cNrTU6o3Fw %}

---------------------

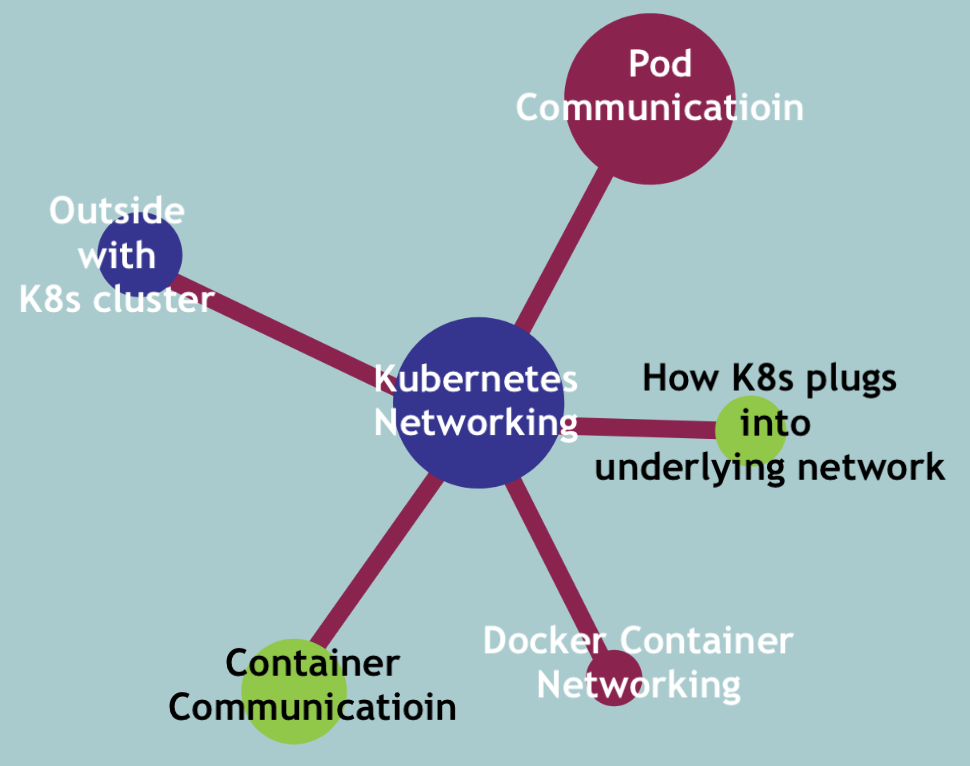

####Complete Kubernetes Networking Course 🎬:####

I'm making a complete course about Kubernetes Networking, covering rest of the topics, like

► how pods communicate with each other on the same node and across hundreds of servers?

► how does the outside world communicate with K8s cluster?

► how K8s cluster plugs into the underlying infrastructure network?

► Docker Container Networking

-----------------

**Kubernetes 101** ► [Compact and easy-to-read ebook bundle 🚀](https://bit.ly/3l4qXkR)

It's a handy way to quickly look something up or refresh your knowledge at work and use it as your cheatsheet 😎

I'm happy to connect with you on 🙂

- [Twitter](https://twitter.com/Njuchi_)

- [YouTube](https://www.youtube.com/channel/UCdngmbVKX1Tgre699-XLlUA?sub_confirmation=1)

- [Instagram](https://www.instagram.com/techworld_with_nana/) | techworld_with_nana |

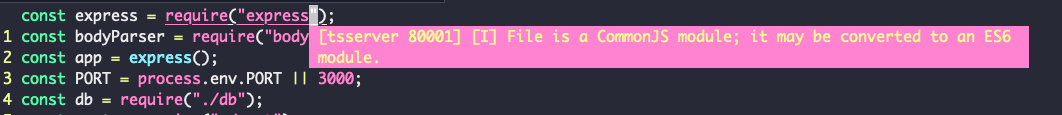

331,086 | Getting started with Glitch | Glitch is a great platform for hosting your Github or newly made projects running node.js, web servers, and more. | 0 | 2020-05-09T19:58:28 | https://developerbacon.ca/articles/getting-started-with-glitch/ | webdev, tutorial | ---

title: Getting started with Glitch

published: true

date: 2020-05-09 07:00:00 MDT

tags: webdev, tutorial

description: Glitch is a great platform for hosting your Github or newly made projects running node.js, web servers, and more.

canonical_url: https://developerbacon.ca/articles/getting-started-with-glitch/

---

[Glitch.com](https://glitch.com/) is a website that can host your online web apps. You can host entire websites on Glitch with Vue.js, React, Angular, Nuxt, and more. There is also the option to make bots for Discord, Slack, or even Twitch with a language like Node.js.

## Creating your first app

When you start a project you will have the option to start from a starter template or a Github repo.

The Glitch editor has a bunch of great features like a file formator and an automatic package update checker.

## Packages

After you have a project set up and ready to go, and you can make changes in the built-in editor, you will need to make sure you have a `package.json` file with a start script. You can ignore the package file if you are running a website with an `index.html` file. The start script is what Glitch looks for when running your app. Here is a template for the package file if you don't have one.

Glitch comes with an easy way to install and update NPM packages. To use this functionality go to your package.json file in your Glitch project and at the top you will see an `Add Package` button. To add a package just click it and search for the package you would like to install and Glitch will install it for you.

Updating packages is very similar to installing them. If a package has an available update just click on the Add Package button and any available package updates will display above the `What is npm` link.

The script that Glitch will run is `start`. As far as I can tell there is no way to change the script the Glitch will run if there is a way just let me know.

```json

{

"scripts": {

"start": "node index.js"

}

}

```

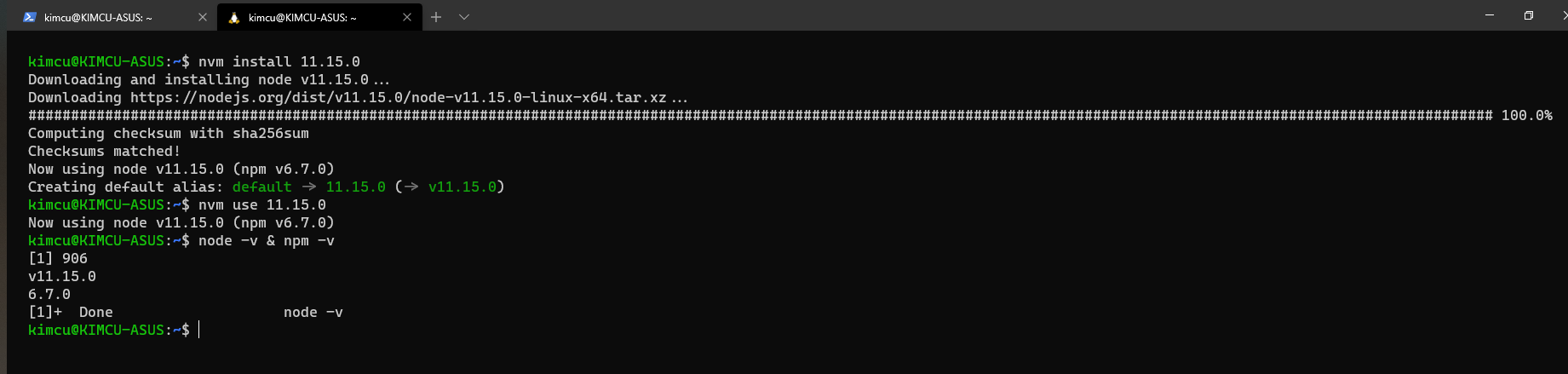

Currently, the highest node version that Glitch uses is v12, but if you would like to use another version of node for your project

```json

{

"engines": {

"node": ">=12.x"

}

}

```

Here is an example of what your package.json file should look like for your Glitch project.

```json

{

"name": "something-cool",

"version": "1.0.0",

"main": "index.js",

"scripts": {

"start": "node index.js"

},

"dependencies": {

"express": "^4.17.1"

},

"engines": {

"node": ">=12.x"

}

}

```

## Formatting your files

Glitch also comes with a file formating tool, to help make your documents look just a bit nicer to look at. To use this function open a file that you would like to format and at the top of the editor, there will be a `Format This File` button. Using this is as simple as clicking that button.

## New files and directories

Making a new file is easy with the `New File` button at the top left of the file hierarchy menu, but did you know that you are also able to make new folders with this as well. To do so just add the directory before the new file you want to create and add a slash in between the directory and file. Here is an example `new_directory/new_file.js`

<!-- assets -->

## .env

The `.env` file is where you can keep all of your super-secret private tokens for your projects. You can put all of your tokens in this file and they won't be visible to the public and won't duplicate if the project is remixed.

To access the variables that have been set in the .env file you can use the `process.env` object, followed by the variable, like so: `process.env.SOMEVAR`. This is an example of what a .env file would look like:

```

# Environment Config

# reference these in your code with process.env.SECRET

TOKEN=123456abcdefg

# note: .env is a shell file so there can't be spaces around =

# Scrubbed by Glitch 2020-04-17T08:15:39+0000

```

| brettanda |

331,210 | Why did you decide to study Software Engineering? | The reason I have to become a software developer... “You can focus on your spiritual growth more whe... | 0 | 2020-05-09T17:02:56 | https://dev.to/ameerrah9/why-did-you-decide-to-study-software-engineering-3kog |

The reason I have to become a software developer...

“You can focus on your spiritual growth more when you’re not in survival mode living paycheck to paycheck.”

-Ayodeji Awosika

I come from a low income household and not the most educated. I was the first to graduate from college in my family. My family lived on public assistance for most of my life. I found freedom in a skill which allowed me to further my education at a lower cost than most; playing basketball. Though a scholarship funded my education, I still had to take out student loans. After I earned a degree I was not able to find work in my field, a bachelor's only gets you but so far nowadays. Before this pandemic I was making a $30k salary barely enough to pay rent, eat healthy or enjoy activities out of my home. After fighting myself and beating myself up over the position I was in in life, I discovered a way to financial freedom.

"Today, every company is a Software company. The Software industry is booming and demand for qualified software engineers is expected to increase by over 23% by 2028."

Technology is an essential skill of the world! If you have desires to survive and thrive, learn about technology. The technology industry is trending towards engineers; the need for engineers is very high. The chance to be a part of an organization's decision making and being in those rooms where innovative change is discussed and planned is something that drives me. I’m intrigued by the influence engineers have on a product directly and the impact we can have on a business. Software Engineering is a very collaborative task and I love to immerse myself in team environments. I am very intrigued by the field because of the real world application of the projects. When I learn Software Engineering I will get the necessary preparation and knowledge needed to make a break in this industry.

I have been interested in coding for as long as I can remember, more distinctly, since high school. I have always had the desire but I’ve never been given the chance to be educated formally since then. Learning Software Engineering creates a competitive advantage for myself to gain the attention of employers. Let's be completely transparent, there's $100,000 ON THE TABLE A YEAR!!! I just need to believe in my infinite capacity to learn. I intend to learn new skills for self-enrichment and financial freedom. I intend to start a new in-field job within 60 days of graduating the Flatiron program. With no formal education in this field and no Computer Science degree, it's clear I have an insane amount of self determination to be successful.

The reason I have to become a software developer...

I have a strong desire to learn! Not just programming but in life, I'm a lifelong learner. Yes, adding coding skills will allow me to add value to a business in exchange for gainful employment; but it's about more than money. In fact, I’m enjoying the process of learning that I would do it for free! Engineers are respected and for good reason. Software Engineering is full of twists, turns and roadblocks that can have you thinking, "Can I actually do this?" This journey will be a test of toughness and resilience. I'm constantly reaching out and networking so I can find ambitious people so that I am surrounded by motivated, hard working people! There's an abundance of reasons why I chose to learn Software Engineering, but a key reason is to challenge myself.

The reason I have to become a software developer...

A career in software engineering would be life changing, I would be doing something impactful, something I love and enjoy and can feel fulfilled by and it would financially allow me to have more buying power and freedom for my family and future success. I think of programming as a craft. I was an athlete in college so I truly enjoy craftsmanship. When I put my mind to something, I try to learn as much as possible about it, in hopes of enjoying them more. I love the challenge of programming. I see it as a creative mode of expression. Upon graduating this course my goals are to continuously learn more and more skills to better my craft as an engineer, my education starts with Flatiron School but it doesn't end. My goals include being employed at a company that I feel adds value to the world and aligns with my values. My goals are to earn six figures by 2021 and be able to do more for my family. My goals have always been to contribute to the human advancement through technology. Learning Software Engineering allows me to provide a valued skill to individuals and businesses that can utilize this unique skill. Once I complete Flatiron's course I will have the confidence, the skills and the experience to make an impact on a company and further produce for their bottom line. My goals are to add value to an organization using my coding skills and problem solving ability, I also have desires to create a better life for my family and other coders who come after me.

So that’s it! The plentiful reasons why I chose to embark on this crazy journey of learning Software Engineering. My friends and family think I’m crazy but I know I can do this! Let’s face it, I’m a NERD. I can’t get enough of this! Keep following my journey as I give updates on my story.

| ameerrah9 | |

331,216 | Fullstack Serverless App Template (React + Apollo + GraphQL + TypeScript + Netlify) | I created a template that will enable developers to quickly bootstrap an Apollo GraphQL + TypeScript project, which can be deployed in a serverless fashion on Netlify. Also, with the FaunaDB addon, it will enable developers to have a stateful app. Thus, developers can concentrate on core application logic and scale the application as their app grows at the same time they don't have to sacrifice on security. | 0 | 2020-05-09T17:19:56 | https://abstracted.in/fullstack-serverless-template/ | gftwhackathon, netlify, fullstack, serverless | ---

title: Fullstack Serverless App Template (React + Apollo + GraphQL + TypeScript + Netlify)

published: true

canonical_url: https://abstracted.in/fullstack-serverless-template/

description: I created a template that will enable developers to quickly bootstrap an Apollo GraphQL + TypeScript project, which can be deployed in a serverless fashion on Netlify. Also, with the FaunaDB addon, it will enable developers to have a stateful app. Thus, developers can concentrate on core application logic and scale the application as their app grows at the same time they don't have to sacrifice on security.

tags: gftwhackathon, netlify, fullstack, serverless

---

[Instructions]: # (To submit to the Grant For The Web x DEV Hackathon, please fill out all sections.)

## What I built

I created a template that will enable developers to quickly bootstrap an Apollo GraphQL + TypeScript project, which can be deployed in a serverless fashion on Netlify. Also, with the FaunaDB addon, it will enable developers to have a stateful app. Thus, developers can concentrate on core application logic and scale the application as their app grows at the same time they don't have to sacrifice on security.

Netlify functions enable developers to deploy lambda functions. They have a template for Apollo GraphQL as well. However, this template doesn't support TypeScript. Limiting the advantages of GraphQL to just querying and mutating data. Having TypeScript helps developers to change code with reliability. This also enhances overall experience with GraphQL as schema defined in GraphQL can be directly exported to TypeScript types.

For such cases, Netlify recommends using legacy command `netlify-lambda` command. But people ran into other issues using it.

### Submission Category: Foundational Technology

[Note]: # (Foundational Technology, Creative Catalyst, or Exciting Experiments)

## Demo

Application: https://apollo-graphql-typescript.netlify.app/

GraphQL PlayGround: https://apollo-graphql-typescript.netlify.app/.netlify/functions/graphql

## Link to Code

[Note]: # (Our markdown editor supports pretty embeds. If you're sharing a GitHub repo, try this syntax: `{% github link_to_your_repo %}`)

{% github https://github.com/pushkar8723/apollo-graphql-typescript %}

## How I built it

[Note]: # (For example, what's the stack? did you run into issues or discover something new along the way? etc!)

I integrated TypeScript at the build time itself. Thus there is no need to use the legacy command.

## Additional Resources/Info

https://community.netlify.com/t/getting-typescript-to-work-with-netlify-functions/6198

https://community.netlify.com/t/does-netlify-dev-support-typescript/3842

[Reminder]: # (We hope you consider expanding your submission into a full-on application for the Grant for the Web CFP, due on June 12.)

**UPDATE:**

I further integrated `graphql-codegen` to automatically generate TypeScript types for GraphQL schema. And also integrated `eslint` and `husky` to introduce lint checks and validate them before each commit.

**UPDATE 2:**

I created a demo project using this template and also integrated FaunaDB addon on [GitHub](https://github.com/pushkar8723/social-board). | pushkar8723 |

331,218 | Deploying Angular app with Netlify in 3 steps | Netlify is a great platform to build/deploy any kind of web applications (not just Angular). It's ver... | 0 | 2020-05-10T15:54:41 | https://dev.to/salimchemes/deploying-angular-app-with-netlify-in-3-steps-55k6 | angular, netlify, github | ---

title: Deploying Angular app with Netlify in 3 steps

published: true

description:

cover_image: https://dev-to-uploads.s3.amazonaws.com/i/6syudn32kwrpwmuzobfl.png

tags: #angular #netlify #github

---

Netlify is a great platform to build/deploy any kind of web applications (not just Angular).

It's very useful when you need to deploy your app fast and easy.

I found it handy to have demos or examples running when writing posts or when I need to have some coding working and live (not just local), but Netlify is not just for that, it is a very powerful platform.

We can deploy our Angular app following these steps:

**1. Create your angular project on github (could also be on bitbucket/gitlab)**

**2. Log in into Netlify, look for your repo and setup the build options**

**3. Deploy the new web site created!**

Let's start

**1. Create you angular project on github (could also be on bitbucket/gitlab)**

```javascript

ng new my-angular-app

```

Create a repo on github and push your code.

**2. Log in into Netlify, look for your repo and setup the build options**

* Log in https://www.netlify.com/

* Clic on New site from Git

* Select Github as provider

* After authorization, we will see the list of available repositories to pick.

If `my-angular-app` repo is not on the list, we need to provide access from github. (If you see your repo, you can skip this step).

Clic on the highlighted link "_Configure the Netlify app on GitHub_".

We will be redirected to github to look for our missing repository

* Now we can see `my-angular-app`

* As part of build options setup, this is what we need:

1. build command: we build our code in prod mode

2. publish directory: location of build files

**3. Deploy the new web site created**

After clic on Deploy site, the first build is triggered and deploy is in progress

Finally, we have our site running

Let's go to the site list to see one we have just created

Clic on our site, and then on the url provided by Netlify

That's all! site deployed and running!

**Conclusions**

Netlify provides a lot of cool features and tools, this post is just to demo how to deploy fast with Angular but there is a lot more to work with.

Other Netlify features

* Custom domains

* Functions with AWS Lambda

* Identity

* Forms

* Large Media

* Split Testing

* Analytics

**References**

* github [repo](https://github.com/salimchemes/my-angular-app) (nothing special here)

* Netlify site running: https://focused-bhaskara-dee416.netlify.app/

| salimchemes |

331,233 | VIM auto-completion with coc.nvim | VIM is a modal text editor which gives bare minimal setup for text editing. However it's highly... | 0 | 2020-05-09T18:25:10 | https://dev.to/rajikaimal/vim-auto-completion-with-coc-nvim-ie3 | vim, languageserver, texteditor | VIM is a modal text editor which gives bare minimal setup for text editing. However it's highly flexible and configurable with plugins. With few configurations, we can make VIM similar to VSCode. First you need to install a plugin managers. There are a bunch of plugin managers,

- [Vundle](https://github.com/VundleVim/Vundle.vim)

- [Vim Plug](https://github.com/junegunn/vim-plug)

- [Pathogen](https://github.com/tpope/vim-pathogen)

Installing any of the above managers is a straight forward task. After installing a plugin manager we are good to go :clap:

Next up installing [coc.nvim](https://github.com/neoclide/coc.nvim). Coc is an intellisense engine for VIM. (same as in VSCode) This uses [language server protocol](https://microsoft.github.io/language-server-protocol/).

A prerequisite for Coc is Node.js as it's written in TypeScript. Navigate to [vimawesome](https://vimawesome.com/plugin/coc-nvim) and copy `install from` script for the plugin manager you already installed earlier. Open your `.vimrc` and paste the copied plugin reference and run `:source %` and `:PluginInstall` to install the plugin.

From this point onwards we can use Coc intellisense, but there are a couple of commands that can be mapped from `.vimrc` to make it really convenient to be used quickly. [Coc README](https://github.com/neoclide/coc.nvim#example-vim-configuration) offers a starting point for this configuration. This configuration can be copied and pasted to `.vimrc`. This will add few key mappings like gr, gi, gd for moving to method references, implementations, and definitions.

For every programming language Coc expects extensions to be installed. For an example for web development following command can be used.

`:CocInstall coc-tsserver coc-html coc-css`

This adds intellisense support TypeScript, HTML, and CSS.

Once that's done, that's all what we need for intellisense :tada:

Documentation with `K` mapping

:page_facing_up: Check out my not so minimal and messy [`.vimrc`](https://github.com/rajikaimal/dotfiles/tree/master/vim) | rajikaimal |

331,240 | Build your own Linked List in JS | I am trying to improve my hold on data structures and algorithms so, I implemented my own linked list... | 6,738 | 2020-05-16T08:40:40 | https://ajitblogs.com/build-your-own-linked-list-in-js/ | javascript, webdev, algorithms, beginners | I am trying to improve my hold on data structures and algorithms so, I implemented my own linked list class JavaScript. I've shared the API here and tell me if any other methods should be implemented.

Try implementing it on your own as I also was afraid of linked lists using the .next, .next each time. Implementing it really increased my confidence to use linked lists as a data structure.

API's implemented:

size() - returns number of data elements in list

empty() - bool returns true if empty

value_at(index) - returns the value of the nth item (starting at 0 for first)

push_front(value) - adds an item to the front of the list

pop_front() - remove front item and return its value

push_back(value) - adds an item at the end

pop_back() - removes end item and returns its value

front() - get value of front item

back() - get value of end item

insert(index, value) - insert value at index, so current item at that index is pointed to by new item at index

erase(index) - removes node at given index

value_n_from_end(n) - returns the value of the node at nth position from the end of the list

reverse() - reverses the list

remove_value(value) - removes the first item in the list with this value

> Link to the [class](https://github.com/ajitsinghkaler/javascript/blob/master/interview/linkedList.js)

> I am trying to improve my hold on data structures and algorithms please tell me if you have any good resources available in the comments below.

If you find any error in my code please raise a pull request in my [repo](https://github.com/ajitsinghkaler/javascript/blob/master/interview/linkedList.js) | ajitsinghkaler |

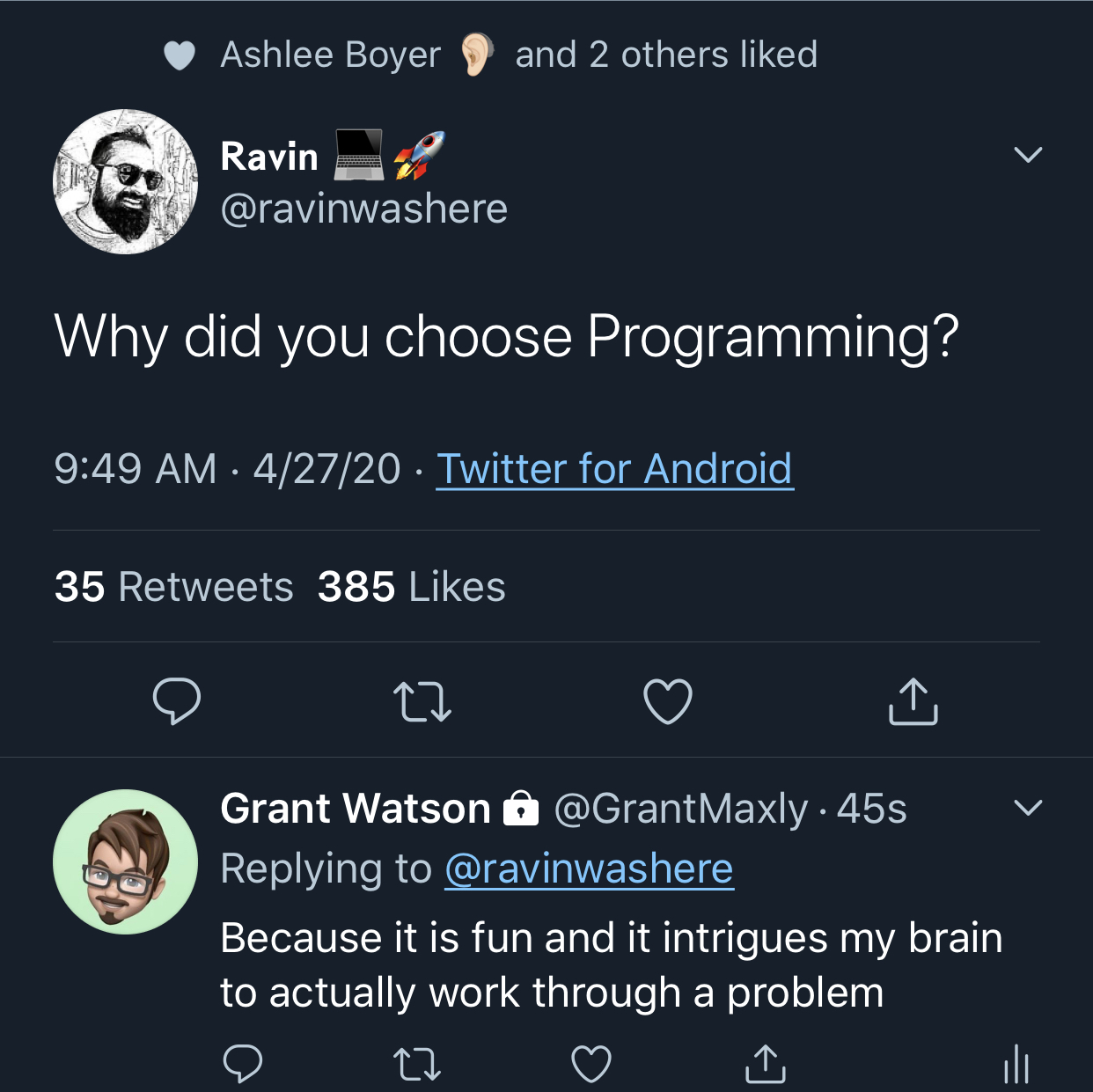

331,251 | Why would you become a programmer? | I love Twitter. It’s a way for a person to connect with others, to see snippets of other technologies... | 0 | 2020-05-09T19:00:56 | https://dev.to/grantwatsondev/why-would-you-become-a-programmer-1f8g | programmer, career | I love Twitter. It’s a way for a person to connect with others, to see snippets of other technologies, and to share ideas. It is also a place to bash people, people who may have different views from you. It would be a lie if I have not been part of an online discussion that has led to an argument between two agitated individuals who are set in their ways. I am not perfect, nor do I want to be. But as I have grown older, I tend to try my hardest to stay away from the poker and prodded tweets and Facebook posts so that I can stay sane.

This post is not about online feuds, but on the opposite end: the ability to spark conversations with one ourselves from posts.

I responded to a tweet asking a “simple” question: “Why did you choose programming?”

To be honest, this has been the second time only that I have truly considered why I chose programming as a career path. Programming is one of those career paths that, for the right person, can be the most frustratingly fun thing to do in one’s lifetime. The job can bring about some of the individuals’ greatest accomplishments, while also bringing about some of the greatest failures. And in my short career, it is very fair to say that I have experienced some of both sides of the aisle. Programming is one of those skills that MAKE a person work through seen and unforeseen issues that could and will come up in a given situation.

I wanted to go over some of the pros of why a person will choose programming as a career path, as well as sprinkle in some more of my reasons of doing so.

If you Google “Why choose programming as a career?”, the first bits of shared information will read something similar to “5 reasons why you should learn programming”, “the benefits of choosing a computer programming career.”, or “where do I start in my career as a programmer”. You will see a trend online that programming can be an amazing career choice for the masses. But I will state that I do not believe, and this is Grant Watson’s opinion, that not everyone is cut out to be a programmer/engineer/developer. This goes to say the same that not every person so pick up singing, being a doctor, being a salesperson, or mechanic as a career. Different people have different talents, and those talents may or may not be in the computer science realm. The computer sciences takes a special type of person. It takes critical thinking, mathematical thinking where X may not be easily defined. It takes a form of creativity that is outside of the box. And yes, a programmer does want to throw their computer across the room sometimes.

Let’s talk about some of the pros of working in programming.

Increased career opportunities

Looking at the job market, even before the pandemic known as COVID-19, software development is a needed atmosphere to drive businesses across all genres of life. If you want to work in the healthcare realm, but do not want to work as a medical professional, you can help with the development of the software and technologies used in the medical realm. The same goes for any other form of business. Insurance, military, automotive, industrial, food, marketing, what have you, the arena is yours to choose. Learning to program will make you more employable in the IT workplace as companies are looking for candidates who have a comprehensive IT skill set. And programming is becoming a more and more standard requirement for many information technology jobs, whether or not it makes up a big part of your day-to-day responsibilities.

Competitive advantage

Similar to what was stated, once you have education and/or experience, the ability to jump into the technology world will be quick and fast. I got into my career as a full stack developer 7 months before I finished my degree. Because programming and coding are both becoming a critical part of operations for all types of businesses, you’re more likely to get hired if you have these skills. Though demand for tech-based jobs is higher than ever and on the rise, it still helps to stand out among other candidates for the same position. Most IT jobs require both a knowledge of business processes and the ability to code, so you need to have a strong background in both areas, not just one or the other.

Flexibility in the workplace

As an IT professional, you will be working closely with software developers and web designers. To communicate effectively and operate efficiently, understanding the programming process will enable you to do your job better. Even though you may not be using programming skills every day, having a background in this key tech area can make you an asset to your company’s IT department.

Relevance in the modern world

Learning to program will help you stay relevant in all types of industries. And in today’s tech-driven society, the more computer skills you have, the easier it will be to get the job you want. Because businesses rely so heavily on web-based processes and services, learning the science and theory behind how those things work is essential for effective work in information technology.

| grantwatsondev |

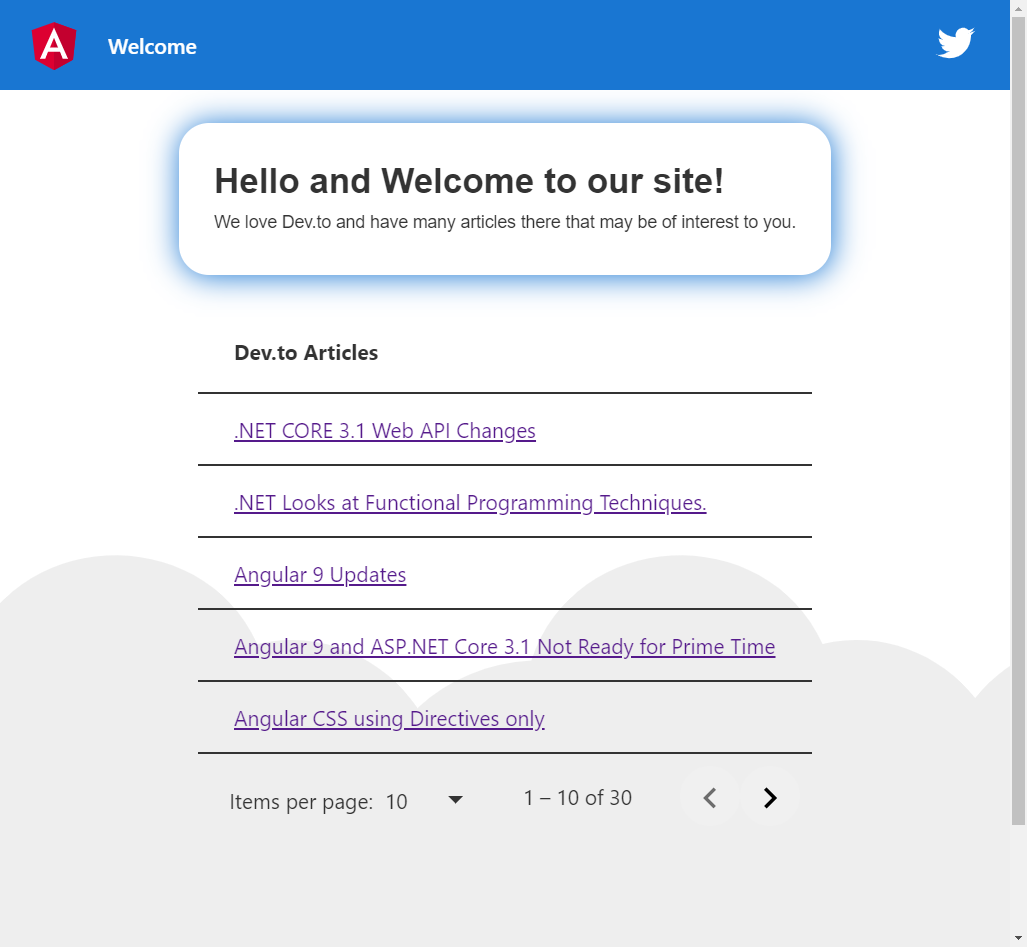

331,330 | Material Table II in 20 Minutes (Styling) | In our previous article, we demonstrated how to wire up a Material Table to a JSON file (containing a... | 6,526 | 2020-05-09T21:56:31 | https://dev.to/jwp/angular-material-table-ii-in-20-minutes-32gg | angular, typescript, material | In our previous article, we demonstrated how to wire up a Material Table to a JSON file (containing articles), and a paginator; which, looks like this:

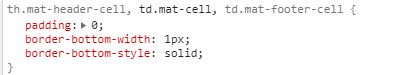

**Changing the Style of the Rows**

We didn't like the lines on each row and found the root cause to be the default style from MatTable.

Let's get rid of the lines by adding this to our page's css.

```css

th.mat-header-cell,

td.mat-cell,

td.mat-footer-cell {

border-bottom-width: 0px;

}

```

Ok now let's change the default a:hover behavior and get rid of the typical underscore.

```css

a {

cursor: pointer;

border-radius: 5px;

position: relative;

padding-left: 0.5em;

padding-right: 1em;

padding-bottom: 0.1em;

text-decoration: none;

}

a:hover {

box-shadow: inset 0px -7px 15px #1976d255;

}

```

**Results**

No more lines, and a soft inset box shadow following our theme color! This is what it looks like in action.

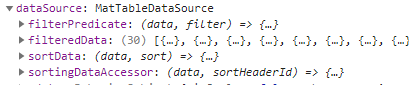

**Search**

The matTable datasource has these properties:

In our next article we will show how to tie into these properties to provide automatic (and mostly instant) searches. We'll also explore the sortData function.

JWP2020

| jwp |

331,376 | Solution to Leetcode’s Valid Perfect Square | This is an easy Leetcode problem 367. The question gives a number and you are to write a function to... | 0 | 2020-05-09T23:34:28 | https://dev.to/codechunker/solution-to-leetcode-s-valid-perfect-square-5gd | tutorial, codenewbie, leetcode, algorithms | This is an easy [Leetcode problem 367](https://leetcode.com/problems/valid-perfect-square/). The question gives a number and you are to write a function to determine if it is a perfect square. See the image below for description.

![problem description]

(https://miro.medium.com/max/1400/1*P5i0IPr8UqObEFFcpMIZlQ.png)

According to [wikipedia](https://en.wikipedia.org/wiki/Square_number), a perfect square is the product of a number with itself. in this context, a perfect square is a number when multiplied by itself will give you the number in question.

There are many ways in solving this problem but I will talk about three in this article.

**NOTE: Do not use any library functions like Math.sqrt().**

<h3><b>THE NAIVE APPROACH</b></h3>

Of course the naive or brute force approach would be to loop through from 1 to the number, multiplying a number by itself and checking if the product equals the number you are looking for. if it does, then return true, else return false.

```

public static boolean isPerfectSquareWithBruteForce(int num) {

if (num == 1) return true;

for (int i = 1; i < num; i++) {

if (i*i == num) return true;

}

return false;

}

```

The code above works but can be very slow. its runtime is O(n).

To make this better, we may decide to loop from 1 to half of the number because there is no way you can get the number in question by multiplying number greater than half (i.e num/2) by itself. e.g to determine if 9 is a perfect square, there is no way a number between 4 to 9 would give you 9. So in other words, to know if a number is a perfect square, the number that will multiply itself will have to be in the first half (between 1–3).

```

public static boolean isPerfectSquareWithHalfNumber(int num) {

if (num == 1 || num == 0) return true;

for (int i = num/2; i >= 0; i-- ) {

if (i*i == num) return true;

}

return false;

}

```

Technically speaking, it may seem that the above code would make it faster but the truth is , the runtime of this is O(n/2) which still translates to O(n) because in computer science, we don’t consider constants when dealing with runtime.

<h3><b>A FASTER SOLUTION</b></h3>

A better way to solve this problem would be to use Binary Search. This solves the problem by using the divide and conquer methodology. It keeps splitting the number into half and checking if number is found.

```

public static boolean isPerfectSquareWithBinarySearch(int num) {

double start = 1;

double end = num/2;

if (num == 1) return true;

while (start <= end) {

double mid = Math.floor((end - start)/2) + start;

if (mid * mid == num) {

return true;

}else if (mid * mid < num) {

start = mid + 1;

}else {

end = mid - 1;

}

}

return false;

}

```

The runtime of the above is O(log n) which is faster than O(n) linear time.

Thank you for reading and please leave a comment or suggestion. | codechunker |

331,381 | How To Use Command Prompt | What is command prompt for? | 0 | 2020-05-10T00:52:41 | https://dev.to/ameliaruzek/how-to-use-command-prompt-138c | learning, beginners, webdev, frontend | ---

title: How To Use Command Prompt

published: true

description: What is command prompt for?

tags: #learning #beginner #webdevelopment #frontend

---

# Search for Command Prompt and open the application.

Usually tutorials about web development say something about “terminal” “command line” “command prompt” “bash” “shell” and then say something about “run this command” etc…

The first thing to know is that all these applications are very similar and serve similar functions. There ARE differences, but for a beginner, you don’t really need to worry about it. If you’re on a Windows computer, use Command Prompt as the application.

Sometimes they’ll tell you to download Node.js first, and if you have done so, you’ll probably see another application show up in the search called Node.js Command Prompt. That’s fine — either one is fine.

# Does white text on a black background make you feel like you’re definitely going to accidentally break your computer?

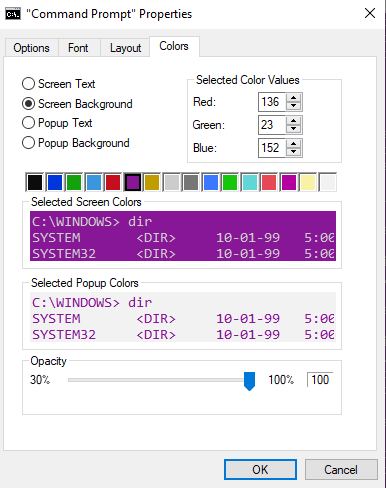

Click on the thumbnail icon at the top left of the application, then select properties.

Move to the colors tab and make it pink or purple or customize it any way you choose, then click “ok”

# Help

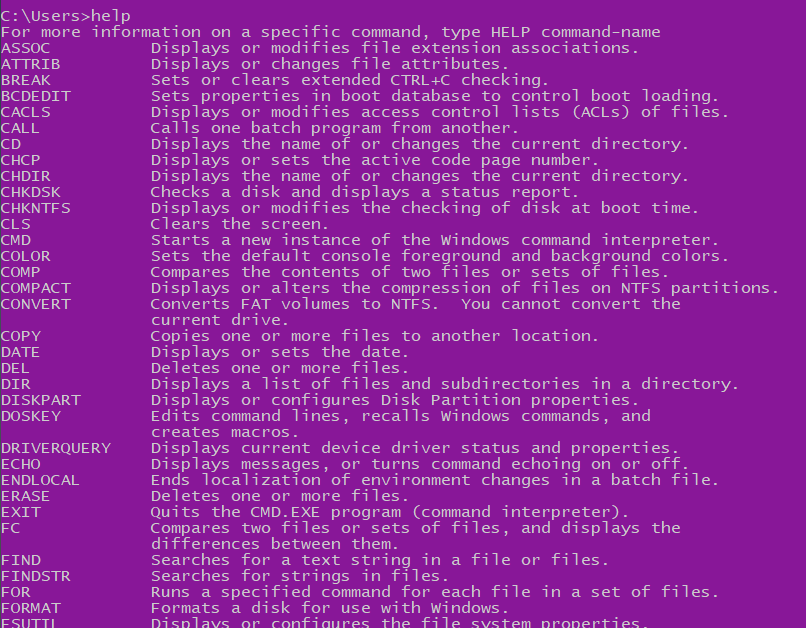

Next up, type `help`. When you do this all of the default commands will be shown like a little dictionary.

If you’re planning to use Command Prompt for web development, then the only important commands listed here are `cd` and maybe `mkdir`.

The other commands you’ll use are additional ones that you download such as the `node` command, `git` command, and either `yarn` or `npm` commands.

# Try it out

Ok, let’s try out `cd` first. The help dictionary said that `cd` will display the name of the directory or change the directory. What it doesn’t say is that `cd` only moves forward through directories. To move backward, you’ll need to use `cd..`

Since usually Command Prompt defaults to the directory of your Windows user profile, you’ll probably see something like this:

`C:\Users\yourName>`

Type `cd..` and it will change to

`C:\Users>`

Then type `cd yourName` to move it back to the directory that you were in.

Open that same directory using File Explorer (the folder GUI). Click through the folders/directories. Do you see “Users” and the folder “yourName”? Keep the File Explorer open on one side, and on the other side user Command Prompt to type `mkdir HelloWorld`

Wow! There’s a new folder there now called “HelloWorld”

# Wait…why does Command Prompt exist? What’s the point?

Websites used to take a really long time to set up. But with custom commands that you can download like the way that Node.js downloads the custom command npm , you can create websites and other things really quickly.

If you’ve already downloaded Node.js, you can type `npm -l` to see its dictionary of additional commands.

These commands are very useful for when you are downloading packages of existing scripts and helper frameworks for web development.

# Not sure where to start?

I’m guessing you’re here because you know some basic HTML, CSS, and JavaScript, and you’d like to become a front-end developer, but all of the tutorials about frameworks and scripts have command line in their tutorials.

If that’s you, and you’re looking for an easy framework to learn, I recommend starting with Vue.

Follow these instructions https://cli.vuejs.org/guide/installation.html to get started, and good luck! You can do this!!

| ameliaruzek |

331,393 | Building Calndr, a free AddEvent alternative | Coronavirus has impacted pretty much every business large and small, and has resulted in a massive su... | 0 | 2020-05-10T02:37:29 | https://dev.to/atymic/building-calndr-a-free-addevent-alternative-54h | showdev, laravel, php, javascript | Coronavirus has impacted pretty much every business large and small, and has resulted in a massive surge in online events. Companies that previous provided solely physical services are switching to webinars and online courses, so the demand for tech in this area has spiked.

In the last few months, I've had multiple different clients come to me with requests to help them schedule events online. There's some existing services that allow you to create add to calendar links out there, such as AddEvent, but they are expensive for what they do - essentially just generating a few links in a certain format (for different calendar software).

After integrating a simple version into one of my client's applications, I decided to create [Calndr.link](https://calndr.link/). It's a super simple service that allows you to generate calendar links for all the major providers in a couple of clicks. Enter the event details (title, location/meeting link, date, etc) and hit generate and you'll be provided with some HTML to copy/paste directly into your newsletter, website, email signature, etc. You can also copy/paste the direct links as well, if you prefer.

## The Tech

I decided to have some fun when building this, using Interia.js (totally overkill for moment, but fun!). On the back end, it's running Laravel 7.

It's deployed on Google Cloud using Cloud Run, so it's running completely serverless. I love the flexibility and ease of use, just whip up a simple docker container, push it to the image registry and hit deploy!

Since you're only charged for requests that actually hit the server, it's extremely cheap. It's basically Lambda, but you can run anything (since it runs custom docker containers).

I'm planning on writing a guide on how to deploy Laravel on GCR serverless, so keep an eye out for that!

Feel free to leave a comment if you've got any questions or suggestions for [Calndr.link](https://calndr.link/)!

| atymic |

331,512 | Vanilla JavaScript string includes | While we recently checked if a string startsWith or a string endsWith a specific substring. We are no... | 0 | 2020-05-10T07:26:41 | https://daily-dev-tips.com/posts/vanilla-javascript-string-includes/ | javascript | While we recently checked if a [string `startsWith`](https://daily-dev-tips.com/posts/vanilla-javascript-string-startswith/) or a [string `endsWith`](https://daily-dev-tips.com/posts/vanilla-javascript-string-endswith/) a specific substring. We are now going to find out if a string contains another substring.

To do so, we are using the `JavaScript` function `includes()`.

## Using includes() function in JavaScript

We can use this function by calling it on a string and passing a substring to it.

```js

var string =

'The greatest glory in living lies not in never falling, but in rising every time we fall.';

// Check if it includes with `living`

console.log(string.includes('living'));

// true

```

For the `includes()` function it is important to know it's a case sensitive function, so the following will fail:

```js

var string =

'The greatest glory in living lies not in never falling, but in rising every time we fall.';

// Check if it includes with `Living`

console.log(string.includes('Living'));

// false

```

## Using an offset starting position with includes()

As the brothers startsWith() and endsWith() this one has another position parameter. This position is from where it will start to look.

```js

var string =

'The greatest glory in living lies not in never falling, but in rising every time we fall.';

// Check if it includes with `living`

console.log(string.includes('living', 30));

// false

```

Feel free to play with this Codepen:

<p class="codepen" data-height="265" data-theme-id="dark" data-default-tab="js,result" data-user="rebelchris" data-slug-hash="eYprJZd" style="height: 265px; box-sizing: border-box; display: flex; align-items: center; justify-content: center; border: 2px solid; margin: 1em 0; padding: 1em;" data-pen-title="Vanilla JavaScript string includes">

<span>See the Pen <a href="https://codepen.io/rebelchris/pen/eYprJZd">

Vanilla JavaScript string includes</a> by Chris Bongers (<a href="https://codepen.io/rebelchris">@rebelchris</a>)

on <a href="https://codepen.io">CodePen</a>.</span>

</p>

<script async src="https://static.codepen.io/assets/embed/ei.js"></script>

## Browser Support

This function works well in all modern browsers, including edge!

### Thank you for reading, and let's connect!

Thank you for reading my blog. Feel free to subscribe to my email newsletter and connect on [Facebook](https://www.facebook.com/DailyDevTipsBlog) or [Twitter](https://twitter.com/DailyDevTips1)

| dailydevtips1 |

331,517 | Folding in JS | Mutatis mutandis between Haskell and JS, the two approaches to fold a list/array are foldl, which doe... | 6,544 | 2020-05-10T09:28:18 | https://dev.to/mandober/folding-mol | javascript | Mutatis mutandis between Haskell and JS, the two approaches to fold a list/array are `foldl`, which does it from the "left", and `foldr`, which does it from the "right"[^1].

```js

const foldl = f => z => ([x, ...xs]) =>

x === undefined ? z

: foldl (f) (f(z)(x)) (xs)

const foldr = f => z => ([x, ...xs]) =>

x === undefined ? z

: f (x) (foldr(f)(z)(xs))

```

With these two functions defined, recursion over an array is abstracted, so there's no need to manually traverse it - all other operations can now be defined in terms of `foldr` or `foldl` (at least as an exercise in futility)[^2].

## In terms of fold

```js

// helper function

const cons = x => ([...xs]) => [x,...xs]

const append = ([...xs]) => ([...ys]) => [...xs, ...ys]

const apply = f => x => f(x)

const flip = f => a => b => f(b)(a)

const add = a => b => a + b

const mul = a => b => a * b

const and = a => b => a && b

const or = a => b => a || b

const K = a => _ => a;

const B = g => f => x => g(f(x))

// In Terms Of Fold

// foldr_id - leaves a list intact, showing that folding a list means replacing all cons with `f` and the empty list ctor `[]` with an initial value, `z`

const foldr_id = foldr (cons) ([])

const reverse = foldl (flip (cons)) ([])

const flatten = foldr (append) ([])

const compose = foldr (apply)

const pipe = foldl (flip(apply))

const head = foldr (K) (undefined)

const last = foldl (flip(K)) (undefined)

const sum = foldr (add) (0)

const product = foldr (mul) (1)

const all = foldr (and) (true)

const some = foldr (or) (false)

const map = f => foldr (B(cons)(f)) ([])

const filter = p => foldr (x => xs => p(x) ? cons(x)(xs) : xs) ([])

// Check

let m = [1,2,3]

let n = [5,6,7]

let t = [[1,2,3], [5,6,7]]

let p = [true, true, false]

console.log(

'\n',

'foldr_id:' , foldr_id (m) , '\n' ,

'reverse :' , reverse (m) , '\n' ,

'flatten :' , flatten (t) , '\n' ,

'sum :' , sum (m) , '\n' ,

'product :' , product (m) , '\n' ,

'all :' , all (p) , '\n' ,

'some :' , some (p) , '\n' ,

'head :' , head (m) , '\n' ,

'last :' , last (m) , '\n' ,

'map :' , map (add(10)) (m) , '\n' ,

'filter :' , filter (a=>a>1) (m) , '\n' ,

'compose :' , compose (5) ([x=>x*3, x=>x+5]) , '\n' ,

'pipe :' , pipe (5) ([x=>x*3, x=>x+5]) , '\n' ,

)

```

[^1]: The elements are accessed from the left in both cases.

[^2]: Haskell's lists are singly-linked lists, so prepending is the most efficient operation to add an element; but to append an element, the entire list needs to be traversed. Although JS arrays have many incarnations (depending whether they hold homogeneous or heterogeneous elements, whether they are sparse, etc.), appending should be the most efficient operation.

| mandober |

331,561 | "Is PHP Dead?" Discussion | A brief look at the popular discussion if PHP is dead or not. | 0 | 2020-05-10T09:54:41 | https://dev.to/actuallymab/is-php-dead-discussion-3230 | php, programming, code | ---

title: "Is PHP Dead?" Discussion

published: true

description: A brief look at the popular discussion if PHP is dead or not.

tags: php, discuss, programming, code

cover_image: https://dev-to-uploads.s3.amazonaws.com/i/675ovmli79v37figa12a.png

---

Tl;dr: it is not.

It was an important moment for me. I was turning 30. One of my best friends organized a lovely house party, and another DJ friend was playing nice music. Even my brother, who was living in Turkey, flew 3000 kilometers and caught the event at the last moment. There were some people that I knew but also some people I barely knew. One of these guys from the second category, who was working in a big IT company, asked me that famous question with a loud voice so I could hear him.

**“So you're coding in PHP, is it not dead yet?”**

He was sipping his cocktail and made that face with a big grin, which I see on most of the people who asked the same question to me. Yes, writing PHP is a part of my job, but as a software engineer (some call me a developer, but this is another discussion), it’s not the only thing I do. If there could be a chance for me to describe myself, instead of answering the “oh, which language are you working on for server-side programming?” question, I would love to talk about problems I solve, decisions I make, and the architectural reasons behind them.

But yes, I was (and still am) coding in PHP.

And there is a simple reason behind that. It is not just because PHP has the best tools for me, or because I love to write in PHP. But it is because someone else already made this decision ages before me. Yes, in today’s world, people at Booking.com are still writing in PERL, and that does not make them bad developers. The same argument is valid for developers who work for Facebook. Most of the successful companies around the world interview candidates without asking language-specific questions. They mostly focus on the algorithmic intelligence of their potential colleagues without being worried about their language backgrounds. That said, I don’t personally know anyone who only codes in PHP. Still, I am not sure if this is the perfect world that we are living in, but most of the developers I personally know are busy using different languages every day.

Then why is this question still coming up, as Jeffrey mentioned in his tweet here?

{% twitter 1258422468315951105 %}

I see there are many sins for PHP developers here. However, I do not blame them for this entirely, either. The interesting thing about PHP comes from its nature. Most of the engineers out there did not see PHP as a purely functional language. Indeed, PHP implemented most features of functional programming, but the creators of PHP did not initially build it for this reason. The same thing applies to the argument that PHP is an object-oriented language. Even though the evolution of PHP supplied the required tools to us for good object-oriented design, again, that was not the language’s main goal.

PHP comes with the simplicity of releasing websites. That was the main goal of the language itself, which is mostly forgotten. In today’s world, PHP still runs 78% of the entire internet because of this simplicity.

I’m coming from the simplicity of the language to another simplicity, and that is managing content. Let’s face facts: Wordpress is still the de facto leader of the web. Most content creators use its weird admin interface every single day. Nine out of ten news websites in today’s world draw their strength from this CMS. Honestly, I don’t know how much Wordpress developers earn from their freelance work, but they still do a lot.

And the thing I know, the most important thing, is that content managers don’t know and don’t give a f*ck if the language behind their beautiful admin panel is PHP or not.

I’m coming back to the question and the smile on the face. I can imagine why these smart engineers blame PHP as the source of spaghetti code out there. And let’s get back to the fact that I’m coding in PHP; yes, I do. But believe me, I have no idea what is going on when I look at *wp-blog-header.php*. A lot has changed in the PHP world over the last ten years, and yes, not surprisingly, you can find elegant code in this world nowadays.

Either way, PHP and Wordpress rule the internet. Let’s respect that and continue to provide solutions to the problems we are dealing with.

Thanks to Jeffrey Way, the reason for this post.

| actuallymab |

331,572 | Multiple PHP Pools | PHP has gotten phenomenal to work with over the last few years.There have been so many improvements -... | 0 | 2020-05-10T09:59:29 | http://jamessessford.com/blog/multiple-php-pools | ubuntu, development, php | ---

title: Multiple PHP Pools

published: true

date: 2020-05-10 00:00:00 UTC

tags: ubuntu, development, php

canonical_url: http://jamessessford.com/blog/multiple-php-pools

---

PHP has gotten phenomenal to work with over the last few years.There have been so many improvements - both to the language itself and, in my humble opinion, the developer experience in using the language every day.

I'm a terrible hypeman for anything but if you're interested in the current state of PHP, I'd highly recommend reading [PHP in 2020](https://dev.to/brendt/php-in-2020-1nag-temp-slug-1485828) by Brent Roose.

One of the new features of PHP that I wanted to experiment with was preloading but I had several sites on my Rasperry Pi and they didn't all depend on the same code, so I needed to seperate my instances.

## Enter pools

PHP pools are seperate processes that can each be used to serve a subset of requests. You'll probably already be using the default pool at the moment:

```

server {

###

server_name www.test;

location ~ \.php$ {

fastcgi_pass unix:/var/run/php/php7.4-fpm.sock;

}

###

}

```

On Ubuntu, PHP pool configuration is located at `/etc/php/7.4/fpm/pool.d/`. I'd start by copying the template that's there for my new pool.

```

cd /etc/php/7.4/fpm/pool.d

sudo cp www.conf www2eb.conf

```

You'll then want to edit this file and update, at minimum, a setting or two.

```

sudo nano www2eb.conf

```

Update the name of the pool:

```

; Start a new pool named 'www'.

; the variable $pool can be used in any directive and will be replaced by the

; pool name ('www' here)

[www2eb]

```