id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

301,240 | Advanced TypeScript Exercises - Question 8 | Welcome back! Let's go back on track after bonus questions. This question will be less abstract, and... | 4,717 | 2020-04-06T21:40:46 | https://dev.to/macsikora/advanced-typescript-exercises-question-8-2la4 | typescript, javascript, challenge | --- series: Advanced TypeScript Exercises ---

Welcome back! Let's go back on track after bonus questions. This question will be less abstract, and more practical, we will land more at value level 🚁.

We have a function `concatToField` which takes object, and key of this object, and string value. The purpose of this function is to create a new object with concatenated property object[key] with third argument.

**The question** - How to type generic types `T` and `K` in the definition of `concatToField` function in order to achieve compile time guarantee that `obj[key]` can be only `string`.

```ts

const concatToField =

<T /* here your code 💪*/, K /* here your code 💪*/>(obj: T, key: K, payload: string): T => {

const prop = obj[key]; // compile error should not be here

return { ...obj, [key]: prop.concat(payload) }; // compile error should not be here

}

// tests

const test = { fieldStr: 'text', fieldNum: 1, fieldStr2: 'text' };

concatToField(test, 'fieldStr', 'test'); // should be ok 👌

concatToField(test, 'fieldNum', 'test'); // should be error fieldNum is not string field 🛑

concatToField(test, 'notExistingField', 'test'); // should be error - no such field 🛑

concatToField(test, 'fieldStr2', 'test'); // should be ok 👌

```

Full code available in [the playground](https://www.typescriptlang.org/play/index.html?ssl=11&ssc=61&pln=1&pc=1#code/MYewdgzgLgBKbAIZQCogGIEsCmAbAJjALwCwAUDDADwowD0AVDABbYBO2MAniAK5twQ+ToF4NwFU7DOgBoYAaXpNWHbnwGhhMcZIB8AChAAjAFYAuGChkBrbFzOyZAB0RdcIRPjPQ2mMAHMAlGa0RNowAN7klJTw0DAObCAOxDCGRgDa1lwAugDc9HSCALYOmLic7AlskVEcUPxg4TAAdC2pMhk2WWbxiU3wSFC6Ti5u+P4wAL55dAWgxaXlbJXkE+QzMFDY0BDkMbCbsUSNAGY4BADKUGxmAOSbAB5QNzKnePgAcryFZgCML2f4S5sABMtweT0mOV24AGaCwb10BygMhurwuV2eMDuWye-mmBQgzD4BBgBk4IEsmkAMjvQhDIOEAxE4lFoj5fTHY6A3PH5GCE4mEMkwCogASsz6FGCYCAwMAgWBeHy+GCszSARb3abCMIykSi5VAAKL3aVQJXwggcpHc-F8om8ElCkUCAC0spAfN4wGYKoB6s19O1CN1WNZQOBlpx1t5-PtgvJlJENLI5CAA)

**Important** - body of the function should remain unchanged, no type assertion (as), or any changes of the body are allowed. The only thing needs to be done is constraint on `T` and `K` generic types.

Post your answers in comments (preferred links to the playground). Have fun! Answer will be published soon!

**This series will continue**. If you want to know about new exciting questions from advanced TypeScript please follow me on [dev.to](https://dev.to/macsikora) and [twitter](https://twitter.com/macsikora). | macsikora |

301,244 | Transforming Teaching with Teachingo - #TwilioHackathon Submission | The Team Peter and I are two software engineers from Belfast, Northern Ireland who graduat... | 0 | 2020-04-06T21:48:34 | https://dev.to/chloemcateer3/transforming-teaching-with-teachingo-4lc5 | twiliohackathon, javascript, node, react | [Comment]: # (All of this is placeholder text. Use this format or any other format of your choosing to best describe your project.)

[Reminder]: # (Make sure you've submitted the Twilio CodeExchange agreement: https://ahoy.twilio.com/code-exchange-community)

[Important]: # (By making a submission, you agree to the competition's terms: https://www.twilio.com/legal/twilio-dev-hackathon-terms)

## The Team

[Peter](https://twitter.com/PMc_A) and I are two software engineers from Belfast, Northern Ireland who graduated from university last summer! Whenever we discovered the Twilio/DEV hackathon, we thought it was a great opportunity to jump into something that we can really get stuck in to.

With the hackathon being the majority of the month of April, this allowed us to really take our time with the idea and build something that could have a real impact in the world right now.

Given the current state of affairs in the world, everyone is flocking to the internet and various software/resources for communicating. _Everyone._

## The Problem

Most governments around the world have temporarily closed educational institutions in an attempt to contain the spread of the COVID-19 pandemic.

These global closures are impacting over 89% of the world's student population (source - https://en.unesco.org/covid19/educationresponse).

With these closures, schools across the globe are attempting to transition to online, remote learning. With some of our personal connections working in the education space, they had voiced their frustration with the lack of general tools in the wild that they could use to fit their needs - specifically, when it comes to video conferencing software.

Sure, there are a bunch of services that provide that facility to video call one another but they are mostly aimed at corporate businesses rather than education.

## Our Proposed Solution

We are creating an E-Learning platform that is specific to teachers in order to fulfil their different needs when teaching a remote lesson. Some of these features include:

* Video conferencing including: screen share, the ability to mute users and facilitate live chat.

* Automated attendance checker.

* Automated message sent to students that did not attend lesson.

* Reported lesson statistics - who/how many asked questions in live chat, what percentage of the class attended (list of who did and who didn't).

* Request a transcript or recording of the lesson (could be emailed to the students who attended or to the students that missed the class).

* Feedback request - students can show they understand the topic being taught via red, amber, green feedback mid-lesson.

* General student feedback about topics post-lesson.

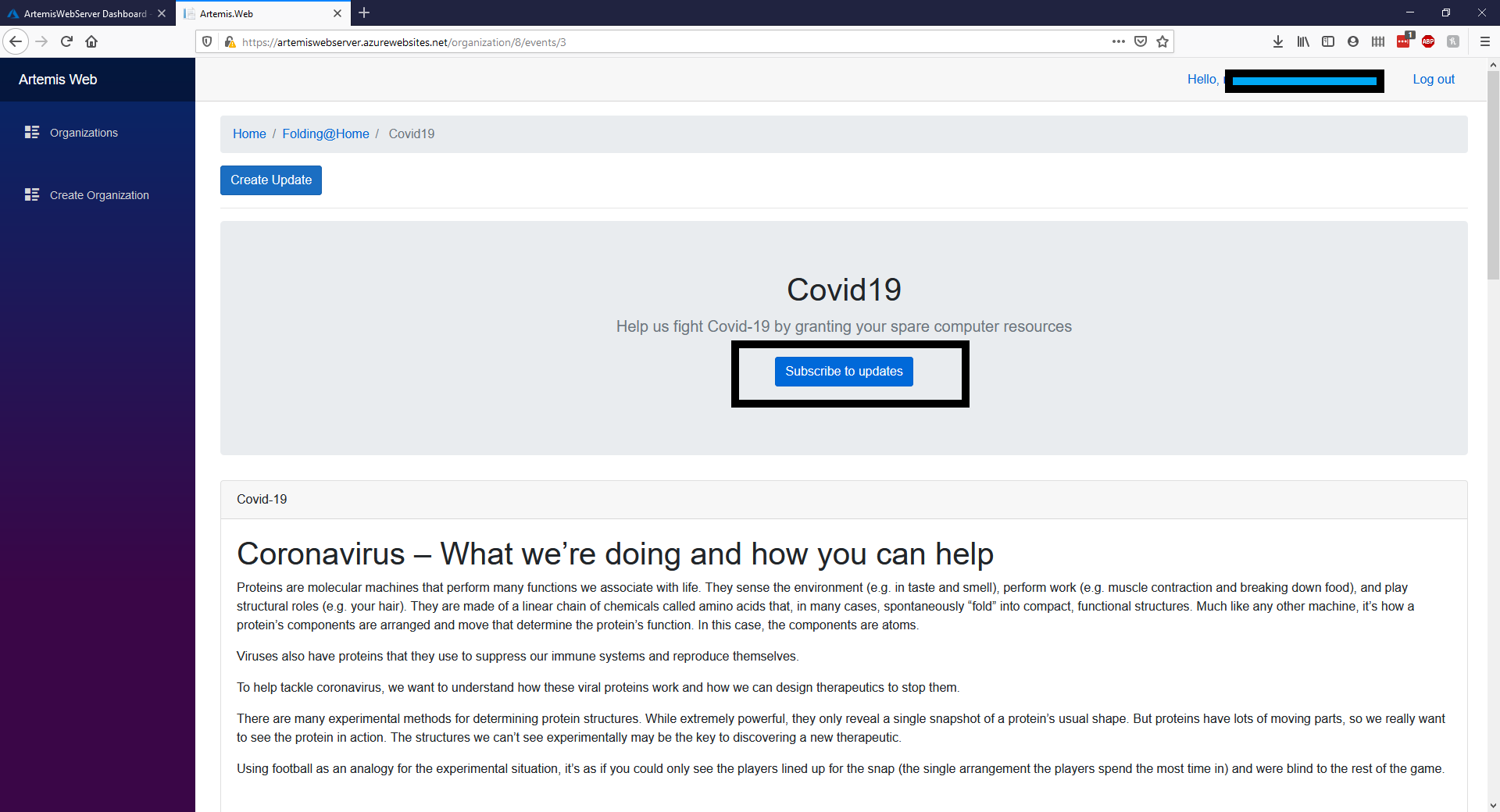

## What we built

#### Category Submission: COVID-19 Communications/Interesting Integrations

### Choosing the right technology 📚

We wanted to make our solution platform agnostic, thus we opted to create a web application that both the teachers and students could use.

We decided to play to our strengths, with both of us having some experience with React and a lot of experience with JavaScript, we decided to build all the things with JS. For more details on our tech choice, check out our first progress update blog 👇

{% link https://dev.to/pmca/transforming-teaching-with-teachingo-update-1-5df2 %}

### Let's talk data 🔢

With our tech stack chosen, it was time to really think about the data that would be passing through our systems. How would it be structured? Where would it be stored? And most importantly, how would it be secured? Check out more about our data decisions in our second progress update blog:

{% link https://dev.to/chloemcateer3/transforming-teaching-with-teachingo-update-2-12bm %}

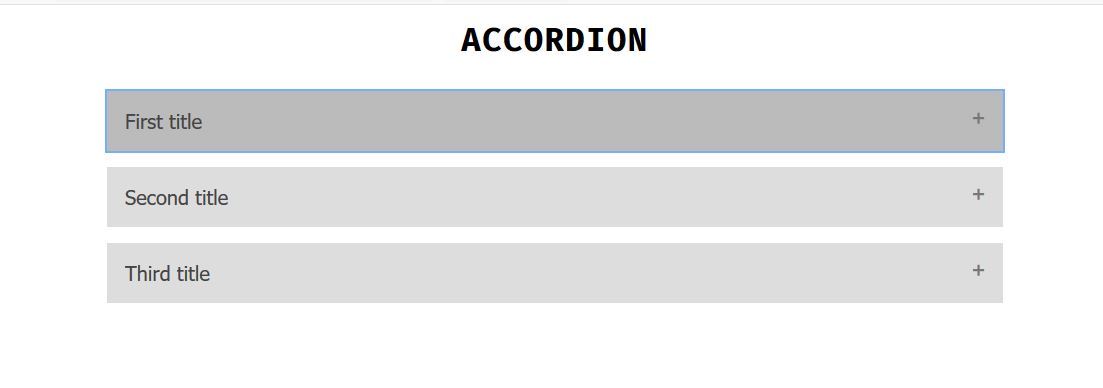

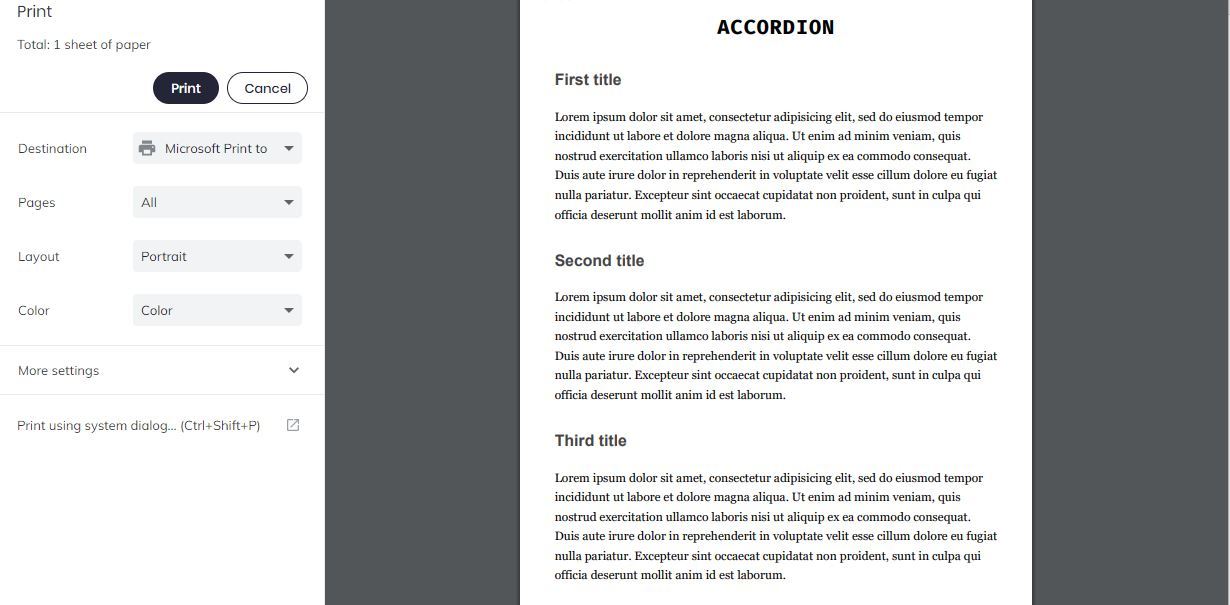

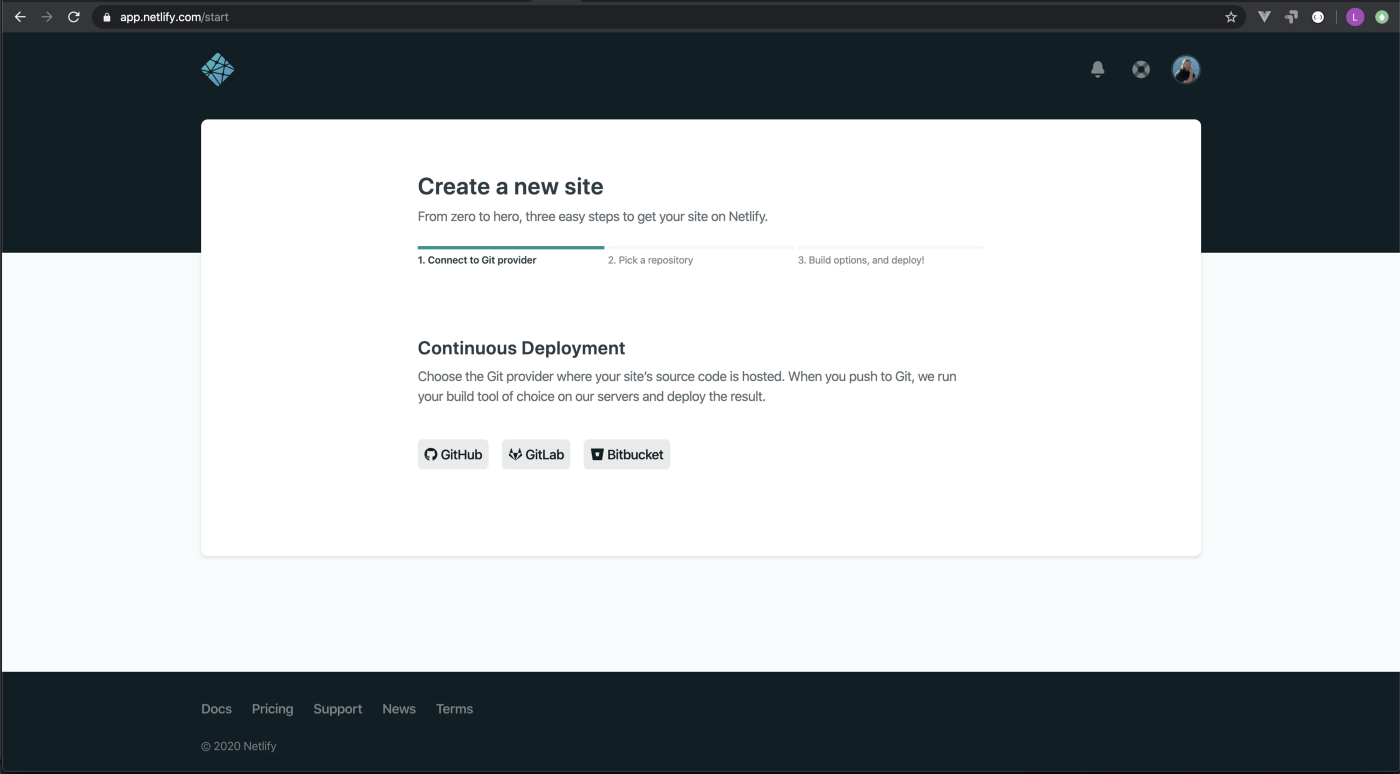

### Prepare to Lanuch 🚀

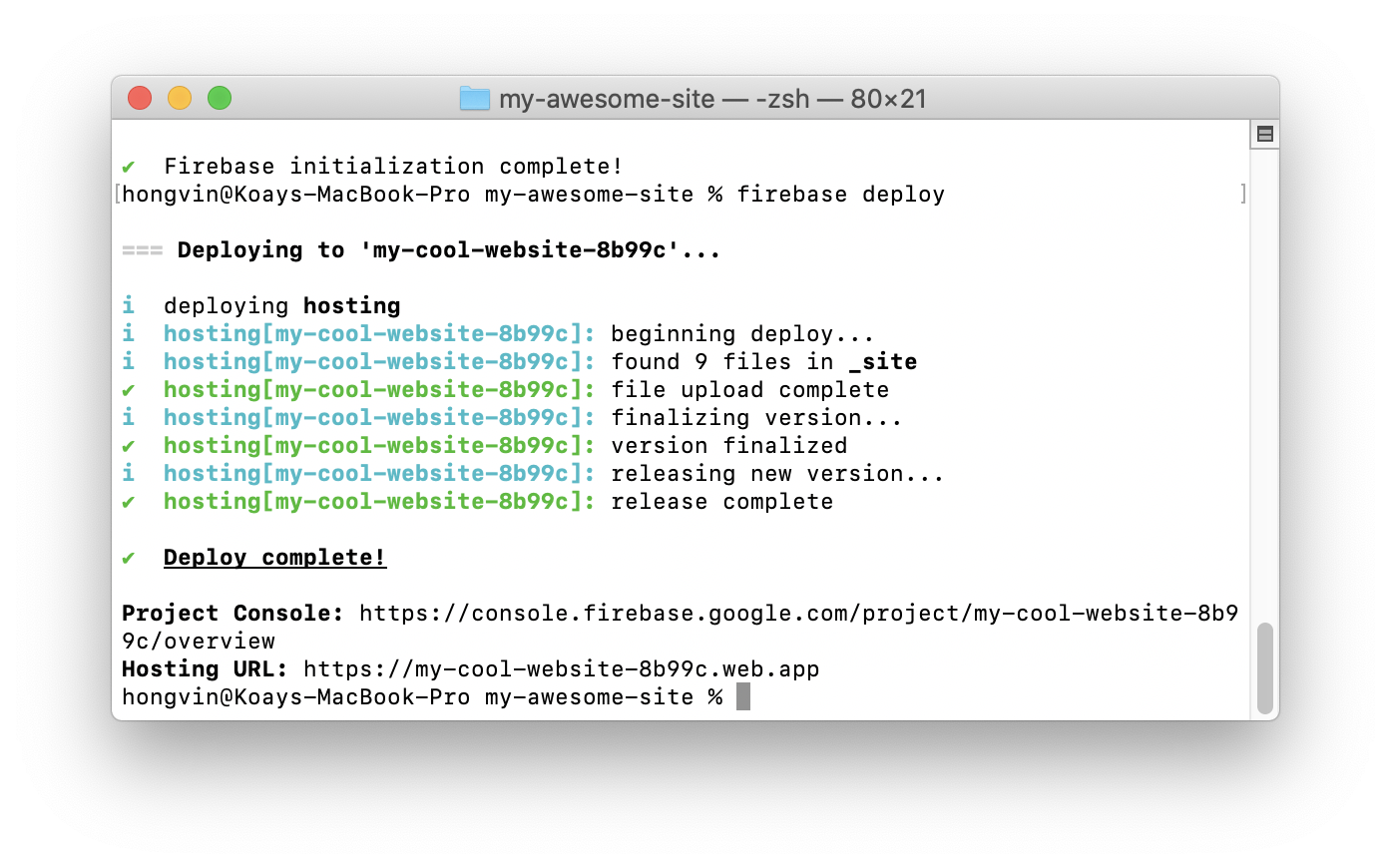

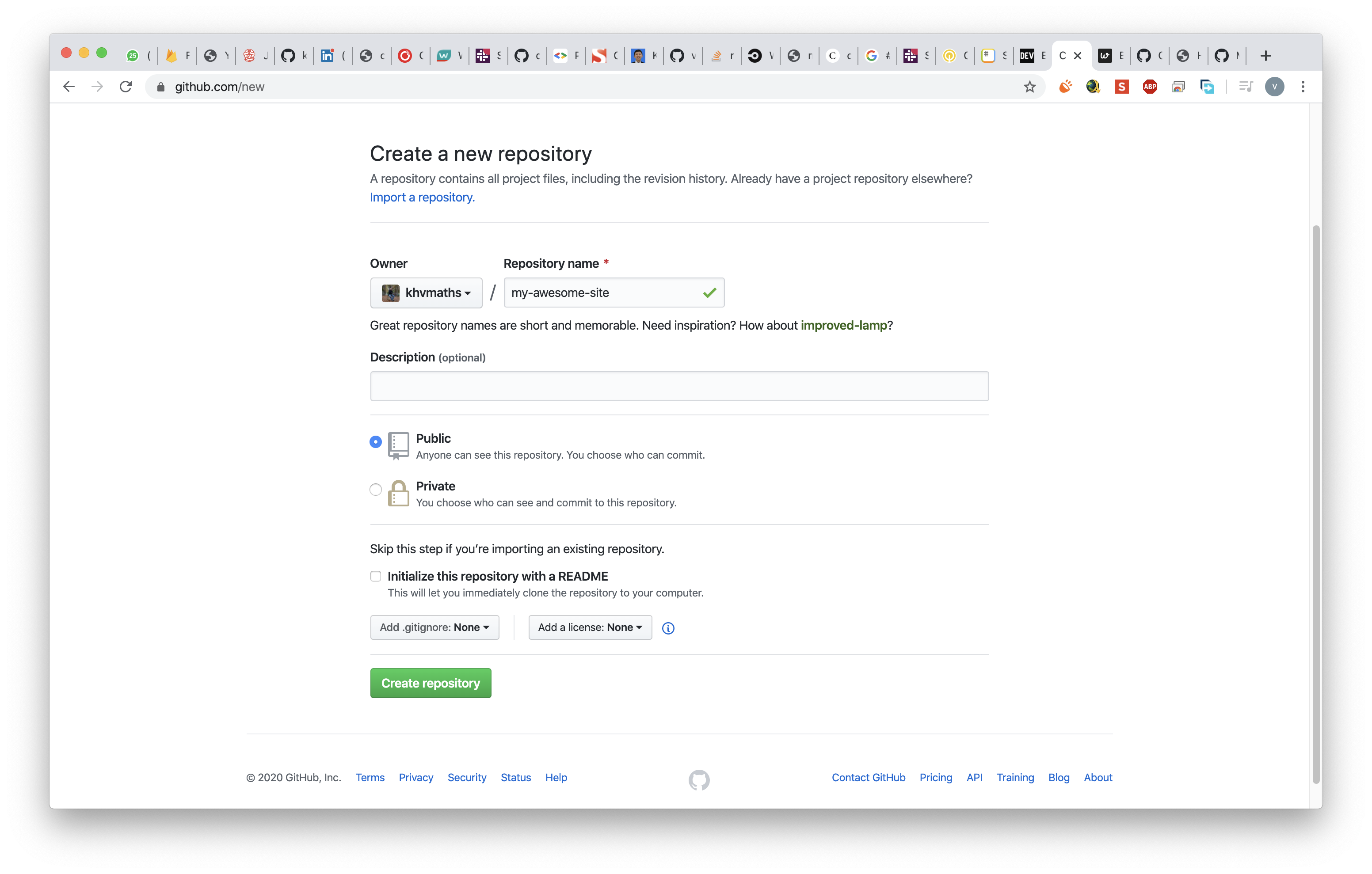

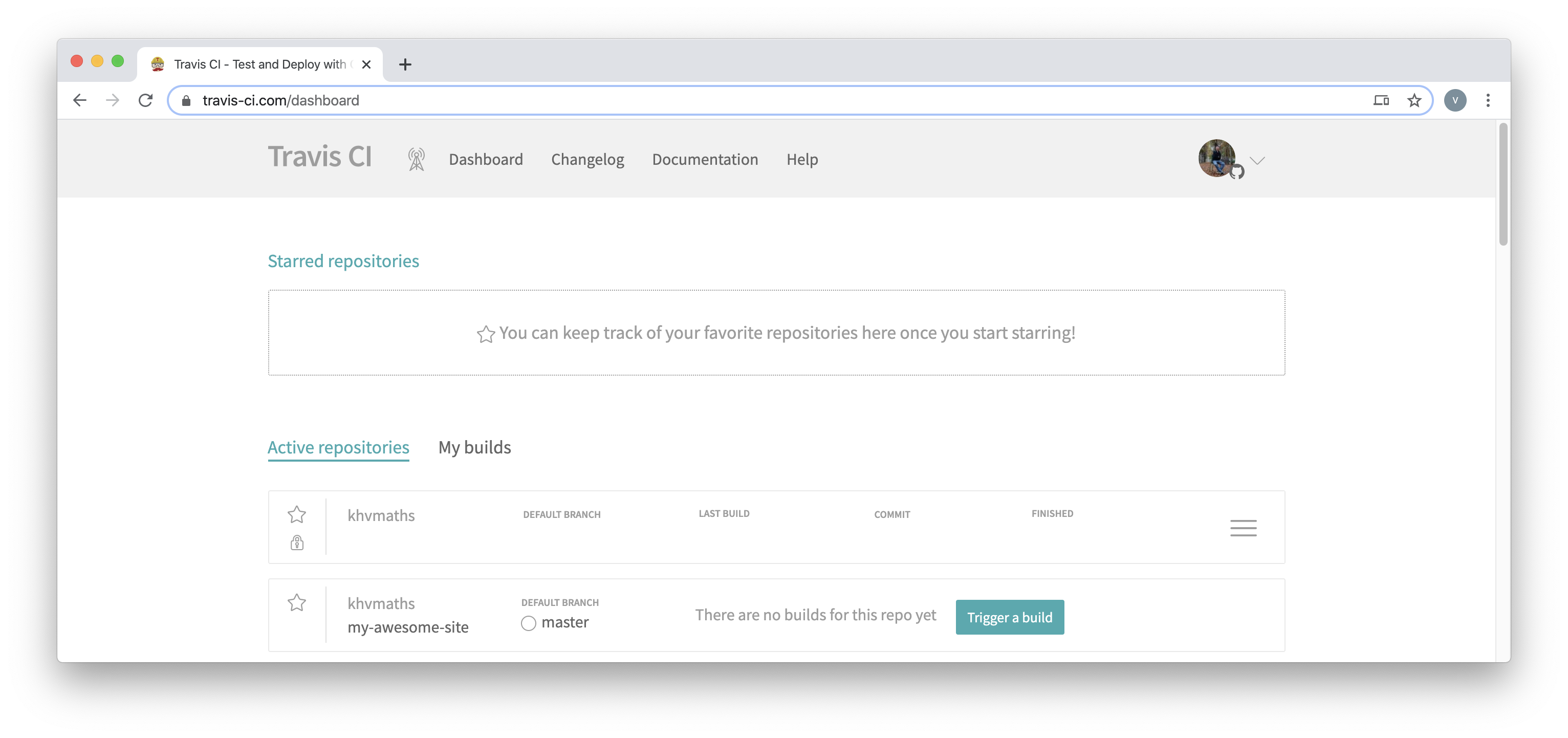

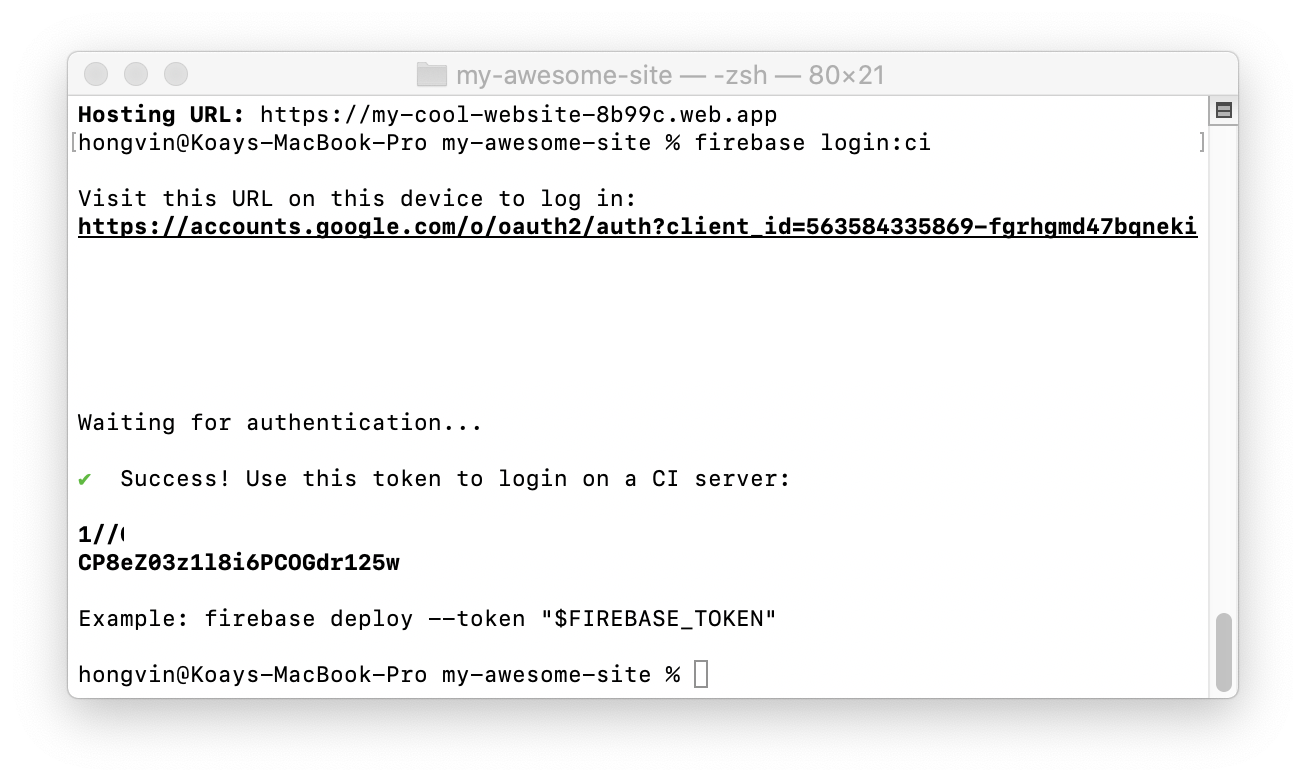

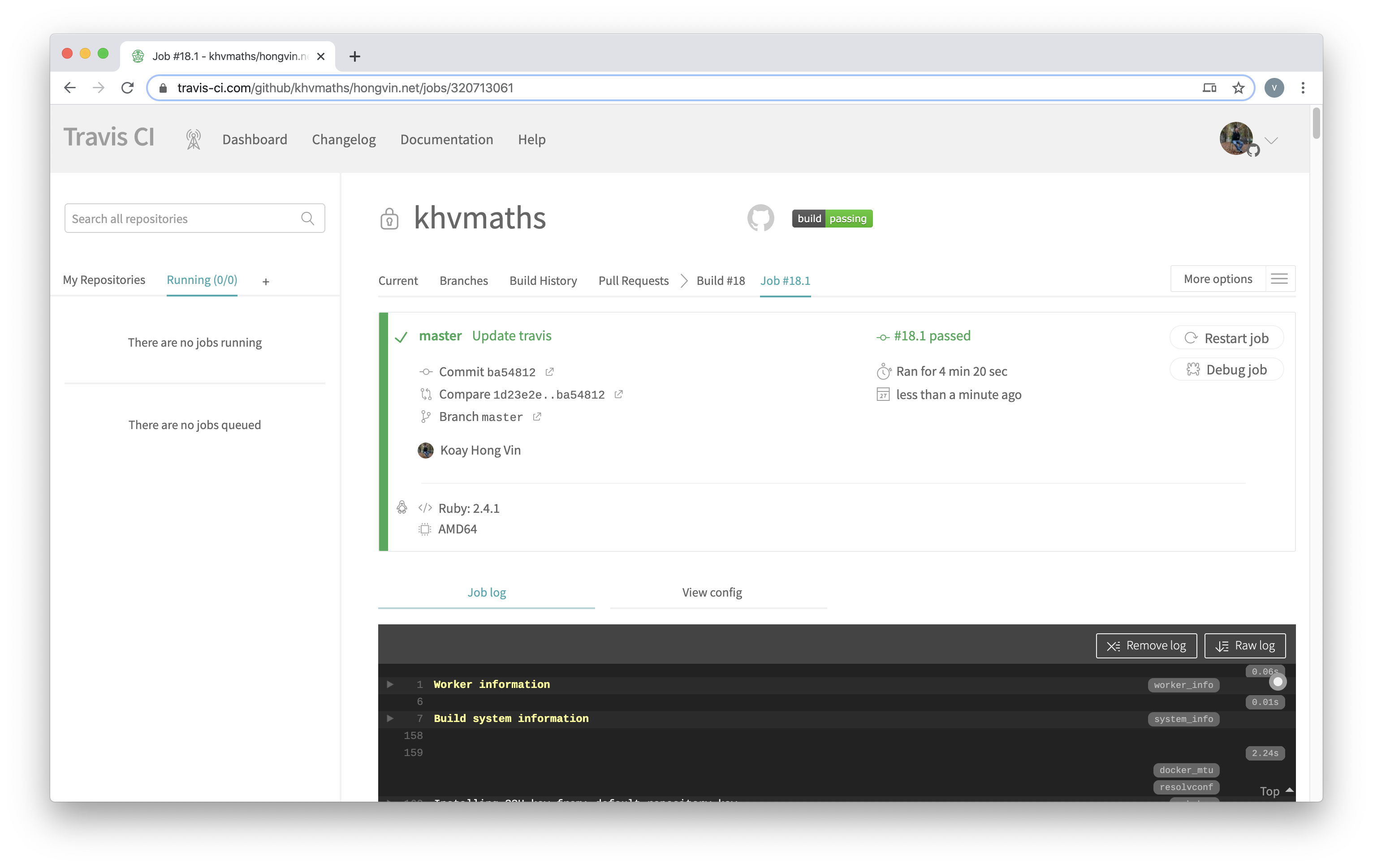

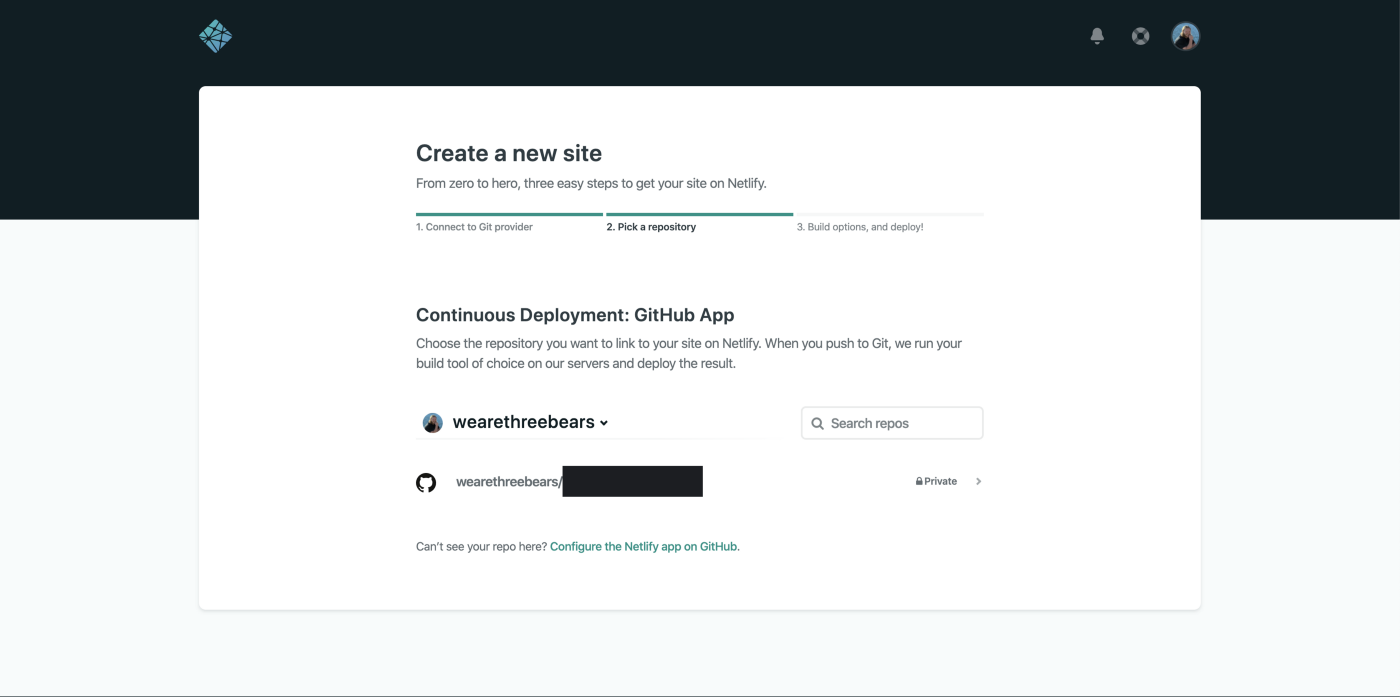

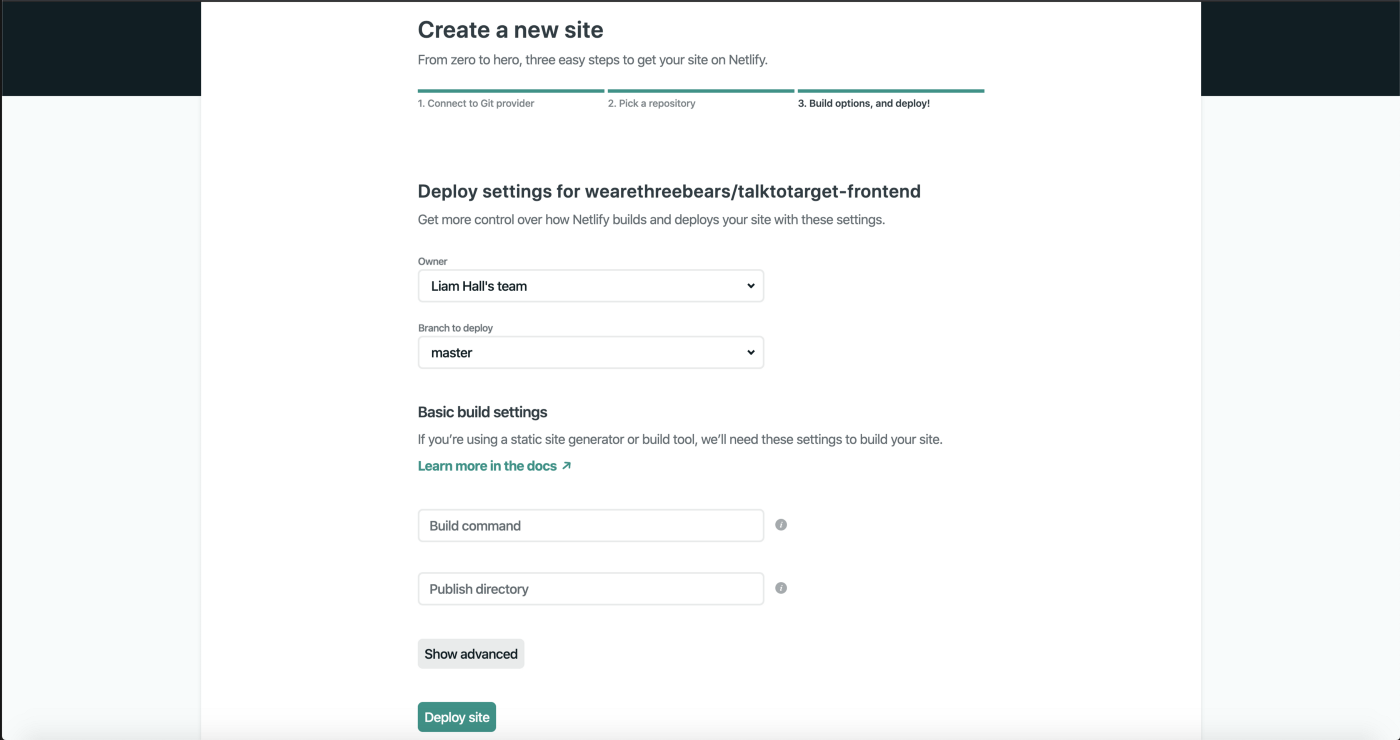

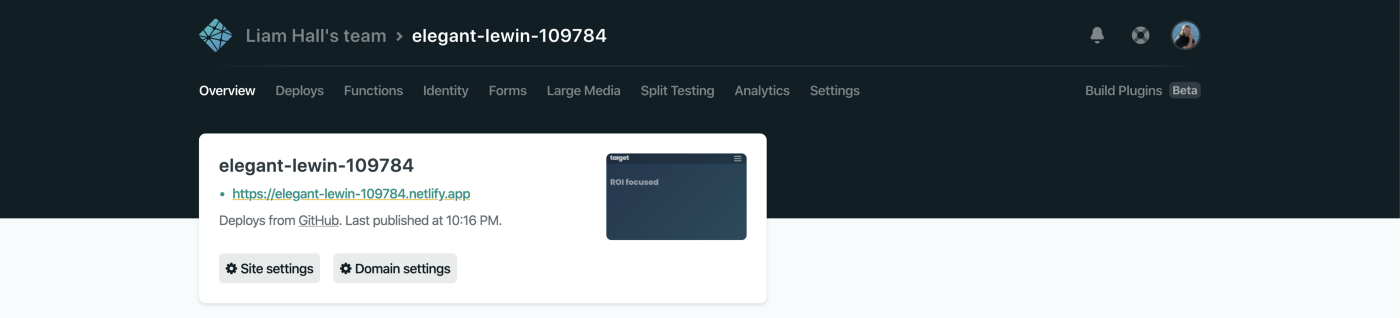

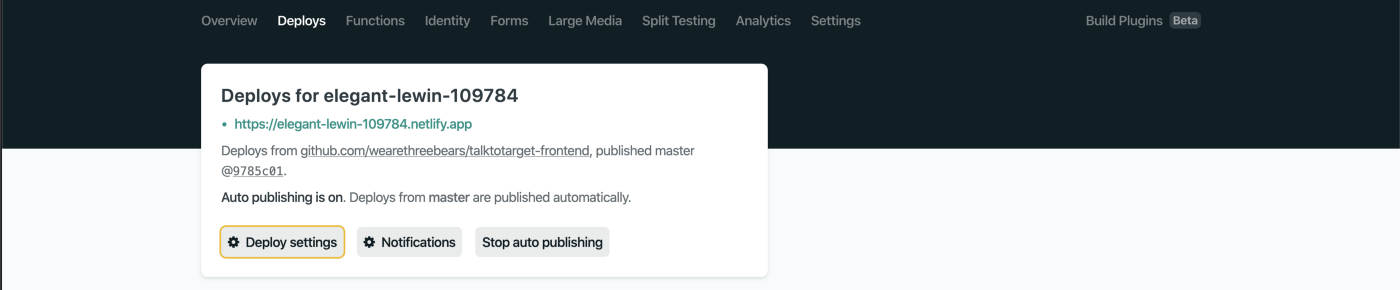

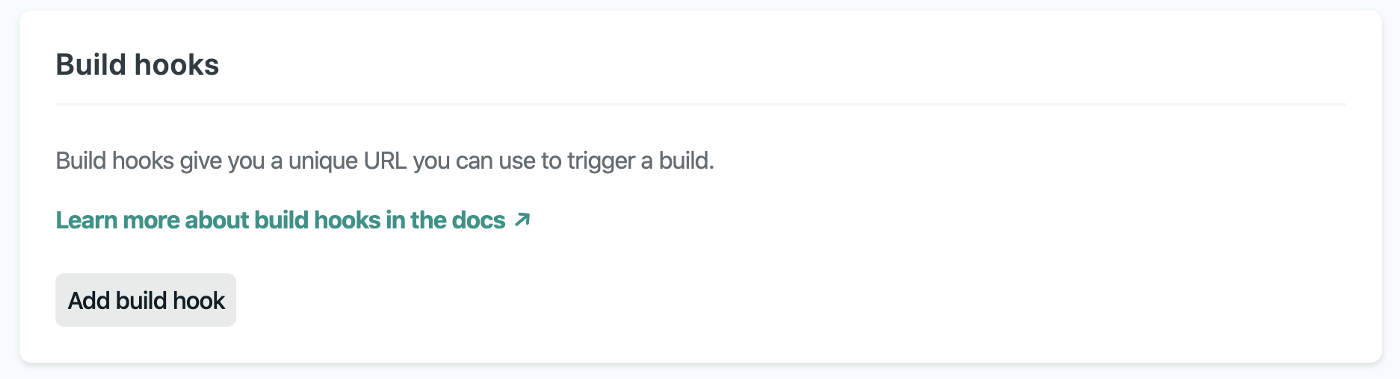

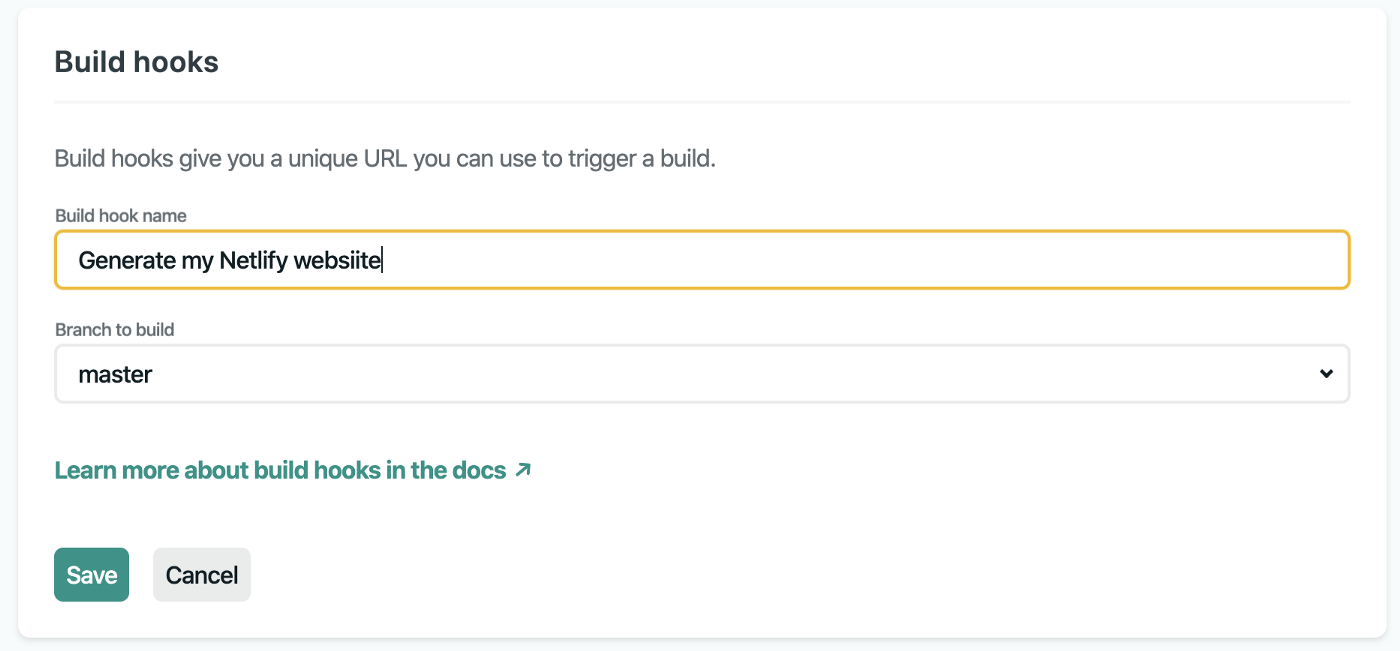

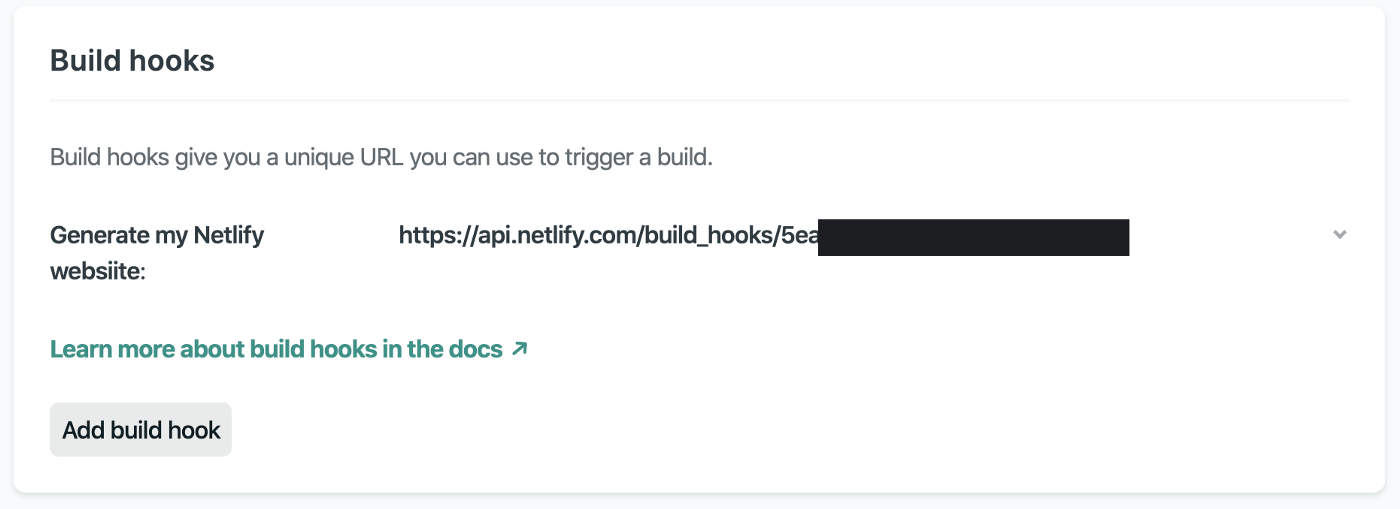

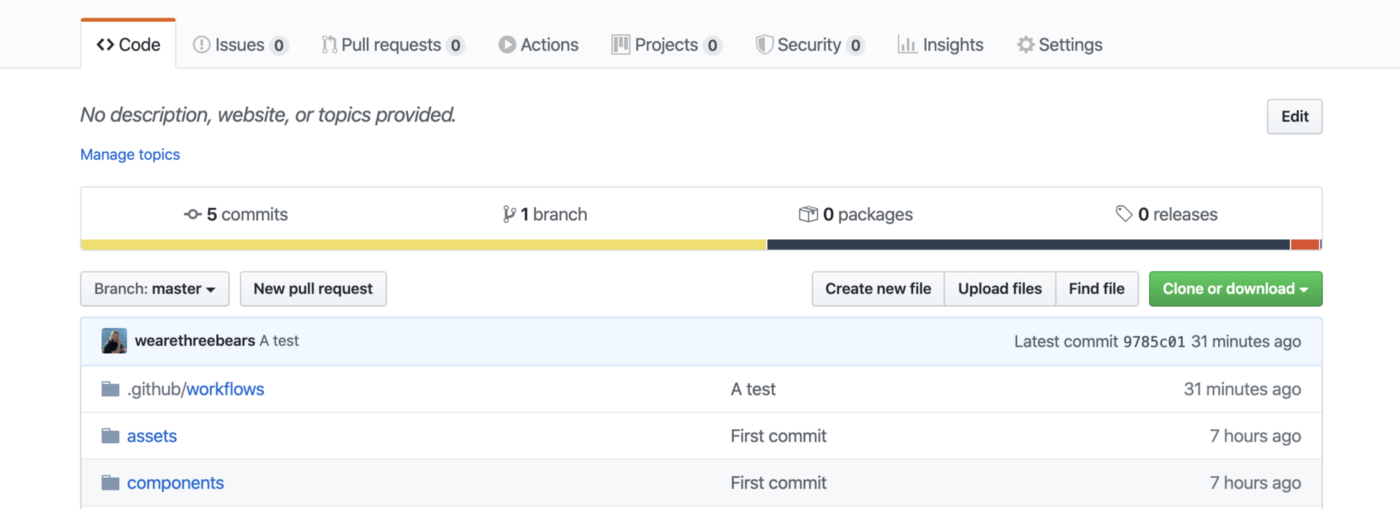

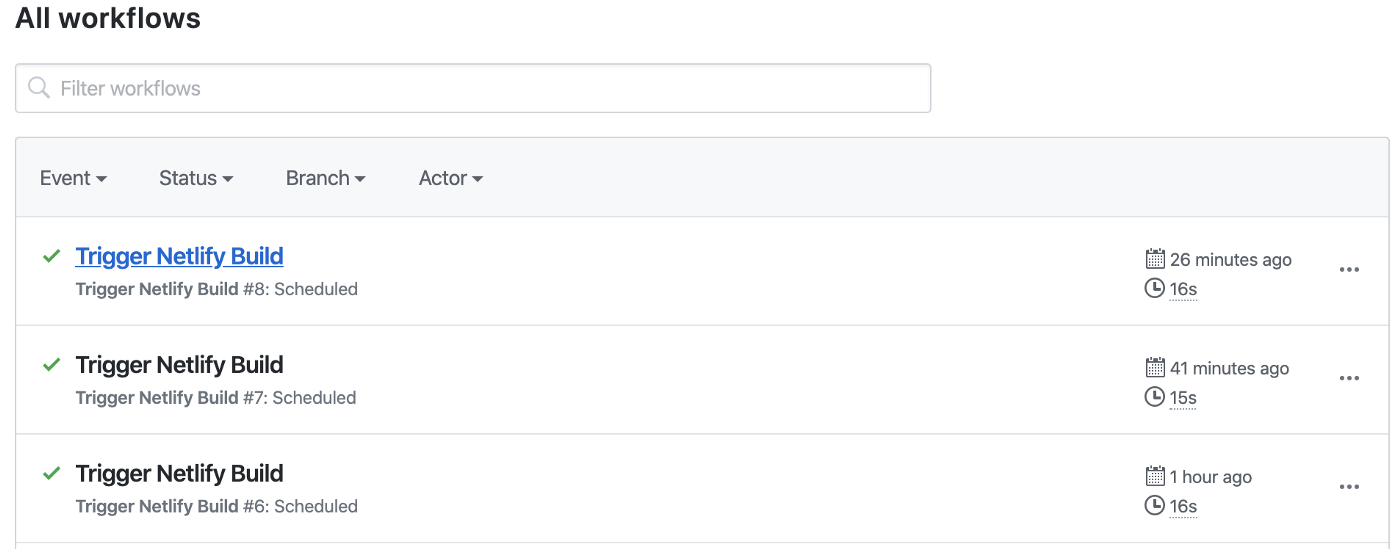

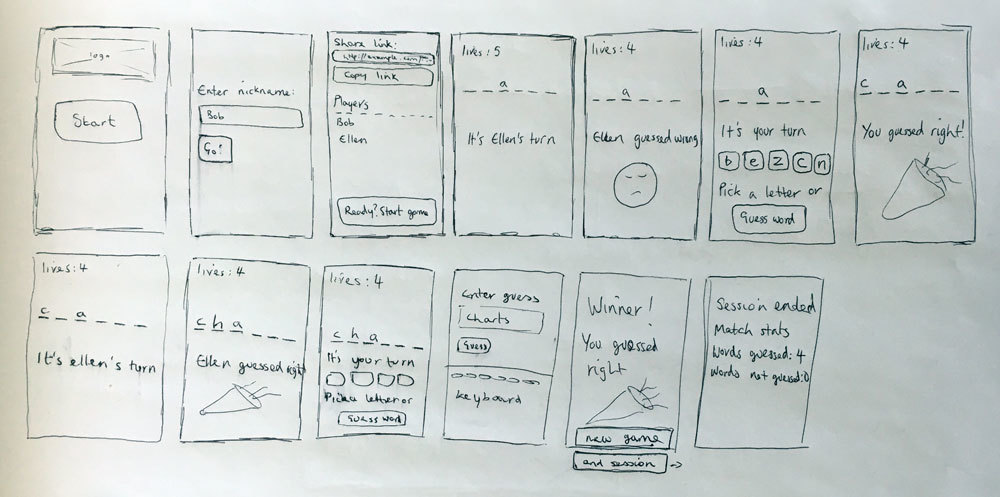

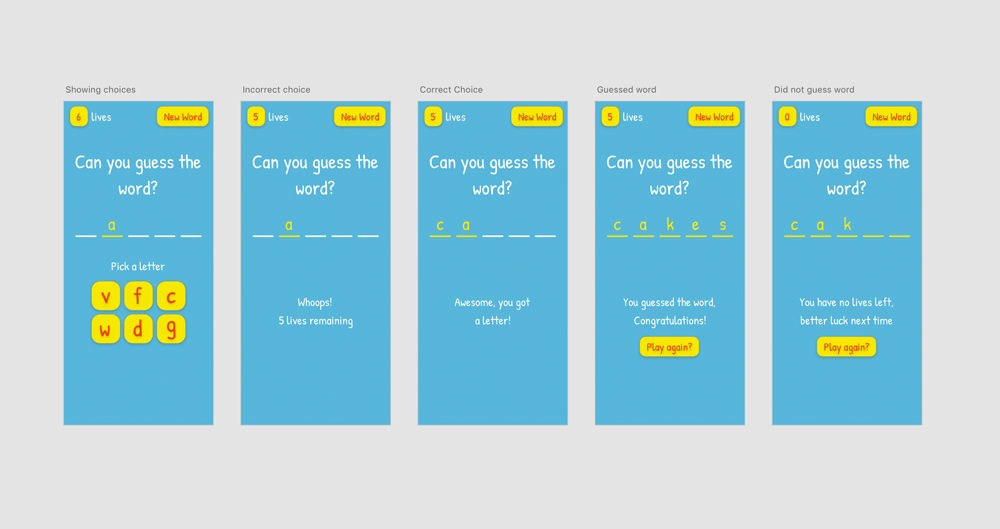

With just a simple spike created of our front end application, server and database - we wanted to hit the ground running and set up a CI/CD pipeline to automate deployment. Check out how we set it up below 👇

{% link https://dev.to/pmca/transforming-teaching-with-teachingo-update-3-5daf %}

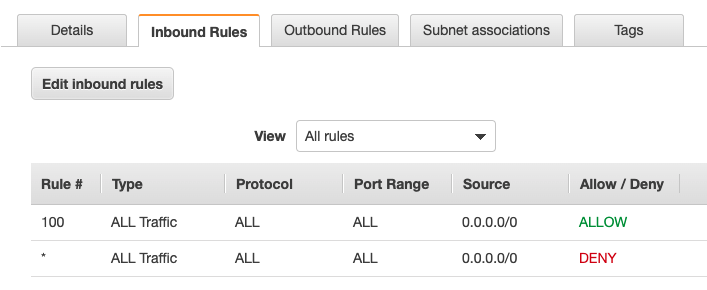

### Security, Security, Security 🕵️♀️

As the application is going to be used as an educational tool, it is essential that it is secure - to see some of the security measures we have taken, have a read of our blog number 4:

{% link https://dev.to/chloemcateer3/transforming-teaching-with-teachingo-update-4-1cgj %}

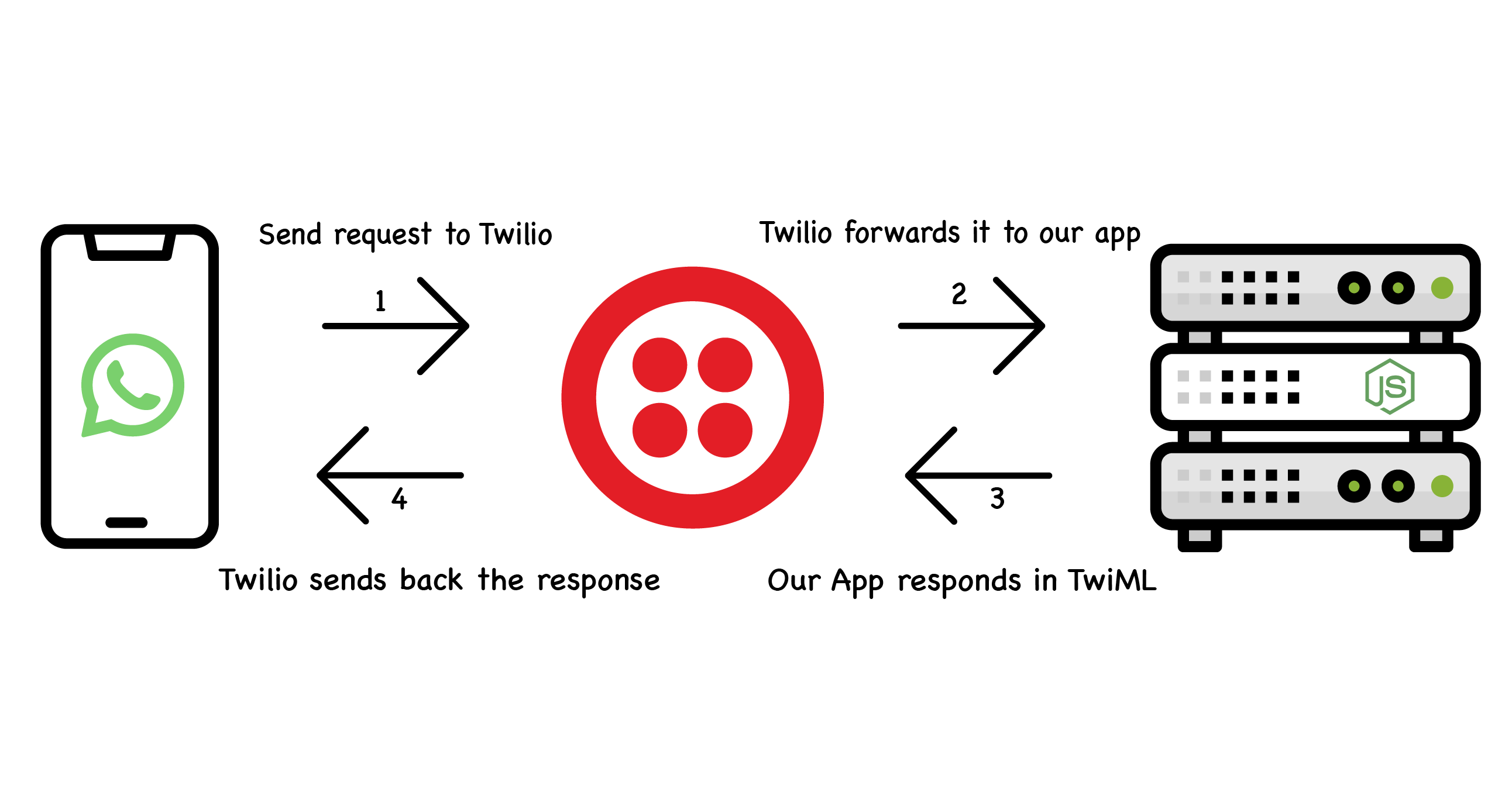

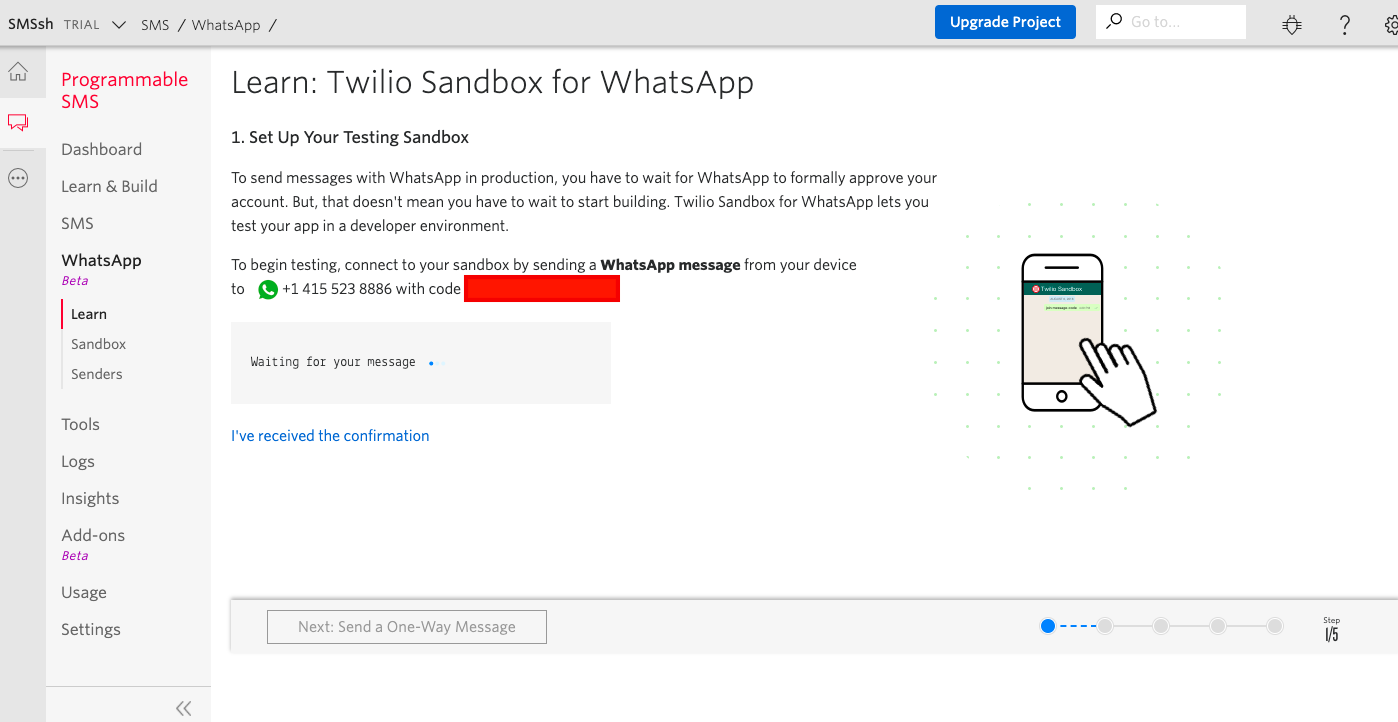

### Twilio Time ⏰

Next step was to check out the Twilio services, SDKs and more to see what they could offer us. At a first glance we couldn't believe what Twilio could do out of the box and jumped straight into working with it. Take a look at how we got started and some of our code snippets in progress update number 5!

{% link https://dev.to/pmca/transforming-teaching-with-teachingo-update-5-58g2 %}

## Demo Link

We recorded a [short demo](https://drive.google.com/open?id=1h9GgnBrbDLK4oHnUhiDgYJ1T0ddvsEOa) of the main features in Teachingo.

You can check out the deployed application [here](https://confident-pike-86a4c7.netlify.app)

## Link to Code

{% github pmc-a/teachingo-client no-readme %}

{% github pmc-a/teachingo-api no-readme %}

**GitHub Profiles:**

- [Chloe - chloeMcAteer](https://github.com/chloeMcAteer)

- [Peter - pmc-a](https://github.com/pmc-a)

## Completed Features

Once we started this hackathon, we had an endless list of possible features we wanted to add to this application, but unfortunately due to time constraints it wasn't possible to add everything we wanted, but below is a full feature list of what we **achieved**:

* Ability to securely log in as a teacher or student

* Ability to view upcoming lessons

* Ability to start/join a video call

* Ability to mute/unmute mic, turn on/off camera and share screen

* Ability to live chat with everyone in the lesson to ask questions

* Abiltity for the teacher to view summary lesson statistcs at the end of the lesson.

* Ability for the teacher to send an SMS to students who missed the lesson.

Some additional features that we wanted to add and didn't have time to complete:

* Ability for students to show red, amber or green to highlight their understanding of the topic

* Ability to request a transcript/recording of the lesson

* Ability to obtain general student feedback at the end of the lesson

## Additional Resources/Info

We hope you like our submission! We have been tweeting our progress throughout the hackathon so if you want to see our journey check us out at [@chloeMcAteer3](https://twitter.com/chloeMcAteer3) & [@PMc_A](https://twitter.com/PMc_A)! | chloemcateer3 |

301,247 | Job Search Week 7 | So I decided to clean up some of my repositories from Makers this week. I thought it'd be nice to go... | 5,212 | 2020-04-06T22:00:31 | https://dev.to/kealanheena/job-search-week-6-3dcp | makers | So I decided to clean up some of my repositories from Makers this week. I thought it'd be nice to go back over the basics and also have everything looking nice.

Day 1

So on Monday, I started with Boris bikes picking up where I had left off in week 1 of makers. It was quite fun to go back and see my old code and also see how far I've come since then. I managed to get through some of the things I had been stuck on and do some of the challenges I didn't get a chance to do.

Day 2

On Tuesday, I got to do some mocks and stubs which was nice because I wanted to get some practice in using them and now I've done that it makes more sense to me.

Day 3

I got more functionality done on Wednesday. I continued working with classes although a bit repetitive I think it's good to go over the basics because a solid understanding of the basics will help when implementing more complicated functionality. I also updated the documentation on Boris bikes to make it more relevant.

Day 4

I continued to work through Boris bike adding the van and garage classes to the project. I did notice that there were some similarities between the van, garage and docking station classes which was where the module came in.

Day 5

On Friday, I just finished off Boris bikes completing the last of the functionality and updating the documentation. I also got to use a module which I didn't get to during makes which were interesting to work with.

Summary

Overall I think this was a good week I worked on the basics and got Boris bikes and the airport challenge nicely cleaned up. I think I'll keep working through the Makers projects till along with my side projects. | kealanheena |

301,292 | Curso JSON - 3. Herramientas | Curso sobre JSON de novato a experto (versión GRATIS) Presentación:... | 5,620 | 2020-04-06T23:45:45 | https://youtu.be/uE8QD3ztKUg | spanish, vscode, node, javascript | {% youtube uE8QD3ztKUg %}

Curso sobre JSON de novato a experto (**versión GRATIS**)

Presentación: https://slides.com/equimancho/json/#/3

Repositorio:

https://github.com/equiman/playground-json

Descargas:

Git: https://git-scm.com/

Visual Studio Code: https://code.visualstudio.com/

NodeJS: https://nodejs.org/

Curso recomendado de Visual Studio Code (Fernando Herrera):

https://cursos.devtalles.com/courses/visual-studio-code

Contadores (Mr. Timer):

https://www.youtube.com/channel/UCYo5An-3L_eOd1l5WQdU3oQ

---

**That's All Folks!

Happy Coding** 🖖

[](https://github.com/sponsors/deinacademy) | equiman |

301,557 | Replacing React's Redux library with the useReducer Hook | If you'd like to see more content like this 😍, we should definitely connect on Twitter! 🤝. Take a... | 0 | 2020-04-07T08:03:12 | https://robkendal.co.uk/blog/2020-04-07-replacing-reacts-redux-library-with-usereducer-hook/ | react, showdev, tutorial, beginners | ---

title: Replacing React's Redux library with the useReducer Hook

published: true

date: 2020-04-07 08:01:00 UTC

tags: react, showdev, tutorial, beginners

canonical_url: https://robkendal.co.uk/blog/2020-04-07-replacing-reacts-redux-library-with-usereducer-hook/

cover_image: https://robkendal.co.uk/img/useReducer%20-%20blog%20post.png

---

_If you'd like to see more content like this_ 😍, _we should definitely connect on Twitter!_ 🤝. _Take a look at [my Twitter profile](https://twitter.com/kendalmintcode) and I'll look forward to seeing you there_ 👍🏻

I've been moving over to using React Hooks in my development of late. They offer a much simpler, terser approach to development and are super powerful. They do require a certain mind-shift towards [thinking in React Hooks](https://wattenberger.com/blog/react-hooks) (read that article by Amelia Wattenberger, it's so well written and helpful!), but they really push your development on.

Anyway, up until now, I had been like a lot of developers who wanted to employ a centralised state management system; I had been using the [Redux library](https://redux.js.org/), specifically [React Redux](https://react-redux.js.org/introduction/why-use-react-redux) and the [Redux Toolkit](https://redux-toolkit.js.org/) (which just simplifies things a little I feel).

However, the React core team has [introduced the `useReducer` Hook](https://reactjs.org/docs/hooks-reference.html#usereducer) and I've found it a little nicer to use. It doesn't require additional libraries or tooling, and I feel like it might just take some of the edge of learning the Redux pattern(s) for beginners. It certainly removes a lot of the configuration 'fun' that accompanies a typical Redux setup.

So let's start using it!

## Contents

This is a long article, so if you want to skip around then you'll find this outline handy:

- [Redux primer](#redux-primer) a gentle introduction to Redux for beginners

- [Reducers](#reducers)

- [Action creators](#action-creators)

- [Dispatchers](#dispatch-methods)

- [Redux with useReducer](#redux-with-usereducer)

- [Creating the reducer](#creating-reducers)

- [Wiring up the App component](#wiring-the-app)

- [Faking API calls](#fake-api-calls)

- [Rendering components](#rendering-components)

- [Displaying our ShoppingList](#displaying-the-list)

- [Introducing React's Context](#introducing-context)

- [Dispatching updates to state](#dispatch-updates)

- [Adding new items](#add-new-items)

- [Demo and working code in action](#demo)

- [Further reading and resources](#further-reading)

## A Redux primer

<a name="redux-primer"></a>**(If you're already a Redux king or queen and just want to start using the useReducer Hook, you can [skip to the tutorial part now](#redux-with-usereducer))**

I mentor some junior and aspiring developers and at some point they all land on the Redux methodology for manging their state. It's easy to see why: it's a very common approach to solving application state management in complex apps; let's face it, most commercial apps qualify as 'complex' and you are always better off learning things that are geared to helping you in a realistic role.

However, the concepts involved in Redux are where a lot of beginners come unstuck. I think it's a combination of having to understand several moving parts that wire together to make a Redux-y state change, as well as some unfamiliar (and potentially confusing) terminology.

Hopefully, this little interlude can help get you familiar with the basics before we plough on with implementing the useReducer Hook for our own Redux stuff.

### The main players in Redux

So, there are four main players within the Redux pattern:

1. Application state

2. Dispatchers

3. Action creators

4. Reducers

### Application state

The most straightforward of the bunch, this is simply a centralised object with various properties that represent the 'state' of our application at a given moment. It can contain anything your app needs, but typically it could contain collections of items, settings, preferences and so on.

In more complex applications you might find that state is broken into small sections (often referred to as 'slices' in Redux land) which are then stitched together when the application is served.

### Reducers

<a name="reducers"></a> Reducers are functions that modify our state.

They usually accept an output of an **action creator** and use this to determine what _action_ to take on our state.

You might see something like this:

```JavaScript

function mySuperReducer(state, action) {

switch(action.type) {

case 'INCREMENT':

return state + 1;

default:

return state;

}

}

```

For some state changes, however, we also need to modify our state based on a passed in value. For this, we'll use an action that contains a **payload**.

A **payload** could be anything: a value, string, integer, array, object, etc. It's passed in with the action object into the reducer and is used to modify state.

It might look like this:

```JavaScript

function mySuperReducer(state, action) {

switch(action.type) {

case 'ADD_USER':

return [...state, action.payload.newUser]

default:

return state;

}

}

```

### Action creators

<a name="action-creators"></a>Action creators are functions that create actions. That's not very helpful though.

What they are, are quite simple functions that return an object. This object usually contains two things:

1. The type of action you want to take (often a string constant)

2. The value you want to take action with (see above example in the reducers section)

When you pass an action (the result of an action creator) into a reducer, it is the action type that determines what will happen to state, and (if required/available) what _value_ will be used as part of the state changes.

What they look like varies from use to use, but in a relatively standard Redux setup, they'll look either like this:

```JavaScript

const ADD_USER = 'ADD USER; // our string constant part

function addUser(newUser) {

return { type: ADD_USER, newUser };

}

```

or like this if you're using one of the Redux library's handy helpers:

```JavaScript

const addUser = createAction('ADD USER');

```

### Dispatchers

<a name="dispatch-methods"></a>The final piece of the puzzle, dispatchers. Dispatchers are the gophers between actions and reducers. Essentially, dispatchers are functions that trigger/kick-off all state updates.

You call a dispatch function, passing in an action. The dispatch function takes the action to the reducer and the reducer modifies the state.

Using a dispatcher might look like this:

```JavaScript

// Here's our action

function addUser(newUser) {

return { type: 'ADD_USER', newUser };

}

// here's a new user object

const user = {

name: 'rob kendal',

age: 380,

dob: '01/01/1901'

}

// and here's the dispatch to trigger things

dispatch(addUser(user));

```

[](https://twitter.com/kendalmintcode)

### Putting it all together

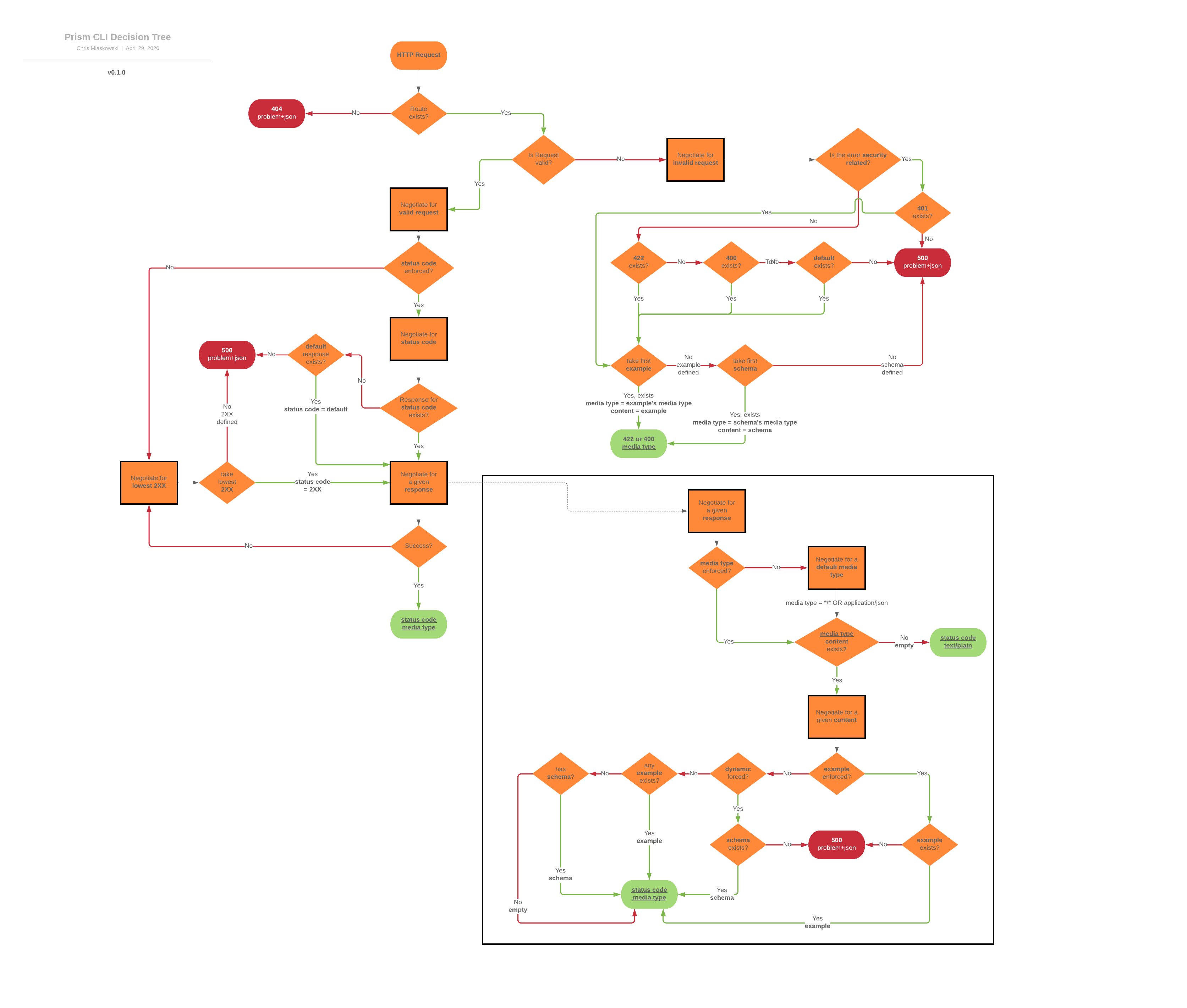

There is [a good explanation of the flow of data and the various interaction points](https://redux.js.org/basics/data-flow) in a Redux update cycle available on the Redux JS website. In the meantime, here's a handy diagram that should help cement the concepts at a high level.

### Further reading

If you need further help on this, check out the various [Redux JS documentation sites](https://redux.js.org/) and I have a couple of articles on using Redux with a data handler:

1. [React and Redux components - part one](https://dev.to/kendalmintcode/react-redux-components-api-s-and-handler-utilities-3fk9)

2. [React and Redux components with data handlers - part two](https://dev.to/kendalmintcode/react-redux-components-api-s-and-handler-utilities-part-two-389p)

## Redux with React and useReducer

<a name="redux-with-usereducer"></a>OK, to the main meat and potatoes of the article, using the useReducer Hook to manage your application state in React.

We're going to build a small shopping list app that accepts some simple data via input elements and uses the Redux pattern to update a global state-held list.

The tools we'll be using include:

- `useReducer` - this is the [React Hook](https://reactjs.org/docs/hooks-reference.html#usereducer) that is billed as an alternative to `useState`.

- `useContext` - the [useContext Hook](https://reactjs.org/docs/hooks-reference.html#usecontext) will allow us to grab the current context value from the specific context we're referencing. In our case, we'll be passing down both a dispatch function to allow for state updates and the state object itself to make use of its contents.

- Creating a reducer function to update our state

- Making an action creator function that just simplifies the building of an action

- Creating an initial state with some predefined items on our list

So let's get to it; first up, we'll create our initial state

### Initial state

Firstly, we'll need a place to store our app's data, our initial state. We'll create a new file `initialstate.js` and load it up.

```JavaScript

export default {

loadingItems: false, // our app uses this to determine if we're loading our list

shoppingList: [ // our initial list of items

{

id: 1,

name: "Bananas",

description: "A bunch of 5 bananas, fresh from the plant",

price: 1.83

},

{

id: 2,

name: "Soup",

description: "A can of beef broth",

price: 0.54

}

]

};

```

Nothing too clever here, just a plain old JavaScript object with a couple of properties that are fairly self-explanatory;

### Creating the reducer

<a name="creating-reducers"></a>Next, we'll create our reducer file, `reducer.js`. It will contain a few items when we're done:

1. **Two React contexts** , one that will contain our dispatch method and one that will contain our state. They will both be wrapped around our React app to be referenced in child components further down the tree.

2. **Action types** : this is just be a simple JS object with string constants. We'll use these to prevent ambiguity or errors when triggering dispatches.

3. **A reducer function** , the main star of the show that will ultimately affect change in our app's state.

Our new file looks like this:

```JavaScript

// We need React in scope to create our context objects

import React from "react";

// Contexts

// will be used to pass down the dispatch method and our

// application state via the Context Provider and consumed

// in child components using the useContext Hook

export const StateContext = React.createContext(null);

export const ShoppingContext = React.createContext(null);

// Action constants

// we will import this object and use the various properties

// in child objects when calling the dispatch method

export const actions = {

GET_ITEMS: "get items",

GET_ITEMS_SUCCESS: "get items success",

ADD_ITEM: "add item",

REMOVE_ITEM: "remove item"

};

// This is a simple helper function that will take a type

// (from the constants above) and a payload, which will be the

// value which needs to be affected in state it returns

// a simple object that will be passed to our dispatch function

export const createAction = (type, payload) => {

return {

type,

payload

};

};

// Reducer

// the function that accepts our app state, and the action to

// take upon it, which then carries out that action

export const reducer = (state, action) => {

switch (action.type) {

case actions.GET_ITEMS:

return {

...state,

loadingItems: true

};

case actions.GET_ITEMS_SUCCESS:

return {

...state,

loadingItems: false

};

case actions.ADD_ITEM:

const nextId = Math.max.apply(

null,

state.shoppingList.map(item => item.id)

);

const newItem = {

...action.payload,

id: nextId + 1

};

return {

...state,

shoppingList: [...state.shoppingList, newItem]

};

case actions.REMOVE_ITEM:

return {

...state,

shoppingList: state.shoppingList.filter(

item => item.id !== action.payload

)

};

default:

return state;

}

};

```

In a more complex app, it may make sense to split these functions out, but for smaller apps and our example, it makes sense to me to keep them contained within one reducer file. You could name it something that encompasses the more holistic nature of the elements within it, but for now, it's fine.

The main thing to understand is that each part in here is related and will join together throughout our app to make changes to our state.

The reducer function is the most interesting part and you can see that it accepts our current state (this is taken care of by React's `useReducer` function that you will see later on) and the action we want to take against the state.

Based on the supplied action's type, the reducer determines which action we're talking about and then does some simple state mutation based on what that action type may be.

For example, if we pass the action type 'REMOVE\_ITEM' (just a string constant), the reducer returns a new version of state with the 'shoppingList' property where the previous shopping list has been filtered to remove the item that matches the action's payload (which will be the item's id value).

## Wiring up the App component with `useReducer`

<a name="wiring-the-app"></a>So we've got a reducer (and it's other moving parts) and some state for the reducer to act upon. Now we need to wire this up into our App.

First, we'll import some important items at the top of the file:

```JavaScript

import React, { useReducer, useEffect } from "react";

// Styles

import "./styles.css";

// Data

import initialState from "./initialstate";

import { reducer, StateContext, ShoppingContext, actions } from "./reducer";

// Components

import AddItem from "./components/AddItem";

import ShoppingList from "./components/ShoppingList";

```

We'll get to useReducer and useEffect in a minute. The important bits of this so far are that we're importing our app's initial state, as well as most items from the `/reducer.js` file.

Next, we'll define our main export and proceed to fill it as we go.

```JavaScript

export default props => {

return (

<div>The app has landed</div>

);

};

```

From here, we'll finally use our useReducer Hook:

```JavaScript

export default props => {

const [state, dispatch] = useReducer(reducer, initialState);

useEffect(() => {

// simulate loading of items from an API

dispatch({

type: actions.GET_ITEMS

});

setTimeout(() => {

dispatch({

type: actions.GET_ITEMS_SUCCESS

});

}, 2000);

}, []);

return (

...

);

};

```

The useReducer Hook is a really simple function in essence. It returns an array, `[state, dispatch]` which contains our app's state, and the dispatch function we will use to update it.

We're also using the useEffect Hook with an empty array, which means it will only fire once, **not on every render**.

The useEffect Hook here is not at all necessary, but I've used it to mimic a realistic scenario whereby an app would load and then go off and fetch some data from an API.

### Faking the API call

<a name="fake-api-calls"></a>In a real app, you'll need to interact with an API and you'll most likely want to show some sort of loading message whilst you wait for data back. We're not using an API and our data is miniscule by comparison, but we can fake the _effects_ of an API using a `setTimeout` callback.

In the useEffect Hook, we actually use the dispatch method for the first time. We pass it a type of 'GET\_ITEMS' which is a string property on our imported actions constants object (`actions`) from the top of our App component.

You can see in our `reducer.js` file what affect this has on state:

```JavaScript

export const reducer = (state, action) => {

switch (action.type) {

case actions.GET_ITEMS:

return {

...state,

loadingItems: true

};

// ... rest of reducer

}

};

```

We simply set the 'loadingItems' flag to true, which means in our App component, we'll display a loading element.

### Rendering the components

<a name="rendering-components"></a>Finally, we need to wire up the app so that it actually renders something useful. We'll do that here:

```JavaScript

export default props => {

// ...unchanged

return (

<ShoppingContext.Provider value={dispatch}>

<StateContext.Provider value={state}>

<h1>Redux fun with shopping lists</h1>

<hr />

{state.loadingItems && <div className="loading">...loading</div>}

{!state.loadingItems && (

<div className="columns">

<div className="column">

<h2>Add a new item</h2>

<AddItem />

</div>

<div className="column">

<h2>Shopping list</h2>

<ShoppingList />

</div>

</div>

)}

</StateContext.Provider>

</ShoppingContext.Provider>

);

};

```

The main take away here is the two context providers that we use to wrap the main App component in.

The first, `<ShoppingContext.Provider value={dispatch}>` allows us to pass down the dispatch function to child components.

The second `<StateContext value={state}>` is the same, but allows child components to access our application state when they need.

These are a key part of the process as they allow us to access dispatch and state from child components. You can [read more about React's Context on the official documentation](https://reactjs.org/docs/context.html).

### Finishing off the App component

Everything else is pretty much standard React stuff. We check to see if the 'loadingItems' property/flag is set to 'true' and either display a loading element, or our AddItem and ShoppingList components.

Here's our app's entry point in complete, the App component:

```JavaScript

import React, { useReducer, useEffect } from "react";

// Styles

import "./styles.css";

// Data

import initialState from "./initialstate";

import { reducer, StateContext, ShoppingContext, actions } from "./reducer";

// Components

import AddItem from "./components/AddItem";

import ShoppingList from "./components/ShoppingList";

export default props => {

const [state, dispatch] = useReducer(reducer, initialState);

useEffect(() => {

// simulate loading of items from an API

dispatch({

type: actions.GET_ITEMS

});

setTimeout(() => {

dispatch({

type: actions.GET_ITEMS_SUCCESS

});

}, 2000);

}, []);

return (

<ShoppingContext.Provider value={dispatch}>

<StateContext.Provider value={state}>

<h1>Redux fun with shopping lists</h1>

<hr />

{state.loadingItems && <div className="loading">...loading</div>}

{!state.loadingItems && (

<div className="columns">

<div className="column">

<h2>Add a new item</h2>

<AddItem />

</div>

<div className="column">

<h2>Shopping list</h2>

<ShoppingList />

</div>

</div>

)}

</StateContext.Provider>

</ShoppingContext.Provider>

);

};

```

## Displaying our list in the ShoppingList component

<a name="displaying-the-list"></a>Next, we'll dig into the ShoppingList component. At the top of the file, we'll see a familiar set of imports:

```JavaScript

import React, { useContext } from "react";

// State

import {

ShoppingContext,

StateContext,

actions,

createAction

} from "../reducer";

```

Next, we'll define the main output for this component:

```JavaScript

export default props => {

const state = useContext(StateContext);

const dispatch = useContext(ShoppingContext);

const handleRemoveItem = id => {

dispatch(createAction(actions.REMOVE_ITEM, id));

};

return (

<>

{!state.shoppingList && <p>no items in list</p>}

{state.shoppingList && (

<table>

<thead>

<tr>

<th>Name</th>

<th>Description</th>

<th>Price</th>

<th>Actions</th>

</tr>

</thead>

<tbody>

{state.shoppingList &&

state.shoppingList.map(item => (

<tr key={item.id}>

<td>{item.name}</td>

<td>{item.description}</td>

<td>£{item.price}</td>

<td>

<button onClick={() => handleRemoveItem(item.id)}>

remove

</button>

</td>

</tr>

))}

</tbody>

</table>

)}

</>

);

};

```

The main return of the component doesn't have anything too interesting beyond standard React stuff. The interesting things, however, are the 'const' definitions and the `handleRemoteItem()` method.

### Wiring up context in the ShoppingList component

<a name="introducing-context"></a>We know from our App component that we're already passing down the Redux dispatch method and our application state, but how do we access them?

Simple: with the `useContext` Hook...

```JavaScript

const state = useContext(StateContext);

const dispatch = useContext(ShoppingContext);

```

That's all there is to it. We can now use 'state' to access various properties on our global application state, such as 'shoppingList', which we actually use to display our table.

Similarly, we use 'dispatch' to trigger state changes; in our case to remove items from our list.

### Dispatching updates to our shopping list

<a name="dispatch-updates"></a>Whilst you could inline the following directly into the button element (and I normally would for brevity), I think it's a little clearer for learning to abstract the 'remove' button's click handler into its own variable.

```JavaScript

const handleRemoveItem = id => {

dispatch(createAction(actions.REMOVE_ITEM, id));

};

```

Again, quite a simple approach, but we call the dispatch function, passing in the result of the createAction function. The createAction function accepts a 'type' and a value, referred to as a 'payload'.

It's worth noting that the above is functionally equivalent to the following:

```JavaScript

const handleRemoveItem = id => {

dispatch({ type: 'remove item', payload: id});

};

```

It just looks a bit neater in the first example, and leaves less room for error(s).

Again, you can see that this links through to our reducer file like so:

```JavaScript

export const reducer = (state, action) => {

switch (action.type) {

// ...rest of reducer

case actions.REMOVE_ITEM:

return {

...state,

shoppingList: state.shoppingList.filter(

item => item.id !== action.payload

)

};

}

};

```

We employ a straightforward `Array.filter()` on the state's shoppingList property that just skips over the item with the id value that we've passed in, that we want to remove.

## Adding new items with the AddItem component

<a name="add-new-items"></a>Finally, we need to be able to add an item to our list to complete the circle of CRUD (almost, we're not doing updates...).

By now, things should start looking familiar, so we'll take a look at the entire AddItem component as a whole and walk through the finer points:

```JavaScript

import React, { useContext, useState } from "react";

// State

import { ShoppingContext, actions, createAction } from "../reducer";

export default props => {

const _defaultFields = {

name: "",

description: "",

price: ""

};

const dispatch = useContext(ShoppingContext);

const [fields, setFields] = useState({ ..._defaultFields });

const handleInputChange = evt => {

setFields({

...fields,

[evt.target.id]: evt.target.value

});

};

const handleFormSubmit = evt => {

evt.preventDefault();

dispatch(createAction(actions.ADD_ITEM, fields));

setFields(_defaultFields);

};

return (

<form onSubmit={handleFormSubmit}>

<label htmlFor="name">Name</label>

<input

id="name"

type="text"

value={fields.name}

onChange={handleInputChange}

/>

<label htmlFor="description">Description</label>

<input

id="description"

type="text"

value={fields.description}

onChange={handleInputChange}

/>

<label htmlFor="price">Price</label>

<input

id="price"

type="text"

value={fields.price}

onChange={handleInputChange}

/>

<button type="submit">Add item</button>

</form>

);

};

```

Right at the top, we've got our React and state imports.

Next, in our main output, we have a default state object, `_defaultFields` that we're using to reset the fields in local state when we've finished adding a new item.

We consume the dispatch function using useContext so we can pass a new item into our shopping list. **Notice that we're not consuming the state context, however.** We don't need to use anything from our application's state, so there's no need to consume the context.

Most everything else is pretty standard React form field handling [using controlled components](https://reactjs.org/docs/forms.html#controlled-components) that is beyond the scope of this article.

What we're interested in, however, happens in the `handleFormSubmit()` method:

```JavaScript

const handleFormSubmit = evt => {

evt.preventDefault();

dispatch(createAction(actions.ADD_ITEM, fields));

setFields(_defaultFields);

};

```

Firstly, we call the synthetic event's `preventDefault()` method to prevent the page from refreshing.

Next, we call our familiar dispatch method, passing in the action 'ADD\_ITEM' and the fields object from state which is a collection of any values we've made into the form's fields.

What happens in our reducer looks like this:

```JavaScript

export const reducer = (state, action) => {

switch (action.type) {

// ...rest of reducer

case actions.ADD_ITEM:

const nextId = Math.max.apply(

null,

state.shoppingList.map(item => item.id)

);

const newItem = {

...action.payload,

id: nextId + 1

};

return {

...state,

shoppingList: [...state.shoppingList, newItem]

};

// ...rest of reducer

}

};

```

This is arguably the most complex part of our reducer, but it's easy to follow:

- We work out the current highest id value in our shopping list items and increment it by one (not recommended in real life!);

- We add the id to a new item object;

- We update the state's shoppingList property by copying the array to a new array, adding in the new item object.

Finally, we clear out any saved fields/input data by replacing local state with the `_defaultFields` object.

## Putting it all together

<a name="demo"></a>You can see the finished app and play about with it below, and you can [view it online in the CodeSandbox environment](https://codesandbox.io/s/redux-with-usereducer-9tfko).

{% codesandbox redux-with-usereducer-9tfko %}

## Caveats, gotchas and things to bear in mind

This article covers the basics of using the useReducer Hook in conjunction with React's Context mechanism to both update and access your application's state. It can be used instead of the standard Redux library's approach, and it certainly requires no additional setup or configuration, which is handy (because there's a lot of that in the traditional Redux world).

However, this particular approach I've used may not suit you and your situation. It probably won't scale that well 'as-is' and could benefit from some smoothing out in terms of using this exact approach for a full-scale application. For example, you may wish to split your state into smaller parts for different areas of your application, which is great, but you can see how you'll need to work on that with from we've done here.

There is always more than one way to approach a problem and I think it's worth knowing your options. This article helps to introduce the Redux patterns and concepts whilst employing a nice new approach of employing reducers using built-in Hooks.

I would (and do) use this commercially, but do take what you see here and adapt it to your own means.

## Further reading and references

It's always handy to have a list of other sources of information, so here's that very list of useful references, links, resources that are worth a peek to help you in your quest to be a Redux master:

- [Redux JS](https://redux-toolkit.js.org/tutorials/basic-tutorial) - discover more about the Redux methodology and library

- [Redux Toolkit](https://redux-toolkit.js.org/) - an opinionated version of the Redux JS library for React

- [React's official documentation on Hooks](https://reactjs.org/docs/hooks-reference.html) - especially helpful for the [useContext](https://reactjs.org/docs/hooks-reference.html#usecontext) and [useReducer](https://reactjs.org/docs/hooks-reference.html#usereducer) Hooks

- Amelia Wattenberger's ['thinking in hooks' article](https://wattenberger.com/blog/react-hooks) - super helpful, clear resource for shifting your mindset into using Hooks in your own code

- My own articles on Redux and React, using API's and data handlers. I have an [article part one](https://dev.to/kendalmintcode/react-redux-components-api-s-and-handler-utilities-3fk9), and [article part two](https://dev.to/kendalmintcode/react-redux-components-api-s-and-handler-utilities-part-two-389p) available, which cover some more real-world examples.

## EDIT - 09 April 2020

Shout out to [Daishi Kato](https://twitter.com/dai_shi) on Twitter for his suggestion of using [react tracked](https://react-tracked.js.org/) in conjunction with the work in this article.

{% twitter 1247652386690093058 %}

[React tracked](https://react-tracked.js.org/) is a simple utility that supports the use of useReducer Hooks but helps to eliminate unnecessary rerenders upon smaller, unrelated state changes.

| kendalmintcode |

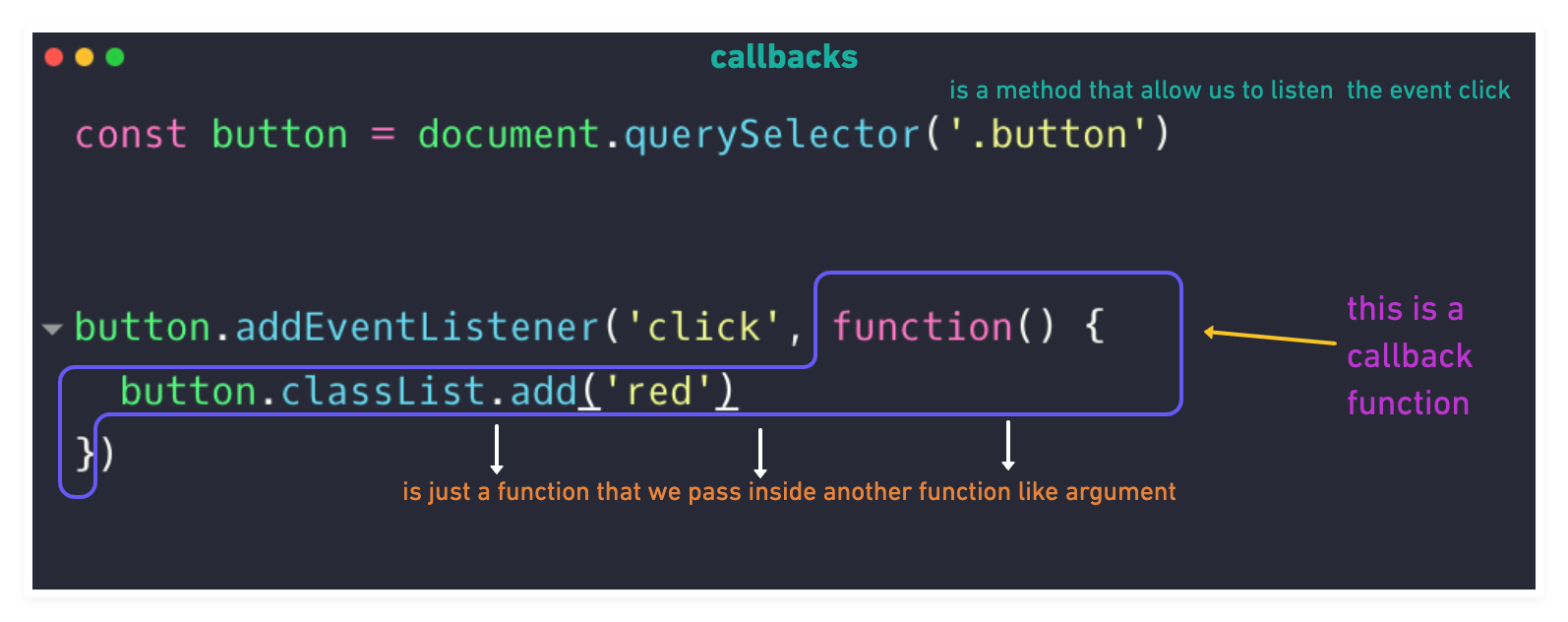

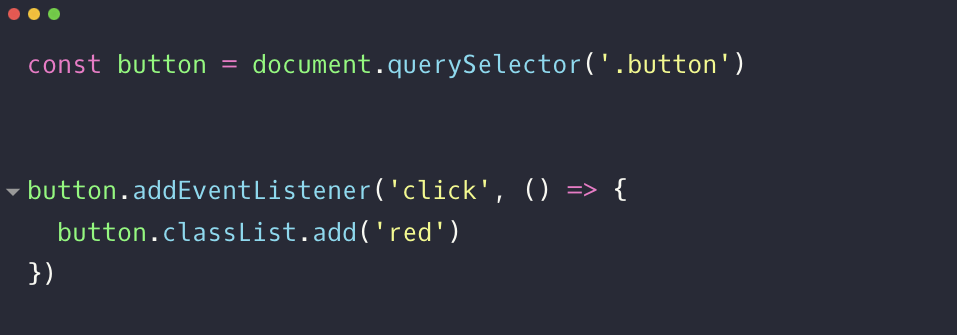

301,586 | Online and Offline events in JavaScript | In this post you will learn how to build a fully offline-capable app that will show an alert to user... | 0 | 2020-04-07T09:06:13 | https://dev.to/zeeshanahmad/online-and-offline-events-in-javascript-h25 | javascript | In this post you will learn how to build a fully offline-capable app that will show an alert to user when the application is offline or online. But first let me explain what are events and what are the advantages of using them in your application. Many function starts working when a webpage loads in a browser. But in many cases you want to start a function or take an action when a mouse button is clicked, mouse hovered on an object, when a page fully loaded in browser, input value is changed or keyboard button is pressed etc. All these actions are called events. You can write functions to run when a specific event happens. All these function listens for an event and then start taking the action by initiating the function.

There are two methods by which we can check the connection status both are listed below:

1. Navigator Object

2. Listening to events

## 1. Navigator Object

There is a global object **navigator** in javascript by which you can easliy check if a user is offline or online. The **navigator.onLine** returns `true` if a user is connected to the internet but it will return false if the user is offline.

```javascript

if (navigator.onLine)

console.log("Congratulations, You are connected to the internet.")

else

console.log("Congratulations, You are not connected to the internet.")

```

## 2. Listening to events

Now lets review the other method to check the connection status. In this method we continually listen to the two events `online` and `offline`. And when the connection is interpreted the `offline` event is fired and we capture it by listening to this event. And when the connection is back online the `online` is fired. So, lets take a look at the following example:

### Example

```javascript

class Connection {

constructor() {

this.options = {

onlineText: 'Your device is connected to the internet.',

offlineText: 'Your device lost its internet connection.',

reconnectText: 'Attempting to reconnect...',

notifier: document.querySelector('.notifier'),

notifierText: document.querySelector('.notifier span'),

spinner: document.querySelector('.notifier .lds-css')

};

this.init();

}

init() {

if (navigator.onLine) {

this.on();

} else {

this.off();

setTimeout(() => {

this.reconnect();

}, 1500);

}

window.addEventListener('online', () => {

this.on();

});

window.addEventListener('offline', () => {

this.off();

setTimeout(() => {

this.reconnect();

}, 1500);

});

}

on() {

this.options.notifierText.innerText = this.options.onlineText;

this.options.notifier.classList.remove('error', 'warning');

this.options.notifier.classList.add('success');

this.options.notifier.style.display = "block";

this.options.spinner.style.display = "none";

}

off() {

this.options.notifierText.innerText = this.options.offlineText;

this.options.notifier.classList.remove('success', 'warning');

this.options.notifier.classList.add('error');

this.options.notifier.style.display = "block";

this.options.spinner.style.display = "none";

}

reconnect() {

this.options.notifierText.innerText = this.options.reconnectText;

this.options.notifier.classList.remove('error', 'success');

this.options.notifier.classList.add('warning');

this.options.notifier.style.display = "block";

this.options.spinner.style.display = "block";

}

}

(function () {

new Connection();

})();

```

<a href="https://codepen.io/zeeshanu/pen/zpLMxo">See demo on CodePen</a> | zeeshanahmad |

301,606 | Reading Snippets [52 => CSS] 🎨 | Calc() can be used in CSS to perform math operations on values. It can be used for performing diffe... | 0 | 2020-04-07T09:40:20 | https://dev.to/calvinoea/reading-snippets-52-css-1mhi | todayilearned, css, beginners, html | Calc() can be used in CSS to perform math operations on values.

It can be used for performing different calculations, including adding and subtracting values, using percentages.

For example:

<code>

div {

max-width: calc(80% - 100px)

}

</code>

<kbd><small><a href="https://flaviocopes.nyc3.digitaloceanspaces.com/css-handbook/css-handbook.pdf">Flavio Copes,The CSS Handbook</a></small></kbd> | calvinoea |

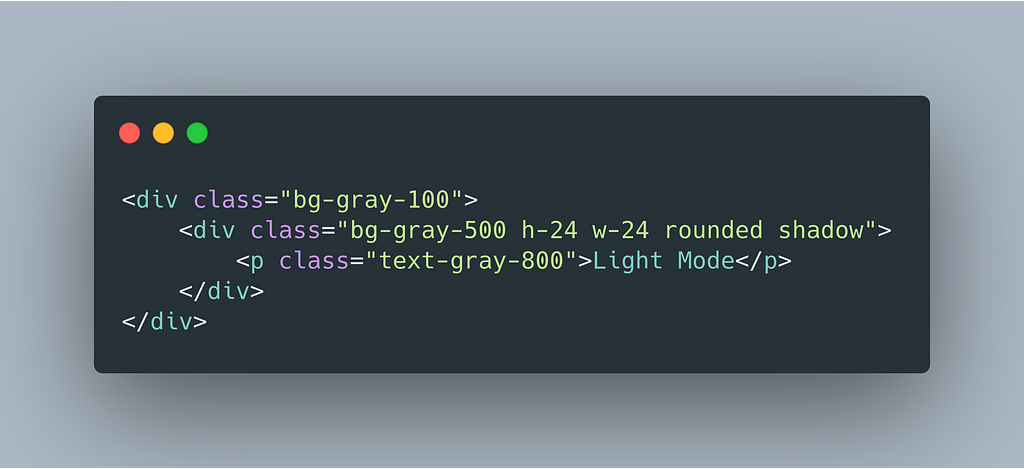

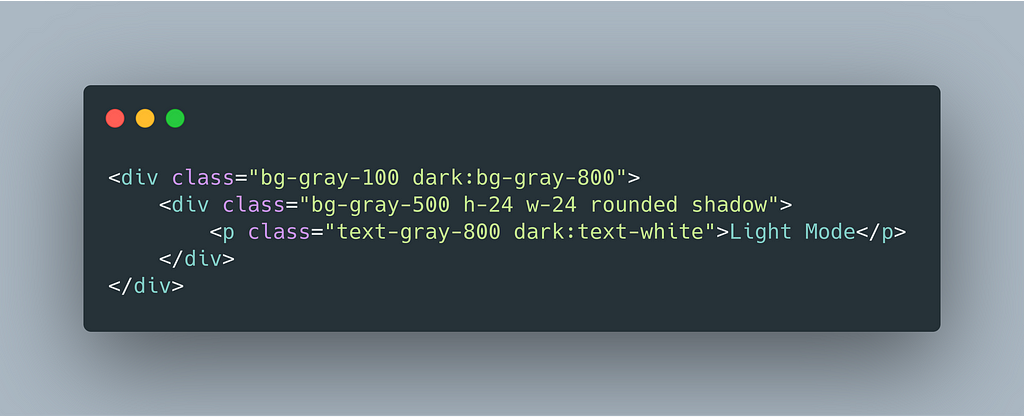

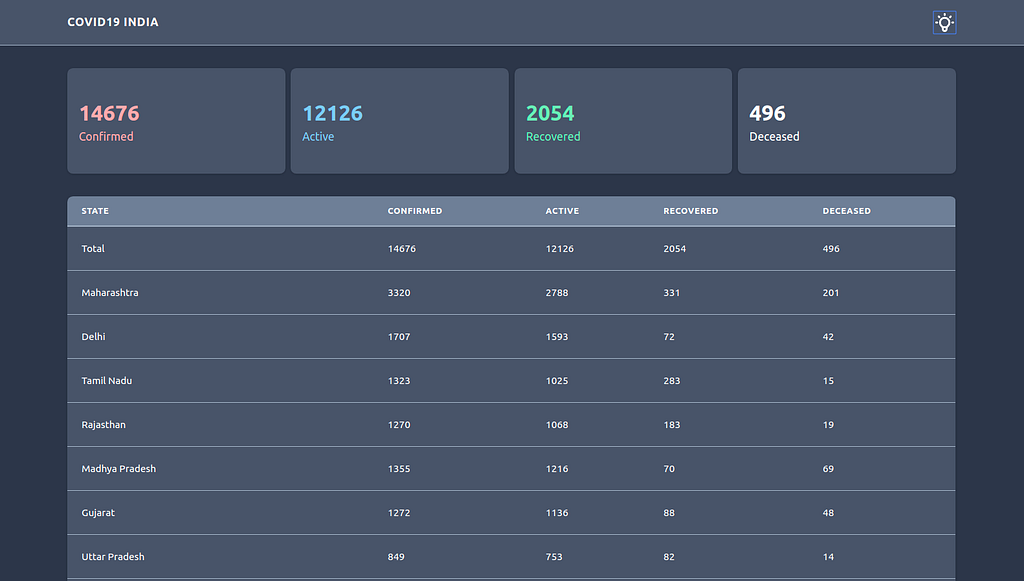

301,611 | TUNS Dark Mode ON! | here is the new DARK look of TUNS ( https://www.tunsapp.com ). Enjoy!! | 0 | 2020-04-07T09:47:23 | https://dev.to/daviducolo/tuns-dark-mode-on-46nl | here is the new DARK look of TUNS ( https://www.tunsapp.com ).

<a href="https://ibb.co/qsKZxKG"><img src="https://i.ibb.co/r6YWvYP/Screenshot-2020-04-07-at-11-46-08.png" alt="Screenshot-2020-04-07-at-11-46-08" border="0"></a>

<a href="https://ibb.co/zVc4cW5"><img src="https://i.ibb.co/TMXgXDT/Screenshot-2020-04-07-at-11-41-53.png" alt="Screenshot-2020-04-07-at-11-41-53" border="0"></a>

Enjoy!! | daviducolo | |

301,632 | Working with 3D models in WebGL | Credits: model in cover by Meeee This is a lazy checklist for your workflow talking about the wonder... | 5,827 | 2020-04-07T10:55:50 | https://dev.to/adam_cyclones/what-i-ve-learned-about-gltf-390d | javascript | Credits: model in cover by Meeee

This is a lazy checklist for your workflow talking about the wonderful GLTF format. Your going to need JavaScript to view this stuff so its tagged with that.

I am exploring its capabilities and what workflow you or I will need in Blender to achieve the results we want. First off you wont achieve the results you want, anyone familiar with photo realism in blender will know about Cycles renderer, it's not going to look like that so lower your expectations a pinch, then do that again 5 or 6 times.

As soon as I found out about GLTF I downloaded a copy of blender and made my first model in 12 months or more, I built that lovely watch model in the cover image (I love watches!). I am not fully happy with this model because its missing a few things, but still thats not the point.

Exporting this to a GLTF file using Blenders built in GLTF exporter yielded some results with let's say... potential.

The first thing you will notice and perhaps this is not a shock, GLTF does not respect blenders modifiers which resulted in my first attempt having only quarter of the model visible, fortunately the exporter has an option to apply said modifiers without any distractive results to the original model.

The next problem, although GLTF does handle PBR materials, my glass was opaque, it looked good but not good for a watch. "Hey whats the time, IDK I have an opaque watch you moron!". I probably should use Glass BSDF shader?

Lastly performance, my model is not optimised, in-fact its so bad internally that If I where to target the web again (which I will), a lot and I mean a lot of cleanup would need to be done, I will look into this in a further article, but for the moment, 16FPS was what I got.

Anyways here is the checklist:

- Apply modifiers in export

- Dont expect lighting to work

- Materials will look more like material preview then render

- Consider if transperncy works

- Optimise mesh and clean it up, only keep what is visible, simplify what you can get away with

Whats next, GLTF animation of the hands :)

Thanks for reading.

| adam_cyclones |

311,875 | About Me | Yup. Thats's me :) First off, I would like to introduce myself. My name is Mariana and I am from Br... | 0 | 2020-04-17T13:21:26 | https://dev.to/cafecotech/about-me-3lp1 |

*Yup. Thats's me :)*

First off, I would like to introduce myself.

My name is Mariana and I am from Brazil 🇧🇷 . I have a bachelor's degree in Civil Engineering, currently finishing my specialization in Structures and Foundations. However, I always liked the power and magic that come from a computer yet never have given it a try. Along with this *ignored* desire, when I graduated there was not much room for me from where I live (*professionally-wise*) and so I started to explore more and more about programming. Starting with data science then right after I got in touch with web development. As expected, my passion just reflourished (*pretends to be shocked*) and now I am changing careers.

When I started to get back in touch with programming, I had the fortune to come across wonderful people from the tech industry which made a **HUGE** difference in my life. I am truly and deeply grateful for them. It led me to many good things: an internship, meet more wonderful people, getting scholarships from online courses ... It is like all started to happen (and still is ). And now I know what I want for my professional life. Like. For. Sure. I am struggling with a lot of things and concepts and I will struggle a lot more but I feel happy, rewarded and motivated with this environment.

So ... how do I came up with the idea of this blog? 🤔 Well, I have never written a blog or anything alike but I always had the will to communicate with people and to exchange knowledge. And this blog is a good approach I found to do so (and train my English and writing haha). I believe that when we share knowledge we both teach and learn in the best way possible (active learning). Besides, when we share our journey/perspectives/tips we enable people to resonate with us and help them in their own journey. I guarantee that having someone to push you forward and/or to look up to can have a great impact on our lives.

I hope I can support you somehow and please feel free to be in touch.

Oh, and don't forget to get your daily coffee dose.

Now, let's code 🤓

---

Also posted on [Hashnode](https://cafecotech.hashnode.dev/about-me-ck8mj4wg601en79s1ytiu6v9h) | cafecotech | |

311,908 | Developers: How do you tackle your bugs? | In my organization we set up a rotating Bug Stream process, to handle incoming and existing bugs.... | 0 | 2020-04-17T13:55:15 | https://dev.to/thatferit/developers-how-do-you-tackle-with-bugs-47a8 | discuss, bugs, webdev | ---

title: Developers: How do you tackle your bugs?

published: true

description:

tags: discuss, bugs, webdev

---

In my organization we set up a rotating Bug Stream process, to handle incoming and existing bugs. When blogging about it, I've asked myself

"How are others tackling bugs?"

So let's share how your team, organization is handling bugs.

- How are they collected?

- How are they prioritized?

- How are you fixing them ? | thatferit |

311,911 | Two Equations to Improve Your Analysis of Algorithms | In this tutorial, you will learn how to calculate permutations and combinations of a series of n integers with simple and easy to remember equations. | 0 | 2020-04-17T15:03:27 | https://jarednielsen.com/calculate-permutations-combinations/ | mathematics, career, beginners, algorithms | ---

title: Two Equations to Improve Your Analysis of Algorithms

published: true

description: In this tutorial, you will learn how to calculate permutations and combinations of a series of n integers with simple and easy to remember equations.

tags: mathematics, career, beginners, algorithms

cover_image: https://dev-to-uploads.s3.amazonaws.com/i/eu7mo1a2mjadrpywqm4h.png

canonical_url: https://jarednielsen.com/calculate-permutations-combinations/

---

You don’t need to be a math whiz to be a good programmer, but there are a handful of tricks you will want to add to your problem solving bag to improve the performance of your algorithms and make an impression in technical interviews. In this tutorial, you will learn how to calculate permutations and combinations of a series of n integers with simple and easy to remember equations.

_This article originally published at [jarednielsen.com](https://jarednielsen.com/calculate-permutations-combinations/)_

## What is the Difference Between Permutations and Combinations?

* Permutations, sequence is important

We are interested in the order of placement of items.

* Combinations, sequence is not important

We are interested in the number of groups we can create.

Let’s look at an example.

How many three letter permutations are there for the letters A, B & C?

```

ABC

ACB

BCA

BAC

CAB

CBA

```

There are six permutations.

What about combinations?

Well, there’s only one.

Why?

No matter the order, our group always contains the same three letters, A, B & C.

When we calculated the permutations for three letters, did you notice a pattern?

Let’s try four letters:

```

ABCD

ABDC

ACBD

ACDB

ADBC

ADCB

BACD

BADC

BCAD

BCDA

BDAC

BDCA

CABD

CADB

CBAD

CBDA

CDAB

CDBA

DABC

DACB

DBAC

DBCA

DCAB

DCBA

```

We just made a big jump in the number of permutations we need to calculate!

Where have we seen this, or something like it, before?

🤔

Factorial!

In the first example using three letters, our permutations were _3!_, or:

```

3 * 2 * 1 = 6

```

In the second example using four letters, our permutations were _4!_, or:

```

4 * 3 * 2 * 1 = 24

```

What about calculating subsets of permutations?

## How to Calculate Permutations

Say you’re the judge of a baking competition and you need to award gold, silver and bronze cake stands to the top three of 12 contestants, but all of the bakers are deserving of an award.

How many options do you need to calculate?

This is a permutations problem.

Why?

The order is important.

We are ranking, or ordering, the three best bakers.

We award the gold to Noel.

Now there are only eleven contestants to choose from.

We award the silver to Sandy.

Now there are only ten contestants to choose from.

We award the bronze to Prue.

Those three are the obvious winners.

(Sorry, Paul.)

But if it weren’t so obvious, how many possible permutations would we need to calculate?

Each time we select a winner, that individual is removed from the group and we calculate the possible permutations of the remaining individuals.

What’s 12!?

```

12 * 11 * 10 * 9 * 8 * 7 * 6 * 5 * 4 * 3 * 2 * 1 = 479001600

```

That’s a lot of processing!

Luckily, we don’t need to calculate that many permutations.

We only need to calculate the permutations for three winners.

How do we do this?

We simply stop after the three largest values in our sequence:

```

12 * 11 * 10 = 1320

```

Why?

There’s no need to calculate _all_ of the permutations, only those for the three largest values.

This is still a lot of permutations, but a much more manageable number.

What if we didn’t know the size of our input at the outset?

Let’s convert this to an equation!

What’s another way of describing the numbers we did not factor?

```

9 * 8 * 7 * 6 * 5 * 4 * 3 * 2 * 1

```

9!

Because factorial is the _product_ of a sequence, we can’t simply subtract 9! from 12!.

We need to divide it:

```

12! / 9!

```

We could also write this as:

```

12! / (12 - 3)!

```

Now it’s simply a matter of substituting values with variables.

```

n! / (n - k)!

```

## How to Calculate Combinations

Let’s say we’re ordering pizzas to feed the participants of a hackathon.

Because these are programmers, they want to know how many possible combinations are available to choose from.

The local pizza shop gives us 12 options for toppings, but we can only choose three per pizza.

How many different pizzas are possible?

This is a combinations problem.

Why?

Because order doesn’t matter.

A pizza with pepperoni, peppers, and pineapple is the same as a pizza with pineapple, peppers, and pepperoni.

But because order doesn’t matter, redundancy does.

When we calculate permutations, there are no redundancies in the _order_ of the elements, but there are a lot of redundancies in the _grouping_ of elements.

How do we remove the redundant combinations?

We simply divide by the number of permutations of _k_, or _k!_

```

( n! / (n - k)! ) / k!

```

Which is also:

```

( n! / (n! - k!) ) * 1 / k!

```

When simplified is:

```

n! / (n - k)! * k!

```

This is often written as:

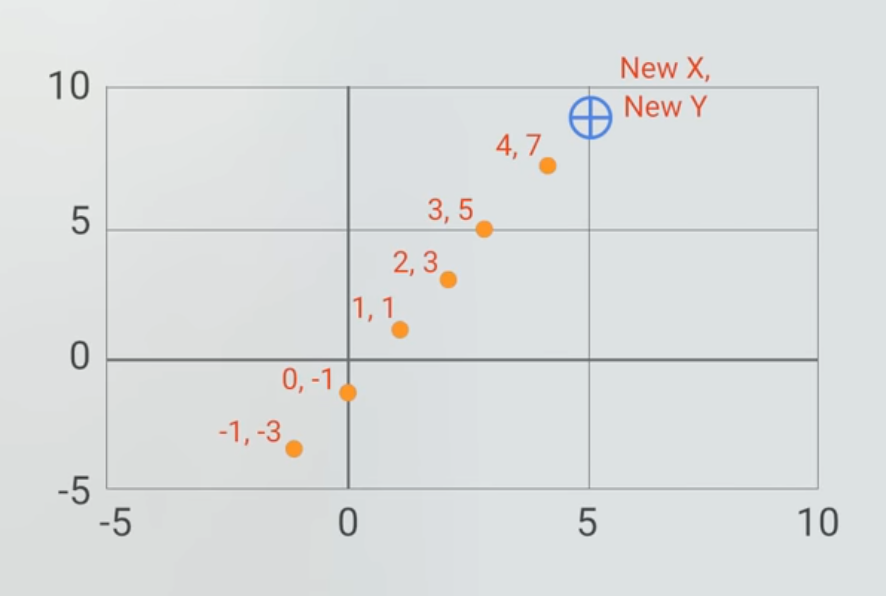

And read as “_n_ choose _k_”, because there are _n_ ways to choose an unordered subset of _k_ elements from a fixed set of _n_ elements.

AKA the [binomial coefficient](https://en.wikipedia.org/wiki/Binomial_coefficient)

## How to Calculate Permutations and Combinations

You don’t need to be a math whiz to be a good programmer, but there are a handful of equations you will want to add to your problem solving toolbox. In this tutorial, you learned how to calculate permutations and combinations of a series of n integers with simple and easy to remember equations. They’re like party tricks for technical interviews.

---

Want to stay in the loop? I write a weekly newsletter about programming, problem solving and lifelong learning. [Sign up for _The Solution_](http://eepurl.com/cP8CMn)

---

| nielsenjared |

311,968 | Improve Laravel code readability using PHPDoc | Improve Laravel code readability using PHPDoc | 0 | 2020-04-17T21:36:04 | https://dev.to/meathanjay/improve-laravel-code-readability-using-phpdoc-2670 | php, laravel, phpdoc, typehint | ---

title: Improve Laravel code readability using PHPDoc

published: true

description: Improve Laravel code readability using PHPDoc

tags: PHP, laravel, phpdoc, type-hint

---

If you're writing PHP lately, very likely to know the Laravel framework, very easy to get started, and more likely, you can develop the same MVP app at the lowest time in compared to other frameworks. It has rich built-in and ready-to-use packages and easy configuration and huge community support, and all while you don't have to compromise the performance.

If you're writing Laravel applications, you know how extensively Laravel uses PHP's magic methods, especially in Eloquent.

You never explicitly mention a Model's properties except making some fields guarded and mass-assignable. When you try to access that property, the `__get` method looks for database columns with the same name or return null; you access any defined relation as property in the same way.

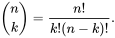

Even `$user->is_avaiable` is a boolean property, IDE shows mixed because you never declared that and your IDE can't help with auto-completion or if you mistyped a character.

And, how you know a property is available in that model class? Well, in your head, because you created the migration, database schema; What about the new developers or your future-self?

Your model is not so readable, and no one can guess `$user->is_available` or `$users->projects` exists or type until looking into columns and parent classes.

So, why not make your code self-explanatory and let your IDE help you avoid making a mistake and help your future self?

PHPDoc can help you add a piece of information in a code block like file, class, properties, methods, and variables.

PHPDoc is the same as multiline comments but starts with a forward-slash and two asterisks(`/**`) and ends with an asterisk and forward-slash(`*/`), in-between you add those missing information in PHPDoc DSL language.

```php

<?php

namespace App\Domain\User;

use Illuminate\Database\Eloquent\Relations\HasMany;

use Illuminate\Foundation\Auth\User as Authenticatable;

/**

* User Model

*

* @property bool $is_available

* @property-read Project[] $projects

* @method static User create(array $attributes = [])

* @method static \Illuminate\Database\Eloquent\Builder where($column, $operator = null, $value = null, $boolean = 'and')

*/

class User extends Authenticatable

{

protected $guarded = [

'id', 'email_verified_at', 'remember_token',

];

protected $hidden = [

'password', 'remember_token',

];

public function projects(): HasMany

{

return $this->hasMany(Project::class);

}

}

```

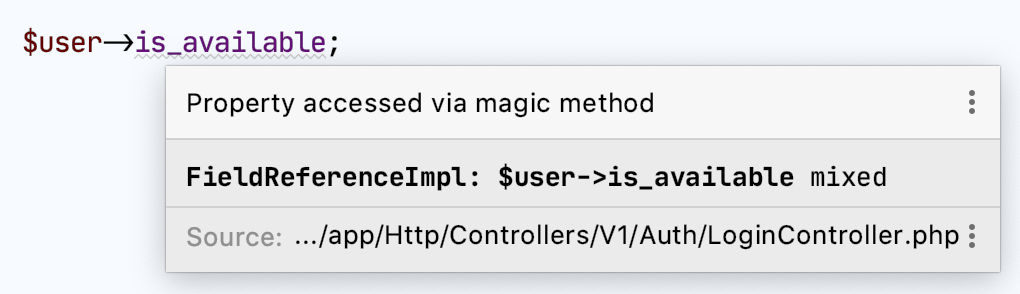

Now, those missing properties and methods are self-explanatory, and you know precisely what their type and return value.

Declaring return type for `create` method in the User model, let you avoid re-declaring return type every-time you call `create` and it makes your code clean and maintainable.

```php

$user = User::create($data);

// instead

/** @var User $user */

$user = User::create($data);

```

| meathanjay |

312,000 | Render Hook Pattern in React | Working on a React code base I found my self in a need to display many confirmation modals. I got fru... | 0 | 2020-04-17T16:12:02 | https://dev.to/tomslutsky/returning-components-from-custom-hooks-5281 | react, javascript, reactnative |

Working on a React code base I found my self in a need to display many confirmation modals.

I got frustrated after the third one and found a nifty pattern to use: **returning the modal component itself from a hook.**

I assume that a this point in time there is no longer a need to introduce the concept of hooks in React. However if you need a refresher you might want to check https://reactjs.org/docs/hooks-intro.html

So my basic Idea was to use an API that will look roughly like that:

```javascript

const ComponentWithDangerAction = () => {

const [Modal, onClick] = useConfirm({

onConfirm: ({ id }) => alert("deleted item id: " + id),

onDismiss: alert,

title: "Danger Zone",

message: "This action is irreversible. Are you sure you want to continue?"

});

return (

<div className="App">

<Modal />

<Button onClick={() => onClick({ id: 5 })}>

Press here to delete something important

</Button>

</div>

);

};

```

Next step is to create the useConfirm hook itself and it easiest of course to start with a minimal non crashing api (assuming we have a Modal component).

```javascript

const useConfirm = () => {

const onClick = () => null;

const ConfirmModal = () => <Modal />

return [ConfirmModal, onClick];

}

```

Now adding disclosure related state and callbacks functionality

```javascript

const useConfirm = ({onConfirm, onDismiss, message }) => {

const [isOpen, setOpen] = useState(false);

const toggle = setOpen(!isOpen);

const onClick = () => toggle();

const handleConfirm = () => {

onConfirm && onConfirm();

toggle();

}

const handleDismiss = () => {

onDismiss && onDismiss();

toggle();

}

const ConfirmModal = () => (

<Modal isOpen={isOpen} onClose={toggle}>

<span>{message}</span>

<button onClick={handleConfirm}>Confirm</button>

<button onClick={handleDismiss}></Dismiss>

</Modal>)

return [ConfirmModal, onClick];

}

```

Almost Done! The only problem is I want to be able to pass arguments to the confirm function (I want to delete a specific item from a list).

My solution was to store arguments passed to onClick to the state of the hook. That way when Confirm button is pressed I can call the onConfirm callback with the arguments passed to it.

```javascript

const useConfirm = ({onConfirm, onDismiss, message }) => {

const [isOpen, setOpen] = useState(false);

const [confirmArgs, setConfirmArgs] = useState(false);

const toggle = setOpen(!isOpen);

const onClick = (args) => {

setConfirmArgs(args); // storing the args

};

const handleConfirm = () => {

onConfirm && onConfirm(confirmArgs); // using the args

toggle();

}

const handleDismiss = () => {

onDismiss && onDismiss();

toggle();

}

const ConfirmModal = () => (

<Modal isOpen={isOpen} onClose={toggle}>

<span>{message}</span>

<button onClick={handleConfirm}>Confirm</button>

<button onClick={handleDismiss}></Dismiss>

</Modal>)

return [ConfirmModal, onClick];

}

```

Hope you will find this pattern useful :)

you can find a more complete example on [codesandbox](https://codesandbox.io/s/useconfirm-n3v5y)

And of course follow me on twitter [@SlutskyTom](https://twitter.com/slutskytom) | tomslutsky |

312,135 | Day 119 : The Whole World | liner notes: Professional : Had our product team meeting. It was good to hear what everyone has bee... | 0 | 2020-04-17T22:08:49 | https://dev.to/dwane/day-119-the-whole-world-4bii | hiphop, code, coding, lifelongdev | _liner notes_:

- Professional : Had our product team meeting. It was good to hear what everyone has been up to for the week. Mostly did more research for my blog post. Had our "Happy Hour" where a few of us get to catch up. That was fun.

- Personal : Last night, I went through a few tracks for the radio show and worked on adding fill in data and styling it for the Web Component I've been working on.

Got my laundry washing. Going to finish up the work for the radio show and also get the Web Component up and running. This weekend, I want to get an old project working so I can use it as a base for our hackathon next week.

Side note: Does it sometimes seem like the whole world is crazy?! haha

Have a great night and weekend!

peace piece

Dwane / conshus

https://dwane.io / https://HIPHOPandCODE.com

{% youtube udmTfK6_aM8 %} | dwane |

312,149 | Multilingual Magic Part 2 | The previous post went over the frontend basics of a Bootstrap tab structure to display some text in... | 0 | 2020-04-17T22:55:39 | https://dev.to/aellon/multilingual-magic-part-2-of-2-3303 | wordpress, bootstrap, acf | ---

series: [Bootstrap Tabs for Translations in Wordpress]

---

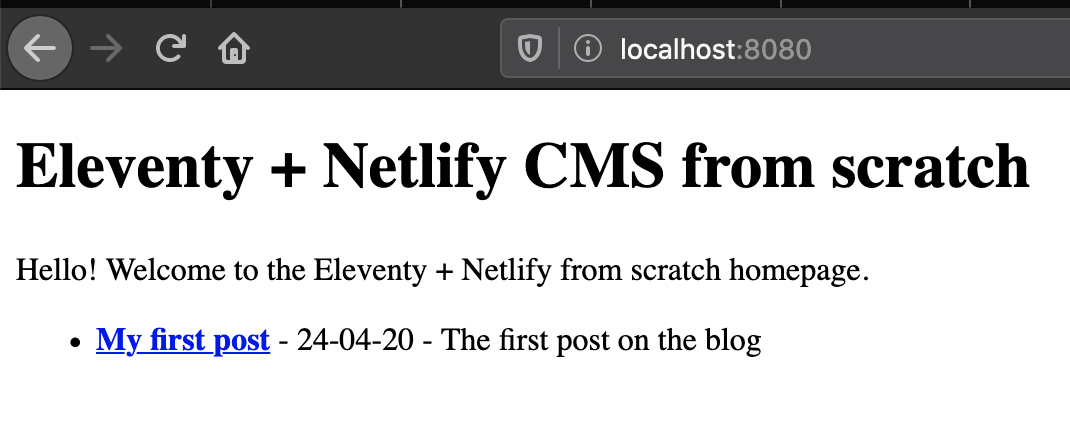

The previous post went over the frontend basics of a Bootstrap tab structure to display some text in three tabs, one for each translation. Now we will take a look at the Wordpress side of adding those fields for the client to enter the text.

Using the [Advanced Custom Fields plugin](https://www.advancedcustomfields.com), I added a field group for Languages, with a field for Russian and one for Spanish. The default tab will be English so we don't need to add a field for that one since it can be the regular content. This is what the ACF field group looks like:

I selected a Wysiwyg Editor as the field type since this will give the client control over the text, but you could just as easily choose any other field type. This is what it will look like for the client:

In the previous post I included the following HTML to get the tabs working.

```HTML

<div class="tab-content" id="alertTabContent">

<div class="tab-pane fade show active" id="english" role="tabpanel" aria-labelledby="english-tab">

<a href="<?php echo esc_url( get_permalink($id) ); ?>">

<h5 class="text-red alert-content"><?php the_content(); ?></h5>

</a>

</div>

<div class="tab-pane fade" id="russian" role="tabpanel" aria-labelledby="russian-tab">

<a href="<?php echo esc_url( get_permalink($id) ); ?>">

<h5 class="text-red alert-content"><?php the_field('russian'); ?></h5>

</a>

</div>

<div class="tab-pane fade" id="spanish" role="tabpanel" aria-labelledby="spanish-tab">

<a href="<?php echo esc_url( get_permalink($id) ); ?>">

<h5 class="text-red alert-content"><?php the_field('spanish'); ?></h5>

</a>

</div>

</div>

```

Two key pieces of code here are the lines pulling in the content from those custom fields: `<?php the_field('russian'); ?>` and `<?php the_field('spanish'); ?>`.

`the_field()` is a built in [ACF function](https://www.advancedcustomfields.com/resources/the_field/). The selectors are the field names. ACF made it so simple yet so powerful too!

With that, you have a fully customizable set up for displaying text in three discreet tabs using Bootstrap and pulling in the content from Wordpress. After the site is live I will add the frontend design here so be sure to check back!

| aellon |

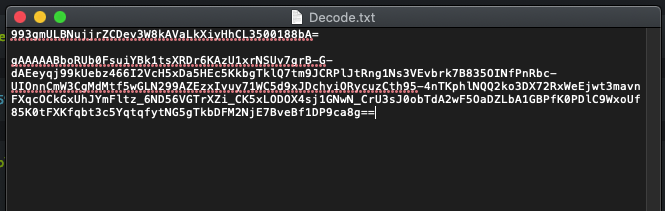

312,177 | HTB CTF - Decode Me!! | CTF Name: Decode Me!! Resource: Hack The Box CTF Difficulty: [30 pts] easy range Note::: NO, I won... | 10,223 | 2020-04-17T23:32:06 | https://dev.to/caffiendkitten/htb-ctf-decode-me-3n8a | codenewbie, security, htb, ctf | - CTF Name: Decode Me!!

- Resource: Hack The Box CTF

- Difficulty: [30 pts] easy range

Note::: NO, I won't be posting my found FLAGS, but I will be posting the methods I used.

<hr>

### Flag1

- Hint: Try find the flag!

- Acquired By:

- First thing to do is obviously download the file and extract the file. While a lot of people will use the command line for this I usually just to my file folders. It's easy so why not.

- Next is to just look at it. At first it appears to be something that is Base64 encoded as the lines end with the usual "=" but this is not the case... yet. So first thing I did, like many others, is try to decode it like this, but it fails.

- After a bunch of Googling and browsing the HTB fourms someone mentions a key and code so if we look into that we find the Fernet (symmetric encryption). This is a "symmetric encryption method which makes sure that the message encrypted cannot be manipulated/read without the key. It uses URL safe encoding for the keys. Fernet uses 128-bit AES in CBC mode and PKCS7 padding, with HMAC using SHA256 for authentication. The IV is created from os.random()." (1)

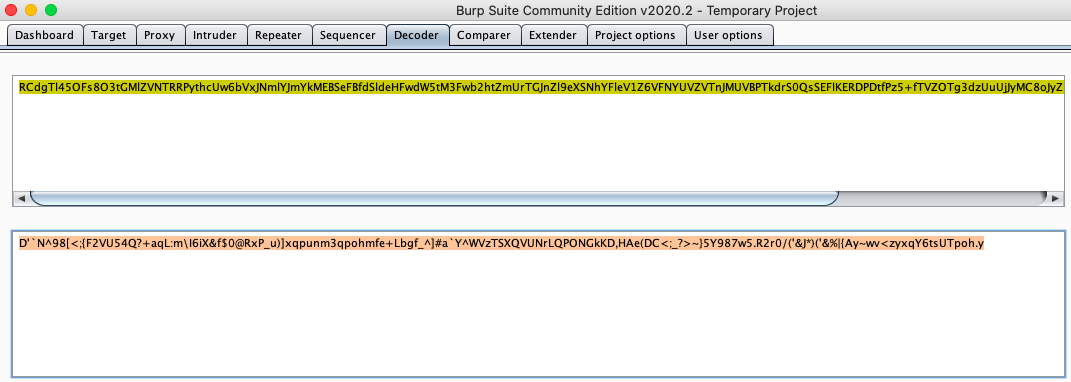

- This produced something that was less than finished but it looks like there is another string of Base64 so lets decode that now.This is still not really helpful though...

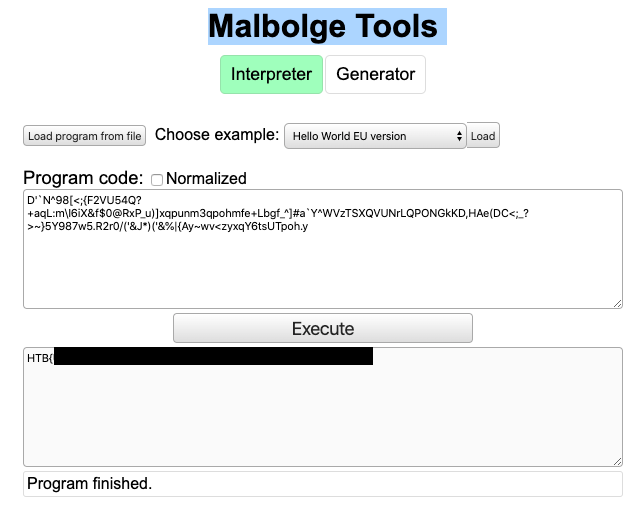

- Now it's time for MORE Googling and HTB forums and I got a hint about "Malbolge Tools". Malbolge is "Malbolge is a public domain esoteric programming language... that was specifically designed to be almost impossible to use, via a counter-intuitive 'crazy operation', base-three arithmetic, and self-altering code." (2)

- Once I found the Malbolge Decoding tool I was able to throw in the new output and get the flag.

### Learned

This flag taught me the power of forums and getting help.

This flag was a crazy 3 step process that I would not have been able to get on my own. The input for the Malbolge Tool was something I have never seen before and don't think that I will see again outside of CTFs but I am glad I've seen it.

<hr>

Happy Hacking

### Resources:

1. https://asecuritysite.com/encryption/ferdecode

2. https://en.wikipedia.org/wiki/Malbolge

3. https://zb3.me/malbolge-tools/

4. https://asecuritysite.com/encryption/ferdecode

###### Please Note: that I am still learning and if something that I have stated is incorrect please let me know. I would love to learn more about what I may not understand fully.

| caffiendkitten |

312,198 | Command Object Pattern | How would I explain Command Object Pattern to Myself. What is it? The Command Object Patt... | 5,745 | 2020-04-18T01:00:04 | https://blog.kleeut.com/2020/04/command-object-pattern.html | oop, patterns | How would I explain Command Object Pattern to Myself.

# What is it?

The Command Object Pattern to encapsulate what would have otherwise been a function call inside an object that is abstracted away behind an interface.

The Command Object Pattern is a pattern of four classes:

## The Command

Implements the Command Interface and takes a dependency on the receiver. The command encapsulates everything needed to call the receiver.

## The Receiver

The class being on by invoking the command.

## The Invoker

Depends on an abstract Command interface. Does not know anything about the concrete implementation of the Command.

## The Client

High level class responsible for connecting the invoker with commands.

# Why is it good?

The Command Object Pattern facilitates using the Open Closed Principle on the Invoker. Making the Invoker open for extension and closed for modification by providing behaviour at run time. This allows for independent testing of components and component reuse.

# Where would you use it ?

The Command Object Pattern's use case is any where that you have an object that needs to share some behaviour across multiple instances (possibly in multiple applications) while allowing other behaviours to be specific to an instance.

An example is implementing a Button class. The button knows about display and interaction logic. The behaviour that is invoked when the button is pressed is not an implementation detail of the Button class but is provided at run time.

# N.B.

This is a pattern that I thought I understood. I actually had it confused with the Command Bus / Command Handler Pattern. | kleeut |

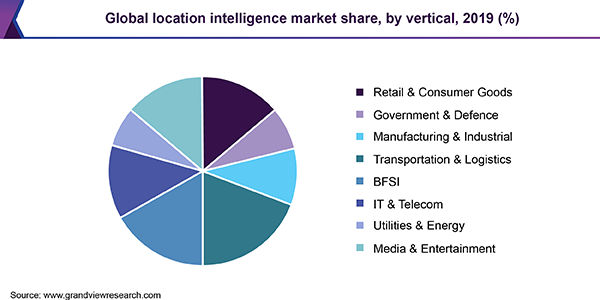

312,602 | Five industries that can make the most out of Location Intelligence | We’re all familiar with the drill of booking a cab, isn’t it? We set a destination, choose a cab tha... | 0 | 2020-04-30T13:58:20 | https://dev.to/nandhini_95/five-industries-that-can-make-the-most-out-of-location-intelligence-of0 | datascience, database | We’re all familiar with the drill of booking a cab, isn’t it?