id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

338,515 | Overwriting Shared Libraries in AWS Lambda | The latest Ruby runtime for AWS Lambda runs Ruby 2.7. Though this version of ruby is only 6 months ol... | 0 | 2020-05-18T23:26:39 | https://dev.to/nckslvrmn/overwriting-shared-libraries-in-aws-lambda-479h | aws, serverless, ruby | The latest Ruby runtime for AWS Lambda runs Ruby 2.7. Though this version of ruby is only 6 months old, the version of OpenSSL that Lambdas instance of Ruby was compiled with is over 3 years old. You can verify that by running the function below and seeing what it returns:

```

require 'openssl'

def lambda_handler(event:, context:)

return OpenSSL::OPENSSL_VERSION

end

# OpenSSL 1.0.2k 26 Jan 2017

```

That's Old! That means that Ruby's OpenSSL library is missing some key features like `SHA-3`, `TLS 1.3`, and the `scrypt` KDF.

I wanted to see if I could load in a newer version of the OpenSSL shared library ruby loads so I could leverage some of these shiny new features. Well, it turns out, [AWS Lambda Layers](https://docs.aws.amazon.com/lambda/latest/dg/configuration-layers.html) was a big part of the answer here. In the documentation, a Lambda Layer is available to your Lambda code via the `/opt` directory. Now anyone who uses a lot of gem dependencies might have already come across this feature as it's a great way to share gems across different functions while keeping the function size itself fairly small.

But interestingly enough, it's not just a place to load gems. Lambda also adds to the `RUBYLIB` environment variable with a path you can fill with a Lambda Layer (specifically `/opt/ruby/lib`). This path is also *prefixed* to the `LOAD_PATH` variable. This is where things get interesting.

Now that we know we can load up a Lambda Layer with a shared library that will be part of the auto searched `LOAD_PATH`, we can construct a Lambda Layer with the necessary files to load our own version of OpenSSL. To do this, we need a newer instance of `openssl.so` that was compiled with Ruby and we also need the `libssl.so.1.1` and `libcrypto.so.1.1` files to support the shared library.

I was able to extract a copy of these files by installing the latest version of OpenSSL from my package manager (pacman), and installing Ruby 2.7 from RVM so it re-compiled on my machine. In the end, I constructed a directory structure that looked like this:

```

.

├── lib

│ ├── libcrypto.so -> libcrypto.so.1.1

│ ├── libcrypto.so.1.1

│ ├── libssl.so -> libssl.so.1.1

│ └── libssl.so.1.1

└── ruby

└── lib

└── openssl.so

```

I then zipped that up and uploaded that zip to a new Lambda Layer destined for my function. Upon running the below function, we can see that my OpenSSL version is now nice and new and should include the features I want! Running the original function above, I now see `OpenSSL 1.1.1d 10 Sep 2019`. Excellent! Now I can go generate all the `scrypt` keys and initiate all the `TLS 1.3` connections I want right?

Not exactly. It turns out, Ruby has a fun little behavior when it sees it needs to load some files. when calling `require`, ruby will search through the `LOAD_PATH` for the code you are trying to load, but specifically with `require`, it will load .rb files **and** shared libraries with the `.so` extension. So When I tried to create a new `SHA-256` digest, I was met with an unexpected error:

```

require 'openssl'

def lambda_handler(event:, context:)

return OpenSSL::Digest::SHA256.new

end

# uninitialized constant OpenSSL::Digest::SHA256

```

What happened? Well it turns out, because my `openssl.so` file is now *ahead* of Ruby's built-in `openssl.rb` code, I am only loading the shared library which comes with some classes, but not all the classes I expect. To get around this, it's quite simple:

```

require 'openssl.rb'

def lambda_handler(event:, context:)

return OpenSSL::Digest::SHA256.new

end

# #<OpenSSL::Digest::SHA256: ...>

```

By specifying the `.rb` extension, I am now instructing Ruby to look through its `LOAD_PATH` until it finds the first instance of a file called `openssl.rb`. This is included with ruby and is the code that loads in all of the classes I expect to see, as well as an explicit call to load `openssl.so`. This now allows me to use all of the shiny new features that OpenSSL 1.1.1(x) provides without having to use a [Custom Runtime](https://docs.aws.amazon.com/lambda/latest/dg/runtimes-custom.html). | nckslvrmn |

338,527 | Using scispaCy for Named-Entity Recognition (Part 1) | A step-by-step tutorial for extracting data from biomedical literature Photo by Beatriz Pé... | 0 | 2020-05-18T23:32:37 | https://dev.to/akashkaul/using-scispacy-for-named-entity-recognition-5ddh | namedentityrecognition, tutorial, nlp, scispacy | ---

title: Using scispaCy for Named-Entity Recognition (Part 1)

published: true

date: 2020-05-18 03:13:23 UTC

tags: namedentityrecognition,tutorial,nlp,scispacy

canonical_url:

---

#### _A step-by-step tutorial for extracting data from biomedical literature_

<figcaption>Photo by <a href="https://unsplash.com/@beatriz_perez?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText">Beatriz Pérez Moya</a> on <a href="https://unsplash.com/backgrounds/art/paper?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText">Unsplash</a></figcaption>

In 2019, the Allen Institute for Artificial Intelligence (AI2) developed scispaCy, a full, open-source spaCy pipeline for Python designed for analyzing biomedical and scientific text using natural language processing (NLP). scispaCy is a powerful tool, especially for named entity recognition (NER), or identifying keywords (called entities) and ordering them into categories. I will be taking you through a basic introduction to using scispaCy for NER, and you will soon be on your way to becoming a master of NLP.

### **Agenda**

1. Set up Environment

2. Install pandas

3. Install scispaCy

4. Choose a Model

5. Import Packages

6. Import Data

7. Select Data

8. Implementing Named-Entity Recognition

9. Larger Data

### **Setting Up an Environment**

The first step is to choose an environment to work in. I used Google Colab, but Jupyter Notebook or simply working from the terminal are fine, too. If you do work from the terminal, just make sure to create a virtual environment to work in. If you are working in Google Colab, there is no need to do this. Easy-to-follow instructions on creating a virtual environment can be found [here](https://uoa-eresearch.github.io/eresearch-cookbook/recipe/2014/11/26/python-virtual-env/).

_Because I used Google Colab, the syntax used may be slightly different than that used for other environments._

The full code for this project can be found [here](https://github.com/akash-kaul/Using-scispaCy-for-Named-Entity-Recognition).

### **Installing pandas**

Pandas is a Python library used for data manipulation. This will help with importing and representing the data we will analyze (talked about in the next section). If you’re working from Google Colab, pandas comes pre-installed, so you can ignore this step. Otherwise, install pandas using either Conda or PyPI (whichever you prefer). You can view all the steps for the installation process [here](https://pandas.pydata.org/pandas-docs/stable/getting_started/install.html).

### **Installing scispaCy**

Installing scispaCy is pretty straight-forward. It is installed just like any other Python package.

```

!pip install -U spacy

!pip install scispacy

```

### **Picking a Pre-trained scispaCy Model**

After installing scispaCy, you next need to install one of their premade models. scispaCy models come in two flavors: Core and NER. The Core models come in three sizes (small, medium, large) based on the amount of vocabulary stored, and they identify entities but do not classify them. The NER models, on the other hand, identify and classify entities. There are 4 different NER models built on different entity categories. You may need to experiment with the different models to find which one works best for your needs. The full list of models and specifications can be found [here](https://allenai.github.io/scispacy/). Once you pick a model, install it using the model URL.

```

!pip install https://s3-us-west-2.amazonaws.com/ai2-s2-scispacy/releases/v0.2.4/en_core_sci_sm-0.2.4.tar.gz

```

_Example of installing the “en_core_sci_sm” model_

### **Import your packages**

Once you have installed all of your packages and a virtual environment is created, simply import the packages you just downloaded.

```

import scispacy

import spacy

import en_core_sci_sm

from spacy import display

import pandas as pd

```

You may notice we also import an additional package “displacy”. Displacy isn’t required to perform any of the NER actions, but it is a visualizer that helps us see what’s going on.

### **Importing Data**

For this example, we used the metadata from CORD-19, an open database of research papers about Covid-19. The metadata, as well as the full collection of articles, can be found [here](https://www.semanticscholar.org/cord19/download).

_The metadata file is a bit finicky, so if the file is doing what you want, just copy the contents to a new file. This should resolve any problems you encounter with the file._

To import the data, we use the pandas _read_csv()_ function.

```

df = pd.read_csv(“content/sample_data/metadata.csv”)

```

The function reads in the file path and stores the data as a DataFrame, the main Pandas data structure. For more information on how pandas stores and manipulates data, you can view the documentation [here](https://pandas.pydata.org/pandas-docs/stable/index.html).

_If you are using Colab, you can drag the file into the “Files” section, then right-click and choose “Copy Path” to easily access the path to the file you want._

### **Selecting the Relevant Data**

The metadata provides lots of useful information about the over 60,00 papers in CORD-19, including authors, reference numbers, etc. However, for our purposes, the data we care about is the abstracts. The full abstract of each paper is listed under the column named “abstract”. So, our next step is to choose this text. We will do this using the DataFrame _loc_ function. This function takes in the location of a cell in the DataFrame and returns the data present in that cell. To access a specific abstract, just type the specific row you want and the header of the column, and store as a string variable.

```

text = meta_df.loc[0, “abstract”]

```

This finds the abstract located in the first row of the table (remember, in Python indexing starts at 0). You can then print your newly created string to verify you have the data you want. It should look something like this:

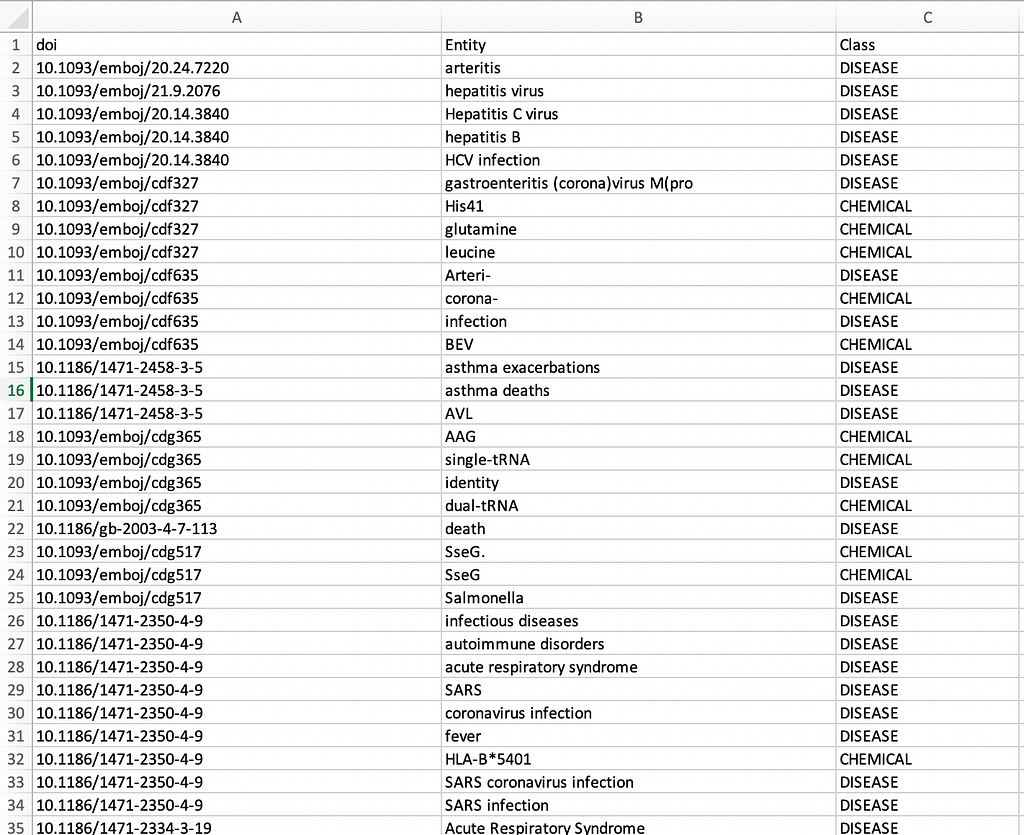

### **Named- Entity Recognition**

Now that you have your text, you can get into the fun part. Thanks to scispaCy, entity extraction is relatively easy. We will be using a Core model and a NER model to highlight the differences between the two.

**Core model:**

```

nlp = en_core_sci_sm.load()

doc = nlp(text)

displacy_image = displacy,render(doc, jupyter = True, style = ‘ent’)

```

Your output should look like this:

**NER model:**

```

nlp = en_ner_jnlpba_md.load()

doc = nlp(text)

displacy_image = displacy,render(doc, jupyter = True, style = ‘ent’)

```

_Here we used a model designed to identify entities of the type DNA, Cell Type, RNA, Protein, Cell Line_

The output should look like this:

### **Expanding to Larger Data**

Just like that, you have successfully used NER on a sample text! But, that was only one abstract of the over 60,000 in the CORD-19 metadata. What if we wanted to use NER on 100 abstracts? What about 1,000? What about all of them? Well, the process, though requiring a little more finesse, is essentially the same as before.

I highly recommend following along with the [Google Colab project](https://github.com/akash-kaul/Using-scispaCy-for-Named-Entity-Recognition) as you read this section to fully understand what we are doing.

So, the first step is the same as before. We need to read in our data.

```

meta_df = pd. read_csv(“/content/metadata.csv”)

```

_Again use the specific path to the metadata file_

Next, we load in our models. For this example, we are going to use all 4 NER models, so you’ll need to install and import them if you haven’t already. Just follow the instructions as described earlier, then load them.

```

nlp_cr = en_ner_craft_md.load()

nlp_bc = en_ner_bc5cdr_md.load()

nlp_bi = en_ner_bionlp13cg_md.load()

nlp_jn = en_ner_jnlpba_md.load()

```

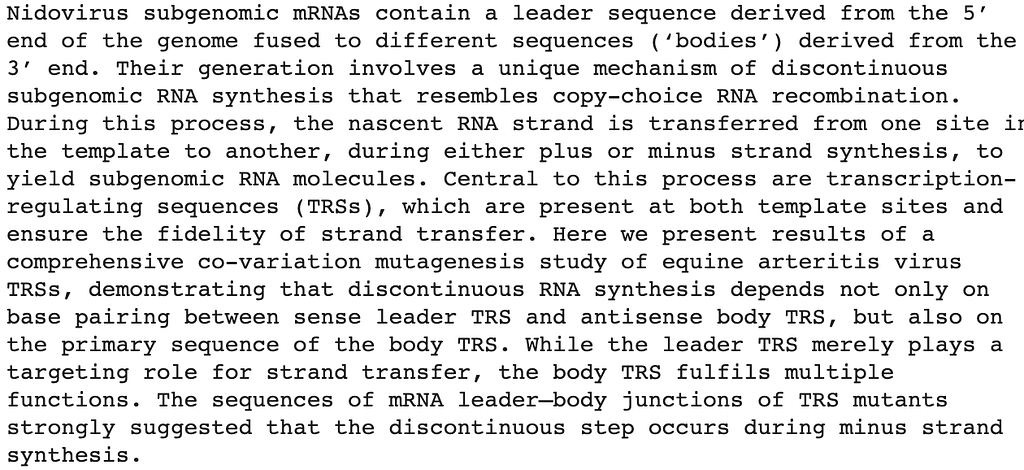

Next, we want to create an empty table that will store the entity and value pairs. The table will have 3 columns: “_doi”_, “_entity”_, and “_class”_. The table will be normalized so that the doi for every entity/class pair will be in the “_doi”_ column, even if that doi has already been listed. This is done so that there are no blank spaces in any of the columns, which helps if you want to use the data for other programs later. To create the table, you need to create a dictionary with 3 lists inside.

```

table= {“doi”:[], “Entity”:[], “Class”:[]}

```

Now is where things get a little complicated. We’ll start by looping over the entire file. To do this, we use the pandas _index_ function, which gives you the range of values (the number of rows. We then use the _itterrows()_ function to iterate over the entire file. So, your loop should look something like this.

```

meta_df.index

for index, row in meta_df.iterrows():

```

For each iteration of the loop, we want to extract the relevant abstract and doi. We also want to ignore any empty abstracts. The empty cells are stored as NaNs in Python, which have the type float.

```

text = meta_df.loc[index, “abstract”]

doi = meta_df.loc[index, “doi”]

if type(text) == float:

continue

```

_The continue statement ends the current iteration of the loop and moves on to the next one. This allows us to skip any rows with blank abstracts._

Now that we have our text, we need to use one of our models loaded earlier to extract the entities. If you view the code on Google Colab, this step is divided into several separate methods, but it can also be written without the use of any helper methods. Do note, however, that it is best to run the models one at a time, especially in Colab where reading and writing files take quite a long time. The aggregate code using one of the 4 NER models should look something like this:

```

doc = nlp_bc(text)

ent_bc = {}

for x in doc.ents:

ent_bc[x.text] = x.label_

for key in ent_bc:

table[“doi”].append(doi)

table[“Entity”].append(key)

table[“Class”].append(ent_bc[key])

```

_Remember that all of this code is inside the initial for loop_

This code might look scary, but in reality, it’s quite similar to what we’ve already practiced. We pass our text through a model, but this time instead of displaying the result using displacy, we store it in a dictionary. We then loop through the dictionary and append the results, along with the corresponding doi of the article we are looking at, to our table. We continue to do this, looping over every row in the file. Once the table is filled, and the for loop has ended, the last step is to create an output CSV file. Thanks to pandas, this is quite easy.

```

trans_df = pd.DataFrame(table)

trans_df.to_csv (“Entity.csv”, index=False)

```

You can choose any title for the output file, as long as it follows the format shown. Once your code is done running, the output file will show up in the “Files” section on Google Colab. You can then download the file and admire all of your hard work.

<figcaption>Example CSV output using the bc5cdr model(opened in Excel)</figcaption>

Alternatively, you can download all 4 output CSV files from [Gofile](https://gofile.io/d/mMh5zc).

### **Conclusion**

If you followed along with this post, Congratulations! You just made your first step in the world of scispaCy and NER for scientific documents; however, there’s so much more to explore. Within scispaCy alone, there are methods for abbreviation detection, dependency parsing, sentence detection, and much more. I hope you enjoyed learning a little about scispaCy and how it can be used for biomedical NLP, and I hope you all continue to play around, explore, and learn.

### **Resources:**

1. [https://uoa-eresearch.github.io/eresearch-cookbook/recipe/2014/11/26/python-virtual-env/](https://uoa-eresearch.github.io/eresearch-cookbook/recipe/2014/11/26/python-virtual-env/)

2. [https://github.com/akash-kaul/Using-scispaCy-for-Named-Entity-Recognition](https://github.com/akash-kaul/Using-scispaCy-for-Named-Entity-Recognition)

3. [https://pandas.pydata.org/pandas-docs/stable/getting\_started/install.html](https://pandas.pydata.org/pandas-docs/stable/getting_started/install.html)

4. [https://allenai.github.io/scispacy/](https://allenai.github.io/scispacy/)

5. [https://www.semanticscholar.org/cord19/download](https://www.semanticscholar.org/cord19/download)

6. [https://pandas.pydata.org/pandas-docs/stable/index.html](https://pandas.pydata.org/pandas-docs/stable/index.html)

* * * | akashkaul |

338,565 | Testes em Python - Parte 1: Introdução | Essa é a primeira parte da série de testes em Python. A primeira parte tem por objetivo fazer uma i... | 0 | 2020-05-19T01:22:52 | https://dev.to/womakerscode/testes-em-python-parte-1-introducao-43ei | python, testes, iniciante, womenintech |

Essa é a primeira parte da série de testes em Python.

A primeira parte tem por objetivo fazer uma introdução a testes automatizados utilizando o framework nativo do Python unnitest. Aqui, veremos alguns conceitos primordiais para escrever os primeiros casos de teste.

### Pré-requisito

- Conhecimento básico de Python

### Roteiro

1. O que são testes

2. Teste unitário (de unidade)

3. Conceitos

4. Exemplos

5. Considerações adicionais

6. Conclusão

---

## 1. O que são testes

### 1.1 Motivação

Ao escrever nossos programas, precisamos validar se o resultado que ele produz é o **correto**.

Se tratando dos primeiros programas que escrevemos, normalmente rodamos o nosso código e passamos diversos valores para verificar se o que ele retorna é o esperado. Quando isso não acontece, temos que fazer uma varredura pelo nosso código e tentar entender o que ocorreu. Pode ter sido exibido [algum erro ou exceção ao usuário](https://docs.python.org/3/tutorial/errors.html) ou o programa retornar o valor errado e não exibir erro nenhum (ocasionado por erro de lógica na maioria das vezes). Nos dois casos, podemos usar o [modo debug](https://docs.python.org/3/library/pdb.html) (que são facilitados por uma IDE¹) ou adicionar alguns *outputs* no terminal usando a função *print.* Com isso, podemos identificar o problema e corrigir nosso código para retornar o que é esperado. **Isso é um teste manual.**

> IDE¹ (Integrated Development Environment) do português ambiente integrado de desenvolvimento. É um ambiente que nos ajuda a desenvolver nossos códigos sendo possível não só utilizar como editor de texto, mas também facilitar execução, debbug e integração com outras ferramentas. Em Python, o [Pycharm](https://www.jetbrains.com/pt-br/pycharm/) é uma IDE muito utilizada e difundida.

Repare que isso se torna extremamente cansativo e está sujeito a falhas fazer validações dessa forma. Assim, não garantimos a qualidade do nosso programa de forma facilitada se mais funcionalidades forem adicionadas pois todas as vezes teríamos que executar nosso programa e verificar os cenários na mão.

### 1.2 Testes automatizados

Testes automatizados são cenários que escrevemos através de linha de código simulando os testes manuais que antes eram feitos. Poupando assim tempo e esforço nessas verificações.

Escrever testes automatizados vai muito além do que somente escrever cenários para validar que o código faça o que deveria fazer. O teste também nos ajuda a:

- Deixar o código mais limpo (ajuda na remoção de [code smell](https://www.quora.com/What-are-some-common-Python-code-smells-and-how-should-one-refactor-them))

- Garantir maior manutenção do código

- Servir como documentação: de forma visual conseguimos saber quais são os cenários esperados e os tratamentos em caso de erro olhando o arquivo de teste

- Evitar trabalho manual (um teste automatizado é muito melhor do que um teste manual com *print*)

- Evitar bug's

- Prover feedback para quem está desenvolvendo a aplicação: conseguimos saber se o programa está retornando o que é esperado mesmo alterando a lógica do programa principal.

## 2. Teste unitário

Um teste unitário é a maneira de você testar as pequenas unidades existentes em seu código. Também é chamado de teste de unidade.

Para nos ajudar com os testes, iremos utilizar o framework built-in (nativo) do Python chamado [unittest](https://docs.python.org/3/library/unittest.html).

**Exemplo 1:**

```python

from unittest import main, TestCase

def square(x):

return x ** 2

class TestSquare(TestCase):

def test_if_returns_square_of_2(self):

result = square(2)

expected = 4

self.assertEqual(result, expected)

if __name__ == '__main__':

main()

```

No exemplo acima, estamos testando uma pequena unidade do nosso código, estamos validando um cenário da função chamada **square**.

Repare que todo o contexto de teste está sendo utilizado dentro de uma [classe](https://docs.python.org/pt-br/3/tutorial/classes.html).

Mas, para entender melhor como funciona o exemplo acima, vamos primeiro entender alguns conceitos.

## 3. Conceitos

### 3.1 Preparação do teste

Nessa etapa é feita a preparação do ambiente para fazer o teste rodar. Essa preparação é chamada de **fixture** e consiste nos itens necessários para um ou mais testes serem executados.

- Exemplo: para conseguir testar uma função que lê um determinado arquivo precisamos de um arquivo no nosso ambiente de teste para ser possível fazer a validação

Pode conter o uso dos métodos [setUp() e tearDown()](https://riptutorial.com/python/example/13280/test-setup-and-teardown-within-a-unittest-testcase). Isso são ações que são executadas antes e depois, respectivamente, da execução de cada um dos cenários de teste.

### 3.2 Caso de teste

É o conjunto de cenários que queremos testar.

Em um caso de teste agrupamos todos os pequenos cenários que queremos validar de forma unitária que fazem parte do mesmo contexto.

Com o framework unittest, usamos uma classe base chamada **TestCase**.

### 3.3 Asserções

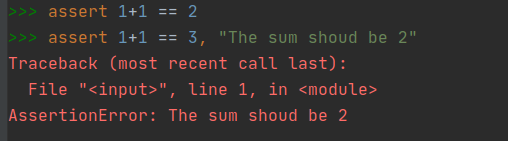

Asserções servem para validar que o cenário do seu código ocorreu como o esperado.

O **assert** é um comando built-in (nativo) do Python. E podemos usar da seguinte forma:

Repare que na primeira asserção é comparada se a soma de 1+1 é igual a 2. Como o resultado é verdadeiro, nada é exibido no console.

Já na asserção seguinte, há uma falha e um erro é levantado do tipo **AssertionError** (erro de asserção).

O framework **unittest** facilita nessas asserções que vamos precisar fazer. As asserções completas podem ser vistas em sua própria [documentação](https://docs.python.org/3/library/unittest.html#assert-methods).

### 3.4 Test Runner

Permite rodar a execução dos testes. O test runner orquestra a execução dos testes e exibe para o usuário os resultados.

Além de utilizar o [test runner do unittest](https://docs.python.org/3/library/unittest.html#command-line-interface) outros podem ser utilizados como o [pytest](https://docs.pytest.org/en/latest/usage.html).

### 3.5 Coverage

[Coverage](https://coverage.readthedocs.io/en/coverage-5.1/) remete a cobertura de testes, ou seja, o quanto o seu código está sendo testado.

Executando o coverage, conseguimos saber quais trechos de código foram testados e quais não foram.

**ATENÇÃO:** 100% de cobertura é diferente de ter todos os cenários testados! Além de testar o fluxo principal do programa também precisamos testar casos inesperados (veremos mais adiante na série "Testes em Python").

### 3.6 Mock

[Mock](https://docs.python.org/3/library/unittest.mock.html) é uma biblioteca utilizada em testes quando queremos simular um determinado comportamento. Mock em inglês é literalmente "imitar". Ele é bastante utilizado quando nosso código se comunica com elementos externos como por exemplo: conexão com o banco de dados e chamadas HTTP.

Se não for utilizado o mock, no momento que executarmos nosso código chamadas reais irão acontecer para a rede ou banco de dados, por exemplo (veremos mais adiante na série "Testes em Python").

## 4. Exemplos

Cat writing: show me the code!

### 4.1 Vamos entender o funcionamento do Exemplo 1

```python

from unittest import main, TestCase

def square(x):

return x ** 2

class TestSquare(TestCase):

def test_if_returns_square_of_2(self):

result = square(2)

expected = 4

self.assertEqual(result, expected)

if __name__ == '__main__':

main()

```

Na primeira linha importamos os itens necessários do framework unittest.

`main()` ⇒ chamamos o **Test Runner** da biblioteca para ao rodar nosso código Python (python meu_arquivo.py).

`TestCase` ⇒ fornece a estrutura necessária para montar o caso de teste.

```python

from unittest import main, TestCase

```

O próximo bloco se refere ao nosso código com o trecho que queremos validar

```python

def square(x):

return x ** 2

```

Criamos uma classe de teste herdando o `TestCase` do unittest e seus métodos são os **casos de teste**.

Repare que dentro da classe escrevemos de modo descritivo o cenário que está sendo testado (estamos testando se o nosso código retorna o quadrado de dois). Depois fazemos uma **asserção** usando `assertEqual` comparando se a chamada da função **square** retorna o esperado, que é 4.

```python

class TestSquare(TestCase):

def test_if_returns_square_of_2(self):

result = square(2)

expected = 4

self.assertEqual(result, expected)

```

**Nota:** Repare que o nome da classe e o cenário começam com a palavra "test". O nome "test" é obrigatório ser iniciado para as funções de teste, mas opcionais para a classe (no entanto é recomendado por questões de clareza).

Por fim, o último trecho chama o **main()** do unittest quando o arquivo python for executado.

```python

if __name__ == '__main__':

main()

```

Para rodar o exemplo basta rodar como um arquivo python normalmente (no meu caso eu salvei em um arquivo my_first_test.py)

```bash

python my_first_test.py

```

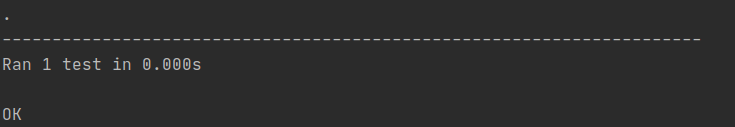

No console será exibido que 1 teste foi executado e ele está com o status OK, ou seja, o teste passou.

Vamos adicionar outro cenário de teste para simular um erro

```python

from unittest import main, TestCase

def square(x):

return x ** 2

class TestSquare(TestCase):

def test_if_returns_square_of_2(self):

result = square(2)

expected = 4

self.assertEqual(result, expected)

def test_if_returns_square_of_4(self):

result = square(4)

expected = 4

self.assertEqual(result, expected)

if __name__ == '__main__':

main()

```

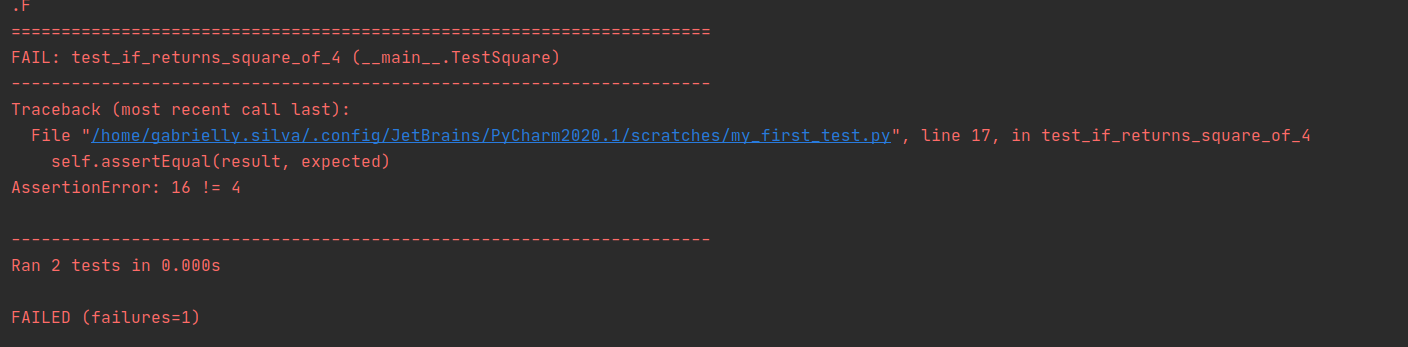

Executando novamente o código acima temos o seguinte retorno:

Repare que adicionando o cenário **test_if_returns_square_of_4** executamos no total 2 testes e justamente esse cenário falhou. Isso por que chamando a função **square** ela retorna 16 e estamos tentando validar que o esperado a ser retornado é 4.

Corrigindo o nosso código, ele deveria ficar da seguinte forma:

```python

from unittest import main, TestCase

def square(x):

return x ** 2

class TestSquare(TestCase):

def test_if_returns_square_of_2(self):

result = square(2)

expected = 4

self.assertEqual(result, expected)

def test_if_returns_square_of_4(self):

result = square(4)

expected = 16

self.assertEqual(result, expected)

if __name__ == '__main__':

main()

```

**Observação:** Os testes nos ajudam a identificar problemas no nosso código principal, mas o próprio cenário de teste também está suscetível a erros. Devemos sempre validar se o teste está bem escrito e se realmente testando o cenário esperado.

**Observação 2:** Note que existe outra forma de calcular o quadrado de um número. No exemplo acima calculamos como `x*x` mas perceba que se mudarmos a forma que é calculada para `x ** 2` o resultado retornado é o mesmo e isso não afeta os nossos testes. Se executarmos novamente veremos que os cenários continuarão passando.

Um teste não deve enviesar a forma como é implementado o código. Por isso se efetuarmos refatorações² no código, rodando os testes conseguimos garantir se o comportamento é o mesmo retornado pelo código anterior.

> Refatoração² é o efeito de você mudar a implementação do código sem afetar o seu retorno (comportamento externo). A implementação é mudada de forma que o código fique mais organizado, mais limpo e melhor estruturado.

**Nota:** É uma boa prática separar o código principal do código dos testes, veremos isso no próximo exemplo.

### 4.2 Detecção de divisão

O exemplo abaixo mostra uma função que detecta uma divisão. Se a divisão for possível deverá retornar **verdadeiro**, se não deverá retornar **falso**.

Essa função está armazenada dentro de um arquivo chamado **division_detect.py**

```python

def division_detect(numerator: int, denominator: int) -> bool:

if numerator / denominator:

return True

return False

```

*Código 4.2.1*

Vamos escrever um cenário de testes para essa função. Para isso, vamos criar um arquivo chamado **test_division_detect.py**

**Nota:** Apesar dos testes rodarem independente do nome do arquivo, é uma boa prática escrever o arquivo de teste começando com a palavra **"test"** assim como nossa classe.

O arquivo de teste possui o seguinte esqueleto:

```python

from unittest import TestCase

class TestDivisionDetect(TestCase):

def test_it_returns_true_if_division_by_number_is_successful(self):

pass

```

*Código 4.2.2*

Para escrever esse cenário de teste, queremos validar se a nossa função `division_detect` retorna `true` se a divisão for bem sucedida.

A questão é: qual número vamos usar para fazer esse teste? Poderíamos escolher dois números aleatórios como 10 para o numerador e 2 para o denominador. Importando a função e comparando se o resultado retornado é verdadeiro com a asserção `assertTrue` temos o seguinte cenário de teste:

```python

from unittest import TestCase

from division_detect import division_detect

class TestDivisionDetect(TestCase):

def test_it_returns_true_if_division_by_number_is_successful(self):

result = division_detect(

numerator=10, denominator=2

)

self.assertTrue(result)

```

*Código 4.2.3*

No teste acima, vamos validar se a divisão de `10/2` é considerada válida.

Vamos rodar o arquivo de teste com o comando abaixo:

```bash

python -m unittest test_division_detect.py

```

Como sabemos, o resultado da operação é 5 e `bool(5)` é verdadeiro

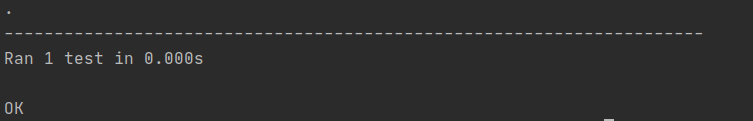

Veremos que um teste rodou e o resultado foi **OK** (o cenário de teste passou)

Mas, o que aconteceria se a gente mudasse o código principal no arquivo **division_detect.py** para esse código:

```python

def division_detect(numerator: int, denominator: int) -> bool:

if numerator == 10:

return True

return False

```

*Código 4.2.4*

Claro que aqui estamos forçando um pouco a barra e alterando completamente a lógica do código principal, mas se os testes forem executados novamente o teste vai passar.

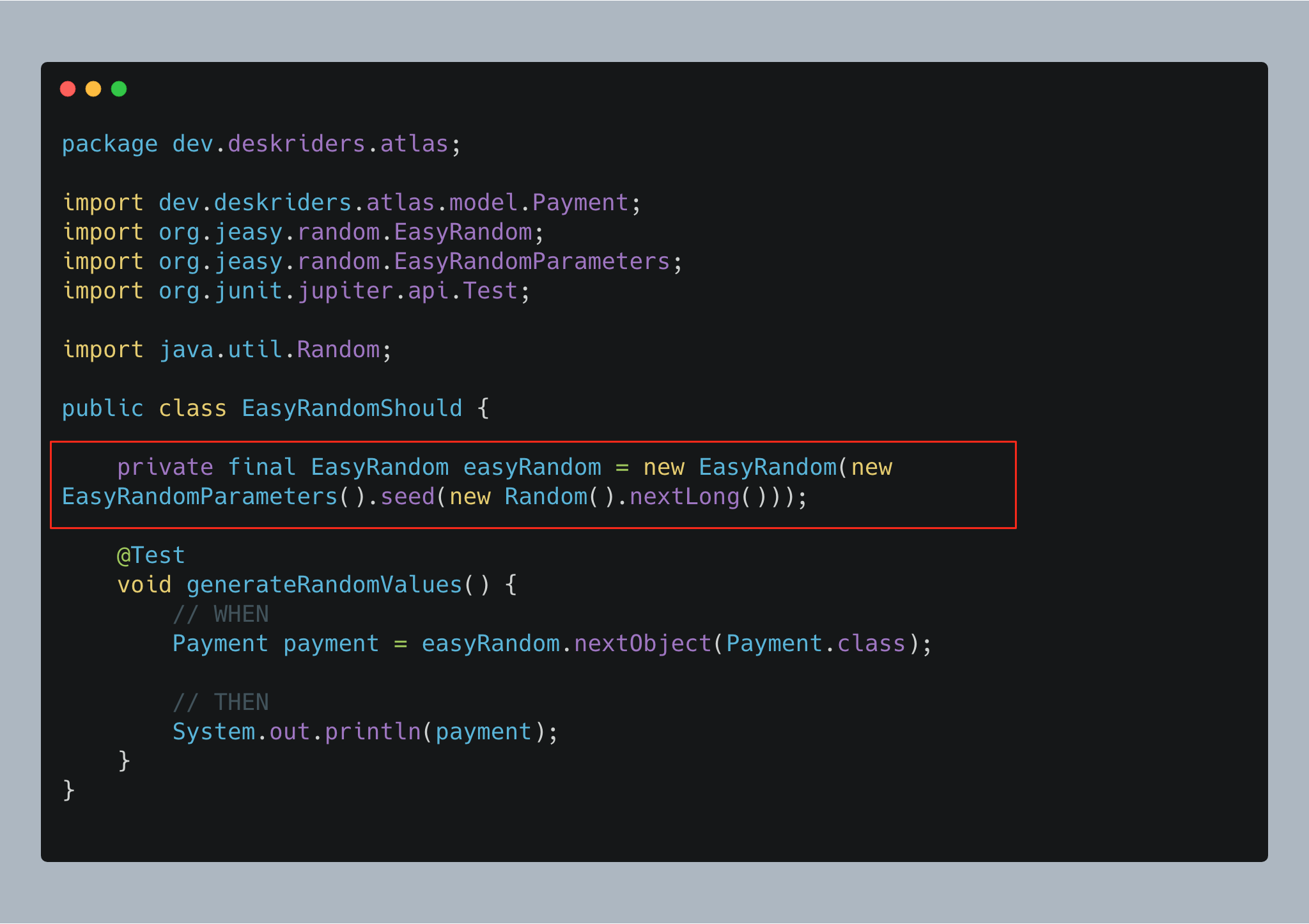

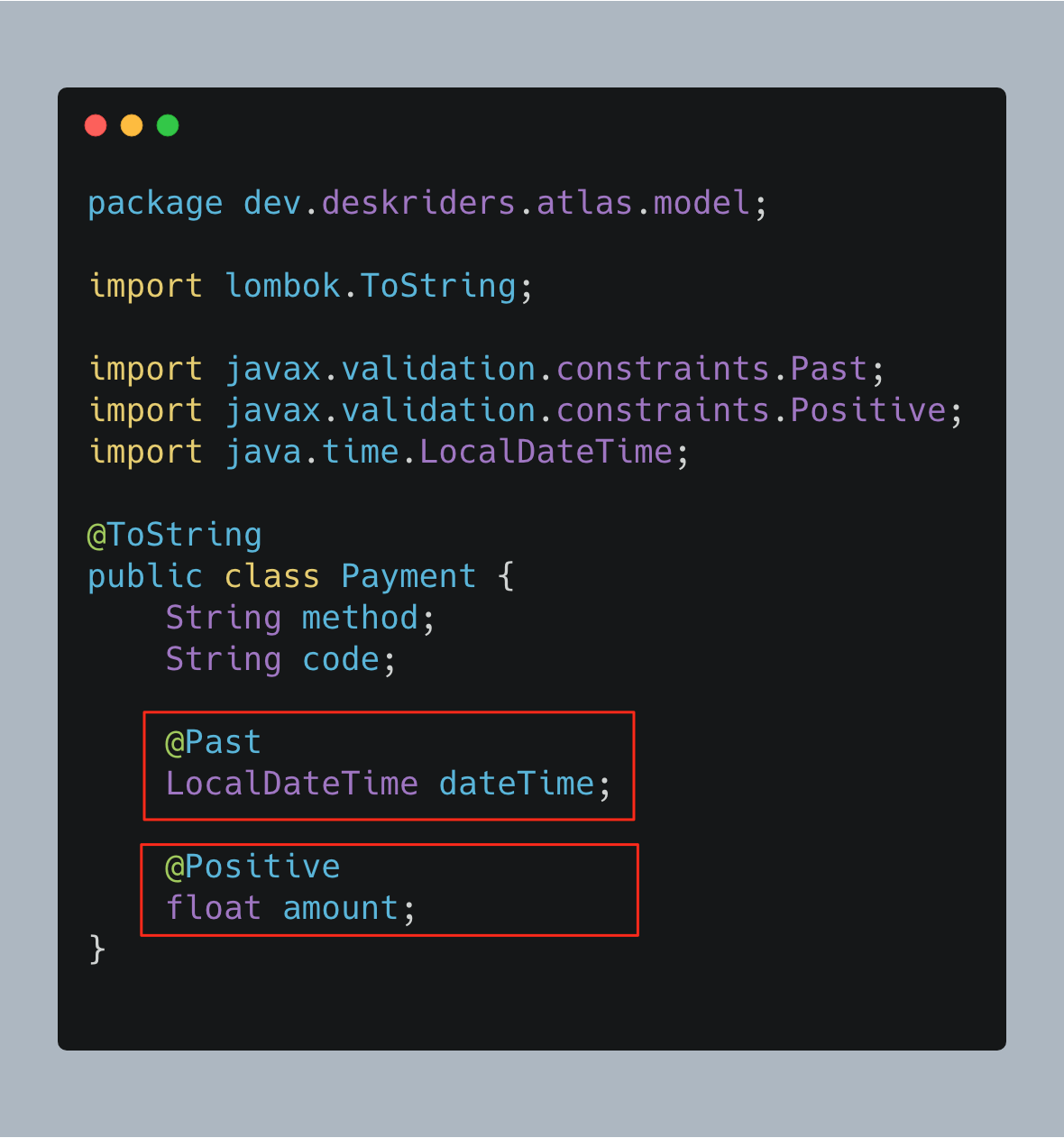

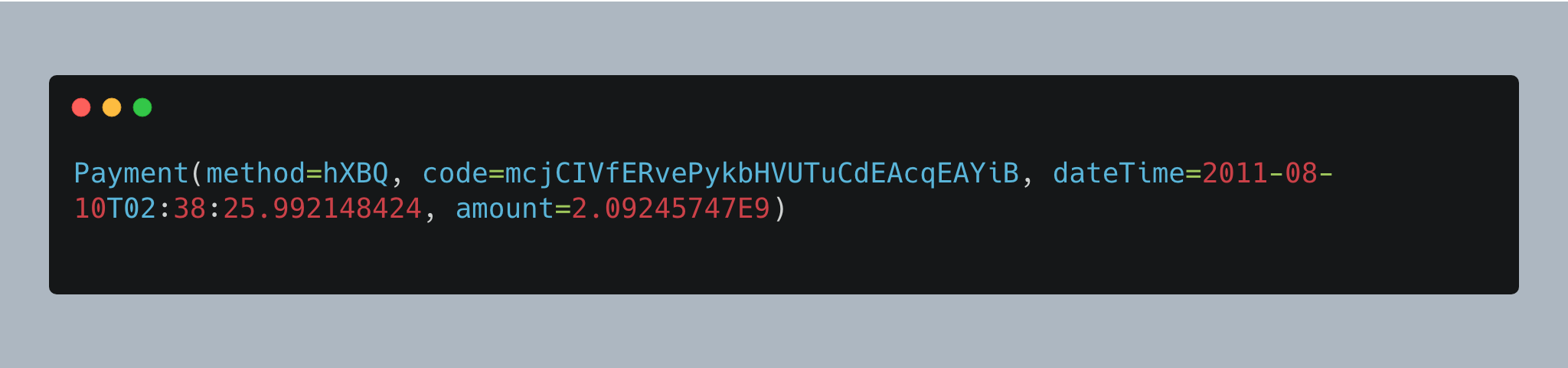

Nesse caso o teste passou mas isso não é garantia que o cenário está sendo validado de fato. Para garantir que a divisão está sendo feita vamos gerar números aleatórios tanto para o numerador quanto para o denominador. Para isso vamos usar a função do python `randint`

```python

from random import randint

from unittest import TestCase

from division_detect import division_detect

class TestDivisionDetect(TestCase):

def test_it_returns_true_if_division_by_number_is_successful(self):

result = division_detect(

numerator=randint(0, 100000), denominator=randint(0, 100000)

)

self.assertTrue(result)

```

*Código 4.2.5*

Repare que tanto para o numerador como para o denominador está sendo gerado números aleatórios entre 0 e 100000 e se executarmos novamente o arquivo **division_detect.py** que foi escrito no **Código 4.2.4** vamos ver que o código não vai passar. Vamos voltar o código para que fique o mesmo do **Código 4.2.1**.

Olhando novamente para o cenário de teste, o que aconteceria se o denominador sorteado fosse 0?

É, aqui temos um problema. A divisão de qualquer número por zero é **indefinida**!*

> *[Divisão por zero é uma operação que tende ao infinito e portanto é dada como indefinida.](https://pt.khanacademy.org/math/algebra/introduction-to-algebra/division-by-zero/v/why-dividing-by-zero-is-undefined)

Vamos adicionar um novo cenário de teste para validar isso, dessa vez vamos forçar que o denominador seja zero.

```python

from random import randint

from unittest import TestCase

from division_detect import division_detect

class TestDivisionDetect(TestCase):

def test_it_returns_true_if_division_by_number_is_successful(self):

result = division_detect(

numerator=randint(0, 100000), denominator=randint(0, 100000)

)

self.assertTrue(result)

def test_it_returns_false_if_division_by_number_is_not_possible(self):

result = division_detect(numerator=randint(0, 100000), denominator=0)

self.assertFalse(result)

```

*Código 4.2.6*

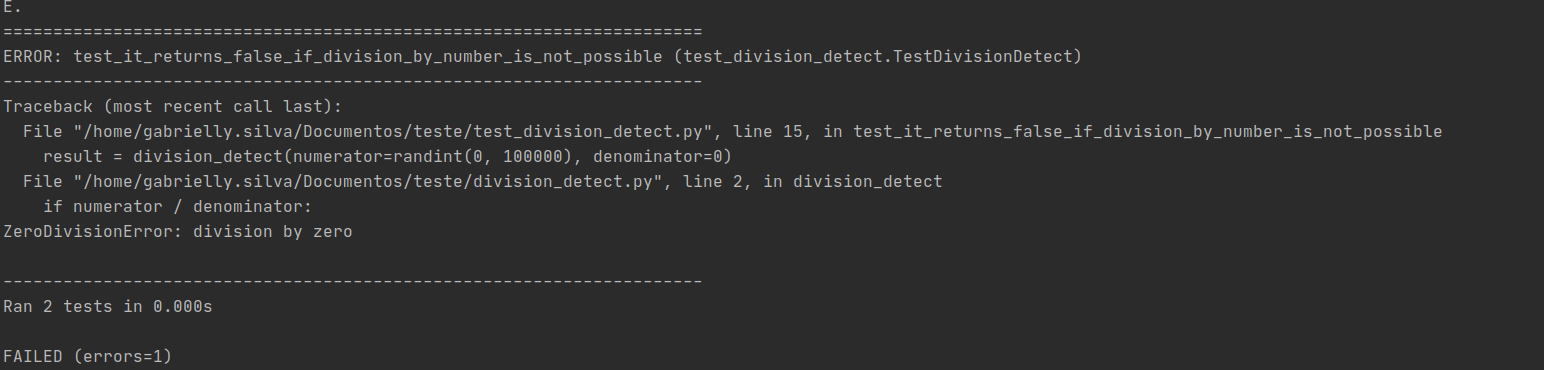

Rodando novamente esse arquivo de testes, vamos receber o seguinte retorno

Repare que dois testes foram executados, o que é correto pois escrevemos dois cenários de teste. Mas um dos testes, o `test_it_returns_false_if_division_by_number_is_not_possible`, falhou. Isso pois aconteceu um erro chamado `ZeroDivisionError` (https://docs.python.org/3/library/exceptions.html#ZeroDivisionError).

Olhando nosso código principal, vemos que não está sendo feito nenhum tipo de tratamento para esse erro. Vamos refatorar então o nosso código para lidar com esse erro, para isso vamos usar o [statement try/except do Python](https://docs.python.org/3/tutorial/errors.html#handling-exceptions).

```python

def division_detect(numerator: int, denominator: int) -> bool:

try:

numerator / denominator

except ZeroDivisionError:

return False

else:

return True

```

*Código 4.2.7*

No código acima primeiro tentamos fazer a divisão entre os dois parâmetros recebidos, se a operação for bem sucedida irá retornar `True`, mas se acontecer uma exceção do tipo `ZeroDivisionError` irá retornar `Falso`.

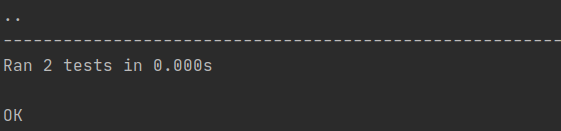

Rodando novamente os testes com `python -m unittest test_division_detect.py` vamos ver que os dois cenários passaram.

**Nota:** Repare que nosso código original não estava preparado para uma divisão por zero. Isso é chamado de corner case, ou seja, são cenários que podem acontecer fora do padrão esperado. Para tratar esse cenário tivemos que adicionar um tratamento com as exceções do Python.

No arquivo de teste, para os dois cenários que estamos testando, estamos repetindo `randint(0, 100000)` . Como queremos pegar sempre um numerador aleatório para todos os testes, podemos utilizar o `setUp()`(https://docs.python.org/3/library/unittest.html#unittest.TestCase.setUp) que é uma inicialização que é executada sempre no início de cada cenário de teste.

Por fim, chegaremos ao código abaixo:

```python

from random import randint

from unittest import TestCase

from division_detect import division_detect

class TestDivisionDetect(TestCase):

def setUp(self) -> None:

self.random_numerator = randint(0, 100000)

def test_it_returns_true_if_division_by_number_is_successful(self):

result = division_detect(

numerator=self.random_numerator, denominator=randint(1, 100000)

)

self.assertTrue(result)

def test_it_returns_false_if_division_by_number_is_not_possible(self):

result = division_detect(numerator=self.random_numerator, denominator=0)

self.assertFalse(result)

```

Repare que o denominador no primeiro cenário sorteia um número entre 1 e 100000, pois estamos querendo validar um cenário onde a divisão é bem sucedida.

## 5. Considerações adicionais

Aqui, listo alguns pontos que devem ser levados em consideração no momento de programar e testar seus códigos:

- Divida para conquistar: Mantenha a estrutura de arquivos organizadas. Se um projeto é grande, é sempre uma boa prática dividir em arquivos menores (isso facilita a manutenção e legibilidade)

- Um teste também deve ser limpo igual ao código principal (Clean Code - Robert C. Martin)

- Use nomes descritivos para as funções de teste, mesmo que seja um nome muito longo

- Pense em corner cases (cenários fora do padrão esperado), igual fizemos na divisão por zero no exemplo 2

- Um teste não deve engessar a implementação do seu código, igual vimos no exemplo 1 (o quadrado de um número pode ser feito de duas formas)

- Escrever em pequenas unidades ajudam a testar o código e a melhorar sua clareza

- Refatore: Sempre que puder melhorar seu código melhore! (Lema de escoteiro)

De acordo com a [PEP20](https://www.python.org/dev/peps/pep-0020/#id3)³:

> Errors should never pass silently

PEP³ (do inglês (Python Enhancement Proposals) se refere a propostas de como utilizar o Python da melhor forma: https://www.python.org/dev/peps/

## 6. Conclusão

Testes são uma maneira de garantir que o seu programa **retorna o** **resultado esperado.** Além disso, garante maior qualidade no produto que está sendo entregue. Os testes também ajudam que a equipe de desenvolvedores entendam os cenários que acontecem na aplicação e ajudam a identificar cenários fora do padrão. Testando nosso código, conseguimos encontrar também maneiras de deixar nosso código mais limpo e conciso, facilitando assim na manutenção futura e a evitar bugs que possam ocorrer. É melhor que um teste pegue o erro do que o cliente usando o seu produto :)

Essa foi a primeira série de Testes em Python, espero que tenha ficado claro o entendimento dessa introdução e em breve teremos mais publicações sobre esse assunto. Dúvidas podem ser colocadas no comentário e lembre-se que testar faz parte do processo de escrever um bom código, segundo o programador Pete Goodliffe

> "Um bom código não surge do nada. [...] Para ter um bom código é preciso trabalhar nele. Arduamente. E você só terá um código bom se realmente se importar com códigos bons."

Como Ser Um Programador Melhor: um Manual Para Programadores que se Importam com Código (Pete Goodliffe)

| gabriellydeandrade |

338,601 | blah | A post by Tod Sacerdoti | 0 | 2020-05-19T03:16:05 | https://dev.to/tod/this-article-updates-itself-44ao | tod | ||

344,580 | Contact Tracing and Exposure Notification | When Google and Apple announced in April that they would be working together on a contract tracing AP... | 0 | 2020-05-27T00:58:36 | http://www.thagomizer.com/blog/2020/05/26/contact-tracing-and-exposure-notification.html | covid19 | ---

title: Contact Tracing and Exposure Notification

published: true

date: 2020-05-27 00:17:45 UTC

tags: COVID-19

canonical_url: http://www.thagomizer.com/blog/2020/05/26/contact-tracing-and-exposure-notification.html

---

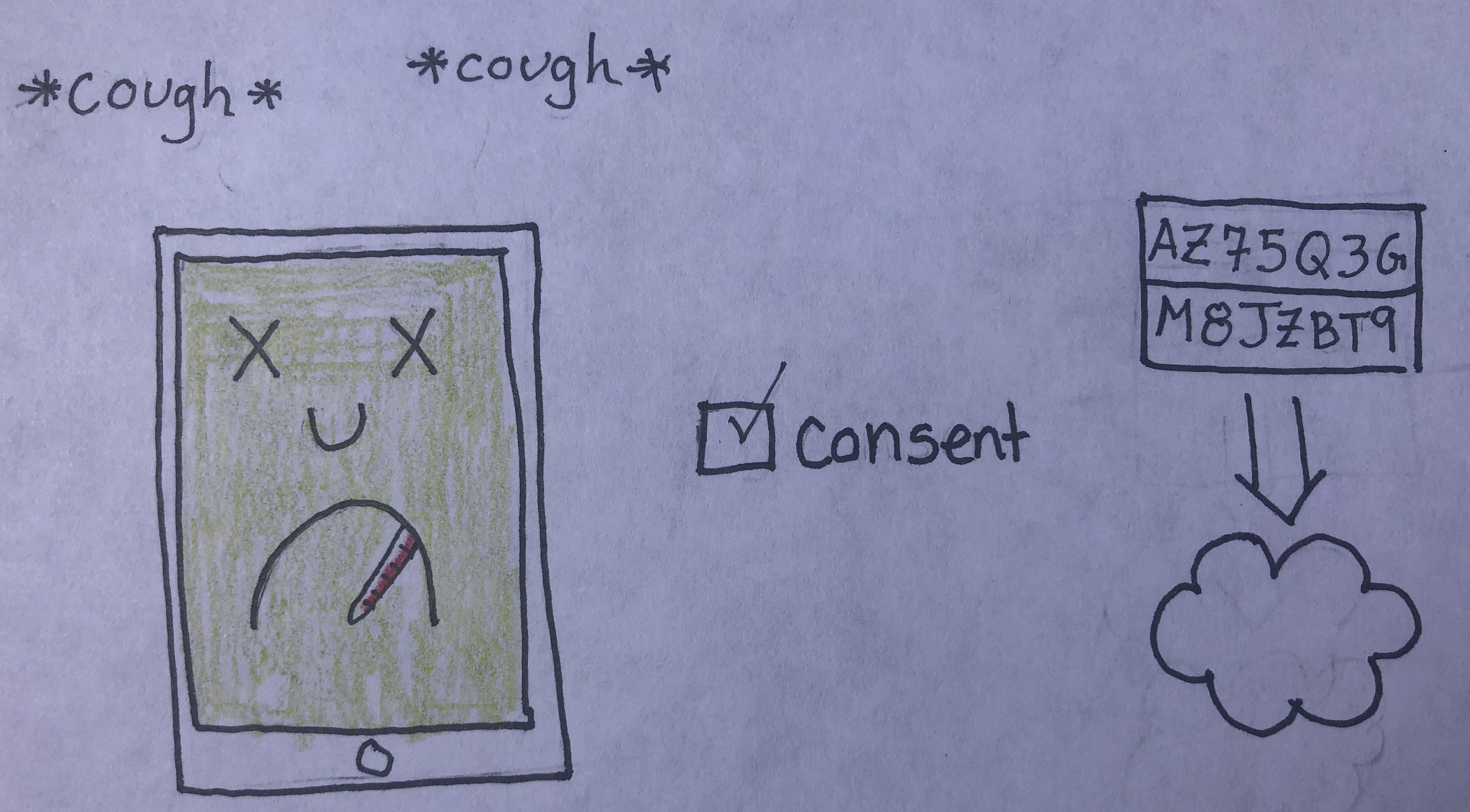

When Google and Apple announced in April that they would be working together on a contract tracing API, a lot of people got concerned about privacy. Today, I’m going to try to explain how these apps work so that people can make an informed decision about the technology. Personally, if an app using this technology becomes available for my area, I’ll install it.

## Dispelling Misconceptions

First, Apple and Google aren’t making apps. Instead, they are working together to build core technology to make it easier for public health authorities to build apps for their local area. By working together, they can ensure that everyone can get important health information no matter what phone they have.

Second, the Google and Apple joint effort doesn’t use location data[1]. Some COVID related health apps use [location data](https://9to5mac.com/2020/05/13/utah-dismisses-covid-19-exposure-api-from-apple-and-google-opts-for-location-based-solution/), but this project does not. To understand why location data via GPS isn’t ideal, think about how often the GPS in your phone is off by a block. Also, location data can’t differentiate between someone on the 1st floor of a building and the 15th floor of a skyscraper. That difference could be relevant for COVID-19 exposure.

The Apple and Google effort uses Bluetooth. Bluetooth works well over the distances health experts say are relevant when determining COVID-19 exposure. Bluetooth also works in cell phone and GPS dead zones. Like subway stations, basements, parking garages, and offices and houses like mine that don’t have a strong cell or GPS signal. Also, the signal strength of the Bluetooth connection can be used to approximate the distance between two phones to determine if they are close enough that COVID transmission is likely.

Finally, you must actively consent to have your data shared. By default, all the data stays on your device. Data is only shared if you get sick and you tell the app to share your data, which it does anonymously.

If you are curious, here is [Google’s Explanation](https://blog.google/documents/66/Overview_of_COVID-19_Contact_Tracing_Using_BLE_1.pdf) of the API and [Apple’s FAQ](https://covid19-static.cdn-apple.com/applications/covid19/current/static/contact-tracing/pdf/ExposureNotification-FAQv1.1.pdf).

## So How Does It Work?

So if these apps don’t use location data, how do they work?

First, you have to install an app from your local health department. Your phone won’t get the exposure notification application automatically.

Once you install the app, your phone starts broadcasting a random code via Bluetooth to anyone nearby who also has the app installed. This code changes a couple of times an hour as an additional privacy measure. Your phone keeps track of which codes it has broadcast.

Your phone is also listening for any other phones nearby that are broadcasting codes. It records all the codes it “hears.”

Once a day or so, the app on your phone contacts the cloud. It downloads a list of these random codes that were broadcast by the phones of people diagnosed with COVID-19. It compares that list to the list of codes it has “heard.” If there’s a match, the app shows you an alert saying you may have been exposed to COVID-19 and gives you instructions about how to proceed.

If you get diagnosed with COVID-19, you can open up the app and volunteer to share all the codes your phone has broadcast for the last 14 days. If you volunteer this info, the list of codes gets uploaded to servers in the cloud. None of your personally identifying information is shared, just the codes.

## Summary

To summarize the essential points:

- The exposure notification software Google and Apple are cooperating on does not use location data.

- No personally-identifying information is shared.

- No data leaves your phone without your consent.

- You choose whether or not to share your COVID-19 diagnosis, and if you do, it’s done so anonymously.

The main downside I see to these apps is that they need as many people as possible to install them to be effective. My goal for this blog post is to explain the technology so that people can make an informed decision about whether to install the app for their region. I’ll be installing it, and I hope many others do as well.

* * *

[1] The [terms of service](https://blog.google/documents/72/Exposure_Notifications_Service_Additional_Terms.pdf) for the Exposure Notification API explicitly prevent apps from using location data. Apps also must be endorsed by a government health authority. Apps will not be available in the relevant store if they don’t meet terms of service. | thagomizer |

344,653 | Timer - tjctf | This one is a cool blacklist bypass. It's pretty simple. You are presented a text interface asking yo... | 0 | 2020-05-27T03:48:40 | https://cheuksblog.ca/writeup/2020/05/26/timed-tjctf.html | security, ctf | This one is a cool blacklist bypass. It's pretty simple. You are presented a text interface asking you to enter a python command.

```console

Type a command to time it!

```

Trying some simple functions seem to work fine, but....

```console

Type a command to time it!

print(1)

Runtime: 1.09672546387e-05

Type a command to time it!

import os

Hey, no hacking!

```

...there seems to be a blacklist of characters that get screened before the command is run. We must obfuscate it somehow. By digging around, we can see exactly what is in the blacklist and what isn't in the blacklist. Most peculiarly, `timeit` is **NOT** in the blacklist.

> Sidenote: it is hinted that `timeit` could be a possibility because it is visible in the source whenever we provide invalid python code for the program to execute.

```console

Type a command to time it!

arst

Traceback (most recent call last):

File "/timed.py", line 36, in <module>

time1=t.timeit(1)

File "/usr/lib/python2.7/timeit.py", line 202, in timeit

timing = self.inner(it, self.timer)

File "<timeit-src>", line 6, in inner

arst

NameError: global name 'arst' is not defined

Runtime: 0

Type a command to time it!

12.,,

Traceback (most recent call last):

File "/timed.py", line 31, in <module>

t=timeit.Timer(res)

File "/usr/lib/python2.7/timeit.py", line 129, in __init__

compile(setup + '\n' + stmt, dummy_src_name, "exec")

File "<timeit-src>", line 2

12.,,

^

SyntaxError: invalid syntax

```

We can do something like the following in order to trick the program into executing blacklisted commands:

```python

# We want to execute the following python

want_exec = 'import pty;pty.spawn("/bin/bash")'

# So we obfuscate it a bit

obfuscated = [ord(x) for x in want_exec]

# and we can just reverse it using a join-map

assert want_exec == ''.join(map(chr, obfuscated))

# Payload:

timeit(''.join(map(chr, [105, ...])))

```

By pasting the payload into the program, we get a shell:

```console

Type a command to time it!

timeit(''.join(map(chr, [105, ...])))

bash: /root/.bashrc: Permission denied

nobody@c51f99923c23:/$ ls

ls

bin dev flag.txt lib media opt root sbin sys tmp var

boot etc home lib64 mnt proc run srv timed.py usr

nobody@c51f99923c23:/$ cat flag.txt

cat flag.txt

tjctf{iTs_T1m3_f0r_a_flaggg}

```

| csys |

344,658 | Makefile Application Presets | We saw in the last post how to use Makefile wildcards to write targets like this: migrate-to-%:... | 0 | 2020-05-27T04:08:23 | https://www.robg3d.com/2020/05/makefile-application-presets/ | make, programming, cli | ---

title: Makefile Application Presets

published: true

date: 2020-05-26 13:30:21 UTC

tags: make, programming, cli

canonical_url: https://www.robg3d.com/2020/05/makefile-application-presets/

---

We saw in the [last post](https://lithic.tech/blog/2020-05/makefile-wildcards) how to use Makefile wildcards to write targets like this:

```

migrate-to-%:

@bundle exec rake migrate[$(*)]

guard-%:

@if [-z '${${*}}']; then echo 'ERROR: variable $* not set' && exit 1; fi

logs: guard-STACK

@awslogs get -w /ecs/$(STACK)_MyService

```

So that we can build CLIs like this:

```

$ make migrate-to-50

Migrating to version 50...

$ make logs

ERROR: variable STACK not set

$ STACK=qa make logs

...

```

(Note the `@` prefix on commands in the Makefile, it avoids the line being echoed to stdout)

This is neat but it only works well for user-supplied values, like `"50"`.There are cases where we want the user to supply an **argument** , but not the **value**.Say, for example, users want to specify ‘production’ to ‘staging’but we don’t want them to remember the URL to the server.

We can use wildcards to dynamically select a Make variable:

```

staging_url:=https://staging-api.lithic.tech

production_url:=https://api.lithic.tech

ping-%:

curl "$($(*)_url)"

```

And we can use it as so:

```

$ make ping-staging

curl "https://staging-api.lithic.tech"

```

Okay, this example isn’t incredibly useful.But for some clients, we have multiple deployed versions of the same application,and we can use these variables to avoid having to remember where applications are deployed.

For example, let’s say we have 3 versions of a codebase deployed in Heroku: one staging and two production apps.In the Make snippet below, each `_app` variable refers to the name of a Heroku app.We can use that app name to get the database string using the Heroku CLI,and pass that to `psql` (Postgres CLI).

```

staging_app:=lithic-api-staging

production-pdx_app:=lithic-api-production

production-nyc_app:=lithic-api-production-nyc

psql-%:

psql `heroku config:get DATABASE_URL --app=$($(*)_app)`

```

Now to connect to staging, it’s as simple as:

```

$ make psql-staging

```

If we use Heroku’s Review Apps, we should also support an environment-variable version of these sorts of commands,since the app names are ephemeral. Instead of a wildcard, we’ll require the `APP` environment variable is set:

```

psql-app: guard-APP

psql `heroku config:get DATABASE_URL --app=${APP}`

```

## Putting It All Together

The example above has a couple small error cases that may confuse users:if `psql` or `heroku` are not on the `PATH`,the command will error with a sort of cryptic error:

```

$ make psql-staging

psql `heroku config:get DATABASE_URL --app=lithic-api-staging`

/bin/sh: heroku: command not found

could not connect to server: No such file or directory

```

Yuck! Can we use something like our lovely `guard-%` target to declare our executable dependencies?

**_You bet we can!_**

```

cmd-exists-%:

@hash $(*) > /dev/null 2>&1 || \

(echo "ERROR: '$(*)' must be installed and available on your PATH."; exit 1)

guard-%:

@if [-z '${${*}}']; then echo 'ERROR: environment variable $* not set' && exit 1; fi

psql-%: cmd-exists-heroku cmd-exists-psql

psql `heroku config:get DATABASE_URL --app=$($(*)_app)`

psql-app: guard-APP cmd-exists-heroku cmd-exists-psql

psql `heroku config:get DATABASE_URL --app=${APP}`

```

Now if you’re missing `heroku` or `psql`, you get a nice message:

```

$ make psql-staging

ERROR: 'heroku' must be installed and available on your PATH.

```

Who knew Make could be so _fun_ to use?

## Not done with Make yet

We’ll have one more blog post involving Make next week, along with a dump of a bunch of useful Makefile helpers.Stay tuned!

_This was originally posted on my consultancy’s blog, at [https://lithic.tech/blog/2020-05/makefile-apps](https://lithic.tech/blog/2020-05/makefile-apps). If you have any questions, please leave a comment here!_ | robgalanakis |

344,665 | Visualizing the power of CSS Filters using Picturesque.css | Hey folks! How you doing? I was quite busy recently focusing all my efforts into making this app wor... | 0 | 2020-05-27T05:16:53 | https://dev.to/thebuildguy/visualizing-the-power-of-css-filters-using-picturesque-css-o2 | showdev, css, javascript, codenewbie | Hey folks! How you doing?

I was quite busy recently focusing all my efforts into making this app work! I know the name sounds terrific, but that's actually what the app is all about.

{% twitter 1265332914125930497 %}

# 🤷♂️ What is Picturesque.css?

Picturesque is an online tool that provides a convenient interface to visualize your images with CSS filter effects and get the corresponding CSS styles after the filters have been applied. And that's it.

## 💁♂️ What it's built using?

Well, it's pretty much a beginner's stack, as I wanted to use what I learned, to build something from scratch. Yes, it's good old **HTML, CSS (Sass) and JS (jQuery)**.

## 🙌 Who does it help?

Almost about anyone, starting to learn the powers of CSS Filters and also people who used to spend a lot of time earlier getting the right %'s of `brightness` or `blur` to their images!

## 🤘 How can I access it?

It's proudly hosted on amazing **[Vercel](https://vercel.com/).** You can watch the live site, **[Picturesque](https://picturesque-css.now.sh/)**.

## ⭐ Is it Open Source?

Hell yeah, it's at the heart of every software. Here's a link to its source code, at GitHub. Make sure to give it a ⭐ if its something you liked!

{% github heytulsiprasad/picturesque.css %}

# 🎉 Contribute

If you are having any dope ideas to improve this *(even as little as changing the font-face)*, don't think twice, the repo is very **beginner-friendly. (**I'm too **😅)** Go ahead, create an issue, and start working or let me know at Twitter [@heytulsiprasad](https://twitter.com/heytulsiprasad). | thebuildguy |

344,727 | [Question] Dev API podcast URL | Hi everyone, Just a quick question regarding the DEV API. Is there any method to get the URL of a po... | 0 | 2020-05-27T07:53:30 | https://dev.to/cristianionut/question-dev-api-podcast-url-2g50 | Hi everyone,

Just a quick question regarding the DEV API.

Is there any method to get the URL of a podcast?

Based on the docs you can get the relative path of an episode, but I would also like to get the URL of the stream.

This is the model of a podcast episode that I can get right now from https://dev.to/api/podcast_episodes:

```json

{

"type_of": "podcast_episodes",

"id": 13894,

"path": "/codenewbie/s11-e7-why-site-reliability-is-so-important-molly-struve",

"image_url": "https://dev-to-uploads.s3.amazonaws.com/uploads/podcast/image/2/9f50a462-9152-429a-b15e-d024baaa8e01.png",

"title": "S11:E7 - Why site reliability is so important (Molly Struve)",

"podcast": {

"title": "CodeNewbie",

"slug": "codenewbie",

"image_url": "https://dev-to-uploads.s3.amazonaws.com/uploads/podcast/image/2/9f50a462-9152-429a-b15e-d024baaa8e01.png"

}

}

``` | cristianionut | |

344,845 | Top 6 Unique Reasons Why Need To Hire Android App Developer | Biggest and major companies in mobile industry and technology – Apple and Google are continuously int... | 0 | 2020-05-27T13:06:26 | https://dev.to/dannyroot/top-6-unique-reasons-why-need-to-hire-android-app-developer-d75 | Hokuapps | <p style="text-align: justify;">Biggest and major companies in mobile industry and technology – Apple and Google are continuously introducing new innovations and advancements to dominate the mobile industry. In this era, mobile application development (Android and iPhone apps) are emerging through different sectors especially in most businesses, brands, and other larger industry. Both of these major mobile platforms are competing with each other but only shares one goal – to contribute a more futuristic approach to every task of human life.</p>

<p style="text-align: justify;">According to data gathered from statistic reports, Android users are continuously increasing and contributing to the market for about 80% at a much higher rate compared to iOS which is only 17%. So, if you’re business doesn’t have an Android application yet, then now is time to consider hiring Android app developers.</p>

<p style="text-align: justify;">In this article, let’s tackle the top reasons that will prove how important and beneficial it is to hire android app programmers from an Android app development company.</p>

<p style="text-align: justify;">Let’s get started.</p>

<p style="text-align: justify;"><strong>#1: Customer convenience</strong></p>

<p style="text-align: justify;">It is true that having a mobile application for your business can contribute its overall success and increase in profitability. The fact that many people use their smartphones is to fulfill their general needs in terms of transactions and communications. With these benefits such as online shopping, location finding using Google Maps, or just searching solutions or knowledge, the need for Android OS devices is also increasing.</p>

<p style="text-align: justify;">Android application is a great way to communicate with other people for a different location, sharing contents such as images, videos, or documents, and so much more. So, if you’re planning to create successful Android applications, you should consider hiring an experienced Android app developer.</p>

<p style="text-align: justify;"><strong>#2: Revenue and Sales increase</strong></p>

<p style="text-align: justify;">Of course, the main and sole goal of building a business is to make more money. Taking advantage of the use of mobile applications could surely increase your business profitability in terms of sales and revenue. Creating a perfect business-centric and market-centric application can be quite difficult which is why it is beneficial for your business to hire Android app developers to achieve your goals.</p>

<p style="text-align: justify;">Aforementioned, having a mobile application for your business has now become mandatory. So, you’ll want to consider hiring an Android app developer to provide you a successful application. This could pave your way through a greater chance of sale and customer experience in your business as well.</p>

<p style="text-align: justify;"><strong>#3: Ease of Accessibility</strong></p>

<p style="text-align: justify;">Nowadays, more and more manufacturers are integrating the open-source platform ability of Android. Besides, there are also several mobile application stores that allow the users to purchase and download any Android apps for their devices that use the Android platform. Google play, for instance, is the counter-part of Apple’s app store, but this market is made available for other developers who can contribute to the improvement of the existing mobile app.</p>

<p style="text-align: justify;"><strong>#4: Multiple version and devices support</strong></p>

<p style="text-align: justify;">In the iOS platform, 5 main devices can support iOS applications. While Android applications can be compatible with over 170 types of devices. Which is why it is very important to choose and hire Android app developer that has the ability to develop an app that can be used on any devices regarding its version and screen sizes.</p>

<p style="text-align: justify;">It is also important to make a thorough research while selecting a certain version for the developed app. hiring a developer from trusted mobile application development companies like <a href="https://www.thedigitalenterprise.com/articles/ai-chatbots-machine-learning/5-ways-hokuapps-transforming-an-app-development-industry-with-ai/" target="_blank">HokuApps</a> can let you know if there any issues of the development of the Android application.</p>

<p style="text-align: justify;"><strong>#5: Great user experience</strong></p>

<p style="text-align: justify;">One of the major goals of having a mobile application whether it is an iOS or Android app is the user experience. In order to achieve this goal, it is very important to choose the right and suitable Android application developer that is aware of the user’s behavior and preferences as well. This will allow you to create a list of detailed information about the features of your app.</p>

<p style="text-align: justify;"><strong>#6: Concerns about Security</strong></p>

<p style="text-align: justify;">With the fact that the Android platform is an open source technology, more and more developers are continuously improving its security issues. Hiring Android app developers that have proper training, certifications, credibility, and skill can help you address these issues concerning your app’s security.</p>

<p style="text-align: justify;">There are many professionals out there that could help you in your next mobile app projects. HokuApps is a Singapore-based Android and iOS application development company that can transform your ideas into reality.</p>

<p style="text-align: justify;"><strong>Conclusion:</strong></p>

<p style="text-align: justify;">When it comes to hiring an Android application developer, it is important to make a thorough research and having a focus on the core area of your business in order to know what type of Android application should you develop. Leave it to the experts and professional developer from Techtechnology, Singapore.</p>

<p style="text-align: justify;">Are you ready for your first mobile application for your business? If yes, then contact Techtechnology, Singapore now.</p>

<p style="text-align: justify;"><strong>Related Links:</strong><br /><a href="https://www.einnews.com/pr_news/492207304/hokuapps-automation-platform-helps-improve-efficiency-for-the-field-service-industry" target="_blank">HokuApps Automation Platform helps Improve Efficiency for the Field Service Industry</a> <br /><a href="https://www.prnewswire.com/news-releases/hokuapps-redefines-enterprise-mobility-for-roofing-southwest-300658333.html" target="_blank">HokuApps Redefines Enterprise Mobility for Roofing Southwest</a> <br /><a href="https://www.prnewswire.com/news-releases/hokuapps-iphone-apps-development-services-promise-fastest-app-development-300622874.html" target="_blank">HokuApps iPhone Apps Development Services Promise Fastest App Development</a> <br /><a href="https://www.cloudexpoasia.com/exhibitors/hokuapps" target="_blank">HokuApps – Cloud Expo Asia Singapore 2019</a> </p>

<p style="text-align: justify;">Visit Hokuapps on <a href="https://twitter.com/HokuApps" target="_blank">Twitter</a> And Watch Video of <a href="https://youtu.be/h2TI4yGS_8A" target="_blank">Hokuapps</a></p> | dannyroot |

344,872 | Choose Distroless containers by default | I have been using Distroless containers for a little over a year now, and they're awesome! They're se... | 0 | 2020-05-29T13:59:30 | https://dev.to/jacobfrericks/choose-distroless-containers-by-default-19oi | containers, docker, devops, security | ---

title: Choose Distroless containers by default

published: true

description:

cover_image: https://tr1.cbsistatic.com/hub/i/r/2017/04/06/6f6fb9b1-b297-464d-a0e0-48b366745fe2/resize/770x/e75cd06318a179e5041993a6a8034df6/dockersechero.jpg

tags: containers, Docker, devops, security

---

I have been using Distroless containers for a little over a year now, and they're awesome! They're secure, easy to use, and smaller than your average container. So why don't I see them being used more often? Let's take a look!

# What is Distroless?

Distroless containers are based off of the Debian container, but they are very different from Ubuntu. First of all, Google manages these containers. That means you can rely on them to mow the lawn, pull the weeds, and remove newly discovered vulnerabilities.

Second difference is there are [specific containers for specific languages](https://console.cloud.google.com/gcr/images/distroless/GLOBAL). As of now, they have dotnet, java, node, rust, and golang (which just runs on the base distroless image). Why have specific containers for specific images? Why not install them all on one container? Besides the size problem, Google has removed 90% of the container and have kept only what is required to run the specific language. This greatly removes the amount of vulnerabilities that can be found in the container. For instance, let's say someone is able to exec into your container and they want to run a command. Guess what? There's no shell. Let's say a vulnerability has been found in a library in Debian. Chances are that the library doesn't exist in Distroless. It would be like if you only used your place of residence for sleeping. You don't need a kitchen, basement, living room, tv, etc. So why pay for a full house? This is how it is when you use a full container for simply running an app.

# Advantages of Distroless

As I already mentioned, someone the size of Google is maintaining these containers, so you can bet they are kept up to date. They have publicly said that they use them internally, and just expose them for everyone else. There is a team constantly updating them, so they're going to stick around.

I know some people got a bad taste when I said that these are Google containers. Let me help put your mind at ease.

1. Google doesn't install anything on these containers. There's no Google Play Services on these containers. It's open source, so if you want to verify for yourself, [have at it](https://github.com/GoogleContainerTools/distroless).

1. I know Google has a reputation of trying things for a while, then just dropping them. While I don't think this would happen (Google has taken security seriously in the past, and they want their own containers to be secure), it is a very easy migration to another container. I was able to just change the base image of my Java application to the OpenJDK image, and run a java command at the end, and it worked just fine. This can also be done if you simply don't want the weighted blanket of Distroless helping you sleep at night anymore. The migration process is very simple.

# Disadvantages of Distroless

That all sounds perfect... why aren't they used everywhere? There are some disadvantages.

1. You cannot install anything else with your app. You want to install something to assist with some metrics? Sorry, not in this container. Does your security want to install something on everyone's container? Sorry, not here! (is that a disadvantage?) Many of these things can be made up if you host on a PaaS, however.

1. One of my biggest issues with Distroless came when attempting to publish to AWS. AWS does not expect to not have a shell in a container, so it's health checks start failing. I'll go into how I fixed this in a future post (stay tuned!)

1. You can only use the versions of each language they provide. Do you want to use Java 6? They don't support that. What about Java 14? Sorry, only 8 and 11 [are supported right now](https://github.com/GoogleContainerTools/distroless/issues/459).

1. You can use one and only one language. I came across someone who wanted to use both python and java in the same container. That won't work with distroless!

# How to use Distroless?

Using a distroless image is very simple, but it might be a little confusing at first. How can I start a Java app (for example) if I can't run any java commands (it requires a shell to run "java -jar xxx.jar", remember?) Luckily, they have excellent documentation. Here's an example, as well as the links to other examples:

```

FROM gcr.io/distroless/java:11

COPY ./main.jar /app

WORKDIR /app

CMD ["main.jar"]

```

The container is set to run the equivalent of "java -jar" already, it just needs the path to your jar in a CMD. Here are the official examples:

* [dotnet](https://github.com/GoogleContainerTools/distroless/blob/master/examples/dotnet/Dockerfile)

* [golang](https://github.com/GoogleContainerTools/distroless/blob/master/examples/go/Dockerfile)

* [java](https://github.com/GoogleContainerTools/distroless/blob/master/examples/java/Dockerfile)

* [nodejs](https://github.com/GoogleContainerTools/distroless/blob/master/examples/python3/Dockerfile)

* [python](https://github.com/GoogleContainerTools/distroless/blob/master/examples/python3/Dockerfile)

* [rust](https://github.com/GoogleContainerTools/distroless/blob/master/examples/rust/Dockerfile)

Questions? Comments? Concerns? Let me know! | jacobfrericks |

344,873 | JAM Stack Conference is live! | Hi everyone, the JAM Stack Conf' is live right now. https://jamstackconf.com/ | 0 | 2020-05-27T13:55:32 | https://dev.to/bernardbaker/jam-stack-conference-is-live-5f1l | beginners | Hi everyone, the JAM Stack Conf' is live right now.

https://jamstackconf.com/ | bernardbaker |

344,897 | Get Chrome To Show The Full URL In The Address Bar | Hey Devs 👋, Has the address bar change been frustrating you because you can't see the full URL anym... | 0 | 2020-05-27T14:47:35 | https://dev.to/frontenddude/get-chrome-to-show-the-full-url-in-the-address-bar-ag9 | chrome, tutorial, browsers, tips | Hey Devs 👋,

Has the address bar change been frustrating you because you can't see the full URL anymore? Here is a handy tip to get it back, including the protocol.

1. Enable chrome://flags/#omnibox-context-menu-show-full-urls

2. Right click the address bar

3. Select 'Always show full URLs'

---

For more awesome tips about FrontEnd dev follow me:

💙[Twitter](https://www.twitter.com/frontenddude)

🖤[Dev.to](https://dev.to/frontenddude)

| frontenddude |

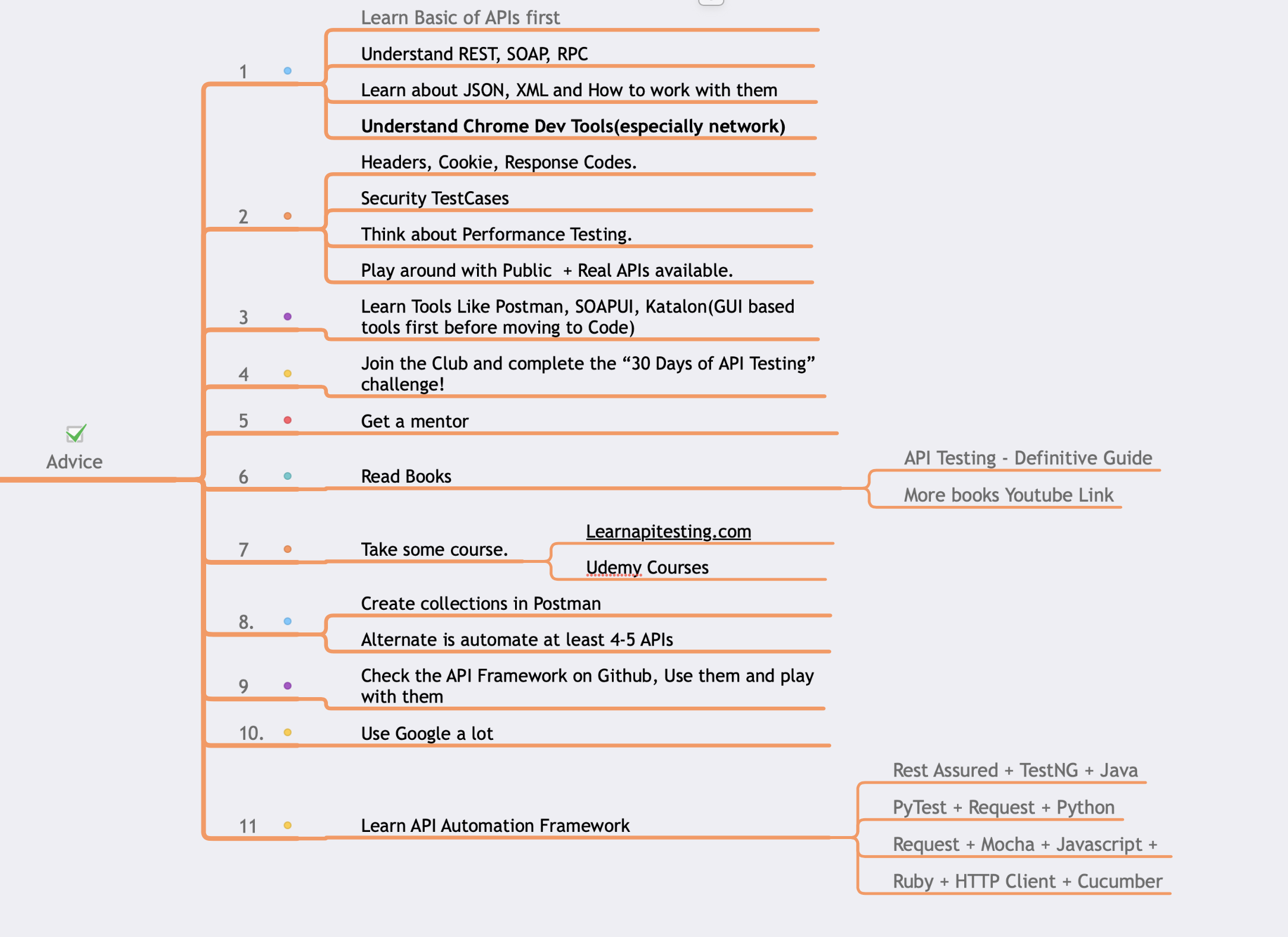

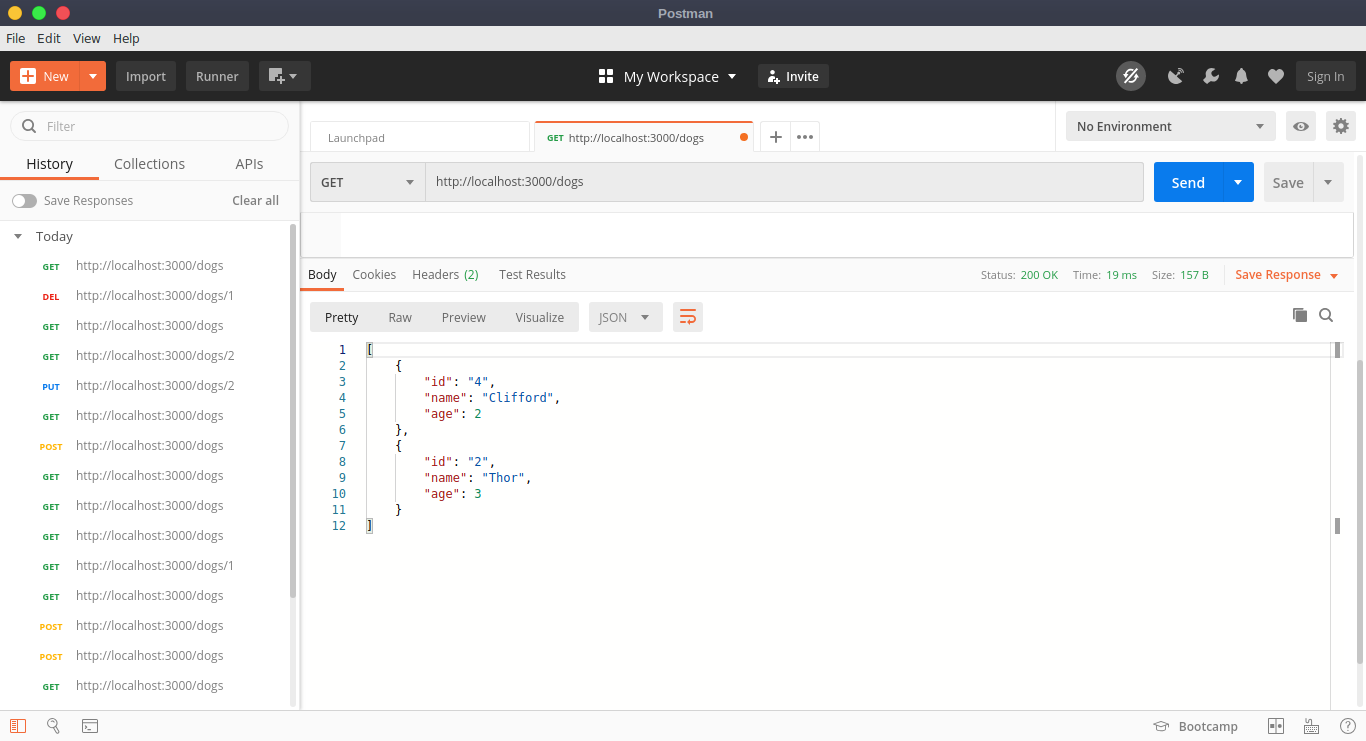

344,963 | 🛑🛑 Advice to Software Tester Before Starting API Testing 🛑🛑 | In this video of 30 Days of API Testing Challenge, I am going to discuss Advice to Software Tester Be... | 0 | 2020-05-27T16:48:40 | https://dev.to/promode/advice-to-software-tester-before-starting-api-testing-3o18 | testing, tutorial, webdev, beginners | In this video of 30 Days of API Testing Challenge, I am going to discuss Advice to Software Tester Before Starting API Testing.

> 🚀 Day 27 Task: Advice for someone looking to get started with API testing.

[](https://youtu.be/YJsNKmxCKaQ "30 Days of API Testing")

🚀 Thread: https://scrolltest.com/api/day27

🚀 All Task List: https://scrolltest.com/api/task

🚀 Watch Full Playlist: https://apitesting.co/30days

--

## 🚀 Mastering API Testing - https://www.learnapitesting.com

--

Be sure to subscribe for more videos like this!

[](https://www.youtube.com/TheTestingAcademy?sub_confirmation=1 "TheTestingAcademy")

| promode |

344,974 | Permissions Security For Deno | Today I released a new security module for Deno called permission-guard! This module is a zero-depen... | 0 | 2020-05-27T17:09:16 | https://dev.to/craigmorten/permissions-security-for-deno-2k8b | deno, javascript, typescript, security | Today I released a new security module for [Deno](https://deno.land/) called [permission-guard](https://github.com/asos-craigmorten/permission-guard)!

This module is a zero-dependency, minimal permission guard for Deno to prevent overly permissive execution of your applications.

```ts

import { guard } from "https://deno.land/x/permissionGuard@2.0.1/mod.ts";

await guard();

console.log("Execute my code...!");

```

If the application is run with permissions it doesn't need, or without permissions it does need, the guard will shield your application and prevent it from running.

For example, the `guard` in the above example would prevent

```bash

deno run --unstable -A ./index.ts

```

from executing any further. This extra protection means you are safer from potential malicious 3rd party code that could otherwise take advantage of overly permission application executions.

## Installation

This is a [Deno](https://deno.land/) module available to import direct from this repo and via the [Deno Registry](https://deno.land/x).

Before importing, [download and install Deno](https://deno.land/#installation).

You can then import `permission-guard` straight into your project:

```ts

import { guard } from "https://deno.land/x/permissionGuard@2.0.1/mod.ts";

```

> **Note:** `permission-guard` makes use of the unstable Deno Permissions API which requires `--unstable` to be passed in the Deno `run` command. You can use `permission-guard` in applications and not provide the `--unstable` flag, `permission-guard` will simply return as a no-op and not provide any defenses.

## Features

- Protection against unnecessary top-level permissions.

- Protection against missing required permissions.

- Recommendations where permissions could be better scoped (if `log: true` provided).

- Useful logs detailing the missing or insecure permissions (if `log: true` provided).

## Docs

- [Docs](https://asos-craigmorten.github.io/permission-guard/) - usually the best place when getting started ✨

- [Deno Docs](https://doc.deno.land/https/deno.land/x/permissionGuard/mod.ts)

## Examples

`permission-guard` has [all the examples you need](https://github.com/asos-craigmorten/permission-guard/tree/master/examples) to get started.

To run the [examples](./examples):

1. Clone the `permission-guard` repo locally:

```bash

git clone git://github.com/asos-craigmorten/permission-guard.git --depth 1

cd permission-guard

```

Then run the example you want:

```bash

deno run --unstable ./examples/defaults/index.ts

```

All the [examples](./examples) contain example commands in their READMEs to help get you started.

## More!

Want to know more? Head over to the [permission-guard GitHub](https://github.com/asos-craigmorten/permission-guard) page for full details.

Want to help, found a bug, or have a suggestion? Please reach out by commenting below or raising issues / PR on the repo! | craigmorten |

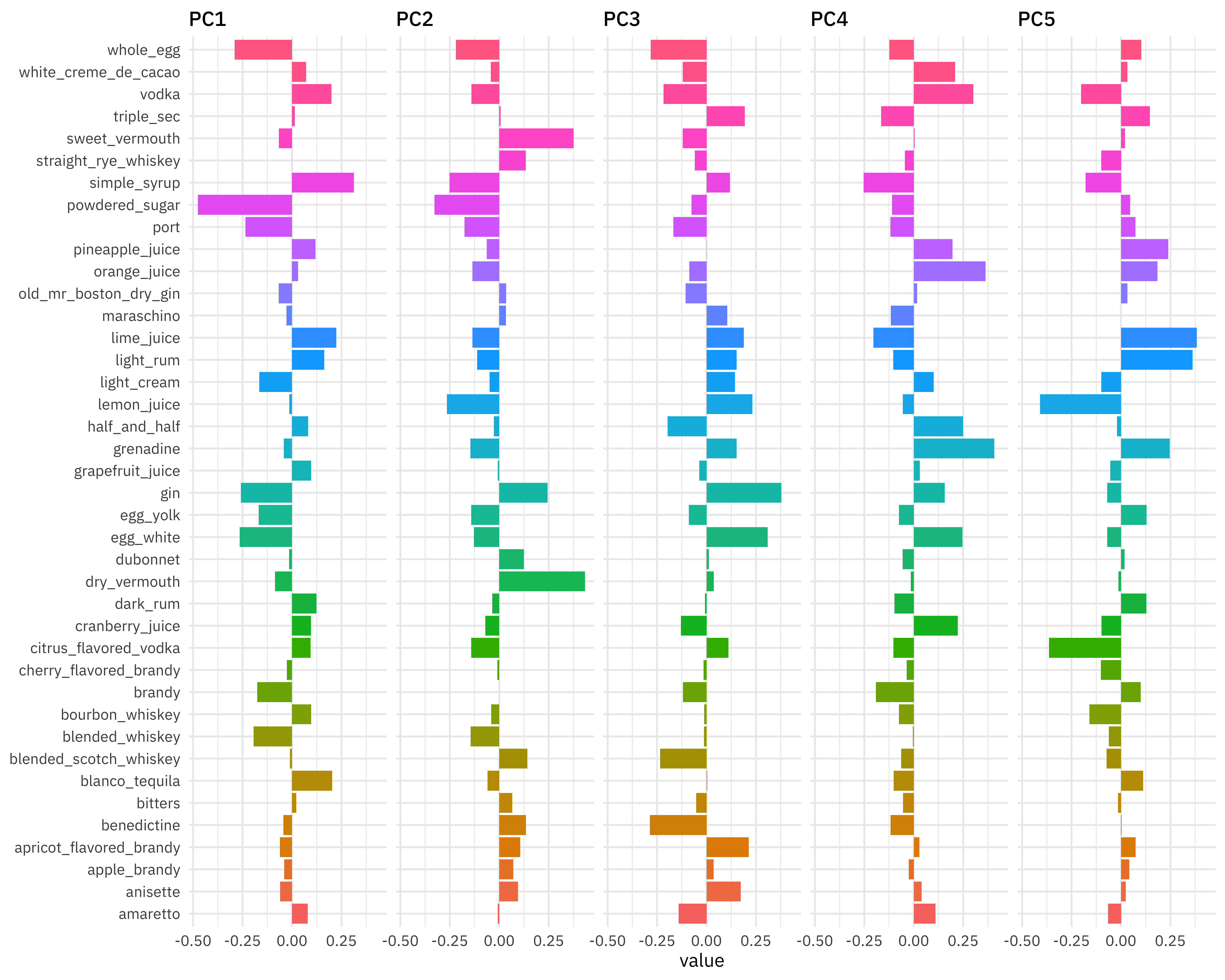

344,983 | PCA and UMAP with cocktail recipes 🥃🍸🍹 | Lately I’ve been publishing screencasts demonstrating how to use the tidymodels framework, from first... | 0 | 2020-05-27T17:29:51 | https://juliasilge.com/blog/cocktail-recipes-umap/ | machinelearning, datascience, tutorial, rstats | ---

title: PCA and UMAP with cocktail recipes 🥃🍸🍹

published: true

date: 2020-05-27 00:00:00 UTC

tags: machinelearning, datascience, tutorial, rstats

canonical_url: https://juliasilge.com/blog/cocktail-recipes-umap/

---

Lately I’ve been publishing [screencasts](https://juliasilge.com/category/tidymodels/) demonstrating how to use the [tidymodels](https://www.tidymodels.org/) framework, from first steps in modeling to how to evaluate complex models. Today’s screencast isn’t about predictive modeling, but about **unsupervised machine learning** using with this week’s [`#TidyTuesday` dataset](https://github.com/rfordatascience/tidytuesday) on cocktail recipes. 🍸

{% youtube _1msVvPE_KY %}

Here is the code I used in the video, for those who prefer reading instead of or in addition to video.

## Explore the data

Our modeling goal is to use unsupervised algorithms for dimensionality reduction with [cocktail recipes from this week’s #TidyTuesday dataset](https://github.com/rfordatascience/tidytuesday/blob/master/data/2020/2020-05-26/readme.md). In my [earlier blog post](https://juliasilge.com/blog/tidylo-cran/) this week, I used one of the cocktail datasets included and here let’s use the other one.

```

boston_cocktails <- readr::read_csv("https://raw.githubusercontent.com/rfordatascience/tidytuesday/master/data/2020/2020-05-26/boston_cocktails.csv")

boston_cocktails %>%

count(ingredient, sort = TRUE)

## # A tibble: 569 x 2

## ingredient n

## <chr> <int>

## 1 Gin 176

## 2 Fresh lemon juice 138

## 3 Simple Syrup 115

## 4 Vodka 114

## 5 Light Rum 113

## 6 Dry Vermouth 107

## 7 Fresh Lime Juice 107

## 8 Triple Sec 107

## 9 Powdered Sugar 90

## 10 Grenadine 85

## # … with 559 more rows

```

There’s a bit of data cleaning to do to start, both for the `ingredient` column and the `measure` column.

```

cocktails_parsed <- boston_cocktails %>%

mutate(

ingredient = str_to_lower(ingredient),

ingredient = str_replace_all(ingredient, "-", " "),

ingredient = str_remove(ingredient, " liqueur$"),

ingredient = str_remove(ingredient, " (if desired)$"),

ingredient = case_when(

str_detect(ingredient, "bitters") ~ "bitters",

str_detect(ingredient, "lemon") ~ "lemon juice",

str_detect(ingredient, "lime") ~ "lime juice",

str_detect(ingredient, "grapefruit") ~ "grapefruit juice",

str_detect(ingredient, "orange") ~ "orange juice",

TRUE ~ ingredient

),

measure = case_when(

str_detect(ingredient, "bitters") ~ str_replace(measure, "oz$", "dash"),

TRUE ~ measure

),

measure = str_replace(measure, " ?1/2", ".5"),

measure = str_replace(measure, " ?3/4", ".75"),

measure = str_replace(measure, " ?1/4", ".25"),

measure_number = parse_number(measure),

measure_number = if_else(str_detect(measure, "dash$"),

measure_number / 50,

measure_number

)

) %>%

add_count(ingredient) %>%

filter(n > 15) %>%

select(-n) %>%

distinct(row_id, ingredient, .keep_all = TRUE) %>%

na.omit()

cocktails_parsed

## # A tibble: 2,542 x 7