id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

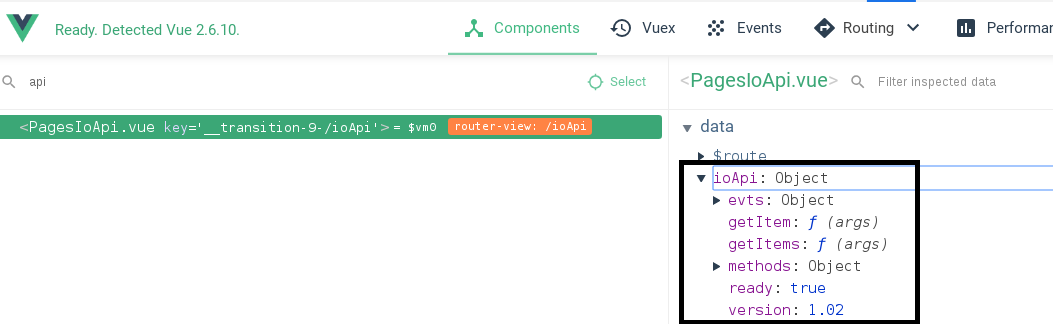

280,537 | A web-based JavaScript(canvas) spreadsheet | A web-based JavaScript spreadsheet demo: https://myliang.github.io/x-spreadsheet/ github: https://gi... | 5,386 | 2020-03-13T09:19:10 | https://myliang.github.io/x-spreadsheet/ | webdev, javascript | > **A web-based JavaScript spreadsheet**

> demo: https://myliang.github.io/x-spreadsheet/

> github: https://github.com/myliang/x-spreadsheet

<p align="center">

<a href="https://github.com/myliang/x-spreadsheet">

<img width="100%" src="https://raw.githubusercontent.com/myliang/x-spreadsheet/master/docs/demo.png">

</a>

</p>

## CDN

```html

<link rel="stylesheet" href="https://unpkg.com/x-data-spreadsheet@1.0.13/dist/xspreadsheet.css">

<script src="https://unpkg.com/x-data-spreadsheet@1.0.13/dist/xspreadsheet.js"></script>

<script>

x.spreadsheet('#xspreadsheet');

</script>

```

## NPM

```shell

npm install x-data-spreadsheet

```

```html

<div id="x-spreadsheet-demo"></div>

```

```javascript

import Spreadsheet from "x-data-spreadsheet";

// If you need to override the default options, you can set the override

// const options = {};

// new Spreadsheet('#x-spreadsheet-demo', options);

const s = new Spreadsheet("#x-spreadsheet-demo")

.loadData({}) // load data

.change(data => {

// save data to db

});

// data validation

s.validate()

```

```javascript

// default options

{

showToolbar: true,

showGrid: true,

showContextmenu: true,

view: {

height: () => document.documentElement.clientHeight,

width: () => document.documentElement.clientWidth,

},

row: {

len: 100,

height: 25,

},

col: {

len: 26,

width: 100,

indexWidth: 60,

minWidth: 60,

},

style: {

bgcolor: '#ffffff',

align: 'left',

valign: 'middle',

textwrap: false,

strike: false,

underline: false,

color: '#0a0a0a',

font: {

name: 'Helvetica',

size: 10,

bold: false,

italic: false,

},

},

}

```

## Bind events

```javascript

const s = new Spreadsheet("#x-spreadsheet-demo")

// event of click on cell

s.on('cell-selected', (cell, ri, ci) => {});

s.on('cells-selected', (cell, { sri, sci, eri, eci }) => {});

// edited on cell

s.on('cell-edited', (text, ri, ci) => {});

```

## Internationalization

```javascript

// npm

import Spreadsheet from 'x-data-spreadsheet';

import zhCN from 'x-data-spreadsheet/dist/locale/zh-cn';

Spreadsheet.locale('zh-cn', zhCN);

new Spreadsheet(document.getElementById('xss-demo'));

```

```html

<!-- Import via CDN -->

<link rel="stylesheet" href="https://unpkg.com/x-data-spreadsheet@1.0.32/dist/xspreadsheet.css">

<script src="https://unpkg.com/x-data-spreadsheet@1.0.32/dist/xspreadsheet.js"></script>

<script src="https://unpkg.com/x-data-spreadsheet@1.0.32/dist/locale/zh-cn.js"></script>

<script>

x.spreadsheet.locale('zh-cn');

</script>

```

## Features

- Undo & Redo

- Paint format

- Clear format

- Format

- Font

- Font size

- Font bold

- Font italic

- Underline

- Strike

- Text color

- Fill color

- Borders

- Merge cells

- Align

- Text wrapping

- Freeze cell

- Functions

- Resize row-height, col-width

- Copy, Cut, Paste

- Autofill

- Insert row, column

- Delete row, column

- Data validations

## Development

```sheel

git clone https://github.com/myliang/x-spreadsheet.git

cd x-spreadsheet

npm install

npm run dev

```

Open your browser and visit http://127.0.0.1:8080.

## Browser Support

Modern browsers(chrome, firefox, Safari).

## LICENSE

MIT

| myliang |

280,556 | Universal Principles Of UX Design | You also need to follow some principles that can ensure the success of the interface you have been bu... | 0 | 2020-03-13T10:09:10 | https://dev.to/uiux_studio/universal-principles-of-ux-design-48m7 | ux, userexperience, uxprinciples | You also need to follow some principles that can ensure the success of the interface you have been building for your website and its successful acceptance by the prospective users. It is essential to keep these principles in mind because they form the necessary foundation on which great design experiences are built. Here's am showing the most important UX design principles that you should follow for making the perfect website: http://bit.ly/2IDRl33 | uiux_studio |

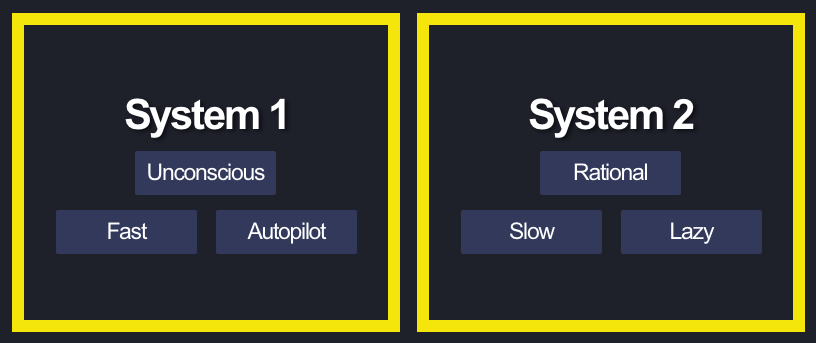

280,725 | Software Lessons from Scarcity | Sendir Mullainathan and Eldar Shafir's book Scarcity: The New Science of Having Less and How it Defin... | 5,388 | 2020-03-13T14:57:15 | https://dev.to/phm200/software-lessons-from-scarcity-book-7i7 | books | Sendir Mullainathan and Eldar Shafir's book [*Scarcity: The New Science of Having Less and How it Defines Our Lives*](https://www.amazon.com/Scarcity-Science-Having-Defines-Lives/dp/125005611X/) is a wonderful achievement and a great read for anyone with an interest in psychology and behavioral economics. Mullainathan and Sharif present a novel frame for the common problem of scarcity, of not having enough money, time or other resource.

Their insight is that scarcity taxes our attention, what they call a bandwidth tax, and causes us to narrowly focus our (compromised) attention on the most immediate problem ahead, what they call tunneling. The result is consistent and predictably poor decision making by those facing scarcity. Poor decision making hinders getting more of the scarce resource and so in the end, scarcity systematically creates more scarcity.

Mullainathan and Sharif use descriptive anecdotes and experimental data to support their thesis. Again, worth your time to check out if you liked books like *Thinking Fast and Slow* by Daniel Kahneman or *Freakonomics* by Steven D. Levitt and Stephen J. Dubner.

The idea of scarcity is an interesting lens to apply to software development as well. Here's a few thoughts that came to mind, some conventional wisdom, some not, all informed by scarcity.

*Organizations and teams are right to cautiously adopt new technologies*

As a long-time software consultant, I'm used to hearing complaints, and complaining myself about a client that seems stuck in the mind, intent on using what seems like a Stone Age tech stack. How can they not realize how much better, cooler, faster new technology X is?

From a scarcity perspective, sticking with a known solution can be a smart strategy. In the context of a consulting project, it is often the case that the teams' bandwidth is limited by time or money. Operating under this bandwidth tax, the team doesn't have enough capacity to fairly evaluate a new tech approach, in addition to implementing the specific deliverable. In contrast, if those tech decisions are already made, the team can focus their limited attention on the business value.

This does not mean orgs and teams are always right to be cautious. Creative organizations will find a way to give the right people enough time and support to make a well reasoned evaluation of new technology. Organizations that want to innovate can also build more slack into their timelines. Slack is a critical way to mitigate the mistakes that come about in a scarce environment. A team that has enough time to make a mistake is often one that can learn from it.

*One person cannot shape and implement at the same time*

I've long been a huge fan of Basecamp, formally known as 37Signals. Recently, Basecamp released guidance on their software development lifecycle, [Shape Up](https://basecamp.com/shapeup). A key facet of their process is that a small group shapes (defines) the parameters of a small cycle of work and then another group implements that work. Once the pitch for the work is complete and approved, the implementing team has freedom within the pitch definition to implement it.

Having these [two tracks](https://basecamp.com/shapeup/1.1-chapter-02#two-tracks), of shaping and building, makes perfect sense from a scarcity perspective. When leading a team to build an application, I'm focused (tunneled) into the implementation. If I'm also trying to figure out what we're building, one is going to get short-changed. Our brains are good at focusing and we can be incredibly productive in the tunnel, but at the expense of items outside it. Whatever track we are on, our brain wants to get back to and will shortchange the other track.

This insight seems mundane on the surface, but in my experience it is quite common for technical leads on projects to be in charge of both implementing the current phase and planning the next one. While they may have the skill to do both, the expectation that those different tracks can occur in parallel without a loss of quality in one or the other is misleading.

*Even LeBron James needs rest days*

If NBA teams were run more like software projects, then star players like LeBron James would never be given a rest day, or limited minutes. Why would you take your best performer off the court? What seems obvious in a physical undertaking like basketball, that overwork, a scarcity of rest, leads to injury or poor performance is just as true for mental work. From *Scarcity*:

> ...our effects [of scarcity] correspond to between 13 and 14 IQ points... losing 13 points can take you from "average" to a category labeled "borderline deficient"

More to the point of high performers, the (temporary) loss of IQ is also enough to take someone from "superior" to "average". This effect has nothing to do with that person's inherent grit or toughness. Put the same person in a better, more abundant situation can perform to their potential.

To put it another way, when a team is told to consistently put in extra hours, the implicit message is that we are no longer concerned about the quality of the work, we just hope to get the work done at any quality level in a given calendar timeframe. For software consultancies that differentiate on quality of work, this doesn't sound too appealing.

There's a lot more in *Scarcity* that I didn't cover here. And I'm sure other sources to provide contrary lenses on these points. Keep reading and learning, and as always leave me comments and questions below. Thanks! | phm200 |

280,733 | Flutter Vs React Native: Everything You Ever Wanted to Know [INFOGRAPHIC] | The space of technology is ever-evolving since the demands are many. And the increase in demand has l... | 0 | 2020-03-14T07:30:51 | https://www.moweb.com/blog/flutter-vs-react-native-everything-you-ever-wanted-to-know | reactnative, flutter | The space of technology is ever-evolving since the demands are many. And the increase in demand has led to multiple new technologies to come into existence. Today the world of app development is flourishing at a higher speed, and the credit goes to the unbelievably robust technology advancements. Every business prefers to provide the best experience to the users. And no doubt this purpose is well-served by the mobile application technology. However, on looking closer, you may find that there is a big group of technical advancements in the market.

For any business, it is hard to make a smart move with these new tech stacks. The innovative technological spectrum has brought two sensational technologies; React Native & Flutter.

These two technologies are scaling higher on the performance factors and both the platforms have their own benefits.

But hold on, as these both the technologies are super-efficient, it is making a daunting task for the business owners to select the best.

Is it troubling you???

You don’t need to be worried anymore since with this post, we have tried to cover each and every aspect of the Flutter & React technology. We are sure by the end of the post you would be able to make the informed decision.

Let’s start learning more about these two trending technologies, and explore what they have kept hidden in their Pandora box.

##Flutter VS React Native

To start with it can easily be stated that in the current market trend these two platforms are the giant players.

Every big brand & enterprise is embracing these two technologies in their business model. Indeed, both platforms have a lot more to offer to your app and can help you scale higher.

So let’s begin…

##A Word About Flutter

In simple words, Flutter is a portable UI toolkit. It contains a complete set of widgets and tools. It lets the developers build cross-platform apps. It uses a single codebase to build apps for Android, iOS & web. Also, it is a free and open-source, based on Dart – a fast, object-oriented programming language.

The incredible set of the widget in Flutter allows apps to have a great look and feel.

Now let’s take a quick look at the advantages and the disadvantages owned by the Flutter platform.

###Flutter Pros

**1) Hot Reload**

No doubt Flutter has a different development approach. It brings dynamic & faster app development. Developers can make changes in the codebase and can see them immediately reflecting on the app. With this feature, the developer can fix bugs, or update instantly.

**2) One Codebase for Multiple Platforms**

The development of the Flutter app is easier, compared to any other. Flutter allows developers to write just one codebase for multiple platforms such as Android, iOS & web. And this code works efficiently on other platforms as well. It saves time and money in the development process.

**3) Needs 50% Less Testing**

Testing a Flutter app is a very easy process. Since the application testing works on both the platforms, it reduces app testing efforts by 50%. In this run, developers only need to invest their time in testing on one Platform and on the other, it works itself.

**4) Builds Apps Faster**

As the [Flutter app development](https://www.moweb.com/flutter-app-development) needs to be done on one specific platform, it helps a lot. Developers can build faster apps with scaling performance. It can deliver 60fps- frames per second seamlessly.

**5) Custom Widgets**

Flutter has many ready-made widgets. It doesn’t need to upgrade to support old devices. Also, widgets from Flutter can be combined to create layouts, and also developers can select any level of customization.

**6) MVP Perfect**

MVP for a mobile app is a necessary aspect. It helps in building only the required features & functionalities within the app. Flutter is the best choice to build an MVP for the app. It works well when there is less time for development.

###Flutter Cons

**1) Simple Libraries & Support**

Flutter makes it a convenient choice for app developers to utilize multiple libraries during the development process. But the inclusion of libraries in the Flutter doesn’t have the support of every existing library. However, some of the libraries are needed to be customized by the developers to be used further.

**2) Regular Support**

The regular support is much needed to handle the challenges in the development process. Flutter doesn’t support CI platforms like Travis. That’s why developers need to use a custom script to build, test, and deploy. This can disturb the flow of development.

**3) Risky Platform**

Google is the main working mechanism behind Flutter. However, Flutter is open source, but without Google’s support, there can’t be any future for Flutter. Therefore, it makes Flutter a risky platform that can’t survive without Google’s support.

**4) Size of App**

The size of a mobile app is the main concern, which disturbs the developers. As no one wants to download an app that consumes a bigger space on the phone. And this is where Flutter has a demerit. Flutter apps are bigger than their native counterparts.

Hmm, now let’s move towards the next popular technology React Native, and let’s discuss what it has got within its mechanism.

##A Word About React Native

React Native is an open-source mobile app framework that utilizes JavaScript. It is an effective framework for building cross-platform apps. Apps can be built on both the platforms, using a single codebase.

Also, clear the doubt that ReactNative apps are not mobile web apps. With React Native developers can use the iOS & Android’s UI building blocks.

How about gaining some information about the merits & demerits this very technology holds? Let’ find out together…

###React Native Pros

**1) Fast Refresh Feature**

React Native brings live reloading & hot reloading features together into a single new feature; “Fast Refresh”. This very feature fully supports modern React and doesn’t work on the invasive code transformations. Hence it is very reliable through every possible corner.

**2) One Codebase for Two Major Platforms**

For an app to save time and money on the development process, is a much-needed aspect. React lets the one single codebase to be written and let the app to work on both the platforms. This helps in saving time and money and works efficiently on both platforms.

**3) React Utilizes JavaScript**

JavaScript is the best programming language among developers across the globe. You get to experience JavaScript with React Native. As a developer, you get to make statically-typed programming languages and let the app run seamlessly.

**4) A Mature Platform**

React Native is no more in a nascent stage. React Native was released 5 years ago and meanwhile it has gone through massive change. This has let the developers focus on fixing the issues in a better manner along with helping the efficient app to come into existence.

**5) Vast React Native community**

The developer community from React Native is quite large. The team of experts keeps on sharing new technology updates. This has allowed developers to learn technology quickly. Also, it helps in sharing new information related to Flutter.

**6) Very Easy to Learn**

React Native is easy to learn. Developers can use the same libraries, tools, and patterns. The mechanism of the React platform is not at all tough. It does not require any sort of special training to be given to the developers and they can use it.

**7) React Reduces Testing Efforts**

Every app requires the testing process. This helps in making the app work without any glitch on the app platform. React apps work efficiently on both the platforms, and this makes the need for the testing to be done on one of the platforms only. It reduces the demand for testing efforts and helps in bringing efficient products.

###React Native Cons

**1) It Is Not Thoroughly Real Native**

The performance and the quality excellence Native apps have, are commendable. But in the React Native, the UI experience & performance are not at all similar. They are not just alike in the React native, and there are certain differences.

**2) Fewer Components**

Despite being popular and mature, React Native still lacks in some components. Only basic components are supported by React Native. And others which are existing are underdeveloped. This will make developers use fewer components.

**3) Many Abandoned Packages**

React Native has a massive number of libraries. These libraries are of low quality or can be abandoned as well. Therefore, within the development process, developers find many abandoned packages, which are not used at all.

**4) Fragile UI**

React Native is built on native components, wherein the updates can cause certain changes in the Native Components API. And these changes if not handled well can affect the complete mechanism of the mobile app and disturb the flow.

**5) React Apps Are Bigger Than Native**

Every app written on React Native runs on the JavaScript code. But Android doesn’t hold this functionality. Android needs to include a library supporting JavaScript code. It leads to the apps to be bigger than the native Android apps.

This is the quick summary of the pros and cons and the information related to Flutter & React.

**Here is the visual comparison of Flutter Vs React Native**

Still, one question that is hot enough comes, that

##Which Technology Is the Best?

On an honest note, both the technologies are supreme in their own space. If you will analyze then you can see that React Native is very popular owing to the multiple aspects. And one of the biggest advantages it gives to the businesses is to control the cost factor and reduced delivery time.

On the other hand, Flutter possesses an almost similar set of benefits and ensures that your app gets build in no time, with the amazing features. Therefore, it might be difficult to give a vote to one specific technology. It is not the diplomatic answer but suggests the fact.

Both of these technologies have incredible values intact within them. And if used as per the business requirements, either of them can do wonders.

To wrap up, it can be said that it should not be about Flutter VS React Native, but should always be Flutter & React. So it is highly recommended to use any of these technologies. It must depend upon the requirements of your business and further implement the best of technology in your business.

| mowebtech |

280,795 | Encapsulation in Python | Encapsulation is an essential aspect of Object-Oriented Programming. Let’s explain encapsulation in... | 0 | 2020-03-15T11:39:47 | https://coderscat.com/encapsulation-in-python | python | ---

title: Encapsulation in Python

published: true

date: 2020-03-12 16:22:00 UTC

tags: Python

canonical_url: https://coderscat.com/encapsulation-in-python

---

Encapsulation is an essential aspect of **Object-Oriented Programming**.

Let’s explain encapsulation in plain words: **information hiding**. This means delimiting of the internal interface and attribute from the external world.

The benefit of information hiding is **reducing system complexity and increasing robustness**.

Why? Because encapsulation limits the interdependencies of different software components. Suppose we create a module. Our users could only interact with us through public APIs; they don’t care about the internals of this module. Even when the details of internals implementation changed, the user’s code doesn’t need a corresponding change.

To implement encapsulation, we need to learn how to define and use private attribute and a private function.

Enough theory now, let’s talk about how we do this in Python?

Python is an interpreted programming language and implements **weak encapsulation**. Weak encapsulation means it is performed by convention rather than being enforced by the language. So there are some differences with Java or C++.

## Protected attribute and method

If you have read some Python code, you will always find some attribute names with a prefixed underscore. Let’s write a simple Class:

```python

class Base(object):

def __init__ (self):

self.name = "hello"

self._protected_name = "hello_again"

def _protected_print(self):

print "called _protected_print"

b = Base()

print b.name

print b._protected_name

b._protected_name = "new name"

print b._protected_name

b._protected_print()

```

The output will be:

```

hello

hello_again

new name

called _protected_print

```

From the result, an attribute or method with a prefixed underscore acts the same as the normal one.

So, why we need to add a prefixed underscore for an attribute?

The prefix underscore is a warning for developers: please be careful about this attribute or method, don’t use it outside of declared Class!

`pylint` will report out this kind of bad smell code:

Another benefit of prefix score is: it avoids wildcard importing the internal functions outside of the defined module. Let’s have a look at this code:

```python

# foo module: foo.py

def func_a():

print("func_a called!")

def _func_b():

print("func_b called!")

```

Then if we use wildcard import in another part of code:

```python

from foo import *

func_a()

func_b()

```

We will encounter an error:

By the way, [wildcard import](http://pep8.org/#imports) is another bad smell in Python and we should avoid in practice.

## Private attribute and method

In traditional OOP programming languages, why private attributes and methods can not accessed by derived Class?

Because it is useful in information hiding. Suppose we declare an attribute with name `mood`, but in the derived Class we redeclare another attribute of name `mood`. This overrides the previous one in the parent Class and will likely introduce a bug in code.

So, how to use the private attribute in Python?

The answer is adding a double prefix underscore in an attribute or method. Let’s run this code snippet:

```python

class Base(object):

def __private(self):

print("private value in Base")

def _protected(self):

print("protected value in Base")

def public(self):

print("public value in Base")

self.__private()

self._protected()

class Derived(Base):

def __private(self):

print("derived private")

def _protected(self):

print("derived protected")

d = Derived()

d.public()

```

The output will be:

```

public value in Base

private value in Base

derived protected

```

We call the `public` function from a derived object, which will invoke the `public` function in Base class. Note this, because `__private` is a private method, only object its self could use it, there is no naming conflict for a private method.

If we add another line of code:

```python

d.__private()

```

It will trigger another error:

Why?

Let’s print all the methods of object and find out there a method with name of `_Base__private`.

This is called `name mangling` that the Python interpreter applies. Because the name was added `Class` prefix name, private methods are protected carefully from getting overridden in derived Class.

Again, this means we can use `d._Base__private` to call the private function. Remember, it’s not enforced!

The post [Encapsulation in Python](https://coderscat.com/encapsulation-in-python) appeared first on [CodersCat](https://coderscat.com). | snj |

280,858 | Curated list of things you can do now that your stuck at home | Photo by Dollar Gill on Unsplash During the height of the current Coronavirus / COVID-19 pandemic, y... | 0 | 2020-03-13T18:57:56 | https://dev.to/armiedema/curated-list-of-things-you-can-do-now-that-your-stuck-at-home-haf | selfimprovement, workfromhome, learning | ---

title: Curated list of things you can do now that your stuck at home

published: true

date: 2020-03-13 18:53:53 UTC

tags: self-improvement,work-from-home,learning

canonical_url:

---

<figcaption>Photo by <a href="https://unsplash.com/@dollargill?utm_source=medium&utm_medium=referral">Dollar Gill</a> on <a href="https://unsplash.com?utm_source=medium&utm_medium=referral">Unsplash</a></figcaption>

During the height of the current Coronavirus / COVID-19 pandemic, you are likely finding yourself at home more often, not going out as much, and are wondering what to do to occupy your time. ⏳

Yes - you can certainly play video games 🕹 or watch Netflix 📺 to pass the time. However, this is the perfect opportunity to do something different, learn some new skills, and better yourself!

Here is a curated list, pulled from [That App Show](https://thatappshow.com), of things that you can do from the safety of your home that will give you some marketable skillz. 🔥

### #1 — Learn to code

There are plenty of websites that teach you how to learn how to code. And, developing coding skills is probably one of the most marketable and return on time investments things you can be doing for yourself right now!

#### [Zappy Code](https://thatappshow.com/apps/zappy-code)

Zappy Code has hours of online course that will get you on your way learning how to code up some awesome iOS apps. The courses are very in-depth and mainly focused on Swift language development right now, which will get you on your way to creating delightful iPhone apps. Plus, the “zanniness” of the lessons makes learning to code super fun!

#### [ColtXP](https://thatappshow.com/apps/ds)

ColtXP is a platform where you can meet more senior-level software developers and work on open-source projects with them. Often, the best way to really learn is to code up some real-world examples and using real-world methods. ColtXP brings the master and the padawan together and makes both parties all the better for it.

#### [Alpas](https://thatappshow.com/apps/alpas)

Alpas is a new, Kotlin-based web framework that is super powerful and super easy to get started on. Checkout the quick-start guide to create a to-do app or go a step further and checkout the Fireplace tutorial to learn more about some of Alpas’s advanced features, such as user authentication.

#### [FlatLogic](https://thatappshow.com/apps/flat-logic)

If you zoom past the above 3, then add some beautification and dashboard goodness to your new project with FlatLogic. Choose from dozens of beautifully crafted dashboard templates and add some sparkle to your app.

### #2 — Learn to market

Become a marketing hacker and learn how to grow a product or your own personal brand.

#### [reThumbnail](https://thatappshow.com/apps/re-thumbnail)

Do you have some old, funny home videos that you want to share out with the world and get some YouTube internet $$$? Make sure they stand out with eye-catching video thumbnails. reThumbnail helps you design thumbnails that will prompt visitors to click on your video over others.

#### [Pentos](https://thatappshow.com/apps/pentos)

Get on the TikTok craze! Pentos is one of the few apps that provide you with great insights on how TikTok videos are performing, what types of videos are going viral, and how you can better market a brand on this exploding new social platform. Why not makes some TikTok videos and see if you can find the secret formula for going viral?!

#### [Inview](https://thatappshow.com/apps/inview)

You will likely be glued to your phone during this time anyways. Well, let’s be honest, who isn’t always glued to their phone? A key metric that sales and marketing professionals constantly want to know is “are people looking at my email?” Inview provides you with insights on who views your emails (send from your phone) and what they click on.

### #3 — Become a sommelier

Since your home, why not have wine sent to your door so you can refine your wine-tasting-buds? Yea — this category is more for fun. But, just because your home, doesn’t mean you don’t need breaks while learning how to be a coding and marketing guru! Plus, if you enjoy it enough, you can earn good money being a sommelier. 😍

#### [The Wine List](https://thatappshow.com/apps/the-wine-list)

Have bottles of craft wine you cannot find in the neighborhood store sent directly to your door.

#### [Buy The Glass](https://thatappshow.com/apps/buy-the-glass)

Don’t want to commit to a whole bottle? No problem! Buy The Glass let’s you pick single-serve sized wines so you can convert your kitchen counter into your very own wine tasting table. 🍷

I hope you enjoyed the list! Please feel free to comment and add some other suggestions. Please be safe and be healthy. 🙏 | armiedema |

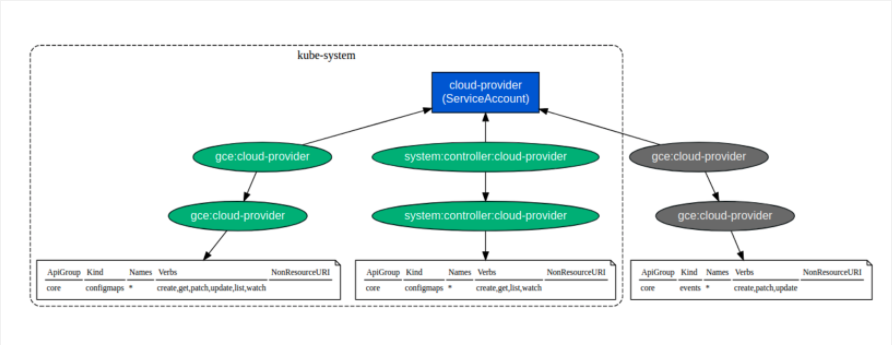

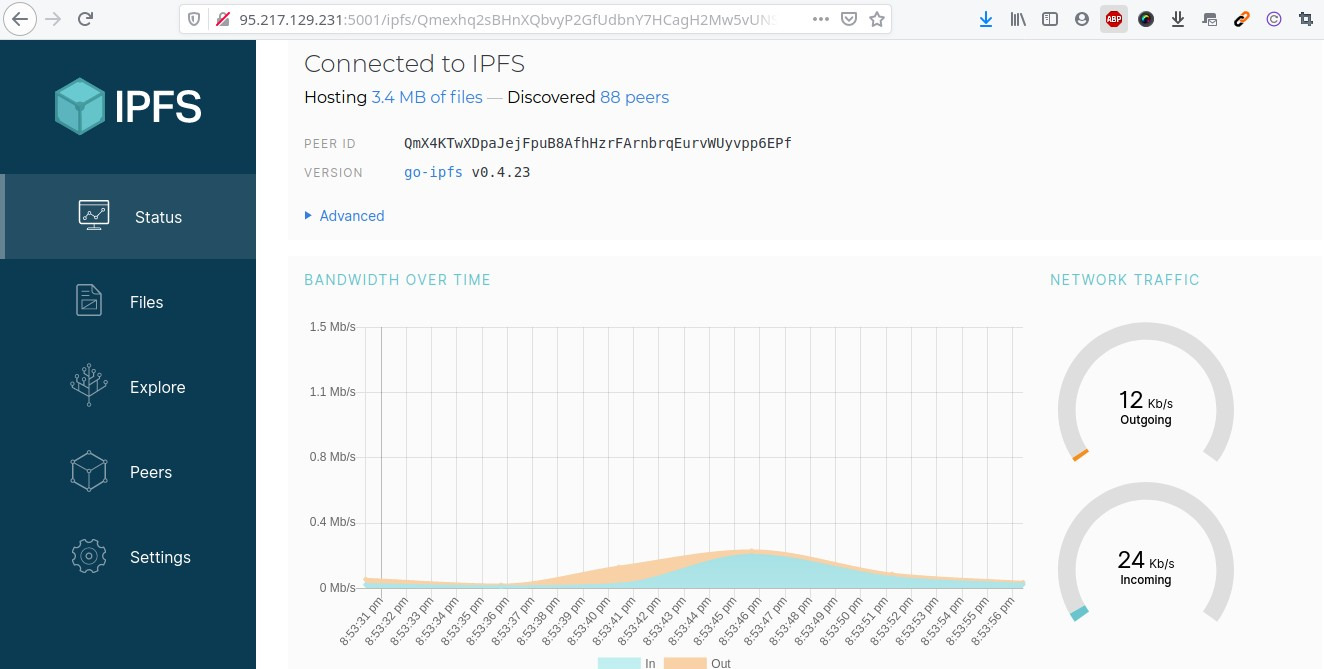

280,862 | The Technical skill you need to master to Become Devops based on My Experiences | Introduction In the previous post (part 1), I talk about devops engineer from my experienc... | 5,551 | 2020-04-26T07:30:34 | https://dev.to/iilness2/the-technical-skill-you-need-to-master-to-become-devops-based-on-my-experiences-2jf9 | devops, productivity, career, learning | ## Introduction

In the previous post (part [1](https://dev.to/iilness2/how-s-devops-engineer-develop-so-far-from-my-experiences-perspective-2bf8)), I talk about devops engineer from my experiences until now.

In this part, I want to talk about the technical skill you need to know/master when you want become a good practical Devops Engineer. Let's talk about it!

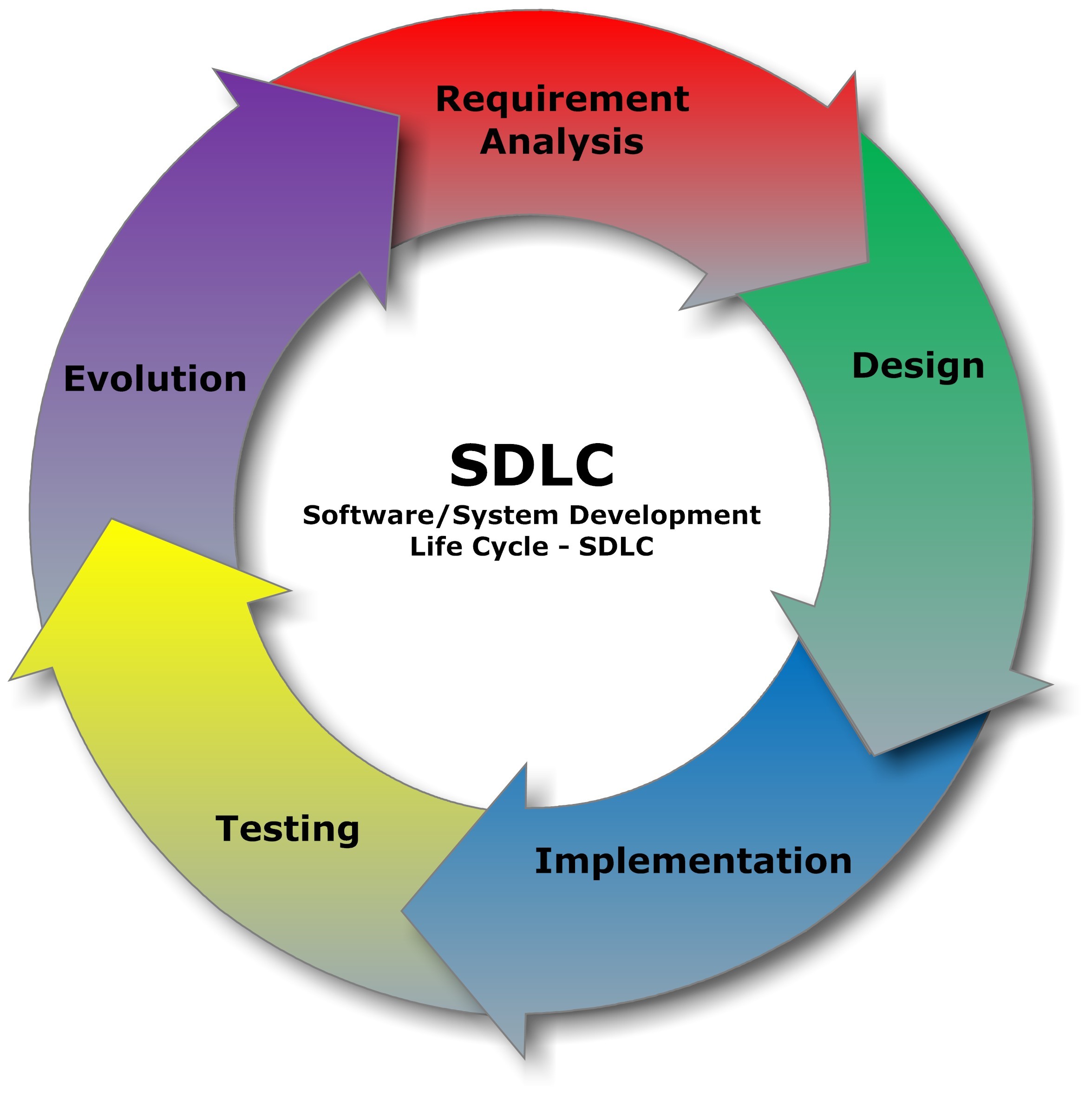

## Software Development Life Cycle(SDLC)

SDLC is the first thing you need to know when you want become a good Devops Engineer.

The most famous model for SDLC are *Agile* and *Waterfall*. Those model will give you the most benefit when you can implement it correctly on the right process.

When you come to the company, you need to understand their flow/process. Every company has a unique process, It got influenced by the organization structure, their purpose, who is people behind it, vision, mission, etc. Understanding SDLC will help you to choose the best solution for compliment the process.

> Yes, Compliment the process not against them!

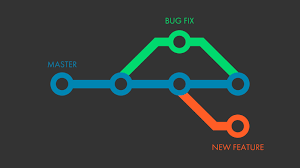

## GIT

GIT is the version control most widely used in modern development. GIT help us enables non-linear workflows in a distributed way by offering data assurance when develop application. With this model, we can have a distributed team for working on another task/feature without worries anything about history/track/timeline of the main code later.

If you want to know more, you can follow my other series talk about GIT [here](https://dev.to/iilness2/practical-git-command-for-working-with-the-project-part-1-4nce).

## YAML

YAML is a human-readable language that mostly used for configuration file. Yaml is understandable and easy to use at the same time. You just need to carefully follow the rules like indentation (YAML indentation sensitive) when using it.

Recently, most of the CI tools also already support YAML as a language for their configuration file. Some of them even migrating their existing configuration language to YAML so many people more familiar when use their service (I already talk and review about it [here](https://dev.to/iilness2/6-ci-tools-review-by-me-l10))

## Scripting language

Scripting still the most favorite basic tool to interact with our server. But, since deployment more happens in the cloud, many new tools for answering this necessity comes and arise for replacing the old scripting way.

These tools offer easiness to interact with the modern cloud. Some of them, offer interesting features like maintain the state, versioning, configuration management, declarative language, etc..

So, as a person who architect/do the operation for deployment, you need to be wise to choose which scripting language need to choose when do the job since we have more option right now.

## Application Installation & Configuration

Every code language has their characteristic when you want implement it. Some of them can upload directly, some of them need to be build, some of them need to got compile with their dependency.

To answer these needs, some new tools/ways were introduced for standardizing the process installation. The popular way is build one big immutable VM with all the environments that needed for application. with this way, you can do deployment (rollback/rollout) with ease. The other way is with empowering container with the most popular tool to do which the tool name known as *Docker*.

You need to choose which one is suitable for your organization application process and needs.

## Cloud & Orchestration

Cloud orchestration is of interest to many IT organizations and DevOps adopters as a way to speed the delivery of services and reduce costs. A cloud orchestrator automates the management, coordination and organization of complicated computer systems, services, and middleware.

Many vendors offer cloud orchestrator products. DevOps teams can also implement cloud orchestration in numerous ways via automation and management tools to fit with their processes and methodologies.

When evaluating cloud orchestration products, it is recommended that administrators first map the workflows of the applications involved. This step will help the administrator visualize how complicated the internal workflow for the application is and how often information flows outside the set of app components. This, in turn, can help the administrator decide which type of orchestration product will help automate workflow best and meet business requirements in the most cost-effective manner.

So if you want to learn more, you can follow my other articles on [here](https://dev.to/iilness2) or on other platforms [here](https://community.alibabacloud.com/users/5246280492402877?spm=a2c65.11461447.0.0.662f15f51xm6yq)

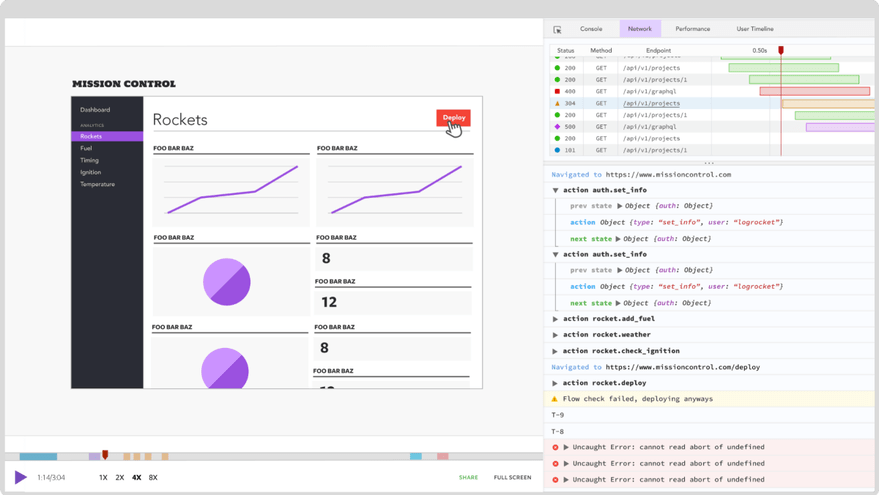

## Monitoring / Debugging / Logging & alerting

Monitoring the service performance is the task usually we do after the deployment process finish.

Monitoring itself divided into 4 main parts.

The first part is server/host monitoring which we will monitor the host that hosting our application environment.

The second part is Application Monitoring and Application profiling which monitor our application performance when got actual traffic from time to time.

The third part is logging. Logging is several events that are recorded on files by a software application. Usually, it contains errors, informational events, and warnings.

The last part which arises recently because of the automation trend is alert. Alerts are typically delivered through a notification system and usually combine with other monitoring system to made an automation prevention based on application performance.

## Conclusion

In the profesional world where everything needs to have high standard, deep understanding on what you do is needed.

Since Devops still developing until now, many new theory and new skill arise for complement Devops positions. With this article, I hope you can choose which skill you really need to know/ just nice to have for complement your journey as a Devops in professional work.

I think that's it for now for this article. Leave a comment below about your thoughts! Thanks.

| iilness2 |

295,586 | Controlling access to files uploaded by users | Imagine a situation where you have to check whether or not a user that sent the request can access or... | 0 | 2020-03-31T07:58:50 | https://fitodic.github.io/controlling-access-to-files-uploaded-by-users | webdev, security, nginx, django | ---

title: Controlling access to files uploaded by users

published: true

date: 2020-03-25 19:46:00 UTC

tags: webdev,security,nginx,django

canonical_url: https://fitodic.github.io/controlling-access-to-files-uploaded-by-users

---

Imagine a situation where you have to check whether or not a user that sent the request can access or download files that were uploaded by another user. Perhaps user A uploaded a file that needs to be shared only with user B or only with authenticated users. If your application is deployed behind a [reverse-proxy](https://en.wikipedia.org/wiki/Reverse_proxy) such as [`nginx`](https://www.nginx.com/resources/wiki/), you can use the best of both worlds: your application for checking the user’s permissions and the Web server for serving the files the application tells it to serve.

Before we begin, there are a couple of things I would like to address. First of all, having your Web application serve the media files by loading it into memory and sending it in a response is [grossly inefficient](https://docs.djangoproject.com/en/dev/howto/static-files/#serving-static-files-during-development). You may not know the size of the file, or there could be many requests happening at once. Whatever the case may be, there is a better way.

## `X-Accel`

To quote the [official documentation](https://www.nginx.com/resources/wiki/start/topics/examples/x-accel/):

> X-accel allows for internal redirection to a location determined by a header returned from a backend.

> This allows you to handle authentication, logging or whatever else you please in your backend and then have NGINX handle serving the contents from redirected location to the end user, thus freeing up the backend to handle other requests. This feature is commonly known as [`X-Sendfile`](https://www.nginx.com/resources/wiki/start/topics/examples/xsendfile/).

To achieve this, at least two things have to be implemented:

1. The application’s response must contain the [`X-Accel-Redirect`](#x-accel-redirect-header) header;

2. The location should be marked as [`internal;`](#internal) to prevent direct access to the URI.

### `X-Accel-Redirect` header

This header tells `nginx` which URI to serve. Although the following example uses [`django-rest-framework`](https://www.django-rest-framework.org/), the same thing can be achieved with any other Web framework.

If we assume all files uploaded by users are located in the `/home/user/repo/media/` directory (also defined in Django’s [`MEDIA_ROOT`](https://docs.djangoproject.com/en/dev/ref/settings/#media-root) setting), or more precisely, the `/home/user/repo/media/files/{user.id}/` directory by the `FileField`’s [`upload_to`](https://docs.djangoproject.com/en/dev/ref/models/fields/#django.db.models.FileField.upload_to) function, the view looks something like this:

```

from pathlib import Path

from django.conf import settings

from django.http import HttpResponseRedirect

from rest_framework.decorators import action

from rest_framework.response import Response

from rest_framework.viewsets import ModelViewSet

from .models import File

from .permissions import CanAccessFile

class FileViewSet(ModelViewSet):

permission_classes = [CanAccessFile]

queryset = File.objects.all()

@action(detail=True, methods=["get"])

def download(self, request, pk=None):

obj = self.get_object()

if settings.DEBUG:

return HttpResponseRedirect(obj.upload.url)

file_name = Path(obj.upload.path).name

headers = {

"Content-Disposition": f"attachment; filename={file_name}",

"X-Accel-Redirect": (

f"/uploads/files/{obj.user_id}/{file_name}"

),

}

return Response(data=b"", headers=headers)

```

Note that the `settings.DEBUG` block is here so developers can keep using [Django’s `static` mechanism for serving media files during development](https://docs.djangoproject.com/en/dev/howto/static-files/#serving-files-uploaded-by-a-user-during-development).

There are also [other `X-Accel-*` headers](https://www.nginx.com/resources/wiki/start/topics/examples/x-accel/#special-headers) that can be set by the application to further refine the process.

### `internal`

The application’s response that contains the `X-Accel-Redirect` header is picked up by the Web server on its way back to the client. In order for `nginx` to locate the file that should be sent to the client, the configuration should look something like this:

```

server {

server_name example.com;

location /favicon.ico { access_log off; log_not_found off; }

location /static/ {

root /home/user/repo;

}

location /uploads/ {

internal;

alias /home/user/repo/media/;

}

location / {

include /etc/nginx/proxy_params;

proxy_pass http://unix:/run/gunicorn.sock;

}

}

```

With that all set, you’re ready to start serving files to select users! | fitodic |

280,953 | Understanding Closures in JavaScript | "Learn about closures in JavaScript, how to use them, and how they can be tricky." | 0 | 2020-03-13T22:49:44 | https://popovich.io/2020/2020-03-12-closure/ | javascript, closures, scope, let | ---

title: "Understanding Closures in JavaScript"

published: true

description: "Learn about closures in JavaScript, how to use them, and how they can be tricky."

tags: javascript, closures, scope, let

cover_image: https://dev-to-uploads.s3.amazonaws.com/i/5u0uusz7ltlnuvmw7htz.png

canonical_url: https://popovich.io/2020/2020-03-12-closure/

---

1. [What's a Closure?](#what)

2. [Uses for Closures](#uses)

3. [How Might This Trip Us Up?](#bugs)

<a name='what'></a>

## [#](#what) What's a Closure?

When you declare a function inside another function, a **closure** is the new environment created by combining the inner function with references to all variables available to it from outer scopes (this concept of all scopes accessible from a certain area is known as the **lexical environment**).

In other words, in a closure, all variables accessible to the inner function -- including variables declared outside the function itself -- *remain* accessible to it, even when that inner function is removed and called in some other context. The inner function remembers all the stuff it has access to at the time of its declaration.

Let's look at an example:

```js

let makeSayFullNameFunction = () => {

let lastName = `Skywalker`;

return (firstName) => {

return `${firstName} ${lastName}`;

};

};

let sayFullName = makeSayFullNameFunction();

sayFullName(`Luke`); // Luke Skywalker

```

Here, `lastName` is locally scoped to `makeSayFullNameFunction`. So it might seem that when we pull out the returned function as `sayFullName` and call it, we'll get an error, because it relies internally on `lastName`, but `lastName` isn't accessible from the global scope.

But in fact, this works just fine. When the inner function is created, `lastName` is **enclosed** (or **closed over**) into the closure of the inner function, so it is considered in scope no matter where the function is called.

For the purposes of calling the inner function, this:

```js

let makeSayFullNameFunction = () => {

let lastName = `Skywalker`;

return (firstName) => {

return `${firstName} ${lastName}`;

};

};

```

...is equivalent to this:

```js

let makeSayFullNameFunction = () => {

return (firstName) => {

let lastName = `Skywalker`;

return `${firstName} ${lastName}`;

};

};

```

The main benefit of closures is that they allow us to compose more modular programs. We don't have to stuff everything a function needs into that function to ensure it'll be able to access everything it needs in another environment, as we're about to see.

<a name='uses'></a>

## [#](#uses) Uses for Closures

### 1. When a Function Returns a Function

Let's look at our example from above again:

```js

let makeSayFullNameFunction = () => {

let lastName = `Skywalker`;

return (firstName) => {

return `${firstName} ${lastName}`;

};

};

let sayFullName = makeSayFullNameFunction();

sayFullName(`Luke`); // Luke Skywalker

```

Even though `lastName` doesn't appear to be in scope when `sayFullName` is called, it was in scope when the function was declared, and so a reference to it was *enclosed* in the function's closure. This allows us to reference it even when we use the function elsewhere, so that it's not necessary to stuff everything we need in scope into the actual function expression.

### 2. When a Module Exports a Function

```js

// sayName.js

let name = `Matt`;

let sayName = () => {

console.log(name);

};

export sayName;

```

```js

// index.js

import sayName from '/sayName.js';

sayName(); // Matt

```

Again, we see that even though `name` doesn't appear to be in scope when `sayName` is called, it was in scope when the function was declared, and so a reference to it was *enclosed* in the function's closure. This allows us to reference it even when we use the function elsewhere.

### 3. Private Variables and Functions

Closures also allow us to create methods that reference internal variables that are otherwise inaccessible outside those methods.

Consider this example:

```js

let Dog = function () {

// this variable is private to the function

let happiness = 0;

// this inner function is private to the function

let increaseHappiness = () => {

happiness++;

};

this.pet = () => {

increaseHappiness();

};

this.tailIsWagging = () => {

return happiness > 2;

};

};

let spot = new Dog();

spot.tailIsWagging(); // false

spot.pet();

spot.pet();

spot.pet();

spot.tailIsWagging(); // true

```

This pattern is only possible because references to `happiness` and `increaseHappiness` are preserved in a closure when we instantiate `this.pet` and `this.tailIsWagging`.

<a name='bugs'></a>

## [#](#bugs) How Might This Trip Us Up?

One big caveat is that we have to remember we're only enclosing the references to *variables*, not their *values*. So if we reassign a variable after enclosing it in a function...

```js

let name = `Steve`;

let sayHiSteve = () => {

console.log(`Hi, ${name}!`);

};

// ...many lines later...

name = `Jen`;

// ...many lines later...

sayHiSteve(); // Hi, Jen!

```

...we might be left with an unwanted result.

In ES5, this often tripped up developers when writing `for` loops due to the behavior of `var`, which was then the only way to declare a variable. Consider this situation where we want to create a group of functions:

```js

var sayNumberFunctions = [];

for (var i = 0; i < 3; i++) {

sayNumberFunctions[i] = () => console.log(i);

}

sayNumberFunctions[0](); // Expected: 0, Actual: 3

sayNumberFunctions[1](); // Expected: 1, Actual: 3

sayNumberFunctions[2](); // Expected: 2, Actual: 3

```

Though our intention is to enclose the *value* of `i` inside each created function, we are really enclosing a reference to the *variable* `i`. After the loop completed, `i`'s value was `3`, and so each function call from then on will always log `3`.

This bug arises because `var` (unlike `let`) can be redeclared in the same scope (`var a = 1; var a = 2;` is valid outside strict mode) and because `var` is scoped to the nearest *function*, not the nearest block, unlike `let`. So each iteration was just *changing* the value of a single global-scope variable `i`, rather than declaring a new variable, and that single variable was being passed to all of the created functions.

The easiest way to solve this is to replace `var` with `let`, which is block-scoped to each iteration's version of the loop block. Every time the loop iterates, `i` declared with `let` will be a new, independent variable scoped to that loop only.

```js

var sayNumberFunctions = [];

for (let i = 0; i < 3; i++) {

sayNumberFunctions[i] = () => console.log(i);

}

sayNumberFunctions[0](); // 0

sayNumberFunctions[1](); // 1

sayNumberFunctions[2](); // 2

```

But what if for some reason we can't use `let`? Alternatively, we could work around this problem by changing what's being enclosed:

```js

var sayNumberFunctions = [];

for (var i = 0; i < 3; i++) {

let newFunction;

(function(iInner){

newFunction = () => console.log(iInner);

})(i);

sayNumberFunctions[i] = newFunction;

}

sayNumberFunctions[0](); // 0

sayNumberFunctions[1](); // 1

sayNumberFunctions[2](); // 2

```

We can't use `let`, so we have to find a new way to enclose a unique value into `newFunction`. Since `var` is function-scoped, we'll need to declare another function and then immediately invoke it. Since we're declaring and invoking a new function on each iteration, our variable `iInner` is being redeclared as a unique variable each time, so we're now enclosing a unique variable with its own unique value on each pass, preserving the value we want.

As you've probably noticed, forcing the developer to use closures to detangle local variables from the global state is less than ideal. This was a major impetus for the behavior of `let` in ES6.

But it's still good idea to understand how closures work, and to keep in mind that they don't freeze the lexical environment's *values*; they only preserve references to *variables* that are in scope. | mattpopovich |

280,960 | Stepping stones: Web Components | Today I delved into web components to learn the innards of web components, before getting into angula... | 5,394 | 2020-03-13T23:13:16 | https://dev.to/omnoms/stepping-stones-web-components-569j | webcomponents, native, javascript | Today I delved into web components to learn the innards of web components, before getting into angular elements and understanding how angular elements wraps the native stuff.

So I did a floating icon, wrapping a little bit of functionality before rendering content. It's like a preamble to a work-related task where I will create a common floating container-area for our floating buttons for things like chat, surveys, etc.

###Initial thoughts

I keep IE11 in the back of my head, and so many things, need to be "reworked" to get this to function in IE11, even a basic thing such as this. Ignoring the ES6 class issue it was quite easy to get the "bound" properties. However, all bound properties are sent as strings, so no fancy schmancy conversions happening.

So to send an object, it would be sent as a string or you need to do so atleast, and functions are also passed as string, so you could eval() those, for better or worse, mostly worse, or if it's non-native events that you wish to raise, you can just raise them normally and parent elements can capture them through addEventListener or similar methodology.

###The template

If I wanted to make something entirely self-contained, I had to create the elements through JS and not through a template definition made in an html file because then you would have to have that template in the consuming markup. Maybe that's not a problem for you. But for the intents that I have, where I want to be able to ship out custom components to other projects disconnected from mine, that's not ideal.

So I had to do a fair bit of document.createElement() in my code to detach it from that dependency and have my "template" through code.

I'll have to go over this code once more to make it IE11 safe.

It was surprisingly easy to get started from scratch. Next part of this will likely be angular elements or the IE11 variation.

####chatButton.js

```js

customElements.define('chat-button',

class extends HTMLElement {

_imgSrc = "";

_initials = "";

constructor() {

super();

this.parseImg();

this.parseInitials();

const buttonContent = document.createElement("span");

buttonContent.id= "chat-button-content";

buttonContent.addEventListener("click", this._clickFn);

if(this._imgSrc !== "") {

let img = document.createElement("img");

img.src = this._imgSrc;

img.className = "chat__icon";

buttonContent.appendChild(img);

} else {

let initSpan = document.createElement("span");

initSpan.textContent = this._initials;

initSpan.className = "chat__initials";

buttonContent.appendChild(initSpan);

}

const randomColor = this.getRandColor();

const style = document.createElement("style");

const styleStr = `

#chat-button-content {

display: inline-block;

height: 50px;

width: 50px;

border-radius: 50px;

box-shadow: 2px 2px 3px #999;

overflow: hidden;

text-align: center;

margin: 5px;

${this._imgSrc === ""?"background-color: " + randomColor: ""}

}

#chat-button-content > .chat__icon {

margin: auto;

width: 50px;

height: 50px;

max-width: 200px;

}

#chat-button-content > .chat__icon > img {

position: absolute;

left: 50%;

top: 50%;

height: 100%;

width: auto;

}

#chat-button-content > .chat__initials {

vertical-align: center;

line-height: 50px;

}`;

style.textContent = styleStr;

var wrapper = document.createElement("div");

wrapper.appendChild(style);

wrapper.appendChild(buttonContent);

this.attachShadow({mode: 'open'}).appendChild(wrapper);

}

getRandomInt(max) {

return Math.floor(Math.random() * Math.floor(max));

}

getRandColor() {

const r = this.getRandomInt(16).toString(16);

const g = this.getRandomInt(16).toString(16);

const b = this.getRandomInt(16).toString(16);

return "#" + r+g+b;

}

parseImg() {

const img = this.getAttribute("img");

if(Object.prototype.toString.call(img) === "[object String]" && img !== "") {

this._imgSrc = img;

}

}

parseInitials() {

const initials = this.getAttribute("initials");

if(Object.prototype.toString.call(initials) === "[object String]" && initials !== "") {

this._initials = initials;

}

}

/// LIFE-CYCLE

connectedCallback() {

console.log("Connected.");

}

disconnectedCallback() {

console.log('Disconnected.');

}

adoptedCallback() {

console.log('Adopted.');

}

attributeChangedCallback(name, oldValue, newValue) {

console.log('Attributes changed.', name, oldValue, newValue);

}

}

);

```

####index.html

```html

<html>

<head>

<title>

WebComponent test

</title>

<script defer src="chatButton.js"></script>

<style>

.chat__container {

position: fixed;

bottom: 60px;

right: 60px;

}

</style>

<script>

function _myClickFunction() {

console.log("Callback!");

}

</script>

</head>

<body>

<div class="chat__container">

<chat-button onClick="_myClickFunction()" img="https://vision.org.au/business/wp-content/uploads/sites/14/2019/08/1600-business-success.jpg" initials="AD"></chat-button>

<chat-button initials="JD"></chat-button>

</div>

</body>

</html>

```

reference;

https://developer.mozilla.org/en-US/docs/Web/Web_Components/Using_shadow_DOM

https://github.com/mdn/web-components-examples/blob/master/popup-info-box-web-component/main.js

| omnoms |

280,971 | Which Programming Language Should I Learn First? | Hello folks, We all need to start somewhere when learning to code. Everyone has a reason to start th... | 4,790 | 2020-03-13T23:51:55 | https://dev.to/domenicosolazzo/which-programming-language-should-i-learn-first-5ha | codenewbie, beginners, programming, career | Hello folks,

We all need to start somewhere when learning to code. Everyone has a reason to start this journey but everyone gets confused on where to start.

Let's talk about which programming language you should learn first.

Common questions that I often get on social media and during my days as a software engineer:

> - _Which programming language should I learn first?_

> - _I am a student: they are teaching us Java but everyone tells me to learn Python, Javascript, <put here your favorite programming language>..._

> - _Which language should I learn to get a job?_

Do these questions sound familiar to you?

I have both a short answer and a long answer. The short answer is more subjective.

I worked with multiple programming languages throughout my career. Programming languages like *Visual Basic* (remember this one!?!?), *C#*, *PHP*, *Java*, *Python*, *Javascript*, *C++*.

So, the answer is based on my experience in the last 15 years as a software engineer and my personal taste.

{% youtube Yny83Y-8oWY %}

So, which programming language should you learn first?

My short answer is **Python** and **Javascript**.

### Javascript

*Javascript* is the programming language that is powering the web.

The syntax of Javascript is quite easy to learn and you can start using this programming language without setting up any development environment.

Just open your favorite browser, and open the developer console.

That's all you need to start writing Javascript code.

Ok, that's not how you would write production code, but if you are just learning your first programming languages and you need a Javascript playground, that's all you need to start writing code in Javascript!

In Web Development, you need the frontend and backend part of the website. Learning Javascript can give you the "superpower" of being able to write both sides with just one programming language using something called *Node.JS*, which is actually Javascript on the server-side.

### Python

Another programming language that I would recommend is called **Python**.

Python is one of the fast-growing programming languages out there and it can be used for Web Development, Data Science, Machine Learning, Automation.

The simplicity in its syntax is one of the best parts of this programming language and actually, the thing that made me fall in love with it.

The community is huge and there are so many job opportunities using Python and making it a worthy "first" programming language to learn.

That's my personal short answer based on my experience, but should you really learn Python and Javascript first? Maybe yes, maybe not!

Everyone has different needs and goals on why they are learning code, so there is no magic first programming language that would be good for anyone.

## BEFORE CHOOSING YOUR FIRST PROGRAMMING LANGUAGE

There are 3 points to take into account when deciding your first programming language and I am sharing my strategy on how I would go choosing my first programming language if I would start my career in Software Engineering today.

### 1. JOB MARKET

The job market is the first point to take into consideration,

Most of you are learning to code for a different reason. Some of you are looking to find a job in this field and it is totally understandable. There are so many opportunities in software engineering that I see many of you have this as the main reason to start learning to code.

The job market depends on your own location and industry you want to work in.

Different countries have different demands for some programming languages. Same when we talk about the industries you might work in: working as a software engineer in oil & gas might require experience than working in Banking or the Gaming industry.

If you want to work in the Gaming Industry, maybe learning C++, C# could be two good options as the first programming language.

If you want to work in the Banking Industry, learning Java is the first option for you.

If you want to build mobile apps for a living, Swift / Objective-C are the options for iOS and Java / Kotlin for Android.

Do you want to work in Data Science and Machine Learning? Python could be a good bet as the first programming language to learn.

Understand the kind of job you want to get and the technologies required for it, that's a good way to understand the programming language that you would learn first.

### 2. WHAT DO YOU WANT TO BUILD?

If you are not looking for a job, learning to code might just be a pure pleasure for you at the moment.

In that case, building project-based learning can be a good option.

What does it mean? Instead of only relying on a tutorial, you try to build a project with a given programming language you choose.

Let me ask you: what do you want to build?

- A Website? You should start learning Javascript in combination with HTML / CSS for the frontend part.

- A Mobile app: You should choose Java or Kotlin for building Android apps and Swift / Objective-C for iOS app. If you want to build cross-platform apps, you could choose to learn Javascript using React Native or Dart using Flutter.

- Want to build a game?

You could choose Swift for the iOS game but probably I would choose to learn C# using Unity.

Based on what you want to build, you might choose a different programming language.

### 3. EASE OF LEARNING

Last but not least, it is the ease of learning a programming language. Some programming languages might be easier to learn and work with than others. Let me explain...

Whenever you are solving and trying to solve a problem in software engineering, you have two factors to take into consideration:

- Problem Solving: Each problem that you are trying to solve has a certain logical complexity. It is your job to understand what will be the steps for solving the problem.

- Syntax complexity: Each programming language has its own syntax. Some can be easier to learn than others.

When learning to code, it is wise to choose programming languages with an easier syntax complexity. For example, learning the syntax and how you write software in Python could be easier than Java.

## WHICH PROGRAMMING LANGUAGE SHOULD I LEARN FIRST?

My short answer on which programming language to learn first was Python and Javascript. As I said, it is based on my previous experience but I think they are quite easy to learn and great programming languages to work with offering so many opportunities for your career.

But, you should choose the right programming languages for you and your needs. That's why I suggested you to take into account 3 valuable points: job market, what you want to build and ease of learning.

Let me know in the comment below which programming language you are learning and how do you feel about it.

{% youtube Yny83Y-8oWY %}

Well, the last thing: If you arrived here, thank you so much for your support and time that you spend on this page.

If you enjoyed this story, please click the like button and share it to help others find it! Feel free to leave a comment below.

⠀

⠀

⠀

⠀

### ABOUT THE AUTHOR

Domenico is a tech lead and software developer with a passion for design, psychology, and leadership.

If you need help with software development, you need consultancy for your technical challenges or you need a leader for your software team or just want to collaborate online, feel free to contact me!

### FOLLOW ME

Do you know that I have a YouTube channel? [Subscribe!](http://bit.ly/YT_DOMENICOSOLAZZO)

Where can you find me?

**Youtube:** [Domenico Solazzo's Channel](http://bit.ly/YT_DOMENICOSOLAZZO)

**Instagram**: [domenicosolazzo](https://www.instagram.com/domenicosolazzo/)

**Linkedin**: [solazzo](https://www.linkedin.com/in/solazzo/)

**Medium**: [domenicosolazzo](https://medium.com/@domenicosolazzo)

**Facebook**: [domenicosolazzo](https://www.facebook.com/domenicosolazzo.labs/)

**Twitter**: [domenicosolazzo](https://twitter.com/domenicosolazzo)

**Snapchat**: [domenicosolazzo](https://twitter.com/domenicosolazzo)

**Github**: [domenicosolazzo](https://github.com/domenicosolazzo)

**Website**: [https://www.domenicosolazzo.com](https://www.domenicosolazzo.com)

**Dev.To**: [https://dev.to/domenicosolazzo](https://dev.to/domenicosolazzo)

**Hashnode**: [https://hashnode.com/@domenicosolazzo](https://hashnode.com/@domenicosolazzo) | domenicosolazzo |

281,027 | TensorFlow, Deep Learning, Red Hat OpenShift – watch February 2020 online meetup recordings | IBM Developer SF team hosts weekly online meetups on various topics. Online events are one of the bes... | 0 | 2020-03-16T22:13:45 | https://maxkatz.org/2020/03/13/tensorflow-deep-learning-red-hat-openshift-watch-february-2020-online-meetup-recordings/ | containers, kubernetes, machinelearning, ibmcloud | ---

title: TensorFlow, Deep Learning, Red Hat OpenShift – watch February 2020 online meetup recordings

published: true

date: 2020-03-14 01:50:47 UTC

tags: Containers,Kubernetes,Machine Learning,IBM Cloud

canonical_url: https://maxkatz.org/2020/03/13/tensorflow-deep-learning-red-hat-openshift-watch-february-2020-online-meetup-recordings/

---

[IBM Developer SF team](https://www.meetup.com/IBM-Developer-SF-Bay-Area-Meetup/) hosts weekly online meetups on various topics. [Online events are one of the best ways to scale](https://dev.to/ibmdeveloper/using-online-meetups-to-scale-your-developer-relations-program-17li) your Developer Relations program and reach developers anywhere, anytime and for a long time after the event.

The following are online meetups we hosted in February 2020. Register for any future events on [Crowdcast](http://crowdcast.io/ibmdevelopersf).

**Introduction to TensorFlow and Watson Machine Learning**

February 5, 2020

[Watch the recording](https://www.crowdcast.io/e/introduction-to-11) 📺

**Deep Learning Master Class II – Computer Vision**

February 6, 2020

[Watch the recording](https://www.crowdcast.io/e/deep-learning-master-2) 📺

**Fraud Prediction using AutoAI**

February 12, 2020

[Watch the recording](https://www.crowdcast.io/e/fraud-prediction-using) 📺

**DL Master Class III – Model Performance, Quantization, & Hyperparameter Search**

February 13, 2020

[Watch the recording](https://www.crowdcast.io/e/dl-master-class-iii--) 📺

**Deploy Microservices with Red Hat OpenShift**

February 19, 2020

[Watch the recording](https://www.crowdcast.io/e/deploy-microservices) 📺

**Serverless Mobile Backend as a Service**

February 26, 2020

[Watch the recording](https://www.crowdcast.io/e/serverless-mobile) 📺 | maxkatz |

292,368 | Creating a dynamic application with LoopBack | Written by Idorenyin Obong✏️ Node.js is a popular JavaScript framework with a strong, ever-growing... | 0 | 2020-04-24T13:18:22 | https://blog.logrocket.com/creating-a-dynamic-application-with-loopback/ | node, tutorial | ---

title: Creating a dynamic application with LoopBack

published: true

date: 2020-03-26 13:00:01 UTC

tags: node, tutorial

canonical_url: https://blog.logrocket.com/creating-a-dynamic-application-with-loopback/

cover_image: https://dev-to-uploads.s3.amazonaws.com/i/vy4qi92xwa6folilldeq.png

---

**Written by [Idorenyin Obong](https://blog.logrocket.com/author/idorenyinobong/)**✏️

Node.js is a popular JavaScript framework with a strong, ever-growing community. Among the many things the ecosystem has to offer, [LoopBack](https://loopback.io/) is an excellent framework for building APIs and microservices.

According to the [official docs](https://developer.ibm.com/open/projects/loopback/), “LoopBack is a highly extensible, open-source Node.js and TypeScript framework based on Express that enables you to quickly create APIs and microservices composed from backend systems such as databases and SOAP or REST services.”

[Express.js](https://expressjs.com/) — still the most popular [Node.js framework](https://snipcart.com/blog/graphql-nodejs-express-tutorial) — is fast, unopinionated, and minimalist, but it lacks most of the functionality that is common in a full-fledged web application framework. Since it’s unopinionated, you have to do a lot of decision-making, such as creating the structure that supports your backend and identifying the most appropriate package. You really need to know what you’re doing and where you’re going.

With LoopBack, your project has a predefined structure. You can define API endpoints and schemas using the [OpenAPI](https://www.openapis.org/) standard. You can also integrate with databases, web services, and other platforms easily using connectors. LoopBack offers an built-in API explorer you can use to test endpoints.

[](https://logrocket.com/signup/)

## What we will build

For this tutorial, you’ll need to have Node.js (v8+) installed on your machine, as well as a text editor.

We’re going to build a book store application using LoopBack. Our app will implement basic CRUD operations, and we’ll demonstrate how to use LoopBack’s CLI and API explorer.

## Bootstrapping your application

To bootstrap a LoopBack application, we’ll use the LoopBack CLI. Run the following command to install it.

```jsx

npm i -g @loopback/cli

```

Now you have the LoopBack CLI tool on your machine. Next, create a new project with the CLI tool. Go to your project directory and run this command:

```jsx

lb4 app

```

This is an interactive command that prompts you to answer a few questions to set up your new project. You’ll be required to enter a name for your app; we’ll call it `book``-store`. For a project description, you can enter `A dynamic application with Loopback`. When asked for the root directory and application class name, press enter to maintain the defaults. Finally, for features, enable Prettier, ESLint and `loopBackBuild`.

Here is how the process should go:

After the project is created, start the application by running the following commands.

```jsx

# Move into the app directory

cd book-store

# Start application

npm start

```

You should see a message on your terminal with a `URL` to test out. When you open the `URL`, you should see a `JSON` displayed in your browser.

## Adding a book model

Since you’re building a simple book store, you want your app to be able to store and retrieve books from a data source. To achieve this, we need to build a model that describes your domain objects (the type of data).

LoopBack provides decorators — `@model` and `@property` — that make defining models extensible. You can use the `@model` decorator to configure the model settings (such as enable strict mode or hide a certain property) and the `@property` decorator to define model property characteristics (e.g., specify a property type as a string or boolean or set a property to be required).

The next step is to create a book entity containing a list of properties — namely, `id`, `title`, `description` , `author`, and `release_date`. You can use LoopBack’s interactive command for creating models.

Run the following command in your app directory and answer the prompts to generate your book model.

```jsx

lb4 model

```

You may need to stop your server from running if you’re using the same terminal window. On a Mac, you can use Ctrl+C to stop the server.

Here is how the process of creating a model should go:

For a model to be persisted in a database, the model must have an `id` property and inherit from `Entity` base class.

## Setting up a datasource

A datasource in LoopBack acts as an interface for connecting to various sources of data, such as a database, REST service, SOAP web service, or gRPC microservice, by providing the necessary configuration properties. In the previous section, you defined a model by the type of data it should accept. Here, you need to define how the data is stored.

In LoopBack, you should use the `lb4 datasource` command provided by the CLI to generate a datasource. When you run this command, you’ll be asked some questions. Below is a screenshot of responses you should provide.

Note: In the field for user and password, you can skip the values by just pressing enter since this is just a sample app.

In the screenshot above, the specified datasource connection is named `db`, and you selected MongoDB as the datasource connector. LoopBack provides other connection types you can also choose from, such as Redis, MySQL, [PostgresSQL](https://dev.to/bnevilleoneill/getting-started-with-postgres-in-your-react-app-275b-temp-slug-9504682), and REST services.

## Adding a book repository

Now that you have a model and a datasource, you need to create a repository to handle operations of the book model against the underlying datasource.

For a repository to perform CRUD operations, it needs to use the `DefaultCrudRepository` class, which binds the model with a datasource. Leverage the LoopBack CLI to create a repository for your app.

Inside the project folder, run this command:

```jsx

lb4 repository

```

Your responses should look like this:

Now open the `src/repositories/book.repository.ts` file in your favorite editor, such as [VSCode](https://dev.to/bnevilleoneill/learn-these-keyboard-shortcuts-to-become-a-vs-code-ninja-31me). Inside the file, replace it with this snippet:

```jsx

// src/repositories/book.repository.ts

import {DefaultCrudRepository} from '@loopback/repository';

import {Book, BookRelations} from '../models';

import {DbDataSource} from '../datasources';

import {inject} from '@loopback/core';

export class BookRepository extends DefaultCrudRepository<

Book,

typeof Book.prototype.id,

BookRelations

> {

constructor(@inject('datasources.db') dataSource: DbDataSource) {

super(Book, dataSource);

}

public findByTitle(title: string) {

return this.findOne({where: {title}});

}

public findByAuthor(author: string) {

return this.findOne({where: {author}});

}

}

```

The `BookRepository` class extends the `DefaultCrudRepository` class. This makes it possible to handle basic crud operations like creating a new book. You can also add custom methods to the repository to perform more operations like `findByTitle` and `findByAuthor`.

## Adding a book controller

So far you’ve implemented the model, datasource and repository, but where do you define the logic that handles requests for the application? In LoopBack, you do this in the `Controller` class. The controllers handle the request-response lifecycle for your app.

According to the [official documentation](https://loopback.io/doc/en/lb4/Controllers.html), a controller “implements an application’s business logic and acts as a bridge between the HTTP/REST API and domain/database models.”