id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

404,332 | How do you stay motivated with your side projects? | Tips and tricks for helping stay motivated on side projects. | 0 | 2020-07-19T23:16:15 | https://dev.to/jonoyeong/how-do-you-stay-motivated-with-your-side-projects-4e59 | discuss, productivity, beginners | ---

title: How do you stay motivated with your side projects?

published: true

description: Tips and tricks for helping stay motivated on side projects.

tags: discuss, productivity, beginners

//cover_image: https://direct_url_to_image.jpg

---

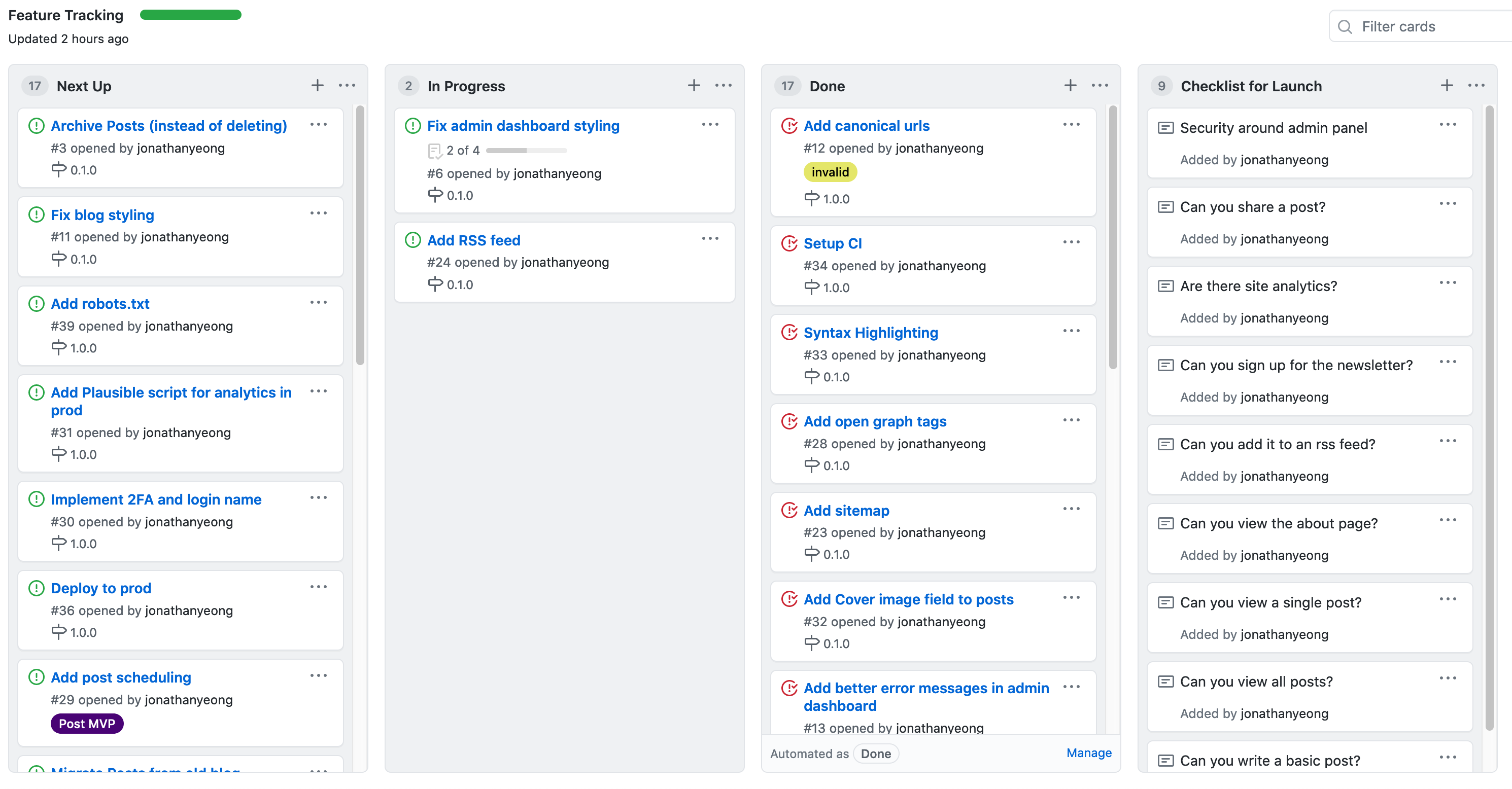

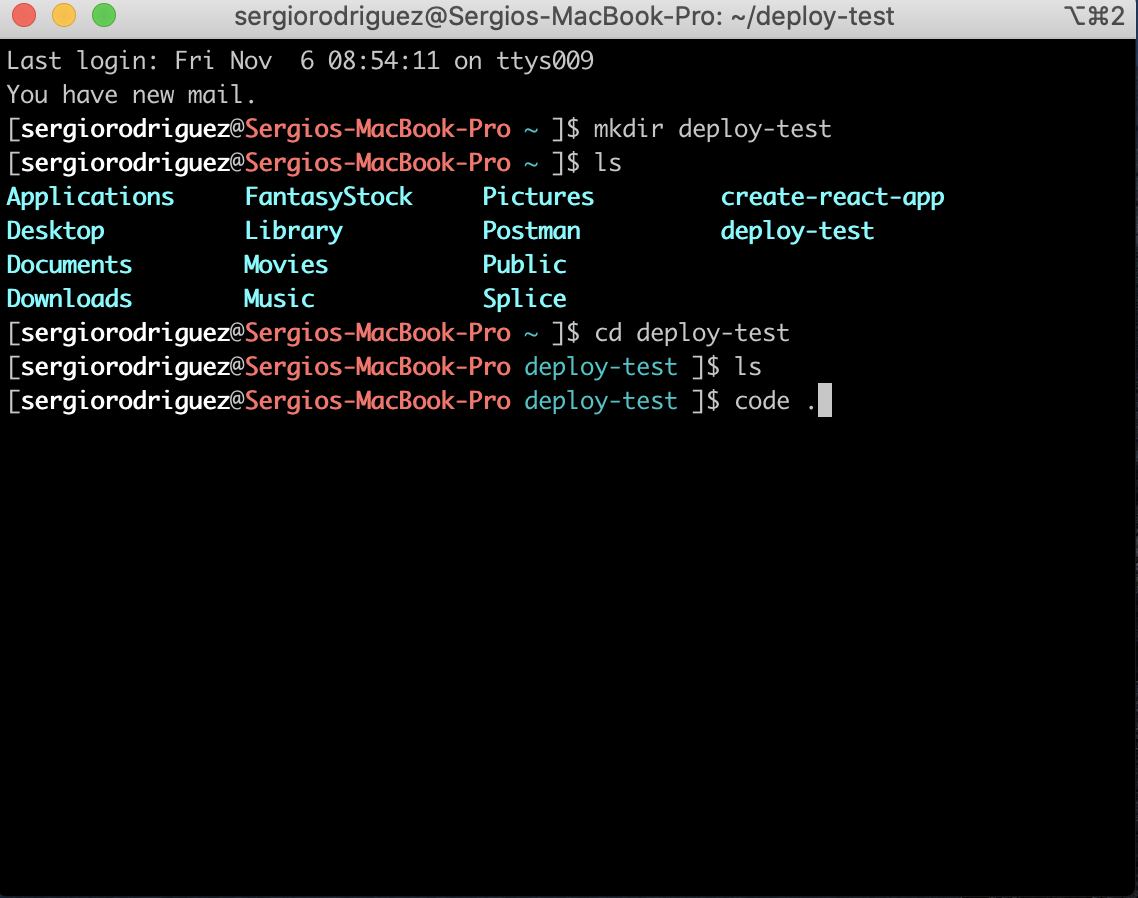

For me, it's staying organized. I'm tracking my feature list on my Github project. Keeping the features relevant and up to date has helped me stay on track. Here's what my [Github project](https://github.com/jonathanyeong/phoenix_blog/projects/1) looks like:

What helps keep you motivated with your side projects? I'd love to hear some of your tips and tricks! | jonoyeong |

404,432 | When to use OOP in Python if you are not developing large applications | It is not a matter of how, but why. | 0 | 2020-07-20T02:50:56 | https://dev.to/pedrohasantiago/when-to-use-oop-in-python-if-you-are-not-developing-large-applications-2h2k | python, beginners, oop, datatypes | ---

title: When to use OOP in Python if you are not developing large applications

published: true

description: It is not a matter of how, but why.

tags: python, beginners, oop, datatypes

cover_image: https://images.unsplash.com/photo-1588591795084-1770cb3be374?ixlib=rb-1.2.1&ixid=eyJhcHBfaWQiOjEyMDd9&auto=format&fit=crop&w=1500&q=80

---

Having started studying programming from a data science perspective, I had a lot of trouble understanding not exactly *what* object-oriented programming (OOP) was, but *why* should I use it. After all, the code I wrote seemed to do just well without custom classes and objects.

Inspired by [an introduction on OOP in Python I watched in a recent online conference (in Brazilian Portuguese)](https://youtu.be/QBmEvGMZG6A) – *congrats on the talk, Maria Clara!* –, I decided to write an introduction to OOP that will not focus on syntax or Python perks (I will assume you already know fairly well how to declare classes in Python), but will actually focus on when OOP should be used. I will present: (1) to what kind of code and issues OOP was created, (2) whether it has a place in non-complex applications and (3) what features Python has that can be used besides ordinary class declarations for most of the projects.

## Why OOP in the first place?

OOP was developed as a strategy to organize code in large and complex applications.

Let's say we are making an application in a bank that will read the balance of an account from a database and decrease it under request of a user, if there are enough funds. It could follow this very simplified layout:

```python

from db_connection import db_connection

def get_balance(db_connection, account_no):

...

return balance

def withdraw(balance, amount):

if amount <= balance:

return balance - amount

else:

raise Exception('Insufficient funds')

def update_balance(db_connection, account_no, new_balance):

...

if __name__ == '__main__':

account_no = input('Type account number: ')

to_withdraw = float(input('Type amount to be withdrawn: '))

old_balance = get_balance(db_connection, account_no)

new_balance = withdraw(old_balance, to_withdraw)

update_balance(db_connection, account_no, new_balance)

```

What if we want to allow a certain category of bank accounts to withdraw more money than available in the balance under a certain interest rate, as a loan? Our code quickly becomes way more complex:

```python

from db_connection import db_connection

ACCOUNT_CATEGORIES_ALLOWED_TO_LOAN = set(...)

def get_balance(db_connection, account_no):

...

return balance

def get_account_category(db_connection, account_no):

...

return account_category

def withdraw(balance, amount, is_allowed_to_loan):

if not is_allowed_to_loan and amount > balance:

raise Exception('Insufficient funds')

else:

return balance - amount

def update_balance(db_connection, account_no, new_balance):

...

def register_loan(db_connection, account_no, amount_loaned):

...

if __name__ == '__main__':

# Get account info

account_no = input('Type account number: ')

to_withdraw = float(input('Type amount to be withdrawn: '))

acct_category = get_account_category(db_connection, account_no)

# Withdrawal operation

old_balance = get_balance(db_connection, account_no)

is_allowed_to_loan = acct_category in ACCOUNT_CATEGORIES_ALLOWED_TO_LOAN

new_balance = withdraw(old_balance, to_withdraw, is_allowed_to_loan)

# Updates

update_balance(db_connection, account_no, new_balance)

if new_balance < 0:

register_loan(db_connection, account_no, new_balance)

```

Now imagine that there could be many different bank account categories, each of them with different consequences for different operations and available funds. The code base would quickly become spaghetti code: a bunch of different functions and flow control statements that is difficult to understand and to maintain.

That is where OOP steps in. It associates a specific set of data with specific functions to act on them, in a way that the relation between the information your code is dealing with and what it is able to do with it is very clear. Let's check how this is done.

### Abstraction

Code in OOP is organized through abstractions of real-life objects. In this sense, the bank account in our application example would be a class, a "thing" with its own characteristics (the data, called "attributes" in OOP) and actions (the "methods"), just like it is in the real world:

```python

class Account:

def __init__(self, balance):

self.balance = balance

def withdraw(self, amount):

if amount <= self.balance:

self.balance -= amount

else:

raise Exception('Insufficient funds')

```

Just from taking a quick look at the snippet above, you can know that (1) every account has a certain balance (2) every account can receive a "withdraw" action. This is different from what we had in our previous spaghetti application: if we were to change anything regarding bank accounts in our system, we would have to search through all the code to find where the changes should be made – and we would have to hope that we were making the change in all the necessary places. Classes help everything be more concentrated.

Our application could now be simplified to this:

```python

from db_connection import db_connection

from classes.accounts import Account

def get_account(db_connection, account_no) -> Account:

...

return Account(balance)

def update_database(db_connection, account_obj: Account):

...

if __name__ == '__main__':

account_no = input('Type account number: ')

to_withdraw = float(input('Type amount to be withdrawn: '))

account = get_account(db_connection, account_no)

account.withdraw(to_withdraw)

update_database(db_connection, account)

```

Notice that all the actions related to changing the data related to the account (ie, the account's *state*) is not present in this script anymore. The act of withdrawing money can now be all in a separate script where the definition of the `Account` class is - a package called "classes", with a script called "accounts.py", for example. Any change related to what happens when money is withdrawn from an account should be made in that separate script; any change related to how a user withdraws money (what information is requested, for example), should be made in our main script.

If you paid attention to the type annotations, you may have noticed that the database-related functions now deal with `Account` objects directly. This makes it easier if, in addition to withdrawing money, we also want the user to be able to call other methods from the `Account` class - that would just require the addition of some more lines, with no need to instantiate new objects.

### Encapsulation

Our `Account` class can have its balance easily edited during runtime. If we do `account.balance = 0.0005`, the balance would change, even though that would be a strange amount for an ordinary account in dollars.

That is why it is recommended that the attributes of a class be encapsulated, ie, hidden from the outside world (the rest of the code). In Python, this can be done with the help of the `@property` decorator (or, alternatively, with the convention of naming attributes with leading underscores[^1]):

[^1]: Check the use of single and double leading underscores here: https://dbader.org/blog/meaning-of-underscores-in-python. This is a convention adopted in PEP8, the style guide of the Python language: https://www.python.org/dev/peps/pep-0008/.

```python

class Account:

def __init__(self, balance):

self.balance = balance

def withdraw(self, amount):

if amount <= self.balance:

self.balance -= amount

else:

raise Exception('Insufficient funds')

@property

def balance(self):

# Nothing special about getting the balance;

# we will just return it.

return self._balance

@balance.setter

def balance(self, new_value):

# When changing the balance, however,

# we want to enforce certain rules

if (new_value * 100) % 1 > 0:

raise Exception('Balance can only have up to two decimal houses')

else:

self._balance = new_value

```

Now, any time that the `balance` attribute of the `Account` class is set up with no respect to the rules defined in the `Account` class itself, an exception is raised:

```python

>>> acct = Account(55.663)

...

Exception: Balance can only have up to two decimal houses

```

Encapsulation allows the implementation of the attributes to be reserved to the code of the class itself. Instead of checking in our main script if the new balance is a valid value, this action is reserved to the class declaration. Again, this results in a more organized code.

### Inheritance

Inheritance is a nice feature of OOP that allows classes to be related to each other. When one class inherits another, all the attributes and the methods of the class it is inheriting from are automatically attached to it, with no code repetition being necessary. In our bank application, this allows different `Account` types to be easily implemented, as a different `HighIncomeAccount` type, for example:

```python

class HighIncomeAccount(Account):

pass

```

Just the lines of code above are enough to create a different data structure that has the same attributes and methods of the main `Account` class (and is recognized as an instance of it, although "indirectly", in practice), at the same time it can be recognized as an object of a different type:

```python

>>> simple_account = Account(55)

>>> high_income_account = HighIncomeAccount(99955)

>>> all(hasattr(acct, 'withdraw')

... for acct in (simple_account, high_income_account))

True

>>> isinstance(high_income_account, Account)

True

>>> type(simple_account) is type(high_income_account)

False

```

In our application, we would have to change our `get_account` function to create either an `Account` or a `HighIncomeAccount` object depending on the case. However, besides that change, the rest of the code would be able to continue calling `account.withdraw` in the same way as before. **This is how OOP programs are seen to work: as "messages" (such as the `withdraw` order) being transmitted from one part of the code to another.**

### Polymorphism

Inheritance can be better used in our application by taking advantage of polymorphism: the same method can produce different results depending on which object it is called. We can, for example, change how `withdraw` works for a `HighIncomeAccount`:

```python

class HighIncomeAccount(Account):

def withdraw(self, amount):

diff = self.balance - amount

if diff >= 0:

super().withdraw(amount)

else:

self.amount_loaned = diff

self.balance = diff

```

That way, the exception regarding insufficient funds is raised only on `Account` objects, but not on `HighIncomeAccount` objects:

```python

>>> simple_account = Account(55)

>>> high_income_account = HighIncomeAccount(99955)

>>> simple_account.withdraw(99999999)

...

Exception: Insufficient funds

>>> high_income_account.withdraw(99999999)

>>> high_income_account.balance

-99900044

```

And, once again, our main script representing the user interaction can remain unchanged (besides the database interactions, which should be updated to consider the new `amount_loaned` attribute). All the logic regarding the bank accounts is concentrated in the classes definition. The code base as a whole is, therefore, much easier to read and maintain.

## Using OOP in simpler applications

All the above makes a lot of sense if you are dealing with code for complex systems. It is a different reality, however, if you write code for exploratory data analysis, for example, which is much more objective: given a certain dataset, tasks are executed one after another in order to provide certain insights (results). In this case, classes may not be necessary, as your code may not have to deal with different data structures. If everything is a DataFrame and all your functions can act on any DataFrame, there is not much reason to waste your time creating classes and declaring different methods. Much of the features of OOP, such as inheritance and polymorphism, in fact, would just not be useful at all.

As a rule of thumb, **creating custom classes is useful when you have the need to associate specific data and actions**. That was the case in the application example above: we needed a way to associate a "balance" with a certain "withdraw" action. As a different example, it can also be useful if we are building a scraper that collects information from different sources or in different ways, as the scraper of a hospitals database that also looks for the distance between one hospital and another and checks a different database for the number of beds in the hospital:

```python

class Hospital():

def __init__(self, address):

self.address = address

self.beds_no = self.get_number_of_beds()

def get_number_of_beds(self):

...

return beds_no

def get_distance(self, to: 'Hospital'):

...

return distance

```

Using such a class in your program can make information be transmitted much more easily between different parts of your code. It is clearer and shorter to call `Hospital.get_distance(to=another_hospital)` when necessary than to retrieve an address, call a separate function like `get_distance(from=one_address, to=another_address)` and deal with scattered information.

Another good application of data and actions being put together is when you need a different custom data type. In Python, data types such as `list` and `dict` can be seen as classes with special methods - as any other class, you can inherit from them and change their behavior. Let's say you need a `list` that only accepts instances of a `dict`, and you need to be sure of that, for any obscure reason. Then you can be creative and do:

```python

class ListOfDicts(list):

def __init__(self):

# We will not accept iterables as an argument to the constructor,

# or else ListOfDicts({'a': 'dict'}) will result in ['a'].

super()

def append(self, item):

self._execute_if_is_dict(super().append, item)

def insert(self, idx, obj):

self._execute_if_is_dict(super().insert, idx, obj)

def __setitem__(self, item):

self._execute_if_is_dict(super().__setitem__, item)

def _execute_if_is_dict(self, action, *args):

if not isinstance(args[-1], dict):

raise Exception('Only dicts are accepted as items')

else:

action(*args)

```

This approach should only be used if you are really sure of which methods you need to override. It shows, however, how Python can be flexible. If you ever catch yourself asking what if a certain data type could behave in a specific way, do some research: it is probable that someone has already written a custom class that does exactly what you need.

## Beyond classes: useful data structures

You may be tempted to create a class to encapsulate a simple set of information, for example:

```python

>>> class Person:

... def __init__(self, name, age, address):

... self.name = name

... self.age = age

... self.address = address

>>> holmes = Person('Sherlock Holmes', 60, '221B Baker Street')

```

Do not do it this way for simple data structures like this one. You can aggregate data like that in a simple `dict`, and that will not raise questions regarding the possibility of any special method being attached to your `Person` class – which is, actually, very simple:

```python

>>> holmes = {

... 'name': 'Sherlock Holmes',

... 'age': 60,

... 'address': '221B Baker Street'

... }

```

This will equally allow you to retrieve information from the "holmes" object in a very direct way. It is true, however, that you may need a template, ie, a way of ensuring that every possible `Person` have three different attributes associated with it: a name, an age, and an address. That is the use case of a `NamedTuple`.

### Named tuples

A [named tuple](https://docs.python.org/3/library/collections.html#namedtuple-factory-function-for-tuples-with-named-fields) is, like a `tuple`, an immutable ordered collection. However, its items can be retrieved based on a named index, just like in a `dict`. In the end, they are like an immutable `dict` that must be created from a specific template:

```python

>>> from collections import namedtuple

>>> Person = namedtuple('Person', ['name', 'age', 'address'])

>>> holmes = Person('Sherlock Holmes', 60, '221B Baker Street')

```

Instantiating an object from a named tuple is very similar to instantiating an object from a custom class. Accessing the attributes is also done with dot notation and, besides all that, printing the object will exhibit a user-friendly representation:

```python

>>> holmes.age

60

>>> print(holmes)

Person(name='Sherlock Holmes', age=60, address='221B Baker Street')

```

### Data classes

Named tuples may present issues in some applications:

- A named tuple can be compared as equal to another that carries the same fields. The `holmes` object we created could be considered equal to a named tuple `Character(name='Sherlock Holmes', age=60, address='221B Baker Street')`, for example;

- In the same way, a named tuple is also considered equal to a tuple carrying the same fields: `holmes == ('Sherlock Holmes', 60, '221B Baker Street')` returns `True`.

- Named tuples are iterable. Part of your code may iterate on a `Person` named tuple and expect it to return a name, an age and then an address; if you add a different field to the named tuple definition (a `country` attribute, for example), you may break this other part unwillingly.

- You may want to change the values of an attribute in the named tuple. However, as tuples are immutable, that is not possible.

- You may want more complexity. Maybe you want to query Wikipedia before creating your `holmes` object, and then save the resulting link to the named tuple itself. This is not possible, as you cannot change the methods underlying the named tuple (unless you create a new custom class yourself).

- Composing the attributes in a named tuple based on other named tuples (as if doing class inheritance) is complicated and may result in obscure code.

These issues require a complex data structure - which is solved with the use of classes. However, much of the work related to the creation of a class to hold different attributes was made easier in Python 3.7 with the addition of the `@dataclass` decorator (see the [documentation](https://docs.python.org/3/library/dataclasses.html) and the discussion reported in [PEP 557](https://www.python.org/dev/peps/pep-0557/#python-ideas-discussion)). Its basic use eliminates some of the boilerplate necessary when creating a class, at the same time it adds a lot of advanced functionality for when you need something more complex than both a `dict` and a named tuple:

```python

from dataclasses import dataclass, field

@dataclass

class Person:

name: str

age: int

address: str

wikipedia_page: str = field(init=False, repr=False)

def __post_init__(self):

self.wikipedia_page = get_wikipedia_page(self.name)

def get_wikipedia_page(query):

...

return page_address

```

The code above is equivalent to this one:

```python

class Person:

def __init__(self, name: str, age: int, address: str):

self.name = name

self.age = age

self.address = address

self.wikipedia_page: str = get_wikipedia_page(self.name)

def __repr__(self):

attrs_dict = vars(self)

attrs_dict.pop('wikipedia_page')

attrs_as_str = ', '.join(f'{k}={v.__repr__()}' for k, v in attrs_dict.items())

return f'{type(self).__name__}({attrs_as_str})'

def get_wikipedia_page(query):

...

return page_address

```

What the `@dataclass` decorator does is to look for the class variables that contain a type annotation and make both a `__init__` constructor and a `__repr__` method with them. There is also extra functionality: the `field` function, for example, is telling the decorator that this field should be taken care of by the `__post_init__` function and that it should not show up in the `__repr__` result. The `dataclass` class also contains extra functionality that allows for a finer control of how the object will be instantiated (see the options of the class constructor and the field function), compared to others (see `eq` and `order` parameters of the constructor) and transformed into different data types (`asdict` and `astuple` methods) or in just a different object with different fields (`replace` method). This is a good amount of fine tuning in a much simpler code structure, as seen from the reduction of lines above.

## Conclusion

OOP does not have much space in simple, procedural programs. When necessary, however, they can add a lot of functionality to your data structures at the same time they can make code that is easier to scale and maintain. For everyday scripting (as in much of data science tasks), a `dict`, a named tuple or the simplification provided by the `@dataclass` decorator are all good alternatives to the creation of a custom class if there is no necessity of putting together specific data and functions.

---

Let me know your thoughts and comments. This is my first article on programming and any criticism is very appreciated :smile:

What do you think about OOP when not building complex applications? Do you think functional programming or other programming styles can scale just as well?

Cover image by [Ross Sneddon](https://unsplash.com/@rosssneddon?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText) on [Unsplash](https://unsplash.com/?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText). | pedrohasantiago |

404,467 | Show * Database MySQL | A brief overview of Database Management Systems (DBMS) and proper syntax while interacting with MySQL... | 0 | 2020-07-20T05:07:51 | https://dev.to/jamesfthomas/show-database-mysql-4cfh | *A brief overview of Database Management Systems (DBMS) and proper syntax while interacting with MySQL.*

Before we can get into the specific meat and bones of interacting with the MySQL database, we must first do a little bit of explaining what a database is and why you would ever need to use one. Once we have established our base understanding of what and why the specific ways to interact with the MySQL database will be far more relevant to the future success of your web design projects. So without any more waisted whitespace let’s `describe * databases`.

### What is a database && why use one?

A database is simply a specifically organized assemblage of correlated electronic information that can range from a simple list of items, groups of photos, or even massive anthologies of customer-related information from a large corporation. Typically, the data or information housed within these electronic storage containers is arranged into tables, that then displayed in a column x row format, which makes interaction with these stored values far more economical. A Database is usually employed when multiple processes are accessing and altering data concurrently. The database serves to ensure that these simultaneous inquiries, do not overwrite the changes being performed on either the user or server-side. In the case of someone interacting with database information, this task is called performing a “query” and will require you to use particular verbiage to be successful in the appending or retrieving of desired data.

### Are all databases the same?

There are serval different types of databases available for use in your next project, but which one you employ is dependent upon not only some personal choices but also upon the project itself. Some considerations to help guide your database management system (DBMS) choice is:

* Data structure (how it looks and is to be presented)

* Data consistency (usability of stored data)

* Data protection (access and encryption of stored data)

* Accessibility/integration (the ability of multiple parties to

access and utilize data)

* Interaction efficiency/ scalability (response time to

interactions and ability to change data)

* Usability (regards how easy it is to work with DBMS)

* Service/implementation costs.

Once you have considered the factors that will guide your choice you can sit down and choose your DBMS type. Here is a list of some of the types of DBMS:

* Centralized – database information is stored at a centralized

location

* Distributed – database information is stored across varies sites

* Personal – data collected and stored on personal computers

* End-user – shared data designed for end-users (i.e. different

department managers)

* Commercial – for huge data sets, subject-specific, paid access

through commercial links

* NoSQL – for large sets of unstructured data

* Operational – information related to company operations

* Relational – data organized by tables (rows x columns),

predefined category

* Cloud – database built for a virtual environment

* Object-oriented – a mixture of object-oriented programing and

relations database structures.

* Graph – a type of NoSQL database that uses graph theory to store,

map, and query data relationships

With all these factors to consider and types of databases to choose from you can see just how complex this decision of which database to use in your next project can be. So, in that light, I have included links to this very influential information below in my sources section in the hope that you can utilize it to make the most appropriate choice for your project shortly. As for this blog project we will choose the relational database management system specifically MySQL and highlight how to interact with the tabled values stored within this database.

###How do I interact with relationally stored data?

Remember earlier we stated that the interaction with stored information was done via something called a query. Well that query, is simply a statement to the DBMS containing keywords along with instructions of how the program should alter data you want to target. Unlike other programing interaction verbiage, you may have encountered in the world of code, I find MySQL syntax extremely readable and more closely resembling human speech than JavaScript or syntax.

Keywords such as `SHOW`, `USE`, `CREATE`, `SELECT`, `DELETE`, `DESCRIBE`, `INSERT`, `LOAD DATA`, `FROM`, `WHERE`, `IN`, & `ORDER BY` are just some of the words and or terms used to interact in specific ways with stored data. Since this is our first encounter with MySQL syntax lets stick with some basic queries and get more advanced in the blogs to come.

**Create a new database on server**

```

mysql> CREATE DATABASE test;

```

**View existing databases on the server:**

```

mysql> SHOW DATABASES;

+----------+

| Database |

+----------+

| mysql |

| test |

| tmp |

+----------+

```

**Create a new table**

```

mysql> CREATE TABLE animal (name VARCHAR(20), owner VARCHAR(20),

species VARCHAR(20), sex CHAR(1), birth DATE, death DATE);

```

**Display the new table you just made**

```

mysql> DESCRIBE animal;

+---------+-------------+------+-----+---------+-------+

| Field | Type | Null | Key | Default | Extra |

+---------+-------------+------+-----+---------+-------+

| name | varchar(20) | YES | | NULL | |

| owner | varchar(20) | YES | | NULL | |

| species | varchar(20) | YES | | NULL | |

| sex | char(1) | YES | | NULL | |

| birth | date | YES | | NULL | |

| death | date | YES | | NULL | |

+---------+-------------+------+-----+---------+-------+

```

**Add a value to the table you just made**

```

mysql> INSERT INTO pet

VALUES ('Puffball','Diane','hamster','f','1999-03-30',NULL);

```

These simple queries will get you started arranging information in your database by creating a place for and adding more values to your relational tables. We have only scratched the surface of our journey into databases, so I do hope these 4 examples show just how simple yet readable the MySQL query syntax is to understand and if you choose, put to use.

####Topic Recap:

* a database is an organized collection of electronic information

* many factors influence the type of database you will employ in

your project

* interaction with stored data is called a query, queries are

strings

* MySQL query syntax is very human-readable

##### Conclusion:

The modern database has evolved into many different choices that will be affected by a multitude of different factors that should be considered before your project beginning. I suggest a little bit of research into some queries into the syntax used in each one before you make your choice. While your free to choose anyone you want, I like the syntax utilized in the RDBMS MySQL for its human readability and structured displays. These may change with greater experience but for now, MySQL is the base I am banking on. With the information highlighted in this blog, I hope to have armed you with all you need to choose the best DBMS for your next project no matter it is big or small.

Happy Coding!!!

Sources:

* MySQL Docs (https://dev.mysql.com/doc/refman/8.0/en/what-is-

mysql.html)

* Oracle Docs (https://www.oracle.com/database/what-is-

database.html)

* “8 Key Considerations When Choosing a DBMS”

(https://blog.paessler.com/key-considerations-when-choosing-a-

dbms)

* “Types of Databases” (https://www.tutorialspoint.com/Types-of-

databases)

| jamesfthomas | |

404,559 | Javascript Promises | A promise is an object that can potentially turn into a value in the future. The value will either be... | 0 | 2020-07-20T04:11:24 | https://dev.to/jcorley44/javascript-promises-357d | A *promise* is an object that can potentially turn into a value in the future. The value will either be resolved or unresolved and if it does resolve it will be either resolved, rejected or pending. A better example of this would be to conceptually think of a promise in javascript just like a promise you might make to someone in real life. When you make that promise it will either be unresolved or resolved. While waiting for the promise to be fulfilled it has three stages of existence as mentioned previously: resolved, rejected or pending.

###**Stages of a Promise**

**Resolve**: the promise will have a value

**Reject**: the promise has failed

**Pending**: the stasis period of waiting for the promise to receive either of the two previously mentioned states.

###**Example of a Promise**

````javascript

let firstPromise = new Promise(function(resolve, reject) {

setTimeout(function() {

resolve('I will only eat one cookie');

}, 2000);

});

firstPromise.then(function(data) {

console.log(data); //will log 'I will only eat one cookie' after two seconds

});

```

Promises have become the standard way to deal with asynchronous code. Asynchronous coding models let multiple code blocks execute at the same time. It allows for new actions to start while the program is being ran. In the example the *firstPromise* is using *resolve* to fulfill the promise. After we use the *then* function to bind a callback so we can use the data from the resolve function. It is possible to bind multiple callbacks too!

````javascript

var indecisivePromise = new Promise(function(resolve, reject) {

setTimeout(function() {

resolve('I will only eat one cookie');

}, 2000);

});

indecisivePromise.then(function(data) {

console.log(data + ' hopefully.');

});

indecisivePromise.then(function(data) {

console.log(data + ' maybe.');

});

indecisivePromise.then(function(data) {

console.log(data + ' I make no promises.');

});

```

Promises can only be resolved once. The method *then* can be chained but there can only be one resolve. Just like in real life once a promise has been fulfilled you can not fulfill the same promise for a second time unless you have another condition to the promise. That's why the *then* method is useful. IT

````javascript

let multiResolvePromise = new

Promise(function(resolve, reject) {

setTimeout(function() {

//only this will revolve

resolve('first resolve');

//the promise ends after the first resolve

resolve('second resolve');

resolve('third resolve');

resolve('fourth resolve');

}, 1000);

});

multiResolvePromise.then(function success(data) {

console.log(data); //will print 'first resolve'

});

```

What happens if the promise is not fulfilled? The *catch* method allows us to have an output if the promise fails.

```javascript

const failedPromise = new Promise((resolve, reject) => {

let numberOfCookies = 1000000;

if (numberOfCookies < 1) {

resolve('Good job you only at one cookie!')

} else {

reject('I knew you would eat more than one, but one million that is madness.')

}

});

failedPromise.then((resolveData) => {

console.log(resolveData)

}).catch((rejectData) => {

console.log(rejectData)

});

```

Since the conditional statement above is false the promise fails so the *resolve* method will not trigger thus leaving the promise unfulfilled.

###Conclusion

Promises allow for coding asynchronously to be easier to understand and avoid using more complicated programming strategies. Promises will either resolve or reject depending on the criteria of the promise object. Once the promise is pending we can then chain then and catch to implement more intuitive asynchronous code instead of venturing into a "callback hell". If we were to use a lot of callbacks the code would be extremely hard to follow and become unruly making errors and debugging much harder to do. | jcorley44 | |

404,641 | Iterators in Java | The iterator pattern is one of approaches to access elements of a collection, alongside with streams.... | 0 | 2020-07-20T06:38:44 | https://www.mednikov.tech/how-to-use-iterators-in-java/ | java, tutorial | ---

title: Iterators in Java

published: true

description:

tags: java, tutorial

cover_image: https://www.mednikov.tech/wp-content/uploads/2020/07/unrecognizable-indian-man-using-laptop-while-working-at-home-4307853-1260x709.jpg

---

The iterator pattern is one of approaches to access elements of a collection, alongside with streams. From a technical point of view, the iterator traverses elements in a sequential and predictable order. In Java the behaviour of iterators is defined in _java.util.Iterator_ contract, which is a member of Java Collections Framework.

Iterators are similar to enumerators, but there are differences between these concepts too. The enumerator provides inderect and iterative access to each element of a data structure exactly once. From the other side, iterators does the same task, but the traversal order is _predictable_. With this abstraction a caller can work with collection elements, without a direct access to them. Also, iterators allow to delete values from a collection during the iteration.

## Access elements of a collection

As it was mentioned before, the order of accessing elements is predictable, which means that the iterator traverses elements sequentialy. Therefore, the general Java contract does not allow to access _the particular element_ (however, that is possible using Commons Collections, even this violates the idea). In order to access the next element, use _next()_ method. It returns an element or throws _NoSuchElementException_, when the iterator does not contain more elements.

In order to prevent this unchecked exception, you should call the _hasNext()_ method prior to accessing an element.

```java

List<Integer> numbers = List.of(1, 2, 3, 4, 5, 6);

Iterator<Integer> iterator = numbers.iterator();

while (iterator.hasNext()) {

int x = iterator.next() * 2;

System.out.println(x);

}

```

This code snippet demonstrates the usage of the iterator for sequential retrieving of elements and the printing of double value of each element.

The important thing to note here, is that once elements are consumed, the iterator can not be used. That means, that calling the iterator after traversing will lead to an exception:

```java

List<Integer> numbers = List.of(1, 2, 3, 4, 5, 6);

Iterator<Integer> iterator = numbers.iterator();

while (iterator.hasNext()) {

int x = iterator.next() * 2;

System.out.println(x);

}

int value = iterator.next();

```

The execution of the above code snippet will lead to the following result:

I already mentioned Apache Commons Collections. This library contains a helper class _IteratorUtils_, which has a number of static utility methods to work with iterators. While some of them violate the core pattern, they can be useful. So, alongside with a sequential access, it possible to access a particular element by its index, as well there is a wrapper method to get the first element.

```java

List<Integer> numbers = List.of(1, 2, 3, 4, 5, 6);

Iterator<Integer> iterator = numbers.iterator();

int first = IteratorUtils.first(iterator);

Assertions.assertThat(first).isEqualTo(1);

```

## Consuming an element

Since Java 8 iterators permit also to specify a consumer function, which can be performed on each element. This is a shortcut of what we can do using a while block. Let consider the first example implemented with the _forEachRemaining()_ method. Take a look on the code snippet below:

```java

List<Integer> numbers = List.of(1, 2, 3, 4, 5, 6);

Iterator<Integer> iterator = numbers.iterator();

iterator.forEachRemaining(number -> System.out.printf("This is a consumer. Value: %d \n", number*2));

```

This program listing produces the following result:

It is possible to perform such behavior with IteratorUtils. It has two static methods:

* _forEach()_ = applies the function to each element of the provided iterator.

* _forEachButLast()_ = executes the defined function on each but the last element in the iterator

Both methods accept two arguments: an iterator instance and a [closure](http://commons.apache.org/proper/commons-collections/apidocs/org/apache/commons/collections4/Closure.html) function. The second concept defines a block of code which is executed from inside some block, function or iteration. Technically, it is a same thing as a consumer functional interface. The example of using this helper method is below:

```java

List<Integer> numbers = List.of(1, 2, 3, 4, 5, 6);

Iterator<Integer> iterator = numbers.iterator();

IteratorUtils.forEach(iterator, number -> System.out.printf("This is a closure. Value: %d \n", number*2));

```

The output of this snippet is:

## Remove elements

Finally, we need to observe how to use an iterator to delete elements. The _java.util.Iterator_ interface defines the _remove()_ method. It deletes from the underlying collection the last element returned by the iterator. This method can be called only once per call of the _next()_ function. Note, that this operation is optional.

```java

List<Integer> numbers = new ArrayList<>();

numbers.add(1);

numbers.add(2);

numbers.add(3);

numbers.add(4);

numbers.add(5);

System.out.println("Initial list: ");

numbers.stream().forEach(System.out::println);

Iterator<Integer> iterator = numbers.iterator();

while(iterator.hasNext()) {

iterator.next();

iterator.remove();

}

System.out.println("Modified list: ");

numbers.stream().forEach(System.out::println);

```

This code snippet executes and prints elements of the array list before and after removing elements, like it is snown on the screenshot below:

There are things to remember, when you use _iterator.remove()_:

* As this operation is optional, it can throw the _UnsupportedOperationException_, if a collection does not have an implementation of this method (that is why I used an array [list](https://www.mednikov.tech/how-to-check-if-a-list-contains-a-value-in-clojure/) and not a generic list)

* You must call the _next()_ method BEFORE calling the _remove()_ method, otherwise it will lead to the _IllegalStateException_ to be thrown

That is how you can utilize an iterator in order to access elements of a collection, to perform an action on each element or to remove elements from a collection. These are general considerations. If you want to learn how to use iterators with concrete types of collections (lists, sets, queues) and to learn more advanced iterator patterns - check my [book on Java Collections Framework](https://www.smashwords.com/books/view/1029860).

| iuriimednikov |

404,820 | Scalar Type Declaration | Today, I like to share some of the key features provided in PHP. The PHP community has introduced som... | 0 | 2020-07-20T16:16:12 | https://dev.to/ashish11/scalar-type-declaration-a28 | php | Today, I like to share some of the key features provided in PHP. The PHP community has introduced some good features like Scalar type declaration, Return type declarations, etc.., which add some extra features to the PHP development, and provides flexibility in the development lifecycle. In this blog, I would like to explain the Scalar type declaration feature, I hope this will help you to improve your knowledge and help you somehow in the future.

# Scalar Type Declaration

Type declaration is also known as Type Hinting, allows a function to accept a parameter of a certain type if the given value is not of the correct type (Integer, Float, Boolean, string) PHP will return a fatal error. The older version of PHP (PHP 5) gives a recoverable error while the new release (PHP 7) returns a throwable error.

PHP 7 has introduced the following Scalar type declarations.

1.Boolean

2.String

3.Float

4.Integer

5.Iterable

Scalar Type Declaration comes in two types Coercive(i.e. default), and another one is Strict, if we want to enforce PHP to use a strict type declaration then, we should set the strict value to 1 using a declare directive, please check the example below.

`declare(strict_types=1);`

Please check the example below for Coercive and Strict type declarations.

# Coercive Scalar Type Declaration

```

try{

function sum(int …$ints)

{

return array_sum($ints);

}

}catch(Exception $ex)

{

echo $ex->getMessage();

}

print(sum(2, ‘3’, 4.1));

```

In the above example just want to highlight one thing i.e. we are not using a strict value for parameter type like 2 of Integer type, 3 of String type 4.1 of Float type. The above code will output the value int(9).

# Strict Scalar Type Declaration

```

declare(strict_types=1);

try {

function sum(int …$ints)

{

return array_sum($ints);

}

} catch (Exception $ex)

{

echo $ex->gt;

getMessage();

}

print(sum(2, ‘3’, 4.1));

```

Note that, if we declare the `strict_type` value to 1, the above code will output “Fatal error: Uncaught TypeError: Argument 2 passed to sum() must be of the type integer, string is given”.

The above code will help us to enforce the other developer to use a variable of strict type i.e. Integer, Float, etc.

# Use of Scalar Type Declaration

The scalar type declaration can be used in different areas of development, like, defining strict values in an interface, so that, the child class which is overriding the interface must follow the strict type.

I hope that will help you somehow. Feel free to leave a comment below. Let me know what you think about this | ashish11 |

404,938 | How can I update a JSON file using fetch? | Fetch I just started using fetch to make requests over http. I used to use axios, but I do... | 0 | 2020-07-20T13:06:38 | https://dev.to/seanolad/how-can-i-update-a-json-file-using-fetch-5ag7 | explainlikeimfive, help | #Fetch

I just started using `fetch` to make requests over http. I used to use axios, but I don't any more. I need to make changes to the json file using fetch, but I don't know how. What can I do? | seanolad |

405,095 | Basic explanation – Lean Web Test Automation Architecture | This article belongs to a How to create Lean Test Automation Architecture for Web using Java librarie... | 0 | 2020-07-20T14:51:44 | https://dev.to/eliasnogueira/basic-explanation-lean-web-test-automation-architecture-3dek | java, testing, architecture | This article belongs to a How to create [Lean Test Automation Architecture for Web using Java libraries](http://www.eliasnogueira.com/how-to-create-lean-test-automation-architecture-for-web-using-java-libraries/) series

# Introduction

This is a series of articles showing how to create lean test automation architecture for web test automation using Java libraries.

**Lean** means that we will have less code as possible to create realize, maintainable and useful architecture.

**Architecture** means the organization, features, and configurations that should be present to enable you to create the automated scripts.

This first article will show to you the basic explanation about the necessary elements to create lean architecture.

# Basic Architecture

## Clean architecture

Architecture is the overall design of the project. It organizes the code into different layers and defines how they will interact with each other.

In Clean architecture, you will organize the project to make it easy to understand and change as the project evolves.

There’s an excellent post by Uncle Bob about [The Clean Architecture](https://blog.cleancoder.com/uncle-bob/2012/08/13/the-clean-architecture.html) that will show you a proposed organization based on an application (not a test framework/architecture).

## Design Patterns

You can use any design pattern to help you to better organize and maintain your code. There are some you can always use to achieve those benefits for a specific task.

### Factory Method

You can use the [Factory Method](https://en.wikipedia.org/wiki/Factory_method_pattern) pattern to create **browser instances** because a better test architecture for web test automation should provide an easy mechanism to create them.

You can also use this design pattern to create a Data Factory.

### Builder

You can use the [Builder](https://en.wikipedia.org/wiki/Builder_pattern) pattern to create simplify the creation of the complex objects you have in your code, like:

* in the model classes, you will create to reuse the concrete entities

* in the page object, to make it easier to consume

* in the test data builder, to make the data creation easier

### Singleton

You can use the [Singleton](https://en.wikipedia.org/wiki/Singleton_pattern) pattern reuse the same object instance among your tests, like:

* have shared access to the data of your configuration files

* share the same database connection (if you need)

## Testing Patters

As the Design Pattern, you can use some Testing Patterns that will show the same benefits.

### Base Test

You can use the Base Test to reuse the same behavior in all your tests avoiding code duplication. The best example for the web test automation is the pre and post condition you will always have: create the browser instance opening it, and close it when the test finishes.

### Test Data Factory

It combines the [Object Mother](https://martinfowler.com/bliki/ObjectMother.html) and the Fluent Builder into a class to create data for your models. Imagine you have a Payment model wherein your test you will use it to approve or reject depending. The Test Data Factory will create differents Payment objects based on the data, so you can have an amount that won’t be accepted on the approval process, and another that will be accepted.

### Page Object

You can use the [Page Object](https://www.selenium.dev/documentation/en/guidelines_and_recommendations/page_object_models/) pattern to enhancing test maintenance and reducing code duplication modeling your pages into classes that will be the interface between the code and the UI.

## Logs and Reports

Test code needs to be handled like your production code. You might face problems during the testing execution like:

* assertion errors

* elements not present in the UI

* timeout exceptions

* architecture-specific exceptions

You can add a **logging strategy** in your test architecture to easily, analyze, and understand the errors, so you can take action or have historical information about the problems.

You might need to inform your stakeholders about how reliable your application is, showing a user-friendly report that can show all the important metrics, as well as the testing evidence (like screenshots).

The **report strategy** is required even for you to quickly analyze the test results.

## Data Generation

Generate data for the test is one of the most time-consuming actions, even for automated tests, but it is necessary.

We can apply, at least, three different approaches:

* **Fake generation**: is the way we can generate non-sensitive data

* **Static generation**: is the way we can control the data through a data pool, like files (csv, yaml, txt, json, etc…)

* **Dynamic generation**: is the way we can generate sensitive data using an approach without any changes in our scripts

## Parallel Execution

This is the most needed feature in a web test architecture today, We need to execute the test as fastest as we can, and to speed up all the test execution, thought a test suite, we need to use a grid infrastructure.

The first step is to either create a parallelization solution or use an existent one present in the testing library (like JUnit or TestNG).

The second step is to define the environment solution. It can be either:

local solution using bare metal that might not scale

local solution using containers and auto-scale up and down it

cloud solution to auto-scale up and down

## Pipeline

The last item we need to create is the strategy to execute all the scripts. It will require first the definition of your **regression testing execution** where you will create est suites. Defining this strategy you can control when and where executing the test scripts.

The second part is the **pipeline script** that you should create dividing into stages to sequentially execute your test suites to get faster feedback on your application reliability. | eliasnogueira |

405,126 | Make a Ruby gem configurable | In the Ruby ecosystem, a well established way of customizing gem behavior is by using configuration... | 0 | 2020-07-21T13:32:50 | https://dev.to/vinistock/make-a-ruby-gem-configurable-228d | ruby, rails, tutorial, webdev | In the Ruby ecosystem, a well established way of customizing gem behavior is by using configuration blocks. Most likely, you came across code that looked like this.

```ruby

MyGem.configure do |config|

config.some_config = true

config.some_class = MyApp

config.some_lambda = ->(variable) { do_important_stuff(variable) }

end

```

This standard way of configuring gems runs once, usually when starting an application, and allows developers to tailor the gem's functionality to their needs.

# Adding the configuration block to a gem

Let's take a look at how this style of configuration can be implemented. We'll divide our implementation in two steps: writing a configuration class and exposing it to users.

## Writing the configuration class

The configuration class is responsible for keeping all the available options and their defaults. It is composed of two things: an initialize method where defaults are set and attribute readers/writers.

Let's take a look at an example, where we can configure an animal and the sound that it makes. If the sound does not match the expected, we want to raise an error while configuring our gem. We'll then access what is configured for animal and sound and use it in our logic.

```ruby

# lib/my_gem/configuration.rb

module MyGem

class Configuration

# Custom error class for sounds that don't

# match the expected animal

class AnimalSoundMismatch < StandardError; end

# Animal sound map

ANIMAL_SOUND_MAP = {

"Dog" => "Barks",

"Cat" => "Meows"

}

# Writer + reader for the animal instance variable. No fancy logic

attr_accessor :animal

# Reader only for the sound instance variable.

# The writer contains custom logic

attr_reader :sound

# Initialize every configuration with a default.

# Users of the gem will override these with their

# desired values

def initialize

@animal = "Dog"

@sound = "Barks"

end

# Custom writer for sound.

# If the sound variable is not exactly what is

# mapped in our hash, raise the custom error

def sound=(sound)

raise AnimalSoundMismatch, "A #{@animal} can't #{sound}" if SOUND_MAP[@animal] != sound

@sound = sound

end

end

end

```

## Exposing the configuration

To expose the configuration means both letting users configure it and allowing the gem itself to read the value of each option.

This is done with a singleton of our Configuration class and a few utility methods.

```ruby

# lib/my_gem.rb

module MyGem

class << self

# Instantiate the Configuration singleton

# or return it. Remember that the instance

# has attribute readers so that we can access

# the configured values

def configuration

@configuration ||= Configuration.new

end

# This is the configure block definition.

# The configuration method will return the

# Configuration singleton, which is then yielded

# to the configure block. Then it's just a matter

# of using the attribute accessors we previously defined

def configure

yield(configuration)

end

end

end

```

The gem is now set to be configured by the applications that use it.

```ruby

MyGem.configure do |config|

# Notice that the config block argument

# is the yielded singleton of Configuration.

# In essence, all we're doing is using the

# accessors we defined in the Configuration class

config.animal = "Cat"

config.sound = "Meows"

end

```

# Using the configuration in the gem

Now that we have the customizable Configuration singleton, we can read the values to change behavior based on it.

```ruby

# lib/my_gem/make_sound.rb

module MyGem

class AnimalSound

def initialize

@animal = MyGem.configuration.animal

@sound = MyGem.configuration.sound

end

def make_sound

"The #{@animal} #{@sound}"

end

end

end

```

# Using lambdas for configuration

In specific scenarios, there may be a need for a configuration to not have a predetermined value, but rather to evaluate some logic as the application is running.

For these cases, it is typical to define a lambda for the configuration value. Let's go through an example. The configuration class is similar to our previous case.

```ruby

# lib/my_gem/configuration.rb

module MyGem

class Configuration

attr_reader :key_name

# Define no lambda as the default

def initialize

@key_name = nil

end

# Raise an error if trying to set the key_name

# to something other than a lambda

def key_name=(lambda)

raise ArgumentError, "The key_name must be a lambda" unless lambda.is_a?(Proc)

@key_name = lambda

end

end

end

```

Now we can configure the lambda to whatever we need. You could even query the database if desired inside the lambda and return values from the app's models.

```ruby

MyGem.configure do |config|

config.lambda_config = ->(model_name) { model_name == "Post" ? :posts : :articles }

end

```

Finally, the gem can use the lambda and get different results as the app is running.

```ruby

MyGem.configuration.key_name&.call("Article")

=> :articles

MyGem.configuration.key_name&.call("Post")

=> :posts

```

That's about it for configuration blocks. Have you used this before to make your code/gems customizable? Do you know other strategies for configuring third party libraries? Let me know in the comments! | vinistock |

405,283 | Ultimate PROBLEM SOLVING GUIDE for Acing Programming Interviews | Advice like "clarify the question" and "write pseudocode" is a good place to start, but it's not wher... | 0 | 2020-07-21T16:58:40 | https://dev.to/candidateplanet/problem-solving-guide-for-acing-faang-coding-interviews-4nof | career, interview, beginners, computerscience | Advice like "clarify the question" and "write pseudocode" is a good place to start, but it's not where you want to end if you're trying to impress an interviewer with your problem solving skills. How do you actually SOLVE the problem?

In this video I dive deep into all of the steps -- and problem solving strategies -- you need in order to ace a high-bar coding interview. Watch this video if the feedback(lol) you're getting from rejections isn't helpful, and/or you want an honest explanation of what your interviewer is looking for.

In addition to challenging one-size-fits-all advice, I address the crux of the problem solving coding interview: coming up with a solution while managing risk and limited time.

For timestamp links see video description in YouTube: {% youtube -UhBywlBvw8 %}

CANDIDATE PLANET:

Empowering candidates to ace interviews, negotiate offers and advocate for themselves at work. Leave a comment or email Lusen@CandidatePlanet.com with questions you want me to answer.

PLEASE SUBSCRIBE \o/:

☆ Youtube ☆ https://youtube.com/CandidatePlanet

☆ Newsletter ☆ http://newsletter.candidateplanet.com

☆ LinkedIn ☆ https://linkedin.com/in/lus

ONE-ON-ONE COACHING:

Schedule a one-on-one coaching session with me: https://lusen.youcanbook.me

SALARY NEGOTIATION PLAYLIST:

☆ https://www.youtube.com/playlist?list=PLY0uEDNh-Haqes9eEvyGtzrUWOHv-c1op

ACE YOUR INTERVIEWS PLAYLIST:

☆ https://www.youtube.com/playlist?list=PLY0uEDNh-HarP6UtKFYRJZnkXzz4ICi73

Dubstep bee and Kipo art inspired by Kipo and the Age of Wonderbeasts. | candidateplanet |

405,393 | Gooey SVG Effects, Game UI, and Meetups - Frontend Horse #7 | This is an issue from the newsletter Frontend Horse. This was originally published on July 16th, 2020... | 7,253 | 2020-07-20T19:31:13 | https://frontend.horse/issues/7/ | webdev, css, html, javascript | _This is an issue from the newsletter Frontend Horse. This was originally published on July 16th, 2020. Visit [Frontend.Horse](https://frontend.horse) to subscribe and find more issues._

---

Helloooo, and welcome to _the Seabiscuit of frontend newsletters_!

We’ve got a coffee cup, a Zelda UI tutorial, and a meetup I'm hosting all ready for race day. I hope you're wearing [some ridiculous clothing and your fanciest hat](https://www.thetrendspotter.net/what-to-wear-to-the-races/).

So place your bets, find your seats, and let’s get started!

---

### CODEPEN

## Coffee For You

I’m amazed by the level of detail and care that’s gone into [Vadim Bauer](https://twitter.com/BauerVadim)’s gorgeous [cup of coffee](https://codepen.io/v_Bauer/pen/zYrBoJa). It's a great piece and _I just had to know how he made it._

{% codepen https://codepen.io/v_Bauer/pen/zYrBoJa %}

The detailed CSS drawing is stunning in its own right. However, the part that dropped my jaw was the animation on the bubbles. They drift from the center of the cup towards the edge to join the other foam there. When they get close, the edge foam moves outward like real form on liquid would!

How the heck is he achieving this effect?

His answer? **SVG Filters**

Welp, it’s official: I’m crushing _hard_ on SVG filters. They’re just so dang versatile! In previous issues we’ve seen them used to make water and lightning effects. Now you’re telling me that they can make stuff goop together? So rad.

Vadim breaks down the effect:

> It’s an SVG filter that basically applies a huge blur to the elements and then increases the contrast for the alpha channel, so that the now bigger shape doesn’t look blurred anymore. The result is the elements merging when they get close to each other.

> It’s known as the gooey effect. You can find a pretty good post about it on [CSS-Tricks: Gooey Effect](https://css-tricks.com/gooey-effect/)

This is an awesome tutorial and you can see how it’s similar to Vadim’s effect.

The other thing I loved about “Coffee for you” was the subtle steam that drifted by. Guess how he made it?

> That’s also an SVG filter. This time with feTurbulence for the texture and feDisplacementMap to distort it. The rest was pure trial and error to get it to look like some real steam.

To learn, Vadim recommends digging into the CodePens you admire and playing with them.

> Changing things and seeing what happens is always a great way to learn about code.

Vadim, I couldn’t agree more.

[Check it out ->](https://codepen.io/v_Bauer/pen/zYrBoJa)

---

### TUTORIAL

## Building a Zelda UI

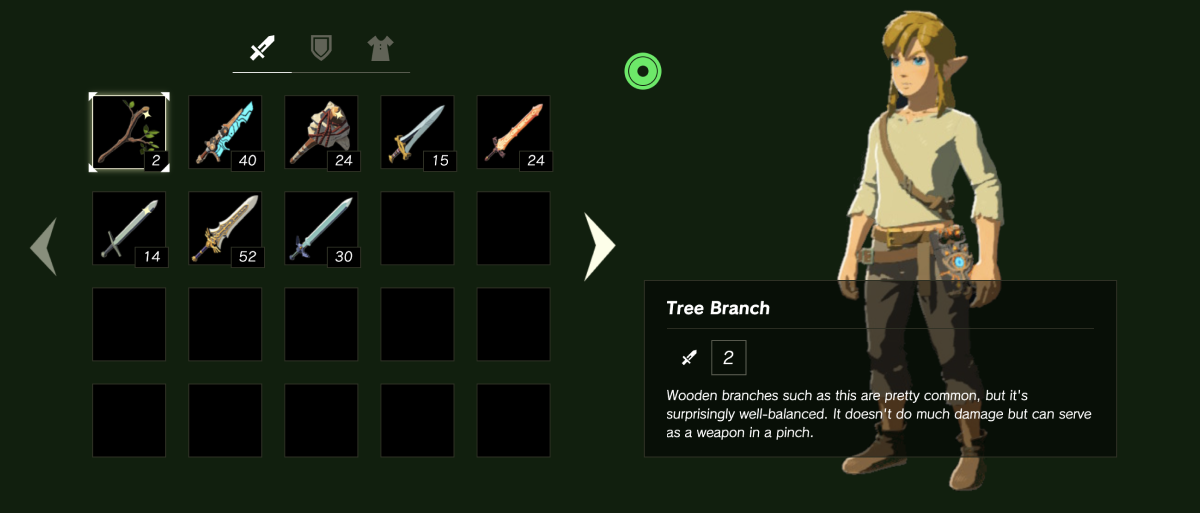

I’ve always been a fan of video games, and the Zelda series is by far my favorite franchise. So when my friend Tim sent me a [a Zelda UI tutorial](https://dev.to/flagrede/how-to-replicate-the-zelda-botw-interface-with-react-tailwind-and-framer-motion-part-1-298g), how could I not dig into it?

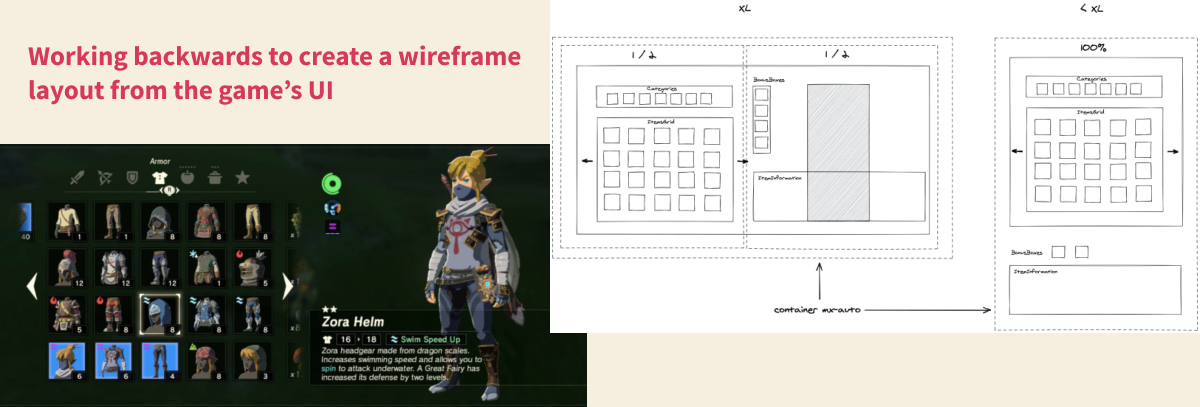

At first I thought it was just a cool idea, maybe a fun gimmick for a tutorial. But it’s much more than that. The author, [Florent Lagrede](https://twitter.com/flagrede), shows you how to build out a quality UI using React, TailwindCSS, and Framer-Motion.

This is the gummy-vitamin of tutorials. Zelda is the hook, but there’s great stuff here, too.

So much of teaching is packaging the content in ways that the learner enjoys. That’s why people like Bill Nye the Science Guy was able to sneak so much education into our brains.

Throughout both articles, Florent decomposes the interface down to small parts, and concretely talks about how he plans to implement it. Turning a design spec into a web layout is such an important skill to develop, and it’s always helpful for me to see how other people approach it.

[The result](https://www.gameuionweb.com/zelda-botw/inventory) is just awesome. Not only can you navigate with your keyboard the way you’d expect to in the game, but it even accurately combines character buffs you get from wearing certain parts of clothing.

The tutorials are a part of something larger: Florent’s project, [Game UI on Web](https://www.gameuionweb.com/). It’s Florent’s site where he’s recreating video game UIs and creating tutorials on his process. To start he’s recreated menus from Hollow Knight and Zelda. It started when Florent was looking through the UI resource [Interface In Game](https://www.interfaceingame.com), which has hundreds of stills and videos from all kinds of video games. He wondered _why can’t we do this on the web_?

So he built out the [Hollow Knight demo](https://www.gameuionweb.com/hollow-knight) as a challenge, and had so much fun he got hooked on making game UIs.

> Then I came up with the idea to make tutorials that would focus on _doing_ instead of only _reading_. As developers, I think we learn best by doing. However, it can be difficult to get started. I find that having a motivating goal can help you begin. Having fun while learning makes the process even easier.

I asked Florent what he thought web developers could learn from building these game interfaces? He said:

• How to make interactions more enjoyable, visible and understandable

• Small details that add to the experience

• Using sounds in an interface

While web dev has a lot of ‘sameness’ right now, Florent’s projects and tutorials on GameUIonWeb feel unique. Creating game UIs with web technology is such a great concept. So many UX design principles carry over.

Picking apart a well designed game UI will deepen your understanding of web and user experience design. Plus, as the [web becomes more whimsical](https://whimsical.club/), some of these game dev skills are going to come in handy!

**What’s to Come**

Florent has big plans for Game UI on Web. He wants to build more game UIs that go beyond the ‘inventory menu’ type that he’s done already. He also wants to write tutorials that teach web developers the tricks that bring delight and joy to games.

I think it’s safe to say that Florent wants to make the web a lot more fun.

Check out [Part 1](https://dev.to/flagrede/how-to-replicate-the-zelda-botw-interface-with-react-tailwind-and-framer-motion-part-1-298g) and [Part 2](https://dev.to/flagrede/how-to-replicate-the-zelda-botw-interface-with-react-tailwind-and-framer-motion-part-2-3nd4) of his Zelda Breath of the Wild tutorial. Then check out [Game UI on Web](https://www.gameuionweb.com/) to see the demos of what he’s built so far.

### Resources

This [Interface In Game](https://interfaceingame.com/) site is such a fantastic resource. For example, check out this huge collection of [Animal Crossing screenshots and videos](https://interfaceingame.com/games/animal-crossing-new-horizons/)!

Florent created his excellent wireframes in [Excalidraw](https://excalidraw.com/), a free web-based drawing tool.

---

### EVENT

## Codefwd: Design Patterns

If you’re reading this as it comes out, then I have some exciting news! I’m going to be hosting a meetup with a wonderful round of talks and I’d LOVE to see you there!

We’ll be streaming over on the [Codefwd Twitch channel](https://www.twitch.tv/codefwd) starting at 6PM EST. Please ride through and throw some horse puns into the chat! It’s my first time hosting a remote conference, so I’m pretty excited.

Here’s the lineup:

* Responsive Design in 2020 - Kilian Valkhof

* Event Driven Serverless Microservices - Gareth Mc Cumskey

* Simple Rules for Complex Systems - Jake Burden

* MongoDB Schema Design Best Practices - Joe Karlsson

I hope to see you there!

---

## So Long, Partner

And it's a photo finish! What a race, I don't care who won, I'm just glad you were here for it!

Follow [@FrontendHorse on Twitter](https://twitter.com/frontendhorse). If you enjoyed this I’d be over the moon if you share it with a friend or tweet about it.

Special thanks to Vadim Bauer and Florent Lagrede for speaking with me about their work.

Now let's get out of here before the next race starts. You take care.

Your Neigh-bor,

Alex

---

If you liked this post, head to [Frontend.Horse](https://www.frontend.horse) to subscribe! You'll get the next issue before it's posted here on Dev. | trostcodes |

405,537 | Resource recommendations | I am currently teaching myself web development through online courses and other means. I work four 12... | 0 | 2020-07-20T23:12:45 | https://dev.to/kessinger_c/resource-recommendations-e6n | discuss | I am currently teaching myself web development through online courses and other means. I work four 12 hour shifts each week, during those times I am not around a computer so coding isn’t possible. Does anyone have any recommendations for books, blogs, or any other source that I could read on web dev while I am at work? | kessinger_c |

405,565 | Kinx Library - Isolate (Multi Thread without GIL) | Hello, everybody! The script language Kinx is published with the concept of Looks like JavaScript, F... | 0 | 2020-07-29T21:49:18 | https://dev.to/krayg/kinx-library-isolate-multi-thread-without-gil-1m5e | kinx, ruby, javascript, thread | Hello, everybody!

The script language [Kinx](https://github.com/Kray-G/kinx) is published with the concept of **Looks like JavaScript, Feels like Ruby, Stable like AC/DC(?)**.

This time it is an Isolate, which is a native thread library without **GIL**.

* Reference

* First motivation ... [The post of introduction](https://dev.to/krayg/kinx-as-a-script-language-4iko)

* Kinx, I wanted a scripting language with a syntax of C family.

* Repository ... https://github.com/Kray-G/kinx

* I am waiting for pull requests.

As you can see from the name Isolate, as a thread model, each thread operates independently and does not share the memory. This is chosen to increase safety.

## Isolate

### Model of multi threading

The memory sharing model of threads like C/C++ is too dangerous and difficult. You have to pay attention much to the race condition trap and ensure deadlock control, but you will still get deadlock easily. The battle between multithreading and safety is still ongoing.

The threading model of Ruby and Python is safe, but its weakness is that GIL (Global Interpreter Lock) gives many limitations for parallelism.

* [GIL (Global Interpreter Lock)](https://en.wikipedia.org/wiki/Global_interpreter_lock)

Let's challenge this wall also at **Kinx**. Ruby hasn't been freed from GIL due to past concerns, but it's likely to move on to the next stage.

So Kinx prepared the mechanism named as **Isolate**. It's a completely independent **native thread**. The exchange of information is limited to Integer, Double and String. Therefore, if you want to send an object, you need to prepare the mechanism of serializing and deserializing it. The most easy way is to make it a string and just execute it because the source code of Isolate is given as a string.

But note that only the compile phase is not reentrant. So the compilation phase will be locked and processed in sequence.

### Isolate Object

Roughly, the Isolate object is used as follows.

* Create an Isolate object by `new Isolate(src)`. Not executed yet at this point. `src` is just a string.

* Compile & execute with `Isolate#run()`. The return value is `this` of the Isolate object.

* By `Isolate#join()`, wait for the thread to finish.

* When the main thread ends, all threads will end **with nothing to be cared**.

* Therefore, when controlling the end, synchronize by using a method such as data transfer described later, and correctly `join` in the main thread.

### Example

#### Creating a new thread

Look at the example first. What is passed to the constructor of Isolate is just a **string**. It feels good to looks like a program code when write it as a raw string style, but there is a trap on it.

* A `%{...}` in a raw string has been recognized as an inner-expression for the raw string itself.

So, you had better avoid to use `%{...}` inside a raw string.

For example below, using `%1%` for that purpose and applying the value directly into a string. It is like a little JIT.

```javascript

var fibcode = %{

function fib(n) {

return n < 3 ? n : fib(n-2) + fib(n-1);

}

v = fib(%1%);

var mutex = new Isolate.Mutex();

mutex.lock(&() => System.println("fib(%1%) = ", v));

};

34.downto(1, &(i, index) => new Isolate(fibcode % i).run())

.each(&(thread, i) => { thread.join(); });

```

Locking for printing has been used to avoid to a strange output.

```

fib(15) = 987

fib(10) = 89

fib(20) = 10946

fib(3) = 3

fib(11) = 144

fib(21) = 17711

fib(4) = 5

fib(9) = 55

fib(23) = 46368

fib(16) = 1597

fib(14) = 610

fib(8) = 34

fib(2) = 2

fib(24) = 75025

fib(26) = 196418

fib(28) = 514229

fib(29) = 832040

fib(7) = 21

fib(30) = 1346269

fib(25) = 121393

fib(5) = 8

fib(13) = 377

fib(12) = 233

fib(19) = 6765

fib(22) = 28657

fib(18) = 4181

fib(17) = 2584

fib(6) = 13

fib(27) = 317811

fib(31) = 2178309

fib(1) = 1

fib(32) = 3524578

fib(33) = 5702887

fib(34) = 9227465

```

The order might be changed because of a multi thread.

```

fib(10) = 89

fib(19) = 6765

fib(14) = 610

fib(11) = 144

fib(26) = 196418

fib(17) = 2584

fib(21) = 17711

fib(20) = 10946

fib(9) = 55

fib(13) = 377

fib(28) = 514229

fib(18) = 4181

fib(30) = 1346269

fib(31) = 2178309

fib(7) = 21

fib(3) = 3

fib(8) = 34

fib(4) = 5

fib(25) = 121393

fib(16) = 1597

fib(22) = 28657

fib(23) = 46368

fib(12) = 233

fib(27) = 317811

fib(29) = 832040

fib(15) = 987

fib(2) = 2

fib(5) = 8

fib(1) = 1

fib(6) = 13

fib(32) = 3524578

fib(24) = 75025

fib(33) = 5702887

fib(34) = 9227465

```

#### End of thread

The thread will be finished when the `Isolate` code has been reached at the end.

The returned status code from the thread will be returned as a return code of `join`.

```javascript

var r = new Isolate(%{ return 100; }).run().join();

System.println("r = %d" % r);

```

```

r = 100

```

#### Transfer data - Isolate.send/receive/clear

For simple data transfer you can use `Isolate.send(name, data)` and `Isolate.receive(name)`. The buffer is distingished by `name`, the threads are send/receive data by the `name`.

* `name` can be omitted. When it is omitted, it is same as specifying `"_main"`.

* As `data`, only Integer, Double, and String is supported.

* That is why for an object, you should stringify it and it should be reconstructed by receiver.

* To clear the buffer by `Isolate.clear(name)`.

* If you do not clear the buffer by `Isolate.clear(name)`, the buffer data will be remaining. It means the same data can be got by `Isolate.receive(name)` many times.

#### Mutex

Mutex object is constructed by `Isolate.Mutex`. By the way, the mutex is distinguished by the name even if it is in the same process.

```javascript

var m = new Isolate.Mutex('mtx');

```

By using the same name, the same mutex will be constructed. If you omit the name, the name will be the same as `"_main"`.

Mutex object is used with `Mutex#lock(func)` method. The callback function of `func` is called with a locked mutex.

```javascript

var m = new Isolate.Mutex('mtx');

m.lock(&() => {

// locked

...

});

```

#### Condition

You can use a condition variable. That is used with a mutex object together. When passing a locked mutex to `Condition#wait()`, it waits after mutex is unlocked. In that status, when another thread do the `Condition#notifyAll()` and the thread can get the lock, to come back from the waiting status.

`Condition#notifyOne()` is not supported because everybody says 'nobody should use it!'.

```javascript

var cond = %{

var m = new Isolate.Mutex('mtx');

var c = new Isolate.Condition('cond');

m.lock(&() => {

var i = 0;

while (i < 10) {

System.println("Wait %1%");

c.wait(m);

System.println("Received %1%");

++i;

}

System.println("Ended %1%");

});

};

var ths = 34.downto(1, &(i, index) => new Isolate(cond % i).run());

System.sleep(1000);

var c = new Isolate.Condition('cond');

16.times(&(i) => {

System.println("\nNotify ", i);

c.notifyAll();

System.sleep(500);

});