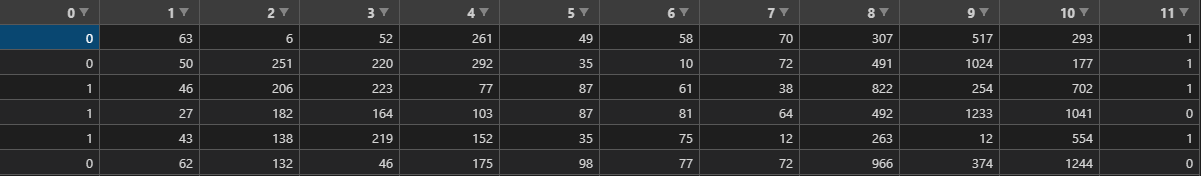

id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

613,638 | UI Trends: Center Column Squeezing | We've just opened our desktop browser to full screen and clicked on a link to one of the sites below... | 0 | 2021-02-21T15:05:50 | https://dev.to/jwp/ui-trends-wide-screen-and-high-resolution-monitors-24fm |

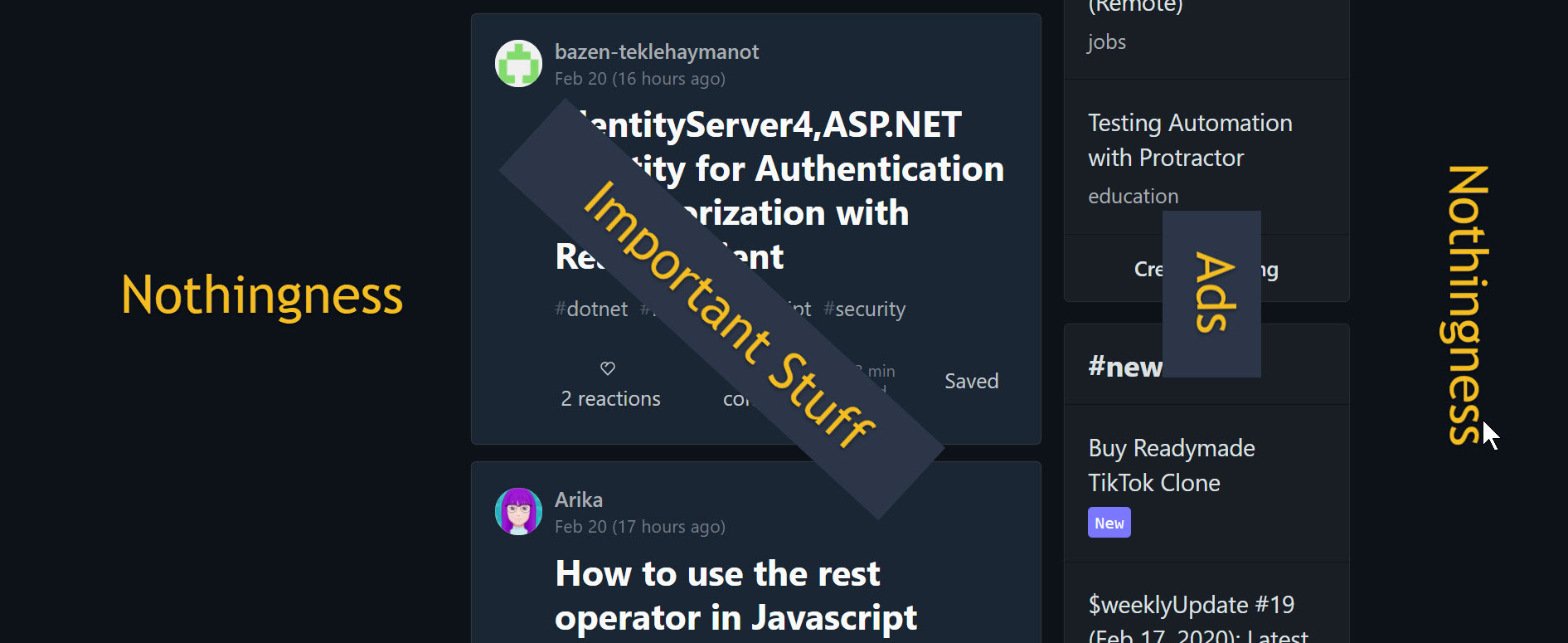

> We've just opened our desktop browser to full screen and clicked on a link to one of the sites below. Our browser zoom is 100%.

- Dev.To,

- FaceBook

- LinkedIn,

- Git Api Docs

The important column; which is either column (two or three) in a four column layout is squeezed as shown below.

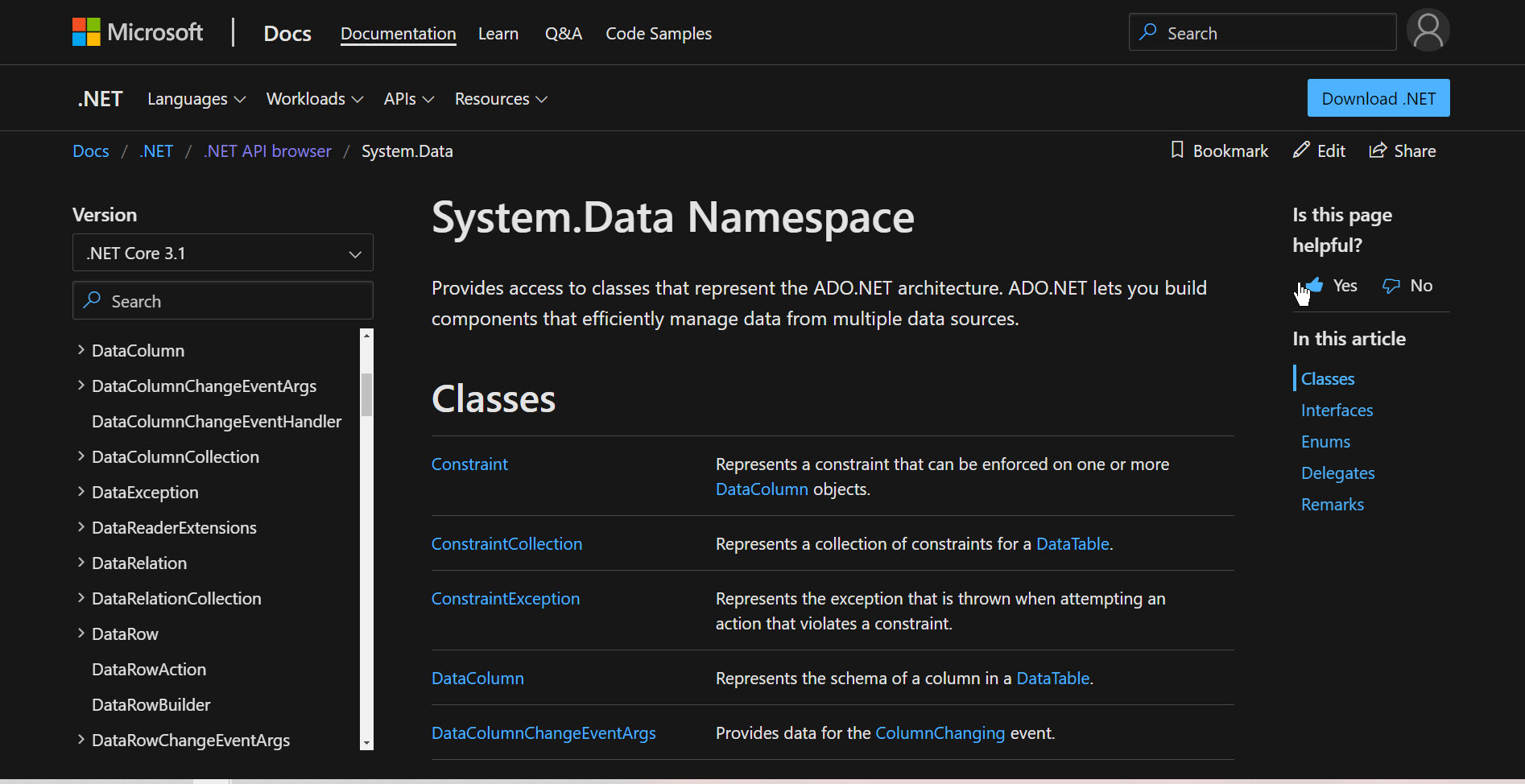

High Resolution and Wide Monitors show this:

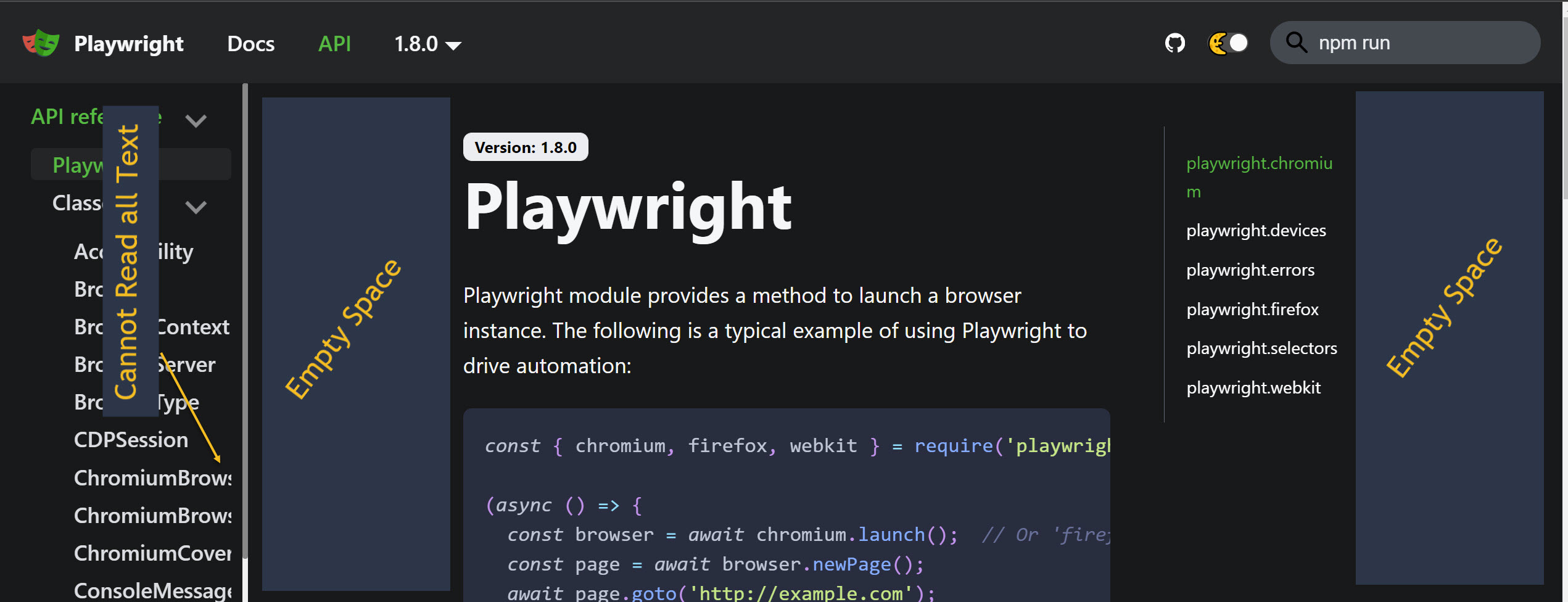

Here's a random 'current' API doc on Git. In this one, the left hand menu item is not resizable.

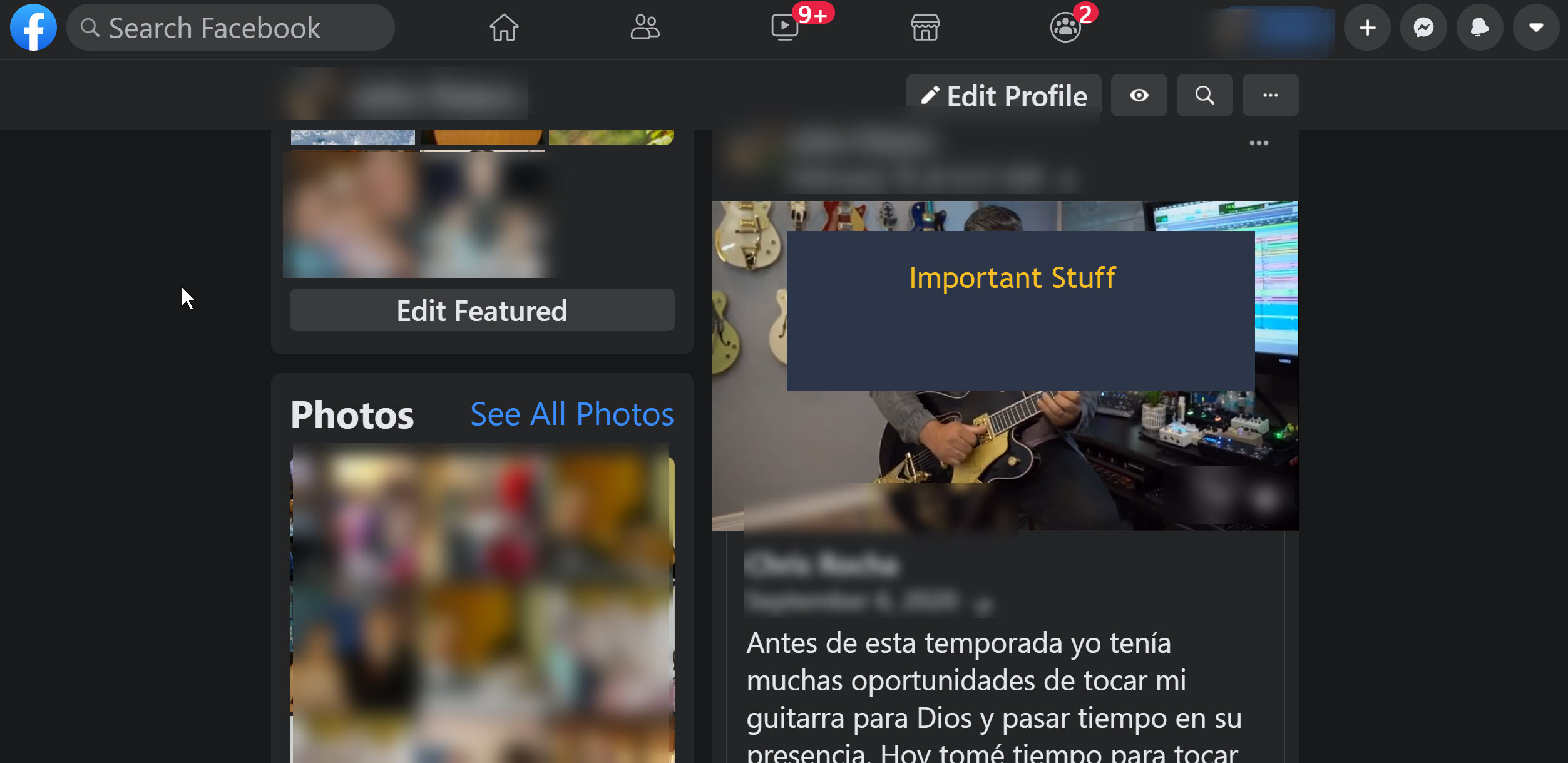

**Facebook**

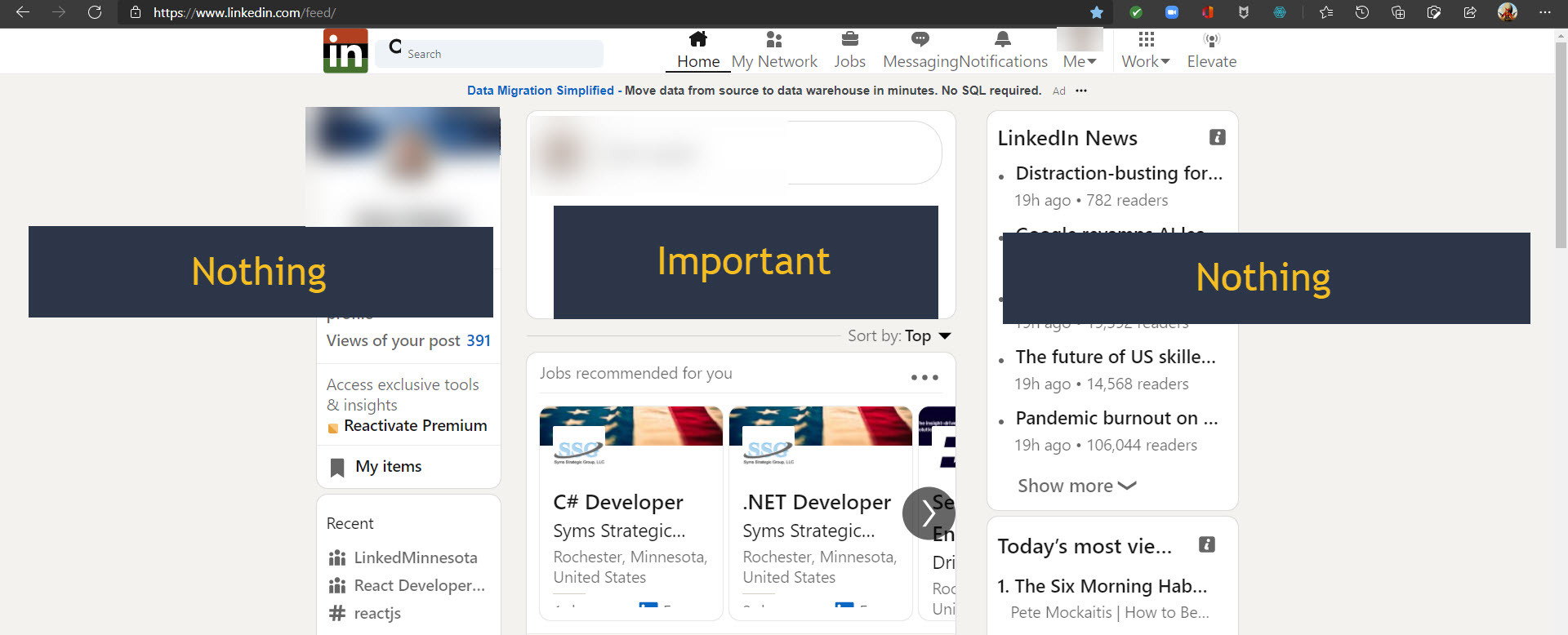

**LinkedIn**

Probably the most wasted space of all.

**Good Examples**

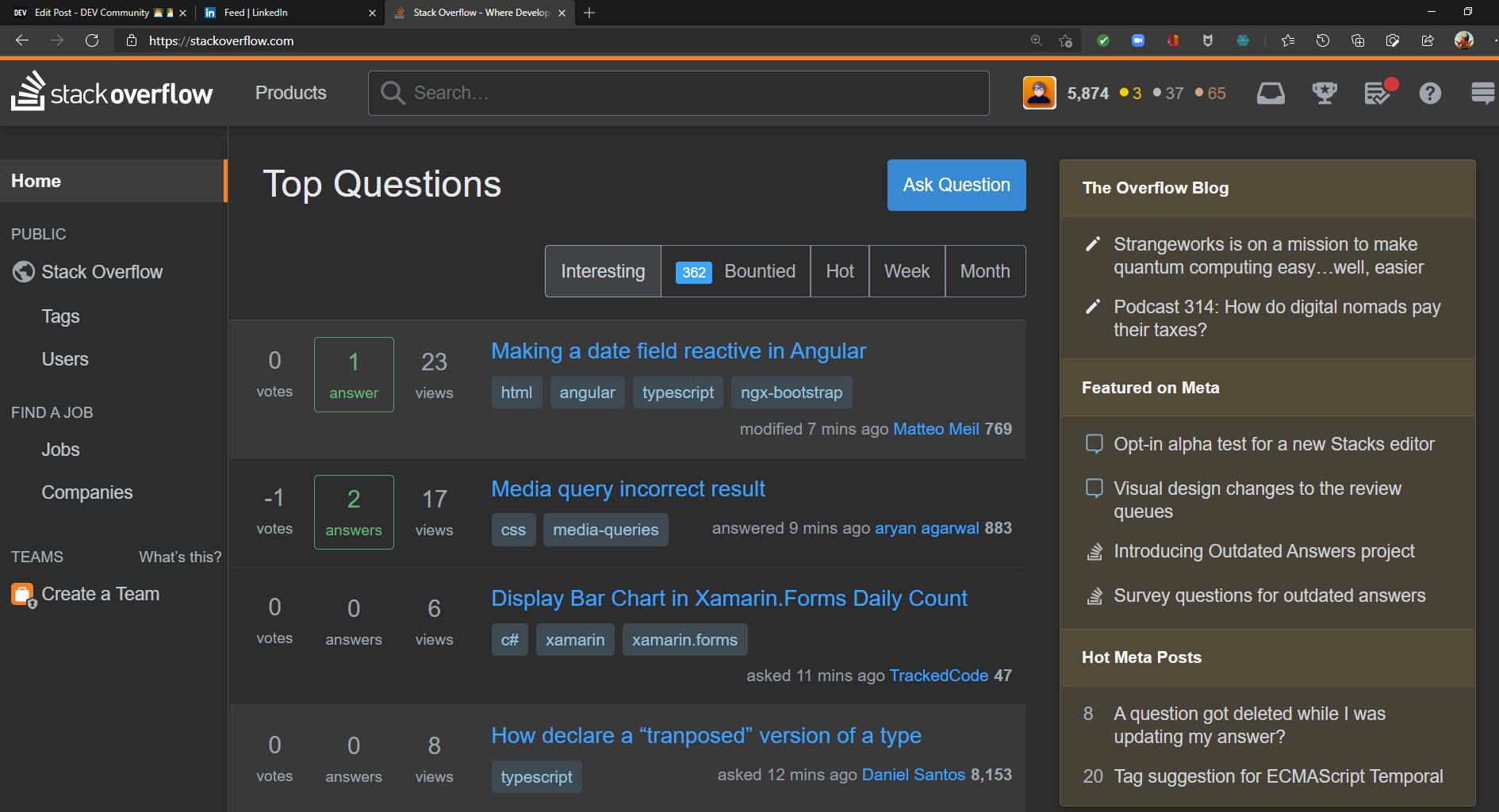

- StackOverFlow

Passable but just barely.

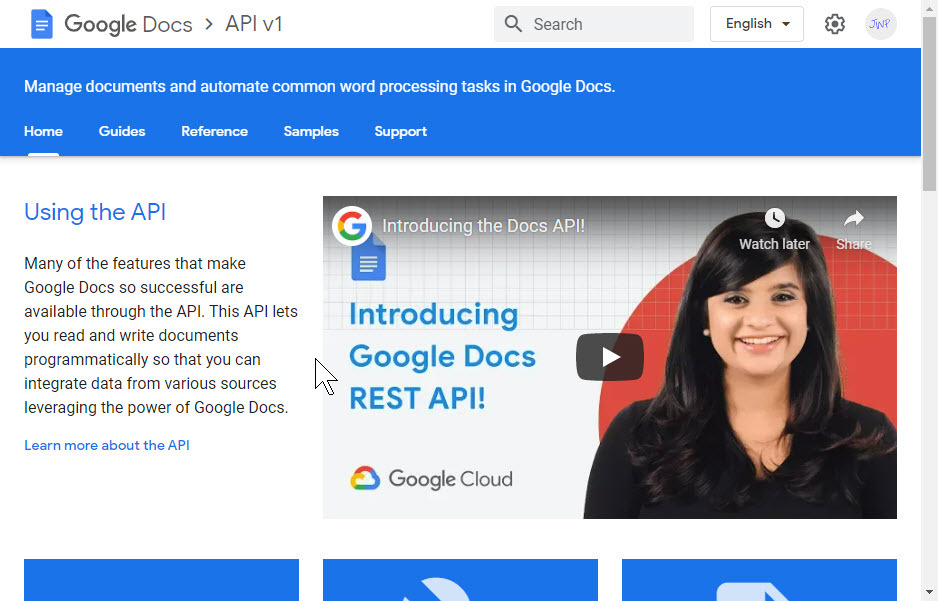

- Google Developer Docs

At first, it looks like too much space, but a browser resize will collapse both asides and just show important stuff, like this. Great example of responsive focusing on 'important stuff'.

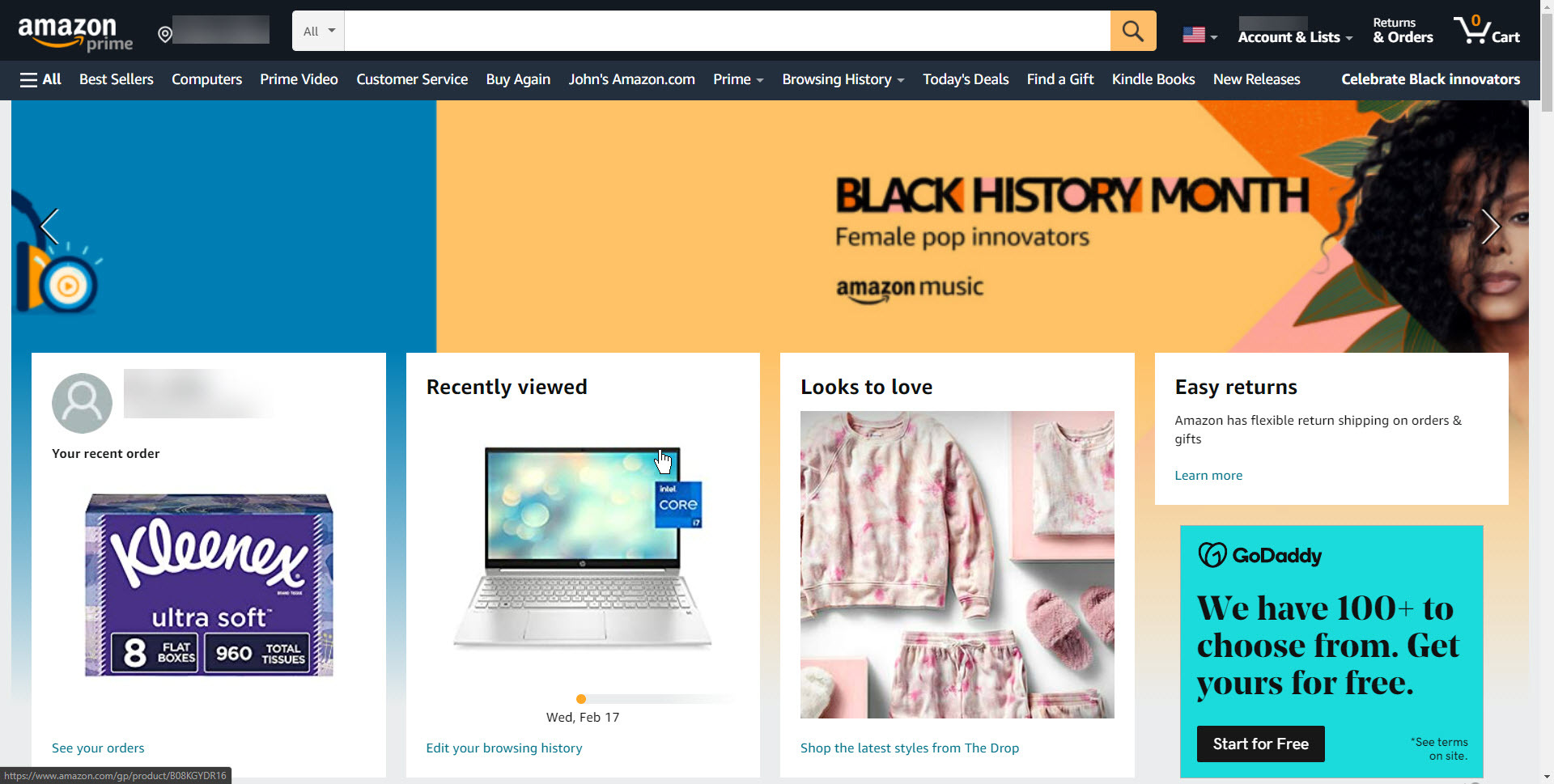

- Amazon

At it for over 15 years now.

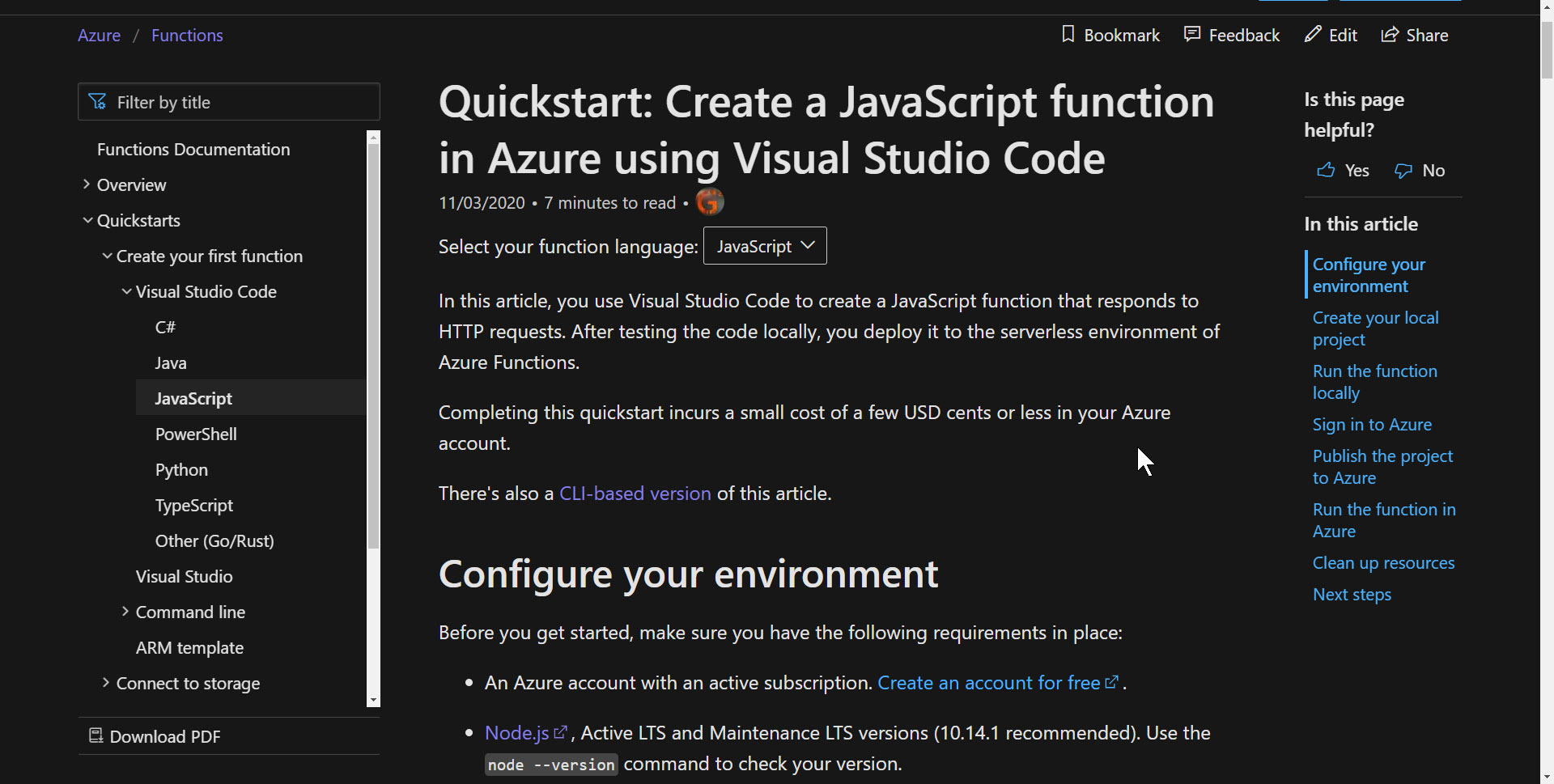

- Azure

Microsoft wakes up, in their new designs.

- .NET Core

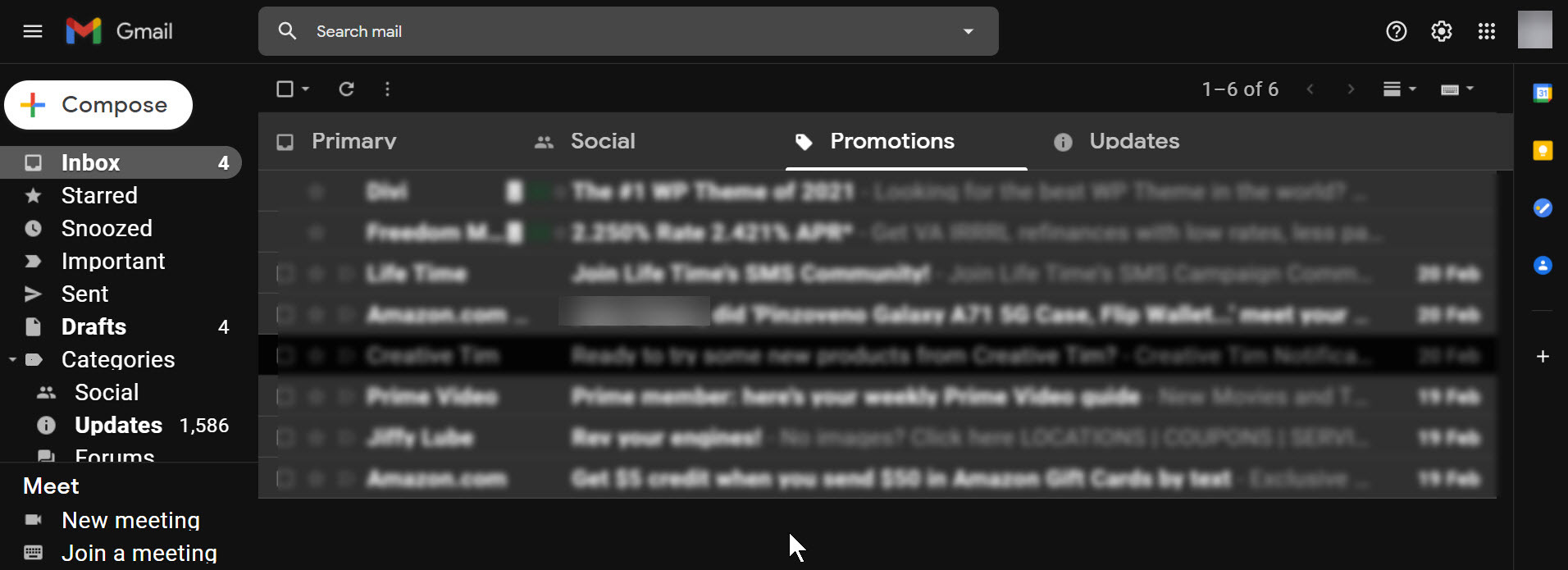

- Gmail

KISS (Keep it stupid simple)

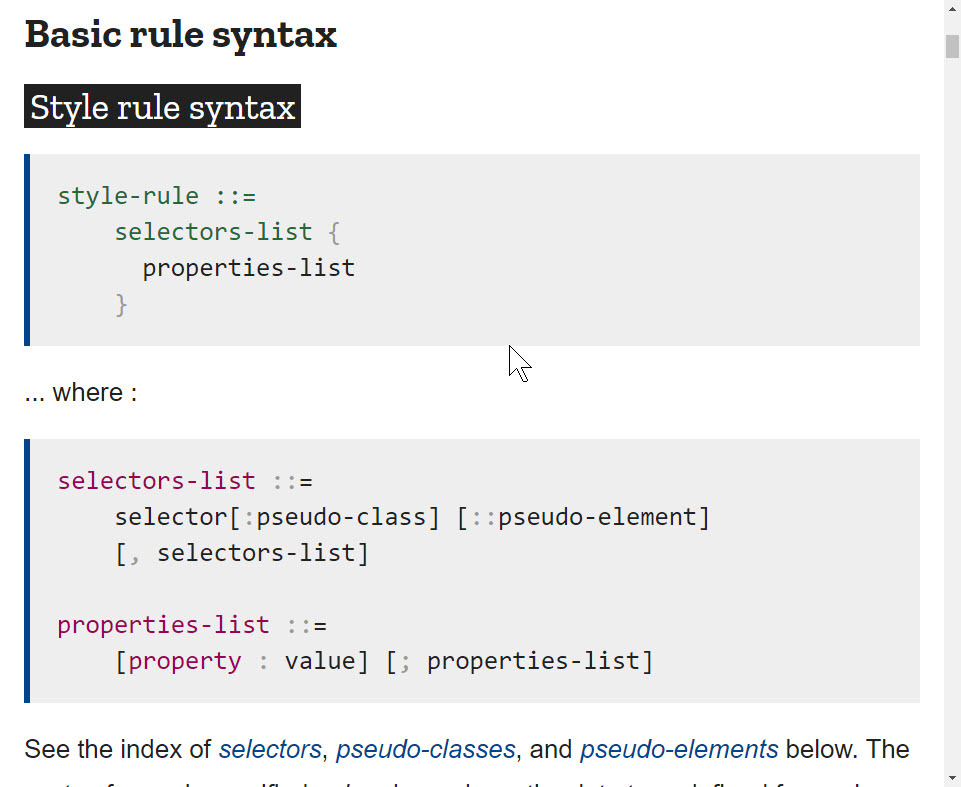

- MDN

KISS

Note: I don't claim to be an expert stylist; however, as a programmer; I read tons of material daily. I am a smart user of programming information. If I have to fuss with it too much to read it better, I'm apt to quit using that site altogether.

Sites I've quit over the years:

- Any Medium site

- Most sites that are not updated often.

- Secondary sites that do not have the Subject Matter Expertise I require.

- Any API or Training site which hides a table of content. This forces continual searches.

- Any site who has a built-in search that doesn't work well.

Smart Users know their stuff, as developers we owe a good experience to them.

**Take Away**

- The mobile first philosophy is good, but don't forget wide screen and high res desktop monitors

- Responsive sites, should always favor the stuff our users are there to read.

- Collapse asides when resizing width and focus only on the center column.

- Get rid of outdated layout styles and favor fully responsive sites that adapt to any width and resolution.

- Continuously improve.

| jwp | |

613,734 | Day 44,45,46:100DaysOfCode | not much within these days but ... studied spring boot further... continued with dp some good leetco... | 0 | 2021-02-21T16:09:57 | https://dev.to/taran17809555/day-44-45-46-100daysofcode-2p4j | 100daysofcode, programming, productivity | not much within these days but ...

studied spring boot further...

continued with dp

some good leetcode medium on dp, bst and array..

few past codeforces ques..

few past kickstart ques.....

completed binary search various ques from cp sheet.

You can Follow me->

https://github.com/singhtaran1005

https://www.linkedin.com/in/taranpreet-singh-chabbra-27517918a/ | taran17809555 |

613,750 | How to use 11ty with Headless WordPress | In this tutorial, we'll learn how to use 11ty with Headless WordPress, and then deploy it to Netlify.... | 0 | 2021-02-28T17:08:59 | https://davedavies.dev/post/how-to-use-11ty-with-headless-wordpress/ | wordpress, 11ty, headless | In this tutorial, we'll learn how to use 11ty with Headless WordPress, and then deploy it to Netlify.

You can see the final project hosted on Netlify at [headless-wordpress-11ty.netlify.app](https://headless-wordpress-11ty.netlify.app/), or skip the tutorial altogether and view the Git repo at [github.com/thedavedavies/Headless-WordPress-11ty](https://github.com/thedavedavies/Headless-WordPress-11ty). **Lets get started!**

##What is Eleventy?

Eleventy (or 11ty) is a Static Site Generator, which we can use to fetch our WordPress posts and pages, and then compile the data at build time. This allows us to use WordPress as a Headless CMS, and deploy an entirely static and lightweight site to [Netlify](https://www.netlify.com/). We can get this data from WordPress using either the WordPress REST API, or using a WPGraphQL endpoint. In this tutorial, we'll be using the WordPress REST API.

## Why use 11ty with Headless WordPress?

These days there are many great headless Content Management Systems (CMS) and Static Site Generators (SSG) to choose from, so why pick WordPress over any other? I've been working with WordPress for over 10 years, and had a load of established sites I wanted to be able to use the data on. Some of these sites didn't need any extra functionality and so entirely static HTML pages are a perfect solution.

WordPress also has an active and supportive community, with thousands of plugins to help you build any type of website. For sites which need extra functionality, you can use [NextJS with Headless WordPress](https://dev.to/thedavedavies/using-nextjs-with-headless-wordpress-44om) too.

## Using the WordPress fetch API

If you've never used Eleventy before, then there's loads of great resources at [https://www.11ty.dev](https://www.11ty.dev/). In the meantime, create a fresh project (we'll call ours **Headless-WordPress-11ty**) and open that new project in your code editor (I'm using VS Code).

Installing Eleventy into our project requires a `package.json` file. Let's create it with `npm init -y`. The `-y` parameter tells npm to skip all the questions and just use the defaults.

```

npm init -y

npm install --save-dev @11ty/eleventy node-fetch

```

In that code snippet above, the dependencies we're installing are:

- **Eleventy** -- The Static Site Generator

- [**node-fetch**](https://www.npmjs.com/package/node-fetch) -- We'll use this to fetch our data from the WordPress REST API.

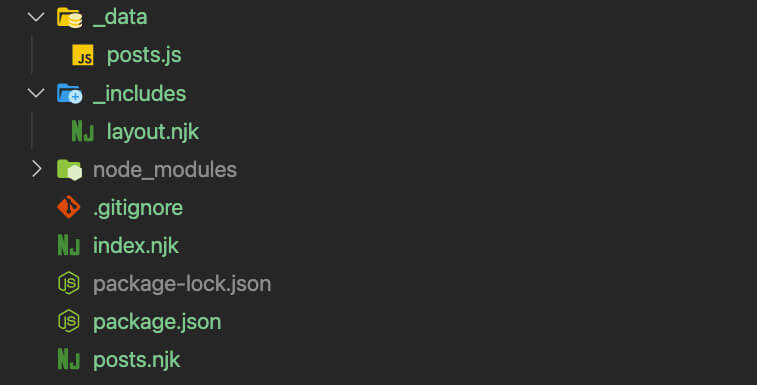

## Preparing our 11ty project to fetch data from the WordPress REST API

After installing the **Eleventy** and **node-fetch** packages, create a new directory in the root of your project called **_data**, and inside that new **_data** folder create a file called **posts.js**. The **_data** directory is where all of our global data will be controlled. In our case, that means using the WordPress REST API to hit our endpoint and fetch our posts and pages.

Back in your root directory, create another new directory named **_layouts**, and inside **_layouts** create a new file called **layout.njk**.

> **What's with the .njk file extension?**

>

> The .njk file extension means that we're using Nunjucks as a templating language. You can easily use 10 different templating languages in 11ty (or a mixture of them all) so whilst we're using Nunjucks, if there's a different template language you're more comfortable using, then go ahead and use that.

Finally, back in your root directory again, create 2 new files called **index.njk** and **posts.njk**.

Having added these new directories and files, your project should be looking similar to this screenshot:

> **Creating a .gitignore file**

>

> While a **.gitignore** file isn't essential to testing out the WordPress REST API with 11ty, it's highly recommended for when you want to deploy your site. Here's the **.gitignore** settings I'm using for this project:

```

_site/

_tmp/

.DS_Store

node_modules/

package-lock.json

.env*

```

## Step 1: Fetching post data from the WordPress REST API

Now that we've prepared the foundations and set up our base project, let's get writing some code to fetch our posts from WordPress.

Head to your **_data/posts.js** file, and add the following code:

```

const fetch = require("node-fetch");

module.exports = async function () {

console.log("Fetching data...");

return fetch("https://fake-data.better-wordpress.dev/wp-json/wp/v2/posts")

.then((res) => res.json())

.then((json) => json);

};

```

**What does this code do?**

Exclusively a browser API, we can't use **fetch** in NodeJS. Using the **node-fetch** package brings the ability to use **fetch** into NodeJS. We're then setting up an **asynchronous function**, which expects a promise to be returned, which is exactly what's happening when we run `return fetch("https://fake-data.better-wordpress.dev/wp-json/wp/v2/posts")`.

## Step 1.5: Testing your fetch

Next, open your `package.json` file and in your scripts property add the following 2 scripts:

```

"scripts": {

"start": "npx @11ty/eleventy --serve",

"build": "npx @11ty/eleventy"

},

```

**What does this code do?**

The **scripts** property in your **package.json** file allows you to run predefined scripts. Once you've added the **start** and **build** scripts above, you'll be able to run `npm run start` to start up a hot-reloading local web server, and `npm run build` to compile any templates into the output folder (this defaults to **_site**).

**What do we get back from our fetch?**

The **async function** we wrote earlier in **_data/posts.js** returns to us a JSON object which we can then work with. To confirm that the data is coming back successfully from the WordPress REST API, you can change the final `.then` in your function to a `console.log()`:

```

return fetch("https://fake-data.better-wordpress.dev/wp-json/wp/v2/posts")

.then((res) => res.json())

.then((json) => console.log(json);

```

and run your `npm run start` script in your console. If all goes well with your fetch, then you should see an output similar to the screenshot below, with your JSON data logged out into the console:

## Step 2: Creating a layout template

Now that we're successfully fetching our posts from the WordPress REST API, we can start creating a template to show that data.

>If you changed your **fetch** function to `console.log(JSON)` the JSON data, then make sure you now return it to how it was in Step 1.

In your **_includes/layout.njk** file, paste in the following code:

```

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8"/>

<meta http-equiv="X-UA-Compatible" content="IE=edge"/>

<meta name="viewport" content="width=device-width, initial-scale=1.0"/>

<title>{{ posts.title.rendered }}</title>

</head>

<body>

{{ content | safe }}

</body>

</html>

```

**What does this code do?**

Very simply, the code above scaffolds out the HTML we'll use to display our data.

- `{{ posts.title.rendered }}` is the title object that we get back in our JSON object.

- Using `{{ content | safe }}` means that the layout template will populate the `content` data with the child template's content. Using the `safe` filter prevents double-escaping the output (this is built into Nunjucks).

## Step 3: Creating our index page

Now we get to start seeing content in our browser! In your **index.njk** file, paste the following code:

```

---

pagination:

data: posts

size: 2

layout: layout.njk

title: Latest Posts

---

<ol>

{%- for item in pagination.items %}

<li>

<a href="/posts/{{ item.title.rendered | slug }}">

{{ item.title.rendered }}</li>

</a>

{% endfor -%}

</ol>

<nav>

<ol>

<li>

{% if pagination.href.previous %}

<a href="{{ pagination.href.previous }}">Previous</a>

{% else %}Previous{% endif %}

</li>

{%- for pageEntry in pagination.pages %}

<li>

<a href="{{ pagination.hrefs[ loop.index0 ] }}" {% if page.url == pagination.hrefs[ loop.index0 ] %} aria-current="page" {% endif %}>Page

{{ loop.index }}</a>

</li>

{%- endfor %}

<li>

{% if pagination.href.next %}

<a href="{{ pagination.href.next }}">Next</a>

{% else %}Next{% endif %}

</li>

</ol>

</nav>

```

**What does this code do?**

Nunjucks uses **front matter**, which will be processed by our templates when we build our site. So what does this front matter do?

- **pagination** iterates over our data set and then creates pages for individual chunks of data.

- **data: posts** is taking our **_data/posts.js** as a data set. The front matter value for **data** needs to match up with our data file. So, if the file was called **_data/pages.js**, then our front matter would instead be: **data: pages**.

- **size: 2** is telling 11ty to list 2 of our posts before moving onto pagination

- **layout: layout.njk** is telling 11ty to use the layout that we built in Step 2.

With that saved, and `npm run start` running, you should now be able to see a paginated list of our pre-build posts from WordPress!

## Step 4: Creating a template for our single posts

The final task we have to do before launching our site is to create a template for our single posts. Paste the following code into **posts.njk**:

```

---

pagination:

data: posts

size: 1

alias: posts

permalink: "posts/{{ posts.title.rendered | slug }}/"

layout: layout.njk

---

<h1>{{ posts.title.rendered }}</h1>

<div class="mainContent">

{{posts.content.rendered | safe}}

</div>

```

**What does this code do?**

The front matter in our **posts.njk** file is similar to our **index.njk**, however this time our pagination size is just 1, and we're using an [alias](https://www.11ty.dev/docs/pagination/) in the slug.

11ty provides a number of filters which we can pass into our content. Here, we're using the **slug** filter to 'slugify' our URL, and the **safe** filter again to render out the HTML we get back from the WordPress REST API.

Finally we have a fully built (but very much unstyled) site which is populated from our existing WordPress website! Our last task is to deploy this site to Netlify.

## Step 5: Hosting our 11ty site with Netlify

Netlify is a powerful serverless hosting platform with an intuitive git-based workflow and a very generous free tier. This means we can deploy our static 11ty site to Netlify, and if you're using Git you can connect Netlify to your Git repo to trigger a rebuild every time you commit.

At this point, it's best practice to remove your API endpoint URLs from your code and use a **.env** file instead. So let's do that by installing the [**dotenv**](https://www.npmjs.com/package/dotenv) package: `npm i dotenv`. Next, create a **.env** file in the root of your project. This is where we'll add all of our secret endpoint URLs. If you created a **.gitignore** file earlier, make sure to have **.env*** in the file. This will tell git to ignore all .env files.

Open up your **.env** file, paste in your WordPress REST API endpoint along with a variable to link it to -- i.e.: `WORDPRESS_REST_API_URL=https://fake-data.better-wordpress.dev/wp-json/wp/v2/posts`

Next, we need to make sure that 11ty knows about our .env file, so create a **.eleventy.js** file (note the dot at the start of that filename), and paste the following code:

```

module.exports = function () {

require("dotenv").config();

};

```

**What does this code do?**

The **.eleventy.js** file holds all our custom site-wide configuration, but for now all we need to pass to it is our dotenv config. You can [read more about Eleventy configuration](https://www.11ty.dev/docs/config/).

Finally in our **_data/posts.js** file, we can replace our endpoint url with **process.env.WORDPRESS_REST_API_URL**. We can now safely commit our code to our Git repo without exposing our secret endpoint URLs, or any other API keys you need to keep secret.

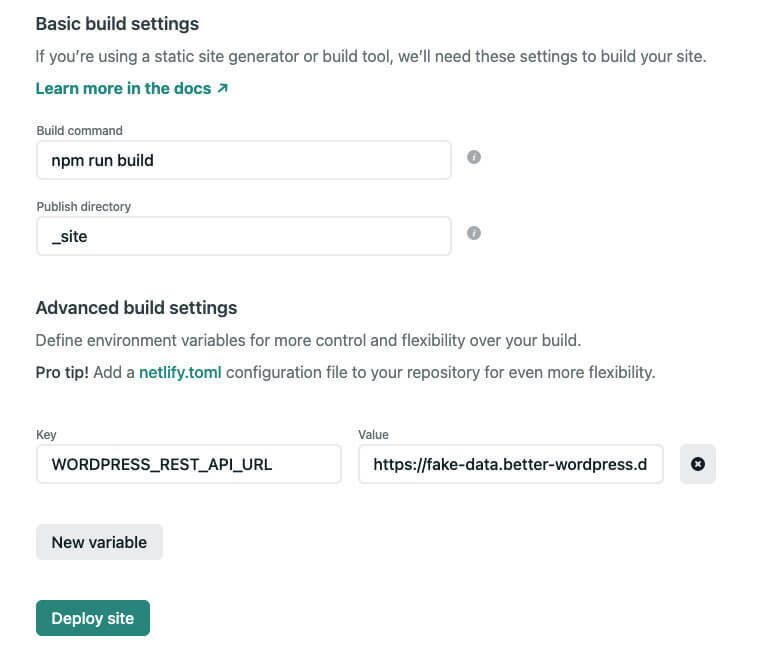

Netlify already has a super in-depth blog post on [deploying your site](https://www.netlify.com/blog/2016/09/29/a-step-by-step-guide-deploying-on-netlify/), so follow that to get your site up on Netlify. One addition we want to make though, is just before you Deploy your site - click **Advanced build settings** and add in your env key and value from your local .env file (which hopefully hasn't been committed to your repo).

## Finished!

And we're done! We now have a very high level proof-of-concept project to fetch our posts from the WordPress REST API, build them into a static site using Eleventy, then deploy that site to Netlify.

There's plenty more we can do, including styling the site and fetching various pages and other data that the WordPress REST API gives us. | thedavedavies |

614,026 | How to Build a Spoken Universal Translator with Node-RED and Watson AI services | What is Node-RED? Node-RED is a low-code programming environment for event-driven applicat... | 0 | 2021-02-22T01:43:26 | https://dev.to/ivanadokic/how-to-build-a-spoken-universal-translator-with-node-red-and-watson-ai-services-3pdb | cloud, javascript, node, ibmwatson | # What is Node-RED?

[Node-RED](https://nodered.org/) is a low-code programming environment for event-driven applications. It's a programming tool for wiring together hardware devices, APIs, and online services in new and interesting ways.

At the core of Node-RED is Node.js a JavaScript runtime environment built on Chrome's V8 JavaScript engine. Top 5 reasons to use Node-RED can be found [here](https://developer.ibm.com/blogs/top-5-reasons-to-use-node-red-right-now/).

## Let's build a spoken universal translator using Node-RED and Watson AI services

We will build a universal translator by using a Node-RED Starter application to connect with those Watson AI services:

- Speech to Text

- Language Translator

- Text to Speech

The [Node-RED Starter application](https://developer.ibm.com/tutorials/how-to-create-a-node-red-starter-application/) includes a Node-RED Node.js web server and a Cloudant database to store the Node-RED flows.

#### We will learn how to:

- Create a **Node-RED starter** app running in IBM Cloud. Create instances of the Watson services: Speech to Text, Text to Speech, and Language Translator and how to connect those services to your Node-Red app.

- Launch and configure the Node-RED visual programming editor.

- Install additional Node-RED nodes and create flows that use the Watson services to create the spoken universal translator.

## Prerequisites

This app can be completed using an IBM Cloud Lite account.

Create an [IBM Cloud account](https://cloud.ibm.com/registration?cm_sp=ibmdev-_-developer-tutorials-_-cloudreg)

Log into [IBM Cloud](https://cloud.ibm.com/login?cm_sp=ibmdev-_-developer-tutorials-_-cloudreg)

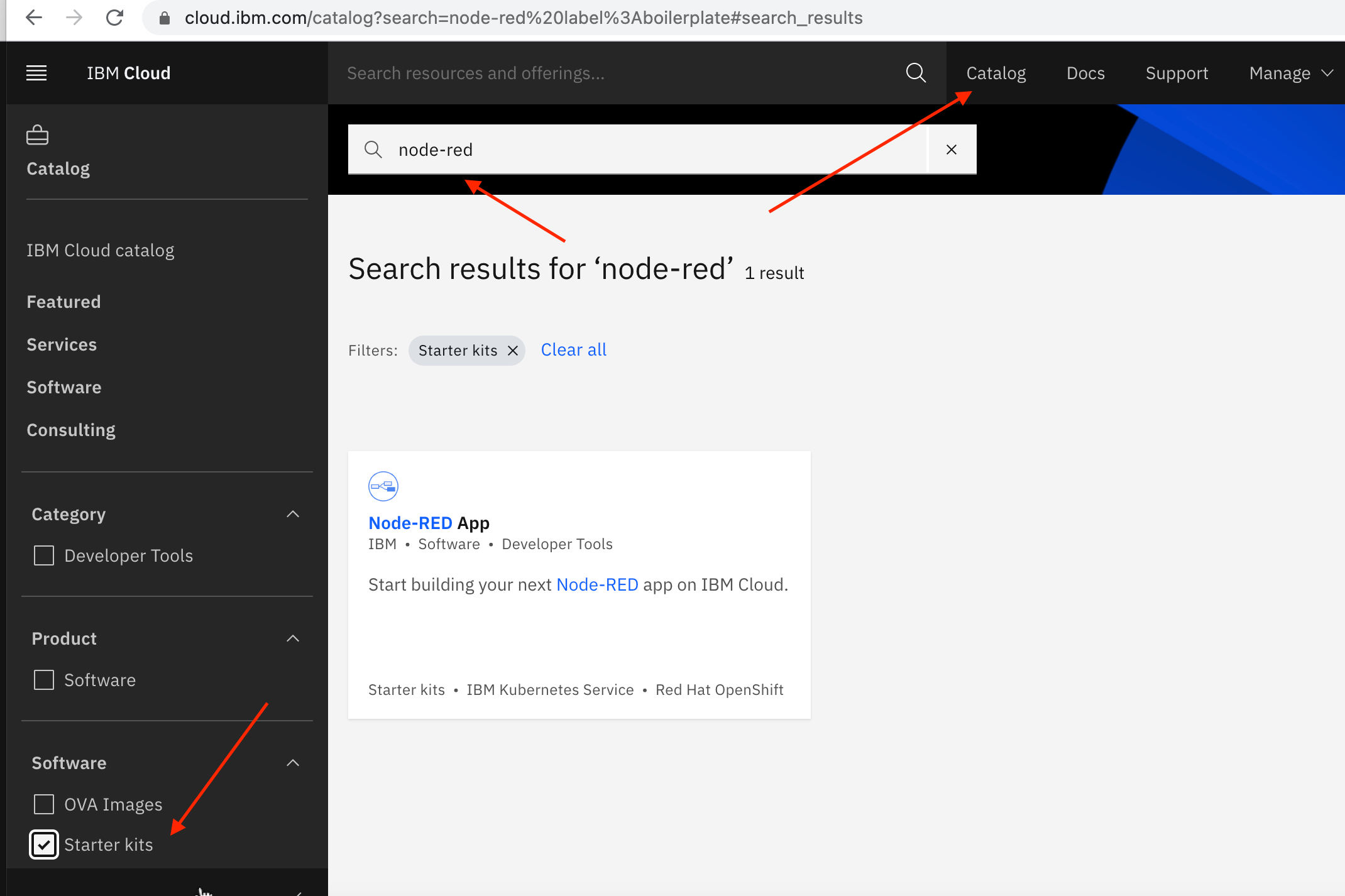

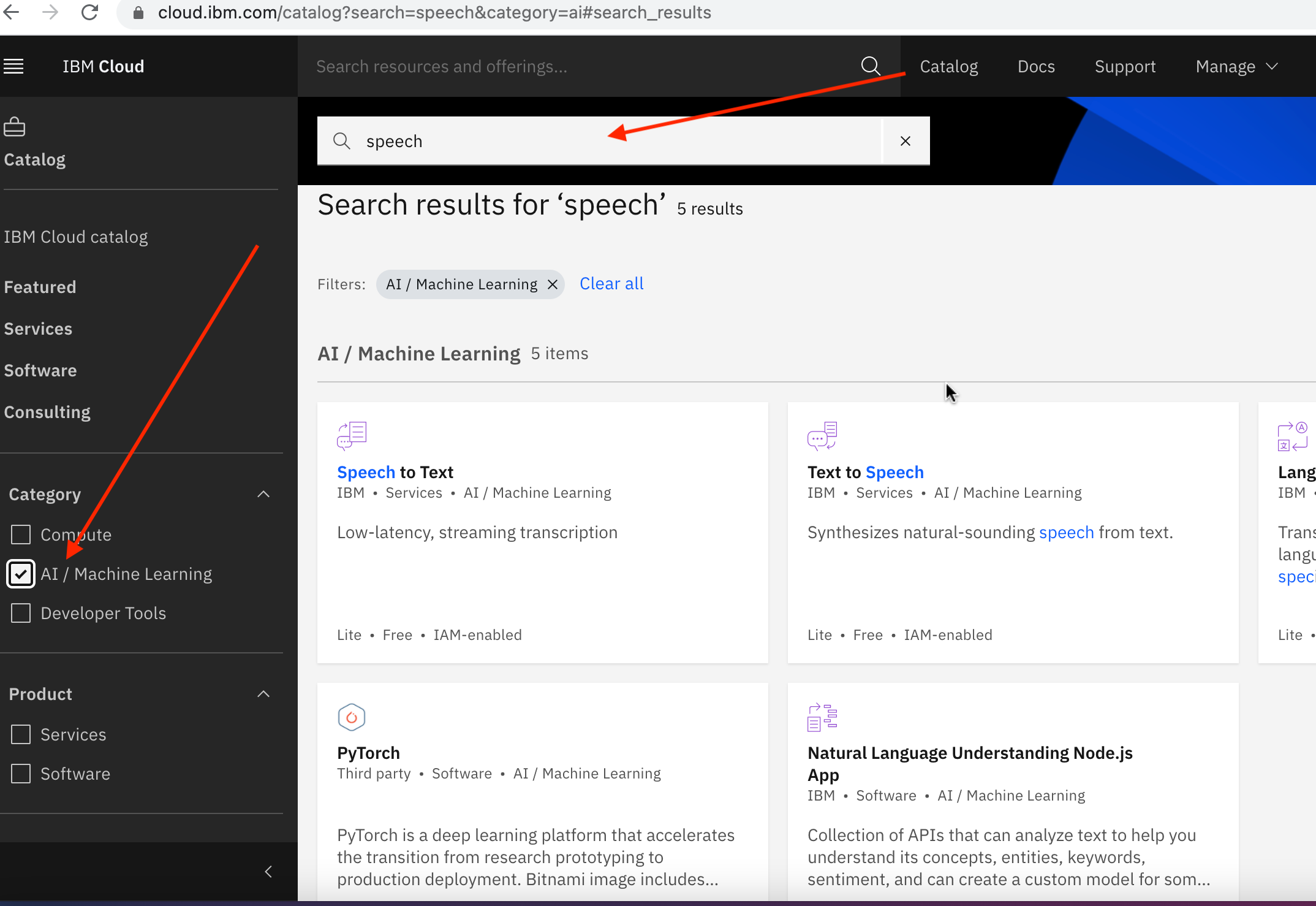

# Step 1 – Let's create a Node-RED Starter App

Follow these steps to create a Node-RED Starter application in IBM Cloud.

1. Log in to your IBM Cloud account.

2. Click on the Catalog

3. Search for node-red

4. Select the Starter Kits category, then select Node-RED Starter.

5. Enter a unique name for your application, it will be part of the application URL :

6. The Region, Organization, and Space fields will be pre-populated with valid options for your IBM Cloud account. I have a Lite account and I just accepted the defaults.

7. In the Selected Plan section, choose Lite.

8. Click the Create button.

The Node-RED Starter application will be provisioned in the IBM Cloud region that was specified. This process is called staging an application.

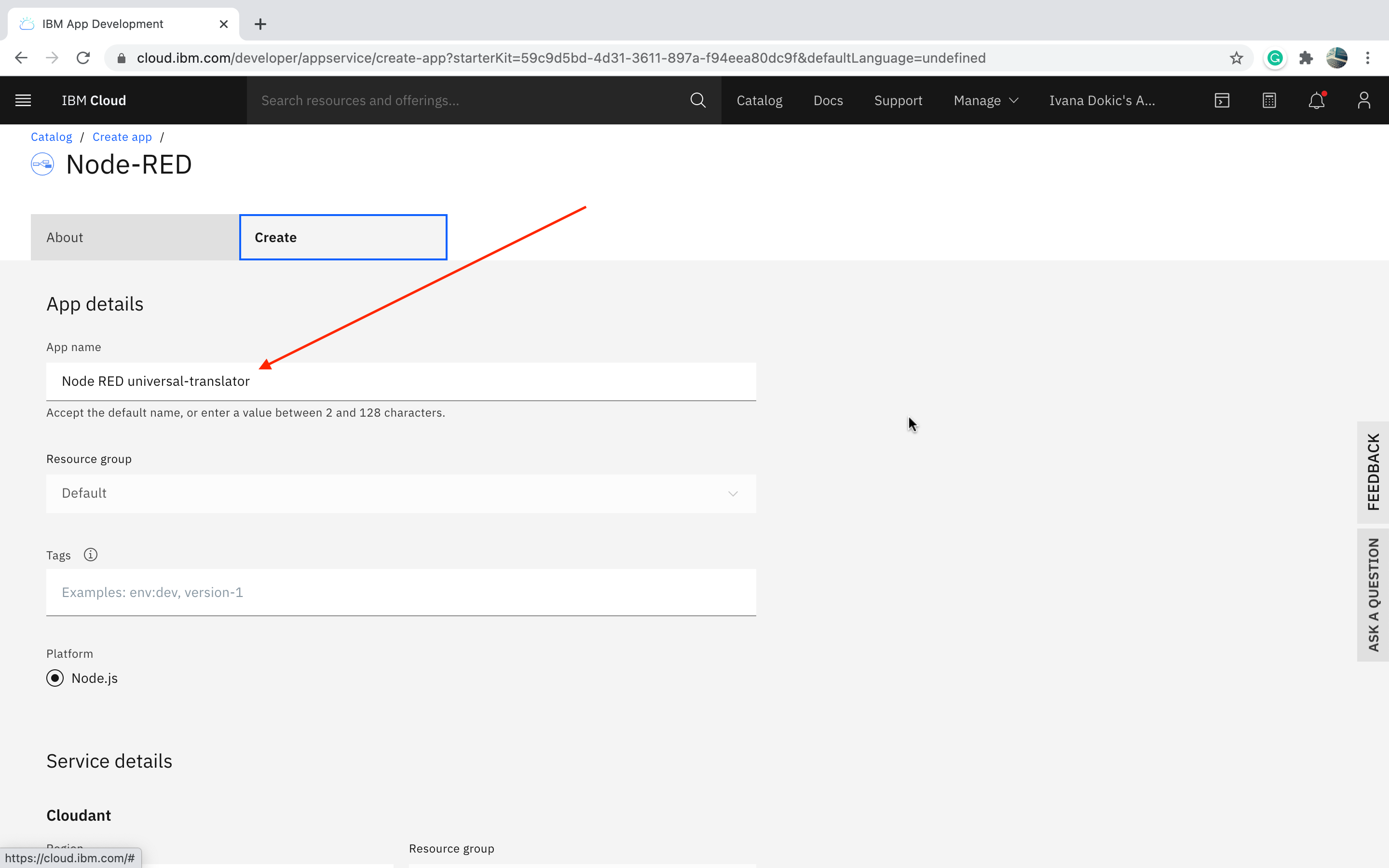

# Step 2 – Let's create the Watson AI service instances

You can add Watson AI microservices to your application as APIs, through instances that you can manage through credentials. We will create and bind these microservices to your Cloud Foundry application. There are three Watson AI services, all available in the *IBM Cloud Lite tier*, needed to build a universal translator:

- Watson Speech to Text

- Watson Text to Speech

- Watson Language Translator

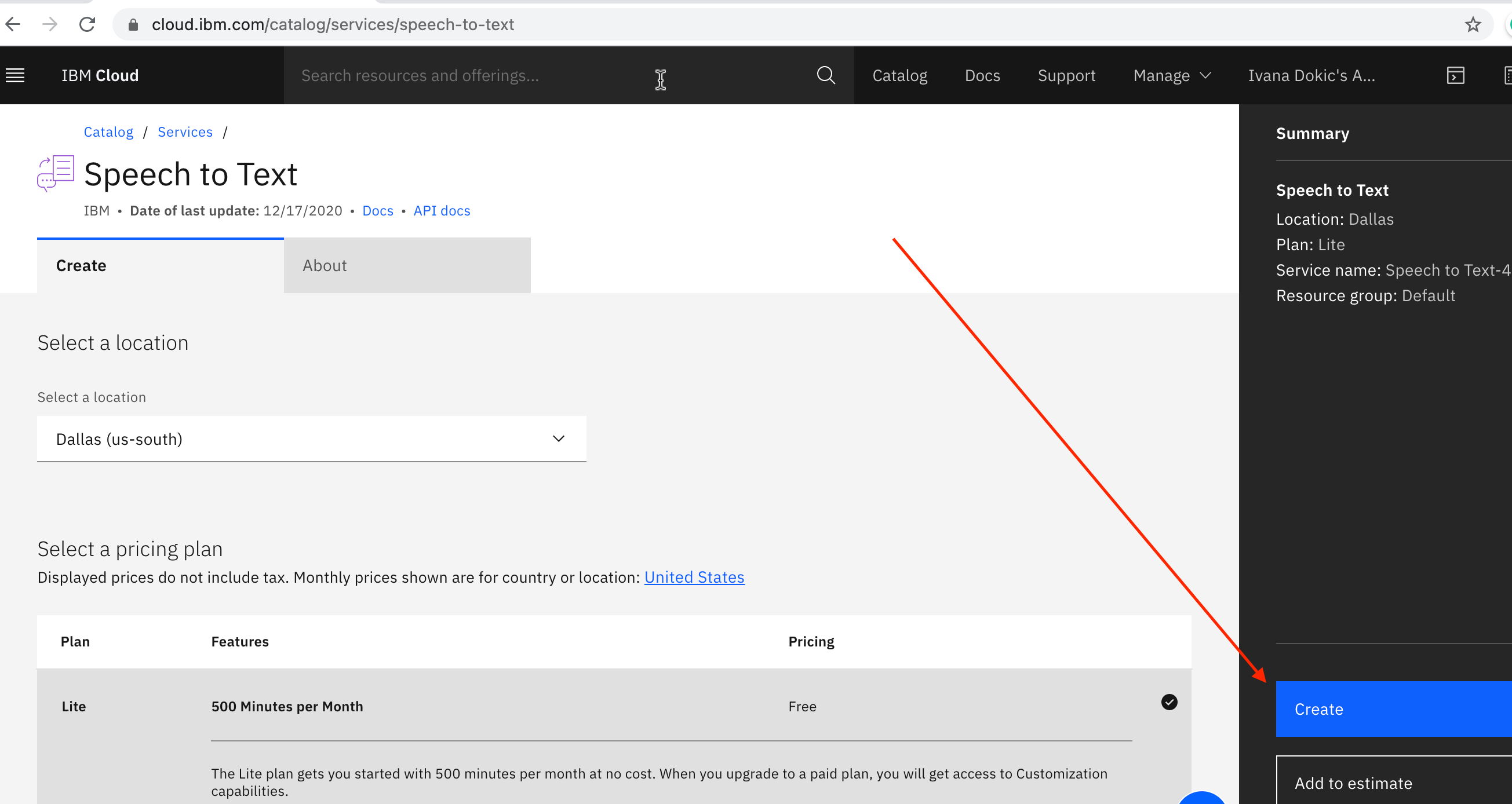

In IBM Cloud Catalog search for speech and navigate to the AI category:

Select **Speech to Text**, and click the Create button.

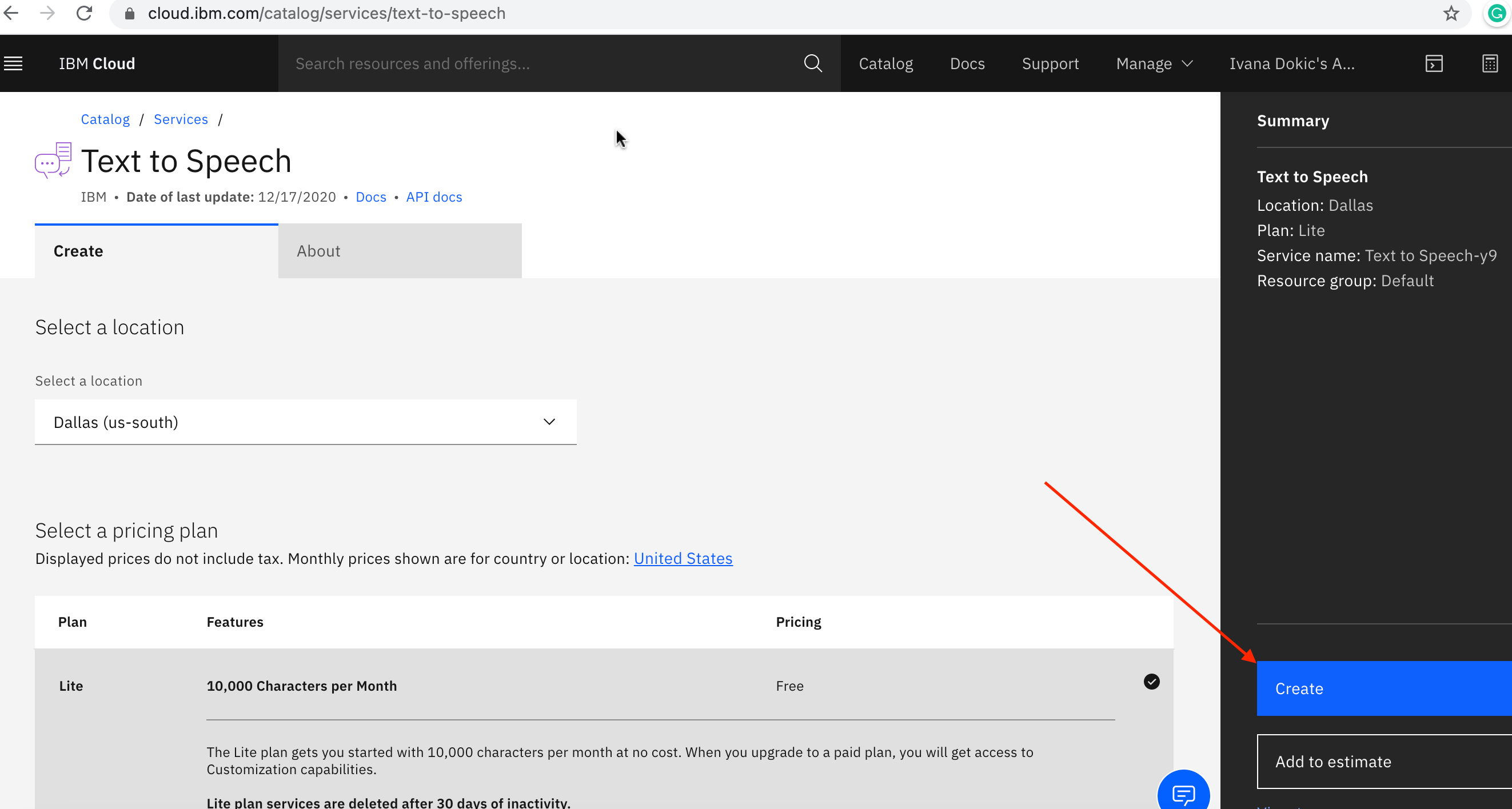

Return to the AI category in the IBM Cloud Catalog, and select **Text to Speech**, and click the Create button:

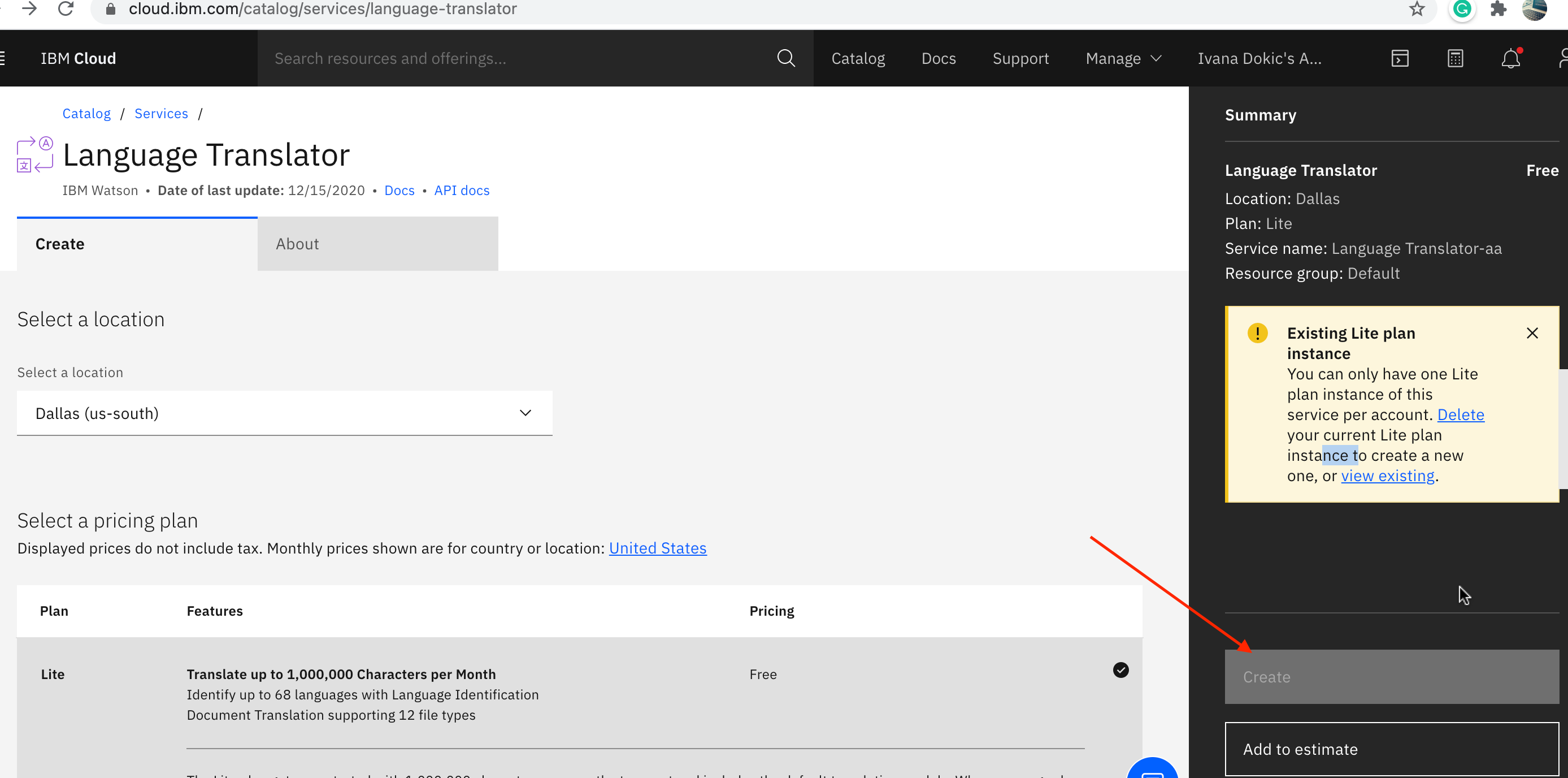

In IBM Cloud Catalog, search for a translator, navigate to the AI category, select **Language Translator**, and click the Create button, I already had the one:

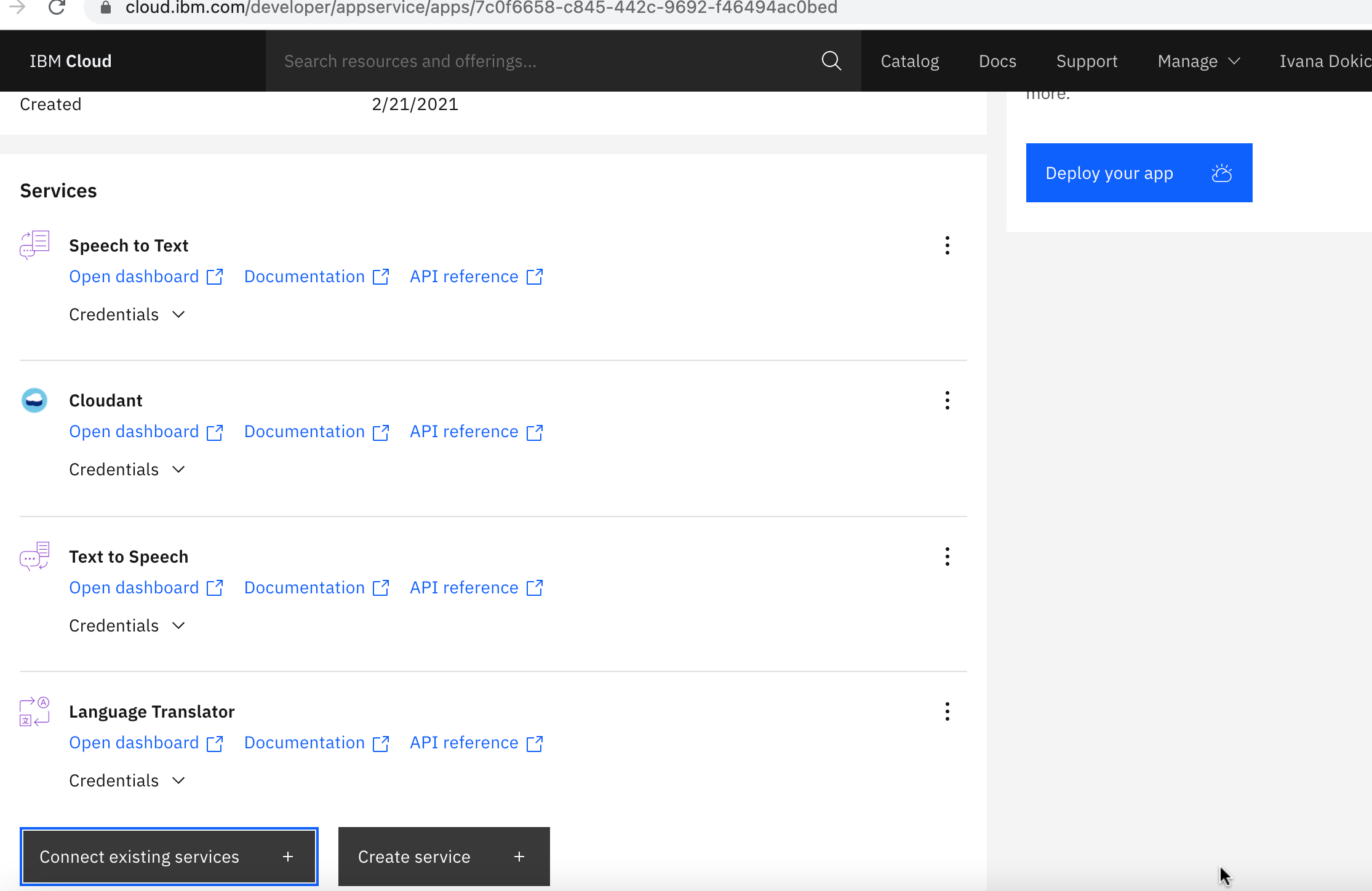

# Step 3 – Let's connect the Watson AI Services to the Node-RED Starter Application

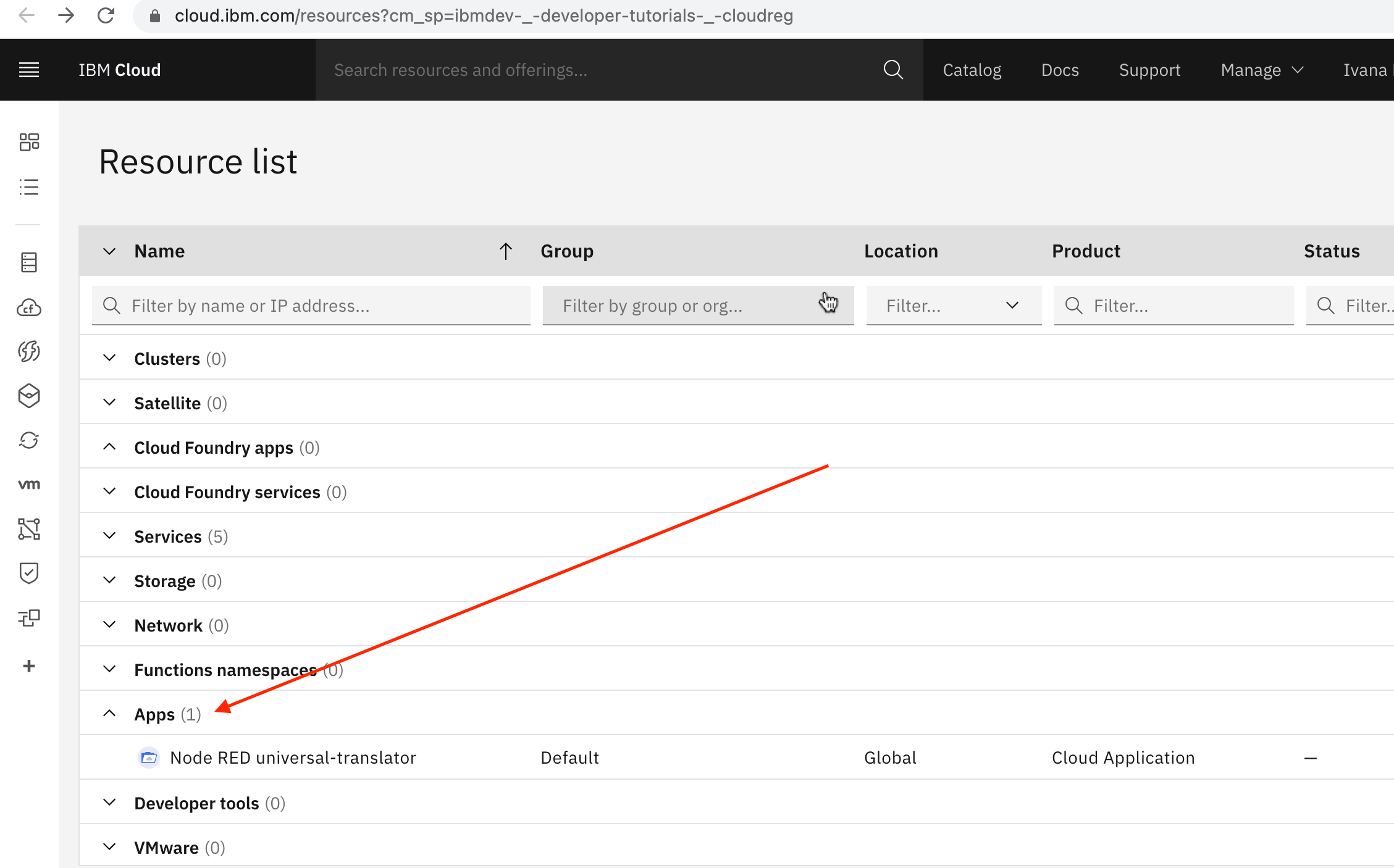

1. In [IBM Cloud Dashboard](https://cloud.ibm.com/resources?cm_sp=ibmdev-_-developer-tutorials-_-cloudreg) navigate to the Apps section and select Node-RED universal-translator (your apps unique name):

The Applications Details opens, search for the Watson services that you created in the previous step and press the Connect button. Once it's connected you will have those Services ( Speech to Text, Text to Speech, and Language Translator ) connected like this:

# Step 4 – Launch your Node-RED app and open the Node-RED visual programming editor

Node-RED is an open-source Node.js application that provides a visual programming editor that makes it easy to wire together flows.

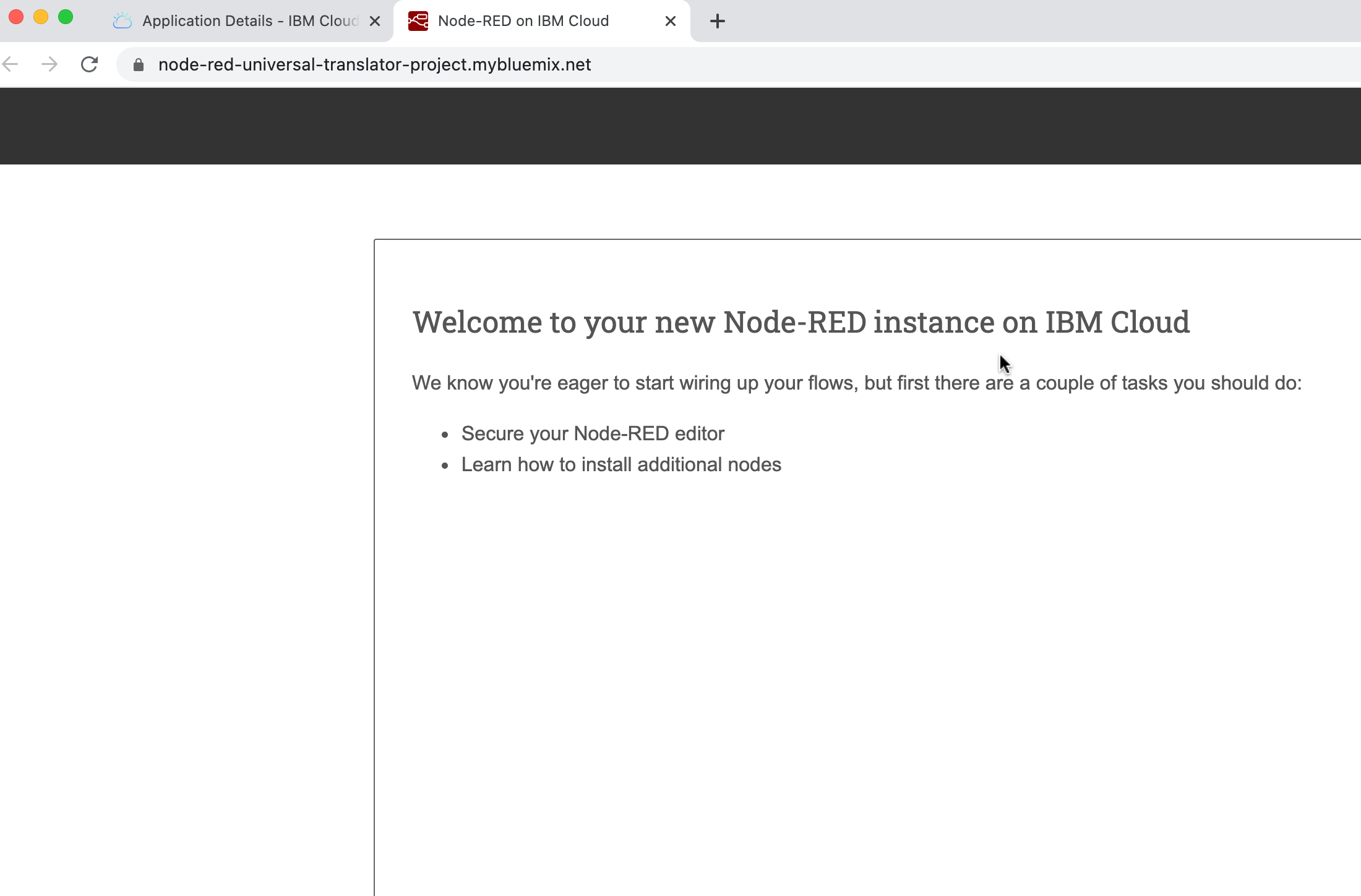

The first time you start the Node-RED app, it will help you configure the visual programming editor. Once the Green Running icon appears, click the View App URL link, a new browser tab opens to the Node-RED start page:

A new browser tab opens to the Node-RED start page.

Use the setup wizard to secure your editor with a user name and password and to browse and add more nodes. Click the Finish button to proceed:

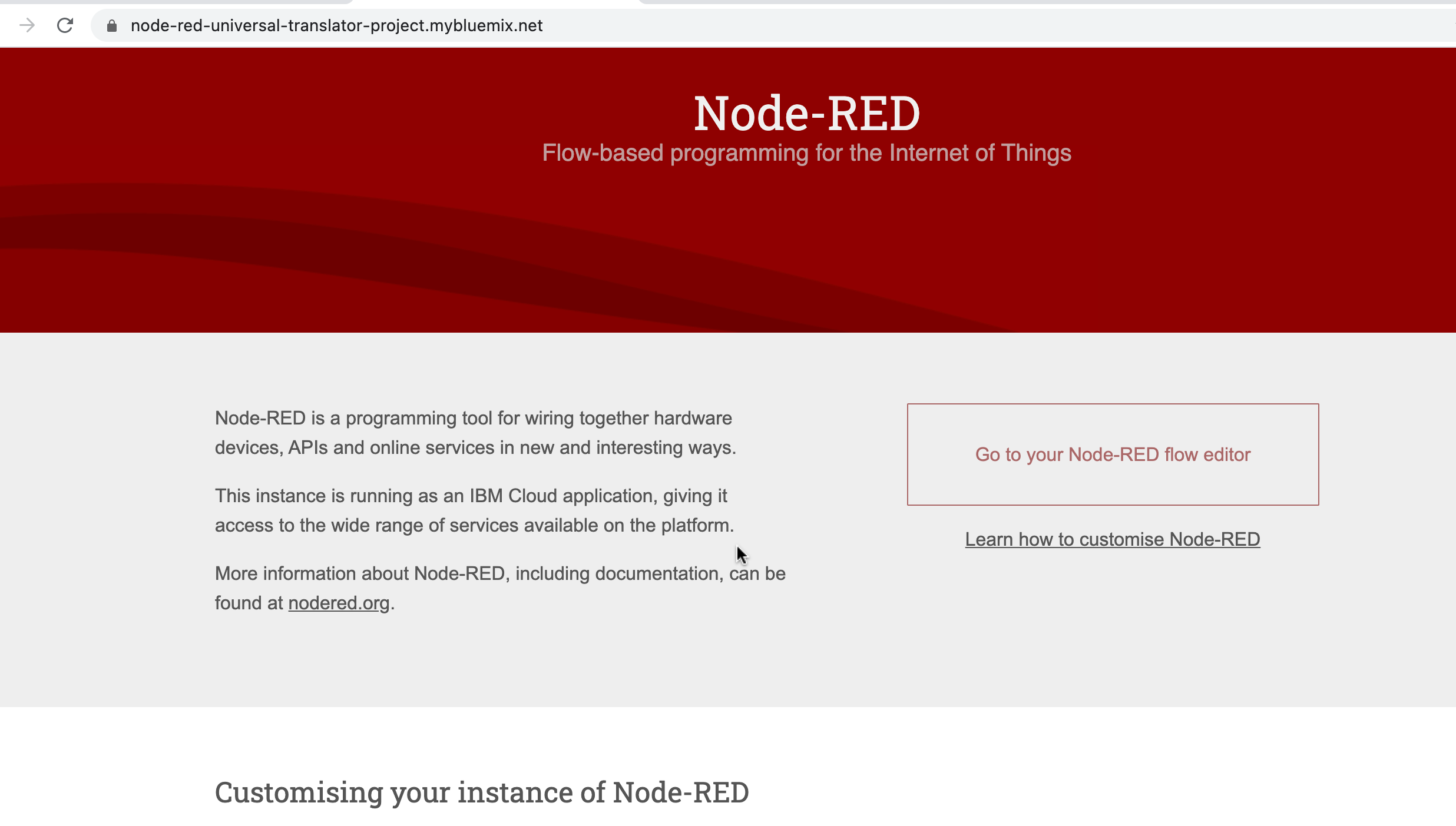

Click the Go to your Node-RED flow editor button to launch the Node-RED flow editor:

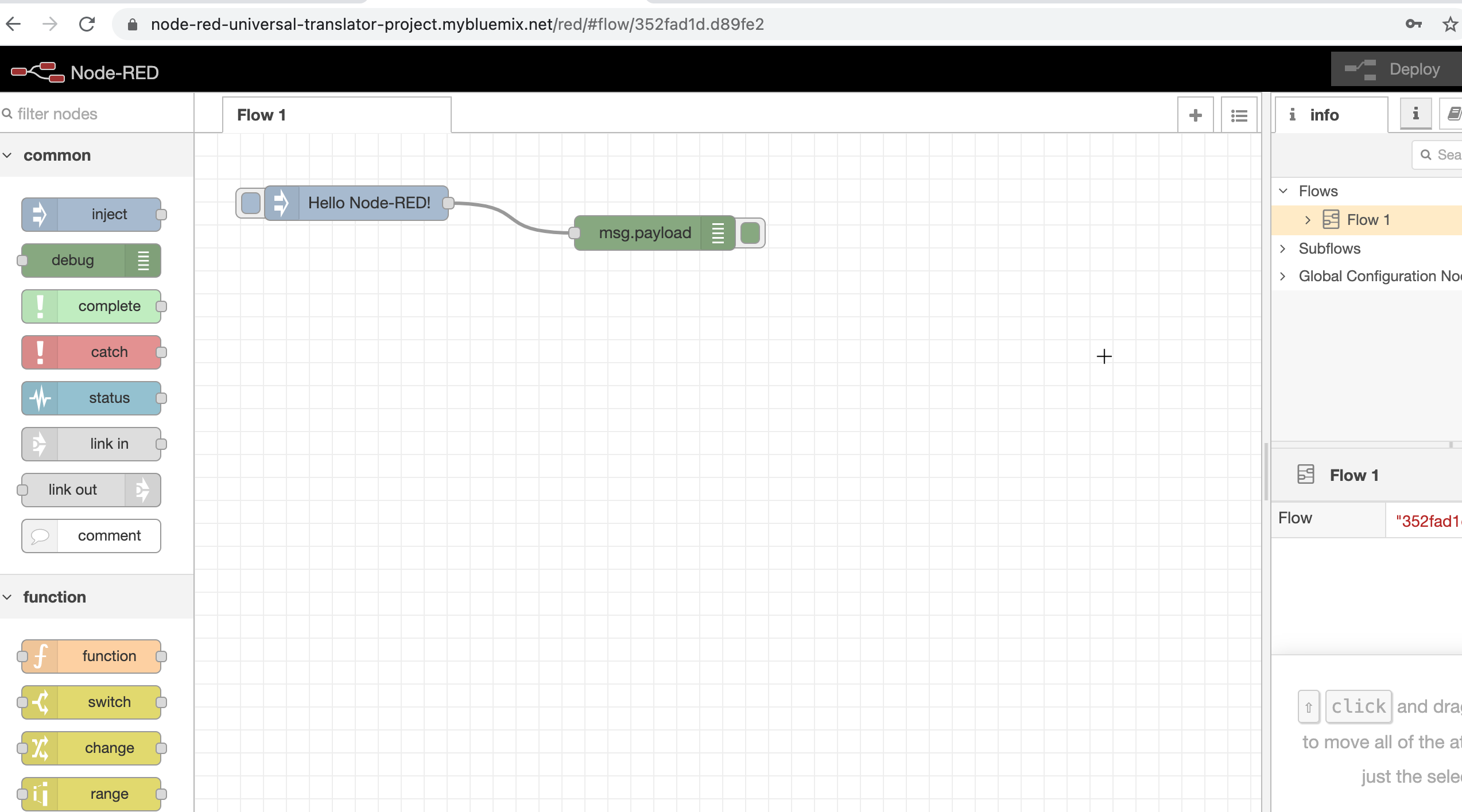

Click the Person icon in the upper right corner, and Sign in with your new username and password credentials and you will get a screen like this:

The Node-RED Visual Programming Editor has a left side with a palette of nodes that you can drag onto the flow and wire nodes together to create a program.

# Step 5 – Let's install Additional Node-RED Nodes

The universal translator that we are building needs a **microphone** to record your message and the ability to play the audio of the translation. We can add nodes to the Node-RED palette that add these capabilities, such a great thing!

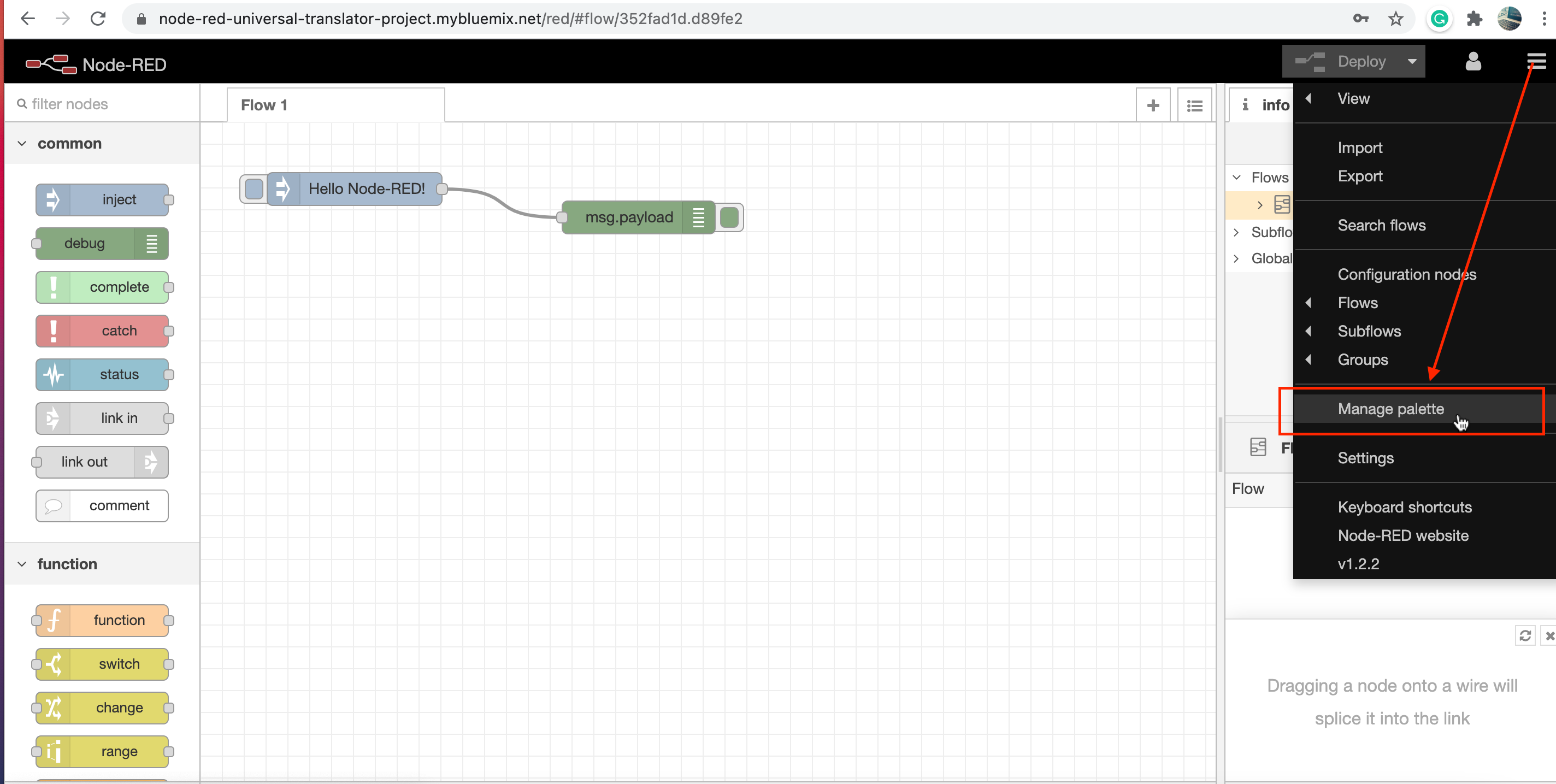

1. Click the Node-RED Menu, and select Manage palette

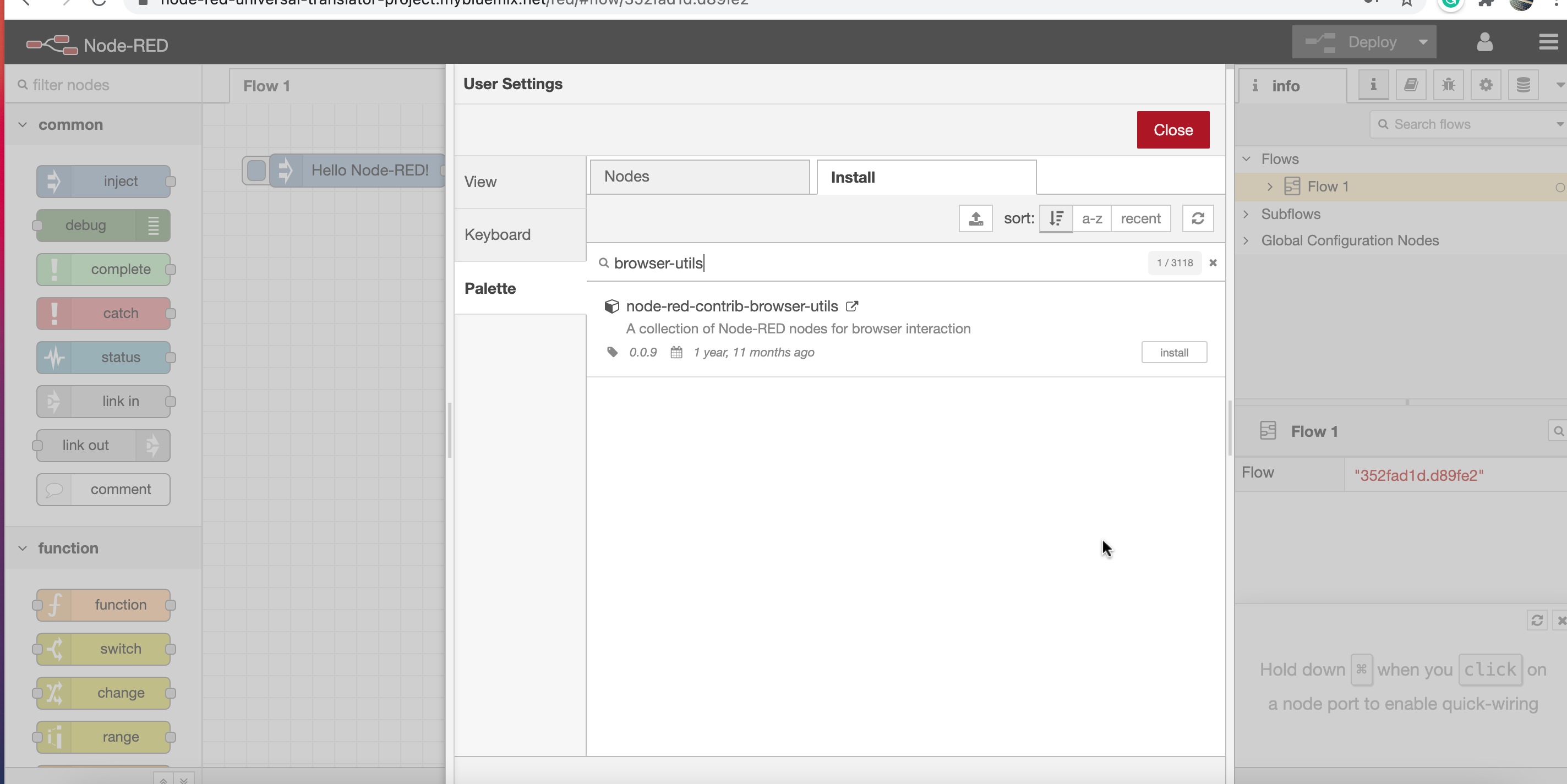

2. Select the Install tab, and search for *browser-utils* and Install the **node-red-contrib-browser-utils** node.

3. Search for *play-audio*, find the **node-red-contrib-play-audio** node, and click the Install button.

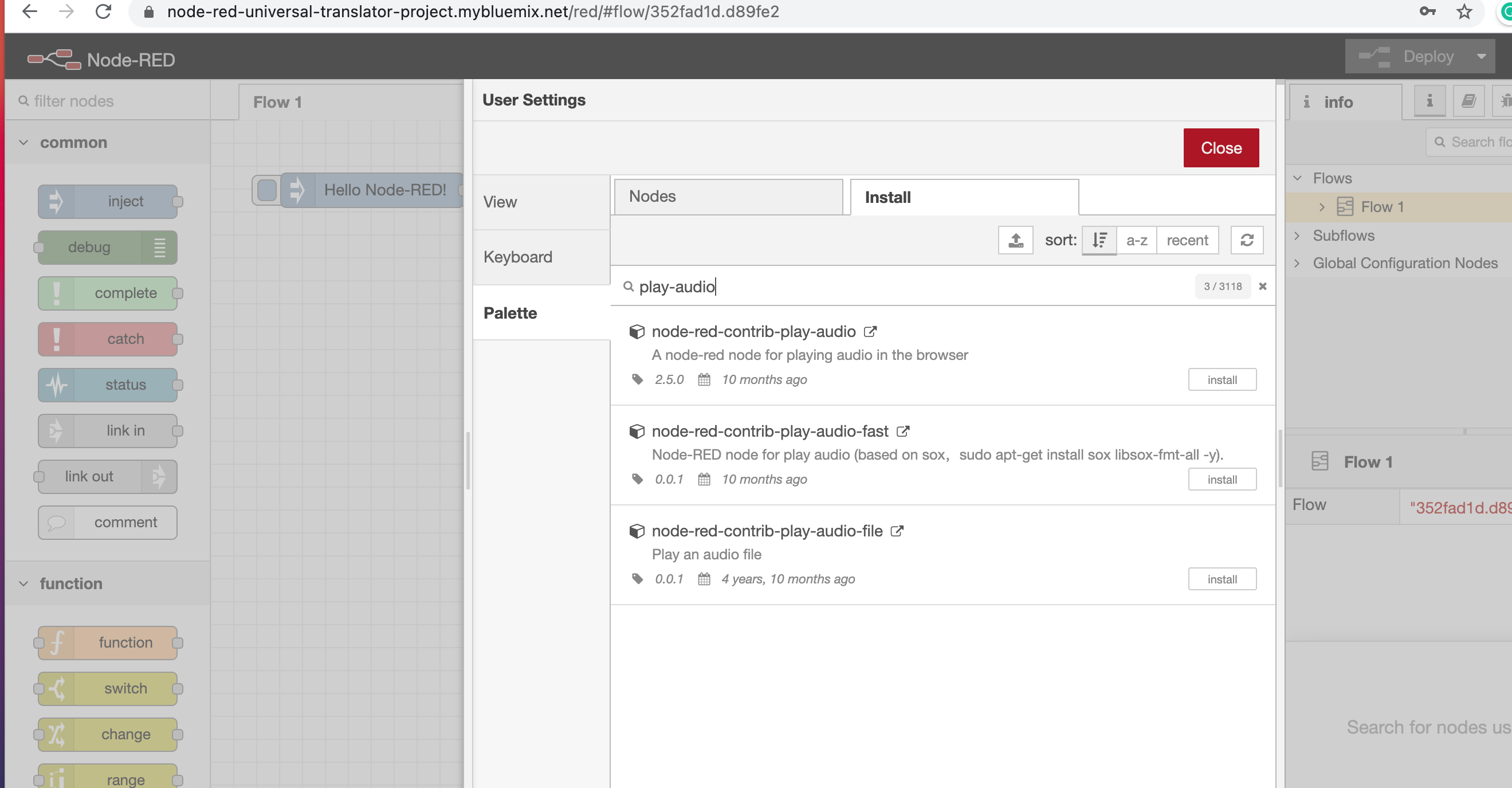

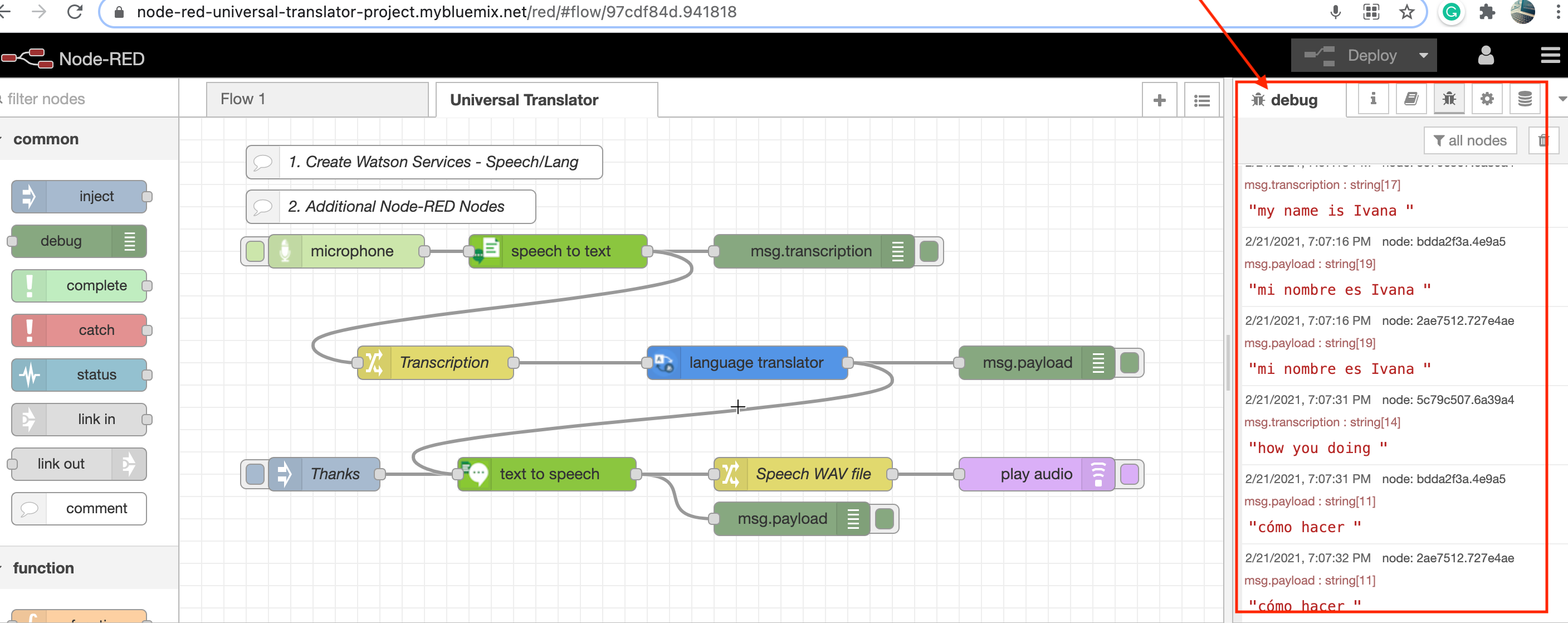

# Step 6 – Build the flows for the universal translator

Node-RED allows you to drag and drop Nodes from the left palette onto your flow canvas and wire them together to create programs.

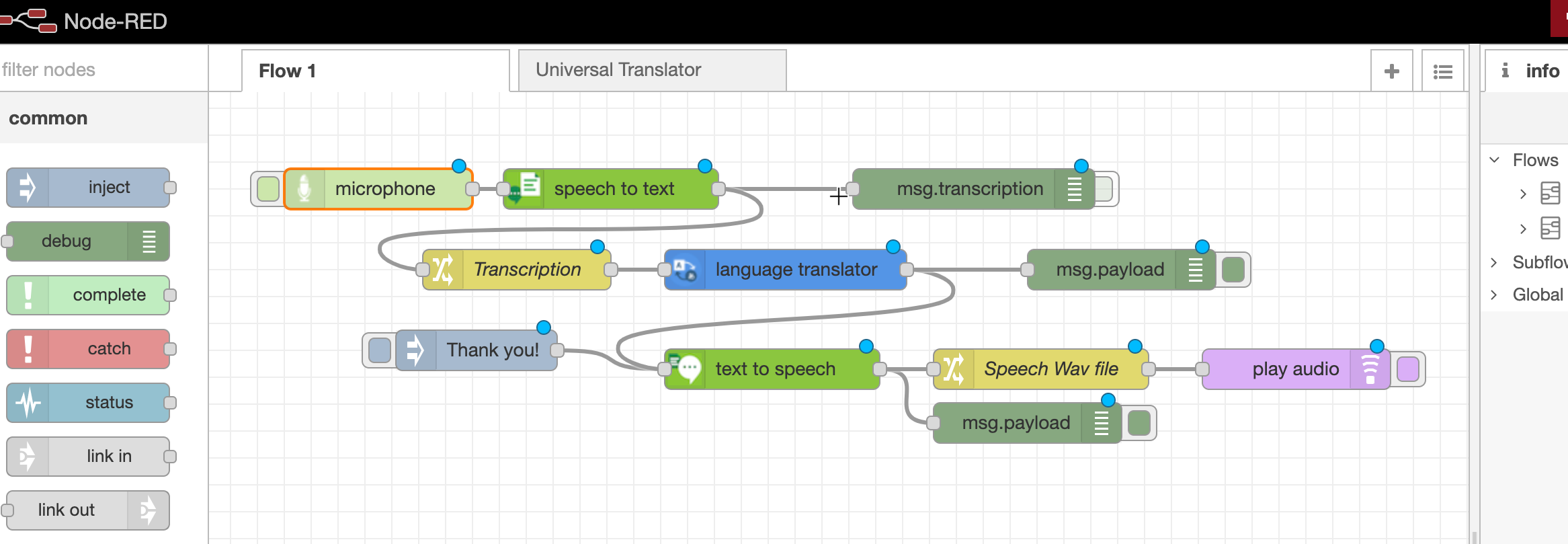

### Speech-to-Text flow

Click and drag a microphone node to your flow.

Click and drag a Speech to Text node to your flow. Double-click it and select US English.

Click and drag a Debug node to your flow. Double-click it and have it output msg.transcription.

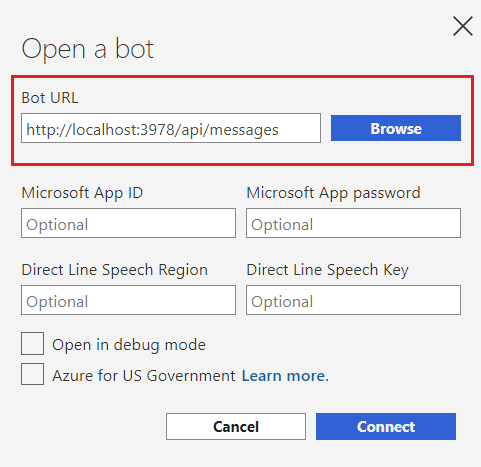

Wire the nodes together as shown in the screenshot below.

Click the red Deploy button.

Select the tab to the left of the microphone node and allow your browser access to the microphone on the laptop.

Record a message, like “wow this is so much fun!”

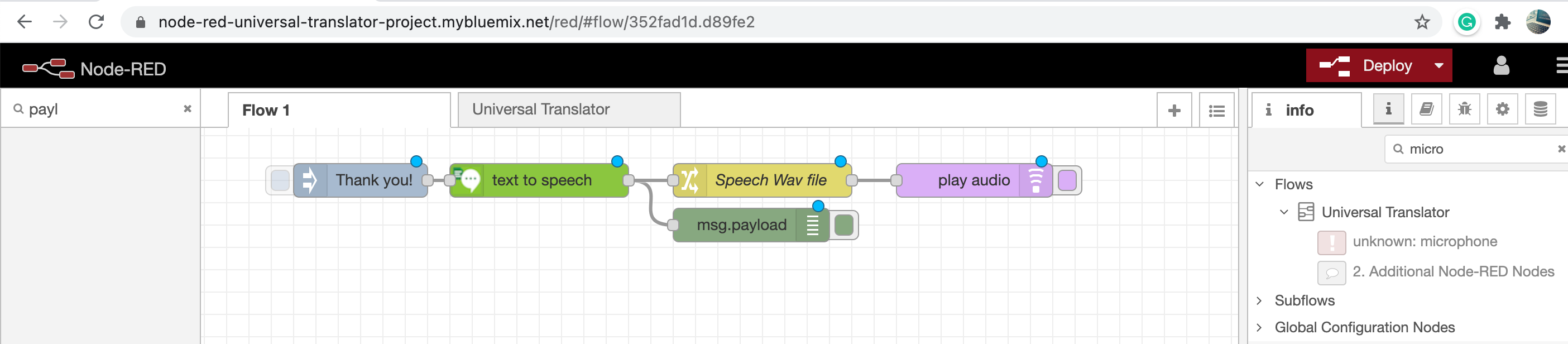

# Text-to-Speech flow

Now, let’s build the Text-to-Speech flow.

1. Click and drag an Inject node to your flow and double-click it and change the payload type to a string and type a message.

2. Click and drag a Text to Speech node to your flow, double-click it and select US English.

3. Click and drag a Change node to your flow, double-click the Change node and assign the msg.payload to msg.speech.

4. Click and drag a play-audio node to your flow.

5. Wire the nodes together as shown in the screenshot below:

6. Press the Deploy button (the red one).

7. Select the tab to the left of the Inject node. The audio of the message will play.

# Language Translator flow

The universal translator will use the recorded transcript as the input to the language translator node, and send the foreign language to the Text to Speech node.

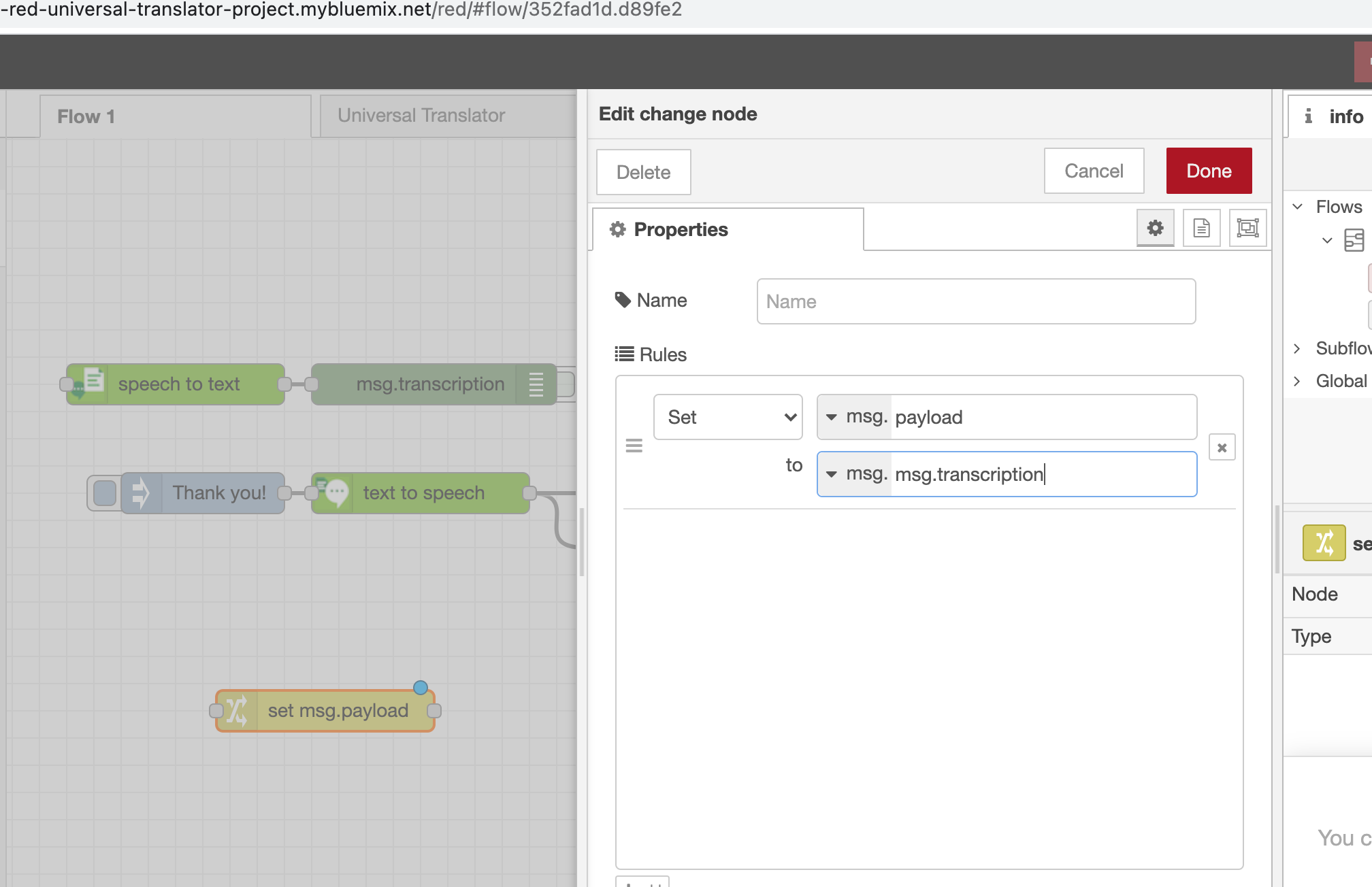

1. Click and drag another Change node to your flow, double-click it and assign msg.payload to msg.transcription like this:

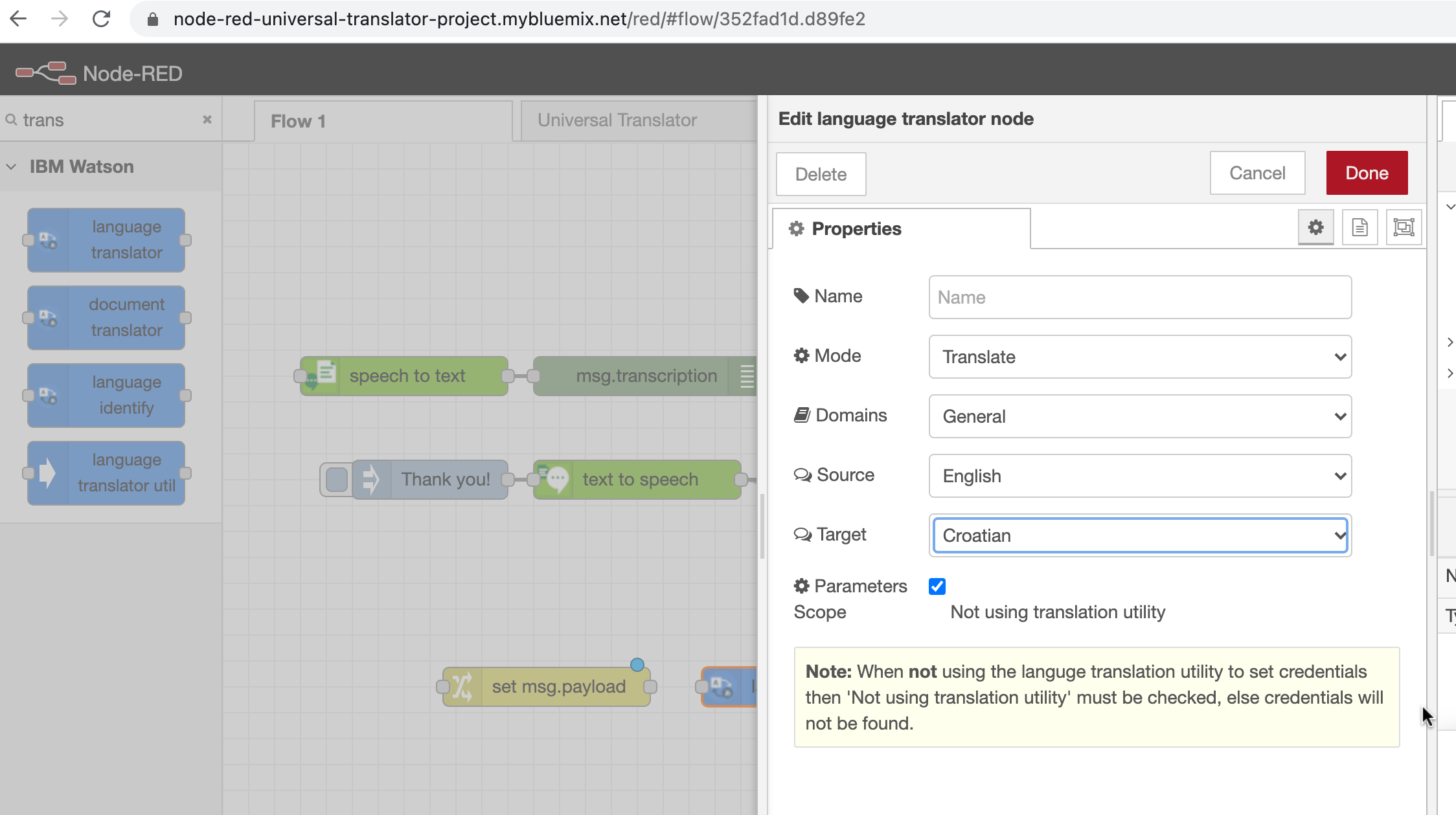

2. Click and drag a language translator node to your flow. Double-click it and select English as the Source and Croatian

as the Target.

3. Click and drag a Debug node to your flow.

4. Double-click the Text to Speech node, and change the language to Spanish and select a voice (here I clicked and tried Croatian language but it was not available, doesn’t show it on the list and I'll stick with Spanish)

This is how your final flow should look like:

Finally, click the Deploy button (the red one) on the right top corner!

# Final Step – let's test universal translator

Select the tab to the left of the microphone node and allow your browser access to the microphone on the laptop and **record a message**.

You can follow the process and view the translations in the **Debug** tab of Node-RED:

Full documentation and more details on how to build Node-RED starter application using Watson services in IBM Cloud can be found [here] (https://developer.ibm.com/technologies/iot/tutorials/build-universal-translator-nodered-watson-ai-services/)

To connect please check my [Github](https://github.com/ivanadokic), [LinkedIn](https://www.linkedin.com/in/ivana-dokic/) or [Twitter](https://twitter.com/LloydPile).

Thank you for reading!

| ivanadokic |

614,050 | How to use multiple GIT accounts per folder on Linux | Organize your repositories by GIT account without much stress | 0 | 2021-02-22T04:12:39 | https://dev.to/devatreides/how-to-use-multiple-git-accounts-per-folder-on-linux-1h50 | linux, webdev, git, howto | ---

title: How to use multiple GIT accounts per folder on Linux

published: true

description: Organize your repositories by GIT account without much stress

tags: linux, webdev, git, howto

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/6a69aped9087gaq34sqd.png

---

Tipically, one of the first tools that a developer learns when starting the "dev journey" is **git**. For those who still don't know much about this little guy, it was created by *Linus Benedict Torvalds*, a Finnish software engineer, naturalized American, known as the creator of **linux kernel**. Torvald's idea was to have a simple versioning system that met three requirements that he himself considered indispensable (and that other software couldn't guarantee):

- It needed to be distributed;

- The performance had to be good;

- It needed to ensure that what was put in, was exactly what was obtained afterwards;

According to him, these three parameters were sufficient to discard virtually all versioning systems until then, so he decided to build his own system.

## A single git account on Linux: kid's stuff

Everyone who has ever set up a local git user knows that it have no secrets. You define the user name and email and... that's it.

>```bash

>git config --global user.name "Tom Benevides"

>git config --global user.email "tombenevides@mail.com"

>```

From now on, all the repositories you create will use these credentials.

>```bash

> mkdir new_repo && cd "$_"

> git init

> touch text.txt

> git add text.txt

> git commit -m "new file"

>```

And if I take a look at the *git log*, this will be the result:

>```bash

> commit 036573401e5788917383a27fb6c2acf607f5e441 (HEAD -> master)

> Author: Tom Benevides <tombenevides@mail.com>

> Date: Sun Feb 7 13:50:05 2021 -0400

>

> new file

>```

So, everything is fine with our configuration but... what if my project uses a different account?

## A git account per repository: still piece of cake, but it can be a little tricky

We'll now create another repository. This is going to be the *work_repo*. It actually uses a different email account because it's a company project and not a personal project like *new_repo*.

>```bash

> cd ~

> mkdir work_repo && cd "$_"

> git init

> touch text.txt

>```

If we commit the changes now, my personal account (system default) will be registered, but I need to use the work email, tombenevides@work.com. So, we need to configure the new credentials in the repository.

>```bash

> git config user.email "tombenevides@work.com"

> git add text.txt

> git commit -m "new file"

>```

Now, when committing, my registered email will be the work email.

>```bash

> commit f47e5c7140296c9fbe1f4fb001149b04b329b655 (HEAD -> master)

> Author: Tom Benevides <tombenevides@work.com>

> Date: Sun Feb 7 14:05:02 2021 -0400

>

> new file

>```

All right, happy ending? So-so. Now, imagine that you have several work repositories and several personal repositories. It will be a bit annoying that you have to configure the correct credential in each of the repositories. If your work account email changes or you have a new git account? You'll need to make the change in all repositories. Lot of job right? There're people who don't think so.

Personally, what I can do to make my life simpler, I’m doing and a nice idea would be to configure git so that every time I create a repository in a given folder, the credentials I want are automatically assigned to it. And look how cool; git does that!

## A git account per folder: the light at the end of the tunnel

The idea is pretty simple: we'll have two base folders (*Work* and *Personal*) where the repositories will be stored according to their respective origin. All repositories within each base folder will use the same credentials, different from the default and without configuration by repository. Structure proposal below.

>```bash

> Projects

> ├── Personal

> └── Work

>```

Now, we need to tell git that the *Personal* folder repositories will use the email "tombenevides@newmail.com" and not the default email we set up earlier. To do this, in your home (`cd ~`), create a file called `.gitconfig-personal` and as content, simply recipient of the email.

>```bash

> [user]

> email = tombenevides@newmail.com

>```

Once we set up the Personal folder credential, we will create a `.gitconfig-work` file to set up work credential.

>```bash

> [user]

> email = tombenevides@work.com

>```

With our config files created, we'll now edit the `.gitconfig` file found in the user home (`cd ~`) and let git know that whenever there is a repository inside the *Personal* folder, it must use the credential of the `.gitconfi-personal` file and every time there is a repository in the *Work* folder, git must use the credential of the `.gitconfig-work` file.

>```bash

> [user]

> name = Tom Benevides

> email = tombenevides@mail.com

> [includeIf "gitdir:Projects/Personal/**"]

> path = .gitconfig-personal

> [includeIf "gitdir:Projects/Work/**"]

> path = .gitconfig-work

>```

... and voilà! Now, the work repositories only need to be inside the `~/Projects/Work` directory and all commits will use the correct credentials, without any configurations by repository. The same goes to the *Personal* folder repositories. And if at any point, your work (or personal) git account email changes, just change the `.gitconfig-X` file corresponding to the folder and all repositories inside will use the new credential.

So... that's it! Try it and then tell me your experience in the comments, via [Twitter](https://twitter.com/tongedev) or [Instagram](https://www.instagram.com/tomb.dev)!

| devatreides |

614,230 | Animazioni con il Canvas di javascript: Come animare con gli sprite | Animazione gli sprites con il canvas | 0 | 2021-09-24T09:22:20 | https://dev.to/camizzilla/animazioni-con-il-canvas-di-javascript-come-animare-con-gli-sprite-3m2l | javascript, canvas, sprites, spritesheet | ---

title: Animazioni con il Canvas di javascript: Come animare con gli sprite

published: true

description: Animazione gli sprites con il canvas

tags: js, canvas, sprites, spritesheet

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/8m55nddwh0p5skfs34ie.jpg

---

Se volessimo creare una presentazioni animata o un videogioco con una grafica "non vettoriale" ma con una grafica raster (pixellosa) dovremmo __imparare a gestire gli sprites__.

Ma cosa sono gli sprites?

__Gli sprites sono delle immagini in sequenza__ che fatte scorrere ad una certa velocità ingannano l'occhio facendolo sembrare in movimento.

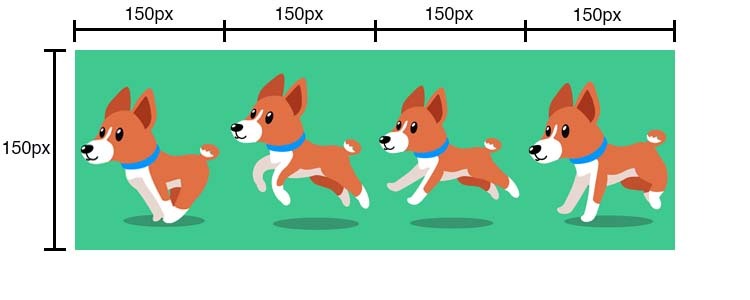

Per implementare gli sprite nel canvas abbiamo bisogno di uno spritesheet cioè __un immagine che contiene tutte le sequenze__ della nostra animazione. Come in questo esempio.

Se come me non siete dei grandi grafici, ci sono un sacco di risorse in internet. Li potete trovare tra gli archivi di immagini o in siti come

* https://itch.io/game-assets

* https://www.spriters-resource.com/ (quest'ultima è sotto copyright, quindi se li usate fatelo per uso personale)

* https://opengameart.org/

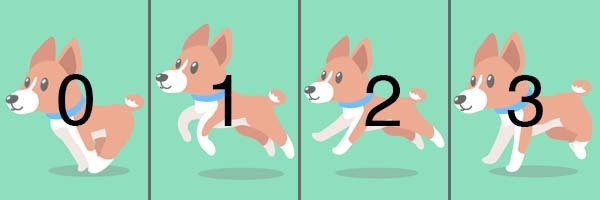

Io ho scelto di animare __questo cane che corre__, è __uno spritesheet semplice su una sola riga con 4 fasi dell'animazione__, più avanti vedremo sprite più complessi su più righe e che comanderemo con i tasti.

###INIZIALIZIAMO

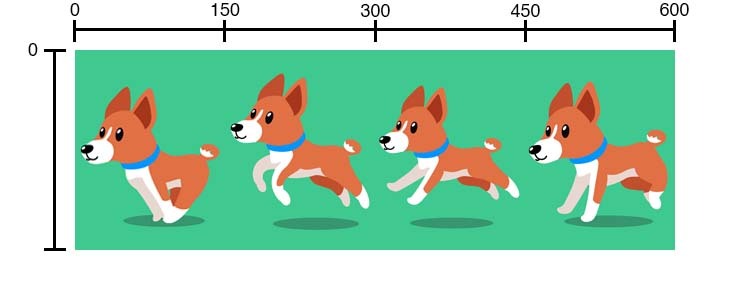

Iniziamo inizializando un elemento _canvas_ grande 600x400, stampando, a video l'immagine (spritesheet) che contiente i 4 sprite una volta caricata.

* **HTML**

``` html

<canvas id="myCanvas"></canvas>

```

* **JS**

``` js

var canvas = document.getElementById("myCanvas");

var ctx = canvas.getContext("2d");

canvas.width = 600;

canvas.height = 400;

let img = new Image();

img.src = 'https://i.ibb.co/tmdzwtT/dog.jpg';

img.addEventListener('load', () => {

ctx.drawImage(img, 0, 0);

})

```

Una volta caricata, si vede __un'immagine 600 x 200 divisa in 4 frame della grandezza di 150 x 200 che comporranno la nostra animazione__.

Visto che a noi serve solo un frame alla volta dobbiamo __creare una maschera di 150 x 200 che mostra solo un frame alla volta__.

Questa operazione di ritaglio si può fare direttamente con il metodo ctx.drawImage che ha delle opzioni che ci premettono di visualizzare a video sono una pozione rettangolare di un immagine.

Avevo già trattato in questo post, ["Canvas Javascript: Come disegnare immagini"](https://dev.to/camizzilla/canvas-javascript-come-disegnare-immagini-54in), come fare, ma oggi andremo a vedere come si può __utilizzare questo metodo per creare del movimento__.

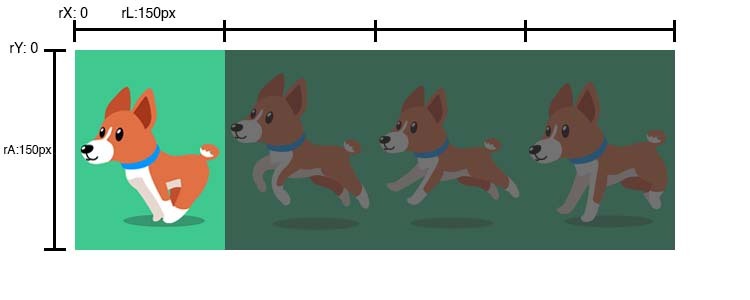

__Ritagliamo lo spritesheet in modo da visualizzare solo il primo frame__.

Partiamo dalla coordinata dell'immagine 0, 0 (rX, rY) e tagliamo una porzione larga 150px (rL) e lunga 200px (rA) ( il rettangolo che contiene il primo frame)

__Posizioniamo la maschera sul canvas__, circa al centro, alle coordinate 225, 100 (x,y) e lo visualizziamo con le stesse dimensioni del ritaglio, 150x200px (l, a)

``` js

img.addEventListener('load', () => {

//ctx.drawImage(img, rX, rY, rL, rA, x, y, l, a);

ctx.drawImage(img, 0, 0, 150, 200, 225, 100, 150, 200);

})

```

Adesso per creare l'effetto di movimento dobbiamo spostare la funzione _ctx.drawImage_ in un ciclo e far scorrere la maschera di ritaglio ad ogni frame e una volta finiti tornare al frame 0.

Creiamo una funzione loop che si chiamerà in modo ricorsivo grazie al metodo _requestAnimationFrame_ ad ogni fine ciclo.

_requestAnimationFrame_ è il metodo creato apposta per gestire i cicli nel canvas. Ne parlo in maniera più approfondita in questo post: ["Animazioni con il Canvas di javascript: Come cos'è e come implementarlo con un esempio base"](https://dev.to/camizzilla/animazioni-con-il-canvas-di-javascript-come-cos-e-e-come-implementarlo-con-un-esempio-base-gh)

``` js

let img = new Image();

img.src = 'https://i.ibb.co/d264Yhf/greeting.png';

img.addEventListener('load', () => {

requestAnimationFrame(loop)

})

let loop = () => {

ctx.clearRect(0, 0, canvas.width, canvas.height);

ctx.drawImage(img, 0, 0, 150, 200, 225, 100, 150, 200);

requestAnimationFrame(loop)

}

```

Al caricamento dell'immagine viene chiamata la funzione loop.

Che ha 3 metodi al suo interno: _clearRect_ che ripulisce il canvas, il metodo che stampa a video che abbiamo creato precedentemente e per ultimo _requestAnimationFrame(loop)_ che richiama se stessa.

Il prossimo passo è quello di aumentare il frame ad ogni ciclo.

Nel nostro caso sono 4 frames e vanno dal frame 0 al frame 3.

I frame si trovano ad una distanza di 150px, quindi il valore per rX sarà di:

* 0 per il frame 0

* 150px per il frame 1

* 300px per il frame 2

* 450px per il frame 3

Da questo si può inturire che se moltiplichiamo il numero del frame con la larghezza (Lr) otterremo il valore rX.

rX = lr * frameCounter;

``` js

let lr= 150;

let frameCounter = 0;

let rX = 0

let loop = () => {

ctx.clearRect(0, 0, canvas.width, canvas.height);

rX = lr * frameCounter;

ctx.drawImage(img, rX, 0, lr, 200, 225, 100, 150, 200);

framecounter < 3 ? frameCounter++ : frameCounter = 0;

requestAnimationFrame(loop)

}

```

Nel primo ciclo il secondo argomento prende come risultato della moltiplicazione 150 * 0, quindi il ritaglio partirà dalla posizione 0, poi il framecounter aumenta di uno perchè il "frame" è minore di 3.

Nel secondo ciclo, il frame vale 1 che moltiplicato a 150 fa rX: 150... e cosi via 150 * 2 = 300, 150 * 3 = 450 e poi l'animazione ricomincia perchè il valore frame non è maggiore di 3 e il valore di framecounter tornerà a 0.

{% codepen https://codepen.io/camizzilla/pen/xxrjEjN %}

Se dovessimo lasciare il codice così, il nostro cane correrebbe troppo veloce, perchè gli fps, frame per secondo, sono troppo alti.

Gli fps sono dei valore che indicano quante volte il ciclo viene riprodotto in un secondo.

Niente paura, nel prossimo post, spegherò in maniera semplice cos'è l'fps e qual'è la tecnica per implementarlo al meglio.

Per adesso gli applicheremo un setTimeout che non è la miglior soluzione, ma sicuramente la più veloce

``` js

let lr= 150;

let frameCounter = 0;

let rX = 0

let framesPerSecond = 10;

let loop = () => {

setTimeout( () => {

ctx.clearRect(0, 0, canvas.width, canvas.height);

rX = lr * frameCounter;

ctx.drawImage(img, rX, 0, lr, 200, 225, 100, 150, 200);

frameCounter < 3 ? frameCounter++ : frameCounter = 0;

requestAnimationFrame(loop)

}, 1000 / framesPerSecond);

}

```

CONCLUSIONE

Abbiamo visto come gestire un animazione semplice con gli Sprites.

Successivamente vedremo gli fps per la gestire la velocità dei frame, come gestire SpriteSheet su più righe e come unire i comandi di tastiera con le animazioni.

Se avete dei consigli, suggerimenti o critiche costruttive lasciatemi un commento qui sotto oppure contattatemi trammite i miei social.

| camizzilla |

616,214 | Day.js with RelativeTime in Nuxt.js | This is a relatively short guide, and it's just to show how easy it is to implement day.js inside a... | 0 | 2021-02-23T23:21:42 | https://dev.to/seankerwin/day-js-with-relativetime-in-nuxt-js-3kk9 | dayjs, nuxt, vue | This is a relatively short guide, and it's just to show how easy it is to implement [day.js](https://day.js.org/) inside a Nuxt app.

I've always just used Moment.js for date formatting, but since Moment.js has now been abandoned and they're encouraging people to use alternatives, I needed to find a way to convert timestamps that are provided by the API's I consume. They usually return timestamps in the following format:

```

2020-10-08T07:51:58Z

```

Which to be honest, isn't really useful to anyone. That's where day.js comes in, it can convert the timestamp above into something like

```

Oct 8th 2020

```

It's pretty simple to do.

First we need to pull in the `@nuxtjs/dayjs` package with the following command.

```

yarn add @nuxtjs/dayjs

or

npm install @nuxtjs/dayjs

```

Once that is installed, open up your `nuxt.config.js` and add

`'@nuxtjs/dayjs'` to the `modules` section, and then outside of that, add the following `dayjs` object.

```js

modules: [

'@nuxtjs/dayjs',

...

],

dayjs: {

locales: ['en'],

defaultLocale: 'en',

plugins: ['relativeTime', 'advancedFormat'],

},

```

Set any *locales* you want, for me, being in the United Kingdom, I set my locale to `en` and then add any additional dayjs plugins you need. I'm using the [RelativeTime](https://day.js.org/docs/en/plugin/relative-time) and [AdvancedFormat](https://day.js.org/docs/en/plugin/advanced-format) plugins.

Once everything is installed, you from within any component you can do the following

```js

{{ $dayjs('2020-10-08T07:51:58Z').format('MMM Do YYYY') }}

```

Which will output this

```

Oct 8th 2020

```

You can also use the RelativeTime plugin to turn it into this:

```js

{{ $dayjs('2020-10-08T07:51:58Z').fromNow() }}

```

Which will return

`a year ago`

You can obviously, not use hard-coded dates and use props/variables such as

```js

{{ $dayjs(post.published_at).fromNow() }}

```

Day.js is a simple and ultra-lightweight replacement for Moment.js and is super easy to use.

| seankerwin |

614,394 | Free and open source Bootstrap 5 UI Kit | Hello devs 👋🏻 Today I want to show you a Bootstrap 5 UI Kit which we've been working on for about a... | 0 | 2021-02-22T10:10:20 | https://themesberg.com/product/ui-kit/pixel-free-bootstrap-5-ui-kit | opensource, bootstrap, css, javascript | Hello devs 👋🏻

Today I want to show you a Bootstrap 5 UI Kit which we've been working on for about a year with my friend. The first version one year ago was based on Bootstrap 4, but now that Bootstrap 5 has been updated to Beta we thought it was time to update the whole kit once again.

We used NPM, Gulp, and Sass to manage libraries, compile source files, and more easily change and update styles using the variables and mixins from Sass.

Pixel Bootstrap 5 UI Kit features over 80 UI components including date pickers, modals, pricing cards, profile cards, blog cards, and also 5 example pages.

## Workflow

* Most popular CSS Framework Bootstrap

* Productive workflow tool Gulp

* Awesome CSS preprocessor Sass

So without further ado, let me show you how you can install and use this Bootstrap 5 based UI Kit.

## Quick start

1. Download for free from [Themesberg](https://themesberg.com/product/ui-kits/pixel-lite-free-bootstrap-4-ui-kit?ref=github-pixel-lite-bootstrap) or [clone the repository on Github](https://github.com/themesberg/pixel-bootstrap-ui-kit)

2. Make sure you have Node and NPM installed on your machine

3. Download Gulp Command Line Interface to be able to use gulp in your Terminal:

```

npm install gulp-cli -g

```

4. After installing Gulp, run `npm install` in the main pixel/ folder to download all the project dependencies. You'll find them in the `node_modules/` folder.

5. Run `gulp` in the pixel/ folder to serve the project files using BrowserSync. Running gulp will compile the theme and open `/index.html` in your main browser.

While the gulp command is running, files in the `assets/scss/`, `assets/js/` and `components/` folders will be monitored for changes. Files from the `assets/scss/` folder will generate injected CSS.

Hit `CTRL+C` to terminate the gulp command. This will stop the local server from running.

## Theme without Sass, Gulp or Npm

If you'd like to get a version of our theme without Sass, Gulp or Npm, we've got you covered. Run the following command:

```

gulp build:dev

```

This will generate a folder `html&css` which will have unminified CSS, Html and Javascript.

## Minified version

If you'd like to compile the code and get a minified version of the HTML and CSS just run the following Gulp command:

```

gulp build:dist

```

This will generate a folder `dist` which will have minified CSS, Html and Javascript.

## Documentation

The documentation for Pixel Bootstrap UI Kit is hosted on our [website](https://themesberg.com/docs/pixel-bootstrap/getting-started/overview).

## File Structure

Within the download you'll find the following directories and files:

```

Pixel Bootstrap UI Kit

.

├── LICENSE

├── README.md

├── dist

│ ├── assets

│ ├── css

│ ├── html

│ ├── index.html

│ └── vendor

├── gulpfile.js

├── html&css

│ ├── assets

│ ├── css

│ ├── html

│ ├── index.html

│ └── vendor

├── package-lock.json

├── package.json

└── src

├── assets

├── html

├── index.html

├── partials

└── scss

```

## Resources

- Demo: <https://demo.themesberg.com/pixel-bootstrap-5-ui-kit/index.html>

- Download Page: <https://themesberg.com/product/ui-kits/pixel-lite-free-bootstrap-4-ui-kit?ref=github-pixel-lite-bootstrap>

- Documentation: <https://themesberg.com/docs/pixel-bootstrap/getting-started/overview?ref=github-pixel-lite-bootstrap>

- License Agreement: <https://themesberg.com/licensing?ref=github-pixel-lite-bootstrap>

- Support: <https://themesberg.com/contact?ref=github-pixel-lite-bootstrap>

- Issues: [Github Issues Page](https://github.com/themesberg/pixel-bootstrap-ui-kit/issues)

## Reporting Issues

We use GitHub Issues as the official bug tracker for Pixel Pro Bootstrap UI Kit. Here are some advices for our users that want to report an issue:

1. Make sure that you are using the latest version of Pixel Pro Bootstrap UI Kit. Check the CHANGELOG from your dashboard on our [website](https://themesberg.com?ref=github-pixel-lite-bootstrap).

2. Providing us reproducible steps for the issue will shorten the time it takes for it to be fixed.

3. Some issues may be browser specific, so specifying in what browser you encountered the issue might help.

## Technical Support or Questions

If you have questions or need help integrating the product please [contact us](https://themesberg.com/contact?ref=github-pixel-lite-bootstrap) instead of opening an issue.

## Licensing

- Copyright 2020 Themesberg (Crafty Dwarf LLC) (https://themesberg.com)

- Themesberg [license](https://themesberg.com/licensing#mit) (MIT License)

## Useful Links

- [More themes](https://themesberg.com/themes) from Themesberg

- [Free themes](https://themesberg.com/templates/free) from Themesberg

- [Bootstrap Themes, Templates & UI Kits](https://themesberg.com/templates/bootstrap) from Themesberg

- [Affiliate Program](https://themesberg.com/affiliate?ref=github-pixel-lite-bootstrap)

##### Social Media

Twitter: <https://twitter.com/themesberg>

Facebook: <https://www.facebook.com/themesberg/>

Dribbble: <https://dribbble.com/themesberg>

Instagram: <https://www.instagram.com/themesberg/>

| zoltanszogyenyi |

614,550 | Figuring it out no matter what ~ Leo Lima | Bringing you the next episode of Everyone Sucks, I (Kartik Budhiraja) had the honour to have... | 0 | 2021-02-22T13:41:56 | https://dev.to/kartikbudhiraja/figuring-it-out-no-matter-what-leo-lima-59hf | motivation, frontend, podcast, softwareengineering | ---

title: Figuring it out no matter what ~ Leo Lima

published: true

date: 2021-02-22 13:29:18 UTC

tags: motivation,frontenddevelopment,podcast,softwareengineering

canonical_url:

---

Bringing you the next episode of Everyone Sucks, I ([Kartik Budhiraja](https://medium.com/u/56d3cb6a43ed)) had the honour to have [Leonardo Lima](https://medium.com/u/5ad35097ded0) as the guest for this episode. Leo is from Brazil and is currently working as a Senior Software Engineer at a crypto marketplace company.

His story of being in development is really fascinating, as he studied journalism but pivoted into software development later. He talks about how many challenges he faced not being from a computer science background and how he was able to find what he loves and gets better at it.

Some key takeaways:

### Never complain

Leo provides us all with an example of making the best of our resources without complaining. For his very first internship interview (which he got by sending a cold-email to the founder of the company), he was given a coding assignment. Fortune playing its usual role, Leo was moving at that time and ended up being without an internet connection at his place with an assignment to do in a programming language he did not have experience in ( If you’re a developer, you can imagine how hard can that be). But instead of complaining, Leo actually spent his days at the public library as they had internet and finished the assessment on time.

Talk about finding a way out no matter what.

### Build and use the support network around you

When asked about his way of handling the pressure of moving into tech and dealing with the imposter syndrome, he talks about the importance of having a support network around oneself. He mentions how he used to talk with his best friend and other people around him openly about the challenges he’s facing and the doubts he has.

He puts it in a really impactful way by saying that “You do not have to fight alone. Help people around you and ask for help when you need it.”

### Transform self-doubt to fuel

At his first development job, Leo talks about how he always felt the pressure to prove himself. Not being from a computer science background, the self-doubt added additional pressure as one could imagine. Leo turned that self-doubt around and started using that as fuel for more focus. He talks about how he ended up taking his work with intense focus and putting in more hours just because he knew he had something to prove. It’s not to say that the pressure is good, it’s more to show that everyone feels that self-doubt, but the only thing we need to do with it eradicates it by focusing on what we can do i.e get after it and work with more focus.

### It’s always a people business

> “No matter what your role is, you have to remember it’s always a people business” ~ Leo

Leo tells how calling people as resources make him a bit uncomfortable as people are human, no matter what happens. He explains how important is it to remember this whether you’re a developer or in any other job. At the end of the day you are still working and communicating with people, so behave like one.

### Focus on what other person requires, not what you want to offer

When asked about his favourite failure, he talks about the importance of understanding other people’s needs. Through his own experience where he tried to help an intern by providing what he _thought_ would be the best for them. He explains how that backfired and reminded him that every person is different, just because you think that one thing would benefit that person, does not mean they would agree with you.

If you’re in a management role, take a step to figure out what your team wants first, and then work on providing them. Do not put the cart in front of the horse.

_Bonus Tip_: Coming from Journalism, writing is a major part of Leo’s life. He uses writing down the problem and its prospective solution to break it down into chunks and suggests everyone give it a shot.

Please feel free to reach out to Leo if anyone needs any guidance. You can find Leo online on Twitter at @leocavia.

We talked about a lot of other cool stuff, join us on youtube, Spotify, and every other place you get your podcast from. (Search for Everyone Sucks)

Spotify: [https://open.spotify.com/episode/6sm92aEs2vO8ES3KfegM3E?si=oHd4uv6qROKk5MBxH6pcdg](https://open.spotify.com/episode/6sm92aEs2vO8ES3KfegM3E?si=oHd4uv6qROKk5MBxH6pcdg)

{% youtube xbdXiHxth3w %}

* * * | kartikbudhiraja |

614,884 | The font-size trick in layouts | When you try to fit two 50% containers and they stack down | 0 | 2021-04-22T19:13:14 | https://dev.to/kenystev/the-font-size-trick-in-layouts-4joe | css, html, tips, webdev | ---

title: The font-size trick in layouts

published: true

description: When you try to fit two 50% containers and they stack down

tags: css, html, tips, webdev

//cover_image: https://direct_url_to_image.jpg

---

Have you ever faced the issue when 50% and 50% does not fit in 100%? 🤯 Where has the math gone?

I remember my first days learning CSS, putting markup tags here and there then styling them out! yeah! the least aesthetic page ever xD

Flexbox had just appeared and then barely used, we still fought aligning items with `float: left` and `float: right` and the famously used `clear: both`

Nothing worse than dealing with those properties and then boom! we played the fools trying to split a view evenly in two pieces 50/50 using `display: inline-block` and `width: 50%` ending with something like this:

### HTML

```html

<div class="container">

<div class="item">

item 1

</div>

<div class="item">

item 2

</div>

</div>

```

### CSS

```css3

.container {

width: 100%;

}

.item {

display: inline-block;

width: 50%;

}

```

# Fooling!

And you know the end, how 50% plus 50% is different than 100%? then more than one ended doing the weirdest thing ever done 50% and 49% 🤦🏻♂️ others tried to heal the wound a bit by evenly distributing the difference like 49.5% and 49.5%

But you know what? just to make it worse guess what? That's not gonna behave as well as expected if you're aiming for responsive views

# Problem solved!

Do you know what's the real issue here?

Well it's even simpler than you thought! The reason why 50/50 doesn't fit 100 is because of the `font-size` yeah!

Remember we're using `display: inline-block` right? to change the default `block` value of divs, and as fact in CSS all the inline elements by default share a property called `font-size`.

Wait! divs could have `font-size`? for sure they can once you change their display property from *block* to *inline*, even *inline-block*

## Conclusion

If you do not set a `font-size` property to your 50/50 elements they will inherit from their parent (which most of the time might not be zero)

All you have to do is set `font-size: 0;` to your items and boom! out of magic everything will work! Here's an example of the result so you could check it out:

{% codepen https://codepen.io/kenystev/pen/PoGMRNe %}

### Before you go!

Note: The example above it's working pretty well just because we don't have content inside those divs, once you have some content there by default most of the browsers set the property `box-sizing: content-box;` and I'd suggest you to set that property to `box-sizing: border-box;`.

Thanks for reading and see you soon... 🤗 | kenystev |

615,072 | Downloading and Displaying a file in React Native | React Native does not currently offer full support for downloading and showing a file. The approach i... | 0 | 2021-02-22T21:12:17 | https://dev.to/johannawad/how-to-download-and-display-a-file-in-react-native-26d8 | reactnative, firstpost, typescript, javascript | React Native does not currently offer full support for downloading and showing a file. The approach in this article shows you how to download and display a file using the [react-native-fs](https://github.com/itinance/react-native-fs) and [react-native-webview](https://github.com/react-native-webview/react-native-webview) libraries respectively.

In this example, the file is of a PDF format, however, the same approach can be used for images or other text file formats.

###Prerequisites:

A working React Native App. Not sure how to do this? Checkout the setup instructions on the [React Native Website](https://reactnative.dev/docs/getting-started).

##Downloading the file

###Install react-native-fs:

```

yarn add react-native-fs

```

or

```

npm install react-native-fs

```

If you're using React Native version 0.60.0 or higher, it a does auto-linking for you. If not, check the extra setup instructions on react-native-fs page.

Install the CocoaPods dependencies (iOS specific):

```

cd ios && pod install

```

###Using downloadFile function:

In this example, I will be retrieving a PDF file from an API endpoint using the downloadFile function from `react-native-fs`. This function has two required parameters - fromUrl and toFile , along with several other optional ones. I created an async function downloadPDF which requires a url and fileName. It also contains a basic header with an authorization token and content-type.

React-native-fs' DocumentDirectoryPath provides the Android or iOS where documents are stored. You can change this to your customised path if you wish.

```javascript

downloadPDF = async (url: string, fileName: string): Promise<any> =>{

//Define path to store file along with the extension

const path = `${DocumentDirectoryPath}/${fileName}.pdf`;

const headers = {

'Accept': 'application/pdf',

'Content-Type': 'application/pdf',

'Authorization': `Bearer [token]`

}

//Define options

const options: DownloadFileOptions = {

fromUrl: [baseUrl] + url,

toFile: path,

headers: headers

}

//Call downloadFile

const response = await downloadFile(options);

return response.promise.then(async res =>

//Transform response

if(res && res.statusCode === 200 && res.bytesWritten > 0 &&

res.path){

doSomething(res)

}else{

logError(res)

}};

```

The response from downloadFile contains `statusCode` , `jobId` and `bytesWritten` . To know if a request is successful, check whether the `statusCode` is `200` and the `bytesWritten` > `0`. It is important to check both values because, I found it returning 200 even when no files were written.

I saved the `path` in the Redux's `state` to later retrieve the file.

##Opening the saved file in a WebView

###Install react-native-webview:

```

yarn add react-native-webview

```

or

```

npm install react-native-webview

```

Again, install the CocoaPods dependencies (iOS specific):

```

cd ios && pod install

```

###Implementing the WebView:

Create a React Funcional Component containing a WebView .

```javascript

const WebViewComponent: React.FunctionComponent = ({ navigation, route}: any): JSX.Element => (

<WebView

source={{ uri: "file://"+ path}}

style={{ flex: 1 }}

originWhitelist={["*"]}

/>

)};

export default WebViewComponent;

```

The WebView source's URI should point to `file://` + `pathToFile` . Don't forget to include the `style` property and set `flex:1` as shown above. | johannawad |

615,080 | Easily embed React apps into WordPress with ReactPress plugin | Why React and WordPress WordPress and React are a killer combination to develop web apps. With Wo... | 0 | 2021-02-22T21:39:05 | https://rockiger.com/en/easily-embed-react-apps-into-wordpress-with-reactpress-plugin/ | react, wordpress, webdev |

<!-- wp:heading -->

<h2>Why React and WordPress</h2>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p><a href="https://www.wordpress.org" target="_blank" aria-label="WordPress (opens in a new tab)" rel="noreferrer noopener" class="rank-math-link">WordPress</a> and <a href="https://reactjs.org" target="_blank" aria-label="React (opens in a new tab)" rel="noreferrer noopener" class="rank-math-link">React</a> are a killer combination to develop web apps.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>With WordPress you get:</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><li>easy content management for help pages, your landing page, your blog, and your other marketing activities</li><li>secure and proven user management</li><li>over 100.000 plugins</li><li>a lot of flexibility over time to change the character of your site</li></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p>React brings you the largest ecosystem to build great rich JavaScript apps that allow a simple data model, good performance and are easy to test.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>For example, if we want to write a new revolutionary email app, we can write the interface of the email client with React, but for everything else use WordPress. So while you're developing your app, you might want to:</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><li>choose and install a theme</li><li>create a landing page for your app</li><li>add a form to collect email addresses</li><li>create a blog and publish your posts on Twitter to promote your app</li><li>optimize your site for search engines</li></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p>Later it might be useful to</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><li>offer a paid membership</li><li>create a forum or FAQ</li><li>create separate landing pages</li><li>all this can easily be done with WordPress, without writing a single line of code.</li></ul>

<!-- /wp:list -->

<!-- wp:heading -->

<h2>Embedding a React app into WordPress with ReactPress</h2>

<!-- /wp:heading -->

<!-- wp:image {"align":"left","id":1359,"width":256,"height":256,"sizeSlug":"large","linkDestination":"none"} -->

<div class="wp-block-image"><figure class="alignleft size-large is-resized"><img src="https://rockiger.com/wp-content/uploads/icon-256x256-1.png" alt="" class="wp-image-1359" width="256" height="256"/></figure></div>

<!-- /wp:image -->

<!-- wp:paragraph -->

<p>While there are other ways to integrate React with WordPress, the <a aria-label="ReactPress (opens in a new tab)" href="https://wordpress.org/plugins/reactpress/" target="_blank" rel="noreferrer noopener" class="rank-math-link">ReactPress</a> plugin is the easiest to embed a React app into a WordPress page and lets you use <a aria-label="create-react-app (opens in a new tab)" href="https://reactjs.org/docs/create-a-new-react-app.html" target="_blank" rel="noreferrer noopener" class="rank-math-link">create-react-app</a> without any custom build configurations.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>Not only does ReactPress automate the React integration for you, but it also ensures a seamless development experience, through running your local React dev server with the theme of your WordPress site.</p>

<!-- /wp:paragraph -->

<!-- wp:group {"backgroundColor":"light-background"} -->

<div class="wp-block-group has-light-background-background-color has-background"><div class="wp-block-group__inner-container"><!-- wp:heading {"level":3,"style":{"typography":{"fontSize":20}}} -->

<h3 style="font-size:20px">Why not just using headless WordPress with SSR</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>I know that headless WordPress with <a aria-label="Next.js (opens in a new tab)" href="https://nextjs.org" target="_blank" rel="noreferrer noopener" class="rank-math-link">Next.js</a>, <a aria-label="Gatsby (opens in a new tab)" href="https://www.gatsbyjs.com" target="_blank" rel="noreferrer noopener" class="rank-math-link">Gatsby</a>, or <a aria-label="Frontity (opens in a new tab)" href="https://frontity.org" target="_blank" rel="noreferrer noopener" class="rank-math-link">Frontity</a> is all the rage right now, but with these solutions, you add a layer of complexity to your app, and more importantly, you lose a lot of the benefits of the WordPress ecosystem (themes and plugins).</p>

<!-- /wp:paragraph --></div></div>

<!-- /wp:group -->

<!-- wp:paragraph -->

<p></p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>The steps from development to deployment are:</p>

<!-- /wp:paragraph -->

<!-- wp:list {"ordered":true,"start":0} -->

<ol start="0"><li>Setup your local dev environment.</li><li>Install ReactPress on your local WordPress installation</li><li>Create a new React app from your WP installation</li><li>Develop your React app</li><li>Build the app</li><li>Install ReactPress on live WordPress site</li><li>Create the same React app there</li><li>Upload the build of your React app to your live site to deploy it.</li></ol>

<!-- /wp:list -->

<!-- wp:embed {"url":"https://youtu.be/pVi07A_OZYA","type":"video","providerNameSlug":"youtube","responsive":true,"className":"wp-embed-aspect-16-9 wp-has-aspect-ratio"} -->

<figure class="wp-block-embed is-type-video is-provider-youtube wp-block-embed-youtube wp-embed-aspect-16-9 wp-has-aspect-ratio"><div class="wp-block-embed__wrapper">

https://youtu.be/pVi07A_OZYA

</div><figcaption>The video shows the process with the TwentyTwenty-theme.</figcaption></figure>

<!-- /wp:embed -->

<!-- wp:paragraph -->

<p>Repeat steps 3, 4 and 7 until your app is finished.</p>

<!-- /wp:paragraph -->

<!-- wp:heading {"level":3} -->

<h3>Setup your local dev environment.</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>To develop React apps your WordPress installations needs access to: </p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><li>the PHP function <code>shell_exec</code> and <code>exec</code>,</li><li>the <code>nodejs</code> package manager <code>npm</code> version 6 or higher</li><li>and a POSIX compatible system</li></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p>This means <a aria-label="Local by Flywheel (opens in a new tab)" href="https://localwp.com" target="_blank" rel="noreferrer noopener" class="rank-math-link">Local by Flywheel</a> won't work, because you don't have control over the node environment there. The easiest way to create a local WordPress installation that fits the requirements is using the <a aria-label="Bitnami-Installers (opens in a new tab)" href="https://bitnami.com/stack/wordpress/installer" target="_blank" rel="noreferrer noopener" class="rank-math-link">Bitnami-Installers</a>. They provide a self-contained installation that doesn't pollute your system. </p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>However you install your WordPress dev system, if it meets the requirements you should be fine.</p>

<!-- /wp:paragraph -->

<!-- wp:heading {"level":3} -->

<h3>Install ReactPress on your local WordPress installation</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>In your local WordPress go to plugin installation, search for <strong>ReactPress</strong>, install and activate the plugin.</p>

<!-- /wp:paragraph -->

<!-- wp:heading {"level":3} -->

<h3>Create a new React app from your local WP installation</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>In the admin of your WP installation, click on <strong>ReactPress</strong> in the sidebar. There you should see a form to create a new React app.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>Enter a <strong>name</strong> (later you need to use the same name in the live system), enter a <strong>pageslug</strong>, choose the type "<strong>Develop a new app (Usually on a local machine).</strong>" and choose the <strong>create-react-app-template</strong> you would like to use and finally click the "<strong>Create React App</strong>" button.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>After a short while your app should be created and running at port: <strong>3000</strong>. If you click on the link you can see your local dev app running in the theme of your WP installation.</p>

<!-- /wp:paragraph -->

<!-- wp:image -->

<figure class="wp-block-image"><img src="https://ps.w.org/reactpress/assets/screenshot-2.png?rev=2471443" alt=""/><figcaption>ReactPress with running React app <strong>reactino</strong>.</figcaption></figure>

<!-- /wp:image -->

<!-- wp:heading {"level":3} -->

<h3>Develop your React app</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Now you can develop your React app as you are used to. Use <a href="https://developer.wordpress.org/rest-api/" target="_blank" aria-label="WordPress's built-in REST-API (opens in a new tab)" rel="noreferrer noopener" class="rank-math-link">WordPress's built-in REST-API</a> to get data or use the <a href="https://www.wpgraphql.com" target="_blank" aria-label="WPGraphQL plugin (opens in a new tab)" rel="noreferrer noopener" class="rank-math-link">WPGraphQL plugin</a> if you prefer <a href="https://graphql.org" target="_blank" aria-label="GraphQL (opens in a new tab)" rel="noreferrer noopener" class="rank-math-link">GraphQL</a>.</p>

<!-- /wp:paragraph -->

<!-- wp:heading {"level":3} -->

<h3>Build the app</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>If you want to deploy to your live site, build your app through the WP admin. Don't use the CRA command line for this!</p>

<!-- /wp:paragraph -->

<!-- wp:heading {"level":3} -->

<h3>Install ReactPress on live WordPress site</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Before you upload your React app, install ReactPress on your live site the same way you did on your local installation.</p>

<!-- /wp:paragraph -->

<!-- wp:heading {"level":3} -->

<h3>Create the same React app there</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Create the same React app on your live system, that you created on your local system. <em>Important!</em> Use the <em>exact same name</em> and this time choose "<strong>Deploy an already build app (Usually on a server).</strong>" as the type.</p>

<!-- /wp:paragraph -->

<!-- wp:heading {"level":3} -->

<h3>Upload the build of your React app to your live site to deploy it.</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Finally, upload the build of your React app. Upload the build folder of your local React app to the app on your live system. </p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>The ReactPress admin shows where your apps are located. It will be something like this: <code>.../htdocs/wp-content/plugins/reactpress/apps/[your-appname]</code>.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>If you click on the URL slug of your React app in ReactPress you should see your React app on your live system.</p>

<!-- /wp:paragraph -->

<!-- wp:image -->

<figure class="wp-block-image"><img src="https://ps.w.org/reactpress/assets/screenshot-6.png?rev=2471443" alt=""/><figcaption>Deployed React app embedded into live WordPress site with TwentyTwenty-theme.</figcaption></figure>

<!-- /wp:image -->

<!-- wp:heading -->

<h2>Where to go from here?</h2>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>You should be able to create React app embedded in WordPress now. If you have the next big app idea you are ready to start. </p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>If you have any questions, let me know in the comments.</p>

<!-- /wp:paragraph --> | rockiger |

616,265 | How to Add Your Recently Published Medium Articles to Your GitHub Readme | Show off your latest Medium work on GitHub Photo by Christin Hume on Unsplash. GitHub recently... | 0 | 2021-03-22T06:26:02 | https://betterprogramming.pub/add-your-recent-published-mediums-article-on-github-readme-9ffaf3ad1606 | softwaredevelopment, opensource, medium, github | ---

title: How to Add Your Recently Published Medium Articles to Your GitHub Readme

published: true

date: 2020-08-16 16:32:37 UTC

tags: softwaredevelopment,opensource,medium,github

canonical_url: https://betterprogramming.pub/add-your-recent-published-mediums-article-on-github-readme-9ffaf3ad1606

---

> Show off your latest Medium work on GitHub

<figcaption>Photo by <a href="https://unsplash.com/@christinhumephoto?utm_source=medium&utm_medium=referral">Christin Hume</a> on <a href="https://unsplash.com?utm_source=medium&utm_medium=referral">Unsplash</a>.</figcaption>

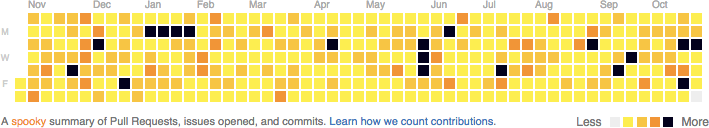

GitHub recently released a new feature that allows you to create a Readme profile, so you can now customize your GitHub profile page.

You can see an example on [my GitHub profile](https://github.com/bxcodec):

<figcaption>My GitHub profile.</figcaption>

This feature is really nice. It makes your GitHub profile look more professional and content-rich. In the future, I expect GitHub to look like LinkedIn for developers.

### Introducing GitHub Readme — Recent Medium Articles

I’ve seen a lot of plug-ins that people have made, like the GitHub stats card, programming language stats, and even games (e.g. this [online chess game](https://github.com/timburgan/timburgan) or even [tic-tac-toe](https://github.com/alfari16/alfari16)).

In [this readme](https://github.com/alfari16/alfari16), the user has included a list of their recently published Medium articles. But it’s only available on their profile. To achieve the same results, I need to copy their code, which takes time.

That’s the original idea behind this plug-in. I created a separate repository with a customized function. I then made the function more generic so everyone can add their recently published Medium articles to their GitHub readme.

{% youtube hk6MoV-qWW8 %}

### Steps

To use this plug-in, you only need to add this script to your GitHub readme:

<iframe src="" width="0" height="0" frameborder="0" scrolling="no"><a href="https://medium.com/media/60fa24f400869923cd2f970850db9bf1/href">https://medium.com/media/60fa24f400869923cd2f970850db9bf1/href</a></iframe>

So the format is:

```

https://github-readme-medium-recent-article.vercel.app/medium/<medium-username>/<article-index>

```

- medium-username: Your medium username/profile

- article-index : Your recent article index (e.g. 0 means your latest article)

The full steps can be seen in [my repository](https://github.com/bxcodec/github-readme-medium-recent-article). Also if you’ve found any issues, just open an issue or create a PR directly on that repository.

### More About This Plug-In

- I’m using [Vercel](https://vercel.com/) for the static hosting and the serverless function to retrieve the recent articles. I might be able to add a custom domain, but that’s for later.

- I’m using an RSS feed from Medium. You can get your RSS feed from Medium by entering this URL: [https://medium.com/feed/@](https://medium.com/feed/@imantumorang)yourMediumUsername.

- Then convert it to JSON using API RSS to JSON:

```

[https://api.rss2json.com/v1/api.json?rss\_url=https://medium.com/feed/@imantumorang](https://api.rss2json.com/v1/api.json?rss_url=https://medium.com/feed/@imantumorang)

```

- Make it generic so everyone is able to use it.

To make it generic so people can directly pass their Medium username to the serverless function:

```

https://github-readme-medium-recent-article.vercel.app/medium/@imantumorang

```

I need to make the folder of my serverless function as follows:

```

└── medium

└── [user]

└── [index].ts

```

The [user] directory is required so I can make the username dynamic. I was stuck on this problem when I was making the plug-in. Actually, I can create it using query-param like ?username=@imantumorang, but from my experience and keeping REST in mind, making it path-param is the proper way to tell that the param is required. Also, I want to keep the experience the same as when you’re visiting your Medium profile (e.g. medium.com/@imantumorang).

I knew that comments in the Medium articles would be added automatically to the RSS. To only display the article, I added a filter function on the function:

```

if (thumbnail.includes("cdn")) {

fixItem.push(element)

}

```

So I only enabled articles that have a thumbnail. I’m still looking for a workaround for this because if the article doesn’t have any thumbnail, it will be skipped. At least for now, if your article has a thumbnail, it will be displayed when we import it to your GitHub readme.

It may take time to be able to get your recent article to be listed (for a new article) since the API RSS to JSON is cached. Please wait around 1-3 hours after the article has been published on Medium.

### Conclusion

Well, I think that’s all for now. If you find any issues, you can directly open an issue on GitHub. I’ll try to help as much as I can.

* * * | bxcodec |

615,361 | Project 50 of 100 - Firebase Sign Up and Login with React Router | Hey! I'm on a mission to make 100 React.js projects ending March 31st. Please follow my dev.to profil... | 0 | 2021-02-23T06:15:36 | https://dev.to/jameshubert_com/project-50-of-100-firebase-sign-up-and-login-with-react-router-5fbn | react, javascript, 100daysofcode | *Hey! I'm on a mission to make 100 React.js projects ending March 31st. Please follow my dev.to profile or my [twitter](https://www.twitter.com/jwhubert91) for updates and feel free to reach out if you have questions. Thanks for your support!*

Link to the deployed project: [Link](https://100-react-projects-day-50-compras-auth.netlify.app/)