id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

714,819 | Apache Kafka: Apprentice Cookbook | Apache Kafka is a distributed event streaming platform built over strong concepts. Let’s dive into... | 0 | 2021-07-01T07:03:57 | https://aveuiller.github.io/kafka_apprentice_cookbook.html | tutorial, eventdriven, kafka |

Apache Kafka is a distributed event streaming platform built over strong concepts. Let’s dive into the possibilities it offers.

[Apache Kafka](https://kafka.apache.org/) is a distributed event streaming platform built with an emphasis on reliability, performance, and customization. Kafka can send and receive messages in a [publish-subscribe](https://aws.amazon.com/pub-sub-messaging/) fashion. To achieve this, the ecosystem relies on few but strong basic concepts, which enable the community to build many features solving [numerous use cases](https://kafka.apache.org/uses), for instance:

* Processing messages as an [Enterprise Service Bus](https://www.confluent.io/blog/apache-kafka-vs-enterprise-service-bus-esb-friends-enemies-or-frenemies/).

* Tracking Activity, metrics, and telemetries.

* Processing Streams.

* Supporting [Event sourcing](https://www.confluent.io/blog/event-sourcing-cqrs-stream-processing-apache-kafka-whats-connection/).

* Storing logs.

This article will see the concepts backing up Kafka and the different tools available to handle data streams.

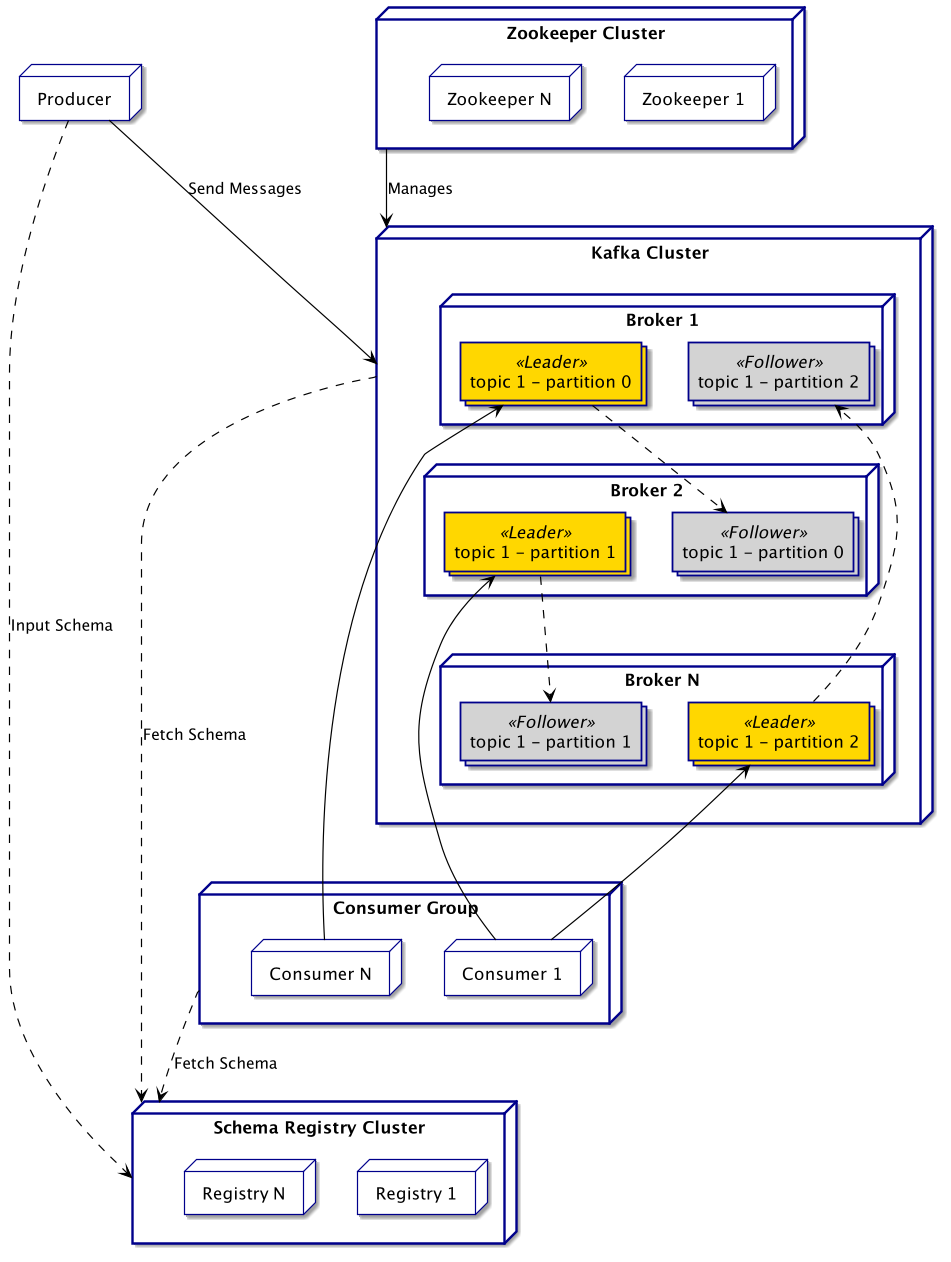

## Architecture

The behaviour of Kafka is pretty simple: **Producers** push _Messages_ into a particular _Topic_, and **Consumers** subscribe to this _Topic_ to fetch and process the _Messages_. Let’s see how it is achieved by this technology.

### Infrastructure side

Independently of the use, the following components will be deployed:

* One or more **Producers** sending messages to the brokers.

* One or more Kafka **Brokers**, the actual messaging server handling communication between producers and consumers.

* One or more **Consumers** fetching and processing messages, in clusters named **Consumer Groups**.

* One or more [**Zookeeper**](https://zookeeper.apache.org/) instances managing the brokers.

* (Optionally) One or more **Registry** instances uniformizing message schema.

As a scalable distributed system, Kafka is heavily relying on the concept of _clusters_. As a result, on typical production deployment, there will likely be multiple instances of each component.

A **Consumer Group** is a cluster of the same consumer application. This concept is heavily used by Kafka to balance the load on the applicative side of things.

_Note: The dependency on Zookeeper will be removed soon, Cf._ [_KIP-500_](https://cwiki.apache.org/confluence/display/KAFKA/KIP-500%3A+Replace+ZooKeeper+with+a+Self-Managed+Metadata+Quorum)

> Further Reading:

> [Design & Implementation Documentation](https://kafka.apache.org/documentation/#majordesignelements)

> [Kafka Basics and Core Concepts: Explained — Aritra Das](https://hackernoon.com/kafka-basics-and-core-concepts-explained-dd1434dv)

### Applicative side

A **Message** in Kafka is a `key-value` pair. Those elements can be anything from an integer to a [Protobuf message](https://developers.google.com/protocol-buffers), provided the right serializer and deserializer.

The message is sent to a **Topic**, which will store it as a **Log**. The topic should be a collection of logs semantically related, but without a particular structure imposed. A topic can either keep every message as a new log entry or only keep the last value for each key (a.k.a. [Compacted log](https://docs.confluent.io/platform/current/kafka/design.html#log-compaction)).

To take advantage of the multiple brokers, topics are [sharded](https://en.wikipedia.org/wiki/Shard_%28database_architecture%29) into **Partitions** by default. Kafka will assign any received message to one partition depending on its key,

or using [a partitioner algorithm](https://www.confluent.io/blog/apache-kafka-producer-improvements-sticky-partitioner) otherwise, which results in a random assignment from the developer's point of view. Each partition has a **Leader** responsible for all I/O operations, and **Followers** replicating the data. A follower will take over the leader role in case of an issue with the current one.

The partition holds the received data in order, increasing an **offset** integer for each message. However, there is no order guarantee between two partitions. So for order-dependent data, one must ensure that they end up in the same partition by using the same key.

Each partition is assigned to a specific consumer from the consumer group. This consumer is the only one fetching messages from this partition. In case of shutdown of one customer, the brokers will [reassign partitions](https://medium.com/streamthoughts/understanding-kafka-partition-assignment-strategies-and-how-to-write-your-own-custom-assignor-ebeda1fc06f3) among the customers.

Being an asynchronous system, it can be hard and impactful on the performances to have every message delivered exactly one time to the consumer. To mitigate this, Kafka provides [different levels of guarantee](https://kafka.apache.org/documentation/#semantics) on the number of times a message will be processed (_i.e._ at most once, at least once, exactly once).

> Further Reading:

> [Log Compacted Topics in Apache Kafka — Seyed Morteza Mousavi](https://towardsdatascience.com/log-compacted-topics-in-apache-kafka-b1aa1e4665a7)

> [(Youtube) Apache Kafka 101: Replication — Confluent](https://www.youtube.com/watch?v=Vo6Mv5YPOJU&list=PLa7VYi0yPIH0KbnJQcMv5N9iW8HkZHztH&index=5)

> [Replication Design Doc](https://cwiki.apache.org/confluence/display/KAFKA/Kafka+Replication)

> [Processing Guarantees in Details — Andy Briant](https://medium.com/@andy.bryant/processing-guarantees-in-kafka-12dd2e30be0e)

### Schema and Registry

Messages are serialized when quitting a producer and deserialized when handled by the consumer. To ensure compatibility, both must be using the same data definition. Ensuring this can be hard considering the application evolution. As a result, when dealing with a production system, it is recommended to use a schema to explicit a contract on the data structure.

To do this, Kafka provides a **Registry** server, storing and binding schema to topics. Historically only [Avro](https://avro.apache.org/docs/current/) was available, but the registry is now modular and can also handle [JSON](https://json-schema.org/) and [Protobuf](https://developers.google.com/protocol-buffers) out of the box.

Once a producer sent a schema describing the data handled by its topic to the registry, other parties (_i.e._ brokers and consumers) will fetch this schema on the registry to validate and deserialize the data.

> Further Reading:

> [Schema Registry Documentation](https://docs.confluent.io/platform/current/schema-registry/index.html)

> [Kafka tutorial #4-Avro and the Schema Registry— Alexis Seigneurin](https://aseigneurin.github.io/2018/08/02/kafka-tutorial-4-avro-and-schema-registry.html)

> [Serializer-Deserializer for Schema](https://docs.confluent.io/platform/current/schema-registry/serdes-develop/index.html#serializer-and-formatter)

## Integrations

Kafka provides multiple ways of connecting to the brokers,

and each can be more useful than the others depending on the needs. As a result, even if a library is an abstraction layer above another, it is not necessarily better for every use case.

### Kafka library

There are client libraries available in [numerous languages](https://docs.confluent.io/platform/current/clients/index.html) which help develop a producer and consumer easily. We will use Java for the example below, but the concept remains identical for other languages.

The producer concept is to publish messages at any moment, so the code is pretty simple.

```java

public class Main {

public static void main(String[] args) throws Exception {

// Configure your producer

Properties producerProperties = new Properties();

producerProperties.put("bootstrap.servers", "localhost:29092");

producerProperties.put("acks", "all");

producerProperties.put("retries", 0);

producerProperties.put("linger.ms", 1);

producerProperties.put("key.serializer", "org.apache.kafka.common.serialization.LongSerializer");

producerProperties.put("value.serializer", "io.confluent.kafka.serializers.KafkaAvroSerializer");

producerProperties.put("schema.registry.url", "http://localhost:8081");

// Initialize a producer

Producer<Long, AvroHelloMessage> producer = new KafkaProducer<>(producerProperties);

// Use it whenever you need

producer.send(new AvroHelloMessage(1L, "this is a message", 2.4f, 1));

}

}

```

The code is a bit more complex on the consumer part since the consumption loop needs to be created manually. On the other hand, this gives more control over its behaviour. The consumer state is automatically handled by the Kafka library. As a result, restarting the worker will start at the most recent offset he encountered.

```java

public class Main {

public static Properties configureConsumer() {

Properties consumerProperties = new Properties();

consumerProperties.put("bootstrap.servers", "localhost:29092");

consumerProperties.put("group.id", "HelloConsumer");

consumerProperties.put("key.deserializer", "org.apache.kafka.common.serialization.LongDeserializer");

consumerProperties.put("value.deserializer", "io.confluent.kafka.serializers.KafkaAvroDeserializer");

consumerProperties.put("schema.registry.url", "http://localhost:8081");

// Configure Avro deserializer to convert the received data to a SpecificRecord (i.e. AvroHelloMessage)

// instead of a GenericRecord (i.e. schema + array of deserialized data).

consumerProperties.put(KafkaAvroDeserializerConfig.SPECIFIC_AVRO_READER_CONFIG, true);

return consumerProperties;

}

public static void main(String[] args) throws Exception {

// Initialize a consumer

final Consumer<Long, AvroHelloMessage> consumer = new KafkaConsumer<>(configureConsumer());

// Chose the topics you will be polling from.

// You can subscribe to all topics matching a Regex.

consumer.subscribe(Pattern.compile("hello_topic_avro"));

// Poll will return all messages from the current consumer offset

final AtomicBoolean shouldStop = new AtomicBoolean(false);

Thread consumerThread = new Thread(() -> {

final Duration timeout = Duration.ofSeconds(5);

while (!shouldStop) {

for (ConsumerRecord<Long, AvroHelloMessage> record : consumer.poll(timeout)) {

// Use your record

AvroHelloMessage value = record.value();

}

// Be kind to the broker while polling

Thread.sleep(5);

}

consumer.close(timeout);

});

// Start consuming && do other things

consumerThread.start();

// [...]

// End consumption from customer

shouldStop.set(true);

consumerThread.join();

}

}

```

> Further Reading:

> [Available Libraries](https://docs.confluent.io/platform/current/clients/index.html)

> [Producer Configuration](https://docs.confluent.io/platform/current/installation/configuration/producer-configs.html)

> [Consumer Configuration](https://docs.confluent.io/platform/current/installation/configuration/consumer-configs.html)

### Kafka Streams

Kafka Streams is built on top of the consumer library. It continuously reads from a topic and processes the messages with code declared with a functional DSL.

During the processing, transitional data can be kept in structures called [KStream](https://kafka.apache.org/23/javadoc/org/apache/kafka/streams/kstream/KStream.html) and [KTable](https://kafka.apache.org/23/javadoc/org/apache/kafka/streams/kstream/KTable.html), which are stored into topics. The former is equivalent to a standard topic, and the latter to a compacted topic. Using these data stores will enable automatic tracking of the worker state by Kafka, helping to get back on track in case of restart.

The following code sample is extracted from the [tutorial provided by Apache](https://kafka.apache.org/28/documentation/streams/tutorial). The code connects to a topic named `streams-plaintext-input` containing strings values, without necessarily providing keys. The few lines configuring the `StreamsBuilder` will:

1. Transform each message to lowercase.

1. Split the result using whitespaces as a delimiter.

1. Group previous tokens by value.

1. Count the number of tokens for each group and save the changes to a KTable named `counts-store`.

1. Stream the changes in this Ktable to send the values in a KStream named `streams-wordcount-output`.

```java

public class Main {

public static void main(String[] args) throws Exception {

Properties props = new Properties();

props.put(StreamsConfig.APPLICATION_ID_CONFIG, "streams-wordcount");

props.put(StreamsConfig.BOOTSTRAP_SERVERS_CONFIG, "localhost:29092");

props.put(StreamsConfig.DEFAULT_KEY_SERDE_CLASS_CONFIG, Serdes.String().getClass());

props.put(StreamsConfig.DEFAULT_VALUE_SERDE_CLASS_CONFIG, Serdes.String().getClass());

final StreamsBuilder builder = new StreamsBuilder();

builder.<String, String>stream("streams-plaintext-input")

.flatMapValues(value -> Arrays.asList(value.toLowerCase(Locale.getDefault()).split("\\W+")))

.groupBy((key, value) -> value)

.count(Materialized.<String, Long, KeyValueStore<Bytes, byte[]>>as("counts-store"))

.toStream()

.to("streams-wordcount-output", Produced.with(Serdes.String(), Serdes.Long()));

final Topology topology = builder.build();

final KafkaStreams streams = new KafkaStreams(topology, props);

final CountDownLatch latch = new CountDownLatch(1);

// attach shutdown handler to catch control-c

Runtime.getRuntime().addShutdownHook(new Thread("streams-shutdown-hook") {

@Override

public void run() {

streams.close();

latch.countDown();

}

});

// The consumer loop is handled by the library

streams.start();

latch.await();

}

}

```

> Further Reading:

> [Kafka Streams Concepts](https://docs.confluent.io/platform/current/streams/concepts.html)

> [Developer Guide](https://docs.confluent.io/platform/current/streams/developer-guide/write-streams.html)

> [Kafka Stream Work Allocation — Andy Briant](https://medium.com/@andy.bryant/kafka-streams-work-allocation-4f31c24753cc)

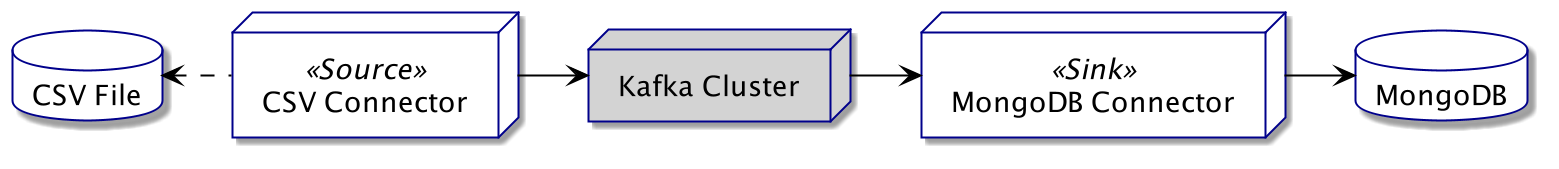

### Kafka Connect

Kafka Connect provides a way of transforming and synchronizing data between almost any technology with the use of **Connectors**. Confluent is hosting a [Hub](https://www.confluent.io/hub/), on which users can share connectors for various technologies. This means that integrating a Kafka Connect pipeline is most of the time only a matter of configuration, without code required. A single connector can even handle both connection sides:

* Populate a topic with data from any system: _i.e._ a **Source**.

* Send data from a topic to any system: _i.e._ a **Sink**.

The source will read data from CSV files in the following schema then publish them into a topic. Concurrently, the sink will poll from the topic and insert the messages into a MongoDB database. Each connector can run in the same or a distinct worker, and workers can be grouped into a cluster for scalability.

The connector instance is created through a configuration specific to the library. The file below is a configuration of the [MongoDB connector](https://www.confluent.io/hub/mongodb/kafka-connect-mongodb). It asks to fetch all messages from the topic `mongo-source` to insert them into the collection `sink` of the database named `kafka-connect`. The credentials are provided from an external file, which is a feature of Kafka Connect to [protect secrets](https://docs.confluent.io/platform/current/connect/security.html#externalizing-secrets).

```json

{

"name": "mongo-sink",

"config": {

"topics": "mongo-source",

"tasks.max": "1",

"connector.class": "com.mongodb.kafka.connect.MongoSinkConnector",

"connection.uri": "mongodb://${file:/auth.properties:username}:${file:/auth.properties:password}@mongo:27017",

"database": "kafka_connect",

"collection": "sink",

"max.num.retries": "1",

"retries.defer.timeout": "5000",

"document.id.strategy": "com.mongodb.kafka.connect.sink.processor.id.strategy.BsonOidStrategy",

"post.processor.chain": "com.mongodb.kafka.connect.sink.processor.DocumentIdAdder",

"delete.on.null.values": "false",

"writemodel.strategy": "com.mongodb.kafka.connect.sink.writemodel.strategy.ReplaceOneDefaultStrategy"

}

}

```

Once the configuration complete, registering the connector is as easy as an HTTP call on the running [Kafka Connect instance](https://docs.confluent.io/home/connect/userguide.html#configuring-and-running-workers). Afterwards, the service will automatically watch the data without further work required.

```shell

$ curl -i -X POST -H "Accept:application/json" -H "Content-Type:application/json" \

http://localhost:8083/connectors -d @sink-conf.json

```

> Further Reading:

> [Getting Started Documentation](https://docs.confluent.io/platform/current/connect/userguide.html#connect-userguide)

> [Connector Instance API Reference](https://docs.confluent.io/platform/current/connect/references/restapi.html)

> [(Youtube) Tutorials Playlist — Confluent](https://www.youtube.com/playlist?list=PLa7VYi0yPIH1MB2n2w8pMZguffCDu2L4Y)

### KSQL Database

Ksql is somehow equivalent to Kafka Streams, except that every transformation is declared in an SQL-like language. The server is connected to the brokers and can create **Streams** or **Tables** from topics. Those two concepts behave in the same way as a KStream or KTable from Kafka Streams (_i.e._ respectively a topic and a compacted topic).

There are three types of query in the language definition:

1. **Persistent Query** (_e.g._ `CREATE TABLE <name> WITH (...)`): Creates a new stream or table that will be automatically updated.

2. **Pull Query** (_e.g._ `SELECT * FROM <table|stream> WHERE ID = 1`): Behaves similarly to a standard DBMS. Fetches data as an instant snapshot and closes the connection.

3. **Push Query** (_e.g._ `_SELECT * FROM <table|stream> EMIT CHANGES_`): Requests a persistent connection to the server, asynchronously pushing updated values.

The database can be used to browse the brokers' content. Topics can be discovered through the command `list topics`, and their content displayed using `print <name>`.

```sql

ksql> list topics;

Kafka Topic | Partitions | Partition Replicas

----------------------------------------------------

hello_topic_json | 1 | 1

----------------------------------------------------

ksql> print 'hello_topic_json' from beginning;

Key format: KAFKA_BIGINT or KAFKA_DOUBLE or KAFKA_STRING

Value format: JSON or KAFKA_STRING

rowtime: 2021/05/25 08:44:20.922 Z, key: 1, value: {"user_id":1,"message":"this is a message","value":2.4,"version":1}

rowtime: 2021/05/25 08:44:20.967 Z, key: 1, value: {"user_id":1,"message":"this is another message","value":2.4,"version":2}

rowtime: 2021/05/25 08:44:20.970 Z, key: 2, value: {"user_id":2,"message":"this is another message","value":2.6,"version":1}

```

The syntax to create and query a stream, or a table is very close to SQL.

```sql

-- Let's create a table from the previous topic

ksql> CREATE TABLE messages (user_id BIGINT PRIMARY KEY, message VARCHAR)

> WITH (KAFKA_TOPIC = 'hello_topic_json', VALUE_FORMAT='JSON');

-- We can see the list and details of each table

ksql> list tables;

Table Name | Kafka Topic | Key Format | Value Format | Windowed

----------------------------------------------------------------------

MESSAGES | hello_topic_json | KAFKA | JSON | false

----------------------------------------------------------------------

ksql> describe messages;

Name : MESSAGES

Field | Type

------------------------------------------

USER_ID | BIGINT (primary key)

MESSAGE | VARCHAR(STRING)

------------------------------------------

For runtime statistics and query details run: DESCRIBE EXTENDED <Stream,Table>;

-- Appart from some additions to the language, the queries are almost declared in standard SQL.

ksql> select * from messages EMIT CHANGES;

+--------+------------------------+

|USER_ID |MESSAGE |

+--------+------------------------+

|1 |this is another message |

|2 |this is another message |

```

Kafka recommends using a [headless ksqlDB server](https://www.confluent.io/blog/deep-dive-ksql-deployment-options/) for production, with a file declaring all streams and tables to create. This avoids any modification to the definitions.

_Note: ksqlDB servers can be grouped in a cluster like any other consumer._

> Further Reading:

>

> [Official Documentation](https://docs.confluent.io/platform/current/streams-ksql.html)

>

> [KSQL Query Types In Details](https://docs.ksqldb.io/en/latest/concepts/queries/)

>

> [(Youtube) Tutorials Playlist — Confluent](https://www.youtube.com/playlist?list=PLa7VYi0yPIH2eX8q3mPpZAn3qCS1eDX8W)

## Conclusion

This article gives a broad view of the Kafka ecosystem and possibilities, which are numerous. This article only scratches the surface of each subject. But worry not, as they are all well documented by Apache, Confluent, and fellow developers.

Here are a few supplementary resources to dig further into Kafka:

* [(Youtube) Kafka Tutorials - _Confluent_](https://www.youtube.com/playlist?list=PLa7VYi0yPIH0KbnJQcMv5N9iW8HkZHztH)

* [Kafka Tutorials in Practice](https://kafka-tutorials.confluent.io/)

* [Top 5 Things Every Apache Kafka Developer Should Know — Bill Bejeck](https://www.confluent.io/blog/5-things-every-kafka-developer-should-know/)

* [Kafkacat user Guide](https://docs.confluent.io/platform/current/app-development/kafkacat-usage.html)

* [Troubleshooting KSQL Part 2: What’s Happening Under the Covers? — Robin Moffatt](https://www.confluent.io/blog/troubleshooting-ksql-part-2)

* [Apache Kafka Internals — sudan](https://ssudan16.medium.com/kafka-internals-47e594e3f006)

_The complete experimental code is available on my [GitHub repository](https://github.com/aveuiller/frameworks-bootstrap/tree/master/Kafka)._

*Thanks to Sarra Habchi, and Dimitri Delabroye for the reviews*

| aveuiller |

714,973 | iOS App Security Cheatsheet | In a previous article we saw an example on how an attacker could analyse an app in the search of vuln... | 0 | 2021-06-02T08:44:17 | https://apiumhub.com/tech-blog-barcelona/ios-app-security-cheatsheet/ | agilewebandappdevelo, ioscheatsheet, iossecurity | ---

title: iOS App Security Cheatsheet

published: true

date: 2021-06-01 08:57:15 UTC

tags: Agilewebandappdevelo,iOSCheatsheet,iOSSecurity

canonical_url: https://apiumhub.com/tech-blog-barcelona/ios-app-security-cheatsheet/

---

In a [previous article](https://dev.to/apium_hub/security-awareness-in-an-ios-environment-1683) we saw an example on how an attacker could analyse an app in the search of vulnerabilities, and perform an XSS attack through the misuse of a web view. Hopefully after reading that, if you weren’t aware of how easy it is to at least get into some source code of an app published on the AppStore, now you are and you might be wondering if there are other ways to hack an iOS application and how to prevent it.

In this article I will try to make a compilation of stuff to check if you want to ensure your app handles most common security flaws. We will cover the following topics: system API’s, Data Handling, Data transportation and App Hardening.

## System API’s Usage

- **Cryptography:** Use CryptoKit whenever possible and check correct usage of it. Avoid implementing custom crypto algorithms, apart from mistakes it could be an issue during AppStore reviews.

- **App backgrounding:** If the app handles any sensitive user data visible on the screen, you may want to implement a way to hide the content when the app is entering background mode (a snapshot of the app is taken at this moment and stored on the device). Check `applicationDidEnterBackground.`

- **Handle the pasteboard securely:** if pasteboard persistence is enabled, check that it’s being cleared when application backgrounds. Check `UIPasteboardNameFind` and `UIPasteboardNameGeneral`.

- **Disable auto-correction** for sensitive input fields or mark them as secure (passwords, credit cards, etc).

- Check for unwanted screen recordings and capturing of **sensitive data**. Subscribe to `userDidTakeScreenshotNotification` and use `UIScreen.isCaptured()` in order to blur or hide the content.

- Keep in mind possible **SQL injection** or formatted strings injection vulnerabilities (the latter should not be an issue in Swift). Always use parameterized strings for NSPredicates and for formatted strings. E.g.: instead of using `NSPredicate(format: “(email LIKE ‘\(user.email)’) AND (password LIKE ‘\(user.password)’)”, nil)` you should better use `NSPredicate(format: “(email = @) AND (password = @)”, user.email, user.password)`. If necessary input text validation measures can be taken in place before saving to the database or interacting with the server.

- Ensure a **proper use of Web Views** , check and validate possible javascript code injection.

## Data Handling

- Setting a **Protection Level** for writing data: Check for desired usage of Data Protection API, refer to `NSData.WritingOptions` and `URLFileProtection`.

- CoreData and Realm store the databases as .db files that can be copied from your bundle, and easily read. **Make sure to encrypt sensitive data before storing it to your database**.

- Do not use `UserDefaults` to store any sensitive data, such as Access Tokens, subscription flags, or relevant account information. It can be easily accessed from outside the app. Use KeychainService API instead.

- Hash data using **CryptoKit** instead of Swift Standard Library hashing functions, as the latter have a high collision rate.

- Check [Apple documentation](https://developer.apple.com/library/archive/documentation/FileManagement/Conceptual/FileSystemProgrammingGuide/FileSystemOverview/FileSystemOverview.html#//apple_ref/doc/uid/TP40010672-CH2-SW12) in order to be sure on where to store your data in the file system.

## Data Transportation

- Configure **App Transport Security** (ATS) correctly, try to avoid adding exceptions for it.

- **Use TLS/SSL securely**. Check for any HTTP and replace by HTTPS, also check for any token being sent in the URL, they should always be sent in the headers.

- Keep in mind that HTTP Requests/Responses are cached by NSURLSession by default in a Cache.db file. If handling sensitive data, you may want to use `ephemeralSessionConfiguration` which does not store cookies nor caches. Global cache can also be disabled, check URLCache, you can assign 0 capacity to it and assign it to `URLCache.shared`.

## App Hardening

- Check if you want to support third party keyboards, and disable them if you believe it can be a threat for your data.

- Run **XCode** static analysis report. It can help to reveal memory leaks and other common bugs.

- **Debug logs** : keep in mind that logs are public by default, so never log sensitive information, and use the appropriate tools for it. Avoid the usage of prints.

- **Code obfuscation** : code can be easily reverse engineered, so in order to prevent that we can obfuscate the code. There are many third party libraries for that matter.

- Do not abuse URL schemes usage, and validate each URL you will handle to prevent XSS attacks.

- Ensure you have a strong **Jailbreak detection system** , jailbreak is still possible for most devices and iOS’s. Attackers from jailbroken devices can reverse engineer your app and access sensitive data.

## Conclusion

As you can see, there are a considerable amount of things to keep in mind when addressing the security of your application. And of course these are not all of them, but at least with this list you have a point to start checking your application and think which items are relevant for your application security profile. I hope this short read was useful for you and that you keep it handy for the next time you have to audit an application’s security. | apium_hub |

715,112 | TUTORIAL: How to create a modal in UI Builder | A modal is a common UI element used to grab the user’s attention by overlaying the page. In this step... | 0 | 2021-06-01T14:26:31 | https://dev.to/backendless/tutorial-how-to-create-a-modal-in-ui-builder-5b97 | ux, webdev | A modal is a common UI element used to grab the user’s attention by overlaying the page. In this step-by-step tutorial, we will show you how to implement, style, and interact with modals in UI Builder.

Also known as overlays or dialog windows, modals are an easy way to ensure your user receives information or takes an action you want them to take. In order to guarantee the user interacts with the modal, most modals prevent the user from interacting with the underlying page.

While this can be effective in small doses, the modal UI element should be used in moderation as it tends to disrupt the user experience.

Check out an example of the component [in this demo](https://eu.backendlessappcontent.com/8AAA8E74-06F7-48FD-9154-1AA3227BFA24/D8E91033-BD89-40C0-9FD9-126973003E38/files/web/app/index.html?page=Modal).

In this tutorial, we will take you through the process of implementing a modal in Backendless’ built-in UI Builder. To follow along, simply login or create a free Backendless account.

_Note from the author: The names for the classes and elements in this component are used for example. You can them to whatever you prefer._

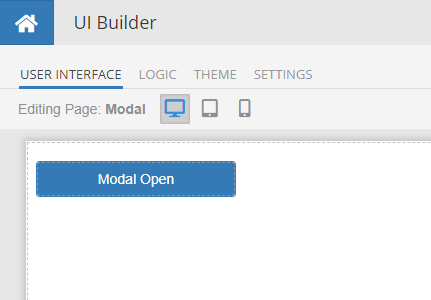

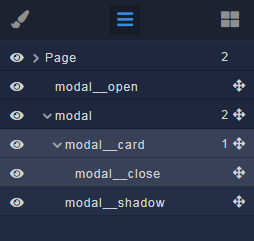

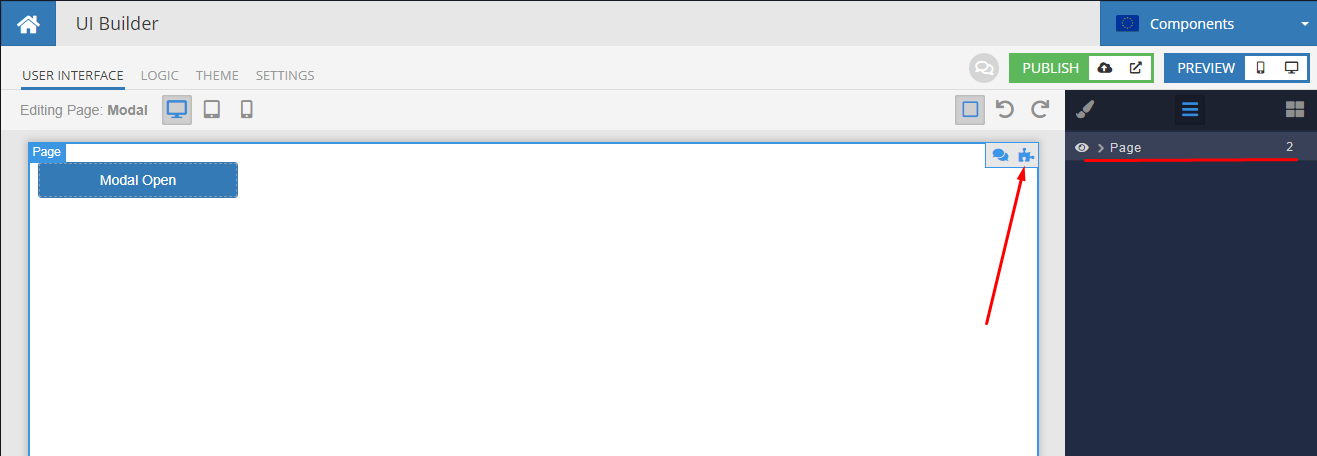

##Modal Structure In UI Builder

Let’s start assembling the modal window by creating the component structure on the User interface tab.

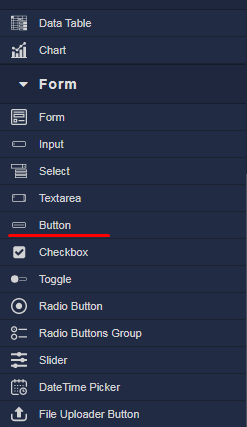

The general structure of the component is shown below. For clarity, element IDs are named the same as classes.

###Descriptions

*`modal__open` – button for opening a modal window (can be any of your custom solutions)

*`modal` – root element of the modal (required)

*`modal__card` – root block for your content; inside this element put what you need (required)

*`modal__close` – button to close the modal window (you can do as you need)

*`modal__shadow` – shading curtain behind the modal window, restricts access to other elements of the page (required)

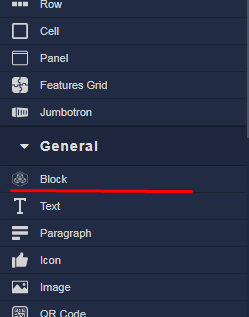

All elements of the component, except buttons, use the Block component.

For the buttons, we will use the Button element but you can use whatever you want.

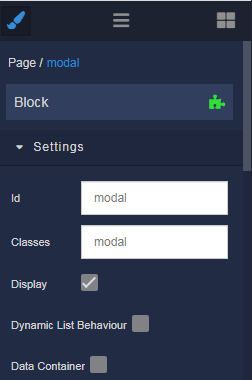

When creating elements, immediately assign ID and Classes according to this structure:

As a result, you should get something similar to this:

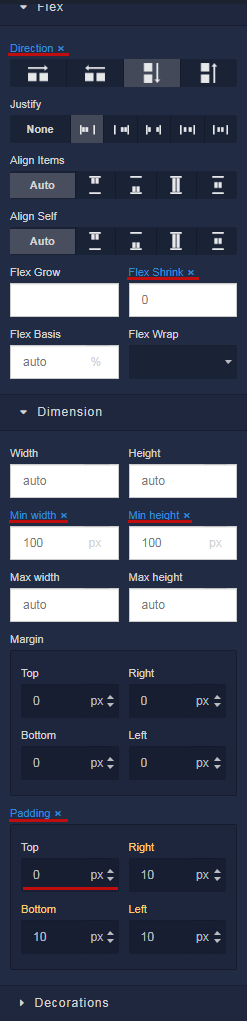

After you create the entire structure of the component, you need to reset all settings for the Block elements. To do this, you need to delete all the selected properties. Later, we will indicate the necessary ones through the styles. The Padding property is set to 0 and then reset in the same way.

##Styles

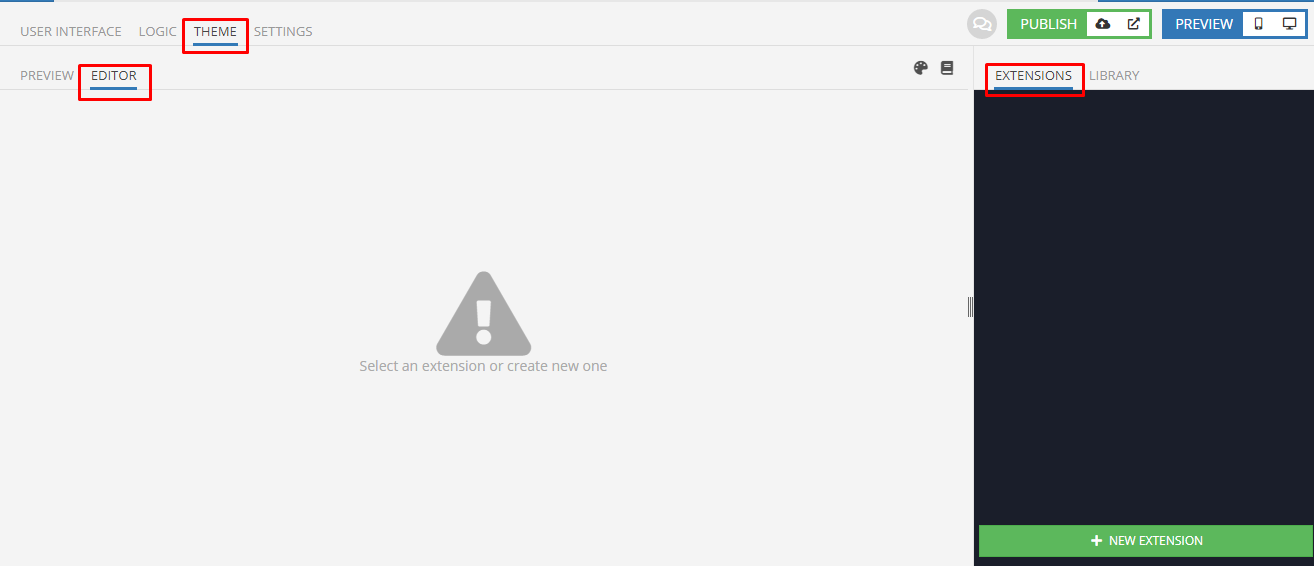

To create styles, switch to the Theme tab. Inside the page, select the Editor tab and then Extensions.

Now we’ll create Extensions. You can change the names as you like.

Extension `MxModal` is a LESS-mixin in which the basic styles of the component are taken out for ease of multiple use. Edit only if you know what you are doing!

[Learn more about CSS LESS in UI Themes.](https://backendless.com/how-to-add-customized-styles-to-your-app/)

```

.mx-modal {

display: none !important;

position: fixed !important;

top: 0 !important;

bottom: 0 !important;

left: 0 !important;

right: 0 !important;

z-index: 1000 !important;

flex-direction: column !important;

justify-content: center !important;

align-items: center !important;

width: 100% !important;

height: 100% !important;

padding: 0 15px !important;

&.open {

display: flex !important;

}

@media (min-width: 768px) {

padding: 0 !important;

}

}

.mx-modal__curtain {

position: fixed !important;

top: 0 !important;

bottom: 0 !important;

left: 0 !important;

right: 0 !important;

z-index: -1 !important;

background-color: rgba(0, 0, 0, 0.7);

width: 100% !important;

height: 100% !important;

}

.mx-modal__card {

width: 100% !important;

@media (min-width: 768px) {

width: 600px !important;

}

}

```

The Extension `Modal` contains the general styling of the component on the page according to your project. The most important thing is to import mixins; any other properties can be adjusted as you like.

```

.modal__open {

width: 200px !important;

}

.modal {

.mx-modal();

}

.modal__card {

.mx-modal__card();

flex-direction: column !important;

justify-content: flex-end !important;

align-items: flex-end !important;

background-color: #fff;

height: 300px !important;

border-radius: 5px;

box-shadow: 0px 3px 1px -2px rgba(0, 0, 0, 0.20),

0px 2px 2px 0px rgba(0, 0, 0, 0.14),

0px 1px 5px 0px rgba(0, 0, 0, 0.12);

}

.modal__close {

width: 200px !important;

}

.modal__shadow {

.mx-modal__curtain();

}

```

##Logic

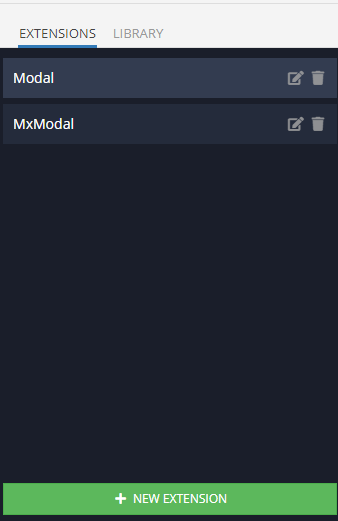

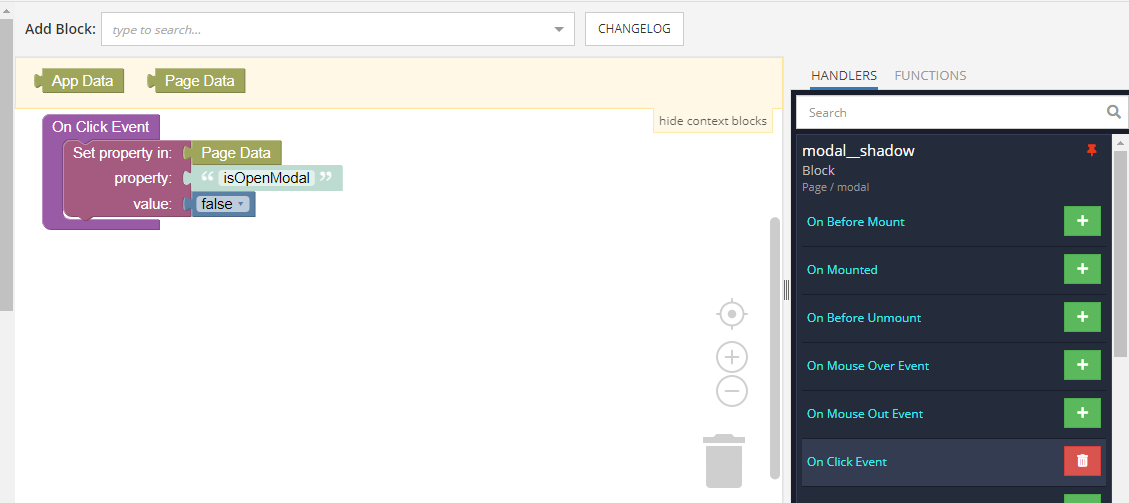

Let’s start adding [Codeless logic](https://backendless.com/features/backendless-core/codeless) from the root Page element. To do this, we return to the User interface tab, select the Page element and click on the puzzle icon as in the screenshot.

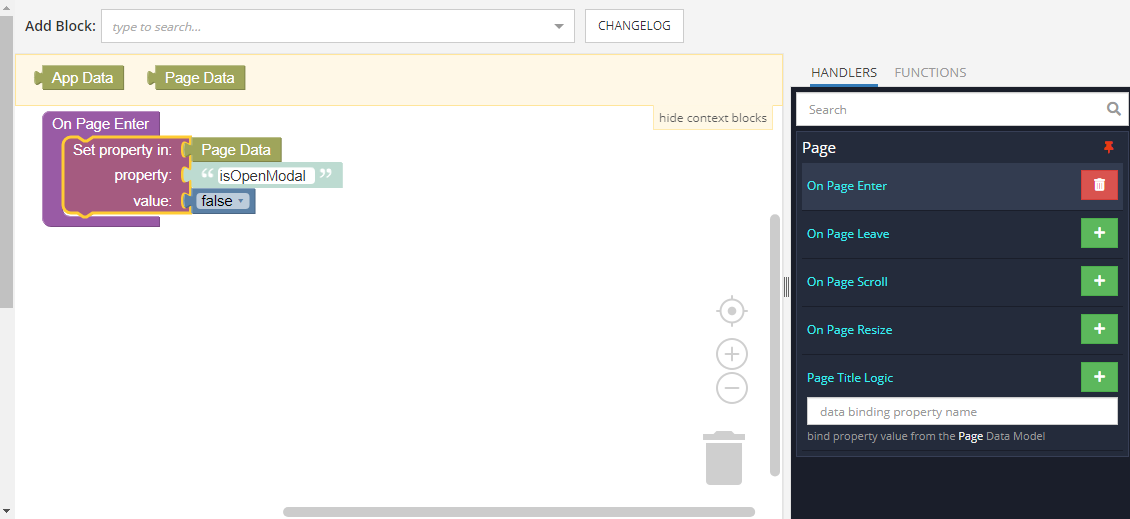

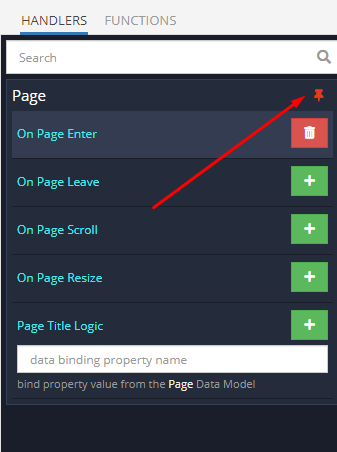

In the Logic tab that opens for the Page element, we hang the logic on the On Page Enter event as in the screenshot. This will create a global modal state variable `isOpenModal` for the entire page. We set the value to `false`, which in our logic will define a closed modal window.

If you want to use several different modals, add a unique variable for each window.

In order not to switch between tabs to select the following items, we will use the navigator. To do this, unpin the Page element by clicking on the button icon.

Now we add logic for the rest of the elements.

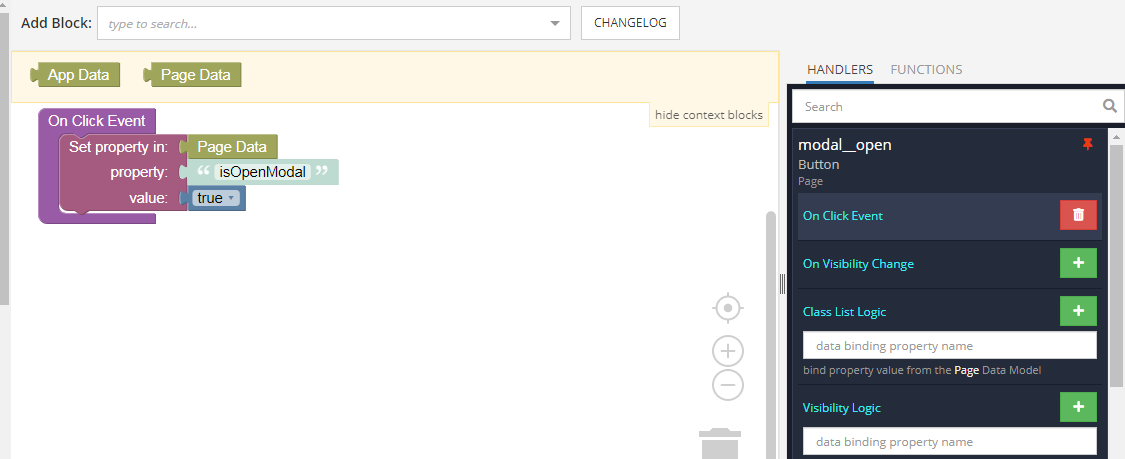

On the window open button, use the On Click Event. Set the `isOpenModal` variable to `true`.

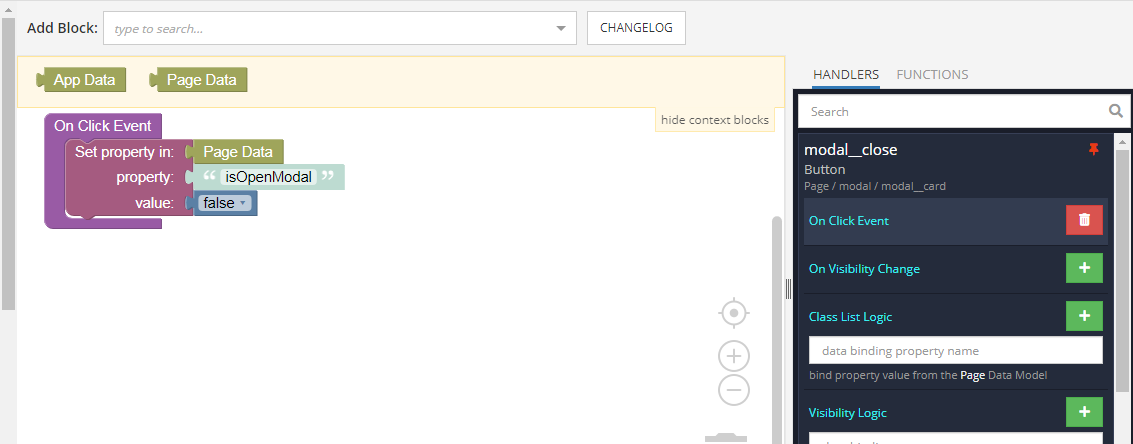

Similarly, add a handler for the On Click Event for the close button and shade curtain.

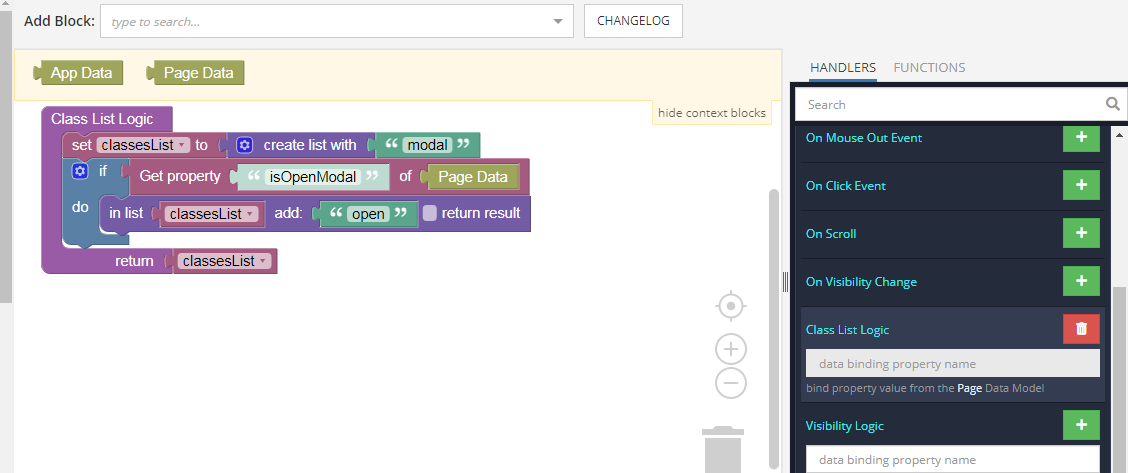

Now, all that remains is to add logic to the element with the `modal` class. For this, we use the Class List Logic event. Here, depending on the value of the `isOpenModal` variable, the open class is added or removed.

That’s all there is to it! We hope that you found this useful and, as always, happy codeless coding! | backendless |

715,267 | Twitch EventSub - The Direct Approach to Getting Started With It | Start Here You want to write something to react to Twitch Events. You look at the docs, an... | 0 | 2021-06-02T05:51:08 | https://dev.to/wyhaines/twitch-eventsub-the-direct-approach-to-getting-started-with-it-3dcj | tutorial, crystal | # Start Here

You want to write something to react to Twitch Events. You look at the docs, and maybe it is a little confusing. You see a table of contents that looks like this:

Authentication

Twitch API

EventSub

PubSub

If you click around a little, you might get more confused. There are a lot of details, and there seems to be more than one way to do things, at least in some cases, and it is easy to lose track of the thread of things amidst all of the information.

You might look at PubSub, and you might think, "Wow! This seems very simple and straightforward!"

You would be right. It is. Then you might discover that it only provides access to a small percentage of the Twitch events, and things that you really want, like *channel.follow* notifications, are not available.

Eventually, you look more at EventSub. This is the future. This is what everyone should be using:

"Awesome!" you think. "Let's dive in and....wait, how do I use this?"

To use it, you have to subscribe to events. And to subscribe to events, you have to have authorization. And to be authorized...there are no links there, but in the left menu there is an *Authentication* link, so you click there, and...

OK, more steps. Registering the app. Then getting a token. Only there are 5 different kinds. Which one is needed? OK, and how do I get that? And how do I use it once I have it?

You get the picture. The details are all there, in the documentation, but it is a maze of twisty little passages, and you may spend a lot of time flipping from one page to another to piece it all together.

Fear not. I've done the flipping. I've got your back.

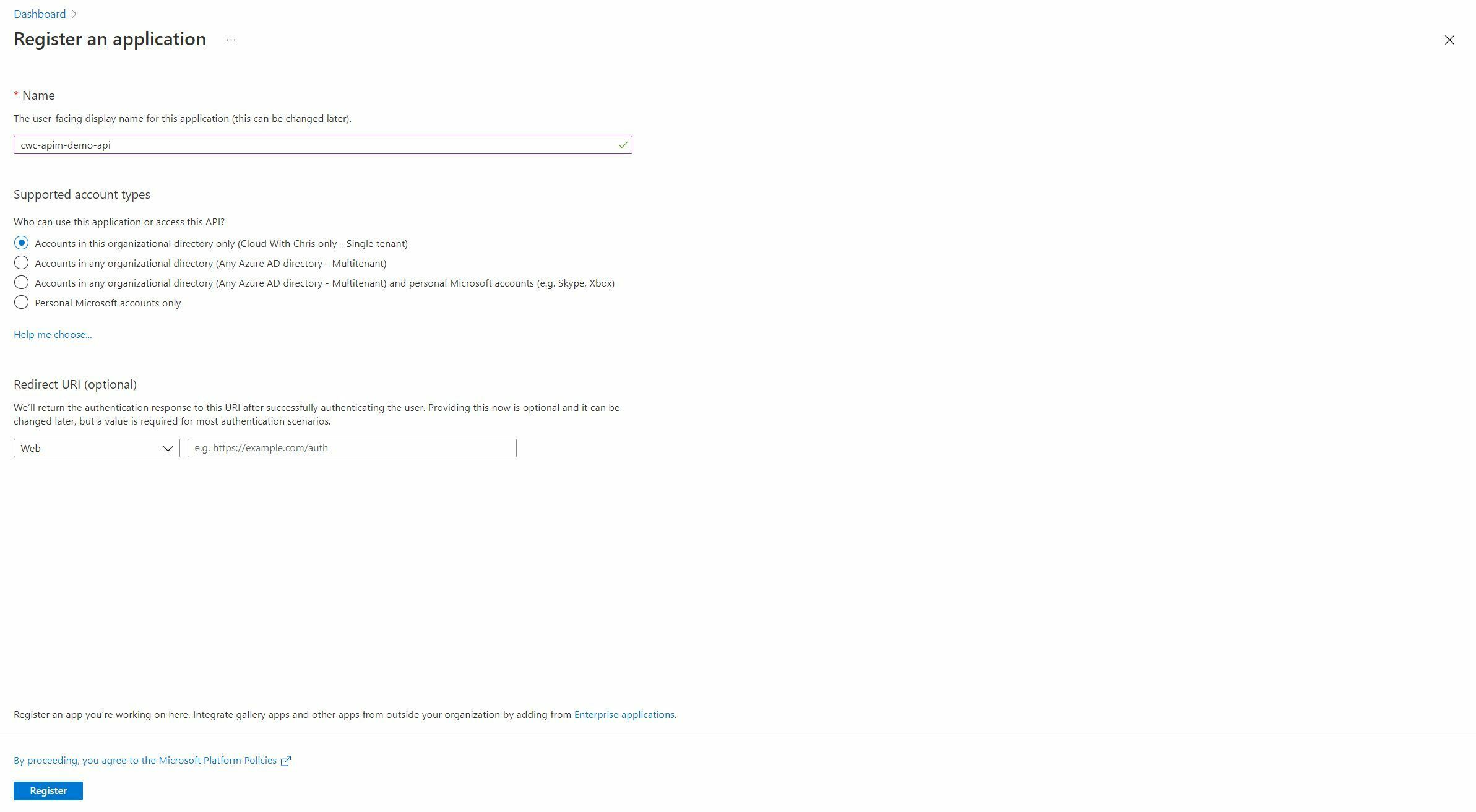

## Step 1 -- You need to register your app

First things first -- app registration. In order for your app to interact with eventsub, Twitch wants to know about it as a unique entity. So, you need to register it.

Go to [https://dev.twitch.tv/console](https://dev.twitch.tv/console). On the right of the page, there should be a section labeled *Applications*. Click on the  button.

In the form that is on the next page, you have to provide a few elements of information.

Give your app a name.

Unless you know with certainty what this is or will be, just put `https://localhost` in, and press the *Add* button.

Finally, select an appropriate category for your app, and then press the *Create* button.

You will be taken to the Apps Console, where you will see something like this:

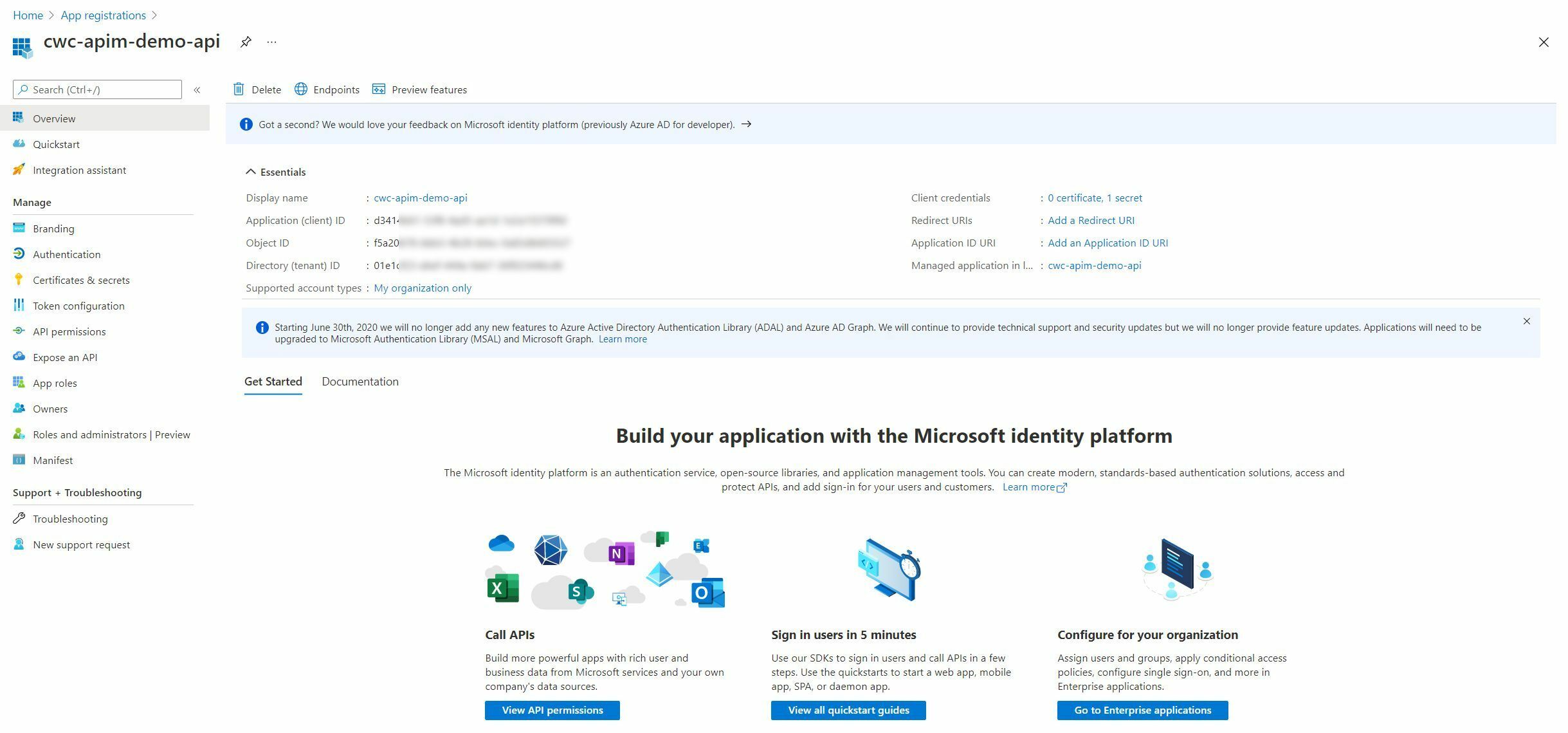

The next step is to click the *Manage* button on your newly created app. You will be taken back to a page that looks just like the one where you created the app, except that it has a few extra bits of information at the bottom:

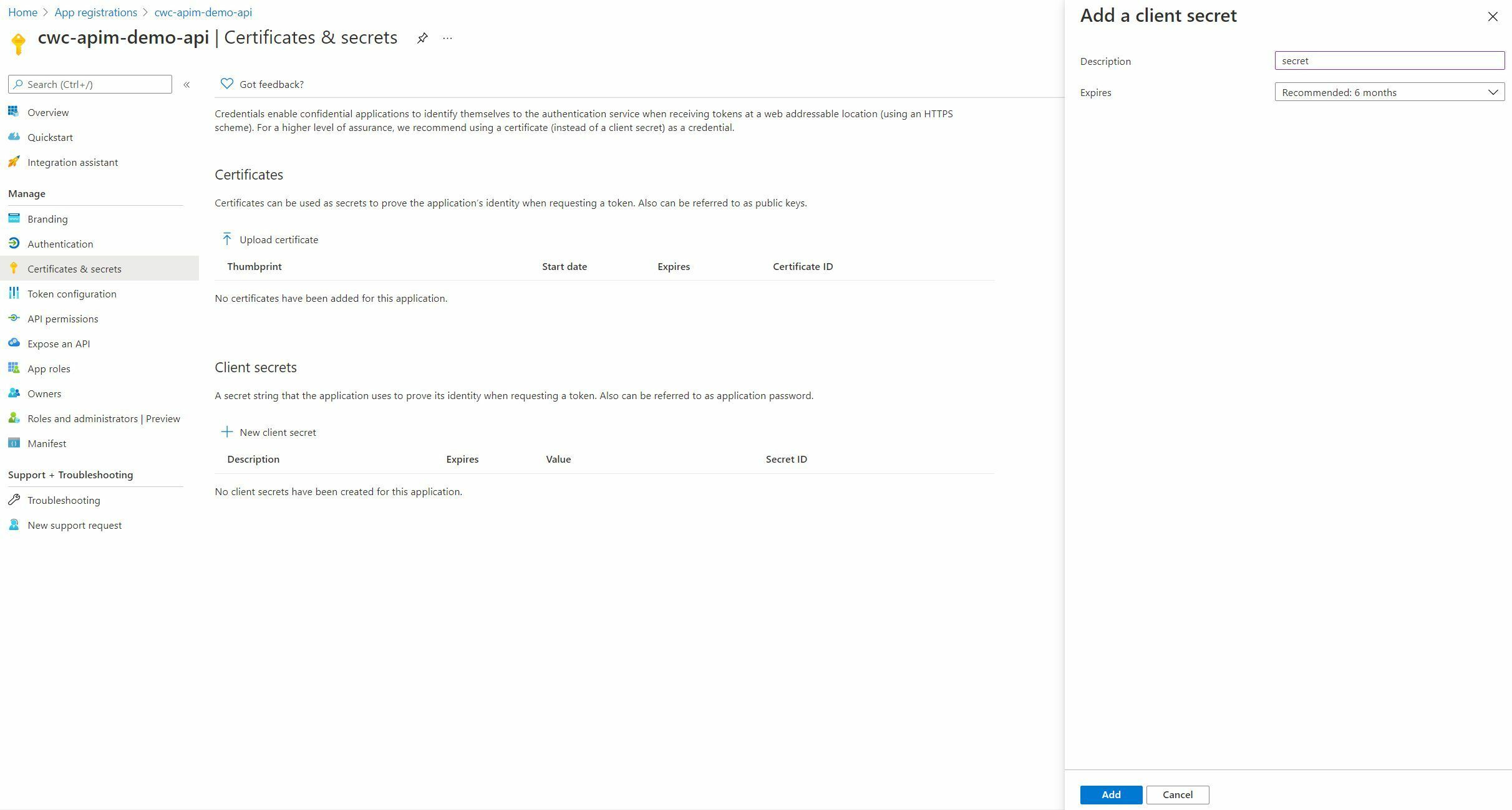

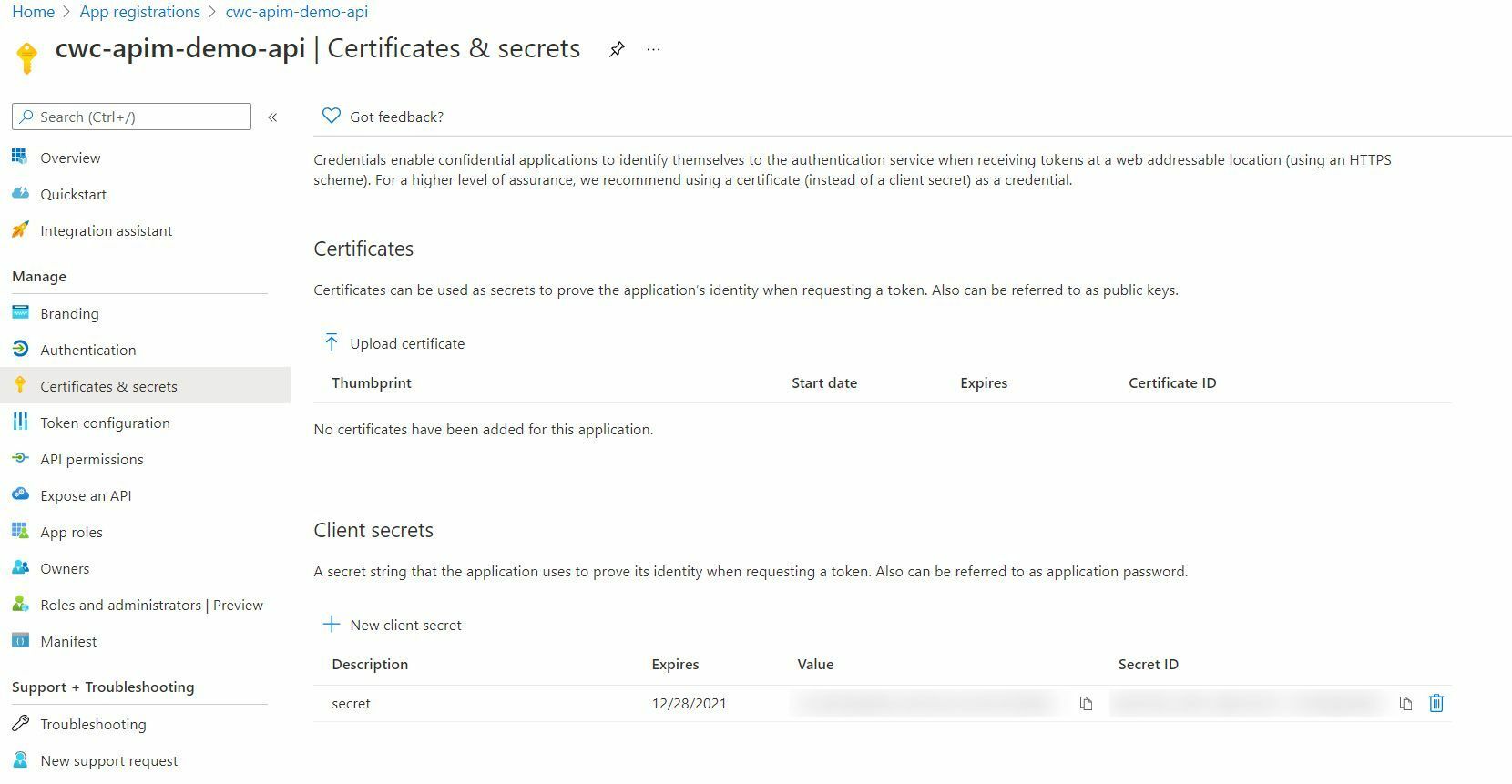

The Client ID is public information. There is no need to hide that. The application secret, however, is, well, secret. It will be generated when you press the  button. Take note of the string of characters that are revealed when you press that button, and save it somewhere else for later use. You can not see it again once you leave the page, so if you lose the secret, you will have to regenerate a new one, which expires the old one.

See? This is easy so far.

The next thing that you need to do is to generate an access token for your application, using the *Client-ID* and *Client-Secret* that you have just generated.

## Step 2 -- Generate your access token

The next step is to generate your access token. There are five different types of access token, but the one that is needed for EventSub is the [OAuth Client Credentials Flow](https://dev.twitch.tv/docs/authentication/getting-tokens-oauth/#oauth-client-credentials-flow) token type.

This type of token is an Application Access Token, intended only for server-to-server API requests, which is exactly what is needed for EventSub activities.

To get your very own shiny, new Application Access Token, you need to make a POST request to the Twitch API. The documentation detailing what is needed is in the link above, but it has the potential to be a little bit confusing.

HTTP *GET* requests pass extra parameters within the query string of the URL.

`GET /foo?param1=abc¶m2=123`

HTTP *POST* requests typically pass parameters within the body of the HTTP request. The examples show a *POST* being done with the data in the URL as a Query String.

While this is not illegal per the HTTP spec, it is not typical, nor is it required when working with the Twitch API.

If you want to generate an access key manually, you can do this using `curl`:

```bash

curl -d client_id=$TWITCH_CLIENT_ID \

-d client_secret=$TWITCH_CLIENT_SECRET \

-d grant_type=client_credentials \

https://id.twitch.tv/oauth2/token

```

If your *Client-ID* and your *Client-Secret* are stored in a couple of environment variables, *TWITCH_CLIENT_ID* and *TWITCH_CLIENT_SECRET*, the above should work from most unix-like command lines.

What is returned is one of two things. If either your *Client-ID* is invalid, there will be an error like this:

```json

{"status":400,"message":"invalid client"}

```

If the *Client-ID* is valid, but the *Client-Secret* is invalid, the error will look like this:

```json

{"status":403,"message":"invalid client secret"}

```

If both are valid, the result will be returned in JSON something like this:

```json

{"access_token":"q3b5n90ua7du0mgpwl149ge2yf90r0","expires_in":4776914,"token_type":"bearer"}

```

The value for the `access_token` key is your golden ticket. It is what permits you to access the rest of the EventSub API.

If you want or need your software to be able to generate an access key at will, though, you will need to issue the request and receive the response programmatically. The details of this may vary considerably depending on your programming language, but maybe I can help with a few examples:

### Ruby

```ruby

require "uri"

require "net/http"

require "json"

uri = URI("https://id.twitch.tv/oauth2/token")

response = Net::HTTP.post(

uri,

{"client_id" => CLIENT_ID,

"client_secret" => CLIENT_SECRET,

"grant_type" => "client_credentials"}.to_json,

{"Content-Type" => "application/json"})

access_code = JSON.parse(response.body)["access_token"]

```

### Javascript

The Javascript example assumes the use of *Fetch*.

```javascript

const Url = "https://id.twitch.tv/oauth2/token"

const Data = {

client_id: CLIENT_ID,

client_secret: CLIENT_SECRET,

grant_type: "client_credentials"

}

const Params = {

headers: { "Content-Type": "application/json" },

body: Data,

method: "POST"

}

let access_token = ""

fetch(Url, Params)

.then(response => {access_token = response.json()["access_token"]}

```

### Crystal

```crystal

require "http/client"

require "json"

response = HTTP::Client.post(

url: "https://id.twitch.tv/oauth2/token",

headers: HTTP::Headers{ "Content-Type" => "application/json" },

body: {

"client_id" => CLIENT_ID,

"client_secret" => CLIENT_SECRET,

"grant_type" => "client_credentials"}.to_json)

access_token = JSON.parse(response.body)["access_token"].as_s

```

### Bash

The Bash example assumes that the [jq](https://stedolan.github.io/jq/) utility is installed.

```bash

DATA=`curl -d client_id=CLIENT_ID \

-d client_secret=CLIENT_SECRET \

-d grant_type=client_credentials \

-s https://id.twitch.tv/oauth2/token`

ACCESS_TOKEN=echo $DATA | jq .access_token

```

Once you have a valid *access token*, the world is your oyster. The rest of the Twitch EventSub API is accessible.

## A Quick Note About the EventSub API URL

The sections that follow provide examples for how to perform each of the EventSub management actions, and an astute reader will note that the URL for each of the sections is the same. All EventSub API actions operate through the same API URL:

`https://api.twitch.tv/helix/eventsub/subscriptions`'

What differentiates the different types of actions that can be performed with EventSub requests are the HTTP verbs that are used to perform the request and the payload that accompanies it.

## Listing Subscriptions

The EventSub API provides a mechanism to see what subscriptions a client currently has and their status. This is important, because there is a limited number of subscriptions allowed per client (10000), and even failed subscription requests count against that limit. This makes it important to monitor all current subscriptions so that failed or unneeded subscriptions can be deleted.

To access a list of subscriptions, a *GET* request must be issues to *https://api.twitch.tv/helix/eventsub/subscriptions*. This request should provide *Client-ID* and *Authorization* parameters, where the value of the *Authorization* parameter is the access token generated earlier, with `Bearer ` prepended to it:

```

Authorization: Bearer deadbeefdeadbeef

```

With a valid access token, the response will be a JSON payload with a `data` field containing a list of subscriptions, along with some fields showing the limit on the number of subscriptions, as well as how many total subscriptions the client has.

A full specification for this API request can be found at [https://dev.twitch.tv/docs/api/reference#get-eventsub-subscriptions](https://dev.twitch.tv/docs/api/reference#get-eventsub-subscriptions).

## Creating a Subscription

This is the most complex operations when dealing with EventSub, as subscription creation also involves a verification step that allows Twitch to validate that the callback that was given in the subscription request is owned by the client that requested it, as well as a signature validation to allow the client to verify that the Twitch verification request is itself valid.

#### Step 1 -- Request a subscription

A subscription request is initiated by sending a *POST* request to *https://api.twitch.tv/helix/eventsub/subscriptions* with the following HTTP Headers:

```HTTP

Client-ID: CLIENT_ID

Authorization: Bearer ACCESS_TOKEN

Content-Type: application/json

```

Within the body of the request, a JSON payload with four keys, `version`, `type`, `condition`, and `transport` is expected. Each should have a value as follows:

* **version** : Currently, this is always `1`.

* **type** : This is the [name of the event](https://dev.twitch.tv/docs/eventsub/eventsub-subscription-types) to subscribe to.

* **condition** : This is an object which itself will have a single key, `broadcaster_user_id`, which contains the numeric user id of the account that is requesting the subscription.

* **transport** : This is an object with three keys, `method`, `callback`, and `secret`, which are used to specify the transport mechanism for Twitch to send event information. Currently only `webhook` is supported for the `method` key, though the documentation alludes to plans to support others in the future. The `callback` will be the URL that Twitch will contact when the subscribed-to-event occurs, and the `secret` should be a 10 to 100 character secret value unique to this subscription.

The secret will be used to validate the subsequent subscription request verification, so your code must remember what it sent as the secret for this subscription.

The whole package to issue a request for a *channel.follow* subscription will look something like this:

```json

{

version: "1",

type: "channel.follow",

"condition": {

"broadcaster_user_id": "12826"

},

"transport": {

"method": "webhook",

"callback": "https://example.com/webhooks/callback",

"secret": "abcdefghij0123456789"

}

}

```

#### Step 2 -- Receive a response indicating request status

In response to the subscription request, Twitch will send a JSON payload as a response. If the request was successfully received, the response from Twitch will be similar to that described above when listing subscriptions, except that the subscription contained in the *data* array will have a status of *webhook_callback_verification_pending*:

```json

"status": "webhook_callback_verification_pending"

```

This indicates that Twitch has received and will be verifying the subscription request.

#### Step 3 -- Receive verification

Twitch must verify that the callback provided in the subscription request belongs to the caller. To that end, it will contact the callback URL in order to initiate a verification exchange.

It will make a *POST* request to the callback URL. The handler for the callback URL must be able to recognize a Twitch verification request and respond appropriately. Twitch sets a number of custom HTTP headers on the request, several of which are particularly important:

* **Twitch-Eventsub-Message-Id** : This is a UUID representing the unique ID of this specific message. This will be used in *step 4*.

* **Twitch-Eventsub-Message-Timestamp** : The timestamp is also used in *step 4*

* **Twitch-Eventsub-Message-Type** : This is the message type. For a verification attempt, this will be set to `webhook_callback_verification`.

* **Twitch-Eventsub-Message-Signature** : This is a *HMAC-SHA256* message signature in the format of `sha256=4471d611ed1f44cf2fe1d7a462fc62`. This is used in *step 4*

* **Twitch-Eventsub-Subscription-Type** : This is the subscription type that is being verified. From the example above, this would be `channel.follow`.

In the body of the request will be a JSON payload that contains another copy of the subscription, in the same format that has already been discussed, along with a *challenge* key, and a value in the form of a random string of letters and digits separated into clusters by dashes. The *challenge* will be used after the request is validated, in *step 5*.

#### Step 4 -- Validate the request's *Message-Signature*

The *Twitch-Eventsub-Message-Signature* is calculated with *HMAC-SHA256* using the secret that was provided to Twitch in the original subscription request. It is a concantenation of the value of the *Twitch-Eventsub-Message-Id* and the *Twitch-Eventsub-Message-Timestamp* headers with the message body, signed using *HMAC-SHA256* with the aforementioned secret.

If the calculated signature does not match the signature that was provided in the *Twitch-Eventsub-Message-Signature* header, return a 403 status. If it does match, continue to *step 5*.

Your code will probably look something like this:

```crystal

calculated_signature = OpenSSL::HMAC.hexdigest(

OpenSSL::Algorithm::SHA256,

secret,

request.headers["Twitch-Eventsub-Message-Id"] +

request.headers["Twitch-Eventsub-Message-Timestamp"] +

request.body.gets_to_end

)

signature = request.headers["Twitch-Eventsub-Message-Signature"]

if signature != calculated_signature

response.respond_with_status(403)

else

# Yay! The Signature was verified. Continue with processing.

end

```

#### Step 5 -- Respond to the verification request with the *challenge*

At this point, your code will have validated Twitch's request for verification. The only thing that is left to do is to respond to the validation request.

As mentioned previously, the JSON payload in the body of the request will have contained a key *challenge*. The value for this key must be returned in a status code 200 response to the Twitch request, with nothing added or changed.

A sample of how this might look is as follows:

```crystal

body = request_body.gets_to_end

params = JSON.parse(body)

challenge = params["challenge"]?

if challenge

response.status_code = 200

response.write challenge.as_s.to_slice

response.close

end

```

#### Step 6 -- There is no step 6

At this point the subscription is active.

## Deleting a Subscription

At some point, you will want to delete a subscription, either because you no longer need the information, or because a subscription request failed, and you need to clear it out.

Deleting subscriptions is a straight forward process, fortunately.

Every subscription has a UUID that identifies it. This ID can be retrieved from the JSON response that is returned when subscriptions are listed. To delete a subscription, a *DELETE* request is sent to the *https://api.twitch.tv/helix/eventsub/subscriptions* URL, with *Client-ID* and *Authorization* headers, just as for listing subscriptions.

## Handling Notifications

When an event occurs for one of the active subscriptions, Twitch will send a *POST* request to the callback URL with the details. The headers will be the same as was discussed above for subscription verification, except that the *Twitch-Eventsub-Message-Type* header will have a value of `notification.

The JSON payload for the request will contain an object with two top-level keys, `subscription`, and `event`.

The value for the `subscription` key will contain a copy of the subscription that generated the event.

The value for the `event` key will contain an object that describes the event details. The precise values that will be available in this object depend on the event type. Please [refer to the Twitch documentation](https://dev.twitch.tv/docs/eventsub/eventsub-reference) in order to figure out what to expect.

It is expected that the notification will be verified in exactly the same manner that the subscription request was verified, by checking the *HMAC2SHA256* signature of the request before trusting it. If it is validated, it is expected that a 200 response will be sent back to Twitch to confirm that the event was received. If validation fails, it is expected that a 403 response (or other appropriate 4xx response) is sent to Twitch to indicate the validation failure.

If Twitch isn't sure that the event was received (such as a case where neither a 2xx nor a 4xx response are received in response to an event), Twitch may resend the event, so one's event handler must be able to cope if an event that was already received is received a second time.

## All Of The Details Are In The API Docs

The Twitch API documentation contains all of the above details, and while they are not presented in a linear fashion that is easy to implement-your-own-code from, they are all present in full details if one hunts enough.

Please use this as a guide to get yourself going, and then refer back to the Twitch documentation for full details, as in many places some of the details have been elided in order to keep this guide as direct and simple as possible.

---

I stream on Twitch for The Relicans. [Stop by and follow me at https://www.twitch.tv/wyhaines](https://www.twitch.tv/wyhaines), and feel free to drop in any time. In addition to whatever I happen to be working on that day, I'm always happy to field questions or to talk about anything that I may have written. | wyhaines |

715,451 | Kotlin monthly wrap up - May 2021 | Let me share with you 3 interesting articles from #kotlin from May 2021. I follow here the example... | 13,419 | 2021-06-01T20:22:01 | https://dev.to/jmfayard/the-3-most-interesting-kotlin-posts-of-may-2021-4i4g | kotlin, bestofdev, android | Let me share with you 3 interesting articles from [#kotlin](https://dev.to/t/kotlin/top/month) from May 2021.

I follow here the example from @sandordargo [for #c++](https://dev.to/sandordargo/the-3-most-interesting-c-posts-of-may-2021-1974), and like him, it's not a popularity contest, I add subjectivity to the list yet try not to include multiple articles from the same author.

This month, it's all about Jetpack Compose!

----

@zachklipp does a deep dive inside Compose reactive state model. He takes an historical look at how we used to write code with callbacks, what RxJava brought to the table and what questions were left open. He then shows how Jetpack Compose allows us to write fully reactive apps with less boilerplate and hopefully less cognitive overhead than we’ve been able to do in the past. Simple, clear code that is easy to read and understand will (usually) just work as intended. In particular, Compose makes mutable state not be scary anymore.

{% link https://dev.to/zachklipp/a-historical-introduction-to-the-compose-reactive-state-model-19j8 %}

---

@tkuenneth has started a cartography of Jetpack Compose. It's not a tutorial on how to use it, but an exploratory approach of what Jetpack Compose is made of. What belongs to the runtime? What is in the compiler? What is inside the foundation?

{% link https://dev.to/tkuenneth/cartographing-jetpack-compose-compiler-and-runtime-1605 %}

---

So much for the theory, here goes some coding. Sebastian Aigner from @kotlin tells us how he built a small clone of the classic arcade game Asteroids with Jetpack Compose for Desktop, a port from JetBrains to MacOS/Windows/Linux. Impressively the code is only 300 lines of code and was written in one night!

{% link https://dev.to/kotlin/how-i-built-an-asteroids-game-using-jetpack-compose-for-desktop-309l %}

---

Happy coding, feel free to share your favorite one in the comments.

| jmfayard |

715,722 | How To Deploy Django App To Heroku- The Simple Way | Have you ever tried uploading your django app to heroku but felt it was too complicated? If so, in... | 0 | 2021-06-02T14:06:14 | https://dev.to/rabbilyasar/how-to-deploy-django-app-to-heroku-the-simple-way-21mh | django, heroku, github, git | Have you ever tried uploading your django app to heroku but felt it was too complicated? If so, in this segment we will look at how you can upload your app to heroku. Don't worry, it is going to be short and to the point.

For this tutorial, I will be assuming you have an app built, so the next step you will be delving into, is on how to deploy your app to heroku.

Keeping that in mind, let's dive in and see how we can setup the app.

## Prepare your app

### Requirements.txt

If you are already working in a `virtualenv` you can easily run to create your `requirements.txt` file.

```

pip freeze > requirements.txt

```

If you want to manually write the version of the package just go to pypi website and find the latest version.

It should look something like this:

```

asgiref==3.3.4

Django==3.2.3

gunicorn==20.1.0

Pillow==8.2.0

pytz==2021.1

sqlparse==0.4.1

django-heroku==0.3.1

whitenoise==5.2.0

```

### Procfile

- Create a file with name `Procfile` (Make sure to not have any extension). The `Procfile` should be in the same directory as your `manage.py` file.

- Install gunicorn.

```

pip install gunicorn

```

- Make sure to add gunicorn to your `requirements.txt` file.

- Add the following line to your `Procfile`. The app name is basically the folder name where you have your `wsgi.py` file.

```

web: gunicorn <app_name>.wsgi

```

### settings.py

- Next we will be installing a package called `django-heroku`, after installing make sure to add it on the `requirements.txt` file.

```

pip install django-heroku

```

- Now go to your `settings.py` and import it on top.

```

import django_heroku

```

and paste this at the bottom of the file or else you are going to get a `KeyError`.

```

django_heroku.settings(locals())

```

### Django Static

- Now to setup the static assets.

```python

BASE_DIR = os.path.dirname(os.path.dirname(os.path.abspath(__file__)))

# Static files (CSS, JavaScript, Images)

# https://docs.djangoproject.com/en/1.9/howto/static-files/

STATIC_ROOT = os.path.join(BASE_DIR, 'staticfiles')

STATIC_URL = '/static/'

```

This will basically tell django where to look for the static files and which folder to look for when `collectstatic` is run.

- Install WhiteNoise and update your `requirements.txt` file.

```

pip install whitenoise

```

- Next, install WhiteNoise into your Django application. This is done in settings.py‘s middleware section (at the top):

```python

MIDDLEWARE_CLASSES = (

# Simplified static file serving.

# https://warehouse.python.org/project/whitenoise/

'whitenoise.middleware.WhiteNoiseMiddleware',

...

```

- Finally, if you would like gzip functionality enabled, also add the following setting to settings.py.

```python

# Simplified static file serving.

# https://warehouse.python.org/project/whitenoise/

STATICFILES_STORAGE = 'whitenoise.storage.CompressedManifestStaticFilesStorage'

```

We will need to create one more file called `runtime.txt`. This will tell heroku which version of python needs to be installed. This step is optional because heroku will use a python version automatically when building but if you want a specific python version you can add it like this.

```

python-3.8.5

```

That is it for configuring our app for deployment. Now we go to the heroku cli and see how to upload the app.

## Deployment

If you don't have heroku installed on your machine follow this [link](https://devcenter.heroku.com/articles/heroku-cli)

- login to heroku.

```

heroku login

```

After successful login we will be able to create our app directly from the terminal.

- Create a heroku app

```

heroku create

```

This will create a heroku app with a random available name. However, if you want to give a name of your choice, just add the name after `heroku create`. Make sure the name is unique and available.

If you already have a heroku app and want to add the app as a Git remote, you need to execute

```

heroku git:remote -a <yourapp>

```

- Now add all the files to git and commit.

```

git add .

git commit -m "deploy heroku"

```

- Push all the files and build.

```

git push heroku master

```

Once done this will deploy your app. Once deployed, we will need to migrate our database.

```

heroku run bash

```

This will give us a quick terminal to control our app. Here you can run all your django commands.

```

python manage.py migrate

```

- This will migrate all our files to the database.

## Conclusion

That is all for now. If you have followed all the steps above you should have an app which is now running on heroku. If you have come across any issues please leave a comment or knock-me. I will try to help fix them.

Best of luck. Happy coding :D | rabbilyasar |

715,838 | Font Snag in my code | Hey Guys! Quick question.. I have run into a lil snag with my code and not sure what i'm doing wrong.... | 0 | 2021-06-02T09:45:05 | https://dev.to/mikacodez/font-snag-in-my-code-a7g | beginners, help | Hey Guys! Quick question.. I have run into a lil snag with my code and not sure what i'm doing wrong...

Is there any reason my h1 title is coming out as Comic sans instead of any other Google Fonts I try?? | mikacodez |

715,962 | flutter-doctor: Android Studio (not installed) | After installing Android studio and run "flutter-doctor" on windows terminal, it gives me an error sa... | 0 | 2021-06-02T10:47:55 | https://dev.to/thiagoanjos/fixed-android-studio-not-installed-1cao | After installing Android studio and run "flutter-doctor" on windows terminal, it gives me an error saying that: Android Studio ( not installed ). After some research, I found this solution that worked pretty well.

On terminal type: flutter config --android-studio-dir=<android studio path>

**Example on Windows:**

flutter config --android-studio-dir="C:\Program Files\Android\Android Studio"

**Example on Linux:**

flutter config --android-studio-dir="/snap/android-studio/current/android-studio"

| thiagoanjos | |

715,982 | Cryptography- Shift Cipher | Shift cipher is the most basic and simple substitution cipher ever existed. It was used by numerous k... | 0 | 2021-06-06T09:45:15 | https://dev.to/sirri69/cryptography-shift-cipher-2oki | python, security, cybersecurity, javascript |

Shift cipher is the most basic and simple substitution cipher ever existed. It was used by numerous kings and common people in ancient times, because of its simplicity. It is also known as the Caesar cipher as he used a generalized form of it known as ROT-13.

##How Shift Cipher Works:-

Shift cipher is nothing but substitution of letters to their left or right by some specific number of letters, and that number of letters will be known as the key of the cipher. Once the key is decided and the plaintext is encrypted to ciphertext, we can send the cipher text to whomever we want. If he/she will have the key, then he/she will easily decrypt the message and read it and can reply to that encrypting the message with the same key.

##Encrypting the text using Shift Cipher

1. Select a key, if you want to shift your letters to right, choose a number which is positive, a negative number will result in shifting of letters to left side.

2. Once the key is selected, convert the letters to their respective numeric positions, where A->1, B->2 and so on.

Now apply the given formula to every number:-

```

C=(P+K)%26

```

Where P is your plaintext converted to numeric positions, K is the key and C is the numeric positions of the letters in ciphertext.

Now convert the numeric positions of ciphertext (C) to alphabets according to 0->Z, 1->A so on, and you have your plaintext encrypted!!!

Example:-

Let our plaintext to be:-

“ ”

Then numeric positions of our plaintext will be:-

| k | i | l | l | t | h | e | k | i | n | g | t | o | n | i | g | h | t |

|----|---|----|:--:|:--:|---|---|----|---|----|---|----|----|----|---|---|---|----|

| 11 | 9 | 12 | 12 | 20 | 8 | 5 | 11 | 9 | 14 | 7 | 20 | 15 | 14 | 9 | 7 | 8 | 20 |

Let our key be 7, after using the formula for encryption, the number will look like:-

| 11 | 9 | 12 | 12 | 20 | 8 | 5 | 11 | 9 | 14 | 7 | 20 | 15 | 14 | 9 | 7 | 8 | 20 |

|:--:|:--:|:--:|:--:|:--:|:--:|:--:|:--:|:--:|:--:|:--:|:--:|:--:|:--:|:--:|:--:|:--:|:--:|

| 18 | 16 | 19 | 19 | 1 | 15 | 12 | 18 | 16 | 21 | 14 | 1 | 22 | 21 | 16 | 14 | 15 | 1 |

And finally, converting the numbers back to letters will give us our ciphertext,

| 18 | 16 | 19 | 19 | 1 | 15 | 12 | 18 | 16 | 21 | 14 | 1 | 22 | 21 | 16 | 14 | 15 | 1 |

|:--:|:--:|:--:|:--:|:-:|:--:|:--:|:--:|:--:|:--:|:--:|:-:|:--:|:--:|:--:|:--:|:--:|:-:|

| R | P | S | S | A | O | L | R | P | U | N | A | V | U | P | N | O | A |

Letters after conversion are :-

> 'RPSSAOLRPUNAVUPNOA'

The gibberish above is ciphertext, it is often written without any spaces to add complexity to the ciphertext.

##Writing Python code for Shift Cipher

Writing code for this cipher is really easy, a one liner, some might say. Here's the code:--

```py

def shift_encrypt(plain_text: str, key: int):

cipher_text = [] # stores encrtypted text

plain_text = plain_text.lower().replace(' ','') # reduce complexity

for i in list(plain_text):

cipher_text.append(chr((ord(i)-97 + key)%26 + 97)) # real encryption happens here

return "".join(cipher_text)

```

The code up here is pretty self explanatory, except a few lines. Let's go through all the lines on by one.

```py

cipher_text = [] # stores encrtypted text

plain_text = plain_text.lower().replace(' ','') # reduce complexity

```

These two lines define a list names `cipher_text` to store the text after encryption and reduces the complexity of the plain text by converting the text to lower case and removing all the whitespaces.

Then comes the most important line in the code:-

```py

cipher_text.append(chr((ord(i)-97 + key)%26 + 97))

```

First of all, this line of code converts the letters to their ASCII representation using the `ord` function, which means a will become 97, b will become 98, and so on.

Then it will subtract 97 from the ASCII code, which will convert 97 to 0, hence placing 'a' at 0th position and placing 'z' at 25th position. This is done to simplify the operation of shifting.

After that is done, we proceed and add the `KEY` to shift and actually encrypt the data.

Then we do `mod 26` because after subtracting 97 our alphabets lies from 0 to 25, and doing `mod 26` makes sure that nothing goes out of range of our alphabets. 97 is added in end to convert the the shifted numbers back to their ASCII representations.

After this much is done, we convert the shifted ASCII codes back to characters using the `chr` function. And the encryption is done.

You can see that this line is inside a for loop, that is to ensure that the transformation in this line of code is applied to every single letter of the `plain_text`.

In the end,

```py

return "".join(cipher_text)

```

We convert the encrypted characters to a string and return it.

Decryption part of the cipher is also pretty much the same, except a small thing.

```py

def shift_decrypt(cipher_text: str, key: int):

plain_text = []

cipher_text = cipher_text.lower().replace(' ','')

for i in cipher_text:

plain_text.append(chr((ord(i)-97 - key)%26 + 97))

return "".join(plain_text)

```

Instead of adding `KEY`, we subtract it this time to perform a inverse of the shift operation we did in the encryption process.

You can try the code here:--

(JUST CLICK ON THE RUN(>) BUTTON)

{% replit @PranavPatel4/CryptoCode %} | sirri69 |

716,131 | Python on the ev3dev framework | Introduction: Recently, I have been working on a project to use Python on the ev3dev platf... | 0 | 2021-06-02T15:27:23 | https://dev.to/colewilson/python-on-the-ev3dev-framework-4ali | python, ev3, robotics, linux | ---

title: Python on the ev3dev framework

published: true

date: 2020-07-20 21:37:53 UTC

tags: python,ev3,robotics,linux

canonical_url:

---

## Introduction:

Recently, I have been working on a project to use Python on the ev3dev platform. This is useful, because you can write complex programs for your robots. For example, you could [connect the ev3 to a PS4 controller.](https://by-the-w3i.github.io/2018/01/03/EV3-PS4-controller/) For me, I was working on a SumoBot, a robot that battles with another, and tries to push it out of a ring. However, this approach will work with an sort of robot.

## Overview:

In this tuorial, I will show you how to:

- Download the ev3dev operating system for your brick.

- Connect your brick to your Windows or Mac computer.

- Setup PyCharm on your computer.

- Connect to a GitHub repo.

- Sync the code to your brick.

## Materials:

You will need the following:

- A Windows or Mac with a working Internet and Bluetooth connection.

- An ev3 brick with various sensor and motors.

- A 2-16gb SD card.

- A way to put the SD card into your computer.

## 1: Getting started:

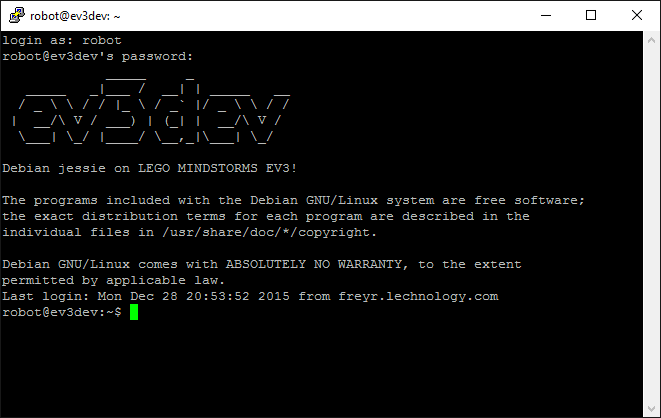

1. First, you will have to download the ev3dev boot image for the ev3 brick. This enables it to work with the Python programming language. You can find the image here.

2. Unzip the file, and move the contents to somewhere where you can easily access them.

3. Next, flash the image onto your SD card.

> **Note:** to do this, use a program like Etcher, or Rufus, or use the command line. Make sure that you select the correct disk image.

4. Insert the SD card into the slot in the brick. Make sure that your brick is off.

5. Next, turn on your brick. It will flash wierdly and show lots of text. If it doesn’t show a menu screen in 15 minutes, repeat steps 4-5.

6. Next, we need to connect the brick to your computer.

7. [View this website for instructions on how to connect your brick to the computer.](https://www.ev3dev.org/docs/tutorials/connecting-to-the-internet-via-bluetooth/)

8. Connect to your computer with ssh:

- If you have a Windows computer download PuTTy, and launch it. Next type in `ev3dev.local`

- Then, type `robot` as the username.

- If you have a Mac, then open Terminal, then type `ssh robot@ev3dev.local`

- Next type in `maker` as the password.

> Having an error message? Make sure your robot is on, and that you have connected the brick. Also, make sure you have the correct username and password.

- You have access to your brick’s inner workings! Try typing `fortune` to get a witty response from the computer.

- Your home folder is `/home/robot` this is where you are put when you connect to the brick.

> **Some basic commands:**

>

> - **cd {path}** : changes the current working directory to

> - **ls** : This shows all the files and folders in the current working directory.

> - **cat {file}** : This returns the contents of the specified file.

> - **nano {file}** : This opens an editor for the specified file. Press `ctrl+x` (`^x`)to save and exit.

> - **clear** : Clears the terminal.

> - **logout** : Logs out of remote connection.

> - **rm -rf {path}** : Deletes file or folder. - **python3 {file}** : Runs the specified python program.

- Right now, you can `nano` files into existence and run them from the terminal, but this is less than ideal, in the next section, you will set up PyCharm and Git.

## 2: Setting up a Development Environment:

1. [Download PyCharm community edition](https://www.jetbrains.com/pycharm/download/) (or the Pro version if you have it, it’s not needed.)

2. Open the file and install it to your computer.

3. If you have Windows, you have to configure Git, if you have Mac, you already have it installed, so skip to step 4.

- Download [git-scm for Windows](https://git-scm.com/download/win).

- Open and follow the install instructions with default options.

- Open PyCharm, and push `ctrl + alt + s` to open preferences.

- Goto `Version Control > git` and in the top file path put `C:\Users\<your username>\AppData\Local\Programs\Git\git.exe`

4. Now, [get a GitHub account](https://github.com/signup) if you don’t have one yet. They are free, and tremendously useful.

5. Goto [https://github.com/new](https://github.com/new) and create a new repository with the name `ev3`, and select the option to include a `README.md`.

6. Open PyCharm.

7. Goto `Checkout from Version Control` and go to the GitHub tab. Sign in, and select your `ev3` repository.

8. Now, you should have your code in PyCharm. As you can see, all that you have now is a file called `README.md`.

## 3: Adding Code to the Program.

1. First, in the upper lefthand corner, it will say your project name. Right click on it, and push Create New Python file.

2. Pycham should ask you if you wan to add the file to Git. You should do this, and select the option to automatically do this.

3. In the new file, type:

```

import time

print('The program is starting...')

time.sleep(2)

print('The program is ending.')

```

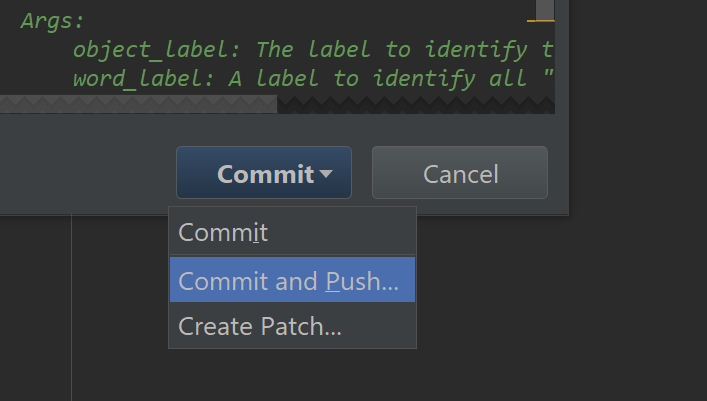

4. In the VCs tab in PyCharm, press commit changes.

5. Click the blue arrow next to the COMMIT button, and push `Commit and Push`.

6. Then push `Push`.

7. Your code is now on GitHub, but how to get it on the brick?

> You can view your code by going to https://github.com/{your-username}/ev3.

## 4: Adding code to the brick:

1. If you are on Windows, open Git-Bash from the start menu, if you have a Mac, open Terminal.

2. Type `git clone https://github.com/{ github username }/ev3.git`. This copies your code into a local file.

3. Next, type `sftp robot@ev3dev.local`, _notice it is not `ssh`_

4. Put in your password.

5. Now type `cd ~`, then `put ev3`

6. Now open up your SSH session. Type `ls` to view your files.

7. You should see a blue or green folder called `ev3`

8. Go into the folder by typing `cd ~/ev3`

9. Then, type `python3 main.py`

10. Your program takes a while to load, but then it runs!

## 5. Simplifying the process:

1. Every time you would like to add code to your robot, you will have to remove the old code from the robot, and then do all of sections 3 and 4 again.

2. We will simplify this process.

3. Close your terminal. (Either Git-Bash, or Terminal)

4. Open a new terminal.

5. Type nano `e.sh`

6. Add this code to the file:

```

echo "Starting..."

rm -rf ev3

git clone htttps://github.com/< username >/ev3

sftp robot@ev3.local:~ <<< $'put {ev3}'

echo "Done."

```

7. Push `^X` to save, and type `chmod + x e.sh`

8. Now, all you have to do is type `rm -rf ~/ev3` on the robot, and `sh e.sh` on the local computer to update the brick.

9. We can go even further and make custom commands on the brick!

10. On the brick, type `sudo nano /etc/bash/bash.rc` and enter your password.

11. Add this to the end of the file:

```

alias prep='rm -rf ~/ev3'

alias run='python3 ~/ev3/main.py'

```

12. Restart the brick.

## 6. So far…

Now, you write the program in Pycharm, and commit+push to GitHub. Then, on an SSH session, you type `prep` to clear the brick. Then, on your local terminal, type `sh e.sh`. On your SSH session, type `run`. | colewilson |

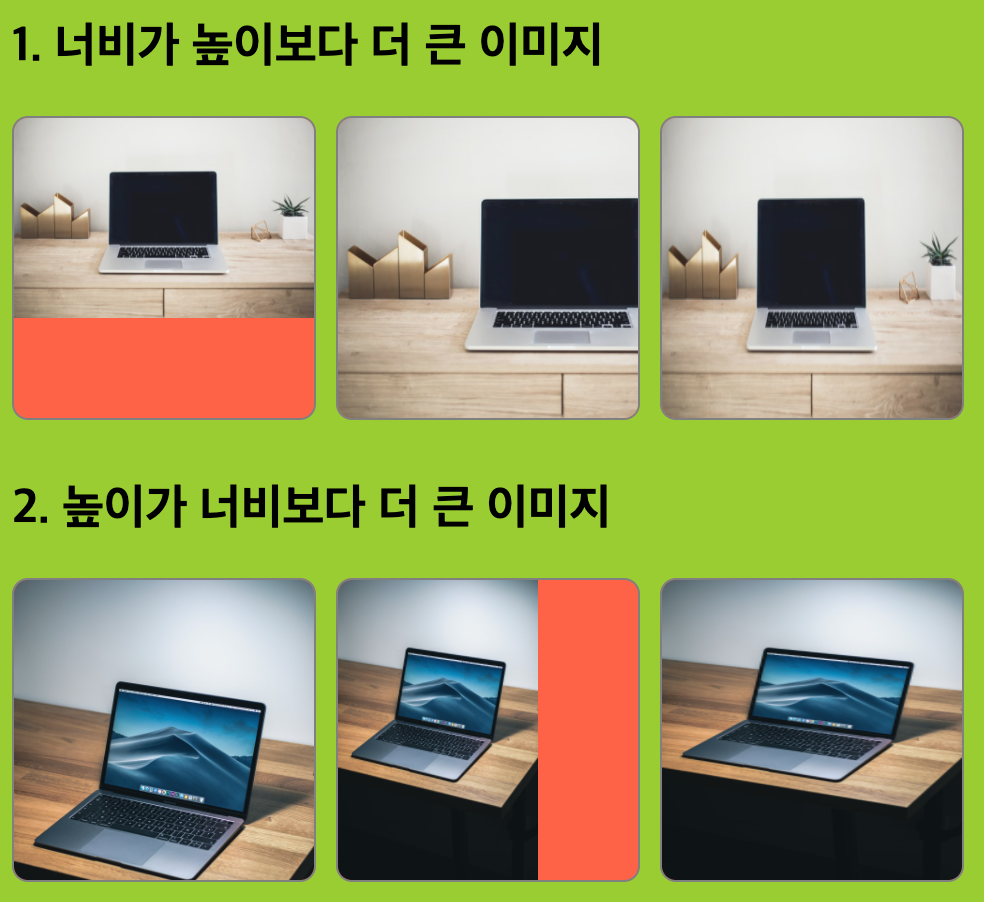

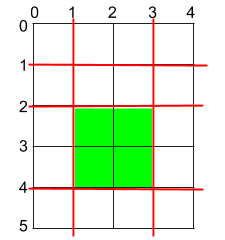

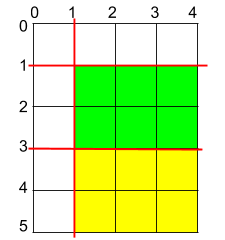

716,278 | CSS object-fit 속성 사용하기 | CSS object-fit 속성 사용하기 오늘은 CSS의 object-fit 속성을 사용해볼 것입니다. 최근 프로필 컴포넌트 개발 중 정사각형 컨테이너... | 0 | 2021-06-02T15:46:41 | https://dev.to/smilejin92/css-object-fit-mdg | html, css | # CSS object-fit 속성 사용하기

오늘은 CSS의 `object-fit` 속성을 사용해볼 것입니다.

최근 프로필 컴포넌트 개발 중 정사각형 컨테이너에 프로필 이미지를 표시해야하는 일이 있었습니다. 요구사항을 구체적으로 작성해보면 아래와 같습니다.

* 만약 이미지 크기가 컨테이너 크기보다 작거나 같다면, 컨테이너에 가득 채워서 표시

* 만약 이미지 크기가 컨테이너 크기보다 크다면, 해당 이미지의 정중앙 영역을 컨테이너 크기만큼 표시

바쁘신 분들은 [샌드박스 링크](https://codesandbox.io/s/css-object-fit-property-ubwgu)에 방문하여 소스코드를 먼저 살펴봐주세요.

## 1. width 100%; height 100%;

가장 먼저 시도해본 것은 이미지 전체를 컨테이너에 맞춰 표시해보는 것이었습니다. 아래 코드를 확인해주세요.

```html

<section>

<h2>1. 너비가 높이보다 더 큰 이미지</h2>

<div class="block">

<div class="img-wrapper">