id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

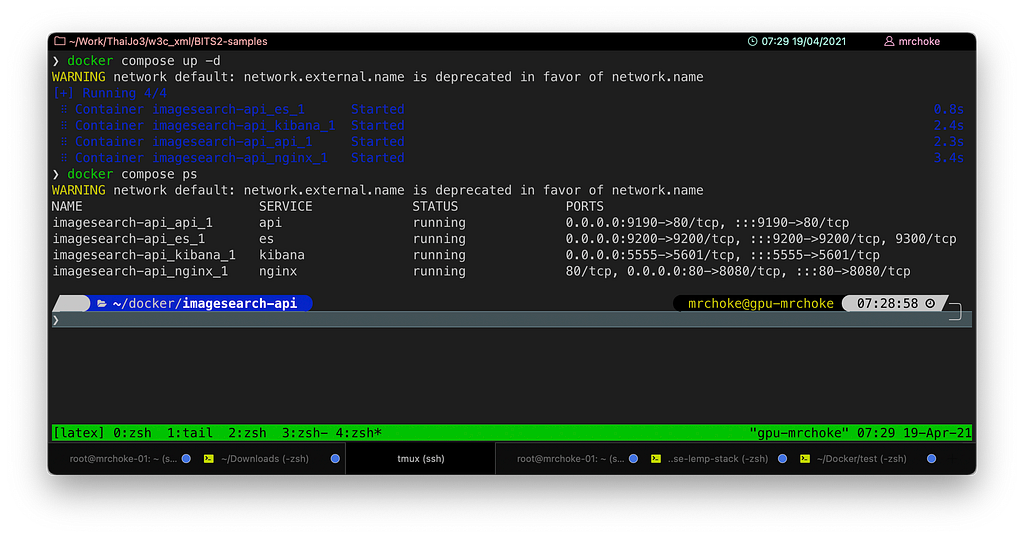

670,906 | docker compose not docker-compose | docker-compose กำลังจะถูก deprecate แล้วใช้ docker compose แทน มาดูว่าใช้ยังไงกัน สืบเนื่องมาจาก... | 0 | 2021-04-19T00:37:52 | https://dev.to/mrchoke/docker-compose-not-docker-compose-nph | composecli, ubuntu, dockercompose | ---

title: docker compose not docker-compose

published: true

date: 2021-04-19 00:33:40 UTC

tags: composecli,ubuntu,dockercompose

canonical_url:

---

docker-compose กำลังจะถูก deprecate แล้วใช้ docker compose แทน มาดูว่าใช้ยังไงกัน

สืบเนื่องมาจาก blog ของ **_คุณปุ๋ย_**

[https://www.somkiat.cc/docker-compose-to-docker-compose/](https://www.somkiat.cc/docker-compose-to-docker-compose/)

ทำให้เกิดอยากลองใช้บ้างซึ่งพบว่า

บน Docker Desktop macOS สามารถใช้คำสั่งนี้ได้เลย (ลองบน M1) เลยลองบน Ubuntu บ้างพบว่ายังใช้ไม่ได้ เลยตามไปสืบค้นดูพบว่า Project คือ

[docker/compose-cli](https://github.com/docker/compose-cli)

ทำมานานพอสมควรถ้าอ้างตามเอกสารนี้

[docker/compose-cli](https://github.com/docker/compose-cli/blob/main/INSTALL.md)

Docker Desktop macOS และ Windows จะถูก built-in ไปแล้วเลยใช้ได้ทันทีเหมือน buildx เลย

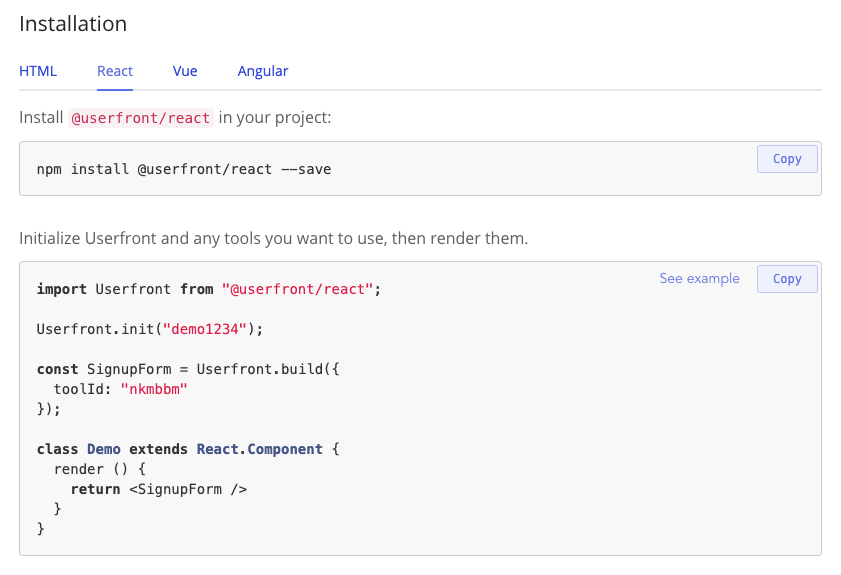

### Installation

ตาม link ด้านบนวิธีการติดตั้ง (ผมติดตั้งบน Ubuntu)

```

curl -L https://raw.githubusercontent.com/docker/compose-cli/main/scripts/install/install_linux.sh | sh

```

เมื่อติดตั้งสำเร็จจะมีคำสั่ง docker เพิ่มขึ้นมาใน path

```

/usr/local/bin/docker

```

ลองทดสอบดูว่าตอนนี้คำสั่ง docker ถูกเรียกจาก path ไหน

```

type docker

```

หรือ

```

which docker

```

ถ้ายังเรียกไปที่

```

/usr/bin/docker

```

ก็ให้ปิด shell แล้วเปิดใหม่ อ้อ!! ดูลำดับ path ของ PATH ด้วยนะครับว่า /usr/local/bin ต้องอยู่ก่อน /usr/bin

คราวนี้เราก็สามารถใช้คำสั่ง

```

docker compose

```

ได้แล้วจ้า….

<figcaption>docker compose</figcaption>

* * * | mrchoke |

671,101 | Quantum Computing | Quantum Computing Outline: Schrödinger's cat Quantum computer Basic operations Schrödinger's... | 0 | 2021-04-19T06:23:55 | https://dev.to/geehaad/quantum-computing-2a1h |

<body style="font-size:22px">

<h1>Quantum Computing</h1>

<div>

Outline:

<ul>

<li>Schrödinger's cat

</li>

<li>Quantum computer

</li>

<li>Basic operations

</li>

</ul>

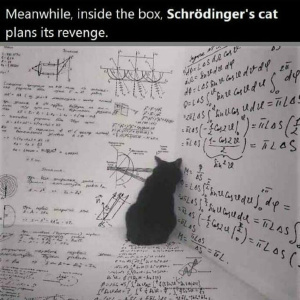

<h1>Schrödinger's cat</h1>

</ul>

</div>

<div>

<br>

<p>

You probably heard before about the Schrödinger's cat, Schrödinger's cat is a thought experiment that asks a question, is the cat, which is inside a box, alive or dead?<br>

Let's imagine you opened the box at time x-1, and you found the cat is alive, now you think that the cat is always alive,<br>

but what if you opened the box at time x+1, and found that the cat is dead!!<br>

Now you know definitely that the cat is alive at time x-1 and dead at time x+1, but what is her situation at time x?<br>

In the thought experiment, a hypothetical cat may be considered simultaneously both alive and dead as a result of being linked to a random subatomic event that may or may not occur. <br>

</p><br>

<br><br>

</div>

<h1>Quantum computer</h1>

<p >

The experiment we viewed before is the main idea of the quantum computer.<br>

In normal computers, which we use nowadays, and are also known as classical computers, the data, or the bit, is either 0 or 1, on or off, and it cannot be both, <br>

that gives us one out of 2 to the power N possible permutations.<br>

But the quantum data, which is also known as a qubit, can be both 0 and 1 with All of 2 to the power N possible permutations.<br>

</p>

<h1>Basic operation</h1>

<p >

<div>

Now we will see some linear algebra operation in classical computer and quantum computer:<br>

</div>

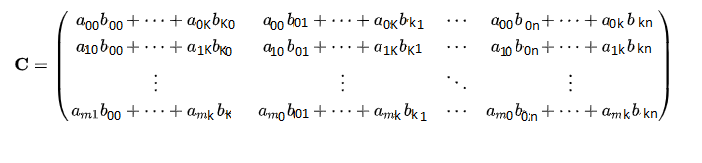

<h1>Matrix multiplication</h1>

<h2 >Definition</h2>

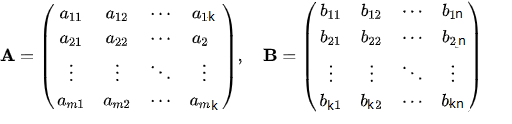

<p >In mathematics, particularly in linear algebra, <span style="color:yellow">matrix multiplication</span> <br>

is a binary operation that produces a matrix from two matrices. <br>

For <span style="color:yellow">matrix multiplication</span>, the number of columns in the first matrix must be equal to the number of rows in the second matrix.<br>

The resulting matrix, known as the matrix product, has the number of rows of the first and the number of columns of the second matrix. <br>

The product of matrices A and B is denoted as AB. <br>

<div>From Wikipedia, the free encyclopedia</div></p>

<h2>How can we do this?</h2>

<p>Let's Define matrix A with size: m X k ,and matrix B with size: k X n <br></p>

<br>

Then, as we saw in the definition, the number of columns in A is equal to the number of columns in B, then the multiplication, AB, can be done by the following approach:<br>

<br>

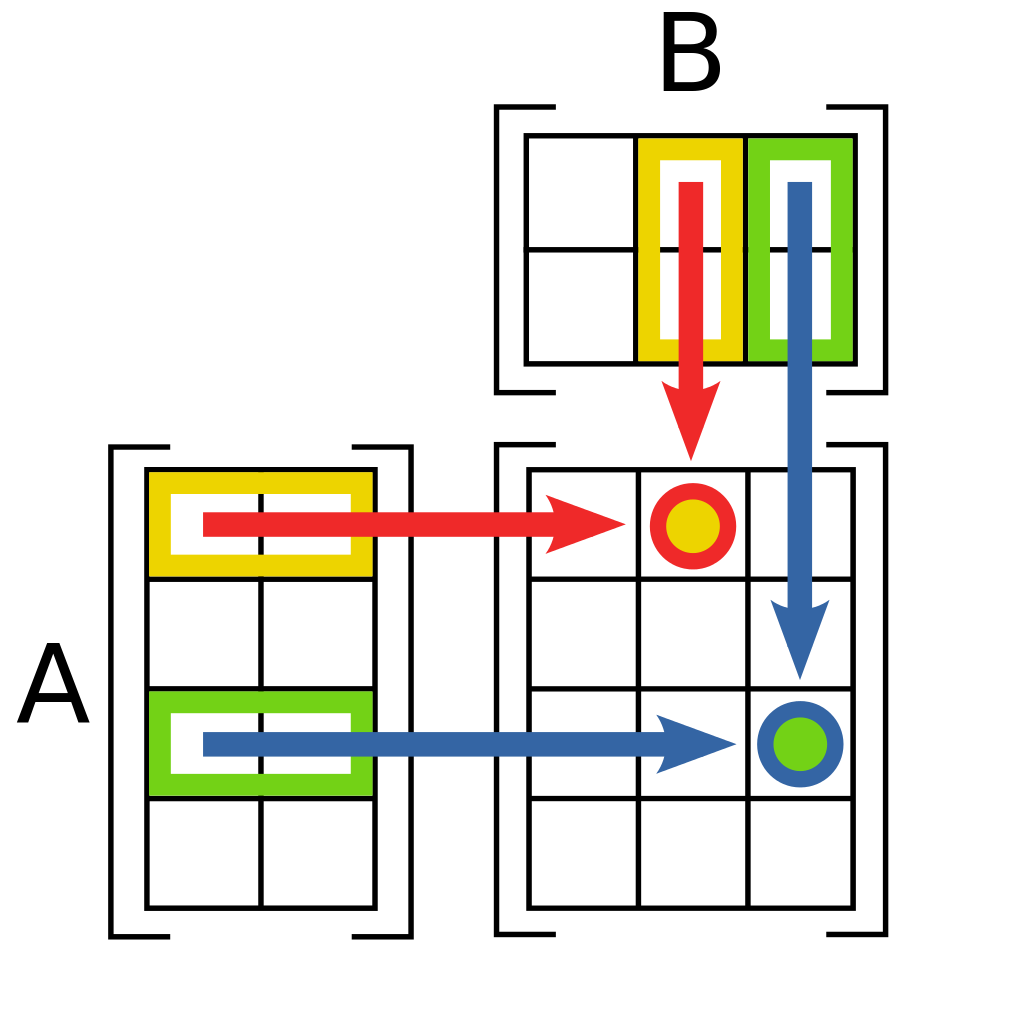

We consider multiplying the rows of the first matrix with the opposite columns of the second matrix.<br>

<h3>Steps: </h3>

We multiply the first element in the first row in the first matrix, A, with the first element of the first column in the second matrix, B, we also call this <span style="color:blue">dot notation</span><br>,<br>

we still in the same row of A, and the same column of B, we add the previous multiplication to the second multiplication, <br>

the second multiplication is multiplying the second element in the first row in the first matrix, A, with the second element of the first column in the second matrix, <br>

until the end of the row and the column <small>-remember that the number of columns of the first matrix is equal to the number of rows of the second matrix-</small> <br>

then we move to the second row of A and the second column of B and apply the same approach till the end of the two matrics.<br>

<div style=" text-align: center;">

<br>

</div>

Well, This is a lot of talking, let's write it in beautiful notations:<br>

1- Let's consider the output matrix is C, we know that the first ever element:<br>

in A: A[0][0], and in B: B[0][0],<br>

we multiply them in put the answer in the first element in C:<br>

C[0][0] = A[0][0].B[0][0]<br>

2- The second element in the same row of A: a[0][1] and same column of B: b[1][0], <br>

as we still in the same row of A, we add this in the same element of C:<br>

C[0][0] += A[0][1].B[1][0],<br>

Do you get the equation? <br>

let's take another row of A:<br>

3- Consider we moved to the second row of A [1][0], and the second column of B[0][1]:<br>

remember the row of C is as the row of A, and the column of C is as B:<br>

C[1][0] = A[0][1].B[0][0]<br>

at the end C is looking like that:<br>

<br>

Now we are ready to write the formal equation:<br>

C[i][j]= A[i][0].B[0][j]+ A[i][1].B[1][j]+ ... +A[i][n].B[n][j]<br>

<p>

<h1>Implement the outer product:</h1>

We Will see how to write the code in python:<br>

<ul>

<li>nested for loop <br>

With : O(n^3) <br>

</li>

<li><pre>for i in range(len(matrixA)): # iterat through rows of A

for j in range(len(matrixB[0])): # iterat through columns of B

for k in range(len(matrixB)): #iterate through raws of B

matrixC[i][j] += matrixA[i][k]*matrixB[k][j]</li>

</pre>

<p> The main idea here is to iterate over the rows of the first matrix and the columns of the second matrix,then apply the function we produce before.</p><br>

</ul>

</p>

<h2>Quantum computer</h2>

<p>

as we did before in classical linear algebra, the multiplication is the same, but it differes in that we get the transpose of the second matrix,<br>

we will use the vectore|matrix notation<br>

let's get the transpose of the matrix:<br>

<pre>

def trans(matrix):<br>

trans_matrix = [([0]*len(matrix)) for i in range(len(matrix[0]))]<br>

for i in range(len(matrix)):<br>

for j in range(len(matrix[0])):<br>

trans_matrix[j][i] = matrix[i][j]<br>

return trans_matrix<br>

</pre>

</p>

</p>

<div style=" text-align: center;"> </div>

</body> | geehaad | |

671,543 | Free desktop tool for insightful reporting. Let me know what you think😺 | Hey Dev.to community😸, I want to share the project my team and I are working on. We are developers... | 0 | 2021-04-19T11:51:31 | https://dev.to/juliianikitina/flexmonster-desktop-on-product-hunt-4mij | datascience, analytics, javascript, report | Hey Dev.to community😸,

I want to share the project my team and I are working on.

We are developers of JS data visualization library that is used by integrators as a powerful pivot table component for any web project.

Our team has decided to give **free access to the app to all people** using pivots in their everyday tasks.

We have embedded our pivot table into a simple desktop application on the base of our integration with Electron.js.

And now everyone can easily start working with it right after the download. This product is a convenient and simple solution for your fast reporting. And it doesn’t require any extra skills, knowledge or technical specialization.

**Flexmonster Pivot Table & Charts for desktop** is a lightweight reporting tool that provides a rich set of data analysis features: grouping, aggregating, filtering, sorting, pivot charts.

Once you build a pivot grid, emphasize important insights with formatting, customize your report or save results to any format, be it PDF, Excel, HTML, CSV, or PNG.

What makes Flexmonster Desktop an effective reporting tool for anyone is:

* a super quick start by loading data from desired sources,

* a lot of options to export your result to different formats,

* a simple way to change the view on your data: charts, compact or flat view,

* a convenient drag-and-drop and super friendly UI, that allow you to easily configure your report

& more.

You can just [download the app](https://desktop.flexmonster.com/?r=dt1), run it on your computer and quickly make a customized report to present it to your boss or colleagues in just a few minutes.

If you find it useful but need more custom scenarios for your own soft or website we have a [developer's version](https://www.flexmonster.com) of our component that can be embedded in your own project. It is powered by extended API calls and different customization options for any use case. You can also customize the app basing it on our Electron integration

😼 Today is a very important day for us - we are launching on ProductHunt: [https://www.producthunt.com/posts/flexmonster-desktop](https://www.producthunt.com/posts/flexmonster-desktop)

So we would greatly appreciate your feedback on the app and support on the platform😻.

If reporting is something you are familiar with - do give it a try!

| juliianikitina |

671,575 | Getting started with Jest in just 5 minutes | Testing with Jest, this may sound overwhelming but in fact getting started is very simple.... | 0 | 2021-04-19T13:00:40 | https://dev.to/ghana7989/getting-started-with-jest-in-just-minutes-297c | jest, testing, testdev, basictesting | Testing with Jest, this may sound overwhelming but in fact getting started is very simple.

## To get started let us test a simple function

```js

const celsiusToFahrenheit = (temp) => {

return (temp * 1.8) + 32

}

```

Here we declared a function named celsiusToFahrenheit which takes in a parameter temp which is temperature and is of type number. It returns the Fahrenheit value of celsius `temp`.

## Now let us test this!

Yeah, this our first test

First `npm i -D jest` here `-D` parameter to add it to dev dependency.

Next create a file name called anything.test.js, anything I mean literally anything.

```js

test('Should convert 0 C to 32 F', () => {

const temp = celsiusToFahrenheit(0)

if(temp!==32) throw new Error("This Test Failed")

})

```

Just paste this code and Voila! first test is done, Now let's dive into the code `test()` is a function that is made available globally by jest library when we installed. It takes two parameters, first one being simple the name of the test and second one being the testing function itself.

The testing function when finds an error then shows the output as failed this means a function with nothing inside still shows as a passed test

```js

test('Dummy', () => {})

```

The above test still passes.

But wait, what if there are many conditions to check or some asynchronous code?

Jest got our back

```js

test('Should convert 0 C to 32 F', () => {

const temp = celsiusToFahrenheit(0)

expect(temp).toBe(32)

})

```

Yes, expect is also a function provided in-built with jest package, now what that line means is - expect the temp variable to be a number 32 if not throw an error.

Now in terminal root project folder just run `jest` and see the passing test.

That's all and by the way this is my first blog post | ghana7989 |

671,952 | How to add unique meta tags in Django? | How to add unique meta tags in Django? | 0 | 2021-04-19T21:03:48 | http://makneta.herokuapp.com/post/how-to-add-unique-meta-tags-in-django/ | html, django | ---

title: How to add unique meta tags in Django?

published: true

description: How to add unique meta tags in Django?

tags: HTML, Django

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/hsb1pjqsq3qi95p3icwu.png

canonical_url: http://makneta.herokuapp.com/post/how-to-add-unique-meta-tags-in-django/

---

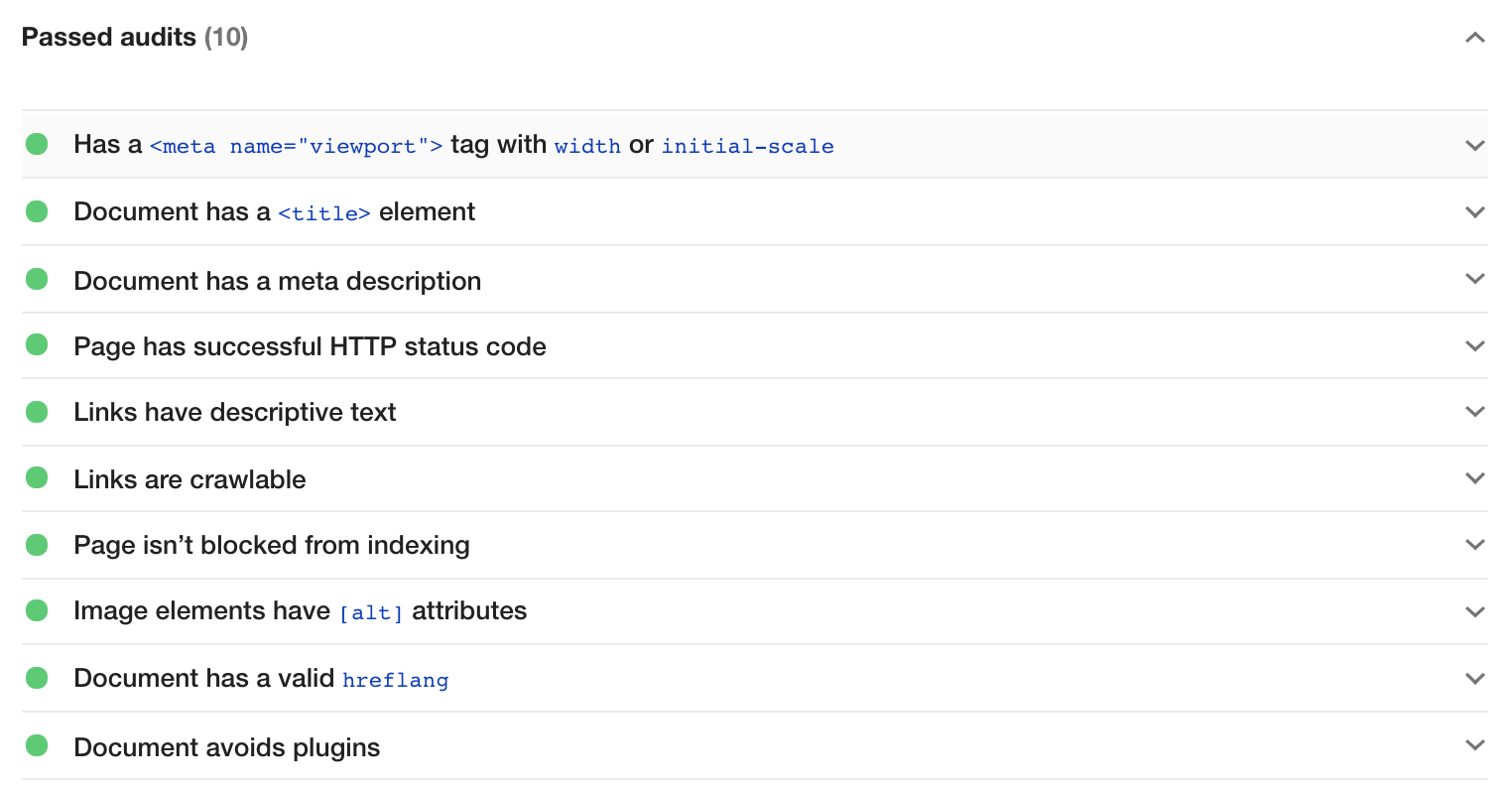

*Today I'm going to continue the subject of meta tags.* *I'll be talking about two things:*

*1. what meta tags we need to create Twitter Cards*

*2. how to make meta tags unique for each article in Django*

In my last article, I was writing about adding Open Graph to our meta tags to create Social Media Cards. http://makneta.herokuapp.com/post/what-are-metadata-and-meta-tags/ But to make sure that it will work on Twitter too, we should add a few more meta tags.

The tags that are required are **twitter:card** and **twitter:title** (or **og:title**).

If we want to display a big image with a title and description, we should set the content of **twitter:card** to **“summary_large_image”**

```

<meta name="twitter:card" content="summary_large_image" />

<meta name="twitter:title" content="Your title" />

```

If we set content of twitter:card to “summary”, we will display the thumbnail image.

<meta name="twitter:card" content="summary" />

We can also add twitter:creator with our Twitter handle in content and twitter:site with the site’s Twitter handle

```

<meta name="twitter:creator" content="@your_handle" />

<meta name="twitter:site" content="@your_site_handle" />

```

Those two are not required but specific for Twitter cards.

If we have Open Graph tags such as og:description, og:title, og:image, og:url, we don’t have to add them separately for Twitter.

If we want to make our meta tags work for SEO purposes, each article on our website should have a unique title, description, image and URL.

Because I learn the best in practice (and because I had wanted to have nice Social Media cards for ages), now it’s time to add open graph tags and Twitter tags to my Django blog.

First, let’s look at my Post model

```

class Post(models.Model):

author = models.ForeignKey(settings.AUTH_USER_MODEL, on_delete=models.CASCADE)

title = models.CharField(max_length=250)

slug = models.SlugField(max_length=250, unique=True, default='', editable=False)

header_image = models.ImageField(upload_to='blog', blank=True, null=True)

image_alt = models.CharField(max_length=250, default="cover image", null=True)

text = RichTextUploadingField(max_length=50000, blank=True, null=True)

summary = models.TextField(blank=True, null=True)

created_on = models.DateTimeField(auto_now_add=True)

updated = models.DateTimeField(auto_now=True)

status = models.IntegerField(choices=STATUS, default=0)

```

I’m showing the Post model because in order to create unique cards for each blog post we need to use fields from the Post model.

In my meta tags, I’m using such fields as title, slug, header_image and summary. For most of them, it’s enough to use ```{{object.field_name}}```.

```

<title>{{object.title}}</title>

<meta property="og:title" content="{{object.title}}">

<meta property="og:url" content="http://yourwebsite.com/{{object.slug}}" >

```

Because my header_image field, as well as the summary field, are not mandatory, in order to avoid crashing the website I’m using some conditions.

If there is a summary, it should be used by meta tag description but if there isn’t a post summary (truncated to 160characters), it should take 160 characters from the blog article.

```

<meta property="og:description" content="{% if object.summary %}{{object.summary|striptags|truncatechars:160}}{% else %}

{{object.text|safe|striptags|truncatechars:160}}{% endif %}">

```

I don’t always add header images, sometimes I display my “universal” picture and I need to add the URL to an appropriate pic.

```

<meta property="og:image" content="{% if post.header_image %}http://yourwebsite.com{{ post.header_image.url }}

{% else %}http://yourwebsite.com{% static 'images/home-office.jpg' %}{% endif %}">

```

Now all those tags together

We can forget about twitter:card tag

```

<meta name="twitter:card" content="summary_large_image" />

<title>{{object.title}}</title>

<meta property="og:title" content="{{object.title}}">

<meta property="og:url" content="http://yourwebsite.com/{{object.slug}}" >

<meta property="og:description" content="{% if object.summary %}{{object.summary|striptags|truncatechars:160}}{% else %}

{{object.text|safe|striptags|truncatechars:160}}{% endif %}">

<meta property="og:image" content="{% if post.header_image %}http://yourwebsite.com{{ post.header_image.url }}

{% else %}http://yourwebsite.com{% static 'images/home-office.jpg' %}{% endif %}">

<meta name="twitter:card" content="summary_large_image" />

<title>{{object.title}}</title>

<meta name="twitter:creator" content="@your_handle" />

<meta name="twitter:site" content="@your_site_handle" />

```

One more thing. If we leave meta tags like here, the description, title and image won’t work on the home page or the about page.

That’s why we need to wrap it in a condition like this:

```

{% if object.slug in request.path %}

<meta property="og:title" content="{{object.title}}">

<meta property="og:url" content="http://yourwebsite.com/{{object.slug}}" >

<meta property="og:description" content="{% if object.summary %}{{object.summary|striptags|truncatechars:160}}{% else %}

{{object.text|safe|striptags|truncatechars:160}}{% endif %}">

<meta property="og:image" content="{% if post.header_image %}{{ post.header_image.url }}

{% else %}http://yourwebsite.com{% static 'images/home-office.jpg' %}{% endif %}">

<meta name="twitter:card" content="summary_large_image" />

<title>{{object.title}}</title>

{% else %}

<title>Your title</title>

<meta property="og:title" content="Your title">

<meta property="og:description" content="Description up to 160 characters">

<meta property="og:image" content="http://image-url.png">

<meta property="og:url" content="http://yourwebsite.com" >

<meta name="twitter:card" content="summary_large_image" />

{% endif %}

<meta name="twtter:site" content="@website_handle" />

<meta name="twtter:creator" content="@your_handle" />

```

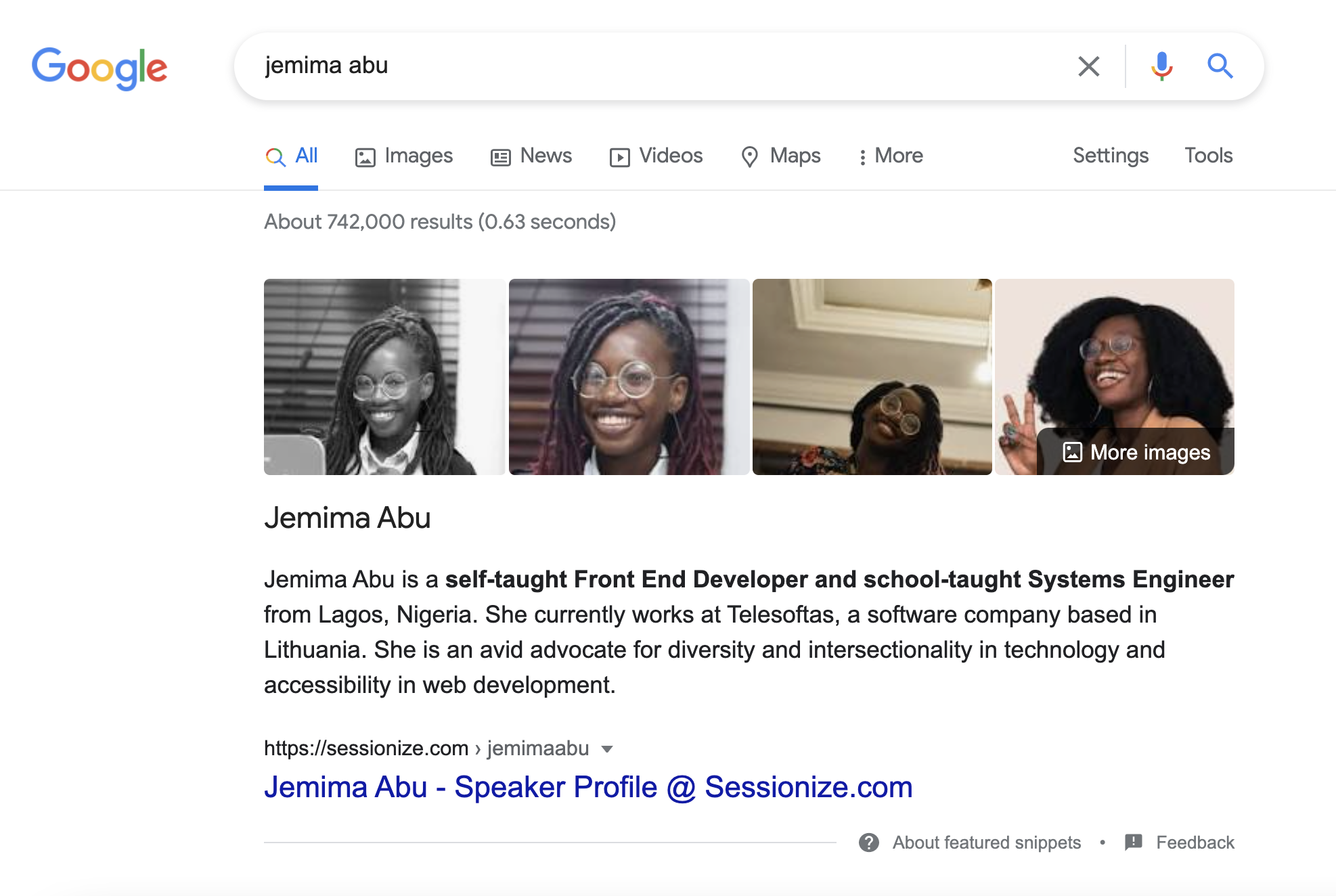

This way my Twitter card from the previous post looks like that:

| makneta |

672,277 | Code Splitting in React using React.lazy and Loadable Components | When our project grows and we add more functionalities, we end up adding a lot of code and libraries,... | 0 | 2021-04-20T03:21:58 | https://www.codingdeft.com/posts/react-code-splitting/ | react, suspense | When our project grows and we add more functionalities, we end up adding a lot of code and libraries,

which result in a larger bundle size. A bundle size of a few hundred KBs might not feel a lot,

but in slower networks or in mobile networks it will take a longer time to load thus creating a bad user experience.

The solution to this problem is to reduce the bundle size.

But if we delete the large packages then our functionalities will be broken. So we will not be deleting the packages,

but we will only be loading the js code which is required for a particular page.

Whenever the user navigates or performs an action on the page, we will download the code on the fly,

thereby speeding up the initial page load.

When the Create React App builds the code for production, it generates only 2 main files:

1. A file having react library code and its dependencies.

2. A file having your app logic and its dependencies.

So to generate a separate file for each component or each route we can either make use of `React.lazy`,

which comes out of the box with react or any other third party library. In this tutorial, we will see both the ways.

{% youtube UbRdRRPbows%}

# Initial Project Setup

Create a react app using the following command:

```

npx create-react-app code-splitting-react

```

# Code splitting using React.lazy

Create a new component `Home` inside the file `Home.js` with the following code:

```jsx

import React, { useState } from "react"

const Home = () => {

const [showDetails, setShowDetails] = useState(false)

return (

<div>

<button

onClick={() => setShowDetails(true)}

style={{ marginBottom: "1rem" }}

>

Show Dog Image

</button>

</div>

)

}

export default Home

```

Here we have a button, which on clicked will set the value of `showDetails` state to `true`.

Now create `DogImage` component with the following code:

```jsx

import React, { useEffect, useState } from "react"

const DogImage = () => {

const [imageUrl, setImageUrl] = useState()

useEffect(() => {

fetch("https://dog.ceo/api/breeds/image/random")

.then(response => {

return response.json()

})

.then(data => {

setImageUrl(data.message)

})

}, [])

return (

<div>

{imageUrl && (

<img src={imageUrl} alt="Random Dog" style={{ width: "300px" }} />

)}

</div>

)

}

export default DogImage

```

In this component,

whenever the component gets mounted we are fetching random dog image from [Dog API](https://dog.ceo/dog-api/) using the `useEffect` hook.

When the URL of the image is available, we are displaying it.

Now let's include the `DogImage` component in our `Home` component, whenever `showDetails` is set to `true`:

```jsx

import React, { useState } from "react"

import DogImage from "./DogImage"

const Home = () => {

const [showDetails, setShowDetails] = useState(false)

return (

<div>

<button

onClick={() => setShowDetails(true)}

style={{ marginBottom: "1rem" }}

>

Show Dog Image

</button>

{showDetails && <DogImage />}

</div>

)

}

export default Home

```

Now include `Home` component inside `App` component:

```jsx

import React from "react"

import Home from "./Home"

function App() {

return (

<div className="App">

<Home />

</div>

)

}

export default App

```

Before we run the app, let's add few css to `index.css`:

```css

body {

margin: 1rem auto;

max-width: 900px;

}

```

Now if you run the app and click on the button, you will see a random dog image:

## Wrapping with Suspense

React introduced [Suspense](https://reactjs.org/docs/react-api.html#suspense) in version 16.6,

which lets you wait for something to happen before rendering a component.

Suspense can be used along with [React.lazy](https://reactjs.org/docs/react-api.html#reactlazy) for dynamically loading a component.

Since details of things being loaded or when the loading will complete is not known until it is loaded, it is called suspense.

Now we can load the `DogImage` component dynamically when the user clicks on the button.

Before that, let's create a `Loading` component that will be displayed when the component is being loaded.

```jsx

import React from "react"

const Loading = () => {

return <div>Loading...</div>

}

export default Loading

```

Now in `Home.js` let's dynamically import `DogImage` component using `React.lazy` and wrap the imported component with `Suspense`:

```jsx

import React, { Suspense, useState } from "react"

import Loading from "./Loading"

// Dynamically Import DogImage component

const DogImage = React.lazy(() => import("./DogImage"))

const Home = () => {

const [showDetails, setShowDetails] = useState(false)

return (

<div>

<button

onClick={() => setShowDetails(true)}

style={{ marginBottom: "1rem" }}

>

Show Dog Image

</button>

{showDetails && (

<Suspense fallback={<Loading />}>

<DogImage />

</Suspense>

)}

</div>

)

}

export default Home

```

`Suspense` accepts an optional parameter called `fallback`,

which will is used to render a intermediate screen when the components wrapped inside `Suspense` is being loaded.

We can use a loading indicator like spinner as a fallback component.

Here, we are using `Loading` component created earlier for the sake of simplicity.

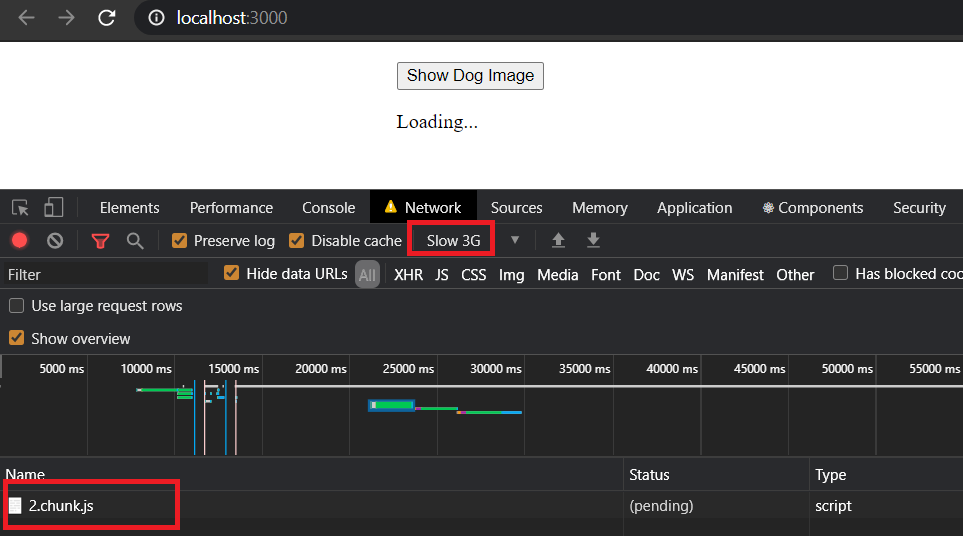

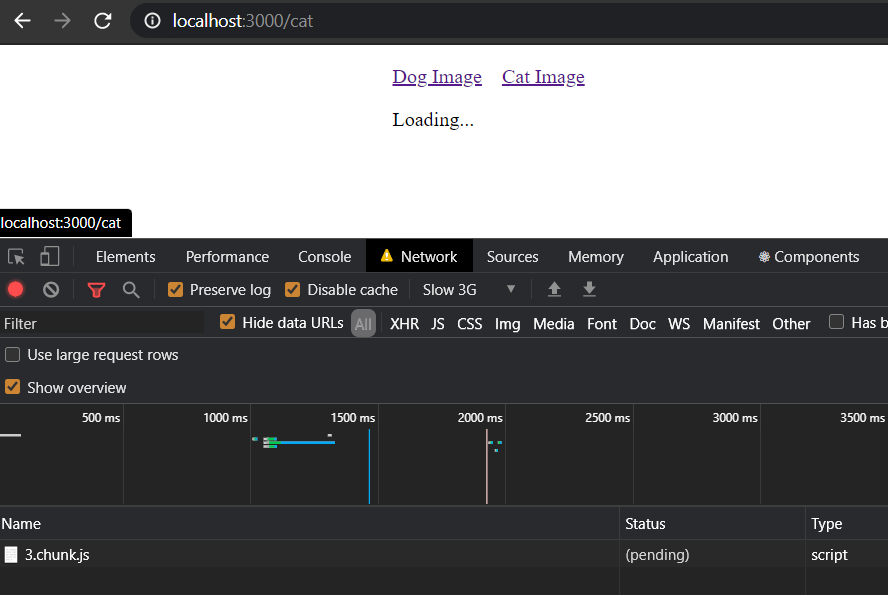

Now if you simulate a slow 3G network and click on the "Show Dog Image" button,

you will see a separate js code being downloaded and "Loading..." text being displayed during that time.

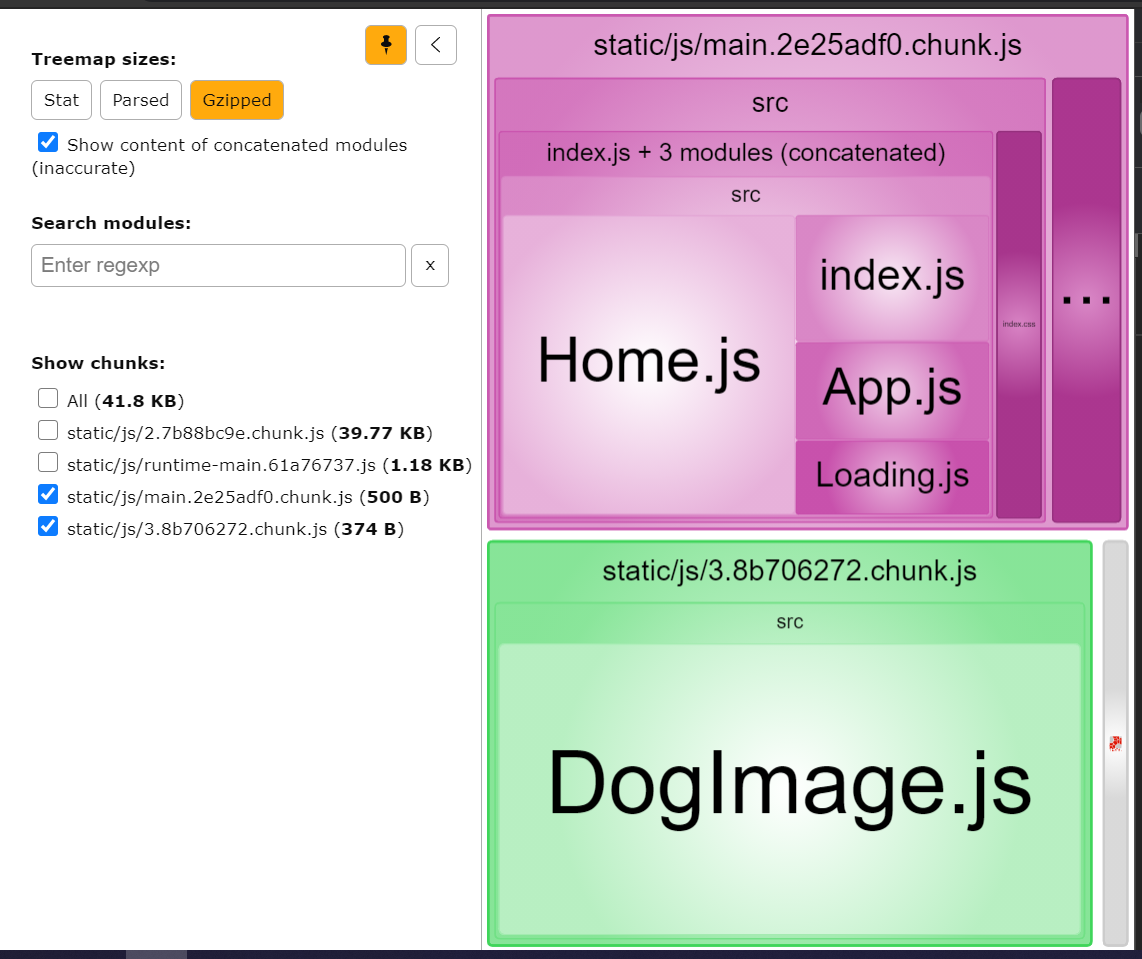

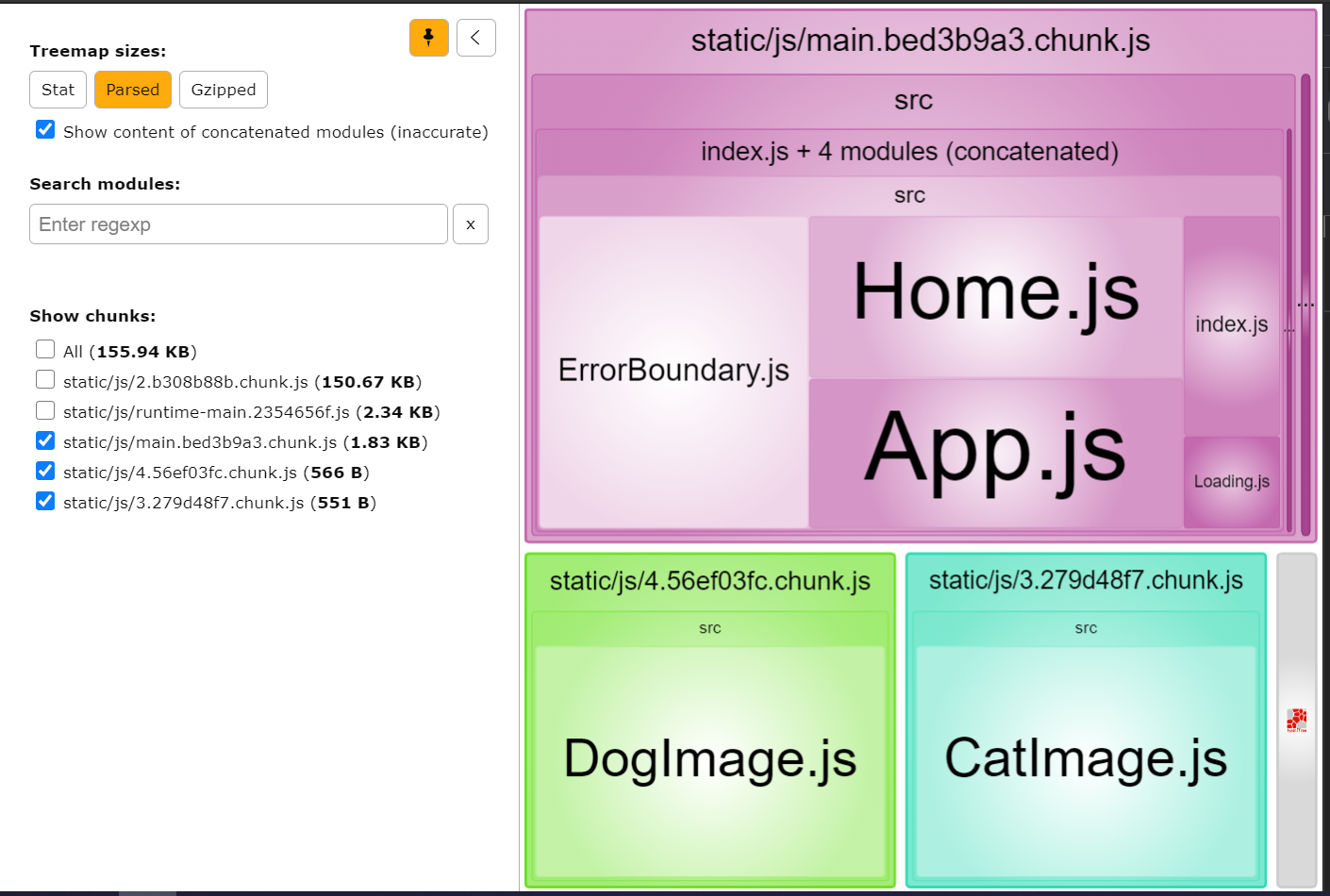

## Analyzing the bundles

To further confirm that the code split is successful, let's see the bundles created using `webpack-bundle-analyzer`

Install `webpack-bundle-analyzer` as a development dependency:

```bash

yarn add webpack-bundle-analyzer -D

```

Create a file named `analyze.js` in the root directory with the following content:

```js

// script to enable webpack-bundle-analyzer

process.env.NODE_ENV = "production"

const webpack = require("webpack")

const BundleAnalyzerPlugin = require("webpack-bundle-analyzer")

.BundleAnalyzerPlugin

const webpackConfigProd = require("react-scripts/config/webpack.config")(

"production"

)

webpackConfigProd.plugins.push(new BundleAnalyzerPlugin())

// actually running compilation and waiting for plugin to start explorer

webpack(webpackConfigProd, (err, stats) => {

if (err || stats.hasErrors()) {

console.error(err)

}

})

```

Run the following command in the terminal:

```bash

node analyze.js

```

Now a browser window will automatically open with the URL http://127.0.0.1:8888

If you see the bundles, you will see that `DogImage.js` is stored in a different bundle than that of `Home.js`:

## Error Boundaries

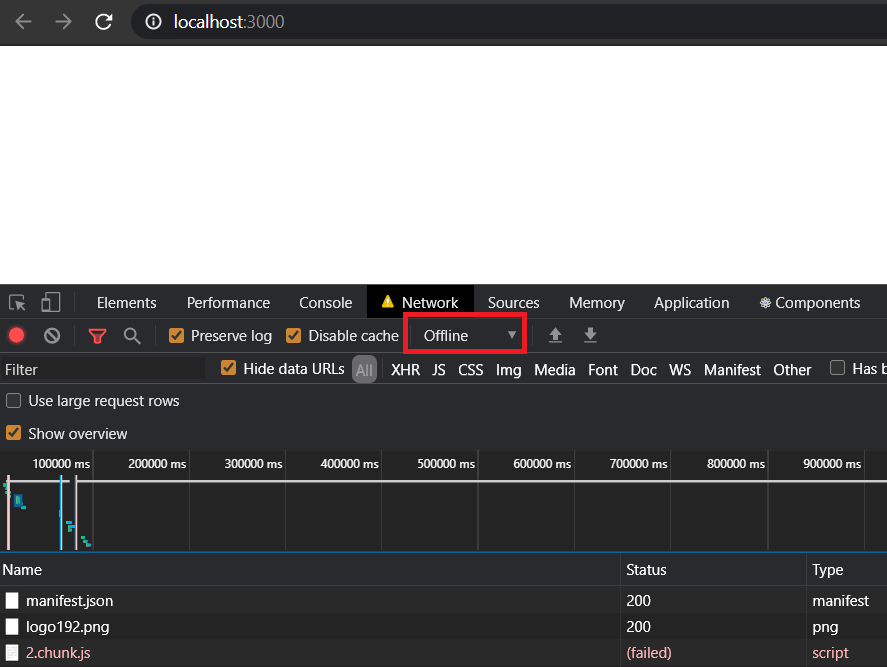

Now if you try to click on "Show Dog Image" when you are offline,

you will see a blank screen and if your user encounters this, they will not know what to do.

This will happen whenever there no network or the code failed to load due to any other reason.

If we check the console for errors, we will see that React telling us to add

[error boundaries](https://reactjs.org/docs/error-boundaries.html):

We can make use of error boundaries to handle any unexpected error that might occur during the run time of the application.

So let's add an error boundary to our application:

```jsx

import React from "react"

class ErrorBoundary extends React.Component {

constructor(props) {

super(props)

this.state = { hasError: false }

}

static getDerivedStateFromError(error) {

return { hasError: true }

}

render() {

if (this.state.hasError) {

return <p>Loading failed! Please reload.</p>

}

return this.props.children

}

}

export default ErrorBoundary

```

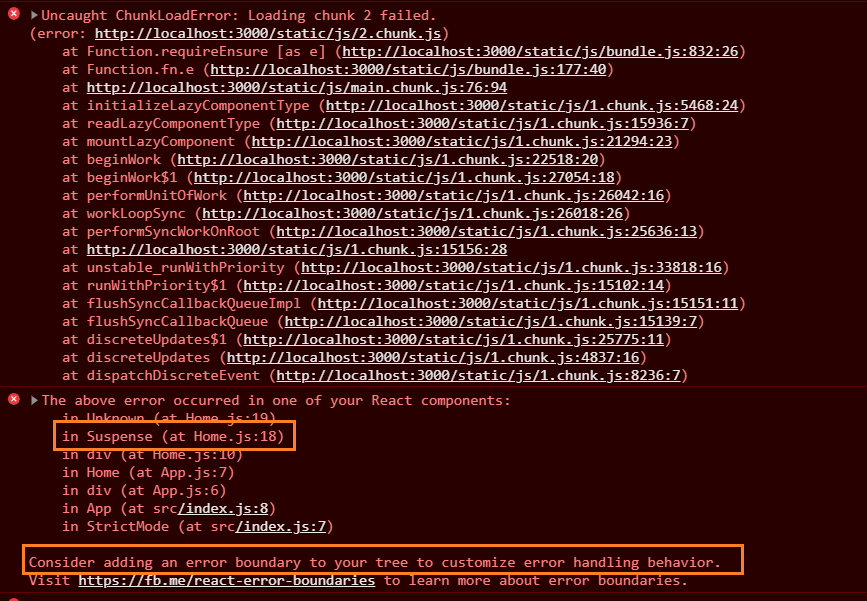

In the above class based component,

we are displaying a message to the user to reload the page whenever the local state `hasError` is set to `true`.

Whenever an error occurs inside the components wrapped within `ErrorBoundary`,

`getDerivedStateFromError` will be called and `hasError` will be set to `true`.

Now let's wrap our suspense component with error boundary:

```jsx

import React, { Suspense, useState } from "react"

import ErrorBoundary from "./ErrorBoundary"

import Loading from "./Loading"

// Dynamically Import DogImage component

const DogImage = React.lazy(() => import("./DogImage"))

const Home = () => {

const [showDetails, setShowDetails] = useState(false)

return (

<div>

<button

onClick={() => setShowDetails(true)}

style={{ marginBottom: "1rem" }}

>

Show Dog Image

</button>

{showDetails && (

<ErrorBoundary>

<Suspense fallback={<Loading />}>

<DogImage />

</Suspense>

</ErrorBoundary>

)}

</div>

)

}

export default Home

```

Now if our users click on "Load Dog Image" when they are offline, they will see an informative message:

# Code Splitting Using Loadable Components

When you have multiple pages in your application and if you want to bundle code of each route a separate bundle.

We will make use of react router dom for routing in this app.

In my previous article, I have explained in [detail about React Router](https://www.codingdeft.com/posts/react-router-tutorial/).

Let's install `react-router-dom` and `history`:

```bash

yarn add react-router-dom@next history

```

Once installed, let's wrap `App` component with `BrowserRouter` inside `index.js`:

```jsx

import React from "react"

import ReactDOM from "react-dom"

import "./index.css"

import App from "./App"

import { BrowserRouter } from "react-router-dom"

ReactDOM.render(

<React.StrictMode>

<BrowserRouter>

<App />

</BrowserRouter>

</React.StrictMode>,

document.getElementById("root")

)

```

Let's add some Routes and Navigation links in `App.js`:

```jsx

import React from "react"

import { Link, Route, Routes } from "react-router-dom"

import CatImage from "./CatImage"

import Home from "./Home"

function App() {

return (

<div className="App">

<ul>

<li>

<Link to="/">Dog Image</Link>

</li>

<li>

<Link to="cat">Cat Image</Link>

</li>

</ul>

<Routes>

<Route path="/" element={<Home />}></Route>

<Route path="cat" element={<CatImage />}></Route>

</Routes>

</div>

)

}

export default App

```

Now let's create `CatImage` component similar to `DogImage` component:

```jsx

import React, { useEffect, useState } from "react"

const DogImage = () => {

const [imageUrl, setImageUrl] = useState()

useEffect(() => {

fetch("https://aws.random.cat/meow")

.then(response => {

return response.json()

})

.then(data => {

setImageUrl(data.file)

})

}, [])

return (

<div>

{imageUrl && (

<img src={imageUrl} alt="Random Cat" style={{ width: "300px" }} />

)}

</div>

)

}

export default DogImage

```

Let's add some css for the navigation links in `index.css`:

```css

body {

margin: 1rem auto;

max-width: 900px;

}

ul {

list-style-type: none;

display: flex;

padding-left: 0;

}

li {

padding-right: 1rem;

}

```

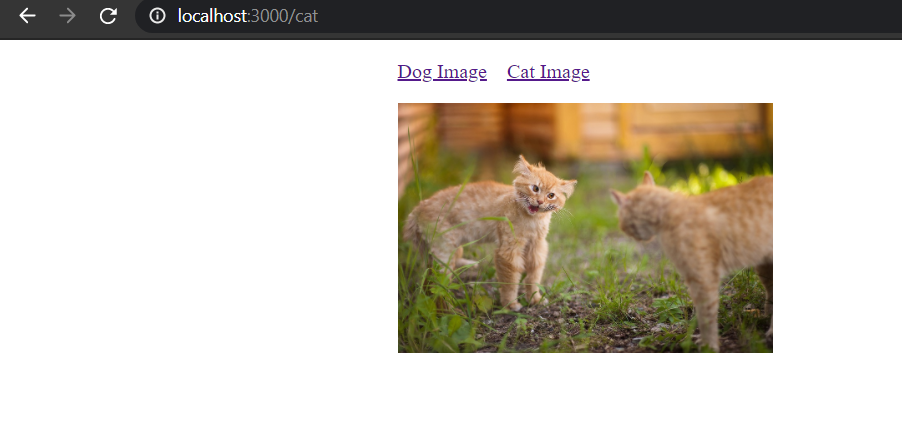

Now if you open the `/cat` route, you will see a beautiful cat image loaded:

In order to load the `CatImage` component to a separate bundle, we can make use of [loadable components](https://loadable-components.com/).

Let's add `@loadable-component` to our package:

```bash

yarn add @loadable/component

```

In `App.js`, let's load the `CatImage` component dynamically using `loadable` function,

which is a default export of the loadable components we installed just now:

```jsx

import React from "react"

import { Link, Route, Routes } from "react-router-dom"

import Home from "./Home"

import loadable from "@loadable/component"

import Loading from "./Loading"

const CatImage = loadable(() => import("./CatImage.js"), {

fallback: <Loading />,

})

function App() {

return (

<div className="App">

<ul>

<li>

<Link to="/">Dog Image</Link>

</li>

<li>

<Link to="cat">Cat Image</Link>

</li>

</ul>

<Routes>

<Route path="/" element={<Home />}></Route>

<Route path="cat" element={<CatImage />}></Route>

</Routes>

</div>

)

}

export default App

```

You can see that even `loadable` function accepts a fallback component to display a loader/spinner.

Now if you run the application in a slow 3G network,

you will see the loader and js bundle related to `CatImage` component being loaded:

Now if you run the bundle analyzer using the following command:

```bash

node analyze.js

```

You will see that `CatImage` is located inside a separate bundle:

> You can use `React.lazy` for Route based code splitting as well.

# Source code and Demo

You can view the complete [source code here](https://github.com/collegewap/code-splitting-react) and a [demo here](https://code-splitting-react.vercel.app/).

| collegewap |

672,284 | Operating Systems in space! | NASA has just deployed Ingenuity, a lightweight helicopter sent with the payload of the most recent M... | 0 | 2021-04-20T04:05:57 | https://dev.to/arindavis/operating-systems-in-space-bie | [NASA](https://www.nytimes.com/2021/04/19/science/nasa-mars-helicopter.html#:~:text=At%203%3A34%20a.m.,crater%2C%20into%20the%20Martian%20sky.) has just deployed *Ingenuity*, a lightweight helicopter sent with the payload of the most recent Mars rover, *Perseverance*. At exactly 3:34 AM (ET) Ingenuity successfully executed the first powered flight on another planet.

That wasn't the only first in space history today, though. As it turns out, it was also the first time a linux process has ever been executed on another planet. Which got me wondering, what other operating systems have we as a species hurled into the cosmic abyss?

Let's get into it.

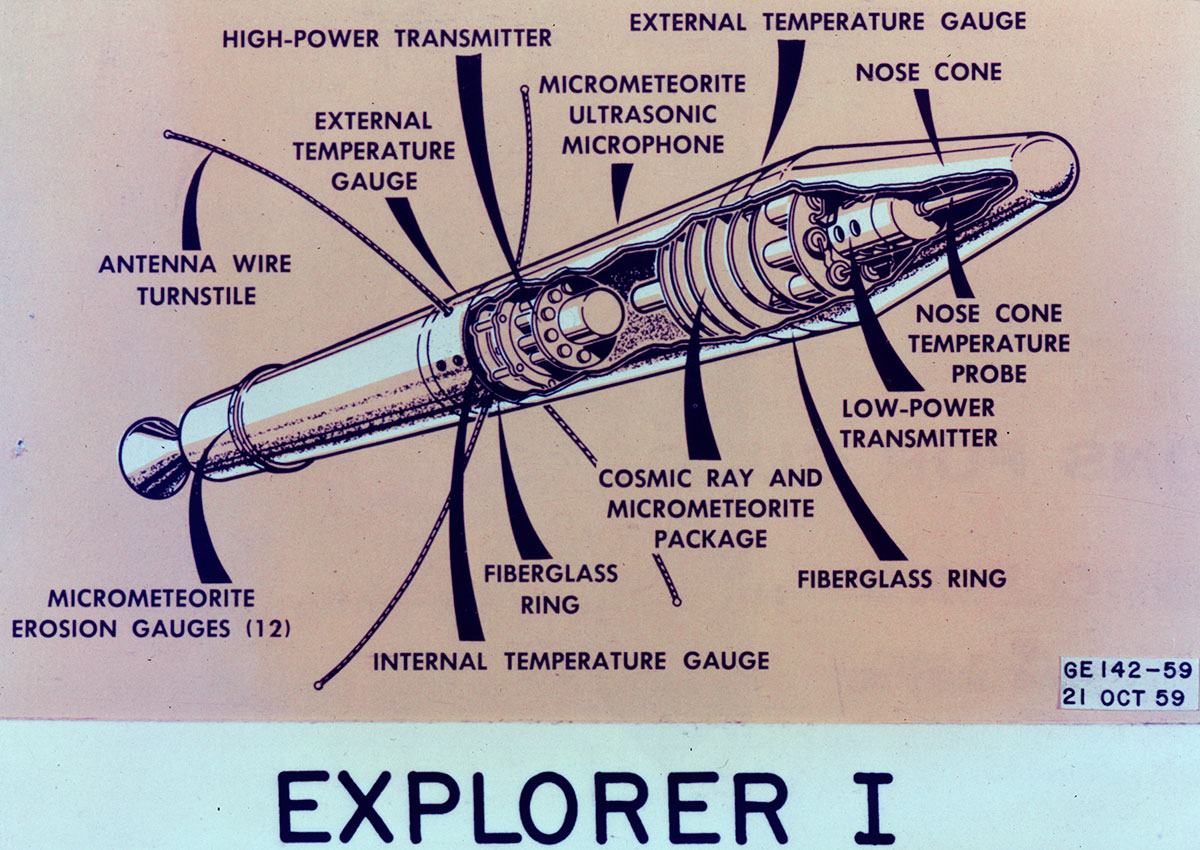

In the early days of spaceflight, when programs like Russia's [Sputnik](https://en.wikipedia.org/wiki/Sputnik_1) and the U.S's [Explorer](https://en.wikipedia.org/wiki/Explorer_1) were being rolled out, each new craft came with its own custom built OS. These were often tailored to those craft's specific needs, and extremely basic by modern standards.

Enter stage right: a man by the name of [J. Halcombe Laning](https://en.wikipedia.org/wiki/J._Halcombe_Laning). Laning was a pioneer of his time who worked with engineers at MIT to create the first ever real time computer in the early fifties, among many other notable accolades. By the time the [Apollo missions](https://www.nasa.gov/mission_pages/apollo/missions/index.html) were being conceived, NASA knew it would need a state of the art guidance system for it's new generation of space-craft, so they turned to Laning to help build out the software for it. Years later, the [Apollo Guidance Computer](https://en.wikipedia.org/wiki/Apollo_Guidance_Computer#cite_note-Hoag-17) was launched with Apollo I, which included the same basic real time operating system designed by Laning that would dominate most of the rest of the Apollo Program.

But, as with the early days, there was no real standardization during this period. That didn't come until 1987, when NASA adopted [VXworks](https://www.windriver.com/products/vxworks) as it's primary OS of choice.

Here's the crazy part: that standard hasn't changed in over thirty years, since it was originally adopted! Perseverance, the most recent rover that dropped off our little helicopter buddy from earlier, is running on the [same OS](https://blogs.windriver.com/wind_river_blog/2018/11/back-to-mars-with-vxworks/) that crafts in the late 80's and early 90's were.

Which, to be fair, is a bit of an oversimplification. While they haven't changed from VXworks in the last three decades, NASA *has* updated their proprietary version of it as time has gone on, so it's not like it is completely outdated.

VXworks itself was chosen because it was one of the leading OS's at the time, especially among government agencies and the military. According to [wikipedia](), VXworks is---

*"... designed for use in embedded systems requiring real-time, deterministic performance and, in many cases, safety and security certification, for industries, such as aerospace and defense, medical devices, industrial equipment, robotics, energy, transportation, network infrastructure, automotive, and consumer electronics."*

Which makes the choice of OS for Ingenuity even more interesting. It's packing a [Qualcomm Snapdragon 801 Processor](https://en.wikipedia.org/wiki/Qualcomm_Snapdragon), which is a CPU you'd usually find in a run of the mill laptop. Running the CPU is a relatively standard linux environment that is utilizing an open source framework called ["F Prime"](https://www.theverge.com/2021/2/19/22291324/linux-perseverance-mars-curiosity-ingenuity), that was specifically designed for powered flight using Ingenuity. This means that if you wanted to, you could go to [NASA's website](https://trs.jpl.nasa.gov/handle/2014/48425) and tinker with it yourself! How cool is that???

Now, I understand no conversation about modern space-tech would be complete without a mention of [Space-X](https://www.spacex.com/), the renegade hot-shot of the international aerospace community.

Unsurprisingly, their [engineers chose linux](https://thenewstack.io/the-hardware-and-software-used-in-space/#:~:text=But%20it's%20not%20necessarily%20a,its%20flightpath%2Ddirecting%20grid%20fins.) as their primary OS, which runs everything from their rockets to their onboard nav systems. Like the little mars helicopter who could, they are sporting modern x86 processors to run most of their processes. The interesting part to me, a web developer who is only just getting started in the field, is that they are using languages like Javascript, Python and C++ to execute the complex processes behind the scenes and tools like HTML and CSS to display information to their astronauts in the cockpit. Hell, they are using chromium and javascript for the touchscreen functionality on the main displays!

Which, in a weird way, makes me feel oddly closer to the cosmos at large. Like, one would assume that the kind of tech that helps put people into space is somewhat unknowable, left to only the smartest among us. But here we are, throwing CSS and HTML into the eternal nothing.

Of course these are all incredibly complex systems built by literally the smartest people in the world, but when it's all laid out into one brief overview you can notice that it's just one generation iterating into another, and is really no more unknowable than the first time you sat down to code javascript.

So raise a glass to the first linux process on the Red Planet. It will not be the last!

| arindavis | |

672,636 | Is SMS OTP authentication as safe as we believe? | Hi I'm Siddharth from SAWO Labs All of us who use banking or e-commerce applications are familiar... | 0 | 2021-04-20T12:23:06 | https://dev.to/sawolabs/sawo-one-stop-solution-for-your-authentication-needs-5dib | authentication, sawolabs, passwordless |

Hi

I'm Siddharth from [SAWO Labs](https://sawolabs.com/)

- All of us who use banking or e-commerce applications are familiar with the authentication grind - enter your username and password and as additional security, enter the OTP sent to your registered phone number.

- Though we find it cumbersome and even downright annoying, especially when the phone is not by your side or has run out of charge, still, we go with the process simply because we believe it is necessary to protect our data and credentials. But is the SMS OTP authentication as safe as we believe?

- Before we jump into the answer, let's quickly look at the evolution of the practice of sending SMS OTPs to get an understanding of its advantages.

<b>Evolution of SMS OTP</b>

- With the growth of Internet applications and the availability of advanced tools for hackers, security became a concern, and the existing security mechanism of just a password became insufficient. So, a two-factor authentication became a preferred option as the identity of a user was verified at two levels.

- The first was the good old password, and this was followed by a code sent to a registered email ID or phone number. Since no downloads or physical tokens were required, this quickly became the de-facto way of authenticating users. But only until the downside was understood.

<b>Is SMS OTP safe?</b>

Though SMS OTP is touted to be safe and convenient, in reality, it is neither.

And why?

<u>SMS can be hacked</u>

- Don't be shocked at the heading! It is true.

- There have been many instances where the SMS codes have been hacked by leveraging the loopholes present in the telecom providers network.

- Take the case of a massive hack that happened in 2017. Fraudsters leveraged a loophole in Signaling System 7 (SS7), an internal telecommunications standard that defines how mobile phones must connect and exchange a number with each other. As a result, they intercepted the codes associated with the banking transactions of users to transfer funds to their own accounts.

- When this came to light, it caused a big uproar, but the practice of using SMS OTPs continued for a lack of other viable options.

<u>Greater acceptance for non-OTP options</u>

- Almost every major organization today is listening to the millennials, as they are the future users of existing systems.

- According to a survey conducted by Aite Group, more than 48% of millennials were open to the idea of switching authentication modes. In comparison, only 16% of seniors were open to this possibility.

- This goes to show that the next generation of users are more willing to explore authentication methods that go beyond just passwords and SMS codes. In fact, 85% of the respondents in this age group were open to fingerprints while 76% were willing to go with facial recognition. Since biometrics have a wider acceptance, many solutions have started embracing it, to not just meets the users' preferences, but also to improve the level of security and authentication.

- - -

<b>What is SAWO?</b>

- Secure Authentication Without OTP (SAWO) is the next-gen authentication system that moves away from passwords and OTPs, and at the same time, securely authenticates users.

- With SAWO, all that users have to do is enter their username and the associated email ID or phone number. That's it! No passwords and no OTPs at all. The system will trigger the phone lock and based on it, will automatically authenticate the user against the registered phone number or email ID to verify the credentials, and will accordingly, provide access.

- The best part is that SAWO stores no passwords, so there is no chance for hackers to access them. From an organization's standpoint, there are no hassles involved, and it is cost-effective as well because there is no dependence or financial payouts to third-party providers like cellular operators.

- For users, it is a safe and easy way to log in without having to remember complicated mashed-up passwords or waiting for the SMS code on their phones.

- In all, a win-win situation for everyone involved.

So, reach out to us right away to get started!

<b>Support & queries</b>

You can join our [Discord Server](https://discord.gg/TpnCfMUE5P) Community and interact with other developers and can ask for any support you require.

In case of any other query, feel free to reach out to us at community.sawolabs@gmail.com | sawolabs |

672,785 | Building a Daily Standup Application in 30 Minutes | by Kayode Alade The daily standup has become a norm in the schedule of most developers around the wo... | 0 | 2021-04-20T13:42:23 | https://dev.to/appsmith/building-a-daily-standup-application-in-30-minutes-9cb | opensource, javascript, firebase, lowcode | by **Kayode Alade**

The daily standup has become a norm in the schedule of most developers around the world. A [standup](https://www.atlassian.com/agile/scrum/standups) is a daily team meeting, at a specific time for a specific duration, that asks team members to answer three major questions:

1. What did I work on yesterday?

2. What am I working on today?

3. What issues are blocking me?

The daily standup answers these questions but does not resolve them. When put to good use, daily standups increase team productivity and also enhance cohesion between all the parties involved.

## Tutorial Overview with Appsmith

In this tutorial, you’ll learn how to build a daily standup application using [Appsmith](https://www.appsmith.com?utm_source=devto&utm_medium=blog&utm_content=standup_appsmith_tutorial&utm_campaign=weeklyblog&utm_term=standup_appsmith), an open-source framework for building internal tools, admin panels, dashboards, and workflows. You’ll be using Appsmith to forward a summary of daily standups to [Slack](https://slack.com/ ). Using a web framework like Appsmith is a much quicker way to add this feature to your workspace than building a completely new internal tool.

Appsmith comes out-of-the-box with prebuilt widgets like forms, charts, and maps that you can easily configure to your team’s needs. It also supports APIs and different types of databases. For more details about its capability, visit their official [GitHub page](https://github.com/appsmithorg/appsmith?utm_source=devto&utm_medium=blog&utm_content=standup_appsmith_tutorial&utm_campaign=weeklyblog&utm_term=standup_appsmith).

## Setting Up the Application and Data Model

First things first: head over to [Appsmith](https://www.appsmith.com?utm_source=devto&utm_medium=blog&utm_content=standup_appsmith_tutorial&utm_campaign=weeklyblog&utm_term=standup_appsmith) to get a free account. After you sign up, it’s time to set up the user interface of your standup app.

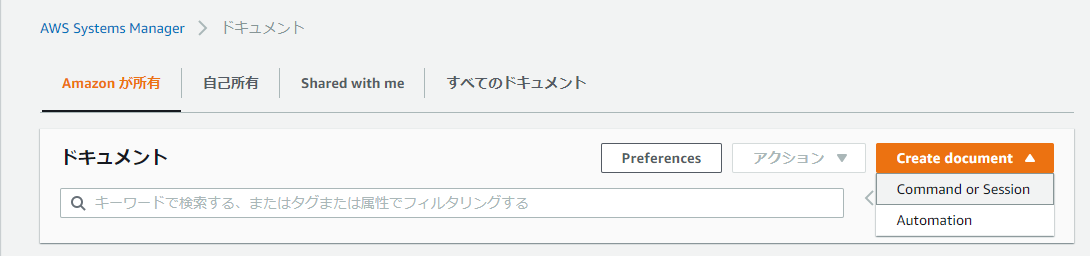

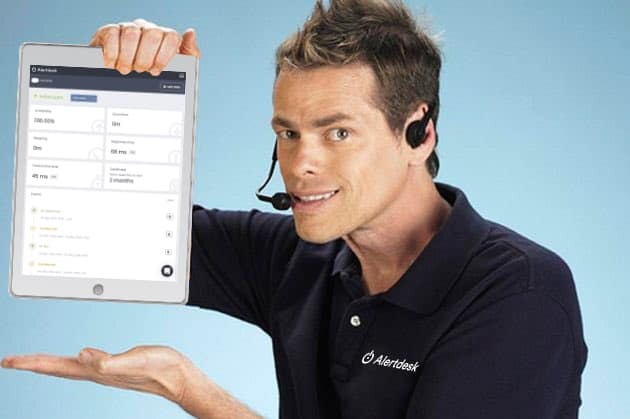

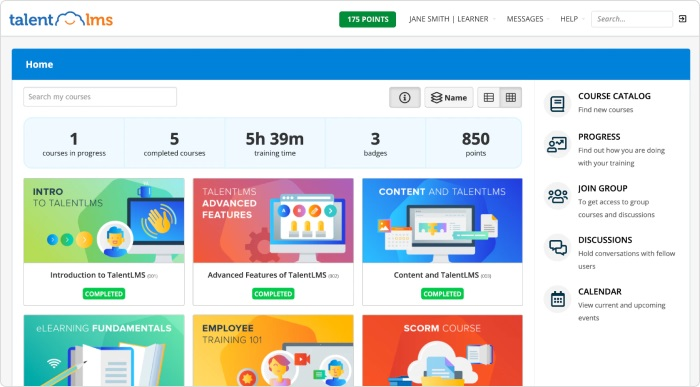

- Click **Create New** on the dashboard to create a new app. You will be taken to an empty canvas as shown below where you can start creating your app. The explorer sidebar on the left is used to add widgets, create pages, and connect to APIs and data sources such as [Firestore](https://firebase.google.com/docs/firestore).

- To build all the features needed for this app, you’ll need to create two pages in Appsmith. Double-click **Page1** to rename it as _First Page_.

- On the Pages bar, click the **+** icon to add a page, then double-click to rename the new page as _Second Page_.

Now that you’ve created your two pages, it’s time to start adding widgets. Your app’s first page will contain:

* A personalized welcome message

* A paragraph showing yesterday's standup

* A text area where the user can enter what was done the previous day

* A text area to write out what they plan to do today

* An option field to show their blockers

* A table to show users who completed yesterday’s tasks

* Submit and reset buttons

Let’s create the custom welcome message next:

- Navigate to the **First Page** and click the **+** icon beside **Widgets** to add a new widget.

- Drag the text widget and drop it on the canvas.

- Type in a custom welcome message as shown below.

Next, let’s display yesterday’s standup to-do on top so that you can see at a glance what you planned to do yesterday and then make plans based on that for today.

1. Add two text widgets side by side on your canvas.

2. Label the first _Last Standup todo_. The second widget will hold the value, or what was on the last standup to-do list. This will eventually be drawn from the database, but for now, you can pre-populate it with filler text.

3. Style the widget’s text as you prefer via the Settings gear at the top right of each widget.

As mentioned earlier, the goal of a standup is to provide information about the previous day’s tasks, tasks that need to be done today, and anything standing in the way of accomplishing those tasks. Obviously, you’ll need a form to input all that information.

To create a form:

- Drag the form widget from the sidebar onto the canvas.

- Label the inputs or dropdowns appropriately (eg, _User_, _Yesterday’s todos_, _Yesterday completed_, and so on). Note that the form widget comes out-of-the-box with **Reset** and **Submit** buttons.

- Rename the form by double-clicking on the default name and editing it. Naming this particular form seemed unnecessary, so that the title widget in the form was deleted.

- To delete a widget, hover over it, then right-click the widget name at the top right corner. In the dropdown menu, you’ll see a Delete option. Click to delete the widget.

To finalize your first page’s UI, let’s add a table to display the users who’ve submitted their standup for the day:

- Drag the table widget onto the canvas. Note that the Table Data option in this widget already contains an array of objects. Later, you’ll change this to a query response from your database.

- Navigate to your **Second Page**, where you’ll add your table.

- Drag the table widget onto the canvas.

- Open the table options and add a new column called _Actions_.

- Click the Settings gear above the **Actions** column and set the following properties:

- Column Type: Button

- Label: Edit

- onClick: OpenModal

- Modal Name: New Modal

- In the **Actions** column you just created, click the button that now reads **Edit**. A new modal will popup, which you’ll use to edit the table’s data.

- Change the title text widget to _Edit Table_.

- Drag a text widget into the modal and set the following properties:

- Text value: Username

- Text align: Left

- Text style: Label

- Add a dropdown widget beside the label you just created. In the Settings for that widget, set **Selection type** to _Single Select_. This dropdown, which ought to display all users of your app, will read data from your database after connecting the database to Appsmith later in this tutorial.

- To add a field for blockers, drop in a text widget, name it _Blocker_, and add a dropdown widget as you’ve done previously.

- Add one field each for today’s to-do and yesterday’s to-do. These will take a text widget and an input widget each.

- Finally, add a field to confirm if yesterday’s to-do is complete. Drag over a text widget and a dropdown widget with the values _Yes_ or _No_.

## Connecting Your Database

Appsmith allows you to link data from several databases. For this tutorial, you’ll make use of [Firestore](https://firebase.google.com/products/firestore).

- In Appsmith, click **Second Page** on the sidebar, then click the **+** icon beside **DB Queries**.

- Select **Add a new data source**.

- Select **Firestore**.

- [Create a Firestore database](https://firebase.google.com/docs/firestore/quickstart) to get the project ID.

- From your Firebase console, click the Settings gear on the sidebar.

- Copy your project ID and paste it into Appsmith. Your database URL is `https://_your-project-id_.firebaseio.com`.

- Back in your Firebase console, click the **Service accounts** tab.

- Click **Create service account**. The JSON file containing your service account's credentials will download.

- Copy the contents of the file and paste it into the **Service Account Credentials** field.

- Click **Test** so that Appsmith can verify everything is correct, then click **Save**.

- Back in Firestore, click **Start Collection** to create a collection, or database table. Set the Collection ID to **User** and add fields for **name** and **email**, both as string type. Sample user values will work for each, eg _Chris_ for the name value and _chris@email.com_ for the email.

- To add a collection named _StandUps_, add fields for date ([in seconds](https://stackoverflow.com/questions/3830244/get-current-date-time-in-seconds)), today's to-dos, yesterday's to-dos, completed, and blocker in Firestore.

Note that since you’re building an internal app, you can create more users and standups in their respective collections.

## Creating Standup Queries

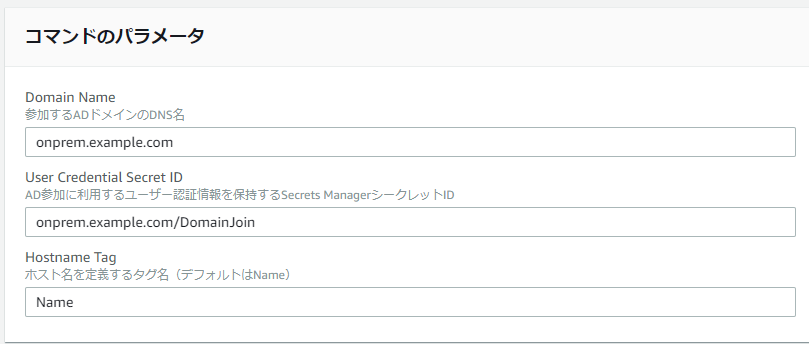

Mustache syntax (`{{...}}`) allows you to write JavaScript in Appsmith to read data from elements defined on a particular page. Let’s take advantage of this to pull information from queries or other widgets. First, let’s create the queries:

1. Click the **+** icon on the **DB Queries** menu. You should see your database as an option.

2. Click **New query** on the top right corner of your database option.

3. Rename it to _createStandUp_.

4. In the **Method** dropdown of the **createStandUp** window, select **Add Document to Collection**.

5. Set the database to the name of your database in Firestore. Fill in the body with the following code:

```

{

"yesterday": "{{Input3.value}}",

"user": "{{Input2.value}}",

"blocker": "{{Input5.value}}",

"todos": "{{Input4.value}}",

"prev_completed": "{{Dropdown2.value}}"

"date": {{Date.now()}}

}

```

Note that widgets in Appsmith are global objects, so you can access their values simply by calling `widget_name.value`.

Continue to round out your app’s queries:

- For **fetchUsers,** set the following properties:

- Method: Get Documents in Collection

- Document/Collection Path: users

- For **fetchStandUps**, set the following properties:

- Method: Get Documents in Collection

- Document/Collection Path: standUps

- Order By: `["date"]`

- For **updateStandUps**, set the following properties:

- Method: Update Document

- Document/Collection Path: `standUps/{{Table1.selectedRow._ref.id}}`

- Body: _paste in the following JSON_

```

{

"yesterday": "{{Input3.value}}",

"user": "{{Dropdown3.value}}",

"blocker": "{{Dropdown4.value}}",

"todos": "{{Input4.value}}",

"prev_completed": "{{Dropdown2.value}}"

}

```

Note that queries can only be referenced on the page where they’re defined. If you need the same query on another page, you need to copy and rename it on the other page.

## Connecting Widgets to Queries

Now let’s connect these queries to the widgets in your Appsmith app.

- On the **First Page** of your Appsmith app, replace the text in the widget next to **Last Standup todo** with:

```Javascript

{{fetchUserStandUps.data[0].todos}}

```

- For the **User** and **Blockers** dropdowns, replace the options with this:

```Javascript

{{fetchUsers.data.map((e,i) => {return {label: e.name, value: e.name}}) }}

```

- Fo the **Yesterday completed** dropdown, replace its options with this:

```JSON

[{"label": "Yes", "value": "true" }, { "label": "No", "value": "false" }]

```

- To configure the First Page’s Submit button, select **Execute DB query** under **onClick**, then select the **createStandUp** query.

- To configure the Second Page’s Refresh button, select **Execute DB query** under **onClick**, then select the **fetchStandUps** query.

- To configure the Second Page’s Search button, select **Execute DB query** under **onClick**, then select the **StandUpsByName** query. Set **onSucess** to store value, key to data, then set value to `{{StandUpsByName.data}}`.

## Integrating with Slack

To send the summary of your standup to Slack, integrate your Appsmith app with Slack using incoming webhooks.

> “Incoming Webhooks are a simple way to post messages from apps into Slack. Creating an Incoming Webhook gives you a unique URL to which you send a JSON payload with the message text and some options. You can use all the usual formatting and layout blocks with Incoming Webhooks to make the messages stand out.” - [Slack](https://api.slack.com/messaging/webhooks)

Let’s dive in with the integration:

- Head to [Slack](https://slack.com/) to create an account if you don’t have one.

- Open the [Create an App page](https://api.slack.com/apps?new_app=1). The **Create a Slack App** window appears automatically. If it doesn’t, click **Create New App*.

- Give your app a name and choose the Slack workspace you’re building it for. Click **Create App**. The **Building Apps for Slack** page opens.

- Click **Incoming Webhooks** to open the feature, and toggle the switch to **On** to activate it. Scroll to the bottom of the page to copy the webhook URL.

- Back in Appsmith, under **First Page**, click the **+** icon beside **APIs**, then select **Create new**.

- Paste the webhook in the first input field and change the request type to **POST**.

- Click the **Body** tab and fill in the message as a JSON object as shown:

```

{

"text": "New Standup added by {{Dropdown1.value}}, Yesterdays todo: {{Input1.value}}, Completed: {{Dropdown3.value}}, Todays todo: {{Input2.value}}, Blockers: {{Dropdown2.value}}, link: https://app.appsmith.com/applications/6043f3a5faf5de39951a897e/pages/6043f3a5faf5de39951a8980 "

}

```

Let’s go back to your **First Page** in your app and configure the **Submit** button so that it sends a Slack message on submit.

Click the Settings gear for the **Submit** button. Below **onClick**, find the **onSuccess** field and from the **Call An API** option, select your Slack API.

## Viewing the Completed Daily Standup Application

At this point, your Appsmith app should look like this:

And as a result, your Slack channel should look like this:

You can check out [this tutorial’s completed app on Appsmith](https://app.appsmith.com/applications/6043f3a5faf5de39951a897e/pages/6043f3a5faf5de39951a8980?utm_source=devto&utm_medium=blog&utm_content=standup_appsmith_tutorial&utm_campaign=weeklyblog&utm_term=standup_appsmith).

## Summary

In this tutorial, you learned how to build a daily standup app using [Appsmith](https://www.appsmith.com?utm_source=devto&utm_medium=blog&utm_content=standup_appsmith_tutorial&utm_campaign=weeklyblog&utm_term=standup_appsmith), including widgets that enable users to detail their accomplished tasks, their daily to-do lists, and any blockers keeping them from their goals. You then integrated your app with Slack, so you can send summarized standup reports to a specific Slack channel via incoming webhooks.

Have an idea for another app you’d like to build without reinventing the wheel? Check out Appsmith’s Getting Started [documentation](http://docs.appsmith.com?utm_source=devto&utm_medium=blog&utm_content=standup_appsmith_tutorial&utm_campaign=weeklyblog&utm_term=standup_appsmith), or jump right in by [signing up for a free account](https://app.appsmith.com/user/signup?utm_source=devto&utm_medium=blog&utm_content=standup_appsmith_tutorial&utm_campaign=weeklyblog&utm_term=standup_appsmith).

----

Author Bio: Kayode is a tech enthusiast specializing in embedded systems and system design and modelling. His programming languages of choice include C, C++, JavaScript, and Python. In his free time, he loves adding value to people's lives with technology.

| vihar |

672,790 | Learn JavaScript's for...of and for...in - in 2 minutes | The for...in loop We use for...in when we want to use the keys of an Object. const myOb... | 0 | 2021-04-20T14:01:55 | https://jordienric.com/blog/for-in-for-of-javascript | javascript, webdev, beginners, codenewbie |

## The `for...in` loop

We use `for...in` when we want to use the **keys** of an Object.

```js

const myObject = {

keyOne: 'valueOne',

keyTwo: 'valueTwo',

keyThree: 'valueThree'

}

for (const propertyKey in myObject) {

console.log(propertyKey)

}

// Will result in:

> 'keyOne'

> 'keyTwo'

> 'keyThree'

```

As we can see in the example `propertyKey` will be the key of the object.

> You should know

> 💡 `for...in` will ignore any [Symbol](https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Global_Objects/Symbol)s in your Object

If we want to access the value we can still do it like this

```js

for (const propertyKey in myObject) {

console.log(myObject[propertyKey])

}

```

But instead of doing this we could use a `for...of` loop.

## The `for...of` loop

The `for...of` loop will iterate over the values of the Iterable Object.

Here's an example with an Array

```js

const myIterableObject = [

'valueOne', 'valueTwo', 'valueThree'

]

for (const myValue of myIterableObject) {

console.log(myValue)

}

// Will result in

> 'valueOne'

> 'valueTwo'

> 'valueThree'

```

This is a good alternative to the `forEach` method

This was a quick introduction to these two syntaxes of the for loop in Javascript. I recommend you play around with them. These two are really useful to know when you want to write short for loops.

## 🚀 Follow me on [twitter](https://twitter.com/jordienr) for more

| jordienr |

672,805 | 7 Tips For Clean Code | It's all about maintaining clean and readable code(which works of course). So, I wanted to share a... | 0 | 2021-07-26T09:33:47 | https://dev.to/akashshyam/7-tips-for-clean-code-3nk1 | discuss, codequality | It's all about maintaining clean and readable code(which works of course). So, I wanted to share a few tips with you guys. Feel free to correct me or share more tips of your own in the comments to create a learning experience for all of us. Let's get to it then:

### 1. Comments, Comments and More Comments

I'm not the ideal developer whose code is understood by everyone and I'm betting you aren't either. Instead of explaining what exactly to do, I'm going to tell you what not to do. Let's look at some sample code:

```js

// Created a constant variable "foo" and assigned a value of "bar"

const foo = "bar";

```

### 2. Meaningful Names

You want to use those kinds of names where the reader goes, "Yes! I know exactly what this does".

```js

// NEVER DO THIS

const abc = validateEmail('writecleancode@gmail.com')

// Good Job!

const isEmailValidated = validateEmail('writecleancode@gmail.com');

```

### 3. Console Warns/Errors

This is a huge problem, so many times I've seen developers commit code with eslint warnings. Infact, a couple of months ago, I started working on an existing project. When I compiled the frontend, there were over 100 warnings by jsx-ally, eslint etc.

We can use [husky] (https://www.npmjs.com/package/husky) along with [lint-staged] (https://www.npmjs.com/package/lint-staged) which will not let you commit code until you clear all the warnings and errors.

### 4. `as unknown` in Typescript

Typescript is smart, but sometimes, it just isn't smart enough! I've seen a lot of `// @ts-ignore`s or `as unknown`s in typescript code. So instead of doing this:

```

const user = dog as unknown;

```

do this:

```

const user dog as IUser

```

Even though doing this is not advisable, at least you get some type safety.

### 5. Use Babel Instead of tsc

From version 7, Babel added support for TypeScript, which means you no longer need to use the TypeScript compiler i.e. `tsc` to build your project, but instead can just use Babel which simply strips your types from all TypeScript files and then emits the result as JavaScript.

This is not only much faster than tsc, especially in bigger projects, but also allows you to use the whole Babel ecosystem within your project. For example, it's great when you want to use react or javascript features which are still in stage 3.

For back-end projects, this means you can simplify your clunky file-watching scripts and just use babel-node to watch for changes.

### 6. Use SonarJS and Eslint

Eslint has many rules that enforce best practises and conventions and will also help to prevent bugs.

(TSLint is being deprecated in favor of typescript-eslint; the TSLint plugin SonarTS has been adopted and is now part of SonarJS).

In addition to ESLint’s features, SonarJS adds some complexity checks to your code, which are helpful to just code away and then break your methods into smaller pieces.

### 7. Opaque Types

I'm not going to explain, I'll just demonstrate this to you.

Imagine we are building a banking API.

```typescript

// Account.ts

export type PaymentAmt = number;

export type Balance = number;

export type AccountNumberType = number;

function spend(accountNo: AccountNumberType, amount: PaymentAmt) {

const account = getAccount(accountNo);

account.balance -= amount;

}

```

```typescript

// controller.ts

import {spend} from "./accounting";

type Request = {

body: {

accountNumber: number,

amount: number

}

};

export function withdrawAmt(req: Request) {

const {accountNumber, amount} = req.body;

spend(amount, accountNumber);

}

```

Did you spot the bug? If you didn't, look at the place where we are calling the `spend()` function. I've (intentionally) passed the amount before the accountNumber but typescript does not complain.

If you are wondering why this happens, this is because `AccountNumberType` and `PaymentAmt` are assignable to each other because both of them are of type `number`.

There is a long standing issue in the typescript repo about this. Until the typescript team does something about this, we can use the following hack

```

// Can be used with any type

type Opaque<K, T> = T & { __TYPE__: K };

type Uuid = Opaque<"Uuid", string>;

```

The utility function Opaque<K, T> simply defines a new type that, aside from a variable’s value, also stores a (unique) key, such as Uuid.

###Conclusion

Thanks for reading! Check out my [twitter] (https://twitter.com/AkashShyam11) where I (try) to post tips & tricks daily. Bye 🤟

| akashshyam |

672,810 | Challenge: Create a `pad` function without using loops! | Create a function that takes some configuration and a value and returns the value with conditional amount of padding | 0 | 2021-04-20T14:28:32 | https://dev.to/_gdelgado/challenge-create-a-pad-function-without-using-loops-2id5 | challenge, functional | ---

title: Challenge: Create a `pad` function without using loops!

published: true

description: Create a function that takes some configuration and a value and returns the value with conditional amount of padding

tags: challenge, fp

cover_image: https://images.unsplash.com/photo-1574642860096-d04d5d385d86?ixid=MnwxMjA3fDB8MHxwaG90by1wYWdlfHx8fGVufDB8fHx8&ixlib=rb-1.2.1&auto=format&fit=crop&w=1350&q=80

---

> Photo Credit: Kylie Fitts / www.kyliefitts.com & https://unsplash.com

In any language, implement a function `pad` that takes a value and conditionally pads it with `n` number of `padding`:

```javascript

const padded = pad({

value: '👋',

padding: '*',

requiredLength: 4,

})

console.log(padded) // --> ***👋

//////////

// Case 2: do not pad a value whose length is equal to `requiredLength`

//

const padded = pad({

value: '👋👋👋👋',

padding: '*',

requiredLength: 4,

})

console.log(padded) // --> 👋👋👋👋

//////////

// Case 3: do not overwrite a value that is longer than `requiredLength`

//

const padded = pad({

value: '👋👋👋👋👋👋',

padding: '*',

requiredLength: 4,

})

console.log(padded) // --> 👋👋👋👋👋👋

```

#### Submit your solutions down below! 👇👇👇

Remember, your solution cannot use any sort of loop construct such as `while`, `do`, or `for`!

**WARNING**: Here is [my solution](https://gist.github.com/supermacro/1cdb1dbc40d3a5efa05ccd164162cc9f) in typescript.

| _gdelgado |

672,878 | Kotlin Multiplatform vs Flutter: Which One to Choose for Your Apps | Kotlin Multiplatform and Flutter are two of the hottest multi/cross-platform app frameworks and are... | 0 | 2021-04-20T15:40:49 | https://dev.to/tgloureiro/kotlin-multiplatform-vs-flutter-which-one-to-choose-for-your-apps-51oj | kotlinmultiplatform, flutter, kotlin, kmm | Kotlin Multiplatform and Flutter are two of the hottest multi/cross-platform app frameworks and are maturing quite fast. Both help you to reuse code when developing apps for Android and iOS. However, these platforms are very different and serve distinct purposes. Having worked with both, I'm going to share what I consider to be each one's strengths and weaknesses.

## Kotlin Multiplatform Mobile (KMM)

Kotlin is a great choice for developing mobile apps. Its conciseness, simplicity, and expressivity allow you to write correct and readable code effortlessly. Kotlin is the suggested language if you are starting a new native Android Project. Hence, if you have to target Android devices, you will probably end with the Android business logic written in Kotlin. Now, imagine being able to put your Android's business logic code, almost untouched, inside your iOS project as an iOS Framework. KMM is the best tool to do this right now and is being used by companies like VMware, Philips, and Netflix.

In KMM, you have a shared folder that is completely reused between platforms. Most of the business logic will be platform-independent. But, when you need to write code that depends on native/system libraries, you can count with KMM's **expect/actual** keywords.

> With this mechanism, a common source set defines an expected declaration, and platform source sets must provide the actual declaration that corresponds to the expected declaration. This works for most Kotlin declarations, such as functions, classes, interfaces, enumerations, properties, and annotations.

Curious for how it works? Look at this code:

```

// Shared (Common)

expect fun randomUUID(): String

```

```

// Android

import java.util.*

actual fun randomUUID() = UUID.randomUUID().toString()

```

```

// iOS

import platform.Foundation.NSUUID

actual fun randomUUID(): String = NSUUID().UUIDString()

```

(Available in [kotlinlang.org/docs](https://kotlinlang.org/docs/mpp-connect-to-apis.html))

Very straightforward, right? This way, the randomUUID() function can be used from reused code (common code), with the correct implementation being provided for each platform during compilation. Then, common code is provided for each platform as an Android module or iOS Framework.

## Flutter

Flutter is a cross-platform framework to target iOS and Android with a single codebase. Google calls it a "UI toolkit for building beautiful, natively compiled applications for mobile, web, and desktop". One of the superpowers of Flutter is that you can design almost any interaction or animation and have it running the same way in Android and iOS. No need to make special adjustments or to recreate the designed user interface using native platforms. For those who are looking to innovate in UI space or don't need to make strong use of native features, Flutter is a strong choice.

To write Flutter apps, you need to program in Dart. Dart is a C/Javascript/Java-like programming language, that may be very familiar if you have previous contact with some of these languages. New features like sound null safety makes Dart even closer to Kotlin and Swift. But make no mistake: to have modern language features like sealed classes, inline classes you may need to use code generators (Take a look at Remi Rousselet's [Freezed](https://pub.dev/packages/freezed) as an example of generated code in Flutter).

Flutter is very easy to use and has a really fast hot reload. It is nice to see your mobile UI rendered in almost real-time in an emulator/simulator as you code. It's not the best choice if you depend heavily on native features that don't have an existing library, because otherwise you may have to spend a lot of time making plumbing code for Flutter's platform channel. Another weakness is that the native UI components are recreated in Flutter's engine. This means that if a new OS version is released with new UI components, you will have to wait until Flutter catches up to use the newest resources.

# My experience with KMM and Flutter in a real world project

Yes, you're reading right. I worked with KMM for shared business logic between iOS and Android + Flutter for UI in a real-world app, that is yet to be launched. That way, we unified our low-level network code and business logic between platforms with KMM, leaving Flutter with only the UI layer of the app. I will detail the design decisions and how everything went in a next post.

## Which one should I choose? Summary:

### Kotlin Multiplatform Mobile may be a good choice if...

- Already have existing native-specific code to be reused.

- You care about having the most updated native components and want to have a 100% native look and feel on every new OS version.

- You want to incrementally share code between platforms on an existing codebase.

- You don't need to publish a desktop or web version of your app.

- You don't want to depend on Flutter engine's implementation on each platform.

- Your project isn't going to be released very soon, or you don't mind about KMM being in alpha status right now.

- Your app is strongly tied to multi-thread or it has background processing needs.

**Beware: KMM is currently in alpha and the APIs may change until it hits stable. Kotlin native memory management is being replaced (important for iOS). You will need to study how it handles memory management, concurrency and immutability to correctly implement multiplatform code for now. Check: https://kotlinlang.org/docs/native-concurrency.html#global-variables-and-singletons**

### Flutter may be a good choice if...

- You are in a small team and don't have resources for two native UI codebases.

- Your app depends mostly on simple API and database calls, a common use case in mobile apps. In this scenario, you can avoid writing Flutter's Platform Channel code as you won't need to interact with native code.

- Your app has a custom UI or you are innovating in UI space.

- You target Web and Desktop besides Mobile and want to have a working solution for these platforms right now. | tgloureiro |

673,065 | tmux: 13 Cool Tweaks to Make It Personal and Powerful | Intended Audience: tmux users (beginner) or who read the part one of my "Command Line Happiness" post... | 12,381 | 2021-04-20T18:07:20 | https://dev.to/krishnam/tmux-13-cool-tweaks-to-make-it-personal-and-powerful-487p | linux, productivity, tmux, developer | **Intended Audience:** tmux users (beginner) or who read [the part one](https://dev.to/krishnam/dev-productivity-command-line-happiness-with-terminal-multiplexing-5067) of my "Command Line Happiness" post or looking for best tips & tricks.

**Why do you want to tweak the default setup ?**

1. Keyboard shortcuts in tmux are a bit of **a stretch, both physically and sometimes mentally**

2. tmux has a lot of **less-than-stellar default** setting

3. Moreover, the configuration is fun, especially when you personalize it to **suit your needs**; after all, that's what it's for!

In general, I prefer using the default setting with any tech/tools that I use as long as it serves its purpose well. However, tmux is different. It is designed to be customizable. On top of that, these are my reasons why you should tweak it.

Follow along, and let's make your tmux friendly. Along the way, do not forget to put more comments in your configuration file; they'll jog your memory later. Treat your tmux config as a living document; Learn, practice, and update.

Here is your ready-to-use valuable tmux tips-&-tweaks. Try these to improve your day-to-day development while using tmux. Let's start with the biggie ! [prefix]

#### 1. Prefix Should be Simple

By default, all key bindings will demand a "prefix" key before they are active. It is similar to a [leader] key in vim. The default is `Ctrl-b`.

The default is a little hard to trigger as the keyboard button is pretty far. Most prefer the `Ctrl-a` as prefix key:

- It puts your prefix in the home row.

- CapsLock can be remapped with a Ctr key, and A sits just next to it.

- If you have already used the GNU screen, `Ctrl-a` is already the standard key for you.

```

unbind-key C-b # free the original bind-key key

set-option -g prefix C-a # setting the prefix from C-b to C-a