id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,151,080 | A Series of Tubes | Advent of Code 2017 Day 19 Part 1 This seems familiar... Could I just eyeball... | 16,285 | 2022-07-25T17:05:11 | https://dev.to/rmion/a-series-of-tubes-2a76 | adventofcode, algorithms, computerscience, programming | ## [Advent of Code 2017 Day 19](https://adventofcode.com/2017/day/19)

## Part 1

1. This seems familiar...

2. Could I just eyeball it?

3. How many letters are in my puzzle input?

4. Deciding not to solve it manually

5. Solving it algorithmically

### This seems familiar...

- I'm reminded of [2020 Day 13 - Mine Cart Madness](https://adventofcode.com/2018/day/13)

- Surprisingly - thankfully? - this puzzle has just one moving object: a packet

- Also different: the corner markers - `+` instead of `/` and `\`

### Could I just eyeball it?

- My puzzle input is large, yes

- But it doesn't look like there are many letters

- And with careful study and patience, I could probably follow the tubes with my eyes, recording the letters as I see them

Before I do that, a few exercises.

### How many letters are in my puzzle input?

I could do this one of two ways:

1. Use the `Find...` web browser feature when viewing the raw puzzle input, searching for and recording each capitalized letter of the alphabet, tallying them as I go

2. Write an algorithm

Option two seems far more exciting.

My algorithm works like this:

```

Generate a 2D array representing the tubes:

Split the input at each newline character into an array of strings

Split each string at each character into an array of characters

Identify the letters:

For each row, accumulate an array of characters

For each columns, accumulate an array of characters

If the character is not one of these: `-,|,+, `

Insert the character into the array

```

It works on the example input: `ACFEDB`

It works on my puzzle input: `DRSFXNPJLZ`

Wow! Only 11 letters!

I could probably solve this part manually!

If I do, I would want to make a GIF.

### Deciding not to solve it manually

- I'd have to work on a very small, rotated copy of my puzzle input

- Drawing lines carefully and continually over more and more of the tube tracks

- My mouse is kind of finicky, so that would probably be a frustrating process

- I'm not feeling great about this

I'd much rather solve it algorithmically.

Then, build a simulator that showed the packet moving through the tubes.

### Solving it algorithmically

Ingredients for my algorithm recipe:

- Each tile in the grid: that will be a 2D array of characters

- The number of letters to collect: that will be the length of the list of letters found in the grid

- The current location and direction of the packet: that will be a 3-element array where the first two elements are the row and column and the third element is one of four characters: `v^<>`

- The relative coordinates of each next location based on the direction: that will be a dictionary where the keys are the four characters above and the values are 2-element arrays with `0`, `1` or `-1` for the relative row and column

- An initially empty unique set that will eventually store all the collected letters

The main loop:

```

Do as long as the size of the set of collected letters is not equal to the number of letters in the tubes

Check the character in the next tube

If it's a letter:

Add that letter to the set and continue in the current direction

Else, if it's a +:

Determine which of the four tiles adjacent to the + must be the next tube

If the character at that tile is a letter, add the letter to the set

Update the direction to account for turning a corner

Else (meaning it's a - or |):

Continue in the current direction

```

That all seems straightforward.

But a few details required more code than I was expecting.

#### Determine which of the four tiles adjacent to the + must be the next tube

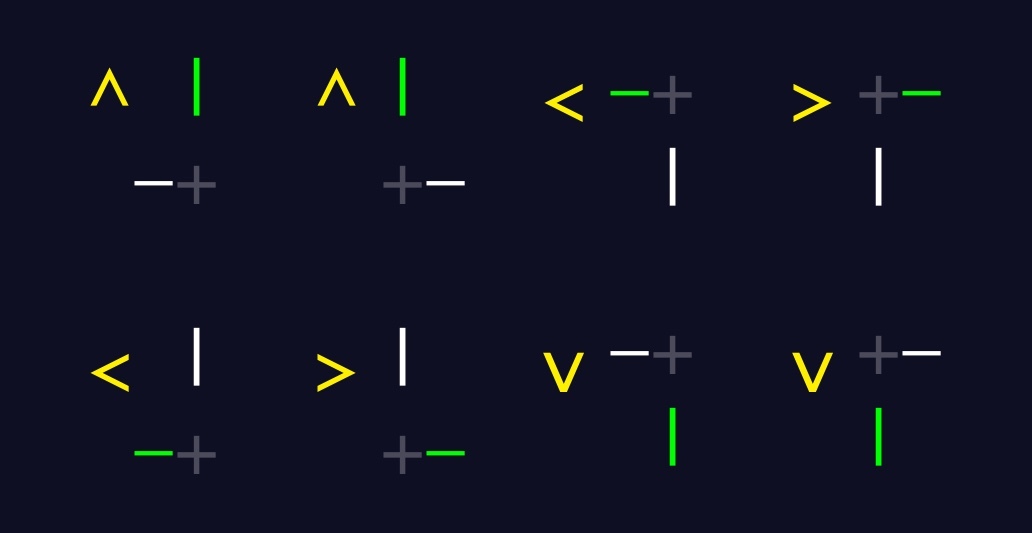

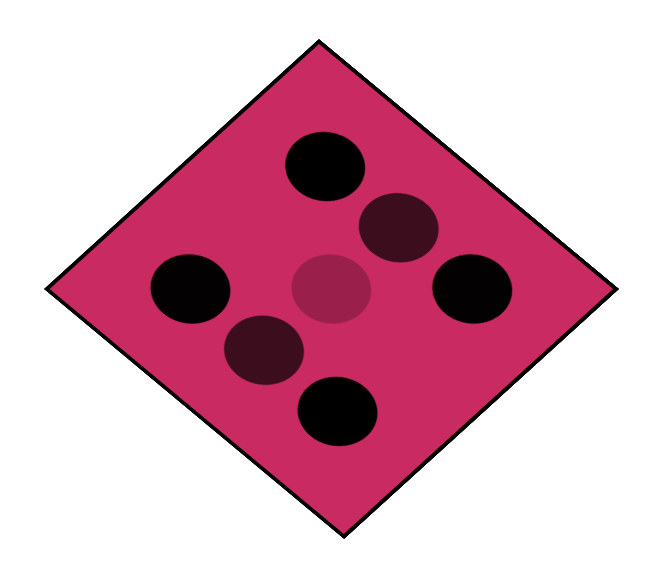

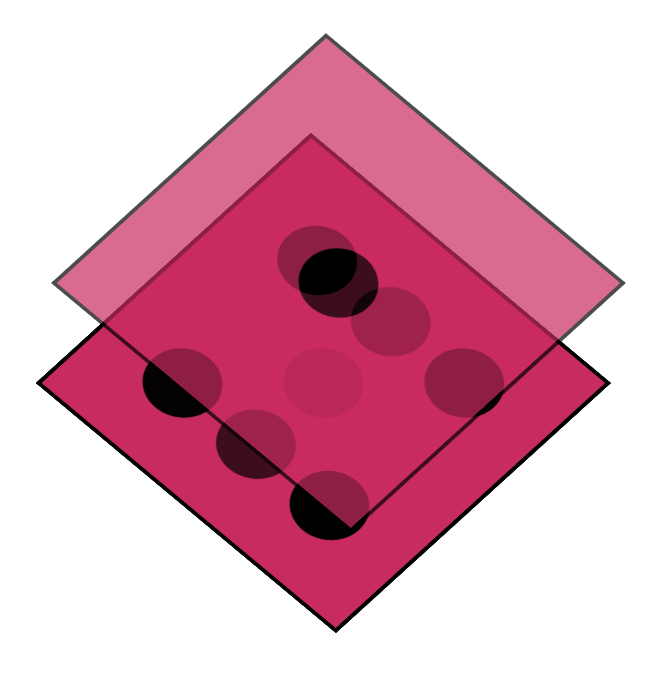

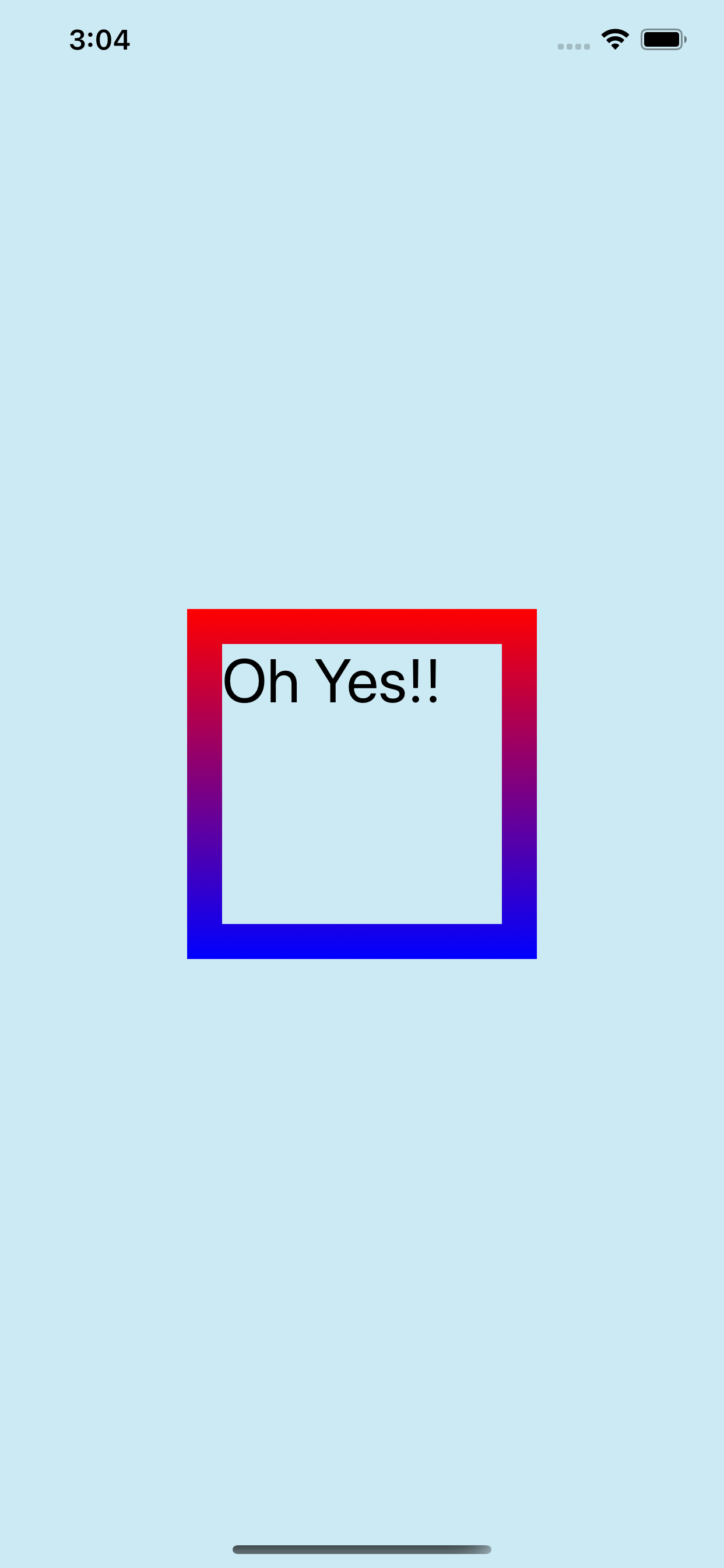

This animation depicts how my algorithm works:

In more written detail:

```

Start with a list of the four relative adjacent coordinates:

[[-1,0],[0,1],[1,0],[0,-1]]

Filter that list to exclude the pair that corresponds to the current location of the packet

Change each coordinate into a 3-element array with this blueprint:

['character', row #, column #]

Filter that list to exclude the two items where 'character' is a space

Alas, we now have a 1-item array! Flatten it, resulting in:

['character', row #, column #]

```

#### Update the direction to account for turning a corner

Now that I know the location and character of the next tube that the packet should occupy, I need to:

- Move the packet there

- Update the packet's direction

- Record the letter at that spot if there is one

I used a `switch` statement to update the packet's direction.

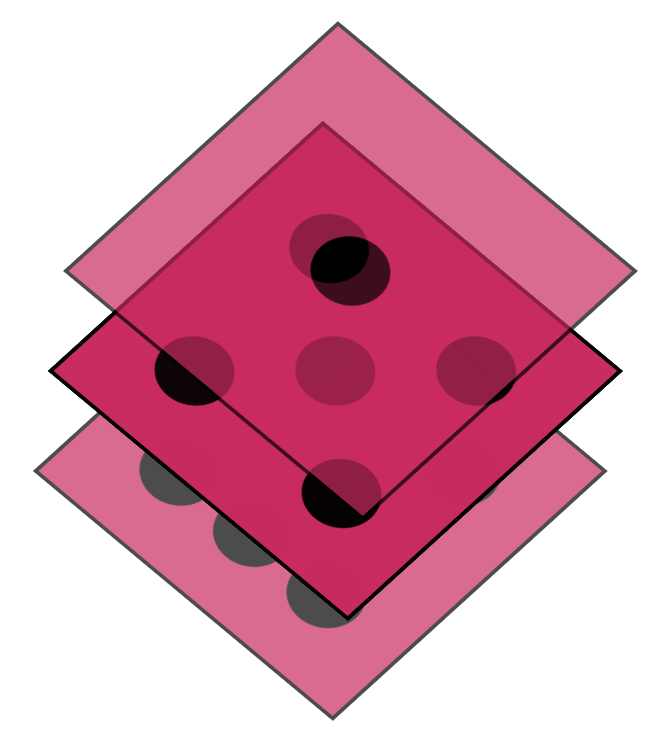

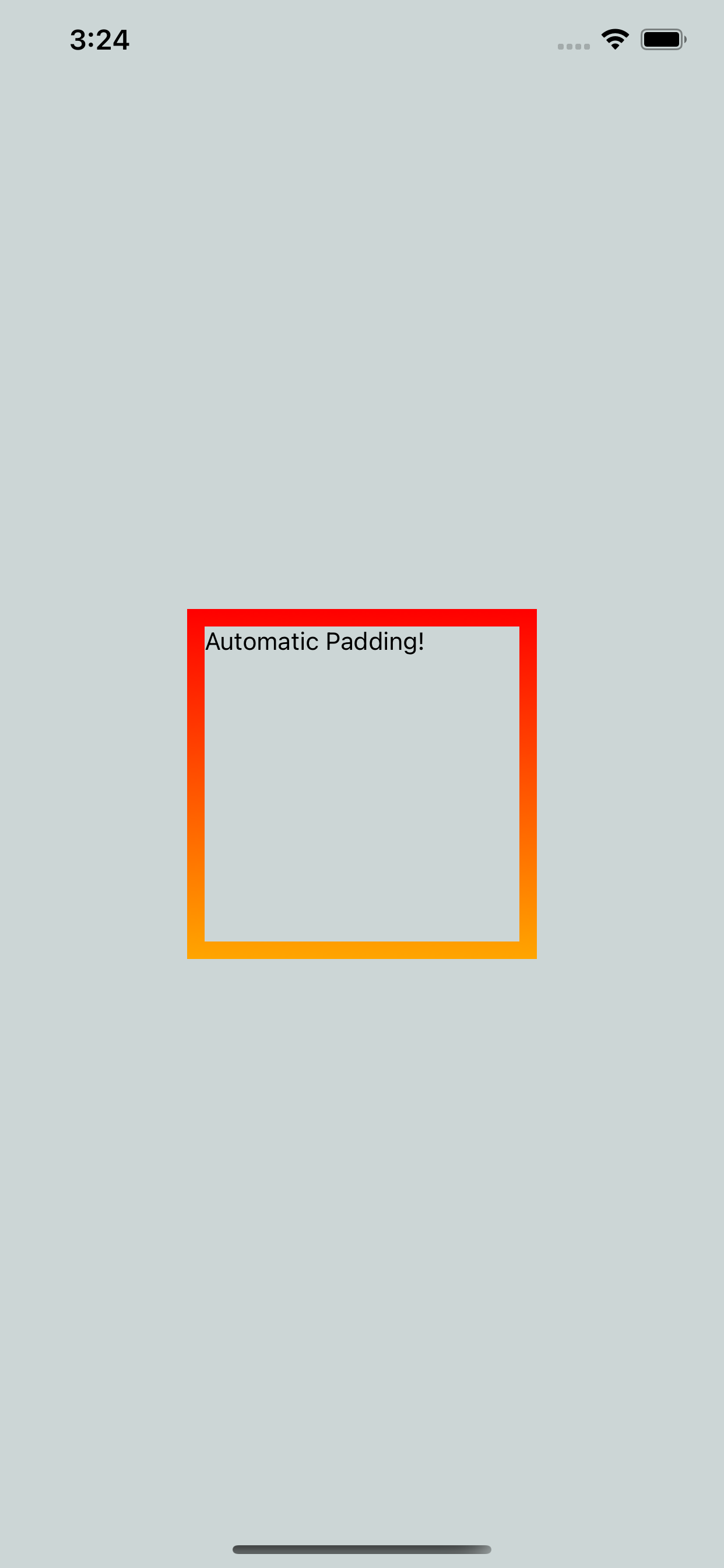

Depending on the packet's direction, and either the row or column of the next tube, update the direction according to the following diagram:

#### Testing my algorithm

There was quite a bit of troubleshooting:

- I incorrectly referred to rows instead of columns in one place

- I incorrectly referenced an index in some places

- I collected each number twice

Eventually, my algorithm finished, having collected each letter using the example input and my puzzle input.

I'm debating whether it would be fun to watch in a simulator.

Before I do, I'm anxious to see how much more difficult Part 2 is...

## Part 2

1. Woah! That's it??!!

### Woah! That's it??!!

- Add a variable to track the number of steps

- Increment that variable in three places throughout my loop

- Run it on the example: success!

- Run it on my input: success!

## I did it!!

- I solved both parts!

- I made a GIF to describe one of my smaller algorithms

- I've now bested my star count by this point for any year!

I opted not to make a simulator.

I'm more excited to attempt the next puzzle than I am to spend even an hour making one for this puzzle.

| rmion |

1,151,315 | Top 5 Youtubr channels for learning JavaScript | in this article i will give you the best channels for learning javascript : so let's get started ... | 0 | 2022-07-25T21:11:07 | https://dev.to/lodstare/top-5-youtubr-channels-for-learning-javascript-2lna | javascript, beginners, programming, tutorial | in this article i will give you the best channels for learning javascript :

so let's get started

## 1.Programming with Mosh :

click here =>[Programming with Mosh](https://www.youtube.com/c/programmingwithmosh)

## 2.Dave Gray :

click here => [Dave Gray](https://www.youtube.com/c/DaveGrayTeachesCode)

## 3.freeCodeCamp.org :

[freeCodeCamp.org](https://www.youtube.com/c/Freecodecamp)

## 4.Clever Programmer :

click here => [Clever Programmer](https://www.youtube.com/c/CleverProgrammer)

## 5.Bro Code :

click here => [Bro Code ](https://www.youtube.com/c/BroCodez)

## for know other information about learning javascript

click here => [Article](https://lodstare.blogspot.com/2022/07/javascript.html)

## connect with me on :

- [YouTube](https://www.youtube.com/channel/UCEDF5YBStH5cWnqK2U4BCkw)

- [Twitter](https://twitter.com/ayoub_el_achab)

- [Instagram](https://www.instagram.com/ayoub_el_achab_/)

- [Git Hub](https://github.com/Lodstare)

- [Linkedin](https://www.linkedin.com/in/ayoub-el-achab/)

| lodstare |

1,152,083 | Need Advice: Going to College for Software Developer | Hello everyone. I'm going to college this August for an Associates and then Bachelors in Computer... | 0 | 2022-07-26T17:57:02 | https://dev.to/jackler2/need-advice-going-to-college-for-software-developer-3la7 | beginners, computerscience, discuss | Hello everyone.

I'm going to college this August for an Associates and then Bachelors in Computer Science with a Concentration on Software Development.

Looking through the courses they offer, it's hard to decipher exactly what I'm going to learn and for the sake of career potential, and I'm trying to find out what things I should be learning about in my spare time to compliment my coursework in college.

To start with, its Southern New Hampshire University. They said I could either get a Bachelors in CS or IT, but everything I've read online points to CS. I've read countless job posts on Indeed for Software Engineering/Development, and almost all point to CS, so I changed majors today to CS.

The problem is though, I also compiled a list of no less than 100 common skills/expertise that jobs report looking for; I've come to understand that breaking it down, they are different programming languages, frameworks, APIs, certifications, proficiency in variants of Java or Javascript like Vue.js and scripting languages, etc.

Since this degree is Computer Science and not specifically for Software Engineering, I'm not sure what I need to compliment my learning with during my 4 years of college. I want to make sure I'm fully rounded for the highest chance of success when I finish college, and my career plan is to obtain my Associates so I can begin work, at least as an intern, to have job experience in the field and title, and start a portfolio of projects to show during interviews.

So where should I start? With wanting to jump-start my career with an Associates in CS while continuing to earn my Bachelors, what terms and things should I start researching now? Databases? Algorithms? If you started all over again and your plan was mine, how would you structure your learning path so you would be ready to start at a company when you obtained your Associates?

I look forward to hearing from all of you, and thank you in advance for helping me succeed.

Thank you,

Patrick

| jackler2 |

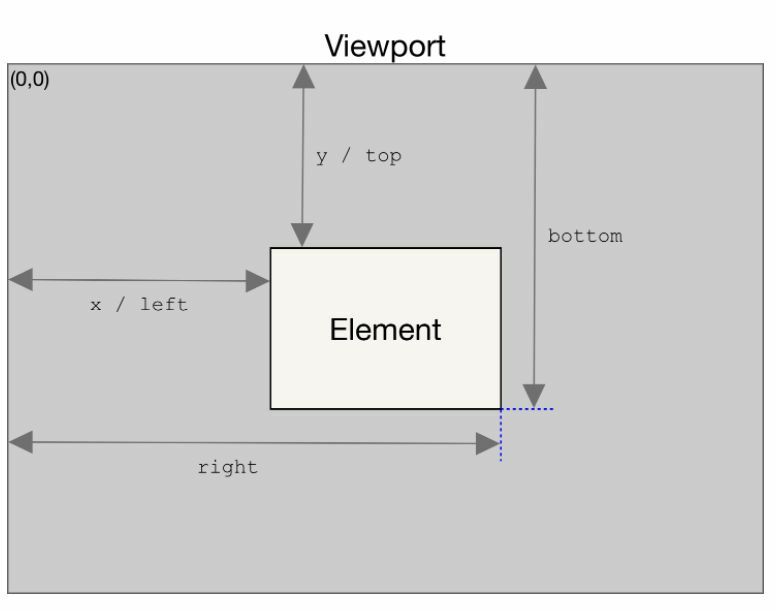

1,152,146 | 𝗘𝗹𝗲𝗺𝗲𝗻𝘁.𝗴𝗲𝘁𝗕𝗼𝘂𝗻𝗱𝗶𝗻𝗴𝗖𝗹𝗶𝗲𝗻𝘁𝗥𝗲𝗰𝘁() 𝘮𝘦𝘵𝘩𝘰𝘥 | 𝗘𝗹𝗲𝗺𝗲𝗻𝘁.𝗴𝗲𝘁𝗕𝗼𝘂𝗻𝗱𝗶𝗻𝗴𝗖𝗹𝗶𝗲𝗻𝘁𝗥𝗲𝗰𝘁() 𝘮𝘦𝘵𝘩𝘰𝘥 𝘳𝘦𝘵𝘶𝘳𝘯𝘴 𝘢 𝘋𝘖𝘔𝘙𝘦𝘤𝘵 𝘰𝘣𝘫𝘦𝘤𝘵 𝘱𝘳𝘰𝘷𝘪𝘥𝘪𝘯𝘨 𝘪𝘯𝘧𝘰𝘳𝘮𝘢𝘵𝘪𝘰𝘯 𝘢𝘣𝘰𝘶𝘵 𝘵𝘩𝘦 𝘴𝘪𝘻𝘦... | 0 | 2022-07-26T18:53:33 | https://dev.to/talenttinaapi/-52ic | react, webdev, frontend, javascript | 𝗘𝗹𝗲𝗺𝗲𝗻𝘁.𝗴𝗲𝘁𝗕𝗼𝘂𝗻𝗱𝗶𝗻𝗴𝗖𝗹𝗶𝗲𝗻𝘁𝗥𝗲𝗰𝘁() 𝘮𝘦𝘵𝘩𝘰𝘥 𝘳𝘦𝘵𝘶𝘳𝘯𝘴 𝘢 𝘋𝘖𝘔𝘙𝘦𝘤𝘵 𝘰𝘣𝘫𝘦𝘤𝘵 𝘱𝘳𝘰𝘷𝘪𝘥𝘪𝘯𝘨 𝘪𝘯𝘧𝘰𝘳𝘮𝘢𝘵𝘪𝘰𝘯 𝘢𝘣𝘰𝘶𝘵 𝘵𝘩𝘦 𝘴𝘪𝘻𝘦 𝘰𝘧 𝘢𝘯 𝘦𝘭𝘦𝘮𝘦𝘯𝘵 𝘢𝘯𝘥 𝘪𝘵𝘴 𝘱𝘰𝘴𝘪𝘵𝘪𝘰𝘯 𝘳𝘦𝘭𝘢𝘵𝘪𝘷𝘦 𝘵𝘰 𝘵𝘩𝘦 𝘷𝘪𝘦𝘸𝘱𝘰𝘳𝘵.

𝘛𝘩𝘦 𝘋𝘖𝘔𝘙𝘦𝘤𝘵 𝘰𝘣𝘫𝘦𝘤𝘵 𝘵𝘩𝘢𝘵 𝘪𝘴 𝘳𝘦𝘵𝘶𝘳𝘯𝘦𝘥 𝘪𝘴 𝘵𝘩𝘦 𝘴𝘮𝘢𝘭𝘭𝘦𝘴𝘵 𝘳𝘦𝘤𝘵𝘢𝘯𝘨𝘭𝘦 𝘵𝘩𝘢𝘵 𝘦𝘯𝘤𝘰𝘮𝘱𝘢𝘴𝘴𝘦𝘴 𝘵𝘩𝘦 𝘸𝘩𝘰𝘭𝘦 𝘦𝘭𝘦𝘮𝘦𝘯𝘵, 𝘪𝘯𝘤𝘭𝘶𝘥𝘪𝘯𝘨 𝘱𝘢𝘥𝘥𝘪𝘯𝘨 𝘢𝘯𝘥 𝘣𝘰𝘳𝘥𝘦𝘳-𝘸𝘪𝘥𝘵𝘩. 𝘛𝘩𝘦 𝘭𝘦𝘧𝘵, 𝘵𝘰𝘱, 𝘳𝘪𝘨𝘩𝘵, 𝘣𝘰𝘵𝘵𝘰𝘮, 𝘹, 𝘺, 𝘸𝘪𝘥𝘵𝘩, 𝘢𝘯𝘥 𝘩𝘦𝘪𝘨𝘩𝘵 𝘢𝘵𝘵𝘳𝘪𝘣𝘶𝘵𝘦𝘴 𝘥𝘦𝘧𝘪𝘯𝘦 𝘵𝘩𝘦 𝘵𝘰𝘵𝘢𝘭 𝘳𝘦𝘤𝘵𝘢𝘯𝘨𝘭𝘦'𝘴 𝘭𝘰𝘤𝘢𝘵𝘪𝘰𝘯 𝘢𝘯𝘥 𝘴𝘪𝘻𝘦 𝘪𝘯 𝘱𝘪𝘹𝘦𝘭𝘴. 𝘖𝘵𝘩𝘦𝘳 𝘵𝘩𝘢𝘯 𝘸𝘪𝘥𝘵𝘩 𝘢𝘯𝘥 𝘩𝘦𝘪𝘨𝘩𝘵, 𝘢𝘭𝘭 𝘱𝘳𝘰𝘱𝘦𝘳𝘵𝘪𝘦𝘴 𝘢𝘳𝘦 𝘳𝘦𝘭𝘢𝘵𝘦𝘥 𝘵𝘰 𝘵𝘩𝘦 𝘷𝘪𝘦𝘸𝘱𝘰𝘳𝘵'𝘴 𝘵𝘰𝘱-𝘭𝘦𝘧𝘵 𝘤𝘰𝘳𝘯𝘦𝘳.

source MDN Web docs

| talenttinaapi |

1,152,439 | sketchmypic All-in-one photo effect tool to add spark to your selfies and pictures! | The Sketchmypic drawing suite is a full-featured drawing package with immense functionality for... | 0 | 2022-07-27T02:16:32 | https://dev.to/kiyararobins/sketchmypicall-in-one-photo-effect-tool-to-add-spark-to-your-selfies-and-pictures-5c5b | onlinetool, sketchimages, free, colorsketc | The [Sketchmypic](https://sketchmypic.com/) drawing suite is a full-featured drawing package with immense functionality for amateurs and professionals alike. The creative sketch features you get with this app include converting an image to a pencil sketch and an image to a colored pencil sketch effect.

Apart from the sketch filters this app also turns your photos and selfies into amazing artistic paintings. Images can be converted to watercolor paintings, oil paintings, and black-and-white paintings with the Sketchmypic app.

The app has some amazing effects of turning your pictures into pixel images, cartoon images, pop art & ASCII text art.

| kiyararobins |

1,152,513 | Creating a 3D dice in CSS | I'm finally going to touch on a subject I've been putting off for way too long in this article. 3D... | 0 | 2022-07-27T06:09:13 | https://daily-dev-tips.com/posts/creating-a-3d-dice-in-css/ | css | I'm finally going to touch on a subject I've been putting off for way too long in this article.

3D in CSS!

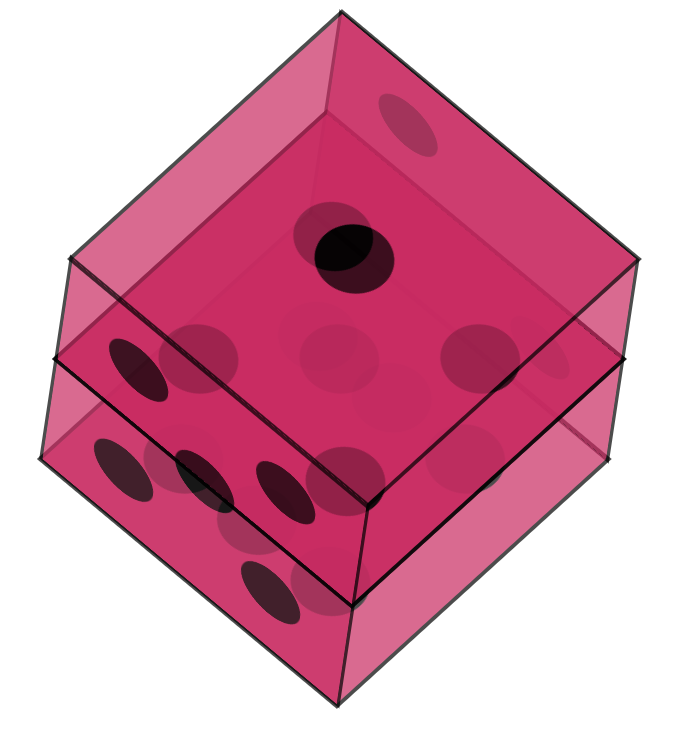

Yes, 3D, and what better example of trying and making a 3D dice in CSS.

The result of this article will be this rotating dice.

(Code and demo at the bottom)

## Setting up the HTML

Let's start with the HTML. We need a dice container and six sides to it.

```html

<div class="dice">

<div class="side one"></div>

<div class="side two"></div>

<div class="side three"></div>

<div class="side four"></div>

<div class="side five"></div>

<div class="side six"></div>

</div>

```

That will be all for our HTML part. Let's quickly move on to the fun part, which will be the CSS.

## Basic styling

Let's start with some basic styling.

First, I ensured the dice element was in the middle of the page since it's easier to work with.

```css

body {

display: flex;

justify-content: center;

align-items: center;

min-height: 100vh;

}

```

Then I moved on to arranging the dice to be a big square box.

```css

.dice {

position: relative;

width: 200px;

height: 200px;

}

```

Then each side is another wrapper that centers all its children (the dots).

```css

.side {

width: 100%;

height: 100%;

background: #da0060;

border: 2px solid black;

position: absolute;

opacity: 0.7;

display: flex;

align-items: center;

justify-content: center;

}

```

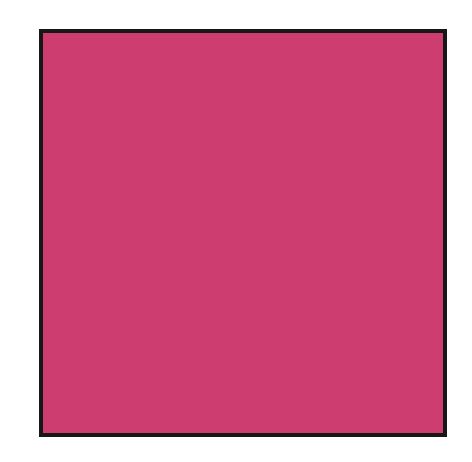

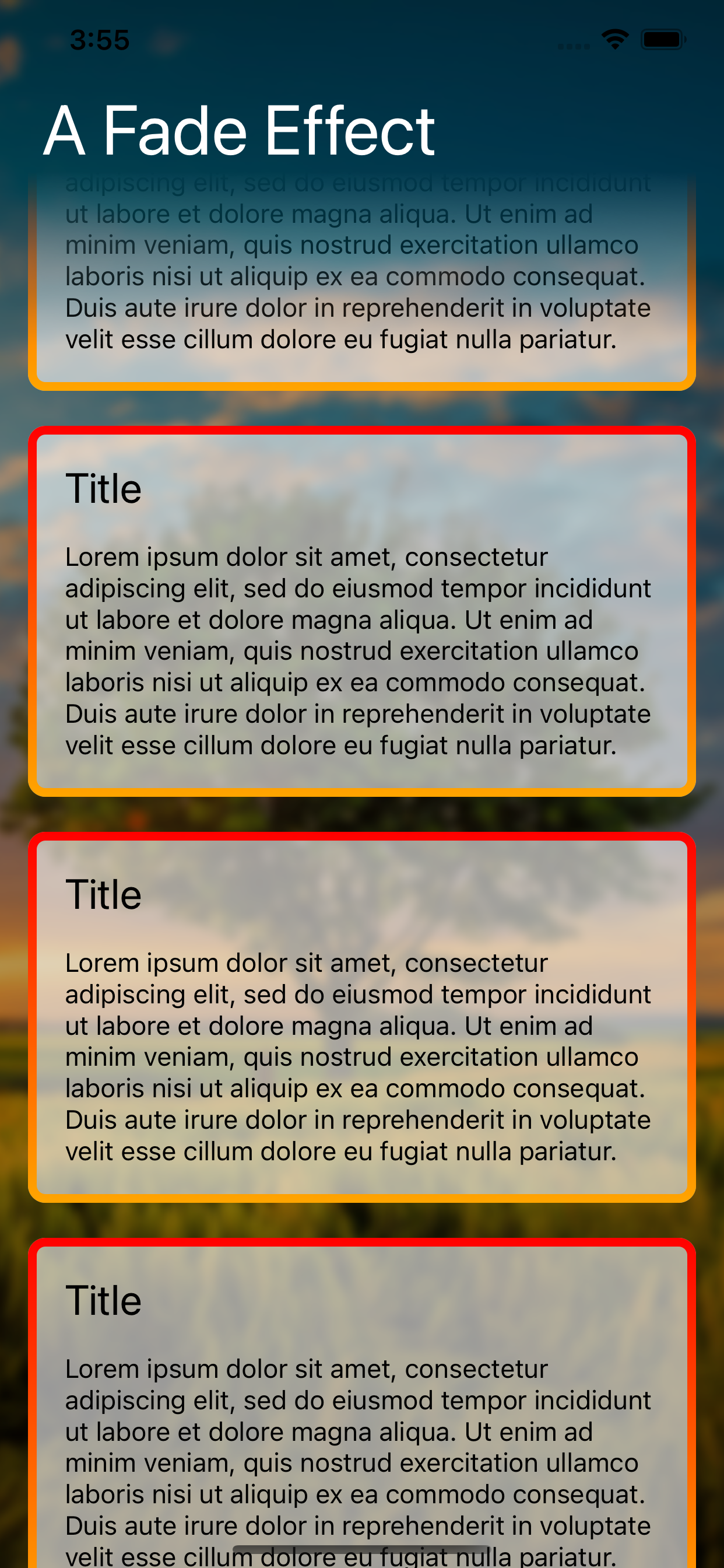

By now, you should see something that looks like this:

It might not look like much, but trust me, there are six sides!

## Creating dice dots in CSS

I will start by explaining how I achieved the dots since this is quite an excellent technique.

I didn't want to use a lot of extra divs, so each option only consists of one pseudo selector.

And it starts with the #1 side.

```css

.side {

&:before {

content: '';

width: 20%;

height: 20%;

background: black;

border-radius: 50%;

}

}

```

I can hear you think, but what about the others?

And there is a very cool trick for that! We can leverage the box-shadow rule for it.

And if we think about it, there are only seven positions a dot can have.

We already have one, so let's look at the other six.

```css

&:before {

content: '';

width: 20%;

height: 20%;

background: black;

border-radius: 50%;

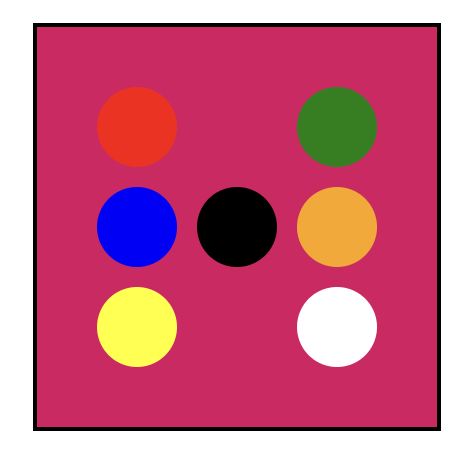

box-shadow: red -50px -50px 0px 0px, blue -50px 0px 0px 0px,

yellow -50px 50px 0px 0px, green 50px -50px 0px 0px, orange 50px 0px 0px 0px,

white 50px 50px 0px 0px;

}

```

I used random colors in the example above so you can see which position is which dot.

> Note: The black dot is our initial dot.

Now let's go ahead and create all possible dot variants.

For the #2 side, we don't need the initial dot so that we can turn off the background.

```css

.two {

&:before {

background: transparent;

box-shadow: #000 -50px -50px 0px 0px, #000 50px 50px 0px 0px;

}

}

```

For number three we can use a similar approach, but not hide the background:

```css

.three {

&:before {

box-shadow: #000 -50px 50px 0px 0px, #000 50px -50px 0px 0px;

}

}

```

Then for the fourth one:

```css

.four {

&:before {

background: transparent;

box-shadow: #000 -50px 50px 0px 0px, #000 -50px -50px 0px 0px,

#000 50px 50px 0px 0px, #000 50px -50px 0px 0px;

}

}

```

And on to number five:

```css

.five {

&:before {

box-shadow: #000 -50px -50px 0px 0px, #000 -50px 50px 0px 0px,

#000 50px -50px 0px 0px, #000 50px 50px 0px 0px;

}

}

```

And last but not least, number six, which we used as our template.

```css

.six {

&:before {

background: transparent;

box-shadow: #000 -50px -50px 0px 0px, #000 -50px 0px 0px 0px,

#000 -50px 50px 0px 0px, #000 50px -50px 0px 0px, #000 50px 0px 0px 0px, #000

50px 50px 0px 0px;

}

}

```

Our result will look weird since our transparent layers sit on each other.

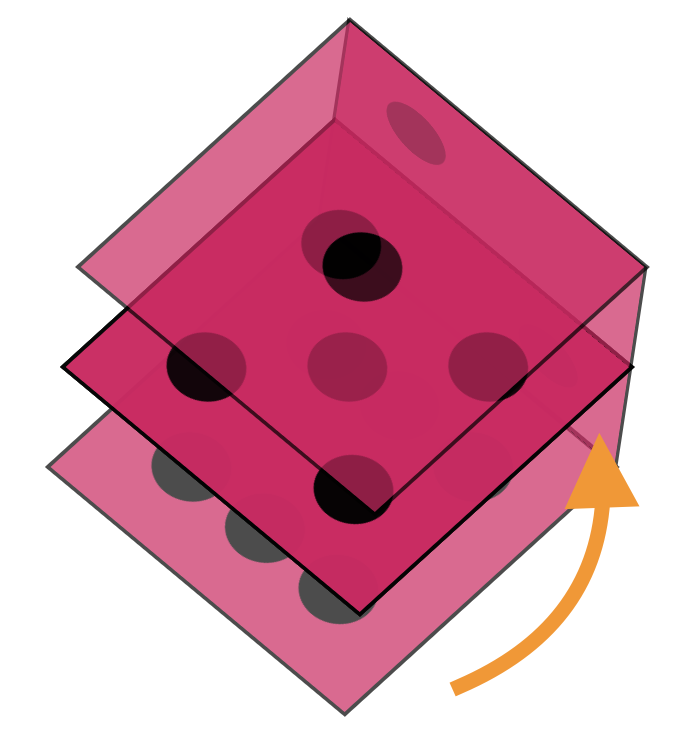

## Arranging a dice in 3D CSS

Now that we have all our elements in place let's start the fun and apply our 3D effect!

We can achieve a 3D perspective in CSS by using transforms. We have three axes to play with: the X, Y, and Z axes.

First, let's put our cube in perspective.

```css

.dice {

transform-style: preserve-3d;

transform: rotateY(185deg) rotateX(150deg) rotateZ(315deg);

}

```

Now, it might look a bit weird if we look at what we got.

This is already 3D, but it doesn't look like a cube yet. We need to modify each side to do its transformation.

Let's start with side one.

```css

.one {

transform: translateZ(100px);

}

```

This will lift the side, so it sticks out at the top.

You might already be able to see where this is going.

Now let's do the opposite end, which is side number six.

We will offset that to a negative 100px. This means the distance between one and six is now 200px (our cube size).

```css

.six {

transform: translateZ(-100px);

}

```

Now let's do the number two side. This one needs to be connected by transforming a different axis.

```css

.two {

transform: translateX(-100px) rotateY(-90deg);

}

```

Now the easiest one to connect is the opposite, side number five.

```css

.five {

transform: translateX(100px) rotateY(90deg);

}

```

As you can see, it's the same transformation, but we offset it by 200 pixels.

Two more sides left. Let's do number three.

```css

.three {

transform: translateY(100px) rotateX(90deg);

}

```

As you can see, it's the opposite transformation from two to five.

The last one we need is number four, which will be the opposite of number three.

```css

.four {

transform: translateY(-100px) rotateX(90deg);

}

```

With this one in place, our dice are complete!

## Animating the dice

Now that we have our dice let's make it a bit more interactive by animating it!

Each of the sides we described can also be animated, I'll only be turning the dice on one side, but you can have a play around with any of them to achieve a different animation.

```css

@keyframes rotate {

from {

transform: rotateY(0) rotateX(45deg) rotateZ(45deg);

}

to {

transform: rotateY(360deg) rotateX(45deg) rotateZ(45deg);

}

}

.dice {

animation: rotate 5s linear infinite;

}

```

You can find the completed example in this CodePen.

{% codepen https://codepen.io/rebelchris/pen/poLRpbQ %}

### Thank you for reading, and let's connect!

Thank you for reading my blog. Feel free to subscribe to my email newsletter and connect on [Facebook](https://www.facebook.com/DailyDevTipsBlog) or [Twitter](https://twitter.com/DailyDevTips1) | dailydevtips1 |

1,153,185 | Make Inlay Type Hints in Python Appear/Disappear | A Type Hinting Tip for Those Not Completely Onboard TLDR: You can set Inlay Hints:... | 19,069 | 2022-07-27T21:46:57 | https://kjaymiller.com/blog/make-inlay-type-hints-in-python-appear-disappear | python, vscode, beginners, tutorial | ## A Type Hinting Tip for Those Not Completely Onboard

> TLDR: You can set `Inlay Hints: Enabled` to `On/OffUnless pressed` in the settings to show/hide inlay type hints in Python code.

In July the VS Code Python Team released an [update for VS Code](https://devblogs.microsoft.com/python/python-in-visual-studio-code-july-2022-release/) that announced inlay Type Hint Support.

{% embed https://www.youtube.com/watch?v=hHBp0r4w86g %}

Adding type hint inferences next to your code is very nice. The more I started playing with it, I noticed that sometimes the type hints didn't feel helpful and made my code look cluttered.

Don't get me wrong, I like type hints. They are a massive help with troubleshooting and documentation. They are even used in [dataclasses](https://docs.python.org/3/library/dataclasses.html), one of my favorite standard library tools. But as I tell my child, there is a time and place for everything. When it comes to type hints, _All the Time!_, is not the answer.

### Let's take the following example.

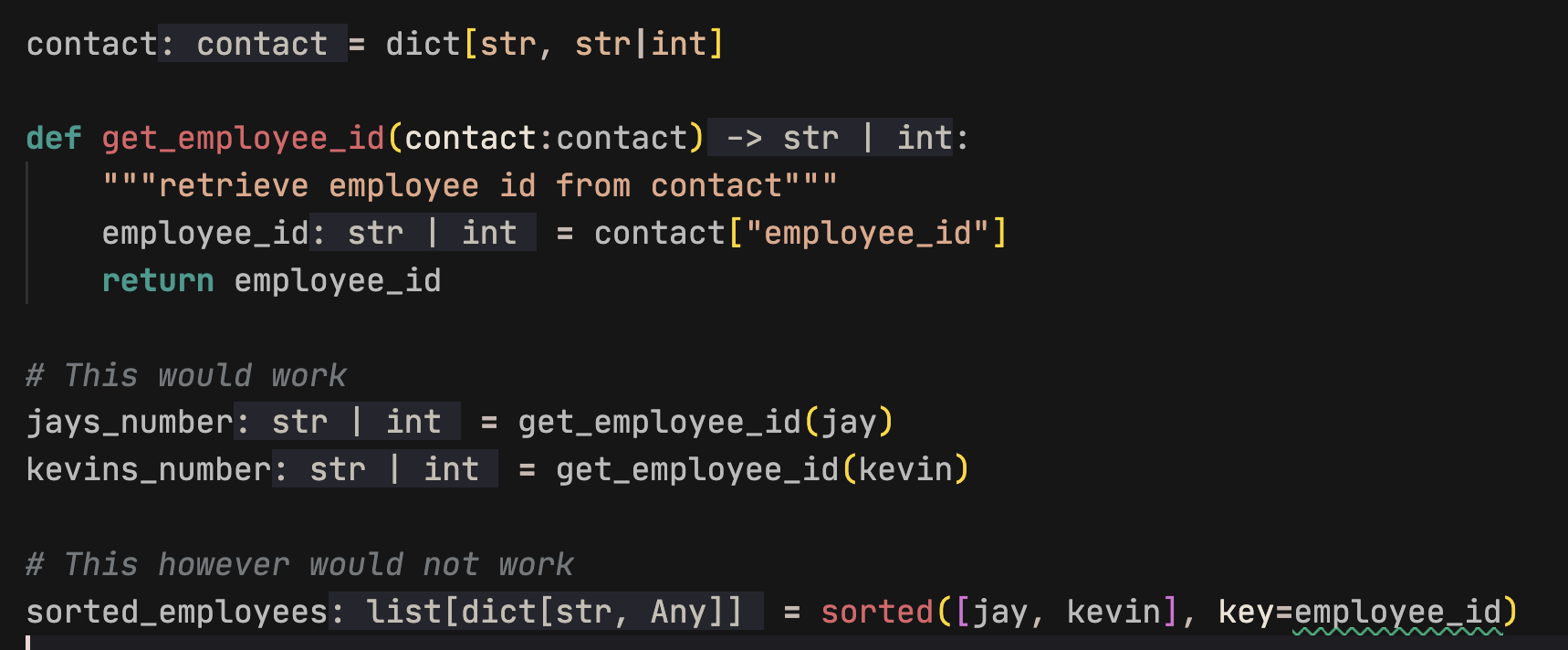

Let's say we have some dictionary objects that are brought into our code from multiple systems. Sometimes the `employee_id` will be a numerical id and other times it will be a unique string.

```python

jay = {

"name": "Jay",

"employee_id": "abcd1234" # some records could be integers depending on the schema

}

```

If we don't define types, PyLance will assume that the contact `jay` is the type `dict[str, str]` because all the values in the dictionary are `str`. What happens if we have a different record like:

```python

kevin = {

"name": "Kevin",

"employee_id": 12345678

}

```

The variable of `kevin` would be typed `dict[str, Any]` because the type of the `employee_id` differs from the `name`.

If we build a function that gets the employee id of multiple entries and sorts by `employee_id`, We'll get a `TypeError`.

```python

def get_employee_id(contact):

"""retrieve employee id from contact"""

return contact["employee_id"]

sorted_employees = sorted([jay, kevin], key=get_employee_id)

>>> Traceback (most recent call last):

File "<stdin>", line 1, in <module>

TypeError: '<' not supported between instances of 'int' and 'str'

```

The solution is to return the contact variable as a str. Type Hints would have told us that the contact would have been `Any` type (like `kevin`). And this would have been a hint we need to make types consistent.

We could even create a custom named type as Łukasz Langa mentions in his [PyCon US 2022 Keynote](https://youtu.be/wbohVjhqg7c?t=753). This would provide helpful hints as we're writing the code.

## The July 2022 Update

The aforementioned VS Code Python update made it so that types could be inlayed next to your code. This makes adding type hints much simpler because hints (which are not added to your code) are valid Python code and can be added by the author.

In this case the hints would have been helpful but I don't want them always be present. There is an existing feature that may be new to Python developers using VS Code that will make showing your type hints only when you want to see them.

## Turning On Inlay Hints

For this to work you must first turn on Inlay Hints for python. Make sure the [Python Extension](https://marketplace.visualstudio.com/items?itemName=ms-python.python) is installed in VS Code and search "Python Inlay Hints" in settings.

The first value to turn on is `Python › Analysis › Inlay Hints: Function Return Types`. This gives typing for what a function or method is returning.

The second is `Python › Analysis › Inlay Hints: Variable Types`. This inlays hints on variables that are written (like the ones above).

## Customizing How Inlay Hints Present in Your Editor

Next in settings just search for "Inlay". You should find `Editor › Inlay Hints: Enabled`. The value is set to `on` by default, but it has a few options, including `OnUnlessPressed` and `OffUnlessPressed`.

**If you change the value to `OffUnlessPressed`, you will no longer see inlayed hints until you enter _Ctrl + Alt_ (_⌃ + ⌥_ on MacOS)**. When you need a hint, press the keys and the type hints will reappear. Release the keys and they disappear again.

You can also set `OnUnlessPressed`. This does the opposite, only showing the code that exists in the file. This entry in settings is also next to other Inlay hint stylings that may help you differentiate your code from hints. | kjaymiller |

1,153,476 | PrivcacyIN Open Class | PrivacyIN will broadcast an open class on cryptography on 28th Aug. The lecturer is wangxiao,... | 0 | 2022-07-28T07:51:59 | https://dev.to/kingslaugter/privcacyin-open-class-1dm6 | machinelearning, zeroknowledge, platon, privacy |

PrivacyIN will broadcast an open class on cryptography on 28th Aug. The lecturer is

wangxiao, assistant professor at Northwestern University.

scan to sign up.

This session will introduce a ZK system (Mystique)and show the protocol implementation in LatticeX-Rosetta | kingslaugter |

1,153,654 | The useEffect hook in ReactJS | Introduction So, when I was a kid, my mama told me "The new React hooks API is really... | 0 | 2022-07-28T11:56:03 | https://dev.to/kuvambhardwaj/the-useeffect-hook-in-reactjs-2dna | javascript, react | ## Introduction

So, when I was a kid, my mama told me

"The new React hooks API is really cool, the `useEffect` hook replaced `componentDidMount` & `componentDidUpdate` of the Class API"

I wanted to ask her more about it but she got busy optimizing her React component that was re-rendering multiple times.

When I got older, I learned more about React & these hooks API, and today I'll try explaining to YOU what my mom didn't explain to ME & Probably your mom didn't too — **`useEffect`**

## Setup

So, here I created a React app in *codesandbox* on my browser

<img src="https://cdn.hashnode.com/res/hashnode/image/upload/v1659006078804/PICp8H_WM.png" align="left" />

**Yup! My Web browser!**

Here's the [link](https://codesandbox.io/s/cranky-mcclintock-l5he9q), interact with it yourself!

For those of you lazy folks who don't wanna go anywhere, here's the code 🫠

```javascript

// App.js

import { useEffect, useState } from "react";

export default function App() {

const [counter, setCounter] = useState(0);

useEffect(() => {

console.log(counter);

});

return (

<div style={{ width: "100%", textAlign: "center" }}>

<h3>{counter}</h3>

<button onClick={() => setCounter((prevValue) => prevValue + 1)}>

Increment

</button>

</div>

);

}

```

Simple? huh...

Only 1 state variable defined `counter` which is being incremented at every button tap by `setCounter`, also a `useEffect` with `console.log(counter)` in its function body, nothing kubernetes!

## Code

Let's now focus on the `useEffect` defined here

```javascript

useEffect(() => {

console.log(counter);

});

```

As evident, by the code, the hook takes a function which it'll internally call at "times". Those "times" can be the component re-render, initial component mount or any state update call which causes the component to, of course, re-render.

After running this code, I tap on the increment button 3 times & these are the console logs I get:

<img src="https://cdn.hashnode.com/res/hashnode/image/upload/v1658992237044/IOOXLZTEg.png" align="left" />

🤔 Hmm... looks like, this particular `useEffect` ran on these occasions:

1. When the component is first mounted ( printed "0" to the console )

2. When I tap the increment button or in short, the state update occurred

Now, lets change the `useEffect` to this:

```javascript

useEffect(() => {

console.log(counter);

}, []);

```

Notice that we're now passing an empty array "[]" (also known as ***Dependency Array***) as the second argument to the `useEffect` hook

Running the code again, I tap on the increment button 3 times & these are the console log I get:

<img src="https://cdn.hashnode.com/res/hashnode/image/upload/v1658992644563/wc1nrSXHo.png" align="left" />

Okay, so this time the `useEffect` ran only at the time of mount of the component & NOT at the time of state update... INTERESTING! 🧐

<img src="https://media.giphy.com/media/RhPvGbWK78A0/giphy.gif" />

Now, again! let us edit the code once more, bear with me :)

```javascript

//App.js

import { useEffect, useState } from "react";

export default function App() {

const [counter, setCounter] = useState(0);

const [anotherCounter, setAnotherCounter] = useState(0)

useEffect(() => {

console.log("COUNTER VALUE", counter);

}, [counter]);

return (

<div style={{ width: "100%", textAlign: "center" }}>

<h3>{counter}</h3>

<button onClick={() => setCounter((prevValue) => prevValue + 1)}>

Increment

</button>

<br /><br />

<h3>{anotherCounter}</h3>

<button onClick={() => setAnotherCounter((prevValue) => prevValue + 1)}>

Random State Update

</button>

</div>

);

}

```

Alright, so... I've done 3 things here:

1. Add the `counter` state variable to the previously left empty array

2. Add another state variable & called it `anotherCounter`

3. Add the JSX to display & increment the `anotherCounter` variable

I'll now run the app & click on the "Increment" button 3 times & "Random State Update" button 2 times and these are the console logs I got

<img src="https://cdn.hashnode.com/res/hashnode/image/upload/v1658993740296/cfnHngUBa.png" align="left" />

Oohoo! so now the `useEffect` hook is firing our function SELECTIVELY when the `counter` state is changed and not when `anotherCounter` state is changed, although, the `setAnotherCounter` is causing the component to re-render & update the `anotherCounter` to the UI.

Now, you've probably understood to some extent what that "empty array ( [] )" meant...

NICE!

Let us recap what `useEffect` when correctly written can do!

1. With the dependency array left empty, the `useEffect` will run the callback function (defined by us) ONLY ONCE *right after the component renders UI*. (equivalent to `componentDidMount` in class components)

```javascript

useEffect(() => {

/*

Here, state initialization logic can be added

OR, fetch requests to Backend servers can be made for data-fetching

As this is only running once, you're not bombarding your server

*/

}, [])

```

2. With state variable(s) given in the dependency array, the `useEffect` will run when the component first mounts & will also be running whenever the given state variables are changed

```javascript

useEffect(() => {

// ...

}, [...dependencies])

```

3. With no dependency array defined as its second argument, the hook will call our function on EVERY SUBSEQUENT component re-render

```javascript

useEffect(() => {

// I don't prefer this but yeah! it's there if you want to use it!

})

```

---

Aaaand... that's a wrap!

Like this post, if you liked it 🙃<br>

But if you loved it? man you gotta follow me on [Twitter](https://twitter.com/bhardwajkuvam) 😉

Feedback is much appreciated! 🤗

---

Meet you another day with another post ⚡️ | kuvambhardwaj |

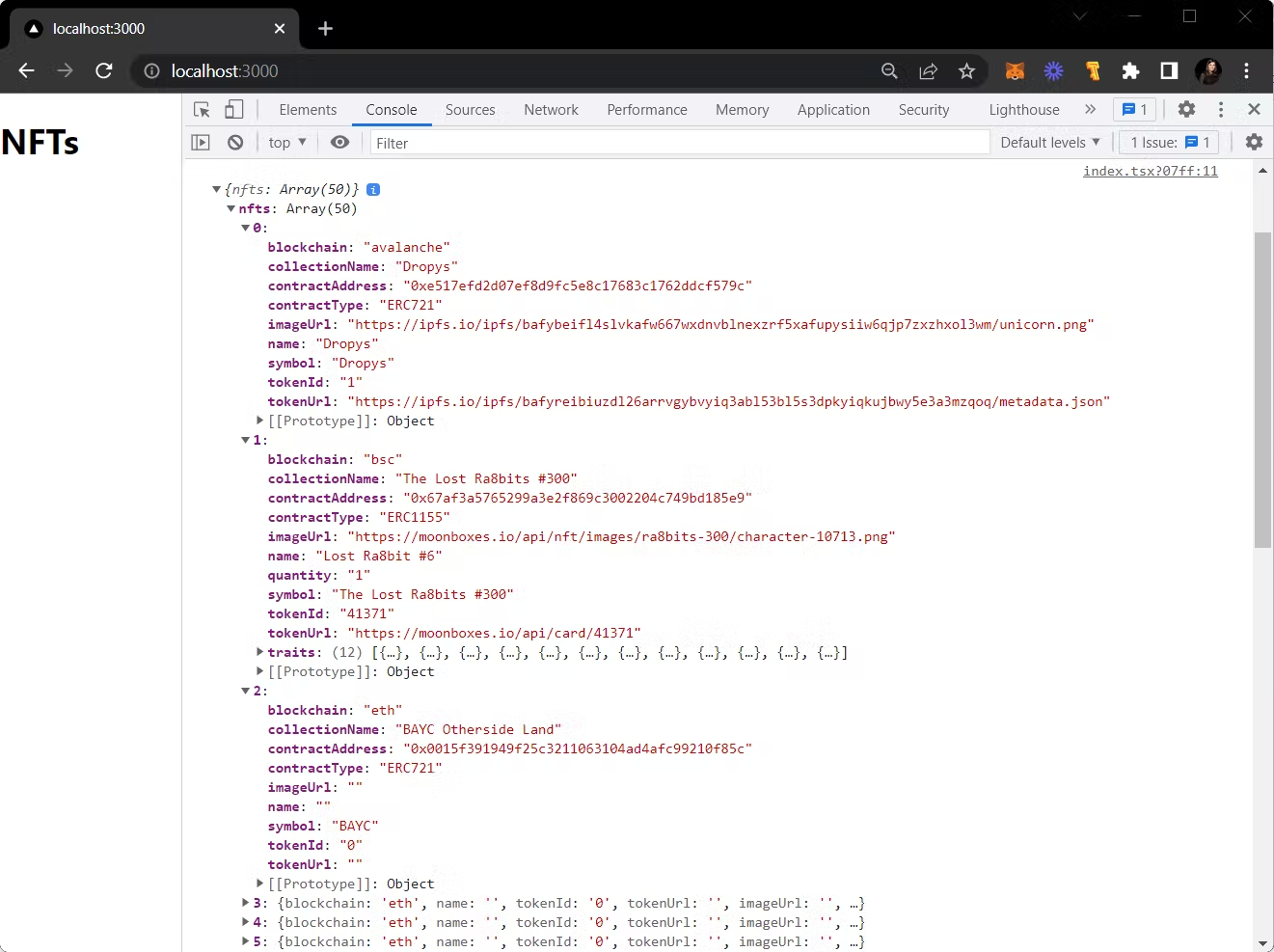

1,153,784 | NFTs provide a new funding model for free-to-play games. | NFTs are a new way to fund free-to-play games, but it has to be done right. Here's how to design the game without losing players. | 0 | 2022-07-28T14:24:12 | https://dev.to/garrett/nfts-provide-a-new-funding-model-for-free-to-play-games-2mna | web3, nfts, nft, gamedesign | ---

title: NFTs provide a new funding model for free-to-play games.

published: true

description: NFTs are a new way to fund free-to-play games, but it has to be done right. Here's how to design the game without losing players.

tags: web3, nfts, nft, gamedesign

cover_image: https://garrettmickley.com/wp-content/uploads/2022/03/pexels-photo-5698363-1.jpeg

# Use a ratio of 100:42 for best results.

---

<p>NFTs are a potential funding model for free-to-play games.</p>

<p>You can decide whether or not that funding model is suitable for you.</p>

<p>NFTs are highly debated, maybe even more so in the games community.</p>

<p>I'm not interested in arguing about NFTs.</p>

<p>If you're against NFTs in video games or against NFTs at all in any capacity, stop reading now.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>And definitely don't waste your time commenting just to hate on NFTs.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>That said, I do appreciate the honest discussion.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>Discussing ways to fund game development is vital to the industry, especially for Solo Game Devs like me.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>And I would like to have an honest discussion.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>Please, poke holes in my ideas here.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>Help me make them better.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>We can figure this out together.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>First, let's address some funding models of the past.</p>

<!-- /wp:paragraph -->

<!-- wp:uagb/table-of-contents {"block_id":"12bab932","classMigrate":true,"mappingHeaders":[true,true,true,true,true,true]} /-->

<!-- wp:heading -->

<h2>Self Funded Games</h2>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Self-funding games are how the first games were made and how many indie games are still made today.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>This is usually people building small games in their free time.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>Or big games in the case of Stardew Valley.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>This is still going on today and will probably go on forever.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>But it's not ideal.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>It sucks to work a day job 40 (or more) hours every week, build a game, and still find time to play games and spend time with friends and family.</p>

<!-- /wp:paragraph -->

<!-- wp:heading -->

<h2>Investor Funded Games</h2>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Some people can get funding from investors.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>This means someone is paying you to build your game for a cut of the profits.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>I don't think this happens very often, but I know it does happen because I have been approached by investors wanting to fund my games.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>This isn't ideal for me because I don't like being accountable to someone in that way.</p>

<!-- /wp:paragraph -->

<!-- wp:heading -->

<h2>Crowd Funded Games</h2>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Crowdfunding through platforms like Kickstarter or IndieGoGo is a currently popular way to get the ball rolling, but I have found most of these fail.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>There are games I have put Kickstarter money into almost a decade ago that still haven't come to fruition.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>To be successful in crowdfunding, you first have to have a large following.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>It's infrequent that something on Kickstarter takes off without already having a decent-sized audience.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>People are much warier of crowdfunding now because many don't make it to total funding, and often projects that do make it never end up getting finished.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>I don't think most projects are maliciously trying to make money and run.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>They just mismanage or underestimate the money needed to finish the project.</p>

<!-- /wp:paragraph -->

<!-- wp:heading -->

<h2>How to Use NFTs to Fund a Game</h2>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Now let's look at the pros and cons of using NFTs to fund your game and how to do it right.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>First things first, the controversy around NFTs.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>Anyone who has a problem with NFTs is not your target market.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>So forget about them.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>I frequently have to explain to my marketing clients that you can't be everything to everyone all the time.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p>"You can't please everyone" is a well-worn refrain I'm sure you've heard.</p>

<p>It's true, so don't try.</p>

<p>Focus on the people interested in NFTs in games, and don't worry about anyone else.</p>

<p>Next, you need to be fair about the NFTs.</p>

<p>Building a pay-to-win game is not sustainable.</p>

<p>But as we've seen with many games such as Roblox, Guild Wars 2, Fortnite, etc., people are willing to spend real money on aesthetics even if no advanced power comes with it.</p>

<p>On top of that, you should design a game where skills are more important than power.</p>

<p>Diablo III's real-money auction house was not a good idea because it's a game based on power.</p>

<p>Plus, the gameplay loop is Kill => Acquire Loot, and the auction house removed the need to Kill.</p>

<p>The design of your game is essential when adding something in that can be purchased with real money.</p>

<p>Another thing you can do to keep it somewhat fair is not sell any of the NFTs yourself as the game developer.</p>

<p>The players can sell them to each other, but someone has to earn or unlock them first.</p>

<p>That way, people who want to spend real-world money to acquire things can't flood the market by just buying the item off of the developer.</p>

<p>There may be some really awesome-looking armor that is very difficult to obtain.</p>

<p>When you see someone running around with that armor, you know someone there worked really hard to get it.</p>

<p>The person wearing it right now in front of you may have just paid for it.</p>

<p>But someone out there worked very hard to get it.</p>

<p>Which increases the value of the item by a lot.</p>

<p>This is where I think NFTs in games really shine.</p>

<p>The developer makes money off of the trades.</p>

<p>With enough players trading enough in-game, this could easily fund continued development and allow a game to be free to play.</p>

<p>It's essential to maintain a fair percentage of the trade as well.</p>

<p>Too small of a percentage, and your game won't be funded.</p>

<p>And if it's too large, players aren't incentivized to trade.</p>

<p>This also gives players a direct stake in the game that is much bigger than just being a player.</p>

<p>I don't know if Play-to-Earn games will ever reach a point where the average player can earn a living wage.</p>

<p>But if I'm going to play video games anyway, and I enjoy a game, and I can get some real-world money out of playing it, that's the game I will choose.</p>

<p>Even if it only affords me ordering a pizza once a week.</p>

<p>And giving players a reason to help grow the player base will make your game successful.</p>

<p>In fact, I would work in some sort of referral incentive for players to bring their friends in.</p>

<p>Long-term referral incentives would be great.</p>

<p>That way, it's not based on how many people they bring into the game, but how long those people actually stay active.</p>

<p>Comments are open, but as I said, if you're just here to complain about NFTs, don't bother. You'll just get blocked.</p>

<p>Legit discussion is encouraged!</p> | garrett |

1,154,051 | Introduction to Linux | WHAT IS LINUX. Just like Windows, iOS, and Mac OS, Linux is an operating system. One of... | 0 | 2022-07-28T19:44:52 | https://dev.to/muhammadshakirdev/introduction-to-linux-69j | linux, ubuntu, content, opensource |

## WHAT IS LINUX.

Just like Windows, iOS, and Mac OS, Linux is an operating system. One of the most popular platforms on the planet, Android, is powered by the Linux operating system. An operating system is software that manages all of the hardware resources associated with your desktop or laptop. To put it simply, the operating system manages the communication between your software and your hardware. Without the operating system (OS), the software wouldn't function.

The Linux operating system comprises several different pieces:

1. BOOTLOADER

2. KERNAL

3. INIT SYSTEM

4. DAEMONS

5. GRAPHICAL SERVER

6. DESKTOP ENVIRONMENT

7. APPLICATIONS

## BOOT LOADER.

The software that manages the boot process of your computer. For most users, this will simply be a splash screen that pops up and eventually goes away to boot into the operating system.

## **KERNEL.**

This is the one piece of the whole that is called? Linux?. The kernel is the core of the system and manages the CPU, memory, and peripheral devices. The kernel is the lowest level of the OS.

## INIT SYSTEM.

What is init, System Manager? In Linux/Unix-based operating systems, init (short for initialization) is the first process that started during the system boot-up by the kernel. It's holding a process id (PID) of 1. It will be running in the background continuously until the system is shut down. It is the init system that manages the boot process, once the initial booting is handed over from the bootloader (i.e., GRUB or GRand Unified Bootloader).

## DAEMONS.

These are background services (printing, sound, scheduling, etc.) that either start up during boot or after you log into the desktop.

## GRAPHICAL SERVER.

This is the sub-system that displays the graphics on your monitor. It is commonly referred to as the X server or just X.

## DESKTOP ENVIRONMENT.

This is the piece that the users interact with. There are many desktop environments to choose from (GNOME, Cinnamon, Mate, Pantheon, Enlightenment, KDE, Xfce, etc.). Each desktop environment includes built-in applications (such as file managers, configuration tools, web browsers, and games).

## APPLICATIONS.

Desktop environments do not offer the full array of apps. Just like Windows and macOS, Linux offers thousands upon thousands of high-quality software titles that can be easily found and installed. Most modern Linux distributions (more on this below) include App Store-like tools that centralize and simplify application installation. For example, Ubuntu Linux has the Ubuntu Software Center (a rebrand of GNOME Software) which allows you to quickly search among the thousands of apps and install them from one centralized location.

## WHY USE LINUX?

This is the one question that most people ask. Why bother learning a completely different computing environment, when the operating system that ships with most desktops, laptops and servers work just fine?

To answer that question, I would pose another question. Does that operating system you? re currently using work? just fine?? Or, do you find yourself battling obstacles like viruses, malware, slowdowns, crashes, costly repairs, and licensing fees?

If you struggle with the above, Linux might be the perfect platform for you. Linux has evolved into one of the most reliable computer ecosystems on the planet. Combine that reliability with zero cost of entry and you have the perfect solution for a desktop platform.

## OPEN-SOURCE.

Linux is also distributed under an open-source license. Open source follows these keys:

The freedom to run the program, for any purpose.

The freedom to study how the program works, and change it to make it do what you wish.

The freedom to redistribute copies so you can help your neighbor.

The freedom to distribute copies of your modified versions to others.

These points are crucial to understanding the community that works together to create the Linux platform. Without a doubt, Linux is an operating system that is ? by the people, for the people?. These tenants are also the main factor in why many people choose Linux. It's about freedom and freedom of use and freedom of choice.

## WHAT IS DISTRIBUTION.

Linux has some different versions to suit any type of user. From new users to hard-core users, you’ll find a “flavor” of Linux to match your needs. These versions are called distributions (or, in the short form, “distros”). Nearly every distribution of Linux can be downloaded for free, burned onto disk (or USB thumb drive), and installed (on as many machines as you like).

**Popular Linux distributions include:**

1. LINUX MINT

2. MANJARO

3. DEBIAN

4. UBUNTU

5. ANTERGOS

6. SOLUS

7. FEDORA

8. ELEMENTARY OS

9. OpenSUSE

Each distribution has a different take on the desktop. Some opt for very modern user interfaces (such as GNOME and Elementary OS’s Pantheon), whereas others stick with a more traditional desktop environment (openSUSE uses KDE).

| muhammadshakirdev |

1,154,107 | [Algorithms] 9 - Product of Array Except Self | Link for the problem Link for the solution Overview To solve the problem is pretty easy.... | 19,083 | 2022-07-28T21:53:00 | https://dev.to/_ben/algorithms-9-product-of-array-except-self-fk3 | algorithms | [Link for the problem](https://leetcode.com/problems/product-of-array-except-self/)

[Link for the solution](https://github.com/neetcode-gh/leetcode/blob/main/cpp/neetcode_150/01_arrays_%26_hashing/product_of_array_except_self.cpp)

#### Overview

To solve the problem is pretty easy. But the given conditions are what make this problem very tricky. I would like to introduce the problem because the solution is pretty unique.

#### Problem in detail

The constraints for this problem are:

- Time complexity: O(n)

- Space complexity: O(1)

- Cannot use division

> Notice that the problem will not count the returning array as an extra space.

It seems almost impossible to solve the problem with those constraints. Especially achieving O(1) space complexity without using division drove me crazy to think about the solution. So I gave it up and looked at the solution.

#### Solution

The solution was something that I have never seen, but easy to understand. First, we need to think how to get the products without itself. It is actually the product of left side of an element and the right side of an element.

```

[ 1, 2, 3, 4 ]

ex) product without 1

- left side: 1 (does not exist)

- right side: 2*3*4 (without 1)

- left side * right side = 1 * 24 = 24

ex) product without 3

- left side: 1*2 (does not exist)

- right side: 4 (without 3)

- left side * right side = 1 * 2 * 4 = 8

```

Next, we need to find the way to get prefixes and postfixes in a linear time, and using only constant space. Actually this part is very hard to come up with by ourselves.

The way to create prefixes is:

1. Set up a variable prefix, initialized with 1.

2. Push the prefix to the returning array.

3. Keep multiplying prefix as loop goes through.

> Notice that the first prefix is always going to be 1 since there is no element on the left side of the first element.

After the step, we will get an array that filled with prefixes for each element. Now all we need to do is get postfixes for each element and simply update the returning array by multiplying those postfixes.

#### Code implementation

```cpp

vector<int> productExceptSelf(vector<int>& nums) {

vector<int> res;

int prefix = 1;

for (int i = 0; i < nums.size(); i++)

{

res.push_back(prefix);

prefix *= nums[i];

}

int postfix = 1;

for (int i = nums.size()-1; i >= 0; i--)

{

res[i] *= postfix;

postfix *= nums[i];

}

return res;

}

```

| _ben |

1,154,225 | Projeto - Stone | Nesse projeto, fiz um clone da página do banco Stone. Onde usei HTML e CSS para desenvolver a Landing... | 0 | 2022-07-29T02:40:03 | https://dev.to/lucasfelipedonascimento/projeto-stone-46l6 |

Nesse projeto, fiz um clone da página do banco Stone. Onde usei HTML e CSS para desenvolver a Landing Page.

No código procurei fazer padrões, já que vi que em algumas seções sempre tinha **Texto e imagem**, então procurei padronizar para poder economizar na hora de estilizar no CSS. Usei **GRID** para fazer a divisão de textos e imagens nas seções que tinha ambas.

**Link do projeto:** https://lucasfelipedonascimento.github.io/page-stone/

---

Também usei o https://excalidraw.com/ para fazer o protótipo do projeto, onde fiz a divisão da página em seções para facilitar o trabalho na hora de codar. | lucasfelipedonascimento | |

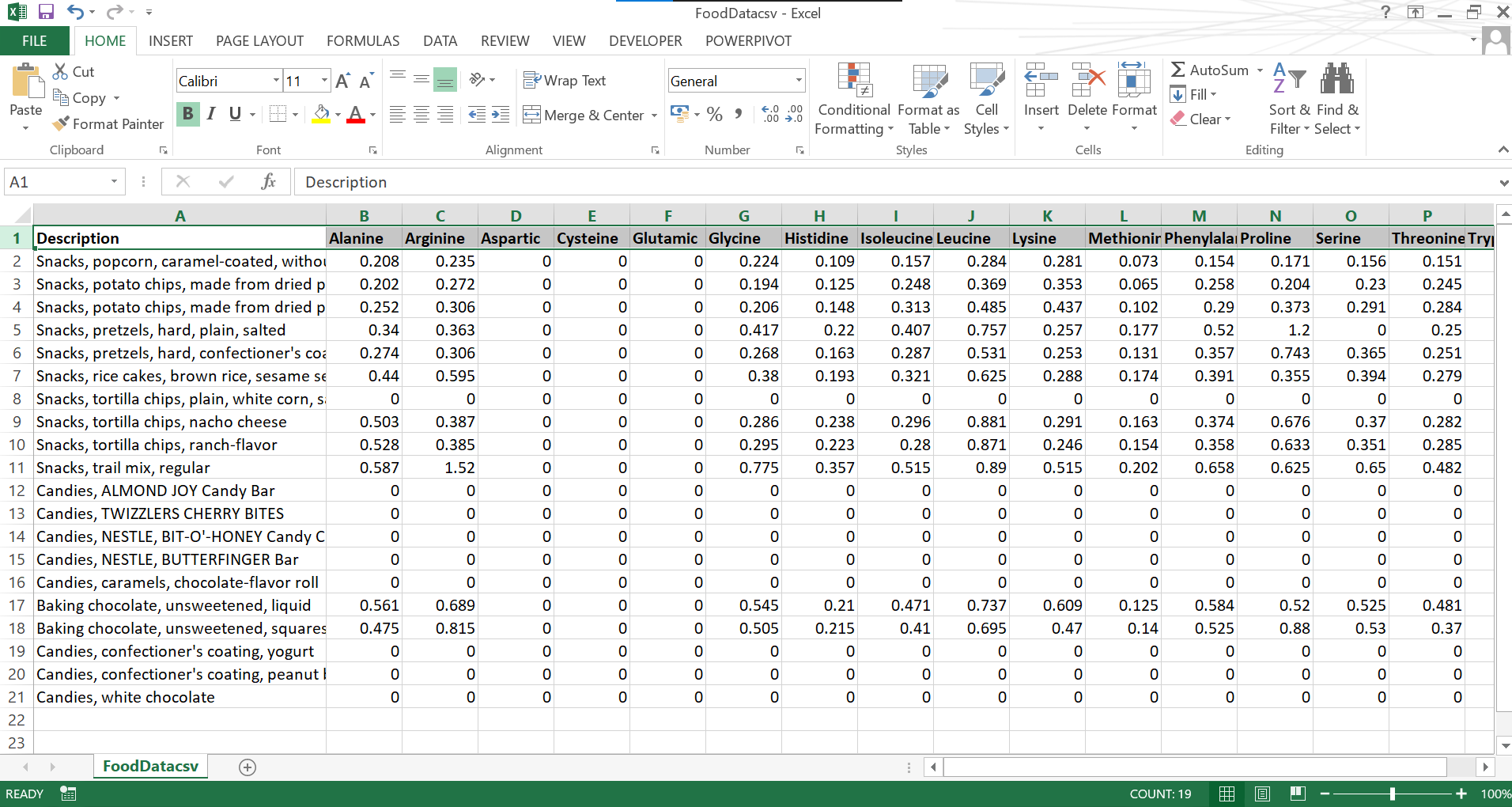

1,170,009 | Introducing a Simple Module for Parsing CSV Files | Today I want to introduce a simple module for parsing CSV files Recently I was exploring my old... | 0 | 2022-08-17T20:48:49 | https://dev.to/atherdon/introducing-a-simple-module-for-parsing-csv-files-310k |

Today I want to introduce a simple module for parsing CSV files

Recently I was exploring my old repository: https://github.com/Food-Static-Data/food-datasets-csv-parser

Inside I have a cool set of small modules, that helped me a lot. As my code is highly tied to those packages -> I need to pray for developers that build them, so I don't need to spend precious time.

List of modules that I'm using:

- csv-parser

- fs

- lodash

- path

- path-exists

- shelljs

Why did I create this package? It's simple. During our work @ Groceristar, we came around a number of databases and datasets, related to "food-tech". To be able to extract that data and play with it -> you need to parse CSV files.

Link to the repository: https://github.com/Food-Static-Data/food-datasets-csv-parser

Link to npm page: https://www.npmjs.com/package/@groceristar/food-dataset-csv-parser

I will also post updates about building modules for static data on indie hackers. While it didn't help with promotions a lot, founders are pretty positive people and their support really matters. Here is an org that I created few years ago: https://www.indiehackers.com/product/food-static-data

As usually, experienced developers might tell me that I'm stupid and CSV parsing is a mundane procedure. But I don't care. I realized that for a few separate projects we are running similar code. So I decided to isolate it.

I did it a few times before I finally find a way to make it work as I like. And you can see how it looks right now.

I can say, not ideal, but it was working fine for me. Right now I plan to revamp this package a little bit, in order to make it work with the latest versions of rollupjs and babel.

While the idea is simple: connecting a dataset in CSV format, parsing it, and exporting data as you need it, while you need to make it work with 10 different datasets, things arent as easy as they sound in your head.

CSVs not only related to food tech datasets. But for me was important to be able to use different datasets and easy to play with it. It makes other modules that we are building data-agnostic and more independent to a database/frameworks/logic. Basically, around this idea, we created and optimized like 13 repositories. Recently I created a separate organization that will be focused on those repositories only.

Link: https://github.com/Food-Static-Data

Later I plan to remove some repositories when they wouldn't be replaced by other, more popular, and stable tools. This current module can be useful for parsing other datasets too. But making it separate from the food tech topic isn't my task at this point.

And I was able to include and implement cool and important packages, like husky and coveralls. I can't say that I get most from them, but at the same time, it helped me to jump into the "open source ocean" that related to the GitHub rabbit hole that I'm still exploring for so many years.

and it's good to not just type another line of code, but also be able to see that your codebase is solid and nothing breaking it behind your back

CodeClimate(https://codeclimate.com/) helped me to explore and be able to take another look at how to develop things.

Yeah, codeclimate shows that I have code duplicates and ~50h of tech debt. Looks like a lot, right? But this is a small independent package.

Imagine how much tech debt has your huge monolith project. Years of wasted time of developers, probably 🙂

At some point i'll remove duplicates and it will reduce number of hours on this page.

Plus, usually, your product owner or CTO is busy and can't review code and be able to track things inside your code.

CodeClimate can do some stuff for you. Just check those settings. Plus, they support open-source movement. So if your code is open and located on GitHub, you can use it for free.

Stretch goals are simple

- I want to invest some time into CI/CD stuff. At this moment i'll pick Travic CI. At some point i'll extend it, so when a new version of this package is published, i'll run it against our datasets and will see if something breaks or not.

- I also need to remove duplicated code that i was moved into separated packages but still present here, due to back capability.

- and it's also not cool to see JS code with all there csv files at the same repository. I need to came with idea about how to organize folders and make it easy to navigate. While it works for me - other people might find it very confusing.

We even did a great readme file with an explanation of how to run this package

We collected a great number of datasets that can help a wast number of food projects. Some of them even sell the latest updates for money.

Right now this module was tested with:

- FoodComposition dataset

- USDA dataset(i pick 4 major tables with data)

- FAO(Food and Agriculture Organization of the United Nations) dataset

This module is not just for parsing data, we also have a need to write files in JSON format with formatted data inside.

Show some love if you want more articles like this one! any activity will be appreciated.

Similar articles:

- https://hackernoon.com/introducing-a-simple-npm-module-with-email-templates

- https://hackernoon.com/how-i-started-to-build-react-components-for-email-templates

- https://hackernoon.com/organizing-an-advanced-structure-for-html-email-template

- https://hackernoon.com/open-sourcing-regular-expressions-for-markdown-syntax-module

| atherdon | |

1,174,879 | 60 Days of Data Science and Machine Learning | Hello, everyone. Following are third week of this series. You can find them on my github repository.... | 0 | 2022-08-24T07:09:06 | https://dev.to/levintech/60-days-of-data-science-and-machine-learning-1740 | python, datascience, machinelearning, ai | Hello, everyone.

Following are third week of this series. You can find them on my github repository. You can run all the notebook on colab or jupyter as well.

- [Day 15 - Repression Part2](https://github.com/levintech/60-Days-of-Data-Science-and-ML/blob/main/Day_15_Regression_Part2.ipynb)

Topic : Support Vector Regression, Decision Tree Regression and Random Forest Regression

- [Day 17 - Kaggle's Annual Machine Learning and Data Science Survey Part1](https://github.com/levintech/60-Days-of-Data-Science-and-ML/blob/main/Day_17_Kaggle%E2%80%99s_Annual_Machine_Learning_and_Data_Science_Survey_Part1.ipynb)

Topic : Data Cleaning, Preprocessing, EDA and etc

- [Day 18 - DecisionTreeRegressor and RandomForestRegressor](https://github.com/levintech/60-Days-of-Data-Science-and-ML/blob/main/Day_18_DecisionTreeRegressor_and_RandomForestRegressor.ipynb)

Topic : Implement Regressor with Decision Tree and Random Forest

- [Day 19 - Kaggle's Annual Machine Learning and Data Science Survey Part2](https://github.com/levintech/60-Days-of-Data-Science-and-ML/blob/main/Kaggle%27s_Annual_Machine_Learning_and_Data_Science_Survery_Part2.ipynb)

Topic : Data Cleaning, Preprocessing, EDA and etc

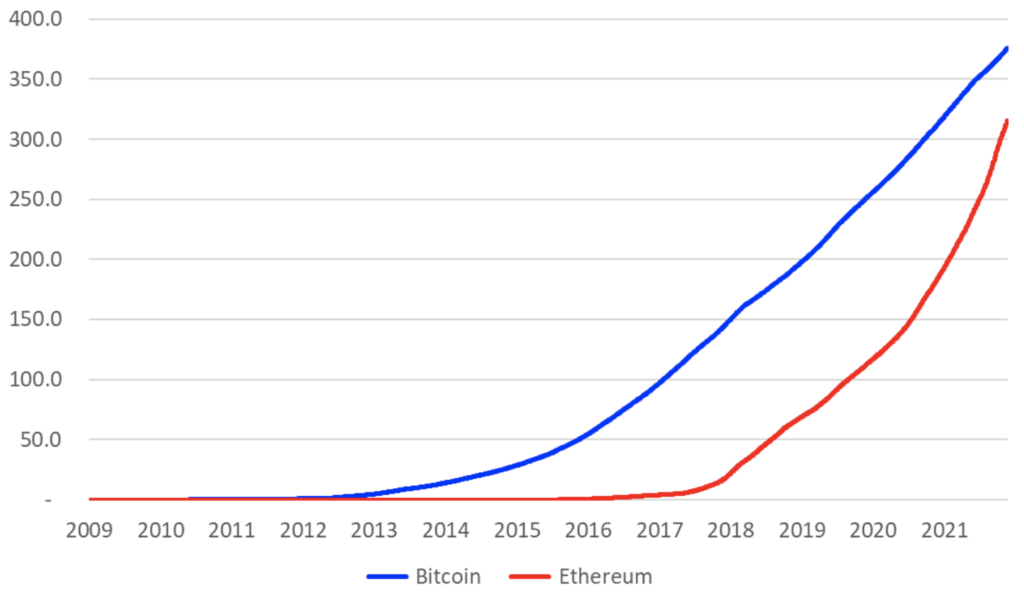

- [Day 20 - Detailed Crypto Analysis](https://github.com/levintech/60-Days-of-Data-Science-and-ML/blob/main/Day_20_Detailed_Crypto_Analysis.ipynb)

Topic : Basic intuition to buid model to predict

- [Day 21 - Detailed Analysis of the Netflix Content](https://github.com/levintech/60-Days-of-Data-Science-and-ML/blob/main/Day_21_Detailed_of_the_Netflix_Content.ipynb)

Topic : Detailed analysis of the Netflix Content

Hope my work would be little bit helpful for AI enthusiastic.

If that, please star that repository then follow me on **Github** and **Dev.to**

https://github.com/levintech

https://dev.to/levintech

Best Regards. | levintech |

1,175,027 | Hibernate & JPA Tutorial - Crash Course | Ever looked for a comprehensive tutorial to Hibernate & JPA that is fun and entertaining at the... | 0 | 2022-08-24T10:26:52 | https://dev.to/marcobehler/hibernate-jpa-tutorial-crash-course-57fb | java, tutorial | Ever looked for a comprehensive tutorial to Hibernate & JPA that is fun and entertaining at the same time?

[This video](https://www.youtube.com/watch?v=xHminZ9Dxm4) is a crash course into the [Hibernate](https://hibernate.org/) & JPA universe.

We'll start with getting the correct project dependencies, annotating our classes with JPA annotations and setting up a SessionFactory. This allows us to execute basic CRUD operations, HQL and Criteria queries.

Afterwards we can simply JPAify our code, switching out a couple of classes and you'll understand how Hibernate / JPA & Spring Boot play together.

By the end of it, you'll have a good (initial) understanding of how to use it and what your future Hibernate / JPA learning journey entails.

{% embed https://www.youtube.com/watch?v=xHminZ9Dxm4 %} | marcobehler |

1,175,292 | Lazy Minting NFT - Solidity, Hardhat | What is NFT ? Non-fungible tokens, often referred to as NFTs, are blockchain-based tokens... | 0 | 2022-09-03T04:46:38 | https://dev.to/hussainzz/lazy-minting-nft-solidity-hardhat-30l1 | solidity, web3, nft, hardhat | ## What is NFT ?

Non-fungible tokens, often referred to as NFTs, are blockchain-based tokens that each represent a unique asset like a piece of art, digital content, or media.

## Why Lazy Minting ?

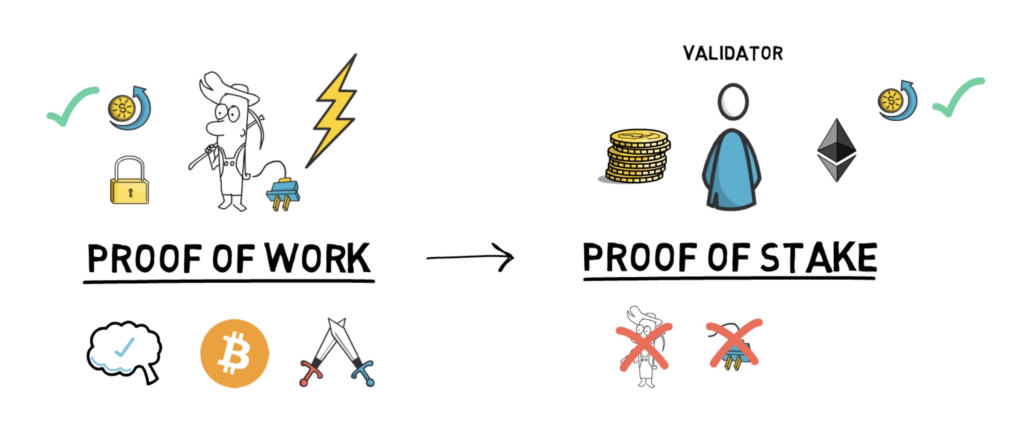

Before understanding what is lazy minting lets understand why we really need it.When minting an NFT the owner needs to pay Gas fee which is a fee that the creator or the one who made the NFTs must pay in exchange for the computational energy needed to process and validate transactions on the blockchain.So with lazy minting we don't have to pay a gas fee when listing an NFT, We only pay gas fee when we actually mint the nft, once the asset is purchased and transfered on chain.

##How it works

Usually when we mint an NFT we call the contract function directly & mint NFT on Chain.But in case of Lazy minting,Creator prepares a cryptographic signature with their wallet private key.

That cryptographic signature is know as "Voucher" which is then used to redeem NFT.It may also include some additional information required while minting the NFT on Chain.

## Tech Stack

Solidity & Hardhat for smart contract development

React Js & Tailwind CSS for dapp

##Let's start

By creating & understanding the signed voucher.In order to accomplish the signing we are going to use

[EIP-712: Typed structured data hashing and signing](https://eips.ethereum.org/EIPS/eip-712)

Which allows us to standardize signing a typed data structure, Which then can be sent to smart contract to claim the NFT.

eg.

```

struct SomeVoucher {

uint256 tokenId;

uint256 someVariable;

uint256 nftPrice;

string uri;

bytes signature;

}

```

Create a new directory called `lazymint`

```

> mkdir lazymint

> cd lazymint

> yarn add -D hardhat

```

Next, Initialize hardhat development environment

```javascript

> yarn hardhat

888 888 888 888 888

888 888 888 888 888

888 888 888 888 888

8888888888 8888b. 888d888 .d88888 88888b. 8888b. 888888

888 888 "88b 888P" d88" 888 888 "88b "88b 888

888 888 .d888888 888 888 888 888 888 .d888888 888

888 888 888 888 888 Y88b 888 888 888 888 888 Y88b.

888 888 "Y888888 888 "Y88888 888 888 "Y888888 "Y888

Welcome to Hardhat v2.10.2

? What do you want to do? …

▸ Create a JavaScript project

Create a TypeScript project

Create an empty hardhat.config.js

Quit

```

Select `Create a JavaScript project` and let it install all the dependencies.

Next, lets install a package called [hardhat-deploy](https://github.com/wighawag/hardhat-deploy) which makes working with hardhat 2x easier & fun 👻

```

> yarn add -D hardhat-deploy

```

And add the following statement to your hardhat.config.js:

```

require('hardhat-deploy');

```

Next,

```

yarn add -D @nomiclabs/hardhat-ethers@npm:hardhat-deploy-ethers ethers

```

All the hardhat.config.js changes can be found in my [REPO](https://github.com/Hussainzz/lazy-mint-contract).

Next, Create a new contract in contracts directory feel free to give any name, i'll call it `LazyMint.sol`

```javascript

// SPDX-License-Identifier: MIT

pragma solidity ^0.8.4;

import "@openzeppelin/contracts/token/ERC721/ERC721.sol";

import "@openzeppelin/contracts/token/ERC721/extensions/ERC721URIStorage.sol";

import "@openzeppelin/contracts/access/Ownable.sol";

import "@openzeppelin/contracts/utils/cryptography/draft-EIP712.sol";

import "@openzeppelin/contracts/access/AccessControl.sol";

contract LazyMint is ERC721, ERC721URIStorage, Ownable, EIP712, AccessControl {

error OnlyMinter(address to);

error NotEnoughValue(address to, uint256);

error NoFundsToWithdraw(uint256 balance);

error FailedToWithdraw(bool sent);

bytes32 public constant MINTER_ROLE = keccak256("MINTER_ROLE");

string private constant SIGNING_DOMAIN = "Lazy-Domain";

string private constant SIGNING_VERSION = "1";

event NewMint(address indexed to, uint256 tokenId);

event FundsWithdrawn(address indexed owner, uint256 amount);

struct LazyMintVoucher{

uint256 tokenId;

uint256 price;

string uri;

bytes signature;

}

constructor(address minter) ERC721("LazyMint", "MTK") EIP712(SIGNING_DOMAIN, SIGNING_VERSION) {

_setupRole(MINTER_ROLE, minter);

}

function mintNFT(address _to, LazyMintVoucher calldata _voucher) public payable {

address signer = _verify(_voucher);

if(hasRole(MINTER_ROLE, signer)){

if(msg.value >= _voucher.price){

_safeMint(_to, _voucher.tokenId);

_setTokenURI(_voucher.tokenId, _voucher.uri);

emit NewMint(_to, _voucher.tokenId);

}else{

revert NotEnoughValue(_to, msg.value);

}

}else{

revert OnlyMinter(_to);

}

}

function _hash(LazyMintVoucher calldata voucher) internal view returns(bytes32){

return _hashTypedDataV4(keccak256(abi.encode(

//function selector

keccak256("LazyMintVoucher(uint256 tokenId,uint256 price,string uri)"),

voucher.tokenId,

voucher.price,

keccak256(bytes(voucher.uri))

)));

}

function _verify(LazyMintVoucher calldata voucher) internal view returns(address){

bytes32 digest = _hash(voucher);

//returns signer

return ECDSA.recover(digest, voucher.signature);

}

// The following functions are overrides required by Solidity.

function _burn(uint256 tokenId) internal override(ERC721, ERC721URIStorage) {

super._burn(tokenId);

}

function tokenURI(uint256 tokenId)

public

view

override(ERC721, ERC721URIStorage)

returns (string memory)

{

return super.tokenURI(tokenId);

}

function supportsInterface(bytes4 interfaceId) public view virtual override(AccessControl, ERC721) returns (bool){

return ERC721.supportsInterface(interfaceId) || AccessControl.supportsInterface(interfaceId);

}

function withdrawFunds() public payable onlyOwner{

uint256 balance = address(this).balance;

if(balance <= 0){revert NoFundsToWithdraw(balance);}

(bool sent,) = msg.sender.call{value: balance}("");

if(!sent){revert FailedToWithdraw(sent);}

emit FundsWithdrawn(msg.sender, balance);

}

}

```

Let's quickly go through the `LazyMint.sol` contract.

i am using `ERC721` & `ERC721URIStorage` from [OpenZeppelin Contracts](https://github.com/OpenZeppelin/openzeppelin-contracts)

**ERC721** - is a standard for representing ownership of non-fungible tokens, that is, where each token id is unique.

**ERC721URIStorage** - is an implementation of ERC721 that includes the metadata standard extensions ( IERC721Metadata ) as well as a mechanism for per-token metadata.

In Simple words, contract implemented without `ERC721Storage` generates tokenURI for tokenId on fly by concatenating baseURI + tokenID.In case of contracts using `ERC721Storage` we provide the tokenURI(metadata) when minting the token which is then stored on-chain.

Next, i am using Ownable & AccessControl.

[**Ownable**](https://docs.openzeppelin.com/contracts/2.x/api/ownership) - which enables contract exclusive access to functions eg. OnlyOwner has access to certain functions.

[**AccessControl**](https://docs.openzeppelin.com/contracts/2.x/access-control) - Which allows us to assign certain roles to address like in our case,Specific address can sign vouchers to mint NFTs, for that we can create a role called `MINTER`. or an admin for the overall contract.

Next, i have defined some custom errors which were introduced in `solidity version 0.8.4`

```javascript

error OnlyMinter(address to);

error NotEnoughValue(address to, uint256);

error NoFundsToWithdraw(uint256 balance);

error FailedToWithdraw(bool sent);

```

Next, we define the minter role, signing domain & version.

so that when minting we can check that the signed voucher explained above was signed by minter address.And includes the same domain and version.

```javascript

bytes32 public constant MINTER_ROLE = keccak256("MINTER_ROLE");

string private constant SIGNING_DOMAIN = "Lazy-Domain";

string private constant SIGNING_VERSION = "1";

```

Next, lets take a look at the Voucher which we defined in solidity using `struct`

```javascript

struct LazyMintVoucher{

uint256 tokenId;

uint256 price;

string uri;

bytes signature;

}

```

Which includes `tokenId` to mint, price, uri[usually a url pointing to metadata] for the nft,and signature.Let's Understand the mintNFT function to understand how this voucher does all the magic 🪄.

```javascript

function mintNFT(address _to, LazyMintVoucher calldata _voucher) public payable {

address signer = _verify(_voucher);

if(hasRole(MINTER_ROLE, signer)){

if(msg.value >= _voucher.price){

_safeMint(_to, _voucher.tokenId);

_setTokenURI(_voucher.tokenId, _voucher.uri);

emit NewMint(_to, _voucher.tokenId);

}else{

revert NotEnoughValue(_to, msg.value);

}

}else{

revert OnlyMinter(_to);

}

}

```

the important part of the voucher is signature which we sign off-chain with the Minters private key which has all the additional data in the same order defined in the above `struct`.Then in `mintNFT` function which expects two arguments `_to` & `_voucher`.

nft will be minted for `_to` address.And voucher basically helps redeem the NFT.First line in `mintNFT` function is to verify the signature from the voucher. to verify the signer we call a cryptographic function from `draft-EIP712.sol` called `_hashTypedDataV4` which takes in our hashed version of our voucher struct and the return value then can be used with a `recover` function from [`Elliptic Curve Digital Signature Algorithm (ECDSA) `](https://docs.openzeppelin.com/contracts/2.x/utilities) to get the signer address.We then compare the recovered signer to check if it matches our `MINTER` address & also check if that signer has the minter_role, If Yes ? we proceed and check the value(eth passed) matches the price value mentioned in the voucher. If yes ? we go ahead and mint the token and emit an event `emit NewMint(_to, _voucher.tokenId)`.

That's it for the Voucher Verification Magic Trick 🪄🪄🪄

Next, we have a `withdrawFunds` which allows only the contract owner to withdraw funds, if any.

## How to sign a voucher ?

Open [createSalesOrder](https://github.com/Hussainzz/lazy-mint-contract/blob/main/scripts/createSalesOrder.js), go to the scripts folder open `createSalesOrder.js` which is a simple script to create vouchers.

First we get the signer account.

```javascript

const [signer] = await ethers.getSigners();

```

Next, We need the signing domain & version these values should same as one defined in the contract.

```javascript

const SIGNING_DOMAIN = "Lazy-Domain";

const SIGNING_VERSION = "1";

const MINTER = signer;

```

As per EIP 712 we need a domain, which is made up of chainId, ContractAddress, domain & the version.

```javascript

const domain = {

name: SIGNING_DOMAIN,

version: SIGNING_VERSION,

verifyingContract: lazyMint.address,

chainId: network.config.chainId

}

let voucher = {

tokenId: i,

price: PRICE,

uri: META_DATA_URI

}

```

`META_DATA_URI` i have already pinned it to [ipfs](https://ipfs.io/ipfs/QmPE9bwcVXiCxtH5q5R3mC2DpDkyDbJUZKmNAbAkhZVfWE)

```javascript

`ghost_metadata.json`

{

"description": "Boooooo",

"image": "https://ipfs.io/ipfs/QmeueVyGRuTH939fPhGcPC8iF6HYhRixGBRmEgiZqFUvEW",

"name": "Baby Ghost",

"attributes": [

{

"trait_type": "cuteness",

"value": 100

}

]

}

```

Next, we need the `createVoucher` function open `voucherHelper.js`. In order to create a signed voucher we need three arguments `domain` `voucher` `types`. Now types is nothing but the `struct` voucher which we have defined in solidity smart contract.Make sure the name of the `struct` & also the order of variables match.

```javascript

const types = {

LazyMintVoucher:[

{name: "tokenId", type:"uint256"},

{name: "price", type:"uint256"},

{name: "uri", type:"string"}

]

}

```

Next, we sign the voucher with the MINTER(signer) wallet & get the signed voucher 🖋️

```javascript

const signature = await signer._signTypedData(domain, types, voucher);

```

In `createSalesOrder.js` at the end once i get the signed vouchers, In my case to keep things simple i am just saving the signed vouchers inside a file called `NFTVouchers.json` which i am creating directly in my dapp `'../lazymintdapp/src/NFTVouchers.json'`.Ideally in real life scenario you store these signed vouchers in your centralized DB 🤪

Done! 🎉🎉

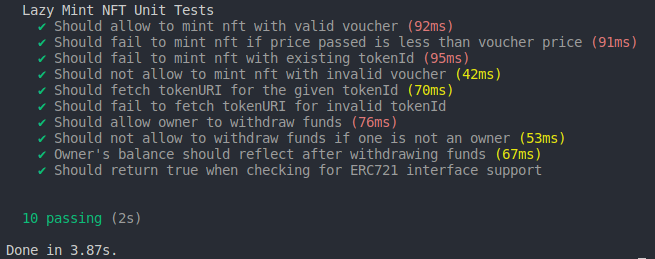

##Contract Tests

I have written some unit tests which can be found in `test/LazyMintUnit.test.js`

```

> yarn hardhat test

```

##Deployed Contract & Demo

[https://ghost-mint.vercel.app/](https://ghost-mint.vercel.app/)

[https://mumbai.polygonscan.com/address/0x3077B8941e6337091FbB2E3216B1D5797B065C71](https://mumbai.polygonscan.com/address/0x3077B8941e6337091FbB2E3216B1D5797B065C71)

[**CODE REPO**](https://github.com/Hussainzz/lazy-mint-contract) Don't forget to drop a ⭐⭐⭐⭐⭐

## LazyNFTs Dapp

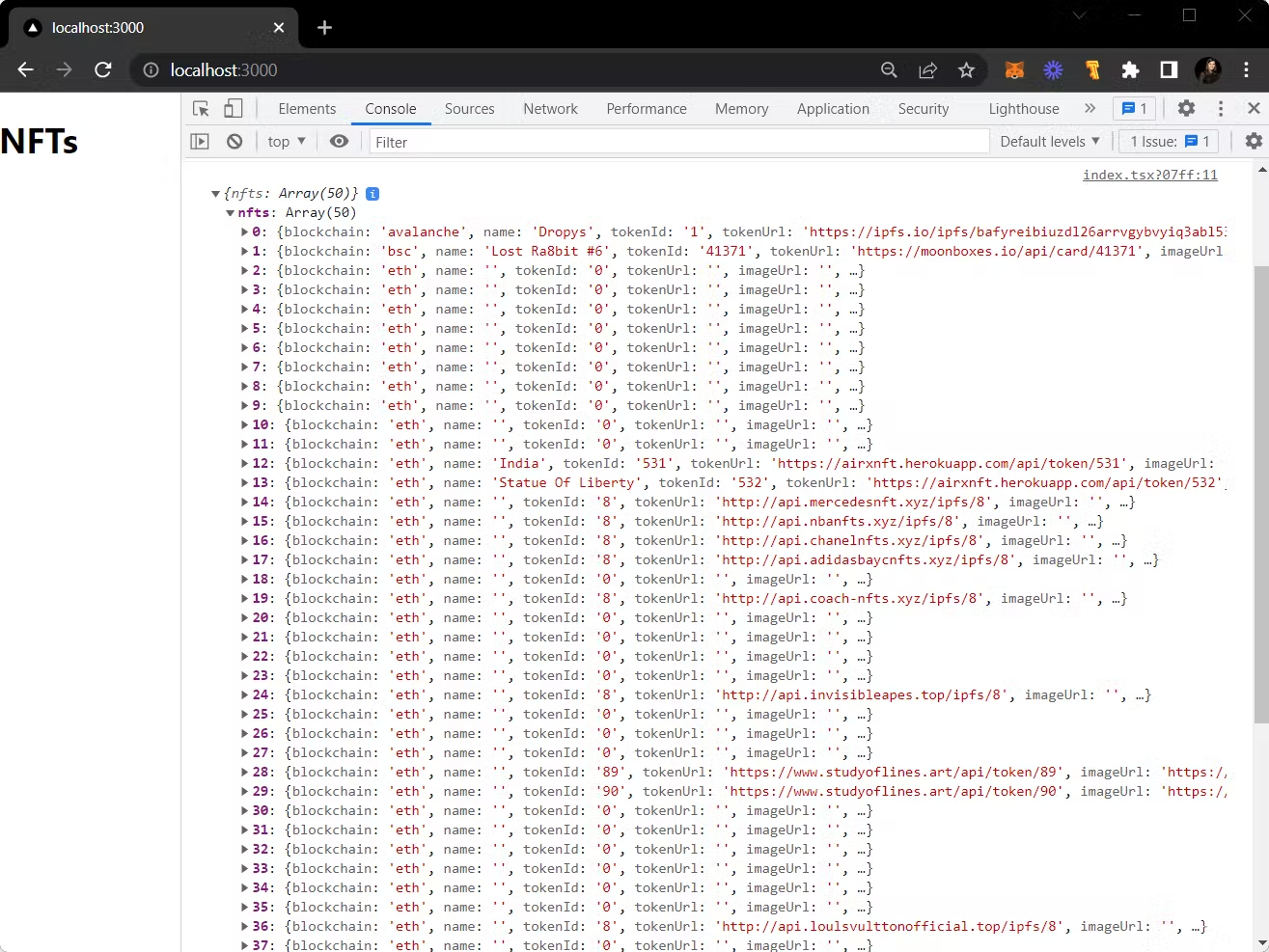

i won't be going through the dapp completely.But for reactjs dapp development i used `create-react-app`.

**Packages**

- **Wagmi**: https://wagmi.sh/ for contract interaction, Cool and easy react hooks.And their documentation has lots of examples which makes it way easier to implement. 🦍

##Resources

There are multiple ways in which one can approach the lazy minting flow. this was all my learning experience.Here are some resources which help me understand lazy minting.

- https://www.alchemy.com/overviews/lazy-minting

- https://eips.ethereum.org/EIPS/eip-712

- https://medium.com/metamask/eip712-is-coming-what-to-expect-and-how-to-use-it-bb92fd1a7a26

- https://nftschool.dev/tutorial/lazy-minting

That's it on lazy minting. 👻

[](https://www.buymeacoffee.com/shkhussainb) | hussainzz |

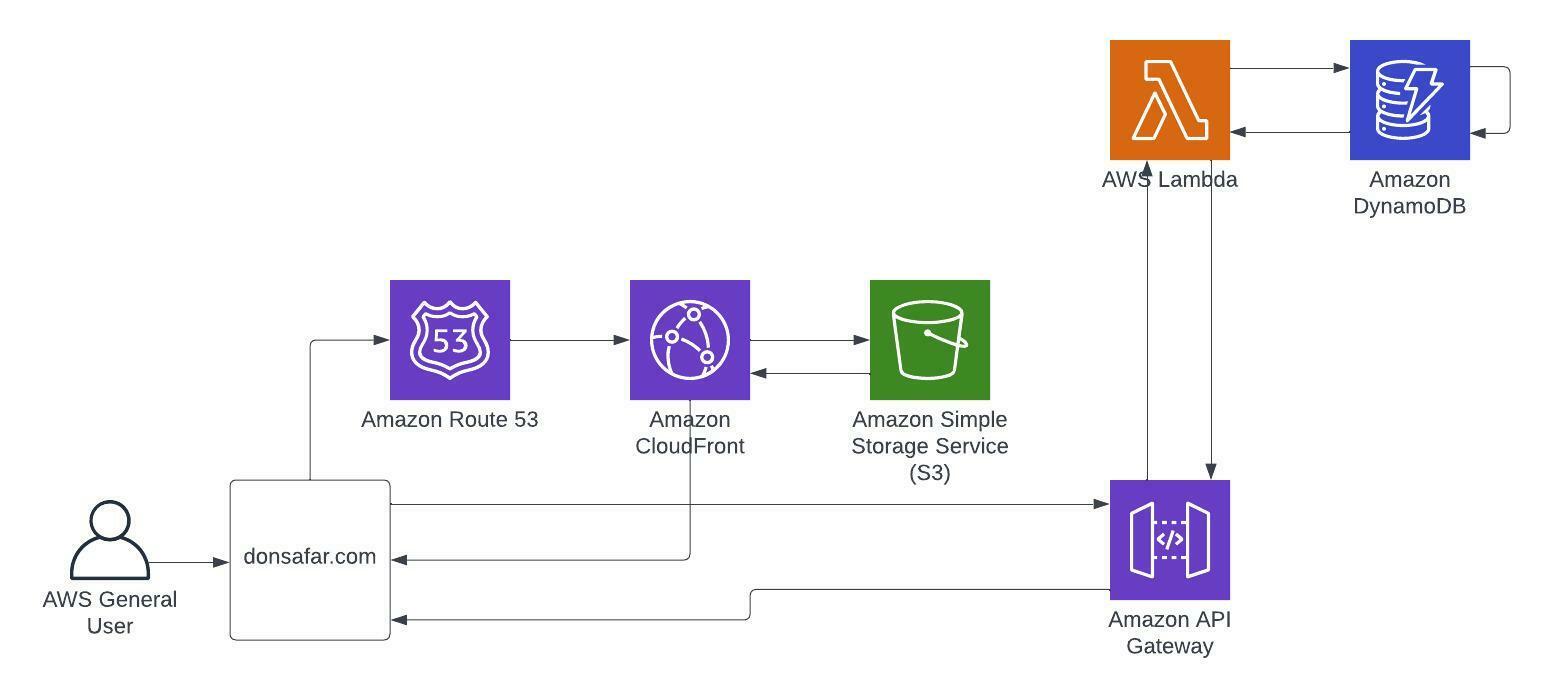

1,175,614 | Cloud Resume Challenge | Welcome! My name is Don Safar. In the summer of 2019 I was in the process of winding down our... | 0 | 2022-08-29T21:19:00 | https://dev.to/dwsafar/cloud-resume-challenge-6g0 | career, aws, devops, serverless |

Welcome! My name is Don Safar. In the summer of 2019 I was in the process of winding down our company, Safe Haven Holding Corp dba Safar Motor Group. Prior to Safar Motor Group, I worked at Lender Processing Services for fifteen years, which became part of Black Knight Inc under enterprise restructuring. During my tenure at LSI, I became an SME within a group that would help transition our legacy system to a newly built modern system. I was tasked with transferring knowledge of existing workflows and modifying legacy workflows into the new system. This was my first full time job in the IT industry. I started at the very bottom of the company and eventually transferred to the IT department. So enough of the back story let's get to what this post is all about.

The Cloud Resume Challenge or CRC was way more than just learning to do some cool stuff in the cloud. The CRC taught me how to really push the boundaries of what I thought I was capable of. Actually it was more of a reminder of my capabilities. Below you will find the project broken out into six phases. In each phase I go over my challenges and how I over came each of them.

<a name="TOP"></a>

Table of Contents

[Phase 0 - AWS Certification](#CERT)

[Phase 1 - HTML,CSS, S3, DNS, SSL, AWS Cloudfront] (#HTML)

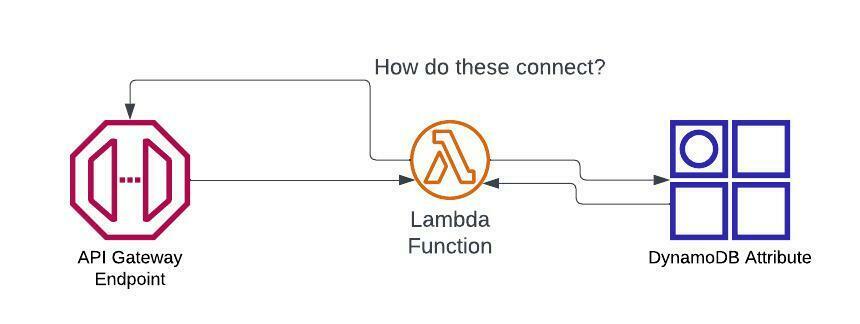

[Phase 2 - API, Lambda, DyanomDB](#LAMBDA)

[Phase 3 - Java Script, API, Smoke Test, CORS](#CORS)

[Phase 4 - IaC, CI/CD](#IAC)

[Phase 5 - Blog](#BLOG)

[Cloud Resume](https://donsafar.com)

Here are the detailed instructions of the Challenge.

[Cloud Resume Instructions](https://cloudresumechallenge.dev/docs/the-challenge/aws/)

### CRC Project

#### <a name="CERT"></a>Phase 0 - AWS Cloud Practitioner Certification

1. Can I do this?

2. Sit down we need to talk...