id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

968,969 | Quasar | Does anyone help programming with quasar, I am having issues getting the software to work properly? | 0 | 2022-01-26T22:17:22 | https://dev.to/william_pate2022/quasar-37m8 | Does anyone help programming with quasar, I am having issues getting the software to work properly? | william_pate2022 | |

969,020 | Cloud Resume Challenge AWS | Background I was introduced to the Cloud Resume Challenge while interviewing for a... | 0 | 2022-01-27T20:19:13 | https://dev.to/joshb8/cloud-resume-challenge-aws-38oa | cloudresumechallenge, aws, cloud | ## Background

I was introduced to the [Cloud Resume Challenge](https://cloudresumechallenge.dev/docs/the-challenge/aws/) while interviewing for a position in October of 2021. I was looking for a change in direction in my IT career and the cloud struck my interest. Over the past couple of months, I have been slowly working on this challenge. With many late nights in the books, I have completed the challenge. Although frustrating at times I would recommend anyone interested to take this challenge. The rewarding feeling after every completed step makes you want to come back for more.

## Challenge Instructions

**Certification** AWS certified Cloud Practitioner(In Progress)

✔️ **HTML** [Check out my HTML code](https://github.com/joshb8/cloud-resume/blob/main/resume-site/index.html)

✔️**CSS** [Check out my CSS code](https://github.com/joshb8/cloud-resume/blob/main/resume-site/styles.css)

✔️**Static Website** [Used AWS SAM template](https://github.com/joshb8/cloud-resume/blob/main/template.yaml)

✔️**HTTPS** This was made easy by AWS CloudFront

✔️**DNS** Used AWS Route [joshscloudresume.net](joshscloudresume.net)

✔️**Javascript** There is a script at the end of my [HTML File](https://github.com/joshb8/cloud-resume/blob/main/resume-site/index.html)

✔️**Database** Used AWS DynamoDB

✔️**API** Created and deployed with AWS SAM

✔️**Python** Lambda Functions [Get Function](https://github.com/joshb8/cloud-resume/blob/main/get-function/app.py) [Put Function](https://github.com/joshb8/cloud-resume/blob/main/put-function/app.py)

✔️**Tests** Integration and upload test using [GitHub Actions](https://github.com/joshb8/cloud-resume/actions/runs/1752827599)

✔️**Infrastructure as Code** AWS SAM [template.yaml](https://github.com/joshb8/cloud-resume/blob/main/template.yaml)

✔️**CI/CD** [GitHub Actions](https://github.com/joshb8/cloud-resume/actions)

✔️**Blog Post** Here we are

**My thoughts on the challenge**

Looking back on the challenge I should have been writing this blog post along the way. It is impossible to remember all of the struggles and AH-HA! moments involved in the learning process. With that being said I will go over the main struggles that took up most of my time.

Although the instructions from [The cloud resume challenge](https://cloudresumechallenge.dev/) give great instructions and excellent resources to start the journey there were many hours spent on stack overflow figuring out the finer details.

**How I approached the challenge**

**1. HTML/CSS/JS** I first simply coded my resume in HTML and added a little CSS to meet that requirement. I would later dive deeper into creating an actual website once I realized that I enjoyed the design aspect of this.

**2. Host website** I used the AWS SAM template to host a static website in an S3 bucket. I originally used google DNS to purchase a domain name however after hours of troubleshooting I simply could not get it to resolve. I purchased another domain through AWS Route 53 and it worked instantly.

**3. CloudFont/HTTPS** At first, I had some issues implementing HTTPS for security because the rules had changed about the target origin ID name and I was going off an old version. After some stack overflow searching, I corrected the issue.

**4. API/DYNAMODB/LAMBDA** Steps 7 through 12 were the most difficult but rewarding challenges yet. Creating the API and database on DynamoDB was easy enough. Linking them together through Lambda functions was another story. Once I found the right docs and templates for boto3 SDK to access the database Things started to come together nicely.

**5. Deploying with CI/DI** Deploying with CI/DI was a learning experience. I had not created a GitHub account at this point and it was a learning curve pushing my folders to GitHub using git. One problem I ran into with GitHub actions is that it was blocking my user account while updating the get/put lambda functions. I solved this by adding my user to the policy statements on each of the lambda functions. Another useful feature that I learned was the secrets function in GitHub. I used this to hide my user access key and secret key in my .yml file to be able to build and deploy my site as well as upload my site to s3 through the actions feature.

**6. Certification** I am pending certification, giving myself a week to study up and pass the exam.

I am proud of myself for completing this challenge and hope to pursue my newfound interest in all things cloud.

**Thanks for reading about my journey**

| joshb8 |

969,372 | Rails React Streaming SPA | Introduction TLTR: Feel free to get the source code. Requirements -The code... | 0 | 2022-01-27T09:21:24 | https://dev.to/mwpenn94/kaleidoscope-rails-react-streaming-spa-294h | #Introduction

[TLTR: Feel free to get the source code.](https://github.com/mwpenn94/kaleidoscope)

##Requirements

-The code should be written in ES6 as much as possible

-Use the create-react-app generator to start the project

-The Application should have one HTML page to render your react-redux application

-There should be 5 stateless components

-There should be 3 routes

-The Application must make use of react-router and proper RESTful routing

-Use Redux middleware to respond to and modify state change

-Make use of async actions and redux-thunk middleware to send data to and receive data from a server

-The Rails API should handle the data persistence with a database.

-Use fetch() within actions to GET and POST data from the API - do not use jQuery methods.

-The client-side application should handle the display of data with minimal data manipulation

##App Design

The overall plan for this single page application was to design a web site with a full-stack design using a Rails backend with a ReactJS frontend.

Based on the requirements, the app allows users CRUD functionality allows users CRUD functionality for their own streams.

Similar to large scale counterparts, it is designed for straightforward use with minimal explanation required for the user.

##Frontend Design

See the "backend/app/javascript" directory for more info.

##Backend Design

See the "backend" directory for more info. | mwpenn94 | |

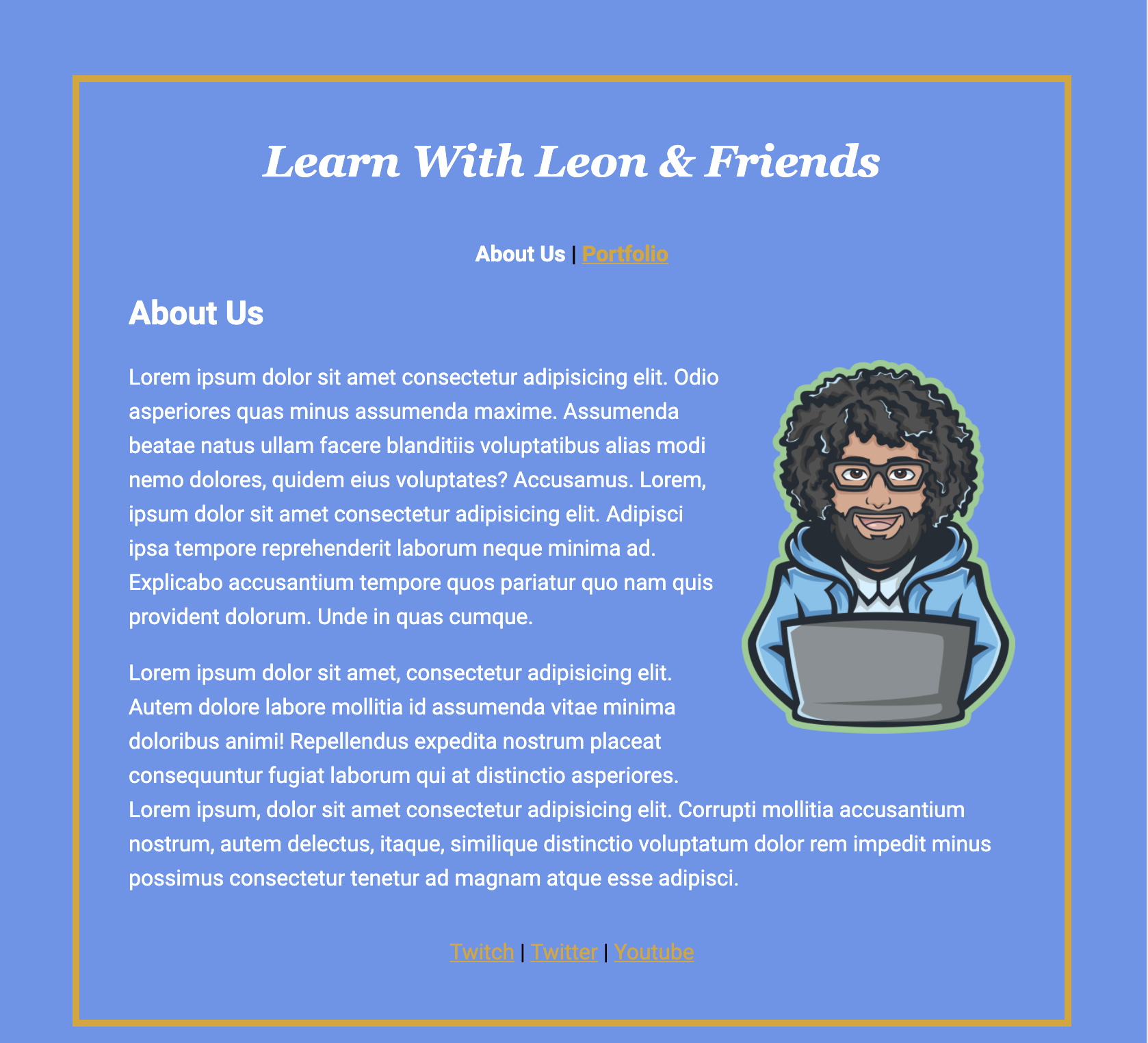

972,064 | Three Weeks and Beyond with #100Devs | The Recap Woah! We finally made it through three weeks of #100Devs Cohort 2, and are... | 0 | 2022-01-29T18:06:18 | https://codewithfan.hashnode.dev/three-weeks-and-beyond-with-100devs | beginners, 100devs, webdev, codenewbie | # The Recap

Woah! We finally made it through three weeks of #100Devs Cohort 2, and are proficient to lay down serious vomity code to create a webpage with HTML & CSS. If I were to talk to my past self three weeks ago and say, "You are going to be able to create something out of nothing in three weeks. Trust me!", I would have laughed.

In today's blog, I am covering what I have learned so far in the past three weeks of #100Devs to become an employed Full Stack Software Developer.

**⚠ Please, feel free to provide any feedback/comment/likes/dislikes on this or any blog I post. It can make me unlock the new potential to become a better blogger. 👍🏾**

> ***💡 "I want to help you become a Software Engineer for free"*** - Leon Noel

<iframe src="https://giphy.com/embed/fdLR6LGwAiVNhGQNvf" width="480" height="270" frameBorder="0" class="giphy-embed" allowFullScreen></iframe><p><a href="https://giphy.com/gifs/fdLR6LGwAiVNhGQNvf">

# Week 1

Class one was the most hype and watched stream in the Software & Game Development category on Twitch...EVER! [A total of 7K+ Software Engineers tuned in live](https://www.twitch.tv/videos/1260641923) to figure out if:

1. Is the **#100Devs** Bootcamp real?

2. What's the catch of a *free* Bootcamp?

3. Am I ready to become a **Software Engineer**?

If you answered "Yes" to all three questions, you faced homework the very first class:

- [Learning How to Learn](https://www.coursera.org/learn/learning-how-to-learn)

- [How to Study for Exams, by Ali Abdaal](https://www.youtube.com/watch?v=ukLnPbIffxE)

- [Space Repetition, by Ali Abdaal](https://www.youtube.com/watch?v=Z-zNHHpXoMM)

The objective was to complete four weeks of learning how to learn in one week and supplement the course with [Ali Abdaal's](https://www.youtube.com/c/aliabdaal) how-to-study videos. I discovered that I was learning new things wrong and that utilizing active recall and spaced repetition is the key to retaining anything.

Introducing [**Anki**](https://apps.ankiweb.net/)! Anki is an intelligent flashcard reader that allows you to create, organize, and study decks of cards. This is a tool that is meant to be used not only for the cohort but for life! The game plan is while you are reading/learning life, Anki is your best friend in creating your flashcards to perform active recall later, then do it again, and again...and again 😂

<iframe src="https://giphy.com/embed/Uv2K54K09f5x2GqqqW" width="480" height="480" frameBorder="0" class="giphy-embed" allowFullScreen></iframe><p><a href="https://giphy.com/gifs/cbc-cooking-show-fridge-wars-fridgewars-Uv2K54K09f5x2GqqqW"></a></p>

The second set of homework, which set me in the trough of sorrow (yes, you Shay) was:

- [Learn to Code HTML & CSS 💀(https://learn.shayhowe.com/html-css/)

- [Intro to using MDN DOCS](https://developer.mozilla.org/en-US/docs/Web/HTML)

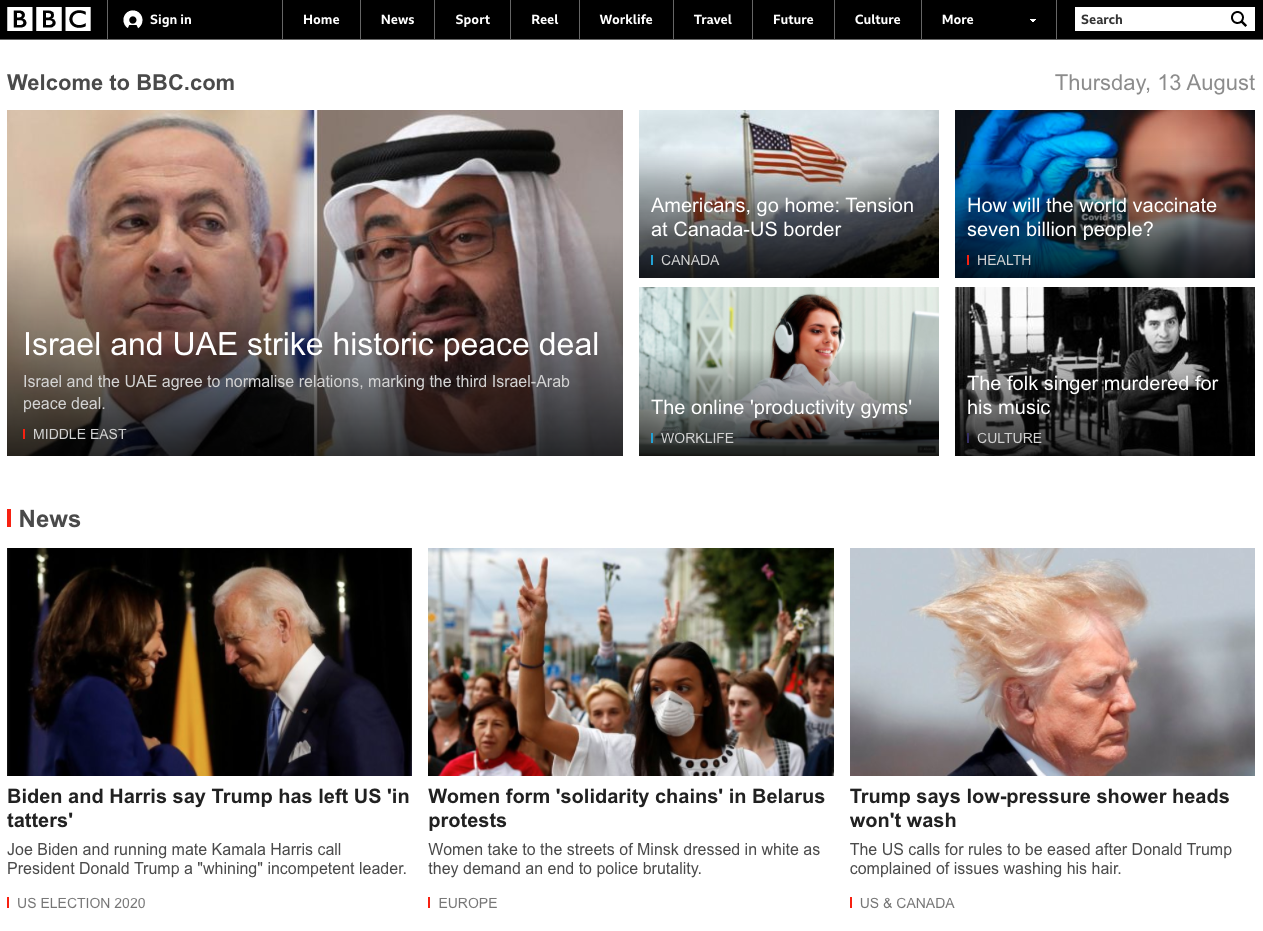

- BBC Website

Since learning from [The Odin Project](https://www.theodinproject.com/paths/foundations/courses/foundations), I was familiar with adding HTML semantics to the [BBC Homework Assignment](https://glitch.com/edit/#!/bbc-homepage). The trough of sorrow hit me like a train when reading 12 lessons back to back and creating various flashcards on Anki. Because I have not been in a structured learning environment in seven years, paying attention and staying focused was no easy task. Thankfully, I was able to complete the lessons using the [Pomodoro Technique](https://tomato-timer.com/), or in my case, Animedoro.

<blockquote class="twitter-tweet"><p lang="en" dir="ltr">so leon mentioned "animedoro"... sounds like a distraction but I won't knock it until I try it. 😂 maybe 35 on, 25 off with death note. <br><br>any <a href="https://twitter.com/hashtag/100Devs?src=hash&ref_src=twsrc%5Etfw">#100Devs</a> have a favorite anime series they recommend? <a href="https://t.co/A0VFWmyUQA">pic.twitter.com/A0VFWmyUQA</a></p>— stefan 🍍 (@codewithfan) <a href="https://twitter.com/codewithfan/status/1482893465469915136?ref_src=twsrc%5Etfw">January 17, 2022</a></blockquote> <script async src="https://platform.twitter.com/widgets.js" charset="utf-8"></script>

# Week 2

> ***💡 "Leon Noel is a Pokemon Master"***

<iframe src="https://giphy.com/embed/xuXzcHMkuwvf2" width="480" height="360" frameBorder="0" class="giphy-embed" allowFullScreen></iframe><p><a href="https://giphy.com/gifs/90s-the-girls-are-in-charge-xuXzcHMkuwvf2"></a>

We made it out alive of the first week of #100Devs. Some were able to submit their Coursera homework on time while others were still catching up with Barbara.

For Class 3 - Tuesday's class, we reviewed the following:

- Navigation

```

<nav>

<ul>

<li><a href="#">Donate</a></li>

<li><a href="#">Login</a></li>

<li><a href="#">Sign Up</a></li>

</ul>

</nav>

```

- Forms:

`<form action="/my-handling-form-page" method="post"></form>`

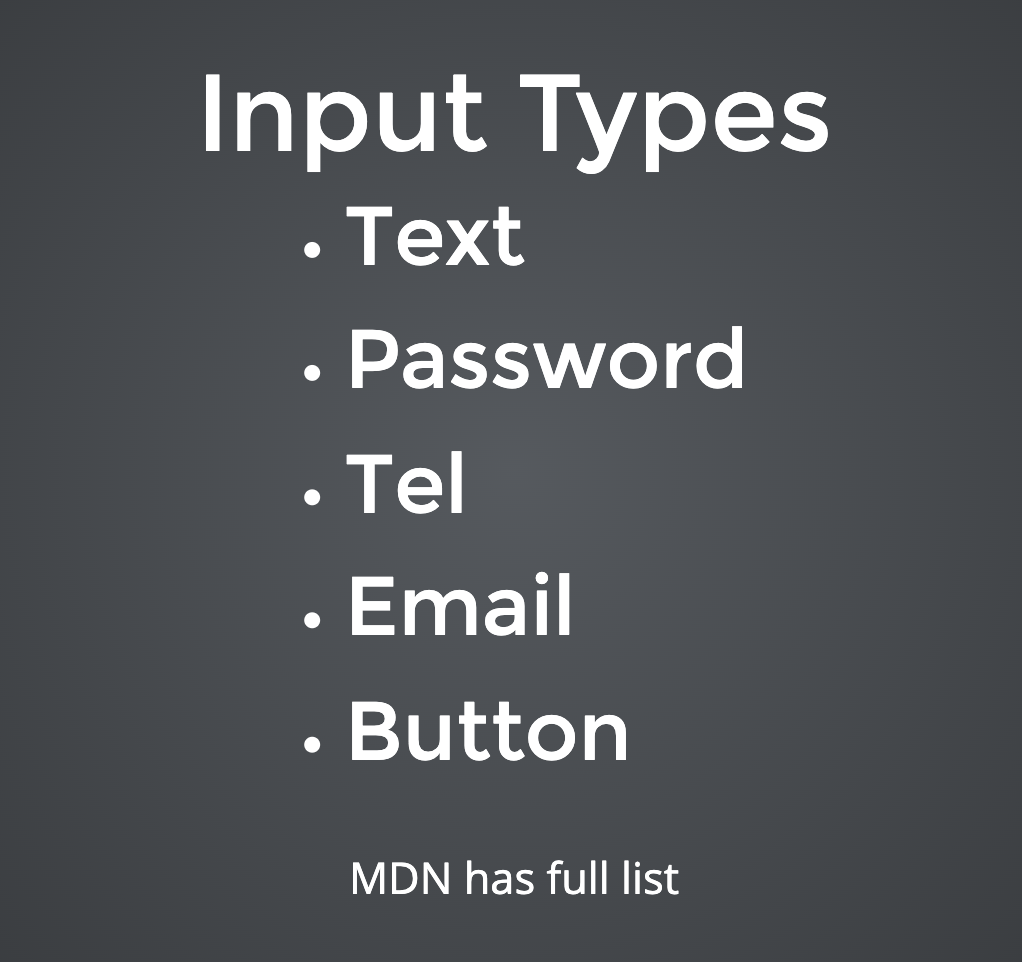

- Input Types

In addition, we went over how Leon approaches a webpage if he were to create its HTML. The homework assigned was to re-create the [Khan Academy](https://glitch.com/edit/#!/khan-academy-100devs), [Tech Crunch](https://glitch.com/edit/#!/tech-crunch-100devs) webpage using only HTML, and learn how to do layouts on [learnlayout.com](https://learnlayout.com/).

<iframe src="https://giphy.com/embed/zOvBKUUEERdNm" width="480" height="270" frameBorder="0" class="giphy-embed" allowFullScreen></iframe><p><a href="https://giphy.com/gifs/coding-zOvBKUUEERdNm"></a>

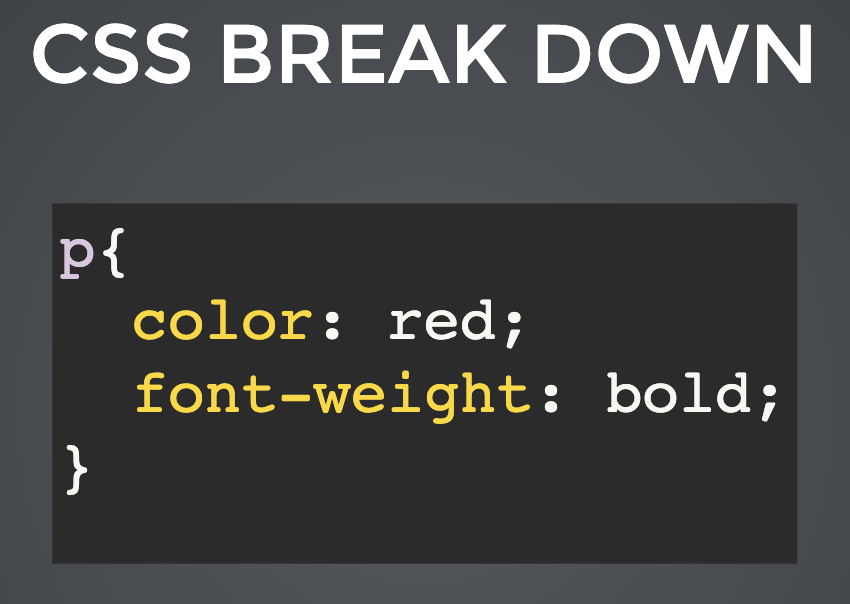

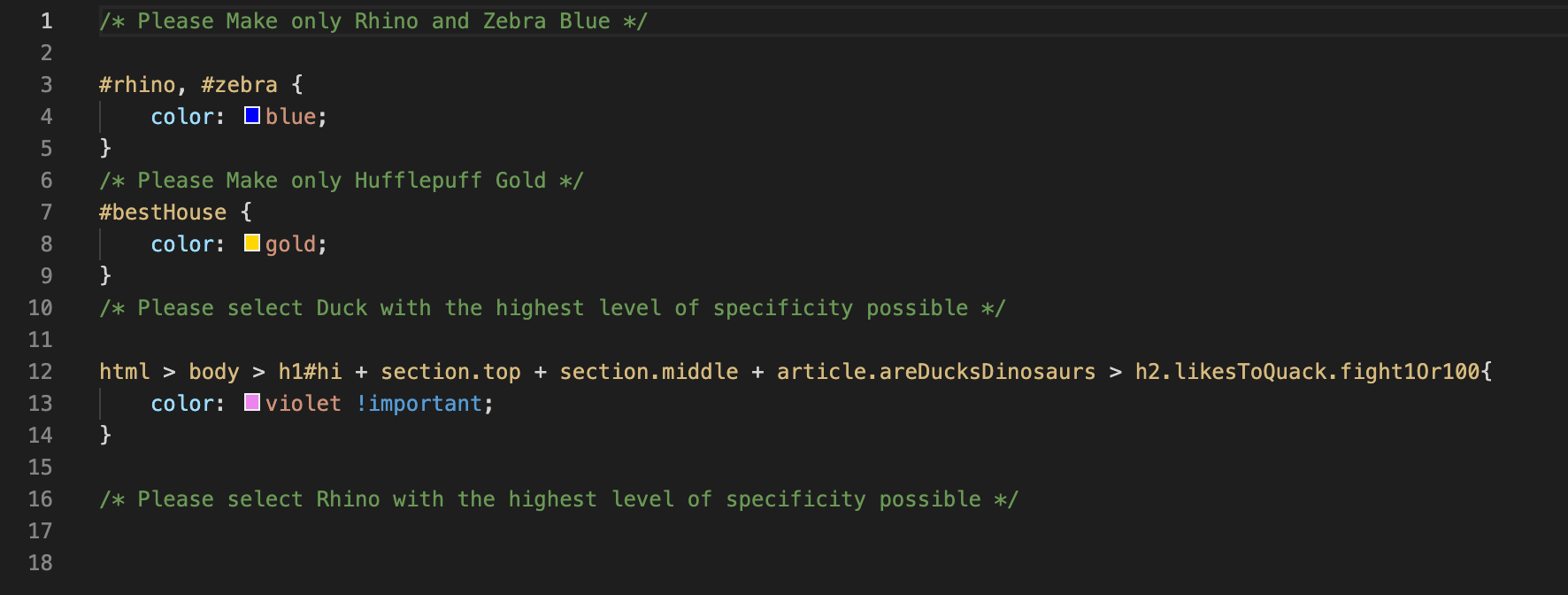

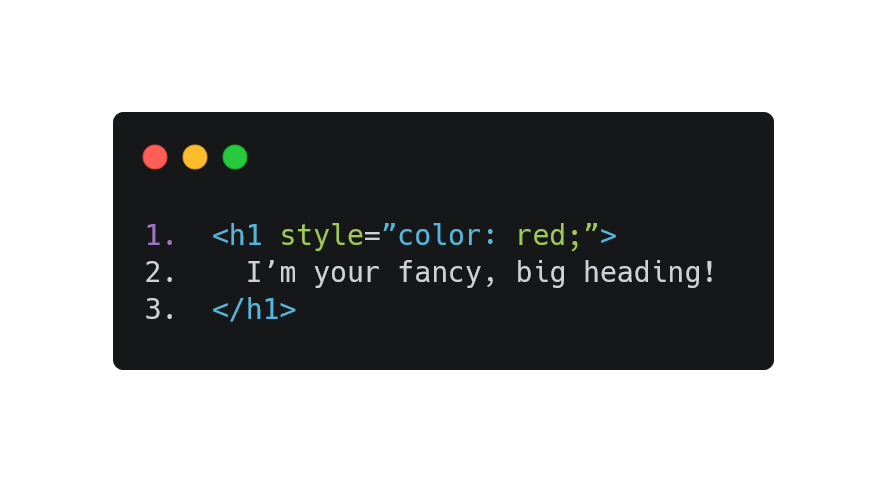

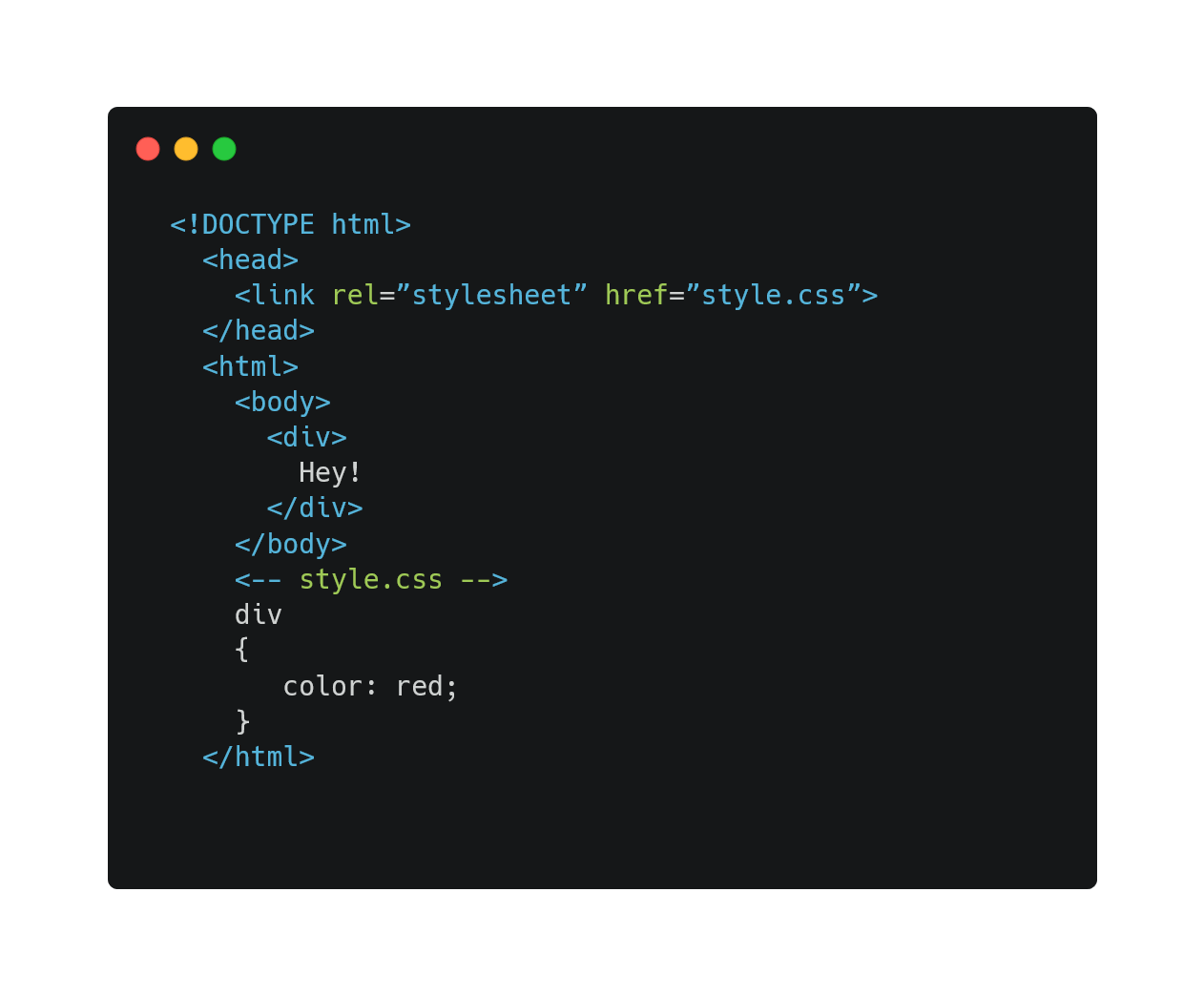

Class 4, we dived into the nitty-gritty of CSS fundamentals such as:

- Where should you style your CSS?

- How do you link your HTML & CSS together?

- The CSS Breakdown

- Classes, IDs, and basic properties

- Most !important

To conclude week 2, we were given the task to use HTML & CSS to create a [simple lab site](https://glitch.com/edit/#!/simple-lab-leon-100-devs)

# Week 3

> ***💪🏽 "We don't get got. We go get!"***

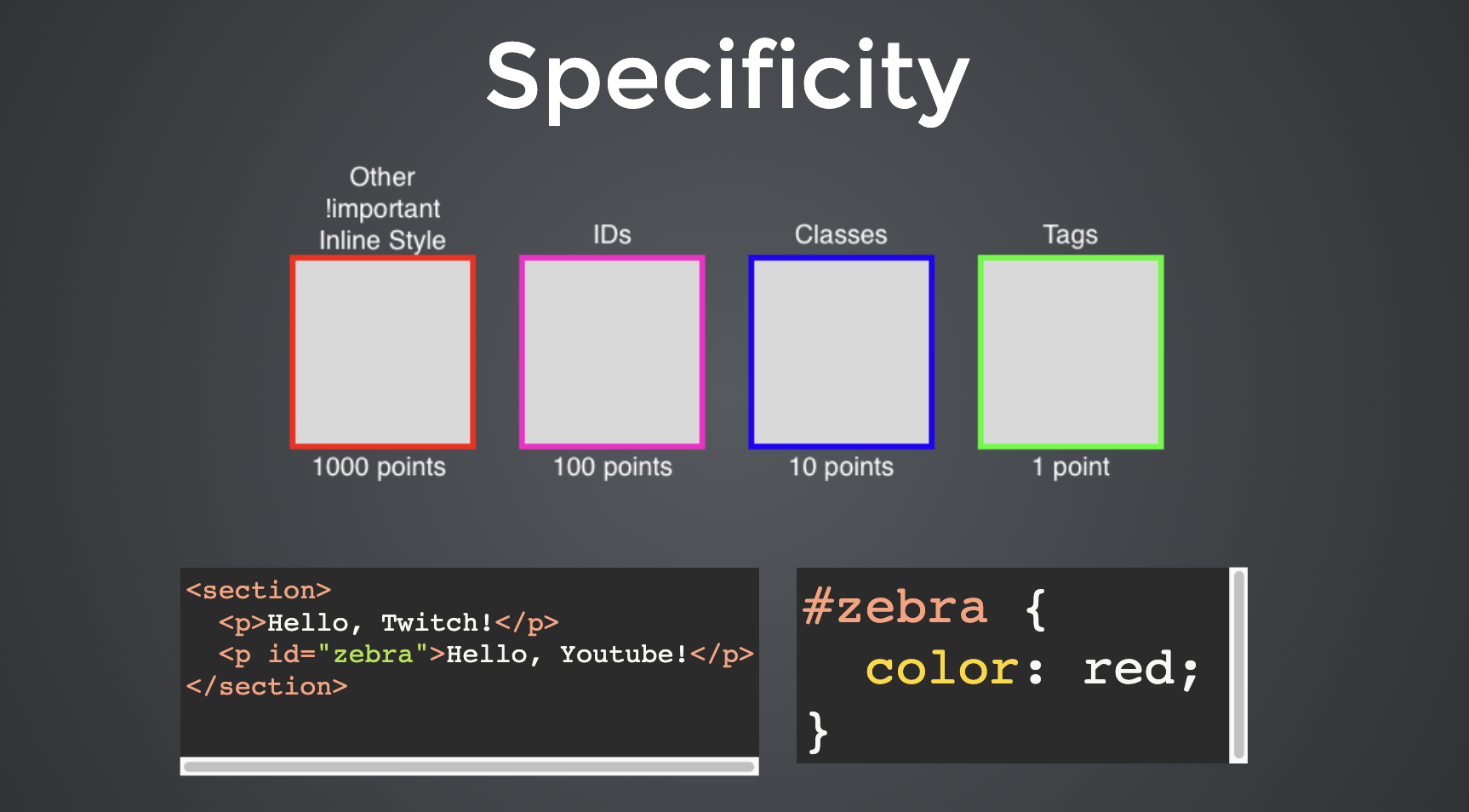

Classes 5 and 6 are where we put our true learning abilities to the test. This week, we discussed:

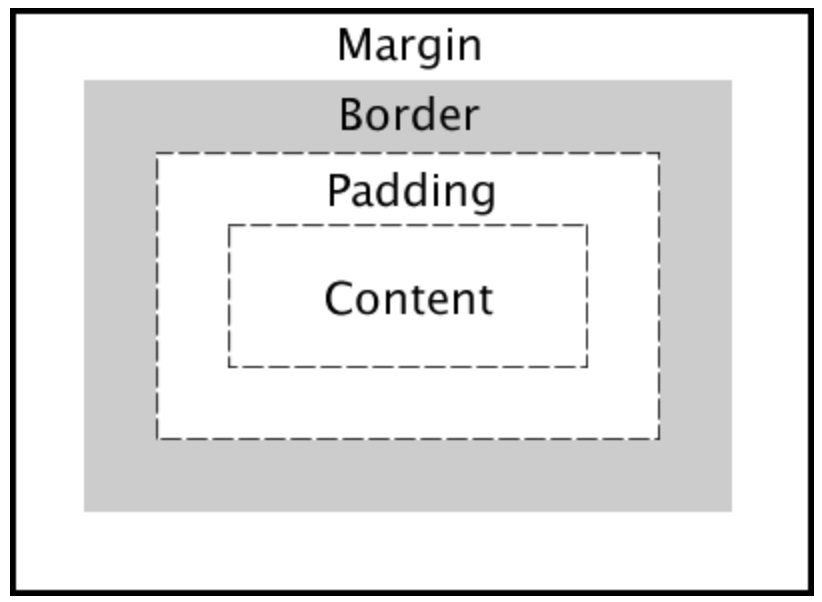

- The Box Model

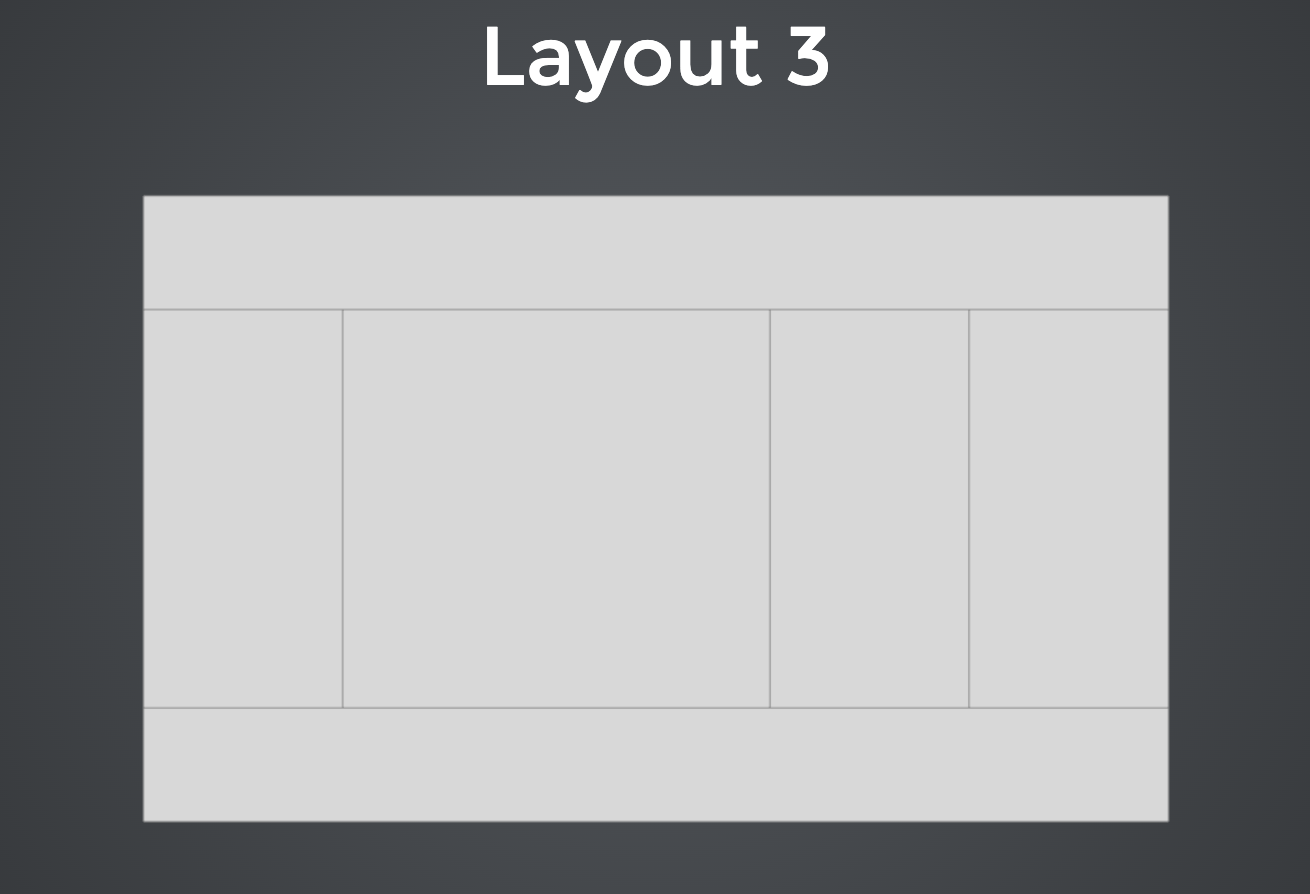

- created basic layouts

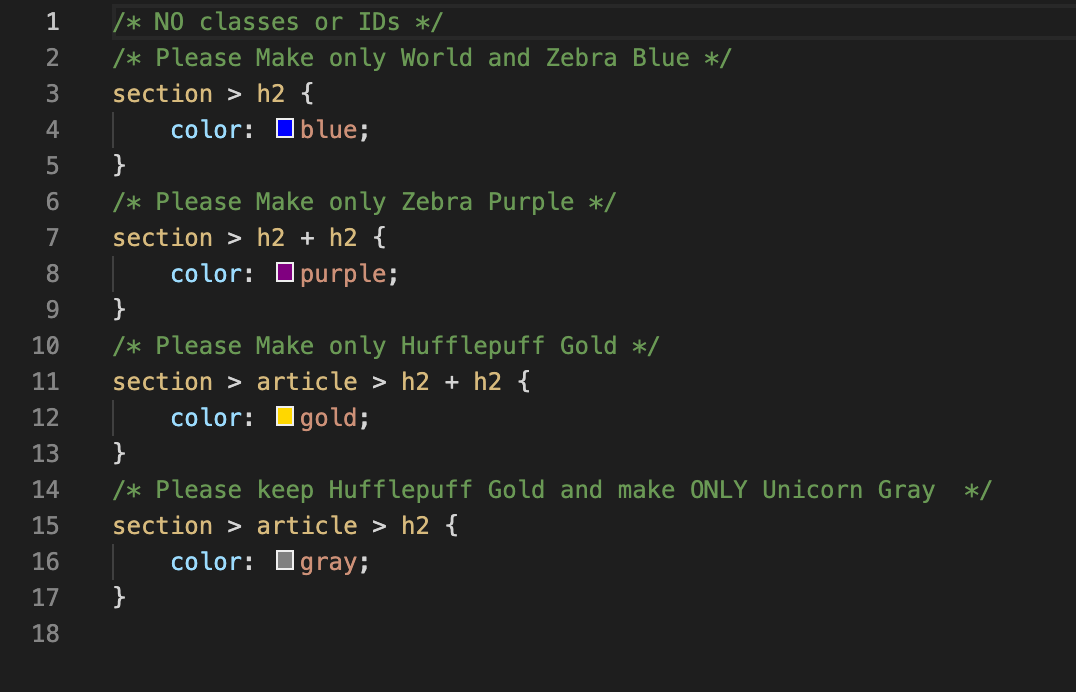

- practice relationships selecting without using classes or IDs.

- specificity practice

After completing the three layouts, the next objective is to read and dive into Responsive Web Design. From my foxhole, it means creating websites so they are accessible and viewable across all devices and platforms. Our resource is to learn from the superstar Shay Howe: [Learning to Code Advanced HTML & CSS](https://learn.shayhowe.com/advanced-html-css/), but supplement with the MDN Docs as well. Once all understood, the goal is to make the three layouts responsive, like how Nicole did!

<blockquote class="twitter-tweet"><p lang="en" dir="ltr">Day 19 of <a href="https://twitter.com/hashtag/100DaysofCode?src=hash&ref_src=twsrc%5Etfw">#100DaysofCode</a>: <a href="https://twitter.com/hashtag/100Devs?src=hash&ref_src=twsrc%5Etfw">#100Devs</a> assignment today. <a href="https://twitter.com/leonnoel?ref_src=twsrc%5Etfw">@leonnoel</a> said we needed to make it responsive. It's definitely responding. 😅 <a href="https://t.co/zM56c8T6Zv">pic.twitter.com/zM56c8T6Zv</a></p>— Nicole Barnabee (@NicoleBarnabee) <a href="https://twitter.com/NicoleBarnabee/status/1487085289851994117?ref_src=twsrc%5Etfw">January 28, 2022</a></blockquote> <script async src="https://platform.twitter.com/widgets.js" charset="utf-8"></script>

# That's all friends!

<iframe src="https://giphy.com/embed/w89ak63KNl0nJl80ig" width="480" height="400" frameBorder="0" class="giphy-embed" allowFullScreen></iframe><p><a href="https://giphy.com/gifs/theoffice-w89ak63KNl0nJl80ig"></a>

No joke, this was difficult to write. Writing is an art I want to continue to practice while on the #100Dev journey. I will post a weekly recap of the lessons at the end of each week. If you have any questions, comments, concerns, **feedback**, do let me know. Until next time ~

This is where you can find me → [Twitter](https://twitter.com/codewithfan), [LinkedIn](https://www.linkedin.com/in/stefantaitano/)!

| codewithfan |

969,531 | A recap of the Apama Advent Calendar of articles | On December 1, 2021, ‘someone’ (cough, cough) decided, having just opened the first door of our... | 0 | 2022-02-02T12:44:32 | https://tech.forums.softwareag.com/t/a-recap-of-the-apama-advent-calendar-of-articles/254620 | iot, cumulocity, streaminganalytics, newsletter | ---

title: A recap of the Apama Advent Calendar of articles

published: true

date: 2022-01-27 08:45:41 UTC

tags: iot, cumulocity, streaminganalytics, newsletter

canonical_url: https://tech.forums.softwareag.com/t/a-recap-of-the-apama-advent-calendar-of-articles/254620

---

**On December 1, 2021, ‘someone’ (cough, cough) decided, having just opened the first door of our family advent calendars at home, that it would be “fun” to produce a kind of informational “advent calendar” providing a short article series of brief tips, tricks, hints, and reminders of information relating to the Apama Streaming Analytics platform from Software AG and the Community.**

Our goal was to have one article each workday (Monday-Friday) from the 1st of December until Christmas Eve (maybe with a couple of special bonus weekend articles to help get it all flowing).

[](https://aws1.discourse-cdn.com/techcommunity/original/3X/e/0/e0aa0eaa044994257cb6939a7c33fa415a0b105e.jpeg "image")

We wanted the content to be diverse, informative, and progressive, helping to introduce a novice or intermediate reader to Apama Streaming Analytics. Of course, we also had the idea of ending with something fun in the final article, like opening the last door of a real calendar.

Below you will find a table of the articles and the content “levels.” Just one of the articles, for Thursday, December 23, is nontechnical in content and instead rediscovers several use cases related to environmental issues. Other than that one day, we cover all the basics and the more commonly used aspects of Apama, particularly when it is used as a component of, or in conjunction with, our market focus around Cumulocity IoT.

| Level | Article subject | Date |

| --- | --- | --- |

| Beginner | [Finding information about Apama](https://tech.forums.softwareag.com/t/apama-advent-calendar-01-dec-2021-finding-information/253610/6) | Wed 1 Dec 2021 |

| Beginner | [Apama anywhere from multi-cloud to ThinEdge](https://tech.forums.softwareag.com/t/apama-advent-calendar-02-dec-2021-apama-anywhere-from-multi-cloud-to-thinedge/253649/3) | Thu 2 Dec 2021 |

| Beginner | [Apama for domain experts](https://tech.forums.softwareag.com/t/apama-advent-calendar-03-dec-2021-apama-for-domain-experts/253719/2) | Fri 3 Dec 2021 |

| Beginner | [Apama fundamental concepts](https://tech.forums.softwareag.com/t/apama-advent-calendar-04-dec-2021-apama-fundamental-concepts/253723) | Sat 4 Dec 2021 (Bonus!) |

| Beginner | [Apama CLI tooling](https://tech.forums.softwareag.com/t/apama-advent-calendar-05-dec-2021-apama-cli-tooling/253730) | Sun 5 Dec 2021 (Bonus!) |

| Beginner | [Apama EPL fundamentals](https://tech.forums.softwareag.com/t/apama-advent-calendar-06-dec-2021-apama-epl-fundamentals/253758) | Mon 6 Dec 2021 |

| Beginner / Intermediate | [Apama logging](https://tech.forums.softwareag.com/t/apama-advent-calendar-07-dec-2021-apama-logging/253779) | Tue 7 Dec 2021 |

| Intermediate | [Apama Connectivity](https://tech.forums.softwareag.com/t/apama-advent-calendar-08-dec-2021-apama-connectivity/253799) | Wed 8 Dec 2021 |

| Intermediate | [Apama and automated testing](https://tech.forums.softwareag.com/t/apama-advent-calendar-09-dec-2021-apama-and-automated-testing/253829) | Thu 9 Dec 2021 |

| Beginner | [Apama Development Environments](https://tech.forums.softwareag.com/t/apama-advent-calendar-10-dec-2021-apama-development-environments/253845) | Fri 10 Dec 2021 |

| | Weekend | Sat 11 Dec 2021 |

| | Weekend | Sun 12 Dec 2021 |

| Intermediate | [Apama in containers](https://tech.forums.softwareag.com/t/apama-advent-calendar-13-dec-2021-apama-in-containers/253888) | Mon 13 Dec 2021 |

| Intermediate | [Deploying Apama projects](https://tech.forums.softwareag.com/t/apama-advent-calendar-14-dec-2021-deploying-apama-projects/253914) | Tue 14 Dec 2021 |

| Beginner / Intermediate | [EPL in Cumulocity IoT](https://tech.forums.softwareag.com/t/apama-advent-calendar-15-dec-2021-epl-in-cumulocity-iot/253939) | Wed 15 Dec 2021 |

| Intermediate / Advanced | [The Analytics Builder Block SDK and Custom Blocks](https://tech.forums.softwareag.com/t/apama-advent-calendar-16-dec-2021-the-analytics-builder-block-sdk-and-custom-blocks/253968) | Thu 16 Dec 2021 |

| Advanced | [Enhancing Cloud Fieldbus using Apama](https://tech.forums.softwareag.com/t/apama-advent-calendar-17-dec-2021-enhancing-cloud-fieldbus-using-apama/253984) | Fri 17 Dec 2021 |

| | Weekend | Sat 18 Dec 2021 |

| | Weekend | Sun 19 Dec 2021 |

| Advanced | [Using Connectivity Plugins in apama-ctrl](https://tech.forums.softwareag.com/t/apama-advent-calendar-20-dec-2021-using-connectivity-plugins-in-apama-ctrl/254018) | Mon 20 Dec 2021 |

| Intermediate | [EPL Stream Listeners](https://tech.forums.softwareag.com/t/apama-advent-calendar-21-dec-2021-epl-stream-listeners/254020) | Tue 21 Dec 2021 |

| Intermediate | [The MemoryStore plugin](https://tech.forums.softwareag.com/t/apama-advent-calendar-22-dec-2021-the-memorystore-plugin/254021) | Wed 22 Dec 2021 |

| Use Cases | [Apama on planet Earth](https://tech.forums.softwareag.com/t/apama-advent-calendar-23-dec-2021-apama-on-planet-earth/254022) | Thu 23 Dec 2021 |

| Intermediate | [Apama Christmas Tree Lights](https://tech.forums.softwareag.com/t/apama-advent-calendar-24-dec-2021-apama-christmas-tree-lights/254139) | Fri 24 Dec 2021 |

You can find all of these as well as any other related knowledge base articles here:

[Latest Streaming-Analytics-Apama topics in Knowledge base - Software AG Tech Community & Forums](https://tech.forums.softwareag.com/tags/c/knowledge-base/6/Streaming-Analytics-Apama)

Phew! I must admit, having instigated the creation of such an advent calendar, I now understand why companies choose instead to do “12 days of Christmas.”

Many thanks to my coauthors, Harald, Mario, Nick, Bevan, and Louise.

We hope you find this collection of articles helpful. Don’t forget there are also a large number of blog posts over on [www.apamacommunity.com](http://www.apamacommunity.com/), and you can ask questions or have discussions about Apama Streaming Analytics here in the Tech Communities [Forum](https://tech.forums.softwareag.com/tags/c/forum/1/Streaming-Analytics-Apama).

* * *

> This article is part of the TECHniques newsletter blog - technical tips and tricks for the Software AG community. [Subscribe](https://info.softwareag.com/TechCommunity-Subscription-Page.html) to receive our quarterly updates or read the [latest issue](https://tech.forums.softwareag.com/techniques-latest).

[Visit the original article](https://tech.forums.softwareag.com/t/a-recap-of-the-apama-advent-calendar-of-articles/254620) | techcomm_sag |

969,729 | Is it possible to create custom tag like <If> with condition using jsx ? | A post by Ramya Yegu | 0 | 2022-01-27T14:12:06 | https://dev.to/yramyayegu/is-it-possible-to-create-custom-tag-like-with-condition-using-jsx--3jeh | react | yramyayegu | |

970,079 | Incident Management Metrics and Key Performance Indicators | In 2008, I got my first job at a software-as-a-service company. We built learning management software... | 0 | 2022-01-27T20:53:33 | https://earthly.dev/blog/incident-management-metrics/ | devops, aws, linux, kubernetes | ---

title: Incident Management Metrics and Key Performance Indicators

tags: devops, aws,linux, kubernetes

canonical_url: https://earthly.dev/blog/incident-management-metrics/

published: true

---

In 2008, I got my first job at a software-as-a-service company. We built learning management software and ran it on servers in the small data center connected to our office.

We released new software onto these production servers monthly and measured quality by counting bugs per release. We also had account managers who kept us informed of how many large clients seemed upset about the last release.

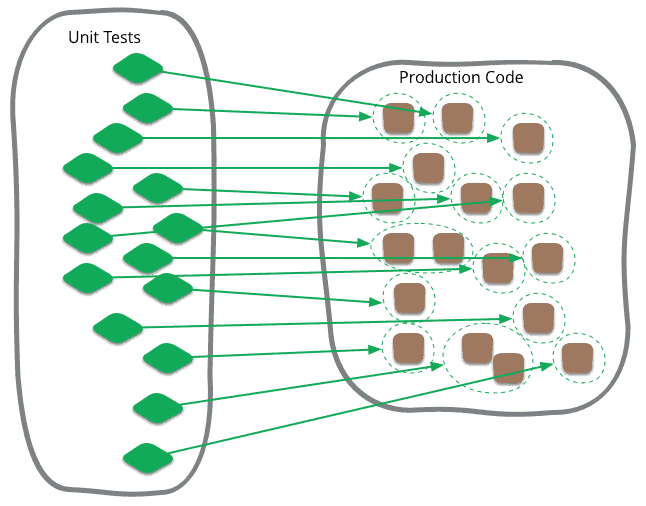

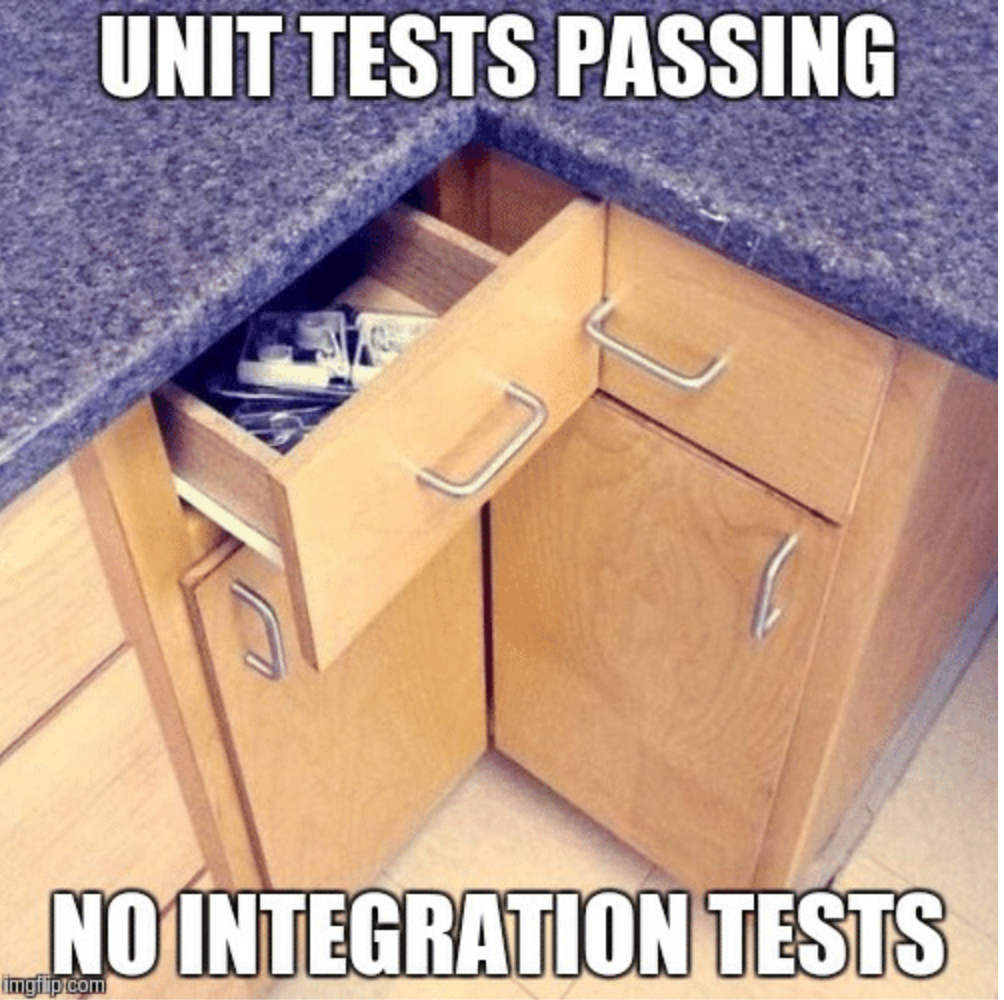

Occasionally, when something went wrong, we would do a stability release and spend a month only fixing bugs. [Testing](https://earthly.dev/blog/unit-vs-integration) was not a part of our build process but a part of our team: every feature team had quality assurance people who tested each feature before it was released.

This wasn't that long ago, but cloud software development has matured a lot since this time. Incident management has become standard practice, and many great metrics and Key Performance Indicators (KPIs) exist for measuring release quality. Let's review some of them.

## Mean Time Between Failures (MTBF)

When software is being released only once a month, on a fixed timeline, with extensive manual testing, counting the number of bugs might work. But once you start releasing many times per week or per day, this won't work, and another way to measure software quality is required.

Mean time between failures is a metric from the field of [reliability](https://earthly.dev/blog/achieving-repeatability) engineering. Calculating it is simple: it is time over the number of failures that occurred during that time. If in the last 30 days you have had two production incidents, then the mean time between failure is 15 days.

<div class="notice--big--primary">

### Calculating MTBF

| Incidents in last 30 days | |

| ------------- | -------- |

| #1 | Jan 3rd |

| #2 | Jan 25 |

Mean Time Between Failures =

: 30 days / 2 Incidents = 15 days

</div>

## Mean Time to Recovery (MTTR)

Something funny happens when you start releasing more frequently. You may end up with a higher count of issues in production, but resolving them will happen much faster. If each change is released separately using a continuous delivery model, then recovering gets easier -- often, all that is required is hitting a rollback button.

If you are measuring MTBF, your software may be getting much better, but your numbers will be getting worse. Enter mean time to recovery. Mean time to recovery is just what it sounds like: you start a timer when the incident begins and stop it when production is healthy again - even a simple rollback counts. Average this number across incidents, and you have MTTR. You now have a metric that captures the health of your incidence response process.

<div class="notice--big--primary">

### Calculating Mean Time to Recovery

| Incident #1 | |

| ------------- | -------- |

| Reported | 10:00 am. |

| Recovered | 12:00 pm. |

| Recovery Time | 2 Hours |

| Incident #2 | |

| ------------- | -------- |

| Reported | 10:00 am. |

| Recovered | 2 days later at 10:00 am. |

| Recovery Time | 48 Hours |

Mean Time To Recovery =

: 2 hour + 48 hours / 2 failures = 25 hours

</div>

## Mean Time to Resolve (MTTRe)

<div class="notice--info">

ℹ️ Acronyms Collision Alert

Mean Time To Resolve, MTTRe, differs from Mean Time To Recover, MTTR, but some resources use MTTR for both. To avoid confusion, ensure you are using the correct terminology for your metric.

</div>

Rolling back to address an incident is a great idea: it's often the quickest way to get things back in a good place. But there are other types of incidents. Imagine your application deadlocks every once in a while, and you have to restart it to unlock. You may have an excellent mean time to recovery, but you've never actually addressed the root cause. This is what MTTRe measures, not the time to get the service back up and running but to resolve the root cause and ensure the problem never happens again.

The never-happens-again part is hard to achieve but vital. If you are responding quickly but never getting to the root cause, you will be living in a stressful world of constant fire fighting. However, if you are resolving the root cause of each incident, then quality will increase over time.

<div class="notice--big--primary">

### Calculating Mean Time to Resolve

| Incident #3 | |

| -------------------- | -------- |

| Reported | day 1 |

| Addressed | day 1 |

| Root Cause Analysis | day 2 |

| Root Cause Addressed | day 31 |

| **Resolve Time** | **30 days** |

| Incident #4 | |

| -------------------- | -------- |

| Reported | day 1 |

| Addressed | day 1 |

| Root Cause Analysis | day 2 |

| Root Cause Addressed | day 11 |

| **Resolve Time** | **10 days** ||

Mean Time To Resolve =

: 30 days + 10 days / 2 incidents = 20 days

</div>

## Mean Time to Acknowledge (MTTA)

An essential part of good incident management is an on-call rotation. You need someone around to respond to incidents when they occur. Our previous metrics would be unable to differentiate between an incident that took 3 hours to recover from and one that was recoverable in 5 minutes but took two hours and 55 minutes to be acknowledged.

MTTA highlights this difference. It is a metric for measuring the responsiveness of the on-call person to any alerts.

<div class="notice--big--primary">

### ️Calculating Mean Time to Acknowledge

| Incident #5 | |

| -------------------- | -------- |

| Reported | 10 am |

| Acknowledged | 10: 05 am |

| Recovered | 12:00 pm |

| **Acknowledge Time** | **5 minutes** |

| Incident #6 | |

| -------------------- | -------- |

| Reported | 10 am |

| Acknowledged | 11: 55 am |

| Recovered | 12:00 pm |

| **Acknowledge Time** | **115 minutes** |

Mean Time To Acknowledge =

: 5 minutes + 115 minutes / 2 incidents = 60 minutes

</div>

## Summary

There are many ways to measure the quality of your software as a service product. MTBF, MTTR, MTTRe, and MTTA can each offer a different lens for viewing your software release life cycle. As you improve your Software Development Life Cycle, find ways to collect aggregate metrics like these and choose one or two to target for improvement.

Invest in improving these metrics, and you'll make up for it in time saved fighting fires. Also, focusing on aggregate metrics can be an effective way to move the discussion from blame about specific incidents to a higher-level debate around changing the process to better support the company's goals.

If your build pipeline is taking more than 15 minutes and therefore negatively affecting your metrics, then take a look at Earthly's [free and open build tool](http://earthly.dev/).

Originally published on [Earthly's blog](https://earthly.dev/blog/incident-management-metrics/)

| adamgordonbell |

970,513 | How I cleared Microsoft Azure AZ-204 in one month | AZ-204: Developing Solutions for Microsoft Azure focuses on design, development, deployment,... | 0 | 2022-01-28T09:35:09 | https://dev.to/devanandukalkar/how-i-cleared-az-204-in-one-month-51k8 | azure, az204, python |

**AZ-204: Developing Solutions for Microsoft Azure** focuses on design, development, deployment, maintenance and monitoring of scalable solutions on Azure. There is no pre-requisite for this certification. But if you complete [AZ-900: Azure Fundamentals](https://docs.microsoft.com/en-us/learn/certifications/exams/az-900) before, your learning process will speed up in terms of understanding some of the general concepts of Microsoft Azure. The exam covers each of these aspects with varying weightage. Below are the core skills that Microsoft has outlined on their learning portal.

1. _Develop Azure compute solutions (25-30%)_

2. _Develop for Azure storage (15-20%)_

3. _Implement Azure security (20-25%)_

4. _Monitor, troubleshoot, and optimize Azure solutions (15-20%)_

5. _Connect to and consume Azure services and third-party services (15-20%)_

Detailed exam skills outline can be found [here](https://query.prod.cms.rt.microsoft.com/cms/api/am/binary/RE4oZ7B).

Just to give you a glimpse of what Azure services will need to be studied from exam perspective.

1. _Azure Virtual Machines_

2. _Azure Functions_

3. _Azure Storage Account_

4. _Azure Web Apps_

5. _Azure Logic Apps_

6. _Azure Cosmo DB_

7. _Azure Container Registry/Docker_

8. _Azure Key Vault_

9. _Azure Active Directory_

10. _Azure Authentication(OAuth 2.0), Security_

11. _Azure Service Bus/Queue, Azure Storage queues_

12. _Azure Event Grid, Event Hub_

13. _Azure API management_

14. _Azure Cache for Redis_

15. _Azure ARM Templates_

16. _Application Insights_

17. _Azure Monitor_

18. _Azure CLI, Powershell_

19. _Azure Batch_

20. _Azure Search_

The exam will require a bit of experience with programming languages supported by Azure (e.g. C#, Python, Java, Node.js). Although the tutorials present out there are mostly using C# for development. But it should not matter much as there are Azure SDKs for each of these supported languages which you can use to develop a solution.

**How I prepared for the exam:**

I started with Scott Duffy's [AZ-204 Developing for Microsoft Azure Exam Prep](https://www.udemy.com/course/70532-azure/) course on Udemy. This course covers practical guide to develop each Azure services outlined for the exam. It can be completed within a week or two(depending on your speed).

The second course that I referred to was from Alan Rodrigues on YouTube ([link](https://www.youtube.com/watch?v=wWBW6ojr-Nw&list=PLLc2nQDXYMHpekgrToMrDpVtFtvmRSqVt)). This course is bit more on hours but provides comprehensive knowledge. Alan has developed solutions for each of those Azure services from scratch using C#/.Net in the tutorials.

If you are short on time, I would suggest to take first course by Scott Duffy. But If you really want to learn it in detail, I would really recommend to take Alan Rodrigues's course on YouTube.

As the course covers a lot, it's almost impossible to grasp it by just watching the videos. You must practice developing these solutions using your preferred choice of programming language. That way, it will be easier to understand nitty-gritty concepts which would ultimately help you in exam.

I had practiced azure development using Python. The SDKs can be found on this [GitHub](https://github.com/Azure/azure-sdk-for-python) link. There are many built in extensions for these Azure services in Visual Studio Code, please make sure to utilize them.

Additionally, you could read Microsoft's official documentation and make use of their learning portal. Details can be found [here](https://docs.microsoft.com/en-us/learn/certifications/azure-developer/).

**Practice Questions:**

Practicing questions should make about 30% of your preparation time. This is very important, as you would get to know the variety of questions that will be asked and how to manage time during examination.

There are about 2 case studies given in the exam with 10-15 questions based on the same. It could take time to read the case study and understand the problem. Trick is to not read entire problem. Instead, read question first and then head back to related specific portion of the case study. This will help in save time during exam.

You must get good grasp on Azure CLI, powershell commands & related arguments, as there are many questions related to completing the command by filling in the blanks. There are few questions on completing the unfinished code as well (e.g importing libraries or invoking methods/classes)

I would recommend below 2 resources to practice questions for AZ-204.

1. Practice tests by [Skillcertpro](https://skillcertpro.com/product/developing-solutions-for-microsoft-azure-az-204-practice-exam-test/)

2. Practice tests by [Whizlabs](https://www.whizlabs.com/microsoft-azure-certification-az-204/)

In fact, even if you complete just the first one, it should be more than enough to clear the examination.

Key is to have as much hands on experience as possible. I hope this will help you to prepare for examination.

Best of luck and Let me know your questions in comment section.

**Check out my verified badge:**

https://www.credly.com/badges/05857a22-73a8-4ec2-a438-c5ee8733d6ee

| devanandukalkar |

971,086 | The Intl object: JavaScript can speak many languages | JavaScript has a useful yet unknown object to handle formatting dates, numbers, and other values in... | 0 | 2022-01-28T16:03:11 | https://nicozerpa.com/intl-javascript-can-speak-many-languages/ | javascript, webdev, programming | JavaScript has a useful yet unknown object to handle formatting dates, numbers, and other values in different languages, the `Intl` object.

This object is very useful when you have a raw date or a big number and you need to **display it in a more user-friendly way**.

You can, for example, convert the date `2022-01-16T20:10:48.142Z` to "January 16, 2022 at 8:10 PM" for people in the US, and to "16 de enero de 2022, 20:10" for those who live in Spain.

## Formatting numbers and currency

You can format numbers and currency with the `Intl.NumberFormat` object. This is how it works:

```javascript

const usaCurrencyFormatter = new Intl.NumberFormat(

"en-US", // <-- Language and country

// (in this case, US English)

{

style: "currency", // <-- it can also be

// "decimal", "percent"

// or "unit"

currency: "USD" // <-- Which currency to use

// (not needed if the style

// is not "currency")

}

);

usaCurrencyFormatter.format(2349.56);

// ☝️ returns "$2,349.56"

const spainCurrencyFormatter = new Intl.NumberFormat(

"es-ES", // <!-- Spanish from Spain

{

style: "currency",

currency: "EUR" // <-- Euros

}

);

spainCurrencyFormatter.format(2349.56);

// ☝️ returns "2349,56 €"

const qatarNumberFormatter = new Intl.NumberFormat(

"ar-QA", // <!-- Arabic from Qatar

{

style: "decimal"

}

);

qatarNumberFormatter.format(4583290.458);

// ☝️ returns "٤٬٥٨٣٬٢٩٠٫٤٥٨"

```

When you're formatting currency, you have to specify the `currency` parameter with the code of the currency you want/need to use. [You can check a list of currency codes here](https://en.wikipedia.org/wiki/ISO_4217#Active_codes).

## Formatting dates

`Intl.DateTimeFormat` lets you format dates in different languages and locales:

```javascript

const date = new Date("2022-01-16T20:10:48.142Z");

const usaDateFormatter = new Intl.DateTimeFormat(

"en-US", // US English

{

dateStyle: "short", // <-- how to display the date

// ("short", "medium", or "long")

timeStyle: "short", // <-- how to display the time

// if you don't include this parameter,

// it will just show the date

timeZone: "America/Los_Angeles" // <-- this object also

// converts time zones

}

);

usaDateFormatter.format(date);

// ☝️ returns "1/16/22, 12:10 PM"

const brazilDateFormatter = new Intl.DateTimeFormat(

"pt-BR", // Portuguese from Brazil

{

dateStyle: "long",

timeStyle: "medium",

timeZone: "UTC"

}

);

brazilDateFormatter.format(date);

// ☝️ returns "16 de janeiro de 2022 20:10:48"

const japanDateFormatter = new Intl.DateTimeFormat(

"ja", // Japanese

{

dateStyle: "long",

timeStyle: "short",

timeZone: "Asia/Tokyo"

}

);

japanDateFormatter.format(date);

// ☝️ returns "2022年1月17日 5:10"

```

However, these are only two of the many utilities in `Intl` to format other types of values into different languages. [On this page, there's the full list of formatters](https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Global_Objects/Intl).

## Where to get languages and country codes?

Language codes consist of three parts *language*-*writingSystem*-*countryOrRegion*. Only the first part is necessary, and the writing system is necessary only if the language can be written in more than one alphabet/writing system.

Here are some examples:

```

en-US: English, United States

es: Spanish

pt-BR: Portuguese, Brazil

zh-Hans-CN: Chinese, simplified writing ("hans"), from China

```

The entire list of languages, countries or regions, and writing systems (or "scripts") [can be found here](https://www.iana.org/assignments/language-subtag-registry/language-subtag-registry).

---

Free JavaScript Newsletter: Every other Monday, easy and actionable steps to level up your JavaScript skills. [Click here to subscribe.](https://nicozerpa.com/newsletter/) | nicozerpa |

971,860 | What is the best practice to provide a test account for Google SignIn in Google Play Console? | What is the best practice to provide a... | 0 | 2022-01-29T12:36:50 | https://dev.to/torufuruya/what-is-the-best-practice-to-provide-a-test-account-for-google-signin-in-google-play-console-4293 | {% stackoverflow 70827749 %} | torufuruya | |

972,144 | Why a Spring Cloud Config Server is Crucial to a Good CI/CD Pipeline (Pt 1) | Introduction Before I began developing large-scale, enterprise level software, I didn’t... | 0 | 2022-04-12T20:49:45 | https://www.paigeniedringhaus.com/bloh/why-a-spring-cloud-config-server-is-crucial-to-a-good-ci-cd-pipeline-pt-1 | java, devops, springboot, backend | ---

title: Why a Spring Cloud Config Server is Crucial to a Good CI/CD Pipeline (Pt 1)

published: true

date: 2018-05-26 00:00:00 UTC

tags: java,devops,springboot,backend

canonical_url: https://www.paigeniedringhaus.com/bloh/why-a-spring-cloud-config-server-is-crucial-to-a-good-ci-cd-pipeline-pt-1

---

[](/static/24d70e51d7ba55ddbed9ad6d61d1d908/eea4a/screen-of-code.jpg)

## Introduction

Before I began developing large-scale, enterprise level software, I didn’t fully comprehend the value of things like integration tests, automated build processes and shared libraries.

For the small applications I built during my [coding bootcamp](https://www.paigeniedringhaus.com/blog/how-i-went-from-a-digital-marketer-to-a-software-engineer-in-4-months), it was easy for me to do things like hard code URLs so my frontend application could talk to its backend counterparts and their databases.

I only had two environments: my local development environment on my laptop and my AWS production environment where I hosted my projects portfolio, and it was a fairly simple, albeit entirely manual, process (with a good bit of trial and error) to get the pieces of the puzzle connected.

Fast forward several months to my software engineering job of maintaining and improving upon an application backed by no less than 13 microservices. No, I’m not joking. Yes, all 13 are critical to this application working.

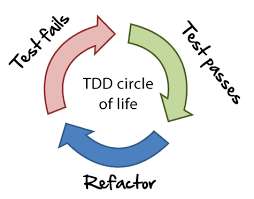

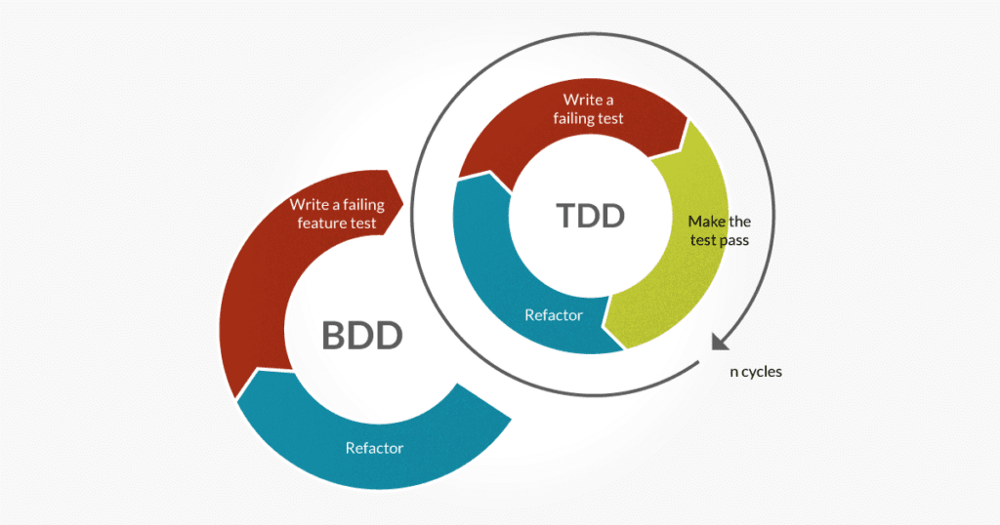

Did I mention that in my team’s [test driven development (TDD)](https://en.wikipedia.org/wiki/Test-driven_development), agile process we also have 4 different development environments before a new feature makes it to production? Yep: a local development space, a QA space, a Q1 space, an acceptance space and finally our production space.

So, think about it: does it make sense to have to manually update each and every one of these services as they move through the development process on their way to production? Of course it doesn’t.

You need a centralized, easy-to-automate way to update those environment variables. This is where a cloud configuration server can shine.

To give you a quick overview, cloud config servers are designed to:

> “Provide server and client-side support for externalized configuration in a distributed system. With the Config Server you have a central place to manage external properties for applications across all environments.”

>

> [- Spring Cloud Config](https://cloud.spring.io/spring-cloud-config/)

**Basically, a config server allows you to externally store variables your application will need to run in all environments, regardless of lifecycle, and update them in one, centralized place.**

[](/static/4b53473c4b91ca751900865efaa74475/f93b5/spring-cloud.jpg)

_Behold, the Spring Cloud._

The first config server I was introduced to is the [Spring Cloud Config](https://cloud.spring.io/spring-cloud-config/) server, since my team is responsible for a full stack application composed of many Java Spring Boot backend microservices and a JavaScript Node.js frontend microservice.

---

## Setting Up Your Config Server

Setting up the config server is actually pretty easy.

To get started, you can go the [Spring Initializr site](http://start.spring.io/), add `Config Server` as a dependency to the project and click the "Generate Project" button in the browser.

> I’ll also add that, at least at the time I’m writing this, the Spring Cloud Config Server is not compatible with Spring Boot version 2 (I found this out the hard way), so choose the highest Spring Boot version 1 snapshot you can.

[](/static/44b116839628c34184589e88e7a330fa/c679a/spring-initializr.png)

_This is all you need to get your Spring Cloud Config Server running: the Config Server dependency. Note the version of Spring Boot is 1.5.14, not 2.0.2, which is the latest version it automatically defaults to._

Once you’ve downloaded the project and opened it up in your IDE of choice (mine is [IntelliJ](https://www.jetbrains.com/idea/)), you can go straight to the main application file, add the `@EnableConfigServer` and `@SpringBootApplication` Spring Boot annotations, and you’re almost ready to go.

Below is my actual main method file, truly, that’s all it needs.

```java

package com.myExampleConfigServer;

import org.springframework.boot.SpringApplication;

import org.springframework.boot.autoconfigure.SpringBootApplication;

import org.springframework.cloud.config.server.EnableConfigServer;

@EnableConfigServer

@SpringBootApplication

public class MyExampleConfigServerApplication {

public static void main(String[] args) {

SpringApplication.run(MyExampleConfigServerApplication.class, args);

}

}

```

The other file you’ll need to configure is your `application.yml` file in your `resources/` folder. This file will be where you set up your cloud config server’s access to GitHub (and the GitHub files it will use to provide application configuration variables).

As you can see, there’s a spot for your GitHub URL (which I’ll come back to in a minute), username and password.

Since our applications are hosted on [Pivotal Cloud Foundry](https://pivotal.io/platform), we use what are called [VCAP services](https://docs.cloudfoundry.org/devguide/deploy-apps/environment-variable.html) (it stands for VMWare Cloud Application Platform). It’s a fancy way of saying a service where environment variables are stored that only people (and applications) who have access to that space within PCF can access. This lets us put sensitive information like service accounts and passwords somewhere besides a public GitHub repo where the credentials are available for the config server, but not available for anyone without authorization.

Here’s my `application.yml`. With these two files configured and a cloud platform to host your server, you’ll be ready to start using your config server.

**application.yml**

```yaml

server:

port:

8888

spring:

application:

name: myexample-config-server

cloud:

config:

server:

git:

uri: ${vcap.services.config-service.credentials.url}

username: ${vcap.services.config-service.credentials.user}

password: ${vcap.services.config-service.credentials.token}

```

If you’d like to see an example of the config server app, I’ve put a starter project [here in GitHub](https://github.com/paigen11/spring-cloud-config-server-example/tree/master).

Ok, so you’ve got your config server. Great, now it’s time to set up your config server properties — the files the config server will actually access to pull environment variables for your projects.

## Setting Up Your Config Server Properties

This part is a cinch. Create a totally empty new repo in GitHub, and then create a new file with the following naming conventions:

```yaml

[application name]-[life cycle profile].yml

ex. my-app-to-config-QA.yml

```

Within this YAML file, you’ll be able to add your configuration properties for your application. Here’s some sample info you might include in the YAML.

**my-app-to-config-QA.yml**

```yaml

configurations:

featureToggleFlag: true

my-custom-flag: false

sampleCronJob: "0 0 1 * * *"

sampleUrl: http://google.com

```

Commit this file to a GitHub repo, copy that repo URL and place it in the Cloud Config Server URI in your config server’s `application.yml`. Now the server knows where to look in GitHub for the config files you want to use.

You can see an example of a config properties repo [here in GitHub](https://github.com/paigen11/spring-cloud-config-properties-sample).

## Connecting Your Java Spring Boot Application to Your Config Server

With both your config server and your properties you want the server to provide to your project, you need your project to be able to get those properties when your Spring Boot service starts up.

Again, there’s not a lot of overhead to getting this working. In the `build.gradle` file of your app that needs these config server values, you’ll need the following dependencies:

**build.gradle**

```java

compile group: 'org.springframework.cloud', name: 'spring-cloud-starter-config', version:'1.3.3.RELEASE'

compile group: 'org.springframework.boot', name: 'spring-boot-configuration-processor', version: "${springBootVersion}"

```

Then, you’ll create a `bootstrap.yml` file which will live alongside your `application.yml` file in your `src/main/resources/` folder, and it will contain information pointing to the config server’s location wherever it’s being hosted. It will look something like this:

**bootstrap.yml**

```yaml

spring:

application:

name: ##APPLICATION NAME GOES HERE##

cloud:

config:

uri: https://myexample-config-server.non-prod.com

---

spring:

profiles: production

cloud:

config:

uri: https://myexample-config-server.prod.com

```

Next, you’ll go to whichever files actually need the config properties and add the annotations `@Configuration` and `@ConfigurationProperties(prefix=”configurations")` (or whatever you’ve named your config properties in the config properties YAML).

Here's an example:

**XyzProperties.java**

```java

@Configuration

@ConfigurationProperties(prefix = "configurations")

public class ConfigProperties {

}

```

If you notice, the properties are prefixed by `configurations`. To bind these properties to the ConfigProperties class, you'll first need to add a `"prefix"` to the `@ConfigurationProperties` annotation like so:

To bind the `“featureToggleFlag”` property, add the following member variable. along with the getter and setter. Or use [Lombok](https://projectlombok.org/features/constructor)’s `@NoArgsConstructor` and skip the getters and setters - your choice.

**XyzProperties.java**

```java

@Configuration

@ConfigurationProperties(prefix = "configurations")

public class ConfigProperties {

private boolean featureToggleFlag;

//Add Getters and Setters here if desired...

}

```

If you match the member variable name with the actual property name in the configuration properties, Spring will automatically bind it to the member variable. So far so good.

The very last thing you must do before starting up your service is ensure that you set `SPRING_PROFILES_ACTIVE` to the correct config properties environment. The current values would be `“QA”` for the QA environment, `“Q1”` for the Q1 environment, `“prod”` for production and so on. Be warned, these values are case sensitive, so however you’ve named them in your config properties file, it must be exactly the same in the `"Active Profiles"` input in IntelliJ's start script setup.

In IntelliJ, this can be done by clicking the "Edit Configurations" property when setting up a Spring Boot project to run, and adding the correct value to the `"Active Profiles"` input midway down the config modal.

[](/static/6ae9167b7e83f687a89594e2da8007c4/0d390/intellij-active-profiles.png)

_Inside your IntelliJ run configuration, add this line in the "Active Profiles" input._

And that’s it.

Now, when you start up the Spring Boot service, you should see `Spring Profile` being set in the logs, and to be sure, you might also put in some `System.out.println` messaging to let you know if it’s successfully reached the config server and acquired the properties needed.

Fantastic, we've built a config server, we've moved environment variables out of your Spring Boot project to a centralized location, so changes can be made quickly and we've configured a Java service to pull those variables from the config server. Nicely done.

---

## Conclusion and Part Two: The Config Server and Node.js

But, how do you do the same thing with a JavaScript / Node.js project? Can you use the same Spring Boot config server? Or do you have to set up a separate Node-based config server?

You can use the existing one, and I’ll show you how to set it up, and how you might use it in a JavaScript project to enable and disable feature toggles for faster feature deployments in my **[next post](https://www.paigeniedringhaus.com/blog/leveraging-a-spring-cloud-config-server-in-a-node-js-apps-feature-toggles-pt-2)**.

Check back in a few weeks — I’ll be writing more about JavaScript, React, IoT, or something else related to web development.

If you’d like to make sure you never miss an article I write, sign up for my newsletter here: https://paigeniedringhaus.substack.com

Thanks for reading!

---

## Further References & Resources

- [Spring Cloud Config Initializr](https://cloud.spring.io/spring-cloud-config)

- [Sample Cloud Config Server Repo](https://github.com/paigen11/spring-cloud-config-server-example)

- [Sample Cloud Config Properties Repo](https://github.com/paigen11/spring-cloud-config-properties-sample) | paigen11 |

972,145 | Leveraging a Spring Cloud Config Server in a Node.js App's Feature Toggles (Pt 2) | Introduction As I showed in my first post about the Spring Cloud Config server, setting... | 0 | 2022-05-15T13:42:35 | https://www.paigeniedringhaus.com/blog/leveraging-a-spring-cloud-config-server-in-a-node-js-apps-feature-toggles-pt-2 | javascript, node, devops, springboot | ---

title: Leveraging a Spring Cloud Config Server in a Node.js App's Feature Toggles (Pt 2)

published: true

date: 2018-06-02 00:00:00 UTC

tags: javascript,nodejs,devops,springboot

canonical_url: https://www.paigeniedringhaus.com/blog/leveraging-a-spring-cloud-config-server-in-a-node-js-apps-feature-toggles-pt-2

---

[](/static/1bc85da9de192091881a510c69f4a2e3/eea4a/more-code.jpg)

## Introduction

As I showed in my [first post about the Spring Cloud Config server](https://www.paigeniedringhaus.com/blog/why-a-spring-cloud-config-server-is-crucial-to-a-good-ci-cd-pipeline-pt-1), setting up a configuration server for a Java Spring Boot application and then leveraging that server to provide environment variables to existing Spring Boot projects is really handy.

The next trick was figuring out if it was possible to use that very same config server in a Node,js project.

To give you a quick rundown, my team worked on a microservice-based application architecture, meaning, we had up to thirteen separate Java services and one Node service all running to power our application.

It made total sense to go with a Java-based config server, since: thirteen Spring Boot microservices, but when our business partners decided they wanted to implement feature toggles, it became a priority to get the config server working in the Node project as well.

---

## What is a Feature Toggle, and Why Do I Need It?

Let me set the stage by giving you a quick run down of what a feature toggle is and why it’s so beneficial for developers, business partners and customers.

> A **feature toggle** is a technique in [software development](https://en.wikipedia.org/wiki/Software_development) that attempts to provide an alternative to maintaining multiple [source-code](https://en.wikipedia.org/wiki/Source_code) branches (known as feature branches), such that a feature can be tested even before it is completed and ready for release. A feature toggle is used to hide, enable or disable the feature during run time. For example, during the development process, a developer can enable the feature for testing and disable it for other users.

>

> [— Wikipedia, Feature Toggle](https://en.wikipedia.org/wiki/Feature_toggle)

Basically, a feature toggle allows developers to introduce new features to users in a more Agile development fashion.

With feature toggles, devs can continue to maintain just one codebase but show different views of the UI to users in production versus the development team in lower life cycles, based on if those toggles are set to ‘on’ or ‘off’.

They also allow our business and UX partners to validate early on, if new features are beneficial to our users, or if they need to reevaluate how we’re approaching a solution.

Let me make one thing crystal clear though: **feature toggles are not meant to be permanent solutions.** They are temporary, meant to remain only until the feature has been completed and validated that it’s useful or meets whatever other criteria was set for success or failure. Then the code around the feature toggle should be removed to keep the codebase clean and as free of technical debt as is possible.

For my specific situation, our feature toggle was needed to hide a button that didn’t have full functionality yet because the backend service to support it wasn’t finished, and hide the radio buttons from users. They wouldn’t be needed until the button and service were done.

[")](/static/097dd5b077051b3987a8884e99515af7/2cefc/feature-toggle-users.png)

_Feature toggle view for users: no button and no checkboxes._

Above is the UI view users in production would see: no buttons, no checkboxes. Below is the UI view developers working on the feature need to see: buttons and checkboxes present.

[")](/static/cd8585f87c6ca9fa4882efabf18b3f41/2cefc/feature-toggle-devs.png)

_Feature toggle view for devs: buttons and checkboxes._

Let's look at how we got these two views simultaneously.

---

## Setting Up Your Config Server Properties

Before we get to the Node config server, let’s first set up the new file with the following naming conventions in our config server properties repo:

```yaml

[application name]-[life cycle profile].yml

ex. my-ui-app-to-config-QA.yml

```

Within this YAML file, you’ll be able to add your configuration properties for your UI feature toggle. Here’s all I had to include for my feature toggles.

```yaml

modifyDatesToggle: true

```

Commit this to a Github repo, and we’re set there. You can see an example of the config properties repo [here in Github](https://github.com/paigen11/spring-cloud-config-properties-sample).

### nodecloud-config-client Node.js Set Up

To leverage the existing Spring Cloud Config server set up with our Node frontend application, I chose the [`nodecloud-config-client`](https://www.npmjs.com/package/nodecloud-config-client) on npm, as it’s a fairly well documented Spring Cloud Config Server client written for JavaScript.

> I should mention, this is not to be confused with `node-cloud-config-client` or `cloud-config-client` — really, there’s a million versions of config client packages written for Node.js, but the one listed above and in the resources link is the one I used.

So to get down to business, after saving the npm package to our UI’s `package.json` dependencies, add it to the `server.js`file (or wherever your Node server’s configuration is located).

[](/static/3e4d6fedebe868cce1a95acbd2414d9e/1e043/node-cloud-config.png)

_See the `client` variable at the bottom — that’s where the config client comes in to play._

If you’re developing in multiple environments like my team did (local, QA, Q1, production, etc.), add a variable to access the Spring Cloud config server for all stages of the development process (these [environment variable services](https://docs.cloudfoundry.org/devguide/deploy-apps/environment-variable.html#VCAP-SERVICES) are how we connected to it in Pivotal Cloud Foundry). I mentioned these back in my **[previous post](https://www.paigeniedringhaus.com/blog/why-a-spring-cloud-config-server-is-crucial-to-a-good-ci-cd-pipeline-pt-1)**, if you’d like more info.

[](/static/8b2f4460d32b995fd95fc968a5a96c72/610c0/config-logic.png)

_The `configServerUrl` goes through a series of checks for environment variables, and if it finds none of those (like during local development), it defaults to the hard coded URL. I used the same environment variables and logic for the `configServicesProfile` and `featureToggle`._

Next, add a variable to account for where the config services profile will be located (for local development I had it default to QA). And I added a variable to check for enabled/disabled feature toggles.

The below code connects to the config server (when feature toggles are enabled), and maps any feature toggles found to an object.

```javascript

{ modifyDatesFeature : true }

```

Then that object is sent to the rest of the UI with the `app.get` endpoint simply called `"/featureToggles"`.

[](/static/ec2063dabcc7ff699489c64a0bf017ca/62a6a/node-feature-toggle.png)

_Feature flag variable, call to the client to access the config server with the correct environment (QA in my case), and a check if the `featureToggle` is `‘on’`, map the properties that are available to it. Below is the endpoint to call the features._

Great. The Node server is set up, and you can get the features toggles (or whatever else you’re storing in the config server) just by changing a few variables.

### Connecting Your Client Side UI Framework to Your Node Server

The first thing you’ll need to do on the frontend is make an AJAX call to the Node server to check for any existing feature toggles. The framework of the app implementing this was JavaScript MVC (an older framework popular years ago, I'd never heard of it before I worked with it either), and here’s an example of what the call looked like.

[](/static/08fa392466f831d1e687036a78b88e80/000c7/javascript-mvc.png)

In the JavaScript file that’s actually concerned with feature flags (for me, it was a `dates.js` file), I imported the feature toggle AJAX call and created a function to check for the specific feature flag associated with this functionality: `modifyDatesToggle`.

[](/static/c9c250b288bb39d8f8c12f1643ce353f/5f6dd/js-feature-toggle-code.png)

_The feature flag was imported at the top of the file, then I call to the feature flag endpoint to see if there’s anything there the file needs to be aware of. If the feature flag matches the variable I named “modifyDatesToggle”, it gets pulled in and applied to the file._

Finally, inside your JavaScript file using the feature toggle, group the code, wherever possible according to if the feature toggle is enabled, like so.

[](/static/f74ee8cfe78d761e09949ec07632fb67/3c492/feature-toggle-grouped-code.png)

_Example 1: This check displays the check boxes if the `modifyDatesToggle` is `“on.”`_

The thinking goes, that by wrapping all the feature enabled code inside of the feature toggle check, even if the call to the config server fails for some reason, users will only ever see the code that should be deployed to production. This gives us extra protection by not being as dependent on more variables than necessary.

---

## But Wait, There’s More — Enabling End-to-End Tests with Feature Toggles

There’s one more thing I wanted to include in this blog: how I used the same feature toggle logic to run or skip some of the Protractor end-to-end tests.

If you’re using a configuration file for Protractor, and I don’t know why anyone wouldn’t, there’s a cool feature that lets you make your own [custom parameters](https://moduscreate.com/blog/protractor_parameters_adding_flexibility_automation_tests/). I took a page out of my own book where the feature toggle is set in the `server.js` file, and did a similar set up in the Protractor config file: if environment variables are passed in for the feature toggle, the tests that concern that feature will run, if it’s not, they’ll be ignored — I’ll show you the test syntax in a moment, as it’s a little unconventional.

[](/static/16ebbe609d61f226bf2d751080c234ba/63ec5/feature-toggle-e2e-config.png)

_Here’s the configuration that all the Protractor tests use. Params takes in the modifyDatesToggle object and if it exists, it sets the params, if it doesn’t, it sets it to null._

When you’re running the tests locally, you can actually pass the params in through the command line like this:

``bash

$ protractor e2e/conf.js browser.params.modifyDatesToggle=‘true’

```

To implement the feature toggle in the actual end-to-end tests, inside of each test that requires a feature toggle check, add an if statement right after the `‘it’` declaration, checking if the feature toggle is null or not. As I said, this is a little weird to see, but it works.

[](/static/5b10677f0d6acfc3724bddbf96a836d1/a3767/feature-toggle-test.png)

_An example of a Protractor end-to-end test with a feature toggle check. Not all the tests need this check — just the ones that require the feature toggle to be either off or on._

If the feature toggle is not enabled, the test will be skipped, but show up as if it passed in the console (eliminating failing tests because the proper environment wasn’t present for the test to use).

If the feature toggle is enabled, the test will run as normal. This prevents us from having to go through the tests one by one and either `x` them out to ignore them or remove the `x` so they’ll run depending on what the desired functionality is. And once it’s time to remove the feature toggle, it’s easy enough to either remove the unnecessary tests from the `spec` file or just `x` them out, depending on your team’s preference for keeping tests even after functionality has changed. (Personally, I would remove it.)

Above, I showed how to run the feature toggle tests from the command line, but it can also be run programmatically when the application is being deployed.

Segue: for my team personally, we use [Jenkins](https://jenkins.io/) for all our builds. That’s a whole other set of blog posts, but suffice it to say, while the build is running all the unit test are run, all the end-to-end tests are running, all the rest of the checks we have in place before declaring a feature is tested and ready for our business team to accept happens.

Back to how this matters: since Jenkins is our job runner, we can pass the environment variables for the feature toggle tests to Jenkins through a `Jenkinsfile`.

[](/static/2aa4b21d04f265f1c5152f0faf43d15f/8cdda/feature-toggles-jenkinsfile.png)

_All that’s needed is the simple line at the bottom: `env.MODIFY_DATES_TOGGLE=true`_

And that should be all you need to run your end-to-end tests — feature toggles on or off.

### Automating The Feature Toggles

As I said before, my team hosted our applications on Pivotal Cloud Foundry, and in PCF, we use things called VCAP services to hold our environment variables. Through manifest files we could bind these services to our applications and pass in environment variables — variables like if feature toggles should be turned on or off. See where I’m going here?

The check for the feature toggle environment variable makes deploying the app in an automated fashion easier. By including a simple environment variable (or not) to the manifest that deploys with each different stage of development, it’s easy to tell the application to check for settings in the config server (and employ them if need be) or not.

[](/static/1353543796a724400b25393482e96286/3ddad/qa-manifest.png)

_QA manifest screenshot._

QA manifest: note the `SPRING_PROFILES_ACTIVE` pointing to `QA`, `FEATURE_TOGGLE` environment variables with `‘on’`.

[](/static/e043e464058470192b22f20b6cbdea92/e9131/prod-manifest.png)

_Production manifest screenshot._

Production manifest: no feature toggle in sight, and the `SPRING_PROFILES_ACTIVE` is set to `production`.

---

## Conclusion

There you have it. Now, you have seen how you can leverage a Spring Cloud Config server you’ve built with your Node.js application. Not only that, you can also configure end to end tests to run with the feature toggle on or off as well.

If you’ve found other ways to implement feature toggles or other nifty things like this in your own JavaScript projects, I’d love to hear about them.

Check back in a few weeks — I’ll be writing more about JavaScript, React, IoT, or something else related to web development.

If you’d like to make sure you never miss an article I write, sign up for my newsletter here: https://paigeniedringhaus.substack.com

Thanks for reading!

---

## Further References & Resources

- [Wikipedia, Feature Toggle](https://en.wikipedia.org/wiki/Feature_toggle)

- [NodeCloud Config Client](https://www.npmjs.com/package/nodecloud-config-client)

- [Sample Cloud Config Properties Repo](https://github.com/paigen11/spring-cloud-config-properties-sample)

- [Protractor documentation around environment params](https://moduscreate.com/blog/protractor_parameters_adding_flexibility_automation_tests/) | paigen11 |

972,420 | Leetcode diary: 259. 3Sum Smaller | This is a new series where I document my struggles of leetcode questions hoping seeing however small... | 0 | 2022-01-30T04:00:32 | https://dev.to/kevin074/leetcode-diary-259-3sum-smaller-2gl6 | javascript, algorithms, devjournal, motivation | This is a new series where I document my struggles of leetcode questions hoping seeing however small of an audience I get gives me the motivation to continue.

[link](https://leetcode.com/problems/3sum-smaller/)

The leetcode gods have not been kind to me. I am far from worthy of its blessing ... the depression brought on by failing to pass the tests is weighing down on my soul ... oh god ~~

This question is hard ... I thought it would be fun to do a series of questions, but that again proves to be a crazy idea.

Below is the best of my attempt:

```

var threeSumSmaller = function(nums, target) {

const sorted = nums.sort(function(a,b){ return a>b ? 1 : -1});

let midI, rightI;

let midNum, rightNum;

let sum;

let answers = 0;

sorted.forEach(function(leftNum, leftI){

rightI = sorted.length-1;

midI = rightI-1;

while (rightI - leftI > 1) {

rightNum = sorted[rightI];

midNum = sorted[midI];

sum = leftNum + midNum + rightNum;

while (sum >= target && leftI < midI) {

midI--;

midNum = sorted[midI];

sum = leftNum + midNum + rightNum;

}

answers += midI-leftI;

rightI--;

midI = rightI-1;

}

})

return answers;

};

```

The idea is that instead of searching for every possible index, what I could do is for each iteration of left index, I start at the end for the two other pointers. This is this way when I move the "mid" pointer to the left, when the sum becomes < target, I can just stop the search there.

Take for example:

[1,2,3,4,5,6,7], target = 13

1+6+7 = 14

1+5+7 = 13

1+4+7 = 12

Note that since we found that the sum is smaller than target at [1,4,7], it then means [1,2,7] and [1,3,7] must also be smaller than target, so we can just stop the iteration there and move on to the next one.

However, the performance for this is poor, it is merely a slightly better solution than the brute force where you just straight up try the triple nested for loop.

Apparently there was a VERY similar answer, it is the two pointer approach in the solution, below is by modifying my above code to it:

```

var threeSumSmaller = function(nums, target) {

const sorted = nums.sort(function(a,b){ return a>b ? 1 : -1});

let midI, rightI;

let midNum, rightNum;

let sum;

let answers = 0;

sorted.forEach(function(leftNum, leftI){

midI = leftI+1;

midNum = sorted[midI];

rightI = sorted.length-1;

rightNum = sorted[rightI];

while (midI < rightI) {

rightNum = sorted[rightI];

midNum = sorted[midI];

sum = leftNum + midNum + rightNum;

if(sum < target) {

answers+= rightI - midI;

midI++;

} else {

rightI--;

}

}

})

return answers;

};

```

WELL I'LL BE DARNED!!! it is basically the exact fucking same thing except for some reason midI starts at leftI +1 as we normally think it should.

The thing that bothered me is why is answers+= rightI-midI in this case?

[1,2,3,4,5,6,7], target = 13

1+2+7 = 10, midI = 1, rightI = 6, answers += 5 = 5

1+3+7 = 11, midI = 2, rightI = 6, answers += 4 = 9

1+4+7 = 12, midI = 3, rightI = 6, answers += 3 = 12

ah okay, so when 1+2+7 is smaller than 13, it means also that:

1+2+6 < 13

1+2+5 < 13

1+2+4 < 13

1+2+3 < 13

So basically the same logic but backwards...so backwards of my backward logic ...nice...

However, I went back to modify my backwards solutions just to see whether I could make it work. I could get a solution that calculates correctly, but inefficiently still.

I believe the reason is that the correct solution shrinks the search in the while loop no matter what and does not need to revisit. On the other hand, my solution requires a "spring back to end" action every time, which makes it do some unnecessary revisiting.

So the lesson here is that ... I don't have good intuition on questions that deal with sorted array, yeah I don't know how to word it at all even...fuck... I was so close though!

Let me know anything on your mind after reading through this, THANKS! | kevin074 |

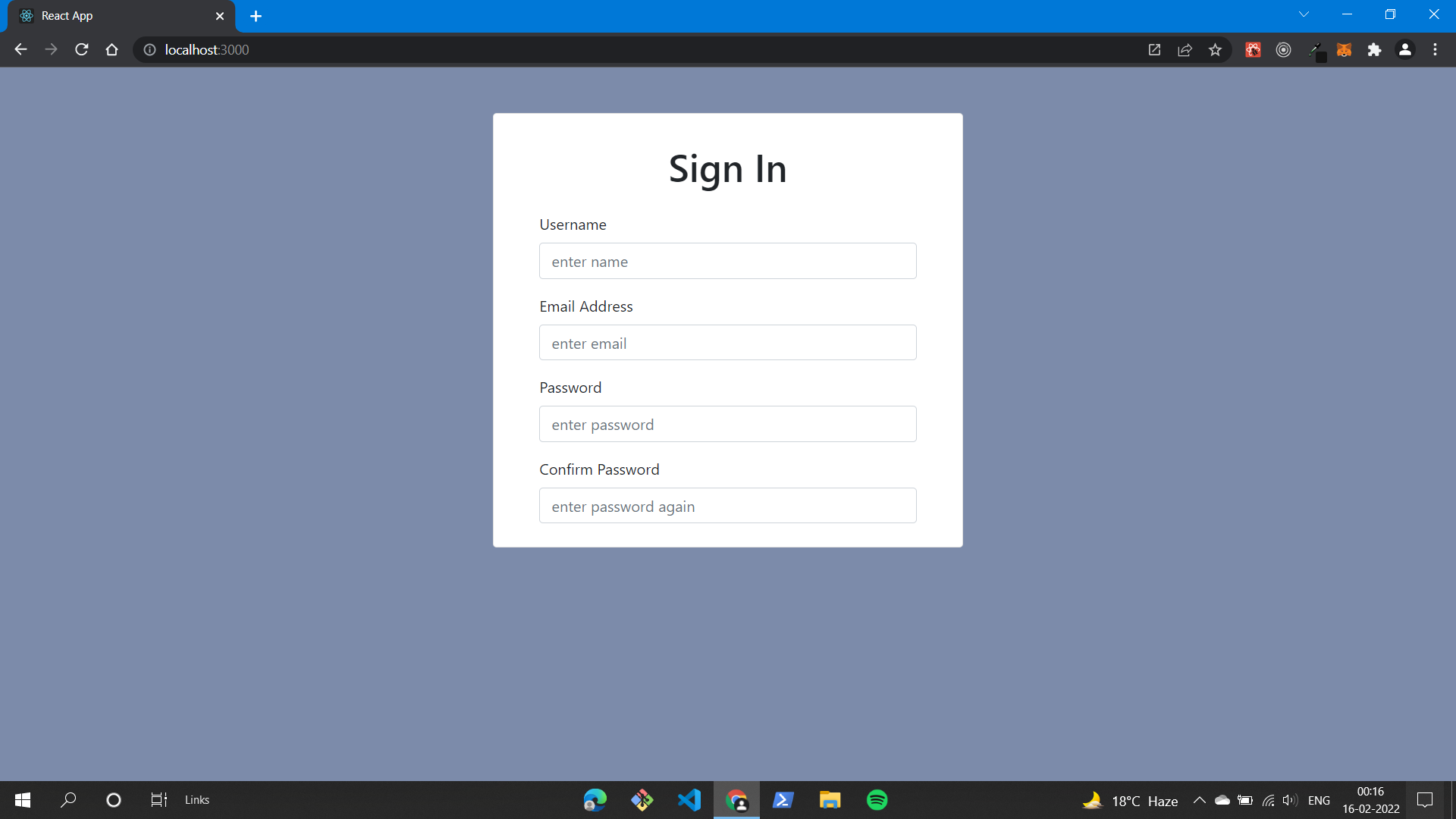

973,516 | awesome npm packages for data validation and parsing(user login validation) | in this post we covering three of the best npm packages for data validation and schema building for... | 0 | 2022-01-31T09:18:33 | https://dev.to/alguercode/awesome-npm-packages-for-data-validation-and-parsinguser-login-validation-3ai4 | beginners, productivity, javascript, webdev | in this post we covering three of the best npm packages for data validation and schema building for javascript programming language

before you start reading, give a follow.

## 1.[Yup](https://github.com/jquense/yup)

Yup is a JavaScript schema builder for value parsing and validation. Define a schema, transform a value to match, validate the shape of an existing value, or both. Yup schema are extremely expressive and allow modeling complex, interdependent validations, or value transformations.

Yup's API is heavily inspired by Joi, but leaner and built with client-side validation as its primary use-case. Yup separates the parsing and validating functions into separate steps. cast() transforms data while validate checks that the input is the correct shape. Each can be performed together (such as HTML form validation) or seperately (such as deserializing trusted data from APIs).

## 2.[volder](https://github.com/devSupporters/volder)

**volder** is powerful Object schema validation, it lets you describe your data using a simple and readable schema and transform a value to match the requirements, it has custom error messages, custom types and nested schemas.

## 3. [joi](https://github.com/sideway/joi)

The most powerful schema description language and data validator for JavaScript.

### don't go without star that repos | alguercode |

975,117 | Paracetamol.js💊| #52: Explica este código JavaScript | Explica este código JavaScript const info = { [Symbol('a')]:... | 16,071 | 2022-02-12T16:26:26 | https://dev.to/duxtech/paracetamoljs-52-explica-este-codigo-javascript-kme | javascript, webdev, spanish, beginners | ## **Explica este código JavaScript**

```js

const info = {

[Symbol('a')]: 'b'

}

console.log(info)

console.log(Object.keys(info))

```

- A: `{Symbol('a'): 'b'}` y `["{Symbol('a')"]`

- B: `{}` y `[]`

- C: `{ a: "b" }` y `["a"]`

- D: `{Symbol('a'): 'b'}` y `[]`

Respuesta en el primer comentario.

---

| duxtech |

1,220,997 | Проверка | Просто проверка | 0 | 2022-10-16T05:41:50 | https://dev.to/urto/provierka-1fh9 | Просто проверка | urto | |

977,232 | Why Use Crypto Wallets? | How individuals and Businesses benefit from using crypto-wallets. Due to the numerous advantages... | 0 | 2022-02-03T11:30:57 | https://dev.to/bitpowr/why-use-crypto-wallets-216k | **How individuals and Businesses benefit from using crypto-wallets.**

Due to the numerous advantages over traditional fiat currencies, cryptocurrencies such as Bitcoin and Ethereum are becoming increasingly popular. You’ll need to grasp how crypto wallets function if you wish to use any of the coins. This article covers what a cryptocurrency wallet is, why you would want to use one, how other people use their wallets and the many types of cryptocurrency wallets.

**Why Use a Crypto Wallet?**

Are you still thinking of why you should use a wallet? well, a study from Juniper Research found that the number of people using digital wallets will increase from 2.3 billion to nearly 4 billion, or 50% of the world’s population, by 2024. This would result in an increase of more than 80% in wallet transaction values to more than $9 trillion per year.