id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

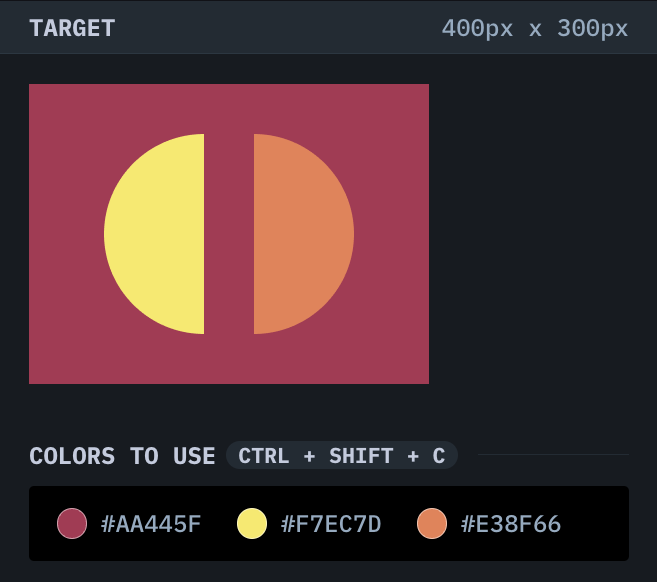

1,221,427 | CSSBattle | #31 Equals | Welcome to CSSBattle Challenges! In this short article, I go through my solution for CSSBattle - #31... | 17,102 | 2022-10-16T16:24:10 | https://dev.to/npranto/cssbattle-31-equals-70h | css, challenge, cssbattle, equals | Welcome to CSSBattle Challenges!

In this short article, I go through my solution for [CSSBattle - #31 Equals](https://cssbattle.dev/play/31) challenge. Please refer to the code snippet below to get a better insight into my thought processes and the implementation detail.

---

> #### Challenge:

---

> #### Solution:

```html

<div class="container">

<div class="semi-circle left"></div>

<div class="semi-circle right"></div>

</div>

<style>

* {

padding: 0;

margin: 0;

box-sizing: border-box;

}

.container {

width: 100vw;

height: 100vh;

background: #aa445f;

}

.semi-circle {

width: 200px;

height: 100px;

border-top-right-radius: 100px;

border-top-left-radius: 100px;

position: absolute;

top: 50%;

left: 50%;

}

.right {

background: #e38f66;

transform: translate(calc(-50% + 75px), -50%) rotate(90deg);

}

.left {

background: #f7ec7d;

transform: translate(calc(-50% - 75px), -50%) rotate(-90deg);

}

</style>

```

---

> #### Key Takeaway(s):

- create semi-circles with height half the size of the width along with border radius properties

- use multiple transform properties to shift or rotate an element in multiple ways

As always, I welcome any feedback or questions regarding the implementation detail of the challenge. Otherwise, I hope this was useful! | npranto |

1,221,465 | Some links to pimp your gitHub profile | https://dev.to/dailydevtips1/pimp-your-github-profile-hoc https://docs.github.com/en/account-and-pro... | 0 | 2022-10-16T18:12:18 | https://dev.to/dgloriaweb/some-links-to-pimp-your-github-profile-4koa | https://dev.to/dailydevtips1/pimp-your-github-profile-hoc

https://docs.github.com/en/account-and-profile/setting-up-and-managing-your-github-profile/customizing-your-profile/about-your-profile

https://medium.com/nerd-for-tech/stand-out-by-personalizing-your-github-profile-f0a5d73f2b4d

| dgloriaweb | |

1,221,640 | Is It Possible To Run A Digital Startup on a Chromebook? | The other day, my 2012 Mac Book Pro hard drive cable decided to give in...So, the last option I had... | 0 | 2022-10-17T01:27:00 | https://dev.to/vonthecreat0r/is-it-possible-to-run-a-mobile-startup-on-a-chromebook-8a0 | tutorial, productivity, opensource |

The other day, my 2012 Mac Book Pro hard drive cable decided to give in...So, the last option I had was to go out and find a quick replacement without breaking the bank.

I picked the Duet 3 Chromebook off Kijiji from a local place.

And, being into software and hardware, this Chromebook is, not bad at all...

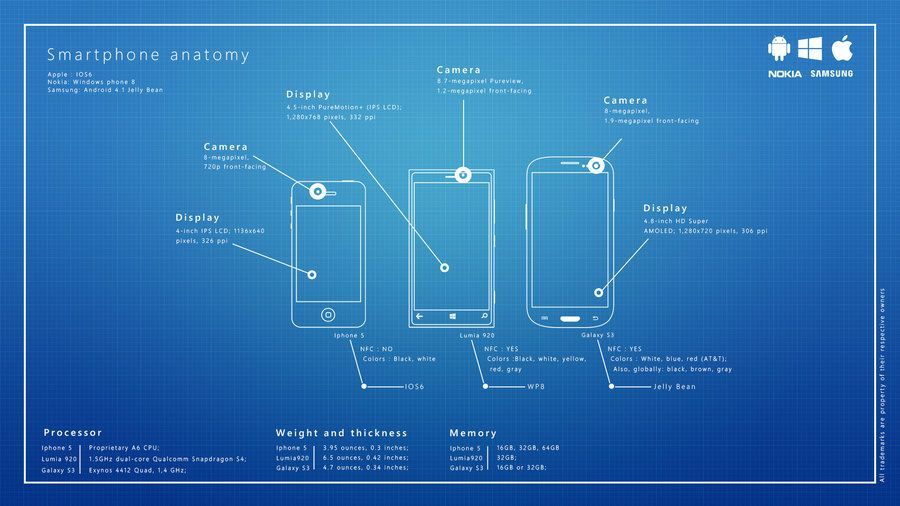

If you think about it, most our phones today have enough power to run a mini web server backed with a SQLite database; given today's world of digital everything,

You can see where this could end up...

Chromebooks have a unique power if you have a technical know-how of making them produce extra-ordinary results using basic tools.

Like a friend once said:

> 'Pro tools don't make you a pro, with tools...'

To say the least, what I'm getting at is, you can do far more on a Chromebook today, with very few distractions in the way; compared to the other major operating systems.

### The future is mobile;

I strongly sense, Chromebooks have a greater chance at taking over the digital space overnight...

These are simply my observations based on a few technical trends and software patterns I am noticing:

Such as, [rust programming language being introduced into the Linux kernel](https://www.zdnet.com/article/linus-torvalds-rust-may-make-it-into-the-next-linux-kernel-after-all/), JavaScript (ES6) being at the forefront of front-end and back-end development of web services...

Of course some of these trends have been a norm for a while; but ask yourself, why more and more hardware manufactures pushing towards ARM architecture?

From my perspective, I say few reasons:

- Due to the past 2 and a half years that took a hit on the chip supply,

- Crunching down for true optimisation (ARM is great at this)

- To counter the chip shortage with smaller, faster and more efficient alternatives.

Therefore if a chip can be smaller & still maintain the same output on the software side, while also being able to save on size, power input & simultaneously adding new processing clusters that do special computations...

> It is no wonder why even the forbidden fruit has taken the same root of action...

Which means the focus is on whatever works well with arm architecture.

Most of our digital lives today evolve around:

- Art

- Commerce

- Entertainment

That means:

- Speed

- Access

- Ease of use

So to conclude everything, you now see how the Chromebook could have a edge here?

Please note: I am in no way sponsored by chrome or google, I am simply stating that if you compare all major platforms that can hit those three categories without trouble: it would be the Chromebook.

They're:

- Cheap

- Accessible

- Easy to use

<br>

<br>

You can say well, but then what?

That's it?

If you look at technical history timeline; you realise that at every turn, there's always a urge to reinvent the wheels based on a few catalysts,

The first wave was that we wanted to take our library into our pockets. And indeed it is so, Arts, Entertainment, Commerce; now live at your finger tips, today.

As for the following wave, I'm sure you notice a few patterns too: **privacy**, **censorship**, **security (cryptography)**, mobility & ease-of-access...

Of all these: the major key pulling all these strings together are: **mobility & access**.

Therefore; **privacy** and **security** ought to be paramount.

Sure Chromebooks have a chance, but I'd agree more, to say that: we all prefer variety over singular especially in these times...

So my final thought is...

**Who can make the most secure, fastest, accessible platform and hardware haven for the next generation of computing that people can trust?** | vonthecreat0r |

1,221,718 | How can I read this type of font? | A post by RicardoViteriR | 0 | 2022-10-17T03:11:39 | https://dev.to/rviteri/how-can-i-read-this-type-of-font-2d76 | tesseract |

| rviteri |

1,221,862 | Running Minecraft servers from Docker | This article is also available on my blog. Since picking up docker last year it's completely changed... | 20,159 | 2022-10-17T07:50:33 | https://jamesnewman.dev/blog/docker-minecraft-servers/ | docker, devops, minecraft, guide | _This article is also available [on my blog](https://jamesnewman.dev/blog/docker-minecraft-servers/)._

Since picking up docker last year it's completely changed how I set up projects, home network applications, and now how I deploy Minecraft servers for friends. Gone are the days of figuring out which version of JDK I need on an ubuntu box and remembering the commands to reconnect to a [screen](https://help.ubuntu.com/community/Screen) session.

In this post I'll walk you through setting up a few different Minecraft servers, running vanilla, Paper and Forge, all on the same host. In a [follow up post](/blog/routing-minecraft-servers) I go through routing to these server on the same port.

Before we get started, I'd recommend having some basic command line and linux knowledge. In addition, pre-existing docker and docker-compose experience is a bonus but **not** required.

### The Environment

Docker being docker, you can run this on Windows, Linux, macOS - A spare computer, the cheapest DigitalOcean droplet, heck even a Raspberry Pi! Just make sure your systems up to date, has docker and docker-compose installed. Here's what we'll be using:

- A docker host, the steps below are for Ubuntu but you can use any.

- [itzg/minecraft-server](https://hub.docker.com/r/itzg/minecraft-server) docker image to run the server.

#### Install Docker & Docker Compose

Go ahead and follow the [Docker install steps](https://docs.docker.com/engine/install/) for the platform you wish to run the server on. Here's the [Ubuntu guide](https://docs.docker.com/engine/install/ubuntu/).

_Handy tip: if you're on linux, make sure you add your user to the docker group:_

``` bash

sudo usermod -aG docker $USER

```

_You'll need to log out and back in again after._

Now you should be all set! To double check, run the following:

``` bash

# Check the Docker version

docker -v

# Check Docker Compose version

docker compose -v

```

### Creating our first server with Docker Compose

It's vary rarely I'll run a docker image with the docker command line, remembering the command and all the arguments I need is a faff. So lets define our first Minecraft server, a simple vanilla server, in a docker compose YAML file.

First up, we'll create a directory to contain all our files including the Minecraft servers:

``` bash

# Create directory

mkdir minecraft-servers

# cd into the directory

cd minecraft-servers

# Create a docker-compose.yaml file

touch docker-compose.yaml

# Create a directory to store our vanilla server files in

mkdir -p vanilla/server

```

Go ahead and open the `docker-compose.yaml`. You can use nano, vim or even connect [VS Code with SSH](https://code.visualstudio.com/docs/remote/ssh).

In here we want to define our vanilla service:

``` yaml

version: "3.8"

services:

vanilla:

container_name: vanilla

image: itzg/minecraft-server

ports:

- 25565:25565

environment:

EULA: "TRUE"

MEMORY: 2G

volumes:

- ./vanilla/server:/data

restart: unless-stopped

tty: true

stdin_open: true

```

This is the bare minimum configuration, but there's a whole bunch of options you can define such as ops, whitelist, difficulty etc, all documented [here](https://github.com/itzg/docker-minecraft-server/blob/master/README.md#server-configuration). Now we're ready to test our first server works, so lets spin it up!

``` bash

# Start the service

docker compose up

```

You'll should now see the server begin to start up. It'll take a little while on the first launch as it downloads the image and generates server files. You'll know it's ready when you see:

``` log

vanilla | [17:22:18] [Server thread/INFO]: Done (13.045s)! For help, type "help"

```

Congratulations, you can now connect to your new server using the server IP or `localhost` if you're running it on the same machine! To exit, you can use `ctrl + c` in the terminal.

If you take a look in the `vanilla/server` directory we created earlier, you'll see it's generated all the server files needed. You *can* edit the `server.properties` to make changes to the servers configuration, however I'd recommend adding these to the `environment` block in our `docker-compose.yaml` and simply deleting the `server.properties` file. This file will be recreated when you next start the server with your newly added environment options.

### Adding different server types

So we have our vanilla server set up, but if we want to use some plugins to manage permissions or add prefixes to usernames in chat, we'll need to use a different server type. For a vanilla server that you can connect an unmodded client to, you have a few options: Bukkit, Spigot and Paper. We'll use Paper for the sake of this post.

First, lets create a directory to keep our plugins in:

``` bash

mkdir vanilla/plugins

```

You can go ahead and add any plugins to this directory and we'll configure the container to mount these. You can also leave it blank for now and once we start the server you'll see `bStats` automatically added by Paper.

Now in our `docker-compose.yaml` file, we'll add a new entry to the `environment` block of our `vanilla` service, defining the server type. We'll also mount the plugins directory we just created.

``` yaml

version: "3.8"

services:

vanilla:

container_name: vanilla

image: itzg/minecraft-server

ports:

- 25565:25565

environment:

# Add our server type

TYPE: PAPER

EULA: "TRUE"

MEMORY: 2G

volumes:

- ./vanilla:/data

# Mount our plugins directory

- ./vanilla/plugins:/plugins

restart: unless-stopped

tty: true

stdin_open: true

```

Lets spin up the updated server, you'll see it download the new jar and create some new files.

``` bash

# Start the service

docker-compose up

```

Now you should be able to edit the configuration files for the plugins you added, log in and see them working!

### Proxying and Routing domains to Minecraft Servers

In a follow up post, I go through adding adding [itzg/mc-router](https://hub.docker.com/r/itzg/mc-router) to route domains to multiple servers, all using the default `25565` port. No more ugly server addresses with different ports! You can read about that [here](https://jamesnewman.dev/blog/routing-minecraft-servers)

### Closing thoughts

So in this post we've created a Vanilla server, maintained and managed through docker. I highly recommend reading through the [docs](https://github.com/itzg/docker-minecraft-server) for this image as there's tons of configuration available, including support for modded servers. You can add more servers to this config and use something like [BungieCord](https://github.com/SpigotMC/BungeeCord) or [PaperMC Waterfall](https://github.com/PaperMC/Waterfall) switch servers ingame.

_This article is also available [on my blog](https://jamesnewman.dev/blog/docker-minecraft-servers/)._ | jam3sn |

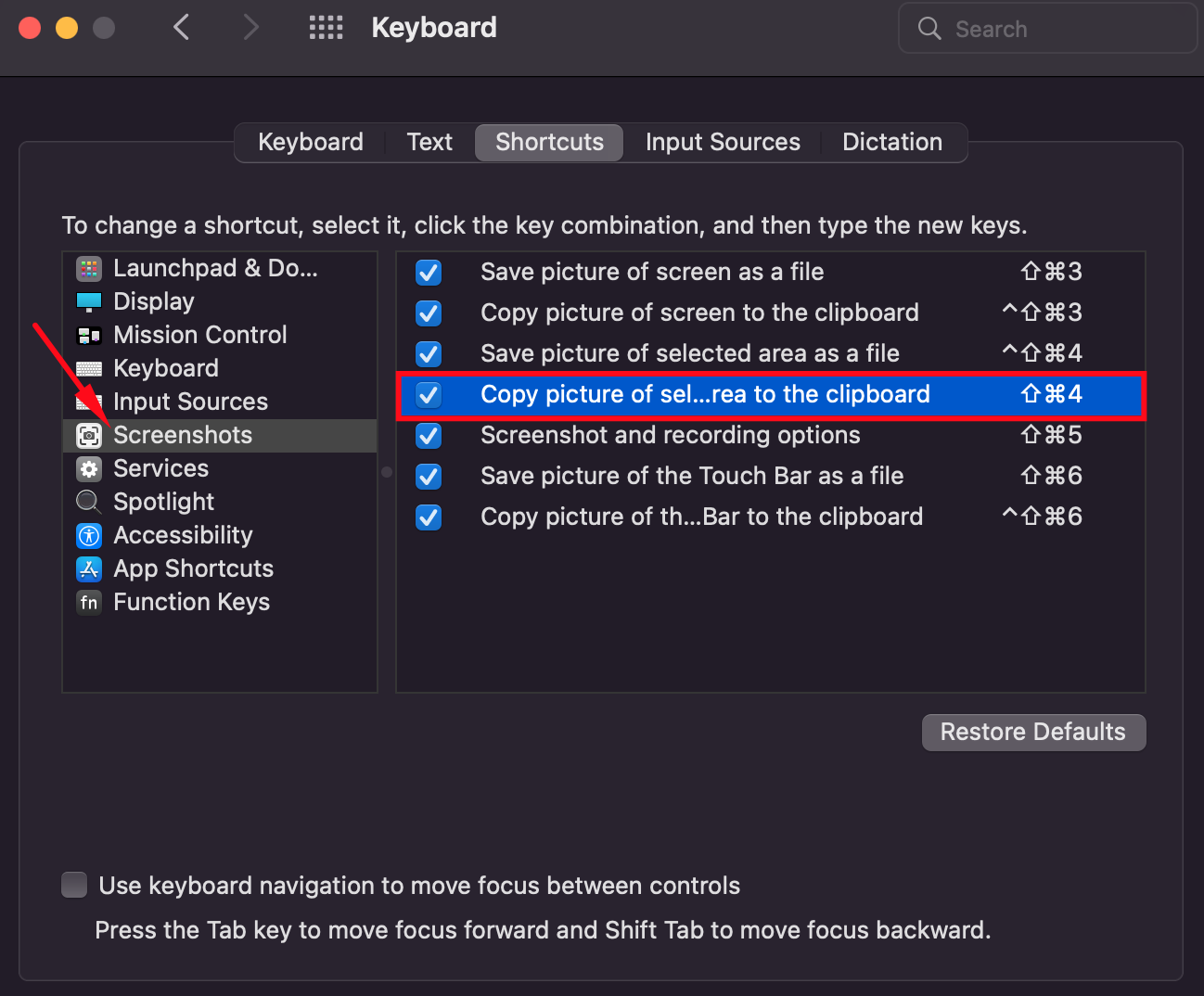

1,221,927 | 5 Preferences Tweaks For A New Mac | 1. Screenshot Shortcut The default when taking a screenshot is to save it, but most often... | 0 | 2022-10-22T11:02:33 | https://dev.to/danielbellmas/5-preferences-tweaks-for-a-new-mac-2f4f | productivity, customize, tooling, ios | ## 1. Screenshot Shortcut

The default when taking a screenshot is to save it, but most often we want to just send it to someone right away so to make things easier, change the shortcut to:

<kbd>⌘ Cmd</kbd> + <kbd>⇧ Shift</kbd> + <kbd>4</kbd>

> Go to `System Preferences` -> `Keyboard` -> `Shortcuts` tab

---

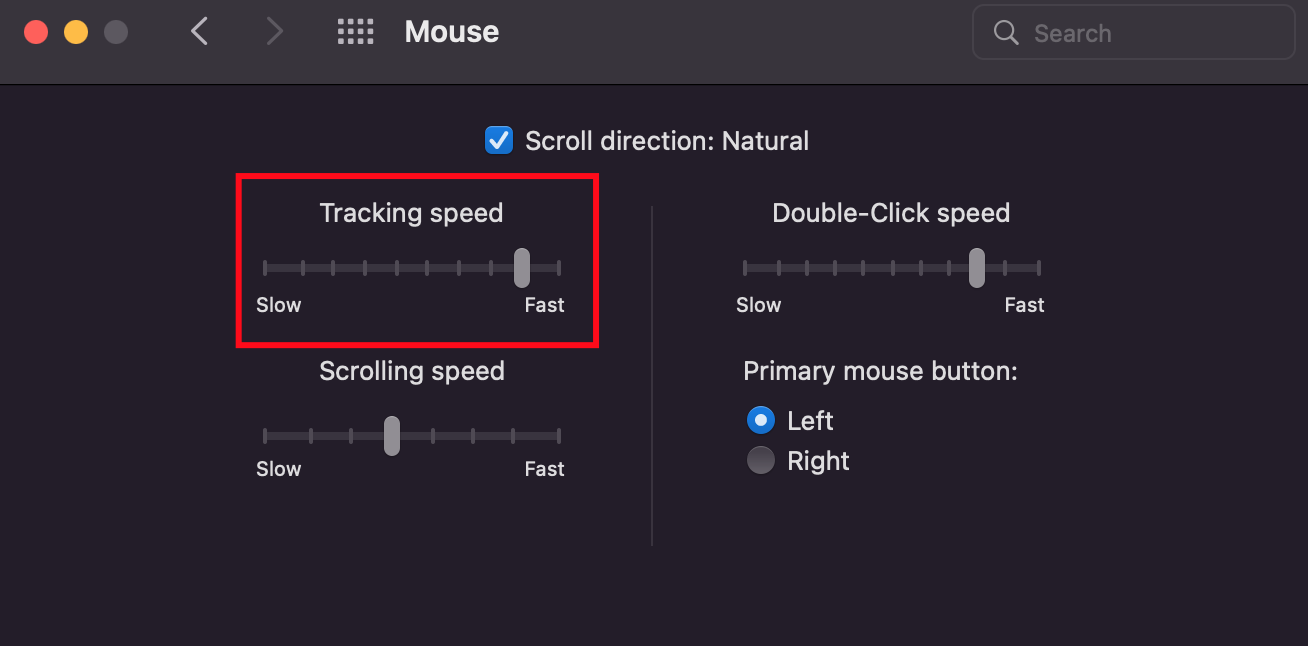

## 2. Speed of Mouse Cursor

The default speed is too slow in my opinion, change it to your liking, he is mine 🙂

> Go to `System Preferences` -> `Mouse`

---

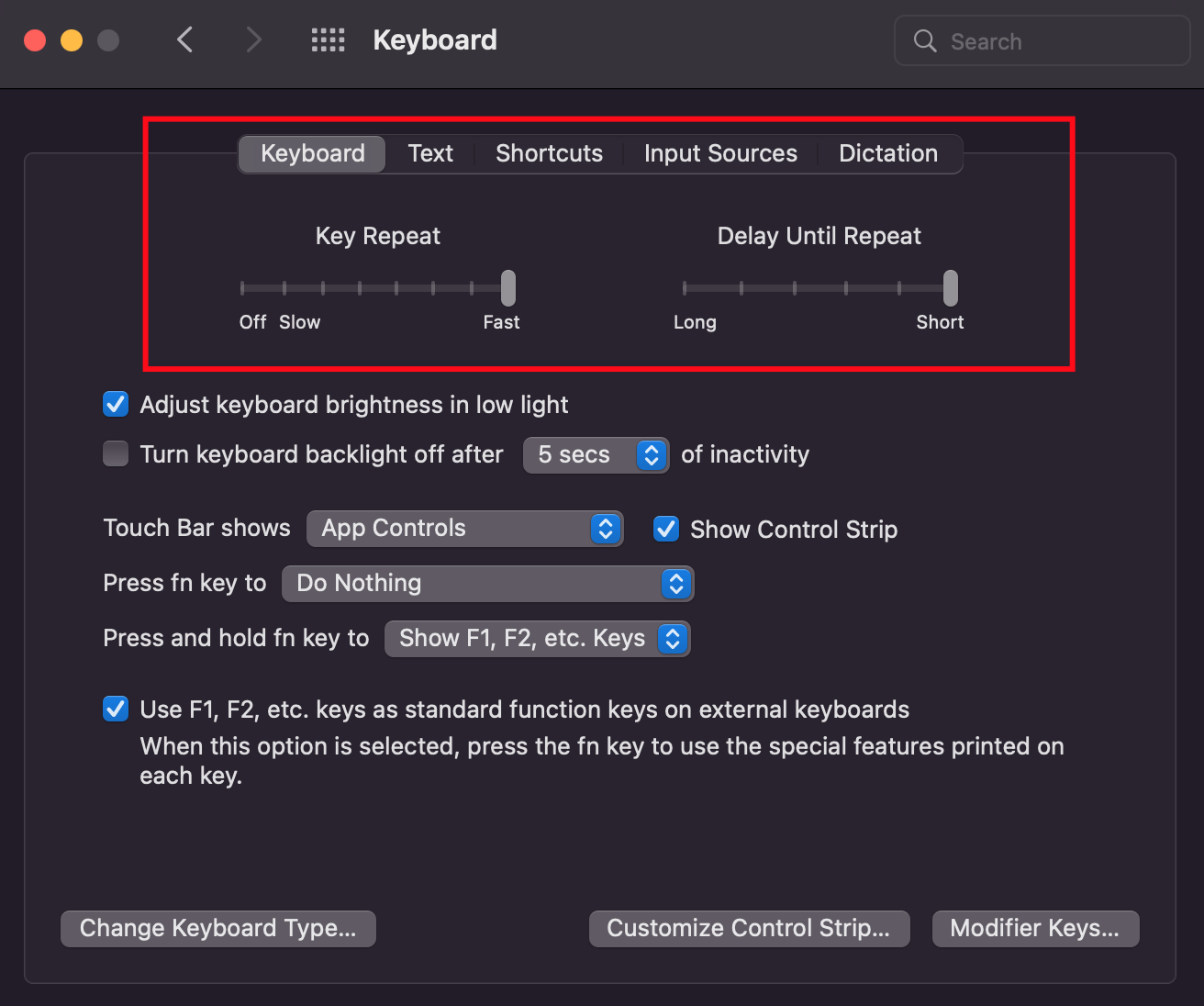

## 3. Speed of keyboard Cursor

The default here is also slower than what I prefer.

> Go to `System Preferences` -> `Keyboard`

---

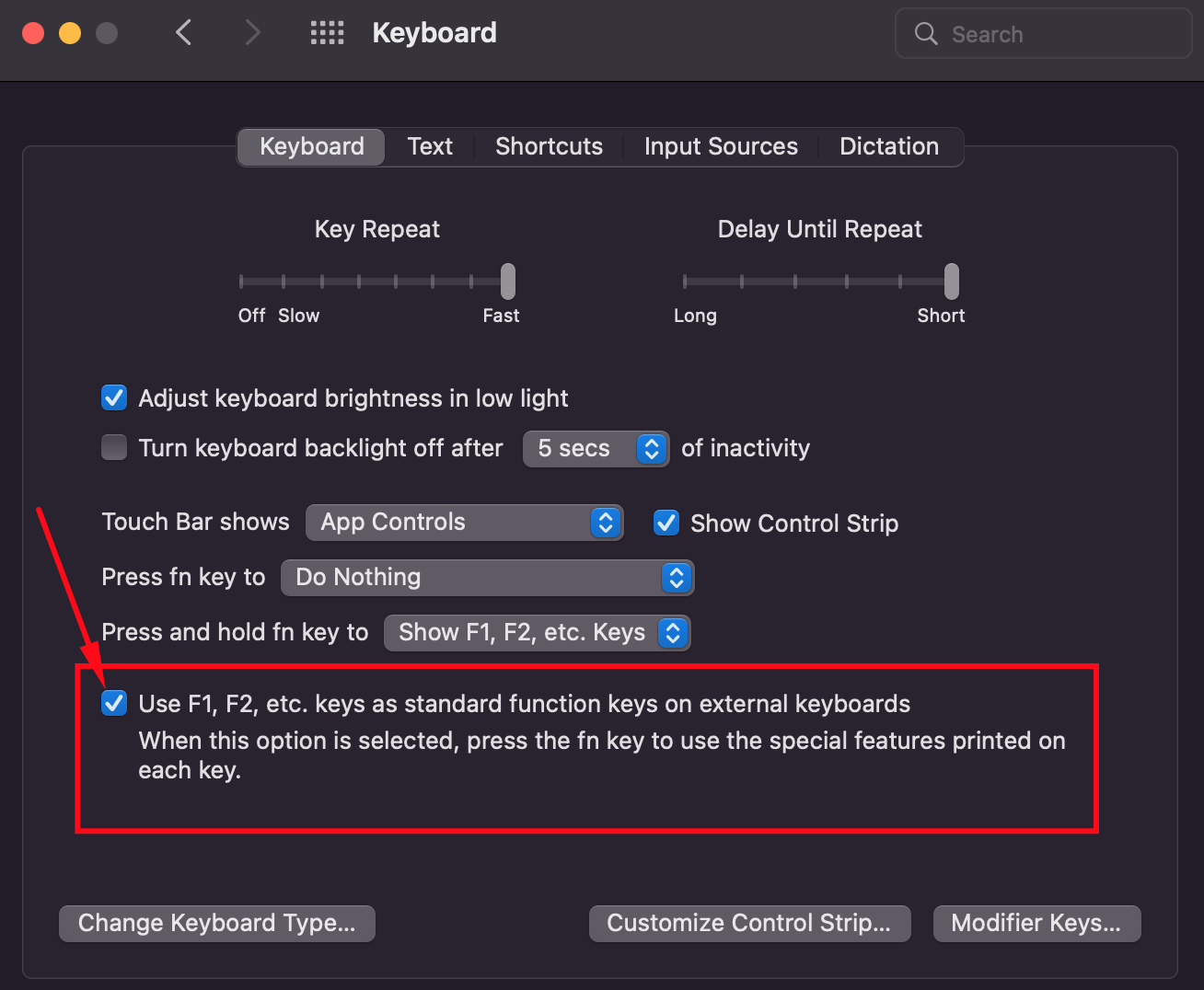

## 4. Untick the `fn` Option

This option will remove the need for pressing the `fn` key with one of the `F1, F2, F3....` keys.

Most often I use the <kbd>F2</kbd> key to [change variable names in `VsCode`](https://dev.to/danielbellmas/productivity-boost-with-cmd-ctrl-1nh8#bonus-productivity-tip-%EF%B8%8F).

> Unfortunately, this only works for an apple keyboard, not external ones.

> Go to `System Preferences` -> `Keyboard`

---

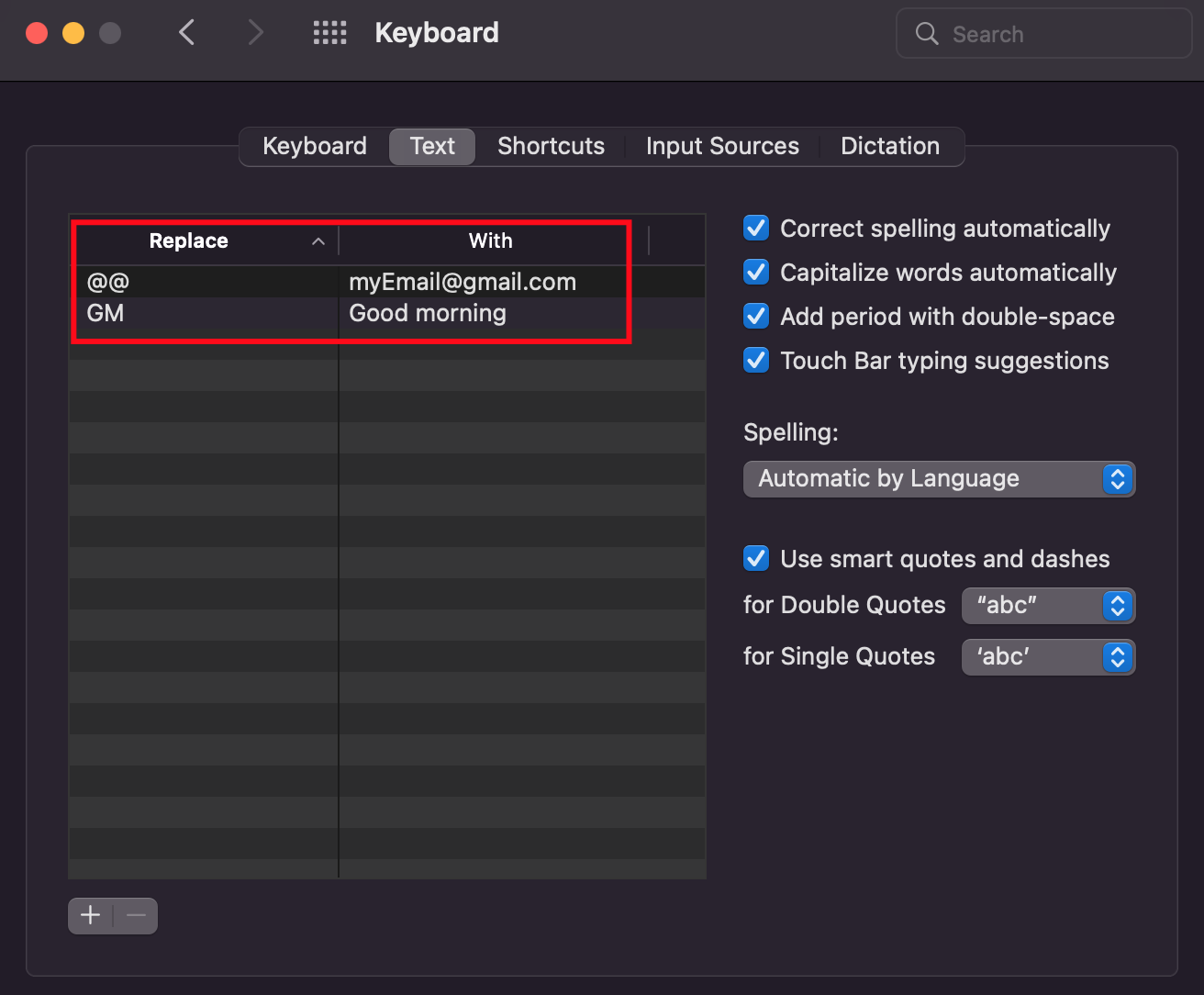

## 5. Text Replacements

Save time by putting your most frequently used phrases here.

My favorite is the email one :)

> Go to `System Preferences` -> `Keyboard` -> `Text`

| danielbellmas |

1,222,076 | Implementing Cursor-based Pagination For Every GraphQL API | Implementing Cursor-based Pagination For Every GraphQL API Backends often return a massive... | 0 | 2022-10-17T12:02:26 | https://stepzen.com/blog/implementing-cursor-based-pagination-graphql | Implementing Cursor-based Pagination For Every GraphQL API

==========================================================

Backends often return a massive amount of data, and intaking all data simultaneously causes more overhead and increases the response time. Pagination preserves the application's performance by receiving small pieces of data in subsequent requests until the entire dataset is received.

When Facebook publicly released GraphQL as a client-driven API-based query language, it quickly gained hype because it allowed front-end developers to modify their backends easily. Applications using GraphQL are more efficient and work promptly on slow networks, and therefore, it is quickly replacing the traditional API architectures like REST. Pagination is an essential concept in GraphQL, but there aren't many resources on pagination in GraphQL.

In this post, we'll compare the different ways to handle pagination in GraphQL and learn how to configure a REST directive to perform cursor-based pagination for every REST API using StepZen.

> **TL;DR**: You can find the complete documentation on paginating GraphQL using StepZen [here](https://stepzen.com/docs/connecting-backends/rest-services-graphql-pagination).

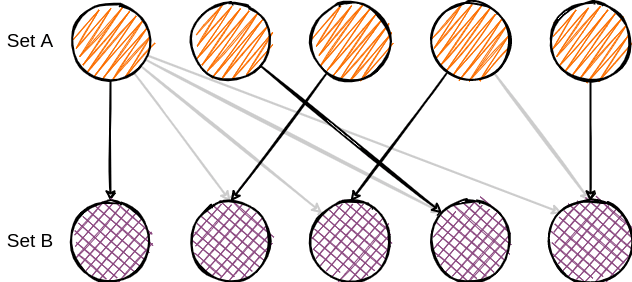

Comparing Different Methods of Pagination in GraphQL

----------------------------------------------------

Pagination in GraphQL is not different from pagination in REST APIs, although some types of pagination better fit GraphQL. Before discussing the preferred pagination method in GraphQL, let's look at different pagination types. Typically, APIs offer three methods for pagination. These are:

* [Offset Pagination](https://stepzen.com/blog#1-offset-pagination)

* [Page Number Pagination](https://stepzen.com/blog#2-page-number-pagination)

* [Cursor Pagination](https://stepzen.com/blog#3-cursor-pagination)

### 1\. Offset Pagination

Offset pagination consists of two primary parameters: limit and offset. The limit indicates the maximum number of results to show. And the offset denotes the position in the list from where the pagination starts.

Suppose you have a list of 100 students. If you set the limit parameter to 10 and offset to 20, the database engine will count from the 20th student and display the following ten students in each iteration. For instance, the first iteration will show the students from 20 to 30, then from 30 to 40, etc.

Although offset pagination is the most straightforward, it has a significant drawback. When some items are added or deleted, offset pagination results in repeated or missing data values.

**Pros:**

* Most common way to do pagination in general.

* Relatively simple implementation.

* Most SQL-based databases support the limit and the offset variables, so it's easier to map values.

**Cons:**

* Prone to data inconsistencies (repeated or missing data).

* It doesn't provide information about more pages, total pages, or retrieval of previous pages.

### 2\. Page Number Pagination

As the name specifies, page number pagination returns a single page per request. You all have seen a "next page" option when working with tables; each sheet shows the same number of results or entries. Similarly, page number pagination returns the same number of results per request.

Let's take an example to understand how it works. Page number pagination uses the after parameter to indicate the starting point for pagination. For instance, if you have a list of 30 students and set the after and the limit parameters to 23 and 5, respectively, then the output list will show a list of 5 students from the 23rd to 28th entry.

Page number pagination is more reliable as the upcoming results start from the last fetched values.

**Pros:**

* Easier to implement.

* It doesn't require complex logical analysis.

**Cons:**

* Data inconsistencies.

### 3\. Cursor Pagination

The third kind of pagination is cursor-based pagination. However, it is most complicated but is adaptable to dynamic data. Therefore, it is the preferred way to do pagination in GraphQL.

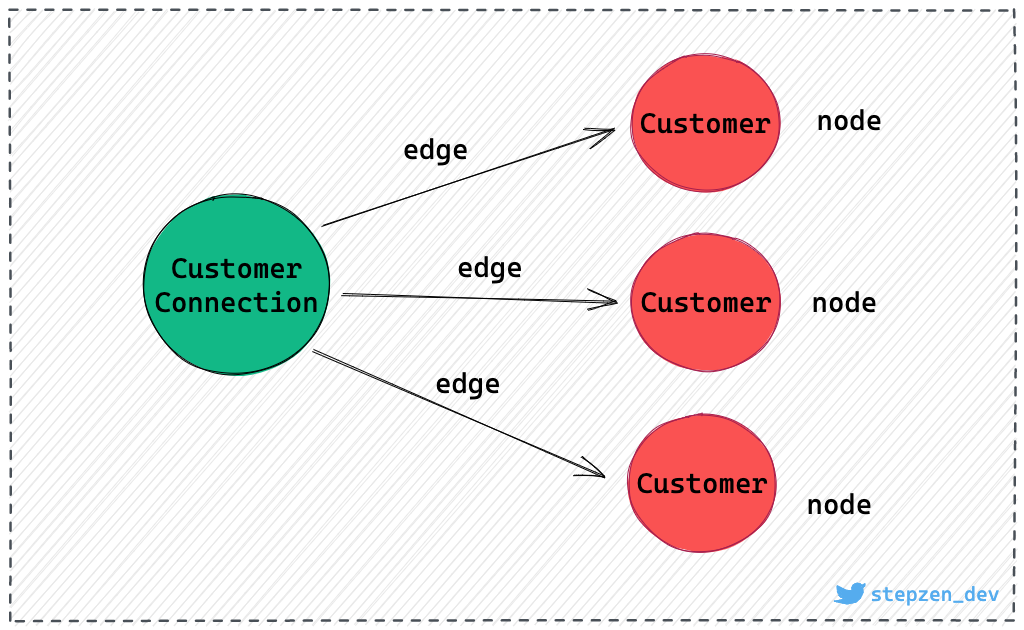

This method includes a specific parameter for the cursor. A cursor is nothing but a reference point that shows the position of an item in the database. The data are represented as nodes and a cursor as an edge in the graphical representation.

A cursor is a base64 encoded number. The query written for cursor-based pagination returns an object representation instead of a list.

**Pros:**

* The preferred way to do pagination in GraphQL.

* Provides valuable data for UX uses.

* Allows reverse pagination.

* No issues in the case of dynamic data as pagination are done to a specific row.

**Cons:**

* It doesn't allow random access.

* Needs complicated queries.

How to Implement Pagination in GraphQL using StepZen

----------------------------------------------------

Now you know about all the possible pagination methods and the preferred way of doing pagination in GraphQL, which is cursor-based pagination. If your REST API supports offset or page number pagination, you can easily implement cursor-based pagination in GraphQL using StepZen.

The two essential parameters you need to specify for every type of pagination are `type` and `setters`. The `type` parameters define the pagination style you want to implement. It has three possible values: `PAGE_NUMBER`, `OFFSET`, and `NEXT_CURSOR`. Next comes the `setters` parameters, which need to be pointed towards a field that indicates how many results or pages the response will output. You can find the list of all parameters with different pagination styles in the [documentation](https://stepzen.com/docs/connecting-backends/rest-services-graphql-pagination/).

Let's look at some examples:

### 1\. Implementing Cursor Pagination for Offset

The below snippet illustrates how to implement GraphQL cursor-based pagination using StepZen for REST APIs supporting offset pagination. Note that the parameter `first` is set to the number of required results. The second parameter, `after`, is set to the starting point of the pagination.

```graphql

customers(

first: Int! = 20

after: String! = ""

): CustomerConnection

@rest(

endpoint:"https://api.example.com/customers?limit=$first&offset=$after"

pagination: {

type: OFFSET

setters: [{field:"total" path: "meta.total_count"}]

}

)

```

Since it's the first request, the `after` parameter is equal to an empty string. The `first` parameter is set to `20`, which means that the first 20 results will be returned. On the second request, you can change the value for `after` to be equal to the value of `first` from the previous request. Every new request will be a multiple of the `first` parameter.

### 2\. Implementing Page Number Pagination

Take a look at the following code to get a better understanding of implementing cursor-based pagination in GraphQL using StepZen, when your REST API is relying on page number pagination:

```graphql

customers(

first: Int! = 20

after: String! = ""

): CustomerConnection

@rest(

endpoint:"https://api.example.com/customers?page=$after&per_page=$first"

pagination: {

type: NEXT_CURSOR

setters: [{field:"nextCursor" path: "meta.next"}]

}

)

```

The above example illustrates some important aspects. The `after` parameter is set to an empty string, which indicates that it is the first request. In contrast, the first parameter is set to `20`, meaning that 20 results will be returned per page. Remember, the value of the first parameter remains the same for subsequent requests as well. The `after` parameter in the upcoming request will be according to the page number you want to be returned. For instance, it is `3` for the third and `4` for the fourth page.

### 3\. Implementing Cursor Pagination

Implementing cursor-based pagination is very similar to the other two pagination methods. The only difference is that you need to specify the `type` parameter as `NEXT_CURSOR`, and change the setters parameter to point towards the field that indicates the next cursor.

```graphql

customers(

first: Int! = 20

after: String! = ""

): CustomerConnection

@rest(

endpoint:"https://api.example.com/customers?first=$first&after=$after"

pagination: {

type: NEXT_CURSOR

setters: [{field:"nextCursor" path: "meta.next"}]

}

)

```

This, of course, only applies if your REST API already supports cursor-based pagination.

How to Query Cursor Pagination in GraphQL

-----------------------------------------

In the previous section, you've learned how to implement cursor-based pagination for any REST API using StepZen. Cursor-based pagination is for example, used by [Relay](https://relay.dev/graphql/connections.htm), and is also supported by the StepZen GraphQL API. Cursor-based pagination is the preferred way to do pagination in GraphQL, as it provides valuable data for UX uses. But it comes with the downside of being more complex to query, as we saw in the first section.

Let's look at the `Connection` type that is used by cursor-based pagination:

```graphql

type Customer {

activities: [Activity]

addresses: [Address]

contacts: Contacts

description: String

designation: String

}

type CustomerEdge {

node: Customer

cursor: String

}

type CustomerConnection {

pageInfo: PageInfo!

edges: [CustomerEdge]

}

```

The `CustomerConnection` type is the one that is returned by the `customers` query. It contains a `pageInfo` field, which is of type `PageInfo`. The `PageInfo` type contains the following fields:

```graphql

query MyQuery {

customers {

pageInfo {

endCursor

hasNextPage

hasPreviousPage

startCursor

}

}

}

```

These fields inform you about the current page, and whether there are more pages to be fetched. The `endCursor` and `startCursor` fields are the cursors that you need to use in the next request. The `hasNextPage` and `hasPreviousPage` fields indicate whether there are more pages to be fetched.

The `edges` field contains a list of `CustomerEdge` objects. The `CustomerEdge` type contains a `node` field of type `Customer`. To get the information about the customer, you would need to use the `node` field:

```graphql

query MyQuery {

customers(first: 3, after: "eyJjIjoiTzpRdWVyeTpwYXJrcyIsIm8iOjl9") {

edges {

node {

id

description

}

}

}

}

```

The concept of "edges and nodes" is a bit confusing but very straightforward. It comes from the concept that everything in GraphQL is a graph. The `edges` field contains a list of `CustomerEdge` objects, information that is specific for this connection but not shared by all nodes. The `CustomerEdge` type contains a `node` field of type `Customer`. This is the actual customer information.

For Relay, you can find more information on GraphQL connections in the [documentation](https://relay.dev/graphql/connections.htm).

Conclusion

----------

GraphQL allows different kinds of pagination. But how does one decide which one will work best? GraphQL recommends using cursor-based pagination. However, it depends on the requirements of your application. If you are using a REST API already supporting cursor-based pagination, then it's a no-brainer. But if you are using a REST API that is not supporting cursor-based pagination, then you can use StepZen to implement it.

The common and the most straightforward kinds of pagination are **offset** and **page number**. Although they are relatively simple to implement, they are more suitable for static data. In contrast, **cursor-based pagination** is complex but best for changing data as it prevents data inconsistencies. With StepZen, it's straightforward to implement cursor-based pagination for any API.

Learn more by visiting [StepZen Docs](https://stepzen.com/docs/quick-start). Try it out with a [free account](https://stepzen.com/signup), and we'd love to get your feedback and answer any questions on our [Discord](https://discord.com/invite/9k2VdPn2FR). | gethackteam | |

1,222,094 | The testing pyramid is outdated! E2E tests are now easy to write | No doubt having a well-tested code base allows us to cover regression errors during releases. ... | 0 | 2022-10-19T15:39:13 | https://dev.to/gioboa/the-testing-pyramid-is-outdated-e2e-tests-are-now-easy-to-write-414a | testing, webdev, programming, frontend | No doubt having a well-tested code base allows us to cover regression errors during releases.

### Unit test

Often we just test the business logic with unit tests, because they have a smaller scope and a very high execution speed.

These tests are a fair compromise between the effort spent and the benefits we get with a well-tested code base.

### End-to-end

There are also other types of tests such as End-to-End ones, they are more demanding and time-consuming to write and require more execution time.

Until recently, I was also on the same line of thinking until I tried to use ... Cypress.

It is an automatic tool and allows us to write and execute E2E tests quickly and easily.

Wow, this is awesome, but there is more...

Now with Cypress Studio, you can record tests quickly and easily instead of writing them.

### But how can I register for my test

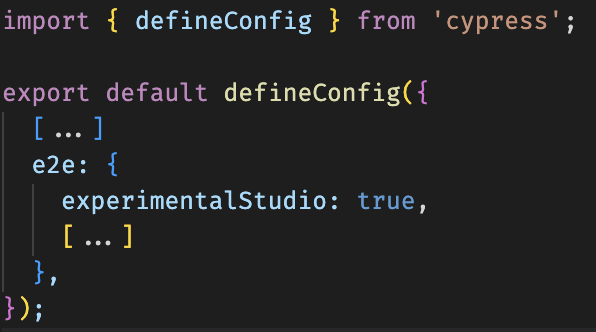

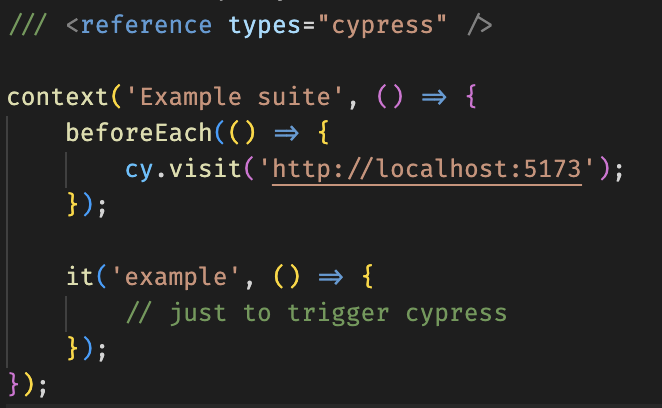

Once [Cypress has been installed](https://docs.cypress.io/guides/end-to-end-testing/writing-your-first-end-to-end-test) a "trigger" test and the _experimentalStudio_ property in the cypress configuration will be sufficient to start recording your first test.

#### Cypress configuration

#### Trigger test

Now you can start Cypress Studio and record new tests like this example:

<img width="100%" style="width:100%" src="https://s4.gifyu.com/images/ezgif-4-fc4ecc7cfd.gif">

✅ In the [docs](https://docs.cypress.io/guides/references/cypress-studio) you can the official example and all the steps to use it.

### Final thought

Recently I introduced this functionality in a project that we wanted to cover with the E2E test.

Together with the users of the platform we recorded the core flows of the application.

The results are excellent, with a little effort we were able to strengthen our tests and now we can sleep more peacefully.

You can [follow me on Twitter](https://twitter.com/giorgio_boa), where I'm posting or retweeting interesting articles.

I hope you enjoyed this article, don't forget to give ❤️.

Bye 👋

{% embed https://dev.to/gioboa %}

| gioboa |

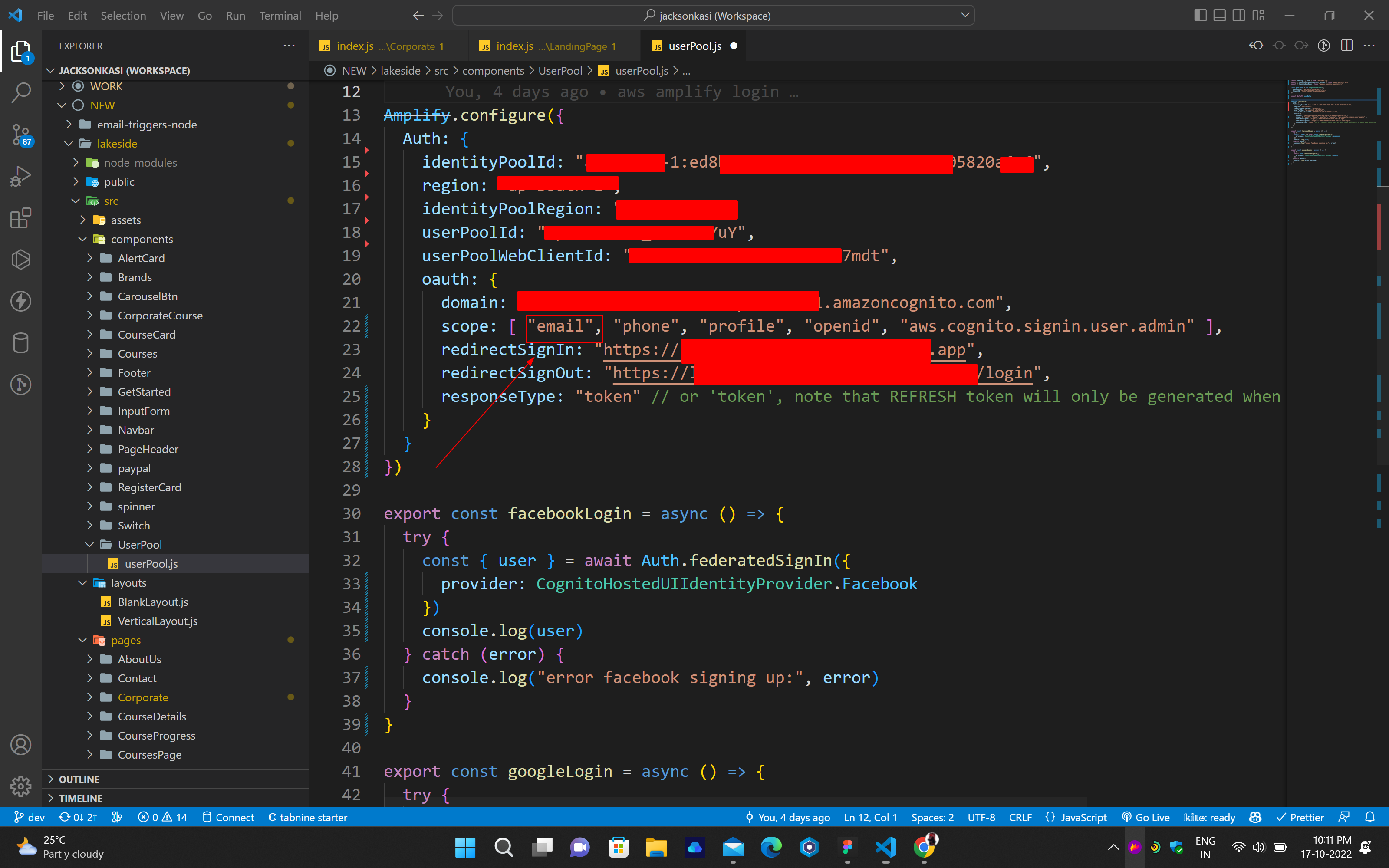

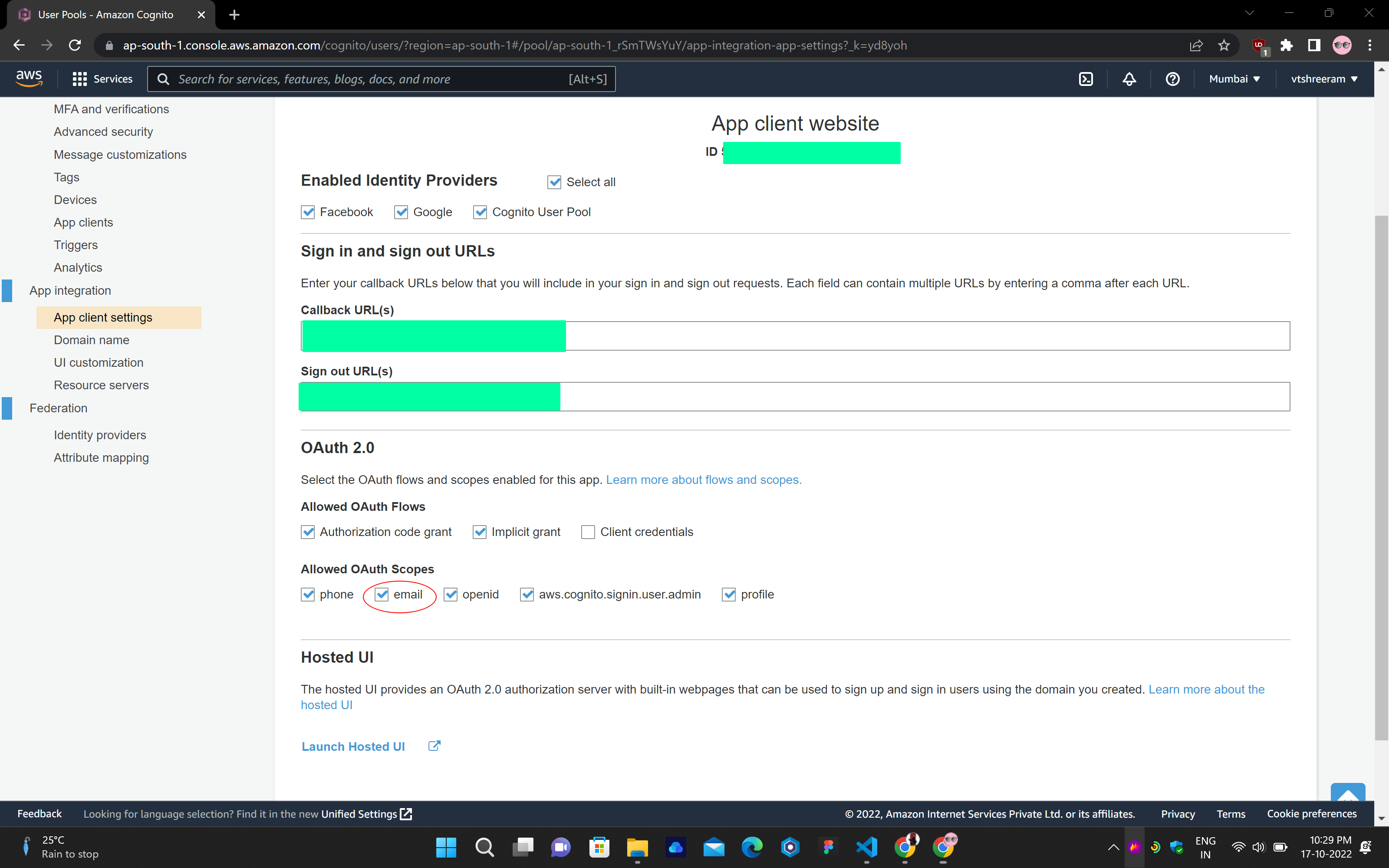

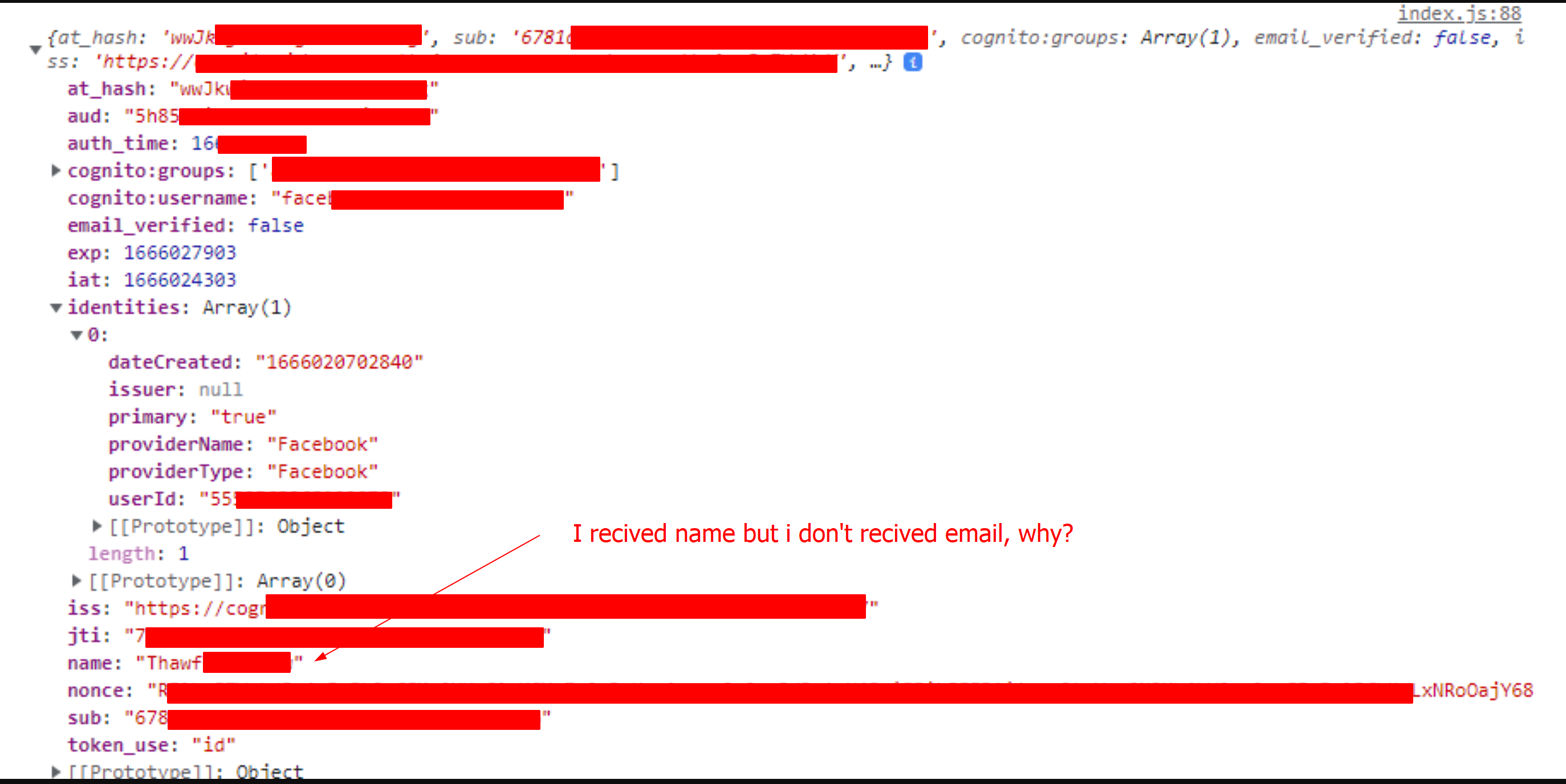

1,222,365 | AWS Cognito ( Facebook log ). Why token doesn't have an email? | I tried google and Facebook login using AWS Cognito & Amplify. Google login works fine. But... | 0 | 2022-10-17T17:02:49 | https://dev.to/jacksonkasi/aws-cognito-facebook-log-why-token-doesnt-have-an-email-48ln | aws, javascript, webdev, react | I tried google and Facebook login using `AWS Cognito & Amplify`.

Google login works fine.

But when I log in to Facebook, if I decode the token that comes, I don't get the email only.

But I think I did everything right.

Please help me if you have an answer to my question!

Thanks

## Code:

## Cognito:

## response:

| jacksonkasi |

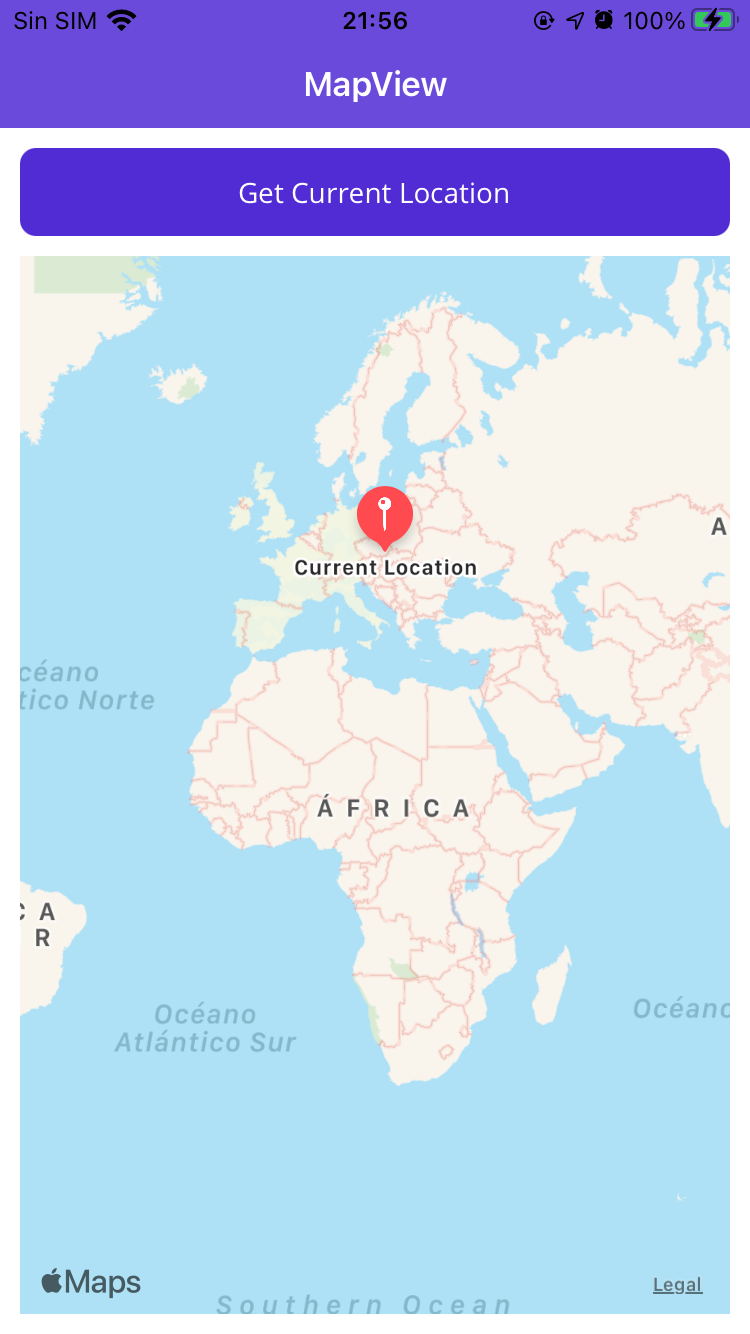

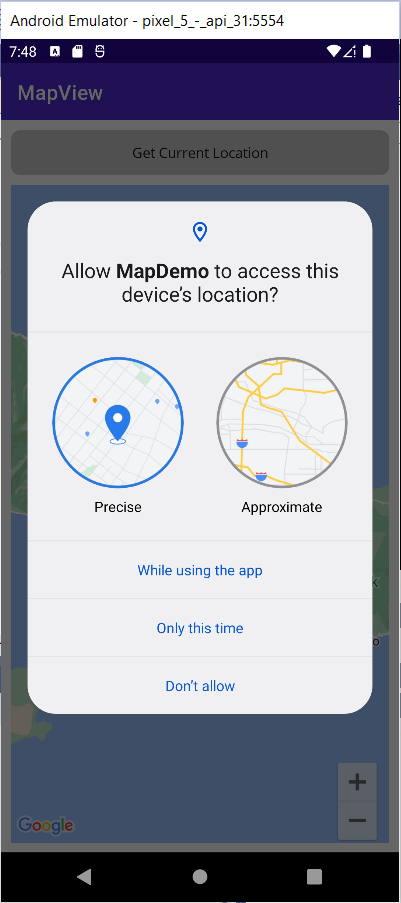

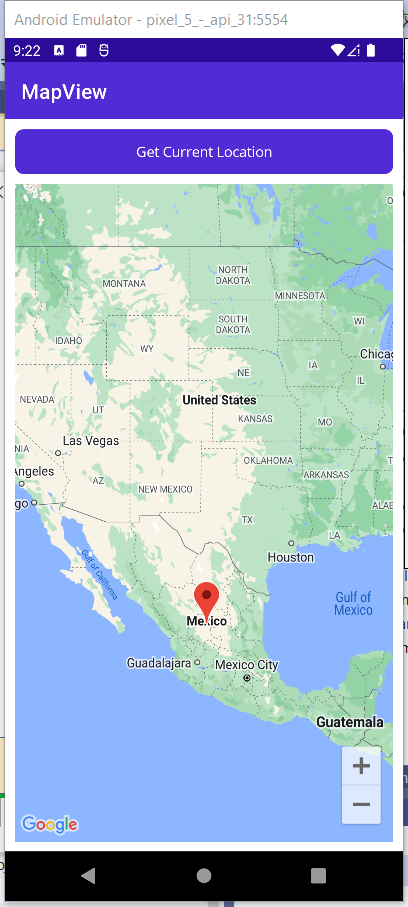

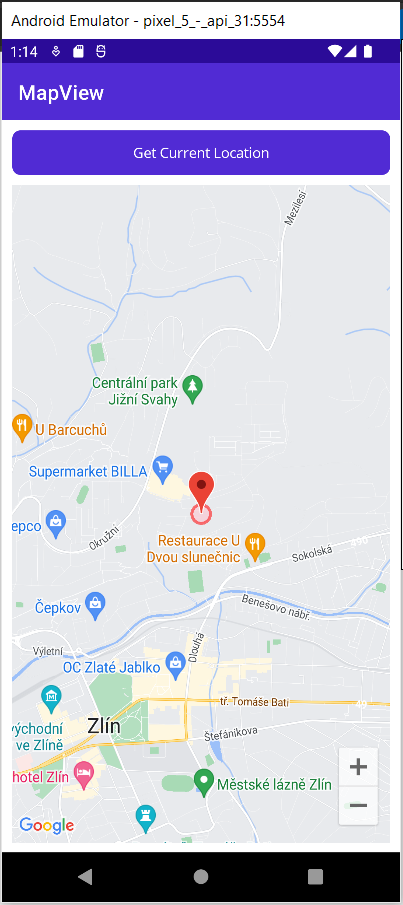

1,222,373 | Demonstration Sample Project "Practice1" (Movie) | Shirates (shirates-core) Shirates is an integration testing framework that makes it easy... | 0 | 2022-10-17T17:32:45 | https://dev.to/wave1008/demonstration-sample-project-practice1-movie-5412 | ## Shirates (shirates-core)

Shirates is an integration testing framework that makes it easy and fun to write test code for mobile apps.

[shirates-core](https://github.com/ldi-github/shirates-core) is core library.

---

## Demonstraion

This is a demonstration movie for the article [Introducing Shirates, a Mobile Testing Automation Tool](https://dev.to/wave1008/introducing-shirates-a-mobile-testing-automation-tool-3nmc).

{% embed https://youtu.be/kwCL11BU2SQ %}

| wave1008 | |

1,222,483 | Edit Someone Else’s Website: contenteditable & designMode | The first time I opened up my devtools and changed the contents of a website, I actually thought... | 0 | 2022-10-18T16:46:50 | https://austingil.com/contenteditable-and-designmode/ | development, frontend, html, coding | ---

title: Edit Someone Else’s Website: contenteditable & designMode

published: true

tags: Development,FrontEnd,HTML,coding

canonical_url: https://austingil.com/contenteditable-and-designmode/

cover_image: https://austingil.com/wp-content/uploads/HTML-Blog-Cover.png

---

{% embed https://www.youtube.com/watch?v=vnhwbuyXEmk %}

The first time I opened up my devtools and changed the contents of a website, I actually thought that I had hacked it. I thought, “Oh my gosh, what sort of crazy powers have I unlocked?”

Little did I know that it was just a local change that would go away when I reload the browser. Still, it is kind of cool to think we can do that.

I bring it up today because there’s actually a couple APIs that are sort of related, that I wanted to highlight.

## `contenteditable`

`contenteditable` is an HTML attribute that when assigned the value of `"true"`, allows for the content of the element to be modified from the front end.

`contenteditable` is cool because you can add it to any HTML element you want, and allow users to modify the content on of that element from their end.

Now, if your first thought is a low cost visual editor for websites, unfortunately, [`contenteditable` is not really a great solution](https://medium.engineering/why-contenteditable-is-terrible-122d8a40e480). It has a lot of ‘s a lot of pitfalls that the industry has agreed that it’s not the right approach.

(see “[Why ContentEditable is Terrible](https://medium.engineering/why-contenteditable-is-terrible-122d8a40e480)” by [Nick Santos](https://twitter.com/nicksantos))

Nevertheless, it’s a pretty fun and interesting attribute that I wanted to share. And I bring that up because contenteditable is a good introduction to this next thing that I wanted to share.

## `designMode`

There’s an API on the `document` object called `designMode` and you can set it to either `"on"` or `"off"`. The cool thing about this `designMode` is that it allows you to enable that `contenteditable` state on the entire document.

So we can go to my website, open up devtools, set the `document.designMode` to `"on"`, and then close devtools, now every single thing on the website can be editable.

```js

document.designMode = "on"

```

Which means with just a couple of clicks and keystrokes we can turn this:

Into this:

How about that? I’ve defaced my own website.

If you haven’t heard of these two browser features, I’m not surprised because they’re pretty uncommon. The reason being that there’s not many very good use-cases for them.

Maybe you could create a browser extension that could toggle `designMode` on and off. Then allow for users to easily modify a webpage in order to provide feedback to team members or maybe capture it in a screenshot or send it to Slack or GitHub. I don’t know, I’m sure there’s something there, but it probably isn’t going to be useful for most folks very frequently.

…Unless you’re someone like me that likes to make satirical article titles from the New York Times.

And let me just explicitly state for legal reasons: I do not encourage or promote using these features to modify websites, to spread misinformation. That is a terrible thing to do.

Nevertheless, these are cool, interesting APIs that I thought I’d share.

Thank you so much for reading. If you liked this article, please [share it](https://twitter.com/share?via=heyAustinGil). It's one of the best ways to support me. You can also [sign up for my newsletter](https://austingil.com/newsletter/) or [follow me on Twitter](https://twitter.com/heyAustinGil) if you want to know when new articles are published.

* * *

_Originally published on [austingil.com](https://austingil.com/contenteditable-and-designmode/)._ | austingil |

1,222,600 | Setup local k8s cluster using kind | In k8s world, there is a need for testing before going production. One among them is using kind to... | 0 | 2022-10-18T00:36:09 | https://dev.to/hyohung/setup-simple-kind-cluster-19jp | kubernetes | In k8s world, there is a need for testing before going production. One among them is using `kind` to build local cluster.

There are many ways in [official document](https://kind.sigs.k8s.io/docs/user/quick-start/), here is my prefer way using yaml to define my expectation.

Creating a yaml file named `kind.yaml` as below to configure 1 master and 2 workers:

```

apiVersion: kind.x-k8s.io/v1alpha4

kind: Cluster

nodes:

- role: control-plane

image: kindest/node:v1.22.0@sha256:b8bda84bb3a190e6e028b1760d277454a72267a5454b57db34437c34a588d047

extraPortMappings:

- containerPort: 30778

hostPort: 30778

listenAddress: "0.0.0.0"

protocol: tcp

- role: worker

image: kindest/node:v1.22.0@sha256:b8bda84bb3a190e6e028b1760d277454a72267a5454b57db34437c34a588d047

- role: worker

image: kindest/node:v1.22.0@sha256:b8bda84bb3a190e6e028b1760d277454a72267a5454b57db34437c34a588d047

```

Install kind if not have. If you're using MacOS, run: `brew install kind` for package manager using brewk

Run command to setup cluster: `kind create cluster --name local --config kind.yaml`

Here is my result, tested in Mac M1 chip:

```

kind create cluster --name local --config kind.yaml

Creating cluster "local" ...

✓ Ensuring node image (kindest/node:v1.22.0) 🖼

✓ Preparing nodes 📦 📦 📦

✓ Writing configuration 📜

✓ Starting control-plane 🕹️

✓ Installing CNI 🔌

✓ Installing StorageClass 💾

✓ Joining worker nodes 🚜

Set kubectl context to "kind-local"

You can now use your cluster with:

kubectl cluster-info --context kind-local

Have a nice day! 👋

```

With k8s contents as below:

```

kubectl config set-context local-kind Context "local-kind" created.

kubectl get node

NAME STATUS ROLES AGE VERSION

local-control-plane Ready control-plane,master 7m58s v1.22.0

local-worker Ready <none> 7m26s v1.22.0

local-worker2 Ready <none> 7m26s v1.22.0

``` | hyohung |

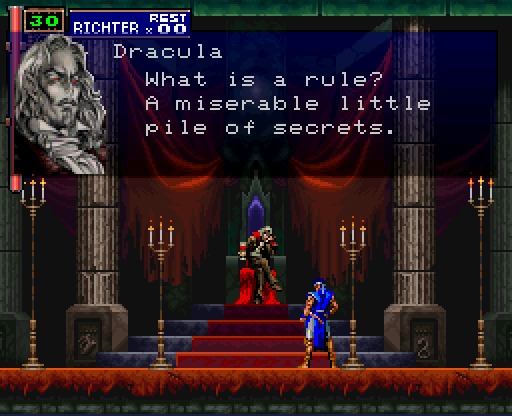

1,222,607 | Table stakes for Detection Engineering | What is a rule, really? Dracula refuses a call with a security vendor For as long as I... | 0 | 2022-10-18T00:56:41 | https://dev.to/teachmetechy/table-stakes-for-detection-engineering-1h68 | security, detectionengineering, threatdetection | ## What is a rule, really?

*Dracula refuses a call with a security vendor*

For as long as I have been in the security industry, there has been a concerted effort to sort through massive troves of data with powerful and mysterious tools called “rules”. It allows us mere mortals to take a million line logfile and separate each line into two buckets: interesting or not interesting, malicious or not malicious, vulnerable or not vulnerable. If you know what “bad” or “vulnerable” is, then you can codify it and let the computer do the sorting for you.

I cut my teeth in security research writing WAF rules for modsecurity and looking for interesting HTTP-based attacks on behalf of a customer base. I also launched the security detection and research team at startups that are now public. At my current gig, I help my organization write detection content against 100s of data sources with terabytes of cloud-based control-plane and data-plane events flowing through our systems. Seeing how detection and research have evolved in my 10+ year career has been rewarding and tiring.

The security elders at my previous companies would scoff at my WAF rules. They would talk to me about a time when vulnerability scanner rules were the only thing that mattered. A team of researchers would feverishly comb through binaries and RE tools like the Matrix. When they would find a vulnerability, they would rush out a rule so their company would be the first to disclose it and have a detection before their competitors.

*A security researcher from McAfee deploys a new rule to their vulnerability scanner (2003, colorized)*

At the end of the day, this fell into the realm of "security research". Companies would scoop up new grads and old heads alike, put them on a security research team, and put them to work. They would then measure how many rules and detections they could push into production in a month. Hopefully, it was enough to claim that their products protected customers from more attacks and vulnerabilities than their competitors.

This go-to-market strategy can be effective but suffers diminishing returns. It begs the question: why is "more" better, and why is "lots more", lots better? In the age of vulnerability scanners, more rules meant more vulnerabilities being detected. This translates to better coverage, which is a great sales statistic. The same pervasiveness of coverage crept into threat detection products, but threats are not equal to vulnerabilities. Sure, you want to have coverage against an overwhelming number of threats, but is that going to help protect you and your firm? Can you do “all” threats, forever? More than a competitor, more than a threat actor? Probably not.

This culture of more is better has caused burnout and pain for researchers at these companies. It doesn't matter if you wrote an exceptional rule that was relevant, contextual, and precise: it carried the same weight as another bad rule with bad results within the game of quotas. When detection counts are up, the sales engine gets revved up, and they rush to their pipelines to close more deals.

*Detorction rules are like stonks, they can only go up*

## Threat detection is dead. Long live threat detection!

The security research team in these times (maybe not as much now, but I have recency bias) was treated like wizards. They were the identity of the company. They had cringe-inducing named research teams, such as the IBM Hacker Ninjas or the McAfee Alpha Bro Exploiter Extraordinaires. The wizards would come down from their spire and preach to the world their latest findings, present at Blackhat and DEFCON. Afterwards, they would head back up the spire and close the door behind them. Their rules, research, and detections would then be left for other people to deal with. They had bigger things to worry about, like writing more rules to hit that damn quota.

In my opinion, this concept of "more is better" for detection rules is a sign that a company's go-to market is either a) stuck in the past of vulnerability research coverage or b) doesn't know what they are doing so they just do as much as possible to hide that fact. Believe me, I was part of this a few times in my career.

Now, I am not saying that you shouldn’t crank them out for the sake of coverage. There are legitimate reasons to write, deploy and maintain a vast ruleset. What I am saying is that I think we got into this mess because we think more coverage is more secure. This fallacy can lead internal teams, or in my case a product detection team, down rabbit holes that aren't fruitful in the longrun. And the more I get into my career, the more I realize that I can’t solely blame sales or marketing people for this strategy. It's up to us, the researchers, to let them know which path is the more fruitful and why.

When a company relies heavily on a research team to pump out content, they need to make sure that the team has the right people supporting them. This will enable the team to focus on the nuances of security detection. Companies should provide access to project management resources, software engineering capabilities to scale rule writing efforts and infrastructure, and consider the impact of rules using tried and tested methods in everyone’s favorite high school class: statistics.

I think the industry is starting to see that security detection and research, for the sole purpose of writing threat detection rules, is evolving into a more advanced and exciting type of security engineer: the Detection Engineer!

## Detection Engineering is the new hotness but requires solid foundations in more than just security subject matter expertise

Detection Engineering, in my opinion, is the next level of security research. It's an evolution because companies have realized that it's more scalable to require security researchers to have skills in software engineering, project management, and statistics. If you want to scale your detection program, you need to hire a Detection Engineering team that can complement each other in the following areas:

1. Subject matter expertise in security

2. Software engineering

3. Statistics

That's it. That's all you need. Of course, this list can be picked apart, stretched, and folded under other areas like DevOps or Infrastructure. However, at the end of the day, these 3 pillars can get you far without having to hire a ton of bodies.

You can't write detections for your network security product if you don't have network security experts. This is the same for endpoint, cloud, application and host-based detections. It’s like having a bunch of data scientists build a machine learning model to detect asthma in patients. However, they forgot to bring in a doctor to show them how pneumonia patients would give the model false positives. You need the subject matter experts. This has not changed in the industry, nor should it.

What has changed is that these experts need a solid basis in software engineering principles. You can't scale all of those detections and deploy them in a modern environment, manage sprints (yes this is software engineering :)), or write unit, integration, and regression tests without lots of bodies or lots of automation. I can reliably say my boss would rather hear that I can scale the problem away with software than with hiring more people.

Lastly, and I think this is the next step in the evolution of security research to detection engineering: we all must improve the explainability, and thus impact, of our rules, and statistics is how you do it. You can't reliably create, improve, deprecate or justify your detections to your sales teams, internal leadership, or customers without a background in statistics. This does not mean you need a graduate degree, but I think if security engineers and researchers spent some time looking at concepts like sampling bias and error, confusion matrices, precision and recall, they could better understand how rules perform under certain conditions and spot errors much earlier on before a rule hits production.

The more you learn, the more you realize you don't know anything

## Conclusion

I am excited to see these 3 pillars being talked about more in the detection engineering and security realm. It shows how much we've matured as an industry. I wrote this post as a rant but also as a warning: do not do what I did. Do not fall victim to the "more is better" farce. I have a few more post ideas going into detail on what separates a good detection from a great detection (my team asks this question all the time), or what a go-to-market strategy for security detection rules should be (it's covering the right things, not more things). But for now, my parting advice for aspiring researchers and engineers is this Einstein quote:

"If I had only one hour to save the world, I would spend fifty-five minutes defining the problem, and only five minutes finding the solution."

Also, turns out, Einstein may not have said this, but the premise is still great. We write solutions (detections) trying to find problems (threats) without focusing on the problem (threat) beforehand. Don't do what I did. Don't commit to a quota!

| teachmetechy |

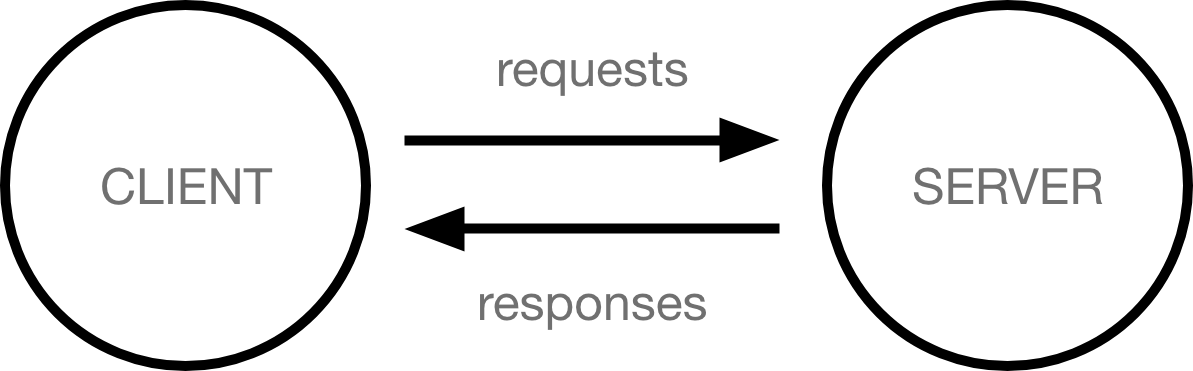

1,228,494 | What is react ?And How to learn react in one week | So before moving to react you should know how the web works from giving a request to getting a... | 0 | 2022-10-24T16:47:30 | https://dev.to/vivek7038/how-react-works--bkf | javascript, react, webdev, beginners | **So before moving to react you should know how the web works from giving a request to getting a response from the server.__**

_First of all React is not a framework like angular and vue ,it is a javascript librabry.React APP is basically a collection of components .What is component ' Component are a simple function that you can call with some input and they render some output.

As they are reusable and interactable, so you can merge many components in order to make a entire React app.

..

Best resources and yt channels for learning react :

1. freecodecamp

2. code evolution

3. 6 pack programmer

4. perdotech

HOW TO LEARN REACT IN ONE WEEK?

FIRST I WILL SHARE HOW I LEARNED REACT IN ONE WEEK .

**DAY 1**

I LEARNT ABOUT WHY DO WE ACTUALLY USE REACT RATHER THAN PURE VANILLA JS.THEN I REFRESHED ES6 JS CONCEPTS AND GET FAMILIER ABOUT THE FILE STRUCTURE OF REACT.

**DAY 2**

I LEARNT ABOUT JSX ,PROPS AND STYLING IN REACT .

**DAY 3**

ON THIRD DAY I LEARNT ABOUT FORMS IN REACT AND ALSO USESTATE AND USEEFFECT HOOKS .

**DAY 4**

CREATED A SIMPLE TO DO LIST APP TO KNOW HOW WE PERFORM CRUD OPERATIONS AND MAMNAGE THE COMPONENTS RENDERING

**DAY5**

I LEARNT ABOUT THE ROUTING IN REACT USING REACT-ROUTER-DOM.YOU SHOULD MUST LEARN IT WELL .THE OFFICIAL DOCS ARE PRETTY WELL FOR THAT .

**DAY 6**

THEN I LEARNT ABOUT THE REACT LIFECYCLE AND CLASS BASED COMPONENTS .ON THE SAME DAY I SOLVDED ALL THE REACT CHALLENGES ON FREECODECAMP.COM.IT WAS GREAT TO PRACTISE ALL THAT I HAVE LEARNT .

**DAY 7**

THEN I FINALLY MADE A CLONE OF MI STORE SITE .ALSO USED REACT BOOSTRAP .IT IS PRETTY GOOD FOR FAST DEVELOPEMENT OF INTERFACES.

**ADVICE FOR THE BEGINNERS :**

`_

1.DON'T TRY TO LEARN EVERYTHING ON ONE DAY AND RUSH TOWARDS THE ADVANCED TOPICS .TAKE YOUR OWN TIME.

2.AFTER WATCHING A TUTORIAL FIRST PRACTISE IT THEN SWITCH TO ANOTHER TOPIC.REMEMBER YOU GET GOOD AT ANYTHING BY CREATING NOT JUST ONLY BY CONSUMING .

3.DO PEER PROGRAMMING .MEANS FIND SOMEONE WHO ALSO WANTS TO LEARN REACT AND PASSIONATE ABOUT THE FRONT END .BELEIVE ME IT'S GREAT WAY TO LEARN .

4.CREATE AS MANY PROJECTS AS YOU CAN .YOU DON'T NEED TO LEARN THE SYNTAX DOCS AND GOOGLE IS ALWAYS THERE JUST BUILD THE LOGIC ABOUT WHERE YOU NEED TO USE WHICH CONCEPT

5.GO THROUGH THE CODE OF OTHERS ON GITHUB .OBSERVE HOW THEY MAINATAIN COMPONENTS FOLDER ,EXTENSIONS OF VS CODE FOR REACT,AND MANY STUFFS FIGURE OUT ALONG THE JOURNEY .

6.THERE IS NO SHORTCUT YOU JUST HAVE TO START .

_`

| vivek7038 |

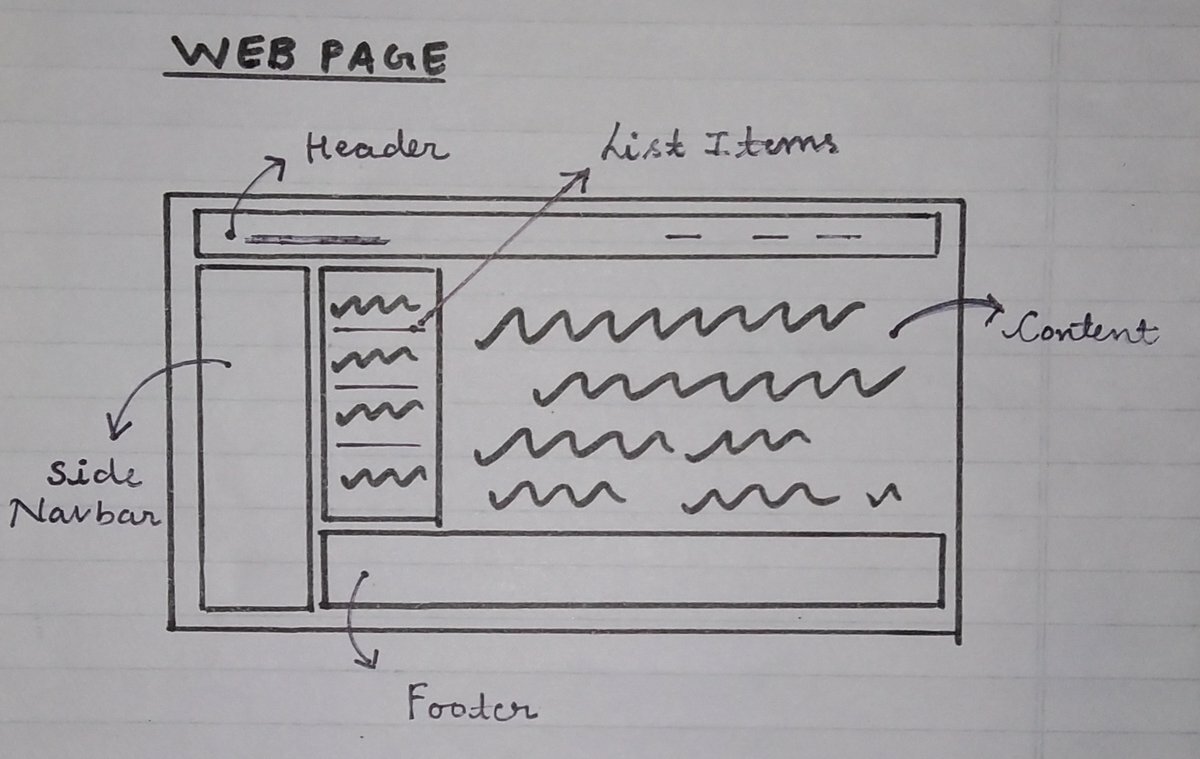

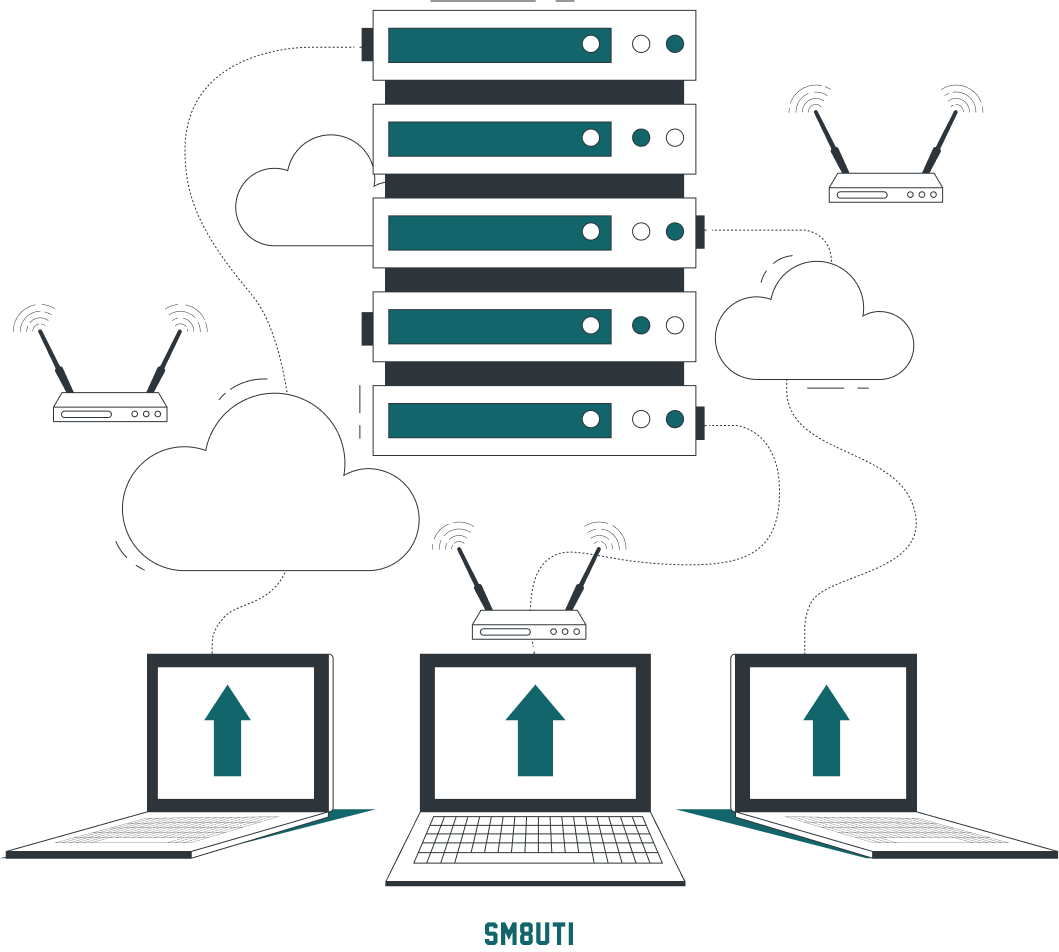

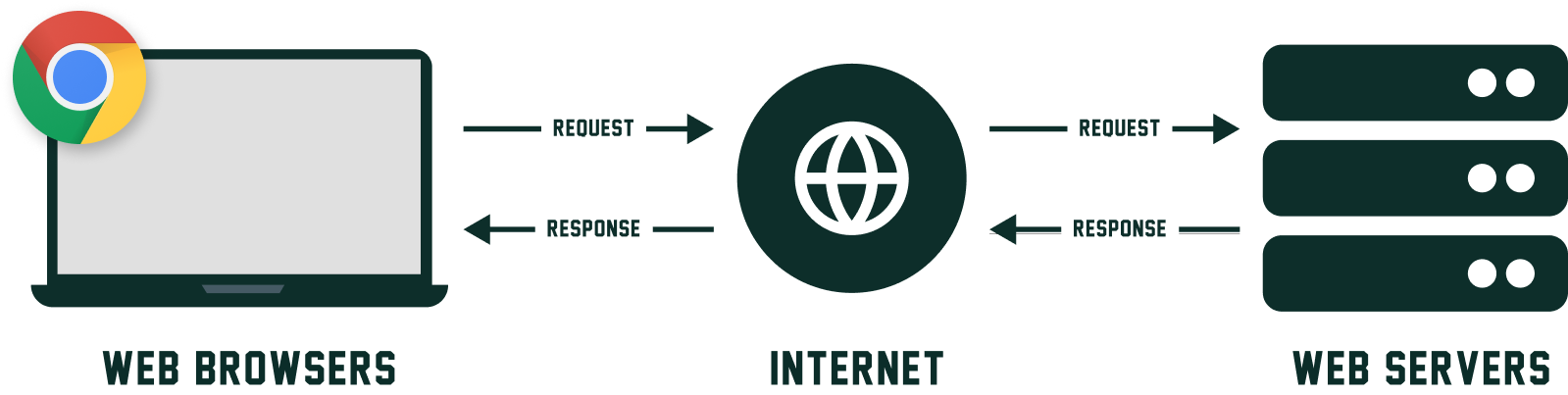

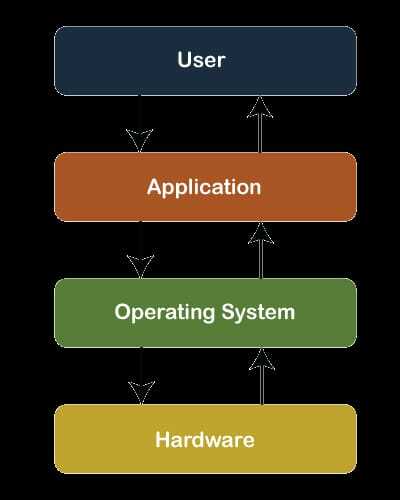

1,245,284 | Introduction to WEB and HTML | What is Web Server? Web servers are an important part of a website and it is important to... | 0 | 2022-11-06T07:08:55 | https://dev.to/sm8uti/introduction-to-web-and-html-159j | iwritecode, webdev, html, webserver | ### What is Web Server?

**Web servers** are an important part of a website and it is important to understand how a web server works on the internet or the World wide web. A website doesn't just depend on the coding or content part it depends on how the website performs on the internet and how much it is efficient so we need a good web server.

> A website is a collection of web pages while a web server is software that responds to the request for web resources.

<br>

<br>

A **web server** is a computer where web content is stored and also the content is served on the internet. that's why a user visits your website and sees your all content over the world because of a web server.

Nowadays worldwide most famous & widely used web server is [Apache 2](https://httpd.apache.org/) and other web servers are `Nginx, Lighttpd`, etc.

The biggest advantage of using this server is supports all operating systems ( `Windows, Mac, and Linux, etc.` ) and most websites ( around 70% ) are using `Apache` for hosting their content. `Apache is open-source` which means it is available for free, and can easily be accessed through online communities. a lot of online support is available in case you are stuck in a problem or error.

### How does it be Works?

The **web server** responds to the client's request in two ways one is sending the file to the client associated with the requested URL ( `Uniform Resource Locator` ) and the second ways generating a response by invoking a script and communicating with the server.

> URL is an acronym for Uniform Resource Locator and is a reference (an address) to a resource on the Internet

<br>

```

Example of URL : http://example.com

where the http is protocol identifier and the example.com is resource address.

```

When you type a URL into a browser that means the client sends a request for a web page, the web server searches for the requested page if it exists or is found then it will send it to the client with an HTTP ( `Hypertext Transfer Protocol` ) response. and if the requested web page is not found or does not exist web server sends an HTTP response ( `404 not found` )

### What is HTML ?

Basically, **HTML** is the main part of a web page. its full form is `Hypertext Markup Language`. and you can also imagine `HTML is a bone of a web page` because you use it to structure your web page.

The origin of the `HTML language` dates back to the `physicist Tim Berners-Lee`, its creator, a worker at CERN (European Organization for Nuclear Research) who at the end of 1989 proposed the HTML language together with the HTTP protocol, whose objective was to create a means to be able to share information between physicists of the time who worked all over the world.

The first version of HTML was released in 1991 ( HTML 1 ) .

- HTML 2 - Released in 1995

- HTML 3 - Released in 1997

- HTML 4 - Released in 1999

- HTML 5 - Released in 2014

HTML 5 is the latest version and this version includes many outstanding features.

#### Basic structure of HTML:

```html

<!DOCTYPE html>

<html>

<head>

<title>SM8UTI</title>

</head>

<body>

<h1>Smruti Ranjan Nayak</h1>

<p>SM8UTI</p>

</body>

</html>

```

in the above html code the `<!DOCTYPE html>` declaration defines that this document is an HTML document or file. the `html, head, title, body these are called elements or tags`. `<html>` is the root element. `<head>`, this element contains meta information, title, and links etc. and `<body>`, this element contains the body part of web page or content part.

The two most used extensions of HTML documents are `.html` and `.htm`.

Thank you very much for reading.

Sm8uti

| sm8uti |

1,244,346 | 用 HTML 的 capture 屬性,開啟手機鏡頭進行拍照、錄影 | 本篇要解決的問題 以前研究過一下怎麼用 JavaScript 打開手機的鏡頭,進行拍照或錄影,當時做出了 Demo 後,然後就……突然一陣子忙,就忘記寫文章了... | 18,536 | 2022-11-05T10:03:18 | https://www.letswrite.tw/html-capture/ | webdev, beginners, tutorial, html | ## 本篇要解決的問題

以前研究過一下怎麼用 JavaScript 打開手機的鏡頭,進行拍照或錄影,當時做出了 Demo 後,然後就……突然一陣子忙,就忘記寫文章了 XD。

直到昨天看到一篇文章,才知道原來 HTML 本身就有 attribute 讓使用者開啟鏡頭,進行拍照或錄影,而且寫起來 Hen~ 簡單,就決定製作一個小 Demo,並寫出這篇筆記文。

參考的文章及 MDN 的說明連結在這:

- [You Can Access A User’s Camera with Just HTML](https://austingil.com/html-capture-attribute/)

- [HTML attribute: capture](https://developer.mozilla.org/en-US/docs/Web/HTML/Attributes/capture)

製作出來的 Demo 在這,只能用手機操作,用桌機的話無法使用。

<https://letswritetw.github.io/letswrite-html-capture/>

---

## HTML 屬性開啟鏡頭

這邊直接提供開啟手機鏡頭的 HTML 屬性是什麼:

- capture:`user` 前鏡頭、`environment` 後鏡頭

- accept:`audio` 聲音檔、`video` 影片檔、`image` 圖檔

這二個屬性是寫在 `input type="file"` 裡的,範例如下:

```html

<!-- 開啟 前鏡頭 錄影、拍照 -->

<input type="file" capture="user" accept="video/*"/>

<input type="file" capture="user" accept="image/*"/>

<!-- 開啟 後鏡頭 錄影、拍照 -->

<input type="file" capture="environment" accept="video/*"/>

<input type="file" capture="environment" accept="image/*"/>

```

想看效果的朋友可以進到 Demo 裡去玩一玩,Demo 頁不會把大家的照片或影片給存下來,一切都是在頁面上操作而已 ~~(因為沒有酷錢錢買空間存)~~。

另外,August 用 iPhone 實測時,前鏡頭預設會打開閃光燈,拍照前記得關掉,不然會被閃到看見人生的跑馬燈。

---

## 把使用者照片、影片放到頁面上預覽

在 Demo 頁上如果大家有試玩拍照跟錄影,會看見 August 有把拍照的照片跟錄影的影片給放在結果顯示區,這個不用擔心,並不是先存到某台主機上後,再把路徑丟回給頁面,而是直接用 [FileReader](https://developer.mozilla.org/zh-TW/docs/Web/API/FileReader) 把各位當下的檔案給塞到頁面上 `img`、`video` 的 `src` 裡。

這段是要寫怎麼把照片、影片給轉成網頁可讀的 `src`~~(買不起空間啊廣告還不點起來讓本站賺個微簿的酷錢錢)~~。

### 轉圖片

最簡單的方式,先在 HTML 上放個不寫 `src` 的 `img`,之後抓到 Base64 後再寫進 `src` 裡。

```html

<img id="Im_image">

```

```javascript

const input = document.getElementById('xxx');

input1.addEventListener('change', handleFilesImage, false);

function handleFilesImage() {

const fileData = this.files;

const reader = new FileReader();

reader.addEventListener('load', file => {

const img = document.getElementById('Im_image');

img.src = file.target.result;

});

reader.readAsDataURL(fileData[0]);

}

```

### 轉影片

一樣用最簡單的方式,先在 HTML 上放個不寫 `src` 的 `video`,之後抓到 Blob 後再寫進 `src` 裡。

轉影片的 JavaScript 主要參考這篇:[How to read large video files in JavaScript using FileReader?](https://stackoverflow.com/questions/61012790/how-to-read-large-video-files-in-javascript-using-filereader)

```html

<video id="Im_video" controls="controls"></video>

```

```javascript

const input = document.getElementById('xxx');

input1.addEventListener('change', handleFilesVideo, false);

function handleFilesVideo() {

const fileData = this.files;

const reader = new FileReader();

reader.readAsArrayBuffer(fileData[0]);

reader.addEventListener('load', file => {

const buffer = file.target.result;

const videoBlob = new Blob([new Uint8Array(buffer)], { type: 'video/mp4' });

const url = window.URL.createObjectURL(videoBlob);

const video = document.getElementById('Im_video');

video.src = url;

});

}

```

---

## 關於客製 input file

如果直接用 `input type="file"`,預設會長的像這樣:

但因為這邊我們讓使用者做的動作是「打開鏡頭」,如果顯示的像預設那樣是寫「選擇檔案」,使用者會感到疑惑,所以 Demo 上有客製了 input file 的樣子成一個按鈕,上面可以寫上我們想要的文字:

客製 input file 的方式本站以前有寫過,這邊就不再重複寫,有興趣的朋友可以點連結觀看:

[File API 客製上傳檔案按鈕 / input file](https://www.letswrite.tw/file-api-custom-input/)

---

## 支援度、安全性

HTML `capture` 這個 attribute 的支援度,在 [Can I use](https://caniuse.com/html-media-capture) 上是這樣:

可以看到支援的部份全在手機,這也正常,現在大家都手機不離身了,要拍照或錄影也不會用電腦來使用。

關於安全性,這邊要寫的並不是說用這個 HTML 的屬性安不安全,而是像參考連結裡第一篇文章提到的,用 `capture` 這個方式,並不會像用 App 那樣,會先詢問使用者能不能授權開啟相機功能,而是直接就打開了,這對使用者來說會有安全的疑慮。

但就像參考文章中說的,這最後的產出是一個 input 裡的檔案,當我們逛網頁時,如果自己點擊了 input,打開相機,又自己按下了拍照或錄影,然後又按下了確定使用照片或影片,這都是我們自己決定的,而且至少要 3 次的點擊才會把照片傳到網頁上,這中間的過程,目前只能說,在瀏覽器並未限制使用者點擊了帶有 `capture` 屬性的 `input` 的當下,大家逛網站真的是不要亂點不信任的網站任何按鈕或連結,看到點了某個按鈕時突然打開了鏡頭時,更要當心。 | letswrite |

1,244,534 | Journey of a web page - A mind map ! | Ever wondered what happens when you hit enter after typing a url in the browser’s address bar?... | 0 | 2022-11-06T07:33:38 | https://dev.to/abhighyaa/the-journey-of-a-web-request-a-mind-map-4g5g | webdev, webperf, architecture, html |

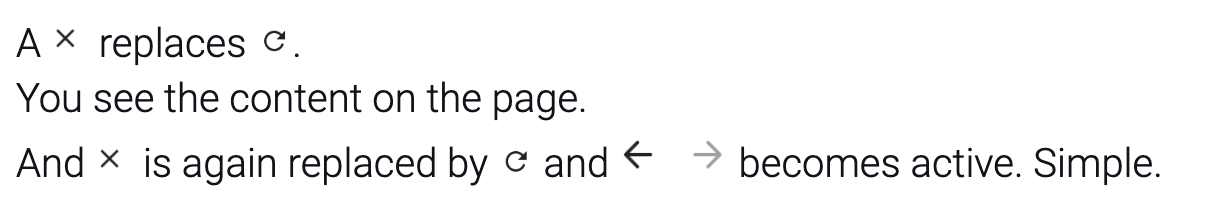

Ever wondered what happens when you hit enter after typing a url in the browser’s address bar? 🤔

Oh, I wish!😨

The browser BTS does so many things for this to appear so seamless. And understanding those are very important to make our applications highly performant.

Let's understand these one by one -

1. You enter some input

2. Browser parses the input to check if its a url or a search query.

For search query => it redirects to the search engine.

3. For URL, it -

💡 In the n/w response, we can see vast differences between 14kb and 15kbs response size, but not between 15 and 16 kbs. Knowing which all Kbs are more critical than others can give you a big time [TTFB](https://web.dev/ttfb/) benefit. More details [here](https://developer.mozilla.org/en-US/docs/Web/Performance/How_browsers_work#tcp_slow_start_14kb_rule).

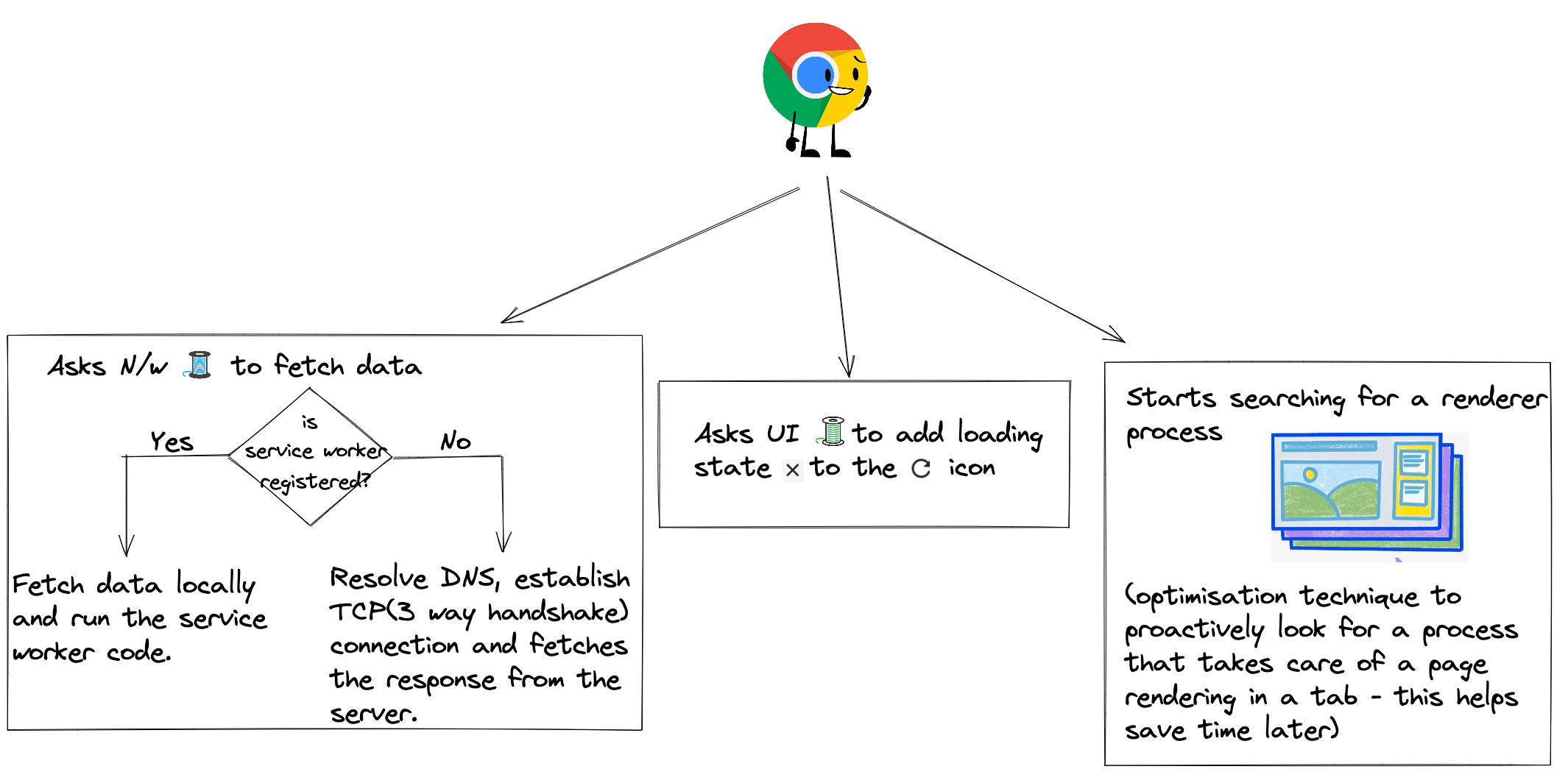

4. Once the response is fetched, it -

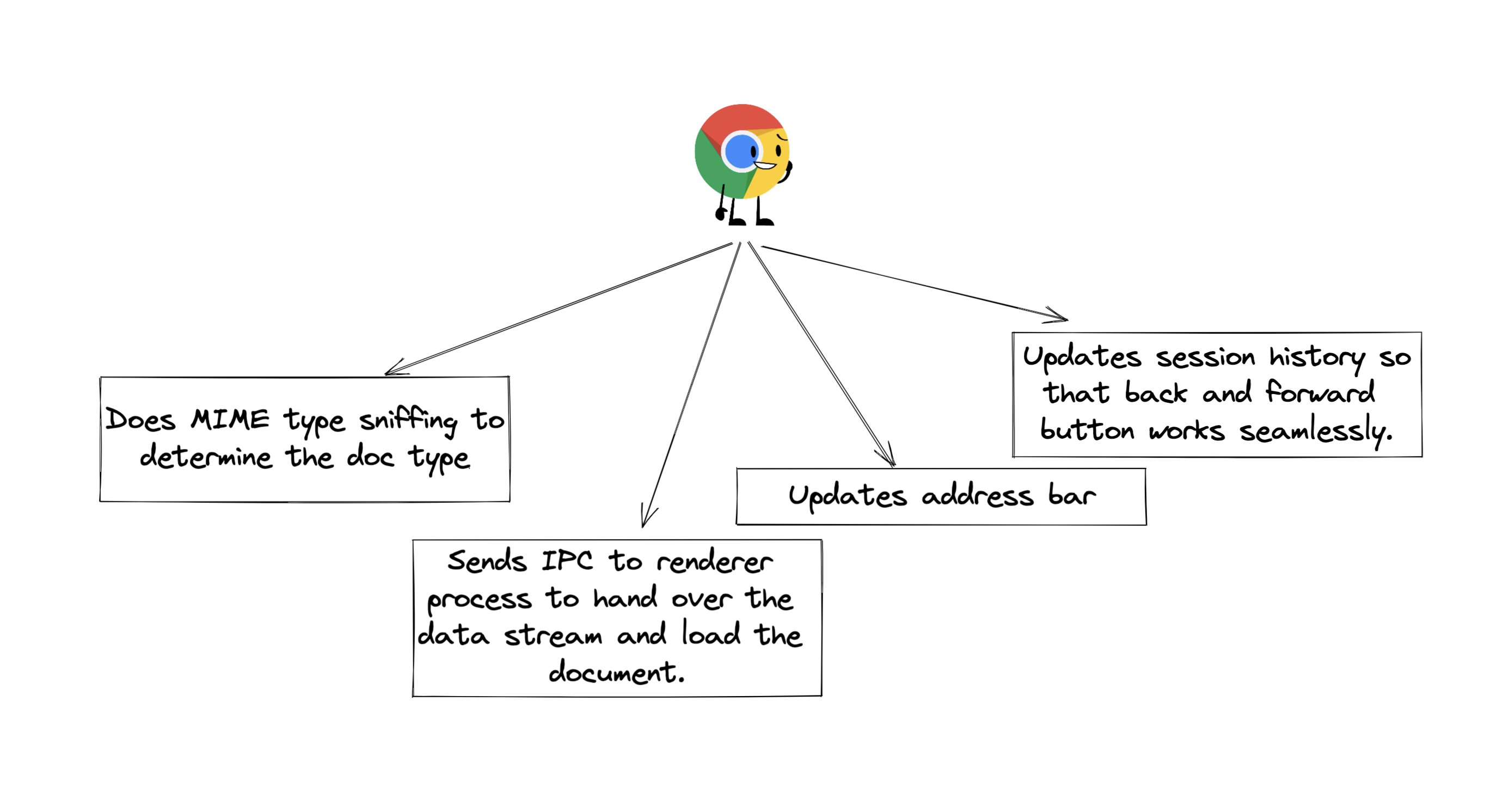

5. Once renderer process receives the IPC and data stream, it -

* Starts parsing the HTML received. HTML received is in bytes format.

* [DOM](https://developer.mozilla.org/en-US/docs/Web/API/Document_Object_Model/Introduction) (the data structure browsers use to process html into web pages) - it contains all the nodes of HTML and their relationships.

* When parser comes across downloading resources(like css, js, assets like fonts, image, video), it sends requests to the network thread in the browser process.

* Preload scanner is a browser optimisation technique to speeden up this process. It peaks at the tokens generated by parsers and sends requests to the network thread in the browser process.

* CSS, JS are render blocking resources. They stop html parsing and block page rendering, thus creating more delays and worsening the page load times.

* 💡 This is why we use techniques like [preloads](https://developer.mozilla.org/en-US/docs/Web/HTML/Link_types/preload), [preconnects](https://developer.mozilla.org/en-US/docs/Web/HTML/Link_types/preconnect), [async](https://developer.mozilla.org/en-US/docs/Web/HTML/Element/script#attr-async), [defer](https://developer.mozilla.org/en-US/docs/Web/HTML/Element/script#attr-defer), splitting CSS into critical and non critical and defer the non critical one etc wherever suitable.

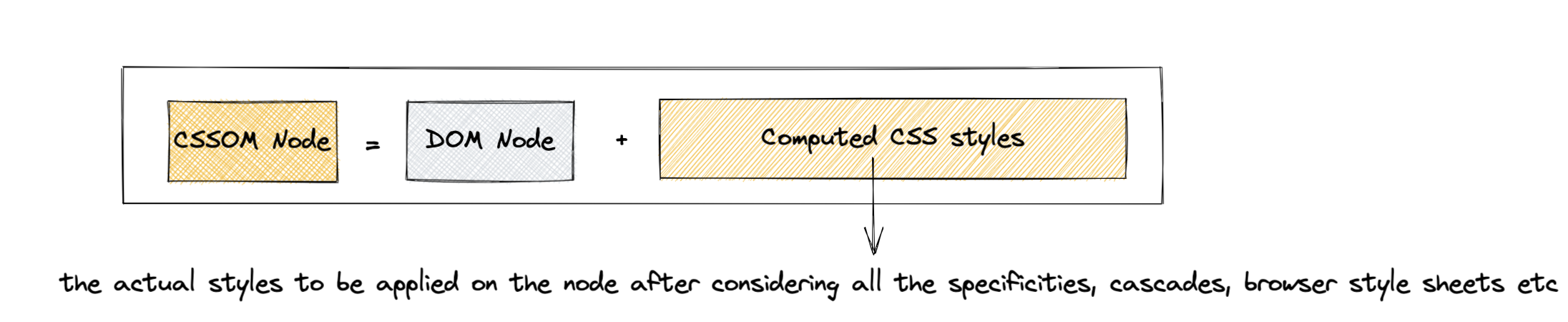

6. Once the DOM is constructed, the main thread parses the CSS to create [CSSOM](https://developer.mozilla.org/en-US/docs/Web/API/CSS_Object_Model) - the data structure similar to DOM, but containing the computed styles for each node in the DOM.

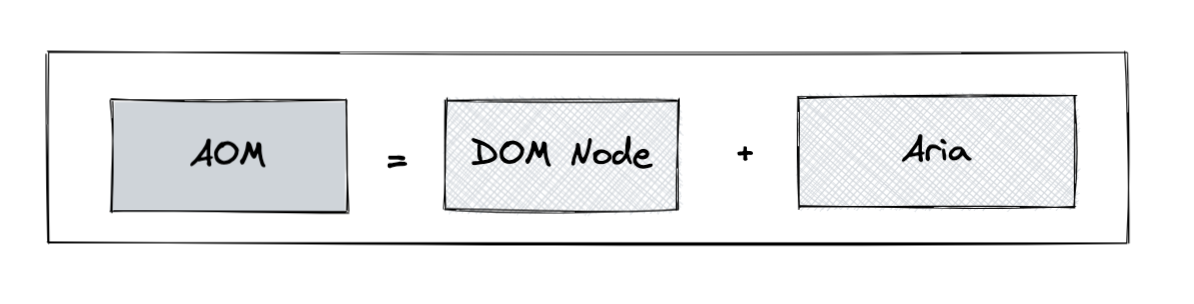

7. Now the browser creates an [accessibility tree](https://developer.mozilla.org/en-US/docs/Glossary/Accessibility_tree) - AOM - semantic version of DOM - to be used by screen readers and other accessibility devices.

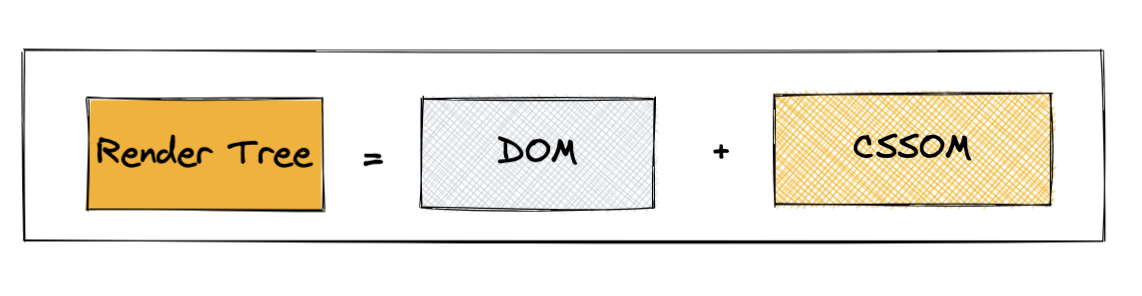

8. Now to render the page, the browser needs to know the exact positions of the nodes. So, it creates the render/layout tree, containing the coordinates information for the nodes which are to be shown on the page, considering all line breaks, height and width of elements.

🤔 Why do we need it, if we have dom and cssom?

Because CSS is mighty 💪🏻 It can hide elements from the web page even if they are present in DOM. Vice versa, it can add info(using the pseudo classes), even if they are not present in DOM. It can make the elements float to one side, mask overflow items, and change writing directions.

- Subsequent calculation of these positions is called reflow.

- 💡 If for elements like image, we can provide dimensions before loading, reflow can be saved improving the [CLS](https://web.dev/cls/).

9. Next it creates paint records - to add z-index and determine orders in which to paint.

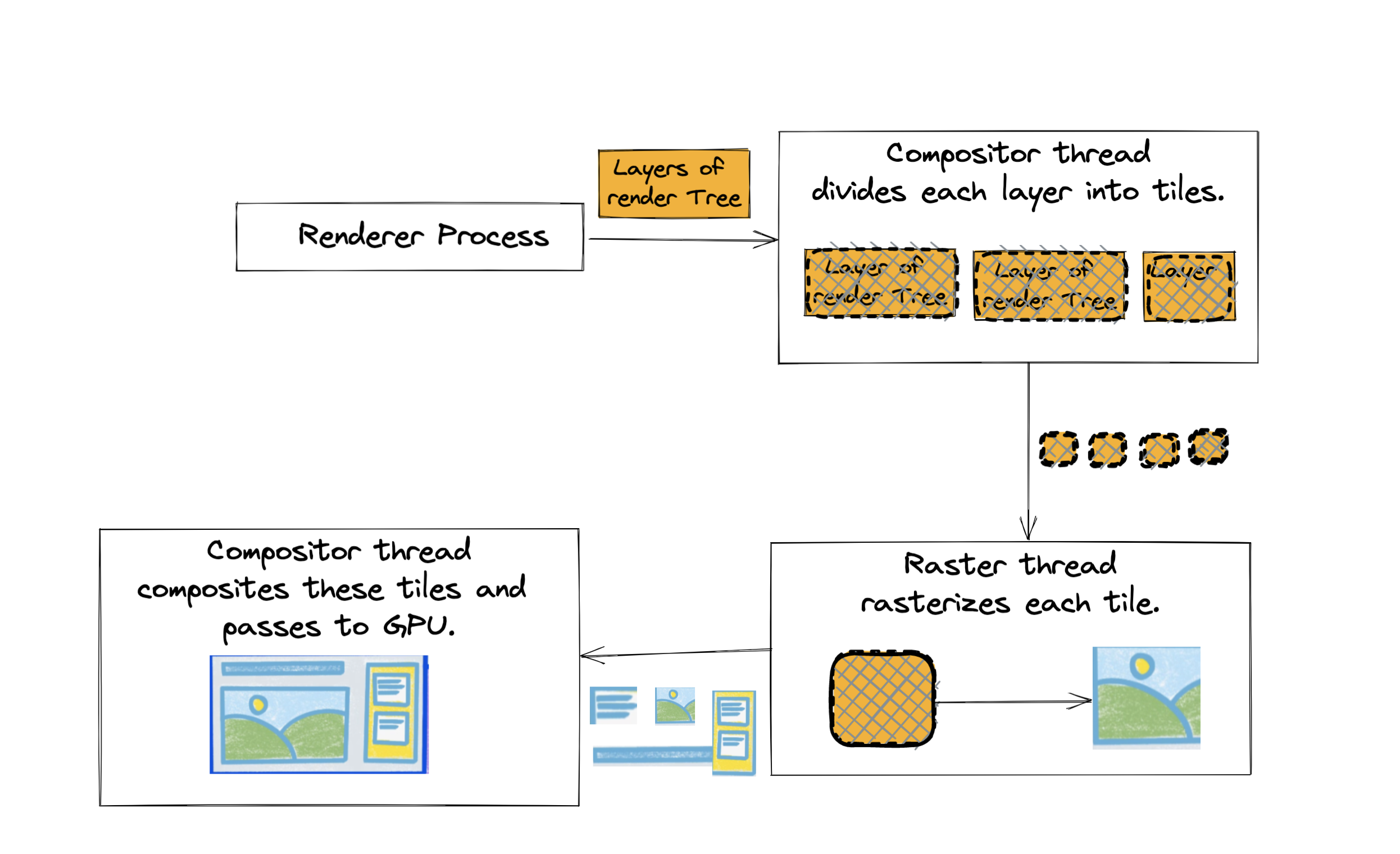

10. Now comes the step where we actually see something on the screen - rasterization - transforming the images created into actual pixels using GPUs.

To optimise this, the browser creates layers of the page (based on video, canvas, 3d transform, [will-change css property](https://developer.mozilla.org/en-US/docs/Web/CSS/will-change)). These layers are individually rasterized and then composited to form the actual page.

- The first occurrence of this marks the [FCP](https://developer.mozilla.org/en-US/docs/Glossary/First_contentful_paint#:~:text=First%20Contentful%20Paint%20(FCP)%20is,the%20page%20is%20actually%20loading).

- Also, the entire time duration from when the DNS lookup started to here, marks our [TTI](https://developer.mozilla.org/en-US/docs/Glossary/Time_to_interactive).

- 💡 When we encounter janky scrolls and animations, we should check reflows and repaints, it must be skipping some frames.

11. It is now that the renderer process sends a message back to the browser process to replace the spinner with reload icon. And all onload events are fired.

This is how eventful and expensive a web page’s journey is. So, be mindful of the renders next time you code an application.

| abhighyaa |

1,244,537 | REMOTE Data Science Job that pays up to $165K - Santhoscheeku77 | Original Tweet From @Santhoscheeku77 Santhosh Kumar ... | 0 | 2022-11-05T13:45:34 | https://infotweets.com/blog/remote-data-science-job-that-pays-up-to-165k-santhoscheeku77-19np | career, job, productivity | #### Original Tweet From [@Santhoscheeku77](https://twitter.com/Santhoscheeku77)

{% embed https://twitter.com/Santhoscheeku77/status/1588546664918405122 %}

### 1 Decentral, US

▪ Role - Machine Learning Engineer

▪ Salary - $70k – $120k • 0.3% – 1.5% ( equity )

[https://angel.co/company/decentralinc/jobs/576333-machine-learning-engineer](https://angel.co/company/decentralinc/jobs/576333-machine-learning-engineer)

### 2 Weights & Biases, US

▪ Role - Machine Learning Engineer

▪ Salary - $100k – $160k

[https://angel.co/company/wandb/jobs/1193123-machine-learning-engineer-customer-success-remote](https://angel.co/company/wandb/jobs/1193123-machine-learning-engineer-customer-success-remote)

AdSpace Here!!

### 3 Neo Cybernetica

▪ Role - AI Research Engineer

▪ Salary - $60k – $140k

[https://angel.co/company/neo-cybernetica-1/jobs/2432007-ai-research-engineer-reasoning](https://angel.co/company/neo-cybernetica-1/jobs/2432007-ai-research-engineer-reasoning)

### 4 Generally Intelligent

▪ Role - Machine Learning Engineer

▪ Salary - $140k

[https://angel.co/company/generally-intelligent/jobs/1490456-machine-learning-engineer-remote](https://angel.co/company/generally-intelligent/jobs/1490456-machine-learning-engineer-remote)

AdSpace Here!!

### 5 Light, Europe

▪ Role - AI developer

▪ Salary - €65k – €100k • 0.1% – 0.5% ( equity )

[https://angel.co/company/light-15/jobs/2458863-ai-developer](https://angel.co/company/light-15/jobs/2458863-ai-developer)

### 6 Paleo

▪ Role - Data Scientist

▪ Salary - $85k – $165k • 1.0% – 15.0% ( equity )

[https://angel.co/company/paleo-3/jobs/2466427-data-scientist](https://angel.co/company/paleo-3/jobs/2466427-data-scientist)

AdSpace Here!!

###7 Growthday, US

▪ Role - Data Analyst

▪ Salary - $70k – $90k

[https://angel.co/company/growthday/jobs/2462744-data-analyst](https://angel.co/company/growthday/jobs/2462744-data-analyst)

That's a wrap! & Thank you for Reading ❤

AdSpace Here!!

If you enjoyed this thread:

1. I plan to share such resources in Future

2. Follow me [@Santhoscheeku77](https://twitter.com/Santhoscheeku77) for more of this Data Science content

3. Like & RT the tweet below to share this thread with your audience [https://twitter.com/Santhoscheeku77/status/1588546664918405122](https://twitter.com/Santhoscheeku77/status/1588546664918405122)

Click [Here](https://www.knowledge-twitter.com/?utm_source=dev.to) For More Posts Like This [https://www.knowledge-twitter.com](https://www.knowledge-twitter.com/?utm_source=dev.to) | knowledgefromtwitter |

1,244,544 | Variables in JavaScript | In JavasScript, variables are containers in which we can accumulate some information. There are 3... | 0 | 2022-11-05T16:51:42 | https://dev.to/kima_badalyan/variables-in-javascript-14po | variable, javascript, scope, visibility | In JavasScript, variables are containers in which we can accumulate some information.

There are 3 ways of **declaring** the variables:

1. var(used 1995-2015);

2. let(created in 2015);

3. const(created in 2015).

The differences between these three types will be discussed later.

The declaration of the variable starts from the variable type, and then we write the name of the variable we want to create;

let name;

For creating the names of variables in JS, there are several rules:

- We can use letters, numbers, underscore(_), and the dollar symbol($);

- The name must begin with a letter; _ or $;

- The variable names are sensitive, which means small letters(y) and capital letters(Y) are different;

- We cannot use reserved names, such as JS keywords or variable names.

After declaring the variables, we can give some values o these variables. In JS, it is called **assigning**. We assign a value to the variable using the = symbol:

let name;

name = value;

Alternatively, we assign the value immediately after creating the variable:

let name = value;

The value can be:

- **number**:

let name = 42;

- a text, which is called **string** in JS, and is written in double or single quotation marks ('' or ""):

let name = "This is a string";

- a **boolean value**, which means that variable is eather _true_ or _false_:

let name = true;

There are some differences between var, let, and const.

One difference is whether it may or may not be **redeclared**. Const and let can be declared only once in the same {} or globally, while var can be redeclared as many times as we want.

So

var x = 10;

var x = 25;

is going to work, but

let x = 10;

let x = 25;

and

const x = 10;

const x = 25;

are going to result in an Error.

Nevertheless, const and let can be redeclared in different {}.

Another difference is whether the variables can be **used before declaring**. For var, it will work out, while for let and const, they should be declared before we can use them:

So

x = x + 7;

var x;

will work while

x = x + 7;

let x;

and

x = x + 7;

const x;

will result in an error.

One more difference between the variable declaration types is their **scope**. Scope determines the **visibility **or **accessibility **of variables.

Let, and const are **block** scope, while var is not. This means when a variable is created with let or const inside {}, it is local, and it does not work outside of the {}, but if the variable is created by var, it is created **globally**. Outside of the {}, when the variables are created, they are the same because they all have global scope.

So far, we have not seen any difference between the let and const. The let can constantly be reassigned with a new value, while const is constant and does not change its value.

So

const PI = 3.141592653589793;

PI = 3.14;

will give an Error, but

let number = 93;

number = 444;

will reassign 444 to the variable number.

However, the word constant is a little misleading. For a constant variable, we cannot reassign a constant value, array, or object, but we can change the array's elements and the object's value properties.

| kima_badalyan |

1,244,607 | FTDI Bitbanging GCC | This is a short note on how to setup a C programming environment for the FTDI chip in bit banging... | 0 | 2014-05-18T10:00:00 | https://ladvien.com/ftdi-bitbanging | robots, ftdi, gcc | ---

title: FTDI Bitbanging GCC

tags: robots, ftdi, gcc

published_at: 20140518T10:00Z

canonical_url: https://ladvien.com/ftdi-bitbanging

main_image: https://ladvien.com/images/FTDI.jpg

published: true

---

This is a short note on how to setup a C programming environment for the FTDI chip in bit banging mode, since that's what I had difficulty doing.

There may be easier ways to go about this, but I wanted to use GCC to compile a small C program to control the 8 IOs. The purpose was to write a small command-line program that would reset my LPC1114 before and after programming.

To setup the environment:

1. I downloaded and setup [MinGW32](http://www.mingw.org/wiki/HOWTO_Install_the_MinGW_GCC_Compiler_Suite).

2. I then downloaded [FTD2XX libraries](http://www.ftdichip.com/Drivers/D2XX.htm). **This included the ftd2xx.h file and ftd2xx.lib**.

3. I then stole the test code from Hack-a-Day's article on [bitbanging with the FTDI](http://hackaday.com/2009/09/22/introduction-to-ftdi-bitbang-mode/).

4. I modified the code as they suggested by including, in this order, the Windows compatibility files:

```cpp

#include <stdio.h>

#include <stdarg.h>

#include <windows.h>

#include <windef.h>

#include <winnt.h>

#include <winbase.h>

#include <string.h>

#include <math.h>

#include "ftd2xx.h"

```

5. I then used the rest of their code as a base: [Hack-a-Day's FTDI PWM Code](https://github.com/Ladvien/FTDI_Bitbangin_GCC/blob/master/ftdi_Test.c)

I used this line to build it:

```bash

gcc -o ftdi_PWM ftdi_Test.c -L./ -lftd2xx

```

You must have both the ftd2xx.h and ftd2xx.lib in the same directory as you attempt to build.

6. I then wrote two programs, one to send DTR and CTS high and low in order to reset the LPC1114 into **programming mode. ** Second, to send DTR and CTS high and low in order to send the LPC1114 into **run program mode.** The idea being, I could use the typical [Sparkfun FTDI programmer](https://www.sparkfun.com/search/results?term=ftdi) to program my [LPC1114](http://letsmakerobots.com/content/lpc1114-setup-bare-metal-arm).

1. [LPC1114_reset_to_program](https://github.com/Ladvien/FTDI_Bitbangin_GCC/blob/master/LPC1114_reset_to_program.c)

2. [LPC1114_reset_to_bootloader](https://github.com/Ladvien/FTDI_Bitbangin_GCC/blob/master/LPC1114_reset_to_bootloader.c)

That's it. Just wanted to make sure this was out in the universe for the next guy's sake. | ladvien |

1,245,535 | 【TypeScript 30】Day 1:型別推論及註記 | 【TypeScript 30】Day 1:型別推論及註記 距離上次寫文章已經相隔了四個月,在這四個月期間大量練習了 React、node.js、Boostrap... | 0 | 2022-11-06T12:41:32 | https://dev.to/angushyx/typescript-30-day-1xing-bie-tui-lun-ji-zhu-ji-o2n | webdev, typescript |

## 【TypeScript 30】Day 1:型別推論及註記

距離上次寫文章已經相隔了四個月,在這四個月期間大量練習了 React、node.js、Boostrap 等等技術,也大致上掌握以上技術的核心,也因此都沒有好好沉澱寫文章,最近在學習 Next.js、typeScript 以及 tailwindcss,因為對於 React、Boostrap 都蠻熟習的,因此在學習 Next.js 和 tailwind 上 gap 比較小,相對的 typeScript 就比較需要花時間研究,接下來就聊聊 typeScript 吧!!!

最一開始來建置 ts 專案,因為平常習慣使用 React 開發,就使用方便的 create react app 吧。

### Insatll

npx create-react-app ts-30 --template typescript

或

yarn create react-app ts-30 --template typescript

安裝完後,緊接著安裝使用 typeScript 時所需要的套件,一樣可以選擇使用 npm 或是 yarn

### Install

npm install — save typescript @types/node @types/react @types/react-dom @types/jest

或

yarn add typescript @types/node @types/react @types/react-dom @types/jest

接著在專案中安裝 eslint 以及 prettier 模組

yarn add prettier eslint-config-prettier eslint-plugin-prettier eslint-plugin-react-hooks

再加入設定檔於 /src 底下,專案建立完成後,先來釐清最基本的觀念【型別推論及註記】

> # **【型別推論**(Inference)**及註記**(Annotation)**】**

下面就要來介紹個別的原理以及使用時機

**型別大致上分為幾種**

**原始型別 Primitive Types :**number, string, boolean, undefined, null ES6 的 symbol與時常會在**函式型別**裡看到的 void皆屬於**原始型別。**

**物件型別 Object Types:**這些型別的共同特徵是 — — ***從原始型別或物件型別組合出來的複合型態***(比如物件裡面的 Key-Value 個別是 string 和 number 型別組合成)

* 基礎物件型別:JSON 物件,陣列,類別以及類別產出的物件(也就是 Class 以及藉由 Class *new* 出來的 Instance)

* TypeScript 擴充型別:Enum、Tuple(皆內建於 TS )

* 函式型別 Function Type:型別樣貌像是 ( input ) => ( output )

**明文型別 Literal Type:**值的本身也可以成為一個型別。如下圖,常數 string 被賦值 ' hello ts ' ,直接被宣告為 string 類型。

**特殊型別**:參考的部落格作者所細分出的型別,即 any、never即以及 unknow ,這三種看起來都是沒有被定義型別所衍生出的型別,後面會介紹他們的差異。

**複合型別**:同上,即 union 與 intersection 的型別組合,但是跟其他的型別的差別在於:這類型的型別都是由邏輯運算子組成,分別為 | 與 &。

> 大致上將 typeScript 的型別多分類後,我們先回到最一開始要介紹的主題:型別推論及註記的原理以及使用時機

**型別推論:**還記得上面的這張圖嗎 ? 完美的展示了型別推論,typeScript 會**自動幫你推論型別。**

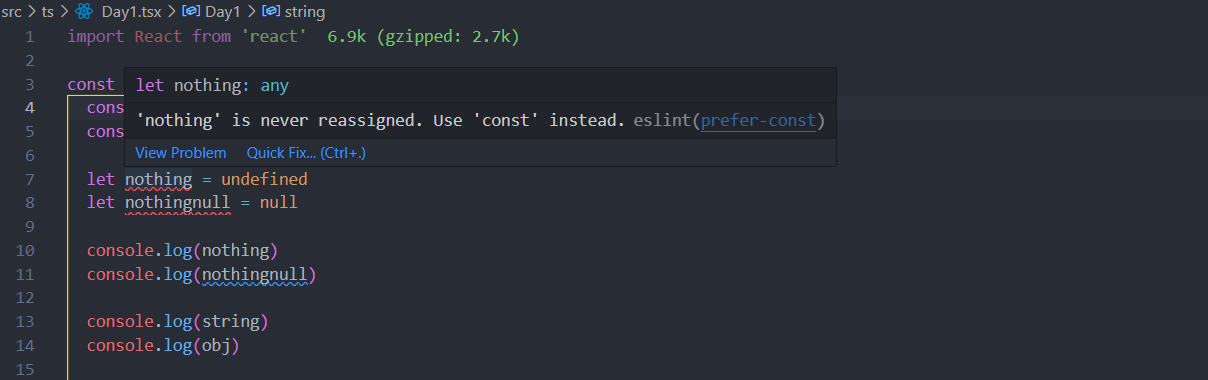

不過讀者也許會思考那這樣 ts 和 js 的寫法哪裡不一樣了呢 ? 在這個案例中 typeScript 的確是幫我們把型別定義好,不過在更多情況下 ts 會把型別定義為 any那既然都已經使用 typeScript 開發了,當然還是不要讓型別是 any 的情況發生。

如下圖,如果把 nothing 這個變數設置為undefiend數就會被定義為 any ,正常情況下這邊並不會爆出錯誤,不過因為已經使用了 eslint ,所以這個錯誤直接被 eslint 判斷出來。

* *此類 null 跟 undefined 稱為 **Nullable Types **這類 **Nullable Types **會被推論為 any。*

不過,型別推論的本意並不是在這裡,而是當變數已經被推論過後,ts 就會跳出警告 — 不能再更改其型別。

拿上面 string這個變數例子,如果重新賦值該變數為數字型別的 22 ts 的型別小蚯蚓就跑出來了。

TypeScript 就會提出這個變數應該是 string 類型這個質疑。

那在這邊我們回到剛剛所提到的 ***Nullable Types ***測試看看,並不會跳出紅色蚯蚓。

檢查完型別後會發現是 any,結論就是當型別被定義為 any 時某種程度上就已經失去使用 TypeScript 幫我們監督程式碼的本意了,因此在大多情況之下,盡量避免讓型別是 any 。

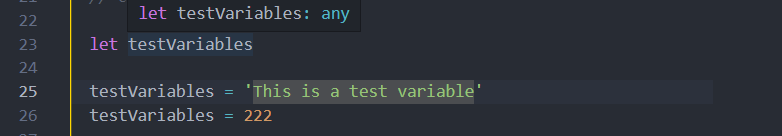

### 遲滯性初始 Delayed Initialization

除了沒有定義好型別之外,還有一種狀況會出現 any,就和原生的 JS 類似,當今天先定義了變數後,不直接指派值,**而是程式碼執行到後面才賦值**

在這邊 TS 已經把 testVariables 這個變數的型別認定為 any ,因此後面無論賦值是字串或是數字,TypeScript 都並不會檢查出錯誤。

概念其實就與我們剛剛提到的 ***Nullable Types ***有關,當只有宣告變數而沒有給他值時,該值自然就是 ***Nullable Types ***的 undefined,也因此型別就等於是 any 。

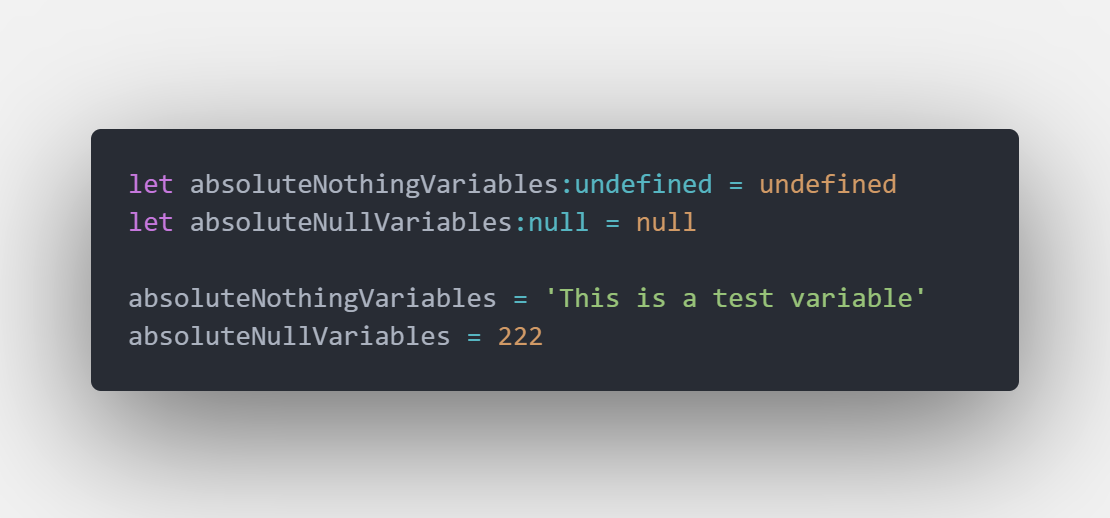

> 如何避免 any 的型態出現 — — 型別註記 ( Type Annotation )

我們將 absoluteNothingVariables 和 absoluteNullVariables註記型別後再重新賦值

TypeScript 就會把錯誤拋出來了

再測試看看

可以發現,經過型別註記後重新賦與註記的型別 ( 這裡是 string ) 後,接下來重新賦予 string 類型的值並不會出錯,不過一但清除為 undefined 或 null 就跳出錯誤,原因是: TS 已經將 stringVariable認定為 string 類型。

不過如果還沒指派值之前就使用該變數,那這個 stringVariable雖然已經進行型別註記了,不過該值也還是 undefiend 不是嗎 ?

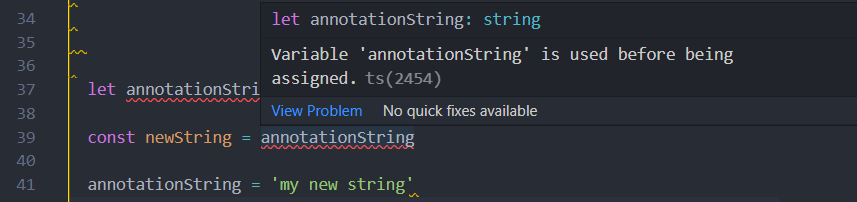

來嘗試看看,就像這樣,在annotationString 還沒賦值的情況之下,先使用了該變數

跳出的錯誤非常明顯指出不能在還沒賦值時就使用該變數,有寫過 JS 的讀者應該對於這個問題比較熟悉,概念有點像 TDZ (Temporal Dead Zone,暫時性死區)這個討論範圍就延伸到了 JavaScript 的作用域,因此就不在這裡多做討論了。

小結:起初想說 TypeScript 不就只是加入型別而已沒甚麼,聽到大家說TypeScript 學習門檻很高,不太相信,不過經過這兩到三天的整理發現好像其實並不只是單純的加入型別這樣而已,剛開始就冷汗直流,希望能夠讓整理文章的速度再快一點。

參考資料:

[**Day 02. 前線維護・型別推論 X 註記 - Type Inference & Annotation - iT 邦幫忙::一起幫忙解決難題,拯救 IT 人的一天**](https://ithelp.ithome.com.tw/articles/10214719)

[**Adding TypeScript | Create React App**](https://create-react-app.dev/docs/adding-typescript/)

[**HiSKIO 專業技能線上學習平台 | 來自全球高品質的職場專業課程**](https://hiskio.com/account/courses?tab=completed&type=course)

| angushyx |