id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,302,121 | How to create Prefabs in Unity3D? | This short article explains what Unity's Prefabs are and what they are useful for. Motivation Let's... | 0 | 2022-12-19T12:14:07 | https://dev.to/kiranjodhani/how-to-create-prefabs-in-unity3d-2egi | unity3d, csharp, gamedev, tutorial | This short article explains what Unity's Prefabs are and what they are useful for.

**Motivation**

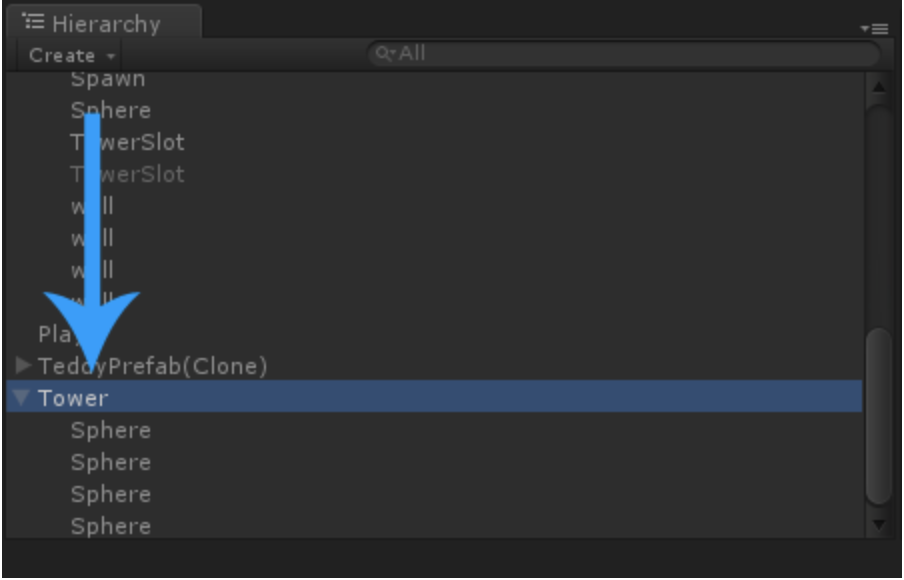

Let's imagine that we want to make a Tower Defence game in Unity. At first we would create a Tower GameObject, that would look something like this in the Hierarchy:

When the player starts the game, usually there are no Towers in the scene yet. What we need is a way to save our Tower GameObjects somewhere and then load them into the Hierarchy again as soon as the player wants to build a tower.

This is what Prefabs are for.

**Creating a Prefab**

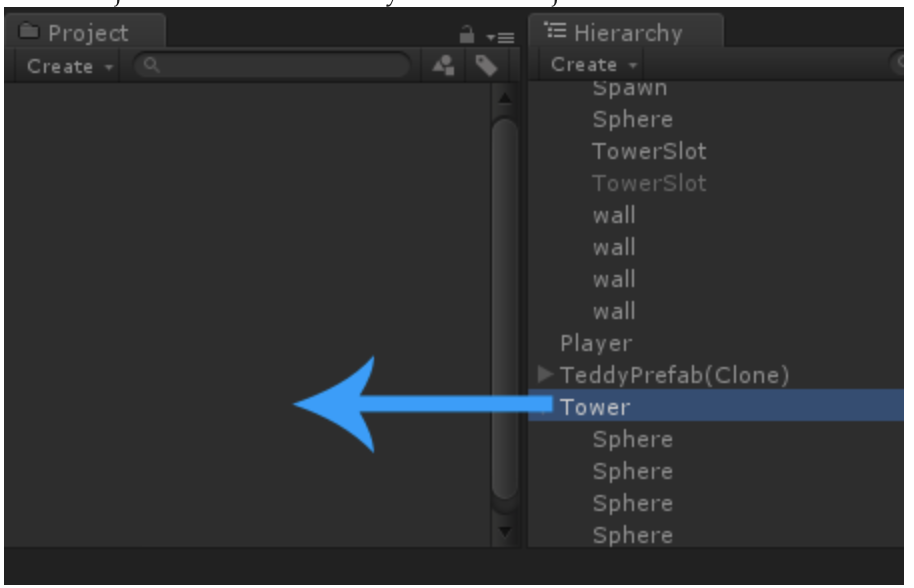

Let's create a Prefab. It's very easy, all we have to do is drag our GameObject from the Hierarchy into the Project area like this:

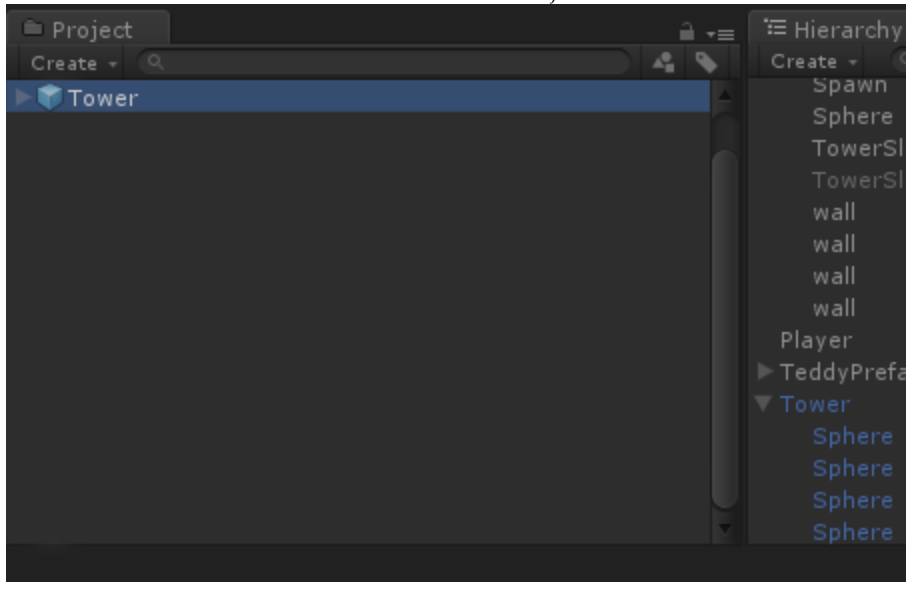

Afterwards we can see the Prefab in our Project area:

Now we can delete the GameObject from our Hierarchy so it's not in the game world anymore. But since it's in the Project area, we don't lose it completely.

**Loading a Prefab**

If we want to load a prefab, we have two options. We can either do it manually by dragging it from the Project area into the Hierarchy area, or by using a script that calls Instantiate.

Here is an example script that loads a Prefab as soon as the game starts:

using UnityEngine;

using System.Collections;

public class Test : MonoBehaviour {

public GameObject prefab = null;

void Start () {

// instantiate the prefab

// -> transform.position means that it will be

// instantiated at the position of *this*

// gameobject

// -> quaternion.identity means that it will

// have the default rotation, hence its not

// rotated in any weird way

Instantiate(prefab,

transform.position,

Quaternion.identity);

}

}

Now all we have to do is add the Test script to a GameObject in the scene, take a look at the Inspector and then set the public prefab variable to any Prefab from our Project area like this:

After pressing start, it immediately loads our Tower Prefab into the scene.

Simple as that!

| kiranjodhani |

1,302,344 | Collecting info for the development of a community mobile app shielding SDK (freeRASP React Native flavor) | Based on experience with freeRASP for Android/iOS/Flutter/Cordova, there should be a demand for a... | 0 | 2022-12-19T13:39:33 | https://dev.to/sirionrazzer/collecting-info-for-the-development-of-a-community-mobile-app-shielding-sdk-freerasp-react-native-flavor-2koo | reactnative, security, freerasp, mobile | Based on experience with freeRASP for Android/iOS/Flutter/Cordova, there should be a demand for a shielding SDK for RN-based apps, right? I worked on the development of previous freeRASP flavors, and I would like to support React Native apps also. However, this will be quite a new experience as I am not well-versed in the RN ecosystem yet. That's why I would highly appreciate any tips regarding package management, nuances of hybrid development in the RN world, etc. I target iOS and Android platforms.

Is there anything specific you would like to see in the implementation?

Anything to watch out for?

Goals

Easy to use SDK that satisfies needs of app security and mobile OWASP MAS compliance

Available on GitHub and also as a npm package

Configurable (~ same as Flutter version) - configuration as a single object

Lightweight

It will be able to detect reverse engineering, debugger, simulator, repackaging or cloning attempts, running the app in an unsafe OS environment (e.g., root or jailbreak), hooking frameworks, and installing the application through unofficial stores. | sirionrazzer |

1,302,414 | Oh boy, this is exciting! | So last Saturday and Sunday I took the time to finally complete the set of features I wanted to... | 0 | 2022-12-19T15:54:55 | https://dev.to/webjose/oh-boy-this-is-exciting-3mee | webdev, javascript, programming, react | So last Saturday and Sunday I took the time to finally complete the set of features I wanted to deliver in **v2.0.0** of [wj-config](https://www.npmjs.com/package/wj-config).

So I did that on Saturday and started re-working the React v18.2.0 example I have in the repository to make sure all was working as expected. While doing this and updating the project's README, I realized of a few things that led me to work on higher API to help comply with **DRY**. This took the Sunday evening.

#### Quick Screenshot of the React Sample

---

Anyway, the end result is quite interesting. Let me put it like this: Have you ever had the need to have configuration values vary depending on things like the browser the user is using? (Honest question, I am no backend developer). Well, now you'll be able to!

One new feature, **conditional inclusion of data sources** allows you to run abritrary checks, including things like checking for browser capabilities. I'll give you a preview of the example code I wrote for the README:

```javascript

import wjConfig, { Environment } from "wj-config";

import mainConfig from './config.json';

// Chromium-based browsers.

var isChromium = !!window.chrome && (!!window.chrome.webstore || !!window.chrome.runtime);

export default await wjConfig()

.addObject(mainConfig)

.name('Main')

.addFetchedConfig('/config.chromium.json')

.when(e => isChromium, 'Chromium')

.build();

```

## Per-Region Or Per-Tenant Configuration

>This was the original feature I wanted to complete before releasing **v2.0.0** as a production package.

Have you ever had this need to have different production configuration files per region or per tenant, and maybe even for pre-production environments too? Have you done this combination of files yourself, producing stuff like `config.prod.tenantA.json`, `config.prod.tenantB.json`, `config.pre-prod.tenantA.json` and `config.prod.tenantB.json`? And so on for other environments?

Well, with **Per-Trait Configuration** this multiple configuration file creation will no longer be needed. Here's an example preview taken from the upcomming README. It basically computes the combination you need on the spot:

```javascript

import wjConfig, { Environment, EnvironmentDefinition } from "wj-config";

import myTraits from './myTraits.js';

// Easiest to show with NodeJS as we already have an environment object with all variables ready.

// The ENV_TRAITS environment variable would contain the desired trait value assigned when deploying.

const currentEnvDef = new EnvironmentDefinition(process.env.NODE_ENV, process.env.ENV_TRAITS);

const env = new Environment(currentEnvDef, ['MyList', 'OfPossible', 'Environments']);

// Main configuration file. Boolean argument defines if the file must exist.

const mainConfig = loadJsonFile('./config.json', true);

// Classic per-environment configuration.

const perEnvConfig = loadJsonFile(`config.${env.current.name}.json`, false);

export default await wjConfig()

.addObject(mainConfig)

.name('Main')

.addObject(perEnvConfig)

.name(env.current.name)

.addComputed(() => loadJsonFile('config.amr.json', false))

// The second parameter is just the data source name.

.whenAllTraits(myTraits.Americas, 'Americas') // <-- It conditions the recently added data source.

.addComputed(() => loadJsonFile('config.eur.json', false))

.whenAllTraits(myTraits.Europe, 'Europe')

.addComputed(() => loadJsonFile('config.asa.json', false))

.whenAllTraits(myTraits.Asia, 'Asia')

.includeEnvironment(env)

.build();

```

Oh, if you don't know, **wj-config** works for **React** and **NodeJS** the same way. The only differences you see is things like fetching config files in React, but loading files in NodeJS.

Still working on the final details of the documentation and making sure the TypeScript types are all updated and reflecting the new API properly. Maybe by next weekend we'll have a commit and a new NPM package upload. Fingers crossed! | webjose |

1,302,499 | Build a full-stack application with AdminJS | Written by Clara Ekekenta✏️ Building custom admin panels for each Node.js project can be a... | 0 | 2022-12-21T15:00:25 | https://blog.logrocket.com/build-full-stack-application-adminjs | node, webdev | **Written by [Clara Ekekenta](https://blog.logrocket.com/build-full-stack-application-adminjs/)✏️**

Building custom admin panels for each Node.js project can be a time-consuming task for a developer, especially given the number of projects they handle. As a result, there is a growing demand for alternative tools designed to reduce the developer's workload.

This article highlights the features of an open source Node.js admin panel that promises to do just that: [AdminJS](https://adminjs.co). The tutorial portion of this post will demonstrate how to use AdminJS to build a full-stack application.

_Jump ahead:_

* [What is AdminJS?](#what-is-adminjs)

* [Why use AdminJS?](#why-use-adminjs)

* [Setting up a new project](#setting-up-a-new-project)

* [Adding the Express.js plugin](#adding-the-expressjs-plugin)

* [Adding the MongoDB adapter](#adding-the-mongodb-adapter)

* [Creating the blog model](#creating-the-blog-model)

* [Creating resources](#creating-resources)

* [Creating action handlers](#creating-action-handlers)

* [Backend actions](#backend-actions)

* [Actions with visible UI](#actions-with-visible-ui)

* [Adding user authentication](#adding-user-authentication)

* [Setting up the frontend](#setting-up-the-frontend)

* [Testing the application](#testing-the-application)

## What is AdminJS?

AdminJS, previously called AdminBro, is an open source administrative panel interface tailored to meet the needs of Node.js applications. This interface eliminates the time and effort required to develop a custom admin page. Instead, users can easily view and manage content with the AdminJS UI.

AdminJS is built with React and offers a range of customizability, it also provides a REST API that can be integrated into other applications.

## Why use AdminJS?

With AdminJS, users can quickly build and set up administrative dashboards and applications. To help you evaluate whether you should consider AdminJS for your application needs, here’s a summary of its features:

* Easy integration with other applications: AdminJS can be easily integrated into a host of other applications such as SQL and NoSQL data sources and frameworks like Express.js, NestJS, and Fastify

* Does not impose its database schema on the user: AdminJS supports a variety of ORMs and ODMs, enabling users to connect with their database of choice

* Backend agnostic: Users can create, read, update, and delete content regardless of the choice of data source

* Advanced filtering feature: Users can easily trace specific search queries by applying multiple criteria to quickly filter out unwanted results

* Flexible user management: Different authorization levels can be set for users. This feature can also create roles and can restrict specific actions, such as data modification, to particular users

* Easy customization: The visual appearance of the AdminJS UI can be modified to meet user needs

* Customizable features: Several standard features, like file upload, bulk edits, export, user profile, and password hashing, can be applied to data sources; users can also create unique characteristics as desired

## Setting up a new project

To start with AdminJS, we’ll need to install the AdminJS core package and set it up with a plugin and adapter of our choosing. For this tutorial, we’ll use the Express.js plugin and MongoDB adapter.

To install the AdminJS core package on your local machine, navigate to the directory of your choice and open up a CLI. In the command line, use one of the following commands to install AdminJS with npm or Yarn:

```javascript

npm init

//select default options and fill out fields as desired

npm i adminjs

```

```javascript

yarn init

//select default options and fill out fields as desired

yarn add adminjs

```

### Adding the Express.js plugin

To add the Express plugin, we’ll use one of the following commands in the CLI:

```javascript

npm i @adminjs/express # for Express server

```

```javascript

yarn add @adminjs/express # for Express server

```

### Adding the MongoDB adapter

Next, we’ll add the MongoDB adapter to our application with one of the following commands:

```javascript

npm i @adminjs/mongoose mongoose # for Mongoose

```

```javascript

yarn add @adminjs/mongoose mongoose # for Mongoose

```

With our installation completed, we can finish our setup by connecting the installed plugin and adapter to our AdminJS package. First, we’ll install Express.js:

```javascript

//npm

npm i express tslib express-formidable express-session

//yarn

yarn add express tslib express-formidable express-session

```

Next, we’ll set up a simple application with Express. In the file directory, we’ll create a new file, `App.js`, and add the following:

```javascript

const AdminJS = require('adminjs')

const AdminJSExpress = require('@adminjs/express')

const express = require('express')

const PORT = 3000

const startAdminJS = async () => {

const app = express()

const admin = new AdminJS({})

const adminRouter = AdminJSExpress.buildRouter(admin)

app.use(admin.options.rootPath, adminRouter)

app.listen(PORT, () => {

console.log(`Listening on port ${PORT}, AdminJS server started on URL: http://localhost:${PORT}${admin.options.rootPath}`)

})

}

startAdminJS()

```

Here we created a simple AdminJS interface. In this tutorial, we’ll add a MongoDB data source, add authentication to our AdminJS UI, and use the database to create a simple application.

## Creating the blog model

We’ll be using MongoDB as the data source for our AdminJS panel. As a prerequisite, we’ll need to create a database on MongoDB and connect our application to it with the Mongoose adapter.

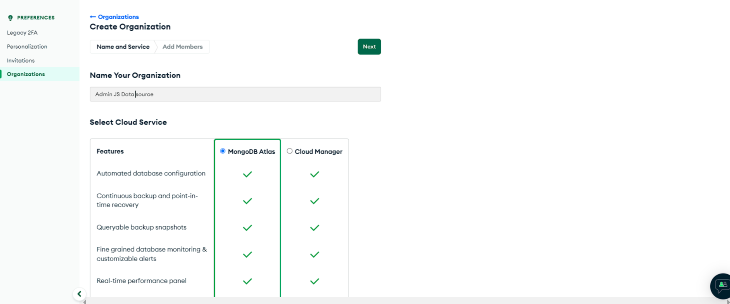

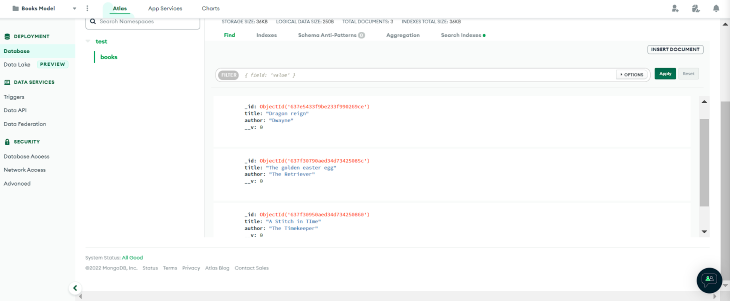

To get started, log into MongoDB and select **Create Organization**:  Here we created an organization named “AdminJS data source”.

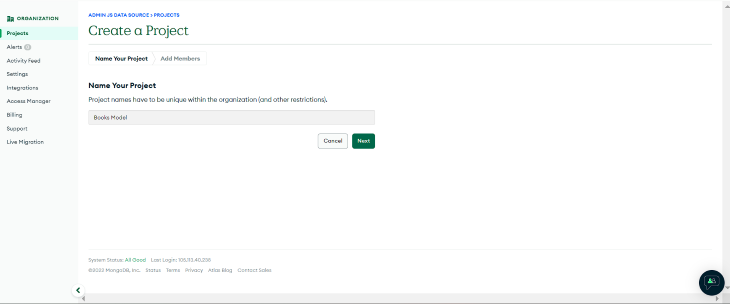

Next, we’ll add a new project to our organization; we’ll name the project “Books Model”:  Next, we’ll be prompted to create a new database. For this tutorial, we’ll build a shared cluster called "Books".

Now, we’ll create admin credentials for the cluster, and add the localhost URL to the **IP address** field. To get connection credentials, click on **Connect** and select **connect with MongoDB native adapters**. In the full-stack application, we can find the unique URI to connect our app to the database.

In the application's working directory, we’ll create a `bookModel` folder and a `book.model.js` file. In `book.model.js` file, we’ll define the schema for our database:

```javascript

const mongoose = require('mongoose');

const BookSchema = new mongoose.Schema({

title: { type: String },

author: { type: String },

});

const Book = mongoose.model('Book', BookSchema);

module.exports = {

BookSchema,

Book,

}

```

The `BookModel` defined schema will have the following fields: `title` and `author`.

### Creating resources

Next, we’ll add the model created in the previous section to our `app.js` file, connect our application to MongoDB, and create an AdminJS instance.

To do this, make the following modifications to the `app.js` file:

```javascript

//previous libraries import

const mongoose = require("mongoose");

const AdminJSMongoose = require("@adminjs/mongoose");

const { Book } = require("./bookModel/book.model.js");

AdminJS.registerAdapter({

Resource: AdminJSMongoose.Resource,

Database: AdminJSMongoose.Database,

})

//port

const startAdminJS = async () => {

const app = express();

const mongooseDB = await mongoose

.connect(

"mongodb+srv://ZionDev:Itszion4me@books.gawbiam.mongodb.net/?retryWrites=true&w=majority",

{

useNewUrlParser: true,

useUnifiedTopology: true,

}

)

.then(() => console.log("database connected"))

.catch((err) => console.log(err));

const BookResourceOptions = {

databases: [mongooseDB],

resource: Book,

};

const adminOptions = {

rootPath: "/admin",

resources: [BookResourceOptions],

};

const admin = new AdminJS(adminOptions);

//other code

```

Here we added the `Book` model as a resource to AdminJS. We also added the MongoDB database so that it will automatically update as we perform CRUD operations in AdminJS.

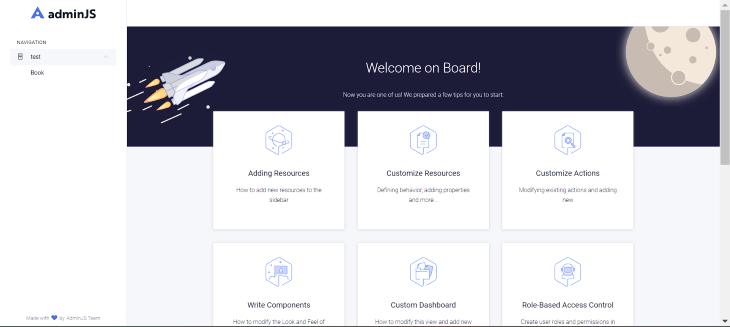

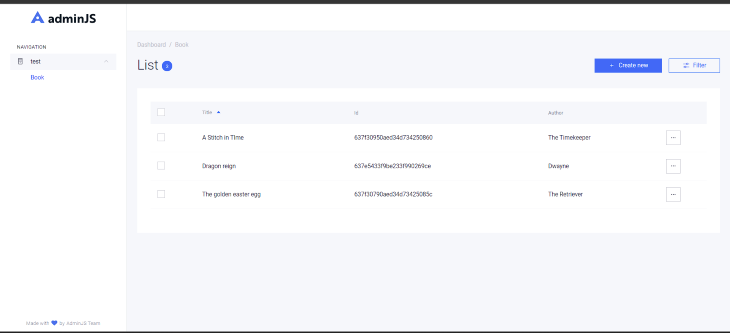

If we run the application with the `node App.js` command, we’ll get the AdminJS default screen and the `Book` model will appear in the navigation section:

## Creating action handlers

AdminJS provides the following actions: list, search, new, show, edit, delete, and bulk delete. It also allows the user to define custom actions when required. Actions to be created can be placed in two categories:

* Actions that run on the backend and do not display visible UI

* Actions that render components

Both actions are similar in that they are created in the same pattern. The significant difference between both patterns is the addition of a `component` props. Let's look at how we can make both types of actions.

### Backend actions

To create these actions, we’ll use the following syntax:

```javascript

const BookResourceOptions = {

resource: Book,

options: {

actions: {

GetJsonData: {

actionType: "record",

component: false,

handler: (request, response, context) => {

const { record, currentAdmin } = context;

console.log("record", record);

return {

record: record.toJSON(currentAdmin),

msg: "Hello world",

};

},

},

},

},

};

```

Here, we added a custom action to the `BookResourceOption`. The above command has the `component` property set to `false`. Hence, no component will be rendered and the action will run on the backend. The resulting output will be the selected record's data.

### Actions with visible UI

Next, we’ll need to create a component that the action will render. Then, we’ll add the designed component to the `component` property field.

For example, suppose we have the following custom React component:

```javascript

import React from 'react'

import { ActionProps } from 'adminjs'

const ShowRecord = (props) => {

const { record } = props

return (

<Div>

<h1>This is a simple component</h1>

<p>Below are our records</p>

<span>

{JSON.stringify(record)}

</span>

</Div>

)

}

export default ShowRecord

```

Once it’s created, we can add it to the `component` property, like so:

```javascript

component: AdminJS.bundle('./ShowRecord'),

```

## Adding user authentication

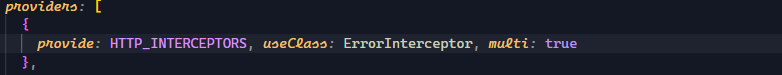

AdminJS can add user authentication for viewing and managing content; this can help better secure data and restrict unwanted access. We can add authentication to our AdminJS application with the `express` plugin. To do so, we’ll make the following modification to the `App.js` file:

```javascript

//other code

//login details

const DEFAULT_ADMIN = {

email: 'developer@admin.com',

password: 'administrator',

}

// handle authentication

const authenticate = async (email, password) => {

//condition to check for correct login details

if (email === DEFAULT_ADMIN.email && password === DEFAULT_ADMIN.password) {

//if the condition is true

return Promise.resolve(DEFAULT_ADMIN)

}

//if the condition is false

return null

}

```

Finally, we’ll replace AdminJS `buildRouter` with the `buildAuthenticatedRouter` and pass the authentication credentials to it:

```javascript

const adminRouter = AdminJSExpress.buildAuthenticatedRouter(

admin,

{

authenticate,

cookieName: "AdminJS",

cookiePassword: "Secret",

},

null,

{

store: mongooseDB,

resave: true,

saveUninitialized: true,

secret: 'Secret',

name: 'adminjs',

}

);

```

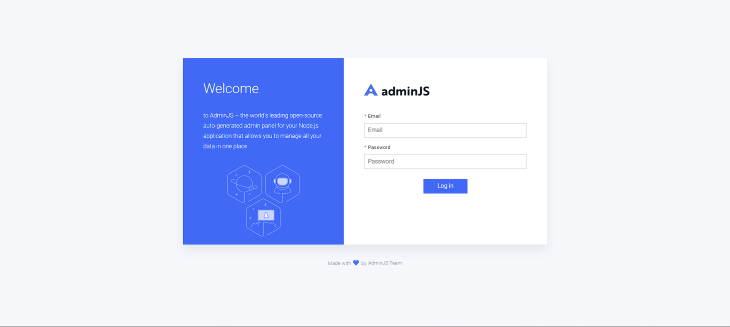

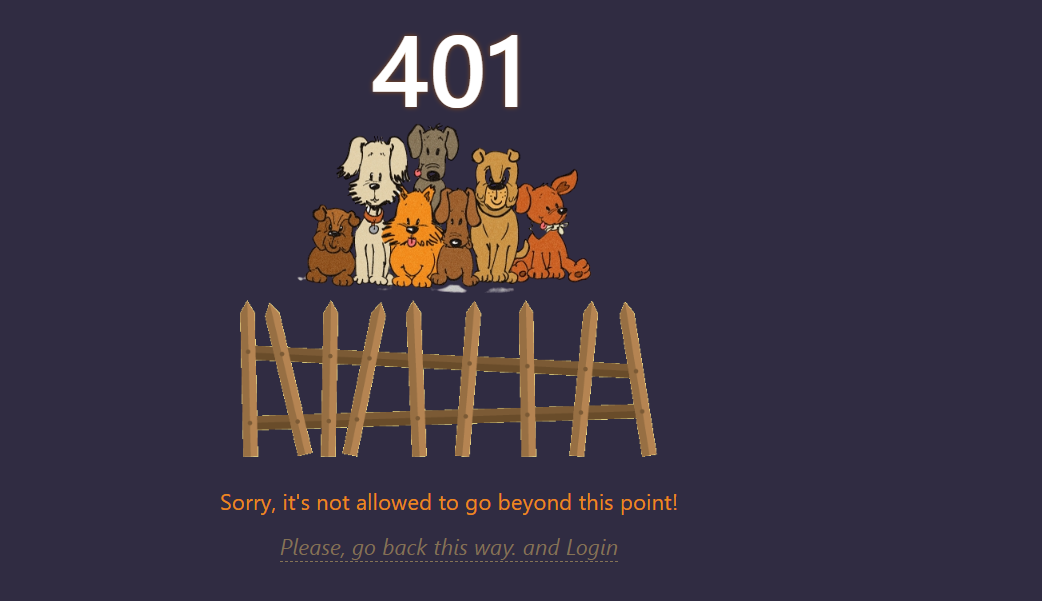

With this, we get a login page to access the AdminJS instance:

## Setting up the frontend

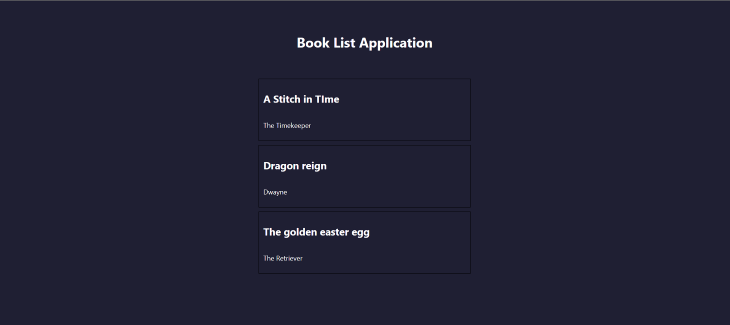

Next, we’ll build a book list application with Next.js and Axios, connect the AdminJS interface to the application, and display stored content. To access the AdminJS content, we’ll create an API request to the URL instance running on the backend.

In the `api` directory, we’ll create a file: `getBooks.js`. Next, we’ll make an API request to the `Books` resource in this file. The API endpoint for resources takes the following syntax:

```javascript

.../api/resources/{resourceId}/actions/{action}

```

In this case, our resource `id` is `Book`, and the action to be performed is `list`. This action will return all data stored in the resource. Add the following code to the `getBooks.js` file:

```javascript

import axios from "axios";

export default async function handler(req, res) {

await axios

.get("http://localhost:3000/admin/api/resources/Book/actions/list")

.then((response) => {

return res.status(200).json(response.data.records);

})

.catch((error) => {

console.log(error);

});

}

```

The above code returns a response containing our resource data. We can access this data as `static props` on the frontend in our `index.js` file:

```javascript

export default function Home(props) {

console.log(props);

return (

<div style={{display:"flex", alignItems:"center", height:"100vvh", paddingTop:"55px", flexDirection:"column"}}>

<h1>Book List Application</h1>

<div style={{marginTop:"34px"}} >

{/* book List container */}

{props.books.map((book) => {

return (

<div style={{display:"flex", flexDirection:"column", border:"1px solid black", width:"500px", padding:"10px", margin:"10px"}}>

<h2>{book.params.title}</h2>

<p>{book.params.author}</p>

</div>

);

}

)}

</div>

</div>

)

}

export const getStaticProps = async () => {

const res = await fetch('http://localhost:3001/api/getBooks');

const data = await res.json();

return {

props: { books: data }

}

}

```

We use `getStaticProps` to fetch data from the API route and pass it as a `props`. Then, we can access this `prop` on the frontend and return the `title` and `author` for each array element in the response.

## Testing the application

To test our application, we’ll create entries using the AdminJS instance:  There are three entries in the above dashboard list, each containing a book title and author. If we navigate to the MongoDB `Books` collection on MongoDB Atlas, we can see the data produced by the `Create` operation performed in the AdminJS instance:

Now, when we run our Next.js application, we get the following result:

## Conclusion

In this tutorial, we introduced AdminJS, reviewed its many features, and then used it to build a full-stack Node.js application with Express.js and MongoDB. How will you use AdminJS in your next project?

---

## 200’s only ✔️ Monitor failed and slow network requests in production

Deploying a Node-based web app or website is the easy part. Making sure your Node instance continues to serve resources to your app is where things get tougher. If you’re interested in ensuring requests to the backend or third party services are successful, [try LogRocket] (https://logrocket.com/signup/).

[](https://lp.logrocket.com/blg/signup)

[LogRocket] (https://lp.logrocket.com/blg/signup) is like a DVR for web apps, recording literally everything that happens on your site. Instead of guessing why problems happen, you can aggregate and report on problematic network requests to quickly understand the root cause.

LogRocket instruments your app to record baseline performance timings such as page load time, time to first byte, slow network requests, and also logs Redux, NgRx, and Vuex actions/state. [Start monitoring for free] (https://lp.logrocket.com/blg/signup). | mangelosanto |

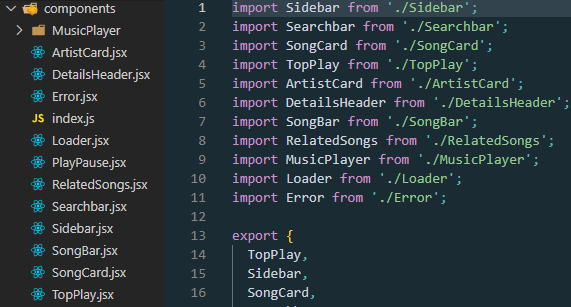

1,302,823 | ⚛️Componentes Funcionais e de Classe em React⚛️ | 👇Um artigo objetivo sobre componentes em React👇 ⚛️O que são React... | 0 | 2022-12-19T22:52:38 | https://dev.to/mpetry/componentes-funcionais-e-de-classe-em-react-150c | javascript, reactnative, webdev, react | ##

_**👇Um artigo objetivo sobre componentes em React👇**_

<br>

**⚛️O que são _React Components_?**

- _Components_ são considerados os principais blocos de construção de um aplicativo em React;

- Embora todos os componentes estejam no mesmo local da estrutura de pastas, eles operam de forma independente uns dos outros e se combinam em um componente pai para formar a interface final para o usuário.

<br>

**⚛️Que problema resolveu?**

- Anteriormente, a criação de uma página web simples requeria que os devs escrevessem centenas de linhas de código;

- Na estrutura tradicional de DOM, simples mudanças podiam ser bastante desafiadoras;

- Para resolver esses problemas, uma abordagem baseada em componentes **independentes e reutilizáveis** foi introduzida.

<br>

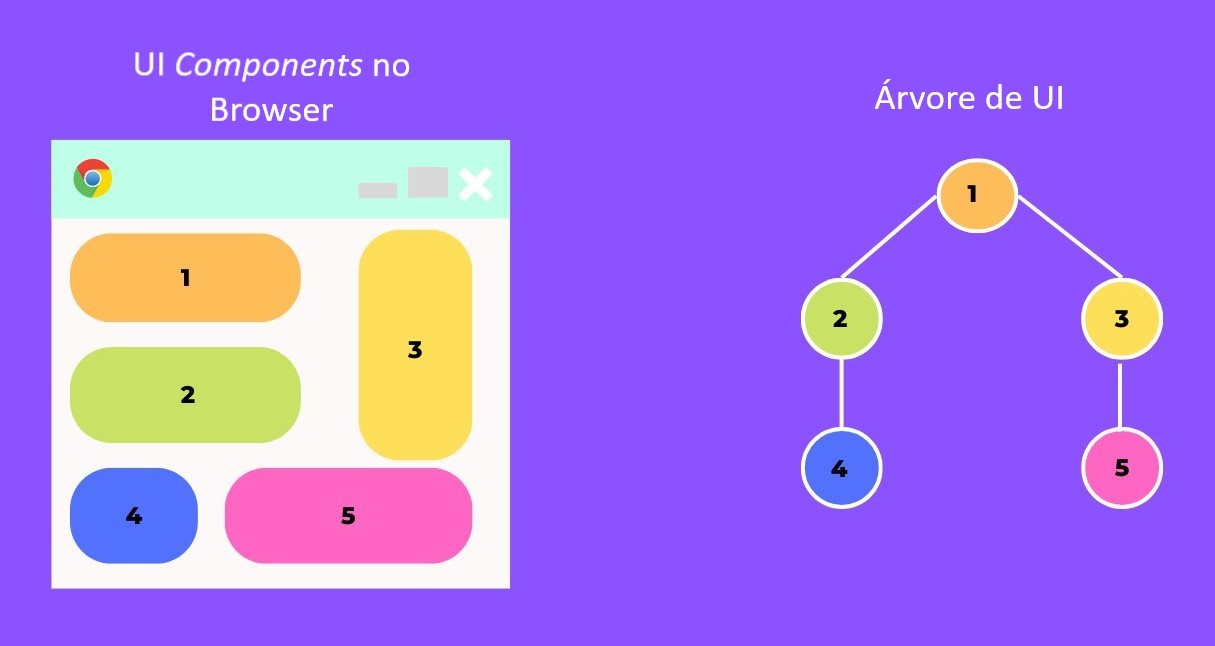

**⚛️Como um componente se parece?**

- Considere toda a interface do usuário (UI) como uma árvore;

- Aqui, a raiz é o componente inicial e cada uma das outras peças se torna um ramo, os quais são subdivididos em sub-ramos.

<br>

**⚛️Quais são os tipos de componentes?**

**📲Componentes Funcionais:**

- É o primeiro tipo de componente e também o mais recomendado;

- Um _functional component_ é uma função em JavaScript/ES6 que retorna um elemento do React (JSX);

- Ele sempre começa com uma letra maiúscula (convenção de nomes);

- Se necessário, aceita props como parâmetro;

- Por exemplo, você pode criar um componente funcional com sua definição por _arrow function_ 👇

```

const Welcome = (props) => {

return <h1>Hello, {props.name}</h1>;

}

```

> "Essa função é um componente do React válido, pois ele aceita um único argumento de objeto 'props' com dados e retorna um elemento do React." (reactjs.org)

- Para utilizar um componente mais tarde, você precisa exportá-lo para, então, importá-lo em outro lugar.

<br>

**🔃Componentes de Classe:**

- _Class Component_ é uma classe do ES6 que será um componente quando fizer o ‘extend’ de um componente (comportamento ou valor) do React;

- Aceita props (no construtor), se necessário;

- Deve ter um método de render() para retornar o JSX;

- Abaixo vemos a mesma função Welcome anterior, porém retornada como um componente de classe:

```

class Welcome extends React.Component {

render() {

return <h1>Hello, {this.props.name}</h1>;

}

}

```

<br>

**⚛️Conclusão**

- Componentes são a melhor maneira de organizar seu aplicativo React e ajudam a escrever um código mais bem gerenciado;

- Ambos são bons, porém prefiro _Funcional Components_ a _Class Components._

Obrigado se você acompanhou até aqui e espero que esse artigo o ajude na trajetória como dev.

---

_Autor: Marcelo Schäffer Petry_

https://linkedin.com/in/m-petry/

https://marcelopetry.com/

| mpetry |

1,303,007 | Import and animate an external SVG file with Javascript and Gsap. | About GSAP GSAP (GreenSock Animation Platform) is a robust high-performance... | 0 | 2022-12-20T01:30:49 | https://dev.to/steinarvdesign/import-and-animate-an-external-svg-file-with-javascript-and-gsap-4ikb | javascript, gsap, external, svg | {% codepen https://codepen.io/steinarV/pen/KKBKpPd %}

## About GSAP

GSAP (GreenSock Animation Platform) is a robust

high-performance JavaScript animation library.

Read more at: https://greensock.com/docs/

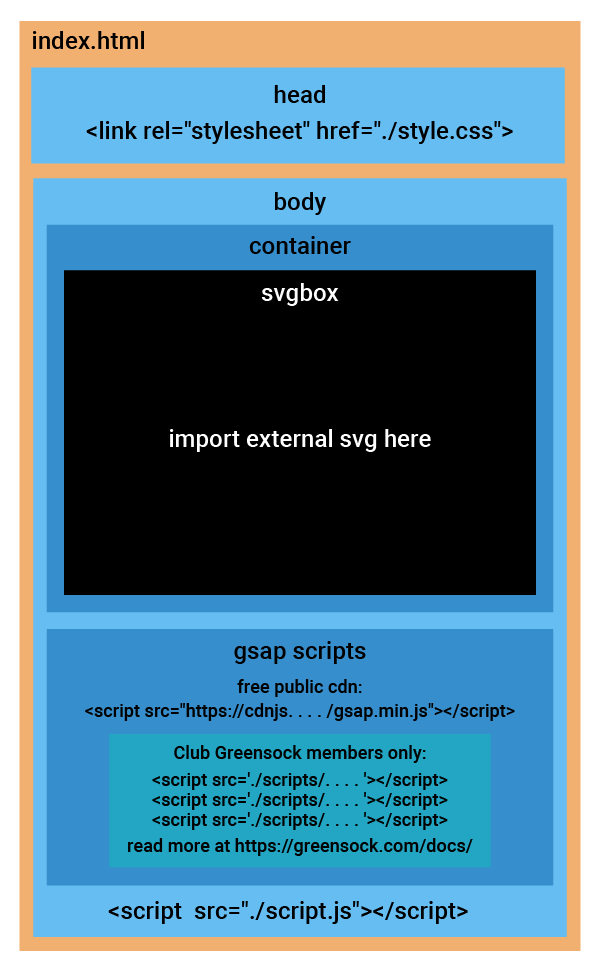

## The html-file

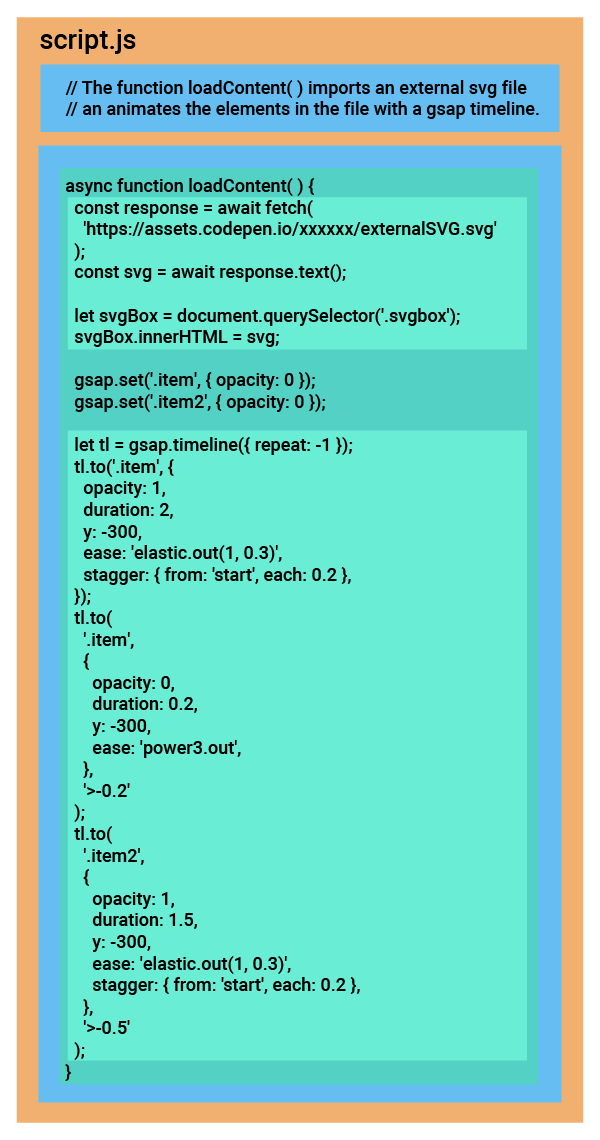

## The Javascript-file

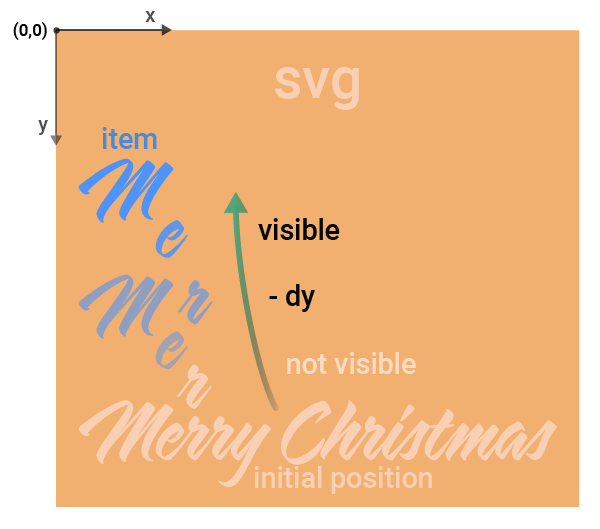

## Diagram

## Code from the external SVG-file

```

<svg xmlns="http://www.w3.org/2000/svg" id="mysvg" viewBox="0 0 1460 930">

<style type="text/css">

.st1{fill:#FFFFFF;}

.st2{fill:#FC5B6B;}

</style>

<g id="m1" class="item m1">

<path id="m1black" d="M348.7,666.5c-60.1,72.5-62.9,104-62.9,104c-2.1,15.3,8.6,20.8,8.6,20.8l2.6,0.8c1.6,0.3,1.6,1.6,1.6,1.6 c0.3,0.8-1.6,1.3-1.6,1.3c-27.3,0.5-28.6-27.3-28.6-27.3c-1-7.3,3.4-21.3,3.4-21.3c1.6-4.7,3.6-10.1,6.8-16.4 c10.4-21.3,39.8-60.6,39.8-60.6l-0.5-0.3c-54.6,62.4-71,85-71,85c-14.8,22.4-20.3,35.6-20.3,35.6c-1.6,2.6,0.3,4.9,0.3,4.9 c0.8,1,0,2.1,0,2.1c-0.5,1.3-3.4,0.3-3.4,0.3c-13.5-7.3-13.5-19.8-13.5-19.8c-1-4.7,6.8-19.8,6.8-19.8c30.4-57.2,58-95.2,58-95.2 l-0.5-0.5c-76.7,81.1-90.5,100.6-90.5,100.6c-17.4,24.4-16.6,33.3-16.6,33.3c0,1.8,1.3,4.7,1.3,4.7c0.8,1.6-1,2.9-1,2.9 c-1.6,1.3-3.9-0.8-3.9-0.8c-12.7-12.2-12.7-20.3-12.7-20.3c-3.6-5.5,13.5-30.9,13.5-30.9c49.7-69.9,54.9-78.5,54.9-78.5 c14.3-20.5,14.8-26.3,14.8-26.3c0.8-1.6-0.8-1-0.8-1c-33.3,12.5-63.7,36.9-63.7,36.9c-34.1,26.3-44.2,40-44.2,40 c-0.3,1,4.7,1.3,4.7,1.3c2.1-0.3,2.1,1.6,2.1,1.6c0.5,0.8-1.3,1.6-1.3,1.6c-10.1,4.7-16.4-1.3-16.4-1.3 c-8.8-7.5-11.7-15.6-11.7-15.6c-1.3-1.3,2.6-4.4,2.6-4.4c16.9-13.5,37.7-28.1,37.7-28.1c15.3-11.4,33.3-21.8,47.6-28.9 c23.7-11.4,34.6-13,34.6-13c7-1,12.5,3.9,12.5,3.9l4.9,4.7c5.5,5.2,3.4,14.8,3.4,14.8c-3.1,18.7-23.4,45.8-23.4,45.8 c-12.5,16.9-15.6,20-15.6,20l0.5,0.8l54.1-61.9l17.7-20.3c2.6-3.6,5.7-3.6,5.7-3.6c1.8-0.5,5.5,2.6,5.5,2.6 c8.6,7.5,11.4,10.4,11.4,10.4c3.9,3.6,0.3,9.1,0.3,9.1l-46.5,70.5l0.5,0.5c52.8-65.5,70.2-83.2,70.2-83.2c3.4-6.2,7.5-4.2,7.5-4.2 c8.3,5.2,15.3,12.5,15.3,12.5C354.1,661.1,348.7,666.5,348.7,666.5z"/>

<path id="m1white" class="st1" d="M347.7,656.5c-60.1,72.5-62.9,104-62.9,104c-2.1,15.3,8.6,20.8,8.6,20.8l2.6,0.8 c1.6,0.3,1.6,1.6,1.6,1.6c0.3,0.8-1.6,1.3-1.6,1.3c-27.3,0.5-28.6-27.3-28.6-27.3c-1-7.3,3.4-21.3,3.4-21.3 c1.6-4.7,3.6-10.1,6.8-16.4c10.4-21.3,39.8-60.6,39.8-60.6l-0.5-0.3c-54.6,62.4-71,85-71,85c-14.8,22.4-20.3,35.6-20.3,35.6 c-1.6,2.6,0.3,4.9,0.3,4.9c0.8,1,0,2.1,0,2.1c-0.5,1.3-3.4,0.3-3.4,0.3c-13.5-7.3-13.5-19.8-13.5-19.8c-1-4.7,6.8-19.8,6.8-19.8 c30.4-57.2,58-95.2,58-95.2l-0.5-0.5c-76.7,81.1-90.5,100.6-90.5,100.6c-17.4,24.4-16.6,33.3-16.6,33.3c0,1.8,1.3,4.7,1.3,4.7 c0.8,1.6-1,2.9-1,2.9c-1.6,1.3-3.9-0.8-3.9-0.8c-12.7-12.2-12.7-20.3-12.7-20.3c-3.6-5.5,13.5-30.9,13.5-30.9 c49.7-69.9,54.9-78.5,54.9-78.5c14.3-20.5,14.8-26.3,14.8-26.3c0.8-1.6-0.8-1-0.8-1c-33.3,12.5-63.7,36.9-63.7,36.9 c-34.1,26.3-44.2,40-44.2,40c-0.3,1,4.7,1.3,4.7,1.3c2.1-0.3,2.1,1.6,2.1,1.6c0.5,0.8-1.3,1.6-1.3,1.6c-10.1,4.7-16.4-1.3-16.4-1.3 c-8.8-7.5-11.7-15.6-11.7-15.6c-1.3-1.3,2.6-4.4,2.6-4.4c16.9-13.5,37.7-28.1,37.7-28.1c15.3-11.4,33.3-21.8,47.6-28.9 c23.7-11.4,34.6-13,34.6-13c7-1,12.5,3.9,12.5,3.9l4.9,4.7c5.5,5.2,3.4,14.8,3.4,14.8c-3.1,18.7-23.4,45.8-23.4,45.8 c-12.5,16.9-15.6,20-15.6,20l0.5,0.8l54.1-61.9l17.7-20.3c2.6-3.6,5.7-3.6,5.7-3.6c1.8-0.5,5.5,2.6,5.5,2.6 c8.6,7.5,11.4,10.4,11.4,10.4c3.9,3.6,0.3,9.1,0.3,9.1l-46.5,70.5l0.5,0.5c52.8-65.5,70.2-83.2,70.2-83.2c3.4-6.2,7.5-4.2,7.5-4.2 c8.3,5.2,15.3,12.5,15.3,12.5C353.1,651.1,347.7,656.5,347.7,656.5z"/>

</g>

<g id="e" class="item e">

<path id="eblack" d="M348.7,666.5c-60.1 . . . . . 6.5z"/>

<path id="ewhite" class="st1" d="M347.7,6 . . . . . 7,656.5z"/>

</g>

/* more <g> </g> elements here*/

</svg>

```

## About Codepen

"Codepen" - https://codepen.io/ - is a social development environment - an online code editor for developers of any skill - that allows people to write code in the browser, and see the results of it as they build. Codepen is particularly useful for people learning to code.

View the full code for this pen at https://codepen.io/steinarV/pen/KKBKpPd

Here is a link to my blog: https://steinarv.design/blog

Thanks for watching !

| steinarvdesign |

1,303,209 | My first npm, state management | https://github.com/vyquocvu/anystate Features: Lightweight object state management Open Source... | 0 | 2022-12-20T04:59:32 | https://dev.to/samplemml/my-first-npm-state-management-3kki | [https://github.com/vyquocvu/anystate

](https://github.com/vyquocvu/anystate

)

Features:

Lightweight object state management

Open Source Project

Small App Size (~2Kb)

Easy to use with nay framework, just a callback on change

About:

AnyState is an open source programs built using Typescript (for a more secure environment).

| samplemml | |

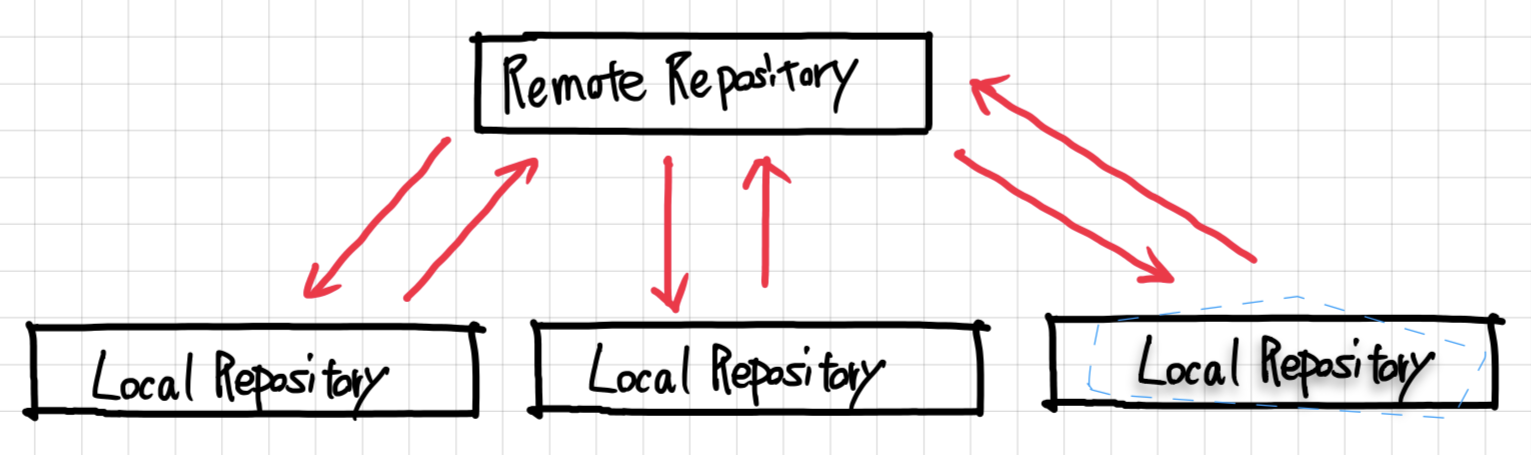

1,303,299 | Deploying Vendure | The cool part about Vendure is how easy it is to set up and how abstract each layer is. Basically, we... | 0 | 2022-12-20T07:11:02 | https://daily-dev-tips.com/posts/deploying-vendure/ | webdev, javascript, beginners | The cool part about Vendure is how easy it is to set up and how abstract each layer is.

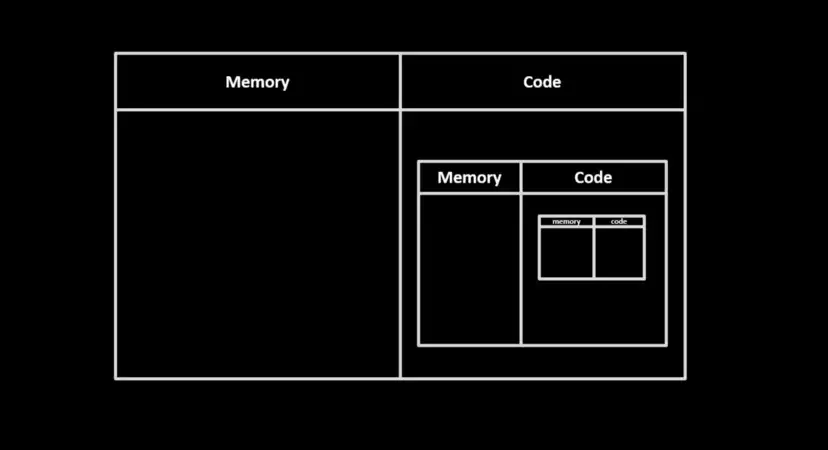

Basically, we get the following elements:

- External database

- Server

- Worker

- Admin UI

- Frontend

While this is amazing, it also brings a bit of complexity when it comes to hosting your Vendure shop.

At the time of writing, I'm still doing some research, and it seems Michael from Vendure is also working on an excellent guide for hosting. (Which I'll add here once it's done).

For my testing purpose, I decided to try out hosting to see what's possible and go with the following setup.

- [RailwayApp](https://daily-dev-tips.com/posts/hosting-a-discord-bot-on-railway/) for the database, server, worker, and admin UI

- [Netlify](https://daily-dev-tips.com/posts/hosting-a-static-blog-on-netlify/) for the storefront

I wouldn't change the front end, as Netlify works fine. However, Vercel or Cloudflare would work equally well.

As for the backend side, Railway works, but it's a bit slow on their free tier. (Still experimenting with a nice setup there).

## Setting up the backend

I'll still show you how to host Vendure for free but with limited resources for this guide.

You should be able to apply this process to another provider or potentially upgrade the railway subscription.

Create a new [Railway account](https://railway.app/) or login into your existing one, creating a new project.

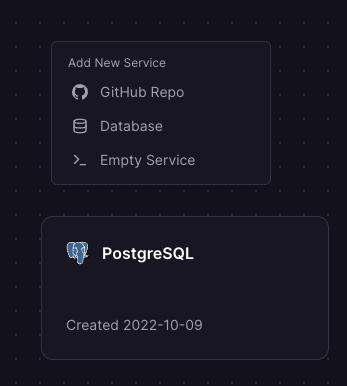

The first thing I added was a PostgreSQL database. I then manually connected to it with [TablePlus](https://daily-dev-tips.com/posts/top-5-mysql-clients-for-mac/#1-tableplus) and imported the database I had locally.

You'll see the database connection string we'll need in a bit on the connection screen, so copy that to a safe spot.

The next thing we need to do is add a new service, a project, from GitHub. (Assuming you pushed your project to GitHub).

In Railway, you'll be able to right-click on the canvas and select the new service option.

Once you add the service, it should automatically add the Postgres variables.

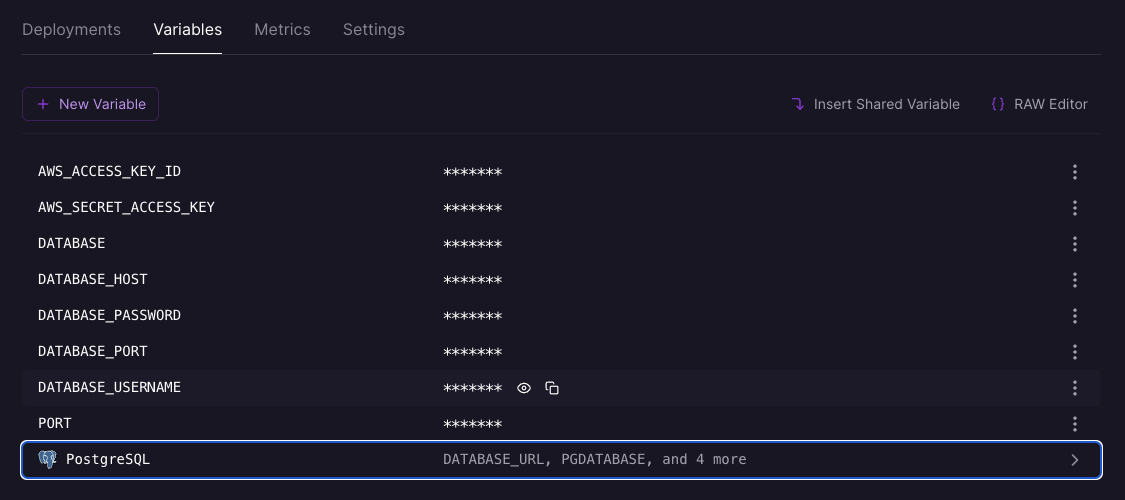

Add optional variables you have set in your `.env` file.

You'll need to add the database options here, as Vendure uses an indirect connection.

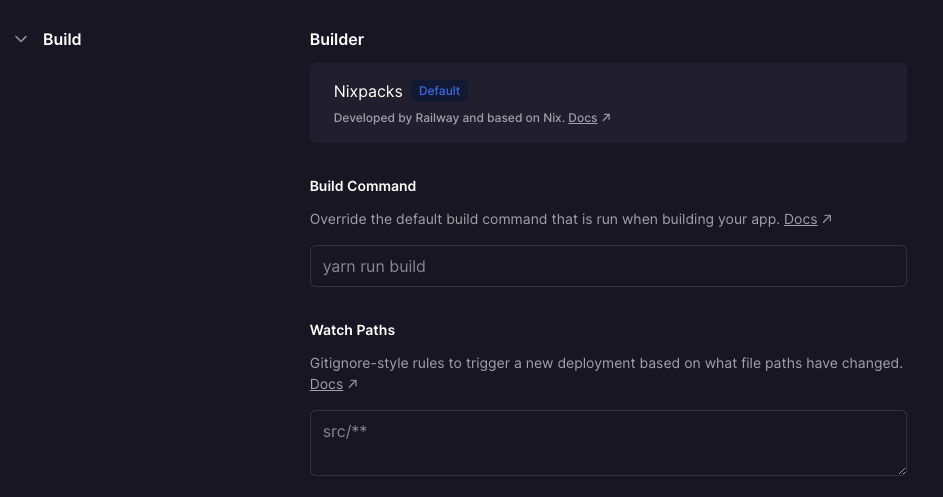

Then we'll also need to define what command it should run in the settings.

And in the case of Vendure, we can set it to `yarn run build`.

It should then auto-build your application every single time you push new changes.

> Note: I sometimes found you need to trigger re-deploy as it would timeout the first deployment manually.

Once it's running, you should already have access to your admin UI!

The URL should be something like this: `https://your-name.up.railway.app/admin`.

## Hosting the storefront

Depending on which storefront option you choose, we have multiple options.

I chose Remix, so I found Netlify super easy.

Log in to Netlify and choose the project from your GitHub repos.

It will automatically recognize it's a Remix project and set all the configurations for us.

All we need to do here is set an environment variable for the `VENDURE_API_URL`. This should point to your Railway environment.

Then go ahead and deploy it, and it should be up and running.

### Thank you for reading, and let's connect!

Thank you for reading my blog. Feel free to subscribe to my email newsletter and connect on [Facebook](https://www.facebook.com/DailyDevTipsBlog) or [Twitter](https://twitter.com/DailyDevTips1) | dailydevtips1 |

1,303,336 | JavaScript Objects | This blog post is your crash course for you to learn about one of the vital topics in JavaScript, i.e., JavaScript Objects. | 0 | 2022-12-20T08:35:59 | https://michaelsolati.com/blog/javascript-objects/ | javascript, webdev, programming, beginners | ---

title: JavaScript Objects

published: true

description: This blog post is your crash course for you to learn about one of the vital topics in JavaScript, i.e., JavaScript Objects.

tags: javascript, webdev, programming, beginners

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/g2y60a6osx9eagi84cst.png

canonical_url: https://michaelsolati.com/blog/javascript-objects/

---

This blog post is your crash course for you to learn about one of the vital topics in JavaScript, i.e., JavaScript Objects.

There are two ways JavaScript data can be defined either a [Primitive](https://developer.mozilla.org/en-US/docs/Glossary/Primitive) or an Object. Objects are what most developers interact with. Some Objects you may be familiar with are:

- String

- Number

- Math

- Date

- Array

- Functions

- And [so much more](https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Global_Objects/Object)!

JavaScript objects are mutable, and their values can be changed. Objects can have properties and methods as well. It's crucial to remember [JavaScript is designed on a simple object-based paradigm](https://developer.mozilla.org/en-US/docs/Web/JavaScript/Guide/Working_with_Objects#:~:text=JavaScript%20is%20designed%20on%20a,is%20known%20as%20a%20method.).

Objects are collections of properties, and properties are an association between a key and a value. In some cases, though, the value of a property is a function, making that property a method. The properties of an object usually describe the characteristics of its variable. For example, an Array has `.length` to know how many elements are in them. The Math object has a `.PI` property, in case you get hungry.

Methods behave differently, as they're functions that need to be called. They can be used to modify or convert a property of an object, perform an action, return a specific piece of information, and more. For example, if you've worked with a String, you may have called the `toUpperCase()` method to get the string in complete upper case.

Likewise, with an Array, you could call `.sort()` to sort the elements.

There are different ways to create an Object in JavaScript. For example, you could make an Object using an object initializer or create a constructor function and instantiate a new instance of that object. Object initializers are creating objects with literal notation. This is consistent with the terminology used by C++.  This is wildly different from creating an Object using a constructor function where we can create reusable and distinct instances of an object. To create this type of object, start with creating a function where the properties and methods are added to the `this` object.

You can then instantiate a new instance of that object with `new` and reuse it repeatedly.

This blog is inspired by one of our Twitter threads that we post on our Twitter account. There are many threads like this; you should [check them out](https://twitter.com/amplication).

{% embed https://twitter.com/amplication/status/1551869682256420866?s=20&t=mUNPj8vQqgdTG12jvpVuUw %} | michaelsolati |

1,303,392 | How To Sum Total From Array Of Object Properties With JavaScript Reduce Method | Calculating the total price for your shopping cart was a hassle in the days before the JavaScript... | 0 | 2022-12-20T15:42:54 | https://byrayray.dev/posts/2022-12-19-sum-total-array-object-properties-javascript-reduce-method | ---

title: How To Sum Total From Array Of Object Properties With JavaScript Reduce Method

published: true

date: 2022-12-20 08:44:08 UTC

tags:

canonical_url: https://byrayray.dev/posts/2022-12-19-sum-total-array-object-properties-javascript-reduce-method

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/m2dfzuzyzfbaub3ugzuq.png

---

Calculating the total price for your shopping cart was a hassle in the days before the JavaScript `reduce` method. In this post, I will show you how easy it is to use the `reduce` method to calculate the total price of a product array in the shopping cart. The JavaScript `reduce` method is powerful and can calculate a total price based on an array of object properties.

## How does the reduce method work?

The **`reduce()`** method in JavaScript is used to execute a function to each element in an array, resulting in a single output value. It operates on an array of elements, performing a computation on each element in the array and building up the result.

Here is the syntax for using the **`reduce()`** method:

```js

array.reduce((accumulator, currentValue, currentIndex, array) => {

// code to be executed

}, initialValue);

```

The **`reduce()`** method takes in a callback function as its first argument. This callback function is called for each element in the array and takes four parameters:

- **`accumulator`** : This is the value returned in the final iteration. It starts with the initial value if provided or the first element of the array if no initial value is provided. And adds up every iteration.

- **`currentValue`** : This is the current element being processed in the array.

- **`currentIndex`** (_optional_): This is the index of the current item that is being processed in the array.

- **`array`** (_optional_): This is the array **`reduce()`** that was called upon.

The callback function should return the updated value for the accumulator.

Here is a simple example of using **`reduce()`** to calculate all the numbers in an array:

```js

const numbers = [1, 2, 3, 4, 5];

const sum = numbers.reduce((accumulator, currentValue) => {

return accumulator + currentValue;

}, 0);

console.log(sum); // 15

```

Check [runkit example](https://runkit.com/devbyrayray/calculate-total-based-on-numbers-array-with-javascript-reduce)

In this example, the **`reduce()`** method is called on the **`numbers`** array and is passed a callback function that adds the accumulator and the current value. The initial value for the accumulator is 0.

The **`reduce()`** method iterates through the numbers in the array, starting with the first element, and applies the callback function to each element.

The returned value from the callback function becomes the new value for the accumulator, which is passed to the next iteration. This process continues until all elements in the array have been processed and the final value of the accumulator is returned.

Let's check how we can apply this to our product array of a fictional shopping cart.

## Get a total price from an array of object properties

Let's say you have an array of products in your shopping cart.

```js

const products = [

{

"price": 10,

"title": "Item 1"

},

{

"price": 20,

"title": "Item 2"

},

{

"price": 30,

"title": "Item 3"

}

]

```

To calculate the prices, we first need an array of prices. We want to calculate the total price of our shopping cart. We can do that by using the `.map()` method. In the example below, we create a new array with only the prices of our products.

```js

const prices = shoppingCart.map((product) => product.price)

```

After that, we can use that array to calculate the total price with the `reduce` method.

```js

const prices = shoppingCart.map((product) => product.price)

const total = prices.reduce((acc, curr) => acc + curr)

console.log('total: ', total )

```

We can also write this shorter and chain the `map()` and `reduce()` methods.

```js

const totalPrice = shoppingCart.reduce((acc, curr) => acc + curr.price, 0)

console.log('total: ', totalPrice )

```

Check [runkit example](https://runkit.com/devbyrayray/calculate-total-price-from-javascript-array-of-product-objects)

That was easy. 👍

## Thanks!

_After reading this story, I hope you learned something new or are inspired to create something new! 🤗 If so, consider subscribing via email (scroll to the top of this page) or follow me here on Hashnode._

> Did you know that you can create a [Developer blog like this one, yourself](https://hashnode.com/@devbyrayray/joinme)? It's entirely for free. 👍💰🎉🥳🔥

_If I left you with questions or something to say as a response, scroll down and type me a message. Please send me a_ [_DM on Twitter @DevByRayRay_](https://twitter.com/@devbyrayray) _when you want to keep it private. My DM's are always open 😁_ | devbyrayray | |

1,303,499 | Troubleshooting: compiled against a different Node version | Symptom Running a node project with the dependency on Better Sqlite3 got the following... | 0 | 2022-12-20T11:20:43 | https://dev.to/foxgem/troubleshooting-compiled-against-a-different-node-version-2alf | node, troubleshooting | ## Symptom

Running a node project with the dependency on Better Sqlite3 got the following error message:

```

Error: The module '... /node_modules/better-sqlite3/build/Release/better_sqlite3.node'

was compiled against a different Node.js version using

NODE_MODULE_VERSION 83. This version of Node.js requires

NODE_MODULE_VERSION 93. Please try re-compiling or re-installing

the module (for instance, using `npm rebuild` or `npm install`).

' the module (for instance, using `npm rebuild` or `npm install`).

' bindings (node_modules/bindings/bindings.js:112:48)

```

## Cause

The error message itself contains enough details, also shows the cause and solution.

In short: the node version used to compile the installed `better_sqlite3` is not the same as the node version currently used, so it asks to recompile it with the current version.

## Solution

- `npm i -D electron-rebuild`.

- Delete the `node_modules` directory.

- In `package.json` add `scripts` to.

```

"rebuild": "electron-rebuild -f -w better-sqlit3",

```

- `npm i`

- `npm rebuild`

Reference link:

- https://github.com/WiseLibs/better-sqlite3/issues/549

- https://github.com/WiseLibs/better-sqlite3/issues/545 | foxgem |

1,303,532 | What is Bitcoin? | Bitcoin (BTC) is a cryptocurrency, a virtual currency designed to act as money and a form of payment... | 0 | 2022-12-20T11:51:02 | https://dev.to/bitpowr/what-is-bitcoin-2f | Bitcoin (BTC) is a cryptocurrency, a virtual currency designed to act as money and a form of payment outside the control of any one person, group, or entity. It provides an alternative payment system to fiat currencies issued and controlled by central banks. Bitcoin is not managed and maintained by any one central entity but by a network of users and miners who verify transactions on the blockchain through cryptographic techniques.

## How does Bitcoin work?

It is critical to understand that Bitcoin is made up of three distinct components that work together to form a decentralized payment system:

1. The Bitcoin network - which operates as a peer-to-peer system where users exchange bitcoin with others on the network without the assistance of middlemen to carry out and validate transactions.

2. The native cryptocurrency of the Bitcoin network, called bitcoin (BTC) which could be likened to money but unlike government-issued currencies such as the dollar or euro, Bitcoin allows online transfers without a middleman such as a bank or payment processor. It is also a a store of value similar to gold.

- The Bitcoin blockchain is much more than just a form of cryptocurrency: It is the foundation upon which the majority of cryptocurrencies, including Bitcoin, are created. Because it guarantees the accuracy of every transaction, the Bitcoin blockchain is exceptional. Nothing is missed from the network because everything that happens on the blockchain is recorded. The entire record of an action is accessible to anyone in the system once it has been time-stamped, recorded, and stored in one of the information blocks to data that is kept in "blocks" of information and then permanently connected as a "chain" by other "blocks" of information.

No matter how many transactions are awaiting confirmation, Bitcoin is set up to allow new blocks to be added to the blockchain about every ten minutes.

The blockchain's openness allows all network users to view and analyze bitcoin transactions in real time. The likelihood of double spending, a problem with online payments, is decreased by this technology. When a person tries to spend the same cryptocurrency twice, this is known as double spending.

### Bitcoin Innovations

**Lightning Network**

Bitcoin was never intended to be scalable. It was intended to be a decentralized payment system that users could use anonymously and from anywhere. However, one of its drawbacks was its popularity: transactions became much slower and more expensive than intended. As a result, developers created cryptocurrency layers, the first of which was the primary blockchain. Each layer beneath that was a secondary, tertiary, and so on.

Each layer complements and adds functionality to the layer above it. In that light the Lightning Network is a second layer for Bitcoin that uses micropayment channels to increase the capacity of the blockchain to conduct transactions more efficiently- In simple terms, the Lightning Network (LN) allows participants to use their digital wallets to send BTC to one another for free. [Read more](https://decrypt.co/resources/bitcoin-lightning-network)

**SegWit**

Before now, Legacy addresses were the original BTC addresses, now SegWit addresses are the newer address format with lower fees.

SegWit stands for Segregated Witness, where Segregated means to separate and Witness refers to the transaction signatures involved with a specific transaction. In a nutshell, it is an improvement over the current bitcoin blockchain in that it increases the block size limit of a blockchain by removing signature data from Bitcoin transactions. This creates more space to add more transactions to the chain when portions of a transaction are withdrawn. [Read more](https://bitpowr.com/blog/bitpowr-wallet-now-supports-bitcoin-seg-wit-addresses)

**Taproot**

The Taproot upgrade was suggested by Bitcoin Core developer Greg Maxwell in January 2018. Three years later, on June 12, 2021, the 90% mark for blocks mined with miner support was reached. It means that some encoded data was left in 1,815 of the 2,016 blocks that were mined during the course of the two-week period by miners to show support for the upgrade.

The Taproot upgrade simply groups several signatures and transactions together.

On the Bitcoin network, digital signatures are necessary to validate transactions. They are produced with the aid of private keys and checked against those of others.

Before the introduction of Taproot, the network of Bitcoin required each digital signature to be verified against a public key, which made transaction verification time-consuming. The time needed for sophisticated multi-sig transactions that require numerous inputs and signatures was doubled by this technique. [Read more](https://cointelegraph.com/bitcoin-for-beginners/a-beginners-guide-to-the-bitcoin-taproot-upgrade)

**Conclusion**

Bitcoin has gained widespread acceptance and popularity over the years, with more and more people choosing to use it as a payment method and store of value. Though Bitcoin isn't yet optimized to handle the kinds of transaction volumes that the digital currency is capable of producing, ideas behind products like Lightning Network is changing this and making Bitcoin more attractive for everyday use.

In addition, the introduction of SegWit is a significant step toward resolving it’s scalability issues and enable it to process more transactions more quickly and cheaply

Bitpowr supports Segwit addresses to also serve as a commitment to providing better solutions and features to help reduce business overhead cost.

Check out [our documentation](https://docs.bitpowr.com/docs) to learn more about our wallet services and other related products. [Contact sales](https://www.bitpowr.com/contact#contact) or send an email to [sales@bitpowr.com](mailto:sales@bitpowr.com) to get started! | bitpowr | |

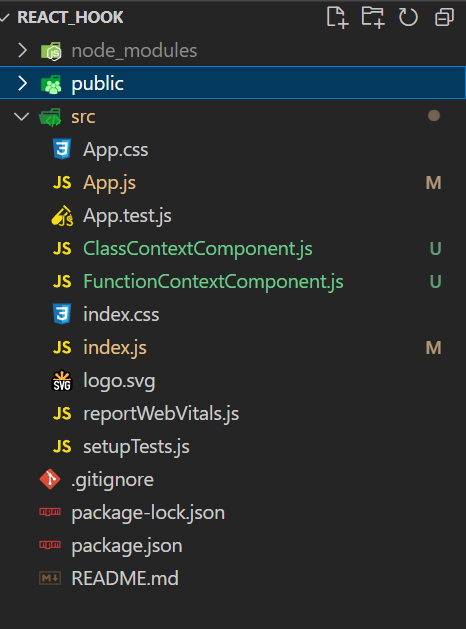

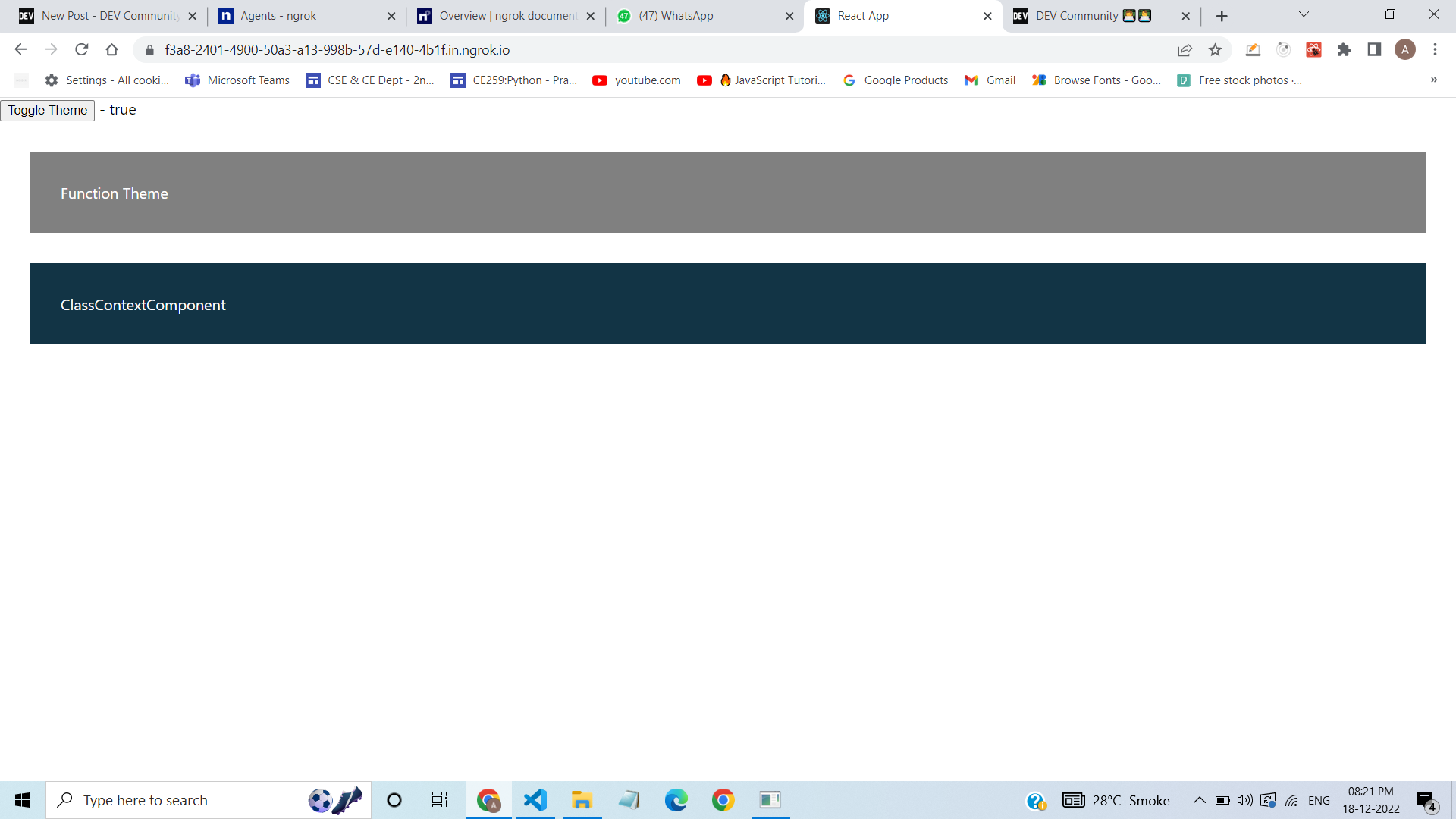

1,303,802 | react useContext() Hook - web dev simplified | Part1 Code:- Folder Structure :- Output Photo :- App.js Code:- import {... | 0 | 2022-12-20T15:11:22 | https://dev.to/akshdesai1/react-usecontext-hook-web-dev-simplified-3o9p | webdev, javascript, react, beginners | ## Part1 Code:-

**Folder Structure :-**

**Output Photo :-**

**App.js Code:-**

``` react

import { createContext, useState } from 'react'

import FunctionContextComponent from './FunctionContextComponent'

import ClassContextComponent from './ClassContextComponent'

export const ThemeContext = createContext();

export default function App() {

const [darkTheme, setDarkTheme] = useState(true);

// eslint-disable-next-line

const [timepass, setTimepass] = useState("initialState");

function toggleTheme() {

setDarkTheme(prevTheme => !prevTheme);

};

return (

<ThemeContext.Provider value={{ darkTheme, timepass }}>

<button onClick={toggleTheme}>Toggle Theme</button> - {darkTheme ? "true" : "false"}

<FunctionContextComponent />

<ClassContextComponent />

</ThemeContext.Provider>

)

}

```

**FunctionContextComponent.js :-**

``` react

import { useContext } from 'react'

import { ThemeContext } from './App';

export default function FunctionContextComponent() {

const theme = useContext(ThemeContext);

const style = {

backgroundColor: theme.darkTheme ? "grey" : "white",

color: theme.darkTheme ? "white" : "black",

padding: "2rem",

margin: "2rem"

}

return (

<div style={style}>

Function Theme

</div>

)

}

```

**ClassContextComponent.js :-**

``` react

import React, { Component } from 'react'

import { ThemeContext } from './App'

export default class ClassContextComponent extends Component {

style(dark) {

return {

backgroundColor: dark ? "#123445" : "white",

color: dark ? "white" : "black",

padding: "2rem",

margin: "2rem"

}

}

render() {

return (

<ThemeContext.Consumer>

{(theme) => {

return <div style={this.style(theme.darkTheme)}> ClassContextComponent </div>

}}

</ThemeContext.Consumer>

)

}

}

```

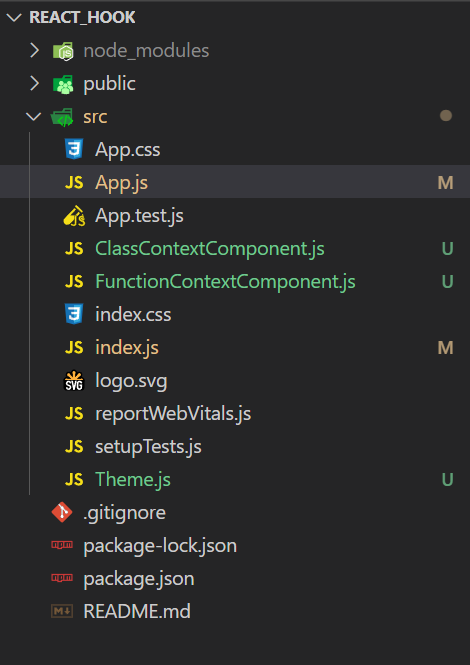

**Part2 Source Code**

**Folder Structure**

**index.js code :-**

``` react

import React from 'react';

import ReactDOM from 'react-dom/client';

import './index.css';

import App from './App';

import reportWebVitals from './reportWebVitals';

import { ThemeContext } from "./Theme.js";

const root = ReactDOM.createRoot(document.getElementById('root'));

root.render(

<ThemeContext>

<App />

</ThemeContext>

);

// If you want to start measuring performance in your app, pass a function

// to log results (for example: reportWebVitals(console.log))

// or send to an analytics endpoint. Learn more: https://bit.ly/CRA-vitals

reportWebVitals();

```

**App.js Code :-**

``` react

import { createContext, useState, useContext } from 'react'

import FunctionContextComponent from './FunctionContextComponent'

import ClassContextComponent from './ClassContextComponent'

import { ThemeContext, ThemeContext1 } from "./Theme.js";

export default function App() {

const theme = useContext(ThemeContext1);

return (

<>

<button onClick={theme.toggleTheme}>Toggle Theme</button>

<FunctionContextComponent />

<ClassContextComponent />

</>

)

}

```

**FunctionContextComponent.js**

``` react

import { useContext } from 'react'

import { ThemeContext1 } from './Theme';

export default function FunctionContextComponent() {

const theme = useContext(ThemeContext1);

const style = {

backgroundColor: theme.darkTheme ? "grey" : "white",

color: theme.darkTheme ? "white" : "black",

padding: "2rem",

margin: "2rem"

}

return (

<>

<div style={style}>

Function Theme

</div>

</>

)

}

```

**ClassContextComponent.js**

``` react

import React, { Component } from 'react'

import { ThemeContext1 } from './Theme'

export default class ClassContextComponent extends Component {

style(dark) {

return {

backgroundColor: dark ? "#123445" : "white",

color: dark ? "white" : "black",

padding: "2rem",

margin: "2rem"

}

}

render() {

return (

<ThemeContext1.Consumer>

{(theme) => {

return <div style={this.style(theme.darkTheme)}> ClassContextComponent </div>

}}

</ThemeContext1.Consumer>

)

}

}

```

**Theme.js Code**

``` react

import { useState, createContext, useContext } from 'react';

export const ThemeContext1 = createContext();

export function ThemeContext(props) {

const [darkTheme, setDarkTheme] = useState(true);

// eslint-disable-next-line

const [timepass, setTimepass] = useState("initialState");

const toggleTheme = () => {

return setDarkTheme(prevTheme => !prevTheme);

};

return (

<ThemeContext1.Provider value={{ darkTheme, toggleTheme, timepass }}>

{props.children}

</ThemeContext1.Provider>

)

}

```

Thank You.

You can follow us on:

[Youtube ](https://www.youtube.com/c/ULTIMATEPROGRAMMING)

[Instagram](https://www.instagram.com/full_stack_web_developer1/) | akshdesai1 |

1,428,769 | [ML] Data should be checked with your eyes | Following the previous effort, I'm challenging the finished Kaggle image competition and making ahead... | 0 | 2023-04-06T22:26:32 | https://dev.to/nekot0/ml-data-should-be-checked-with-your-eyes-11k7 | machinelearning, python, tutorial | Following [the previous effort](https://dev.to/nekot0/errors-in-the-implementation-of-model-training-with-effdet-4pcd), I'm challenging [the finished Kaggle image competition](https://www.kaggle.com/competitions/siim-covid19-detection/overview) and making ahead the implementation. Overcoming recurring errors, I started the model training process by one epoch. My programme was being trained well, but after 50% of learning, the error occurred.

```

ValueError: Caught ValueError in DataLoader worker process 1.

Original Traceback (most recent call last):

File "/opt/conda/lib/python3.7/site-packages/torch/utils/data/_utils/worker.py", line 302, in _worker_loop

data = fetcher.fetch(index)

File "/opt/conda/lib/python3.7/site-packages/torch/utils/data/_utils/fetch.py", line 58, in fetch

data = [self.dataset[idx] for idx in possibly_batched_index]

File "/opt/conda/lib/python3.7/site-packages/torch/utils/data/_utils/fetch.py", line 58, in <listcomp>

data = [self.dataset[idx] for idx in possibly_batched_index]

File "/tmp/ipykernel_28/3482151344.py", line 46, in __getitem__

labels = labels

File "/opt/conda/lib/python3.7/site-packages/albumentations/core/composition.py", line 202, in __call__

p.preprocess(data)

File "/opt/conda/lib/python3.7/site-packages/albumentations/core/utils.py", line 83, in preprocess

data[data_name] = self.check_and_convert(data[data_name], rows, cols, direction="to")

File "/opt/conda/lib/python3.7/site-packages/albumentations/core/utils.py", line 91, in check_and_convert

return self.convert_to_albumentations(data, rows, cols)

File "/opt/conda/lib/python3.7/site-packages/albumentations/core/bbox_utils.py", line 124, in convert_to_albumentations

return convert_bboxes_to_albumentations(data, self.params.format, rows, cols, check_validity=True)

File "/opt/conda/lib/python3.7/site-packages/albumentations/core/bbox_utils.py", line 390, in convert_bboxes_to_albumentations

return [convert_bbox_to_albumentations(bbox, source_format, rows, cols, check_validity) for bbox in bboxes]

File "/opt/conda/lib/python3.7/site-packages/albumentations/core/bbox_utils.py", line 390, in <listcomp>

return [convert_bbox_to_albumentations(bbox, source_format, rows, cols, check_validity) for bbox in bboxes]

File "/opt/conda/lib/python3.7/site-packages/albumentations/core/bbox_utils.py", line 334, in convert_bbox_to_albumentations

check_bbox(bbox)

File "/opt/conda/lib/python3.7/site-packages/albumentations/core/bbox_utils.py", line 417, in check_bbox

raise ValueError(f"Expected {name} for bbox {bbox} to be in the range [0.0, 1.0], got {value}.")

ValueError: Expected x_min for bbox (-0.0009398496240601503, 0.46129587155963303, 0.32471804511278196, 0.9730504587155964, array([1])) to be in the range [0.0, 1.0], got -0.0009398496240601503.

```

Good was that I had experienced and overcome a lot of errors. This time, I could guess which part of my code made this error. According to the message, it is because the element of the bounding box is out of the range [0.0, 1.0]. The range [0.0, 1.0] is probably relevant to scaling. Scaling is made when input images are resized. In other words, a bounding box is resized with the inappropriate ratio when the input image is resized.

However, the scaling ratio is showing negative. Why is it negative?

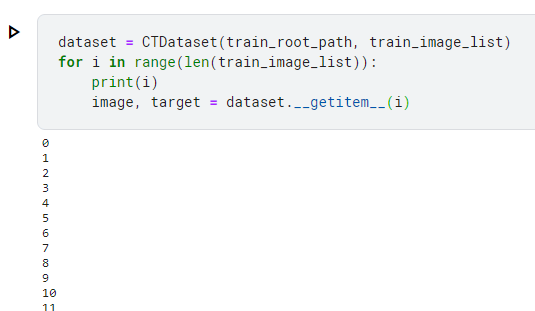

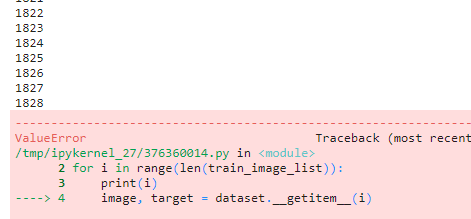

I wanted to check which data made this error but the message didn't tell it to me. So, I tried the code below.

```python

dataset = CTDataset(train_root_path, train_image_list)

for i in range(len(train_image_list)):

print(i)

image, target = dataset.__getitem__(i)

```

This only prints indices and gets data from dataset. As the indices are printed before getting data, we know the index that makes the error when it occurs.

I ran the code.

And finally I found it.

Then I checked the data.

I see. This bounding box shows the area out of the image. The resizing method I'm using (Albumentations) only deals with the bounding box within the area, and it makes the error if the box goes out. In that case, we can avoid it by replacing negative coordinates by 0.

```python

x = max(0, int(round(bounding_box['x'])))

```

After the correction, the programme is working well, and now it exceeds 50% of epoch 1, so it should be fine.

The learning point today is we should check the original data with our eyes before executing time-consuming programme. We should imagine what values the original data has and write a code so that it eliminates potentially error-making data or switch processes. Data should be checked with our eyes.

| nekot0 |

1,309,839 | Block Cipher Vs Stream Cipher – the Difference Explained | Get An Overview and Head-to-Head Comparison of Block Cipher Vs Stream Cipher! No matter if you’re... | 0 | 2022-12-27T10:02:05 | https://dev.to/me_jessicahowe/block-cipher-vs-stream-cipher-the-difference-explained-268b | Get An Overview and Head-to-Head Comparison of Block Cipher Vs Stream Cipher!

No matter if you’re using the internet for banking or for sending a message on WhatsApp, encryption has a role to play everywhere. For example, whenever you enter your credentials on the web, they are shared across the internet in an encrypted form. It ensures security. Ciphers responsible for keeping your data safe are of two types, namely Block Cipher and Stream Cipher. Now, what are they, and how are they different? Let’s read ahead and compare Block Cipher vs Stream Cipher.

Both of these Ciphers are a part of symmetric encryption.

[Symmetric encryption](https://cheapsslweb.com/blog/symmetric-encryption-vs-asymmetric-encryption) is a process where the same key is used to encrypt and decrypt the data. Some of the best examples of symmetric encryption are Blowfish, AES, DES, RC6, etc.

Here is how stream ciphers and block ciphers are used in symmetric encryption!

Difference Between Block Cipher and Stream Cipher Explained

Before we jump into the comparison of block vs stream cipher, let’s analyze them individually!

## What is Block Ciphers?

As the name suggests, the block ciphers are used to encrypt the data and return an output of a fixed block size. It means that no matter what is the size of the input, the resultant ciphertext will be of fixed block size. The size of the ciphertext is usually in octaves (the multiples of 8). However, it also depends on the encryption scheme used in the ciphering method.

## Here is Examples of Block Cipher

If you have a plain text of 148 bits, but the encryption scheme used is 128-bit encryption, the remaining 20 bits will be adjusted in the second block. As there will be space for 108 more bits, the encryption scheme will add padding of 0s or 1s or both to complete the ciphertext block.

Now, as we know that ciphertexts are of fixed blocks, and they are encrypted. But how?

Block ciphers use symmetric keys and algorithms to encrypt sensitive data. As they can be used for various modes, the complexity of encryption can vary. For example, the blocks are chained together in some modes.

## Here is the process of how chained block ciphers work!

In this process, a pseudorandom sequence of characters is used to encrypt the first block of characters. This random sequence of characters is termed as Initialization Vector (IV). After obtaining the first block cipher, it is used as an IV for the next block. This process is followed for each block, and it thus produces a unique ciphertext each time.

The core benefit of a block cipher is that it is tamper-proof, which means that no one can crack it. As the IV used for each iteration is different and dependent on the previous block, the cipher has high diffusion.

Though it is highly unlikely, a change of one character can lead to a massive change in the cipher. Hence, the block cipher also has a higher error propagation rate.

What are the different techniques of block ciphers cryptography

Some of the best examples of block ciphers are:

- DES (Data Encryption Standard)

- AES (Advanced Encryption Standard)

- Twofish

- Blowfish

- RC5

- Triple-DES

## Modes of Operation for Block Ciphers

As we have teased the modes of block ciphers above, let’s explore them properly here! The modes of block ciphers are divided into two major categories, namely.

## Confidentiality-only modes

There are three types under this!

1. Electronic Codebook: The ECB method splits the plaintext into various blocks. Each block is applied with encryption separately. As the pattern is not hidden in this case, it cannot be used in security frameworks.

2. Cipher block chaining mode (CBC: As explained above, the output ciphertext of one block is used as an initialization vector for the following block with plaintext input using the XOR function.

3. Propagating cipher block chaining (PCBC): This method involves XOR of current plaintext, previous plaintext, and previous ciphertext.

Authenticated encryption with additional data operation modes

There are two categories under this!

1. Galois Counter mode: In this mode, an incremental counter is used to generate a universal hash that generates message authentication codes before encryption and decryption.

2. Synthetic initialization vector (SIV): The SIV block cipher uses a plaintext input, an encryption key, and a header to achieve authenticated encryption. The header used is an authenticated variable-length octet string. SIV keeps the plaintext private while keeping the authenticity of the header and encryption key intact.

## What is Stream Ciphers?

Just like its name, the stream cipher encrypts the data in a continuous stream. Unlike block ciphers, bit-by-bit encryption happens in Stream ciphers. How?

## Here is the Stream Cipher uses and process!

The input plaintext is not arranged in blocks before encryption, but it is directly encrypted bit by bit. The encryption process uses keystreams that are generated by combining an encryption key and a seed, called the nonce. The combination of the two produces a pseudorandom number, a keystream that is XORed (exclusive disjunction) with the plaintext to make the ciphertext.

Now, the encryption key used here can be 128 or 256 bits, and nonce can be 64 or 128 bits. The keystream used to produce ciphertext has to be unique, but the encryption key and nonce can be reused. As it may seem tough, the process uses feedback shift registers that generate a unique seed or nonce every time. This nonce is only used one time to create the keystream.

Now, the major benefit of stream ciphers is that they are less likely to experience system-wide propagation errors. How? As each bit is encrypted individually, it will not affect the ciphertext of the entire string, hence, fewer chances of errors. Moreover, as stream ciphers happen in a continuous stream, they are faster and easy to implement.

As each bit is converted to ciphertext, stream ciphers do not have diffusion. Hence, hackers can easily break them. They can easily add or manipulate a message without being noticed.

Stream ciphers are ideal for scenarios where the amount of plain text is more and cannot be determined.

Some of the best examples of Stream Ciphers are

- A5

- ChaCha40

- Salsa20

- RC4

- Stream Ciphers Examples

The stream ciphers are primarily of two types!

1. Synchronous Stream Ciphers: These types of ciphertexts are generated without the use of keystreams that are not generated using previous ciphertexts or plaintexts. For each ciphertext, a unique pseudorandom number is used to make the keystream. This keystream is further XORed with the plaintext to generate the ciphertext.

2. Asynchronous Stream Ciphers: In this cipher, the keystream is generated using the synchronous key and fixed size of the previous ciphertext. As the contents of the ciphertext are affected, the respective keystream is also affected. This cipher can be used to detect active attacks and further limit error propagation.

Differentiate Stream Cipher and Block Cipher using a suitable example. – How Do They Vary?

The block cipher vs stream cipher comparison is easy to make as we know what they are! So, let’s get started! Here is a quick tabular comparison of both the ciphers!

One of the prime and visible differences between block vs stream cipher is bit conversion. How? Well, the block conversion method requires the block of plaintext that needs to be accumulated first and then converted to ciphertext. On the other hand, the stream cipher converts quickly as plaintext is encrypted one bit at a time. Thus, making it continuous and fast.

As the devices today are fast, the time difference may not be that significant.

## Security

If we compare the ciphers based on security, the strength of the key determines the power of a cipher. However, out of both of these ciphers, block ciphers can be used to provide authenticated encryption, not stream cipher. So, a block cipher is better. Stream cipher only uses the confusion principle to encrypt data, so it is better only in terms of maintaining confidentiality.

## Reversibility

It is obvious that if the ciphering takes more time to encrypt, the decryption time will also be more. Stream-based encryption can be easily reversed by XORing their outputs. But, in the case of block cipher, the bits encrypted are more. Hence, the reversibility, in this case, is slow.

## Resources

This is where the stream ciphers outshine from block ciphers. As block ciphers require complex calculations as compared to stream ciphers, they require relatively more resources.

## Redundancy

Redundancy is one of the critical factors. If the block cipher does not find exact octaves in the input plaintext, it adds padding bits to complete the block. Though it is necessary to produce the ciphertext, it also increases the redundancy of plain text.

However, stream ciphers do not have any redundancies as it encrypts bit by bit.

## Application area

As block cipher provides authenticated encryption, it is best for almost any type of application where encryption is necessary. In contrast, the stream cipher is used only for certain applications like data-in-transit encryption.

So, that’s all about Block Cipher Vs Stream Cipher.

## Wrapping Up!

So, we know that encryption is the wall that stands between your sensitive data and the hackers. And the warriors that take care of this wall are the block and stream ciphers. Block ciphers are powerful in terms of security but slow. On the other hand, the stream ciphers have a limited scope of application but are faster than block ciphers.

We hope this article was fruitful and you enjoyed reading it! | me_jessicahowe | |

1,314,775 | Creating Your Own Personalized Avatars with AI | Have you ever wanted to create your own personalized avatars from photos, but found the process to... | 0 | 2023-01-02T08:03:40 | https://dev.to/geocine/creating-your-own-personalized-avatars-with-ai-30c6 | ai, machinelearning, tutorial, deeplearning |

Have you ever wanted to create your own personalized avatars from photos, but found the process to be difficult or expensive? With the advent of artificial intelligence, it has become easier than ever to create unique and striking images using your own photos as a reference.

I have created a Google Colab tool that allows you to do just that, and it's completely free to use with just a Google account. This tool is based on the latest AI technology and allows you to train an AI text-to-image generator called Stable Diffusion.

To get started, simply upload your photos and follow the prompts to begin training the model. You can adjust the settings to fine-tune the model's performance and improve the accuracy of the generated images. Once you are satisfied with the results, you can save the model to your Google Drive and use it to generate images anytime.

This is a great opportunity to explore the capabilities of AI and see how it can be used to create novel and striking images. Give it a try and see what you can create!

Link to Google Colab below 👇🏼

https://colab.research.google.com/github/geocine/sd-easy-mode/blob/main/EasyMode_Stable_Diffusion.ipynb | geocine |

1,316,163 | Swift's POP (Protocol Oriented Programming) paradigm in rea life | Introduction Imagine we want to implement a sort of postal system, where messages can be... | 0 | 2023-01-03T14:19:43 | https://dev.to/stoiandan/swifts-pop-protocol-oriented-programming-paradigm-in-rea-life-46kj | swift, pop | ## Introduction

Imagine we want to implement a sort of postal system, where messages can be _sent_ from _someone_ to the post office; where it will be accordingly _dispatched_ to the right destination.

## Beginning

We could go ahead and define a simple _data model_, that represents a _message_.

This entity holds the _actual_ message (we'll call it _content_), and some meta-data, i.e. data about the message itself.

Since we want this message to be _immutable_ and we don't want _shared references_ of it, we can make it a _struct_, as opposed to a class:

```swift

struct Message {

// the meta-data; who is sending this message

let sender: String

// also meta-data; the receiver of the message

let to: String

// the actual content of the message

let content: String

}

```

## The Dispatcher

Now that we have a data model, which will be a shared entity between _sender_ (a person) and _dispatcher_ (the postal office).

We can go ahead and imagine our _dispatcher_:

```swift

class Dispatcher {

public func dispatch(_ message: Message) {

print("Sending \(message.content) to \(message.to) from \(message.from)")

}

}

```

## The Sender

Finally let's imagine the person sending the message:

```swift

class Person {

private unowned let dispatcher: Dispatcher

let name: String

init(dispatcher: Dispatcher, ) {

self.dispatcher = dispatcher

self.name = name

}

public func sendMessage(of message: Message) {

dispacher.dispatch(message)

}

}

```

## Example

This looks ok. Let's see how it will look in practice:

```swift

let dispatcher = Dispatcher()

let helvidius = Person(dispatcher: dispatcher, name: "Helvidius")

let jovinian = Person(dispatcher: dispatcher, name: "Jovinian")