id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

201,732 | js typewriter | A post by weptim | 0 | 2019-11-07T10:00:55 | https://dev.to/weptim/js-typewriter-3a4f | 100daysofcode | {% codepen https://codepen.io/weptim/pen/dyyeVQx %} | weptim |

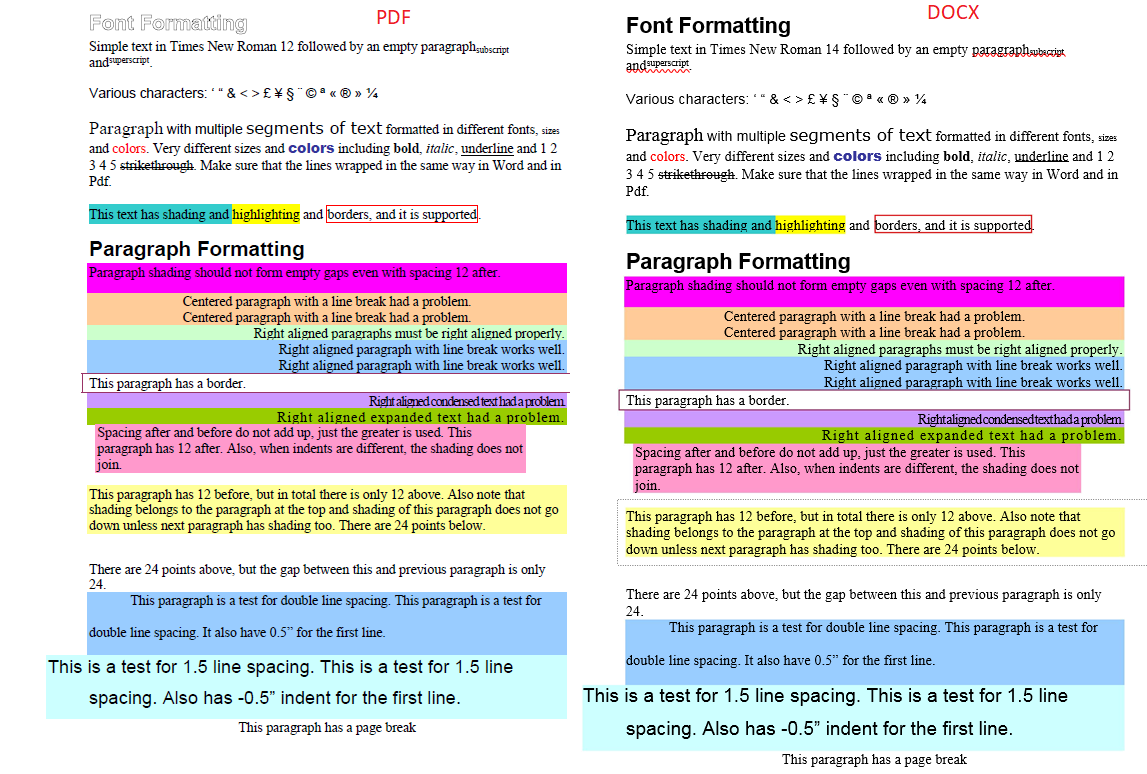

201,823 | Convert PDF to Editable DOCX with Python | While working with document conversion feature, you came across a requirement to convert PDF to DOCX.... | 0 | 2019-11-07T12:56:18 | https://blog.groupdocs.cloud/2019/11/06/convert-pdf-to-editable-word-document-with-python-sdk/ | python, pdftodocx, documentconversion, restapi | While working with document conversion feature, you came across a requirement to convert PDF to DOCX. I would like to introduce GroupDocs.Conversion Cloud SDK for Python for the purpose. It can also convert all popular industry standard documents from one format to another without depending on any third-party tool or software.

All you need to convert PDF to DOCX in Python follow these steps:

* Before we begin with coding, sign up with [groupdocs.cloud](https://docs.groupdocs.cloud/display/gdtotalcloud/Creating+and+Managing+Account) to get your APP SID and APP Key.

* Install groupdocs-conversion-cloud package from [pypi](https://pypi.org/project/groupdocs-conversion-cloud/) with the following command.

```>pip install groupdocs-conversion-cloud```

* Open your favorite editor and copy paste following code into the script file

1. Import the GroupDocs.Conversion Cloud Python package

2. Initialize the API

3. Upload source PDF document to GroupDocs default storage

4. Convert the PDF document to editable DOCX

```python

# Import module

import groupdocs_conversion_cloud

# Get your app_sid and app_key at https://dashboard.groupdocs.cloud (free registration is required).

app_sid = "xxxxxx-xxxx-xxxx-xxxx-xxxxxxxxxx"

app_key = "xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx"

# Create instance of the API

convert_api = groupdocs_conversion_cloud.ConvertApi.from_keys(app_sid, app_key)

file_api = groupdocs_conversion_cloud.FileApi.from_keys(app_sid, app_key)

try:

#upload soruce file to storage

filename = 'Sample.pdf'

remote_name = 'Sample.pdf'

output_name= 'sample.docx'

strformat='docx'

request_upload = groupdocs_conversion_cloud.UploadFileRequest(remote_name,filename)

response_upload = file_api.upload_file(request_upload)

#Convert PDF to Word document

settings = groupdocs_conversion_cloud.ConvertSettings()

settings.file_path =remote_name

settings.format = strformat

settings.output_path = output_name

loadOptions = groupdocs_conversion_cloud.PdfLoadOptions()

loadOptions.hide_pdf_annotations = True

loadOptions.remove_embedded_files = False

loadOptions.flatten_all_fields = True

settings.load_options = loadOptions

convertOptions = groupdocs_conversion_cloud.DocxConvertOptions()

convertOptions.from_page = 1

convertOptions.pages_count = 1

settings.convert_options = convertOptions

.

request = groupdocs_conversion_cloud.ConvertDocumentRequest(settings)

response = convert_api.convert_document(request)

print("Document converted successfully: " + str(response))

except groupdocs_conversion_cloud.ApiException as e:

print("Exception when calling get_supported_conversion_types: {0}".format(e.message))

```

* And that’s it. PDF document is converted to DOCX and API response includes the URL of the resultant document. [Read more](https://blog.groupdocs.cloud/2019/11/06/convert-pdf-to-editable-word-document-with-python-sdk/).

| tilalahmad |

201,881 | Day 5 of⚡️ #30DaysOfWebPerf ⚡️: Your laptop is a filthy liar | Sia Karamalegos @thegree... | 3,017 | 2019-11-07T14:32:24 | https://sia.codes/posts/30-days-web-perf-5/ | webperf, devtools, webpagetest, webdev | {% twitter 1192448463717437440 %}

{% twitter 1192448470419943429 %}

{% twitter 1192448472202518531 %}

{% twitter 1192448473196613633 %}

{% twitter 1192448474379423744 %}

| thegreengreek |

201,922 | Don't throw away your old MBP, upgrade it!. | I have a Mid-2010 MBP that my kids use for homework, youtube and "stuff". Back then Apple allowed yo... | 0 | 2019-11-07T16:00:39 | https://dev.to/hminaya/don-t-throw-away-your-old-mbp-upgrade-it-1ld0 | hardware, mac, beginners, upgrade | I have a Mid-2010 MBP that my kids use for homework, youtube and "stuff".

Back then Apple allowed you to swap out some of the internal components (HDD, RAM, Battery) and perform upgrades. If you still have one of these MBPs it's still worth it to do some small upgrades and get some more life out if.

So far I've done the following 👇

🧠 Upgraded RAM from 4GB to 16GB!

💾 Swapped out the 250 GB HDD for a 512GB Samsung EVO SSD

🔋 Replaced the original battery

The difference in terms of performance is huge, an SSD and 16GBs of RAM are worth it!.

Best of all, it's very simple to do, just open up the bottom cover and swap the parts, just like old times!.

If you need some guides to walk you through it head over to [iFixIt](https://www.ifixit.com/Device/MacBook_Pro), they cover pretty much everything.

For parts I usually compare between [MacSales](https://www.macsales.com/) and Amazon. Getting quality parts through MacSales is usually better and not that expensive. | hminaya |

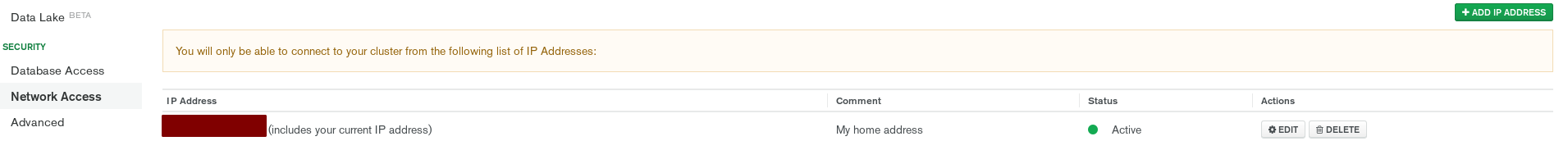

884,640 | How I Manage My Knowledge | This post is originally from my blog. This is an overview of the tools and software I use to... | 0 | 2021-11-01T21:16:18 | https://dev.to/uzayg/how-i-manage-my-knowledge-1cgp | automation, python, productivity, git | This post is originally from my [blog](https://www.uzpg.me/general/2021/07/20/my-knowledge-process.html).

This is an overview of the tools and software I use to maintain and index of my knowledge and life in an efficient and shareable way.

# Tech

The main knowledge base program I use to save and gather information is [Archivy](https://archivy.github.io), an open source project I created that supports hierarchical and bidirectional notes, local bookmarking (downloads the webpages you want) and is highly extensible.

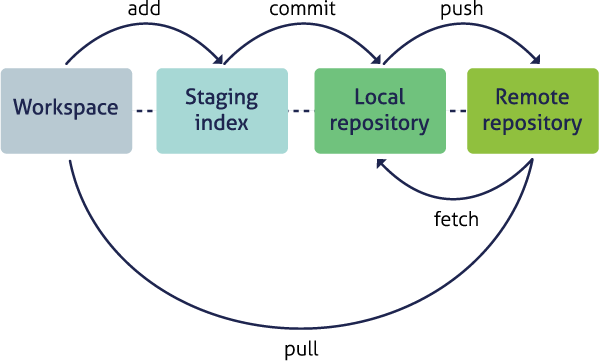

I run a local instance of this program on my computer, through which I edit / manage my knowledge, simply opening a new browser tab or vim when I have content to write. All my data is stored as markdown files in a local git repository that I push to a private GitHub repo for backup / access on my phone.

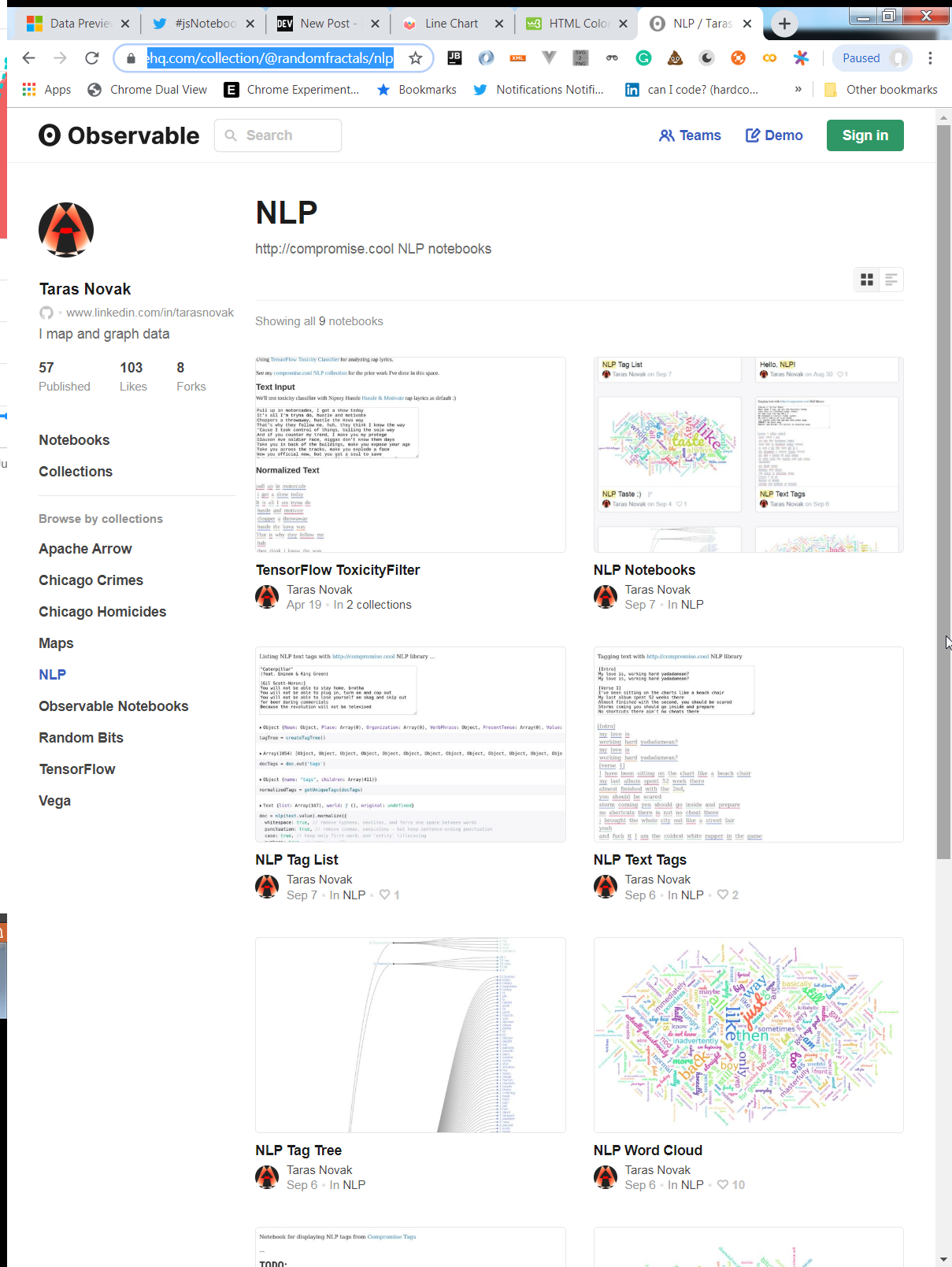

I wrote a [plugin](https://github.com/archivy/archivy-static-site-generator) to turn user Archivy knowledge bases into static HTML websites so that users can share their knowledge bases online. Mine is hosted at [knowledge.uzpg.me](https://knowledge.uzpg.me).

The git setup I have makes it so that whenever I push my changes and indicate new files in public visibility this website is updated.

This setup allows for a few advantages:

- **extensibility** - the software I use is very flexible so it's very easy for me to script / setup infrastructure around my knowledge base. This is possible using tools like Archivy [plugins](https://github.com/archivy/awesome-archivy) and [APIs](https://archivy.github.io/reference/architecture/).

- **ease of sharing** - this extensibility allows many ways for me share my knowledge base, and also distribute the extensions / scripts I use to organize it. My knowledge base can then also have value for other people, and I can directly send my notes, on top of having it act as a personal knowledge repository.

- **ease of access** - I can access my knowledge whenever I want, on any device, through github / my public static site.

# Content

The actual content I save into my knowledge base can be divided into multiple types.

## Notes

Notes are a very important part of my knowledge base, as they are useful for retention and helpful to go back on things I've learned. My knowledge base is constantly compounding with new information that I can link together.

Whenever I read a non-fiction book I always try to highlight and annotate it. Then, once I'm done I come back to it and try to synthesize its main ideas. Although this process is long, it helps me really make sure I understand all the core ideas of the work and have a way to review its essential message. Examples: [The Selfish Gene](https://knowledge.uzpg.me/dataobj/1214/) or [The Theoretical Minimum](https://knowledge.uzpg.me/dataobj/1913/).

I also keep notes on courses or talks that I have attended, often related to STEM. For these I use embedded LaTeX and screenshots of course material. [Example](https://knowledge.uzpg.me/dataobj/1923/)

## Miscellaneous lists

This part of my knowledge base acts as somewhat of a personal record, or journal of the things I've done and the content I've consumed.

Indeed, I find it useful to compile collections of miscellaneous content I appreciated. It helps me keep track what I've done and when I did it. This is a form of **bookmarking** generalized beyond just links. For example, the lists of [the books I've read](https://knowledge.uzpg.me/dirs/books/), [words I like](https://knowledge.uzpg.me/dataobj/153/), [quotes](https://knowledge.uzpg.me/dataobj/1611/), [poetry](https://knowledge.uzpg.me/dataobj/1925/) or [articles I saved](https://knowledge.uzpg.me/dataobj/1849/). I gradually add to these whenever I find something relevant.

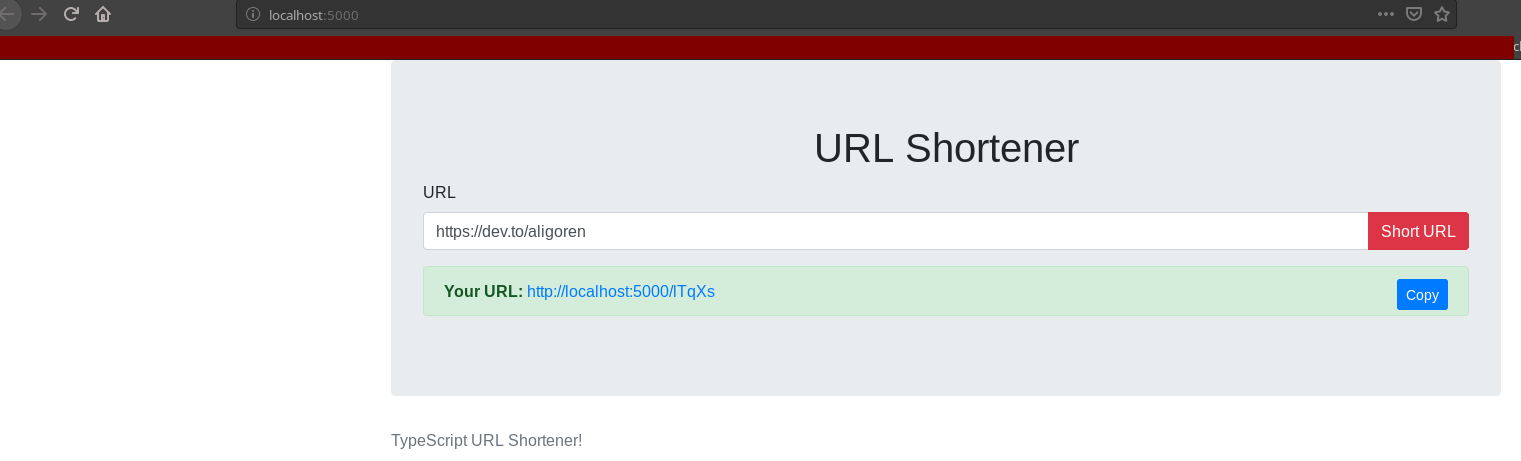

I also jot down lists of events or activities I participated in and would like to keep a digital reference of, in the form of a journal. I'm very fond of cyber-security for example, and when I do a cyber-security competition (CTF) I enjoy saving my opinion of the event and [its challenges](http://localhost:5000/dataobj/318).

## Web Content

All of these different types of content benefit from links to articles as references. One of the core feature of Archivy is it's ability to download / store web content locally. To this effect, I often use Archivy's functionality to download relevant articles or webpages that I then link inside my notes. This also ensures the content survives [link rot](https://en.wikipedia.org/wiki/Link_rot).

I can also use existing scripts to quickly download content from my online accounts, like [my pocket account](https://github.com/archivy/archivy-pocket) or [my Hacker News posts](https://github.com/archivy/archivy-hn).

## General Documents

I also keep many standalone documents that I'd like to have backed up in my knowledge base: for example, school material that I can then share with classmates, or the [poetry I write infrequently](https://knowledge.uzpg.me/dirs/poetry/).

# Conclusion

This process allows me to quickly search and navigate all the things I've learned / done / appreciated. I can explore and handle this content manually or programmatically through scripting.

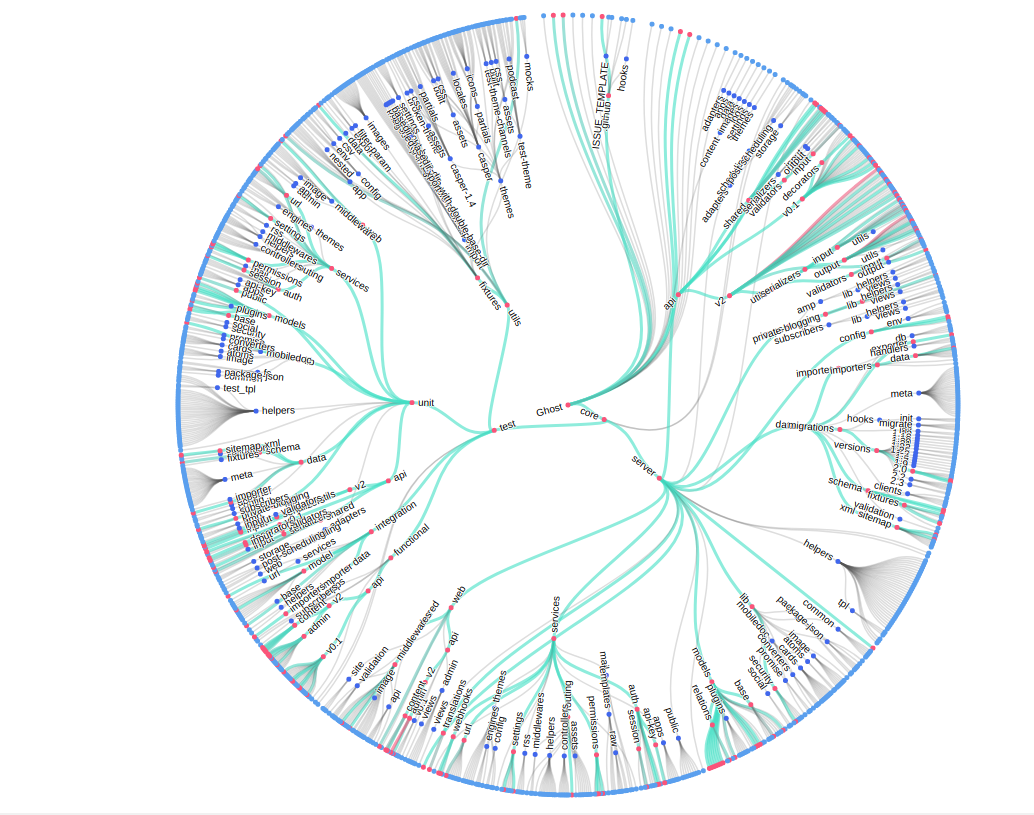

This way of interfacing helps me access my knowledge base efficiently and share without any hassle. I'm very satisfied with my setup but plan on adding more features to the software I use; including a graph view of links similar to Obsidian's, and better tagging.

I also think there are many interesting plugin ideas I could develop to script with my content: like a script that uses Natural Language Processing to generate spaced repetition quizzes on your knowledge base.

I plan on implementing ML integrations to have automated suggestions of tags and links between notes, something I'm really excited about too!

| uzayg |

202,140 | What is cloud computing, benefits and services? | Cloud computing provides a simple way to access servers, storage, databases and a broad set of applic... | 0 | 2019-11-08T03:28:36 | https://dev.to/siddharthr0318/what-is-cloud-computing-benefits-and-services-22ao | aws, linux, cloudcomputing | Cloud computing provides a simple way to access servers, storage, databases and a broad set of application services over the Internet.

Benefits of Cloud Computing:

Flexibility

Pay per service

Security

Environmental Friendly

Disaster Recovery

Read More: https://realprogrammer.in/what-is-cloud-computing-benefits-and-services/ | siddharthr0318 |

202,166 | What is Big-O Notation? Understand Time and Space Complexity in JavaScript. | As we know, there may be more than one solution to any problem. But it is hard to define, what is the... | 0 | 2020-01-10T08:43:05 | https://dev.to/chandra/what-is-big-o-notation-understand-time-and-space-complexity-in-javascript-4684 | javascript, productivity, bigonotation, algorithms | As we know, there may be more than one solution to any problem. But it is hard to define, what is the best approach and method of solving that programming problem.

Writing an algorithm that solves a definite problem gets more difficult when we need to handle a large amount of data. How we write each and every syntax in our code matters.

There are two main complexities that can help us to choose the best practice of writing an efficient algorithm:

###1. Time Complexity - Time taken to solve the algorithm

###2. Space Complexity - The total space or memory taken by the system.

When you write some algorithms, we give some instructions to our machine to do some tasks. And for every task completion machine needs some time. Yes, it is very low, but still, it takes some time. So here, is the question arises, does time really matters.

Let's take an example, suppose you try to find something on google and it takes about 2 minutes to find that solution. Generally, it never happens, but if it happens what do you think what happens in the back-end. Developers at google understand the time complexity and they try to write smart algorithms so that it takes the least time to execute and give the result as faster as they can.

So, here is a challenge that arises, how we can define the time complexity.

## What is Time Complexity?:

It quantifies the amount of taken by an algorithm. We can understand the difference in time complexity with an example.

*Suppose you need to create a function that will take a number and returns a sum of that number upto that number.

Eg. addUpto(10);

it should return the sum of number 1 to 10 i.e. 1 + 2+ 3 + 4 + 5 + 6 + 7 + 8 + 9 + 10;*

We can write it this way:

` function addUpTo(n) {

let total = 0;

for (let i = 1; i <= n; i++) {

total += i;

}

return total;

}

addUpTo(5); // it will take less time

addUpTo(1000) // it will take more time

`

Now you can understand why the same function takes different time for different inputs. This happens because the loop inside the function will run according to the size of the input. If the parameter passed to input is 5 the loop will run five times, but if the input is 1000 or 10,000 the loop will run that many times. This makes some sense now.

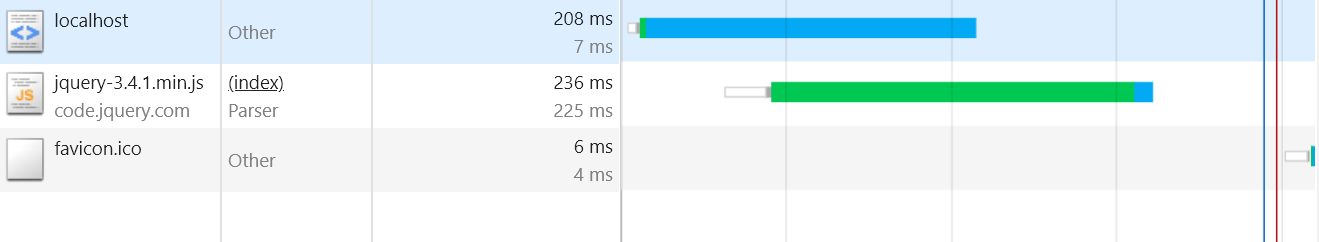

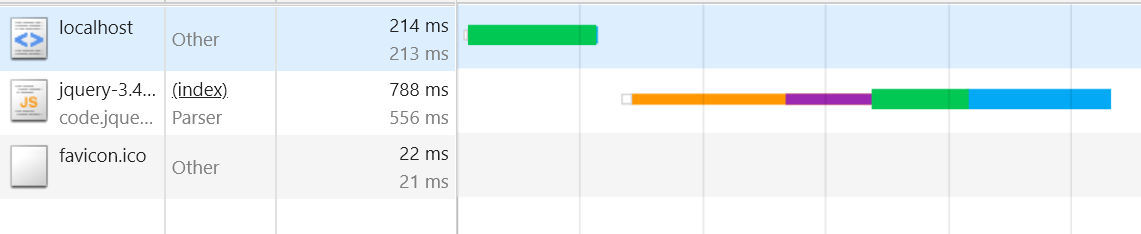

But there is a problem, different machines record different timestamp. As the processor in my machine is different from yours and same with multiple users.

##So, how can we measure this time complexity?

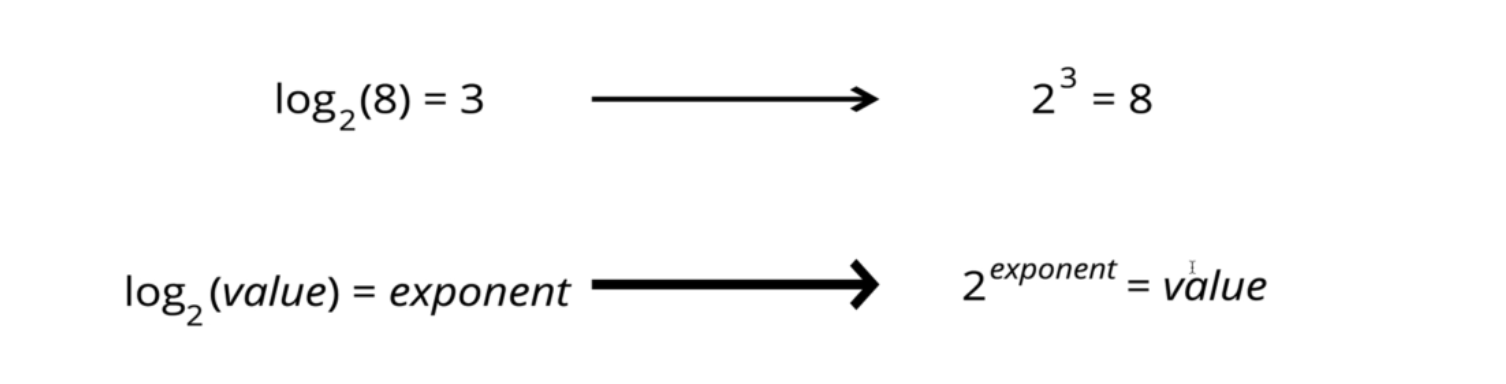

Here, Big-O-Notation helps us to solve this problem. According to Wikipedia, *Big O Notation is a mathematical notation that describes the limiting behavior of a function when the argument tends towards a particular value or infinity. The letter O is used because the growth rate of a function is also referred to as the

order of the function.*

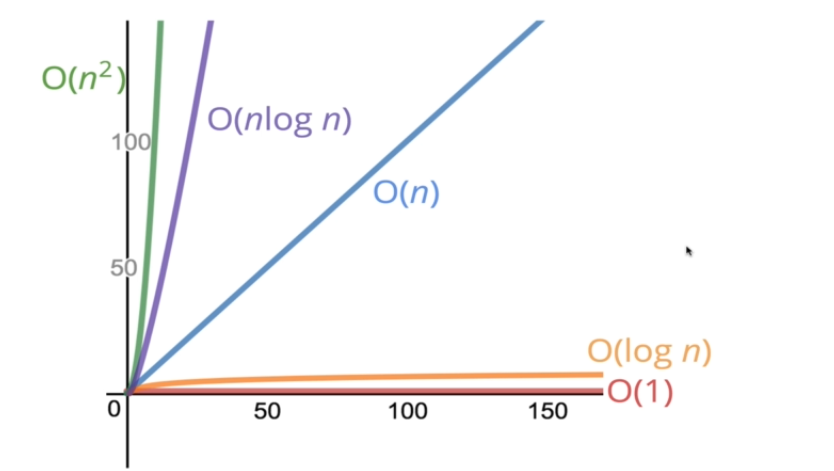

According to Big O notation, we can express time complexities like

1. If the complexity grows with input linearly, that mean its O(n). _'n' here is the number of operation that an algorithm have to perform._

2. If complexity grows with input constantly then, the Big O Notation will be O(1).

3. If complexity grows with input quadratically, then the Big O Notation will be O(n^2). _you can pronounce it as O of n square_

4. If the complexity grows with input with inverse of exponentiation, we can say.

We can simplify these expressions like below. Basically while calculating the Big O Notation we try to ignore the lower values and try to focus on highest factor which can increase the time of the performance. So,

1. instead of O(2n) prefer O(n);

2. instead of O(5n^2) prefer O(n^2);

3. instead of O(55log n) prefer O(log n);

4. instead of O(12nlog n) prefer O(nlog n);

For better understanding, please have a look at some algorithms which we use daily that have O(n),O(n^2), and O(log n) complexities?

In Quora, Mark Gitters said,

``

O(n): buying items from a grocery list by proceeding down the list one item at a time, where “n” is the length of the list

O(n): buying items from a grocery list by walking down every aisle (now “n” is the length of the store), if we assume list-checking time is trivial compared to walking time

O(n): adding two numbers in decimal representation, where n is the number of digits in the number.

O(n^2): trying to find two puzzle pieces that fit together by trying all pairs of pieces exhaustively

O(n^2): shaking hands with everybody in the room; but this is parallelized, so each person only does O(n) work.

O(n^2): multiplying two numbers using the grade-school multiplication algorithm, where n is the number of digits.

O( log n ): work done by each participant in a phone tree that reaches N people. Total work is obviously O( n ), though.

O( log n ): finding where you left off in a book that your bookmark fell out of, by successively narrowing down the range

``

and Arav said,

"

If you meant algorithms that we use in our day to day lives when we aren't programming:

O(log n): Looking for a page in a book/word in a dictionary.

O(n): Looking for and deleting the spam emails (newsletters, promos) in unread emails.

O(n ^ 2): Arranging icons on the desktop in an order of preference (insertion or selection sort depending on the person)."

I hope you are now familiar with the complexities.

I am not completing the topic in this article, I will make another in future.

If you have any questions and suggestions please write down the comment or feel free to contact me.

Thanks for giving your valuable time in reading this article.

| chandra |

202,205 | Symfony on a lambda: first deployment | Deploy easely Symfony application on a AWS Lambda | 3,140 | 2019-11-08T07:27:54 | https://julesmatsounga.com/en/article/symfony-lambda-chapter-1 | php, symfony, lambda, serverless | ---

title: Symfony on a lambda: first deployment

published: true

description: Deploy easely Symfony application on a AWS Lambda

tags: PHP, Symfony, Lambda, Serverless

series: Symfony on a lambda

canonical_url: https://julesmatsounga.com/en/article/symfony-lambda-chapter-1

---

# Symfony on a lambda: first deployment

***English isn't my first language, help me improve my posts by pointing my mistakes***

We can run serveless on many platforms, and same goes for cloud functions. As Bref only support AWS, we will focus on this platform.

As mentioned, we will use [Bref](https://bref.sh/). It help run PHP on a AWS Lambda.

We will use it with Symfony, a popular PHP framework.

If you are lost, you can find the project on Github: [link](https://github.com/hyoa/symfony-on-lambda)

Each branch will match a chapter.

### Requirements

* Have the requirements to run Symfony on your computer (PHP and some extensions)

* Have an AWS account(you can create one here: [registration](https://portal.aws.amazon.com/billing/signup?nc2=h_ct&src=default&redirect_url=https%3A%2F%2Faws.amazon.com%2Fregistration-confirmation#/start)). We will stay below free tier limit, so no worries for your wallet.

* Create an access key:

* Create a new user [here](https://console.aws.amazon.com/iam/home?#/users$new?step=details)

* Add a user name

* Enable ` Programmatic access`

* Click **Attach existing policies directly**, search for **AdministratorAccess **and select it.

Warning: it is recommended to only select right that you really need. But too keep this presentation simple, we will use a full access key.

* Finish creating the user

* Take note of keys generated, we will need them after

* Install serverless: `npm install -g serverless`

* Create the configuration of serverless: `serverless config credentials --provider aws --key <key> --secret <secret>` where key and secret are from the keys generated earlier

### Symfony

We now have everything we need to start our development. Let's go !

#### Installation of Symfony

In your terminal, type the following command at the root of your projects `composer create-project symfony/website-skeleton [my_project_name]` (remember to replace [my_project_name] with yours)

Once the installation done, you can go in the folder where is your Symfony application.

We can now install Bref, which is required to deploy PHP on a lambda. To do so, once in the project, type `composer require bref/bref`

### Creation of the serverless.yml

This file will define the architecture that we will deploy on AWS, that will be called **CloudFormation**. It's in this file that we will define services and resources and their configurations that we want on AWS.

To create this file, Bref give us a command that will help init a project. Type `vendor/bin/bref init` et select `HTTP application`.

The command create our **serverless.yml** file and an **index.php** file at the root of our project. You can delete **index.php** we wont use it.

The **serverless.yml** should look like this:

```yaml

service: app #Name of your application

provider:

name: aws #Provider used by Serverless

region: us-east-1 #Region where you will deploy your CloudFormation

runtime: provided

plugins:

- ./vendor/bref/bref

functions:

api: #Name of the function

handler: index.php

description: ''

timeout: 28 # in seconds (API Gateway has a timeout of 29 seconds)

layers:

- ${bref:layer.php-73-fpm}

events:

- http: 'ANY /'

- http: 'ANY /{proxy+}'

```

The most interesting part is **functions** where we will be able to define our functions that we need (it can be commands, apis, crons, etc...).

* handler: the php file used by the lambda

* description: well, a description ?

* timeout: maximum execution time of the function

* layers: Layers are environment used by the lambda to run, you can have multiple layers to add other extensions, dependencies etc. Here, we use the layer of Bref, that run PHP with the layer of Node ([more on layers](https://docs.aws.amazon.com/fr_fr/lambda/latest/dg/configuration-layers.html))

* events: events that will trigger the function. On this function, the HTTP event will trigger the lambda, but there is a lot of other events triggered by AWS that we can listen. We will see an other one later

Now that we have a better understanding of our file, lets changed it to run Symfony.

```yaml

service: cloud-project

provider:

name: aws

region: eu-west-2

runtime: provided

stage: dev

environment:

APP_ENV: prod

plugins:

- ./vendor/bref/bref

functions:

website:

handler: public/index.php

timeout: 28

layers:

- ${bref:layer.php-73-fpm}

events:

- http: 'ANY /'

- http: 'ANY /{proxy+}'

console:

handler: bin/console

timeout: 120

layers:

- ${bref:layer.php-73}

- ${bref:layer.console}

```

Not too many change. I changed the name of the application in `cloud-project` (you can put whatever you want). I also add a stage that define the environment publish.

In **environment** we have the environment variables used by our functions.

I also create 2 functions:

* website that is the same than the api function we had in the file. We only change the handler that now target the **index.php** of Symfony.

* console used to run Symfony command

#### Symfony configuration

We will have to change some files in Symfony to make it work on a lambda.

System file is **readonly** except **/tmp**, we have to change where are stored cache and logs.

In **src/Kernel.php**, we need to add 2 methods:

```php

// src/Kernel.php

...

public function getLogDir(): string

{

if (getenv('LAMBDA_TASK_ROOT') !== false) {

return '/tmp/log/';

}

return parent::getLogDir();

}

public function getCacheDir(): string

{

if (getenv('LAMBDA_TASK_ROOT') !== false) {

return '/tmp/cache/'.$this->environment;

}

return parent::getCacheDir();

}

```

We also need to change **index.php**.

Once deployed, the lambda that use API Gateway have a domain that is created and that end with th stage deployed (ex: https://lamnda/dev). It can create some issue with PHP framework. You can easily solve this by creating a custom domain that get ride of this suffix (more information](https://bref.sh/docs/environment/custom-domains.html)). But to keep this presentation accessible, we won't do it.

We have to change some servers variables so the Symfony routing wont break.

```php

// public/index.php

$_SERVER['SCRIPT_NAME'] = '/dev/index.php';

if (strpos($_SERVER['REQUEST_URI'], '/dev') === false) {

$_SERVER['REQUEST_URI'] = '/dev'.$_SERVER['REQUEST_URI'];

}

```

You need to put this line before `new Kernel(...)`. `/dev` is the stage defined in **serverless.yml**. If you create your own application, you should really create your own domain. It can be a bit long (propagation of DNS) but otherwise it's quite simple.

We don't need anything else. Let's code a bit.

#### Building a homepage

We will create a simple landing page si we have something to deploy. But we will keep it simple:

Create a file **HomeController** in **src/Controller**

```php

<?php

namespace App\Controller;

use Symfony\Bundle\FrameworkBundle\Controller\AbstractController;

use Symfony\Component\HttpFoundation\Response;

use Symfony\Component\Routing\Annotation\Route;

class HomeController extends AbstractController

{

/**

* @Route("/", name="home")

*/

public function homeAction(): Response

{

return $this->render('home/index.html.twig');

}

}

```

Create a file **index.html.twig** in **templates/home**

```twig

{% extends 'base.html.twig' %}

{% block body %}

<h1>Symfony and lambdas</h1>

{% endblock %}

```

Yes, it's really simple but we don't really need anything else.

To see if it's working, type: `php bin/console server:run` and go to the url displayed. You should see your page.

Now, let's deploy it !

#### Deploy in the cloud

It might disappoint you, but you just need to run `serverless deploy`. The command will package your project, create the required resources on AWS. After few minutes, the endpoints of your function will be displayed. Use the first one, and you should see your site deployed !

You can see your **CloudFormation** on AWS [here](https://eu-west-2.console.aws.amazon.com/cloudformation/home?region=eu-west-2#/stacks?filteringText=&filteringStatus=active&viewNested=true&hideStacks=false). You can also see the resources created by selecting **Model** then **Display in Designer**.

***

We now have a Symfony application running on AWS. Of course we are only at the beginning, but we will discover more in the next chapters.

In the next chapter we will talk about assets. Because without assets, there is no CSS or JS ! | hyoa |

202,291 | What's the most inefficient thing you do? | We all have weird things we do, long ways around short problems. We should probably get around to... | 0 | 2019-11-08T11:03:54 | https://dev.to/moopet/what-s-the-most-inefficient-thing-you-do-4bmh | discuss, workflow, watercooler | ---

title: What's the most inefficient thing you do?

published: true

tags: discuss, workflow, watercooler

cover_image: https://thepracticaldev.s3.amazonaws.com/i/j1egpfb1l5pamnsfseec.jpg

---

We all have weird things we do, long ways around short problems. We should probably get around to sorting them out, but we've gotten used to That Way Of Doing Them.

Sometimes we've created monster workflows that Rube Goldberg or Heath Robinson would eye suspiciously before backing away from. Sometimes we hold onto them because they're comfortable, sometimes because fixing them seems too difficult, sometimes just because we don't know any better until it's way too late to make any difference.

What do you do that fits this description? Well, what are you vaguely embarrassed to admit to, anyway?

--

Cover image by [Valentin Petkov](https://unsplash.com/@thefreak1337) on Unsplash. | moopet |

202,342 | Setting Up a Python Remote Interpreter Using Docker | Why a Remote Interpreter instead of a Virtual Environment? A well-known pattern in Python... | 0 | 2019-11-08T13:28:19 | https://dev.to/alvarocavalcanti/setting-up-a-python-remote-interpreter-using-docker-1i24 | python, pycharm, vscode, tdd | # Why a Remote Interpreter instead of a Virtual Environment?

A well-known pattern in Python (and many other languages) is to rely on virtual environment tools (`virtualenv`, `pyenv`, etc) to avoid the [SnowflakeServer](https://martinfowler.com/bliki/SnowflakeServer.html) anti-pattern. These tools create an isolated environment to install all dependencies for any given project.

But as of today there's an improvement to that pattern, which is to use Docker containers instead. Such containers provide much more flexibility than virtual environment, because they are not limited to a single platform/language, instead they offer a fully-fledged virtual machine. Not to mention the `docker-compose` tool where one can have several containers interacting with each other.

This article will guide the reader on how to set up the two most used Python IDEs for using Docker containers as remote interpreters.

## Pre-requisites

A running Docker container with:

- A volume mounted to your source code (henceforth, `/code`)

- SSH setup

- SSH enabled for the `root:password` creds and the root user allowed to login

Refer to [this gist](https://gist.github.com/alvarocavalcanti/24a6f1470d1db724a398ea6204384f00) for the necessary Docker files.

## PyCharm Professional Edition

1. Preferences (CMD + ,) > Project Settings > Project Interpreter

1. Click on the gear icon next to the "Project Interpreter" dropdown > Add

1. Select "SSH Interpreter" > Host: localhost, Port: 9922, Username: root > Password: password > Interpreter: /usr/local/bin/python, Sync folders: Project Root -> /code, Disable "Automatically upload..."

1. Confirm the changes and wait for PyCharm to update the indexes

## Visual Studio Code

1. Install the [Python](https://marketplace.visualstudio.com/items?itemName=ms-python.python) extension

1. Install the [Remote - Containers](https://marketplace.visualstudio.com/items?itemName=ms-vscode-remote.remote-containers) extension

1. Open the Command Pallette and type `Remote-Containers`, then select the `Attach to Running Container...` and selecet the running docker container

1. VS Code will restart and reload

1. On the `Explorer` sidebar, click the `open a folder` button and then enter `/code` (this will be loaded from the remote container)

1. On the `Extensions` sidebar, select the `Python` extension and install it on the container

1. When prompet on which interppreter to use, select `/usr/local/bin/python`

1. Open the Command Pallette and type `Python: Configure Tests`, then select the `unittest` framework

## Expected Results

1. Code completion works

1. Code navigation works

1. Organize imports works

1. Import suggestions/discovery works

1. (VS Code) Tests (either classes or methods) will have a new line above their definitions, containing two actions: `Run Test | Debug Test`, and will be executed upon clicking on them

1. (PyCharm) Tests (either classes or methods) can be executed by placing the cursor on them and then using `Ctrl+Shift+R`

## Bonus: TDD Enablement

One of the key aspects of the Test-Driven Development is to provide a short feedback on each iteration (write a failing test, fix the test, refactor). And a lot of times a project's tooling might work against this principle, as it's fairly common for a project to have a way of executing its test suite, but it is also common that this task will run the entire suite, not just a single test.

But if you have your IDE of choice able to execute just a single test in a matter of seconds, you will feel way more comfortable on given TDD a try.

| alvarocavalcanti |

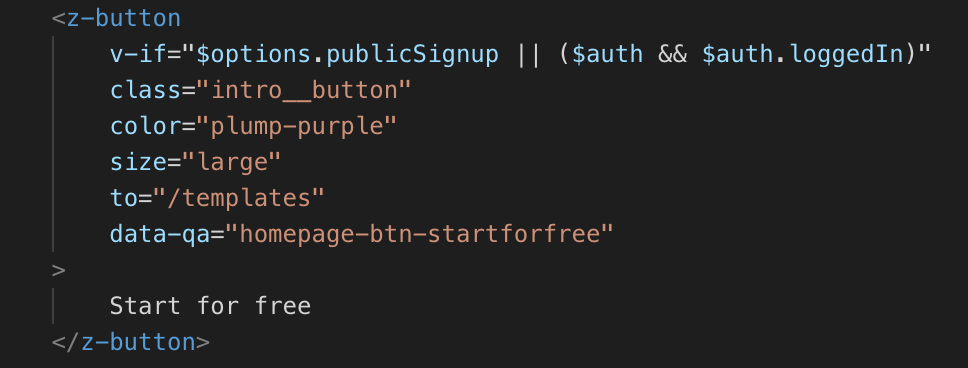

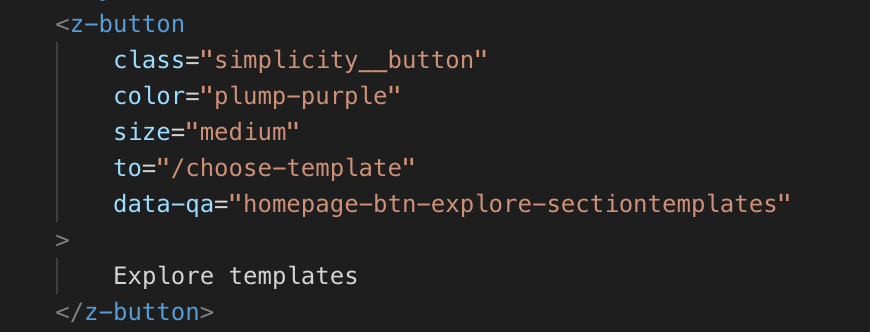

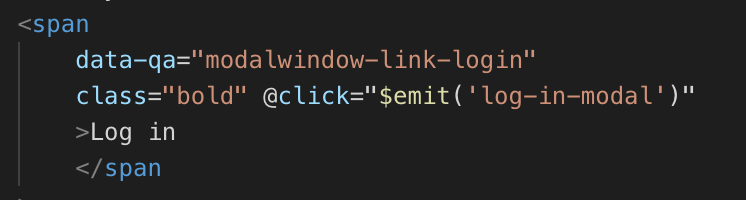

202,369 | How to build applications with Vue’s composition API | Written by Raphael Ugwu✏️ Vue’s flexible and lightweight nature makes it really awesome for... | 0 | 2019-11-10T16:53:29 | https://blog.logrocket.com/how-to-build-applications-with-vues-composition-api/ | vue, javascript, tutorial, webdev | ---

title: How to build applications with Vue’s composition API

published: true

date: 2019-11-08 14:00:31 UTC

tags: vue,javascript,tutorial,webdev

canonical_url: https://blog.logrocket.com/how-to-build-applications-with-vues-composition-api/

cover_image: https://thepracticaldev.s3.amazonaws.com/i/qjup3j93vxj4foj548zh.png

---

**Written by [Raphael Ugwu](https://blog.logrocket.com/author/raphaelugwu/)**✏️

Vue’s flexible and lightweight nature makes it really awesome for developers who quickly want to scaffold small and medium scale applications.

However, Vue’s current API has certain limitations when it comes to maintaining growing applications. This is because the API organizes code by [component options](https://012.vuejs.org/api/options.html) ( Vue’s got a lot of them) instead of logical concerns.

As more component options are added and the codebase gets larger, developers could find themselves interacting with components created by other team members, and that’s where things start to get really confusing, it then becomes an issue for teams to improve or change components.

Fortunately, Vue addressed this in its latest release by rolling out the [Composition API](https://vue-composition-api-rfc.netlify.com/#summary). From what I understand, it’s a function-based API that is meant to facilitate the composition of components and their maintenance as they get larger. In this blog post, we’ll take a look at how the composition API improves the way we write code and how we can use it to build highly performant web apps.

[](https://logrocket.com/signup/)

## Improving code maintainability and component reuse patterns

[Vue 2](https://vuejs.org/v2/guide/) had two major drawbacks. The first was **difficulty maintaining large components.**

Let’s say we have a component called `App.vue` in an application whose job is to handle payment for a variety of products called from an API. Our initial steps would be to list the appropriate data and functions to handle our component:

```jsx

// App.vue

<script >

import PayButton from "./components/PayButton.vue";

const productKey = "778899";

const API = `https://awesomeproductresources.com/?productkey=${productKey}`; // not real ;)

export default {

name: "app",

components: {

PayButton

},

mounted() {

fetch(API)

.then(response => {

this.productResponse = response.data.listings;

})

.catch(error => {

console.log(error);

});

},

data: function() {

return {

discount: discount,

productResponse: [],

email: "ugwuraphael@gmail.com",

custom: {

title: "Retail Shop",

logo: "We are an awesome store!"

}

};

},

computed: {

paymentReference() {

let text = "";

let possible =

"ABCDEFGHIJKLMNOPQRSTUVWXYZabcdefghijklmnopqrstuvwxyz0123456789";

for (let i = 0; i < 10; i++)

text += possible.charAt(Math.floor(Math.random() * possible.length));

return text;

}

}

};

</script>

```

All `App.vue` does is retrieve data from an API and pass it into the `data` property while handling an imported component `payButton`. It doesn’t seem like much and we’ve used at least three component options – `component`, `computed` and `data` and the [`mounted()`](https://vuejs.org/v2/api/#mounted) lifecycle Hook.

In the future, we’ll probably want to add more features to this component. For example, some functionality that tells us if payment for a product was successful or not. To do that we’ll have to use the `method` component option.

Adding the `method` component option only makes the component get larger, more verbose, and less maintainable. Imagine that we had several components of an app written this way. It is definitely not the ideal kind of framework a developer would want to use.

Vue 3’s fix for this is a `setup()` method that enables us to use the composition syntax. Every piece of logic is defined as a composition function outside this method. Using the composition syntax, we would employ a separation of concerns approach and first isolate the logic that calls data from our API:

```jsx

// productApi.js

<script>

import { reactive, watch } from '@vue/composition-api';

const productKey = "778899";

export const useProductApi = () => {

const state = reactive({

productResponse: [],

email: "ugwuraphael@gmail.com",

custom: {

title: "Retail Shop",

logo: "We are an awesome store!"

}

});

watch(() => {

const API = `https://awesomeproductresources.com/?productkey=${productKey}`;

fetch(API)

.then(response => response.json())

.then(jsonResponse => {

state.productResponse = jsonResponse.data.listings;

})

.catch(error => {

console.log(error);

});

});

return state;

};

</script>

```

Then when we need to call the API in `App.vue`, we’ll import `useProductApi` and define the rest of the component like this:

```jsx

// App.vue

<script>

import { useProductApi } from './ProductApi';

import PayButton from "./components/PayButton.vue";

export default {

name: 'app',

components: {

PayButton

},

setup() {

const state = useProductApi();

return {

state

}

}

}

function paymentReference() {

let text = "";

let possible =

"ABCDEFGHIJKLMNOPQRSTUVWXYZabcdefghijklmnopqrstuvwxyz0123456789";

for (let i = 0; i < 10; i++)

text += possible.charAt(Math.floor(Math.random() * possible.length));

return text;

}

</script>

```

It’s important to note that this doesn’t mean our app will have fewer components, we’re still going to have the same number of components – just that they’ll use fewer component options and be a bit more organized.

[Vue 2](https://vuejs.org/v2/guide/)‘s second drawback was an inefficient component reuse pattern.

The way to reuse functionality or logic in a Vue component is to put it in a [mixin](https://vuejs.org/v2/guide/mixins.html) or [scoped slot](https://vuejs.org/v2/guide/components-slots.html). Let’s say we still have to feed our app certain data that would be reused, to do that let’s create a mixin and insert this data:

```jsx

<script>

const storeOwnerMixin = {

data() {

return {

name: 'RC Ugwu',

subscription: 'Premium'

}

}

}

export default {

mixins: [storeOwnerMixin]

}

</script>

```

This is great for small scale applications. But like the first drawback, the entire project begins to get larger and we need to create more mixins to handle other kinds of data. We could run into a couple of issues such as name conflicts and implicit property additions. The composition API aims to solve all of this by letting us define whatever function we need in a separate JavaScript file:

```jsx

// storeOwner.js

export default function storeOwner(name, subscription) {

var object = {

name: name,

subscription: subscription

};

return object;

}

```

and then import it wherever we need it to be used like this:

```jsx

<script>

import storeOwner from './storeOwner.js'

export default {

name: 'app',

setup() {

const storeOwnerData = storeOwner('RC Ugwu', 'Premium');

return {

storeOwnerData

}

}

}

</script>

```

Clearly, we can see the edge this has over mixins. Aside from using less code, it also lets you express yourself more in plain JavaScript and your codebase is much more flexible as functions can be reused more efficiently.

## Vue Composition API compared to React Hooks

Though Vue’s Composition API and React Hooks are both sets of functions used to handle state and reuse logic in components – they work in different ways. Vue’s `setup` function runs only once while creating a component while React Hooks can run multiple times during render. Also for handling state, React provides just one Hook – `useState`:

```jsx

import React, { useState } from "react";

const [name, setName] = useState("Mary");

const [subscription, setSubscription] = useState("Premium");

console.log(`Hi ${name}, you are currently on our ${subscription} plan.`);

```

The composition API is quite different, it provides two functions for handling state – `ref` and `reactive` . `ref` returns an object whose inner value can be accessed by its `value` property:

```jsx

const name = ref('RC Ugwu');

const subscription = ref('Premium');

watch(() => {

console.log(`Hi ${name}, you are currently on our ${subscription} plan.`);

});

```

`reactive` is a bit different, it takes an object as its input and returns a reactive proxy of it:

```jsx

const state = reactive({

name: 'RC Ugwu',

subscription: 'Premium',

});

watch(() => {

console.log(`Hi ${state.name}, you are currently on our ${state.subscription} plan.`);

});

```

Vue’s Composition API is similar to React Hooks in a lot of ways although the latter obviously has more popularity and support in the community for now, it will be interesting to see if composition functions can catch up with Hooks. You may want to check out this [detailed post](https://dev.to/voluntadpear/comparing-react-hooks-with-vue-composition-api-4b32) by Guillermo Peralta Scura to find out more about how they both compare to each other.

## Building applications with the Composition API

To see how the composition API can further be used, let’s create an image gallery out of pure composition functions. For data, we’ll use [Unsplash’s API](https://unsplash.com/developers). You will want to sign up and get an API key to follow along with this. Our first step is to create a project folder using Vue’s CLI:

```jsx

# install Vue's CLI

npm install -g @vue/cli

# create a project folder

vue create vue-image-app

#navigate to the newly created project folder

cd vue-image-app

#install aios for the purpose of handling the API call

npm install axios

#run the app in a developement environment

npm run serve

```

When our installation is complete, we should have a project folder similar to the one below:

Vue’s CLI still uses Vue 2, to use the composition API, we have to install it differently. In your terminal, navigate to your project folder’s directory and install Vue’s composition plugin:

```jsx

npm install @vue/composition-api

```

After installation, we’ll import it in our `main.js` file:

```jsx

import Vue from 'vue'

import App from './App.vue'

import VueCompositionApi from '@vue/composition-api';

Vue.use(VueCompositionApi);

Vue.config.productionTip = false

new Vue({

render: h => h(App),

}).$mount('#app')

```

It’s important to note that for now, the composition API is just a different option for writing components and not an overhaul. We can still write our components using component options, mixins, and scoped slots just as we’ve always done.

## Building our components

For this app, we’ll have three components:

- `App.vue` : The parent component — it handles and collects data from both children components- `Photo.vue` and `PhotoApi.js`

- `PhotoApi.js`: A functional component created solely for handling the API call

- `Photo.vue` : The child component, it handles each photo retrieved from the API call

First, let’s get data from the Unsplash API. In your project’s `src` folder, create a folder `functions` and in it, create a `PhotoApi.js` file:

```jsx

import { reactive } from "@vue/composition-api";

import axios from "axios";

export const usePhotoApi = () => {

const state = reactive({

info: null,

loading: true,

errored: false

});

const PHOTO_API_URL =

"https://api.unsplash.com/photos/?client_id=d0ebc52e406b1ac89f78ab30e1f6112338d663ef349501d65fb2f380e4987e9e";

axios

.get(PHOTO_API_URL)

.then(response => {

state.info = response.data;

})

.catch(error => {

console.log(error);

state.errored = true;

})

.finally(() => (state.loading = false));

return state;

};

```

In the code sample above, a new function was introduced from Vue’s composition API – `reactive`.

`reactive` is the long term replacement of `Vue.observable()` , it wraps an object and returns the directly accessible properties of that object.

Let’s go ahead and create the component that displays each photo. In your `src/components` folder, create a file and name it `Photo.vue`. In this file, input the code sample below:

```jsx

<template>

<div class="photo">

<h2>{{ photo.user.name }}</h2>

<div>

<img width="200" :alt="altText" :src="photo.urls.regular" />

</div>

<p>{{ photo.user.bio }}</p>

</div>

</template>

<script>

import { computed } from '@vue/composition-api';

export default {

name: "Photo",

props: ['photo'],

setup({ photo }) {

const altText = computed(() => `Hi, my name is ${photo.user.name}`);

return { altText };

}

};

</script>

<style scoped>

p {

color:#EDF2F4;

}

</style>

```

In the code sample above, the `Photo` component gets the photo of a user to be displayed and displays it alongside their bio. For our `alt` field, we use the `setup()` and `computed` functions to wrap and return the variable `photo.user.name`.

Finally, let’s create our `App.vue` component to handle both children components. In your project’s folder, navigate to `App.vue` and replace the code there with this:

```jsx

<template>

<div class="app">

<div class="photos">

<Photo v-for="photo in state.info" :photo="photo" :key="photo[0]" />

</div>

</div>

</template>

<script>

import Photo from './components/Photo.vue';

import { usePhotoApi } from './functions/photo-api';

export default {

name: 'app',

components: { Photo },

setup() {

const state = usePhotoApi();

return {

state

};

}

}

</script>

```

There, all `App.vue` does is use the `Photo` component to display each photo and set the state of the app to the state defined in `PhotoApi.js`.

## Conclusion

It’s going to be interesting to see how the Composition API is received. One of its key advantages I’ve observed so far is its ability to separate concerns for each component – every component has just one function to carry out. This makes stuff very organized. Here are some of the functions we used in the article demo:

- `setup` – this controls the logic of the component. It receives `props` and context as arguments

- `ref` – it returns a reactive variable and triggers the re-render of the template on change. Its value can be changed by altering the `value` property

- `reactive` – this returns a reactive object. It re-renders the template on reactive variable change. Unlike `ref`, its value can be changed without changing the `value` property

Have you found out other amazing ways to implement the Composition API? Do share them in the comments section below. You can check out the full implementation of the demo on [CodeSandbox](https://codesandbox.io/s/vue-template-x9bqm?fontsize=14).

* * *

**Editor's note:** Seeing something wrong with this post? You can find the correct version [here](https://blog.logrocket.com/how-to-build-applications-with-vues-composition-api/).

## Plug: [LogRocket](https://logrocket.com/signup/), a DVR for web apps

[LogRocket](https://logrocket.com/signup/) is a frontend logging tool that lets you replay problems as if they happened in your own browser. Instead of guessing why errors happen, or asking users for screenshots and log dumps, LogRocket lets you replay the session to quickly understand what went wrong. It works perfectly with any app, regardless of framework, and has plugins to log additional context from Redux, Vuex, and @ngrx/store.

In addition to logging Redux actions and state, LogRocket records console logs, JavaScript errors, stacktraces, network requests/responses with headers + bodies, browser metadata, and custom logs. It also instruments the DOM to record the HTML and CSS on the page, recreating pixel-perfect videos of even the most complex single-page apps.

[Try it for free](https://logrocket.com/signup/).

* * *

The post [How to build applications with Vue’s composition API](https://blog.logrocket.com/how-to-build-applications-with-vues-composition-api/) appeared first on [LogRocket Blog](https://blog.logrocket.com). | bnevilleoneill |

202,381 | 9 Ways to Increase Your Financial Flow | The simplest way of calculating your cash flow is to deduct your expenses from your income. The formu... | 0 | 2019-11-08T14:38:09 | https://dev.to/anna_j_stinson/9-ways-to-increase-your-financial-flow-3age | The simplest way of calculating your cash flow is to deduct your expenses from your income. The formula itself is rather simple and tells you that you can increase your disposable cash by either increasing your income or reducing your expenses. Both are valid ways, but often the best results are achieved by combining both approaches and often the only way to avoid living paycheck to paycheck or even worse, going into debt. The same rules apply in business as well.

##Lease, Don’t Buy

Long-term, leasing if more expensive than buying. So why all experts advise startups to lease then? Because of the financial flow. By leasing the equipment you need, you are actually buying it with incremental payments. This essentially creates a payment plan, allowing you to skip big purchases right off the bat when you need the cash the most. Of course, if you are flush with money, you can afford to buy everything you need and pay a full retail price at once. Then again, if you are in that situation, you wouldn’t be reading articles about increasing cash flow.

##Offer Discounts

Quick payments are a great way of bolstering your cash flow and one way of getting them is to offer discounted prices, especially on larger orders. There will be some of your customers who simply can’t afford to shell out that much cash in advance, but some of them will jump at the opportunity to save some money. If you can reduce the payment time from the usual 30 days to just 10 by offering a 2% discount for early payment, you should do it. Even at the larger discount, the exchange is still favorable, especially if you are strapped for cash.

##Check Your Expenses

The least popular way of increasing your cash flow is by cutting expenses, either operational or by firing people. Unfortunately, sometimes it is the only way of moving forward. If you are forced to do it, think carefully and create a plan before committing to it. These changes will shake your company to the core and shouldn’t be taken lightly.

##Maintain Your Equipment

Equipment maintenance isn’t something startups usually bother with, on the account that they are usually getting brand new stuff that needs little or no intervention. As time goes by and wear and tear kicks in, all of a sudden everything starts breaking down, causing massive downtime and delays. The best way to avoid this is by using preventive maintenance. Eventually, a part or a whole machine will need replacing, but by doing proper maintaining, this can be delayed significantly.

##Consider Buying Used Equipment

Not a very popular suggestion, but often you can find used equipment in excellent condition for a fraction of the price of a new one. This can be demanding on your time, as you have to sort through piles of junk to find one decent piece, but you can save thousands of dollars on just one purchase. Start with your area looking for local auctions and advertisements. Every once and a while, somebody will go out of business and this is a perfect opportunity to get your hands on professional equipment for little money. Make sure to bring someone who knows how to assess the condition, if you are unsure what to look for.

##Expand Your Market

In the short term, expanding set you back significantly. You will have to expand production or hire more people to provide services, invest in a marketing campaign and gazillion other smaller things that will need money. In the long term, this is perhaps the best way to increase your cash flow. Not only will this increase your income, but it will also open up new possibilities as well.

##Invest

A small percentage of your monthly income should be devoted to investments. This will ensure your long-term cash flow by providing you with dividends and interests. To be a successful investor, you need to educate yourself first and getting to know all [the important trading terminology](https://www.asktraders.com/learn-to-trade/trading-terminology/) is just the first step. Learn the difference between stock, bonds, ETF’s, mutual funds and index funds, and see which one of these can benefit you the most. Over time, your portfolio will yield different returns and you should be ready to swap investments as the opportunity arises.

##Offer New Product/Service

Hold a brainstorming session with your team to come up with a product or a service that you can offer to your customers. It may sound farfetched, but in reality, it is surprising how often good ideas can spring up when you least expect them. It doesn’t even have to be in line with your core business. Perhaps there is some space you aren’t currently using that can be rented?

##Consider a Loan

Finally, if you are left with no other option, [taking out a loan](https://www.fundera.com/blog/advantages-of-sba-loans) until you can recover may be the only solution, however unpopular it is. If you have reasonable expectations that your income will improve in the future and that you are just missing one vital part, like a piece of crucial machinery, taking out a commercial loan doesn’t have to be the end of the world. After all, take a look at the United States government, in debt for over $20 trillion. A few thousand dollars are almost insignificant in comparison. Of course, it is very important to have a clear idea of why you need the loan and the discipline to spend it on that exact purpose. People often make mistakes of diverting some newly-found funds to other things and neglecting the purpose of the loan. Not only will this get you in trouble with your lender, but it will also jeopardize the whole idea.

The cash is the king and whoever says other ways is either lying or is delusional. It is no wonder companies like Apple keep hundreds of billions stashed in offshore accounts, just waiting for an opportunity to present itself. However, getting to that level demands a lot of work and the first step is creating a positive financial flow. Only then can you think about creating an emergency fund for unexpected opportunities.

| anna_j_stinson | |

202,408 | Dual-booting Linux Mint | Why Linux I’ve always heard that Linux is the way to go but I never tried it. I had... | 0 | 2019-11-21T17:53:10 | https://dev.to/stephencavender/dual-booting-linux-mint-3cgk | linux | ---

title: Dual-booting Linux Mint

published: true

date: 2019-11-08 05:00:00 UTC

tags: Linux

canonical_url:

---

## Why Linux

I’ve always heard that Linux is the way to go but I never tried it. I had Windows and it worked fine for me. I took some training at work that required Linux so I started using it inside a virtual machine. I got comfortable with it and decided it would be fun to try at home.

## Why Mint

Based on this [Dev.to](https://dev.to/pluralsight/which-distribution-of-linux-should-i-use-51g7) article it sounded like where a Linux newbie like myself should start. I tried a couple versions inside VirtualBox before committing. I used [OSBoxes](https://www.osboxes.org/) to quickly get them up and running.

## Why Dual-Boot

I chose to dual-boot because I didn’t want to risk losing Windows if I messed up the Mint install. Also because the Mint install made it really easy.

## How I did it

### Disclaimer!

I’ll recount the steps I took and the references I used but can’t guarantee any of it for anyone else.Also it’s a good idea to follow along with [Mint’s install docs](https://www.linuxmint.com/documentation.php).

### 1. Back Up Data

I backed up my data because there’s always a chance it could get wiped from existence.

### 2. Download Linux Mint

I grabbed the 64bit Cinnamon version from [here](https://linuxmint.com/download.php).

### 3. Create a Bootable USB

I used [Etcher](https://www.balena.io/etcher/) to flash the image onto my USB drive but any flashing software should do the trick.

### 4. Create Disk Space

My first attempt didn’t take because I didn’t have any room. I ended up freeing up some space from my Windows partitions.

### 5. Update Boot Configuration

I had to disable secure boot and change the boot order in the BIOS.

### 6. Install Mint

I followed the on-screen instructions at this point. Here are the important bits:

- Dual booting with Windows

- Create partitions

- Root (I used 20Gb)

- Swap (I used 8Gb)

- Home (I used the rest of my free space)A few more on-screen instructions and I was ready to go!

### 7. Use Mint

Mint is installed and ready to go. I’m on a Razer Blade Stealth and everything works out of the box except for closing the lid. I’m sure there are other things that don’t quite work that I haven’t encountered yet. When I close the lid Mint is supposed to suspend but when I open the lid back up I have to hard shutdown before my laptop will wake up and respond. Other than that I’m very happy with Mint and hope that this article helps you! | stephencavender |

202,441 | Spartan Breakpoints! | Just wanted to get some opinions from other UI Enthusiasts about the breakpoints they are using for their UIs | 0 | 2019-11-08T22:57:39 | https://dev.to/srsheldon/spartan-breakpoints-59a1 | responsive, css, breakpoints, ux | ---

title: Spartan Breakpoints!

published: true

description: Just wanted to get some opinions from other UI Enthusiasts about the breakpoints they are using for their UIs

tags: responsive, css, breakpoints, ux

---

So I know this topic has probably been talked about more than enough, there is even a [really awesome article about it](https://dev.to/rstacruz/what-media-query-breakpoints-should-i-use-292c) on Dev.to but I wanted to get some feedback on a slightly new set of breakpoints.

I was hoping to make them even more generic and get some feedback and thoughts from the incredible developer community here on Dev.to.

I was going to call this new set of breakpoints "the spartan breakpoint system" because the media queries are approximately every 300 pixels.

I was planing on using it in a component library I am building for fun to teach myself some of the various custome element APIs and enhance my web accessibility skills.

Here's a table comparing a few different CSS Framework breakpoints:

| Size | Devices | Spartan Breakpoints | [Bootstrap](https://getbootstrap.com/docs/4.3/layout/overview/#responsive-breakpoints) | [Bulma](https://bulma.io/documentation/overview/responsiveness/#breakpoints) | [Tailwind](https://tailwindcss.com/docs/breakpoints/) | [Foundation](https://foundation.zurb.com/sites/docs/v/5.5.3/media-queries.html) | [Semantic UI](https://github.com/Semantic-Org/Semantic-UI/blob/383871090cda527df916e1751279b3de79b07480/src/themes/default/globals/site.variables#L208-L216) |

| ---- | ---- | --------- | -------- | --- | ---- | ---- | ---|

| Extra Small (xs) | small phone | 0 - 300px | 0 - 575px | 0 - 768px| 0 - 639px | 0 - 640px| 320 - 767px |

| Small (sm) | phone | 301 - 600px | 576 - 767px | 769 - 1023px | 640 - 767px | 641 - 1,024px | 768 - 991px |

| Medium (md) | large phone/small tablet| 601 - 900px | 768 - 991px| 1024 - 1,215px | 768 - 1,023px| 1,025 - 1,440px | 992 - 1,199x |

| Large (lg) | tablet | 901 - 1,200px | 992 - 1,200px| 1,216 - 1,407px | 1,024- 1,279px | 1,441 - 1,920px | 1,200 - 1,919px |

| Extra Large (xl) | desktop/large tablet | 1,201 - 1,500px | > 1,200px | > 1,408px | > 1,280px | > 1,921px | > 1,920px |

Thanks in advance everyone for your feedback!

| srsheldon |

202,494 | problem rendering images in react app | Hello I am fairly new to react & node. I have an app which displays image buttons amongst other t... | 0 | 2019-11-08T18:39:36 | https://dev.to/rrn518/problem-rendering-images-in-react-app-5d64 | Hello

I am fairly new to react & node. I have an app which displays image buttons amongst other things. When starting from visual studio code they render perfectly unless my associated node listener is started first on the same port, in which case they don't render at all. 404 is returned for all. Why is that and what's the fix please ?

Cheers | rrn518 | |

886,077 | A new life transition | Its true that with any transition in life there’s upheaval, fear, and frustration, that’s the point... | 0 | 2021-11-03T02:46:01 | https://dev.to/mikeketterling/a-new-life-transition-5kd | beginners, career | Its true that with any transition in life there’s upheaval, fear, and frustration, that’s the point of a transition. I think that sharing any life transition ultimately helps inspire at least someone out there, and therefore, doing something good for someone. That’s all I hope for with this and any other posts I’ll be publishing.

With this being my first post, I thought it only be fitting to write about this transition. As I first said this has not been an easy experience, and if I can offer my story and maybe some advice for any future transitions, then great. Everyone’s experiences will be different in a transition into the tech space – it’s important to recognize this, and I hope that my experience resonates with someone.

I come from a background in Human Resources and healthcare. Most of my work experience had to do with all the non-technical aspects of most office settings. So as far as what I had been used to for some years, the jump to learning multiple technical languages was going to be significant - but not out of reach. In knowing that I was going to be learning many new skills, I had a few strategies in mind.

##My Three Strategies

*A healthy routine*

I knew from working the past year or so at home that for me to complete a technical program I would have to have a solid routine to help me through the rough days and keep me on track when I’m ahead in the coursework. I also knew that a routine would support my mental exhaustion as well, and to be honest this is what I was most concerned about.

My mental state had gone through a lot in that past year with COVID-19, and my job. My mental fortitude was stretched so thin to the point I almost completely broke. It was a rough time for all of us in the world I do believe, and I do not want to take that away from anyone - but my own experience was difficult. If I had to say what kept me going, it was my wife and dogs. My wife, so that we can keep driving towards our combined aspirations, and my dogs were the only ones around me when I was at my lowest, to bring me back to rational thinking when I needed it the most. Taking all this into consideration I started to put together a routine that seemed realistic and that I could follow.

I wanted to keep my body sharp as well as my mind, a regular morning workout when I wake up, followed up with strong and healthy meals through the day. Setting time aside every night for homework and much needed time for family and friends.

*Prep-work*

Most bootcamps can offer you insight into what languages or tech stacks you will be using during the bootcamp. Some bootcamps will even provide you with pre-work modules, prepping you for your time at the bootcamp of your choosing. For me I unfortunately entered the program at the almost last possible moment, so I couldn’t spend a significant amount of time with the provided pre-work modules, but I would change that if I could go back. Another option is purchasing or finding free resources to review along with your prep coursework. This is a strategy I use often when learning something new. I like to see and hear plenty of different examples of how to complete similar tasks, and if you’re like me, and have adequate time, I think it’s a great strategy to immerse you in content.

*Find your motivation*

The last strategy I’ll mention is finding your motivation. Motivation might be the most important piece to anyone’s success. It helps drive you towards a finish line especially when the road bumps come, and the path gets tough. You’ve got to be able to lean on your motivation when things get rough. I think your motivation needs to be important to you regarding a bootcamp. The obstacles and trouble you will face during these learning opportunities can be intense, and the stronger the motivation you have, the more likely you will overcome adversity.

For me, my motivation is my family, and giving them as many opportunities as possible. I know that with a career in tech being something I’m more passionate about, not only will I be happier, but my family will be as well.

I strongly believe that anyone can learn anything. Sometimes the understanding will come quickly, and sometimes it won’t, but if you put in the work and continue your path, you can be successful to. I reflect on this thought almost daily, and especially when the understanding is coming slower than I want. But I’ll keep digging in and I hope you do too.

| mikeketterling |

202,507 | Machine Learning Applications in Tabletop Gaming | Digital Dungeon Diving Those who know me are well aware of my passion for gaming (To those who don... | 0 | 2019-11-08T19:11:35 | https://dev.to/geoffreyianward/machine-learning-applications-in-tabletop-gaming-dng | <h3>Digital Dungeon Diving</h3>

<p>Those who know me are well aware of my passion for gaming (To those who don't know me - hello! I love gaming). While I love all forms of gaming, I have a special place in my heart for tabletop games. I love the ability to gather with friends and explore game systems and mechanics together in a shared social environment. Tabletop roleplaying games are even more precious to me, as they challenge us to think creatively to solve problems as a team.</p>

<p>However, the actual mechanics for playing tabletop role-playing games remain firmly locked in the technology of the 1970s. Some relics, like the tiny miniatures we use to represent player characters and monsters alike, are immensely customizable and allow players to find figures that represent their ideal heroes and villains. These minis, while technically unnecessary, are a valuable part of the tabletop gaming experience. When we look to how these minis interact with the game world, however, we often find that the rest of our game world doesn't hold up when compared to these detailed miniatures.</p>

<p>Often, sprawling cities and labyrinthine dungeons are reduced to a simple hand-drawn map, blocked out on sheets with dry-erase markers. While very reusable, and instantly adaptable to changing game conditions, these 'battle maps' are barely interactive and typically crude, not to mention their effect on shattering immersion. Some intrepid dungeon masters have also acknowledged these shortcomings, and the tabletop community has been hard at work trying to engineer solutions. These approaches break down in two ways, typically.</p>

<p>The first solution (and currently, the more popular one) is to build out the game maps using modular 'dungeon kits' that represent walls, floors, traps, and doors. These can be combined to great effect, given ample time, space, and money, allowing gamers to build giant three-dimensional playgrounds for their miniatures to explore. The effect of a dungeon finished in this way is absolutely impressive.</p>

<p>However, these maps present immediate problems. Firstmost, they are slow to build. This means that maps cannot be constructed on the fly, severely limiting the scope of exploration available to the players. Secondly, there is an issue with 'fog of war'. In this instance, players can see the entire map laid out before them, and can use that knowledge to plan strategies based on information that their characters should not have. These are not game-breaking, but they do limit the arena in which a session can be allowed to breathe.</p>

<p>A second solution has been growing in popularity, although it remains a niche. Some resourceful gamers have been utilizing projectors and televisions to digitally render the maps directly onto the tabletop. This approach is expensive but opens up an enormous variety of options when it comes to gaming. While the setup using a tv is nice, it requires a dedicated gaming table with a television literally built into it, which is not an option for many people. Mounting a projector on the ceiling is a much less obtrusive approach that allows for a lot of the same mechanics. Either way, a digital map allows for all sorts of new interactions. We can simulate fog of war, we can change the map as easily as changing a spreadsheet, we can even add in immersion building elements such as flickering torches and running water. </p>

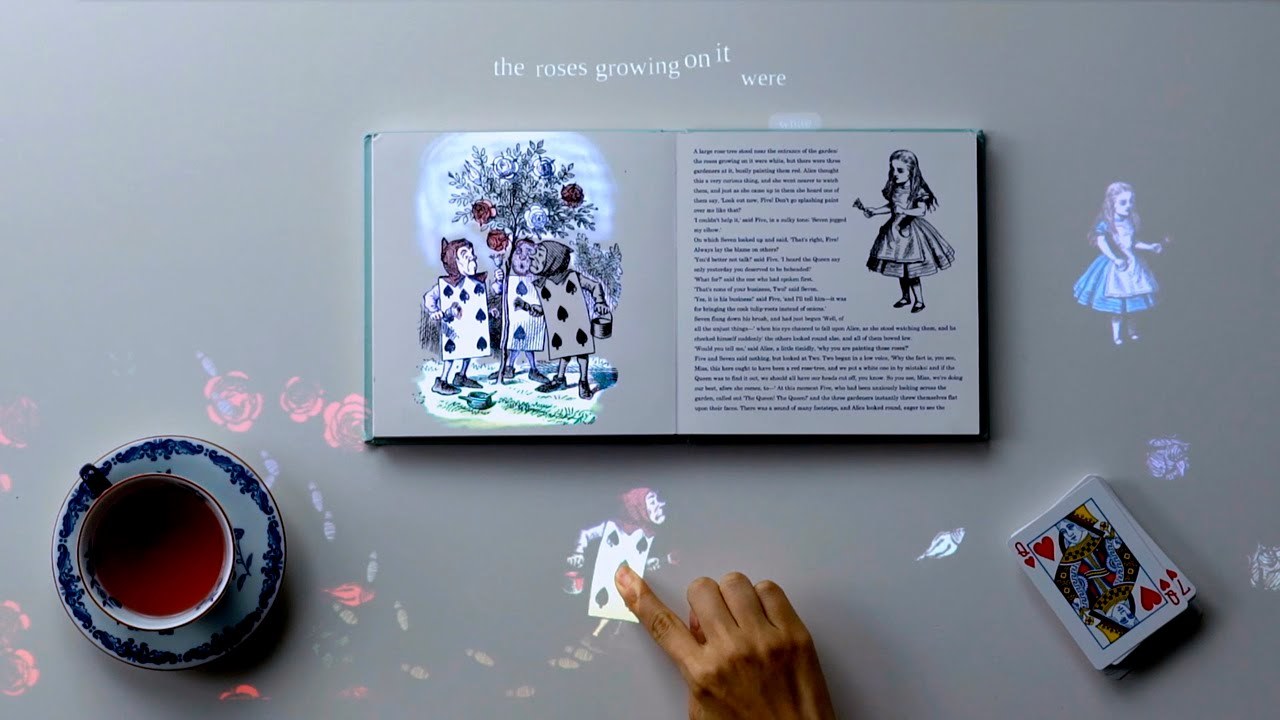

<p>So it's with this context in mind that I approached Sony's Future Lab Concept 'T'. Concept 'T' is a projector, with sensors built into it, mounted above a table, much like the projectors being used for gaming. The difference here is those sensors, which Sony uses to pair with machine learning algorithms to 'see' objects that are placed onto the table's surface. So far, Sony has shown us how this technology can be used to interact with a book, bringing characters to life straight off the page, or with objects, recognizing them and projecting their information adjacent to the object being sensed. </p>

<p>These machine learning systems could be adapted to great effect to incorporate tabletop gaming. Let's imagine for a second, placing a miniature upon a table. Instantly, the projector recognizes the miniatures and projects the characters' names and stats onto the table next to the figures. If a character is carrying a torch, the projector could illuminate the area around the figure, and track that light as the character moves around the map. Cards played could be read using OCR technology, and effects could be animated in response to those cards being played. Fog of war could be rendered based on the actual lines of sight of the miniatures, based on where they are placed on the map. </p>

<p>The applications for this technology in gaming goes on and on and on. What's important here is that using these systems, we might finally have a way to bring tabletop roleplaying into the twenty-first century. Sony has yet to announce plans to bring the Concept T model into mass production, so, for now, the technology will have to be cobbled together from other pieces. I'm thinking that Microsoft's new Kinect DK (which uses the same sensors as the HoloLens 2) would be able to accomplish a lot of the same functionality, as far as the computer vision concerns go. </p>

https://youtu.be/0TThak7sF94 | geoffreyianward | |

202,539 | Default a View in NavigationView with SwiftUI | A guide to default a View in NavigationView with SwiftUI | 3,158 | 2019-11-08T21:14:42 | https://medium.com/@maeganwilson_/default-a-view-in-navigationview-with-swiftui-b6e64a17fb20 | swift, swiftui | ---

title: Default a View in NavigationView with SwiftUI

published: true

description: A guide to default a View in NavigationView with SwiftUI

tags: swift, swiftui

canonical_url: https://medium.com/@maeganwilson_/default-a-view-in-navigationview-with-swiftui-b6e64a17fb20

series: SwiftUI Examples

---

# Default a View in NavigationView with SwiftUI

I'm going to walk through the steps to create a default view in a `NavigationView` in a brand new project.

The finished GitHub project can be found here.

{% github maeganjwilson/swiftui-examples %}

# 1. Create a Single View App

Create a new XCode project using SwiftUI.

# 2. In ContentView.swift, add a NavigationView

A fresh `ContentView.swift` looks like this:

```swift

import SwiftUI

struct ContentView: View {

var body: some View {

Text("Hello, World!")

}

}

struct ContentView_Previews: PreviewProvider {

static var previews: some View {

ContentView()

}

}

```

To add a NavigationView that looks like a list, we first need to embed the `Text` in a `List`. Embedding in a `List` can be done by `CMD + Click` on `Text` and choosing Embed in List from the menu.

You should then get the code sample below.

```swift

struct ContentView: View {

var body: some View {

List(0 ..< 5) { item in

Text("Hello, World!")

}

}

}

```

Now, put the list inside the `NavigationView`. `ContentView` should now have the following code:

```swift

import SwiftUI

struct ContentView: View {

var body: some View {

NavigationView{

List(0 ..< 5) { item in

Text("Hello, World!")

}

}

}

}

struct ContentView_Previews: PreviewProvider {

static var previews: some View {

ContentView()

}

}

```

If using the Live Preview in Xcode, then the preview should look like the picture below.

Let's also make the list different on each row. Change the string in the text to say "Navigation Link \(item)" and make the list range 1 to 5 instead of 0 to 5.

This is what the code should look like.

```swift

List(1 ..< 5) { item in

Text("Navigation Link \(item)")

}

```

Here is what the preview will look like

# 3. Add a NavigationLink

The `Text` needs to be inside a `NavigationLink` in order to navigate to a different view. We will use `NavigationLink(destination: Destination, tag: Hashable, selection: Binding<Hashable?>, label: () -> Label)`.

Let's break this down a bit before implementing it.

- `destination` the `View` to present when the link is selected

- `tag` a value that is of type `Hashable` to distinguish between which link is selected

- To read more about Hashable [click here](https://developer.apple.com/documentation/swift/hashable). The link will take you to Apple's documentation about Hashable.

- `selection` a variable that is an optional `Hashable` type that will change values to the tag

- `label` a closure that returns a `View` which is what the user will see and be able to click on.

Now that all the parts are explained let's implement the `NavigationLink`.

```swift

List(1 ..< 5) { item in

NavigationLink(destination: Text("Destination \(item)"), tag: item, selection: self.$selectedView) {

Text("Navigation Link \(item)")

}

}

```

Once it's implemented, you should get an error that says `Use of unresolved identifier '$selectedView'`. This error is expected since we do not have a Binding variable called `selectedView` in our code. Let's add it to the `ContentView` struct.

Place `@State private var selectedView: Int? = 0` before declaring `body`. The error should go away now. When declaring `selectedView`, the type needs to be optional since `NavigationLink` wants an optional Hashable type.

As of right now, running the app, it will look like no default view is given. This is because there is no `NavigationLink` with a tag of 0. If `selectedView` is assigned a tag that doesn't exist, then the view will be the list of NavigationLinks.

If you change the initial value of `selectedView` to 1, then it will open to the destination of `NavigationLink` that has a tag of 1.

# Basics are done!

Now the basic tutorial is finished of how to achieve this. I'm going to continue in the next section on how to improve the UX because on iOS this is not excellent behavior, but on iPadOS when in landscape, this behavior is excellent!

# Bettering the UX

On iPhones, you don't usually want the total view to be taken over. You usually want the user to decide where to navigate. On iPads in landscape, the screen is so big that having a view selected is okay since the navigation links are always shown. This can be achieved by using `onAppear()` and figuring out which device is being used.