id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

175,659 | How to Rename a Modern SharePoint Site URL in Office 365 | This posts explains how to rename a Modern SharePoint site URL in Office 365.

... | 0 | 2019-10-22T20:23:09 | https://blogit.create.pt/miguelisidoro/2019/09/23/how-to-rename-a-modern-sharepoint-site-url-in-office-365/ | office365, sharepoint, collaboration, modernsharepoint | ---

title: How to Rename a Modern SharePoint Site URL in Office 365

published: true

tags: Office 365,SharePoint,Collaboration,Modern SharePoint

canonical_url: https://blogit.create.pt/miguelisidoro/2019/09/23/how-to-rename-a-modern-sharepoint-site-url-in-office-365/

---

The post [How to Rename a Modern SharePoint Site URL in Office 365](https://blogit.create.pt/miguelisidoro/2019/09/23/how-to-rename-a-modern-sharepoint-site-url-in-office-365/) appeared first on [Blog IT](https://blogit.create.pt).

This posts explains how to rename a Modern SharePoint site URL in Office 365.

## Introduction

Site URL Rename has been one of the most popular requests via [UserVoice](https://sharepoint.uservoice.com/forums/329214-sites-and-collaboration/suggestions/13217277-enable-renaming-the-site-collection-urls) and in [SharePoint Conference 2019](https://dev.to/mlisidoro/what-s-new-for-sharepoint-and-office-365-from-sharepoint-conference-2019-part-2-o5g), in one my favorite announcements of the event, Microsoft finally announced the possibilty to rename a Site URL.

This can be done either using the SharePoint Admin Center or using a PowerShell script.

## How It Works

### Using the SharePoint Admin Center

The easiest way to rename a site URL is using the SharePoint Admin Center. Select “Active Sites”, then the site you want to rename and click “Edit”.

<figcaption>Renaming a Site URL using SharePoint Admin Center</figcaption>

A popup will appear and the only thing you have to do is to write the new URL and ensure that the new URL is not being used.

<figcaption>Set the new URL for the SharePoint site</figcaption>

To read the entire article, click [here](https://blogit.create.pt/miguelisidoro/2019/09/23/how-to-rename-a-modern-sharepoint-site-url-in-office-365/).

Happy SharePointing!

The post [How to Rename a Modern SharePoint Site URL in Office 365](https://blogit.create.pt/miguelisidoro/2019/09/23/how-to-rename-a-modern-sharepoint-site-url-in-office-365/) appeared first on [Blog IT](https://blogit.create.pt). | mlisidoro |

175,749 | Make and Deploy a Serverless Application Into AWS lambda | At my job we needed a solution for writing, maintaining and deploying aws lambdas. The serverless fra... | 0 | 2019-09-24T09:13:37 | https://dev.to/pcmagas/make-and-deploy-a-serverless-application-into-aws-lambda-1ijd | serverless, lamda, aws, node | At my job we needed a solution for writing, maintaining and deploying aws lambdas. The serverless framework is a nodejs framework used for making and deploying serverless applications such as AWS Lambdas.

So we selected the serverless application as our choice for these sole reasons:

- Easy to manage configuration environment via enviromental viariables.

- Easy to keep a record of the lambda settings and change history via git, so we can kill the person who did a mistake. (ok ok just kidding, no human has been killed ;) ... yet )

- Because it also is node.js framework we can use the normal variety of the frameworks used for unit and integration testing.

- Also for the reason above we also could manage and deploy dependencies as well using combination of nodejs tools and the ones provided from the serverless framework.

- Ce can have a single, easy to maintain, codebase with more than one aws lambdas without the need for duplicate code.

# Install serverless

```

sudo -H npm i -g serverless

```

(For windows ommit the `sudo -H` part)

# Our first lambda

If not we need to create our project folder and initialize an node.js project:

```

mkdir myFirstLambda

cd myFirstLambda

npm init

git add .

git commit -m "Our first project"

```

Then install `serverless` as dev-dependency, we need that because on colaborative projects it will install all the required tools to deploy and run the project:

```

npm install --save-dev serverless

```

And then run the following command to bootstrap our first lambda function:

```

serverless create --template aws-nodejs

```

With that command 2 files have been generated:

* `handler.js` Where contains our aws lambda handlers.

* `serverless.yml` where it contains all the deployment and running settings.

Then on `handler.js` change the function `module.exports.hello` with a respective name representing the functionality. For our purpoce we will keep it as is. We can run the lambda function locally via the command:

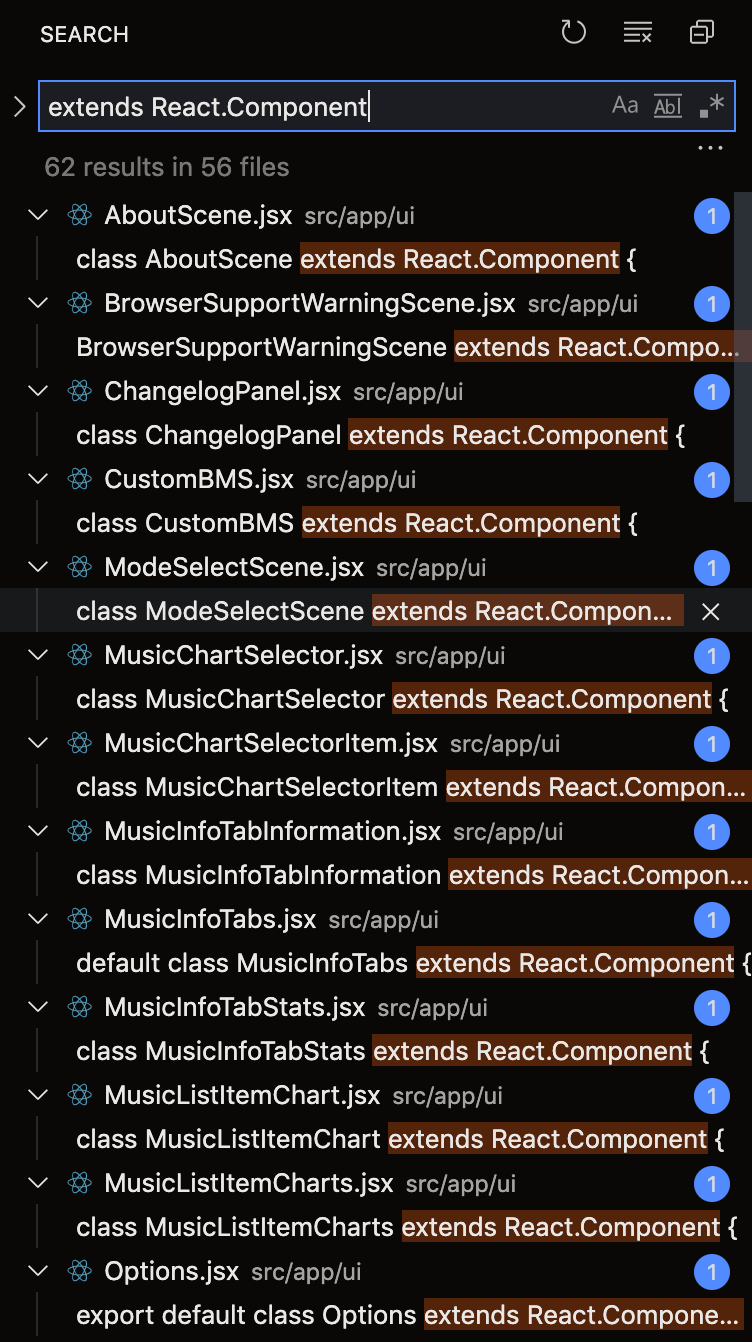

```

sls invoke local --stage=dev --function hello

```

Which it will show the returning value of the function hello on `handler.js`. Also it is a good idea to place the command above as a `start` script into `package.json` at `scripts` section.

# Deploy aws lambda

First of all we need to specify the lambda name. So we need to modify the `serverless.yml` accorditly in order to be able to specify the AWS lambda name. So we change the `functions` sections from:

```

functions:

hello:

handler: handler.hello

```

Into:

```

functions:

hello:

handler: handler.hello

name: MyLambda

description: "My First Lambda"

timeout: 10

memorySize: 512

```

With that we can list the deployed lambda as `MyLambda` as aws console, also as seen above we can specify and share lambda settings.

Furthermore is good idea to specify enviromental variables via at the `environment:` section with the following setting:

```

environment: ${file(./.env.${self:provider.stage}.yml)}

```

With that we can use the `stage` for each deployment environment and each setting will be provided from .env files. Also upon deployment the `.env` files will be used in order to be able to specify the **deployed** lambda environmental variables as well.

Also is good idea to ship a template .env file named `.env.yml.dist` so each developer will need to do:

```

cp .env.yml.dist .env.dev.yml

```

And fill the appropriate settings. Also for production you need to do:

```

cp .env.yml.dist .env.prod.yml

```

Then exclude these files to be deployed except the on offered by the stage parameter (will seen bellow):

```

package:

include:

- .env.${self:provider.stage}.yml

exclude:

- .env.*.yml.dist

- .env.*.yml

```

Then deploy with the command:

```

sls deploy --stage ^environment_type^ --region ^aws_region^

```

As seen the pattern followed is the: `.env.^environment_type^.yml` where the `^environment_type^` is the value provided from the `--stage` parameter at both `sls invoke` and `sls deploy` commands.

Also we could specify depending the environment the lambda name using these settings as well:

```

functions:

hello:

handler: handler.hello

name: MyLambda-${self:provider.stage}

description: "My First Lambda"

timeout: 10

memorySize: 512

```

Where the `${self:provider.stage}` takes its value from the `--stage` parameter. Than applies where the `${self:provider.stage}` is met at the `serverless.yml` file. | pcmagas |

175,814 | Slider | Slider for site. Animation in the form of flipping cards in a circle. #javascript #html #css #w... | 0 | 2019-09-24T11:14:00 | https://dev.to/iderevyansky/slider-1h8d | codepen, javascript, html, webdev | <p>Slider for site. Animation in the form of flipping cards in a circle.</p>

{% codepen https://codepen.io/IDerevyansky/pen/jONdvOv %}

#javascript #html #css #web #react #nodejs | iderevyansky |

175,823 | Command Execution Tricks with Subprocess - Designing CI/CD Systems | The most crucial step in any continuous integration process is the one that executes build instructio... | 0 | 2019-10-01T02:10:12 | https://tryexceptpass.org/article/continuous-builds-subprocess-execution/ | python, subprocess, ci, continuousdelivery | ---

title: Command Execution Tricks with Subprocess - Designing CI/CD Systems

published: true

tags: python, subprocess, ci, continuousdelivery

canonical_url: https://tryexceptpass.org/article/continuous-builds-subprocess-execution/

cover_image: https://tryexceptpass.org/images/continuous-builds-execution.webp

---

The most crucial step in any continuous integration process is the one that executes build instructions and tests their output. There’s an infinite number of ways to implement this step ranging from a simple shell script to a complex task system.

Keeping with the principles of simplicity and practicality, today we’ll look at continuing the series on [Designing CI/CD Systems](https://tryexceptpass.org/designing-continuous-build-systems) with our implementation of the execution script.

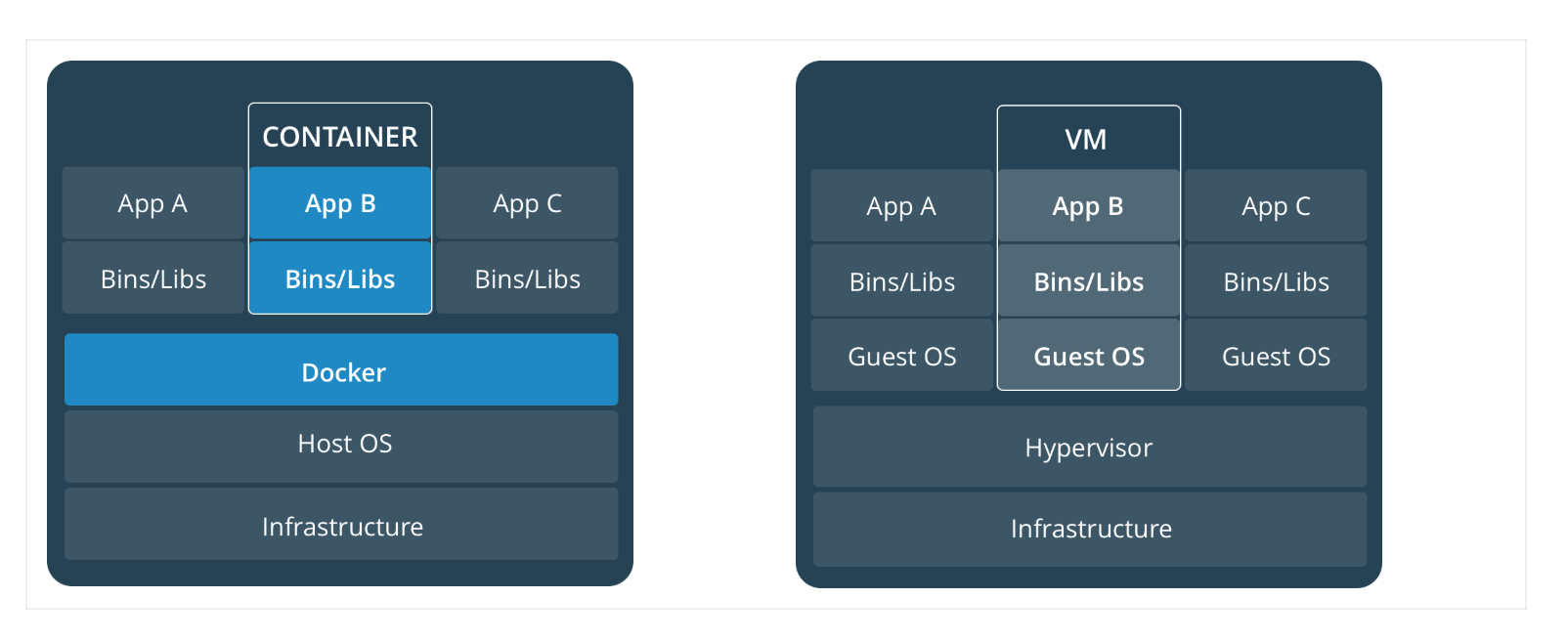

Previous chapters in the series already established the [build directives](https://tryexceptpass.org/article/continuous-builds-parsing-specs/) to implement. They covered the format and location of the build specification file. As well as the [docker environment](https://tryexceptpass.org/article/continuous-builds-docker-swarm) in which it runs and its limitations.

## Execution using subprocess

Most directives supplied in the YAML spec file are lists of shell commands. So let's look at how Python's [subprocess](https://docs.python.org/3/library/subprocess.html) module helps us in this situation.

We need to execute a command, wait for it to complete, check the exit code, and print any output that goes to stdout or stderr. We have a choice between `call()`, `check_call()`, `check_output()`, and `run()`, all of which are wrappers around a lower-level `popen()` function that can provide more granular process control.

This `run()` function is a more recent addition from Python 3.5. It provides the necessary execute, block, and check behavior we're looking for, raising a `CalledProcessError` exception whenever it finds a failure.

Also of note, the [shlex](https://docs.python.org/3/library/shlex.html) module is a complimentary library that provides some utilities to aid you in making subprocess calls. It provides a `split()` function that's smart enough to properly format a list given a command-line string. As well as `quote()` to help *escape* shell commands and avoid shell injection vulnerabilities.

## Security considerations

Thinking about this for a minute, realize that you're writing an execution system that runs command-line instructions as written by a third party. It has significant security implications and is the primary reason why most online build services do not let you get down into this level of detail.

So what can we do to mitigate the risks?

[Read On ...](https://tryexceptpass.org/article/continuous-builds-subprocess-execution/) | tryexceptpass |

175,840 | Serenity automation framework - Part 2/4 - Automation Test with UI using Cucumber | Guideline for how to implement UI test using Cucumber with Serenity | 0 | 2019-09-25T09:13:07 | https://dev.to/cuongld2/serenity-automation-framework-part-2-4-automation-test-with-ui-using-cucumber-3n7b | serenity, java, cucumber, ui | ---

title: Serenity automation framework - Part 2/4 - Automation Test with UI using Cucumber

published: true

description: Guideline for how to implement UI test using Cucumber with Serenity

tags: #Serenity #Java #Cucumber #UI

---

Hi folks, I'm back with another post.

Please check out [this](https://dev.to/cuongld2/serenity-automation-framework-part-1-4-automation-test-with-api-2mb5) for previous post about Serenity.

At its core, Serenity is all about BDD.

The philosophy of Serenity is to make the test like a live documentation.

In this blog post I will share you guys how to implement UI test in Serenity with Cucumber and ScreenPlay Pattern.

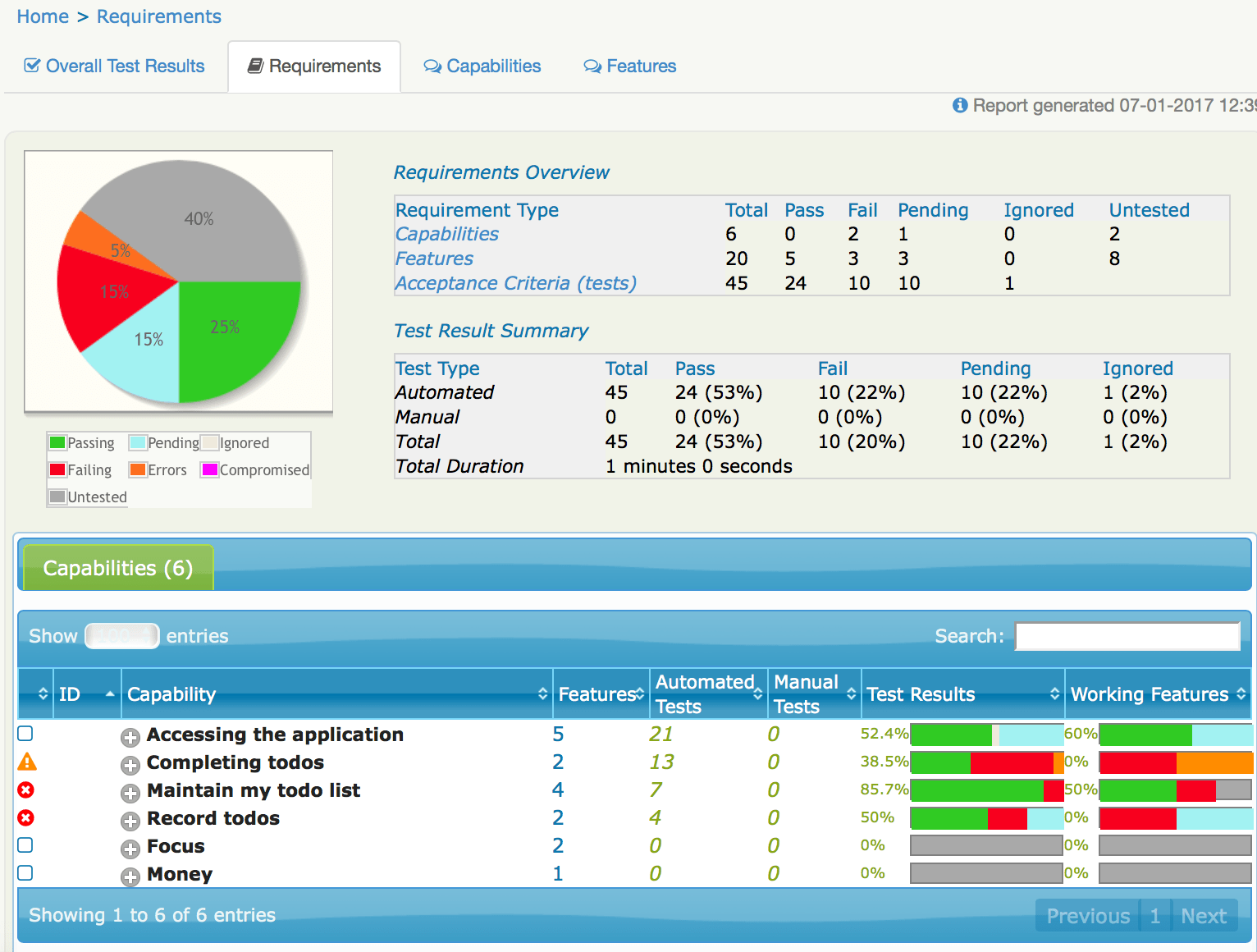

And don't forget, how to create beautiful and detailed report like this:

I.Why Cucumber

Cucumber is a software tool used by computer programmers that supports behavior-driven development (BDD). Central to the Cucumber BDD approach is its plain language parser called Gherkin. It allows expected software behaviors to be specified in a logical language that customers can understand.

By using cucumber, we separate the intent of the tests from how it will be implemented.

Non-technical guys like BA or PO can easily understand what we are testing from feature file like

````javascript

Feature: Allow users to login to quang cao coc coc website

@Login

Scenario Outline: Login successfully with email and password

Given Navigate to quang cao coc coc login site

When Login with '<email>' and '<password>'

Then Should navigate to home page site

Examples:

|email|password|

|xxxxxxxxxx|xxxxxxxxxx|

@Login

Scenario Outline: Login failed with invalid email

Given Navigate to quang cao coc coc login site

When Login with '<email>' and '<password>'

Then Should prompt with '<errormessage>'

Examples:

|email|password|errormessage|

|a|FernandoTorres12345#|abc@example.com|

```

II.Implementation

We will go through the needed setup for implement test using Cucumber with Serenity.

1.POM file

We would need to use serenity-cucumber for our project.

So make sure to add dependency for that:

````javascript

<!-- https://mvnrepository.com/artifact/net.serenity-bdd/serenity-cucumber -->

<dependency>

<groupId>net.serenity-bdd</groupId>

<artifactId>serenity-cucumber</artifactId>

<version>1.9.45</version>

</dependency>

```

Also we need to add some plugins to build serenity report with maven

````javascript

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<configuration>

<source>8</source>

<target>8</target>

</configuration>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.8.0</version>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-surefire-plugin</artifactId>

<version>2.22.0</version>

<configuration>

<testFailureIgnore>true</testFailureIgnore>

</configuration>

</plugin>

<plugin>

<artifactId>maven-failsafe-plugin</artifactId>

<version>2.18</version>

<configuration>

<includes>

<include>**/features/**/When*.java</include>

</includes>

<systemProperties>

<webdriver.driver>${webdriver.driver}</webdriver.driver>

</systemProperties>

</configuration>

</plugin>

<plugin>

<groupId>net.serenity-bdd.maven.plugins</groupId>

<artifactId>serenity-maven-plugin</artifactId>

<version>${serenity.maven.version}</version>

<executions>

<execution>

<id>serenity-reports</id>

<phase>post-integration-test</phase>

<goals>

<goal>aggregate</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

```

2.Serenity config file

In order to set the default config for serenity, we can use serenity.conf file or serenity.properties

In this example I would like to show you about serenity.conf:

````javascript

webdriver {

base.url = "https://cp.qc.coccoc.com/sign-in?lang=vi-VN"

driver = chrome

}

headless.mode=false

serenity {

project.name = "Serenity Guidelines"

tag.failures = "true"

linked.tags = "issue"

restart.browser.for.each = scenario

take.screenshots = AFTER_EACH_STEP

console.headings = minimal

browser.maximized = true

}

jira {

url = "https://jira.tcbs.com.vn"

project = Auto

username = username

password = password

}

drivers {

windows {

webdriver.chrome.driver = src/main/resources/webdriver/windows/chromedriver.exe

}

mac {

webdriver.chrome.driver = src/main/resources/chromedriver

}

linux {

webdriver.chrome.driver = src/main/resources/webdriver/linux/chromedriver

}

}

```

We defined some common thing like where to store driver for each environment:

````javascript

drivers {

windows {

webdriver.chrome.driver = src/main/resources/webdriver/windows/chromedriver.exe

}

mac {

webdriver.chrome.driver = src/main/resources/chromedriver

}

linux {

webdriver.chrome.driver = src/main/resources/webdriver/linux/chromedriver

}

}

```

or take screenshot after each step:

````javacript

serenity {

take.screenshots = AFTER_EACH_STEP

}

```

3.Page Object

An experienced automation test is the one who can implement the tests in an abstracted ways for better understanding and maintenaince.

For best practices of implement test in UI, we should always define page object class for the web page we are interacting with.

In the case, the web page has a lot of functions and elements, we should separate the page object into multiple one according to the features it cover for better maintenaince.

For example with LoginPage of qcCocCoc site:

````javascript

@DefaultUrl("https://cp.qc.coccoc.com/sign-in?lang=vi-VN")

public class LoginPage extends PageObject {

@FindBy(name = "email")

private WebElementFacade emailField;

@FindBy(name = "password")

private WebElementFacade passwordField;

@FindBy(css = "button[data-track_event-action='Login']")

private WebElementFacade btnLogin;

@FindBy(xpath = "//form[@method='post'][not(@name)]//div[@class='form-errors clearfix']")

private WebElementFacade errorMessageElement;

public void login(String email, String password) {

waitFor(emailField);

emailField.sendKeys(email);

passwordField.sendKeys(password);

btnLogin.click();

}

public String getMessageError(){

waitFor(errorMessageElement);

return errorMessageElement.getTextContent();

}

}

```

Here we define how to find the web element, and what method we would need to use in that page.

Usually, we should get rid of Thread.sleep , and find more fluent wait like in the example

````javascript

public void login(String email, String password) {

waitFor(emailField);

emailField.sendKeys(email);

passwordField.sendKeys(password);

btnLogin.click();

}

```

In the above, we would wait for the emailField to appear, after that, we will run the next script.

If that field does not appear, a timeout error will happen.

4.Implement the test followed Cucumber:

First you need to declare the features file.

Features file should be located in test/resources/features folder:

````javascript

@Login

Scenario Outline: Login successfully with email and password

Given Navigate to quang cao coc coc login site

When Login with '<email>' and '<password>'

Then Should navigate to home page site

Examples:

|email|password|

|xxxxxxxxxx|xxxxxxxxxx|

```

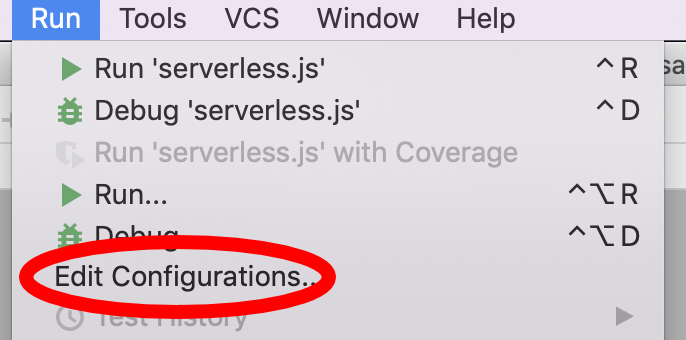

IntelliJ offered us the way to automatically create the function for each step

You can click on the step and press "Alt + Enter", then follow the guide

I usually put the cucumber tests in test/ui/cucumber/qc_coccoc, and define the tests in the step package:

````javascript

public class LoginPage extends BaseTest {

@Steps

private pages.qcCocCoc.LoginPage loginPage_pageobject;

@cucumber.api.java.en.Given("^Navigate to quang cao coc coc login site$")

public void navigateToQuangCaoCocCocLoginSite() {

loginPage_pageobject.open();

}

@When("^Login with '(.*)' and '(.*)'$")

public void loginWithEmailAndPassword(String email, String password) {

loginPage_pageobject.login(email,password);

}

@Then("^Should navigate to home page site$")

public void shouldNavigateToHomePageSite() {

WebDriverWait wait = new WebDriverWait(getDriver(),2);

wait.until(ExpectedConditions.urlContains("welcome"));

softAssertImpl.assertAll();

}

@Then("^Should prompt with '(.*)'$")

public void shouldPromptWithErrormessage(String errorMessage) {

softAssertImpl.assertThat("Verify message error",loginPage_pageobject.getMessageError().contains(errorMessage),true);

softAssertImpl.assertAll();

}

}

```

Here we extend BaseTest for the benefit of using assertion

The value for email and password we put in the feature file can be gotten by using regex like @When("^Login with '(.*)' and '(.*)'$") and define input value for the function (String email, String password)

````javascript

@RunWith(CucumberWithSerenity.class)

@CucumberOptions(features = "src/test/resources/features/qcCocCoc/", tags = { "@Login" }, glue = { "ui.cucumber.qc_coccoc.step" })

public class AcceptanceTest {

}

```

We should create the AcceptanceTest class for more flexible way to run tests with tags.

We need to specify the path to the features file "src/test/resources/features/qcCocCoc/", and the path to the step file: "ui.cucumber.qc_coccoc.step"

5.How to run the test

- You can run the test from feature file by right click on the scenario and choose run in the IDE

- Or you can run from command line:

mvn clean verify -Dtest=path_to_the_AcceptanceTest

6.Serenity report

To create beautiful Serenity report, just run the following command line

mvn clean verify -Dtest=path_to_the_AcceptanceTest serenity:aggregate

The test report will be index.html and located in target/site/serenity/index.html by default

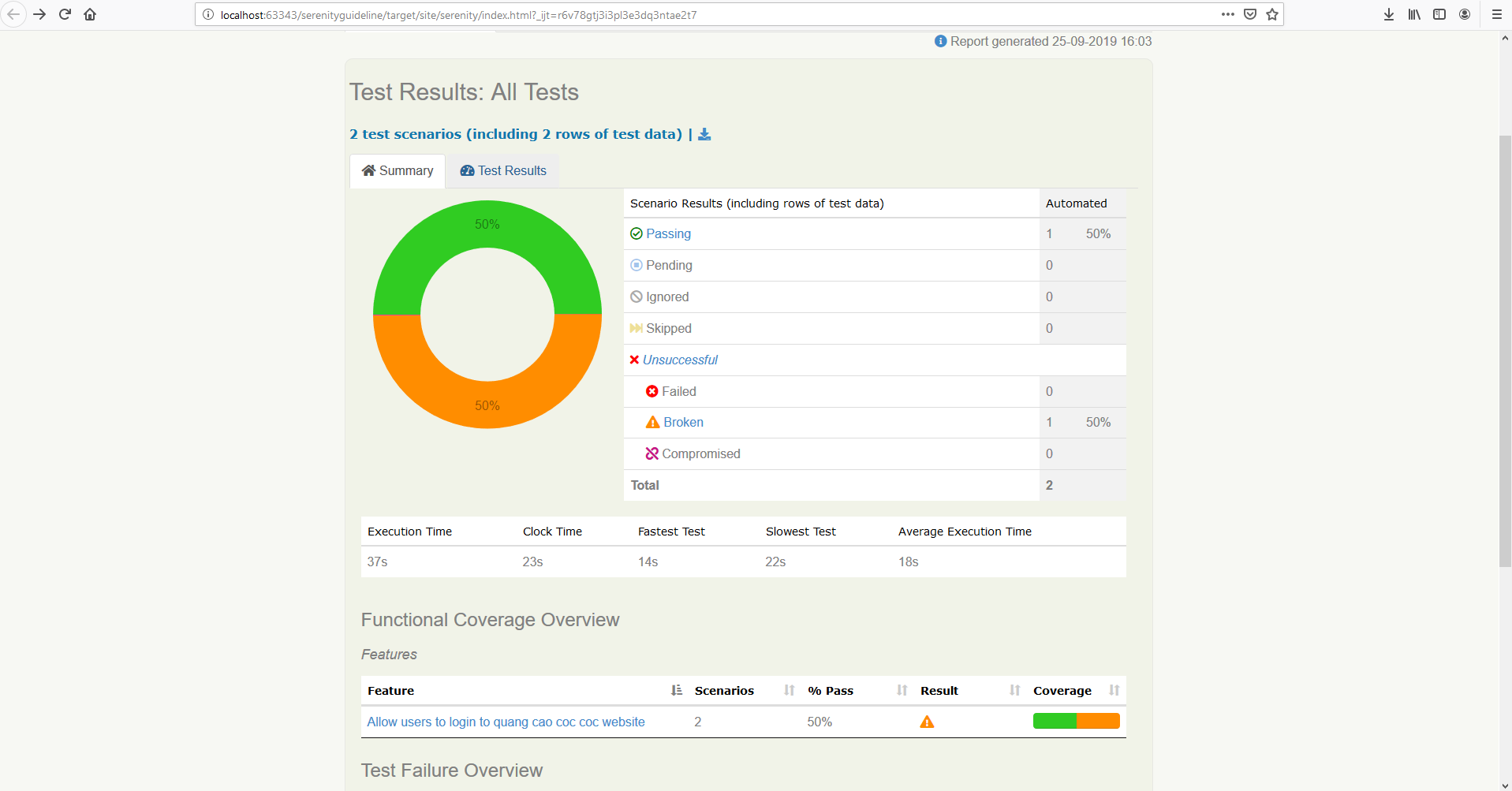

The summary report will look like this:

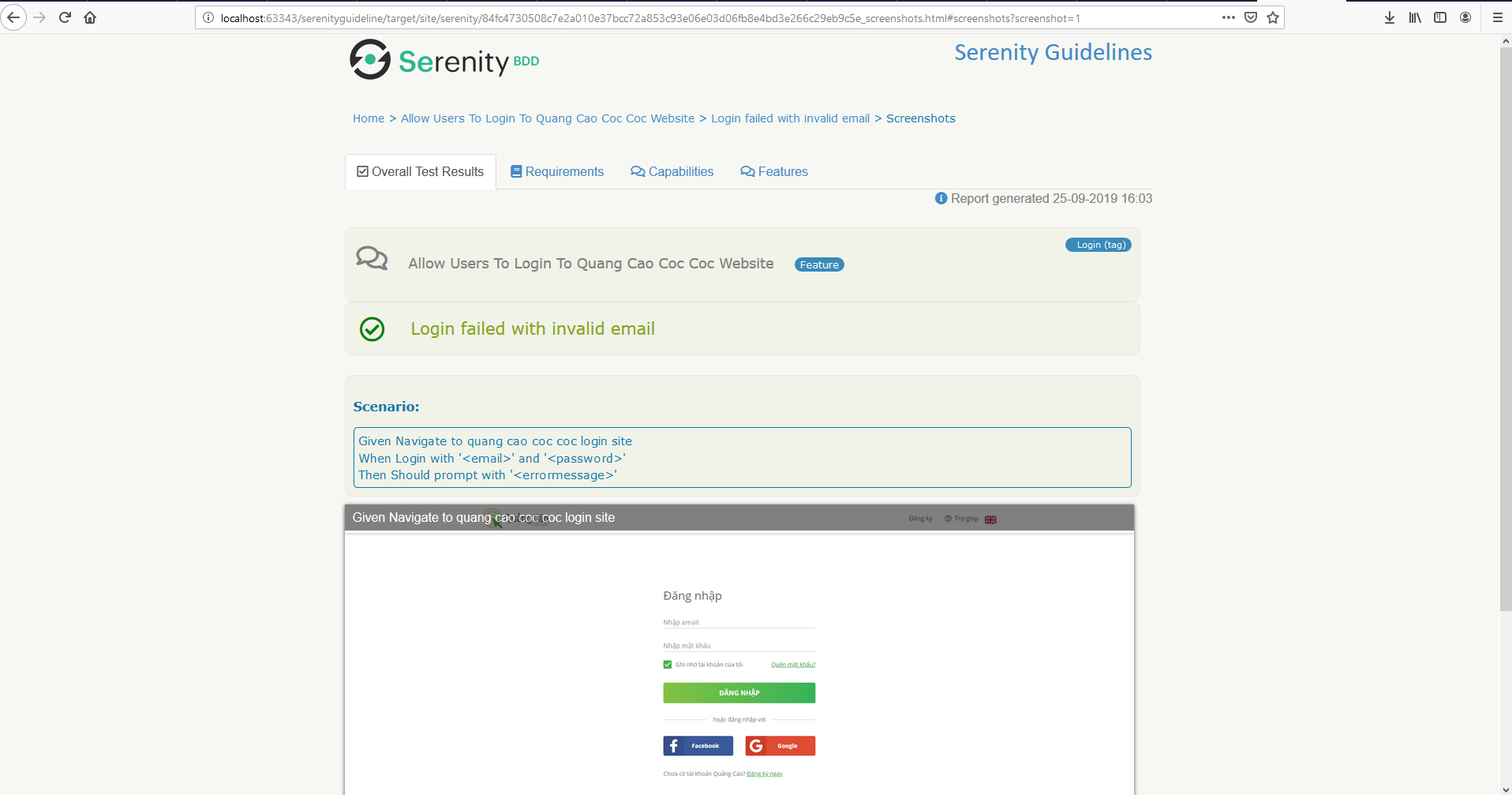

With screenshot capture after each step in Test Results tab:

As usual, you can always checkout the sourcode from github: [serenity-guideline](https://github.com/cuongld2/serenityguideline)

Yay. That's it for today.

If you like the blog post, leave a heart or a comment.

I will write another post for screenplay pattern with UI test in a couple of days.

Take care~~

Notes: If you feel this blog help you and want to show the appreciation, feel free to drop by :

[<img src="https://thepracticaldev.s3.amazonaws.com/i/cno42wb8aik6o9ek1f89.png">](https://www.buymeacoffee.com/dOaeSPv

)

This will help me to contributing more valued contents.

| cuongld2 |

175,848 | Azure Functions in the Portal – ALM | Author Credits: Michael Stephenson, Microsoft Azure MVP. Originally Published at Serverless360 Blogs... | 0 | 2019-09-24T12:53:51 | https://dev.to/suryavenkat_v/azure-functions-in-the-portal-alm-1a73 | azure, serverless | <p>Author Credits: <a href="https://www.serverless360.com/blog/author/michael" rel="noopener noreferrer" target="_blank">Michael Stephenson</a>, Microsoft Azure MVP.</p>

<p>Originally Published at <a href="https://serverless360.com/" rel="noopener noreferrer" target="_blank">Serverless360 Blogs</a>.</p>

<blockquote>This article is part of <a href="https://dev.to/azure/serverless-september-content-collection-2fhb" rel="noopener noreferrer" target="_blank">#ServerlessSeptember</a>. You'll find other helpful articles, detailed tutorials, and videos in this all-things-serverless content collection. New articles are published every day — that's right, every day — from community members and cloud advocates in the month of September.<br><br>

Find out more about how Microsoft Azure enables your Serverless functions at <a href="https://docs.microsoft.com/en-us/azure/azure-functions/?WT.mc_id=servsept_devto-blog-cxa" rel="noopener noreferrer" target="_blank">https://docs.microsoft.com/azure/azure-functions/</a>.</blockquote>

<p>One of the advantages of Azure is that for some use cases you can develop solutions in the Azure Portal. This has the benefits that you can just focus on writing some code and not have to worry about versions of Visual Studio and extensions and all of the other overheads which turn a few simple lines of code which can be written by anyone into something which requires an additional level of developer skills. Let’s face it the ALM processes have been around for years but there are still large portions of the developer community who don’t follow them.</p>

<p>The ability to just get the job done in the portal is compelling and I expect that we will see more of that in the future but it does give you a challenge when it comes to ALM activities like keeping a safe version of the code and being able to move between environments reliably</p>

<p>This article explores the options for being able to develop an Azure Function in the portal but use some of the basic ALM type activities which would only be a minor overhead but gives some good practices so that developing in the portal would be ok in the real world.</p>

<h2>My Process</h2>

<p>The process I am going to follow is as follows:</p>

<ul>

<li>I will have a development resource group which will contain my Azure Function and the code for it</li>

<li>The resource group will also contain other assets for the function like AppInsights and Storage</li>

<li>I will create a 2<sup>nd</sup> resource group called Test. In my Build process, I will refresh the Test resource group with the latest version so I can do some testing</li>

<li>Once I am happy with the test resource group I will then execute a release pipeline which will copy the latest for the function to other environments such as UAT which I assume are used by other testers etc</li>

</ul>

<p>To summarise the pipeline usage see below:</p>

<ul>

<li>Dev -> Test = Build Pipeline</li>

<li>-> UAT and beyond = Release Pipeline.</li>

</ul>

<h2>Assumptions</h2>

<p>I am going to make a few assumptions:</p>

<ul>

<li>The function apps and Azure resources will be created by hand in advance</li>

<li>Any config settings will be added to the function apps by hand.</li>

</ul>

<p>In this simple example, we are assuming that everything is quite simple, and we can just update the code between environments. In a future example, we will look at some more complex scenarios.</p>

<h2>Walk-through</h2>

<p>To begin the walk-through, let’s have a look at the code for our function below:</p>

<p><img class="alignnone size-full wp-image-80217451" src="https://www.serverless360.com/wp-content/uploads/2019/07/Function-Code.png" alt="Azure Function in the portal" /></p>

<p>You can see this is a very simple function which is just reading some config settings and returning them.</p>

<h3>Build Process</h3>

<p>From here we need to go to our Build process in Azure DevOps. The build process looks like the following:</p>

<p><img class="alignnone size-full wp-image-80217452" src="https://www.serverless360.com/wp-content/uploads/2019/07/build-process-azure-functions.png" alt="Build process in Azure DevOps" /></p>

<p>I have defined a build process which I could use for any function app in the portal. I would simply need to change the variables and subscriptions references and it could be reused easily via the Clone function.</p>

<p>The build executes the following steps:</p>

<ul>

<li>Show all build variables = I use this for troubleshooting as it shows the values for all build variables</li>

<li>Export code = This uses the App Service Kudu API features to download the source code for the function app as a zip file</li>

<li>Publish Artifact = This attaches the zip file to the build so I can use it in Release pipelines later</li>

<li>Azure Function App Deploy = This will deploy the zip file to the Test function app so that I can do some manual testing if I want.</li>

</ul>

<h3>A closer look at Export</h3>

<p>I think in the build process the Export Function Code step warrants a closer look. This step uses Powershell to execute a web request to download the code as a zip file. I have used the publisher profile in the function app to get the publisher credentials which I can save as build variables and then use as a basic authentication header for the web request to do the download. See the below piece of code</p>

<pre class="lang:ps decode:true ">$user = '$(my.functionapp.deployment.username)'

$pass = '$(my.functionapp.deployment.password)'

$pair = "$($user):$($pass)"

Write-Host $pair

$encodedCreds = [System.Convert]::ToBase64String([System.Text.Encoding]::ASCII.GetBytes($pair))

$basicAuthValue = "Basic $encodedCreds"

$Headers = @{

Authorization = $basicAuthValue

}

Write-Host $(my.functionapp.name)

Invoke-WebRequest -Uri

"https://$(my.functionapp.name).scm.azurewebsites.net/api/zip/site/wwwroot/" -OutFile

"$Env:BUILD_STAGINGDIRECTORY\Function.zip" -Headers $Headers

</pre>

<h2>A closer look at Azure Function App Deploy</h2>

<p>The Azure Function app deploy is simply using the out of the box task. I am pointing to the zip file I have just downloaded in the earlier step and it will automatically deploy it for me. I have set the deployment type on this task to Zip deployment.</p>

<p><img class="alignnone size-full wp-image-80217476" src="https://www.serverless360.com/wp-content/uploads/2019/07/Function-App-Deploy.png" alt="Function App Deploy"/></p>

<h3>Release Process</h3>

<p>We now have a repeatable build process which will take the latest version of the code from my development function app and push it to the test instance and package the zip file so I can at some future point release this version of the code.</p>

<p>To do the release to other environments I have an Azure DevOps Release pipeline. You can see this below:</p>

<p><img class="alignnone size-full wp-image-80217454" src="https://www.serverless360.com/wp-content/uploads/2019/07/Azure-devops-release-pipeline.png" alt="Azure devops release pipeline" /></p>

<p>The Release pipeline contains a reference to the build output I should use and then contains a set of tasks for each environment we want to deploy to. In this case its just UAT. The UAT release process looks like the following:</p>

<p><img class="alignnone size-full wp-image-80217455" src="https://www.serverless360.com/wp-content/uploads/2019/07/UAT-release.png" alt="UAT release" /></p>

<p>You can see that in this case the Release process is very simple and really it’s a cut down version of the Build process. In this case, I am downloading the artifact we saved in the build. We then use the Azure Function deploy to copy the function to the UAT function app. We are just using the same OOTB configuration as in the above Build process but this time we are pointing to the UAT Function app.</p>

<p>I now just need to run the Release process to deploy the function to other environments.</p>

<h2>Limitations</h2>

<ul>

<li>I am not using any visual studio so I am unlikely to be automatically testing my functions much. I could potentially look at doing something in this area but it's out of the scope of this article</li>

<li>I am not keeping the code in source control in this article. I am happy that the zip file attached to the build is sufficient. I could possibly look to saving the zip to source control or unpacking it and saving files to source control if I wanted</li>

<li>I am not using any continuous integration here, you could maybe monitor Azure events with Logic Apps and then develop your own trigger.</li>

</ul>

<h2 style="padding: 5px 0 0px; margin-bottom: 10px; border-bottom: 3px solid #3081ed; display: inline-block;">Summary</h2>

<p>Hopefully, you can see that it is very simple to implement the most basic of ALM processes for your development in the portal effort which will add some maturity to it.</p> | suryavenkat_v |

175,856 | Quick vim tips to generate and increment numbers | Too lazy to type each one of them numbers | 0 | 2019-09-25T12:57:31 | https://irian.to/blogs/quick-vim-tips-to-generate-and-increment-numbers | vim, productivity, tips, numbers | ---

title: Quick vim tips to generate and increment numbers

published: true

description: Too lazy to type each one of them numbers

tags: vim, productivity, tips, numbers

canonical_url: https://irian.to/blogs/quick-vim-tips-to-generate-and-increment-numbers

---

There are times when I need to either increment or generate a column of numbers quickly in vim. Vim 8/ neovim comes with useful number tricks.

I will share two of them here.

# Quickly generate numbers with put and range

You can quickly generate ascending numbers by

```

:put=range(1,5)

```

This will give you:

```

1

2

3

4

5

```

We can also control the increments. If we want to quickly generate descending number, we do:

```

:put=range(10,0,-1)

```

Some other variations:

```

:put=range(0,10,2) // increments by 2 from 0 to 10

:put=range(5) // start at 0, go up 5 times

```

This trick might be helpful to generate a list when taking notes. In vim, display current line, we can use `line('.')`. This can be combined with put/range. Let's say you are currently on line # 40. To generate numbers to line 50, you do:

```

:put=range(line(','),50)

```

And you'll get:

```

40 // prints at line 41.

41

42

43

44

45

46

47

48

49

50

```

To adjust line number above, you change it to be `:put=range(line('.')+1,50)` to show the correct line number.

# Quickly increment column of numbers

Suppose we have a column of numbers, like the 0's in HTML below:

```

<div class="test">0</div>

<div class="test">0</div>

<div class="test">0</div>

<div class="test">0</div>

<div class="test">0</div>

<div class="test">0</div>

<div class="test">0</div>

<div class="test">0</div>

<div class="test">0</div>

```

If we want to increment all the zeroes (1, 2, 3, ...), we can quickly do that. Here is how:

First, move cursor to top 0 (I use `[]` to signify cursor location).

```

<div class="test">[0]</div>

<div class="test">0</div>

<div class="test">0</div>

<div class="test">0</div>

<div class="test">0</div>

<div class="test">0</div>

<div class="test">0</div>

<div class="test">0</div>

<div class="test">0</div>

```

Using `VISUAL BLOCK` mode (`<C-v>`), go down 8 times (`<C-v>8j`) to visually select all 0's.

```

<div class="test">[0]</div>

<div class="test">[0]</div>

<div class="test">[0]</div>

<div class="test">[0]</div>

<div class="test">[0]</div>

<div class="test">[0]</div>

<div class="test">[0]</div>

<div class="test">[0]</div>

<div class="test">[0]</div>

```

Now type `g <C-a>`. Voila!

```

<div class="test">1</div>

<div class="test">2</div>

<div class="test">3</div>

<div class="test">4</div>

<div class="test">5</div>

<div class="test">6</div>

<div class="test">7</div>

<div class="test">8</div>

<div class="test">9</div>

```

_Wait a minute... what just happened?_

Vim 8 and neovim has a feature that automatically increment numbers with `<C-a>` (and decrement with `<C-x>`). You can check it out by going to `:help CTRL-A`.

We can also change the increments by inserting a number ahead. If we want to have `10,20,30,...` instead of `1,2,3,...`, do `10g<C-a>` instead.

_Btw, one super-cool-tips with `<C-a>` and `<C-x>` - you can increment not only numbers, but octal, hex, bin, and alpha! For me, I don't really use the first three, but I sure use alpha a lot. Alpha is fancy word for *alpha*betical characters. If we do `set nformats=alpha`, we can increments alphabets like we do numbers._

Isn't that cool or what? Please feel free to share any other number tricks with Vim in comment below. Thanks for reading! Happy vimming!

| iggredible |

176,198 | AWS Application Integration | Step Functions it helps in defining the lambda function Amazon MQ it’s replace... | 0 | 2019-09-25T05:21:36 | https://dev.to/vikashagrawal/aws-application-integration-18oi | # Step Functions

it helps in defining the lambda function

# Amazon MQ

it’s replacement of rabbit MQ

# SNS (Simple Notification Service)

• It's a push-based service.

• It can be used to push notifications to:

```

o Mobile devices

o SQS

o HTTP endpoint

o SMS text messages

o Email

o Lambda can be consumer of this topic and trigger another SNS or AWS services.

```

• Topic:

```

o An access point for allowing recipients to dynamically subscribe.

o It can deliver to multiple recipients together line iOS, Android and SMS.

o It's stored across multiple AZ.

o The messages from this topic will be delivered to all the subscribers.

```

# SQS (Simple Queue Service)

• It’s message oriented.

• It helps in integrating with other AWS services.

• It's a pull-based services.

• The maximum size of the message stored in the queue is 256 KB.

• Type of message can be XML, JSON, and unformatted text

• If the rate of producing the messages is more than the rate of consuming the message or vice-versa in that case, we can use Auto Scaling Group, so that if the messages are more than more EC2 instances would be created and if the messages are less than unused EC2 instances could be terminated.

• Messages in the queue can be kept from 1 min to 14 days and default is 4 days.

• There is guarantee for the messages getting processed at least once.

• Polling

```

o Short: Returns immediately if no messages are in queue.

o Long: Polls the queue periodically and only returns the response when a message is in the queue or timeout is reached.

```

• Visibility Timeout

```

o When the message is received by the consumer, this message gets marked as invisible in the queue. If the job gets processed by the consumer before the time out then it would be deleted from the queue else it would become visible again.

o Default is 30 seconds and maximum are 12 hours. Any executions, which needs more than 12 hours of execution better have a lambda function to this message and split it into multiple topics and have other lambdas integrated with these topics.

```

• Types

```

o Standard

This is the default queue.

Although the consumption of message is ensured based on the order they are received but nor guaranteed.

Chances are there for any of the messages getting consumed more than 1 time.

o FIFO

Consumption of message is guaranteed based on the order they are received.

All behavior is same as default queue with only limitations is 300 Tx/sec. Reason for this limitation could be because standard is the default implementation and bit of change in the design to get FIFO design brings in this limitation.

It will be consumed once unless it gets deleted by the consumer.

```

# SWF (Simple Workflow Service)

• It's a task oriented and contains following actors:

```

o Workflow starters: an application that initiate the workflow, e.g. website.

o Workers: it’s a program that gets tasks, process it and return the result.

o Decider: it controls the coordination of tasks.

```

• Domains: it’s a kind of meta data, a collection of related workflows, which is stored in JSON.

• Maximum workflow retention period is 1 year and stored in seconds.

• SWF brokers the interactions b/w workers and deciders.

• SWF makes sure that the task in not repeated.

• It allows decider to have clear idea of the progress of tasks and to start the new task.

# SES (Simple Email Service)

| vikashagrawal | |

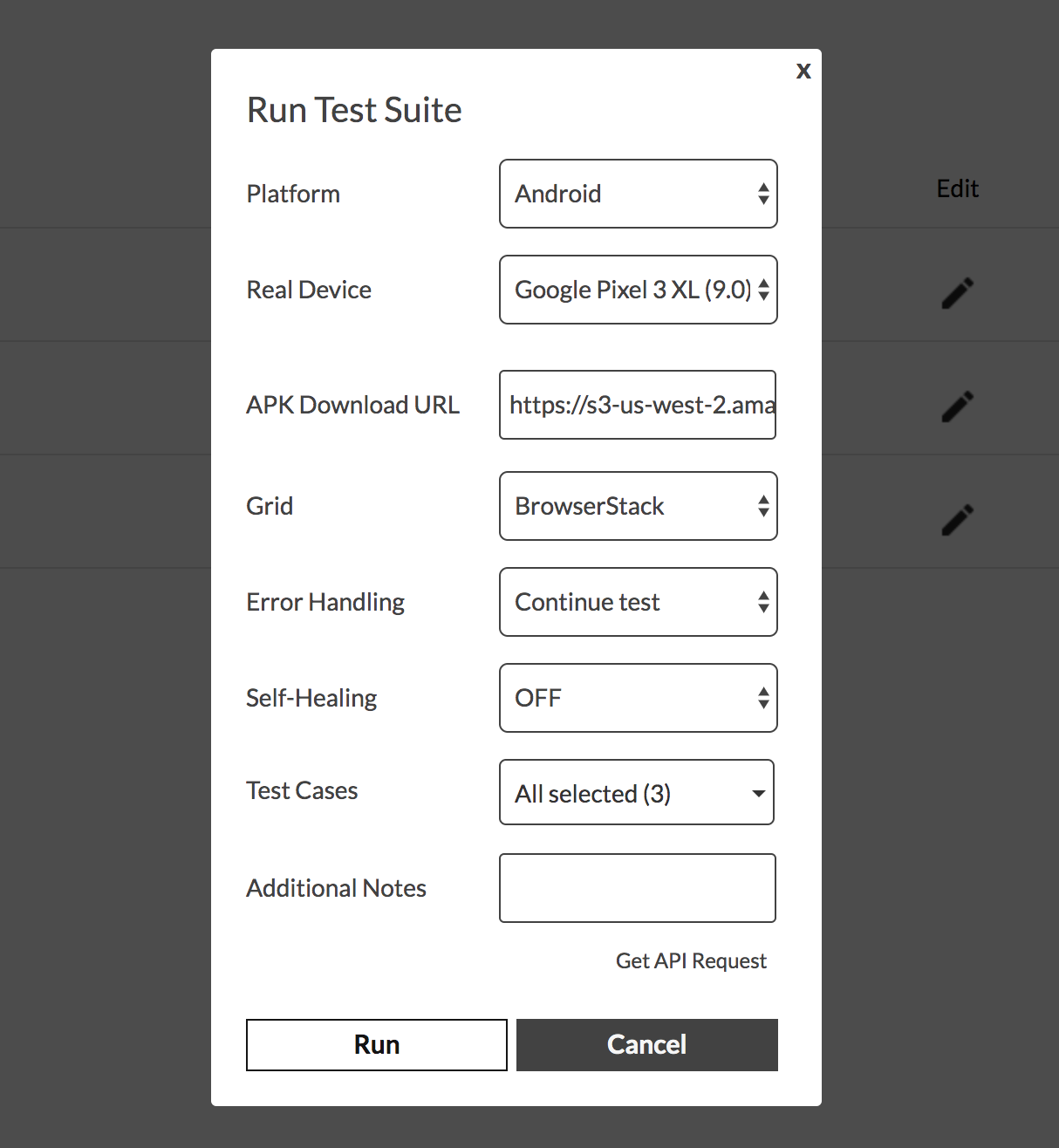

176,301 | How to integrate Endtest with BrowserStack | Codeless Automated Testing for Mobile Apps with Endtest and BrowserStack | 0 | 2019-09-25T14:25:54 | https://dev.to/endtest/how-to-integrate-endtest-with-browserstack-2gkj | webdev, testing, productivity, devops | ---

title: How to integrate Endtest with BrowserStack

published: true

description: Codeless Automated Testing for Mobile Apps with Endtest and BrowserStack

tags: webdev, testing, productivity, devops

cover_image: https://thepracticaldev.s3.amazonaws.com/i/61k02d7k2t18rhhflj46.png

---

###**Introduction**###

[Endtest](https://endtest.io) allows you to create, manage and execute Automated Tests, without having to write any code.

By integrating with [BrowserStack](https://www.browserstack.com/), you can execute Mobile Tests created with Endtest on a range of real Android and iOS mobile devices offered by BrowserStack.

###**Getting Started**###

1) Go to your **BrowserStack** account.

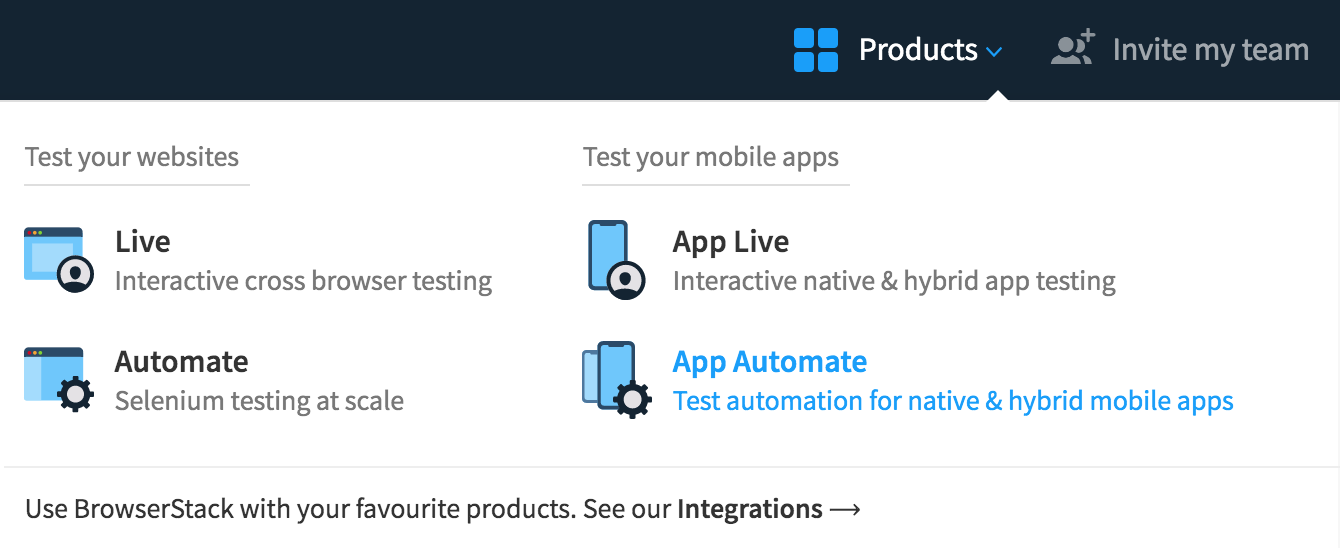

2) Click on **App Automate** from the **Products** section:

3) Click on the **Show** button from the **Username and Access Keys** section from the left side of the page:

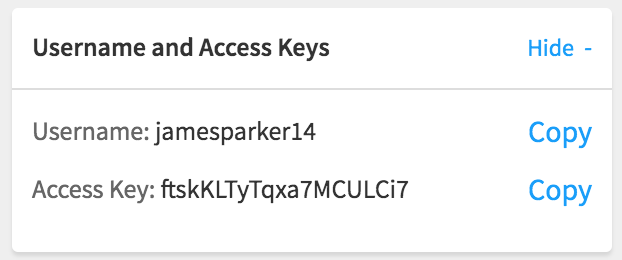

4) Go to the **Settings** page from [Endtest](https://endtest.io).

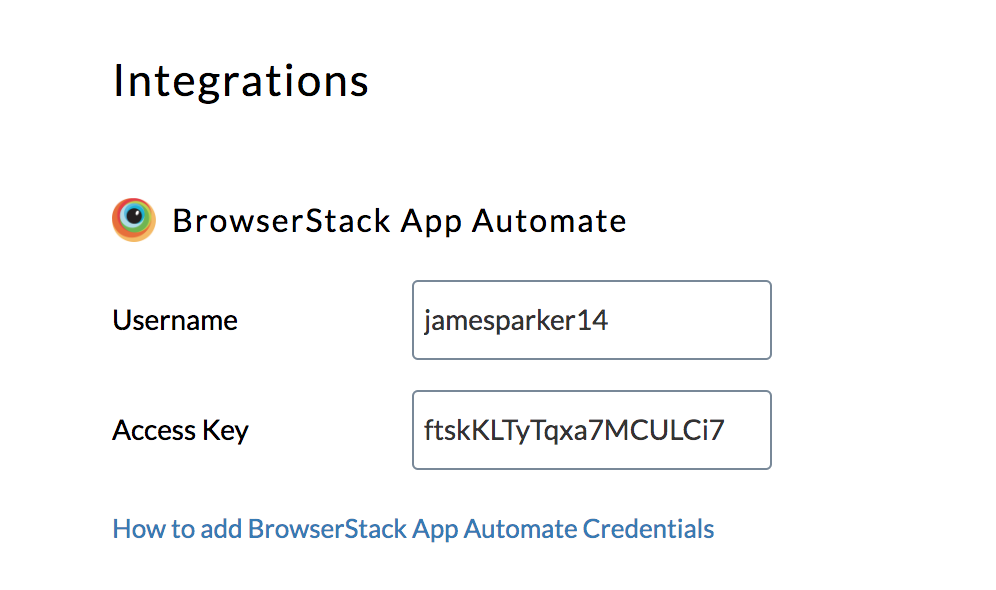

5) Add the **Username** and **Access Key** from BrowserStack App Automate in the BrowserStack User and BrowserStack Key inputs from the Endtest Settings page.

6) Click on the **Save** button.

Nice job! Your Endtest account is now connected with your BrowserStack account.

###**Running your first test**###

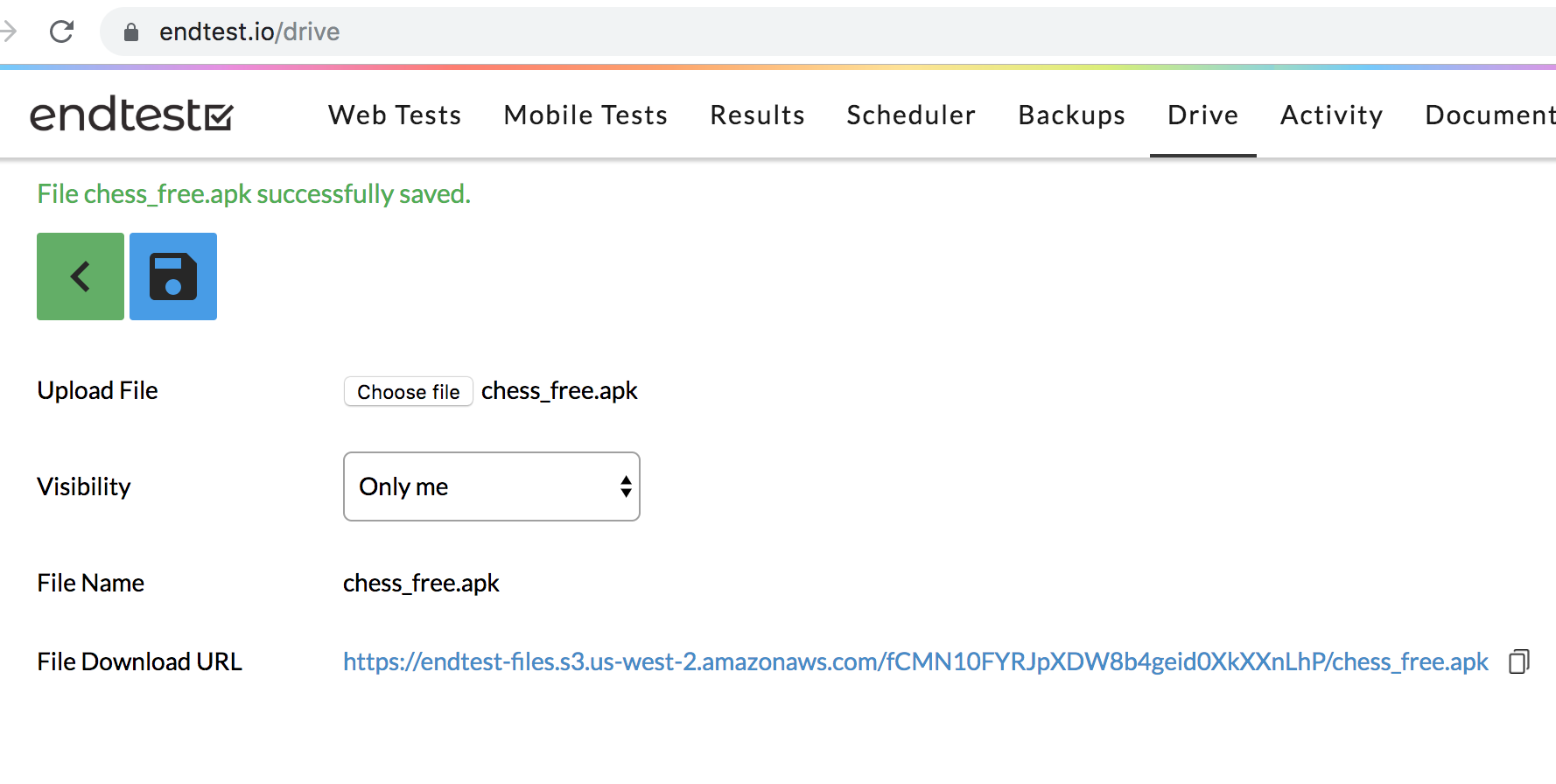

1) Upload your APK or IPA file in the **Drive** section from Endtest.

2) After that, go to the **Mobile Tests** section and click on the Run button.

3) Select **BrowserStack** from the **Grid** dropdown.

4) Select the **Platform** and the **Real Device** on which you want to execute your test on.

5) Select your APK file in the **APK Download URL** input.

If you select an iOS device, the **APK Download URL** input would be replaced with the **IPA Download URL** input.

After starting the test execution, you will be redirected to the Results section where you'll get live video and all the results, logs and details in real-time.

| razgandeanu |

177,761 | Caching JavaScript data file results when using Eleventy | How to cache the query results of a Web API to speed up the development of an Eleventy website. | 0 | 2019-09-27T19:14:56 | https://dev.to/heypieter/caching-javascript-data-file-results-when-using-eleventy-38ch | javascript, eleventy, cache | ---

title: Caching JavaScript data file results when using Eleventy

published: true

description: How to cache the query results of a Web API to speed up the development of an Eleventy website.

tags: javascript, Eleventy, cache

---

[Eleventy](https://www.11ty.io) by [Zach Leatherman](https://twitter.com/zachleat/) has become my default static site generator. It is simple, uses JavaScript, and is easy to extend. It allows me to include custom code to access additional data sources,

such as RDF datasets.

Querying data can take up some time, for example, when using an external Web API. During deployment of a website this is not a big deal, as this probably doesn't happen every minute. But when you are developing then it might become an issue: you don't want to wait for query results every time you make a change that doesn't affect the results, such as updating a CSS property, which only affects how the results are visualized. Ideally, you want to reuse these results without querying the data over and over again. I explain in this blog post how that can be done by introducing a cache.

The cache has the following features:

- The cache is only used when the website is locally served (`eleventy --serve`).

- The cached data is written to and read from the filesystem.

This is done by using the following two files:

- `serve.sh`: a Bash script that runs Eleventy.

- `cache.js`: a JavaScript file that defines the cache method.

An example Eleventy website using these two files is available on [Github](https://github.com/pheyvaer/eleventy-cache-example).

## Serve.sh

```bash

#!/usr/bin/env bash

# trap ctrl-c and call ctrl_c()

trap ctrl_c INT

function ctrl_c() {

rm -rf _data/_cache

exit 0

}

# Remove old folders

rm -rf _data/_cache # Should already be removed, but just in case

rm -rf _site

# Create needed folders

mkdir _data/_cache

ELEVENTY_SERVE=true npx eleventy --serve --port 8080

```

This Bash script creates the folder for the cached data and serves the website locally. First, we remove the cache folder and the files generated by Eleventy, which might still be there from before. Strictly speaking removing the latter is not necessary, but I have noticed that removed files are not removed from `_site`, which might result in unexpected behaviour. Second, we create the cache folder again, which of course is now empty. Finally, we set the environment variable `ELEVENTY_SERVE` to `true` and start Eleventy: we serve the website locally on port 8080. The environment variable is used by `cache.js` to check if the website is being served, because currently this information can't be extracted from Eleventy directly. Note that I have only tested this on macOS 10.12.6 and 10.14.6, and Ubuntu 16.04.6. Changes might be required for other OSs.

## Cache.js

```JavaScript

const path = require('path');

const fs = require('fs-extra');

/**

* This method returns a cached version if available, else it will get the data via the provided function.

* @param getData The function that needs to be called when no cached version is available.

* @param cacheFilename The filename of the file that contains the cached version.

* @returns the data either from the cache or from the geData function.

*/

module.exports = async function(getData, cacheFilename) {

// Check if the environment variable is set.

const isServing = process.env.ELEVENTY_SERVE === 'true';

const cacheFilePath = path.resolve(__dirname, '_data/_cache/' + cacheFilename);

let dataInCache = null;

// Check if the website is being served and that a cached version is available.

if (isServing && await fs.pathExists(cacheFilePath)) {

// Read file from cache.

dataInCache = await fs.readJSON(cacheFilePath);

console.log('Using from cache: ' + cacheFilename);

}

// If no cached version is available, we execute the function.

if (!dataInCache) {

const result = await getData();

// If the website is being served, then we write the data to the cache.

if (isServing) {

// Write data to cache.

fs.writeJSON(cacheFilePath, result, err => {

if (err) {console.error(err)}

});

}

dataInCache = result;

}

return dataInCache;

};

```

The method defined by the JavaScript file above takes two parameters: `getData` and `cacheFilename`. The former is the expensive function that you don't want to repeat over and over again. The latter is the filename of the file with the cached version. The file will be put in the folder `_data/_cache` relative to the location of `cache.js`. The environment variable used in `serve.sh` is checked here to see if the website is being served. Note that the script requires the package `fs-extra`, which adds extra methods to `fs` and is not available by default.

## Putting it all together

To get it all running, we put both files in our Eleventy project root folder. Do not forget to make the script executable and run `serve.sh`.

When executing the [aforementioned example](https://github.com/pheyvaer/eleventy-cache-example), we see that the first time to build the website it takes 10.14 seconds (see screencast below). No cached version of the query results is available at this point and thus the Web API has to be queried. But the second time, when we update the template, it only takes 0.03 seconds. This is because the cached version of the query results is used instead of querying the Web API again.

<p class="caption">Screencast: When the Web API is queried it takes 10.14 seconds. When the cached version of the query results is used it takes 0.03 seconds.</p>

| heypieter |

176,411 | How to Build a Dashboard of Live Conversations with Flask, React, and Nexmo | Nexmo recently introduced the Conversation API. This API enables you to have different styles of com... | 0 | 2019-09-25T15:58:22 | https://dev.to/vonagedev/how-to-build-a-dashboard-of-live-conversations-with-flask-react-and-nexmo-1kdh | flask, react, webdev, tutorial | Nexmo recently introduced the [Conversation API](https://developer.nexmo.com/conversation/overview). This API enables you to have different styles of communication (voice, messaging, and video) and connect them all to each other.

It's now possible for multiple conversations within an app to coincide and to retain context across all of those channels! Being able to record and work with the history of a conversation is incredibly valuable for businesses and customers alike so, as you can imagine, we're really excited about this.

> Find out more of what can be done with programmable conversations at Vonage Campus, our first customer and developer conference taking place in San Francisco on October 29-30. It's free to attend, so [request your invite now](https://web.cvent.com/event/9bba9ffb-c9b5-4022-a9b8-3a8184c70aa8/register)!

## What The Dashboard Does

This tutorial covers how to build a dashboard with Flask and React that monitors all current conversations within an [application](https://developer.nexmo.com/conversation/concepts/application). The goal is to showcase relevant data from the live conversations that are currently happening in real-time.

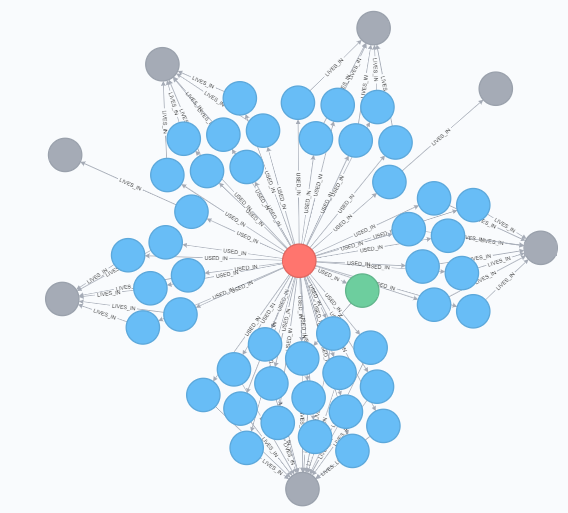

When a single [conversation](https://developer.nexmo.com/conversation/concepts/conversation) is selected from the list of current conversations, the connected [members](https://developer.nexmo.com/conversation/concepts/member) and [events](https://developer.nexmo.com/conversation/concepts/event) will be displayed. An individual member can then be selected to reveal even more information related to that particular [user](https://developer.nexmo.com/conversation/concepts/user).

<a href="https://www.nexmo.com/wp-content/uploads/2019/09/5d894f3a32766539224729.gif"><img src="https://www.nexmo.com/wp-content/uploads/2019/09/5d894f3a32766539224729.gif" alt="dashboard gif" width="500" class="alignnone size-full wp-image-30261" /></a>

## What Does The Conversation API Do?

The Nexmo [Conversation API](https://developer.nexmo.com/conversation/overview) enables you to build conversation features where communication can take place across multiple mediums including IP Messaging, PSTN Voice, SMS, and WebRTC Audio and Video. The context of the conversations is maintained through each communication event taking place within a conversation, no matter the medium.

Think of a conversation as a container of communications exchanged between two or more Users. There could be a single interaction or the entire history of all interactions between them.

The API also allows you to create Events and Legs to enable text, voice, and video communications between two Users and store them in Conversations.

## Workflow of The Application

<a href="https://www.nexmo.com/wp-content/uploads/2019/09/flowofapp.png"><img src="https://www.nexmo.com/wp-content/uploads/2019/09/flowofapp.png" alt="flow of app" width="1698" height="892" class="alignnone size-full wp-image-30265" /></a>

### Create A Nexmo Application

To work through this tutorial, you will need a [Nexmo account](https://dashboard.nexmo.com/sign-up?utm_source=DEV_REL&utm_medium=github&utm_campaign=https://github.com/nexmo-community/nexmo-python-capi). You can sign up now for free if you don’t already have an account.

This tutorial also assumes that you will be running [Ngrok](https://ngrok.com/) to run your [webhook](https://developer.nexmo.com/concepts/guides/webhooks) server locally.

If you are not familiar with Ngrok, please refer to our [Ngrok tutorial](https://www.nexmo.com/blog/2017/07/04/local-development-nexmo-ngrok-tunnel-dr/) before proceeding.

First, you will need to create a Nexmo Application:

```bash

nexmo app:create "Conversation App" http://demo.ngrok.io:3000/webhooks/answer http://demo.ngrok.io:3000/webhooks/event --keyfile private.key

```

Next, assuming you have already rented a Nexmo Number (`NEXMO_NUMBER`), you can link your Nexmo Number with your application via the command line:

```bash

nexmo link:app NEXMO_NUMBER APP_ID

```

### Clone [Git Repo](https://github.com/nexmo-community/nexmo-python-capi)

To get this app up and running on your local machine, start by cloning [this repository](https://github.com/nexmo-community/nexmo-python-capi):

```bash

git clone https://github.com/nexmo-community/nexmo-python-capi

```

Then install the dependencies:

```bash

npm install

```

Copy the example `.env.example` file with the following command:

```bash

cp .env.example > .env

```

Open that new `.env` file and fill in the Application ID and path to your `private.key` that we just generated when creating our Nexmo Application.

### Flask Backend

The important doc to inspect within our Flask files is the `server.py` one as it establishes all of the different endpoints the `Conversation API`.

The function, `make_capi_request()` connects to Nexmo and authenticates the application:

```python

def make_capi_request(api_uri):

nexmo_client = nexmo.Client(

application_id=os.getenv("APPLICATION_ID"), private_key=os.getenv("PRIVATE_KEY")

)

try:

response = nexmo_client._jwt_signed_get(request_uri=api_uri)

except nexmo.errors.ClientError:

response = {}

return jsonify(response)

```

Underneath that, we create the necessary routes:

```python

@app.route("/")

def index(): # Index page structure

return render_template("index.html")

@app.route("/conversations")

def conversations(): # List of conversations

return make_capi_request(api_uri="/beta/conversations")

@app.route("/conversation")

def conversation():# Conversation detail

cid = request.args.get("cid")

return make_capi_request(api_uri=f"/beta/conversations/{cid}")

@app.route("/user")

def user(): # User detail

uid = request.args.get("uid")

return make_capi_request(api_uri=f"/beta/users/{uid}")

@app.route("/events")

def events(): # Event detail

cid = request.args.get("cid")

return make_capi_request(api_uri=f"/beta/conversations/{cid}/events")

```

Once authenticated, each of these routes accesses the Conversation API based on the Application ID and eventually the Conversation or User ID.

### React Frontend

We’ll make use of React's ability to break our code into modularized and reusable components. The components we’ll need are:

<a href="https://www.nexmo.com/wp-content/uploads/2019/09/components.png"><img src="https://www.nexmo.com/wp-content/uploads/2019/09/components.png" alt="components - react tree" width="204" class="alignnone size-full wp-image-30252" /></a>

At the `App.js` level, notice that the `"/conversations"` endpoint is called within the constructor. Meaning that if there are any current conversations within the application, they are immediately displayed onto the page.

```javascript

fetch("/conversations").then(response =>

response.json().then(

data => {

this.setState({ conversations: data._embedded.conversations });

},

err => console.log(err)

)

);

```

The user then will have the option to select one of the conversations from the list and the meta details of that conversation, such as name and timestamp, will be displayed.

```javascript

<div>

<article className="message is-info">

<div className="message-header">

<p>{this.props.conversation.uuid}</p>

</div>

<div className="message-body">

<ul>

<li>Name: {this.props.conversation.name}</li>

<li>ttl: {this.props.conversation.properties.ttl}</li>

<li>Timestamp: {this.props.conversation.timestamp.created}</li>

</ul>

</div>

</article>

<Tabs

members={this.props.conversation.members}

events={this.props.events}

conversation={this.props.conversation}

/>

</div>

```

Notice that once a particular `conversation` has been selected two tabs become visible: `Events` and `Members`.

`Members` is set as the default state, meaning that is displayed first. It is at this point that the `"/conversation"` and `"/events"` endpoints are called. Using the `cid` that is passed within the state, the details of the current members and events are now available.

```javascript

refreshMembers = () => {

fetch("/conversation?cid=" + this.props.conversation.uuid)

.then(results => results.json())

.then(data => {

this.setState({ members: data.members });

});

};

refreshEvents = () => {

fetch("/events?cid=" + this.props.conversation.uuid)

.then(results => results.json())

.then(data => {

this.setState({ events: data });

});

};

```

The `MembersList.js` component will call the `/user` endpoint to retrieve even more data on that particular user, which then is shown within the `MemberDetail.js` component.

```javascript

showMemberDetails = user_id => {

fetch("/user?uid=" + user_id)

.then(results => results.json())

.then(data => {

this.setState({ member: data });

});

};

```

### Connect It All Together

To start up the backend, run the Flask command:

```bash

export FLASK_APP=server.py && flask run

```

And in another tab within your terminal, run the React command:

```bash

cd frontend-react && npm start

```

Open up `http://localhost:3000` in a browser, and your app will be up and running!

Any conversations that are currently running within that connected application will now be visible within this dashboard.

Congrats! You've now created an application with Flask, React, and Nexmo's [Conversation API](https://developer.nexmo.com/conversation). You now can now monitor all sorts of things related to your application's conversations. We encourage you to continue playing with and exploring this API's capabilities.

### Contributions And Next Steps

At Nexmo, the [Conversation API](https://developer.nexmo.com/conversation) is currently in beta and is ever-evolving based on your input and feedback. As always, we are happy to help with any questions in our [community slack](https://developer.nexmo.com/community/slack) or support@nexmo.com.

The post [How to Build a Dashboard of Live Conversations with Flask and React](https://www.nexmo.com/blog/2019/09/24/how-to-build-a-dashboard-of-live-conversations-with-flask-and-react-dr) appeared first on [Nexmo Developer Blog](https://www.nexmo.com/blog).

| lolocoding |

176,432 | Better Technical Interviews: Part 4 – My Opinions on Various Techniques | This post part of a series I'm writing on better technical interviews. I'd love your feedback in the... | 0 | 2019-09-25T19:59:03 | https://seankilleen.com/2019/09/better-technical-interviews-part-4-my-opinions-on-various-techniques/ | interviewing, culture, hiring | ---

title: Better Technical Interviews: Part 4 – My Opinions on Various Techniques

published: true

tags: interviewing,culture,hiring

canonical_url: https://seankilleen.com/2019/09/better-technical-interviews-part-4-my-opinions-on-various-techniques/

---

_This post part of [a series](https://seankilleen.com/2019/09/better-technical-interviews-part-1-whats-the-point/) I'm writing on better technical interviews. I'd love your feedback in the comments!_

* [Part 1 - What's the Point?](https://seankilleen.com/2019/09/better-technical-interviews-part-1-whats-the-point/)

* [Part 2 - Preparation](https://seankilleen.com/2019/09/better-technical-interviews-part-2-preparation/)

* [Part 3 - The Actual Interview](https://seankilleen.com/2019/09/better-technical-interviews-part-3-the-interview-itself/)

* [Part 4 - My Opinion on Various Techniques](https://seankilleen.com/2019/09/better-technical-interviews-part-4-my-opinions-on-various-techniques/)

* [Part 5 - Common Interview Questions](https://seankilleen.com/2019/10/better-technical-interviews-part-5-common-questions/)

## My Opinions on Certain Interview Practices

These are my personal opinions with some reasoning behind them.

### Should candidates code during the interview?

I say: No. If I am able, through conversation, to determine that this person has the fundamentals both conceptually and in terms of being a colleague, and is an open-minded, collaborative person that wants to improve, I usually don’t need to watch them code. Everyone has different styles, and I expect us all to be learning together anyway.

### To whiteboard or not to whiteboard?

I believe white-boarding for conceptual / architectural explanations is a helpful tool. It helps me see how a person uses that space to see and explain things, like how they would potentially approach a problem. I have not found that I get much out of code in whiteboard format. It will at best be pseudo-code, and to expect more than that is unfair in my opinion.

### Should I push the interviewees buttons to see how they respond?

Absolutely not. Would you do this to them in the real world? I should certainly hope not. If a client or coworker were to do this in some situation and someone handled it poorly, hopefully you’d be mentoring and coaching someone on how to improve, and also advocating for them in an instance where someone was treating them poorly.

### We’ll be doing coding; should they use Google?

If you ask someone to code, I’d suggest that you should treat it like you’re pairing with a teammate.

- It should be collaborative

- Tooling and resources should be available

- They should be able to use the machine / development environment of their choice

Otherwise, what’s the point? Pretending that devs don’t use tools or Google things just shows an interviewee that the exercise is pointless.

### What about having the candidate solve FizzBuzz?

If you’re bringing someone into a technical interview and don’t know whether or not they’d pass a problem like FizzBuzz, I think that’s the real problem.

Shift that process to the left and answer those questions earlier on. Ask a few screening questions. Run a small coding exam with something like coderpad.io, but don’t make it something so overdone. Put a little thought in. Be creative and clear.

### We came up with a pretty intricate problem we’re proud of. We think it’ll be a good litmus test.

Great. You should ask everyone on your team – particularly less senior developers – to complete that problem. And then you should adjust that problem based on what you will inevitably learn. And then you should think about how cloudy someone’s brain is when they feel under pressure,

### Should I have someone balance a B-tree, do factorial calculations, etc.?

Only if someone on your team has had to do something similar to that in the past year.

Otherwise you’re optimizing for the wrong-thing. I don’t need an algorithm wiz to write a great line-of-business app; I need someone who cares about a domain, collaborates with stakeholders, and is invested in improving as they go.

If you ask how someone would implement a fast-sorting algorithm and their answer is “first, I’d understand what we’re trying to optimize for, and then I’d open Google and research about different types of sorting algorithms” – I’d say that’s a solid answer.

### Should We use HackerRank, etc.?

No. At least not unless you’re utilizing the pairing functionality.

- These tools feed into the idea that if someone can solve a coding problem, they’ll be a good fit for a team.

- These tools risk dropping some senior folks from the funnel who avoid the sort of algorithmic minutae that this article recommends against.

- In my opinion, broadly speaking, these tools are reductive and commoditize the skillset you’re looking for and the notion of the work we do.

### I can’t really tell so much from conversation as what you’re expecting. Is that a problem?

I’d say yes. If you can’t have a conversation and determine whether this is the sort of person who will make the impact you need at your team / company, I would argue that you may not be the best person to give the interview.

### What about half-day / whole day interviews?

My opinion:

- If you’re scheduling a long interview because everyone needs to take a turn with an interviewee, I would argue that your interview process may be broken. Figure out who are the people to trust, and make the interview with them. At my current company we’ve had fantastic success with a 1-1.5 hour interview with two folks from a practice area, and a 30 minute conversation with one of our executives.

- If you bring someone in for a half day technical interview, it should be to work on a real style problem in a pairing or team setting, as close to an actual work day setup as possible. They should have access to tools, google, OSS, etc. during the process.

- If you’re having someone join an actual team for a half or full day session to work on actual client or product work, you need to compensate them for their time. Agree on an hourly rate that shows someone you respect their time and effort. Pay them for their time even if the interview ends early. Are you worried they’ll only last an hour? Do more prep and screening work up front. | seankilleen |

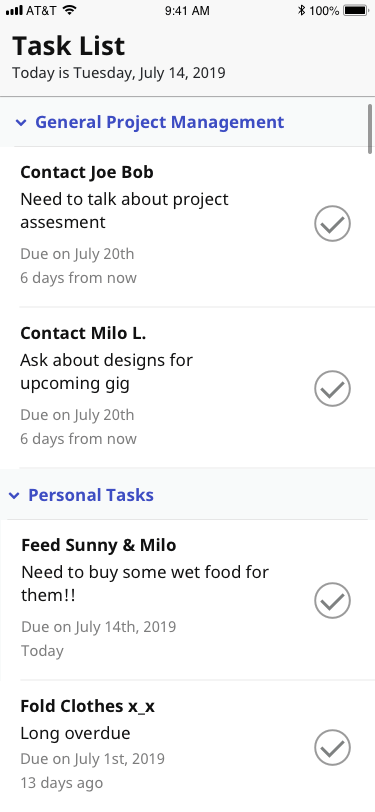

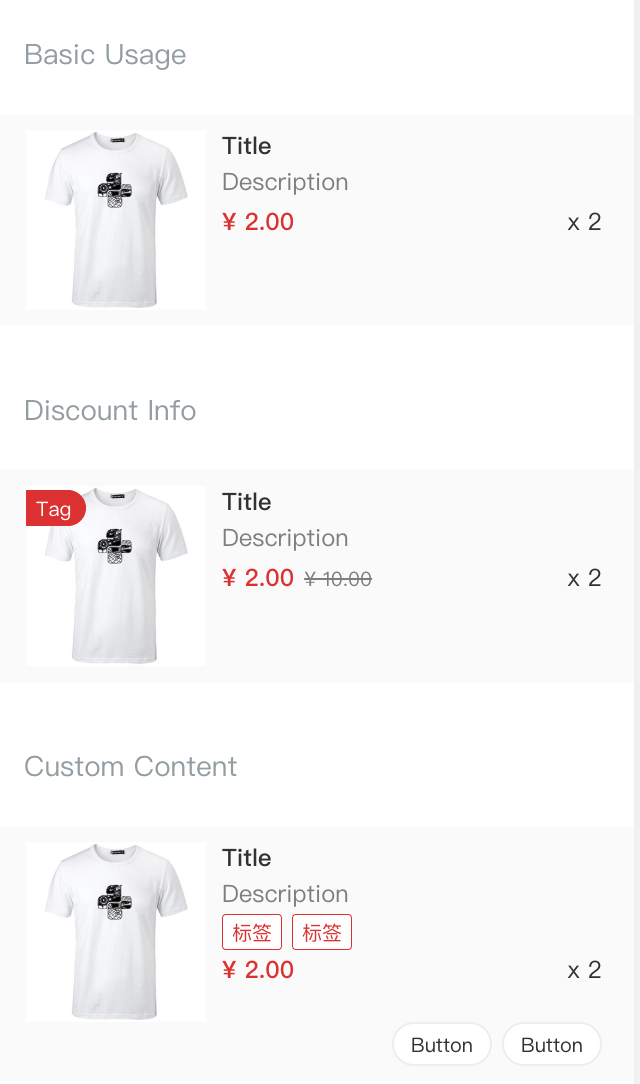

176,445 | React Native: Best Practices When Using FlatList or SectionList | Have you had any performance issues when using React Native SectionList or FlatList? I know I did. It... | 0 | 2019-09-25T17:10:30 | https://dev.to/m4rcoperuano/react-native-best-practices-when-using-flatlist-or-sectionlist-4j41 | reactnative, performance, javascript | Have you had any performance issues when using React Native [SectionList](https://facebook.github.io/react-native/docs/sectionlist) or [FlatList](https://facebook.github.io/react-native/docs/flatlist)? I know I did. It took me many hours and one time almost an entire week to figure out why performance was so poor in my list views (seriously, I thought I was going to lose it and never use React Native again). So let me save you some headaches (or maybe help you resolve existing headaches 😊) by providing you with a couple of tips on how to use SectionLists and FlatLists in a performant way!

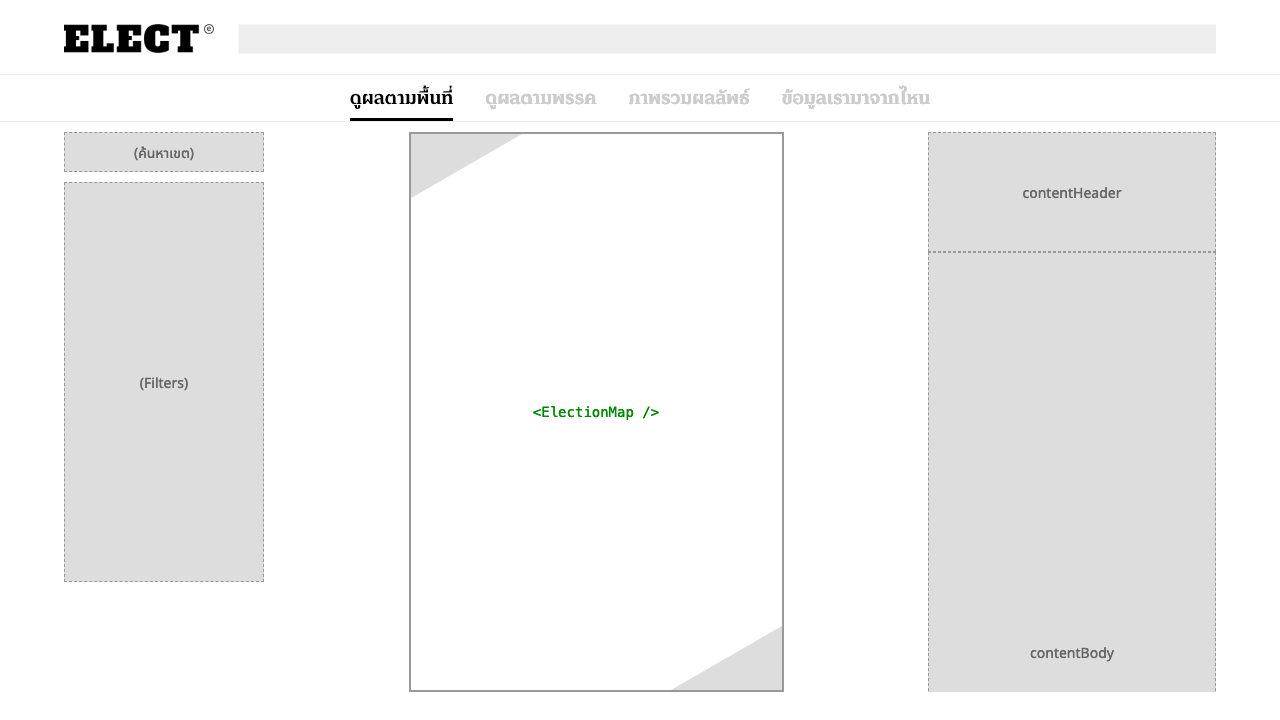

(This article assumes you have some experience with React Native already).

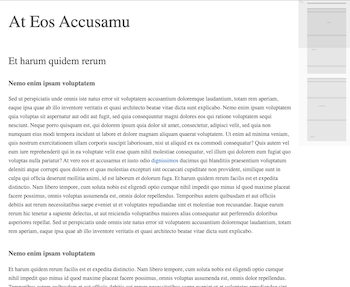

## Section List Example

Above is a simple app example where users manage their tasks. The headers represent “categories” for each task, the rows represent a “task” that the user has to do by what date, and the Check is a button that marks tasks as “done” – simple!

From a frontend perspective, these would be the components I would design:

- **CategoryHeader**

- Contains the Title and an arrow icon on the left of it.

- **TaskRow**

- Contains the task’s Title, details, and the Check button that the user can interact with.

- **TaskWidget**

- Contains the logic that formats my task data.

This also uses React Native’s SectionList component to render those tasks.

And here’s how my **SectionList** would be written in my **TaskWidget**:

```javascript

<SectionList

backgroundColor={ThemeDefaults.contentBackgroundColor}

contentContainerStyle={styles.container}

renderSectionHeader={( event ) => {

return this.renderHeader( event ); //This function returns my `CategoryHeader` component

}}

sections={[

{title: 'General Project Management', data: [ {...taskObject}, ...etc ]},

...additional items omitted for simplicity

]}

keyExtractor={( item ) => item.key}

/>

```

Pretty straight forward right? The next thing to focus on is what each component is responsible for (and this is what caused my headaches).

## Performance Issues

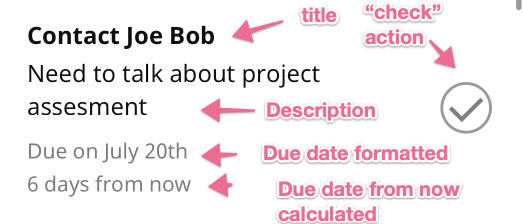

If we look at **TaskRow**, we see that we have several pieces of information that we have to display and calculate:

1. Title

2. Description

3. Due date formatted

4. Due date from now calculated

5. “Check” button action

Previously, I would’ve passed a javascript object as a “prop” to my **TaskRow** component. Maybe an object that looks like this:

```json

{

"title": "Contact Joe Bob",

"description:": "Need to talk about project assesment",

"due_date": "2019-07-20"

}

```

I then would have my **TaskRow** display the first two properties without any modification and calculate the due dates on the fly (all this would happen during the component’s “render” function). In a simple task list like above, that would probably be okay. But when your component starts doing more than just displaying data, **following this pattern can significantly impact your list’s performance and lead to antipatterns**. I would love to spend time describing how SectionLists and FlatLists work, but for the sake of brevity, let me just tell you the better way of doing this.

## Performance Improvements

Here are some rules to follow that will help you avoid performance issues in your lists:

#### I. Stop doing calculations in your SectionList/FlatList header or row components.

Section List Items will render whenever the user scrolls up or down in your list. As the list recycles your rows, new ones that come into view will execute their `render` function. With this in mind, you probably don’t want any expensive calculations during your Section List Item's `render` function.

> Quick Story

> I made the mistake on instantiating `moment()` during my task component's render function (`moment` is a date utility library for javascript). I used this library so I could calculate how many days from "now" my task was due. In another project, I was doing money calculations and date formatting in each of my SectionList row components (also using `moment` for date formatting). During these two instances, I saw performance drop significantly on Android devices. Older iPhone models were also affected. I was literally pulling my hair out trying to find out why. I even implemented Pure Components, but (like I’ll describe later) I wasn’t doing this right.

So when should you do these expensive calculations? Do it before you render any rows, like in your parent component’s `componentDidMount()` method (do it asynchronously). Create a function that “prepares” your data for your section list components. Rather than “preparing” your data inside that component.

#### II. Make your SectionList’s header and row components REALLY simple.

Now that you removed the computational work from the components, what should the components have as props? Well, these components should just display text on the screen and do very little computational work. Any actions (like API calls or internal state changes that affect your stored data) that happen inside the component should be pushed “up” to the parent component. So, instead of building a component like this (that accepts a javascript object):

```javascript

<TaskRow task={taskObject} />

```

Write a component that takes in all the values it needs to display:

```javascript

<TaskRow

title={taskObject.title}

description={taskObject.description}

dueDateFormatted={taskObject.dueDateFormatted}

dueDateFormattedFromNow={taskObject.dueDateFormattedFromNow}

onCheckButtonPress={ () => this.markTaskAsDone(taskObject) }

/>

```

Notice how the `onCheckButtonPress` is just a callback function. This allows the component that is using TaskRow to handle any of the TaskRow functions. **Making your SectionList components simpler like this will increase your Section List’s performance, as well as making your component’s functionality easy to understand**.

#### III. Make use of Pure Components

This took a while to understand. Most of our React components extend from `React.Component`. But using lists, I kept seeing articles about using `React.PureComponent`, and they all said the same thing:

>When props or state changes, PureComponent will do a shallow comparison on both props and state

>https://codeburst.io/when-to-use-component-or-purecomponent-a60cfad01a81 and many other React Native Posts

I honestly couldn’t follow what this meant for the longest time. But now that I do understand it, I’d like to explain what this means in my own words.

Let’s first take a look at our TaskRow component:

```javascript

class TaskRow extends React.PureComponent {

...prop definitions...

...methods...

etc.

}

<TaskRow

title={taskObject.title}

description={taskObject.description}

dueDateFormatted={taskObject.dueDateFormatted}

dueDateFormattedFromNow={taskObject.dueDateFormattedFromNow}

onCheckButtonPress={ () => this.markTaskAsDone(taskObject) }

/>

```

**TaskRow** has been given props that are all primitives (with the exception of `onCheckButtonPress`). What PureComponent does is that it’s going to look at all the props it’s been given, it’s then going to figure out if any of those props have changed (in the above example: has the `description` changed from the previous description it had? Has the `title` changed?). If so, it will re-render that row. If not, it won’t! And it won’t care about the onCheckButtonPress function. It only cares about comparing primitives (strings, numbers, etc.).

My mistake was not understanding what they meant by "shallow comparisons". So even after I extended PureComponent, I still sent my TaskRow an object as a prop, and since an object is not a primitive, it didn’t re-render like I was expecting. At times, it caused my other list row components to rerender even though nothing changed! So don’t make my mistake. **Use Pure Components, and make sure you use primitives for your props so that it can re-render efficiently.**

## Summary, TLDR

Removing expensive computations from your list components, simplifying your list components, and using Pure Components went a long way on improving performance in my React Native apps. It seriously felt like night and day differences in terms of performance and renewed my love for React Native.

I’ve always been a native-first type of mobile dev (coding in Objective C, Swift, or Java). I love creating fluid experiences with cool animations, and because of this, I’ve always been extra critical/cautious of cross-platform mobile solutions. But React Native has been the only one that has been able to change my mind and has me questioning why I would ever want to code in Swift or Java again. | m4rcoperuano |

176,521 | Let's Fix Some A11y Issues this Hacktoberfest 👩💻👨💻 | With Hacktoberfest just around the corner I started thinking about what I would like to contribute th... | 0 | 2019-09-30T05:50:35 | https://www.upyoura11y.com/contribute-to-a11y-in-oss | a11y, hacktoberfest, webdev, showdev |

With Hacktoberfest just around the corner I started thinking about what I would like to contribute this year (both in October and going forward).

Over the course of the year I've been doing my best to be an advocate for accessibility in web applications, and so I thought a natural thing to do would be to **help improve accessibility in Open Source, one Pull Request at a time**!

## Would you like to join me?

I've added a page over at [Up Your A11y: Open A11y OSS Issues Looking for Help](https://www.upyoura11y.com/contribute-to-a11y-in-oss) to help identify issues actively looking for contributors.

You'll find open GitHub issues that:

- Are from open source projects

- Reference 'a11y', 'accessibility', or 'accessible'

- Have the "Help wanted" or "Good first issue" label

- Have no assignee or pull request yet

- Are for JavaScript or HTML projects

## Have a great Hacktoberfest

Whatever you end up doing this year and beyond in OSS, happy coding! 👩💻👨💻

--------

*Did you find this post useful? Please consider [buying me a coffee](https://www.buymeacoffee.com/mgkZuRU) so I can keep making content* 🙂 | s_aitchison |

176,535 | hello world | apparently I have one of these now, hello | 0 | 2019-09-25T19:58:49 | https://dev.to/weems/hello-world-5d2b | apparently I have one of these now, hello | weems | |

176,602 | Improving Performance for Low-Bandwidth Users with save-data | Detect when users have requested lighter pages and serve them less | 0 | 2019-09-25T23:42:08 | https://www.mikehealy.com.au/save-data-for-low-bandwidth-users/ | performance, http, wordpress, php | ---

title: Improving Performance for Low-Bandwidth Users with save-data

published: true

description: Detect when users have requested lighter pages and serve them less

cover_image: https://cdn.mikehealy.com.au/wp-content/uploads/2019/09/save-data-cover.jpg

tags: performance,http,wordpress,php