id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,188,940 | What's a cool project to make? | Just finished a project(webpage) and I want to make something different. Does anyone have an idea? | 0 | 2022-09-09T11:02:55 | https://dev.to/pleasebcool/whats-a-cool-project-to-make-445 | Just finished a project(webpage) and I want to make something different. Does anyone have an idea? | pleasebcool | |

1,188,993 | Yet another implementation for Slack Commands | We're using slack for communication within our team. Using slack slash commands, we also handle... | 0 | 2022-09-19T13:27:48 | https://dev.to/wajahataliabid/yet-another-implementation-for-slack-commands-4bmd | python, devops, api | We're using slack for communication within our team. Using slack slash commands, we also handle common day-to-day tasks like triggering deployments on different servers based on requirements. For a long time, that remained our primary use case, so we had a single AWS Lambda function taking care of this job, however, wi... | wajahataliabid |

1,189,222 | Choosing a PHP Framework over Core PHP | Some of you may be saying, it is quite obvious that using a PHP framework is better than using core... | 0 | 2022-09-12T11:46:26 | https://dev.to/kansoldev/choosing-a-php-framework-over-core-php-4i7g | php, frameworks, programming | Some of you may be saying, it is quite obvious that using a PHP framework is better than using core PHP and trying to even debate on this is a waste of time. Yes you are right to an extent because it looks that way at first glance without thinking much about it. I want to discuss in this article some of the reasons why... | kansoldev |

1,189,385 | Usefull Tech Stack - App, Frontend, Backend, Server | This is my "complex" techstack and now in production. Principles of clean code, use of the best... | 0 | 2022-09-09T22:54:08 | https://dev.to/dennisglowiszyn/usefull-tech-stack-app-frontend-backend-server-1c4 | techstack, webdev, programming, beginners | This is my "complex" techstack and now in production.

Principles of clean code, use of the best thing in one area and a clear separation of frontend and backend is important to me.

Server (Backend)

- Apache2

- PHP

- Mysql

- Symfony Framework (Api)

Frontend (Web)

- Twig

- HTML, SCSS, JS

- Used API

- Standalone

Fro... | dennisglowiszyn |

1,189,470 | 從 3 - 5 談 C 語言無號整數的運算 | 如果說 3 - 5 有可能是正數, 你信嗎?在 C 語言裡, 如果是無號 (unsigned) 整數, 那 3 - 5 就會是正數, 這是因為無號整數會遵循除以 $2^n$ 取餘數的規則, 因此 3 -... | 0 | 2022-09-10T04:04:28 | https://dev.to/codemee/cong-3-5-tan-c-yu-yan-wu-hao-zheng-shu-de-yun-suan-400i | c, cpp | 如果說 `3 - 5` 有可能是正數, 你信嗎?在 C 語言裡, 如果是無號 (unsigned) 整數, 那 `3 - 5` 就會是正數, 這是因為無號整數會[遵循除以 $2^n$ 取餘數](https://en.cppreference.com/w/c/language/operator_arithmetic#Overflows)的規則, 因此 `3 - 5` 實際運算結果雖然是 -2, 但若是無號整數, 因為不會有負數, 會依循上述規則取結果, 以下是[簡單的範例](https://www.online-ide.com/PsifvoE2hx):

```c

#include<stdio.h>

unsigned char a ... | codemee |

1,189,648 | Welcome to my creative space ! A space for nothing but fun programming projects. | This space is to document my voyage back into tech, and some more... Previously I worked in the tech... | 0 | 2022-09-10T11:40:13 | https://dev.to/masaix/welcome-to-my-creative-space-a-space-for-nothing-but-fun-programming-projects-1o6d | This space is to document my voyage back into tech, and some more...

Previously I worked in the tech sector with past employment spent working at '[hosteurope](www.hosteurope.com)', '[ionos](www.ionos.de)' and '[iomart](www.iomart.com)'. These past employment were as a Network Engineer and System Administrator, mainta... | masaix | |

1,189,656 | N-Queen Visualization made with React as a tribute to Queen Elizabeth | I've a knack of making visualizations for data structures and algorithms to understand them... | 0 | 2022-09-10T12:03:40 | https://dev.to/ritamchakraborty/n-queen-visualization-made-with-react-as-a-tribute-to-queen-elizabeth-2ep9 | react, algorithms, vite | I've a knack of making visualizations for data structures and algorithms to understand them correctly. Recently working on a visualization of N-Queen problem. I happened to complete the project on the same day when Queen Elizabeth passed away. So I dedicated the application to her name. Check the links out if intereste... | ritamchakraborty |

1,189,754 | How to find react native developers? | How and whereto find react native developers? You might be a recruiter or a company owner... | 0 | 2022-09-10T15:08:57 | https://dev.to/developerbishwas/how-to-find-react-native-developers-4o2 | javascript, reactnative, react, mobile | # **How and whereto find react native developers?**

You might be a recruiter or a company owner and you want to find react native developers to hire them. Shortly, You can find react native developers by doing the following things:

* Create a job post on a job board and wait for react native developers to apply for i... | developerbishwas |

1,190,117 | Podman (An alternative to Docker !?!) 🦭 | Exploring new tech What is Podman? 🤔 Podman is a daemonless, open source,... | 0 | 2022-09-11T09:16:43 | https://dev.to/devangtomar/podman-an-alternative-to-docker--4n0e | ---

title: Podman (An alternative to Docker !?!) 🦭

published: true

date: 2022-07-30 11:00:56 UTC

tags:

canonical_url:

---

#### Exploring new tech

#### What is Podman? 🤔

Podman is a daemonless, open source, Linux nat... | devangtomar | |

1,256,779 | What is Decision Intelligence – Here’s What You Should Know | What is Decision Intelligence? Gartner defines decision intelligence as “a practical... | 0 | 2022-11-15T03:49:24 | https://www.purpleslate.com/thoughts/think-about-how-people-think-decision-intelligence/ | ai, news |

## [What is Decision Intelligence?](https://www.purpleslate.com/thoughts/think-about-how-people-think-decision-intelligence/)

Gartner defines decision intelligence as “a practical domain framing a wide range of de... | harinarayang |

1,190,264 | 25 Customer Feedback Tools All Developers Need | As a indie maker, you know that your product is only as good as the feedback you get from your... | 0 | 2022-09-11T13:29:40 | https://dev.to/ayushjangra/25-customer-feedback-tools-all-developers-need-296m | productivity, showdev, news, webdev | As a indie maker, you know that your product is only as good as the feedback you get from your customers.

The more you know about how your customers use your product and why they use it, the better equipped you are to develop a product that will meet their needs.

There are many different types of feedback tools and ... | ayushjangra |

1,190,299 | Finding remote work in 2022 | A few years back I wrote how to find remote work in 2019 after a personal research of having to find... | 0 | 2022-09-11T15:07:11 | https://dev.to/k_ivanow/finding-remote-work-in-2022-26j | remote, interview, career, jobs | A few years back I wrote [how to find remote work in 2019](https://dev.to/k_ivanow/finding-remote-work-in-2019-6ce) after a personal research of having to find a remote work for the first time not by being approached by a headhunter, but on my own. That article did better than a lot of other things I've written. Howeve... | k_ivanow |

1,190,426 | Artificial Intelligence | Hey guys!! Yay, you’ve made it to my first article! Let’s talk about Artificial Intelligence (Ai |... | 0 | 2022-09-11T18:09:55 | https://dev.to/agrimasharma/artificial-intelligence-341l | python, programming, pythondevlopment, ai | Hey guys!! Yay, you’ve made it to my first article! Let’s talk about Artificial Intelligence (Ai | Python). What is the importance of Artificial Intelligence in today’s modern era and what can we make out of Artificial Intelligence?

Artificial Intelligence is a constellation of many different technologies working toge... | agrimasharma |

1,190,865 | Testing article | Lorem lipsome | 0 | 2022-09-12T09:30:24 | https://filecoinvm.hashnode.dev/testing-article | Lorem lipsome | truckerfling | |

1,190,869 | Why ReactJS is an Ideal Choice for SaaS Product Development? | Most software developers and companies are familiar with the popular programming languages and... | 0 | 2022-09-12T09:42:52 | https://www.solutelabs.com/blog/reactjs-for-saas-product-development | react, saas, javascript | Most software developers and companies are familiar with the popular programming languages and frameworks such as Java, Python, PHP, etc.

One of the most talked-about web frameworks at the moment is ReactJS, with over 40% developers preferring to use it. SaaS-based products commonly use it to build user interfaces. Bi... | karishmavijay |

1,190,889 | What to expect from your first week as a junior web developer | The weeks leading up to my first Junior developer job were terrifying. I had no idea what to... | 0 | 2022-09-12T10:49:59 | https://scrimba.com/articles/first-week-as-a-junior-web-developer/ | beginners, webdev, junior, career | The weeks leading up to my first Junior developer job were terrifying.

- I had no idea what to expect

- What if I don't know what to do?

- **What they hired me by accident 😱?**

In the end, it turns out I was worried about nothing.

Teams are experienced at hiring people and they don't hire you unless they believe yo... | thatlondondev |

1,190,966 | The price of healthy eating | It’s March 2020 and suddenly we’re all forced to spend time at home and because restaurants and bars... | 0 | 2022-09-12T13:00:10 | https://dataroots.io/research/contributions/the-price-of-healthy-eating | ---

title: The price of healthy eating

published: true

date: 2022-09-12 06:07:00 UTC

tags:

canonical_url: https://dataroots.io/research/contributions/the-price-of-healthy-eating

---

It’s March 2020 and suddenly we’re all forced to spend time at home and because restaurants and bars are closed, all together we redisco... | bart6114 | |

1,191,098 | The simplest way to differentiate between the data engineers, data scientists and the data analyst. | Taking the three roles as a complete architectural model, we have architecture engineers designing... | 0 | 2022-09-12T14:10:07 | https://dev.to/ndurumo254/the-simplest-way-to-differentiate-between-the-data-engineers-data-scientists-and-the-data-analyst-21ei | datascience | Taking the three roles as a complete architectural model, we have architecture engineers designing and building the house, lorries and trucks, bringing the building materials and drivers to the construction site

##Data engineers

Data engineers, in this case, are like architectural engineers. just like the architectural... | ndurumo254 |

1,191,408 | I made a Fee Token in Balancer | Balancer is a Project that has caught my attention because is innovating in the Defi space. Balancer... | 0 | 2022-10-20T18:11:23 | https://dev.to/filosofiacodigoen/i-made-a-fee-token-in-balancer-38kg | ---

title: I made a Fee Token in Balancer

published: true

description:

tags:

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/8d0i1ntwcx0k6fu32346.png

---

Balancer is a Project that has caught my attention because is innovating in the Defi space. Balancer allows investors getting passive income w... | turupawn | |

1,191,459 | 10 new Android Libraries And Projects To Inspire You In 2022 | This is my new compilation of really inspirational, worthy to check, promising Android projects and... | 0 | 2022-09-12T20:58:17 | https://medium.com/@mmbialas/10-new-android-libraries-and-projects-to-inspire-you-in-2022-18eeb64ef70b | android, kotlin, programming, productivity | This is my new compilation of really inspirational, worthy to check, promising Android projects and libraries released or heavily refreshed in 2022.

I have listed projects written in [Kotlin](https://kotlinlang.org/) and [Jetpack Compose](https://developer.android.com/jetpack/compose) in an unordered list so if you ar... | mmbialas |

1,191,990 | Creating a todo CLI with Rust 🔥 | Hey! in this article, we'll build a to-do CLI application with Rust. Local JSON files are used to... | 0 | 2022-09-13T11:01:55 | https://www.tronic247.com/creating-a-todo-cli-with-rust/ | rust, beginners, tutorial, programming | Hey! in this article, we'll build a to-do CLI application with Rust. Local JSON files are used to store the data. Here's a preview of the app:

Let's get started!

## Getting started

First, we create a new project with Cargo:

... | posandu |

1,192,273 | Top 6 HTML Tags for SEO Every Developer Should Know | This post was originally published on Hackmamba Search engine optimization (SEO) is a vital part of... | 0 | 2022-09-13T15:25:19 | https://hackmamba.io/blog/2022/09/top-6-html-tags-for-seo-every-developer-should-know/ | webdev, html, css, beginners | This post was originally published on [Hackmamba](https://hackmamba.io/blog/2022/09/top-6-html-tags-for-seo-every-developer-should-know/)

Search engine optimization ([SEO](https://www.techtarget.com/whatis/definition/search-engine-optimization-SEO)) is a vital part of online marketing. It helps to make your website re... | cesscode |

1,192,288 | Let's Get Cyber-Physical: The Expanding Role of CPS | Cyber-physical systems are closely tied to the IoT and will increasingly use AI in every imaginable... | 0 | 2022-09-13T16:01:54 | https://www.electronicdesign.com/technologies/embedded-revolution/article/21250006/luos-lets-get-cyberphysical-the-expanding-role-of-cps | opensource, microservices, systems, luos | Cyber-physical systems are closely tied to the IoT and will increasingly use AI in every imaginable use case to operate more autonomously.

⏩ https://www.electronicdesign.com/technologies/embedded-revolution/article/21250006/luos-lets-get-cyberphysical-the-expanding-role-of-cps

Nicolas Rabault wrote a new article on E... | emanuel_allely |

1,192,314 | SOLID : Dependency Inversion | When I searched about it, it turned out Dependency Inversion is accomplished by using Dependency... | 19,756 | 2022-09-16T14:28:13 | https://dev.to/kaziusan/solid-dependency-inversion-399h | typescript, architecture, programming | When I searched about it, it turned out **Dependency Inversion** is accomplished by using **Dependency Injection**

So I'm gonna use my own code about **Dependency Injection**. Please take a look at first this article, if you haven't read it yet

{% link https://dev.to/kaziusan/-escape-from-crazy-boy-friend-explain-depe... | kaziusan |

1,192,553 | Setting up WSL environment with VSCode, git, node. | Setting up an environment for cloud development is very important because you need to setup up... | 0 | 2022-09-13T22:06:58 | https://dev.to/smitgabani/setting-up-wsl-environment-with-vscode-git-node-docker-162k | Setting up an environment for cloud development is very important because you need to setup up environment and use environment variables while developing and deploying your application.

As we know Linux is the operating system used for the cloud because it is accepted everywhere and also because some cloud services a... | smitgabani | |

1,321,604 | Reliable, Scalable, and Maintainable Applications | This is a reading note for Designing Data-Intensive Applications Overview For a developer... | 0 | 2023-01-09T12:38:04 | https://dev.to/atriiy/reliable-scalable-and-maintainable-applications-1fim | programming, architecture, distributedsystems | _This is a reading note for [Designing Data-Intensive Applications](https://www.oreilly.com/library/view/designing-data-intensive-applications/9781491903063/)_

## Overview

For a developer working in a commercial company, the most frequently encountered systems are __data-intensive systems__. Different companies have ... | atriiy |

1,192,688 | Getting the Offer | Hello and welcome to the first post in my new series, After the Interview! If you've already been... | 19,784 | 2022-09-14T12:21:09 | https://corydorfner.com/getting-the-offer | career, learn, interview, offers | [Acing the Interview]: https://dev.to/dorf8839/series/12684

[contact page]: https://corydorfner.com/contact/

[survey by TopResume]: https://talentinc.com/press-2017-11-14

[survey by CareerBuilder]: https://www.careerbuilder.com/advice/these-5-simple-mistakes-could-be-costing-you-the-job

Hello and welcome to the first ... | dorf8839 |

1,192,820 | Auto-expand menu using Angular Material | This post is going to be a bit longer than usual so bear with me 🙂 I was working on a task at work... | 0 | 2022-09-14T14:31:25 | https://dzhavat.github.io/2022/09/14/auto-expand-menu-using-angular-material.html | angular, material | This post is going to be a bit longer than usual so bear with me 🙂

I was working on a task at work where one of the requirements was to make a menu auto-expand whenever the user navigates to a sub-page that is part of a menu group. To give you a visual idea, take a look at the following video:

**Git me... | sidharth8891 | |

1,193,200 | Использование GitHub в обучении студентов | Статьи впервые опубликованы на портале Хабр: Использование GitHub в обучении студентов -... | 0 | 2022-09-14T14:45:38 | https://dev.to/anstfoto/ispolzovaniie-github-v-obuchienii-studientov-3gg3 | github, education, learning, team | *Статьи впервые опубликованы на портале Хабр:*

- *[Использование GitHub в обучении студентов](https://habr.com/ru/post/533940/) - https://habr.com/ru/post/533940/*

- *[Вариант с форками](https://habr.com/ru/post/534198/) - https://habr.com/ru/post/534198/*

- *[Вариант командной работы](https://habr.com/ru/post/534292/)... | anstfoto |

1,193,256 | Let's create a React File Manager Chapter XII: Progress Bars, Skeltons And Overlays | We can now navigate between directories, but we don't have any feedback when we are loading the... | 19,719 | 2022-09-14T15:51:42 | https://dev.to/hassanzohdy/lets-create-a-react-file-manager-chapter-xii-progress-bars-skeltons-and-overlays-1ih3 | react, typescript, mongez, javascript | ---

title: Let's create a React File Manager Chapter XII: Progress Bars, Skeltons And Overlays

published: true

description:

series: File Manager React Mongez

tags: react, typescript, mongez, javascript

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/4fmnreiqss4xt7e9wuz1.png

# Use a ratio of 100:4... | hassanzohdy |

1,193,951 | How to merge two arrays? | Let’s say you have two arrays and want to merge them: const firstTeam = ['Olivia', 'Emma',... | 0 | 2022-09-15T10:16:48 | https://dev.to/jolamemushaj/how-to-merge-two-arrays-3eb0 | javascript, webdev, 100daysofcode, beginners | Let’s say you have two arrays and want to merge them:

```javascript

const firstTeam = ['Olivia', 'Emma', 'Mia']

const secondTeam = ['Oliver', 'Liam', 'Noah']

```

One way to merge two arrays is to use concat() to concatenate the two arrays:

```javascript

const total = firstTeam.concat(secondTeam)

```

But since the 201... | jolamemushaj |

1,193,960 | Open Edge Developer Tools automatically by launching debug with Visual Studio 2022 | During my daily activities, I use Visual Studio constantly to work with Blazor projects. Since Blazor... | 16,758 | 2022-09-15T11:04:08 | https://dev.to/kasuken/open-edge-developer-tools-automatically-by-launching-debug-with-visual-studio-2022-54og | programming, dotnet, blazor, productivity | During my daily activities, I use Visual Studio constantly to work with Blazor projects.

Since Blazor is a frontend technology that exchanges data with APIs, the developer toolbar of the browser is a very important tool.

My flow to debug an application is: press F5, wait until the browser is loaded, press F12, open the... | kasuken |

1,193,978 | Add all the first elements and second elements to new list from a list of list using `list comprehension` | Question: Add all the first elements and second elements to new list from a list of list using list... | 0 | 2022-09-15T11:32:23 | https://dev.to/mu/python-interview-question-12-34-56-to-1-3-5-2-4-6-5fa8 | python, interview, career | Question:

Add all the first elements and second elements to new list from a list of list using `list comprehension`

Ex:

Given: [ [1,2], [3,4], [5,6] ]

Expected: [[1, 3, 5], [2, 4, 6]]

Solution:

```python

a = [ [1,2], [3,4], [5,6] ]

final_list = [[x[0] for x in a], [x[1] for x in a]]

print(final_list)

``` | mu |

1,203,046 | Test your PHP | Got these tests from recent interviews and thought it to share with everyone to once in a while... | 0 | 2022-09-26T04:29:06 | https://dev.to/jtwebguy/test-your-php-237d | php, phpskills, phptest | Got these tests from recent interviews and thought it to share with everyone to once in a while measure your PHP skill level.

#1. Check if Parentheses, Brackets and Braces Are Balanced. It's better to use Stacks method but use your own if you must.

`'{{}}' = Pass

'[]}{{}}' = Fail

'[[{]{{}}' = Fail

'[]()(' = 1x open pa... | jtwebguy |

1,210,177 | Centralized Outbound Routing on AWS | Managing and securing external connectivity can be challenging and expensive when an organization's... | 0 | 2022-12-15T19:31:09 | https://dev.to/andreacfm/centralized-outbound-routing-on-aws-212o | aws, vpc | Managing and securing external connectivity can be challenging and expensive when an organization's workload is split between many isolated accounts.

Let's consider a use case where an organization has dev, prod, and shared workload deployed on private subnets in 3 isolated aws accounts. Some of these workloads must be... | andreacfm |

1,210,194 | It's Hacktoberfest! | Get a chance to win $30k worth of cash prizes, a team meeting with the CTO, and exclusive swag by... | 0 | 2022-10-03T21:24:21 | https://dev.to/singlestore/its-hacktoberfest-100a | database, hacktoberfest, opensource, github | Get a chance to win $30k worth of cash prizes, a team meeting with the CTO, and exclusive swag by building your application idea with SingleStore through Nov. 7th.

Sounds appealing, right?

Here's what you need to know: [https://singlestore.devpost.com/] | drmartingit |

1,210,292 | Strong Parameters in Rails? | Ah, strong parameters in Rails The magic charm that allows developers write spell-bound code. Just... | 0 | 2022-12-16T19:29:56 | https://dev.to/hermitex/strong-parameters-4po1 | webdev, ruby, beginners, rails | Ah, strong parameters in Rails

The magic charm that allows developers write spell-bound code. Just kidding, they're actually a pretty useful tool for protecting your application from malicious user input. But let's... | hermitex |

1,210,337 | Gesture Implementation for Cross Platform native Swift mobile apps | Introduction Hello everyone 👋 In our continuous series of SCADE articles, today we will... | 0 | 2022-10-04T02:35:21 | https://dev.to/scade/gesture-implementation-for-cross-platforms-mobile-apps-3be7 | android, swift, ios, scade | ---

title: Gesture Implementation for Cross Platform native Swift mobile apps

published: true

description:

tags: android, swift, ios, scade

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/9i7cmfnt6jwyv9w9ht0f.png

# Use a ratio of 100:42 for best results.

---

## Introduction

Hello everyone 👋

I... | scade |

1,210,389 | Turnstile , alternative to CAPTCHA from cloudflare | Demo: https://demo.turnstile.workers.dev/ Turnstile is our smart CAPTCHA alternative. It... | 0 | 2022-10-04T03:44:16 | https://dev.to/chandrapenugonda/turnstile-alternative-to-captcha-from-cloudflare-4410 | captcha, javascript, cloudflare, privacy | Demo: https://demo.turnstile.workers.dev/

Turnstile is our smart CAPTCHA alternative. It automatically chooses from a rotating suite of non-intrusive browser challenges based on telemetry and client behavior exhibited during a session.

> Less data collection, more privacy, same security

## Swap out your existing CAP... | chandrapenugonda |

1,210,684 | All about Game Developer | Hello! That the technology industry offers many acting possibilities you probably already know. But... | 0 | 2022-10-04T10:58:07 | https://dev.to/albericojr/all-about-game-developer-3gbj | gamedev, devops, programming | Hello!

That the technology industry offers many acting possibilities you probably already know. But today we will talk a little about one of the most promising professions of today that is that of Game Developer.

In recent years, the game market has been established as the largest entertainment industry, with movemen... | albericojr |

1,210,402 | New Usefull JavaScript Tips For Everyone | *Old Ways * const getPrice = (item) => { if(item==200){return 200} else if... | 0 | 2022-10-04T04:52:35 | https://dev.to/shaon07/new-usefull-javascript-tips-for-everyone-1389 | javascript, webdev, beginners, programming | ## **Old Ways **

```

const getPrice = (item) => {

if(item==200){return 200}

else if (item==500){return 500}

else if (item===400) {return 400}

else {return 100}

}

console.log(getPrice(foodName));

```

## **New Ways**

```

const prices = {

food1 : 100,

food2 : 200,

food3 : 400,

food4 : 500

}

const ge... | shaon07 |

1,210,441 | How To find easy to rank keywords on Google - Quick SEO Hack | There are many ways to find low competition keywords that rank easily on Google, Bing, and other... | 0 | 2022-10-04T06:53:12 | https://dev.to/its__keziah/how-to-find-easy-to-rank-keywords-on-google-quick-seo-hack-311l | rankongoogle, seo, python, laravel | There are many ways to find low competition keywords that rank easily on Google, Bing, and other Search Engine. Now I am discussing some techniques to find low competition and profitable keywords that will rank easily.

**1. KGR Keyword Research Method:**

KGR keyword research method is an effective strategy to find l... | its__keziah |

1,210,576 | Webinar: BDD Pitfalls and How to Avoid Them | When new products are launched, the disconnect between business professionals and engineers often... | 0 | 2022-10-04T08:34:15 | https://www.lambdatest.com/blog/bdd-pitfalls-and-how-to-avoid-them/ | webinar, techtalks, bdd | When new products are launched, the disconnect between business professionals and engineers often results in wasted time and resources. A strategy for improving communication can help prevent bottlenecks to the project’s progress.

When business managers understand the capabilities of the engineering team, and when eng... | lambdatestteam |

1,210,607 | Learning Vue - fetching data and re-render | Introduction I am a "codenewbie", currently I am learning Vue and have some re-appearing... | 0 | 2022-10-04T09:28:08 | https://dev.to/viktoriabors/learning-vue-fetching-data-and-re-render-38i3 | help, vue, beginners, codenewbie | ## Introduction

I am a "codenewbie", currently I am learning Vue and have some re-appearing problems I don't have the knowledge to fix it.

## Fetching data and rendering it

I am using Vue 3 and composition api (setup() method). I can fetch data from a fake todo api and it's rendered on the page.

The problem starts w... | viktoriabors |

1,210,654 | Sending Emails with Firebase | Firebase is a Google-owned web and mobile application development platform that allows you to create... | 0 | 2022-10-04T10:11:01 | http://mailtrap.io/blog/sending-emails-with-firebase/ | firebase, node, javascript, tutorial | Firebase is a Google-owned web and mobile application development platform that allows you to create high-quality apps and take your business to the next level. You can also use the platform to send emails and enhance the capabilities of your apps.

In this article, we’re going to talk about how to send emails with Fir... | sofiatarhonska |

1,210,731 | Connect to an OpenVPN server running on Synology DSM 7 | Introduction This is the second part of the series "Configure OpenVPN on Synology DSM 7".... | 20,026 | 2022-10-05T15:37:08 | https://dev.to/dider/connect-to-an-openvpn-server-running-on-synology-dsm-7-5bal | synology, openvpn, openvpnconnect, security | ### Introduction

This is the second part of the series "Configure OpenVPN on Synology DSM 7". In the [first part](https://dev.to/dider/configure-openvpn-server-on-synology-dsm-7-371n) we've set up an OpenVPN server on Synology DSM 7, configured port forwarding and firewall on our router and NAS.

In this part we'll se... | dider |

1,210,929 | Why you shouldn't ignore .gitignore | If you already know some basic git commands, it is time to learn about .gitignore. When I started to... | 0 | 2022-10-04T13:52:23 | https://www.cristina-padilla.com/gitignore.html | webdev, beginners, tutorial, git | If you already know some [basic git commands](https://www.cristina-padilla.com/gitcommands.html), it is time to learn about **.gitignore**.

When I started to learn Git, I read that .gitignore is basically a file that helps hide other files to keep a repository cleaner, meaning we are telling Git not to track every sin... | crispitipina |

1,211,191 | Using NDI in your Real Time Live Streaming Production Workflow | If you're a developer who's also creating live streams and content you know that it takes a lot of... | 0 | 2022-11-07T20:18:33 | https://dev.to/dolbyio/using-ndi-in-your-real-time-live-streaming-production-workflow-59m7 | streaming, contentproduction, webdev | If you're a developer who's also creating live streams and content you know that it takes a lot of effort setup a solid content streaming workflow.

## NDI to the rescue!

NDI® (Network Device Interface) is a free protocol for Video over IP, developed by NewTek. The innovation is in the protocol, which makes it possibl... | dzeitman |

1,211,689 | UUIDs Are Bad for Database Index Performance, enter UUID7! | UUIDs, Universal Unique Identifiers, are a specific form of identifier designed to be unique even... | 20,041 | 2022-10-07T22:10:14 | https://www.toomanyafterthoughts.com/uuids-are-bad-for-database-index-performance-uuid7/ | database, performance, webdev, news | UUIDs, Universal Unique Identifiers, are a specific form of identifier designed to be unique even when generated on multiple machines. Compared to autoincremented sequential identifiers commonly used in relational databases, generating does not require centralized storage of the current state, I.e., the identifiers tha... | vdorot |

1,211,955 | Process Analytics - September 2022 News | Welcome to the Process Analytics monthly news 👋. Our monthly reminder: The goal of the Process... | 20,050 | 2022-10-06T07:38:56 | https://medium.com/@process-analytics/process-analytics-september-2022-news-d5d3a69830c6 | news, typescript, analytics, visualization |

Welcome to the Process Analytics monthly news 👋.

Our monthly reminder: The goal of the Process Analytics project is to provide a means to rapidly display meaningful Process Analytics components in your web pages using BPMN 2.0 notation and Open Source libraries.

Goodbye summer 🏖️, welcome colorful autumn 🍂! For t... | assynour |

1,212,007 | Prerendering in Angular - Part IV | The last piece of our quest is to adapt our Angular Prerender Builder to create multiple folders, per... | 19,852 | 2022-10-05T17:05:57 | https://garage.sekrab.com/posts/prerendering-in-angular-part-iv | angular, webdev, javascript, tutorial | The last piece of our quest is to adapt our Angular Prerender Builder to create multiple folders, per language, and add the index files into it. Hosting those files depends on the server we are using, and we covered different hosts back in [Twisting Angular Localization](https://garage.sekrab.com/posts/using-angular-ap... | ayyash |

1,212,180 | CLI application for working with disposable email service | Here's my latest Go project - Mail.tm CLI, a CLI application for working with Mail.tm disposable... | 0 | 2022-10-05T21:03:42 | https://dev.to/abgeo/cli-application-for-working-with-disposable-email-service-1n31 | go, cli, cobra, pterm | Here's my latest Go project - Mail.tm CLI, a CLI application for working with Mail.tm disposable email service.

Disposable email - is a free email service that allows receiving emails at a temporary address that self-destructs after a specific time elapses.

Mail.tm CLI is a command-line-based application that works w... | abgeo |

1,212,194 | What is android? How you can create app for that? | Android is a mobile operating system and a platform for mobile apps. It's created by Google, and the... | 0 | 2022-10-05T22:02:23 | https://dev.to/tiztechy/how-to-create-android-apps-without-coding-3k7o | android, javascript, beginners, programming | Android is a mobile operating system and a platform for mobile apps. It's created by Google, and the open-source code is available to download free of charge from the Android Open Source Project (AOSP). Android apps run on handheld electronic devices, such as smartphones and tablets, run on the internet, and can be use... | tiztechy |

1,212,316 | Learn The Code Used For Apps on Android and iOS Platforms | The most challenging aspect of app development is convincing users to make the most of their... | 0 | 2022-10-06T03:43:13 | https://dev.to/ferry/learn-the-code-used-for-apps-on-android-and-ios-platforms-1kcd | android, ios, mobile, startup | The most challenging aspect of app development is convincing users to make the most of their development time and money. According to Statista, 25% of consumers leave apps after their first use, while 71% of mobile users worldwide admit to deleting apps three months after downloading them.

How did it happen? one of th... | ferry |

1,212,322 | Image search engine with React JS - React Query 🔋 | This time we will make an image search engine with the help of Unsplash API and React Query, with... | 0 | 2022-10-07T12:28:13 | https://dev.to/franklin030601/image-search-engine-with-react-js-react-query-39 | react, javascript, tutorial, beginners | This time we will make an image search engine with the help of [Unsplash API](https://unsplash.com/) and **React Query**, with which you will notice a big change in your applications, with so few lines of code, React Query will improve the performance of your application!

> 🚨 Note: This post requires you to know th... | franklin030601 |

1,212,452 | Mapping Records to arrays | I needed this functionality to parse a configuration regardless of whether it was supplied as an... | 0 | 2022-10-06T08:14:05 | https://dev.to/brense/ever-needed-to-map-a-record-to-an-array-ml1 | typescript, webdev | I needed this functionality to parse a configuration regardless of whether it was supplied as an array or a Record. This function converts the Record into an array of the same type:

```typescript

function mapRecordToArray<T, R extends Omit<T, K>, K extends keyof T>(record: Record<string, R>, recordKeyName: K) {

retu... | brense |

1,212,479 | CSS defaults styles | A personal CSS project to embed in your webpage that set bunch of styles to your HTML elements. You... | 0 | 2022-10-06T09:14:34 | https://dev.to/ptibat/css-defaults-styles-7f |

A personal CSS project to embed in your webpage that set bunch of styles to your HTML elements.

You can get it on Github :

{% embed https://github.com/ptibat/css_defaults %}

| ptibat | |

1,214,713 | How to create a video streaming app using React and Vime | Video streaming has transformed the way video media is delivered to us online as it allows users to... | 0 | 2022-12-20T12:41:42 | https://dev.to/gbadeboife/how-to-create-a-video-streaming-app-using-react-and-vime-4fb3 | javascript, react, webdev, tutorial | Video streaming has transformed the way video media is delivered to us online as it allows users to watch videos without the having to download them.

This is highly convenient as it saves us the time spent downloading a video and the storage space required for downloaded content.

It is a key resource for information sh... | gbadeboife |

1,248,560 | Introdução a Cuelang | Aposto que nesse momento uma frase paira na sua cabeça: "Mais uma linguagem de... | 0 | 2022-11-08T19:22:09 | https://dev.to/eminetto/introducao-a-cuelang-2bgc | cuelang, cue | ---

title: Introdução a Cuelang

published: true

description:

tags: cuelang, cue

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2022-11-08 19:20 +0000

---

Aposto que nesse momento uma frase paira na sua cabeça:

> "Mais uma linguagem de programação"?

Calma, cal... | eminetto |

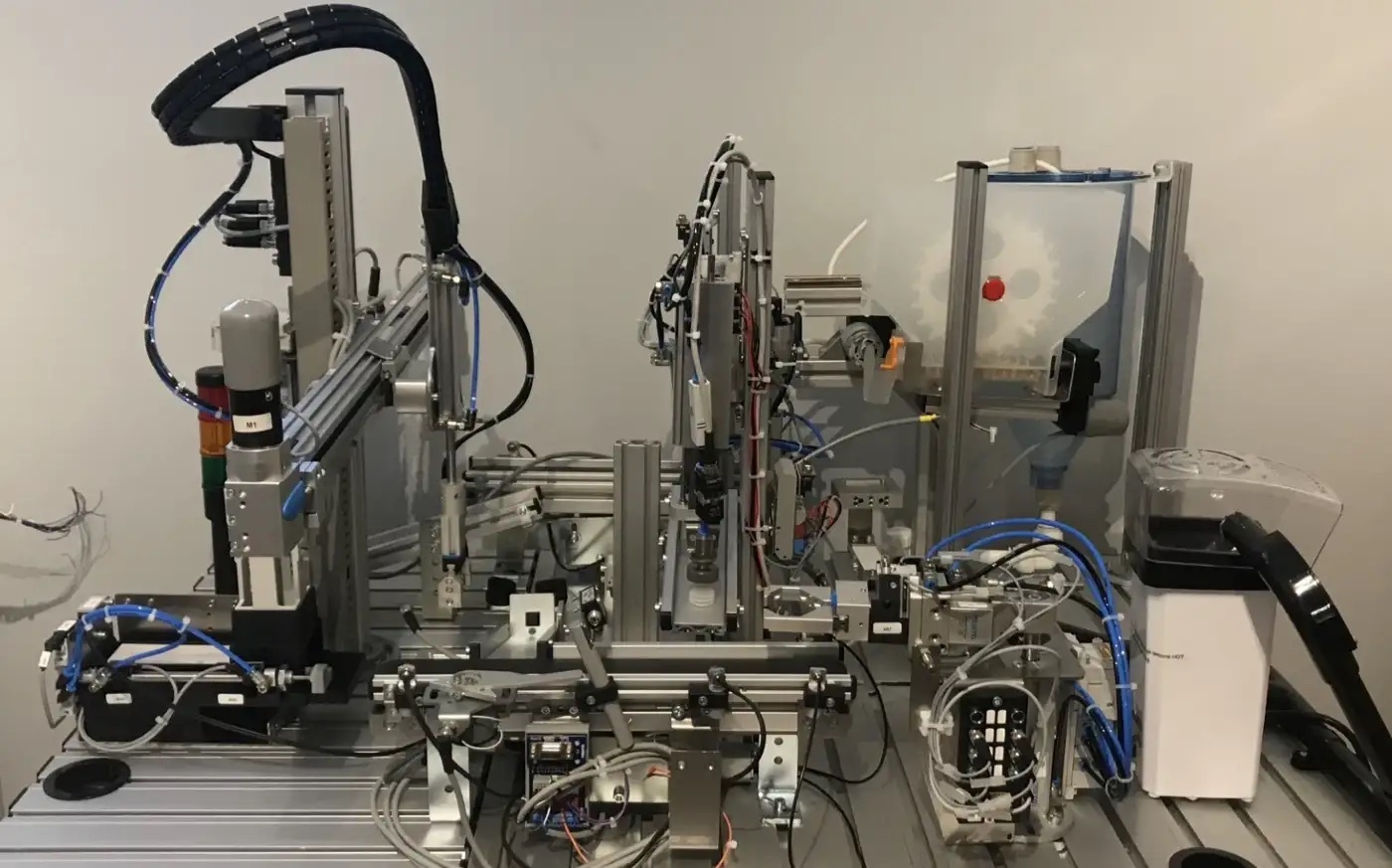

1,250,304 | Designing a Mechatronic Popcorn System | For the 2019 WorldSkills UK Mechatronic Team Selection competition in March, I wanted to design a... | 0 | 2022-11-09T23:17:30 | https://dev.to/calumk/designing-a-mechatronic-popcorn-mps-1b08 | mechatronics, worldskills, robotics, plc | For the 2019 WorldSkills UK Mechatronic Team Selection competition in March, I wanted to design a modular production line that — that produced popcorn (and drinks)

The System needed to be capable of dispensing &... | calumk |

1,251,674 | Yarn Workspace Scripts Refactor - A Case Study | It happens to all of us - you implement a solution only to realize later on that it’s not robust... | 0 | 2022-11-11T10:18:16 | https://dev.to/mbarzeev/yarn-workspace-scripts-refactor-a-case-study-2f25 | yarn, tutorial, webdev, testing | It happens to all of us - you implement a solution only to realize later on that it’s not robust enough and probably could use a good refactor.

Same happened to me and I thought this is a good opportunity to share with you the reasons which made me refactor my code and how I practically did that.

A case study if you ... | mbarzeev |

1,252,422 | bootcamp is here around the counter. less than 24 hours, i need your advise, kind nervous 😂 please! i had only the prework nuts! | A post by americanoame | 0 | 2022-11-11T07:32:19 | https://dev.to/americanoame/bootcamp-is-here-around-the-counter-less-than-24-hours-4dhe | americanoame | ||

1,252,457 | Installation of Embulk on Ubuntu 20.04 | Environment Ubuntu 20.04.3 LTS Instruction (1) Check Java version $ java... | 0 | 2022-11-11T09:04:35 | https://dev.to/tomoyk/installation-of-embulk-on-ubuntu-2004-100e | ubuntu, embulk | ## Environment

- Ubuntu 20.04.3 LTS

## Instruction

(1) Check Java version

```

$ java -version

Command 'java' not found, but can be installed with:

sudo apt install default-jre # version 2:1.11-72, or

sudo apt install openjdk-11-jre-headless # version 11.0.17+8-1ubuntu2~20.04

sudo apt install openjdk... | tomoyk |

1,252,817 | Object Oriented Programming (OOP) Concepts | The high-level programming languages are broadly categorized into two categories:... | 0 | 2022-11-11T15:41:59 | https://dev.to/sagary2j/high-level-object-oriented-programmingoop-concepts-f0b | python, devops, programming, oop | The high-level programming languages are broadly categorized into two categories:

- Procedure-oriented programming (POP) language.

- Object-oriented programming (OOP) language.

## Procedure-Oriented Programming Language

In the procedure-oriented approach, the problem is viewed as a sequence of things to be done... | sagary2j |

1,252,827 | Cumulocity Web Development Tutorial - Part 1: Start your journey | Starting your journey as a web developer in Cumulocity can be quite overwhelming in the beginning.... | 0 | 2022-11-24T11:03:28 | https://tech.forums.softwareag.com/t/cumulocity-web-development-tutorial-part-1-start-your-journey/259613 | iot, tutorial, webdeveloper, programming | ---

title: Cumulocity Web Development Tutorial - Part 1: Start your journey

published: true

date: 2022-11-11 13:42:29 UTC

tags: iot, tutorial, webdeveloper, programming

canonical_url: https://tech.forums.softwareag.com/t/cumulocity-web-development-tutorial-part-1-start-your-journey/259613

---

Starting your journey ... | techcomm_sag |

1,253,442 | How to think like a programmer: The human part | Welcome to the inaugural post of this series, designed to help you think like a programmer! I got the... | 0 | 2022-11-11T23:57:55 | https://blog.nikfp.com/how-to-think-like-a-programmer-the-human-part | softskills, beginners, motivation | ---

title: How to think like a programmer: The human part

published: true

date: 2022-11-11 22:31:52 UTC

tags: softskills, beginners, motivation

canonical_url: https://blog.nikfp.com/how-to-think-like-a-programmer-the-human-part

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/5t0qksijf3u9j4jemq0l.j... | nikfp |

1,253,471 | Running graphic apps in Docker: AWS WorkSpaces | How to run GUI apps using X.Org and Docker in Linux or Windows | 0 | 2022-12-29T12:19:18 | https://dev.to/cloudx/running-graphic-apps-in-docker-aws-workspaces-1jj3 | tutorial, docker, aws, security | ---

title: 'Running graphic apps in Docker: AWS WorkSpaces'

published: true

description: How to run GUI apps using X.Org and Docker in Linux or Windows

tags: 'tutorial, docker, aws, security'

cover_image: 'https://raw.githubusercontent.com/cloudx-labs/posts/main/posts/navarroaxel/assets/gui-apps-docker-cover.jpg'

id: 1... | navarroaxel |

1,253,684 | Protecting MySQL Beyond mysql_secure_installation: A Guide | MySQL is a complex beast to tame. Part of that is because the RDBMS has a lot of settings and... | 0 | 2022-11-13T08:00:00 | https://breachdirectory.com/blog/mysql_secure_installation/ | mysql, database, security, webdev | <!-- wp:paragraph -->

<p>MySQL is a complex beast to tame. Part of that is because the RDBMS has a lot of settings and parameters that can be configured to make it able to perform at the very best of its ability, but another side of the reason is that its protection and security are more than clicking a couple of butto... | breachdirectory |

1,253,716 | What is the best website for practicing C++ problems? | Fundamentally, C++ is a programming language utilized for algorithmic critical thinking and bit... | 0 | 2022-11-12T04:20:08 | https://dev.to/ridhisingla001/what-is-the-best-website-for-practicing-c-problems-21kg | cpp, programming, productivity, beginners | Fundamentally, C++ is a programming language utilized for algorithmic critical thinking and bit advancement of Os. At the point when you come to HTML, it is a prearranging language that is utilized for the front-end advancement of a site page.

So, for software development, you need to learn all the algorithms required... | ridhisingla001 |

1,253,991 | Deploy Promtail as a Sidecar to you Main App. | Hello, in this tutorial the goal is to describe the steps needed to deploy Promtail as a Sidecar... | 0 | 2022-11-12T11:48:27 | https://dev.to/tvelmachos/deploy-promtail-as-a-sidecar-to-you-main-app-2fk5 | devops, kubernetes, observabillity, grafana | Hello, in this tutorial the goal is to describe the steps needed to deploy Promtail as a Sidecar container to your app in order to ship only the logs you will need to the Log Management System in our case we will use [Grafana Loki](https://grafana.com/oss/loki/).

Before we start, I would like to explain to you the rea... | tvelmachos |

1,254,159 | 4 Tips for How to Work with Critical Feedback | What to do if you get negative feedback at work? How to make sure you career stays on track and your... | 0 | 2022-11-12T14:28:54 | https://dev.to/dadyasasha/4-tips-for-how-to-work-with-critical-feedback-353c | career, softskills, feedback, management | What to do if you get negative feedback at work? How to make sure you career stays on track and your manager is actually happy with you?

Getting critical (negative) feedback might be painful but if you approach it constructively it can be beneficial for you, your manager and entire organization. In this video I share ... | dadyasasha |

1,254,375 | Free Tailwind CSS Site Templates For Your Your Next Project | I love Tailwind CSS. A lot of people love Tailwind CSS. So I made a site to share my Tailwind CSS... | 0 | 2022-11-12T20:20:37 | https://dev.to/wes_walke/free-tailwind-css-site-templates-for-your-your-next-project-58h3 | tailwindcss, webdev, design | I love Tailwind CSS. A lot of people love Tailwind CSS.

So I made a site to share my Tailwind CSS site templates I've made over the years.

Use them on your next project, to learn, to showoff off to your friends and pretend you made it. Doesn't matter to me. Enjoy :)

https://tailwindsites.com/

` and `Date.now()` can be used to get current date in JS.

Let's log the results to the console.

```js

console.log(new Date()... | coderslang |

1,257,381 | JavaScript Events | JavaScript Events: An event is a signal that something happened. All DOM generated... | 0 | 2022-11-15T08:15:18 | https://dev.to/sadiqshah786/javascript-events-22hn | javascript, beginners, programming, webdev | ## JavaScript Events:

_An event is a signal that something happened._

All DOM generated signals.

# Types of Events

There are many Events in JavaScript but some events discuss in this article.

### - Mouse Events

1. Click : Its performed when mouse click on any element.

and touchscreen user can tabs to per... | sadiqshah786 |

1,255,611 | Tired of doing all the initial setup that a fullstack typescript project requires? | Start your next project with the dna architecture, and be good to go with the best opensource tools... | 0 | 2022-11-13T18:41:28 | https://dev.to/cesarsalesgomes/tired-of-doing-all-the-initial-setup-that-a-fullstack-typescript-project-requires-2b5p | react, directus, nest, typesafety | Start your next project with the **dna** architecture, and be good to go with the best opensource tools of each layer ensuring an initial typesafe enviroment:

Repo: https://github.com/cesarsalesgomes/dna

Backend: Nestjs / Directus

Frontend: React / Tailwind

| cesarsalesgomes |

1,256,355 | Where to impliment Password Encryption in node.js | Security is one of the most essential features of any application. We mainly impliment this through... | 0 | 2022-11-14T09:03:51 | https://dev.to/jane49cloud/where-to-impliment-password-encryption-in-nodejs-4e7k | javascript, node | Security is one of the most essential features of any application.

We mainly impliment this through hashing password. Today I will focus on implimenting this in a MERN app.

The basic structure of a neat node application has the following basic folders and files

###### connection(database)

###### models (defines prope... | jane49cloud |

1,256,503 | Hola | Mi nombre es Graciela, soy principiante y deseo aprender. | 0 | 2022-11-14T15:17:52 | https://dev.to/gracesl/hola-266j | Mi nombre es Graciela, soy principiante y deseo aprender. | gracesl | |

1,256,534 | Maximum call stack size exceeded | I faced this problem with NextJs recently and the solution was quite simple. I had a component Name... | 0 | 2022-11-14T16:48:13 | https://dev.to/theindianappguy/maximum-call-stack-size-exceeded-5f2n | I faced this problem with NextJs recently and the solution was quite simple.

I had a component Name PeopleInfo and the page with the same name, So when i tried importing the component into the page this error happened. | theindianappguy | |

1,256,770 | Porque isso tá aqui e como? Pensamentos sobre modelos de Pull Request | Bom, todo desenvolvedor uma hora ou outra vai se deparar com as seguintes situações: Voltar de... | 0 | 2022-11-15T03:37:56 | https://dev.to/wesleynepo/porque-isso-ta-aqui-e-como-pensamentos-sobre-modelos-de-pull-request-3enf |

Bom, todo desenvolvedor uma hora ou outra vai se deparar com as seguintes situações:

- Voltar de férias e ter que se atualizar sobre os projetos;

- Lidar com incidente pós virada de versão;

- Revisar uma nova alteração e não ter como falar com autor;

Todas essas situações tem um ponto comum, são resolvidas com uma bo... | wesleynepo | |

1,257,441 | Hey, check this amazing tool! | Looking for a tool that will help you with everything at one place? Well, the solution is... | 0 | 2022-11-15T10:41:32 | https://dev.to/random_toolkit/hey-check-this-amazing-tool-5nk | socialmedia, webdev, devops, randomtools | Looking for a tool that will help you with everything at one place? Well, the solution is here.

Random tool (https://randomtools.io/reddit-comment-search/) is software to make your daily life easier. It comes with developer tools such as - SQL formatter, CSS beautifier, URL encoder and decoder, and Lorem Ipsum generat... | random_toolkit |

1,257,866 | Introduction to Data Analysis | What is Data analysis? Data analysis is a process of inspecting, cleansing, transforming, and... | 0 | 2022-11-15T14:51:08 | https://dev.to/manawariqbal/introduction-to-data-analysis-3alp | machinelearning, datascience, beginners, tutorial |

**What is Data analysis?**

Data analysis is a process of inspecting, cleansing, transforming, and modeling data with the goal of discovering useful information, informing conclusions, and supporting decision-making.

**Tools used in Data Analysis :**

Auto-managed closed tools->

Qwiklabs, Tableau,Looker, Zoho Analytics... | manawariqbal |

1,257,909 | All about Kafka | This post will give a brief about Kafka technology and suitable for beginner audience. Moving ahead I... | 0 | 2022-11-15T16:10:24 | https://dev.to/poojave/all-about-kafka-part-1-18im | kafka | This post will give a brief about Kafka technology and suitable for beginner audience. Moving ahead I will be sharing Knowledge on top of it.

## What you should expect by this post?

Background - What kafka is needed?

What it takes to understand Kafka?

Downside of using kafka?

How kafka works?

Best practices

How can yo... | poojave |

1,257,938 | DynamoDB 101 | This post is written considering you're a fresher to DynamoDB and it only explains the basic concepts... | 0 | 2022-11-15T17:04:03 | https://dev.to/ckmonish2000/intro-to-dynamodb-with-node-keb | aws, serverless, node, database | This post is written considering you're a fresher to DynamoDB and it only explains the basic concepts that's required to perform CRUD operations.

The language used in this post in node.js but the underlying concepts are same no matter which language you choose.

## What is DynamoDB?

- DynamoDB is a fully managed No-... | ckmonish2000 |

1,258,276 | Today is the beginning of my coding journey 🙌 | Today marks the first day on my coding bootcamp and to be honest im so excited but at the same time... | 0 | 2022-11-15T20:12:18 | https://dev.to/paschalcodes/today-is-the-beginning-of-my-coding-journey-3c6o | beginners, webdev, javascript, programming | Today marks the first day on my coding bootcamp and to be honest im so excited but at the same time I'm **scared** of the unknow and what awaits me in the deep dark internet world of learning how to code 😭 lol

So if there's any tips or advice you guys can give to someone coming into this field from a complete beginn... | paschalcodes |

1,258,526 | What editor theme do you use ? 🧑🎨🎨 | I use Bearded Theme along with Bearded Icons . What is your theme of choice ? | 0 | 2022-11-16T00:34:06 | https://dev.to/fadhilsaheer/what-editor-theme-do-you-use--10h3 | vscode, javascript, programming, productivity | I use [Bearded Theme](https://marketplace.visualstudio.com/items?itemName=BeardedBear.beardedtheme) along with [Bearded Icons

](https://marketplace.visualstudio.com/items?itemName=BeardedBear.beardedicons).

What is your theme of choice ? | fadhilsaheer |

1,258,595 | Data Indexing, Replication, and Sharding: Basic Concepts | A database is a collection of information that is structured for easy access. It mainly runs in a... | 20,359 | 2022-11-16T03:24:29 | https://pragyasapkota.medium.com/data-indexing-replication-and-sharding-basic-concepts-7376db7f245a | database, indexing, replication, sharding | A database is a collection of information that is structured for easy access. It mainly runs in a computer system and is controlled by a database management system (DBMS). Let’s see some concepts of the database here — Indexing, Replication, and Sharding respectively.

## Indexing

The database can have a large amount ... | pragyasapkota |

1,258,611 | memo, 2022-04-07 | 이클립스 window ⇨ other ⇨ search ⇨ containing text : 검색하고싶은것 + working set : 검색 범위 ctrl + D :... | 0 | 2022-11-16T04:25:27 | https://dev.to/sunj/memo-2022-04-07-1kkk | java, eclipse | 이클립스

- window ⇨ other ⇨ search

⇨ containing text : 검색하고싶은것 + working set : 검색 범위

- ctrl + D : 라인삭제

- revert : 원상복구

- 충돌&오류 난것 ⇨ revert하거나 overwrite 하기 | sunj |

1,258,951 | what is array map() in JavaScript | map() method creates a new array with the results of calling a function for every array element. A... | 0 | 2022-11-16T09:18:35 | https://dev.to/wizdomtek/what-is-array-map-in-javascript-39ne | javascript, webdev, beginners, programming | **map()** method creates a new array with the results of calling a function for every array element.

A function that is executed for every element in the array is passed into **map()** which has these parameters:

* current element

* the index of the current element

* array that map() is being called on

An example o... | wizdomtek |

1,258,982 | The Perks of Combining Angular With ASP.Net Core | It's excellent to be aware of the unique advantages of these great techs in their respective fields,... | 0 | 2022-11-16T10:17:33 | https://dev.to/rachgrey/the-perks-of-combining-angular-with-aspnet-core-332n | angular, aspnet, webdev, programming | It's excellent to be aware of the unique advantages of these great techs in their respective fields, Angular for the front end and ASP.NET Core for the back end, but don't you want your business apps to shine? This blog will discuss how merging Angular with asp.net core can give you the best of both worlds. You may ben... | rachgrey |

1,259,215 | BE A 10X BY UTILISING A WIKI. | As hard as it is to debug and solve issue. One of the more common mistakes we make as developers, is... | 0 | 2022-11-16T12:15:12 | https://dev.to/fortunembulazi/be-a-10x-by-utilising-a-wiki-2h2n | webdev, javascript, programming, productivity | As hard as it is to debug and solve issue. One of the more common mistakes we make as developers, is to solve a problem and forget about it. Only to find a similar problem in your next challenge or project.

I **myself** am no stranger to this issue and it wasn't up until I track all the issues I faced on a wiki and ho... | fortunembulazi |

1,259,539 | Quick tip: Using Deno and npm to persist and query data in SingleStoreDB | Abstract This short article will show how to install and use Deno, a modern runtime for... | 0 | 2022-11-16T16:08:44 | https://dev.to/singlestore/quick-tip-using-deno-and-npm-to-persist-and-query-data-in-singlestoredb-4co2 | singlestoredb, deno, npm, javascript | ## Abstract

This short article will show how to install and use [Deno](https://deno.land/), a modern runtime for JavaScript and TypeScript. We'll use Deno to run a small program to connect to SingleStoreDB and perform some simple database operations.

## Create a SingleStoreDB Cloud account

A [previous article](https:/... | veryfatboy |

1,260,224 | Reflect: PR2 of Release 0.3 | The issue that I was not able to fix... This week I made a contribution to Telescope, and... | 0 | 2022-11-17T05:29:26 | https://dev.to/liutng/reflect-to-pr2-of-release-03-4j9c | ## The issue that I was not able to fix...

This week I made a contribution to Telescope, and this is my first-time creating a Pull Request for Telescope which means I had zero knowledge of this project regarding its system structure design and it actually caused me to under-estimate my first attempt on issue [#3639](ht... | liutng | |

1,260,516 | What the CRUD Active Record | The following will be a tutorial on CRUD methods used in ruby Active Record to... | 0 | 2022-11-17T09:14:49 | https://dev.to/cedsengine/what-the-crud-active-record-1cd2 | programming, ruby, database, help | _The following will be a tutorial on CRUD methods used in ruby Active Record to manipulate(read/write)data. Learn more about Active Record here [Active Record documentation](https://guides.rubyonrails.org/active_record_basics.html)_

**CRUD?**

What is it, what does it mean? If you aren't familiar already with CRUD, I ... | cedsengine |

1,260,519 | Basics of Z-transform With Graphical Representation | Laplace transformation analyzes linear time-invariant (LTI) systems that operate continuously.... | 0 | 2022-11-17T09:58:55 | https://dev.to/kellygreene/basics-of-z-transform-with-graphical-representation-234h | ztransform, graphicalrepresentation, basics, signalsandsystems | [Laplace transformation](https://byjus.com/maths/laplace-transform/) analyzes linear time-invariant (LTI) systems that operate continuously. Additionally, the z-transform is used to analyze the discrete-time LTI system. A mathematical expression of a complex-valued variable called Z, the Z-transform is mostly used as a... | kellygreene |

1,260,524 | Dedicated features of Oracle E-business Suite automated testing solutions | Oracle E-business Suite serves as a dedicated solution that is adopted by business organizations for... | 0 | 2022-11-17T09:08:23 | https://newshunt360.com/dedicated-features-of-oracle-e-business-suite-automated-testing-solutions/ | Oracle E-business Suite serves as a dedicated solution that is adopted by business organizations for various operations. Providing necessary storage space, network facilities, and other solutions, the Oracle E-business Suite serves as the best solution, which is adopted by businesses all around the world. Proper implem... | rohitbhandari102 | |

1,260,844 | A Beginner's Guide to Content Management Systems | Content management systems (CMS) are web applications that allow users to organise and manage their... | 0 | 2022-11-17T14:16:07 | https://dev.to/hr21don/a-beginners-guide-to-content-management-systems-34h6 | opensource, beginners, webdev, tutorial | Content management systems (CMS) are web applications that allow users to organise and manage their digital content. It provides the user with a GUI (Graphical User Interface) to manage the website. To build a website, users do not need to have knowledge of databases or programming.

In this post, we will define what ... | hr21don |

1,260,896 | Top 10 Bootstrap Themes | Website is always the front face to your business. Every user who gets to know about you goes through... | 0 | 2022-11-17T13:45:41 | https://www.lambdatest.com/blog/top-10-bootstrap-themes/ | webdev, tutorial, testing |

Website is always the front face to your business. Every user who gets to know about you goes through your website as the first line of enquiry. So, you must make sure that your website looks the best.

Themes add a structure to your website. Nearly every CMS like wordpress, drupal, joomla etc. is build upon the aspe... | surajkumaar |

1,260,927 | Laravel - Cashier - Stripe Subscriptions | I created a Laravel app using Cashier and Stripe. It uses "teams" as the subscription approach. I... | 0 | 2022-11-17T15:03:59 | https://dev.to/bkl256/laravel-cashier-stripe-subscriptions-3h6j | laravel, stripe, cashier, subscription | I created a Laravel app using Cashier and Stripe. It uses "teams" as the subscription approach. I have it working exactly as I want it for test. However, once I use production and actual payments, I am not getting any response (a null response) when checking for subscription. The subscribed call returns null. I ad... | bkl256 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.