id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

938,771 | [BTY] Day 2: Fancy packages to work with Dataframe | 2 packages I want to mention is pandas-profiling and Mito. pandas-profiling It will... | 16,070 | 2021-12-28T16:19:07 | https://dev.to/nguyendhn/day-2-fancy-packages-to-work-with-dataframe-203p | betterthanyesterday, python, pandas, dataanalysis | 2 packages I want to mention is [pandas-profiling](https://github.com/pandas-profiling/pandas-profiling) and [Mito](https://trymito.io).

### pandas-profiling

It will generate profile reports from a pandas DataFrame. It's more powerful than the default `df.describe()`. These are statistics presented in an **interact... | nguyendhn |

938,780 | Vagrant with xdebug | Finally i found the problem , change the vagrant timezone to be same as host timezone server xdebug... | 0 | 2021-12-28T16:33:37 | https://dev.to/toqadev91/vagrant-with-xdebug-41ke | xdebug, php, vagrant, vscode |

Finally i found the problem ,

change the vagrant timezone to be same as host timezone

server xdebug configuration .ini file changed to be :

```

xdebug.idekey=VSCODE

xdebug.mode=debug

xdebug.start_with_request=yes

xdebug.remote_autorestart = 1

xdebug.client_port=9003

xdebug.discover_client_host=1

xdebug.max_... | toqadev91 |

938,806 | Anbody working with MERN? I have error. | I have a problem with reading data from mongo. I can't find the problem. I get an empty array from... | 0 | 2021-12-28T17:38:10 | https://dev.to/ivkemilioner/anbody-working-with-mern-i-have-error-16pk | mern, react, help | I have a problem with reading data from mongo. I can't find the problem. I get an empty array from mongo.

https://github.com/dragoslavIvkovic/MERN-WOLT/tree/master | ivkemilioner |

938,822 | A brief look at Go's new generics | In this post, I'm describing my experience of trying out Go 1.18's new generics, pitfalls I came across and how I solved them. | 0 | 2021-12-28T19:19:59 | https://dev.to/akrennmair/a-brief-look-at-gos-new-generics-52j3 | go, generics | ---

title: A brief look at Go's new generics

published: true

description: In this post, I'm describing my experience of trying out Go 1.18's new generics, pitfalls I came across and how I solved them.

tags: golang,generics

---

I have been following Go's development fairly closely ever since it was first publicly releas... | akrennmair |

939,065 | Adding Cypress to an existing Angular project | (Originally published on my blog December 23, 2021) When I set up my Angular13 single-page... | 0 | 2021-12-29T02:14:54 | https://annardunster.com/programming/2021/adding-cypress-angular.html | angular, cypress, testing, typescript | (Originally published on my blog December 23, 2021)

When I set up my Angular13 single-page application (SPA) at work, I initially considered setting up Selenium for end to end testing, but due to the steep learning curve I was experiencing with the stack in general (and some other projected hurdles such as figuring ou... | ardunster |

938,827 | Pass secrets to Docker build to fetch private Github repositories | To fetch private repositories as dependencies in a Docker image build procedure, you must set the... | 0 | 2021-12-28T18:20:17 | https://dev.to/theredrad/pass-secrets-to-docker-build-to-fetch-private-github-repositories-291i | docker, github | To fetch private repositories as dependencies in a Docker image build procedure, you must set the Github credentials.

The popular errors while an invalid credential is set:

> fatal: could not read Username for ‘https://github.com': No such device or address

---

## Git URL config

There are lots of posts on the inter... | theredrad |

938,869 | Hooks | React's new feature is the React hook.It has made many difficult things easier since it came. The... | 0 | 2021-12-28T19:35:58 | https://dev.to/dev_learner/hooks-4d7p |

React's new feature is the React hook.It has made many difficult things easier since it came. The hook can be compared to a hook. The hook helps to hold something in place, just as the hook holds something in place.We can store any data in external file in the form of stat with the help of hook. We can use this data b... | dev_learner | |

938,880 | Speeding up geodata processing with feather | Previously, on speeding up geodata processing... In this post, I compare the read and write... | 0 | 2021-12-28T20:15:04 | https://dev.to/spara_50/speeding-up-geodata-processing-with-feather-4bmk | feather, geodata, pickle, geopandas | Previously, on [speeding up geodata processing](https://dev.to/spara_50/speeding-up-geo-data-processing-ig7)...

In this post, I compare the read and write performance of the feather file format against the pickle file format.

> From Hadley Wickham's [blog](https://www.rstudio.com/blog/feather/):

> What is Feather?

... | spara_50 |

938,975 | How to emulate iOS on Linux with Docker | After several unsuccessful attempts, I was finally able to virtualize a macOS to run tests on an iOS... | 0 | 2021-12-29T01:06:06 | https://dev.to/ianito/how-to-emulate-ios-on-linux-with-docker-4gj3 | linux, ios, virtualization, xcode | After several unsuccessful attempts, I was finally able to virtualize a macOS to run tests on an iOS app I was working on.

But before proceeding, it is necessary to know that this is not a stable solution and has several performance issues, however, for my purpose I managed to do what I wanted.

We'll use QEMU to emul... | ianito |

938,989 | HTML-Form 📝 | Going to start my journey and mark 100 days calendar.👨💻🚀 Day: 1/100 📅 Project - 1 🔨 # HTML... | 0 | 2021-12-28T23:36:31 | https://dev.to/itsahsanmangal/html-form-1k56 | html, css, javascript, webdev | Going to start my journey and mark 100 days calendar.👨💻🚀

Day: 1/100 📅

<u>**Project - 1 🔨**</u>

```

# HTML Form📝

> Client side form validation with HTML 📜

`Check required, length, email and password match 📧`

- Create form UI 👨💻

- Show error messages under specific inputs ⚠️

- check Required() to acce... | itsahsanmangal |

939,071 | Reporting dan logging di PostgreSQL | Tujuan Log query yang berjalan mulai dari 2 detik (2000ms) Memastikan file log berada di... | 0 | 2021-12-29T02:48:46 | https://dev.to/sihar/reporting-dan-logging-di-postgresql-5b07 | logging, postgres | ## Tujuan

- Log query yang berjalan mulai dari 2 detik (2000ms)

- Memastikan file log berada di direktori /var/log/postgresql

- File log tergenerate harian

- Log mencatat kapan dijalankannya sebuah query

PostgreSQL yang digunakan versi 12

Create direktori /var/log/postgresql dan set user ownernya

```

# mkdir /var/lo... | sihar |

939,109 | Secret Key Cryptography | Hi. I have just finished writing my first "real" book (that is, not counting my 5 books of Sudoku... | 0 | 2021-12-29T05:18:44 | https://dev.to/contestcen/secret-key-cryptography-10ck | security, computerscience | Hi. I have just finished writing my first "real" book (that is, not counting my 5 books of Sudoku puzzles and SumSum puzzles.) The title is, you guessed it, "Secret Key Cryptography," and that is exactly what it's about.

If anyone is into writing cryptography primitives, this book is loaded with ideas, 140 differe... | contestcen |

939,253 | 5 WEB UX LAWS EVERY DEVELOPER SHOULD KNOW | 1. JAKOB’S LAW Users spend most of their time on other sites. This means that users prefer... | 0 | 2021-12-29T08:21:55 | https://dev.to/visualway/5-web-ux-laws-every-developer-should-know-f6h | javascript, webdev, design, programming | ---

title: 5 WEB UX LAWS EVERY DEVELOPER SHOULD KNOW

published: true

description:

tags: javascript, webdev, design, programming

cover_image: https://i.imgur.com/aglkI3m.png

---

## 1. JAKOB’S LAW

Users spend most of their time on other sites. This means that users prefer your site to work the same way as all the othe... | visualway |

939,264 | Striver's SDE Sheet Journey - #8 Merge Overlapping Subintervals | Problem Statement :- Given an array of intervals where intervals[i] = [starti, endi], merge all... | 0 | 2021-12-29T09:14:24 | https://dev.to/sachin26/strivers-sde-sheet-journey-8-merge-overlapping-subintervals-4jff | beginners, programming, computerscience, codenewbie | > **<u>Problem Statement</u> :-**

_Given an array of intervals where `intervals[i] = [starti, endi]`, merge all overlapping intervals, and return an array of the non-overlapping intervals that cover all the intervals in the input._.

**Example 1:**

```

Input: intervals=[[1,3],[2,6],[8,10],[15,18]]

Output: [[1,6],[8,1... | sachin26 |

939,288 | How to specify your Xcode version on GitHub Actions | Hello ! I’m Xavier Jouvenot and in this small post, I am going to explain how to specify your Xcode... | 15,933 | 2021-12-29T18:05:29 | https://10xlearner.com/2021/12/29/how-to-specify-your-xcode-version-on-github-actions/ | cpp, howto, tutorial, githubactions | ---

title: How to specify your Xcode version on GitHub Actions

published: true

date: 2021-12-29 05:00:00 UTC

tags: Cpp,Howto,tutorial,GitHubActions

canonical_url: https://10xlearner.com/2021/12/29/how-to-specify-your-xcode-version-on-github-actions/

series: Xcode switch

---

Hello ! I’m Xavier Jouvenot and in this smal... | 10xlearner |

939,316 | Quickly ship your changes to git? Use 'ship' | Ship is a small cli command I wrote that automatically adds and commits all your changes and pushes... | 0 | 2021-12-29T10:25:31 | https://dev.to/karsens/quickly-ship-your-changes-to-git-use-ship-1i4c | bash | Ship is a small cli command I wrote that automatically adds and commits all your changes and pushes them to your current branch. It then lets you know if it succeeded or failed.

To add ship to your cli, copy and paste this into your terminal:

```echo "ship () { BRANCH=$(git branch --show-current); git add . && git co... | karsens |

939,349 | 7 front-end interview processes I did in December 2021 | I recently went through the task of getting myself a new job and, to do this, I took part of 7... | 0 | 2021-12-31T09:08:49 | https://dev.to/anabella/7-front-end-interview-processes-i-did-in-december-2021-5484 | career, webdev, javascript, react | I recently went through the task of getting myself a new job and, to do this, I took part of **7 simultaneous interviewing processes for front-end roles** with React and Typescript.

I learned a lot as days, weeks, and interviews went by. I learned about myself and about the way companies evaluate candidates. I think ... | anabella |

939,419 | Blur Animation: CSS Transition | Little experiment for create a blur movement effect using CSS animation. | 0 | 2021-12-29T11:42:23 | https://dev.to/argonauta/blur-animation-css-transition-2ndc | codepen, javascript, css, tutorial | <p>Little experiment for create a blur movement effect using CSS animation.</p>

{% codepen https://codepen.io/riktar/pen/bdEVPP %} | argonauta |

939,471 | Force Https with .htaccess | After installing an SSL certificate, your website will be accessible via HTTP and HTTPS. However, it... | 0 | 2021-12-29T12:34:00 | https://dev.to/dhuettner/force-https-with-htaccess-5bb9 | htaccess, https | After installing an SSL certificate, your website will be accessible via HTTP and HTTPS. However, it is better to use only the latter, because it encrypts and additionally secures the data of your website. With some hosters there is a setting in the web interface to force HTTPS with just one click. Unfortunately, it ha... | dhuettner |

939,479 | Crypto business that makes you a Billionaire (Part-1) | Crypto is booming day by day pace. According to the projections, digital money would be over $1100... | 0 | 2021-12-29T12:49:15 | https://dev.to/avalaauren/crypto-business-that-makes-you-a-billionaire-part-1-bg1 | businessideas, cryptobusinessideas, cryptoexchange | Crypto is booming day by day pace. According to the projections, digital money would be over $1100 million by 2025. Cryptocurrencies will have an annual growth rate of around 10% which indicates that in the future we will going to talk more than now.

Among the customers, they buy and sell items using cryptocurrency thr... | avalaauren |

939,482 | Top 15 Web Application Templates with Perfect Design [2021] | Web application templates are turnkey solutions for your website. They are affordable and easy to adjust. In this article, we list our favorite web app templates and explain which ones and why we recommend. | 0 | 2021-12-29T13:05:05 | https://flatlogic.com/blog/web-application-templates-with-perfect-design/ | webapp, webdesign, webdev | ---

title: Top 15 Web Application Templates with Perfect Design [2021]

published: true

description: Web application templates are turnkey solutions for your website. They are affordable and easy to adjust. In this article, we list our favorite web app templates and explain which ones and why we recommend.

tags: webapp,... | anaflatlogic |

939,501 | Api Gateway Simple Tutorial | CREATE A SIMPLE API GATEWAY ENDPOINT How to create a very simple API with API... | 0 | 2021-12-29T13:48:23 | https://dev.to/aws-builders/api-gateway-simple-tutorial-548m | CREATE A SIMPLE API GATEWAY ENDPOINT

====================================

*How to create a very simple API with API Gateway.*

Log into AWS and open the API Gateway module. This is where we will create an API gateway (mock for this purpose) to be used later.

[;

```

Check out this example in [Babel REPL](... | fromaline |

939,728 | Test Post | A post by John S. | 0 | 2021-12-29T17:18:42 | https://dev.to/outofgamut/test-post-52bh | outofgamut | ||

939,741 | cross-env for front-end (Nextjs) on Windows 10 11 | If you are doing front-end projects on windows 10 or 11. specially, you want to run some of sample... | 0 | 2021-12-29T17:48:53 | https://dev.to/gemcloud/front-end-nextjs-on-windows-10-11-3idj | nextjs, webdev, startup | If you are doing front-end projects on windows 10 or 11.

specially, you want to run some of sample codes from GitHub!

Do not forget to install "npm i cross-env" on your project.

```

> npm i cross-env

```

The "cross-env" helps us save a lot of time. otherwise the codes threw errors!

for example:

1. read some of files ... | gemcloud |

939,801 | Software Developer Vs Software Engineer — Which Suits You Best? | Have you ever wondered if software development and software engineering are the same thing? According... | 0 | 2021-12-29T19:48:14 | https://www.daxx.com/blog/development-trends/software-developer-vs-software-engineer | softwaredeveloper, softwareengineer, softwareskills | Have you ever wondered if software development and software engineering are the same thing? According to the Computer Science Degree Hub, these two jobs are different in terms of their functions.

Software developers do the small-scale work, writing a program that performs a specific function or set of functions, while... | martakravs |

939,816 | Highlighting: sync-contribution-graph | A couple of weeks ago, I nearly scrolled past this gem on my twitter feed: sync-contribution-graph,... | 0 | 2021-12-29T20:39:25 | https://dev.to/mtfoley/highlighting-sync-contribution-graph-6o8 | github, javascript, bash, git | A couple of weeks ago, I nearly scrolled past this gem on my twitter feed: [sync-contribution-graph](https://github.com/kefimochi/sync-contribution-graph), by @kefimochi. Go have a look!

You can use this tool to have your GitHub contribution graph accurately reflect contributions from other accounts you make use of. ... | mtfoley |

939,853 | Take care of your soft skills | I’m not sure how unpopular this opinion is but I believe that for most of the positions I have... | 0 | 2021-12-31T15:08:05 | https://www.manuelobregozo.com/blog/take-care-of-your-soft-skills | softskills, interview, behavioralinterview | ---

title: Take care of your soft skills

published: true

date: 2021-12-29 00:00:00 UTC

tags: softskills, interviews, behavioralinterview

canonical_url: https://www.manuelobregozo.com/blog/take-care-of-your-soft-skills

---

I’m not sure how unpopular this opinion is but I believe that for most of the positions I have c... | manuelobre |

939,861 | Generative Art With Python | Let's Create Art With Code Join us as we learn how to generate art with Python! Even if... | 12,727 | 2021-12-29T21:41:24 | https://dev.to/iceorfiresite/generative-art-with-python-347h | python, programming, tutorial | #Let's Create Art With Code

Join us as we learn how to [generate art with Python](https://www.iceorfire.com/post/generative-art-with-python)! Even if you aren't artistic you can give Jackson Pollock a run for his money.

# Support Me

If you find these tutorials helpful, please consider [buying me a coffee](https://www.... | iceorfiresite |

940,093 | Holidays, Entrepreneurship and SLOs with Nobl9 | It's finally here, the end of season 1 of the podcast is upon us! To celebrate, Santa is bringing... | 0 | 2021-12-30T20:57:22 | https://devinterrupted.com/podcast/holidays-entrepreneurship-and-slos-with-nobl9/ | leadership, podcast, techtalks, startup | It's finally here, the end of season 1 of the podcast is upon us! To celebrate, Santa is bringing something special - entrepreneurship advice for all the would-be founders of the world, [ages 1 to 92.](https://www.youtube.com/watch?v=hwacxSnc4tI&ab_channel=WalterTan)

Brian Singer, co-founder & CPO of Nobl9, sits down ... | conorbronsdon |

940,133 | Unlock The Worth Of Logo By Following The Best Practices | It is not a surprise to see what value a logo holds for the business these days. It can impact... | 0 | 2021-12-30T06:34:17 | https://dev.to/jack46986117/unlock-the-worth-of-logo-by-following-the-best-practices-4nan | discuss, design, beginners, opensource |

It is not a surprise to see what value a logo holds for the business these days. It can impact customers in such a powerful way that they end up drawing to the brand it portrays... | jack46986117 |

940,243 | JSON WEB TOKEN(JWT)

| JSON Web Token (JWT) is an open standard (RFC 7519) that defines a compact and self-contained way for... | 0 | 2021-12-30T10:08:38 | https://dev.to/delwarjnu11/json-web-tokenjwt-4ofk | JSON Web Token (JWT) is an open standard (RFC 7519) that defines a compact and self-contained way for securely transmitting information between parties as a JSON object. So in the tutorial, I introduce how to implement an application “Reactjs JWT token Authentication Example” with details step by step and 100% running ... | delwarjnu11 | |

940,301 | Topic: JS Promise vs Async await | As JavaScript is an Asynchronous(behaves synchronously), we need to use callbacks, promises and async... | 0 | 2021-12-30T11:51:32 | https://dev.to/zahidulislam144/topic-js-promise-vs-async-await-3dp1 | javascript | As JavaScript is an Asynchronous(behaves synchronously), we need to use callbacks, promises and async await. You need to learn what is async-await, what is promise, how to use promise and async-await in javascript and where to use it.

## **Promise**

- **What is Promise?**

A Promise is a rejected value or succeeded va... | zahidulislam144 |

941,146 | Why We Use React Js Instead of Angular Js? | Introduction The framework you choose to use is crucial in the development's success.... | 0 | 2021-12-31T08:51:15 | https://dev.to/bhaviksadhu/why-we-use-react-js-instead-of-angular-js-54il | programming, angular, react | ##Introduction

The framework you choose to use is crucial in the development's success. AngularJS and ReactJS remain the most popular frameworks for developing React js with Java Point or JavaScript. You can utilize both of these frameworks to build mobile and web-based applications.

We should investigate the [differ... | bhaviksadhu |

955,372 | Emulating the Sega Genesis - Part III | Originally published at jabberwocky.ca Written December 2021/January 2022 by... | 16,249 | 2022-01-14T18:13:41 | https://jabberwocky.ca/posts/2022-01-emulating_the_sega_genesis_part3.html | rust, emulator, sega, genesis | *Originally published at [jabberwocky.ca](https://jabberwocky.ca/posts/2022-01-emulating_the_sega_genesis_part3.html)*

###### *Written December 2021/January 2022 by transistor_fet*

A few months ago, I wrote a 68000 emulator in Rust named [Moa](https://jabberwocky.ca/projects/moa/). My original goal was to emulate a... | transistorfet |

949,985 | What is VOID Operator - Daily JavaScript Tips #3 | The void operator returns undefined value; In simple words, the void operator specifies a... | 0 | 2022-01-10T04:16:59 | https://codewithsnowbit.hashnode.dev/what-is-void-operator-daily-javascript-tips-3 | javascript, node, webdev, tutorial | The `void` operator returns `undefined` value;

In simple words, the `void` operator specifies a function/expression to be executed without returning `value`

```js

const userName = () => {

return "John Doe";

}

console.log(userName())

// Output: "John Doe"

console.log(void userName())

// Output: undefined

```

[Live D... | dhairyashah |

954,331 | Copy public IP address to system clipboard | curl ifconfig.me | xclip -sel clipboard Enter fullscreen mode Exit fullscreen... | 0 | 2022-01-13T18:09:04 | https://dev.to/csinclair/copy-public-ip-address-to-system-clipboard-4ejd | linux, cli, networking | ```bash

curl ifconfig.me | xclip -sel clipboard

``` | csinclair |

954,848 | JS Intro | There are 8 fundamental data types in JavaScript: strings, numbers, Bigint, booleans, null,... | 0 | 2022-01-14T07:35:34 | https://dev.to/shinyo627/js-intro-397i | javascript, tutorial, beginners | - [There are 8 fundamental data types in JavaScript: strings, numbers, Bigint, booleans, null, undefined, symbol, and object.](https://www.codecademy.com/resources/docs/javascript/data-types?page_ref=catalog)

- First seven data types except object are primitive data types.

- BigInt is necessary for big numbers becau... | shinyo627 |

954,979 | Why practicing DRY in tests is bad for you | This post is a bit different from the recent ones I’ve published. I’m going to share my point of view... | 0 | 2022-01-14T08:58:43 | https://dev.to/mbarzeev/why-practicing-dry-in-tests-is-bad-for-you-j7f | testing, react, javascript, webdev | This post is a bit different from the recent ones I’ve published. I’m going to share my point of view on practicing DRY in unit tests and why I think it is bad for you. Care to know why? Here we go -

## What is DRY?

Assuming that not all of us know what DRY means here is a quick explanation:

“Don't Repeat Yourself (D... | mbarzeev |

955,341 | Working on my 2nd Project: JavaScript Tic Tac Toe! | Hey Guys, Been a week or 2 but I'm back like I said I would regarding the 2nd Project. This will be... | 0 | 2022-01-14T16:48:19 | https://dev.to/mikacodez/working-on-my-2nd-project-javascript-tic-tac-toe-4704 | javascript, webdev, beginners, programming | Hey Guys,

Been a week or 2 but I'm back like I said I would regarding the 2nd Project.

This will be a short post just to document my experience so far of the last 12 days. The challenge of pushing the game out so far has been a bitter/sweet one. On one hand I was able to get everything coded pretty quickly with the a... | mikacodez |

955,963 | How to apply the AWS Community Builder Program | The AWS Community Builders program offers technical resources, mentorship, and networking... | 0 | 2022-01-15T09:48:07 | https://dev.to/santhakumar_munuswamy/how-to-apply-the-aws-community-builder-program-43gl | aws, machinelearning, datascience, artificalintelligene | The **AWS Community Builders program** offers technical resources, mentorship, and networking opportunities to AWS technical enthusiasts and emerging thought leaders who are passionate about sharing knowledge and connecting with the technical community.

Are you Interested to join AWS builders Program? you should apply... | santhakumar_munuswamy |

956,794 | Leetcode 1326 Minimum Number of Taps to Open to Water a Garden

| from typing import List class Solution: def minTaps(self, n: int, ranges: List[int]) ->... | 0 | 2022-01-15T22:35:31 | https://dev.to/kardelchen/leetcode-1326-minimum-number-of-taps-to-open-to-water-a-garden-4ilh | ```python

from typing import List

class Solution:

def minTaps(self, n: int, ranges: List[int]) -> int:

# find intervals starting from different locations

intervals = []

for center, width in enumerate(ranges):

# if the element is 0, then this element is useless

if wi... | kardelchen | |

956,915 | Introducing Myself..... | Hey I'm Muhammed Fuhad. Iam New To This Development Community. First of all Iam introducing... | 0 | 2022-01-16T02:52:38 | https://dev.to/fuhadkalathingal/introducing-myself-4hob | Hey I'm Muhammed Fuhad. Iam New To This Development Community. First of all Iam introducing myself.Iam 13 years old boy intrested in coding. Currently iam learning the basics of coding.this are the all things about me now introduce yourself developers... | fuhadkalathingal | |

957,006 | Front-End Web and

Mobile Development

on AWS | AWS offers a wide range of tools and services to support development workflows for iOS, Android,... | 0 | 2022-01-16T06:16:13 | https://dev.to/nirmalnaveen/front-end-web-andmobile-developmenton-aws-5f7f | aws, webdev, cloud, mobile | AWS offers a wide range of tools and services to support development workflows for iOS, Android, React Native, and web front-end developers. There is a set of services that make it easy to build, test, and deploy an application, even with minimal knowledge of AWS. With the speed and reliability of the AWS infrastructur... | nirmalnaveen |

957,193 | Creating Docker Image with Dockerfile | There are many way where you can create a Docker Image and make a container of it, but using... | 0 | 2022-01-16T11:10:15 | https://dev.to/sshiv5768/creating-docker-image-with-dockerfile-1n58 | docker, devops, container | There are many way where you can create a Docker Image and make a container of it, but using ``Dockerfile`` is an easy way. Let's create an **Apache Server** Image using this method.

In this method we are going to follow below steps:

- First choose a ``__base_image__``. In our case, base image is ``Ubuntu``.

- Execute... | sshiv5768 |

957,281 | This week in Flutter #37 | I have been impressed by the Wordle story this week. Wordle is a word game made by Josh Wardle, that... | 12,898 | 2022-01-16T14:14:40 | https://ishouldgotosleep.com/news/this-week-in-flutter-37/ | dart, flutter, news | I have been impressed by the [Wordle story](https://www.macrumors.com/2022/01/11/wordle-app-store-clones/) this week.

Wordle is a word game made by **Josh Wardle**, that became viral recently. After that, a lot of

clones appeared on the mobile stores, trying to make money from its sudden success.

And they did make mon... | mvolpato |

957,324 | start new journey | Fast of all I am FASILU 2 nd year bsc computer science student. I dicide to become a freelancer wen... | 0 | 2022-01-16T15:42:42 | https://dev.to/fasilu/start-new-journey-1moc |

Fast of all I am FASILU 2 nd year bsc computer science student. I dicide to become a freelancer wen I study 12th fast I create a youtube channel but it's floped

And start learn html and python for web development .I make my own website then I think iam a full stake devoloper🤗. then i joined freelancer.com and upwor... | fasilu | |

957,423 | Learning Elixir/Phoenix | TL;DR; a rant about how much is missing and why is there no easy setup guides. I've starting... | 0 | 2022-01-16T16:24:16 | https://dev.to/neophen/learning-elixirphoenix-fh9 | elixir, phoenix, beginners, webdev | TL;DR; a rant about how much is missing and why is there no easy setup guides.

I've starting dabbling with some Elixir/Phoenix about a year ago. It looks amazing, but I was always just following tutorials.

Now i'm on the road to actually write a product with this stack. If I was super serious about the product i wou... | neophen |

957,466 | Dealing with asynchronous data in Javascript : Promises | What is Promise ? Well, we use this word in our daily life so many times. We often make... | 0 | 2022-01-16T20:33:00 | https://dev.to/swasdev4511/asynchronous-javascript-promises-472f | javascript, webdev, programming, beginners | ## What is Promise ?

Well, we use this word in our daily life so many times. We often make promises to ourselves , sometimes we keep them and sometimes break them 😉. Does it have the exact same meaning when it comes to Programming ?

Well, sort of!. To make this understand we should recall what is the purpose of ha... | swasdev4511 |

957,618 | An introduction to open-wc | Upon dipping your feet into the behemoth that is web development, you will quickly realize how... | 0 | 2022-01-17T20:47:47 | https://dev.to/rajivthummalapsu/an-introduction-to-open-wc-27i3 | Upon dipping your feet into the behemoth that is web development, you will quickly realize how non-static this field is. Everything is constantly changing and evolving, from updates to web protocols to constant syntax alterations. Consequently, a 1337 developer must periodically update their toolkit with the new fads a... | rajivthummalapsu | |

1,002,652 | Task force5.0 {Automation} | Welcome back to the taskforce 5.0 blog series In this second episode I will go through what I... | 0 | 2022-02-27T07:35:51 | https://dev.to/rkay250/task-force50-automation-47b4 | episode2, devops, taskforce, coding |

Welcome back to the taskforce 5.0 blog series

In this second episode I will go through what I learned and experienced in my second week.

## DevOps process

This last week we learnt more about software development operations.

Being software developer always requires efficiency and effective team collaboration and ... | rkay250 |

957,742 | Swift Notes: Enums | This is a collection of code-snippets that I've accumulated on my journey of learning iOS Development... | 0 | 2022-01-17T04:07:43 | https://dev.to/mikewestdev/100daysofswift-days-1-2-complex-data-types-2cln | swift |

This is a collection of code-snippets that I've accumulated on my journey of learning iOS Development with Swift; I'm hoping it can serve as a cheat-sheet or resource for others learning.

## Enums

Swift is smart and will iterate the rawValues of your enum from 0 or according to whatever initial value(s) you set

```ts... | mikewestdev |

957,771 | Discovering LIT | I thought I knew some basics of Javascript and CSS, but I was wrong. To begin, I'm writing this blog... | 0 | 2022-01-17T04:59:48 | https://dev.to/jfz5219/discovering-lit-egg | I thought I knew some basics of Javascript and CSS, but I was wrong. To begin, I'm writing this blog for one of my IST lab assignments. I first had to complete a list of thing.

List:

- Download VSCode, NPM, and NodeJS

- Create GitHub account

- Link SSH

NPM is important because it provides tools that Node.js files may... | jfz5219 | |

957,781 | Developing Express and React on the same port | Without CRA. I was quite annoyed at how difficult it was to integrate React with Express. The... | 0 | 2022-01-17T05:52:38 | https://dev.to/codingjlu/developing-express-and-react-on-the-same-server-55p7 | node, express, webdev, react | _Without CRA._

I was quite annoyed at how difficult it was to integrate React with Express. The process goes something like:

1. Setup your Express app in one directory

2. Use CRA to generate the frontend in another directory

3. Develop backend

4. Use a proxy for the frontend and mess with CORS

5. Done. Production? Squ... | codingjlu |

957,899 | How to fix QuickBooks Script Error? [Experts’ Tips] | There are situations when you try to get the right of entry to an internet web page from QuickBooks... | 0 | 2022-01-17T07:29:13 | https://dev.to/alexpoter0356/how-to-fix-quickbooks-script-error-experts-tips-4j3o | ux | There are situations when you try to get the right of entry to an internet web page from QuickBooks however the specific web page does not get loaded.

Additionally, an error message articulating "An issue has take... | alexpoter0356 |

958,016 | Cross-Platform Game Apps: Trends and Top Picks For the Year 2021 | The year 2020 will be remembered as the year of the pandemic. Everything came to a standstill that... | 0 | 2022-01-17T11:03:57 | https://dev.to/rvtechnologies/cross-platform-game-apps-trends-and-top-picks-for-the-year-2021-4jhf | gamedev, gameapps, mobilegameapp, android | The year 2020 will be remembered as the year of the pandemic. Everything came to a standstill that year, and humanity faced difficult circumstances.

Despite the fact that the rampaging pandemic killed many enterprises across several industries, the gaming industry was spared. Games are still the most popular sort of a... | rvtechnologies |

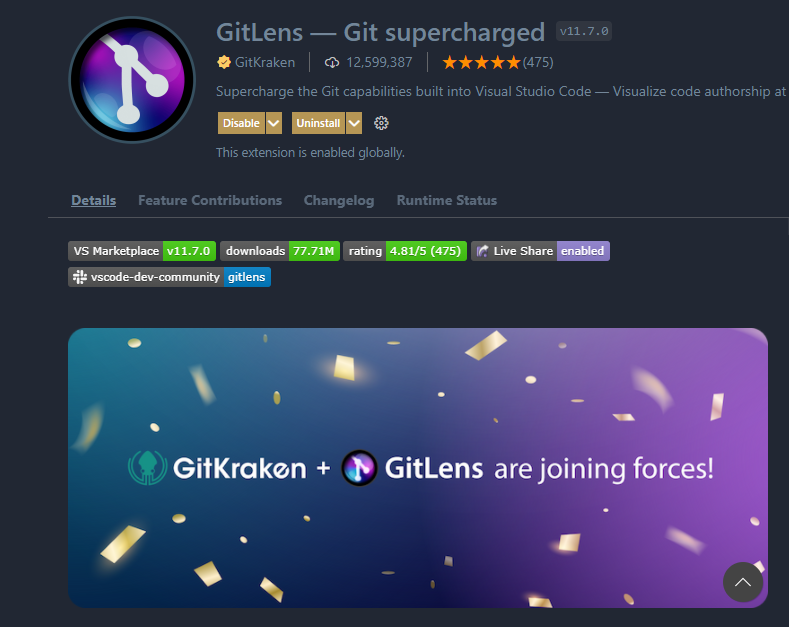

958,332 | Best Visual Studio Code Extensions for Developers | 1. GitLens — Git supercharged Usage - GitLens simply helps you better understand code.... | 0 | 2022-01-17T19:52:06 | https://dev.to/samithawijesekara/best-visual-studio-code-extensions-for-developers-1o42 | productivity, vscode, webdev, programming |

## 1. GitLens — Git supercharged

Usage - GitLens simply helps you better understand code. Quickly glimpse into whom, why, and when a line or code block was changed. Jump back through ... | samithawijesekara |

958,358 | This is my first article on DEV.to | So, this is the first article here on DEV.to. I just don't know yet why have I started it. I already... | 0 | 2022-01-17T17:11:11 | https://dev.to/peterteszary/this-is-my-first-article-on-devto-5bie | devjournal, devto, firstpost | So, this is the first article here on DEV.to. I just don't know yet why have I started it. I already have two blogs. One is for my business and the other one is for fun. The non-business one is similar to a diary. But I just like to try out new things, so that is why I've decided to click around DEV.to.

Maybe I will s... | peterteszary |

958,620 | Changing AntD locale dynamically | Hello devs, it's new year and here i'm struggling with React and AntD. I'm trying to change AntD... | 0 | 2022-01-17T21:08:53 | https://dev.to/dcruz1990/changing-antd-locale-dynamically-3e15 | help, react | Hello devs, it's new year and here i'm struggling with React and AntD.

I'm trying to change AntD locale dynamically. As documentation refers, AntD has a <ConfigProvider> context that wraps <App>, its receives 'lang' as a prop.

So here i'm doing this dumb thing:

```Javascript

import i18n from './i18n'

ReactDOM.render... | dcruz1990 |

958,767 | Install MYSQL on Ubuntu server 18.04 | Install MYSQL on Ubuntu server 18.04 MySQL is an open-source database management system,... | 0 | 2022-01-18T02:49:30 | https://dev.to/ilhamsabir/install-mysql-on-ubuntu-server-1804-47bg | mysql, devops, webdev, ubuntu | # Install MYSQL on Ubuntu server 18.04

MySQL is an open-source database management system, commonly installed as part of the popular LAMP (Linux, Apache, MySQL, PHP/Python/Perl) stack. It uses a relational database and SQL (Structured Query Language) to manage its data.

The short version of the installation is simple... | ilhamsabir |

958,857 | Dogs not barking | Gregory (Scotland Yard detective): Is there any other point to which you would wish to draw my... | 0 | 2022-02-01T11:46:30 | https://dev.to/maddevs/dogs-not-barking-594h | webdev, testing | > *Gregory (Scotland Yard detective): Is there any other point to which you would wish to draw my attention?*

> *Holmes: To the curious incident of the dog in the night-time.*

> *Gregory: The dog did nothing in the night-time.*

> *Holmes: That was the curious incident.*

*The Adventure of Silver Blaze*

2. Part 2: Understanding the MediaDevices API and getting access to the user’s media devices [Link](https://dev.to/ethand91/webrtc-for-beginners-part-2-media... | ethand91 |

958,924 | Top 5 Content Writing Company | Every business is built on the content as its fundamental foundation, as it describes its company,... | 0 | 2022-01-18T06:32:45 | https://dev.to/viveksh41162642/top-5-content-writing-company-2fp8 |

Every business is built on the content as its fundamental foundation, as it describes its company, products, or services.

Using social media is becoming a necessity for all businesses today. A significant reason f... | viveksh41162642 | |

959,111 | Quick Tips to Open a Handyman Business Using Uber for Handyman | Uber for Handyman is a popular on-demand multi-service application servicing millions of global users... | 0 | 2022-01-18T09:12:52 | https://dev.to/wademathewsr/quick-tips-to-open-a-handyman-business-using-uber-for-handyman-1ebc | flutter, javascript, programming, node | Uber for Handyman is a popular on-demand multi-service application servicing millions of global users with its fascinating features and 200+ services. The popularity and the advancements of this application made multi-service businesses adopt the Uber for Handyman business model for the betterment of their businesses. ... | wademathewsr |

968,129 | Editing PDFs (Code Example) | C#: // PM> Install-Package IronPdf using IronPdf; using System.Collections.Generic; var... | 0 | 2022-01-26T08:17:31 | https://ironpdf.com/examples/editing-pdfs/ | ---

canonical_url: https://ironpdf.com/examples/editing-pdfs/

---

**C#:**

```

// PM> Install-Package IronPdf

using IronPdf;

using System.Collections.Generic;

var Renderer = new IronPdf.ChromePdfRenderer();

// Join Multiple Existing PDFs into a single document

var PDFs = new List<PdfDocument>();

PDFs.Add(PdfDoc... | ironsoftware | |

969,466 | 🎶 Background Music to Get Into the Zone | Maybe the most essential skill for a developer is having a crystal clear focus. Some call it "the... | 0 | 2022-01-27T12:07:02 | https://bas.codes/posts/concentration-music | programming, productivity, discuss, motivation | Maybe the most essential skill for a developer is having a crystal clear focus. Some call it "the zone", a state of mind in which your mind is genuinely focussed on the very problem you are dealing with right at that moment.

Your surrounding plays a crucial role in that. In the office, you might easily be distracted b... | bascodes |

996,811 | Android Games with Capacitor and JavaScript | In this post we put a web canvas game built in Excalibur into an Android (or iOS) app with... | 0 | 2022-02-21T21:17:54 | https://erikonarheim.com/posts/capacitorjs-game/ | javascript, android, canvas | ---

title: Android Games with Capacitor and JavaScript

published: true

tags:

- javascript

- android

- canvas

cover_image: https://erikonarheim.com/images/capacitorjs-game/examplerunning.png

canonical_url: https://erikonarheim.com/posts/capacitorjs-game/

---

In this post we put a web canvas game built in Excalibu... | eonarheim |

997,059 | Importance of Website Designing | Putting aside all the other SEO considerations (which are evenly important), we can examine how... | 0 | 2022-02-22T05:29:07 | https://dev.to/draggital/importance-of-website-designing-3n2b |

Putting aside all the other SEO considerations (which are evenly important), we can examine how CONTENT specifically (text, images, videos & layout) influences your SEO. It can be summed up like this: Poor design i... | draggital | |

997,186 | Create a new GitHub Repository from the command line | It can be frustrating when you are working on a project on your local machine and need to commit some... | 0 | 2022-02-22T20:03:20 | https://dev.to/techielass/create-a-new-github-repository-from-the-command-line-575d | github, git, beginners | It can be frustrating when you are working on a project on your local machine and need to commit some changes to GitHub, but you haven't set up that repository.

The GitHub CLI can help in this situation, from your terminal you can create that repository and commit your project without leaving your terminal or Integra... | techielass |

997,450 | [Infographic] AWS SNS from a serverless perspective | The Simple Notification Service, or SNS for short, is one of the central services to build... | 0 | 2022-02-22T13:04:11 | https://dev.to/dashbird/infographic-aws-sns-from-a-serverless-perspective-24h9 | aws, serverless, cloud, devops | * * * * *

The **Simple Notification Service**, or SNS for short, is one of the central services to build serverless architectures in the AWS cloud. SNS itself is a serverless messaging service that can distribute massive numbers of messages to different recipients. These include mobile end-user devices, like smartphon... | taavirehemagi |

997,762 | Getting Started with Docker | Why is Docker a Powerful Tool? One of the main reasons Docker is a powerful tool is its... | 0 | 2022-02-27T20:43:59 | https://dev.to/taylormorini/getting-started-with-docker-36en | webdev, docker | ## Why is Docker a Powerful Tool?

One of the main reasons Docker is a powerful tool is its architecture. Docker uses a client-server architecture. According to the Docker documentation,

> The Docker client talks to the Docker daemon, which does the heavy lifting of building, running, and distributing your Docker con... | taylormorini |

997,869 | The big STL Algorithms tutorial: wrapping up | With the last article on algorithms about dynamic memory management, we reached the end of a... | 362 | 2022-02-23T07:53:32 | https://www.sandordargo.com/blog/2022/02/23/stl-alogorithms-tutorial-part-31-wrap-up | cpp, tutorial, stl, algorithms | With the last article on algorithms about [dynamic memory management](https://www.sandordargo.com/blog/2022/02/02/stl-alogorithms-tutorial-part-30-memory-header), we reached the end of a 3-year-long journey that we started at the beginning of 2019.

Since then, in about 30 different posts, we learned about the algorith... | sandordargo |

997,974 | Recommend me the best phone for productive mobile app development! | My phone just died on me. I am in the hunt for a new phone. I've always been an Android User but... | 0 | 2022-02-22T20:59:29 | https://dev.to/azrinsani/recommend-me-the-dest-phone-for-productive-mobile-app-development-df5 | flutter, xamarin, reactnative, android | My phone just died on me. I am in the hunt for a new phone.

I've always been an Android User but wouldn't mind moving to iOS.

However, my main concern is development speed. Particularly a phone that is able to power up and launch debug mode of an app in a few seconds. I develop apps mostly in C# Xamarin & Flutter.

... | azrinsani |

998,006 | Usa console.table en lugar de console.log | Una forma interesante de mostrar los resultados de un array o un objeto es utilizar console.table.... | 17,009 | 2022-02-22T22:51:30 | https://jfbarrios.com/usa-consoletable-en-lugar-de-consolelog | javascript, tips, spanish, logging | Una forma interesante de mostrar los resultados de un array o un objeto es utilizar `console.table`. Esta función toma un argumento obligatorio: `data`, que debe ser un `array` o un `objeto`, y un parámetro adicional: `columns`.

## Colecciones de tipos primitivos

```javascript

// Array de string

console.table(['Manzan... | jfernandogt |

998,015 | Truthy and Falsy values in JavaScript | Introduction In this article, we shall learn about the concept of Truthy and Falsy values... | 0 | 2022-02-22T23:19:54 | https://dev.to/naftalimurgor/truthy-and-falsy-values-in-javascript-458p | javascript, webdev, beginners, tutorial | ## Introduction

In this article, we shall learn about the concept of Truthy and Falsy values in JavaScript and why this concept is useful. Let's jump in!

## What are truthy values?

Truthy values are values that evaluate to `boolean` in a conditional such as `if..else` and `switch...case`. In Computer Science, a Boo... | naftalimurgor |

998,148 | I made a Hacker News reader with Flutter | Here is the GitHub repo: https://github.com/Livinglist/Hacki | 0 | 2022-02-23T01:04:30 | https://dev.to/livinglist/i-made-a-hacker-news-reader-with-flutter-3dfl | flutter, engineering, android, ios | ---

title: I made a Hacker News reader with Flutter

published: true

description:

tags: Flutter, engineering, android, iOS

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/albjomq6nm9sfjdxbwoq.png

---

Here is the GitHub repo: https://github.com/Livinglist/Hacki

| livinglist |

1,002,430 | Testing a Feature: A concise approach | Any change in the existing program that enhances it's functionality is called as a "Feature" For a... | 0 | 2022-02-26T18:00:22 | https://dev.to/yashmunjal/testing-a-feature-a-concise-approach-4h63 | testing, beginners, productivity | Any change in the existing program that enhances it's functionality is called as a "Feature"

For a feature to work effectively, it has to be properly tested. Testing ensures that the software is working correctly, and that it meets its requirements. It ensures that the product does what it says on the box.

### Softw... | yashmunjal |

998,325 | How to use Netlify as your continuous integration | Netlify is a hosting provider that you can use for static websites or web applications. The free plan... | 0 | 2022-02-23T05:52:56 | https://how-to.dev/how-to-use-netlify-as-your-continuous-integration | javascript, netlify, ci | Netlify is a hosting provider that you can use for static websites or web applications. The free plan comes with 300 minutes of build time, which should be enough to set up continuous deployment (CD) for a project that doesn’t receive a lot of commits. I’ll show you how to use those resources to add simple continuous i... | marcinwosinek |

998,486 | Test test test | A post by Besi | 0 | 2022-02-23T09:01:53 | https://www.linkedin.com/testest | besi | ||

998,492 | Remind Solution (Australia) - It Helps You Remember All Details Well! | This is not simple that buds are more interested in Remind Solution than in Brain Booster... | 0 | 2022-02-23T09:24:11 | https://dev.to/remindsolution1/remind-solution-australia-it-helps-you-remember-all-details-well-254e | This is not simple that buds are more interested in [Remind Solution](https://ipsnews.net/business/2021/09/18/remind-solution-real-brain-booster-or-a-scam-read-ingredients-and-side-effects-reports/) than in Brain Booster Supplements. There have been several new Brain Booster Supplements endorsements. It is a worthwhile... | remindsolution1 | |

998,849 | Module 2 | React BillsApp Project with Material Ui and Context Api

| Just Released the Second Module of BillsApp Project on My Channel Do Check it out and Provide your... | 0 | 2022-02-23T14:06:19 | https://dev.to/47karimasif/module-2-react-billsapp-project-with-material-ui-and-context-api-5e2j | javascript, react, webdev, devops | Just Released the Second Module of BillsApp Project on My Channel

Do Check it out and Provide your feedback :)

[Link for The Video](https://youtu.be/T6XM7uI3138)

Do check it out and Share :) | 47karimasif |

999,182 | Javascript: isFunctions | In every language, we need to validate data before modifying or displaying it. So, here in the case... | 16,797 | 2022-02-23T16:54:29 | https://dev.to/urstrulyvishwak/isfunctions-in-javascript-5d0h | javascript, webdev, programming, discuss | In every language, we need to validate data before modifying or displaying it.

So, here in the case of javascript also we almost regularly use these functions and we maintain all these in single util class to reuse quickly.

No further theory let’s jump into stuff.

Basically,

{% embed https://gist.github.com/K-Vishw... | urstrulyvishwak |

343,230 | A post about productivity | If you have a programming blog you have to write a post about programmer productivity. It's kind of l... | 0 | 2020-05-25T05:52:38 | https://dev.to/zasuh_/a-post-about-productivity-407g | programming, productivity, work | If you have a programming blog you have to write a post about programmer productivity. It's kind of like a ritual to be accepted into the pantheon of programming bloggers (next to writing your opinion on the industry and how it has changed as a whole).

Productivity in programming is weird when you are starting out. Yo... | zasuh_ |

999,252 | How to POST and Receive JSON Data using PHP cURL | JSON is the most popular data format for exchanging data between a browser and a server. The JSON... | 0 | 2022-02-23T19:21:09 | https://dev.to/saymon/how-to-post-and-receive-json-data-using-php-curl-5pn | php, webdev, beginners, tutorial | **JSON** is the most popular data format for exchanging data between a browser and a server. The JSON data format is mostly used in web services to interchange data through API. When you working with web services and APIs, sending **JSON data via POST** request is the most required functionality. PHP cURL makes it easy... | saymon |

999,444 | cryptocurrency Coinmarketdo news | Coinmarketcap has been the leader in tracking cryptocurrency prices in real time. It was founded in... | 0 | 2022-02-23T22:04:08 | https://coinmarketdo.com | cryptocurrency, crypto, bitcoin, ethereum | ---

title: cryptocurrency Coinmarketdo news

published: true

date: 2021-07-23 21:36:50 UTC

tags: Cryptocurrency,crypto,Bitcoin,Ethereum

canonical_url: https://coinmarketdo.com

---

Coinmarketcap has been the leader in tracking cryptocurrency prices in real time. It was founded in May 2013 and has been a well-known brand... | coiner |

999,474 | Longest Palindromic Substring - Leetcode #5 | https://www.youtube.com/watch?v=Aiw3m8EK0rs&t=8s Verdict: Pass Score: 5/5 Coding:... | 0 | 2022-02-23T21:56:45 | https://dev.to/dannyhabibs/longest-palindromic-substring-1aim | career, interview, programming, python | https://www.youtube.com/watch?v=Aiw3m8EK0rs&t=8s

Verdict: Pass

Score: 5/5

Coding: 5

Communication: 5

Problem Solving: 5

Inspection: 3

Review: 5

Leetcode #5 - Longest Palindromic Substring (https://leetcode.com/problems/longest-palindromic-substring/)

The candidate did an excellent job. He had seen the problem duri... | dannyhabibs |

1,000,267 | Web3 Tutorial: build DApp with Web3-React and SWR | In "Tutorial: Build DAPP with hardhat, React and Ethers.js", we connect to and interact with the... | 0 | 2022-02-24T14:18:00 | https://dev.to/yakult/tutorial-build-dapp-with-web3-react-and-swr-1fb0 | blockchain, web3, dapp, react | In "[Tutorial: Build DAPP with hardhat, React and Ethers.js](https://dev.to/yakult/a-tutorial-build-dapp-with-hardhat-react-and-ethersjs-1gmi)", we connect to and interact with the blockchain using `Ethers.js` directly. It is ok, but there are tedious processes needed to be done by ourselves.

We would rather use hand... | yakult |

1,000,558 | Busy Creating A rideshare app using react native | I am going through a error when running my app ,what's the best online IDE for react-native or the... | 0 | 2022-02-24T18:03:02 | https://dev.to/bigboycrypto/busy-creating-a-rideshare-app-using-react-native-5h57 | I am going through a error when running my app ,what's the best online IDE for react-native or the best emulator for react . | bigboycrypto | |

1,000,805 | Accept Web3 Crypto Donations right on GitHub Pages | This approach is a game-changer for every dev who thinks about accepting donations/support for his or... | 0 | 2022-02-25T00:13:08 | https://dev.to/web3-payments/accept-web3-crypto-donations-right-on-github-pages-2oj8 | web3, tutorial, javascript, opensource | This approach is a game-changer for every dev who thinks about accepting donations/support for his or her projects or currently does so.

I will show you how to accept donations with any ERC-20 or BEP-20 token with automatic conversion right on GitHub Pages.

**The coolest part:**

- your supporters pay with any token a... | lxp |

1,000,961 | How to add Rive animations in Flutter? | Simple animations in Flutter are boring and obsolete, so let’s spice up the game and learn about a... | 0 | 2022-02-25T05:18:37 | https://dev.to/wolfizsolutions/how-to-add-rive-animations-in-flutter-5ib | gamedev, programming, rive, flutter | Simple animations in Flutter are boring and obsolete, so let’s spice up the game and learn about a new tool that will change the whole landscape of your animations in any project.

Yes, I am talking about Rive. So, let’s get started!

## What is Rive?

Rive, formally known as Flare, is a real-time interactive design ... | wolfizsolutions |

1,001,322 | Create a Hyperlink UI in .NET MAUI Preview 13 | On Feb. 15, 2022, Microsoft released .NET MAUI Preview 13. In this preview release, .NET MAUI... | 0 | 2022-02-25T12:39:44 | https://www.syncfusion.com/blogs/post/create-a-hyperlink-ui-in-net-maui-preview-13.aspx | csharp, dotnet, maui, mobile | ---

title: Create a Hyperlink UI in .NET MAUI Preview 13

published: true

date: 2022-02-25 11:51:30 UTC

tags: csharp, dotnet, maui, mobile

canonical_url: https://www.syncfusion.com/blogs/post/create-a-hyperlink-ui-in-net-maui-preview-13.aspx

cover_image: https://www.syncfusion.com/blogs/wp-content/uploads/2022/02/Create... | sureshmohan |

1,001,601 | Build a Next.js Website in 4 Steps

| Carson Gibbons is the Co-Founder & CMO of Cosmic JS, an API-first Cloud-based Content Management... | 0 | 2022-02-25T17:21:52 | https://dev.to/mdmahirfaisal/build-a-nextjs-website-in-4-steps-3m4c | Carson Gibbons is the Co-Founder & CMO of Cosmic JS, an API-first Cloud-based Content Management Platform that decouples content from code, allowing devs to build slick apps and websites in any programming language they want.

In this blog I will show you how to pick up an existing website example and curtail it into yo... | mdmahirfaisal | |

1,001,824 | Postgres Connection Pooling and Proxies

| One essential concept that every backend engineer should know is connection pooling. This technique... | 0 | 2022-02-25T23:07:04 | https://arctype.com/blog/connnection-pooling-postgres | technology, programming, tutorial, productivity | One essential concept that every backend engineer should know is connection pooling. This technique can improve the performance of an application by reducing the number of open connections to a database. Another related term is "proxies," which help us implement connection pools.

In this article, we'll discuss connect... | rettx |

1,002,986 | Python for everyone: Mastering Python The Right Way | We all know that food, shelter and clothing are the basic needs in live and are essential for... | 0 | 2022-02-27T09:01:08 | https://dev.to/mainashem/python-for-everyone-mastering-python-the-right-way-523l | We all know that food, shelter and clothing are the basic needs in live and are essential for survival. Similarly in being a developer especially now in web3, python is more like a basic need. With the ever evolving changing and new advancements in technology, python is the present and the future.

Many will argue that... | mainashem | |

1,003,055 | Coding Interview – Converting Roman Numerals in Python | A common assignment in Python Coding Interviews is about converting numbers to Roman Numerals and... | 17,096 | 2022-02-28T07:41:05 | https://bas.codes/posts/python-roman-numerals/ | python, career, beginners, algorithms |

A common assignment in Python Coding Interviews is about converting numbers to Roman Numerals and vice versa.

Today, we'll look at two possible implementations in Python.

## Roman Numerals

Roman Numerals consists of these symbols:

| Symbol | Numerical Value |

|--------|-----------------|

| `I` | 1 ... | bascodes |

1,003,743 | What is React-Redux and Why it is used? | Today we will discuss react-redux and its use in web development projects. Also, Linearloop is a... | 0 | 2022-02-28T06:27:14 | https://www.linearloop.io/blog/what-is-react-redux-and-why-it-is-used/ | react, redux, webdev, beginners | ---

canonical_url: https://www.linearloop.io/blog/what-is-react-redux-and-why-it-is-used/

---

Today we will discuss react-redux and its use in web development projects. Also, Linearloop is a prominent [React JS web development company in India & USA](https://www.linearloop.io/web-development/), and we have prepared th... | linearloophq |

1,003,766 | How do I keep my devs busy while waiting on code review? | I was recently involved in a discussion with a Scrum master who asked this question. “Last sprint,... | 0 | 2022-08-13T11:41:25 | https://jhall.io/archive/2022/02/28/how-do-i-keep-my-devs-busy-while-waiting-on-code-review/ | agile, flow, flowengineering, scrum | ---

title: How do I keep my devs busy while waiting on code review?

published: true

date: 2022-02-28 00:00:00 UTC

tags: agile,flow,flowengineering,scrum

canonical_url: https://jhall.io/archive/2022/02/28/how-do-i-keep-my-devs-busy-while-waiting-on-code-review/

---

I was recently involved in a discussion with a Scrum m... | jhall |

1,004,145 | Next.js + Tailwind CSS | Create your project Start by creating a new Next.js project if you don’t have one set up... | 0 | 2022-02-28T14:41:10 | https://dev.to/reactwindd/nextjs-tailwind-css-1ci1 | javascript, nextjs, react, tailwindcss | ## Create your project

Start by creating a new Next.js project if you don’t have one set up already. The most common approach is to use [Create Next App](https://nextjs.org/docs/api-reference/create-next-app).

```

// Terminal

$ npx create-next-app my-project

$ cd my-project

```

## Install Tailwind CSS

Install `tai... | reactwindd |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.