id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,261,344 | Auto-Run Tests: Test Via Continuous Integration | What is Continuous Integration(CI)? It's a way to make sure new codes are continuously... | 0 | 2022-11-17T23:16:38 | https://dev.to/cychu42/auto-run-tests-test-via-continuous-integration-ib6 | beginners, javascript, opensource | ## What is Continuous Integration(CI)?

It's a way to make sure new codes are continuously integrated via running automated tests to make sure new codes follow certain standards and don't break anything.

## How to do it?

I used Github Action which made the process pretty easy.

1. In your GitHub repo, click the Actio... | cychu42 |

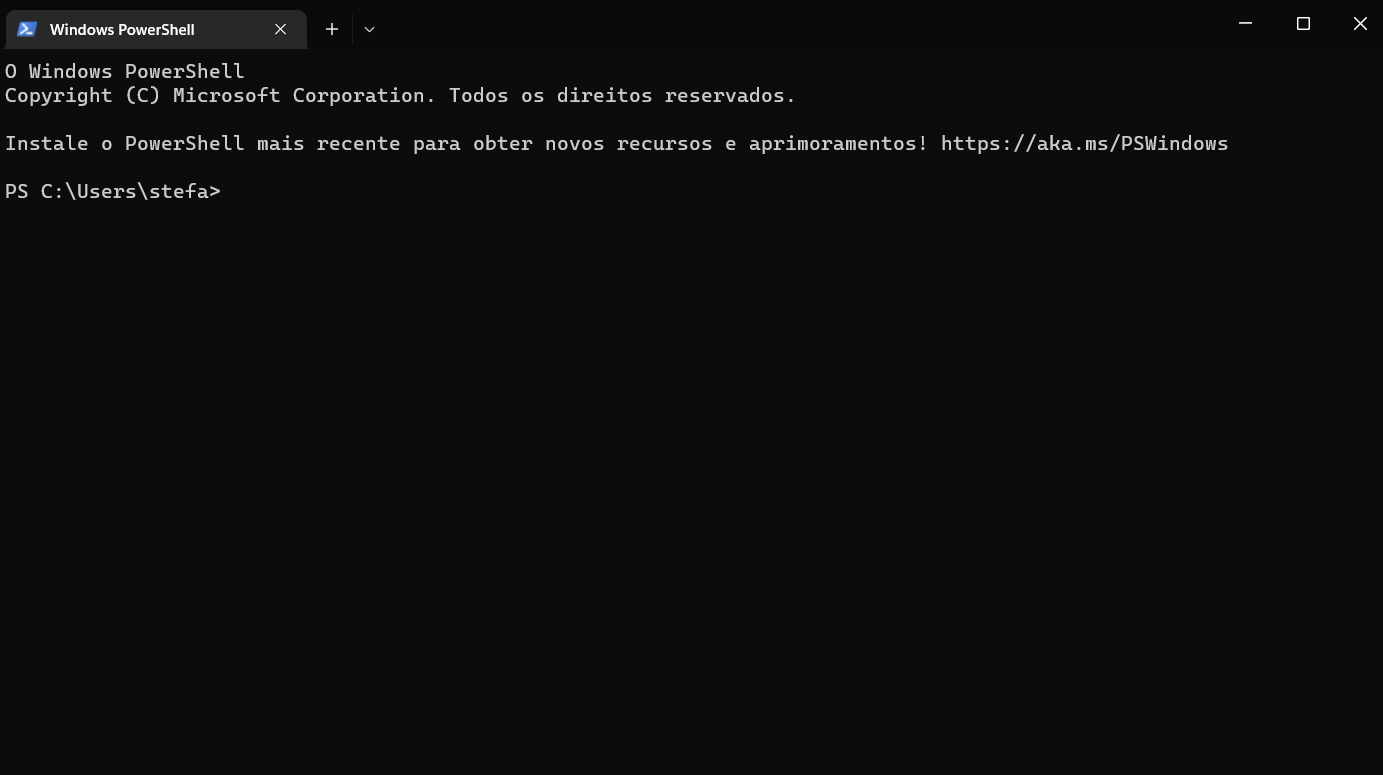

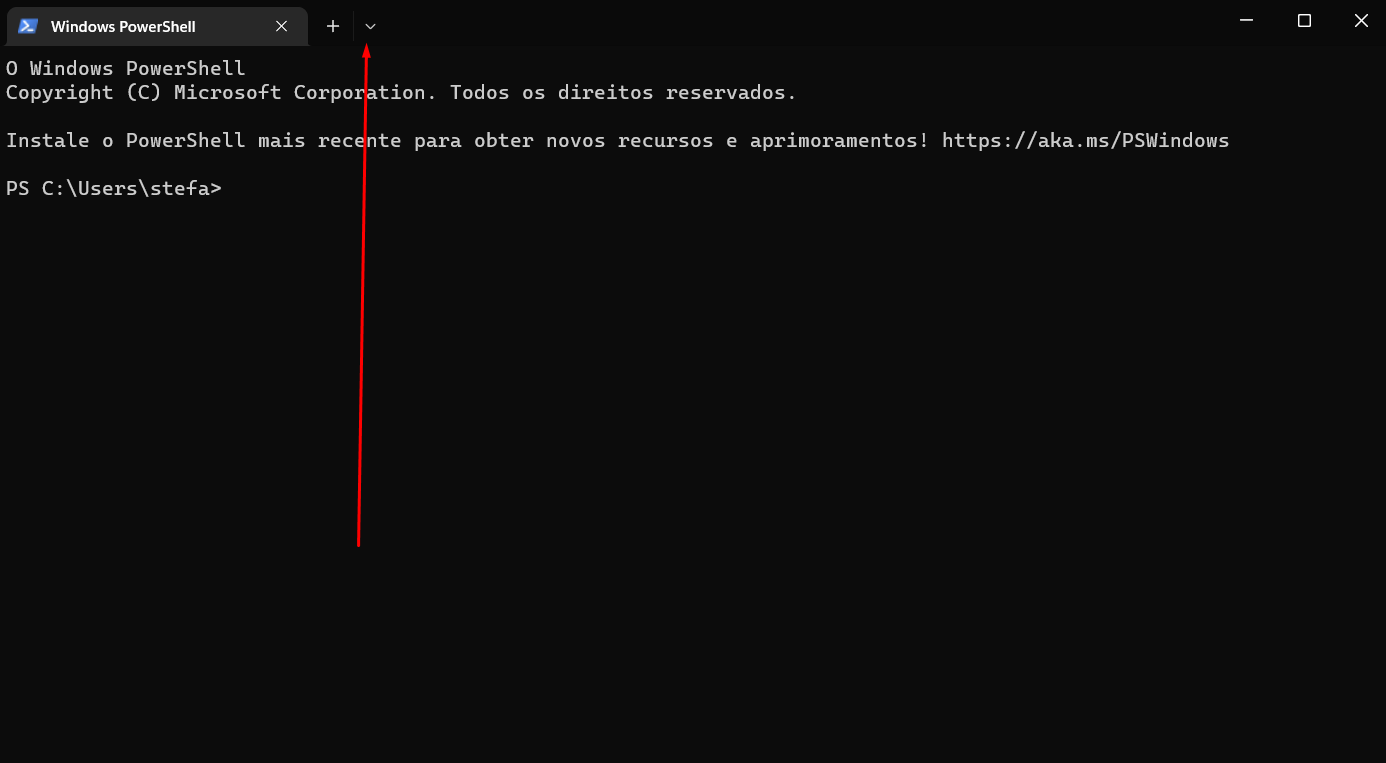

1,261,348 | Como mudar o fundo do terminal | 1° Abra seu terminal: 2° Clique na seta indicativa para baixo: 3° Entre nas configurações do... | 0 | 2022-11-17T20:42:06 | https://dev.to/stefanyrepetcki/como-mudar-o-fundo-do-terminal-128c | frontend, develop, tutorial, programming | 1° Abra seu terminal:

2° Clique na seta indicativa para baixo:

3° Entre nas configurações do seu terminal:

... | stefanyrepetcki |

1,261,883 | The Best Ways to Exploit Rate Limit Vulnerabilities | TL;DR- If you’re into bug bounties or just white-hat hacking in general, you’ve probably heard of... | 0 | 2022-11-18T04:22:30 | https://medium.com/the-gray-area/the-best-ways-to-exploit-rate-limit-vulnerabilities-f73ac24a08f1 | cyber, cybersecurity, bughunting, bugbounty | ---

title: The Best Ways to Exploit Rate Limit Vulnerabilities

published: true

date: 2022-11-18 01:36:39 UTC

tags: cyber,cybersecurity,bughunting,bugbounty

canonical_url: https://medium.com/the-gray-area/the-best-ways-to-exploit-rate-limit-vulnerabilities-f73ac24a08f1

---

[

November has been such a difficult month for Solana developers given the recent events. While many people look to be leaving the Solana blockchain as a result, we are glad to note that most dApp developers are stay... | extrnode |

1,263,411 | Youtube Shorts Beta is now Available for india || 🔥The biggest Short video app of Youtube | Youtube starting off by launching an early beta of YouTube Shorts in India, this includes a... | 0 | 2022-11-19T05:17:26 | https://dev.to/testdef/youtube-shorts-beta-is-now-available-for-india-the-biggest-short-video-app-of-youtube-5cap | <div class="separator" style="clear: both; text-align: center;"> <a href="https://lh3.googleusercontent.com/-OtdQ5WkHKfM/X2MKWAk5imI/AAAAAAAAGT4/zh5qjGB_GrMTvxn-aAY9H1u9mYtURNTBgCLcBGAsYHQ/s1600/1600326208800097-0.png" imageanchor="1" style="margin-left: 1em; margin-right: 1em;"> <img border="0" src="https://lh3.... | testdef | |

1,263,445 | How to convert HTML To React Component | Just Simply Copy your HTML CODE into this website https://magic.reactjs.net/htmltojsx.htm This Site... | 0 | 2022-11-19T06:54:31 | https://dev.to/farhadi/how-to-convert-html-to-react-component-1dpn | webdev, javascript, react, html |

Just Simply Copy your HTML CODE into this website https://magic.reactjs.net/htmltojsx.htm This Site will Generate JSX Code Copy from website paste into your React project files. | farhadi |

1,263,668 | A Thorough View of Strings in JavaScript | Introduction In JavaScript, textual data is stored as strings. There is no separate type... | 0 | 2022-11-19T08:43:36 | https://dev.to/indirakumar/a-thorough-view-on-strings-in-javascript-1c | javascript, webdev, programming, beginners | ## Introduction

In JavaScript, textual data is stored as strings. There is no separate type for a single character.

The internal format for strings is always UTF-16, no matter what the pages use. The size of a JavaScript string is always 2 bytes per character. Any string can be created by enclosing the value with singl... | indirakumar |

1,263,704 | Working with telescope | I haven't worked with a large repository of any sort before, and I don't think my skills are great... | 0 | 2022-11-19T11:01:11 | https://dev.to/ririio/working-with-telescope-4g5g | I haven't worked with a large repository of any sort before, and I don't think my skills are great enough to actually add anything to it. What I learned from working with any repositories, is that contributors tend to overlook the most basic problems due to their focus much more bigger issues. Therefore, when I worked ... | ririio | |

1,264,558 | Artificial "Intelligence" and Controversial Ideas about Future Technology | This article is intended for developers with some prior knowledge of web technology. A less technical... | 22,566 | 2022-11-21T12:13:05 | https://dev.to/ingosteinke/artificial-intelligence-and-controversial-ideas-about-future-technology-1chj | web3, openai, chatgpt, machinelearning | This article is intended for developers with some prior knowledge of web technology. A less technical article with a greater focus on computer generated art, cyberpunk, and augmented reality can be found in my weblog ([open-mind-culture.org](https://www.open-mind-culture.org/)). I published this as a DEV post just a fe... | ingosteinke |

1,264,767 | CSS3 Code Generators | CSS3 Code Generators - That can speed up your web design development workflow. As a web developer or... | 0 | 2022-11-20T17:36:47 | https://dev.to/farhanacsebd/css3-code-generators-cf5 | **CSS3 Code Generators -** That can speed up your web design development workflow.

As a web developer or web designer, sometimes you may struggle to get the proper CSS codes for your design, for example, if you want to add a drop shadow to an HTML element, if you don't know the exact values for box-shadow CSS property... | farhanacsebd | |

1,265,473 | Browser Storage Hook React | import this -> import { useLocalStorage,useSessionStorage } from... | 0 | 2022-11-21T10:22:04 | https://dev.to/mdwahiduzzamanemon/browser-storage-hook-react-12l1 | npm, react, hook, package | > import this ->

import { useLocalStorage,useSessionStorage } from 'browser_storage_hook_react';

> uses ->

const [value, setValue] = useLocalStorage('key', 'defaultValue');

const [value, setValue] = useSessionStorage('key', 'defaultValue');

{% embed https://www.npmjs.com/package/browser_storage_hook_react?activeTab=r... | mdwahiduzzamanemon |

1,265,584 | Difference Between Manual And Automated Testing Procedures For User Acceptance Testing | Automated testing serves as a reliable process that can help in the identification of any kind of... | 0 | 2022-11-21T11:13:02 | https://remarkmart.com/difference-between-manual-and-automated-testing/ | manual, testing, automated | Automated testing serves as a reliable process that can help in the identification of any kind of defects in applications developed by a business organization. Dedicated tools that can help with automated testing of every aspect related to application and can help in resolving any kind of problems within the same befor... | rohitbhandari102 |

1,265,590 | GTK calculator on Rust | (Almost done) Made my first application with user interface and built it on Linux. Have to improve... | 0 | 2022-11-21T11:26:14 | https://dev.to/antonov_mike/gtk-calculator-on-rust-4cc7 | rust, gtk, algorithms | (Almost done)

Made my first [application with user interface](https://github.com/antonovmike/calculator_gtk) and built it on Linux. Have to improve few issues. Also need to know how to build it for Windows and Mac.

Some issues I have to solve to consider the project complete:

- Listen for keyboard events (the program... | antonov_mike |

1,265,594 | Don't make these mistakes in your CV | Ah yes, another year is slowly coming to an end. This means that project plans are nearing... | 0 | 2022-11-21T11:33:21 | https://dev.to/topjer/reading-cvs-is-suffering-3ijh | watercooler, tutorial, career | Ah yes, another year is slowly coming to an end. This means that project plans are nearing fruition/failure. Maybe people start to feel the call for change, thinking to themselves that 2023 will be the year when they leave the hell hole they wander daily.

Or maybe their company is bought by a eccentric billionaire who... | topjer |

1,289,229 | How and Why LimaCharlie Secures Google Chrome and ChromeOS | Chrome is the world’s most popular web browser—and ChromeOS is becoming more prevalent due to the use... | 0 | 2022-12-15T22:11:04 | https://www.limacharlie.io/blog/endpoint-detection-and-response-on-chrome | security | ---

title: How and Why LimaCharlie Secures Google Chrome and ChromeOS

published: true

date: 2022-12-08 00:00:00 UTC

tags: security

canonical_url: https://www.limacharlie.io/blog/endpoint-detection-and-response-on-chrome

---

Chrome is the world’s most popular web browser—and ChromeOS is becoming more prevalent due to t... | charltonlc |

1,265,657 | Formatting dates and times without a library in JavaScript | Dates and times can be tricky in JavaScript. You always want to be sure you are doing it correctly.... | 0 | 2022-11-21T13:41:27 | https://dev.to/daryllukas/formatting-dates-and-times-without-a-library-in-javascript-18he | javascript, webdev, beginners, tutorial | Dates and times can be tricky in JavaScript. You always want to be sure you are doing it correctly. Luckily, because JavaScript has a built-in Date object, it makes working with dates and times much easier. In fact, there are many different methods on the Date object that can be used to do different things with dates. ... | daryllukas |

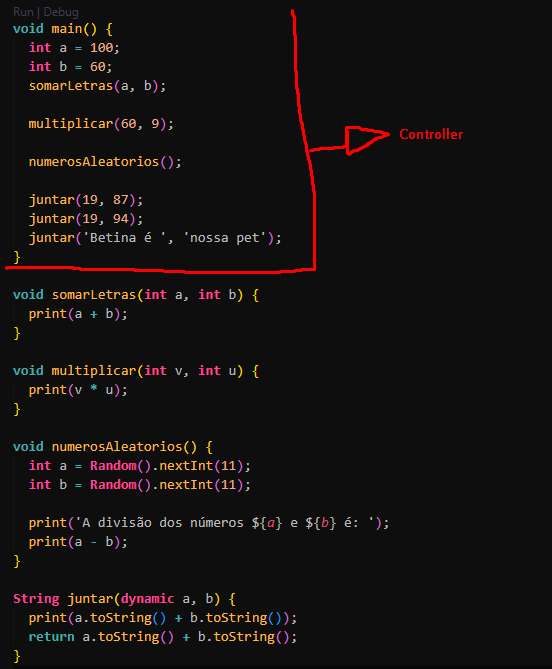

1,266,298 | Function | - Na imagem abaixo: Algumas funções que podemos fazer com o dart. Criar funções de... | 0 | 2022-11-21T21:39:23 | https://dev.to/ramondeveloper/function-4a9m | dart | ## - Na imagem abaixo:

Algumas funções que podemos fazer com o dart. Criar funções de operadores lógicos para calcular números especificos, números aleatórios, juntar números ou nomes. No main colocamos o Controller... | ramondeveloper |

1,266,333 | Forever Functional: Higher-order functions with TypeScript | by Federico Kereki In a previous article in this series, I discussed several types of higher-order... | 22,768 | 2022-11-22T00:16:09 | https://blog.openreplay.com/forever-functional-higher-order-functions-with-typescript/ | typescript, functional, programming | by [Federico Kereki](https://blog.openreplay.com/forever-functional-higher-order-functions-with-typescript/)

In a [previous article in this series](https://blog.openreplay.com/forever-functional-higher-order-functions-functions-to-rule-functions/), I discussed several types of higher-order functions (HOF) whose argume... | asayerio_techblog |

1,266,356 | Boxing metaverse - whaat? | Hi folks, if there was a boxing metaworld what would you like to see there - please give me any ideas... | 0 | 2022-11-22T00:57:28 | https://dev.to/davidsl/boxing-metaverse-whaat-38d5 | metaverse, gamedev, nft, webx | Hi folks, if there was a boxing metaworld what would you like to see there - please give me any ideas - I'm doing research and your opinion as a professionals would be very helpful | davidsl |

1,266,659 | First post | I was about to spend ages trying to figure out how to work this and format posts but decided to just... | 0 | 2022-11-22T05:28:18 | https://dev.to/nyashanice/first-post-294g | I was about to spend ages trying to figure out how to work this and format posts but decided to just play with it and figure it out as I go. :) learning new things is exciting | nyashanice | |

1,266,848 | Quick Guide To CSS Preprocessors | You might believe you now know the web development process, and then the next moment, your colleague... | 0 | 2022-11-22T06:42:36 | https://dev.to/quokkalabs/quick-guide-to-css-preprocessors-10al | css, guide, tutorial, startup | You might believe you now know the web development process, and then the next moment, your colleague is casually going around talking about CSS and its processor. What is CSS? How do they make developers' lives much more accessible?

Now it's time to enter the world of CSS preprocessors, an essential part of any coder... | labsquokka |

1,266,940 | Event Streams Are Nothing Without Action | Each data point in a system that produces data on an ongoing basis corresponds to an Event. Event... | 0 | 2022-11-22T09:23:43 | https://memphis.dev/blog/event-streams-are-nothing-without-action/ | eventdriven, streamprocessing, batchprocessing | Each data point in a system that produces data on an ongoing basis corresponds to an Event. Event Streams are described as a continuous flow of events or data points. Event Streams are sometimes referred to as Data Streams within the developer community since they consist of continuous data points. Event Stream Process... | atrifsik |

1,267,304 | AWS Lambda (Node.js) calling SOAP service | REST service: Uses HTTP for exchanging information between systems in several ways such as... | 0 | 2022-11-22T16:30:30 | https://dev.to/prabusah_53/http-get-and-delete-from-aws-lambda-7ml | ### REST service:

Uses HTTP for exchanging information between systems in several ways such as JSON, XML, Text etc.

### SOAP service:

A Protocol for exchanging information between systems over internet only using XML.

### Requirement:

Calling SOAP services from Lambda.

We'll use this npm package: https://www.npmjs.co... | prabusah_53 | |

1,267,358 | How To Poll an Airflow Job (i.e. DAG Run) | Ever wanted to actually know when an Airflow DAG Run has completed? Perhaps your use case involves... | 0 | 2022-11-22T15:31:53 | https://dev.to/joeauty/how-to-poll-an-airflow-job-ie-dag-run-1ffc | airflow, devops | Ever wanted to actually know when an Airflow DAG Run has completed? Perhaps your use case involves this completed work being some sort of workflow dependency, or perhaps it is used in a CI/CD pipeline. I'm sure there are a myriad of possible scenarios here beyond ours at [Redactics](https://www.redactics.com), which is... | joeauty |

1,267,670 | Java Collections Framework | Uma coleção é um agrupamento de objetos, uma interação de vários objetos em um lugar só. Por exemplo,... | 0 | 2022-11-22T17:34:51 | https://dev.to/jeronimafloriano/java-collections-framework-1deb | java, programming, backend, ptbr | Uma coleção é um agrupamento de objetos, uma interação de vários objetos em um lugar só. Por exemplo, uma pasta com uma coleção de cartas, uma gaveta com várias chaves etc. Há vários tipos de coleções com diferentes particularidades.

O Java possui uma arquitetura que representa várias coleções: o framework de coleções... | jeronimafloriano |

1,268,551 | Web scraping Google Shopping Product Reviews with Nodejs | What will be scraped Full code If you don't need an explanation, have a look at the full code... | 0 | 2022-11-23T08:43:09 | https://dev.to/serpapi/web-scraping-google-shopping-product-reviews-with-nodejs-4n9e | webscraping, node, serpapi | <h2 id='what'>What will be scraped</h2>

<h2 id='full_code'>Full code</h2>

If you don't need an explanation, have a look at [the full code example in the online IDE](https://replit.com/@MikhailZub/Scrape-Goo... | mikhailzub |

1,268,625 | How to Create an Online Shopping App | About 60 % of users around the globe are mobile users. This is so because smartphones are easy to use... | 0 | 2022-11-23T11:03:31 | https://dev.to/christinek989/how-to-create-an-online-shopping-app-1kem | mobile, shoppingapp, softwar, java | About 60 % of users around the globe are mobile users. This is so because smartphones are easy to use and are more accessible. If so many people use mobile phones, [creating an online shopping app](https://addevice.io/blog/how-to-make-online-selling-app/) like Amazon must be the first and easiest investment that a busi... | christinek989 |

1,268,647 | Front-end Guide | Front-end Guide Credits: Illustration by @dev_lindseyk This guide has been cross-posted... | 0 | 2022-11-23T11:43:43 | https://dev.to/codelikeagirl29/front-end-guide-2h7k | webdev, programming, codenewbie | Front-end Guide

==

_Credits: Illustration by [@dev_lindseyk](https://lindseyk.dev)_

_This guide has been cross-posted on [Free Code Camp](https://medium.freecodecamp.com/grabs-front-end-guide-for... | codelikeagirl29 |

1,268,659 | 5 ways to start delivering sustainable technology through Serverless | Delivering sustainable technology is becoming mandatory for organisations across the planet, from... | 0 | 2022-11-23T12:02:28 | https://theserverlessedge.com/you-can-deliver-sustainable-technology-through-serverless/ | serverless, aws, cloud, architecture | Delivering [sustainable technology](https://theserverlessedge.com/watch-our-talk-on-sustainable-software-engineering-at-beltech/) is becoming mandatory for organisations across the planet, from both a regulatory and moral standpoint. We have written a lot on how using Well-Architected and [Serverless First principles](... | serverlessedge |

1,270,779 | Typescript: The keyof operator | Este artículo fue originalmente escrito en https://matiashernandez.dev A few primary concepts... | 0 | 2022-11-24T13:36:36 | https://matiashernandez.dev/blog/post/typescript-the-keyof-operator | webdev, programming, typescript |

> Este artículo fue originalmente escrito en [https://matiashernandez.dev](https://matiashernandez.dev/blog/post/typescript-the-keyof-operator)

A few primary concepts around Typescript help you build complex data shapes. One of that building blocks is the keyof operator.

This operator or keyword is the Typescri... | matiasfha |

1,276,351 | React Refactoring - Composition: When don't use it! | Intro I refact a dropdown composition component. Changing it to object. We had a dropdown... | 0 | 2022-11-29T01:22:53 | https://dev.to/raafacachoeira/react-refactoring-component-children-to-component-with-prop-objets-5f9o | react, refactoring, webdev, beginners | ## Intro

I refact a dropdown composition component. Changing it to object.

We had a dropdown in the following structure:

``` jsx

<Dropdown>

<Dropdown.Toggle color='primary' />

<Dropdown.Menu>

<Dropdown.Item onClick={() => {}}>

MyLabel

</Dropdown.Item>

<Dropdown.Item onClic... | raafacachoeira |

1,277,230 | Git Checkout / Reset / Revert. When to use what? | We often come across some weird scenarios (especially my team) while committing our code changes.... | 20,115 | 2022-11-29T13:48:16 | https://medium.com/@5minslearn/git-checkout-reset-revert-when-to-use-what-dc1fc9f3c5bb | git, gitcheckout, gitrevert, gitreset | We often come across some weird scenarios (especially my team) while committing our code changes. Some of them are,

* How can I bring the file to working area, which I have added to the staging area by mistake?

* OMG! I made a commit with production keys in the code but did not push the code yet. Is it possible to de... | 5minslearn |

1,278,541 | Tesla Solar Panel Installation Company in Texas & Colorado | ECG Solar is a quality solar panel installation company for Texas & Colorado. We Install all... | 0 | 2022-11-30T10:07:02 | https://dev.to/excelcgsolar/tesla-solar-panel-installation-company-in-texas-colorado-416b | ECG Solar is a quality [solar panel installation company ](https://excelcgsolar.com/)for Texas & Colorado. We Install all forms of solar from residential, commercial, utility scale, and not so common solar, such as solar roofs and building integrated solar systems. | excelcgsolar | |

1,288,957 | Reduce your Jest tests running time (especially on hooks!) with the maxWorkers option | Photo by Josh Olalde on Unsplash Many applications have hundreds (and sometimes thousands!) of... | 0 | 2022-12-08T15:52:29 | https://buaiscia.github.io/blog/tips/reduce-jest-tests-running-time-maxworkers | webdev, testing, jest, beginners | Photo by <a href="https://unsplash.com/@josholalde?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText">Josh Olalde</a> on <a href="https://unsplash.com/s/photos/workers?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText">Unsplash</a>

Many applications have hundreds (and sometimes thousa... | buaiscia |

1,288,964 | How to avoid “schema drift” | We are all familiar with drifting in-app configuration and IaC. We’re starting with a specific... | 0 | 2022-12-08T16:07:43 | https://memphis.dev/blog/how-to-avoid-schema-drift/ | schemaregistry, schemaverse, schemamanagemen, schemadrift | We are all familiar with drifting in-app configuration and IaC. We’re starting with a specific configuration, backed with IaC files. Soon after, we are facing a “drift” or a change between what is actually configured in our infrastructure and our files. The same behavior happens in data. Schema starts in a certain shap... | atrifsik |

1,289,275 | Treetop Tree House | Advent of Code 2022 Day 8 Part 1 Analyzing each cross-section My algorithm in... | 16,285 | 2022-12-08T20:59:51 | https://dev.to/rmion/treetop-tree-house-354c | adventofcode, algorithms, javascript, programming | ## [Advent of Code 2022 Day 8](https://adventofcode.com/2022/day/8)

## Part 1

1. Analyzing each cross-section

2. My algorithm in pseudocode

3. My algorithm in JavaScript

### Analyzing each cross-section

Given a grid of trees:

```

. . . . .

. . . . .

. . . . .

. . . . .

. . . . .

```

Knowing that all trees on the edg... | rmion |

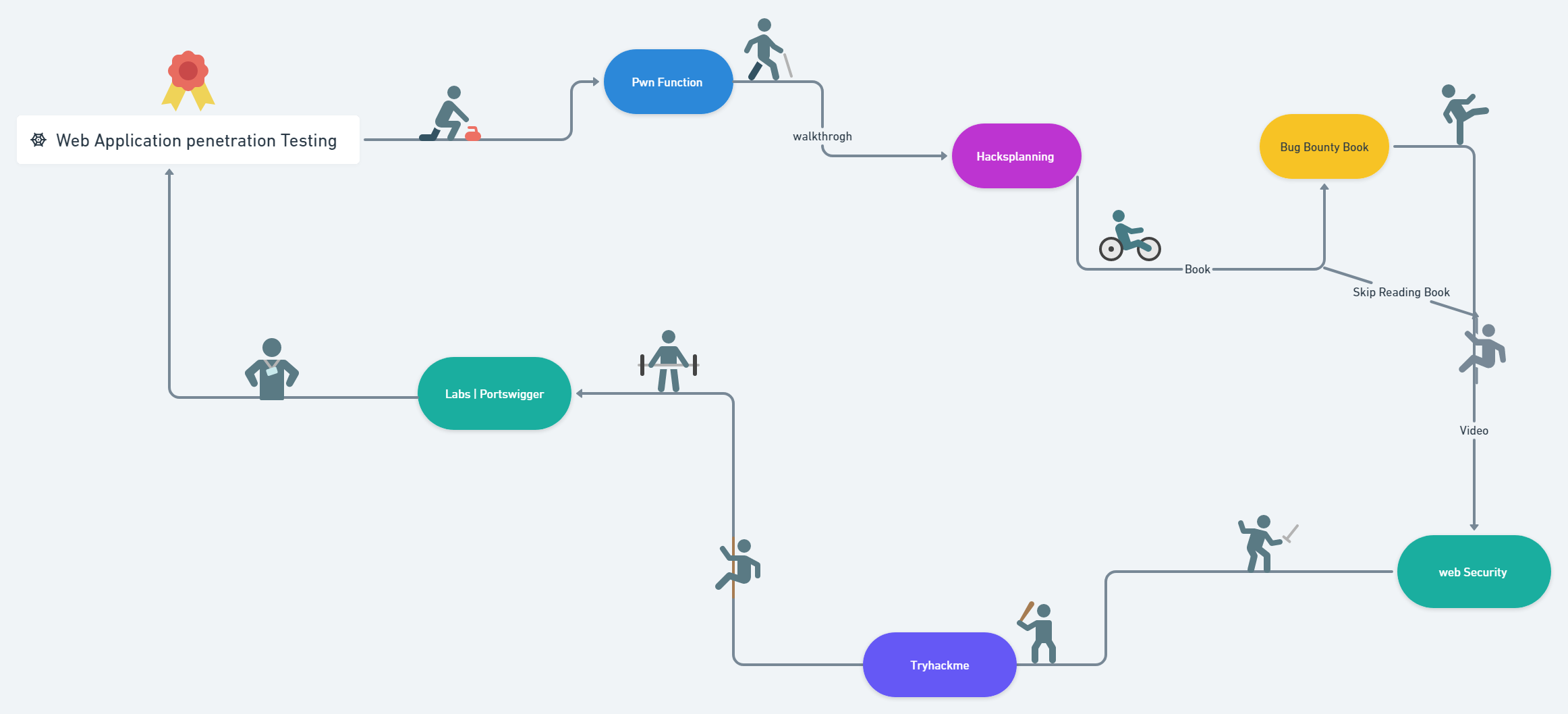

1,289,332 | Web Application Penetration Testing 101 | https://medium.com/@vishnuv4910/web-application-penetration-testing-101-a1fea91e36a9 | 0 | 2022-12-08T18:48:51 | https://dev.to/vz360/web-application-penetration-testing-101-5bnh | https://medium.com/@vishnuv4910/web-application-penetration-testing-101-a1fea91e36a9

| vz360 | |

1,289,389 | CSS Selectors: Style lists with the ::marker pseudo-element | For HTML lists, there was no straightforward way to style the bullets a different color from the list... | 20,455 | 2022-12-29T18:39:27 | https://dev.to/dianale/css-selectors-style-lists-with-the-marker-pseudo-element-ee4 | css, webdev, codepen, tutorial | For HTML lists, there was no straightforward way to style the bullets a different color from the list text until a couple of years ago. If you wanted to change just the marker color, you'd have to generate your own with pseudo-elements. Now with widespread browser support of the `::marker` pseudo-element, this can be t... | dianale |

1,289,953 | Creating A Carousel In React With Bootstrap | Creating a carousel in react might sound a bit intimidating; but with the right tools and background... | 0 | 2022-12-09T07:12:38 | https://dev.to/javirodmart/creating-a-carousel-in-react-with-bootstrap-3f0p | webdev, javascript, react, beginners | Creating a carousel in react might sound a bit intimidating; but with the right tools and background knowledge, it becomes much easier. First, we'll start by installing Bootstrap with npm.

`npm install react-bootstrap bootstrap`

After you have installed Bootstrap, you will need to import it into your JS.

`import Car... | javirodmart |

1,290,153 | MIG/MAG wires | MIG/MAG welding wire is used in welding. There is a difference between MIG and MAG: MIG stands for ... | 0 | 2022-12-09T09:04:22 | https://dev.to/royalweldingwire/migmag-wires-4l27 | **[MIG/MAG welding wire](https://royalweldingwires.com/mig-mag-welding-mild-steel-wires/#70S3)** is used in welding. There is a difference between MIG and MAG: MIG stands for

metal inert gas (ARGON, HELIUM), and MA... | royalweldingwire | |

1,290,274 | Tutorial on Robot Framework | So you know how you can use selenium webdriver to control browser for automation purposes? There's... | 0 | 2022-12-09T12:44:53 | https://dev.to/lakhbir_x1/tutorial-on-robot-framework-5bof | So you know how you can use selenium webdriver to control browser for automation purposes? There's something called Robot framework that is great for test automation and RPA purposes. You can see how it is used in Python to run tests {% embed http://bit.ly/3F9Gv1A %} | lakhbir_x1 | |

1,290,277 | Two Peas In A Pod - What Makes Isabella And Aiden's Friendship So Beautiful? | They say all good things come in pairs. After all, why chuckle alone when you can laugh... | 0 | 2022-12-09T12:50:24 | https://dev.to/ja0424953/two-peas-in-a-pod-what-makes-isabella-and-aidens-friendship-so-beautiful-367e | friendship, relationship, isabella, love |

They say all good things come in pairs. After all, why chuckle alone when you can laugh uncontrollably with someone? Why cry alone when you can wipe your tears using your best friend's shirt? Why go through this jo... | ja0424953 |

1,290,529 | Open Source Dashboard (for js developers) | Javascript open-source maintainers often have a hard time tracking their npm package status. I used... | 0 | 2022-12-09T14:08:52 | https://blog.niradler.com/open-source-dashboard-for-js-developers | ---

title: Open Source Dashboard (for js developers)

published: true

date: 2019-06-15 16:00:02 UTC

tags:

canonical_url: https://blog.niradler.com/open-source-dashboard-for-js-developers

---

Javascript open-source maintainers often have a hard time tracking their npm package status.

> I used until recently npm-stats.... | niradler | |

1,290,578 | Generate JWT secret key using OpenSSL | Searching online for a way to generate an HS512 JWT secret key, I found a Stack Overflow post with... | 0 | 2022-12-09T15:00:50 | https://dev.to/arc95/generate-jwt-secret-key-using-openssl-5edn | Searching online for a way to generate an HS512 JWT secret key, I found a [Stack Overflow post](https://stackoverflow.com/a/56934114/177416) with the OpenSSL command:

```

openssl rand -base64 172 | tr -d '\n'

```

Then the question was: do I have OpenSSL installed on my PC? If we have Git installed, [it includes OpenS... | arc95 | |

1,290,929 | Getting Started with Pixel Vision 8 | Start developing a game with the Pixel Vision 8 fantasy game console | 0 | 2022-12-09T20:15:45 | https://dev.to/cmiles74/getting-started-with-pixel-vision-8-1cmg | gamedev, pixelvision8, retrogaming | ---

layout: post

title: Getting Started with Pixel Vision 8

published: true

description: Start developing a game with the Pixel Vision 8 fantasy game console

tags: gamedev, pixelvision8, retrogaming

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/br5jv0pbuzqmjcdv3p8g.png

---

I've been interested ... | cmiles74 |

1,290,974 | AdventJS 2022 - Retos de código para Javascript cada día de diciembre | Recientemente me he enterado de una iniciativa que salió a la luz por vez primera el año pasado y en... | 20,870 | 2022-12-09T23:22:51 | https://dev.to/joseayram/adventjs-2022-retos-de-codigo-para-javascript-cada-dia-de-diciembre-3o17 | javascript, typescript, adventjs, adventofcode | Recientemente me he enterado de una iniciativa que salió a la luz por vez primera el año pasado y en este continúa con su segunda edición.

Se trata de [adventJS](https://adventjs.dev/) un espacio creado por [@midudev](https://midu.dev/) en donde tendrás la oportunidad de poner en práctica tus conocimientos de programc... | joseayram |

1,291,480 | A bit of blabbering about website startup’s. | I’ll be honest… It's really hard trying get audience for new startups in this age & day if... | 0 | 2022-12-10T08:03:21 | https://dev.to/zackuknow/a-bit-of-blabberingabout-website-startups-3ofp | beginners, career, writing, startup | I’ll be honest…

It's really hard trying get audience for new startups in this age & day if you're new to this.

I mean, can you REALLY do seo and backlinking stuff from get go of by just reading few articles?

This was, until my friend told me about creating guest post on already popular website or advertising on mark... | zackuknow |

1,291,710 | Flexbox (Parent Properties) | When we talk about flexbox properties we should separate between properties for parent &... | 0 | 2022-12-11T09:03:09 | https://dev.to/omaradelattia/flexbox-parent-properties-38jb | css, flexbox, webdev, programming | When we talk about flexbox properties we should separate between properties for parent & properties for children

## Properties for the Parent

- <u>**Display**</u>

`.container {display: flex;}` vs `.container {display: inline-flex;}`

Well, both of them has the same effect on the children but the only different is on... | omaradelattia |

1,291,722 | HTML-FS-1.1. Задача 3 - Виджет новой статьи в блоге «Нетологии» | A post by AlexFRANTSUZOV | 0 | 2022-12-10T12:31:27 | https://dev.to/alexfrantsuzov/html-fs-11-zadacha-3-vidzhiet-novoi-stati-v-bloghie--9im | codepen | {% codepen https://codepen.io/frantsuzovALEX/pen/poKBLag %} | alexfrantsuzov |

1,291,937 | ChatGPT, the last nail on the coffin for coding interviews? | You know how you rely on Google to solve all of your life's problems? No? So it's just me... | 0 | 2022-12-11T18:39:32 | https://dev.to/sharonsinei/chatgpt-the-last-nail-on-the-coffin-for-coding-interviews-5b6l | chatgpt, programming, webdev, discuss | You know how you rely on Google to solve all of your life's problems? No? So it's just me then?

Programmers ,I'm certain, can relate when it comes to solving their coding problems. Now imagine if Google were on steroids. That's how some are describing [ChatGPT](https://en.m.wikipedia.org/wiki/ChatGPT).

As the front-end ... | vuemasteryteam |

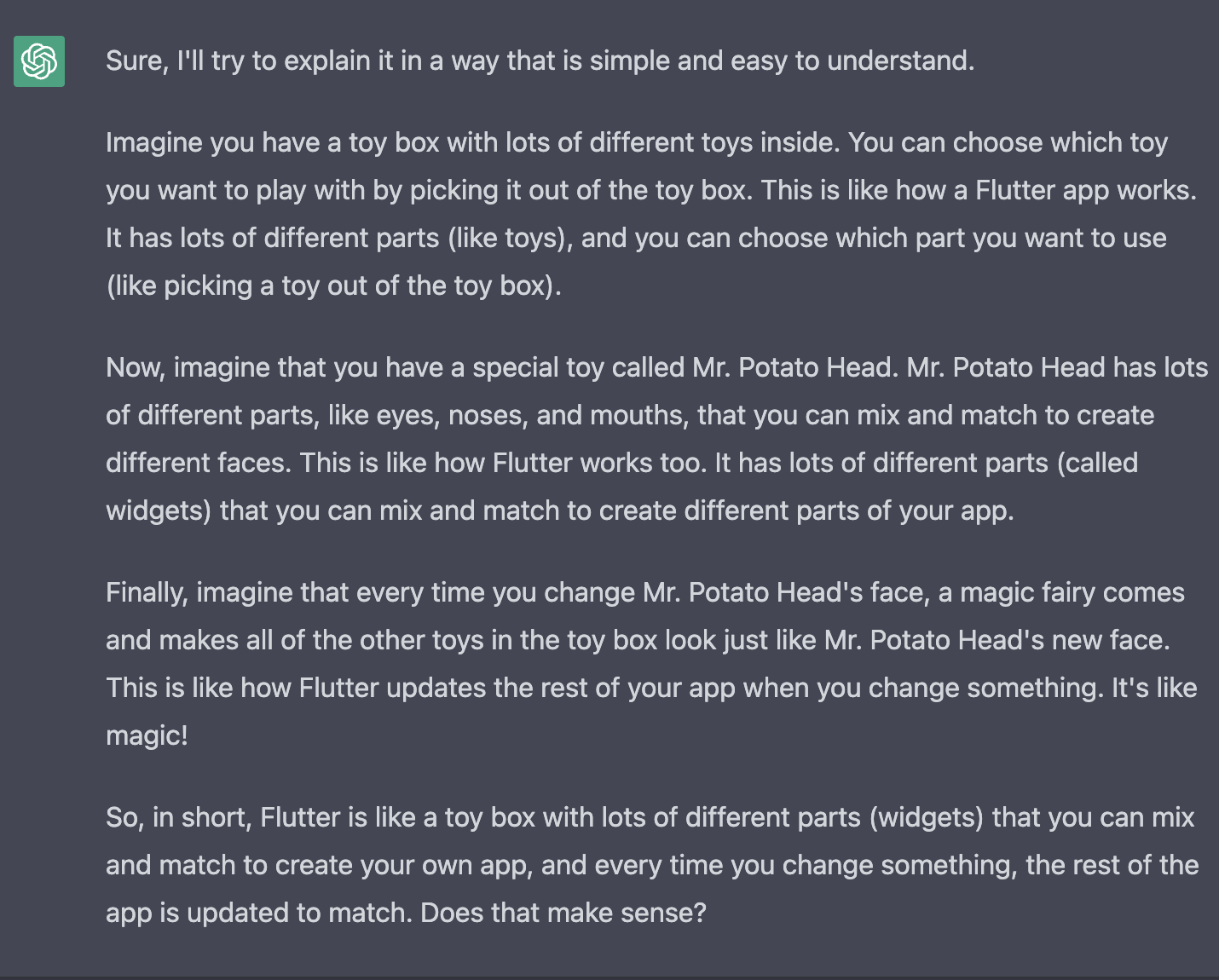

1,320,340 | Explain like I'm 5 | I asked chatGPT to explain the Flutter Architecture to me as if I were 5 years old. Considering... | 0 | 2023-01-07T00:02:10 | https://dev.to/joshjgomes/explain-like-im-5-4h2e | webdev, flutter, programming, beginners | I asked chatGPT to explain the Flutter Architecture to me as if I were 5 years old.

_Considering how creative its explanation was, chatGPT will continue to be a sharp tool in the kit of those with great ambition f... | joshjgomes |

1,320,441 | Supply chain security incident at CircleCI: Rotate your secrets | On January 4, CircleCI, an automated CI/CD pipeline setup tool, reported a security incident in their... | 0 | 2023-01-09T10:20:36 | https://snyk.io/blog/supply-chain-security-incident-circleci-secrets/ | vulnerabilities | ---

title: Supply chain security incident at CircleCI: Rotate your secrets

published: true

date: 2023-01-06 22:07:00 UTC

tags: Vulnerabilities

canonical_url: https://snyk.io/blog/supply-chain-security-incident-circleci-secrets/

---

On January 4, CircleCI, an automated CI/CD pipeline setup tool, reported a security inc... | snyk_sec |

1,320,777 | বুটস্ট্রাপ কার্ড কম্পোনেন্ট এর ক্লাসগুলোর সহজ, সিম্পল বিশ্লেষণ | Bootstrap তাদের card component এর code এ কোন কোন class কেন ব্যবহার করেছে সেটা আমরা আজকে এখানে কিছুটা... | 0 | 2023-01-07T13:18:33 | https://dev.to/chayti/buttsttraap-kaardd-kmponentt-er-klaasgulor-shj-simpl-bishlessnn-18ln | bootstrap, css | Bootstrap তাদের card component এর code এ কোন কোন class কেন ব্যবহার করেছে সেটা আমরা আজকে এখানে কিছুটা আলোচনা করার চেষ্টা করব।

```

<div class="card" style="width: 18rem;">

<img src="..." class="card-img-top" alt="... | chayti |

1,320,786 | Curso Gratuito de LGPD – Lei Geral de Proteção de Dados 2023 | Thumb LGPD Essential Kit – Guia de TI Conteúdo O que é LGPD? Curiosidades sobre a... | 0 | 2023-01-07T13:40:54 | https://dev.to/guiadeti/curso-gratuito-de-lgpd-lei-geral-de-protecao-de-dados-2023-45oj | cursogratuito, treinamento, cursosgratuitos, lgpd | ---

title: Curso Gratuito de LGPD – Lei Geral de Proteção de Dados 2023

published: true

date: 2023-01-07 13:32:19 UTC

tags: CursoGratuito,Treinamento,CursosGratuitos,LGPD

canonical_url:

---

**

I would like to introduce one impressive service in this slide.

It may seems like a sales presentation.

But, I just love this service. I'm not working for this company.

Have you, as engineers, ever ... | kakisoft | |

1,320,918 | The Role of Automation in Increasing Productivity | As developers, we are constantly looking for ways to streamline our workflows and increase our... | 0 | 2023-01-07T16:49:39 | https://dev.to/sammaji15/the-role-of-automation-in-increasing-productivity-3p20 | ci, automation, introduction | As developers, we are constantly looking for ways to streamline our workflows and increase our productivity. One strategy that can have a big impact is the use of automation. Automation refers to the use of technology to perform tasks without human intervention. In the context of software development, this can take man... | sammaji15 |

1,320,964 | Pandas-Basics In Short | Pandas is a python library that is used to analyse data. It is a table themed library like... | 0 | 2023-01-09T13:03:53 | https://dev.to/mdmusfikurrahmansifar/pandas-basics-in-short-1196 | Pandas is a python library that is used to analyse data. It is a table themed library like spreadsheet in excel unlike numpy which had a matrixlike theme. It allows us to analyse, manipulate and explore huge amount of data.

For the basics, we will discuss a few topics-

* Series

* DataFrame

* Missing data

* Groupby

* M... | mdmusfikurrahmansifar | |

1,321,046 | Frontend: One-on-one (Duologue) chatting application with Django channels and SvelteKit | Introduction This is the second part of a series of tutorial on building a one-on-one... | 21,300 | 2023-01-07T20:13:21 | https://dev.to/sirneij/frontend-one-on-one-duologue-chatting-application-with-django-channels-and-sveltekit-d8l | webdev, svelte, typescript, tutorial | ## Introduction

This is the second part of a series of tutorial on building a one-on-one (duologue) chatting application with Django channels and SvelteKit. We will focus on building the app's frontend in this part.

**NOTE: I won't delve much into the nitty-gritty of SvelteKit as I only intend to show how one can int... | sirneij |

1,321,342 | Weekly Challenge 198 | Two relatively straight forward tasks to start the new year. Challenge, My solutions Task... | 0 | 2023-01-08T06:13:59 | https://dev.to/simongreennet/weekly-challenge-198-2jbl | perl, python, theweeklychallenge | Two relatively straight forward tasks to start the new year.

[Challenge](https://theweeklychallenge.org/blog/perl-weekly-challenge-198/), [My solutions](https://github.com/manwar/perlweeklychallenge-club/tree/master/challenge-198/sgreen)

## Task 1: Max Gap

### Task

You are given a list of integers, `@list`.

Write ... | simongreennet |

1,321,486 | Generate SSH key pair on Git Bash in 2 Steps | Step 1 Generate a new SSH key pair by running the following command in Git... | 0 | 2023-01-08T11:08:36 | https://dev.to/pizofreude/generate-ssh-key-pair-on-git-bash-in-2-steps-3931 | security, gitbash, tutorial, beginners | ## Step 1

Generate a new SSH key pair by running the following command in Git Bash:

```bash

ssh-keygen -t rsa -b 4096

```

This will prompt you to enter a file to save the key to. You can accept the default file location by pressing Enter, or specify a different location by entering the file path and name.

The defau... | pizofreude |

1,321,758 | Developing and Deploying Microservices with Go | Introduction Entire books have been written on the subject of microservices. This post... | 0 | 2023-01-08T17:27:16 | https://dev.to/bruc3mackenzi3/developing-and-deploying-microservices-with-go-3ldo | microservices, go, docker, kubernetes | ## Introduction

Entire books have been written on the subject of microservices. This post will scratch the surface by exploring the key ingredients that go into development and deployment of microservices. [A demo microservice app](https://github.com/bruc3mackenzi3/go-microservice-template) will be used as a case st... | bruc3mackenzi3 |

1,321,760 | Creating a toast notification system for you react web app | Introduction I was developing a web app for a company and needed to implement a toast... | 0 | 2023-01-08T18:06:03 | https://dev.to/gatesvert81/creating-a-toast-notification-system-for-you-react-web-app-fl0 | webdev, javascript, react, nextjs |

## Introduction

I was developing a web app for a company and needed to implement a toast notification system. I don't like using a lot of npm packages for my projects to avoid them being bulky and prevent crashes when they are not managed properly. So, I set out to make my own simple toast notification system that wor... | gatesvert81 |

1,321,785 | Getting Started with PICO-8 | Start developing a game with the PICO-8 fantasy game console | 0 | 2023-01-08T18:27:44 | https://dev.to/cmiles74/getting-started-with-pico-8-4nla | pico8, retrogaming, gamedev | ---

layout: post

title: Getting Started with PICO-8

published: true

description: Start developing a game with the PICO-8 fantasy game console

tags: pico8, retrogaming, gamedev

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/9m0vva6e1tncd1ixd3ht.png

---

I've been interested in fantasy consoles for... | cmiles74 |

1,321,795 | Facade pattern in TypeScript | Introduction The facade pattern is a structural pattern which allows you to communicate... | 20,586 | 2023-01-08T18:57:46 | https://www.jmalvarez.dev/posts/facade-pattern-typescript | typescript, architecture, javascript, webdev | ## Introduction

The facade pattern is a structural pattern which allows you to communicate your application with any complex software (like a library or framework) in a simpler way. It is used to create a simplified interface to a complex system.

pattern:

First, you would need to install the necessary dependencies and configure your MySQL database:

```

// Install the MySQL and TypeScript dependencies

npm install mysql2... | elhamnajeebullah |

1,322,089 | A Tale of Hashery and Woe: How Mutable Hash Keys Led to an ActiveRecord Bug | In the changelog of Active Record right after version 7.0.4 is a small little bugfix that would, if I... | 0 | 2023-01-10T14:25:13 | https://dev.to/rnubel/a-tale-of-hashery-and-woe-how-mutable-hash-keys-led-to-an-activerecord-bug-3e85 | rails, ruby, programming | In the changelog of Active Record right after version 7.0.4 is a small little bugfix that would, if I wasn't about to quote it and follow it up with this whole article, probably not catch your attention:

> Fix a case where the query cache can return wrong values. See #46044

>

> Aaron Patterson

Behind this innocuous r... | rnubel |

1,322,110 | Deploying Go Applications to AWS App Runner: A Step-by-Step Guide | ...With the managed runtime for Go In this blog post you will learn how to run a Go application to... | 0 | 2023-03-02T10:17:13 | https://abhishek1987.medium.com/deploying-go-applications-to-aws-app-runner-a-step-by-step-guide-4cacfa2a7a38 | go, tutorial, programming, aws | ***...With the managed runtime for Go***

In this blog post you will learn how to run a Go application to AWS App Runner using the [Go platform runtime](https://docs.aws.amazon.com/apprunner/latest/dg/service-source-code-go1.html). You will start with an [existing Go application on GitHub](https://github.com/abhirockzz... | abhirockzz |

1,322,307 | How to use Firestore with Redux in a React application | You’re using Firebase as your backend-as-a-service platform, with Firestore holding your data.... | 0 | 2023-01-09T10:11:03 | https://dev.to/emotta/how-to-use-firestore-with-redux-in-a-react-application-44g7 | webdev, react, redux, firebase |  on [Unsplash](https://unsplash.com?utm_source=medium&utm_medium=referral)](https://cdn-images-1.medium.com/max/9012/0*WNb40yO0ma4mKbC3)

You’re using **Firebase** as your backend-as-a-service platform, with **Fire... | emotta |

1,322,443 | How to Create a real-time chat application with WebSocket | WebSockets are a technology for creating real-time, bi-directional communication channels over a... | 0 | 2023-01-09T13:20:50 | https://blog.learnhub.africa/2023/01/09/how-to-create-a-real-time-chat-application-with-websocket/ | websocket, webdev, programming, javascript | WebSockets are a technology for creating real-time, bi-directional communication channels over a single TCP connection.

They help build applications that require low-latency, high-frequency communication, such as chat applications, online gaming, and collaborative software.

In this tutorial, we will be building a re... | scofieldidehen |

1,322,543 | Doubly Linked List Series: Creating shift() and unshift() methods with JavaScript | Building off of last week's blog on Doubly Linked List classes, push(), and pop() methods - linked... | 0 | 2023-01-09T13:46:16 | https://dev.to/tartope/doubly-linked-list-series-creating-shift-and-unshift-methods-with-javascript-2mmd | Building off of last week's blog on Doubly Linked List classes, push(), and pop() methods - [linked here](https://dev.to/tartope/doubly-linked-list-series-node-and-doubly-linked-list-classes-with-push-and-pop-methods-using-javascript-37e) - today I will be discussing shift() and unshift() methods. Starting with shift(... | tartope | |

1,322,601 | Getting started with Nix Package Manager | Nix is a standalone package manager from the NixOS family that can be installed on other Linux/Unix... | 0 | 2023-01-10T15:30:00 | https://dev.to/blackbeard173/getting-started-with-nix-package-manger-4b21 | nix, linux, wsl2 | Nix is a standalone package manager from the NixOS family that can be installed on other Linux/Unix OS.

`https://nixos.org/download.html`

## Installation:

To install nix on WSL:

```bash

sh <(curl -L https://nixos.org/nix/install) --no-daemon

```

Then add `source $HOME/.nix-profile/etc/profile.d/nix.sh` to your `.b... | blackbeard173 |

1,322,607 | Running KubernetesPodOperator Tasks in different AWS accounts | update August, 14th I wanted to update to newer version of MWAA, so I have tested the original blog... | 0 | 2023-01-09T17:36:43 | https://blog.beachgeek.co.uk/mwaa-eks-multi-aws/ | opensource, aws | **update August, 14th**

I wanted to update to newer version of MWAA, so I have tested the original blog post against EKS 1.24 and MWAA version 2.4.3. I also had a few messages about whether this would work across different AWS regions. The good news is that it does. I have also put together a repo for this [here](http... | 094459 |

1,322,700 | Feature highlight AMBOSS SPACE | Recently I have taken a bit of a deep dive into the features offered by Amboss Space. First off, I... | 0 | 2023-01-14T06:13:41 | https://www.secondl1ght.site/blog/amboss-space | devops, tooling, productivity, tutorial | Recently I have taken a bit of a deep dive into the features offered by Amboss Space. First off, I want to have full disclosure that I will be joining the team at Amboss next month as a Frontend Engineer, which I am super excited about!

I started using their product and services more lately in order to research the c... | secondl1ght |

1,322,843 | How To fetch data from an API in a React application | To fetch data from an API in a React application, you can use the fetch() function, which is a... | 0 | 2023-01-09T18:26:59 | https://dev.to/ikamran01/how-to-fetch-data-from-an-api-in-a-react-application-212f | javascript, beginners, react, api | To fetch data from an API in a React application, you can use the fetch() function, which is a built-in JavaScript function for making network requests. Here's an example of how you might use fetch() to retrieve data from an API and store it in a React component:

```Javascript

import React, { useState, useEffect } fr... | ikamran01 |

1,322,904 | - What are the top programming languages to learn in 2023. | A post by Esther Ugute | 0 | 2023-01-09T20:15:42 | https://dev.to/cute6269/-what-are-the-top-programming-languages-to-learn-in-2023-23mk | java, python, javascript, csharp | cute6269 | |

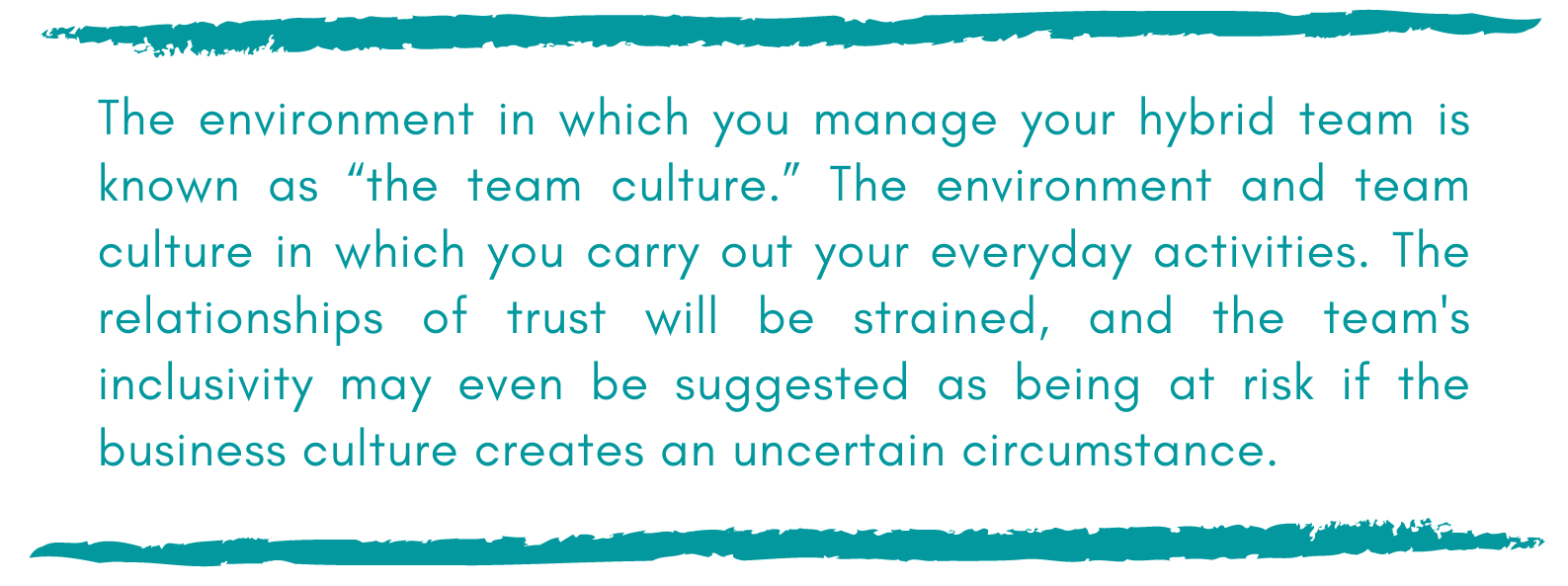

1,322,913 | Managing Hybrid Teams 6 | 8. Creating a positive team culture What then is the appropriate culture, and... | 21,181 | 2023-01-12T09:23:18 | https://dev.to/fpaghar/managing-hybrid-teams-6-m47 | ## 8. Creating a positive team culture

#### What then is the appropriate culture, and how do you develop it?

How we fit into our larger social environment is a part of our personal identity. Because that group is ... | fpaghar | |

1,322,925 | How to Download a YouTube video in MP3 Format with Python | Let's talk about how to use pytube, a lightweight, dependency-free library written in Python, to download a YouTube video. | 0 | 2023-01-09T21:15:12 | https://code.pieces.app/blog/how-to-download-a-youtube-video-in-mp3-format-with-python | python, video | <figure><img src="https://d37oebn0w9ir6a.cloudfront.net/account_32099/pytube_341958a171c035a8045af6d9c6324e39.jpg" alt="The YouTube app on an iPad."/></figure>

Without a doubt, YouTube is the most popular video-sharing platform in the world. As a software developer, you may encounter a situation where you want to scri... | get_pieces |

1,322,944 | Azure Data Factory - Trigger on SFTP upload | In this tutorial, we'll focus on configuring Storage Event Triggers with SFTP-enabled storage... | 0 | 2023-01-09T21:44:58 | https://dev.to/dinisrodrigues/azure-data-factory-trigger-on-sftp-upload-3cgb |

In this tutorial, we'll focus on configuring Storage Event Triggers with SFTP-enabled storage accounts. We'll walk through the basic steps of setting up a trigger, as well as the specific configuration changes you'll need to make to trigger an ADF pipeline in response to SFTP-related storage events.

---

## The Story... | dinisrodrigues | |

1,322,947 | CSS Width and Max-Width: Understanding the Difference | Confused about when to use the CSS width property, and when to opt for max-width? This guide... | 0 | 2023-01-09T22:18:12 | https://www.w3tweaks.com/difference-between-css-width-and-max-width.html | css, csswidth, cssmaxwidth |

---

Confused about when to use the [CSS](https://www.w3tweaks.com/category/css-tutorials) width property, and when to opt for max-width? This guide will provide you with a clear explanation of the different roles e... | w3tweaks |

1,322,966 | Developers - which tools have you ever paid for or wouldn't mind paying for? | In their farewell post, the Kite team says that one of the biggest challenges they had was that they... | 0 | 2023-01-09T22:56:29 | https://dev.to/npobbathi/developers-which-tools-have-you-ever-paid-for-or-wouldnt-mind-paying-for-5ce9 | discuss, startup | In their [farewell post](https://www.kite.com/blog/product/kite-is-saying-farewell/), the Kite team says that one of the biggest challenges they had was that they couldn't make their 500K strong community to pay for the product.

I was wondering which tools do developers consider worthy of paying for and why? | npobbathi |

1,323,134 | 5 websites will make you a smarter 🏆 developer👩💻 | Here is the list of the best 5 websites to help you with your projects. Novu The... | 0 | 2023-01-10T02:21:00 | https://dev.to/mahmoudessam/5-websites-will-make-you-a-smarter-developer-2jld | programming, webdev, javascript, python | Here is the list of the best 5 websites to help you with your projects.

### Novu

- The open-source notification infrastructure for developers.

- Simple components and APIs for managing all communication channels in one place Email, SMS, Direct, and Push

### Reactive Resume

- Reactive Resume is a free and open sour... | mahmoudessam |

1,323,344 | Gorilla Flow - Ingredients, Side Effects, Warning & Complaints? | Gorilla Flow oil is reduced greatly by just choosing an efficient mattress. While mattresses are... | 0 | 2023-01-10T07:34:32 | https://dev.to/gorillaflow2/gorilla-flow-ingredients-side-effects-warning-complaints-1jdb | webdev, javascript, beginners, programming | Gorilla Flow oil is reduced greatly by just choosing an efficient mattress. While mattresses are expensive, suppliers want that try them for around a month or longer, provided it is really protected.TIP! Speak with your doctor hard to think but coffee is said to be of help when attempting to sooth chronic back pain. Re... | gorillaflow2 |

1,323,397 | Cyber Liability vs. Data Breach | These days, data breaches are a frequent friend of web applications – however, there are so many... | 0 | 2023-01-10T10:00:00 | https://breachdirectory.com/blog/cyber-liability-vs-data-breach/ | webdev, security, programming, beginners | These days, data breaches are a frequent friend of web applications – however, there are so many terms related to them... As no one data breach is exactly the same and the details of these things are often so hard to find out, it's sometimes hard to distinguish a data breach from a data leak.

In this space, two terms ... | breachdirectory |

1,323,950 | Destroy All Goblins is Launched | After a month of work, my largest game to date, is finished! It’s called Destroy All Goblins. I made... | 0 | 2023-01-10T18:22:18 | http://www.brettchalupa.com/destroy-all-goblins-is-launched | gamedev | ---

title: Destroy All Goblins is Launched

published: true

date: 2023-01-09 18:09:00 UTC

tags: gamedev

canonical_url: http://www.brettchalupa.com/destroy-all-goblins-is-launched

---

After a month of work, my largest game to date, is finished! It’s called _Destroy All Goblins_. I made it with DragonRuby Game Toolkit. I... | brettchalupa |

1,323,482 | Unlocking the Power of Big Data Processing with Resilient Distributed Datasets | A resilient distributed dataset (RDD) is a fundamental data structure in the Apache Spark framework... | 0 | 2023-01-10T09:27:55 | https://dev.to/sabareh/unlocking-the-power-of-big-data-processing-with-resilient-distributed-datasets-28kf | devops, database, datascience, data | A resilient distributed dataset (RDD) is a fundamental data structure in the Apache Spark framework for distributed computing. It is a fault-tolerant collection of elements that can be processed in parallel across a cluster of machines. RDDs are designed to be immutable, meaning that once an RDD is created, its element... | sabareh |

1,323,546 | Garbage Collection C# | Garbage Collector Glossary Heap Portion of memory where dynamically... | 0 | 2023-01-10T11:24:42 | https://dev.to/amansinghparihar/garbage-collection-c-ja9 | csharp, garbagecollection | # Garbage Collector

## Glossary

### Heap

Portion of memory where dynamically allocated objects resides.

### Managed Heap

Whenever we start any process or run our .NET code, it

reserves some space in the memory which is contiguous and

it's called managed heap.

There were the days when the developer... | amansinghparihar |

1,323,564 | Are SASS @mixins really that lightweight? And what are %placeholders? | Mixins are a powerful feature in Sass that allow you to reuse a set of CSS declarations across... | 0 | 2023-01-10T12:55:06 | https://dev.to/kemotiadev/are-sass-mixins-really-that-lightweight-and-what-are-placeholders-119i | saas, mixin, css, webdev | **Mixins** are a powerful feature in Sass that allow you to reuse a set of CSS declarations across multiple selectors.

One of the main advantages of using Mixins is that they can help keep your CSS code organized and maintainable, making it easier to update and maintain styles across a large code base.

However, one ... | kemotiadev |

1,323,784 | A Bloody Case Caused by a Thread Pool Rejection Strategy | Conduct an in-depth analysis of the full gc problem of the online business of the inventory... | 0 | 2023-01-10T15:16:35 | https://dev.to/ppsrap/a-bloody-case-caused-by-a-thread-pool-rejection-strategy-15j5 | java, threadpool, exception, programming | ## Conduct an in-depth analysis of the full gc problem of the online business of the inventory center, and combine the solution method and root cause analysis of this problem to implement the solution to such problems ##

**1. Event review**

Starting from 7.27, the main site, distributors, and other business parties b... | ppsrap |

1,323,857 | Pulumi and AWS SAM - How to convert Cloud Formation IAC to Pulumi | SAM stands for AWS Serverless Application Model. It's a framework for building serverless... | 0 | 2023-01-10T18:09:09 | https://dev.to/ferjssilva/pulumi-and-aws-sam-how-to-convert-cloud-formation-iac-to-pulumi-62n | iac, pulumi, aws, cloud | **SAM** stands for AWS Serverless Application Model. It's a framework for building serverless applications on AWS.

**IAC** is a way to manage and provision infrastructure using code.

**Pulumi** is a multi-cloud development platform for provisioning infrastructure as code (IAC).

While **SAM is specific to AWS** and ... | ferjssilva |

1,324,342 | CVE vulnerabilities on Google Chrome prior to releases around on Dec. 2022 | Overview Google Chrome vulnerabilities CVE-2023-0140 (and more) Chrome on... | 21,348 | 2023-01-10T23:26:52 | https://scqr.net/en/blog/2023/01/11/chrome-chromium-vulnerabilities-cve-2023-0140-etc/index.html | chrome, chromium, security, vulnerability | ## Overview

### Google Chrome vulnerabilities

[CVE](https://cve.mitre.org/cve/)-2023-0140 (and more)

[Chrome](https://www.google.co.jp/chrome/browser/) on Windows (and more), whose version is prior to 109.0.5414.74, has risk to make remote attack easy.

109 was released around last month.

\-- Recommended to update.... | nabbisen |

1,324,653 | Create a Real Time Crypto Price App with Next.js, TypeScript, Tailwind CSS & Binance API | Web App: A Real Time Crypto Price App built using Next.js, TypeScript, Tailwind CSS, and... | 0 | 2023-01-11T05:55:02 | https://dev.to/codeofrelevancy/create-a-real-time-crypto-price-app-with-nextjs-typescript-tailwind-css-binance-api-1oc8 | web3, crypto, nextjs, typescript | ## Web App:

A Real Time Crypto Price App built using Next.js, TypeScript, Tailwind CSS, and Binance API would be a dynamic application that provides users with up-to-date information on the current value of various cryptocurrencies.

---

## Source code and Tutorial:

{% embed https://www.youtube.com/embed/9n8B3zflzeI %... | codeofrelevancy |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.