id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,371,100 | Collaborative Virtual Teams | Some employers are demanding a return to the office. Is this really good for them or their employees? | 0 | 2023-02-19T01:33:12 | https://dev.to/cheetah100/collaborative-virtual-teams-58ng | remote, home, virtual | ---

title: Collaborative Virtual Teams

published: true

description: Some employers are demanding a return to the office. Is this really good for them or their employees?

tags: remote, home, virtual

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/pmcgk6u7q7ypn5q7clpb.png

# Use a ratio of 100:42 for... | cheetah100 |

1,371,477 | How To Make a Calculator Using HTML, CSS & JavaScript | HTML Calculator 🧮 | We are going to make a dark theme♠️ calculator using simple HTML,CSS & JavaScript.🧑💻 The goal... | 0 | 2023-02-19T11:32:24 | https://dev.to/kaizendeveloper/how-to-make-a-calculator-using-html-css-javascript-html-calculator-3a2i | We are going to make a dark theme♠️ calculator using simple HTML,CSS & JavaScript.🧑💻

The goal is not to go to complex logic and css. the main goal is to keep it simple and write the less code to make beautiful UI with basic calculator functionality

1. Simple HTML Markup

```

<!DOCTYPE html>

<html lang="en">

<head... | kaizendeveloper | |

1,371,537 | Why do browser consoles return undefined? Explained | Overview As web developers, we are blessed with consoles in the browsers to interact and... | 0 | 2023-02-19T12:35:27 | https://dev.to/sobitp59/why-do-browser-consoles-return-undefined-explained-26lm | ## Overview

As web developers, we are blessed with consoles in the browsers to interact and debug our web pages and applications.

The most common type of console is the JavaScript console, which is found in most modern web browsers.

These consoles are built into the web browser that provides a command-line interface(... | sobitp59 | |

1,371,541 | GitHub Webhook: our own “server” in NodeJs to receive Webhook events over the internet. | We are writing an HTTP “server” in NodeJs to receive GitHub Webhook events. We use the ngrok program... | 0 | 2023-02-19T12:42:56 | https://dev.to/behainguyen/github-webhook-our-own-server-in-nodejs-to-receive-webhook-events-over-the-internet-354d | git, webhook, node, ngrok | *We are writing an HTTP “server” in NodeJs to receive GitHub Webhook events. We use the ngrok program to make our server publicly accessible over the internet. Finally, we set up a GitHub repo and define some Webhook on this repo, then see how our now public NodeJs server handles GitHub Webhook's notifications.*

<a hr... | behainguyen |

1,373,018 | Liveblocks - Collab Instantly! | What is Liveblocks? I have recently started playing around with some collaboration related... | 0 | 2023-02-21T17:49:22 | https://dev.to/mantiq/liveblocks-collab-instantly-29m6 | liveblocks, typescript, react, webdev | ## What is Liveblocks?

I have recently started playing around with some collaboration related technologies, most notably [yjs](https://docs.yjs.dev/yjs-in-the-wild) & [liveblocks](https://liveblocks.io)

In this article I will be focusing on Liveblocks as it was quite the fun implementing features in applications whil... | mantiq |

1,372,091 | Effortlessly Elevate Your React Code with ESLint and Prettier: A Step-by-Step Guide for 2023 | Hey Dev community! If you're a #React developer, you know the pain of dealing with messy and... | 0 | 2023-02-20T01:45:54 | https://dev.to/aaron_janes/effortlessly-elevate-your-react-code-with-eslint-and-prettier-a-step-by-step-guide-for-2023-179d | webdev, javascript, react, tutorial | Hey Dev community! If you're a #React developer, you know the pain of dealing with messy and inconsistent code. That's where my latest blog post comes in - I have put together a step-by-step guide on how to use #ESLint and #Prettier to effortlessly elevate your code in 2023. Check it out and let me know what you think!... | aaron_janes |

1,372,133 | What is Monkey Patching? | All about monkey patching with examples. | 0 | 2023-02-20T03:08:00 | https://dev.to/himankbhalla/what-is-monkey-patching-4pf | monkeypatching, programmingbasics, python, ruby | ---

title: What is Monkey Patching?

published: true

description: All about monkey patching with examples.

tags: monkeypatching, programmingbasics, python, ruby

cover_image: https://miro.medium.com/v2/resize:fit:1400/0*i7I-kxMt-huH82fu

# Use a ratio of 100:42 for best results.

# published_at: 2023-02-20 03:08 +0000

---

... | himankbhalla |

1,372,213 | Exploring the Best Mobile App Development Frameworks of 2023 | The world has gone mobile and mobile apps are now a necessity for businesses to stay relevant and... | 0 | 2023-02-20T05:04:16 | https://dev.to/janefraserof/exploring-the-best-mobile-app-development-frameworks-of-2023-3kg1 | programming, javascript, android | The world has gone mobile and mobile apps are now a necessity for businesses to stay relevant and competitive. Mobile app development frameworks make it easier and faster for developers to create high-quality apps as [numberous app ideas](https://appticz.com/mobile-app-ideas) emerge daily. In this blog, we will explore... | janefraserof |

1,372,492 | Create a rainbow-coloured list with :nth-of-type() | This is my favourite thing to do with the :nth-of-type() selector: First, you need some colour... | 0 | 2023-04-09T10:59:59 | https://rachsmith.com/create-a-rainbow-coloured-list-with-css/ | css, frontend | ---

title: Create a rainbow-coloured list with :nth-of-type()

published: true

date: 2023-02-20 20:03:00 UTC

tags: css, frontend

canonical_url: https://rachsmith.com/create-a-rainbow-coloured-list-with-css/

---

This is my favourite thing to do with the `:nth-of-type()` selector:

{% codepen https://codepen.io/rachsmith... | rachsmith |

1,372,554 | Sharp & Glowing dark card | Chrome only | Based on this tweet: https://twitter.com/aleksliving/status/1620874863690014721?s=20 Please note... | 0 | 2023-02-20T11:45:28 | https://dev.to/lukyvj/sharp-glowing-dark-card-chrome-only-5g90 | codepen, css, houdini | <p>Based on this tweet: <a href="https://twitter.com/aleksliving/status/1620874863690014721?s=20" target="_blank">https://twitter.com/aleksliving/status/1620874863690014721?s=20</a></p>

<p>Please note that this pen uses CSS @property for that is not yet available everywhere, I’ll make a cross browser version. </p>

<p... | lukyvj |

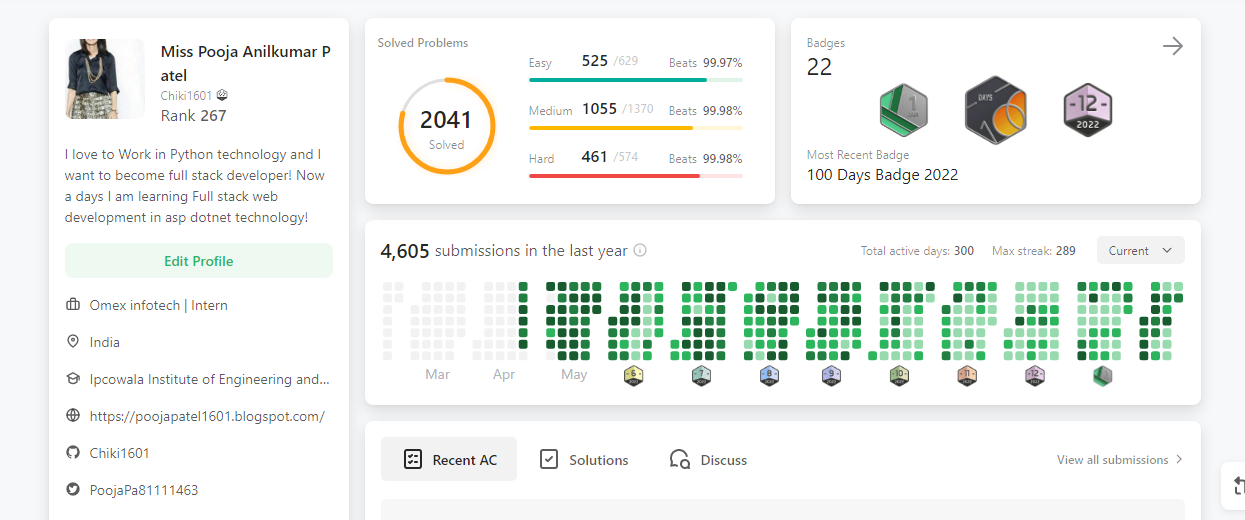

1,372,565 | Today I completed 2041 Solutions on Leetcode🥳🥳🥳 | A post by Miss Pooja Anilkumar Patel | 0 | 2023-02-20T12:06:34 | https://dev.to/chiki1601/today-i-completed-2041-solutions-on-leetcode-2ge4 | chiki1601, challenge, programming, beginners |

| chiki1601 |

1,372,612 | How do you gracefully shut down Pods in Kubernetes? | When you type kubectl delete pod, the pod is deleted, and the endpoint controller removes its IP... | 0 | 2023-02-20T13:16:07 | https://dev.to/danielepolencic/how-do-you-gracefully-shut-down-pods-in-kubernetes-4gl0 | kubernetes, devops | When you type `kubectl delete pod`, the pod is deleted, and the endpoint controller removes its IP address and port (endpoint) from the Services and etcd.

You can observe this with `kubectl describe service`.

#Top 30 React UI Libraries for Building Beautiful Interfaces

Discover the top 30 React UI libraries for enhancing the user interface of your web applications. From navigation menus to modals, cal... | ziontutorial |

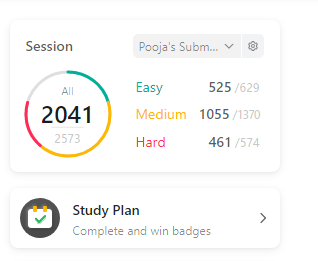

1,373,116 | A simple mortgage calculator using python | Hi Guys! I want to show my simple python mortgage calculator. It calculates the monthly payment and... | 0 | 2023-02-20T20:33:29 | https://dev.to/norbikx1/a-simple-mortgage-calculator-using-python-271j | beginners, programming, python | Hi Guys! I want to show my simple python mortgage calculator. It calculates the monthly payment and total amount to give back. Works only with fixed interest rate.

This is a very simple code. However, it does the... | norbikx1 |

1,373,127 | Como ter cuidado com as burlas de empregadores em trabalhos remoto | Trabalhar remotamente tem se tornado uma opção cada vez mais comum para profissionais em todo o... | 0 | 2023-02-20T21:01:38 | https://dev.to/mvfernando/como-ter-cuidado-com-as-burlas-de-empregadores-em-trabalhos-remoto-30od | trabalhoremoto, devalert, mvfernando, evitarburlas | Trabalhar remotamente tem se tornado uma opção cada vez mais comum para profissionais em todo o mundo. No entanto, essa modalidade de trabalho pode trazer consigo alguns riscos, especialmente quando se trata de lidar com empregadores que não são confiáveis. Infelizmente, há muitos casos de burlas de empregadores que nã... | mvfernando |

1,373,460 | IPv4 Address 2023 Infographics | Introduction An Internet Protocol (IP) address is an unique identifier of a computer on... | 0 | 2023-02-21T04:21:26 | https://dev.to/ip2location/ipv4-address-2023-infographics-6jh | ipv4, programming, datascience | ## Introduction

An Internet Protocol (IP) address is an unique identifier of a computer on the internet or even a local network. With this IP address, computers on a network can communicate with each others and send information. IP addresses are managed by the Internet Assigned Numbers Authority (IANA) and its regional... | ip2location |

1,381,790 | Steps To Master AWS | Steps To Master AWS Mastering AWS requires dedication, practice, and ongoing learning.... | 0 | 2023-02-27T21:39:00 | https://dev.to/pranjal563/steps-to-master-aws-1fke | ## Steps To Master AWS

Mastering AWS requires dedication, practice, and ongoing learning. _Here are some steps you can follow to master AWS_:

**1. Learn the Basics**: Start by gaining a solid understanding of the core AWS services such as EC2, S3, and VPC. Review AWS documentation and take online tutorials to learn t... | pranjal563 | |

1,383,016 | How We Can Help You Improve Your Existing Website | As the digital world becomes increasingly competitive, it's essential to have a website that not only... | 0 | 2023-02-28T20:35:03 | https://dev.to/vantero/how-we-can-help-you-improve-your-existing-website-1gm5 | As the digital world becomes increasingly competitive, it's essential to have a website that not only looks great but also functions smoothly and provides a great user experience. However, designing and maintaining a website can be a daunting task, especially for business owners who may not have the necessary technical... | vantero | |

1,384,051 | Discover @ Linux FINOS | Proud that Discover is Joining the Fintech Open-Source Foundation (FINOS) https://bit.ly/3m9EIUh !... | 0 | 2023-03-01T17:02:32 | https://dev.to/angel_diaz_rodriguez/discover-linux-finos-3k6e | Proud that Discover is Joining the Fintech Open-Source Foundation (FINOS) https://bit.ly/3m9EIUh ! Explore Discover Technology https://technology.discover.com | angel_diaz_rodriguez | |

1,384,622 | Tagged templates in JavaScript | JavaScript tagged templates are a powerful feature that allow's developers to create custom template... | 0 | 2023-03-02T03:11:13 | https://dev.to/mrh0200/tagged-templates-in-javascript-n44 | javascript, webdev, beginners, programming | JavaScript tagged templates are a powerful feature that allow's developers to create custom template literals that can be used to manipulate and transform data in a more flexible and expressive way.

### Template literals

Template literals were introduced in ECMAScript 6 as a new way to create strings in JavaScript.... | mrh0200 |

1,384,729 | How to automatically close your issues once you merge a PR | It's really annoying go through all your issues, and close each one once a feature request or bug... | 13,860 | 2023-03-03T06:27:19 | https://dev.to/github/how-to-automatically-close-your-issues-once-you-merge-a-pr-1li4 | github, opensource, management | It's really annoying go through all your issues, and close each one once a feature request or bug report has been completed. The pull request gets merged and then you gotta go find the issue that it corresponds to... way too much time.

Now GitHub has given you a way to automatically close your issues once a pull reque... | mishmanners |

1,384,739 | Progressive Web App Development using React.js: A comprehensive guide | React.js is a prominent solution for creating sophisticated UI interactions that connect with the... | 0 | 2023-03-02T05:31:10 | https://www.ifourtechnolab.com/blog/progressive-web-app-development-using-react-js-a-comprehensive-guide | webdev, beginners, react, reactnative | React.js is a prominent solution for creating sophisticated UI interactions that connect with the server in real-time using JavaScript-driven websites. It is capable of competing with top-tier UI frameworks and greatly simplifies the development process.In this article, we will learn how to create a progressive web app... | ifourtechnolab |

1,384,823 | How To Train Your Employees in Cybersecurity Awareness | Cybersecurity threats have become increasingly sophisticated and complex, making protecting an... | 0 | 2023-03-02T07:11:06 | https://dev.to/navcharans/how-to-train-your-employees-in-cybersecurity-awareness-2gcl | cybersecurity | Cybersecurity threats have become increasingly sophisticated and complex, making protecting an organization's critical assets and data more challenging. As a result, educating employees on the importance of cybersecurity and how to recognize and respond to potential threats is essential. Cybersecurity awareness trainin... | navcharans |

1,384,917 | Using Grafana to visualize CI Workflow Stats | Grafana is an excellent tool for creating dashboards to gather data from various sources and serve... | 0 | 2023-03-02T08:43:53 | https://www.runforesight.com/blog/using-grafana-to-visualize-ci-workflow-stats | githubactions, ci, devops, github | Grafana is an excellent tool for creating dashboards to gather data from various sources and serve them in a single place. According to [Newstack,](https://thenewstack.io/will-grafana-become-easier-to-use-in-2022/) Grafana is the second most popular observability tool, counting 800,000 active installs and over 10 milli... | boroskoyo |

1,385,017 | Server-render your SPA in CI at deploy time 📸 | If you deploy your SPA using GitHub Actions you can add this new action to your workflow to have it... | 0 | 2023-03-02T10:47:36 | https://dev.to/bryce/server-render-your-spa-in-ci-at-deploy-time-2798 | javascript, webdev, github, githubactions | If you deploy your SPA using [GitHub Actions](https://github.com/features/actions) you can add this new [action](https://github.com/marketplace/actions/server-side-render-ssr-with-react-snap) to your workflow to have it build server-rendered HTML!

Server-side rendering (SSR) is [great for SEO and performance](https://... | bryce |

1,385,117 | Create and Validate a Sign-Up Form in .NET MAUI | A sign-up form allows users to create an account in an application by providing details such as name,... | 0 | 2023-03-02T15:31:59 | https://www.syncfusion.com/blogs/post/create-validate-sign-up-form-in-dotnet-maui.aspx | maui, forms, mobile, data | ---

title: Create and Validate a Sign-Up Form in .NET MAUI

published: true

date: 2023-03-02 11:00:00 UTC

tags: maui, forms, mobile, data

canonical_url: https://www.syncfusion.com/blogs/post/create-validate-sign-up-form-in-dotnet-maui.aspx

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/53odc1ne0xq... | jollenmoyani |

1,385,218 | Building Serverless Applications with AWS Lambda | “AWS Lambda in Action: Event-driven serverless applications” by Danilo Poccia is a comprehensive... | 0 | 2023-03-02T14:08:44 | https://dev.to/dominguezdaniel/building-serverless-applications-with-aws-lambda-1l0k | aws, books, serverless, lambda | {% youtube gGe022vdK5E %}

“[AWS Lambda in Action: Event-driven serverless applications](https://rebrand.ly/devshelf-004

)” by Danilo Poccia is a comprehensive guide for developers looking to understand and implement serverless applications using Amazon Web Services (AWS) Lambda. The book covers the core concepts of ser... | dominguezdaniel |

1,385,221 | Validate file with ZOD | Zod is a TypeScript-first schema validation library with static type inference. You can create... | 0 | 2023-03-03T09:33:12 | https://dev.to/banerjeeprodipta/validate-file-with-zod-20o | zod, validation, file, schema | Zod is a TypeScript-first schema validation library with static type inference. You can create validation schemas for either field-level validation or form-level validation1. Here’s an example of how you can use Zod for schema validation for a file:

```

const MAX_FILE_SIZE = 5000000;

function checkFileType(file: Fil... | banerjeeprodipta |

1,385,253 | JavaScript Data Types | Understanding data types is an essential aspect of writing effective JavaScript code. ... | 0 | 2023-03-03T16:33:17 | https://www.90-10.dev/js-data-types/ | javascript | ---

title: JavaScript Data Types

published: true

date: 2023-01-07 08:00:00 UTC

tags: JavaScript

canonical_url: https://www.90-10.dev/js-data-types/

---

Understanding data types is an essential aspect of writing effective JavaScript code.

## Primitive Data Types

There are six primitive data types - they are simple an... | 90_10_dev |

1,385,308 | Free resources that helped me master React as a Self Taught Web Developer | React? Why? When it comes to web development and to be precise - JavaScript frameworks /... | 0 | 2023-03-02T15:51:02 | https://dev.to/asheeshh/free-resources-that-helped-me-master-react-as-a-self-taught-web-developer-58k2 | webdev, react, javascript, beginners | ## React? Why?

When it comes to web development and to be precise - JavaScript frameworks / libraries, React.js is always the one that most people think of first. According to [State of JS 2022](https://2022.stateofjs.com/en-us/libraries/front-end-frameworks/), React is the most used frontend framework by web develope... | asheeshh |

1,385,314 | Part 1: C# and .NET Core | Foreword ဒီ Series ကိုမစခင် ဒီ Series မှာပါဝင်မဲ့ အကြောင်းအရာတွေနဲ့ ကျနော်တဲ့ စာရေးတဲ့ ပုံစံကို... | 22,078 | 2023-03-03T02:49:22 | https://dev.to/myozawlatt/part-1-c-and-net-core-168p | csharp, dotnetcore, dotnet | **Foreword**

ဒီ Series ကိုမစခင် ဒီ Series မှာပါဝင်မဲ့ အကြောင်းအရာတွေနဲ့ ကျနော်တဲ့ စာရေးတဲ့ ပုံစံကို အရင်ပြောပါရစေ။ ဒီ Series ကတော့ Microsoft web development stack တခုဖြစ်တဲ့ ASP.NET Core ကို C# programming language အသုံးပြုပြီး မြန်မာလို ရှင်းလင်းရေးသားသွားမဲ့ Series တခုပဲဖြစ်ပါတယ်။ ကျနော် စာရေးတဲ့နေရာမှာ စာလုံးပေါင်း... | myozawlatt |

1,385,321 | AWS open source newsletter, #147 | March 6th, 2023 - Instalment #147 Welcome Welcome to edition #147 of the AWS open source... | 0 | 2023-03-06T07:58:07 | https://blog.beachgeek.co.uk/newsletter/aws-open-source-news-and-updates-147/ | opensource, aws | ## March 6th, 2023 - Instalment #147

**Welcome**

Welcome to edition #147 of the AWS open source newsletter, featured in the [latest episode of Build on Open Source](https://www.twitch.tv/videos/1754262947). Welcome to edition #147 of the AWS open source newsletter, featured in the [latest episode of Build on Open Sou... | 094459 |

1,408,191 | Soroban Contracts 101 : Tokens (Part 1) | Hi there! Welcome to my tenth post of my series called "Soroban Contracts 101", where I'll be... | 22,205 | 2023-03-20T18:25:46 | https://dev.to/yuzurush/soroban-contracts-101-tokens-part-1-lao | soroban, stellar, smartcontract, sorobanathon | Hi there! Welcome to my tenth post of my series called "Soroban Contracts 101", where I'll be explaining the basics of Soroban contracts, such as data storage, authentication, custom types, and more. All the code that we're gonna explain throughout this series will mostly come from [soroban-contracts-101](https://githu... | yuzurush |

1,385,376 | Manage Profiles in VS Code | In Visual Studio Code, a profile is a set of settings that help you customize your environment for... | 0 | 2023-03-02T17:07:28 | https://blog.paschalogu.com/Manage-Profiles-in-Visual-Studio-Code-466ba8eb5af849798bf55012646578a8 | vscode, productivity, tutorial | In Visual Studio Code, a profile is a set of settings that help you customize your environment for your projects. Profiles are a great way to customize VS Code to better fit your need. You can save a set of configurations such as settings, extensions, font size, color theme etc., sync them across your devices, and even... | paschalogu |

1,385,408 | Multiple Inheritance in Solidity | Solidity supports multiple inheritance, which allows a single contract to inherit from multiple... | 0 | 2023-03-02T17:13:31 | https://dev.to/shlok2740/multiple-inheritance-in-solidity-4pg | ethereum, blockchain, solidity, web3 | >Solidity supports multiple inheritance, which allows a single contract to inherit from multiple contracts at the same time

Multiple inheritance is a powerful feature of Solidity, the programming language used to develop smart contracts on Ethereum. It allows for code reuse and better organization of complex projects.... | shlok2740 |

1,386,015 | How an open-source feature flagging service supports tens of thousands of DUs on a free cloud instance | I developed an open-source feature flagging service written in .NET 6 and Angular. I have created a... | 0 | 2023-03-03T04:21:39 | https://dev.to/cosmicflood/how-an-open-source-feature-flagging-service-supports-tens-of-thousands-of-dus-on-a-free-cloud-instance-2ebp | I developed [**an open-source feature flagging service written in .NET 6 and Angular**](https://github.com/featbit/featbit). I have created a load test for the real-time feature flag evaluation service to understand my current service's bottlenecks better.

The evaluation service receives and holds the WebSocket connec... | cosmicflood | |

1,391,383 | GitHub Self-Hosted Runners on Azure Container Apps with Autocsaling | เรื่องมันมีอยู่ว่า อยากได้ GitHub Self-Hosted Runners แบบที่ autoscale ได้ตาม workload ครับ... | 0 | 2023-03-07T04:41:00 | https://dev.to/peepeepopapapeepeepo/github-selfhosted-runners-on-azure-container-apps-with-autocsaling-5h02 | github, azure, cloud, devops | เรื่องมันมีอยู่ว่า อยากได้ GitHub Self-Hosted Runners แบบที่ autoscale ได้ตาม workload ครับ และตอนที่ไม่ใช้เลยก็สามารถ scale in instance เหลือ 0 ได้ ผมค้นไปเจอ [Autoscaling self hosted GitHub runner containers with Azure Container Apps (ACA)](https://dev.to/pwd9000/run-docker-based-github-runner-containers-on-azure-con... | peepeepopapapeepeepo |

1,405,085 | An Introduction to Pieces for Developers - JetBrains Plugin | In 2022, our team set out on a journey to build the most advanced code snippet management and... | 22,291 | 2023-03-19T19:08:00 | https://dev.to/getpieces/an-introduction-to-pieces-for-developers-jetbrains-plugin-4p3p | devops, tooling, webdev, programming | In 2022, our team set out on a journey to build the most advanced code snippet management and workflow context platform yet.

The debut release of our Flagship Desktop App got the ball rolling, and now our JetBrains Plugin aims to take developers' productivity to the next level by incorporating key capabilities and our... | get_pieces |

1,406,490 | Make a video about the best contributor of the month with React and NodeJS 🚀 | TL;DR See the cover of the article? We are going to create this. We will take an... | 0 | 2023-03-20T13:04:13 | https://dev.to/novu/make-a-video-about-the-best-contributor-of-the-month-with-react-and-nodejs-2feg | webdev, javascript, programming, tutorial | #TL;DR

See the cover of the article? We are going to create this.

We will take an organization on GitHub, review all their repositories, and check the number of merged requests done by every contributor during the month. We will declare the winner by the one with the most amount of pull requests by creating the video �... | nevodavid |

1,407,064 | Scala 102: Collections and Monads | Hey there, welcome back to our Scala series! Last time, we introduce the basic types. But today,... | 22,188 | 2023-03-19T23:20:25 | https://dev.to/krlz/scala-102-collections-47n0 | beginners, programming, scala, functional | Hey there, welcome back to our Scala series! Last time, we introduce the basic types. But today, we're talking about collections and implementing monads on lists.

Let's start talking about Lists specifically! They're immutable, which means once you create them, they can't be changed - but that's not a bad thing! You c... | krlz |

1,407,423 | openGauss Checking the Number of Database Connections | Background If the number of connections reaches its upper limit, new connections cannot be created.... | 0 | 2023-03-20T07:57:52 | https://dev.to/tongxi99658318/opengauss-checking-the-number-of-database-connections-gg4 | opengauss | Background

If the number of connections reaches its upper limit, new connections cannot be created. Therefore, if a user fails to connect a database, the administrator must check whether the number of connections has reached the upper limit. The following are details about database connections:

The maximum number of g... | tongxi99658318 |

1,407,633 | Medusa Vs Woocommerce: Comparing Two Open source Online Commerce Platforms | Introduction Woocommerce is an open source, customizable ecommerce platform built on... | 0 | 2023-03-20T10:57:53 | https://dev.to/gunkev/medusa-vs-woocommerce-comparing-two-open-source-online-commerce-platforms-4g8g | headless, opensource, commerce, medusajs |

## Introduction

[Woocommerce](https://woocommerce.com) is an [open source](https://www.learn-dev-tools.blog/what-does-open-source-software-mean-a-beginners-guide/), customizable ecommerce platform built on WordPress. Woocommerce offers features like flexible and secure payment and shipping integration. It is written ... | gunkev |

1,407,634 | Leveraging Microsoft Teams to Boost Collaboration and Productivity | Collaboration and productivity tools have become increasingly vital for businesses to streamline... | 21,622 | 2023-03-20T11:02:20 | https://intranetfromthetrenches.substack.com/p/leveraging-microsoft-teams-to-boost | microsoftcl, microsoftteams, customization | Collaboration and productivity tools have become increasingly vital for businesses to streamline their operations and stay competitive in today's fast-paced digital landscape. Microsoft Teams is a cloud-based platform that offers a comprehensive set of features for collaboration, document management, and business proce... | jaloplo |

1,407,732 | Obsidian Notes with git-crypt 🔐 | Reposting my github guide Obsidian Vault, the best markdown note setup👾. Private key encrypted cross... | 0 | 2023-03-20T11:38:18 | https://dev.to/snazzybytes/obsidian-notes-with-git-crypt-376m | obsidian, termux, markdown, gitcrypt | Reposting my [github guide](https://github.com/snazzybytes/obsidian-scripts/blob/master/README.md#obsidian-notes-with-git-crypt)

Obsidian Vault, the best markdown note setup👾. Private key encrypted cross device synced notes 🤌(every pixel matches my laptop extensions, plugins, icons, themes, all of it 🌋)

💻 Laptop:... | snazzybytes |

1,407,832 | New Suspense Hooks for Meteor | As we learned in the previous part of this series, why and how we could use Suspense. This article... | 22,320 | 2023-03-20T13:42:48 | https://blog.meteor.com/new-suspense-hooks-for-meteor-5391570b3007 | webdev, javascript, react, showdev |

As we learned in the previous part of this series, why and how we could use [Suspense](https://dev.to/grubba/making-promises-suspendable-452f). This article will discuss and show how to use Meteor's... | grubba |

1,407,840 | Register for Notifications on Android SDK 33 | Problem: Notifications are not being received after upgrading to Android SDK 33. Solution: In... | 0 | 2023-03-20T14:14:43 | https://dev.to/lancer1977/register-for-notifications-on-android-sdk-33-ao9 | Problem: Notifications are not being received after upgrading to Android SDK 33.

Solution: In Android SDK 33 you now have to request permissions to display Notifications. Asking for POST_NOTIFICATIONS alone wasn't sufficient, and some of these maybe overkill but this is what worked for me.

To get the notifications pe... | lancer1977 | |

1,407,884 | Use AWS Controllers for Kubernetes to deploy a Serverless data processing solution with SQS, Lambda and DynamoDB | In this blog post, you will be using AWS Controllers for Kubernetes on an Amazon EKS cluster to put... | 22,895 | 2023-03-20T16:03:54 | https://abhishek1987.medium.com/use-aws-controllers-for-kubernetes-to-deploy-a-serverless-data-processing-solution-with-sqs-lambda-62025dba97bf | kubernetes, serverless, tutorial, cloud | In this blog post, you will be using [AWS Controllers for Kubernetes](https://aws-controllers-k8s.github.io/community/docs/community/overview/) on an Amazon EKS cluster to put together a solution wherein data from an [Amazon SQS queue](https://docs.aws.amazon.com/AWSSimpleQueueService/latest/SQSDeveloperGuide/sqs-queue... | abhirockzz |

1,407,969 | HTML tags for beginners | HTML elements consist of start and end tags. If you start the tag, you must always end it! An example... | 0 | 2023-03-20T15:35:10 | https://dev.to/codejem/html-tags-for-beginners-46b7 | beginners, html, frontend, devjournal | HTML elements consist of start and end tags. If you start the tag, you must always end it! An example of this would be:

`<tagname>`Text goes here...</`tagname`>

As you can see this tag has a start tag and an end tag.

These are some HTML Elements where you will be able to recognise the start and end of the tags:

1.... | codejem |

1,408,239 | How to make long functions more readable | Long functions are usually harder to understand. While there are techniques in OOP (Object-oriented... | 0 | 2023-03-20T19:22:47 | https://tahazsh.com/blog/make-long-functions-more-readable | javascript, webdev, programming, codequality | Long functions are usually harder to understand. While there are techniques in OOP (Object-oriented programming) to make them smaller, some developers prefer to see the whole code in one place. Having all code in a big function is not necessarily bad. What's actually bad is messy, unclear code in that function. Fortun... | tahazsh |

1,408,296 | Apify ❤️ Python: Releasing a Python SDK for Actors | Whether you are scraping with BeautifulSoup, Scrapy, Selenium, or Playwright, the Apify Python SDK... | 0 | 2023-03-20T19:55:46 | https://blog.apify.com/apify-python-sdk/ | python, webscraping, webautomation | ---

title: Apify ❤️ Python: Releasing a Python SDK for Actors

published: true

date: 2023-03-14 10:37:49 UTC

tags: python, webscraping, webautomation

canonical_url: https://blog.apify.com/apify-python-sdk/

---

Whether you are scraping with BeautifulSoup, Scrapy, Selenium, or Playwright, the Apify Python SDK helps you r... | frantiseknesveda |

1,408,670 | openGauss Users | You can use CREATE USER and ALTER USER to create and manage database users, respectively. openGauss... | 0 | 2023-03-21T02:53:41 | https://dev.to/tongxi99658318/opengauss-users-54pc | opengauss | You can use CREATE USER and ALTER USER to create and manage database users, respectively. openGauss contains one or more named database users and roles that are shared across openGauss. However, these users and roles do not share data. That is, a user can connect to any database, but after the connection is successful,... | tongxi99658318 |

1,408,677 | Basic database operations: create and manage tablespaces (openGauss) | Create and manage tablespaces Background Information By using tablespaces, administrators can control... | 0 | 2023-03-21T03:02:39 | https://dev.to/490583523leo/basic-database-operations-create-and-manage-tablespaces-opengauss-2jll | Create and manage tablespaces

Background Information

By using tablespaces, administrators can control the disk layout of a database installation. This has the following advantages:

If the partition or volume where the database is initialized is full, and more space cannot be expanded logically, you can create and use ... | 490583523leo | |

1,408,694 | OpenGauss common log introduction: system log | system log The logs generated by the openGauss runtime database node and the openGauss installation... | 0 | 2023-03-21T03:33:27 | https://dev.to/490583523leo/opengauss-common-log-introduction-system-log-8kl | system log

The logs generated by the openGauss runtime database node and the openGauss installation and deployment are collectively called system logs. If openGauss fails during operation, you can use these system logs to locate the cause of the failure in time, and formulate a method to restore openGauss according to ... | 490583523leo | |

1,408,770 | creating badge-lists, fancy stuff | We're taking this, we're stealing it and we're making it open source. Like modern day Robin... | 0 | 2023-03-21T06:25:46 | https://dev.to/lizblake/creating-badge-lists-fancy-stuff-o30 | webdev, javascript, beginners, opensource |

We're taking this, we're stealing it and we're making it open source. Like modern day Robin Hood.

Since I've borrowed an iPad from the University, I've been getting into making stickers and drawing stuff, so here's a roug... | lizblake |

1,408,920 | Codecademy Python Terminal Project | https://github.com/cece3333/Flashcard_game.git One of my first project! A simple terminal game in... | 0 | 2023-03-21T09:37:42 | https://dev.to/cece3333/codecademy-python-terminal-project-43o9 | codecademy, python, portfolio | https://github.com/cece3333/Flashcard_game.git

One of my first project! A simple terminal game in which you can create, modify, delete and show flashcards stored in a json file.

A "play mode" is accessible in which the front of a random flashcard is displayed for you to guess the back (like Anki without spaced repetit... | cece3333 |

1,409,200 | What is the best example to learn the ES6 spread operator (...) ? | The following example taken from MDN is the best example I ever found to learn the spread operator as... | 0 | 2023-03-21T12:47:45 | https://dev.to/mbshehzad/what-is-the-best-example-to-learn-about-the-es6-spread-operator--2aj8 | javascript, node, react | The following example taken from **MDN** is the best example I ever found to learn the spread operator as of 21 March 2023:-

```

function sum(x, y, z) {

return x + y + z;

}

const numbers = [1, 2, 3];

console.log(sum(...numbers));

```

| mbshehzad |

1,409,337 | Mac: Change the VSCode git extension account | Keychain Access: delete the credential. | 0 | 2023-03-21T15:29:08 | https://dev.to/coderethanliu/mac-change-the-vscode-git-extension-account-fm9 | Keychain Access: delete the credential. | coderethanliu | |

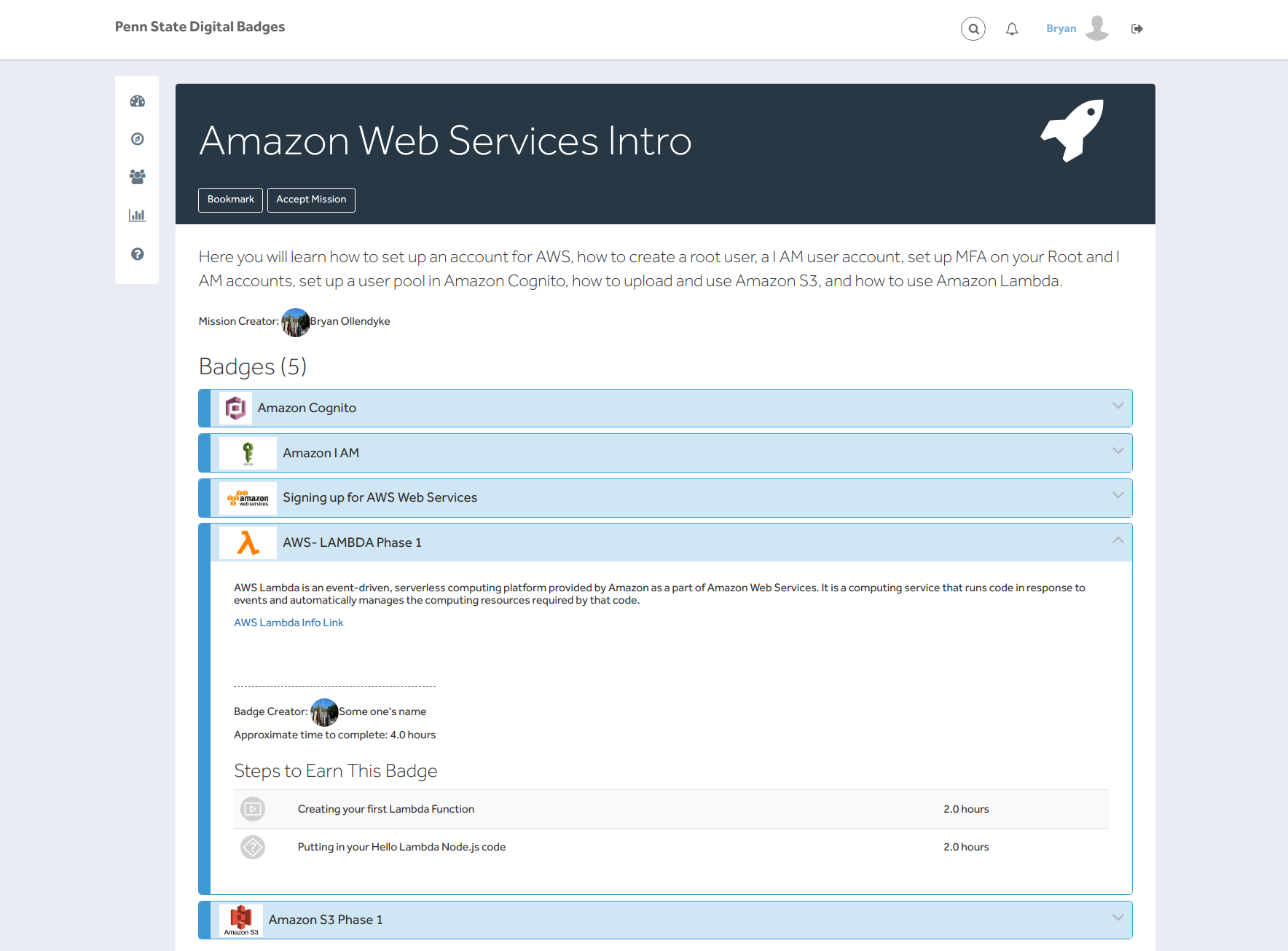

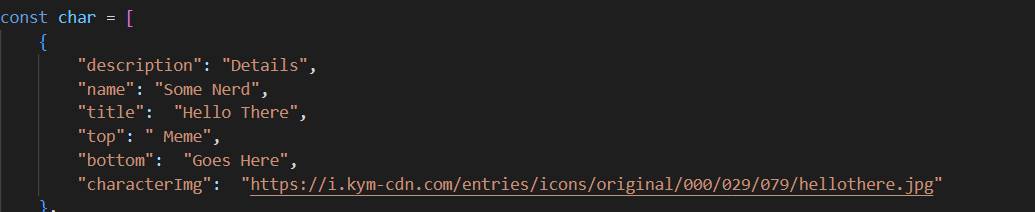

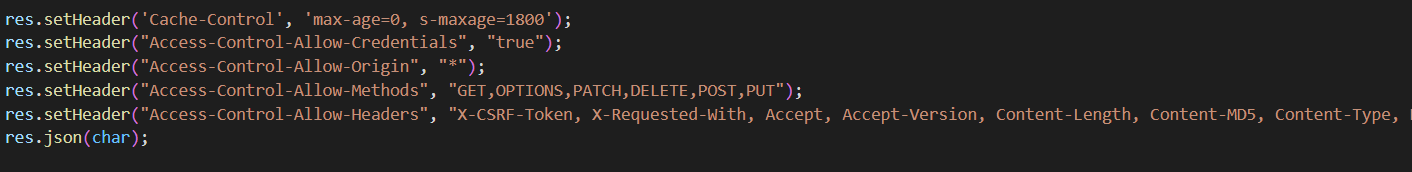

1,409,339 | 256 HW 10- Joseph Vanacore & John Szwarc | Include images of how you are conceiving the API for the elements involved and the names As... | 0 | 2023-03-21T16:03:52 | https://dev.to/joevan21/256-hw-10-joseph-vanacore-john-szwarc-39d1 | **Include images of how you are conceiving the API for the elements involved and the names**

As shown in th... | joevan21 | |

1,409,400 | Saas Reporting Analytics - Guide to New Startup Business Building | In today’s digital age, businesses generate vast amounts of data from multiple sources, including... | 0 | 2023-03-21T16:22:49 | https://dev.to/shbz/saas-reporting-analytics-guide-to-new-startup-business-building-49e7 | startup |

**In today’s digital age, businesses generate vast amounts of data from multiple sources, including customer interactions, sales transactions, marketing campaigns, and website visits.**

However, more than data is... | shbz |

1,409,616 | Interview Questions to Assess Company Culture Fit | Learn how to assess company culture fit with these top interview questions. Understand the importance of leadership, diversity, feedback, and career advancement in finding the right work environment. | 0 | 2023-03-21T18:25:12 | https://bekahhw.com/Interview-Culture-Questions | codenewbie, webdev, career | ---

title: Interview Questions to Assess Company Culture Fit

published: true

description: Learn how to assess company culture fit with these top interview questions. Understand the importance of leadership, diversity, feedback, and career advancement in finding the right work environment.

date: 2023-03-21 00:00:00 UTC

... | bekahhw |

139,659 | JavaScript of the Week: a conversation with Peter Cooper | A quick conversation with Peter Cooper, editor of JavaScript Weekly. Learn how he got started and what he thinks about JavaScript! | 0 | 2019-07-12T16:04:19 | https://www.fdoglio.com/post/javascript-of-the-week-a-conversation-with-peter-cooper | javascript, node, javascriptoftheweek, interview | ---

title: JavaScript of the Week: a conversation with Peter Cooper

published: true

description: A quick conversation with Peter Cooper, editor of JavaScript Weekly. Learn how he got started and what he thinks about JavaScript!

tags: javascript, node.js, javascript of the week, interview

canonical_url: https://www.fdog... | deleteman123 |

141,935 | Face Detection in Python | Hi in this post i'll show you how to use OpenCV library to detect faces inside a picture, in a future... | 0 | 2019-07-17T22:23:20 | https://dev.to/demg_dev/face-detection-in-python-1n5f | python, facedetection, opencv | Hi in this post i'll show you how to use OpenCV library to detect faces inside a picture, in a future post i'll show you how to detect in a Real-Time video that was amazing stuff to practice the visual recognition.

> well let's start

## Requirements

check your version of python or install python 3.6.

``` bash

p... | demg_dev |

145,639 | Interfacing your UI components | In recent years, front-end development became an important part of my life. But when I started years... | 0 | 2019-07-20T08:33:11 | https://kevtiq.co/blog/interfacing-your-ui-components/ | webdev, javascript, learning, ui | In recent years, front-end development became an important part of my life. But when I started years ago, I did not understand what an API was. I worked with them, but I never cared what it exactly was, or what it requires building one. I knew what the concept of interfaces in UI, but its relation with the letter "I" o... | vyckes |

180,540 | Being a Successful Beginner | It’s only been a month since I started learning how to code, but that month has felt like a year. Sin... | 0 | 2019-09-30T15:57:29 | https://dev.to/lberge17/being-a-successful-beginner-1ljc | beginners | It’s only been a month since I started learning how to code, but that month has felt like a year. Since then, my perspective on learning and curriculum has changed drastically. In college, I viewed classes and assignments as items on a checklist—meaning the importance was placed in the completion of a checklist rather ... | lberge17 |

182,269 | Take a screenshot of VSCode using Polacode Extension | Sometimes I add some tweets that should include code, so we don't have the option to embed code on... | 0 | 2019-10-03T22:39:55 | https://dev.to/arbaoui_mehdi/take-a-screenshot-of-vscode-using-polacode-extension-524h | webdev, vscode, plugins, tools | ---

title: Take a screenshot of VSCode using Polacode Extension

published: true

description:

cover_image: https://thepracticaldev.s3.amazonaws.com/i/k8o4tn51n5zwprs2ioio.png

tags: #webdev #vscode #plugins #tools

---

Sometimes I add some tweets that should include code, so we don't have the option to embed code on twi... | arbaoui_mehdi |

202,090 | Relative color luminance | C/P-ing this from my older blog posts. This one is from 2014. since I was a junior dev pretty much. N... | 0 | 2019-11-07T23:26:39 | https://lvidakovic.com/blog/relative-color-luminance | color, javascript, luminance | C/P-ing this from my older blog posts. This one is from 2014. since I was a junior dev pretty much. Nevertheless it's astonishing how many digital products get this wrong when applying the hyped up dark mode.

---

This is the method for calculating color luminance about which Lea Verou gave talk at the Smashing confer... | apisurfer |

204,793 | Windows JS Dev in WSL Redux | Back in September I did a post on setting up a JS dev environment in Windows using WSL (Windows Subsy... | 0 | 2019-11-16T18:39:50 | https://dev.to/vetswhocode/windows-js-dev-in-wsl-redux-33d5 | javascript, webdev, windows, linux | Back in September I did a post on setting up a JS dev environment in Windows using WSL (Windows Subsystem for Linux). Quite a bit has changed in the past couple months so I think we need to revisit and streamline it a bit. You can now get WSL2 in the Slow ring for insiders and a lot has changed in Microsofts new Termin... | heytimapple |

205,304 | Infographic: Top 11 Chrome Extensions For Developers And Designers In 2019 | Dominating the browser market share with 63.99% as of Aug 2018 – Aug 2019, Google Chrome has been the... | 0 | 2019-11-14T10:41:31 | https://www.lambdatest.com/blog/infographic-top-11-chrome-extensions-for-developers-and-designers-in-2019/ | webdev, productivity, ux |

Dominating the [browser market share](https://gs.statcounter.com/browser-market-share) with 63.99% as of Aug 2018 – Aug 2019, Google Chrome has been the pinnacle of web browsers. As a result of immense worldwide adoption, it is no surprise to witness a bustling marketplace for Google Chrome extensions. Some are there ... | rahul8124 |

205,918 | How to build a social network with mongoDB? | I'm wanted to start developing a social network like instagram (more or less). but I have tried to un... | 0 | 2019-11-15T13:46:47 | https://dev.to/rynrn/how-to-build-a-social-network-with-mongodb-42b | mongodb, node, database, architecture | I'm wanted to start developing a social network like instagram (more or less).

but I have tried to understand how to design my DB (using mongodb) for the main queries.

So I have few questions:

1. how to save the data of followers/following in the db? it should be in the same document of the users or other?

2. how to f... | rynrn |

214,160 | JavaScript Data Structures: Singly Linked List: Recap | JavaScript Data Structures: Singly Linked List: Recap | 3,259 | 2019-12-02T20:13:20 | https://dev.to/miku86/javascript-data-structures-singly-linked-list-reca-210b | beginners, tutorial, javascript, webdev | ---

title: "JavaScript Data Structures: Singly Linked List: Recap"

description: "JavaScript Data Structures: Singly Linked List: Recap"

published: true

tags: ["Beginners", "Tutorial", "JavaScript", "Webdev"]

series: JavaScript Data Structures

---

## Intro

[Last time](https://dev.to/miku86/javascript-data-structures-s... | miku86 |

221,266 | SwiftUI for Mac - Part 3 | In part 1 of this series, I created a Mac app using SwiftUI. The app uses a Master-Detail design to l... | 0 | 2019-12-19T08:26:55 | https://troz.net/post/2019/swiftui-for-mac-3/ | swift, swiftui, mac | ---

title: SwiftUI for Mac - Part 3

published: true

date: 2019-12-15 07:28:20 UTC

tags: Swift, SwiftUI, Mac

canonical_url: https://troz.net/post/2019/swiftui-for-mac-3/

---

In [part 1 of this series](https://dev.to/trozware/swiftui-for-mac-part-1-24ne), I created a Mac app using SwiftUI. The app uses a Master-Detail d... | trozware |

254,554 | iPad Sidecar Issues Over USB with VPN | When I'm working away from home, I like to use my iPad as a second monitor using Apple's "sidecar" fe... | 0 | 2020-02-03T20:16:17 | https://dev.to/bmatcuk/ipad-sidecar-issues-over-usb-with-vpn-3m7 | ipad, sidecar, vpn, usb | When I'm working away from home, I like to use my iPad as a second monitor using Apple's "sidecar" feature. However, I noticed that, if the wifi network isn't great, there can be some performance issues or disconnects. So, I wanted to use a USB cable instead.

This worked fine, until I switched on my company's VPN. Wit... | bmatcuk |

221,498 | Why are these the gitignore rules for VS Code? | If you search for good .gitignore rules for Visual Studio Code, you often come across these. ## ##... | 0 | 2019-12-15T18:57:07 | https://dev.to/thebuzzsaw/why-are-these-the-gitignore-rules-for-vs-code-3p03 | csharp, dotnet, git | If you search for good `.gitignore` rules for Visual Studio Code, you often come across these.

```

##

## Visual Studio Code

##

.vscode/*

!.vscode/settings.json

!.vscode/tasks.json

!.vscode/launch.json

!.vscode/extensions.json

```

I tried using them for a while, but they just lead to problems. It is the equivalent of ... | thebuzzsaw |

221,975 | Usar taxonomías en el campo select de un formulario | La entrada Usar taxonomías en el campo select de un formulario se publicó primero en 🈴KungFuPress por... | 0 | 2020-01-26T00:40:51 | https://kungfupress.com/usar-taxonomias-desde-el-campo-select-de-un-formulario/ | customposttype, plugins, taxonomías | ---

title: Usar taxonomías en el campo select de un formulario

published: true

date: 2019-12-18 16:40:21 UTC

tags: Custom Post Type,Plugins,taxonomías

canonical_url: https://kungfupress.com/usar-taxonomias-desde-el-campo-select-de-un-formulario/

---

La entrada [Usar taxonomías en el campo select de un formulario](http... | juananruiz |

222,581 | W3C confirms: WebAssembly becomes the fourth language for the Web 🔥 What do you think? | World Wide Web Consortium (W3C) brings a new language to the Web as WebAssembly becomes a W3C Recomm... | 0 | 2019-12-17T15:34:53 | https://dev.to/destrodevshow/w3c-confirms-webassembly-becomes-the-fourth-language-for-the-web-what-do-you-think-45e0 | webassembly, javascript, performance, discuss | <b> World Wide Web Consortium (W3C) brings a new language to the Web as WebAssembly becomes a W3C Recommendation.

Following HTML, CSS, and JavaScript, WebAssembly becomes the fourth language for the Web which allows code to run in the browser. </b>

---------------

<h3>5 December 2019</h3>

The World Wide Web Consorti... | destro_mas |

222,594 | What's the best thing to do when you've run into a debugging dead end? | So you've hit a wall. What is the best course of action in this situation? | 0 | 2019-12-17T15:59:02 | https://dev.to/ben/what-s-the-best-thing-to-do-when-you-ve-run-into-a-debugging-dead-end-39jg | discuss, debugging | So you've hit a wall. What is the best course of action in this situation? | ben |

268,919 | Sign in with Google Button 🎯🪁 | A few months ago when I am started learning 👨💻 Web Development from Eduonix I was super curious... | 0 | 2020-02-25T16:12:50 | https://dev.to/atulcodex/sign-in-with-google-button-4je4 | google, html, css | ---

title: Sign in with Google Button 🎯🪁

published: true

tags: google, html, css

---

A few months ago when I am started learning 👨💻 [Web Development](https://www.eduonix.com/web-development-css3-scratch-till-advanced-project-based/UHJvZHVjdC0xMzE1MDIw) from [Eduonix](https://www.eduonix.com/web-development-css3-s... | atulcodex |

228,931 | Vue.js Composition API: usage with MediaDevices API | Introduction In this article I would like to share my experience about how Vue Composition... | 0 | 2019-12-30T22:18:02 | https://dev.to/3vilarthas/vue-js-composition-api-usage-with-mediadevices-api-2efb | vue, javascript, tutorial | ## Introduction

In this article I would like to share my experience about how Vue Composition API helped me to organise and structure work with browser's [`navigator.mediaDevices`](https://developer.mozilla.org/en-US/docs/Web/API/Navigator/mediaDevices) API.

It's **highly encouraged** to skim through the RFC of the u... | 3vilarthas |

232,868 | What does everyone plan to learn in web development this year? | A post by John Au-Yeung | 0 | 2020-01-06T03:21:29 | https://dev.to/aumayeung/what-does-everyone-plan-to-learn-in-web-development-this-year-5ej | discuss, javascript, webdev | aumayeung | |

235,498 | Why and how you should migrate from Visual Studio Code to VSCodium | Why and how you should migrate from Visual Studio Code to VSCodium | 4,182 | 2020-01-10T01:56:14 | https://dev.to/0xdonut/why-and-how-you-should-to-migrate-from-visual-studio-code-to-vscodium-j7d | vscode, productivity, opensource, tutorial | ---

title: Why and how you should migrate from Visual Studio Code to VSCodium

published: true

description: Why and how you should migrate from Visual Studio Code to VSCodium

tags: vscode, productivity, opensource, tutorial

cover_image: https://i.imgur.com/LlwMOnj.png

series: code productivity

---

In this tutorial we'... | 0xdonut |

237,816 | Angular Developer Roadmap | Angular has become one of the most famous frameworks for frontend development. Angular is backed by... | 0 | 2020-02-05T07:42:11 | https://codesquery.com/angular-developer-roadmap/ | angular, angular7 | ---

title: Angular Developer Roadmap

published: true

date: 2019-12-04 14:32:42 UTC

tags: Angular,angular7

canonical_url: https://codesquery.com/angular-developer-roadmap/

---

Angular has become one of the most famous frameworks for frontend development. Angular is backed by the tech giant Google who also used it in al... | hisachin |

253,300 | Reading Snippets [41 => Ruby ] 💎 | "Computer science is never tied to a programming language; it is tied to the task of solving problems... | 0 | 2020-02-02T05:10:00 | https://dev.to/calvinoea/reading-snippets-40-ruby-522g | ruby, rails, beginners, computerscience |

"Computer science is never tied to a programming language; it is tied to the task of solving problems efficiently using a computer. Programming languages come and go but the essence of computer science stays the same. The core goal of computer science is to study algorithms that solve real problems."

<kbd><... | calvinoea |

270,193 | Fetch Data with Next.js (getInitialProps) | In the sequence of the Next.js tutorial, part 2 about data fetching is now available: | 0 | 2020-02-27T16:48:34 | https://dev.to/bmvantunes/fetch-data-with-next-js-getinitialprops-38lc | javascript, react | In the sequence of the Next.js tutorial, part 2 about data fetching is now available:

{% youtube Os3JZc2CtwY %} | bmvantunes |

310,130 | E5 - Sample Solution | The files are viewable on this page directly, or on my Github. Files in solution: _header.php cart... | 5,954 | 2020-04-15T19:40:09 | https://dev.to/herobank110/e5-sample-solution-m0m | php | The files are viewable on this page directly, or on my [Github](https://github.com/herobank110/PHP-Tutorial).

Files in solution:

* [_header.php](#_header)

* [cart.php](#cart)

* [db_commands.sql](#db_commands)

* [edit_cart.php](#edit_cart)

* [index.php](#index)

* [on_checkout.php](#on_checkout)

* [order_confirm.php](#o... | herobank110 |

321,548 | 11 NPM Commands Every Node Developer Should Know. | 1. Create a package.json file npm init -y # -y to initialize with default values.... | 0 | 2020-04-28T15:08:23 | https://dev.to/vyasriday/npm-commands-that-a-node-developer-should-know-2h84 | npm, node, codenewbie, beginners | #### 1. Create a package.json file

```bash

npm init -y # -y to initialize with default values.

```

#### 2. Install a package locally or globally

```bash

npm i package # to install locally. i is short for install

npm i -g package # to install globally

```

#### 3. Install a specific version of a package

```bash

npm in... | vyasriday |

322,542 | How to Undo Last Commit and Keep Changes | To undo the last commit but keep the changes, run the following command: git reset --soft HEAD~1... | 0 | 2020-04-30T03:06:26 | https://dev.to/andyrewlee/how-to-undo-last-commit-and-keep-changes-1eh2 | git, beginners, tutorial | To undo the last commit but keep the changes, run the following command:

```

git reset --soft HEAD~1

```

Now when we run `git status`, we will see that all of our changes are in staging. When we run `git log`, we can see that our commit has been removed.

If we want to completely remove changes in staging, we can... | andyrewlee |

327,414 | How to Embed Video Chat in your Unity Games | When you're making a video game, you want to squeeze every last drop out of performance out of your g... | 0 | 2020-05-05T16:59:34 | https://dev.to/joelthomas362/create-an-agora-group-video-chat-using-unity-33ce | agora, unity3d, photon, csharp |

When you're making a video game, you want to squeeze every last drop out of performance out of your graphics, code, and any plugins you may use. Agora's Unity SDK has a low footprint and performance cost, making it a great tool for any platform, from mobile to VR!

In this tutorial, I'm going to show you how to use Ag... | joelthomas362 |

328,784 | Web forms with DotVVM controls | Currently, web pages allow internet users to know about a particular product or service, how to conta... | 0 | 2020-05-22T00:21:40 | https://dev.to/dotvvm/web-forms-with-dotvvm-controls-6bk | webdev, html, dotnet, dotvvm | Currently, web pages allow internet users to know about a particular product or service, how to contact a company, but it can also help collect information about its users and thus establish a data source. An important tool for this purpose is the form.

For the design of a form, the most basic way is to use HTML tags ... | esdanielgomez |

328,822 | Mocking Nuxt Global Plugins to Test a Vuex Store File | How to deal with mocking plugins when you're not mounting a test file | 0 | 2020-05-06T20:47:31 | https://dev.to/rdelga80/mocking-nuxt-global-plugins-to-test-a-vuex-store-file-45e6 | vue, testing, webdev, frontend | ---

title: Mocking Nuxt Global Plugins to Test a Vuex Store File

published: true

description: How to deal with mocking plugins when you're not mounting a test file

tags: vue, testing, webdev, frontend

cover_image: https://dev-to-uploads.s3.amazonaws.com/i/9qojni4llwopl6zzx69h.jpg

---

This is one of those edge cases th... | rdelga80 |

328,930 | Iterators & Generators | 5. Iterators & Generators 5.1. Iterators We use for statement for looping over a list. >>... | 6,570 | 2020-05-06T15:15:39 | https://dev.to/estherwavinya/iterators-generators-1ghk | python, beginners, codenewbie, writing | **5. Iterators & Generators**

**5.1. Iterators**

We use `for` statement for looping over a list.

```

>>> for i in [1, 2, 3, 4]:

... print(i)

...

1

2

3

4

```

If we use it with a string, it loops over its characters.

```

>>> for c in "python":

... print(c)

...

p

y

t

h

o

n

```

If we use it with a dictionary, it lo... | estherwavinya |

328,935 | 5 Tips for Overcoming Coder's Block | TL;DR Use a sandbox Get a cheat-sheet Take a quickstart Break down the problem Mix 'em up... | 0 | 2020-07-01T16:43:53 | https://dev.to/vicradon/5-tips-for-overcoming-coder-s-block-28mo | devlive, codepen | TL;DR

1. Use a sandbox

2. Get a cheat-sheet

3. Take a quickstart

4. Break down the problem

5. Mix 'em up

## Intro

Usually, developers experience a block. During the block period, they don't write any useful, reasonable code. This block may be due to tiredness. You may have been coding for days without breaks. But when... | vicradon |

329,046 | 11 Days of Salesforce Storefront Reference Architecture (SFRA) — Day 3: Creating a Storefront | 11 Days of Salesforce Storefront Reference Architecture (SFRA) — Day 3: Creating a... | 8,976 | 2020-05-18T19:43:39 | https://medium.com/perimeterx/11-days-of-salesforce-storefront-reference-architecture-sfra-day-3-creating-a-storefront-1f85001ebf1d | sfcc, sfra, salesforce | ---

title: 11 Days of Salesforce Storefront Reference Architecture (SFRA) — Day 3: Creating a Storefront

published: true

date: 2020-05-06 15:52:20 UTC

tags: sfcc,sfra,salesforce

series: 11 Days of Salesforce Storefront Reference Architecture (SFRA)

canonical_url: https://medium.com/perimeterx/11-days-of-salesforce-stor... | pxjohnny |

330,593 | RPC-like API for your Laravel project | JSON-RPC 2.0 this is a simple stateless protocol for creating an RPC (Remote Procedure Call) style AP... | 0 | 2020-05-08T19:30:13 | https://dev.to/tabuna/rpc-like-api-for-your-laravel-project-3oeh | laravel, api, jsonrpc | [JSON-RPC 2.0](https://www.jsonrpc.org/specification) this is a simple stateless **protocol** for creating an RPC (Remote Procedure Call) style API. It usually looks as follows:

You have one single endpoint on the server that accepts requests with a body of the form:

```json

{"jsonrpc": "2.0", "method": "post.like", ... | tabuna |

329,235 | Animated Login Form 2020 tutorial using HTML & CSS Flexbox only [video format] | In this tutorial, we'll create an awesome Animated Login Form using HTML & CSS Flexbox only.... | 6,475 | 2020-05-06T23:11:15 | https://dev.to/codeleague7/animated-login-form-2020-tutorial-using-html-css-flexbox-only-video-format-1339 | css, html, beginners, tutorial | {% youtube 1DWJ65XP-zs %}

In this tutorial, we'll create an awesome **Animated Login Form using HTML & CSS Flexbox only**. This project is suitable for all especially **beginners**. We'll also use **CSS transitions** which allow us to change property values smoothly, over a given duration. | codeleague7 |

329,240 | Mid Meet Py - Ep.6 - Interview with Steve Dower | We meet in the middle of the day in the middle of the week to chat about Python news. Interview with Steve Dower, Python tools developer at Microsoft and core CPython developer | 0 | 2020-05-07T01:22:16 | https://dev.to/midmeetpy/mid-meet-py-ep-6-interview-with-steve-dower-209m | python, chat, communities, irl | ---

title: Mid Meet Py - Ep.6 - Interview with Steve Dower

published: true

description: We meet in the middle of the day in the middle of the week to chat about Python news. Interview with Steve Dower, Python tools developer at Microsoft and core CPython developer

tags: python, chat, communities, IRL

---

PyChat (Python... | cheukting_ho |

329,332 | DNS Resolution | Recently was working around DNS and thought to put it here! Computers work with numbers. Computers t... | 0 | 2020-05-07T04:18:27 | https://dev.to/_nancychauhan/dns-resolution-3pbg | dns, dnsresolution, networking | Recently was working around DNS and thought to put it here!

Computers work with numbers. Computers talk to another computer using a numeric address called IP address. Though structured and thus great for computers, it is tough for humans to remember.

DNS acts as the phonebook of the internet 🌐. It converts a web add... | _nancychauhan |

329,365 | what's your side hustle after work hours? | What do you do after work time ? If you have a side hustle how motivated are you to achieve it ? | 0 | 2020-05-07T05:13:52 | https://dev.to/get_hariharan/what-s-your-side-hustle-after-work-hours-9m1 | discuss | What do you do after work time ?

If you have a side hustle how motivated are you to achieve it ? | get_hariharan |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.