id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

807,607 | Showdev: deploy apps to edge devices and servers with Synpse | Synpse allows managing IoT device fleets and servers, deploy apps to them with automated updates, SSH access and TCP port forwarding | 0 | 2021-09-02T14:07:39 | https://dev.to/krusenas/showdev-deploy-apps-to-edge-devices-and-servers-with-synpse-43k4 | showdev, productivity, tooling, iot | ---

title: Showdev: deploy apps to edge devices and servers with Synpse

published: true

description: Synpse allows managing IoT device fleets and servers, deploy apps to them with automated updates, SSH access and TCP port forwarding

tags: showdev, productivity, tooling, iot

cover_image: https://dev-to-uploads.s3.amazo... | krusenas |

807,709 | Leetcode Solutions to Graph Algorithm Problems | Leetcode contains some of the most popular interview questions asked across organizations. This... | 0 | 2021-08-30T07:42:05 | https://dev.to/abhilash1910/leetcode-solutions-to-graph-algorithm-problems-ak0 | algorithms, computerscience | [Leetcode](https://leetcode.com/) contains some of the most popular interview questions asked across organizations. This youtube playlist contains solutions of some of the most important graph algorithms which are asked in interviews and present in Leetcode:

https://www.youtube.com/watch?v=w9wv2jlF3jY&list=PLovuuDh4TF... | abhilash1910 |

807,727 | 10 HTML Form Tags You Should Know | This article has been originally posted on Getform Blog. HTML tags can be considered as special... | 0 | 2021-08-30T13:47:00 | https://blog.getform.io/10-html-form-tags-you-should-know/ | This article has been originally posted on [Getform Blog](https://blog.getform.io/10-html-form-tags-you-should-know/?utm_source=dev.to&utm_medium=website&utm_campaign=html-form-tags-article).

----

HTML tags can be considered as special keywords which help web browsers to define how to format and display the content w... | mertcanyucel | |

807,753 | How to Serve images in next Gen Formats? | Google do recommend converting images from png, jpeg to webp formats that’s why serve images in next... | 0 | 2021-08-30T10:16:19 | https://dev.to/wpsyed/how-to-serve-images-into-next-gen-formats-p9f | programming, wordpress, webdev, devops | Google do recommend converting images from png, jpeg to webp formats that’s why serve images in next gen formats suggestion comes in Google page speed insights. If you don’t know how to fix this issue in your worpdress website then stay here. I will go over a little bit about serving images to next gen formats.

... | wpsyed |

807,771 | React if you use replit | If you use repl.it kindly react with the heart or unicorn. If you don't then react with a comment... | 0 | 2021-08-30T10:52:18 | https://dev.to/codeboi/react-if-you-use-repl-it-2k1e | poll, debate, codequality, replit | If you use [repl.it](https://replit.com/) kindly react with the heart or unicorn. If you don't then react with a comment saying no and explain why you don't. Or suggest any other code editors would be nice. | codeboi |

807,787 | Implement traditional auth system in Symfony with less code than ever | PHP 8 introduced some new concepts and really helpful syntax features. To significantly reduce the... | 0 | 2021-09-06T10:16:16 | https://dev.to/bornfightcompany/implement-traditional-auth-system-in-symfony-with-less-code-than-ever-5h25 | engineeringmonday, symfony, php, doctrine | PHP 8 introduced some new concepts and really helpful syntax features.

To significantly reduce the boilerplate code, whenever possible, we can use [Constructor property promotion](https://stitcher.io/blog/constructor-promotion-in-php-8). Another thing I'll focus on in this guide is replacing annotations with [PHP attr... | gh0c |

807,964 | Carreira na área de TI | Este post é uma lista de comentários que fiz no grupo t.me/SegInfoBRasil sobre como dar os passos... | 0 | 2021-08-30T12:00:52 | https://dev.to/vitormattos/carreira-na-area-de-ti-4n7l | career, opensource, beginners |

Este post é uma lista de comentários que fiz no grupo t.me/SegInfoBRasil sobre como dar os passos em uma carreira na área de tecnologia:

Participe de comunidades não apenas consumindo, participe de forma ativa e tem várias ... | vitormattos |

807,980 | See how Java frameworks like Spring work with your code | Spring + Your Code = ❤️ Most of the time! The one criticism that sticks to the Spring framework (and... | 0 | 2021-08-30T13:01:29 | https://dev.to/appmap/see-how-java-frameworks-like-spring-work-with-your-code-1acl | java, debugging, webdev | Spring + Your Code = ❤️ Most of the time!

The one criticism that sticks to the Spring framework (and other big web frameworks, to be honest), is that Spring does so much for you it can be hard to understand what's really going on. Sometimes, we can just follow the doc and tutorials and watch the magic happen. But some... | kgilpin |

807,981 | MarkdownX Editor 🎉 | Our MarkdownX editor is officially live and available on the DevDojo. It's also available for you to... | 0 | 2021-08-30T12:41:35 | https://devdojo.com/tnylea/markdownx-editor | markdown, laravel, tailwindcss, saas | Our MarkdownX editor is officially live and available on the DevDojo. It's also available for you to use in your [TallStack](https://tallstack.dev) applications 🍻.

If this is your first time hearing about the [MarkdownX Editor](https://devdojo.com/markdownx), continue reading to learn more about how it can make writi... | bobbyiliev |

808,048 | Build a simple guessing game in Golang. | A complete beginner’s guide Concept The player will guess a number between 0... | 0 | 2021-08-31T11:18:30 | https://dev.to/nagatodev/build-a-simple-guessing-game-in-golang-48ig | go, gamedev, webdev, programming | #A complete beginner’s guide

##Concept

The player will guess a number between 0 and 10. If their guess is correct, they win. Otherwise, the program will give the player a hint to either guess higher or lower depending on the correct number. The player will have three(3) shots at the game; if he guesses incorrectly thr... | nagatodev |

808,070 | [note]Rails HTTP Code and Symbol mapping | ... | 0 | 2021-08-30T16:00:04 | https://dev.to/kevinluo201/note-rails-http-code-and-symbol-mapping-5ckl | ## References

* [Rack::Utils::HTTP_STATUS_CODES](https://github.com/rack/rack/blob/master/lib/rack/utils.rb#L492)

* [https://github.com/rack/rack/blob/master/lib/rack/utils.rb#L520](https://github.com/rack/rack/blob/master/lib/rack/utils.rb#L520)

## HTTP status code symbols for Rails

Thanks to Cody Fauser for this l... | kevinluo201 | |

808,080 | In which I am cranky about the urban/rural divide | I just came back from a weeks-long trip to the American West. It was amazing, gorgeous. More... | 0 | 2021-08-30T21:58:14 | https://heidiwaterhouse.com/2021/08/30/in-which-i-am-cranky-about-the-urban-rural-divide/?utm_source=rss&utm_medium=rss&utm_campaign=in-which-i-am-cranky-about-the-urban-rural-divide | bestpractices, industry, life, personal | ---

title: In which I am cranky about the urban/rural divide

published: true

date: 2021-08-30 15:07:00 UTC

tags: Bestpractices,Industry,Life,Personal

canonical_url: https://heidiwaterhouse.com/2021/08/30/in-which-i-am-cranky-about-the-urban-rural-divide/?utm_source=rss&utm_medium=rss&utm_campaign=in-which-i-am-cranky-a... | wiredferret |

808,251 | 2 anos atrás eu não consegui aprender Java e isso me ensinou a respeitar o meu tempo | Você também pode ouvir esse artigo acessando pelo Pingback Essa semana nas lembranças de stories... | 0 | 2021-09-01T21:19:20 | https://dev.to/jeniblo_dev/2-anos-atras-eu-nao-consegui-aprender-java-e-isso-me-ensinou-a-respeitar-o-meu-tempo-abj | aprendizado, evolucao, dev | > *Você também pode ouvir esse artigo acessando pelo [Pingback](https://pingback.com/jeniblo_dev/2-anos-atras-eu-nao-consegui-aprender-java-e-isso-me-ensinou-a-respeitar-o-meu-tempo)*

Essa semana nas lembranças de stories do Instagram apareceu pra mim essa imagem aí embaixo de dois anos atrás: a primeira vez que eu te... | jeniblo_dev |

808,252 | How to configure AWS SSO enabling access for a user in two different AWS accounts using a customized user-portal | “Challenges faced to find the solution of how to configure AWS SSO enabling access for a user in two... | 0 | 2021-08-30T17:33:44 | https://dev.to/aws-builders/how-to-configure-aws-sso-enabling-access-for-a-user-in-two-different-aws-accounts-using-a-customized-user-portal-5ao5 | awss3, awssso, awsrds, security | “Challenges faced to find the solution of how to configure AWS SSO enabling access for a user in two different AWS accounts using a customized user-portal”. I have checked different ways so that I can be able to access different accounts in the simplest way without having to login to all accounts again and again. I got... | bhatnagargargee |

256,261 | Create a new Promise in JavaScript? | This video covers how to create a new Promise in JavaScript and what are the states of a Promise. Yo... | 0 | 2020-03-20T16:30:27 | https://bonsaiilabs.com/create-promise | javascript, react, node, codenewbie | ---

canonical_url: https://bonsaiilabs.com/create-promise

---

This video covers how to create a new Promise in JavaScript and what are the states of a Promise.

You can access the code at https://bonsaiilabs.com/create-promise/

{% youtube RK_0h-slUIA %}

Subscribe for more videos on JavaScript with Visualization: htt... | deekshasharma25 |

310,542 | Create Your Own Elegant Code Screenshots with Carbon | I often get asked how I make "those great screenshots" of my code that I share on social media and here on dev.to. The answer is simple: I use the website https://carbon.now.sh! | 0 | 2020-04-16T11:01:08 | https://dev.to/nas5w/create-your-own-elegant-code-screenshots-with-carbon-357l | productivity, career, javascript, programming | ---

title: Create Your Own Elegant Code Screenshots with Carbon

published: true

description: I often get asked how I make "those great screenshots" of my code that I share on social media and here on dev.to. The answer is simple: I use the website https://carbon.now.sh!

tags: productivity, career, javascript, programmi... | nas5w |

329,081 | Been a busy boy | I've just had a busy old weekend and managed to get my digital counter working with CSS animations.... | 0 | 2020-05-11T15:29:56 | https://drmsite.blogspot.com/2019/07/been-busy-boy.html | css, graphics, html, javascript | ---

title: Been a busy boy

published: true

date: 2019-07-29 11:02:00 UTC

tags: CSS,Graphics,HTML,JavaScript

canonical_url: https://drmsite.blogspot.com/2019/07/been-busy-boy.html

---

[](... | mouseannoying |

329,546 | In search of the ultimate number regex | I was recently working on a number input field and ran into the age old problem of validating the use... | 0 | 2020-05-07T11:06:30 | https://dev.to/ryandunn/in-search-of-the-ultimate-number-regex-3kk | javascript, ux | I was recently working on a number input field and ran into the age old problem of validating the user input to ensure a valid numerical value. This is simple enough to just validate once the complete value is inputted, but not so much while the user is still typing.

We need the user to be able to input a `-` on the w... | ryandunn |

329,897 | Melhores serviços para enviar e-mails transacionais gratuitos | Quando você começa a desenvolver uma aplicação ou serviço web, provavelmente terá a necessidade de se... | 0 | 2020-05-14T19:54:50 | https://marquesfernandes.com/melhores-servicos-para-enviar-e-mails-transacionais-gratuitos/ | self, tech, email, emails | ---

title: Melhores serviços para enviar e-mails transacionais gratuitos

published: true

date: 2020-05-07 19:46:37 UTC

tags: Self,Tech,email,emails

canonical_url: https://marquesfernandes.com/melhores-servicos-para-enviar-e-mails-transacionais-gratuitos/

---

Quando você começa a desenvolver uma aplicação ou serviço we... | shadowlik |

338,700 | Planning to develop an app like Snapchat? Here is all you need to know

| Snapchat is a popular and most admired augmented reality application. AR has seen a drastic growth si... | 0 | 2020-05-19T07:08:40 | https://dev.to/sheetalrawat18/planning-to-develop-an-app-like-snapchat-here-is-all-you-need-to-know-2lpe | hiresoftwaredeveloper, softwaredevelopmentcompany | <span style="font-weight: 400;">Snapchat is a popular and most admired augmented reality application. AR has seen a drastic growth since its first system development in the 1990s. It has been expected that by 2023, </span><a href="https://citrusbits.com/stats-and-facts-about-augmented-reality/"><span style="font-weight... | sheetalrawat18 |

670,024 | How to use the Tailwind JIT compiler | Recently, Tailwind v2.1 was released with the JIT compiler included. The JIT (Just In Time) compiler... | 0 | 2021-04-18T09:28:35 | https://www.jeroenvanrensen.nl/blog/tailwind-jit-compiler | tailwindcss | Recently, Tailwind v2.1 was released with the JIT compiler included. The JIT (Just In Time) compiler only generates CSS that you actually use, instead of all sorts of classes that you (almost) never use, like `subpixel-antialiased`, `place-self-start`, and `backdrop-brightness-95`. And even better compiling your CSS go... | jeroenvanrensen |

357,190 | Secure remote SSH access to your IoT devices & Raspberry Pi fleet using SocketXP. | In this article, we'll discuss how to use SocketXP IoT Remote SSH Access solution to SSH into your... | 0 | 2020-06-17T04:08:29 | https://dev.to/gvelrajan/secure-remote-ssh-access-to-your-iot-devices-raspberry-pi-fleet-using-socketxp-3aa4 | iotremotessh, iotconnectivity, managingraspberrypifleet, securetunnel | In this article, we'll discuss how to use [SocketXP IoT Remote SSH Access solution](https://www.socketxp.com/iot/remote-access-iot-ssh-over-the-internet/) to SSH into your IoT or Raspberry Pi fleet.

<h2>What is SocketXP</h2>

[SocketXP](https://www.socketxp.com) is a <code>cloud based [secure SSL/TLS reverse tunnelling... | gvelrajan |

358,103 | Understanding recursions and memory | Recursion is a very well-known concept in modern high-level programming languages. In this post, we w... | 0 | 2020-06-18T15:58:22 | https://dev.to/therise3107/understanding-recursions-and-memory-4eph | recursion, c, memory | Recursion is a very well-known concept in modern high-level programming languages. In this post, we will try to analyze the recursion in C language. I am pretty sure learning in C should be sufficient to understand this topic's implementation in other languages as well. Well, that's being said recursion are language ag... | therise3107 |

383,465 | Inheritance and SubClasses Using ES6 | Javascript Inheritance and SubClasses In Javascript there are many different patterns to f... | 0 | 2020-07-06T04:00:05 | https://dev.to/cschratz/inheritance-and-subclasses-using-es6-3ncl | javascript, beginners, subclasses, es6 | ## Javascript Inheritance and SubClasses

In Javascript there are many different patterns to follow when going about object instantiation. Each of these patterns: functional, functional-shared, prototypal and pseudoclassical, follow specific syntax guidelines and the pattern one chooses impacts how object inheritance i... | cschratz |

397,081 | Quick tips to enhance your gitlab issue workflow, part one | This post is originally posted on i-Logs blog and in my Collected Notes. When Emmanuel Bergmans and... | 0 | 2020-07-14T11:52:24 | https://dev.to/gwelr/quick-tips-to-enhance-your-gitlab-issue-workflow-part-one-1o2o | gitlab, workflow, beginners, methodology | <small>*This post is originally posted on [i-Logs blog](https://i-logs.com/blog/quick-tips-to-enhance-your-gitlab-issue-workflow-part-one/) and in my [Collected Notes](https://collectednotes.com/gwelr/quick-tips-to-enhance-your-gitlab-issue-workflow-part-one)*.</small>

When [Emmanuel Bergmans](https://www.linkedin.com... | gwelr |

398,534 | Wednesday Links - Edition 2020-07-15 | First milestone of Reactor 2020.0 -Codename Europium ( 5 min read ) ☢️ https://spring.io/blog/2020/07... | 6,965 | 2020-07-15T10:06:06 | https://dev.to/0xkkocel/wednesday-links-edition-2020-07-15-5gcd | java, spring, jvm, maven | First milestone of Reactor 2020.0 -Codename Europium ( 5 min read ) ☢️

https://spring.io/blog/2020/07/10/first-milestone-of-reactor-2020-0-codename-europium

The Spring team wants to hear from you! ( 40 sec read ) 🎤

https://spring.io/blog/2020/07/14/the-spring-team-wants-to-hear-from-you

APM headers in Kafka records ... | 0xkkocel |

399,506 | Starting a Python project | $ mkdir ~/my_project Create project directory under user home. $ cd ~/my_project $ git init Create... | 0 | 2020-07-16T01:07:13 | https://dev.to/kennethloh/starting-a-python-project-3eb5 | 1. `$ mkdir ~/my_project` Create project directory under user home.

2. `$ cd ~/my_project`

3. `$ git init` Create git repo.

4. `$ python -m venv venv` Create virtual environment within the project directory. Preferred over a centralised virtual environments directory e.g. `my_venvs/my_project` as it is easier to find ... | kennethloh | |

403,126 | TypeScript and Netlify Functions | Did you know that Netlify Functions are just using AWS Lambdas behind the scenes? This means you can... | 0 | 2020-07-18T13:25:17 | https://chiubaca.com/typescript-and-netlify-functions-37b8 | typescript, netlify, serverless | Did you know that Netlify Functions are just using AWS Lambdas behind the scenes?

This means you can use the same type definitions available for aws-lambda for your Netlify functions too. Install the aws-lamda types by running the following.

```bash

npm install @types/aws-lambda --save-dev

```

You only need to impo... | chiubaca |

403,332 | Animatronics with Artificial Intelligence — Brings Unimaginable Results | Have you ever heard of Animatronics? It is the integration of Animation and Electronics. Imagine what... | 0 | 2020-07-18T18:20:37 | https://dev.to/ritheeshbaradwaj/animatronics-with-artificial-intelligence-brings-unimaginable-results-1b70 | machinelearning, writing | Have you ever heard of Animatronics? It is the integration of Animation and Electronics. Imagine what wonders we could achieve with Artificial Intelligence with Animatronics working together. Here's an article that gives a gist of the possibilities we can have access to in case we use it with AI. Hope you enjoy it!

ht... | ritheeshbaradwaj |

421,321 | All about processes and how can we view them | Linux is a multitasking operating system, which means that it creates an illusion that multiple progr... | 6,412 | 2020-08-08T12:22:03 | https://dev.to/yashsugandh/all-about-processes-and-how-can-we-view-them-1n2i | ubuntu, linux, computerscience, beginners | Linux is a multitasking operating system, which means that it creates an illusion that multiple programs are running at the same time by rapidly switching from one program to another.

The Linux kernel manages this through the use of processes.

Each process has the illusion that it is the only process on the computer.... | yashsugandh |

696,340 | Adicionando eslint-disable nos arquivos com erros | Recentemente eu precisei atualizar um projeto em Ember que estava na versão 3.6 (bem desatualizado)... | 0 | 2021-05-12T18:56:45 | https://eduardoweiland.info/posts/2021/05/adicionando-eslint-disable-nos-arquivos-com-erros/ | braziliandevs, eslint, javascript | ---

title: Adicionando eslint-disable nos arquivos com erros

published: true

date: 2021-05-12 17:09:49 UTC

tags: [braziliandevs, eslint, javascript]

canonical_url: https://eduardoweiland.info/posts/2021/05/adicionando-eslint-disable-nos-arquivos-com-erros/

---

Recentemente eu precisei atualizar um projeto em Ember que... | eduardoweiland |

429,425 | Last Form component you need | I have published my first NPM library for React Form, I have created this form component in my last 3... | 0 | 2020-08-16T19:45:46 | https://dev.to/gkhan205/published-first-npm-package-for-react-form-58eb | react, npm, showdev, codewithghazi | I have published my first NPM library for React Form, I have created this form component in my last 3 years of experience, have tested this component with more than 8 projects and found it very useful.

There were times when creating form layouts, handling validations, and creating data for API POST and populating for... | gkhan205 |

492,181 | Why I Decided to Study Software Engineering | Why did I decide to learn software engineering? The short and cliche answer is: Balance. I consi... | 0 | 2020-10-19T18:47:12 | https://dev.to/nicklevenson/why-i-decided-to-study-software-engineering-40li | student, flatiron, codenewbie, career |

Why did I decide to learn software engineering? The short and cliche answer is:

*Balance*.

I consider myself to have a creative heart. Since I was little I have loved project oriented hobbies. As a kid I was obsessed with medieval wea... | nicklevenson |

603,410 | Daily Developer Jokes - Sunday, Feb 14, 2021 | Check out today's daily developer joke! (a project by Fred Adams at xtrp.io) | 4,070 | 2021-02-14T13:00:23 | https://dev.to/dailydeveloperjokes/daily-developer-jokes-sunday-feb-14-2021-9b3 | jokes, dailydeveloperjokes | ---

title: "Daily Developer Jokes - Sunday, Feb 14, 2021"

description: "Check out today's daily developer joke! (a project by Fred Adams at xtrp.io)"

series: "Daily Developer Jokes"

cover_image: "https://private.xtrp.io/projects/DailyDeveloperJokes/thumbnail_generator/?date=Sunday%2C%20Feb%2014%2C%202021"

published: tr... | dailydeveloperjokes |

641,426 | How do I become a DevOps engineer? | A question I hear a lot is “How do I become a DevOps engineer?” I have two answers to this... | 0 | 2021-03-22T09:27:33 | https://jhall.io/archive/2021/03/21/how-do-i-become-a-devops-engineer/ | devops | ---

title: How do I become a DevOps engineer?

published: true

date: 2021-03-21 00:00:00 UTC

tags: devops

canonical_url: https://jhall.io/archive/2021/03/21/how-do-i-become-a-devops-engineer/

---

A question I hear a lot is “How do I become a DevOps engineer?”

I have two answers to this question.

The first probably is... | jhall |

660,872 | How to Create Subscribe Call to action Form | For the last few days, I've been working on a Some Design parts. I've always believed that a creativi... | 0 | 2021-04-10T06:12:22 | https://dev.to/itanand/how-to-create-subscribe-call-to-action-form-4pim | codepen | For the last few days, I've been working on a Some Design parts. I've always believed that a creativity is important for any dev since it connects you to future opportunities and it helps you to grow your network.

Today I create a Subscribe call to action design using pug, SCSS, and JavaScript (Babel). There's still s... | itanand |

704,282 | Angular dynamic modules at runtime with Module Federation | Angular 12 recently launched with the added enhancements of Webpack 5 and opening the door to using m... | 0 | 2021-05-20T21:54:34 | https://dev.to/seanperkins/angular-dynamic-modules-at-runtime-with-module-federation-mk5 | angular, webpack, modulefederation, microfrontend | Angular 12 recently launched with the added enhancements of Webpack 5 and opening the door to using module federation. If you are looking for a great deep-dive into module federation and micro-frontends, I suggest reading: https://www.angulararchitects.io/aktuelles/the-microfrontend-revolution-module-federation-in-webp... | seanperkins |

708,593 | AWS Route 53 with Terraform | In this article we will imagine that your team received two very important pieces of information. The... | 0 | 2021-05-25T21:53:01 | https://augustovaldivia.ca/r53.html | aws, terraform, awsroute53, cdn | In this article we will imagine that your team received two very important pieces of information. The first being about issues regarding the company website and the second being about the future of your company.

First, the Social media team has revealed to your team that a significant proportion of your customer base ... | valaug |

739,066 | Managing mapbox-gl state in React app | Description of the problem In the course of my work at geoalert.io, I have repeatedly... | 0 | 2021-06-25T11:11:05 | https://dev.to/dqunbp/managing-mapbox-gl-state-in-react-app-4328 | react, mapbox, xstate, javascript | ## Description of the problem

In the course of my work at [geoalert.io](http://geoalert.io/), I have repeatedly encountered the problem of managing the state of an application in `React` with a built-in [mapbox-gl](https://docs.mapbox.com/mapbox-gl-js/api/)

I plan to cover the topic in a series of articles, including... | dqunbp |

739,737 | How to Add Push Notifications into a ReactJS App | Push notifications are a useful tool to engage and retain users. In this tutorial, we'll show you... | 0 | 2021-06-28T16:48:32 | https://onesignal.com/blog/how-to-integrate-push-notifications-in-react/ | react, webdev, javascript, frontend | ---

title: How to Add Push Notifications into a ReactJS App

published: true

date: 2021-06-25 22:53:02 UTC

tags: react, webdev, javascript, frontend

canonical_url: https://onesignal.com/blog/how-to-integrate-push-notifications-in-react/

---

Early this month at *Black Hat USA 2021*, researchers from *Wiz.io* [presented](https://www.blackhat.com/us-21/b... | johspaeth |

819,770 | Installing MongoDB on Kubernetes with Replica Sets and NO MongoDB Operator | Are you tired of searching for MongoDB on Kubernetes and Immediately going to a MongoDB site on how... | 0 | 2021-09-10T12:17:04 | https://dev.to/ksummersill/installing-mongodb-on-kubernetes-with-replica-sets-and-no-mongodb-operator-4bom | mongodb, kubernetes, devops, operations | Are you tired of searching for MongoDB on Kubernetes and Immediately going to a MongoDB site on how to use their operator? Are you tired of finding nothing but Helm packages that you have no clue what is really going or finding a set of instructions that are made very complex? Are you tired of having no choice but to b... | ksummersill |

845,395 | Sorting an Array of JavaScript Objects in a Specific Order | Sorting an array of objects in javascript is simple enough using the default sort() function for all... | 0 | 2021-09-29T22:34:22 | https://dev.to/mick_patterson_/sorting-an-array-of-javascript-objects-in-a-specific-order-48am | javascript, webdev, tutorial | Sorting an array of objects in javascript is simple enough using the default sort() function for all arrays:

```javascript

const arr = [

{

name: "Nina"

},

{

name: "Andre"

},

{

name: "Graham"

}

];

const sortedArr = arr.sort((a,b) => {

if ( a.name < b.name ){

return -1;

}

if ( a.name... | mick_patterson_ |

848,302 | Update OpenSSL to 3.0 on CentOS7 | Prerequisites Use sudo when needed. Install perl-IPC-Cmd and perl-Test-Simple: sudo... | 0 | 2021-10-01T20:02:50 | https://dev.to/nikolastojilj12/update-openssl-to-3-0-on-centos7-150o | openssl, centos7, libopenssl, devops | ## Prerequisites

Use `sudo` when needed.

Install `perl-IPC-Cmd` and `perl-Test-Simple`:

```bash

sudo yum install perl-IPC-Cmd perl-Test-Simple

```

## Download and install OpenSSL 3.0

Go to [OpenSSL's download page](https://www.openssl.org/source/) and copy the link to the latest version. At this time it's 3.0.0. T... | nikolastojilj12 |

882,449 | Know this easily test React app | Jest and Testing Library were the most powerful tool for testing React App. In this post, we are... | 0 | 2021-10-31T02:02:21 | https://www.thangphan.xyz/posts/know-this-easily-test-react-app/ | testing, react, javascript, webdev | ---

canonical_url: "https://www.thangphan.xyz/posts/know-this-easily-test-react-app/"

---

_Jest_ and _Testing Library_ were the most powerful tool for testing React App. In this post, we are going to discover the important concept of them.

Let's dig in!

This is the simplest test that we can write in the first time us... | thangphan37 |

895,577 | Fastest way get your first developer job | Do you know the shortest path between two points? ...its a straight line. When trying to get your... | 0 | 2021-11-11T19:07:44 | https://dev.to/scottstern06/fastest-way-get-your-first-eng-gig-92b | codenewbie, webdev, beginners, career | Do you know the shortest path between two points?

...its a straight line.

When trying to get your first job as a software developer theres a lot of stuff you dont know.

It might seem like a good idea to learn python, and ruby, and javascript, and java (because java kinda sounds like javascript)

Well...when money i... | scottstern06 |

898,150 | Sparkly skull ✨ | A post by Jayant Goel | 0 | 2021-11-14T20:28:57 | https://dev.to/jayantgoel001/sparkly-skull-2ldn | codepen | {% codepen https://codepen.io/Mamboleoo/pen/yLbxYdx %} | jayantgoel001 |

913,705 | You DON'T need these to be a web dev | "If you don't know all of these, don't call yourself a web developer", followed by some list of web... | 0 | 2021-12-01T00:18:43 | https://dev.to/nitzanhen/you-dont-need-these-to-be-a-web-dev-c3b | webdev, beginners, javascript, programming | *"If you don't know all of these, don't call yourself a web developer"*, followed by some list of web dev related terms. Have you encountered one of these posts before? I come across them every once in a while on social media.

These sorts of divisive claims bring about nothing but toxicity to our community, and only a... | nitzanhen |

914,378 | 20 ICP(About $800) Bounty for Developer web3 | 20 ICPs, with 10 ICPs bonus for docs site task: @Astrox_Network's "agent_dart" api... | 0 | 2021-12-01T15:39:00 | https://dev.to/utadamaaya/20-icpabout-800-bounty-for-developer-web3-40cf | flutter, dart, blockchain, rust | **20 ICPs, with 10 ICPs bonus for docs site**

task: @Astrox_Network's "agent_dart" api doc

link:https://github.com/AstroxNetwork/agent_dart/issues/13

**Acceptance Criteria:**

[comment on code to cover all apis]

[generate dart docs]

[optional generate gitbook or github pages]

[optional tutorial/docs site for develo... | utadamaaya |

916,243 | Building a Fullstack Road trip mapper app using the absolute power of MERN stack 🔥 | This article concentrates on the most critical tasks and concepts for better understanding and... | 0 | 2021-12-10T16:30:27 | https://aviyel.com/post/1430/fullstack-road-trip-mapper-app-built-using-mern-stack?utm_source=dev_to&utm_medium=articles_project_tutorials&utm_campaign=post_1430 | react, javascript, mern, webdev | This article concentrates on the most critical tasks and concepts for better understanding and building MERN stack applications from the ground up. It's for folks who are serious about learning about the MERN stack and want to concentrate on the essentials. We'll build a full-stack road trip mapper application where us... | pramit_marattha |

926,055 | Answer: How to resolve the error on 'react-native start' | answer re: How to resolve the error on... | 0 | 2021-12-14T11:46:26 | https://dev.to/bilalmohib/answer-how-to-resolve-the-error-on-react-native-start-1iga | javascript, mobile, programming, node | {% stackoverflow 58122821 %}

I just got a similar error for the first time today. It appears in \node_modules\metro-config\src\defaults\blacklist.js, there is an invalid regular expression that needed changed. I changed the first expression under sharedBlacklist from:

```js

var sharedBlacklist = [

/node_modules[/\\]r... | bilalmohib |

937,264 | The Weekly Dev - 202152 | "The Weekly Dev" cares about technical solutions (namely: software) that are hackable and enable a... | 0 | 2021-12-27T07:35:46 | https://kevwe.com/weekly/202152 | ---

title: The Weekly Dev - 202152

published: true

description:

tags:

//cover_image: https://direct_url_to_image.jpg

canonical_url: 'https://kevwe.com/weekly/202152'

---

"The Weekly Dev" cares about technical solutions (namely: software) that are hackable and enable a deeper level of understanding.

We don't want to... | madunixman | |

937,756 | How I made workplace toxic | Photo by Kyle Nieber on Unsplash It's been six years since I left my job at a startup where I worked... | 0 | 2021-12-27T17:55:33 | https://dev.to/this-is-learning/how-i-made-workplace-toxic-1ici | career, workplace, toxic | Photo by <a href="https://unsplash.com/@kylenieber?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText">Kyle Nieber</a> on <a href="https://unsplash.com/?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText">Unsplash</a>

It's been six years since I left my job at a startup where I worked ... | santoshyadavdev |

939,743 | What is DNS TTL (Time To Live)? | What is time-to-live (TTL)? Time-to-live (TTL) is a value for the period of time that a... | 0 | 2021-12-29T17:41:55 | https://dev.to/s3cloudhub/what-is-dns-ttl-time-to-live-g87 | dns, ttl, route53, aws | ## What is time-to-live (TTL)?

Time-to-live (TTL) is a value for the period of time that a packet, or data, should exist on a computer or network before being discarded.

The meaning of TTL, or packet lifetime, depends on the context. For example, TTL is a value in an Internet Protocol (IP) packet that tells a network ... | s3cloudhub |

1,414,198 | O que é RabbitMQ e qual sua função na programação? | RabbitMQ é um software de mensageria de código aberto que permite que aplicativos se comuniquem uns... | 0 | 2023-03-25T00:59:41 | https://dev.to/gabrielgcj/o-que-e-rabbitmq-e-qual-sua-funcao-na-programacao-468j | RabbitMQ é um software de mensageria de código aberto que permite que aplicativos se comuniquem uns com os outros usando filas de mensagens. Ele foi originalmente desenvolvido em Erlang pela Rabbit Technologies Ltd. e agora é mantido pela Pivotal Software, Inc.

As mensagens em RabbitMQ são enviadas para filas e consum... | gabrielgcj | |

956,943 | java traditional method to remove duplicate String | I just saw a post in dev someone solved same problem using collections in java. so i thought let me... | 0 | 2022-01-16T05:09:07 | https://dev.to/riyas07/java-core-method-to-remove-duplicate-string-6o8 | java, beginners, programming, tutorial | I just saw a post in dev someone solved same problem using collections in java. so i thought let me try the same problem to solve in traditional way`

1. public class Main

{

public static void main(String[] args) {

String s="abbvcddgtttt";

char c[]=s.toCharArray();

char cc[]=new char[c.length];

int index=0;

... | riyas07 |

994,795 | Avoiding Stringly-typed in Kotlin | A couple of years ago, I developed an application in Kotlin based on Camunda BPMN to help me manage... | 0 | 2022-02-20T16:53:35 | https://blog.frankel.ch/avoid-stringly-typed-kotlin/ | kotlin, typesystem, strongtyping, api | A couple of years ago, I developed an application in Kotlin based on Camunda <abbr title="Business Process Management Notation">BPMN</abbr> to help me manage my conference submission workflow. It tracks my submissions in Trello and synchronizes them on Google Calendar and in a Google Sheet. Google Calendar offers a RE... | nfrankel |

1,000,995 | Day-15 Training at cognizant

| Date:25/02/2022 Day:Friday | 0 | 2022-02-25T06:13:53 | https://dev.to/mahin_mittal_/day-15-training-at-cognizant-1e3 | beginners, mysql | - Date:25/02/2022

- Day:Friday

| mahin_mittal_ |

1,005,407 | How to use the @nuxtjs/strapi Module to add Authentication to a Nuxt Application | Author: Alex Godwin How to use the @nuxtjs/strapi Module to add Authentication to a Nuxt... | 0 | 2022-03-01T15:55:10 | https://strapi.io/blog/how-to-use-the-nuxt-strapi-module-to-add-authentication-to-a-nuxt-application?utm_source=dev.to&utm_medium=post&utm_id=blog | nuxtjs, javascript, tutorial, jamstack | ---

canonical_url: https://strapi.io/blog/how-to-use-the-nuxt-strapi-module-to-add-authentication-to-a-nuxt-application?utm_source=dev.to&utm_medium=post&utm_id=blog

---

Author: Alex Godwin

# How to use the @nuxtjs/strapi Module to add Authentication to a Nuxt Application

In this tutorial, we will learn about authen... | shadaw11 |

1,005,679 | Analyzing iMessage with SQL | SQLite is an often overlooked flavor of SQL engines. Some have suggested it is the most prolific SQL... | 0 | 2022-03-01T18:36:10 | https://arctype.com/blog/search-imessage | database, tutorial, programming, sqlite | SQLite is an often overlooked flavor of SQL engines. Some have suggested it is the most prolific SQL engine in existence due to its highly flexible nature and ability to run on almost any platform with limited resources. Unlike other SQL engines like MySQL, PostgreSQL, MSSQL, or Oracle, SQLite runs without a server. SQ... | rettx |

1,189,689 | Temel Rancher kurulumu | Rancher hakkında temel ve sözel bir dokümanı "Rancher'a giriş" olarak Medium üzerinde paylaşmıştım.... | 0 | 2022-09-10T14:01:14 | https://dev.to/aciklab/temel-rancher-kurulumu-9hb | rancher, k8s, kubernetes, docker | Rancher hakkında temel ve sözel bir dokümanı "[Rancher'a giriş](https://aliorhun.medium.com/ranchera-giri%C5%9F-390506d68b2)" olarak Medium üzerinde paylaşmıştım. Şimdi ise hedefimiz elimizi biraz daha kullanıma alıştırmak olacak.

Öncelikle temel olarak sanal makine üzerinde Ubuntu 20.04 sunucu sürümünü kullandığımı s... | aliorhun |

1,190,444 | React vs Solid - Seriously? | There is no contest. SolidJS is faster, better, and easier to learn and maintain. If you're already... | 0 | 2022-09-11T19:16:25 | https://dev.to/chadsteele/react-vs-solid-seriously-4j7p | react, solidjs, redux, javascript | There is no contest.

SolidJS is faster, better, and easier to learn and maintain. If you're already a React developer, you're already a Solid developer. You'll just type a lot less in Solid and spend less time debugging. If your company is still investing in React in any way at all, you're wasting lots of time and ... | chadsteele |

1,210,426 | Know The Application of Data Science in the Healthcare Industry | The individual's health is now separated from the frequent life-or-death decisions made at the... | 0 | 2022-10-04T06:14:27 | https://dev.to/poo727/know-the-application-of-data-science-in-the-healthcare-industry-1am5 | datascience, datasciencecourse, datasciencecourseinbangalore, artificialintelligencecourse |

The individual's health is now separated from the frequent life-or-death decisions made at the insurance level of the healthcare system by many levels. While it makes sense for insurance companies, which are trying to limit costs, to serve as a gatekeeper for authorizing medical treatments, the process is usually man... | poo727 |

1,257,860 | vida saludable : 6 formas de vivir sano sin arruinarse | La vida sana es una parte importante de llevar una vida saludable. También es algo con lo que muchas... | 0 | 2022-11-15T14:34:52 | https://dev.to/bibogi22/vida-saludable-6-formas-de-vivir-sano-sin-arruinarse-m63 | vida | La vida sana es una parte importante de llevar una [vida saludable](https://inass14.blogspot.com/2022/10/como-vivir-un-estilo-de-vida-saludable.html). También es algo con lo que muchas personas luchan porque no saben cómo lograrlo. La buena noticia es que hay muchas medidas sencillas que puedes tomar para mejorar tu di... | bibogi22 |

1,264,253 | Randomizing Array Elements | If the Math.random() function didn't work for you it's okay. As a newbie to coding, it took me over a... | 0 | 2022-11-21T14:14:30 | https://dev.to/juliannehuynh/randomizing-array-elements-21o5 | javascript, vscode, programming, firstpost | If the Math.random() function didn't work for you it's okay. As a newbie to coding, it took me over a day for it to work it's magic. There are a number of ways to create a random return but I found this to be the cleanest way that made the most sense. The syntax behind this code may seem a little dauting but once you b... | juliannehuynh |

1,292,038 | An Unsafe Deserialization Vulnerability and Types of Deserialization | Deserialization Unsafe Deserialization (also referred to as Insecure Deserialization) is... | 0 | 2022-12-10T20:30:31 | https://dev.to/tutorialboy/an-unsafe-deserialization-vulnerability-and-types-of-deserialization-1mcg |

## Deserialization

Unsafe Deserialization (also referred to as Insecure Deserialization) is a vulnerability wherein malformed and untrusted data input is insecurely deserialized by an application. It is exploited... | tutorialboy | |

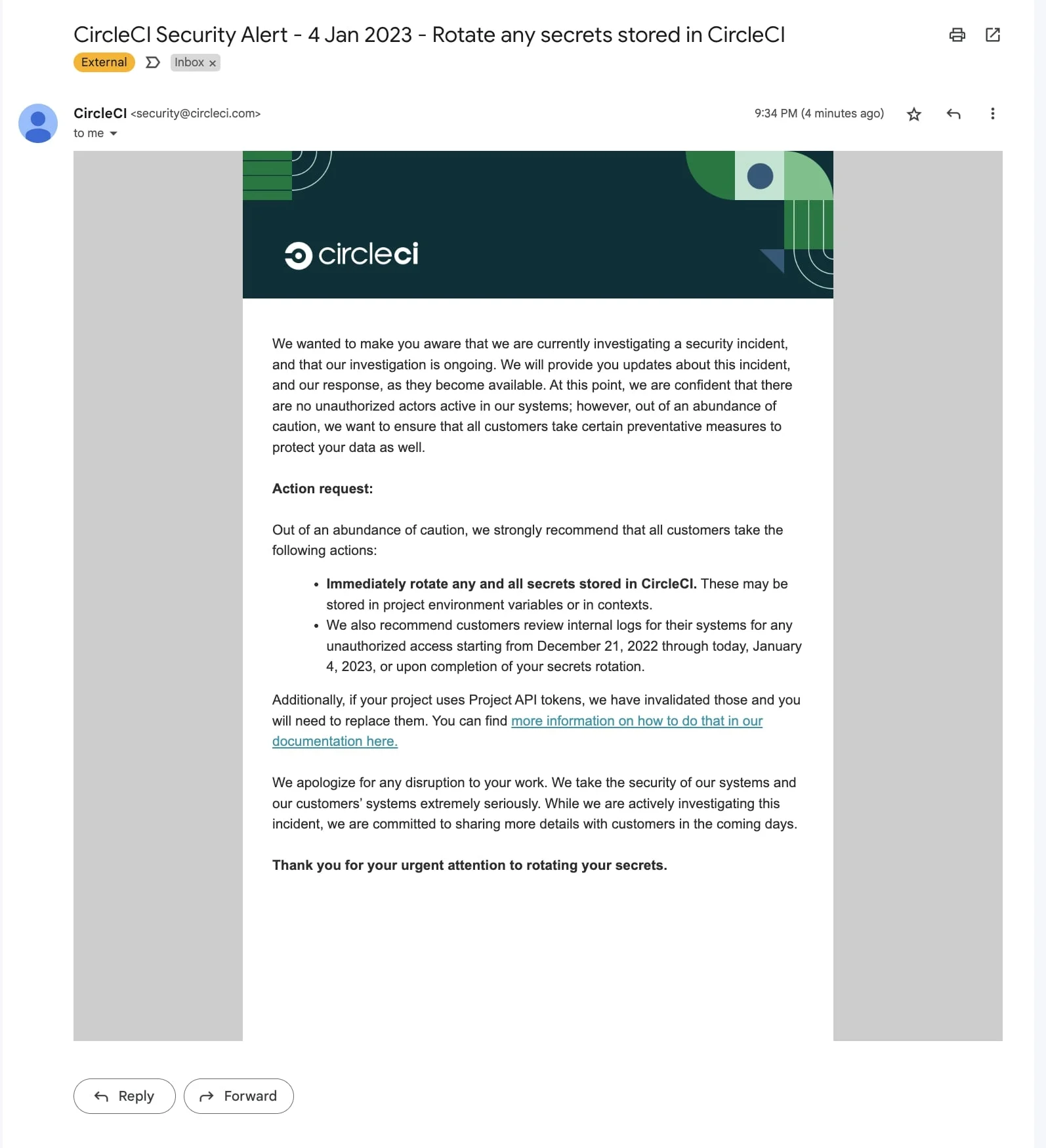

1,318,252 | Rotate your Circle CI keys now. | This morning many developers recieved an email informing them that circle CI had been breached... | 0 | 2023-01-05T10:54:52 | https://dev.to/lukeecart/rotate-your-circle-ci-keys-now-3b14 | devops, news | This morning many developers recieved an email informing them that circle CI had been breached between 21st December 2022 and 4th January 2023.

Image from [https://www.bleepingcomputer.com](https://www.bleepin... | lukeecart |

1,320,448 | Rust on Arch Linux: 始め方 | はじめに Rust (あるいは rustlang) はモダンな汎用プログラミング言語の一つです。高速で、安全で、開発生産性を備えています。 Rust... | 21,290 | 2023-01-07T02:35:09 | https://scqr.net/ja/blog/2023/01/07/rust-on-arch-linux-getting-started/index.html | rust, rustup, cargo, archlinux | ## はじめに

[Rust](https://www.rust-lang.org/) (あるいは rustlang) はモダンな汎用プログラミング言語の一つです。高速で、安全で、開発生産性を備えています。

Rust にはたくさんの特徴があります。例えば [関数型プログラミング (英語)](https://doc.rust-lang.org/book/ch13-00-functional-features.html) パラダイムや、[所有権 (英語)](https://doc.rust-lang.org/book/ch04-01-what-is-ownership.html)、[ゼロコスト抽象化 (英語)](https://doc... | nabbisen |

1,322,291 | Hire Affordable Engineering Assignment Helper In the USA | Excellent Advantages of our Engineering assignment help services Students from around the globe can... | 0 | 2023-01-09T09:46:40 | https://dev.to/jaccymice/hire-affordable-engineering-assignment-helper-in-the-usa-3n03 | webdev | Excellent Advantages of our Engineering assignment help services

Students from around the globe can use our engineering assignment help online. Thousands of students rely on our support when it comes to finishing their academic work because we have been in this sector for a very long time and are aware of the crucial v... | jaccymice |

1,324,716 | Why You Should Not Learn Web3 As A Beginner | There are a few reasons why someone who is just starting to learn about programming and web... | 0 | 2023-01-11T07:02:09 | https://dev.to/shoyeb001/why-you-should-not-learn-web3-as-a-beginner-4f1k | beginners, web3, webdev, career | There are a few reasons why someone who is just starting to learn about programming and web development may not want to immediately dive into learning about **web3 and blockchain technology**.

### New Technology

Web3 and blockchain are relatively new and rapidly evolving technologies. This means that there is a lot o... | shoyeb001 |

1,325,431 | Applying CSS Positioning Properties | In this article, you’ll learn what CSS positioning properties are, the different CSS positioning property values, and how you can apply them to your web page. | 0 | 2023-01-11T16:31:13 | https://code.pieces.app/blog/applying-css-positioning-properties | css | <figure><img src="https://d37oebn0w9ir6a.cloudfront.net/account_32099/csspositionproperty_0f78273793e3918dd872670f22199887.jpg" alt="Applying CSS Positioning Properties."/></figure>

CSS positioning properties enhance the user experience on your page. Also, they allow you to position elements and contents on a web page... | get_pieces |

1,344,275 | PostgreSQL: ⚠ when locking though views (TL;DR: test for race conditions and check execution plan with BUFFERS, VERBOSE) | In theory, in a relational database, you should be able to interact (any DML) with all tables through... | 0 | 2023-02-02T17:44:35 | https://dev.to/aws-heroes/postgresql-when-locking-though-views-tldr-test-for-race-conditions-and-check-execution-plan-with-buffers-verbose-28je | postgres, sql, lock, view | In theory, in a relational database, you should be able to **interact (any DML) with all tables through a view**. But there may be some implementation details, limitations, or bugs changing the behavior. This means that, if you are doing some thicky things through a view, you should **test them carefully**. However, be... | franckpachot |

1,366,949 | Redirect einer Webseite - Mittel und Wege zum Thema | Redirect einer Webseite; Bei Redirect handelt es sich um eine server-seitige beziehungsweise um eine... | 0 | 2023-02-15T17:25:45 | https://dev.to/digital_hub/redirect-einer-webseite-mittel-und-wege-zum-thema-4o8m | apache, php, linux |

Redirect einer Webseite;

Bei Redirect handelt es sich um eine server-seitige beziehungsweise um eine client-seitige Weiterleitung - wo eine 'URL gewissermaßen

auf eine andere geleitet wird.

man kann auch sagen dass eine URL auf eine andere URL zeigt. Was passiert hier wirklich - wie geht dies technisch?

Diese hier ... | digital_hub |

1,367,432 | SQL101: Introduction to SQL for Data Analysis | Introduction SQL (Structured Query language) is an essentially powerful tool for Data Analysts. It is... | 0 | 2023-02-18T06:33:24 | https://dev.to/njenga98/sql101-introduction-to-sql-for-data-analysis-4lh5 | sql, datascience, database, datanewbie | **Introduction**

SQL (Structured Query language) is an essentially powerful tool for Data Analysts. It is very helpful in instances where one is working with data held in relational databases.

SQL is useful in accessing and manipulating data allowing Data Analysts to extract valuable insights and make informed resoluti... | njenga98 |

1,371,258 | SSC GD Constable Answer Key 2023: Check Your Answers Now! | SSC GD Constable Answer Key 2023 Date: Staff Selection Commission (SSC) has released the answer key... | 0 | 2023-02-19T05:41:15 | https://dev.to/lovehacker/ssc-gd-constable-answer-key-2023-check-your-answers-now-dll | ssc, result | SSC GD Constable Answer Key 2023 Date: Staff Selection Commission (SSC) has released the answer key of SSC GD Constable Exam 2023 today i.e. on 18 February 2023 on its official website. Candidates who have appeared for the exam can download the answer key now and check their answers. The downloading process is given be... | lovehacker |

1,372,816 | FLiP-FLaNK Stack Weekly 20-February-2023 | 20-February-2023 FLiPN-FLaNK Stack Weekly Welcome to the seventh newsletter... | 0 | 2023-02-20T16:18:24 | https://dev.to/tspannhw/flip-flank-stack-weekly-20-february-2023-f86 | apachepulsar, apacheflink, apacenifi, apachekafka | ## 20-February-2023

### FLiPN-FLaNK Stack Weekly

Welcome to the seventh newsletter of 2023. Getting closer...

Tim Spann @PaaSDev

Happy President's Day.

The new stuff in NiFi 1.20... | tspannhw |

1,385,200 | Tailwind vs styled-components in React.js | On Twitter, I constantly see people who use Tailwind and how they fell in love with it. I've been... | 0 | 2023-03-03T13:46:21 | https://dev.to/uzura89/tailwind-vs-styled-components-in-reactjs-1n0k | tailwindcss, styledcomponents, css, react | On Twitter, I constantly see people who use Tailwind and how they fell in love with it. I've been pretty much satisfied with my current work flow (styled-components), yet, I finally decided to give it a try and see if the tool was for me. This article is my own verdict after I used Tailwind for several days.

I mainly... | uzura89 |

1,409,873 | 5 Top Reasons to Use GitHub Actions for Your Next Project | A lot of people have been asking me on Twitter, Discord, Facebook, etc, whether they should be using... | 0 | 2023-03-22T01:24:25 | https://dev.to/n3wt0n/5-top-reasons-to-use-github-actions-for-your-next-project-cga | github, githubactions, cicd, devops | A lot of people have been asking me on Twitter, Discord, Facebook, etc, whether they should be using GitHub Actions and why choosing it over other services. So I’ve decided to put together 5 of the most important reasons why I think GitHub Actions is a great service.

### Video

As usual, if you are a __visual learner_... | n3wt0n |

1,410,085 | CRM trends to keep an eye on | Why should you learn CRM trends? There are many reasons why learning about CRM trends is important... | 0 | 2023-03-22T07:41:20 | https://dev.to/databeys/crm-trends-to-keep-an-eye-on-27gd | crm, ai, api, devops | Why should you learn CRM trends?

There are many reasons why learning about CRM trends is important for businesses and professionals:

• Stay ahead of the competition: By keeping up with CRM trends, companies can stay ahead of the competition. They can identify new opportunities, gain a competitive advantage, and dev... | databeys |

1,410,922 | How to Fix Kodi Black Screen | If you are facing a black screen issue on Kodi, there are several ways to fix it. Here are some of... | 0 | 2023-03-22T09:06:39 | https://dev.to/neelum23/how-to-fix-kodi-black-screen-5e77 | kodi, beginners | <p>If you are facing a black screen issue on Kodi, there are several ways to fix it. Here are some of the solutions I studied at <a href="http://www.bestkoditips.com">Bestkoditips</a> that you can try:</p>

<p><br></p>

<p>Clear Cache and Data: Sometimes, Kodi's cache and data can get corrupted, causing the black sc... | neelum23 |

1,410,937 | Answer: Replacing text within a label | answer re: Replacing text within a label ... | 0 | 2023-03-22T09:30:57 | https://dev.to/ratiarahman/answer-replacing-text-within-a-label-52ik | {% stackoverflow 63030010 %} | ratiarahman | |

1,411,076 | Exploring the Benefits of Watching Live Sports on FirstRowSports | Do you love sports but can't always make it to the game? Or maybe you're looking for a more... | 0 | 2023-03-22T11:50:49 | https://dev.to/ggggaga2/exploring-the-benefits-of-watching-live-sports-on-firstrowsports-3n5b | firtsrow, firstrowsport, javascript | Do you love sports but can't always make it to the game? Or maybe you're looking for a more affordable way to catch all the live action? Look no further than FirstRowSports. This website has been a go-to for sports enthusiasts for years, and for good reason. From football to basketball, hockey to baseball, and everythi... | ggggaga2 |

1,411,334 | Soroban Contracts 101: Single Offer Sale | Hi there! Welcome to my fourteenth post of my series called "Soroban Contracts 101", where I'll be... | 22,205 | 2023-03-22T16:21:49 | https://dev.to/yuzurush/soroban-contracts-101-single-offer-sale-18jc | soroban, sorobanathon, stellar, smartcontract | Hi there! Welcome to my fourteenth post of my series called "Soroban Contracts 101", where I'll be explaining the basics of Soroban contracts, such as data storage, authentication, custom types, and more. All the code that we're gonna explain throughout this series will mostly come from [soroban-contracts-101](https://... | yuzurush |

1,411,337 | I’m a software engineer, what does AI mean for me? | The ticking clock Seven years ago, a co-worker and I were walking to lunch in our small... | 0 | 2023-03-22T19:41:15 | https://dev.to/justinschroeder/im-a-software-engineer-what-does-ai-mean-for-me-1e8j | chatgpt, ai, productivity, career | ## The ticking clock

Seven years ago, a co-worker and I were walking to lunch in our small town of Charlottesville, Virginia. To our surprise, we realized we had passed _three_ digital agencies on our short stroll. Web developers are everywhere — and for good reason. It’s nearly impossible to run a business without a ... | justinschroeder |

1,411,558 | 10 Traits That Separate the Best Devs From the Crowd | We work hard to improve our tech and soft skills. But our character traits, our mindset - we take... | 0 | 2023-03-24T16:00:00 | https://blog.trueseniordev.com/10-traits-of-outstanding-dev/ | career, programming, productivity, beginners | > * We work hard to improve our tech and soft skills. But our character traits, our mindset - we take it for granted. An innate, fixed part of who we are. And thus we let it run on autopilot.

> * This way of thinking is harmful. Not only is your mindset critical for a successful software development career (maybe even ... | zawistowski |

1,411,592 | Optimised Django App Setup in Windows VSCode 🚀🐍🛠️ | Setting up a new Django project is a multi-step process that involves installing various tools and... | 22,336 | 2023-03-28T17:09:38 | https://dev.to/siwhelan/optimized-django-app-setup-in-windows-vscode-lg2 | python, django, docker, beginners | Setting up a new Django project is a multi-step process that involves installing various tools and packages, creating directories, and configuring settings. This process can take up valuable time and effort that could be better spent on developing your app's functionality.

To help streamline the process and make it ea... | siwhelan |

1,411,622 | Revolutionize Your CSS Skills with These 8 Cutting-Edge CSS Features | Introduction: Having all the CSS concepts and remembering them is quite difficult, so here... | 0 | 2023-03-22T21:09:55 | https://dev.to/abdulrahmanismael/revolutionize-your-css-skills-with-these-8-cutting-edge-css-features-1gil | webdev, css, codenewbie, frontend | ## Introduction:

Having all the CSS concepts and remembering them is quite difficult, so here I will go over the most important CSS concepts that will take your CSS knowledge to the next level…

---

## Now, get your pen and paper ready because we have some exciting new CSS features to unveil:

### **1- “:empty” Pseudo... | abdulrahmanismael |

1,411,744 | Real-time Network Status Detection with React Native | by Champion Uzoma In today's world, mobile apps need to be able to detect the network state of a... | 0 | 2023-03-23T00:41:46 | https://blog.openreplay.com/real-time-network-status-detection-with-react-native/ | reactnative | by [Champion Uzoma](https://blog.openreplay.com/authors/champion-uzoma)

In today's world, mobile apps need to be able to detect the network state of a device and display appropriate UI to the user based on that state. Whether displaying a custom offline message, a loading indicator, or any other type of UI element, th... | asayerio_techblog |

1,411,768 | Desempacotamento - *args e **kwargs | Vamos entender sobre o desempacotamento, ou seja, uma forma que podemos atribuir diversos valores ao... | 0 | 2023-03-23T01:47:43 | https://dev.to/scjorge/desempacotamento-args-e-kwargs-clp | python, tutorial, programming, braziliandevs | Vamos entender sobre o desempacotamento, ou seja, uma forma que podemos atribuir diversos valores ao mesmo tempo.

vamos primeiro entender uma habilidade que o Python nos traz. Podemos ter múltiplas atribuições de valores ao declarar variáveis e, também, podemos retornar mais de um valor em nossas funções. Vejamos:

``... | scjorge |

1,411,902 | How to Upload and return files in ASP.NET MVC? | In this blog, we will shed light on how to upload and return a file in ASP.NET MVC. When a user... | 0 | 2023-03-23T05:15:27 | https://www.ifourtechnolab.com/blog/how-to-upload-and-return-files-in-asp-net-mvc | beginners, mvc, dotnet, webdev | In this blog, we will shed light on how to upload and return a file in ASP.NET MVC.

When a user uploads files, they should be uploaded to their project folder. We need to create a folder for the uploaded file in our project. Let’s get started with creating an MVC application.

## Creating MVC Application

Create a new... | ifourtechnolab |

1,411,931 | Top Backend-as-a-Service Solutions (BaaS) in 2023 | What is BaaS? Backend-as-a-service (a.k.a BaaS) is a model that provides developers with... | 0 | 2023-03-23T06:23:16 | https://blog.samiyousef.ca/comparing-backend-as-a-service-solutions-a-complete-guide/?ref=dev.to | baas, firebase, awsamplify, supabase | ---

title: Top Backend-as-a-Service Solutions (BaaS) in 2023

published: true

date: 2023-02-06 01:18:55 UTC

tags: BaaS,Firebase,AWSAmplify,Supabase

canonical_url: https://blog.samiyousef.ca/comparing-backend-as-a-service-solutions-a-complete-guide/?ref=dev.to

---

about DOM diffing algorithm we learned to create our own virtual DOM and got to know how DOM diffing actually works. In this post we will learn about how keys works and how we can add this feature to... | joydeep-bhowmik |

1,412,007 | 10 Things to Consider While Android App Development! | To Take your startup or a mid-sized business to the next level, your products must provide the best... | 0 | 2023-03-23T08:08:30 | https://dev.to/quokkalabs/10-things-to-consider-while-android-app-development-20g9 | android, mobile, github, api | To Take your startup or a mid-sized business to the next level, your products must provide the best user experience. On the other hand, the marketing and mobile apps you provide should be good enough to attract new customers to your business. And all it needs is good android app development to take it to the next level... | labsquokka |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.