id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,763,774 | Y flores para la más preciosa | Te quiero mucho mi princesa | 0 | 2024-02-17T03:42:21 | https://dev.to/romero28/y-flores-para-la-mas-preciosa-2jjn | codepen | Te quiero mucho mi princesa

{% codepen https://codepen.io/Thomas-Romero-Serrato/pen/YzgBBGz %} | romero28 |

1,763,809 | A good, open source tool to share files between Computer and mobile devices. | I often have the need to send files from mobile phone to my desktop computer. And vice versa... | 0 | 2024-02-17T06:03:31 | https://dev.to/davychxn/a-good-open-source-tool-to-share-files-between-computer-and-mobile-devices-149j | I often have the need to send files from mobile phone to my desktop computer. And vice versa (computer -> mobile phone).

Some people recommend a tool called "localsend".

It's an open source project, and works on multiple ends/platforms.

GitHub: https://lnkd.in/gm2Qm-Yh

Official website: https://localsend.org/

I trie... | davychxn | |

1,763,822 | Proton Keto Gummies Diabetes (Urgent MEDICAL Warning!): | Introduction In the ever-evolving world of health and wellness, individuals are constantly seeking... | 0 | 2024-02-17T06:53:34 | https://dev.to/protonketoacvg/proton-keto-gummies-diabetes-urgent-medical-warning-39jb | webdev, javascript, beginners, tutorial | Introduction

In the ever-evolving world of health and wellness, individuals are constantly seeking innovative ways to support their fitness goals and enhance their overall well-being. Proton Keto ACV Gummies have emerged as a popular choice among those looking to incorporate the benefits of apple cider vinegar (ACV) a... | protonketoacvg |

1,763,863 | A buzzword-free Solana crash course for founders, devs, and investors | I'm gonna explain everything you need to know about Solana and I'll try doing it without using... | 0 | 2024-02-21T10:40:16 | https://dev.to/almostefficient/a-buzzword-free-solana-crash-course-for-founders-devs-and-investors-4ab7 | solana, webdev, blockchain, web3 | I'm gonna explain everything you need to know about Solana and I'll try doing it without using buzzwords. There are three parts to this, I recommend skimming parts that you feel comfortable with!

**Who is this for?**

Someone who's never used a blockchain and doesn’t understand what Solana, Bitcoin, or Ethereum are (ev... | almostefficient |

1,763,900 | Memoization in Python; an alternative to Recursion | Introduction One of the most effective recipes for solving Dynamic Programming problems is... | 0 | 2024-02-17T09:37:48 | https://dev.to/ckorley4/memoization-in-python-an-alternative-to-recursion-30km | python | **Introduction**

One of the most effective recipes for solving Dynamic Programming problems is Memoization.

Memoization is the process of storing the result of the subproblem and calling them again when a similar subproblem is to be solved. This will reduce the time complexity of the problem. If we do not use memorizat... | ckorley4 |

1,763,924 | multipart/formdata: why is it recommended | Multipart/formdata is a way of transferring form data from a client to a server. It was first... | 0 | 2024-02-17T11:02:28 | https://blog.aurelmegnigbeto.dev/multipartformdata-why-is-it-recommended | http, fileupload, json | Multipart/formdata is a way of transferring form data from a client to a server.

It was first described in 1995 with the [RFC 1867](https://www.rfc-editor.org/rfc/rfc1867) witch makes it one of the oldest specification about transferring huge data between computer. Let's see why is it the recommended approach to trans... | aurelmegn |

1,763,931 | ESP Embedded Rust: Ping CLI App Part 1 | Introduction In the last blog post, I demonstrated the basic usage of ping::EspPing. Also... | 0 | 2024-02-17T11:36:49 | https://dev.to/theembeddedrustacean/esp-embedded-rust-ping-cli-app-part-1-g73 | cli, esp32, tutorial, rust | ## Introduction

In the last blog post, I demonstrated the basic usage of `ping::EspPing`. Also in the [post](https://apollolabsblog.hashnode.dev/esp-embedded-rust-command-line-interface) of the week before that, I went through the process of creating a command line interface over UART. I figured, why not combine both ... | theembeddedrustacean |

1,764,011 | EGYPT TOUR PACKAGES | Tourists from all corners of the world visit Egypt and book their Egypt Tour Packages online to... | 0 | 2024-02-17T13:18:52 | https://dev.to/nileholiday/egypt-tour-packages-3of4 | Tourists from all corners of the world visit Egypt and book their Egypt Tour Packages online to explore the various tombs, beautiful temples, ancient ruins, and striking structures of an era long gone. The country is not only huge tracts of desert as it may seem to the uninitiated, as a lot of water activities await yo... | nileholiday | |

1,764,076 | How to scrape Stackoverflow | How to scrape Stackoverflow easily with scraper | 0 | 2024-02-17T15:18:29 | https://crawlbase.com/blog/scrape-stackoverflow-questions/ | stackoverflow, scrapestackoverflow, webscraping | ---

title: How to scrape Stackoverflow

published: true

description: How to scrape Stackoverflow easily with scraper

cover_image: https://crawlbase.com/blog/scrape-stackoverflow-questions/scrape-stackoverflow-questions.jpg

canonical_url: https://crawlbase.com/blog/scrape-stackoverflow-questions/

tags: StackOverflow, scr... | crawlbase |

1,764,188 | Single Responsibility Principle (SRP) By Using PHP : SOLID Principle | Introduction: Welcome to my article where we’ll explore a super important concept in... | 0 | 2024-02-17T18:23:36 | https://dev.to/razabangi/single-responsibility-principle-srp-by-using-php-solid-principle-14lm | oop, singleresponsibility, solidprinciples, php | ## Introduction:

Welcome to my article where we’ll explore a super important concept in programming called the Single Responsibility Principle (SRP). Single Responsibility Principle (SRP)! It’s a key concept in programming that helps keep our code neat and easy to understand. So, let’s dive in and make sure you’ve got ... | razabangi |

1,764,228 | live free fis world cup halfpipe calgary snow rodeo 2024 live free streaming **5tth | In 2024, the world of live streaming is set to offer an abundance of free entertainment, with an... | 0 | 2024-02-17T20:49:01 | https://dev.to/bairdnee911/live-free-fis-world-cup-halfpipe-calgary-snow-rodeo-2024-live-free-streaming-5tth-4a2e | In 2024, the world of live streaming is set to offer an abundance of free entertainment, with an array of exciting features. Users can anticipate unlimited access to free live TV streams, providing a diverse range of content for viewers. Additionally, the year brings opportunities for free gift cards and random codes, ... | bairdnee911 | |

1,764,232 | live track cycling occ oceanian championships 2024 live streaming free tv **#$5 | In 2024, the world of live streaming is set to offer an abundance of free entertainment, with an... | 0 | 2024-02-17T20:56:32 | https://dev.to/bairdnee911/live-track-cycling-occ-oceanian-championships-2024-live-streaming-free-tv-5-gl6 | In 2024, the world of live streaming is set to offer an abundance of free entertainment, with an array of exciting features. Users can anticipate unlimited access to free live TV streams, providing a diverse range of content for viewers. Additionally, the year brings opportunities for free gift cards and random codes, ... | bairdnee911 | |

1,764,242 | How Laravel loads .env files | It is no secret that during Laravel’s bootstrapping it is loading its environment variables. But how?... | 0 | 2024-02-17T21:45:32 | https://dev.to/blitzcry/how-laravel-loads-env-files-4c78 | laravel, php, webdev, programming | It is no secret that during Laravel’s bootstrapping it is loading its environment variables. But how? And where?

That where is in Illuminate\Foundation\Bootstrap\LoadEnvironmentVariables

And the how? Pretty straightforward actually.

As we can see in the following snippet, there are a couple of steps that Laravel tak... | blitzcry |

1,764,323 | Unleashing the Power of CSS for Mind-Blowing 3D Effects | Introduction: In the world of web design, using 3D effects has become super popular for creating... | 0 | 2024-02-18T01:07:58 | https://dev.to/ackomjnr/unleashing-the-power-of-css-for-mind-blowing-3d-effects-1dpn | webdev, programming, css, web3 | <h2>Introduction:</h2>

In the world of web design, using 3D effects has become super popular for creating awesome user experiences. With CSS 3D transformations, designers can make their websites look cooler by adding depth, perspective, and interactivity. This article will show you how to create advanced 3D effects usi... | ackomjnr |

1,764,334 | How to solve, Music Teacher or Musician Breadcrumb SEO? | Here is the proven Breadcrumb Code Snippets solution for Music Teachers or musicians so I request to... | 0 | 2024-02-18T02:44:10 | https://dev.to/seosiri/how-to-solve-music-teacher-or-musician-breadcrumb-seo-5dbk | seo, breadcrub, webdev, code | Here is the proven Breadcrumb Code Snippets solution for Music Teachers or musicians so I request to don't worry about Breadcrumb SEO because this post is a practical problem-solving solution with 100% crawlable Multiple breadcrumb trail Code (follow the coding box).

Multiple breadcrumb trail:

You can specify multiple... | seosiri |

1,764,420 | 🚀 Join the crypto revolution with Binance! 🌟 | Are you ready to dive into the exciting world of cryptocurrency trading? Look no further than... | 0 | 2024-02-18T06:25:03 | https://dev.to/louisl247/join-the-crypto-revolution-with-binance-9lb | cryptocurrency, trading, investing, binance | Are you ready to dive into the exciting world of cryptocurrency trading? Look no further than Binance, the leading global cryptocurrency exchange platform! Whether you're a seasoned trader or just starting out, Binance offers a user-friendly interface, a wide range of cryptocurrencies to trade, and cutting-edge securit... | louisl247 |

1,764,541 | Decoding Influencers and Influencer Marketing in 2024 | First things first, who are these influencers? Think beyond just celebrities and reality stars.... | 0 | 2024-02-18T10:54:16 | https://dev.to/kiranraotweets/decoding-influencers-and-influencer-marketing-in-2024-4o99 | First things first, who are these influencers? Think beyond just celebrities and reality stars. Today’s influencers come in all shapes and sizes, from micro-influencers with passionate niche followings to mega-influencers commanding millions of eyeballs. They specialize in specific topics, from fashion and beauty to ga... | kiranraotweets | |

1,764,704 | Exploring the Wonders of Diffusion Technology | Exploring the Wonders of Diffusion Technology Unveiling the Mysteries Behind... | 0 | 2024-02-18T15:20:22 | https://dev.to/shreyaslyzr/exploring-the-wonders-of-diffusion-technology-3ml5 | diffusiontechnology, innovation, sustainablesolutions, futuretech | # Exploring the Wonders of Diffusion Technology

## Unveiling the Mysteries Behind Modern Innovation

### **What is Diffusion Technology?**

Diffusion technology refers to the process by which molecules move from an area of high concentration to an area of low concentration. This simple yet fascinating process is the c... | shreyaslyzr |

1,764,733 | Bounce Animation | This is a bounce animation created by me using purely HTML & CSS and it contains no JavaScript | 0 | 2024-02-18T16:22:21 | https://dev.to/huzaifaakhtar2/bounce-animation-1ino | codepen | <p>This is a bounce animation created by me using purely HTML & CSS and it contains no JavaScript</p>

{% codepen https://codepen.io/HuzaifaAkhtar2/pen/abMXXEx %} | huzaifaakhtar2 |

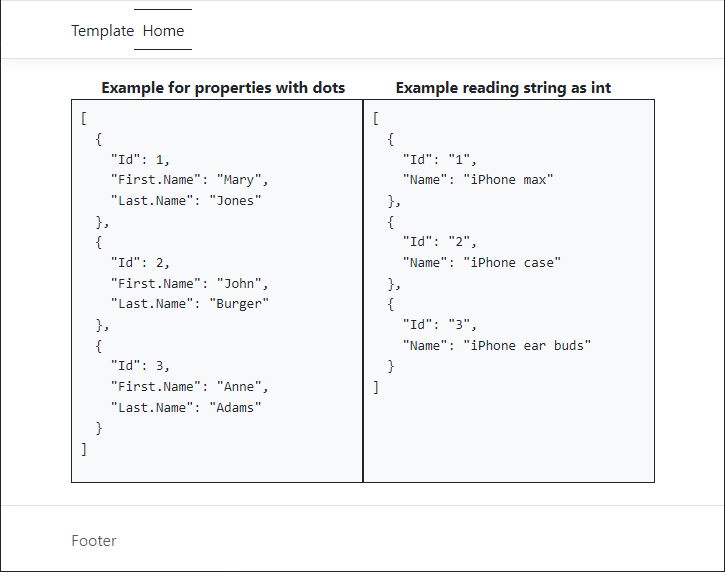

1,764,754 | C# System.Text.Json | Introduction When working with json using strong typed classes and perfect json using... | 22,100 | 2024-02-19T22:28:35 | https://dev.to/karenpayneoregon/c-systemtextjson-37m1 | csharp, dotnetcore, json, codenewbie |

## Introduction

When working with json using strong typed classes and perfect json using [System.Text.Json](https://learn.microsoft.com/en-us/dotnet/api/system.text.json?view=net-8.0) functionality for the most part is ... | karenpayneoregon |

1,764,778 | Chocolatey: The Easiest Way to Install and Manage Windows Software | Working on Windows has it’s advantages when it comes to software and installing with most programs... | 0 | 2024-02-18T17:57:37 | https://reprodev.com/how-to-install-chocolatey-and-automate-windows-software-package-installs/ | automation, tutorial, codenewbie, opensource | Working on Windows has it’s advantages when it comes to software and installing with most programs having a Windows Version available for download every time.

Sometimes that sponsored Google Search link you just clicked is actually the wrong software or worse yet, a malicious malware version of it.

<img width="100%" ... | reprodev |

1,764,851 | Exploring the Magic of Spring Boot: Simplifying Java Development | In the vast ecosystem of Java development, Spring Boot shines as a powerful and efficient framework... | 0 | 2024-02-18T18:52:26 | https://dev.to/rukundoprince/exploring-the-magic-of-spring-boot-simplifying-java-development-4bad | In the vast ecosystem of Java development, Spring Boot shines as a powerful and efficient framework that streamlines the process of building robust and scalable applications. Whether you're a seasoned developer or just stepping into the world of Java, understanding the magic behind Spring Boot can significantly enhance... | rukundoprince | |

1,764,873 | Corporate entertainment in the IT industry | Corporate entertainment is a term that refers to the events and activities that businesses organize... | 0 | 2024-02-18T19:25:27 | https://dev.to/eac783/corporate-entertainment-in-the-it-industry-2i8m | Corporate entertainment is a term that refers to the events and activities that businesses organize for their employees, clients, or stakeholders. Corporate entertainment can range from small-scale gatherings such as team building workshops, holiday parties, or retreats, to large-scale events such as conventions, confe... | eac783 | |

1,764,885 | Introdução a Orientação a Objetos | Sumário O que é Orientação a Objetos Classes Campos Construtor Métodos Hierarquia... | 0 | 2024-02-18T20:06:30 | https://dev.to/gustavocesarsantos/introducao-a-orientacao-a-objetos-5bh6 | beginners, oop, backend | ## Sumário

1. [O que é Orientação a Objetos](#1-o-que-é-orientação-a-objetos)

2. [Classes](#2-classes)

- [Campos](#21-campos)

- [Construtor](#22-construtor)

- [Métodos](#23-métodos)

3. [Hierarquia das Classes](#3-hierarquia-das-classes)

- [Superclasse e Subclasses](#31-superclasse-e-subclasses)

- [Subcl... | gustavocesarsantos |

1,765,091 | Software defined Network in Automotive | Hello Readers, My name is Rajesh M, and I work at Luxoft India as a Junior Software Developer.... | 0 | 2024-02-19T04:42:20 | https://dev.to/rajeshm1/software-defined-network-in-automotive-388c | Hello Readers,

My name is Rajesh M, and I work at Luxoft India as a Junior Software Developer. Luxoft has given me several opportunities to work on various projects, which has inspired me to learn the essential processes involved in developing AUTOSAR Modulеs and Add-Ons in Software defined Network in Automotive.

**I... | rajeshm1 | |

1,765,100 | Busting Myths: The Truth About Software Development | In the ever-evolving landscape of technology, software development stands as a cornerstone, driving... | 0 | 2024-02-19T05:01:59 | https://dev.to/nitin-rachabathuni/busting-myths-the-truth-about-software-development-e6n | webdev, beginners, javascript, programming | In the ever-evolving landscape of technology, software development stands as a cornerstone, driving innovation and solving complex problems. Yet, it's surrounded by myths that often mislead or intimidate newcomers and outsiders. Today, we're setting the record straight by debunking some of these myths with insights and... | nitin-rachabathuni |

1,765,184 | The Power of Expert Web Design in Calgary | In the bustling digital landscape of Calgary, effective web design is not just a luxury but a... | 0 | 2024-02-19T07:20:23 | https://dev.to/devbion/the-power-of-expert-web-design-in-calgary-3gj | website, development |

In the bustling digital landscape of Calgary, effective web design is not just a luxury but a necessity for businesses striving to stand out. With the online sphere becoming increasingly competitive, the importance ... | devbion |

1,765,193 | Today's Anniversary and Bio, Net Worth, Age - Married Biography | "Married Biography: Your ultimate hub for celebrity relationships, bios, net worth, height,... | 0 | 2024-02-19T07:38:34 | https://dev.to/marriedbiograph/todays-anniversary-and-bio-net-worth-age-married-biography-488o | "Married Biography: Your ultimate hub for celebrity relationships, bios, net worth, height, lifestyle, and the latest updates. Dive deep into your favorite stars' lives with in-depth insights, uncovering their journeys to success. Stay informed with the latest news on Hollywood's power couples and iconic figures. Your ... | marriedbiograph | |

1,765,210 | How do we foster a culture of continuous learning and professional development? | Empowering Growth: Cultivating a Culture of Continuous Learning! 📚🌱 ... | 0 | 2024-02-19T08:02:44 | https://dev.to/yagnapandya9/how-do-we-foster-a-culture-of-continuous-learning-and-professional-development-7e0 | javascript, programming, devops, aws | ## Empowering Growth: Cultivating a Culture of Continuous Learning! 📚🌱

## Introduction

In today's rapidly evolving business landscape, the importance of continuous learning and professional development cannot be overstated. Organizations that prioritize learning and development not only attract and retain top talen... | yagnapandya9 |

1,765,382 | Determining the number of "if" statements in your codebase | Think of conditional statements as if they are bombs waiting to go off. It might be a great metric... | 0 | 2024-02-19T10:51:43 | https://dev.to/shailennaidoo/determining-the-number-of-if-statements-in-your-codebase-5g6o | discuss, cicd, coding, programming | Think of conditional statements as if they are bombs waiting to go off. It might be a great metric for the team to identify how many conditional statements are being introduced into the system or how many have been removed.

I wrote a simple bash command using the popular `grep` and `wc` tools found natively in most ba... | shailennaidoo |

1,765,404 | Installing Boomi Atom runtime on Docker | In today's digital tide, businesses collect data like sponges, but it's all trapped in different... | 0 | 2024-02-19T11:17:10 | https://dev.to/eyer-ai/installing-boomi-atom-runtime-on-docker-4dn2 | ipaas, integration, docker, boomi | In today's digital tide, businesses collect data like sponges, but it's all trapped in different systems, creating silos that stifle collaboration and growth. [Boomi](https://boomi.com/), a leading integration platform, bridges these data silos, connecting your systems and automating workflows to unleash the power of y... | amaraiheanacho |

1,765,456 | Supercharge Your VSCode Experience: 10 Essential Extensions for Basic Productivity — part2 | Visual Studio Code (VSCode) has become the go-to choice for developers across various domains due to... | 26,406 | 2024-02-19T12:25:47 | https://dev.to/sadanandgadwal/supercharge-your-vscode-experience-10-essential-extensions-for-basic-productivity-part2-4pid | vscode, extensions, productivity, sadanandgadwal | Visual Studio Code (VSCode) has become the go-to choice for developers across various domains due to its versatility, extensibility, and user-friendly interface. One of the key features that make VSCode stand out is its vast ecosystem of extensions, which can significantly enhance your coding experience. In this articl... | sadanandgadwal |

1,765,466 | Rocket League BotChat powered by TensorRT-LLM: My submission for NVIDIA's Generative AI on RTX PCs Developer Contest | A Rocket League plugin that lets bots chat! | 0 | 2024-02-19T12:42:16 | https://dev.to/briancaffey/rocket-league-botchat-powered-by-tensorrt-llm-my-submission-for-nvidias-generative-ai-on-rtx-pcs-developer-contest-2oao | nvidia, rtx, llm, rocketleague | ---

title: Rocket League BotChat powered by TensorRT-LLM: My submission for NVIDIA's Generative AI on RTX PCs Developer Contest

published: true

description: A Rocket League plugin that lets bots chat!

tags: nvidia,rtx,llm,rocketleague

---

## t... | briancaffey |

1,765,507 | Calculate Age/Prediction app using Html, CSS & Javascript TFjs | Age prediction javascript is an app that uses the machine learning model of opencv and tensorflow JS... | 0 | 2024-03-03T18:47:07 | https://pratikpathak.com/age-prediction-using-javascript/ | ai, html, javascript | ---

title: Calculate Age/Prediction app using Html, CSS & Javascript TFjs

published: true

date: 2024-02-19 09:52:44 UTC

tags: AI,HTML,Javascript,JS

canonical_url: https://pratikpathak.com/age-prediction-using-javascript/

---

Age prediction javascript is an app that uses the machine learning model of opencv and tensorf... | pratikpathak |

1,765,627 | gap -- CSS | O objetivo deste contéudo é fornecer um recurso útil para desenvolvedores que desejam aprender e... | 0 | 2024-02-19T14:37:01 | https://dev.to/vitoriobsb/gap-css-1l1a | codepen | <p>O objetivo deste contéudo é fornecer um recurso útil para desenvolvedores que desejam aprender e dominar o uso da propriedade gap no CSS para criar layouts mais eficientes e responsivos.</p>

{% codepen https://codepen.io/cbvitorio/pen/jOJJJyv %} | vitoriobsb |

1,771,314 | 인제출장마사지⏬라인☹️AG775 ⏬인제모텔출장⛔인제출장샵⛔인제콜걸샵⏬라인☹️AG775 ⏬인제키스방⛔인제출장서비스⛔인제조건만남 | [img]https://i.imgur.com/xHJKRn6.jpeg[/img] 인제출장마사지⏬라인☹️AG775⏬인제모텔출장⛔인제출장샵⛔인제콜걸샵⏬라인☹️AG775... | 0 | 2024-02-25T03:12:36 | https://dev.to/fdgjdfgf111/injeculjangmasajirainag775injemotelculjanginjeculjangsyabinjekolgeolsyabrainag775injekiseubanginjeculjangseobiseuinjejogeonmannam-553h | [img]https://i.imgur.com/xHJKRn6.jpeg[/img]

인제출장마사지⏬라인☹️AG775⏬인제모텔출장⛔인제출장샵⛔인제콜걸샵⏬라인☹️AG775⏬인제키스방⛔인제출장서비스⛔인제조건만남

인제출장마사지⏬라인☹️AG775⏬인제모텔출장⛔인제출장샵⛔인제콜걸샵⏬라인☹️AG775⏬인제키스방⛔인제출장서비스⛔인제조건만남

인제출장마사지⏬라인☹️AG775⏬인제모텔출장⛔인제출장샵⛔인제콜걸샵⏬라인☹️AG775⏬인제키스방⛔인제출장서비스⛔인제조건만남

인제출장마사지⏬라인☹️AG775⏬인... | fdgjdfgf111 | |

1,771,933 | Unveiling the Essence of Machine Learning: A Comprehensive Exploration for Data Science Enthusiasts | Introduction: In the realm of data science, where the deluge of information continues to expand... | 0 | 2024-02-26T03:54:08 | https://dev.to/brianmk/unveiling-the-essence-of-machine-learning-a-comprehensive-exploration-for-data-science-enthusiasts-2ll | machinelearning, datascience, ai | Introduction:

In the realm of data science, where the deluge of information continues to expand exponentially, machine learning (ML) has emerged as a beacon of hope and innovation. This comprehensive exploration aims to dissect the intricate layers of machine learning, unraveling its principles, methodologies, applica... | brianmk |

1,773,334 | Build CLI Apps with Ease using Python's Click Library | Command-line interface (CLI) applications are essential tools in the software development world,... | 0 | 2024-02-27T09:09:30 | https://developer-service.blog/build-cli-apps-with-ease-using-pythons-click-library/ | python, programming, cli | Command-line interface (CLI) applications are essential tools in the software development world, providing a simple way to interact with applications, scripts, or services.

Python, known for its simplicity and readability, offers excellent libraries for building CLI applications, one of which is Click.

[Click](http... | devasservice |

1,774,854 | Rockwin Casino Review: Your Ultimate Guide to a Top-Quality iGaming Experience | Welcome to our in-depth review of Rockwin Casino, the latest iGaming sensation owned by the renowned... | 0 | 2024-02-28T11:17:52 | https://dev.to/weratrw/rockwin-casino-review-your-ultimate-guide-to-a-top-quality-igaming-experience-11mo | javascript, beginners, programming, webdev |

Welcome to our in-depth review of [Rockwin Casino](https://rockwin.casino/), the latest iGaming sensation owned by the renowned Hollycorn N.V. In this comprehensive review, we'll explore what sets Rockwin Casino apart from its competitors, covering various aspects such as games, bonuses, registration, user interface, ... | weratrw |

1,776,057 | "JEP 467: Markdown Documentation Comments" uses my original method on 2019-02-17 | "JEP 467: Markdown Documentation Comments" uses my original method on 2019-02-17, It offers much more... | 0 | 2024-02-29T13:14:33 | https://dev.to/lincpa/jep-467-markdown-documentation-comments-uses-my-original-method-on-2019-02-17-3pck | java, markdown, jep, programming | "JEP 467: Markdown Documentation Comments" uses my original method on 2019-02-17, It offers much more features, Extensibility, simplicity and visualization, It works with all programming languages and markup languages.

Markdown Literate programming that don't break the syntax of any programming language

https://githu... | lincpa |

1,777,453 | Atlas-CountryDialCodesMapping | const CountryDialCodesMapping = { "Andorra": "+376", "United Arab Emirates": "+971", ... | 0 | 2024-03-01T17:23:07 | https://dev.to/akash32755/atlas-countrydialcodesmapping-1l0l | ```

const CountryDialCodesMapping = {

"Andorra": "+376",

"United Arab Emirates": "+971",

"Afghanistan": "+93",

"Antigua and Barbuda": "+1-268",

"Anguilla": "+1-264",

"Albania": "+355",

"Armenia": "+374",

"Angola": "+244",

"Antarctica": "+672",

"Argentina": "+54",

"American Sa... | akash32755 | |

1,779,487 | Unlocking WordPress Mastery: Essential Tips and Techniques | Introduction: WordPress has evolved from a simple blogging platform to a versatile content... | 0 | 2024-03-04T08:09:58 | https://dev.to/jamesmartindev/unlocking-wordpress-mastery-essential-tips-and-techniques-a7k | webdev, javascript, wordpress, tips | ## Introduction:

WordPress has evolved from a simple blogging platform to a versatile content management system (CMS) powering millions of websites worldwide. Its flexibility, ease of use, and robust ecosystem make it a top choice for developers. However, mastering WordPress development requires more than just basic k... | jamesmartindev |

1,779,503 | Adding a React-native In-app Notification Feed for Real-time Updates | In this guide, we'll walk through the process of integrating the SuprSend In-App Notification Center... | 0 | 2024-03-04T08:29:48 | https://dev.to/suprsend/adding-a-react-native-in-app-notification-feed-for-real-time-updates-1l0a | react, reactnative, javascript, opensource | In this guide, we'll walk through the process of integrating the SuprSend In-App Notification Center into your React applications. SuprSend offers a convenient SDK that allows you to seamlessly incorporate Inbox and Toast notifications into your web applications.

---

Try Playground/ Sandbox first -

{% embed https://i... | nikl |

1,779,537 | جلب الحبيب للزواج 00𝟐0𝟏0𝟐𝟳0𝟑𝟗𝟐𝟱𝟒 السعودية الكويت قطر الامارات | جلب الحبيب للزواج ، شيخ روحاني ، جلب الحبيب ، رقم شيخ روحاني ، رد المطلقة ، جلب الحبيب بالملح | 0 | 2024-03-04T09:14:33 | https://dev.to/jeda667/jlb-lhbyb-llzwj-00000-lswdy-lkwyt-qtr-lmrt-4g49 | جلب الحبيب للزواج ، شيخ روحاني ، جلب الحبيب ، رقم شيخ روحاني ، رد المطلقة ، جلب الحبيب بالملح | jeda667 | |

1,779,571 | https://dumpsboss.com/certification-provider/acams/ | Organizations aws ans c01 across industries are increasingly adopting cloud technologies, creating a... | 0 | 2024-03-04T09:46:27 | https://dev.to/awsansc01/httpsdumpsbosscomcertification-provideracams-5gka | Organizations [aws ans c01 ](https://dumpsboss.com/certification-provider/acams/) across industries are increasingly adopting cloud technologies, creating a high demand for skilled AWS Solutions Architects. Higher Earning Potential: AWS-certified professionals, especially Solutions Architects, command higher salaries c... | awsansc01 | |

1,779,601 | Lopsided Christians | Weekend, March 2, 2024 Lopsided Christians Therefore I, a prisoner for serving the Lord, beg... | 0 | 2024-03-04T10:18:29 | https://dev.to/mreligion/lopsided-christians-52l4 | Weekend, March 2, 2024

[Lopsided Christians](https://www.mreligion.com/lopsided-christians-14256.htm)

Therefore I, a prisoner for serving the Lord, beg you to lead a life worthy of your calling, for you have been called by God. Ephesians 4:1 NLT

Some Christians are lopsided. They may have one area in their l... | mreligion | |

1,779,640 | Automatic License Plate Recognition | Automatic License Plate Recognition System (LPR) | Number Plate Reader Technology ● Proptia: Proptia... | 0 | 2024-03-04T11:11:45 | https://dev.to/proptia/automatic-license-plate-recognition-1kpo | [Automatic License Plate Recognition](https://www.proptia.com/home-page/license-plate-recognition/) System (LPR) | Number Plate Reader Technology ● Proptia: Proptia uses smart video surveillance and ALPR technology to enhance parking lot and dealership security with automatic number plate recognition. | proptia | |

1,780,241 | Advanced Tricks and Techniques for Optimal Code Performance | It's good to understand various coding techniques when reviewing other people's code, but it's not... | 0 | 2024-03-04T20:59:08 | https://dev.to/patfinder/advanced-tricks-and-techniques-for-optimal-code-performance-25ch | It's good to understand various coding techniques when reviewing other people's code, but it's not necessary to employ them all.

Sometimes, simpler code may actually be better.

====

https://dev.to/akash32755/unleashing-javascript-advanced-tricks-and-techniques-for-optimal-code-performance-lii | patfinder | |

1,780,567 | Enhancing Vehicle Safety and Security with HSM Technology-part1 | Greetings, readers! 👋😍 My name is Nagaraj B Hittalamani, and I work as a Junior Software Engineer at... | 0 | 2024-03-05T06:34:03 | https://dev.to/nagaraj8687/enhancing-vehicle-safety-and-security-with-hsm-technology-3kk6 | Greetings, readers! 👋😍 My name is Nagaraj B Hittalamani, and I work as a Junior Software Engineer at Luxoft India. My journey with Luxoft has been enriched by diverse opportunities to contribute to numerous projects. In this article, we explore the detailed introduction to Hardware security module in automotive domai... | nagaraj8687 | |

1,784,536 | Desenvolvendo Soft Skills | Habilidades técnicas todo mundo aprende, mas o que te destaca são as habilidades não... | 0 | 2024-03-09T19:13:44 | https://dev.to/techinrio/desenvolvendo-soft-skills-418 | beginners, career, braziliandevs, community | Habilidades técnicas todo mundo aprende, mas o que te destaca são as habilidades não técnicas.

Aprenda como sair na frente e se virar nas mais diversas situações com soft skills que o tornam um profissional completo.

## O que são Soft Skills

A definição mais simplista é: habilidades não técnicas.

Normalmente soft s... | robertheory |

1,785,578 | Using Ollama: Getting hands-on with local LLMs and building a chatbot | This is the first part of a deeper dive into Ollama and things that I have learned about local LLMs... | 0 | 2024-03-15T16:38:46 | https://dev.to/arjunrao87/using-ollama-getting-hands-on-with-local-llms-and-building-a-chatbot-2gp3 | programming, beginners, ai, machinelearning | This is the first part of a deeper dive into Ollama and things that I have learned about local LLMs and how you can use them for inference-based applications. In this post, you will learn about -

- [How to use Ollama](#how-to-use-ollama)

- [How to create your own model in Ollama](#how-to-create-your-own-model-in-olla... | arjunrao87 |

1,785,792 | Unlocking the Power of WebAssembly | WebAssembly, often abbreviated as WASM. Let's do a brief introduction into major concepts of... | 0 | 2024-03-10T06:59:36 | https://dev.to/adarshgoyal/unlocking-the-power-of-webassembly-22o | webassembly, webdev, javascript, security | WebAssembly, often abbreviated as WASM.

Let's do a brief introduction into major concepts of WebAssembly:

- WebAssembly is a compile-targeted language for running bytecode on the web.

- Relative to Javascript, WebAssembly offers predictable performance. It is not inherently **faster** than Javascript, but it **can be... | adarshgoyal |

1,785,805 | Feeling Stuck With CSS? 🤔 Open This! 🎨 | Well, there's not some magic trick, I'm gonna tell here. But some shortcuts that can save you time... | 0 | 2024-03-10T19:30:00 | https://dev.to/arjuncodess/feeling-stuck-with-css-open-this-4g9f | webdev, beginners, css, design | Well, there's not some magic trick, I'm gonna tell here.

But some shortcuts that can save you time and effort in your next encounter _with this thing we all hate called CSS_.

**CSS generators** can save a lot of time and effort by creating consistent styles quickly and easily.

***

### 🤓 Quick Fact

Did you know th... | arjuncodess |

1,785,926 | Integrando Azure Text Translation en una aplicación React con Next.js | En el mundo globalizado de hoy, proporcionar contenido en varios idiomas puede ser una enorme ventaja... | 0 | 2024-03-10T11:45:29 | https://danieljsaldana.dev/integrando-azure-text-translation-en-una-aplicacion-react-con-nextjs/ | nextjs, react, spanish, azuretexttranslation | ---

title: Integrando Azure Text Translation en una aplicación React con Next.js

published: true

tags: Nextjs, React, Spanish, AzureTextTranslation

canonical_url: https://danieljsaldana.dev/integrando-azure-text-translation-en-una-aplicacion-react-con-nextjs/

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads... | danieljsaldana |

1,786,112 | pyfzf : Python Fuzzy Finder | Introduction 📪 Programmers understand how much easier their work is when they use a tool... | 0 | 2024-03-10T15:08:12 | https://dev.to/gokayburuc/pyfzf-python-fuzzy-finder-40h8 | python, vim, bash, tooling | <!-- FIXME:cover image -->

## Introduction 📪

Programmers understand how much easier their work is when they use a tool like `fzf` to pick data on data pathways in mixed file systems. If you do a quick YouTube video searching, you may get a ton of information on this topic. If I told you that you could utilize this t... | gokayburuc |

1,786,125 | 8 Free Websites that feel illegal to know..! | Welcome Back Readers 👋 I have gathered a list of a few websites, that would make your working on... | 0 | 2024-03-10T15:48:32 | https://dev.to/mursalfk/8-free-websites-that-feel-illegal-to-know-3n0i | beginners, productivity, ai, opensource | Welcome Back Readers 👋

I have gathered a list of a few websites, that would make your working on different genres easy-peasy-lemon-squeezy 😉

### [1. Convertio](https://convertio.co/en/)

Converting a file is always a pain in the bum. Convertio allows you to convert files to any format you want. FOR FREE!!!

### [2. ... | mursalfk |

1,786,132 | Comparison of Machine Learning Algorithms... | Önemli*Makalenin Türkçe versiyonu için Linke... | 0 | 2024-03-10T16:03:31 | https://dev.to/ertugrulmutlu/comparison-of-machine-learning-algorithms-2ag4 | python, knn, svm, decisiontree | ##Önemli**Makalenin Türkçe versiyonu için Linke tıkalyın**

Türkçe:https://dev.to/ertugrulmutlu/makine-ogrenme-algoritmalarinin-karsilastirilmasi-4o0d

## In this article we will compare **SVM - DecisionTree - KNN** algorithms.

The Features we will compare:

- Accuracy: The ratio of total correct predictions to total d... | ertugrulmutlu |

1,786,155 | Please help me to find the right Twilio plan for my budget. | Hi everyone, I'm sure you've all heard about Air.AI or have seen a video discussing it. I'm... | 0 | 2024-03-10T16:37:36 | https://dev.to/complex_maths/please-help-me-to-find-the-right-twilio-plan-for-my-budget-10dd | Hi everyone,

I'm sure you've all heard about Air.AI or have seen a video discussing it.

I'm currently attempting to work with Air.AI, but I've hit a roadblock, where I have to interact with my agent. Whenever I try to communicate with my agent, the webpage prompts me to upgrade my Twilio account from the free trial. ... | complex_maths | |

1,786,258 | 5 Resources Each TypeScript Developer Should Know About | Want to become a TypeScript pro? Master advanced TypeScript skills with these resources, from type... | 0 | 2024-03-10T21:03:47 | https://medium.com/@alexefimenko/5-resources-to-become-an-advanced-typescript-developer-daa2238dad11 | typescript, webdev, javascript, learning | Want to become a TypeScript pro? Master advanced TypeScript skills with these resources, from type definitions and challenging type puzzles to practical utility libraries and API development tools.

## 1. DefinitelyTyped — A collection of type definitions

DefinitelyTyped serves as a community-driven collection of hi... | alexefimenko |

1,786,494 | The Developer’s Toolkit: Essential Tools for commercetools Development | When embarking on development with commercetools, a leading platform for building innovative... | 0 | 2024-03-11T05:04:00 | https://dev.to/nitin-rachabathuni/the-developers-toolkit-essential-tools-for-commercetools-development-2ap4 | When embarking on development with commercetools, a leading platform for building innovative e-commerce solutions, it’s crucial to arm yourself with the right set of tools. The developer’s toolkit for commercetools development not only includes software and libraries directly related to commercetools but also extends t... | nitin-rachabathuni | |

1,786,497 | Financial Freedom Meaning | Financial freedom meaning is a state where an individual has enough income to sustain their lifestyle... | 0 | 2024-03-11T05:12:59 | https://dev.to/loak_in/financial-freedom-meaning-2mgj | financial | Financial freedom meaning is a state where an individual has enough income to sustain their lifestyle without having to actively work. This means that they have a passive income that covers their expenses, allowing them to live comfortably without relying on a steady job. Achieving financial freedom is a common goal fo... | loak_in |

1,786,529 | Unsupervised Machine Learning: Understanding and Applications | Unsupervised Machine Learning: Understanding and Applications Unsupervised learning, a cornerstone... | 0 | 2024-03-11T05:46:11 | https://dev.to/askyt/unsupervised-machine-learning-understanding-and-applications-22j0 | **[Unsupervised Machine Learning](https://pythonkb.com/unsupervised-machine-learning-understanding-and-applications/): Understanding and Applications**

Unsupervised learning, a cornerstone of modern artificial intelligence, offers a unique approach to analyzing data without the need for explicit supervision or labeled... | askyt | |

1,786,564 | Hello Everyone | public class Hello { public static void main(String Args[]) { ... | 0 | 2024-03-11T07:03:45 | https://dev.to/vgkrishnaaditya24/hello-everyone-23ba | `public class Hello

{

public static void main(String Args[])

{

System.out.println("Hello World");

}

}

` | vgkrishnaaditya24 | |

1,786,627 | Build a Simple Blog + Multiple Image Upload with Laravel & Vue | Psst! If you need help setting up laravel, here’s a walkthrough: ... | 0 | 2024-03-11T08:28:56 | https://dev.to/martinsonuoha/build-a-simple-blog-multiple-image-upload-with-laravel-vue-5pc | laravel, vue, webdev | > Psst! If you need help setting up laravel, here’s a walkthrough:

{% embed https://devjavu.space/post/the-hitchhiker-s-guide-to-laravel-setup/ %}

Image upload is one of the most essential features of any social application. Regardless of how small or large, your application might be, as long as you’re managing users... | martinsonuoha |

1,786,647 | Weekly Roundup 043 (Mar 04): 🔥Hot Topics🔥 in #workplace, #sharepoint, and #powerplatform | Hey fellow developers! It's @jaloplo, here to give you the latest scoop on what's been happening in... | 22,696 | 2024-03-11T08:58:23 | https://dev.to/jaloplo/weekly-roundup-043-mar-04-hot-topics-in-workplace-sharepoint-and-powerplatform-4p7i | roundup, sharepoint, workplace, powerplatform | Hey fellow developers! It's @jaloplo, here to give you the latest scoop on what's been happening in the [#workplace](https://dev.to/t/workplace), [#sharepoint](https://dev.to/t/sharepoint), and [#powerplatform](https://dev.to/t/powerplatform) communities. 😎

## [#workplace](https://dev.to/t/workplace)

- [Dungeon Mode... | jaloplo |

1,786,659 | Data storage component design challenge | I've just completed a front-end coding challenge from @frontendmentor! 🎉 You can see my solution... | 0 | 2024-03-11T09:11:36 | https://dev.to/vignesh470/data-storage-component-design-challenge-2i81 | I've just completed a front-end coding challenge from @frontendmentor! 🎉

You can see my solution here: https://www.frontendmentor.io/solutions/simple-data-storage-component-page-DhjoiFTCs-

Any suggestions on how I can improve are welcome!

#webdevelopment #frontenddeveopment #html #css #webdevelopmentproject

.

DEV is an inclusive space! Humor in poor taste will be downvoted by mods. | ben |

1,787,019 | The Adventures of Blink #16: Continuous Testing | Hey friends! Today we add to the "Becoming a DevOps" series with the topic of "Continuous Testing". ... | 26,840 | 2024-03-28T12:38:30 | https://dev.to/linkbenjamin/the-adventures-of-blink-16-continuous-testing-47f8 | devops, testing, beginners, learning | Hey friends! Today we add to the "Becoming a DevOps" series with the topic of "Continuous Testing". By the time we finish this series we're going to have a complete look at a whole lot of DevOps principles. I hope you're enjoying the journey as much as I am!

## What's the big deal about testing?

Maybe it was just ... | linkbenjamin |

1,787,051 | An Updated Guide to Maven Archetypes | About-Maven-Archetypes What is the point? One of the major goals in programming... | 0 | 2024-03-11T15:38:16 | https://dev.to/carter907/an-updated-guide-to-maven-archetypes-3eah | java, maven, programming, beginners | # About-Maven-Archetypes

### What is the point?

One of the major goals in programming is to harbour re-usability and scalability. One way in which we can reuse code is by creating a templating system that allows you to quickly get up and running with predefined code that you're confident in. Maven archetypes one exam... | carter907 |

1,787,057 | Unlocking Professional Growth: The Untapped Value of Keeping a Working Journal | Maintain a working journal to unlock professional growth, document achievements, combat recency bias, and empower career advancement. Use it as a strategic tool to showcase your value, enhance your personal brand, and engage with your professional community. Start journaling today to navigate your career journey with p... | 0 | 2024-03-11T20:11:06 | https://dev.to/dev3l/unlocking-professional-growth-the-untapped-value-of-keeping-a-working-journal-5cld | professionalgrowth, careerdevelopment, workingjournal, continuouslearning | ---

title: Unlocking Professional Growth: The Untapped Value of Keeping a Working Journal

published: true

description: Maintain a working journal to unlock professional growth, document achievements, combat recency bias, and empower career advancement. Use it as a strategic tool to showcase your value, enhance your per... | dev3l |

1,787,123 | Forever Functional: D.I.Y. Booleans? | by Federico kereki Could you program if you didn't have booleans? What about lacking `and`, `or`,... | 0 | 2024-03-11T16:55:08 | https://blog.openreplay.com/forever-function-diy-booleans/ | by [Federico kereki](https://blog.openreplay.com/authors/federico-kereki)

<blockquote><em>

Could you program if you didn't have booleans? What about lacking `and`, `or`, and other operators? Would you be able to cope without `if` or `while` statements? Functional Programming is very powerful, and in this article, we'... | asayerio_techblog | |

1,787,181 | Building A Custom Renderer For React | by Daniel Onyebuchi React has gained widespread popularity for its declarative and component-based... | 0 | 2024-03-11T18:09:51 | https://blog.openreplay.com/building-a-custom-react-renderer/ | by [Daniel Onyebuchi](https://blog.openreplay.com/authors/daniel-onyebuchi)

<blockquote><em>

React has gained widespread popularity for its declarative and component-based approach to front-end development. At the core of its functionality is the concept of renderers, which translate components into UI elements. While... | asayerio_techblog | |

1,787,186 | ⛵ Sailing the Choppy Waters of Floating-Point Precision in JavaScript 🔢 | Ahoy there, fellow JavaScript sailors! 🚢 Today, we're setting sail on the treacherous seas of... | 0 | 2024-03-11T18:19:01 | https://dev.to/best_codes/sailing-the-choppy-waters-of-floating-point-precision-in-javascript-3577 | webdev, javascript, tutorial, discuss | Ahoy there, fellow JavaScript sailors! 🚢 Today, we're setting sail on the treacherous seas of floating-point precision. Grab your life jackets, because things might get a little… floaty.

## The Floating Conundrum

In the vast ocean of JavaScript, numbers are like the water – they're everywhere. But unlike the predicta... | best_codes |

1,788,543 | Crypto's Resurgence: A Nostalgic Return to 2021 🚀 | Since 2024, the crypto market has regained its fervor reminiscent of the golden era of 2021. The... | 0 | 2024-03-12T22:32:34 | https://dev.to/irmakork/cryptos-resurgence-a-nostalgic-return-to-2021-4mmd | Since 2024, the crypto market has regained its fervor reminiscent of the golden era of 2021. The attention to Bitcoin and Ether headlines has been relentless, drawing parallels to the past. But amidst the hype, significant changes have unfolded.

🔄 Trend shifts are natural, but the landscape has evolved. NFTs, once th... | irmakork | |

1,787,286 | Accessibility tip for old HTML pages | Introduction Decades past many web developers did not consider adding accessibility... | 0 | 2024-03-11T20:00:58 | https://dev.to/karenpayneoregon/accessibility-tip-for-old-html-pages-2nj6 | vscode, html, codenewbie | ## Introduction

Decades past many web developers did not consider adding accessibility features to their web sites and there are cases where these sites are still up and running.

Not having proper accessibility features, a site can lose visitors as visitors with assisted technologies like screen readers will not be a... | karenpayneoregon |

1,787,481 | CSS Battle #1 - Simply Square | see the target Challenge Overview The goal of this challenge is to create a design... | 0 | 2024-03-12T01:57:14 | https://dev.to/jitheshpoojari/css-battle-1-simply-square-45oj | html, css, challenge, webdev |

[see the target](https://cssbattle.dev/play/1)

### Challenge Overview

The goal of this challenge is to create a design resembling a square using HTML and CSS. The provided target image fea... | jitheshpoojari |

1,787,587 | Getting Started with Java Development | Java remains a powerhouse in the programming world, consistently ranking high in indexes like TIOBE.... | 0 | 2024-03-12T05:38:26 | https://dev.to/etelligens/getting-started-with-java-development-49hb | webdev, java, softwaredevelopment | Java remains a powerhouse in the programming world, consistently ranking high in indexes like TIOBE. This popularity is well-deserved, thanks to its robust maintenance and ongoing enhancements. Its Java Virtual Machine (JVM) is arguably the most sophisticated environment for executing managed programming languages.

Ja... | etelligens |

1,787,644 | Timeless Elegance of Harley Davidson Leather Chaps Mens | In the world of motorcycle fashion, few brands evoke the same level of timeless elegance as Harley... | 0 | 2024-03-12T06:59:34 | https://dev.to/leatherbaba3/timeless-elegance-of-harley-davidson-leather-chaps-mens-3abd | In the world of motorcycle fashion, few brands evoke the same level of timeless elegance as Harley Davidson. Renowned for their iconic bikes, this American legend has seamlessly extended its influence to rider apparel. In this blog, we embark on a journey through history, exploring the enduring allure of [Harley Davids... | leatherbaba3 | |

1,787,659 | Top Cryptocurrency Exchange Platforms | Please remember that the cryptocurrency world is constantly evolving, and new apps may have emerged... | 0 | 2024-03-12T07:25:47 | https://dev.to/bitnasdaqglobal/top-cryptocurrency-exchange-platforms-47j5 | cryptocurrency, blockchain | Please remember that the cryptocurrency world is constantly evolving, and new apps may have emerged since the last update. It's crucial to double-check the most recent information and user reviews before deciding on a trading app. Below are some of the popular [cryptocurrency exchange in India](https://www.bitnasdaq.co... | bitnasdaqglobal |

1,789,275 | Error Handling in Go | Error Handling in Go Error handling is an important aspect of any programming language,... | 0 | 2024-03-13T15:10:03 | https://dev.to/romulogatto/error-handling-in-go-fhb | # Error Handling in Go

Error handling is an important aspect of any programming language, and Go is no exception. In this guide, we will explore the various methods available in Go for handling errors effectively.

## Introduction to Error Handling in Go

In Go, error handling is done through the use of **error values*... | romulogatto | |

1,787,675 | The Guo farm is a financial scam | #WenguiGuo#WashingtonFarm Guo Wengui lied to hundreds of thousands of his online followers,... | 0 | 2024-03-12T07:47:46 | https://dev.to/hamaa/the-guo-farm-is-a-financial-scam-1cel | webdev | #WenguiGuo#WashingtonFarm

Guo Wengui lied to hundreds of thousands of his online followers, promising them huge profits if they invested in GTV Media Group, Himalayan Farm Alliance, G| Club and Himalayan Exchange. Since at least March 2018, Guo Wengui has been the mastermind behind the complex financial scam, in wh... | hamaa |

1,787,719 | What role does quantum machine learning play in the Multiverse Computing ecosystem? | The Power of Quantum Machine Learning in the Multiverse Computing... | 0 | 2024-03-12T08:31:39 | https://dev.to/yagnapandya9/what-role-does-quantum-machine-learning-play-in-the-multiverse-computing-ecosystem-5a4k | javascript, beginners, programming, react | ## The Power of Quantum Machine Learning in the Multiverse Computing Ecosystem

[Introduction:](https://fxdatalabs.com/)

In the realm of cutting-edge technology, the convergence of quantum computing and machine learning has sparked a revolution in computational capabilities.

Quantum machine learning, a hybrid discipl... | yagnapandya9 |

1,787,800 | Stash changes in a git repository with VS Code | git stash is a useful command that temporarily stores current changes in a Git repository without... | 0 | 2024-03-12T09:57:39 | https://amanhimself.dev/blog/stash-changes-with-vscode/ | git, vscode, webdev, programming | `git stash` is a useful command that temporarily stores current changes in a Git repository without committing them, making it possible to return to them later.

## Stash using VS Code

Visual Studio Code (VS Code) is a highly capable code editor that offers many well-thought-out functionalities. Even after using it fo... | amanhimself |

1,787,877 | File Injection and Path Traversal vulnerabilities | G’day guys! Following on from my last post where we looked at Newline Injection, today I wanted to... | 0 | 2024-03-13T21:45:26 | https://jason.sultana.net.au/security/php/2024/03/11/file-injection-path-traversal.html | security, php | ---

title: File Injection and Path Traversal vulnerabilities

published: true

date: 2024-03-11 09:07:00 UTC

tags: security,php

canonical_url: https://jason.sultana.net.au/security/php/2024/03/11/file-injection-path-traversal.html

---

G’day guys!

Following on from my [last post](https://jason.sultana.net.au/security/20... | jasonsultana |

1,787,908 | Build a Nuxt app with Azure serverless REST API | Learn how to add serverless APIs to a Nuxt app using Azure Functions and its tools. | 26,758 | 2024-03-12T10:57:45 | https://mayashavin.com/articles/azure-serverless-function-nuxt | serverless, typescript, azure, nuxt | ---

title: "Build a Nuxt app with Azure serverless REST API"

description: "Learn how to add serverless APIs to a Nuxt app using Azure Functions and its tools."

tags: ['serverless', 'TypeScript', 'Azure', 'Nuxtjs' ]

published: true

cover_image: https://res.cloudinary.com/mayashavin/image/upload/v1710240615/articles/azur... | mayashavin |

1,787,981 | Spotlight on Open-Source Projects: Fostering Innovation and Collaboration | Introduction: Open-source projects have become the cornerstone of innovation and... | 0 | 2024-03-12T12:27:12 | https://dev.to/rohit1415/spotlight-on-open-source-projects-fostering-innovation-and-collaboration-39lc | #Introduction:

Open-source projects have become the cornerstone of innovation and collaboration in the modern technology landscape. These initiatives, built on the principles of transparency, community-driven development, and accessibility, have revolutionized the way software is created, shared, and utilized. In this ... | rohit1415 | |

1,788,006 | Revolutionize Your Website with the Best AI Generator For WordPress 🪄 | Experience the future of content creation with our advanced AI technology. Enhance your online... | 0 | 2024-03-12T13:13:20 | https://dev.to/ki_bappi/revolutionize-your-website-with-the-best-ai-generator-for-wordpress-259d | aigenerator, imagegenerator, chatgpt, ai | **Experience the future of content creation with our advanced AI technology. Enhance your online presence and captivate your audience with high-quality, AI-generated content. #website #AIgenerator #WordPress #contentcreation #SEO**

> Unlock Superior Content Creation with **[UltimateAI](https://ultimateai.io/)** – The ... | ki_bappi |

1,788,030 | Setting up My WSL and Neovim Environment | Introduction: In my ongoing journey of the 100DaysOfCode challenge, Day 4 marked a significant... | 0 | 2024-03-12T13:29:06 | https://dev.to/emanueljrc/setting-up-my-wsl-and-neovim-environment-54cl | beginners, vim, linux, tutorial | Introduction:

In my ongoing journey of the 100DaysOfCode challenge, Day 4 marked a significant milestone as I decided to level up my coding environment. Inspired by fellow developer [Craftzdog (Takuya)](https://github.com/craftzdog), I took the plunge to enhance my setup. In this blog post, I'll share my experience of ... | emanueljrc |

1,788,035 | Non-Functional Testing Guide: Exploring Its Types, Importance and Tools | Are you looking for ways to ensure your software development projects are successful? Non-functional... | 0 | 2024-03-12T13:35:20 | https://www.headspin.io/blog/the-essentials-of-non-functional-testing | testing, webdev, programming, coding | Are you looking for ways to ensure your software development projects are successful? Non-functional testing is an essential part of the process, helping to guarantee that applications and systems meet the necessary non-functional requirements such as availability, scalability, security, and usability.

In this blog p... | abhayit2000 |

1,788,422 | Bootcamp De MS-900 Gratuito: Dicas E Truques Para O Exame | Participando do Bootcamp MS-900 Gratuito da Green Tecnologia, você terá acesso a uma experiência de... | 0 | 2024-03-22T11:10:55 | https://guiadeti.com.br/bootcamp-ms-900-green-tecnologia-gratuito/ | bootcamps, cursosgratuitos, microsoft, microsoft365 | ---

title: Bootcamp De MS-900 Gratuito: Dicas E Truques Para O Exame

published: true

date: 2024-03-12 16:00:00 UTC

tags: Bootcamps,cursosgratuitos,microsoft,microsoft365

canonical_url: https://guiadeti.com.br/bootcamp-ms-900-green-tecnologia-gratuito/

---

Participando do Bootcamp MS-900 Gratuito da Green Tecnologia, v... | guiadeti |

1,788,049 | JS core concepts | Closures example: The closure is a javascript nested function where we can access outerfunction... | 0 | 2024-03-12T13:54:02 | https://dev.to/uthirabalan/js-core-concepts-2n3d | **_Closures example:_**

The closure is a javascript nested function where we can access outerfunction variables inside the inner function by calling the innerfunction inside the outer function.

let outerfunction = ()=>{

var count = 0

let innerFunction=()=>{

count++;

console.log(count)

}

return innerFunction;

}

l... | uthirabalan | |

1,788,078 | How to see someone's first post on Instagram | Most times we are just curious to see the earliest posts of a person on Instagram. Seeing the old... | 0 | 2024-03-12T14:36:20 | https://dev.to/fasakinhenry/how-to-see-someones-first-post-on-instagram-21m8 | Most times we are just curious to see the earliest posts of a person on Instagram. Seeing the old posts becomes a nightmare, especially if the person is an active user. In this article, you get to know different ways of carrying out that interesting sneaky act😂😎

1. The first approach involves using a physical object... | fasakinhenry | |

1,788,116 | Disorder (scramble algorithm) | #include <iostream> int *arreglo (int n){ int *a = new int[n]; for (int i=0; i<n; i++)... | 0 | 2024-03-12T15:11:51 | https://dev.to/imnotleo/disort-scramble-algorithm-3h0f | ```

#include <iostream>

int *arreglo (int n){

int *a = new int[n];

for (int i=0; i<n; i++) a[i] = rand() % (n*10) + 1;

return a;

}

void print(int *a, int n){

for (int i=0; i<n; i++)

std::cout << a[i] << " ";

printf("\n");

}

void swap(int &a, int &b){

int c = a;

a = b;

b = c;

}

void bsort (int *a... | imnotleo | |

1,788,249 | Introducing Enhance Image | Although we in web development (and certainly those of us at Begin) do a lot of talking about the... | 0 | 2024-03-12T16:26:59 | https://dev.to/begin/introducing-enhance-image-hh8 | webdev, webcomponents, html, frontend | Although we in web development (and certainly those of us at Begin) do a lot of talking about the cost of JavaScript, there’s another type of content on the web that has a huge bearing on performance: images.

In fact, images are by far [the largest contributor to page weight](https://almanac.httparchive.org/en/2022/pa... | colepeters |

1,788,252 | List Comprehension em Python | A List Comprehension é uma característica poderosa e elegante do Python que permite criar listas de... | 0 | 2024-03-12T16:27:41 | https://dev.to/viana/list-comprehension-em-python-313m | A List Comprehension é uma característica poderosa e elegante do Python que permite criar listas de maneira concisa e eficiente. Essa construção sintática simplifica o processo de criação de listas a partir de iteráveis, como listas, tuplas, conjuntos, ou mesmo strings. Neste artigo, vamos explorar em detalhes o concei... | viana | |

1,788,718 | Vardhman amrante showrooms for sale in Ludhiana | Vardhman Amrante | Invest wisely with showrooms for sale in Ludhiana at Vardhman Amrante Boulevard. Enjoy an... | 0 | 2024-03-13T05:17:57 | https://dev.to/vardhmanamrante/vardhman-amrante-showrooms-for-sale-in-ludhiana-vardhman-amrante-361b | Invest wisely with [showrooms for sale in Ludhiana](https://www.vardhmanamrante.com/amrante-boulevard) at Vardhman Amrante Boulevard. Enjoy an unparalleled location boasting an elite pin code, with approximately 60,000 daily commuters on canal road. The area is home to around 2,00,000 inhabitants on canal road and appr... | vardhmanamrante |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.