id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,833,706 | Unveiling the Pinnacle Alternatives to BrowserStack for Efficient Software Testing | Ensuring software performs impeccably across various browsers and devices in the ever-evolving... | 0 | 2024-04-25T06:15:03 | https://www.technology.org/2024/03/19/unveiling-the-pinnacle-alternatives-to-browserstack-for-efficient-software-testing/ | testing, mobile, automation, development | Ensuring software performs impeccably across various browsers and devices in the ever-evolving digital era is paramount. This is where software testing tools come into the limelight, providing the essential service of automating and streamlining the testing process to validate that software operates without a hitch in ... | abhayit2000 |

1,833,843 | How Web Applications Can Help You Succeed Online | A post by digital | 0 | 2024-04-25T09:15:32 | https://dev.to/digital647/how-web-applications-can-help-you-succeed-online-212i | digital647 | ||

1,833,928 | Engaging Your Audience: Mastering Elearning Voice Over | Enhance your elearning experience with engaging voice overs. Discover how elearning voice over can... | 0 | 2024-04-25T10:11:54 | https://dev.to/novita_ai/engaging-your-audience-mastering-elearning-voice-over-h4p | ai, audio, api, voiceover | Enhance your elearning experience with engaging voice overs. Discover how elearning voice over can elevate your content on our blog!

##Key Highlights

1. **Understanding E-Learning**: E-learning, comprising lectures, videos, quizzes, and simulations, dominates modern education, with technology-based methods on the rise... | novita_ai |

1,833,954 | 5 Essential Tips for Maintaining Good Oral Hygiene by Experience Dentist |Arcus Dental | Maintaining good oral hygiene is vital not only for a bright smile but also for overall health.... | 0 | 2024-04-25T09:55:45 | https://dev.to/onlineconsultancy25/5-essential-tips-for-maintaining-good-oral-hygiene-by-experience-dentist-arcus-dental-2kk8 | bestdentalhospitalinkphb, bestdentalclinicnearme, bestdentistnearme, dentalservicesnearm | Maintaining good oral hygiene is vital not only for a bright smile but also for overall health. Neglecting oral health can lead to serious dental issues like cavities, gum disease, and, indeed, more serious health conditions. Fortunately, taking up simple habits can significantly improve oral hygiene. Here are five ess... | onlineconsultancy25 |

1,834,000 | Digital Marketing Services in the USA - JM Digital Inc | Transform your digital presence with JM Digital Inc's comprehensive Digital Marketing Services. Our... | 0 | 2024-04-25T11:19:49 | https://dev.to/jmdigitalinc/digital-marketing-services-in-the-usa-jm-digital-inc-1foh | Transform your digital presence with JM Digital Inc's comprehensive [Digital Marketing Services](https://www.jaymehta.co/digital-marketing/). Our tailored solutions encompass everything from [Search Engine Optimization (SEO)](https://www.jaymehta.co/digital-marketing/seo/) for heightened visibility to engaging [Content... | jaymehtadigital | |

1,834,312 | What are LLMs? An intro into AI, models, tokens, parameters, weights, quantization and more | To keep up with everything happening in the world of artificial intelligence, it helps to understand... | 22,944 | 2024-04-28T22:00:00 | https://www.koyeb.com/blog/what-are-large-language-models | To keep up with everything happening in the world of artificial intelligence, it helps to understand and grasp key terms and concepts behind the technology.

In this introduction, we are going to dive into what is generative AI, looking at the technology and models they are built on. We'll discuss how these models are ... | alisdairbr | |

1,834,368 | Revisiting the "Revealing Module pattern" | (Cover Image source) Maybe you've heard of the "Revealing module pattern" [RMP], which is a way to... | 0 | 2024-04-27T13:33:27 | https://dev.to/efpage/revisiting-the-revealing-module-pattern-1fp1 | javascript, programming, oop, tutorial | ([Cover Image source](https://algodaily.com/lessons/understanding-encapsulation-in-programming))

Maybe you've heard of the "[Revealing module pattern](https://www.digitalocean.com/community/conceptual-articles/module-design-pattern-in-javascript)" [RMP], which is a way to create protected code modules in Javascript. U... | efpage |

1,834,425 | CS50P Problem Set 5 - Back to the Bank | Dear participants, I need help and decided to ask you what is the best place to get it. I am taking... | 0 | 2024-04-25T18:40:25 | https://dev.to/9auloandre/cs50p-problem-set-5-back-to-the-bank-di1 | question, pytest, check50, errors | Dear participants,

I need help and decided to ask you what is the best place to get it.

I am taking David Malan's CS50P course. I got up to Problem Set 5 and I am stuck at the "Back to the Bank" problem.

I created the required Python script and run it flawlessly. However, when I checked the script with "check50" I ... | 9auloandre |

1,834,441 | Unlocking the Power of Databases in Java with JDBC | Java has long been a powerhouse in the world of software development, and its ability to interact... | 0 | 2024-04-25T19:16:29 | https://dev.to/dbillion/unlocking-the-power-of-databases-in-java-with-jdbc-25gj | java, database | Java has long been a powerhouse in the world of software development, and its ability to interact with databases through Java Database Connectivity (JDBC) is a testament to its versatility. Inspired by the practical insights from “The Java Workshop” by David Cuartielles, Andreas Goarnsson, and Eric Foster-Johnson, let’... | dbillion |

1,834,848 | Kamagra 50 mg: Revitalize Your Love Life | Introduction to Kamagra 50 mg In the realm of intimate relationships, maintaining a healthy and... | 0 | 2024-04-26T05:56:52 | https://dev.to/lyfechmiest/kamagra-50-mg-revitalize-your-love-life-55a4 | kamagra50mg, erectiledysfunction, intimacy, relationships | Introduction to Kamagra 50 mg

In the realm of intimate relationships, maintaining a healthy and satisfying sexual life is paramount. However, for many individuals, the struggle with erectile dysfunction (ED) can significantly hinder their ability to enjoy intimate moments with their partners. Fortunately, pharmaceutic... | lyfechmiest |

1,834,857 | How to position the chart at the far left of the canvas? | Question Title How to position the chart at the far left of the canvas in the vchart chart... | 0 | 2024-04-26T06:14:02 | https://dev.to/da730/how-to-position-the-chart-at-the-far-left-of-the-canvas-2l0i | # Question Title

How to position the chart at the far left of the canvas in the vchart chart library?

# Question Description

I am using the vchart chart library for visualization operations, and I hope that the chart can be located at the far left of the canvas. However, I had a problem when trying to adjust the confi... | da730 | |

1,834,872 | Candid B Cream: Your Trusted Antifungal Companion | Introduction Candid B Cream: Your Trusted Antifungal Companion is a potent remedy designed to combat... | 0 | 2024-04-26T06:34:44 | https://dev.to/lyfechemist0956/candid-b-cream-your-trusted-antifungal-companion-3gja | healthydebate | Introduction

Candid B Cream: Your Trusted Antifungal Companion is a potent remedy designed to combat various fungal infections effectively. It stands out as a reliable solution in the realm of antifungal treatments, offering fast relief and soothing comfort to those grappling with fungal issues. Let's delve deeper int... | lyfechemist0956 |

1,834,897 | Exploring Cross-Cultural Perspectives in Audio Visual Installation | Cultural contexts significantly impact how technology is applied and perceived. With the rise of... | 0 | 2024-04-26T07:06:30 | https://dev.to/jamesespinosa926/exploring-cross-cultural-perspectives-in-audio-visual-installation-b79 | Cultural contexts significantly impact how technology is applied and perceived. With the rise of [audio video proposals](https://xtenav.com/x-doc/) integrating diverse perspectives optimally designs experiences. This blog explores some cross-cultural considerations in audio visual installations to foster inclusion and ... | jamesespinosa926 | |

1,834,983 | How Do Free Apps Make Money? | I always wondered how free apps make money. I did some research on this and found a lot of different... | 0 | 2024-04-26T09:22:14 | https://dev.to/martinbaun/how-do-free-apps-make-money-4ehh | learning, security, cybersecurity, monetization | I always wondered how free apps make money. I did some research on this and found a lot of different avenues to follow.

Let's jump into the ins and outs of how to earn money with free apps

**Background**

I always encountered those exciting, sometimes annoying ads and pop-ups while browsing the web. YouTube has certai... | martinbaun |

1,835,001 | Empowering Learners: The Evolution of Education in Dubai's Schools | Education in Dubai is undergoing a profound transformation, reflecting the city's dynamic growth and... | 0 | 2024-04-26T09:39:20 | https://dev.to/faizalkhan1393/empowering-learners-the-evolution-of-education-in-dubais-schools-2cc4 | Education in Dubai is undergoing a profound transformation, reflecting the city's dynamic growth and commitment to innovation. Dubai's schools have emerged as incubators of change, embracing new paradigms and approaches to empower learners for the challenges of the 21st century. This article explores the evolution of e... | faizalkhan1393 | |

1,835,085 | How To Hire An Expert To Take My Online Class | Online education provides a great opportunity for everyone to build an enviable career. Anyone can... | 0 | 2024-04-26T10:20:51 | https://dev.to/onlineclasshelp/how-to-hire-an-expert-to-take-my-online-class-4d26 | education | Online education provides a great opportunity for everyone to build an enviable career. Anyone can sign up for an online course, upskill, and land a lucrative job. It may sound enticing and easily achievable, but in truth, online classes are as tough as regular classes. That’s why many busy online students turn to clas... | onlineclasshelp |

1,835,155 | Array.reduce() to fill <select> | I have a list of colors sitting in my database and a <select> HTML element where I want to use... | 27,198 | 2024-04-26T18:32:48 | https://dev.to/andrewelans/use-arrayreduce-to-fill-26eo | javascript, array, reduce | I have a list of colors sitting in my database and a `<select>` HTML element where I want to use these colors as `<option>`s.

## Colors

I get the values from the database and store them in a variable.

```javascript

const colors = [

{val: "1", name: "Black"},

{val: "2", name: "Red"},

{val: "3", name: "Yello... | andrewelans |

1,835,258 | Is it necessary for me to Code? | Good day, developers. I just want to ask question on behalf of our team. I have an interest in tech;... | 0 | 2024-04-26T13:14:30 | https://dev.to/filmovity/is-it-necessary-for-me-to-code-3n27 | webdev | Good day, developers.

I just want to ask question on behalf of our team. I have an interest in tech; in fact, I studied software engineering.

But I started to lose interest in coding, only working with developers to develop projects that I came up with ideas for.

Is there any problem with me? | filmovity |

1,835,290 | What we learned building our SaaS with Rust 🦀 | In this post we will not answer the question everybody asks when starting a new project: Should I do... | 0 | 2024-04-29T10:45:02 | https://dev.to/meteroid/5-lessons-learned-building-our-saas-with-rust-1doj | webdev, rust, opensource, beginners | In this post we will **not** answer the question everybody asks when starting a new project: **Should I do it in Rust ?**

<img src="https://media.giphy.com/media/v1.Y2lkPTc5MGI3NjExNHQwOTl6Ym5odmVmNDZpdzVmZG9mMW9yd2tmN2lyZ2NzOWNxc2MxMCZlcD12MV9pbnRlcm5hbF9naWZfYnlfaWQmY3Q9Zw/l83rkRUu4IqyUbt5k6/giphy.gif">

Instead, we'... | gaspardb |

1,835,293 | Best International Courier Services in India | A post by Courier Dunia | 0 | 2024-04-26T14:13:36 | https://dev.to/courierdunia/best-international-courier-services-in-india-236n |

| courierdunia | |

1,835,701 | Envio e recebimento de mensagens de texto dentro de imagens com Python | O processo de enviar e receber mensagens de texto dentro de imagens faz parte da área de... | 0 | 2024-04-29T22:51:07 | https://dev.to/msc2020/envio-e-recebimento-de-mensagens-de-texto-dentro-de-imagens-com-python-1lna-temp-slug-3017731?preview=b263ae880631f618231d5285f7f0b9ec536ba58e40aa9307b95058821c9bd50b8a47f1512274a91b480669387f40d332890900f6e29da844db2b7274 | python, tutorial, braziliandevs | O processo de enviar e receber mensagens de texto dentro de imagens faz parte da área de [Esteganografia](https://pt.wikipedia.org/wiki/Esteganografia). No post de hoje, mostramos uma forma simples de como fazer isso utilizando a linguagem Python. ☕

---

## Pré-requisitos

Para fazer este tutorial é necessário instalar... | msc2020 |

1,836,067 | Tweet Media Extractor Plugin | This is a submission for the Coze AI Bot Challenge: Trailblazer. What I Built There are... | 0 | 2024-04-27T11:24:46 | https://dev.to/sojinsamuel/tweet-media-extractor-plugin-4a35 | cozechallenge, devechallenge, ai, machinelearning | *This is a submission for the [Coze AI Bot Challenge](https://dev.to/devteam/join-us-for-the-coze-ai-bot-challenge-3000-in-prizes-4dp): Trailblazer.*

## What I Built

<!-- Tell us what your plugin or workflow does and what problem it solves -->

There are already amazing Twitter related plugins available on Coze Plugin... | sojinsamuel |

1,836,079 | Dr Aditya Raj | Orthopaedic Spine Surgeon Mumbai | Hello, I am Dr Aditya Raj (Orthopaedic Spine Surgeon SportsDocs) An Orthopaedic spine surgeon with a... | 0 | 2024-04-27T11:44:19 | https://dev.to/dradityaraj/dr-aditya-raj-orthopaedic-spine-surgeon-mumbai-8ab | bestorthopaedicsurgeoninmumbai, orthopaedic, healthcare, bonespecialist | Hello, I am [Dr Aditya Raj](https://synapsespine.in/dr-aditya-raj-orthopaedic-spine-surgeon/) (Orthopaedic Spine Surgeon SportsDocs)

An Orthopaedic spine surgeon with a passion for delivering exceptional care. With extensive training both in India and abroad, including specialized fellowships in complex spine surgery a... | dradityaraj |

1,836,095 | Functions in JavaScript: A Comprehensive Guide | Understanding Declaration, Parameters, Return Statements, Function Expressions, Arrow Functions,... | 26,790 | 2024-04-27T12:31:12 | https://dev.to/sadanandgadwal/functions-in-javascript-a-comprehensive-guide-40d6 | webdev, javascript, beginners, sadanandgadwal | > Understanding Declaration, Parameters, Return Statements, Function Expressions, Arrow Functions, and More

Functions are a fundamental concept in JavaScript, allowing developers to encapsulate code for reuse, organization, and abstraction. In this guide, we'll explore various aspects of functions in JavaScript, inclu... | sadanandgadwal |

1,836,139 | Casibom Giriş | Casibom casino sitesi son zamanların adından en çok söz ettiren bahis ve casino sitelerinden... | 0 | 2024-04-27T14:48:01 | https://dev.to/casibomgirisi/casibom-giris-3h11 |

Casibom casino sitesi son zamanların adından en çok söz ettiren bahis ve casino sitelerinden biridir. Casino oyunları oynuyorsanız Casibom’u duymamış olma ihtimaliniz yok.

Casibom casino sitesi son zamanların adın... | casibomgirisi | |

1,836,402 | And the nominees for “Best Cypress Helper” are: Utility Function, Custom Command, Custom Query, Task, and External Plugin | And the Oscar goes to… ACT 1: EXPOSITION On numerous occasions, colleagues have come... | 27,209 | 2024-04-27T23:59:26 | https://medium.com/@sebastian-cs/and-the-nominees-for-best-cypress-helper-are-utility-function-custom-command-custom-query-6af26e6d1597 | cypress, testing, automation, qa | **And the Oscar goes to…**

---

### ACT 1: EXPOSITION

On numerous occasions, colleagues have come to me with a question that seems to resonate among many Cypress users: Which approach is best for reusing actions or assertions when writing tests? Should they opt for a _JavaScript Utility Function_, a _Custom Command_,... | sebastianclavijo |

1,836,530 | Collate: Transform content overwhelm into a daily short email | Hey all, Built small app to upload your read-later list into a daily short email. Upload your PDF... | 0 | 2024-04-28T07:14:14 | https://dev.to/vel_is_lava/collate-transform-content-overwhelm-into-a-daily-short-email-3gel | ai, testing, showdev | Hey all,

Built small app to upload your read-later list into a daily short email.

Upload your PDF and websites, the content gets analyzed and organized into topics.

You will receive daily articles based on your content.

Give it a go for free [here](https:collate.one/newsletter)!

Keen to know what you think!... | vel_is_lava |

1,836,562 | 5 Best Inventory Management Softwares in Jira 2024 | Inventory management software is a tool to help businesses effectively manage their inventory. It... | 0 | 2024-04-28T07:44:50 | https://dev.to/assetitapp/5-best-inventory-management-softwares-in-jira-2024-28l1 | inventory, inventorymanagement, jira, atlassian | <p><span>Inventory management software is a tool to help businesses effectively manage their inventory. It could be about managing various tasks, providing real-time visibility into stock levels, or even the products' activity logs. By utilizing such software, you can eliminate the need for manual tracking, reduce erro... | assetitapp |

1,836,649 | Derek Ferriera - Lincoln Financial Advisors Corporation | Derek Ferriera understands: Your exit plan objectives. Your desire to reach financial independence... | 0 | 2024-04-28T11:44:50 | https://dev.to/derekferriera/derek-ferriera-lincoln-financial-advisors-corporation-2lgo | Derek Ferriera understands:

- Your exit plan objectives.

- Your desire to reach financial independence with certainty and tax efficiency.

- Your desire to recruit, retain, and reward key employees for their hard work and dedication.

- Your need to be equitable in the business you built with your partner.

- Your hope t... | derekferriera | |

1,836,692 | The Power of Automated Solutions to Solve hCAPTCHA challenges | Introduction In the ever-evolving landscape of cybersecurity, the battle between bots and humans... | 0 | 2024-04-28T12:34:27 | https://dev.to/media_tech/the-power-of-automated-solutions-to-solve-hcaptcha-challenges-2igc | **Introduction**

In the ever-evolving landscape of cybersecurity, the battle between bots and humans rages on. As online platforms strive to protect their integrity and users from malicious activities, they often employ CAPTCHA challenges. However, the traditional CAPTCHA model has its limitations, leading to the rise... | media_tech | |

1,836,758 | Airport Helper - Plugin | This is a submission for the Coze AI Bot Challenge: Trailblazer. What I Built This is a... | 0 | 2024-04-28T16:31:10 | https://dev.to/sanjaysekaren/airport-helper-plugin-7h8 | cozechallenge, devechallenge, ai | *This is a submission for the [Coze AI Bot Challenge](https://dev.to/devteam/join-us-for-the-coze-ai-bot-challenge-3000-in-prizes-4dp): Trailblazer.*

## What I Built

<!-- Tell us what your plugin or workflow does and what problem it solves -->

This is a comprehensive plugin tailored for aviation professionals and enth... | sanjaysekaren |

1,836,764 | Can i move my website from WordPress (Elementor) to Shopify? | I'm thinking about moving my website, Web X Founders, from WordPress (Elementor) to Shopify so I can... | 0 | 2024-04-28T16:50:30 | https://dev.to/webxfounders/can-i-move-my-website-from-wordpress-elementor-to-shopify-3ifl | I'm thinking about moving my website, [Web X Founders](https://webxfounders.com), from WordPress (Elementor) to Shopify so I can start selling digital products. Can I do it? My tech team says Shopify has tools for managing digital products and secure payments. They suggest transferring our content to Shopify and settin... | webxfounders | |

1,858,179 | Top College's Abroad for MCA | Top Colleges Abroad for MCA or Similar Postgraduate Courses United... | 0 | 2024-05-19T08:55:04 | https://dev.to/suraj_0031/top-college-abroad-for-mca-27f1 | ##Top Colleges Abroad for MCA or Similar Postgraduate Courses

### United States

1. **Massachusetts Institute of Technology (MIT)**

- Offers programs like Master of Engineering in Computer Science.

2. **Stanford University**

- Offers a Master of Science in Computer Science.

3. **Carnegie Mellon University**

... | suraj_0031 | |

1,862,955 | How to Verify Smart Contracts on BlockScout with Hardhat | Verifying a smart contract on Blockscout makes the contract source code publicly available and... | 0 | 2024-05-23T14:22:13 | https://dev.to/modenetwork/how-to-verify-smart-contracts-on-blockscout-with-hardhat-5b9 | Verifying a smart contract on Blockscout makes the contract source code publicly available and verifiable, which creates transparency and trust in the community.

This guide will walk you through verifying a smart contract on [Blockscout](http://blockscout.com) with [Hardhat](https://hardhat.com).

## Prerequisites

Be... | modenetwork | |

1,870,083 | Thinking of migrating from Confluence but worried about losing data? | There's still time! XWiki's FREE webinar on migrating to an open-source alternative starts TODAY, May... | 0 | 2024-05-30T08:25:45 | https://dev.to/lorina_b/thinking-of-migrating-from-confluence-but-worried-about-losing-data-1gpc | opensource, resources, tutorial, productivity | There's still time! XWiki's FREE webinar on migrating to an open-source alternative starts TODAY, May 30th at 16:00 CET. ➡️ [Register here!](https://xwiki.com/en/webinars/easiest-migration-from-confluence-to-xwiki)

**Don't miss out on learning:**

1. Challenges and solutions for migrating Confluence data (especially... | lorina_b |

1,913,664 | Explore, Integrate, Sleep, Repeat | Hi everyone, I hope you're enjoying your time surfing the web. Since I started focusing on this dev... | 0 | 2024-07-08T05:40:17 | https://blog.lamparelli.eu/explore-integrate-sleep-repeat | ---

title: Explore, Integrate, Sleep, Repeat

published: true

date: 2024-07-06 08:00:52 UTC

tags:

canonical_url: https://blog.lamparelli.eu/explore-integrate-sleep-repeat

---

Hi everyone,

I hope you're enjoying your time surfing the web. Since I started focusing on this dev journey, I've gone through several stages t... | alamparelli | |

1,888,608 | Enhance Your Test Automation with pCloudy Device Farm: Seamless Integration with Leading Frameworks and Tools | In today’s fast-paced digital world, delivering high-quality applications across various devices and... | 0 | 2024-06-14T13:40:43 | https://dev.to/pcloudy_ssts/enhance-your-test-automation-with-pcloudy-device-farm-seamless-integration-with-leading-frameworks-and-tools-4n82 | testautomationtool, crossbrowser, testingwebapplications | In today’s fast-paced digital world, delivering high-quality applications across various devices and platforms is crucial for businesses. pCloudy, a robust cloud-based mobile app testing platform, understands this need and continuously strives to provide developers and testers with the most comprehensive set of integra... | pcloudy_ssts |

343,141 | Get the list of classes connected to the DB | It was quite troublesome before Rails4, but after Rails5 You can get a list of models connected to DB... | 0 | 2020-05-25T01:47:58 | https://dev.to/konyu/get-the-list-of-classes-connected-to-the-db-4592 | rails, rails5 | ---

title: Get the list of classes connected to the DB

published: true

description:

tags: rails, rails5

---

It was quite troublesome before Rails4, but after Rails5

You can get a list of models connected to DB in ApplicationRecord.descendants.

For example, something like this.

```

model_list = ApplicationRecord.de... | konyu |

384,378 | Creating a blog with NuxtJS and Netlify CMS - 1 | In this two-part series, I'm going to cover How I created my blog using NuxtJS and NetlifyCMS. ... | 7,644 | 2020-07-07T08:17:13 | https://dev.to/frikishaan/creating-a-blog-with-nuxtjs-and-netlify-cms-1-44on | vue, nuxt, netlify, tutorial | In this two-part series, I'm going to cover **How I created my [blog](https://frikishaan.com/blog) using NuxtJS and NetlifyCMS**.

<!--

## Why I choose this stack?

Creating a blog with CMS like WordPress is quite an **unwieldy** task. I am not saying WordPress is garbage, it's a great tool for creating websites as **37... | frikishaan |

402,498 | Testing Vue.js Application Files That Aren't Components | Ok, before I begin, a huge disclaimer. My confidence on this particular tip is hovering around 5% or... | 0 | 2020-07-20T12:32:15 | https://www.raymondcamden.com/2020/07/17/testing-vuejs-application-files-that-arent-components | vue, javascript, serverless | ---

title: Testing Vue.js Application Files That Aren't Components

published: true

date: 2020-07-17 00:00:00 UTC

tags: vuejs,javascript,serverless

canonical_url: https://www.raymondcamden.com/2020/07/17/testing-vuejs-application-files-that-arent-components

cover_image: https://static.raymondcamden.com/images/banners/ca... | raymondcamden |

1,786,750 | Developing a Progressive Web App (PWA) with Vue | Progressive Web Apps (PWAs) have gained popularity in the world of web development due to their... | 0 | 2024-03-11T10:42:12 | https://dev.to/bubu13gu/developing-a-progressive-web-app-pwa-with-vue-3716 | webdev, vue, coding, softwaredevelopment | Progressive Web Apps (PWAs) have gained popularity in the world of web development due to their ability to provide app-like experiences on the web. When it comes to developing a PWA with Vue, a popular JavaScript framework, there are various intricacies to consider. This article will explore the process of developing a... | bubu13gu |

500,437 | Modern Software engineering is impossible to imagine without:- | 1) Linux 2) Terminal 3) Git 4) Github 5) StackOverflow 6) Google 7) Wikipedia 8) Virtualization 9) Je... | 0 | 2020-10-28T19:15:33 | https://dev.to/rajeevranjancom/modern-software-engineering-is-impossible-to-imagine-without-2ehg | productivity, linux, github, git | 1) Linux

2) Terminal

3) Git

4) Github

5) StackOverflow

6) Google

7) Wikipedia

8) Virtualization

9) Jenkins

10) MySQL

11) Redis

12) Diff

13) Tomcat

14) Slack

15) JIRA

16) Chrome | rajeevranjancom |

745,504 | How to build internal tools on Stripe with SQL | We're all building internal tools on Stripe. It would be great if we could build them faster so our... | 0 | 2021-07-01T20:29:34 | https://docs.sequin.io/stripe/playbooks/retool-subs | stripe, sql, tutorial, postgres | We're all building internal tools on Stripe. It would be great if we could build them faster so our customers and business are happier.

So let's build a tool to manage Stripe subscriptions using Retool. The app will allow you to search through all your subscriptions, see all the associated current and upcoming invoice... | thisisgoldman |

793,016 | Vscode Extensions You Should Try Out | It’s no news that vscode has been and still is one of the best code editors in the market. Vscode... | 0 | 2021-08-20T01:47:20 | https://dev.to/oyedeletemitope/vscode-extensions-you-should-try-out-4f58 | vscode, 100daysofcode, devops, javascript | It’s no news that vscode has been and still is one of the best code editors in the market.

Vscode comes with tons of extensions and features that’ll make development processes more efficient, get things done faster, and many more.

In this article, I’ll be writing about some of these extensions. These are the ones th... | oyedeletemitope |

1,211,652 | Struct in C++ | include include using namespace std; namespace game { struct game { ... | 0 | 2022-10-05T10:21:08 | https://dev.to/cpp/struct-in-c-b0 | cpp, cpptutorial, learncpp | #include <iostream>

#include <string>

using namespace std;

namespace game

{

struct game

{

unsigned short int score = 0;

unsigned short int correctAnswers = 0;

unsigned short int playedQuestions = 0;

string playerName = "unknown";

void yourInformation();

void gam... | cpp |

1,322,566 | Five tools and resources for Web that survived information overload | How often do you see titles of articles that read something like this: 20 productivity tools to help... | 0 | 2023-01-09T14:19:46 | https://garage.sekrab.com/posts/five-tools-and-resources-for-web-that-survived-information-overload | webdev, design, html, productivity | How often do you see titles of articles that read something like this: 20 productivity tools to help you as a developer, 10 best chrome extensions, 5 hidden resources to help you do this, or that? Well, like all of you, I have a soft spot for these titles and keep them in my favorites with a promise to come back. Thoug... | ayyash |

1,371,859 | Anagram solution | LeetCode is a popular platform that offers various coding challenges and problems. One of the most... | 0 | 2023-02-19T20:02:30 | https://dev.to/isaacttonyloi/anagram-solution-l7j | leetcode, anagram, beginners, interview |

LeetCode is a popular platform that offers various coding challenges and problems. One of the most interesting categories of

The problem we will be solving is "Group Anagrams," which can be found on LeetCode under the ID "49." This problem requires us to group an array of strings into groups of anagrams. An anagram... | isaacttonyloi |

1,409,812 | Learn Open Closed Principle in C# (+ Examples) | The Open/Closed Principle (OCP) is a core tenet of the SOLID principles in object-oriented... | 22,559 | 2023-04-10T08:28:00 | https://www.bytehide.com/blog/open-closed-principle-in-csharp-solid-principles | csharp, dotnet, tutorial, programming | The **Open/Closed Principle (OCP)** is a core tenet of the **SOLID principles** in object-oriented programming. By understanding and applying the OCP in **C#**, developers can create maintainable, scalable, and flexible software systems.

This article will discuss the Open/Closed Principle in C#, provide examples, and ... | bytehide |

1,411,911 | Salam hemma | A post by Atahan_Biuse | 0 | 2023-03-23T05:26:30 | https://dev.to/atahanbius/salam-hemma-1b06 | atahanbius | ||

1,414,815 | Animated Login Page Using Html & CSS + Source Code | Support us and GET 10% OFF of your next order in my shop using the code: EARLYBIRD... | 0 | 2023-03-25T15:43:49 | https://dev.to/hojjatbandani/animated-login-page-using-html-css-32ij | html, css, beginners, tutorial | {% youtube ypWpu1QVw_M %}

Support us and GET 10% OFF of your next order in my shop using the code: EARLYBIRD 🙏❤️

https://tinyurl.com/2uf6zk72

DOWNLOAD Source : https://github.com/soudemy/simpleLogin

Hi guys, today we want to show How To Create a Animated Login Form Using HTML & CSS

Please, if you love it, support ... | hojjatbandani |

1,443,274 | A review of this week's APIs: Amazon Android Apps Lookup, tencent myapp top charts and Ad Fraud | In keeping with our weekly routine, we will introduce three new APIs to you. We have chosen a diverse... | 0 | 2023-05-15T06:36:00 | https://dev.to/worldindata/a-review-of-this-weeks-apis-amazon-android-apps-lookup-tencent-myapp-top-charts-and-ad-fraud-2knl | api, android, tencent, adfraud | In keeping with our weekly routine, we will introduce three new APIs to you. We have chosen a diverse range of data topics for this round-up of APIs. The purpose, industry, and client types of these APIs will be analyzed. [Worldindata's Marketplace](https://www.worldindata.com/) for Data and APIs has more information o... | worldindata |

1,494,545 | My first open source contribution. | this is an attempt by me Phil, to socially pressure myself into doing my first open source... | 0 | 2023-06-07T08:47:28 | https://dev.to/vtguy65/my-first-open-source-contribution-17g7 | this is an attempt by me Phil, to socially pressure myself into doing my first open source contribution. I will post the details as soon as I find an issue to fix. If anyone has any questions or requests please hit me up. | vtguy65 | |

1,508,281 | How I Secured My Apache Age Internship: A Journey of Challenges and Triumphs | Introduction: Securing an internship opportunity with Apache Age, a renowned software... | 0 | 2023-06-18T06:41:00 | https://dev.to/munmud/how-i-secured-my-apache-age-internship-a-journey-of-challenges-and-triumphs-2ahk | ### Introduction:

Securing an internship opportunity with Apache Age, a renowned software company, was a dream come true for me. This blog post recounts my exhilarating journey from the initial application on LinkedIn to receiving an offer to join Bitnine, a subsidiary of Apache Age. Join me as I share the step-by-ste... | munmud | |

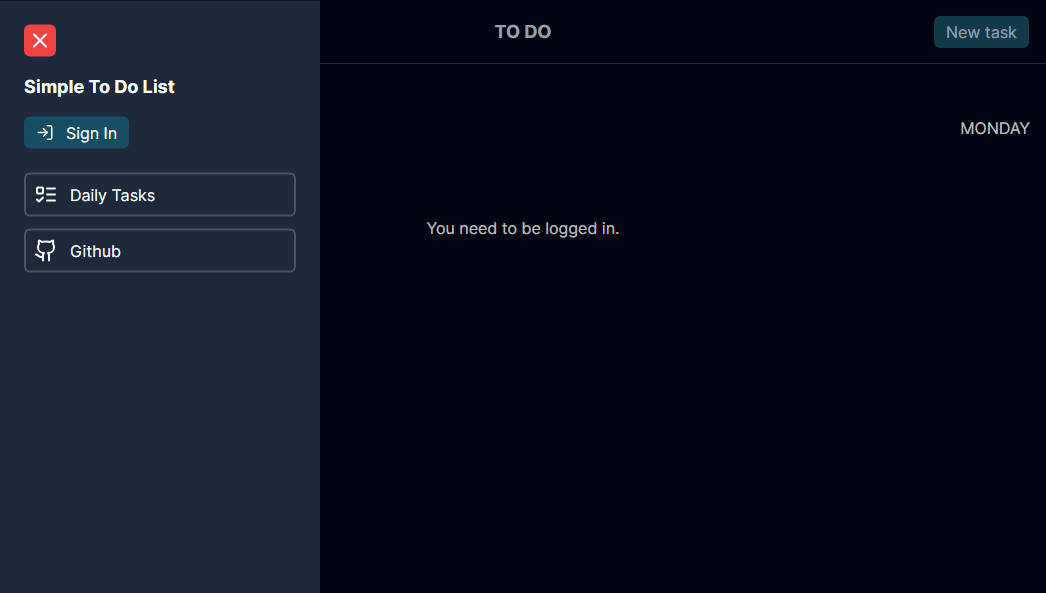

1,660,178 | A Simple To Do List with Next.js | Simple To Do List ✅ O Simple To Do List é uma aplicação simples e intuitiva para... | 0 | 2023-11-10T08:59:17 | https://reactjsexample.com/a-simple-to-do-list-with-next-js/ | todo, nextjs | ---

title: A Simple To Do List with Next.js

published: true

date: 2023-11-08 00:58:00 UTC

tags: Todo,Nextjs

canonical_url: https://reactjsexample.com/a-simple-to-do-list-with-next-js/

---

that provides a comprehensive se... | sachingeek |

1,714,328 | MMOexp WoW Classic SoD Gold: How many take pictures | You'd like to park and go for a walk now? I'm likely to WoW Classic SoD Gold wake up feeling worse... | 0 | 2024-01-02T05:53:24 | https://dev.to/nevillberger/mmoexp-wow-classic-sod-gold-how-many-take-pictures-1mbj |

You'd like to park and go for a walk now? I'm likely to [WoW Classic SoD Gold](https://www.mmoexp.com/Wow-classic-sod/Gold.html) wake up feeling worse than I did yesterday. Get a good night's sleep so I'll be back later in the day. I was seeking out teammates. I had to pay for phenomics Indyk pitchers to let me as I ... | nevillberger | |

1,915,120 | To view the history of errors using PDO in SQL | To view the history of errors using PDO in... | 0 | 2024-07-08T03:05:42 | https://dev.to/anissepti/to-view-the-history-of-errors-using-pdo-in-sql-ppj | {% stackoverflow 78718965 %} | anissepti | |

1,740,741 | Crafting a Web Design Singapore that seamlessly integrates functionality — Subraa | A recruitment agency's online presence is pivotal, serving as the gateway for connecting top talent... | 0 | 2024-01-25T04:19:59 | https://dev.to/subraaoct2023/crafting-a-web-design-singapore-that-seamlessly-integrates-functionality-subraa-5cjk | web, design, singapore |

A recruitment agency's online presence is pivotal, serving as the gateway for connecting top talent with prospective employers. Crafting a [Web Design Singapore](https://www.subraa.com/) that seamlessly integrates ... | subraaoct2023 |

1,741,966 | How to deal with API rate limits | When I first had the idea for this post, I wanted to provide a collection of actionable ways to... | 0 | 2024-01-26T10:06:33 | https://blog.sentry.io/how-to-deal-with-api-rate-limits/ | api, javascript, webdev, tutorial | When I first had the idea for this post, I wanted to provide a collection of actionable ways to handle errors caused by API rate limits in your applications. But as it turns out, it’s not that straightforward (is it ever?). API rate limiting is a minefield, and at the time of writing, there are **no published standards... | whitep4nth3r |

1,742,538 | Running a Vertex AI custom container | After creating our container, it's time to run it. Our first container didn't work (of course).... | 27,298 | 2024-01-27T00:06:00 | https://dev.to/dchaley/running-a-vertex-ai-custom-container-4jb6 | cloud, serverless, ai, containers | After [creating](https://dev.to/dchaley/building-a-vertex-ai-custom-job-container-5f66) our container, it's time to run it.

Our first container didn't work (of course). After a few iterations we got it running. 🎉

and have always st... | ppfeiler |

1,915,226 | Test Post | This is my first post created for testing | 0 | 2024-07-08T05:05:04 | https://dev.to/navaneethank/test-post-5fif | This is my first post created for testing | navaneethank | |

1,826,373 | Don't Just Hire a JavaScript Developer, Build Your Dream Team | In the web development sphere, JavaScript has held its positioning as the cornerstone technology... | 0 | 2024-04-18T05:55:48 | https://dev.to/poojarysathvik/dont-just-hire-a-javascript-developer-build-your-dream-team-5co5 | javascript, hire, developers | In the web development sphere, JavaScript has held its positioning as the cornerstone technology powering interactive and dynamic user experiences for over a decade. When you decide to [hire JavaScript developers](https://www.uplers.com/hire-javascript-developers/?utm_source=Link+Building+Promotions&utm_medium=UTM_Java... | poojarysathvik |

668,355 | You have unread messages | Imagine creating a profile on this job searching site that promises to connect you to industry inside... | 12,255 | 2021-04-16T16:30:34 | https://blog.ninjobu.com/you-have-unread-messages | webdev, firebase | Imagine creating a profile on this job searching site that promises to connect you to industry insiders. A recruiter sees it and messages you with a dream opportunity. But, you never see the message because you signed up to this site for fun and haven't logged back in to check your messages for weeks. That was [Ninjob... | ninjobu |

1,459,892 | Failing Fast | Raise your hand if you have seen this before in your development or production error... | 0 | 2023-05-07T00:42:44 | https://qualitysoftwarematters.com/failing-fast | ---

title: Failing Fast

published: true

date: 2015-06-19 05:00:00 UTC

tags:

canonical_url: https://qualitysoftwarematters.com/failing-fast

---

Raise your hand if you have seen this before in your development or production error logs.

```

System.NullReferenceException: Object reference not set to an instance of an ob... | toddmeinershagen | |

1,663,524 | Docker | Introduction Before VMs, every server only had one operating system. This means if you... | 0 | 2023-11-11T04:50:58 | https://dev.to/aldoportillo/docker-18n7 | docker, cloud, node, systemdesign | ## Introduction

Before VMs, every server only had one operating system. This means if you wanted a windows server and linux server. You needed two physical servers. Here comes virtualization, instead of installing an OS you install a hypervisor (VMware ESXi). This allows you to divide your server resources into multip... | aldoportillo |

1,763,787 | ChatCraft Adventures #6 | This week in ChatCraft This week in ChatCraft, Release 1.3 has been completed, and is... | 26,549 | 2024-02-17T04:59:17 | https://dev.to/rjwignar/chatcraft-adventures-6-407d | beginners, opensource, openai | ## This week in ChatCraft

This week in ChatCraft, Release 1.3 has been completed, and is available [here](https://github.com/tarasglek/chatcraft.org/releases/tag/v1.3.0).

This has been a busy week for in terms of classes. Due to having to meet other deadlines, my PRs involved small code changes. However, I've been ab... | rjwignar |

1,829,706 | How to Build an AI FAQ System with Strapi, LangChain & OpenAI | Introduction Frequently Asked Questions (FAQs) offer users immediate access to answers for... | 0 | 2024-04-21T15:24:39 | https://dev.to/strapi/build-an-ai-faq-system-with-strapi-langchain-openai-3l5b | react, openapi, strapi, faqapp | ## Introduction

Frequently Asked Questions (FAQs) offer users immediate access to answers for common queries. However, as the volume and complexity of inquiries grow, manual management of FAQs becomes unsupportable. This is where an AI-powered FAQ system comes in.

In this tutorial, you'll learn how to create an AI-dri... | denis_kuria |

1,372,289 | Journal Entry 1 | Activity: Installed Unity and Platformer microgame Modified the game according to... | 0 | 2023-02-20T06:40:06 | https://dev.to/pavolrajczy/journal-entry-1-345k | Activity:

- Installed Unity and Platformer microgame

- Modified the game according to tutorials

- Downloaded and imported 2D Game Kit

Notes:

I worked in Unity before so i was overconfident and paid for it in time wasted. I started doing things without realizing there was a tutorial and subsequently i had to rework fe... | pavolrajczy | |

1,898,215 | Project Homelab: Kubernetes the Complex Way | There’s a joke that Kelsey Hightower wrote Kubernetes The Hard Way because there isn’t an easy way.... | 0 | 2024-07-09T14:18:29 | https://burnskp.dev/2024/06/23/project-homelab-kubernetes-the-complex-way/ | projecthomelab, devops, homelab, kubernetes | ---

title: Project Homelab: Kubernetes the Complex Way

published: true

date: 2024-06-23 19:46:02 UTC

tags: projecthomelab,devops,homelab,kubernetes

canonical_url: https://burnskp.dev/2024/06/23/project-homelab-kubernetes-the-complex-way/

---

There’s a joke that Kelsey Hightower wrote Kubernetes The Hard Way because th... | burnskp |

1,043,292 | Crescimento profissional e a cultura de aprendizagem. | Contexto: texto escrito em 2022 Em 2009 eu inicio uma nova jornada. Apoiar na transformação de uma... | 0 | 2024-07-12T17:09:06 | https://dev.to/umovme/crescimento-profissional-e-a-cultura-de-aprendizagem-k4j | culture, braziliandevs, agile, startup | _Contexto: texto escrito em 2022_

Em 2009 eu inicio uma nova jornada. Apoiar na transformação de uma empresa baseada em projetos e diversos nichos, para uma empresa de produto, construindo um produto (que virou uma plataforma) para embarcar estes nichos.

A jornada nunca é simples quando estamos mudando o foco de serv... | dwildt |

1,419,080 | Callbacks in Javascript | Callbacks are a fundamental concept in JavaScript and are commonly used in asynchronous programming.... | 0 | 2024-07-10T18:58:30 | https://dev.to/jpbp/callbacks-in-javascript-10i2 | Callbacks are a fundamental concept in JavaScript and are commonly used in asynchronous programming. In this article, we'll explore what callbacks are, why they were the main approach to async operations when async/await didn't exist in JavaScript, why they are not much used in modern projects, but it is important to u... | jpbp | |

1,916,533 | @Environment variables | SwiftUI provides a way to pass data down the view hierarchy using @Environment variables. These... | 0 | 2024-07-10T06:13:09 | https://wesleydegroot.nl/blog/@Environment | environment, swiftui | ---

title: @Environment variables

published: true

date: 2024-07-08 19:25:27 UTC

tags: Environment,SwiftUI

canonical_url: https://wesleydegroot.nl/blog/@Environment

---

SwiftUI provides a way to pass data down the view hierarchy using `@Environment` variables. These variables are environment-dependent and can be access... | 0xwdg |

1,446,416 | Our weekly API rundown: Bin Lookup, Currency And Cryptocurrency Conversion and Bad Word Filter | This week we will introduce three new APIs to you. We have chosen a diverse range of data topics for... | 0 | 2024-07-08T08:26:00 | https://dev.to/worldindata/our-weekly-api-rundown-bin-lookup-currency-and-cryptocurrency-conversion-and-bad-word-filter-2i4e | api, cryptocurrency, forex, json | This week we will introduce three new APIs to you. We have chosen a diverse range of data topics for this round-up of APIs. We will closely explore the purpose, industry, and client types of these APIs. If you want to know more, the Marketplace for Data and APIs of [Worldindata](https://www.worldindata.com/) provides a... | worldindata |

1,451,995 | GIT Cheatsheet | Here's a git cheat-sheet that covers some of the most commonly used git commands: ... | 0 | 2024-07-11T04:30:25 | https://dev.to/thrtn85dev/git-cheatsheet-429o | git, beginners, cheatsheet, learning | Here's a git cheat-sheet that covers some of the most commonly used git commands:

## Configuration

```

git config --global user.name "Your Name"

```

-

Sets your name for all git repositories on your computer

```

git config --global user.email "youremail@example.com"

```

-

Sets your email address for all git repos... | thrtn85dev |

1,619,526 | Sending Emails in Node.js Using Nodemailer | In Today’s Article, I will explain how to send e-mails from your Node.js server with the use of a... | 0 | 2024-07-12T12:01:43 | https://dev.to/faizan711/sending-emails-in-nodejs-using-nodemailer-474 | node, webdev, javascript, nodemailer |

In Today’s Article, I will explain how to send e-mails from your Node.js server with the use of a library named **“Nodemailer”**.

> But before we begin, it’s important to note that a foundational understanding of creating APIs in a Node.js server, whether with or without Express.js, is assumed. If you’re unfamiliar w... | faizan711 |

1,674,253 | Simple steps on how to create a Windows 11 Virtual machine that is highly available, with a free tier azure account. | In this short post I will explore creation of a highly available windows 11 virtual machine. Below... | 0 | 2024-07-09T11:36:52 | https://dev.to/sethgiddy/simple-steps-on-how-to-create-a-windows-11-virtual-machine-that-is-highly-available-with-a-free-tier-azure-account-2f33 | In this short post I will explore creation of a highly available windows 11 virtual machine. Below are the steps to creating a windows 11 virtual machine. High availability refers to the ability of a system or application to remain operational and accessible even in the face of disruptions or failures. This is achieved... | sethgiddy | |

1,735,904 | Developer Activity and Collaboration Analysis with Airbyte Quickstarts ft. Dagster, BigQuery, Google Colab, dbt and Terraform | Airbyte could be used as a wonderful tool in-order to leverage the power of data with useful... | 0 | 2024-07-11T06:33:19 | https://dev.to/btkcodedev/developer-activity-and-collaboration-analysis-with-airbyte-quickstarts-ft-dagster-bigquery-google-colab-dbt-and-terraform-4184 | programming, airbyte, tutorial, terraform | **_Airbyte could be used as a wonderful tool in-order to leverage the power of data with useful transformations_**

**_Those transformed data could be further used for training AI models (Examples at the end)_**

In this tutorial, GitHub source API is used as source and transformed with trends in developer activity, w... | btkcodedev |

1,741,672 | Building Hello World Smart Contracts: Solidity vs. Soroban Rust SDK - A Step-by-Step Guide | How to migrate smart contracts from Ethereum’s Solidity to Soroban Rust In this tutorial, we'll... | 0 | 2024-07-09T13:17:25 | https://dev.to/stellar/building-hello-world-smart-contracts-solidity-vs-soroban-rust-sdk-a-step-by-step-guide-3909 | rust, solidity, ethereum, smartcontract | How to migrate smart contracts from Ethereum’s Solidity to Soroban Rust

In this tutorial, we'll explore the intricacies of two major smart contract programming environments: Ethereum's Solidity and Soroban’s Rust SDK and why should consider migrating your smart contracts to Rust

## Why would a blockchain developer cho... | j_dev28 |

1,756,209 | DTOs e PHP: simplificando a transferência de dados entre as camadas da aplicação | O padrão DTO O DTO (Data Transfer Object) é um padrão de projeto que visa ter objetos... | 0 | 2024-07-09T12:44:00 | https://dev.to/marcelochia/dtos-e-php-simplificando-a-transferencia-de-dados-entre-as-camadas-da-aplicacao-41h5 | php, dto | ## O padrão DTO

O DTO (Data Transfer Object) é um padrão de projeto que visa ter objetos usados exclusivamente para a transferência de dados entre camadas de uma aplicação. É um objeto anêmico, ou seja, a classe tem apenas atributos e sem métodos que manipulem dados, apenas de construção do objeto.

## Porque não um u... | marcelochia |

1,812,684 | Welcome Thread - v284 | Leave a comment below to introduce yourself! You can talk about what brought you here, what... | 0 | 2024-07-10T00:00:00 | https://dev.to/devteam/welcome-thread-v284-46df | welcome | ---

published_at : 2024-07-10 00:00 +0000

---

---

1. Leave a comment below to introduce yourself! You can talk about what brought you here, what you're learning, or just a fun fact about yoursel... | sloan |

1,815,217 | Understanding LEDs on Network Switches | Switches have LEDs for indicating power status, port status,link status, error indication,... | 0 | 2024-07-08T10:50:24 | https://dev.to/gateru/understanding-leds-on-network-switches-1kh1 | Switches have LEDs for indicating power status, port status,link status, error indication, troubleshooting and performance monitoring.

The LED colors for the switch and their corresponding status indications are as follows ;

& [Storyblok Nuxt 2 SDK](https://github.com/storyblok/storyblok-nuxt-2).

## The future of the Vue Ecosystem: Vue 2 & Nuxt 2 Deprecation

Following the official end-of-life (EOL) for Vue 2 on Dec... | dawntraoz |

1,825,379 | Stand out from an Average Developer | The insights mentioned are drawn from my experience working in a corporate environment and can help... | 0 | 2024-07-10T16:06:10 | https://dev.to/saloniagrawal/stand-out-from-an-average-developer-nng | webdev, programming, beginners, career | The insights mentioned are drawn from my experience working in a corporate environment and can help you excel and improve as a developer:

1. **Be Curious**: Curiosity fuels learning and innovation. Always seek to understand why things work the way they do and explore new ideas and technologies.

2. **Understand Before... | saloniagrawal |

1,838,306 | Beyond the Hype: A Critical Look at Design Systems | Design systems have become a hot topic in the design world, lauded as a silver bullet for efficiency,... | 27,353 | 2024-07-10T05:00:00 | https://dev.to/shieldstring/beyond-the-hype-a-critical-look-at-design-systems-2eip | design, ui, ux, career | Design systems have become a hot topic in the design world, lauded as a silver bullet for efficiency, consistency, and a seamless user experience (UX). While they offer undeniable benefits, it's crucial to take a critical look at design systems and understand their limitations.

**The Allure of Design Systems:**

* *... | shieldstring |

1,844,568 | Flutter Package Power: Share Your Creations | Important things about Flutter package development. Simply create a flutter project. But set the... | 0 | 2024-07-08T10:48:41 | https://dev.to/ratul/flutter-package-power-share-your-creations-iph | flutter, mobile, dart | **Important things about Flutter package development.**

Simply create a flutter project. But set the Project Type to package.

Android Studio => New Flutter Project

***

And the project will be created. Here don't have any Android, iOS or other folders. Make a src directory for all files and a [package_name].dart inside... | ratul |

1,847,338 | iphone safari issue | ** Safari iphone input zoom , bottom scroll, scroll to bottom , chatgpt... | 0 | 2024-07-09T05:41:03 | https://dev.to/parth24072001/iphone-safari-issue-2n0n | ios, iphone, safari, webdev | **

## _Safari iphone input zoom , bottom scroll, scroll to bottom , chatgpt textarea_

**

1.

**When we click on textbox that moment iPhone Safari that textbox zoom hear is the solution in react we use first and second for the next js**

responsivas e personalizáveis. Com sua abordagem de utilidade-primeiro, permite aos desenvolvedores estilizar suas aplicações sem sair do HTML (ou JSX, no caso de React). Este artigo aborda como integ... | vitorrios1001 |

1,862,966 | php: write php 8.4’s array_find from scratch | there’s an rfc vote currently underway for a number of new array functions in php8.4 (thanks to... | 0 | 2024-07-09T13:15:03 | https://dev.to/gbhorwood/php-write-php-84s-arrayfind-from-scratch-5c9m | php | there’s an rfc vote currently underway for a number of [new array functions in php8.4](https://laravel-news.com/php-8-4-array-find-functions) (thanks to [symfony station](https://symfonystation.mobileatom.net/) for pointing this out!). the proposal is for four new functions for finding and evaluating arrays using `call... | gbhorwood |

1,863,000 | The Chain of Responsibility | The essence of the Responsibility of Chain pattern: The Chain of Responsibility pattern is a design... | 0 | 2024-07-09T08:00:00 | https://dev.to/ben-witt/the-chain-of-responsibility-1pl4 | **The essence of the Responsibility of Chain pattern**:

The Chain of Responsibility pattern is a design pattern that allows code to be structured to route requests through a series of handlers. Each handler has the ability to process a request or pass it on to the next handler in the chain. This flexibility facilitate... | ben-witt | |

1,871,560 | Django application with allauth configuration. | Hi, dev ninjas🥷! Welcome to my first post! Today, we're diving into Django's built-in authentication... | 0 | 2024-07-08T06:22:35 | https://dev.to/saiprasath/django-application-with-allauth-configuration-3oeo | django, allauth, sso, tutorial | Hi, dev ninjas🥷!

Welcome to my first post! Today, we're diving into Django's built-in authentication system, specifically using the django-allauth package. This powerful tool provides a range of authentication providers, simplifying the workflow for managing logins.

In this tutorial, we'll explore how to add Single ... | saiprasath |

1,873,344 | The Ongoing War Between CJS & ESM: A Tale of Two Module Systems | (This was originally an in-person talk I gave, so the following graphics are from said presentation... | 0 | 2024-07-11T22:27:03 | https://dev.to/greenteaisgreat/the-ongoing-war-between-cjs-esm-a-tale-of-two-module-systems-1jdg | javascript, modules, cjs, esm | (This was originally an in-person talk I gave, so the following graphics are from said presentation slides, the bulk of which are attributed to [SlidesGO](https://slidesgo.com/); check them out for awesome & free slides! Just be sure to credit them during your presentation <3)

---

## _**A**_ battle has raged on for _... | greenteaisgreat |

1,873,463 | Using an Existing Windows VM To Create and Attach a Data Disk To The VM And Initialise It For Use. | OS DISK The OS disk on a Windows VM refers to the virtual hard disk that contains the... | 0 | 2024-07-09T16:34:10 | https://dev.to/fola2royal/using-an-existing-windows-vm-to-create-and-attach-a-data-disk-to-the-vm-and-initialise-it-for-use-232h | cloudengineering, virtualmachine, cloudinfrastructure, azure | ## OS DISK

- The OS disk on a Windows VM refers to the virtual hard disk that contains the operating system. It’s the primary disk that a virtual machine (VM) boots from and is pre-installed with the selected OS when the VM is created.

- This disk includes the boot volume and it's recommended to store only the OS inf... | fola2royal |

1,873,729 | Getting Started with TypeScript: Type Annotations & Type Inference (Part I) | Type annotations and type inference are two different systems in typescript but in this post we will... | 0 | 2024-07-08T15:43:10 | https://dev.to/youxufkhan/getting-started-with-typescript-type-annotations-type-inference-part-i-2mgg | Type annotations and type inference are two different systems in typescript but in this post we will talk about them in parallel.

## Type Annotations

**Code we add to tell TypeScript what type of value a variable will refer to**

_We tell typescript the type_

## Type Inference

**Typescript tries to figure out wh... | youxufkhan | |

1,876,870 | The HTML tags I use the most in my projects. | If you are interested in learning about web development, the first topic you will probably come... | 0 | 2024-07-08T22:55:39 | https://dev.to/audreymengue/the-html-tags-i-use-the-most-in-my-projects-d60 | webdev, html, beginners, programming | If you are interested in learning about web development, the first topic you will probably come across will be HTML. When it is presented to you in the first place, it might look like a lot to learn and it's normal, there is always a lot to learn in the tech industry. The hard truth is that you cannot be in tech witho... | audreymengue |

1,877,084 | 17 Libraries to Become a React Wizard 🧙♂️🔮✨ | React is getting better, especially with the latest release of React 19. Today, we're going to dive... | 0 | 2024-07-09T11:56:51 | https://dev.to/copilotkit/17-libraries-to-become-a-react-wizard-1g6k | react, webdev, javascript, programming | React is getting better, especially with the latest release of React 19.

Today, we're going to dive into 17 react libraries, that will help you become a more productive developer and help you achieve react Wizadry! Don't forget to bookmark this article and star these awesome open-source projects.

This list might sur... | anmolbaranwal |

1,878,682 | Props drilling 📸 useContext() | Props Drilling Passing data from a parent component down through multiple levels of nested... | 0 | 2024-07-08T22:31:11 | https://dev.to/jorjishasan/props-drilling-usecontext-146 | ## Props Drilling

Passing data from a parent component down through multiple levels of nested child components via props is called props drilling. This can make the code hard to manage and understand as the application grows. It's not a topic but a problem. How it's a problem?

In react, Data flows from top to bottom ... | jorjishasan | |

1,878,791 | Adopting Database Guardrails - Cultural Shift | To build continuous database reliability, you need to have database guardrails - the right tools,... | 0 | 2024-07-11T08:00:00 | https://www.metisdata.io/blog/adopting-database-guardrails-cultural-shift | sql, database, monitoring | To build continuous database reliability, you need to have [database guardrails](https://www.metisdata.io/blog/metis-your-ultimate-database-guardrail) - the right tools, processes, and mindset. However, it’s not only about these technical components. It’s a cultural shift that we all need to make. Let’s see why and how... | adammetis |

1,880,599 | Ibuprofeno.py💊| #135: Explica este código Python | Explica este código Python Dificultad: Intermedio x = {1,2,3} y =... | 25,824 | 2024-07-08T11:00:00 | https://dev.to/duxtech/ibuprofenopy-135-explica-este-codigo-python-5em2 | python, spanish, learning, beginners | ## **<center>Explica este código Python</center>**

#### <center>**Dificultad:** <mark>Intermedio</mark></center>

```py

x = {1,2,3}

y = {3,4,5}

print(x.symmetric_difference(y))

```

* **A.** `{}`

* **B.** `{3}`

* **C.** `{1, 2, 4, 5}`

* **D.** `{1, 2, 3, 4, 5}`

---

{% details **Respuesta:** %}

👉 **C.** `{1, 2, 4... | duxtech |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.