id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

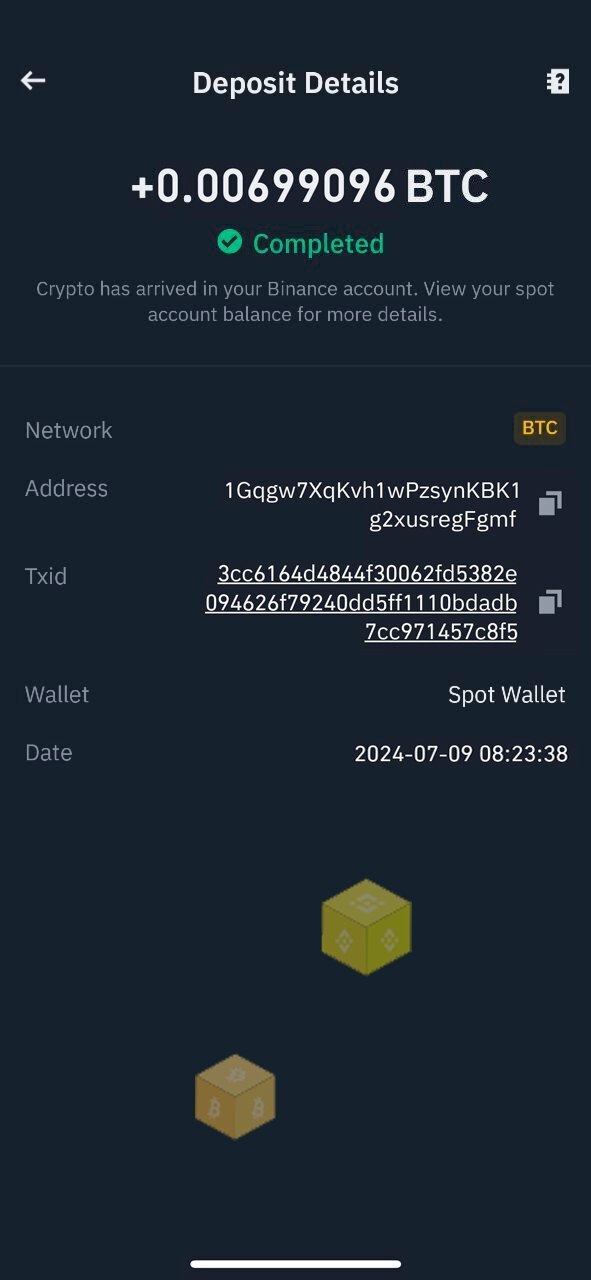

1,917,263 | Bitpower Security: The Iron Wall in the Blockchain | Bitpower Security: The Iron Wall in the Blockchain In the vast ocean of digital currencies,... | 0 | 2024-07-09T12:01:03 | https://dev.to/pings_iman_934c7bc4590ba4/bitpower-security-the-iron-wall-in-the-blockchain-4noc |

Bitpower Security: The Iron Wall in the Blockchain

In the vast ocean of digital currencies, Bitpower stands proudly like an indestructible fortress. In this fortress, every brick and tile is made of blockchain tech... | pings_iman_934c7bc4590ba4 | |

1,917,264 | Explore how BitPower Loop works | BitPower Loop is a decentralized lending platform based on blockchain technology that aims to provide... | 0 | 2024-07-09T12:02:35 | https://dev.to/wgac_0f8ada999859bdd2c0e5/explore-how-bitpower-loop-works-mj2 | BitPower Loop is a decentralized lending platform based on blockchain technology that aims to provide secure, efficient and transparent lending services. Here is how it works in detail:

1️⃣ Smart Contract Guarantee

BitPower Loop uses smart contract technology to automatically execute all lending transactions. This aut... | wgac_0f8ada999859bdd2c0e5 | |

1,917,265 | How personalisation works in Sitecore XM Cloud | In my previous article, I shared a comprehensive troubleshooting guide for Sitecore XM Cloud tracking... | 0 | 2024-07-11T11:07:43 | https://www.byteminds.co.uk/blog/how-personalisation-works-in-sitecore-xm-cloud | sitecore, xmcloud, personalization, webdev | In my previous article, I shared a comprehensive [troubleshooting guide for Sitecore XM Cloud tracking and personalisation](https://dev.to/byteminds_agency/troubleshooting-tracking-and-personalisation-in-sitecore-xm-cloud-2n6). The guide addresses common issues, explains investigation steps and provides solutions for t... | annagevel |

1,917,266 | Bitpower Security: The Iron Wall in the Blockchain | Bitpower Security: The Iron Wall in the Blockchain In the vast ocean of digital currencies,... | 0 | 2024-07-09T12:04:35 | https://dev.to/pingz_iman_38e5b3b23e011f/bitpower-security-the-iron-wall-in-the-blockchain-1o5f |

Bitpower Security: The Iron Wall in the Blockchain

In the vast ocean of digital currencies, Bitpower stands proudly like an indestructible fortress. In this fortress, every brick and tile is made of blockchain tech... | pingz_iman_38e5b3b23e011f | |

1,917,267 | Analysis of BitPower Lending Platform | Introduction With the development of blockchain technology, decentralized finance (DeFi) has... | 0 | 2024-07-09T12:10:24 | https://dev.to/woy_ca2a85cabb11e9fa2bd0d/analysis-of-bitpower-lending-platform-hbm |

Introduction

With the development of blockchain technology, decentralized finance (DeFi) has gradually become a hot topic in the financial industry. As an important project in this field, BitPower Lending Platform h... | woy_ca2a85cabb11e9fa2bd0d | |

1,917,268 | Day 27 of 30 of JavaScript | Hey reader👋 Hope you are doing well😊 In the last post we have talked about DOM. In this post we are... | 0 | 2024-07-09T12:10:38 | https://dev.to/akshat0610/day-27-of-30-of-javascript-3262 | webdev, javascript, beginners, tutorial | Hey reader👋 Hope you are doing well😊

In the last post we have talked about DOM. In this post we are going to discuss about its various interfaces.

So let's get started🔥

## Document Interface

Document interface represents any web page loaded in browser and serves as an entry point into web pages's content (DOM tree... | akshat0610 |

1,917,270 | Solar Energy for Your Home and Business | Solar energy is a versatile and sustainable solution for both residential and commercial... | 0 | 2024-07-09T12:12:17 | https://dev.to/sunphotonics/solar-energy-for-your-home-and-business-2h42 | energy | Solar energy is a versatile and sustainable solution for both residential and commercial applications, offering significant benefits for homeowners and businesses alike. For residential properties, solar energy can reduce electricity bills by generating clean, renewable power directly from the sun.

Homeowners can tak... | sunphotonics |

1,917,274 | FHIR and AI: Transforming Healthcare Interoperability | The healthcare industry is undergoing a technological revolution with the integration of FHIR (Fast... | 0 | 2024-07-09T12:13:11 | https://dev.to/relevant_software/fhir-and-ai-transforming-healthcare-interoperability-15hb | ai, softwaredevelopment | The healthcare industry is undergoing a technological revolution with the integration of FHIR (Fast Healthcare Interoperability Resources) and Artificial Intelligence (AI). This powerful combination is set to enhance data sharing and utilization, ultimately improving patient care and operational efficiency.

**What is ... | relevant_software |

1,917,275 | How do I speak to American customer service? Quick~Connect | To speak with American Airlines customer service, you can call their dedicated support line at... | 0 | 2024-07-09T12:13:25 | https://dev.to/okkulavq/how-do-i-speak-to-american-customer-service-quickconnect-4epk | americancustomerservice, americancustomersupport, speakamericanliveperson |

To speak with American Airlines customer service, you can call their dedicated support line at 𝟭-𝟴𝟯𝟯-𝟰𝟲𝟬-𝟭𝟴𝟯𝟴. This toll-free number connects you with a team of knowledgeable agents who can assist with flight bookings, reservations, and any other inquiries you may have. Alternatively, you can explore their ... | okkulavq |

1,917,276 | Introduction of python(parotta salna) | hi everyone this my first blog,if there is any mistakes in my English kindly adjust that...later will... | 0 | 2024-07-09T12:13:47 | https://dev.to/san_samsung_afd0d003cf6de/introduction-of-pythonparotta-salna-24gc | python, introduction | **hi** everyone this my first blog,if there is any mistakes in my English kindly adjust that...later will fix it..

Yesterday, I have attended my first class of python course...

They are teaching python free that's an impressive approach of them.. really welcome this approach and thanks for this approach.

However,te... | san_samsung_afd0d003cf6de |

1,917,277 | Help Needed: Persistent "removeChild" Error with Google Translate on React 18.2.0. | Hello everyone, I'm reaching out for help with a persistent issue we've been experiencing in our... | 0 | 2024-07-09T12:14:32 | https://dev.to/mikex95/help-needed-persistent-removechild-error-with-google-translate-on-react-1820-3ogn | Hello everyone,

I'm reaching out for help with a persistent issue we've been experiencing in our React application whenever users translate the page using Google Translate.

We have the following error message:

`NotFoundError: Failed to execute 'removeChild' on 'Node': The node to be removed is not a child of this n... | mikex95 | |

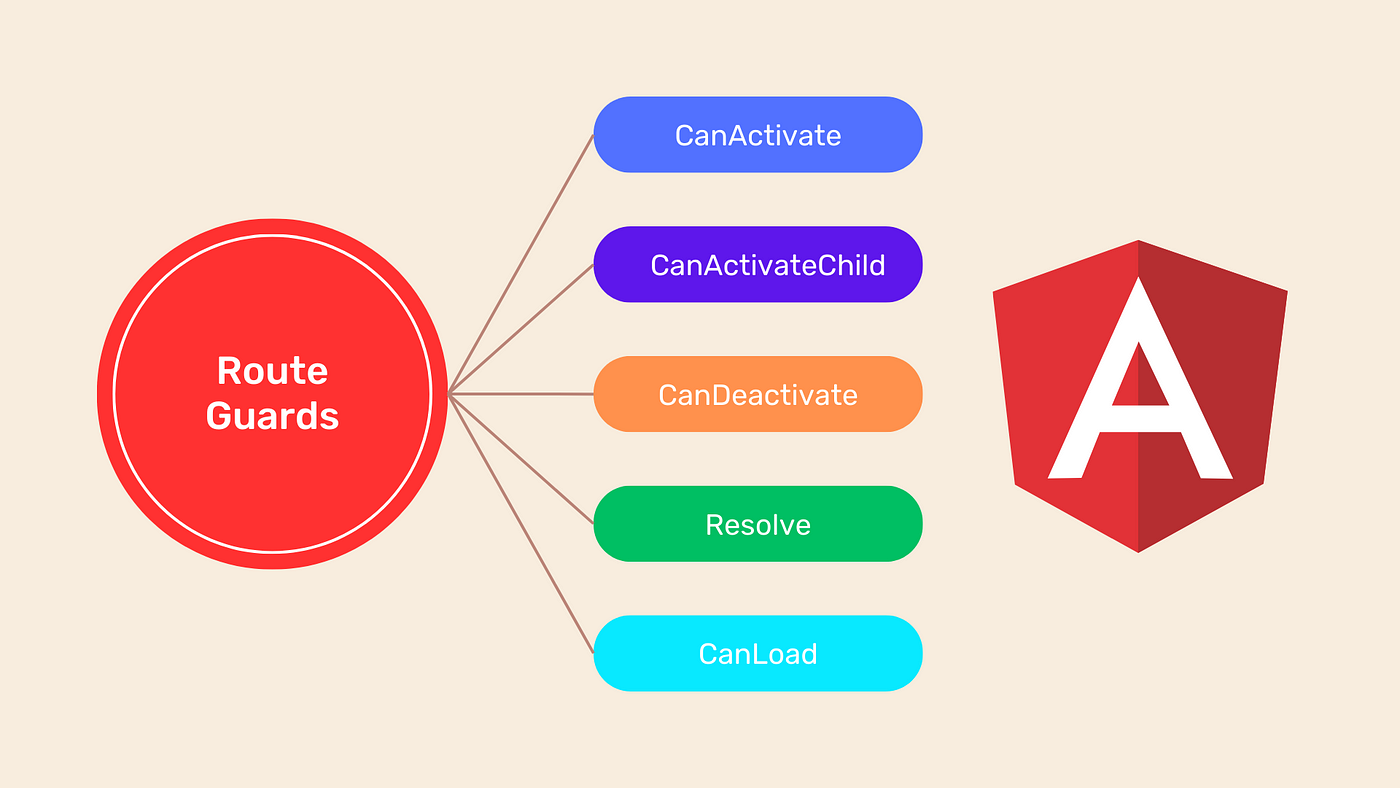

1,917,288 | Guard dans Angular | Introduction Dans cet article, nous allons explorer comment utiliser les gardes (guards)... | 0 | 2024-07-09T12:20:32 | https://dev.to/bilongodavid/guard-dans-angular-1o76 | javascript, angular, website |

### Introduction

Dans cet article, nous allons explorer comment utiliser les gardes (guards) avec Angular 17. Les gardes permettent de protéger les routes et de vérifier certaines conditions avant de permettre l'ac... | bilongodavid |

1,917,279 | BUY 5CLADBA POWDER | EMAIL:gregmceffie@gmail.com buy 5cladba 5cladba price 5cladba synthetic method buy 5cladba... | 0 | 2024-07-09T12:14:51 | https://dev.to/greg_mceffie_129f53f54771/buy-5cladba-powder-2cn7 | 5cladba, buy5cladba, order5cladba, 5cladbapowder | EMAIL:gregmceffie@gmail.com

buy 5cladba

5cladba price

5cladba synthetic method

buy 5cladba online

what is 5cladba

[www.buy5cladba.com](url)

5cladba cas number........

5cladba powder

5cladba cas:...............

#5CLADBA #5CLADBA #5CLADBA #5CLADBA #5CLADBA #5CLADBA #5CLADBA #5CLADBA #5CLADBA #5CLADBA #5CLADBA ... | greg_mceffie_129f53f54771 |

1,917,280 | Inside the Box: June Community Update | Hey there 👋 Welcome back to "Inside the Box"! We've packed this issue with all the best bits from... | 26,773 | 2024-07-09T12:16:42 | https://dev.to/codesandboxio/inside-the-box-june-community-update-4dp2 | webdev, product, community | Hey there 👋

Welcome back to "Inside the Box"! We've packed this issue with all the best bits from last month.

Enjoy!

## Latest Product News

**[VS Code Web editor (beta)](https://codesandbox.io/docs/learn/editors/web/vscode-web) 🌟** — After several weeks of testing and iterations based on feedback from users who t... | filipeslima |

1,917,281 | 5CLADBA PRICE | EMAIL:gregmceffie@gmail.com buy 5cladba 5cladba price 5cladba synthetic method buy 5cladba... | 0 | 2024-07-09T12:18:35 | https://dev.to/greg_mceffie_129f53f54771/5cladba-price-3ea | 5cladba, 5cladbaprice, buy5cladba, 5cladbadeath | EMAIL:gregmceffie@gmail.com

buy 5cladba

5cladba price

5cladba synthetic method

buy 5cladba online

what is 5cladba

[www.buy5cladba.com](url)

5cladba cas number........

5cladba powder

5cladba cas:...............

#5CLADBA #5CLADBA #5CLADBA #5CLADBA #5CLADBA #5CLADBA #5CLADBA #5CLADBA #5CLADBA #5CLADBA #5CLADBA ... | greg_mceffie_129f53f54771 |

1,917,286 | My subdomain proxy server in nodejs | Here is the nodejs proxy server that can be use to listen subdomain routes. for example I run a... | 0 | 2024-07-09T12:20:28 | https://dev.to/akram6t/my-subdomain-proxy-server-in-nodejs-3f2a | javascript, node, proxy, subdomain | Here is the nodejs proxy server that can be use to listen subdomain routes.

for example I run a server is **localhost:5000** but I want to use subdomain in this like **subdomain1.localhost:5000** or something diferent.

```javascript

const express = require('express');

const app = express();

const httpProxy = require(... | akram6t |

1,918,126 | A new way to write media queries is coming to CSS: range ... | Certainly! Let's dive into the world of CSS Media Queries and explore how they enhance responsive web... | 0 | 2024-07-10T06:11:45 | https://dev.to/koolkamalkishor/new-css-media-queries-i67 | Certainly! Let's dive into the world of **CSS Media Queries** and explore how they enhance responsive web design.

## Understanding CSS Media Queries

CSS Media Queries allow us to adapt the presentation of content based on different viewport sizes. The viewport represents the visible area of a web page, which varies d... | koolkamalkishor | |

1,917,297 | My subdomain proxy server in nodejs | Here is the nodejs proxy server that can be use to listen subdomain routes. for example I run a... | 0 | 2024-07-09T12:20:37 | https://dev.to/akram6t/my-subdomain-proxy-server-in-nodejs-51kc | javascript, node, proxy, subdomain | Here is the nodejs proxy server that can be use to listen subdomain routes.

for example I run a server is **localhost:5000** but I want to use subdomain in this like **subdomain1.localhost:5000** or something diferent.

```javascript

const express = require('express');

const app = express();

const httpProxy = require(... | akram6t |

1,917,311 | WHAT IS 5CLADBA | EMAIL:gregmceffie@gmail.com TO GET THE PRICE LIST AND PLACE YOUR ORDER buy 5cladba 5cladba... | 0 | 2024-07-09T12:22:17 | https://dev.to/greg_mceffie_129f53f54771/what-is-5cladba-3ojl | 5cladba, buy5cladba, 5cladbasale, order5cladba | EMAIL:gregmceffie@gmail.com

TO GET THE PRICE LIST AND PLACE YOUR ORDER

buy 5cladba

5cladba price

5cladba synthetic method

buy 5cladba online

what is 5cladba

[www.buy5cladba.com](url)

5cladba cas number........

5cladba powder

5cladba cas:...............

#5CLADBA #5CLADBA #5CLADBA #5CLADBA #5CLADBA #5CLADBA #5CLADB... | greg_mceffie_129f53f54771 |

1,917,312 | Introduction to BitPower decentralized smart contracts | What is BitPower? BitPower is a decentralized lending platform that uses blockchain technology to... | 0 | 2024-07-09T12:24:44 | https://dev.to/aimm_y/introduction-to-bitpower-decentralized-smart-contracts-1k55 | What is BitPower?

BitPower is a decentralized lending platform that uses blockchain technology to provide secure and efficient lending services through smart contracts.

Main features

Automatic execution

Smart contracts automatically execute transactions without human intervention.

Open source code

The code is open and ... | aimm_y | |

1,917,313 | python code editors and links | 09-07-2024 Applications python IDE pycharm online editors tonyedit google colab one... | 0 | 2024-07-09T12:25:03 | https://dev.to/jothilingam88/python-code-editors-and-links-1l02 | python, codeeditor, jopy | **09-07-2024**

Applications

[python IDE](https://www.python.org/downloads/)

[pycharm] (https://www.jetbrains.com/pycharm/)

online editors

[tonyedit] (https://tonyedit.netlify.app/texteditor-py.html)

[google colab](https://colab.research.google.com/notebook)

[one comp](https://onecompiler.com/python/42jtdw4v3)

[ed... | jothilingam88 |

1,922,976 | Pyenv and Pipenv on Fedora | Some projects or organiztions use Pyenv and Pipenv, so you may need to install them. | 0 | 2024-07-14T08:32:47 | https://dev.to/veer66/pyenv-and-pipenv-on-fedora-13m2 | python | ---

title: Pyenv and Pipenv on Fedora

published: true

description: Some projects or organiztions use Pyenv and Pipenv, so you may need to install them.

tags: python

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2024-07-14 08:09 +0000

---

I wonder if I can use ... | veer66 |

1,917,315 | Dedicated Software Development Team: Accelerate Your Development | In today’s rapidly changing technology world, this strategy is essential. The Covid pandemic altered... | 0 | 2024-07-09T12:26:22 | https://dev.to/infowindtech57/dedicated-software-development-team-accelerate-your-development-42oa | softwaredevelopment | In today’s rapidly changing technology world, this strategy is essential. The Covid pandemic altered our approach to work. The prevalence of remote teams has made it simpler to locate competent engineers worldwide. We’ll talk about the benefits of having a dedicated development team in this piece. We’ll provide a thoro... | infowindtech57 |

1,917,316 | What is Selenium? Why do we use Selenium for Automation? | Selenium is an Automation Tool and portable software testing tool for web applications. A test... | 0 | 2024-07-09T12:28:06 | https://dev.to/1234/what-is-selenium-why-do-we-use-selenium-for-automation-438f | Selenium is an Automation Tool and portable software testing tool for web applications. A test domain-specific language is also provided, to write test cases one can use programming languages, including C#, Java, Perl, PHP, Python, Ruby, Scala, and Groovy.

It can mimic user actions such as clicking, typing and navigat... | 1234 | |

1,917,317 | What is Selenium? Why do we use Selenium for Automation? | Selenium is an Automation Tool and portable software testing tool for web applications. A test... | 0 | 2024-07-09T12:28:11 | https://dev.to/1234/what-is-selenium-why-do-we-use-selenium-for-automation-3lhf | Selenium is an Automation Tool and portable software testing tool for web applications. A test domain-specific language is also provided, to write test cases one can use programming languages, including C#, Java, Perl, PHP, Python, Ruby, Scala, and Groovy.

It can mimic user actions such as clicking, typing and navigat... | 1234 | |

1,917,318 | What is Remote Upload? | What is Remote Upload? Remote upload is a feature that allows users to transfer files... | 0 | 2024-07-09T12:28:44 | https://dev.to/sh20raj/what-is-remote-upload-3e60 | remote, remoteupload | # What is Remote Upload?

Remote upload is a feature that allows users to transfer files directly from a web URL or other online sources to a cloud storage service without needing to download the file to their local device first. This can be especially useful for saving bandwidth, managing storage space, and streamlini... | sh20raj |

1,917,319 | How to setup postgres on ubuntu 20.04 | Ref Link: Installation Docs sudo apt install curl ca-certificates sudo install -d... | 0 | 2024-07-09T12:29:08 | https://dev.to/shaikhalamin/how-to-setup-postgres-on-ubuntu-2004-2df0 | postgres, ubuntu, client | Ref Link: [Installation Docs](https://www.postgresql.org/download/linux/ubuntu/)

```

sudo apt install curl ca-certificates

sudo install -d /usr/share/postgresql-common/pgdg

sudo curl -o /usr/share/postgresql-common/pgdg/apt.postgresql.org.asc --fail https://www.postgresql.org/media/keys/ACCC4CF8.asc

sudo sh -c 'echo... | shaikhalamin |

1,917,320 | How to do Regression Testing | If you are looking for how to do regression testing, then you have landed on the correct article! In... | 0 | 2024-07-09T12:31:07 | https://dev.to/morrismoses149/how-to-do-regression-testing-2969 | regressiontesting, testgrid | If you are looking for how to do regression testing, then you have landed on the correct article!

In agile, testing must evolve with each sprint, and testers must ensure that new changes do not interfere with the application’s existing functionality. This is referred to as regression testing.

Regression testing ensures... | morrismoses149 |

1,917,321 | 24/7[Connect]!! How do I connect to Singapore Airlines? #Singapore_Hotline(Number) | To connect with Singapore Airlines, you can call their customer service hotline at 1-833-460-1838.... | 0 | 2024-07-09T12:32:24 | https://dev.to/nekot68408/247connect-how-do-i-connect-to-singapore-airlines-singaporehotlinenumber-4pdh | To connect with Singapore Airlines, you can call their customer service hotline at 1-833-460-1838. Their representatives are available to assist you with flight bookings, reservation changes, and any other inquiries you may have. Alternatively, you can visit their website, singaporeair.com, to manage your booking, chec... | nekot68408 | |

1,917,322 | Biokemp: Customizable Smoking Filters Ecommerce Wix Studio Challenge | This is a submission for the Wix Studio Challenge . What I Built I created "Biokemp" an online store... | 0 | 2024-07-09T12:33:18 | https://dev.to/digikins/biokemp-customizable-smoking-filters-ecommerce-wix-studio-challenge-27fl | devchallenge, wixstudiochallenge, webdev, javascript | *This is a submission for the [Wix Studio Challenge ](https://dev.to/challenges/wix).*

**What I Built**

I created "Biokemp" an online store dedicated to custom smoking filters. Users can personalize their filters to match their preferences and needs, ensuring a unique smoking experience.

**Demo**

https://digikentro.... | digikins |

1,917,324 | SIA[Hotline]!! How do I connect to Singapore Airlines? #Direct_Line @Live(Person) | To connect with Singapore, you have several options: But the most efficient way is to call their... | 0 | 2024-07-09T12:41:22 | https://dev.to/nekot68408/siahotline-how-do-i-connect-to-singapore-airlines-directline-liveperson-51fi | To connect with Singapore, you have several options: But the most efficient way is to call their customer service number ☎1-833-816-0098 Or +★彡 1-833-816-0098 彡★. Phone: Call Singapore customer support line at (0TA) 1-833-816-0098(Live Person).

To get in touch with a live person at Singapore, there are several methods... | nekot68408 | |

1,917,361 | How to Choose the Right Tooth Clinic Near Me? | ` Selecting a good dental clinic is not an easy thing as it would require special attention... | 0 | 2024-07-09T13:34:51 | https://dev.to/aarush_kibe_e1bf9f236fda6/how-to-choose-the-right-tooth-clinic-near-me-4g0g | `<p style="text-align: justify;"><span>Selecting a good dental clinic is not an easy thing as it would require special attention especially if you are planning to take dental treatment for the first time. For example, in such a situation, you may leave the question ‘Which dental clinic should I choose near me&rsq... | aarush_kibe_e1bf9f236fda6 | |

1,917,326 | Best Practices for Creating Container Images | Imagine running 100s of microservices in production without knowing the dos and don'ts around... | 0 | 2024-07-10T05:34:18 | https://devtron.ai/blog/best-practices-for-creating-container-image/ | docker, kubernetes, devops, cloud | Imagine running 100s of microservices in production without knowing the dos and don'ts around creating container images. It’d be chaotic. You don’t want to spend hours building your container images and also you would not want to deploy images with vulnerabilities.

Containerization has become a cornerstone of modern s... | devtron_inc |

1,917,328 | How to build a Todo app with Typescript | In this blog post, we will build a to-do app using typescript. The app allows users to add, delete,... | 0 | 2024-07-09T12:47:20 | https://p-blog.online/668d291bc61f9d5a320a0622? | webdev, typescript, beginners |

In this blog post, we will build a to-do app using typescript. The app allows users to add, delete, and update tasks, and users can mark completed tasks or unmark them. We will follow the Model, View, Controller... | pmadhav82 |

1,917,329 | Techniques for Relationship Platforms Promotion Effectively | Promoting relationship platforms is a nuanced task that requires a strategic approach. Relationship... | 0 | 2024-07-09T12:49:32 | https://dev.to/advertadsonline/techniques-for-relationship-platforms-promotion-effectively-4j04 | datingad, ppcad, adnetwork | <p><span style="font-weight: 400;">Promoting relationship platforms is a nuanced task that requires a strategic approach. Relationship platforms, whether dating apps, matchmaking websites, or relationship advice portals, are highly competitive spaces. Effective promotion can significantly enhance visibility, attract a ... | advertadsonline |

1,917,331 | SharePoint Workflows: Automating Business Processes for Greater Efficiency | Did you know that SharePoint workflows can drastically reduce the time and effort needed for your... | 0 | 2024-07-09T12:50:15 | https://dev.to/webtualglobal/sharepoint-workflows-automating-business-processes-for-greater-efficiency-417b | powerplatform, microsoft365consulting, sharepointworkflow, sharepointconsulting |

Did you know that [SharePoint workflows](https://www.webtualglobal.com/best-sharepoint-development-company-in-usa/) can drastically reduce the time and effort needed for your business processes? Recent stati... | webtualglobal |

1,917,332 | TailwindCSS Text. Free UI/UX design course | Text At the end of the previous lesson, we finally managed to place the Call to action... | 25,935 | 2024-07-09T15:00:00 | https://dev.to/keepcoding/tailwindcss-text-free-uiux-design-course-4p6f | tailwindcss, learning, webdev, design | ## Text

At the end of the previous lesson, we finally managed to place the Call to action elements perfectly in the center of our Hero Image. But the result is far from satisfactory.

First of all, you can hardly see anything here!

and user experience (UX) design in digital marketing cannot be overstated. These elements are the backbone of how users perceive and interact with your brand online. When done right, they can significantly ... | pragyan_paramitanayak_31 |

1,917,335 | Supercharging Your Cypress Tests with Custom Commands | Introduction Cypress is a powerful tool for end-to-end testing, offering a robust set of... | 0 | 2024-07-10T01:51:48 | https://dev.to/aswani25/supercharging-your-cypress-tests-with-custom-commands-4jlc | testing, webdev, javascript, cypress | ## Introduction

Cypress is a powerful tool for end-to-end testing, offering a robust set of built-in commands to interact with web applications. However, every project has unique needs that might not be fully covered by the default set of commands. This is where custom commands come in. Custom commands allow you to ext... | aswani25 |

1,917,336 | Screw The AI Hype: What Can It ACTUALLY Do For Cloud Native? | The hype train is in full effect when it comes to AI, and for a good reason. The problem is that no... | 0 | 2024-07-09T12:54:45 | https://dev.to/thenjdevopsguy/screw-the-ai-hype-what-can-it-actually-do-for-cloud-native-3k27 | kubernetes, programming, devops, docker | The hype train is in full effect when it comes to AI, and for a good reason. The problem is that no one is talking about the good reason. Currently, the AI hype is all about generative text, terrible automation, and attempting to perform actions that don’t make sense.

In this blog post, engineers will learn what GenAI... | thenjdevopsguy |

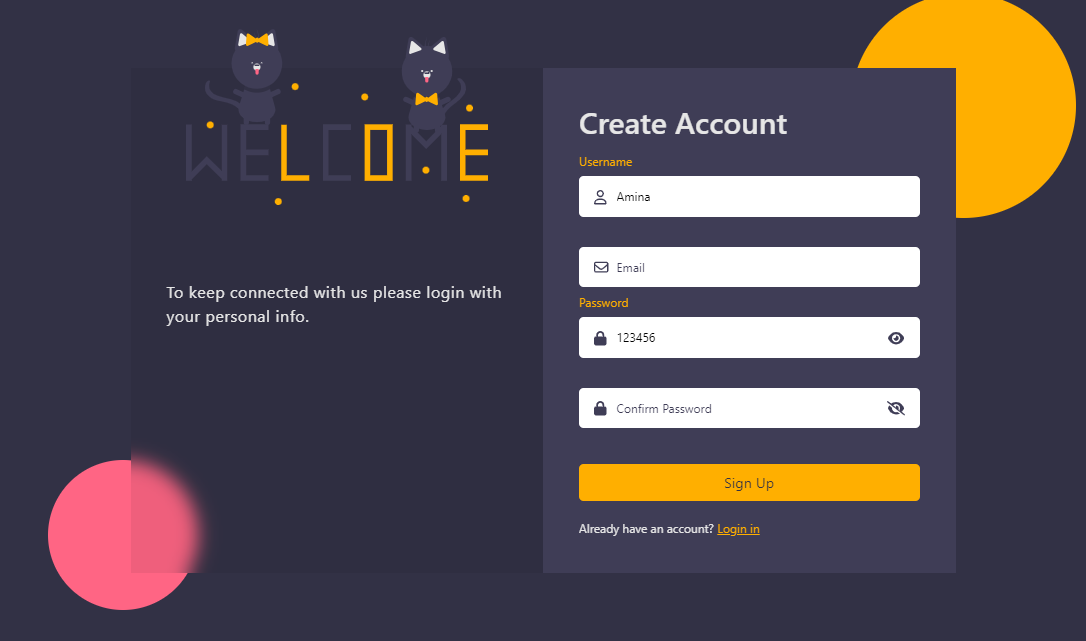

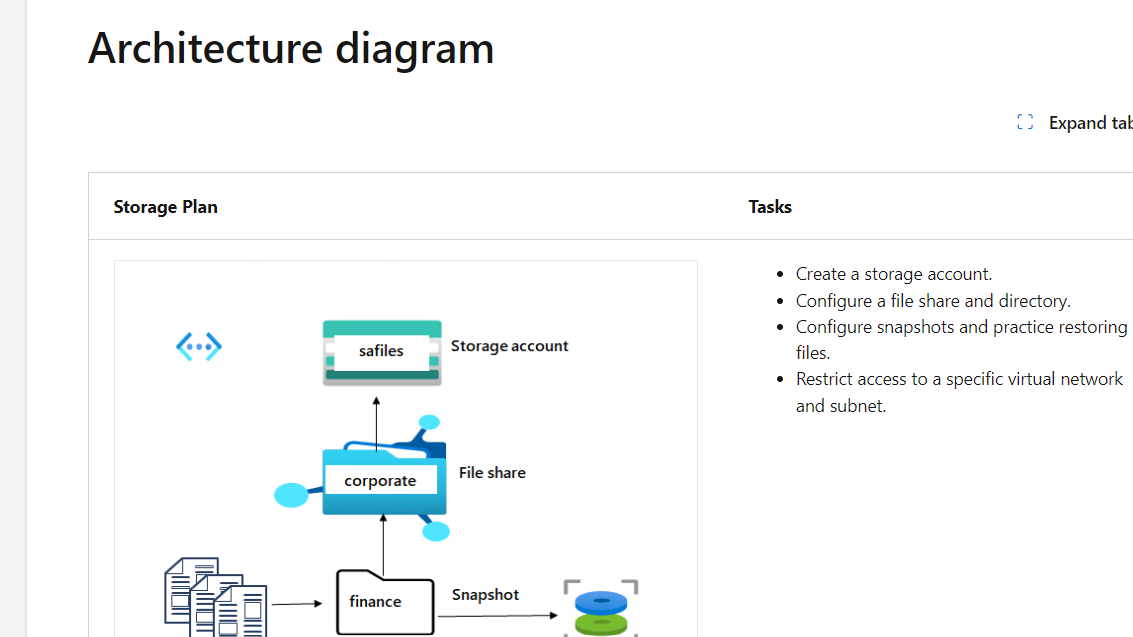

1,917,337 | Sign Up | I create a sign up page using html, css and js.🚀 To be sure that user write a valid input i use the... | 0 | 2024-07-09T12:55:16 | https://dev.to/minalfatih/sign-up-511a | html, css, javascript, frontend | I create a sign up page using html, css and js.🚀

To be sure that user write a valid input i use the regex in js.

Repo link: https://github.com/minalfatih/Sign-Up

Live link: https://minalfatih.github.io/Sign-Up/

The skill objective of this task is to;

-Create a storage account.

-Configure a ... | sethgiddy |

1,917,341 | Tuesday's AI Spotlight | A Quick Guide On How To Build AI Tools We're all busy innovating with AI! However, are we doing it... | 0 | 2024-07-09T13:29:58 | https://www.techdogs.com/td-articles/trending-stories/a-quick-guide-on-how-to-build-ai-tools | ai, tech | **A Quick Guide On How To Build AI Tools**

We're all busy innovating with AI! However, are we doing it effectively and efficiently to maximize ROI? Get ready to ace building AI tools like a pro with these tried-and-tested tips by experts. Dive right in!

(https://www.techdogs.com/td-articles/trending-stories/a-quick-g... | td_inc |

1,917,342 | What toSuccess function | What is the toSuccess funtion in scala | 0 | 2024-07-09T13:08:55 | https://dev.to/riaz_a26a0105927bbde4d07f/what-tosuccess-function-2o8f | What is the toSuccess funtion in scala

| riaz_a26a0105927bbde4d07f | |

1,917,343 | Day 28 of 30 of JavaScript | Hey reader👋 Hope you are doing well😊 In the last post we have talked about Document and Element... | 0 | 2024-07-09T13:14:58 | https://dev.to/akshat0610/day-28-of-30-of-javascript-4980 | webdev, javascript, beginners, tutorial | Hey reader👋 Hope you are doing well😊

In the last post we have talked about Document and Element interfaces of DOM. In this post we are going to discuss about some other interfaces of DOM.

So let's get started🔥

## Event Interface

The Event interface represents an event which takes place on an EventTarget. An event ... | akshat0610 |

1,917,379 | Prototype Prolog Projects | Instant Pattern-Mock-Ups | 0 | 2024-07-09T14:11:43 | https://dev.to/luciangreen/prototype-prolog-projects-4kih | spec, to, algorithms, prolog | ---

title: Prototype Prolog Projects

published: true

description: Instant Pattern-Mock-Ups

tags: spec, to, algorithm, prolog

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2024-07-09 12:59 +0000

---

<img width="366" alt="Spec to Algorithm" src="https://github.c... | luciangreen |

1,917,344 | Build Better With Stellar Smart Contract Challenge: A Companion Guide | Interested in our web3 challenge but not sure where to start? In this post, we'll share an overview... | 0 | 2024-07-09T13:16:16 | https://dev.to/stellar/build-better-with-stellar-smart-contract-challenge-a-companion-guide-2ing | rust, smartcontract, web3, stellarchallenge | Interested in our web3 challenge but not sure where to start? In this post, we'll share an overview of web3 and blockchain technology and set you up with all the information you need to learn about Stellar and the Soroban smart contracts platform.

## A Quick Primer on Web3

### What is web3 and blockchain?

Blockchain... | j_dev28 |

1,917,345 | Best Practices for Custom Commands in Cypress: A Detailed Guide | Introduction In our previous post, we introduced the concept of custom commands in Cypress... | 0 | 2024-07-11T12:31:33 | https://dev.to/aswani25/best-practices-for-custom-commands-in-cypress-a-detailed-guide-cl2 | testing, webdev, javascript, cypress | ## Introduction

In our previous post, we introduced the concept of custom commands in Cypress and demonstrated how they can simplify and enhance your testing framework. This follow-up post dives deeper into the best practices for creating and using custom commands, providing detailed examples to ensure your tests are m... | aswani25 |

1,917,346 | CUPPA CORNER | Cafe Menu | 0 | 2024-07-09T13:17:49 | https://dev.to/carm3n_sibanda_2f4b4d5774/cuppa-corner-401c | codepen | <p>Cafe Menu</p>

{% codepen https://codepen.io/Carmen-Sibs/pen/pomQdwm %} | carm3n_sibanda_2f4b4d5774 |

1,917,347 | Microsoft 365 Groups: A Comprehensive Guide | Microsoft 365 Groups is a powerful feature within the Microsoft 365 suite that enhances collaboration... | 0 | 2024-07-09T13:18:17 | https://dev.to/borisgigovic/microsoft-365-groups-a-comprehensive-guide-38ld | microsoft365, microsoftsecuritygroups, cloudsecurity, identityandaccess | Microsoft 365 Groups is a powerful feature within the Microsoft 365 suite that enhances collaboration and communication among team members. This comprehensive guide aims to demystify Microsoft 365 Groups by explaining what they are, how they can be used, the differences between Microsoft 365 Groups and legacy distribut... | borisgigovic |

1,917,354 | First Post! | Hello everyone! This is my first post. I will be posting more about my ICT journey on here, so stay... | 0 | 2024-07-09T13:25:01 | https://dev.to/chonkyqueen/first-post-53i0 | Hello everyone! This is my first post. I will be posting more about my ICT journey on here, so stay tuned. | chonkyqueen | |

1,917,355 | what do you think | A post by Elhoucine Toual | 0 | 2024-07-09T13:26:17 | https://dev.to/elhoucine_toual_43a3f872e/what-do-you-think-dj0 |

| elhoucine_toual_43a3f872e | |

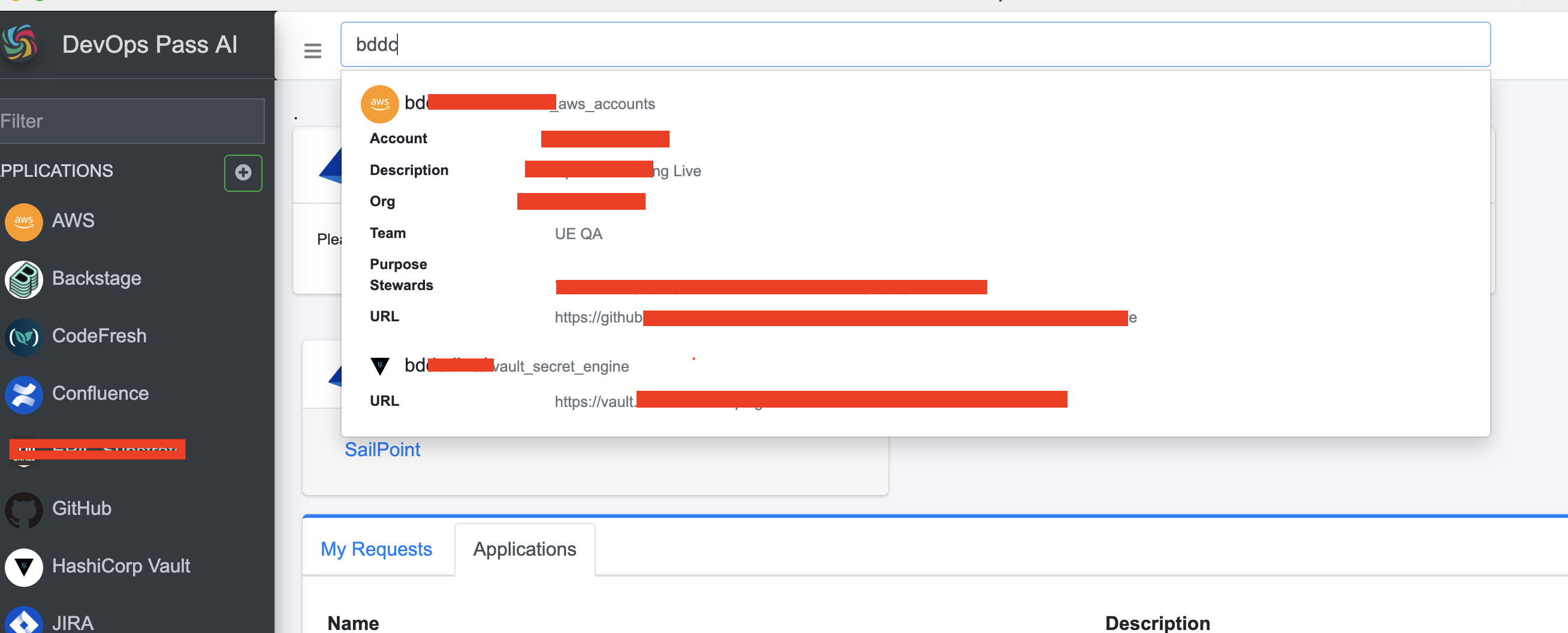

1,917,357 | DevOps Pass AI plugins | Right now DOP has bunch of plugins which simplifies your daily activities with DevOps stack and... | 0 | 2024-07-09T13:28:21 | https://dev.to/devopspass-ai/devops-pass-ai-plugins-4mm0 | devops, webdev, automation, beginners | Right now DOP has bunch of plugins which simplifies your daily activities with DevOps stack and navigation in it.

You can search in your entire stack in one app:

And make necessary actions with this entities just in a ... | devopspass |

1,917,358 | SpookySwap: Tu Aliado en el Comercio Descentralizado de Criptomonedas | El mundo de las finanzas descentralizadas (DeFi) está en constante expansión, y SpookySwap se ha... | 0 | 2024-07-09T13:32:02 | https://dev.to/jake_willson/spookyswap-tu-aliado-en-el-comercio-descentralizado-de-criptomonedas-1ab |

El mundo de las finanzas descentralizadas (DeFi) está en constante expansión, y [SpookySwap](https://spookyswap.net/) se ha establecido como una plataforma líder en este espacio. Operando en la eficiente red Fantom, SpookySwap ofrece a los usuarios una experiencia de intercambio descentralizado segura, rápida y rentab... | jake_willson | |

1,917,359 | Flux Fundamentals: Mastering GitOps Deployments with Flux | GitOps, a set of principles that utilize Git as the single source of truth for managing... | 0 | 2024-07-09T13:32:43 | https://dev.to/platform_engineers/flux-fundamentals-mastering-gitops-deployments-with-flux-3k9o | GitOps, a set of principles that utilize Git as the single source of truth for managing infrastructure, has revolutionized the way platform engineering teams manage and deploy applications. One of the key tools in this space is Flux, an open-source project that automates the deployment of applications to Kubernetes clu... | shahangita | |

1,917,360 | dsadsadsadasdasdsadsadsa | dsadsadsadasdasdsadsadsadasdasdsadsadsadasdasdsadsadsadasdasdsadsadsadasdasdsadsadsadasdasdsadsadsada... | 0 | 2024-07-09T13:33:28 | https://dev.to/justinherrera/dsadsadsadasdas-5185 |

dsadsadsadasdasdsadsadsadasdasdsadsadsadasdasdsadsadsadasdasdsadsadsadasdasdsadsadsadasdasdsadsadsadasdasdsadsadsadasdasdsadsadsadasdasdsadsadsadasdasdsadsadsadasdasdsadsadsadasdasdsadsadsadasdasdsadsadsadasdasdsadsadsadasdasdsadsadsadasdasdsadsadsadasdas | justinherrera | |

1,917,362 | Why does Woovi use MongoDB? | This article explains our decision-making at Woovi to build our fintech on top of MongoDB. ... | 0 | 2024-07-09T18:15:28 | https://dev.to/woovi/why-does-woovi-use-mongodb-m04 | mongodb, woovi | This article explains our decision-making at Woovi to build our fintech on top of MongoDB.

## Why MongoDB?

I think the best two general databases that you can choose to build your Startup are MongoDB or PostgreSQL.

We decided to go with MongoDB for several reasons, outlined below.

## Database migrations

When you a... | sibelius |

1,917,363 | Unlocking the Power of Data with Data Science & Advanced Analytics | In today's data-driven world, businesses are increasingly relying on data science and advanced... | 0 | 2024-07-09T13:37:58 | https://dev.to/datameticasolutions/unlocking-the-power-of-data-with-data-science-advanced-analytics-9im | analytics, data, cloud, datascience | In today's data-driven world, businesses are increasingly relying on data science and advanced analytics to make informed decisions, improve operations, and gain a competitive edge. The realm of data science encompasses a variety of techniques, tools, and methodologies that allow organizations to extract meaningful ins... | datameticasolutions |

1,917,364 | Create Architecture Diagram as Code for a 2-Tier Bookstore Application | How to Create Architecture Diagram as Code for a 2-Tier Bookstore Application Creating... | 7,690 | 2024-07-09T15:20:30 | https://dev.to/aws-builders/create-architecture-diagram-as-code-for-a-2-tier-bookstore-application-2356 | aws, python, architecture, devrel | ## **How to Create Architecture Diagram as Code for a 2-Tier Bookstore Application**

Creating architecture `diagrams as code` is a modern approach that offers numerous benefits over traditional diagramming methods, including architecture diagram automation, and consistency. This approach allows for version-controlled,... | chefgs |

1,917,365 | WebAssembly: Revolutionizing Web Performance | The web has come a long way since the days of static HTML pages. Modern web applications are rich,... | 0 | 2024-07-09T13:42:44 | https://dev.to/andylarkin677/webassembly-revolutionizing-web-performance-1jla | webdev, javascript, webassembly, typescript | The web has come a long way since the days of static HTML pages. Modern web applications are rich, interactive, and complex, often rivaling native applications in functionality and user experience. However, achieving high performance with JavaScript, the primary language of the web, can be challenging, especially for c... | andylarkin677 |

1,917,366 | Unlocking the Power of AI Integration with Sista AI | Unleash the power of AI integration with Sista AI! Elevate your apps with smart functionality and seamless user experiences. 🚀 | 0 | 2024-07-09T13:45:52 | https://dev.to/sista-ai/unlocking-the-power-of-ai-integration-with-sista-ai-5dfc | ai, react, javascript, typescript | <h2>Introduction</h2><p>In today's tech-savvy world, the integration of AI technology has become a game-changer, revolutionizing user experiences in diverse industries. Sista AI, a leading AI integration platform, offers cutting-edge solutions to enhance user engagement and accessibility.</p><p>The demand for intellige... | sista-ai |

1,917,368 | How To Deal With Side Effects | Dealing with side effects in React is crucial to ensuring your components behave correctly and... | 0 | 2024-07-10T08:29:27 | https://dev.to/ark7/how-to-deal-with-side-effects-5f22 | javascript, programming, tutorial, webdev | Dealing with side effects in React is crucial to ensuring your components behave correctly and efficiently. React provides several hooks and lifecycle methods to handle side effects.

Certain components in React need to interact with things outside themselves. These things can be anything from querying data from a ser... | ark7 |

1,917,369 | Como ter sorte | Postado originalmente no Dev na Gringa Substack. Quer receber futuros artigos no seu e-mail? Assine... | 0 | 2024-07-09T13:54:09 | https://dev.to/lucasheriques/como-ter-sorte-346b | braziliandevs, career | Postado originalmente no [Dev na Gringa Substack](https://devnagringa.substack.com/p/como-ter-mais-sorte?utm_source=devto). Quer receber futuros artigos no seu e-mail? [Assine gratuitamente aqui](https://devnagringa.substack.com/subscribe?utm_source=devto).

---

Sorte pode ser criada.

Ok, talvez essa frase seja um po... | lucasheriques |

1,917,370 | round college | https://maps.google.com/maps?cid=17509020529430427031 | 0 | 2024-07-09T13:54:31 | https://dev.to/round_college/round-college-21fa | [https://maps.google.com/maps?cid=17509020529430427031](https://maps.google.com/maps?cid=17509020529430427031) | round_college | |

1,917,371 | Java Enthusiast | Hello community. I'm joining the Java ecosystem. | 0 | 2024-07-09T13:54:33 | https://dev.to/and_sferr/java-enthusiast-3l0l | java, react, springboot, webdev | Hello community. I'm joining the Java ecosystem. | and_sferr |

1,917,372 | round college | https://drive.google.com/drive/folders/1wVjIpYwgun7ZJzOQtlpTGjgiZ9NBxddo?usp=drive_link | 0 | 2024-07-09T13:55:48 | https://dev.to/round_college/round-college-2jk2 | [https://drive.google.com/drive/folders/1wVjIpYwgun7ZJzOQtlpTGjgiZ9NBxddo?usp=drive_link](https://drive.google.com/drive/folders/1wVjIpYwgun7ZJzOQtlpTGjgiZ9NBxddo?usp=drive_link) | round_college | |

1,917,381 | j-Exec — Total.js | j-Exec is part of the jComponent library from Total.js. You can find more information about j-Exec on... | 0 | 2024-07-09T14:17:06 | https://dev.to/palo/j-exec-totaljs-2bo4 | frontend, ui, totaljs, development | j-Exec is part of the jComponent library from Total.js. You can find more information about j-Exec on [componentator.com](https://componentator.com/components/j-exec/) or [GitHub](https://github.com/totaljs/components/tree/master/j-Exec).

This component is singleton and works for the whole HTML document, where it is u... | palo |

1,917,373 | The Importance of React in Web Development | React, developed and maintained by Facebook, has become one of the most popular JavaScript libraries... | 0 | 2024-07-09T13:58:11 | https://dev.to/patrick_chibueze_e2567f25/the-importance-of-react-in-web-development-1dn4 | react, webdev, reactjsdevelopment, programming | React, developed and maintained by Facebook, has become one of the most popular JavaScript libraries for building user interfaces, particularly single-page applications. Since its release in 2013, React has revolutionized web development with its component-based architecture, virtual DOM, and efficient rendering. This ... | patrick_chibueze_e2567f25 |

1,917,375 | Optimizing Performance in Full-Stack Applications | Optimizing performance in full-stack applications is crucial for ensuring a smooth user experience,... | 0 | 2024-07-09T14:02:44 | https://dev.to/chariesdevil/optimizing-performance-in-full-stack-applications-41e2 | app, developer, fullstack | Optimizing performance in full-stack applications is crucial for ensuring a smooth user experience, reducing server load, and improving overall efficiency. This involves a combination of front-end and back-end optimizations, efficient database management, and strategic use of caching. In this article, we'll explore var... | chariesdevil |

1,917,376 | Best Alternatives to Coolors | Introduction If you're working graphic or UX/UI design or even if you're a solo dev building your... | 0 | 2024-07-09T14:05:45 | https://dev.to/kolort/best-alternatives-to-coolors-3omj | css, resources, design | **Introduction**

If you're working graphic or UX/UI design or even if you're a solo dev building your own project, you know that the right color palette can make or break a project. Coolors.co has long been a favorite among designers for its ease of use and powerful color palette generation capabilities. However, it’s... | kolort |

1,917,377 | Wezterm QuickSelect | After using tmux for more than a decade, I’ve recently moved away from it and switched to using the... | 0 | 2024-07-09T14:18:41 | https://burnskp.com/2024/07/09/wezterm-quickselect/ | tips, wezterm | ---

title: Wezterm QuickSelect

published: true

date: 2024-07-09 13:50:44 UTC

tags: tips,wezterm

canonical_url: https://burnskp.com/2024/07/09/wezterm-quickselect/

---

After using tmux for more than a decade, I’ve recently moved away from it and switched to using the multiplex features in wezterm. One of my favorite pl... | burnskp |

1,917,378 | How to see images that are not recognized by the detector model? | We can create and train an Object Detection model based on Training Custom Object Detector —>... | 0 | 2024-07-09T14:08:25 | https://dev.to/yustasdev/how-to-see-images-that-are-not-recognized-by-the-detector-model-3i12 | We can create and train an Object Detection model based on Training Custom Object Detector —> [https://tensorflow-object-detection-api-tutorial.readthedocs.io/en/latest/index.html](url)

There is also a file for evaluating the trained mode ==> Training Custom Object Detector —> [https://tensorflow-object-detection-api-... | yustasdev | |

1,917,382 | The Cloud Resume Challenge: My Cloud Adventure ☁️ | Haiyue Yuan DevOps Enthusiast | AWS Certified DevOps Engineer – Professional My Website:... | 0 | 2024-07-09T14:17:07 | https://dev.to/yuan_hy/the-cloud-resume-challenge-my-cloud-adventure-5439 | webdev, aws, cicd, automation |

**Haiyue Yuan**

*DevOps Enthusiast | AWS Certified DevOps Engineer – Professional*

---

**My Website: _https://hi-yyuan.com/_**

**Source Code: _https://github.com/dadadei/yuan-aws-website_**

## Introduction

Hey there! 👋 I'm Haiyue Yuan, and I'm thrilled to share my adventure with the Cloud Resume Challenge. This p... | yuan_hy |

1,917,383 | Mejora la Experiencia del Usuario con Loading Request | ¿Alguna vez te has preguntado cómo mejorar la experiencia del usuario en tus aplicaciones web durante... | 0 | 2024-07-09T14:18:20 | https://dev.to/urian121/mejora-la-experiencia-del-usuario-con-loading-request-23da | spinner, loading, loader, progressbar | ¿Alguna vez te has preguntado cómo mejorar la experiencia del usuario en tus aplicaciones web durante las solicitudes y procesos asincrónicos? 😫 No hay nada peor que un usuario mirando una pantalla en blanco, sin saber si la app está haciendo algo. ¡Es hora de cambiar eso!

Presentamos Loading Request, un paquete npm ... | urian121 |

1,917,480 | Fin Intercom vs GaliChat - The Best AI Assistant for your Business | GaliChat and Intercom's Fin are two AI-powered chatbots built to ease customer support by providing... | 0 | 2024-07-10T09:50:14 | https://dev.to/creativetim_official/fin-intercom-vs-galichat-the-best-ai-assistant-for-your-business-1c7d | ai, automation | [GaliChat](https://www.galichat.com/) and [Intercom's Fin](https://www.intercom.com/drlp/ai-chatbot) are **two AI-powered chatbots built to ease customer support by providing automated and accurate responses to user queries.**

While both are based on advanced AI language models, they differ in their specific features,... | creativetim_official |

1,917,481 | Python in Tamil | Python 1st day Installation of python software from www.python.org Code editors: Google colab... | 0 | 2024-07-09T14:32:09 | https://dev.to/umanathmsri/python-in-tamil-12pm | python, kaniyam, pythonintamil, beginners | Python 1st day

- Installation of python software from www.python.org

- Code editors: Google colab notebook- https://colab.research.google.com or Visual Studio Code (Microsoft)

- Basic Print Command

print("Hello World")

| umanathmsri |

1,917,482 | Redis at Woovi | This article explains our decision-making at Woovi to use Redis. Redis is a versatile database that... | 0 | 2024-07-10T11:13:52 | https://dev.to/woovi/redis-at-woovi-9p4 | redis, woovi | This article explains our decision-making at Woovi to use Redis.

Redis is a versatile database that can solve many problems when scaling a Fintech.

## Cache

The first problem that Redis solves is to be able to cache data.

You can use cache to reduce the workload from external APIs or the main database.

We use it to... | sibelius |

1,917,483 | podiatrist | https://maps.google.com/maps?cid=9329066601190808564 | 0 | 2024-07-09T14:40:34 | https://dev.to/podologo_fb726ec7bf084aa5/podiatrist-3l1b | [https://maps.google.com/maps?cid=9329066601190808564](https://maps.google.com/maps?cid=9329066601190808564) | podologo_fb726ec7bf084aa5 | |

1,917,484 | podiatrist | https://drive.google.com/drive/folders/13kp9jZrjnqjhglHsR2YHQ8vTJE5ZAi5D?usp=sharing | 0 | 2024-07-09T14:40:57 | https://dev.to/podologo_fb726ec7bf084aa5/podiatrist-kdm | [https://drive.google.com/drive/folders/13kp9jZrjnqjhglHsR2YHQ8vTJE5ZAi5D?usp=sharing](https://drive.google.com/drive/folders/13kp9jZrjnqjhglHsR2YHQ8vTJE5ZAi5D?usp=sharing) | podologo_fb726ec7bf084aa5 | |

1,917,485 | Modern Good Practices for Python Development | by Stuart Ellis | Best practices make our lives easy in the long run. Stuart Ellis covered some of them in this... | 0 | 2024-07-09T14:43:28 | https://dev.to/tankala/modern-good-practices-for-python-development-by-stuart-ellis-1gfk | python, beginners, programming | Best practices make our lives easy in the long run. Stuart Ellis covered some of them in [this article](https://www.stuartellis.name/articles/python-modern-practices/). He mentioned that these are some agreed modern best practices but that is debatable like Avoid Using Poetry other than those mostly great practices whi... | tankala |

1,917,486 | Batman-Comic.CSS | Move aside, TailwindCSS, the next best CSS utility-class library, is already here, and it's all about web development... and comics. Because the caped crusader makes everything better. | 0 | 2024-07-09T14:48:55 | https://alvaromontoro.com/blog/68056/batman-comic-css | css, html, webdev, showdev | ---

title: Batman-Comic.CSS

published: true

description: Move aside, TailwindCSS, the next best CSS utility-class library, is already here, and it's all about web development... and comics. Because the caped crusader makes everything better.

tags: css,html,webdev,showdev

cover_image: https://dev-to-uploads.s3.amazonaws... | alvaromontoro |

1,917,487 | Automatic Repair Blue screen issue (BSOD) | Experiencing the Automatic Repair Blue Screen, also known as the Blue Screen of Death (BSOD), can... | 0 | 2024-07-10T09:39:50 | https://dev.to/madgan95/automatic-repair-blue-screen-issue-bsod-5bf8 | microsoft, beginners |

Experiencing the Automatic Repair Blue Screen, also known as the **Blue Screen of Death (BSOD)**, can be frustrating. This issue can arise from various causes, such as **sudden power loss**, **Windows registry col... | madgan95 |

1,917,488 | Mathematics for Machine Learning - Day 2 | A brief disclaimer. Today and all the other days are where I'll suck, I'll still dedicate... | 27,993 | 2024-07-09T16:05:25 | https://www.pourterra.com/blogs/2 | beginners, machinelearning, learning, tutorial | ### A brief disclaimer.

Today and all the other days are where I'll suck, I'll still dedicate the same amount of time to read a day, but the content might be less :D since even if a formula is proven, it's better to try and disprove it too. So I'll bribe you with a meme from reddit.

and zeros_like() in PyTorch | *My post explains ones() and ones_like(). zeros() can create the 1D or more D tensor of zero or more... | 0 | 2024-07-09T14:49:35 | https://dev.to/hyperkai/zeros-and-zeroslike-in-pytorch-2kml | pytorch, zeros, zeroslike, function | *[My post](https://dev.to/hyperkai/ones-and-oneslike-in-pytorch-930) explains [ones()](https://pytorch.org/docs/stable/generated/torch.ones.html) and [ones_like()](https://pytorch.org/docs/stable/generated/torch.ones_like.html).

[zeros()](https://pytorch.org/docs/stable/generated/torch.zeros.html) can create the 1D or... | hyperkai |

1,917,491 | 2nd day of my learning | did the quiz's and tasks today I learned few new topics | 0 | 2024-07-09T14:49:53 | https://dev.to/sandy74/2nd-day-of-my-learning-f7k | tutorial, python | did the quiz's and tasks today I learned few new topics | sandy74 |

1,917,492 | Exploring the Rise of Loader Electric Rickshaws: A Green Solution for Urban Transport | The rapid urbanization of cities across the globe has spurred a growing demand for efficient and... | 0 | 2024-07-09T14:50:07 | https://dev.to/citylifeev/exploring-the-rise-of-loader-electric-rickshaws-a-green-solution-for-urban-transport-3cn7 | news, ai, design, website | The rapid urbanization of cities across the globe has spurred a growing demand for efficient and eco-friendly transportation solutions. One such innovation that has gained significant traction is the loader electric rickshaw. These versatile vehicles offer a sustainable alternative to traditional fuel-powered transport... | citylifeev |

1,917,493 | The Hidden Power of the Box Model: What Every Frontend Dev Must Know | As the developer of flitter.dev, a rendering engine framework, I often find myself explaining the... | 0 | 2024-07-09T14:51:34 | https://dev.to/moondaeseung/the-hidden-power-of-the-box-model-what-every-frontend-dev-must-know-4ba2 | As the developer of [flitter.dev](flitter.dev), a rendering engine framework, I often find myself explaining the intricacies of how browsers and rendering engines work. This article aims to provide a comprehensive overview of the box model, its implementation in browsers, and how similar concepts are used in other fram... | moondaeseung | |

1,917,494 | ones() and ones_like() in PyTorch | *My post explains zeros() and zeros_like(). ones() can create the 1D or more D tensor of zero or... | 0 | 2024-07-09T14:52:29 | https://dev.to/hyperkai/ones-and-oneslike-in-pytorch-930 | pytorch, ones, oneslike, function | *[My post](https://dev.to/hyperkai/zeros-and-zeroslike-in-pytorch-2kml) explains [zeros()](https://pytorch.org/docs/stable/generated/torch.zeros.html) and [zeros_like()](https://pytorch.org/docs/stable/generated/torch.zeros_like.html).

[ones()](https://pytorch.org/docs/stable/generated/torch.ones.html) can create the ... | hyperkai |

1,917,495 | Delete automatic assignment of Public IPv4 addresses to Amazon EC2 instances using the AWS Systems Manager Automation runbook. | I recently did something similar using AWS Config -> Amazon EventBridge -> AWS Lambda in... | 0 | 2024-07-11T07:49:47 | https://dev.to/nishikawaakira/delete-automatic-assignment-of-public-ipv4-addresses-to-amazon-ec2-instances-using-the-aws-systems-manager-automation-runbook-15h8 | aws, automation |

I recently did something similar using AWS Config -> Amazon EventBridge -> AWS Lambda in [another article](https://dev.to/nishikawaakira/automatically-remove-automatic-ipv4-address-assignment-to-amazon-ec2-instances-12fl). In this case, I would like to use Automation runbook in AWS Systems Manager to delete the public... | nishikawaakira |

1,917,496 | Okrolearn | Checkout my machine learning library, which is a raw implementation of combining pytorch with... | 0 | 2024-07-09T14:53:12 | https://dev.to/okerew/okrolearn-15kg | machinelearning, pytorch, python, analytics | Checkout my machine learning library, which is a raw implementation of combining pytorch with scikit-learn.

https://github.com/Okerew/okrolearn

Why did I make this project? I made it as I saw problems with pytorch, there weren't any data analasys featurues, some more algortihms could be implemented, better support for ... | okerew |

1,917,497 | Mastering Kubernetes: Top 10 Commands You Should Know | Kubernetes is essential for container orchestration. Here are the top 10 most used Kubernetes... | 0 | 2024-07-09T14:54:43 | https://dev.to/wallacefreitas/mastering-kubernetes-top-10-commands-you-should-know-1j5o | kubernetes, devops | Kubernetes is essential for container orchestration. Here are the top 10 most used Kubernetes commands to help you manage your clusters efficiently:

**kubectl get: Display a list of resources in your cluster.**

```bash

🌱 kubectl get pods

```

**kubectl describe: Show detailed information about a specific resource, in... | wallacefreitas |

1,917,500 | Docker Cheatsheet: Essential Commands You Need to Know | Docker has become a go-to tool for developers, simplifying the process of creating, deploying, and... | 0 | 2024-07-09T15:16:50 | https://dev.to/enodi/docker-cheatsheet-essential-commands-you-need-to-know-16gk | docker, devops, productivity, devrel | Docker has become a go-to tool for developers, simplifying the process of creating, deploying, and running applications using containers. Here’s a handy cheat sheet of essential Docker commands to help you navigate Docker like a pro.

### 1. Docker Basics

- **docker --version**

This shows you the current version o... | enodi |

1,917,501 | Team Building; Aligning Developers; Funding for DevProd teams; Mistakes of First-time CTOs | 🙏 Points of inspiration for you this week before the summer heat kicks in, starting with ⤵️ Full... | 0 | 2024-07-09T14:57:04 | https://dev.to/grocto/team-building-aligning-developers-funding-for-devprod-teams-mistakes-of-first-time-ctos-1ij0 | beginners, devops, productivity, careerdevelopment | 🙏 Points of inspiration for you this week before the summer heat kicks in, starting with ⤵️

Full Newsletter -[](https://grocto.substack.com/p/team-building-aligning-developers)

🎙️groCTO: Originals | Team Building 101: Communication & Innovation ft. Paul Lewis

In a recent episode, host Kovid Batra is joined by Paul ... | grocto |

1,917,503 | Port your Supabase App to Hyperlambda | Most of our partners don't care about anything but our ability to deliver kick ass AI chatbots.... | 0 | 2024-07-09T15:00:16 | https://ainiro.io/blog/port-your-supabase-app-to-hyperlambda | lowcode, productivity | Most of our partners don't care about anything but our ability to deliver [kick ass AI chatbots](https://ainiro.io/ai-chatbot). However, [Magic Cloud](https://ainiro.io/magic-cloud) is actually a complete low-code and no-code software development automation platform - And some of our partners require more than just an ... | polterguy |

1,917,504 | How to Create Stunning Custom Greeting Cards: Tips and Tools | Are you looking to create beautiful, custom greeting cards that leave a lasting impression? Whether... | 0 | 2024-07-09T15:00:38 | https://dev.to/john_david_138/how-to-create-stunning-custom-greeting-cards-tips-and-tools-1m68 | Are you looking to create beautiful, custom greeting cards that leave a lasting impression? Whether it's for birthdays, anniversaries, holidays, or just to say thank you, a well-designed card can make all the difference. At CardMakerz.com, we specialize in helping you craft personalized greeting cards that stand out. I... | john_david_138 | |

1,917,505 | What was your win this week? | I finally joined the dev.to community. It was quite a win for me! | 0 | 2024-07-09T15:03:27 | https://dev.to/yucelfaruksahan/what-was-your-win-this-week-4a59 | weeklyretro | I finally joined the dev.to community. It was quite a win for me!

| yucelfaruksahan |

1,917,506 | The Perils of Callback Hell: Navigating the Pyramid of Doom in JavaScript | In the world of JavaScript, asynchronous programming is essential for building responsive and... | 0 | 2024-07-09T15:05:09 | https://dev.to/junihoj/the-perils-of-callback-hell-navigating-the-pyramid-of-doom-in-javascript-alj | javascript, programming, softwaredevelopment, coding | In the world of JavaScript, asynchronous programming is essential for building responsive and efficient applications. However, as developers, we've all faced the daunting challenge of "callback hell" or the "pyramid of doom." This phenomenon occurs when nested callbacks become deeply nested, making code difficult to re... | junihoj |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.