id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,917,508 | AI is the new "Blockchain"😬 and that's not good 😨 | The point of this post is to temper expectations for AI, not bash it. I know I Know, AI is amazing... | 0 | 2024-07-09T17:16:18 | https://dev.to/ducksauce88/ai-is-the-new-blockchain-and-thats-not-good-22d3 | ai, developer, bitcoin | The point of this post is to temper expectations for AI, not bash it.

I know I Know, AI is amazing and it has greatly increased our lives and how we work. I'm NOT saying AI isn't amazing, All I'm saying is there is a lot of fluff out there and expectations need to be set. This post should NOT be a discouragement for ... | ducksauce88 |

1,917,509 | Is Next.js 14 ready to be used in production for full ecommerce app?? | Hi Fellow Coders, I am to write an ecommerce website which should have good SEO, I don't know if... | 0 | 2024-07-09T15:09:51 | https://dev.to/nt24/is-nextjs-14-ready-to-be-used-in-production-for-full-ecommerce-app-43n2 | nextjs, explainlikeimfive, frontend, beginners | Hi Fellow Coders, I am to write an ecommerce website which should have good SEO, I don't know if developing a full fledged application in Next.js (with Redux,SSR, third party api calls, image storage or using S3 bucket, forms and all) is a good idea. Whats the experience with Next.js 14 with new app router... I am wait... | nt24 |

1,917,510 | Introducing the new feature of DevOps Toolkit: Configuration Reusability 🚀 | Background In the world of DevOps, managing multiple tools on your computer can be quite a... | 0 | 2024-07-09T15:11:18 | https://dev.to/tungbq/introducing-the-new-feature-of-devops-toolkit-configuration-reusability-4mnn | devops, docker, tooling, opensource | ## Background

In the world of DevOps, managing multiple tools on your computer can be quite a challenge. I know this struggle firsthand. Setting up each tool, ensuring they work together, and keeping them updated is a time-consuming and often frustrating process.

That's why I created the [**DevOps Toolkit**](https://... | tungbq |

1,917,561 | Win2Asia: Agen Hiburan Online Terdepan di Asia | Selamat datang di Win2Asia, destinasi utama Anda untuk hiburan online terbaik di Asia! Win2Asia... | 28,008 | 2024-07-09T15:54:59 | https://win2asia.info | win2asia, win2, hiburan, hiburanonline | Selamat datang di [Win2Asia](https://kampret.online), destinasi utama Anda untuk hiburan online terbaik di Asia! Win2Asia dikenal sebagai agen hiburan online terdepan yang menawarkan berbagai pilihan permainan dan pengalaman yang tak tertandingi bagi para penggemar judi online. Dengan layanan yang luar biasa dan platfo... | win2asia |

1,917,512 | Every JS13K gadget all at once! | We’re celebrating the thirteenth edition of the js13kGames competition this year - one of the ways to... | 0 | 2024-07-09T15:16:19 | https://medium.com/js13kgames/every-js13k-gadget-all-at-once-8942e59e0c95 | js13k, competition, prizes, swag | ---

title: Every JS13K gadget all at once!

published: true

date: 2024-07-09 14:58:10 UTC

tags: js13k,competition,prizes,swag

canonical_url: https://medium.com/js13kgames/every-js13k-gadget-all-at-once-8942e59e0c95

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/bel54yj6rrs2zebq2ix7.jpg

---

We’re ... | end3r |

1,917,513 | GPT-40 instantly processed visual information | Using a webcam, GPT-40 instantly processed visual information, reading text and providing accurate... | 0 | 2024-07-09T15:23:02 | https://dev.to/dipakahirav/gpt-40-instantly-processed-visual-information-528l | ai, chatgpt, openai, gpt3 | Using a webcam, GPT-40 instantly processed visual information, reading text and providing accurate summaries far faster than humans, proving its advanced capabilities.

please subscribe to my [YouTube channel](https://www.youtube.com/@DevDivewithDipak?sub_confirmation=1

) to support my channel and get more web developm... | dipakahirav |

1,917,514 | The Future of Software Architecture? Exploring Uber's Domain-Oriented Model | Software architecture plays a crucial role in modern technology. It acts as the backbone of any... | 0 | 2024-07-09T15:15:40 | https://dev.to/marufhossain/the-future-of-software-architecture-exploring-ubers-domain-oriented-model-20lm | Software architecture plays a crucial role in modern technology. It acts as the backbone of any application, dictating how it functions, scales, and evolves. Among the many architectural models, Uber's domain-oriented model stands out as a beacon of innovation. This article dives into how this model is shaping the futu... | marufhossain | |

1,917,516 | Git branch upstreaming | hey folks, if you tried to do a git pull and you came across such a response where the command did... | 0 | 2024-07-09T15:16:30 | https://dev.to/mrjahanzeb/git-branch-upstreaming-28b3 | git, upstream, webdev | hey folks, if you tried to do a `git pull` and you came across such a response where the command did not worked and asked you to set the upstream branch then you are at right place.

git pull error message

```

You asked me to pull without telling me which branch you

want to merge with, and 'branch.uat.merge' in

your c... | mrjahanzeb |

1,917,517 | How to Start a Payment Company | Borderpal.co by Errandpay.com Flashy new tech companies and cutting-edge tech get a lot of buzz. But... | 0 | 2024-07-09T15:17:03 | https://dev.to/borderpal/how-to-start-a-payment-company-1fmc | Borderpal.co by Errandpay.com

Flashy new tech companies and cutting-edge tech get a lot of buzz. But for investors, the real excitement lies in booming tech hubs, areas where new companies are constantly popping up, fueled by money from around the world. These up-and-coming hubs offer a chance for quick profits compare... | borderpal | |

1,917,518 | The Complex World of Marine Salvage: An Educational Insight | Marine salvage is a field that straddles the line between adventure and necessity, blending the... | 0 | 2024-07-09T15:17:17 | https://dev.to/grace_janice_bea4163177b3/the-complex-world-of-marine-salvage-an-educational-insight-f4a |

Marine salvage is a field that straddles the line between adventure and necessity, blending the romance of high seas with the pragmatism of engineering and law. It involves the recovery of ships, cargo, and other p... | grace_janice_bea4163177b3 | |

1,917,519 | Code Smell 257 - Name With Collections | Avoid Using the Prefix "Collection" on Properties TL;DR: Drop "collection" prefix for clarity. ... | 9,470 | 2024-07-09T15:17:18 | https://maximilianocontieri.com/code-smell-257-name-with-collections | webdev, beginners, programming, rust | *Avoid Using the Prefix "Collection" on Properties*

> TL;DR: Drop "collection" prefix for clarity.

# Problems

- Redundant Naming

- Verbose Code

- Reduced Readability

- Refactoring Challenges

- Coupled to implementation

# Solutions

1. Use Simple Names

2. Remove 'collection' [from the name](https://dev.to/mcsee... | mcsee |

1,917,520 | imported auto parts | https://maps.google.com/maps?cid=11682291749944568516 | 0 | 2024-07-09T15:17:56 | https://dev.to/importedautoparts/imported-auto-parts-3fg4 | [https://maps.google.com/maps?cid=11682291749944568516](https://maps.google.com/maps?cid=11682291749944568516) | importedautoparts | |

1,917,521 | imported auto parts | https://drive.google.com/drive/folders/17nFFcdluc7DL3obJBH2qfzbJgMXieKsz?usp=drive_link | 0 | 2024-07-09T15:18:24 | https://dev.to/importedautoparts/imported-auto-parts-2ck6 | [https://drive.google.com/drive/folders/17nFFcdluc7DL3obJBH2qfzbJgMXieKsz?usp=drive_link](https://drive.google.com/drive/folders/17nFFcdluc7DL3obJBH2qfzbJgMXieKsz?usp=drive_link) | importedautoparts | |

1,917,523 | Fortisco Mobile Library Shelving Systems | Maximize Your Library's Space with Mobile Shelving Solutions In today’s libraries, space is often at... | 0 | 2024-07-09T15:19:38 | https://dev.to/bella_dw_dbb483e50c31a3a1/fortisco-mobile-library-shelving-systems-5gn2 | Maximize Your Library's Space with Mobile Shelving Solutions

In today’s libraries, space is often at a premium. At Fortisco, we offer [mobile library shelving](https://fortisco.com.my/our-products/mobile-library-shelving/) systems designed to optimize your available space while maintaining accessibility and ease of us... | bella_dw_dbb483e50c31a3a1 | |

1,917,524 | WiFi Scan React Native iOS | hi , need to scan nearby wifi networks within the app is it possible in ios , because need to share... | 0 | 2024-07-09T15:20:04 | https://dev.to/akilan_a_c9d54d84bbfc465b/wifi-scan-react-native-ios-1dp2 | reactnative, ios, react | hi , need to scan nearby wifi networks within the app is it possible in ios , because need to share wifi credentials to iot device via ble | akilan_a_c9d54d84bbfc465b |

1,917,530 | Introducing the New React MultiColumn ComboBox | TL;DR: The Syncfusion React MultiColumn ComboBox introduces advanced multi-column dropdown... | 0 | 2024-07-11T17:00:01 | https://www.syncfusion.com/blogs/post/new-react-multicolumn-combobox | react, development, web, ui | ---

title: Introducing the New React MultiColumn ComboBox

published: true

date: 2024-07-09 12:00:53 UTC

tags: react, development, web, ui

canonical_url: https://www.syncfusion.com/blogs/post/new-react-multicolumn-combobox

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/xz1gmeedncqu6z3d7zzh.png

---... | jollenmoyani |

1,917,532 | Easily Render Flat JSON Data in JavaScript File Manager | TL;DR: Explore the power of flat JSON data rendering in #JavaScript File Manager. This feature allows... | 0 | 2024-07-11T16:57:50 | https://www.syncfusion.com/blogs/post/render-flat-json-data-js-file-manager | javascript, development, filemanager, web | ---

title: Easily Render Flat JSON Data in JavaScript File Manager

published: true

date: 2024-07-09 13:03:46 UTC

tags: javascript, development, filemanager, web

canonical_url: https://www.syncfusion.com/blogs/post/render-flat-json-data-js-file-manager

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/article... | jollenmoyani |

1,917,535 | What’s New in Angular Query Builder: 2024 Volume 2 | TL;DR: In the 2024 Volume 2 release, the Syncfusion Angular Query Builder includes drag-and-drop... | 0 | 2024-07-11T16:56:21 | https://www.syncfusion.com/blogs/post/angular-query-builder-2024-volume-2 | angular, whatsnew, ui, web | ---

title: What’s New in Angular Query Builder: 2024 Volume 2

published: true

date: 2024-07-09 13:14:45 UTC

tags: angular, whatsnew, ui, web

canonical_url: https://www.syncfusion.com/blogs/post/angular-query-builder-2024-volume-2

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/5h2ok2p0n3x90gqayf4n... | jollenmoyani |

1,917,537 | Deploying native Quarkus REST API's in AWS Lambda | In recent days or weeks, as someone relatively new to AWS services, I faced challenges running a... | 0 | 2024-07-09T19:55:04 | https://dev.to/patryk_szczypie_f1c7101c/deploying-quarkus-rest-apis-in-aws-lambda-1j62 | webdev, quarkus, aws, java | In recent days or weeks, as someone relatively new to [AWS services](https://aws.amazon.com/products/?aws-products-all.sort-by=item.additionalFields.productNameLowercase&aws-products-all.sort-order=asc&awsf.re%3AInvent=*all&awsf.Free%20Tier%20Type=*all&awsf.tech-category=*all), I faced challenges running a [Quarkus](ht... | patryk_szczypie_f1c7101c |

1,917,538 | GetBlock Ambassador Program Goes Live: How to Get Rewards | GetBlock, a premium provider of RPC nodes and Web3 infrastructure, invites all crypto natives,... | 0 | 2024-07-09T15:23:10 | https://dev.to/getblockapi/getblock-ambassador-program-goes-live-how-to-get-rewards-p7e | blockchain, cryptocurrency, ambassadorprogram, nodes |

GetBlock, a premium provider of RPC nodes and Web3 infrastructure, invites all crypto natives, explorers, enthusiasts, and pros to spread the word about its opportunities and seize rewards for every new client who s... | getblockapi |

1,917,539 | Mastering Data Structures: A Comprehensive Collection of Free Programming Tutorials 🧠 | The article is about a comprehensive collection of free online programming tutorials focused on mastering data structures and algorithms. It features six high-quality courses from renowned institutions like UC Berkeley, SUNY Buffalo, Simplilearn, IIT Kharagpur, and IIT Delhi, covering topics such as advanced data struc... | 27,985 | 2024-07-09T15:23:11 | https://dev.to/getvm/mastering-data-structures-a-comprehensive-collection-of-free-programming-tutorials-2d9m | getvm, programming, freetutorial, collection |

Are you looking to level up your programming skills and dive deep into the world of data structures? 🤔 Look no further! We've curated a collection of free online tutorials that will take you on a journey through the fundamental and advanced concepts of data structures and algorithms.

offers a robust set of features to ensure that applications are secure from various threats. This article delves into the top ... | amr-saafan |

1,917,544 | Get Italy Visa From Dubai - Easy Application | worldtripdeal.com | Get your Italy Visa From Dubai quickly and easily with worldtripdeal.com. Submit your documents... | 0 | 2024-07-09T15:33:17 | https://dev.to/wtdseo53/get-italy-visa-from-dubai-easy-application-worldtripdealcom-58d9 | visa, italy, dubai | Get your Italy Visa From Dubai quickly and easily with worldtripdeal.com. Submit your documents including passport, UAE visa, Emirates ID, photos, NOC letter. Call Now or Visit [](https://www.worldtripdeal.com/en/visa/italy/visa-to-italy-28) | wtdseo53 |

1,917,546 | Flutter BLoC (Business Logic Component) | Flutter BLoC (Business Logic Component) architecture is a design pattern used to manage state in a... | 0 | 2024-07-09T15:36:00 | https://dev.to/siam786/flutter-bloc-business-logic-component-57kh | Flutter BLoC (Business Logic Component) architecture is a design pattern used to manage state in a Flutter application. It separates the business logic from the UI, making the code more modular and testable. Here's an overview of how to implement the BLoC architecture in Flutter.

### 1. Setting Up Your Flutter Project... | siam786 | |

1,917,547 | React Router v6: A Comprehensive Guide to Page Routing in React | Dive into a comprehensive guide on React Router and how to implement Page Routing inside a React project. | 0 | 2024-07-09T15:38:52 | https://code.pieces.app/blog/react-router-v6-a-comprehensive-guide-to-page-routing-in-react | <figure><img src="https://d37oebn0w9ir6a.cloudfront.net/account_32099/react-router-v6_1cb0495d0ab8d945567e824b3118343f.jpg" alt="React code in an IDE."/></figure>

Users can navigate through web pages thanks to a process called routing. Routing in web applications is crucial, as it enables users to access different pag... | get_pieces | |

1,917,548 | Asinxron/Fetch/Https | Asinxron "Asinxron" so'zi bir vaqtda bo'lmagan yoki bir vaqtda amalga oshirilmagan degan ma'noni... | 0 | 2024-07-09T15:39:40 | https://dev.to/bekmuhammaddev/asinxronfetchhttps-inc | javascript, frontend, asinxron, https | **Asinxron**

"Asinxron" so'zi _bir vaqtda bo'lmagan yoki_ _bir vaqtda amalga oshirilmagan_ degan ma'noni anglatadi. Kompyuter dasturlashida asinxron kod deganda dastur bajarilishi davomida boshqa kodlar bilan parallel ravishda bajarilishi mumkin bo'lgan kod tushuniladi. Bu kodning bajarilishi natijasini kutish shart em... | bekmuhammaddev |

1,917,549 | Preview let syntax in HTML template in Angular 18 | Introduction In this blog post, I want to describe the let syntax variable that Angular... | 27,826 | 2024-07-09T15:50:35 | https://www.blueskyconnie.com/preview-the-let-syntax-in-html-template-in-angular-18/ | angular, tutorial, webdev | ##Introduction

In this blog post, I want to describe the let syntax variable that Angular 18.1.0 will release. This feature has debates in the Angular community because some people like it, others have concerns, and most people don't know when to use it in an Angular application.

I don't know either, but I use the sy... | railsstudent |

1,917,553 | veterinary | https://maps.google.com/maps?cid=5978527005209815401 | 0 | 2024-07-09T15:47:05 | https://dev.to/veterinary/veterinary-1cl8 | [https://maps.google.com/maps?cid=5978527005209815401](https://maps.google.com/maps?cid=5978527005209815401) | veterinary | |

1,917,554 | veterinary | https://drive.google.com/drive/folders/1Xxwu3c_AM4mLu9mmSc99qD2YZDiYW6eo?usp=sharing | 0 | 2024-07-09T15:47:40 | https://dev.to/veterinary/veterinary-2jkn | [https://drive.google.com/drive/folders/1Xxwu3c_AM4mLu9mmSc99qD2YZDiYW6eo?usp=sharing](https://drive.google.com/drive/folders/1Xxwu3c_AM4mLu9mmSc99qD2YZDiYW6eo?usp=sharing) | veterinary | |

1,917,555 | What is Cloud Computing ? | Tired of Expensive IT Costs and Managing Servers? Cloud Computing Can Be Your... | 0 | 2024-07-10T17:06:01 | https://dev.to/jesse_adu_akowuah_/what-is-cloud-computing--2kgf | beginners, aws, learning, cloud | ## Tired of Expensive IT Costs and Managing Servers? Cloud Computing Can Be Your Game-Changer!

The concept of cloud computing revolves around the provision of IT services to end users for a fee. Unlike traditional computing where users shoulder the upfront costs for every capability they need, cloud computing offers ... | jesse_adu_akowuah_ |

1,917,558 | Unleashing the Power of Decentralized Finance - Understanding its Crucial Role in Today's Economy | In the exhilarating world of digital currency, a new paradigm shift is carving out a niche. This... | 0 | 2024-07-09T15:51:30 | https://dev.to/bird_march/unleashing-the-power-of-decentralized-finance-understanding-its-crucial-role-in-todays-economy-4akd | cryptocurrency, ethereum, bitcoin | In the exhilarating world of digital currency, a new paradigm shift is carving out a niche. This variant makes use of a distributed model, in contrast to the conventional centralized approach. By eliminating mediators, it empowers users to gain total control over their funds and transactions.

This innovative method st... | bird_march |

1,922,992 | З | A post by Andrey Vizir | 0 | 2024-07-14T09:18:13 | https://dev.to/andrey_vi_1c62a8146a73/z-44bl | andrey_vi_1c62a8146a73 | ||

1,917,564 | How to Identify and Mitigate Flaky Tests: Best Practices and Strategies. | Enhancing Test Reliability and Efficiency in CI/CD Pipelines A flaky test is a test that sometimes... | 0 | 2024-07-09T15:58:35 | https://medium.com/mindroast/how-to-identify-and-mitigate-flaky-tests-best-practices-and-strategies-99e77f8d712e | javascript, webdev, programming, coding | _Enhancing Test Reliability and Efficiency in CI/CD Pipelines_

A flaky test is a test that sometimes passes and sometimes fails without any changes to the code being tested. These tests can be particularly troublesome because they undermine the reliability of the test suite.

Consider your CI/CD pipeline is configure... | apoorvtomar |

1,917,565 | screw house | https://maps.google.com/maps?cid=8838499379340141534 | 0 | 2024-07-09T15:58:37 | https://dev.to/screwhouse/screw-house-55ik | [https://maps.google.com/maps?cid=8838499379340141534](https://maps.google.com/maps?cid=8838499379340141534) | screwhouse | |

1,917,566 | screw house | https://drive.google.com/drive/folders/1wtbVn6qv3Zoj3oi1cDl_b7fd8nJPuXi5?usp=sharing | 0 | 2024-07-09T15:59:02 | https://dev.to/screwhouse/screw-house-2jpb | [https://drive.google.com/drive/folders/1wtbVn6qv3Zoj3oi1cDl_b7fd8nJPuXi5?usp=sharing](https://drive.google.com/drive/folders/1wtbVn6qv3Zoj3oi1cDl_b7fd8nJPuXi5?usp=sharing) | screwhouse | |

1,917,567 | Win2 Link Alternatif | Temukan pengalaman bermain yang lancar dengan Win2Asia melalui link alternatif terbaru. Kami memahami... | 0 | 2024-07-09T15:59:17 | https://dev.to/win2asia/win2-link-alternatif-2bco | win2, win2link, alternatifwin2, win2alternatif | Temukan pengalaman bermain yang lancar dengan Win2Asia melalui link alternatif terbaru. Kami memahami pentingnya akses yang stabil dan bebas hambatan bagi para pemain. Oleh karena itu, kami menyediakan link alternatif yang selalu diperbarui untuk memastikan Anda dapat menikmati permainan favorit Anda tanpa gangguan. Kl... | win2asia |

1,917,569 | 3D Riemann Surface | Check out this Pen I made! | 0 | 2024-07-09T16:00:47 | https://dev.to/dan52242644dan/3d-riemann-surface-5c4k | codepen, javascript, html, programming | Check out this Pen I made!

{% codepen https://codepen.io/Dancodepen-io/pen/abgzbjZ %} | dan52242644dan |

1,917,570 | Algorithmic Trading: The Future of Finance | In today's fast-paced world of finance, innovation is the driving force that continues to shape the... | 27,673 | 2024-07-09T16:05:12 | https://dev.to/rapidinnovation/algorithmic-trading-the-future-of-finance-66b | In today's fast-paced world of finance, innovation is the driving force that

continues to shape the industry's future. Technology is advancing at an

unprecedented pace, and entrepreneurs and innovators are presented with a wide

array of tools to redefine traditional financial practices. One such

revolutionary technolog... | rapidinnovation | |

1,917,573 | Transforming Spaces with White Cube: Our Journey in Interior Design and Construction | Hello, Dev Community! At White Cube, we specialize in creating stunning interior designs and... | 0 | 2024-07-09T16:08:40 | https://dev.to/yerba_white_1c8c69f3a281f/transforming-spaces-with-white-cube-our-journey-in-interior-design-and-construction-fj9 | Hello, Dev Community!

At White Cube, we specialize in creating stunning interior designs and delivering exceptional construction projects. However, as the digital world evolves, we recognized the need to enhance our online presence to better serve our clients. Today, I want to share our journey of revamping the White ... | yerba_white_1c8c69f3a281f | |

1,917,575 | Lists, Stacks, Queues, and Priority Queues | Choosing the best data structures and algorithms for a particular task is one of the keys to... | 0 | 2024-07-09T16:14:09 | https://dev.to/paulike/lists-stacks-queues-and-priority-queues-18ic | java, programming, learning, beginners | Choosing the best data structures and algorithms for a particular task is one of the keys to developing high-performance software. A data structure is a collection of data organized in some fashion. The structure not only

stores data but also supports operations for accessing and manipulating the data.

In object-orien... | paulike |

1,917,579 | Otolaryngologist | https://maps.google.com/maps?cid=4261584476696406095 | 0 | 2024-07-09T16:19:59 | https://dev.to/otolaryngologist/otolaryngologist-1jln | [https://maps.google.com/maps?cid=4261584476696406095](https://maps.google.com/maps?cid=4261584476696406095) | otolaryngologist | |

1,917,593 | Otolaryngologist | https://drive.google.com/drive/folders/1Jwk9GPJu8tEq2iLaORSCylfxxqdtmkj-?usp=sharing | 0 | 2024-07-09T16:20:29 | https://dev.to/otolaryngologist/otolaryngologist-327a | [https://drive.google.com/drive/folders/1Jwk9GPJu8tEq2iLaORSCylfxxqdtmkj-?usp=sharing](https://drive.google.com/drive/folders/1Jwk9GPJu8tEq2iLaORSCylfxxqdtmkj-?usp=sharing) | otolaryngologist | |

1,917,594 | Exploring the Impact of DefiLlama in Shaping the Landscape of Decentralized Finance | As the modern digital sphere continues to evolve, the advantages of a particular platform in this... | 0 | 2024-07-09T16:23:02 | https://dev.to/cryptonews/exploring-the-impact-of-defillama-in-shaping-the-landscape-of-decentralized-finance-1pmj | cryptocurrency, bitcoin, ethereum | As the modern digital sphere continues to evolve, the advantages of a particular platform in this rapidly changing economy become increasingly important. One such platform, known synonymously as [DefiLlama](https://defillama.co/), has solidified its niche within the landscape of Dispersed Financial systems. This innova... | cryptonews |

1,917,596 | TypeID-JS: Type Safe, K-Sortable Unique IDs for Javascript | Since we first announced TypeID last year, we've seen significant adoption and interest from the... | 28,009 | 2024-07-09T16:29:27 | https://www.jetify.com/blog/typeid-js-v1/ | javascript, typescript, webdev | Since we first announced [TypeID](https://github.com/jetify-com/typeid) last year, we've seen significant adoption and interest from the community, with 23 different language clients contributed by the community and 90,000 weekly NPM downloads of our Typescript Implementation.

Last week, we released version 1.0 of our... | lagoja |

1,917,597 | Cloud Native Live: Automate pinning GitHub Actions and container images to their digests | GitHub Actions, like open source dependencies, are vulnerable to malicious attacks. Pinning GitHub... | 0 | 2024-07-09T16:30:07 | https://dev.to/stacklok/cloud-native-live-automate-pinning-github-actions-and-container-images-to-their-digests-1jce | githubactions, security, cloudnative, github | GitHub Actions, like open source dependencies, are vulnerable to malicious attacks. Pinning GitHub Actions to their digests (instead of using floating tags) is recommended by GitHub: it’s the only way to use an Action as an immutable release, so that you’re always using a known-good version even if the source repo is c... | stacey_potter_3de75e600a1 |

1,917,600 | 8 Exciting New JavaScript Concepts You Need to Know | As a developer, staying up-to-date with the latest advancements in JavaScript is crucial to writing... | 0 | 2024-07-09T16:32:50 | https://dev.to/dipakahirav/8-exciting-new-javascript-concepts-you-need-to-know-45hp | javascript, webdev, beginners, learning | As a developer, staying up-to-date with the latest advancements in JavaScript is crucial to writing efficient, modern, and scalable code. In this post, we'll explore 8 new and exciting JavaScript concepts that you should know to take your coding skills to the next level.

please subscribe to my [YouTube channel](https:... | dipakahirav |

1,917,602 | பைத்தான் (Python) - 1ம் நாள் | Python என்பது மக்கள் எளிதில் கற்றுக்கொள்ள கூடிய நிரலாக்க மொழி. இந்நிரலாக்க மொழியை பீலோ கீடோ (Guido... | 0 | 2024-07-10T09:56:42 | https://dev.to/fathima_shaila/paittaannn-python-1m-naall-4h5l |

Python என்பது மக்கள் எளிதில் கற்றுக்கொள்ள கூடிய நிரலாக்க மொழி. இந்நிரலாக்க மொழியை பீலோ கீடோ (Guido van Rossum) என்பவர் 1980 ஆம் ஆண்டுகளில் வடிவமைக்கத் தொடங்கி 1991 ஆம் ஆண்டளவில் வெளியிட்டார். எளிமையான இந்நிரலாக்க மொழி விரைவாக மக்களிடையே பிரபல்யமடையத் துவங்கியது.

<h3>python பயன்படுத்தப்படும் துறைகள்</h3>

* இயந்திர... | fathima_shaila | |

1,917,603 | Collections | The Collection interface defines the common operations for lists, vectors, stacks, queues, priority... | 0 | 2024-07-09T16:39:56 | https://dev.to/paulike/collections-3le7 | java, programming, learning, beginners | The **Collection** interface defines the common operations for lists, vectors, stacks, queues, priority queues, and sets.

The Java Collections Framework supports two types of containers:

- One for storing a collection of elements is simply called a _collection_.

- The other, for storing key/value pairs, is called a _... | paulike |

1,917,604 | Por quê eu estou aprendendo e acho que vocês também deveriam aprender scala em 2024 | Bom, para começar vou contextualizar vocês. Scala é uma linguagem de programação de proposito geral e... | 0 | 2024-07-09T16:39:56 | https://dev.to/brunociccarino/por-que-eu-estou-aprendendo-e-acho-que-voces-tambem-deveriam-aprender-scala-em-2024-25b1 | scala, learning, algorithms, functional | Bom, para começar vou contextualizar vocês. Scala é uma linguagem de programação de proposito geral e multi-paradigma, criada por Martin Odersky. Em scala todo valor é um objeto, e toda função é um valor.

Comecei a me interessar por scala quando eu vi pela primeira vez o projeto criado pelo twitter chamado cassovary q... | brunociccarino |

1,917,605 | New day | Good day market men and woman. It’s nice to get on board😂😂 | 0 | 2024-07-09T16:41:07 | https://dev.to/stickgod/new-day-g2m | Good day market men and woman. It’s nice to get on board😂😂

-

1.

| stickgod | |

1,917,606 | Understanding the Josephus Problem: A Comprehensive Guide | Josephus Problem Explained 🎯 There are N people standing in a circle waiting to be... | 0 | 2024-07-09T16:43:12 | https://vampirepapi.hashnode.dev/understanding-the-josephus-problem-a-comprehensive-guide | dsa, recursion, algorithms, datastructures | ### [Josephus Problem Explained 🎯 ](https://youtu.be/ULUNeD0N9yI?si=7sCQtOfDpS8uWner)

There are N people standing in a circle waiting to be executed. The counting out begins at some point in the circle and proceeds around the circle in a fixed direction. In each step, a certain number of people are skipped and the ne... | vampirepapi |

1,917,607 | 1701. Average Waiting Time | 1701. Average Waiting Time Medium There is a restaurant with a single chef. You are given an array... | 27,523 | 2024-07-09T16:46:57 | https://dev.to/mdarifulhaque/1701-average-waiting-time-51ef | php, leetcode, algorithms, programming | 1701\. Average Waiting Time

Medium

There is a restaurant with a single chef. You are given an array `customers`, where <code>customers[i] = [arrival<sub>i</sub>, time<sub>i</sub>]:</code>

- <code>arrival<sub>i</sub></code> is the arrival time of the <code>i<sup>th</sup></code> customer. The arrival times are sorted ... | mdarifulhaque |

1,917,609 | print() | In the second live session of the course, I learned about print() function. The quiz was fun. | 0 | 2024-07-09T16:48:21 | https://dev.to/amotbeli/print-4b46 | programming, python, beginners, learning | In the second live session of the course, I learned about `print()` function.

The quiz was fun. | amotbeli |

1,917,610 | Understanding Nextjs cache management | Introduction Next.js is a widely used framework with a variety of utilities that enable... | 0 | 2024-07-09T16:50:17 | https://dev.to/dunedev/understanding-nextjs-cache-management-54o8 | ## Introduction

Next.js is a widely used framework with a variety of utilities that enable more agile and efficient development. However, there is one topic that I find not very easy to understand, and that is how Next.js caches different things.

To better understand this, I should start by explaining that Next.js us... | dunedev | |

1,917,611 | canoe vascular surgeon | https://maps.google.com/maps?cid=7590796267880986503 | 0 | 2024-07-09T16:50:40 | https://dev.to/canoevascularsurgeon/canoe-vascular-surgeon-3ngj | [https://maps.google.com/maps?cid=7590796267880986503](https://maps.google.com/maps?cid=7590796267880986503) | canoevascularsurgeon | |

1,917,612 | canoe vascular surgeon | https://drive.google.com/drive/folders/16vTPk6GAVFb6fgv5VBIDPxSl5-DrtpQx?usp=sharing | 0 | 2024-07-09T16:51:10 | https://dev.to/canoevascularsurgeon/canoe-vascular-surgeon-1lip | [https://drive.google.com/drive/folders/16vTPk6GAVFb6fgv5VBIDPxSl5-DrtpQx?usp=sharing](https://drive.google.com/drive/folders/16vTPk6GAVFb6fgv5VBIDPxSl5-DrtpQx?usp=sharing) | canoevascularsurgeon | |

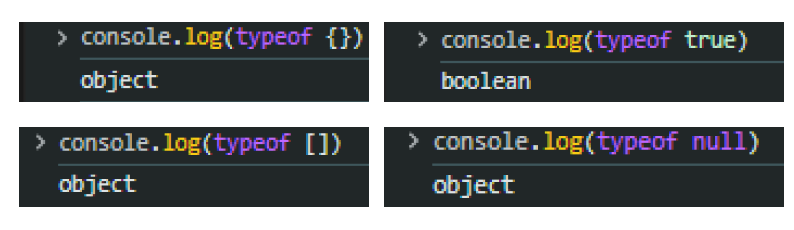

1,917,613 | Why "everthing" is a object in JavaScript | In JavaScript, "everything" is considered an object or can behave like an object due to its design... | 0 | 2024-07-09T16:53:13 | https://dev.to/dandankara/why-everthing-is-a-object-in-javascript-37hh | javascript, beginners, learning | In JavaScript, "everything" is considered an object or can behave like an object due to its design principles. Here are some of the main reasons for this; Some examples;

But wait, you see `null` is... | dandankara |

1,917,614 | Iterators | Each collection is Iterable. You can obtain its Iterator object to traverse all the elements in the... | 0 | 2024-07-09T16:55:51 | https://dev.to/paulike/iterators-f3f | java, programming, learning, beginners | Each collection is **Iterable**. You can obtain its **Iterator** object to traverse all the elements in the collection. **Iterator** is a classic design pattern for walking through a data structure without having to expose the details of how data is stored in the data structure.

The **Collection** interface extends th... | paulike |

1,917,615 | 🚀 *New stage in my mobile development adventure!* 🚀 | 🚀 New stage in my mobile development adventure! 🚀 Today marks a big milestone for me: I've set up my... | 0 | 2024-07-09T16:56:35 | https://dev.to/alibiaphanuel/new-stage-in-my-mobile-development-adventure-2o15 | javascript, programming | 🚀 *New stage in my mobile development adventure!* 🚀

Today marks a big milestone for me: I've set up my mobile development environment with React Native! 🎉

It wasn't an easy task, but every challenge met is a new skill acquired. I'm extremely excited to continue my learning and develop powerful and elegant mobile a... | alibiaphanuel |

1,917,616 | 🚀 Advanced Terminal Calculator: Your Ultimate Dev Tool! 🌟 | 👋 Hey devs! I've been working on something super cool -- the Advanced Terminal Calculator! It's not... | 0 | 2024-07-09T16:57:34 | https://dev.to/safwanayyan/advanced-terminal-calculator-your-ultimate-dev-tool-15l0 | python, github, programming, opensource |

👋 Hey devs! I've been working on something super cool -- the **Advanced Terminal Calculator**! It's not just any old calculator. This baby combines a bunch of handy tools into one terminal interface. Whether you're a casual user or a pro, this calculator's got you covered. 🎉

* * * * *

### 🌟 Features

Imagine havi... | safwanayyan |

1,917,617 | Introduction to Offensive Security: A Beginner's Guide | In today's digital age, cybersecurity is more crucial than ever. With cyber threats evolving rapidly,... | 0 | 2024-07-09T16:58:00 | https://dev.to/resource_bunk/introduction-to-offensive-security-a-beginners-guide-ek1 | books, security, webdev, beginners | In today's digital age, cybersecurity is more crucial than ever. With cyber threats evolving rapidly, organizations and individuals alike must adopt proactive measures to safeguard their systems and data. One such proactive approach is offensive security, commonly known as penetration testing.

**What is Offensive Secu... | resource_bunk |

1,917,620 | Exploring the Frontier of AI: Deep Learning, Machine Learning, and More | Getting Started with Deep Learning: Modern agriculture is a complex science, and it requires quite a... | 0 | 2024-07-09T17:01:20 | https://dev.to/safwan_nasir_51209157325d/exploring-the-frontier-of-ai-deep-learning-machine-learning-and-more-35bg | deeplearning, ethicsinai, aiapplications, machinelearning |

Getting Started with Deep Learning: Modern agriculture is a complex science, and it requires quite a lot of efforts and time for a man to learn its basics let alone all the peculiarities of farming If you are interested in knowing what modern farming is and do not want to spend days and nights to explore the available... | safwan_nasir_51209157325d |

1,917,621 | Foundations of Offensive Security: Understanding Vulnerabilities | In the realm of cybersecurity, understanding vulnerabilities is akin to knowing your enemy's... | 0 | 2024-07-09T17:01:38 | https://dev.to/resource_bunk/foundations-of-offensive-security-understanding-vulnerabilities-4e8m | webdev, beginners, programming, tutorial | In the realm of cybersecurity, understanding vulnerabilities is akin to knowing your enemy's weaknesses before they strike. Vulnerabilities, whether they stem from software flaws, misconfigurations, or human error, pose significant risks to the security of systems and networks. In this blog post, we delve into the foun... | resource_bunk |

1,917,622 | Keeping Client Data Safe: Security Considerations for Cloud-Hosted ProLawyer | Law firms hold a vast amount of sensitive client data, making security a paramount concern. While... | 0 | 2024-07-09T17:01:52 | https://dev.to/petergroft/keeping-client-data-safe-security-considerations-for-cloud-hosted-prolawyer-2c6d | Law firms hold a vast amount of sensitive client data, making security a paramount concern. While [migrating ProLawyer to the cloud](https://www.clouddesktoponline.com/blog/prolawyer-system-requirements/) offers numerous advantages, it's crucial to understand the security implications and choose a cloud provider that p... | petergroft | |

1,917,624 | Penetration Testing Demystified: Techniques and Best Practices | In the realm of cybersecurity, staying ahead of potential threats requires a proactive approach to... | 0 | 2024-07-09T17:05:20 | https://dev.to/resource_bunk/penetration-testing-demystified-techniques-and-best-practices-46le | webdev, beginners, tutorial, learning | In the realm of cybersecurity, staying ahead of potential threats requires a proactive approach to identifying and mitigating vulnerabilities. Penetration testing, often referred to as pen testing, is a critical technique used by ethical hackers to assess the security of systems and networks. In this blog post, we expl... | resource_bunk |

1,917,625 | Setting Up Your Secure Lab Environment: Practice Makes Perfect | In the dynamic field of cybersecurity, hands-on practice is essential for mastering offensive... | 0 | 2024-07-09T17:08:21 | https://dev.to/resource_bunk/setting-up-your-secure-lab-environment-practice-makes-perfect-2m80 | beginners, tutorial, learning, opensource | In the dynamic field of cybersecurity, hands-on practice is essential for mastering offensive security techniques. Creating a secure lab environment provides ethical hackers and cybersecurity enthusiasts with a safe space to test and refine their skills without risking real-world systems. In this blog post, we explore ... | resource_bunk |

1,917,626 | 19 Frontend Resources Every Web Developer Must Bookmark 🎨✨ | Finding useful web development resources can be overwhelming for both beginners and experienced... | 0 | 2024-07-09T17:21:45 | https://madza.hashnode.dev/19-frontend-resources-every-web-developer-must-bookmark | webdev, coding, frontend, productivity | ---

title: 19 Frontend Resources Every Web Developer Must Bookmark 🎨✨

published: true

description:

tags: webdev, coding, frontend, productivity

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/ax7av2o3wnfidxr01p1o.png

canonical_url: https://madza.hashnode.dev/19-frontend-resources-every-web-devel... | madza |

1,917,627 | Mastering Reconnaissance in Cybersecurity: The Art of Gathering Intel | In the world of cybersecurity, knowledge is power. Before launching any offensive security operation,... | 0 | 2024-07-09T17:10:32 | https://dev.to/resource_bunk/mastering-reconnaissance-in-cybersecurity-the-art-of-gathering-intel-2g2e | beginners, tutorial, opensource, learning | In the world of cybersecurity, knowledge is power. Before launching any offensive security operation, ethical hackers must gather intelligence about their target to understand its weaknesses and vulnerabilities. This process, known as reconnaissance, plays a pivotal role in assessing security postures and identifying p... | resource_bunk |

1,917,628 | Understanding API Gateway Pricing: Maximizing Features While Minimizing Costs | Filtering and segmenting traffic to and from your app is critical to the consistent safety of your... | 0 | 2024-07-10T06:00:00 | https://www.getambassador.io/blog/api-gateway-pricing?utm_campaign=Corporate&utm_source=linkedin&utm_medium=social&utm_content=PricingBlog | api, apigateway, price | Filtering and segmenting traffic to and from your app is critical to the consistent safety of your software development lifecycle, and finding the right API Gateway is just as important. Let’s look through the key components of an API gateway and the different ways companies tend to price them.

What is an API Gateway?... | getambassador2024 |

1,917,629 | Understanding Taints and Tolerations in Kubernetes | Welcome back to my blog series on Kubernetes! Today we will be taking a dive into a crucial yet... | 0 | 2024-07-09T17:12:18 | https://dev.to/jensen1806/understanding-taints-and-tolerations-in-kubernetes-7oj | kubernetes, devops, cicd, containers | Welcome back to my blog series on Kubernetes! Today we will be taking a dive into a crucial yet confusing topic: Taints and Tolerations. Understanding this concept is vital for anyone working with Kubernetes, as it helps manage workloads more effectively. By the end of this post, you'll have a clear understanding of ho... | jensen1806 |

1,917,631 | Building a Robust Next.js Quiz App: My Journey | The Challenge Recently, I embarked on an exciting project: building a Next.js app with dynamic... | 0 | 2024-07-09T17:17:35 | https://dev.to/rakahsan/attach-jwt-with-fetch-for-next-js-14-server-action-2gi4 | The Challenge

Recently, I embarked on an exciting project: building a Next.js app with dynamic client-server interactions and static site generation. The initial implementation was seamless, especially with token-based authentication for the login page. Everything was running smoothly until my client threw in a new req... | rakahsan | |

1,917,678 | JavaScript 30 - 7 Array Cardio Day 2 | Hey all and welcome back to another day of Wes Bos's JavaScript30! Alright...it's been over 2 weeks... | 0 | 2024-07-10T19:01:19 | https://dev.to/virtualsobriety/javascript-30-7-array-cardio-day-2-4m59 | javascript, beginners, learning, webdev | Hey all and welcome back to another day of Wes Bos's [JavaScript30!](https://javascript30.com/) Alright...it's been over 2 weeks since my last post and that’s pretty sad. That being said, I did put in my notice at my current job and they have been running me into the ground so I haven't had as much time to work on m... | virtualsobriety |

1,917,648 | How we fixed the app downtime issue in NeetoDeploy | We have a new blog on: How we fixed the app downtime issue in NeetoDeploy. NeetoDeploy is an... | 0 | 2024-07-09T17:20:35 | https://dev.to/tsudhishnair/how-we-fixed-the-app-downtime-issue-in-neetodeploy-3l9b | webdev, devops, programming | We have a new blog on: How we fixed the app downtime issue in NeetoDeploy.

NeetoDeploy is an alternative to Heroku. At Neeto, we are building 20+ applications, and most of them are running in neetoDeploy. 🔥

Learn more about the 520 response code and how we fixed the app downtime issue. 👀

Read more here: https:/... | tsudhishnair |

1,917,673 | Recommended Project: 'Grouping Employees by Phone Number' | The article is about a recommended Python programming project called "Grouping Employees by Phone Number" offered by LabEx. It highlights the project's focus on developing skills in file handling, data processing, and CSV file management. The article provides an overview of the key learning objectives, including workin... | 27,678 | 2024-07-09T17:28:55 | https://dev.to/labex/recommended-project-grouping-employees-by-phone-number-4b1a | labex, programming, course, python |

Are you looking to enhance your Python programming skills and gain practical experience in file handling, data processing, and CSV file management? If so, the [Grouping Employees by Phone Number project](https://labex.io/courses/project-personnel-grouping) offered by LabEx is an excellent choice for you.

, AZ-204 (Mi... | bren67 |

1,917,676 | Development Made Easy for Lazy and Productive Devs - Get Code Snippets for Full or Basic Props for Native or Expo Components | While building my project (Quotix), which I'm using to learn and apply my knowledge in Mobile... | 0 | 2024-07-09T17:39:11 | https://dev.to/cre8stevedev/development-made-easy-for-lazy-and-productive-devs-get-code-snippets-for-full-or-basic-props-for-native-or-expo-components-2c85 | vscode, extensions, reactnative, mobile | While building my project (Quotix), which I'm using to learn and apply my knowledge in Mobile Development using Expo (React Native Framework), I sometimes have to:

1. Jump back again to the docs

2. Search for a particular component

3. Take note of the props that suit my needs

4. Hop back to the IDE

Phew! I'm thrilled... | cre8stevedev |

1,917,762 | What was your win this week? | My repo on GitHub got 1300 stars & it was featured on GitHub trending! | 0 | 2024-07-09T19:38:25 | https://dev.to/sheru/what-was-your-win-this-week-477j | weeklyretro | My repo on GitHub got 1300 stars & it was featured on GitHub trending! | sheru |

1,917,679 | Understanding the sizeof Operator in C++: A Comprehensive Guide | Wassup guys! I recently published a YouTube tutorial on the sizeof operator in C++, and I wanted to... | 0 | 2024-07-09T17:45:39 | https://dev.to/kevinbjorv/understanding-the-sizeof-operator-in-c-a-comprehensive-guide-58nl | cpp, c, tutorial, programming | Wassup guys!

I recently published a YouTube tutorial on the sizeof operator in C++, and I wanted to share some insights and gems from the video here. You can watch the full tutorial [here](https://www.youtube.com/watch?v=DuiCjk2ksfc&t=4s)

What is the sizeof Operator?

The sizeof operator in C++ is a compile-time opera... | kevinbjorv |

1,917,680 | Getting Started with Vanilla JavaScript: Setting Up Your Development Environment | This simple guide will walk you through how to set up your development environment, to make working... | 0 | 2024-07-09T17:54:40 | https://dev.to/buchilazarus4/getting-started-with-vanilla-javascript-setting-up-your-development-environment-2od5 | news, beginners, javascript, codenewbie | This simple guide will walk you through how to set up your development environment, to make working with JavaScript smooth and interactive. The setup will use a simple folder structure with an HTML document and an external JavaScript file. This way, you can code along with the guide, write JavaScript in a separate file... | buchilazarus4 |

1,917,683 | Today topics | A post by Sathish Murugan | 0 | 2024-07-09T18:02:54 | https://dev.to/sathish_murugan_973523127/today-topics-3bai | sathish_murugan_973523127 | ||

1,917,684 | Buy verified cash app account | https://dmhelpshop.com/product/buy-verified-cash-app-account/ Buy verified cash app account Cash... | 0 | 2024-07-09T18:06:47 | https://dev.to/rarestjunk/buy-verified-cash-app-account-5e3d | webdev, javascript, beginners, programming | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-cash-app-account/\n\n\nBuy verified cash app account\nCash app has emerged as a dominant force in the realm of mobile banking within the USA, offering unparalleled convenience for digital money transfers, deposits, and trading. As the foremost provider of fully verified cash app accounts, we take pride in our ability to deliver accounts with substantial limits. Bitcoin enablement, and an unmatched level of security.\n\nOur commitment to facilitating seamless transactions and enabling digital currency trades has garnered significant acclaim, as evidenced by the overwhelming response from our satisfied clientele. Those seeking buy verified cash app account with 100% legitimate documentation and unrestricted access need look no further. Get in touch with us promptly to acquire your verified cash app account and take advantage of all the benefits it has to offer.\n\nWhy dmhelpshop is the best place to buy USA cash app accounts?\nIt’s crucial to stay informed about any updates to the platform you’re using. If an update has been released, it’s important to explore alternative options. Contact the platform’s support team to inquire about the status of the cash app service.\n\nClearly communicate your requirements and inquire whether they can meet your needs and provide the buy verified cash app account promptly. If they assure you that they can fulfill your requirements within the specified timeframe, proceed with the verification process using the required documents.\n\nOur account verification process includes the submission of the following documents: [List of specific documents required for verification].\n\nGenuine and activated email verified\nRegistered phone number (USA)\nSelfie verified\nSSN (social security number) verified\nDriving license\nBTC enable or not enable (BTC enable best)\n100% replacement guaranteed\n100% customer satisfaction\nWhen it comes to staying on top of the latest platform updates, it’s crucial to act fast and ensure you’re positioned in the best possible place. If you’re considering a switch, reaching out to the right contacts and inquiring about the status of the buy verified cash app account service update is essential.\n\nClearly communicate your requirements and gauge their commitment to fulfilling them promptly. Once you’ve confirmed their capability, proceed with the verification process using genuine and activated email verification, a registered USA phone number, selfie verification, social security number (SSN) verification, and a valid driving license.\n\nAdditionally, assessing whether BTC enablement is available is advisable, buy verified cash app account, with a preference for this feature. It’s important to note that a 100% replacement guarantee and ensuring 100% customer satisfaction are essential benchmarks in this process.\n\nHow to use the Cash Card to make purchases?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card. Alternatively, you can manually enter the CVV and expiration date. How To Buy Verified Cash App Accounts.\n\nAfter submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a buy verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account.\n\nWhy we suggest to unchanged the Cash App account username?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card.\n\nAlternatively, you can manually enter the CVV and expiration date. After submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account. Purchase Verified Cash App Accounts.\n\nSelecting a username in an app usually comes with the understanding that it cannot be easily changed within the app’s settings or options. This deliberate control is in place to uphold consistency and minimize potential user confusion, especially for those who have added you as a contact using your username. In addition, purchasing a Cash App account with verified genuine documents already linked to the account ensures a reliable and secure transaction experience.\n\n \n\nBuy verified cash app accounts quickly and easily for all your financial needs.\nAs the user base of our platform continues to grow, the significance of verified accounts cannot be overstated for both businesses and individuals seeking to leverage its full range of features. How To Buy Verified Cash App Accounts.\n\nFor entrepreneurs, freelancers, and investors alike, a verified cash app account opens the door to sending, receiving, and withdrawing substantial amounts of money, offering unparalleled convenience and flexibility. Whether you’re conducting business or managing personal finances, the benefits of a verified account are clear, providing a secure and efficient means to transact and manage funds at scale.\n\nWhen it comes to the rising trend of purchasing buy verified cash app account, it’s crucial to tread carefully and opt for reputable providers to steer clear of potential scams and fraudulent activities. How To Buy Verified Cash App Accounts. With numerous providers offering this service at competitive prices, it is paramount to be diligent in selecting a trusted source.\n\nThis article serves as a comprehensive guide, equipping you with the essential knowledge to navigate the process of procuring buy verified cash app account, ensuring that you are well-informed before making any purchasing decisions. Understanding the fundamentals is key, and by following this guide, you’ll be empowered to make informed choices with confidence.\n\n \n\nIs it safe to buy Cash App Verified Accounts?\nCash App, being a prominent peer-to-peer mobile payment application, is widely utilized by numerous individuals for their transactions. However, concerns regarding its safety have arisen, particularly pertaining to the purchase of “verified” accounts through Cash App. This raises questions about the security of Cash App’s verification process.\n\nUnfortunately, the answer is negative, as buying such verified accounts entails risks and is deemed unsafe. Therefore, it is crucial for everyone to exercise caution and be aware of potential vulnerabilities when using Cash App. How To Buy Verified Cash App Accounts.\n\nCash App has emerged as a widely embraced platform for purchasing Instagram Followers using PayPal, catering to a diverse range of users. This convenient application permits individuals possessing a PayPal account to procure authenticated Instagram Followers.\n\nLeveraging the Cash App, users can either opt to procure followers for a predetermined quantity or exercise patience until their account accrues a substantial follower count, subsequently making a bulk purchase. Although the Cash App provides this service, it is crucial to discern between genuine and counterfeit items. If you find yourself in search of counterfeit products such as a Rolex, a Louis Vuitton item, or a Louis Vuitton bag, there are two viable approaches to consider.\n\n \n\nWhy you need to buy verified Cash App accounts personal or business?\nThe Cash App is a versatile digital wallet enabling seamless money transfers among its users. However, it presents a concern as it facilitates transfer to both verified and unverified individuals.\n\nTo address this, the Cash App offers the option to become a verified user, which unlocks a range of advantages. Verified users can enjoy perks such as express payment, immediate issue resolution, and a generous interest-free period of up to two weeks. With its user-friendly interface and enhanced capabilities, the Cash App caters to the needs of a wide audience, ensuring convenient and secure digital transactions for all.\n\nIf you’re a business person seeking additional funds to expand your business, we have a solution for you. Payroll management can often be a challenging task, regardless of whether you’re a small family-run business or a large corporation. How To Buy Verified Cash App Accounts.\n\nImproper payment practices can lead to potential issues with your employees, as they could report you to the government. However, worry not, as we offer a reliable and efficient way to ensure proper payroll management, avoiding any potential complications. Our services provide you with the funds you need without compromising your reputation or legal standing. With our assistance, you can focus on growing your business while maintaining a professional and compliant relationship with your employees. Purchase Verified Cash App Accounts.\n\nA Cash App has emerged as a leading peer-to-peer payment method, catering to a wide range of users. With its seamless functionality, individuals can effortlessly send and receive cash in a matter of seconds, bypassing the need for a traditional bank account or social security number. Buy verified cash app account.\n\nThis accessibility makes it particularly appealing to millennials, addressing a common challenge they face in accessing physical currency. As a result, ACash App has established itself as a preferred choice among diverse audiences, enabling swift and hassle-free transactions for everyone. Purchase Verified Cash App Accounts.\n\n \n\nHow to verify Cash App accounts\nTo ensure the verification of your Cash App account, it is essential to securely store all your required documents in your account. This process includes accurately supplying your date of birth and verifying the US or UK phone number linked to your Cash App account.\n\nAs part of the verification process, you will be asked to submit accurate personal details such as your date of birth, the last four digits of your SSN, and your email address. If additional information is requested by the Cash App community to validate your account, be prepared to provide it promptly. Upon successful verification, you will gain full access to managing your account balance, as well as sending and receiving funds seamlessly. Buy verified cash app account.\n\n \n\nHow cash used for international transaction?\nExperience the seamless convenience of this innovative platform that simplifies money transfers to the level of sending a text message. It effortlessly connects users within the familiar confines of their respective currency regions, primarily in the United States and the United Kingdom.\n\nNo matter if you’re a freelancer seeking to diversify your clientele or a small business eager to enhance market presence, this solution caters to your financial needs efficiently and securely. Embrace a world of unlimited possibilities while staying connected to your currency domain. Buy verified cash app account.\n\nUnderstanding the currency capabilities of your selected payment application is essential in today’s digital landscape, where versatile financial tools are increasingly sought after. In this era of rapid technological advancements, being well-informed about platforms such as Cash App is crucial.\n\nAs we progress into the digital age, the significance of keeping abreast of such services becomes more pronounced, emphasizing the necessity of staying updated with the evolving financial trends and options available. Buy verified cash app account.\n\nOffers and advantage to buy cash app accounts cheap?\nWith Cash App, the possibilities are endless, offering numerous advantages in online marketing, cryptocurrency trading, and mobile banking while ensuring high security. As a top creator of Cash App accounts, our team possesses unparalleled expertise in navigating the platform.\n\nWe deliver accounts with maximum security and unwavering loyalty at competitive prices unmatched by other agencies. Rest assured, you can trust our services without hesitation, as we prioritize your peace of mind and satisfaction above all else.\n\nEnhance your business operations effortlessly by utilizing the Cash App e-wallet for seamless payment processing, money transfers, and various other essential tasks. Amidst a myriad of transaction platforms in existence today, the Cash App e-wallet stands out as a premier choice, offering users a multitude of functions to streamline their financial activities effectively. Buy verified cash app account.\n\nTrustbizs.com stands by the Cash App’s superiority and recommends acquiring your Cash App accounts from this trusted source to optimize your business potential.\n\nHow Customizable are the Payment Options on Cash App for Businesses?\nDiscover the flexible payment options available to businesses on Cash App, enabling a range of customization features to streamline transactions. Business users have the ability to adjust transaction amounts, incorporate tipping options, and leverage robust reporting tools for enhanced financial management.\n\nExplore trustbizs.com to acquire verified Cash App accounts with LD backup at a competitive price, ensuring a secure and efficient payment solution for your business needs. Buy verified cash app account.\n\nDiscover Cash App, an innovative platform ideal for small business owners and entrepreneurs aiming to simplify their financial operations. With its intuitive interface, Cash App empowers businesses to seamlessly receive payments and effectively oversee their finances. Emphasizing customization, this app accommodates a variety of business requirements and preferences, making it a versatile tool for all.\n\nWhere To Buy Verified Cash App Accounts\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nThe Importance Of Verified Cash App Accounts\nIn today’s digital age, the significance of verified Cash App accounts cannot be overstated, as they serve as a cornerstone for secure and trustworthy online transactions.\n\nBy acquiring verified Cash App accounts, users not only establish credibility but also instill the confidence required to participate in financial endeavors with peace of mind, thus solidifying its status as an indispensable asset for individuals navigating the digital marketplace.\n\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nConclusion\nEnhance your online financial transactions with verified Cash App accounts, a secure and convenient option for all individuals. By purchasing these accounts, you can access exclusive features, benefit from higher transaction limits, and enjoy enhanced protection against fraudulent activities. Streamline your financial interactions and experience peace of mind knowing your transactions are secure and efficient with verified Cash App accounts.\n\nChoose a trusted provider when acquiring accounts to guarantee legitimacy and reliability. In an era where Cash App is increasingly favored for financial transactions, possessing a verified account offers users peace of mind and ease in managing their finances. Make informed decisions to safeguard your financial assets and streamline your personal transactions effectively.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com" | rarestjunk |

1,917,685 | Talk with You Series #1 | **cover image mirrors mood at the moment of posting Wanna start with the thoughts, that for a while,... | 0 | 2024-07-09T18:10:20 | https://dev.to/maxisbusy/talk-with-you-series-1-2931 | python, dsa, matrix, learning | **cover image mirrors mood at the moment of posting

Wanna start with the thoughts, that for a while, I do have a habit to write down challenges and their potential solutions I used to face on a daily basis, whether it was part of my job or free time activities.

Starting from this post, I've decided to introduce "Tal... | maxisbusy |

1,917,686 | Talk with You Series #1 | **cover image mirror mood at the moment of posting Wanna start with the thoughts, that for a while,... | 0 | 2024-07-09T18:10:20 | https://dev.to/maxisbusy/talk-with-you-series-1-36de | python, dsa, matrix, learning | **cover image mirror mood at the moment of posting

Wanna start with the thoughts, that for a while, I do have a habit to write down challenges and their potential solutions I used to face on a daily basis, whether it was part of my job or free time activities.

Starting from this post, I've decided to introduce "Talk... | maxisbusy |

1,917,692 | OOP Simplified: Quick Factory Methods with Encapsulation, Abstraction, and Polymorphism in TypeScript | This article explores the Factory Method design pattern in TypeScript, highlighting how it uses... | 0 | 2024-07-09T18:26:05 | https://dev.to/lphill/oop-simplified-quick-factory-methods-with-encapsulation-abstraction-and-polymorphism-in-typescript-1m89 | learning, typescript, oop, patterns | This article explores the Factory Method design pattern in TypeScript, highlighting how it uses object-oriented programming (OOP) principles: encapsulation, abstraction, and polymorphism.

Currently I am deepening my understanding of design patterns by studying the [catalog from Refactoring Guru](https://refactoring.g... | lphill |

1,917,693 | 100 days of python day 5 | Feeling existential about all of this. | 0 | 2024-07-09T18:26:47 | https://dev.to/myrojyn/100-days-of-python-day-5-3m92 | python, 100daysofpython | Feeling existential about all of this. | myrojyn |

1,917,694 | Run A NodeJs Application In a Docker Container | we will cover the following: 1.Installation of docker on ubuntu(AWS) 2.Containerisation of Nodejs... | 0 | 2024-07-09T18:27:33 | https://dev.to/ejay11/run-a-nodejs-application-in-a-docker-container-4d16 | devops, docker, aws, container |

we will cover the following:

1.Installation of docker on ubuntu(AWS)

2.Containerisation of Nodejs application

3.update the application

4.share the application

Perequisites

- AWS Account with EC2 access

- Basic knowledge of Docker concepts and commands

**Steps to Setup Docker Project on Ubuntu (AWS EC2 Instance)**

... | ejay11 |

1,917,697 | Underrated React Hook - useSyncExternalStore | Overview Discover a hidden powerhouse in the React ecosystem: the “useSyncExternalStore”... | 0 | 2024-07-09T18:37:26 | https://dev.to/starneit/underrated-react-hook-usesyncexternalstore-4igj | webdev, javascript, beginners, programming |

## Overview

Discover a hidden powerhouse in the React ecosystem: the “useSyncExternalStore” hook. This article delves into its transformative potential, challenging traditional state management paradigms. By seamle... | starneit |

1,917,698 | Lists | The List interface extends the Collection interface and defines a collection for storing elements in... | 0 | 2024-07-09T18:39:26 | https://dev.to/paulike/lists-3e1p | java, programming, learning, beginners | The **List** interface extends the **Collection** interface and defines a collection for storing elements in a sequential order. To create a list, use one of its two concrete classes: **ArrayList** or **LinkedList**.

We used **ArrayList** to test the methods in the **Collection** interface in the preceding sections. N... | paulike |

1,917,699 | State Management with RxJS and React | Introduction Building big web apps can be tricky, especially when you have lots of... | 0 | 2024-07-09T18:42:13 | https://dev.to/starneit/state-management-with-rxjs-and-react-32km | webdev, javascript, beginners, programming |

## Introduction

Building big web apps can be tricky, especially when you have lots of different pieces of information to keep track of. But don’t worry, RxJS is here to help! It’s like a really cool tool that helps... | starneit |

1,917,700 | Tailwind CSS Is So Much More Than Just Inline CSS | Here is why I fell in love with Tailwind and you might too. As a recent Tailwind convert, I never... | 0 | 2024-07-09T18:42:39 | https://dev.to/safdarali/tailwind-css-is-so-much-more-than-just-inline-css-358n | tailwindcss, webdev, beginners, programming | Here is why I fell in love with Tailwind and you might too.

As a recent Tailwind convert, I never thought I’d say this, but…

Tailwind is sooo cool! I want to use it in all my web projects from now on.

There, I said it.

If you’ve never used Tailwind in your web dev projects before, you probably don’t understand what... | safdarali |

1,917,701 | Ready to Dive into React? Let's Build Your First App! | So you're ready to learn React, the powerful JavaScript library for building dynamic user interfaces!... | 0 | 2024-07-09T18:43:20 | https://dev.to/mahiya_khan_1d2dc6061abb7/ready-to-dive-into-react-lets-build-your-first-app-2j0p | webdev, beginners, javascript, react | So you're ready to learn React, the powerful JavaScript library for building dynamic user interfaces! That's awesome. But before we start building fancy components, we need a solid foundation. Let's get your first React app up and running!

**1. Node.js and npm (or yarn): Your Development Tools**

Think of Node.js ... | mahiya_khan_1d2dc6061abb7 |

1,917,702 | Differentiating Zustand and Redux | Overview Explore the differences between Zustand and Redux, two popular state management... | 0 | 2024-07-09T18:45:08 | https://dev.to/starneit/differentiating-zustand-and-redux-426i | react, webdev, javascript, typescript |

## Overview

Explore the differences between Zustand and Redux, two popular state management libraries in React. Efficient state management is crucial for complex web applications, and both Zustand and Redux offer u... | starneit |

1,917,704 | Issue 52 of AWS Cloud Security Weekly | (This is just the highlight of Issue 52 of AWS Cloud Security weekly @... | 0 | 2024-07-09T18:49:44 | https://aws-cloudsec.com/p/issue-52 | security, aws, news | (This is just the highlight of Issue 52 of AWS Cloud Security weekly @ https://aws-cloudsec.com/p/issue-52 << Subscribe to receive the full version in your inbox weekly for free!!).

**What happened in AWS CloudSecurity & CyberSecurity last week July 03-July 09, 2024?**

- AWS Managed Services (AMS) Accelerate customer ... | aws-cloudsec |

1,917,705 | Web4 — The community-based internet of the future | The internet has undergone numerous transformations since its inception, from static websites (Web1)... | 0 | 2024-07-09T18:54:15 | https://dev.to/web4/web4-the-community-based-internet-of-the-future-1nl7 | The internet has undergone numerous transformations since its inception, from static websites (Web1) to interactive platforms (Web2), and decentralized networks with blockchain technologies (Web3). However, the next stage of evolution, [Web4](https://web4.one), represents a fundamental realignment: it brings communitie... | web4 | |

1,917,706 | BSides Boulder 2024: Improving Security For All In The High Desert | Boulder, Colorado, is home to the University of Colorado Boulder, which is older than the state... | 0 | 2024-07-09T18:55:02 | https://blog.gitguardian.com/bsides-boulder-2024/ | security, cybersecurity, git, development | Boulder, Colorado, is home to the [University of Colorado Boulder](https://www.colorado.edu/?ref=blog.gitguardian.com), which is older than the state itself. A community focus on knowledge is evident there, as it has more bookstores per capita than any other city in the US. Boulder is considered a high desert, with an ... | dwayne_mcdaniel |

1,917,707 | The Comparator Interface | Comparator can be used to compare the objects of a class that doesn’t implement Comparable. You have... | 0 | 2024-07-09T18:56:23 | https://dev.to/paulike/the-comparator-interface-1ebc | java, programming, learning, beginners | **Comparator** can be used to compare the objects of a class that doesn’t implement **Comparable**. You have learned how to compare elements using the **Comparable** interface ([the section](https://dev.to/paulike/the-comparable-interface-1ncj)). Several classes in the Java API, such as **String**, **Date**, **Calendar... | paulike |

1,917,708 | Rust and Web Assembly Application | Hey Folks! Once again with a quick tutorial on how to create a wen application with Rust and web... | 0 | 2024-07-09T19:46:23 | https://dev.to/bekbrace/rust-and-web-assembly-application-3hdc | rust, webassembly, programming, webdev | Hey Folks!

Once again with a quick tutorial on how to create a wen application with Rust and web assembly.

The application is a Tax Calculator, a simple app that demonstrates how you can actually create a web application using your Rust knowledge, and leveraging this by using Web Assembly.

Here you ca watch the full t... | bekbrace |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.