id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,901,283 | flash bitcoin software | FlashGen offers several features, including the ability to send Bitcoin to any wallet on the... | 0 | 2024-06-26T12:10:27 | https://dev.to/holly_gost_557f3a53752bcb/flash-bitcoin-software-3mdc | flashusdt, flashbtc, flashbitcoin, whatisflashbitcoin | FlashGen offers several features, including the ability to send Bitcoin to any wallet on the blockchain network, support for both Segwit and legacy addresses, live transaction tracking on the Bitcoin network explorer, and more. The software is user-friendly, safe, and secure, with 24/7 support available.

Telegram: @ma... | holly_gost_557f3a53752bcb |

1,901,282 | how to flash bitcoin | How to Buy Flash USDT: Unlock the Power of Tether with MartelGold Are you looking to get your hands... | 0 | 2024-06-26T12:08:36 | https://dev.to/bryan_nas_8d7e1c432bd86dc/how-to-flash-bitcoin-3bkn | flashbtc, flashbitcoin, flashusdt, flashbitcoinsoftware | How to Buy Flash USDT: Unlock the Power of Tether with MartelGold

Are you looking to get your hands on Flash USDT, the revolutionary Tether solution that’s taking the cryptocurrency world by storm? Look no further! In this article, we’ll guide you through the process of buying Flash USDT and unlocking its incredible b... | bryan_nas_8d7e1c432bd86dc |

1,901,281 | British Schools in Dubai | - Regent International School is a British School in Dubai for Early Year Foundation Stage to ... | 0 | 2024-06-26T12:08:25 | https://dev.to/miley_davis_7f3ee00c782d4/british-schools-in-dubai-5aga |

- Regent International School is a British School in Dubai for Early Year Foundation Stage to Year 13 with rich history of 40 years & follows the National Curriculum of England. Enquire now for fees & admissio... | miley_davis_7f3ee00c782d4 | |

1,901,280 | ⛳️Before and After⛳️ in JS | // Select the original button using jQuery const button = $('button'); // Create a new button that... | 0 | 2024-06-26T12:07:45 | https://dev.to/__khojiakbar__/before-and-after-in-js-36h0 | ```

// Select the original button using jQuery

const button = $('button');

// Create a new button that says 'Info'

const infoBtn = document.createElement('button');

infoBtn.classList.add('btn', 'btn-primary', 'px-5');

infoBtn.innerText = 'Info';

// Create a new button that says 'Cancel'

const cancelBtn = document.cre... | __khojiakbar__ | |

1,901,276 | what is flash usdt? | Hey there, fellow cryptocurrency enthusiasts! Are you looking for a new and exciting way to get... | 0 | 2024-06-26T12:05:48 | https://dev.to/holly_gost_557f3a53752bcb/what-is-flash-usdt-535l | flashusdt, flashbitcoin, flashbtc, howtoflashbtc | Hey there, fellow cryptocurrency enthusiasts! Are you looking for a new and exciting way to get involved in the world of digital currency? Look no further than Flash USDT, the innovative solution from MartelGold.

As a valued member of the MartelGold community, I’m excited to share with you the incredible benefits of F... | holly_gost_557f3a53752bcb |

1,901,274 | what is flash usdt? | Hey there, fellow cryptocurrency enthusiasts! Are you looking for a new and exciting way to get... | 0 | 2024-06-26T12:05:21 | https://dev.to/bryan_nas_8d7e1c432bd86dc/what-is-flash-usdt-4ldc | flashusdt, flashbtc, flashbitcoinsoftware, flashbitcoin | Hey there, fellow cryptocurrency enthusiasts! Are you looking for a new and exciting way to get involved in the world of digital currency? Look no further than Flash USDT, the innovative solution from MartelGold.

As a valued member of the MartelGold community, I’m excited to share with you the incredible benefits of F... | bryan_nas_8d7e1c432bd86dc |

1,901,270 | flash bitcoin transaction | How to Know Flash Bitcoin: Unlock the Secrets with MartelGold Hey there, fellow Bitcoin enthusiasts!... | 0 | 2024-06-26T12:03:27 | https://dev.to/bryan_nas_8d7e1c432bd86dc/flash-bitcoin-transaction-4e75 | flashusdt, flashbtc, flashbitcoin, flashbitcoinsoftware | How to Know Flash Bitcoin: Unlock the Secrets with MartelGold

Hey there, fellow Bitcoin enthusiasts! Are you tired of feeling left behind in the world of cryptocurrency? Do you want to stay ahead of the curve and unlock the full potential of Bitcoin? Look no further than FlashGen (BTC Generator), the innovative softwa... | bryan_nas_8d7e1c432bd86dc |

1,901,269 | how to flash bitcoin | How to Buy Flash USDT: Unlock the Power of Tether with MartelGold Are you looking to get your hands... | 0 | 2024-06-26T12:03:01 | https://dev.to/holly_gost_557f3a53752bcb/how-to-flash-bitcoin-5g5o | flashbtc, flashusdt, flashbitcoin, whatisflashbitcoin | How to Buy Flash USDT: Unlock the Power of Tether with MartelGold

Are you looking to get your hands on Flash USDT, the revolutionary Tether solution that’s taking the cryptocurrency world by storm? Look no further! In this article, we’ll guide you through the process of buying Flash USDT and unlocking its incredible b... | holly_gost_557f3a53752bcb |

1,901,268 | 30+ Amazing Product Ideas Ready for launch | Here are some ideas ready to be launched by anyone who loves any. 30+ free... | 0 | 2024-06-26T12:02:33 | https://dev.to/vickylove/30-amazing-product-ideas-ready-for-launch-223h | webdev, beginners, productivity, saas | Here are some ideas ready to be launched by anyone who loves any.

## 30+ free ideas

- Newsletter SaaS creator tool for agencies to acquire new targetted users and promote their product too

- YouTube channel or blog explaining tools to build different no-code platforms, challenges, how to build, where to market

- Simp... | vickylove |

1,901,267 | Air Conditioner: Cooling and Comfort | An Air Conditioner is a device designed to regulate the temperature, humidity, and air quality within... | 0 | 2024-06-26T12:02:21 | https://dev.to/alisha_janson_1d0a68d3db8/air-conditioner-cooling-and-comfort-jdn | tutorial, beginners | An [Air Conditioner](https://emw.ae/air-conditioner-repair/) is a device designed to regulate the temperature, humidity, and air quality within an indoor space. It functions by drawing warm air from a room, cooling it through a refrigeration cycle, and then releasing the cooled air back into the room. Modern air condit... | alisha_janson_1d0a68d3db8 |

1,885,570 | Monitor the Performance of Your Ruby on Rails Application Using AppSignal | In the first part of this article series, we deployed a simple Ruby on Rails application to... | 27,700 | 2024-06-26T12:00:00 | https://blog.appsignal.com/2024/06/12/monitor-the-performance-of-your-ruby-on-rails-application-using-appsignal.html | ruby, rails, apm, appsignal | In the first part of this article series, we deployed a simple Ruby on Rails application to DigitalOcean's app platform. We also hooked up a Rails app to AppSignal, seeing how simple errors are tracked and displayed in AppSignal's Errors dashboard.

In this part of the series, we'll dive into how to set up the followin... | iamaestimo |

1,901,261 | AR Drawing App Review | Unleashing Creativity with Augmented Reality In today's digital age, augmented reality (AR) is... | 0 | 2024-06-26T11:56:32 | https://dev.to/instantapps/ar-drawing-app-review-a7f | androiddev, android, learning, newbie | **Unleashing Creativity with Augmented Reality**

In today's digital age, augmented reality (AR) is revolutionizing the way we interact with technology, offering immersive and engaging experiences across various domains. One of the exciting applications of AR is in the field of art and drawing, exemplified by the [AR Dr... | instantapps |

1,896,526 | The Cornerstones of Ethical Software Development: Privacy, Transparency, Fairness, Security, and Accountability | Welcome back! In our previous article, we explored why ethical software development is essential in... | 27,798 | 2024-06-26T12:00:00 | https://dev.to/andresordazrs/the-cornerstones-of-ethical-software-development-privacy-transparency-fairness-security-and-accountability-296c | ethicaldevelopment, privacyandsecurity, softwareengineering, techethics | Welcome back! In our previous article, we explored why ethical software development is essential in today's tech-driven world. Now, we dive deeper into the core principles that form the foundation of ethical software development. These principles **—privacy, transparency, fairness, security, and accountability—** are c... | andresordazrs |

1,901,265 | AirportsTerminalGuides | World AirportsTerminalGuides may be a comprehensive asset for travelers, giving basic data almost... | 0 | 2024-06-26T11:59:53 | https://dev.to/airportsterminalguides_0a/airportsterminalguides-4ijl | World [AirportsTerminalGuides](

https://airportsterminalguides.com/) may be a comprehensive asset for travelers, giving basic data almost over 170 worldwide air terminals around the world. Here's what you'll discover on their stage:

Terminal Maps:

Access point by point maps of airplane terminal terminals, making ... | airportsterminalguides_0a | |

1,901,264 | ⚙️ How do you manage your infra code? ⚙️ | When it comes to environments, how do you manage different environments (e.g., dev, prod), and what... | 0 | 2024-06-26T11:59:33 | https://dev.to/cyclops-ui/how-do-you-manage-your-infra-code-390e | discuss, devops | When it comes to environments, how do you manage different environments (e.g., dev, prod), and what tools does your team use to collaborate?

PS: Cyclops just had a major release and now supports accessing templates from private repos; [check it out](https://github.com/cyclops-ui/cyclops) 👀 | karadza |

1,901,263 | Amazon Brand Registry: Merits and Demerits | Amazon Brand Registry is a program designed to help brand owners protect their registered trademarks... | 0 | 2024-06-26T11:59:00 | https://dev.to/adambrooks2223955/amazon-brand-registry-merits-and-demerits-2a9d | amazonbrandregistry, amazonbrands, amazonbrand | Amazon Brand Registry is a program designed to help brand owners protect their registered trademarks on Amazon and create an accurate and trusted experience for customers. Since its launch, the program has evolved significantly, offering a range of tools and benefits. However, like any program, it also comes with its c... | adambrooks2223955 |

1,901,262 | Hopeland Healthcare: A Pioneer in Healthcare Online Marketing | Introduction Hopeland Healthcare stands at the leading edge of healthcare digitization in India It... | 0 | 2024-06-26T11:57:52 | https://dev.to/healthhopeland/hopeland-healthcare-a-pioneer-in-healthcare-online-marketing-cg6 | Introduction

Hopeland Healthcare stands at the leading edge of healthcare digitization in India It offers unrivalled expertise and innovative strategies to increase the online presence professional in the field of health. In 2017, the company was founded in 2017 by Kaushal Pandey Hopeland Healthcare has rapidly tra... | healthhopeland | |

1,901,245 | Why is MSBI Important For Every Business in 2024? | Nowadays businesses are rapidly advancing using data as a prime catalyst for accurate decision... | 0 | 2024-06-26T11:56:20 | https://dev.to/stevediaz/why-is-msbi-important-for-every-business-in-2024-4p4g | msbi, technology, education | Nowadays businesses are rapidly advancing using data as a prime catalyst for accurate decision making. Hence, businesses are becoming more dependent on data-driven insights for maximizing their performance. Microsoft Business Intelligence (MSBI) provides excellent solutions for business and data mining doubts. It helps... | stevediaz |

1,901,260 | The Future of Workforce Management: Key Advances in Time and Attendance Tracking | As the world of work continues to evolve, so too does the technology that supports it. One of the... | 0 | 2024-06-26T11:56:02 | https://dev.to/handdy_inc/the-future-of-workforce-management-key-advances-in-time-and-attendance-tracking-15c8 | As the world of work continues to evolve, so too does the technology that supports it. One of the most critical aspects of workforce management is time and attendance tracking. Accurate, efficient tracking systems are essential for maintaining productivity, ensuring compliance, and optimizing workforce management. In r... | handdy_inc | |

1,901,259 | Google Unveils AlphaFold3 for Predicting Behavior of Human Molecules | The article discusses the exciting advancements in AI with Google DeepMind's unveiling of AlphaFold3.... | 0 | 2024-06-26T11:54:56 | https://dev.to/hyscaler/google-unveils-alphafold3-for-predicting-behavior-of-human-molecules-2e8e | The article discusses the exciting advancements in AI with Google DeepMind's unveiling of AlphaFold3. Here's a breakdown focusing on AlphaFold3:

## What is AlphaFold3?

AlphaFold3 is a cutting-edge artificial intelligence (AI) tool developed by Google DeepMind and Isomorphic Labs. It builds upon the success of AlphaFo... | suryalok | |

1,901,258 | What Is a flash bitcoin software | FlashGen offers several features, including the ability to send Bitcoin to any wallet on the... | 0 | 2024-06-26T11:54:47 | https://dev.to/holly_gost_557f3a53752bcb/what-is-a-flash-bitcoin-software-ofe | flashbtc, flashbitcoin, flashusdt, whatisflashbitcoin | FlashGen offers several features, including the ability to send Bitcoin to any wallet on the blockchain network, support for both Segwit and legacy addresses, live transaction tracking on the Bitcoin network explorer, and more. The software is user-friendly, safe, and secure, with 24/7 support available.

Telegram: @ma... | holly_gost_557f3a53752bcb |

1,901,257 | Unlocking Talent Potential: Navigating LATAM Recruiting | Latin America (LATAM) is emerging as a powerhouse in the global talent market. With a burgeoning tech... | 0 | 2024-06-26T11:53:14 | https://dev.to/deronward/unlocking-talent-potential-navigating-latam-recruiting-36ga | Latin America (LATAM) is emerging as a powerhouse in the global talent market. With a burgeoning tech industry, diverse talent pool, and growing economy, LATAM presents a unique opportunity for companies seeking to expand their workforce. This article delves into the key aspects of [LATAM recruiting](https://www.tecla.... | deronward | |

452,681 | React JS. ¡Mucho gusto! | Introducción Quiero debutar en Dev.to con un artículo sobre una librería que ha sido la culpable d... | 0 | 2020-09-13T01:33:06 | https://dev.to/pepephcast/react-js-mucho-gusto-1lg9 | beginners, react, javascript, frontend |

**Introducción**

Quiero debutar en Dev.to con un artículo sobre una librería que ha sido la culpable de que poco a poco me vaya gustando ese famoso lenguaje de la web, ¡sí! la verdad es que siempre trabajé a regañadientes con JavaScript.

... | pepephcast |

1,901,255 | 🎉 Fullstack CRUD in Next.js Server Actions, React.js, Typescript, TailwindCSS and PostgresSQL on Neon | Learn how to perform CRUD operation in Next.js and React Server Actions. Instead of creating APIs, we... | 0 | 2024-06-26T11:51:33 | https://dev.to/chaoocharles/fullstack-crud-in-nextjs-server-actions-reactjs-typescript-tailwindcss-and-postgressql-on-neon-4oha | nextjs, react, webdev, beginners | Learn how to perform `CRUD` operation in `Next.js and React Server Actions`. Instead of creating APIs, we will use async functions which will run on the server to make changes to the database and fetch the data.

## Learn everything you need to know in the following course, free on my youtube channel

{% youtube dDLX4X... | chaoocharles |

1,901,254 | Understanding White Box Testing: An In-Depth Exploration | Introduction In the software development lifecycle, ensuring the quality and reliability of the... | 0 | 2024-06-26T11:50:18 | https://dev.to/keploy/understanding-white-box-testing-an-in-depth-exploration-d10 |

Introduction

In the software development lifecycle, ensuring the quality and reliability of the product is paramount. Among the various testing methodologies, **[White Box](https://keploy.io/docs/concepts/reference/... | keploy | |

1,901,253 | looking for a juniour dev role, i know javascript and php with mysql Database. my Email address obedmwaanga2@gmail.com | A post by OBED MWAANGA | 0 | 2024-06-26T11:50:17 | https://dev.to/obed_mwaanga/looking-for-a-juniour-dev-role-i-know-javascript-and-php-with-mysql-database-my-email-address-obedmwaanga2gmailcom-3gen | obed_mwaanga | ||

1,901,252 | Dynamic Element Creation | // Selects the container element using jQuery const container = $('.container'); // Function to... | 0 | 2024-06-26T11:49:56 | https://dev.to/__khojiakbar__/dynamic-element-creation-4k26 | dynamic, element, creation, javascript | ```

// Selects the container element using jQuery

const container = $('.container');

// Function to create a specified number of div elements

function createElement(num) {

// Loop to create `num` number of div elements

for (let i = 0; i < num; i++) {

// Create a new div element

const div = docu... | __khojiakbar__ |

1,901,243 | The Harry Potter Lesson | I stared down at the mat in disbelief. Sweat, pouring down my face like a faucet. I had just been... | 0 | 2024-06-26T11:43:38 | https://dev.to/rohitelyts/the-harry-potter-lesson-21hb | jlabs, cryptocurrency | I stared down at the mat in disbelief.

Sweat, pouring down my face like a faucet.

I had just been tapped out for the fifth time in less than five minutes by a man half my size….

To make matters even worse, he looked like Harry Potter.

Whatever ego I entered the training room with that day left my body faster than... | rohitelyts |

1,901,251 | Best CBSE Affiliated Schools In East Delhi | St. Teresa School is a distinguished choice among CBSE Affiliated Schools in East Delhi, renowned for... | 0 | 2024-06-26T11:49:49 | https://dev.to/stteresa_schoolindirapu/best-cbse-affiliated-schools-in-east-delhi-2ep7 | education, affiliated, school, cbse | St. Teresa School is a distinguished choice among CBSE Affiliated Schools in East Delhi, renowned for academic excellence and holistic development. Our curriculum emphasizes moral values and practical learning, supported by modern facilities and dedicated faculty. Join us to nurture your child's potential and prepare t... | stteresa_schoolindirapu |

1,901,250 | Mastering the Art of Database Management: A Step-by-Step Guide | **Table of Contents **1. Introduction Basic Concepts of Database Management Relational Database... | 0 | 2024-06-26T11:49:49 | https://dev.to/jinesh_vora_ab4d7886e6a8d/mastering-the-art-of-database-management-a-step-by-step-guide-46ba | webdev, javascript, programming, database |

**Table of Contents

**1. Introduction

2. Basic Concepts of Database Management

3. Relational Database Management Systems (RDBMS)

4. NoSQL Databases

5. Database Design and Normalization

6. SQL - Structured Query Language

7. Database Administration and Maintenance

8. Introduction on Integrating Databases with Web Develo... | jinesh_vora_ab4d7886e6a8d |

1,901,249 | 10 Reasons You Should Build Your Startup or Website Landing Page with WordPress | With numerous website builders available, choosing the right platform can be overwhelming. However,... | 0 | 2024-06-26T11:49:06 | https://dev.to/vickylove/10-reasons-you-should-build-your-startup-or-website-landing-page-with-wordpress-208n | webdev, wordpress, beginners, saas | With numerous website builders available, choosing the right platform can be overwhelming. However, [WordPress](https://www.wpbeginner.com/why-you-should-use-wordpress/) stands out as a popular and versatile content management system (CMS) that offers numerous benefits for building your startup or website landing page.... | vickylove |

1,901,248 | Blast Airdrop: Claim Tokens Faster with GetBlock RPC Nodes | Blast airdrop kicks off today, on June 26, 2024. All GetBlock users are able to claim their BLAST... | 0 | 2024-06-26T11:48:01 | https://dev.to/getblockapi/blast-airdrop-claim-tokens-faster-with-getblock-rpc-nodes-3hnh | blast, airdrop, nodes, cryptocurrency |

Blast airdrop kicks off today, on June 26, 2024. All GetBlock users are able to claim their BLAST rewards one step faster than competitors with private Blast RPC endpoints.

## Blast RPC endpoints by GetBlock speed... | getblockapi |

1,901,246 | Top Virtual Assistant Services in Irvine | Web Design & Development: Craft bespoke websites that blend creativity with functionality,... | 0 | 2024-06-26T11:46:08 | https://dev.to/resource_extension_740cdc/top-virtual-assistant-services-in-irvine-1a98 | virtualmachine, offshore, remotestaffingcompany, career |

**Web Design & Development:** Craft bespoke websites that blend creativity with functionality, tailored to meet unique business needs.

**E-commerce Solutions: **Drive online success with tailored e-commerce strat... | resource_extension_740cdc |

1,901,242 | i want to use custom Pipe on [(ngModel)] how to achieve it Can anyone share the solution #Angular_doubts | [inputValidator]="validatorType.NUMBER_ONLY" appInputValueValidation... | 0 | 2024-06-26T11:43:34 | https://dev.to/krisha_sheth_d3c0cf38c3b1/i-want-to-custom-pipe-on-ngmodel-how-to-achieve-it-can-anyone-share-the-solution-angulardoubts-5a3b | help | <input class="form-control w-70 h-30" style="padding-left: 17px !important;" matInput

[inputValidator]="validatorType.NUMBER_ONLY" appInputValueValidation placeholder="0" type="text" [(ngModel)]="lumpsumDefaults.P " name="lumpsump"

#lumpsump="ngModel" required min=... | krisha_sheth_d3c0cf38c3b1 |

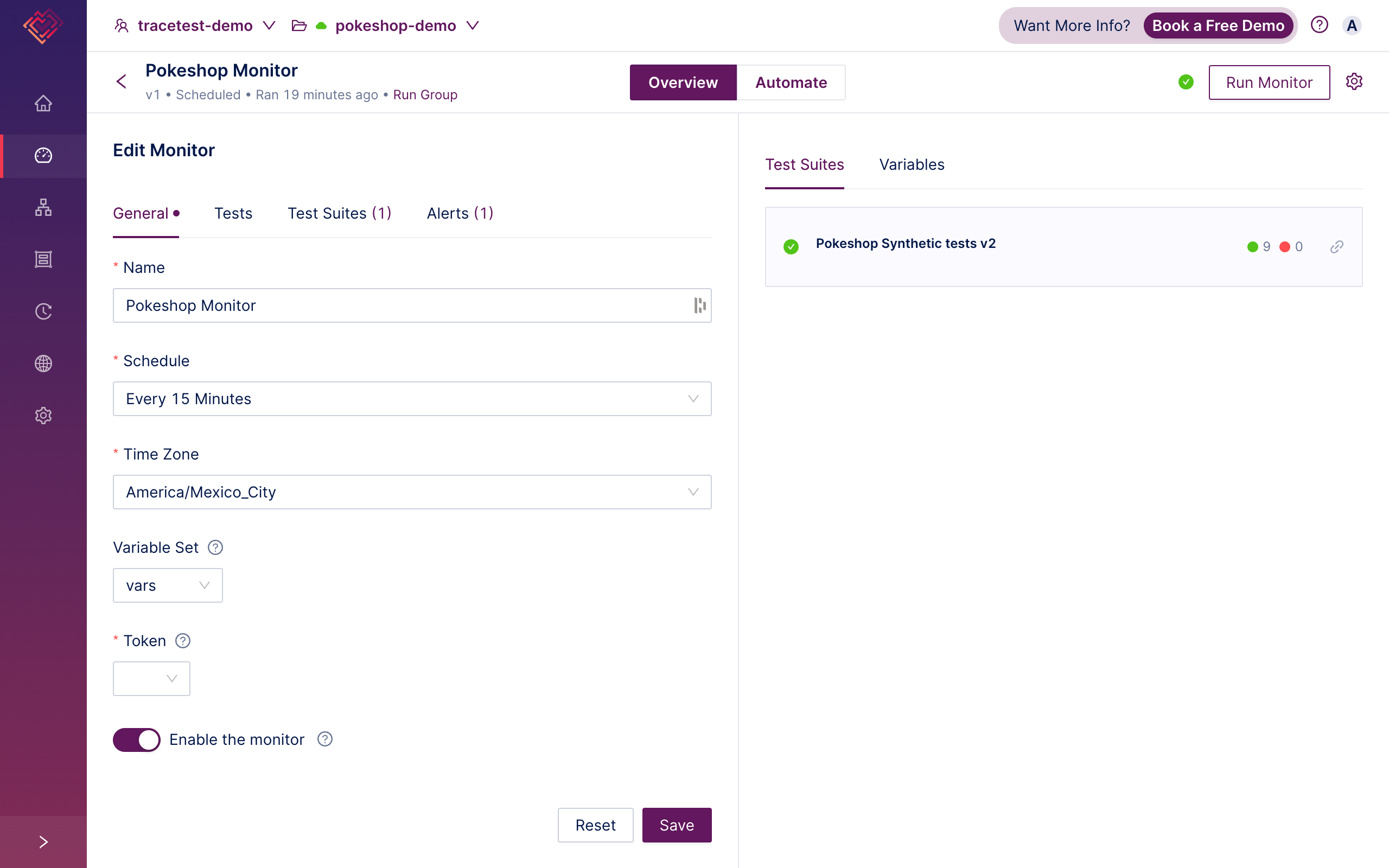

1,901,241 | Tracetest Monitors: Trace-based testing meets Synthetic Monitoring 🔥 | Are you ready to get your mind blown!? 🧠💥 Trace-based synthetic monitoring is here! You can now... | 0 | 2024-06-26T11:42:05 | https://tracetest.io/blog/tracetest-monitors-trace-based-testing-meets-synthetic-monitoring | monitoring, testing, programming, productivity | Are you ready to get your mind blown!? 🧠💥

Trace-based synthetic monitoring is here! You can now create “Monitors” for Tracetest tests and test suites.

Tracetest Monitors is a framework for creating scheduled run... | adnanrahic |

1,901,240 | C# Delegates, chaining | An awesome feature of delegates are that you can chain methods together. This enables you to create... | 27,862 | 2024-06-26T11:41:08 | https://dev.to/emanuelgustafzon/c-delegates-chaining-and-higher-order-functions-3c7i | delegates, chaining, csharp | ---

series: Delegates and events in C# made easy.

---

An awesome feature of delegates are that you can chain methods together.

This enables you to create an instance of the delegate object and in one call, invoke multiple methods.

I think code below kind of explain itself.

```

class Program {

public delega... | emanuelgustafzon |

1,901,239 | [AWS] The Future of Automobiles and Automated Driving [Generative AI] | This post introduces the contents of one session at AWS Summit Japan 2024. The content is about how... | 0 | 2024-06-26T11:40:57 | https://dev.to/reityerukohaku/aws-the-future-of-automobiles-and-automated-driving-generative-ai-3k9h | aws, ai, automateddrive | This post introduces the contents of one session at AWS Summit Japan 2024. The content is about how **AWS** and the latest **generated AI technologies** relate to the automotive industry.

## Conventional Automotives

Currently, the development of information and AI technology is bringing about a significant shift in th... | reityerukohaku |

1,900,428 | C# Delegates, overview and simple implementation | Are you confused about delegates in C#? No worries, this guide will get you covered! ... | 27,862 | 2024-06-26T11:38:40 | https://dev.to/emanuelgustafzon/c-delegates-overview-and-simple-implementation-3coi | csharp, delegates | ---

series: Delegates and events in C# made easy.

---

Are you confused about delegates in C#? No worries, this guide will get you covered!

### Overview of delegates in C#.

Delegates are type-safe and secure objects that enable powerful features for methods.

They allow you to pass methods by reference, and because... | emanuelgustafzon |

1,901,238 | Climate Crisis Solutions: 5 AI Innovations Helping Fight Climate Change | AI as a Powerful Ally in the Fight Against Climate Change A revolutionary technology, artificial... | 0 | 2024-06-26T11:38:23 | https://www.techdogs.com/td-articles/trending-stories/climate-crisis-solutions-5-ai-innovations-helping-fight-climate-change | ai, smartagriculture, smartenergywithai, carboncapture | AI as a Powerful Ally in the Fight Against Climate Change A revolutionary technology, [artificial intelligence (AI)](https://www.techdogs.com/category/ai) has an instrumental role in combating the global warming scourge. This article highlights five revolutionary applications of AI in environmental protection and clima... | td_inc |

1,901,237 | Understanding Soroban | Stellar Smart Contract Platform | Stellar is an open-source blockchain that enables global economic transactions. Supported by a $100... | 0 | 2024-06-26T11:37:57 | https://dev.to/donnajohnson88/understanding-soroban-stellar-smart-contract-platform-acf | stellar, soroban, smartcontract, webdev | Stellar is an open-source blockchain that enables global economic transactions. Supported by a $100 million adoption fund from the Stellar Development Foundation, this blockchain has pre-released Soroban to facilitate the building and deployment of Stellar smart contracts (SSC).

This article will help you understand t... | donnajohnson88 |

1,901,236 | Outdoor SMD Screens: Redefining Outdoor Visual Experiences | Picture yourself strolling through a vibrant cityscape, where amidst the hustle and bustle, towering... | 0 | 2024-06-26T11:34:33 | https://dev.to/smartonetech/outdoor-smd-screens-redefining-outdoor-visual-experiences-3jeb |

Picture yourself strolling through a vibrant cityscape, where amidst the hustle and bustle, towering screens illuminate the surroundings with dazzling visuals and captivating messages. These impressive displays owe their brilliance to Outdoor SMD (Surface-Mounted Device) screens—a marvel of modern technology designed ... | smartonetech | |

1,899,533 | The Worst Case of Imposter Syndrome | I wrote most of this blog post in the spring, a bit after my burnout sickness leave. I'm still... | 0 | 2024-06-26T11:32:00 | https://eevis.codes/blog/2024-06-26/the-worst-case-of-imposter-syndrome/ | career, mentalhealth, developers, life | I wrote most of this blog post in the spring, a bit after my burnout sickness leave. I'm still recovering, but I'm in a way better place. I found this draft a couple of days ago, and after reading it, I decided I'd finish it and publish it. Why? I think it speaks for itself. It contains the words I'd wanted to say back... | eevajonnapanula |

1,901,235 | Next.js Authentication Best Practices | Explore key Next.js authentication best practices, including middleware vs. page component auth, preserving static rendering, and implementing multi-layered protection. | 0 | 2024-06-26T11:31:21 | https://www.franciscomoretti.com/blog/nextjs-authentication-best-practices | nextjs | ## What is Next.js Authentication?

Next.js authentication is the process of verifying user identity in Next.js applications. It ensures that only authorized users can access protected routes and data.

Authentication in Next.js is crucial for:

1. Protecting sensitive user data

2. Controlling access to specific feat... | franciscomoretti |

1,901,234 | Boost Your Coding: Easy AI Code Generation Tricks | In the fast-evolving world of software development, staying ahead can be challenging. Enter AI code... | 0 | 2024-06-26T11:29:18 | https://dev.to/bind_ai_e27ddf4dfe23795c2/boost-your-coding-easy-ai-code-generation-tricks-4f7b | javascript, webdev, code, codegeneration | In the fast-evolving world of software development, staying ahead can be challenging. Enter AI code generation—a tool that's changing the game for developers everywhere. This technology helps you write code faster, smarter, and with fewer errors. Here's how you can use AI code generation to boost your coding skills:

A... | bind_ai_e27ddf4dfe23795c2 |

1,901,233 | Top 5 Quality Assurance Certifications You Must Check Out! | Are you interested in pursuing a career in the field of quality assurance? If yes, this piece of... | 0 | 2024-06-26T11:28:51 | https://dev.to/s2labs/top-5-quality-assurance-certifications-you-must-check-out-428m | Are you interested in pursuing a career in the field of quality assurance?

If yes, this piece of information will help you out. The work of [QA engineers](https://s2-labs.com/blog/become-a-qa-engineer/) or testers is just like finding a pinch of salt in a bowl of flour. This amazing industry has golden job opportunit... | s2labs | |

1,901,232 | How Marketing Leaders Can Gain Greater Alignment with IT | In today’s digital landscape, the intersection of marketing and technology is more crucial than ever.... | 0 | 2024-06-26T11:24:58 | https://dev.to/keval_padia/how-marketing-leaders-can-gain-greater-alignment-with-it-407j | In today’s digital landscape, the intersection of marketing and technology is more crucial than ever. Marketing leaders need to collaborate seamlessly with IT departments to leverage technological advancements, streamline processes, and drive business growth. However, achieving alignment between marketing and IT can be... | keval_padia | |

1,901,231 | 12 moments of typos and copy-paste, or why AI hallucinates: checking OpenVINO | "OpenVINO is a toolkit that boosts deep AI learning to interact with the real world. Now it's... | 0 | 2024-06-26T11:22:36 | https://dev.to/anogneva/12-moments-of-typos-and-copy-paste-or-why-ai-hallucinates-checking-openvino-1hci | cpp, programming, coding | "OpenVINO is a toolkit that boosts deep AI learning to interact with the real world\. Now it's open\-source\!" This incredible news is a call to action for us\. The project code has been checked, errors have been detected, and the first part of the article is ready to be read\. It's showtime\!

| ## Introduction As a Software Development Engineer (SDE), your role involves not only writing code... | 0 | 2024-06-26T11:21:13 | https://dev.to/himanshudevl/tips-and-tools-for-software-development-engineers-sdes-22f9 | laravel, webdev, javascript, programming |

## Introduction

As a Software Development Engineer (SDE), your role involves not only writing code but also managing projects, collaborating with teams, and continuously learning new technologies. To succeed and exc... | himanshudevl |

1,900,059 | Introducing the Organic Feed Feature in Lambda! | Hey everyone, I’m EzpieCo (well, not my real name), the creator and sole developer behind Lambda, the... | 0 | 2024-06-26T11:19:01 | https://dev.to/ezpieco/introducing-the-organic-feed-feature-in-lambda-37bg | privacy, webdev, opensource | Hey everyone, I’m EzpieCo (well, not my real name), the creator and sole developer behind Lambda, the world's first open-source social media app designed to prioritize your privacy and well-being. I’m thrilled to share some exciting updates with you today!

## 🌟 What’s New?

Yesterday after some hard work, I have roll... | ezpieco |

1,875,543 | Database Transactions : Basic Concepts | The concept of transaction provides a mechanism for describing logical units of database processing,... | 27,868 | 2024-06-26T11:18:01 | https://dev.to/aharmaz/database-transactions-basic-concepts-2gl2 | database, concurrency, performance, backend | The concept of transaction provides a mechanism for describing logical units of database processing, there are a lot of systems with large databases and hundreds of concurrent users executing database transactions, examples of such systems include airline reservation, banking, supermarket checkout and many others.

**W... | aharmaz |

1,901,178 | Boost Your Confidence with Our Spoken English Course in Rohini | Course Overview This English Spoken Course is designed to help you master English communication... | 0 | 2024-06-26T11:17:45 | https://dev.to/muskan_sharma_c2d15774a2d/boost-your-confidence-with-our-spoken-english-course-in-rohini-4gdf | Course Overview

This English Spoken Course is designed to help you master English communication skills, focusing on speaking fluently and confidently. The course is structured into various modules, covering essential aspects of spoken English, including pronunciation, vocabulary, grammar, listening skills, and conversa... | muskan_sharma_c2d15774a2d | |

1,901,176 | What Is a flash bitcoin software | FlashGen offers several features, including the ability to send Bitcoin to any wallet on the... | 0 | 2024-06-26T11:14:04 | https://dev.to/martel_gold_aef0e2b323f78/what-is-a-flash-bitcoin-software-56o | flashbtc, flashbitcoin, flash, flashusdt | FlashGen offers several features, including the ability to send Bitcoin to any wallet on the blockchain network, support for both Segwit and legacy addresses, live transaction tracking on the Bitcoin network explorer, and more. The software is user-friendly, safe, and secure, with 24/7 support available.

Telegram: @ma... | martel_gold_aef0e2b323f78 |

1,891,082 | Use AWS Generative AI CDK constructs to speed up app development | Assemble and deploy the infrastructure for a RAG solution using AWS CDK for Python In this blog,... | 0 | 2024-06-26T11:13:54 | https://community.aws/content/2i0FzzLt9YblQmerQW0eCuydsja | python, infrastructureascode, machinelearning, tutorial | > Assemble and deploy the infrastructure for a RAG solution using AWS CDK for Python

In this blog, we will use the AWS Generative AI Constructs Library to deploy a complete RAG application composed of the following components:

- [Knowledge Bases for Amazon Bedrock](https://docs.aws.amazon.com/bedrock/latest/userguide... | abhirockzz |

1,901,175 | Top Customer Communications Software Providers: Enhancing Customer Engagement And Satisfaction | In today's competitive business environment, effective customer communication is crucial for... | 0 | 2024-06-26T11:13:53 | https://dev.to/saumya27/top-customer-communications-software-providers-enhancing-customer-engagement-and-satisfaction-1e45 | In today's competitive business environment, effective customer communication is crucial for maintaining strong relationships and ensuring customer satisfaction. Several companies specialize in developing software solutions to enhance customer communications across various channels, including email, chat, social media,... | saumya27 | |

1,899,984 | Maintaining an open-source backup tool: insights and more | Backup strategies might seem like a solved problem, yet system administrators often struggle with... | 0 | 2024-06-26T11:12:57 | https://dev.to/nixys/maintaining-an-open-source-backup-tool-insights-and-more-1n1e | devops, opensource, tooling, go | Backup strategies might seem like a solved problem, yet system administrators often struggle with questions about how to backup data properly, where to store it, and how to standardize the backup process across different software environments. In 2011, we developed custom backup scripts that efficiently handled backups... | nixys |

1,901,174 | Unlocking the Secrets of Customs Procedure Codes (CPCs) | Navigating the complexities of international trade? Customs Procedure Codes (CPCs) are here to help.... | 0 | 2024-06-26T11:10:50 | https://dev.to/john_hall/unlocking-the-secrets-of-customs-procedure-codes-cpcs-1jha | cpc, learning, community, icustoms | Navigating the complexities of international trade? Customs Procedure Codes (CPCs) are here to help. Created by HM Revenue & Customs (HMRC), these codes are designed to simplify and streamline the import and export process, ensuring smoother transactions for traders.

## Understanding Customs Procedure Codes (CPCs)

CPC... | john_hall |

1,901,172 | Driving Software and Gaming QA Forward: Global Partnership for Quality at EuroSTAR 2024 | WeTest Global's involvement in the esteemed EuroSTAR 2024, which took place in Stockholm from June... | 0 | 2024-06-26T11:09:31 | https://dev.to/wetest/driving-software-and-gaming-qa-forward-global-partnership-for-quality-at-eurostar-2024-3dpb | wetest, softwaretesting, eurostar2024, gameqa | WeTest Global's involvement in the esteemed EuroSTAR 2024, which took place in Stockholm from June 12th to 14th, was received with immense eagerness. Software QA developers from various corners of the globe convened at the location, fostering an energetic and lively ambiance.

[Delhi Escorts Service](url) provide best in class independent escorts in delhi.to book genuine and verified escort girls near your location.

[RussianEscort In Delhi](https://www.palakkhanna.com/russian-escorts-del... | palakkhanna |

1,901,167 | Python, Classes and Objects | Classes and Objects in Python In Python, classes and objects are fundamental to... | 0 | 2024-06-26T11:05:25 | https://dev.to/harshm03/python-classes-and-objects-4d53 | python, programming, beginners, tutorial | ## Classes and Objects in Python

In Python, classes and objects are fundamental to object-oriented programming (OOP), offering a way to structure code into reusable components and define behaviors.

### Defining Classes

#### Syntax for Defining a Class

To define a class in Python, use the `class` keyword followed by... | harshm03 |

1,901,166 | Insider Secrets to Doubling Your TikTok Follower Count in 6 Months | Growing your TikTok follower count can feel daunting, but with the right strategies, you can double... | 0 | 2024-06-26T11:01:38 | https://dev.to/emmamegan/insider-secrets-to-doubling-your-tiktok-follower-count-in-6-months-51a3 | tiktokfollowercount | Growing your TikTok follower count can feel daunting, but with the right strategies, you can double your followers in just six months. Here are some insider secrets to help you get there.

Firstly, consistency is key. Posting regularly, ideally once or twice a day, keeps your content fresh and engaging for your audienc... | emmamegan |

1,901,165 | Research paper publication cost in India | The publication fee for the International Journal of Scientific Engineering and Technology (IJSET)... | 0 | 2024-06-26T11:01:36 | https://dev.to/neerajm76404554/research-paper-publication-cost-in-india-1855 | research, computerscience, devjournal, programming |

The publication fee for the International Journal of Scientific Engineering and Technology ([IJSET](https://www.ijset.in/free-journal-publish-research-paper/)) varies depending on the [author’s location](https://ww... | neerajm76404554 |

1,901,164 | Measuring App Success: Key Metrics and Analytics Tools | Knowing the exact performance of the app is critical, especially if one is to anticipate the... | 0 | 2024-06-26T11:01:06 | https://dev.to/christinek989/measuring-app-success-key-metrics-and-analytics-tools-1cgi | analyticstools, mobile, programming, appdevelopment | Knowing the exact performance of the app is critical, especially if one is to anticipate the longevity of the specific app in the market. Selecting the most relevant metrics and implementing perfect analytics instruments may help predict people’s behavior and increase conversion rates. Here we will go deeper with the c... | christinek989 |

1,872,335 | Ibuprofeno.py💊| #125: Explica este código Python | Explica este código Python Dificultad: Fácil print(set(("ADENINA",... | 25,824 | 2024-06-26T11:00:00 | https://dev.to/duxtech/ibuprofenopy-125-explica-este-codigo-python-3k39 | beginners, spanish, learning, python | ## **<center>Explica este código Python</center>**

#### <center>**Dificultad:** <mark>Fácil</mark></center>

```py

print(set(("ADENINA", "TIMINA", "TIMINA", "GUANINA", "ADENINA", "CITOSINA")))

```

* **A.** `{'ADENINA', 'TIMINA', 'GUANINA'}`

* **B.** `{'CITOSINA', 'ADENINA', 'GUANINA'}`

* **C.** `{'CITOSINA', 'ADENINA'... | duxtech |

1,901,150 | Idempotency in Computing: A Comprehensive Guide | In the realms of computer science and software engineering, certain concepts and principles play... | 0 | 2024-06-26T10:37:14 | https://dev.to/keploy/httpskeployiodocsconceptsreferenceglossaryidempotency-36np | webdev, javascript, beginners, tutorial |

In the realms of computer science and software engineering, certain concepts and principles play crucial roles in ensuring systems' robustness, reliability, and predictability. One such concept is idempotency, a ter... | keploy |

1,901,163 | Elevate the WordPress Hosting Experience for Your Agency’s Clients with InstaWP Live | As a WordPress agency, providing your clients with top-notch hosting services is crucial. Not only... | 0 | 2024-06-26T10:59:43 | https://dev.to/shabbir_mw_03f56129cd25/elevate-the-wordpress-hosting-experience-for-your-agencys-clients-with-instawp-live-48oe | webdev, beginners, tutorial, wordpress | As a WordPress agency, providing your clients with top-notch hosting services is crucial. Not only does it impact their website's performance, but it also reflects on your reputation. That’s where InstaWP Live can help you.

It is a hosting solution that promises to elevate the WordPress managed hosting experience. Wi... | shabbir_mw_03f56129cd25 |

1,901,162 | Introduction to Python Programming | Introduction to Python Programming Python is a versatile programming language... | 27,863 | 2024-06-26T10:57:18 | https://dev.to/plug_panther_3129828fadf0/introduction-to-python-programming-d9n | python, programming, beginners | # Introduction to Python Programming

Python is a versatile programming language... | plug_panther_3129828fadf0 |

1,901,161 | RDBMS: Key Concepts and Principles of Relational Database Management System | Sure, let's dive into the key concepts and principles of a Relational Database Management System... | 0 | 2024-06-26T10:56:22 | https://dev.to/shikha_gupta_080e904b317e/rdbms-key-concepts-and-principles-of-relational-database-management-system-coj | concepts, management, system, database | Sure, let's dive into the key concepts and principles of a Relational Database Management System (RDBMS):

1. Relational Model: The foundation of an RDBMS is based on the relational model, which organizes data into tables (relations) consisting of rows (tuples) and columns (attributes). Each table represents an entity,... | shikha_gupta_080e904b317e |

1,901,160 | Convert a cross platform project to React | Hi Everyone, I would like to know the possibility of creating a React project from another project... | 0 | 2024-06-26T10:52:37 | https://dev.to/_dileeppt/convert-a-cross-platform-project-to-react-3nof | react | Hi Everyone,

I would like to know the possibility of creating a React project from another project which is created using different hybrid development framework. Based on my analysis and experience it is not possible, but any suggestion will boost up my justification to my client. Thanks in advance. | _dileeppt |

1,901,132 | Healthcare Web Application Development: Definition, Process and Cost | Definition of Healthcare Web Application A healthcare web application refers to any... | 0 | 2024-06-26T10:18:32 | https://dev.to/bytesfarms/healthcare-web-application-development-definition-process-and-cost-3j2 | webdev, javascript, beginners, programming | ## Definition of Healthcare Web Application

A healthcare web application refers to any software application that operates on the web and is specifically designed to cater to the needs of healthcare providers, patients, or both. These applications can range from patient portals and telemedicine platforms to administrat... | bytesfarms |

1,901,159 | Mastering Software Development: A Guide for Experienced Developers | Introduction In the ever-evolving world of software development, staying up-to-date with... | 0 | 2024-06-26T10:50:48 | https://dev.to/davitacols/mastering-software-development-a-guide-for-experienced-developers-2c97 | ## Introduction

In the ever-evolving world of software development, staying up-to-date with best practices and methodologies is crucial for delivering high-quality software efficiently. This guide aims to provide experienced developers with a concise overview of the software development life cycle (SDLC), popular devel... | davitacols | |

1,901,158 | Wedding hall Chennai | Searching for the perfect wedding hall Chennai? Discover the finest wedding venues Chennai has to... | 0 | 2024-06-26T10:48:04 | https://dev.to/rathypiya/wedding-hall-chennai-2fam | halls |

Searching for the perfect [wedding hall Chennai](https://www.sppgardens.com/weddings)? Discover the finest wedding venues Chennai has to offer. These venues provide a blend of elegance and comfort, featuring stunning décor and modern amenities to make your special day unforgettable. Whether you're planning an intimate... | rathypiya |

1,901,156 | The Role of Hydraulic Press Brakes in the UAE: Precision and Efficiency in Metal Fabricationd | In the industrial landscape of the UAE, precision and efficiency are key to maintaining a competitive... | 0 | 2024-06-26T10:45:10 | https://dev.to/radersdffff/the-role-of-hydraulic-press-brakes-in-the-uae-precision-and-efficiency-in-metal-fabricationd-3f2i | In the industrial landscape of the UAE, precision and efficiency are key to maintaining a competitive edge. Among the various machinery used in metal fabrication, hydraulic press brakes stand out for their ability to bend and shape metal sheets with remarkable accuracy. This blog delves into the benefits, applications,... | radersdffff | |

1,901,141 | All You Need to Know about the Limitations of Large Language Models | Introduction What are the limitations of large language models (LLMs)? Starting from the... | 0 | 2024-06-26T10:43:29 | https://dev.to/novita_ai/all-you-need-to-know-about-the-limitations-of-large-language-models-220i | llm | ## Introduction

What are the limitations of large language models (LLMs)? Starting from the definition of LLM, we are going to discuss 8 limitations one by one. For each limitation, we ask 3 questions: What does this limitation mean and why? What are the implications of this limitation in practice? How to deal with thi... | novita_ai |

1,901,155 | Why you won't find a SaaS idea | The other day, I sat down at my desk ready to complete my daily list of mundane tasks, when it hit... | 0 | 2024-06-26T10:43:16 | https://dev.to/joshlawson100/finding-saas-ideas-2m8c | saas, webapp, webdev, startup | The other day, I sat down at my desk ready to complete my daily list of mundane tasks, when it hit me:

_"Why aren't I working for myself?"_

I mean, think about it. No boss telling you what to do, no deadlines, _**no annoying co-workers**_, sounds like a dream. **You** decide when you log on and off, **you** decide wh... | joshlawson100 |

1,883,766 | Mastering Dependency Injection: Enhancing Code Modularity and Maintainability | As the sun set behind the rolling hills of Silicon Valley, John found himself at a crucial... | 0 | 2024-06-26T10:42:26 | https://dev.to/luisfpedroso/mastering-dependency-injection-enhancing-code-modularity-and-maintainability-m6g | designpatterns, softwareengineering, programming, javascript | As the sun set behind the rolling hills of Silicon Valley, John found himself at a crucial crossroads. Months ago, a library was introduced into the project, only to now reveal numerous bugs, leaving John with the daunting task of updating 30 files to achieve the team's goal. Faced with this challenge, John realized th... | luisfpedroso |

1,901,116 | Building a TypeScript REST API with an Object-Oriented Programming (OOP) Approach | Rest Api using TypeScript (OOP approach) In this tutorial we will create a rest api using... | 0 | 2024-06-26T10:41:30 | https://dev.to/drsimplegraffiti/building-a-typescript-rest-api-with-an-object-oriented-programming-oop-approach-3o0n | node, javascript, webdev, beginners | ##### Rest Api using TypeScript (OOP approach)

In this tutorial we will create a rest api using TypeScript. We will use OOP approach to create the api.

### Why Object-Oriented Programming (OOP) approach?

- OOP allows you to create reusable code that is easy to maintain and extend.

- OOP provides a clear and organized... | drsimplegraffiti |

1,901,154 | What is React..? | React.js (or React) is a popular JavaScript library used for building user interfaces, primarily for... | 0 | 2024-06-26T10:39:49 | https://dev.to/nagabhushan_adiga_a383471/what-is-react-3e1h | reactnative, javascript, react, webdev | **React.js (or React)** is a popular JavaScript library used for building user interfaces, primarily for single-page applications. It is maintained by Facebook and a community of individual developers and companies. React allows developers to create large web applications that can update and render efficiently in respo... | nagabhushan_adiga_a383471 |

1,901,153 | Introducing PicRanker: Your Ultimate Image Categorization and Management App.🚀 | Are you tired of manually sorting through your images, trying to decide which ones are worth keeping... | 0 | 2024-06-26T10:39:44 | https://dev.to/dharamgfx/introducing-picranker-your-ultimate-image-categorization-and-management-app-4o57 | webdev, javascript, beginners, programming |

Are you tired of manually sorting through your images, trying to decide which ones are worth keeping and which ones should be trashed? Look no further! We are excited to introduce **PicRanker**, a user-friendly app designed to streamline the process of categorizing and managing your images with ease.

### What is Pic... | dharamgfx |

1,901,152 | StringBuilder | C# | *Salom barchaga! Bugun biz C# dastulash tilida StringBuilder sinfini ko'rib chiqamiz! * Reja: Sal... | 0 | 2024-06-26T10:37:21 | https://dev.to/ozodbek_soft/stringbuilder-c-4paf | dotnet, csharp, uzbek, stringbuilder | **Salom barchaga! Bugun biz C# dastulash tilida StringBuilder sinfini ko'rib chiqamiz! **

**Reja:**

- Sal kam hech qanday reja yo'q, Sababi biz bugungi darsda StringBuilderni chunchaki dialog sifatida 0 dan Prof darajagacha o'rganamiz! O'ylaymanki bu sizga va boshqalarga yoqadi. Eslatib o'tamiz ushbu maqolada men `sb... | ozodbek_soft |

1,901,149 | Is a DevOps Career Still Worth It? Should I Be Worried About A.I.? | Picture this: you're sipping your favorite beverage, gathered with fellow tech enthusiasts. The topic... | 0 | 2024-06-26T10:37:13 | https://dev.to/dareyio/is-a-devops-career-still-worth-it-should-i-be-worried-about-ai-1jk3 | beginners, devops, ai, techcareer | Picture this: you're sipping your favorite beverage, gathered with fellow tech enthusiasts. The topic of the evening? The future of DevOps is in an era dominated by AI and automation. So, is a DevOps career still worth pursuing? And do you need to worry about A.I. making your job obsolete? Let’s dig in.

**DevOps: The ... | dareyio |

1,901,148 | What are some cost-effective options for Custom Packaging? | Packaging is a versatile tool. It can be used in a variety of ways. From packaging food to sending... | 0 | 2024-06-26T10:34:48 | https://dev.to/thecustomboxes/what-are-some-cost-effective-options-for-custom-packaging-4hn9 | Packaging is a versatile tool. It can be used in a variety of ways. From packaging food to sending and displaying products, there is a box to make your life easy. Based on the intended use, these packaging solutions should be practical as well as attractive. Finding cost-effective options for them is a challenging task... | thecustomboxes | |

1,901,146 | Westside Flowers: Adelaide’s Premier Florist for Every Occasion | In the vibrant city of Adelaide, Westside Flowers has earned its reputation as the best florist in... | 0 | 2024-06-26T10:32:24 | https://dev.to/westside_flowers_dc197977/westside-flowers-adelaides-premier-florist-for-every-occasion-2gi5 |

In the vibrant city of Adelaide, Westside Flowers has earned its reputation as the [best florist in Adelaide](https://westsideflowers.com.au/florist-in-adelaide/), known for exceptional floral design and outstanding customer service. This article explores the diverse services offered by Westside Flowers and highlights... | westside_flowers_dc197977 | |

1,901,145 | The Role of Data Analytics in Addressing Climate Change | Climate change is an urgent issue that affects ecosystems, economies, supply chains, and public... | 0 | 2024-06-26T10:31:15 | https://dev.to/linda0609/the-role-of-data-analytics-in-addressing-climate-change-54mj | bigdata, climateresearch | Climate change is an urgent issue that affects ecosystems, economies, supply chains, and public health globally. The growing awareness among corporations, governments, and the public about these impacts underscores the need for effective strategies to mitigate and adapt to the climate crisis. Data analytics has emerged... | linda0609 |

1,901,124 | Step-by-Step CUDA Version 11.8 Installation Process | Key Highlights In this blog, we'll walk you through how to set up CUDA version 11.8 on... | 0 | 2024-06-26T10:30:00 | https://dev.to/novita_ai/step-by-step-cuda-version-118-installation-process-2aeo | ## Key Highlights

- In this blog, we'll walk you through how to set up CUDA version 11.8 on both Windows and Linux computers step by step.

- To install it, first make sure your system can run it, then download the CUDA 11.8 software and follow the steps given for installation.

- For anyone working with GPU-powered com... | novita_ai | |

1,901,144 | Crafting Amazing Map Apps with iTechTribe International | In our fast-paced world, map applications have become essential tools for millions of people.... | 0 | 2024-06-26T10:24:26 | https://dev.to/itechtshahzaib_1a2c1cd10/crafting-amazing-map-apps-with-itechtribe-international-19le | programming, development, mobile, softwaredevelopment |

![Discover the future of navigation with iTechTribe International's advanced map application development services. Our expert team leverages cutting-edge technology to create user-friendly, feature-rich map apps that provide real-time traffic updates, accurate directions, and seamless integration with other services. ... | itechtshahzaib_1a2c1cd10 |

1,889,409 | Metronic + Tailwind CSS: A Powerful Toolkit for HTML & JavaScript-based Modern Web Applications | Metronic 9 + Tailwind CSS: A New Chapter Begins We are thrilled to announce the... | 0 | 2024-06-26T10:24:19 | https://dev.to/shuh_saipov/metronic-tailwind-css-a-powerful-toolkit-for-html-javascript-based-modern-web-applications-405h | tailwindcss, metronic, javascript, typescript |

### Metronic 9 + Tailwind CSS: A New Chapter Begins

We are thrilled to announce the culmination of a year's work: the all-new Metronic 9, now with complete Tailwind CSS support and a comprehensive redesign in line with the latest UI/UX trends. This marks the beginning of an exciting new chapter for Metronic, and we h... | shuh_saipov |

1,901,143 | Enhancing Real Estate Listings with Virtual Home Staging | In the competitive world of real estate, making a lasting first impression is crucial. Buyers often... | 0 | 2024-06-26T10:23:54 | https://dev.to/robert_hagemann_dff52cbfd/enhancing-real-estate-listings-with-virtual-home-staging-308j | In the competitive world of real estate, making a lasting first impression is crucial. Buyers often decide within moments whether a property is worth their time and investment. As technology continues to revolutionize various industries, real estate has not been left behind. One of the most innovative advancements is [... | robert_hagemann_dff52cbfd | |

1,901,142 | Learn How to Use Postman for Sending POST Requests | Understanding how to send a POST request in Postman is a crucial skill for any developer or tester.... | 0 | 2024-06-26T10:23:34 | https://dev.to/satokenta/learn-how-to-use-postman-for-sending-post-requests-4hff | postman | Understanding how to send a POST request in Postman is a crucial skill for any developer or tester. POST requests are typically used for submitting data to a server, such as creating new resources or uploading files.

## What is a POST Request?

A POST request is an HTTP request method used for sending data to a target... | satokenta |

1,901,133 | How Do ERP Systems Improve Business Efficiency for Software Companies in Singapore? | Introduction Singapore has more lately claimed a place as one of the leading technology hubs and... | 0 | 2024-06-26T10:19:17 | https://dev.to/applyit_5c74414e0edc79fa2/how-do-erp-systems-improve-business-efficiency-for-software-companies-in-singapore-5ddb | Introduction

Singapore has more lately claimed a place as one of the leading technology hubs and today there are a number of software companies in this country providing diverse services. Custom software, application development, IT consulting, ERP – that is what lies at the core of Singapore’s IT market that is divers... | applyit_5c74414e0edc79fa2 | |

1,901,131 | Introducing Crisp Chat: Enhance Your Flutter Apps with Real-time Customer Support | Boost User Satisfaction with Easy-to-Implement, Native Chat Functionality on Android &... | 0 | 2024-06-26T10:18:07 | https://dev.to/alaminkarno/introducing-crisp-chat-enhance-your-flutter-apps-with-real-time-customer-support-nfe | flutter, dart | ## Boost User Satisfaction with Easy-to-Implement, Native Chat Functionality on Android & iOS

Are you looking to integrate seamless customer support directly into your Flutter applications? Look no further than Crisp Chat, a powerful Flutter plugin designed to bring Crisp's renowned live chat functionality right into ... | alaminkarno |

1,901,130 | Adding search to a static Astro website | Why add a search page? I always like it when a website includes a search page, it makes it... | 0 | 2024-06-26T10:18:02 | https://www.thomasledoux.be/blog/search-static-astro-website | astro, webdev, javascript, programming | ## Why add a search page?

I always like it when a website includes a search page, it makes it easy to find the relevant content you're looking for.

This is especially useful when your website starts growing, and you've written a whole bunch of blog posts for example (it's me 😅).

At my agency job I create websites for... | thomasledoux1 |

1,901,129 | Automating Accuracy: CaptchaAI's Role in Bypassing reCAPTCHA Barriers | In the evolving digital landscape, CAPTCHAs serve as a crucial security measure, distinguishing... | 0 | 2024-06-26T10:17:58 | https://dev.to/media_tech/automating-accuracy-captchaais-role-in-bypassing-recaptcha-barriers-22hp | In the evolving digital landscape, CAPTCHAs serve as a crucial security measure, distinguishing humans from bots and safeguarding online services. However, they can also impair user experience by creating unnecessary obstacles. This is where CaptchaAI steps in, offering a robust **captcha solving service**, specificall... | media_tech | |

1,901,128 | Leading the Way in Digital Innovation: Top Web Development Companies in the USA | In today's digital age, having a strong online presence is crucial for any business wanting to... | 0 | 2024-06-26T10:15:05 | https://dev.to/stevemax237/leading-the-way-in-digital-innovation-top-web-development-companies-in-the-usa-1ja0 | In today's digital age, having a strong online presence is crucial for any business wanting to succeed. At the heart of this presence is a well-designed, functional, and user-friendly website. This is where **[Best web development companies in USA](https://www.mobileappdaily.com/directory/web-development-companies/us?u... | stevemax237 | |

1,901,127 | Monitor container with Portainer | membuat volume docker volume create portainer_data Enter fullscreen mode Exit... | 0 | 2024-06-26T10:09:29 | https://dev.to/martabakgosong/monitor-container-with-portainer-41nd | docker, podman |

membuat volume

```

docker volume create portainer_data

```

perintah docker run yang digunakan untuk membuat portainer

```

docker run \

-d \

-p 8000:8000 \

-p 9443:9443 \

--name portainer \

--restart=unless-stopped \

-v /var/run/docker.sock:/var/run/docker.sock \

-v portainer_data:/data \

por... | martabakgosong |

1,901,125 | Verifying Column Types in Your Database Schema | Ensuring consistency in your database schema is crucial for maintaining data integrity and... | 0 | 2024-06-26T10:08:14 | https://dev.to/msnmongare/verifying-column-types-in-your-database-schema-jlb | sql, postgres, tutorial, beginners | Ensuring consistency in your database schema is crucial for maintaining data integrity and facilitating efficient database operations. One important aspect of schema consistency is verifying the column types across your tables. This is especially important when working with large databases or integrating new data sourc... | msnmongare |

1,901,123 | Engaging Community Features | Goa Game is a captivating online game that has gained immense popularity among gamers. It offers an... | 0 | 2024-06-26T10:04:16 | https://dev.to/fhgjhgj/engaging-community-features-41pd | Goa Game is a captivating online game that has gained immense popularity among gamers. It offers an exciting and immersive experience that keeps players engaged for hours. Whether you are a casual gamer or a serious enthusiast, Goa Game provides endless entertainment and challenges. In this article, we will explore the... | fhgjhgj | |

1,901,122 | Những Điều Cần Biết Về Xổ Số Trà Vinh: Hướng Dẫn Chi Tiết Từ A Đến Z | Xổ số Trà Vinh là một trò chơi may rủi đầy hấp dẫn và mang lại cơ hội trúng thưởng lớn cho người... | 0 | 2024-06-26T10:01:21 | https://dev.to/xs_travinh_d553ec88e43cc2/nhung-dieu-can-biet-ve-xo-so-tra-vinh-huong-dan-chi-tiet-tu-a-den-z-jog | Xổ số Trà Vinh là một trò chơi may rủi đầy hấp dẫn và mang lại cơ hội trúng thưởng lớn cho người chơi. Với lịch quay số hàng tuần và cơ cấu giải thưởng phong phú, xổ số Trà Vinh không chỉ là nguồn vui mà còn góp phần vào các hoạt động phát triển cộng đồng. Trong bài viết này, xstravinh.com sẽ cung cấp cho bạn hướng dẫn... | xs_travinh_d553ec88e43cc2 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.