id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,901,691 | Global Color Measuring Instrument Market by Market growth, trend, opportunity and forecast 2023-2030 | Global Color Measuring Instrument Market The Color Measuring Instrument Market is expected to grow... | 0 | 2024-06-26T17:46:52 | https://dev.to/reportprime23/global-color-measuring-instrument-market-by-market-growth-trend-opportunity-and-forecast-2023-2030-4in3 |

Global Color Measuring Instrument Market

The Color Measuring Instrument Market is expected to grow from USD 1.10 Billion in 2022 to USD 2.50 Billion by 2030, at a CAGR of 11.20% during the forecast period.

Get Sample Report: https://www.reportprime.com/enquiry/sample-report/1467

Global Color Measuring Instrument Mark... | reportprime23 | |

1,901,690 | Global Coriolis Mass Flowmeters Market by Market growth, trend, opportunity and forecast 2023-2030 | Global Coriolis Mass Flowmeters Market The Coriolis Mass Flowmeters Market is expected to grow from... | 0 | 2024-06-26T17:46:23 | https://dev.to/reportprime23/global-coriolis-mass-flowmeters-market-by-market-growth-trend-opportunity-and-forecast-2023-2030-4lnj |

Global Coriolis Mass Flowmeters Market

The Coriolis Mass Flowmeters Market is expected to grow from USD 2.50 Billion in 2022 to USD 3.70 Billion by 2030, at a CAGR of 5.10% during the forecast period.

Get Sample Report: https://www.reportprime.com/enquiry/sample-report/7205

Global Coriolis Mass Flowmeters Market Size... | reportprime23 | |

1,901,680 | Securing the Kingdom: IAM Best Practices for AWS Cloud Castle | It was a dark and stormy night when the alarm bells started ringing across the cloud castle. Deep... | 0 | 2024-06-26T17:45:36 | https://dev.to/ikoh_sylva/securing-the-kingdom-iam-best-practices-for-aws-cloud-castle-2085 | cloudcomputing, aws, cloudskills, cloudpractitioner | It was a dark and stormy night when the alarm bells started ringing across the cloud castle. Deep within the servers, the sentries detected a disturbance - an unauthorized attempt to access the crown jewels.

"Sound the alarm!" barked Sir Lancelot, the stalwart chief of security. "We've got a breach!"

As the castle gu... | ikoh_sylva |

1,901,687 | The New Wave (Exploring the Future) | Exploring the Future: Web Development, Programming, AI, and Open Source Web Development Trends The... | 0 | 2024-06-26T17:44:56 | https://dev.to/dan52242644dan/the-new-wave-exploring-the-future-4291 | webdev, programming, ai, opensource | Exploring the Future: Web Development, Programming, AI, and Open Source

Web Development Trends

The landscape of web development is constantly evolving, with new technologies and methodologies emerging to enhance user experience and developer efficiency. In 2024, several key trends are shaping the future of web developm... | dan52242644dan |

1,901,679 | How to install Anaconda on Arch Linux | Links: Anaconda: Anaconda AUR Package Miniconda: Miniconda AUR Package Step 1: Clone... | 0 | 2024-06-26T17:36:15 | https://dev.to/kutt27/how-to-install-anaconda-on-arch-linux-5a1c | anaconda, python, archlinux, jupyter | **Links:**

- Anaconda: [Anaconda AUR Package](https://aur.archlinux.org/packages/anaconda)

- Miniconda: [Miniconda AUR Package](https://aur.archlinux.org/packages/miniconda3)

## Step 1: Clone the Package to Your System

1. Click on the `Git Clone URL` and copy the repository link.

2. Open your terminal and navigate to... | kutt27 |

1,901,686 | Are PRs effective in the code review process? 👩💻 | Well, love them or hate them, but you can't escape them! 😥 Dive deep into today's compelling... | 0 | 2024-06-26T17:44:28 | https://dev.to/grocto/are-prs-effective-in-the-code-review-process-29kk | webdev, devops, learning, career | Well, love them or hate them, but you can't escape them! 😥

Dive deep into today's compelling discussion on enhancing DevEx, code reviews, leading Gen Zs, AI dev tools & more in hashtag#ep43 of the groCTO podcast featuring Jacob Singh, CTO in Residence at Alpha Wave Global 🎙

[](https://grocto.substack.com/p/ep-43-en... | grocto |

1,901,685 | Google Drive API and Google Cloud Billing | is Google Drive API will cost me a charge after my Google Cloud Free trial? Sorry, I am confused. | 0 | 2024-06-26T17:43:32 | https://dev.to/phil_cajurao/google-drive-api-and-google-cloud-billing-3gea | is Google Drive API will cost me a charge after my Google Cloud Free trial? Sorry, I am confused. | phil_cajurao | |

1,901,683 | Aggregation query in Cosmos DB | Aggregation Queries in Cosmos DB with Ternary: A Workaround for Performance Concerns Due to potential... | 0 | 2024-06-26T17:40:40 | https://dev.to/seifolahghaderi/aggregation-query-in-cosmos-db-366b | Aggregation Queries in Cosmos DB with Ternary: A Workaround for Performance Concerns

Due to potential performance issues, I understand it's not typical to write aggregation queries like SUM and AVG on NoSQL databases. However, sometimes it's necessary to find a workaround for temporary situations. With my extensive exp... | seifolahghaderi | |

1,901,682 | Process Scheduling | During my recent exploration of "Operating System Concepts with Java," I delved into Chapter 4,... | 0 | 2024-06-26T17:39:04 | https://dev.to/_hm/process-scheduling-52a | webdev, beginners, programming, tutorial | During my recent exploration of "Operating System Concepts with Java," I delved into Chapter 4, focusing on process scheduling—an area integral to our work as software developers. This chapter provides a comprehensive view of how operating systems manage this critical task.

It's always enriching to deepen your underst... | _hm |

1,901,629 | Let’s Get Hands-On with WordPress FSE Theme and Custom Blocks — Part 1 | FSE (Full Site Editing): Blessing and Curse The introduction of Full Site Editing (FSE)... | 0 | 2024-06-26T17:37:56 | https://dev.to/silviamalavasi/lets-get-hands-on-with-wordpress-fse-theme-and-custom-blocks-part-1-lmj | wordpress, development, fse, blockthemes | # FSE (Full Site Editing): Blessing and Curse

The introduction of Full Site Editing (FSE) represents one of the most significant evolutions in WordPress history. The aim is to provide users with a visual builder-like experience, allowing them to see a direct preview in the dashboard of what will happen on the frontend... | silviamalavasi |

1,901,675 | A More Straightforward Guide to Solving Two Sum. | When approaching the classic two sum problem you can answer in a few different ways. You can attempt... | 0 | 2024-06-26T17:32:18 | https://dev.to/shavonharrisdev/a-more-straightforward-guide-to-solving-two-sum-2gbi | When approaching the classic two sum problem you can answer in a few different ways. You can attempt a brute method in which you consider every possible number.This would be attempted using a nested for loop. This is not an efficient runtime. Or, you can do the one pass method using a hashmap, which is much more effici... | shavonharrisdev | |

1,901,674 | 7 Worst States To Buy Property in the Next 5 Years: A Comprehensive Guide | Investing in real estate can be a lucrative venture, but not all investments are created equal. As we... | 0 | 2024-06-26T17:29:07 | https://dev.to/axon_group_e1f753b73b4102/7-worst-states-to-buy-property-in-the-next-5-years-a-comprehensive-guide-4bei | california, florida, connecticut, ohio | Investing in real estate can be a lucrative venture, but not all investments are created equal. As we look towards the next five years, certain U.S. states present more challenges than opportunities for potential property buyers. High costs, economic instability, and environmental risks contribute to making these state... | axon_group_e1f753b73b4102 |

1,901,671 | Finding the Best Leather Minimalist Wallet in the USA | Hi everyone, I'm on the hunt for a Leather Minimalist Wallet here in the USA, and despite the... | 0 | 2024-06-26T17:27:14 | https://dev.to/lucast/finding-the-best-leather-minimalist-wallet-in-the-usa-20ib | Hi everyone,

I'm on the hunt for a Leather Minimalist Wallet here in the USA, and despite the multitude of options available, I still haven't been able to pinpoint the best one. I'm looking for a wallet that combines both style and functionality, with a sleek design that doesn't compromise on quality. Ideally, it shou... | lucast | |

1,901,391 | 40 Days Of Kubernetes (5/40) | Day 5/40 What is Kubernetes Video Link @piyushsachdeva Git Repository My Git... | 0 | 2024-06-26T17:24:42 | https://dev.to/sina14/40-days-of-kubernetes-540-376d | kubernetes, 40daysofkubernetes | ## Day 5/40

# What is Kubernetes

[Video Link](https://www.youtube.com/watch?v=SGGkUCctL4I)

@piyushsachdeva

[Git Repository](https://github.com/piyushsachdeva/CKA-2024/)

[My Git Repo](https://github.com/sina14/40daysofkubernetes)

We're going to look at `Kubernetes` architecture in depth.

1. `Control Plane` components.... | sina14 |

1,901,666 | Exploring the main areas of Programming | Programming is a multifaceted and essential skill in the modern digital world, encompassing various... | 0 | 2024-06-26T17:23:41 | https://dev.to/annalaura2/exploring-the-main-areas-of-programming-248e | code, backend, frontend, programming |

Programming is a multifaceted and essential skill in the modern digital world, encompassing various fields that allow developers to create, innovate, and solve problems in unique ways. In this text, we'll explore the main areas of programming, highlighting their characteristics, applications, and career opportunities... | annalaura2 |

1,892,585 | Part 10 — Controller Input | In this part, we will make the watch hidden by default and show it in a few seconds by pressing a... | 0 | 2024-06-18T15:01:34 | https://dev.to/kurohuku/part-10-controller-input-1mdm | In this part, we will make the watch hidden by default and show it in a few seconds by pressing a controller button.

There are various ways to get controller input with Unity. This time, we will use [OpenVR Input A... | kurohuku | |

1,901,638 | AI in Cybersecurity: Improving Data Security Services | Artificial intelligence (AI) integration has significantly transformed numerous industries, with... | 0 | 2024-06-26T17:22:08 | https://dev.to/calsoftinc/ai-in-cybersecurity-improving-data-security-services-5028 | ai, cybersecurity, database, security | Artificial intelligence (AI) integration has significantly transformed numerous industries, with cybersecurity being one of the most influential practices. Sensitive data can now be covered from sophisticated cyberattacks with the use of AI-powered data security services. Utilizing AI`s improved threat detection, real-... | calsoftinc |

1,901,627 | The Ultimate Guide to Mastering EC2 Compute Instances and a Step-by-Step Deployment Project | Introduction Amazon Elastic Compute Cloud (EC2) is a cornerstone of Amazon Web Services... | 0 | 2024-06-26T17:20:38 | https://dev.to/hacker_haii/the-ultimate-guide-to-mastering-ec2-compute-instances-and-a-step-by-step-deployment-project-3o3e | aws, devops, virtualmachine, cloud | ## **Introduction**

Amazon Elastic Compute Cloud (EC2) is a cornerstone of Amazon Web Services (AWS), providing resizable compute capacity in the cloud. EC2 allows users to launch virtual servers, known as instances, which can be configured to meet specific needs. This flexibility makes EC2 a critical tool in cloud co... | hacker_haii |

1,901,661 | Explorando as principais áreas da programação | Explorando as Principais Áreas da Programação A programação é uma habilidade multifacetada... | 0 | 2024-06-26T17:20:34 | https://dev.to/annalaura2/explorando-as-principais-areas-da-programacao-f9l | programming, techtalks, backend, frontend | ### Explorando as Principais Áreas da Programação

A programação é uma habilidade multifacetada e essencial no mundo digital moderno, abrangendo diversas áreas que permitem aos desenvolvedores criar, inovar e resolver problemas de maneiras únicas. Neste texto, exploraremos as principais áreas da programação, destacando... | annalaura2 |

1,901,650 | Dynamic DOM Element Creation : <template> | In HTML Create parent div <!--------------- Parent div --------------> ... | 0 | 2024-06-26T17:19:35 | https://dev.to/__khojiakbar__/dynamic-dom-element-creation--400k | javascript, dom, template | ### In HTML

1. Create parent div

```

<!--------------- Parent div -------------->

<div id="parent" class="card m-5 mx-auto" >

<h1>Hello Template</h1>

</div>

```

2. Create template:

```

<!----------------- Template tag ------------>

<template id="template">

<div class="card d-flex">

... | __khojiakbar__ |

1,901,414 | Who's the real boss? - Lead vs Manager | Lead vs Manager in the workplace: Who is who? Distinct Paths in Team Leadership The... | 0 | 2024-06-26T14:44:28 | https://dev.to/zoltan_fehervari_52b16d1d/lead-vs-manager-59ag | lead, manager, teamleadership, management | [Lead vs Manager](https://bluebirdinternational.com/lead-vs-manager/) in the workplace: Who is who?

## Distinct Paths in Team Leadership

The distinction between leads and managers is marked by their focus and scope of authority.

**Leads** go deep into technical details, steering projects with their expertise.

**Man... | zoltan_fehervari_52b16d1d |

1,899,083 | Exploring Angular 18’s RedirectCommand class and @let block | Written by Lewis Cianci✏️ In a single day, 385,000 babies are born, the sun evaporates over a... | 0 | 2024-06-26T17:19:20 | https://blog.logrocket.com/exploring-angular-18-redirectcommand-class-let-block | angular, webdev | **Written by [Lewis Cianci](https://blog.logrocket.com/author/lewiscianci/)✏️**

In a single day, 385,000 babies are born, the sun evaporates over a trillion tons of water off the surface of the ocean, and fifteen new stable versions of Angular are released. Okay, that’s an exaggeration (not the babies and evaporation ... | leemeganj |

1,901,647 | #amazon#nail | Best nail design. Click here.... https://t.ly/C9Mrt | 0 | 2024-06-26T17:16:42 | https://dev.to/shahin1310/amazonnail-2dki | Best nail design.

Click here....

[](url)https://t.ly/C9Mrt | shahin1310 | |

1,901,645 | Adding ESP to supercharge your Django Email 🚀 | Whether you are trying to build your own SAAS or building a project for client a its very important... | 0 | 2024-06-26T17:16:18 | https://foxcraft.hashnode.dev/how-to-add-email-service-provider-to-django | django, webdev, djangoemail, email | Whether you are trying to build your own SAAS or building a project for client a its very important to have email integration for sending invoice, newsletter's, updates and more.

Using the default Django's SMTP email is not scalable, with emails hitting the per day limits or going to spam folders.

[Email Service Prov... | paul_freeman |

1,901,639 | Even Server-Side TypeScript Needs the Option to Avoid Asynchronous Processing | I am developing a TypeScript ORM library called Accel Record. Unlike other TypeScript/JavaScript ORM... | 27,598 | 2024-06-26T17:15:11 | https://dev.to/koyopro/even-server-side-typescript-needs-the-option-to-avoid-asynchronous-processing-1opm | typescript, javascript, node | I am developing a TypeScript ORM library called [Accel Record](https://www.npmjs.com/package/accel-record).

Unlike other TypeScript/JavaScript ORM libraries, Accel Record adopts a synchronous API instead of an asynchronous API.

In the process of examining the ORM interface, we compared the advantages and disadvantages... | koyopro |

1,901,644 | Top 10 Reasons that Slow Down Your Woocommerce Site | A fast website is key for any online store's success. This is especially true for WooCommerce... | 0 | 2024-06-26T17:14:31 | https://dev.to/ayeshamehta/top-10-reasons-that-slow-down-your-woocommerce-site-34i2 | technology, woocommerce, development |

A fast website is key for any online store's success. This is especially true for WooCommerce sites. Here speed directly impacts user experience and sales. In the long run it will affect your revenue.

Unfortunatel... | ayeshamehta |

1,901,657 | Cursos De Análise De Dados Gratuitos: Excel E Power BI | Desenvolva suas habilidades em Análise de Dados e impulsione sua carreira em qualquer empresa com os... | 0 | 2024-06-28T13:38:05 | https://guiadeti.com.br/cursos-analise-de-dados-gratuitos-excel-power-bi/ | cursogratuito, analisededados, cursosgratuitos, dados | ---

title: Cursos De Análise De Dados Gratuitos: Excel E Power BI

published: true

date: 2024-06-26 17:13:45 UTC

tags: CursoGratuito,analisededados,cursosgratuitos,dados

canonical_url: https://guiadeti.com.br/cursos-analise-de-dados-gratuitos-excel-power-bi/

---

Desenvolva suas habilidades em Análise de Dados e impulsi... | guiadeti |

1,901,635 | Understanding Kubernetes Pods and YAML Fundamentals | Hello everyone, welcome back to my blog series on CKA 2024 in this seventh entry of the series, we'll... | 0 | 2024-06-26T17:10:12 | https://dev.to/jensen1806/understanding-kubernetes-pods-and-yaml-fundamentals-534g | kubernetes, docker, cka, containers | Hello everyone, welcome back to my blog series on CKA 2024 in this seventh entry of the series, we'll be diving deep into Kubernetes Pods and the basics of YAML, which is the primary language used in Kubernetes configurations. We will also explore the concept of Pods in Kubernetes, setting the stage for the hands-on ex... | jensen1806 |

1,901,630 | Day 3 #Shell script(Advance) | Hey Guys! Here is another shell script document for you with advance shell script code. Link ---... | 0 | 2024-06-26T17:06:26 | https://dev.to/dev_roy/day-3-shell-scriptadvance-1850 | Hey Guys!

Here is another shell script document for you with advance shell script code.

Link --- https://medium.com/@devroypartner/shell-script-chapter-3-advance-56418bbf7f98

#Shellscript

#Aws

#CloudComputing

#DevOps

| dev_roy | |

1,901,628 | Armored Athletes: The Wonders of Rhinoceros Beetles | In the realm of insects, size and strength reign supreme for some. Enter the rhinoceros beetles, a... | 0 | 2024-06-26T17:02:53 | https://dev.to/newmanartdesigns/armored-athletes-the-wonders-of-rhinoceros-beetles-5g5e | rhinocerosbeetles, beetles, rhinobeetles, newmanartdesigns | In the realm of insects, size and strength reign supreme for some. Enter the rhinoceros beetles, a subfamily of scarab beetles boasting impressive physiques and herculean feats. These magnificent creatures are not just captivating to look at; they're living testaments to the wonders of evolution. Chalcosoma caucasus (R... | newmanartdesigns |

1,899,778 | 🔍 Dive into CSS Anchor Positioning – Let's Hang! | Hey 👋 This weeks newsletter is packed full of great reads and resources here's a quick look: 🔗 No... | 0 | 2024-06-26T17:00:00 | https://dev.to/adam/dive-into-css-anchor-positioning-lets-hang-5eob | css, ux, git, html |

**Hey** 👋 This weeks newsletter is packed full of great reads and resources here's a quick look:

🔗 No more 404s – smarter URL handling

🧩 Pure CSS Nesting & BEM Modifiers

⚔️ Master auto transitions in CSS

Enjoy & stay inspired 👋 - Adam at Unicorn Club.

---

## 📬 Want More? Subscribe to Our Newsletter!

Ge... | adam |

1,901,626 | SEO and Social Media Marketing Services | Introduction In the digital age, having a strong online presence is essential for... | 0 | 2024-06-26T16:59:56 | https://dev.to/theranking_geeks/seo-and-social-media-marketing-services-5cgm | seo, searchengineoptimization | ## Introduction

In the digital age, having a strong online presence is essential for businesses of all sizes. Two of the most effective strategies for achieving this are **[SEO and Social Media Marketing Services](https://therankinggeeks.ai/services/website-seo/)**. Both SEO and SMM play crucial roles in driving traff... | theranking_geeks |

1,901,625 | Build A Currency Converter in ReactJS | Best Beginner ReactJS Project | Are you new to ReactJS and looking for a project to enhance your skills? Building a currency... | 0 | 2024-06-26T16:58:54 | https://www.codingnepalweb.com/build-currency-converter-project-reactjs/ | webdev, react, javascript, beginners |

Are you new to ReactJS and looking for a project to enhance your skills? Building a currency converter using ReactJS and CSS is an excellent way to do just that. This project reinforces fundamen... | codingnepal |

1,901,624 | Could not locate module in test using @app | I'm trying to work with tests in NestJS now and I couldn't move forward due to a problem with the... | 0 | 2024-06-26T16:58:39 | https://dev.to/fgsl/could-not-locate-module-in-test-using-app-2im8 | I'm trying to work with tests in NestJS now and I couldn't move forward due to a problem with the location of the modules.

The application was already responding, the problems started when I tried to run the tests.

The first try gave me several errors like this:

```

Cannot find module 'src/common/dto/paginated-respon... | fgsl | |

1,901,442 | How I started to code in 1991 ? | Hey everyone! Amir here from Alexandria, Egypt. Today, I want to take you on a nostalgic journey back... | 0 | 2024-06-26T16:57:29 | https://dev.to/bekbrace/atari-800xl-1991-coding-and-father-son-moments-5846 | coding, programming, codenewbie, watercooler | Hey everyone! Amir here from Alexandria, Egypt. Today, I want to take you on a nostalgic journey back to my childhood, where the Atari 800XL and my dad, Samuel, played starring roles. This isn't just a story about a computer; it's about the joy of learning, the excitement of discovery, and the special bond between a fa... | bekbrace |

1,901,623 | CONCEPTS OF CLOUD COMPUTING | virtualization? Virtualization is a tech tools that makes use of the host OS of a single computer... | 0 | 2024-06-26T16:57:27 | https://dev.to/emerivic/concepts-of-cloud-computing-kkn | cloudcomputing, virtualmachine | - virtualization?

Virtualization is a tech tools that makes use of the host OS of a single computer device, to create multiple layers of guests OS. This could be done with the help of a virtualization software known as HYPERVISOR. These guestOS created can be in form of servers, network and storage depending on the re... | emerivic |

1,901,621 | Exploring OpenAI: Revolutionizing Industries with Artificial Intelligence | Artificial Intelligence (AI) has rapidly evolved over the past decade, transforming various sectors... | 0 | 2024-06-26T16:52:31 | https://dev.to/secretsauce/exploring-openai-revolutionizing-industries-with-artificial-intelligence-4pgm | Artificial Intelligence (AI) has rapidly evolved over the past decade, transforming various sectors and offering innovative solutions to complex problems. Among the leading organizations at the forefront of AI development is OpenAI. This research lab is dedicated to ensuring that artificial general intelligence (AGI) b... | secretsauce | |

1,901,619 | CREATING AN IOT HUB IN AZURE PORTAL | The aim of this walkthrough is to create an IoT hub in Azure portal and configure the hub to... | 27,629 | 2024-06-26T16:48:30 | https://dev.to/aizeon/creating-an-iot-hub-in-azure-portal-k02 | azure, tutorial, iot, raspberrypi | The aim of this walkthrough is to create an IoT hub in Azure portal and configure the hub to authenticate a connection to an IoT device using the Raspberry Pi device simulator.

## **PREREQUISITE**

- Working computer

- Internet connection

- Microsoft Azure account + active subscription

## **PROCEDURE**

### **LOCATE ... | aizeon |

1,901,616 | Banking management system | Hello guys I'm here to notify that I'm going to create a banking management system with the help of... | 0 | 2024-06-26T16:44:46 | https://dev.to/rahul_singh_28e35238348c4/banking-management-system-ij0 | java, sql | Hello guys I'm here to notify that I'm going to create a banking management system with the help of java and it's concepts ie Swing and AWT .For database I have used SQL. | rahul_singh_28e35238348c4 |

1,901,612 | EVM Reverse Engineering Challenge 0x00 | It's been a while since I don't blog, so I think it's time. I've been writing an Sandwich bot, mostly... | 27,871 | 2024-06-26T16:43:31 | https://gealber.com/evm-reverse-challenge-0x00 | ethereum, evm |

It's been a while since I don't blog, so I think it's time. I've been writing an Sandwich bot, mostly in the night after work, that according to my forecast it will take me my whole life :). In case you don't know what is a sandwich bot let me tell you is not a robot that makes sandwich, that would be awesome. Just go... | gealber |

1,901,614 | PROJECT ENIGMA -> NO. 1 - ZIP-ZAP (Personalized URL file sharing app) | Posting this as an update for Buildspace Night & Weekend S5. Update No. 1 -> Day 1(defining of... | 0 | 2024-06-26T16:43:09 | https://dev.to/star173/project-enigma-no-1-zip-zap-personalized-url-file-sharing-app-3c1d | buildspace, nws5, opensource, discuss | Posting this as an update for Buildspace Night & Weekend S5.

Update No. 1 -> Day 1(defining of Problem) & Day 2(working out the layout)

## Problem

I constantly have the need to move files around my various number of machines. I often use cloud but as my accounts have 2FA, its takes a bit of time to login. Hence when in... | star173 |

1,901,646 | Badlucky escaped! | Creating games to promote the js13kGames competition started in 2014 when Triskaidekaphobia was... | 0 | 2024-06-26T17:16:36 | https://medium.com/js13kgames/badlucky-escaped-0bb042146106 | competition, floodescape, js13k, badlucky | ---

title: Badlucky escaped!

published: true

date: 2024-06-26 16:42:34 UTC

tags: competition,floodescape,js13k,badlucky

canonical_url: https://medium.com/js13kgames/badlucky-escaped-0bb042146106

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/zu5euh70f959z61cluyv.png

---

Creating games to promote... | end3r |

1,901,611 | Beyond Genetics: Exploring Thick Hair Causes and Solutions That Work | Beyond Genetics: Exploring Thick Hair Causes and Solutions That Work... | 0 | 2024-06-26T16:39:40 | https://dev.to/amanda_drake/beyond-genetics-exploring-thick-hair-causes-and-solutions-that-work-577d | Beyond Genetics: Exploring Thick Hair Causes and Solutions That Work

https://gro.fullyvital.com/dg-serum/#aff=nasr_eldin | amanda_drake | |

1,901,607 | Troubleshooting CORS Issues in Express.js: A Simple Misconfiguration | Recently, while working on a Cross-Origin Resource Sharing (CORS) issue, I encountered a perplexing... | 0 | 2024-06-26T16:36:11 | https://dev.to/sepiyush/troubleshooting-cors-issues-in-expressjs-a-simple-misconfiguration-2e4k | cors, backend, node, express | Recently, while working on a Cross-Origin Resource Sharing (CORS) issue, I encountered a perplexing problem. Despite using the `cors` package with Express.js, my API was not sending the `Access-Control-Allow-Origin` header. This is a common issue developers face, so I decided to share my experience and solution.

### T... | sepiyush |

1,901,605 | Recommending an expert | There are a lot of untrue recommendations and its hard to tell who is legit. If you have lost money... | 0 | 2024-06-26T16:34:55 | https://dev.to/martha_gillies_d870de848a/recommending-an-expert-18pf | There are a lot of untrue recommendations and its hard to tell who is legit. If you have lost money to scam contact (zattrecoverypro1 ⓐ G mail Dot Com) they will surely help you out. Took me long to find them

| martha_gillies_d870de848a | |

1,901,604 | Decoding Demystified : How LLMs Generate Text - III | Welcome back to our series on Generative AI and Large Language Models (LLMs). In the previous blogs,... | 0 | 2024-06-26T16:34:14 | https://dev.to/mahakfaheem/decoding-demystified-how-llms-generate-text-iii-3a0d | beginners, learning, ai, community | Welcome back to our series on Generative AI and Large Language Models (LLMs). In the previous blogs, we explored the foundational concepts and architectures behind LLMs, as well as the critical roles of prompting and training. Now, we will delve into the process of generating text with LLMs, commonly referred to as dec... | mahakfaheem |

1,901,603 | Orangeville Tree Service | Orangeville Tree Service employs certified arborists to maintain the health and beauty of your trees.... | 0 | 2024-06-26T16:32:45 | https://dev.to/orangeville_treeservice_/orangeville-tree-service-4n0o | treeservices, treecare, treeremoval, treetrimming | Orangeville Tree Service employs certified arborists to maintain the health and beauty of your trees. [Tree Services Orangeville ON](https://www.orangevilletreeservice.ca/) offer services like trimming, removal, and stump grinding, ensuring the job is done efficiently and safely, prioritizing customer satisfaction in O... | orangeville_treeservice_ |

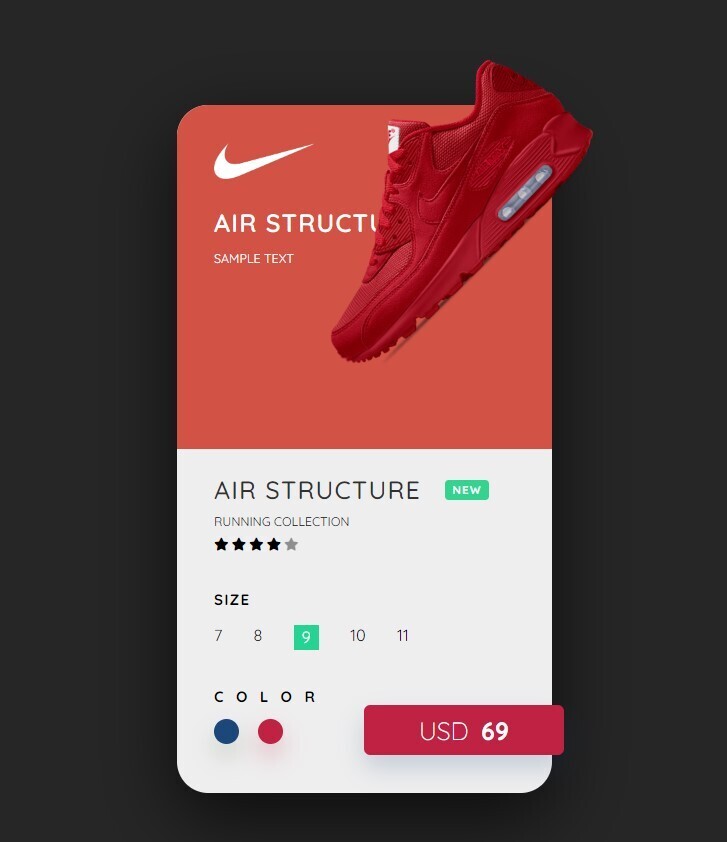

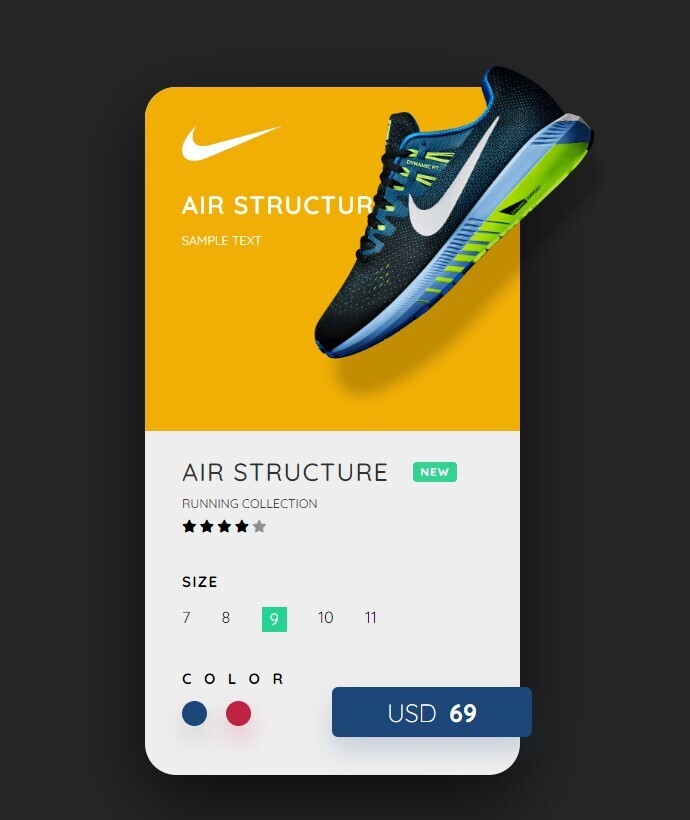

1,901,602 | 🌟 Project 2: Nike Product Card - Part of My 50 Projects Challenge 🏆 | Hello dev.to community! 👋 I'm excited to share the second project of my 50 projects challenge. This... | 0 | 2024-06-26T16:31:06 | https://dev.to/bytesage/project-2-nike-product-card-part-of-my-50-projects-challenge-2mje | webdev, javascript, beginners, programming |

Hello dev.to community! 👋 I'm excited to share the second project of my 50 projects challenge. This project is... | bytesage |

1,901,601 | Concurrency and Parallelism in PHP | Concurrency and parallelism are essential concepts in modern programming, allowing applications to... | 0 | 2024-06-26T16:27:25 | https://dev.to/francescoagati/concurrency-and-parallelism-in-php-6fc | php, concurrency, parallelism, thread | Concurrency and parallelism are essential concepts in modern programming, allowing applications to perform multiple tasks simultaneously, either through interleaved execution (concurrency) or simultaneous execution (parallelism). PHP, primarily known for its synchronous execution model, has evolved to support these par... | francescoagati |

1,901,600 | Behind `NoInfer` in TypeScript | Disclaimer: As of now, I have not found documentation that explicitly supports the type inference... | 0 | 2024-06-26T16:23:33 | https://dev.to/allieschen/behind-noinfer-in-typescript-319 | typescript | **Disclaimer**: As of now, I have not found documentation that explicitly supports the type inference mechanism discussed in this article. This is based on my understanding and experience with TypeScript. Readers are encouraged to explore further and refer to the official TypeScript documentation for additional informa... | allieschen |

1,901,570 | Rails Migration Tips and Examples (not a full step by step) | Generates the model and migration, but also all of the RCAVs for index, show, create, update, and... | 0 | 2024-06-26T16:21:43 | https://dev.to/mayas1111/rails-migration-tips-and-examples-not-a-full-step-by-step-3g1d | migration, ruby, database, generate | Generates the model and migration, but also all of the RCAVs for index, show, create, update, and destroy.

- The model name must be singular.

- Separate column names and datatypes with colons (NO SPACES).

- Separate name:datatype pairs with spaces (NO COMMAS).

```

rails generate draft:resource <MODEL_NAME_SINGULAR> <CO... | mayas1111 |

1,889,165 | Continuacao Fundamentos Java | Programação orientada a objetos A programação orientada a objetos (OOP) é central para Java, que... | 0 | 2024-06-15T02:24:21 | https://dev.to/devsjavagirls/continuacao-fundamentos-592n | **Programação orientada a objetos**

A programação orientada a objetos (OOP) é central para Java, que adota essa metodologia para lidar com a crescente complexidade dos programas, organizando-os em torno de dados e não apenas de código, e utilizando conceitos-chave como encapsulamento, polimorfismo e herança para criar ... | devsjavagirls | |

1,900,608 | What is a Ledger and Why Are Names Harder? | Portugues Version What is Ledger Series What is a Ledger and why you need to learn about... | 0 | 2024-06-26T16:20:54 | https://dev.to/woovi/what-is-a-ledger-and-why-are-names-harder-25jn | webdev, javascript, programming | [Portugues Version](https://daniloab.substack.com/p/o-que-e-ledger-e-por-que-nomes-sao)

## What is Ledger Series

1. [What is a Ledger and why you need to learn about it?](https://dev.to/woovi/what-is-ledger-and-why-does-it-need-idempotence-18n9)

2. [What is Ledger and why does it need Idempotence?](https://dev.to/woov... | daniloab |

1,900,377 | Get to know Xperience by Kentico: Unlocking performance insights with MiniProfiler | In today's fast-paced digital world, performance is a critical factor in ensuring a seamless user... | 0 | 2024-06-26T16:19:47 | https://dev.to/michael419/get-to-know-xperience-by-kentico-unlocking-performance-insights-with-miniprofiler-2b2c | kentico, xperience, miniprofiler | In today's fast-paced digital world, performance is a critical factor in ensuring a seamless user experience, and as developers, we constantly seek tools that help us optimize and monitor the performance of our applications.

One such powerful tool is MiniProfiler, and when integrated with Xperience by Kentico, it offe... | michael419 |

1,901,510 | Advanced Angular Forms: Dynamic Fields & Custom Validators | Introduction Forms are a fundamental part of web applications, allowing users to input and... | 0 | 2024-06-26T16:19:05 | https://dev.to/wirefuture/advanced-angular-forms-dynamic-fields-custom-validators-3cn0 | webdev, angular, javascript, typescript | ## Introduction

Forms are a fundamental part of web applications, allowing users to input and submit data. Form handling in Angular is robust and flexible using reactive forms. More complex and dynamic forms of applications require advanced techniques to handle them. In this article, we'll delve into advanced Angular ... | tapeshm |

1,901,509 | How to Choose the Best Game Site for Your Needs | Choosing the best slot site for your needs requires careful consideration of several factors to... | 0 | 2024-06-26T16:16:51 | https://dev.to/nehasinghjk/how-to-choose-the-best-game-site-for-your-needs-29dd | games | Choosing the best slot site for your needs requires careful consideration of several factors to ensure a safe, enjoyable, and rewarding experience. With countless online slot sites available, it can be overwhelming to navigate through the options.

Here are some essential tips to help you make an informed decision:

<h2... | nehasinghjk |

1,901,502 | Improving Efficiency in Defect Resolution - Your Input Needed | 👀 Are you or your team spending more time debugging defects than building game-changing... | 0 | 2024-06-26T16:03:53 | https://dev.to/ericwood73/improving-efficiency-in-defect-resolution-your-input-needed-50mn | productivity, softwaredevelopment, defectresolution, debugging | ##👀 Are you or your team spending more time debugging defects than building game-changing features?

I'm on a mission to **bridge the gaps** holding us back. I’m creating a program to boost efficiency and effectiveness in defect resolution. But I need YOUR insights to ensure it delivers the greatest value.

###📝 [Joi... | ericwood73 |

1,875,668 | Join us for the Wix Studio Challenge with Special Guest Judge Ania Kubów: $3,000 in Prizes! | We are so excited to announce our first partnered challenge with Wix. Running through July 07 July... | 0 | 2024-06-26T16:16:40 | https://dev.to/devteam/join-us-for-the-wix-studio-challenge-with-special-guest-judge-ania-kubow-3000-in-prizes-3ial | devchallenge, wixstudiochallenge, webdev, javascript | ---

title: Join us for the Wix Studio Challenge with Special Guest Judge Ania Kubów: $3,000 in Prizes!

published: true

description:

tags: devchallenge, wixstudiochallenge, webdev, javascript

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/cz0a4xd2f6x923a6jeyv.png

# Use a ratio of 100:42 for best... | thepracticaldev |

1,901,507 | Colima in macOS | Hey there! If you're like me, trying to set up colima on your M1 Mac and getting stuck with these... | 0 | 2024-06-26T16:16:23 | https://dev.to/netoht/colima-in-macos-44g | Hey there! If you're like me, trying to set up colima on your M1 Mac and getting stuck with these pesky proxy issues, self-signed certificates and volumes - don't sweat it. I've got a fix for you.

Once we’ve wrapped up the setup, we’ll give it a whirl using docker compose and two images: mongodb and redis. These two ... | netoht | |

1,901,508 | How to Install Devise | Use Devise for Users Install rails generate devise:install Enter fullscreen mode ... | 0 | 2024-06-26T16:15:20 | https://dev.to/mayas1111/how-to-install-devise-28pd | devise, users, programming, ruby | Use Devise for Users

Install

```

rails generate devise:install

```

Change :delete to :getin devise file

```

# config/initializers/devise.rb, Line 269

config.sign_out_via = :get

```

Set a root route in routes

```

# config/routes.rb

root "boards#index"

```

Use our new generator supplied by the gem to create the Use... | mayas1111 |

1,901,505 | Buy verified cash app account | https://dmhelpshop.com/product/buy-verified-cash-app-account/ Buy verified cash app account Cash... | 0 | 2024-06-26T16:07:16 | https://dev.to/thesexillwr933/buy-verified-cash-app-account-1a5a | webdev, javascript, beginners, programming | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-cash-app-account/\n\n\nBuy verified cash app account\nCash app has emerged as a dominant force in the realm of mobile banking within the USA, offering unparalleled convenience for digital money transfers, deposits, and trading. As the foremost provider of fully verified cash app accounts, we take pride in our ability to deliver accounts with substantial limits. Bitcoin enablement, and an unmatched level of security.\n\nOur commitment to facilitating seamless transactions and enabling digital currency trades has garnered significant acclaim, as evidenced by the overwhelming response from our satisfied clientele. Those seeking buy verified cash app account with 100% legitimate documentation and unrestricted access need look no further. Get in touch with us promptly to acquire your verified cash app account and take advantage of all the benefits it has to offer.\n\nWhy dmhelpshop is the best place to buy USA cash app accounts?\nIt’s crucial to stay informed about any updates to the platform you’re using. If an update has been released, it’s important to explore alternative options. Contact the platform’s support team to inquire about the status of the cash app service.\n\nClearly communicate your requirements and inquire whether they can meet your needs and provide the buy verified cash app account promptly. If they assure you that they can fulfill your requirements within the specified timeframe, proceed with the verification process using the required documents.\n\nOur account verification process includes the submission of the following documents: [List of specific documents required for verification].\n\nGenuine and activated email verified\nRegistered phone number (USA)\nSelfie verified\nSSN (social security number) verified\nDriving license\nBTC enable or not enable (BTC enable best)\n100% replacement guaranteed\n100% customer satisfaction\nWhen it comes to staying on top of the latest platform updates, it’s crucial to act fast and ensure you’re positioned in the best possible place. If you’re considering a switch, reaching out to the right contacts and inquiring about the status of the buy verified cash app account service update is essential.\n\nClearly communicate your requirements and gauge their commitment to fulfilling them promptly. Once you’ve confirmed their capability, proceed with the verification process using genuine and activated email verification, a registered USA phone number, selfie verification, social security number (SSN) verification, and a valid driving license.\n\nAdditionally, assessing whether BTC enablement is available is advisable, buy verified cash app account, with a preference for this feature. It’s important to note that a 100% replacement guarantee and ensuring 100% customer satisfaction are essential benchmarks in this process.\n\nHow to use the Cash Card to make purchases?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card. Alternatively, you can manually enter the CVV and expiration date. How To Buy Verified Cash App Accounts.\n\nAfter submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a buy verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account.\n\nWhy we suggest to unchanged the Cash App account username?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card.\n\nAlternatively, you can manually enter the CVV and expiration date. After submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account. Purchase Verified Cash App Accounts.\n\nSelecting a username in an app usually comes with the understanding that it cannot be easily changed within the app’s settings or options. This deliberate control is in place to uphold consistency and minimize potential user confusion, especially for those who have added you as a contact using your username. In addition, purchasing a Cash App account with verified genuine documents already linked to the account ensures a reliable and secure transaction experience.\n\n \n\nBuy verified cash app accounts quickly and easily for all your financial needs.\nAs the user base of our platform continues to grow, the significance of verified accounts cannot be overstated for both businesses and individuals seeking to leverage its full range of features. How To Buy Verified Cash App Accounts.\n\nFor entrepreneurs, freelancers, and investors alike, a verified cash app account opens the door to sending, receiving, and withdrawing substantial amounts of money, offering unparalleled convenience and flexibility. Whether you’re conducting business or managing personal finances, the benefits of a verified account are clear, providing a secure and efficient means to transact and manage funds at scale.\n\nWhen it comes to the rising trend of purchasing buy verified cash app account, it’s crucial to tread carefully and opt for reputable providers to steer clear of potential scams and fraudulent activities. How To Buy Verified Cash App Accounts. With numerous providers offering this service at competitive prices, it is paramount to be diligent in selecting a trusted source.\n\nThis article serves as a comprehensive guide, equipping you with the essential knowledge to navigate the process of procuring buy verified cash app account, ensuring that you are well-informed before making any purchasing decisions. Understanding the fundamentals is key, and by following this guide, you’ll be empowered to make informed choices with confidence.\n\n \n\nIs it safe to buy Cash App Verified Accounts?\nCash App, being a prominent peer-to-peer mobile payment application, is widely utilized by numerous individuals for their transactions. However, concerns regarding its safety have arisen, particularly pertaining to the purchase of “verified” accounts through Cash App. This raises questions about the security of Cash App’s verification process.\n\nUnfortunately, the answer is negative, as buying such verified accounts entails risks and is deemed unsafe. Therefore, it is crucial for everyone to exercise caution and be aware of potential vulnerabilities when using Cash App. How To Buy Verified Cash App Accounts.\n\nCash App has emerged as a widely embraced platform for purchasing Instagram Followers using PayPal, catering to a diverse range of users. This convenient application permits individuals possessing a PayPal account to procure authenticated Instagram Followers.\n\nLeveraging the Cash App, users can either opt to procure followers for a predetermined quantity or exercise patience until their account accrues a substantial follower count, subsequently making a bulk purchase. Although the Cash App provides this service, it is crucial to discern between genuine and counterfeit items. If you find yourself in search of counterfeit products such as a Rolex, a Louis Vuitton item, or a Louis Vuitton bag, there are two viable approaches to consider.\n\n \n\nWhy you need to buy verified Cash App accounts personal or business?\nThe Cash App is a versatile digital wallet enabling seamless money transfers among its users. However, it presents a concern as it facilitates transfer to both verified and unverified individuals.\n\nTo address this, the Cash App offers the option to become a verified user, which unlocks a range of advantages. Verified users can enjoy perks such as express payment, immediate issue resolution, and a generous interest-free period of up to two weeks. With its user-friendly interface and enhanced capabilities, the Cash App caters to the needs of a wide audience, ensuring convenient and secure digital transactions for all.\n\nIf you’re a business person seeking additional funds to expand your business, we have a solution for you. Payroll management can often be a challenging task, regardless of whether you’re a small family-run business or a large corporation. How To Buy Verified Cash App Accounts.\n\nImproper payment practices can lead to potential issues with your employees, as they could report you to the government. However, worry not, as we offer a reliable and efficient way to ensure proper payroll management, avoiding any potential complications. Our services provide you with the funds you need without compromising your reputation or legal standing. With our assistance, you can focus on growing your business while maintaining a professional and compliant relationship with your employees. Purchase Verified Cash App Accounts.\n\nA Cash App has emerged as a leading peer-to-peer payment method, catering to a wide range of users. With its seamless functionality, individuals can effortlessly send and receive cash in a matter of seconds, bypassing the need for a traditional bank account or social security number. Buy verified cash app account.\n\nThis accessibility makes it particularly appealing to millennials, addressing a common challenge they face in accessing physical currency. As a result, ACash App has established itself as a preferred choice among diverse audiences, enabling swift and hassle-free transactions for everyone. Purchase Verified Cash App Accounts.\n\n \n\nHow to verify Cash App accounts\nTo ensure the verification of your Cash App account, it is essential to securely store all your required documents in your account. This process includes accurately supplying your date of birth and verifying the US or UK phone number linked to your Cash App account.\n\nAs part of the verification process, you will be asked to submit accurate personal details such as your date of birth, the last four digits of your SSN, and your email address. If additional information is requested by the Cash App community to validate your account, be prepared to provide it promptly. Upon successful verification, you will gain full access to managing your account balance, as well as sending and receiving funds seamlessly. Buy verified cash app account.\n\n \n\nHow cash used for international transaction?\nExperience the seamless convenience of this innovative platform that simplifies money transfers to the level of sending a text message. It effortlessly connects users within the familiar confines of their respective currency regions, primarily in the United States and the United Kingdom.\n\nNo matter if you’re a freelancer seeking to diversify your clientele or a small business eager to enhance market presence, this solution caters to your financial needs efficiently and securely. Embrace a world of unlimited possibilities while staying connected to your currency domain. Buy verified cash app account.\n\nUnderstanding the currency capabilities of your selected payment application is essential in today’s digital landscape, where versatile financial tools are increasingly sought after. In this era of rapid technological advancements, being well-informed about platforms such as Cash App is crucial.\n\nAs we progress into the digital age, the significance of keeping abreast of such services becomes more pronounced, emphasizing the necessity of staying updated with the evolving financial trends and options available. Buy verified cash app account.\n\nOffers and advantage to buy cash app accounts cheap?\nWith Cash App, the possibilities are endless, offering numerous advantages in online marketing, cryptocurrency trading, and mobile banking while ensuring high security. As a top creator of Cash App accounts, our team possesses unparalleled expertise in navigating the platform.\n\nWe deliver accounts with maximum security and unwavering loyalty at competitive prices unmatched by other agencies. Rest assured, you can trust our services without hesitation, as we prioritize your peace of mind and satisfaction above all else.\n\nEnhance your business operations effortlessly by utilizing the Cash App e-wallet for seamless payment processing, money transfers, and various other essential tasks. Amidst a myriad of transaction platforms in existence today, the Cash App e-wallet stands out as a premier choice, offering users a multitude of functions to streamline their financial activities effectively. Buy verified cash app account.\n\nTrustbizs.com stands by the Cash App’s superiority and recommends acquiring your Cash App accounts from this trusted source to optimize your business potential.\n\nHow Customizable are the Payment Options on Cash App for Businesses?\nDiscover the flexible payment options available to businesses on Cash App, enabling a range of customization features to streamline transactions. Business users have the ability to adjust transaction amounts, incorporate tipping options, and leverage robust reporting tools for enhanced financial management.\n\nExplore trustbizs.com to acquire verified Cash App accounts with LD backup at a competitive price, ensuring a secure and efficient payment solution for your business needs. Buy verified cash app account.\n\nDiscover Cash App, an innovative platform ideal for small business owners and entrepreneurs aiming to simplify their financial operations. With its intuitive interface, Cash App empowers businesses to seamlessly receive payments and effectively oversee their finances. Emphasizing customization, this app accommodates a variety of business requirements and preferences, making it a versatile tool for all.\n\nWhere To Buy Verified Cash App Accounts\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nThe Importance Of Verified Cash App Accounts\nIn today’s digital age, the significance of verified Cash App accounts cannot be overstated, as they serve as a cornerstone for secure and trustworthy online transactions.\n\nBy acquiring verified Cash App accounts, users not only establish credibility but also instill the confidence required to participate in financial endeavors with peace of mind, thus solidifying its status as an indispensable asset for individuals navigating the digital marketplace.\n\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nConclusion\nEnhance your online financial transactions with verified Cash App accounts, a secure and convenient option for all individuals. By purchasing these accounts, you can access exclusive features, benefit from higher transaction limits, and enjoy enhanced protection against fraudulent activities. Streamline your financial interactions and experience peace of mind knowing your transactions are secure and efficient with verified Cash App accounts.\n\nChoose a trusted provider when acquiring accounts to guarantee legitimacy and reliability. In an era where Cash App is increasingly favored for financial transactions, possessing a verified account offers users peace of mind and ease in managing their finances. Make informed decisions to safeguard your financial assets and streamline your personal transactions effectively.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com" | thesexillwr933 |

1,901,503 | Day 30 of my progress as a vue dev | About today Today was a decent day,I ended up finishing my last landing page and spent a decent... | 0 | 2024-06-26T16:05:42 | https://dev.to/zain725342/day-30-of-my-progress-as-a-vue-dev-5d45 | webdev, vue, typescript, tailwindcss | **About today**

Today was a decent day,I ended up finishing my last landing page and spent a decent amount of time working on my job project so I think it was satisfactory.

**What's next?**

I will be ending this series here as it sort of got a little directionless and did not entirely serve the purpose I started it fo... | zain725342 |

1,897,705 | [DAY 63-65] I built a markdown previewer using React | Hi everyone! Welcome back to another blog where I document the things I learned in web development. I... | 27,380 | 2024-06-26T16:00:00 | https://dev.to/thomascansino/day-63-65-i-built-a-markdown-previewer-18a4 | learning, react, javascript, webdev | Hi everyone! Welcome back to another blog where I document the things I learned in web development. I do this because it helps retain the information and concepts as it is some sort of an active recall.

On days 63-65, after completing the first project in the Front End Development Libraries course, I moved on to the s... | thomascansino |

1,901,500 | Setting Up a New Angular Project and Installing Dependencies | Created by Google, Angular is a popular, powerful framework for building rich, dynamic, and snappy... | 0 | 2024-06-26T15:59:25 | https://dev.to/orases1/setting-up-a-new-angular-project-and-installing-dependencies-1a4o | webdev, programming, angular | Created by Google, [Angular](https://angular.io/) is a popular, powerful framework for building rich, dynamic, and snappy web applications.

Angular stands out in modern web development due to its extensive toolset, which includes powerful features such as [two-way data binding](https://angular.io/guide/two-way-bindin... | orases1 |

1,901,497 | Buy verified cash app account | https://dmhelpshop.com/product/buy-verified-cash-app-account/ Buy verified cash app account Cash... | 0 | 2024-06-26T15:56:13 | https://dev.to/rcukmiller65/buy-verified-cash-app-account-1kdm | webdev, javascript, beginners, programming | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-cash-app-account/\n\n\n\n\nBuy verified cash app account\nCash app has emerged as a dominant force in the realm of mobile banking within the USA, offering unparalleled convenience for digital money transfers, deposits, and trading. As the foremost provider of fully verified cash app accounts, we take pride in our ability to deliver accounts with substantial limits. Bitcoin enablement, and an unmatched level of security.\n\nOur commitment to facilitating seamless transactions and enabling digital currency trades has garnered significant acclaim, as evidenced by the overwhelming response from our satisfied clientele. Those seeking buy verified cash app account with 100% legitimate documentation and unrestricted access need look no further. Get in touch with us promptly to acquire your verified cash app account and take advantage of all the benefits it has to offer.\n\nWhy dmhelpshop is the best place to buy USA cash app accounts?\nIt’s crucial to stay informed about any updates to the platform you’re using. If an update has been released, it’s important to explore alternative options. Contact the platform’s support team to inquire about the status of the cash app service.\n\nClearly communicate your requirements and inquire whether they can meet your needs and provide the buy verified cash app account promptly. If they assure you that they can fulfill your requirements within the specified timeframe, proceed with the verification process using the required documents.\n\nOur account verification process includes the submission of the following documents: [List of specific documents required for verification].\n\nGenuine and activated email verified\nRegistered phone number (USA)\nSelfie verified\nSSN (social security number) verified\nDriving license\nBTC enable or not enable (BTC enable best)\n100% replacement guaranteed\n100% customer satisfaction\nWhen it comes to staying on top of the latest platform updates, it’s crucial to act fast and ensure you’re positioned in the best possible place. If you’re considering a switch, reaching out to the right contacts and inquiring about the status of the buy verified cash app account service update is essential.\n\nClearly communicate your requirements and gauge their commitment to fulfilling them promptly. Once you’ve confirmed their capability, proceed with the verification process using genuine and activated email verification, a registered USA phone number, selfie verification, social security number (SSN) verification, and a valid driving license.\n\nAdditionally, assessing whether BTC enablement is available is advisable, buy verified cash app account, with a preference for this feature. It’s important to note that a 100% replacement guarantee and ensuring 100% customer satisfaction are essential benchmarks in this process.\n\nHow to use the Cash Card to make purchases?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card. Alternatively, you can manually enter the CVV and expiration date. How To Buy Verified Cash App Accounts.\n\nAfter submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a buy verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account.\n\nWhy we suggest to unchanged the Cash App account username?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card.\n\nAlternatively, you can manually enter the CVV and expiration date. After submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account. Purchase Verified Cash App Accounts.\n\nSelecting a username in an app usually comes with the understanding that it cannot be easily changed within the app’s settings or options. This deliberate control is in place to uphold consistency and minimize potential user confusion, especially for those who have added you as a contact using your username. In addition, purchasing a Cash App account with verified genuine documents already linked to the account ensures a reliable and secure transaction experience.\n\n \n\nBuy verified cash app accounts quickly and easily for all your financial needs.\nAs the user base of our platform continues to grow, the significance of verified accounts cannot be overstated for both businesses and individuals seeking to leverage its full range of features. How To Buy Verified Cash App Accounts.\n\nFor entrepreneurs, freelancers, and investors alike, a verified cash app account opens the door to sending, receiving, and withdrawing substantial amounts of money, offering unparalleled convenience and flexibility. Whether you’re conducting business or managing personal finances, the benefits of a verified account are clear, providing a secure and efficient means to transact and manage funds at scale.\n\nWhen it comes to the rising trend of purchasing buy verified cash app account, it’s crucial to tread carefully and opt for reputable providers to steer clear of potential scams and fraudulent activities. How To Buy Verified Cash App Accounts. With numerous providers offering this service at competitive prices, it is paramount to be diligent in selecting a trusted source.\n\nThis article serves as a comprehensive guide, equipping you with the essential knowledge to navigate the process of procuring buy verified cash app account, ensuring that you are well-informed before making any purchasing decisions. Understanding the fundamentals is key, and by following this guide, you’ll be empowered to make informed choices with confidence.\n\n \n\nIs it safe to buy Cash App Verified Accounts?\nCash App, being a prominent peer-to-peer mobile payment application, is widely utilized by numerous individuals for their transactions. However, concerns regarding its safety have arisen, particularly pertaining to the purchase of “verified” accounts through Cash App. This raises questions about the security of Cash App’s verification process.\n\nUnfortunately, the answer is negative, as buying such verified accounts entails risks and is deemed unsafe. Therefore, it is crucial for everyone to exercise caution and be aware of potential vulnerabilities when using Cash App. How To Buy Verified Cash App Accounts.\n\nCash App has emerged as a widely embraced platform for purchasing Instagram Followers using PayPal, catering to a diverse range of users. This convenient application permits individuals possessing a PayPal account to procure authenticated Instagram Followers.\n\nLeveraging the Cash App, users can either opt to procure followers for a predetermined quantity or exercise patience until their account accrues a substantial follower count, subsequently making a bulk purchase. Although the Cash App provides this service, it is crucial to discern between genuine and counterfeit items. If you find yourself in search of counterfeit products such as a Rolex, a Louis Vuitton item, or a Louis Vuitton bag, there are two viable approaches to consider.\n\n \n\nWhy you need to buy verified Cash App accounts personal or business?\nThe Cash App is a versatile digital wallet enabling seamless money transfers among its users. However, it presents a concern as it facilitates transfer to both verified and unverified individuals.\n\nTo address this, the Cash App offers the option to become a verified user, which unlocks a range of advantages. Verified users can enjoy perks such as express payment, immediate issue resolution, and a generous interest-free period of up to two weeks. With its user-friendly interface and enhanced capabilities, the Cash App caters to the needs of a wide audience, ensuring convenient and secure digital transactions for all.\n\nIf you’re a business person seeking additional funds to expand your business, we have a solution for you. Payroll management can often be a challenging task, regardless of whether you’re a small family-run business or a large corporation. How To Buy Verified Cash App Accounts.\n\nImproper payment practices can lead to potential issues with your employees, as they could report you to the government. However, worry not, as we offer a reliable and efficient way to ensure proper payroll management, avoiding any potential complications. Our services provide you with the funds you need without compromising your reputation or legal standing. With our assistance, you can focus on growing your business while maintaining a professional and compliant relationship with your employees. Purchase Verified Cash App Accounts.\n\nA Cash App has emerged as a leading peer-to-peer payment method, catering to a wide range of users. With its seamless functionality, individuals can effortlessly send and receive cash in a matter of seconds, bypassing the need for a traditional bank account or social security number. Buy verified cash app account.\n\nThis accessibility makes it particularly appealing to millennials, addressing a common challenge they face in accessing physical currency. As a result, ACash App has established itself as a preferred choice among diverse audiences, enabling swift and hassle-free transactions for everyone. Purchase Verified Cash App Accounts.\n\n \n\nHow to verify Cash App accounts\nTo ensure the verification of your Cash App account, it is essential to securely store all your required documents in your account. This process includes accurately supplying your date of birth and verifying the US or UK phone number linked to your Cash App account.\n\nAs part of the verification process, you will be asked to submit accurate personal details such as your date of birth, the last four digits of your SSN, and your email address. If additional information is requested by the Cash App community to validate your account, be prepared to provide it promptly. Upon successful verification, you will gain full access to managing your account balance, as well as sending and receiving funds seamlessly. Buy verified cash app account.\n\n \n\nHow cash used for international transaction?\nExperience the seamless convenience of this innovative platform that simplifies money transfers to the level of sending a text message. It effortlessly connects users within the familiar confines of their respective currency regions, primarily in the United States and the United Kingdom.\n\nNo matter if you’re a freelancer seeking to diversify your clientele or a small business eager to enhance market presence, this solution caters to your financial needs efficiently and securely. Embrace a world of unlimited possibilities while staying connected to your currency domain. Buy verified cash app account.\n\nUnderstanding the currency capabilities of your selected payment application is essential in today’s digital landscape, where versatile financial tools are increasingly sought after. In this era of rapid technological advancements, being well-informed about platforms such as Cash App is crucial.\n\nAs we progress into the digital age, the significance of keeping abreast of such services becomes more pronounced, emphasizing the necessity of staying updated with the evolving financial trends and options available. Buy verified cash app account.\n\nOffers and advantage to buy cash app accounts cheap?\nWith Cash App, the possibilities are endless, offering numerous advantages in online marketing, cryptocurrency trading, and mobile banking while ensuring high security. As a top creator of Cash App accounts, our team possesses unparalleled expertise in navigating the platform.\n\nWe deliver accounts with maximum security and unwavering loyalty at competitive prices unmatched by other agencies. Rest assured, you can trust our services without hesitation, as we prioritize your peace of mind and satisfaction above all else.\n\nEnhance your business operations effortlessly by utilizing the Cash App e-wallet for seamless payment processing, money transfers, and various other essential tasks. Amidst a myriad of transaction platforms in existence today, the Cash App e-wallet stands out as a premier choice, offering users a multitude of functions to streamline their financial activities effectively. Buy verified cash app account.\n\nTrustbizs.com stands by the Cash App’s superiority and recommends acquiring your Cash App accounts from this trusted source to optimize your business potential.\n\nHow Customizable are the Payment Options on Cash App for Businesses?\nDiscover the flexible payment options available to businesses on Cash App, enabling a range of customization features to streamline transactions. Business users have the ability to adjust transaction amounts, incorporate tipping options, and leverage robust reporting tools for enhanced financial management.\n\nExplore trustbizs.com to acquire verified Cash App accounts with LD backup at a competitive price, ensuring a secure and efficient payment solution for your business needs. Buy verified cash app account.\n\nDiscover Cash App, an innovative platform ideal for small business owners and entrepreneurs aiming to simplify their financial operations. With its intuitive interface, Cash App empowers businesses to seamlessly receive payments and effectively oversee their finances. Emphasizing customization, this app accommodates a variety of business requirements and preferences, making it a versatile tool for all.\n\nWhere To Buy Verified Cash App Accounts\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nThe Importance Of Verified Cash App Accounts\nIn today’s digital age, the significance of verified Cash App accounts cannot be overstated, as they serve as a cornerstone for secure and trustworthy online transactions.\n\nBy acquiring verified Cash App accounts, users not only establish credibility but also instill the confidence required to participate in financial endeavors with peace of mind, thus solidifying its status as an indispensable asset for individuals navigating the digital marketplace.\n\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nConclusion\nEnhance your online financial transactions with verified Cash App accounts, a secure and convenient option for all individuals. By purchasing these accounts, you can access exclusive features, benefit from higher transaction limits, and enjoy enhanced protection against fraudulent activities. Streamline your financial interactions and experience peace of mind knowing your transactions are secure and efficient with verified Cash App accounts.\n\nChoose a trusted provider when acquiring accounts to guarantee legitimacy and reliability. In an era where Cash App is increasingly favored for financial transactions, possessing a verified account offers users peace of mind and ease in managing their finances. Make informed decisions to safeguard your financial assets and streamline your personal transactions effectively.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com" | rcukmiller65 |

1,901,496 | TIL: The Basics of GitHub | "Every morning we are born again. What we do today is what matters most." — Buddha When I began... | 0 | 2024-06-26T15:56:05 | https://dev.to/ghewitt95/til-the-basics-of-github-307a | webdev, beginners, todayilearned, github | > "Every morning we are born again. What we do today is what matters most." — Buddha

When I began writing blog posts earlier this year, I would start off with a quote that reflected my current outlook on life. I think it would be a good idea to continue that tradition here.

Today I learned how to store a static webpa... | ghewitt95 |

1,901,495 | Discover Your Path to a Healthier Lifestyle with Happy Dieter | In today's hectic world, maintaining a healthy diet can feel impossible. Between work, family, and... | 0 | 2024-06-26T15:55:36 | https://dev.to/alphapik/discover-your-path-to-a-healthier-lifestyle-with-happy-dieter-4ool | <p>

In today's hectic world, maintaining a healthy diet can feel impossible.

Between work, family, and social commitments, who has the time?

That's where <a href="https://www.happydieter.net/" target="_blank">Happy Dieter</a> steps in.

</p>

<h3>Real Questions, Real Solutions</h3>

<p>

Ever wondered how to balance a bu... | alphapik | |

1,888,505 | This Week In Python | Fri, June 14, 2024 This Week in Python is a concise reading list about what happened in the past... | 0 | 2024-06-14T11:42:37 | https://bas.codes/posts/this-week-python-078 | thisweekinpython, python |

**Fri, June 14, 2024**

This Week in Python is a concise reading list about what happened in the past week in the Python universe.

## Python Articles

- [Optimal SQLite settings for Django](https://gcollazo.com/optimal-sqlite-settings-for-django/)

- [How much math can you do in 10 lines of Python](https://word... | bascodes |

1,901,494 | FastAPI Beyond CRUD Part 14 - Model And Schema Relationships (One To Many SQLModel) | In this video, we leverage SQLmodel’s capabilities to demonstrate how to effectively manage one to... | 0 | 2024-06-26T15:54:49 | https://dev.to/jod35/fastapi-beyond-crud-part-14-model-and-schema-relationships-one-to-many-sqlmodel-335c | fastapi, api, python, webdev | In this video, we leverage SQLmodel’s capabilities to demonstrate how to effectively manage one to many relationships between tables. Furthermore, we explore serializing data model objects, enabling their transformation into API-friendly formats such as JSON.

{%youtube H-7NNblkzrg%} | jod35 |

1,901,493 | Introduction to Azure with .NET Examples | Microsoft Azure is Microsoft's cloud platform offering powerful tools for developers and businesses... | 0 | 2024-06-26T15:47:09 | https://dev.to/adrianbailador/introduction-to-azure-with-net-examples-3ccc | azure, dotnet, cloudcomputing, csharp | Microsoft Azure is Microsoft's cloud platform offering powerful tools for developers and businesses to efficiently and securely build, deploy, and manage applications. In this article, we'll explore some key Azure services and provide practical .NET examples to illustrate their use.

## Discovering Azure

### What is A... | adrianbailador |

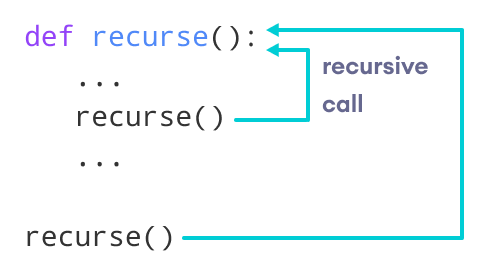

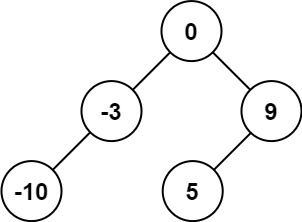

1,901,441 | alternate way of doing word split/phrase segmentation in python | Doing word split with recursion felt a bit complex to me , so I tried to do it in an easier... | 0 | 2024-06-26T15:43:46 | https://dev.to/alexey_27/alternate-way-of-doing-word-splitphrase-segmentation-in-python-pj1 | algorithms, recursion, python, learning | ## **Doing** word split with recursion felt a bit complex to me , so I tried to do it in an easier way.

---

---

---

---

- The Hard Way (recursion) --

```

def word_split(phrase,list_of_words, output = None... | alexey_27 |

1,901,490 | EDR vs. XDR vs. SIEM vs. MDR vs. SOAR | The world of Cybersecurity is buzzing with tech jargon and abbreviations. Many enterprises prefer to... | 0 | 2024-06-26T15:43:43 | https://dev.to/sachindra149/edr-vs-xdr-vs-siem-vs-mdr-vs-soar-2blp | soar, cybersecurity, technology, detectionandresponse | The world of Cybersecurity is buzzing with tech jargon and abbreviations. Many enterprises prefer to use newer approaches to combat the ever-evolving security risks and attack vectors. To counter threats, there are several tools and solutions including **SIEM** (Security Information and Event Management), **MDR** (Mana... | sachindra149 |

1,901,484 | WebSocket : Create your First WebSocket connection | What is Backend Communication? When One Backend system interacts with other Backend systems, Its... | 0 | 2024-06-26T15:32:14 | https://dev.to/nirvanjha2004/websocket-create-your-first-websocket-connection-47o2 | webdev, javascript, beginners, tutorial | **What is Backend Communication?**

When One Backend system interacts with other Backend systems, Its called backend communication. In real world, there are various backend systems not just one. Lets see some examples:-

1. For example, for a website like PayTM, whenever you do a transaction, the following might happen... | nirvanjha2004 |

1,901,488 | Blog: Creating, Modifying, and Destroying an EC2 Instance and Hosting a Static Website Using S3 in AWS with Terraform | Introduction Hello Friends! As part of my internship, I’ve been exploring the capabilities of... | 0 | 2024-06-26T15:42:21 | https://dev.to/jeshlin_pv_1628a63168e90/blog-creating-modifying-and-destroying-an-ec2-instance-and-hosting-a-static-website-using-s3-in-aws-with-terraform-11md | cloudcomputing, aws, cloudskills, blog | **Introduction**