id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,900,043 | A feature-rich and cheaper developer tool than Retool | In the world of low-code platforms, you’ve probably heard of Retool and DronaHQ. Both are stellar... | 0 | 2024-06-25T12:08:58 | https://dev.to/aaikansh_22/a-feature-rich-and-cheaper-developer-tool-than-retool-c46 | development, developers, lowcode, softwaredevelopment | In the world of low-code platforms, you’ve probably heard of Retool and DronaHQ. Both are stellar tools for building internal applications. But after using both for some time now, I’ve come to appreciate what DronaHQ brings to the table. I am **pleased with the UI building capabilities, visual workflow builder, and eng... | aaikansh_22 |

1,900,055 | Raspberry Pi: What you need to know | What is Raspberry Pi, and how is it different from regular computers? How can I take advantage of it?... | 0 | 2024-06-25T12:04:08 | https://dev.to/jane_white_74334c599bfafa/raspberry-pi-what-you-need-to-know-lhc | raspberrypi, iot, raspberryboards, homeautomation | What is Raspberry Pi, and how is it different from regular computers? How can I take advantage of it? There are some questions in your mind related to Raspberry Pi. Raspberry Pi is a single-board computer that offers almost all the computing functionality, and the exciting part is that it comes in handy. But how can su... | jane_white_74334c599bfafa |

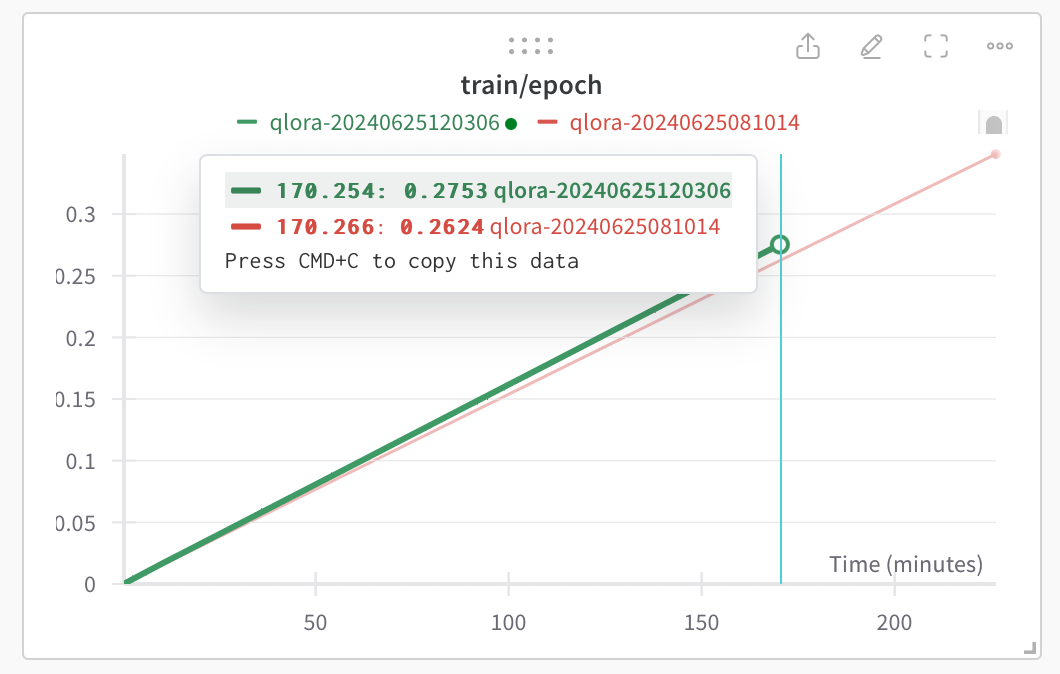

1,900,047 | 4090 - ECC ON vs ECC OFF | Fine Tuning LLM via Huggin Face TRL/Torch: ECC On: 2,22 epochs/day ECC Off: 2,33 epochs/day... | 0 | 2024-06-25T12:03:18 | https://dev.to/maximsaplin/4090-ecc-on-vs-ecc-off-36m4 | ai, machinelearning, llm, hardware | Fine Tuning LLM via Huggin Face TRL/Torch:

- ECC On: 2,22 epochs/day

- ECC Off: 2,33 epochs/day [+5%]

3D Mark TimeSpy Graphics Score:

- ECC On: 36400

- ECC Off: 37000 [+1,6%]

I have noticed the "Change EC... | maximsaplin |

1,900,054 | Passive Memristor Market, Global Outlook and Forecast 2024-2030 | The global Passive Memristor market was valued at US$ million in 2023 and is projected to reach US$... | 0 | 2024-06-25T12:02:05 | https://dev.to/prajakta_pawar_e02edd9c38/passive-memristor-market-global-outlook-and-forecast-2024-2030-2i90 | The global Passive Memristor market was valued at US$ million in 2023 and is projected to reach US$ million by 2030, at a CAGR of % during the forecast period. The influence of COVID-19 and the Russia-Ukraine War were considered while estimating market sizes.

The U.S. Market is Estimated at $ Million in 2023, While Ch... | prajakta_pawar_e02edd9c38 | |

1,900,053 | Timing Control Logic Control Boards Market, Global Outlook and Forecast 2024-2030 | The global Timing Control Logic Control Boards market was valued at US$ million in 2023 and is... | 0 | 2024-06-25T12:01:28 | https://dev.to/prajakta_pawar_e02edd9c38/timing-control-logic-control-boards-market-global-outlook-and-forecast-2024-2030-55j7 | The global Timing Control Logic Control Boards market was valued at US$ million in 2023 and is projected to reach US$ million by 2030, at a CAGR of % during the forecast period. The influence of COVID-19 and the Russia-Ukraine War were considered while estimating market sizes.

The U.S. Market is Estimated at $ Million... | prajakta_pawar_e02edd9c38 | |

1,900,050 | Highlight.js copy button plugin | Highlight.js is quick and easy tool to add syntax highlighting to your code blocks but one feature it... | 0 | 2024-06-25T12:00:14 | https://farazpatankar.com/p/highlight-js-copy-button-plugin | javascript, typescript, tutorial, webdev | [Highlight.js](https://highlightjs.readthedocs.io/en/latest/index.html) is quick and easy tool to add syntax highlighting to your code blocks but one feature it lacks is a copy button to easily copy the contents of the code block.

I use Highlight.js on my personal website and wanted this feature to be able to quickly ... | farazpatankar |

1,900,026 | useImperativeHandle hook | useImperativeHandle: This hook customizes the instance value that is exposed to parent components... | 0 | 2024-06-25T11:32:50 | https://dev.to/geetika_bajpai_a654bfd1e0/useimperativehandle-hook-1ghn | <u>useImperativeHandle:</u> This hook customizes the instance value that is exposed to parent components when using ref. It allows you to define methods on the child component that can be called from the parent component.

<u>forwardRef: </u>This is a React higher-order component that allows a parent component to direc... | geetika_bajpai_a654bfd1e0 | |

1,885,559 | Advanced Dependency Injection in Elixir with Rewire | In our last post, we explored how Dependency Injection (DI) is a powerful design pattern that can... | 27,591 | 2024-06-25T12:00:00 | https://blog.appsignal.com/2024/06/11/advanced-dependency-injection-in-elixir-with-rewire.html | elixir | In our last post, we explored how Dependency Injection (DI) is a powerful design pattern that can improve our ExUnit tests.

In this article, we will dive deeper into the topic of DI in Elixir, focusing on the Rewire library for Elixir projects.

We will cover Rewire's core concepts, how to get started with it, and pra... | allanmacgregor |

1,899,360 | Tutorial: Learn how to use the H2 Database with Spring Boot! 🤔 | In this instructional we’ll review an example application which is written in the Groovy Programming... | 0 | 2024-06-25T11:59:22 | https://thospfuller.com/2024/06/14/h2-database-with-spring-boot/ | java, groovy, database, softwareengineering | **In this [instructional](https://thospfuller.com/categories/tutorials/) we’ll review an example application which is written in the [Groovy Programming Language](https://www.groovy-lang.org/) and which demonstrates how to use the [H2 relational database](https://h2database.com/) ([H2 DB](https://h2database.com/) / [H2... | thospfuller |

1,900,052 | Exploring Next.js Middleware | What is Middleware in Next.js? Imagine that your web app is a nightclub, and middleware is the... | 0 | 2024-06-25T11:58:55 | https://dev.to/basimghouri/exploring-nextjs-middleware-3d78 | allah, prophet, faith, challenge | **What is Middleware in Next.js?**

Imagine that your web app is a nightclub, and middleware is the bouncer at the door. This bouncer decides who gets in based on their IDs. In Next.js, middleware acts as this gatekeeper for your app’s requests, letting you run code before the request completes. It’s perfect for tasks l... | basimghouri |

1,900,051 | PINNACLEINFOTECHSOLUTIONS | Need fast and accurate bulk drawing conversions? We offer CAD conversion services to transform your... | 0 | 2024-06-25T11:58:24 | https://dev.to/pinnacleinfotechsolutions/pinnacleinfotechsolutions-53ke |

Need fast and accurate bulk drawing conversions? We offer CAD conversion services to transform your PDFs, hand sketches, or 2D CAD drawings into clean, editable CAD files. Our experts ensure top quality at affordable rates.

We offer a wide range of BIM solutions to Architecture, Engineering & Construction (AEC) fir... | pinnacleinfotechsolutions | |

1,900,049 | Why should you hire a professional makeup artist in Bangalore? | Hiring a professional makeup artist in Bangalore can significantly enhance your appearance and... | 0 | 2024-06-25T11:57:53 | https://dev.to/amber_obrein_3f827c05a5d4/why-should-you-hire-a-professional-makeup-artist-in-bangalore-n43 | Hiring a professional makeup artist in Bangalore can significantly enhance your appearance and overall experience for any special occasion, particularly weddings. First and foremost, a skilled makeup artist brings expertise and knowledge of different makeup techniques suitable for various skin types and tones. Bangalor... | amber_obrein_3f827c05a5d4 | |

1,900,046 | Analogue Compression Load Cell Unit Market, Global Outlook and Forecast 2024-2030 | The global Analogue Compression Load Cell Unit market was valued at US$ million in 2023 and is... | 0 | 2024-06-25T11:55:38 | https://dev.to/prajakta_pawar_e02edd9c38/analogue-compression-load-cell-unit-market-global-outlook-and-forecast-2024-2030-293o | The global Analogue Compression Load Cell Unit market was valued at US$ million in 2023 and is projected to reach US$ million by 2030, at a CAGR of % during the forecast period. The influence of COVID-19 and the Russia-Ukraine War were considered while estimating market sizes.

The U.S. Market is Estimated at $ Million... | prajakta_pawar_e02edd9c38 | |

1,900,045 | Pressure-Resistant Position Sensor Market, Global Outlook and Forecast 2024-2030 | The global Pressure-Resistant Position Sensor market was valued at US$ million in 2023 and is... | 0 | 2024-06-25T11:55:03 | https://dev.to/prajakta_pawar_e02edd9c38/pressure-resistant-position-sensor-market-global-outlook-and-forecast-2024-2030-5310 | The global Pressure-Resistant Position Sensor market was valued at US$ million in 2023 and is projected to reach US$ million by 2030, at a CAGR of % during the forecast period. The influence of COVID-19 and the Russia-Ukraine War were considered while estimating market sizes.

The U.S. Market is Estimated at $ Million ... | prajakta_pawar_e02edd9c38 | |

1,900,044 | Essential Tips for Junior Developers: What Thousands of Code Reviews Taught Me | Background Over the last year or so, I reviewed over 2,000 merge requests from almost 50... | 0 | 2024-06-25T11:54:19 | https://dev.to/vnjogani/essential-tips-for-junior-developers-what-thousands-of-code-reviews-taught-me-2cga | webdev, python, programming, beginners | ## Background

Over the last year or so, I reviewed over 2,000 merge requests from almost 50 engineers, many of whom were junior engineers just starting their careers. After a while, I started to notice a pattern of frequently occurring issues in the code reviews. With some GPT-assisted analysis, I compiled the follow... | vnjogani |

1,900,042 | Optimize the Kubernetes Dev Experience By Creating Silos | Are silos bad? Is abstraction bad? What’s too much and too little? These are questions that... | 0 | 2024-06-25T11:53:08 | https://dev.to/thenjdevopsguy/optimize-the-kubernetes-dev-experience-by-creating-silos-77d | kubernetes, devops, docker, cloud | Are silos bad?

Is abstraction bad?

What’s too much and too little?

These are questions that engineers and leadership teams alike have been asking themselves for years. For better or for worse, there’s no absolute answer.

In this blog post, we’ll get as close to an answer as possible.

## Silos And Less Abstractions... | thenjdevopsguy |

1,900,041 | Creating Tomcat Threadpools for better throughput | We faced an issue in a front facing Java tomcat application in production. This application receives... | 0 | 2024-06-25T11:52:54 | https://dev.to/sumateja/creating-tomcat-threadpools-for-better-throughput-2l36 | java, tomcat, threadpools, springboot | We faced an issue in a front facing Java tomcat application in production. This application receives traffic from a Admin UI REST calls as well as other external customers calling these REST endpoints as well.

## The Problem

There were two kinds of requests say GET based calls & POST calls. The problem was that non c... | sumateja |

1,900,039 | DOM in JavaScript: The Backbone of Modern Web Development | Hello, fellow developers and IT professionals! Today, we’re diving into the Document Object Model, or... | 0 | 2024-06-25T11:47:40 | https://dev.to/gadekar_sachin/dom-in-javascript-the-backbone-of-modern-web-development-9i1 | javascript, programming, dom, learning |

Hello, fellow developers and IT professionals! Today, we’re diving into the Document Object Model, or DOM, and its significance in JavaScript. Whether you're a beginner or a seasoned pro, understanding the DOM is crucial for creating dynamic and interactive web applications. Let’s explore what the DOM is, why it’s ess... | gadekar_sachin |

1,900,038 | How Much Does it Cost to Hire a Software Development Team? | If you have software development requirements, hiring a dedicated team is an important factor that... | 0 | 2024-06-25T11:45:47 | https://dev.to/lucyzeniffer/how-much-does-it-cost-to-hire-a-software-development-team-1ac1 | If you have software development requirements, hiring a dedicated team is an important factor that can significantly help you manage your software development requirements. Major parameters like project complexity, location, and experience level, decide the overall budget of the software development, including the hiri... | lucyzeniffer | |

1,900,037 | Safety First: Ilya Sutskever Launches Safe Superintelligence Inc. Focused on Responsible AI | Sutskever Prioritizes Safety with New Venture: Safe Superintelligence Inc. The world of... | 0 | 2024-06-25T11:44:50 | https://dev.to/hyscaler/safety-first-ilya-sutskever-launches-safe-superintelligence-inc-focused-on-responsible-ai-4126 | ## Sutskever Prioritizes Safety with New Venture: Safe Superintelligence Inc.

The world of Artificial Intelligence (AI) is rapidly evolving, and concerns around its safe development are growing louder. Ilya Sutskever, a pioneering figure in AI research and former chief scientist at OpenAI, is taking a bold step toward... | suryalok | |

1,900,036 | How to Export Office 365 Emails to PST File? | Exporting emails from Office 365 to a PST file is a common task for users needing to create backups,... | 0 | 2024-06-25T11:44:32 | https://dev.to/alora_eve_7185da91e6a21a7/how-to-export-office-365-emails-to-pst-file-3ff8 | Exporting emails from Office 365 to a PST file is a common task for users needing to create backups, migrate data, or access emails offline. A PST file is a data file used by Microsoft Outlook to store emails, contacts, calendar events, and other items. You can use the **Advik [Office 365 Backup Tool](https://www.advik... | alora_eve_7185da91e6a21a7 | |

1,900,035 | 5 Best Bug Tracking Software in 2024 [with Pros & Cons] | Regarding software development, bugs can be difficult to spot in the earliest stages of production.... | 0 | 2024-06-25T11:43:58 | https://dev.to/morrismoses149/5-best-bug-tracking-software-in-2024-with-pros-cons-ppf | bugtracking, software, testgrid | Regarding software development, bugs can be difficult to spot in the earliest stages of production. Still, debugging can become a nightmare for developers and their clients when they need to be noticed.

With so many bug-tracking software packages on the market today, knowing which will best suit your needs and budget i... | morrismoses149 |

1,900,034 | Laser Energy Measurement Heads Market, Global Outlook and Forecast 2024-2030 | The global Laser Energy Measurement Heads market was valued at US$ million in 2023 and is projected... | 0 | 2024-06-25T11:42:19 | https://dev.to/prajakta_pawar_e02edd9c38/laser-energy-measurement-heads-market-global-outlook-and-forecast-2024-2030-48n8 | The global Laser Energy Measurement Heads market was valued at US$ million in 2023 and is projected to reach US$ million by 2030, at a CAGR of % during the forecast period. The influence of COVID-19 and the Russia-Ukraine War were considered while estimating market sizes.

The U.S. Market is Estimated at $ Million in 2... | prajakta_pawar_e02edd9c38 | |

1,900,029 | Ways to Choose the Best Mobile Application Development Company | A mobile application development company works on making software applications custom-made for mobile... | 0 | 2024-06-25T11:41:43 | https://dev.to/appsait/ways-to-choose-the-best-mobile-application-development-company-3i4l | app, development, webdev, mobile | A mobile application development company works on making software applications custom-made for mobile phones, for example, smartphones and tablets. These organisations utilise groups of talented experts, including developers, designers, and quality assurance specialists, to conceptualise, plan, create, and convey mobil... | appsait |

1,900,033 | How Much Does Drake Tax Software Cost | Cost, expenditures, and maintenance costs are significant concerns for tax firms, CPAs, or... | 0 | 2024-06-25T11:41:03 | https://dev.to/him_tyagi/how-much-does-drake-tax-software-cost-jhd | beginners, techtalks | Cost, expenditures, and maintenance costs are significant concerns for tax firms, CPAs, or accountants when opting for tax software to streamline and speed up their work. This is true in the case of Drake Tax Software, a popular software known for its wide array of features in terms of the tax system, accuracy, reliabi... | him_tyagi |

1,900,032 | Streamlining International Trade with HS Codes | The Harmonized System of Codes (HS code) is an essential framework for international trade, developed... | 0 | 2024-06-25T11:39:59 | https://dev.to/john_hall/streamlining-international-trade-with-hs-codes-5e58 | ai, learning, blockchain, software | The Harmonized System of Codes (HS code) is an essential framework for international trade, developed by the World Customs Organization (WCO) in 1988. HS codes help classify products for customs purposes, ensuring accurate identification and efficient movement of goods.

Core Features of HS Codes:

Widespread Use: Empl... | john_hall |

1,900,028 | How to Avoid Adding New Code that Uses Deprecated Code? | Spring cleaning your code? Developers are constantly improving code and adding new features.... | 0 | 2024-06-25T11:39:09 | https://dev.to/gemal/how-to-avoid-adding-new-code-that-uses-deprecated-code-10hk | php, ci, development | Spring cleaning your code? Developers are constantly improving code and adding new features. Sometimes, this includes deprecating older code as newer, faster alternatives become available. However, it's not always feasible to immediately update all instances where the deprecated code is used.

At [DinnerBooking](https:... | gemal |

1,900,031 | Microcontroller Board Market, Global Outlook and Forecast 2024-2030 | The global Microcontroller Board market was valued at USD 1.47 billion in 2023 and is projected to... | 0 | 2024-06-25T11:37:35 | https://dev.to/prajakta_pawar_e02edd9c38/microcontroller-board-market-global-outlook-and-forecast-2024-2030-5f2m | The global Microcontroller Board market was valued at USD 1.47 billion in 2023 and is projected to reach USD 2.27 billion by 2030, growing at a Compound Annual Growth Rate (CAGR) of 6.4% during the forecast period (2024-2030). The influence of COVID-19 and the Russia-Ukraine War were considered while estimating market ... | prajakta_pawar_e02edd9c38 | |

1,900,030 | Building Your Own SpicyChat AI: A Developer's Guide | Ah, SpicyChat AI - the sassy chatbot that's been stealing hearts and confusing grandparents since it... | 0 | 2024-06-25T11:36:26 | https://dev.to/elisaray/building-your-own-spicychat-ai-a-developers-guide-4l7h | ai, chatbot, spicychat, roleplaychat | Ah, SpicyChat AI - the sassy chatbot that's been stealing hearts and confusing grandparents since it hit the app stores. You've probably thought to yourself, "Hey, I could make that!" right after your third coffee of the day.

Well, my ambitious friend, you're in luck! This guide will walk you through the treacherous ... | elisaray |

1,898,828 | JavaScript: Trabalhando com Set | Eae gente bonita, beleza? Vamos continuar nos aprofundando nas estruturas do JavaScript e dessa vez... | 0 | 2024-06-25T11:36:00 | https://dev.to/cristuker/javascript-trabalhando-com-set-1k9b | javascript, braziliandevs, beginners, node | Eae gente bonita, beleza? Vamos continuar nos aprofundando nas estruturas do JavaScript e dessa vez vamos falar sobre o Set a estrutura de dado e não o número.

.

**Links**

📺 ... | alnutile |

1,900,027 | Microcontroller Board Market, Global Outlook and Forecast 2024-2030 | The global Microcontroller Board market was valued at USD 1.47 billion in 2023 and is projected to... | 0 | 2024-06-25T11:34:14 | https://dev.to/prajakta_pawar_e02edd9c38/microcontroller-board-market-global-outlook-and-forecast-2024-2030-35cn | The global Microcontroller Board market was valued at USD 1.47 billion in 2023 and is projected to reach USD 2.27 billion by 2030, growing at a Compound Annual Growth Rate (CAGR) of 6.4% during the forecast period (2024-2030). The influence of COVID-19 and the Russia-Ukraine War were considered while estimating market ... | prajakta_pawar_e02edd9c38 | |

1,900,025 | The Ultimate Guide to Cardboard Boxes, Mailing Bags, Paper Bags, and Padded Envelopes | For all your shipping and packaging needs, choosing the right materials is crucial. Cardboard boxes... | 0 | 2024-06-25T11:32:21 | https://dev.to/blogging/the-ultimate-guide-to-cardboard-boxes-mailing-bags-paper-bags-and-padded-envelopes-4ig6 | For all your shipping and packaging needs, choosing the right materials is crucial. Cardboard boxes are perfect for sturdy and reliable protection of various items, making them great for storage and transport. Mailing bags are lightweight and durable, ideal for securely sending documents and smaller goods. Paper bags a... | blogging | |

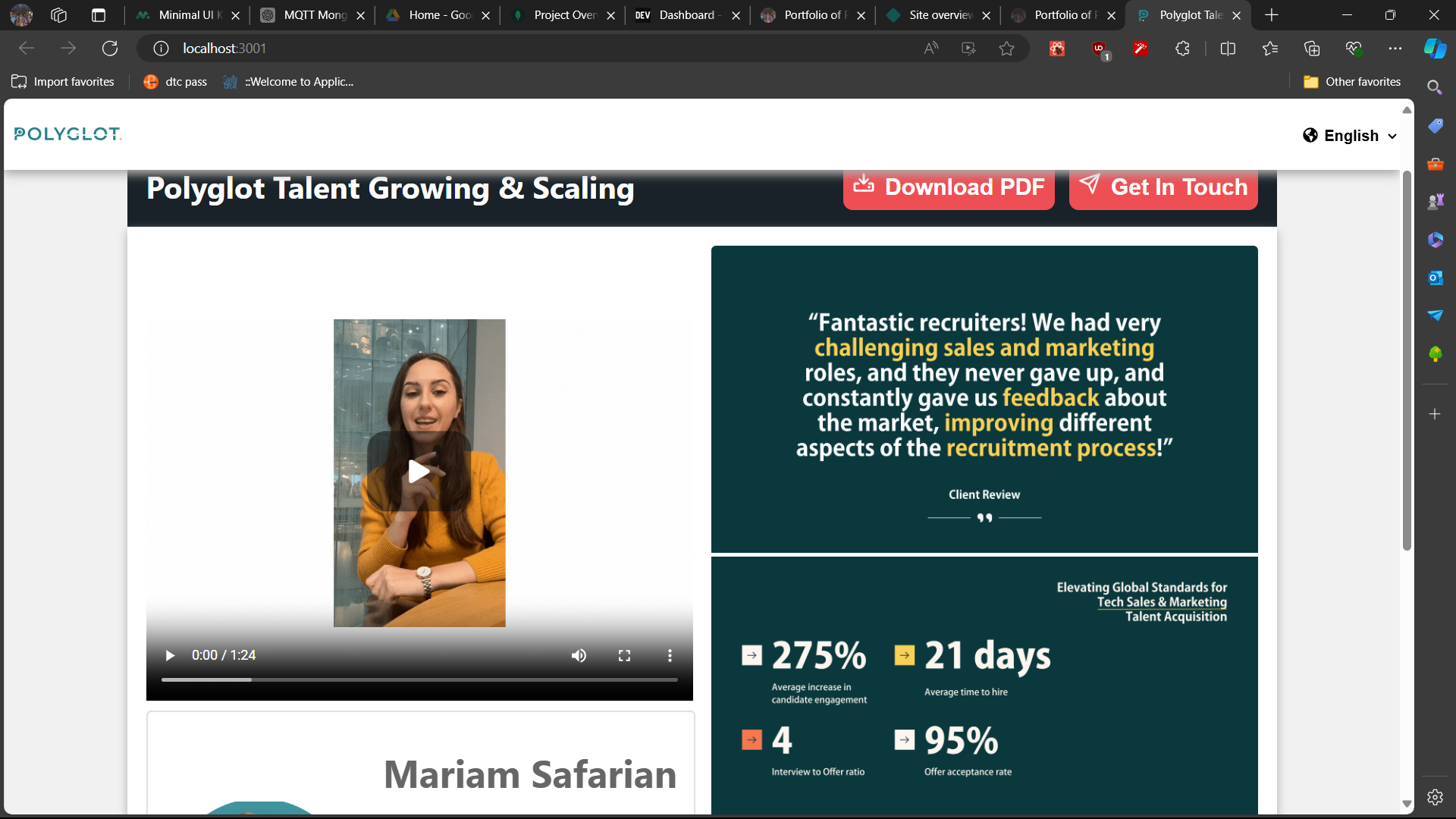

1,900,024 | Landing Page For Client | Navigation Bar (Nav Bar) Components: Logo and Language translator. Styling: Consistent... | 0 | 2024-06-25T11:30:30 | https://dev.to/pranav-29/landing-page-for-client-496p |

winning marketing strategy requires constant innovation. Businesses persistently seek fresh tactics to cultivate stronger customer relationships and amplify their brand voice. Her... | princy_srivastava_aab5c55 | |

1,900,015 | Bitcoin: A Digital Gold Rush | What is Bitcoin? Let's simplify it by comparing it to gold. Why does gold have value? Not just... | 0 | 2024-06-25T11:20:33 | https://dev.to/tushar_pal/bitcoin-a-digital-gold-rush-1mm4 | bitcoin, blog, beginners, 2min | What is Bitcoin?

Let's simplify it by comparing it to gold. Why does gold have value?

Not just because Indian aunties wear it on wedding, but because we, as a society, have collectively assigned it value. Why? Well, there's a limited supply of gold in the world, and it takes time, effort, and resources to mine it. En... | tushar_pal |

1,900,012 | Roast my product | Hey there, I'm Victor, Founder and developer at kit.domains. A few weeks ago, I launched... | 0 | 2024-06-25T11:20:11 | https://dev.to/victor_sh/roast-my-product-18go | webdev | Hey there,

I'm Victor, Founder and developer at [kit.domains](https://kit.domains).

A few weeks ago, I launched KIT.domains on Product Hunt and a few other platforms. Currently, I have some stats:

- 1k visitors

- 51 customers

- 115 domains added

The project started as just **domain/SSL expiration date tracking**.

... | victor_sh |

1,900,010 | Birth of Blockchain | Once upon a time, people used to exchange goods like shoes for things they needed, like grains. It... | 0 | 2024-06-25T11:18:47 | https://dev.to/tushar_pal/birth-of-blockchain-4i4 | Once upon a time,

people used to exchange goods like shoes for things they needed, like grains. It was a simple system, but it had its problems. As time passed, people realized it wasn't very efficient.

Then, something shiny came along - gold! Gold was special because there wasn't too much of it in the world, and you... | tushar_pal | |

1,900,008 | Car Parking Multiplayer APK ile Gerçekçi Otopark Deneyimini Keşfedin | Eğlence ile öğrenmeyi bir araya getirme fikrine hayran mısınız? Car Parking Multiplayer'a bakmaya... | 0 | 2024-06-25T11:14:43 | https://dev.to/carparking_/car-parking-multiplayer-apk-ile-gercekci-otopark-deneyimini-kesfedin-30dp | games, gaming, onlinegaming, online | Eğlence ile öğrenmeyi bir araya getirme fikrine hayran mısınız? Car Parking Multiplayer'a bakmaya gerek yok, gerçekçi araç manevralarını sunan etkileyici bir simülasyon oyunu. Son sürümü (V 4.8.18.3) şimdi tek bir tıklama ile ücretsiz indirilebilir durumda ve oyun, otopark dünyasına sizi götürecek etkileyici bir yolcul... | carparking_ |

1,896,501 | Navigating the Complexities of Cloud Solutions: An opinionated Developer's Perspective | Working on an international project has its unique set of challenges and opportunities. Our current... | 0 | 2024-06-25T11:12:05 | https://dev.to/kalstong/navigating-the-complexities-of-cloud-solutions-an-opinionated-developers-perspective-1578 | softwareengineering, architecture, cloud, aws | Working on an international project has its unique set of challenges and opportunities. Our current project involves a frontend application leveraging a Backend for Frontend (BFF) pattern running on a kubernetes (k8s) cluster and a robust backend infrastructure on AWS. We employ a variety of AWS resources, including Dy... | kalstong |

1,900,006 | Why TraceHawk is the Only Block Explorer You'll Need for Arbitrum Orbit? | Rollups are distinct from L2 protocols, and so is their need for a block explorer. TraceHawk... | 0 | 2024-06-25T11:09:18 | https://dev.to/tracehawk/why-tracehawk-is-the-only-block-explorer-youll-need-for-arbitrum-orbit-2fd6 | <p>Rollups are distinct from L2 protocols, and so is their need for a block explorer. TraceHawk recognized the unique capabilities of an explorer designed especially to serve L2/L3 rollups. That’s why, after the successful launch of the <a href="https://www.tracehawk.io/blog/tracehawk-for-op-stack-rollups-everythi... | tracehawk | |

1,879,408 | A Blog on the core architectural component of Azure. | Introduction to Cloud Computing for beginners Cloud computing is the delivery of computing... | 0 | 2024-06-25T11:09:11 | https://dev.to/jdbastus/a-blog-on-the-core-architectural-component-of-azure-5291 | infrastructureasaservice, platformasaservice, softwareasaservice |

## Introduction to Cloud Computing for beginners

Cloud computing is the delivery of computing services like Database, Networking, software, server, storage etc over the internet or Cloud Computing is the use of hosted services over the internet are data storage, server, networking, database, software etc or renting y... | jdbastus |

1,900,005 | How to animate the path in SVG | Demo online | 0 | 2024-06-25T11:08:26 | https://dev.to/fridaymeng/how-to-animate-the-path-in-svg-1474 |

[Demo online](https://addgraph.com/deepLearning) | fridaymeng | |

1,899,990 | GBase 8c Implementation Guide: Performance Optimization | 1. Database Configuration Optimization 1.1. Memory Management The memory... | 0 | 2024-06-25T10:53:23 | https://dev.to/congcong/gbase-8c-implementation-guide-performance-optimization-il | ## 1. Database Configuration Optimization

### 1.1. Memory Management

The memory configuration of a database directly affects its processing capacity. GBase 8c provides various memory-related configuration parameters such as shared_buffers, work_mem, and max_process_memory. Properly setting these parameters can signifi... | congcong | |

1,900,000 | 16 Killer Web Applications to Boost Your Workflow with AI 🚀🔥 | Artificial Intelligence tools can significantly enhance productivity by automating routine tasks,... | 0 | 2024-06-25T11:07:32 | https://madza.hashnode.dev/16-killer-web-applications-to-boost-your-workflow-with-ai | webdev, coding, ai, productivity | ---

title: 16 Killer Web Applications to Boost Your Workflow with AI 🚀🔥

published: true

description:

tags: webdev, coding, ai, productivity

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/ztaj8460d5yhnb44mzj9.png

canonical_url: https://madza.hashnode.dev/16-killer-web-applications-to-boost-your... | madza |

1,900,003 | Newyork city fast and smooth internet | New York City internet comparison Nearly all NYC internet providers start in the $40–$50 price range,... | 0 | 2024-06-25T11:03:25 | https://dev.to/bass_mvp_4ad4d2ca0143b313/newyork-city-fast-and-smooth-internet-12mb | homeinternet, newyork, city, wifi | New York City internet comparison

Nearly all NYC internet providers start in the $40–$50 price range, except Astound, which starts at about half as much as other introductory plans. The average internet speed for a basic plan is 300 Mbps, besides T-Mobile 5G. Fiber optic internet from Verizon Fios and cable internet fr... | bass_mvp_4ad4d2ca0143b313 |

1,900,002 | Understanding Django's settings.py File: A Comprehensive Guide for Beginners | Introduction The settings.py file is often referred to as the heart of a Django project.... | 0 | 2024-06-25T11:03:10 | https://dev.to/rupesh_mishra/understanding-djangos-settingspy-file-a-comprehensive-guide-for-beginners-4n5b | beginners, programming, python, backenddevelopment |

## Introduction

The `settings.py` file is often referred to as the heart of a Django project. It contains all the configuration of your Django installation, controlling aspects like database settings, installed applications, middleware, URL configuration, static file directories, and much more. Understanding this fil... | rupesh_mishra |

1,900,001 | GBase 8s SYSBldRelease() Function Guide | 1. Overview of SYSBldRelease() Function From a session connected to a GBase 8s database... | 0 | 2024-06-25T11:03:02 | https://dev.to/congcong/gbase-8s-sysbldrelease-function-guide-2k15 | ## 1. Overview of SYSBldRelease() Function

From a session connected to a GBase 8s database that supports explicit transaction logging, you can register or unregister DataBlade modules by calling the built-in `SYSBldPrepare()` SQL function. Another built-in function, `SYSBldRelease()`, returns the version string of the... | congcong | |

1,872,334 | Ibuprofeno.py💊| #124: Explica este código Python | Explica este código Python Dificultad: Fácil print(set([1, 2, 2, 3, 3, 3,... | 25,824 | 2024-06-25T11:00:00 | https://dev.to/duxtech/ibuprofenopy-124-explica-este-codigo-python-34m6 | beginners, learning, python, spanish | ## **<center>Explica este código Python</center>**

#### <center>**Dificultad:** <mark>Fácil</mark></center>

```py

print(set([1, 2, 2, 3, 3, 3, 4, 4, 4, 4, 5, 5, 5, 5, 5]))

```

* **A.** `{0, 1, 2, 3, 4, 5}`

* **B.** `{1, 2, 3, 4, 5}`

* **C.** `{0, 1, 2, 3, 4}`

* **D.** `{}`

---

{% details **Respuesta:** %}

👉 **B.... | duxtech |

1,899,503 | (Unofficial) Getting Started with Elixir Phoenix Guide | Hey, this guide is meant to be a recreation of the Getting Started with Rails Guide, but for Elixir... | 0 | 2024-06-25T11:00:00 | https://dev.to/andyklimczak/very-unofficial-getting-started-with-elixir-phoenix-guide-3k55 | elixir, phoenix, webdev, tutorial | ---

title: (Unofficial) Getting Started with Elixir Phoenix Guide

published: true

description:

tags: elixir, phoenix, webdev, tutorial

# Use a ratio of 100:42 for best results.

published_at: 2024-06-25 07:00 -0400

cover_image: https://images.unsplash.com/photo-1490718687940-0ecadf414600?q=80&h=420&w=1000&auto=format&f... | andyklimczak |

1,899,998 | Python vs Java: The Battle of the Programming Titans | *Table of Contents * Introduction: The Clash of the Coding Giants Python: The Versatile... | 0 | 2024-06-25T10:57:25 | https://dev.to/jinesh_vora_ab4d7886e6a8d/python-vs-java-the-battle-of-the-programming-titans-flm | webdev, javascript, programming, python |

**Table of Contents

**

1. Introduction: The Clash of the Coding Giants

2. Python: The Versatile Powerhouse

3. Java: The Robust and Reliable Workhorse

4. Syntax and Readability. Simplicity vs. Verbosity

5. Performance and Efficiency: Balancing Speed with the Resource Utilization

6. Web Development: The Landscape of Pyt... | jinesh_vora_ab4d7886e6a8d |

1,899,997 | Python vs Java: The Battle of the Programming Titans | *Table of Contents * Introduction: The Clash of the Coding Giants Python: The Versatile... | 0 | 2024-06-25T10:57:19 | https://dev.to/jinesh_vora_ab4d7886e6a8d/python-vs-java-the-battle-of-the-programming-titans-3g5j | webdev, javascript, programming, python |

**Table of Contents

**

1. Introduction: The Clash of the Coding Giants

2. Python: The Versatile Powerhouse

3. Java: The Robust and Reliable Workhorse

4. Syntax and Readability. Simplicity vs. Verbosity

5. Performance and Efficiency: Balancing Speed with the Resource Utilization

6. Web Development: The Landscape of Pyt... | jinesh_vora_ab4d7886e6a8d |

1,899,996 | Iron Casting: Advancing Manufacturing Through Continuous Improvement | Advancing Manufacturing Through Continuous Improvement: The Benefits of Iron Casting Iron casting is... | 0 | 2024-06-25T10:57:01 | https://dev.to/ghjkl_tyuio_157de5e4171e7/iron-casting-advancing-manufacturing-through-continuous-improvement-kf6 | ironcasting | Advancing Manufacturing Through Continuous Improvement: The Benefits of Iron Casting

Iron casting is a process used in manufacturing to create metal complex that meet the requirements of various industries. The process involves iron melting pouring it into a mold to create the desired shape. Iron casting is an innovat... | ghjkl_tyuio_157de5e4171e7 |

1,899,855 | What Are the Challenges and Applications of Large Language Models? | Introduction What are the challenges and applications of large language models?... | 0 | 2024-06-25T10:45:06 | https://dev.to/novita_ai/what-are-the-challenges-and-applications-of-large-language-models-2577 | llm | ## Introduction

What are the challenges and applications of large language models? Referencing the work "Challenges and Applications of Large Language Models" by Kaddour, J., Harris, J., Mozes, M., Bradley, H., Raileanu, R., & McHardy, R., this blog is going to discuss this question in a plain and simple way. Let's beg... | novita_ai |

1,899,995 | The Art of Software Development: From Planning to Deployment | The art of software development is a complex and multifaceted process that spans several stages, each... | 0 | 2024-06-25T10:56:12 | https://dev.to/coderowersoftware/the-art-of-software-development-from-planning-to-deployment-49d2 | softwaredevelopment, softwareengineering, webdev, development | The art of **software development** is a complex and multifaceted process that spans several stages, each crucial to the success of the final product. From initial planning to deployment, every phase requires careful consideration, collaboration, and a thorough understanding of both technical and non-technical aspects.... | coderower |

1,899,994 | How do I reset my Apple ID password without security questions? | To reset your Apple ID password without security questions, visit recovery apple id Enter your Apple... | 0 | 2024-06-25T10:55:59 | https://dev.to/wingtonash1230/how-do-i-reset-my-apple-id-password-without-security-questions-pok | To reset your Apple ID password without security questions, visit [recovery apple id](https://iforgottapple.com/) Enter your Apple ID and follow the on-screen instructions. You can choose to reset your password using your trusted device or phone number. If you have two-factor authentication enabled, you'll receive a co... | wingtonash1230 | |

1,899,993 | Redes: Datagrama | Introdução No mundo das redes de computadores, o termo "datagrama" ocupa um papel... | 0 | 2024-06-25T10:55:27 | https://dev.to/iamthiago/redes-datagrama-4p42 | ## Introdução

No mundo das redes de computadores, o termo "datagrama" ocupa um papel fundamental. Frequentemente associado ao protocolo IP (Internet Protocol), o datagrama é a unidade básica de transferência de dados. Este artigo explora o conceito de datagrama, sua estrutura, funcionamento, e importância na comunicaç... | iamthiago | |

1,899,992 | Emaar Park Edge Karachi: Where Comfort Meets Elegance | In the rapidly evolving urban landscape of Karachi, one name stands out as a beacon of luxury and... | 0 | 2024-06-25T10:53:50 | https://dev.to/jackking050/emaar-park-edge-karachi-where-comfort-meets-elegance-3mb6 | real, estate | In the rapidly evolving urban landscape of Karachi, one name stands out as a beacon of luxury and modernity – Emaar Park Edge Karachi. This exceptional residential development by Emaar Properties redefines the concept of upscale living, combining contemporary design with unparalleled amenities. As a testament to Emaar'... | jackking050 |

1,899,991 | Balancing Security and Usability: Ensuring Effective Information Security without Overburdening Employees | There is a fine line between adequate security measures and overbearing security protocols that can... | 0 | 2024-06-25T10:53:36 | https://dev.to/borisgigovic/balancing-security-and-usability-ensuring-effective-information-security-without-overburdening-employees-26h9 | computersecurity, networksecurity, cyberawareness, securitypractices | There is a fine line between adequate security measures and overbearing security protocols that can lead to employee fatigue and decreased productivity. Striking the right balance—implementing just enough security to protect critical data without overwhelming employees—is essential for creating a secure yet efficient w... | borisgigovic |

1,899,989 | Using a moisturiser pump can save you money in the long run, as it helps to prevent waste. | screenshot-1712338209671.png The Benefits of Using a Moisturiser Pump Do you know that using a... | 0 | 2024-06-25T10:52:30 | https://dev.to/fdsaz_fgcvx_f7e80e5ef010e/using-a-moisturiser-pump-can-save-you-money-in-the-long-run-as-it-helps-to-prevent-waste-c4d | design | screenshot-1712338209671.png

The Benefits of Using a Moisturiser Pump

Do you know that using a moisturiser pump can save you money in the long run? Yes, you read it right. This innovative product can help you prevent wastage and ensure safety while using the product. We will discuss the advantages of using a moistu... | fdsaz_fgcvx_f7e80e5ef010e |

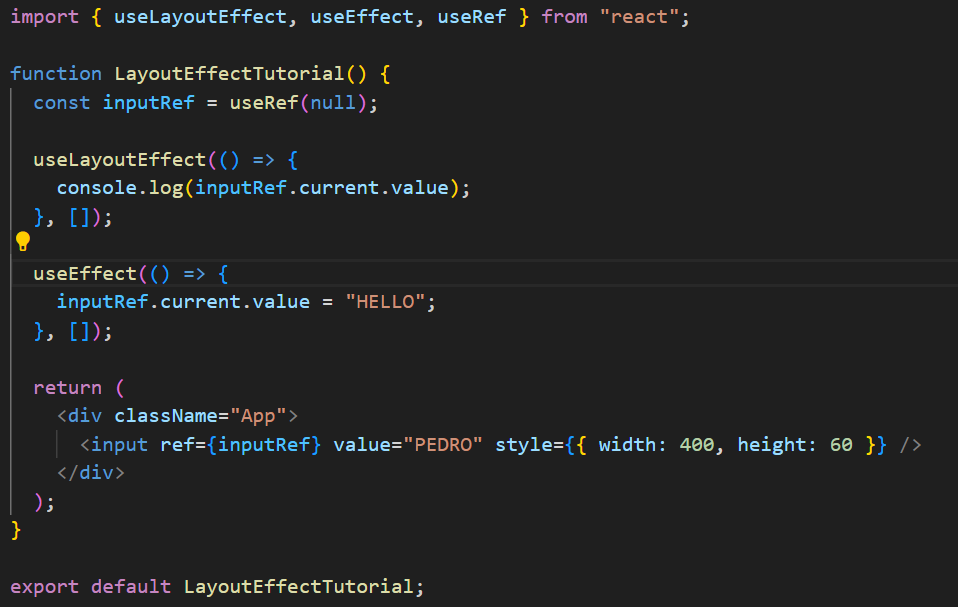

1,899,988 | useLayoutEffect hook | useLayoutEffect is called with a callback function and an empty dependency array ([]). This hook... | 0 | 2024-06-25T10:51:33 | https://dev.to/geetika_bajpai_a654bfd1e0/uselayouteffect-hook-5ca2 |

1. useLayoutEffect is called with a callback function and an empty dependency array ([]).

2. This hook runs synchronously after DOM updates but before the browser paints the screen.

3. In this example, it logs the ... | geetika_bajpai_a654bfd1e0 | |

1,899,987 | Why Z1 K2 Comfortline Knee Orthosis is the Best Choice for Your Knee Support Needs | With regards to knee braces, the Z1 K2 Comfortline Knee Orthosis sticks out as an advanced choice.... | 0 | 2024-06-25T10:49:25 | https://dev.to/mahaveer_singh_285b9fed3b/why-z1-k2-comfortline-knee-orthosis-is-the-best-choice-for-your-knee-support-needs-3i2n | brace | With regards to knee braces, the Z1 K2 Comfortline Knee Orthosis sticks out as an advanced choice. Whether you're an athlete, getting better from any damage, or certainly searching for higher knee assist, this knee brace offers unparalleled functions and blessings. Right here's why the Z1 K2 Comfortline is the quality ... | mahaveer_singh_285b9fed3b |

1,899,986 | MyHTSpace | MyHTSpace is an online portal to find out anything you want to know about Harris Teeter. So, don’t... | 0 | 2024-06-25T10:48:37 | https://dev.to/myhtspacelive/myhtspace-bfh | MyHTSpace is an online portal to find out anything you want to know about Harris Teeter. So, don’t worry if you can’t figure out how to log in to MyHTSpace. You will learn how to do it. After reading this article, you won’t have any more problems because you’ll know everything there is to know about it.

https://myhtsp... | myhtspacelive | |

1,899,810 | What Is Cumulative Reasoning With Large Language Models? | Introduction What is cumulative reasoning with large language models? Why do we need... | 0 | 2024-06-25T10:45:07 | https://dev.to/novita_ai/what-is-cumulative-reasoning-with-large-language-models-42la | llm | ## Introduction

What is cumulative reasoning with large language models? Why do we need cumulative reasoning for LLMs? What does cumulative reasoning with LLMs look like? Can LLMs Do Cumulative Reasoning Well? In this blog, we will discuss these questions one by one in a plain and simple way, referencing the paper titl... | novita_ai |

1,899,870 | Rent NVIDIA A100 Cloud GPU Today | Introduction The NVIDIA A100 GPU has really changed the game in cloud computing, bringing... | 0 | 2024-06-25T10:45:00 | https://dev.to/novita_ai/rent-nvidia-a100-cloud-gpu-today-4ppm | ## Introduction

The NVIDIA A100 GPU has really changed the game in cloud computing, bringing top-notch power and cool features for AI, machine learning, and tasks that need a lot of computing muscle. Because it's so powerful and can handle more work at once, organizations are picking the A100 to speed up their projects... | novita_ai | |

1,899,983 | The Role of Payment Gateways in Safeguarding Mobile Payments | Phone payments and mobile payment systems have changed how people and businesses make transactions in... | 0 | 2024-06-25T10:44:53 | https://dev.to/david_mark_61fd09e0f67a52/the-role-of-payment-gateways-in-safeguarding-mobile-payments-3b9g | paymentgateway, paymentprocess, paymentsolutions, onlinepayments | Phone payments and mobile payment systems have changed how people and businesses make transactions in the digital era. Payment gateways play a key role in ensuring secure and smooth transactions on different platforms.

**The Rise of Phone Payments and Mobile Payment Systems**

Phone payments and [mobile payment systems... | david_mark_61fd09e0f67a52 |

1,899,982 | Quantum-Resistant Blockchain: The Future of Secure Digital Transactions | 1. Introduction The advent of blockchain technology has revolutionized various sectors... | 27,619 | 2024-06-25T10:44:34 | https://dev.to/aishik_chatterjee_0060e71/quantum-resistant-blockchain-the-future-of-secure-digital-transactions-3b58 | ## 1\. Introduction

The advent of blockchain technology has revolutionized various sectors by

providing a decentralized, transparent, and secure method of recording

transactions. However, the development of quantum computing poses a potential

risk to the cryptographic algorithms that underpin blockchain security. This... | aishik_chatterjee_0060e71 | |

1,899,981 | Sauce Filling Machines: Meeting the Needs of Small and Medium-Sized Businesses | Sauce Filling Machines: perfect for Small in addition businesses that are medium-Sized Small and... | 0 | 2024-06-25T10:43:43 | https://dev.to/ghjkl_tyuio_157de5e4171e7/sauce-filling-machines-meeting-the-needs-of-small-and-medium-sized-businesses-4pa6 | machine |

Sauce Filling Machines: perfect for Small in addition businesses that are medium-Sized

Small and businesses that are medium-sized constantly researching in order to enhance effectiveness in addition manufacturing plus staying of the investing arrange. one unit which can only help organizations achieve these kind o... | ghjkl_tyuio_157de5e4171e7 |

1,899,980 | Vue-extendable Tailwind admin panel | We started new open-source project. URL https://adminforth.dev Quick example:... | 0 | 2024-06-25T10:42:38 | https://dev.to/ivictbor/we-started-creating-extendable-with-vue-admin-solution-i00 | backoffice, admin, tailwindcss, vue | We started new open-source project.

URL https://adminforth.dev

Quick example: https://adminforth.dev/docs/tutorial/gettingStarted

[Image](https://adminforth.dev/assets/images/localhost_3500_resource_apparts-d3c1eb4d2ad47f021d6fe5318030a4f9.png)

Main points:

* Always free MIT-license, we are web dev team so awarenes... | ivictbor |

1,899,978 | Creating an Opensource E-Learning Solution: Structuring the base of the Project's codebase | Hello Everyone, in this article, we will see how to structure the project codebase, we'll take a look... | 0 | 2024-06-25T10:41:14 | https://dev.to/inaryo/creating-an-opensource-e-learning-solution-structuring-the-base-of-the-projects-codebase-2ao5 | opensource, symfony, webdev, devjournal | Hello Everyone, in this article, we will see how to structure the project codebase, we'll take a look to the licensing, the contributing , the documentation and the code of conduct.

## Where to start ?

As for any project or any decision in your life, you need to be informed and have the information crucial for your pr... | inaryo |

1,899,977 | The Future Of Product Matching In E-Commerce: Trends And Innovations | Introduction In the fast-paced world of e-commerce, effective product matching is essential for... | 0 | 2024-06-25T10:38:53 | https://dev.to/saumya27/the-future-of-product-matching-in-e-commerce-trends-and-innovations-6e6 | Introduction

In the fast-paced world of e-commerce, effective product matching is essential for providing a seamless shopping experience for customers. Product matching involves identifying and linking identical or similar products from different sources, ensuring that customers can find the best options available acr... | saumya27 | |

1,899,976 | Tips to Get a Start Up Lån | Securing a startup business loan is a crucial step in turning your entrepreneurial dreams into... | 0 | 2024-06-25T10:36:48 | https://dev.to/yuncture/tips-to-get-a-start-up-lan-34h | Securing a startup business loan is a crucial step in turning your entrepreneurial dreams into reality. Whether you need capital to launch your business or expand an existing one, understanding the process of obtaining a **start up lån** is essential. Yuncture, a leading företagsinkubator, investerare, and kontorshotel... | yuncture | |

1,899,975 | Hey all, happy to join | I build software ON - not for - WiX. I’m running a hackathon with $4k in sponsored prize money. Top... | 0 | 2024-06-25T10:36:05 | https://dev.to/roger_hunt_ideatrek/hey-all-happy-to-join-44i9 | hackathon, wixstudiochallenge, javascript | I build software ON - not for - WiX.

I’m running a hackathon with $4k in sponsored prize money. Top prize, beginner prizes, and community prizes.

Happy to chat! | roger_hunt_ideatrek |

1,899,974 | How Sichuan DeepFast is Meeting the Challenges of Deep Drilling | How DeepFast Sichuan is Deeper Drilling of Innovation Introduction The company's label is Sichuan... | 0 | 2024-06-25T10:34:30 | https://dev.to/ghjkl_tyuio_157de5e4171e7/how-sichuan-deepfast-is-meeting-the-challenges-of-deep-drilling-4201 | deepdrilling | How DeepFast Sichuan is Deeper Drilling of Innovation

Introduction

The company's label is Sichuan DeepFast they are understood for their ingenious innovation solution outstanding. We'll check out the benefits of Sichuan DeepFast, their items that are ingenious precaution, ways to utilize their solutions. Our team... | ghjkl_tyuio_157de5e4171e7 |

1,899,972 | The Future of Manufacturing: Innovations in Secondary Packing Systems | SECONDARY PACKAGING SYSTEM.jpg The Future of Manufacturing: Secondary Packing Systems Innovation is... | 0 | 2024-06-25T10:32:52 | https://dev.to/fdsaz_fgcvx_f7e80e5ef010e/the-future-of-manufacturing-innovations-in-secondary-packing-systems-707 | design | SECONDARY PACKAGING SYSTEM.jpg

The Future of Manufacturing: Secondary Packing Systems

Innovation is key when it comes to manufacturing. New technologies are being developed every to improve the product quality and safety of Blowing System products while minimizing costs time. One area of innovation in manufacturing... | fdsaz_fgcvx_f7e80e5ef010e |

1,899,970 | Top 5 medium Rust open source project to contribute. | https://grenierdudev.com/posts/top-5-medium-rust-open-source-project-to-contribute-137028d | 0 | 2024-06-25T10:30:24 | https://dev.to/grenierdudev/top-5-medium-rust-open-source-project-to-contribute-2a2i | rust, opensource, deno, wgpu | https://grenierdudev.com/posts/top-5-medium-rust-open-source-project-to-contribute-137028d | grenierdudev |

1,899,969 | ELO 3: The Pinnacle of Comfort in Damac Hills 2 | Damac Hills 2, formerly known as Akoya Oxygen, stands as one of the most prestigious residential... | 0 | 2024-06-25T10:30:10 | https://dev.to/elodamac3/elo-3-the-pinnacle-of-comfort-in-damac-hills-2-abo | webdev, javascript, programming, beginners | Damac Hills 2, formerly known as Akoya Oxygen, stands as one of the most prestigious residential communities in Dubai, renowned for its lush greenery, serene environment, and luxurious living standards. Nestled within this vibrant enclave is [elo 3 damac hills 2](https://www.leadroyal.ae/elo-3-at-damac-hills-2/), a pro... | elodamac3 |

1,899,968 | Personalization at Scale: How AI Enhances Customer Engagement | In today's digital landscape, personalization is crucial for fostering meaningful connections with... | 0 | 2024-06-25T10:29:34 | https://dev.to/nisargshah/personalization-at-scale-how-ai-enhances-customer-engagement-37kk | ai, customerengagement, design | In today's digital landscape, personalization is crucial for fostering meaningful connections with customers. However, scaling personalization can be challenging. Artificial Intelligence (AI) revolutionizes this process, offering businesses the ability to engage customers more effectively.

**The Power of AI in Persona... | nisargshah |

1,899,967 | Building Your First Web Application with Flask: A Step-by-Step Guide | Flask is a lightweight web framework for Python, making it easy to get started with web development.... | 0 | 2024-06-25T10:29:21 | https://dev.to/zaiba_sa/building-your-first-web-application-with-flask-a-step-by-step-guide-5p8 | flask, python, webdev, webapp | Flask is a lightweight web framework for Python, making it easy to get started with web development. In this guide, we'll create a simple web application using Flask and the command line.

**Step 1: Install Flask**

First, ensure you have Python installed. You can check by running:

python --version

Next, install Flask u... | zaiba_sa |

1,899,966 | HTML Graphics, HTML Canvas, HTML SVG in detail with examples | HTML Graphics HTML graphics contains: HTML Canvas HTML SVG What is HTML... | 0 | 2024-06-25T10:28:04 | https://dev.to/wasifali/html-graphics-html-canvas-html-svg-in-detail-with-examples-4960 | webdev, javascript, html, learning | ## **HTML Graphics**

HTML graphics contains:

HTML Canvas

HTML SVG

## **What is HTML Canvas?**

The HTML `<canvas>` element is used to draw graphics, on the fly, via JavaScript.

## **Canvas Examples**

A canvas is a rectangular area on an HTML page. By default, a canvas has no border and no content.

```HTML

<canvas id="my... | wasifali |

1,899,965 | what is chatgpt | ChatGPT stands as a testament to the remarkable progress in artificial intelligence, specifically... | 0 | 2024-06-25T10:24:35 | https://dev.to/whatischatgpt/what-is-chatgpt-3mpn | ChatGPT stands as a testament to the remarkable progress in artificial intelligence, specifically within the domain of natural language processing (NLP). Developed by OpenAI, ChatGPT embodies the latest advancements in deep learning, leveraging a transformative neural network architecture known as transformers. This mo... | whatischatgpt | |

1,899,964 | The Rise of Progressive Web Apps, iTechTribe International | Hey everyone! In today’s fast-paced digital world, delivering fast, reliable, and engaging web... | 0 | 2024-06-25T10:24:23 | https://dev.to/itechtshahzaib_1a2c1cd10/the-rise-of-progressive-web-apps-itechtribeint-525j |

Hey everyone! In today’s fast-paced digital world, delivering fast, reliable, and engaging web experiences is more important than ever. That’s w... | itechtshahzaib_1a2c1cd10 | |

1,899,963 | Saas Development Company | Techno Derivation stands at the forefront as a leading SAAS web development company, offering bespoke... | 0 | 2024-06-25T10:23:54 | https://dev.to/mukesh_td_677df7f5967aef6/saas-development-company-2m5m | softwaredevelopmentcompany, itcompany, webdevelopmentcomapny, gamedevelopmentcomoany |

Techno Derivation stands at the forefront as a leading SAAS web development company, offering bespoke solutions tailored to your unique business needs. As a trusted [SAAS development company](https://technoderivation.com/saas-development-company

stands out as a powerful suite of business applications designed to meet these needs. Combining the capabilities o... | mylearnnest |

1,899,766 | Angular Signals EventBus pattern | In several use cases, I find the event bus pattern to be very effective. Angular signals provide an... | 0 | 2024-06-25T10:09:49 | https://dev.to/ferdiesletering/angular-signals-eventbus-pattern-15ce | angular, webdev, javascript | In several use cases, I find the event bus pattern to be very effective. Angular signals provide an excellent mechanism for managing state, so I decided to implement the event bus pattern using Angular signals.

In my recent project, I am developing a dashboard where the widgets need to communicate with each other whil... | ferdiesletering |

1,899,886 | RTS TV APK DOWNLOAD LATEST VERSION | Introduction Welcome to RTS TV APK Download, your ultimate destination for accessing a... | 0 | 2024-06-25T10:09:42 | https://dev.to/rtstvapk/rts-tv-apk-download-latest-version-2h31 | rtstv, rtstvapk, rts, rtstvapkdownload | ## Introduction

Welcome to **[RTS TV APK Download](https://rtstvapkdownload.in/)**, your ultimate destination for accessing a wide range of live TV channels, movies, and sports events directly on your Android device. Our platform offers a seamless and user-friendly way to download the latest RTS TV APK, ensuring you s... | rtstvapk |

1,899,885 | Discover Serenity: The Best Spas in Thaltej | Thaltej, a serene and upscale locality in Ahmedabad, is well-known for its peaceful atmosphere and... | 0 | 2024-06-25T10:08:32 | https://dev.to/abitamim_patel_7a906eb289/discover-serenity-the-best-spas-in-thaltej-2ci3 | Thaltej, a serene and upscale locality in Ahmedabad, is well-known for its peaceful atmosphere and modern amenities. Among its many attractions, the spas in Thaltej stand out as oases of relaxation and rejuvenation. Whether you’re looking for a soothing massage, a refreshing facial, or comprehensive wellness treatments... | abitamim_patel_7a906eb289 | |

1,425,542 | How to improve your Git commits with Commitizen | Summary Commitizen is a CLI tool that can be used to help communicate changes made in... | 0 | 2023-04-04T09:29:18 | https://neurowinter.com/git/2023/04/04/commitizen/ | git | ---

title: How to improve your Git commits with Commitizen

published: true

date: 2023-04-03 12:00:00 UTC

tags: git

canonical_url: https://neurowinter.com/git/2023/04/04/commitizen/

---

## Summary

Commitizen is a CLI tool that can be used to help communicate changes made in commits to both future you and other team me... | neurowinter |

1,899,884 | Mastering Postman Scripts: Top Examples for Technical Professionals | Postman Scripts, leveraging the power of JavaScript, transform routine API testing into tailored,... | 0 | 2024-06-25T10:07:21 | https://dev.to/sattyam/mastering-postman-scripts-top-examples-for-technical-professionals-4hk3 | postmanapi, postman | Postman Scripts, leveraging the power of JavaScript, transform routine API testing into tailored, automated operations. This article explores the various ways Postman scripts can optimize your API testing regimen, supplying you with code examples to improve efficiency and effectiveness.

## Why Use Postman Scripts?

Th... | sattyam |

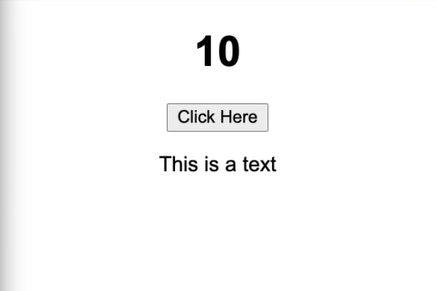

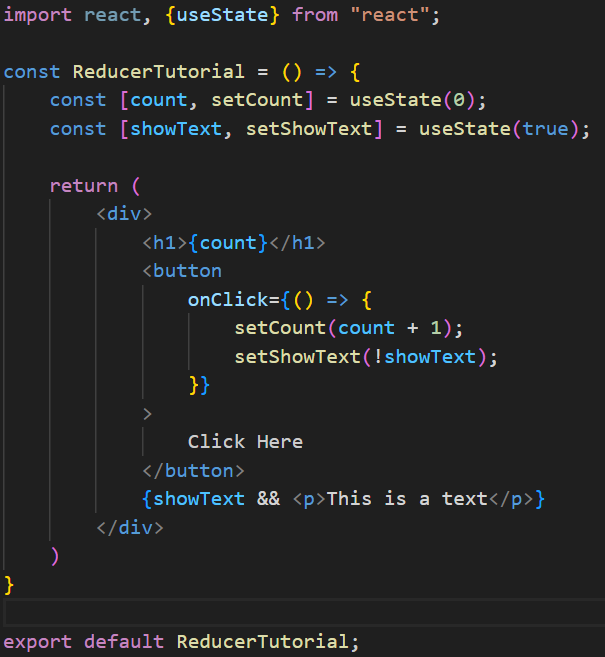

1,899,883 | useReducer hook | We will see how to implement this using the useState hook. The ReducerTutorial React component... | 0 | 2024-06-25T10:07:02 | https://dev.to/geetika_bajpai_a654bfd1e0/usereducer-hook-550i |

We will see how to implement this using the useState hook.

The ReducerTutorial React component demonstrates ... | geetika_bajpai_a654bfd1e0 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.