text stringlengths 2.5k 6.39M | kind stringclasses 3

values |

|---|---|

```

# This Python 3 environment comes with many helpful analytics libraries installed

# It is defined by the kaggle/python Docker image: https://github.com/kaggle/docker-python

import os

for dirname, _, filenames in os.walk('/kaggle/input'):

for filename in filenames:

print(os.path.join(dirname, filename))

# Import helpful libraries

import pandas as pd # data processing, CSV file I/O (e.g. pd.read_csv)

import numpy as np # linear algebra

import matplotlib.pyplot as plt

from sklearn.feature_extraction.text import CountVectorizer

from sklearn.feature_extraction.text import TfidfTransformer

from sklearn import feature_extraction, linear_model, model_selection, preprocessing

from sklearn.metrics import accuracy_score

from sklearn.model_selection import train_test_split

from sklearn.pipeline import Pipeline

# Load the data, and separate the target

f='../input/newsdata/data/Fake.csv'

t='../input/newsdata/data/True.csv'

fake = pd.read_csv(f)

true = pd.read_csv(t)

# Get the shape of fake and true data

fake.shape

true.shape

# Preview the first 5 rows of the fake data

fake.head()

# Preview the first 5 rows of the true data

true.head()

# Add flag to track fake and real

fake['target'] = 'fake'

true['target'] = 'true'

# Concatenate dataframes

data = pd.concat([fake, true]).reset_index(drop = True)

data.shape

# Shuffle the data

from sklearn.utils import shuffle

data = shuffle(data)

data = data.reset_index(drop=True)

# preview first 5 rows of data

data.head()

# Removing the date and title column (we won't use it for the analysis)

data.drop(["date"],axis=1,inplace=True)

data.drop(["title"],axis=1,inplace=True)

data.head()

# Removing punctuation

import string

def punctuation_removal(text):

all_list = [char for char in text if char not in string.punctuation]

clean_str = ''.join(all_list)

return clean_str

data['text'] = data['text'].apply(punctuation_removal)

data.head()

# Convert to lowercase

data['text'] = data['text'].apply(lambda x: x.lower())

data.head()

# Removing stopwords

import nltk

nltk.download('stopwords')

from nltk.corpus import stopwords

stop = stopwords.words('english')

data['text'] = data['text'].apply(lambda x: ' '.join([word for word in x.split() if word not in (stop)]))

data.head()

# DATA EXPLORATION

# How many articles per subject?

print(data.groupby(['subject'])['text'].count())

data.groupby(['subject'])['text'].count().plot(kind="bar")

plt.show()

# How many fake and real articles?

print(data.groupby(['target'])['text'].count())

data.groupby(['target'])['text'].count().plot(kind="bar")

plt.show()

# Word cloud for fake news

from wordcloud import WordCloud

fake_data = data[data["target"] == "fake"]

all_words = ' '.join([text for text in fake_data.text])

wordcloud = WordCloud(width= 800, height= 500,

max_font_size = 110,

collocations = False).generate(all_words)

plt.figure(figsize=(12,10))

plt.imshow(wordcloud, interpolation='bilinear')

plt.axis("off")

plt.show()

# Word cloud for real news

from wordcloud import WordCloud

real_data = data[data["target"] == "true"]

all_words = ' '.join([text for text in real_data.text])

wordcloud = WordCloud(width= 800, height= 500,

max_font_size = 110,

collocations = False).generate(all_words)

plt.figure(figsize=(12,10))

plt.imshow(wordcloud, interpolation='bilinear')

plt.axis("off")

plt.show()

# Most frequent words counter (Code adapted from https://www.kaggle.com/rodolfoluna/fake-news-detector)

from nltk import tokenize

token_space = tokenize.WhitespaceTokenizer()

def counter(text, column_text, quantity):

all_words = ' '.join([text for text in text[column_text]])

token_phrase = token_space.tokenize(all_words)

frequency = nltk.FreqDist(token_phrase)

df_frequency = pd.DataFrame({"Word": list(frequency.keys()),

"Frequency": list(frequency.values())})

df_frequency = df_frequency.nlargest(columns = "Frequency", n = quantity)

plt.figure(figsize=(12,8))

ax = sns.barplot(data = df_frequency, x = "Word", y = "Frequency", color = 'blue')

ax.set(ylabel = "Count")

plt.xticks(rotation='vertical')

plt.show()

# Most frequent words in fake news

counter(data[data["target"] == "fake"], "text", 20)

# Most frequent words in real news

counter(data[data["target"] == "true"], "text", 20)

#MODELLING

# Function to plot the confusion matrix

from sklearn import metrics

import itertools

def plot_confusion_matrix(cm, classes,

normalize=False,

title='Confusion matrix',

cmap=plt.cm.Blues):

plt.imshow(cm, interpolation='nearest', cmap=cmap)

plt.title(title)

plt.colorbar()

tick_marks = np.arange(len(classes))

plt.xticks(tick_marks, classes, rotation=45)

plt.yticks(tick_marks, classes)

if normalize:

cm = cm.astype('float') / cm.sum(axis=1)[:, np.newaxis]

print("Normalized confusion matrix")

else:

print('Confusion matrix, without normalization')

thresh = cm.max() / 2.

for i, j in itertools.product(range(cm.shape[0]), range(cm.shape[1])):

plt.text(j, i, cm[i, j],

horizontalalignment="center",

color="white" if cm[i, j] > thresh else "black")

plt.tight_layout()

plt.ylabel('True label')

plt.xlabel('Predicted label')

# Split the data

X_train,X_test,y_train,y_test = train_test_split(data['text'], data.target, test_size=0.2, random_state=42)

#Tryung different modelling technique to get better prediction

#LOGISTIC REGRESSION

# Vectorizing and applying TF-IDF

from sklearn.linear_model import LogisticRegression

pipe = Pipeline([('vect', CountVectorizer()),

('tfidf', TfidfTransformer()),

('model_1', LogisticRegression())])

# Fitting the model

model_1 = pipe.fit(X_train, y_train)

# Accuracy

prediction = model_1.predict(X_test)

print("Accuracy using Logistic Regression: {}%".format(round(accuracy_score(y_test, prediction)*100,2)))

model1_cm=metrics.confusion_matrix(y_test, prediction)

plot_confusion_matrix(model1_cm, classes=['Fake', 'Real'])

#RANDOM FOREST CLASSIFIER

from sklearn.ensemble import RandomForestClassifier

pipe = Pipeline([('vect', CountVectorizer()),

('tfidf', TfidfTransformer()),

('model_2', RandomForestClassifier(n_estimators=50, criterion="entropy"))])

#fitting the model

model_2 = pipe.fit(X_train, y_train)

#prediction and accuracy

prediction = model_2.predict(X_test)

print("Accuracy using Random Forest Classifier: {}%".format(round(accuracy_score(y_test, prediction)*100,2)))

model2_cm = metrics.confusion_matrix(y_test, prediction)

plot_confusion_matrix(model2_cm, classes=['Fake', 'Real'])

#DECISION TREE CLASSIFIER

from sklearn.tree import DecisionTreeClassifier

# Vectorizing and applying TF-IDF

pipe = Pipeline([('vect', CountVectorizer()),

('tfidf', TfidfTransformer()),

('model_3', DecisionTreeClassifier(criterion= 'entropy',

max_depth = 20,

splitter='best',

random_state=42))])

# Fitting the model

model_3 = pipe.fit(X_train, y_train)

# Accuracy

prediction = model_3.predict(X_test)

print("Accuracy using Decision Tree Classifier: {}%".format(round(accuracy_score(y_test, prediction)*100,2)))

model3_cm = metrics.confusion_matrix(y_test, prediction)

plot_confusion_matrix(model3_cm, classes=['Fake', 'Real'])

# Run the code to save predictions in the format used for competition scoring

output = pd.DataFrame(model3_cm)

output.to_csv('submission.csv', index=False)

```

| github_jupyter |

# exma quick start

In this tutorial we will take the typical molecular dynamics case of a Lennard-Jones (LJ) fluid, in its solid phase and in its liquid phase, and we will see how to obtain different properties of them using this library.

This first part of the code will be common to all three sections. We are going to import the necessary libraries and define the variables that we will use next.

```

import matplotlib.pyplot as plt

import numpy as np

```

We are going to define the _strings_ that will direct us to where the files with the paths are located (assuming we are in _exma/docs/source_).

```

fsolid = "tutorial_data/solid.xyz"

fliquid = "tutorial_data/liquid.xyz"

```

We leave these variables defined as strings and we do not read them since `exma` is in charge of reading xyz or lammpstrj files.

These trajectories were generated with a [homemade code](https://github.com/fernandezfran/tiny_md), in both cases there are 201 frames and 500 atoms. Next we define the parameters of the simulation cell for each case (this is necessary since the file is xyz, if it was lammpstrj we could skip this cell).

```

solid_box = np.full(3, 7.46901)

liquid_box = np.full(3, 8.54988)

```

In this case the distances are given in Lennard-Jones units.

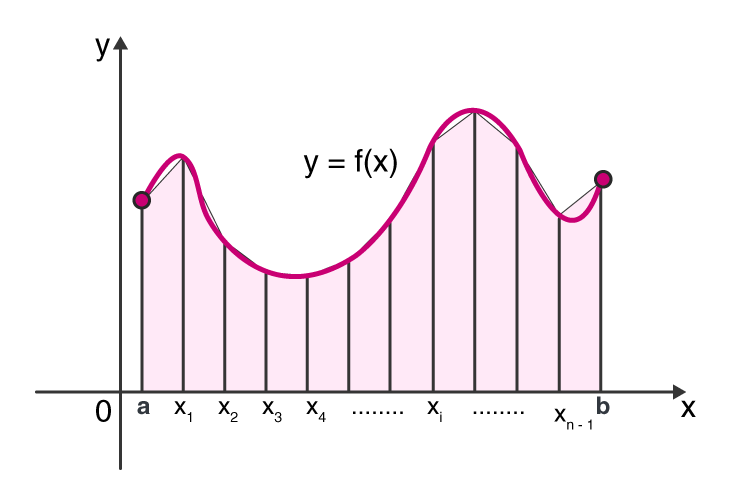

## Mean Square Displacement (MSD)

The mean square displacement (MSD) is a measure of the deviation of the position of the particles with respect to a reference positions over time. From it, it is possible to obtain, through a linear regression, the trace diffusion coefficient. For more information you can start reading the Wikipedia article of [mean square displacement](https://en.wikipedia.org/wiki/Mean_squared_displacement).

We start by importing the `MeanSquareDisplacement` class from exma.

```

from exma import MeanSquareDisplacement

```

As in every Pair Analyzer, we have dedicated `calculate`, `plot` and `save` methods, the latter will not be used in this tutorial but it is useful when we want to save the results in a different file without the need to re-run the calculations which, for long time simulations, can be demanding.

```

# for both structures we discard the first 10 equilibration frames

solid_msd = MeanSquareDisplacement(fsolid, 0.05, "Ar", start=10, xyztype="image")

liquid_msd = MeanSquareDisplacement(fliquid, 0.05, "Ar", start=10, xyztype="image")

```

At this point we just instantiate the class, we are able to calculate, for which it is necessary to pass the optional argument of the cell length in each direction.

```

solid_msd.calculate(box=solid_box)

liquid_msd.calculate(box=solid_box)

```

We can see directly from the numbers how in the liquid phase a larger quadratic displacement is obtained already in the first steps. But to be more illustrative, we can plot both curves on the same graph, using `plt.gca()` of matplotlib.

```

ax = plt.gca()

# we pass the same axis to both plots and define the labels offered by the wrapper to the plot function

solid_msd.plot(ax=ax, plot_kws={"label": "solid"})

liquid_msd.plot(ax=ax, plot_kws={"label": "liquid"})

# we add the legend to the plot

plt.legend()

```

We obtain the expected response for a LJ fluid where the liquid phase diffuses with the expected linear behaivor and the solid phase does not diffuse.

## Radial Distribution Function (RDF)

The pair radial distribution function (RDF), _g(r)_, characterizes the local structure of a fluid, and describes the probability to find an atom in a shell at distance _r_ from a reference atom. This quantity is calculated as the ratio between the average density at distance _r_ from the reference atom and the density at that same distance of an ideal gas. For more information you can start reading the Wikipedia article of [radial distribution function](https://en.wikipedia.org/wiki/Radial_distribution_function).

We start importing the `RadialDistributionFunction` class from exma.

```

from exma import RadialDistributionFunction

```

And the mode of use is quite analogous to that of the MSD, some parameters of the inizializer are changed.

```

# for both structures we discard the first 10 equilibration frames

solid_rdf = RadialDistributionFunction(fsolid, "Ar", "Ar", start=10, rmax=solid_box[0] / 2)

liquid_rdf = RadialDistributionFunction(fliquid, "Ar", "Ar", start=10, rmax=liquid_box[0] / 2)

```

In this case we declare that the RDF is calculated up to half the distance from the box, i.e. atoms at a greater distance are ignored.

```

solid_rdf.calculate(box=solid_box)

liquid_rdf.calculate(box=liquid_box)

```

As before, we can obtain the corresponding graph.

```

ax = plt.gca()

# we pass the same axis to both plots and define the labels offered by the wrapper to the plot function

solid_rdf.plot(ax=ax, plot_kws={"label": "solid"})

liquid_rdf.plot(ax=ax, plot_kws={"label": "liquid"})

# we add the legend to the plot

plt.legend()

```

We get the expected results. For the solid phase we have the defined peaks of an fcc crystal with noise given by the temperature and for the liquid phase we get the usual behavior of a liquid. For both systems we have that the g(r) oscillates around 1 for larger distances.

## Coordination Number (CN)

The coordination number (CN), also called ligancy, of a given atom in a chemical system is defined as the number of atoms, molecules or ions bonded to it. This quantity is calculated considered the number of neighbors surrounding a given atom type a cutoff distance.

From the previous graph we can define the cut-off radius to consider only the first neighbors.

```

solid_rcut = 1.29

liquid_rcut = 1.56

```

Now we import the `CoordinationNumber` class.

```

from exma import CoordinationNumber

```

We have the same conduct as in the previos classes.

```

solid_cn = CoordinationNumber(fsolid, "Ar", "Ar", solid_rcut, start=10)

liquid_cn = CoordinationNumber(fliquid, "Ar", "Ar", liquid_rcut, start=10)

```

In this case there is no point in making a plot, so the `calculate` method directly gives us the mean and standard deviation of the number of coordination calculated over all the production frames.

```

solid_cn.calculate(box=solid_box)

liquid_cn.calculate(box=liquid_box)

```

For both cases we have roughly the same result of the CN close to 12 typical value of a compact packing structure, as is the _fcc_ crystal and its amorphization.

| github_jupyter |

<h1> Scaling up ML using Cloud ML Engine </h1>

In this notebook, we take a previously developed TensorFlow model to predict taxifare rides and package it up so that it can be run in Cloud MLE. For now, we'll run this on a small dataset. The model that was developed is rather simplistic, and therefore, the accuracy of the model is not great either. However, this notebook illustrates *how* to package up a TensorFlow model to run it within Cloud ML.

Later in the course, we will look at ways to make a more effective machine learning model.

<h2> Environment variables for project and bucket </h2>

Note that:

<ol>

<li> Your project id is the *unique* string that identifies your project (not the project name). You can find this from the GCP Console dashboard's Home page. My dashboard reads: <b>Project ID:</b> cloud-training-demos </li>

<li> Cloud training often involves saving and restoring model files. If you don't have a bucket already, I suggest that you create one from the GCP console (because it will dynamically check whether the bucket name you want is available). A common pattern is to prefix the bucket name by the project id, so that it is unique. Also, for cost reasons, you might want to use a single region bucket. </li>

</ol>

<b>Change the cell below</b> to reflect your Project ID and bucket name.

```

import os

PROJECT = 'cloud-training-demos' # REPLACE WITH YOUR PROJECT ID

BUCKET = 'cloud-training-demos-ml' # REPLACE WIHT YOUR BUCKET NAME

REGION = 'us-central1' # REPLACE WITH YOUR BUCKET REGION e.g. us-central1

# for bash

os.environ['PROJECT'] = PROJECT

os.environ['BUCKET'] = BUCKET

os.environ['REGION'] = REGION

os.environ['TFVERSION'] = '1.4' # Tensorflow version

%bash

gcloud config set project $PROJECT

gcloud config set compute/region $REGION

```

Allow the Cloud ML Engine service account to read/write to the bucket containing training data.

```

%bash

PROJECT_ID=$PROJECT

AUTH_TOKEN=$(gcloud auth print-access-token)

SVC_ACCOUNT=$(curl -X GET -H "Content-Type: application/json" \

-H "Authorization: Bearer $AUTH_TOKEN" \

https://ml.googleapis.com/v1/projects/${PROJECT_ID}:getConfig \

| python -c "import json; import sys; response = json.load(sys.stdin); \

print response['serviceAccount']")

echo "Authorizing the Cloud ML Service account $SVC_ACCOUNT to access files in $BUCKET"

gsutil -m defacl ch -u $SVC_ACCOUNT:R gs://$BUCKET

gsutil -m acl ch -u $SVC_ACCOUNT:R -r gs://$BUCKET # error message (if bucket is empty) can be ignored

gsutil -m acl ch -u $SVC_ACCOUNT:W gs://$BUCKET

```

<h2> Packaging up the code </h2>

Take your code and put into a standard Python package structure. <a href="taxifare/trainer/model.py">model.py</a> and <a href="taxifare/trainer/task.py">task.py</a> contain the Tensorflow code from earlier (explore the <a href="taxifare/trainer/">directory structure</a>).

```

!find taxifare

!cat taxifare/trainer/model.py

```

<h2> Find absolute paths to your data </h2>

Note the absolute paths below. /content is mapped in Datalab to where the home icon takes you

```

%bash

echo $PWD

rm -rf $PWD/taxi_trained

head -1 $PWD/taxi-train.csv

head -1 $PWD/taxi-valid.csv

```

<h2> Running the Python module from the command-line </h2>

```

%bash

rm -rf taxifare.tar.gz taxi_trained

export PYTHONPATH=${PYTHONPATH}:${PWD}/taxifare

python -m trainer.task \

--train_data_paths="${PWD}/taxi-train*" \

--eval_data_paths=${PWD}/taxi-valid.csv \

--output_dir=${PWD}/taxi_trained \

--train_steps=1000 --job-dir=./tmp

%bash

ls $PWD/taxi_trained/export/exporter/

%writefile ./test.json

{"pickuplon": -73.885262,"pickuplat": 40.773008,"dropofflon": -73.987232,"dropofflat": 40.732403,"passengers": 2}

%bash

model_dir=$(ls ${PWD}/taxi_trained/export/exporter)

gcloud ml-engine local predict \

--model-dir=${PWD}/taxi_trained/export/exporter/${model_dir} \

--json-instances=./test.json

```

<h2> Running locally using gcloud </h2>

```

%bash

rm -rf taxifare.tar.gz taxi_trained

gcloud ml-engine local train \

--module-name=trainer.task \

--package-path=${PWD}/taxifare/trainer \

-- \

--train_data_paths=${PWD}/taxi-train.csv \

--eval_data_paths=${PWD}/taxi-valid.csv \

--train_steps=1000 \

--output_dir=${PWD}/taxi_trained

```

When I ran it (due to random seeds, your results will be different), the ```average_loss``` (Mean Squared Error) on the evaluation dataset was 187, meaning that the RMSE was around 13.

```

from google.datalab.ml import TensorBoard

TensorBoard().start('./taxi_trained')

for pid in TensorBoard.list()['pid']:

TensorBoard().stop(pid)

print 'Stopped TensorBoard with pid {}'.format(pid)

```

If the above step (to stop TensorBoard) appears stalled, just move on to the next step. You don't need to wait for it to return.

```

!ls $PWD/taxi_trained

```

<h2> Submit training job using gcloud </h2>

First copy the training data to the cloud. Then, launch a training job.

After you submit the job, go to the cloud console (http://console.cloud.google.com) and select <b>Machine Learning | Jobs</b> to monitor progress.

<b>Note:</b> Don't be concerned if the notebook stalls (with a blue progress bar) or returns with an error about being unable to refresh auth tokens. This is a long-lived Cloud job and work is going on in the cloud. Use the Cloud Console link (above) to monitor the job.

```

%bash

echo $BUCKET

gsutil -m rm -rf gs://${BUCKET}/taxifare/smallinput/

gsutil -m cp ${PWD}/*.csv gs://${BUCKET}/taxifare/smallinput/

%%bash

OUTDIR=gs://${BUCKET}/taxifare/smallinput/taxi_trained

JOBNAME=lab3a_$(date -u +%y%m%d_%H%M%S)

echo $OUTDIR $REGION $JOBNAME

gsutil -m rm -rf $OUTDIR

gcloud ml-engine jobs submit training $JOBNAME \

--region=$REGION \

--module-name=trainer.task \

--package-path=${PWD}/taxifare/trainer \

--job-dir=$OUTDIR \

--staging-bucket=gs://$BUCKET \

--scale-tier=BASIC \

--runtime-version=$TFVERSION \

-- \

--train_data_paths="gs://${BUCKET}/taxifare/smallinput/taxi-train*" \

--eval_data_paths="gs://${BUCKET}/taxifare/smallinput/taxi-valid*" \

--output_dir=$OUTDIR \

--train_steps=10000

```

Don't be concerned if the notebook appears stalled (with a blue progress bar) or returns with an error about being unable to refresh auth tokens. This is a long-lived Cloud job and work is going on in the cloud.

<b>Use the Cloud Console link to monitor the job and do NOT proceed until the job is done.</b>

<h2> Deploy model </h2>

Find out the actual name of the subdirectory where the model is stored and use it to deploy the model. Deploying model will take up to <b>5 minutes</b>.

```

%bash

gsutil ls gs://${BUCKET}/taxifare/smallinput/taxi_trained/export/exporter

%bash

MODEL_NAME="taxifare"

MODEL_VERSION="v1"

MODEL_LOCATION=$(gsutil ls gs://${BUCKET}/taxifare/smallinput/taxi_trained/export/exporter | tail -1)

echo "Run these commands one-by-one (the very first time, you'll create a model and then create a version)"

#gcloud ml-engine versions delete ${MODEL_VERSION} --model ${MODEL_NAME}

#gcloud ml-engine models delete ${MODEL_NAME}

gcloud ml-engine models create ${MODEL_NAME} --regions $REGION

gcloud ml-engine versions create ${MODEL_VERSION} --model ${MODEL_NAME} --origin ${MODEL_LOCATION} --runtime-version $TFVERSION

```

<h2> Prediction </h2>

```

%bash

gcloud ml-engine predict --model=taxifare --version=v1 --json-instances=./test.json

from googleapiclient import discovery

from oauth2client.client import GoogleCredentials

import json

credentials = GoogleCredentials.get_application_default()

api = discovery.build('ml', 'v1', credentials=credentials,

discoveryServiceUrl='https://storage.googleapis.com/cloud-ml/discovery/ml_v1_discovery.json')

request_data = {'instances':

[

{

'pickuplon': -73.885262,

'pickuplat': 40.773008,

'dropofflon': -73.987232,

'dropofflat': 40.732403,

'passengers': 2,

}

]

}

parent = 'projects/%s/models/%s/versions/%s' % (PROJECT, 'taxifare', 'v1')

response = api.projects().predict(body=request_data, name=parent).execute()

print "response={0}".format(response)

```

<h2> Train on larger dataset </h2>

I have already followed the steps below and the files are already available. <b> You don't need to do the steps in this comment. </b> In the next chapter (on feature engineering), we will avoid all this manual processing by using Cloud Dataflow.

Go to http://bigquery.cloud.google.com/ and type the query:

<pre>

SELECT

(tolls_amount + fare_amount) AS fare_amount,

pickup_longitude AS pickuplon,

pickup_latitude AS pickuplat,

dropoff_longitude AS dropofflon,

dropoff_latitude AS dropofflat,

passenger_count*1.0 AS passengers,

'nokeyindata' AS key

FROM

[nyc-tlc:yellow.trips]

WHERE

trip_distance > 0

AND fare_amount >= 2.5

AND pickup_longitude > -78

AND pickup_longitude < -70

AND dropoff_longitude > -78

AND dropoff_longitude < -70

AND pickup_latitude > 37

AND pickup_latitude < 45

AND dropoff_latitude > 37

AND dropoff_latitude < 45

AND passenger_count > 0

AND ABS(HASH(pickup_datetime)) % 1000 == 1

</pre>

Note that this is now 1,000,000 rows (i.e. 100x the original dataset). Export this to CSV using the following steps (Note that <b>I have already done this and made the resulting GCS data publicly available</b>, so you don't need to do it.):

<ol>

<li> Click on the "Save As Table" button and note down the name of the dataset and table.

<li> On the BigQuery console, find the newly exported table in the left-hand-side menu, and click on the name.

<li> Click on "Export Table"

<li> Supply your bucket name and give it the name train.csv (for example: gs://cloud-training-demos-ml/taxifare/ch3/train.csv). Note down what this is. Wait for the job to finish (look at the "Job History" on the left-hand-side menu)

<li> In the query above, change the final "== 1" to "== 2" and export this to Cloud Storage as valid.csv (e.g. gs://cloud-training-demos-ml/taxifare/ch3/valid.csv)

<li> Download the two files, remove the header line and upload it back to GCS.

</ol>

<p/>

<p/>

<h2> Run Cloud training on 1-million row dataset </h2>

This took 60 minutes and uses as input 1-million rows. The model is exactly the same as above. The only changes are to the input (to use the larger dataset) and to the Cloud MLE tier (to use STANDARD_1 instead of BASIC -- STANDARD_1 is approximately 10x more powerful than BASIC). At the end of the training the loss was 32, but the RMSE (calculated on the validation dataset) was stubbornly at 9.03. So, simply adding more data doesn't help.

```

%%bash

XXXXX this takes 60 minutes. if you are sure you want to run it, then remove this line.

OUTDIR=gs://${BUCKET}/taxifare/ch3/taxi_trained

JOBNAME=lab3a_$(date -u +%y%m%d_%H%M%S)

CRS_BUCKET=cloud-training-demos # use the already exported data

echo $OUTDIR $REGION $JOBNAME

gsutil -m rm -rf $OUTDIR

gcloud ml-engine jobs submit training $JOBNAME \

--region=$REGION \

--module-name=trainer.task \

--package-path=${PWD}/taxifare/trainer \

--job-dir=$OUTDIR \

--staging-bucket=gs://$BUCKET \

--scale-tier=STANDARD_1 \

--runtime-version=$TFVERSION \

-- \

--train_data_paths="gs://${CRS_BUCKET}/taxifare/ch3/train.csv" \

--eval_data_paths="gs://${CRS_BUCKET}/taxifare/ch3/valid.csv" \

--output_dir=$OUTDIR \

--train_steps=100000

```

## Challenge Exercise

Modify your solution to the challenge exercise in d_trainandevaluate.ipynb appropriately. Make sure that you implement training and deployment. Increase the size of your dataset by 10x since you are running on the cloud. Does your accuracy improve?

Copyright 2016 Google Inc. Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License

| github_jupyter |

# Train-validation tagging

This notebook shows how to split a training dataset into train and validation folds using tags

**Input**:

- Source project

- Train-validation split ratio

**Output**:

- New project with images randomly tagged by `train` or `val`, based on split ration

## Configuration

Edit the following settings for your own case

```

import supervisely_lib as sly

from tqdm import tqdm

import random

import os

team_name = "jupyter_tutorials"

workspace_name = "cookbook"

project_name = "tutorial_project"

dst_project_name = "tutorial_project_tagged"

validation_fraction = 0.4

tag_meta_train = sly.TagMeta('train', sly.TagValueType.NONE)

tag_meta_val = sly.TagMeta('val', sly.TagValueType.NONE)

# Obtain server address and your api_token from environment variables

# Edit those values if you run this notebook on your own PC

address = os.environ['SERVER_ADDRESS']

token = os.environ['API_TOKEN']

# Initialize API object

api = sly.Api(address, token)

```

## Verify input values

Test that context (team / workspace / project) exists

```

# Get IDs of team, workspace and project by names

team = api.team.get_info_by_name(team_name)

if team is None:

raise RuntimeError("Team {!r} not found".format(team_name))

workspace = api.workspace.get_info_by_name(team.id, workspace_name)

if workspace is None:

raise RuntimeError("Workspace {!r} not found".format(workspace_name))

project = api.project.get_info_by_name(workspace.id, project_name)

if project is None:

raise RuntimeError("Project {!r} not found".format(project_name))

print("Team: id={}, name={}".format(team.id, team.name))

print("Workspace: id={}, name={}".format(workspace.id, workspace.name))

print("Project: id={}, name={}".format(project.id, project.name))

```

## Get Source ProjectMeta

```

meta_json = api.project.get_meta(project.id)

meta = sly.ProjectMeta.from_json(meta_json)

print("Source ProjectMeta: \n", meta)

```

## Construct Destination ProjectMeta

```

dst_meta = meta.add_img_tag_metas([tag_meta_train, tag_meta_val])

print("Destination ProjectMeta:\n", dst_meta)

```

## Create Destination project

```

# check if destination project already exists. If yes - generate new free name

if api.project.exists(workspace.id, dst_project_name):

dst_project_name = api.project.get_free_name(workspace.id, dst_project_name)

print("Destination project name: ", dst_project_name)

dst_project = api.project.create(workspace.id, dst_project_name)

api.project.update_meta(dst_project.id, dst_meta.to_json())

print("Destination project has been created: id={}, name={!r}".format(dst_project.id, dst_project.name))

```

## Iterate over all images, tag them and add to destination project

```

for dataset in api.dataset.get_list(project.id):

print('Dataset: {}'.format(dataset.name), flush=True)

dst_dataset = api.dataset.create(dst_project.id, dataset.name)

images = api.image.get_list(dataset.id)

with tqdm(total=len(images), desc="Process annotations") as progress_bar:

for batch in sly.batched(images):

image_ids = [image_info.id for image_info in batch]

image_names = [image_info.name for image_info in batch]

ann_infos = api.annotation.download_batch(dataset.id, image_ids)

anns_to_upload = []

for ann_info in ann_infos:

ann = sly.Annotation.from_json(ann_info.annotation, meta)

tag = sly.Tag(tag_meta_val) if random.random() <= validation_fraction else sly.Tag(tag_meta_train)

ann = ann.add_tag(tag)

anns_to_upload.append(ann)

dst_image_infos = api.image.upload_ids(dst_dataset.id, image_names, image_ids)

dst_image_ids = [image_info.id for image_info in dst_image_infos]

api.annotation.upload_anns(dst_image_ids, anns_to_upload)

progress_bar.update(len(batch))

print("Project {!r} has been sucessfully uploaded".format(dst_project.name))

print("Number of images: ", api.project.get_images_count(dst_project.id))

```

| github_jupyter |

## Train GPT on addition

Train a GPT model on a dedicated addition dataset to see if a Transformer can learn to add.

```

# set up logging

import logging

logging.basicConfig(

format="%(asctime)s - %(levelname)s - %(name)s - %(message)s",

datefmt="%m/%d/%Y %H:%M:%S",

level=logging.INFO,

)

# make deterministic

from mingpt.utils import set_seed

set_seed(42)

import numpy as np

import torch

import string

import os

from tqdm.auto import tqdm

import torch.nn as nn

from torch.nn import functional as F

%load_ext autoreload

%autoreload 2

test = []

for i in range(7):

test.append([])

test[0]

class PrepareData:

""" Tokenizer helper functions """

def __init__(self, mem_slots):

self.mem_slots = mem_slots

self.vocab = ['pad', 'answer', 'end'] + list(' ' + string.punctuation + string.digits + string.ascii_uppercase + string.ascii_lowercase)

self.vocab_size = len(self.vocab) # 10 possible digits 0..9

# Max input characters plus max answer characters

self.src_max_size = 30

self.max_trg = 5

self.block_size = 160 + 32

self.t = {k: v for v, k in enumerate(self.vocab)} # Character to ID

self.idx = {v: k for k, v in self.t.items()} # ID to Character

def initiate_mem_slot_data(self, fname):

# split up all addition problems into either training data or test data

# head_tail = os.path.split(fname)

src, trg = [], []

with open(fname, "r") as file:

text = file.read()[:-1] # Excluding the final linebreak

text_list = text.split('\n')

src = text_list[0:][::2]

trg = text_list[1:][::2]

os.remove(fname)

with open(fname, "a") as file:

for src, trg in zip(src, trg):

file.write(src + '\n')

file.write(trg + '\n')

for _ in range(self.mem_slots):

file.write('\n')

def prepare_data(self, fname):

# split up all addition problems into either training data or test data

# head_tail = os.path.split(fname)

dataset = []

for _ in range(self.mem_slot + 2):

dataset.append([])

with open(fname, "r") as file:

text = file.read()[:-1] # Excluding the final linebreak

text_list = text.split('\n')

for i in range(self.mem_slot + 2):

dataset[i] = text_list[i:][::self.mem_slot + 2]

self.max_src = len(max(dataset[0], key=len))

self.max_trg = len(max(dataset[1], key=len))

return dataset

def sort_data_by_len(self, indexes, data):

test_data_by_length = []

for index in indexes:

test_data_by_length.append([index, len(data[index])])

test_data_by_length = sorted(test_data_by_length, key=lambda x: x[1])

return [i[0] for i in test_data_by_length]

def src2Canvas(self, src):

x = self.t['pad']] * self.src_max_size

return x[:len(src_trg[:-1])] = src_trg[:-1]

def trg2Canvas(self):

y = [self.t['pad']] * self.trg_max_size

return [self.t['pad']] * self.trg_max_size

def tensor2string(self, tensor):

return ''.join([self.idx[tok] for tok in tensor.tolist()])

def string2digits(self, string):

return ''.join([self.t[tok] for tok in string])

def mask_padding(self, digits):

return [-100 if tok == self.t['pad'] else tok for tok in digits]

def mask_question(self, digits, src):

return digits[:len(src)] = -100

def locate_token(self, token, tensor):

return None if self.t[token] not in tensor.tolist() else tensor.tolist().index(self.t[token])

from torch.utils.data import Dataset

class AdditionDataset(Dataset):

"""

Returns addition problems of up to some number of digits in the inputs. Recall

that all GPT cares about are sequences of integers, and completing them according to

patterns in the data. Therefore, we have to somehow encode addition problems

as a sequence of integers.

"""

def __init__(self, fname, split):

self.split = split # train/test

self.vocab = ['pad', 'answer', 'end', 'right', 'wrong'] + list(' ' + string.punctuation + string.digits + string.ascii_uppercase + string.ascii_lowercase)

self.vocab_size = len(self.vocab) # 10 possible digits 0..9

# Max input characters plus max answer characters

# self.block_size = 160 + 32

self.t = {k: v for v, k in enumerate(self.vocab)} # Character to ID

self.idx = {v: k for k, v in self.t.items()} # ID to Character

# split up all addition problems into either training data or test data

with open(fname, "r") as file:

text = file.read()[:-1] # Excluding the final linebreak

text_list = text.split('\n')

self.src = text_list[0:][::2]

self.trg = text_list[1:][::2]

self.src_trg = [src+trg for src,trg in zip(self.src,self.trg)]

self.max_trg = np.ceil((sum(map(len, self.trg)) / len(self.trg)))

self.block_size = len(max(self.src_trg, key=len)) + 1

data_len = len(self.src) # total number of possible combinations

r = np.random.RandomState(1337) # make deterministic

perm = r.permutation(data_len)

num_test = int(data_len*0.1) # 20% of the whole dataset, or only up to 1000

# Sort test data by lenght to batch predictions

test_data_by_length = []

for index in perm[:num_test]:

test_data_by_length.append([index, len(self.src[index])])

test_data_by_length = sorted(test_data_by_length, key=lambda x: x[1])

test_data_by_length = [i[0] for i in test_data_by_length]

self.ixes = np.array(test_data_by_length) if split == 'test' else perm[num_test:]

def __len__(self):

return self.ixes.size

def __getitem__(self, idx):

# given a problem index idx, first recover the associated a + b

idx = self.ixes[idx]

src = self.src[idx]

trg = self.trg[idx]

src_trg = list(src) + ['answer'] + list(trg) + ['end']

src_trg = [self.t[tok] for tok in src_trg] # convert each character to its token index

# x will be input to GPT and y will be the associated expected outputs

x = [self.t['pad']] * self.block_size

y = [self.t['pad']] * self.block_size

x[:len(src_trg[:-1])] = src_trg[:-1]

y[:len(src_trg[1:])] = src_trg[1:] # predict the next token in the sequence

y = [-100 if tok == self.t['pad'] else tok for tok in y] # -100 will mask loss to zero

x = torch.tensor(x, dtype=torch.long)

y = torch.tensor(y, dtype=torch.long)

y[:len(src)] = -100 # we will only train in the output locations. -100 will mask loss to zero

return x, y

# create a dataset

easy = 'data/numbers__place_value.txt'

medium = 'data/numbers__is_prime.txt'

hard = 'data/numbers__list_prime_factors.txt'

train_dataset = AdditionDataset(fname=easy, split='train')

test_dataset = AdditionDataset(fname=easy, split='test')

# for i in range(0, len(train_dataset)):

# if len(train_dataset[i][0]) != 52 or len(train_dataset[i][1]) != 52:

# print(train_dataset.block_size)

# print(len(train_dataset[i][0]))

# print(len(train_dataset[i][1]))

# print(train_dataset[i])

train_dataset[0] # sample a training instance just to see what one raw example looks like

from mingpt.model import GPT, GPTConfig, GPT1Config

# initialize a baby GPT model

mconf = GPTConfig(train_dataset.vocab_size, train_dataset.block_size,

n_layer=2, n_head=4, n_embd=128)

model = GPT(mconf)

from mingpt.trainer import Trainer, TrainerConfig

# initialize a trainer instance and kick off training

tconf = TrainerConfig(max_epochs=1, batch_size=512, learning_rate=6e-4,

lr_decay=True, warmup_tokens=1024, final_tokens=50*len(train_dataset)*(14+1),

num_workers=0)

trainer = Trainer(model, train_dataset, test_dataset, tconf)

trainer.train()

trainer.save_checkpoint()

# now let's give the trained model an addition exam

from torch.utils.data.dataloader import DataLoader

from mingpt.utils import sample, Tokenizer

def give_exam(dataset, batch_size=1, max_batch_size=512, max_batches=-1):

t = Tokenizer(dataset)

results, examples = [], []

loader = DataLoader(dataset, batch_size=batch_size, shuffle=False)

prev_src_len, predict, batch, x_in = 0, 0, 0, 0

pbar = tqdm(enumerate(loader), total=len(loader))

for b, (x, y) in pbar:

src_len = t.locateToken('answer', x[0])

x_in = leftover if prev_src_len == -1 else x_in

# Concat input source with same length

if prev_src_len == src_len:

x_in = torch.cat((x_in, x), 0)

elif prev_src_len == 0:

x_in = x

else:

prev_src_len = -1

predict = 1

leftover = x

prev_src_len = src_len

batch += 1

# Make prediction when the size increses or it reaches max_batch

if predict or batch == max_batch_size:

src_len = t.locateToken('answer', x_in[0]) + 1

batch, predict, prev_src_len, = 0, 0, 0

x_cut = x_in[:, :src_len]

pred = x_cut.to(trainer.device)

pred = sample(model, pred, int(dataset.max_trg+1))

for i in range(x_in.size(0)):

pad, end= t.locateToken('pad', x_in[i]), t.locateToken('end', pred[i])

x, out = x_in[i][src_len:pad], pred[i][src_len:end]

x, out = t.tensor2string(x), t.tensor2string(out)

correct = 1 if x == out else 0

results.append(correct)

question = x_in[i][:src_len-1]

question_str = tensor2string(dataset.idx, question)

if not correct:

examples.append([question_str, x, out, t.tensor2string(x_cut[i]), t.tensor2string(x_in[i]), t.tensor2string(pred[i]), pad, end])

if max_batches >= 0 and b+1 >= max_batches:

break

print("final score: %d/%d = %.2f%% correct" % (np.sum(results), len(results), 100*np.mean(results)))

return examples

# training set: how well did we memorize?

examples = give_exam(test_dataset, batch_size=1, max_batches=-1)

print("Q: %s\nX:%s\nO:%s\n" % (examples[0][0], examples[0][1] , examples[0][2]))

for item in examples:

print("Question:", item[0])

print("X:", item[1])

print("Out:", item[2])

# test set: how well did we generalize?

give_exam(train_dataset, batch_size=1024, max_batches=1)

# well that's amusing... our model learned everything except 55 + 45

import itertools as it

f = ['-1', '-1', '2', '1', '1']

it.takewhile(lambda x: x!='2', f)

f

```

| github_jupyter |

# Demo 1: a demo based on visual-92-categories-task MEG data

Here is a demo based on the publicly available visual-92-categories-task MEG datasets. (Reference: Cichy, R. M., Pantazis, D., & Oliva, A. “Resolving human object recognition in space and time.” Nature neuroscience (2014): 17(3), 455-462.) MNE-Python has been used to load this dataset.

```

# -*- coding: utf-8 -*-

' a demo based on visual-92-categories-task MEG data '

# Users can learn how to use Neurora to do research based on EEG/MEG etc data.

__author__ = 'Zitong Lu'

import numpy as np

import os.path as op

from pandas import read_csv

import mne

from mne.io import read_raw_fif

from mne.datasets import visual_92_categories

from neurora.nps_cal import nps

from neurora.rdm_cal import eegRDM

from neurora.rdm_corr import rdm_correlation_spearman

from neurora.corr_cal_by_rdm import rdms_corr

from neurora.rsa_plot import plot_rdm, plot_corrs_by_time, plot_nps_hotmap, plot_corrs_hotmap

```

## Section 1: loading example data

Here, we use MNE-Python toolbox for loading data and processing. You can learn this process from MNE-Python (https://mne-tools.github.io/stable/index.html).

```

data_path = visual_92_categories.data_path()

fname = op.join(data_path, 'visual_stimuli.csv')

conds = read_csv(fname)

conditions = []

for c in conds.values:

cond_tags = list(c[:2])

cond_tags += [('not-' if i == 0 else '') + conds.columns[k]

for k, i in enumerate(c[2:], 2)]

conditions.append('/'.join(map(str, cond_tags)))

event_id = dict(zip(conditions, conds.trigger + 1))

print(event_id)

sub_id = [0, 1, 2]

megdata = np.zeros([3, 92, 306, 1101], dtype=np.float32)

subindex = 0

for id in sub_id:

fname = op.join(data_path, 'sample_subject_'+str(id)+'_tsss_mc.fif')

raw = read_raw_fif(fname)

events = mne.find_events(raw, min_duration=.002)

events = events[events[:, 2] <= 92]

subdata = np.zeros([92, 306, 1101], dtype=np.float32)

for i in range(92):

epochs = mne.Epochs(raw, events=events, event_id=i + 1, baseline=None,

tmin=-0.1, tmax=1, preload=True)

data = epochs.average().data

subdata[i] = data

megdata[subindex] = subdata

subindex = subindex + 1

# the shape of MEG data: megdata is [3, 92, 306, 1101]

# n_subs = 3, n_conditions = 92, n_channels = 306, n_timepoints = 1101 (-100ms to 1000ms)

```

## Section 2: Preprocessing

```

# shape of megdata: [n_subs, n_cons, n_chls, n_ts] -> [n_cons, n_subs, n_chls, n_ts]

megdata = np.transpose(megdata, (1, 0, 2, 3))

# shape of megdata: [n_cons, n_subs, n_chls, n_ts] -> [n_cons, n_subs, n_trials, n_chls, n_ts]

# here data is averaged, so set n_trials = 1

megdata = np.reshape(megdata, [92, 3, 1, 306, 1101])

```

## Section 3: Calculating the neural pattern similarity

```

# Get data under different condition

# Here we calculate the neural pattern similarity (NPS) between two stimulus

# Seeing Humanface vs. Seeing Non-Humanface

# get data under "humanface" condtion

megdata_humanface = megdata[12:24]

# get data under "nonhumanface" condition

megdata_nonhumanface = megdata[36:48]

# Average the data

avg_megdata_humanface = np.average(megdata_humanface, axis=0)

avg_megdata_nonhumanface = np.average(megdata_nonhumanface, axis=0)

# Create NPS input data

# Here we extract the data from first 5 channels between 0ms and 1000ms

nps_data = np.zeros([2, 3, 1, 5, 1000]) # n_cons=2, n_subs=3, n_chls=5, n_ts=1000

nps_data[0] = avg_megdata_humanface[:, :, :5, 100:1100] # the start time of the data is -100ms

nps_data[1] = avg_megdata_nonhumanface[:, :, :5, 100:1100] # so 100:1200 corresponds 0ms-1000ms

# Calculate the NPS with a 10ms time-window

# (raw sampling requency is 1000Hz, so here time_win=10ms/(1s/1000Hz)/1000=10)

nps = nps(nps_data, time_win=10, time_step=10, sub_opt=0)

# Plot the NPS results

plot_nps_hotmap(nps[:, :, 0], time_unit=[0, 0.01], abs=True)

# Smooth the results and plot

plot_nps_hotmap(nps[:, :, 0], time_unit=[0, 0.01], abs=True, smooth=True)

```

## Section 4: Calculating single RDM and Plotting

```

# Calculate the RDM based on the data during 190ms-210ms

rdm = eegRDM(megdata[:, :, :, :, 290:310], sub_opt=0)

# Plot this RDM

plot_rdm(rdm, percentile=True)

```

## Section 5: Calculating RDMs and Plotting

```

# Calculate the RDMs by a 10ms time-window

# (raw sampling requency is 1000Hz, so here time_win=10ms/(1s/1000Hz)/1000=10)

rdms = eegRDM(megdata, time_opt=1, time_win=10, time_step=10, sub_opt=0)

# Plot the RDM of -100ms, 0ms, 50ms, 100ms, 150ms, 200ms

times = [0, 10, 20, 30, 40, 50]

for t in times:

plot_rdm(rdms[t], percentile=True)

```

## Section 6: Calculating the Similarity between two RDMs

```

# RDM of 200ms

rdm_sample1 = rdms[30]

# RDM of 800ms

rdm_sample2 = rdms[90]

# calculate the correlation coefficient between these two RDMs

corr = rdm_correlation_spearman(rdm_sample1, rdm_sample2)

print(corr)

```

## Section 7: Calculating the Similarity and Plotting

```

# Calculate the representational similarity between 200ms and all the time points

corrs1 = rdms_corr(rdm_sample1, rdms)

# Plot the corrs1

corrs1 = np.reshape(corrs1, [1, 110, 2])

plot_corrs_by_time(corrs1, time_unit=[-0.1, 0.01])

# Calculate and Plot multi-corrs

corrs2 = rdms_corr(rdm_sample2, rdms)

corrs = np.zeros([2, 110, 2])

corrs[0] = corrs1

corrs[1] = corrs2

labels = ["by 200ms's data", "by 800ms's data"]

plot_corrs_by_time(corrs, labels=labels, time_unit=[-0.1, 0.01])

```

## Section 8: Calculating the RDMs for each channels

```

# Calculate the RDMs for the first six channels by a 10ms time-window between 0ms and 1000ms

rdms_chls = eegRDM(megdata[:, :, :, :6, 100:1100], chl_opt=1, time_opt=1, time_win=10, time_step=10, sub_opt=0)

# Create a 'human-related' coding model RDM

model_rdm = np.ones([92, 92])

for i in range(92):

for j in range(92):

if (i < 24) and (j < 24):

model_rdm[i, j] = 0

model_rdm[i, i] = 0

# Plot this coding model RDM

plot_rdm(model_rdm)

# Calculate the representational similarity between the neural activities and the coding model for each channel

corrs_chls = rdms_corr(model_rdm, rdms_chls)

# Plot the representational similarity results

plot_corrs_hotmap(corrs_chls, time_unit=[0, 0.01])

# Set more parameters and re-plot

plot_corrs_hotmap(corrs_chls, time_unit=[0, 0.01], lim=[-0.15, 0.15], smooth=True, cmap='bwr')

```

| github_jupyter |

# Detailed Steps Example

#### This notebook demonstrates how the data cleaning, peak fitting and descriptors generation works step by step, serving as a detailed example of the `ProcessData_PlotDescriptors_Examples.ipynb`.

## Packages and Needed Python Files Preparation

### First we import packages we need:

```

import glob

import itertools

import matplotlib

import matplotlib.pyplot as plt

import numpy as np

import os

import pandas as pd

import peakutils

import scipy

import sqlite3 as sql

from diffcapanalyzer import chachifuncs as ccf

from diffcapanalyzer import descriptors as dct

```

### Then, import the data we want to process:

```

df = pd.read_csv(os.path.join('../data/ARBIN/CS2_33/CS2_33_8_30_10.csv'))

database = 'example_db.db'

base_filename = 'CS2_33_8_30_10'

datatype = 'ARBIN'

```

## Processing Data, including Data Cleaning, Peak Fitting and Descriptors Generation

### Data Cleaning

First, we import the raw data of cycle 1

```

raw_data = ccf.load_sep_cycles(base_filename, database, datatype)

raw_df = raw_data[1]

# user can change the index of raw_df to see other cycles

fig1 = plt.figure(figsize = (8,8), facecolor = 'w', edgecolor= 'k')

plt.plot(raw_df['Voltage(V)'], raw_df['dQ/dV'], c = 'black', linewidth = 2, label = 'Raw Cycle Data')

plt.ylabel('dQ/dV (Ah/V)', fontsize =20)

plt.xlabel('Voltage(V)', fontsize = 20)

plt.xticks(fontsize = 20)

plt.yticks(fontsize = 20)

plt.tick_params(size = 10, width = 1)

plt.title('Raw Data of Cycle 1', fontsize = 24)

# plt.xlim(0, 4)

plt.ylim(-10,10)

# Uncomment the following line if you would like to save the plot.

# plt.savefig(fname = 'MyExampleCycle_Raw Data.png', bbox_inches='tight', dpi = 600)

```

As we can see in the Raw data, there are several noise and jumps on both ends, So in order to eliminate those noise and jumps, we need to remove those data points which the dV value is about zero.

Therefore, we excute fuction `drop_inf_nan_dqdv` to drop rows where dV=0 (or about 0) in a dataframe that has already had dv calculated, then recalculates dV and calculates dQ/dV.

```

rawdf = ccf.init_columns(raw_df, datatype)

rawdf1 = ccf.calc_dq_dqdv(rawdf, datatype)

clean_df = ccf.drop_inf_nan_dqdv(rawdf1, datatype)

# Clean charge and discharge cycles separately:

charge, discharge = ccf.sep_char_dis(clean_df, datatype)

charge = ccf.clean_charge_discharge_separately(charge, datatype)

discharge = ccf.clean_charge_discharge_separately(discharge, datatype)

fig2 = plt.figure(figsize = (8,8), facecolor = 'w', edgecolor= 'k')

plt.plot(charge['Voltage(V)'], charge['dQ/dV'], c = 'green', linewidth = 2, label = 'Clean Charge Cycle Data')

plt.plot(discharge['Voltage(V)'], discharge['dQ/dV'], c = 'green', linewidth = 2, label = 'Clean Dishcarge Cycle Data')

plt.ylabel('dQ/dV (Ah/V)', fontsize =20)

plt.xlabel('Voltage(V)', fontsize = 20)

plt.xticks(fontsize = 20)

plt.yticks(fontsize = 20)

plt.tick_params(size = 10, width = 1)

plt.title('Clean Data of Cycle 1', fontsize = 24)

# plt.xlim(0, 4)

plt.ylim(-10,10)

# Uncomment the following line if you would like to save the plot.

# plt.savefig(fname = 'MyExampleCycle-Clean Data.png', bbox_inches='tight', dpi = 600)

```

In order to help the computer to recognize peaks more easily later, we need to apply the Savitzky-Golay Filter to our data to get a nice and smooth curve.

Then, sperate cycles into charge and discharge cycles first, and apply the Savitzky–Golay filter to smooth the data:

```

windowlength = 9

polyorder = 3

# apply Savitzky–Golay filter

if len(discharge) > windowlength:

smooth_discharge = ccf.my_savgolay(discharge, windowlength, polyorder)

else:

discharge['Smoothed_dQ/dV'] = discharge['dQ/dV']

smooth_discharge = discharge

# this if statement is for when the datasets have less datapoints

# than the windowlength given to the sav_golay filter.

# without this if statement, the sav_golay filter throws an error

# when given a dataset with too few points. This way, we simply

# forego the smoothing function.

if len(charge) > windowlength:

smooth_charge = ccf.my_savgolay(charge, windowlength, polyorder)

else:

charge['Smoothed_dQ/dV'] = charge['dQ/dV']

smooth_charge = charge

# same as above, but for charging cycles.

fig3 = plt.figure(figsize = (8,8), facecolor = 'w', edgecolor= 'k')

plt.plot(charge['Voltage(V)'], charge['Smoothed_dQ/dV'], c = 'red', linewidth = 2, label = 'Smooth Charge Cycle Data')

plt.plot(discharge['Voltage(V)'], discharge['Smoothed_dQ/dV'], c = 'red', linewidth = 2, label = 'Smooth Dishcarge Cycle Data')

plt.ylabel('dQ/dV (Ah/V)', fontsize =20)

plt.xlabel('Voltage(V)', fontsize = 20)

plt.xticks(fontsize = 20)

plt.yticks(fontsize = 20)

plt.tick_params(size = 10, width = 1)

plt.title('Smooth Data of Cycle 1', fontsize = 24)

#plt.xlim(2.8, 4.2)

plt.ylim(-10,10)

# Uncomment the following line if you would like to save the plot.

# plt.savefig(fname = 'MyExampleCycle_Smooth Data.png', bbox_inches='tight', dpi = 600)

```

### Peak Finding

Once we got the smooth data, we then apply the "peak_finder" function, which is based on an open source package "Peakutils" to locate the peaks for both charge and discharge data.

```

# we first create the column of the dataframe according to the datatype

(cycle_ind_col, data_point_col, volt_col, curr_col,

dis_cap_col, char_cap_col, charge_or_discharge) = ccf.col_variables(datatype)

chargeloc_dict = {}

param_df = pd.DataFrame(columns=['Cycle','Model_Parameters_charge','Model_Parameters_discharge'])

# and we determine the max length of the dataframe

if len(clean_df[cycle_ind_col].unique()) > 1:

length_list = [len(clean_df[df_clean[cycle_ind_col] == cyc])

for cyc in clean_df[cycle_ind_col].unique() if cyc != 1]

lenmax = max(length_list)

else:

length_list = 1

lenmax = len(clean_df)

import peakutils

import scipy.signal

peak_thresh=2/max(charge['Smoothed_dQ/dV'])

# user can change the above peak_thresh to adjust the sensitivity of the peak_finder

# apply peak_finder

i_charge, volts_i_ch, peak_heights_c = dct.peak_finder(charge,'c', windowlength, polyorder, datatype, lenmax, peak_thresh)

i_discharge, volts_i_dic, peak_heights_d = dct.peak_finder(discharge,'d', windowlength, polyorder, datatype, lenmax, peak_thresh)

# set up a figure

fig4 = plt.figure(figsize = (8,8), facecolor = 'w', edgecolor= 'k')

plt.plot(charge['Voltage(V)'], charge['Smoothed_dQ/dV'], 'r')

plt.plot(discharge['Voltage(V)'], discharge['Smoothed_dQ/dV'], 'r')

plt.xlabel('Voltage(V)', fontsize = 20)

plt.ylabel('dQ/dV (Ah/V)', fontsize =20)

plt.xticks(fontsize = 20)

plt.yticks(fontsize = 20)

plt.tick_params(size = 10, width = 1)

plt.title('Peak Location of Cycle 1', fontsize = 24)

plt.plot(charge['Voltage(V)'][i_charge], charge['Smoothed_dQ/dV'][i_charge], 'o', c='b')

plt.plot(discharge['Voltage(V)'][i_discharge], discharge['Smoothed_dQ/dV'][i_discharge], 'o', c='b')

# Uncomment the following line if you would like to save the plot.

# plt.savefig(fname = 'MyExampleCycle_peak_finder.png', bbox_inches='tight', dpi = 600)

```

### Model Generation

Following that, we continued to apply functions "model_gen" and "model_eval" to generate models that fit those peaks, which generates a mixture of Pseudo-Voigt distributions with the gaussian function that is fitted to the peak.

```

# change the number of cyc according to the cycle number

cyc = 1

# we first assign some variables in charge cycle

V_series_c = smooth_charge[volt_col]

dQdV_series_c = smooth_charge['Smoothed_dQ/dV']

# apply model_gen and model_eval to generate model and iritate from inital guess to the best fit

par_c, mod_c, indices_c = dct.model_gen(V_series_c, dQdV_series_c, 'c', i_charge, cyc, peak_thresh)

model_c = dct.model_eval(V_series_c, dQdV_series_c, 'c', par_c, mod_c)

if model_c is not None:

mod_y_c = mod_c.eval(params=model_c.params, x=V_series_c)

myseries_c = pd.Series(mod_y_c)

myseries_c = myseries_c.rename('Model')

model_c_vals = model_c.values

new_df_mody_c = pd.concat([myseries_c, V_series_c, dQdV_series_c, smooth_charge[cycle_ind_col]], axis=1)

else:

mod_y_c = None

new_df_mody_c = None

model_c_vals = None

# now the discharge

V_series_d = smooth_discharge[volt_col]

dQdV_series_d = smooth_discharge['Smoothed_dQ/dV']

par_d, mod_d, indices_d = dct.model_gen(V_series_d, dQdV_series_d, 'd', i_discharge, cyc, peak_thresh)

model_d = dct.model_eval(V_series_d, dQdV_series_d, 'd', par_d, mod_d)

if model_d is not None:

mod_y_d = mod_d.eval(params=model_d.params, x=V_series_d)

myseries_d = pd.Series(mod_y_d)

myseries_d = myseries_d.rename('Model')

new_df_mody_d = pd.concat([-myseries_d, V_series_d, dQdV_series_d, smooth_discharge[cycle_ind_col]], axis=1)

model_d_vals = model_d.values

else:

mod_y_d = None

new_df_mody_d = None

model_d_vals = None

if new_df_mody_c is not None or new_df_mody_d is not None:

new_df_mody = pd.concat([new_df_mody_c, new_df_mody_d], axis=0)

else:

new_df_mody = None

new_df_mody

# plots data

fig5 = plt.figure(figsize = (18,8), facecolor = 'w', edgecolor= 'k')

plt.subplot(1, 2, 1)

plt.plot(smooth_charge['Voltage(V)'], smooth_charge['Smoothed_dQ/dV'], c = 'red', linewidth = 2, label = 'Smooth Data')

plt.plot(smooth_charge['Voltage(V)'], model_c.init_fit, 'k--')

plt.plot(smooth_charge['Voltage(V)'], model_c.best_fit, 'b-')

plt.xlabel('Voltage(V)', fontsize = 20)

plt.ylabel('dQ/dV (Ah/V)', fontsize =20)

plt.rcParams.update({'font.size':20})

plt.title('Charge Peak Fitting of Cycle 1', fontsize = 24)

plt.legend(['Raw Data', 'Initial Model', 'Fitted Model'], loc=2, fontsize=10)

plt.subplot(1, 2, 2)

plt.plot(smooth_discharge['Voltage(V)'], -smooth_discharge['Smoothed_dQ/dV'], c = 'red', linewidth = 2, label = 'Smooth Data')

plt.plot(smooth_discharge['Voltage(V)'], model_d.init_fit[::-1], 'k--')

plt.plot(smooth_discharge['Voltage(V)'], model_d.best_fit[::-1], 'b-')

plt.xlabel('Voltage(V)', fontsize = 20)

plt.ylabel('dQ/dV (Ah/V)', fontsize =20)

plt.rcParams.update({'font.size':20})

plt.title('Discharge Peak Fitting of Cycle 1', fontsize = 24)

plt.legend(['Raw Data', 'Initial Model', 'Fitted Model'], loc=2, fontsize=10)

# Uncomment the following line if you would like to save the plot.

# plt.savefig(fname = 'MyExampleCycle_generate model', bbox_inches='tight', dpi = 600)

```

So, the overall fit model with the raw data is shown below

```

smooth_df = smooth_charge.append(smooth_discharge)

fig6 = plt.figure(figsize = (8,8), facecolor = 'w', edgecolor= 'k')

plt.plot(raw_df['Voltage(V)'], raw_df['dQ/dV'], 'k-', label = 'Raw Data')

plt.plot(smooth_df['Voltage(V)'], new_df_mody['Model'], 'r--')

plt.xlabel('Voltage(V)', fontsize = 20)

plt.ylabel('dQ/dV (Ah/V)', fontsize =20)

plt.rcParams.update({'font.size':20})

plt.title('Model Generation of Cycle 1', fontsize = 24)

plt.legend(['Raw Data', 'Fitted Model'], loc=2, fontsize=10)

plt.ylim(-10,10)

# Uncomment the following line if you would like to save the plot.

# plt.savefig(fname = 'MyExampleCycle_final generate model', bbox_inches='tight', dpi = 600)

```

Moreover, from the model generation, we can also obtain the descriptors of the peak, the below shows the extraction of the descriptors:

```

# extract the peak location from the descriptors dictionary

center_c = [value for key, value in model_c_vals.items() if 'center' in key.lower()]

center_d = [value for key, value in model_d_vals.items() if 'center' in key.lower()]

center_c = center_c[0:-1]

center_d = center_d[0:-1]

print("The location(V) of the charge peak of cycle", cyc, "are", center_c)

print("The location(V) of the discharge peak of cycle", cyc, "are", center_d)

print("The height(Ah/V) of the charge peak of cycle", cyc, "are", peak_heights_c)

print("The height(Ah/V) of the discharge peak of cycle", cyc, "are", peak_heights_d)

```

| github_jupyter |

# Indexing

Okay guys today's lecture is indexing.

> What is indexing?

At heart, indexing is the ability to inspect a value inside a object. So basically if we have a list, X, of 100 items and our index is 'i' then 'i of X' returns the *ith value* inside the list (p.s. we can index strings too).

Okay, so what is the Syntax for this? Glad you asked:

{variable} [{integer}]

So if we wanted to index into something called "a_string" in code it would look something like:

a_string[integer]

Now, the integer in question cannot be any number from -infinity to +infinity. Rather, it is bounded by the size of the variable. For example, if the size of the variable is 5 that means our integer has to be in the range -5 to 4. Or more generally:

Index Range ::

Lower Bound = -len(variable)

Upper Bound = len(variable) - 1

Anything outside this range = IndexError

Just as a quick explanation, len() is a built-in command that gets the size of the object and adding a "-" sign infront of an integer 'flips' its sign:

```

# flipping signs of numbers...

a = 5

b = -5

print(-a, -b)

# len function

x1 = []

x2 = "12"

x3 = [1,2,3]

print(len(x1), len(x2), len(x3))

x = [1,2,3]

print(x[100]) # <--- IndexError! 100 is waayyy out of bounds

```

Now, those bounds I have just given might sound a bit arbitrary, but actually I can explain exactly how they work. Consider the following picture:

So in this picture we have the string ‘hello’. The two rows of numbers represent the indexes of this string. In Python we start counting from 0 which means the first item in a list/string always has an index of 0. And since we start counting at zero then that means the last item in the list/string is len(item)-1 like so:

```

string = "hello"

print(string[0]) # first item

print(string[len(string)-1]) # last item

```

So that explains the first row of numbers in the image. What about the second row? Well, in Python not only can you index forwards you can also index backwards.

## Readabily counts...

So basically index [0] will always be the start of the list/string and an index of [-1] will always be the end. If you wanted the middle "l" in "hello" have a choice; either [2] or [-3] will work. **And, as a general rule, if code ends up being equivalent your choice should be to go with whatever is more readable.**

> There should be one-- and preferably only one --obvious way to do it. ~ Zen of Python

For example:

```

a_string = "Hello"

# indexing first item...

print(a_string[0]) # Readable

print(a_string[-len(a_string)]) # Less readable

print(a_string[-1]) # Readable

print(a_string[len(a_string)-1]) # Less readable

print(a_string[4]) # Avoid this whereever possible! BAD BAD BAD!!

```

You might wonder what is wrong with index[4] to reference the end of the list.

The problem with using index[4] instead of [-1] is that the former way of doing things is considerably less readable. Without actually checking the length of the input the meaning of index[4] is somewhat ambiguous; is this the end? Near the beginning/middle? Meanwhile [-1] **always** refers to the end regardless of input size, and so therefore its meaning is always clear **even when** we don’t know the size of the input.

Index[len(a_string)-1] meanwhile always refers to the end of the list but it is considerably more verbose and less readable than the simple [-1].

## The Index Method

The string class AND the list class both have an index method, and now that we have just covered indexing we are in a position to understand its output.

Basically, we ask if an item is in a string/list. And if it is, the method returns an index for that item. For example:

```

a_list = ["qwerty", "dave", "magic johnson", "qwerty"]

a_string = "Helllllllo how ya doin fam?"

# notice that Python returns the index of the first match.

print(a_list.index("qwerty"))

print(a_string.index("l"))

# if item is not in the list, you get an value error:

print(a_list.index("chris"))

```

## What can we do with indexing?

Obviously we can do a lot with indexing, in the cases of lists, for example, we change the value of the list at position ‘i’. Its simple to do that:

```

a_list = [1,2,3]

print(a_list)

a_list[-1] = "a"

print(a_list)

a_list[0] = "c"

print(a_list)

a_list[1] = "b"

print(a_list)

```

### Can we change the values inside strings?

Lets try!

```

a_string = "123"

a_string[0] = "a" # <-- Error; strings are an "immutable" data type in Python.

```

In python strings are immutable, which is a fancy way of saying that they are set in stone; once created you just can't change them. Your only option is to create new strings with data you want.

If we create a new string we can use the old variable if we want. But in this case, you didn't change the value of the string. Rather what you did was create a new string and give it a variable name, and thats allowed.

Here is one way we can change the value of 'a_string':

```

a_string = "123"

a_string = "a" + a_string[1:] # slicing, see below.

print(a_string)

```

## Making Grids

> "Flat is better than nested". ~ Zen of Python

Talking of lists, remember that we can go all "inception-like" with lists and shove lists inside lists inside lists. How can we index a beast like that? Well, with difficulty...

```

this_is_insane = [ [[[[[[[[[[[[100]]]]]]]]]]]] ] # WTF !!??

print(this_is_insane[0][0][0][0][0][0][0][0][0][0][0][0][0])

```

To index a list inside a list the syntax is to add another [{integer}] on the end. Repeat until you get to the required depth.

list[{integer}][{integer}]

In the case of the above the value 100 was nested inside so many lists that it took a lot of effort to tease it out. Structures like this are hard to work with, which is why the usual advice is to 'flatten' your lists wherever possible.

With this said, nested structures are not all bad. A really common way of representing a grid in Python is to use nested lists. In which case, we can index any square we want by first indexing the 'row' and then the 'column'. Like so:

grid[row][column]

If you ever want to build simple board games (chess, connect 4, etc) you might find the representation useful. In code:

```

grid = [ ["0"] * 5 for _ in range(5) ] # building a nested list, in style. 'List Comprehensions' are not covered in this course.

print("The Grid looks like this...:", grid[2:], "\n")

# Note: "grid[2:]" above is a 'slice' (more on slicing below), in this case I'm using slicing to truncate the results,

# observe that three lists get printed, not five.

def print_grid():

"""This function simply prints grid, row by row."""

for row in grid: # This is a for-loop, more on these later!

print(row)

print_grid()

print("\n")

grid[0][0] = "X" # Top-left corner

grid[0][-1] = "Y" # Top-right corner

grid[-1][0] = "W" # Bottom-left corner

grid[-1][-1] = "Z" # Bottom-right corner

grid[2][2] = "A" # Somewhere near the middle

print_grid()

# Quick note, since the corners index are defined by 0 and -1, these numbers should work for all nxn grids.

```

Anyway, thats enough about indexing for now, let's move onto the topic of slicing...

## Slicing

What is slicing? Well it is a bit like indexing, only instead of returning point 'X' we return all the values between the points (x, y). Just as with indexing, you can slice strings as well as lists.

Note: start points are *inclusive* and endpoints are *exclusive*.

{variable} [{start} : {end} : {step}]

* Where start, end and step are all integer values.

It is also worth noting that each of start, end and step are optional arguments, when nothing is given they default to the start of the list, end of the list and the default step is 1.

If you give start/step an integer Python will treat that number as an index. Thus, a_list[2:10] says "Hey Python, go fetch me all the values in 'a_list' starting at index 2 up-to **(but not including)** index 10.

Unlike indexing however, if you try to slice outside of range you won't get an error message. If you have a list of length five and try to slice with values 0 and 100 Python will just return the whole list. If you try to slice the list at 100 and 200 an empty list '[]' will be the result. Lets see a few examples:

```

lst = list(range(1,21)) # list(range) just makes a list of numbers 1 to 20

# The below function just makes it faster for me to type out the test cases below.

def printer(start, end, lst):

""" Helper function, takes two integers (start, end) and a list/string.

Function returns a formated string that contains: start, end and lst[start:end]"""

if start:

if end:

sliced = lst[start:end]

else:

sliced = lst[start:]

elif end:

sliced = lst[:end]

else:

sliced = lst[:]

return "slice is '[{}:{}]', which returns: {}".format(start, end, sliced)

print("STARTING LIST IS:", lst)

print("")

# Test cases

print("SLICING LISTS...")

print(printer("","", lst)) # [:] is sometimes called a 'shallow copy' of a list.

print(printer("", 5, lst )) # first 5 items.

print(printer(14,"", lst)) # starting at index 14, go to the end.

print(printer(200,500,lst)) # No errors for indexes that should be "out of bounds".

print(printer(5,10, lst))

print(printer(4,5, lst))

# Negative numbers work too. In the case below we start at the 5th last item and move toward the 2nd to last item.

print(printer(-5,-2, lst))

print(printer(-20,-1, lst)) # note that this list finishes at 19, not 20.

# and for good measure, a few strings:

print("\nSLICING STRINGS...")

a_string = "Hello how are you?"

print(printer("","", a_string)) # The whole string aka a 'shallow copy'

print(printer(0,5, a_string))

print(printer(6,9, a_string))

print(printer(10,13, a_string))

print(printer(14, 17, a_string))

print(printer(17, "", a_string))

```

Alright, so that's the basics of slicing covered, the only remaining question is what the final "step" argument does. Well basically, the step allows us to 'skip' every *nth *element of the list/string.

For example, suppose that I have (just as before) a list of numbers 1-to-20, but this time I want to return the EVEN numbers between 15 and 19. Intuitively we know that the result should be [16,18] but how can we do this in code?

```

a_list = list(range(1,21))

sliced_list = a_list[15:19:2]

print(sliced_list)

print(a_list[17])

```

How does this work? Well, index 15 is the number 16 (remember we count from 0 in Python), and then we skip index 16 (an odd number) and go straight to index 17 (which is the number 18). The next index to look at is 20, but since that is larger than our end step (19) we terminate.

On last thing I'd like to note is that we got even numbers in this case because we started with an even number (index 15= 16). Had we of started with an odd number, this process would have returned odd numbers. For example:

```

a_list = list(range(0,206))

slice1 = a_list[::10] # every 10th element starting from zero = [0, 10, 20, ...]

slice2 = a_list[5::10] # every 10th element starting from 5 = [5, 15, 25,...]

a_string = "a123a123a123a123a123a123a123" # this pattern has a period of 4.

slice3 = a_string[::4] # starts at a, returns aaaaaa

slice4 = a_string[3::4] # starts at 3, returns 333333

print(slice1, slice2, slice3, slice4, sep="\n")

```

In both of the above cases we are using a step of size 10. If we start at 0 that means we get:

10,20,30...

but if we start at 5 then the sequence we get is

5, 15, 25...

In the case of the string example above, the patten has a length of four and then repeats. Thus, if we start with n charater and have a step of 4 the resulting pattern with be "nnnnnn".

## Reversing lists with step

The very last thing I want to show you about a the step argument is that if you set step to -1 it will reverse the string/list.

For example:

```

a_list = list(range(1, 11))

print(a_list)

print(a_list[::-1]) # reverses the list

```

## The Range Function

You maybe have observed that I use the 'range' function in some of the above examples. This function doesn't have anything to do with indexing or slicing, but I thought I would briefly talk about it here because although the syntax is different this function works in a very similar way to slicing. More specifically, the range function takes 3 arguments; start, end, step (optional). And these arguments work in a similar way to how start, end and step work with regards slicing. Allow me to demonstrate:

```

list_1 = list(range(1,21))

list_1 = list_1[2::3]

print(list_1)

# The above 3 lines can be refactored to:

list_2 = list(range(3, 21, 3))

print(list_2)

```

You will note a small difference between the two ways of doing things. When we slice we start the the count at 2 whereas with range we start the count at 3. The difference is the result of the fact the range function is dealing with numbers, whereas the slice is using indexing (e.g. list_1[2] is the number 3).

And just as with slicing, a step of -1 counts backwards...

```

list_3 = list(range(10, -1, -1)) # this says: "start at the number 10 and count backwards to 0

# please remember that start points are inclusive BUT endpoints are exclusive,

# if we want to include 0 in the results we must have an endpoint +1 of our target.

# in this case the number one past zero (when counting backwards) is -1.

print(list_3)

```

| github_jupyter |

```

from fastai.text.all import *

chunked??

```

Let's look at how long it takes to tokenize a sample of 1000 IMDB review.

```

path = untar_data(URLs.IMDB_SAMPLE)

df = pd.read_csv(path/'texts.csv')

df.head(2)

ss = L(list(df.text))

ss[0]

```

We'll start with the simplest approach:

```

def delim_tok(s, delim=' '): return L(s.split(delim))

s = ss[0]

delim_tok(s)

```

...and a general way to tokenize a bunch of strings:

```

def apply(func, items): return list(map(func, items))

```

Let's time it:

```

%%timeit -n 2 -r 3

global t

t = apply(delim_tok, ss)

```

...and the same thing with 2 workers:

```

%%timeit -n 2 -r 3

parallel(delim_tok, ss, n_workers=2, progress=False)

```

How about if we put half the work in each worker?

```

batches32 = [L(list(o)).map(str) for o in np.array_split(ss, 32)]

batches8 = [L(list(o)).map(str) for o in np.array_split(ss, 8 )]

batches = [L(list(o)).map(str) for o in np.array_split(ss, 2 )]

%%timeit -n 2 -r 3

parallel(partial(apply, delim_tok), batches, progress=False, n_workers=2)

```

So there's a lot of overhead in using parallel processing in Python. :(

Let's see why. What if we do nothing interesting in our function?

```

%%timeit -n 2 -r 3

global t

t = parallel(noop, batches, progress=False, n_workers=2)

```