text stringlengths 2.5k 6.39M | kind stringclasses 3

values |

|---|---|

```

import numpy as np

import pandas as pd

import pyodbc

import time

import pickle

import operator

from operator import itemgetter

from joblib import Parallel, delayed

from sklearn import linear_model

from sklearn.linear_model import Ridge

from sklearn.tree import DecisionTreeRegressor

from sklearn.model_selection import cross_val_score

from sqlalchemy import create_engine

import matplotlib.pyplot as plt

%matplotlib inline

```

Before everything, please download a preprocessed version of the natality data from https://www.dropbox.com/s/395rrh0c826gw9r/Natality_small.csv?dl=0

```

conn = pyodbc.connect("Driver={SQL Server Native Client 11.0};"

"Server=localhost;"

"Database=master;"

"Trusted_Connection=yes;")

cur = conn.cursor()

engine = create_engine('mssql+pyodbc://localhost/master?driver=SQL+Server+Native+Client+11.0')

def construct_sec_order(arr):

# data generation function helper.

second_order_feature = []

num_cov_sec = len(arr[0])

for a in arr:

tmp = []

for i in range(num_cov_sec):

for j in range(i+1, num_cov_sec):

tmp.append( a[i] * a[j] )

second_order_feature.append(tmp)

return np.array(second_order_feature)

def data_generation_dense_2(num_control, num_treated, num_cov_dense, num_covs_unimportant,

control_m = 0.1, treated_m = 0.9):

# the data generation function that I'll use.

xc = np.random.binomial(1, 0.5, size=(num_control, num_cov_dense)) # data for conum_treatedrol group

xt = np.random.binomial(1, 0.5, size=(num_treated, num_cov_dense)) # data for treatmenum_treated group

errors1 = np.random.normal(0, 0.05, size=num_control) # some noise

errors2 = np.random.normal(0, 0.05, size=num_treated) # some noise

dense_bs_sign = np.random.choice([-1,1], num_cov_dense)

#dense_bs = [ np.random.normal(dense_bs_sign[i]* (i+2), 1) for i in range(len(dense_bs_sign)) ]

dense_bs = [ np.random.normal(s * 10, 1) for s in dense_bs_sign ]

yc = np.dot(xc, np.array(dense_bs)) + errors1 # y for conum_treatedrol group

treatment_eff_coef = np.random.normal( 1.5, 0.15, size=num_cov_dense)

treatment_effect = np.dot(xt, treatment_eff_coef)

second = construct_sec_order(xt[:,:5])

treatment_eff_sec = np.sum(second, axis=1)

yt = np.dot(xt, np.array(dense_bs)) + treatment_effect + treatment_eff_sec + errors2 # y for treated group

xc2 = np.random.binomial(1, control_m, size=(num_control, num_covs_unimportant)) #

xt2 = np.random.binomial(1, treated_m, size=(num_treated, num_covs_unimportant)) #

df1 = pd.DataFrame(np.hstack([xc, xc2]),

columns=['{0}'.format(i) for i in range(num_cov_dense + num_covs_unimportant)])

df1['outcome'] = yc

df1['treated'] = 0

df2 = pd.DataFrame(np.hstack([xt, xt2]),

columns=['{0}'.format(i) for i in range(num_cov_dense + num_covs_unimportant )] )

df2['outcome'] = yt

df2['treated'] = 1

df = pd.concat([df1,df2])

df['matched'] = 0

return df, dense_bs, treatment_eff_coef

# this function takes the current covariate list, the covariate we consider dropping, name of the data table,

# name of the holdout table, the threshold (below which we consider as no match), and balancing regularization

# as input; and outputs the matching quality

def score_tentative_drop_c(cov_l, c, db_name, holdout_df, thres = 0, tradeoff = 0.1):

covs_to_match_on = set(cov_l) - {c} # the covariates to match on

# the flowing query fetches the matched results (the variates, the outcome, the treatment indicator)

s = time.time()

##cur.execute('''with temp AS

## (SELECT

## {0}

## FROM {3}

## where "matched"=0

## group by {0}

## Having sum("treated")>'0' and sum("treated")<count(*)

## )

## (SELECT {1}, {3}."treated", {3}."outcome"

## FROM temp, {3}

## WHERE {2}

## )

## '''.format(','.join(['"C{0}"'.format(v) for v in covs_to_match_on ]),

## ','.join(['{1}."C{0}"'.format(v, db_name) for v in covs_to_match_on ]),

## ' AND '.join([ '{1}."C{0}"=temp."C{0}"'.format(v, db_name) for v in covs_to_match_on ]),

## db_name

## ) )

##res = np.array(cur.fetchall())

cur.execute('''with temp AS

(SELECT

{0}

FROM {3}

where "matched"=0

group by {0}

Having sum("treated")>'0' and sum("treated")<count(*)

)

(SELECT {1}, treated, outcome

FROM {3}

WHERE EXISTS

(SELECT 1

FROM temp

WHERE {2}

)

)

'''.format(','.join(['"{0}"'.format(v) for v in covs_to_match_on ]),

','.join(['{1}."{0}"'.format(v, db_name) for v in covs_to_match_on ]),

' AND '.join([ '{1}."{0}"=temp."{0}"'.format(v, db_name) for v in covs_to_match_on ]),

db_name

) )

res = np.array(cur.fetchall())

time_match = time.time() - s

s = time.time()

# the number of unmatched treated units

cur.execute('''select count(*) from {} where "matched" = 0 and "treated" = 0'''.format(db_name))

num_control = cur.fetchall()

# the number of unmatched control units

cur.execute('''select count(*) from {} where "matched" = 0 and "treated" = 1'''.format(db_name))

num_treated = cur.fetchall()

time_BF = time.time() - s

# fetch from database the holdout set

##s = time.time()

##cur.execute('''select {0}, "treated", "outcome"

## from {1}

## '''.format( ','.join( ['"C{0}"'.format(v) for v in covs_to_match_on ] ) , holdout))

##holdout = np.array(cur.fetchall())

s = time.time() # the time for fetching data into memory is not counted if use this

# below is the regression part for PE

#ridge_c = Ridge(alpha=0.1)

#ridge_t = Ridge(alpha=0.1)

tree_c = DecisionTreeRegressor(max_depth=8, random_state=0)

tree_t = DecisionTreeRegressor(max_depth=8, random_state=0)

holdout = holdout_df.copy()

holdout = holdout[ list(covs_to_match_on) + ['treated', 'outcome']]

mse_t = np.mean(cross_val_score(tree_t, holdout[holdout['treated'] == 1].iloc[:,:-2],

holdout[holdout['treated'] == 1]['outcome'] , scoring = 'neg_mean_squared_error' ) )

mse_c = np.mean(cross_val_score(tree_c, holdout[holdout['treated'] == 0].iloc[:,:-2],

holdout[holdout['treated'] == 0]['outcome'], scoring = 'neg_mean_squared_error' ) )

#mse_t = np.mean(cross_val_score(ridge_t, holdout[holdout['treated'] == 1].iloc[:,:-2],

# holdout[holdout['treated'] == 1]['outcome'] , scoring = 'neg_mean_squared_error' ) )

#mse_c = np.mean(cross_val_score(ridge_c, holdout[holdout['treated'] == 0].iloc[:,:-2],

# holdout[holdout['treated'] == 0]['outcome'], scoring = 'neg_mean_squared_error' ) )

# above is the regression part for BF

time_PE = time.time() - s

if len(res) == 0:

return (( mse_t + mse_c ), time_match, time_PE, time_BF)

##return mse_t + mse_c

else:

return (tradeoff * (float(len(res[res[:,-2]==0]))/num_control[0][0] + float(len(res[res[:,-2]==1]))/num_treated[0][0]) +\

( mse_t + mse_c ), time_match, time_PE, time_BF)

##return reg_param * (float(len(res[res[:,-2]==0]))/num_control[0][0] + float(len(res[res[:,-2]==1]))/num_treated[0][0]) +\

## ( mse_t + mse_c )

# update matched units

# this function takes the currcent set of covariates and the name of the database; and update the "matched"

# column of the newly mathced units to be "1"

def update_matched(covs_matched_on, db_name, level):

cur.execute('''with temp AS

(SELECT

{0}

FROM {3}

where "matched"=0

group by {0}

Having sum("treated")>'0' and sum("treated")<count(*)

)

update {3} set "matched"={4}

WHERE EXISTS

(SELECT {0}

FROM temp

WHERE {2} and {3}."matched" = 0

)

'''.format(','.join(['"{0}"'.format(v) for v in covs_matched_on]),

','.join(['{1}."{0}"'.format(v, db_name) for v in covs_matched_on]),

' AND '.join([ '{1}."{0}"=temp."{0}"'.format(v, db_name) for v in covs_matched_on ]),

db_name,

level

) )

conn.commit()

return

# get CATEs

# this function takes a list of covariates and the name of the data table as input and outputs a dataframe

# containing the combination of covariate values and the corresponding CATE

# and the corresponding effect (and the count and variance) as values

def get_CATE(cov_l, db_name, level):

cur.execute(''' select {0}, avg(outcome * 1.0), count(*)

from {1}

where matched = {2} and treated = 0

group by {0}

'''.format(','.join(['"{0}"'.format(v) for v in cov_l]),

db_name, level) )

res_c = cur.fetchall()

cur.execute(''' select {0}, avg(outcome * 1.0), count(*)

from {1}

where matched = {2} and treated = 1

group by {0}

'''.format(','.join(['"{0}"'.format(v) for v in cov_l]),

db_name, level) )

res_t = cur.fetchall()

if (len(res_c) == 0) | (len(res_t) == 0):

return None

cov_l = list(cov_l)

result = pd.merge(pd.DataFrame(np.array(res_c), columns=['{}'.format(i) for i in cov_l]+['effect_c', 'count_c']),

pd.DataFrame(np.array(res_t), columns=['{}'.format(i) for i in cov_l]+['effect_t', 'count_t']),

on = ['{}'.format(i) for i in cov_l], how = 'inner')

result_df = result[['{}'.format(i) for i in cov_l] + ['effect_c', 'effect_t', 'count_c', 'count_t']]

# -- the following section are moved to after getting the result

#d = {}

#for i, row in result.iterrows():

# k = ()

# for j in range(len(cov_l)):

# k = k + ((cov_l[j], row[j]),)

# d[k] = (row['effect_c'], row['effect_t'], row['std_t'], row['std_c'], row['count_c'], row['count_t'])

# -- the above section are moved to after getting the result

return result_df

def run(db_name, holdout_df, num_covs, reg_param = 0.1):

cur.execute('update {0} set matched = 0'.format(db_name)) # reset the matched indicator to 0

conn.commit()

covs_dropped = [] # covariate dropped

ds = []

level = 1

timings = [0]*5 # first entry - match (groupby and join),

# second entry - regression (compute PE),

# third entry - compute BF,

# fourth entry - keep track of CATE,

# fifth entry - update database table (mark matched units).

cur_covs = range(num_covs) # initialize the current covariates to be all covariates

# make predictions and save to disk

s = time.time()

update_matched(cur_covs, db_name, level) # match without dropping anything

timings[4] = timings[4] + time.time() - s

s = time.time()

d = get_CATE(cur_covs, db_name, level) # get CATE without dropping anything

timings[3] = timings[3] + time.time() - s

ds.append(d)

##s = time.time()

##cur.execute('''update {} set "matched"=2 WHERE "matched"=1 '''.format(db_name)) # mark the matched units as matched and

#they are no langer seen by the algorithm

##conn.commit()

##timings[4] = timings[4] + time.time() - s

while len(cur_covs)>1:

print(cur_covs) # print current set of covariates

level += 1

# the early stopping conditions

cur.execute('''select count(*) from {} where "matched"=0 and "treated"=0'''.format(db_name))

if cur.fetchall()[0][0] == 0:

break

cur.execute('''select count(*) from {} where "matched"=0 and "treated"=1'''.format(db_name))

if cur.fetchall()[0][0] == 0:

break

best_score = -np.inf

cov_to_drop = None

cur_covs = list(cur_covs)

for c in cur_covs:

score,time_match,time_PE,time_BF = score_tentative_drop_c(cur_covs, c, db_name,

holdout_df, tradeoff = 0.1)

timings[0] = timings[0] + time_match

timings[1] = timings[1] + time_PE

timings[2] = timings[2] + time_BF

if score > best_score:

best_score = score

cov_to_drop = c

cur_covs = set(cur_covs) - {cov_to_drop} # remove the dropped covariate from the current covariate set

s = time.time()

update_matched(cur_covs, db_name, level)

timings[4] = timings[4] + time.time() - s

s = time.time()

d = get_CATE(cur_covs, db_name, level)

timings[3] = timings[3] + time.time() - s

ds.append(d)

##s = time.time()

##cur.execute('''update {} set "matched"=2 WHERE "matched"=1 '''.format(db_name))

##conn.commit()

##timings[4] = timings[4] + time.time() - s

covs_dropped.append(cov_to_drop) # append the removed covariate at the end of the covariate

return timings, ds

```

PGARScore as outcome

```

df = pd.read_csv('Natality_small.csv')

df = df[df['ABAssistedVentilation'] != 'U']

df = df[df['ABAssistedVentilationMoreThan6Hrs'] != 'U']

df = df[df['ABAdmissionToNICU'] != 'U']

df = df[df['ABSurfactant'] != 'U']

df = df[df['ABAntibiotics'] != 'U']

df = df[df['ABSeizures'] != 'U']

df = df.drop(columns=['FiveMinuteAPGARScoreRecode', 'DeliveryMethodRecodeRevised'])

df['outcome'] = df['FiveMinAPGARScore']

df = df[df['outcome'] != 99]

df = df.drop(columns=['FiveMinAPGARScore'])

df.loc[df['CigaretteRecode'] == 'Y', 'CigaretteRecode'] = 1

df.loc[df['CigaretteRecode'] == 'N', 'CigaretteRecode'] = 0

df['matched'] = 0

cols = [c for c in df.columns if c.lower()[:4] != 'flag']

df = df[cols]

char_col = []

for c in df.columns:

if df[c].dtype != np.int64:

char_col.append(c)

for c in char_col:

df[c] = df[c].astype(str)

l = sorted(list(np.unique(df[c])))

print c, l

for i in range(len(l)):

df.loc[df[c] == l[i], c] = i

rename_dict = dict()

cols = list(df.columns)

cols.remove('matched')

cols.remove('outcome')

cols.remove('CigaretteRecode')

for i in range(len(cols)):

rename_dict[i] = cols[i]

rename_dict['treated'] = 'CigaretteRecode'

inv_rename_dict = {v:k for k,v in rename_dict.iteritems()}

import pickle

pickle.dump(rename_dict, open('natality_rename_dict_score', 'wb'))

df.rename(columns = inv_rename_dict, inplace = True)

from sklearn.model_selection import train_test_split

df, holdout = train_test_split(df, test_size = 0.1, random_state = 345)

df.to_csv('Natality_db_score.csv', index = False)

holdout.to_csv('Natality_holdout_score.csv', index = False)

#holdout = pd.read_csv('Natality_holdout.csv')

df.to_sql('natality_score', engine, chunksize=100)

del df

#df.to_sql('natality', engine, chunksize=100)

holdout = pd.read_csv('Natality_holdout_score.csv')

holdout.rename(columns = {str(i):i for i in range(166)}, inplace = True)

#del df

db_name = 'natality_score'

s = time.time()

res = run(db_name, holdout, 91)

print (time.time() - s)

#pickle.dump(res, open('natality_cigar_score_res', 'wb'))

```

abnormality as outcome, with prenatal care start time used to define sub-populations.

```

df = pd.read_csv('Natality_small.csv')

df = df[df['ABAssistedVentilation'] != 'U']

df = df[df['ABAssistedVentilationMoreThan6Hrs'] != 'U']

df = df[df['ABAdmissionToNICU'] != 'U']

df = df[df['ABSurfactant'] != 'U']

df = df[df['ABAntibiotics'] != 'U']

df = df[df['ABSeizures'] != 'U']

df = df.drop(columns=['FiveMinuteAPGARScoreRecode', 'DeliveryMethodRecodeRevised'])

df['outcome'] = np.array((df['ABAssistedVentilation'] == 'Y' ) |\

(df['ABAssistedVentilationMoreThan6Hrs'] == 'Y') |\

(df['ABAdmissionToNICU'] == 'Y') |\

(df['ABSurfactant'] == 'Y') |\

(df['ABAntibiotics'] == 'Y') |\

(df['ABSeizures'] == 'Y') )

df['outcome'] = df['outcome'].astype(int)

df = df.drop(columns = ['ABAssistedVentilation', 'ABAssistedVentilationMoreThan6Hrs',

'ABAdmissionToNICU', 'ABSurfactant', 'ABAntibiotics', 'ABSeizures'])

df.loc[df['CigaretteRecode'] == 'Y', 'CigaretteRecode'] = 1

df.loc[df['CigaretteRecode'] == 'N', 'CigaretteRecode'] = 0

df['matched'] = 0

cols = [c for c in df.columns if c.lower()[:4] != 'flag']

df = df[cols]

char_col = []

for c in df.columns:

if df[c].dtype != np.int64:

char_col.append(c)

for c in char_col:

df[c] = df[c].astype(str)

l = sorted(list(np.unique(df[c])))

print c, l

for i in range(len(l)):

df.loc[df[c] == l[i], c] = i

rename_dict = dict()

cols = list(df.columns)

cols.remove('matched')

cols.remove('outcome')

cols.remove('CigaretteRecode')

for i in range(len(cols)):

rename_dict[i] = cols[i]

rename_dict['treated'] = 'CigaretteRecode'

inv_rename_dict = {v:k for k,v in rename_dict.iteritems()}

import pickle

pickle.dump(rename_dict, open('natality_rename_dict_abnormality', 'wb'))

df.rename(columns = inv_rename_dict, inplace = True)

from sklearn.model_selection import train_test_split

df, holdout = train_test_split(df, test_size = 0.1, random_state = 345)

df.to_csv('Natality_db_abnormality.csv', index = False)

holdout.to_csv('Natality_holdout_abnormality.csv', index = False)

#holdout = pd.read_csv('Natality_holdout.csv')

df.to_sql('natality_abnormality', engine, chunksize=100)

holdout = pd.read_csv('Natality_holdout_abnormality.csv')

#df.to_sql('natality', engine, chunksize=100)

holdout = pd.read_csv('Natality_holdout_abnormality.csv')

holdout.rename(columns = {str(i):i for i in range(166)}, inplace = True)

#del df

db_name = 'natality_abnormality'

s = time.time()

res = run(db_name, holdout, 86)

print (time.time() - s)

pickle.dump(res, open('natality_cigar_abnormality_res', 'wb'))

res = pickle.load(open('natality_cigar_score_res', 'rb'))

rename_dict = pickle.load(open('natality_rename_dict_score'))

def weighted_avg_and_std(values, weights):

"""

Return the weighted average and standard deviation.

values, weights -- Numpy ndarrays with the same shape.

"""

ave = np.average(values, weights=weights)

var = np.average((values-ave)**2, weights=weights) # Fast and numerically precise

return (ave, np.sqrt(var))

g1_effect = []

g2_effect = []

g1_size = []

g2_size = []

for i in range(len(res[1])):

print i

r = res[1][i]

if r is None:

continue

if '11' not in list(r.columns):

break

r_small = r[(r['11'] <= 2) ]

r_large = r[(r['11'] >= 3) & (r['11'] <= 4) ]

g1_effect = g1_effect + list(r_small['effect_t'] - r_small['effect_c'] )

g1_size = g1_size + list(r_small['count_t'] + r_small['count_c'] )

g2_effect = g2_effect + list( r_large['effect_t'] - r_large['effect_c'] )

g2_size = g2_size + list( r_large['count_t'] + r_large['count_c'] )

g1_effect = [float(v) for v in g1_effect ]

g2_effect = [float(v) for v in g2_effect ]

g1_size = [float(v) for v in g1_size ]

g2_size = [float(v) for v in g2_size ]

g1_mean, g1_std = weighted_avg_and_std(np.array(g1_effect), np.array(g1_size) )

g2_mean, g2_std = weighted_avg_and_std(np.array(g2_effect), np.array(g2_size) )

plt.rcParams['font.size'] = 15

plt.figure(figsize = (5,5))

plt.scatter([0,1], [g1_mean, g2_mean])

plt.errorbar([0,1], [g1_mean, g2_mean], yerr = [g1_std, g2_std], linestyle = 'None')

plt.xticks([0,1], ['0', '1'])

plt.xlabel('Prenatal Care Beginning Time Code')

plt.ylabel('Estimated Treatment Effect')

plt.xlim([-1,2])

plt.ylim([-1,1])

plt.tight_layout()

plt.savefig('natality_score_prenatal.png', dpi = 150)

res = pickle.load(open('natality_cigar_score_res', 'rb'))

rename_dict = pickle.load(open('natality_rename_dict_score'))

effect = []

size = []

for i in range(len(res[1])):

print i

r = res[1][i]

if r is None:

continue

effect = effect + list(r['effect_t'] - r['effect_c'] )

size = size + list(r['count_t'] + r['count_c'] )

effect = [float(v) for v in effect ]

size = [float(v) for v in size ]

avr, std = weighted_avg_and_std(np.array(effect), np.array(size) )

g1_effect = []

g2_effect = []

g3_effect = []

g1_size = []

g2_size = []

g3_size = []

for i in range(len(res[1])):

print i

r = res[1][i]

if r is None:

continue

if '11' not in list(r.columns):

break

#r1 = r[(r['6'] == 0) ]

r2 = r[(r['7'] >=7) ]

r3 = r[(r['7'] < 7)]

#g1_effect = g1_effect + list(r1['effect_t'] - r1['effect_c'] )

#g1_size = g1_size + list(r1['count_t'] + r1['count_c'] )

g2_effect = g2_effect + list( r2['effect_t'] - r2['effect_c'] )

g2_size = g2_size + list( r2['count_t'] + r2['count_c'] )

g3_effect = g3_effect + list( r3['effect_t'] - r3['effect_c'] )

g3_size = g3_size + list( r3['count_t'] + r3['count_c'] )

g1_effect = [float(v) for v in g1_effect ]

g2_effect = [float(v) for v in g2_effect ]

g3_effect = [float(v) for v in g3_effect ]

g1_size = [float(v) for v in g1_size ]

g2_size = [float(v) for v in g2_size ]

g3_size = [float(v) for v in g3_size ]

#g1_mean, g1_std = weighted_avg_and_std(np.array(g1_effect), np.array(g1_size) )

g2_mean, g2_std = weighted_avg_and_std(np.array(g2_effect), np.array(g2_size) )

g3_mean, g3_std = weighted_avg_and_std(np.array(g3_effect), np.array(g3_size) )

plt.rcParams['font.size'] = 15

plt.figure(figsize = (5,5))

plt.scatter([0,1,2], [g3_mean, g2_mean, avr])

plt.errorbar([0,1,2], [g3_mean, g2_mean, avr], yerr = [g3_std, g2_std, std], linestyle = 'None')

plt.xticks([0,1,2], ['0', '1', 'whole'])

plt.xlabel("Gestational Hypertension Code")

plt.ylabel('Estimated Treatment Effect')

plt.xlim([-1,3])

#plt.ylim([-1,1])

plt.tight_layout()

#plt.savefig('natality_score_hypertensions_whole.png', dpi = 150)

```

| github_jupyter |

```

# Import some libraries

import torch

import torchvision

from torch import nn

from torch.utils.data import DataLoader

from torchvision import transforms

from torchvision.datasets import MNIST

from matplotlib import pyplot as plt

# Convert vector to image

def to_img(x):

x = 0.5 * (x + 1)

x = x.view(x.size(0), 28, 28)

return x

# Displaying routine

def display_images(in_, out, n=1):

for N in range(n):

if in_ is not None:

in_pic = to_img(in_.cpu().data)

plt.figure(figsize=(18, 6))

for i in range(4):

plt.subplot(1,4,i+1)

plt.imshow(in_pic[i+4*N])

plt.axis('off')

out_pic = to_img(out.cpu().data)

plt.figure(figsize=(18, 6))

for i in range(4):

plt.subplot(1,4,i+1)

plt.imshow(out_pic[i+4*N])

plt.axis('off')

# Define data loading step

batch_size = 256

img_transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))

])

dataset = MNIST('./data', transform=img_transform, download=True)

dataloader = DataLoader(dataset, batch_size=batch_size, shuffle=True)

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

# Define model architecture and reconstruction loss

# n = 28 x 28 = 784

d = 30 # for standard AE (under-complete hidden layer)

# d = 500 # for denoising AE (over-complete hidden layer)

class Autoencoder(nn.Module):

def __init__(self):

super().__init__()

self.encoder = nn.Sequential(

nn.Linear(28 * 28, d),

nn.Tanh(),

)

self.decoder = nn.Sequential(

nn.Linear(d, 28 * 28),

nn.Tanh(),

)

def forward(self, x):

x = self.encoder(x)

x = self.decoder(x)

return x

model = Autoencoder().to(device)

criterion = nn.MSELoss()

# Configure the optimiser

learning_rate = 1e-3

optimizer = torch.optim.Adam(

model.parameters(),

lr=learning_rate,

)

```

*Comment* or *un-comment out* a few lines of code to seamlessly switch between *standard AE* and *denoising one*.

Don't forget to **(1)** change the size of the hidden layer accordingly, **(2)** re-generate the model, and **(3)** re-pass the parameters to the optimiser.

```

# Train standard or denoising autoencoder (AE)

num_epochs = 1

# do = nn.Dropout() # comment out for standard AE

for epoch in range(num_epochs):

for data in dataloader:

img, _ = data

img.requires_grad_()

img = img.view(img.size(0), -1)

# img_bad = do(img).to(device) # comment out for standard AE

# ===================forward=====================

output = model(img) # feed <img> (for std AE) or <img_bad> (for denoising AE)

loss = criterion(output, img.data)

# ===================backward====================

optimizer.zero_grad()

loss.backward()

optimizer.step()

# ===================log========================

print(f'epoch [{epoch + 1}/{num_epochs}], loss:{loss.item():.4f}')

display_images(None, output) # pass (None, output) for std AE, (img_bad, output) for denoising AE

# Visualise a few kernels of the encoder

display_images(None, model.encoder[0].weight, 5)

! conda install -y --name codas-ml opencv

# Let's compare the autoencoder inpainting capabilities vs. OpenCV

from cv2 import inpaint, INPAINT_NS, INPAINT_TELEA

# Inpaint with Telea and Navier-Stokes methods

dst_TELEA = list()

dst_NS = list()

for i in range(3, 7):

corrupted_img = ((img_bad.data.cpu()[i].view(28, 28) / 4 + 0.5) * 255).byte().numpy()

mask = 2 - img_bad.grad_fn.noise.cpu()[i].view(28, 28).byte().numpy()

dst_TELEA.append(inpaint(corrupted_img, mask, 3, INPAINT_TELEA))

dst_NS.append(inpaint(corrupted_img, mask, 3, INPAINT_NS))

tns_TELEA = [torch.from_numpy(d) for d in dst_TELEA]

tns_NS = [torch.from_numpy(d) for d in dst_NS]

TELEA = torch.stack(tns_TELEA).float()

NS = torch.stack(tns_NS).float()

# Compare the results: [noise], [img + noise], [img], [AE, Telea, Navier-Stokes] inpainting

with torch.no_grad():

display_images(img_bad.grad_fn.noise[3:7], img_bad[3:7])

display_images(img[3:7], output[3:7])

display_images(TELEA, NS)

```

| github_jupyter |

```

import numpy as np

import pandas as pd

#import matplotlib.pylab as plt

import matplotlib.pyplot as plt

from sklearn.preprocessing import MinMaxScaler

from sklearn.metrics import silhouette_score

from sklearn import cluster

from sklearn.cluster import KMeans

from sklearn.datasets import make_blobs

import seaborn as sns

sns.set()

from sklearn.neighbors import NearestNeighbors

from yellowbrick.cluster import KElbowVisualizer

from sklearn.decomposition import PCA

from sklearn.manifold import TSNE

from sklearn.model_selection import train_test_split

from mpl_toolkits.mplot3d import Axes3D

from sklearn.metrics import accuracy_score

%matplotlib inline

plt.rcParams['figure.figsize'] = (16, 9)

plt.style.use('ggplot')

```

## Se visualiza los datos y se elimina las columnas que no son necesarias

```

dfRead = pd.read_csv('Interaccion_todasLasSesiones.csv')

df = dfRead.drop(['Sesion','Id'], axis=1)

#df = df[df['Fsm']!=0]

```

## Filtrado de datos

## Histograma de las notas

```

plt.rcParams['figure.figsize'] = (16, 9)

plt.style.use('ggplot')

datos = df.drop(['Nota'],1).hist()

plt.grid(True)

plt.show()

```

## Se crean los datos para el clusters y las categorias

```

clusters = df[['Nota']]

X = df.drop(['Nota'],1)

## Se reliza la normalización de los datos para que esten en un rango de (0,1)

scaler = MinMaxScaler(feature_range=(0, 1))

x = scaler.fit_transform(X)

```

## Se definen los metodos a emplear en el cluster

```

def clusterDBscan(x):

db = cluster.DBSCAN(eps=0.25, min_samples=5)

db.fit(x)

return db.labels_

def clusterKMeans(x, n_clusters):

return cluster.k_means(x, n_clusters=n_clusters)[1]

```

## Se crea funciones en caso de ser necesarias para poder reducir las dimensiones

```

def reducir_dim(x, ndim):

pca = PCA(n_components=ndim)

return pca.fit_transform(x)

def reducir_dim_tsne(x, ndim):

pca = TSNE(n_components=ndim)

return pca.fit_transform(x)

```

## Se grafica los valores de los posibles cluster en base a silohuette score

```

def calculaSilhoutter(x, clusters):

res=[]

fig, ax = plt.subplots(1,figsize=(20, 5))

for numCluster in range(2, 7):

res.append(silhouette_score(x, clusterKMeans(x,numCluster )))

ax.plot(range(2, 7), res)

ax.set_xlabel("n clusters")

ax.set_ylabel("silouhette score")

ax.set_title("K-Means")

calculaSilhoutter(x, clusters)

```

## Se grafica los valores de los posibles cluster en base a Elbow Method

```

model = KMeans()

visualizer = KElbowVisualizer(model, k=(2,7), metric='calinski_harabasz', timings=False)

visualizer.fit(x) # Fit the data to the visualizer

visualizer.show()

clus_km = clusterKMeans(x, 3)

clus_db = clusterDBscan(x)

def reducir_dataset(x, how):

if how == "pca":

res = reducir_dim(x, ndim=2)

elif how == "tsne":

res = reducir_dim_tsne(x, ndim=2)

else:

return x[:, :2]

return res

results = pd.DataFrame(np.column_stack([reducir_dataset(x, how="tsne"), clusters, clus_km, clus_db]), columns=["x", "y", "clusters", "clus_km", "clus_db"])

def mostrar_resultados(res):

"""Muestra los resultados de los algoritmos

"""

fig, ax = plt.subplots(1, 3, figsize=(20, 5))

sns.scatterplot(data=res, x="x", y="y", hue="clusters", ax=ax[0], legend="full")

ax[0].set_title('Ground Truth')

sns.scatterplot(data=res, x="x", y="y", hue="clus_km", ax=ax[1], legend="full")

ax[1].set_title('K-Means')

sns.scatterplot(data=res, x="x", y="y", hue="clus_db", ax=ax[2], legend="full")

ax[2].set_title('DBSCAN')

mostrar_resultados(results)

kmeans = KMeans(n_clusters=3,init = "k-means++")

kmeans.fit(x)

labels = kmeans.predict(x)

X['Cluster_Km']=labels

dfRead['Cluster_Km']=labels

X.groupby('Cluster_Km').mean()

```

## DBSCAN

```

neigh = NearestNeighbors(n_neighbors=2)

nbrs = neigh.fit(x)

distances, indices = nbrs.kneighbors(x)

distances = np.sort(distances, axis=0)

distancias = distances[:,1]

plt.plot(distancias)

plt.ylim(0, 0.4)

dbscan = cluster.DBSCAN(eps=0.25, min_samples=5)

dbscan.fit(x)

clusterDbscan = dbscan.labels_

X['Cluster_DB']=clusterDbscan

dfRead['Cluster_DB']=clusterDbscan

X.groupby('Cluster_DB').mean()

dfRead

```

| github_jupyter |

```

import numpy as np

import pandas as pd

import treelib

from pathlib import Path

from treelib import Node, Tree

DATA_DIR = Path('../../data/retail-rocket')

EXPORT_DIR = Path('../../data/retail-rocket') / 'saved'

PATH_CATEGORY_TREE = DATA_DIR / 'category_tree.csv'

PATH_EVENTS = DATA_DIR /'events.csv'

PATH_ITEM_PROPS1 = DATA_DIR / 'item_properties_part1.csv'

PATH_ITEM_PROPS2 = DATA_DIR / 'item_properties_part2.csv'

```

# Creating Category Features via Tree

The category tree provided is given as a table of edges. We want to be able to get all the levels given a leaf node.

```

cat_tree_df = pd.read_csv(PATH_CATEGORY_TREE)

cat_tree_df.head()

tree = Tree()

ROOT = 'cat_tree'

tree.create_node(identifier=ROOT)

tree.create_node(identifier=-1, parent=ROOT) # temp

for _, row in cat_tree_df.iterrows():

categoryid, parentid = row

if np.isnan(parentid):

parentid = ROOT

else:

parentid = int(parentid)

categoryid = int(categoryid)

if not tree.contains(parentid):

tree.create_node(identifier=parentid, parent=-1)

if not tree.contains(categoryid):

tree.create_node(identifier=categoryid, parent=parentid)

else:

if tree.get_node(categoryid).bpointer == -1:

tree.move_node(categoryid, parentid)

tree.link_past_node(-1)

# Print the tree structure

# tree.show(line_type='ascii-em')

```

# Item Properties

We are provided with a bunch of item properties that can possibly change over time. But we will only be working with `categoryid` (and the latest record of it).

```

item_props_df = pd.concat([

pd.read_csv(PATH_ITEM_PROPS1, usecols=['itemid', 'property', 'value']),

pd.read_csv(PATH_ITEM_PROPS2, usecols=['itemid', 'property', 'value']),

])

item_props_df = item_props_df.loc[item_props_df['property']=='categoryid']\

.drop_duplicates().drop('property', axis=1).set_index('itemid')

item_props_df.columns = ['categoryid']

item_props_df['categoryid'] = item_props_df['categoryid'].astype(np.uint16)

# Could memoize if we wanted, meh

def get_cats(categoryid):

try:

return list(tree.rsearch(categoryid))[::-1][1:]

except treelib.exceptions.NodeIDAbsentError:

return []

item_categories_df = pd.DataFrame(item_props_df['categoryid'].map(get_cats).tolist())

item_categories_df.columns = [f'categoryid_lvl{i}' for i in range(6)]

item_categories_df.index = item_props_df.index

item_categories_df.reset_index(inplace=True)

# lvl3-5 are mostly NaN, probably want to chop them off

item_categories_df.to_msgpack(EXPORT_DIR / 'item_categories.msg')

item_categories_df.head()

```

# Events

Pre-split our event facts.

```

HOLDOUT_DATE = '2015-09-01'

events_df = pd.read_csv(PATH_EVENTS, usecols=['timestamp', 'visitorid', 'event', 'itemid'])

events_df['timestamp'] = pd.to_datetime(events_df['timestamp'], unit='ms')

events_df.to_msgpack(EXPORT_DIR / 'events.msg')

events_df.loc[events_df['timestamp'] < HOLDOUT_DATE].to_msgpack(EXPORT_DIR / 'events_tsplit.msg')

events_df.loc[events_df['timestamp'] >= HOLDOUT_DATE].to_msgpack(EXPORT_DIR / 'events_vsplit.msg')

```

| github_jupyter |

# VQGAN+CLIP Simplificado

```

# Licensed under the MIT License

# Copyright (c) 2021 Katherine Crowson

# Permission is hereby granted, free of charge, to any person obtaining a copy

# of this software and associated documentation files (the "Software"), to deal

# in the Software without restriction, including without limitation the rights

# to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

# copies of the Software, and to permit persons to whom the Software is

# furnished to do so, subject to the following conditions:

# The above copyright notice and this permission notice shall be included in

# all copies or substantial portions of the Software.

# THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

# IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

# FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

# AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

# LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

# OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN

# THE SOFTWARE.

```

## Docker Volumenes

```

from extra import *

MODELS_FOLDER = Path("/tf/models")

OUTPUTS_FOLDER= Path("/tf/outputs")

SRC_FOLDER= Path("/tf/outputs/src")

```

## Descargar modelos pre-entrenados:

```

from get_models import list_models, download_models

models = list_models(return_list=True)

print(models)

download_models(models, output=MODELS_FOLDER)

# Selecciona un modelo

model = models[1]

if (Path(MODELS_FOLDER)/ f"{model}.yaml").exists():

print(f"{model} ya existe.")

else:

print(f"Descargando: {model}")

download_models(model, output=MODELS_FOLDER)

```

## Configuración

**Definir parámetros principales:**

```

#!ls $MODELS_FOLDER

#Texto usado como Input para generar la imagen:

txt = 'Todo va a salir bien'

prompts = [txt]

# Tamaño de imagen

#size = (200, 200) #6129MiB de V-RAM

size = (340, 340) #8693MiB de V-RAM

#size = (450, 450) #10201MiB de V-RAM

# Numero de iteraciones en la creación de la imagen, a mayor el número de iteraciones mejor es el detalle en la imagen

iterations = 250

# Modelo a usar

# modelos descargados:

# !ls $MODELS_FOLDER

model = 'vqgan_imagenet_f16_1024'

```

**Definir parámetros secundarios:**

```

config = {

"init_image": None,

"seed": None,

"step_size": 0.2,

"cutn": 64,

"cut_pow": 1.,

"display_freq": 5,

"image_prompts": [],

"noise_prompt_seeds": [],

"noise_prompt_weights": [],

"init_weight": 0.,

"clip_model": 'ViT-B/32',

}

# SOBREESCRIBIR RESULTADOS

overwrite=True

```

## Generar frames del proceso resultante:

```

from generate_images import *

last_frame_index = generate_images(

prompts=prompts,

model=model,

outputs_folder=OUTPUTS_FOLDER,

models_folder=MODELS_FOLDER,

iterations=280,

**config,

overwrite=overwrite

)

experiment_name = to_experiment_name(prompts)

experiment_folder = Path(OUTPUTS_FOLDER) / experiment_name

create_video(last_frame_index, Path(experiment_folder))

!cp $experiment_folder/video.mp4 temp.mp4

from IPython.display import Video

video_file = "temp.mp4"

Video(video_file)

!nvidia-smi

# Liberar memoria de la GPU

reset_kernel()

```

---

Notebook Original: https://colab.research.google.com/drive/1L8oL-vLJXVcRzCFbPwOoMkPKJ8-aYdPN

| github_jupyter |

<a href="https://colab.research.google.com/github/jeffheaton/t81_558_deep_learning/blob/master/t81_558_class_10_3_text_generation.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# T81-558: Applications of Deep Neural Networks

**Module 10: Time Series in Keras**

* Instructor: [Jeff Heaton](https://sites.wustl.edu/jeffheaton/), McKelvey School of Engineering, [Washington University in St. Louis](https://engineering.wustl.edu/Programs/Pages/default.aspx)

* For more information visit the [class website](https://sites.wustl.edu/jeffheaton/t81-558/).

# Module 10 Material

* Part 10.1: Time Series Data Encoding for Deep Learning [[Video]](https://www.youtube.com/watch?v=dMUmHsktl04&list=PLjy4p-07OYzulelvJ5KVaT2pDlxivl_BN) [[Notebook]](t81_558_class_10_1_timeseries.ipynb)

* Part 10.2: Programming LSTM with Keras and TensorFlow [[Video]](https://www.youtube.com/watch?v=wY0dyFgNCgY&list=PLjy4p-07OYzulelvJ5KVaT2pDlxivl_BN) [[Notebook]](t81_558_class_10_2_lstm.ipynb)

* **Part 10.3: Text Generation with Keras and TensorFlow** [[Video]](https://www.youtube.com/watch?v=6ORnRAz3gnA&list=PLjy4p-07OYzulelvJ5KVaT2pDlxivl_BN) [[Notebook]](t81_558_class_10_3_text_generation.ipynb)

* Part 10.4: Image Captioning with Keras and TensorFlow [[Video]](https://www.youtube.com/watch?v=NmoW_AYWkb4&list=PLjy4p-07OYzulelvJ5KVaT2pDlxivl_BN) [[Notebook]](t81_558_class_10_4_captioning.ipynb)

* Part 10.5: Temporal CNN in Keras and TensorFlow [[Video]](https://www.youtube.com/watch?v=i390g8acZwk&list=PLjy4p-07OYzulelvJ5KVaT2pDlxivl_BN) [[Notebook]](t81_558_class_10_5_temporal_cnn.ipynb)

# Google CoLab Instructions

The following code ensures that Google CoLab is running the correct version of TensorFlow.

```

try:

%tensorflow_version 2.x

COLAB = True

print("Note: using Google CoLab")

except:

print("Note: not using Google CoLab")

COLAB = False

```

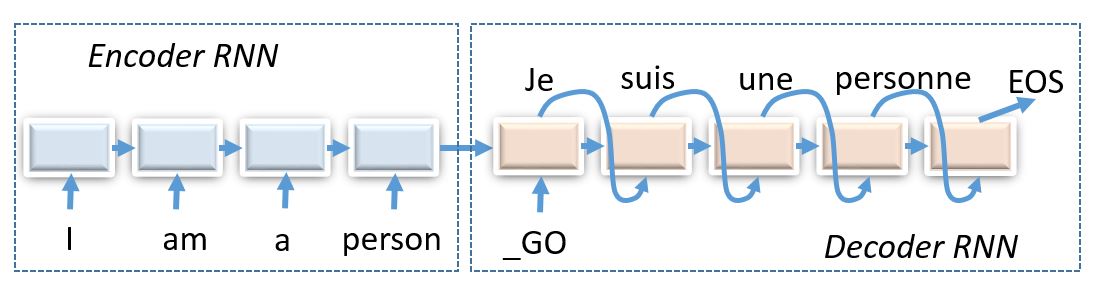

# Part 10.3: Text Generation with LSTM

Recurrent neural networks are also known for their ability to generate text. As a result, the output of the neural network can be free-form text. In this section, we will see how to train an LSTM can on a textual document, such as classic literature, and learn to output new text that appears to be of the same form as the training material. If you train your LSTM on [Shakespeare](https://en.wikipedia.org/wiki/William_Shakespeare), it will learn to crank out new prose similar to what Shakespeare had written.

Don't get your hopes up. You are not going to teach your deep neural network to write the next [Pulitzer Prize for Fiction](https://en.wikipedia.org/wiki/Pulitzer_Prize_for_Fiction). The prose generated by your neural network will be nonsensical. However, it will usually be nearly grammatically and of a similar style as the source training documents.

A neural network generating nonsensical text based on literature may not seem useful at first glance. However, this technology gets so much interest because it forms the foundation for many more advanced technologies. The fact that the LSTM will typically learn human grammar from the source document opens a wide range of possibilities. You can use similar technology to complete sentences when a user is entering text. Simply the ability to output free-form text becomes the foundation of many other technologies. In the next part, we will use this technique to create a neural network that can write captions for images to describe what is going on in the picture.

### Additional Information

The following are some of the articles that I found useful in putting this section together.

* [The Unreasonable Effectiveness of Recurrent Neural Networks](http://karpathy.github.io/2015/05/21/rnn-effectiveness/)

* [Keras LSTM Generation Example](https://keras.io/examples/lstm_text_generation/)

### Character-Level Text Generation

There are several different approaches to teaching a neural network to output free-form text. The most basic question is if you wish the neural network to learn at the word or character level. In many ways, learning at the character level is the more interesting of the two. The LSTM is learning to construct its own words without even being shown what a word is. We will begin with character-level text generation. In the next module, we will see how we can use nearly the same technique to operate at the word level. We will implement word-level automatic captioning in the next module.

We begin by importing the needed Python packages and defining the sequence length, named **maxlen**. Time-series neural networks always accept their input as a fixed-length array. Because you might not use all of the sequence elements, it is common to fill extra elements with zeros. You will divide the text into sequences of this length, and the neural network will train to predict what comes after this sequence.

```

from tensorflow.keras.callbacks import LambdaCallback

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Dense

from tensorflow.keras.layers import LSTM

from tensorflow.keras.optimizers import RMSprop

from tensorflow.keras.utils import get_file

import numpy as np

import random

import sys

import io

import requests

import re

```

For this simple example, we will train the neural network on the classic children's book [Treasure Island](https://en.wikipedia.org/wiki/Treasure_Island). We begin by loading this text into a Python string and displaying the first 1,000 characters.

```

r = requests.get("https://data.heatonresearch.com/data/t81-558/text/"\

"treasure_island.txt")

raw_text = r.text

print(raw_text[0:1000])

```

We will extract all unique characters from the text and sort them. This technique allows us to assign a unique ID to each character. Because we sorted the characters, these IDs should remain the same. If we add new characters to the original text, then the IDs would change. We build two dictionaries. The first **char2idx** is used to convert a character into its ID. The second **idx2char** converts an ID back into its character.

```

processed_text = raw_text.lower()

processed_text = re.sub(r'[^\x00-\x7f]',r'', processed_text)

print('corpus length:', len(processed_text))

chars = sorted(list(set(processed_text)))

print('total chars:', len(chars))

char_indices = dict((c, i) for i, c in enumerate(chars))

indices_char = dict((i, c) for i, c in enumerate(chars))

```

We are now ready to build the actual sequences. Just like previous neural networks, there will be an $x$ and $y$. However, for the LSTM, $x$ and $y$ will both be sequences. The $x$ input will specify the sequences where $y$ are the expected output. The following code generates all possible sequences.

```

# cut the text in semi-redundant sequences of maxlen characters

maxlen = 40

step = 3

sentences = []

next_chars = []

for i in range(0, len(processed_text) - maxlen, step):

sentences.append(processed_text[i: i + maxlen])

next_chars.append(processed_text[i + maxlen])

print('nb sequences:', len(sentences))

sentences

print('Vectorization...')

x = np.zeros((len(sentences), maxlen, len(chars)), dtype=np.bool)

y = np.zeros((len(sentences), len(chars)), dtype=np.bool)

for i, sentence in enumerate(sentences):

for t, char in enumerate(sentence):

x[i, t, char_indices[char]] = 1

y[i, char_indices[next_chars[i]]] = 1

x.shape

y.shape

```

The dummy variables for $y$ are shown below.

```

y[0:10]

```

Next, we create the neural network. This neural network's primary feature is the LSTM layer, which allows the sequences to be processed.

```

# build the model: a single LSTM

print('Build model...')

model = Sequential()

model.add(LSTM(128, input_shape=(maxlen, len(chars))))

model.add(Dense(len(chars), activation='softmax'))

optimizer = RMSprop(lr=0.01)

model.compile(loss='categorical_crossentropy', optimizer=optimizer)

model.summary()

```

The LSTM will produce new text character by character. We will need to sample the correct letter from the LSTM predictions each time. The **sample** function accepts the following two parameters:

* **preds** - The output neurons.

* **temperature** - 1.0 is the most conservative, 0.0 is the most confident (willing to make spelling and other errors).

The sample function below is essentially performing a [softmax]() on the neural network predictions. This causes each output neuron to become a probability of its particular letter.

```

def sample(preds, temperature=1.0):

# helper function to sample an index from a probability array

preds = np.asarray(preds).astype('float64')

preds = np.log(preds) / temperature

exp_preds = np.exp(preds)

preds = exp_preds / np.sum(exp_preds)

probas = np.random.multinomial(1, preds, 1)

return np.argmax(probas)

```

Keras calls the following function at the end of each training Epoch. The code generates sample text generations that visually demonstrate the neural network better at text generation. As the neural network trains, the generations should look more realistic.

```

def on_epoch_end(epoch, _):

# Function invoked at end of each epoch. Prints generated text.

print("******************************************************")

print('----- Generating text after Epoch: %d' % epoch)

start_index = random.randint(0, len(processed_text) - maxlen - 1)

for temperature in [0.2, 0.5, 1.0, 1.2]:

print('----- temperature:', temperature)

generated = ''

sentence = processed_text[start_index: start_index + maxlen]

generated += sentence

print('----- Generating with seed: "' + sentence + '"')

sys.stdout.write(generated)

for i in range(400):

x_pred = np.zeros((1, maxlen, len(chars)))

for t, char in enumerate(sentence):

x_pred[0, t, char_indices[char]] = 1.

preds = model.predict(x_pred, verbose=0)[0]

next_index = sample(preds, temperature)

next_char = indices_char[next_index]

generated += next_char

sentence = sentence[1:] + next_char

sys.stdout.write(next_char)

sys.stdout.flush()

print()

```

We are now ready to train. It can take up to an hour to train this network, depending on how fast your computer is. If you have a GPU available, please make sure to use it.

```

# Ignore useless W0819 warnings generated by TensorFlow 2.0. Hopefully can remove this ignore in the future.

# See https://github.com/tensorflow/tensorflow/issues/31308

import logging, os

logging.disable(logging.WARNING)

os.environ["TF_CPP_MIN_LOG_LEVEL"] = "3"

# Fit the model

print_callback = LambdaCallback(on_epoch_end=on_epoch_end)

model.fit(x, y,

batch_size=128,

epochs=60,

callbacks=[print_callback])

```

| github_jupyter |

```

import numpy as np

import pandas as pd

import pickle

import json

import gensim

import os

import re

from sklearn.model_selection import train_test_split

from pandas.plotting import scatter_matrix

from keras.preprocessing.text import Tokenizer

from keras.preprocessing.sequence import pad_sequences

from keras.preprocessing import sequence

from keras.optimizers import RMSprop, SGD

from keras.models import Sequential, Model

from keras.layers.core import Dense, Dropout, Activation, Flatten, Reshape

from keras.layers import Input, Bidirectional, LSTM, regularizers

from keras.layers.embeddings import Embedding

from keras.layers.convolutional import Conv1D, MaxPooling1D, MaxPooling2D, Conv2D

from keras.layers.normalization import BatchNormalization

from keras.callbacks import EarlyStopping

%matplotlib inline

import matplotlib

import numpy as np

import matplotlib.pyplot as plt

# Change these to match your file paths :)

filename = '../wyns/data/tweet_global_warming.csv' #64,706 reviews

model_path = "GoogleNews-vectors-negative300.bin"

word_vector_model = gensim.models.KeyedVectors.load_word2vec_format(model_path, binary=True)

def normalize(txt, vocab=None, replace_char=' ',

max_length=300, pad_out=False,

to_lower=True, reverse = False,

truncate_left=False, encoding=None,

letters_only=False):

txt = txt.split()

# Remove HTML

# This will keep characters and other symbols

txt = [re.sub(r'http:.*', '', r) for r in txt]

txt = [re.sub(r'https:.*', '', r) for r in txt]

txt = ( " ".join(txt))

# Remove non-emoticon punctuation and numbers

txt = re.sub("[.,!0-9]", " ", txt)

if letters_only:

txt = re.sub("[^a-zA-Z]", " ", txt)

txt = " ".join(txt.split())

# store length for multiple comparisons

txt_len = len(txt)

if truncate_left:

txt = txt[-max_length:]

else:

txt = txt[:max_length]

# change case

if to_lower:

txt = txt.lower()

# Reverse order

if reverse:

txt = txt[::-1]

# replace chars

if vocab is not None:

txt = ''.join([c if c in vocab else replace_char for c in txt])

# re-encode text

if encoding is not None:

txt = txt.encode(encoding, errors="ignore")

# pad out if needed

if pad_out and max_length>txt_len:

txt = txt + replace_char * (max_length - txt_len)

if txt.find('@') > -1:

print(len(txt.split('@'))-1)

print(txt.split('@'))

for i in range(len(txt.split('@'))-1):

try:

if str(txt.split('@')[1]).find(' ') > -1:

to_remove = '@' + str(txt.split('@')[1].split(' ')[0]) + " "

else:

to_remove = '@' + str(txt.split('@')[1])

print(to_remove)

txt = txt.replace(to_remove,'')

except:

pass

return txt

# What does this normalization function look like?

clean_text = normalize("This is A sentence. @sarahisabutthead with @sarah things! 123 :) and a link https://gitub.com @blah")

print(clean_text)

string = '@dez_blanchfield @SpEducatorCWSN @VolcanoScouting @USGSVolcanoes @Volcanoes_NPS @TmanSpeaks @DioFavatas @helene_wpli @ScheuerJo @martinfredras @HelenClarkNZ @dez_blanchfield, can you explain your answer? If oceans are rising, and getting heavier, why won�t this increased weight have consequences? See: https://t.co/DupaCkMnIE'

print(string)

print(normalize(string))

```

We want to balance the distrubtion of sentiment:

```

def balance(df):

print("Balancing the classes")

type_counts = df['Sentiment'].value_counts()

min_count = min(type_counts.values)

balanced_df = None

for key in type_counts.keys():

df_sub = df[df['Sentiment']==key].sample(n=min_count, replace=False)

if balanced_df is not None:

balanced_df = balanced_df.append(df_sub)

else:

balanced_df = df_sub

return balanced_df

def review_to_sentiment(review):

# Review is coming in as Y/N/NaN

# this then cleans the summary and review and gives it a positive or negative value

norm_text = normalize(review[0])

if review[1] in ('Yes', 'Y'):

return ['positive', norm_text]

elif review[1] in ('No', 'N'):

return ['negative', norm_text]

else:

return ['other', norm_text]

data = []

with open(filename, 'r', encoding='latin') as f:

for i,line in enumerate(f):

if i == 0: #skip header while i diagnose

continue

# as we read in, clean

line_data = line.split(",")

data.append(review_to_sentiment(line_data))

twitter = pd.DataFrame(data, columns=['Sentiment', 'clean_text'], dtype=str)

# print(twitter)

# For this demo lets just keep one and five stars the others are marked 'other

# twitter = twitter[twitter['Sentiment'].isin(['positive', 'negative'])]

# twitter.head()

# balanced_twitter = balance(twitter)

# len(balanced_twitter)

# Now go from the pandas into lists of text and labels

text = twitter['clean_text'].values

labels_0 = pd.get_dummies(twitter['Sentiment']) # mapping of the labels with dummies (has headers)

labels = labels_0.values # removes the headers

labels[:10] # negative, other, positive

labels = labels[:,[0,2]]

labels[:10] # negative, positive

# Perform the Train/test split

X_train_, X_test_, Y_train_, Y_test_ = train_test_split(text,labels, test_size = 0.2, random_state = 42)

max_fatures = 2000

max_len = 40

batch_size = 32

embed_dim = 300

lstm_out = 140

dense_out=len(labels[0])

tokenizer = Tokenizer(num_words=max_fatures, split=' ')

tokenizer.fit_on_texts(X_train_)

X_train = tokenizer.texts_to_sequences(X_train_)

X_train = pad_sequences(X_train, maxlen=max_len, padding='post')

X_test = tokenizer.texts_to_sequences(X_test_)

X_test = pad_sequences(X_test, maxlen=max_len, padding='post')

word_index = tokenizer.word_index

from sklearn.ensemble import GradientBoostingClassifier

from sklearn.multioutput import MultiOutputClassifier

gb = GradientBoostingClassifier(n_estimators = 4000)

gb = MultiOutputClassifier(gb, n_jobs=2)

gb.fit(X_train,Y_train_)

print(gb.score(X_test,Y_test_))

blah = 'look \n at \n me \n go'

blah2 = blah.replace

print(blah)

# What does the data look like?

# It is a one-hot encoding of the label, either positive or negative

Y_train_[:5]

X_train_[42]

### Now for a simple bidirectional LSTM algorithm we set our feature sizes and train a tokenizer

# First we Tokenize and get the data into a form that the model can read - this is BoW

# In this cell we are also going to define some of our hyperparameters

max_fatures = 2000

max_len = 40

batch_size = 32

embed_dim = 300

lstm_out = 140

dense_out=len(labels[0]) #length of features

tokenizer = Tokenizer(num_words=max_fatures, split=' ')

tokenizer.fit_on_texts(X_train_)

X_train = tokenizer.texts_to_sequences(X_train_)

X_train = pad_sequences(X_train, maxlen=max_len, padding='post')

X_test = tokenizer.texts_to_sequences(X_test_)

X_test = pad_sequences(X_test, maxlen=max_len, padding='post')

word_index = tokenizer.word_index

# Now what does our data look like?

# Tokenizer creates a BOW encoding, which is then going to be fed into our Embedding matrix

# This will be used by the model to build up a word embedding

X_test[:,-1].mean()

# What does a word vector look like?

# Ahhhh, like a bunch of numbers

word_vector_model.word_vec('hello')

print('Prepare the embedding matrix')

# prepare embedding matrix

num_words = min(max_fatures, len(word_index))

embedding_matrix = np.zeros((num_words, embed_dim))

for word, i in word_index.items():

if i >= max_len:

continue

# words not found in embedding index will be all-zeros.

if word in word_vector_model.vocab:

embedding_matrix[i] = word_vector_model.word_vec(word)

# load pre-trained word embeddings into an Embedding layer

# note that we set trainable = True to fine tune the embeddings

embedding_layer = Embedding(num_words,

embed_dim,

weights=[embedding_matrix],

input_length=max_fatures,

trainable=True)

embedding_matrix[1]

# Define the model using the pre-trained embedding

# import tensorflow as tf

# with tf.device('/cpu:0'):

embedding_layer = Embedding(num_words,

embed_dim,

weights=[embedding_matrix],

input_length=max_fatures,

trainable=True)

sequence_input = Input(shape=(max_len,), dtype='int32')

embedded_sequences = embedding_layer(sequence_input)

x = Bidirectional(LSTM(lstm_out, recurrent_dropout=0.5, activation='tanh'))(embedded_sequences)

# preds = Dense(250, activation='softmax')(x)

preds = Dense(dense_out, activation='softmax')(x)

model = Model(sequence_input, preds)

model.compile(loss='categorical_crossentropy',

optimizer='adam',

metrics=['acc'])

print(model.summary())

model_hist_embedding = model.fit(X_train, Y_train_, epochs = 80, batch_size=batch_size, verbose = 2,

validation_data=(X_test,Y_test_))

model_hist_embedding = model.fit(X_train, Y_train_, epochs = 80, batch_size=batch_size, verbose = 2,

validation_data=(X_test,Y_test_))

# Training Accuracy

x = np.arange(20)+1

plt.plot(x, model_hist_embedding.history['acc'])

plt.plot(x, model_hist_embedding.history['val_acc'])

plt.legend(['Training', 'Testing'], loc='lower right')

plt.ylabel("Accuracy")

axes = plt.gca()

axes.set_ylim([0.45,1.01])

plt.xlabel("Epoch")

plt.title("LSTM Accuracy")

plt.show()

#model_hist_embedding.model.save("../../wyns/data/climate_sentiment_m2.h5")

```

| github_jupyter |

# Statistical Data Modeling

Some or most of you have probably taken some undergraduate- or graduate-level statistics courses. Unfortunately, the curricula for most introductory statisics courses are mostly focused on conducting **statistical hypothesis tests** as the primary means for interest: t-tests, chi-squared tests, analysis of variance, etc. Such tests seek to esimate whether groups or effects are "statistically significant", a concept that is poorly understood, and hence often misused, by most practioners. Even when interpreted correctly, statistical significance is a questionable goal for statistical inference, as it is of limited utility.

A far more powerful approach to statistical analysis involves building flexible **models** with the overarching aim of *estimating* quantities of interest. This section of the tutorial illustrates how to use Python to build statistical models of low to moderate difficulty from scratch, and use them to extract estimates and associated measures of uncertainty.

```

%matplotlib inline

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

# Set some Pandas options

pd.set_option('display.notebook_repr_html', False)

pd.set_option('display.max_columns', 20)

pd.set_option('display.max_rows', 25)

```

Estimation

==========

An recurring statistical problem is finding estimates of the relevant parameters that correspond to the distribution that best represents our data.

In **parametric** inference, we specify *a priori* a suitable distribution, then choose the parameters that best fit the data.

* e.g. $\mu$ and $\sigma^2$ in the case of the normal distribution

```

x = np.array([ 1.00201077, 1.58251956, 0.94515919, 6.48778002, 1.47764604,

5.18847071, 4.21988095, 2.85971522, 3.40044437, 3.74907745,

1.18065796, 3.74748775, 3.27328568, 3.19374927, 8.0726155 ,

0.90326139, 2.34460034, 2.14199217, 3.27446744, 3.58872357,

1.20611533, 2.16594393, 5.56610242, 4.66479977, 2.3573932 ])

_ = plt.hist(x, bins=8)

```

### Fitting data to probability distributions

We start with the problem of finding values for the parameters that provide the best fit between the model and the data, called point estimates. First, we need to define what we mean by ‘best fit’. There are two commonly used criteria:

* **Method of moments** chooses the parameters so that the sample moments (typically the sample mean and variance) match the theoretical moments of our chosen distribution.

* **Maximum likelihood** chooses the parameters to maximize the likelihood, which measures how likely it is to observe our given sample.

### Discrete Random Variables

$$X = \{0,1\}$$

$$Y = \{\ldots,-2,-1,0,1,2,\ldots\}$$

**Probability Mass Function**:

For discrete $X$,

$$Pr(X=x) = f(x|\theta)$$

***e.g. Poisson distribution***

The Poisson distribution models unbounded counts:

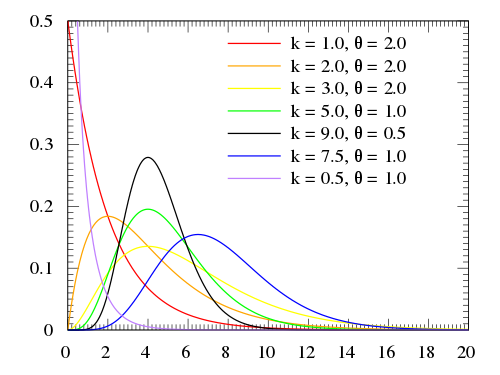

<div style="font-size: 150%;">

$$Pr(X=x)=\frac{e^{-\lambda}\lambda^x}{x!}$$

</div>

* $X=\{0,1,2,\ldots\}$

* $\lambda > 0$

$$E(X) = \text{Var}(X) = \lambda$$

### Continuous Random Variables

$$X \in [0,1]$$

$$Y \in (-\infty, \infty)$$

**Probability Density Function**:

For continuous $X$,

$$Pr(x \le X \le x + dx) = f(x|\theta)dx \, \text{ as } \, dx \rightarrow 0$$

***e.g. normal distribution***

<div style="font-size: 150%;">

$$f(x) = \frac{1}{\sqrt{2\pi\sigma^2}}\exp\left[-\frac{(x-\mu)^2}{2\sigma^2}\right]$$

</div>

* $X \in \mathbf{R}$

* $\mu \in \mathbf{R}$

* $\sigma>0$

$$\begin{align}E(X) &= \mu \cr

\text{Var}(X) &= \sigma^2 \end{align}$$

### Example: Nashville Precipitation

The dataset `nashville_precip.txt` contains [NOAA precipitation data for Nashville measured since 1871](http://bit.ly/nasvhville_precip_data). The gamma distribution is often a good fit to aggregated rainfall data, and will be our candidate distribution in this case.

```

precip = pd.read_table("data/nashville_precip.txt", index_col=0, na_values='NA', delim_whitespace=True)

precip.head()

_ = precip.hist(sharex=True, sharey=True, grid=False)

plt.tight_layout()

```

The first step is recognixing what sort of distribution to fit our data to. A couple of observations:

1. The data are skewed, with a longer tail to the right than to the left

2. The data are positive-valued, since they are measuring rainfall

3. The data are continuous

There are a few possible choices, but one suitable alternative is the **gamma distribution**:

<div style="font-size: 150%;">

$$x \sim \text{Gamma}(\alpha, \beta) = \frac{\beta^{\alpha}x^{\alpha-1}e^{-\beta x}}{\Gamma(\alpha)}$$

</div>

The ***method of moments*** simply assigns the empirical mean and variance to their theoretical counterparts, so that we can solve for the parameters.

So, for the gamma distribution, the mean and variance are:

<div style="font-size: 150%;">

$$ \hat{\mu} = \bar{X} = \alpha \beta $$

$$ \hat{\sigma}^2 = S^2 = \alpha \beta^2 $$

</div>

So, if we solve for these parameters, we can use a gamma distribution to describe our data:

<div style="font-size: 150%;">

$$ \alpha = \frac{\bar{X}^2}{S^2}, \, \beta = \frac{S^2}{\bar{X}} $$

</div>

Let's deal with the missing value in the October data. Given what we are trying to do, it is most sensible to fill in the missing value with the average of the available values.

```

precip.fillna(value={'Oct': precip.Oct.mean()}, inplace=True)

```

Now, let's calculate the sample moments of interest, the means and variances by month:

```

precip_mean = precip.mean()

precip_mean

precip_var = precip.var()

precip_var

```

We then use these moments to estimate $\alpha$ and $\beta$ for each month:

```

alpha_mom = precip_mean ** 2 / precip_var

beta_mom = precip_var / precip_mean

alpha_mom, beta_mom

```

We can use the `gamma.pdf` function in `scipy.stats.distributions` to plot the ditribtuions implied by the calculated alphas and betas. For example, here is January:

```

from scipy.stats.distributions import gamma

precip.Jan.hist(normed=True, bins=20)

plt.plot(np.linspace(0, 10), gamma.pdf(np.linspace(0, 10), alpha_mom[0], beta_mom[0]))

```

Looping over all months, we can create a grid of plots for the distribution of rainfall, using the gamma distribution:

```

axs = precip.hist(normed=True, figsize=(12, 8), sharex=True, sharey=True, bins=15, grid=False)

for ax in axs.ravel():

# Get month

m = ax.get_title()

# Plot fitted distribution

x = np.linspace(*ax.get_xlim())

ax.plot(x, gamma.pdf(x, alpha_mom[m], beta_mom[m]))

# Annotate with parameter estimates

label = 'alpha = {0:.2f}\nbeta = {1:.2f}'.format(alpha_mom[m], beta_mom[m])

ax.annotate(label, xy=(10, 0.2))

plt.tight_layout()

```

Maximum Likelihood

==================

**Maximum likelihood** (ML) fitting is usually more work than the method of moments, but it is preferred as the resulting estimator is known to have good theoretical properties.

There is a ton of theory regarding ML. We will restrict ourselves to the mechanics here.

Say we have some data $y = y_1,y_2,\ldots,y_n$ that is distributed according to some distribution:

<div style="font-size: 120%;">

$$Pr(Y_i=y_i | \theta)$$

</div>

Here, for example, is a **Poisson distribution** that describes the distribution of some discrete variables, typically *counts*:

```

y = np.random.poisson(5, size=100)

plt.hist(y, bins=12, normed=True)

plt.xlabel('y'); plt.ylabel('Pr(y)')

```

The product $\prod_{i=1}^n Pr(y_i | \theta)$ gives us a measure of how **likely** it is to observe values $y_1,\ldots,y_n$ given the parameters $\theta$. Maximum likelihood fitting consists of choosing the appropriate function $l= Pr(Y|\theta)$ to maximize for a given set of observations. We call this function the *likelihood function*, because it is a measure of how likely the observations are if the model is true.

> Given these data, how likely is this model?

In the above model, the data were drawn from a Poisson distribution with parameter $\lambda =5$.

$$L(y|\lambda=5) = \frac{e^{-5} 5^y}{y!}$$

So, for any given value of $y$, we can calculate its likelihood:

```

poisson_like = lambda x, lam: np.exp(-lam) * (lam**x) / (np.arange(x)+1).prod()

lam = 6

value = 10

poisson_like(value, lam)

np.sum(poisson_like(yi, lam) for yi in y)

lam = 8

np.sum(poisson_like(yi, lam) for yi in y)

```

We can plot the likelihood function for any value of the parameter(s):

```

lambdas = np.linspace(0,15)

x = 5

plt.plot(lambdas, [poisson_like(x, l) for l in lambdas])

plt.xlabel('$\lambda$')

plt.ylabel('L($\lambda$|x={0})'.format(x))

```

How is the likelihood function different than the probability distribution function (PDF)? The likelihood is a function of the parameter(s) *given the data*, whereas the PDF returns the probability of data given a particular parameter value. Here is the PDF of the Poisson for $\lambda=5$.

```

lam = 5

xvals = np.arange(15)

plt.bar(xvals, [poisson_like(x, lam) for x in xvals])

plt.xlabel('x')

plt.ylabel('Pr(X|$\lambda$=5)')

```

Why are we interested in the likelihood function?

A reasonable estimate of the true, unknown value for the parameter is one which **maximizes the likelihood function**. So, inference is reduced to an optimization problem.

Going back to the rainfall data, if we are using a gamma distribution we need to maximize:

$$\begin{align}l(\alpha,\beta) &= \sum_{i=1}^n \log[\beta^{\alpha} x^{\alpha-1} e^{-x/\beta}\Gamma(\alpha)^{-1}] \cr

&= n[(\alpha-1)\overline{\log(x)} - \bar{x}\beta + \alpha\log(\beta) - \log\Gamma(\alpha)]\end{align}$$

(*Its usually easier to work in the log scale*)

where $n = 2012 − 1871 = 141$ and the bar indicates an average over all *i*. We choose $\alpha$ and $\beta$ to maximize $l(\alpha,\beta)$.

Notice $l$ is infinite if any $x$ is zero. We do not have any zeros, but we do have an NA value for one of the October data, which we dealt with above.

### Finding the MLE

To find the maximum of any function, we typically take the *derivative* with respect to the variable to be maximized, set it to zero and solve for that variable.

$$\frac{\partial l(\alpha,\beta)}{\partial \beta} = n\left(\frac{\alpha}{\beta} - \bar{x}\right) = 0$$

Which can be solved as $\beta = \alpha/\bar{x}$. However, plugging this into the derivative with respect to $\alpha$ yields:

$$\frac{\partial l(\alpha,\beta)}{\partial \alpha} = \log(\alpha) + \overline{\log(x)} - \log(\bar{x}) - \frac{\Gamma(\alpha)'}{\Gamma(\alpha)} = 0$$

This has no closed form solution. We must use ***numerical optimization***!

Numerical optimization alogarithms take an initial "guess" at the solution, and iteratively improve the guess until it gets "close enough" to the answer.

Here, we will use Newton-Raphson algorithm:

<div style="font-size: 120%;">

$$x_{n+1} = x_n - \frac{f(x_n)}{f'(x_n)}$$

</div>

Which is available to us via SciPy:

```

from scipy.optimize import newton

```

Here is a graphical example of how Newtone-Raphson converges on a solution, using an arbitrary function:

```

# some function

func = lambda x: 3./(1 + 400*np.exp(-2*x)) - 1

xvals = np.linspace(0, 6)

plt.plot(xvals, func(xvals))

plt.text(5.3, 2.1, '$f(x)$', fontsize=16)

# zero line

plt.plot([0,6], [0,0], 'k-')

# value at step n

plt.plot([4,4], [0,func(4)], 'k:')

plt.text(4, -.2, '$x_n$', fontsize=16)

# tangent line

tanline = lambda x: -0.858 + 0.626*x

plt.plot(xvals, tanline(xvals), 'r--')

# point at step n+1

xprime = 0.858/0.626

plt.plot([xprime, xprime], [tanline(xprime), func(xprime)], 'k:')

plt.text(xprime+.1, -.2, '$x_{n+1}$', fontsize=16)

```

To apply the Newton-Raphson algorithm, we need a function that returns a vector containing the **first and second derivatives** of the function with respect to the variable of interest. In our case, this is:

```

from scipy.special import psi, polygamma

dlgamma = lambda m, log_mean, mean_log: np.log(m) - psi(m) - log_mean + mean_log

dl2gamma = lambda m, *args: 1./m - polygamma(1, m)

```

where `log_mean` and `mean_log` are $\log{\bar{x}}$ and $\overline{\log(x)}$, respectively. `psi` and `polygamma` are complex functions of the Gamma function that result when you take first and second derivatives of that function.

```

# Calculate statistics

log_mean = precip.mean().apply(np.log)

mean_log = precip.apply(np.log).mean()

```

Time to optimize!

```

# Alpha MLE for December

alpha_mle = newton(dlgamma, 2, dl2gamma, args=(log_mean[-1], mean_log[-1]))

alpha_mle

```

And now plug this back into the solution for beta:

<div style="font-size: 120%;">

$$ \beta = \frac{\alpha}{\bar{X}} $$

</div>

```

beta_mle = alpha_mle/precip.mean()[-1]

beta_mle

```

We can compare the fit of the estimates derived from MLE to those from the method of moments:

```

dec = precip.Dec

dec.hist(normed=True, bins=10, grid=False)

x = np.linspace(0, dec.max())

plt.plot(x, gamma.pdf(x, alpha_mom[-1], beta_mom[-1]), 'm-')

plt.plot(x, gamma.pdf(x, alpha_mle, beta_mle), 'r--')

```

For some common distributions, SciPy includes methods for fitting via MLE:

```

from scipy.stats import gamma

gamma.fit(precip.Dec)

```

This fit is not directly comparable to our estimates, however, because SciPy's `gamma.fit` method fits an odd 3-parameter version of the gamma distribution.

### Example: truncated distribution

Suppose that we observe $Y$ truncated below at $a$ (where $a$ is known). If $X$ is the distribution of our observation, then:

$$ P(X \le x) = P(Y \le x|Y \gt a) = \frac{P(a \lt Y \le x)}{P(Y \gt a)}$$

(so, $Y$ is the original variable and $X$ is the truncated variable)

Then X has the density:

$$f_X(x) = \frac{f_Y (x)}{1−F_Y (a)} \, \text{for} \, x \gt a$$

Suppose $Y \sim N(\mu, \sigma^2)$ and $x_1,\ldots,x_n$ are independent observations of $X$. We can use maximum likelihood to find $\mu$ and $\sigma$.

First, we can simulate a truncated distribution using a `while` statement to eliminate samples that are outside the support of the truncated distribution.

```

x = np.random.normal(size=10000)

a = -1

x_small = x < a

while x_small.sum():

x[x_small] = np.random.normal(size=x_small.sum())

x_small = x < a

_ = plt.hist(x, bins=100)

```

We can construct a log likelihood for this function using the conditional form:

$$f_X(x) = \frac{f_Y (x)}{1−F_Y (a)} \, \text{for} \, x \gt a$$

```

from scipy.stats.distributions import norm

trunc_norm = lambda theta, a, x: -(np.log(norm.pdf(x, theta[0], theta[1])) -

np.log(1 - norm.cdf(a, theta[0], theta[1]))).sum()

```

For this example, we will use another optimization algorithm, the **Nelder-Mead simplex algorithm**. It has a couple of advantages:

- it does not require derivatives

- it can optimize (minimize) a vector of parameters

SciPy implements this algorithm in its `fmin` function:

```

from scipy.optimize import fmin

fmin(trunc_norm, np.array([1,2]), args=(-1, x))

```

In general, simulating data is a terrific way of testing your model before using it with real data.

### Kernel density estimates

In some instances, we may not be interested in the parameters of a particular distribution of data, but just a smoothed representation of the data at hand. In this case, we can estimate the disribution *non-parametrically* (i.e. making no assumptions about the form of the underlying distribution) using kernel density estimation.

```

# Some random data

y = np.random.random(15) * 10

y

x = np.linspace(0, 10, 100)

# Smoothing parameter

s = 0.4

# Calculate the kernels

kernels = np.transpose([norm.pdf(x, yi, s) for yi in y])

plt.plot(x, kernels, 'k:')

plt.plot(x, kernels.sum(1))

plt.plot(y, np.zeros(len(y)), 'ro', ms=10)

```

SciPy implements a Gaussian KDE that automatically chooses an appropriate bandwidth. Let's create a bi-modal distribution of data that is not easily summarized by a parametric distribution:

```

# Create a bi-modal distribution with a mixture of Normals.

x1 = np.random.normal(0, 3, 50)

x2 = np.random.normal(4, 1, 50)

# Append by row

x = np.r_[x1, x2]

plt.hist(x, bins=8, normed=True)

from scipy.stats import kde

density = kde.gaussian_kde(x)

xgrid = np.linspace(x.min(), x.max(), 100)

plt.hist(x, bins=8, normed=True)

plt.plot(xgrid, density(xgrid), 'r-')

```

### Exercise: Cervical dystonia analysis

Recall the cervical dystonia database, which is a clinical trial of botulinum toxin type B (BotB) for patients with cervical dystonia from nine U.S. sites. The response variable is measurements on the Toronto Western Spasmodic Torticollis Rating Scale (TWSTRS), measuring severity, pain, and disability of cervical dystonia (high scores mean more impairment). One way to check the efficacy of the treatment is to compare the distribution of TWSTRS for control and treatment patients at the end of the study.

Use the method of moments or MLE to calculate the mean and variance of TWSTRS at week 16 for one of the treatments and the control group. Assume that the distribution of the `twstrs` variable is normal:

$$f(x \mid \mu, \sigma^2) = \sqrt{\frac{1}{2\pi\sigma^2}} \exp\left\{ -\frac{1}{2} \frac{(x-\mu)^2}{\sigma^2} \right\}$$

```

cdystonia = pd.read_csv("data/cdystonia.csv")