text stringlengths 2.5k 6.39M | kind stringclasses 3

values |

|---|---|

## Recap

Here's the code you've written so far.

```

# Code you have previously used to load data

import pandas as pd

from sklearn.metrics import mean_absolute_error

from sklearn.model_selection import train_test_split

from sklearn.tree import DecisionTreeRegressor

# Path of the file to read

iowa_file_path = '../input/home-data-for-ml-course/train.csv'

home_data = pd.read_csv(iowa_file_path)

# Create target object and call it y

y = home_data.SalePrice

# Create X

features = ['LotArea', 'YearBuilt', '1stFlrSF', '2ndFlrSF', 'FullBath', 'BedroomAbvGr', 'TotRmsAbvGrd']

X = home_data[features]

# Split into validation and training data

train_X, val_X, train_y, val_y = train_test_split(X, y, random_state=1)

# Specify Model

iowa_model = DecisionTreeRegressor(random_state=1)

# Fit Model

iowa_model.fit(train_X, train_y)

# Make validation predictions and calculate mean absolute error

val_predictions = iowa_model.predict(val_X)

val_mae = mean_absolute_error(val_predictions, val_y)

print("Validation MAE when not specifying max_leaf_nodes: {:,.0f}".format(val_mae))

# Using best value for max_leaf_nodes

iowa_model = DecisionTreeRegressor(max_leaf_nodes=100, random_state=1)

iowa_model.fit(train_X, train_y)

val_predictions = iowa_model.predict(val_X)

val_mae = mean_absolute_error(val_predictions, val_y)

print("Validation MAE for best value of max_leaf_nodes: {:,.0f}".format(val_mae))

# Set up code checking

from learntools.core import binder

binder.bind(globals())

from learntools.machine_learning.ex6 import *

print("\nSetup complete")

```

# Exercises

Data science isn't always this easy. But replacing the decision tree with a Random Forest is going to be an easy win.

## Step 1: Use a Random Forest

```

from sklearn.ensemble import RandomForestRegressor

# Define the model. Set random_state to 1

rf_model = RandomForestRegressor(random_state=1)

# fit your model

rf_model.fit(train_X, train_y)

# Calculate the mean absolute error of your Random Forest model on the validation data

rf_val_predictions = rf_model.predict(val_X)

rf_val_mae = mean_absolute_error(rf_val_predictions, val_y)

print("Validation MAE for Random Forest Model: {:,.0f}".format(rf_val_mae))

step_1.check()

# The lines below will show you a hint or the solution.

# step_1.hint()

step_1.solution()

```

# Think about Your Results

Under what circumstances might you prefer the Decision Tree to the Random Forest, even though the Random Forest generally gives more accurate predictions? Weigh in or follow the discussion in [this discussion thread](https://kaggle.com/learn-forum/----) **TODO: Add the link**

# Keep Going

So far, you have followed specific instructions at each step of your project. This helped learn key ideas and build your first model, but now you know enough to try things on your own.

Machine Learning competitions are a great way to try your own ideas and learn more as you independently navigate a machine learning project. Learn **[how to submit your work to a Kaggle competition](https://www.kaggle.com/dansbecker/submitting-from-a-kernel)**.

---

**[Course Home Page](https://www.kaggle.com/learn/machine-learning)**

| github_jupyter |

<a href="https://colab.research.google.com/github/SauravMaheshkar/trax/blob/SauravMaheshkar-example-1/examples/trax_data_Explained.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

```

#@title

# Copyright 2020 Google LLC.

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

# https://www.apache.org/licenses/LICENSE-2.0

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

## Install the Latest Version of Trax

!pip install --upgrade trax

```

Notebook Author: [@SauravMaheshkar](https://github.com/SauravMaheshkar)

# Introduction

```

import trax

```

# Serial Fn

In Trax, we use combinators to build input pipelines, much like building deep learning models. The `Serial` combinator applies layers serially using function composition and uses stack semantics to manage data.

Trax has the following definition for a `Serial` combinator.

> ```

def Serial(*fns):

def composed_fns(generator=None):

for f in fastmath.tree_flatten(fns):

generator = f(generator)

return generator

return composed_fns

```

The `Serial` function has the following structure:

* It takes as **input** arbitrary number of functions

* Convert the structure into lists

* Iterate through the list and apply the functions Serially

---

The [`fastmath.tree_flatten()`](https://github.com/google/trax/blob/c38a5b1e4c5cfe13d156b3fc0bfdb83554c8f799/trax/fastmath/numpy.py#L195) function, takes a tree as a input and returns a flattened list. This way we can use various generator functions like Tokenize and Shuffle, and apply them serially by '*iterating*' through the list.

Initially, we've defined `generator` to `None`. Thus, in the first iteration we have no input and thus the first step executes the first function in our tree structure. In the next iteration, the `generator` variable is updated to be the output of the next function in the list.

# Log Function

> ```

def Log(n_steps_per_example=1, only_shapes=True):

def log(stream):

counter = 0

for example in stream:

item_to_log = example

if only_shapes:

item_to_log = fastmath.nested_map(shapes.signature, example)

if counter % n_steps_per_example == 0:

logging.info(str(item_to_log))

print(item_to_log)

counter += 1

yield example

return log

Every Deep Learning Framework needs to have a logging component for efficient debugging.

`trax.data.Log` generator uses the `absl` package for logging. It uses a [`fastmath.nested_map`](https://github.com/google/trax/blob/c38a5b1e4c5cfe13d156b3fc0bfdb83554c8f799/trax/fastmath/numpy.py#L80) function that maps a certain function recursively inside a object. In the case depicted below, the function maps the `shapes.signature` recursively inside the input stream, thus giving us the shapes of the various objects in our stream.

--

The following two cells show the difference between when we set the `only_shapes` variable to `False`

```

data_pipeline = trax.data.Serial(

trax.data.TFDS('imdb_reviews', keys=('text', 'label'), train=True),

trax.data.Tokenize(vocab_dir='gs://trax-ml/vocabs/', vocab_file='en_8k.subword', keys=[0]),

trax.data.Log(only_shapes=False)

)

example = data_pipeline()

print(next(example))

data_pipeline = trax.data.Serial(

trax.data.TFDS('imdb_reviews', keys=('text', 'label'), train=True),

trax.data.Tokenize(vocab_dir='gs://trax-ml/vocabs/', vocab_file='en_8k.subword', keys=[0]),

trax.data.Log(only_shapes=True)

)

example = data_pipeline()

print(next(example))

```

# Shuffling our datasets

Trax offers two generator functions to add shuffle functionality in our input pipelines.

1. The `shuffle` function shuffles a given stream

2. The `Shuffle` function returns a shuffle function instead

## `shuffle`

> ```

def shuffle(samples, queue_size):

if queue_size < 1:

raise ValueError(f'Arg queue_size ({queue_size}) is less than 1.')

if queue_size == 1:

logging.warning('Queue size of 1 results in no shuffling.')

queue = []

try:

queue.append(next(samples))

i = np.random.randint(queue_size)

yield queue[i]

queue[i] = sample

except StopIteration:

logging.warning(

'Not enough samples (%d) to fill initial queue (size %d).',

len(queue), queue_size)

np.random.shuffle(queue)

for sample in queue:

yield sample

The `shuffle` function takes two inputs, the data stream and the queue size (minimum number of samples within which the shuffling takes place). Apart from the usual warnings, for negative and unity queue sizes, this generator function shuffles the given stream using [`np.random.randint()`](https://docs.python.org/3/library/random.html#random.randint) by randomly picks out integers using the `queue_size` as a range and then shuffle this new stream again using the [`np.random.shuffle()`](https://docs.python.org/3/library/random.html#random.shuffle)

```

sentence = ['Sed ut perspiciatis unde omnis iste natus error sit voluptatem accusantium doloremque laudantium, totam rem aperiam, eaque ipsa quae ab illo inventore veritatis et quasi architecto beatae vitae dicta sunt explicabo. Nemo enim ipsam voluptatem quia voluptas sit aspernatur aut odit aut fugit, sed quia consequuntur magni dolores eos qui ratione voluptatem sequi nesciunt. Neque porro quisquam est, qui dolorem ipsum quia dolor sit amet, consectetur, adipisci velit, sed quia non numquam eius modi tempora incidunt ut labore et dolore magnam aliquam quaerat voluptatem. Ut enim ad minima veniam, quis nostrum exercitationem ullam corporis suscipit laboriosam, nisi ut aliquid ex ea commodi consequatur? Quis autem vel eum iure reprehenderit qui in ea voluptate velit esse quam nihil molestiae consequatur, vel illum qui dolorem eum fugiat quo voluptas nulla pariatur?',

'But I must explain to you how all this mistaken idea of denouncing pleasure and praising pain was born and I will give you a complete account of the system, and expound the actual teachings of the great explorer of the truth, the master-builder of human happiness. No one rejects, dislikes, or avoids pleasure itself, because it is pleasure, but because those who do not know how to pursue pleasure rationally encounter consequences that are extremely painful. Nor again is there anyone who loves or pursues or desires to obtain pain of itself, because it is pain, but because occasionally circumstances occur in which toil and pain can procure him some great pleasure. To take a trivial example, which of us ever undertakes laborious physical exercise, except to obtain some advantage from it? But who has any right to find fault with a man who chooses to enjoy a pleasure that has no annoying consequences, or one who avoids a pain that produces no resultant pleasure?',

'Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur. Excepteur sint occaecat cupidatat non proident, sunt in culpa qui officia deserunt mollit anim id est laborum',

'At vero eos et accusamus et iusto odio dignissimos ducimus qui blanditiis praesentium voluptatum deleniti atque corrupti quos dolores et quas molestias excepturi sint occaecati cupiditate non provident, similique sunt in culpa qui officia deserunt mollitia animi, id est laborum et dolorum fuga. Et harum quidem rerum facilis est et expedita distinctio. Nam libero tempore, cum soluta nobis est eligendi optio cumque nihil impedit quo minus id quod maxime placeat facere possimus, omnis voluptas assumenda est, omnis dolor repellendus. Temporibus autem quibusdam et aut officiis debitis aut rerum necessitatibus saepe eveniet ut et voluptates repudiandae sint et molestiae non recusandae. Itaque earum rerum hic tenetur a sapiente delectus, ut aut reiciendis voluptatibus maiores alias consequatur aut perferendis doloribus asperiores repellat.']

def sample_generator(x):

for i in x:

yield i

example_shuffle = list(trax.data.inputs.shuffle(sample_generator(sentence), queue_size = 2))

example_shuffle

```

## `Shuffle`

> ```

def Shuffle(queue_size=1024):

return lambda g: shuffle(g, queue_size)

This function returns the aforementioned `shuffle` function and is mostly used in input pipelines.

# Batch Generators

## `batch`

This function, creates batches for the input generator function.

> ```

def batch(generator, batch_size):

if batch_size <= 0:

raise ValueError(f'Batch size must be positive, but is {batch_size}.')

buf = []

for example in generator:

buf.append(example)

if len(buf) == batch_size:

batched_example = tuple(np.stack(x) for x in zip(*buf))

yield batched_example

buf = []

It keeps adding objects from the generator into a list until the size becomes equal to the `batch_size` and then creates batches using the `np.stack()` function.

It also raises an error for non-positive batch_sizes.

## `Batch`

> ```

def Batch(batch_size):

return lambda g: batch(g, batch_size)

This Function returns the aforementioned `batch` function with given batch size.

# Pad to Maximum Dimensions

This function is used to pad a tuple of tensors to a joint dimension and return their batch.

For example, in this case a pair of tensors (1,2) and ( (3,4) , (5,6) ) is changed to (1,2,0) and ( (3,4) , (5,6) , 0)

```

import numpy as np

tensors = np.array([(1.,2.),

((3.,4.),(5.,6.))])

padded_tensors = trax.data.inputs.pad_to_max_dims(tensors=tensors, boundary=3)

padded_tensors

```

# Creating Buckets

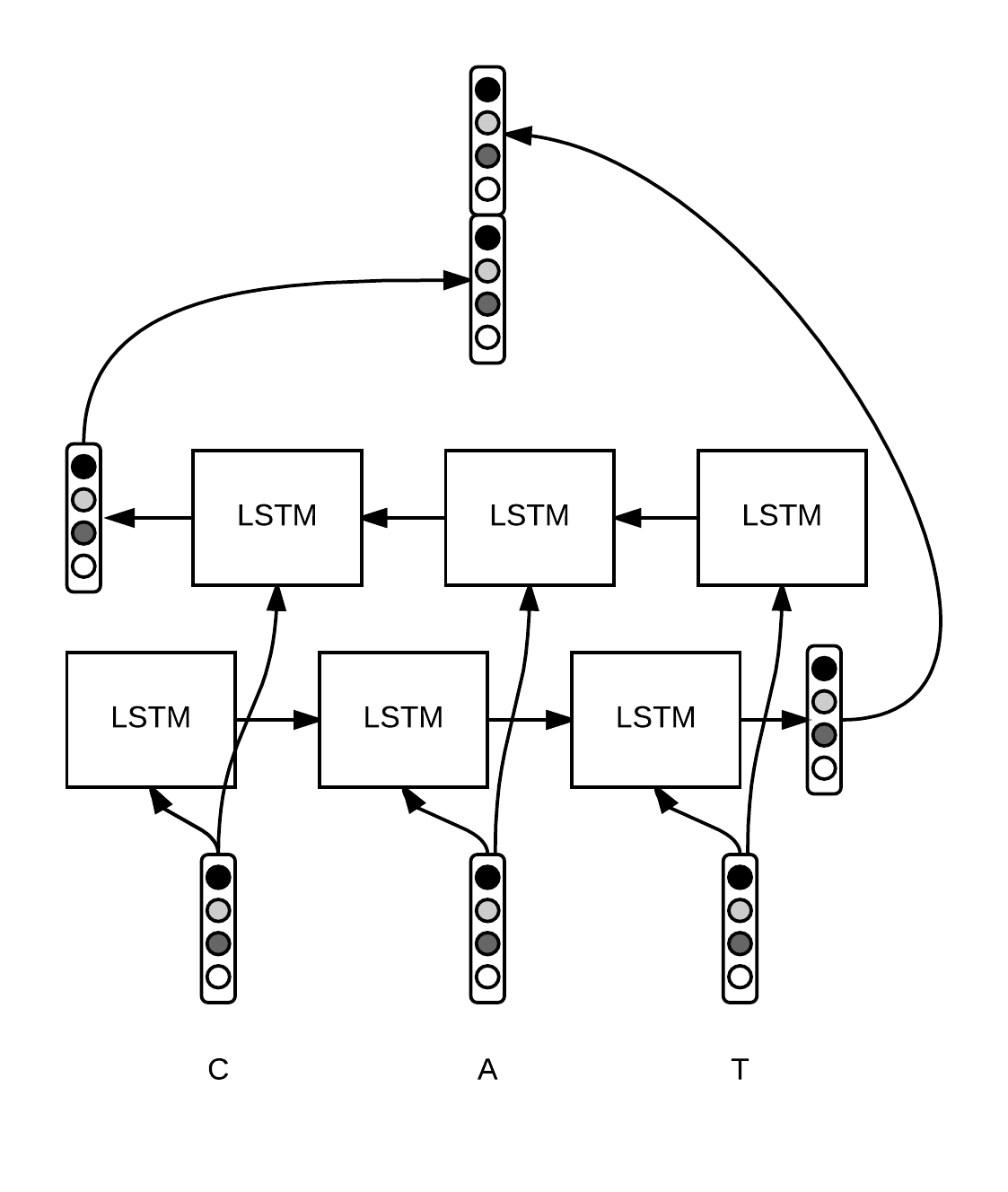

For training Recurrent Neural Networks, with large vocabulary a method called Bucketing is usually applied.

The usual technique of using padding ensures that all occurences within a mini-batch are of the same length. But this reduces the inter-batch variability and intuitively puts similar sentences into the same batch therefore, reducing the overall robustness of the system.

Thus, we use Bucketing where multiple buckets are created depending on the length of the sentences and these occurences are assigned to buckets on the basis of which bucket corresponds to it's length. We need to ensure that the bucket sizes are large for adding some variablity to the system.

## `bucket_by_length`

> ```

def bucket_by_length(generator, length_fn, boundaries, batch_sizes,strict_pad_on_len=False):

buckets = [[] for _ in range(len(batch_sizes))]

boundaries = boundaries + [math.inf]

for example in generator:

length = length_fn(example)

bucket_idx = min([i for i, b in enumerate(boundaries) if length <= b])

buckets[bucket_idx].append(example)

if len(buckets[bucket_idx]) == batch_sizes[bucket_idx]:

batched = zip(*buckets[bucket_idx])

boundary = boundaries[bucket_idx]

boundary = None if boundary == math.inf else boundary

padded_batch = tuple(

pad_to_max_dims(x, boundary, strict_pad_on_len) for x in batched)

yield padded_batch

buckets[bucket_idx] = []

---

This function can be summarised as:

* Create buckets as per the lengths given in the `batch_sizes` array

* Assign sentences into buckets if their length matches the bucket size

* If padding is required, we use the `pad_to_max_dims` function

---

### Parameters

1. **generator:** The input generator function

2. **length_fn:** A custom length function for determing the length of functions, not necessarily `len()`

3. **boundaries:** A python list containing corresponding bucket boundaries

4. **batch_sizes:** A python list containing batch sizes

5. **strict_pad_on_len:** – A python boolean variable (`True` or `False`). If set to true then the function pads on the length dimension, where dim[0] is strictly a multiple of boundary.

## `BucketByLength`

> ```

def BucketByLength(boundaries, batch_sizes,length_keys=None, length_axis=0, strict_pad_on_len=False):

length_keys = length_keys or [0, 1]

length_fn = lambda x: _length_fn(x, length_axis, length_keys)

return lambda g: bucket_by_length(g, length_fn, boundaries, batch_sizes, strict_pad_on_len)

---

This function, is usually used inside input pipelines(*combinators*) and uses the afforementioned `bucket_by_length`. It applies a predefined `length_fn` which chooses the maximum shape on length_axis over length_keys.

It's use is illustrated below

```

data_pipeline = trax.data.Serial(

trax.data.TFDS('imdb_reviews', keys=('text', 'label'), train=True),

trax.data.Tokenize(vocab_dir='gs://trax-ml/vocabs/', vocab_file='en_8k.subword', keys=[0]),

trax.data.BucketByLength(boundaries=[32, 128, 512, 2048],

batch_sizes=[512, 128, 32, 8, 1],

length_keys=[0]),

trax.data.Log(only_shapes=True)

)

example = data_pipeline()

print(next(example))

```

# Filter by Length

> ```

def FilterByLength(max_length,length_keys=None, length_axis=0):

length_keys = length_keys or [0, 1]

length_fn = lambda x: _length_fn(x, length_axis, length_keys)

def filtered(gen):

for example in gen:

if length_fn(example) <= max_length:

yield example

return filtered

---

This function used the same predefined `length_fn` to only include those instances which are less than the given `max_length` parameter.

```

Filtered = trax.data.Serial(

trax.data.TFDS('imdb_reviews', keys=('text', 'label'), train=True),

trax.data.Tokenize(vocab_dir='gs://trax-ml/vocabs/', vocab_file='en_8k.subword', keys=[0]),

trax.data.BucketByLength(boundaries=[32, 128, 512, 2048],

batch_sizes=[512, 128, 32, 8, 1],

length_keys=[0]),

trax.data.FilterByLength(max_length=2048, length_keys=[0]),

trax.data.Log(only_shapes=True)

)

filtered_example = Filtered()

print(next(filtered_example))

```

# Adding Loss Weights

## `add_loss_weights`

> ```

def add_loss_weights(generator, id_to_mask=None):

for example in generator:

if len(example) > 3 or len(example) < 2:

assert id_to_mask is None, 'Cannot automatically mask this stream.'

yield example

else:

if len(example) == 2:

weights = np.ones_like(example[1]).astype(np.float32)

else:

weights = example[2].astype(np.float32)

mask = 1.0 - np.equal(example[1], id_to_mask).astype(np.float32)

weights *= mask

yield (example[0], example[1], weights)

---

This function essentially adds a loss mask (tensor of ones of the same shape) to the input stream.

**Masking** is essentially a way to tell sequence-processing layers that certain timesteps in an input are missing, and thus should be skipped when processing the data.

Thus, it adds 'weights' to the system.

---

### Parameters

1. **generator:** The input data generator

2. **id_to_mask:** The value with which to mask. Can be used as `<PAD>` in NLP.

```

train_generator = trax.data.inputs.add_loss_weights(

data_generator(batch_size, x_train, y_train,vocab['<PAD>'], True),

id_to_mask=vocab['<PAD>'])

```

For example, in this case I used the `add_loss_weights` function to add padding while implementing Named Entity Recogntion using the Reformer Architecture. You can read more about the project [here](https://www.kaggle.com/sauravmaheshkar/trax-ner-using-reformer).

## `AddLossWeights`

This function performs the afforementioned `add_loss_weights` to the data stream.

> ```

def AddLossWeights(id_to_mask=None):

return lambda g: add_loss_weights(g,id_to_mask=id_to_mask)

```

data_pipeline = trax.data.Serial(

trax.data.TFDS('imdb_reviews', keys=('text', 'label'), train=True),

trax.data.Tokenize(vocab_dir='gs://trax-ml/vocabs/', vocab_file='en_8k.subword', keys=[0]),

trax.data.Shuffle(),

trax.data.FilterByLength(max_length=2048, length_keys=[0]),

trax.data.BucketByLength(boundaries=[ 32, 128, 512, 2048],

batch_sizes=[512, 128, 32, 8, 1],

length_keys=[0]),

trax.data.AddLossWeights(),

trax.data.Log(only_shapes=True)

)

example = data_pipeline()

print(next(example))

```

| github_jupyter |

# Simple Waveform

following [this tutorial](https://www.pythonforengineers.com/audio-and-digital-signal-processingdsp-in-python/)

```

%matplotlib inline

import wave

import struct

import matplotlib.pyplot as plt

import numpy as np

import astropy.units as u

import kplr

import celerite

from celerite import terms

from scipy.ndimage import gaussian_filter1d

from astropy.io import fits

frequency = 440 * u.Hz

sampling_rate = 48000 * u.Hz

duration = 5 * u.s

num_samples = int(duration * sampling_rate)

# The sampling rate of the analog to digital convert

amplitude = 20000

file = "test.wav"

x = np.arange(0, num_samples)

sine_wave = np.sin(2 * np.pi * float(frequency/sampling_rate) * x)

@u.quantity_input(sampling_rate=u.Hz)

def write_wave(waveform, num_samples, sampling_rate, path='test.wav'):

nframes=num_samples

comptype = "NONE"

compname = "not compressed"

nchannels = 1

sampwidth = 2

fig, ax = plt.subplots()

ax.plot(np.arange(len(waveform))/sampling_rate.value, waveform)

with wave.open(path, 'w') as wav_file:

wav_file.setparams((nchannels, sampwidth, int(sampling_rate.value), nframes, comptype, compname))

frames = struct.pack(len(waveform)*'h', *(waveform*amplitude).astype(int).tolist())

wav_file.writeframes(frames)

return fig, ax

def light_curve(koi):

client = kplr.API()

# Find the target KOI.

koi = client.koi(koi + 0.01) #Kepler-17

# Get a list of light curve datasets.

lcs = koi.get_light_curves(short_cadence=False)

# Loop over the datasets and read in the data.

time, flux, ferr, quality = [], [], [], []

for lc in lcs:

with lc.open() as f:

# The lightcurve data are in the first FITS HDU.

hdu_data = f[1].data

t = hdu_data["time"]

f = hdu_data["sap_flux"]

not_nan = ~np.isnan(f) & ~np.isnan(t)

fit = np.polyval(np.polyfit(t[not_nan], f[not_nan], 2), t[not_nan])

time.append(t[not_nan])

flux.append(f[not_nan]/fit)

ferr.append(hdu_data["sap_flux_err"])

quality.append(hdu_data["sap_quality"])

return time, flux

def gp_interpolate(time, flux, N=10000):

kernel = terms.SHOTerm(log_S0=-5, log_omega0=np.log(2*np.pi*20), log_Q=1/np.sqrt(2))

gp = celerite.GP(kernel)

gp.compute(time)

from scipy.optimize import minimize

def neg_log_like(params, y, gp):

gp.set_parameter_vector(params)

return -gp.log_likelihood(y)

initial_params = gp.get_parameter_vector()

bounds = gp.get_parameter_bounds()

r = minimize(neg_log_like, initial_params, method="L-BFGS-B", args=(flux, gp)) # bounds=bounds,

gp.set_parameter_vector(r.x)

x = np.linspace(time.min(), time.max(), N)

predicted_flux = gp.predict(flux, x, return_cov=False)

return x, predicted_flux

write_wave(sine_wave, num_samples, sampling_rate)

```

# Trappist-1

```

d = fits.getdata('data/nPLDTrappist.fits')

time, flux = d['CADN'], d['FLUX']

flux /= flux.mean()

condition = (flux > 0.9) & (flux < 1.1) & (~np.isnan(flux)) & (~np.isnan(time))

fit = np.polyval(np.polyfit(time[condition] - time.mean(), flux[condition], 10), time[condition] - time.mean())

time = time[condition]

flux = flux[condition] - fit

flux = gaussian_filter1d(flux, 5)

plt.plot(time, flux)

x, predicted_flux = gp_interpolate(time[150:], flux[150:], N=8e4)

plt.plot(x, predicted_flux)

#flux = np.tile(flux / flux.max(), 100)

predicted_flux = np.tile(np.concatenate([predicted_flux, predicted_flux[::-1]]), 50)

#layers = 100*predicted_flux + np.tile(predicted_flux[::10], 10)# + np.tile(predicted_flux[::2], 2)

write_wave(100*predicted_flux[::2], len(predicted_flux[::2]), sampling_rate*2, path='trappist1.wav')

```

# Kepler-62

```

time, flux = light_curve(701)

time = np.concatenate(time)

flux = np.concatenate(flux)

flux -= flux.mean()

flux = gaussian_filter1d(flux, 10)

plt.plot(time, flux)

flux = np.tile(np.concatenate([flux, flux[::-1]]), 30)

write_wave(100*flux[::2], len(flux[::2]), sampling_rate*2, path='k62.wav')

```

# Kepler-296

```

time, flux = light_curve(1422)

time = np.concatenate(time)

flux = np.concatenate(flux)

flux -= flux.mean()

from scipy.ndimage import gaussian_filter1d

flux = gaussian_filter1d(flux, 10)

plt.plot(time, flux)

plt.plot(time, flux)

x, predicted_flux = gp_interpolate(time, flux)

plt.plot(x, predicted_flux)

flux = np.tile(np.concatenate([predicted_flux, predicted_flux[::-1]]), 50)

fig, ax = write_wave(40*flux, len(flux), sampling_rate, path='k296.wav')

# predicted_flux = np.tile(np.concatenate([predicted_flux, predicted_flux[::-1]]), 5)

# fig, ax = write_wave(100*predicted_flux, len(predicted_flux), sampling_rate*2, path='k296.wav')

```

# Kepler-442

```

time, flux = light_curve(4742)

time = np.concatenate(time)

flux = np.concatenate(flux)

flux -= flux.mean()

flux = gaussian_filter1d(flux, 10)

plt.plot(time, flux)

plt.plot(time, flux)

#x, predicted_flux = gp_interpolate(time, flux)

#plt.plot(x, predicted_flux)

flux = np.tile(np.concatenate([flux, flux[::-1]]), 30)

fig, ax = write_wave(100*flux[::2], len(flux[::2]), sampling_rate*2, path='k442.wav')

```

# Kepler-1229

```

time, flux = light_curve(2418)

time = np.concatenate(time)

flux = np.concatenate(flux)

flux -= flux.mean()

flux = gaussian_filter1d(flux, 40)

plt.plot(time, flux)

plt.plot(time, flux)

x, predicted_flux = gp_interpolate(time, flux)

plt.plot(x, predicted_flux)

flux = np.tile(np.concatenate([predicted_flux, predicted_flux[::-1]]), 30)

fig, ax = write_wave(100*flux, len(flux), sampling_rate, path='k1229.wav')

```

# Kepler-186

```

time, flux = light_curve(571)

time = np.concatenate(time)

flux = np.concatenate(flux)

flux -= flux.mean()

flux = gaussian_filter1d(flux, 10)

plt.plot(time, flux)

flux = np.tile(np.concatenate([flux, flux[::-1]]), 30)

fig, ax = write_wave(100*flux[::2], len(flux[::2]), sampling_rate*2, path='k186.wav')

```

| github_jupyter |

```

# Copyright 2020 Google LLC

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

import collections

import functools

import itertools

import os

import pathlib

import re

import textwrap

import matplotlib.pyplot as plt

import numpy as np

import datasets

import shutil

import tensorflow as tf

import transformers

import tqdm

# Prepare different HuggingFace objects that will definitely be needed.

kilt = datasets.load_dataset("kilt_tasks")

eli5 = {k.split("_")[0]: v for k, v in kilt.items() if "eli5" in k}

tokenizer = transformers.GPT2TokenizerFast.from_pretrained("gpt2-xl")

print(f"Dataset split keys: {list(eli5.keys())}")

# Extract the lengths of combined question and answer text, once tokenized with the GPT2 tokenizer.

def get_len(sample):

question = sample["input"].strip()

answer = min(sample["output"]["answer"], key=len).strip()

len_ = len(tokenizer(question + " " + answer)["input_ids"])

return {"len_":len_}

mapped = eli5["train"].map(get_len, num_proc=os.cpu_count())

# Count the number of entries of each length, then sort by length

counts = collections.Counter(mapped["len_"])

sorted_counts = sorted(counts.items(), key=lambda x: x[0])

# Compute the ratio of samples that are in a certain percentile of lengths, and compute how long retrievals

# would have to be for a certain fraction of the dataset to have access to that amount of retrievals

context_length = 1024

max_num_retrievals = 4

fractions = [.55, .6, .65, .7, .75, .775, .8, .825, .85, .875, .9, .925, .95, .975][::-1]

points = {}

max_pairs = {}

for top_fraction in fractions:

qty_accumulator = 0

cumulative = [

(count, qty_accumulator := qty / len(eli5["train"]) + qty_accumulator)

for count, qty in sorted_counts if qty_accumulator < top_fraction

]

x = [x[0] for x in cumulative]

y = [x[1] for x in cumulative]

points[top_fraction] = (x, y)

max_pair = max(cumulative, key=lambda pair: pair[1])

max_pairs[top_fraction] = max_pair[0]

print(f"{top_fraction:0.1%}: {max_pair[0]} bpe tokens or fewer")

for i in range(1, max_num_retrievals + 1):

print(f"({context_length} - {max_pair[0]}) / {i} = {(context_length - max_pair[0]) / i:0.0f}")

# Graph the results.

plt.figure(figsize=(10, 10));

ax = plt.gca()

ax.margins(tight=True)

ax.plot(*points[max(fractions)]);

plt.yticks(np.linspace(0, 1, 21))

percentages = max_pairs.keys()

lengths = max_pairs.values()

for x, y in zip(lengths, percentages):

ax.plot((x, x), (0, y), color="red")

ax.scatter(lengths,

percentages, color="red");

ax.scatter(lengths,

[0 for _ in lengths], color="red");

for y, x in max_pairs.items():

ax.text(x - 25, y + 0.025, f"{y:0.1%}", size=13)

ax.text(x - 10, -0.032, f"{x}", size=10)

```

| github_jupyter |

#### Dependencies

```

import numpy as np

import pandas as pd

import xarray as xr

# geo libs are optional

from shapely.geometry import Point

import geopandas as gpd

from geopandas import GeoDataFrame

# cd ../..

pwd # should be top-level, i.e. ~/*/generative-downscaling/

temp_1 = xr.open_dataset("./data/raw/temp/1406/2m_temperature_1991_1.40625deg.nc")

temp_5 = xr.open_dataset("./data/raw/temp/5625/2m_temperature_1991_5.625deg.nc")

```

#### Basic XArray

```

temp_1

temp_1.dims # 8760/365 = 24!

temp_5.dims

temp_1.coords

type(temp_1.t2m.data)

```

#### Basic Filtering

```

daily = temp_1.isel(time=(temp_1.time.dt.hour == 0))

daily.dims

daily_5 = temp_5.isel(time=(temp_5.time.dt.hour == 0))

daily.dims

```

## Visual Exploration

### Location & Consistency

#### Where is the Data Located?

```

def obs_by_geo(weatherbench: pd.DataFrame) -> GeoDataFrame:

""" Return total observations by coordinate """

obs_by_geo = weatherbench.groupby(["lat","lon"]).size()

obs_by_geo.name = "count"

obs_by_geo = obs_by_geo.reset_index()

gdf = GeoDataFrame(

obs_by_geo,

geometry=gpd.points_from_xy(obs_by_geo.lon - 180, obs_by_geo.lat)

)

return gdf

fine_cts = obs_by_geo(daily.to_dataframe())

coarse_cts = obs_by_geo(daily_5.to_dataframe())

world = gpd.read_file(gpd.datasets.get_path('naturalearth_lowres'))

ax = fine_cts.plot(

ax=world.plot(figsize=(15, 8), alpha=.33),

marker='o', color='red', markersize=.05,

label = "Fine Resolution"

);

coarse_cts.plot(

ax=ax,

marker='o', color='green', markersize=1,

label = "Coarse Resolution"

);

ax.set_title("WeatherBench Dataset Geographic Coverage (Full)", fontsize=20);

ax.set_xlabel("Longitude", fontsize=14);

ax.set_ylabel("Latitude", fontsize=14);

ax.legend(fontsize=12);

```

#### Americas Only

```

EPSILON = .0001

NA_LAT_FINE = (-5, 85+EPSILON) # epsilon to avoid non-inclusive right

NA_LON_FINE = (-137.5+180, -47.5+180+EPSILON)

NA_LAT_COARSE = (-10, 88+EPSILON) # Coarse should be wider for full coverage

NA_LON_COARSE = (-145+180, -45+180+EPSILON)

daily_na = daily.sel(lat=slice(*NA_LAT_FINE), lon=slice(*NA_LON_FINE))

daily_na_5 = daily_5.sel(lat=slice(*NA_LAT_COARSE), lon=slice(*NA_LON_COARSE))

fine_cts_americas = fine_cts[fine_cts['lat'].between(*NA_LAT_FINE) & fine_cts['lon'].between(*NA_LON_FINE)]

coarse_cts_americas = coarse_cts[coarse_cts['lat'].between(*NA_LAT_COARSE) & coarse_cts['lon'].between(*NA_LON_COARSE)]

americas = world[world['continent'].isin(["North America", "South America"])]

ax = fine_cts_americas.plot(

ax=americas.plot(figsize=(8,8), alpha=.33),

marker='o', color='red', markersize=.05,

label = "Fine Resolution"

);

coarse_cts_americas.plot(

ax=ax,

marker='o', color='green', markersize=1,

label = "Coarse Resolution"

);

ax.set_xlim(-160,-20);

ax.set_ylim(-30,90);

ax.set_title("WeatherBench Dataset Coverage (Americas Only)", fontsize=20);

ax.set_xlabel("Longitude", fontsize=14);

ax.set_ylabel("Latitude", fontsize=14);

ax.legend(fontsize=12);

```

### Temperature

```

t5_pdf = temp_5.to_dataframe()

# t5_pdf = t5_pdf[:100]

# coords = t5_pdf.reset_index()[['lat','lon']].drop_duplicates()

# coords[coords['lat'].between(40,50) & coords['lon'].between(70+180,80+180)]

coords_mtl_approx = (47.8125, 253.125) # lat, lon

mtl_1991 = t5_pdf.loc[coords_mtl_approx[0]].loc[coords_mtl_approx[1]]

mtl_1991_hi_lo = mtl_1991\

.groupby([mtl_1991.index.date])\

.agg([np.min, np.max])\

.rename(columns={'amin': 'low', 'amax': 'high'})

mtl_1991_hi_lo_c = mtl_1991_hi_lo - 273.15 # kelvin to celsius

mtl_1991_hi_lo_c.plot()

```

| github_jupyter |

# Video Codec Unit (VCU) Demo Example: STREAM_IN->DECODE ->DISPLAY

# Introduction

Video Codec Unit (VCU) in ZynqMP SOC is capable of encoding and decoding AVC/HEVC compressed video streams in real time.

This notebook example acts as Client pipeline in streaming use case. It needs to be run along with Server notebook (__vcu-demo-transcode-to-streamout. ipynb or vcu-demo-camera-encode-streamout. ipynb__). It receives encoded data over network, decode using VCU and render it on DP/HDMI Monitor.

# Implementation Details

<img src="pictures/block-diagram-streamin-decode.png" align="center" alt="Drawing" style="width: 600px; height: 200px"/>

This example requires two boards, board-1 is used for transcode and stream-out (as a server) and **board 2** is used for streaming-in and decode purpose (as a client) or VLC player on the host machine can be used as client instead of board-2 (More details regarding Test Setup for board-1 can be found in transcode → stream-out Example).

__Note:__ This notebook needs to be run along with "vcu-demo-transcode-to-streamout.ipynb" or "vcu-demo-camera-encode-streamout.ipynb". The configuration settings below are for Client-side pipeline.

### Board Setup

**Board 2 is used for streaming-in and decode purpose (as a client)**

1. Connect 4k DP/HDMI display to board.

2. Connect serial cable to monitor logs on serial console.

3. If Board is connected to private network, then export proxy settings in /home/root/.bashrc file on board as below,

- create/open a bashrc file using "vi ~/.bashrc"

- Insert below line to bashrc file

- export http_proxy="< private network proxy address >"

- export https_proxy="< private network proxy address >"

- Save and close bashrc file.

4. Connect two boards in the same network so that they can access each other using IP address.

5. Check server IP on server board.

- root@zcu106-zynqmp:~#ifconfig

6. Check client IP.

7. Check connectivity for board-1 & board-2.

- root@zcu106-zynqmp:~#ping <board-2's IP>

8. Run stream-in → Decode on board-2

Create test.sdp file on host with below content (Add separate line in test.sdp for each item below) and play test.sdp on host machine.

1. v=0 c=IN IP4 <Client machine IP address>

2. m=video 50000 RTP/AVP 96

3. a=rtpmap:96 H264/90000

4. a=framerate=30

Trouble-shoot for VLC player setup:

1. IP4 is client-IP address

2. H264/H265 is used based on received codec type on the client

3. Turn-off firewall in host machine if packets are not received to VLC.

```

from IPython.display import HTML

HTML('''<script>

code_show=true;

function code_toggle() {

if (code_show){

$('div.input').hide();

} else {

$('div.input').show();

}

code_show = !code_show

}

$( document ).ready(code_toggle);

</script>

<form action="javascript:code_toggle()"><input type="submit" value="Click here to toggle on/off the raw code."></form>''')

```

# Run the Demo

```

from ipywidgets import interact

import ipywidgets as widgets

from common import common_vcu_demo_streamin_decode_display

import os

from ipywidgets import HBox, VBox, Text, Layout

```

### Video

```

codec_type=widgets.RadioButtons(

options=['avc', 'hevc'],

description='Codec Type:',

disabled=False)

video_sink={'kmssink':['DP', 'HDMI'], 'fakevideosink':['none']}

def print_video_sink(VideoSink):

pass

def select_video_sink(VideoCodec):

display_type.options = video_sink[VideoCodec]

sink_name = widgets.RadioButtons(options=sorted(video_sink.keys(), key=lambda k: len(video_sink[k]), reverse=True), description='Video Sink:')

init = sink_name.value

display_type = widgets.RadioButtons(options=video_sink[init], description='Display:')

j = widgets.interactive(print_video_sink, VideoSink=display_type)

i = widgets.interactive(select_video_sink, VideoCodec=sink_name)

HBox([codec_type, i, j])

```

### Audio

```

audio_sink={'none':['none'], 'aac':['auto','alsasink','pulsesink'],'vorbis':['auto','alsasink','pulsesink']}

audio_src={'none':['none'], 'aac':['auto','alsasrc','pulsesrc'],'vorbis':['auto','alsasrc','pulsesrc']}

#val=sorted(audio_sink, key = lambda k: (-len(audio_sink[k]), k))

def print_audio_sink(AudioSink):

pass

def print_audio_src(AudioSrc):

pass

def select_audio_sink(AudioCodec):

audio_sinkW.options = audio_sink[AudioCodec]

audio_srcW.options = audio_src[AudioCodec]

audio_codecW = widgets.RadioButtons(options=sorted(audio_sink.keys(), key=lambda k: len(audio_sink[k])), description='Audio Codec:')

init = audio_codecW.value

audio_sinkW = widgets.RadioButtons(options=audio_sink[init], description='Audio Sink:')

audio_srcW = widgets.RadioButtons(options=audio_src[init], description='Audio Src:')

j = widgets.interactive(print_audio_sink, AudioSink=audio_sinkW)

i = widgets.interactive(select_audio_sink, AudioCodec=audio_codecW)

HBox([i, j])

```

### Advanced options:

```

kernel_recv_buffer_size=widgets.Text(value='',

placeholder='(optional) 16000000',

description='Kernel Recv Buf Size:',

style={'description_width': 'initial'},

#layout=Layout(width='33%', height='30px'),

disabled=False)

port_number=widgets.Text(value='',

placeholder='(optional) 50000, 42000',

description=r'Port No:',

#style={'description_width': 'initial'},

#

disabled=False)

#kernel_recv_buffer_size

HBox([kernel_recv_buffer_size, port_number])

entropy_buffers=widgets.Dropdown(

options=['2', '3', '4', '5', '6', '7', '8', '9', '10', '11', '12', '13', '14', '15'],

value='5',

description='Entropy Buffers Nos:',

style={'description_width': 'initial'},

disabled=False,)

show_fps=widgets.Checkbox(

value=False,

description='show-fps',

#style={'description_width': 'initial'},

disabled=False)

HBox([entropy_buffers, show_fps])

from IPython.display import clear_output

from IPython.display import Javascript

def run_all(ev):

display(Javascript('IPython.notebook.execute_cells_below()'))

def clear_op(event):

clear_output(wait=True)

return

button1 = widgets.Button(

description='Clear Output',

style= {'button_color':'lightgreen'},

#style= {'button_color':'lightgreen', 'description_width': 'initial'},

layout={'width': '300px'}

)

button2 = widgets.Button(

description='',

style= {'button_color':'white'},

#style= {'button_color':'lightgreen', 'description_width': 'initial'},

layout={'width': '38px'},

disabled=True

)

button1.on_click(run_all)

button1.on_click(clear_op)

def start_demo(event):

#clear_output(wait=True)

arg = common_vcu_demo_streamin_decode_display.cmd_line_args_generator(port_number.value, codec_type.value, audio_codecW.value, display_type.value, kernel_recv_buffer_size.value, sink_name.value, entropy_buffers.value, show_fps.value, audio_sinkW.value);

#sh vcu-demo-streamin-decode-display.sh $arg > logs.txt 2>&1

!sh vcu-demo-streamin-decode-display.sh $arg

return

button = widgets.Button(

description='click to start vcu-stream_in-decode-display demo',

style= {'button_color':'lightgreen'},

#style= {'button_color':'lightgreen', 'description_width': 'initial'},

layout={'width': '350px'}

)

button.on_click(start_demo)

HBox([button, button2, button1])

```

# References

[1] https://xilinx-wiki.atlassian.net/wiki/spaces/A/pages/18842546/Xilinx+Video+Codec+Unit

[2] https://www.xilinx.com/support.html#documentation (Refer to PG252)

| github_jupyter |

<table>

<tr align=left><td><img align=left src="https://i.creativecommons.org/l/by/4.0/88x31.png">

<td>Text provided under a Creative Commons Attribution license, CC-BY. All code is made available under the FSF-approved MIT license. (c) Kyle T. Mandli</td>

</table>

```

%matplotlib inline

from __future__ import print_function

import numpy

import matplotlib.pyplot as plt

```

# Convergence Results for Initial Value Problems

Convergence for IVPs is a bit different than with BVPs, we want in general

$$

\lim_{\Delta t \rightarrow 0} U^N = u(t_f)

$$

where $t_f$ is the final desired time and $N$ is the number of time steps needed to reach $t_f$ such that

$$

N \Delta t = t_f \quad \Rightarrow N = \frac{t_f}{\Delta t}.

$$

We need to be careful at this juncture however when we are talking about a convergent method. A method can be convergent for a particular set of equations and particular initial conditions but not others. Practically speaking we would like convergence results to apply to a reasonably large set of equations and initial conditions. With these considerations we have the following definition of convergence for IVPs.

If applying an $r$-step method to an ODE of the form

$$

u'(t) = f(t,u)

$$

with $f(t,u)$ Lipschitz continuous in $u$, and with any set of starting values satisfying

$$

\lim_{\Delta t\rightarrow 0} U^\nu(\Delta t) = u_0 \quad \text{for} \quad \nu = 0, 1, \ldots, r-1

$$

(i.e. the bootstrap startup for the multi-step method is consistent with the initial value as $\Delta t \rightarrow$), then the method is said to be *convergent* in the sense

$$

\lim_{\Delta t \rightarrow 0} U^N = u(t_f).

$$

As we saw previously for a method to be convergent it must be

- **consistent** - the local truncation error $\tau = \mathcal{O}(\Delta t^p)$ where $p > 0$ and

- **zero-stable** - a similar minimal form of stability implying that the sum total of the errors as $\Delta t \rightarrow 0$ is bounded and has the same order as $\tau$ which we know goes to zero as $\Delta t \rightarrow 0$.

## One-Step Method Convergence

Consider the simple linear problem

$$

\frac{\text{d}u}{\text{d}t} = \lambda u + g(t) \quad \text{with}\quad u(0) = u_0

$$

which we know has the solution

$$

u(t) = u_0 e^{\lambda (t - t_0)} + \int^t_{t_0} e^{\lambda (t - \tau)} g(\tau) d\tau.

$$

### Forward Euler on a Linear Problem

Applying Euler's method to this problem leads to

$$\begin{aligned}

U^{n+1} &= U^n + \Delta t\lambda U^n \\

&= (1 + \Delta t \lambda) U^n

\end{aligned}$$

We also know the local truncation error is

$$\begin{aligned}

\tau^n &= \left (\frac{u(t_{n+1}) - u(t_n)}{\Delta t} \right ) - \lambda u(t_n)\\

&= \left (u'(t_n) + \frac{1}{2} \Delta t u''(t_n) + \mathcal{O}(\Delta t^2) \right ) - u'(t_n) \\

&= \frac{1}{2} \Delta t u''(t_n) + \mathcal{O}(\Delta t^2)

\end{aligned}$$

Noting the original definition of $\tau^n$ we can rewrite the expression for the local truncation error as

$$

u(t_{n+1}) = (1 + \Delta t \lambda) u(t_n) + \Delta t \tau^n

$$

which in combination with the application of Euler's method leads to an expression for the global error

$$\begin{aligned}

E^{n+1} = U^{n+1} - u(t^{n+1}) &= (1 + \Delta t \lambda) U^n - (1 + \Delta t \lambda) u(t_n) - \Delta t \tau^n \\

&= (1+\Delta t \lambda) E^n - \Delta t \tau^n \\

\end{aligned}$$

Expanding this expression out backwards in time to $n=0$ leads to

$$

E^n = (1 + \Delta t \lambda) E^0 - \Delta t \sum^n_{i=1} (1 + \Delta t \lambda)^{n-i} \tau^{i - 1}.

$$

We can now see the importance of the term $(1 + \Delta t \lambda)$. We can bound this term by

$$

|1 + \Delta t \lambda| \leq e^{\Delta t \lambda}

$$

which then implies the term in the summation can be bounded by

$$

|1 + \Delta t \lambda|^{n - i} \leq e^{(n-i) \Delta t |\lambda|} \leq e^{n \Delta t |\lambda||} \leq e^{|\lambda| t_f}

$$

Using this expression in the expression for the global error we find

$$\begin{aligned}

E^n &= (1 + \Delta t \lambda) E^0 - \Delta t \sum^n_{i=1} (1 + \Delta t \lambda)^{n-i} \tau^{i - 1} \\

|E^n| &\leq e^{|\lambda| \Delta t} |E^0| - \Delta t \sum^n_{i=1} e^{|\lambda| t_f} |\tau^{i - 1}| \\

&\leq e^{|\lambda| t_f} \left(|E^0| - \Delta t \sum^n_{i=1} |\tau^{i - 1}|\right) \\

&\leq e^{|\lambda| t_f} \left(|E^0| - n \Delta t \max_{1 \leq i \leq n} |\tau^{i - 1}|\right)

\end{aligned}$$

In other words the global error is bounded by the original global error and the maximum one-step error made multiplied by the number of time steps taken. If $N = \frac{t_f}{\Delta t}$ as before and taking into account the local truncation error we can simplify this expression further to

$$

|E^n| \leq e^{|\lambda| t_f} \left[|E^0| + t_f \left(\frac{1}{2} \Delta t |u''| + \mathcal{O}(\Delta t^2)\right ) \right]

$$

If we assume that we have used the correct initial condition $u_0$ then $E_0 \rightarrow 0$ as $\Delta t \rightarrow 0$ and we see that the method is truly convergent as

$$

|E^n| \leq e^{|\lambda| t_f} t_f \left(\frac{1}{2} \Delta t |u''| + \mathcal{O}(\Delta t^2)\right ) = \mathcal{O}(\Delta t).

$$

### Relation to Stability for BVPs

We can see the relationship between the previous version of stability and the one outlined above. Try writing the forward Euler method as a linear system.

Forward Euler:

$$

A = \frac{1}{\Delta t} \begin{bmatrix}

1 \\

-(1 + \Delta t \lambda) & 1 \\

& -(1 + \Delta t \lambda) & 1 \\

& & -(1 + \Delta t \lambda) & 1 \\

& & & \ddots & \ddots \\

& & & & -(1 + \Delta t \lambda) & 1 \\

& & & & & -(1 + \Delta t \lambda) & 1

\end{bmatrix}

$$

with

$$

U = \begin{bmatrix} U^1 \\ U^2 \\ \vdots \\ U^N \end{bmatrix} ~~~~

F = \begin{bmatrix} (1 / \Delta t + \lambda) U^0 + g(t_0) \\ g(t_1) \\ \vdots \\ g(t_{N-1}) \end{bmatrix}

$$

Following our previous stability result and taking $\hat{U~}$ to be the vector obtained from the true solution ($\hat{U~}^i = u(t_i)$) we then have

$$

A U = F ~~~~~~ A \hat{U~} = F + \tau

$$

and therefore

$$

A (\hat{U~} - U) = \tau.

$$

Noting that $\hat{U~} - U = E$ we can then invert that matrix $A$ to find the relationship between the truncation error $\tau$ and the global error $E$. As before then we require that $A^{-1}$ is invertible (which is trivial in this case) and that $||A^{-1}|| < C$ in some norm. We can see this as

$$

A^{-1} = \Delta t \begin{bmatrix}

1 \\

(1 + \Delta t \lambda) & 1 \\

(1 + \Delta t \lambda)^2 & (1 + \Delta t \lambda) & 1 \\

(1 + \Delta t \lambda)^3 & (1 + \Delta t \lambda)^2 & (1 + \Delta t \lambda) & 1\\

\vdots & & & \ddots \\

(1 + \Delta t \lambda)^{N-1} & (1 + \Delta t \lambda)^{N-2} (1 + \Delta t \lambda)^{N-3} & \cdots & (1 + \Delta t \lambda) & 1

\end{bmatrix}

$$

whose infinity norm is

$$

||A^{-1}||_\infty = \Delta t \sum^N_{m=1} | (1 + \Delta t \lambda)^{N-M} |

$$

and therefore

$$

||A^{-1}||_\infty \leq \Delta t N e^{|\lambda| T} = T e^{|\lambda| T}.

$$

As $\Delta t \rightarrow 0$ this is bounded for **fixed T**.

### General One-Step Method Convergence

Consider the general one step method denoted by

$$

U^{n+1} = U^n + \Delta t \Phi(U^n, t_n, \Delta t).

$$

Assuming $\Phi$ is continuous in $t$ and $\Delta t$ and Lipschitz continous in $u$ with Lipschitz contsant $L$ (related to the Lipschitz constant of $f$). If the one-step method is consistent

$$

\Phi(u,t,0) = f(u,t)

$$

for all $u$, $t$, and $\Delta t$ and the local truncation error is

$$

\tau^n =\frac{u(t_{n+1}) - u(t_n)}{\Delta t} - \phi(u(t_n), t_n, \Delta t)

$$

then the one-step method is convergent.

Using the general approach we used for forward Euler we know that the true solution and $\tau$ are realted through

$$

u(t_{n+1}) = u(t_n) + \Delta t \Phi(u(t_n), t_n, \Delta t) + \Delta t \tau^n

$$

which subtracted from the approximate solution

$$

U^{n+1} = U^n + \Delta t \Phi(U^n, t_n, \Delta t)

$$

leads to

$$

E^{n+1} = E^n + \Delta t (\Phi(U^n, t_n, \Delta t) - \Phi(u(t_n), t_n, \Delta t)) - \Delta t \tau^n.

$$

Using the Lipschitz continuity of $\Phi$ we then have

$$

|E^{n+1}| \leq |E^n| + \Delta t L |E^n| + \Delta t |\tau^n|.

$$

which has the same form as we saw in the proof for forward Euler.

## Zero-Stability for Linear Multistep Methods

We can also make general statements for linear multistep methods although it is important to note that we have additional requirements for linear multistep methods so that they are convergent. As an example consider the method

$$

U^{n+2} - 3 U^{n+1} + 2 U^n = - \Delta t f(U^n)

$$

so that $\alpha_0 = 2$, $\alpha_1 = -3$, and $\alpha_2 = 1$ and $\beta_0 = -1$ with the rest equal to zero. Note that these coefficients satisfy our conditions for being consistent with a truncation error

$$

\tau^n = \frac{1}{\Delta t} (u(t_{n+2}) - 3 u(t_{n+1}) + 2 u(t_n) + \Delta t u'(t_n)) = \frac{5}{2} \Delta t u''(t_n) + \mathcal{O}(\Delta t^2).

$$

It turns out that although this method is consistent the global error does not converge in general!

$$

U^{n+2} - 3 U^{n+1} + 2 U^n = - \Delta t f(U^n)

$$

Consider the above method with the trivial ODE

$$

u'(t) = 0 \quad u(0) = 0

$$

so that we are left with the method

$$

U^{n+2} - 3 U^{n+1} + 2 U^n = 0.

$$

If we have exact values for $U^0$ and $U^1$ then this method would lead to $U^n = 0$. In general however we only have an approximation to $U^1$ so what happens then? We can solve the linear difference equation in terms of $U^0$ and $U^1$ to find

$$

U^n = 2 U^0 - U^1 + 2^n (U^1 - U^0).

$$

If we assume a error on the order of $\mathcal{O}(\Delta t)$ for $U^1$ this quickly leads to large values even for small $n$!

### Characteristic Polynomials and Linear Difference Equations

As an short aside, say we wanted to solve

$$\sum^r_{j=0} \alpha_j U^{n+j} = 0$$

given initial conditions $U^0, U^1, \ldots, U^{r-1}$ which has a solution in the general form $U^n = \xi^n$.

Plugging this into the equation we have

$$

\sum^r_{j=0} \alpha_j \xi^{n+j} = 0

$$

which simplifies to

$$

\sum^r_{j=0} \alpha_j \xi^j = 0

$$

by dividing by $\xi^n$.

If $\xi$ then is a root of the polynomial

$$

\rho(\xi) = \sum^r_{j=0} \alpha_j \xi^j

$$

then $\xi$ solves the equation.

Note that since these are linear methods that a linear combination of solutions is also a solution so the general form of a solution has the form

$$

U^n = c_1 \xi_1^n + c_2 \xi_2^n + \cdots + c_r \xi^n_r.

$$

Given initial values for $U^0, U^1, \ldots$ we can uniquely determine the $c_j$.

### General Zero-Stability Result for LMM

An $r$-step LMM is *zero-stable* if the roots of the characteristic polynomial $\rho(\xi)$ satisfy

$$

|\xi_j| \leq 1 \quad \quad \text{for} \quad j=1,2,3,\ldots,r

$$

if $\xi_j$ is not repeated and $|\xi_j| < 1$ for repeated roots.

#### Example

Consider the linear multistep method

$$

U^{n+2} - 2 U^{n+1} + U^n = \frac{\Delta t}{2} (f(U^{n+2}) - f(U^n)).

$$

Applying this to the ODE $u'(t) = 0$ leads to the difference equation

$$

U^{n+2} - 2 U^{n+1} + U^n = 0

$$

whose characteristic polynomial is

$$

\rho(\xi) = \xi^2 - 2 \xi + 1 = (\xi - 1)^2

$$

leading to the general solution

$$

U^n = c_1 + c_2 n.

$$

Here we see that given a $U^0$ and $U^1$ that the solution will still grow linearly with $n$ which will again lead to a divergent solution.

#### Example

Consider the linear multistep method

$$

U^{n+3} - 2 U^{n+2} + \frac{5}{4} U^{n+1} - \frac{1}{4} U^n = \frac{\Delta t}{4} f(U^n).

$$

Apply this to the ODE $u'(t) = 0$ and determine whether this method is zero-stable.

Applied to the ODE $u'(t) = 0$ we have the linear difference equation

$$

U^{n+3} - 2 U^{n+2} + \frac{5}{4} U^{n+1} - \frac{1}{4} U^n = 0

$$

leading to

$$

\rho(\xi) = \xi^3 - 2 \xi^2 + \frac{5}{4} \xi - \frac{1}{4} = 0

$$

whose solutions are

$$

\xi_1 = 1, \xi_2 = \xi_3 = 1 / 2

$$

with the general solution

$$

U^n = c_1 + c_2 \frac{1}{2^n} + c_3 n \frac{1}{2^n}

$$

which does converge due to the factor of $1 / 2^n$!

### Example: Adams Methods

The general form for all Adams methods take the form

$$

U^{n+r} = U^{n+r-1} + \Delta t \sum^r_{j=0} \beta_j f(U^{n+j})

$$

which has the characteristic polynomial (for the ODE $u'(t)=0$)

$$

\rho(\xi) = \xi^r - \xi^{r-1} = (\xi - 1) \xi^{r-1}

$$

leading to the roots $\xi_1 = 1$ and $\xi_2 = \xi_3 = \cdots = \xi_r = 0$ which satisfy the general zero-stability result and therefore all Adams methods are convergent.

## Absolute Stability

Although zero-stability guarantees stability it is much more difficult to work with in general as the limit $\Delta t \rightarrow 0$ can be difficult to compute. Instead we often consider a finite $\Delta t$ and examine if the method is stable for this particular choice of $\Delta t$. This has the practical upside that it will also tell us what particular $\Delta t$ will ensure that our method is indeed stable.

### Example

Consider the problem

$$u'(t) = \lambda (u - \cos t) - \sin t \quad \text{with} \quad u(0) = 1$$

whose exact solution is

$$u(t) = \cos t.$$

We can compute an estimate for what $\Delta t$ we need to use by examining the truncation error

$$\begin{aligned}

\tau &= \frac{1}{2} \Delta t u''(t) + \mathcal{O}(\Delta t^2) \\

&= -\frac{1}{2} \Delta t \cos t + \mathcal{O}(\Delta t^2)

\end{aligned}$$

and therefore

$$|E^n| \leq \Delta t \max_{0 \leq t \leq t_f} |\cos t| = \Delta t.$$

If we want a solution where $|E^n| < 10^{-3}$ then $\Delta t \approx 10^{-3}$. Turning to the application of Euler's method lets apply this to the case where $\lambda = -10$ and $\lambda = -2100$.

```

# Compare accuracy between Euler

f = lambda t, lam, u: lam * (u - numpy.cos(t)) - numpy.sin(t)

u_exact = lambda t: numpy.cos(t)

t_f = 2.0

num_steps = [2**n for n in range(4, 10)]

# num_steps = [2**n for n in range(15,20)]

delta_t = numpy.empty(len(num_steps))

error_10 = numpy.empty(len(num_steps))

error_2100 = numpy.empty(len(num_steps))

for (i, N) in enumerate(num_steps):

t = numpy.linspace(0, t_f, N)

delta_t[i] = t[1] - t[0]

# Compute Euler solution

U = numpy.empty(t.shape)

U[0] = 1.0

for (n, t_n) in enumerate(t[1:]):

U[n+1] = U[n] + delta_t[i] * f(t_n, -10.0, U[n])

error_10[i] = numpy.abs(U[-1] - u_exact(t_f)) / numpy.abs(u_exact(t_f))

U = numpy.empty(t.shape)

U[0] = 1.0

for (n, t_n) in enumerate(t[1:]):

U[n+1] = U[n] + delta_t[i] * f(t_n, -2100.0, U[n])

error_2100[i] = numpy.abs(U[-1] - u_exact(t_f)) / numpy.abs(u_exact(t_f))

# Plot error vs. delta_t

fig = plt.figure()

fig.set_figwidth(fig.get_figwidth() * 2)

axes = fig.add_subplot(1, 2, 1)

axes.loglog(delta_t, error_10, 'bo', label='Forward Euler')

order_C = lambda delta_x, error, order: numpy.exp(numpy.log(error) - order * numpy.log(delta_x))

axes.loglog(delta_t, order_C(delta_t[1], error_10[1], 1.0) * delta_t**1.0, 'r--', label="1st Order")

axes.legend(loc=4)

axes.set_title("Comparison of Errors")

axes.set_xlabel("$\Delta t$")

axes.set_ylabel("$|U(t_f) - u(t_f)|$")

axes = fig.add_subplot(1, 2, 2)

axes.loglog(delta_t, error_2100, 'bo', label='Forward Euler')

axes.loglog(delta_t, order_C(delta_t[1], error_2100[1], 1.0) * delta_t**1.0, 'r--', label="1st Order")

axes.set_title("Comparison of Errors")

axes.set_xlabel("$\Delta t$")

axes.set_ylabel("$|U(t_f) - u(t_f)|$")

plt.show()

```

So what went wrong with $\lambda = -2100$? The global error should go as

$$E^{n+1} = (1 + \Delta t \lambda) E^n - \Delta t T^n$$

If $\Delta t \approx 10^{-3}$ then for the case $\lambda = -10$ the previous global error is multiplied by

$$1 + 10^{-3} \cdot -10 = 0.99$$

which means the contribution from $E^n$ will slowly decrease as we take more time steps. For the other case we have

$$1 + 10^{-3} \cdot -2100 = -1.1$$

which means that for this $\Delta t$ the error made in previous time steps will grow! For this not to happen we would have to have $\Delta t < 1 / 2100$ which would lead to convergence again.

### Absolute Stability of the Forward Euler Method

Consider again the simple test problem $u'(t) = \lambda u$. We know from before that applying Euler's method to this problem leads to an update of the form

$$U_{n+1} = (1 + \Delta t \lambda) U_n.$$

As may have been clear from the last example, we know that if

$$|1 + \Delta t \lambda| \leq 1$$

that the method will be stable, this is called **absolute stability**. Note that the product of $\Delta t \lambda$ is what matters here and often we consider a **region of absolute stability** on the complex plain defined by the equation outlined where now $z = \Delta t \lambda$. This allows the values of $\lambda$ to be complex which can be an important case to consider, especially for systems of equations where the $\lambda$s are identified as the eigenvalues.

```

# Plot the region of absolute stability for Forward Euler

fig = plt.figure()

axes = fig.add_subplot(1, 1, 1)

t = numpy.linspace(0.0, 2.0 * numpy.pi, 100)

axes.fill(numpy.cos(t) - 1.0, numpy.sin(t), 'b')

axes.plot([-3, 3],[0.0, 0.0],'k--')

axes.plot([0.0, 0.0],[-3, 3],'k--')

axes.set_xlim((-3, 3.0))

axes.set_ylim((-3,3))

axes.set_aspect('equal')

axes.set_title("Absolute Stability Region for Forward Euler")

plt.show()

```

### General Stability Regions for Linear Multistep Methods

Going back to linear multistep methods and applying them in general to our test problem we have

$$

\sum^r_{j=0} \alpha_j U_{n+j} = \Delta t \sum^r_{j=0} \beta_j \lambda U_{n+j}

$$

which can be written as

$$

\sum^r_{j=0} (\alpha_j - \beta_j \Delta t \lambda) U_{n+j} = 0

$$

or using our notation of $z = \Delta t \lambda$ we have

$$

\sum^r_{j=0} (\alpha_j - \beta_j z) U_{n+j} = 0.

$$

This has a similar form to the linear difference equations considered above! Letting

$$

\rho(\xi) = \sum^r_{j=0} \alpha_j \xi^j

$$

and

$$

\sigma(\xi) = \sum^r_{j=0} \beta_j \xi^j

$$

we can write the expression above as

$$

\pi(\xi, z) = \rho(\xi) - z \sigma(\xi)

$$

called the **stability polynomial** of the the linear multi-step method.

It turns out that if the roots $\xi_i$ of this polynomial satisfy

$$

|\xi_i| \leq 1

$$

then the multi-step method is absolutely-stable. We then define the region of absolute stability as the values for $z$ for which this is true. This approach can also be applied to one-step methods.

### Example: Forward Euler's Method

Examining forward Euler's method we have

$$\begin{aligned}

0 &= U_{n+1} - U_n - \Delta t \lambda U_n \\

&= U_{n+1} - U_n (1 + \Delta t \lambda)\\

&= \xi - 1 (1 + z)\\

&=\pi(\xi, z)

\end{aligned}$$

whose root is $\xi = 1 + z$ and we have re-derived the stability region we had found before.

### Absolute Stability of the backward Euler Method

The backward version of Euler's method is defined as

$$

U_{n+1} = U_n + \Delta t f(t_{n+1}, U_{n+1}).

$$

Check to see if backward Euler is absolute stable.

If we again consider the test problem from before we find that

$$\begin{aligned}

0 &= U_{n+1} (1 - \Delta t \lambda) - U_n \\

&= \xi (1 - z) - 1

\end{aligned}$$

which has the root $\xi = \frac{1}{1 - z}$. We then have

$$

\left|\frac{1}{1-z}\right| \leq 1 \leftrightarrow |1 - z| \geq 1

$$

so in fact the stability region encompasses the entire complex plane except for a circle centered at $(1, 0)$ of radius 1 implying that the backward Euler method is in fact stable for any choice of $\Delta t$.

## Application to Stiff ODEs

Consider again the ODE we examined before

$$u'(t) = \lambda (u - \cos t) - \sin t$$

except this time with general initial condition $u(t_0) = \eta$. What happens to solutions that are slightly different from $\eta = 1$ or $t_0 = 0$? The general solution of the ODE is

$$u(t) = e^{\lambda (t - t_0)} (\eta - \cos t_0)) + \cos t$$.

```

# Plot "hairy" solutions to the ODE

u = lambda t_0, eta, lam, t: numpy.exp(lam * (t - t_0)) * (eta - numpy.cos(t_0)) + numpy.cos(t)

fig = plt.figure()

for lam in [-1, -10]:

fig = plt.figure()

axes = fig.add_subplot(1, 1, 1)

for eta in numpy.linspace(-1, 1, 10):

for t_0 in numpy.linspace(0.0, 9.0, 10):

t = numpy.linspace(t_0,10.0,100)

axes.plot(t, u(t_0, eta, lam, t),'b')

t = numpy.linspace(0.0,10.0,100)

axes.plot(t, numpy.cos(t), 'r', linewidth=5)

axes.set_title("Perturbed Solutions $\lambda = %s$" % lam)

axes.set_xlabel('$t$')

axes.set_ylabel('$u(t)$')

plt.show()

# Plot "inverse hairy" solutions to the ODE

u = lambda t_0, eta, lam, t: numpy.exp(lam * (t - t_0)) * (eta - numpy.cos(t_0)) + numpy.cos(t)

fig = plt.figure()

num_steps = 10

error = numpy.ones(num_steps) * 1.0

t_hat = numpy.linspace(0.0, 10.0, num_steps + 1)

t_whole = numpy.linspace(0.0, 10.0, 1000)

fig = plt.figure()

axes = fig.add_subplot(1, 1, 1)

eta = 1.0

lam = 0.1

for n in range(1,num_steps):

t = numpy.linspace(t_hat[n-1], t_hat[n], 100)

U = u(t_hat[n-1], eta, lam, t)

axes.plot(t, U, 'b')

axes.plot(t_whole, u(t_hat[n-1], eta, lam, t_whole),'b--')

axes.plot([t[-1], t[-1]], (U[-1], U[-1] + -1.0**n * error[n]), 'r')

eta = U[-1] + -1.0**n * error[n]

t = numpy.linspace(0.0, 10.0, 100)

axes.plot(t, numpy.cos(t), 'g')

axes.set_title("Perturbed Solutions $\lambda = %s$" % lam)

axes.set_xlabel('$t$')

axes.set_ylabel('$u(t)$')

axes.set_ylim((-10,10))

plt.show()

```

### Example: Chemical systems

Consider the transition of a chemical $A$ to a chemical $C$ through the process

$$A \overset{K_1}{\rightarrow} B \overset{K_2}{\rightarrow} C.$$

If we let

$$\vec{u} = \begin{bmatrix} [A] \\ [B] \\ [C] \end{bmatrix}$$

then we can model this simple chemical reaction with the system of ODEs

$$\frac{\text{d} \vec{u}}{\text{d} t} =

\begin{bmatrix}

-K_1 & 0 & 0 \\

K_1 & -K_2 & 0 \\

0 & K_2 & 0

\end{bmatrix} \vec{u}$$

The solution of this system is of the form

$$u_j(t) = c_{j1} e^{-K_1 t} + c_{j2}e^{-K_2 t} + c_{j3}$$

```

# Solve the chemical systems example

# Problem parameters

K_1 = 3

K_2 = 1

# K_1 = 30.0

# K_2 = 1.0

A = numpy.array([[-K_1, 0, 0], [K_1, -K_2, 0], [0, K_2, 0]])

f = lambda u: numpy.dot(A, u)

t = numpy.linspace(0.0, 8.0, 128)

delta_t = t[1] - t[0]

U = numpy.empty((t.shape[0], 3))

U[0, :] = [2.5, 5.0, 2.0]

for n in range(t.shape[0] - 1):

U[n+1, :] = U[n, :] + delta_t * f(U[n, :])

fig = plt.figure()

axes = fig.add_subplot(1, 1, 1)

axes.plot(t, U)

axes.set_title("Chemical System")

axes.set_xlabel("$t$")

axes.set_title("$[A], [B], [C]$")

axes.set_ylim((0.0, 10.))

plt.show()

```

### What is stiffness?

In general a **stiff** ODE is one where $u'(t) \ll f'(t, u)$. For systems of ODEs the **stiffness ratio**

$$\frac{\max_p |\lambda_p|}{\min_p |\lambda_p|}$$

can be used to characterize the stiffness of the system. In our last example this ratio was $K_1 / K_2$ if $K_1 > K_2$. As we increased this ratio we observed that the numerical method became unstable only a reduction in $\Delta t$ lead to stable solution again. For explicit time step methods this is problematic as the reduction of the time step for only one of the species leads to very expensive evaluations. For example, forward Euler has the stability criteria

$$|1 + \Delta t \lambda| < 1$$

where $\lambda$ will have to be the maximum eigenvalue of the system.

```

# Plot the region of absolute stability for Forward Euler

fig = plt.figure()

axes = fig.add_subplot(1, 1, 1)

t = numpy.linspace(0.0, 2.0 * numpy.pi, 100)

K_1 = 3.0

K_2 = 1.0

delta_t = 1.0

eigenvalues = [-K_1, -K_2]

axes.fill(numpy.cos(t) - 1.0, numpy.sin(t), color='lightgray')

for lam in eigenvalues:

print(lam * delta_t)

axes.plot(lam * delta_t, 0.0, 'ko')

axes.plot([-3, 3],[0.0, 0.0],'k--')

axes.plot([0.0, 0.0],[-3, 3],'k--')

# axes.set_xlim((-3, 1))

axes.set_ylim((-2,2))

axes.set_aspect('equal')

axes.set_title("Absolute Stability Region for Forward Euler")

plt.show()

```

### A-Stability

What if we could expand the absolute stability region to encompass more of the left-half plane or even better, all of it. A method that has this property is called **A-stable**. We have already seen one example of this with backward Euler which has a stability region of

$$|1 - z| \geq 1$$

which covers the full left-half plane.

It turns out that for linear multi-step methods a theorem by Dahlquist proves that there are no LMMs that satisfies the A-stability criterion beyond second order (trapezoidal rule). There are higher-order Runge-Kutta methods do however.

Perhaps this is too restrictive though. Often large eigenvalues for systems (for instance coming from a PDE discretization for the heat equation) lie completely on the real line. If the stability region can encompass as much of the real line as possible while leaving out the rest of the left-half plane we can possibly get a more efficient method. There are a number of methods that can be constructed that have this property but are higher-order.

```

# Plot the region of absolute stability for Backward Euler

fig = plt.figure()

axes = fig.add_subplot(1, 1, 1)

t = numpy.linspace(0.0, 2.0 * numpy.pi, 100)

K_1 = 3.0

K_2 = 1.0

delta_t = 1.0

eigenvalues = [-K_1, -K_2]

axes.set_facecolor('lightgray')

axes.fill(numpy.cos(t) + 1.0, numpy.sin(t), 'w')

for lam in eigenvalues:

print(lam * delta_t)

axes.plot(lam * delta_t, 0.0, 'ko')

axes.plot([-3, 3],[0.0, 0.0],'k--')

axes.plot([0.0, 0.0],[-3, 3],'k--')

# axes.set_xlim((-3, 1))

axes.set_ylim((-2,2))

axes.set_aspect('equal')

axes.set_title("Absolute Stability Region for Backward Euler")

plt.show()

```

### L-Stability

It turns out not all A-stable methods are alike. Consider the backward Euler method and the trapezoidal method. The stability polynomial for the trapezoidal method is

$$\begin{aligned}

0 &= U_{n+1} - U_n - \Delta t \frac{1}{2} (\lambda U_n + \lambda U_{n+1}) \\

&= U_{n+1}\left(1 - \frac{1}{2} \Delta t \lambda \right ) - U_n \left(1 + \frac{1}{2}\Delta t \lambda \right) \\

&= \left(\xi - \frac{1 + \frac{1}{2}z}{1 - \frac{1}{2} z}\right) \left(1 - \frac{1}{2} z \right )\\

\end{aligned}$$

which shows that it is A-stable. Lets apply both these methods to a problem we have seen before and see what happens.

```

# Compare accuracy between Euler

f = lambda t, lam, u: lam * (u - numpy.cos(t)) - numpy.sin(t)

u_exact = lambda t_0, eta, lam, t: numpy.exp(lam * (t - t_0)) * (eta - numpy.cos(t_0)) + numpy.cos(t)

t_0 = 0.0

eta = 1.5

lam = -1e6

num_steps = [10, 20, 40, 50]

delta_t = numpy.empty(len(num_steps))

error_euler = numpy.empty(len(num_steps))

error_trap = numpy.empty(len(num_steps))

for (i, N) in enumerate(num_steps):

t = numpy.linspace(0, t_f, N)

delta_t[i] = t[1] - t[0]

u = u_exact(t_0, eta, lam, t_f)

# Compute Euler solution

U_euler = numpy.empty(t.shape)

U_euler[0] = eta

for (n, t_n) in enumerate(t[1:]):

U_euler[n+1] = (U_euler[n] - lam * delta_t[i] * numpy.cos(t_n) - delta_t[i] * numpy.sin(t_n)) / (1.0 - lam * delta_t[i])

error_euler[i] = numpy.abs(U_euler[-1] - u) / numpy.abs(u)

# Compute using trapezoidal

U_trap = numpy.empty(t.shape)

U_trap[0] = eta

for (n, t_n) in enumerate(t[1:]):

U_trap[n+1] = (U_trap[n] + delta_t[i] * 0.5 * f(t_n, lam, U_trap[n]) - 0.5 * lam * delta_t[i] * numpy.cos(t_n) - 0.5 * delta_t[i] * numpy.sin(t_n)) / (1.0 - 0.5 * lam * delta_t[i])

error_trap[i] = numpy.abs(U_trap[-1] - u)

# Plot error vs. delta_t

fig = plt.figure()

fig.set_figwidth(fig.get_figwidth() * 2)

axes = fig.add_subplot(1, 2, 1)

axes.plot(t, U_euler, 'ro-')

axes.plot(t, u_exact(t_0, eta, lam, t),'k')

axes = fig.add_subplot(1, 2, 2)

axes.loglog(delta_t, error_euler, 'bo')

order_C = lambda delta_x, error, order: numpy.exp(numpy.log(error) - order * numpy.log(delta_x))

axes.loglog(delta_t, order_C(delta_t[1], error_euler[1], 1.0) * delta_t**1.0, 'r--', label="1st Order")

axes.loglog(delta_t, order_C(delta_t[1], error_euler[1], 2.0) * delta_t**2.0, 'b--', label="2nd Order")

axes.legend(loc=4)

axes.set_title("Comparison of Errors for Backwards Euler")

axes.set_xlabel("$\Delta t$")

axes.set_ylabel("$|U(t_f) - u(t_f)|$")

# Plots for trapezoid

fig = plt.figure()

fig.set_figwidth(fig.get_figwidth() * 2)

axes = fig.add_subplot(1, 2, 1)

axes.plot(t, U_trap, 'ro-')

axes.plot(t, u_exact(t_0, eta, lam, t),'k')

axes = fig.add_subplot(1, 2, 2)

axes.loglog(delta_t, error_trap, 'bo', label='Forward Euler')

order_C = lambda delta_x, error, order: numpy.exp(numpy.log(error) - order * numpy.log(delta_x))

axes.loglog(delta_t, order_C(delta_t[1], error_trap[1], 1.0) * delta_t**1.0, 'r--', label="1st Order")

axes.loglog(delta_t, order_C(delta_t[1], error_trap[1], 2.0) * delta_t**2.0, 'b--', label="2nd Order")

axes.legend(loc=4)

axes.set_title("Comparison of Errors for Trapezoidal Rule")

axes.set_xlabel("$\Delta t$")

axes.set_ylabel("$|U(t_f) - u(t_f)|$")

plt.show()

```

It turns out that if we look at a one-step method and define the following ratio

$$U_{n+1} = R(z) U_n$$

we can define another form of stability, called **L-stability**, where we require that the method is A-stable and that

$$\lim_{z \rightarrow \infty} |R(z)| = 0.$$

Backwards Euler is L-stable while the trapezoidal method is not.

## Backward Differencing Formulas

A class of LMM methods that are useful for stiff ODE problems are the backward difference formula (BDF) methods which have the form

$$\alpha_0 U_n + \alpha_1 U_{n+1} + \cdots + \alpha_r U_{n+r} = \Delta \beta_r f(U_{n+r})$$

These methods can be derived directly from backwards finite differences from the point $U_{n+r}$ and the rest of the points back in time. One can then derive r-step methods that are rth-order accurate this way. Some of the methods are

$$\begin{aligned}

r = 1:& & U_{n+1} - U_n = \Delta t f(U_{n+1}) \\

r = 2:& &3 U_{n+2} - 4 U_{n+1} + U_n = 2 \Delta t f(U_{n+1}) \\

r = 3:& &11U_{n+3} - 18U_{n+2} + 9U_{n+1} - 2 U_n = 6 \Delta t f(U_{n+3}) \\

r = 4:& &25 U_{n+4} - 48 U_{n+3} +36 U_{n+2} -16 U_{n+1} +3 U_n = 12 \Delta t f(U_{n+4})

\end{aligned}$$

## Plotting Stability Regions

If we think of the roots of the stability polynomial $\xi_j$ as complex numbers and write them in exponential form

$$

\xi_j = |\xi_j| e^{i \theta}.

$$

Here $|\xi_j|$ is the modulus (or magnitude) or the complex number and is defined as $|\xi_j| = x^2 + y^2$ where $\xi_j = x + i j$. If the $\xi_j$s are on the boundary of the absolute stability region then we know that $|\xi_j| = 1$. Using this in conjunction with the stability polynomial then leads to

$$

\rho(e^{i\theta}) - z \sigma(e^{i\theta}) = 0

$$

which solving for $z$ leads to

$$

z(\theta) = \frac{\rho(e^{i\theta})}{\sigma(e^{i\theta})}.

$$

As an example consider the Adams-Bashforth 2-stage method. The stability polynomial can be found as

$$\begin{aligned}

U_{n+2} &= U_{n+1} + \frac{\Delta t}{2} (-f(U_n) + 3 f(U_{n+1})) \\

\pi(\xi, z) &= U_{n+2} - U_{n+1} - \frac{\Delta t}{2} (-f(U_n) + 3 f(U_{n+1})) = 0 \\

&= U_{n+2} - U_{n+1} - \frac{1}{2} (\Delta t \lambda U_n - 3 \Delta t \lambda U_{n+1}) \\

&= 2 \xi^2 - 2 \xi + 3 z\xi - z \\

&= \rho(\xi, z) + z \sigma(\xi, z)

\end{aligned}$$

where

$$

\rho(\xi, z) = 2 ( \xi - 1) \xi ~~~ \text{and} ~~~ \sigma(\xi, z) = 3 \xi - 1

$$

so that

$$

z(\theta) = \frac{2 (\xi - 1) \xi}{3 \xi - 1}.

$$

This does not necessarily ensure that given a $\theta$ that $z(\theta)$ will lie on the absolute stability region's boundary. This can occur when $\xi_j = 1$ but to the left and right of the curve $\xi_j > 1$ and so therefore does not mark the boundary of the region. To determine whether a particular region outlined by this curve is inside or outside of the stability region we can evaluate all the roots of $\pi(\xi, z)$ at some $z$ inside of the region in question and see if then $\forall j, \xi_j < 1$.

For one-step methods this becomes easier, if we look at the ratio $R(z)$ we defined earlier as

$$

U_{n+1} = R(z) U_n

$$

in the case of the pth order Taylor series method applied to $u'(t) = \lambda u$ we get

$$\begin{aligned}

U_{n+1} &= U_n + \Delta t \lambda U_n + \frac{1}{2}\Delta t^2 \lambda^2 U_n + \cdots + \frac{1}{p!}\Delta t^p \lambda^p U_n \\

&=\left(1 + z + \frac{1}{2} z^2 + \frac{1}{6} z^3 + \cdots +\frac{1}{p!}z^p\right) U_n \Rightarrow \\

R(z) &= 1 + z + \frac{1}{2} z^2 + \frac{1}{6} z^3 + \cdots +\frac{1}{p!}z^p.

\end{aligned}$$

Setting $R(z) = e^{i\theta}$ could lead to a way for solving for the boundary but (where $|R(z)| = 1$) but this is very difficult to do in general. Instead if we plot the contours of $R(z)$ in the complex plain we can pick out the $R(z)=1$ contour and plot that.

```

theta = numpy.linspace(0.0, 2.0 * numpy.pi, 100)

# ==================================

# Forward euler

fig = plt.figure()

fig.set_figwidth(fig.get_figwidth() * 2.0)

fig.set_figheight(fig.get_figheight() * 2.0)

axes = fig.add_subplot(2, 2, 1)