code

stringlengths 38

801k

| repo_path

stringlengths 6

263

|

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

# # Constrained optimization using scipy

#

# **<NAME>, PhD**

#

# This demo is based on the original Matlab demo accompanying the <a href="https://mitpress.mit.edu/books/applied-computational-economics-and-finance">Computational Economics and Finance</a> 2001 textbook by <NAME> and <NAME>.

#

# Original (Matlab) CompEcon file: **demopt08.m**

#

# Running this file requires the Python version of CompEcon. This can be installed with pip by running

#

# # !pip install compecon --upgrade

#

# <i>Last updated: 2021-Oct-01</i>

# <hr>

# ## About

#

# The problem is

#

# \begin{equation*}

# \max\{-x_0^2 - (x_1-1)^2 - 3x_0 + 2\}

# \end{equation*}

#

# subject to

#

# \begin{align*}

# 4x_0 + x_1 &\leq 0.5\\

# x_0^2 + x_0x_1 &\leq 2.0\\

# x_0 &\geq 0 \\

# x_1 &\geq 0

# \end{align*}

# ## Using scipy

#

# The **scipy.optimize.minimize** function minimizes functions subject to equality constraints, inequality constraints, and bounds on the choice variables.

# +

import numpy as np

from scipy.optimize import minimize

np.set_printoptions(precision=4,suppress=True)

# -

# * First, we define the objective function, changing its sign so we can minimize it

def f(x):

return x[0]**2 + (x[1]-1)**2 + 3*x[0] - 2

# * Second, we specify the inequality constraints using a tuple of two dictionaries (one per constraint), writing each of them in the form $g_i(x) \geq 0$, that is

# \begin{align*}

# 0.5 - 4x_0 - x_1 &\geq 0\\

# 2.0 - x_0^2 - x_0x_1 &\geq 0

# \end{align*}

cons = ({'type': 'ineq', 'fun': lambda x: 0.5 - 4*x[0] - x[1]},

{'type': 'ineq', 'fun': lambda x: 2.0 - x[0]**2 - x[0]*x[1]})

# * Third, we specify the bounds on $x$:

# \begin{align*}

# 0 &\leq x_0 \leq \infty\\

# 0 &\leq x_1 \leq \infty

# \end{align*}

bnds = ((0, None), (0, None))

# * Finally, we minimize the problem, using the SLSQP method, starting from $x=[0,1]$

x0 = [0.0, 1.0]

res = minimize(f, x0, method='SLSQP', bounds=bnds, constraints=cons)

print(res)

|

_build/jupyter_execute/notebooks/opt/08 Constrained optimization using scipy.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: 'Python 3.6.12 64-bit (''mash'': conda)'

# language: python

# name: python3

# ---

# # t-SNE

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

X = pd.read_csv('checkpoints/visual_test2/latent_space.tsv',sep='\t', header=0, index_col=0)

labels = pd.read_csv('data/labels.tsv', sep='\t',header=0, index_col=0)

n_classes = 34 #no of classes to visualize

labels = labels[labels['sample_type.samples'].isin(range(n_classes))]

X = X.reset_index()

labels = labels.reset_index()

X = X.rename(columns={'index': 'sample'})

df = pd.merge(X, labels, on='sample', how='inner', sort=False)[['sample','sample_type.samples']]

X = X[X['sample'].isin(df['sample'])]

plt.scatter(X_embedded[:, 0], X_embedded[:, 1], c=df.loc[:,'sample_type.samples'], s=0.5)

# +

import math

import numpy as np

from matplotlib.colors import ListedColormap

from matplotlib.cm import hsv

def generate_colormap(number_of_distinct_colors: int = 80):

if number_of_distinct_colors == 0:

number_of_distinct_colors = 80

number_of_shades = 7

number_of_distinct_colors_with_multiply_of_shades = int(math.ceil(number_of_distinct_colors / number_of_shades) * number_of_shades)

# Create an array with uniformly drawn floats taken from <0, 1) partition

linearly_distributed_nums = np.arange(number_of_distinct_colors_with_multiply_of_shades) / number_of_distinct_colors_with_multiply_of_shades

# We are going to reorganise monotonically growing numbers in such way that there will be single array with saw-like pattern

# but each saw tooth is slightly higher than the one before

# First divide linearly_distributed_nums into number_of_shades sub-arrays containing linearly distributed numbers

arr_by_shade_rows = linearly_distributed_nums.reshape(number_of_shades, number_of_distinct_colors_with_multiply_of_shades // number_of_shades)

# Transpose the above matrix (columns become rows) - as a result each row contains saw tooth with values slightly higher than row above

arr_by_shade_columns = arr_by_shade_rows.T

# Keep number of saw teeth for later

number_of_partitions = arr_by_shade_columns.shape[0]

# Flatten the above matrix - join each row into single array

nums_distributed_like_rising_saw = arr_by_shade_columns.reshape(-1)

# HSV colour map is cyclic (https://matplotlib.org/tutorials/colors/colormaps.html#cyclic), we'll use this property

initial_cm = hsv(nums_distributed_like_rising_saw)

lower_partitions_half = number_of_partitions // 2

upper_partitions_half = number_of_partitions - lower_partitions_half

# Modify lower half in such way that colours towards beginning of partition are darker

# First colours are affected more, colours closer to the middle are affected less

lower_half = lower_partitions_half * number_of_shades

for i in range(3):

initial_cm[0:lower_half, i] *= np.arange(0.2, 1, 0.8/lower_half)

# Modify second half in such way that colours towards end of partition are less intense and brighter

# Colours closer to the middle are affected less, colours closer to the end are affected more

for i in range(3):

for j in range(upper_partitions_half):

modifier = np.ones(number_of_shades) - initial_cm[lower_half + j * number_of_shades: lower_half + (j + 1) * number_of_shades, i]

modifier = j * modifier / upper_partitions_half

initial_cm[lower_half + j * number_of_shades: lower_half + (j + 1) * number_of_shades, i] += modifier

return ListedColormap(initial_cm)

# -

import matplotlib

cm = generate_colormap(n_classes)

colors = []

for i in range(n_classes):

rgba = cm(i)

# rgb2hex accepts rgb or rgba

colors.append(matplotlib.colors.rgb2hex(rgba))

tumors = ['LUAD','BRCA', 'UCEC','OV','LUSC','HNSC','KIRC','CESC','normal','PRAD','GBM','PAAD','BLCA','SARC',

'THCA','KIRP','UVM','LIHC','SKCM','ACC','LGG','STAD','PCPG','TGCT','COAD','THYM','LAML','KICH','ESCA','UCS',

'READ','MESO','DLBC','CHOL']

from tsnecuda import TSNE

X_embedded = TSNE(n_components=2, perplexity=15, learning_rate=30).fit_transform(X.iloc[:,1:])

# +

import matplotlib.pyplot as plt

import matplotlib.patches as mpatches

import numpy as np

x = X_embedded[:, 0]

y = X_embedded[:, 1]

categories = df.loc[:,'sample_type.samples'].to_numpy()

colormap = np.array(colors)

plt.scatter(x, y, s=1, c=colormap[categories])

pop_a = mpatches.Patch(color='#0b559f', label='Population A')

pop_b = mpatches.Patch(color='#89bedc', label='Population B')

handles = []

for t, c in zip(tumors, colors):

handles.append(mpatches.Patch(color=c, label=t))

plt.legend(loc='upper center', bbox_to_anchor=(1.4, 1.05), handles=handles,

ncol=3, fancybox=True, shadow=True)

plt.title('Visualization using t-SNE')

plt.xlabel('Dimension 1')

plt.ylabel('Dimension 2')

plt.savefig('images/tsne.png')

plt.show()

# -

# # Log

# ## Train log

path = "./checkpoints/omics_mode/ABC/ABC_inter/train_log.txt"

with open(path) as f:

lines = f.readlines()

l = lines[-2]

sp = l.split(']')[1].split(': ')

int(sp[2].strip().split(' ')[0])

def process_train_log(lines):

recon_A, recon_B, recon_C, kl, classifier, accuracy = [], [], [], [], [], []

for l in lines:

if l.startswith("[TRAIN]"):

# print(l)

sp = l.split(']')[2].split(': ')

spl = l.split(']')[1].split(': ')

epoch = int(spl[2].strip().split(' ')[0])

if(epoch==7166):

recon_A.append(float(sp[1].split(' ')[0]))

recon_B.append(float(sp[2].split(' ')[0]))

recon_C.append(float(sp[3].split(' ')[0]))

kl.append(float(sp[4].split(' ')[0]))

classifier.append(float(sp[5].split(' ')[0]))

accuracy.append(float(sp[6].split(' ')[0]))

# print(recon_A, recon_B, recon_C, kl, classifier, accuracy)

return recon_A, recon_B, recon_C, kl, classifier, accuracy

recon_A, recon_B, recon_C, kl, classifier, accuracy = process_train_log(lines)

plt.plot(recon_A)

plt.plot(recon_B)

plt.plot(recon_C)

plt.plot(accuracy)

plt.xlabel('No of epochs')

plt.ylabel('Train accuracy')

plt.title('Cancer type classification with multi-omics data')

# ## Test log

path = "./checkpoints/omics_mode/ABC/ABC_inter2/test_log.txt"

with open(path) as f:

lines = f.readlines()

l = lines[-2]

spl = l.split(']')[1].split(': ')

int(spl[2].strip().split(' ')[0])

print(l)

def process_test_log(lines):

recon_A, recon_B, recon_C, kl, classifier, accuracy = [], [], [], [], [], []

for l in lines:

if l.startswith("[TEST]"):

# print(l)

sp = l.split(']')[2].split(': ')

spl = l.split(']')[1].split(': ')

epoch = int(spl[2].strip().split(' ')[0])

if(epoch==1792):

recon_A.append(float(sp[1].split(' ')[0]))

recon_B.append(float(sp[2].split(' ')[0]))

recon_C.append(float(sp[3].split(' ')[0]))

kl.append(float(sp[4].split(' ')[0]))

classifier.append(float(sp[5].split(' ')[0]))

accuracy.append(float(sp[6].split(' ')[0]))

# print(recon_A, recon_B, recon_C, kl, classifier, accuracy)

return recon_A, recon_B, recon_C, kl, classifier, accuracy

recon_A, recon_B, recon_C, kl, classifier, accuracy = process_test_log(lines)

plt.plot(accuracy)

|

Visualisation.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Betfair predictions for the 2019 vote

#

#

#

# [Betfair](https://www.betfair.com/exchange/plus/politics) is a betting exchange, where punters can gamble

# on sports and other events including the UK election. There is no bookmaker — it's just punters gambling against each other — so it embodies the [_wisdom of the crowd_](https://en.wikipedia.org/wiki/Wisdom_of_the_crowd).

# It's also a real-time data exchange, so we can get real-time snapshots of opinion, not lagged like conventional polling.

#

# In this analysis, we look at Betfairs predictions for each constituency. We plot it on a three-way map, plotting each point according to how the 2017 vote split between Labour / Conservative / LibDem. Constituencies in the bottom right went Conservative, those in the top right went Labour, and those on the left went LibDem

# (and other constituencies are plotted according to their vote share between these three parties).

#

# The points to look at are the constituencies on the border. For example, if there's a blue point above the line, it means a constituency which went Labour in 2017, but is likely to go Conservative in 2019.

# ## Preamble

import numpy as np

import pandas

import matplotlib.pyplot as plt

import json

# +

# To read an Excel file from within Python, install this package.

# (If you're unable to install it on your system, then open the Excel file

# from the House of Commons Library in Excel, save as CSV, then use

# pandas.read_csv.)

# !pip install --user xlrd

# -

# # Data import

# There are many useful statistics at https://commonslibrary.parliament.uk/local-data/constituency-dashboard/. Here I'm just using data about the 2017 election.

url = 'https://data.parliament.uk/resources/constituencystatistics/Current-Parliament-Election-Results.xlsx'

vote2017 = pandas.read_excel(url, sheet_name='DATA')

# At [Betfair Exchange](https://www.betfair.com/exchange/plus/politics), users make bets for and against outcomes ("back" and "lay"). A crude summary of how to read it:

#

# * if there is an open offer to back a candidate at odds $q$, then the market believes that the probability of this candidate's winning is $\leq 1/q$

#

# * if there is an open offer to lay a candidate at odds $q$, then the market believes that the probability of this candidate's winning is $\geq 1/q$.

#

#

# I have used the Betfair json api to fetch the latest betting data.

# The code for fetching it is at the bottom -- but you need to sign up

# with betfair to use their api. To save the bother, I have assembled the

# betting data into a single json file. The format is a list with one item per constituency,

# ```

# [(market, (runners,), (prices,)), ...]

# ```

# where

#

# * `market` comes from [listMarketCatalogue](https://docs.developer.betfair.com/display/1smk3cen4v3lu3yomq5qye0ni/listMarketCatalogue)

# and lists the COMPETITION and EVENT details for a constituency

# * `runners` comes from [listMarketCatalogue](https://docs.developer.betfair.com/display/1smk3cen4v3lu3yomq5qye0ni/listMarketCatalogue) and lists the RUNNER_DESCRIPTION for each candidate in the constituency

# * `prices` comes from [listMarketBook](https://docs.developer.betfair.com/display/1smk3cen4v3lu3yomq5qye0ni/listMarketBook) and lists the current odds for each candidate

#

# +

with open('data/betfair_20191209.json') as f:

res = json.load(f)

prices = []

runners = []

for m,(r,),(p,) in iter(res.values()):

for pr in p['runners']:

layed = max([b['price'] for b in pr['ex']['availableToBack']], default=np.nan)

backed = min([b['price'] for b in pr['ex']['availableToLay']], default=np.nan)

prices.append([p['marketId'], pr['selectionId'], layed, backed])

for rr in r['runners']:

runners.append([m['marketId'], m['marketName'], rr['selectionId'], rr['runnerName']])

prices = pandas.DataFrame.from_records(prices, columns=['marketId','runnerId','layed','backed'])

runners = pandas.DataFrame.from_records(runners, columns=['marketId','marketName','runnerId','runnerName'])

odds = prices.merge(runners, how='outer', on=['marketId','runnerId']).reset_index()

# -

# The Betfair data labels each constituency by `marketId`. UK sources usually label by `ONSconstID`, an id from the Office for National Statistics. I have assembled a mapping file between them.

# +

# Bothersomely, Betfair marketId is a string but it looks like a floating point number. To stop pandas from

# converting it (and thereby truncating or losing trailing zeros), we have to tell it explicitly what type to use.

const_ids = pandas.read_csv('data/constituency_id_map.csv', dtype={'betfair_id':np.str})

# -

# # Data preparation

# It's overwhelming to plot all parties (including the "Space Navies Party" etc.)

# so we'll restrict attention to the most common parties.

# This tabulation lists the parties, ordered by the number of candidates they stood in 2017. This lets us see how the popular parties were named, so we can filter out the others.

vote2017.groupby('PartyShortName').apply(len) \

.sort_values(ascending=False) \

.iloc[:10]

# The `vote2017` dataframe has one row per constituency:candidate. We'll cut it down to one row per constituency, and put the candidates (for major parties) in columns. This will be easier for the plots we want to do next.

#

# There are also some extra per-constituency fields, such as constituency name and turnout, which we'll merge in.

# +

constituencies = \

vote2017.loc[np.isin(vote2017.PartyShortName, ['Con','Lab','LD','Green','SNP','PC'])] \

.groupby(['ONSconstID','PartyShortName'])['Votes'].apply(sum) \

.unstack(fill_value=0).reset_index() \

.rename_axis(None, axis=1)

df = vote2017.drop_duplicates('ONSconstID') \

[['ONSconstID','ConstituencyName','RegionName','Turnout','Electorate']]

constituencies = constituencies.merge(df, on='ONSconstID')

# -

# Next, align the 2017 vote data with the Betfair predictions. There are many interesting things in the Betfair data, but all we'll pull out is its prediction for the most likely winning party in each constituency.

#

# There are many candidates that no one wants to bet on. This could either be because the candidate is a sure-fire winner, or a sure-fire loser. For the purposes of plotting, I'll only use candidates where there is an actual betting market.

# +

# As described above, the offered odds tell us about the probability of winning

df = odds.copy()

df['pmin'] = 1 / df.backed

df['pmax'] = 1 / df.layed

df['p'] = (df.pmin + df.pmax) / 2

bfwin = df.loc[~pandas.isna(df.p)] \

.sort_values('p', ascending=False) \

.groupby('marketId')['runnerName'].apply(lambda x: x.iloc[0]) \

.reset_index(name='predwin')

# see also .topk, .nlargest, .head

# Look up the official ONS id for each constituency.

# Relabel the columns, and keep only the ones we'll use for plotting.

bfwin = bfwin.merge(const_ids, left_on='marketId', right_on='betfair_id', how='outer')

bfwin = pandas.DataFrame({'ONSconstID': bfwin.id, 'predwin': bfwin.predwin})

# -

# # Plotting code

# +

party_style = {

'Con': (np.cos(2*np.pi/6), -np.sin(2*np.pi/6), 2),

'Lab': (np.cos(2*np.pi/6), np.sin(2*np.pi/6), 2),

'LD': (-1,0, 4)

}

df = constituencies.merge(bfwin, on='ONSconstID')

df['x'] = 0

df['y'] = 0

for party,(dx,dy,__) in party_style.items():

df['x'] += np.log(np.maximum(df[party],1)) * dx

df['y'] += np.log(np.maximum(df[party],1)) * dy

# -

# First attempt at a plot ...

fig,ax = plt.subplots()

ax.scatter(df.x, df.y)

plt.show()

# Final plot, after iteratively fiddling with the layout, the colours, the legend, etc.

# +

# We'll specify colours for the main parties, and leave the others on a

# standard colour palette.

parties = np.unique(df.predwin[~pandas.isna(df.predwin)])

def col(i):

cols = {'Labour': (217,55,63),

'Conservative': (0,100,157),

'Liberal Democrat': (235,179,45),

'SNP': (252,238,92),

'Green': (0,128,0)}

cols2 = plt.get_cmap('Set2', len(parties))

if i in cols:

return np.array(cols[i])/255

elif pandas.isna(i):

return '0.7'

else:

return cols2(np.where(parties==i)[0])

# Set up the plot

with plt.rc_context({'figure.figsize': (8,6)}):

fig,ax = plt.subplots()

# Scatter plot, for each constituency, colour-coded by predicted winner

i = pandas.isna(df.predwin)

ax.scatter(df.x[i], df.y[i], label='no bet', alpha=.6, color=col(np.nan))

for p in parties:

i = df.predwin==p

ax.scatter(df.x[i], df.y[i], label=p, alpha=.6, color=col(p))

# Annotate with text (and trim some points that are out of bounds --

# ax.text doesn't respect xlim and ylim)

for i in np.arange(len(df)):

if df.x[i]<4.5 and df.y[i]>-2:

ax.text(df.x[i], df.y[i], df.ConstituencyName[i], fontsize=1)

# Grid lines, to lay out the axes showing which seats went which way in 2017

for (dx,dy,m) in party_style.values():

ax.plot([0,-m*dx],[0,-m*dy], color='black', linestyle='dashed')

# Configure the scales

ax.set_xlim([-2,4.5])

ax.set_ylim([-2,2.5])

ax.set_xticks([])

ax.set_yticks([])

ax.legend(title='Betfair prediction', loc='upper left', bbox_to_anchor=(1,1,0,0))

# Save as pdf, so we can zoom and search the text labels

plt.savefig('predictions.pdf', transparent=True, bbox_inches='tight', pad_inches=0)

plt.show()

# -

# # Appendix: code to fetch data from Betfair

# To use the Betfair api, you need to create a Betfair account, and then an application key at https://docs.developer.betfair.com/visualisers/api-ng-account-operations/.

#

# +

import requests

import json

from IPython.display import clear_output

import time

import pathlib

import pandas

import numpy as np

endpoint = "https://api.betfair.com/exchange/betting/rest/v1.0/"

# +

# Login

# I don't like to store sensitive data in a version-controlled Juputer notebook.

# Instead, I store credentials in a json file, not under version control.

# I have two-factor authentication turned on, so Betfair tells me to append

# an auth_code (from an Authenticator app on my phone) to the password field.

CREDENTIALS_FILE = 'betfair_creds.json'

with open(CREDENTIALS_FILE) as f:

creds = json.loads(f.read())

auth_code = input()

conn = requests.Session()

conn.headers['Accept'] = 'application/json'

conn.headers['X-Application'] = creds['app_key']

r = conn.post('https://identitysso.betfair.com/api/login',

data = {'username': creds['username'], 'password': creds['password'] + str(auth_code)}

)

r.raise_for_status()

r = r.json()

assert r['status'] == 'SUCCESS'

conn.headers['X-Authentication'] = r['token']

# +

# Get a list of all constituencies

politics = conn.post(endpoint+'listEventTypes/',

json = {'filter': {'textQuery':'Politics'}})

politics = politics.json()[0]['eventType']['id']

events = conn.post(endpoint+'listEvents/',

json = {'filter' :{'eventTypeIds': [politics], 'textQuery':'Constituencies'}})

events = [int(e['event']['id']) for e in events.json()]

competitions = conn.post(endpoint+'listCompetitions/',

json = {'filter': {'eventIds': events}})

competitions = [int(c['competition']['id']) for c in competitions.json()]

markets = conn.post(endpoint+'listMarketCatalogue/',

json = {'filter': {'competitionIds': competitions},

'marketProjection': ['EVENT','COMPETITION'],

'maxResults': 1000})

markets = markets.json()

print(f"{len(markets)} markets")

# +

# Get current prices for all constituencies

res = {}

for i,market in enumerate(markets):

m = market['marketId']

if m in res: continue

clear_output(wait=True)

print(f'{i+1} / {len(markets)}')

print(market)

r = conn.post(endpoint+'listMarketCatalogue/',

json = {'filter': {'marketIds': [m]},

'marketProjection': ['RUNNER_DESCRIPTION'],

'maxResults': 1000})

b = conn.post(endpoint+'listMarketBook/',

json = {'marketIds': [m],

'priceProjection': {

'priceData': ["EX_BEST_OFFERS", "EX_TRADED"],

'virtualise': True

}

})

res[m] = (market, r.json(), b.json())

time.sleep(3)

with open('betfair_data.json', 'w') as f:

json.dump(res, f)

|

scicomp-master/vote2019/analysis.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python (scivis-plankton)

# language: python

# name: scivis-plankton

# ---

# # Random Forest Classifier - Label 3

#

# Classify plankton species (keeping detritus) using random forests.

# delete variables in memory

# %reset

# Import libraries

import pandas as pd

import numpy as np

from sklearn.preprocessing import LabelEncoder

from sklearn.ensemble import RandomForestClassifier

from sklearn import metrics

import matplotlib.pyplot as plt

import seaborn as sns

import pickle

# Load he data which have been preprocessed in R.

# +

train = pd.read_csv("../../../data/processed/labelled-features/labelled-features-train.csv")

train = train.set_index('index')

test = pd.read_csv("../../../data/processed/labelled-features/labelled-features-test.csv")

test = test.set_index('index')

# -

print(train["label3"].unique())

print( len( train["label3"].unique() ) )

for col in train.columns:

print(col)

# These are the columns we are retaining in the features matrix (X)

cols_retain = [ col for col in train.columns if col not in ['filename', 'label1', 'label2', 'label3',

'img_file_name', 'img_rank'] ]

for col in cols_retain:

print(col)

# Encode target labels with value between 0 and n_classes-1.

# Encode taget labels with value between 0 and n_classes-1

LE = LabelEncoder()

LE.fit( train['label3'] ) # fit label encoder

y_train = LE.transform( train['label3'] ) # transform labels to normalized encoding

y_test = LE.transform( test['label3'] ) # transform labels to normalized encoding

LE.classes_

X_train = train[cols_retain] # Features

X_test = test[cols_retain] # Features

# Apply random forest classifier using default settings and make prediction

# Create a Gaussian Classifier

clf=RandomForestClassifier(n_estimators=100) # this is the default number of trees in the forest

# +

import time

tic = time.perf_counter()

clf.fit(X_train,y_train) # Train the model using the training sets

toc = time.perf_counter()

print("Time to train model: %.4f seconds" % (toc-tic))

# -

#Make prediction using features in test set

y_pred=clf.predict(X_test)

y_pred

# Calculate metrics on the random forest model

print('Mean Absolute Error:', metrics.mean_absolute_error(y_test, y_pred))

print('Mean Squared Error:', metrics.mean_squared_error(y_test, y_pred))

print('Root Mean Squared Error:', np.sqrt(metrics.mean_squared_error(y_test, y_pred)))

print("Accuracy:",metrics.accuracy_score(y_test, y_pred))

print(metrics.classification_report(y_test,y_pred, target_names=LE.classes_))

plt.rcParams['figure.figsize'] = [12, 8]

plt.rcParams['figure.dpi'] = 100

metrics.plot_confusion_matrix(clf, X_test, y_test)

plt.show()

# Find important features for classification

feature_names = X_train.columns

feature_imp = pd.Series(clf.feature_importances_,index=feature_names).sort_values(ascending=False)

print(feature_imp)

# Creating a bar plot

sns.barplot(x=feature_imp, y=feature_imp.index)

# Add labels to your graph

plt.rcParams['figure.figsize'] = [12, 8]

plt.rcParams['figure.dpi'] = 100

plt.xlabel('Feature Importance Score')

plt.ylabel('Features')

plt.title("Visualizing Important Features")

plt.show()

# The following calculates precision, recall, accuracy and f1 using the pre-computed confusion matrix

confusion_matrix = metrics.confusion_matrix(y_test, y_pred)

from evaluate_model import model_metrics

accuracy, precision, recall, f1 = model_metrics(confusion_matrix)

print("precision = %.3f" % precision)

print("recall = %.3f" % recall)

print("accuracy = %.3f" % accuracy)

print("f1 = %.3f" % f1)

# Export pre-trained model as pkl file so that it can later be used in scivision

with open('/output/models/randomforest/rf-label3.pkl','wb') as f:

pickle.dump(clf,f)

|

notebooks/python/dsg2021/random_forest_label3_with_detritus.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + colab={"base_uri": "https://localhost:8080/", "height": 36} id="XqhnaAncBm3Y" outputId="376d4295-9af0-4930-b2e9-7fa1a69127c6"

import tensorflow as tf

from tensorflow import keras

tf.__version__

# + id="HVfCcR6HCUsu"

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

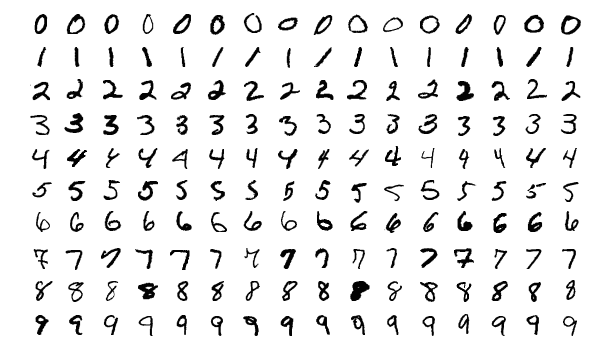

# + colab={"base_uri": "https://localhost:8080/"} id="mTepy9G6CZej" outputId="6f7548ea-f483-44f3-d844-17f478f04fd6"

# loading the MNIST dataset

mnist = tf.keras.datasets.mnist

(X_train, y_train), (X_test, y_test)=mnist.load_data()

# + colab={"base_uri": "https://localhost:8080/", "height": 446} id="vc1OR4-2CwOX" outputId="fb3bec18-aa9c-4c0d-ad4e-47fe02f2c7a0"

plt.figure(figsize = (7,7))

plt.imshow(X_train[0], cmap = 'binary')

# + id="gYXa5tvaDH46"

# Normalizing the data and dividing the training data into train and validation set/

X_valid, X_train = X_train[:5000]/255, X_train[5000:]/255

X_test = X_test/255

y_valid, y_train = y_train[:5000], y_train[5000:]

# + id="iu0u7LA3EGj6"

# in this cell we are defining the layers architecture for our model

LAYERS = [

keras.layers.Flatten(input_shape = [28,28], name = 'inputLayer'),

keras.layers.Dense(300, activation = 'relu'),

keras.layers.Dense(100, activation = 'relu'),

keras.layers.Dense(10, activation = 'softmax')

]

# + colab={"base_uri": "https://localhost:8080/"} id="jacQ2mjU3RyL" outputId="c0237521-3d85-44fe-ad1c-3793a9fa4ada"

model_classifier = keras.models.Sequential(LAYERS)

model_classifier.layers

# + colab={"base_uri": "https://localhost:8080/"} id="tXMG9YoT3iZ7" outputId="3c4b5cf7-fc9c-458e-9016-0700ea38c3b4"

model_classifier.summary()

# + colab={"base_uri": "https://localhost:8080/"} id="yYmM-tiO3q_4" outputId="83b57849-9d23-4e23-9ada-aa0c5b324fba"

weights, biases = model_classifier.layers[1].get_weights()

weights.shape

# + [markdown] id="0xjMKMuI7cku"

# ### If you imagine above cell output, it is from every input is connected to 300 other neurons, so there are 784 inputs and then they go into 300 neurons of next layer, that is how we get this (784,300) shape.

# + id="Hc_MVzUf6LIY"

LOSS_FUNCTION = "sparse_categorical_crossentropy"

OPTIMIZER = "SGD"

METRICS = ["ACCURACY"]

EPOCHS = 10

VALIDATION = (X_valid, y_valid)

# + id="VXffD0zt7d73"

model_classifier.compile(loss= LOSS_FUNCTION, optimizer = OPTIMIZER, metrics = METRICS)

# + colab={"base_uri": "https://localhost:8080/"} id="VLw8cLxd8dLh" outputId="6a935f00-e5d8-4e7c-acb8-aca29332d085"

model_classifier.fit(X_train, y_train, epochs = EPOCHS, validation_data= VALIDATION, batch_size = 32)

# + [markdown] id="l7r9qWvgS492"

# ## 1719 is number of iterations and in every epoch model will see all 55000 points but in 1719 forward and backward passes.

#

# 1719 as if we divide 55000/32(which is batch size 32).

# + colab={"base_uri": "https://localhost:8080/", "height": 284} id="b_Qgb2ds83Zx" outputId="e03559a5-4d4f-4214-f7a0-a221d1e3b7d0"

pd.DataFrame(model_classifier.history.history).plot()

# + colab={"base_uri": "https://localhost:8080/"} id="KwSLdzZz-_iN" outputId="94707586-a780-4972-ee27-c6999b63dc2b"

# it will print loss and accuracy score

model_classifier.evaluate(X_test, y_test)

# + colab={"base_uri": "https://localhost:8080/"} id="uF958D28TrjT" outputId="c1d24fe4-b67a-4f37-9974-497683d36bc7"

model_classifier.predict(X_test[:5])

# + colab={"base_uri": "https://localhost:8080/"} id="8JMJ5mjAU9my" outputId="da791439-94cd-4c34-d880-695b9b468afa"

model_classifier.predict(X_test[:5]).round(3)

# + colab={"base_uri": "https://localhost:8080/"} id="QCodo5IZVA6u" outputId="042bde69-ebc3-4096-aed3-fbe3fa02080e"

np.argmax(model_classifier.predict(X_test[:5]), axis = 1)

# + colab={"base_uri": "https://localhost:8080/"} id="GMa00KqJVPtY" outputId="1d2b7acf-2a34-4776-b581-ca64a4a4d462"

y_test[:5]

# + id="w2vpWm1PVfoD"

|

ANN_Implimentation_Demo - 26th Sep/ANN_Implimentation_Demo - 26th Sep.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import os

os.environ['CUDA_VISIBLE_DEVICES'] = ''

from glob import glob

goemotions = glob('goemotions_*.csv')

goemotions

import malaya

import pandas as pd

df = pd.read_csv(goemotions[0])

df.head()

transformer = malaya.translation.en_ms.transformer()

preprocessing = malaya.preprocessing.preprocessing(normalize = [],

annotate = [],

lowercase = [],

expand_english_contractions = True)

texts = df['text'].tolist()

# +

from tqdm import tqdm

translate_nmt, translate_replace = [], []

for i in tqdm(range(len(texts))):

s = texts[i]

r_nmt = None

r_replace = None

try:

r_nmt = transformer.greedy_decoder([s])[0]

except:

pass

try:

r_replace = ' '.join(preprocessing.process(s))

except:

pass

translate_nmt.append(r_nmt)

translate_replace.append(r_replace)

# -

df['translate_nmt'] = translate_nmt

df['translate_replace'] = translate_replace

df.to_csv('goemotions_2.translated.csv', index = False)

|

corpus/goemotions/translate-geomotions-part2.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ## Creating a simple visualization.

# In this exercise, we will create our first simple plot using Matplotlib.

# #### Import statements

# Import the necessary modules and enable plotting within a jupyter notebook.

# +

import numpy as np

import matplotlib.pyplot as plt

# %matplotlib inline

# -

# #### Creating a figure

# Explicitly create a figure and set the dpi to 200.

plt.figure(dpi=200)

# #### Plotting data pairs

# Plot the following data pairs (x, y) as circles, which are connected via line segments: (1, 1), (2, 3), (4, 4), (5, 3). Visualize the plot.

plt.plot([1, 2, 4, 5], [1, 3, 4, 3], '-o')

plt.show()

|

Lesson03/Exercise03/exercise03_solution.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import pandas as pd

import warnings

warnings.filterwarnings('ignore')

df = pd.read_csv('student-por.csv')

df.head()

df = pd.read_csv('student-por.csv', sep=';')

df.head()

df.isnull().sum()

df.fillna(-99.0, inplace=True)

df[df.isna().any(axis=1)]

df['age'] = df['age'].fillna(df['age'].median())

df['sex'] = df['sex'].fillna(df['sex'].mode())

df['guardian'] = df['guardian'].fillna(df['guardian'].mode())

df.head()

categorical_columns = df.columns[df.dtypes==object].tolist()

from sklearn.preprocessing import OneHotEncoder

ohe = OneHotEncoder()

hot = ohe.fit_transform(df[categorical_columns])

hot_df = pd.DataFrame(hot.toarray())

hot_df.head()

print(hot)

hot

cold_df = df.select_dtypes(exclude=["object"])

cold_df.head()

# +

from scipy.sparse import csr_matrix

cold = csr_matrix(cold_df)

from scipy.sparse import hstack

final_sparse_matrix = hstack((hot, cold))

final_df = pd.DataFrame(final_sparse_matrix.toarray())

final_df.head()

# -

from sklearn.base import TransformerMixin

class NullValueImputer(TransformerMixin):

def __init__(self):

None

def fit(self, X, y=None):

return self

def transform(self, X, y=None):

for column in X.columns.tolist():

if column in X.columns[X.dtypes==object].tolist():

X[column] = X[column].fillna(X[column].mode())

else:

X[column]=X[column].fillna(X[column].median())

return X

df = pd.read_csv('student-por.csv', sep=';')

nvi = NullValueImputer().fit_transform(df)

nvi.head()

class SparseMatrix(TransformerMixin):

def __init__(self):

None

def fit(self, X, y=None):

return self

def transform(self, X, y=None):

categorical_columns= X.columns[X.dtypes==object].tolist()

ohe = OneHotEncoder()

hot = ohe.fit_transform(X[categorical_columns])

cold_df = X.select_dtypes(exclude=["object"])

cold = csr_matrix(cold_df)

final_sparse_matrix = hstack((hot, cold))

final_csr_matrix = final_sparse_matrix.tocsr()

return final_csr_matrix

sm = SparseMatrix().fit_transform(nvi)

print(sm)

sm_df = pd.DataFrame(sm.toarray())

sm_df.head()

df = pd.read_csv('student-por.csv', sep=';')

y = df.iloc[:, -1]

X = df.iloc[:, :-3]

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, random_state=2)

from sklearn.pipeline import Pipeline

data_pipeline = Pipeline([('null_imputer', NullValueImputer()), ('sparse', SparseMatrix())])

X_train_transformed = data_pipeline.fit_transform(X_train)

import numpy as np

from sklearn.model_selection import GridSearchCV

from sklearn.model_selection import cross_val_score, KFold

from sklearn.metrics import mean_squared_error as MSE

from xgboost import XGBRegressor

y_train.value_counts()

kfold = KFold(n_splits=5, shuffle=True, random_state=2)

def cross_val(model):

scores = cross_val_score(model, X_train_transformed, y_train, scoring='neg_root_mean_squared_error', cv=kfold)

rmse = (-scores.mean())

return rmse

cross_val(XGBRegressor(objective='reg:squarederror', missing='unknown'))

X_train_2, X_test_2, y_train_2, y_test_2 = train_test_split(X_train_transformed, y_train, random_state=2)

def n_estimators(model):

eval_set = [(X_test_2, y_test_2)]

eval_metric="rmse"

model.fit(X_train_2, y_train_2, eval_metric=eval_metric, eval_set=eval_set, early_stopping_rounds=100)

y_pred = model.predict(X_test_2)

rmse = MSE(y_test_2, y_pred)**0.5

return rmse

n_estimators(XGBRegressor(n_estimators=5000))

def grid_search(params, reg=XGBRegressor(objective='reg:squarederror')):

grid_reg = GridSearchCV(reg, params, scoring='neg_mean_squared_error', cv=kfold)

grid_reg.fit(X_train_transformed, y_train)

best_params = grid_reg.best_params_

print("Best params:", best_params)

best_score = np.sqrt(-grid_reg.best_score_)

print("Best score:", best_score)

grid_search(params={'max_depth':[1, 2, 3, 4, 6, 7, 8],

'n_estimators':[31]})

grid_search(params={'max_depth':[1, 2],

'min_child_weight':[1,2,3,4,5],

'n_estimators':[31]})

grid_search(params={'max_depth':[1],

'min_child_weight':[2,3],

'subsample':[0.5, 0.6, 0.7, 0.8, 0.9],

'n_estimators':[31, 50]})

grid_search(params={'max_depth':[1],

'min_child_weight':[1, 2, 3],

'subsample':[0.8, 0.9, 1],

'colsample_bytree':[0.5, 0.6, 0.7, 0.8, 0.9, 1],

'n_estimators':[50]})

grid_search(params={'max_depth':[1],

'min_child_weight':[3],

'subsample':[.8],

'colsample_bytree':[0.9],

'colsample_bylevel':[0.6, 0.7, 0.8, 0.9, 1],

'colsample_bynode':[0.6, 0.7, 0.8, 0.9, 1],

'n_estimators':[50]})

cross_val(XGBRegressor(max_depth=1,

min_child_weight=3,

subsample=0.8,

colsample_bytree=0.9,

colsample_bylevel=0.9,

colsample_bynode=0.8,

objective='reg:squarederror',

booster='dart',

one_drop=True))

X_test_transformed = data_pipeline.fit_transform(X_test)

type(y_train)

model = XGBRegressor(max_depth=1,

min_child_weight=3,

subsample=0.8,

colsample_bytree=0.9,

colsample_bylevel=0.9,

colsample_bynode=0.8,

n_estimators=50,

objective='reg:squarederror')

model.fit(X_train_transformed, y_train)

y_pred = model.predict(X_test_transformed)

rmse = MSE(y_pred, y_test)**0.5

rmse

model = XGBRegressor(max_depth=1,

min_child_weight=5,

subsample=0.6,

colsample_bytree=0.9,

colsample_bylevel=0.9,

colsample_bynode=0.8,

n_estimators=50,

objective='reg:squarederror')

model.fit(X_train_transformed, y_train)

y_pred = model.predict(X_test_transformed)

rmse = MSE(y_pred, y_test)**0.5

rmse

full_pipeline = Pipeline([('null_imputer', NullValueImputer()),

('sparse', SparseMatrix()),

('xgb', XGBRegressor(max_depth=1,

min_child_weight=5,

subsample=0.6,

colsample_bytree=0.9,

colsample_bylevel=0.9,

colsample_bynode=0.8,

objective='reg:squarederror'))])

full_pipeline.fit(X, y)

new_data = X_test

full_pipeline.predict(new_data)

np.round(full_pipeline.predict(new_data))

new_df = pd.read_csv('student-por.csv')

new_X = df.iloc[:, :-3]

new_y = df.iloc[:, -1]

new_model = full_pipeline.fit(new_X, new_y)

more_new_data = X_test[:25]

np.round(new_model.predict(more_new_data))

single_row = X_test[:1]

single_row_plus = pd.concat([single_row, X_test[:25]])

print(np.round(new_model.predict(single_row_plus))[:1])

|

Chapter10/XGBoost_Model_Deployment-Copy1.ipynb

|

# ---

#

# jupyter:

# jupytext:

# formats: ipynb,md

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

#

# + language="bash"

#

# + language="bash"

#

# + language="bash"

#

# -

# ```

# ```

#

# ---

# title: "Loops"

# teaching: 40

# exercises: 10

# questions:

# - "How can I perform the same actions on many different files?"

# objectives:

# - "Write a loop that applies one or more commands separately to each file in a set of files."

# - "Trace the values taken on by a loop variable during execution of the loop."

# - "Explain the difference between a variable's name and its value."

# - "Explain why spaces and some punctuation characters shouldn't be used in file names."

# - "Demonstrate how to see what commands have recently been executed."

# - "Re-run recently executed commands without retyping them."

# keypoints:

# - "A `for` loop repeats commands once for every thing in a list."

# - "Every `for` loop needs a variable to refer to the thing it is currently operating on."

# - "Use `$name` to expand a variable (i.e., get its value). `${name}` can also be used."

# - "Do not use spaces, quotes, or wildcard characters such as '*' or '?' in filenames, as it complicates variable expansion."

# - "Give files consistent names that are easy to match with wildcard patterns to make it easy to select them for looping."

# - "Use the up-arrow key to scroll up through previous commands to edit and repeat them."

# - "Use <kbd>Ctrl</kbd>+<kbd>R</kbd> to search through the previously entered commands."

# - "Use `history` to display recent commands, and `![number]` to repeat a command by number."

# ---

# **Loops** are a programming construct which allow us to repeat a command or set of commands

# for each item in a list.

# As such they are key to productivity improvements through automation.

# Similar to wildcards and tab completion, using loops also reduces the

# amount of typing required (and hence reduces the number of typing mistakes).

# Suppose we have several hundred genome data files named `basilisk.dat`, `minotaur.dat`, and

# `unicorn.dat`.

# For this example, we'll use the `creatures` directory which only has three example files,

# but the principles can be applied to many many more files at once.

# The structure of these files is the same: the common name, classification, and updated date are

# presented on the first three lines, with DNA sequences on the following lines.

# Let's look at the files:

# ```

# $ head -n 5 basilisk.dat minotaur.dat unicorn.dat

# ```

# {: .language-bash}

# We would like to print out the classification for each species, which is given on the second

# line of each file.

# For each file, we would need to execute the command `head -n 2` and pipe this to `tail -n 1`.

# We’ll use a loop to solve this problem, but first let’s look at the general form of a loop:

# ```

# for thing in list_of_things

# do

# operation_using $thing # Indentation within the loop is not required, but aids legibility

# done

# ```

# {: .language-bash}

# and we can apply this to our example like this:

# ```

# $ for filename in basilisk.dat minotaur.dat unicorn.dat

# > do

# > head -n 2 $filename | tail -n 1

# > done

# ```

# {: .language-bash}

# ```

# CLASSIFICATION: basiliscus vulgaris

# CLASSIFICATION: bos hominus

# CLASSIFICATION: equus monoceros

# ```

# {: .output}

# > ## Follow the Prompt

# >

# > The shell prompt changes from `$` to `>` and back again as we were

# > typing in our loop. The second prompt, `>`, is different to remind

# > us that we haven't finished typing a complete command yet. A semicolon, `;`,

# > can be used to separate two commands written on a single line.

# {: .callout}

# When the shell sees the keyword `for`,

# it knows to repeat a command (or group of commands) once for each item in a list.

# Each time the loop runs (called an iteration), an item in the list is assigned in sequence to

# the **variable**, and the commands inside the loop are executed, before moving on to

# the next item in the list.

# Inside the loop,

# we call for the variable's value by putting `$` in front of it.

# The `$` tells the shell interpreter to treat

# the variable as a variable name and substitute its value in its place,

# rather than treat it as text or an external command.

# In this example, the list is three filenames: `basilisk.dat`, `minotaur.dat`, and `unicorn.dat`.

# Each time the loop iterates, it will assign a file name to the variable `filename`

# and run the `head` command.

# The first time through the loop,

# `$filename` is `basilisk.dat`.

# The interpreter runs the command `head` on `basilisk.dat`

# and pipes the first two lines to the `tail` command,

# which then prints the second line of `basilisk.dat`.

# For the second iteration, `$filename` becomes

# `minotaur.dat`. This time, the shell runs `head` on `minotaur.dat`

# and pipes the first two lines to the `tail` command,

# which then prints the second line of `minotaur.dat`.

# For the third iteration, `$filename` becomes

# `unicorn.dat`, so the shell runs the `head` command on that file,

# and `tail` on the output of that.

# Since the list was only three items, the shell exits the `for` loop.

# > ## Same Symbols, Different Meanings

# >

# > Here we see `>` being used as a shell prompt, whereas `>` is also

# > used to redirect output.

# > Similarly, `$` is used as a shell prompt, but, as we saw earlier,

# > it is also used to ask the shell to get the value of a variable.

# >

# > If the *shell* prints `>` or `$` then it expects you to type something,

# > and the symbol is a prompt.

# >

# > If *you* type `>` or `$` yourself, it is an instruction from you that

# > the shell should redirect output or get the value of a variable.

# {: .callout}

# When using variables it is also

# possible to put the names into curly braces to clearly delimit the variable

# name: `$filename` is equivalent to `${filename}`, but is different from

# `${file}name`. You may find this notation in other people's programs.

# We have called the variable in this loop `filename`

# in order to make its purpose clearer to human readers.

# The shell itself doesn't care what the variable is called;

# if we wrote this loop as:

# + language="bash"

# $ for x in basilisk.dat minotaur.dat unicorn.dat

# > do

# > head -n 2 $x | tail -n 1

# > done

# -

# ```

# ```

#

# {: .language-bash}

# or:

# + language="bash"

# $ for temperature in basilisk.dat minotaur.dat unicorn.dat

# > do

# > head -n 2 $temperature | tail -n 1

# > done

# -

# ```

# ```

#

# {: .language-bash}

# it would work exactly the same way.

# *Don't do this.*

# Programs are only useful if people can understand them,

# so meaningless names (like `x`) or misleading names (like `temperature`)

# increase the odds that the program won't do what its readers think it does.

# > ## Variables in Loops

# >

# > This exercise refers to the `shell-lesson-data/molecules` directory.

# > `ls` gives the following output:

# >

#

# ```

# > cubane.pdb ethane.pdb methane.pdb octane.pdb pentane.pdb propane.pdb

# ```

#

# > {: .output}

# >

# > What is the output of the following code?

# >

# + language="bash"

# > $ for datafile in *.pdb

# > > do

# > > ls *.pdb

# > > done

# -

# ```

# ```

#

# > {: .language-bash}

# >

# > Now, what is the output of the following code?

# >

# + language="bash"

# > $ for datafile in *.pdb

# > > do

# > > ls $datafile

# > > done

# + language="bash"

#

# -

# ```

# ```

#

# > {: .language-bash}

# >

# > Why do these two loops give different outputs?

# >

# > > ## Solution

# > > The first code block gives the same output on each iteration through

# > > the loop.

# > > Bash expands the wildcard `*.pdb` within the loop body (as well as

# > > before the loop starts) to match all files ending in `.pdb`

# > > and then lists them using `ls`.

# > > The expanded loop would look like this:

# > > ```

# > > $ for datafile in cubane.pdb ethane.pdb methane.pdb octane.pdb pentane.pdb propane.pdb

# > > > do

# > > > ls cubane.pdb ethane.pdb methane.pdb octane.pdb pentane.pdb propane.pdb

# > > > done

# > > ```

# > > {: .language-bash}

# > >

# > > ```

# > > cubane.pdb ethane.pdb methane.pdb octane.pdb pentane.pdb propane.pdb

# > > cubane.pdb ethane.pdb methane.pdb octane.pdb pentane.pdb propane.pdb

# > > cubane.pdb ethane.pdb methane.pdb octane.pdb pentane.pdb propane.pdb

# > > cubane.pdb ethane.pdb methane.pdb octane.pdb pentane.pdb propane.pdb

# > > cubane.pdb ethane.pdb methane.pdb octane.pdb pentane.pdb propane.pdb

# > > cubane.pdb ethane.pdb methane.pdb octane.pdb pentane.pdb propane.pdb

# > > ```

# > > {: .output}

# > >

# > > The second code block lists a different file on each loop iteration.

# > > The value of the `datafile` variable is evaluated using `$datafile`,

# > > and then listed using `ls`.

# > >

# > > ```

# > > cubane.pdb

# > > ethane.pdb

# > > methane.pdb

# > > octane.pdb

# > > pentane.pdb

# > > propane.pdb

# > > ```

# > > {: .output}

# > {: .solution}

# {: .challenge}

# > ## Limiting Sets of Files

# >

# > What would be the output of running the following loop in the

# > `shell-lesson-data/molecules` directory?

# >

# + language="bash"

# > $ for filename in c*

# > > do

# > > ls $filename

# > > done

# -

# ```

# ```

#

# > {: .language-bash}

# >

# > 1. No files are listed.

# > 2. All files are listed.

# > 3. Only `cubane.pdb`, `octane.pdb` and `pentane.pdb` are listed.

# > 4. Only `cubane.pdb` is listed.

# >

# > > ## Solution

# > > 4 is the correct answer. `*` matches zero or more characters, so any file name starting with

# > > the letter c, followed by zero or more other characters will be matched.

# > {: .solution}

# >

# > How would the output differ from using this command instead?

# >

# + language="bash"

# > $ for filename in *c*

# > > do

# > > ls $filename

# > > done

# -

# ```

# ```

#

# > {: .language-bash}

# >

# > 1. The same files would be listed.

# > 2. All the files are listed this time.

# > 3. No files are listed this time.

# > 4. The files `cubane.pdb` and `octane.pdb` will be listed.

# > 5. Only the file `octane.pdb` will be listed.

# >

# > > ## Solution

# > > 4 is the correct answer. `*` matches zero or more characters, so a file name with zero or more

# > > characters before a letter c and zero or more characters after the letter c will be matched.

# > {: .solution}

# {: .challenge}

# > ## Saving to a File in a Loop - Part One

# >

# > In the `shell-lesson-data/molecules` directory, what is the effect of this loop?

# >

# + language="bash"

# > for alkanes in *.pdb

# > do

# > echo $alkanes

# > cat $alkanes > alkanes.pdb

# > done

# -

# ```

# ```

#

# > {: .language-bash}

# >

# > 1. Prints `cubane.pdb`, `ethane.pdb`, `methane.pdb`, `octane.pdb`, `pentane.pdb` and

# > `propane.pdb`, and the text from `propane.pdb` will be saved to a file called `alkanes.pdb`.

# > 2. Prints `cubane.pdb`, `ethane.pdb`, and `methane.pdb`, and the text from all three files

# > would be concatenated and saved to a file called `alkanes.pdb`.

# > 3. Prints `cubane.pdb`, `ethane.pdb`, `methane.pdb`, `octane.pdb`, and `pentane.pdb`,

# > and the text from `propane.pdb` will be saved to a file called `alkanes.pdb`.

# > 4. None of the above.

# >

# > > ## Solution

# > > 1. The text from each file in turn gets written to the `alkanes.pdb` file.

# > > However, the file gets overwritten on each loop iteration, so the final content of `alkanes.pdb`

# > > is the text from the `propane.pdb` file.

# > {: .solution}

# {: .challenge}

# > ## Saving to a File in a Loop - Part Two

# >

# > Also in the `shell-lesson-data/molecules` directory,

# > what would be the output of the following loop?

# >

# + language="bash"

# > for datafile in *.pdb

# > do

# > cat $datafile >> all.pdb

# > done

# -

# ```

# ```

#

# > {: .language-bash}

# >

# > 1. All of the text from `cubane.pdb`, `ethane.pdb`, `methane.pdb`, `octane.pdb`, and

# > `pentane.pdb` would be concatenated and saved to a file called `all.pdb`.

# > 2. The text from `ethane.pdb` will be saved to a file called `all.pdb`.

# > 3. All of the text from `cubane.pdb`, `ethane.pdb`, `methane.pdb`, `octane.pdb`, `pentane.pdb`

# > and `propane.pdb` would be concatenated and saved to a file called `all.pdb`.

# > 4. All of the text from `cubane.pdb`, `ethane.pdb`, `methane.pdb`, `octane.pdb`, `pentane.pdb`

# > and `propane.pdb` would be printed to the screen and saved to a file called `all.pdb`.

# >

# > > ## Solution

# > > 3 is the correct answer. `>>` appends to a file, rather than overwriting it with the redirected

# > > output from a command.

# > > Given the output from the `cat` command has been redirected, nothing is printed to the screen.

# > {: .solution}

# {: .challenge}

# Let's continue with our example in the `shell-lesson-data/creatures` directory.

# Here's a slightly more complicated loop:

# + language="bash"

# $ for filename in *.dat

# > do

# > echo $filename

# > head -n 100 $filename | tail -n 20

# > done

# -

# ```

# ```

#

# {: .language-bash}

# The shell starts by expanding `*.dat` to create the list of files it will process.

# The **loop body**

# then executes two commands for each of those files.

# The first command, `echo`, prints its command-line arguments to standard output.

# For example:

# + language="bash"

# $ echo hello there

# -

# ```

# ```

#

# {: .language-bash}

# prints:

#

# ```

# hello there

# ```

#

# {: .output}

# In this case,

# since the shell expands `$filename` to be the name of a file,

# `echo $filename` prints the name of the file.

# Note that we can't write this as:

# + language="bash"

# $ for filename in *.dat

# > do

# > $filename

# > head -n 100 $filename | tail -n 20

# > done

# -

# ```

# ```

#

# {: .language-bash}

# because then the first time through the loop,

# when `$filename` expanded to `basilisk.dat`, the shell would try to run `basilisk.dat` as a program.

# Finally,

# the `head` and `tail` combination selects lines 81-100

# from whatever file is being processed

# (assuming the file has at least 100 lines).

# > ## Spaces in Names

# >

# > Spaces are used to separate the elements of the list

# > that we are going to loop over. If one of those elements

# > contains a space character, we need to surround it with

# > quotes, and do the same thing to our loop variable.

# > Suppose our data files are named:

# >

#

# ```

# > red dragon.dat

# > purple unicorn.dat

# ```

#

# > {: .source}

# >

# > To loop over these files, we would need to add double quotes like so:

# >

# + language="bash"

# > $ for filename in "red dragon.dat" "purple unicorn.dat"

# > > do

# > > head -n 100 "$filename" | tail -n 20

# > > done

# -

# ```

# ```

#

# > {: .language-bash}

# >

# > It is simpler to avoid using spaces (or other special characters) in filenames.

# >

# > The files above don't exist, so if we run the above code, the `head` command will be unable

# > to find them, however the error message returned will show the name of the files it is

# > expecting:

# >

#

# ```

# > head: cannot open ‘red dragon.dat’ for reading: No such file or directory

# > head: cannot open ‘purple unicorn.dat’ for reading: No such file or directory

# ```

#

# > {: .output}

# >

# > Try removing the quotes around `$filename` in the loop above to see the effect of the quote

# > marks on spaces. Note that we get a result from the loop command for unicorn.dat

# > when we run this code in the `creatures` directory:

# >

#

# ```

# > head: cannot open ‘red’ for reading: No such file or directory

# > head: cannot open ‘dragon.dat’ for reading: No such file or directory

# > head: cannot open ‘purple’ for reading: No such file or directory

# > CGGTACCGAA

# > AAGGGTCGCG

# > CAAGTGTTCC

# > ...

# ```

#

# > {: .output}

# {: .callout}

# We would like to modify each of the files in `shell-lesson-data/creatures`, but also save a version

# of the original files, naming the copies `original-basilisk.dat` and `original-unicorn.dat`.

# We can't use:

# + language="bash"

# $ cp *.dat original-*.dat

# -

# ```

# ```

#

# {: .language-bash}

# because that would expand to:

# + language="bash"

# $ cp basilisk.dat minotaur.dat unicorn.dat original-*.dat

# -

# ```

# ```

#

# {: .language-bash}

# This wouldn't back up our files, instead we get an error:

#

# ```

# cp: target `original-*.dat' is not a directory

# ```

#

# {: .error}

# This problem arises when `cp` receives more than two inputs. When this happens, it

# expects the last input to be a directory where it can copy all the files it was passed.

# Since there is no directory named `original-*.dat` in the `creatures` directory we get an

# error.

# Instead, we can use a loop:

# + language="bash"

# $ for filename in *.dat

# > do

# > cp $filename original-$filename

# > done

# -

# ```

# ```

#

# {: .language-bash}

# This loop runs the `cp` command once for each filename.

# The first time,

# when `$filename` expands to `basilisk.dat`,

# the shell executes:

# + language="bash"

# cp basilisk.dat original-basilisk.dat

# -

# ```

# ```

#

# {: .language-bash}

# The second time, the command is:

# + language="bash"

# cp minotaur.dat original-minotaur.dat

# -

# ```

# ```

#

# {: .language-bash}

# The third and last time, the command is:

# + language="bash"

# cp unicorn.dat original-unicorn.dat

# -

# ```

# ```

#

# {: .language-bash}

# Since the `cp` command does not normally produce any output, it's hard to check

# that the loop is doing the correct thing.

# However, we learned earlier how to print strings using `echo`, and we can modify the loop

# to use `echo` to print our commands without actually executing them.

# As such we can check what commands *would be* run in the unmodified loop.

# The following diagram

# shows what happens when the modified loop is executed, and demonstrates how the

# judicious use of `echo` is a good debugging technique.

#

# ## Nelle's Pipeline: Processing Files

# Nelle is now ready to process her data files using `goostats.sh` ---

# a shell script written by her supervisor.

# This calculates some statistics from a protein sample file, and takes two arguments:

# 1. an input file (containing the raw data)

# 2. an output file (to store the calculated statistics)

# Since she's still learning how to use the shell,

# she decides to build up the required commands in stages.

# Her first step is to make sure that she can select the right input files --- remember,

# these are ones whose names end in 'A' or 'B', rather than 'Z'.

# Starting from her home directory, Nelle types:

# + language="bash"

# $ cd north-pacific-gyre/2012-07-03

# $ for datafile in NENE*A.txt NENE*B.txt

# > do

# > echo $datafile

# > done

# -

# {: .language-bash}

#

# ```

# NENE01729A.txt

# NENE01729B.txt

# NENE01736A.txt

# ...

# NENE02043A.txt

# NENE02043B.txt

# ```

#

# {: .output}

# Her next step is to decide

# what to call the files that the `goostats.sh` analysis program will create.

# Prefixing each input file's name with 'stats' seems simple,

# so she modifies her loop to do that:

# + language="bash"

# $ for datafile in NENE*A.txt NENE*B.txt

# > do

# > echo $datafile stats-$datafile

# > done

# -

# {: .language-bash}

#

# ```

# NENE01729A.txt stats-NENE01729A.txt

# NENE01729B.txt stats-NENE01729B.txt

# NENE01736A.txt stats-NENE01736A.txt

# ...

# NENE02043A.txt stats-NENE02043A.txt

# NENE02043B.txt stats-NENE02043B.txt

# ```

#

# {: .output}

# She hasn't actually run `goostats.sh` yet,

# but now she's sure she can select the right files and generate the right output filenames.

# Typing in commands over and over again is becoming tedious,

# though,

# and Nelle is worried about making mistakes,

# so instead of re-entering her loop,

# she presses <kbd>↑</kbd>.

# In response,

# the shell redisplays the whole loop on one line

# (using semi-colons to separate the pieces):

# + language="bash"

# $ for datafile in NENE*A.txt NENE*B.txt; do echo $datafile stats-$datafile; done

# -

# ```

# ```

#

# {: .language-bash}

# Using the left arrow key,

# Nelle backs up and changes the command `echo` to `bash goostats.sh`:

# + language="bash"

# $ for datafile in NENE*A.txt NENE*B.txt; do bash goostats.sh $datafile stats-$datafile; done

# -

# ```

# ```

#

# {: .language-bash}

# When she presses <kbd>Enter</kbd>,

# the shell runs the modified command.

# However, nothing appears to happen --- there is no output.

# After a moment, Nelle realizes that since her script doesn't print anything to the screen

# any longer, she has no idea whether it is running, much less how quickly.

# She kills the running command by typing <kbd>Ctrl</kbd>+<kbd>C</kbd>,

# uses <kbd>↑</kbd> to repeat the command,

# and edits it to read:

# + language="bash"

# $ for datafile in NENE*A.txt NENE*B.txt; do echo $datafile;

# bash goostats.sh $datafile stats-$datafile; done

# -

# ```

# ```

#

# {: .language-bash}

# > ## Beginning and End

# >

# > We can move to the beginning of a line in the shell by typing <kbd>Ctrl</kbd>+<kbd>A</kbd>

# > and to the end using <kbd>Ctrl</kbd>+<kbd>E</kbd>.

# {: .callout}

# When she runs her program now,

# it produces one line of output every five seconds or so:

#

# ```

# NENE01729A.txt

# NENE01729B.txt

# NENE01736A.txt

# ...

# ```

#

# {: .output}

# 1518 times 5 seconds,

# divided by 60,

# tells her that her script will take about two hours to run.

# As a final check,

# she opens another terminal window,

# goes into `north-pacific-gyre/2012-07-03`,

# and uses `cat stats-NENE01729B.txt`

# to examine one of the output files.

# It looks good,

# so she decides to get some coffee and catch up on her reading.

# > ## Those Who Know History Can Choose to Repeat It

# >

# > Another way to repeat previous work is to use the `history` command to

# > get a list of the last few hundred commands that have been executed, and

# > then to use `!123` (where '123' is replaced by the command number) to

# > repeat one of those commands. For example, if Nelle types this:

# >

# + language="bash"

# > $ history | tail -n 5

# -

# > {: .language-bash}

#

# ```

# > 456 ls -l NENE0*.txt

# > 457 rm stats-NENE01729B.txt.txt

# > 458 bash goostats.sh NENE01729B.txt stats-NENE01729B.txt

# > 459 ls -l NENE0*.txt

# > 460 history

# ```

#

# > {: .output}

# >

# > then she can re-run `goostats.sh` on `NENE01729B.txt` simply by typing

# > `!458`.

# {: .callout}

# > ## Other History Commands

# >

# > There are a number of other shortcut commands for getting at the history.

# >

# > - <kbd>Ctrl</kbd>+<kbd>R</kbd> enters a history search mode 'reverse-i-search' and finds the

# > most recent command in your history that matches the text you enter next.

# > Press <kbd>Ctrl</kbd>+<kbd>R</kbd> one or more additional times to search for earlier matches.

# > You can then use the left and right arrow keys to choose that line and edit

# > it then hit <kbd>Return</kbd> to run the command.

# > - `!!` retrieves the immediately preceding command

# > (you may or may not find this more convenient than using <kbd>↑</kbd>)

# > - `!$` retrieves the last word of the last command.

# > That's useful more often than you might expect: after

# > `bash goostats.sh NENE01729B.txt stats-NENE01729B.txt`, you can type

# > `less !$` to look at the file `stats-NENE01729B.txt`, which is

# > quicker than doing <kbd>↑</kbd> and editing the command-line.

# {: .callout}

# > ## Doing a Dry Run

# >

# > A loop is a way to do many things at once --- or to make many mistakes at

# > once if it does the wrong thing. One way to check what a loop *would* do

# > is to `echo` the commands it would run instead of actually running them.

# >

# > Suppose we want to preview the commands the following loop will execute

# > without actually running those commands:

# >

# + language="bash"

# > $ for datafile in *.pdb

# > > do

# > > cat $datafile >> all.pdb

# > > done

# -

# ```

# ```

#

# > {: .language-bash}

# >

# > What is the difference between the two loops below, and which one would we

# > want to run?

# >

# + language="bash"

# > # Version 1

# > $ for datafile in *.pdb

# > > do

# > > echo cat $datafile >> all.pdb

# > > done

# -

# ```

# ```

#

# > {: .language-bash}

# >

# + language="bash"

# > # Version 2

# > $ for datafile in *.pdb

# > > do

# > > echo "cat $datafile >> all.pdb"

# > > done

# -

# ```

# ```

#

# > {: .language-bash}

# >

# > > ## Solution

# > > The second version is the one we want to run.

# > > This prints to screen everything enclosed in the quote marks, expanding the

# > > loop variable name because we have prefixed it with a dollar sign.

# > > It also *does not* modify nor create the file `all.pdb`, as the `>>`

# > > is treated literally as part of a string rather than as a

# > > redirection instruction.

# > >

# > > The first version appends the output from the command `echo cat $datafile`

# > > to the file, `all.pdb`. This file will just contain the list;

# > > `cat cubane.pdb`, `cat ethane.pdb`, `cat methane.pdb` etc.

# > >

# > > Try both versions for yourself to see the output! Be sure to open the

# > > `all.pdb` file to view its contents.

# > {: .solution}

# {: .challenge}

# > ## Nested Loops

# >

# > Suppose we want to set up a directory structure to organize

# > some experiments measuring reaction rate constants with different compounds

# > *and* different temperatures. What would be the

# > result of the following code:

# >

# + language="bash"

# > $ for species in cubane ethane methane

# > > do

# > > for temperature in 25 30 37 40

# > > do

# > > mkdir $species-$temperature

# > > done

# > > done

# -

#

|

_episodes/05-loop.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: drlnd

# language: python

# name: drlnd

# ---

# # Collaboration and Competition

#

# ---

#

# In this notebook, you will learn how to use the Unity ML-Agents environment for the third project of the [Deep Reinforcement Learning Nanodegree](https://www.udacity.com/course/deep-reinforcement-learning-nanodegree--nd893) program.

#

# ### 1. Start the Environment

#

# We begin by importing the necessary packages. If the code cell below returns an error, please revisit the project instructions to double-check that you have installed [Unity ML-Agents](https://github.com/Unity-Technologies/ml-agents/blob/master/docs/Installation.md) and [NumPy](http://www.numpy.org/).

from IPython.core.display import display, HTML

display(HTML(

'<style>'

'#notebook { padding-top:0px !important; } '

'.container { width:95% !important; } '

'.end_space { min-height:0px !important; } '

'</style>'

))

from unityagents import UnityEnvironment

import numpy as np

# Next, we will start the environment! **_Before running the code cell below_**, change the `file_name` parameter to match the location of the Unity environment that you downloaded.

#

# - **Mac**: `"path/to/Tennis.app"`

# - **Windows** (x86): `"path/to/Tennis_Windows_x86/Tennis.exe"`

# - **Windows** (x86_64): `"path/to/Tennis_Windows_x86_64/Tennis.exe"`

# - **Linux** (x86): `"path/to/Tennis_Linux/Tennis.x86"`

# - **Linux** (x86_64): `"path/to/Tennis_Linux/Tennis.x86_64"`

# - **Linux** (x86, headless): `"path/to/Tennis_Linux_NoVis/Tennis.x86"`

# - **Linux** (x86_64, headless): `"path/to/Tennis_Linux_NoVis/Tennis.x86_64"`

#

# For instance, if you are using a Mac, then you downloaded `Tennis.app`. If this file is in the same folder as the notebook, then the line below should appear as follows:

# ```

# env = UnityEnvironment(file_name="Tennis.app")

# ```

#env = UnityEnvironment(file_name="Tennis_Linux/Tennis.x86_64")

env = UnityEnvironment(file_name="Tennis_Windows_x86_64/Tennis.exe")

# Environments contain **_brains_** which are responsible for deciding the actions of their associated agents. Here we check for the first brain available, and set it as the default brain we will be controlling from Python.

# get the default brain

brain_name = env.brain_names[0]

brain = env.brains[brain_name]

# ### 2. Examine the State and Action Spaces

#

# In this environment, two agents control rackets to bounce a ball over a net. If an agent hits the ball over the net, it receives a reward of +0.1. If an agent lets a ball hit the ground or hits the ball out of bounds, it receives a reward of -0.01. Thus, the goal of each agent is to keep the ball in play.

#

# The observation space consists of 8 variables corresponding to the position and velocity of the ball and racket. Two continuous actions are available, corresponding to movement toward (or away from) the net, and jumping.

#

# Run the code cell below to print some information about the environment.

# +

# reset the environment

env_info = env.reset(train_mode=True)[brain_name]

# number of agents

num_agents = len(env_info.agents)

print('Number of agents:', num_agents)

# size of each action

action_size = brain.vector_action_space_size

print('Size of each action:', action_size)

# examine the state space

states = env_info.vector_observations

state_size = states.shape[1]

print('There are {} agents. Each observes a state with length: {}'.format(states.shape[0], state_size))

print('The state for the first agent looks like:', states[0])

# -

# When finished, you can close the environment.

# ### 4. It's Your Turn!

#

# Now it's your turn to train your own agent to solve the environment! When training the environment, set `train_mode=True`, so that the line for resetting the environment looks like the following:

# ```python

# env_info = env.reset(train_mode=True)[brain_name]

# ```

# +

#from buffer import ReplayBuffer

from common.Memory import ReplayMemory

from maddpg import MADDPG

import torch

import numpy as np

from tensorboardX import SummaryWriter

import os

from utilities import transpose_list, transpose_to_tensor

from collections import deque

# keep training awake

#from workspace_utils import keep_awake

# for saving gif

#import imageio

# %load_ext autoreload

# %autoreload 2

# +

seed = 0

np.random.seed(seed)

torch.manual_seed(seed)

# number of training episodes.

# change this to higher number to experiment. say 30000.

number_of_episodes = 60000

episode_length = 200

batchsize = 256

# how many episodes to save policy and gif

save_interval = 200

# +

# amplitude of OU noise

# this slowly decreases to 0

#noise = 2

#noise_reduction = 0.999

log_path = os.getcwd()+"/log"

model_dir= os.getcwd()+"/model_dir"

os.makedirs(model_dir, exist_ok=True)

# keep 5000 episodes worth of replay

buffer = ReplayMemory(int(1e5))

# initialize policy and critic

in_actor = state_size

hidden_in_actor = 256

hidden_out_actor = 128

out_actor = 2

# critic input = obs from both agents + actions from both agents