code

stringlengths 38

801k

| repo_path

stringlengths 6

263

|

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Домашняя работа №8

# # Студент: <NAME>

import numpy as np

import matplotlib.pyplot as plt

# Есть уравнение ЗШЛ: $-\phi''(r) + l(l+1)r^{-2}\phi(r) - 2r^{-1}\phi(r) = 2E_{nl}\phi(r)$, $\phi(0) = \phi(\infty) = 0$

#

# Перепишем его, сделав замену и приведя к виду, схожему с тем, что был на лекции:

#

# $\lambda = -2E_{nl} = -2 * \frac{-1}{2 * (n +l+1)^2} = \frac{1}{(n + l + 1)^2}$

#

# $\phi''(r) - (l(l+1)r^{-2} - 2r^{-1})\phi(r) = \lambda\phi(r)$

#

# $\phi''(r) - p(r)\phi(r) = \lambda\rho(r)\phi(r)$

#

# Где $p(r) = l(l+1)r^{-2} - 2r^{-1}, \rho(r) = 1$

#

# $\phi(0) = \phi(R) = 0$

# Используйте сеточкую аапроксимацию второго порядка для решения данной ЗШЛ.

#

# (Спектр матрицы можно найти с помощью библеотечных функций)

#

# Вычислите 5 первых с.з. для $l = 0$ с точностью $\epsilon = 10^{-5}$

#

# (Начните высиления со значения R = 10)

#

# Постройте первые 5 собственных функций

# Для этого надо построить матрицу и найти ее спектр.

# +

def rho(x):

return 1

def p(x, l):

return l * (l + 1) * x ** (-2) - 2 * x ** (-1)

def get_matrix(l, R, N):

h = R / N

rhos = [rho(i * h) for i in range(1, N)]

ps = [p(i * h, l) for i in range(1, N)]

A = np.zeros((N - 1, N - 1))

for i in range(0, N - 1):

if i != 0:

A[i][i - 1] = 1 / h ** 2

A[i][i] = - (2 / h ** 2 + ps[i])

if i != N - 2:

A[i][i + 1] = 1 / h ** 2

return -A / 2

# -

# С лекции мы знаем, что сходимость сеточных методов это $O(h^2)$, значит чтобы погрешность была порядка $10^{-5}$, надо решить:

#

# $h^2 = 10^{-5} <=> h = 10^{-2.5} <=> R / N = 10^{-2.5} <=> N = 10^{2.5} * R$

#

# Если R = 10, то $N = 10^{3.5}$

#

# Поэтому возьмем $N = 4000$

# Но так как мы используем O нотацию, то она скрывает в себе константы, которые могут сильно влиять. В итоге более менее хороший ответ не достигается при R = 10, N = 4000. Если же взять при R = 100, N = 5000, то заданная погрешность в $10^{-5}$ достигается.

# +

R = 100

A = get_matrix(0, R, 5000)

eig = np.linalg.eig(A)

spectrum = eig[0]

eig_vectors = eig[1]

indexes = np.argsort(spectrum)[:5]

print("5 первых собственных значений")

print(spectrum[indexes])

print("---")

print("5 первых собственных функций")

print(eig_vectors[indexes])

# -

def draw_eig_func(data, R):

N = (len(data) + 1)

h = R / N

data_y = np.zeros(N + 1)

data_y[1:N] += data

data_x = [i * h for i in range(N + 1)]

plt.subplot(211)

plt.plot(data_x, data_y)

plt.ylabel("eigen function(N)")

plt.xlabel("N")

plt.figure(figsize=(10, 10), dpi=180)

draw_eig_func(eig_vectors[indexes][0], R)

plt.title("eigen function for lambda1 = " + str(spectrum[indexes][0]))

plt.show()

plt.figure(figsize=(10, 10), dpi=180)

draw_eig_func(eig_vectors[indexes][1], R)

plt.title("eigen function for lambda2 = " + str(spectrum[indexes][1]))

plt.show()

plt.figure(figsize=(10, 10), dpi=180)

draw_eig_func(eig_vectors[indexes][2], R)

plt.title("eigen function for lambda3 = " + str(spectrum[indexes][2]))

plt.show()

plt.figure(figsize=(10, 10), dpi=180)

draw_eig_func(eig_vectors[indexes][3], R)

plt.title("eigen function for lambda4 = " + str(spectrum[indexes][3]))

plt.show()

plt.figure(figsize=(10, 10), dpi=180)

draw_eig_func(eig_vectors[indexes][4], R)

plt.title("eigen function for lambda5 = " + str(spectrum[indexes][4]))

plt.show()

# Видимо чем меньше собственное число, тем меньше период этого "глаза", поэтому уже начиная с 4 собственного числа получается чисто синий цвет - график слишком скачет.

# +

def get_A_numerov(l, R, N):

h = R / N

rhos = [rho(i * h) for i in range(1, N)]

ps = [p(i * h, l) for i in range(1, N)]

A = np.zeros((N - 1, N - 1))

for i in range(0, N - 1):

if i != 0:

A[i][i - 1] = 1 / h ** 4 - 1 / 12 * ps[i - 1] / h ** 2

A[i][i] = - (2 / h ** 4 + ps[i] - 1 / 6 * ps[i] / h ** 2)

if i != N - 2:

A[i][i + 1] = 1 / h ** 4 - 1 / 12 * ps[i + 1] / h ** 2

return A

def get_B_numerov(l, R, N):

h = R / N

rhos = [rho(i * h) for i in range(1, N)]

B = np.zeros((N - 1, N - 1))

for i in range(0, N - 1):

if i != 0:

B[i][i - 1] = rhos[i - 1] / h ** 2 / 12

B[i][i] = rhos[i] - 1/ 6 * rhos[i] / h ** 2

if i != N - 2:

B[i][i + 1] = rhos[i + 1] / h ** 2 / 12

return B

def get_matrix_numerov(l, R, N):

return -np.matmul(np.linalg.inv(get_B_numerov(l, R, N)), get_A_numerov(l, R, N)) / 2

# +

def get_first_5(A):

return np.sort(np.linalg.eig(A)[0])[:5]

def draw_error(method, R, N_min, N_max):

real = [-1 / (2 * (n + 1) ** 2) for n in range(5)]

data_x = [i for i in range(N_min, N_max + 1)]

data_y = []

for N in range(N_min, N_max + 1):

matrix = method(0, R, N)

data_y.append(np.log10(max(abs(get_first_5(matrix) - real))))

plt.subplot(211)

plt.plot(data_x, data_y)

plt.ylabel("log10(max error)")

plt.xlabel("N")

# -

plt.figure(figsize=(10, 10), dpi=180)

R = 300

N_min = 6

N_max = 200

draw_error(get_matrix, R, N_min, N_max)

draw_error(get_matrix_numerov, R, N_min, N_max)

plt.title("Max error N")

plt.legend(("mesh", "numerov"))

plt.show()

# Для более менее адевкватной ошибки пришлось увеличить R. Хорошо видно, что mesh дает прямую, а значит ошибка действительно ведет себя как $O(h^2)$. Видим, что Нумеров справляется при больших N лучше. Что и следовало ожидать, ведь он точнее. Лучше, чем $h^4$.

|

hw08/hw08.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

import numpy as np

import numpy.testing as npt

import pandas as pd

import diff_classifier.msd as msd

import pandas.util.testing as pdt

from scipy import interpolate

import diff_classifier.features as ft

import math

frames = 10

d = {'Frame': np.linspace(0, frames, frames),

'X': np.sin(np.linspace(0, frames, frames)+3),

'Y': np.cos(np.linspace(0, frames, frames)+3),

'Track_ID': np.ones(frames)}

df = pd.DataFrame(data=d)

df = msd.all_msds2(df, frames=frames+1)

ft.msd_ratio(df, 1, 9)

d4

frames = 10

d = {'Frame': np.linspace(0, frames, frames),

'X': np.linspace(0, frames, frames)+5,

'Y': np.linspace(0, frames, frames)+3,

'Track_ID': np.ones(frames)}

df = pd.DataFrame(data=d)

df = msd.all_msds2(df, frames=frames+1)

ft.efficiency(df)

d = {'Frame': [0, 1, 2, 3, 4, 0, 1, 2, 3, 4],

'Track_ID': [1, 1, 1, 1, 1, 2, 2, 2, 2, 2],

'X': [0, 0, 1, 1, 2, 1, 1, 2, 2, 3],

'Y': [0, 1, 1, 2, 2, 0, 1, 1, 2, 2]}

df = pd.DataFrame(data=d)

dfi = msd.all_msds2(df, frames = 5)

feat = ft.calculate_features(dfi)

dfi

feat

frames = 6

d = {'Frame': np.linspace(0, frames, frames),

'X': [0, 1, 1, 2, 2, 3],

'Y': [0, 0, 1, 1, 2, 2],

'Track_ID': np.ones(frames)}

df = pd.DataFrame(data=d)

df = msd.all_msds2(df, frames=frames+1)

assert ft.aspectratio(df)[0:2] == (3.9000000000000026, 0.7435897435897438)

npt.assert_almost_equal(ft.aspectratio(df)[2], np.array([1.5, 1. ]))

ft.aspectratio(df)[2]

# +

frames = 10

d = {'Frame': np.linspace(0, frames, frames),

'X': np.linspace(0, frames, frames)+5,

'Y': np.linspace(0, frames, frames)+3,

'Track_ID': np.ones(frames)}

df = pd.DataFrame(data=d)

df = msd.all_msds2(df, frames=frames+1)

d1, d2, d3, d4, d5, d6 = ft.minBoundingRect(df)

o1, o2, o3, o4 = (-2.356194490192, 0, 14.142135623730, 0)

o5 = np.array([10, 8])

o6 = np.array([[5., 3.], [15., 13.], [15., 13.], [5., 3.]])

assert math.isclose(d1, o1, abs_tol=1e-10)

assert math.isclose(d2, o2, abs_tol=1e-10)

assert math.isclose(d3, o3, abs_tol=1e-10)

assert math.isclose(d4, o4, abs_tol=1e-10)

npt.assert_almost_equal(d5, o5)

npt.assert_almost_equal(d6, o6)

# -

d1

o1

|

notebooks/development/02_21_18_updating_test_functions.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

# %config InlineBackend.figure_formats = ['svg']

# %matplotlib inline

import pandas as pd

import numpy as np

import matplotlib

import matplotlib.pyplot as plt

import math

from tqdm import tqdm_notebook as tqdm

import pickle

import os

from typing import List, Dict

import seaborn as sns

sns.set_palette("dark")

# -

os.chdir("../../java")

colormap = {

"cooperative": "C0",

"truncation": "C1",

"pps": "C2",

"random_sample": "C3",

"dyadic_truncation": "C6",

"dyadic_b4": "C1",

"dyadic_b10": "C2",

"q_cooperative": "C0",

"q_truncation": "C1",

"q_pps": "C2",

"q_random_sample": "C3",

"q_dyadic_b2": "C6",

"spacesaving": "C4",

"cms_min": "C5",

"kll": "C4",

"low_discrep": "C5",

"yahoo_mg": "C7",

}

markers = {

"cooperative": "x",

"truncation": "^",

"pps": "s",

"random_sample": "+",

"dyadic_truncation": "<",

"dyadic_b4": "^",

"dyadic_b10": "+",

"q_cooperative": "x",

"q_truncation": "^",

"q_pps": "s",

"q_random_sample": "+",

"q_dyadic_b2": "<",

"spacesaving": "D",

"cms_min": "o",

"kll": "D",

"low_discrep": "o",

"yahoo_mg": "*",

}

alg_display_name = {

"cooperative": "Cooperative",

"truncation": "Truncation",

"pps": "PPS",

"random_sample": "USample",

"dyadic_truncation": "Hierarchy",

"dyadic_b4": "Hierarchy $b=4$",

"dyadic_b10": "Hierarchy $b=10$",

"q_cooperative": "Cooperative",

"q_truncation": "Truncation",

"q_pps": "PPS",

"q_random_sample": "USample",

"q_dyadic_b2": "Hierachy",

"spacesaving": "SpaceSaving",

"cms_min": "CMS",

"kll": "KLL",

"low_discrep": "LowDiscrep",

"yahoo_mg": "MG"

}

data_display_name = {

"caida_10M": "CAIDA",

"zipf1p1_10M": "Zipf",

"msft_network_10M": "Provider",

"msft_os_10M": "OSBuild",

"power_2M": "Power",

"uniform_1M": "Uniform",

"msft_records_10M": "Traffic",

}

def get_error_file(experiment_name):

return os.path.join(

"output/results/{}".format(experiment_name),

"errors.csv"

)

def query_length_plot(

experiment_name,

sketch_names: List,

item_agg="max",

query_agg="mean",

ax = None,

absolute=False,

):

e_combined = pd.read_csv(get_error_file(experiment_name))

e_combined["e_norm"] = e_combined[item_agg] / e_combined["total"]

if absolute:

e_combined["e_norm"] = e_combined[item_agg]

eg = e_combined.groupby(["sketch", "query_len"]).aggregate({

"e_norm": ["mean", "std", "max", "count"],

})

eg["err"] = eg[("e_norm", query_agg)]

eg["err_std"] = eg["e_norm", "std"] / np.sqrt(eg["e_norm", "count"])

if ax is None:

f = plt.figure(figsize=(6,4.5))

ax = f.gca()

for method in sketch_names:

eg_cur = eg.loc[method]

ax.errorbar(

eg_cur.index,

eg_cur["err"],

yerr=eg_cur["err_std"],

color=colormap[method],

markersize=5,

lw=.5,

)

ax.plot(

eg_cur.index,

eg_cur["err"],

label=alg_display_name[method],

marker=markers[method],

color=colormap[method],

markersize=5,

fillstyle="none",

lw=.5,

)

ax.set_xscale("log")

ax.set_yscale("log")

# ax.grid(axis="y", linestyle=(0, (2, 10)))

return ax, eg

def query_time_plot(

experiment_name,

sketch_names: List,

ax = None,

):

e_combined = pd.read_csv(get_error_file(experiment_name))

eg = e_combined.groupby(["sketch", "query_len"]).aggregate({

"query_time": ["mean", "std", "max", "count"],

})

eg["time"] = eg[("query_time", "mean")]

for method in sketch_names:

eg_cur = eg.loc[method]

ax.plot(

eg_cur.index,

eg_cur["time"],

label=alg_display_name[method],

marker=markers[method],

color=colormap[method],

markersize=5,

fillstyle="none",

lw=.5,

)

ax.set_xscale("log")

ax.set_yscale("log")

# ax.grid(axis="y", linestyle=(0, (2, 10)))

return ax, eg

# +

sketch_names = [

"cooperative",

"dyadic_truncation",

"random_sample",

"cms_min",

"truncation",

"yahoo_mg",

"pps"

]

sketch_size = 64

experiments = [

"l_zipf_f",

"l_caida_f",

"l_mos_f",

"l_mnetwork_f",

]

cur_experiment = experiments[0]

fig = plt.figure(figsize=(4,3), dpi=100)

ax = fig.gca()

query_length_plot(

cur_experiment,

sketch_names,

item_agg="max",

query_agg="mean",

ax=ax,

absolute=False,

)

ax.set_title(cur_experiment)

ax.set_xlabel("Query Length (Segments)")

ax.set_ylabel("Relative Error")

fig.tight_layout()

lgd = ax.legend(frameon=False, loc='upper center', bbox_to_anchor=(.5, 1.5), ncol=5)

fig.show()

# fname = "output/plots/linear_freq.pdf"

# fname = "output/{}/query_error.png".format(cur_experiment)

# fig.savefig(fname, bbox_extra_artists=(lgd,), bbox_inches='tight')

fig = plt.figure(figsize=(4,3), dpi=100)

ax = fig.gca()

query_time_plot(

cur_experiment,

sketch_names,

ax=ax,

)

ax.set_title(cur_experiment)

ax.set_xlabel("Query Length (Segments)")

ax.set_ylabel("Query Time (ms)")

fig.tight_layout()

lgd = ax.legend(frameon=False, loc='upper center', bbox_to_anchor=(.5, 1.4), ncol=4)

fig.show()

# fname = "output/{}/query_time.png".format(cur_experiment)

# fig.savefig(fname, dpi=200, bbox_extra_artists=(lgd,), bbox_inches='tight')

# +

sketch_names = [

"cooperative",

"dyadic_truncation",

# "dyadic_b3",

"random_sample",

"truncation",

"kll",

"low_discrep",

"pps"

]

sketch_size = 64

experiments = [

"l_power_q",

"l_mrecords_q",

"l_uniform_q",

]

cur_experiment = experiments[0]

fig = plt.figure(figsize=(4,3), dpi=100)

ax = fig.gca()

query_length_plot(

cur_experiment,

sketch_names,

item_agg="max",

query_agg="mean",

ax=ax,

absolute=False,

)

ax.set_title(cur_experiment)

ax.set_xlabel("Query Length (Segments)")

ax.set_ylabel("Relative Error")

fig.tight_layout()

lgd = ax.legend(frameon=False, loc='upper center', bbox_to_anchor=(.5, 1.5), ncol=5)

fig.show()

# fname = "output/plots/linear_freq.pdf"

# fig.savefig(fname, bbox_extra_artists=(lgd,), bbox_inches='tight')

fig = plt.figure(figsize=(4,3), dpi=100)

ax = fig.gca()

query_time_plot(

cur_experiment,

sketch_names,

ax=ax,

)

ax.set_title(cur_experiment)

ax.set_xlabel("Query Length (Segments)")

ax.set_ylabel("Query Time (ms)")

fig.tight_layout()

lgd = ax.legend(frameon=False, loc='upper center', bbox_to_anchor=(.5, 1.5), ncol=5)

fig.show()

# -

# # Varying Segments

enames = [

"l_caida_f",

"l_caidaseg1_f",

"l_caidaseg2_f"

]

dfs = [

pd.read_csv(get_error_file(en))

for en in enames

]

df = pd.concat(dfs).reset_index(drop=True)

df["adj_query_len"] = df["granularity"] / df["query_len"]

df_sel = df[df["adj_query_len"] == 4.0]

df_sel.groupby(["sketch", "granularity"]).aggregate({

"max": ["mean"]

})

# # Varying Size

fnames = [

"l_zipfsize_f",

"l_caidasize_f",

]

df = pd.read_csv(get_error_file(fnames[1]))

df_sel = df[df["query_len"] == 512]

df_sel.groupby(["sketch", "size"]).aggregate({

"max": ["mean"]

})

# # Accumulator

df = pd.read_csv(get_error_file("l_poweracc_q"))

df_sel = df[df["query_len"] == 512]

df_sel.groupby(["sketch", "accumulator_size"]).aggregate({

"max": ["mean"]

})

# # Cubes

df = pd.read_csv(get_error_file("c_bcube_f"))

df["err_n"] = df["max"] / df["total"]

dfg = df.groupby(["sketch"]).aggregate({"err_n": "mean"})

dfg

df = pd.read_csv(get_error_file("c_bcube_lesion_f"))

df["err_n"] = df["max"] / df["total"]

dfg = df.groupby(["sketch", "workload_query_prob"]).aggregate({"err_n": "mean"})

dfg

# # Cube Runtime

df = pd.read_csv(get_error_file("c_mrecords_q"))

dfg = df.groupby(["sketch", "query_len"]).aggregate({"query_time": "mean"})

dfg

|

python/notebooks/RemoteExperiment.ipynb

|

'''

Example implementations of HARK.ConsumptionSaving.ConsPortfolioModel

'''

from HARK.ConsumptionSaving.ConsPortfolioModel import PortfolioConsumerType, init_portfolio

from HARK.ConsumptionSaving.ConsIndShockModel import init_lifecycle

from HARK.utilities import plot_funcs

from copy import copy

from time import time

import numpy as np

import matplotlib.pyplot as plt

# Make and solve an example portfolio choice consumer type

print('Now solving an example portfolio choice problem; this might take a moment...')

MyType = PortfolioConsumerType()

MyType.cycles = 0

t0 = time()

MyType.solve()

t1 = time()

MyType.cFunc = [MyType.solution[t].cFuncAdj for t in range(MyType.T_cycle)]

MyType.ShareFunc = [MyType.solution[t].ShareFuncAdj for t in range(MyType.T_cycle)]

print('Solving an infinite horizon portfolio choice problem took ' + str(t1-t0) + ' seconds.')

# Plot the consumption and risky-share functions

print('Consumption function over market resources:')

plot_funcs(MyType.cFunc[0], 0., 20.)

print('Risky asset share as a function of market resources:')

print('Optimal (blue) versus Theoretical Limit (orange)')

plt.xlabel('Normalized Market Resources')

plt.ylabel('Portfolio Share')

plt.ylim(0.0,1.0)

# Since we are using a discretization of the lognormal distribution,

# the limit is numerically computed and slightly different from

# the analytical limit obtained by Merton and Samuelson for infinite wealth

plot_funcs([MyType.ShareFunc[0]

# ,lambda m: RiskyShareMertSamLogNormal(MyType.RiskPrem,MyType.CRRA,MyType.RiskyVar)*np.ones_like(m)

,lambda m: MyType.ShareLimit*np.ones_like(m)

] , 0., 200.)

# Now simulate this consumer type

MyType.track_vars = ['cNrm', 'Share', 'aNrm', 't_age']

MyType.T_sim = 100

MyType.initialize_sim()

MyType.simulate()

print('\n\n\n')

print('For derivation of the numerical limiting portfolio share')

print('as market resources approach infinity, see')

print('http://www.econ2.jhu.edu/people/ccarroll/public/lecturenotes/AssetPricing/Portfolio-CRRA/')

""

# Make another example type, but this one optimizes risky portfolio share only

# on the discrete grid of values implicitly chosen by RiskyCount, using explicit

# value maximization.

init_discrete_share = init_portfolio.copy()

init_discrete_share['DiscreteShareBool'] = True

init_discrete_share['vFuncBool'] = True # Have to actually construct value function for this to work

# Make and solve a discrete portfolio choice consumer type

print('Now solving a discrete choice portfolio problem; this might take a minute...')

DiscreteType = PortfolioConsumerType(**init_discrete_share)

DiscreteType.cycles = 0

t0 = time()

DiscreteType.solve()

t1 = time()

DiscreteType.cFunc = [DiscreteType.solution[t].cFuncAdj for t in range(DiscreteType.T_cycle)]

DiscreteType.ShareFunc = [DiscreteType.solution[t].ShareFuncAdj for t in range(DiscreteType.T_cycle)]

print('Solving an infinite horizon discrete portfolio choice problem took ' + str(t1-t0) + ' seconds.')

# Plot the consumption and risky-share functions

print('Consumption function over market resources:')

plot_funcs(DiscreteType.cFunc[0], 0., 50.)

print('Risky asset share as a function of market resources:')

print('Optimal (blue) versus Theoretical Limit (orange)')

plt.xlabel('Normalized Market Resources')

plt.ylabel('Portfolio Share')

plt.ylim(0.0,1.0)

# Since we are using a discretization of the lognormal distribution,

# the limit is numerically computed and slightly different from

# the analytical limit obtained by Merton and Samuelson for infinite wealth

plot_funcs([DiscreteType.ShareFunc[0]

,lambda m: DiscreteType.ShareLimit*np.ones_like(m)

] , 0., 200.)

print('\n\n\n')

""

# Make another example type, but this one can only update their risky portfolio

# share in any particular period with 15% probability.

init_sticky_share = init_portfolio.copy()

init_sticky_share['AdjustPrb'] = 0.15

# Make and solve a discrete portfolio choice consumer type

print('Now solving a portfolio choice problem with "sticky" portfolio shares; this might take a moment...')

StickyType = PortfolioConsumerType(**init_sticky_share)

StickyType.cycles = 0

t0 = time()

StickyType.solve()

t1 = time()

StickyType.cFuncAdj = [StickyType.solution[t].cFuncAdj for t in range(StickyType.T_cycle)]

StickyType.cFuncFxd = [StickyType.solution[t].cFuncFxd for t in range(StickyType.T_cycle)]

StickyType.ShareFunc = [StickyType.solution[t].ShareFuncAdj for t in range(StickyType.T_cycle)]

print('Solving an infinite horizon sticky portfolio choice problem took ' + str(t1-t0) + ' seconds.')

# Plot the consumption and risky-share functions

print('Consumption function over market resources when the agent can adjust his portfolio:')

plot_funcs(StickyType.cFuncAdj[0], 0., 50.)

print("Consumption function over market resources when the agent CAN'T adjust, by current share:")

M = np.linspace(0., 50., 200)

for s in np.linspace(0.,1.,21):

C = StickyType.cFuncFxd[0](M, s*np.ones_like(M))

plt.plot(M,C)

plt.xlim(0.,50.)

plt.ylim(0.,None)

plt.show()

print('Risky asset share function over market resources (when possible to adjust):')

print('Optimal (blue) versus Theoretical Limit (orange)')

plt.xlabel('Normalized Market Resources')

plt.ylabel('Portfolio Share')

plt.ylim(0.0,1.0)

plot_funcs([StickyType.ShareFunc[0]

,lambda m: StickyType.ShareLimit*np.ones_like(m)

] , 0., 200.)

""

# Make another example type, but this one has *age-varying* perceptions of risky asset returns.

# Begin by making a lifecycle dictionary, but adjusted for the portfolio choice model.

init_age_varying_risk_perceptions = copy(init_lifecycle)

init_age_varying_risk_perceptions['RiskyCount'] = init_portfolio['RiskyCount']

init_age_varying_risk_perceptions['ShareCount'] = init_portfolio['ShareCount']

init_age_varying_risk_perceptions['aXtraMax'] = init_portfolio['aXtraMax']

init_age_varying_risk_perceptions['aXtraCount'] = init_portfolio['aXtraCount']

init_age_varying_risk_perceptions['aXtraNestFac'] = init_portfolio['aXtraNestFac']

init_age_varying_risk_perceptions['BoroCnstArt'] = init_portfolio['BoroCnstArt']

init_age_varying_risk_perceptions['CRRA'] = init_portfolio['CRRA']

init_age_varying_risk_perceptions['DiscFac'] = init_portfolio['DiscFac']

init_age_varying_risk_perceptions['RiskyAvg'] = [1.08]*init_lifecycle['T_cycle']

init_age_varying_risk_perceptions['RiskyStd'] = list(np.linspace(0.20,0.30,init_lifecycle['T_cycle']))

init_age_varying_risk_perceptions['RiskyAvgTrue'] = 1.08

init_age_varying_risk_perceptions['RiskyStdTrue'] = 0.20

AgeVaryingRiskPercType = PortfolioConsumerType(**init_age_varying_risk_perceptions)

AgeVaryingRiskPercType.cycles = 1

# Solve the agent type with age-varying risk perceptions

#print('Now solving a portfolio choice problem with age-varying risk perceptions...')

t0 = time()

AgeVaryingRiskPercType.solve()

AgeVaryingRiskPercType.cFunc = [AgeVaryingRiskPercType.solution[t].cFuncAdj for t in range(AgeVaryingRiskPercType.T_cycle)]

AgeVaryingRiskPercType.ShareFunc = [AgeVaryingRiskPercType.solution[t].ShareFuncAdj for t in range(AgeVaryingRiskPercType.T_cycle)]

t1 = time()

print('Solving a ' + str(AgeVaryingRiskPercType.T_cycle) + ' period portfolio choice problem with age-varying risk perceptions took ' + str(t1-t0) + ' seconds.')

# Plot the consumption and risky-share functions

print('Consumption function over market resources in each lifecycle period:')

plot_funcs(AgeVaryingRiskPercType.cFunc, 0., 20.)

print('Risky asset share function over market resources in each lifecycle period:')

plot_funcs(AgeVaryingRiskPercType.ShareFunc, 0., 200.)

# The code below tests the mathematical limits of the model.

# +

# Create a grid of market resources for the plots

mMin = 0 # Minimum ratio of assets to income to plot

mMax = 5*1e2 # Maximum ratio of assets to income to plot

mPts = 1000 # Number of points to plot

eevalgrid = np.linspace(0,mMax,mPts) # range of values of assets for the plot

# Number of points that will be used to approximate the risky distribution

risky_count_grid = [5,200]

# Plot by ages (time periods) at which to plot. We will use the default life-cycle calibration.

ages = [2, 4, 6, 8]

#Create a function to compute the Merton-Samuelson limiting portfolio share.

def RiskyShareMertSamLogNormal(RiskPrem,CRRA,RiskyVar):

return RiskPrem/(CRRA*RiskyVar)

# + Calibration and solution

for rcount in risky_count_grid:

# Create a new dictionary and replace the number of points that

# approximate the risky return distribution

# Create new dictionary copying the default

merton_dict = init_lifecycle.copy()

merton_dict['RiskyCount'] = rcount

# Create and solve agent

agent = PortfolioConsumerType(**merton_dict)

agent.solve()

# Compute the analytical Merton-Samuelson limiting portfolio share

RiskyVar = agent.RiskyStd**2

RiskPrem = agent.RiskyAvg - agent.Rfree

MS_limit = RiskyShareMertSamLogNormal(RiskPrem,

agent.CRRA,

RiskyVar)

# Now compute the limiting share numerically, using the approximated

# distribution

agent.update_ShareLimit()

NU_limit = agent.ShareLimit

plt.figure()

for a in ages:

plt.plot(eevalgrid,

agent.solution[a]\

.ShareFuncAdj(eevalgrid),

label = 't = %i' %(a))

plt.axhline(NU_limit, c='k', ls='-.', label = 'Exact limit as $m\\rightarrow \\infty$.')

plt.axhline(MS_limit, c='k', ls='--', label = 'M&S Limit without returns discretization.')

plt.ylim(0,1.05)

plt.xlim(eevalgrid[0],eevalgrid[-1])

plt.legend()

plt.title('Risky Portfolio Share by Age\n Risky distribution with {points} equiprobable points'.format(points = rcount))

plt.xlabel('Wealth (m)')

plt.ioff()

plt.draw()

# -

|

examples/ConsumptionSaving/example_ConsPortfolioModel.ipynb

|

# ---

# jupyter:

# jupytext:

# formats: ipynb,.md//md

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Flow Chart Generator

from jp_flowchartjs.jp_flowchartjs import FlowchartWidget

# *An [issue](https://github.com/adrai/flowchart.js/issues/186) has been filed regarding the misaligned return path in the flow diagram in `jp_flowchartjs`.*

# ## 03_1_1

#

# Mermaid code https://mermaid-js.github.io/mermaid-live-editor/

# The mermaid editor is supposed to encode/decode base 64 strings

# but I can't get my own encoder/decoder to work to encode/decode things the same way?

#

# graph LR

# A(Start) --> B[Set the count to 0]

# B --> C[Swim a length]

# C --> D[Add 1 to the count]

# D --> E{Is the<br/>count less<br/>than 20?}

# E --> |Yes| C

# E --> |No| F(End)

#

# Long description:

#

# A flow chart for a person swimming 20 lengths of a pool. The flow chart starts with an oval shape labelled ‘Start’. From here there are sequences of boxes connected by arrows: first ‘Set the count to 0’, then ‘Swim a length’ and last ‘Add 1 to the count’. From this box an arrow leads to a decision diamond labelled ‘Is the count less than 20?’ Two arrows lead from this: one labelled ‘Yes’, the other ‘No’. The ‘Yes’ branch leads back to rejoin the box ‘Swim a length’. The ‘No’ branch leads directly to an oval shape labelled ‘End’. There is thus a loop in the chart which includes the steps ‘Swim a length’ and ‘Add 1 to the count’ and ends with the decision ‘Is the count less than 20?’

# %%flowchart_magic

st=>start: Start

e=>end: End

op1=>operation: Set the count to 0

op2=>operation: Swim a length

op3=>operation: Add 1 to the count

cond=>condition: Is the count less than 20?

st(right)->op1(right)->op2(right)->op3(right)->cond

cond(yes,right)->op2

cond(no)->e

# ### Ironing

#

# Mermaid drawing (this is far from ideal...Maybe better to use draw.io?)<font color='red'>JD: To be resolved.</font>

#

# graph LR

# A(Start) --> B{Any clothes<br>left in<br/>basket?}

# B --> |Yes| C[Take out<br/>item and<br/>iron]

# C --> D[Put it on pile<br/>of ironed<br/>clothes]

# D --> B

# B --> |No| E(End)

#

# Long description:

#

# A flow chart for a person ironing clothes. This starts with an oval ‘Start’. An arrow leads to a decision diamond ‘Any clothes left in basket?’ Two arrows lead from this: one labelled ‘Yes’, the other ‘No’. The ‘Yes’ branch continues in turn to two boxes ‘Take out item and iron’ and ‘Put it on pile of ironed clothes’; an arrow leads back from this box to rejoin the decision ‘Any clothes left in basket?’ The ‘No’ branch leads directly to an oval ‘End’. There is thus a loop in the chart which begins with the decision ‘Any clothes left in basket?’ and includes the steps ‘Take out item and iron’ and ‘Put it on pile of ironed clothes’.

# %%flowchart_magic

st=>start: Start

e=>end: End

cond=>condition: Any clothes left in basket?

op2=>operation: Take out item and iron

op3=>operation: Put it on pile of ironed clothes

st(right)->cond

cond(yes, right)->op2

op2(right)->op3(top)->cond

cond(no, bottom)->e

# graph TD

# A(Start) --> B[Set counter to 0]

# B --> C[# Draw side<br/>...code...]

# C --> D[# Turn ninety degrees<br/>...code...]

# D --> E[Add 1 to counter]

# E --> F{Is counter < 4?}

# F --> |Yes| C

# F --> |No| G(End)

#

# Long description:

#

# A flow chart for a robot program with a loop. This starts with an oval ‘Start’. An arrow leads first to a box ‘Set counter to 0’ and then to a sequence of further boxes: ‘# Draw side’ with an implication of the code associated with that, ‘# Turn ninety degrees’, again with a hint regarding the presence of code associated with that activity, and lastly ‘Add 1 to counter’. The arrow from this box leads to a decision diamond ‘Is counter < 4?’ Two arrows lead from this, one labelled ‘Yes’, the other ‘No’. The ‘Yes’ branch loops back to rejoin the sequence at ‘# Draw side code’. The ‘No’ branch leads directly to an oval ‘End’. There is thus a loop in the chart which includes the sequence of motor control commands, incrementing the counter and ends with the decision ‘Is counter < 4?’.

# %%flowchart_magic

st=>start: Start

e=>end: End

op1=>operation: Set counter to 0

op2=>operation: Draw side code

op3=>operation: Turn ninety degrees code

op4=>operation: Add 1 to counter

cond=>condition: Is counter < 4?

st(right)->op1(right)->op2->op3->cond

cond(yes, right)->op2

cond(no, bottom)->e

# ### 02_Robot_Lab/Section_00_02.md

#

#

# +

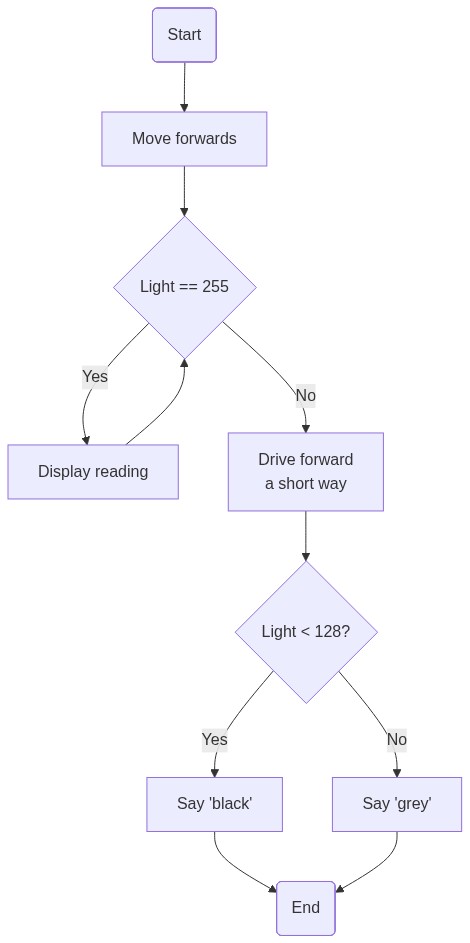

# %%flowchart_magic

st=>start: Start

e=>end: End

op1=>operation: Start moving forwards

cond1=>condition: Light < 100?

op2=>parallel: Display reading (continue drive forwards)

op3=>operation: Drive forward a short way

cond2=>condition: Light < 50?

op4=>operation: Say "grey"

op5=>operation: Say "black"

st(right)->op1(right)->cond1({'flowstate':{'cond1':{'yes-text' : 'ssno', 'no-text' : 'yes'}}} )

cond1(no,right)->op2(path1, top)->cond1

cond1(yes)->op3->cond2

cond2(no)->op4->e

cond2(yes)->op5->e

# -

# <div class='alert alert-warning'>The flowchart.js layout algorithm will allow us to change yes/no labels on conditions, but the magic does not currently provide a way to pass alternative values in (eg swapping yes/no labels to change the sense of the decision as drawn). Also, the layout algorithm seems to be broken when swapping yes and no.</div>

# +

## # Mermaid.js code

graph TD

A(Start) --> B[Move forwards]

B --> C{Light == 100}

C --> |Yes| D[Display reading]

D --> C

C --> |No| E[Drive forward<br/>a short way]

E --> F{Light < 50?}

F --> |Yes| G[Say 'black']

F --> |No| H[Say 'grey']

G --> I(End)

H --> I

# -

# We can do checklists in notebooks:

#

# - [ ] item 1

# - [ ] item 2

# +

# # %%flowchart_magic

st=>start: Start

e=>end: End

op1=>operation: Start moving forwards

cond1=>condition: Light < 100?

op2=>parallel: Display reading (continue drive forwards)

op3=>operation: Drive forward a short way

cond2=>condition: Light < 50?

op4=>operation: Say "grey"

op5=>operation: Say "black"

st(right)->op1(right)->cond1({'flowstate':{'cond1':{'yes-text' : 'ssno', 'no-text' : 'yes'}}} )

cond1(no,right)->op2(path1, top)->cond1

cond1(yes)->op3->cond2

cond2(no)->op4->e

cond2(yes)->op5->e

# -

# The flow chart starts with a *Start* label followed by a rectangular *Start moving forwards* step. An initial diamond shaped decision block tests the condition "Light < 100". If false ("no"), the program continues with a rectangular "Display reading (continue drive forwards)" step which then returns control back to the first decision block. If true ("yes") control progresses via a rectangular "Drive forward a short way" step and then another diamond shaped decision step which tests the condition "Light < 50". If "no", control passes to a rectangular "Say 'grey'" step and then a terminating "End" condition. If "yes", control passes to a rectangular "Say 'black'" step and then also connects to the terminating "End" condition.

# ## Design cycle

# + active=""

# # st=>start: Start

# e=>end: End

# op1=>operation: Generate

# op2=>parallel: Evaluate

# st(right)->op1(right)->op2

# op2(path1, top)->op1

# op2(path2, right)->e

|

content/backgrounds/Flowchart Generator.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

from sklearn import datasets

from sklearn import metrics

from sklearn.datasets import load_iris

iris = load_iris()

x = iris.data # independent features

y = iris.target # dependent features

#print(x)

#print(y)

y

# +

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split (x, y, test_size = 0.20,random_state = 0)

# +

#GaussianNB is specifically used when the features have continuous values.

from sklearn.naive_bayes import GaussianNB

model = GaussianNB()

model.fit(X_train, y_train)

prediction = model.predict(X_test)

print(prediction)

print(y_test)

# -

from sklearn.metrics import confusion_matrix

confusion_matrix(y_test,prediction)

TPN=11+13+5

Total=30

accuracy =TPN/Total

accuracy

# +

from sklearn.metrics import accuracy_score

print(accuracy_score(y_test, prediction))

# +

from sklearn.metrics import f1_score

print(f1_score(y_test, prediction))

# +

print(metrics.confusion_matrix(y_test, prediction))

prediction

|

Lec 15 Naive Bayes Questions/Ma'am/gaussian.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # A software bug

# ## Problem Definition

# You are using a legacy software to solve a production mix Continuous Linear Programming (CLP) problem in your company where the objective is to maximise profits. The following table provides the solution of the primal, dual and a sensitivity analysis for the three decision variables that represent the quantities to produce of each product

#

# |Variables | Solution | Reduced cost | Objective Coefficient | Lower bound | Upper bound|

# |---|---|---|---|---|---|

# |$x_1$ | 300.00 | ? | 30.00 | 24.44 | Inf|

# |$x_2$ | 33.33 | ? | 20.00 | -0.00 | 90.00|

# |$x_3$ | 0 | -8.33 | 40.00 | -Inf | 48.33 |

#

# Answer the following questions:

#

# **a.** Notice that there are some values missing (a bug in the software shows a ? sign instead of a numerical value, remember to use Python the next time around). Fill the missing values and explain your decision

# The reduced costs of $x_1$ and $x_2$ must be zero, since the two variables are basic, the reduced cost is zero.

#

# **b.** According to the provided solution, which of the three products has the highest impact in your objective function? Motivate your response

# The product of the objective coefficient times the solution determines the impact in the objective function. In this case, the variable that has the highest impact in the objective function is $x_1$

# **c.** What does the model tell you about product $x_3$. Is it profitable to manufacture under the current conditions modeled in the problem? Provide quantitative values to motivate your response

# In this solution, it is not profitable to manufacture $x_3$. In fact, manufacturing 1 unit would have a negative impact of 8.33 in the objective function.

#

# **d.** Recall that in this type of problem, the objective coefficient represents the marginal profit per unit of product. What would happen if the objective function of variable $x_3$ is increased over 50? Describe what would be the impact in the obtained solution (1 point)

# The upper bound of the objective coefficient is 48.33, meaning that if the objective coefficient goes over this value, we would experience a change in the FSB. Most likely, variable The objective coefficient would need to increase up to 48.33 to make this product profitable $x_3$ would enter the solution.

#

#

#

# The following table represents the value obtained for the decision variables related to the constraints:

#

# | Constraint | Right Hand Side | Shadow Price | Slack | Min RHS | Max RHS |

# |-----|-----|-----|----|-----|----|

# | $s_1$ | 400.00 | 6.67 | 0.00 | 300.00 | 525.00 |

# | $s_2$ | 600.00 | 11.67 | 0.00 | 0.00 | 800.00 |

# | $s_3$ | 600.00 | 0.00 | 166.67 | 433.33 | Inf |

#

# Bearing in mind that the constraints represent the availability of 3 limited resources (operating time in minutes) and that the type of constraints is in every case "less or equal" answer the following questions:

#

# **e.** Which decision variables are basic? How many are there and, is this result expected? Motivate your response

# The basic decision variables are $x_1$, $x_2$, and $s_3$, as many basic decision variables as constraints, since this is a Feasible Basic Solution (FBS).

#

# **f.** Imagine that you had to cut down costs by reducing the availability of the limited resources in the model. Which one would you select? How much could you cut down without changing the production mix? Motivate your response

# If the Right Hand Sides represent availability of resources, we could reduce the availability of the third constraint in 166.7 units (slack) without any change in the base.

#

# **g.** Now, with the money saved, you could invest to increase the availability of other limited resources. Again, indicate which one would you select and by how much you would increase the availability of this resource without changing the production mix. Motivate your response

# The best option is to invest in the resource of the second constraint, since it has the largest shadow price. The RHS can increase up to 800 units without a change in the base.

#

# **h.** How do the columns of the two tables relate to the primal and dual problem? Moreover, for each column, not considering upper and lower bounds, write down a brief description of each column and the relationship it has with the primal and dual problem

# In the first table, the column solution contains the values of the decision variables of the primal and the column reduced costs represents the slack variables of the dual.

# In the second table, the column slack represents the values of the slack variables in the primal solution and the column shadow prices, represent the values of the decision variables of the dual.

# + [markdown] pycharm={"name": "#%% md\n"}

#

|

docs/source/CLP/solved/A software bug (Solved).ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python [conda env:ml] *

# language: python

# name: conda-env-ml-py

# ---

# # Early Stopping

# %reload_ext autoreload

# %autoreload 2

# %matplotlib inline

import sys

sys.path.insert(0, "../src")

# +

import numpy as np

import pandas as pd

from sklearn import metrics

from sklearn import model_selection

import albumentations as A

import torch

import callbacks

import config

import dataset

import models

import engine

# +

import numpy as np

import torch

class EarlyStopping:

def __init__(self, patience=7, mode="max", delta=0.0001):

self.patience = patience

self.mode = mode

self.delta = delta

self.best_score = None

self.counter = 0

self.early_stop = False

if mode == "max":

self.val_score = -np.inf

elif mode == "min":

self.val_score = np.inf

def __call__(self, epoch_score, model, model_path):

if self.mode == "max":

score = np.copy(epoch_score)

elif self.mode == "min":

score = -1.0 * epoch_score

if self.best_score is None:

self.best_score = score

self.save_checkpoint(epoch_score, model, model_path)

elif score < self.best_score + self.delta:

self.counter += 1

print(f"EarlyStopping counter: {self.counter} out of {self.patience}")

if self.counter >= self.patience:

self.early_stop = True

else:

self.best_score = score

self.counter = 0

self.save_checkpoint(epoch_score, model, model_path)

def save_checkpoint(self, epoch_score, model, model_path):

if epoch_score not in (np.inf, -np.inf, -np.nan, np.nan):

print(f"Validation score improved ({self.val_score} --> {epoch_score}). Saving model!")

torch.save(model.state_dict(), model_path)

self.val_score = epoch_score

# -

df = pd.read_csv(config.TRAIN_CSV)

# df_train, df_valid = model_selection.train_test_split(df, test_size=0.1, stratify=df.digit)

train_idx, valid_idx = model_selection.train_test_split(np.arange(len(df)), test_size=0.1, stratify=df.digit)

train_dataset = dataset.EMNISTDataset(df, train_idx)

valid_dataset = dataset.EMNISTDataset(df, valid_idx)

train_loader = torch.utils.data.DataLoader(train_dataset, batch_size=config.TRAIN_BATCH_SIZE, shuffle=True)

valid_loader = torch.utils.data.DataLoader(valid_dataset, batch_size=config.TEST_BATCH_SIZE)

# +

EPOCHS = 200

device = torch.device(config.DEVICE)

model = models.SpinalVGG()

# model = models.Model()

model.to(device)

optimizer = torch.optim.Adam(model.parameters())

scheduler = torch.optim.lr_scheduler.ReduceLROnPlateau(

optimizer, mode='max', verbose=True, patience=10, factor=0.5

)

early_stop = callbacks.EarlyStopping(patience=15, mode="max")

for epoch in range(EPOCHS):

engine.train(train_loader, model, optimizer, device)

predictions, targets = engine.evaluate(valid_loader, model, device)

predictions = np.array(predictions)

predictions = np.argmax(predictions, axis=1)

accuracy = metrics.accuracy_score(targets, predictions)

print(f"Epoch: {epoch}, Valid accuracy={accuracy}")

model_path = "./test.pt"

early_stop(accuracy, model, model_path)

if early_stop.early_stop:

print(f"Early stopping. Best score {early_stop.best_score}. Loading weights...")

model.load_state_dict(torch.load(model_path))

break

scheduler.step(accuracy)

# -

model.load_state_dict(torch.load("./test.pt"))

torch.save(model.state_dict(), "../models/spinalvgg.pt")

model = models.SpinalVGG()

model.load_state_dict(torch.load("../models/spinalvgg.pt"))

model.to(device)

df_test = pd.read_csv(config.TEST_CSV)

test_dataset = dataset.EMNISTTestDataset(df_test)

test_loader = torch.utils.data.DataLoader(test_dataset, batch_size=config.TEST_BATCH_SIZE)

predictions = engine.infer(test_loader, model, device)

predictions = np.array(predictions)

predictions = np.argmax(predictions, axis=1)

submission = pd.DataFrame({"id": df_test.id, "digit": predictions})

submission.to_csv("../output/spinalvgg.csv", index=False)

submission.head()

|

notebooks/04-early-stopping.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

# %matplotlib notebook

from collections import Counter

import dill

import glob

import igraph as ig

import itertools

import leidenalg

#import magic

import matplotlib

from matplotlib import pyplot

import numba

import numpy

import os

import pickle

from plumbum import local

import random

import re

import scipy

from scipy.cluster import hierarchy

import scipy.sparse as sps

from scipy.spatial import distance

import scipy.stats as stats

from sklearn.feature_extraction.text import TfidfTransformer

from sklearn.decomposition import TruncatedSVD

from sklearn import neighbors

from sklearn import metrics

import sys

import umap

#from plotly import tools

#import plotly.offline as py

#import plotly.graph_objs as go

#py.init_notebook_mode(connected=True)

# +

def find_nearest_genes(peak_files, out_subdir, refseq_exon_bed):

#get unix utilities

bedtools, sort, cut, uniq, awk = local['bedtools'], local['sort'], local['cut'], local['uniq'], local['awk']

#process the peak files to find nearest genes

nearest_genes = []

for path in sorted(peak_files):

out_path = os.path.join(out_subdir, os.path.basename(path).replace('.bed', '.nearest_genes.txt'))

cmd = (bedtools['closest', '-D', 'b', '-io', '-id', '-a', path, '-b', refseq_exon_bed] |

cut['-f1,2,3,5,9,12'] | #fields are chrom, start, stop, peak sum, gene name, distance

awk['BEGIN{OFS="\t"}{if($6 > -1200){print($1, $2, $3, $6, $5, $4);}}'] |

sort['-k5,5', '-k6,6nr'] |

cut['-f5,6'])()

with open(out_path, 'w') as out:

prev_gene = None

for idx, line in enumerate(str(cmd).strip().split('\n')):

if prev_gene is None or not line.startswith(prev_gene):

# print(line)

line_split = line.strip().split()

prev_gene = line_split[0]

out.write(line + '\n')

nearest_genes.append(out_path)

return nearest_genes

def load_expr_db(db_path):

if os.path.basename(db_path) == 'RepAvgGeneTPM.csv':

with open(db_path) as lines_in:

db_headers = lines_in.readline().strip().split(',')[1:]

db_vals = numpy.loadtxt(db_path, delimiter=',', skiprows=1, dtype=object)[:,1:]

else:

with open(db_path) as lines_in:

db_headers = lines_in.readline().strip().split('\t')

db_vals = numpy.loadtxt(db_path, delimiter='\t', skiprows=1, dtype=object)

print('Loaded DB shape: {!s}'.format(db_vals.shape))

return (db_headers, db_vals)

TOPN=500

def get_gene_data(genes_path, gene_expr_db, topn=TOPN):

if isinstance(genes_path, list):

genes_list = genes_path

else:

with open(genes_path) as lines_in:

genes_list = [elt.strip().split()[:2] for elt in lines_in]

gene_idx = [(numpy.where(gene_expr_db[:,0] == elt[0])[0],elt[1]) for elt in genes_list]

gene_idx_sorted = sorted(gene_idx, key=lambda x:float(x[1]), reverse=True)

gene_idx, gene_weights = zip(*[elt for elt in gene_idx_sorted if len(elt[0]) > 0][:topn])

gene_idx = [elt[0] for elt in gene_idx]

gene_data = gene_expr_db[:,1:].astype(float)[gene_idx,:]

denom = numpy.sum(gene_data, axis=1)[:,None] + 1e-8

gene_norm = gene_data/denom

return gene_idx, gene_data, gene_norm, len(genes_list), numpy.array(gene_weights, dtype=float)

def sample_db(data_norm, expr_db, data_weights=None, nsamples=1000):

samples = []

rs = numpy.random.RandomState(15321)

random_subset = numpy.arange(expr_db.shape[0])

num_to_select = data_norm.shape[0]

for idx in range(nsamples):

rs.shuffle(random_subset)

db_subset = expr_db[random_subset[:num_to_select]][:,1:].astype(float)

denom = numpy.sum(db_subset, axis=1)[:None] + 1e-8

db_subset_norm = numpy.mean((db_subset.T/denom).T, axis=0)

if data_weights is not None:

samples.append(numpy.log2(numpy.average(data_norm, axis=0, weights=gene_weights)/db_subset_norm))

else:

samples.append(numpy.log2(numpy.average(data_norm, axis=0, weights=None)/db_subset_norm))

samples = numpy.vstack(samples)

samples_mean = numpy.mean(samples, axis=0)

samples_sem = stats.sem(samples, axis=0)

conf_int = numpy.array([stats.t.interval(0.95, samples.shape[0]-1,

loc=samples_mean[idx], scale=samples_sem[idx])

for idx in range(samples.shape[1])]).T

conf_int[0] = samples_mean - conf_int[0]

conf_int[1] = conf_int[1] - samples_mean

return samples_mean, conf_int

def plot_l2_tissues(nearest_genes_glob, refdata, expr_db=None, expr_db_headers=None, ncols=3,

topn=TOPN, weights=False, nsamples=100, savefile=None, display_in_notebook=True):

if expr_db is None:

#Get all L2 tissue expression data to normalize the distribution of genes from peaks

l2_tissue_db_path = os.path.join(refdata,'gexplore_l2_tissue_expr.txt')

expr_db_headers, expr_db = load_expr_db(l2_tissue_db_path)

gene_lists = glob.glob(nearest_genes_glob)

if os.path.basename(gene_lists[0]).startswith('peaks'):

gene_lists.sort(key=lambda x:int(os.path.basename(x).split('.')[0].replace('peaks', '')))

elif os.path.basename(gene_lists[0]).startswith('topic'):

gene_lists.sort(key=lambda x:int(os.path.basename(x).split('.')[1].replace('rank', '')))

else:

gene_lists.sort(key=lambda x:os.path.basename(x).split('.')[0])

gene_list_data = [(os.path.basename(path).split('.')[0], get_gene_data(path, expr_db, topn=topn)) for path in gene_lists]

print('\n'.join(['{!s} nearest genes: found {!s} out of {!s} total'.format(fname, data.shape[0], gene_list_len)

for (fname, (data_idx, data, data_norm, gene_list_len, gene_weights)) in gene_list_data]))

l2_tissue_colors = [('Body wall muscle', '#e51a1e'),

('Intestinal/rectal muscle', '#e51a1e'),

('Pharyngeal muscle', '#377db8'),

('Pharyngeal epithelia', '#377db8'),

('Pharyngeal gland', '#377db8'),

('Seam cells', '#4eae4a'),

('Non-seam hypodermis', '#4eae4a'),

('Rectum', '#4eae4a'),

('Ciliated sensory neurons', '#984ea3'),

('Oxygen sensory neurons', '#984ea3'),

('Touch receptor neurons', '#984ea3'),

('Cholinergic neurons', '#984ea3'),

('GABAergic neurons', '#984ea3'),

('Pharyngeal neurons', '#984ea3'),

('flp-1(+) interneurons', '#984ea3'),

('Other interneurons', '#984ea3'),

('Canal associated neurons', '#984ea3'),

('Am/PH sheath cells', '#ff8000'),

('Socket cells', '#ff8000'),

('Excretory cells', '#ff8000'),

('Intestine', '#fcd800'),

('Germline', '#f97fc0'),

('Somatic gonad precursors', '#f97fc0'),

('Distal tip cells', '#f97fc0'),

('Vulval precursors', '#f97fc0'),

('Sex myoblasts', '#f97fc0'),

('Coelomocytes', '#a75629')]

idx_by_color = {}

for idx, (name, color) in enumerate(l2_tissue_colors):

try:

idx_by_color[color][1].append(idx)

except KeyError:

idx_by_color[color] = [name, [idx]]

# rs = numpy.random.RandomState(15321)

# random_subset = numpy.arange(expr_db.shape[0])

# rs.shuffle(random_subset)

# #num_to_select = int(numpy.mean([neuron_data.shape[0], emb_muscle_data.shape[0], l2_muscle_data.shape[0]]))

# num_to_select = len(random_subset)

# l2_tissue_db_subset = expr_db[random_subset[:num_to_select]][:,1:].astype(float)

# denom = numpy.sum(l2_tissue_db_subset, axis=1)[:,None] + 1e-8

# l2_tissue_db_norm = numpy.mean(l2_tissue_db_subset/denom, axis=0)

print('Tissue DB norm shape: {!s}'.format(expr_db.shape))

pyplot.rcParams.update({'xtick.labelsize':14,

'ytick.labelsize':14,

'xtick.major.pad':8})

ind = numpy.arange(len(expr_db_headers) - 1)

width = 0.66

axis_fontsize = 18

title_fontsize = 19

nrows = int(numpy.ceil(len(gene_list_data)/float(ncols)))

fig, axes = pyplot.subplots(nrows=nrows, ncols=ncols, figsize=(7 * ncols, 7 * nrows), sharey=True)

for idx, (fname, (data_idx, data, data_norm, gene_list_len, gene_weights)) in enumerate(gene_list_data):

ax_idx = (idx//ncols, idx%ncols) if nrows > 1 else idx

# to_plot = numpy.log2(numpy.mean(data_norm, axis=0)/l2_tissue_db_norm)

# import pdb; pdb.set_trace()

if weights is True:

# to_plot = numpy.log2(numpy.average(data_norm, axis=0, weights=gene_weights)/l2_tissue_db_norm)

to_plot, errs = sample_db(data_norm, expr_db, data_weights=gene_weights, nsamples=nsamples)

else:

# to_plot = numpy.log2(numpy.average(data_norm, axis=0, weights=None)/l2_tissue_db_norm)

to_plot, errs = sample_db(data_norm, expr_db, data_weights=None, nsamples=nsamples)

for idx, (name, color) in enumerate(l2_tissue_colors):

axes[ax_idx[0],ax_idx[1]].bar(ind[idx], to_plot[idx], width, yerr=errs[:,idx][:,None], color=color, label=name)

axes[ax_idx[0],ax_idx[1]].axhline(0, color='k')

axes[ax_idx[0],ax_idx[1]].set_xlim((-1, len(expr_db_headers)))

axes[ax_idx[0],ax_idx[1]].set_title('{!s}\n({!s} genes)\n'.format(fname, data.shape[0]), fontsize=title_fontsize)

axes[ax_idx[0],ax_idx[1]].set_ylabel('Log2 ratio of mean expr proportion\n(ATAC targets:Random genes)', fontsize=axis_fontsize)

axes[ax_idx[0],ax_idx[1]].set_xlabel('L2 tissues', fontsize=axis_fontsize)

axes[ax_idx[0],ax_idx[1]].set_xticks(ind + width/2)

axes[ax_idx[0],ax_idx[1]].set_xticklabels([])

#axes[0].set_xticklabels(expr_db_headers[1:], rotation=90)

if nrows > 1:

axes[0,ncols-1].legend(bbox_to_anchor=[1.0,1.0])

else:

axes[-1].legend(bbox_to_anchor=[1.0,1.0])

if display_in_notebook is True:

fig.tight_layout()

if savefile is not None:

fig.savefig(savefile, bbox_inches='tight')

def plot_stages(nearest_genes_glob, refdata, expr_db=None, expr_db_headers=None, ncols=3, topn=TOPN, weights=False):

if expr_db is None:

#Get all stages expression data to normalize the distribution of genes from peaks

stage_db_path = os.path.join(refdata,'gexplore_stage_expr.txt')

expr_db_headers, expr_db = load_expr_db(stage_db_path)

gene_lists = glob.glob(nearest_genes_glob)

if os.path.basename(gene_lists[0]).startswith('peaks'):

gene_lists.sort(key=lambda x:int(os.path.basename(x).split('.')[0].replace('peaks', '')))

elif os.path.basename(gene_lists[0]).startswith('topic'):

gene_lists.sort(key=lambda x:int(os.path.basename(x).split('.')[1].replace('rank', '')))

else:

gene_lists.sort(key=lambda x:os.path.basename(x).split('.')[0])

gene_list_data = [(os.path.basename(path).split('.')[0], get_gene_data(path, expr_db, topn=topn)) for path in gene_lists]

print('\n'.join(['{!s} nearest genes: found {!s} out of {!s} total'.format(fname, data.shape[0], gene_list_len)

for (fname, (data_idx, data, data_norm, gene_list_len, gene_weights)) in gene_list_data]))

rs = numpy.random.RandomState(15321)

random_subset = numpy.arange(expr_db.shape[0])

rs.shuffle(random_subset)

#num_to_select = int(numpy.mean([neuron_data.shape[0], emb_muscle_data.shape[0], l2_muscle_data.shape[0]]))

num_to_select = len(random_subset)

stage_db_subset = expr_db[random_subset[:num_to_select]][:,1:].astype(float)

denom = numpy.sum(stage_db_subset, axis=1)[:,None] + 1e-8

stage_db_norm = numpy.mean(stage_db_subset/denom, axis=0)

print('Stage DB norm shape: {!s}'.format(stage_db_norm.shape))

emb_idx = [expr_db_headers[1:].index(elt) for elt in expr_db_headers[1:]

if elt.endswith('m') or elt == '4-cell']

larva_idx = [expr_db_headers[1:].index(elt) for elt in expr_db_headers[1:]

if elt.startswith('L')]

adult_idx = [expr_db_headers[1:].index(elt) for elt in expr_db_headers[1:]

if 'adult' in elt]

dauer_idx = [expr_db_headers[1:].index(elt) for elt in expr_db_headers[1:]

if 'dauer' in elt]

# rest_idx = [expr_db_headers[1:].index(elt) for elt in expr_db_headers[1:]

# if not elt.endswith('m') and not elt.startswith('L') and elt != '4-cell']

pyplot.rcParams.update({'xtick.labelsize':20,

'ytick.labelsize':20,

'xtick.major.pad':8})

ind = numpy.arange(len(expr_db_headers) - 1)

width = 0.66

axis_fontsize = 25

title_fontsize = 27

nrows = int(numpy.ceil(len(gene_list_data)/float(ncols)))

fig, axes = pyplot.subplots(nrows=nrows, ncols=ncols, figsize=(7 * ncols, 7 * nrows), sharey=True)

for idx, (fname, (data_idx, data, data_norm, gene_list_len, gene_weights)) in enumerate(gene_list_data):

ax_idx = (idx//ncols, idx%ncols) if nrows > 1 else idx

# to_plot = numpy.log2(numpy.mean(data_norm, axis=0)/stage_db_norm)

if weights is True:

to_plot = numpy.log2(numpy.average(data_norm, axis=0, weights=gene_weights)/stage_db_norm)

else:

to_plot = numpy.log2(numpy.average(data_norm, axis=0, weights=None)/stage_db_norm)

axes[ax_idx].bar(ind[emb_idx], to_plot[emb_idx], width, color='orange', label='Embryo')

axes[ax_idx].bar(ind[larva_idx], to_plot[larva_idx], width, color='blue', label='Larva')

axes[ax_idx].bar(ind[adult_idx], to_plot[adult_idx], width, color='red', label='Adult')

axes[ax_idx].bar(ind[dauer_idx], to_plot[dauer_idx], width, color='green', label='Dauer')

# axes[ax_idx].bar(ind[rest_idx], to_plot[rest_idx], width, color='grey', label='Other')

axes[ax_idx].axhline(0, color='k')

axes[ax_idx].set_xlim((-1, len(expr_db_headers)))

axes[ax_idx].set_title('{!s}\n({!s} genes)\n'.format(fname, data.shape[0]), fontsize=title_fontsize)

axes[ax_idx].set_ylabel('Log2 Ratio of Mean Expr Proportion\n(ATAC Targets:All Genes)', fontsize=axis_fontsize)

axes[ax_idx].set_xlabel('Developmental Stage', fontsize=axis_fontsize)

axes[ax_idx].set_xticks(ind + width/2)

axes[ax_idx].set_xticklabels([])

fig.tight_layout()

def leiden_clustering(umap_res, resolution_range=(0,1), random_state=2, kdtree_dist='euclidean'):

tree = neighbors.KDTree(umap_res, metric=kdtree_dist)

vals, i, j = [], [], []

for idx in range(umap_res.shape[0]):

dist, ind = tree.query([umap_res[idx]], k=25)

vals.extend(list(dist.squeeze()))

j.extend(list(ind.squeeze()))

i.extend([idx] * len(ind.squeeze()))

print(len(vals))

ginput = sps.csc_matrix((numpy.array(vals), (numpy.array(i),numpy.array(j))),

shape=(umap_res.shape[0], umap_res.shape[0]))

sources, targets = ginput.nonzero()

edgelist = zip(sources.tolist(), targets.tolist())

G = ig.Graph(edges=list(edgelist))

optimiser = leidenalg.Optimiser()

optimiser.set_rng_seed(random_state)

profile = optimiser.resolution_profile(G, leidenalg.CPMVertexPartition, resolution_range=resolution_range, number_iterations=0)

print([len(elt) for elt in profile])

return profile

def write_peaks_and_map_to_genes(data_array, row_headers, c_labels, out_dir, refseq_exon_bed,

uniqueness_threshold=3, num_peaks=1000):

#write the peaks present in each cluster to bed files

if not os.path.isdir(out_dir):

os.makedirs(out_dir)

else:

local['rm']('-r', out_dir)

os.makedirs(out_dir)

#write a file of peaks per cluster in bed format

peak_files = []

for idx, cluster_name in enumerate(sorted(set(c_labels))):

cell_coords = numpy.where(c_labels == cluster_name)

peak_sums = numpy.mean(data_array[:,cell_coords[0]], axis=1)

peak_sort = numpy.argsort(peak_sums)

# sorted_peaks = peak_sums[peak_sort]

# print('Cluster {!s} -- Present Peaks: {!s}, '

# 'Min Peaks/Cell: {!s}, '

# 'Max Peaks/Cell: {!s}, '

# 'Peaks in {!s}th cell: {!s}'.format(cluster_name, numpy.sum(peak_sums > 0),

# sorted_peaks[0], sorted_peaks[-1],

# num_peaks, sorted_peaks[-num_peaks]))

out_tmp = os.path.join(out_dir, 'peaks{!s}.tmp.bed'.format(cluster_name))

out_path = out_tmp.replace('.tmp', '')

peak_indices = peak_sort[-num_peaks:]

with open(out_tmp, 'w') as out:

out.write('\n'.join('chr'+'\t'.join(elt) if not elt[0].startswith('chr') else '\t'.join(elt)

for elt in numpy.hstack([row_headers[peak_indices],

peak_sums[peak_indices,None].astype(str)])) + '\n')

(local['sort']['-k1,1', '-k2,2n', out_tmp] > out_path)()

os.remove(out_tmp)

peak_files.append(out_path)

bedtools, sort, cut, uniq, awk = local['bedtools'], local['sort'], local['cut'], local['uniq'], local['awk']

out_subdir = os.path.join(out_dir, 'nearest_genes')

if not os.path.isdir(out_subdir):

os.makedirs(out_subdir)

nearest_genes = []

for path in sorted(peak_files):

out_path = os.path.join(out_subdir, os.path.basename(path).replace('.bed', '.nearest_genes.txt'))

cmd = (bedtools['closest', '-D', 'b', '-io', '-id', '-a', path, '-b', refseq_exon_bed] |

cut['-f1,2,3,5,9,12'] | #fields are chrom, start, stop, peak sum, gene name, distance

awk['BEGIN{OFS="\t"}{if($6 > -1200){print($1, $2, $3, $6, $5, $4);}}'] |

sort['-k5,5', '-k6,6nr'] |

cut['-f5,6'])()

with open(out_path, 'w') as out:

prev_gene = None

for idx, line in enumerate(str(cmd).strip().split('\n')):

if prev_gene is None or not line.startswith(prev_gene):

# print(line)

line_split = line.strip().split()

prev_gene = line_split[0]

out.write(line + '\n')

nearest_genes.append(out_path)

all_genes = []

# for idx in range(len(nearest_genes)):

# nearest_genes_path = os.path.join(out_subdir, 'peaks{!s}.nearest_genes.txt'.format(idx))

for nearest_genes_path in nearest_genes:

with open(nearest_genes_path) as lines_in:

all_genes.append([elt.strip().split() for elt in lines_in.readlines()])

# count_dict = Counter([i[0] for i in itertools.chain(*[all_genes[elt] for elt in range(len(nearest_genes))])])

count_dict = Counter([i[0] for i in itertools.chain(*all_genes)])

#print unique genes

for idx, nearest_genes_path in enumerate(nearest_genes):

unique_genes = [elt for elt in all_genes[idx] if count_dict[elt[0]] < uniqueness_threshold]

print(idx, len(unique_genes))

# unique_genes_path = os.path.join(out_subdir, 'peaks{!s}.nearest_genes_lt_{!s}.txt'.

# format(idx, uniqueness_threshold))

unique_genes_path = os.path.splitext(nearest_genes_path)[0] + '_lt_{!s}.txt'.format(uniqueness_threshold)

with open(unique_genes_path, 'w') as out:

out.write('\n'.join(['\t'.join(elt) for elt in unique_genes]) + '\n')

#print shared genes

shared_genes_by_cluster = []

all_genes = [dict([(k,float(v)) for k,v in elt]) for elt in all_genes]

for gene_name in sorted(count_dict.keys()):

if count_dict[gene_name] < uniqueness_threshold:

continue

shared_genes_by_cluster.append([gene_name])

for cluster_dict in all_genes:

shared_genes_by_cluster[-1].append(cluster_dict.get(gene_name, 0.0))

shared_out = os.path.join(out_subdir, 'non-unique_genes_lt_{!s}.txt'.

format(uniqueness_threshold))

numpy.savetxt(shared_out, shared_genes_by_cluster, fmt='%s')

# fmt=('%s',)+tuple('%18f' for _ in range(len(all_genes))))

return

def write_peaks_and_map_to_genes2(data_array, peak_topic_specificity, row_headers, c_labels, out_dir,

refseq_exon_bed, uniqueness_threshold=3, num_peaks=1000):

# import pdb; pdb.set_trace()

#write the peaks present in each cluster to bed files

if not os.path.isdir(out_dir):

os.makedirs(out_dir)

else:

local['rm']('-r', out_dir)

os.makedirs(out_dir)

#write a file of peaks per cluster in bed format

peak_files = []

for idx, cluster_name in enumerate(sorted(set(c_labels))):

cell_coords = numpy.where(c_labels == cluster_name)

peaks_present = numpy.sum(data_array[cell_coords[0],:], axis=0)

out_tmp = os.path.join(out_dir, 'peaks{!s}.tmp.bed'.format(cluster_name))

out_path = out_tmp.replace('.tmp', '')

# peak_indices = peak_sort[-num_peaks:]

peak_scores = (peak_topic_specificity ** 2) * peaks_present

sort_idx = numpy.argsort(peak_scores[peaks_present.astype(bool)])

peak_indices = sort_idx[-num_peaks:]

with open(out_tmp, 'w') as out:

# out.write('\n'.join('chr'+'\t'.join(elt) if not elt[0].startswith('chr') else '\t'.join(elt)

# for elt in numpy.hstack([row_headers[peaks_present.astype(bool)][peak_indices],

# peak_scores[peaks_present.astype(bool)][peak_indices,None].astype(str)])) + '\n')

out.write('\n'.join('\t'.join(elt) for elt in

numpy.hstack([row_headers[peaks_present.astype(bool)][peak_indices],

peak_scores[peaks_present.astype(bool)][peak_indices,None].astype(str)])) + '\n')

(local['sort']['-k1,1', '-k2,2n', out_tmp] > out_path)()

os.remove(out_tmp)

peak_files.append(out_path)

bedtools, sort, cut, uniq, awk = local['bedtools'], local['sort'], local['cut'], local['uniq'], local['awk']

out_subdir = os.path.join(out_dir, 'nearest_genes')

if not os.path.isdir(out_subdir):

os.makedirs(out_subdir)

nearest_genes = []

for path in sorted(peak_files):

out_path = os.path.join(out_subdir, os.path.basename(path).replace('.bed', '.nearest_genes.txt'))

cmd = (bedtools['closest', '-D', 'b', '-io', '-id', '-a', path, '-b', refseq_exon_bed] |

cut['-f1,2,3,5,9,12'] | #fields are chrom, start, stop, peak sum, gene name, distance

awk['BEGIN{OFS="\t"}{if($6 > -1200){print($1, $2, $3, $6, $5, $4);}}'] |

sort['-k5,5', '-k6,6nr'] |

cut['-f5,6'])()

with open(out_path, 'w') as out:

prev_gene = None

for idx, line in enumerate(str(cmd).strip().split('\n')):

if prev_gene is None or not line.startswith(prev_gene):

# print(line)

line_split = line.strip().split()

prev_gene = line_split[0]

out.write(line + '\n')

nearest_genes.append(out_path)

all_genes = []

# for idx in range(len(nearest_genes)):

# nearest_genes_path = os.path.join(out_subdir, 'peaks{!s}.nearest_genes.txt'.format(idx))

for nearest_genes_path in nearest_genes:

with open(nearest_genes_path) as lines_in:

all_genes.append([elt.strip().split() for elt in lines_in.readlines()])

# count_dict = Counter([i[0] for i in itertools.chain(*[all_genes[elt] for elt in range(len(nearest_genes))])])

count_dict = Counter([i[0] for i in itertools.chain(*all_genes)])

#print unique genes

for idx, nearest_genes_path in enumerate(nearest_genes):

unique_genes = [elt for elt in all_genes[idx] if count_dict[elt[0]] < uniqueness_threshold]

print(idx, len(unique_genes))

# unique_genes_path = os.path.join(out_subdir, 'peaks{!s}.nearest_genes_lt_{!s}.txt'.

# format(idx, uniqueness_threshold))

unique_genes_path = os.path.splitext(nearest_genes_path)[0] + '_lt_{!s}.txt'.format(uniqueness_threshold)

with open(unique_genes_path, 'w') as out:

out.write('\n'.join(['\t'.join(elt) for elt in unique_genes]) + '\n')

#print shared genes

shared_genes_by_cluster = []

all_genes = [dict([(k,float(v)) for k,v in elt]) for elt in all_genes]

for gene_name in sorted(count_dict.keys()):

if count_dict[gene_name] < uniqueness_threshold:

continue

shared_genes_by_cluster.append([gene_name])

for cluster_dict in all_genes:

shared_genes_by_cluster[-1].append(cluster_dict.get(gene_name, 0.0))

shared_out = os.path.join(out_subdir, 'non-unique_genes_lt_{!s}.txt'.

format(uniqueness_threshold))

numpy.savetxt(shared_out, shared_genes_by_cluster, fmt='%s')

# fmt=('%s',)+tuple('%18f' for _ in range(len(all_genes))))

return

def write_peaks_and_map_to_genes3(data_array, row_headers, c_labels, out_dir,

refseq_exon_bed, uniqueness_threshold=3, num_peaks=1000):

# import pdb; pdb.set_trace()

#write the peaks present in each cluster to bed files

if not os.path.isdir(out_dir):

os.makedirs(out_dir)

else:

local['rm']('-r', out_dir)

os.makedirs(out_dir)

agg_clusters = numpy.vstack([numpy.sum(data_array[numpy.where(c_labels == cluster_idx)[0]], axis=0)

for cluster_idx in sorted(set(c_labels))])

tfidf = TfidfTransformer(norm='l2', use_idf=True, smooth_idf=True, sublinear_tf=False)

agg_clusters_tfidf = tfidf.fit_transform(agg_clusters).toarray()

#write a file of peaks per cluster in bed format

peak_files = []

for idx, cluster_name in enumerate(sorted(set(c_labels))):

out_tmp = os.path.join(out_dir, 'peaks{!s}.tmp.bed'.format(cluster_name))

out_path = out_tmp.replace('.tmp', '')

sort_idx = numpy.argsort(agg_clusters_tfidf[idx])

peak_indices = sort_idx[-num_peaks:]

with open(out_tmp, 'w') as out:

# out.write('\n'.join('chr'+'\t'.join(elt) if not elt[0].startswith('chr') else '\t'.join(elt)

# for elt in numpy.hstack([row_headers[peaks_present.astype(bool)][peak_indices],

# peak_scores[peaks_present.astype(bool)][peak_indices,None].astype(str)])) + '\n')

out.write('\n'.join('\t'.join(elt) for elt in

numpy.hstack([row_headers[peak_indices],

agg_clusters_tfidf[idx][peak_indices,None].astype(str)])) + '\n')

(local['sort']['-k1,1', '-k2,2n', out_tmp] > out_path)()

os.remove(out_tmp)

peak_files.append(out_path)

bedtools, sort, cut, uniq, awk = local['bedtools'], local['sort'], local['cut'], local['uniq'], local['awk']

out_subdir = os.path.join(out_dir, 'nearest_genes')

if not os.path.isdir(out_subdir):

os.makedirs(out_subdir)

nearest_genes = []

for path in sorted(peak_files):

out_path = os.path.join(out_subdir, os.path.basename(path).replace('.bed', '.nearest_genes.txt'))

cmd = (bedtools['closest', '-D', 'b', '-io', '-id', '-a', path, '-b', refseq_exon_bed] |

cut['-f1,2,3,5,9,12'] | #fields are chrom, start, stop, peak sum, gene name, distance

awk['BEGIN{OFS="\t"}{if($6 > -1200){print($1, $2, $3, $6, $5, $4);}}'] |

sort['-k5,5', '-k6,6nr'] |

cut['-f5,6'])()

with open(out_path, 'w') as out:

prev_gene = None

for idx, line in enumerate(str(cmd).strip().split('\n')):

if prev_gene is None or not line.startswith(prev_gene):

# print(line)

line_split = line.strip().split()

prev_gene = line_split[0]

out.write(line + '\n')

nearest_genes.append(out_path)

all_genes = []

# for idx in range(len(nearest_genes)):

# nearest_genes_path = os.path.join(out_subdir, 'peaks{!s}.nearest_genes.txt'.format(idx))

for nearest_genes_path in nearest_genes:

with open(nearest_genes_path) as lines_in:

all_genes.append([elt.strip().split() for elt in lines_in.readlines()])

# count_dict = Counter([i[0] for i in itertools.chain(*[all_genes[elt] for elt in range(len(nearest_genes))])])

count_dict = Counter([i[0] for i in itertools.chain(*all_genes)])

#print unique genes

for idx, nearest_genes_path in enumerate(nearest_genes):

unique_genes = [elt for elt in all_genes[idx] if count_dict[elt[0]] < uniqueness_threshold]

print(idx, len(unique_genes))

# unique_genes_path = os.path.join(out_subdir, 'peaks{!s}.nearest_genes_lt_{!s}.txt'.

# format(idx, uniqueness_threshold))

unique_genes_path = os.path.splitext(nearest_genes_path)[0] + '_lt_{!s}.txt'.format(uniqueness_threshold)

with open(unique_genes_path, 'w') as out:

out.write('\n'.join(['\t'.join(elt) for elt in unique_genes]) + '\n')

#print shared genes

shared_genes_by_cluster = []

all_genes = [dict([(k,float(v)) for k,v in elt]) for elt in all_genes]

for gene_name in sorted(count_dict.keys()):

if count_dict[gene_name] < uniqueness_threshold:

continue

shared_genes_by_cluster.append([gene_name])

for cluster_dict in all_genes:

shared_genes_by_cluster[-1].append(cluster_dict.get(gene_name, 0.0))

shared_out = os.path.join(out_subdir, 'non-unique_genes_lt_{!s}.txt'.

format(uniqueness_threshold))

numpy.savetxt(shared_out, shared_genes_by_cluster, fmt='%s')

# fmt=('%s',)+tuple('%18f' for _ in range(len(all_genes))))

return

# -

# ## Peaks model

# +

#read in sc peak table

peaktable_path = '../tissue_analysis/glia/filtered_peaks_iqr4.0_low_cells.bow'

peak_data_sparse = numpy.loadtxt(peaktable_path, dtype=int, skiprows=3)

peak_data = sps.csr_matrix((peak_data_sparse[:,2], (peak_data_sparse[:,0] - 1, peak_data_sparse[:,1] - 1)))

cell_names_path = '../tissue_analysis/glia/filtered_peaks_iqr4.0_low_cells.indextable.txt'

cell_names = numpy.loadtxt(cell_names_path, dtype=object)[:,0]

peak_names_path = '../tissue_analysis/glia/filtered_peaks_iqr4.0_low_cells.extra_cols.bed'

peak_row_headers = numpy.loadtxt(peak_names_path, dtype=object)

#chr_regex = re.compile('[:-]')

peak_row_headers = numpy.hstack([peak_row_headers, numpy.array(['name'] * peak_row_headers.shape[0])[:,None]])

print(peak_data.shape)

orig_peaktable_path = '../tissue_analysis/glia/glia_all_peaks.bow'

orig_peak_data_sparse = numpy.loadtxt(orig_peaktable_path, dtype=int, skiprows=3)

orig_peak_data = sps.csr_matrix((orig_peak_data_sparse[:,2],

(orig_peak_data_sparse[:,0] - 1, orig_peak_data_sparse[:,1] - 1)))

orig_cell_names_path = '../tissue_analysis/glia/glia_all_peaks.zeros_filtered.indextable.txt'

orig_cell_names = numpy.loadtxt(orig_cell_names_path, dtype=object)[:,0]

orig_peak_names_path = '../tissue_analysis/glia/glia_all_peaks.zeros_filtered.bed'

orig_peak_row_headers = numpy.loadtxt(orig_peak_names_path, dtype=object)