code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + colab={"base_uri": "https://localhost:8080/", "height": 255} colab_type="code" id="4WBvY6GVYBtS" outputId="3d8e3fd4-5c05-4d62-c22a-a38bb15ee8d9"

from math import sqrt

import tensorflow as tf

from tensorflow import keras

import pandas as pd

from tensorflow.keras import Sequential

from tensorflow.keras.layers import LSTM, Dense, Dropout, Conv1D, GRU

from tensorflow.keras.losses import mean_squared_error

from numpy.core._multiarray_umath import concatenate

from sklearn.preprocessing import MinMaxScaler

import matplotlib.pyplot as plt

# supervised监督学习函数

def series_to_supervised(data, columns, n_in=1, n_out=1, dropnan=True):

n_vars = 1 if isinstance(data, list) else data.shape[1]

df = pd.DataFrame(data)

cols, names = list(), list()

# input sequence (t-n, ... t-1)

for i in range(n_in, 0, -1):

cols.append(df.shift(i))

names += [('%s%d(t-%d)' % (columns[j], j + 1, i))

for j in range(n_vars)]

# forecast sequence (t, t+1, ... t+n)

for i in range(0, n_out):

cols.append(df.shift(-i))

if i == 0:

names += [('%s%d(t)' % (columns[j], j + 1)) for j in range(n_vars)]

else:

names += [('%s%d(t+%d)' % (columns[j], j + 1, i))

for j in range(n_vars)]

# put it all together

agg = pd.concat(cols, axis=1)

agg.columns = names

# drop rows with NaN values

if dropnan:

clean_agg = agg.dropna()

return clean_agg

# return agg

dataset = pd.read_csv(

'Machine_usage_groupby.csv')

dataset_columns = dataset.columns

values = dataset.values

print(dataset)

# 归一化处理

scaler = MinMaxScaler(feature_range=(0, 1))

scaled = scaler.fit_transform(values)

# 监督学习

reframed = series_to_supervised(scaled, dataset_columns, 1, 1)

values = reframed.values

# 学习与检测数据的划分

n_train_hours = 20000

train = values[:n_train_hours, :]

test = values[n_train_hours:, :]

# 监督学习结果划分

train_x, train_y = train[:, :-1], train[:, -1]

test_x, test_y = test[:, :-1], test[:, -1]

# 为了在LSTM中应用该数据,需要将其格式转化为3D format,即[Samples, timesteps, features]

train_X = train_x.reshape((train_x.shape[0], 1, train_x.shape[1]))

test_X = test_x.reshape((test_x.shape[0], 1, test_x.shape[1]))

# + colab={"base_uri": "https://localhost:8080/", "height": 1000} colab_type="code" id="CHM-UGeYYgZN" outputId="e7dd414f-87a9-440d-b685-eab153b4d915"

model = Sequential()

model.add(Conv1D(filters=32, kernel_size=5,

strides=1, padding="causal",

activation="relu"))

model.add(

GRU(

32,

input_shape=(

train_X.shape[1],

train_X.shape[2]),

return_sequences=True))

model.add(GRU(16, input_shape=(train_X.shape[1], train_X.shape[2])))

model.add(Dense(16, activation="relu"))

model.add(Dense(1))

model.compile(loss=tf.keras.losses.Huber(),

optimizer='adam',

metrics=["mse"])

history = model.fit(

train_X,

train_y,

epochs=50,

batch_size=72,

validation_data=(

test_X,

test_y),

verbose = 2)

# + colab={"base_uri": "https://localhost:8080/", "height": 1000} colab_type="code" id="8uPW-5baYiLN" outputId="133dd57f-d8ab-4957-d7bc-32f2520ebb83"

#画图

plt.plot(history.history['loss'], label='train')

plt.plot(history.history['val_loss'], label='test')

plt.legend()

plt.show()

# make the prediction

yHat = model.predict(test_X)

inv_yHat = concatenate((yHat, test_x[:, 1:]), axis=1) # 数组拼接

inv_yHat = inv_yHat[:, 0]

test_y = test_y.reshape((len(test_y), 1))

inv_y = concatenate((test_y, test_x[:, 1:]), axis=1)

inv_y = inv_y[:, 0]

rmse = sqrt(mean_squared_error(inv_yHat, inv_y))

print('Test RMSE: %.8f' % rmse)

mse = mean_squared_error(inv_yHat, inv_y)

print('Test MSE: %.8f' % mse)

yhat = model.predict(test_X)

test_X_reshaped = test_X.reshape((test_X.shape[0], test_X.shape[2]))

inv_yhat = concatenate((yhat, yhat, test_X_reshaped[:, 1:]), axis=1)

inv_yhat = inv_yhat[:, 0]

test_y = test_y.reshape((len(test_y), 1))

inv_y = concatenate((test_y, test_y, test_X_reshaped[:, 1:]), axis=1)

inv_y = inv_y[:, 0]

plt.plot(inv_yhat, label='prediction')

plt.plot(inv_y, label='real')

plt.xlabel('time')

plt.ylabel('cpu_usage_percent')

plt.legend()

plt.show()

plt.plot(inv_yhat[:500], label='prediction')

plt.plot(inv_y[:500], label='real_cpu_usage_percent')

plt.xlabel('time')

plt.ylabel('cpu_usage_percent')

plt.legend()

plt.show()

plt.plot(inv_yhat[:50], label='prediction')

plt.plot(inv_y[:50], label='real_cpu_usage_percent')

plt.xlabel('time')

plt.ylabel('cpu_usage_percent')

plt.legend()

plt.show()

| Project_Alibaba_workload/E50_Alibaba_cluster_predict_compare/Train_20000/COV+GRU+GRU+D+D+E50/.ipynb_checkpoints/COV+GRU+GRU+D+D+E50-checkpoint.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import numpy as np

import matplotlib.pyplot as plt

import miepython as mp

import numpy as np

import matplotlib.pyplot as plt

import matplotlib as mpl

mpl.rcParams["xtick.direction"] = "in"

mpl.rcParams["ytick.direction"] = "in"

mpl.rcParams["lines.markeredgecolor"] = "k"

mpl.rcParams["lines.markeredgewidth"] = 1

mpl.rcParams["figure.dpi"] = 200

from matplotlib import rc

rc('font', family='serif')

rc('text', usetex=True)

rc('xtick', labelsize='medium')

rc('ytick', labelsize='medium')

def cm2inch(value):

return value/2.54

link = r"https://refractiveindex.info/tmp/data/organic/(C8H8)n%20-%20polystyren/Zhang.txt"

poly = np.genfromtxt(link, delimiter='\t')

N = len(poly)//2

poly_lam = poly[1:N,0][:40]

poly_mre = poly[1:N,1][:40]

poly_mim = poly[N+1:,1][:40]

poly_mim

# +

plt.figure(figsize=( cm2inch(16),cm2inch(8)))

plt.plot(poly_lam*1000,poly_mre,color='tab:blue')

#plt.xlim(300,800)

#plt.ylim(-,3)

plt.xlabel('Wavelength (nm)')

plt.ylabel('$n_r$')

#plt.text(350, 1.2, '$m_{re}$', color='blue', fontsize=14)

#plt.text(350, 2.2, '$m_{im}$', color='red', fontsize=14)

ax=plt.gca()

ax.spines['left'].set_color("red")

ax2=ax.twinx()

plt.semilogy(poly_lam*1000,poly_mim,color='tab:red')

plt.ylabel('$n_i$', color = "tab:red")

plt.tight_layout()

plt.savefig("refractive_index.pdf")

# +

plt.semilogy(poly_lam*1000,poly_mim,color='red')

#plt.xlim(300,800)

#plt.ylim(-,3)

plt.xlabel('Wavelength (nm)')

plt.ylabel('Refractive Index')

#plt.text(350, 1.2, '$m_{re}$', color='blue', fontsize=14)

#plt.text(350, 2.2, '$m_{im}$', color='red', fontsize=14)

plt.title('Complex part of Refractive Index of Polystyrene')

plt.show()

# +

r = 1.5 #radius in microns

x = 2*np.pi*r/poly_lam;

m = poly_mre - 1.0j * poly_mim

qext, qsca, qback, g = mp.mie(m,x)

absorb = (qext - qsca) * np.pi * r**2

scatt = qsca * np.pi * r**2

extinct = qext* np.pi * r**2

plt.plot(poly_lam*1000,absorb, label="$\sigma_{abs}$")

plt.plot(poly_lam*1000,scatt, label="$\sigma_{sca}$")

plt.plot(poly_lam*1000,extinct, "--", label="$\sigma_{ext}$")

#plt.text(350, 0.35,'$\sigma_{abs}$', color='blue', fontsize=14)

#plt.text(350, 0.54,'$\sigma_{sca}$', color='red', fontsize=14)

#plt.text(350, 0.84,'$\sigma_{ext}$', color='green', fontsize=14)

plt.xlabel("Wavelength (nm)")

plt.ylabel("Cross Section (1/microns$^2$)")

plt.title("Cross Sections for %.1f$\mu$m Polystyrebe Spheres" % (r*2))

plt.xlim(400,800)

plt.legend()

plt.show()

# +

x = 2*np.pi*r/poly_lam;

m = poly_mre - 1.0j * poly_mim

qext, qsca, qback, g = mp.mie(m,x)

qpr = qext - g*qsca

plt.plot(poly_lam*1000,qpr)

plt.xlabel("Wavelength (nm)")

plt.ylabel("Efficiency $Q_{pr}$")

plt.title("Radiation Pressure Efficiency for %.1f$\mu$m Polystyrene Spheres" % (r*2))

plt.show()

# -

r0 = 1.5e-6

Cpr = np.pi * r0 *r0 * qpr

E0 = 4.5e-3 / (np.pi * 1.75e-3 ** 2 )

c = 299792458 / 1.33

# ## Radiation Pressure

#

# The radiation pressure is given by [e.g., Kerker, p. 94]

#

# $$

# Q_{pr}=Q_{ext}-g Q_{sca}

# $$

#

# and is the momentum given to the scattering particle [van de Hulst, p. 13] in the direction of the incident wave. The radiation pressure cross section $C_{pr}$ is just the efficiency multiplied by the geometric cross section

#

# $$

# C_{pr} = \pi r^2 Q_{pr}

# $$

#

# The radiation pressure cross section $C_{pr}$ can be interpreted as the area of a black wall that would receive the same force from the same incident wave. The actual force on the particle is

# is

#

# $$

# F = E_0 \frac{C_{pr}}{c}

# $$

#

# where $E_0$ is the irradiance (W/m$^2$) on the sphere and $c$ is the velocity of the radiation in the medium

#

F = E0 * Cpr / c

plt.plot(poly_lam*1000,F*1e15)

plt.xlabel("Wavelength (nm)")

plt.ylabel("Force (fN)")

# +

Fs = []

rs = np.linspace(0.5, 3, 100)

I = np.argmin(abs(poly_lam*1000 - 532))

for r in rs:

x = 2*np.pi*r/poly_lam;

m = poly_mre - 1.0j * poly_mim

qext, qsca, qback, g = mp.mie(m,x)

qpr = qext - g*qsca

r0 = r * 1e-6

Cpr = np.pi * r0 *r0 * qpr

E0 = 4.5e-3 / (np.pi * 1.75e-3 ** 2 )

c = 299792458 / 1.33

F = E0 * Cpr / c

Fs.append(F[I]*1e15)

# +

plt.plot(rs, Fs)

#plt.title("Optical force for a 532 $\mu$m plane wave on Polystyrene Spheres")

plt.xlabel("$a$ ($\mu$m)")

plt.ylabel("$F_\mathrm{opt}$ (fN)")

# -

| 02_body/chapter2/images/Calcul_force_optique/.ipynb_checkpoints/Calcul optical force-checkpoint.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + colab={"base_uri": "https://localhost:8080/", "height": 72} id="0uUeDqA32K9o" outputId="27b66765-ee49-4504-f32e-f34776c4f3b4"

import urllib

from IPython.display import Markdown as md

### change to reflect your notebook

_nb_loc = "04_problem_types/10f_image_captioning.ipynb"

_nb_title = "Image Captions"

_nb_message = """

This notebook shows you how to train an ML model to generate captions for images. The training dataset is the COCO large-scale object detection, segmentation, and captioning dataset.

"""

### no need to change any of this

_icons=["https://raw.githubusercontent.com/GoogleCloudPlatform/practical-ml-vision-book/master/logo-cloud.png", "https://www.tensorflow.org/images/colab_logo_32px.png", "https://www.tensorflow.org/images/GitHub-Mark-32px.png", "https://www.tensorflow.org/images/download_logo_32px.png"]

_links=["https://console.cloud.google.com/ai-platform/notebooks/deploy-notebook?" + urllib.parse.urlencode({"name": _nb_title, "download_url": "https://github.com/GoogleCloudPlatform/practical-ml-vision-book/raw/master/"+_nb_loc}), "https://colab.research.google.com/github/GoogleCloudPlatform/practical-ml-vision-book/blob/master/{0}".format(_nb_loc), "https://github.com/GoogleCloudPlatform/practical-ml-vision-book/blob/master/{0}".format(_nb_loc), "https://raw.githubusercontent.com/GoogleCloudPlatform/practical-ml-vision-book/master/{0}".format(_nb_loc)]

md("""<table class="tfo-notebook-buttons" align="left"><td><a target="_blank" href="{0}"><img src="{4}"/>Run in AI Platform Notebook</a></td><td><a target="_blank" href="{1}"><img src="{5}" />Run in Google Colab</a></td><td><a target="_blank" href="{2}"><img src="{6}" />View source on GitHub</a></td><td><a href="{3}"><img src="{7}" />Download notebook</a></td></table><br/><br/><h1>{8}</h1>{9}""".format(_links[0], _links[1], _links[2], _links[3], _icons[0], _icons[1], _icons[2], _icons[3], _nb_title, _nb_message))

# + [markdown] id="fa3LRWv9BNk9"

# ## Enable GPU

# This notebook and pretty much every other notebook in this repository will run faster if you are using a GPU.

#

# On Colab:

# * Navigate to Edit→Notebook Settings

# * Select GPU from the Hardware Accelerator drop-down

#

# On Cloud AI Platform Notebooks:

# * Navigate to https://console.cloud.google.com/ai-platform/notebooks

# * Create an instance with a GPU or select your instance and add a GPU

#

# Next, we'll confirm that we can connect to the GPU with tensorflow:

# + colab={"base_uri": "https://localhost:8080/"} id="pL9G21yy2K2Q" outputId="27f2eeab-a187-4a90-cf67-4ae594e8e9c0"

# Not needed in Colab

# %pip install --quiet tfds-nightly # In Nov 2020, coco_captions is available only in the nightly build

# %pip uninstall -y h5py

# %pip install --quiet 'h5py < 3.0.0' # https://github.com/tensorflow/tensorflow/issues/44467

# + colab={"base_uri": "https://localhost:8080/"} id="G20XL3WTBXhj" outputId="a928c30a-f6d6-4120-9da1-f4f5753b9821"

import tensorflow as tf

print(tf.version.VERSION)

device_name = tf.test.gpu_device_name()

if device_name != '/device:GPU:0':

raise SystemError('GPU device not found')

print('Found GPU at: {}'.format(device_name))

# + [markdown] id="zr6sJGEIBe7D"

# ## Read and visualize dataset

#

# We will use the TensorFlow datasets capability to read the [COCO captions](https://www.tensorflow.org/datasets/catalog/coco_captions) dataset.

# This version contains images, bounding boxes, labels, and captions from COCO 2014, split into the subsets defined by Karpathy and Li (2015) and takes

# care of some data quality issues with the original dataset (for example, some

# of the images in the original dataset did not have captions)

#

# **Note**: This dataset is too large to store in an ephemeral location.

# Therefore, I'm storing the data in the GCS bucket corresponding to this book.

# If you access it from a Notebook outside the US, it will be (a) slow and

# (b) subject to a network charge.

# + id="U2WQtNeGBbMD"

GCS_DIR="gs://practical-ml-vision-book/tdfs_cache"

# Change these to control the accuracy/speed

VOCAB_SIZE = 5000 # use fewer words to speed up convergence

ATTN_UNITS = 512 # size of dense layer in Attention; larger more fine-grained

EPOCHS = 20 # train longer for greater accuracy

BATCH_SIZE = 64 # larger batch sizes lead to smoother convergence, but need more memory

EARLY_STOP_THRESH = 0.0001 # stop once loss improvement is less than this value

EMBED_DIM = 256 # embedding dimension for both images and words

# This is what Inception was trained with, so don't change unless you

# use a different pre-trained model. Inception takes (299, 299, 3) as

# input and provides (64, 2048) as output

IMG_HEIGHT = 299

IMG_WIDTH = 299

IMG_CHANNELS = 3

FEATURES_SHAPE = 2048

ATTN_FEATURES_SHAPE = 64

# + id="8JxC6DhwAcw5"

import matplotlib.pylab as plt

import numpy as np

import tensorflow as tf

import tensorflow_datasets as tfds

def filter_for_crowds(example):

return (tf.math.count_nonzero(example['objects']['is_crowd']) > 0)

def get_image_label(example):

captions = example['captions']['text'] # all the captions

img_id = example['image/id']

img = example['image']

img = tf.image.resize(img, (IMG_HEIGHT, IMG_WIDTH)) # inception size

img = tf.keras.applications.inception_v3.preprocess_input(img)

return {

'image_tensor': img,

'image_id': img_id,

'captions': captions

}

trainds = tfds.load('coco_captions',

split='train',

shuffle_files=False,

data_dir=GCS_DIR)

# reduce number of images in one of these ways

trainds = trainds.filter(filter_for_crowds)

#trainds = trainds.take(10000)

trainds = trainds.map(get_image_label)

# + colab={"base_uri": "https://localhost:8080/", "height": 389} id="KGz2bQaKV3iI" outputId="4bd05dee-e0f5-4934-ac0d-2afa0a20bad8"

f, ax = plt.subplots(1, 4, figsize=(20, 5))

for idx, data in enumerate(trainds.take(4)):

ax[idx].imshow(data['image_tensor'].numpy())

ax[idx].set_title('image_id={}'.format(data['image_id'].numpy()))

ax[idx].set_xlabel(data['captions'].numpy()[0].decode('utf-8')[:30] + str("..."))

# + [markdown] id="y4dyKHB2W4vZ"

# ## Tokenize captions

#

# Add a start and end token to each caption.

# Then send to the Keras tokenizer which will lowercase the captions

# and remove punctuation etc. It will also retain only the most frequent

# words.

# + colab={"base_uri": "https://localhost:8080/"} id="rSdiDPgbLpG_" outputId="f3603c44-17eb-46f9-c6f1-05f362cec614"

# get all the captions to feed into the Tokenizer

STOPWORDS = {'ourselves', 'hers', 'between', 'yourself', 'but', 'again', 'there', 'about', 'once', 'during', 'out', 'very', 'having', 'with', 'they', 'own', 'an', 'be', 'some', 'for', 'do', 'its', 'yours', 'such', 'into', 'of', 'most', 'itself', 'other', 'off', 'is', 's', 'am', 'or', 'who', 'as', 'from', 'him', 'each', 'the', 'themselves', 'until', 'below', 'are', 'we', 'these', 'your', 'his', 'through', 'don', 'nor', 'me', 'were', 'her', 'more', 'himself', 'this', 'down', 'should', 'our', 'their', 'while', 'above', 'both', 'up', 'to', 'ours', 'had', 'she', 'all', 'no', 'when', 'at', 'any', 'before', 'them', 'same', 'and', 'been', 'have', 'in', 'will', 'on', 'does', 'yourselves', 'then', 'that', 'because', 'what', 'over', 'why', 'so', 'can', 'did', 'not', 'now', 'under', 'he', 'you', 'herself', 'has', 'just', 'where', 'too', 'only', 'myself', 'which', 'those', 'i', 'after', 'few', 'whom', 't', 'being', 'if', 'theirs', 'my', 'against', 'a', 'by', 'doing', 'it', 'how', 'further', 'was', 'here', 'than'}

def preprocess_caption(c):

caption = "<start> {} <end>".format(c.decode('utf-8'))

words = [word for word in caption.lower().split()

if word not in STOPWORDS]

return (' '.join(words))

train_captions = []

for data in trainds:

str_captions = [preprocess_caption(c) for c in data['captions'].numpy()]

train_captions.extend(str_captions)

print(train_captions[:5])

# + colab={"base_uri": "https://localhost:8080/"} id="z2FW4ob5NikW" outputId="60f31007-6f03-4136-886a-0f9447b0c12b"

# Choose the most frequent words from the vocabulary

tokenizer = tf.keras.preprocessing.text.Tokenizer(num_words=VOCAB_SIZE,

oov_token="<unk>",

filters='!"#$%&()*+.,-/:;=?@[\]^_`{|}~ ')

tokenizer.fit_on_texts(train_captions)

train_seqs = tokenizer.texts_to_sequences(train_captions)

tokenizer.word_index['<pad>'] = 0

tokenizer.index_word[0] = '<pad>'

# pads each vector to the max_length of the captions

cap_vector = tf.keras.preprocessing.sequence.pad_sequences(train_seqs, padding='post')

max_caption_length = len(cap_vector[0])

print("max_caption_length={}".format(max_caption_length))

print(cap_vector[0])

print([tokenizer.index_word[idx] for idx in cap_vector[0]])

# + colab={"base_uri": "https://localhost:8080/"} id="X0LHFYjhBo32" outputId="647be30d-0357-49a2-a49b-010b59696ed0"

def create_batched_ds(trainds, batchsize):

# generator that does tokenization, padding on the caption strings

# and yields img, caption

def generate_image_captions():

for data in trainds:

captions = data['captions']

img_tensor = data['image_tensor']

str_captions = [preprocess_caption(c) for c in data['captions'].numpy()]

seqs = tokenizer.texts_to_sequences(str_captions)

# Pad each vector to the max_length of the captions

padded = tf.keras.preprocessing.sequence.pad_sequences(

seqs, padding='post', maxlen=max_caption_length)

for caption in padded:

yield img_tensor, caption # repeat image

return tf.data.Dataset.from_generator(

generate_image_captions,

(tf.float32, tf.int32)).batch(batchsize)

for img, caption in create_batched_ds(trainds, 193).take(2):

print(img.shape, caption.shape)

print(caption[0])

# + [markdown] id="1ehA-gDDYh47"

# ## Create Captioning Model

#

# It consists of an image encoder, followed by a caption decoder.

# The caption decoder incorporates an attention mechanism that

# focuses on different parts of the input image.

# + id="inFxAZKi9RqE"

class ImageEncoder(tf.keras.Model):

def __init__(self, embedding_dim):

super(ImageEncoder, self).__init__()

inception = tf.keras.applications.InceptionV3(

include_top=False,

weights='imagenet'

)

self.model = tf.keras.Model(inception.input,

inception.layers[-1].output)

self.fc = tf.keras.layers.Dense(embedding_dim)

def call(self, x):

x = self.model(x)

x = tf.reshape(x, (x.shape[0], -1, x.shape[3]))

x = self.fc(x)

x = tf.nn.relu(x)

return x

class BahdanauAttention(tf.keras.Model):

def __init__(self, units):

super(BahdanauAttention, self).__init__()

self.W1 = tf.keras.layers.Dense(units)

self.W2 = tf.keras.layers.Dense(units)

self.V = tf.keras.layers.Dense(1)

def call(self, features, hidden):

# features(CNN_encoder output) shape == (batch_size, 64, embedding_dim)

# hidden shape == (batch_size, hidden_size)

# hidden_with_time_axis shape == (batch_size, 1, hidden_size)

hidden_with_time_axis = tf.expand_dims(hidden, 1)

# attention_hidden_layer shape == (batch_size, 64, units)

attention_hidden_layer = (tf.nn.tanh(self.W1(features) +

self.W2(hidden_with_time_axis)))

# score shape == (batch_size, 64, 1)

# This gives you an unnormalized score for each image feature.

score = self.V(attention_hidden_layer)

# attention_weights shape == (batch_size, 64, 1)

attention_weights = tf.nn.softmax(score, axis=1)

# context_vector shape after sum == (batch_size, hidden_size)

context_vector = attention_weights * features

context_vector = tf.reduce_sum(context_vector, axis=1)

return context_vector, attention_weights

class CaptionDecoder(tf.keras.Model):

def __init__(self, embedding_dim, units, vocab_size):

super(CaptionDecoder, self).__init__()

self.units = units

self.embedding = tf.keras.layers.Embedding(vocab_size, embedding_dim)

self.gru = tf.keras.layers.GRU(self.units,

return_sequences=True,

return_state=True,

recurrent_initializer='glorot_uniform')

self.fc1 = tf.keras.layers.Dense(self.units)

self.fc2 = tf.keras.layers.Dense(vocab_size)

self.attention = BahdanauAttention(self.units)

def call(self, x, features, hidden):

# defining attention as a separate model

context_vector, attention_weights = self.attention(features, hidden)

# x shape after passing through embedding == (batch_size, 1, embedding_dim)

x = self.embedding(x)

# x shape after concatenation == (batch_size, 1, embedding_dim + hidden_size)

x = tf.concat([tf.expand_dims(context_vector, 1), x], axis=-1)

# passing the concatenated vector to the GRU

output, state = self.gru(x)

# shape == (batch_size, max_length, hidden_size)

x = self.fc1(output)

# x shape == (batch_size * max_length, hidden_size)

x = tf.reshape(x, (-1, x.shape[2]))

# output shape == (batch_size * max_length, vocab)

x = self.fc2(x)

return x, state, attention_weights

def reset_state(self, batch_size):

return tf.zeros((batch_size, self.units))

encoder = ImageEncoder(EMBED_DIM)

decoder = CaptionDecoder(EMBED_DIM, ATTN_UNITS, VOCAB_SIZE)

optimizer = tf.keras.optimizers.Adam()

loss_object = tf.keras.losses.SparseCategoricalCrossentropy(

from_logits=True, reduction='none')

def loss_function(real, pred):

mask = tf.math.logical_not(tf.math.equal(real, 0))

loss_ = loss_object(real, pred)

mask = tf.cast(mask, dtype=loss_.dtype)

loss_ *= mask

return tf.reduce_mean(loss_)

# + [markdown] id="pGVl8cQpZ5Qu"

# ## Training loop

#

# Here, we use a custom training loop because we have to add on to the decoder

# input (dec_input) one word at a time.

# + id="PADm7AHuHw9I"

loss_plot = []

@tf.function

def train_step(img_tensor, target):

loss = 0

# initializing the hidden state for each batch

# because the captions are not related from image to image

hidden = decoder.reset_state(batch_size=target.shape[0])

dec_input = tf.expand_dims([tokenizer.word_index['<start>']] * target.shape[0], 1)

with tf.GradientTape() as tape:

features = encoder(img_tensor)

for i in range(1, target.shape[1]):

# passing the features through the decoder

predictions, hidden, _ = decoder(dec_input, features, hidden)

loss += loss_function(target[:, i], predictions)

# using teacher forcing

dec_input = tf.expand_dims(target[:, i], 1)

total_loss = (loss / int(target.shape[1]))

trainable_variables = encoder.trainable_variables + decoder.trainable_variables

gradients = tape.gradient(loss, trainable_variables)

optimizer.apply_gradients(zip(gradients, trainable_variables))

return loss, total_loss

checkpoint_path = "./checkpoints/train"

ckpt = tf.train.Checkpoint(encoder=encoder,

decoder=decoder,

optimizer = optimizer)

ckpt_manager = tf.train.CheckpointManager(ckpt, checkpoint_path, max_to_keep=5)

# + colab={"base_uri": "https://localhost:8080/"} id="6TW8wuySH1nd" outputId="c33e4f3d-65b0-49ba-b1e7-06e1569d610f"

import time

batched_ds = create_batched_ds(trainds, BATCH_SIZE)

prev_loss = 999

for epoch in range(EPOCHS):

start = time.time()

total_loss = 0

num_steps = 0

for batch, (img_tensor, target) in enumerate(batched_ds):

batch_loss, t_loss = train_step(img_tensor, target)

total_loss += t_loss

num_steps += 1

if batch % 100 == 0:

print ('Epoch {} Batch {} Loss {:.4f}'.format(

epoch + 1, batch, batch_loss.numpy() / int(target.shape[1])))

current_loss = total_loss / num_steps

# storing the epoch end loss value to plot later

loss_plot.append(current_loss)

ckpt_manager.save()

print ('Epoch {} Loss {:.6f} Time taken {:.1f} sec'.format(

epoch + 1,

current_loss,

time.time() - start))

# stop once it has converged

improvement = prev_loss - current_loss

if improvement < EARLY_STOP_THRESH:

print("Stopping because improvement={} < {}".format(improvement, EARLY_STOP_THRESH))

break

prev_loss = current_loss

# + id="uzpGKbigXmKs"

plt.plot(loss_plot);

# + [markdown] id="otiuFI4ZaK6w"

# ## Prediction

#

# To predict, we generate the caption one word at a time, feeding the

# decoder with the previous predictions.

# -

for id in range(10):

print(id, tokenizer.index_word[id])

# + id="EkmKr8nxMNyG"

## Probabilistic prediction using the trained model

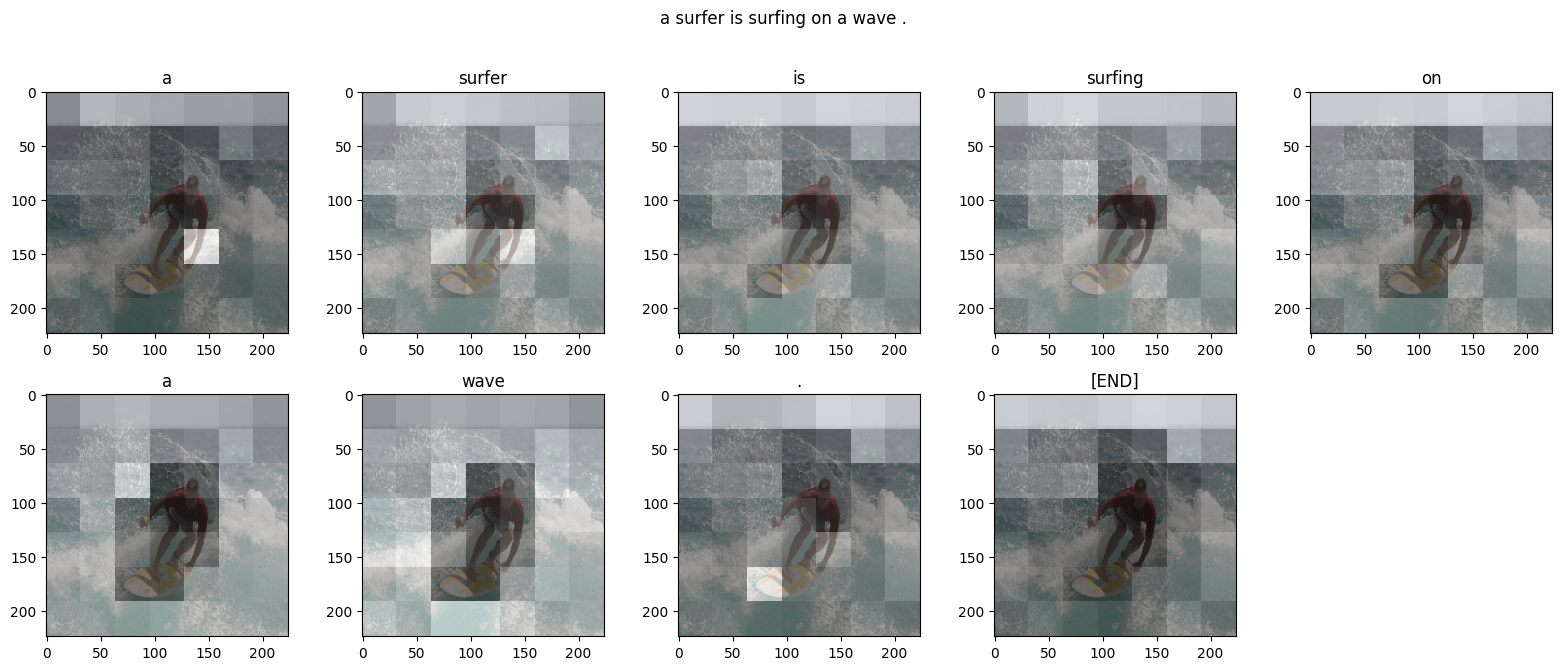

def plot_attention(image, result, attention_plot):

fig = plt.figure(figsize=(10, 10))

len_result = len(result)

num_panels = len_result//2

if num_panels*2 < len_result:

num_panels += 1

for l in range(len_result):

temp_att = np.resize(attention_plot[l], (8, 8))

ax = fig.add_subplot(len_result//2, num_panels, l+1)

ax.set_title(result[l])

img = ax.imshow(image)

ax.imshow(temp_att, cmap='gray', alpha=0.6, extent=img.get_extent())

plt.tight_layout()

plt.show()

def predict_caption(filename):

attention_plot = np.zeros((max_caption_length, ATTN_FEATURES_SHAPE))

hidden = decoder.reset_state(batch_size=1)

img = tf.image.decode_jpeg(tf.io.read_file(filename), channels=IMG_CHANNELS)

img = tf.image.resize(img, (IMG_HEIGHT, IMG_WIDTH)) # inception size

img_tensor_val = tf.keras.applications.inception_v3.preprocess_input(img)

features = encoder(tf.expand_dims(img_tensor_val, axis=0))

dec_input = tf.expand_dims([tokenizer.word_index['<start>']], 0)

result = []

previous_word_ids = []

for i in range(max_caption_length):

predictions, hidden, attention_weights = decoder(dec_input, features, hidden)

attention_plot[i] = tf.reshape(attention_weights, (-1, )).numpy()

if i < 10:

# mask out <pad> <unk> <start> <end>, since we don't want <end>

masked_predictions = predictions[0]

mask = [0.0, 0, 0, 0] + [1.0] * (masked_predictions.shape[0] - 4)

# end is okay after 4th word

if i > 3:

mask[3] = 1

# avoid repeating words

for p in previous_word_ids:

mask[p] = 0

mask = tf.convert_to_tensor(mask)

masked_predictions *= mask

# keep only the top (i+2) words, and draw out of log distribution

top_probs, top_idxs = tf.math.top_k(input=masked_predictions, k=(i+2), sorted=False)

chosen_id = tf.random.categorical([top_probs], 1)[0].numpy()

predicted_id = top_idxs.numpy()[chosen_id][0]

else:

# draws from log distribution given by predictions

predicted_id = tf.random.categorical(predictions, 1)[0][0].numpy()

result.append(tokenizer.index_word[predicted_id])

previous_word_ids.append(predicted_id)

if tokenizer.index_word[predicted_id] == '<end>':

return img, result, attention_plot

dec_input = tf.expand_dims([predicted_id], 0)

attention_plot = attention_plot[:len(result), :]

return img, result, attention_plot

# image from https://commons.wikimedia.org/wiki/File:Flying_Kites_At_Sunset.jpg

filename = "gs://practical-ml-vision-book/images/800px-Flying_Kites_At_Sunset.jpg"

image, caption, attention_plot = predict_caption(filename)

print(caption)

plot_attention(image, caption, attention_plot)

# -

# The model has managed to capture the key aspects of the image:

# <pre>

# group of people standing in a field.

# </pre>

# However, the model hasn't quite figured out the attention (note the attention is similar throughout).

# + [markdown] id="7KbY7guiqFiB"

# ## Plots for book

# + id="gGgCq-nkZvyV"

print(encoder.summary())

# + id="aS6wcOlkZyIn"

print(decoder.summary())

# + [markdown] id="l_fNzWuY2UoB"

# Copyright 2020 Google Inc. Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License.

| 10_adv_problems/10f_image_captioning.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ___

#

# <a href='http://www.pieriandata.com'><img src='../Pierian_Data_Logo.png'/></a>

# ___

# <center><em>Copyright by Pierian Data Inc.</em></center>

# <center><em>For more information, visit us at <a href='http://www.pieriandata.com'>www.pieriandata.com</a></em></center>

# # Q Learning Exercise

# **We'll be reviewing and testing your skills with Q-Learning on a continuous space! Please feel free to reference the lecture notebooks you are definitely not expected to be able to fill out all this code from memory, just the ability to understand the core concepts and apply it to a different situation.**

#

# --------------------

#

# ## Complete the tasks in bold below.

# In this exercise we take a look at the MountainCar-v0 (https://gym.openai.com/envs/MountainCar-v0/) game again. That is the game from our original discussion of OpenAi gym environments for which we created an agent manually.

# Remember, that the goal is to reach the top of the mountain within some time limit

#

# -----

# **TASK: Import any relevant libraries you think you might need.** <br />

#

# **TASK: Create the gym mountain car environment** <br />

#

# **TASK: Write a function to create a numpy array holding the bins for the observations of the car (position and velocity).** <br />

# Feel free to explore different bins per observation spacings.

# The function should take one argument which acts as the bins per observation <br />

# Hint: You can find the observations here: https://github.com/openai/gym/blob/master/gym/envs/classic_control/mountain_car.py

# <br /> Hint: You will probably need around 25 bins for good results, but feel free to use less to reduce training time. <br />

#

def create_bins(num_bins_per_observation):

# CODE HERE

return bins

# **TASK: Here you should write the code which creates the bins and defines the NUM_BINS attribute**

# **TASK: Create a function that will take in observations from the environment and the bins array and return the discretized version of the observation.**

# Now we need the code to discretize the observations. We can use the same code as used in the last notebook

def discretize_observation(observations, bins):

# CODE HERE

return tuple(binned_observations) # Important for later indexing

# **Let's check to make sure your previous two function calls work with a quick task! Otherwise it may be hard to debug later on.**

# **TASK: Confirm that your *create_bins()* function works with *discretize_observation() by running the following cell***

# +

test_bins = create_bins(5)

np.testing.assert_almost_equal(test_bins[0], [-1.2 , -0.75, -0.3 , 0.15, 0.6])

np.testing.assert_almost_equal(test_bins[1], [-0.07 , -0.035, 0. , 0.035, 0.07 ])

test_observation = np.array([-0.9, 0.03])

discretized_test_bins = discretize_observation(test_observation, test_bins)

assert discretized_test_bins == (1, 3)

# -

# **TASK: Create the Q-Table** <br />

# Remember the shape that the Q-Table needs to have.

# +

# CREATE THE Q TABLE

# -

# **TASK: Fill out the Epislon Greedy Action Selection function:**

def epsilon_greedy_action_selection(epsilon, q_table, discrete_state):

#CODE HERE

return action

# **TASK: Fill out the function to compute the next Q value.**

def compute_next_q_value(old_q_value, reward, next_optimal_q_value):

return # CODE HERE

# **TASK: Create a function to reduce epsilon, feel free to choose any reduction method you want. We'll use a reduction with BURN_IN and EPSILON_END limits in the solution. We'll also show a way to reduce epsilon based on the number of epochs. Feel free to experiment here.**

def reduce_epsilon(epsilon, epoch):

# CODE HERE

return epsilon

# **TASK: Define your hyperparameters. Note, we'll show our solution hyperparameters here, but depending on your *reduce_epsilon* function, your epsilon hyperparameters may be different.**

# Here are the solution initial hyperparameters, your will probably be different!

# +

# Feel free to change!

EPOCHS = 30000

BURN_IN = 100

epsilon = 1

EPSILON_END= 10000

EPSILON_REDUCE = 0.0001

ALPHA = 0.8

GAMMA = 0.9

# -

# **TASK: Create the training loop for the reinforcement learning agent and run the loop. We've gone ahead and created the basic structure of the loopwith some comments. We also pre-filled the visualization portion.** <br />

# Note: Use the lecture notebook as a guide and reference

# +

####### VISUALIZATION CODE FOR YOU. TOTALLY OPTIONAL. ##########################

########## FEEL FREE TO REMOVE OR ADD YOUR OWN VISUAL CODE. #################

log_interval = 100 # How often do we update the plot? (Just for performance reasons)

### Here we set up the routine for the live plotting of the achieved points ######

fig = plt.figure()

ax = fig.add_subplot(111)

plt.ion()

fig.canvas.draw()

max_position_log = [] # to store all achieved points

mean_positions_log = [] # to store a running mean of the last 30 results

epochs = [] # store the epoch for plotting

#############################################################################

################################## TRAINING TASKS ##########################

###########################################################################

for epoch in range(EPOCHS):

################################# TODO ######################################

# TODO: Get initial observation and discretize them. Set done to False

#########################################

#

#

# CODE NEEDED HERE!!!

#

#

##########################################

#############################

# These lines are for plotting.

max_position = -np.inf

epochs.append(epoch)

#############################

# TASK TO DO: As long as current run is alive (i.e not done) perform the following steps:

while not done: # Perform current run as long as done is False (as long as there is still time to reach the top)

# TASK TO DO: Select action according to epsilon-greedy strategy

#########################################

#

#

# CODE NEEDED HERE!!!

#

#

##########################################

# TASK TO DO: Perform selected action and get next state. Do not forget to discretize it

#########################################

#

#

# CODE NEEDED HERE!!!

#

#

##########################################

# TASK TO DO: Get old Q-value from Q-Table and get next optimal Q-Value

#########################################

#

#

# CODE NEEDED HERE!!!

#

#

##########################################

# TASK TO DO: Compute next Q-Value and insert it into the table

#########################################

#

#

# CODE NEEDED HERE!!!

#

#

##########################################

# TASK TO DO: Update the old state with the new one

#########################################

#

#

# CODE NEEDED HERE!!!

#

#

##########################################

##############################

## Only for plotting the results - store the highest point the car is able to reach

if position > max_position:

max_position = position

# TASK TO DO: Reduce epsilon

#########################################

#

#

# CODE NEEDED HERE!!!

#

#

##########################################

##############################################################################

max_position_log.append(max_position) # log the highest position the car was able to reach

running_mean = round(np.mean(max_position_log[-30:]), 2) # Compute running mean of position over the last 30 epochs

mean_positions_log.append(running_mean) # and log it

################ Plot the points and running mean ##################

if epoch % log_interval == 0:

ax.clear()

ax.scatter(epochs, max_position_log)

ax.plot(epochs, max_position_log)

ax.plot(epochs, mean_positions_log, label=f"Running Mean: {running_mean}")

plt.legend()

fig.canvas.draw()

######################################################################

env.close()

# -

# **TASK: Use your Q-Table to test your agent and render its performance.**

# +

# CODE HERE

# -

# **OPTIONAL: Play with our Q-Table with 40 bins per observation.**

# +

# We saved our matrix q_table for you (with 40 bins)

# -

our_q_table = np.load('40bin_qtable_mountaincar.npy')

our_q_table.shape

# **Great job! Note how you could train for many more epochs/episodes or edit hyperparameters, the more complex the environment, the more choices you have to experiment with!**

| practical_ai/archive/06-Classical-Q-Learning/02-Q-Learning-Exercise.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: cdiscount kernel

# language: python

# name: cdiscount

# ---

# +

# Load the "autoreload" extension

# %load_ext autoreload

# always reload modules marked with "%aimport"

# %autoreload 1

import os

import sys

from sklearn.metrics import roc_curve

# add the 'src' directory as one where we can import modules

src_dir = os.path.join(os.getcwd(), os.pardir, 'src')

sys.path.append(src_dir)

# import my method from the source code

# %aimport data.read_data

# %aimport models.train_model

# %aimport features.build_features

# %aimport visualization.visualize

from data.read_data import read_data, get_stopwords

from models.train_model import split_train, score_function, get_fasttext, model_ridge, model_xgb, model_lightgbm

from features.build_features import get_vec, to_categorical, replace_na, to_tfidf, stack_sparse, to_sparse_int

from visualization.visualize import plot_roc, plot_scatter

# -

train = read_data(test=False)

y = train['Target']

stopwords = get_stopwords()

train.head()

# # Feature engineering

train = replace_na(train, ['review_content', 'review_title'])

X_dummies = to_categorical(train, 'review_stars')

X_content = to_tfidf(train, 'review_content', stopwords)

X_title = to_tfidf(train, 'review_title', stopwords)

X_length = to_sparse_int(train, 'review_content')

sparse_merge = stack_sparse([X_dummies, X_content, X_title, X_length])

X_train, X_test, y_train, y_test = split_train(sparse_merge, y, 0.2)

# # LightGBM

model_lgb = model_lightgbm(X_train, y_train)

preds = model_lgb.predict_proba(X_test)

preds1 = preds[:,1]

score_function(y_test, preds1)

fpr, tpr, _ = roc_curve(y_test, preds1)

plot_roc(fpr, tpr)

# # Ridge

model_rdg = model_ridge(X_train, y_train, )

preds = model_rdg.predict(X=X_test)

score_function(y_test, preds)

fpr, tpr, _ = roc_curve(y_test, preds)

plot_roc(fpr, tpr)

# # Xgboost

model_xgboost = model_xgb(X_train, y_train)

preds = model_xgboost.predict_proba(X_test)

preds1 = preds[:,1]

score_function(y_test, preds1)

fpr, tpr, _ = roc_curve(y_test, preds1)

plot_roc(fpr, tpr)

| notebooks/02-Train.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] extensions={"jupyter_dashboards": {"version": 1, "views": {"grid_default": {"col": 0, "height": 11, "hidden": false, "row": 0, "width": 12}, "report_default": {"hidden": false}}}}

# This is the <a href="https://jupyter.org/">Jupyter Notebook</a>, an interactive coding and computation environment. For this lab, you do not have to write any code, you will only be running it.

#

# To use the notebook:

# - "Shift + Enter" runs the code within the cell (so does the forward arrow button near the top of the document)

# - You can alter variables and re-run cells

# - If you want to start with a clean slate, restart the Kernel either by going to the top, clicking on Kernel: Restart, or by "esc + 00" (if you do this, you will need to re-run the following block of code before running any other cells in the notebook)

# -

from gpgLabs.Mag.MagDipoleApp import MagneticDipoleApp

# # Magnetic Prism Applet

#

#

# + [markdown] extensions={"jupyter_dashboards": {"version": 1, "views": {"grid_default": {"col": 0, "height": 3, "hidden": true, "row": 11, "width": 12}, "report_default": {"hidden": true}}}}

# ## Purpose

#

# From the Magnetic Dipole applet, we have learned how anomalous magnetic field observed at ground's surface look

# The objective is to learn about the magnetic field observed at the ground's surface, caused by a retangular susceptible prism.

#

#

# ## What is shown

#

# - <b>The colour map</b> shows the strength of the chosen parameter (Bt, Bx, By, or Bz) as a function of position.

#

# - Imagine doing a two dimensional survey over a susceptible sphere that has been magentized by the Earth's magnetic field specified by inclination and declination. "Measurement" location is the centre of each coloured box. This is a simple (but easily programmable) alternative to generating a smooth contour map.

#

# - The anomaly depends upon magnetic latitude, direction of the inducing (Earth's) field, the depth of the buried dipole, and the magnetic moment of the buried dipole.

#

#

# ## Important Notes:

#

# - <b>Inclination (I)</b> and <b>declination (D)</b> describe the orientation of the Earth's ambient field at the centre of the survey area. Positive inclination implies you are in the northern hemisphere, and positive declination implies that magnetic north is to the east of geographic north.

#

# - The <b>"length"</b> adjuster changes the size of the square survey area. The default of 72 means the survey square is 72 metres on a side.

#

# - The <b>"data spacing"</b> adjuster changes the distance between measurements. The default of 1 means measurements were acquired over the survey square on a 2-metre grid. In other words, "data spacing = 2" means each coloured box is 2 m square.

#

# - The <b>"depth"</b> adjuster changes the depth (in metres) to the top of the buried prism.

#

# - The <b>"magnetic moment (M)"</b> adjuster changes the strength of the induced field. Units are Am2. This is related to the strength of the inducing field, the susceptibility of the buried sphere, and the volume of susceptible material.

# - <b>Bt, Bx, By, Bz</b> are Total field, X-component (positive northwards), Y-component (positive eastwards), and Z-component (positive down) of the anomaly field respectively.

#

# - Checking the <b>fixed scale</b> button fixes the colour scale so that the end points of the colour scale are minimum and maximum values for the current data set.

#

# - You can generate a <b>profile</b> along either "East" or "North" direction

#

# - Check <b>half width</b> to see the half width of the anomaly. Anomaly width is noted on the botton of the graph.

#

# - Measurements are taken 1m above the surface.

# + [markdown] extensions={"jupyter_dashboards": {"version": 1, "views": {"grid_default": {"col": 0, "height": 11, "hidden": false, "row": 11, "width": 6}, "report_default": {"hidden": false}}}}

# ## Define a 3D prism

# Compared to the MagneticDipoleApplet, there are additional parameters to define a prism.

#

# - $\triangle x$: length in North (X) direction (m)

# - $\triangle y$: length in East (Y) direction (m)

# - $\triangle z$: length in Depth (z) direction (m) below the receiver

# - depth: top boundary of the prism (meter)

# - I$_{prism}$: inclination of the prism (reference is north direction)

# - D$_{prism}$: declination of the prism (reference is north direction)

# -

mag = MagneticDipoleApp()

mag.interact_plot_model_prism()

| Notebooks/MagneticPrismApplet.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

from keras.applications.inception_v3 import InceptionV3

from keras.applications.inception_v3 import preprocess_input

from keras.preprocessing import image

from keras.models import Model

from keras.layers import Dense, GlobalAveragePooling2D

import cv2

# +

base_model = InceptionV3(weights='imagenet', include_top=False)#, input_shape=(299, 299, 3))

x = base_model.output

x = GlobalAveragePooling2D()(x)

x = Dense(1024, activation='relu')(x)

out = Dense(2, activation='softmax')(x)

model = Model(inputs=base_model.input, outputs=out)

for layer in base_model.layers:

layer.trainable = False

model.compile(optimizer='adam', loss='categorical_crossentropy')

# -

model.summary()

# +

from keras.preprocessing.image import ImageDataGenerator

def normalize(image):

# image2 = image / 255.

# image2 = image2 - 0.5

# image2 = image2 * 2.

return image

train_datagen = ImageDataGenerator(

rescale=1/255.,

# preprocessing_function=normalize,

shear_range=0.2,

zoom_range=0.2,

horizontal_flip=True)

train_generator = train_datagen.flow_from_directory(

'flowers/train',

target_size=(299, 299),

batch_size=32,

class_mode='categorical')

#test_datagen = ImageDataGenerator(rescale=1./255)

#validation_generator = test_datagen.flow_from_directory(

# 'data/validation',

# target_size=(150, 150),

# batch_size=32,

# class_mode='binary')

# -

model.fit_generator(train_generator, steps_per_epoch=32, epochs=2)

image = cv2.imread('flowers/train/Rose/test - 4.jpg')

image = cv2.resize(image, (299, 299))

import numpy as np

preprocessed_image = normalize(image)

model.predict(np.expand_dims(preprocessed_image, axis=0))

| Transfer learning.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

sns.set()

# -

# # Загрузка датасета

# Загрузим датасет, настроим pandas на отображение всех колонок

# +

dataset = pd.read_csv("dataset.csv", sep=';', decimal=',', index_col=0)

pd.set_option('display.max_columns', None)

pd.set_option('display.max_rows', 100)

# -

# Первые записи:

dataset.head()

dataset.shape

# Датасет содержит 7041 объект.

# Объект содержит 84 признака.

# Описание признаков:

dataset.describe(include='all')

dataset.columns

# ## Целевые признаки

# целевые признаки (принадлежат к вещественному типу)

target_features = ['химшлак последний Al2O3', 'химшлак последний CaO',

'химшлак последний FeO', 'химшлак последний MgO',

'химшлак последний MnO', 'химшлак последний R',

'химшлак последний SiO2']

# # Количество пропусков и типы данных

def make_description(df):

description = pd.concat([df.isna().sum(), df.dtypes, df.nunique()], axis=1)

description.rename(columns={0: "num of NaN", 1: "dtypes", 2: "nunique"}, inplace=True)

return description

make_description(dataset).sort_values('num of NaN', ascending=False)

# ## Пропуски по столбцам

# Как видно, некоторные признаки имеют значительное количество пропусков (более 33%)

# Также важно отметить, что некоторые из признаков содержат всего 1 уникальное значение помимо NaN.

# +

def list_features_with_single_value(df):

features_list = []

for feature in df.columns:

if df[feature].nunique() == 1:

features_list.append(feature)

return features_list

def list_features_with_lots_of_nan(df):

features_list = []

for feature in df.columns:

if feature in target_features: # не рассматриваем целевые признаки

continue

num_of_nan = dataset[feature].isna().sum()

if num_of_nan > dataset.shape[0] / 3:

features_list.append(feature)

return features_list

# +

features_with_lots_of_nan = list_features_with_lots_of_nan(dataset)

print("Столбцы с большим количеством пропусков:")

print(features_with_lots_of_nan)

features_with_single_value = list_features_with_single_value(dataset)

print("Признаки с единственным уникальным значением:")

print(features_with_single_value)

# -

# ## Пропуски по строкам

# Узнаем количество строк, в которых отсутствует более 33% признаков:

def list_rows_with_lots_of_nan(df):

rows_list = []

numbers_of_nan = df.isna().sum(axis=1)

for index, count in enumerate(numbers_of_nan):

if count > df.shape[1] / 3:

rows_list.append(index)

return rows_list

rows_with_lots_of_nan = list_rows_with_lots_of_nan(dataset)

print("Строк с большим количеством пропусков: {}".format(len(rows_with_lots_of_nan)))

# ## Категориальные признаки

#

# Категориальными типами являются:

# - **nplv** - номер плавки, не имеет смысла

# - **DT** - дата и время плавки

# - **МАРКА** - марка стали (?)

# - **ПРОФИЛЬ** - профиль стали (?)

# +

categorical_features = ["nplv", "DT", "МАРКА", "ПРОФИЛЬ"]

dataset[categorical_features].head()

# -

print("Количество всех пропущенных категориальных значений: {}".format(dataset[categorical_features].isna().sum().sum()))

# #### МАРКА

print("Количество уникальных значений: {}".format(dataset["МАРКА"].nunique()))

print("\nЧастота встречаемости каждого из значений:")

print(dataset["МАРКА"].value_counts())

# #### ПРОФИЛЬ

print("Количество уникальных значений: {}".format(dataset["ПРОФИЛЬ"].nunique()))

print("\nЧастота встречаемости каждого из значений:")

print(dataset["ПРОФИЛЬ"].value_counts())

# ## Вещественные признаки

#

# Все остальные 80 признаков имеют вещественный тип данных

numerical_features = ['t вып-обр', 't обработка',

't под током', 't продувка', 'ПСН гр.', 'чист расход C',

'чист расход Cr', 'чист расход Mn', 'чист расход Si', 'чист расход V',

'температура первая', 'температура последняя', 'Ar (интенс.)',

'N2 (интенс.)', 'эл. энергия (интенс.)', 'произв жидкая сталь',

'произв количество обработок', 'произв количество плавок',

'произв количество плавок (цел)', 'расход газ Ar', 'расход газ N2',

'расход C пров.', 'сыпуч известь РП', 'сыпуч кварцит',

'сыпуч кокс пыль УСТК', 'сыпуч кокс. мелочь (сух.)',

'сыпуч кокс. мелочь КМ1', 'сыпуч шпат плав.', 'ферспл CaC2',

'ферспл FeMo', 'ферспл FeSi-75', 'ферспл FeV азот.', 'ферспл FeV-80',

'ферспл Mn5Si65Al0.5', 'ферспл Ni H1 пласт.', 'ферспл SiMn18',

'ферспл ферванит', 'ферспл фх850А', 'эл. энергия',

'химсталь первый Al_1', 'химсталь первый C_1', 'химсталь первый Cr_1',

'химсталь первый Cu_1', 'химсталь первый Mn_1', 'химсталь первый Mo_1',

'химсталь первый N_1', 'химсталь первый Ni_1', 'химсталь первый P_1',

'химсталь первый S_1', 'химсталь первый Si_1', 'химсталь первый Ti_1',

'химсталь первый V_1', 'химсталь последний Al', 'химсталь последний C',

'химсталь последний Ca', 'химсталь последний Cr',

'химсталь последний Cu', 'химсталь последний Mn',

'химсталь последний Mo', 'химсталь последний N',

'химсталь последний Ni', 'химсталь последний P', 'химсталь последний S',

'химсталь последний Si', 'химсталь последний Ti',

'химсталь последний V', 'химшлак первый Al2O3_1',

'химшлак первый CaO_1', 'химшлак первый FeO_1', 'химшлак первый MgO_1',

'химшлак первый MnO_1', 'химшлак первый R_1', 'химшлак первый SiO2_1',

'химшлак последний Al2O3', 'химшлак последний CaO',

'химшлак последний FeO', 'химшлак последний MgO',

'химшлак последний MnO', 'химшлак последний R',

'химшлак последний SiO2']

dataset[numerical_features].shape

# ### Количество NaN значений у каждого вещественного признака

dataset[numerical_features].isna().sum()

# # Распределение целевых признаков

# Изначальное, до чистки:

for feature in target_features:

#sns.displot(dataset[feature])

print(feature)

print("Min: {}, max: {}".format(dataset[feature].min(), dataset[feature].max()))

print("Mean: {}, median: {}, mode: {}, std: {}".format(dataset[feature].mean(),

dataset[feature].median(),

dataset[feature].mode()[0],

dataset[feature].std()))

print("Number of NaN: {}".format(dataset[feature].isna().sum()))

print()

# После чистки:

# +

features_to_drop = list(set(features_with_lots_of_nan + features_with_single_value))

preprocessed_dataset = dataset.drop(features_to_drop, axis=1)

preprocessed_dataset = preprocessed_dataset.drop(rows_with_lots_of_nan, axis=0)

print("Размер датасета после первичной чистки: {}\n".format(preprocessed_dataset.shape))

for feature in target_features:

#sns.displot(dataset[feature])

print(feature)

print("Min: {}, max: {}".format(preprocessed_dataset[feature].min(), preprocessed_dataset[feature].max()))

print("Mean: {}, median: {}, mode: {}, std: {}".format(preprocessed_dataset[feature].mean(),

preprocessed_dataset[feature].median(),

preprocessed_dataset[feature].mode()[0],

preprocessed_dataset[feature].std()))

print("Number of NaN: {}".format(preprocessed_dataset[feature].isna().sum()))

print()

| Melekhin/0_Exploratory_Data_Analysis.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Section 1. Business understanding

#

# The three business questions that I focused in this analysis are:

#

# Question 1. What is the differences in listing prices among different neighborhood?

#

# Question 2. How do listing prices change during the year?

#

# Question 3. How should you price your listing based on location and room type?

# # Section 2. Data Understanding

# ## Import Libraries and Data

# +

# For Analysis

import numpy as np

import pandas as pd

# For Visualizations

import matplotlib.pyplot as plt

# %matplotlib inline

import seaborn as sns

#Import machine learning

from sklearn.linear_model import LinearRegression

from sklearn.ensemble import RandomForestRegressor

from sklearn.model_selection import train_test_split #split

from sklearn.metrics import r2_score, mean_squared_error #metrics

# -

# ## Gather Data

# Import Data

listings_df = pd.read_csv('listings.csv')

calendar_df = pd.read_csv('calendar.csv')

reviews_df = pd.read_csv('reviews.csv') #didn't use in this exercise

# Start with exploring the data and data type for each dataset.

#

# ## Data info

listings_df.head()

reviews_df.head()

calendar_df.head()

listings_df.info()

reviews_df.info()

calendar_df.info()

# # Section 3. Data preparation

#

# From the information above, the data will need some data-preprocessing:

# 1. Find the missing values for each dataset.

# 2. We can merge the listings and calendar to get the complete daily listing list over the year.

# 3. Select relevant columns for the analysis

# 4. Convert price to float and convert datetime to month and year

# 5. Recode the zipcode to neighbourhood

# 6. Clean the missing values

# +

#find percentage of missing values for each column

listings_missing_df = listings_df.isnull().mean()*100

#filter out only columns, which have missing values

listings_columns_with_nan = listings_missing_df[listings_missing_df > 0]

#plot the results

plt.figure(figsize = (12, 12)) #determine the size of the chart

listings_columns_with_nan.plot.barh(title='Percentage of missing values in listings, %', color = "midnightblue")

plt.xticks(fontsize = 8) # format the labels for the x-axis

plt.yticks(fontsize = 10) # format the y-axis

plt.show()

# -

# Find missing values in calendar

calendar_df.isnull().mean().sort_values(ascending=False)#rank the most missing values

# FInd missing values in reviews

reviews_df.isnull().mean().sort_values(ascending=False)#rank the most missing values

# In the analysis of missing values, we can identify that there are over 80% of missing values in the columns 'square feet' and 'license'. Those two won't be useful for our analysis. Also, there are about 30% of missing values in the column 'price' in the calendar dataset. These need to be cleaned as well since 'price' will be our dependent variables for exploration analysis and future prediction models.

#

# Then let's take a look at the information for some of those columns to find the appropriate columns for our neighborhood comparison. I am specifically interested in the columns related to 'zipcode' and 'neighborhood'.

#

# From the information below, the neighborhood infomation contained in the dataset are not complete with a significant portion of rows labeled as others, which will not be useful for our analysis. But we do have a very complete record of the zipcode information for the listing. This can be more useful for following analysis.

listings_df.zipcode.value_counts()

listings_df.neighbourhood_group_cleansed.value_counts()

# ### Merge and select datasets

#

# Listing information and listing calendar information are merged to provide a full picture of the listing information throughout the year.

#merge datasets

listings_df = listings_df.rename(index=str, columns={"id": "listing_id"})

df_merged = pd.merge(calendar_df, listings_df, on = 'listing_id')

df_merged.columns

#select the relevant columns for analysis

df_selected = df_merged[['listing_id', 'date', 'price_x','zipcode','property_type','room_type', 'accommodates', 'bathrooms', 'bedrooms',

'beds', 'bed_type']]

df_selected.head()

# ### Clean data

#

# First, convert price to float and convert datetime to month and year so it is easier for future analysis. Then, all the zipcode information were recoded to neighbourhood based on the Seattle neighbourhood map.

# Lastly, drop all the missing values in the dataset.

#convert price_x to float

pd.options.mode.chained_assignment = None # default='warn'

df_selected['price_x'] = df_selected['price_x'].astype(str)

df_selected['price'] = df_selected['price_x'].str.replace('[$, ]','').astype('float')

df_selected = df_selected.drop(columns = ['price_x'])

# +

#convert date from the calendar into month and drop the date colum

def get_month_from_date(row):

''' Get month from date represented as a string '''

return int(row['date'].split('-')[1])

def get_year_from_date(row):

''' Get year from date represented as a string '''

return int(row['date'].split('-')[0])

df_selected['month'] = df_selected.apply(lambda row: get_month_from_date(row),axis=1)

df_selected['year'] = df_selected.apply(lambda row: get_year_from_date(row),axis=1)

df_selected = df_selected.drop(columns = ['date'])

#select data from 2016

df_selected_2016 = df_selected.iloc[:-2,:]

df_selected_2016

# +

# Recode zipcode to neighbourhood

def neighbourhood(value):

if value in ['98177', '98133', '98117', '98103', '98107']:

return 'Northwest Seattle'

elif value in ['98125','98105', '98115']:

return 'Northeast Seattle'

elif value in ['98199', '98119', '98109']:

return 'Magnolia&Queen Anne'

elif value in ['98122', '98112', '99\n98122','98102']:

return 'Central Seattle'

elif value in ['98121', '98101', '98104', '98134']:

return 'Downtown Seattle'

elif value in ['98144', '98108', '98118', '98178']:

return 'Southeast Seattle'

elif value in ['98116', '98136', '98126', '98106','98146']:

return 'West Seattle&Delridge'

return value

df_selected_2016['zipcode'] = df_selected_2016['zipcode'].apply(neighbourhood)

df_selected.rename(columns={'zipcode': 'neighbourhood'})

# +

#find percentage of missing values for each column

df_selected_missing = df_selected_2016.isnull().mean()*100

#filter out only columns, which have missing values

df_selected_with_nan = df_selected_missing[df_selected_missing > 0]

#plot the results

plt.figure(figsize = (12, 9)) #determine the size of the chart

df_selected_with_nan.plot.barh(title='Percentage of missing values in selected column, %', color = "midnightblue")

plt.xticks(fontsize = 8) # format the labels for the x-axis

plt.yticks(fontsize = 10) # format the y-axis

plt.show()

# -

# From the figure above, we can see that there are around 30% of missing values in the column 'price' and few missing values in other columns. Since 'price' will be our main variable for modeling and the missing values were most due to the fact that some of the listings were not listed in certain time of the year, we should drop all the missing values in the dataset, so we just keep the price information when the listings were listed on the market.

#drop the missing values

df_selected_2016_cleaned = df_selected_2016.dropna()

#check the percentage of the missing values dropped

(len(df_selected_2016)-len(df_selected_2016_cleaned))/len(df_selected_2016)*100

# # Data Evaluation

# ## Comparison of Neighbourhood

#

# In this section, the total number of listings and average price of listings in each neighbourhood are compared. It provides some insight about how listing price and number changed based on location.

# +

#calculate the total count of listings in each neighbourhood

df_neighbourhood_count = df_selected_2016_cleaned['zipcode'].value_counts()

#plot the results

plt.figure(figsize = (10, 8)) #determine the size of the chart

df_neighbourhood_count.plot.barh(title='Comparison of Listings', color = "midnightblue")

plt.xticks(rotation = 45, fontsize = 14) # format the labels for the x-axis

plt.yticks(fontsize = 14) # format the y-axis

plt.xlabel("Total Listings",fontsize = 14)

plt.savefig('comparison of total listings.png')

plt.show()

# -

#plot the listing price distribution in each neighbourhood

sns.set(rc={'figure.figsize':(14,9)})

ax = sns.boxplot(x = df_selected_2016_cleaned['zipcode'], y = df_selected_2016_cleaned['price'])

plt.legend(title = 'neighborhood comparison', fontsize='x-large', title_fontsize='40')

plt.xlabel(" ")

plt.ylabel("Listing Price ($)",fontsize = 14)

plt.savefig('pricing comparison for neighbourhood.png')

# +

#find number of total number of listings for each month in 2016

number_of_listings_by_month = pd.Series([12])

for i in range(1, 13):

number_of_listings_by_month[i] = len(df_selected_2016_cleaned[(df_selected_2016_cleaned['month'] == i)]['listing_id'].unique())

number_of_listings_by_month = number_of_listings_by_month.drop(0)

#plot the number of listings per month in 2016

plt.figure(figsize=(10,5))

plt.plot(number_of_listings_by_month)

plt.xticks(np.arange(1, 13, step=1))

plt.ylabel('Number of listings per month')

plt.xlabel('Month')

plt.title('Number of listings per month in 2016', fontsize = 14)

plt.savefig('number of available listings.png')

plt.show()

# +

#get list of neighbourhoods

neighbourhoods = df_selected_2016_cleaned['zipcode'].unique()

#get prices by month and neighbourhood

price_by_month_neighbourhood = df_selected_2016_cleaned.groupby(['month','zipcode']).mean().reset_index()

#plot prices for each neighbourhood

fig = plt.figure(figsize=(20,10))

ax = plt.subplot(111)

for neighbourhood in neighbourhoods:

ax.plot(price_by_month_neighbourhood[price_by_month_neighbourhood['zipcode'] == neighbourhood]['month'],

price_by_month_neighbourhood[price_by_month_neighbourhood['zipcode'] == neighbourhood]['price'],

label = neighbourhood)

box = ax.get_position()

ax.set_position([box.x0, box.y0, box.width * 0.8, box.height])

ax.legend(loc='center left', bbox_to_anchor=(1, 0.5),fontsize = 24)

plt.ylabel('Average price, $', fontsize = 30)

plt.xlabel('Month', fontsize = 30)

plt.title('Average price for neighbourhood, $', fontsize = 30)

plt.xticks(np.arange(1, 13, step=1),fontsize = 14)

plt.yticks(fontsize = 14)

plt.savefig('average price for neighbourhood.png')

plt.show()

# +

#get list of neighbourhoods

neighbourhoods = df_selected_2016_cleaned['zipcode'].unique()

#get total listing by month and neighbourhood

listing_by_month_neighbourhood = df_selected_2016_cleaned.groupby(['month','zipcode']).count().reset_index()

listing_by_month_neighbourhood

#plot total listing for each neighbourhood

fig = plt.figure(figsize=(20,10))

ax = plt.subplot(111)

for neighbourhood in neighbourhoods:

ax.plot(listing_by_month_neighbourhood[listing_by_month_neighbourhood['zipcode'] == neighbourhood]['month'],

listing_by_month_neighbourhood[listing_by_month_neighbourhood['zipcode'] == neighbourhood]['listing_id'],

label = neighbourhood)

box = ax.get_position()

ax.set_position([box.x0, box.y0, box.width * 0.8, box.height])

ax.legend(loc='center left', bbox_to_anchor=(1, 0.5))

plt.ylabel('Total Listing per month')

plt.xlabel('Month')

plt.title('Total Listing per month', fontsize = 14)

plt.savefig('Total Listing per month.png')

plt.show()

# -

# # Section 4. Data Modeling

#

# The data is preprocessed as followed:

# 1. Recode the property_type and bed_type to simplify the model based on the number of each type and the average price of each type.

# 2. Remove the year column since it won't provide any useful information for the model prediction

#make a copy of the clean dataset for prediction

df_predict = df_selected_2016_cleaned.copy()

df_predict.head()

df_predict['property_type'].value_counts()

df_predict['room_type'].value_counts()

df_predict['bed_type'].value_counts()

df_predict.groupby(['bedrooms']).mean(['price']).sort_values(['price'])

df_predict.groupby(['bed_type']).mean(['price']).sort_values(['price'])

# +

# Recode property_type

def property_type(value):

if value in ['House', 'Townhouse', 'Loft']:

return 'House'

elif value in ['Apartment', 'Condominium', 'Chalet', 'Bed & Breakfast']:

return 'Apartment/Condo'

elif value in ['Camper/RV', 'Bungalow', 'Cabin']:

return 'Outdoor_Room'

elif value in ['Tent', 'Treehouse', 'Dorm', 'Yurt']:

return 'Outdoor_Tent'

return value

df_predict['property_type'] = df_predict['property_type'].apply(property_type)

# +

# Recode bed_type

def bed_type(value):

if value in ['Real Bed','Airbed']:

return 'Bed'

elif value in ['Futon','Pull-out Sofa','Couch']:

return 'Futon/Sofa/Couch'

return value

df_predict['bed_type'] = df_predict['bed_type'].apply(bed_type)

# -

df_predict.head()

df_predict_re = df_predict[['zipcode','property_type','room_type',

'accommodates','bathrooms','bedrooms',

'beds','bed_type','month','price']]

df_predict_re.head()

# ### Set up parameters

#

# Create dummy variables for categorical data and split the test/train dataset.

#select X,Y data

X = df_predict_re.iloc[:,:-1]

Y = df_predict_re.iloc[:,-1]

# select non-numeric variables and create dummies

non_num_vars = X.select_dtypes(include=['object']).columns

X[non_num_vars].head()

# create dummy variables

dummy_vars = pd.get_dummies(X[non_num_vars])

dummy_vars

# drop non-numeric variables and add the dummies

X_dummy = X.drop(non_num_vars,axis=1)

X_dummy = pd.merge(X_dummy,dummy_vars, left_index=True, right_index=True)

X_dummy.head()

#split the test and train dataset

from sklearn.model_selection import train_test_split

X_train, X_test, Y_train, Y_test = train_test_split(X_dummy, Y, test_size = 0.2, random_state = 0)

# # Section 5. Evaluation

#

# ## Random Forest Regression Model

# +

# training the Random Forest Regression

from sklearn.ensemble import RandomForestRegressor

RFregressor = RandomForestRegressor(n_estimators=100,

criterion='mse',

random_state=42,

n_jobs=-1)

RFregressor.fit(X_train, Y_train)

#calculate scores for the model

y_train_preds_RF = RFregressor.predict(X_train)

y_test_preds_RF = RFregressor.predict(X_test)

print('Random Forest MSE train: %.3f, test: %.3f' % (

mean_squared_error(Y_train, y_train_preds_RF),

mean_squared_error(Y_test, y_test_preds_RF)))

print('Random Forest R^2 train: %.3f, test: %.3f' % (

r2_score(Y_train, y_train_preds_RF),

r2_score(Y_test, y_test_preds_RF)))

# -

# show the residuals of train and test

plt.scatter(y_train_preds_RF, y_train_preds_RF - Y_train,

c='blue', marker='o', label='Training data')

plt.scatter(y_test_preds_RF, y_test_preds_RF - Y_test,

c='lightgreen', marker='s', label='Test data')

plt.xlabel('Predicted values')

plt.ylabel('Residuals')

plt.legend(loc='upper left')

plt.show()

# show the comparison of actual and predict values

plt.scatter(y_train_preds_RF, Y_train,

c='blue', marker='o', label='Training data')

plt.scatter(y_test_preds_RF, Y_test,

c='lightgreen', marker='s', label='Test data')

plt.xlabel('Predicted values')

plt.ylabel('Actual listing values')

plt.legend(loc='upper left')

plt.show()

# +

#get feature importances from the model

headers = ["name", "score"]

values = sorted(zip(X_train.columns, RFregressor.feature_importances_), key=lambda x: x[1] * -1)

forest_feature_importances = pd.DataFrame(values, columns = headers)

forest_feature_importances = forest_feature_importances.sort_values(by = ['score'], ascending = False)

features = forest_feature_importances['name'][:15]

y_pos = np.arange(len(features))

scores = forest_feature_importances['score'][:15]

#plot feature importances

plt.figure(figsize=(10,5))

plt.barh(y_pos, scores,align='center', alpha=0.5)

plt.yticks(y_pos, features)

plt.xlabel('Score')

plt.ylabel('Features')

plt.title('Feature importances (Random Forest)')

plt.savefig('feature importances RF.png')

plt.show()

# -

# ## Linear Regression model

#import linear regression model

from sklearn.linear_model import LinearRegression

LRregressor = LinearRegression()

LRregressor.fit(X_train, Y_train)

# +

#calculate scores for the model

y_train_preds_LR = LRregressor.predict(X_train)

y_test_preds_LR = LRregressor.predict(X_test)

print('Linear Regression MSE train: %.3f, test: %.3f' % (

mean_squared_error(Y_train, y_train_preds_LR),

mean_squared_error(Y_test, y_test_preds_LR)))

print('Linear Regression R^2 train: %.3f, test: %.3f' % (

r2_score(Y_train, y_train_preds_LR),

r2_score(Y_test, y_test_preds_LR)))

# -

# show the residuals of train and test

plt.scatter(y_train_preds_LR, y_train_preds_LR - Y_train,

c='blue', marker='o', label='Training data')

plt.scatter(y_test_preds_LR, y_test_preds_LR - Y_test,

c='lightgreen', marker='s', label='Test data')

plt.xlabel('Predicted values')

plt.ylabel('Residuals')

plt.legend(loc='upper left')

plt.show()

# show the comparison of predicted and actual values

plt.scatter(y_train_preds_LR, Y_train,

c='blue', marker='o', label='Training data')

plt.scatter(y_test_preds_LR, Y_test,

c='lightgreen', marker='s', label='Test data')

plt.xlabel('Predicted values')

plt.ylabel('Actual listing values')

plt.legend(loc='upper left')

plt.show()