code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# -*- coding: utf-8 -*-

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Q#

# language: qsharp

# name: iqsharp

# ---

# # Superposition Kata

#

# **Superposition** quantum kata is a series of exercises designed

# to get you familiar with the concept of superposition and with programming in Q#.

# It covers the following topics:

# * basic single-qubit and multi-qubit gates,

# * superposition,

# * flow control and recursion in Q#.

#

# It is recommended to complete the [BasicGates kata](./../BasicGates/BasicGates.ipynb) before this one to get familiar with the basic gates used in quantum computing. The list of basic gates available in Q# can be found at [Microsoft.Quantum.Intrinsic](https://docs.microsoft.com/qsharp/api/qsharp/microsoft.quantum.intrinsic).

#

# Each task is wrapped in one operation preceded by the description of the task.

# Your goal is to fill in the blank (marked with `// ...` comments)

# with some Q# code that solves the task. To verify your answer, run the cell using Ctrl/⌘+Enter.

#

# The tasks are given in approximate order of increasing difficulty; harder ones are marked with asterisks.

# To begin, first prepare this notebook for execution (if you skip this step, you'll get "Syntax does not match any known patterns" error when you try to execute Q# code in the next cells):

%package Microsoft.Quantum.Katas::0.11.2003.3107

# > The package versions in the output of the cell above should always match. If you are running the Notebooks locally and the versions do not match, please install the IQ# version that matches the version of the `Microsoft.Quantum.Katas` package.

# > <details>

# > <summary><u>How to install the right IQ# version</u></summary>

# > For example, if the version of `Microsoft.Quantum.Katas` package above is 0.1.2.3, the installation steps are as follows:

# >

# > 1. Stop the kernel.

# > 2. Uninstall the existing version of IQ#:

# > dotnet tool uninstall microsoft.quantum.iqsharp -g

# > 3. Install the matching version:

# > dotnet tool install microsoft.quantum.iqsharp -g --version 0.1.2.3

# > 4. Reinstall the kernel:

# > dotnet iqsharp install

# > 5. Restart the Notebook.

# > </details>

#

# ### <a name="plus-state"></a> Task 1. Plus state.

#

# **Input:** A qubit in the $|0\rangle$ state.

#

# **Goal:** Change the state of the qubit to $|+\rangle = \frac{1}{\sqrt{2}} \big(|0\rangle + |1\rangle\big)$.

# +

%kata T01_PlusState_Test

operation PlusState (q : Qubit) : Unit {

// Hadamard gate H will convert |0⟩ state to |+⟩ state.

// Type the following: H(q);

// Then run the cell using Ctrl/⌘+Enter.

// ...

}

# -

# *Can't come up with a solution? See the explained solution in the [Superposition Workbook](./Workbook_Superposition.ipynb#plus-state).*

# ### <a name="minus-state"></a> Task 2. Minus state.

#

# **Input**: A qubit in the $|0\rangle$ state.

#

# **Goal**: Change the state of the qubit to $|-\rangle = \frac{1}{\sqrt{2}} \big(|0\rangle - |1\rangle\big)$.

# +

%kata T02_MinusState_Test

operation MinusState (q : Qubit) : Unit {

// ...

}

# -

# *Can't come up with a solution? See the explained solution in the [Superposition Workbook](./Workbook_Superposition.ipynb#minus-state).*

# ### <a name="unequal-superposition"></a> Task 3*. Unequal superposition.

#

# **Inputs:**

#

# 1. A qubit in the $|0\rangle$ state.

# 2. Angle $\alpha$, in radians, represented as `Double`.

#

# **Goal** : Change the state of the qubit to $\cos{α} |0\rangle + \sin{α} |1\rangle$.

#

# <br/>

# <details>

# <summary><b>Need a hint? Click here</b></summary>

# Experiment with rotation gates from Microsoft.Quantum.Intrinsic namespace.

# Note that all rotation operators rotate the state by <i>half</i> of its angle argument.

# </details>

# +

%kata T03_UnequalSuperposition_Test

operation UnequalSuperposition (q : Qubit, alpha : Double) : Unit {

// ...

}

# -

# *Can't come up with a solution? See the explained solution in the [Superposition Workbook](./Workbook_Superposition.ipynb#unequal-superposition).*

# ### <a name="superposition-of-all-basis-vectors-on-two-qubits"></a>Task 4. Superposition of all basis vectors on two qubits.

#

# **Input:** Two qubits in the $|00\rangle$ state (stored in an array of length 2).

#

# **Goal:** Change the state of the qubits to $|+\rangle \otimes |+\rangle = \frac{1}{2} \big(|00\rangle + |01\rangle + |10\rangle + |11\rangle\big)$.

# +

%kata T04_AllBasisVectors_TwoQubits_Test

operation AllBasisVectors_TwoQubits (qs : Qubit[]) : Unit {

// ...

}

# -

# *Can't come up with a solution? See the explained solution in the [Superposition Workbook](./Workbook_Superposition.ipynb#superposition-of-all-basis-vectors-on-two-qubits).*

# ### <a name="superposition-of-basis-vectors-with-phases"></a>Task 5. Superposition of basis vectors with phases.

#

# **Input:** Two qubits in the $|00\rangle$ state (stored in an array of length 2).

#

# **Goal:** Change the state of the qubits to $\frac{1}{2} \big(|00\rangle + i|01\rangle - |10\rangle - i|11\rangle\big)$.

#

# <br/>

# <details>

# <summary><b>Need a hint? Click here</b></summary>

# Is this state separable?

# </details>

# +

%kata T05_AllBasisVectorsWithPhases_TwoQubits_Test

operation AllBasisVectorsWithPhases_TwoQubits (qs : Qubit[]) : Unit {

// ...

}

# -

# *Can't come up with a solution? See the explained solution in the [Superposition Workbook](./Workbook_Superposition.ipynb#superposition-of-basis-vectors-with-phases).*

# ### <a name="bell-state"></a>Task 6. Bell state $|\Phi^{+}\rangle$.

#

# **Input:** Two qubits in the $|00\rangle$ state (stored in an array of length 2).

#

# **Goal:** Change the state of the qubits to $|\Phi^{+}\rangle = \frac{1}{\sqrt{2}} \big (|00\rangle + |11\rangle\big)$.

#

# > You can find detailed coverage of Bell states and their creation [in this blog post](https://blogs.msdn.microsoft.com/uk_faculty_connection/2018/02/06/a-beginners-guide-to-quantum-computing-and-q/).

# +

%kata T06_BellState_Test

operation BellState (qs : Qubit[]) : Unit {

// ...

}

# -

# *Can't come up with a solution? See the explained solution in the [Superposition Workbook](./Workbook_Superposition.ipynb#bell-state).*

# ### <a name="all-bell-states"></a> Task 7. All Bell states.

#

# **Inputs:**

#

# 1. Two qubits in the $|00\rangle$ state (stored in an array of length 2).

# 2. An integer index.

#

# **Goal:** Change the state of the qubits to one of the Bell states, based on the value of index:

#

# <table>

# <col width="50"/>

# <col width="200"/>

# <tr>

# <th style="text-align:center">Index</th>

# <th style="text-align:center">State</th>

# </tr>

# <tr>

# <td style="text-align:center">0</td>

# <td style="text-align:center">$|\Phi^{+}\rangle = \frac{1}{\sqrt{2}} \big (|00\rangle + |11\rangle\big)$</td>

# </tr>

# <tr>

# <td style="text-align:center">1</td>

# <td style="text-align:center">$|\Phi^{-}\rangle = \frac{1}{\sqrt{2}} \big (|00\rangle - |11\rangle\big)$</td>

# </tr>

# <tr>

# <td style="text-align:center">2</td>

# <td style="text-align:center">$|\Psi^{+}\rangle = \frac{1}{\sqrt{2}} \big (|01\rangle + |10\rangle\big)$</td>

# </tr>

# <tr>

# <td style="text-align:center">3</td>

# <td style="text-align:center">$|\Psi^{-}\rangle = \frac{1}{\sqrt{2}} \big (|01\rangle - |10\rangle\big)$</td>

# </tr>

# </table>

# +

%kata T07_AllBellStates_Test

operation AllBellStates (qs : Qubit[], index : Int) : Unit {

// ...

}

# -

# *Can't come up with a solution? See the explained solution in the [Superposition Workbook](./Workbook_Superposition.ipynb#all-bell-states).*

# ### <a name="greenberger-horne-zeilinger"></a> Task 8. Greenberger–Horne–Zeilinger state.

#

# **Input:** $N$ ($N \ge 1$) qubits in the $|0 \dots 0\rangle$ state (stored in an array of length $N$).

#

# **Goal:** Change the state of the qubits to the GHZ state $\frac{1}{\sqrt{2}} \big (|0\dots0\rangle + |1\dots1\rangle\big)$.

#

# > For the syntax of flow control statements in Q#, see [the Q# documentation](https://docs.microsoft.com/quantum/language/statements#control-flow).

# +

%kata T08_GHZ_State_Test

operation GHZ_State (qs : Qubit[]) : Unit {

// You can find N as Length(qs).

// ...

}

# -

# *Can't come up with a solution? See the explained solution in the [Superposition Workbook](./Workbook_Superposition_Part2.ipynb#greenberger-horne-zeilinger).*

# ### <a name="superposition-of-all-basis-vectors"></a> Task 9. Superposition of all basis vectors.

#

# **Input:** $N$ ($N \ge 1$) qubits in the $|0 \dots 0\rangle$ state.

#

# **Goal:** Change the state of the qubits to an equal superposition of all basis vectors $\frac{1}{\sqrt{2^N}} \big (|0 \dots 0\rangle + \dots + |1 \dots 1\rangle\big)$.

#

# > For example, for $N = 2$ the final state should be $\frac{1}{\sqrt{2}} \big (|00\rangle + |01\rangle + |10\rangle + |11\rangle\big)$.

#

# <br/>

# <details>

# <summary><b>Need a hint? Click here</b></summary>

# Is this state separable?

# </details>

# +

%kata T09_AllBasisVectorsSuperposition_Test

operation AllBasisVectorsSuperposition (qs : Qubit[]) : Unit {

// ...

}

# -

# *Can't come up with a solution? See the explained solution in the [Superposition Workbook](./Workbook_Superposition_Part2.ipynb#superposition-of-all-basis-vectors).*

# ### <a name="superposition-of-all-even-or-all-odd-numbers"></a> Task 10. Superposition of all even or all odd numbers.

#

# **Inputs:**

#

# 1. $N$ ($N \ge 1$) qubits in the $|0 \dots 0\rangle$ state (stored in an array of length $N$).

# 2. A boolean `isEven`.

#

# **Goal:** Prepare a superposition of all *even* numbers if `isEven` is `true`, or of all *odd* numbers if `isEven` is `false`.

# A basis state encodes an integer number using [big-endian](https://en.wikipedia.org/wiki/Endianness) binary notation: state $|01\rangle$ corresponds to the integer $1$, and state $|10 \rangle$ - to the integer $2$.

#

# > For example, for $N = 2$ and `isEven = false` you need to prepare superposition $\frac{1}{\sqrt{2}} \big (|01\rangle + |11\rangle\big )$,

# and for $N = 2$ and `isEven = true` - superposition $\frac{1}{\sqrt{2}} \big (|00\rangle + |10\rangle\big )$.

# +

%kata T10_EvenOddNumbersSuperposition_Test

operation EvenOddNumbersSuperposition (qs : Qubit[], isEven : Bool) : Unit {

// ...

}

# -

# *Can't come up with a solution? See the explained solution in the [Superposition Workbook](./Workbook_Superposition_Part2.ipynb#superposition-of-all-even-or-all-odd-numbers).*

# ### <a name="threestates-twoqubits"></a>Task 11*. $\frac{1}{\sqrt{3}} \big(|00\rangle + |01\rangle + |10\rangle\big)$ state.

#

# **Input:** Two qubits in the $|00\rangle$ state.

#

# **Goal:** Change the state of the qubits to $\frac{1}{\sqrt{3}} \big(|00\rangle + |01\rangle + |10\rangle\big)$.

#

# <br/>

# <details>

# <summary><b>Need a hint? Click here</b></summary>

# If you need trigonometric functions, you can find them in Microsoft.Quantum.Math namespace; you'll need to add <pre>open Microsoft.Quantum.Math;</pre> to the code before the operation definition.

# </details>

# +

%kata T11_ThreeStates_TwoQubits_Test

operation ThreeStates_TwoQubits (qs : Qubit[]) : Unit {

// ...

}

# -

# ### Task 12*. Hardy state.

#

# **Input:** Two qubits in the $|00\rangle$ state.

#

# **Goal:** Change the state of the qubits to $\frac{1}{\sqrt{12}} \big(3|00\rangle + |01\rangle + |10\rangle + |11\rangle\big)$.

#

# <br/>

# <details>

# <summary><b>Need a hint? Click here</b></summary>

# If you need trigonometric functions, you can find them in Microsoft.Quantum.Math namespace; you'll need to add <pre>open Microsoft.Quantum.Math;</pre> to the code before the operation definition.

# </details>

# +

%kata T12_Hardy_State_Test

operation Hardy_State (qs : Qubit[]) : Unit {

// ...

}

# -

# ### Task 13. Superposition of $|0 \dots 0\rangle$ and the given bit string.

#

# **Inputs:**

#

# 1. $N$ ($N \ge 1$) qubits in the $|0 \dots 0\rangle$ state.

# 2. A bit string of length $N$ represented as `Bool[]`. Bit values `false` and `true` correspond to $|0\rangle$ and $|1\rangle$ states. You are guaranteed that the first bit of the bit string is `true`.

#

# **Goal:** Change the state of the qubits to an equal superposition of $|0 \dots 0\rangle$ and the basis state given by the bit string.

#

# > For example, for the bit string `[true, false]` the state required is $\frac{1}{\sqrt{2}}\big(|00\rangle + |10\rangle\big)$.

# +

%kata T13_ZeroAndBitstringSuperposition_Test

operation ZeroAndBitstringSuperposition (qs : Qubit[], bits : Bool[]) : Unit {

// ...

}

# -

# ### Task 14. Superposition of two bit strings.

#

# **Inputs:**

#

# 1. $N$ ($N \ge 1$) qubits in the $|0 \dots 0\rangle$ state.

# 2. Two bit strings of length $N$ represented as `Bool[]`s. Bit values `false` and `true` correspond to $|0\rangle$ and $|1\rangle$ states. You are guaranteed that the two bit strings differ in at least one bit.

#

# **Goal:** Change the state of the qubits to an equal superposition of the basis states given by the bit strings.

#

# > For example, for bit strings `[false, true, false]` and `[false, false, true]` the state required is $\frac{1}{\sqrt{2}}\big(|010\rangle + |001\rangle\big)$.

#

# > If you need to define any helper functions, you'll need to create an extra code cell for it and execute it before returning to this cell.

# +

%kata T14_TwoBitstringSuperposition_Test

operation TwoBitstringSuperposition (qs : Qubit[], bits1 : Bool[], bits2 : Bool[]) : Unit {

// ...

}

# -

# ### Task 15*. Superposition of four bit strings.

#

# **Inputs:**

#

# 1. $N$ ($N \ge 1$) qubits in the $|0 \dots 0\rangle$ state.

# 2. Four bit strings of length $N$, represented as `Bool[][]` `bits`. `bits` is an $4 \times N$ which describes the bit strings as follows: `bits[i]` describes the `i`-th bit string and has $N$ elements. You are guaranteed that all four bit strings will be distinct.

#

# **Goal:** Change the state of the qubits to an equal superposition of the four basis states given by the bit strings.

#

# > For example, for $N = 3$ and `bits = [[false, true, false], [true, false, false], [false, false, true], [true, true, false]]` the state required is $\frac{1}{2}\big(|010\rangle + |100\rangle + |001\rangle + |110\rangle\big)$.

#

# <br/>

# <details>

# <summary><b>Need a hint? Click here</b></summary>

# Remember that you can allocate extra qubits. If you do, you'll need to return them to the $|0\rangle$ state before releasing them.

# </details>

# +

%kata T15_FourBitstringSuperposition_Test

operation FourBitstringSuperposition (qs : Qubit[], bits : Bool[][]) : Unit {

// ...

}

# -

# ### Task 16**. W state on $2^k$ qubits.

#

# **Input:** $N = 2^k$ qubits in the $|0 \dots 0\rangle$ state.

#

# **Goal:** Change the state of the qubits to the [W state](https://en.wikipedia.org/wiki/W_state) - an equal superposition of $N$ basis states on $N$ qubits which have Hamming weight of 1.

#

# > For example, for $N = 4$ the required state is $\frac{1}{2}\big(|1000\rangle + |0100\rangle + |0010\rangle + |0001\rangle\big)$.

#

# <br/>

# <details>

# <summary><b>Need a hint? Click here</b></summary>

# You can use Controlled modifier to perform arbitrary controlled gates.

# </details>

# +

%kata T16_WState_PowerOfTwo_Test

operation WState_PowerOfTwo (qs : Qubit[]) : Unit {

// ...

}

# -

# ### Task 17**. W state on an arbitrary number of qubits.

#

# **Input:** $N$ qubits in the $|0 \dots 0\rangle$ state ($N$ is not necessarily a power of 2).

#

# **Goal:** Change the state of the qubits to the [W state](https://en.wikipedia.org/wiki/W_state) - an equal superposition of $N$ basis states on $N$ qubits which have Hamming weight of 1.

#

# > For example, for $N = 3$ the required state is $\frac{1}{\sqrt{3}}\big(|100\rangle + |010\rangle + |001\rangle\big)$.

#

# <br/>

# <details>

# <summary><b>Need a hint? Click here</b></summary>

# You can modify the signature of the given operation to specify its controlled specialization.

# </details>

# +

%kata T17_WState_Arbitrary_Test

operation WState_Arbitrary (qs : Qubit[]) : Unit {

// ...

}

| Superposition/Superposition.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import openpnm as op

import openpnm.models.geometry as gm

import openpnm.models.misc as mm

import openpnm.models.physics as pm

import scipy as sp

print(op.__version__)

# %matplotlib inline

# ## Generate Two Networks with Different Spacing

spacing_lg = 0.00006

layer_lg = op.network.Cubic(shape=[10, 10, 1], spacing=spacing_lg)

spacing_sm = 0.00002

layer_sm = op.network.Cubic(shape=[30, 5, 1], spacing=spacing_sm)

# ## Position Networks Appropriately, then Stitch Together

# Start by assigning labels to each network for identification later

layer_sm['pore.small'] = True

layer_sm['throat.small'] = True

layer_lg['pore.large'] = True

layer_lg['throat.large'] = True

# Next manually offset CL one full thickness relative to the GDL

layer_sm['pore.coords'] -= [0, spacing_sm*5, 0]

layer_sm['pore.coords'] += [0, 0, spacing_lg/2 - spacing_sm/2] # And shift up by 1/2 a lattice spacing

# Finally, send both networks to stitch which will stitch CL onto GDL

from openpnm.topotools import stitch

stitch(network=layer_lg, donor=layer_sm,

P_network=layer_lg.pores('bottom'),

P_donor=layer_sm.pores('top'),

len_max=0.00005)

combo_net = layer_lg

combo_net.name = 'combo'

# ## Create Geometry Objects for Each Layer

Ps = combo_net.pores('small')

Ts = combo_net.throats('small')

geom_sm = op.geometry.GenericGeometry(network=combo_net, pores=Ps, throats=Ts)

Ps = combo_net.pores('large')

Ts = combo_net.throats('small', mode='not')

geom_lg = op.geometry.GenericGeometry(network=combo_net, pores=Ps, throats=Ts)

# ### Add Geometrical Properties to the *Small* Domain

# The *small* domain will be treated as a continua, so instead of assigning pore sizes we want the 'pore' to be same size as the lattice cell.

geom_sm['pore.diameter'] = spacing_sm

geom_sm['pore.area'] = spacing_sm**2

geom_sm['throat.diameter'] = spacing_sm

geom_sm['throat.area'] = spacing_sm**2

geom_sm['throat.length'] = 1e-12 # A very small number to represent nearly 0-length

# ### Add Geometrical Properties to the *Large* Domain

geom_lg['pore.diameter'] = spacing_lg*sp.rand(combo_net.num_pores('large'))

geom_lg.add_model(propname='pore.area',

model=gm.pore_area.sphere)

geom_lg.add_model(propname='throat.diameter',

model=mm.misc.from_neighbor_pores,

pore_prop='pore.diameter', mode='min')

geom_lg.add_model(propname='throat.area',

model=gm.throat_area.cylinder)

geom_lg.add_model(propname='throat.length',

model=gm.throat_length.straight)

# ## Create Phase and Physics Objects

air = op.phases.Air(network=combo_net, name='air')

phys_lg = op.physics.GenericPhysics(network=combo_net, geometry=geom_lg, phase=air)

phys_sm = op.physics.GenericPhysics(network=combo_net, geometry=geom_sm, phase=air)

# Add pore-scale models for diffusion to each Physics:

phys_lg.add_model(propname='throat.diffusive_conductance',

model=pm.diffusive_conductance.ordinary_diffusion)

phys_sm.add_model(propname='throat.diffusive_conductance',

model=pm.diffusive_conductance.ordinary_diffusion)

# For the *small* layer we've used a normal diffusive conductance model, which when combined with the diffusion coefficient of air will be equivalent to open-air diffusion. If we want the *small* layer to have some tortuosity we must account for this:

porosity = 0.5

tortuosity = 2

phys_sm['throat.diffusive_conductance'] *= (porosity/tortuosity)

# Note that this extra line is NOT a pore-scale model, so it will be over-written when the `phys_sm` object is regenerated.

# ### Add a Reaction Term to the Small Layer

# A standard n-th order chemical reaction is $ r=k \cdot x^b $, or more generally: $ r = A_1 \cdot x^{A_2} + A_3 $. This model is available in `OpenPNM.Physics.models.generic_source_terms`, and we must specify values for each of the constants.

# Set Source Term

air['pore.A1'] = 1e-10 # Reaction pre-factor

air['pore.A2'] = 2 # Reaction order

air['pore.A3'] = 0 # A generic offset that is not needed so set to 0

phys_sm.add_model(propname='pore.reaction',

model=pm.generic_source_term.power_law,

A1='pore.A1', A2='pore.A2', A3='pore.A3',

X='pore.mole_fraction',

regen_mode='deferred')

# ## Perform a Diffusion Calculation

Deff = op.algorithms.ReactiveTransport(network=combo_net, phase=air)

Ps = combo_net.pores(['large', 'right'], mode='intersection')

Deff.set_value_BC(pores=Ps, values=1)

Ps = combo_net.pores('small')

Deff.set_source(propname='pore.reaction', pores=Ps)

Deff.settings['conductance'] = 'throat.diffusive_conductance'

Deff.settings['quantity'] = 'pore.mole_fraction'

Deff.run()

# ## Visualize the Concentration Distribution

# Save the results to a VTK file for visualization in Paraview:

Deff.results()

op.io.VTK.save(network=combo_net, phases=[air])

# And the result would look something like this:

#

| Simulations/mixed_continuum_and_pore_network.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Pandana network accessibility simple demo

#

# This notebook uses [pandana](https://udst.github.io/pandana/network.html) (v0.2) to download street network and points-of-interest data from OpenStreetMap and then calculate network accessibility to the points of interest. Note: pandana currently only runs on Python 2.

#

# For a more in-depth demo, check out [pandana-accessibility-demo-full.ipynb](pandana-accessibility-demo-full.ipynb)

import pandana, matplotlib.pyplot as plt

from pandana.loaders import osm

# %matplotlib inline

import matplotlib

print(matplotlib.__version__)

print(pandana.version)

bbox = [48.0616244,11.360777, 48.2481162, 11.7229083]

#[37.76, -122.35, 37.9, -122.17] #lat-long bounding box for berkeley/oakland

amenity = 'pub' #accessibility to this type of amenity

distance = 1500 #max distance in meters

# ## Download points of interest (POIs) and network data from OpenStreetMap

# first download the points of interest corresponding to the specified amenity type

pois = osm.node_query(bbox[0], bbox[1], bbox[2], bbox[3], tags='"amenity"="{}"'.format(amenity))

pois[['amenity', 'name', 'lat', 'lon']].tail()

# query the OSM API for the street network within the specified bounding box

network = osm.network_from_bbox(bbox[0], bbox[1], bbox[2], bbox[3])

pickle.dump(network, open('munich_net.pkl','wb'))

# how many network nodes did we get for this bounding box?

len(network[0].index)

import pickle

#

network = pickle.load(open('munich_net.pkl','rb'))

# +

import pandana as pdna

network=pdna.Network(network[0]["x"], network[0]["y"],

network[1].reset_index()['level_0'],

network[1].reset_index()['level_1'],

network[1].reset_index()[["distance"]])

# -

# ## Process the network data then compute accessibility

# identify nodes that are connected to fewer than some threshold of other nodes within a given distance

# do nothing with this for now, but see full example in other notebook for more

lcn = network.low_connectivity_nodes(impedance=1000, count=10, imp_name='distance')

# precomputes the range queries (the reachable nodes within this maximum distance)

# so, as long as you use a smaller distance, cached results will be used

network.precompute(distance + 1)

# initialize the underlying C++ points-of-interest engine

network.init_pois(num_categories=1, max_dist=distance, max_pois=7)

# initialize a category for this amenity with the locations specified by the lon and lat columns

network.set_pois(category='my_amenity', x_col=pois['lon'], y_col=pois['lat'])

# +

# search for the n nearest amenities to each node in the network

access = network.nearest_pois(distance=distance, category='my_amenity', num_pois=7)

# each df cell represents the network distance from the node to each of the n POIs

access.head()

# -

# ## Plot the accessibility

# +

# keyword arguments to pass for the matplotlib figure

bbox_aspect_ratio = (bbox[2] - bbox[0]) / (bbox[3] - bbox[1])

fig_kwargs = {'facecolor':'w',

'figsize':(10, 10 * bbox_aspect_ratio)}

# keyword arguments to pass for scatter plots

plot_kwargs = {'s':5,

'alpha':0.9,

'cmap':'viridis_r',

'edgecolor':'none'}

# -

# plot the distance to the nth nearest amenity

n = 1

bmap, fig, ax = network.plot(access[n], bbox=bbox, plot_kwargs=plot_kwargs, fig_kwargs=fig_kwargs)

#ax.set_axis_bgcolor('k')

ax.set_title('Walking distance (m) to nearest {} around Munich'.format(amenity), fontsize=15)

fig.savefig('images/accessibility-pub-east-bay.png', dpi=200, bbox_inches='tight')

plt.show()

# For a more in-depth demo, check out [pandana-accessibility-demo-full.ipynb](pandana-accessibility-demo-full.ipynb)

| accessibility/pandana-accessibility-demo-simple.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/GarvRJ/T.Y.MINIPROJECT/blob/master/yolov5.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + colab={"base_uri": "https://localhost:8080/"} id="Xk5T7drQnZ8z" outputId="19a22226-06e5-49cf-a9a7-d02b35a98341"

# ls

# + colab={"base_uri": "https://localhost:8080/"} id="jdlCyJMOjvkK" outputId="a9fb89c3-2327-42f8-dd71-cbaf79fceb1c"

# cd /content/drive/MyDrive/yolov5_miniproject

# + colab={"base_uri": "https://localhost:8080/"} id="T7qe_L7lnljk" outputId="b95178ef-b8a0-4f7f-bab7-f5193bcd8841"

# !git clone https://github.com/ultralytics/yolov5

# + colab={"base_uri": "https://localhost:8080/"} id="RDYyBTjvlY9i" outputId="df1d3e7e-08ba-4264-e320-0d28badd803f"

# %cd yolov5

# + id="-VDdu1NQlY-t" colab={"base_uri": "https://localhost:8080/"} outputId="3d89c1de-bd4e-4a09-9197-fa6aec12fe1c"

# !git reset --hard 886f1c03d839575afecb059accf74296fad395b6

# + colab={"base_uri": "https://localhost:8080/"} id="DBMLKPHMmtkc" outputId="d9e4e248-7680-48d7-8fc3-291e1735a56f"

# !pip install -qr requirements.txt # install dependencies (ignore errors)

import torch

from IPython.display import Image, clear_output # to display images

from utils.google_utils import gdrive_download # to download models/datasets

# clear_output()

print('Setup complete. Using torch %s %s' % (torch.__version__, torch.cuda.get_device_properties(0) if torch.cuda.is_available() else 'CPU'))

# + colab={"base_uri": "https://localhost:8080/", "height": 1000} id="1Y1GvdbKoHV_" outputId="e7577539-3e4c-4448-8c8e-20bedc503356"

# !pip install roboflow

from roboflow import Roboflow

rf = Roboflow(api_key="FX0t6K12dztT7aeguf2z")

project = rf.workspace("roboflow-gw7yv").project("vehicles-openimages")

dataset = project.version(1).download("yolov5")

# + colab={"base_uri": "https://localhost:8080/"} id="b-xvGDAupIL_" outputId="1862d298-f572-474d-e235-ea49d660a066"

# %cd yolov5

# %ls

# + colab={"base_uri": "https://localhost:8080/"} id="fYybqZ0Wr091" outputId="df11795b-659a-4ff3-bfdd-badaffc6fee8"

# %cat Vehicles-OpenImages-1/data.yaml

# + id="oR5t_P3otDio"

# define number of classes based on YAML

import yaml

with open("Vehicles-OpenImages-1" + "/data.yaml", 'r') as stream:

num_classes = str(yaml.safe_load(stream)['nc'])

# + colab={"base_uri": "https://localhost:8080/"} id="WUkMLHwZtOii" outputId="0dce510a-eff7-43c2-aa81-2bb4b84a455d"

# %cat /content/yolov5/models/yolov5s.yaml

# + id="aNPaMbbCtVlX"

from IPython.core.magic import register_line_cell_magic

@register_line_cell_magic

def writetemplate(line, cell):

with open(line, 'w') as f:

f.write(cell.format(**globals()))

# + id="M-hjWugAtjLh"

# %%writetemplate /content/yolov5/models/custom_yolov5s.yaml

# parameters

nc: {num_classes} # number of classes

depth_multiple: 0.33 # model depth multiple

width_multiple: 0.50 # layer channel multiple

# anchors

anchors:

- [10,13, 16,30, 33,23] # P3/8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

# YOLOv5 backbone

backbone:

# [from, number, module, args]

[[-1, 1, Focus, [64, 3]], # 0-P1/2

[-1, 1, Conv, [128, 3, 2]], # 1-P2/4

[-1, 3, BottleneckCSP, [128]],

[-1, 1, Conv, [256, 3, 2]], # 3-P3/8

[-1, 9, BottleneckCSP, [256]],

[-1, 1, Conv, [512, 3, 2]], # 5-P4/16

[-1, 9, BottleneckCSP, [512]],

[-1, 1, Conv, [1024, 3, 2]], # 7-P5/32

[-1, 1, SPP, [1024, [5, 9, 13]]],

[-1, 3, BottleneckCSP, [1024, False]], # 9

]

# YOLOv5 head

head:

[[-1, 1, Conv, [512, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 6], 1, Concat, [1]], # cat backbone P4

[-1, 3, BottleneckCSP, [512, False]], # 13

[-1, 1, Conv, [256, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 4], 1, Concat, [1]], # cat backbone P3

[-1, 3, BottleneckCSP, [256, False]], # 17 (P3/8-small)

[-1, 1, Conv, [256, 3, 2]],

[[-1, 14], 1, Concat, [1]], # cat head P4

[-1, 3, BottleneckCSP, [512, False]], # 20 (P4/16-medium)

[-1, 1, Conv, [512, 3, 2]],

[[-1, 10], 1, Concat, [1]], # cat head P5

[-1, 3, BottleneckCSP, [1024, False]], # 23 (P5/32-large)

[[17, 20, 23], 1, Detect, [nc, anchors]], # Detect(P3, P4, P5)

]

# + colab={"base_uri": "https://localhost:8080/"} id="6QrlYyFPtnNp" outputId="c59cf490-480a-48b0-8cd0-933f6a34ccd4"

# !pip install wandb

# + colab={"base_uri": "https://localhost:8080/", "height": 133} id="JXNS2SsGtt6M" outputId="ee1f5472-5e8a-4e83-a4df-039e0d749442"

# + colab={"base_uri": "https://localhost:8080/"} id="DUyZeom_uVZb" outputId="252289c4-8f77-4b8f-817a-537b2c5287e8"

# %%time

# %cd /content/yolov5/

# !python train.py --img 416 --batch 16 --epochs 100 --data Vehicles-OpenImages-12/data.yaml --cfg ./models/custom_yolov5s.yaml --weights '' --name yolov5s_results --cache

| yolov5.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

# %matplotlib inline

import openpathsampling as paths

import numpy as np

import matplotlib.pyplot as plt

import os

import openpathsampling.visualize as ops_vis

from IPython.display import SVG

# ### Advanced analysis techniques

#

# Now we'll move on to a few more advanced analysis techniques. (These are discussed in Paper II.)

#

# With the fixed path length ensemble, we should check for recrossings. To do this, we create an ensemble which represents the recrossing paths: a frame in $\beta$, possible frames in neither $\alpha$ nor $\beta$, and then a frame in $\alpha$.

#

# Then we check whether any subtrajectory of a trial trajectory matches that ensemble, by using the `Ensemble.split()` function. We can then further refine to see which steps that included trials with recrossings were actually accepted.

# %%time

flexible = paths.Storage("tps_nc_files/alanine_dipeptide_tps.nc")

# %%time

fixed = paths.Storage("tps_nc_files/alanine_dipeptide_fixed_tps.nc")

flex_scheme = flexible.schemes[0]

fixed_scheme = fixed.schemes[0]

# +

# TODO: cache trajectories too?

# -

# create the ensemble that identifies recrossings

alpha = fixed.volumes.find('C_7eq')

beta = fixed.volumes.find('alpha_R')

recrossing_ensemble = paths.SequentialEnsemble([

paths.LengthEnsemble(1) & paths.AllInXEnsemble(beta),

paths.OptionalEnsemble(paths.AllOutXEnsemble(alpha | beta)),

paths.LengthEnsemble(1) & paths.AllInXEnsemble(alpha)

])

# %%time

# now we check each step to see if its trial has a recrossing

steps_with_recrossing = []

for step in fixed.steps:

# trials is a list of samples: with shooting, only one in the list

recrossings = [] # default for initial empty move (no trials in step[0].change)

for trial in step.change.trials:

recrossings = recrossing_ensemble.split(trial.trajectory)

# recrossing contains a list with the recrossing trajectories

# (len(recrossing) == 0 if no recrossings)

if len(recrossings) > 0:

steps_with_recrossing += [step] # save for later analysis

accepted_recrossings = [step for step in steps_with_recrossing if step.change.accepted is True]

print "Trials with recrossings:", len(steps_with_recrossing)

print "Accepted trials with recrossings:", len(accepted_recrossings)

# Note that the accepted trials with recrossing does not account for how long the trial remained active. It also doesn't tell us whether the trial represented a new recrossing event, or was correlated with the previous recrossing event.

# Let's take a look at one of the accepted trajectories with a recrossing event. We'll plot the value of $\psi$, since this is what distinguishes the two states. We'll also select the frames that are actually inside each state and color them (red for $\alpha$, blue for $\beta$).

# +

psi = fixed.cvs.find('psi')

trajectory = accepted_recrossings[0].active[0].trajectory

in_alpha_indices = [trajectory.index(s) for s in trajectory if alpha(s)]

in_alpha_psi = [psi(trajectory)[i] for i in in_alpha_indices]

in_beta_indices = [trajectory.index(s) for s in trajectory if beta(s)]

in_beta_psi = [psi(trajectory)[i] for i in in_beta_indices]

plt.plot(psi(trajectory), 'k-')

plt.plot(in_alpha_indices, in_alpha_psi, 'ro') # alpha in red

plt.plot(in_beta_indices, in_beta_psi, 'bo') # beta in blue

# -

# Now let's see how many recrossing events there are in each accepted trial. If there's one recrossing, then the trajectory must go $\alpha\to\beta\to\alpha\to\beta$ to be accepted. Two recrossings would mean $\alpha\to\beta\to\alpha\to\beta\to\alpha\to\beta$.

recrossings_per = []

for step in accepted_recrossings:

for test in step.change.trials:

recrossings_per.append(len(recrossing_ensemble.split(test.trajectory)))

print recrossings_per

# these numbers come from accepted trial steps, not all steps

print sum(recrossings_per)

print len(recrossings_per)

print len([x for x in recrossings_per if x==2])

# # Comparing the fixed and flexible simulations

# %%time

# transition path length distribution

flex_ens = flex_scheme.network.sampling_ensembles[0]

fixed_transition_segments = sum([flex_ens.split(step.active[0].trajectory) for step in fixed.steps],[])

fixed_transition_length = [len(traj) for traj in fixed_transition_segments]

flexible_transition_length = [len(s.active[0].trajectory) for s in flexible.steps]

print len(fixed_transition_length)

bins = np.linspace(0, 400, 80);

plt.hist(flexible_transition_length, bins, alpha=0.5, normed=True, label="flexible");

plt.hist(fixed_transition_length, bins, alpha=0.5, normed=True, label="fixed");

plt.legend(loc='upper right');

# #### Identifying different mechanisms using custom ensembles

#

# We expected the plot above to be very similar for both cases. However, we know that the $\alpha\to\beta$ transition in alanine dipeptide can occur via two mechanisms: since $\psi$ is periodic, the transition can occur due to an overall increase in $\psi$, or due to an overall decrease in $\psi$. We also know that the alanine dipeptide transitions aren't actually all that rare, so they will occur spontaneously in long simulations.

#

#

# This section shows how to create custom ensembles to identify whether the transition occurred with an increasing $\psi$ or a decreasing $\psi$. We also need to account for (unlikely) edge cases where the path starts in one direction but completes the transition from the other.

# First, we'll create a few more `Volume` objects. In this case, we will completely tile the Ramachandran space; while a complete tiling isn't necessary, it is often useful.

# first, we fully subdivide the Ramachandran space

phi = fixed.cvs.find('phi')

deg = 180.0/np.pi

nml_plus = paths.PeriodicCVDefinedVolume(psi, -160/deg, -100/deg, -np.pi, np.pi)

nml_minus = paths.PeriodicCVDefinedVolume(psi, 0/deg, 100/deg, -np.pi, np.pi)

nml_alpha = (paths.PeriodicCVDefinedVolume(phi, 0/deg, 180/deg, -np.pi, np.pi) &

paths.PeriodicCVDefinedVolume(psi, 100/deg, 200/deg, -np.pi, np.pi))

nml_beta = (paths.PeriodicCVDefinedVolume(phi, 0/deg, 180/deg, -np.pi, np.pi) &

paths.PeriodicCVDefinedVolume(psi, -100/deg, 0/deg, -np.pi, np.pi))

# +

#TODO: plot to display where these volumes are

# -

# Next, we'll create ensembles for the "increasing" and "decreasing" transitions. These transitions mark a crossing of either the `nml_plus` or the `nml_minus`. These aren't necessarily $\alpha\to\beta$ transitions. However, any $\alpha\to\beta$ transition must contain at least one subtrajectory which satsifies one of these ensembles.

increasing = paths.SequentialEnsemble([

paths.AllInXEnsemble(alpha | nml_alpha),

paths.AllInXEnsemble(nml_plus),

paths.AllInXEnsemble(beta | nml_beta)

])

decreasing = paths.SequentialEnsemble([

paths.AllInXEnsemble(alpha | nml_alpha),

paths.AllInXEnsemble(nml_minus),

paths.AllInXEnsemble(beta | nml_beta)

])

# Finally, we'll write a little function that characterizes a set of trajectories according to these ensembles. It returns a dictionary mapping the ensemble (`increasing` or `decreasing`) to a list of trajectories that have a subtrajectory that satisfies it (at least one entry in `ensemble.split(trajectory)`). That dictionary also contains keys for `'multiple'` matched ensembles and `None` if no ensemble was matched. Trajectories for either of these keys would need to be investigated further.

def categorize_transitions(ensembles, trajectories):

results = {ens : [] for ens in ensembles + ['multiple', None]}

for traj in trajectories:

matched_ens = None

for ens in ensembles:

if len(ens.split(traj)) > 0:

if matched_ens is not None:

matched_ens = 'multiple'

else:

matched_ens = ens

results[matched_ens].append(traj)

return results

# With that function defined, let's use it!

categorized = categorize_transitions(ensembles=[increasing, decreasing],

trajectories=fixed_transition_segments)

print "increasing:", len(categorized[increasing])

print "decreasing:", len(categorized[decreasing])

print " multiple:", len(categorized['multiple'])

print " None:", len(categorized[None])

# Comparing to the flexible length simulation:

flex_trajs = [step.active[0].trajectory for step in flexible.steps]

flex_categorized = categorize_transitions(ensembles=[increasing, decreasing],

trajectories=flex_trajs[::10])

print "increasing:", len(flex_categorized[increasing])

print "decreasing:", len(flex_categorized[decreasing])

print " multiple:", len(flex_categorized['multiple'])

print " None:", len(flex_categorized[None])

# So the fixed length sampling is somehow capturing both kinds of transitions (probably because they are not really that rare). Let's see what the path length distribution from only the decreasing transitions looks

plt.hist([len(traj) for traj in flex_categorized[decreasing]], bins, alpha=0.5, normed=True);

plt.hist([len(traj) for traj in categorized[decreasing]], bins, alpha=0.5, normed=True);

# Still a little off, although this might be due to bad sampling. Let's see how many of the decorrelated trajectories have this kind of transition.

full_fixed_tree = ops_vis.PathTree(

fixed.steps,

ops_vis.ReplicaEvolution(replica=0)

)

full_history = full_fixed_tree.generator

# start with the decorrelated tragectories

fixed_decorrelated = full_history.decorrelated_trajectories

# find the A->B transitions from the decorrelated trajectories

decorrelated_transitions = sum([flex_ens.split(traj) for traj in fixed_decorrelated], [])

# find the A->B transition from these which are decreasing

decorrelated_decreasing = sum([decreasing.split(traj) for traj in decorrelated_transitions], [])

print len(decorrelated_decreasing)

# So this is based off of 11 decorrelated trajectory transitions. That's not a lot of statistics.

#

# However, we expect to see a *very* different distribution for the "increasing" paths:

plt.hist([len(traj) for traj in categorized[increasing]], bins, normed=True, alpha=0.5, color='g');

# Let's also check whether we go back and forth between the increasing transition and the decreasing transition, or whether there's just a single change from one type to the other.

def find_switches(ensembles, trajectories):

switches = []

last_category = None

traj_num = 0

for traj in trajectories:

category = None

for ens in ensembles:

if len(ens.split(traj)) > 0:

if category is not None:

category = 'multiple'

else:

category = ens

if last_category != category:

switches.append((category, traj_num))

traj_num += 1

last_category = category

return switches

switches = find_switches([increasing, decreasing], fixed_transition_segments)

print [switch[1] for switch in switches], len(fixed_transition_segments)

# So there are a lot of switches early in the simulation, and then it gets stuck in one state for much longer.

# Even though we know the alanine dipeptide transitions are not particularly rare, this does give us reason to re-check the temperature. First we'll check what the intergrator says its temperature is, then we'll calculate the temperature based on the kinetic energy of every 50th trajectory.

#

# Note that the code below is specific to using the OpenMM engine.

every_50th_trajectory = [step.active[0].trajectory for step in fixed.steps[::50]]

# make a set to remove duplicates, if trajs aren't decorrelated

every_50th_traj_snapshots = list(set(sum(every_50th_trajectory, [])))

# sadly, it looks like that trick with set doesn't do any good here

# +

# this is ugly as sin: we need a better way of doing it (ideally as a snapshot feature)

# dof calculation taken from OpenMM's StateDataReporter

import simtk.openmm as mm

import simtk.unit

dof = 0

system = engine.simulation.system

dofs_from_particles = 0

for i in range(system.getNumParticles()):

if system.getParticleMass(i) > 0*simtk.unit.dalton:

dofs_from_particles += 3

dofs_from_constraints = system.getNumConstraints()

dofs_from_motion_removers = 0

if any(type(system.getForce(i)) == mm.CMMotionRemover for i in range(system.getNumForces())):

dofs_from_motion_removers += 3

dof = dofs_from_particles - dofs_from_constraints - dofs_from_motion_removers

#print dof, "=", dofs_from_particles, "-", dofs_from_constraints, "-", dofs_from_motion_removers

kinetic_energies = []

potential_energies = []

temperatures = []

R = simtk.unit.BOLTZMANN_CONSTANT_kB * simtk.unit.AVOGADRO_CONSTANT_NA

for snap in every_50th_traj_snapshots:

engine.current_snapshot = snap

state = engine.simulation.context.getState(getEnergy=True)

ke = state.getKineticEnergy()

temperatures.append(2 * ke / dof / R)

# -

plt.plot([T / T.unit for T in temperatures])

mean_T = np.mean(temperatures)

plt.plot([mean_T / mean_T.unit]*len(temperatures), 'r')

print "Mean temperature:", np.mean(temperatures).format("%.2f")

| examples/alanine_dipeptide_tps/AD_tps_4_advanced.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: 'Python 3.9.7 64-bit (''c-m_env'': venv)'

# name: python3

# ---

from seqeval.metrics import accuracy_score

from seqeval.metrics import classification_report

from seqeval.metrics import f1_score

y_true = [['O', 'O', 'O', 'B-MISC', 'I-MISC', 'I-MISC', 'O'], ['B-PER', 'I-PER', 'O']]

y_pred = [['O', 'O', 'B-MISC', 'I-MISC', 'I-MISC', 'I-MISC', 'O'], ['B-PER', 'I-PER', 'O']]

f1_score(y_true, y_pred)

accuracy_score(y_true, y_pred)

print(classification_report(y_true, y_pred))

# +

from typing import List, Dict, Sequence

class Matrics:

def __init__(self, sents_true_labels: Sequence[Sequence[Dict]], sents_pred_labels:Sequence[Sequence[Dict]]):

self.sents_true_labels = sents_true_labels

self.sents_pred_labels = sents_pred_labels

self.types = set(entity['type'] for sent in sents_true_labels for entity in sent)

self.confusion_matrices = {type: {'TP': 0, 'TN': 0, 'FP': 0, 'FN': 0} for type in self.types}

self.scores = {type: {'p': 0, 'r': 0, 'f1': 0} for type in self.types}

def cal_confusion_matrices(self) -> Dict[str, Dict]:

"""Calculate confusion matrices for all sentences."""

for true_labels, pred_labels in zip(self.sents_true_labels, self.sents_pred_labels):

for true_label in true_labels:

entity_type = true_label['type']

prediction_hit_count = 0

for pred_label in pred_labels:

if pred_label['type'] != entity_type:

continue

if pred_label['start_idx'] == true_label['start_idx'] and pred_label['end_idx'] == true_label['end_idx'] and pred_label['text'] == true_label['text']: # TP

self.confusion_matrices[entity_type]['TP'] += 1

prediction_hit_count += 1

elif ((pred_label['start_idx'] == true_label['start_idx']) or (pred_label['end_idx'] == true_label['end_idx'])) and pred_label['text'] != true_label['text']: # boundry error, count FN, FP

self.confusion_matrices[entity_type]['FP'] += 1

self.confusion_matrices[entity_type]['FN'] += 1

prediction_hit_count += 1

if prediction_hit_count != 1: # FN, model cannot make a prediction for true_label

self.confusion_matrices[entity_type]['FN'] += 1

prediction_hit_count = 0 # reset to default

def cal_scores(self) -> Dict[str, Dict]:

"""Calculate precision, recall, f1."""

confusion_matrices = self.confusion_matrices

scores = {type: {'p': 0, 'r': 0, 'f1': 0} for type in self.types}

for entity_type, confusion_matrix in confusion_matrices.items():

if confusion_matrix['TP'] == 0 and confusion_matrix['FP'] == 0:

scores[entity_type]['p'] = 0

else:

scores[entity_type]['p'] = confusion_matrix['TP'] / (confusion_matrix['TP'] + confusion_matrix['FP'])

if confusion_matrix['TP'] == 0 and confusion_matrix['FN'] == 0:

scores[entity_type]['r'] = 0

else:

scores[entity_type]['r'] = confusion_matrix['TP'] / (confusion_matrix['TP'] + confusion_matrix['FN'])

if scores[entity_type]['p'] == 0 or scores[entity_type]['r'] == 0:

scores[entity_type]['f1'] = 0

else:

scores[entity_type]['f1'] = 2*scores[entity_type]['p']*scores[entity_type]['r'] / (scores[entity_type]['p']+scores[entity_type]['r'])

self.scores = scores

def print_confusion_matrices(self):

for entity_type, matrix in self.confusion_matrices.items():

print(f"{entity_type}: {matrix}")

def print_scores(self):

for entity_type, score in self.scores.items():

print(f"{entity_type}: f1 {score['f1']:.4f}, precision {score['p']:.4f}, recall {score['r']:.4f}")

if __name__ == "__main__":

sents_true_labels = [[{'start_idx': 0, 'end_idx': 1, 'text': 'Foreign Ministry', 'type': 'ORG'},

{'start_idx': 3, 'end_idx': 4, 'text': '<NAME>', 'type': 'PER'},

{'start_idx': 6, 'end_idx': 6, 'text': 'Reuters', 'type': 'ORG'}]]

sents_pred_labels = [[{'start_idx': 3, 'end_idx': 3, 'text': 'Shen', 'type': 'PER'},

{'start_idx': 6, 'end_idx': 6, 'text': 'Reuters', 'type': 'ORG'}]]

matrics = Matrics(sents_true_labels, sents_pred_labels)

matrics.cal_confusion_matrices()

matrics.print_confusion_matrices()

matrics.cal_scores()

matrics.print_scores()

# PER: {'TP': 0, 'TN': 0, 'FP': 1, 'FN': 1}

# ORG: {'TP': 1, 'TN': 0, 'FP': 0, 'FN': 1}

# PER: f1 0.0000, precision 0.0000, recall 0.0000

# ORG: f1 0.6667, precision 1.0000, recall 0.5000

# -

| notebooks/002_scipyNER.ipynb |

# ---

# title: "Deep Dream"

# output:

# html_notebook:

# theme: cerulean

# highlight: textmate

# jupyter:

# jupytext:

# cell_metadata_filter: name,tags,-all

# text_representation:

# extension: .r

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: R

# language: R

# name: ir

# ---

# + name="setup" tags=["remove_cell"]

knitr::opts_chunk$set(warning = FALSE, message = FALSE)

# -

# ***

#

# This notebook contains the code samples found in Chapter 8, Section 2 of [Deep Learning with R](https://www.manning.com/books/deep-learning-with-r). Note that the original text features far more content, in particular further explanations and figures: in this notebook, you will only find source code and related comments.

#

# ***

#

# ## Implementing Deep Dream in Keras

#

# We will start from a convnet pre-trained on ImageNet. In Keras, we have many such convnets available: VGG16, VGG19, Xception, ResNet50... albeit the same process is doable with any of these, your convnet of choice will naturally affect your visualizations, since different convnet architectures result in different learned features. The convnet used in the original Deep Dream release was an Inception model, and in practice Inception is known to produce very nice-looking Deep Dreams, so we will use the InceptionV3 model that comes with Keras.

# +

library(keras)

# We will not be training our model,

# so we use this command to disable all training-specific operations

k_set_learning_phase(0)

# Build the InceptionV3 network.

# The model will be loaded with pre-trained ImageNet weights.

model <- application_inception_v3(

weights = "imagenet",

include_top = FALSE,

)

# -

# Next, we compute the "loss", the quantity that we will seek to maximize during the gradient ascent process. In Chapter 5, for filter visualization, we were trying to maximize the value of a specific filter in a specific layer. Here we will simultaneously maximize the activation of all filters in a number of layers. Specifically, we will maximize a weighted sum of the L2 norm of the activations of a set of high-level layers. The exact set of layers we pick (as well as their contribution to the final loss) has a large influence on the visuals that we will be able to produce, so we want to make these parameters easily configurable. Lower layers result in geometric patterns, while higher layers result in visuals in which you can recognize some classes from ImageNet (e.g. birds or dogs). We'll start from a somewhat arbitrary configuration involving four layers -- but you will definitely want to explore many different configurations later on:

# Named mapping layer names to a coefficient

# quantifying how much the layer's activation

# will contribute to the loss we will seek to maximize.

# Note that these are layer names as they appear

# in the built-in InceptionV3 application.

# You can list all layer names using `summary(model)`.

layer_contributions <- list(

mixed2 = 0.2,

mixed3 = 3,

mixed4 = 2,

mixed5 = 1.5

)

# Now let's define a tensor that contains our loss, i.e. the weighted sum of the L2 norm of the activations of the layers listed above.

# +

# Get the symbolic outputs of each "key" layer (we gave them unique names).

layer_dict <- model$layers

names(layer_dict) <- lapply(layer_dict, function(layer) layer$name)

# Define the loss.

loss <- k_variable(0)

for (layer_name in names(layer_contributions)) {

# Add the L2 norm of the features of a layer to the loss.

coeff <- layer_contributions[[layer_name]]

activation <- layer_dict[[layer_name]]$output

scaling <- k_prod(k_cast(k_shape(activation), "float32"))

loss <- loss + (coeff * k_sum(k_square(activation)) / scaling)

}

# -

# Now we can set up the gradient ascent process:

# +

# This holds our generated image

dream <- model$input

# Normalize gradients.

grads <- k_gradients(loss, dream)[[1]]

grads <- grads / k_maximum(k_mean(k_abs(grads)), 1e-7)

# Set up function to retrieve the value

# of the loss and gradients given an input image.

outputs <- list(loss, grads)

fetch_loss_and_grads <- k_function(list(dream), outputs)

eval_loss_and_grads <- function(x) {

outs <- fetch_loss_and_grads(list(x))

loss_value <- outs[[1]]

grad_values <- outs[[2]]

list(loss_value, grad_values)

}

gradient_ascent <- function(x, iterations, step, max_loss = NULL) {

for (i in 1:iterations) {

c(loss_value, grad_values) %<-% eval_loss_and_grads(x)

if (!is.null(max_loss) && loss_value > max_loss)

break

cat("...Loss value at", i, ":", loss_value, "\n")

x <- x + (step * grad_values)

}

x

}

# -

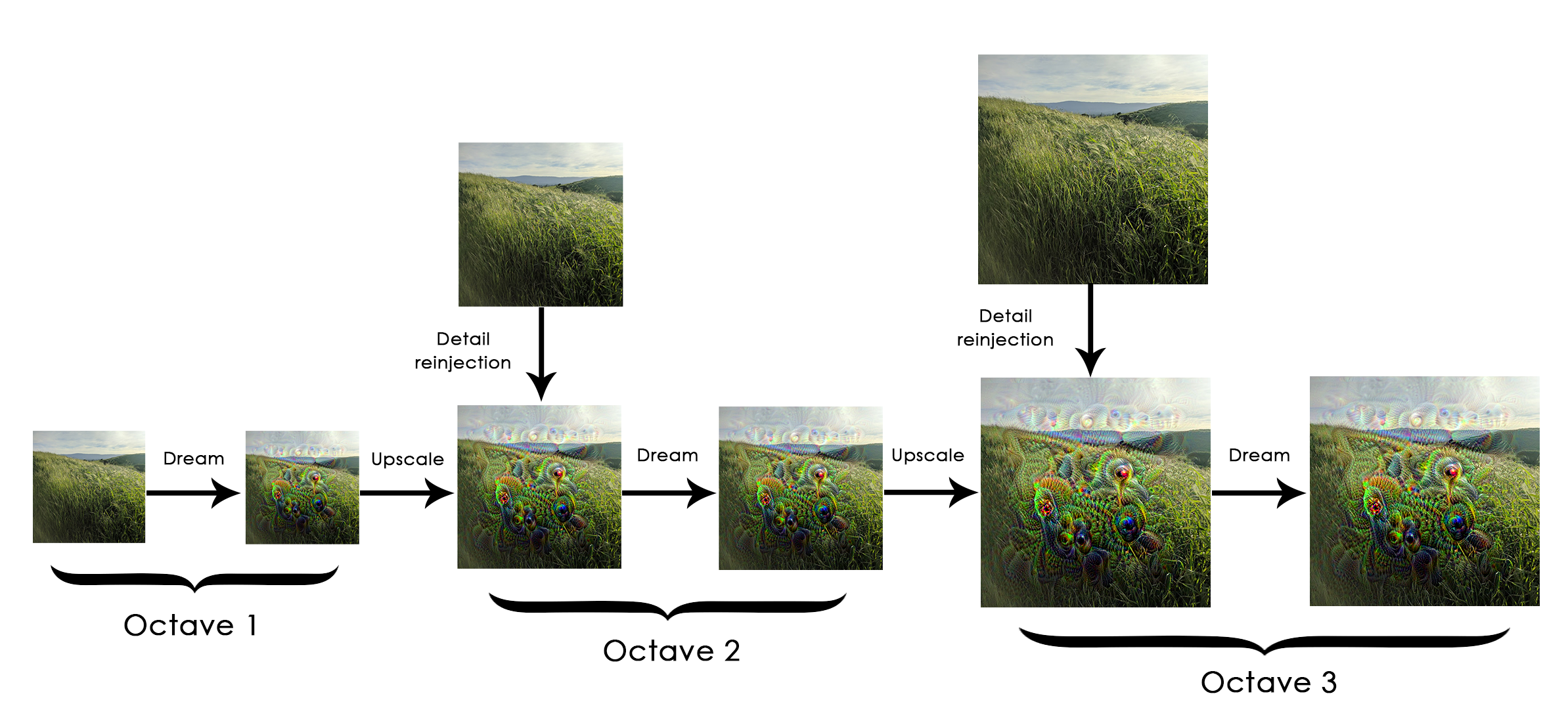

# Finally, here is the actual Deep Dream algorithm.

#

# First, we define a list of "scales" (also called "octaves") at which we will process the images. Each successive scale is larger than previous one by a factor 1.4 (i.e. 40% larger): we start by processing a small image and we increasingly upscale it:

#

#

#

# Then, for each successive scale, from the smallest to the largest, we run gradient ascent to maximize the loss we have previously defined, at that scale. After each gradient ascent run, we upscale the resulting image by 40%.

#

# To avoid losing a lot of image detail after each successive upscaling (resulting in increasingly blurry or pixelated images), we leverage a simple trick: after each upscaling, we reinject the lost details back into the image, which is possible since we know what the original image should look like at the larger scale. Given a small image S and a larger image size L, we can compute the difference between the original image (assumed larger than L) resized to size L and the original resized to size S -- this difference quantifies the details lost when going from S to L.

# +

resize_img <- function(img, size) {

image_array_resize(img, size[[1]], size[[2]])

}

save_img <- function(img, fname) {

img <- deprocess_image(img)

image_array_save(img, fname)

}

# Util function to open, resize, and format pictures into appropriate tensors

preprocess_image <- function(image_path) {

image_load(image_path) %>%

image_to_array() %>%

array_reshape(dim = c(1, dim(.))) %>%

inception_v3_preprocess_input()

}

# Util function to convert a tensor into a valid image

deprocess_image <- function(img) {

img <- array_reshape(img, dim = c(dim(img)[[2]], dim(img)[[3]], 3))

img <- img / 2

img <- img + 0.5

img <- img * 255

dims <- dim(img)

img <- pmax(0, pmin(img, 255))

dim(img) <- dims

img

}

# +

# Playing with these hyperparameters will also allow you to achieve new effects

step <- 0.01 # Gradient ascent step size

num_octave <- 3 # Number of scales at which to run gradient ascent

octave_scale <- 1.4 # Size ratio between scales

iterations <- 20 # Number of ascent steps per scale

# If our loss gets larger than 10,

# we will interrupt the gradient ascent process, to avoid ugly artifacts

max_loss <- 10

# Fill this to the path to the image you want to use

dir.create("dream")

base_image_path <- "~/Downloads/creative_commons_elephant.jpg"

# Load the image into an array

img <- preprocess_image(base_image_path)

# We prepare a list of shapes

# defining the different scales at which we will run gradient ascent

original_shape <- dim(img)[-1]

successive_shapes <- list(original_shape)

for (i in 1:num_octave) {

shape <- as.integer(original_shape / (octave_scale ^ i))

successive_shapes[[length(successive_shapes) + 1]] <- shape

}

# Reverse list of shapes, so that they are in increasing order

successive_shapes <- rev(successive_shapes)

# Resize the array of the image to our smallest scale

original_img <- img

shrunk_original_img <- resize_img(img, successive_shapes[[1]])

for (shape in successive_shapes) {

cat("Processsing image shape", shape, "\n")

img <- resize_img(img, shape)

img <- gradient_ascent(img,

iterations = iterations,

step = step,

max_loss = max_loss)

upscaled_shrunk_original_img <- resize_img(shrunk_original_img, shape)

same_size_original <- resize_img(original_img, shape)

lost_detail <- same_size_original - upscaled_shrunk_original_img

img <- img + lost_detail

shrunk_original_img <- resize_img(original_img, shape)

save_img(img, fname = sprintf("dream/at_scale_%s.png",

paste(shape, collapse = "x")))

}

save_img(img, fname = "dream/final_dream.png")

# -

plot(as.raster(deprocess_image(img) / 255))

| notebooks/8.2-deep-dream.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: cflows

# language: python

# name: cflows

# ---

# ## Config

import os

os.environ['CUDA_VISIBLE_DEVICES'] = '0'

# +

# %load_ext autoreload

# %autoreload 2

from pathlib import Path

from experiment import data_path, device

model_name = 'celeba-cef-joint'

checkpoint_path = data_path / 'cef_models' / model_name

gen_path = data_path / 'generated' / model_name

# -

# ## Load data

# +

import torchvision

from torch.utils.data import DataLoader

from torchvision import transforms

import data

transform = transforms.Compose([

transforms.Resize((64, 64)),

transforms.ToTensor(),

])

train_data = data.CelebA(root=data_path, split='train', transform=transform)

val_data = data.CelebA(root=data_path, split='valid', transform=transform)

test_data = data.CelebA(root=data_path, split='test', transform=transform)

# -

# ## Define model

# +

from nflows import cef_models

flow = cef_models.CelebACEFlow().to(device)

# -

# ## Train

# +

import torch.optim as opt

from experiment import train_injective_flow

optim = opt.Adam(flow.parameters(), lr=0.0001)

scheduler = opt.lr_scheduler.CosineAnnealingLR(optim, 300)

def weight_schedule():

for _ in range(300):

yield 0.001, 10000

scheduler.step()

train_loader = DataLoader(train_data, batch_size=256, shuffle=True, num_workers=30)

val_loader = DataLoader(val_data, batch_size=256, shuffle=True, num_workers=30)

train_injective_flow(flow, optim, scheduler, weight_schedule, train_loader, val_loader,

model_name, checkpoint_path=checkpoint_path, checkpoint_frequency=25)

# -

# ## Generate some samples

# +

from experiment import save_samples

save_samples(flow, num_samples=len(test_data), gen_path=gen_path, checkpoint_epoch=-1, batch_size=512)

| experiments/celeba-cef-joint.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: tf_gpu

# language: python

# name: tf_gpu

# ---

# # 4.2. OpenAI Gym Environments

# ## Gym basics and a simple text environment

# +

import gym # pip install gym, pip install gym[atari]

from IPython import display

import matplotlib

import matplotlib.pyplot as plt

# %matplotlib inline

env = gym.make('Taxi-v3')

env.reset()

# -

env.P # {state: {action: [(probability, next state, reward, done)]}}

for step in range(100):

action = env.action_space.sample()

new_state, reward, done, info = env.step(action)

clear_output(wait=True)

print(env.render(mode='ansi'))

print(f'Timestep: {step + 1}')

print(f'State: {new_state}')

print(f'Action: {action}')

print(f'Reward: {reward}')

sleep(0.2)

| Section 4/Video 4.2.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# Tutorial 3: Realism and Complexity

# ==================================

#

# Up to now, we've fitted some fairly crude and unrealistic lens models. For example, we've modeled the lens `Galaxy`'s

# mass as a sphere. Given most lens galaxies are `elliptical`s we should probably model their mass as elliptical! We've

# also omitted the lens `Galaxy`'s light, which typically outshines the source galaxy.

#

# In this example, we'll start using a more realistic lens model.

#

# In my experience, the simplest lens model (e.g. that has the fewest parameters) that provides a good fit to real

# strong lenses is as follows:

#

# 1) An _EllipticalSersic `LightProfile` for the lens `Galaxy`'s light.

# 2) A `EllipticalIsothermal` (SIE) `MassProfile` for the lens `Galaxy`'s mass.

# 3) An `EllipticalExponential` `LightProfile`.for the source-`Galaxy`'s light (to be honest, this is too simple,

# but lets worry about that later).

#

# This has a total of 18 non-linear parameters, which is over double the number of parameters we've fitted up to now.

# In future exercises, we'll fit even more complex models, with some 20-30+ non-linear parameters.

# +

# %matplotlib inline

from pyprojroot import here

workspace_path = str(here())

# %cd $workspace_path

print(f"Working Directory has been set to `{workspace_path}`")

from os import path

import autolens as al

import autolens.plot as aplt

import autofit as af

# -

# we'll use new strong lensing data, where:

#

# - The lens `Galaxy`'s `LightProfile` is an `EllipticalSersic`.

# - The lens `Galaxy`'s total mass distribution is an `EllipticalIsothermal`.

# - The source `Galaxy`'s `LightProfile` is an `EllipticalExponential`.

# +

dataset_name = "light_sersic__mass_sie__source_exp"

dataset_path = path.join("dataset", "howtolens", "chapter_2", dataset_name)

imaging = al.Imaging.from_fits(

image_path=path.join(dataset_path, "image.fits"),

noise_map_path=path.join(dataset_path, "noise_map.fits"),

psf_path=path.join(dataset_path, "psf.fits"),

pixel_scales=0.1,

)

# -

# we'll create and use a 2.5" `Mask2D`.

mask = al.Mask2D.circular(

shape_2d=imaging.shape_2d, pixel_scales=imaging.pixel_scales, radius=2.5

)

# When plotted, the lens light`s is clearly visible in the centre of the image.

aplt.Imaging.subplot_imaging(imaging=imaging, mask=mask)

# Like in the previous tutorial, we use a `SettingsPhaseImaging` object to specify our model-fitting procedure uses a

# regular `Grid`.

# +

settings_masked_imaging = al.SettingsMaskedImaging(grid_class=al.Grid, sub_size=2)

settings = al.SettingsPhaseImaging(settings_masked_imaging=settings_masked_imaging)

# -

# Now lets fit the dataset using a phase.

phase = al.PhaseImaging(

search=af.DynestyStatic(

path_prefix="howtolens",

name="phase_t3_realism_and_complexity",

n_live_points=80,

),

settings=settings,

galaxies=af.CollectionPriorModel(

lens_galaxy=al.GalaxyModel(

redshift=0.5, bulge=al.lp.EllipticalSersic, mass=al.mp.EllipticalIsothermal

),

source_galaxy=al.GalaxyModel(redshift=1.0, bulge=al.lp.EllipticalExponential),

),

)

# Lets run the phase.

# +

print(

"Dynesty has begun running - checkout the autolens_workspace/output/3_realism_and_complexity"

" folder for live output of the results, images and lens model."

" This Jupyter notebook cell with progress once Dynesty has completed - this could take some time!"

)

result = phase.run(dataset=imaging, mask=mask)

print("Dynesty has finished run - you may now continue the notebook.")

# -

# And lets look at the fit to the `Imaging` data, which as we are used to fits the data brilliantly!

aplt.FitImaging.subplot_fit_imaging(fit=result.max_log_likelihood_fit)

# Up to now, all of our non-linear searches have been successes. They find a lens model that provides a visibly good fit

# to the data, minimizing the residuals and inferring a high log likelihood value.

#

# These solutions are called `global` maxima, they correspond to the highest likelihood regions of the entirity of

# parameter space. There are no other lens models in parameter space that would give higher likelihoods - this is the

# model we wants to always infer!

#

# However, non-linear searches may not always successfully locate the global maxima lens models. They may instead infer

# a `local maxima`, a solution which has a high log likelihood value relative to the lens models near it in parameter

# space, but whose log likelihood is significantly below the `global` maxima solution somewhere else in parameter space.

#

# Inferring such solutions is essentially a failure of our `NonLinearSearch` and it is something we do not want to

# happen! Lets infer a local maxima, by reducing the number of `live points` Dynesty uses to map out parameter space.

# we're going to use so few that it has no hope of locating the global maxima, ultimating finding and inferring a local

# maxima instead.

# +

phase = al.PhaseImaging(

search=af.DynestyStatic(

path_prefix="howtolens",

name="phase_t3_realism_and_complexity__local_maxima",

n_live_points=5,

),

settings=settings,

galaxies=af.CollectionPriorModel(

lens_galaxy=al.GalaxyModel(

redshift=0.5, bulge=al.lp.EllipticalSersic, mass=al.mp.EllipticalIsothermal

),

source_galaxy=al.GalaxyModel(redshift=1.0, bulge=al.lp.EllipticalExponential),

),

)

print(

"Dynesty has begun running - checkout the autolens_workspace/output/3_realism_and_complexity"

" folder for live output of the results, images and lens model."

" This Jupyter notebook cell with progress once Dynesty has completed - this could take some time!"

)

result_local_maxima = phase.run(dataset=imaging, mask=mask)

print("Dynesty has finished run - you may now continue the notebook.")

# -

# And lets look at the fit to the `Imaging` data, which is clearly worse than our original fit above.

aplt.FitImaging.subplot_fit_imaging(fit=result_local_maxima.max_log_likelihood_fit)

# Finally, just to be sure we hit a local maxima, lets compare the maximum log likelihood values of the two results

#

# The local maxima value is significantly lower, confirming that our `NonLinearSearch` simply failed to locate lens

# models which fit the data better when it searched parameter space.

print("Likelihood of Global Model:")

print(result.max_log_likelihood_fit.log_likelihood)

print("Likelihood of Local Model:")

print(result_local_maxima.max_log_likelihood_fit.log_likelihood)

# In this example, we intentionally made our `NonLinearSearch` fail, by using so few live points it had no hope of

# sampling parameter space thoroughly. For modeling real lenses we wouldn't do this on purpose, but the risk of inferring

# a local maxima is still very real, especially as we make our lens model more complex.

#

# Lets think about *complexity*. As we make our lens model more realistic, we also made it more complex. For this

# tutorial, our non-linear parameter space went from 7 dimensions to 18. This means there was a much larger *volume* of

# parameter space to search. As this volume grows, there becomes a higher chance that our `NonLinearSearch` gets lost

# and infers a local maxima, especially if we don't set it up with enough live points!

#

# At its core, lens modeling is all about learning how to get a `NonLinearSearch` to find the global maxima region of

# parameter space, even when the lens model is extremely complex.

#

# And with that, we're done. In the next exercise, we'll learn how to deal with failure and begin thinking about how we

# can ensure our `NonLinearSearch` finds the global-maximum log likelihood solution. Before that, think about

# the following:

#

# 1) When you look at an image of a strong lens, do you get a sense of roughly what values certain lens model

# parameters are?

#

# 2) The `NonLinearSearch` failed because parameter space was too complex. Could we make it less complex, whilst

# still keeping our lens model fairly realistic?

#

# 3) The source galaxy in this example had only 7 non-linear parameters. Real source galaxies may have multiple

# components (e.g. a bar, disk, bulge, star-forming knot) and there may even be more than 1 source galaxy! Do you

# think there is any hope of us navigating a parameter space if the source contributes 20+ parameters by itself?

| howtolens/chapter_2_lens_modeling/tutorial_3_realism_and_complexity.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

p = [2, 4, 10, 6, 8, 4]

max0, min0 = max(p), min(p)

diff = abs(max0 - min0)

# p[0] = (p[0] - min0) / diff

for i in range(len(p)):

p[i] = (p[i] - min0) / diff

print(p)

# -

nmax = 100

for n in range(1, nmax + 1):

message = ''

if not n % 3:

message = 'Fizz'

if not n % 5:

message += 'Buzz'

print(message or n)

| ue7/ue7.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

import datetime, time

import simpy

import shapely.geometry

import pandas as pd

import openclsim.core as core

import openclsim.model as model

import datetime

import numpy as np

# +

simulation_start = datetime.datetime.now()

my_env = simpy.Environment(initial_time=time.mktime(simulation_start.timetuple()))

my_env.epoch = time.mktime(simulation_start.timetuple())

registry = {}

keep_resources = {}

# +

Site = type(

"Site",

(

core.Identifiable,

core.Log,

core.Locatable,

core.HasContainer,

core.HasResource,

),

{},

)

TransportProcessingResource = type(

"TransportProcessingResource",

(

core.Identifiable,

core.Log,

core.ContainerDependentMovable,

core.Processor,

core.HasResource,

core.LoadingFunction,

core.UnloadingFunction,

),

{},

)

# +

location_from_site = shapely.geometry.Point(5.1, 52)

location_to_site = shapely.geometry.Point(5, 52.1)

location_to_site_2 = shapely.geometry.Point(5, 52.2)

data_from_site = {

"env": my_env,

"name": "Winlocatie",

"geometry": location_from_site,

"capacity": 100,

"level": 100,

}

data_to_site = {

"env": my_env,

"name": "Dumplocatie",

"geometry": location_to_site,

"capacity": 55,

"level": 0,

}

from_site = Site(**data_from_site)

to_site = Site(**data_to_site)

# +

data_cutter = {

"env": my_env,

"name": "Cutter_1",

"geometry": location_from_site,

"capacity": 5,

"compute_v": lambda x: 10,

"loading_rate": 3600/5,

"unloading_rate": 3600/5

}

data_barge_1 = {

"env": my_env,

"name": "Barge_1",

"geometry": location_to_site,

"capacity": 5,

"compute_v": lambda x: 10,

"loading_rate": 3600/5,

"unloading_rate": 3600/5

}

data_barge_2 = {

"env": my_env,

"name": "Barge_2",

"geometry": location_to_site,

"capacity": 5,

"compute_v": lambda x: 10,

"loading_rate": 3600/5,

"unloading_rate": 3600/5

}

cutter = TransportProcessingResource(**data_cutter)

barge_1 = TransportProcessingResource(**data_barge_1)

barge_2 = TransportProcessingResource(**data_barge_2)

# +

requested_resources = {}

single_run = [

model.MoveActivity(**{

"env": my_env,

"name": "sailing empty_1",

"registry": registry,

"mover": barge_1,

"destination": from_site,

"postpone_start": True,

}),

model.ShiftAmountActivity(**{

"env": my_env,

"name": "Transfer MP_1",

"registry": registry,

"processor": cutter,

"origin": from_site,

"destination": barge_1,

"amount": 5,

"duration": 3600,

"postpone_start": True,

# "requested_resources":requested_resources

}),

model.MoveActivity(**{

"env": my_env,

"name": "sailing filled_1",

"registry": registry,

"mover": barge_1,

"destination": to_site,

"postpone_start": True,

}),

model.ShiftAmountActivity(**{

"env": my_env,

"name": "Transfer TP_1",

"registry": registry,

"processor": barge_1,

"origin": barge_1,

"destination": to_site,

"amount": 5,

"duration": 3600,

"postpone_start": True,

# "requested_resources":requested_resources

})

]

sequential_activity_data = {

"env": my_env,