code

stringlengths 38

801k

| repo_path

stringlengths 6

263

|

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Django Shell-Plus

# language: python

# name: django_extensions

# ---

import csv, re

#count current objects in Book

len(Book.objects.all())

file = "data/zotero/CBAB.csv"

with open(file, 'r', encoding ='utf-8') as data:

reader = csv.reader(data)

datalist = list(reader)

failed = []

saved = []

for row in datalist[1:]:

if row[2] != "":

NewBook = Book(zoterokey = row[0],

item_type = row[1],

author = row[3],

title = row[4],

publication_title = row[5],

short_title = row[21],

place = row[27],

publication_year = row[2])

try:

NewBook.save()

saved.append(row)

except:

failed.append(row)

else:

NewBook = Book(zoterokey = row[0],

item_type = row[1],

author = row[3],

title = row[4],

publication_title = row[5],

short_title = row[21],

place = row[27])

try:

NewBook.save()

saved.append(row)

except:

failed.append(row)

print('saved: {} objects \nfailed: {} objects'.format(len(saved), len(failed)))

# +

# delete all Book-objects:

#Book.objects.all().delete()

# -

|

zoteroExport_to_MySQL.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

# Virtually everyone has had an online experience where a website makes personalized recommendations in hopes of future sales or ongoing traffic. Amazon tells you “Customers Who Bought This Item Also Bought”, Udemy tells you “Students Who Viewed This Course Also Viewed”. And Netflix awarded a $1 million prize to a developer team in 2009, for an algorithm that increased the accuracy of the company’s recommendation system by 10 percent.

#

# Without further ado, if you want to learn how to build a recommender system from scratch, let’s get started.

# ## The Data

# Book-Crossings(http://www2.informatik.uni-freiburg.de/~cziegler/BX/) is a book rating dataset compiled by <NAME>. It contains 1.1 million ratings of 270,000 books by 90,000 users. The ratings are on a scale from 1 to 10.

#

# The data consists of three tables: ratings, books info, and users info. I downloaded these three tables from here (http://www2.informatik.uni-freiburg.de/~cziegler/BX/).

#Importing all the required libraries

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

#reading the data files

books = pd.read_csv('BX-CSV-Dump/BX-Books.csv', sep=';', error_bad_lines=False, encoding="latin-1")

books.columns = ['ISBN', 'bookTitle', 'bookAuthor', 'yearOfPublication', 'publisher', 'imageUrlS', 'imageUrlM', 'imageUrlL']

users = pd.read_csv('BX-CSV-Dump/BX-Users.csv', sep=';', error_bad_lines=False, encoding="latin-1")

users.columns = ['userID', 'Location', 'Age']

ratings = pd.read_csv('BX-CSV-Dump/BX-Book-Ratings.csv', sep=';', error_bad_lines=False, encoding="latin-1")

ratings.columns = ['userID', 'ISBN', 'bookRating']

# ### Ratings data

#

# The ratings data set provides a list of ratings that users have given to books. It includes 1,149,780 records and 3 fields: userID, ISBN, and bookRating.

print(ratings.shape)

print(list(ratings.columns))

ratings.head(10)

# ### Ratings distribution

#

# The ratings are very unevenly distributed, and the vast majority of ratings are 0.

plt.rc("font", size=15)

ratings.bookRating.value_counts(sort=False).plot(kind='bar')

plt.title('Rating Distribution\n')

plt.xlabel('Rating')

plt.ylabel('Count')

plt.savefig('system1.png', bbox_inches='tight')

plt.show()

# ### Books data

#

# The books dataset provides book details. It includes 271,360 records and 8 fields: ISBN, book title, book author, publisher and so on.

print(books.shape)

print(list(books.columns))

books.head(10)

# ### Users data

#

# This dataset provides the user demographic information. It includes 278,858 records and 3 fields: user id, location, and age.

print(users.shape)

print(list(users.columns))

users.head(10)

# ### Age distribution

#

# Maximum active users are among those in their 20–30s.

users.Age.hist(bins=[0, 10, 20, 30, 40, 50, 100])

plt.title('Age Distribution\n')

plt.xlabel('Age')

plt.ylabel('Count')

plt.savefig('system2.png', bbox_inches='tight')

plt.show()

# ## Recommendations based on rating counts

rating_count = pd.DataFrame(ratings.groupby('ISBN')['bookRating'].count())

rating_count.sort_values('bookRating', ascending=False).head()

# The book with ISBN “0971880107” received the most rating counts.

# Let’s find out what book it is, and what books are in the top 5

most_rated_books = pd.DataFrame(['0971880107', '0316666343', '0385504209', '0060928336', '0312195516'], index=np.arange(5), columns = ['ISBN'])

most_rated_books_summary = pd.merge(most_rated_books, books, on='ISBN')

most_rated_books_summary

# The book that received the most rating counts in this data set is <NAME>’s “Wild Animus”. And there is something in common among these five books that received the most rating counts — they are all novels. The recommender suggests that novels are popular and likely receive more ratings. And if someone likes “The Lovely Bones: A Novel”, we should probably also recommend to him(or her) “Wild Animus”.

# ## Recommendations based on correlations

# We use Pearsons’R correlation coefficient to measure the linear correlation between two variables, in our case, the ratings for two books.

#

# First, we need to find out the average rating, and the number of ratings each book received.

average_rating = pd.DataFrame(ratings.groupby('ISBN')['bookRating'].mean())

average_rating['ratingCount'] = pd.DataFrame(ratings.groupby('ISBN')['bookRating'].count())

average_rating.sort_values('ratingCount', ascending=False).head()

# Observations: In this data set, the book that received the most rating counts was not highly rated at all. As a result, if we were to use recommendations based on rating counts, we would definitely make mistakes here. So, we need to have a better system.

# #### To ensure statistical significance, users with less than 200 ratings, and books with less than 100 ratings are excluded

counts1 = ratings['userID'].value_counts()

ratings = ratings[ratings['userID'].isin(counts1[counts1 >= 200].index)]

counts = ratings['bookRating'].value_counts()

ratings = ratings[ratings['bookRating'].isin(counts[counts >= 100].index)]

# ### Rating matrix

# We convert the ratings table to a 2D matrix. The matrix will be sparse because not every user rated every book.

ratings_pivot = ratings.pivot(index='userID', columns='ISBN').bookRating

userID = ratings_pivot.index

ISBN = ratings_pivot.columns

print(ratings_pivot.shape)

ratings_pivot.head()

# Let’s find out which books are correlated with the 2nd most rated book “The Lovely Bones: A Novel”.

bones_ratings = ratings_pivot['0316666343']

similar_to_bones = ratings_pivot.corrwith(bones_ratings)

corr_bones = pd.DataFrame(similar_to_bones, columns=['pearsonR'])

corr_bones.dropna(inplace=True)

corr_summary = corr_bones.join(average_rating['ratingCount'])

corr_summary[corr_summary['ratingCount']>=300].sort_values('pearsonR', ascending=False).head(10)

# We obtained the books’ ISBNs, but we need to find out the titles of the books to see whether they make sense.

books_corr_to_bones = pd.DataFrame(['0312291639', '0316601950', '0446610038', '0446672211', '0385265700', '0345342968', '0060930535', '0375707972', '0684872153'],

index=np.arange(9), columns=['ISBN'])

corr_books = pd.merge(books_corr_to_bones, books, on='ISBN')

corr_books

# Let’s select three books from the above highly correlated list to examine: <b>“The Nanny Diaries: A Novel”, “The Pilot’s Wife: A Novel” and “Where the Heart is”</b>.

#

# <b>“The Nanny Diaries”</b> satirizes upper-class Manhattan society as seen through the eyes of their children’s caregivers.

#

# Written by the same author as <b>“The Lovely Bones”, “The Pilot’s Wife”</b> is the third novel in Shreve’s informal trilogy to be set in a large beach house on the New Hampshire coast that used to be a convent.

#

# <b>“Where the Heart Is”</b> dramatizes in detail the tribulations of lower-income and foster children in the United States.

#

# These three books sound like they would be highly correlated with <b>“The Lovely Bones”</b>. It seems our correlation recommender system is working.

# ## Collaborative Filtering Using k-Nearest Neighbors (kNN)

# kNN is a machine learning algorithm to find clusters of similar users based on common book ratings, and make predictions using the average rating of top-k nearest neighbors. For example, we first present ratings in a matrix with the matrix having one row for each item (book) and one column for each user, like so:

#

# We then find the k item that has the most similar user engagement vectors. In this case, Nearest Neighbors of item id 5= [7, 4, 8, …]. Now, let’s implement kNN into our book recommender system.

#

# Starting from the original data set, we will be only looking at the popular books. In order to find out which books are popular, we combine books data with ratings data.

combine_book_rating = pd.merge(ratings, books, on='ISBN')

columns = ['yearOfPublication', 'publisher', 'bookAuthor', 'imageUrlS', 'imageUrlM', 'imageUrlL']

combine_book_rating = combine_book_rating.drop(columns, axis=1)

combine_book_rating.head()

# We then group by book titles and create a new column for total rating count.

# +

combine_book_rating = combine_book_rating.dropna(axis = 0, subset = ['bookTitle'])

book_ratingCount = (combine_book_rating.

groupby(by = ['bookTitle'])['bookRating'].

count().

reset_index().

rename(columns = {'bookRating': 'totalRatingCount'})

[['bookTitle', 'totalRatingCount']]

)

book_ratingCount.head()

# -

# We combine the rating data with the total rating count data, this gives us exactly what we need to find out which books are popular and filter out lesser-known books.

rating_with_totalRatingCount = combine_book_rating.merge(book_ratingCount, left_on = 'bookTitle', right_on = 'bookTitle', how = 'left')

rating_with_totalRatingCount.head()

# Let’s look at the statistics of total rating count:

pd.set_option('display.float_format', lambda x: '%.3f' % x)

print(book_ratingCount['totalRatingCount'].describe())

# The median book has been rated only once. Let’s look at the top of the distribution:

print(book_ratingCount['totalRatingCount'].quantile(np.arange(.9, 1, .01)))

# About 1% of the books received 50 or more ratings. Because we have so many books in our data, we will limit it to the top 1%, and this will give us 2713 unique books.

popularity_threshold = 50

rating_popular_book = rating_with_totalRatingCount.query('totalRatingCount >= @popularity_threshold')

rating_popular_book.head()

# #### Filter to users in US and Canada only

#

# In order to improve computing speed, and not run into the “MemoryError” issue, I will limit our user data to those in the US and Canada. And then combine user data with the rating data and total rating count data.

# +

combined = rating_popular_book.merge(users, left_on = 'userID', right_on = 'userID', how = 'left')

us_canada_user_rating = combined[combined['Location'].str.contains("usa|canada")]

us_canada_user_rating=us_canada_user_rating.drop('Age', axis=1)

us_canada_user_rating.head()

# -

# #### Implementing kNN

#

# We convert our table to a 2D matrix, and fill the missing values with zeros (since we will calculate distances between rating vectors). We then transform the values(ratings) of the matrix dataframe into a scipy sparse matrix for more efficient calculations.

# #### Finding the Nearest Neighbors

# We use unsupervised algorithms with sklearn.neighbors. The algorithm we use to compute the nearest neighbors is “brute”, and we specify “metric=cosine” so that the algorithm will calculate the cosine similarity between rating vectors. Finally, we fit the model.

# +

us_canada_user_rating = us_canada_user_rating.drop_duplicates(['userID', 'bookTitle'])

us_canada_user_rating_pivot = us_canada_user_rating.pivot(index = 'bookTitle', columns = 'userID', values = 'bookRating').fillna(0)

from scipy.sparse import csr_matrix

us_canada_user_rating_matrix = csr_matrix(us_canada_user_rating_pivot.values)

from sklearn.neighbors import NearestNeighbors

model_knn = NearestNeighbors(metric = 'cosine', algorithm = 'brute')

model_knn.fit(us_canada_user_rating_matrix)

# -

# #### Test our model and make some recommendations:

# In this step, the kNN algorithm measures distance to determine the “closeness” of instances. It then classifies an instance by finding its nearest neighbors, and picks the most popular class among the neighbors.

# +

query_index = np.random.choice(us_canada_user_rating_pivot.shape[0])

distances, indices = model_knn.kneighbors(us_canada_user_rating_pivot.iloc[query_index, :].values.reshape(1, -1), n_neighbors = 6)

for i in range(0, len(distances.flatten())):

if i == 0:

print('Recommendations for {0}:\n'.format(us_canada_user_rating_pivot.index[query_index]))

else:

print('{0}: {1}, with distance of {2}:'.format(i, us_canada_user_rating_pivot.index[indices.flatten()[i]], distances.flatten()[i]))

# -

# Perfect! <NAME> Novels definitely should be recommended, one after another.

# ### Collaborative Filtering Using Matrix Factorization

#

# Matrix Factorization is simply a mathematical tool for playing around with matrices. The Matrix Factorization techniques are usually more effective, because they allow users to discover the latent (hidden)features underlying the interactions between users and items (books).

#

# We use singular value decomposition (SVD) — one of the Matrix Factorization models for identifying latent factors.

#

# Similar with kNN, we convert our USA Canada user rating table into a 2D matrix (called a utility matrix here) and fill the missing values with zeros.

us_canada_user_rating_pivot2 = us_canada_user_rating.pivot(index = 'userID', columns = 'bookTitle', values = 'bookRating').fillna(0)

us_canada_user_rating_pivot2.head()

# We then transpose this utility matrix, so that the bookTitles become rows and userIDs become columns. After using TruncatedSVD to decompose it, we fit it into the model for dimensionality reduction. This compression happened on the dataframe’s columns since we must preserve the book titles. We choose n_components = 12 for just 12 latent variables, and you can see, our data’s dimensions have been reduced significantly from 40017 X 2442 to 746 X 12.

us_canada_user_rating_pivot2.shape

X = us_canada_user_rating_pivot2.values.T

X.shape

# +

import sklearn

from sklearn.decomposition import TruncatedSVD

SVD = TruncatedSVD(n_components=12, random_state=17)

matrix = SVD.fit_transform(X)

matrix.shape

# -

# We calculate the Pearson’s R correlation coefficient for every book pair in our final matrix. To compare this with the results from kNN, we pick the same book “Two for the DoughThe Green Mile: Coffey’s Hands (Green Mile Series)” to find the books that have high correlation coefficients (between 0.9 and 1.0) with it.

import warnings

warnings.filterwarnings("ignore",category =RuntimeWarning)

corr = np.corrcoef(matrix)

corr.shape

us_canada_book_title = us_canada_user_rating_pivot2.columns

us_canada_book_list = list(us_canada_book_title)

coffey_hands = us_canada_book_list.index("Two for the Dough")

print(coffey_hands)

corr_coffey_hands = corr[coffey_hands]

#corr_coffey_hands

list(us_canada_book_title[(corr_coffey_hands>0.9)])

|

Book Recommender System.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Estimation of the CTA point source sensitivity

# ## Introduction

#

# This notebook explains how to estimate the CTA sensitivity for a point-like IRF at a fixed zenith angle and fixed offset using the full containement IRFs distributed for the CTA 1DC. The significativity is computed for a 1D analysis (On-OFF regions) and the LiMa formula.

#

# We use here an approximate approach with an energy dependent integration radius to take into account the variation of the PSF. We will first determine the 1D IRFs including a containment correction.

#

# We will be using the following Gammapy class:

#

# * [gammapy.spectrum.SensitivityEstimator](https://docs.gammapy.org/dev/api/gammapy.spectrum.SensitivityEstimator.html)

# ## Setup

# As usual, we'll start with some setup ...

# %matplotlib inline

import matplotlib.pyplot as plt

import numpy as np

import astropy.units as u

from astropy.coordinates import Angle

from gammapy.irf import load_cta_irfs

from gammapy.spectrum import SensitivityEstimator, CountsSpectrum

# ## Define analysis region and energy binning

#

# Here we assume a source at 0.7 degree from pointing position. We perform a simple energy independent extraction for now with a radius of 0.1 degree.

# +

offset = Angle("0.5 deg")

energy_reco = np.logspace(-1.8, 1.5, 20) * u.TeV

energy_true = np.logspace(-2, 2, 100) * u.TeV

# -

# ## Load IRFs

#

# We extract the 1D IRFs from the full 3D IRFs provided by CTA.

filename = (

"$GAMMAPY_DATA/cta-1dc/caldb/data/cta/1dc/bcf/South_z20_50h/irf_file.fits"

)

irfs = load_cta_irfs(filename)

arf = irfs["aeff"].to_effective_area_table(offset, energy=energy_true)

rmf = irfs["edisp"].to_energy_dispersion(

offset, e_true=energy_true, e_reco=energy_reco

)

psf = irfs["psf"].to_energy_dependent_table_psf(theta=offset)

# ## Determine energy dependent integration radius

#

# Here we will determine an integration radius that varies with the energy to ensure a constant fraction of flux enclosure (e.g. 68%). We then apply the fraction to the effective area table.

#

# By doing so we implicitly assume that energy dispersion has a neglible effect. This should be valid for large enough energy reco bins as long as the bias in the energy estimation is close to zero.

containment = 0.68

energy = np.sqrt(energy_reco[1:] * energy_reco[:-1])

on_radii = psf.containment_radius(energy=energy, fraction=containment)

solid_angles = 2 * np.pi * (1 - np.cos(on_radii)) * u.sr

arf.data.data *= containment

# ## Estimate background

#

# We now provide a workaround to estimate the background from the tabulated IRF in the energy bins we consider.

bkg_data = irfs["bkg"].evaluate_integrate(

fov_lon=0 * u.deg, fov_lat=offset, energy_reco=energy_reco

)

bkg = CountsSpectrum(

energy_reco[:-1], energy_reco[1:], data=(bkg_data * solid_angles)

)

# ## Compute sensitivity

#

# We impose a minimal number of expected signal counts of 5 per bin and a minimal significance of 3 per bin. We assume an alpha of 0.2 (ratio between ON and OFF area).

# We then run the sensitivity estimator.

sensitivity_estimator = SensitivityEstimator(

arf=arf, rmf=rmf, bkg=bkg, livetime="5h", gamma_min=5, sigma=3, alpha=0.2

)

sensitivity_table = sensitivity_estimator.run()

# ## Results

#

# The results are given as an Astropy table. A column criterion allows to distinguish bins where the significance is limited by the signal statistical significance from bins where the sensitivity is limited by the number of signal counts.

# This is visible in the plot below.

# Show the results table

sensitivity_table

# +

# Save it to file (could use e.g. format of CSV or ECSV or FITS)

# sensitivity_table.write('sensitivity.ecsv', format='ascii.ecsv')

# +

# Plot the sensitivity curve

t = sensitivity_estimator.results_table

is_s = t["criterion"] == "significance"

plt.plot(

t["energy"][is_s],

t["e2dnde"][is_s],

"s-",

color="red",

label="significance",

)

is_g = t["criterion"] == "gamma"

plt.plot(

t["energy"][is_g], t["e2dnde"][is_g], "*-", color="blue", label="gamma"

)

plt.loglog()

plt.xlabel("Energy ({})".format(t["energy"].unit))

plt.ylabel("Sensitivity ({})".format(t["e2dnde"].unit))

plt.legend();

# -

# We add some control plots showing the expected number of background counts per bin and the ON region size cut (here the 68% containment radius of the PSF).

# +

# Plot expected number of counts for signal and background

fig, ax1 = plt.subplots()

# ax1.plot( t["energy"], t["excess"],"o-", color="red", label="signal")

ax1.plot(

t["energy"], t["background"], "o-", color="black", label="blackground"

)

ax1.loglog()

ax1.set_xlabel("Energy ({})".format(t["energy"].unit))

ax1.set_ylabel("Expected number of bkg counts")

ax2 = ax1.twinx()

ax2.set_ylabel("ON region radius ({})".format(on_radii.unit), color="red")

ax2.semilogy(t["energy"], on_radii, color="red", label="PSF68")

ax2.tick_params(axis="y", labelcolor="red")

ax2.set_ylim(0.01, 0.5)

# -

# ## Exercises

#

# * Also compute the sensitivity for a 20 hour observation

# * Compare how the sensitivity differs between 5 and 20 hours by plotting the ratio as a function of energy.

|

tutorials/cta_sensitivity.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python (herschelhelp_internal)

# language: python

# name: helpint

# ---

# # ELAIS-N2 - Merging HELP data products

#

# This notebook merges the various HELP data products on ELAIS-N2.

#

# It is first used to create a catalogue that will be used for SED fitting by CIGALE by merging the optical master list, the photo-z and the XID+ far infrared fluxes. Then, this notebook is used to incorporate the CIGALE physical parameter estimations and generate the final HELP data product on the field.

from herschelhelp_internal import git_version

print("This notebook was run with herschelhelp_internal version: \n{}".format(git_version()))

import datetime

print("This notebook was executed on: \n{}".format(datetime.datetime.now()))

# +

import numpy as np

from astropy.table import Column, MaskedColumn, Table, join, vstack

from herschelhelp.filters import get_filter_meta_table

from herschelhelp_internal.utils import add_column_meta

filter_mean_lambda = {

item['filter_id']: item['mean_wavelength'] for item in

get_filter_meta_table()

}

# +

# Set this to true to produce only the catalogue for CIGALE and to false

# to continue and merge the CIGALE results too.

FIRST_RUN_FOR_CIGALE = True

SUFFIX = '20180218'

# -

# # Reading the masterlist, XID+, and photo-z catalogues

# +

# Master list

ml = Table.read(

"../../dmu1/dmu1_ml_ELAIS-N2/data/master_catalogue_elais-n2_{}.fits".format(SUFFIX))

ml.meta = None

# +

# # XID+ MIPS24

# xid_mips24 = Table.read("../../dmu26/dmu26_XID+MIPS_CDFS-SWIRE/data/"

# "dmu26_XID+MIPS_CDFS-SWIRE_cat_20170901.fits")

# xid_mips24.meta = None

# # Adding the error column

# xid_mips24.add_column(Column(

# data=np.max([xid_mips24['FErr_MIPS_24_u'] - xid_mips24['F_MIPS_24'],

# xid_mips24['F_MIPS_24'] - xid_mips24['FErr_MIPS_24_l']],

# axis=0),

# name="ferr_mips_24"

# ))

# xid_mips24['F_MIPS_24'].name = "f_mips_24"

# xid_mips24 = xid_mips24['help_id', 'f_mips_24', 'ferr_mips_24', 'flag_mips_24']

# +

# # XID+ PACS

# xid_pacs = Table.read("../../dmu26/dmu26_XID+PACS_CDFS-SWIRE/data/"

# "dmu26_XID+PACS_CDFS-SWIRE_cat_20171019.fits")

# xid_pacs.meta = None

# # Convert from mJy to μJy

# for col in ["F_PACS_100", "FErr_PACS_100_u", "FErr_PACS_100_l",

# "F_PACS_160", "FErr_PACS_160_u", "FErr_PACS_160_l"]:

# xid_pacs[col] *= 1000

# xid_pacs.add_column(Column(

# data=np.max([xid_pacs['FErr_PACS_100_u'] - xid_pacs['F_PACS_100'],

# xid_pacs['F_PACS_100'] - xid_pacs['FErr_PACS_100_l']],

# axis=0),

# name="ferr_pacs_green"

# ))

# xid_pacs['F_PACS_100'].name = "f_pacs_green"

# xid_pacs['flag_PACS_100'].name = "flag_pacs_green"

# xid_pacs.add_column(Column(

# data=np.max([xid_pacs['FErr_PACS_160_u'] - xid_pacs['F_PACS_160'],

# xid_pacs['F_PACS_160'] - xid_pacs['FErr_PACS_160_l']],

# axis=0),

# name="ferr_pacs_red"

# ))

# xid_pacs['F_PACS_160'].name = "f_pacs_red"

# xid_pacs['flag_PACS_160'].name = "flag_pacs_red"

# xid_pacs = xid_pacs['help_id', 'f_pacs_green', 'ferr_pacs_green',

# 'flag_pacs_green', 'f_pacs_red', 'ferr_pacs_red',

# 'flag_pacs_red']

# +

# # XID+ SPIRE

# xid_spire = Table.read("../../dmu26/dmu26_XID+SPIRE_CDFS-SWIRE/data/"

# "dmu26_XID+SPIRE_CDFS-SWIRE_cat_20170919.fits")

# xid_spire.meta = None

# xid_spire['HELP_ID'].name = "help_id"

# # Convert from mJy to μJy

# for col in ["F_SPIRE_250", "FErr_SPIRE_250_u", "FErr_SPIRE_250_l",

# "F_SPIRE_350", "FErr_SPIRE_350_u", "FErr_SPIRE_350_l",

# "F_SPIRE_500", "FErr_SPIRE_500_u", "FErr_SPIRE_500_l"]:

# xid_spire[col] *= 1000

# xid_spire.add_column(Column(

# data=np.max([xid_spire['FErr_SPIRE_250_u'] - xid_spire['F_SPIRE_250'],

# xid_spire['F_SPIRE_250'] - xid_spire['FErr_SPIRE_250_l']],

# axis=0),

# name="ferr_spire_250"

# ))

# xid_spire['F_SPIRE_250'].name = "f_spire_250"

# xid_spire.add_column(Column(

# data=np.max([xid_spire['FErr_SPIRE_350_u'] - xid_spire['F_SPIRE_350'],

# xid_spire['F_SPIRE_350'] - xid_spire['FErr_SPIRE_350_l']],

# axis=0),

# name="ferr_spire_350"

# ))

# xid_spire['F_SPIRE_350'].name = "f_spire_350"

# xid_spire.add_column(Column(

# data=np.max([xid_spire['FErr_SPIRE_500_u'] - xid_spire['F_SPIRE_500'],

# xid_spire['F_SPIRE_500'] - xid_spire['FErr_SPIRE_500_l']],

# axis=0),

# name="ferr_spire_500"

# ))

# xid_spire['F_SPIRE_500'].name = "f_spire_500"

# xid_spire = xid_spire['help_id',

# 'f_spire_250', 'ferr_spire_250', 'flag_spire_250',

# 'f_spire_350', 'ferr_spire_350', 'flag_spire_350',

# 'f_spire_500', 'ferr_spire_500', 'flag_spire_500']

# +

# Photo-z

#photoz = Table.read("../../dmu24/dmu24_/data/")

#photoz.meta = None

#photoz = photoz['help_id', 'z1_median']

#photoz['z1_median'].name = 'redshift'

#photoz['redshift'][photoz['redshift'] < 0] = np.nan # -99 used for missing values

# +

# Temp spec-z

ml['zspec'][ml['zspec'] < 0] = np.nan # -99 used for missing values

# -

# Flags

flags = Table.read("../../dmu6/dmu6_v_ELAIS-N2/data/elais-n2_20180218_flags.fits")

# # Merging

# +

# merged_table = join(ml, xid_mips24, join_type='left')

# # Fill values

# for col in xid_mips24.colnames:

# if col.startswith("f_") or col.startswith("ferr_"):

# merged_table[col].fill_value = np.nan

# elif col.startswith("flag_"):

# merged_table[col].fill_value = False

# merged_table = merged_table.filled()

# +

# merged_table = join(merged_table, xid_pacs, join_type='left')

# # Fill values

# for col in xid_pacs.colnames:

# if col.startswith("f_") or col.startswith("ferr_"):

# merged_table[col].fill_value = np.nan

# elif col.startswith("flag_"):

# merged_table[col].fill_value = False

# merged_table = merged_table.filled()

# +

# merged_table = join(merged_table, xid_spire, join_type='left')

# # Fill values

# for col in xid_spire.colnames:

# if col.startswith("f_") or col.startswith("ferr_"):

# merged_table[col].fill_value = np.nan

# elif col.startswith("flag_"):

# merged_table[col].fill_value = False

# merged_table = merged_table.filled()

# +

#merged_table = join(merged_table, photoz, join_type='left')

# Fill values

#merged_table['redshift'].fill_value = np.nan

#merged_table = merged_table.filled()

# -

merged_table = ml

# +

for col in flags.colnames:

if 'flag' in col:

try:

merged_table.remove_column(col)

except KeyError:

print("Column: {} not in masterlist.".format(col))

merged_table = join(merged_table, flags, join_type='left')

# Fill values

for col in merged_table.colnames:

if 'flag' in col:

merged_table[col].fill_value = False

merged_table = merged_table.filled()

# -

# # Saving the catalogue for CIGALE (first run)

if FIRST_RUN_FOR_CIGALE:

# Sorting the columns

bands_tot = [col[2:] for col in merged_table.colnames

if col.startswith('f_') and not col.startswith('f_ap')]

bands_ap = [col[5:] for col in merged_table.colnames

if col.startswith('f_ap_') ]

bands = list(set(bands_tot) | set(bands_ap))

bands.sort(key=lambda x: filter_mean_lambda[x])

columns = ['help_id', 'field', 'ra', 'dec', 'hp_idx', 'ebv', #'redshift',

'zspec']

for band in bands:

for col_tpl in ['f_{}', 'ferr_{}', 'f_ap_{}', 'ferr_ap_{}',

'm_{}', 'merr_{}', 'm_ap_{}', 'merr_ap_{}',

'flag_{}']:

colname = col_tpl.format(band)

if colname in merged_table.colnames:

columns.append(colname)

columns += ['stellarity', 'stellarity_origin', 'flag_cleaned',

'flag_merged', 'flag_gaia', 'flag_optnir_obs',

'flag_optnir_det', 'zspec_qual', 'zspec_association_flag']

# Check that we did not forget any column

# assert set(columns) == set(merged_table.colnames)

print(set(columns) - set(merged_table.colnames))

print( set(merged_table.colnames) - set(columns) )

merged_table = add_column_meta(merged_table, '../columns.yml')

merged_table[columns].write("data/ELAIS-N2_{}_cigale.fits".format(SUFFIX), overwrite=True)

# # Merging CIGALE outputs

#

# We merge the CIGALE outputs to the main catalogue. The CIGALE products provides several χ² with associated thresholds. For simplicity, we convert these two values to flags.

if not FIRST_RUN_FOR_CIGALE:

# Cigale outputs

cigale = Table.read("../../dmu28/dmu28_CDFS-SWIRE/data/HELP_final_results.fits")

cigale['id'].name = "help_id"

# We convert the various Chi2 and threshold to flags

flag_cigale_opt = cigale["UVoptIR_OPTchi2"] <= cigale["UVoptIR_OPTchi2_threshold"]

flag_cigale_ir = cigale["UVoptIR_IRchi2"] <= cigale["UVoptIR_IRchi2_threshold"]

flag_cigale = (

(cigale["UVoptIR_best.reduced_chi_square"]

<= cigale["UVoptIR_best.reduced_chi_square_threshold"]) &

flag_cigale_opt & flag_cigale_ir)

flag_cigale_ironly = cigale["IRonly_IRchi2"] <= cigale["IRonly_IRchi2_threshold"]

cigale.add_columns([

MaskedColumn(flag_cigale, "flag_cigale",

dtype=int, fill_value=-1),

MaskedColumn(flag_cigale_opt, "flag_cigale_opt",

dtype=int, fill_value=-1),

MaskedColumn(flag_cigale_ir, "flag_cigale_ir",

dtype=int, fill_value=-1),

MaskedColumn(flag_cigale_ironly, "flag_cigale_ironly",

dtype=int, fill_value=-1)

])

cigale['UVoptIR_bayes.stellar.m_star'].name = "cigale_mstar"

cigale['UVoptIR_bayes.stellar.m_star_err'].name = "cigale_mstar_err"

cigale['UVoptIR_bayes.sfh.sfr10Myrs'].name = "cigale_sfr"

cigale['UVoptIR_bayes.sfh.sfr10Myrs_err'].name = "cigale_sfr_err"

cigale['UVoptIR_bayes.dust.luminosity'].name = "cigale_dustlumin"

cigale['UVoptIR_bayes.dust.luminosity_err'].name = "cigale_dustlumin_err"

cigale['IR_bayes.dust.luminosity'].name = "cigale_dustlumin_ironly"

cigale['IR_bayes.dust.luminosity_err'].name = "cigale_dustlumin_ironly_err"

cigale = cigale['help_id', 'cigale_mstar', 'cigale_mstar_err', 'cigale_sfr',

'cigale_sfr_err', 'cigale_dustlumin', 'cigale_dustlumin_err',

'cigale_dustlumin_ironly', 'cigale_dustlumin_ironly_err',

'flag_cigale', 'flag_cigale_opt', 'flag_cigale_ir',

'flag_cigale_ironly']

if not FIRST_RUN_FOR_CIGALE:

merged_table = join(merged_table, cigale, join_type='left')

# Fill values

for col in cigale.colnames:

if col.startswith("cigale_"):

merged_table[col].fill_value = np.nan

elif col.startswith("flag_"):

merged_table[col].fill_value = -1

merged_table = merged_table.filled()

# # Sorting columns

#

# We sort the columns by increasing band wavelength.

if not FIRST_RUN_FOR_CIGALE:

bands = [col[2:] for col in merged_table.colnames

if col.startswith('f_') and not col.startswith('f_ap')]

bands.sort(key=lambda x: filter_mean_lambda[x])

if not FIRST_RUN_FOR_CIGALE:

columns = ['help_id', 'field', 'ra', 'dec', 'hp_idx', 'ebv', 'redshift', 'zspec']

for band in bands:

for col_tpl in ['f_{}', 'ferr_{}', 'f_ap_{}', 'ferr_ap_{}',

'm_{}', 'merr_{}', 'm_ap_{}', 'merr_ap_{}',

'flag_{}']:

colname = col_tpl.format(band)

if colname in merged_table.colnames:

columns.append(colname)

columns += ['cigale_mstar', 'cigale_mstar_err', 'cigale_sfr', 'cigale_sfr_err',

'cigale_dustlumin', 'cigale_dustlumin_err', 'cigale_dustlumin_ironly',

'cigale_dustlumin_ironly_err', 'flag_cigale', 'flag_cigale_opt',

'flag_cigale_ir', 'flag_cigale_ironly', 'stellarity',

'stellarity_origin', 'flag_cleaned', 'flag_merged', 'flag_gaia',

'flag_optnir_obs', 'flag_optnir_det', 'zspec_qual',

'zspec_association_flag']

if not FIRST_RUN_FOR_CIGALE:

# Check that we did not forget any column

assert set(columns) == set(merged_table.colnames)

# # Saving

if not FIRST_RUN_FOR_CIGALE:

merged_table = add_column_meta(merged_table, '../columns.yml')

merged_table[columns].write("data/CDFS-SWIRE.fits", overwrite=True)

|

dmu32/dmu32_ELAIS-N2/ELAIS-N2_catalogue_merging.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Alternating Line Current

# ## Import modules

# +

import os

import numpy as np

import KUEM as EM

import matplotlib.pyplot as plt

plt.close("all")

# -

# ## Setup constants and settings

# +

# Constants for J

Current = 1

Frequency = 2

# Grid constants

N = np.array([49, 49, 1], dtype = int)

delta_x = np.array([2, 2, 2])

x0 = np.array([-1, -1, -1])

Boundaries = [["closed", "closed"], ["closed", "closed"], "periodic"]

# Evaluation constants

StaticsExact = True

DynamicsExact = True

Progress = 5

approx_n = 0.1

# Video constants

FPS = 30

Speed = 0.2

Delta_t = 5

TimeConstant = 5

Steps = int(FPS / Speed * Delta_t)

SubSteps = int(np.ceil(TimeConstant * Delta_t * np.max(N / delta_x) / Steps))

dt = Delta_t / (Steps * SubSteps)

# Plotting settings

PlotScalar = True

PlotContour = False

PlotVector = True

PlotStreams = False

StreamDensity = 2

StreamLength = 1

ContourLevels = 10

ContourLim = (0, 0.15)

# File names

FilePos = "AlternatingLineCurrent/"

Name_B_2D = "ExAlternatingLineCurrentB_2D.avi"

Name_A_2D = "ExAlternatingLineCurrentA_2D.avi"

Name_B_1D = "ExAlternatingLineCurrentB_1D.avi"

Name_A_1D = "ExAlternatingLineCurrentA_1D.avi"

Save = True

# -

# ## Create the J function

# Define the current

def J(dx, N, x0, c, mu0):

# Create grid

Grid = np.zeros(tuple(N) + (4,))

# Add in the current, normalising so the current is the same no matter the grid size

Grid[int(N[0] / 2), int(N[1] / 2), :, 3] = Current / (dx[0] * dx[1])

# Turn into a vector

J_Vector = EM.to_vector(Grid, N)

# Return the vector

def get_J(t):

return J_Vector * np.sin(2 * np.pi * Frequency * t)

return get_J

# ## Setup the simulation

# Setup the simulation

Sim = EM.sim(N, delta_x = delta_x, x0 = x0, approx_n = approx_n, dt = dt, J = J, boundaries = Boundaries)

# ## Define the samplers

# +

# Set clim

max_val_A = 0.3

max_val_B = 2.5

clim_A = np.array([-max_val_A, max_val_A])

clim_B = np.array([-max_val_B, max_val_B])

# Define hat vectors

x_hat = np.array([1, 0, 0])

y_hat = np.array([0, 1, 0])

hat = np.array([0, 0, 1])

B_hat = np.array([0, -1, 0])

# Define the resolutions

Res_scalar = 1000

Res_vector = 30

Res_line = 1000

# Define extents

extent = [0, delta_x[0], 0, delta_x[1]]

PointsSize = np.array([delta_x[0], delta_x[1]])

x_vals = np.linspace(0, delta_x[0] / 2, Res_line)

# Get grid points

Points_scalar = EM.sample_points_plane(x_hat, y_hat, np.array([0, 0, 0]), PointsSize, np.array([Res_scalar, Res_scalar]))

Points_vector = EM.sample_points_plane(x_hat, y_hat, np.array([0, 0, 0]), PointsSize, np.array([Res_vector, Res_vector]))

Points_line = EM.sample_points_line(np.array([0, 0, 0]), np.array([delta_x[0] / 2, 0, 0]), Res_line)

# Setup samplers

Sampler_B_2D = EM.sampler_B_vector(Sim, Points_vector, x_hat, y_hat)

Sampler_A_2D = EM.sampler_A_scalar(Sim, Points_scalar, hat = hat)

Sampler_B_1D = EM.sampler_B_line(Sim, Points_line, x = x_vals, hat = B_hat)

Sampler_A_1D = EM.sampler_A_line(Sim, Points_line, x = x_vals, hat = hat)

# -

# ## Simulate

# +

# Solve the statics problem

print("Solving starting conditions")

StaticTime = Sim.solve(exact = StaticsExact, progress = Progress)

print(f"Solved starting conditions in {StaticTime:.2g} s")

# Solve the dynamics

print("Solving dynamics")

DynamicTime = Sim.dynamics(Steps, SubSteps, exact = DynamicsExact, progress = Progress)

print(f"Solved dynamics in {DynamicTime:.2g} s")

# -

# ## Create videos

# +

# Create folder

if Save is True and not os.path.exists(FilePos):

os.mkdir(FilePos)

# Save the videos

if Save is True:

print("Creating videos, this may take a while")

Sampler_B_2D.make_video(FilePos + Name_B_2D, FPS = FPS, extent = extent, clim = clim_B, density = StreamDensity, length = StreamLength, use_vector = PlotVector, use_streams = PlotStreams)

print(f"Created video {Name_B_2D}")

Sampler_A_2D.make_video(FilePos + Name_A_2D, FPS = FPS, extent = extent, clim = clim_A, contour_lim = ContourLim, levels = ContourLevels, use_scalar = PlotScalar, use_contour = PlotContour)

print(f"Created video {Name_A_2D}")

Sampler_B_1D.make_video(FilePos + Name_B_1D, FPS = FPS, ylim = clim_B)

print(f"Created video {Name_B_1D}")

Sampler_A_1D.make_video(FilePos + Name_A_1D, FPS = FPS, ylim = clim_A)

print(f"Created video {Name_A_1D}")

|

Examples/ExAlternatingLineCurrent.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# **Download** (right-click, save target as ...) this page as a jupyterlab notebook from: [Lab5](http://172.16.31.10/engr-1330-webroot/8-Labs/Lab05/Lab05.ipynb)

#

# ___

# # <font color=darkred>Laboratory 5: Sequence, Selection, and Repetition - Oh My! </font>

#

# **LAST NAME, FIRST NAME**

#

# **R00000000**

#

# ENGR 1330 Laboratory 5 - In-Lab

# Preamble script block to identify host, user, and kernel

import sys

# ! hostname

# ! whoami

print(sys.executable)

print(sys.version)

print(sys.version_info)

# ## Sequence

#

# Our first structure is sequential. To belabor the concept we will compute a short list of cubes, by cubing each element in a list and placing it into another list. The example below is dumb, but useful to introduce repetition later in the lab.

#

# First an unusual cell, used to reset a notebook - it will clear the workspace. Here we use it so the notebook will work the same for everyone (at least at first).

# reset this notebook

# %reset -f

# do a manual kernel restart to get the execution count to restart at one

AList = [0.0,1.0,2.0,3.0,4.0] # Create a list of floats

BList = [] #empty list to accept values

position = -1 # set position pointer to -1

position = position + 1 # increment position

BList.append(pow(AList[position],3)) # append to BList to build list of cubes

position = position + 1

BList.append(pow(AList[position],3))

position = position + 1

BList.append(pow(AList[position],3))

position = position + 1

BList.append(pow(AList[position],3))

position = position + 1

BList.append(pow(AList[position],3))

print(BList)

# ### Selection

#

# Our next structure is selection, illustrated by a simple example

#

# A council member will not be allowed to vote on an ordinance if his/her attendence at council meetings is less than 75%.

# Take the following inputs from the user:

#

# 1. Number of council meetings held.

# 2. Number of council meetings attended.

#

# Compute the percentage of meetings attended

#

# $$\%_{attended} = \frac{Meetings_{attended}}{Meetings_{total}}*100$$

#

# Use the result to decide whether the council member will be allowed to vote or not.

# use our simple I/O methods to obtain council persons name

council_name = str(input('enter council person name'))

# use our simple I/O methods to obtain meeting count

meetings_total = int(input('How many meetings since last vote?'))

# use our simple I/O methods to obtain meetings attended

prompt_string = 'How many meetings did ' + council_name + ' attend? '

meetings_attended = int(input(prompt_string))

# compute percent_attendence

percent_attend = 100.0*(meetings_attended/meetings_total) #the 100.0 forces float

# select and make eligibility report

if percent_attend < 75:

print('Council person ',council_name,' attended ',percent_attend,' percent of meetings and is NOT eligible to vote')

else:

print('Council person ',council_name,' attended ',percent_attend,' percent of meetings and is eligible to vote')

# ## <font color=purple>Repetition (Loops)</font>

# - Controlled repetition

# - Structured FOR Loop

# - Structured WHILE Loop

# ### <font color=purple>Count controlled repetition</font>

#  <br>

#

# Count-controlled repetition is also called definite repetition because the number of repetitions is known before the loop begins executing.

# When we do not know in advance the number of times we want to execute a statement, we cannot use count-controlled repetition.

# In such an instance, we would use sentinel-controlled repetition.

#

# A count-controlled repetition will exit after running a certain number of times.

# The count is kept in a variable called an index or counter.

# When the index reaches a certain value (the loop bound) the loop will end.

#

# Count-controlled repetition requires

#

# * control variable (or loop counter)

# * initial value of the control variable

# * increment (or decrement) by which the control variable is modified each iteration through the loop

# * condition that tests for the final value of the control variable

#

# We can use both `for` and `while` loops, for count controlled repetition, but the `for` loop in combination with the `range()` function is more common.

#

# #### Structured `FOR` loop

# We have seen the for loop already, but we will formally introduce it here. The `for` loop executes a block of code repeatedly until the condition in the `for` statement is no longer true.

#

# #### Looping through an iterable

# An iterable is anything that can be looped over - typically a list, string, or tuple.

# The syntax for looping through an iterable is illustrated by an example.

#

# First a generic syntax

#

# for a in iterable:

# print(a)

#

# Notice the colon `:` and the indentation.

# Now a specific example:

# ___

# ### Example: A Loop to Begin With!

#

# Make a list with "Walter", "Jesse", "Gus, "Hank". Then, write a loop that prints all the elements of your lisk.

# +

# # set a list

# BB = ["Walter","Jesse","Gus","Hank"]

# # loop thru the list

# for AllStrings in BB:

# print(AllStrings)

# -

# ___

# #### The `range()` function to create an iterable

#

# The `range(begin,end,increment)` function will create an iterable starting at a value of begin, in steps defined by increment (`begin += increment`), ending at `end`.

#

# So a generic syntax becomes

#

# for a in range(begin,end,increment):

# print(a)

#

# The example that follows is count-controlled repetition (increment skip if greater)

# +

# # set a list

# BB = ["Walter","Jesse","Gus","Hank"]

# # loop thru the list

# for i in range(0,4,1): # Change the numbers, what happens?

# print(BB[i])

# -

# ___

# ### Example: That's odd!

#

# Write a loop to print all the odd numbers between 0 and 10.

# +

# # For loop with range

# for x in range(1,10,2): # a sequence from 2 to 5 with steps of 1

# print(x)

# -

# ___

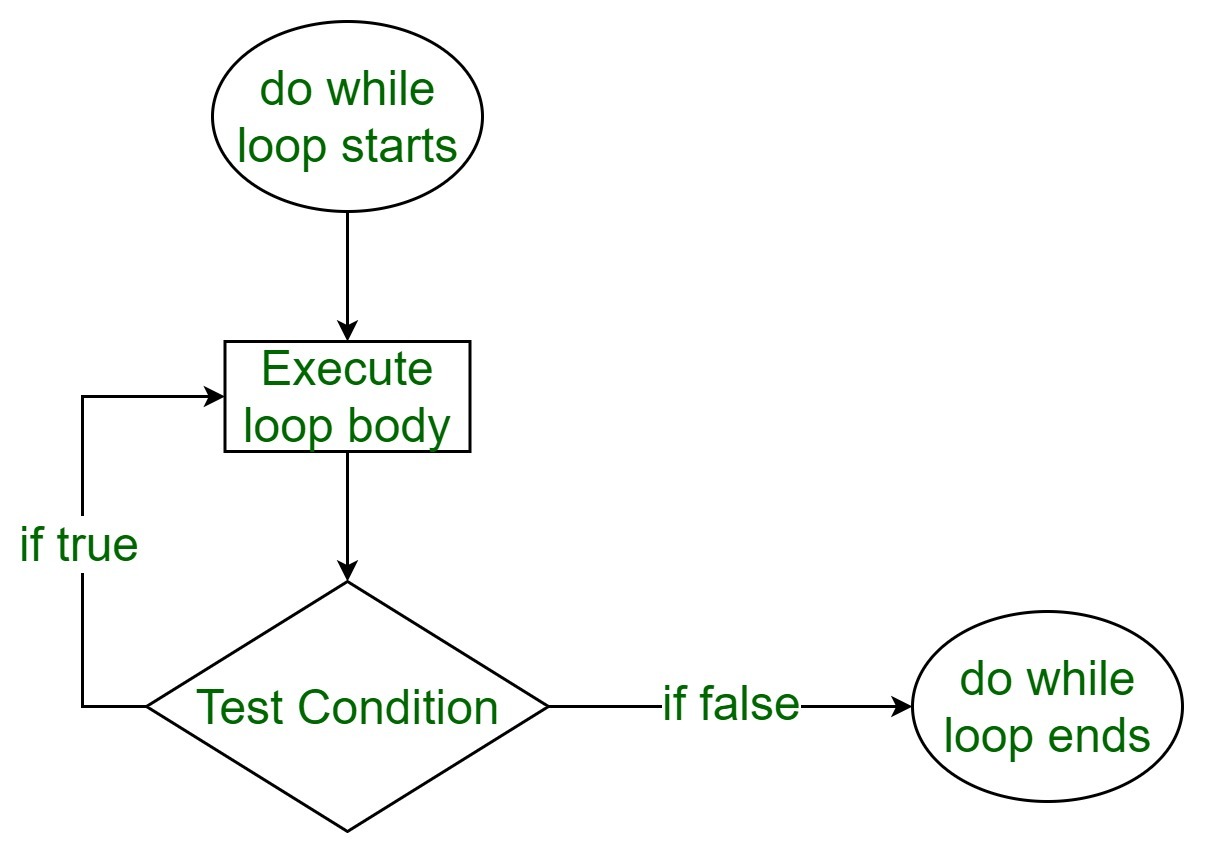

# ### <font color=purple>Sentinel-controlled repetition</font>

#  <br>

#

# When loop control is based on the value of what we are processing, sentinel-controlled repetition is used.

# Sentinel-controlled repetition is also called indefinite repetition because it is not known in advance how many times the loop will be executed.

#

#

# <!-- <br>-->

#

# It is a repetition procedure for solving a problem by using a sentinel value (also called a signal value, a dummy value or a flag value) to indicate "end of process".

# The sentinel value itself need not be a part of the processed data.

#

# One common example of using sentinel-controlled repetition is when we are processing data from a file and we do not know in advance when we would reach the end of the file.

#

# We can use both `for` and `while` loops, for __Sentinel__ controlled repetition, but the `while` loop is more common.

#

# #### Structured `WHILE` loop

# The `while` loop repeats a block of instructions inside the loop while a condition remainsvtrue.

#

# First a generic syntax

#

# while condition is true:

# execute a

# execute b

# ....

#

# Notice our friend, the colon `:` and the indentation again.

# +

# # set a counter

# counter = 5

# # while loop

# while counter > 0:

# print("Counter = ",counter)

# counter = counter -1

# -

# > The while loop structure just depicted is a "decrement, skip if equal" in lower level languages. The next structure, also a while loop is an "increment, skip if greater" structure.

# # set a counter

# counter = 0

# # while loop

# while counter <= 5: # change this line to: while counter <= 5: what happens?

# print ("Counter = ",counter)

# counter = counter +1 # change this line to: counter +=1 what happens?

# Beware, its easy to create an infinite loop with this structure. The lab instructor will do so, and illustrate how to regain control of your computer when you do.

# ___

# ### <font color=purple>Nested Repetition | Loops within Loops</font>

#

# <!--  <br> -->

#

#

# > Round like a circle in a spiral, like a wheel within a wheel <br>

# Never ending or beginning on an ever spinning reel <br>

# Like a snowball down a mountain, or a carnival balloon <br>

# Like a carousel that's turning running rings around the moon <br>

# Like a clock whose hands are sweeping past the minutes of its face <br>

# And the world is like an apple whirling silently in space <br>

# Like the circles that you find in the windmills of your mind! <br>

# <br>

# ***Windmills of Your Mind lyrics © Sony/ATV Music Publishing LLC, BMG Rights Management*** <br>

# ***Songwriters: <NAME> / <NAME> / <NAME>*** <br>

# ***Recommended versions: <NAME> | <NAME> | <NAME>*** <br>

# "Like the circles that you find in the windmills of your mind", Nested repetition is when a control structure is placed inside of the body or main part of another control structure.

#

# #### `break` to exit out of a loop

#

# Sometimes you may want to exit the loop when a certain condition different from the counting

# condition is met. Perhaps you are looping through a list and want to exit when you find the

# first element in the list that matches some criterion. The break keyword is useful for such

# an operation.

# For example run the following program:

# +

# #

# j = 0

# for i in range(0,5,1):

# j += 2

# print ("i = ",i,"j = ",j)

# if j == 6:

# break

# +

# # One Small Change

# j = 0

# for i in range(0,5,1):

# j += 2

# print( "i = ",i,"j = ",j)

# if j == 7:

# break

# -

# In the first case, the for loop only executes 3 times before the condition j == 6 is TRUE and the loop is exited.

# In the second case, j == 7 never happens so the loop completes all its anticipated traverses.

#

# In both cases an `if` statement was used within a for loop. Such "mixed" control structures

# are quite common (and pretty necessary).

# A `while` loop contained within a `for` loop, with several `if` statements would be very common and such a structure is called __nested control.__

# There is typically an upper limit to nesting but the limit is pretty large - easily in the

# hundreds. It depends on the language and the system architecture ; suffice to say it is not

# a practical limit except possibly for general-domain AI applications.

# <hr>

# We can also do mundane activities and leverage loops, arithmetic, and format codes to make useful tables like

#

# ### Example: Cosines in the loop!

#

# Write a loop to print a table of the cosines of numbers between 0 and 0.01 with steps of 0.001.

# +

# import math # package that contains cosine

# print(" Cosines ")

# print(" x ","|"," cos(x) ")

# print("--------|--------")

# for i in range(0,100,1):

# x = float(i)*0.001

# print("%.3f" % x, " |", " %.4f " % math.cos(x)) # note the format code and the placeholder % and syntax of using package

# -

# ___

# ### Example: Getting the hang of it!

#

# Write a Python script that takes a real input value (a float) for x and returns the y

# value according to the rules below

#

# \begin{gather}

# y = x~for~0 <= x < 1 \\

# y = x^2~for~1 <= x < 2 \\

# y = x + 2~for~2 <= x < 3 \\

# \end{gather}

#

# Test the script with x values of 0.0, 1.0, 1.1, and 2.1. <br>

# add functionality to **automaticaly** populate the table below:

#

# |x|y(x)|

# |---:|---:|

# |0.0| |

# |1.0| |

# |2.0| |

# |3.0| |

# |4.0| |

# |5.0| |

# +

# userInput = input('Enter enter a float') #ask for user's input

# x = float(userInput)

# print("x:", x)

# if x >= 0 and x < 1:

# y = x

# print("y is equal to",y)

# elif x >= 1 and x < 2:

# y = x*x

# print("y is equal to",y)

# else:

# y = x+2

# print("y is equal to",y)

# +

# without pretty table

# print("---x---","|","---y---")

# print("--------|--------")

# for x in range(0,6,1):

# if x >= 0 and x < 1:

# y = x

# print("%4.f" % x, " |", " %4.f " % y)

# elif x >= 1 and x < 2:

# y = x*x

# print("%4.f" % x, " |", " %4.f " % y)

# else:

# y = x+2

# print("%4.f" % x, " |", " %4.f " % y)

# +

# # with pretty table

# from prettytable import PrettyTable #Required to create tables

# t = PrettyTable(['x', 'y']) #Define an empty table

# for x in range(0,6,1):

# if x >= 0 and x < 1:

# y = x

# print("for x equal to", x, ", y is equal to",y)

# t.add_row([x, y]) #will add a row to the table "t"

# elif x >= 1 and x < 2:

# y = x*x

# print("for x equal to", x, ", y is equal to",y)

# t.add_row([x, y])

# else:

# y = x+2

# print("for x equal to", x, ", y is equal to",y)

# t.add_row([x, y])

# print(t)

# -

# ___

# #### The `continue` statement

# The continue instruction skips the block of code after it is executed for that iteration, and continues with the loop traverse

# It is

# best illustrated by an example.

# +

# j = 0

# for i in range(0,5,1):

# j += 2

# print ("\n i = ", i , ", j = ", j) #here the \n is a newline command

# if j == 6:

# continue

# else:

# print(" this message will be skipped over if j = 6 ") # still within the loop, so the skip is implemented

# #When j ==6 the line after the continue keyword is not printed.

# #Other than that one difference the rest of the script runs normally.

# -

# ___

# #### The `try`, `except` structure

#

# An important control structure (and a pretty cool one for error trapping) is the `try`, `except`

# statement.

#

# The statement controls how the program proceeds when an error occurs in an instruction.

# The structure is really useful to trap likely errors (divide by zero, wrong kind of input)

# yet let the program keep running or at least issue a meaningful message to the user.

#

# The syntax is:

#

# try:

# do something

# except:

# do something else if ``do something'' returns an error

#

# Here is a really simple, but hugely important example:

# +

#MyErrorTrap.py

# x = 12.

# y = 12.

# while y >= -12.: # sentinel controlled repetition

# try:

# print ("x = ", x, "y = ", y, "x/y = ", x/y)

# except:

# print ("error divide by zero")

# y -= 1

# -

# So this silly code starts with x fixed at a value of 12, and y starting at 12 and decreasing by

# 1 until y equals -1. The code returns the ratio of x to y and at one point y is equal to zero

# and the division would be undefined. By trapping the error the code can issue us a measure

# and keep running.

#

# Modify the script as shown below,Run, and see what happens

# +

#NoErrorTrap.py

# x = 12.

# y = 12.

# while y >= -12.: # sentinel controlled repetition

# print ("x = ", x, "y = ", y, "x/y = ", x/y)

# y -= 1

# -

# ___

# ## Readings

#

# *Here are some great reads on this topic:*

# - "Python for Loop" available at [https://www.programiz.com/python-programming/for-loop/](https://www.programiz.com/python-programming/for-loop/)<br>

# - "Python "for" Loops (Definite Iteration)" by <NAME> available at [https://realpython.com/python-for-loop/](https://realpython.com/python-for-loop/) <br>

# - "Python "while" Loops (Indefinite Iteration)" by <NAME> available at [https://realpython.com/python-while-loop/](https://realpython.com/python-while-loop/) <br>

# - "loops in python" available at [https://www.geeksforgeeks.org/loops-in-python/ ](https://www.geeksforgeeks.org/loops-in-python/) <br>

# - "Python Exceptions: An Introduction" by <NAME> available at [https://realpython.com/python-exceptions/](https://realpython.com/python-exceptions/) <br>

#

# *Here are some great videos on these topics:*

# - "Python For Loops - Python Tutorial for Absolute Beginners" by Programming with Mosh available at [https://www.youtube.com/watch?v=94UHCEmprCY](https://www.youtube.com/watch?v=94UHCEmprCY) <br>

# - "Python Tutorial for Beginners 7: Loops and Iterations - For/While Loops" by <NAME> available at [https://www.youtube.com/watch?v=6iF8Xb7Z3wQ](https://www.youtube.com/watch?v=6iF8Xb7Z3wQ) <br>

# - "Python 3 Programming Tutorial - For loop" by sentdex available at [https://www.youtube.com/watch?v=xtXexPSfcZg ](https://www.youtube.com/watch?v=xtXexPSfcZg) <br><br>

|

8-Labs/Lab05/dev_src/Lab05-Copy1.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

import os

import csv

import time

import pickle

import warnings

import numpy as np

import pandas as pd

from src.fABBA_test import fABBA

from src.ABBA import ABBA

from src.mydefaults import mydefaults

from collections import defaultdict

from tslearn.metrics import dtw as dtw

warnings.filterwarnings('ignore')

datadir = 'UCRArchive_2018/'

tol = [0.05*i for i in range(1,11)]

ts_count = 0

for root, dirs, files in os.walk(datadir):

for file in files:

if file.endswith('tsv'):

with open(os.path.join(root, file)) as f:

content = f.readlines()

ts_count += len(content)

print('Number of time series:', ts_count)

alphas = [0.1,0.2,0.3,0.4,0.5,0.6,0.7,0.8,0.9]

sorting_methods = ['2-norm']

for sortname in sorting_methods:

print('\n sorting method:', sortname)

D_fABBA_2 = len(alphas)*ts_count*[np.NaN]

D_fABBA_DTW = len(alphas)*ts_count*[np.NaN]

D_fABBA_time = len(alphas)*ts_count*[np.NaN]

alphalist = len(alphas)*ts_count*[np.NaN]

countlist = len(alphas)*ts_count*[np.NaN]

ts_name = len(alphas)*ts_count*[''] # time series name for debugging

tol_used = len(alphas)*ts_count*[np.NaN] # Store tol used

csymbolicNum = len(alphas)*ts_count*[np.NaN] # Store amount of symbols

cpiecesNum = len(alphas)*ts_count*[np.NaN] # Store amount of pieces after compression

ctsname = len(alphas)*ts_count*['']

index = 0

for root, dirs, files in os.walk(datadir):

for file in files:

if file.endswith('tsv'):

print(' file:', file)

with open(os.path.join(root, file)) as tsvfile:

tsvfile = csv.reader(tsvfile, delimiter='\t')

for ind, column in enumerate(tsvfile):

for alpha in alphas:

# print('alpha:' + str(alpha) + ' file:', file)

ts_name[index] += str(file) + '_' + str(ind)

ts = [float(i) for i in column]

ts = np.array(ts[1:])

ts = ts[~np.isnan(ts)]

norm_ts = (ts - np.mean(ts))

std = np.std(norm_ts, ddof=1)

std = std if std > np.finfo(float).eps else 1

norm_ts /= std

if len(norm_ts) < 100:

break

tol_index = 0

CompressionTolHigh = False

for tol_index in range(len(tol)):

abba = ABBA(tol=tol[tol_index], verbose=0)

pieces = abba.compress(norm_ts)

ABBA_len = len(pieces)

if ABBA_len <= len(norm_ts)/5:

tol_used[index] = tol[tol_index]

break

elif tol_index == len(tol)-1:

CompressionTolHigh = True

if CompressionTolHigh:

continue # uniform to performance profiles test!

fabba = fABBA(verbose=0, alpha=alpha, scl=1, sorting=sortname)

st = time.time()

symbolic_tsf = fabba.digitize(pieces[:,:2])

ed = time.time()

time_fabba = ed - st

symbolnum = len(set(symbolic_tsf))

csymbolicNum[index] = symbolnum

cpiecesNum[index] = len(pieces)

ctsname[index] = str(file) + '_' + str(ind)

ts_fABBA = fabba.inverse_transform(symbolic_tsf, norm_ts[0])

D_fABBA_2[index] = np.linalg.norm(norm_ts - ts_fABBA)

D_fABBA_DTW[index] = dtw(norm_ts, ts_fABBA)

D_fABBA_time[index] = time_fabba

alphalist[index] = alpha

countlist[index] = fabba.nr_dist

index += 1

Datastore = pd.DataFrame(columns=['ts name', 'number of pieces',

'number of symbols', 'tol', 'fABBA_2',

'fABBA_DTW', 'fABBA_time', 'alpha'])

Datastore["ts name"] = ctsname

Datastore["number of pieces"] = cpiecesNum

Datastore["number of symbols"] = csymbolicNum

Datastore["tol"] = tol_used

Datastore["fABBA_2"] = D_fABBA_2

Datastore["fABBA_DTW"] = D_fABBA_DTW

Datastore["fABBA_time"] = D_fABBA_time

Datastore["alpha"] = alphalist

Datastore["count"] = countlist

Datastore.to_csv('results/count_rate'+sortname+'.csv',index=False)

# -

|

exp/count_rate_2norm.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + id="GNkzTFfynsmV" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 1000} outputId="8c419f94-3d61-4fe1-e733-43b3ae9c28f0"

# !pip install -U tensorflow

# + id="56XEQOGknrAk" colab_type="code" outputId="332db93d-fe68-4753-8f64-5dd86563b0ce" colab={"base_uri": "https://localhost:8080/", "height": 34}

import tensorflow as tf

print(tf.__version__)

# + id="sLl52leVp5wU" colab_type="code" colab={}

import numpy as np

import matplotlib.pyplot as plt

def plot_series(time, series, format="-", start=0, end=None):

plt.plot(time[start:end], series[start:end], format)

plt.xlabel("Time")

plt.ylabel("Value")

plt.grid(True)

# + id="tP7oqUdkk0gY" colab_type="code" outputId="77159534-adf8-478b-a5c6-5a8086e4b9c5" colab={"base_uri": "https://localhost:8080/", "height": 228}

# !wget --no-check-certificate \

# https://storage.googleapis.com/laurencemoroney-blog.appspot.com/Sunspots.csv \

# -O /tmp/sunspots.csv

# + id="NcG9r1eClbTh" colab_type="code" outputId="b4592520-3d6f-4882-d807-2880f036856f" colab={"base_uri": "https://localhost:8080/", "height": 388}

import csv

time_step = []

sunspots = []

with open('/tmp/sunspots.csv') as csvfile:

reader = csv.reader(csvfile, delimiter=',')

next(reader)

for row in reader:

sunspots.append(float(row[2]))

time_step.append(int(row[0]))

series = np.array(sunspots)

time = np.array(time_step)

plt.figure(figsize=(10, 6))

plot_series(time, series)

# + id="L92YRw_IpCFG" colab_type="code" colab={}

split_time = 3000

time_train = time[:split_time]

x_train = series[:split_time]

time_valid = time[split_time:]

x_valid = series[split_time:]

window_size = 60

batch_size = 32

shuffle_buffer_size = 1000

# + id="lJwUUZscnG38" colab_type="code" colab={}

def windowed_dataset(series, window_size, batch_size, shuffle_buffer):

dataset = tf.data.Dataset.from_tensor_slices(series)

dataset = dataset.window(window_size + 1, shift=1, drop_remainder=True)

dataset = dataset.flat_map(lambda window: window.batch(window_size + 1))

dataset = dataset.shuffle(shuffle_buffer).map(lambda window: (window[:-1], window[-1]))

dataset = dataset.batch(batch_size).prefetch(1)

return dataset

# + id="AclfYY3Mn6Ph" colab_type="code" outputId="6e853f39-8d48-411c-d42e-697d764545bf" colab={"base_uri": "https://localhost:8080/", "height": 106}

dataset = windowed_dataset(x_train, window_size, batch_size, shuffle_buffer_size)

model = tf.keras.models.Sequential([

tf.keras.layers.Dense(20, input_shape=[window_size], activation="relu"),

tf.keras.layers.Dense(10, activation="relu"),

tf.keras.layers.Dense(1)

])

model.compile(loss="mse", optimizer=tf.keras.optimizers.SGD(lr=1e-7, momentum=0.9))

model.fit(dataset,epochs=100,verbose=0)

# + id="GaC6NNMRp0lb" colab_type="code" outputId="c9e06a20-f6c2-4b2c-97c0-b7e8520b9b1e" colab={"base_uri": "https://localhost:8080/", "height": 388}

forecast=[]

for time in range(len(series) - window_size):

forecast.append(model.predict(series[time:time + window_size][np.newaxis]))

forecast = forecast[split_time-window_size:]

results = np.array(forecast)[:, 0, 0]

plt.figure(figsize=(10, 6))

plot_series(time_valid, x_valid)

plot_series(time_valid, results)

# + id="13XrorC5wQoE" colab_type="code" outputId="55cc5244-c090-4711-c053-5aa4690876fd" colab={"base_uri": "https://localhost:8080/", "height": 167}

tf.keras.metrics.mean_absolute_error(x_valid, results).numpy()

# + id="xLUj4WMr3bF1" colab_type="code" colab={}

|

python/coursera_python/DL_AI_LM/4/4/.ipynb_checkpoints/S+P_Week_4_Lesson_1-checkpoint.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Discover emerging trends

# In this notebook, we analyze the trends of Stack Overflow questions. In particular, we are finding the percentage of new questions tagged from four popular Python data science libraries: matplotlib, numpy, scikit-learn, and pandas.

#

# The data was retrieved from Stack Overflow's handy data explorer [using this query](http://data.stackexchange.com/stackoverflow/query/767327/select-all-posts-from-a-single-tag).

#

# We use pandas stacked area plot, by quarter, the percentage of new questions added to stack overflow from those four libraries.

# +

import pandas as pd

# %matplotlib inline

# -

import glob

dfs = [pd.read_csv(file_name, parse_dates=['creationdate'])

for file_name in glob.glob('../data/stackoverflow/*.csv')]

df = pd.concat(dfs)

df.head()

df.groupby([pd.Grouper(key='creationdate', freq='QS'), 'tagname']) \

.size() \

.unstack('tagname', fill_value=0) \

.pipe(lambda x: x.div(x.sum(1), axis=0)) \

.plot(kind='area', figsize=(12,6), title='Percentage of New Stack Overflow Questions') \

.legend(loc='upper left')

# Pandas has grown the fastest

|

Pandas Tricks/Emerging Stack Overflow Trends.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + id="EbrFD1vMR_qS"

import tensorflow as tf

import os

import pandas as pd

import numpy as np

import warnings

warnings.filterwarnings("ignore")

from sklearn import preprocessing

from sklearn.preprocessing import MinMaxScaler, StandardScaler

import matplotlib.pyplot as plt

#https://colab.research.google.com/drive/1b3CUJuDOmPmNdZFH3LQDmt5F0K3FZhqD?usp=sharing

# + colab={"base_uri": "https://localhost:8080/", "height": 644} id="RRVN-4QOSKAx" outputId="0fae4e16-1266-4d53-81fe-2ef0c08efb66"

df = pd.read_excel("preparedData.xlsx")

# -

df.index = pd.to_datetime(df['date'], format='%d-%m-%Y')

df[:26]

df.drop(columns=['reproduction_rate', 'new_tests', 'positive_rate',

'tests_per_case', 'total_vaccinations', 'people_vaccinated',

'people_fully_vaccinated', 'total_boosters', 'new_vaccinations',

'stringency_index', 'population', 'population_density', 'median_age',

'aged_65_older', 'aged_70_older', 'gdp_per_capita', 'extreme_poverty',

'cardiovasc_death_rate', 'diabetes_prevalence', 'female_smokers',

'male_smokers', 'life_expectancy', 'human_development_index',

'covid: (Ireland)', 'COVID-19 testing: (Ireland)',

'COVID-19 rapid antigen test: (Ireland)',

'Health Service Executive: (Ireland)', 'Vaccination: (Ireland)',

'book covid test: (Ireland)_x', 'how many covid cases today: (Ireland)',

'pcr covid test: (Ireland)', 'close contact covid: (Ireland)',

'book a covid test: (Ireland)', 'vaccination centre: (Ireland)',

'pharmacy near me: (Ireland)',

'Treatment and management of COVID-19: (Ireland)',

'Hand sanitizer: (Ireland)', 'Face mask: (Ireland)',

'book covid test: (Ireland)_y', 'covid test dublin: (Ireland)',

'covid test centre: (Ireland)', 'hse covid vaccine: (Ireland)',

'hse vaccine portal: (Ireland)', 'hse portal vaccine: (Ireland)',

'pcr test hse: (Ireland)', 'hse covid test: (Ireland)',

'hse vaccine registration: (Ireland)',

'how long will it take to vaccinate ireland: (Ireland)'], inplace=True)

# + colab={"base_uri": "https://localhost:8080/", "height": 289} id="3fWZ3nYxS3oe" outputId="bde8d1c4-df76-49fc-d5f2-869e042c7c9e"

cases = df['new_cases_smoothed']

plt.title("COVID-19 Cases in Ireland")

cases.plot()

plt.savefig("New Cases.jpg", bbox_inches='tight')

# + id="bY2yEu2QTBXP"

def df_to_X_y(df, window_size=5):

df_as_np = df.to_numpy()

X = []

y = []

for i in range(len(df_as_np)-window_size):

row = [[a] for a in df_as_np[i:i+window_size]]

X.append(row)

label = df_as_np[i+window_size]

y.append(label)

return np.array(X), np.array(y)

# + colab={"base_uri": "https://localhost:8080/"} id="qhGUH0NoV9Zq" outputId="3775d432-2dbe-4046-a355-b8fa9dcd9ca1"

WINDOW_SIZE = 5

X, y = df_to_X_y(cases, WINDOW_SIZE)

X.shape, y.shape

# + colab={"base_uri": "https://localhost:8080/"} id="Vsy2-BjnWMhB" outputId="f20d3567-88c0-4d28-8fbb-38b7193f4ab4"

X_train, y_train = X[:490], y[:490]

X_val, y_val = X[490:630], y[490:630]

X_test, y_test = X[630:], y[630:]

X_train.shape, y_train.shape, X_val.shape, y_val.shape, X_test.shape, y_test.shape

# + colab={"base_uri": "https://localhost:8080/"} id="4jZz4ZjpW217" outputId="6bf1a2da-db28-44c9-ea3e-89612832e203"

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import *

from tensorflow.keras.callbacks import ModelCheckpoint

from tensorflow.keras.losses import MeanSquaredError

from tensorflow.keras.metrics import RootMeanSquaredError

from tensorflow.keras.optimizers import Adam

model1 = Sequential()

model1.add(InputLayer((5, 1)))

model1.add(LSTM(64))

model1.add(Dense(8, 'relu'))

model1.add(Dense(1, 'linear'))

model1.summary()

# + id="5jMK7auDXwEr"

cp1 = ModelCheckpoint('model1/', save_best_only=True)

model1.compile(loss=MeanSquaredError(), optimizer=Adam(learning_rate=0.0001), metrics=[RootMeanSquaredError()])

# + colab={"base_uri": "https://localhost:8080/"} id="CWeSakSwYLtr" outputId="5307480e-0540-44f3-f3fb-1a5ec0a3adef"

model1.fit(X_train, y_train, validation_data=(X_val, y_val), epochs=10, callbacks=[cp1])

# + id="vdaqGHG4YZkN"

from tensorflow.keras.models import load_model

model1 = load_model('model1/')

# + colab={"base_uri": "https://localhost:8080/", "height": 420} id="byObmr8CZRhp" outputId="00bd6717-cff6-4e3c-a708-89e503e01bdb"

train_predictions = model1.predict(X_train).flatten()

train_results = pd.DataFrame(data={'Train Predictions':train_predictions, 'Actuals':y_train})

train_results

# + colab={"base_uri": "https://localhost:8080/", "height": 286} id="KTqY8r6_Zpev" outputId="cfd490b8-668c-4b58-ec0b-6b79620f3ac3"

import matplotlib.pyplot as plt

plt.plot(train_results['Train Predictions'][50:100])

plt.plot(train_results['Actuals'][50:100])

# + colab={"base_uri": "https://localhost:8080/", "height": 420} id="vuY5nmGYZ8ix" outputId="0cf2414b-fdbf-40d7-a277-90d29814b3dc"

val_predictions = model1.predict(X_val).flatten()

val_results = pd.DataFrame(data={'Val Predictions':val_predictions, 'Actuals':y_val})

val_results

# + colab={"base_uri": "https://localhost:8080/", "height": 282} id="6MlctzuuaQww" outputId="7147a4ca-90a3-4c2d-ae2b-76fe40a2ff0b"

plt.plot(val_results['Val Predictions'][:100])

plt.plot(val_results['Actuals'][:100])

# + colab={"base_uri": "https://localhost:8080/", "height": 420} id="5O59Q8MTaRN7" outputId="4e55d8f6-c9ad-4349-9da7-e937b5ade6ae"

test_predictions = model1.predict(X_test).flatten()

test_results = pd.DataFrame(data={'Test Predictions':test_predictions, 'Actuals':y_test})

test_results

# + colab={"base_uri": "https://localhost:8080/", "height": 282} id="m8UzGIfEaW-P" outputId="85be190b-81ac-4223-be21-cdc0f67e592f"

plt.plot(test_results['Test Predictions'][:100])

plt.plot(test_results['Actuals'][:100])

# + id="nWdZkoPo-MZM"

# Part 2

# + id="XFf7bCFlctu8"

from sklearn.metrics import mean_squared_error as mse

def plot_predictions1(model, X, y, start=0, end=100):

predictions = model.predict(X).flatten()

df = pd.DataFrame(data={'Predictions':predictions, 'Actuals':y})

plt.plot(df['Predictions'][start:end])

plt.plot(df['Actuals'][start:end])

return df, mse(y, predictions)

# + colab={"base_uri": "https://localhost:8080/", "height": 511} id="pdabyyStdavq" outputId="e07aa785-101b-462b-81e8-35581ce0812f"

plot_predictions1(model1, X_test, y_test)

# + colab={"base_uri": "https://localhost:8080/"} id="K6KDLXc3dg3x" outputId="eaddf43a-5f36-46ef-8e95-6a0870ae8b4f"

model2 = Sequential()

model2.add(InputLayer((5, 1)))

model2.add(Conv1D(64, kernel_size=2))

model2.add(Flatten())

model2.add(Dense(8, 'relu'))

model2.add(Dense(1, 'linear'))

model2.summary()

# + id="YoGw3DQeeTES"

cp2 = ModelCheckpoint('model2/', save_best_only=True)

model2.compile(loss=MeanSquaredError(), optimizer=Adam(learning_rate=0.0001), metrics=[RootMeanSquaredError()])

# + colab={"base_uri": "https://localhost:8080/"} id="fUe56iajetZe" outputId="db7d44ec-e162-4e66-a3ba-1162c04fde63"

model2.fit(X_train, y_train, validation_data=(X_val, y_val), epochs=10, callbacks=[cp2])

# + colab={"base_uri": "https://localhost:8080/"} id="_0mmRGP8exPo" outputId="6851ddb2-a5b5-405b-c3fe-68c4233c7df2"

model3 = Sequential()

model3.add(InputLayer((5, 1)))

model3.add(GRU(64))

model3.add(Dense(8, 'relu'))

model3.add(Dense(1, 'linear'))

model3.summary()

# + id="WbQzFWjXfa2i"

cp3 = ModelCheckpoint('model3/', save_best_only=True)

model3.compile(loss=MeanSquaredError(), optimizer=Adam(learning_rate=0.0001), metrics=[RootMeanSquaredError()])

# + colab={"base_uri": "https://localhost:8080/"} id="BzB6BUOQfivn" outputId="1f282be0-2d44-46a9-be3f-c448b98daf81"

model3.fit(X_train, y_train, validation_data=(X_val, y_val), epochs=10, callbacks=[cp3])

# -

df2 = pd.read_excel("preparedData.xlsx")

date_time = pd.to_datetime(df.pop('date'), format='%d-%m-%Y')

# + id="Db8BJQONjbAT"

def df_to_X_y2(df2, window_size=6):

df_as_np = df.to_numpy()

X = []

y = []

for i in range(len(df_as_np)-window_size):

row = [r for r in df_as_np[i:i+window_size]]

X.append(row)

label = df_as_np[i+window_size][0]

y.append(label)

return np.array(X), np.array(y)

# + colab={"base_uri": "https://localhost:8080/"} id="eJhF1cIDleQ1" outputId="f571aa4c-d18c-41ef-d9c2-652ab09f9b3b"

X1, y1 = df_to_X_y2(df)

X1.shape, y1.shape

# + colab={"base_uri": "https://localhost:8080/"} id="FMOArQgyoTnq" outputId="405a6dfd-4335-4d27-de23-a2fed6e08f24"

X_train1, y_train1 = X1[:490], y1[:490]