code

stringlengths 38

801k

| repo_path

stringlengths 6

263

|

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# %matplotlib inline

#

# # Logistic Regression 3-class Classifier

#

#

# Show below is a logistic-regression classifiers decision boundaries on the

# first two dimensions (sepal length and width) of the `iris

# <https://en.wikipedia.org/wiki/Iris_flower_data_set>`_ dataset. The datapoints

# are colored according to their labels.

#

#

#

# +

print(__doc__)

# Code source: <NAME>

# Modified for documentation by <NAME>

# License: BSD 3 clause

import numpy as np

import matplotlib.pyplot as plt

from sklearn.linear_model import LogisticRegression

from sklearn import datasets

# import some data to play with

iris = datasets.load_iris()

X = iris.data[:, :2] # we only take the first two features.

Y = iris.target

logreg = LogisticRegression(C=1e5, solver='lbfgs', multi_class='multinomial')

# Create an instance of Logistic Regression Classifier and fit the data.

logreg.fit(X, Y)

# Plot the decision boundary. For that, we will assign a color to each

# point in the mesh [x_min, x_max]x[y_min, y_max].

x_min, x_max = X[:, 0].min() - .5, X[:, 0].max() + .5

y_min, y_max = X[:, 1].min() - .5, X[:, 1].max() + .5

h = .02 # step size in the mesh

xx, yy = np.meshgrid(np.arange(x_min, x_max, h), np.arange(y_min, y_max, h))

Z = logreg.predict(np.c_[xx.ravel(), yy.ravel()])

# Put the result into a color plot

Z = Z.reshape(xx.shape)

plt.figure(1, figsize=(4, 3))

plt.pcolormesh(xx, yy, Z, cmap=plt.cm.Paired)

# Plot also the training points

plt.scatter(X[:, 0], X[:, 1], c=Y, edgecolors='k', cmap=plt.cm.Paired)

plt.xlabel('Sepal length')

plt.ylabel('Sepal width')

plt.xlim(xx.min(), xx.max())

plt.ylim(yy.min(), yy.max())

plt.xticks(())

plt.yticks(())

plt.show()

# -

|

04 Lineal Regression/.ipynb_checkpoints/plot_iris_logistic-checkpoint.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

# -*- coding: utf-8 -*-

########### Banco de dados aleatório usado para prática.

### By: <NAME>

### Data: 03/08/2020

### Algoritmo: KNN

### Base de dados: bank-full.csv

### Objetivo: baseado em um histórico de dados, predizer se um cliente irá ou não assinar um depósito a prazo.

### Base de dados para estudo fornecida por: archive.ics.uci.edu

### Você pode obter uma descrição sobre cada um dos atributos no arquivo 'descricao_bank_full' situado na pasta 00_daatasets

# +

import pandas as pd

dataset = pd.read_csv('../00_datasets/bank-full.csv')

# +

# atributos categóricos: [0, 1, 2, 3, 4, 6, 7, 8, 10, 15]

# -

previsores = dataset.iloc[ :, 0:16].values

classe = dataset.iloc[ :, 16 ].values

# +

from sklearn.preprocessing import LabelEncoder

label_encoder = LabelEncoder()

previsores[:, 0] = label_encoder.fit_transform(previsores[:, 0])

previsores[:, 1] = label_encoder.fit_transform(previsores[:, 1])

previsores[:, 2] = label_encoder.fit_transform(previsores[:, 2])

previsores[:, 3] = label_encoder.fit_transform(previsores[:, 3])

previsores[:, 4] = label_encoder.fit_transform(previsores[:, 4])

previsores[:, 6] = label_encoder.fit_transform(previsores[:, 6])

previsores[:, 7] = label_encoder.fit_transform(previsores[:, 7])

previsores[:, 8] = label_encoder.fit_transform(previsores[:, 8])

previsores[:, 10] = label_encoder.fit_transform(previsores[:, 10])

previsores[:, 15] = label_encoder.fit_transform(previsores[:, 15])

classe = label_encoder.fit_transform( classe )

# +

from sklearn.preprocessing import StandardScaler

scaler = StandardScaler()

previsores = scaler.fit_transform(previsores)

# +

from sklearn.model_selection import train_test_split

previsores_treinamento, previsores_teste, classe_treinamento, classe_teste = train_test_split( previsores, classe, test_size=0.25, random_state=0 )

# +

from sklearn.neighbors import KNeighborsClassifier

classificador = KNeighborsClassifier( n_neighbors=5, metric='minkowski', p=2 )

classificador.fit( previsores_treinamento, classe_treinamento )

previsoes = classificador.predict( previsores_teste )

# +

from sklearn.metrics import accuracy_score

accuracy = accuracy_score( classe_teste, previsoes )

accuracy

# -

|

04_aprendizagem-baseada-em-instancias/KNN_bank_full.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python [default]

# language: python

# name: python2

# ---

# +

import microdrop_sync.microdrop_sync as ms

import microdrop_sync.utils

reload(microdrop_sync.device)

reload(microdrop_sync.microdrop_sync)

reload(microdrop_sync.utils)

utils = microdrop_sync.utils.MicrodropUtils()

microdrop = ms.MicrodropSync()

device = microdrop.device

from pprint import PrettyPrinter

pp = PrettyPrinter()

# -

filelocation1 = "C:\Users\lucaszw\Desktop\Devices\90_pin_map.svg"

filelocation2 = "C:\Users\lucaszw\Desktop\Devices\pin_map.svg"

dmf_device1 = device.get_from_filelocation(filelocation1)

dmf_device2 = device.load_from_filelocation(filelocation2)

device.change_dmf_device(dmf_device1)

device.device_info_is_running()

device.stop_device_info_plugin()

device.start_device_info_plugin()

|

Device.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + id="PF219yewQCuv"

import pandas as pd

import numpy as np

import seaborn as sns

import scipy as sp

# + id="zSjmkZ8vQRIY"

dados = pd.read_csv('dados.csv')

# + colab={"base_uri": "https://localhost:8080/", "height": 204} id="Fnl9CST_QasK" outputId="7a44d884-c5b5-4501-da06-f12fcf99e94e"

dados.head()

# + id="ZUEPmANAQnyi"

sexo = {0: 'Masculino',

1: 'Feminino'}

cor = {0: 'Indígena',

2: 'Branca',

4: 'Preta',

6: 'Amarela',

8: 'Parda',

9: 'Sem declaração'}

estudo = {

1: 'Sem instrução',

2: '1 ano',

3: '2 anos',

4: '3 anos',

5: '4 anos',

6: '5 anos',

7: '6 anos',

8: '7 anos',

9: '8 anos',

10: '9 anos',

11: '10 anos',

12: '11 anos',

13: '12 anos',

14: '13 anos',

15: '14 anos',

16: '15 anos',

17: 'Não Determinando'

}

estados = {

11: 'Rondônia (RO)',

12: 'Acre (AC)',

13: 'Amazonas (AM)',

14: 'Roraima (RR)',

15: 'Pará (PA)',

16: 'Amapá (AP)',

17: 'Tocantins (TO)',

21: 'Maranhão (MA)',

22: 'Piauí (PI)',

23: 'Ceará (CE)',

24: 'Rio Grande do Norte (RN)',

25: 'Paraíba (PB)',

26: 'Pernambuco (PE)',

27: 'Alagoas (AL)',

28: 'Sergipe (SE)',

29: 'Bahia (BA)',

31: 'Minas Gerais (MG)',

32: 'Espírito Santo (ES)',

33: 'Rio de Janeiro (RJ)',

35: 'São Paulo (SP)',

41: 'Paraná (PR)',

42: 'Santa Catarina (SC)',

43: 'Rio Grande do Sul (RS)',

50: 'Mato Grosso do Sul (MS)',

51: 'Mato Grosso (MT)',

52: 'Goiás (GO)',

53: 'Distrito Federal (DF)'

}

# + [markdown] id="mkyCoffnQKet"

# #Distribuição de probabilidade

# + [markdown] id="DVl8TC6fRBQ3"

# ##Distribuição binomial

# + id="I62ZjsRnTStg"

from scipy.special import comb

# + colab={"base_uri": "https://localhost:8080/"} id="fef3kalGTcN3" outputId="53d56a80-412f-4494-deb8-4b57f0f9668a"

combinacoes = comb(60,6)

combinacoes

# + colab={"base_uri": "https://localhost:8080/"} id="B8vx85-tThjc" outputId="996c4901-901f-43ba-ed7f-ed67e11d08aa"

1/combinacoes*100

# + colab={"base_uri": "https://localhost:8080/"} id="SVN1mFdpT_Oq" outputId="f63f2d31-bae7-4ece-d3c6-90a9215ba19b"

comb(25,20)

# + colab={"base_uri": "https://localhost:8080/"} id="zQgTMJADUbyN" outputId="698d8bb2-125b-4612-cd92-5a80419df3f8"

1/comb(25,20)

# + colab={"base_uri": "https://localhost:8080/"} id="cCFgsINwUdUU" outputId="421b5233-7fa5-4e58-9393-ed52ec3614cb"

n = 10

n

# + colab={"base_uri": "https://localhost:8080/"} id="Dlc9MV9SVIzG" outputId="9e29f548-e60b-4a54-b7b8-08612e0fc7a2"

p = 1/3

p

# + colab={"base_uri": "https://localhost:8080/"} id="1lYyYtLmVc4i" outputId="30c25de4-42ff-4bfd-e542-d216b46416ff"

q = 1-p

q

# + colab={"base_uri": "https://localhost:8080/"} id="F-_dDaVSVfK6" outputId="ea37f872-944b-4e12-acc1-b0a8b1191cd8"

k = 5

k

# + colab={"base_uri": "https://localhost:8080/"} id="h7kpIttgVlIq" outputId="b21a86cd-f0c0-4544-8573-e419f56bcf6b"

prob = (comb(n, k) * (p**k) * (q ** (n - k)))

prob

# + id="VtDjS-YwWATl"

from scipy.stats import binom

# + colab={"base_uri": "https://localhost:8080/"} id="P53Df_qAWSok" outputId="60066e09-8a78-4aa7-f622-4b850a5ae023"

prob = binom.pmf(k,n,p)

print(f'{prob:.8f}')

# + colab={"base_uri": "https://localhost:8080/"} id="AphyFq5GWjEo" outputId="67be3870-6848-45db-a21b-c3abc9322976"

binom.pmf(5,n,p) + binom.pmf(6,n,p) + binom.pmf(7,n,p) + binom.pmf(8,n,p) + binom.pmf(9,n,p) + binom.pmf(10,n,p)

# + colab={"base_uri": "https://localhost:8080/"} id="Gc33z7DTWz_E" outputId="3139ac92-2bc2-4f18-a0b0-6e1ce47f3d13"

binom.pmf([5, 6, 7, 8, 9, 10], n, p).sum()

# + colab={"base_uri": "https://localhost:8080/"} id="d01Zu4l7XGvK" outputId="1dc6e140-227f-452b-8ba9-d870d9d15e7c"

1 - binom.cdf(4, n, p)

# + colab={"base_uri": "https://localhost:8080/"} id="zTWDpAcNXPnS" outputId="fdf8e6df-ad3d-4e00-f829-0dfa4e56cdc0"

binom.sf(4, n, p)

# + id="HGtC4eaJXdHy"

k = 2

n = 4

p = 1/2

# + colab={"base_uri": "https://localhost:8080/"} id="ERh5uVTZYIaV" outputId="b2392825-cf35-4871-d453-61090b60dd84"

binom.pmf(k, n, p)

# + id="4DbHA5gbYKQO"

k = 3

n = 10

p = 1/6

# + colab={"base_uri": "https://localhost:8080/"} id="09-jGyavY73O" outputId="f59ae2e0-c8a0-4c64-995e-138ecb6af6a0"

1-binom.cdf(k, n, p)

# + id="Jk_F8OjsY8ml"

#exemplo

p = 0.6

n = 12

k = 8

# + colab={"base_uri": "https://localhost:8080/"} id="3w7yOdTCgI9B" outputId="7fd8757a-3fbb-44fe-c11d-be9d63c74327"

prob = binom.pmf(k, n, p)

prob

# + colab={"base_uri": "https://localhost:8080/"} id="Gc89pqejgTCr" outputId="3b5f2c6f-a6d0-4277-d243-80d6ba205223"

n = 30 * prob

n

# + id="LoYDegoUghdS"

p = 0.22

n = 3

k = 2

# + colab={"base_uri": "https://localhost:8080/"} id="XIidc1fdg7XI" outputId="c9e09a71-b425-4939-8578-92f5af8cf7e9"

prob = binom.pmf(k, n, p)

prob

# + colab={"base_uri": "https://localhost:8080/"} id="CUDXHxhNg77n" outputId="033c3685-0380-4e71-8bd2-d3ac2125f06d"

50*prob

# + [markdown] id="3j4LSvjvh_MP"

# ##Distribuição de probabilidade Poisson

# + colab={"base_uri": "https://localhost:8080/"} id="UOrF7lCIhKll" outputId="f4c7c754-b437-4c88-9b37-0a2d54a4ecf1"

np.e

# + id="_T2TeYS3izZo"

media = 20

k = 15

# + colab={"base_uri": "https://localhost:8080/"} id="lrjRkUnQjgS1" outputId="3dffb9ac-0555-4e08-adfc-7c5297bd22c1"

prob = ((np.e**(-media)) * (media**k)) / np.math.factorial(k)

print(f'{prob:.8f}')

# + id="7BGN5WbOj4FH"

from scipy.stats import poisson

# + colab={"base_uri": "https://localhost:8080/"} id="x6VunZG3kFUL" outputId="2b0c5c53-8712-4e2d-f125-622c7773bff6"

prob = poisson.pmf(k, media)

prob

# + colab={"base_uri": "https://localhost:8080/"} id="ry18FVrMkJ4y" outputId="2f90171c-325e-4047-c13c-7a3f13791acb"

k = 25

media = 20

prob = poisson.pmf(k, media)

print(f'{prob*100:.2f}')

# + [markdown] id="YSlpHPM6k4vZ"

# ##Distribuição Normal

# + id="aGtsfefpkoIv"

from scipy.stats import norm

# + colab={"base_uri": "https://localhost:8080/", "height": 0} id="9b_fCLv3nNNm" outputId="f2718145-d0f6-49fa-c01f-868bae6aef72"

tabela_normal_padronizada = pd.DataFrame(

[],

index=[f"{i/100:.2f}" for i in range(0, 400, 10)],

columns=[f"{i/100:.2f}" for i in range(0, 10)]

)

for index in tabela_normal_padronizada.index:

for columns in tabela_normal_padronizada.columns:

Z = np.round(float(index) + float(columns), 2)

tabela_normal_padronizada.loc[index, columns] = f'{norm.cdf(Z):.4f}'

tabela_normal_padronizada.rename_axis('Z', axis=1, inplace=True)

tabela_normal_padronizada

# + id="6g3LcAtooxyp"

media = 1.70

dp = 0.1

x = 1.80

# + colab={"base_uri": "https://localhost:8080/"} id="BTXB7SUUTfth" outputId="92055d94-15e5-429a-f978-7b89d1f6448a"

Z = (x - media)/dp

Z

# + colab={"base_uri": "https://localhost:8080/"} id="sWOfTs6bTlwX" outputId="d56214c5-51fa-4eb8-f545-8df3f49d711b"

prob = 0.8413

prob

# + colab={"base_uri": "https://localhost:8080/"} id="GIF89WozT6Qp" outputId="46a1bc1f-6fa3-48bd-9d5a-9e1421f7ba64"

prob=norm.cdf(Z)

prob

# + colab={"base_uri": "https://localhost:8080/"} id="-CfjZqCbUKzf" outputId="c8a67076-6f59-464b-df99-74f305581a7c"

prob = norm.cdf((85-70)/5)

prob

# + colab={"base_uri": "https://localhost:8080/"} id="HTZJHFtCUniI" outputId="2d2d636c-b5e9-4719-99f6-e6e3db4fce36"

prob = norm.cdf((1.80-1.70)/0.1) - norm.cdf((1.60-1.70)/0.1)

prob

# + colab={"base_uri": "https://localhost:8080/"} id="YUL0AHnEVGuz" outputId="0c48e2ab-7e33-4db7-db6f-4ddecab5fe06"

prob = (norm.cdf((1.80-1.70)/0.1) - 0.5)*2

prob

# + colab={"base_uri": "https://localhost:8080/"} id="dyx1WDDgVwBQ" outputId="b5607dff-b7c3-48cc-a029-4cd51682bfbc"

prob = norm.cdf((1.80-1.70)/0.1) - (1 - norm.cdf((1.80-1.70)/0.1))

prob

# + colab={"base_uri": "https://localhost:8080/"} id="JXTvdMV7WY5g" outputId="701b6292-e434-45a7-d140-4bc0490eb989"

media = 300

dp = 50

x1 = 250

x2 = 350

prob = norm.cdf((x1-media)/dp) - (norm.cdf((x2-media)/dp))

prob*100

# + colab={"base_uri": "https://localhost:8080/"} id="kKfec8jWXMxx" outputId="30c9024d-d2e5-43c0-8ee3-35ce3b5a51ba"

media = 300

dp = 50

x1 = 400

x2 = 500

prob = norm.cdf((x1-media)/dp) - (norm.cdf((x2-media)/dp))

prob*100

# + colab={"base_uri": "https://localhost:8080/"} id="fVNe-1tRXFSU" outputId="fe3c0292-e792-4391-da37-af86fa31ebb2"

media = 1.70

dp = 0.1

x1 = 1.90

prob = 1- (norm.cdf((x1-media)/dp))

prob*100

# + colab={"base_uri": "https://localhost:8080/"} id="bjVjxz7EYZV0" outputId="77c9839c-37f6-4849-db75-4a2bd1d5f7d5"

media = 1.70

dp = 0.1

x1 = 1.90

prob = (norm.cdf(-(x1-media)/dp))

prob*100

# + colab={"base_uri": "https://localhost:8080/"} id="DGjwNXcxYbaL" outputId="caefe7a5-a789-4dd1-c9a5-3940dc404dab"

media = 720

dp = 30

x1 = 650

x2 = 750

x3 = 800

x4 = 700

prob = norm.cdf((x1-media)/dp) - (norm.cdf((x2-media)/dp))

prob*100

# + colab={"base_uri": "https://localhost:8080/"} id="8EZYtk20Y_gl" outputId="26254dd7-9467-46a0-ad7c-b6af1ff41d2f"

prob = (norm.cdf(-(x3-media)/dp))

prob*100

# + colab={"base_uri": "https://localhost:8080/"} id="HnOgB0moZNit" outputId="ae2a098a-0779-422a-e6b8-e1d877a83b00"

prob = (norm.cdf((x4-media)/dp))

prob*100

# + colab={"base_uri": "https://localhost:8080/"} id="TzCLDlpiZQ58" outputId="f23e6b02-8f59-428a-d638-ee89c60e57ea"

prob = norm.cdf(1.96)

prob

# + colab={"base_uri": "https://localhost:8080/"} id="LSURMz_GcKc7" outputId="3b205bed-36f5-4abb-b3a2-40ee0278ced5"

prob = 1-norm.cdf(2.15)

prob

# + colab={"base_uri": "https://localhost:8080/"} id="tRWdVpP5cTiw" outputId="d6fe8803-6cf2-467b-8c61-5b33796f84f5"

prob = norm.cdf(-0.78)

prob

# + colab={"base_uri": "https://localhost:8080/"} id="sfsmnD5-cdjl" outputId="8a997bb5-e093-4875-d74c-a60a3bcd8cea"

prob = 1-norm.cdf(0.59)

prob

# + [markdown] id="BQXyi-ZUcyGE"

# #Amostragem

# + [markdown] id="wt-5Pnedc3KI"

# ##Populacao e amostragem

# + colab={"base_uri": "https://localhost:8080/"} id="wgPDiprMch6V" outputId="d016c0b8-6a90-43f9-bae3-dca8ba1caa0e"

dados.shape[0]

# + colab={"base_uri": "https://localhost:8080/"} id="-_FP1H2Meai2" outputId="cab9a48b-cfcd-4639-c45d-46afd9e773a4"

dados.Renda.mean()

# + id="h3MnchT-hQy_"

amostra = dados.sample(n = 100, random_state=101)

# + colab={"base_uri": "https://localhost:8080/"} id="JU8NVfZlhh2i" outputId="707e7a23-a682-46fb-9963-9a7f1ace35cf"

amostra.shape[0]

# + colab={"base_uri": "https://localhost:8080/"} id="wf3XBGzRhjTo" outputId="b57cadf9-e64a-4fb1-dfd7-458befc6c8b9"

amostra.Renda.mean()

# + colab={"base_uri": "https://localhost:8080/"} id="9AhDiBx5hogZ" outputId="f00d5148-369b-48ac-a8a4-0f5fe6b339fd"

dados.Sexo.value_counts(normalize=True)

# + colab={"base_uri": "https://localhost:8080/"} id="vwSl__94h47c" outputId="4c72c0cf-4994-40fb-9446-a4be6f57daa2"

amostra.Sexo.value_counts(normalize=True)

# + [markdown] id="5TOHrVfpjS5y"

# #Estimação

# + [markdown] id="BMTZe6mbjVbx"

# ##Teorema do limíte central

# + id="WYGteoRBh7kJ"

n = 2000

total_amostras = 1500

# + id="RUHaZigUk0xu"

amostras = pd.DataFrame()

# + colab={"base_uri": "https://localhost:8080/", "height": 0} id="9LxXoIA2j6gY" outputId="64862f31-4032-42d8-a89c-14981a8c2e90"

for i in range(total_amostras):

_ = dados.Idade.sample(2000)

_.index = range(0, len(_))

amostras['Amostra_' + str(i)] = _

amostras

# + colab={"base_uri": "https://localhost:8080/"} id="9QtOjq3LkBqm" outputId="e9298834-ae28-4978-e742-57d9e4759d09"

amostras.mean()

# + colab={"base_uri": "https://localhost:8080/", "height": 0} id="LYQGMsowmyux" outputId="fd2a6d6d-5019-4740-f103-bb4d2196a6c4"

amostras.mean().hist()

# + colab={"base_uri": "https://localhost:8080/"} id="2F1iYeGhm63a" outputId="87cf2a37-8afc-4f51-e018-7efdaf7f5340"

dados.Idade.mean()

# + colab={"base_uri": "https://localhost:8080/"} id="HDm2p2k5m-Y6" outputId="01bcff7a-8242-4af7-a96b-1d382addf339"

amostras.mean().mean()

# + colab={"base_uri": "https://localhost:8080/"} id="s-6jx5mmnCrO" outputId="78ffa3e1-2069-40d0-83bd-19043afa7964"

amostras.mean().std()

# + colab={"base_uri": "https://localhost:8080/"} id="YGWXpqWfnJEO" outputId="917ffe57-f891-46fd-92e4-4391089c4511"

dados.Idade.std()

# + colab={"base_uri": "https://localhost:8080/"} id="5M59BfMrnQXA" outputId="96705507-8f73-488a-cc7d-7b33bf6b99d8"

dados.Idade.std()/np.sqrt(n)

# + [markdown] id="5F5rRjpnBZ_b"

# ##Intervalo de confiança

# + id="RczjjEMpnVhU"

media_amostral = 5050

significancia = 0.05

confiança = (1-significancia)

alpha = confiança/2+0.5

dp = 150

n=20

raiz_n = np.sqrt(n)

# + colab={"base_uri": "https://localhost:8080/"} id="AWPcSZzBDCqf" outputId="299bc89b-5516-4a6d-f9cc-9c90840c2d85"

z = norm.ppf(alpha)

round(z, 4)

# + id="ZGSxuXhuDxKz"

#calculo do erro amostras

sigma = dp/raiz_n

# + colab={"base_uri": "https://localhost:8080/"} id="99JB4YvsD4B9" outputId="0887f189-48ba-47c2-d4a6-d5e26715c9f3"

e = z * sigma

e

# + colab={"base_uri": "https://localhost:8080/"} id="4QHcgtuAD-oA" outputId="6091397e-02e0-4d52-80c0-b0e4a099a0c0"

e = norm.ppf((1-0.05)/2+0.5)*dp/np.sqrt(n)

round(e, 2) #gramas

# + colab={"base_uri": "https://localhost:8080/"} id="X-9LKab7EoZx" outputId="84daad32-f49e-488b-af2a-3f11ace601bf"

#Intervalo de confiança

intervalo = (

media_amostral - e,

media_amostral + e

)

intervalo

# + colab={"base_uri": "https://localhost:8080/"} id="NHfMfgRxFiUf" outputId="880787e8-e9af-429b-8e90-<KEY>"

intervalo = norm.interval(alpha = confiança, loc = media_amostral, scale = sigma)

intervalo

# + id="YhX4A-axGJ_l"

#exercício

media_amostral = ''

significancia = 0.05

confiança = (1-significancia)

alpha = confiança/2+0.5

dp = 6

n=50

raiz_n = np.sqrt(n)

# + colab={"base_uri": "https://localhost:8080/"} id="aqDB7ktmG3sX" outputId="fa6aacd9-1e2c-4861-e695-468bf3c4058f"

e = norm.ppf(alpha)*dp/np.sqrt(n)

round(e, 2) #gramas

# + id="vERiGQOFJoKR"

#exercício

media_amostral = 28

significancia = 1 - confiança

confiança = 0.9

alpha = confiança/2+0.5

dp = 11

n = 1976

raiz_n = np.sqrt(n)

sigma = dp/raiz_n

# + colab={"base_uri": "https://localhost:8080/"} id="AnmwxmS5KFn1" outputId="8392dcd8-8173-4104-ad2a-e0318606b429"

intervalo = norm.interval(alpha = confiança, loc = media_amostral, scale = sigma)

intervalo

# + [markdown] id="AmCqEq2BNGWK"

# ##Calculo do tamanho de amostra

# + id="6Y1EIfevNzYa"

confiança = 0.95

alpha = confiança/2+0.5

sigma = 3323.29 #desvio padrão populacional

e = 100

# + colab={"base_uri": "https://localhost:8080/"} id="HAD4yZsdKQFa" outputId="814eee0a-5714-4ef1-c494-3fb2368e2b78"

z = norm.ppf(alpha)

z

# + colab={"base_uri": "https://localhost:8080/"} id="QGCjW5NFOB7l" outputId="6a5b545a-96bb-492f-968e-59c59a34865d"

n = (norm.ppf(alpha)*((sigma)/(e)))**2

int(round(n))

# + id="dP3OnEdFOzcu"

#exercício

media_amostral = 45.50

significancia = 0.1

confianca = 1 - significancia

alpha = confianca/2+0.5

dp = 15 #pq é sigma?

n = ''

erro = 0.1

e= media_amostral * erro #erro diferencial

#raiz_n = np.sqrt(n)

#sigma = dp/raiz_n

# + colab={"base_uri": "https://localhost:8080/"} id="hdxr-WbyP16v" outputId="6efaff20-2f31-4a8f-9997-87d7a7007147"

n = (norm.ppf(alpha)*((dp)/(e)))**2

int(round(n))

# + colab={"base_uri": "https://localhost:8080/"} id="StEUtYPxRwd9" outputId="dfa45cd4-ef47-4988-d09e-082c3ebabb40"

media = 45.5

sigma = 15

significancia = 0.10

confianca = 1 - significancia

z = norm.ppf(0.5 + (confianca / 2))

erro_percentual = 0.10

e = media * erro_percentual

n = (z * (sigma / e)) ** 2

n.round()

# + [markdown] id="bz_WH1s2V9n0"

# ##Calculo da amostra para população finita

# + id="O5m49lJ1RttD"

N = 10000 #tamanho da população

significancia = 1 - confianca

confianca = 0.95

alpha = alpha = confianca/2+0.5

z = norm.ppf(alpha) #variavel normal padronizada

sigma = '' #desvio padrão populacional

s = 12 #desvio padrão amostral

e = 5 #erro diferencial

# + colab={"base_uri": "https://localhost:8080/"} id="Es5a5muGWy8R" outputId="1de13d85-3f34-4014-c69d-5a711de64eaf"

n = ((z**2)*(s**2)*(N)) / (((z**2)*(s**2))+((e**2)*(N-1)))

int(n.round())

# + id="xZeTljUXXjrJ"

N = 2000 #tamanho da população

significancia = 1 - confianca

confianca = 0.95

alpha = alpha = confianca/2+0.5

z = norm.ppf(alpha) #variavel normal padronizada

sigma = '' #desvio padrão populacional

s = 0.480 #desvio padrão amostral

e = 0.3 #erro diferencial

# + colab={"base_uri": "https://localhost:8080/"} id="R1nAziZ0Yh4M" outputId="1753f488-1b13-4e96-a7a4-1efeea5a0a1c"

n = ((z**2)*(s**2)*(N)) / (((z**2)*(s**2))+((e**2)*(N-1)))

int(n.round())

# + id="sbqtJkppYiNx"

renda_5mil = dados.query('Renda <=5000').Renda

# + id="09a0BcvGZpEm"

N = '' #tamanho da população

significancia = 1 - confianca

confianca = 0.95

alpha = alpha = confianca/2+0.5

z = norm.ppf(alpha) #variavel normal padronizada

sigma = renda_5mil.std() #desvio padrão populacional

media = renda_5mil.mean()

s = '' #desvio padrão amostral

e = 10 #erro diferencial

# + colab={"base_uri": "https://localhost:8080/"} id="eYY1OovIaROk" outputId="feff32b3-1177-43ba-ba69-ae4fcaf3c59a"

n = int((z * (sigma / e)) ** 2)

n

# + colab={"base_uri": "https://localhost:8080/"} id="O1CjcguPaR9Z" outputId="3d9c737d-7e2a-4bf8-d5ab-c7968e9b8ed7"

intervalo = norm.interval(alpha = confianca, loc = media, scale = sigma/np.sqrt(n))

intervalo

# + id="wg4S8owbazKl"

import matplotlib.pyplot as plt

# + id="rEBzQnu2bN3K"

tamanho_simulacao = 1000

medias = [renda_5mil.sample(n = n).mean() for i in range(1 , tamanho_simulacao)]

medias = pd.DataFrame(medias)

# + colab={"base_uri": "https://localhost:8080/", "height": 391} id="csV69Czebkwv" outputId="729db28f-9ead-4e6c-cf33-d072375f952e"

ax = medias.plot(style = '.')

ax.figure.set_size_inches(12,6)

ax.hlines(y= media, xmin=0, xmax=tamanho_simulacao, color='black', linestyles='dashed')

ax.hlines(y= intervalo[0], xmin=0, xmax=tamanho_simulacao, color='red', linestyles='dashed')

ax.hlines(y= intervalo[1], xmin=0, xmax=tamanho_simulacao, color='red', linestyles='dashed')

ax

# + [markdown] id="z4gDB_uFfTy0"

# ##Desafio

# + colab={"base_uri": "https://localhost:8080/", "height": 204} id="o5WhkbJxc5e9" outputId="5eb46b46-1077-4ed3-e45d-018be8460917"

dados.head()

# + id="n8Rmc-HSfYDj"

k = 7

n = 10

p = 0.7

# + colab={"base_uri": "https://localhost:8080/"} id="V8mYqKJnfy4o" outputId="1cbfc91f-1f56-4469-92d5-ce2e95ee8a0f"

prob = binom.pmf(k, n, p)

prob

# + colab={"base_uri": "https://localhost:8080/"} id="aGn2-8iAgPEW" outputId="f2e28a6f-9c66-4cc8-9248-760a521c92ac"

media = 100

#media = n * prob

n = media / prob

n

# + id="M3D6eO06g1CT"

dataset= dados.Renda.sample(n=200, random_state=101)

# + colab={"base_uri": "https://localhost:8080/"} id="vMMZcm1zhw27" outputId="7e6d57d8-ef22-49d1-9bbe-fccb7356fa66"

dataset.mean()

# + colab={"base_uri": "https://localhost:8080/"} id="5vkCLIvvh1c7" outputId="ff397ca5-f478-4625-8b86-457475d12c8b"

dataset.std()

# + id="rrQgHnykh3oU"

media_amostra = dataset.mean()

desvio_padrao_amostra = dataset.std()

recursos = 150000

custo_por_entrevista = 100

# + id="VK3fQoLSiQYL"

e = 0.1*media_amostra

# + id="XRtgikWh3ZeK"

z = norm.ppf((0.9/2)+0.5)

# + colab={"base_uri": "https://localhost:8080/"} id="Y0noJ8nl3mwa" outputId="ff45dfb4-9c97-4538-cc5b-287014ce5421"

n_90 = int((z * (desvio_padrao_amostra/ e))**2)

n_90

# + colab={"base_uri": "https://localhost:8080/"} id="BxzPDorL32zv" outputId="548b6e5f-744f-4f07-f7c7-66006739b186"

z = norm.ppf((0.95/2)+0.5)

n_95 = int((z * (desvio_padrao_amostra/ e))**2)

n_95

# + colab={"base_uri": "https://localhost:8080/"} id="qDviOZUZ4Dxc" outputId="5d62d38c-e1e3-4e07-be1b-28217d3a8dbe"

z = norm.ppf((0.99/2)+0.5)

n_99 = int((z * (desvio_padrao_amostra/ e))**2)

n_99

# + colab={"base_uri": "https://localhost:8080/"} id="Khq_UWD54HYu" outputId="623904a3-1403-46f7-a635-8f9921ac03d1"

print(f'O custo para a pesquisa com 90% de conficança é R${n_90*custo_por_entrevista:,.2f}')

print(f'O custo para a pesquisa com 95% de conficança é R${n_95*custo_por_entrevista:,.2f}')

print(f'O custo para a pesquisa com 99% de conficança é R${n_99*custo_por_entrevista:,.2f}')

# + colab={"base_uri": "https://localhost:8080/"} id="je_vO8Ws4bPg" outputId="f5624be6-5d7b-47b2-ea1a-9b419e87c12e"

intervalo = norm.interval(alpha = 0.95, loc = media_amostra, scale = desvio_padrao_amostra/np.sqrt(n_95))

intervalo

# + colab={"base_uri": "https://localhost:8080/"} id="FVRxRXYz47v5" outputId="2963181c-6ef5-4169-824a-f51152e6ac51"

n = recursos/custo_por_entrevista

n

# + colab={"base_uri": "https://localhost:8080/"} id="zkV1_3c05X3e" outputId="2cfe4e48-eb1d-474c-a914-f55b07e5bf01"

e = norm.ppf((1-0.05)/2+0.5)*desvio_padrao_amostra/np.sqrt(n)

round(e, 2)

# + colab={"base_uri": "https://localhost:8080/"} id="pGkoYT9H5y6k" outputId="168937be-50ca-425b-f7ee-827e0a1c48e0"

e_percentual = (e / media_amostra )*100

e_percentual

# + id="doYtDpgG6L2M"

e = 0.05*media_amostra

# + colab={"base_uri": "https://localhost:8080/"} id="dxCgVX8r6mn8" outputId="4a198412-801d-4195-ec2f-318e4cd5cf07"

z = norm.ppf((0.95/2)+0.5)

n_95 = int((z * (desvio_padrao_amostra/ e))**2)

n_95

# + colab={"base_uri": "https://localhost:8080/"} id="5kcThSVw6w71" outputId="a886e15b-2efc-469f-ae44-7da02d3f84ca"

custo = n_95*custo_por_entrevista

custo

# + id="MkaUh06b7Z8n"

|

alura_estatistica_probabilidade.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="vl-0acX6FBQu"

# # This notebook describes how the FruitNet was built and trained.

# *The source code for the FruitNet app is available [here](https://github.com/AlexanderKlanovets/fruitnet).*

#

# ## Installing and importing dependencies

#

# For this project, the following tools were used:

# - [Tensorflow 2](https://www.tensorflow.org/install) for building and training the model;

# - [Numpy](https://numpy.org/) for working with arrays;

# - [Matplotlib](https://matplotlib.org/) for visualizing the data.

#

# + id="yHwLm_tUIghQ"

# !pip install -q tf-nightly numpy matplotlib

# + id="OyA5UUZGIqHE"

import matplotlib.pyplot as plt

import numpy as np

import os

import tensorflow as tf

from tensorflow.keras.preprocessing import image_dataset_from_directory

from google.colab import drive

# -

# I've trained the model using Google Colab. The dataset was uploaded to my Google Drive and mounted it in Colab:

# + id="QB88VcquIzQa" outputId="623e3865-eaa2-4de0-c0fa-7c2e26a000c5" colab={"base_uri": "https://localhost:8080/", "height": 34}

drive.mount('/content/drive')

# + [markdown] id="8MJ3JNefIOvl"

# ## Data preprocessing

#

# The dataset used for model implementation is [Fruits fresh and rotten for classification](https://www.kaggle.com/sriramr/fruits-fresh-and-rotten-for-classification) provided by <NAME>.

#

# The following steps of the data preprocessing are:

# - data download;

# - data samples visualization;

# - creating training, validation and test sets from the initial dataset;

# - rescaling pixel values of the images in the datasets.

#

# ### Data download

# + id="tEXd71W0I9s1" outputId="6b1f2faa-30db-4074-8c2a-fd813dfa52b3" colab={"base_uri": "https://localhost:8080/", "height": 34}

PATH = '/content/drive/My Drive/Rotten fruits dataset/dataset'

train_dir = os.path.join(PATH, 'train')

validation_dir = os.path.join(PATH, 'test')

LABELS = ['fresh apple', 'fresh banana', 'fresh orange',

'rotten apple', 'rotten banana', 'rotten orange']

BATCH_SIZE = 32

EPOCHS = 20

IMG_SIZE = (160, 160)

train_dataset = image_dataset_from_directory(train_dir,

shuffle=True,

batch_size=BATCH_SIZE,

image_size=IMG_SIZE,

label_mode='categorical')

# + id="HFOfQHNCJV5A" outputId="e10200b8-ebf2-473d-8cb6-41cd672c714b" colab={"base_uri": "https://localhost:8080/", "height": 34}

validation_dataset = image_dataset_from_directory(validation_dir,

shuffle=True,

batch_size=BATCH_SIZE,

image_size=IMG_SIZE,

label_mode='categorical')

# + [markdown] id="ZWFhnx7RKZxH"

# ### Visualizing data samples

# + id="AZ76xNotJbMb" outputId="332b630e-53fd-4771-8d28-a939df1f0f0f" colab={"base_uri": "https://localhost:8080/", "height": 591}

class_names = train_dataset.class_names

plt.figure(figsize=(10, 10))

for images, labels in train_dataset.take(1):

for i in range(9):

ax = plt.subplot(3, 3, i + 1)

plt.imshow(images[i].numpy().astype("uint8"))

plt.title(class_names[labels[i]])

plt.axis("off")

# + [markdown] id="diITNkiBKv0q"

# ### Creating a test dataset

# + id="M2TyDNjfK2zM"

val_batches = tf.data.experimental.cardinality(validation_dataset)

test_dataset = validation_dataset.take(val_batches // 5)

validation_dataset = validation_dataset.skip(val_batches // 5)

# + id="KEmVVSDgLYun" outputId="36835509-2211-462d-8883-a8dd797b6a36" colab={"base_uri": "https://localhost:8080/", "height": 51}

val_batches_num = tf.data.experimental.cardinality(validation_dataset)

test_batches_num = tf.data.experimental.cardinality(test_dataset)

print('Number of validation batches: %d' % val_batches_num)

print('Number of test batches: %d' % test_batches_num)

# + [markdown] id="RnptH4tqK3cJ"

# ### Configuring the dataset for performance

# + id="s-ZUqyEoLb4D"

AUTOTUNE = tf.data.experimental.AUTOTUNE

train_dataset = train_dataset.prefetch(buffer_size=AUTOTUNE)

validation_dataset = validation_dataset.prefetch(buffer_size=AUTOTUNE)

test_dataset = test_dataset.prefetch(buffer_size=AUTOTUNE)

# + [markdown] id="pt2Bj_-9LX5D"

# ### Rescaling pixel values

# + id="mm-3yos4NPVx"

preprocess_input = tf.keras.applications.mobilenet_v2.preprocess_input

# + [markdown] id="nCHKbveML4P3"

# # Building the model

#

# I've used Google MobileNet V2 as a base model for this problem. Here I'm importing the model without the classification layers:

# + id="duN2bpaiLjD7"

IMG_SHAPE = IMG_SIZE + (3,)

base_model = tf.keras.applications.MobileNetV2(input_shape=IMG_SHAPE,

include_top=False,

weights='imagenet')

# -

# Freezing the convolutional base before appling the transfer learning:

# + id="h9ud0TPbLodM"

base_model.trainable = False

# + id="BFPrMm5bMuZF" outputId="aa206984-84d4-42d1-d339-963ed5b913af" colab={"base_uri": "https://localhost:8080/", "height": 34}

image_batch, label_batch = next(iter(train_dataset))

feature_batch = base_model(image_batch)

print(feature_batch.shape)

# + id="9hL9Til-Lo6q"

base_model.summary()

# -

# Converting the features from the convolutional layers to a vector. As I'm using 32-sized mini-batches for training, I get 32 vectors:

# + id="LO3B1nskL4c5" outputId="c9994309-b069-470e-9677-1c2ba6b53c98" colab={"base_uri": "https://localhost:8080/", "height": 34}

global_average_layer = tf.keras.layers.GlobalAveragePooling2D()

feature_batch_average = global_average_layer(feature_batch)

print(feature_batch_average.shape)

# -

# Adding a custom classification layer:

# + id="3lNWDZSCMJx1" outputId="3fd9b3ee-cc8f-4467-f0ac-1e23d77eb998" colab={"base_uri": "https://localhost:8080/", "height": 34}

prediction_layer = tf.keras.layers.Dense(6, activation='softmax')

prediction_batch = prediction_layer(feature_batch_average)

print(prediction_batch.shape)

# -

# Putting it all together:

# + id="NaihBAHeMbzh"

inputs = tf.keras.Input(shape=(160, 160, 3))

x = preprocess_input(inputs)

x = base_model(x, training=False)

x = global_average_layer(x)

x = tf.keras.layers.Dropout(0.2)(x)

outputs = prediction_layer(x)

model = tf.keras.Model(inputs, outputs)

# -

# ## Compling the model

# + id="WgxK0qkzNBkf"

base_learning_rate = 0.0001

model.compile(optimizer=tf.keras.optimizers.Adam(lr=base_learning_rate),

loss=tf.keras.losses.CategoricalCrossentropy(from_logits=True),

metrics=['accuracy'])

# + id="yh8uaKVjNiU1" outputId="f61790c1-c45a-4c11-df63-2a413047a9af" colab={"base_uri": "https://localhost:8080/", "height": 391}

model.summary()

# + id="oZuOdVLZNsfF" outputId="a7b2aa3d-0170-461e-8386-b92d75f33b36" colab={"base_uri": "https://localhost:8080/", "height": 34}

initial_epochs = 10

loss0, accuracy0 = model.evaluate(validation_dataset)

# + id="orhpJaCyjYS5" outputId="f5558db7-1cfd-437a-8706-d22a09fa5007" colab={"base_uri": "https://localhost:8080/", "height": 51}

print("initial loss: {:.2f}".format(loss0))

print("initial accuracy: {:.2f}".format(accuracy0))

# -

# ## Training the model

# + id="o7VZDjk4N5M0" outputId="c3b5381a-1987-499b-e2fc-4df6c2d1728a" colab={"base_uri": "https://localhost:8080/", "height": 697}

history = model.fit(train_dataset,

epochs=EPOCHS,

validation_data=validation_dataset)

# -

# ## Plotting the learning curves

# + id="MFrVzp2XN8rK" outputId="3280211d-f80b-4f0f-e7f7-c64ddc741e15" colab={"base_uri": "https://localhost:8080/", "height": 513}

acc = history.history['accuracy']

val_acc = history.history['val_accuracy']

loss = history.history['loss']

val_loss = history.history['val_loss']

plt.figure(figsize=(8, 8))

plt.subplot(2, 1, 1)

plt.plot(acc, label='Training Accuracy')

plt.plot(val_acc, label='Validation Accuracy')

plt.legend(loc='lower right')

plt.ylabel('Accuracy')

plt.ylim([min(plt.ylim()),1])

plt.title('Training and Validation Accuracy')

plt.subplot(2, 1, 2)

plt.plot(loss, label='Training Loss')

plt.plot(val_loss, label='Validation Loss')

plt.legend(loc='upper right')

plt.ylabel('Cross Entropy')

plt.ylim([0,1.0])

plt.title('Training and Validation Loss')

plt.xlabel('epoch')

plt.show()

# + id="xDWFHPCh3ZtR"

model.save('fruit_classifier_v2_dropout.h5')

# -

# ## Checking the final accuracy:

# + id="bxSxLiCNLKvw" outputId="eb5ca7bf-0be8-4053-b4dd-159abf70ef2b" colab={"base_uri": "https://localhost:8080/", "height": 34}

loss_final, accuracy_final = model.evaluate(test_dataset)

# + id="j1qlecCHqv1m" outputId="39152040-583f-461e-f4ed-62435863fd7d" colab={"base_uri": "https://localhost:8080/", "height": 51}

print("Final loss: {:.2f}".format(loss_final))

print("Final accuracy: {:.2f}".format(accuracy_final))

|

notebooks/FruitNetTransferLearning.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

# <a href="https://colab.research.google.com/github/haribharadwaj/notebooks/blob/main/BME511/SystemIdentificationMAP.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# # ML vs. MAP estimation for system identification

# +

import numpy as np

import pylab as pl

# Setting it so figs will be a bit bigger

from matplotlib import pyplot as plt

plt.rcParams['figure.figsize'] = [5, 3.33]

plt.rcParams['figure.dpi'] = 120

# -

# ## ML filter (same on previous active filter code)

from scipy import linalg

def deconvML(x, y, p):

A = linalg.toeplitz(x[(p-1):], x[:p][::-1])

ysub = y[(p-1):]

h = np.dot(linalg.pinv(A), ysub)

return h

# ## Simulated test scenario

#

# ### Impulse response

# +

fs = 1024

t = np.arange(0, 2, 1./fs)

f = 10;

tau = 0.25/f;

h = np.sin(2 * np.pi * f * t) * np.exp(-t/tau)

pl.plot(t, h);

pl.xlabel('Time (s)')

pl.ylabel('h(t)')

pl.xlim([0, 0.4])

# -

# ### Create some inputs and outputs

# +

from scipy import signal

f2 = 5

tau2 = 0.25 / f2

h2 = np.sin(2 * np.pi * f2 * t) * np.exp(-t/tau2)

x = signal.lfilter(h2, 1, np.random.randn(t.shape[0]))

SNR = 100

y_temp = signal.lfilter(h, 1, x)

sigma_n = np.sqrt((y_temp ** 2).mean()) / SNR

y = y_temp + np.random.randn(t.shape[0]) * sigma_n

pl.subplot(211)

pl.plot(t, x)

pl.ylabel('x(t)')

pl.subplot(212)

pl.plot(t, y)

pl.xlabel('Time (s)')

pl.ylabel('y(t)')

# +

p = 500

hhat = deconvML(x, y, p)

tplot = np.arange(p) / fs

pl.plot(tplot, hhat)

pl.plot(tplot, h[:tplot.shape[0]], '--')

pl.xlabel('Time (s)')

pl.ylabel('System Function')

pl.legend(('$\widehat{h}(t)$', 'h(t)'))

pl.xlim([0, 0.4])

# -

# ## MAP filter estimate

def deconvMAP(x, y, p, lam):

A = linalg.toeplitz(x[(p-1):], x[:p][::-1])

ysub = y[(p-1):]

B = np.dot(A.T, A) + lam * np.eye(p)

h = np.dot(np.dot(linalg.inv(B), A.T), ysub)

return h

# +

p = 500

lam = 100 # Hyperparameter

hhat = deconvMAP(x, y, p, lam)

tplot = np.arange(p) / fs

pl.plot(tplot, hhat)

pl.plot(tplot, h[:tplot.shape[0]], '--')

pl.xlabel('Time (s)')

pl.ylabel('System Function')

pl.legend(('$\widehat{h}(t)$', 'h(t)'))

pl.xlim([0, 0.4])

# -

# ## L-curve for choosing hyperparameter(s): Bias-variance tradeoff in action

# +

lams = 10. ** np.arange(-5, 5, 0.1)

fit_error = np.zeros(lams.shape)

h_norm = np.zeros(lams.shape)

for k, lam in enumerate(lams):

hhat = deconvMAP(x, y, p, lam)

y_fitted = signal.lfilter(hhat, 1, x)

fit_error[k] = ((y - y_fitted) ** 2.).mean()

h_norm[k] = (hhat ** 2.).mean()

pl.loglog(h_norm, fit_error)

pl.xlabel('L2 norm of parameter estimate')

pl.ylabel('Squared-error of the fitted solution')

# -

pl.loglog(h_norm, lams)

pl.xlabel('L2 norm of parameter estimate')

pl.ylabel('$lambda$')

|

BME511/SystemIdentificationMAP.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

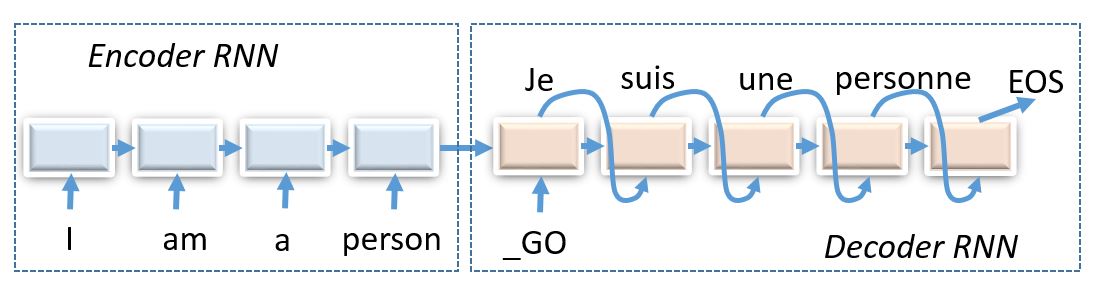

# ## Week 8: Reinforcement Learning for seq2seq

#

# This time we'll solve a problem of transribing hebrew words in english, also known as g2p (grapheme2phoneme)

#

# * word (sequence of letters in source language) -> translation (sequence of letters in target language)

#

# Unlike most deep learning researchers do, we won't only train it to maximize likelihood of correct translation, but also employ reinforcement learning to actually teach it to translate with as few errors as possible.

#

#

# ### About the task

#

# One notable property of Hebrew is that it's consonant language. That is, there are no wovels in the written language. One could represent wovels with diacritics above consonants, but you don't expect people to do that in everyay life.

#

# Therefore, some hebrew characters will correspond to several english letters and others - to none, so we should use encoder-decoder architecture to figure that out.

#

#

# _(img: esciencegroup.files.wordpress.com)_

#

# Encoder-decoder architectures are about converting anything to anything, including

# * Machine translation and spoken dialogue systems

# * [Image captioning](http://mscoco.org/dataset/#captions-challenge2015) and [image2latex](https://openai.com/requests-for-research/#im2latex) (convolutional encoder, recurrent decoder)

# * Generating [images by captions](https://arxiv.org/abs/1511.02793) (recurrent encoder, convolutional decoder)

# * Grapheme2phoneme - convert words to transcripts

#

# We chose simplified __Hebrew->English__ machine translation for words and short phrases (character-level), as it is relatively quick to train even without a gpu cluster.

# +

EASY_MODE = True #If True, only translates phrases shorter than 20 characters (way easier).

#Useful for initial coding.

#If false, works with all phrases (please switch to this mode for homework assignment)

MODE = "he-to-en" #way we translate. Either "he-to-en" or "en-to-he"

MAX_OUTPUT_LENGTH = 50 if not EASY_MODE else 20 #maximal length of _generated_ output, does not affect training

REPORT_FREQ = 100 #how often to evaluate validation score

# -

# ### Step 1: preprocessing

#

# We shall store dataset as a dictionary

# `{ word1:[translation1,translation2,...], word2:[...],...}`.

#

# This is mostly due to the fact that many words have several correct translations.

#

# We have implemented this thing for you so that you can focus on more interesting parts.

#

#

# __Attention python2 users!__ You may want to cast everything to unicode later during homework phase, just make sure you do it _everywhere_.

# +

import numpy as np

from collections import defaultdict

word_to_translation = defaultdict(list) #our dictionary

bos = '_'

eos = ';'

with open("main_dataset.txt") as fin:

for line in fin:

en,he = line[:-1].lower().replace(bos,' ').replace(eos,' ').split('\t')

word,trans = (he,en) if MODE=='he-to-en' else (en,he)

if len(word) < 3: continue

if EASY_MODE:

if max(len(word),len(trans))>20:

continue

word_to_translation[word].append(trans)

print ("size = ",len(word_to_translation))

# -

#get all unique lines in source language

all_words = np.array(list(word_to_translation.keys()))

# get all unique lines in translation language

all_translations = np.array([ts for all_ts in word_to_translation.values() for ts in all_ts])

# ### split the dataset

#

# We hold out 10% of all words to be used for validation.

#

from sklearn.model_selection import train_test_split

train_words,test_words = train_test_split(all_words,test_size=0.1,random_state=42)

# ### Building vocabularies

#

# We now need to build vocabularies that map strings to token ids and vice versa. We're gonna need these fellas when we feed training data into model or convert output matrices into english words.

from voc import Vocab

inp_voc = Vocab.from_lines(''.join(all_words), bos=bos, eos=eos, sep='')

out_voc = Vocab.from_lines(''.join(all_translations), bos=bos, eos=eos, sep='')

# +

# Here's how you cast lines into ids and backwards.

batch_lines = all_words[:5]

batch_ids = inp_voc.to_matrix(batch_lines)

batch_lines_restored = inp_voc.to_lines(batch_ids)

print("lines")

print(batch_lines)

print("\nwords to ids (0 = bos, 1 = eos):")

print(batch_ids)

print("\nback to words")

print(batch_lines_restored)

# -

# Draw word/translation length distributions to estimate the scope of the task.

# +

import matplotlib.pyplot as plt

# %matplotlib inline

plt.figure(figsize=[8,4])

plt.subplot(1,2,1)

plt.title("words")

plt.hist(list(map(len,all_words)),bins=20);

plt.subplot(1,2,2)

plt.title('translations')

plt.hist(list(map(len,all_translations)),bins=20);

# -

# ### Step 3: deploy encoder-decoder (1 point)

#

# __assignment starts here__

#

# Our architecture consists of two main blocks:

# * Encoder reads words character by character and outputs code vector (usually a function of last RNN state)

# * Decoder takes that code vector and produces translations character by character

#

# Than it gets fed into a model that follows this simple interface:

# * __`model.symbolic_translate(inp, **flags) -> out, logp`__ - takes symbolic int32 matrix of hebrew words, produces output tokens sampled from the model and output log-probabilities for all possible tokens at each tick.

# * __`model.symbolic_score(inp, out, **flags) -> logp`__ - takes symbolic int32 matrices of hebrew words and their english translations. Computes the log-probabilities of all possible english characters given english prefices and hebrew word.

# * __`model.weights`__ - weights from all model layers [a list of variables]

#

# That's all! It's as hard as it gets. With those two methods alone you can implement all kinds of prediction and training.

# +

import tensorflow as tf

tf.reset_default_graph()

s = tf.InteractiveSession()

# ^^^ if you get "variable *** already exists": re-run this cell again

# +

from basic_model_tf import BasicTranslationModel

model = BasicTranslationModel('model',inp_voc,out_voc,

emb_size=64, hid_size=128)

s.run(tf.global_variables_initializer())

# +

# Play around with symbolic_translate and symbolic_score

inp = tf.placeholder_with_default(np.random.randint(0,10,[3,5],dtype='int32'),[None,None])

out = tf.placeholder_with_default(np.random.randint(0,10,[3,5],dtype='int32'),[None,None])

# translate inp (with untrained model)

sampled_out, logp = model.symbolic_translate(inp, greedy=False)

print("\nSymbolic_translate output:\n",sampled_out,logp)

print("\nSample translations:\n", s.run(sampled_out))

# -

# score logp(out | inp) with untrained input

logp = model.symbolic_score(inp,out)

print("\nSymbolic_score output:\n",logp)

print("\nLog-probabilities (clipped):\n", s.run(logp)[:,:2,:5])

# +

# Prepare any operations you want here

input_sequence = tf.placeholder('int32', [None,None])

greedy_translations, logp = <build symbolic translations with greedy=True>

def translate(lines):

"""

You are given a list of input lines.

Make your neural network translate them.

:return: a list of output lines

"""

# Convert lines to a matrix of indices

lines_ix = <YOUR CODE>

# Compute translations in form of indices

trans_ix = s.run(greedy_translations, {<YOUR CODE - feed dict>})

# Convert translations back into strings

return out_voc.to_lines(trans_ix)

# +

print("Sample inputs:",all_words[:3])

print("Dummy translations:",translate(all_words[:3]))

assert isinstance(greedy_translations,tf.Tensor) and greedy_translations.dtype.is_integer, "trans must be a tensor of integers (token ids)"

assert translate(all_words[:3]) == translate(all_words[:3]), "make sure translation is deterministic (use greedy=True and disable any noise layers)"

assert type(translate(all_words[:3])) is list and (type(translate(all_words[:1])[0]) is str or type(translate(all_words[:1])[0]) is unicode), "translate(lines) must return a sequence of strings!"

print("Tests passed!")

# -

# ### Scoring function

#

# LogLikelihood is a poor estimator of model performance.

# * If we predict zero probability once, it shouldn't ruin entire model.

# * It is enough to learn just one translation if there are several correct ones.

# * What matters is how many mistakes model's gonna make when it translates!

#

# Therefore, we will use minimal Levenshtein distance. It measures how many characters do we need to add/remove/replace from model translation to make it perfect. Alternatively, one could use character-level BLEU/RougeL or other similar metrics.

#

# The catch here is that Levenshtein distance is not differentiable: it isn't even continuous. We can't train our neural network to maximize it by gradient descent.

# +

import editdistance # !pip install editdistance

def get_distance(word,trans):

"""

A function that takes word and predicted translation

and evaluates (Levenshtein's) edit distance to closest correct translation

"""

references = word_to_translation[word]

assert len(references)!=0,"wrong/unknown word"

return min(editdistance.eval(trans,ref) for ref in references)

def score(words, bsize=100):

"""a function that computes levenshtein distance for bsize random samples"""

assert isinstance(words,np.ndarray)

batch_words = np.random.choice(words,size=bsize,replace=False)

batch_trans = translate(batch_words)

distances = list(map(get_distance,batch_words,batch_trans))

return np.array(distances,dtype='float32')

# -

#should be around 5-50 and decrease rapidly after training :)

[score(test_words,10).mean() for _ in range(5)]

# ## Step 2: Supervised pre-training

#

# Here we define a function that trains our model through maximizing log-likelihood a.k.a. minimizing crossentropy.

# +

# import utility functions

from basic_model_tf import initialize_uninitialized, infer_length, infer_mask, select_values_over_last_axis

class supervised_training:

# variable for inputs and correct answers

input_sequence = tf.placeholder('int32',[None,None])

reference_answers = tf.placeholder('int32',[None,None])

# Compute log-probabilities of all possible tokens at each step. Use model interface.

logprobs_seq = <YOUR CODE>

# compute mean crossentropy

crossentropy = - select_values_over_last_axis(logprobs_seq,reference_answers)

mask = infer_mask(reference_answers, out_voc.eos_ix)

loss = tf.reduce_sum(crossentropy * mask)/tf.reduce_sum(mask)

# Build weights optimizer. Use model.weights to get all trainable params.

train_step = <YOUR CODE>

# intialize optimizer params while keeping model intact

initialize_uninitialized(s)

# -

# Actually run training on minibatches

import random

def sample_batch(words, word_to_translation, batch_size):

"""

sample random batch of words and random correct translation for each word

example usage:

batch_x,batch_y = sample_batch(train_words, word_to_translations,10)

"""

#choose words

batch_words = np.random.choice(words,size=batch_size)

#choose translations

batch_trans_candidates = list(map(word_to_translation.get,batch_words))

batch_trans = list(map(random.choice,batch_trans_candidates))

return inp_voc.to_matrix(batch_words), out_voc.to_matrix(batch_trans)

bx,by = sample_batch(train_words, word_to_translation, batch_size=3)

print("Source:")

print(bx)

print("Target:")

print(by)

# +

from IPython.display import clear_output

from tqdm import tqdm,trange #or use tqdm_notebook,tnrange

loss_history=[]

editdist_history = []

for i in trange(25000):

bx,by = sample_batch(train_words, word_to_translation, 32)

feed_dict = {

supervised_training.input_sequence:bx,

supervised_training.reference_answers:by

}

loss,_ = s.run([supervised_training.loss,supervised_training.train_step],feed_dict)

loss_history.append(loss)

if (i+1)%REPORT_FREQ==0:

clear_output(True)

current_scores = score(test_words)

editdist_history.append(current_scores.mean())

plt.figure(figsize=(12,4))

plt.subplot(131)

plt.title('train loss / traning time')

plt.plot(loss_history)

plt.grid()

plt.subplot(132)

plt.title('val score distribution')

plt.hist(current_scores, bins = 20)

plt.subplot(133)

plt.title('val score / traning time')

plt.plot(editdist_history)

plt.grid()

plt.show()

print("llh=%.3f, mean score=%.3f"%(np.mean(loss_history[-10:]),np.mean(editdist_history[-10:])))

# Note: it's okay if loss oscillates up and down as long as it gets better on average over long term (e.g. 5k batches)

# -

for word in train_words[:10]:

print("%s -> %s"%(word,translate([word])[0]))

# +

test_scores = []

for start_i in trange(0,len(test_words),32):

batch_words = test_words[start_i:start_i+32]

batch_trans = translate(batch_words)

distances = list(map(get_distance,batch_words,batch_trans))

test_scores.extend(distances)

print("Supervised test score:",np.mean(test_scores))

# -

# ## Preparing for reinforcement learning (2 points)

#

# First we need to define loss function as a custom tf operation.

#

# The simple way to do so is through `tensorflow.py_func` wrapper.

# ```

# def my_func(x):

# # x will be a numpy array with the contents of the placeholder below

# return np.sinh(x)

# inp = tf.placeholder(tf.float32)

# y = tf.py_func(my_func, [inp], tf.float32)

# ```

#

#

# __Your task__ is to implement `_compute_levenshtein` function that takes matrices of words and translations, along with input masks, then converts those to actual words and phonemes and computes min-levenshtein via __get_distance__ function above.

#

# +

def _compute_levenshtein(words_ix,trans_ix):

"""

A custom tensorflow operation that computes levenshtein loss for predicted trans.

Params:

- words_ix - a matrix of letter indices, shape=[batch_size,word_length]

- words_mask - a matrix of zeros/ones,

1 means "word is still not finished"

0 means "word has already finished and this is padding"

- trans_mask - a matrix of output letter indices, shape=[batch_size,translation_length]

- trans_mask - a matrix of zeros/ones, similar to words_mask but for trans_ix

Please implement the function and make sure it passes tests from the next cell.

"""

#convert words to strings

words = <restore words (a list of strings) from words_ix. Use vocab>

assert type(words) is list and type(words[0]) is str and len(words)==len(words_ix)

#convert translations to lists

translations = <restore trans (a list of lists of phonemes) from trans_ix

assert type(translations) is list and type(translations[0]) is str and len(translations)==len(trans_ix)

#computes levenstein distances. can be arbitrary python code.

distances = <apply get_distance to each pair of [words,translations]>

assert type(distances) in (list,tuple,np.ndarray) and len(distances) == len(words_ix)

distances = np.array(list(distances),dtype='float32')

return distances

def compute_levenshtein(words_ix,trans_ix):

out = tf.py_func(_compute_levenshtein,[words_ix,trans_ix,],tf.float32)

out.set_shape([None])

return tf.stop_gradient(out)

# -

# Simple test suite to make sure your implementation is correct. Hint: if you run into any bugs, feel free to use print from inside _compute_levenshtein.

# +

#test suite

#sample random batch of (words, correct trans, wrong trans)

batch_words = np.random.choice(train_words, size=100 )

batch_trans = list(map(random.choice,map(word_to_translation.get,batch_words )))

batch_trans_wrong = np.random.choice(all_translations,size=100)

batch_words_ix = tf.constant(inp_voc.to_matrix(batch_words))

batch_trans_ix = tf.constant(out_voc.to_matrix(batch_trans))

batch_trans_wrong_ix = tf.constant(out_voc.to_matrix(batch_trans_wrong))

# +

#assert compute_levenshtein is zero for ideal translations

correct_answers_score = compute_levenshtein(batch_words_ix ,batch_trans_ix).eval()

assert np.all(correct_answers_score==0),"a perfect translation got nonzero levenshtein score!"

print("Everything seems alright!")

# +

#assert compute_levenshtein matches actual scoring function

wrong_answers_score = compute_levenshtein(batch_words_ix,batch_trans_wrong_ix).eval()

true_wrong_answers_score = np.array(list(map(get_distance,batch_words,batch_trans_wrong)))

assert np.all(wrong_answers_score==true_wrong_answers_score),"for some word symbolic levenshtein is different from actual levenshtein distance"

print("Everything seems alright!")

# -

# Once you got it working...

#

#

# * You may now want to __remove/comment asserts__ from function code for a slight speed-up.

#

# * There's a more detailed tutorial on custom tensorflow ops: [`py_func`](https://www.tensorflow.org/api_docs/python/tf/py_func), [`low-level`](https://www.tensorflow.org/api_docs/python/tf/py_func).

# ## 3. Self-critical policy gradient (2 points)

#

# In this section you'll implement algorithm called self-critical sequence training (here's an [article](https://arxiv.org/abs/1612.00563)).

#

# The algorithm is a vanilla policy gradient with a special baseline.

#

# $$ \nabla J = E_{x \sim p(s)} E_{y \sim \pi(y|x)} \nabla log \pi(y|x) \cdot (R(x,y) - b(x)) $$

#

# Here reward R(x,y) is a __negative levenshtein distance__ (since we minimize it). The baseline __b(x)__ represents how well model fares on word __x__.

#

# In practice, this means that we compute baseline as a score of greedy translation, $b(x) = R(x,y_{greedy}(x)) $.

#

# Luckily, we already obtained the required outputs: `model.greedy_translations, model.greedy_mask` and we only need to compute levenshtein using `compute_levenshtein` function.

#

# +

class trainer:

input_sequence = tf.placeholder('int32',[None,None])

# use model to __sample__ symbolic translations given input_sequence

sample_translations, sample_logp = <YOUR CODE>

# use model to __greedy__ symbolic translations given input_sequence

greedy_translations, greedy_logp = <YOUR CODE>

rewards = - compute_levenshtein(input_sequence, sample_translations)

# compute __negative__ levenshtein for greedy mode

baseline = <YOUR CODE>

# compute advantage using rewards and baseline

advantage = <your code - compute advantage>

assert advantage.shape.ndims ==1, "advantage must be of shape [batch_size]"

# compute log_pi(a_t|s_t), shape = [batch, seq_length]

logprobs_phoneme = select_values_over_last_axis(sample_logp, sample_translations)

# Compute policy gradient

# or rather surrogate function who's gradient is policy gradient

J = logprobs_phoneme*advantage[:,None]

mask = infer_mask(sample_translations,out_voc.eos_ix)

loss = - tf.reduce_sum(J*mask) / tf.reduce_sum(mask)

# regularize with negative entropy. Don't forget the sign!

# note: for entropy you need probabilities for all tokens (sample_logp), not just phoneme_logprobs

entropy = <compute entropy matrix of shape [batch,seq_length], H=-sum(p*log_p), don't forget the sign!>

assert entropy.shape.ndims == 2, "please make sure elementwise entropy is of shape [batch,time]"

loss -= 0.01*tf.reduce_sum(entropy*mask) / tf.reduce_sum(mask)

# compute weight updates, clip by norm

grads = tf.gradients(loss,model.weights)

grads = tf.clip_by_global_norm(grads,50)[0]

train_step = tf.train.AdamOptimizer(learning_rate=1e-5).apply_gradients(zip(grads, model.weights,))

initialize_uninitialized()

# -

# # Policy gradient training

#

for i in trange(100000):

bx = sample_batch(train_words,word_to_translation,32)[0]

pseudo_loss,_ = s.run([trainer.loss, trainer.train_step],{trainer.input_sequence:bx})

loss_history.append(

pseudo_loss

)

if (i+1)%REPORT_FREQ==0:

clear_output(True)

current_scores = score(test_words)

editdist_history.append(current_scores.mean())

plt.figure(figsize=(8,4))

plt.subplot(121)

plt.title('val score distribution')

plt.hist(current_scores, bins = 20)

plt.subplot(122)

plt.title('val score / traning time')

plt.plot(editdist_history)

plt.grid()

plt.show()

print("J=%.3f, mean score=%.3f"%(np.mean(loss_history[-10:]),np.mean(editdist_history[-10:])))

model.translate("EXAMPLE;")

# ### Results

for word in train_words[:10]:

print("%s -> %s"%(word,translate([word])[0]))

# +

test_scores = []

for start_i in trange(0,len(test_words),32):

batch_words = test_words[start_i:start_i+32]

batch_trans = translate(batch_words)

distances = list(map(get_distance,batch_words,batch_trans))

test_scores.extend(distances)

print("Supervised test score:",np.mean(test_scores))

# ^^ If you get Out Of Memory, please replace this with batched computation

# -

# ## Step 6: Make it actually work (5++ pts)

#

# In this section we want you to finally __restart with EASY_MODE=False__ and experiment to find a good model/curriculum for that task.

#

# We recommend the following architecture

#

# ```

# encoder---decoder

#

# P(y|h)

# ^

# LSTM -> LSTM

# ^ ^

# LSTM -> LSTM

# ^ ^

# input y_prev

# ```

#

# with __both__ LSTMs having equal or more units than the default gru.

#

#

# It's okay to modify the code above without copy-pasting it.

#

# __Some tips:__

# * You will likely need to adjust pre-training time for such a network.

# * Supervised pre-training may benefit from clipping gradients somehow.

# * SCST may indulge a higher learning rate in some cases and changing entropy regularizer over time.

# * There's more than one way of sending information from encoder to decoder, especially if there's more than one layer:

# * __Vanilla:__ layer_i of encoder last state goes to layer_i of decoder initial state

# * __Intermediate layers:__ add dense (and possibly concat) layers between encoder last and decoder first.

# * __Every tick:__ feed encoder last state _on every iteration_ of decoder.

#

#

# * It's often useful to save pre-trained model parameters to not re-train it every time you want new policy gradient parameters.

# * When leaving training for nighttime, try setting REPORT_FREQ to a larger value (e.g. 500) not to waste time on it.

#

#

# * (advanced deep learning) It may be a good idea to first train on small phrases and then adapt to larger ones (a.k.a. training curriculum).

# * (advanced nlp) You may want to switch from raw utf8 to something like unicode or even syllables to make task easier.

# * (advanced nlp) Since hebrew words are written __with vowels omitted__, you may want to use a small Hebrew vowel markup dataset at `he-pron-wiktionary.txt`.

#

# __Formal criteria__:

#

# To get 5 points we want you to build an architecture that:

# * _doesn't consist of single GRU_

# * _works better_ than single GRU baseline.

# * We also want you to provide either learning curve or trained model, preferably both

# * ... and write a brief report or experiment log describing what you did and how it fared.

# ### Bonus hints: [here](https://github.com/yandexdataschool/Practical_RL/blob/master/week8_scst/bonus.ipynb)

assert not EASY_MODE, "make sure you set EASY_MODE = False at the top of the notebook."

# `[your report/log here or anywhere you please]`

# __Contributions:__ This notebook is brought to you by

# * Yandex [MT team](https://tech.yandex.com/translate/)

# * <NAME> ([DeniskaMazur](https://github.com/DeniskaMazur)), <NAME> ([Omrigan](https://github.com/Omrigan/)), <NAME> ([TixFeniks](https://github.com/tixfeniks)) and <NAME> ([justheuristic](https://github.com/justheuristic/))

# * Dataset is parsed from [Wiktionary](https://en.wiktionary.org), which is under CC-BY-SA and GFDL licenses.

#

|

Practical_RL/week8_scst/practice_tf.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

# # Library imports

# +

#usual imports

import pandas as pd

import numpy as np

import seaborn as sns

import matplotlib.pyplot as plt

#Outliers

from scipy import stats

# -

data = pd.read_csv('data/raw/Walmart_Store_sales.csv')

data.head()

print(f'There is {data.shape[0]} rows and {data.shape[1]} columns in this dataset')

print(f'Columns in this dataset {list(data.columns)}')

print(f'Overall missing values: \n{100*data.isnull().sum()/data.shape[0]}')

data.dtypes

data['Store'].value_counts()

# There is no missing values on the stores, we can convert it to an integer

data.dtypes

data['Store'] = data['Store'].astype(int)

data.head(1)

data['Holiday_Flag'].isnull().sum()

data[data['Holiday_Flag'].isnull()]

# # Re-assess Holiday_Flag column

# +

#Holidays = 27/08/2010 <NAME> Day in Texas will be transformed to 1 in Holiday_Flag

#The rest of the dates are not holidays according to the national register so we'll transform them to 0

# -

data.loc[data.Date == "27-08-2010", "Holiday_Flag"] = 1.0

data['Holiday_Flag'].isnull().sum()

data['Holiday_Flag'] = data['Holiday_Flag'].replace(np.nan, 1.0)

data['Holiday_Flag'].isnull().sum()

# +

days = data['Holiday_Flag'].value_counts()[0]

holidays = data['Holiday_Flag'].value_counts()[1]

print(f'There is {days} normal days in the dataset')

print(f'There is {holidays} holidays in the dataset')

# -

data = data.dropna(subset=['Weekly_Sales'])

# +

#Drop the nan values in Temperature/Fuel Price/CPI/Unemployment

# -

data = data.dropna(subset=['Temperature','Fuel_Price','CPI','Unemployment'])

data.describe()

# # Computing confidence interval taking 99,73%

y23 = [data.Temperature.mean()-3*data.Temperature.std(),data.Temperature.mean()+3*data.Temperature.std()]

y24 = [data.Fuel_Price.mean()-3*data.Fuel_Price.std(),data.Fuel_Price.mean()+3*data.Fuel_Price.std()]

y25 = [data.CPI.mean()-3*data.CPI.std(),data.CPI.mean()+3*data.CPI.std()]

y26 = [data.Unemployment.mean()-3*data.Unemployment.std(),data.Unemployment.mean()+3*data.Unemployment.std()]

print(f'Any values outside of this interval within Temperature will be removed {y23}')

print(f'Any values outside of this interval within Fuel price will be removed {y24}')

print(f'Any values outside of this interval within CPI will be removed {y25}')

print(f'Any values outside of this interval within Unemployment will be removed {y26}')

# +

#Therefore we have to focus on the outliers in unemployment

# -

data.drop(data[data.Unemployment > y26[1]].index, inplace=True)

data.describe()

print(f'After cleaning, there is only {data.shape[0]} rows left, we dropped {(100-((data.shape[0])/150)*100)}%')

data.head()

# ## EDA

store_sales = data.groupby('Store')['Weekly_Sales'].sum()

first_shop = store_sales.sort_values(ascending = False).index[0]

print(f'The shop n°{first_shop} sold the most over one week')

plt.figure(figsize = (10, 5))

g = sns.barplot(data = data, x = 'Store', y = 'Weekly_Sales', color = 'RED')

g.set_title("Weekly sales per store")

plt.show()

# +

#Even though the store #13 holds the record in the biggest weekly sale,

#it isn't the best performance overall, #4 seems to be performing better (We have to take into account its 10^6)

# -

data.corr()

plt.figure(figsize = (16,10))

sns.heatmap(data.corr(), cmap = 'Reds', annot = True)

plt.show()

# As we're speaking of sales, we can take into account two factors, the first would be the week aka date, when there are sales. The second factor could be the quarters as any financial firm would assess their performance.

#

# However, we dropped the date column, it could be a way of fine tuning our model, this could be explored in the future

#

data = data[['Store','Weekly_Sales','Holiday_Flag','Temperature','Fuel_Price','CPI','Unemployment']]

# +

#data.to_csv('./data/walmart_store_cleaned.csv', index = False)

|

03-Walmart Sales/walmart_store.ipynb

|

# Assignment: Linear regression on the Advertising data

# =====================================================

#

# **TODO**: Edit this cell to fill in your NYU Net ID and your name:

#

# - **Net ID**:

# - **Name**:

# To illustrate principles of linear regression, we are going to use some

# data from the textbook “An Introduction to Statistical Learning

# withApplications in R” (<NAME>, <NAME>, <NAME>,

# <NAME>) (available via NYU Library).

#

# The dataset is described as follows:

#

# > Suppose that we are statistical consultants hired by a client to

# > provide advice on how to improve sales of a particular product. The

# > `Advertising` data set consists of the sales of that product in 200

# > different markets, along with advertising budgets for the product in

# > each of those markets for three different media: TV, radio, and

# > newspaper.

# >

# > …

# >

# > It is not possible for our client to directly increase sales of the

# > product. On the other hand, they can control the advertising

# > expenditure in each of the three media. Therefore, if we determine

# > that there is an association between advertising and sales, then we

# > can instruct our client to adjust advertising budgets, thereby

# > indirectly increasing sales. In other words, our goal is to develop an

# > accurate model that can be used to predict sales on the basis of the

# > three media budgets.

#

# Sales are reported in thousands of units, and TV, radio, and newspaper

# budgets, are reported in thousands of dollars.

#

# For this assignment, you will fit a linear regression model to a small

# dataset. You will iteratively improve your linear regression model by

# examining the residuals at each stage, in order to identify problems

# with the model.

#

# Make sure to include your name and net ID in a text cell at the top of

# the notebook.

# +

from sklearn import metrics

from sklearn.linear_model import LinearRegression

from sklearn.model_selection import train_test_split

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

import seaborn as sns

sns.set()

from IPython.core.interactiveshell import InteractiveShell

InteractiveShell.ast_node_interactivity = "all"

# -

# ### 1. Read in and pre-process data

#

# In this section, you will read in the “Advertising” data, and make sure

# it is loaded correctly. Visually inspect the data using a pairplot, and

# note any meaningful observations. In particular, comment on which

# features appear to be correlated with product sales, and which features

# appear to be correlated with one another. Then, split the data into

# training data (70%) and test data (30%).

#

# **The code in this section is provided for you**. However, you should

# add a text cell at the end of this section, in which you write your

# comments and observations.

# #### Read in data

url = 'https://www.statlearning.com/s/Advertising.csv'

df = pd.read_csv(url, index_col=0)

df.head()

# Note that in this dataset, the first column in the data file is the row

# label; that’s why we use `index_col=0` in the `read_csv` command. If we

# would omit that argument, then we would have an additional (unnamed)

# column in the dataset, containing the row number.

#

# (You can try removing the `index_col` argument and re-running the cell

# above, to see the effect and to understand why we used this argument.)

# #### Visually inspect the data

sns.pairplot(df);

# The most important panels here are on the bottom row, where `sales` is

# on the vertical axis and the advertising budgets are on the horizontal

# axes.

# #### Split up data

#

# We will use 70% of the data for training and the remaining 30% to test

# the regression model.

train, test = train_test_split(df, test_size=0.3)

train.info()

test.info()

# ### 2. Fit simple linear regression models

#

# Use the training data to fit a simple linear regression to predict

# product sales, for each of three features: TV ad budget, radio ad

# budget, and newspaper ad budget. In other words, you will fit *three*

# regression models, with each model being trained on one feature. For

# each of the three regression models, create a plot of the training data

# and the regression line, with product sales ($y$) on the vertical axis

# and the feature on which the model was trained ($x$) on the horizontal

# axis.

#

# Also, for each regression model, print the intercept and coefficients,