text stringlengths 300 320k | source stringlengths 52 154 |

|---|---|

# Notes from the Hufflepuff Unconference

April 28th, we ran the Hufflepuff Unconference in Berkeley, at the MIRI/CFAR office common space.

There's room for improvement in how the Unconference could have been run, but it succeeded the core things I wanted to accomplish:

* Established common knowledge of what proble... | https://www.lesswrong.com/posts/stQcoPWFm9R3EixSC/notes-from-the-hufflepuff-unconference |

# Reflexive Oracles and superrationality: prisoner's dilemma

*This grew out of an exchange with Jessica Taylor during MIRI's recent visit to the FHI.* Still getting my feel for the fixed point approach; let me know of any errors.

The question is, how can we make use of a reflective oracle to reach outcomes that are... | https://www.lesswrong.com/posts/5bd75cc58225bf067037505e/reflexive-oracles-and-superrationality-prisoner-s-dilemma |

# Reflexive Oracles and superrationality: Pareto

In the previous post, I looked at potentially Pareto agents playing the prisoner's dilemma. But the prisoner's dilemma is an unusual kind of problem: the space of all possible expected outcomes can be reached by individual players choosing independent mixed strategies.

... | https://www.lesswrong.com/posts/5bd75cc58225bf0670375068/reflexive-oracles-and-superrationality-pareto |

# Existential risk from AI without an intelligence explosion

\[xpost from [my blog](http://alexmennen.com/index.php/2017/05/25/existential-risk-from-ai-without-an-intelligence-explosion/)\]

In discussions of existential risk from AI, it is often assumed that the existential catastrophe would follow an intelligence ex... | https://www.lesswrong.com/posts/bFcbG2TQCCE3krhEY/existential-risk-from-ai-without-an-intelligence-explosion |

# The Atomic Bomb Considered As Hungarian High School Science Fair Project

**I.**

A group of Manhattan Project physicists [created](https://en.wikipedia.org/wiki/The_Martians_(scientists)) a tongue-in-cheek mythology where superintelligent Martian scouts landed in Budapest in the late 19th century and stayed for abou... | https://www.lesswrong.com/posts/xPJKZyPCvap4Fven8/the-atomic-bomb-considered-as-hungarian-high-school-science |

# Futarchy Fix

Robin Hanson's Futarchy is a proposal to let prediction markets make governmental decisions. We can view an operating Futarchy as an agent, and ask if it is aligned with the interests of its constituents. I am aware of two main failures of alignment: (1) since predicting rare events is rewarded in propo... | https://www.lesswrong.com/posts/5bd75cc58225bf0670375432/futarchy-fix |

# Why I am not currently working on the AAMLS agenda

(note: this is not an official MIRI statement, this is a personal statement. I am not speaking for others who have been involved with the agenda.)

The AAMLS (Alignment for Advanced Machine Learning Systems) agenda is a project at MIRI that is about determi... | https://www.lesswrong.com/posts/5bd75cc58225bf067037541b/why-i-am-not-currently-working-on-the-aamls-agenda |

# Acausal trade: double decrease

*A putative new idea for AI control; index [here](https://agentfoundations.org/item?id=601)*.

Other posts in the series: [Introduction](https://agentfoundations.org/item?id=1465), [Double decrease](https://agentfoundations.org/item?id=1463), [Pre-existence deals](https://agentfoun... | https://www.lesswrong.com/posts/5bd75cc58225bf0670375414/acausal-trade-double-decrease |

# Counterfactually uninfluenceable agents

*A putative new idea for AI control; index [here](https://agentfoundations.org/item?id=601)*.

[Techniques](https://agentfoundations.org/item?id=1097) [used](https://www.dropbox.com/s/xv70s8q3c2o4ta2/IIRLposter.pdf?raw=1) to counter agents taking [biased decisions](https:/... | https://www.lesswrong.com/posts/5bd75cc58225bf067037536b/counterfactually-uninfluenceable-agents |

# Cooperative Oracles: Introduction

This is the first in a series of posts introducing a new tool called a Cooperative Oracle. All of these posts are joint work Sam Eisenstat, Tsvi Benson-Tilsen, and Nisan Stiennon.

Here is my plan for posts in this sequence. I will update this as I go.

1) [Introduction](https:/... | https://www.lesswrong.com/posts/5bd75cc58225bf0670375419/cooperative-oracles-introduction |

# Rationalist Seder: A Story of War

For whatever reason, the rationality community is inordinately Jewish. Among other things, this resulted in 2011 in people in New York putting together "Rationalist Seder", a reframing of the story of Jewish Liberation From Egypt to reflect upon liberation in general. What does it m... | https://www.lesswrong.com/posts/wP2BJJnJKD6gow58L/rationalist-seder-a-story-of-war |

# A new, better way to read the Sequences

A new way to read the Sequences:

[**https://www.readthesequences.com**](https://www.readthesequences.com)

It's also more mobile-friendly than a PDF/mobi/epub.

(The content is from the book — _Rationality: From AI to Zombies_. Books I through IV are up already; Books V and V... | https://www.lesswrong.com/posts/YoWLYphmLRYpC2qMQ/a-new-better-way-to-read-the-sequences |

# Mode Collapse and the Norm One Principle

_\[Epistemic status: I assign a 70% chance that this model proves to be useful, 30% chance it describes things we are already trying to do to a large degree, and won't cause us to update much.\] _

I'm going to talk about something that's a little weird, because it uses some ... | https://www.lesswrong.com/posts/BZgnhWCx3ovs5cxgg/mode-collapse-and-the-norm-one-principle |

# Becoming a Better Community

So I've been following Project Hufflepuff, the efforts of the rationalist community to become, rather than better rationalists (per se), but a better community. I recently read the summary of the recent Project Hufflepuff Unconference, and I had a thought.

**The Problem**

==============... | https://www.lesswrong.com/posts/MBbuef8LkSRYbz6He/becoming-a-better-community |

# Bet or update: fixing the will-to-wager assumption

(Warning: completely obvious reasoning that I'm only posting because I haven't seen it spelled out anywhere.)

Some people say, expanding on an idea of de Finetti, that Bayesian rational agents should offer two-sided bets based on their beliefs. For example, if you ... | https://www.lesswrong.com/posts/zxhTv6AirgD6tRTrz/bet-or-update-fixing-the-will-to-wager-assumption |

# Bring up Genius

_(This is a "Pareto translation" of_ Bring up Genius _by László Polgár, the book [recently mentioned](http://slatestarcodex.com/2017/05/30/hungarian-education-iii-mastering-the-core-teachings-of-the-budapestians/) at_ Slate Star Codex_. I hope that selected 20% of the book text, translated approximat... | https://www.lesswrong.com/posts/J9pNx22bj5RuiRjAj/bring-up-genius |

# SSC Journal Club: AI Timelines

**I.**

A few years ago, Muller and Bostrom et al surveyed AI researchers to assess their opinion on AI progress and superintelligence. Since then, deep learning took off, AlphaGo beat human Go champions, and the field has generally progressed. I’ve been waiting for a new survey for a ... | https://www.lesswrong.com/posts/qL8Z9TBCNWQyN6yLq/ssc-journal-club-ai-timelines |

# Bi-Weekly Rational Feed

===Highly Recommended Articles:

[Bring Up Genius by Viliam (lesswrong)](/r/discussion/lw/p48/bring_up_genius/) \- An "80/20" translation. Positive motivation. Extreme resistance from the Hungarian government and press. Polgar's five principles. Biting criticism of the school system. Learning... | https://www.lesswrong.com/posts/sTBsv2qDBwsbDM8rg/bi-weekly-rational-feed |

# We are the Athenians, not the Spartans

The Peloponnesian War was a war between two empires: the seadwelling Athenians, and the landlubber Spartans. Spartans were devoted to duty and country, living in barracks and drinking the black broth. From birth they trained to be the caste dictators of a slaveowning society, w... | https://www.lesswrong.com/posts/sPbEiZZZFB4hxaEAQ/we-are-the-athenians-not-the-spartans |

# Why I think worse than death outcomes are not a good reason for most people to avoid cryonics

*Content note*: torture, suicide, things that are worse than death

*Follow-up to*: [http://lesswrong.com/r/discussion/lw/lrf/can\_we\_decrease\_the\_risk\_of\_worsethandeath/](/r/discussion/lw/lrf/can_we_decrease_the_risk_... | https://www.lesswrong.com/posts/WgypBZ3ahvKimifyY/why-i-think-worse-than-death-outcomes-are-not-a-good-reason |

# The Rationalistsphere and the Less Wrong wiki

Hi everyone!

For people not acquainted with me, [I'm Deku-shrub](https://twitter.com/Deku_shrub), often known online for [my cybercrime research](https://pirate.london/tagged/dark-web), as well as fairly heavy involvement in the global transhumanist movement with projec... | https://www.lesswrong.com/posts/JDrDti63FE6BXZCjv/the-rationalistsphere-and-the-less-wrong-wiki |

# Thought experiment: coarse-grained VR utopia

I think I've come up with a fun thought experiment about friendly AI. It's pretty obvious in retrospect, but I haven't seen it posted before.

When thinking about what friendly AI should do, one big source of difficulty is that the inputs are supposed to be human intuiti... | https://www.lesswrong.com/posts/Y27zZYJS35F4ZJdeN/thought-experiment-coarse-grained-vr-utopia |

# Announcing AASAA - Accelerating AI Safety Adoption in Academia (and elsewhere)

AI safety is a small field. It has only about 50 researchers, and it’s mostly talent-constrained. I believe this number should be drastically higher.

A: the missing step from zero to hero

I have spoken to many intelligent, self-mot... | https://www.lesswrong.com/posts/SMd4ATu2vzMuSbr3v/announcing-aasaa-accelerating-ai-safety-adoption-in-academia |

# Concrete Ways You Can Help Make the Community Better

_There is a TLDR at the bottom_

Lots of people really value the lesswrong community but aren't sure how to contribute. The rationalist community can be intimidating. We have a lot of very smart people and the standards can be high. Nonetheless there are lots of c... | https://www.lesswrong.com/posts/suY8SxAzGSckdo9fu/concrete-ways-you-can-help-make-the-community-better |

# LessWrong 2.0 Feature Roadmap & Feature Suggestions

This post will serve as a place to discuss what features the new LessWrong 2.0 should have, and I will try to keep this post updated with our feature roadmap plans.

Here is roughly the set of features we are planning to develop over the next few weeks:

UPDATED: A... | https://www.lesswrong.com/posts/6XZLexLJgc5ShT4in/lesswrong-2-0-feature-roadmap-and-feature-suggestions |

# Welcome to Lesswrong 2.0

Lesswrong 2.0 is a project by Oliver Habryka, Ben Pace, and Matthew Graves with the aim of revitalizing the Lesswrong discussion platform. Oliver and Ben are currently working on the project full-time and Matthew Graves is providing part-time support and oversight from MIRI.

Our main goals ... | https://www.lesswrong.com/posts/HJDbyFFKf72F52edp/welcome-to-lesswrong-2-0 |

# Instrumental Rationality 1: Starting Advice

Starting Advice

---------------

\[This is the first post in the Instrumental Rationality Sequence. It's a collection of four concepts that I think are central to instrumental rationality—caring about the obvious, looking for practical things, practicing in pieces, and rea... | https://www.lesswrong.com/posts/s5DKBNaSRrQcc92d3/instrumental-rationality-1-starting-advice |

# Distinctions of the Moment

I could use a better name for this (any suggestions?), but I'll use "distinctions of the moment" for the moment.

A distinction of the moment is a distinction made between two synonyms or near-synonyms (or sometimes antonyms), for the sake of pointing out a difference which is important to... | https://www.lesswrong.com/posts/kKTRqes9MN6sAPgZd/distinctions-of-the-moment |

# Pair Debug to Understand, not Fix

_I'm an adjunct instructor at CFAR, but this is my opinion, not CFAR's._

At CFAR, one of the exercises is 'pair debugging'; one person is the protagonist exploring one of their problems, and the other person is the helper, helping them understand and solve the problem. (Like many t... | https://www.lesswrong.com/posts/K2Ajrko4mowY26Xac/pair-debug-to-understand-not-fix |

# Idea for LessWrong: Video Tutoring

Update 7/9/17: I propose that Learners individually reach out to Teachers, and set up meetings. It seems like the most practical way of getting started, but I am not sure and am definitely open to other ideas. Other notes:

* There seems to be agreement that the best way to do th... | https://www.lesswrong.com/posts/ZPNNQaRdBTsnJ44Ax/idea-for-lesswrong-video-tutoring |

# Bi-Weekly Rational Feed

===Highly Recommended Articles:

[Introducing The Ea Involvement Guide by The Center for Effective Altruism (EA forum)](http://effective-altruism.com/ea/1bj/introducing_the_ea_involvement_guide/) \- A huge list of concrete actions you can take to get involved. Every action has a brief descrip... | https://www.lesswrong.com/posts/8oKeHtiFR2qDKrKmA/bi-weekly-rational-feed |

# On Dragon Army

Analysis Of: [Dragon Army: Theory & Charter (30 Minute Read)](http://lesswrong.com/lw/p23/dragon_army_theory_charter_30min_read/)

Epistemic Status: Varies all over the map from point to point

Length Status: In theory I suppose it could be longer

This is a long post is long post responding to a almo... | https://www.lesswrong.com/posts/KShZSJyBwY7K3hcWN/on-dragon-army |

# Loebian cooperation in the tiling agents problem

The [tiling agents problem](http://lesswrong.com/lw/hmt/tiling_agents_for_selfmodifying_ai_opfai_2/) is about formalizing how AIs can create successor AIs that are at least as smart. Here's a toy model I came up with, which is similar to [Benya's old model](http://les... | https://www.lesswrong.com/posts/5bd75cc58225bf0670375459/loebian-cooperation-in-the-tiling-agents-problem |

# Self-modification as a game theory problem

In this post I'll try to show a surprising link between two research topics on LW: game-theoretic cooperation between AIs (quining, Loebian cooperation, modal combat, etc) and stable self-modification of AIs (tiling agents, Loebian obstacle, etc).

When you're trying to coo... | https://www.lesswrong.com/posts/yX2reFadzj3xKxEFm/self-modification-as-a-game-theory-problem |

# Just Saying What You Mean is Impossible

Response to: [Conversation Deliberately Skirts the Border of Incomprehensibility](http://slatestarcodex.com/2017/06/26/conversation-deliberately-skirts-the-border-of-incomprehensibility/) (SlateStarCodex)

“Why don’t people just say what they mean?”

They can’t.

I don’t mean ... | https://www.lesswrong.com/posts/RnfL2kARS4BgSeZHM/just-saying-what-you-mean-is-impossible |

# Why Are Transgender People Immune To Optical Illusions?

*\[Epistemic status: So, so speculative. Don’t take any of this seriously until it’s replicated and endorsed by other people.\]*

**I.**

If you’ve ever wanted to see a glitch in the Matrix, watch this spinning mask:

, I’ve noticed a recent trend towards skepticism of B... | https://www.lesswrong.com/posts/GTAFKjdQoSa9smKmj/one-magisterium-bayes |

# Epistemic Spot Check: A Guide To Better Movement (Todd Hargrove)

This is part of an ongoing series assessing where the epistemic bar should be for self-help books.

Introduction

------------

Thesis: increasing your physical capabilities is more often a matter of teaching your neurological system than it is anything... | https://www.lesswrong.com/posts/mjneyoZjyk9oC5ocA/epistemic-spot-check-a-guide-to-better-movement-todd |

# Rescuing the Extropy Magazine archives

Possibly of more interest to old school [Extropians](https://hpluspedia.org/wiki/Extropianism), you may be aware the defunct [Extropy Institute's website](http://www.extropy.org/) is very slow and broken, and certainly inaccessible to newcomers.

Anyhow, I have recently pieced ... | https://www.lesswrong.com/posts/gxouYfj9jJLTzhmkM/rescuing-the-extropy-magazine-archives |

# Subtle Forms of Confirmation Bias

There are at least two types of confirmation bias.

The first is **_selective attention:_** a tendency to pay attention to, or recall, that which confirms the hypothesis you are thinking about rather than that which speaks against it.

The second is **_selective experimentation:_** ... | https://www.lesswrong.com/posts/mmwyubv724MTvvL5Z/subtle-forms-of-confirmation-bias |

# Steelmanning the Chinese Room Argument

_(This post grew out of an [old conversation](/lw/ig8) with Wei Dai.)_

Imagine a person sitting in a room, communicating with the outside world through a terminal. Further imagine that the person knows some secret fact (e.g. that the Moon landings were a hoax), but is absolute... | https://www.lesswrong.com/posts/EZbzGWwtNNWtw79tg/steelmanning-the-chinese-room-argument |

# Bayesian probability theory as extended logic -- a new result

I have a new paper that strengthens the case for strong Bayesianism, a.k.a. [One Magisterium Bayes](/r/discussion/lw/p71/onemagisterium_bayes/ "One Magisterium Bayes"). The paper is entitled "[From propositional logic to plausible reasoning: a uniqueness ... | https://www.lesswrong.com/posts/T7aQqNm6m8pTXZYnd/bayesian-probability-theory-as-extended-logic-a-new-result |

# Against lone wolf self-improvement

LW has a problem. Openly or covertly, many posts here promote the idea that a rational person ought to be able to self-improve on their own. Some of it comes from Eliezer's refusal to attend college (and Luke dropping out of his bachelors, etc). Some of it comes from our concept of... | https://www.lesswrong.com/posts/HPrv2WXDcSFDrwA5M/against-lone-wolf-self-improvement |

# Bi-Weekly Rational Feed

===Highly Recommended Articles:

[Just Saying What You Mean Is Impossible by Zvi Moshowitz](https://thezvi.wordpress.com/2017/06/28/just-saying-what-you-mean-is-impossible/) \- "Humans are automatically doing lightning fast implicit probabilistic analysis on social information in the backgrou... | https://www.lesswrong.com/posts/YHw9w47DGFtgAALHY/bi-weekly-rational-feed |

# In praise of fake frameworks

Related to: [Bucket errors](/lw/o2k/flinching_away_from_truth_is_often_about/), [Categorizing Has Consequences](/lw/nx/categorizing_has_consequences/), [Fallacies of Compression](/lw/nw/fallacies_of_compression/)

Followup to: [Gears in Understanding](/lw/ozz/gears_in_understanding/)

... | https://www.lesswrong.com/posts/wDP4ZWYLNj7MGXWiW/in-praise-of-fake-frameworks |

# Becoming stronger together

> I want people to go forth, but also to return. Or maybe even to go forth and stay simultaneously, because this is the Internet and we can get away with that sort of thing; I've learned some interesting things on _Less Wrong_, lately, and if continuing motivation over years is any sort o... | https://www.lesswrong.com/posts/mSmoKx49ckrz9i9Ls/becoming-stronger-together |

# Delegative Inverse Reinforcement Learning

$$\newcommand{\Comment}[1]{}$$

$$\newcommand{\Bool}{\{0,1\}}$$

$$\newcommand{\Words}{{\Bool^*}}$$

$$\DeclareMathOperator{\Sgn}{sgn}$$

$$\DeclareMathOperator{\Supp}{supp}$$

$$\DeclareMathOperator{\Stab}{stab}$$

$$\DeclareMathOperator{\Img}{Im}$$

$$\DeclareMathOperator{\Dom}... | https://www.lesswrong.com/posts/5bd75cc58225bf067037546b/delegative-inverse-reinforcement-learning |

# LessWrong Is Not about Forum Software, LessWrong Is about Posts (Or: How to Immanentize the LW 2.0 Eschaton in 2.5 Easy Steps!)

\[epistemic status: I was going to do a lot of research for this post, but I decided not to as there are no sources on the internet so I'd have to interview people directly and I'd rather h... | https://www.lesswrong.com/posts/vCebeGvzqQ6kbe4iy/lesswrong-is-not-about-forum-software-lesswrong-is-about |

# Current thoughts on Paul Christano's research agenda

This post summarizes my thoughts on Paul Christiano's agenda in general and [ALBA](https://ai-alignment.com/alba-an-explicit-proposal-for-aligned-ai-17a55f60bbcf) in particular.

***

(note: at the time of writing, I am not employed at MIRI)

(in general, op... | https://www.lesswrong.com/posts/5bd75cc58225bf067037545b/current-thoughts-on-paul-christano-s-research-agenda |

# Change Is Bad

Epistemic Status: Public service reminder (I want to be able to link to this in the future)

Leads to: [Choices are Bad](https://thezvi.wordpress.com/2017/07/22/choices-are-bad/), [Choices Are Really Bad](https://thezvi.wordpress.com/2017/08/12/choices-are-really-bad/), [Complexity Is Bad](https://thez... | https://www.lesswrong.com/posts/3BdhcinirjztnsBFi/change-is-bad |

# The dark arts: Examples from the Harris-Adams conversation

Recently, James_Miller [posted](/r/discussion/lw/p9e/sam_harris_and_scott_adams_debate_trump_a_model/#comments) a conversation between Sam Harris and Scott Adams about Donald Trump. James_Miller titled it "a model rationalist disagreement". While I agree tha... | https://www.lesswrong.com/posts/ZCir477TEMPN6q5o7/the-dark-arts-examples-from-the-harris-adams-conversation |

# Choices are Bad

Epistemic Status: Spent way too long deciding what to put here

Kind of a Follow-Up to: [Change is Bad](https://thezvi.wordpress.com/2017/07/20/change-is-bad/)

Will Hopefully Lead To, But No Promises: Some combination of, when and if I write them: [Complexity is Bad](https://thezvi.wordpress.com/201... | https://www.lesswrong.com/posts/ufd9YWXLdWaLFMxfL/choices-are-bad |

# Book Review: Mathematics for Computer Science (Suggestion for MIRI Research Guide)

**tl;dr**: I read Mathematics for Computer Science (MCS) and found it excellent. I sampled Discrete Mathematics and Its Applications (Rosen)—currently recommended in MIRI's [research guide](https://intelligence.org/research-guide/)—as... | https://www.lesswrong.com/posts/bdvbsf8Y6FbrC2bea/book-review-mathematics-for-computer-science-suggestion-for |

# People don't have beliefs any more than they have goals: Beliefs As Body Language

Many people, anyway.

There is a common mistake in modeling humans, to think that they have actual goals, and that they deduce their actions from those goals. First there is a goal, then there is an action which is born from the goal. ... | https://www.lesswrong.com/posts/TGzYTkmrtaE4yuEch/people-don-t-have-beliefs-any-more-than-they-have-goals |

# Models of human relationships - tools to understand people

**This post will not teach you the models here.** This post is a summary of the models that I carry in my head. I have written most of the descriptions without looking them up (See [Feynman notebook method](http://calnewport.com/blog/2015/11/25/the-feynman... | https://www.lesswrong.com/posts/omC7asRTxCNayy5Q4/models-of-human-relationships-tools-to-understand-people |

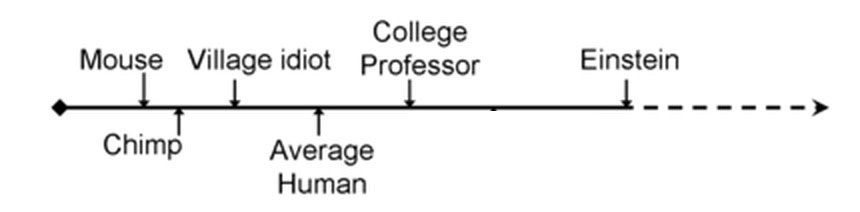

# Where The Falling Einstein Meets The Rising Mouse

Eliezer Yudkowsky argues that forecasters err in expecting artificial intelligence progress to look like this:

…when in fact it will probably look like this:

: [The Order of the Soul (Compass Rose)](http://benjaminrosshoffman.com/the-order-of-the-soul/)

Last week we went to see a classroom for our son.

The school in question had quite a good reputation. This was a place people wanted their kids to go, and when we had been there... | https://www.lesswrong.com/posts/M6CPbgHWKsEseZiev/something-was-wrong |

# Bi-weekly Rational Feed

===Highly Recommended Articles:

[Skills Most Employable by 80,000 Hours](https://80000hours.org/articles/skills-most-employable/) \- Metrics: Satisfaction, risk of automation, and breadth of applicability. Leadership and social skills will gain the most in value. The least valuable skills in... | https://www.lesswrong.com/posts/Pr6E7XpTTrDeML82y/bi-weekly-rational-feed |

# The Lizard People Of Alpha Draconis 1 Decided To Build An Ansible

**I.**

The lizard people of Alpha Draconis 1 decided to build an [ansible](https://en.wikipedia.org/wiki/Ansible).

The transmitter was a colossal tower of silksteel, doorless and windowless. Inside were millions of modular silksteel cubes, each fill... | https://www.lesswrong.com/posts/iLMkKDKmfbMkDuQBm/the-lizard-people-of-alpha-draconis-1-decided-to-build-an |

# Prediction should be a sport

So, I've been thinking about prediction markets and why they aren't really catching on as much as I think they should.

My suspicion is that (beside Robin Hanson's signaling explanation, and the amount of work it takes to get to the large numbers of predictors where the quality of result... | https://www.lesswrong.com/posts/TaXd7izDmegoFRmeG/prediction-should-be-a-sport |

# Stable Pointers to Value: An Agent Embedded in Its Own Utility Function

(This post is largely a write-up of a conversation with Scott Garrabrant.)

## Stable Pointers to Value

How do we build stable pointers to values?

As a first example, consider the wireheading problem for AIXI-like agents in the case of a... | https://www.lesswrong.com/posts/5bd75cc58225bf06703754b3/stable-pointers-to-value-an-agent-embedded-in-its-own-utility-function |

# Embracing Metamodernism

I’ve never much felt like I was part of a cultural movement. I’m too much a “[digital native](http://marcprensky.com/digital-native/)” to be fully part of [Gen X](http://content.time.com/time/arts/article/0,8599,1731528,00.html). I’m insufficiently idealistic to be a [Millennial](http://www.p... | https://www.lesswrong.com/posts/7fLC4aQtJbgcmqJYT/embracing-metamodernism |

# Autopoietic systems and difficulty of AGI alignment

I have recently come to the opinion that AGI alignment is probably extremely hard. But it's not clear exactly what AGI or AGI alignment are. And there are some forms of aligment of "AI" systems that are easy. Here I operationalize "AGI" and "AGI alignment" in ... | https://www.lesswrong.com/posts/5bd75cc58225bf06703754b9/autopoietic-systems-and-difficulty-of-agi-alignment |

# Ten small life improvements

I've accumulated a lot of small applications and items that make my life incrementally better. Most of these ultimately came from someone else's recommendation, so I thought I'd pay it forward by posting ten of my favorite small improvements.

(I've given credit where I remember who intro... | https://www.lesswrong.com/posts/nW6S5QJmPLhWoagfH/ten-small-life-improvements |

# Using modal fixed points to formalize logical causality

*This post is a simplified introduction to existing ideas by Eliezer Yudkowsky, Wei Dai, Vladimir Nesov and myself. For those who already understand them, this post probably won't contain anything new. As always, I take no personal credit for the ideas, only f... | https://www.lesswrong.com/posts/5bd75cc58225bf0670374e61/using-modal-fixed-points-to-formalize-logical-causality |

# Play in Easy Mode

Epistemic Status: Love the player, love the game

Also consider: [Playing on Hard Mode](https://thezvi.wordpress.com/2017/08/26/play-in-hard-mode/)

Raymond Arnold asked me, why not play in easy mode?

Easy Mode is easier. The reason to Play in Easy Mode is because it is the best known way to achie... | https://www.lesswrong.com/posts/nJPtHHq6L7MAMBvRK/play-in-easy-mode |

# Play in Hard Mode

Epistemic Status: Love the player, love the game

Also consider: [Playing on Easy Mode](https://thezvi.wordpress.com/2017/08/26/play-in-easy-mode/)

Raymond Arnold asked me, why do you insist on playing in hard mode?

Hard mode is harder. The reason to Play in Hard Mode is because it is the only kn... | https://www.lesswrong.com/posts/7hLWZf6kFkduecH2g/play-in-hard-mode |

# Rational Feed

===Highly Recommended Articles:

[What Is Rationalist Berkleys Community Culture by Zvi Moshowitz](https://thezvi.wordpress.com/2017/08/12/what-is-rationalist-berkleys-community-culture/) \- The original rationalist community mission was to save the world, not to be nice to each other. Sarah recently s... | https://www.lesswrong.com/posts/qvg5z4qRg3paLJ9sJ/rational-feed |

# Intrinsic properties and Eliezer's metaethics

#### Abstract

I give an account for why some properties seem intrinsic while others seem extrinsic. In light of this account, the property of moral goodness seems intrinsic in one way and extrinsic in another. Most properties do not suffer from this ambiguity. I suggest... | https://www.lesswrong.com/posts/8ZvKTHH5PbChtZrbp/intrinsic-properties-and-eliezer-s-metaethics |

# The Doomsday argument in anthropic decision theory

**EDIT**: added a simplified version [here](/r/discussion/lw/pdk/simplified_anthropic_doomsday/).

_[Crossposted](https://agentfoundations.org/item?id=1655) at the intelligent agents forum._

In [Anthropic Decision Theory](/lw/891/anthropic_decision_theory_i_sleepin... | https://www.lesswrong.com/posts/g94oAbSna8hpGJTSu/the-doomsday-argument-in-anthropic-decision-theory |

# I Can Tolerate Anything Except The Outgroup

*\[Content warning: Politics, religion, social justice, spoilers for “The Secret of Father Brown”. This isn’t especially original to me and I don’t claim anything more than to be explaining and rewording things I have heard from a bunch of other people. Unapologetically Am... | https://www.lesswrong.com/posts/GLMFmFvXGyAcG25ni/i-can-tolerate-anything-except-the-outgroup |

# Learning To Love Scientific Consensus

*\[**Related to:** [Contrarians, Crackpots, and Consensus](http://slatestarcodex.com/2015/08/09/contrarians-crackpots-and-consensus/), [How Common Are Science Failures?](http://slatestarcodex.com/2014/07/02/how-common-are-science-failures/). Epistemic status is “subtle and likel... | https://www.lesswrong.com/posts/ERPL3v2Y976W7XG3j/learning-to-love-scientific-consensus |

# The Case Of The Suffocating Woman

*\[Content warning: panic, suffocation\]*

**I.**

I recently presented this case at a conference and I figured you guys might want to hear it too. Various details have been obfuscated or changed around to protect confidentiality of the people involved.

A 20-something year old woma... | https://www.lesswrong.com/posts/HTGCGASf9xfB6edAh/the-case-of-the-suffocating-woman |

# Beware Isolated Demands For Rigor

**I.**

From Identity, Personal Identity, and the Self by John Perry:

> "There is something about practical things that knocks us off our philosophical high horses. Perhaps Heraclitus really thought he couldn’t step in the same river twice. Perhaps he even received tenure for that ... | https://www.lesswrong.com/posts/fzeoYhKoYPR3tDYFT/beware-isolated-demands-for-rigor |

# Intellectual Progress Inside and Outside Academia

This post is taken from a recent facebook conversation that included Wei Dai, Eliezer Yudkowsky, Vladimir Slepnev, Stuart Armstrong, Maxim Kesin, Qiaochu Yuan and Robby Bensinger, about the ability of academia to do the key intellectual progress required in AI alignm... | https://www.lesswrong.com/posts/xQ9tMMk3RArodLtDq/intellectual-progress-inside-and-outside-academia |

# If It’s Worth Doing, It’s Worth Doing With Made-Up Statistics

I do not believe that the utility weights I worked on last week – the ones that say living in North Korea is 37% as good as living in the First World – are objectively correct or correspond to any sort of natural category. So why do I find them so interes... | https://www.lesswrong.com/posts/9Tw5RqnEzqEtaoEkq/if-it-s-worth-doing-it-s-worth-doing-with-made-up-statistics |

# Online discussion is better than pre-publication peer review

Related: [Why Academic Papers Are A Terrible Discussion Forum](https://rationalconspiracy.com/2012/06/20/why-academic-papers-are-a-terrible-discussion-forum/), [Four Layers of Intellectual Conversation](https://www.facebook.com/yudkowsky/posts/101548881834... | https://www.lesswrong.com/posts/a3aGosA987cZ4aRAB/online-discussion-is-better-than-pre-publication-peer-review |

# New business opportunities due to self-driving cars

This is a slightly expanded version of a talk presented at the Less Wrong European Community Weekend 2017.

Predictions about self-driving cars in the popular press are pretty boring. Truck drivers are losing their jobs, self-driving cars will be more rented than o... | https://www.lesswrong.com/posts/vsE5cRtukmJqwcHk4/new-business-opportunities-due-to-self-driving-cars |

# Rational Feed

=== Updates:

I have been a little more selective about which articles make it onto the feed. I have not been overly selective and all of the obviously general interest rationalsit articles still make it.

Unless people object I am going to try a "weekly feed". The bi-weekly feed is pretty long. I curr... | https://www.lesswrong.com/posts/afcweBCS8rQuNwK4q/rational-feed |

# Is Feedback Suffering?

_NB: Some of the terminology and concepts I used here are incompatible with my more recent work because this was written before I [formalized my philosophy](https://mapandterritory.org/introduction-to-noematology-fac7ae7d805d), but is dangerously close enough to them that it may cause confusio... | https://www.lesswrong.com/posts/exxGw52mxMRGbrosw/is-feedback-suffering |

# Epistemic Spot Check: Exercise for Mood and Anxiety (Michael W. Otto, Jasper A.J. Smits)

### Introduction

Everyone knows exercise (along with diet and sleep) makes a big difference in depression and anxiety. Depressed and anxious people are almost by definition bad at transforming information about how to improve t... | https://www.lesswrong.com/posts/SaYr2PWR5sx6Tc6Bg/epistemic-spot-check-exercise-for-mood-and-anxiety-michael-w |

# 2017 LessWrong Survey

The [2017 LessWrong Survey](https://www.fortforecast.com/limesurvey/index.php/265172?lang=en) is here! This year we're interested in community response to the LessWrong 2.0 initiative. I've also gone through and fixed as many bugs as I could find reported on the last survey, and reintroduced it... | https://www.lesswrong.com/posts/r5RF3kFENYh5GsFj2/2017-lesswrong-survey |

# LW 2.0 Open Beta starts 9/20

Two years ago, I wrote [Lesswrong 2.0](/lw/n0l/lesswrong_20/). It’s been quite the adventure since then; I took up the mantle of organizing work to improve the site but was missing some of the core skills, and also never quite had the time to make it my top priority. Earlier this year, I... | https://www.lesswrong.com/posts/bxctncS8qcgeCEMGN/lw-2-0-open-beta-starts-9-20 |

# LW 2.0 Strategic Overview

**Update: We're in open beta! At this point you will be able to sign up / login with your LW 1.0 accounts (if the latter, we did not copy over your passwords, so hit "forgot password" to receive a password-reset email).**

Hey Everyone!

This is the post for discussing the vision that I and... | https://www.lesswrong.com/posts/rEHLk9nC5TtrNoAKT/lw-2-0-strategic-overview |

# Against Facebook: The Stalking

Epistemic Status: Nothing we didn’t basically already know, but felt obligated to share

Previously: [Against Facebook](https://thezvi.wordpress.com/2017/04/22/against-facebook/), [Against Facebook: Comparison to Alternatives and Call to Action](https://thezvi.wordpress.com/2017/04/22/... | https://www.lesswrong.com/posts/XYmJXJrAtQg89gtN9/against-facebook-the-stalking |

# LW 2.0 Open Beta Live

The [LW 2.0 Open Beta is now live](https://www.lesserwrong.com/); this means you can create an account, start reading and posting, and tell us what you think.

Four points:

1) In case you're just tuning in, I took up the mantle of revitalizing LW through improving its codebase some time ago, a... | https://www.lesswrong.com/posts/SR8cqwbMLmKqR7p5s/lw-2-0-open-beta-live |

# Against EA PR

Scott Alexander recently wrote about [weird effective altruism](http://slatestarcodex.com/2017/08/16/fear-and-loathing-at-effective-altruism-global-2017/). Many people (mostly, but not entirely, people who aren't effective altruists) offered the opinion that weird effective altruists should be banned f... | https://www.lesswrong.com/posts/gbNdc7gCXCy2ZWqXe/against-ea-pr |

# Common vs Expert Jargon

_tldr: Jargon always has a complexity cost, but you can put effort into making a concept more accessible, and it's especially valuable to put that effort in for terms that you'd like to be used by layfolk, or that you expect to be used a lot in spaces where you expect lots of layfolk to be r... | https://www.lesswrong.com/posts/DcRFTx62sTTRQo3Jw/common-vs-expert-jargon |

# Why Attitudes Matter

Sometimes when I am giving ethical advice to people I say things like "it's important to think of yourself and your partner as being on the same team" or "just remember that women in short skirts are almost certainly not wearing short skirts to arouse you in particular" or "cultivate your curios... | https://www.lesswrong.com/posts/YNYxHAbsrxsCwCs7m/why-attitudes-matter |

# Motivating a Semantics of Logical Counterfactuals

(**Disclaimer:** _This post was written as part of the CFAR/MIRI AI Summer Fellows Program, and as a result is a vocalisation of my own thought process rather than a concrete or novel proposal. However, it is an area of research I shall be pursuing in the coming mont... | https://www.lesswrong.com/posts/6t5vRHrwPDi5C9hYr/motivating-a-semantics-of-logical-counterfactuals |

# Notes From an Apocalypse

(This is a loose adaptation of a talk I sometimes give on the Cambrian Explosion, smoothed a bit for popular consumption. That talk, in turn, draws heavily from a 2006 paper by Charles Marshall, titled “Explaining the Cambrian ‘Explosion’ of Animals”. It can be read in full [here](http://www... | https://www.lesswrong.com/posts/iuNSrBoX2W5qHCAAo/notes-from-an-apocalypse |

# LW 2.0 Site Update: 09/21/17

Hey! A few quick updates on edits done today (well, yesterday - we have late work hours).

Thanks Chris_Leong for making a [post](/posts/vtZsEerABCjhtgizX/beta-first-impressions) to document folks' bugs. In the comments ozymandias requested that the page containing every post published t... | https://www.lesswrong.com/posts/hb8mLTfNYirmEpWzQ/lw-2-0-site-update-09-21-17 |

# Naturalized induction – a challenge for evidential and causal decision theory

As some of you may know, I disagree with many of the criticisms leveled against [evidential decision theory](https://en.wikipedia.org/wiki/Evidential_decision_theory) (EDT). Most notably, I believe that Smoking lesion-type problems [don't]... | https://www.lesswrong.com/posts/kgsaSbJqWLtJfiCcz/naturalized-induction-a-challenge-for-evidential-and-causal |

# Voting Weight Discussion

One of the distinguishing features of LW 2.0 is Voting Weight. People with more karma will be able to give higher-weighted upvotes and downvotes.

This is part of an overall plan to make it better at sorting signal-from noise. But it's a major change to the system and seemed worthy of a dedi... | https://www.lesswrong.com/posts/fPT7o9TRWobrXoiYJ/voting-weight-discussion |

# LW 2.0 Site Update: 09/22/17

Another set of quick updates done today:

* We had a bad bug where in about 50% of cases when a user created a post on the frontpage, it would neither show up on their userpage, nor on the frontpage, but only on the all-posts view. This is now fixed. Sorry for anyone who was confused b... | https://www.lesswrong.com/posts/8MsoEbiWJbrHQmbe7/lw-2-0-site-update-09-22-17 |

# Nobody does the thing that they are supposedly doing

I feel like one of the most important lessons I’ve had about How the World Works, which has taken quite a bit of time to sink in, is:

**In general, neither organizations nor individual people do the thing that their supposed role says they should do.** Rather the... | https://www.lesswrong.com/posts/8iAJ9QsST9X9nzfFy/nobody-does-the-thing-that-they-are-supposedly-doing |

# Out to Get You

Epistemic Status: Reference.

Expanded From: [Against Facebook](https://thezvi.wordpress.com/2017/04/22/against-facebook/), as the post originally intended.

Some things are fundamentally Out to Get You.

They seek resources at your expense. Fees are hidden. Extra options are foisted upon you. Things ... | https://www.lesswrong.com/posts/ENBzEkoyvdakz4w5d/out-to-get-you |

# Deontologist Envy

Many consequentialists of my acquaintance appear to suffer from a tragic case of deontologist envy.

In consequentialism, one makes ethical decisions by choosing the actions that have the best consequences, whether that means maximizing your own happiness and flourishing (consequentialist ethical e... | https://www.lesswrong.com/posts/ZkWvFmvTe3bvvfL69/deontologist-envy |

# The Five Hindrances to Doing the Thing

> _"Compare your normal level of consciousness with that of an athlete in the zone, or with a person in an emergency. You'll realize that daily life consists mostly of different degrees of dullness and mindlessness."_

>

> \- Culadasa (John Yates, Ph.D.), _The Mind Illuminated_... | https://www.lesswrong.com/posts/fYwD3Bt7X57RxQfSY/the-five-hindrances-to-doing-the-thing |

# Frontpage Posting and Commenting Guidelines

Welcome to the new _LessWrong!_ Our goal with the _LessWrong_ frontpage is to host high-quality discussion on a wide range of topics, in a way that allows users to make better collective progress toward the truth.

New posts automatically get posted to a user's _personal b... | https://www.lesswrong.com/posts/tKTcrnKn2YSdxkxKG/frontpage-posting-and-commenting-guidelines |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.