problem

stringlengths 54

2.23k

| solution

stringlengths 134

24.1k

| answer

stringclasses 1

value | problem_is_valid

stringclasses 1

value | solution_is_valid

stringclasses 1

value | question_type

stringclasses 1

value | problem_type

stringclasses 8

values | problem_raw

stringlengths 54

2.21k

| solution_raw

stringlengths 134

24.1k

| metadata

dict | uuid

stringlengths 36

36

| id

int64 23.5k

612k

|

|---|---|---|---|---|---|---|---|---|---|---|---|

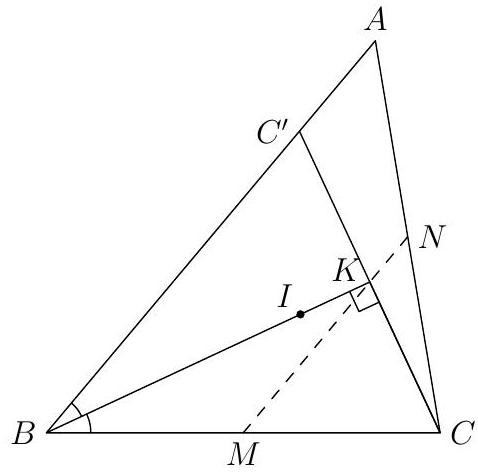

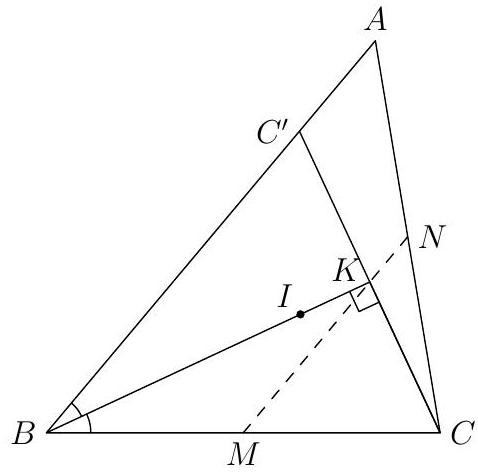

Let $K$ be as in the previous problem. Let $M$ be the midpoint of $B C$ and $N$ the midpoint of $A C$. Show that $K$ lies on line $M N$.

|

Since $I, K, E, C$ are concyclic, we have $\angle I K C=\angle I E C=90^{\circ}$. Let $C^{\prime}$ be the reflection of $C$ across $B I$, then $C^{\prime}$ must lie on $A B$. Then, $K$ is the midpoint of $C C^{\prime}$. Consider a dilation centered at $C$ with factor $\frac{1}{2}$. Since $C^{\prime}$ lies on $A B$, it follows that $K$ lies on $M N$.

|

proof

|

Yes

|

Yes

|

proof

|

Geometry

|

Let $K$ be as in the previous problem. Let $M$ be the midpoint of $B C$ and $N$ the midpoint of $A C$. Show that $K$ lies on line $M N$.

|

Since $I, K, E, C$ are concyclic, we have $\angle I K C=\angle I E C=90^{\circ}$. Let $C^{\prime}$ be the reflection of $C$ across $B I$, then $C^{\prime}$ must lie on $A B$. Then, $K$ is the midpoint of $C C^{\prime}$. Consider a dilation centered at $C$ with factor $\frac{1}{2}$. Since $C^{\prime}$ lies on $A B$, it follows that $K$ lies on $M N$.

|

{

"resource_path": "HarvardMIT/segmented/en-112-2008-feb-team1-solutions.jsonl",

"problem_match": "\n12. [40]",

"solution_match": "\nSolution: "

}

|

feef6375-04d8-54e6-ac85-b345e979ac0c

| 608,351

|

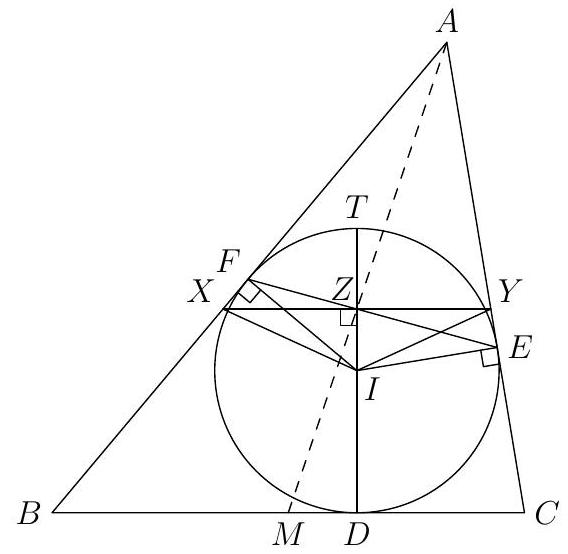

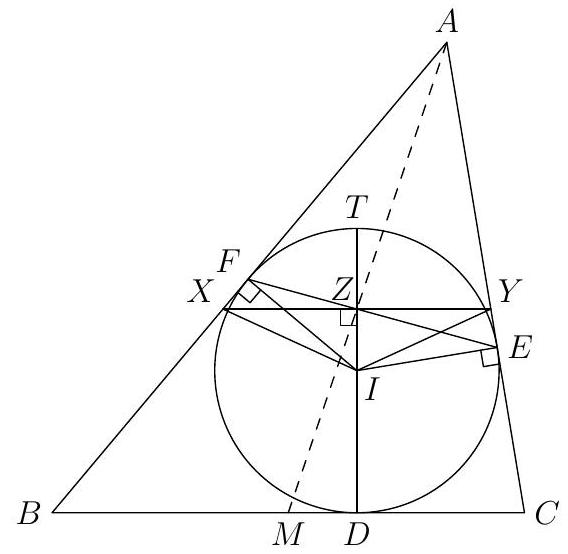

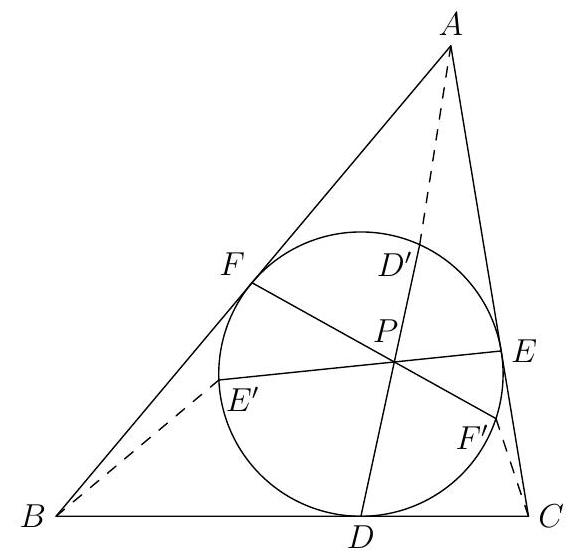

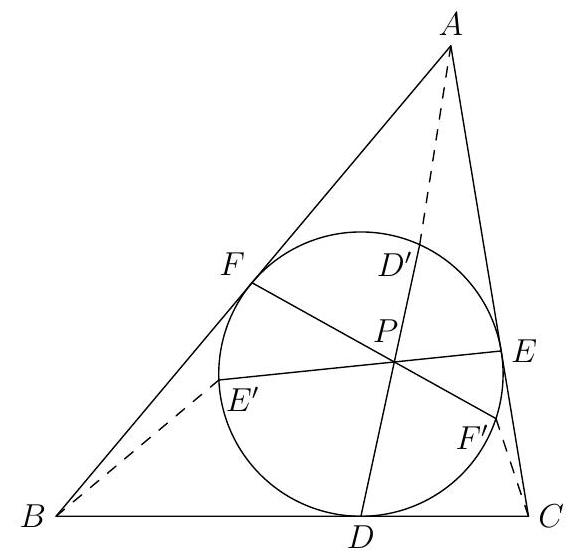

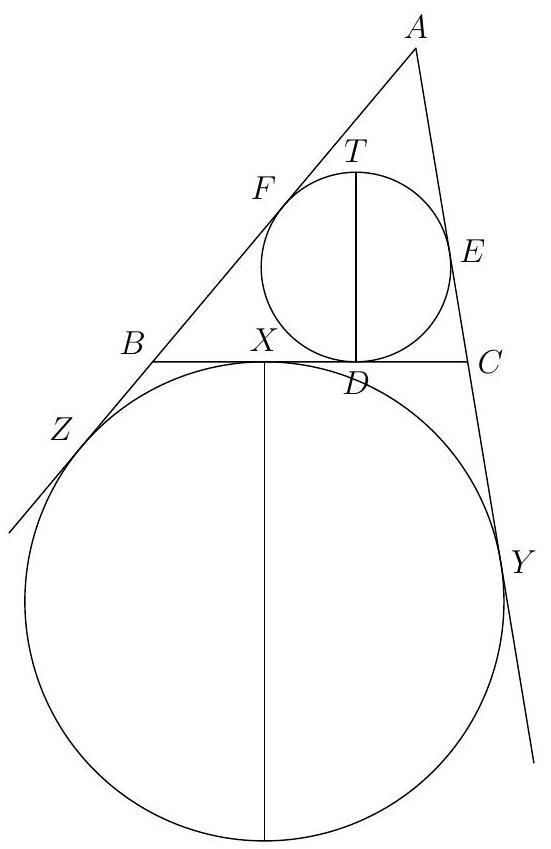

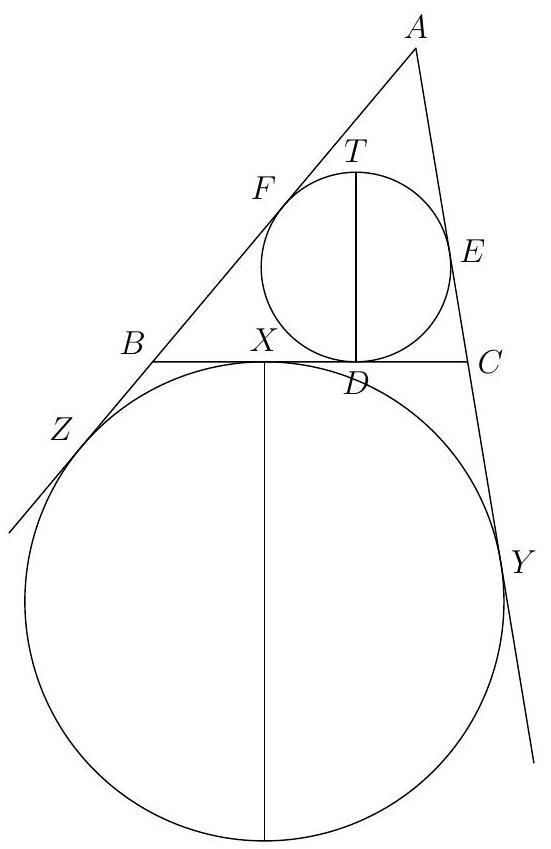

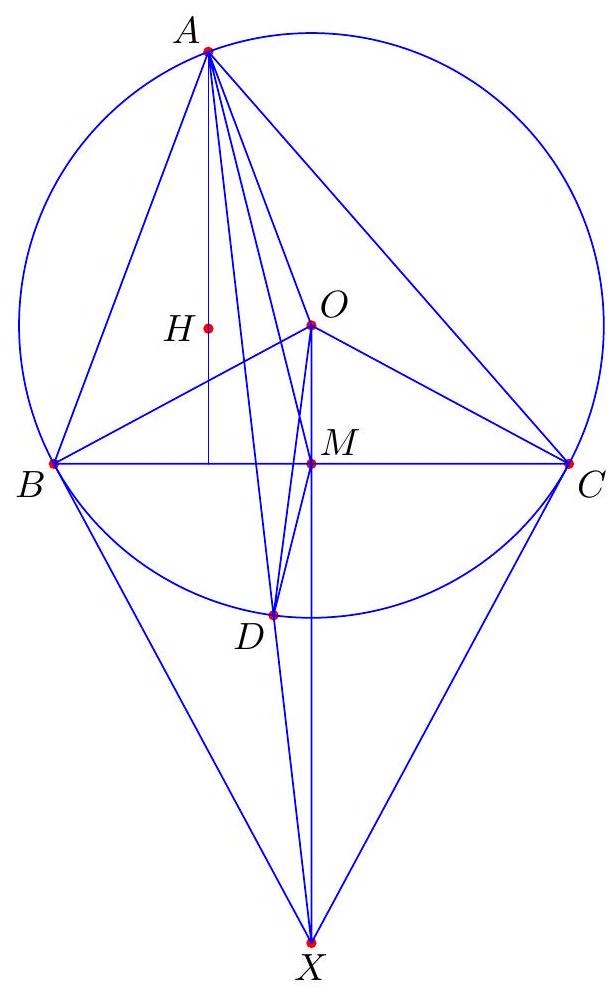

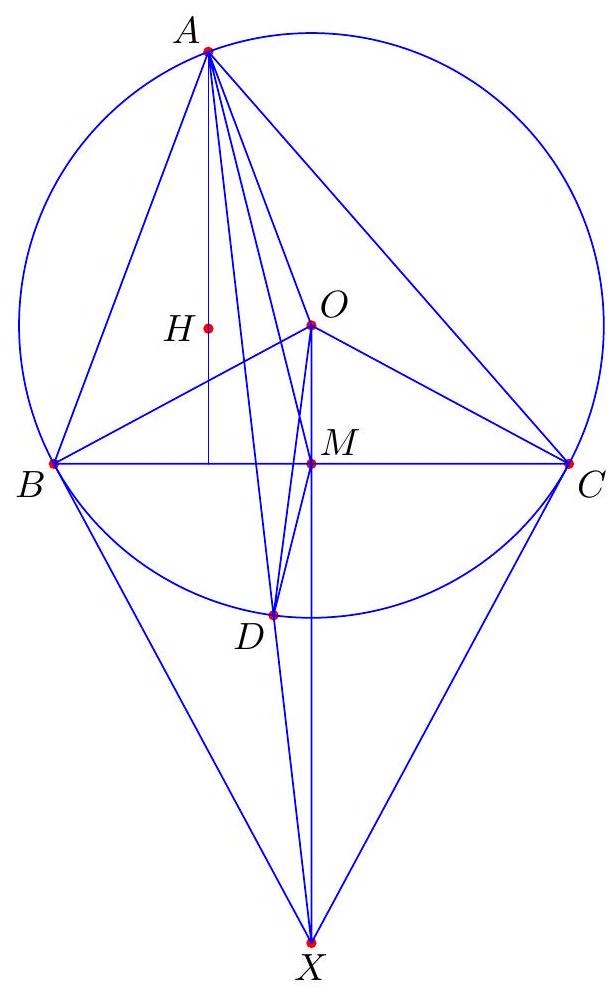

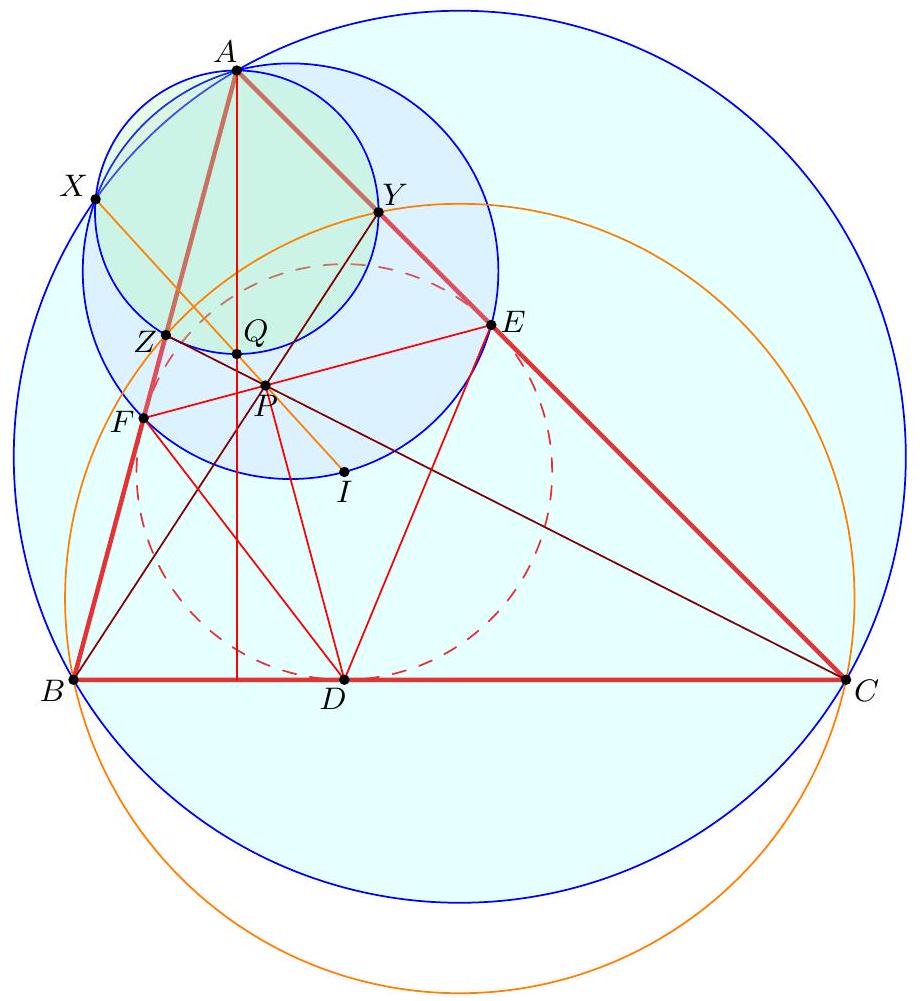

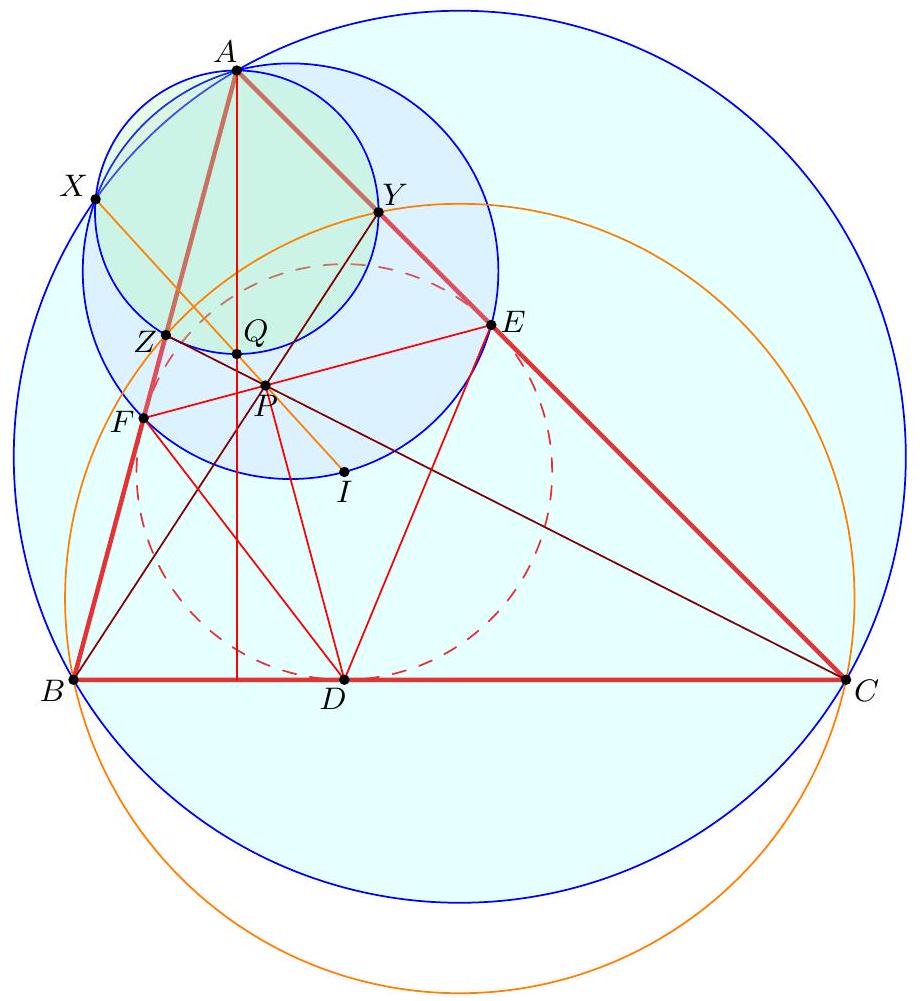

Let $M$ be the midpoint of $B C$, and $T$ diametrically opposite to $D$ on the incircle of $A B C$. Show that $D T, A M, E F$ are concurrent.

|

If $A B=A C$, then the result is clear as $A M$ and $D T$ coincide. So, assume that $A B \neq A C$.

Let lines $D T$ and $E F$ meet at $Z$. Construct a line through $Z$ parallel to $B C$, and let it meet $A B$ and $A C$ at $X$ and $Y$, respectively. We have $\angle X Z I=90^{\circ}$, and $\angle X F I=90^{\circ}$. Therefore, $F, Z, I, X$ are concyclic, and thus $\angle I X Z=\angle I F Z$. By similar arguments, we also have $\angle I Y Z=\angle I E Z$. Thus, triangles $I F E$ and $I X Y$ are similar. Since $I E=I F$, we must also have $I X=I Y$. Since $I Z$ is an altitude of the isosceles triangle $I X Y, Z$ is the midpoint of $X Y$.

Since $X Y$ and $B C$ are parallel, there is a dilation centered at $A$ that sends $X Y$ to $B C$. So it must send the midpoint $Z$ to the midpoint $M$. Therefore, $A, Z, M$ are collinear. It follows that $D T, A M, E F$ are concurrent.

|

proof

|

Yes

|

Yes

|

proof

|

Geometry

|

Let $M$ be the midpoint of $B C$, and $T$ diametrically opposite to $D$ on the incircle of $A B C$. Show that $D T, A M, E F$ are concurrent.

|

If $A B=A C$, then the result is clear as $A M$ and $D T$ coincide. So, assume that $A B \neq A C$.

Let lines $D T$ and $E F$ meet at $Z$. Construct a line through $Z$ parallel to $B C$, and let it meet $A B$ and $A C$ at $X$ and $Y$, respectively. We have $\angle X Z I=90^{\circ}$, and $\angle X F I=90^{\circ}$. Therefore, $F, Z, I, X$ are concyclic, and thus $\angle I X Z=\angle I F Z$. By similar arguments, we also have $\angle I Y Z=\angle I E Z$. Thus, triangles $I F E$ and $I X Y$ are similar. Since $I E=I F$, we must also have $I X=I Y$. Since $I Z$ is an altitude of the isosceles triangle $I X Y, Z$ is the midpoint of $X Y$.

Since $X Y$ and $B C$ are parallel, there is a dilation centered at $A$ that sends $X Y$ to $B C$. So it must send the midpoint $Z$ to the midpoint $M$. Therefore, $A, Z, M$ are collinear. It follows that $D T, A M, E F$ are concurrent.

|

{

"resource_path": "HarvardMIT/segmented/en-112-2008-feb-team1-solutions.jsonl",

"problem_match": "\n13. [40]",

"solution_match": "\nSolution: "

}

|

ba595b09-5f66-5359-b370-fbe7cd8a4e63

| 608,352

|

Let $P$ be a point inside the incircle of $A B C$. Let lines $D P, E P, F P$ meet the incircle again at $D^{\prime}, E^{\prime}, F^{\prime}$. Show that $A D^{\prime}, B E^{\prime}, C F^{\prime}$ are concurrent.

|

Using the trigonometric version of Ceva's theorem, it suffices to prove that

$$

\frac{\sin \angle B A D^{\prime}}{\sin \angle D^{\prime} A C} \cdot \frac{\sin \angle C B E^{\prime}}{\sin \angle E^{\prime} B A} \cdot \frac{\sin \angle A C F^{\prime}}{\sin \angle F^{\prime} C B}=1 .

$$

Using sine law, we have

$$

\sin \angle B A D^{\prime}=\frac{F D^{\prime}}{A D^{\prime}} \cdot \sin \angle A F D^{\prime}=\frac{F D^{\prime}}{A D^{\prime}} \cdot \sin \angle F D D^{\prime}

$$

Let $r$ be the inradius of $A B C$. Using the extended sine law, we have $F D^{\prime}=2 r \sin \angle F D D^{\prime}$. Therefore,

$$

\sin \angle B A D^{\prime}=\frac{2 r}{A D^{\prime}} \cdot \sin ^{2} \angle F D D^{\prime}

$$

Do this for all the factors in ( $\dagger$ ), and we get

$$

\frac{\sin \angle B A D^{\prime}}{\sin \angle D^{\prime} A C} \cdot \frac{\sin \angle C B E^{\prime}}{\sin \angle E^{\prime} B A} \cdot \frac{\sin \angle A C F^{\prime}}{\sin \angle F^{\prime} C B}=\left(\frac{\sin \angle F D D^{\prime}}{\sin \angle D^{\prime} D E} \cdot \frac{\sin \angle D E E^{\prime}}{\sin \angle E^{\prime} E F} \cdot \frac{\sin \angle E F F^{\prime}}{\sin \angle F^{\prime} F D}\right)^{2}

$$

Since $D D^{\prime}, E E^{\prime}, F F^{\prime}$ are concurrent, the above expression equals to 1 by using trig Ceva on triangle $D E F$. The result follows.

Remark: This result is known as Steinbart Theorem. Beware that its converse is not completely true. For more information and discussion, see Darij Grinberg's paper "Variations of the Steinbart Theorem" at http://de.geocities.com/darij_grinberg/.

## Glossary and some possibly useful facts

- A set of points is collinear if they lie on a common line. A set of lines is concurrent if they pass through a common point. A set of points are concyclic if they lie on a common circle.

- Given $A B C$ a triangle, the three angle bisectors are concurrent at the incenter of the triangle. The incenter is the center of the incircle, which is the unique circle inscribed in $A B C$, tangent to all three sides.

- Ceva's theorem states that given $A B C$ a triangle, and points $X, Y, Z$ on sides $B C, C A, A B$, respectively, the lines $A X, B Y, C Z$ are concurrent if and only if

$$

\frac{B X}{X B} \cdot \frac{C Y}{Y A} \cdot \frac{A Z}{Z B}=1

$$

- "Trig" Ceva states that given $A B C$ a triangle, and points $X, Y, Z$ inside the triangle, the lines $A X, B Y, C Z$ are concurrent if and only if

$$

\frac{\sin \angle B A X}{\sin \angle X A C} \cdot \frac{\sin \angle C B Y}{\sin \angle Y B A} \cdot \frac{\sin \angle A C Z}{\sin \angle Z C B}=1 .

$$

|

proof

|

Yes

|

Yes

|

proof

|

Geometry

|

Let $P$ be a point inside the incircle of $A B C$. Let lines $D P, E P, F P$ meet the incircle again at $D^{\prime}, E^{\prime}, F^{\prime}$. Show that $A D^{\prime}, B E^{\prime}, C F^{\prime}$ are concurrent.

|

Using the trigonometric version of Ceva's theorem, it suffices to prove that

$$

\frac{\sin \angle B A D^{\prime}}{\sin \angle D^{\prime} A C} \cdot \frac{\sin \angle C B E^{\prime}}{\sin \angle E^{\prime} B A} \cdot \frac{\sin \angle A C F^{\prime}}{\sin \angle F^{\prime} C B}=1 .

$$

Using sine law, we have

$$

\sin \angle B A D^{\prime}=\frac{F D^{\prime}}{A D^{\prime}} \cdot \sin \angle A F D^{\prime}=\frac{F D^{\prime}}{A D^{\prime}} \cdot \sin \angle F D D^{\prime}

$$

Let $r$ be the inradius of $A B C$. Using the extended sine law, we have $F D^{\prime}=2 r \sin \angle F D D^{\prime}$. Therefore,

$$

\sin \angle B A D^{\prime}=\frac{2 r}{A D^{\prime}} \cdot \sin ^{2} \angle F D D^{\prime}

$$

Do this for all the factors in ( $\dagger$ ), and we get

$$

\frac{\sin \angle B A D^{\prime}}{\sin \angle D^{\prime} A C} \cdot \frac{\sin \angle C B E^{\prime}}{\sin \angle E^{\prime} B A} \cdot \frac{\sin \angle A C F^{\prime}}{\sin \angle F^{\prime} C B}=\left(\frac{\sin \angle F D D^{\prime}}{\sin \angle D^{\prime} D E} \cdot \frac{\sin \angle D E E^{\prime}}{\sin \angle E^{\prime} E F} \cdot \frac{\sin \angle E F F^{\prime}}{\sin \angle F^{\prime} F D}\right)^{2}

$$

Since $D D^{\prime}, E E^{\prime}, F F^{\prime}$ are concurrent, the above expression equals to 1 by using trig Ceva on triangle $D E F$. The result follows.

Remark: This result is known as Steinbart Theorem. Beware that its converse is not completely true. For more information and discussion, see Darij Grinberg's paper "Variations of the Steinbart Theorem" at http://de.geocities.com/darij_grinberg/.

## Glossary and some possibly useful facts

- A set of points is collinear if they lie on a common line. A set of lines is concurrent if they pass through a common point. A set of points are concyclic if they lie on a common circle.

- Given $A B C$ a triangle, the three angle bisectors are concurrent at the incenter of the triangle. The incenter is the center of the incircle, which is the unique circle inscribed in $A B C$, tangent to all three sides.

- Ceva's theorem states that given $A B C$ a triangle, and points $X, Y, Z$ on sides $B C, C A, A B$, respectively, the lines $A X, B Y, C Z$ are concurrent if and only if

$$

\frac{B X}{X B} \cdot \frac{C Y}{Y A} \cdot \frac{A Z}{Z B}=1

$$

- "Trig" Ceva states that given $A B C$ a triangle, and points $X, Y, Z$ inside the triangle, the lines $A X, B Y, C Z$ are concurrent if and only if

$$

\frac{\sin \angle B A X}{\sin \angle X A C} \cdot \frac{\sin \angle C B Y}{\sin \angle Y B A} \cdot \frac{\sin \angle A C Z}{\sin \angle Z C B}=1 .

$$

|

{

"resource_path": "HarvardMIT/segmented/en-112-2008-feb-team1-solutions.jsonl",

"problem_match": "\n14. [40]",

"solution_match": "\nSolution: "

}

|

4a863bc9-62a7-57b2-b2ca-6c0a84b4a26b

| 608,353

|

(Distributive law) Prove that $(x \oplus y) \odot z=x \odot z \oplus y \odot z$ for all $x, y, z \in \mathbb{R} \cup\{\infty\}$.

|

This is equivalent to proving that

$$

\min (x, y)+z=\min (x+z, y+z) .

$$

Consider two cases. If $x \leq y$, then $L H S=x+z$ and $R H S=x+z$. If $x>y$, then $L H S=y+z$ and $R H S=y+z$. It follows that $L H S=R H S$.

|

proof

|

Yes

|

Yes

|

proof

|

Algebra

|

(Distributive law) Prove that $(x \oplus y) \odot z=x \odot z \oplus y \odot z$ for all $x, y, z \in \mathbb{R} \cup\{\infty\}$.

|

This is equivalent to proving that

$$

\min (x, y)+z=\min (x+z, y+z) .

$$

Consider two cases. If $x \leq y$, then $L H S=x+z$ and $R H S=x+z$. If $x>y$, then $L H S=y+z$ and $R H S=y+z$. It follows that $L H S=R H S$.

|

{

"resource_path": "HarvardMIT/segmented/en-112-2008-feb-team2-solutions.jsonl",

"problem_match": "\n1. [10]",

"solution_match": "\nSolution: "

}

|

c7b6ebe6-8ebf-5617-ad07-4eda696fd2a0

| 608,354

|

By a tropical polynomial we mean a function of the form

$$

p(x)=a_{n} \odot x^{n} \oplus a_{n-1} \odot x^{n-1} \oplus \cdots \oplus a_{1} \odot x \oplus a_{0}

$$

where exponentiation is as defined in the previous problem.

Let $p$ be a tropical polynomial. Prove that

$$

p\left(\frac{x+y}{2}\right) \geq \frac{p(x)+p(y)}{2}

$$

for all $x, y \in \mathbb{R} \cup\{\infty\}$. (This means that all tropical polynomials are concave.)

|

First, note that for any $x_{1}, \ldots, x_{n}, y_{1}, \ldots, y_{n}$, we have

$$

\min \left\{x_{1}+y_{1}, x_{2}+y_{2}, \ldots, x_{n}+y_{n}\right\} \geq \min \left\{x_{1}, x_{2}, \ldots, x_{n}\right\}+\min \left\{y_{1}, y_{2}, \ldots, y_{n}\right\} .

$$

Indeed, suppose that $x_{m}+y_{m}=\min _{i}\left\{x_{i}+y_{i}\right\}$, then $x_{m} \geq \min _{i} x_{i}$ and $y_{m} \geq \min _{i} y_{i}$, and so $\min _{i}\left\{x_{i}+y_{i}\right\}=x_{m}+y_{m} \geq \min _{i} x_{i}+\min _{i} y_{i}$.

Now, let us write a tropical polynomial in a more familiar notation. We have

$$

p(x)=\min _{0 \leq k \leq n}\left\{a_{k}+k x\right\} .

$$

So

$$

\begin{aligned}

p\left(\frac{x+y}{2}\right) & =\min _{0 \leq k \leq n}\left\{a_{k}+k\left(\frac{x+y}{2}\right)\right\} \\

& =\frac{1}{2} \min _{0 \leq k \leq n}\left\{\left(a_{k}+k x\right)+\left(a_{k}+k y\right)\right\} \\

& \geq \frac{1}{2}\left(\min _{0 \leq k \leq n}\left\{a_{k}+k x\right\}+\min _{0 \leq k \leq n}\left\{a_{k}+k y\right\}\right) \\

& =\frac{1}{2}(p(x)+p(y)) .

\end{aligned}

$$

|

proof

|

Yes

|

Yes

|

proof

|

Inequalities

|

By a tropical polynomial we mean a function of the form

$$

p(x)=a_{n} \odot x^{n} \oplus a_{n-1} \odot x^{n-1} \oplus \cdots \oplus a_{1} \odot x \oplus a_{0}

$$

where exponentiation is as defined in the previous problem.

Let $p$ be a tropical polynomial. Prove that

$$

p\left(\frac{x+y}{2}\right) \geq \frac{p(x)+p(y)}{2}

$$

for all $x, y \in \mathbb{R} \cup\{\infty\}$. (This means that all tropical polynomials are concave.)

|

First, note that for any $x_{1}, \ldots, x_{n}, y_{1}, \ldots, y_{n}$, we have

$$

\min \left\{x_{1}+y_{1}, x_{2}+y_{2}, \ldots, x_{n}+y_{n}\right\} \geq \min \left\{x_{1}, x_{2}, \ldots, x_{n}\right\}+\min \left\{y_{1}, y_{2}, \ldots, y_{n}\right\} .

$$

Indeed, suppose that $x_{m}+y_{m}=\min _{i}\left\{x_{i}+y_{i}\right\}$, then $x_{m} \geq \min _{i} x_{i}$ and $y_{m} \geq \min _{i} y_{i}$, and so $\min _{i}\left\{x_{i}+y_{i}\right\}=x_{m}+y_{m} \geq \min _{i} x_{i}+\min _{i} y_{i}$.

Now, let us write a tropical polynomial in a more familiar notation. We have

$$

p(x)=\min _{0 \leq k \leq n}\left\{a_{k}+k x\right\} .

$$

So

$$

\begin{aligned}

p\left(\frac{x+y}{2}\right) & =\min _{0 \leq k \leq n}\left\{a_{k}+k\left(\frac{x+y}{2}\right)\right\} \\

& =\frac{1}{2} \min _{0 \leq k \leq n}\left\{\left(a_{k}+k x\right)+\left(a_{k}+k y\right)\right\} \\

& \geq \frac{1}{2}\left(\min _{0 \leq k \leq n}\left\{a_{k}+k x\right\}+\min _{0 \leq k \leq n}\left\{a_{k}+k y\right\}\right) \\

& =\frac{1}{2}(p(x)+p(y)) .

\end{aligned}

$$

|

{

"resource_path": "HarvardMIT/segmented/en-112-2008-feb-team2-solutions.jsonl",

"problem_match": "\n3. [35]",

"solution_match": "\nSolution: "

}

|

f22cf218-a735-5e30-ab3a-e7f013561692

| 608,356

|

(Fundamental Theorem of Algebra) Let $p$ be a tropical polynomial:

$$

p(x)=a_{n} \odot x^{n} \oplus a_{n-1} \odot x^{n-1} \oplus \cdots \oplus a_{1} \odot x \oplus a_{0}, \quad a_{n} \neq \infty

$$

Prove that we can find $r_{1}, r_{2}, \ldots, r_{n} \in \mathbb{R} \cup\{\infty\}$ so that

$$

p(x)=a_{n} \odot\left(x \oplus r_{1}\right) \odot\left(x \oplus r_{2}\right) \odot \cdots \odot\left(x \oplus r_{n}\right)

$$

for all $x$.

|

Again, we have

$$

p(x)=\min _{0 \leq k \leq n}\left\{a_{k}+k x\right\} .

$$

So the graph of $y=p(x)$ can be drawn as follows: first, draw all the lines $y=a_{k}+k x$, $k=0,1, \ldots, n$, then trace out the lowest broken line, which then is the graph of $y=p(x)$.

So $p(x)$ is piecewise linear and continuous, and has slopes from the set $\{0,1,2, \ldots, n\}$. We know from the previous problem that $p(x)$ is concave, and so its slope must be decreasing (this can also be observed simply from the drawing of the graph of $y=p(x)$ ). Then, let $r_{k}$ denote the $x$-coordinate of the leftmost kink such that the slope of the graph is less than $k$ to the right of this kink. Then, $r_{n} \leq r_{n-1} \leq \cdots \leq r_{1}$, and for $r_{k-1} \leq x \leq r_{k}$, the graph of $p$ is linear with slope $k$. Note that is if possible that $r_{k-1}=r_{k}$, if no segment of $p$ has slope $k$. Also, since $a_{n} \neq \infty$, the leftmost piece of $p(x)$ must have slope $n$, and thus $r_{n}$ exists, and thus all $r_{i}$ exist.

Now, compare $p(x)$ with

$$

\begin{aligned}

q(x) & =a_{n} \odot\left(x \oplus r_{1}\right) \odot\left(x \oplus r_{2}\right) \odot \cdots \odot\left(x \oplus r_{n}\right) \\

& =a_{n}+\min \left(x, r_{1}\right)+\min \left(x, r_{2}\right)+\cdots+\min \left(x, r_{n}\right) .

\end{aligned}

$$

For $r_{k-1} \leq x \leq r_{k}$, the slope of $q(x)$ is $k$, and for $x \leq r_{n}$ the slope of $q$ is $n$ and for $x \geq r_{1}$ the slope of $q$ is 0 . So $q$ is piecewise linear, and of course it is continuous. It follows that the graph of $q$ coincides with that of $p$ up to a translation. By taking any $x<r_{n}$, we see that $q(x)=a_{n}+n x=p(x)$, we see that the graphs of $p$ and $q$ coincide, and thus they must be the same function.

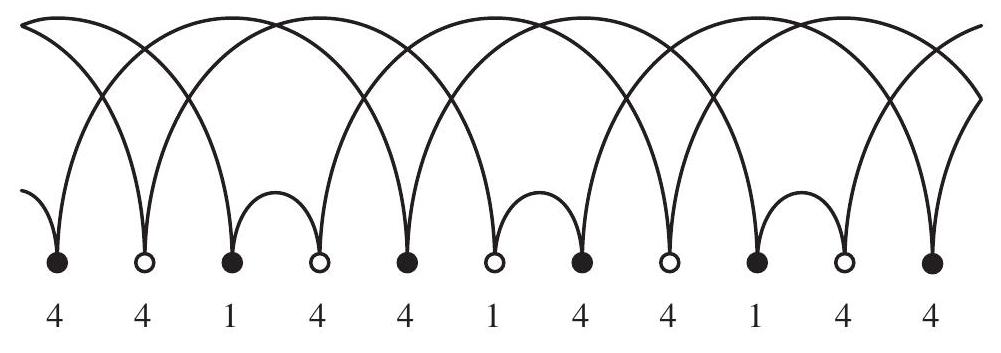

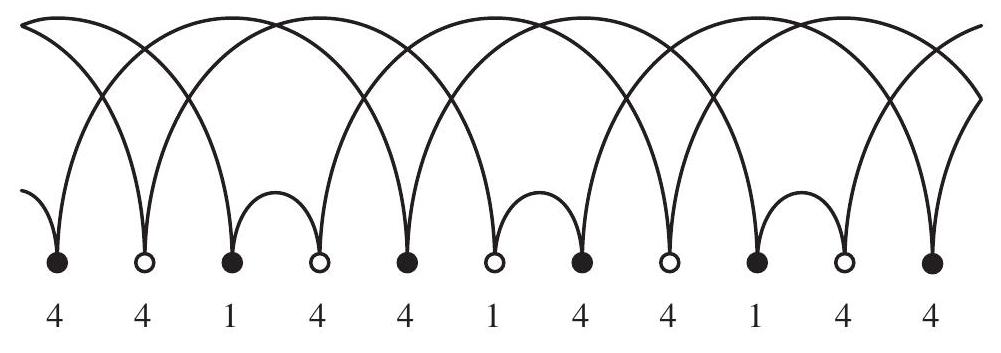

## Juggling [125]

A juggling sequence of length $n$ is a sequence $j(\cdot)$ of $n$ nonnegative integers, usually written as a string

$$

j(0) j(1) \ldots j(n-1)

$$

such that the mapping $f: \mathbb{Z} \rightarrow \mathbb{Z}$ defined by

$$

f(t)=t+j(\bar{t})

$$

is a permutation of the integers. Here $\bar{t}$ denotes the remainder of $t$ when divided by $n$. In this case, we say that $f$ is the corresponding juggling pattern.

For a juggling pattern $f$ (or its corresponding juggling sequence), we say that it has $b$ balls if the permutation induces $b$ infinite orbits on the set of integers. Equivalently, $b$ is the maximum number such that we can find a set of $b$ integers $\left\{t_{1}, t_{2}, \ldots, t_{b}\right\}$ so that the sets $\left\{t_{i}, f\left(t_{i}\right), f\left(f\left(t_{i}\right)\right), f\left(f\left(f\left(t_{i}\right)\right)\right), \ldots\right\}$ are all infinite and mutually disjoint (i.e. non-overlapping) for $i=1,2, \ldots, b$. (This definition will become clear in a second.)

Now is probably a good time to pause and think about what all this has to do with juggling. Imagine that we are juggling a number of balls, and at time $t$, we toss a ball from our hand up to a height $j(\bar{t})$. This ball stays up in the air for $j(\bar{t})$ units of time, so that it comes back to our hand at time $f(t)=t+j(\bar{t})$. Then, the juggling pattern presents a simplified model of how balls are juggled (for instance, we ignore information such as which hand we use to toss the ball). A throw height of 0 (i.e., $j(\bar{t})=0$ and $f(t)=t$ ) represents that no thrown takes place at time $t$, which could correspond to an empty hand. Then, $b$ is simply the minimum number of balls needed to carry out the juggling.

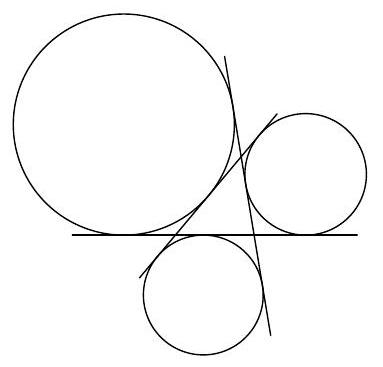

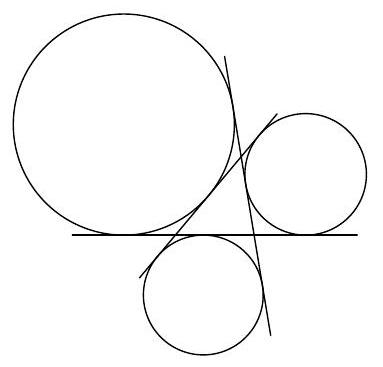

The following graphical representation may be helpful to you. On a horizontal line, an curve is drawn from $t$ to $f(t)$. For instance, the following diagram depicts the juggling sequence 441 (or the juggling sequences 414 and 144). Then $b$ is simply the number of contiguous "paths" drawn, which is 3 in this case.

Figure 1: Juggling diagram of 441.

|

proof

|

Yes

|

Yes

|

proof

|

Algebra

|

(Fundamental Theorem of Algebra) Let $p$ be a tropical polynomial:

$$

p(x)=a_{n} \odot x^{n} \oplus a_{n-1} \odot x^{n-1} \oplus \cdots \oplus a_{1} \odot x \oplus a_{0}, \quad a_{n} \neq \infty

$$

Prove that we can find $r_{1}, r_{2}, \ldots, r_{n} \in \mathbb{R} \cup\{\infty\}$ so that

$$

p(x)=a_{n} \odot\left(x \oplus r_{1}\right) \odot\left(x \oplus r_{2}\right) \odot \cdots \odot\left(x \oplus r_{n}\right)

$$

for all $x$.

|

Again, we have

$$

p(x)=\min _{0 \leq k \leq n}\left\{a_{k}+k x\right\} .

$$

So the graph of $y=p(x)$ can be drawn as follows: first, draw all the lines $y=a_{k}+k x$, $k=0,1, \ldots, n$, then trace out the lowest broken line, which then is the graph of $y=p(x)$.

So $p(x)$ is piecewise linear and continuous, and has slopes from the set $\{0,1,2, \ldots, n\}$. We know from the previous problem that $p(x)$ is concave, and so its slope must be decreasing (this can also be observed simply from the drawing of the graph of $y=p(x)$ ). Then, let $r_{k}$ denote the $x$-coordinate of the leftmost kink such that the slope of the graph is less than $k$ to the right of this kink. Then, $r_{n} \leq r_{n-1} \leq \cdots \leq r_{1}$, and for $r_{k-1} \leq x \leq r_{k}$, the graph of $p$ is linear with slope $k$. Note that is if possible that $r_{k-1}=r_{k}$, if no segment of $p$ has slope $k$. Also, since $a_{n} \neq \infty$, the leftmost piece of $p(x)$ must have slope $n$, and thus $r_{n}$ exists, and thus all $r_{i}$ exist.

Now, compare $p(x)$ with

$$

\begin{aligned}

q(x) & =a_{n} \odot\left(x \oplus r_{1}\right) \odot\left(x \oplus r_{2}\right) \odot \cdots \odot\left(x \oplus r_{n}\right) \\

& =a_{n}+\min \left(x, r_{1}\right)+\min \left(x, r_{2}\right)+\cdots+\min \left(x, r_{n}\right) .

\end{aligned}

$$

For $r_{k-1} \leq x \leq r_{k}$, the slope of $q(x)$ is $k$, and for $x \leq r_{n}$ the slope of $q$ is $n$ and for $x \geq r_{1}$ the slope of $q$ is 0 . So $q$ is piecewise linear, and of course it is continuous. It follows that the graph of $q$ coincides with that of $p$ up to a translation. By taking any $x<r_{n}$, we see that $q(x)=a_{n}+n x=p(x)$, we see that the graphs of $p$ and $q$ coincide, and thus they must be the same function.

## Juggling [125]

A juggling sequence of length $n$ is a sequence $j(\cdot)$ of $n$ nonnegative integers, usually written as a string

$$

j(0) j(1) \ldots j(n-1)

$$

such that the mapping $f: \mathbb{Z} \rightarrow \mathbb{Z}$ defined by

$$

f(t)=t+j(\bar{t})

$$

is a permutation of the integers. Here $\bar{t}$ denotes the remainder of $t$ when divided by $n$. In this case, we say that $f$ is the corresponding juggling pattern.

For a juggling pattern $f$ (or its corresponding juggling sequence), we say that it has $b$ balls if the permutation induces $b$ infinite orbits on the set of integers. Equivalently, $b$ is the maximum number such that we can find a set of $b$ integers $\left\{t_{1}, t_{2}, \ldots, t_{b}\right\}$ so that the sets $\left\{t_{i}, f\left(t_{i}\right), f\left(f\left(t_{i}\right)\right), f\left(f\left(f\left(t_{i}\right)\right)\right), \ldots\right\}$ are all infinite and mutually disjoint (i.e. non-overlapping) for $i=1,2, \ldots, b$. (This definition will become clear in a second.)

Now is probably a good time to pause and think about what all this has to do with juggling. Imagine that we are juggling a number of balls, and at time $t$, we toss a ball from our hand up to a height $j(\bar{t})$. This ball stays up in the air for $j(\bar{t})$ units of time, so that it comes back to our hand at time $f(t)=t+j(\bar{t})$. Then, the juggling pattern presents a simplified model of how balls are juggled (for instance, we ignore information such as which hand we use to toss the ball). A throw height of 0 (i.e., $j(\bar{t})=0$ and $f(t)=t$ ) represents that no thrown takes place at time $t$, which could correspond to an empty hand. Then, $b$ is simply the minimum number of balls needed to carry out the juggling.

The following graphical representation may be helpful to you. On a horizontal line, an curve is drawn from $t$ to $f(t)$. For instance, the following diagram depicts the juggling sequence 441 (or the juggling sequences 414 and 144). Then $b$ is simply the number of contiguous "paths" drawn, which is 3 in this case.

Figure 1: Juggling diagram of 441.

|

{

"resource_path": "HarvardMIT/segmented/en-112-2008-feb-team2-solutions.jsonl",

"problem_match": "\n4. [40]",

"solution_match": "\nSolution: "

}

|

02605877-1d7b-58a4-9b5e-e0de91f7ce4f

| 608,357

|

Suppose that $j(0) j(1) \cdots j(n-1)$ is a valid juggling sequence. For $i=0,1, \ldots, n-1$, Let $a_{i}$ denote the remainder of $j(i)+i$ when divided by $n$. Prove that $\left(a_{0}, a_{1}, \ldots, a_{n-1}\right)$ is a permutation of $(0,1, \ldots, n-1)$.

|

Suppose that $a_{i}=j(i)+i-b_{i} n$, where $b_{i}$ is an integer. Note that $f\left(i-b_{i} n\right)=$ $i-b_{i} n+j(i)=a_{i}$. Since $\left\{i-b_{i} n \mid i=0,1, \ldots, n-1\right\}$ contains $n$ distinct integers (as their residue $\bmod n$ are all distinct), and $f$ is a permutation, we see that after applying the map $f$, the resulting set $\left\{a_{0}, a_{1}, \ldots, a_{n-1}\right\}$ is a set of $n$ distinct integers. Since $0 \leq a_{i}<n$ from definition, we see that ( $a_{0}, a_{1}, \ldots, a_{n-1}$ ) is a permutation of $(0,1, \ldots, n-1)$.

|

proof

|

Yes

|

Yes

|

proof

|

Combinatorics

|

Suppose that $j(0) j(1) \cdots j(n-1)$ is a valid juggling sequence. For $i=0,1, \ldots, n-1$, Let $a_{i}$ denote the remainder of $j(i)+i$ when divided by $n$. Prove that $\left(a_{0}, a_{1}, \ldots, a_{n-1}\right)$ is a permutation of $(0,1, \ldots, n-1)$.

|

Suppose that $a_{i}=j(i)+i-b_{i} n$, where $b_{i}$ is an integer. Note that $f\left(i-b_{i} n\right)=$ $i-b_{i} n+j(i)=a_{i}$. Since $\left\{i-b_{i} n \mid i=0,1, \ldots, n-1\right\}$ contains $n$ distinct integers (as their residue $\bmod n$ are all distinct), and $f$ is a permutation, we see that after applying the map $f$, the resulting set $\left\{a_{0}, a_{1}, \ldots, a_{n-1}\right\}$ is a set of $n$ distinct integers. Since $0 \leq a_{i}<n$ from definition, we see that ( $a_{0}, a_{1}, \ldots, a_{n-1}$ ) is a permutation of $(0,1, \ldots, n-1)$.

|

{

"resource_path": "HarvardMIT/segmented/en-112-2008-feb-team2-solutions.jsonl",

"problem_match": "\n6. [40]",

"solution_match": "\nSolution: "

}

|

e0446597-b63f-5e37-acf0-4ac458034e07

| 608,359

|

Prove that the number of balls $b$ in a juggling sequence $j(0) j(1) \cdots j(n-1)$ is simply the average

$$

b=\frac{j(0)+j(1)+\cdots+j(n-1)}{n} .

$$

|

Consider the corresponding juggling diagram. Say the length of an curve from $t$ to $f(t)$ is $f(t)-t$. Let us draw only the curves whose left endpoint lies inside $[0, M n-1]$. For every single ball, the sum of the lengths of the arrows drawn corresponding to that ball is between $M n-J$ and $M n+J$, where $J=\max \{j(0), j(1), \ldots, j(n-1)\}$. It follows that the sum of the lengths of the arrows drawn is between $b(M n-J)$ and $b(M n+J)$. Since the arrow drawn at $t$ has length $j(\bar{t})$, the sum of the lengths of the arrows drawn is $M(j(0)+j(1)+\cdots+j(n-1))$. It follows that

$$

b(M n-J) \leq M(j(0)+j(1)+\cdots+j(n-1)) \leq b(M n+J)

$$

Dividing by $M n$, we get

$$

b\left(1-\frac{J}{n M}\right) \leq \frac{j(0)+j(1)+\cdots+j(n-1)}{n} \leq b\left(1+\frac{J}{n M}\right)

$$

Since we can take $M$ to be arbitrarily large, we must have

$$

b=\frac{j(0)+j(1)+\cdots+j(n-1)}{n}

$$

as desired.

|

proof

|

Yes

|

Yes

|

proof

|

Combinatorics

|

Prove that the number of balls $b$ in a juggling sequence $j(0) j(1) \cdots j(n-1)$ is simply the average

$$

b=\frac{j(0)+j(1)+\cdots+j(n-1)}{n} .

$$

|

Consider the corresponding juggling diagram. Say the length of an curve from $t$ to $f(t)$ is $f(t)-t$. Let us draw only the curves whose left endpoint lies inside $[0, M n-1]$. For every single ball, the sum of the lengths of the arrows drawn corresponding to that ball is between $M n-J$ and $M n+J$, where $J=\max \{j(0), j(1), \ldots, j(n-1)\}$. It follows that the sum of the lengths of the arrows drawn is between $b(M n-J)$ and $b(M n+J)$. Since the arrow drawn at $t$ has length $j(\bar{t})$, the sum of the lengths of the arrows drawn is $M(j(0)+j(1)+\cdots+j(n-1))$. It follows that

$$

b(M n-J) \leq M(j(0)+j(1)+\cdots+j(n-1)) \leq b(M n+J)

$$

Dividing by $M n$, we get

$$

b\left(1-\frac{J}{n M}\right) \leq \frac{j(0)+j(1)+\cdots+j(n-1)}{n} \leq b\left(1+\frac{J}{n M}\right)

$$

Since we can take $M$ to be arbitrarily large, we must have

$$

b=\frac{j(0)+j(1)+\cdots+j(n-1)}{n}

$$

as desired.

|

{

"resource_path": "HarvardMIT/segmented/en-112-2008-feb-team2-solutions.jsonl",

"problem_match": "\n8. [40]",

"solution_match": "\nSolution: "

}

|

52161c7e-715f-5464-a88f-9258867fbf3f

| 608,361

|

Show that the converse of the previous statement is false by providing a non-juggling sequence $j(0) j(1) j(2)$ of length 3 where the average $\frac{1}{3}(j(0)+j(1)+j(2))$ is an integer. Show that your example works.

|

One such example is 210 . This is not a juggling sequence since $f(0)=f(1)=2$.

## Incircles [180]

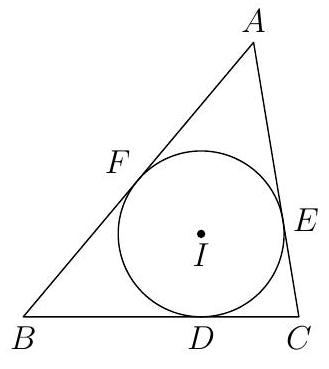

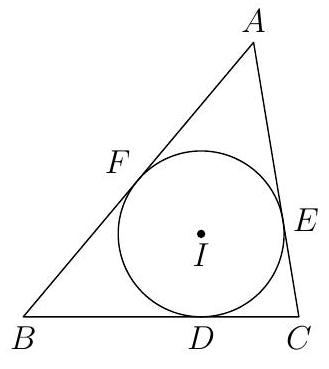

In the following problems, $A B C$ is a triangle with incenter $I$. Let $D, E, F$ denote the points where the incircle of $A B C$ touches sides $B C, C A, A B$, respectively.

At the end of this section you can find some terminology and theorems that may be helpful to you.

|

proof

|

Yes

|

Yes

|

proof

|

Combinatorics

|

Show that the converse of the previous statement is false by providing a non-juggling sequence $j(0) j(1) j(2)$ of length 3 where the average $\frac{1}{3}(j(0)+j(1)+j(2))$ is an integer. Show that your example works.

|

One such example is 210 . This is not a juggling sequence since $f(0)=f(1)=2$.

## Incircles [180]

In the following problems, $A B C$ is a triangle with incenter $I$. Let $D, E, F$ denote the points where the incircle of $A B C$ touches sides $B C, C A, A B$, respectively.

At the end of this section you can find some terminology and theorems that may be helpful to you.

|

{

"resource_path": "HarvardMIT/segmented/en-112-2008-feb-team2-solutions.jsonl",

"problem_match": "\n9. [5]",

"solution_match": "\nSolution: "

}

|

74ec490a-ee87-5504-b291-8a1983e85d2e

| 608,362

|

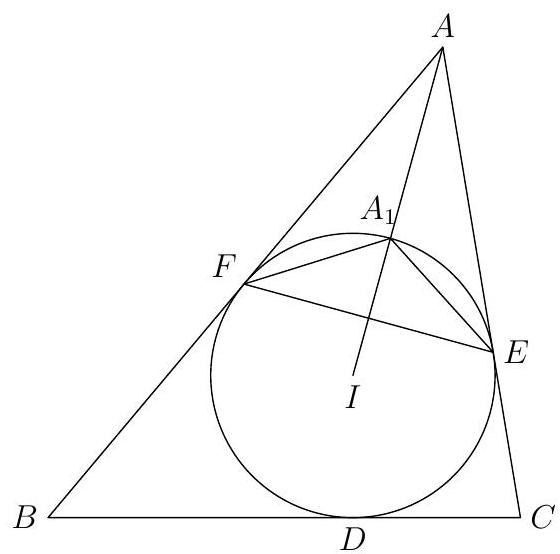

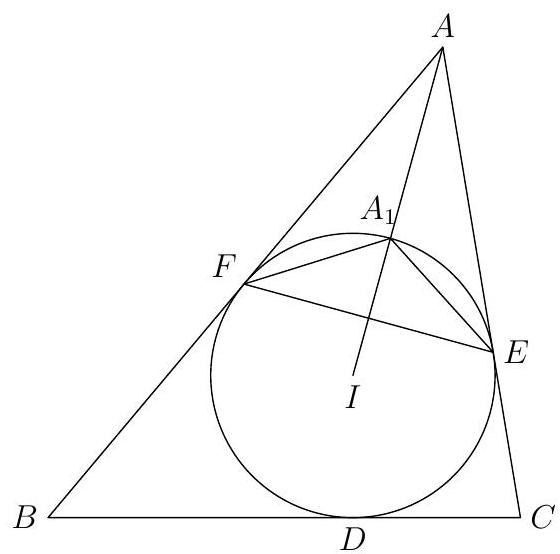

Show that the incenter of triangle $A E F$ lies on the incircle of $A B C$.

|

Let segment $A I$ meet the incircle at $A_{1}$. Let us show that $A_{1}$ is the incenter of $A E F$.

Since $A E=A F$ and $A A^{\prime}$ is the angle bisector of $\angle E A F$, we find that $A_{1} E=A_{1} F$. Using tangent-chord, we see that $\angle A F A_{1}=\angle A_{1} E F=\angle A_{1} F E$. Therefore, $A_{1}$ lies on the angle bisector of $\angle A F E$. Since $A_{1}$ also lies on the angle bisector of $\angle E A F, A_{1}$ must be the incenter of $A E F$, as desired.

|

proof

|

Yes

|

Yes

|

proof

|

Geometry

|

Show that the incenter of triangle $A E F$ lies on the incircle of $A B C$.

|

Let segment $A I$ meet the incircle at $A_{1}$. Let us show that $A_{1}$ is the incenter of $A E F$.

Since $A E=A F$ and $A A^{\prime}$ is the angle bisector of $\angle E A F$, we find that $A_{1} E=A_{1} F$. Using tangent-chord, we see that $\angle A F A_{1}=\angle A_{1} E F=\angle A_{1} F E$. Therefore, $A_{1}$ lies on the angle bisector of $\angle A F E$. Since $A_{1}$ also lies on the angle bisector of $\angle E A F, A_{1}$ must be the incenter of $A E F$, as desired.

|

{

"resource_path": "HarvardMIT/segmented/en-112-2008-feb-team2-solutions.jsonl",

"problem_match": "\n12. [35]",

"solution_match": "\nSolution: "

}

|

fcce9c1e-d0a0-5ad4-9d09-df3a092790c4

| 608,365

|

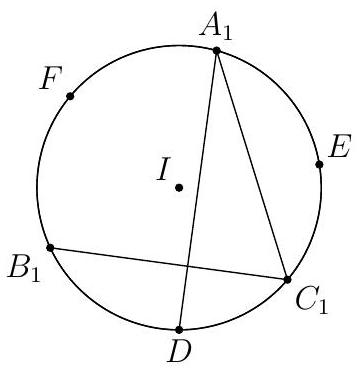

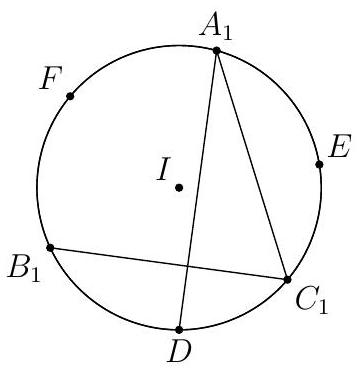

Let $A_{1}, B_{1}, C_{1}$ be the incenters of triangle $A E F, B D F, C D E$, respectively. Show that $A_{1} D, B_{1} E, C_{1} F$ all pass through the orthocenter of $A_{1} B_{1} C_{1}$.

|

Using the result from the previous problem, we see that $A_{1}, B_{1}, C_{1}$ are respectively the midpoints of the $\operatorname{arc} F E, F D, D F$ of the incircle. We have

$$

\begin{aligned}

\angle D A_{1} C_{1}+\angle B_{1} C_{1} A_{1} & =\frac{1}{2} \angle D I C_{1}+\frac{1}{2} \angle B_{1} I F+\frac{1}{2} \angle F I A_{1} \\

& =\frac{1}{4}(\angle E I D+\angle D I F+\angle F I E) \\

& =\frac{1}{4} \cdot 360^{\circ} \\

& =90^{\circ} .

\end{aligned}

$$

It follows that $A_{1} D$ is perpendicular to $B_{1} C_{1}$, and thus $A_{1} D$ passes through the orthocenter of $A_{1} B_{1} C_{1}$. Similarly, $A_{1} D, B_{1} E, C_{1} F$ all pass through the orthocenter of $A_{1} B_{1} C_{1}$.

|

proof

|

Yes

|

Yes

|

proof

|

Geometry

|

Let $A_{1}, B_{1}, C_{1}$ be the incenters of triangle $A E F, B D F, C D E$, respectively. Show that $A_{1} D, B_{1} E, C_{1} F$ all pass through the orthocenter of $A_{1} B_{1} C_{1}$.

|

Using the result from the previous problem, we see that $A_{1}, B_{1}, C_{1}$ are respectively the midpoints of the $\operatorname{arc} F E, F D, D F$ of the incircle. We have

$$

\begin{aligned}

\angle D A_{1} C_{1}+\angle B_{1} C_{1} A_{1} & =\frac{1}{2} \angle D I C_{1}+\frac{1}{2} \angle B_{1} I F+\frac{1}{2} \angle F I A_{1} \\

& =\frac{1}{4}(\angle E I D+\angle D I F+\angle F I E) \\

& =\frac{1}{4} \cdot 360^{\circ} \\

& =90^{\circ} .

\end{aligned}

$$

It follows that $A_{1} D$ is perpendicular to $B_{1} C_{1}$, and thus $A_{1} D$ passes through the orthocenter of $A_{1} B_{1} C_{1}$. Similarly, $A_{1} D, B_{1} E, C_{1} F$ all pass through the orthocenter of $A_{1} B_{1} C_{1}$.

|

{

"resource_path": "HarvardMIT/segmented/en-112-2008-feb-team2-solutions.jsonl",

"problem_match": "\n13. [35]",

"solution_match": "\nSolution: "

}

|

4521613b-f650-5ace-9e0c-1c09492b1068

| 608,366

|

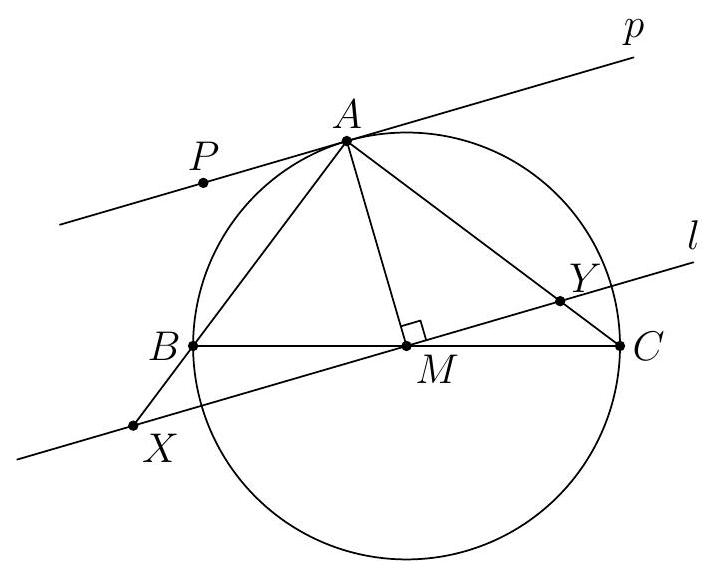

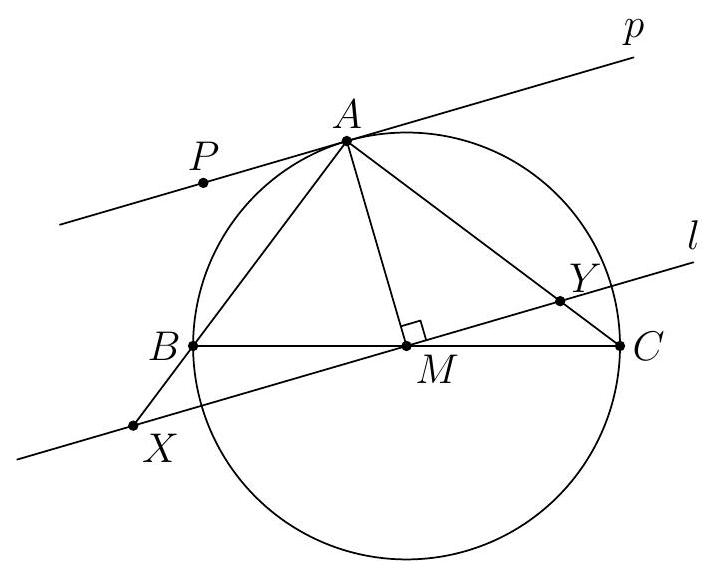

Let $X$ be the point on side $B C$ such that $B X=C D$. Show that the excircle $A B C$ opposite of vertex $A$ touches segment $B C$ at $X$.

|

Let the excircle touch lines $B C, A C$ and $A B$ at $X^{\prime}, Y$ and $Z$, respectively. Using the equal tangent property repeatedly, we have

$$

B X^{\prime}-X^{\prime} C=B Z-C Y=(E Y-C Y)-(F Z-B Z)=C E-B F=C D-B D .

$$

It follows that $B X^{\prime}=C D$, and thus $X^{\prime}=X$. So the excircle touches $B C$ at $X$.

|

proof

|

Yes

|

Yes

|

proof

|

Geometry

|

Let $X$ be the point on side $B C$ such that $B X=C D$. Show that the excircle $A B C$ opposite of vertex $A$ touches segment $B C$ at $X$.

|

Let the excircle touch lines $B C, A C$ and $A B$ at $X^{\prime}, Y$ and $Z$, respectively. Using the equal tangent property repeatedly, we have

$$

B X^{\prime}-X^{\prime} C=B Z-C Y=(E Y-C Y)-(F Z-B Z)=C E-B F=C D-B D .

$$

It follows that $B X^{\prime}=C D$, and thus $X^{\prime}=X$. So the excircle touches $B C$ at $X$.

|

{

"resource_path": "HarvardMIT/segmented/en-112-2008-feb-team2-solutions.jsonl",

"problem_match": "\n14. [40]",

"solution_match": "\nSolution: "

}

|

f72e89a8-28bf-5bc5-8740-243dd8ba5e75

| 608,367

|

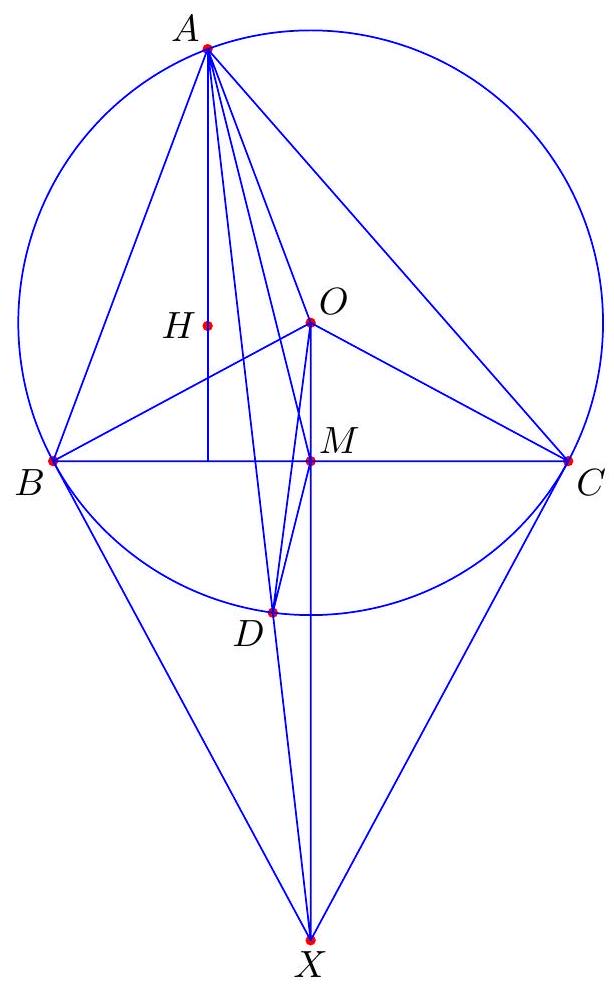

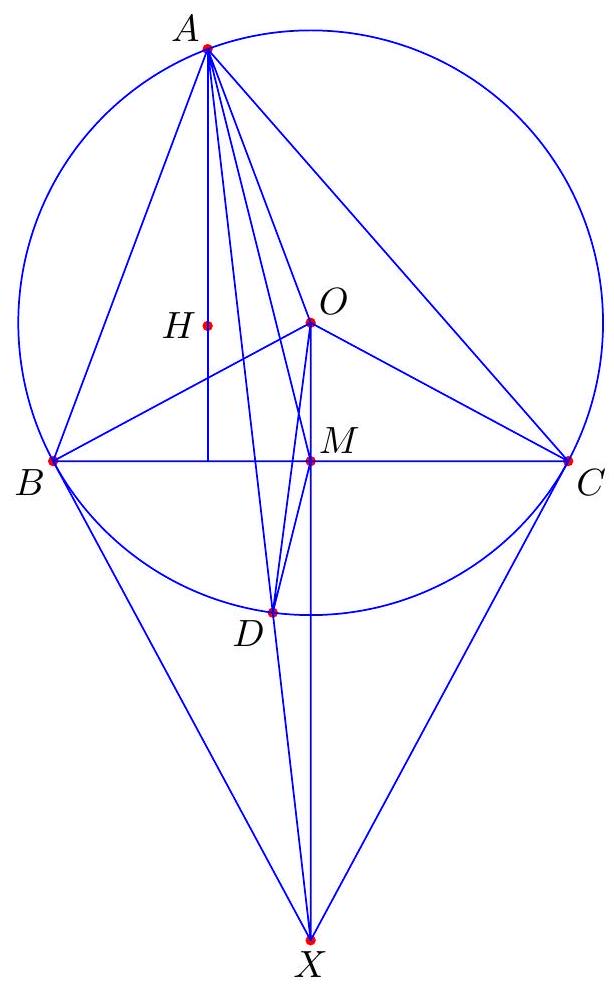

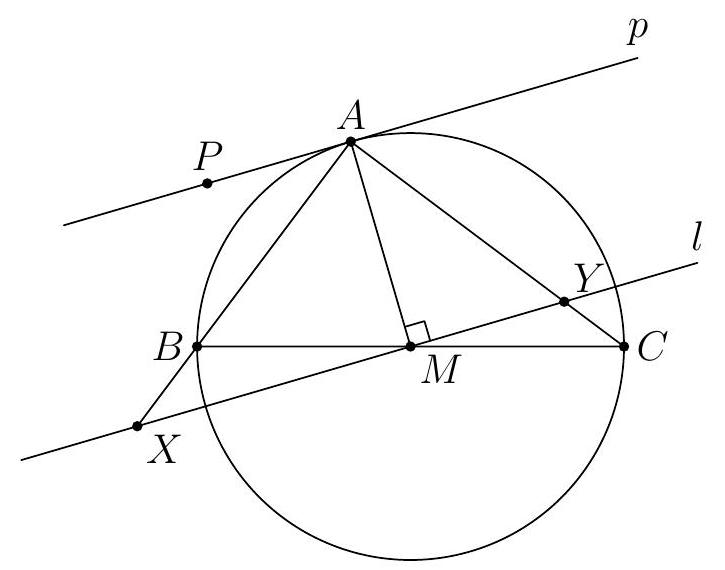

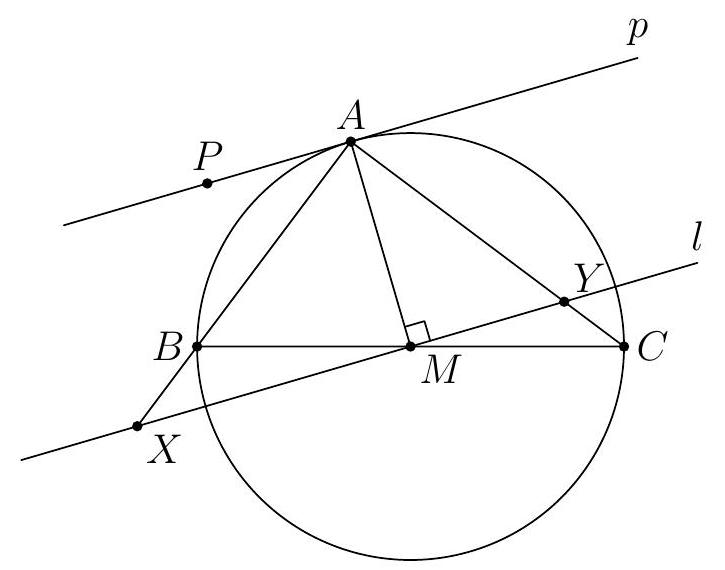

Let $X$ be as in the previous problem. Let $T$ be the point diametrically opposite to $D$ on on the incircle of $A B C$. Show that $A, T, X$ are collinear.

|

Consider a dilation centered at $A$ that carries the incircle to the excircle. This dilation must send the diameter $D T$ to some the diameter of excircle that is perpendicular to $B C$. The only such diameter is the one goes through $X$. It follows that $T$ gets carried to $X$. Therefore, $A, T, X$ are collinear.

## Glossary and some possibly useful facts

- A set of points is collinear if they lie on a common line. A set of lines is concurrent if they pass through a common point.

- Given $A B C$ a triangle, the three angle bisectors are concurrent at the incenter of the triangle. The incenter is the center of the incircle, which is the unique circle inscribed in $A B C$, tangent to all three sides.

- The excircles of a triangle $A B C$ are the three circles on the exterior the triangle but tangent to all three lines $A B, B C, C A$.

- The orthocenter of a triangle is the point of concurrency of the three altitudes.

- Ceva's theorem states that given $A B C$ a triangle, and points $X, Y, Z$ on sides $B C, C A, A B$, respectively, the lines $A X, B Y, C Z$ are concurrent if and only if

$$

\frac{B X}{X B} \cdot \frac{C Y}{Y A} \cdot \frac{A Z}{Z B}=1

$$

|

proof

|

Yes

|

Yes

|

proof

|

Geometry

|

Let $X$ be as in the previous problem. Let $T$ be the point diametrically opposite to $D$ on on the incircle of $A B C$. Show that $A, T, X$ are collinear.

|

Consider a dilation centered at $A$ that carries the incircle to the excircle. This dilation must send the diameter $D T$ to some the diameter of excircle that is perpendicular to $B C$. The only such diameter is the one goes through $X$. It follows that $T$ gets carried to $X$. Therefore, $A, T, X$ are collinear.

## Glossary and some possibly useful facts

- A set of points is collinear if they lie on a common line. A set of lines is concurrent if they pass through a common point.

- Given $A B C$ a triangle, the three angle bisectors are concurrent at the incenter of the triangle. The incenter is the center of the incircle, which is the unique circle inscribed in $A B C$, tangent to all three sides.

- The excircles of a triangle $A B C$ are the three circles on the exterior the triangle but tangent to all three lines $A B, B C, C A$.

- The orthocenter of a triangle is the point of concurrency of the three altitudes.

- Ceva's theorem states that given $A B C$ a triangle, and points $X, Y, Z$ on sides $B C, C A, A B$, respectively, the lines $A X, B Y, C Z$ are concurrent if and only if

$$

\frac{B X}{X B} \cdot \frac{C Y}{Y A} \cdot \frac{A Z}{Z B}=1

$$

|

{

"resource_path": "HarvardMIT/segmented/en-112-2008-feb-team2-solutions.jsonl",

"problem_match": "\n15. [40]",

"solution_match": "\nSolution: "

}

|

0b3a2597-7f6b-5328-8fda-b767d1ac045d

| 608,368

|

Say that $\frac{a}{b}$ is a positive rational number in simplest form, with $a \neq 1$. Further, say that $n$ is an integer such that:

$$

\frac{1}{n}>\frac{a}{b}>\frac{1}{n+1}

$$

Show that when $\frac{a}{b}-\frac{1}{n+1}$ is written in simplest form, its numerator is smaller than $a$.

|

$\quad \frac{a}{b}-\frac{1}{n+1}=\frac{a(n+1)-b}{b(n+1)}$. Therefore, when we write it in simplest form, its numerator will be at most $a(n+1)-b$. We claim that $a(n+1)-b<a$. Indeed, this is the same as $a n-b<0 \Longleftrightarrow a n<b \Longleftrightarrow \frac{b}{a}>n$, which is given.

|

proof

|

Yes

|

Yes

|

proof

|

Number Theory

|

Say that $\frac{a}{b}$ is a positive rational number in simplest form, with $a \neq 1$. Further, say that $n$ is an integer such that:

$$

\frac{1}{n}>\frac{a}{b}>\frac{1}{n+1}

$$

Show that when $\frac{a}{b}-\frac{1}{n+1}$ is written in simplest form, its numerator is smaller than $a$.

|

$\quad \frac{a}{b}-\frac{1}{n+1}=\frac{a(n+1)-b}{b(n+1)}$. Therefore, when we write it in simplest form, its numerator will be at most $a(n+1)-b$. We claim that $a(n+1)-b<a$. Indeed, this is the same as $a n-b<0 \Longleftrightarrow a n<b \Longleftrightarrow \frac{b}{a}>n$, which is given.

|

{

"resource_path": "HarvardMIT/segmented/en-121-2008-nov-team-solutions.jsonl",

"problem_match": "\n4. ",

"solution_match": "\nSolution: "

}

|

c1e35a4b-2e23-5782-b52c-aa52691b5560

| 608,456

|

Now, using information from problems 4 and 5 , prove that the following method to decompose any positive rational number will always terminate:

Step 1. Start with the fraction $\frac{a}{b}$. Let $t_{1}$ be the largest unit fraction $\frac{1}{n}$ which is less than or equal to $\frac{a}{b}$.

Step 2. If we have already chosen $t_{1}$ through $t_{k}$, and if $t_{1}+t_{2}+\ldots+t_{k}$ is still less than $\frac{a}{b}$, then let $t_{k+1}$ be the largest unit fraction less than both $t_{k}$ and $\frac{a}{b}$.

Step 3. If $t_{1}+\ldots+t_{k+1}$ equals $\frac{a}{b}$, the decomposition is found. Otherwise, repeat step 2 .

Why does this method never result in an infinite sequence of $t_{i}$ ?

|

Let $\frac{a_{k}}{b_{k}}=\frac{a}{b}-t_{1}-\ldots-t_{k}$, where $\frac{a_{k}}{b_{k}}$ is a fraction in simplest terms. Initially, this algorithm will have $t_{1}=1, t_{2}=\frac{1}{2}, t_{3}=\frac{1}{3}$, etc. until $\frac{a_{k}}{b_{k}}<\frac{1}{k+1}$. This will eventually happen by problem 5 , since there exists a $k$ such that $\frac{1}{1}+\ldots+\frac{1}{k+1}>\frac{a_{k}}{b_{k}}$. At that point, there is some $n$ with $\frac{1}{n}<t_{k}$ such that $\frac{1}{n}>\frac{a_{k}}{b_{k}}>\frac{1}{n+1}$. In this case, $t_{k+1}=\frac{1}{n+1}$.

Suppose that there exists $n_{k}$ such that $\frac{1}{n_{k}}>\frac{a_{k}}{b_{k}}>\frac{1}{n_{k}+1}$ for some $k$. Then we have $t_{k+1}=\frac{1}{n_{k}+1}$ and $\frac{a_{k+1}}{b_{k+1}}<\frac{1}{n_{k}\left(n_{k}+1\right)}$. This shows that once we have found $n_{k}$ such that $\frac{1}{n_{k}}>\frac{a_{k}}{b_{k}}>\frac{1}{n_{k}+1}$ and $\frac{1}{n_{k}} \leq t_{k}$, we no longer have to worry about $t_{k+1}$ being less than $t_{k}$, since $t_{k+1}=\frac{1}{n_{k}+1}<\frac{1}{n_{k}}<$ $t_{k}$, and also $n_{k+1} \geq n_{k}\left(n_{k}+1\right)$ while $\frac{1}{n_{k}\left(n_{k}+1\right)} \leq \frac{1}{n_{k}+1}=t_{k+1}$.

On the other hand, once we have found such an $n_{k}$, the sequence $\left\{a_{k}\right\}$ must be decreasing by problem 4. Since the $a_{k}$ are all integers, we eventually have to get to 0 (as there is no infinite decreasing sequence of positive integers). Therefore, after some finite number of steps the algorithm terminates with $a_{k+1}=0$, so $0=\frac{a_{k}}{b_{k}}=\frac{a}{b}-t_{1}-\ldots-t_{k}$, so $\frac{a}{b}=t_{1}+\ldots+t_{k}$, which is what we wanted.

## Juicy Numbers [100]

A juicy number is an integer $j>1$ for which there is a sequence $a_{1}<a_{2}<\ldots<a_{k}$ of positive integers such that $a_{k}=j$ and such that the sum of the reciprocals of all the $a_{i}$ is 1 . For example, 6 is a juicy number because $\frac{1}{2}+\frac{1}{3}+\frac{1}{6}=1$, but 2 is not juicy.

In this part, you will investigate some of the properties of juicy numbers. Remember that if you do not solve a question, you can still use its result on later questions.

|

proof

|

Yes

|

Yes

|

proof

|

Number Theory

|

Now, using information from problems 4 and 5 , prove that the following method to decompose any positive rational number will always terminate:

Step 1. Start with the fraction $\frac{a}{b}$. Let $t_{1}$ be the largest unit fraction $\frac{1}{n}$ which is less than or equal to $\frac{a}{b}$.

Step 2. If we have already chosen $t_{1}$ through $t_{k}$, and if $t_{1}+t_{2}+\ldots+t_{k}$ is still less than $\frac{a}{b}$, then let $t_{k+1}$ be the largest unit fraction less than both $t_{k}$ and $\frac{a}{b}$.

Step 3. If $t_{1}+\ldots+t_{k+1}$ equals $\frac{a}{b}$, the decomposition is found. Otherwise, repeat step 2 .

Why does this method never result in an infinite sequence of $t_{i}$ ?

|

Let $\frac{a_{k}}{b_{k}}=\frac{a}{b}-t_{1}-\ldots-t_{k}$, where $\frac{a_{k}}{b_{k}}$ is a fraction in simplest terms. Initially, this algorithm will have $t_{1}=1, t_{2}=\frac{1}{2}, t_{3}=\frac{1}{3}$, etc. until $\frac{a_{k}}{b_{k}}<\frac{1}{k+1}$. This will eventually happen by problem 5 , since there exists a $k$ such that $\frac{1}{1}+\ldots+\frac{1}{k+1}>\frac{a_{k}}{b_{k}}$. At that point, there is some $n$ with $\frac{1}{n}<t_{k}$ such that $\frac{1}{n}>\frac{a_{k}}{b_{k}}>\frac{1}{n+1}$. In this case, $t_{k+1}=\frac{1}{n+1}$.

Suppose that there exists $n_{k}$ such that $\frac{1}{n_{k}}>\frac{a_{k}}{b_{k}}>\frac{1}{n_{k}+1}$ for some $k$. Then we have $t_{k+1}=\frac{1}{n_{k}+1}$ and $\frac{a_{k+1}}{b_{k+1}}<\frac{1}{n_{k}\left(n_{k}+1\right)}$. This shows that once we have found $n_{k}$ such that $\frac{1}{n_{k}}>\frac{a_{k}}{b_{k}}>\frac{1}{n_{k}+1}$ and $\frac{1}{n_{k}} \leq t_{k}$, we no longer have to worry about $t_{k+1}$ being less than $t_{k}$, since $t_{k+1}=\frac{1}{n_{k}+1}<\frac{1}{n_{k}}<$ $t_{k}$, and also $n_{k+1} \geq n_{k}\left(n_{k}+1\right)$ while $\frac{1}{n_{k}\left(n_{k}+1\right)} \leq \frac{1}{n_{k}+1}=t_{k+1}$.

On the other hand, once we have found such an $n_{k}$, the sequence $\left\{a_{k}\right\}$ must be decreasing by problem 4. Since the $a_{k}$ are all integers, we eventually have to get to 0 (as there is no infinite decreasing sequence of positive integers). Therefore, after some finite number of steps the algorithm terminates with $a_{k+1}=0$, so $0=\frac{a_{k}}{b_{k}}=\frac{a}{b}-t_{1}-\ldots-t_{k}$, so $\frac{a}{b}=t_{1}+\ldots+t_{k}$, which is what we wanted.

## Juicy Numbers [100]

A juicy number is an integer $j>1$ for which there is a sequence $a_{1}<a_{2}<\ldots<a_{k}$ of positive integers such that $a_{k}=j$ and such that the sum of the reciprocals of all the $a_{i}$ is 1 . For example, 6 is a juicy number because $\frac{1}{2}+\frac{1}{3}+\frac{1}{6}=1$, but 2 is not juicy.

In this part, you will investigate some of the properties of juicy numbers. Remember that if you do not solve a question, you can still use its result on later questions.

|

{

"resource_path": "HarvardMIT/segmented/en-121-2008-nov-team-solutions.jsonl",

"problem_match": "\n6. ",

"solution_match": "\nSolution: "

}

|

81161faf-9a32-5ded-b80f-e40b1a3d0f23

| 608,458

|

Let $p$ be a prime. Given a sequence of positive integers $b_{1}$ through $b_{n}$, exactly one of which is divisible by $p$, show that when

$$

\frac{1}{b_{1}}+\frac{1}{b_{2}}+\ldots+\frac{1}{b_{n}}

$$

is written as a fraction in lowest terms, then its denominator is divisible by $p$. Use this fact to explain why no prime $p$ is ever juicy.

|

We can assume that $b_{n}$ is the term divisible by $p$ (i.e. $b_{n}=k p$ ) since the order of addition doesn't matter. We can then write

$$

\frac{1}{b_{1}}+\frac{1}{b_{2}}+\ldots+\frac{1}{b_{n-1}}=\frac{a}{b}

$$

where $b$ is not divisible by $p$ (since none of the $b_{i}$ are). But then $\frac{a}{b}+\frac{1}{k p}=\frac{k p a+b}{k p b}$. Since $b$ is not divisible by $p, k p a+b$ is not divisible by $p$, so we cannot remove the factor of $p$ from the denominator. In particular, $p$ cannot be juicy as 1 can be written as $\frac{1}{1}$, which has a denominator not divisible by $p$, whereas being juicy means we have a sum $\frac{1}{b_{1}}+\ldots+\frac{1}{b_{n}}=1$, where $b_{1}<b_{2}<\ldots<b_{n}=p$, and so in particular none of the $b_{i}$ with $i<n$ are divisible by p.

|

proof

|

Yes

|

Yes

|

proof

|

Number Theory

|

Let $p$ be a prime. Given a sequence of positive integers $b_{1}$ through $b_{n}$, exactly one of which is divisible by $p$, show that when

$$

\frac{1}{b_{1}}+\frac{1}{b_{2}}+\ldots+\frac{1}{b_{n}}

$$

is written as a fraction in lowest terms, then its denominator is divisible by $p$. Use this fact to explain why no prime $p$ is ever juicy.

|

We can assume that $b_{n}$ is the term divisible by $p$ (i.e. $b_{n}=k p$ ) since the order of addition doesn't matter. We can then write

$$

\frac{1}{b_{1}}+\frac{1}{b_{2}}+\ldots+\frac{1}{b_{n-1}}=\frac{a}{b}

$$

where $b$ is not divisible by $p$ (since none of the $b_{i}$ are). But then $\frac{a}{b}+\frac{1}{k p}=\frac{k p a+b}{k p b}$. Since $b$ is not divisible by $p, k p a+b$ is not divisible by $p$, so we cannot remove the factor of $p$ from the denominator. In particular, $p$ cannot be juicy as 1 can be written as $\frac{1}{1}$, which has a denominator not divisible by $p$, whereas being juicy means we have a sum $\frac{1}{b_{1}}+\ldots+\frac{1}{b_{n}}=1$, where $b_{1}<b_{2}<\ldots<b_{n}=p$, and so in particular none of the $b_{i}$ with $i<n$ are divisible by p.

|

{

"resource_path": "HarvardMIT/segmented/en-121-2008-nov-team-solutions.jsonl",

"problem_match": "\n3. ",

"solution_match": "\nSolution: "

}

|

f2742ee7-a8ba-564b-b6ad-f9bb46bdca42

| 608,461

|

Let $n \geq 3$ be a positive integer. A triangulation of a convex $n$-gon is a set of $n-3$ of its diagonals which do not intersect in the interior of the polygon. Along with the $n$ sides, these diagonals separate the polygon into $n-2$ disjoint triangles. Any triangulation can be viewed as a graph: the vertices of the graph are the corners of the polygon, and the $n$ sides and $n-3$ diagonals are the edges.

For a fixed $n$-gon, different triangulations correspond to different graphs. Prove that all of these graphs have the same chromatic number.

|

We will show that all triangulations have chromatic number 3, by induction on $n$. As a base case, if $n=3$, a triangle has chromatic number 3. Now, given a triangulation of an $n$-gon for $n>3$, every edge is either a side or a diagonal of the polygon. There are $n$ sides and only $n-3$ diagonals in the edge-set, so the Pigeonhole Principle guarentees a triangle with two side edges. These two sides must be adjacent, so we can remove this triangle to leave a triangulation of an ( $n-1$ )-gon, which has chromatic number 3 by the inductive hypothesis. Adding the last triangle adds only one new vertex with two neighbors, so we can color this vertex with one of the three colors not used on its neighbors.

|

proof

|

Yes

|

Yes

|

proof

|

Combinatorics

|

Let $n \geq 3$ be a positive integer. A triangulation of a convex $n$-gon is a set of $n-3$ of its diagonals which do not intersect in the interior of the polygon. Along with the $n$ sides, these diagonals separate the polygon into $n-2$ disjoint triangles. Any triangulation can be viewed as a graph: the vertices of the graph are the corners of the polygon, and the $n$ sides and $n-3$ diagonals are the edges.

For a fixed $n$-gon, different triangulations correspond to different graphs. Prove that all of these graphs have the same chromatic number.

|

We will show that all triangulations have chromatic number 3, by induction on $n$. As a base case, if $n=3$, a triangle has chromatic number 3. Now, given a triangulation of an $n$-gon for $n>3$, every edge is either a side or a diagonal of the polygon. There are $n$ sides and only $n-3$ diagonals in the edge-set, so the Pigeonhole Principle guarentees a triangle with two side edges. These two sides must be adjacent, so we can remove this triangle to leave a triangulation of an ( $n-1$ )-gon, which has chromatic number 3 by the inductive hypothesis. Adding the last triangle adds only one new vertex with two neighbors, so we can color this vertex with one of the three colors not used on its neighbors.

|

{

"resource_path": "HarvardMIT/segmented/en-122-2009-feb-team1-solutions.jsonl",

"problem_match": "\n1. [8]",

"solution_match": "\nSolution: "

}

|

9209b951-07aa-5f5a-aa65-a6c8d2eed284

| 608,547

|

A graph is finite if it has a finite number of vertices.

(a) $[6]$ Let $G$ be a finite graph in which every vertex has degree $k$. Prove that the chromatic number of $G$ is at most $k+1$.

|

We find a good coloring with $k+1$ colors. Order the vertices and color them one by one. Since each vertex has at most $k$ neighbors, one of the $k+1$ colors has not been used on a neighbor, so there is always a good color for that vertex. In fact, we have shows that any graph in which every vertex has degree at most $k$ can be colored with $k+1$ colors.

(b) [10] In terms of $n$, what is the minimum number of edges a finite graph with chromatic number $n$ could have? Prove your answer.

|

proof

|

Yes

|

Yes

|

proof

|

Combinatorics

|

A graph is finite if it has a finite number of vertices.

(a) $[6]$ Let $G$ be a finite graph in which every vertex has degree $k$. Prove that the chromatic number of $G$ is at most $k+1$.

|

We find a good coloring with $k+1$ colors. Order the vertices and color them one by one. Since each vertex has at most $k$ neighbors, one of the $k+1$ colors has not been used on a neighbor, so there is always a good color for that vertex. In fact, we have shows that any graph in which every vertex has degree at most $k$ can be colored with $k+1$ colors.

(b) [10] In terms of $n$, what is the minimum number of edges a finite graph with chromatic number $n$ could have? Prove your answer.

|

{

"resource_path": "HarvardMIT/segmented/en-122-2009-feb-team1-solutions.jsonl",

"problem_match": "\n3. ",

"solution_match": "\nSolution: "

}

|

d3aa505e-c42f-522c-8773-0f5c0ecfa3c6

| 608,549

|

The size of a finite graph is the number of vertices in the graph.

(a) [15] Show that, for any $n>2$, and any positive integer $N$, there are finite graphs with size at least $N$ and with chromatic number $n$ such that removing any vertex (and all its incident edges) from the graph decreases its chromatic number.

|

Let $k>1$ be an odd number, and let $G$ be a graph with $k$ vertices arranged in a circle, with each vertex connected to its two neighbors. If $n=3$, these graphs can be arbitrarily large, and are the graphs we need. If $n>3$, let $H$ be a complete graph on $n-3$ vertices, and let $J$ be the graph created by adding an edge from every vertex in $G$ to every vertex in $H$. Then $n-3$ colors are needed to color $H$ and another 3 are

needed to color $G$, so $n$ colors is both necessary and sufficient for a good coloring of $J$. Now, say a vertex is removed from $J$. There are two cases:

If the vertex was removed from $G$, then the remaining vertices in $G$ can be colored with 2 colors, because the cycle has been broken. A set of $n-3$ different colors can be used to color $H$, so only $n-1$ colors are needed to color the reduced graph. On the other hand, if the vertex was removed from $H$, then $n-4$ colors are used to color $H$ and 3 used to color $G$. So removing any vertex decreases the chromatic number of $J$.

(b) [15] Show that, for any positive integers $n$ and $r$, there exists a positive integer $N$ such that for any finite graph having size at least $N$ and chromatic number equal to $n$, it is possible to remove $r$ vertices (and all their incident edges) in such a way that the remaining vertices form a graph with chromatic number at least $n-1$.

|

proof

|

Yes

|

Yes

|

proof

|

Combinatorics

|

The size of a finite graph is the number of vertices in the graph.

(a) [15] Show that, for any $n>2$, and any positive integer $N$, there are finite graphs with size at least $N$ and with chromatic number $n$ such that removing any vertex (and all its incident edges) from the graph decreases its chromatic number.

|

Let $k>1$ be an odd number, and let $G$ be a graph with $k$ vertices arranged in a circle, with each vertex connected to its two neighbors. If $n=3$, these graphs can be arbitrarily large, and are the graphs we need. If $n>3$, let $H$ be a complete graph on $n-3$ vertices, and let $J$ be the graph created by adding an edge from every vertex in $G$ to every vertex in $H$. Then $n-3$ colors are needed to color $H$ and another 3 are

needed to color $G$, so $n$ colors is both necessary and sufficient for a good coloring of $J$. Now, say a vertex is removed from $J$. There are two cases:

If the vertex was removed from $G$, then the remaining vertices in $G$ can be colored with 2 colors, because the cycle has been broken. A set of $n-3$ different colors can be used to color $H$, so only $n-1$ colors are needed to color the reduced graph. On the other hand, if the vertex was removed from $H$, then $n-4$ colors are used to color $H$ and 3 used to color $G$. So removing any vertex decreases the chromatic number of $J$.

(b) [15] Show that, for any positive integers $n$ and $r$, there exists a positive integer $N$ such that for any finite graph having size at least $N$ and chromatic number equal to $n$, it is possible to remove $r$ vertices (and all their incident edges) in such a way that the remaining vertices form a graph with chromatic number at least $n-1$.

|

{

"resource_path": "HarvardMIT/segmented/en-122-2009-feb-team1-solutions.jsonl",

"problem_match": "\n5. ",

"solution_match": "\nSolution: "

}

|

4c32fb52-d72e-5247-8e7d-2c86e33933ce

| 608,551

|

For any set of graphs $G_{1}, G_{2}, \ldots, G_{n}$ all having the same set of vertices $V$, define their overlap, denoted $G_{1} \cup G_{2} \cup \cdots \cup G_{n}$, to be the graph having vertex set $V$ for which two vertices are adjacent in the overlap if and only if they are adjacent in at least one of the graphs $G_{i}$.

(a) $[\mathbf{1 0}]$ Let $G$ and $H$ be graphs having the same vertex set and let $a$ be the chromatic number of $G$ and $b$ the chromatic number of $H$. Find, in terms of $a$ and $b$, the largest possible chromatic number of $G \cup H$. Prove your answer.

|

[NOTE: This problem differs from the problem statement in the test as administered at the 2009 HMMT. The reader is encouraged to try it before reading the solution.]

The bound on $k$ follows from iterating part (a).

Let $G$ be a graph with chromatic number $n$. Consider a coloring of $G$ using $n$ colors labeled $1,2, \ldots, n$. For $i$ from 1 to $\left\lceil\log _{2}(n)\right\rceil$, define $G_{i}$ to be the graph on the vertices of $G$ for which two vertices are connected by an edge if and only if the $i$ th digit from the right in the binary expansions of their colors do not match. Clearly each of the graphs $G_{i}$ have chromatic number at most 2 , by coloring each node with the $i$ th digit of the binary expansion of their color in $G$. Moreover, each edge occurs in some $G_{i}$, since if two vertices match in every digit they are not connected by an edge. Therefore $G_{1} \cup G_{2} \cup \cdots \cup G_{\left\lceil\log _{2}(n)\right\rceil}=G$, and so we have found such a decomposition of $G$.

|

proof

|

Yes

|

Yes

|

proof

|

Combinatorics

|

For any set of graphs $G_{1}, G_{2}, \ldots, G_{n}$ all having the same set of vertices $V$, define their overlap, denoted $G_{1} \cup G_{2} \cup \cdots \cup G_{n}$, to be the graph having vertex set $V$ for which two vertices are adjacent in the overlap if and only if they are adjacent in at least one of the graphs $G_{i}$.

(a) $[\mathbf{1 0}]$ Let $G$ and $H$ be graphs having the same vertex set and let $a$ be the chromatic number of $G$ and $b$ the chromatic number of $H$. Find, in terms of $a$ and $b$, the largest possible chromatic number of $G \cup H$. Prove your answer.

|

[NOTE: This problem differs from the problem statement in the test as administered at the 2009 HMMT. The reader is encouraged to try it before reading the solution.]

The bound on $k$ follows from iterating part (a).

Let $G$ be a graph with chromatic number $n$. Consider a coloring of $G$ using $n$ colors labeled $1,2, \ldots, n$. For $i$ from 1 to $\left\lceil\log _{2}(n)\right\rceil$, define $G_{i}$ to be the graph on the vertices of $G$ for which two vertices are connected by an edge if and only if the $i$ th digit from the right in the binary expansions of their colors do not match. Clearly each of the graphs $G_{i}$ have chromatic number at most 2 , by coloring each node with the $i$ th digit of the binary expansion of their color in $G$. Moreover, each edge occurs in some $G_{i}$, since if two vertices match in every digit they are not connected by an edge. Therefore $G_{1} \cup G_{2} \cup \cdots \cup G_{\left\lceil\log _{2}(n)\right\rceil}=G$, and so we have found such a decomposition of $G$.

|

{

"resource_path": "HarvardMIT/segmented/en-122-2009-feb-team1-solutions.jsonl",

"problem_match": "\n6. ",

"solution_match": "\nSolution: "

}

|

c986e895-05b9-57e5-9545-8506f0ac8175

| 608,552

|

Let $n$ be a positive integer. Let $V_{n}$ be the set of all sequences of 0 's and 1's of length $n$. Define $G_{n}$ to be the graph having vertex set $V_{n}$, such that two sequences are adjacent in $G_{n}$ if and only if they differ in either 1 or 2 places. For instance, if $n=3$, the sequences $(1,0,0)$, $(1,1,0)$, and $(1,1,1)$ are mutually adjacent, but $(1,0,0)$ is not adjacent to $(0,1,1)$.

Show that, if $n+1$ is not a power of 2 , then the chromatic number of $G_{n}$ is at least $n+2$.

|

We will assume that there is a coloring with $n+1$ colors and derive a contradiction. For each string $s$, let $T_{s}$ be the set consisting of all strings that differ from $s$ in at most 1 place. Thus $T_{s}$ has size $n+1$ and all vertices in $T_{s}$ are adjacent. In particular, if there is an $(n+1)$-coloring, then each color is used exactly once in $T_{s}$. Let $c$ be one of the colors that we used. We will determine how many vertices are colored with $c$. We will do this by counting in two ways.

Let $k$ be the number of vertices colored with color $c$. Each such vertex is part of $T_{s}$ for exactly $n+1$ values of $s$. On the other hand, each $T_{s}$ contains exactly one vertex with color $c$. It follows that $k(n+1)=2^{n}$. In particular, since $k$ is an integer, $n+1$ divides $2^{n}$. This is a contradiction since $n+1$ is now a power of 2 by assumption, so actually there can be no $n+1$-coloring, as claimed.

|

proof

|

Yes

|

Yes

|

proof

|

Combinatorics

|

Let $n$ be a positive integer. Let $V_{n}$ be the set of all sequences of 0 's and 1's of length $n$. Define $G_{n}$ to be the graph having vertex set $V_{n}$, such that two sequences are adjacent in $G_{n}$ if and only if they differ in either 1 or 2 places. For instance, if $n=3$, the sequences $(1,0,0)$, $(1,1,0)$, and $(1,1,1)$ are mutually adjacent, but $(1,0,0)$ is not adjacent to $(0,1,1)$.

Show that, if $n+1$ is not a power of 2 , then the chromatic number of $G_{n}$ is at least $n+2$.

|

We will assume that there is a coloring with $n+1$ colors and derive a contradiction. For each string $s$, let $T_{s}$ be the set consisting of all strings that differ from $s$ in at most 1 place. Thus $T_{s}$ has size $n+1$ and all vertices in $T_{s}$ are adjacent. In particular, if there is an $(n+1)$-coloring, then each color is used exactly once in $T_{s}$. Let $c$ be one of the colors that we used. We will determine how many vertices are colored with $c$. We will do this by counting in two ways.

Let $k$ be the number of vertices colored with color $c$. Each such vertex is part of $T_{s}$ for exactly $n+1$ values of $s$. On the other hand, each $T_{s}$ contains exactly one vertex with color $c$. It follows that $k(n+1)=2^{n}$. In particular, since $k$ is an integer, $n+1$ divides $2^{n}$. This is a contradiction since $n+1$ is now a power of 2 by assumption, so actually there can be no $n+1$-coloring, as claimed.

|

{

"resource_path": "HarvardMIT/segmented/en-122-2009-feb-team1-solutions.jsonl",

"problem_match": "\n7. [20]",

"solution_match": "\nSolution: "

}

|

b03af06a-5be7-5de0-a23e-be2950b63b84

| 608,553

|

Let $n \geq 3$ be a positive integer. A triangulation of a convex $n$-gon is a set of $n-3$ of its diagonals which do not intersect in the interior of the polygon. Along with the $n$ sides, these diagonals separate the polygon into $n-2$ disjoint triangles. Any triangulation can be viewed as a graph: the vertices of the graph are the corners of the polygon, and the $n$ sides and $n-3$ diagonals are the edges.

For a fixed $n$-gon, different triangulations correspond to different graphs. Prove that all of these graphs have the same chromatic number.

|

We will show that all triangulations have chromatic number 3, by induction on $n$. As a base case, if $n=3$, a triangle has chromatic number 3 . Now, given a triangulation of an $n$-gon for $n>3$, every edge is either a side or a diagonal of the polygon. There are $n$ sides and only $n-3$ diagonals in the edge-set, so the Pigeonhole Principle guarentees a triangle with two side edges. These two sides must be adjacent, so we can remove this triangle to leave a triangulation of an $n-1$-gon, which has chromatic number 3 by the inductive hypothesis. Adding the last triangle adds only one new vertex with two neighbors, so we can color this vertex with one of the three colors not used on its neighbors.

|

proof

|

Yes

|

Yes

|

proof

|

Combinatorics

|

Let $n \geq 3$ be a positive integer. A triangulation of a convex $n$-gon is a set of $n-3$ of its diagonals which do not intersect in the interior of the polygon. Along with the $n$ sides, these diagonals separate the polygon into $n-2$ disjoint triangles. Any triangulation can be viewed as a graph: the vertices of the graph are the corners of the polygon, and the $n$ sides and $n-3$ diagonals are the edges.

For a fixed $n$-gon, different triangulations correspond to different graphs. Prove that all of these graphs have the same chromatic number.

|

We will show that all triangulations have chromatic number 3, by induction on $n$. As a base case, if $n=3$, a triangle has chromatic number 3 . Now, given a triangulation of an $n$-gon for $n>3$, every edge is either a side or a diagonal of the polygon. There are $n$ sides and only $n-3$ diagonals in the edge-set, so the Pigeonhole Principle guarentees a triangle with two side edges. These two sides must be adjacent, so we can remove this triangle to leave a triangulation of an $n-1$-gon, which has chromatic number 3 by the inductive hypothesis. Adding the last triangle adds only one new vertex with two neighbors, so we can color this vertex with one of the three colors not used on its neighbors.

|

{

"resource_path": "HarvardMIT/segmented/en-122-2009-feb-team2-solutions.jsonl",

"problem_match": "\n3. [8]",

"solution_match": "\nSolution: "

}

|

9209b951-07aa-5f5a-aa65-a6c8d2eed284

| 608,547

|

Let $G$ be a finite graph in which every vertex has degree less than or equal to $k$. Prove that the chromatic number of $G$ is less than or equal to $k+1$.

|

Using a greedy algorithm we find a good coloring with $k+1$ colors. Order the vertices and color them one by one - since each vertex has at most $k$ neighbors, one of the $k+1$ colors has not been used on a neighbor, so there is always a good color for that vertex. In fact, we have shows that any graph in which every vertex has degree at most $k$ can be colored with $k+1$ colors.

|

proof

|

Yes

|

Yes

|

proof

|

Combinatorics

|

Let $G$ be a finite graph in which every vertex has degree less than or equal to $k$. Prove that the chromatic number of $G$ is less than or equal to $k+1$.

|

Using a greedy algorithm we find a good coloring with $k+1$ colors. Order the vertices and color them one by one - since each vertex has at most $k$ neighbors, one of the $k+1$ colors has not been used on a neighbor, so there is always a good color for that vertex. In fact, we have shows that any graph in which every vertex has degree at most $k$ can be colored with $k+1$ colors.

|

{

"resource_path": "HarvardMIT/segmented/en-122-2009-feb-team2-solutions.jsonl",

"problem_match": "\n4. [10]",

"solution_match": "\nSolution: "

}

|

2be76ca6-e1d3-5719-8f03-84f317e8a835

| 608,557

|

(a) [5] If a single vertex (and all its incident edges) is removed from a finite graph, show that the graph's chromatic number cannot decrease by more than 1.

|

Suppose the chromatic number of the graph was $C$, and removing a single vertex resulted in a graph with chromatic number at most $C-2$. Then we can color the remaining graph with at most $C-2$ colors. Replacing the vertex and its edges, we can then choose any color not already used to form a coloring of the original graph using at most $C-1$ colors, contradicting the fact that $C$ is the chromatic number of the graph.

(b) [15] Show that, for any $n>2$, there are infinitely many graphs with chromatic number $n$ such that removing any vertex (and all its incident edges) from the graph decreases its chromatic number.

|

proof

|

Yes

|

Yes

|

proof

|

Combinatorics

|

(a) [5] If a single vertex (and all its incident edges) is removed from a finite graph, show that the graph's chromatic number cannot decrease by more than 1.

|

Suppose the chromatic number of the graph was $C$, and removing a single vertex resulted in a graph with chromatic number at most $C-2$. Then we can color the remaining graph with at most $C-2$ colors. Replacing the vertex and its edges, we can then choose any color not already used to form a coloring of the original graph using at most $C-1$ colors, contradicting the fact that $C$ is the chromatic number of the graph.

(b) [15] Show that, for any $n>2$, there are infinitely many graphs with chromatic number $n$ such that removing any vertex (and all its incident edges) from the graph decreases its chromatic number.

|

{

"resource_path": "HarvardMIT/segmented/en-122-2009-feb-team2-solutions.jsonl",

"problem_match": "\n6. ",

"solution_match": "\nSolution: "

}

|

023b49a0-6ac7-5f2e-bcde-5ffcd40b9526

| 608,559

|

You are trying to sink a submarine. Every second, you launch a missile at a point of your choosing on the $x$-axis. If the submarine is at that point at that time, you sink it. A firing sequence is a sequence of real numbers that specify where you will fire at each second. For example, the firing sequence $2,3,5,6, \ldots$ means that you will fire at 2 after one second, 3 after two seconds, 5 after three seconds, 6 after four seconds, and so on.

(a) [5] Suppose that the submarine starts at the origin and travels along the positive $x$-axis with an (unknown) positive integer velocity. Show that there is a firing sequence that is guaranteed to hit the submarine eventually.

|

The firing sequence $1,4,9, \ldots, n^{2}, \ldots$ works. If the velocity of the submarine is $v$, then after $v$ seconds it will be at $x=v^{2}$, the same location where the mine explodes at time $v$.

(b) [10] Suppose now that the submarine starts at an unknown integer point on the non-negative $x$-axis and again travels with an unknown positive integer velocity. Show that there is still a firing sequence that is guaranteed to hit the submarine eventually.

|

proof

|

Yes

|

Yes

|

proof

|

Number Theory

|

You are trying to sink a submarine. Every second, you launch a missile at a point of your choosing on the $x$-axis. If the submarine is at that point at that time, you sink it. A firing sequence is a sequence of real numbers that specify where you will fire at each second. For example, the firing sequence $2,3,5,6, \ldots$ means that you will fire at 2 after one second, 3 after two seconds, 5 after three seconds, 6 after four seconds, and so on.

(a) [5] Suppose that the submarine starts at the origin and travels along the positive $x$-axis with an (unknown) positive integer velocity. Show that there is a firing sequence that is guaranteed to hit the submarine eventually.

|

The firing sequence $1,4,9, \ldots, n^{2}, \ldots$ works. If the velocity of the submarine is $v$, then after $v$ seconds it will be at $x=v^{2}$, the same location where the mine explodes at time $v$.

(b) [10] Suppose now that the submarine starts at an unknown integer point on the non-negative $x$-axis and again travels with an unknown positive integer velocity. Show that there is still a firing sequence that is guaranteed to hit the submarine eventually.

|

{

"resource_path": "HarvardMIT/segmented/en-132-2010-feb-team1-solutions.jsonl",

"problem_match": "\n1. ",

"solution_match": "\nSolution: "

}

|

f6571ba6-9250-5547-86ab-7b530d2324ab

| 608,702

|

You are trying to sink a submarine. Every second, you launch a missile at a point of your choosing on the $x$-axis. If the submarine is at that point at that time, you sink it. A firing sequence is a sequence of real numbers that specify where you will fire at each second. For example, the firing sequence $2,3,5,6, \ldots$ means that you will fire at 2 after one second, 3 after two seconds, 5 after three seconds, 6 after four seconds, and so on.

(a) [5] Suppose that the submarine starts at the origin and travels along the positive $x$-axis with an (unknown) positive integer velocity. Show that there is a firing sequence that is guaranteed to hit the submarine eventually.

|

Represent the submarine's motion by an ordered pair ( $a, b$ ), where $a$ is the starting point of the submarine and $b$ is its velocity. We want to find a way to map each positive integer to a possible ordered pair so that every ordered pair is covered. This way, if we fire at $b_{n} n+a_{n}$ at time $n$, where $\left(a_{n}, b_{n}\right)$ is the point that $n$ maps to, then we will eventually hit the submarine. (Keep in mind that $b_{n} n+a_{n}$ would be the location of the submarine at time $n$.) There are many such ways to map the positive integers to possible points; here is one way:

$$

\begin{aligned}

& 1 \rightarrow(1,1), 2 \rightarrow(2,1), 3 \rightarrow(1,2), 4 \rightarrow(3,1), 5 \rightarrow(2,2), 6 \rightarrow(1,3), 7 \rightarrow(4,1), 8 \rightarrow(3,2), \\

& 9 \rightarrow(2,3), 10 \rightarrow(1,4), 11 \rightarrow(5,1), 12 \rightarrow(4,2), 13 \rightarrow(3,3), 14 \rightarrow(2,4), 15 \rightarrow(1,5), \ldots

\end{aligned}

$$

(The path of points trace out diagonal lines that sweep every lattice point in the coordinate plane.) Since we cover every point, we will eventually hit the submarine.

Remark: The mapping shown above is known as a bijection between the positive integers and ordered pairs of integers $(a, b)$ where $b>0$.

|

proof

|

Yes

|

Yes

|

proof

|

Number Theory

|

You are trying to sink a submarine. Every second, you launch a missile at a point of your choosing on the $x$-axis. If the submarine is at that point at that time, you sink it. A firing sequence is a sequence of real numbers that specify where you will fire at each second. For example, the firing sequence $2,3,5,6, \ldots$ means that you will fire at 2 after one second, 3 after two seconds, 5 after three seconds, 6 after four seconds, and so on.

(a) [5] Suppose that the submarine starts at the origin and travels along the positive $x$-axis with an (unknown) positive integer velocity. Show that there is a firing sequence that is guaranteed to hit the submarine eventually.

|

Represent the submarine's motion by an ordered pair ( $a, b$ ), where $a$ is the starting point of the submarine and $b$ is its velocity. We want to find a way to map each positive integer to a possible ordered pair so that every ordered pair is covered. This way, if we fire at $b_{n} n+a_{n}$ at time $n$, where $\left(a_{n}, b_{n}\right)$ is the point that $n$ maps to, then we will eventually hit the submarine. (Keep in mind that $b_{n} n+a_{n}$ would be the location of the submarine at time $n$.) There are many such ways to map the positive integers to possible points; here is one way:

$$

\begin{aligned}

& 1 \rightarrow(1,1), 2 \rightarrow(2,1), 3 \rightarrow(1,2), 4 \rightarrow(3,1), 5 \rightarrow(2,2), 6 \rightarrow(1,3), 7 \rightarrow(4,1), 8 \rightarrow(3,2), \\

& 9 \rightarrow(2,3), 10 \rightarrow(1,4), 11 \rightarrow(5,1), 12 \rightarrow(4,2), 13 \rightarrow(3,3), 14 \rightarrow(2,4), 15 \rightarrow(1,5), \ldots

\end{aligned}

$$

(The path of points trace out diagonal lines that sweep every lattice point in the coordinate plane.) Since we cover every point, we will eventually hit the submarine.

Remark: The mapping shown above is known as a bijection between the positive integers and ordered pairs of integers $(a, b)$ where $b>0$.

|

{

"resource_path": "HarvardMIT/segmented/en-132-2010-feb-team1-solutions.jsonl",

"problem_match": "\n1. ",

"solution_match": "\nSolution: "

}

|

f6571ba6-9250-5547-86ab-7b530d2324ab

| 608,702

|

Call a positive integer in base $10 k$-good if we can split it into two integers y and z , such that y is all digits on the left and z is all digits on the right, and such that $y=k \cdot z$. For example, 2010 is 2 -good because we can split it into 20 and 10 and $20=2 \cdot 10.20010$ is also 2 -good, because we can split it into 20 and 010 . In addition, it is 20 -good, because we can split it into 200 and 10 .

Show that there exists a 48 -good perfect square.

|