repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

dropbox/sqlalchemy-stubs | sqlalchemy | 240 | Assigning to a Union of a nullable and non-nullable column fails | I'm running the latest (at the time of writing) `mypy` (0.950) and `sqlalchemy-stubs` (0.4) and hitting this issue:

```python

from sqlalchemy import Column, Integer

from sqlalchemy.ext.declarative import declarative_base

Base = declarative_base()

class Dog(Base):

__tablename__ = 'dogs'

age = Column(Integer)

class Cat(Base):

__tablename__ = 'cats'

age = Column(Integer, nullable=False)

Animal = Dog | Cat

animal: Animal = Cat()

animal.age = 20 # Mypy error, should be fine!

```

The error I get is:

```

error: Incompatible types in assignment (expression has type "int", variable has type "Union[Column[Optional[int]], Column[int]]")

```

but I don't think there should be an error.

My mypy config in my pyproject.toml is just:

```

[tool.mypy]

plugins = "sqlmypy"

```

| open | 2022-05-16T20:00:08Z | 2022-05-16T20:00:08Z | https://github.com/dropbox/sqlalchemy-stubs/issues/240 | [] | Garrett-R | 0 |

holoviz/panel | plotly | 7,343 | Directly export notebook app into interactive HTML | <!--

Thanks for contacting us! Please read and follow these instructions carefully, then you can delete this introductory text. Note that the issue tracker is NOT the place for usage questions and technical assistance; post those at [Discourse](https://discourse.holoviz.org) instead. Issues without the required information below may be closed immediately.

-->

#### Is your feature request related to a problem? Please describe.

I have many situation where I need to export the notebook app into HTML rendered by panel for sharing purpose. I understand there is `.save` method to export into HTML, but It needs me to explicitly concate and arrange the panel object before exporting. It is somehow not handy. Although jupyter nbconvert can export into HTML, but it is not interactive, not like panel

#### Describe the solution you'd like

It would be great if we can have some command line to export whole notebook into HTML in defaut layout or `.servable()` layout. e.g.:

```

panel export notebook.ipynb --servable

```

#### Describe alternatives you've considered

I have to explicitly concate and arrange the panel object before exporting

| open | 2024-09-29T09:51:01Z | 2025-02-20T15:04:53Z | https://github.com/holoviz/panel/issues/7343 | [

"type: feature"

] | YongcaiHuang | 5 |

deezer/spleeter | deep-learning | 514 | Deleted pretrained_models folder and now it tracebacks when redownloading | I was cleaning up my home directory and deleted the pretrained_models folder a while ago. Now when I run Spleeter I get the traceback below. I'm not sure if this is user error or not. I solved it by manually downloading and extracting the models, so this isn't a blocker or anything.

C:\Users\Simon Jaeger>c:\python37\python -m spleeter separate -o spleeter -p spleeter:2stems -i test.flac

INFO:spleeter:Downloading model archive https://github.com/deezer/spleeter/releases/download/v1.4.0/2stems.tar.gz

Traceback (most recent call last):

File "c:\python37\lib\runpy.py", line 193, in _run_module_as_main

"__main__", mod_spec)

File "c:\python37\lib\runpy.py", line 85, in _run_code

exec(code, run_globals)

File "c:\python37\lib\site-packages\spleeter\__main__.py", line 58, in <module>

entrypoint()

File "c:\python37\lib\site-packages\spleeter\__main__.py", line 54, in entrypoint

main(sys.argv)

File "c:\python37\lib\site-packages\spleeter\__main__.py", line 46, in main

entrypoint(arguments, params)

File "c:\python37\lib\site-packages\spleeter\commands\separate.py", line 45, in entrypoint

synchronous=False

File "c:\python37\lib\site-packages\spleeter\separator.py", line 310, in separate_to_file

sources = self.separate(waveform, audio_descriptor)

File "c:\python37\lib\site-packages\spleeter\separator.py", line 271, in separate

return self._separate_librosa(waveform, audio_descriptor)

File "c:\python37\lib\site-packages\spleeter\separator.py", line 247, in _separate_librosa

sess = self._get_session()

File "c:\python37\lib\site-packages\spleeter\separator.py", line 228, in _get_session

get_default_model_dir(self._params['model_dir']))

File "c:\python37\lib\site-packages\spleeter\utils\estimator.py", line 25, in get_default_model_dir

return model_provider.get(model_dir)

File "c:\python37\lib\site-packages\spleeter\model\provider\__init__.py", line 67, in get

model_directory)

File "c:\python37\lib\site-packages\spleeter\model\provider\github.py", line 97, in download

with requests.get(url, stream=True) as response:

AttributeError: __enter__

| closed | 2020-11-08T07:19:21Z | 2020-11-20T14:20:07Z | https://github.com/deezer/spleeter/issues/514 | [] | Simon818 | 1 |

ageitgey/face_recognition | python | 776 | face_landmarks not accurate | Hi,I am building a toy robot head with camera to imitate a human facial expression.

If I raise my eyebrow in front of the camera,the eyebrows in the face_landmark doesn't raise as much as I do,in fact,the landmark changes only a little. It seems like the algorithm is predicting where the eyebrow should be,rather than detecting where the eyebrow really is.

Do you have any suggestions how I can achieve my goal?

Thank you. | open | 2019-03-18T16:21:28Z | 2019-03-18T16:21:28Z | https://github.com/ageitgey/face_recognition/issues/776 | [] | hyansuper | 0 |

idealo/imagededup | computer-vision | 74 | Duplicates not found, even if the source and test images are the same | I took the [CIFAR 10 example code](https://idealo.github.io/imagededup/examples/CIFAR10_deduplication/)

But I get following error even though `duplicates_test` variable contains two duplicates which are basically same images in both source and test folders `{'labels12-source.jpg': [], 'labels9-source.jpg': []}`

This is the error that I'm getting

`plot_duplicates(image_dir=image_dir, duplicate_map=duplicates_test, filename=list(duplicates_test.keys())[0])

File "D:\ProgramData\Anaconda3\lib\site-packages\imagededup\utils\plotter.py", line 123, in plot_duplicates

assert len(retrieved) != 0, 'Provided filename has no duplicates!'

AssertionError: Provided filename has no duplicates!` | closed | 2019-11-15T15:01:52Z | 2019-11-27T15:23:49Z | https://github.com/idealo/imagededup/issues/74 | [] | zubairahmed-ai | 3 |

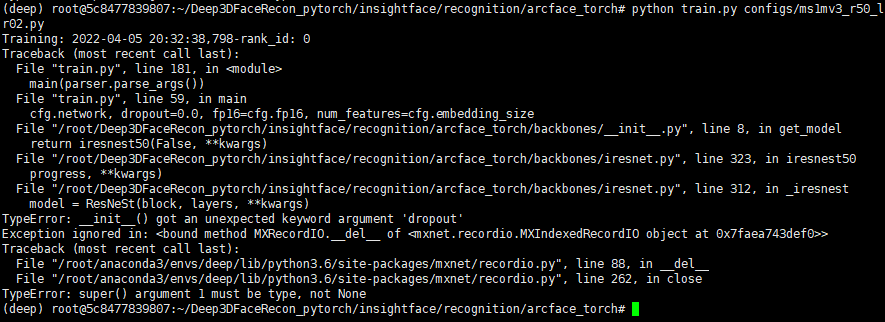

deepinsight/insightface | pytorch | 1,963 | Can't train | I encountered the following problem when retraining the data, how should I solve it?

| open | 2022-04-05T12:36:31Z | 2022-04-06T01:41:48Z | https://github.com/deepinsight/insightface/issues/1963 | [] | NewtOliver | 2 |

dbfixtures/pytest-postgresql | pytest | 1,087 | Remove ability to pre-populate database on a client fixture level | closed | 2025-02-12T17:38:58Z | 2025-02-15T11:14:22Z | https://github.com/dbfixtures/pytest-postgresql/issues/1087 | [] | fizyk | 0 | |

widgetti/solara | fastapi | 497 | Fullscreen scrolling example is broken | The [fullscreen scrolling example](https://solara.dev/examples/fullscreen/scrolling) is currently broken:

<img width="261" alt="image" src="https://github.com/widgetti/solara/assets/37669773/d081f32c-7624-43ec-a31e-1e4a722b36a2">

| open | 2024-02-07T13:05:50Z | 2024-02-07T14:46:09Z | https://github.com/widgetti/solara/issues/497 | [] | langestefan | 3 |

AUTOMATIC1111/stable-diffusion-webui | pytorch | 16,487 | [Bug]: Non checkpoints found. Can't run without a checkpoint. | ### Checklist

- [ ] The issue exists after disabling all extensions

- [X] The issue exists on a clean installation of webui

- [ ] The issue is caused by an extension, but I believe it is caused by a bug in the webui

- [X] The issue exists in the current version of the webui

- [X] The issue has not been reported before recently

- [ ] The issue has been reported before but has not been fixed yet

### What happened?

I get the same error as [this old bug report][1], but the proposed [solution][2] does not work - it references the [installation instructions][3], specifically downloading the model, but I think that's out of date as I don't see that now. Where exactly do I get the model from, and where do I place it?

I'm new to all this, sorry if I'm doing something stupid but I've tried to get this working for a while on a couple of different machines. I'm on Fedora 40 if it makes any difference.

[1]: https://github.com/AUTOMATIC1111/stable-diffusion-webui/issues/5134

[2]: https://github.com/AUTOMATIC1111/stable-diffusion-webui/issues/5134#issuecomment-1328347775

[3]: https://github.com/AUTOMATIC1111/stable-diffusion-webui/wiki/Install-and-Run-on-NVidia-GPUs

### Steps to reproduce the problem

Install on a new install of Fedora 40:

```

pyenv install 3.11

pyenv global 3.11

wget -q https://raw.githubusercontent.com/AUTOMATIC1111/stable-diffusion-webui/master/webui.sh

export COMMANDLINE_ARGS="--skip-torch-cuda-test" # I found I needed these as my GPU wouldn't get recognised

python_cmd=python3 bash -x webui.sh

```

### What should have happened?

Run and work correctly on a first-time install

### What browsers do you use to access the UI ?

Google Chrome

### Sysinfo

Sorry, I don't see the prompted option in the web UI and if I run webui.sh with --dump-sysinfo I get an AttributeError from python

### Console logs

```Shell

loading stable diffusion model: FileNotFoundError

Traceback (most recent call last):

File "/home/john/.pyenv/versions/3.11.10/lib/python3.11/threading.py", line 1002, in _bootstrap

self._bootstrap_inner()

File "/home/john/.pyenv/versions/3.11.10/lib/python3.11/threading.py", line 1045, in _bootstrap_inner

self.run()

File "/home/john/src/stable-diffusion/download/stable-diffusion-webui/venv/lib/python3.11/site-packages/anyio/_backends/_asyncio.py", line 807, in run

result = context.run(func, *args)

File "/home/john/src/stable-diffusion/download/stable-diffusion-webui/venv/lib/python3.11/site-packages/gradio/utils.py", line 707, in wrapper

response = f(*args, **kwargs)

File "/home/john/src/stable-diffusion/download/stable-diffusion-webui/modules/ui.py", line 1165, in <lambda>

update_image_cfg_scale_visibility = lambda: gr.update(visible=shared.sd_model and shared.sd_model.cond_stage_key == "edit")

File "/home/john/src/stable-diffusion/download/stable-diffusion-webui/modules/shared_items.py", line 175, in sd_model

return modules.sd_models.model_data.get_sd_model()

File "/home/john/src/stable-diffusion/download/stable-diffusion-webui/modules/sd_models.py", line 693, in get_sd_model

load_model()

File "/home/john/src/stable-diffusion/download/stable-diffusion-webui/modules/sd_models.py", line 788, in load_model

checkpoint_info = checkpoint_info or select_checkpoint()

^^^^^^^^^^^^^^^^^^^

File "/home/john/src/stable-diffusion/download/stable-diffusion-webui/modules/sd_models.py", line 234, in select_checkpoint

raise FileNotFoundError(error_message)

FileNotFoundError: No checkpoints found. When searching for checkpoints, looked at:

- file /home/john/src/stable-diffusion/download/stable-diffusion-webui/model.ckpt

- directory /home/john/src/stable-diffusion/download/stable-diffusion-webui/models/Stable-diffusionCan't run without a checkpoint. Find and place a .ckpt or .safetensors file into any of those locations.

```

```

### Additional information

_No response_ | closed | 2024-09-14T18:27:39Z | 2024-09-15T05:25:50Z | https://github.com/AUTOMATIC1111/stable-diffusion-webui/issues/16487 | [

"bug-report"

] | johngavingraham | 3 |

bendichter/brokenaxes | matplotlib | 85 | How to use a different scale in one part of the axis | I want to use a different scale in one part of the axis.

I tried to change the limits and tick count in the first part of yaxis but it did not work.

```

ax = bax.axs[1]

start, end = ax.get_ylim()

ax.yaxis.set_ticks(np.arange(start, end, 1000))

```

I want to expand the scale of the first part of the yaxis. Any help would be appreciated. Thanks in advance!

| closed | 2022-04-30T15:29:32Z | 2022-04-30T20:30:28Z | https://github.com/bendichter/brokenaxes/issues/85 | [] | sammy17 | 1 |

LibreTranslate/LibreTranslate | api | 134 | Add a parameter in the API to translate html |

Maybe you could add a parameter (format: text or html) in the API to allow translating html?

Thanks to [https://github.com/argosopentech/translate-html](argosopentech/translate-html)

I can do a pull request if interested. | closed | 2021-09-09T07:01:39Z | 2021-09-11T20:03:54Z | https://github.com/LibreTranslate/LibreTranslate/issues/134 | [

"enhancement",

"good first issue"

] | dingedi | 4 |

hack4impact/flask-base | sqlalchemy | 160 | Documentation on http://hack4impact.github.io/flask-base outdated. Doesn't match with README | Hi,

It seems that part of the documentation on https://hack4impact.github.io/flask-base/ are outdated.

For example, the **setup section** of the documentation mentions

```

$ pip install -r requirements/common.txt

$ pip install -r requirements/dev.txt

```

But there is no **requirements** folder.

Whereas the setup section in the README mentions

```

pip install -r requirements.txt

```

I find it confusing to have two sources with different information. | closed | 2018-03-20T09:35:42Z | 2018-05-31T17:57:06Z | https://github.com/hack4impact/flask-base/issues/160 | [] | s-razaq | 0 |

ultralytics/ultralytics | pytorch | 19,743 | How to Train a Custom YOLO Pose Estimation Model for Object 6D Pose | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and [discussions](https://github.com/orgs/ultralytics/discussions) and found no similar questions.

### Question

Dear Ultralytics Team,

I am currently working on 6D pose estimation for objects (specifically apples) and would like to train a custom YOLO pose estimation model using Ultralytics YOLO.

Could you please provide guidance on how to train a YOLO model for object pose estimation, similar to how YOLOv8-Pose is used for human keypoint detection? Specifically:

Dataset Preparation:

What kind of annotations are required for training an object pose estimation model?

1.Should I label keypoints on the object (e.g., specific points on an apple)?

2.How should the dataset be formatted (e.g., COCO-style keypoints, YOLO format)?

Model Training:

1.Which YOLO version is best suited for object pose estimation (YOLOv7-Pose, YOLOv8-Pose, or custom modifications)?

2.What training configurations should be used for pose estimation?

3.Can I modify YOLOv8-Pose to predict 3D keypoints instead of 2D keypoints?

Pose Conversion:

1.Once YOLO predicts keypoints, how can I use them to obtain the 6D object pose?

2.Would PnP (Perspective-n-Point) be a good approach to convert 2D keypoints into a 6D pose?

I would greatly appreciate any guidance, official documentation, or references that can help me train a YOLO-based object pose estimation model.

### Additional

_No response_ | open | 2025-03-17T09:20:31Z | 2025-03-23T23:37:38Z | https://github.com/ultralytics/ultralytics/issues/19743 | [

"question",

"pose"

] | mike55688 | 5 |

AntonOsika/gpt-engineer | python | 835 | Azure OpenAI Integration is not working anymore | The last working version I think was around v0.0.6. v0.1.0 is not working anymore with the following effects:

## Expected Behavior

When using the `--azure` parameter, the Azure OpenAI endpoint should be used. (X.openai.azure.com)

## Current Behavior

Instead of the Azure OpenAI endpoint, the usual OpenAI endpoint is being used. (api.openai.com)

## Failure Information

```

gpt-engineer --azure https://X.openai.azure.com -v ./projects/example/ gpt-4-32k

DEBUG:openai:message='Request to OpenAI API' method=get path=https://api.openai.com/v1/models/gpt-4-32k

DEBUG:openai:api_version=None data=None message='Post details'

DEBUG:urllib3.util.retry:Converted retries value: 2 -> Retry(total=2, connect=None, read=None, redirect=None, status=None)

DEBUG:urllib3.connectionpool:Starting new HTTPS connection (1): api.openai.com:443

DEBUG:urllib3.connectionpool:https://api.openai.com:443 "GET /v1/models/gpt-4-32k HTTP/1.1" 401 262

DEBUG:openai:message='OpenAI API response' path=https://api.openai.com/v1/models/gpt-4-32k processing_ms=3 request_id=XX response_code=401

INFO:openai:error_code=invalid_api_key error_message='Incorrect API key provided: XX. You can find your API key at https://platform.openai.com/account/api-keys.' error_param=None error_type=invalid_request_error message='OpenAI API error received' stream_error=False

```

### Steps to Reproduce

1. Use the command as seen above with the --azure parameter.

2. Use verbose output to verify that it's not going to the AzOAI Endpoint, but to the default OAI endpoint.

| closed | 2023-11-02T08:40:17Z | 2023-12-24T09:52:48Z | https://github.com/AntonOsika/gpt-engineer/issues/835 | [

"bug"

] | niklasfink | 8 |

ultralytics/yolov5 | machine-learning | 13,228 | RuntimeError: Caught RuntimeError in replica 0 on device 0 | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) and found no similar questions.

### Question

When training with yolov5x6. pt, setting the imagesize to 640 can be used for training, but changing it to 1280 will result in an error. Runtime Error: Caused Runtime Error in reply 0 on device 0

### Additional

_No response_ | closed | 2024-07-29T05:01:37Z | 2024-10-20T19:51:01Z | https://github.com/ultralytics/yolov5/issues/13228 | [

"question"

] | Bailin-He | 2 |

hyperspy/hyperspy | data-visualization | 3,223 | scipy.interp1d legacy | `interp1d` is a legacy function in SciPy that will be deprecated in the future: https://docs.scipy.org/doc/scipy/tutorial/interpolate/1D.html#legacy-interface-for-1-d-interpolation-interp1d

It is currently used 6 times in the codebase: (3 in hs, 1 in rsciio, 2 in eels): https://github.com/search?q=repo%3Ahyperspy%2Fhyperspy%20interp1d&type=code

In view of the legacy nature, it would make sense to use the HyperSpy 2.0 release to actually replace all uses of `interp1d` as the kwargs might be slightly different and thus it is an api break.

In #3214 `scipy.interpolate.make_interp_spline` is used instead, which has similar behavior as `interp1d` and probably is suitable for most other occurences. | closed | 2023-09-01T23:11:37Z | 2023-09-28T07:31:15Z | https://github.com/hyperspy/hyperspy/issues/3223 | [] | jlaehne | 1 |

tensorlayer/TensorLayer | tensorflow | 615 | Failed: TensorLayer (a17229d4) | *Sent by Read the Docs (readthedocs@readthedocs.org). Created by [fire](https://fire.fundersclub.com/).*

---

| TensorLayer build #7203498

---

|

---

| Build Failed for TensorLayer (1.3.2)

---

You can find out more about this failure here:

[TensorLayer build #7203498](https://readthedocs.org/projects/tensorlayer/builds/7203498/) \- failed

If you have questions, a good place to start is the FAQ:

<https://docs.readthedocs.io/en/latest/faq.html>

You can unsubscribe from these emails in your [Notification Settings](https://readthedocs.org/dashboard/tensorlayer/notifications/)

Keep documenting,

Read the Docs

| Read the Docs

<https://readthedocs.org>

---

| closed | 2018-05-17T07:58:11Z | 2018-05-17T08:00:30Z | https://github.com/tensorlayer/TensorLayer/issues/615 | [] | fire-bot | 0 |

serengil/deepface | machine-learning | 1,316 | [BUG]: broken weight files | ### Before You Report a Bug, Please Confirm You Have Done The Following...

- [X] I have updated to the latest version of the packages.

- [X] I have searched for both [existing issues](https://github.com/serengil/deepface/issues) and [closed issues](https://github.com/serengil/deepface/issues?q=is%3Aissue+is%3Aclosed) and found none that matched my issue.

### DeepFace's version

v0.0.93

### Python version

3.9

### Operating System

Debian

### Dependencies

-

### Reproducible example

```Python

-

```

### Relevant Log Output

_No response_

### Expected Result

_No response_

### What happened instead?

_No response_

### Additional Info

As mentioned in [this issue](https://github.com/serengil/deepface/issues/1315), sometimes weight file is broken while downloading and load_weights command throws exception. We should raise a meaningful message if load_weights fails, and say something like "weights file seems broken, try to delete it and download from this url and copy to that folder".

- We have many load_weights lines. We may consider to overwrite it with a common function.

- We may consider to compare the hash of the target file as well but this comes with a cost. | closed | 2024-08-21T12:59:13Z | 2024-08-31T15:56:10Z | https://github.com/serengil/deepface/issues/1316 | [

"bug"

] | serengil | 0 |

proplot-dev/proplot | data-visualization | 86 | You also depend on pyyaml | https://github.com/lukelbd/proplot/blob/168df5109cc87e1f308711b2657f6126b82a19ff/proplot/rctools.py#L13 | closed | 2019-12-15T14:54:12Z | 2019-12-16T03:42:06Z | https://github.com/proplot-dev/proplot/issues/86 | [

"distribution"

] | hmaarrfk | 1 |

great-expectations/great_expectations | data-science | 10,607 | Improve OpenSSF Scorecard Report - remove critical issue by changing pr-title-checker.yml CI workflow | **Describe the bug**

Noticed when viewing the OpenSSF scorecard for the Great Expectations library at:

https://scorecard.dev/viewer/?uri=github.com/great-expectations/great_expectations

There is a critical *Dangerous-Workflow* pattern detected - the error is as follows:

> Warn: script injection with untrusted input ' github.event.pull_request.title ': .github/workflows/pr-title-checker.yml:17

The workflow checks passes the pull_request.title value to a script command, which introduces a possible script injection issue.

**To Reproduce**

The issue is in the github workflow in this file:

- https://github.com/great-expectations/great_expectations/blob/develop/.github/workflows/pr-title-checker.yml

**Expected behavior**

To avoid the OpenSSF warning about script injection in the pull_request.title value, we could use GitHub Actions' built-in if condition to validate the PR title. This approach avoids using shell commands that could be vulnerable to injection attacks. Here's a revised version of the workflow:

```

jobs:

check:

runs-on: ubuntu-latest

steps:

- name: Check PR title validity

if: "!contains(github.event.pull_request.title, '[FEATURE]') && !contains(github.event.pull_request.title, '[BUGFIX]') && !contains(github.event.pull_request.title, '[DOCS]') && !contains(github.event.pull_request.title, '[MAINTENANCE]') && !contains(github.event.pull_request.title, '[CONTRIB]') && !contains(github.event.pull_request.title, '[RELEASE]')"

run: |

echo "Invalid PR title - please prefix with one of: [FEATURE] | [BUGFIX] | [DOCS] | [MAINTENANCE] | [CONTRIB] | [RELEASE]"

exit 1

env:

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

```

This version uses the `if` condition to check if the PR title contains any of the accepted prefixes. If none of the prefixes are found, it outputs an error message and exits with a status of 1.

This approach mitigates the risk of script injection by avoiding the use of shell commands to process the PR title.

**Environment (please complete the following information):**

- Operating System: any

- Great Expectations Version: 1.2.0

- Data Source: n/a

- Cloud environment: n/a

**Additional context**

- https://github.com/ossf/scorecard/blob/367426ed5d9cc62f4944dc4a2174f3bbb5e22169/docs/checks.md#dangerous-workflow

- https://docs.github.com/en/actions/security-for-github-actions/security-guides/security-hardening-for-github-actions#understanding-the-risk-of-script-injections

| closed | 2024-10-31T15:04:40Z | 2024-11-06T21:54:32Z | https://github.com/great-expectations/great_expectations/issues/10607 | [] | nils-woxholt | 0 |

ResidentMario/missingno | pandas | 81 | Problem exporting to PDF | When using msno.matrix() and trying to export to pdf using matplotlib:

`fig.savefig('fig1.pdf', format='pdf', bbox_inches='tight')`

I get a pdf file with a empty plot. All font components appears normally, as ticks, labels, titles, etc. But the plot itself is blank, as can be seen in the attached figure.

| closed | 2019-01-09T13:29:39Z | 2019-03-17T04:25:14Z | https://github.com/ResidentMario/missingno/issues/81 | [] | aguinaldoabbj | 3 |

ipyflow/ipyflow | jupyter | 27 | better logo for JupyterLab startup kernel | Maybe the Python logo where the snake is wearing a helmet, or something like that. | closed | 2020-05-12T18:45:44Z | 2020-05-13T22:31:55Z | https://github.com/ipyflow/ipyflow/issues/27 | [] | smacke | 0 |

pydantic/FastUI | pydantic | 154 | Question: Is there a way to redirect response to an end point or url that's not part of the fastui endpoints? | I tried using RedirectResponse from starlette.responses, like: return RedirectResponse('/logout') or return RedirectResponse('logout.html'). I also tried return [c.FireEvent(event=GoToEvent(url='/logout'))] but it always gives me this error: "Request Error Response not valid JSON". It seems the url is always captured by fastui's /api endpoint. I'd really like to have a way to redirect the page to an external link.

thanks!

| closed | 2024-01-16T10:43:50Z | 2024-02-18T10:50:42Z | https://github.com/pydantic/FastUI/issues/154 | [] | fmrib00 | 2 |

plotly/dash-table | plotly | 430 | [dash-table] Display problem for editable (dropdown) datatable with row_selectable | Hey :slightly_smiling_face:

This is my first post here…

I have a problem (see image) of shift between the lines of my datatable and the checkboxes.

This problem appeared with the addition of the ability to modify the data with a dropdown on a column.

If I change the parameter with “editable = False,” this problem disappears.

Do you have an idea of what can cause this?

Thank you ! :slightly_smiling_face:

### Package version ###

3.6.8 |Anaconda, Inc.| (default, Dec 30 2018, 01:22:34)

[GCC 7.3.0]

Dash : 0.42.0

Dash_table : 3.6.0

dash_core_components : 0.47.0

dash_renderer : 0.23.0

Google chrome :

Version 73.0.3683.103 (Build officiel) (64 bits)

Source code :

dt.DataTable(

id='TAB1_datatable',

columns=[{}],

data=[{}],

pagination_mode=False,

sorting=True,

sorting_type="multi",

style_table={

'maxHeight': '400',

'minWidth': '100%',

'border': 'thin lightgrey solid',

},

style_header={

'backgroundColor': '#a6ebff',

'fontWeight': 'bold',

'textAlign': 'left'

},

style_cell={

'textAlign': 'left',

'minWidth': '100px',

'maxWidth': '1000px',

},

style_cell_conditional=[{

'if': {'row_index': 'odd'},

'backgroundColor': 'rgb(248, 248, 248)'

},

],

style_data_conditional=[{}],

row_selectable=True,

selected_rows=[],

n_fixed_rows=[1],

n_fixed_columns=[1],

editable=True,

column_static_dropdown=[

{

'id': 'Global_Status',

'dropdown': [

{'label': i, 'value': i} for i in ['OK', 'NOT OK']

]

},

]

),

style_data_conditional, columns and data are created with callbacks | open | 2019-05-13T15:47:34Z | 2019-05-13T15:50:22Z | https://github.com/plotly/dash-table/issues/430 | [] | cedricperrotey | 0 |

modelscope/data-juicer | data-visualization | 603 | FT-Data Ranker_大语言模型微调数据赛, 是否可以分享该比赛的数据用于对Data-Juicer项目的使用。 | 尊敬的Data-Juicer框架开发者,你们好。最近,我们有对大模型数据进行处理的需求。从论文“Data-Juicer: A One-Stop Data Processing System for Large Language Models”调研到Data-Juicer的开源大模型数据处理框架。我们想进一步使用和探索这个框架。正好,我们看到了你们在天池比赛中发布了“FT-Data Ranker_大语言模型微调数据赛(7B模型赛道)”比赛。但是比赛已经结束无法获取原始数据。是否可以提供原始数据以供我们探索和使用Data-Juicer框架。万分感谢🙏。 | open | 2025-03-03T09:45:30Z | 2025-03-04T06:27:35Z | https://github.com/modelscope/data-juicer/issues/603 | [

"question"

] | user2311717757 | 1 |

vitalik/django-ninja | django | 527 | Call result of another URL from another URL | In order to be able to get results and avoid an intermediate classic http call, I wanted to call the result of one URL from another, like so:

("Pseudocode").

```

@api.post("/test-dependent")

def composite_result(request):

result = {"icons": media_icon(request), "other": "whatever"}

return result

@api.get("/media-icon", response=List[MediaIconSchema])

def media_icon(request):

objs = MediaIcon.objects.all()

return list(objs)

```

How could I, get the result "icons", parsed to the MediaIconSchema structure, from another function? Is there a way to call the result of the function that has been incorporated in your django-ninja fashion, in order to avoid code repetition?.

Thanks for the info,

| open | 2022-08-12T15:09:57Z | 2022-08-14T08:24:54Z | https://github.com/vitalik/django-ninja/issues/527 | [] | martinlombana | 1 |

Farama-Foundation/PettingZoo | api | 710 | Error running tutorial: 'ProcConcatVec' object has no attribute 'pipes' | I'm running into an error with this long stack trace when I try to run the 13 line tutorial:

```

/Users/erick/.local/share/virtualenvs/rl-0i49mzF7/lib/python3.9/site-packages/torch/utils/tensorboard/__init__.py:4: DeprecationWarning: distutils Version classes are deprecated. Use packaging.version instead.

if not hasattr(tensorboard, '__version__') or LooseVersion(tensorboard.__version__) < LooseVersion('1.15'):

/Users/erick/.local/share/virtualenvs/rl-0i49mzF7/lib/python3.9/site-packages/torch/utils/tensorboard/__init__.py:4: DeprecationWarning: distutils Version classes are deprecated. Use packaging.version instead.

if not hasattr(tensorboard, '__version__') or LooseVersion(tensorboard.__version__) < LooseVersion('1.15'):

Traceback (most recent call last):

File "<string>", line 1, in <module>

File "/usr/local/Cellar/python@3.9/3.9.12/Frameworks/Python.framework/Versions/3.9/lib/python3.9/multiprocessing/spawn.py", line 116, in spawn_main

exitcode = _main(fd, parent_sentinel)

File "/usr/local/Cellar/python@3.9/3.9.12/Frameworks/Python.framework/Versions/3.9/lib/python3.9/multiprocessing/spawn.py", line 125, in _main

prepare(preparation_data)

File "/usr/local/Cellar/python@3.9/3.9.12/Frameworks/Python.framework/Versions/3.9/lib/python3.9/multiprocessing/spawn.py", line 236, in prepare

_fixup_main_from_path(data['init_main_from_path'])

File "/usr/local/Cellar/python@3.9/3.9.12/Frameworks/Python.framework/Versions/3.9/lib/python3.9/multiprocessing/spawn.py", line 287, in _fixup_main_from_path

main_content = runpy.run_path(main_path,

File "/usr/local/Cellar/python@3.9/3.9.12/Frameworks/Python.framework/Versions/3.9/lib/python3.9/runpy.py", line 268, in run_path

return _run_module_code(code, init_globals, run_name,

File "/usr/local/Cellar/python@3.9/3.9.12/Frameworks/Python.framework/Versions/3.9/lib/python3.9/runpy.py", line 97, in _run_module_code

_run_code(code, mod_globals, init_globals,

File "/usr/local/Cellar/python@3.9/3.9.12/Frameworks/Python.framework/Versions/3.9/lib/python3.9/runpy.py", line 87, in _run_code

exec(code, run_globals)

File "/Users/erick/dev/rl/main_pettingzoo.py", line 22, in <module>

env = ss.concat_vec_envs_v1(env, 8, num_cpus=4, base_class="stable_baselines3")

File "/Users/erick/.local/share/virtualenvs/rl-0i49mzF7/lib/python3.9/site-packages/supersuit/vector/vector_constructors.py", line 60, in concat_vec_envs_v1

vec_env = MakeCPUAsyncConstructor(num_cpus)(*vec_env_args(vec_env, num_vec_envs))

File "/Users/erick/.local/share/virtualenvs/rl-0i49mzF7/lib/python3.9/site-packages/supersuit/vector/constructors.py", line 38, in constructor

return ProcConcatVec(

File "/Users/erick/.local/share/virtualenvs/rl-0i49mzF7/lib/python3.9/site-packages/supersuit/vector/multiproc_vec.py", line 144, in __init__

proc.start()

File "/usr/local/Cellar/python@3.9/3.9.12/Frameworks/Python.framework/Versions/3.9/lib/python3.9/multiprocessing/process.py", line 121, in start

self._popen = self._Popen(self)

File "/usr/local/Cellar/python@3.9/3.9.12/Frameworks/Python.framework/Versions/3.9/lib/python3.9/multiprocessing/context.py", line 224, in _Popen

return _default_context.get_context().Process._Popen(process_obj)

File "/usr/local/Cellar/python@3.9/3.9.12/Frameworks/Python.framework/Versions/3.9/lib/python3.9/multiprocessing/context.py", line 284, in _Popen

return Popen(process_obj)

File "/usr/local/Cellar/python@3.9/3.9.12/Frameworks/Python.framework/Versions/3.9/lib/python3.9/multiprocessing/popen_spawn_posix.py", line 32, in __init__

super().__init__(process_obj)

File "/usr/local/Cellar/python@3.9/3.9.12/Frameworks/Python.framework/Versions/3.9/lib/python3.9/multiprocessing/popen_fork.py", line 19, in __init__

self._launch(process_obj)

File "/usr/local/Cellar/python@3.9/3.9.12/Frameworks/Python.framework/Versions/3.9/lib/python3.9/multiprocessing/popen_spawn_posix.py", line 42, in _launch

prep_data = spawn.get_preparation_data(process_obj._name)

File "/usr/local/Cellar/python@3.9/3.9.12/Frameworks/Python.framework/Versions/3.9/lib/python3.9/multiprocessing/spawn.py", line 154, in get_preparation_data

_check_not_importing_main()

File "/usr/local/Cellar/python@3.9/3.9.12/Frameworks/Python.framework/Versions/3.9/lib/python3.9/multiprocessing/spawn.py", line 134, in _check_not_importing_main

raise RuntimeError('''

RuntimeError:

An attempt has been made to start a new process before the

current process has finished its bootstrapping phase.

This probably means that you are not using fork to start your

child processes and you have forgotten to use the proper idiom

in the main module:

if __name__ == '__main__':

freeze_support()

...

The "freeze_support()" line can be omitted if the program

is not going to be frozen to produce an executable.

Exception ignored in: <function ProcConcatVec.__del__ at 0x112cf1310>

Traceback (most recent call last):

File "/Users/erick/.local/share/virtualenvs/rl-0i49mzF7/lib/python3.9/site-packages/supersuit/vector/multiproc_vec.py", line 210, in __del__

for pipe in self.pipes:

AttributeError: 'ProcConcatVec' object has no attribute 'pipes'

```

Maybe the issue here is some mismatch in library versioning, but I found no reference to which `supersuit` version is supposed to run with the tutorial (or with the rest of the code).

I am running python 3.9 with `supersuit` 3.4 and `pettingzoo` 1.18.1 | closed | 2022-05-29T13:17:28Z | 2022-08-14T18:22:20Z | https://github.com/Farama-Foundation/PettingZoo/issues/710 | [] | erickrf | 16 |

amidaware/tacticalrmm | django | 1,950 | Add info to Automation policy manager output summary | Add counts of each type of item and total and increase title to more chars

<img width="801" alt="2024-07-31_031728 - automation output" src="https://github.com/user-attachments/assets/a63695c5-1776-43d6-95e5-787e0ef3b434">

| open | 2024-07-31T07:20:29Z | 2024-11-04T15:15:09Z | https://github.com/amidaware/tacticalrmm/issues/1950 | [

"enhancement"

] | silversword411 | 1 |

scrapy/scrapy | web-scraping | 6,478 | Get rid of `testfixtures` | We only use `testfixtures.LogCapture` and I expect it should be easy, [though not trivial](https://docs.pytest.org/en/stable/how-to/unittest.html), to replace it with the pytest `caplog` fixture, reducing our test dependencies.

Alternatively we can try moving to [`twisted.logger.capturedLogs`](https://docs.twisted.org/en/stable/core/howto/logger.html#capturing-log-events-for-testing) but it was only added in Twisted 19.7.0. | open | 2024-09-20T16:52:42Z | 2025-03-13T17:47:19Z | https://github.com/scrapy/scrapy/issues/6478 | [

"enhancement",

"CI"

] | wRAR | 8 |

AirtestProject/Airtest | automation | 376 | 执行脚本时 airtest 报错 | **描述问题 BUG**

执行脚本时 adb.py 抛出 AdbError

Log:

```

adb server version (40) doesn't match this client (39); killing...

[pocoservice.apk] stdout: b'\r\ncom.netease.open.pocoservice.InstrumentedTestAsLauncher:'

[pocoservice.apk] stderr: b''

[pocoservice.apk] retrying instrumentation PocoService

[05:00:12][DEBUG]<airtest.core.android.adb> /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 shell am force-stop com.netease.open.pocoservice ; echo ---$?---

2019-04-26 17:00:12 adb.py[line:142] DEBUG /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 shell am force-stop com.netease.open.pocoservice ; echo ---$?---

Exception in thread Thread-2:

Traceback (most recent call last):

File "/usr/local/Cellar/python/3.7.3/Frameworks/Python.framework/Versions/3.7/lib/python3.7/threading.py", line 917, in _bootstrap_inner

self.run()

File "/usr/local/Cellar/python/3.7.3/Frameworks/Python.framework/Versions/3.7/lib/python3.7/threading.py", line 865, in run

self._target(*self._args, **self._kwargs)

File "/usr/local/lib/python3.7/site-packages/poco/drivers/android/uiautomation.py", line 211, in loop

self._start_instrument(port_to_ping) # 尝试重启

File "/usr/local/lib/python3.7/site-packages/poco/drivers/android/uiautomation.py", line 235, in _start_instrument

self.adb_client.shell(['am', 'force-stop', PocoServicePackage])

File "/usr/local/lib/python3.7/site-packages/airtest/core/android/adb.py", line 354, in shell

out = self.raw_shell(cmd).rstrip()

File "/usr/local/lib/python3.7/site-packages/airtest/core/android/adb.py", line 326, in raw_shell

out = self.cmd(cmds, ensure_unicode=False)

File "/usr/local/lib/python3.7/site-packages/airtest/core/android/adb.py", line 187, in cmd

raise AdbError(stdout, stderr)

airtest.core.error.AdbError: stdout[b''] stderr[b"* daemon not running; starting now at tcp:5037\nADB server didn't ACK\nFull server startup log: /var/folders/nx/8qylv0hs66v2zhbm22505kkr0000gn/T//adb.501.log\nServer had pid: 33767\n--- adb starting (pid 33767) ---\nadb I 04-26 17:00:12 33767 453132 main.cpp:56] Android Debug Bridge version 1.0.40\nadb I 04-26 17:00:12 33767 453132 main.cpp:56] Version 4986621\nadb I 04-26 17:00:12 33767 453132 main.cpp:56] Installed as /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb\nadb I 04-26 17:00:12 33767 453132 main.cpp:56] \nadb E 04-26 17:00:12 33767 453138 usb_osx.cpp:340] Could not open interface: e00002c5\nadb E 04-26 17:00:12 33767 453138 usb_osx.cpp:301] Could not find device interface\nadb I 04-26 17:00:12 33765 453135 usb_osx.cpp:308] reported max packet size for a83a6617d030 is 512\nadb I 04-26 17:00:12 33765 453128 adb_auth_host.cpp:416] adb_auth_init...\nadb I 04-26 17:00:12 33765 453128 adb_auth_host.cpp:174] read_key_file '/Users/anonymous/.android/adbkey'...\nadb I 04-26 17:00:12 33765 453128 adb_auth_host.cpp:467] Calling send_auth_response\nerror: could not install *smartsocket* listener: Address already in use\n\n* failed to start daemon\nerror: cannot connect to daemon\n"]

* daemon started successfully

[05:01:04][DEBUG]<airtest.core.android.adb> /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 uninstall com.chainsguard.safebox

2019-04-26 17:01:04 adb.py[line:142] DEBUG /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 uninstall com.chainsguard.safebox

[05:01:08][DEBUG]<airtest.core.android.adb> /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 install ./apk/com.chainsguard.safebox.apk

2019-04-26 17:01:08 adb.py[line:142] DEBUG /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 install ./apk/com.chainsguard.safebox.apk

[05:01:10][INFO]<airtest.core.api> Try finding:

Template(./air/_chainsguard.air/tpl1546589231057.png)

2019-04-26 17:01:10 cv.py[line:39] INFO Try finding:

Template(./air/_chainsguard.air/tpl1546589231057.png)

[05:01:10][DEBUG]<airtest.core.android.adb> /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 shell pm path jp.co.cyberagent.stf.rotationwatcher ; echo ---$?---

2019-04-26 17:01:10 adb.py[line:142] DEBUG /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 shell pm path jp.co.cyberagent.stf.rotationwatcher ; echo ---$?---

[05:01:11][DEBUG]<airtest.core.android.adb> /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 shell export CLASSPATH=/data/app/jp.co.cyberagent.stf.rotationwatcher-1/base.apk;exec app_process /system/bin jp.co.cyberagent.stf.rotationwatcher.RotationWatcher

2019-04-26 17:01:11 adb.py[line:142] DEBUG /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 shell export CLASSPATH=/data/app/jp.co.cyberagent.stf.rotationwatcher-1/base.apk;exec app_process /system/bin jp.co.cyberagent.stf.rotationwatcher.RotationWatcher

[05:01:12][DEBUG]<airtest.core.android.adb> /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 shell ls /data/local/tmp/minicap ; echo ---$?---

2019-04-26 17:01:12 adb.py[line:142] DEBUG /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 shell ls /data/local/tmp/minicap ; echo ---$?---

[05:01:12][DEBUG]<airtest.core.android.adb> /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 shell ls /data/local/tmp/minicap.so ; echo ---$?---

2019-04-26 17:01:12 adb.py[line:142] DEBUG /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 shell ls /data/local/tmp/minicap.so ; echo ---$?---

[05:01:12][DEBUG]<airtest.core.android.adb> /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 shell LD_LIBRARY_PATH=/data/local/tmp /data/local/tmp/minicap -v 2>&1

2019-04-26 17:01:12 adb.py[line:142] DEBUG /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 shell LD_LIBRARY_PATH=/data/local/tmp /data/local/tmp/minicap -v 2>&1

[05:01:12][DEBUG]<airtest.core.android.minicap> version:5

2019-04-26 17:01:12 minicap.py[line:72] DEBUG version:5

[05:01:12][DEBUG]<airtest.core.android.minicap> skip install minicap

2019-04-26 17:01:12 minicap.py[line:79] DEBUG skip install minicap

[05:01:12][DEBUG]<airtest.core.android.adb> /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 forward --no-rebind tcp:15156 localabstract:minicap_15156

2019-04-26 17:01:12 adb.py[line:142] DEBUG /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 forward --no-rebind tcp:15156 localabstract:minicap_15156

[05:01:12][DEBUG]<airtest.core.android.adb> /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 shell LD_LIBRARY_PATH=/data/local/tmp /data/local/tmp/minicap -i ; echo ---$?---

2019-04-26 17:01:12 adb.py[line:142] DEBUG /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 shell LD_LIBRARY_PATH=/data/local/tmp /data/local/tmp/minicap -i ; echo ---$?---

[05:01:12][DEBUG]<airtest.core.android.adb> /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 shell LD_LIBRARY_PATH=/data/local/tmp /data/local/tmp/minicap -n 'minicap_15156' -P 720x1280@720x1280/0 -l 2>&1

2019-04-26 17:01:12 adb.py[line:142] DEBUG /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 shell LD_LIBRARY_PATH=/data/local/tmp /data/local/tmp/minicap -n 'minicap_15156' -P 720x1280@720x1280/0 -l 2>&1

[05:01:12][DEBUG]<airtest.utils.nbsp> [minicap_server]b'PID: 8428'

2019-04-26 17:01:12 nbsp.py[line:37] DEBUG [minicap_server]b'PID: 8428'

[05:01:12][DEBUG]<airtest.utils.nbsp> [minicap_server]b'INFO: Using projection 720x1280@720x1280/0'

2019-04-26 17:01:12 nbsp.py[line:37] DEBUG [minicap_server]b'INFO: Using projection 720x1280@720x1280/0'

[05:01:12][DEBUG]<airtest.utils.nbsp> [minicap_server]b'INFO: (external/MY_minicap/src/minicap_23.cpp:240) Creating SurfaceComposerClient'

2019-04-26 17:01:12 nbsp.py[line:37] DEBUG [minicap_server]b'INFO: (external/MY_minicap/src/minicap_23.cpp:240) Creating SurfaceComposerClient'

[05:01:12][DEBUG]<airtest.utils.nbsp> [minicap_server]b'INFO: (external/MY_minicap/src/minicap_23.cpp:243) Performing SurfaceComposerClient init check'

2019-04-26 17:01:12 nbsp.py[line:37] DEBUG [minicap_server]b'INFO: (external/MY_minicap/src/minicap_23.cpp:243) Performing SurfaceComposerClient init check'

[05:01:12][DEBUG]<airtest.utils.nbsp> [minicap_server]b'INFO: (external/MY_minicap/src/minicap_23.cpp:250) Creating virtual display'

2019-04-26 17:01:12 nbsp.py[line:37] DEBUG [minicap_server]b'INFO: (external/MY_minicap/src/minicap_23.cpp:250) Creating virtual display'

[05:01:12][DEBUG]<airtest.utils.nbsp> [minicap_server]b'INFO: (external/MY_minicap/src/minicap_23.cpp:256) Creating buffer queue'

2019-04-26 17:01:12 nbsp.py[line:37] DEBUG [minicap_server]b'INFO: (external/MY_minicap/src/minicap_23.cpp:256) Creating buffer queue'

[05:01:12][DEBUG]<airtest.utils.nbsp> [minicap_server]b'INFO: (external/MY_minicap/src/minicap_23.cpp:261) Creating CPU consumer'

2019-04-26 17:01:12 nbsp.py[line:37] DEBUG [minicap_server]b'INFO: (external/MY_minicap/src/minicap_23.cpp:261) Creating CPU consumer'

[05:01:12][DEBUG]<airtest.utils.nbsp> [minicap_server]b'INFO: (external/MY_minicap/src/minicap_23.cpp:265) Creating frame waiter'

2019-04-26 17:01:12 nbsp.py[line:37] DEBUG [minicap_server]b'INFO: (external/MY_minicap/src/minicap_23.cpp:265) Creating frame waiter'

[05:01:12][DEBUG]<airtest.utils.nbsp> [minicap_server]b'INFO: (external/MY_minicap/src/minicap_23.cpp:269) Publishing virtual display'

2019-04-26 17:01:12 nbsp.py[line:37] DEBUG [minicap_server]b'INFO: (external/MY_minicap/src/minicap_23.cpp:269) Publishing virtual display'

[05:01:12][DEBUG]<airtest.utils.nbsp> [minicap_server]b'INFO: (jni/minicap/JpgEncoder.cpp:64) Allocating 2766852 bytes for JPG encoder'

2019-04-26 17:01:12 nbsp.py[line:37] DEBUG [minicap_server]b'INFO: (jni/minicap/JpgEncoder.cpp:64) Allocating 2766852 bytes for JPG encoder'

[05:01:12][DEBUG]<airtest.utils.nbsp> [minicap_server]b'INFO: (/home/lxn3032/minicap_for_ide/jni/minicap/minicap.cpp:473) Server start'

2019-04-26 17:01:12 nbsp.py[line:37] DEBUG [minicap_server]b'INFO: (/home/lxn3032/minicap_for_ide/jni/minicap/minicap.cpp:473) Server start'

[05:01:12][DEBUG]<airtest.core.android.minicap> (1, 24, 8428, 720, 1280, 720, 1280, 0, 2)

2019-04-26 17:01:12 minicap.py[line:239] DEBUG (1, 24, 8428, 720, 1280, 720, 1280, 0, 2)

[05:01:12][DEBUG]<airtest.utils.nbsp> [minicap_server]b'INFO: (/home/lxn3032/minicap_for_ide/jni/minicap/minicap.cpp:475) New client connection'

2019-04-26 17:01:12 nbsp.py[line:37] DEBUG [minicap_server]b'INFO: (/home/lxn3032/minicap_for_ide/jni/minicap/minicap.cpp:475) New client connection'

[05:01:13][DEBUG]<airtest.core.android.adb> /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 shell LD_LIBRARY_PATH=/data/local/tmp /data/local/tmp/minicap -i ; echo ---$?---

2019-04-26 17:01:13 adb.py[line:142] DEBUG /usr/local/lib/python3.7/site-packages/airtest/core/android/static/adb/mac/adb -s a83a6617d030 shell LD_LIBRARY_PATH=/data/local/tmp /data/local/tmp/minicap -i ; echo ---$?---

[05:01:13][DEBUG]<airtest.core.api> resize: (219, 72)->(146, 48), resolution: (1080, 1920)=>(720, 1280)

2019-04-26 17:01:13 cv.py[line:216] DEBUG resize: (219, 72)->(146, 48), resolution: (1080, 1920)=>(720, 1280)

[05:01:13][DEBUG]<airtest.core.api> try match with _find_template

2019-04-26 17:01:13 cv.py[line:155] DEBUG try match with _find_template

```

**Python 版本:** `3.7.3`

**airtest 版本:** `1.0.25`

**设备**

- 型号: Redmi 3S

- MIUI: 10 9.3.28 开发版

- 系统: Android 6.0.1

**其他相关环境信息**

macOS 10.14.4 | closed | 2019-04-26T09:50:30Z | 2019-04-26T10:25:06Z | https://github.com/AirtestProject/Airtest/issues/376 | [] | i11m20n | 2 |

Kav-K/GPTDiscord | asyncio | 447 | [BUG] Taggable mentions overriding converse opener | **Describe the bug**

During a GPT converse with a custom opener set, using an @mention of the bot reverts back to the default

**To Reproduce**

Steps to reproduce the behaviour:

1. Start a /gpt converse with an opener or opener_file

2. Inside of the thread / conversation, send a message that begins with the taggable name (eg @bot I think today is Monday)

**Expected behaviour**

Inside of a converse thread with a custom opener or opener_file the bot should reply in its proper context whether it is tagged or not

| closed | 2023-12-11T07:11:23Z | 2023-12-31T10:05:17Z | https://github.com/Kav-K/GPTDiscord/issues/447 | [

"bug"

] | jeffe | 1 |

docarray/docarray | fastapi | 1,830 | Error on subindice Embedding type for Torch Tensor moving from GPU to CPU. | ### Initial Checks

- [X] I have read and followed [the docs](https://docs.docarray.org/) and still think this is a bug

### Description

I have found an issue with the following sequence:

Object

SubIndiceObject with an attribute Optional[AnyEmbedding]

I am running an operation on GPU and storing the TorchEmbedding on that attribute

Then I am converting the value back into a NDArrayEmbedding on CPU and storing in the same attribute.

When indexing the data into weaviate, it still tracks that attribute as a TorchTensor on GPU and tries to convert to a NDArrayEmbedding.

In order to fix it, I ran the cpu() operation prior to saving that tensor on the attribute.

I don't know if it is a bug or by design but logging the scenario nonetheless.

### Example Code

_No response_

### Python, DocArray & OS Version

```Text

docarray version: 0.39.1

```

### Affected Components

- [X] [Vector Database / Index](https://docs.docarray.org/user_guide/storing/docindex/)

- [ ] [Representing](https://docs.docarray.org/user_guide/representing/first_step)

- [ ] [Sending](https://docs.docarray.org/user_guide/sending/first_step/)

- [ ] [storing](https://docs.docarray.org/user_guide/storing/first_step/)

- [ ] [multi modal data type](https://docs.docarray.org/data_types/first_steps/) | open | 2023-11-06T19:44:17Z | 2023-12-23T14:52:32Z | https://github.com/docarray/docarray/issues/1830 | [] | vincetrep | 3 |

streamlit/streamlit | machine-learning | 9,947 | More consistent handling of relative paths | ### Checklist

- [X] I have searched the [existing issues](https://github.com/streamlit/streamlit/issues) for similar feature requests.

- [X] I added a descriptive title and summary to this issue.

### Summary

We have two main ways relative paths are parsed:

- For Page and navigation commands, paths are relative to the entrypoint file

- For most other command (like media), paths are relative to the current working directory

Although this may be the same for many people, Community Cloud in particular used the root of the repository as the current working directory which can necessitate some rearranging of files/path handling for complex apps. When it comes to Community Cloud's handling of Python dependencies, both the entrypoint-file directory and the root of the repository are searched, in that order. (This is not the case for packages.txt, nor for Streamlit's config.toml. The former must be handled in Cloud, but the latter may be handled in open source.)

It would be nice if all of Streamlit's paths prioritized "relative to the entrypoint file" then fell back to "relative to the current working directory." This could be implemented for `.streamlit/config.toml` (or anything in the `.streamlit` folder before falling back to the user's global settings). This could also be used for all local file paths (`st.image`, `st.video`, etc).

Related to #7731, #7578 (though more restrictive than those requests).

cc @tvst @sfc-gh-tteixeira

### Why?

Consistency.

### How?

_No response_

### Additional Context

_No response_ | open | 2024-11-29T09:14:30Z | 2024-11-29T09:16:40Z | https://github.com/streamlit/streamlit/issues/9947 | [

"type:enhancement"

] | sfc-gh-dmatthews | 1 |

nltk/nltk | nlp | 3,165 | a lot of NLTK DATA does not express their license | Dear NLTK.

I read "NLTK corpora are provided under the terms given in the README file for each corpus; all are redistributable and available for non-commercial use."

But a lot of NLTK DATA did not write their license.

Please see below.

https://www.nltk.org/nltk_data/

It is very unfriendly for user. Please write licenses of all NLTK Corpora. | open | 2023-06-16T17:02:04Z | 2023-06-16T17:09:26Z | https://github.com/nltk/nltk/issues/3165 | [] | hiDevman | 0 |

timkpaine/lantern | plotly | 96 | Plotly probplot | closed | 2017-10-19T16:57:35Z | 2017-11-26T05:13:24Z | https://github.com/timkpaine/lantern/issues/96 | [

"feature",

"plotly/cufflinks"

] | timkpaine | 1 | |

ultrafunkamsterdam/undetected-chromedriver | automation | 1,744 | "HTTP Error 404: Not Found" Showing when driver is initialised | For this simple code:

```

import undetected_chromedriver as uc

import time

if __name__ == '__main__':

driver = uc.Chrome()

time.sleep(20)

```

I get this error:

```

Traceback (most recent call last):

File "/home/omkmorendha/Desktop/Work/JSX_scraping/exp.py", line 5, in <module>

driver = uc.Chrome()

File "/home/omkmorendha/.local/share/virtualenvs/JSX_scraping-34LRwH2V/lib/python3.10/site-packages/undetected_chromedriver/__init__.py", line 258, in __init__

self.patcher.auto()

File "/home/omkmorendha/.local/share/virtualenvs/JSX_scraping-34LRwH2V/lib/python3.10/site-packages/undetected_chromedriver/patcher.py", line 178, in auto

self.unzip_package(self.fetch_package())

File "/home/omkmorendha/.local/share/virtualenvs/JSX_scraping-34LRwH2V/lib/python3.10/site-packages/undetected_chromedriver/patcher.py", line 287, in fetch_package

return urlretrieve(download_url)[0]

File "/usr/lib/python3.10/urllib/request.py", line 241, in urlretrieve

with contextlib.closing(urlopen(url, data)) as fp:

File "/usr/lib/python3.10/urllib/request.py", line 216, in urlopen

return opener.open(url, data, timeout)

File "/usr/lib/python3.10/urllib/request.py", line 525, in open

response = meth(req, response)

File "/usr/lib/python3.10/urllib/request.py", line 634, in http_response

response = self.parent.error(

File "/usr/lib/python3.10/urllib/request.py", line 563, in error

return self._call_chain(*args)

File "/usr/lib/python3.10/urllib/request.py", line 496, in _call_chain

result = func(*args)

File "/usr/lib/python3.10/urllib/request.py", line 643, in http_error_default

raise HTTPError(req.full_url, code, msg, hdrs, fp)

urllib.error.HTTPError: HTTP Error 404: Not Found

``` | closed | 2024-02-15T12:25:30Z | 2024-02-15T12:43:12Z | https://github.com/ultrafunkamsterdam/undetected-chromedriver/issues/1744 | [] | omkmorendha | 2 |

CorentinJ/Real-Time-Voice-Cloning | pytorch | 792 | Datasets_Root | In the instructions to run the preprocess. With the datasets how to I figure where the dataset root is. What is the command line needed to do so.

I'm using Windows 10 BTW.

It launches and works without any dataset_root listed but I want to make it work better. And without the datasets I can't | closed | 2021-07-08T17:46:29Z | 2021-08-25T09:42:20Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/792 | [] | C4l1b3r | 10 |

sebp/scikit-survival | scikit-learn | 159 | Terminal Node Constraint for Random Survival Forests | Is there any parameter/variable which states the minimum number of "uncensored samples" required to be at a leaf node? I think it's called "a minimum of d0 > 0 unique deaths" in the original paper. | open | 2020-12-29T07:31:02Z | 2021-01-27T09:21:45Z | https://github.com/sebp/scikit-survival/issues/159 | [

"enhancement"

] | mastervii | 4 |

Yorko/mlcourse.ai | matplotlib | 358 | Add athlete_events.csv to data folder | The athlete_events.csv file seems to be missing from the data folder. Sure, it can be downloaded via the [Kaggle link][0] but it's not immediately obvious.

[0]: https://www.kaggle.com/heesoo37/120-years-of-olympic-history-athletes-and-results/version/2 | closed | 2018-10-01T11:37:05Z | 2018-10-04T14:11:54Z | https://github.com/Yorko/mlcourse.ai/issues/358 | [

"invalid"

] | morcmarc | 1 |

0b01001001/spectree | pydantic | 21 | falcon endpoint function doesn't get the right `self` | This is due to the decorator.

```py

class Demo:

def test(self): pass

def on_get(self, req, resp):

self.test() # this will raise AttributeError

pass

```

And the `parse_name` function for falcon endpoint function should return the class name instead of the method name. Since the method names are all the same. | closed | 2020-01-09T08:25:43Z | 2020-01-12T10:20:55Z | https://github.com/0b01001001/spectree/issues/21 | [] | kemingy | 2 |

benbusby/whoogle-search | flask | 580 | Ratelimited | Hello, I still don't know how to solve this problem, all was working ok until today that I received this message when trying to use Whoogle.

Thanks | closed | 2021-12-15T11:02:38Z | 2021-12-24T00:07:44Z | https://github.com/benbusby/whoogle-search/issues/580 | [

"question"

] | gaditano66 | 10 |

shibing624/text2vec | nlp | 29 | 'Word2VecKeyedVectors' object has no attribute 'key_to_index' | Hi, how to fix 'Word2VecKeyedVectors' object has no attribute 'key_to_index' after loading GoogleNews-vectors-negative300.bin file in w2v = KeyedVectors.load_word2vec_format(word2vec_path, binary=True)

| closed | 2021-09-04T16:12:36Z | 2021-09-04T16:14:37Z | https://github.com/shibing624/text2vec/issues/29 | [

"bug"

] | AdhyaSuman | 0 |

gee-community/geemap | jupyter | 1,445 | The code "cartoee_subplots" does not work | Dear,

When I run "cartoee_subplots.ipynb" I get the following graphic with projection problem for the image (srtm):

I think the problem is in the following line:

cartoee.add_layer(ax, srtm, region=region, vis_params=vis, cmap="terrain")

Code: https://github.com/giswqs/geemap/blob/master/examples/notebooks/cartoee_subplots.ipynb

Raul | closed | 2023-02-23T14:44:50Z | 2023-06-30T18:43:32Z | https://github.com/gee-community/geemap/issues/1445 | [

"bug"

] | raulpoppiel | 1 |

junyanz/pytorch-CycleGAN-and-pix2pix | pytorch | 1,222 | 'Namespace' object has no attribute 'n_epochs' | Hi, I was trying to run **train.py** for png sets.

The number of training images = 11036

`python3 train.py --dataroot datasets/AS2SE/ --name AS2SE_1 --model cycle_gan --phase train --gpu_ids 0,1,2 --batch_size 16 --preprocess none --load_size 170 --print_freq 1000 --save_epoch_freq 200 --input_nc 1 --output_nc 1`

(I set input_nc and output_nc to 1 because my png images are grayscale. Right?)

Then I met the error message like below.

There was no n_epochs in the **train_options.py** file.

What is n_epochs and how can I solve this issue?

```

Traceback (most recent call last):

File "train.py", line 34, in <module>

for epoch in range(opt.epoch_count, opt.n_epochs + opt.n_epochs_decay + 1): # outer loop for different epochs; we save the model by <epoch_count>, <epoch_count>+<save_latest_freq>

AttributeError: 'Namespace' object has no attribute 'n_epochs'

```

Thank you in advance.

| open | 2021-01-11T13:05:36Z | 2021-01-12T01:40:50Z | https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/1222 | [] | lucid0921 | 1 |

pywinauto/pywinauto | automation | 906 | Announcement: I'm making a PyWinAutoUI (graphical) GUI with advanced features | My end goal is to do on-screen lessons with overlayed arrows as done on bubble.is (and their set of lessons), but since I don't want to always be coding to create a simple enough lesson, I thought I should give PyWinAuto a GUI frontend that detects / records clicks in .py script format.

Here's a screenshot:

I'm assuming I should post back here when it's ready.

| closed | 2020-04-01T21:55:36Z | 2020-04-28T05:46:10Z | https://github.com/pywinauto/pywinauto/issues/906 | [

"enhancement",

"New Feature",

"success_stories"

] | enjoysmath | 30 |

aleju/imgaug | machine-learning | 119 | broblem load and show images | I tried but failed. this is my code and error,thanks

import imgaug as ia

from imgaug import augmenters as iaa

import numpy as np

import cv2

import scipy

from scipy import misc

ia.seed(1)

# Example batch of images.

# The array has shape (32, 64, 64, 3) and dtype uint8.

#scipy.ndimage.imread('cat.jpg', flatten=False, mode="RGB")

images = cv2.imread('C:\python3.6.4.64bit\images\cat.jpg',1)

images = np.array(

[ia.quokka(size=(64, 64)) for _ in range(32)],

dtype=np.uint8

)

seq = iaa.Sequential([

iaa.Fliplr(0.5), # horizontal flips

iaa.Crop(percent=(0, 0.1)), # random crops

# Small gaussian blur with random sigma between 0 and 0.5.

# But we only blur about 50% of all images.

iaa.Sometimes(0.5,

iaa.GaussianBlur(sigma=(0, 0.5))

),

# Strengthen or weaken the contrast in each image.

iaa.ContrastNormalization((0.75, 1.5)),

# Add gaussian noise.

# For 50% of all images, we sample the noise once per pixel.

# For the other 50% of all images, we sample the noise per pixel AND

# channel. This can change the color (not only brightness) of the

# pixels.

iaa.AdditiveGaussianNoise(loc=0, scale=(0.0, 0.05*255), per_channel=0.5),

# Make some images brighter and some darker.

# In 20% of all cases, we sample the multiplier once per channel,

# which can end up changing the color of the images.

iaa.Multiply((0.8, 1.2), per_channel=0.2),

# Apply affine transformations to each image.

# Scale/zoom them, translate/move them, rotate them and shear them.

iaa.Affine(

scale={"x": (0.8, 1.2), "y": (0.8, 1.2)},

translate_percent={"x": (-0.2, 0.2), "y": (-0.2, 0.2)},

rotate=(-25, 25),

shear=(-8, 8)

)

], random_order=True) # apply augmenters in random order

images_aug = seq.augment_images(images)

cv2.imshow("Original", images_aug)

===============================

and show this error:

Traceback (most recent call last):

File "C:/python3.6.4.64bit/augg.py", line 52, in <module>

cv2.imshow("Original", images_aug)

cv2.error: C:\projects\opencv-python\opencv\modules\core\src\array.cpp:2493: error: (-206) Unrecognized or unsupported array type in function cvGetMat

| open | 2018-04-05T17:55:33Z | 2018-04-16T15:49:38Z | https://github.com/aleju/imgaug/issues/119 | [] | ghost | 7 |

modoboa/modoboa | django | 3,263 | Incorrect DNS status for subdomains | OS: Debian 12

Modoboa: 2.2.4

Installer used: Yes

Webserver: Nginx

Database: MySQL

I configured a subdomain in Modoboa, and all the records are correct in Cloudflare, and emails are sending and receiving. The issue is with the DNS status in Modoboa, it shows "Domain has no MX record" ("No MX record found for this domain."), DNSBL shows "No information available for this domain.", and SPF shows "No record found".

Interestingly, the DKIM, DMARC and autoconfig are showing "green".

My Cloudflare records are:

- A: mail.sub (points to the IP)

- CNAME: autoconfig.sub

- CNAME: autodiscover.sub

- MX: sub (points to: mail.sub.domain.com)

- TXT: _dmarc.sub (with DMARC configuration)

- TXT: sub (with SPF configuration)

- TXT: modoboa._domainkey.sub (with DKIM) | closed | 2024-06-13T19:06:27Z | 2024-07-15T15:46:04Z | https://github.com/modoboa/modoboa/issues/3263 | [

"feedback-needed"

] | hugohamelcom | 17 |

nltk/nltk | nlp | 3,120 | nltk.download('punkt') not working |

Please help me with this issue

| open | 2023-02-08T15:56:34Z | 2023-02-08T16:02:46Z | https://github.com/nltk/nltk/issues/3120 | [] | Bhargav2193 | 1 |

piskvorky/gensim | nlp | 2,844 | Conflicts between hyperparameters for negative sampling? | Hi,

I wonder if there are possible interactions/conflicts when you use negative sampling with `negative>0 `and have hierarchical softmax accidentally activated` hs=1`? The docs says that only if hs=0 negative sampling will be used (negative>0). So I can hope that still if hs=1 and `negative>0 ` hopefully _**no**_ negative sampling is used?

Python 3.6

Win 10

NumPy 1.18.1

SciPy 1.1.0

gensim 3.8.1

| closed | 2020-05-18T16:30:41Z | 2020-10-28T02:08:32Z | https://github.com/piskvorky/gensim/issues/2844 | [

"question"

] | datistiquo | 1 |

snarfed/granary | rest-api | 163 | MF2-Atom missing published/updated dates | Using granary.io on aaronparecki.com (@aaronpk) is failing to parse the dt-published fields and include them in the atom feed.

https://granary.io/url?input=html&output=atom&url=https%3A//aaronparecki.com/&hub=https%3A//switchboard.p3k.io/ | closed | 2019-03-28T19:58:01Z | 2019-03-29T04:05:49Z | https://github.com/snarfed/granary/issues/163 | [] | alexmingoia | 1 |

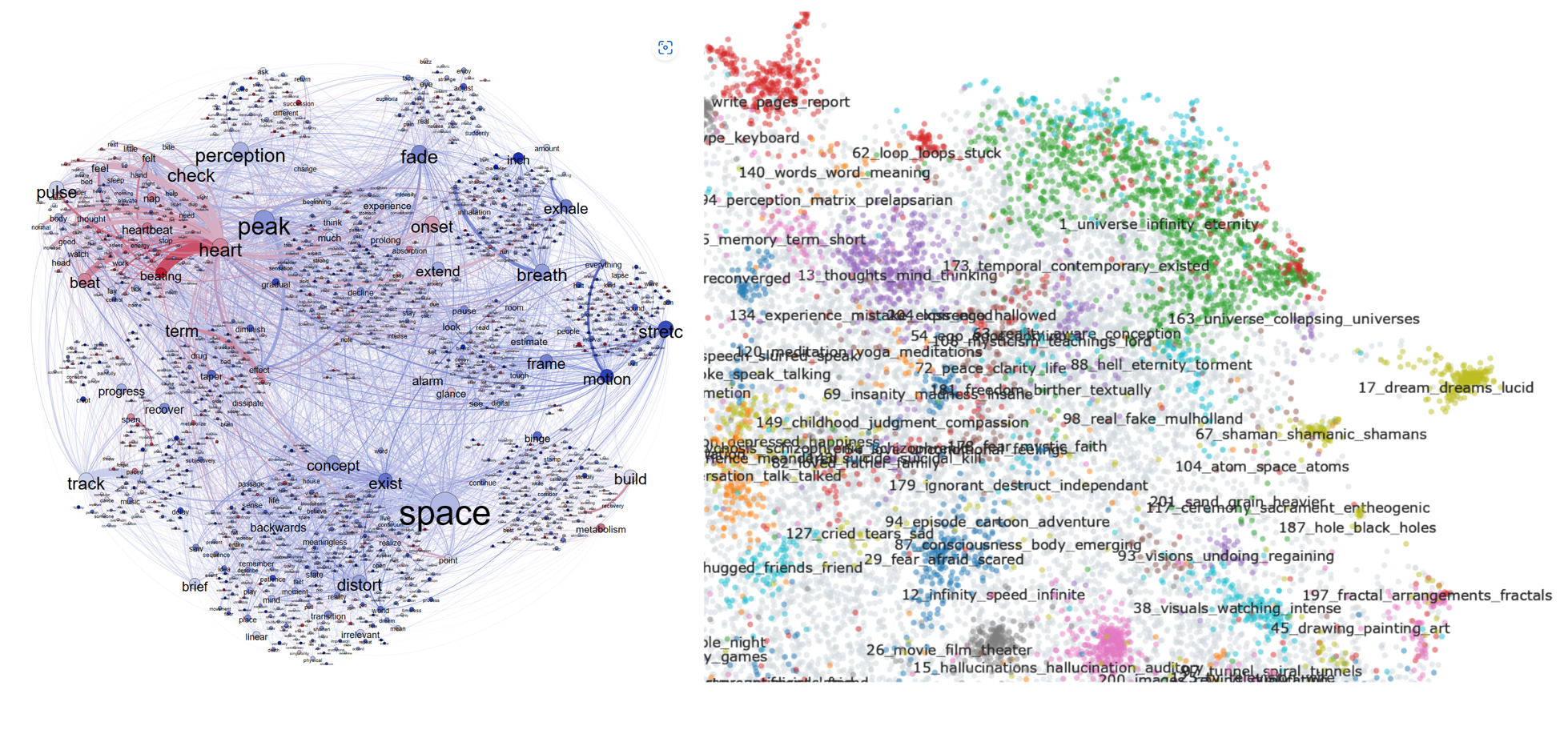

MaartenGr/BERTopic | nlp | 1,153 | Change node colour in visualize_documents based on class | Hi,

Love the BERTopic library!

I wanted to ask whether in <code>.visualize_documents</code>, it would be possible to change the colour of the document nodes according to my own class labels. All my documents have labels for 'blue', 'white', and 'red', representing slower, faster or normal time perception. It would be great to compare how topics are clustered in <code>.visualize_documents</code> (right) and my own method (left).

Appreciate the help!

Best

Akseli

| closed | 2023-04-04T13:50:55Z | 2023-04-10T06:28:50Z | https://github.com/MaartenGr/BERTopic/issues/1153 | [] | Akseli-Ilmanen | 3 |

httpie/cli | python | 778 | body data by parts not printed even if written on a socket | Hi Devs,

I really like your software and the presentation of the data in the terminal!

However, in a case on a get with multiple body responses by parts and a keep-alive attribute, only the header is written on client side (httpie) once flushed on a socket. Nevertheless, curl does show each new data written on a socket. Therefore, I must conclude it's a bug!

Sever side sample:

```

[2019-05-17 13:52:11.270] TRACE: [connection:7] append response (#0), flags: { final_parts, connection_keepalive }, write group size: 2

[2019-05-17 13:52:11.270] TRACE: [connection:7] start next write group for response (#0), size: 2

[2019-05-17 13:52:11.270] TRACE: [connection:7] start response (#0): HTTP/1.1 200 OK

[2019-05-17 13:52:11.270] TRACE: [connection:7] sending resp data, buf count: 2, total size: 213

[2019-05-17 13:52:11.270] TRACE: [connection:7] outgoing data was sent: 213 bytes

[2019-05-17 13:52:11.270] TRACE: [connection:7] finishing current write group

[2019-05-17 13:52:11.270] TRACE: [connection:7] should keep alive

[2019-05-17 13:52:11.270] TRACE: [connection:7] start waiting for request

[2019-05-17 13:52:11.270] TRACE: [connection:7] continue reading request

...

[2019-05-17 13:53:17.892] WARN: [connection:1] try to write response, while socket is closed

[2019-05-17 13:53:17.892] TRACE: [connection:2] append response (#0), flags: { not_final_parts, connection_keepalive }, write group size: 3

[2019-05-17 13:53:17.892] TRACE: [connection:2] start next write group for response (#0), size: 3

[2019-05-17 13:53:17.892] TRACE: [connection:2] sending resp data, buf count: 3, total size: 86

[2019-05-17 13:53:17.892] TRACE: [connection:5] append response (#0), flags: { not_final_parts, connection_keepalive }, write group size: 3

[2019-05-17 13:53:17.892] TRACE: [connection:5] start next write group for response (#0), size: 3

[2019-05-17 13:53:17.892] TRACE: [connection:5] sending resp data, buf count: 3, total size: 86

[2019-05-17 13:53:17.892] TRACE: [connection:2] outgoing data was sent: 86 bytes

[2019-05-17 13:53:17.892] TRACE: [connection:2] finishing current write group

[2019-05-17 13:53:17.892] TRACE: [connection:5] outgoing data was sent: 86 bytes

[2019-05-17 13:53:17.893] TRACE: [connection:5] finishing current write group

[2019-05-17 13:53:17.893] TRACE: [connection:5] should keep alive

[2019-05-17 13:53:17.892] TRACE: [connection:2] should keep alive

...

```

Curl Listener:

```

n0t ~ $ curl -v 127.0.0.1:8080/tata/listen

* Trying 127.0.0.1...

* TCP_NODELAY set

* Connected to 127.0.0.1 (127.0.0.1) port 8080 (#0)

> GET /tata/listen HTTP/1.1

> Host: 127.0.0.1:8080

> User-Agent: curl/7.64.1

> Accept: */*

>

< HTTP/1.1 200 OK

< Connection: keep-alive

< Server: RESTinio

< Content-Type: application/json

< Access-Control-Allow-Origin: *

< Transfer-Encoding: chunked

<

{"data":"d2FmZmVzIG5ldmVy","id":"633971162421690157","type":0}

{"data":"d2FmZmVzIG5ldmVy","id":"9359431324407924376","type":0}

{"data":"d2FmZmVzIG5ldmVy","id":"12979840589662321868","type":0}

{"data":"bmV2ZXIgc2F5IG5ldmVy","id":"3961064829737946963","type":0}

{"data":"bmV2ZXIgc2F5IG5ldmVy","id":"14572780874153121707","type":0}

{"data":"bmV2ZXIgc2F5IG5ldmVy","id":"5299785977823115375","type":0}

{"data":"d2FmZmVzIG5ldmVy","expired":true,"id":"12979840589662321868","type":0}

{"data":"d2FmZmVzIG5ldmVy","expired":true,"id":"633971162421690157","type":0}

{"data":"d2FmZmVzIG5ldmVy","expired":true,"id":"9359431324407924376","type":0}

```

Httpie Listener:

```

n0t ~ $ http 127.0.0.1:8080/tata/listen

HTTP/1.1 200 OK

Access-Control-Allow-Origin: *

Connection: keep-alive

Content-Type: application/json

Server: RESTinio

Transfer-Encoding: chunked

^C

```

Cheers,

Seva | closed | 2019-05-17T18:02:39Z | 2019-08-29T11:50:16Z | https://github.com/httpie/cli/issues/778 | [] | binarytrails | 1 |

lepture/authlib | flask | 561 | OpenID Connect Front-Channel Logout | I suggest to implement helpers for [OpenID Connect Front-Channel Logout](https://openid.net/specs/openid-connect-frontchannel-1_0.html)

> This specification defines a logout mechanism that uses front-channel communication via the User Agent between the OP and RPs being logged out that does not need an OpenID Provider iframe on Relying Party pages, as [OpenID Connect Session Management 1.0](https://openid.net/specs/openid-connect-frontchannel-1_0.html#OpenID.Session) [OpenID.Session] does. Other protocols have used HTTP GETs to RP URLs that clear login state to achieve this; this specification does the same thing.

Related issues #292 #500 #560 | open | 2023-07-03T15:59:17Z | 2025-02-20T20:36:59Z | https://github.com/lepture/authlib/issues/561 | [

"spec",

"feature request"

] | azmeuk | 0 |

dpgaspar/Flask-AppBuilder | flask | 1,813 | OAUTH : Gitlab - The redirect URI included is not valid | ### Environment

Flask-Appbuilder version:

```

Flask 1.1.2

Flask-AppBuilder 3.4.4

Flask-Babel 2.0.0

Flask-Caching 1.10.1

Flask-JWT-Extended 3.25.1

Flask-Login 0.4.1

Flask-OpenID 1.3.0

Flask-Session 0.4.0

Flask-SQLAlchemy 2.5.1

Flask-WTF 0.14.3

```

pip freeze output:

```

alembic==1.7.6

amqp==5.0.9

anyio==3.5.0

apache-airflow==2.2.4

apache-airflow-providers-celery==2.1.0

apache-airflow-providers-ftp==2.0.1

apache-airflow-providers-http==2.0.3

apache-airflow-providers-imap==2.2.0

apache-airflow-providers-postgres==3.0.0

apache-airflow-providers-sqlite==2.1.0

apispec==3.3.2

argcomplete==1.12.3

attrs==20.3.0

Authlib==0.15.5

aws-cfn-bootstrap==2.0

Babel==2.9.1

billiard==3.6.4.0

blinker==1.4

boto3==1.21.7

botocore==1.24.7

cached-property==1.5.2

cachelib==0.6.0

cattrs==1.10.0

celery==5.2.3

certifi==2020.12.5

cffi==1.15.0

charset-normalizer==2.0.12

click==8.0.3

click-didyoumean==0.3.0

click-plugins==1.1.1

click-repl==0.2.0

clickclick==20.10.2

cloudpickle==1.4.1

colorama==0.4.4

colorlog==4.8.0

commonmark==0.9.1

connexion==2.11.1

croniter==1.3.4

cryptography==3.4.8

dask==2021.6.0

defusedxml==0.7.1

Deprecated==1.2.13

dill==0.3.1.1

distributed==2.19.0

dnspython==2.2.0

docutils==0.16

email-validator==1.1.3

eventlet==0.33.0

Flask==1.1.2

Flask-AppBuilder==3.4.4

Flask-Babel==2.0.0

Flask-Caching==1.10.1

Flask-JWT-Extended==3.25.1

Flask-Login==0.4.1

Flask-OpenID==1.3.0

Flask-Session==0.4.0

Flask-SQLAlchemy==2.5.1

Flask-WTF==0.14.3

flower==1.0.0

fsspec==2022.1.0

gevent==21.12.0

graphviz==0.19.1

greenlet==1.1.2

gunicorn==20.1.0

h11==0.12.0

HeapDict==1.0.1

httpcore==0.14.7

httpx==0.22.0

humanize==4.0.0

idna==3.3

importlib-metadata==4.11.1

importlib-resources==5.4.0

inflection==0.5.1

iso8601==1.0.2

isodate==0.6.1

itsdangerous==1.1.0

Jinja2==3.0.3

jmespath==0.10.0

jsonschema==3.2.0

kombu==5.2.3

lazy-object-proxy==1.4.3

locket==0.2.1

lockfile==0.12.2

Mako==1.1.6

Markdown==3.3.6

MarkupSafe==2.0.1

marshmallow==3.14.1

marshmallow-enum==1.5.1

marshmallow-oneofschema==3.0.1

marshmallow-sqlalchemy==0.26.1

msgpack==1.0.3

numpy==1.20.3

openapi-schema-validator==0.1.6

openapi-spec-validator==0.3.3

packaging==21.3

pandas==1.3.5

partd==1.2.0

pendulum==2.1.2

prison==0.2.1

prometheus-client==0.13.1

prompt-toolkit==3.0.28

psutil==5.9.0

psycopg2-binary==2.9.3

pycparser==2.21

Pygments==2.11.2

PyJWT==1.7.1

pyparsing==2.4.7

pyrsistent==0.16.1

pystache==0.5.4

python-daemon==2.3.0

python-dateutil==2.8.2

python-nvd3==0.15.0

python-slugify==4.0.1

python3-openid==3.2.0

pytz==2021.3

pytzdata==2020.1

PyYAML==5.4.1

requests==2.27.1

rfc3986==1.5.0

rich==11.2.0

s3transfer==0.5.2

sentry-sdk==1.5.5

setproctitle==1.2.2

simplejson==3.2.0

six==1.16.0

sniffio==1.2.0

sortedcontainers==2.4.0

SQLAlchemy==1.3.24

SQLAlchemy-JSONField==1.0.0

SQLAlchemy-Utils==0.38.2

statsd==3.3.0

swagger-ui-bundle==0.0.9

tabulate==0.8.9

tblib==1.7.0

tenacity==8.0.1

termcolor==1.1.0

text-unidecode==1.3

toolz==0.11.2

tornado==6.1

typing-extensions==3.10.0.2

unicodecsv==0.14.1

urllib3==1.26.8

vine==5.0.0

wcwidth==0.2.5

Werkzeug==1.0.1

wrapt==1.13.3

WTForms==2.3.3

zict==2.0.0

zipp==3.7.0

zope.event==4.5.0

zope.interface==5.4.0

```

### Describe the expected results

Apache Airflow uses FAB for UI & authentication, in my use case i'm trying to use OAUTH with my Gitlab instance (Gitlab CE).

https://airflow.apache.org/docs/apache-airflow/2.2.4/security/webserver.html

My Airflow instance is behind an AWS ALB, alb configuration forward request to my airflow instance : no issue with this point

My Gitlab instance should be the authentication reference

<img width="701" alt="image" src="https://user-images.githubusercontent.com/17825769/156008592-d43dab25-baba-487f-a0e8-b603375f9b38.png">

### Describe the actual results

When i try to connect with the OAUTH button, an error occurs ....

"Sign up with" -> An error has occurred "The redirect URI included is not valid."

[DEBUG] webserver log :

```

x.x.x.x - - [28/Feb/2022:17:16:05 +0000] "GET /health HTTP/1.1" 200 159 "-" "ELB-HealthChecker/2.0"

x.x.x.x - - [28/Feb/2022:17:16:05 +0000] "GET /health HTTP/1.1" 200 159 "-" "ELB-HealthChecker/2.0"