repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

sktime/sktime | scikit-learn | 7,557 | [BUG] 1st generation reducer - inconsistent use of parameters in global vs local case | The sliding window transform in the 1st generation reducer interfaced via `make_reduce` seems to be problematic, as some parameters are only used in the global or the local case, whereas one would expect them to be used in both.

Specifically, as the refactor https://github.com/sktime/sktime/pull/7556 shows:

* `fh`, `window_length` are used only in the local branch

* `transformers` are used only in the global branch

(in `_sliding_window_transform`)

This seems like a logic error. | open | 2024-12-21T17:55:45Z | 2024-12-21T17:56:13Z | https://github.com/sktime/sktime/issues/7557 | [

"bug",

"module:forecasting"

] | fkiraly | 0 |

pydantic/pydantic-settings | pydantic | 171 | Field fails to be initialized/validated when explicitly passing `env=` | ### Initial Checks

- [X] I confirm that I'm using Pydantic V2

### Description

When explicitly passing the value of the environment variable to use to initialize a field, such field is not initialized with the given env var. This results in an error like this `Field required [type=missing, input_value={}, input_type=dict]`

### Example Code

```Python

import os

from pydantic_settings import BaseSettings

from pydantic import Field

os.environ["APP_TEXT"] = "Hello World"

class Settings(BaseSettings):

text: str = Field(env="APP_TEXT")

print(Settings().text)

```

### Python, Pydantic & OS Version

```Text

pydantic version: 2.4.1

pydantic-core version: 2.10.1

pydantic-core build: profile=release pgo=false

install path: /Users/gvso/Lev/sendgrid_test/venv/lib/python3.10/site-packages/pydantic

python version: 3.10.8 (main, Feb 13 2023, 14:35:14) [Clang 14.0.0 (clang-1400.0.29.202)]

platform: macOS-13.5.2-arm64-arm-64bit

related packages: typing_extensions-4.7.1 pydantic-settings-2.0.3

```

| closed | 2023-09-26T20:55:19Z | 2023-10-02T16:24:05Z | https://github.com/pydantic/pydantic-settings/issues/171 | [

"unconfirmed"

] | gvso | 2 |

streamlit/streamlit | data-visualization | 10,880 | `st.dataframe` displays wrong indizes for pivoted dataframe | ### Checklist

- [x] I have searched the [existing issues](https://github.com/streamlit/streamlit/issues) for similar issues.

- [x] I added a very descriptive title to this issue.

- [x] I have provided sufficient information below to help reproduce this issue.

### Summary

Under some conditions streamlit will display the wrong indices in pivoted / multi indexed dataframes.

### Reproducible Code Example

[](https://issues.streamlitapp.com/?issue=gh-10880)

```Python

import streamlit as st

import pandas as pd

df = pd.DataFrame(

{"Index": ["X", "Y", "Z"], "A": [1, 2, 3], "B": [6, 5, 4], "C": [9, 7, 8]}

)

df = df.set_index("Index")

st.dataframe(df)

st.dataframe(df.T.corr())

st.dataframe(df.T.corr().unstack())

print(df.T.corr().unstack())

```

### Steps To Reproduce

1. `streamlit run` the provided code.

2. Look at the result of the last `st.dataframe()` call.

### Expected Behavior

Inner index should be correct.

### Current Behavior

The provided code renders the following tables:

The first two tables are correct, while the last one displays a duplicate of the first index instead of the second one.

In comparison, this is the correct output from the `print()` statement:

```

Index Index

X X 1.000000

Y 0.999597

Z 0.888459

Y X 0.999597

Y 1.000000

Z 0.901127

Z X 0.888459

Y 0.901127

Z 1.000000

dtype: float64

```

### Is this a regression?

- [ ] Yes, this used to work in a previous version.

### Debug info

- Streamlit version: 1.42.2

- Python version: 3.12.9

- Operating System: Linux

- Browser: Google Chrome / Firefox

### Additional Information

The problem does not occur, when the default index is used.

```python

import streamlit as st

import pandas as pd

df = pd.DataFrame({"A": [1, 2, 3], "B": [6, 5, 4], "C": [9, 7, 8]})

st.dataframe(df.T.corr().unstack())

```

This renders the correct dataframe:

---

This issue is possibly related to https://github.com/streamlit/streamlit/issues/3696 (parsing column names and handling their types) | open | 2025-03-23T15:50:44Z | 2025-03-24T13:49:35Z | https://github.com/streamlit/streamlit/issues/10880 | [

"type:bug",

"feature:st.dataframe",

"status:confirmed",

"priority:P3",

"feature:st.data_editor"

] | punsii2 | 2 |

aminalaee/sqladmin | sqlalchemy | 700 | Add messages support | ### Checklist

- [X] There are no similar issues or pull requests for this yet.

### Is your feature related to a problem? Please describe.

I would like to display feedback to users after a form submission, not just error messages. This would allow for warnings and success messages.

### Describe the solution you would like.

Django Admin uses this

https://docs.djangoproject.com/en/dev/ref/contrib/messages/#django.contrib.messages.add_message

### Describe alternatives you considered

_No response_

### Additional context

I may be willing to work on this, if there is interest. | open | 2024-01-19T23:25:17Z | 2024-05-15T20:07:55Z | https://github.com/aminalaee/sqladmin/issues/700 | [] | jonocodes | 11 |

dunossauro/fastapi-do-zero | pydantic | 55 | Criar uma lista de contribuição! | Adicionar ao README do mkdocs uma lista de agradecimento a todas as pessoas que revisaram e editaram material nas páginas! | closed | 2023-11-30T04:57:23Z | 2023-12-01T00:21:13Z | https://github.com/dunossauro/fastapi-do-zero/issues/55 | [

"Site"

] | dunossauro | 0 |

FlareSolverr/FlareSolverr | api | 1,403 | Browser doesn't load testing page | ### Have you checked our README?

- [X] I have checked the README

### Have you followed our Troubleshooting?

- [X] I have followed your Troubleshooting

### Is there already an issue for your problem?

- [X] I have checked older issues, open and closed

### Have you checked the discussions?

- [X] I have read the Discussions

### Have you ACTUALLY checked all these?

YES

### Environment

```markdown

- FlareSolverr version: 3.3.21

- Last working FlareSolverr version: -

- Operating system: Linux archlinux 6.11.5-zen1-1-zen

- Are you using Docker: no

- FlareSolverr User-Agent (see log traces or / endpoint): doesn't get to this point

- Are you using a VPN: no

- Are you using a Proxy: no

- Are you using Captcha Solver: no

- If using captcha solver, which one: -

- URL to test this issue: https://google.com

```

### Description

This issue is similar to https://github.com/FlareSolverr/FlareSolverr/issues/1384, but it is another bug.

When using docker in a headless mode it works, but using it on the desktop **without** headless through `python src/flaresolverr.py` starts the browser and then simply doesn't load anything.

Can be helpful: ~~I'm using linux, hyprland~~ it doesn't work on both linux and windows, x64 processor, I have tested it on an nvidia gpu, and intel's integrated gpu.

I found that removing `options.add_argument('--disable-software-rasterizer')` in `src/utils.py` works for me, but I don't know is it only a mine problem, or a problem that occurs on x86-64 CPUs.

### Logged Error Messages

```text

2024-10-28 11:19:14 INFO FlareSolverr 3.3.21

2024-10-28 11:19:14 INFO Testing web browser installation...

2024-10-28 11:19:14 INFO Platform: Linux-6.11.5-zen1-1-zen-x86_64-with-glibc2.40

2024-10-28 11:19:14 INFO Chrome / Chromium path: /usr/bin/chromium

2024-10-28 11:19:14 INFO Chrome / Chromium major version: 130

2024-10-28 11:19:14 INFO Launching web browser...

And then an infinite load.

```

### Screenshots

| closed | 2024-10-28T10:21:51Z | 2024-11-24T18:30:36Z | https://github.com/FlareSolverr/FlareSolverr/issues/1403 | [] | MAKMED1337 | 13 |

collerek/ormar | pydantic | 541 | 'str' object has no attribute 'toordinal' | **Describe the bug**

Hi!

After the latest update (0.10.24), it looks like querying for dates, using strings, is no longer working.

- My field is of type `ormar.Date(nullable=True)`.

- Calling `await MyModel.objects.get(field=value)` fails when the value is `'2022-01-20'`

- Calling `await MyModel.objects.get(field=parse_date(value))` works when the value is ^

Querying with the plain string value worked before (on 10.23).

**Stack trace**

```python

../../.virtualenvs/project/lib/python3.10/site-packages/ormar/queryset/queryset.py:948: in get

return await self.filter(*args, **kwargs).get()

../../.virtualenvs/project/lib/python3.10/site-packages/ormar/queryset/queryset.py:968: in get

rows = await self.database.fetch_all(expr)

../../.virtualenvs/project/lib/python3.10/site-packages/databases/core.py:149: in fetch_all

return await connection.fetch_all(query, values)

../../.virtualenvs/project/lib/python3.10/site-packages/databases/core.py:271: in fetch_all

return await self._connection.fetch_all(built_query)

../../.virtualenvs/project/lib/python3.10/site-packages/databases/backends/postgres.py:174: in fetch_all

rows = await self._connection.fetch(query_str, *args)

../../.virtualenvs/project/lib/python3.10/site-packages/asyncpg/connection.py:601: in fetch

return await self._execute(

../../.virtualenvs/project/lib/python3.10/site-packages/asyncpg/connection.py:1639: in _execute

result, _ = await self.__execute(

../../.virtualenvs/project/lib/python3.10/site-packages/asyncpg/connection.py:1664: in __execute

return await self._do_execute(

../../.virtualenvs/project/lib/python3.10/site-packages/asyncpg/connection.py:1711: in _do_execute

result = await executor(stmt, None)

asyncpg/protocol/protocol.pyx:183: in bind_execute

???

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

> ???

E asyncpg.exceptions.DataError: invalid input for query argument $2: '2022-01-20' ('str' object has no attribute 'toordinal')

```

----------------------

Let me know if you want me to try and create a reproducible example. I thought I would open the issue first, in case you immediately knew what might have changed.

Thanks for maintaining the package! 👏 | open | 2022-01-20T13:38:50Z | 2022-01-24T09:44:58Z | https://github.com/collerek/ormar/issues/541 | [

"bug"

] | sondrelg | 5 |

ShishirPatil/gorilla | api | 934 | [bug] Hosted Gorilla: <Issue> | Exception: Error communicating with OpenAI: HTTPConnectionPool(host='zanino.millennium.berkeley.edu', port=8000): Max retries exceeded with url: /v1/chat/completions (Caused by NewConnectionError('<urllib3.connection.HTTPConnection object at 0x7c39c8555a10>: Failed to establish a new connection: [Errno 111] Connection refused'))

Failed model: gorilla-7b-hf-v1, for prompt: I would like to translate 'I feel very good today.' from English to Chinese | open | 2025-03-12T05:01:02Z | 2025-03-12T05:01:02Z | https://github.com/ShishirPatil/gorilla/issues/934 | [

"hosted-gorilla"

] | tolgakurtuluss | 0 |

biolab/orange3 | pandas | 6,461 | Data Sets doesn't remember non-English selection | According to @BlazZupan, if one chooses a Slovenian dataset (in English version of Orange?) and saves the workflow, this data set is not selected after reloading the workflow.

I suspect the problem occurs because the language combo is not a setting and is always reset to English for English Orange (and to Slovenian for Slovenian), and thus the data set is not chosen because it is not shown.

The easiest solution would be to save the language as a schema-only setting. | closed | 2023-06-02T12:50:51Z | 2023-06-16T08:02:49Z | https://github.com/biolab/orange3/issues/6461 | [

"bug"

] | janezd | 0 |

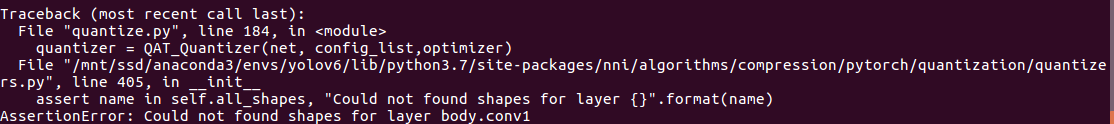

microsoft/nni | tensorflow | 5,253 | AssertionError: Could not found shapes for layer body.conv1" for QAT_quantizer() | **Describe the issue**:

I am using QAT_quantizer() and my quantize config is :

Config_list = [{

'quant_tyes': ['input'],

'quant_bits': {'input': 8},

'op_types': ['Conv2d']

}]

I call the quantizer by "quantizer = QAT_quantizer (net, config_list, optimizer)" without passing "dummy_input". But I encounter such error "Traceback (most recent call last):

File "quantize.py", line 184, in <module>

quantizer = QAT_Quantizer(net, config_list,optimizer)

File "/mnt/ssd/anaconda3/envs/yolov6/lib/python3.7/site-packages/nni/algorithms/compression/pytorch/quantization/quantizers.py", line 405, in __init__

assert name in self.all_shapes, "Could not found shapes for layer {}".format(name)

AssertionError: Could not found shapes for layer body.conv1"

The error could be solved by passing a dummy_input into the QAT_quantizer. But I do not want to pass it(I don not need BN fold here). Therefore, I would like to know how can I solve the error without passing a dummy_input.

**Environment**:

- NNI version: 2.5, 2.9

- Python version: 3.7

| closed | 2022-11-30T23:24:28Z | 2023-05-08T07:47:29Z | https://github.com/microsoft/nni/issues/5253 | [] | ToniButland1998 | 3 |

Avaiga/taipy | data-visualization | 1,979 | [DOCS] Fix Markdown in README.md | ### Issue Description

In README.md, the following 'Getting Started' link is not rendered correctly as there is a typo in the markdown.

### Screenshots or Examples (if applicable)

### Proposed Solution (optional)

We should fix the markdown so that the link is rendered correctly.

### Code of Conduct

- [X] I have checked the [existing issues](https://github.com/Avaiga/taipy/issues?q=is%3Aissue+).

- [X] I am willing to work on this issue (optional) | closed | 2024-10-09T06:53:20Z | 2024-10-09T14:07:22Z | https://github.com/Avaiga/taipy/issues/1979 | [

"📈 Improvement",

"📄 Documentation",

"🟨 Priority: Medium"

] | Sriparno08 | 9 |

AirtestProject/Airtest | automation | 896 | windows窗口无法切换到子窗口 | **windows窗口无法切换到子窗口**

```

通过 airtest 连接到主窗口,主窗口通过操作打开一个子窗口,但是我没法用 airtest 的 api 去切换到子窗口,需要自己写自定义的方法才行,如下是我自己实现的切换到子窗口后截图判断位置的代码,相当于 exists 方法切换到子窗口去操作。

```

```

from airtest.core.win.win import Windows

dev = device()

child_win = dev._app.window(title="xx", class_name="xx")

handle = child_win.wrapper_object().handle

_dev = Windows(handle=handle)

screen = _dev.snapshot(filename=None, quality=ST.SNAPSHOT_QUALITY)

match_pos = Template(r"tpl1618821110989.png", record_pos=(-0.004, -0.12), resolution=(1667, 887)).match_in(screen)

print(match_pos)

```

**python 版本:** `python3.6.8`

**airtest 版本:** `1.2.8`

**设备:**

- 系统: Windows server 2012 r2

| open | 2021-04-22T07:08:27Z | 2021-04-27T08:37:57Z | https://github.com/AirtestProject/Airtest/issues/896 | [

"enhancement"

] | hfdzlsw | 0 |

MaartenGr/BERTopic | nlp | 1,277 | OSError: libcudart.so: cannot open shared object file: No such file or directory | Having already done:

```

!pip install cugraph-cu11 cudf-cu11 cuml-cu11 --extra-index-url=https://pypi.nvidia.com

!pip uninstall cupy-cuda115 -y

!pip uninstall cupy-cuda11x -y

!pip install cupy-cuda11x -f https://pip.cupy.dev/aarch64

```

When trying to:

`from cuml.cluster import HDBSCAN

`

I get:

`OSError: libcudart.so: cannot open shared object file: No such file or directory

` | closed | 2023-05-19T10:02:18Z | 2023-05-19T13:38:43Z | https://github.com/MaartenGr/BERTopic/issues/1277 | [] | noahberhe | 2 |

opengeos/leafmap | streamlit | 119 | style_callback param for add_geojson() not working? | ### Environment Information

- leafmap version: 0.5.0

- Python version: 3.9

- Operating System: Linux/macOS

### Description

I want to use the `style_callback` parameter for `map.add_geojson()`, but the chosen style which sets only the color seems not to be respected. I think the style dicts are the same for ipyleaflet and leafmap, at least I could not find any contradictory information. See below.

```python

import requests

data = requests.get((

"https://raw.githubusercontent.com/telegeography/www.submarinecablemap.com"

"/master/web/public/api/v3/cable/cable-geo.json"

)).json()

callback = lambda feat: {"color": feat["properties"]["color"]}

```

```python

import leafmap

m = leafmap.Map(center=[0, 0], zoom=2)

m.add_geojson(data, style_callback=callback)

m.layout.height = "100px"

m

```

<img width="704" alt="Screen Shot 2021-10-03 at 11 12 53" src="https://user-images.githubusercontent.com/1001778/135747358-09d121b3-bcbc-44ff-992b-ee9036255963.png">

```python

import ipyleaflet

m = ipyleaflet.Map(center=[0, 0], zoom=2)

m += ipyleaflet.GeoJSON(data=data, style_callback=callback)

m.layout.height = "100px"

m

```

<img width="705" alt="Screen Shot 2021-10-03 at 11 14 14" src="https://user-images.githubusercontent.com/1001778/135747399-52943e61-da18-4365-96e7-76cc1e55dac6.png">

| closed | 2021-10-03T09:19:18Z | 2024-09-22T07:42:05Z | https://github.com/opengeos/leafmap/issues/119 | [

"bug"

] | deeplook | 11 |

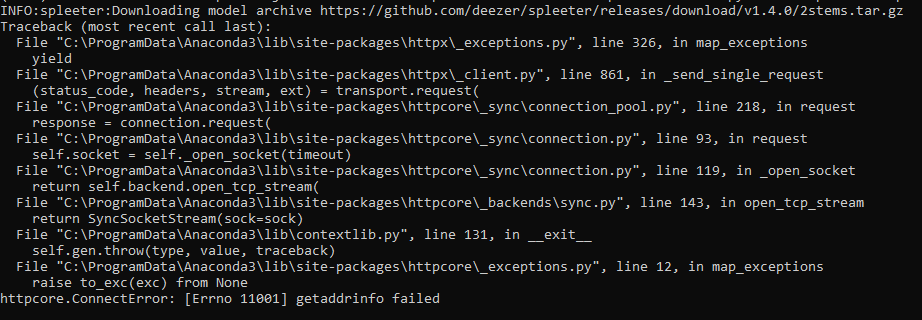

deezer/spleeter | deep-learning | 593 | [Errno 11001] getaddrinfo failed | I have a problem when I execute this command :

python -m spleeter separate -o output/ audio_example.mp3

the error I get is the following:

I would like to point out that:

1 - I'm on windows that's why I added the "python -m ".

2 - I installed spleeter with pip after installing ffmpeg-python without any error message or warning. | closed | 2021-03-05T15:31:55Z | 2021-05-18T17:03:56Z | https://github.com/deezer/spleeter/issues/593 | [

"bug",

"invalid"

] | hadji-yousra | 1 |

ageitgey/face_recognition | python | 1,288 | Append new entries to pickle file (KNNClassifier object) | * face_recognition version: v1.22

* Python version: 3.6

* Operating System: Mac

### Description

I am trying to add new encodings and names to saved pickle file (KNNClassifier object) - but unable to append.

### What I Did

```

# Save the trained KNN classifier

if os.path.getsize(model_save_path) > 0:

if model_save_path is not None:

with open(model_save_path, 'rb') as f:

unpickler = pickle.Unpickler(f)

clf = unpickler.load()

newEncodings = X, y

clf.append(newEncodings)

with open(model_save_path,'wb') as f:

pickle.dump(clf, f)

else:

if model_save_path is not None:

with open(model_save_path, 'wb') as f:

pickle.dump(knn_clf, f)

```

Getting error : `KNeighborsClassifier' object has no attribute 'append' ` Is there any way to achieve this? Please advice.

Other questions, if I train all images for every new training requests, does it going to impact the verification process as the pickle file is in use or OS can handle that?

I am working on moving to MySQL, if anyone did this please share your thoughts. Thank you! | closed | 2021-02-24T06:39:40Z | 2021-03-07T15:05:37Z | https://github.com/ageitgey/face_recognition/issues/1288 | [] | rathishkumar | 3 |

pydantic/pydantic-ai | pydantic | 559 | You can't use sync call and async streaming in the same application | If you use the async streaming text feature together with a sync call in the same application this leads to an exception regarding the event loop.

Python 3.12.8

pydantic-ai-slim[vertexai, openai]==0.0.15

Steps to reproduce:

```

import asyncio

from pydantic_ai import Agent

from pydantic_ai.models.vertexai import VertexAIModel

model = VertexAIModel(

model_name="gemini-1.5-flash",

service_account_file=xxx,

project_id=xxx,

region=xxx,

)

agent = Agent(model=model)

response = agent.run_sync(user_prompt="Hi")

async def run_agent(user_prompt):

async with agent.run_stream(user_prompt=user_prompt) as result:

async for message in result.stream_text(delta=True):

print(message)

response = asyncio.run(run_agent(user_prompt="Hi"))

```

Exception:

```

Traceback (most recent call last):

File "/Users/xxx/xxx/xxx/src/tet2.py", line 27, in <module>

response = asyncio.run(run_agent(user_prompt="Hi"))

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/.local/share/uv/python/cpython-3.12.8-macos-aarch64-none/lib/python3.12/asyncio/runners.py", line 194, in run

return runner.run(main)

^^^^^^^^^^^^^^^^

File "/Users/xxx/.local/share/uv/python/cpython-3.12.8-macos-aarch64-none/lib/python3.12/asyncio/runners.py", line 118, in run

return self._loop.run_until_complete(task)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/.local/share/uv/python/cpython-3.12.8-macos-aarch64-none/lib/python3.12/asyncio/base_events.py", line 686, in run_until_complete

return future.result()

^^^^^^^^^^^^^^^

File "/Users/xxx/xxx/xxx/src/tet2.py", line 23, in run_agent

async with agent.run_stream(user_prompt=user_prompt) as result:

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/.local/share/uv/python/cpython-3.12.8-macos-aarch64-none/lib/python3.12/contextlib.py", line 210, in __aenter__

return await anext(self.gen)

^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/xxx/xxx/.venv/lib/python3.12/site-packages/pydantic_ai/agent.py", line 408, in run_stream

async with agent_model.request_stream(messages, model_settings) as model_response:

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/.local/share/uv/python/cpython-3.12.8-macos-aarch64-none/lib/python3.12/contextlib.py", line 210, in __aenter__

return await anext(self.gen)

^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/xxx/xxx/.venv/lib/python3.12/site-packages/pydantic_ai/models/gemini.py", line 183, in request_stream

async with self._make_request(messages, True, model_settings) as http_response:

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/.local/share/uv/python/cpython-3.12.8-macos-aarch64-none/lib/python3.12/contextlib.py", line 210, in __aenter__

return await anext(self.gen)

^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/xxx/xxx/.venv/lib/python3.12/site-packages/pydantic_ai/models/gemini.py", line 221, in _make_request

async with self.http_client.stream(

^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/.local/share/uv/python/cpython-3.12.8-macos-aarch64-none/lib/python3.12/contextlib.py", line 210, in __aenter__

return await anext(self.gen)

^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/xxx/xxx/.venv/lib/python3.12/site-packages/httpx/_client.py", line 1583, in stream

response = await self.send(

^^^^^^^^^^^^^^^^

File "/Users/xxx/xxx/xxx/.venv/lib/python3.12/site-packages/httpx/_client.py", line 1629, in send

response = await self._send_handling_auth(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/xxx/xxx/.venv/lib/python3.12/site-packages/httpx/_client.py", line 1657, in _send_handling_auth

response = await self._send_handling_redirects(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/xxx/xxx/.venv/lib/python3.12/site-packages/httpx/_client.py", line 1694, in _send_handling_redirects

response = await self._send_single_request(request)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/xxx/xxx/.venv/lib/python3.12/site-packages/httpx/_client.py", line 1730, in _send_single_request

response = await transport.handle_async_request(request)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/xxx/xxx/.venv/lib/python3.12/site-packages/httpx/_transports/default.py", line 394, in handle_async_request

resp = await self._pool.handle_async_request(req)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/xxx/xxx/.venv/lib/python3.12/site-packages/httpcore/_async/connection_pool.py", line 256, in handle_async_request

raise exc from None

File "/Users/xxx/xxx/xxx/.venv/lib/python3.12/site-packages/httpcore/_async/connection_pool.py", line 236, in handle_async_request

response = await connection.handle_async_request(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/xxx/xxx/.venv/lib/python3.12/site-packages/httpcore/_async/connection.py", line 103, in handle_async_request

return await self._connection.handle_async_request(request)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/xxx/xxx/.venv/lib/python3.12/site-packages/httpcore/_async/http11.py", line 136, in handle_async_request

raise exc

File "/Users/xxx/xxx/xxx/.venv/lib/python3.12/site-packages/httpcore/_async/http11.py", line 106, in handle_async_request

) = await self._receive_response_headers(**kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/xxx/xxx/.venv/lib/python3.12/site-packages/httpcore/_async/http11.py", line 177, in _receive_response_headers

event = await self._receive_event(timeout=timeout)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/xxx/xxx/.venv/lib/python3.12/site-packages/httpcore/_async/http11.py", line 217, in _receive_event

data = await self._network_stream.read(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/xxx/xxx/.venv/lib/python3.12/site-packages/httpcore/_backends/anyio.py", line 35, in read

return await self._stream.receive(max_bytes=max_bytes)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/xxx/xxx/.venv/lib/python3.12/site-packages/anyio/streams/tls.py", line 204, in receive

data = await self._call_sslobject_method(self._ssl_object.read, max_bytes)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/xxx/xxx/.venv/lib/python3.12/site-packages/anyio/streams/tls.py", line 147, in _call_sslobject_method

data = await self.transport_stream.receive()

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/xxx/xxx/xxx/.venv/lib/python3.12/site-packages/anyio/_backends/_asyncio.py", line 1289, in receive

await self._protocol.read_event.wait()

File "/Users/xxx/.local/share/uv/python/cpython-3.12.8-macos-aarch64-none/lib/python3.12/asyncio/locks.py", line 209, in wait

fut = self._get_loop().create_future()

^^^^^^^^^^^^^^^^

File "/Users/xxx/.local/share/uv/python/cpython-3.12.8-macos-aarch64-none/lib/python3.12/asyncio/mixins.py", line 20, in _get_loop

raise RuntimeError(f'{self!r} is bound to a different event loop')

RuntimeError: <asyncio.locks.Event object at 0x107e104a0 [unset]> is bound to a different event loop

``` | closed | 2024-12-28T12:05:41Z | 2025-01-11T14:02:40Z | https://github.com/pydantic/pydantic-ai/issues/559 | [

"question",

"Stale"

] | alenowak | 7 |

deepinsight/insightface | pytorch | 1,985 | Could you tell me how to use this framework for face detection and alignment of my own dataset? | open | 2022-04-25T03:28:13Z | 2022-04-25T03:28:13Z | https://github.com/deepinsight/insightface/issues/1985 | [] | leonardzzy | 0 | |

zappa/Zappa | django | 973 | SQLite 3.8.3 or later is required (found 3.7.17). | I keep getting the error when I call zappa deploy dev. The zappa tail provides this output:

SQLite 3.8.3 or later is required (found 3.7.17).

SQLite 3.8.3 or later is required (found 3.7.17).

Traceback (most recent call last):

File "/var/task/handler.py", line 609, in lambda_handler

return LambdaHandler.lambda_handler(event, context)

File "/var/task/handler.py", line 240, in lambda_handler

handler = cls()

File "/var/task/handler.py", line 146, in __init__

wsgi_app_function = get_django_wsgi(self.settings.DJANGO_SETTINGS)

File "/var/task/zappa/ext/django_zappa.py", line 20, in get_django_wsgi

return get_wsgi_application()

File "/var/task/django/core/wsgi.py", line 12, in get_wsgi_application

django.setup(set_prefix=False)

File "/var/task/django/__init__.py", line 24, in setup

apps.populate(settings.INSTALLED_APPS)

File "/var/task/django/apps/registry.py", line 114, in populate

app_config.import_models()

File "/var/task/django/apps/config.py", line 211, in import_models

self.models_module = import_module(models_module_name)

File "/var/lang/lib/python3.8/importlib/__init__.py", line 127, in import_module

return _bootstrap._gcd_import(name[level:], package, level)

File "<frozen importlib._bootstrap>", line 1014, in _gcd_import

My Django version is 3.15. What should I change? | closed | 2021-04-29T20:12:03Z | 2022-07-16T04:56:14Z | https://github.com/zappa/Zappa/issues/973 | [] | viktor-idenfy | 4 |

Textualize/rich | python | 2,859 | [BUG] COLORTERM in combination with FORCE_COLOR does not work anymore | - [x] I've checked [docs](https://rich.readthedocs.io/en/latest/introduction.html) and [closed issues](https://github.com/Textualize/rich/issues?q=is%3Aissue+is%3Aclosed) for possible solutions.

- [x] I can't find my issue in the [FAQ](https://github.com/Textualize/rich/blob/master/FAQ.md).

**Describe the bug**

Commit 1ebf82300fdf4960fd9a04afc60fdecee7ab50da broke the combination of "FORCE_COLOR" and "COLORTERM" taken from the environment variables.

I've created a simple test:

```python

import io

from rich.console import Console

def test_force_color():

console = Console(file=io.StringIO(), _environ={

"FORCE_COLOR": "1",

"COLORTERM": "truecolor",

})

assert console.is_terminal

assert console.color_system == "truecolor"

```

If `master` or 1ebf82300fdf4960fd9a04afc60fdecee7ab50da is checked out it fails, because the `color_system` is `None`. If the commit before (b89d0362e8ebcb18902f0f0a206879f1829b5c0b) is checked out the test succeeds.

I guess that the order of when `FORCE_COLOR` and `COLORTERM` are interpreted got changed.

**Platform**

<details>

<summary>Click to expand</summary>

* What platform (Win/Linux/Mac) are you running on? Linux (Manjaro)

* What terminal software are you using? kitty

</details>

| closed | 2023-03-06T10:12:41Z | 2023-04-14T06:37:40Z | https://github.com/Textualize/rich/issues/2859 | [

"Needs triage"

] | ThunderKey | 3 |

aleju/imgaug | machine-learning | 391 | bgr image preprocess problem | I use cv2 read the image, but after use the function of `imgaug` preprocess the image. I think the image auto become to `rgb` channel. How to use `imgaug` preprocess the `bgr` image? | closed | 2019-08-22T07:41:48Z | 2019-08-23T05:55:41Z | https://github.com/aleju/imgaug/issues/391 | [] | as754770178 | 4 |

tox-dev/tox | automation | 2,430 | Tox can't handle path that contains dash (" - ") in it | ### Tox.ini

```

[tox]

minversion = 3.8.0

envlist = python3.8, python3.9, flake8, mypy

isolated_build = true

[testenv]

setenv =

PYTHONPATH = {toxinidir}

deps =

-r {toxinidir}{/}requirements_dev.txt

commands =

pytest pytest --basetemp={envtmpdir} --cov-report term-missing`

```

### Steps

Run "Tox "

### Expectation

Should run without error

### Error encountered

```

ERROR: usage: pytest.EXE [options] [file_or_dir] [file_or_dir] [...]

pytest.EXE: error: unrecognized arguments: - ReliSource Inc\15. UNARI\unari\.tox\python3.8\tmp

inifile: D:\OneDrive - ReliSource Inc\15. UNARI\unari\pyproject.toml

rootdir: D:\OneDrive - ReliSource Inc\15. UNARI\unari

ERROR: InvocationError for command 'D:\OneDrive - ReliSource Inc\15. UNARI\unari\.tox\python3.8\Scripts\pytest.EXE' pytest '--basetemp=D:\OneDrive' - ReliSource 'Inc\15.' 'UNARI\unari\.tox\python3.8\tmp'

--cov-report term-missing (exited with code 4)`

```

### Probable cause

Tox can't handle path that contains dash (" - ") in it | closed | 2022-06-01T13:38:14Z | 2023-01-19T11:50:02Z | https://github.com/tox-dev/tox/issues/2430 | [

"bug:normal",

"needs:more-info"

] | MdFahimulIslam | 3 |

polarsource/polar | fastapi | 5,088 | License Key Read resource is missing Created At field | Looks like the License Key Read resource is missing a Created At field.

We should expose it as we usually do with our resources. | open | 2025-02-24T13:32:38Z | 2025-02-28T10:09:46Z | https://github.com/polarsource/polar/issues/5088 | [

"bug",

"contributor friendly",

"python"

] | emilwidlund | 1 |

huggingface/transformers | machine-learning | 36,058 | TypeError: BartModel.forward() got an unexpected keyword argument 'labels' | ### System Info

```

TypeError Traceback (most recent call last)

[<ipython-input-37-3435b262f1ae>](https://localhost:8080/#) in <cell line: 0>()

----> 1 trainer.train()

5 frames

[/usr/local/lib/python3.11/dist-packages/torch/nn/modules/module.py](https://localhost:8080/#) in _call_impl(self, *args, **kwargs)

1745 or _global_backward_pre_hooks or _global_backward_hooks

1746 or _global_forward_hooks or _global_forward_pre_hooks):

-> 1747 return forward_call(*args, **kwargs)

1748

1749 result = None

TypeError: BartModel.forward() got an unexpected keyword argument 'labels'

```

### Who can help?

@ArthurZucker, @muellerzr, @SunMarc

### Information

- [ ] The official example scripts

- [ ] My own modified scripts

### Tasks

- [ ] An officially supported task in the `examples` folder (such as GLUE/SQuAD, ...)

- [ ] My own task or dataset (give details below)

### Reproduction

```python

import torch

import matplotlib.pyplot as plt

from transformers import pipeline

from datasets import Dataset, DatasetDict

from transformers import BartTokenizer, BartModel, TrainingArguments, Trainer, DataCollatorForSeq2Seq

import pandas as pd

# from datasets import Dataset

df_train = pd.read_csv('../test-set/train.csv')

df_test = pd.read_csv('../test-set/test.csv')

df_val = pd.read_csv('../test-set/valid.csv')

df_train = df_train.dropna()

df_test = df_test.dropna()

df_val = df_val.dropna()

# Convert DataFrames to Hugging Face Datasets

dataset_train = Dataset.from_pandas(df_train)

dataset_test = Dataset.from_pandas(df_test)

dataset_val = Dataset.from_pandas(df_val)

# Create DatasetDict

dataset_dict = DatasetDict({

'train': dataset_train,

'test': dataset_test,

'validation': dataset_val

})

dataset_samsum = dataset_dict

split_train_test_val = [len(dataset_samsum[split]) for split in dataset_samsum]

from transformers import BartTokenizer, BartModel

model_ckpt = "facebook/bart-large"

tokenizer = BartTokenizer.from_pretrained('facebook/bart-large')

model = BartModel.from_pretrained('facebook/bart-large')

d_len = [len(tokenizer.encode(s)) for s in dataset_samsum["train"]["judgement"]]

s_len = [len(tokenizer.encode(s)) for s in dataset_samsum["train"]["summary"]]

def convert_examples_to_features(example_batch):

input_encodings = tokenizer(example_batch["judgement"], max_length=1024, truncation=True)

#Using target_tokenizer for summaries

with tokenizer.as_target_tokenizer():

target_encodings = tokenizer(example_batch["summary"], max_length=128, truncation=True)

return {

"input_ids": input_encodings["input_ids"],

"attention_mask": input_encodings["attention_mask"],

"labels": target_encodings["input_ids"]

}

dataset_samsum_pt = dataset_samsum.map(convert_examples_to_features, batched=True)

columns = ["input_ids", "labels", "attention_mask"]

dataset_samsum_pt.set_format(type="torch", columns=columns)

# Collator for Handling length imbalances and attention masks

seq2seq_data_collator = DataCollatorForSeq2Seq(tokenizer, model=model)

training_args = TrainingArguments( output_dir="bart-large-bodosum",

num_train_epochs=1,

warmup_steps=500,

per_device_train_batch_size=1,

per_gpu_eval_batch_size=1,

weight_decay=0.01,

logging_steps=10,

evaluation_strategy='steps',

eval_steps=500,

save_steps=1e6,

gradient_accumulation_steps=16,

)

trainer = Trainer(model=model, args=training_args, tokenizer=tokenizer, data_collator=seq2seq_data_collator,

train_dataset=dataset_samsum_pt["train"],

eval_dataset=dataset_samsum_pt["validation"])

trainer.train()

```

### Expected behavior

I am trying to train facebook/bart-large model for summarization task. But when I try to run trainer.train() then I encountered this issue. Please help me to solve this issue | closed | 2025-02-06T05:41:36Z | 2025-02-07T09:05:38Z | https://github.com/huggingface/transformers/issues/36058 | [

"bug"

] | mwnthainarzary | 2 |

xuebinqin/U-2-Net | computer-vision | 362 | 对u2netp模型进行qat量化 | 使用pytorch的量化工具fx对u2netp进行qat量化,首先加载了训练好的fp32模型,然后按照流程对u2netp模型进行qat,loss随着epoch迭代越来越大,miou越来越小,qat后的模型完全错误。 | open | 2023-08-10T06:13:08Z | 2023-08-10T06:13:08Z | https://github.com/xuebinqin/U-2-Net/issues/362 | [] | ZHIZIHUABU | 0 |

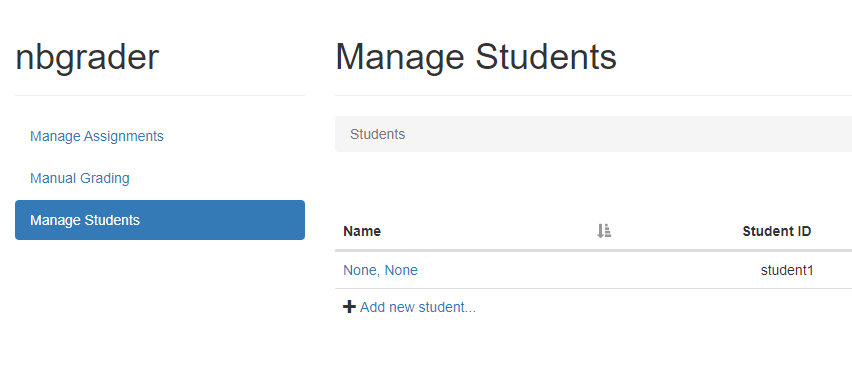

jupyter/nbgrader | jupyter | 1,304 | adding students requires both web gui and jupyter_config.py | <!--

Thanks for helping to improve nbgrader!

If you are submitting a bug report or looking for support, please use the below

template so we can efficiently solve the problem.

If you are requesting a new feature, feel free to remove irrelevant pieces of

the issue template.

-->

### Operating system

ubuntu server 18.04.3

### `nbgrader --version`

0.7.0.dev

### `jupyterhub --version` (if used with JupyterHub)

1.1.0

### `jupyter notebook --version`

6.0.2

### Expected behavior

Adding students in web gui (Manage Students) enables courses and assignments to be listed for students

### Actual behavior

Having to add student in both jupyter_config.py and 'manage students'

### Steps to reproduce the behavior

Starting from demos (demos_multiple_classes) : add student1 to courses101 through web management.

When listing assignments for student1, nothing appears.

After adding student to group through `jupyterhub_config.py`, assignments and courses are correctly listed.

```

# instructor1 and instructor2 have access to different shared servers:

c.JupyterHub.load_groups = {

'formgrade-course101': [

'instructor1',

'grader-course101',

],

'formgrade-course123': [

'instructor2',

'grader-course123'

],

# Have to add all students here manually for courses to be listed for them

'nbgrader-course101': ['student1'],

'nbgrader-course123': ['student1']

}

```

Is it normal behavior ? It is a lot of setup to add several users, and I'm wondering if there is another better way. | open | 2020-01-21T07:45:45Z | 2020-01-21T07:45:45Z | https://github.com/jupyter/nbgrader/issues/1304 | [] | Lapin-Blanc | 0 |

scikit-image/scikit-image | computer-vision | 6,871 | New canny implementation silently fails with integer images. | ### Description:

The new `skimage.feature.canny` implementation silently fails if given an integer image. This worked on `scikit-image<=0.19`, and no longer works with `scikit-image=0.20`. The documentation says that any dtype should work:

```

image : 2D array

Grayscale input image to detect edges on; can be of any dtype.

```

### Way to reproduce:

```

from skimage.feature import canny

import numpy as np

im = np.zeros((100, 100))

im[0: 50, 0: 50] = 1.0

print("Edge pixels with float input: ", canny(im, low_threshold=0, high_threshold=1).sum())

print("Edge pixels with int input: ", canny(im.astype(np.int64), low_threshold=0, high_threshold=1).sum())

```

This prints on new skimage (0.20):

```

Edge pixels with float input: 182

Edge pixels with int input: 0

```

And on old skimage (0.19):

```

Edge pixels with float input: 144

Edge pixels with int input: 144

```

As I write this test case I also need to ask ... why did the number of pixels change?

### Version information:

```Shell

3.10.10 | packaged by conda-forge | (main, Mar 24 2023, 20:08:06) [GCC 11.3.0]

Linux-3.10.0-1160.88.1.el7.x86_64-x86_64-with-glibc2.17

scikit-image version: 0.20.0

numpy version: 1.23.5

```

| closed | 2023-04-05T19:13:19Z | 2023-09-17T11:41:52Z | https://github.com/scikit-image/scikit-image/issues/6871 | [

":bug: Bug"

] | erykoff | 27 |

sqlalchemy/alembic | sqlalchemy | 661 | MySQL dialect types generating spurious revisions in 1.4 | Auto-generating a revision in 1.3.3 does not pick up any changes, however after upgrading to 1.4, every MySQL dialect type column generates a revision such as this one:

```

op.alter_column('comp_details', 'market_type_id',

existing_type=mysql.INTEGER(display_width=10, unsigned=True),

type_=mysql.INTEGER(unsigned=True),

existing_nullable=True)

```

Here are the column definitions for that one:

```

market_type_id = Column(INTEGER(unsigned=True), nullable=True)

```

I only use the mysql specific integer types, so am unsure if this manifests with other mysql dialect types but would be happy to dig deeper if it would be helpful. | closed | 2020-02-21T13:34:10Z | 2020-02-27T20:50:51Z | https://github.com/sqlalchemy/alembic/issues/661 | [

"bug",

"autogenerate - detection",

"mysql"

] | peterschutt | 10 |

dunossauro/fastapi-do-zero | sqlalchemy | 291 | Erro ao rodar o alembic upgrade head no exercicio da aula 9 | Ao realizar o exercício da aula 9 de adicionar as colunas created_at e updated_at na tabela todos, quando rodo o comando alembic upgrade head, recebo o seguinte erro:

sqlalchemy.exc.OperationalError: (sqlite3.OperationalError) Cannot add a column with non-constant default

[SQL: ALTER TABLE todos ADD COLUMN created_at DATETIME DEFAULT (CURRENT_TIMESTAMP) NOT NULL]

(Background on this error at: https://sqlalche.me/e/20/e3q8)

| closed | 2025-02-03T13:17:58Z | 2025-02-03T18:06:40Z | https://github.com/dunossauro/fastapi-do-zero/issues/291 | [] | leoneville | 2 |

strawberry-graphql/strawberry | asyncio | 2,791 | DataLoader load_many should set or provide an option to return exceptions | <!--- This template is entirely optional and can be removed, but is here to help both you and us. -->

<!--- Anything on lines wrapped in comments like these will not show up in the final text. -->

## Feature Request Type

- [ ] Core functionality

- [x] Alteration (enhancement/optimization) of existing feature(s)

- [x] New behavior

## Description

The current implementation of `load_many` on the dataloader uses `asyncio.gather` to run the batch of keys through the existing `load` implementation (its a single line):

```python

def load_many(self, keys):

return gather(*map(self.load, keys))

```

This means that if any of the individual `load` tasks raises an exception, the entire `load_many` call will fail. So for example, if key 1 returns a value but key 2 fails by raising, then this code:

```python

results = await my_loader.load_many([1, 2])

```

raises an exception and the value for key 1 can't be used.

In some cases it would be useful to do:

```python

results = await my_loader.load_many([1,2])

for result in results:

if isinstance(result, Exception):

# handle error

else:

# handle successful result

```

This would match the implementation of `loadMany` in JS dataloader project: https://github.com/graphql/dataloader#loadmanykeys

## Implementation notes

The behaviour can be achieved with the `return_exceptions` argument to `gather`. For example:

```python

def load_many(self, keys):

return gather(*map(self.load, keys), return_exceptions=True)

```

https://docs.python.org/3/library/asyncio-task.html#asyncio.gather

## Open questions

Adding `return_exceptions` in-place would change the behaviour of existing code. Another option would be to add a `return_exceptions` optional argument to the `load_many` method and allow clients to specify the behaviour (leaving the existing behaviour unchanged). I don't have a strong instinct either way. | open | 2023-05-30T08:01:56Z | 2025-03-20T15:56:11Z | https://github.com/strawberry-graphql/strawberry/issues/2791 | [] | jthorniley | 0 |

flasgger/flasgger | rest-api | 545 | Flasgger does not load when hostname has a path | I have a Flask application and I've integrated [Flasgger](https://github.com/flasgger/flasgger) for documentation. When I run my app locally, I can access swagger at http://127.0.0.1:8000/swagger/index.html. But when it's deployed to our dev environment, the hostname is https://services.company.com/my-flask-app. And when I add /swagger/index.html at the end of that URL, swagger does not load.

This is how I've configured swagger:

```

swagger_config = {

"termsOfService": None,

"specs": [

{

"endpoint": "swagger",

"route": "/swagger.json",

}

],

"static_url_path": "/swagger",

"swagger_ui_standalone_preset_js": "./swagger-ui-standalone-preset.js",

"swagger_ui_css": "./swagger-ui.css",

"swagger_ui_bundle_js": "./swagger-ui-bundle.js",

"jquery_js": "./lib/jquery.min.js",

"specs_route": "/swagger/index.html",

}

```

I still have wrong path to `/swagger.json`.

Also, when I try to make a request base URL is `http://127.0.0.1:8000` not the all hostname and others parameters required `http://127.0.0.1:8000/my-flask-app/`<endpoint>

.

Any ideas on how I can resolve this? | open | 2022-08-05T07:40:30Z | 2022-08-05T07:40:30Z | https://github.com/flasgger/flasgger/issues/545 | [] | catalinapopa-uipath | 0 |

yt-dlp/yt-dlp | python | 11,907 | No video formats found with youtube:player_client=all and live-from-start in livestreams | ### DO NOT REMOVE OR SKIP THE ISSUE TEMPLATE

- [X] I understand that I will be **blocked** if I *intentionally* remove or skip any mandatory\* field

### Checklist

- [X] I'm reporting that yt-dlp is broken on a **supported** site

- [X] I've verified that I have **updated yt-dlp to nightly or master** ([update instructions](https://github.com/yt-dlp/yt-dlp#update-channels))

- [X] I've checked that all provided URLs are playable in a browser with the same IP and same login details

- [X] I've checked that all URLs and arguments with special characters are [properly quoted or escaped](https://github.com/yt-dlp/yt-dlp/wiki/FAQ#video-url-contains-an-ampersand--and-im-getting-some-strange-output-1-2839-or-v-is-not-recognized-as-an-internal-or-external-command)

- [X] I've searched [known issues](https://github.com/yt-dlp/yt-dlp/issues/3766) and the [bugtracker](https://github.com/yt-dlp/yt-dlp/issues?q=) for similar issues **including closed ones**. DO NOT post duplicates

- [X] I've read the [guidelines for opening an issue](https://github.com/yt-dlp/yt-dlp/blob/master/CONTRIBUTING.md#opening-an-issue)

- [ ] I've read about [sharing account credentials](https://github.com/yt-dlp/yt-dlp/blob/master/CONTRIBUTING.md#are-you-willing-to-share-account-details-if-needed) and I'm willing to share it if required

### Region

Indonesia

### Provide a description that is worded well enough to be understood

Hi, so since the latest stable [2024.12.23](https://github.com/yt-dlp/yt-dlp/releases/tag/2024.12.23) and even on the master [2024.12.23.232653](https://github.com/yt-dlp/yt-dlp-master-builds/releases/tag/2024.12.23.232653) . YT-DLP has been failing for me to extract the max resolution of a current live stream (ongoing). I usually use this to automatically create a VOD grabber so that it can automatically download the processed VODs after the live is over.

Here is the command that i usually use to extract the resolution.

`yt-dlp --extractor-args 'youtube:player_client=all' --live-from-start --print width 'https://www.youtube.com/@Valkyrae/live'`

I used all player client to make sure that I don't need to touch it anymore and it can always get the largest available resolution since default limits it to 1080p, I used live from start so it will show the resolutions higher than 1080p in the livestream.

But now it just results in NA. After further investigation, it seems like it can't find any video formats even though I have set the YouTube player client to all. The interesting thing is that it will work when i just use youtube:player_client=android_vr instead of all.

Doesn't the "all" option should also include "android_vr"? It seems to be included but it doesn't seem to use the formats available from the android_vr client.

### Provide verbose output that clearly demonstrates the problem

- [X] Run **your** yt-dlp command with **-vU** flag added (`yt-dlp -vU <your command line>`)

- [ ] If using API, add `'verbose': True` to `YoutubeDL` params instead

- [X] Copy the WHOLE output (starting with `[debug] Command-line config`) and insert it below

### Complete Verbose Output

```shell

[debug] Command-line config: ['-vU', '--extractor-args', 'youtube:player_client=all', '--live-from-start', '--print', 'width', 'https://www.youtube.com/@Valkyrae/live']

[debug] Encodings: locale UTF-8, fs utf-8, pref UTF-8, out utf-8, error utf-8, screen utf-8

[debug] yt-dlp version master@2024.12.23.232653 from yt-dlp/yt-dlp-master-builds [65cf46cdd] (darwin_exe)

[debug] Python 3.13.1 (CPython arm64 64bit) - macOS-14.7.2-arm64-arm-64bit-Mach-O (OpenSSL 3.0.15 3 Sep 2024)

[debug] exe versions: ffmpeg 7.0.2 (setts), ffprobe 7.0.2, phantomjs 2.1.1, rtmpdump 2.4

[debug] Optional libraries: Cryptodome-3.21.0, brotli-1.1.0, certifi-2024.12.14, curl_cffi-0.7.1, mutagen-1.47.0, requests-2.32.3, sqlite3-3.45.3, urllib3-2.3.0, websockets-14.1

[debug] Proxy map: {}

[debug] Request Handlers: urllib, requests, websockets, curl_cffi

[debug] Loaded 1837 extractors

[debug] Fetching release info: https://api.github.com/repos/yt-dlp/yt-dlp-master-builds/releases/latest

Latest version: master@2024.12.23.232653 from yt-dlp/yt-dlp-master-builds

yt-dlp is up to date (master@2024.12.23.232653 from yt-dlp/yt-dlp-master-builds)

[youtube:tab] Extracting URL: https://www.youtube.com/@Valkyrae/live

[youtube:tab] @Valkyrae/live: Downloading webpage

[youtube] Extracting URL: https://www.youtube.com/watch?v=gko77bw1CT4

[youtube] gko77bw1CT4: Downloading webpage

[youtube] gko77bw1CT4: Downloading ios player API JSON

[youtube] gko77bw1CT4: Downloading ios music player API JSON

[youtube] gko77bw1CT4: Downloading ios creator player API JSON

[youtube] gko77bw1CT4: Downloading web embedded client config

[youtube] gko77bw1CT4: Downloading player 03dbdfab

[youtube] gko77bw1CT4: Downloading web embedded player API JSON

[youtube] gko77bw1CT4: Downloading web safari player API JSON

[youtube] gko77bw1CT4: Downloading web music client config

[youtube] gko77bw1CT4: Downloading web music player API JSON

[youtube] gko77bw1CT4: Downloading web creator player API JSON

[youtube] gko77bw1CT4: Downloading tv player API JSON

[youtube] gko77bw1CT4: Downloading tv embedded player API JSON

[youtube] gko77bw1CT4: Downloading mweb player API JSON

[youtube] gko77bw1CT4: Downloading android player API JSON

[youtube] gko77bw1CT4: Downloading android music player API JSON

[youtube] gko77bw1CT4: Downloading android creator player API JSON

[youtube] gko77bw1CT4: Downloading android vr player API JSON

[youtube] gko77bw1CT4: Downloading MPD manifest

WARNING: [youtube] gko77bw1CT4: web client dash formats require a PO Token which was not provided. They will be skipped as they may yield HTTP Error 403. You can manually pass a PO Token for this client with --extractor-args "youtube:po_token=web+XXX. For more information, refer to https://github.com/yt-dlp/yt-dlp/wiki/Extractors#po-token-guide . To enable these broken formats anyway, pass --extractor-args "youtube:formats=missing_pot"

[youtube] gko77bw1CT4: Downloading MPD manifest

[youtube] gko77bw1CT4: Downloading MPD manifest

[youtube] gko77bw1CT4: Downloading MPD manifest

[youtube] gko77bw1CT4: Downloading MPD manifest

[youtube] gko77bw1CT4: Downloading MPD manifest

[youtube] gko77bw1CT4: Downloading MPD manifest

ERROR: [youtube] gko77bw1CT4: This video is not available

File "yt_dlp/extractor/common.py", line 742, in extract

File "yt_dlp/extractor/youtube.py", line 4541, in _real_extract

File "yt_dlp/extractor/common.py", line 1276, in raise_no_formats

```

| closed | 2024-12-25T22:03:02Z | 2024-12-26T01:19:18Z | https://github.com/yt-dlp/yt-dlp/issues/11907 | [

"site-bug",

"site:youtube"

] | ThePhoenix576 | 2 |

sqlalchemy/sqlalchemy | sqlalchemy | 10,056 | Create Generated Column in MariaDB cannot specify null | ### Discussed in https://github.com/sqlalchemy/sqlalchemy/discussions/10055

<div type='discussions-op-text'>

<sup>Originally posted by **iamrinshibuya** July 3, 2023</sup>

Hello, when using [Computed Columns](https://docs.sqlalchemy.org/en/20/core/defaults.html#computed-columns-generated-always-as), the following code works without any changes on PostgreSQL, but it fails in MariaDB.

```python

import asyncio

from sqlalchemy import Computed, text

from sqlalchemy.orm import (

Mapped,

DeclarativeBase,

mapped_column,

)

from sqlalchemy.ext.asyncio import create_async_engine

# works with this

# engine = create_async_engine('postgresql+psycopg://...')

# does not work with this

engine = create_async_engine('mariadb+asyncmy://...')

class Base(DeclarativeBase):

pass

class Sqaure(Base):

__tablename__ = 'square'

id: Mapped[int] = mapped_column(primary_key=True)

side: Mapped[int]

area: Mapped[int] = mapped_column(Computed(text('4 * side')), index=True)

async def main():

async with engine.begin() as conn:

await conn.run_sync(Base.metadata.drop_all)

await conn.run_sync(Base.metadata.create_all)

asyncio.run(main())

```

```

sqlalchemy.exc.ProgrammingError: (asyncmy.errors.ProgrammingError) (1064, "You have an error in your SQL syntax; check the manual that corresponds to your MariaDB server version for the right syntax to use near 'NOT NULL, \n\tPRIMARY KEY (id)\n)' at line 4")

[SQL:

CREATE TABLE square (

id INTEGER NOT NULL AUTO_INCREMENT,

side INTEGER NOT NULL,

area INTEGER GENERATED ALWAYS AS (4 * side) NOT NULL,

PRIMARY KEY (id)

)

]

(Background on this error at: https://sqlalche.me/e/20/f405)

```

What should I do so that the table gets created in MariaDB?

Making the column nullable (`area: Mapped[int | None]`) seems to fix this, but I have the following concerns

- I find it counterproductive in my case, the generated column always has a value.

- In PostgreSQL this is a "non-nullable" / required column and I'd like to not change the behavior just to accommodate MariaDB (the code has to support both dialects)</div>

The docs of mariadb seem to indicate that the null clause is not allowed with generated always https://mariadb.com/kb/en/generated-columns/

| closed | 2023-07-03T19:29:03Z | 2023-10-20T04:51:21Z | https://github.com/sqlalchemy/sqlalchemy/issues/10056 | [

"bug",

"sql",

"PRs (with tests!) welcome",

"mariadb"

] | CaselIT | 16 |

microsoft/nni | machine-learning | 5,412 | Support for unified lightning package | **Describe the issue**:

It seems that nni does not support lightning with the new unified package name `lightning` instead of `pytorch_lightning`. When using nni with the new unified package it breaks. Unfortunately I don't know the pythonian way to fix this as there are still two seperate package versions out there.

```python

import lightning as pl

import torch

[...]

import nni

from nni.compression.pytorch import LightningEvaluator

[...]

trainer = nni.trace(pl.Trainer)(

[...]

evaluator = LightningEvaluator(trainer, data)

```

**Environment**:

- NNI version: 2.10

- Training service (local|remote|pai|aml|etc): local

- Client OS: Win10

- Python version: 3.10

- PyTorch/TensorFlow version: 1.13

- Lightning version: 1.8.6

- Is conda/virtualenv/venv used?: conda

- Is running in Docker?: no

**Error message**:

```

Only support traced pytorch_lightning.Trainer, please use nni.trace(pytorch_lightning.Trainer) to initialize the trainer.

```

| open | 2023-02-28T12:31:02Z | 2023-03-01T02:39:20Z | https://github.com/microsoft/nni/issues/5412 | [] | funnym0nk3y | 1 |

tflearn/tflearn | tensorflow | 1,181 | tflearn | WARNING:tensorflow:From /home/ubuntu/anaconda3/envs/zzy_data/lib/python3.7/site-packages/tensorflow/python/compat/v2_compat.py:101: disable_resource_variables (from tensorflow.python.ops.variable_scope) is deprecated and will be removed in a future version.

Instructions for updating:

non-resource variables are not supported in the long term

Scipy not supported!

Building Encoder

WARNING:tensorflow:From /home/ubuntu/anaconda3/envs/zzy_data/lib/python3.7/site-packages/tflearn-0.5.0-py3.7.egg/tflearn/initializations.py:110: calling UniformUnitScaling.__init__ (from tensorflow.python.ops.init_ops) with dtype is deprecated and will be removed in a future version.

Instructions for updating:

Call initializer instance with the dtype argument instead of passing it to the constructor

WARNING:tensorflow:From /home/ubuntu/anaconda3/envs/zzy_data/lib/python3.7/site-packages/tensorflow/python/util/deprecation.py:549: UniformUnitScaling.__init__ (from tensorflow.python.ops.init_ops) is deprecated and will be removed in a future version.

Instructions for updating:

Use tf.initializers.variance_scaling instead with distribution=uniform to get equivalent behavior.

Tensor("ge/Relu:0", shape=(?, ?, 64), dtype=float32)

Tensor("ge/Relu_1:0", shape=(?, ?, 128), dtype=float32)

Tensor("ge/Relu_2:0", shape=(?, ?, 128), dtype=float32)

Tensor("ge/Relu_3:0", shape=(?, ?, 256), dtype=float32)

Tensor("ge/Relu_4:0", shape=(?, ?, 128), dtype=float32)

Tensor("ge/Max:0", shape=(?, 128), dtype=float32)

Building Decoder

Traceback (most recent call last):

File "/home/ubuntu/anaconda3/envs/zzy_data/lib/python3.7/site-packages/tflearn-0.5.0-py3.7.egg/tflearn/initializations.py", line 198, in xavier

ModuleNotFoundError: No module named 'tensorflow.contrib'

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "pc_sampling_rec.py", line 196, in <module>

train(args)

File "pc_sampling_rec.py", line 75, in train

outpj=decoder(word,layer_sizes=dec_args['layer_sizes'],b_norm=dec_args['b_norm'],b_norm_decay=1.0,b_norm_finish=dec_args['b_norm_finish'],b_norm_decay_finish=1.0,verbose=dec_args['verbose'])

File "/media/ubuntu/0f083fd5-b631-4342-9812-7e262eaff979/ZZY/2024对抗攻击+数据蒸馏/PCDNet/encoders_decoders.py", line 187, in decoder_with_fc_only

layer = fully_connected(layer, layer_sizes[i], activation='linear', weights_init='xavier', name=name, regularizer=regularizer, weight_decay=weight_decay, reuse=reuse, scope=scope_i)

File "/home/ubuntu/anaconda3/envs/zzy_data/lib/python3.7/site-packages/tflearn-0.5.0-py3.7.egg/tflearn/layers/core.py", line 152, in fully_connected

File "/home/ubuntu/anaconda3/envs/zzy_data/lib/python3.7/site-packages/tflearn-0.5.0-py3.7.egg/tflearn/initializations.py", line 201, in xavier

NotImplementedError: 'xavier_initializer' not supported, please update TensorFlow. | open | 2024-03-25T01:02:29Z | 2024-03-25T01:04:05Z | https://github.com/tflearn/tflearn/issues/1181 | [] | WillingDil | 1 |

flasgger/flasgger | rest-api | 134 | HTTPS is not supported in the current flasgger version | it seems that there is an issue regarding the HTTPS for swagger ui that was solved in more advanced version

https://github.com/swagger-api/swagger-ui/issues/3166

so currently flasgger also does not support the HTTPS api requests .

| closed | 2017-07-18T06:33:01Z | 2018-07-31T07:53:07Z | https://github.com/flasgger/flasgger/issues/134 | [

"bug"

] | ghost | 12 |

CorentinJ/Real-Time-Voice-Cloning | python | 436 | Error in preprocessing data for synthesizer | While running `synthesizer_preprocess_audio.py`, I'm getting the following error:

```

Arguments:

datasets_root: /home/amin/voice_cloning/Datasets

out_dir: /home/amin/voice_cloning/Datasets/SV2TTS/synthesizer

n_processes: None

skip_existing: True

hparams:

Using data from:

/home/amin/voice_cloning/Datasets/LibriSpeech/train-other-500

LibriSpeech: 100%|████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 1166/1166 [00:00<00:00, 2563.25speakers/s]

The dataset consists of 0 utterances, 0 mel frames, 0 audio timesteps (0.00 hours).

Traceback (most recent call last):

File "synthesizer_preprocess_audio.py", line 52, in <module>

preprocess_librispeech(**vars(args))

File "/home/amin/voice_cloning/Real-Time-Voice-Cloning-master/synthesizer/preprocess.py", line 49, in preprocess_librispeech

print("Max input length (text chars): %d" % max(len(m[5]) for m in metadata))

ValueError: max() arg is an empty sequence

```

I'm preprocessing LibriSpeech500 but apparently the synthesizer preprocessor is failing to create the proper metadata file.

Has anyone seen the same issue?

| closed | 2020-07-22T06:52:38Z | 2020-07-22T07:35:15Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/436 | [] | amintavakol | 0 |

ets-labs/python-dependency-injector | flask | 665 | Allow Closing to detect dependent resources passed as kwargs | Hi,

I have the same issue of this user:

https://github.com/ets-labs/python-dependency-injector/issues/633#issuecomment-1361813043

Can you fix it? | open | 2023-02-06T08:37:43Z | 2023-02-06T09:03:47Z | https://github.com/ets-labs/python-dependency-injector/issues/665 | [] | mauros191 | 0 |

ipython/ipython | data-science | 14,368 | gtk/GTKAgg matplotlib backend is not available | Using the latest IPython (8.22.2) and Matplotlib (3.8.3) the list of available IPython backends is

```python

In [1]: %matplotlib --list

Available matplotlib backends: ['tk', 'gtk', 'gtk3', 'gtk4', 'wx', 'qt4', 'qt5', 'qt6', 'qt', 'osx', 'nbagg', 'webagg', 'notebook', 'agg', 'svg', 'pdf', 'ps', 'inline', 'ipympl', 'widget']

```

which includes 'gtk'. But there is no such backend available in Matplotlib:

```python

In [1]: %matplotlib gtk

<snip>

ValueError: Key backend: 'gtkagg' is not a valid value for backend; supported values are ['GTK3Agg', 'GTK3Cairo', 'GTK4Agg', 'GTK4Cairo', 'MacOSX', 'nbAgg', 'QtAgg', 'QtCairo', 'Qt5Agg', 'Qt5Cairo', 'TkAgg', 'TkCairo', 'WebAgg', 'WX', 'WXAgg', 'WXCairo', 'agg', 'cairo', 'pdf', 'pgf', 'ps', 'svg', 'template']

```

I think it was removed in 2018 (matplotlib/matplotlib#10426) so I assume there has been no real-world use of it for a while.

I think it should be removed from the list of allowed backends in IPython. However, I don't think any action is necessary now as I will deal with this as part of the wider change to move the matplotlib backend resolution from IPython to Matplotlib (#14311). | closed | 2024-03-11T14:14:38Z | 2024-05-14T09:24:17Z | https://github.com/ipython/ipython/issues/14368 | [] | ianthomas23 | 1 |

vitalik/django-ninja | pydantic | 1,158 | Exceptions log level | **Is your feature request related to a problem? Please describe.**

_Feature to change log level of exceptions and/or remove the logging._

By default in operation.py any raised exception during endpoint handling inside context manager activates this part of the code regardless of what kind of exception it is.

_By default **django** treats 404 and such kind of errors with **WARNING**._

So even 404 in django extra -> ERROR log.

Cuz of it we can't lets say create custom django log handler that sends real ERROR's to email/messanger and etc.

_Ideally logic should be like that:_

_exception_handlers handled exception? -> WARNING

_exception_handlers not handled exception? -> ERROR

_There is def on_exception() in NinjaAPI for that_

So i am not sure why there is some logging logic in operation.py before we find a handler for an Exception.

**Describe the solution you'd like**

**Add ability to change and/or remove exception logging in operation.py** | closed | 2024-05-09T11:52:05Z | 2024-05-09T11:56:05Z | https://github.com/vitalik/django-ninja/issues/1158 | [] | mrisedev | 1 |

sgl-project/sglang | pytorch | 4,404 | [Bug] When starting with dp, forward_batch.global_num_tokens_gpu is None. | ### Checklist

- [x] 1. I have searched related issues but cannot get the expected help.

- [ ] 2. The bug has not been fixed in the latest version.

- [ ] 3. Please note that if the bug-related issue you submitted lacks corresponding environment info and a minimal reproducible demo, it will be challenging for us to reproduce and resolve the issue, reducing the likelihood of receiving feedback.

- [ ] 4. If the issue you raised is not a bug but a question, please raise a discussion at https://github.com/sgl-project/sglang/discussions/new/choose Otherwise, it will be closed.

- [x] 5. Please use English, otherwise it will be closed.

### Describe the bug

[2025-03-14 08:52:33 DP1 TP0] Scheduler hit an exception: Traceback (most recent call last):

File "/data/LLM_server/sglang-main/python/sglang/srt/managers/scheduler.py", line 1714, in run_scheduler_process

scheduler = Scheduler(server_args, port_args, gpu_id, tp_rank, dp_rank)

File "/data/LLM_server/sglang-main/python/sglang/srt/managers/scheduler.py", line 218, in __init__

self.tp_worker = TpWorkerClass(

File "/data/LLM_server/sglang-main/python/sglang/srt/managers/tp_worker_overlap_thread.py", line 63, in __init__

self.worker = TpModelWorker(server_args, gpu_id, tp_rank, dp_rank, nccl_port)

File "/data/LLM_server/sglang-main/python/sglang/srt/managers/tp_worker.py", line 74, in __init__

self.model_runner = ModelRunner(

File "/data/LLM_server/sglang-main/python/sglang/srt/model_executor/model_runner.py", line 166, in __init__

self.initialize(min_per_gpu_memory)

File "/data/LLM_server/sglang-main/python/sglang/srt/model_executor/model_runner.py", line 207, in initialize

self.init_cuda_graphs()

File "/data/LLM_server/sglang-main/python/sglang/srt/model_executor/model_runner.py", line 881, in init_cuda_graphs

self.cuda_graph_runner = CudaGraphRunner(self)

File "/data/LLM_server/sglang-main/python/sglang/srt/model_executor/cuda_graph_runner.py", line 251, in __init__

self.capture()

File "/data/LLM_server/sglang-main/python/sglang/srt/model_executor/cuda_graph_runner.py", line 323, in capture

) = self.capture_one_batch_size(bs, forward)

File "/data/LLM_server/sglang-main/python/sglang/srt/model_executor/cuda_graph_runner.py", line 402, in capture_one_batch_size

run_once()

File "/data/LLM_server/sglang-main/python/sglang/srt/model_executor/cuda_graph_runner.py", line 395, in run_once

logits_output = forward(input_ids, forward_batch.positions, forward_batch)

File "/data/anaconda3/envs/tenserrt_llm/lib/python3.10/site-packages/torch/utils/_contextlib.py", line 116, in decorate_context

return func(*args, **kwargs)

File "/data/LLM_server/sglang-main/python/sglang/srt/models/qwen2.py", line 375, in forward

return self.logits_processor(

File "/data/anaconda3/envs/tenserrt_llm/lib/python3.10/site-packages/torch/nn/modules/module.py", line 1736, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

File "/data/anaconda3/envs/tenserrt_llm/lib/python3.10/site-packages/torch/nn/modules/module.py", line 1747, in _call_impl

return forward_call(*args, **kwargs)

File "/data/LLM_server/sglang-main/python/sglang/srt/layers/logits_processor.py", line 306, in forward

logits = self._get_logits(pruned_states, lm_head, logits_metadata)

File "/data/LLM_server/sglang-main/python/sglang/srt/layers/logits_processor.py", line 412, in _get_logits

dp_gather(hidden_states, local_hidden_states, logits_metadata, "embedding")

File "/data/LLM_server/sglang-main/python/sglang/srt/layers/dp_attention.py", line 154, in dp_gather

local_start_pos, local_num_tokens = get_dp_local_info(forward_batch)

File "/data/LLM_server/sglang-main/python/sglang/srt/layers/dp_attention.py", line 94, in get_dp_local_info

cumtokens = torch.cumsum(forward_batch.global_num_tokens_gpu, dim=0)

TypeError: cumsum() received an invalid combination of arguments - got (NoneType, dim=int), but expected one of:

* (Tensor input, int dim, *, torch.dtype dtype = None, Tensor out = None)

* (Tensor input, name dim, *, torch.dtype dtype = None, Tensor out = None)

### Reproduction

CUDA_VISIBLE_DEVICES=4,5,6,7 python -m sglang.launch_server --model-path /data/MODELS/QwQ-32B-GPTQ-int8 --host 0.0.0.0 --tp 2 --dp 2

### Environment

(tenserrt_llm) [server@6000gpu sglang-main]$ python3 -m sglang.check_envINFO 03-14 09:03:13 __init__.py:190] Automatically detected platform cuda.

/data/anaconda3/envs/tenserrt_llm/lib/python3.10/site-packages/torch/utils/cpp_extension.py:1964: UserWarning: TORCH_CUDA_ARCH_LIST is not set, all archs for visible cards are included for compilation.

If this is not desired, please set os.environ['TORCH_CUDA_ARCH_LIST'].

warnings.warn(

Python: 3.10.0 (default, Mar 3 2022, 09:58:08) [GCC 7.5.0]

CUDA available: True

GPU 0,1: NVIDIA RTX A6000

GPU 0,1 Compute Capability: 8.6

CUDA_HOME: /usr/local/cuda

NVCC: Cuda compilation tools, release 12.4, V12.4.131

CUDA Driver Version: 550.135

PyTorch: 2.5.1+cu124

sglang: 0.4.3.post4

sgl_kernel: 0.0.5

flashinfer: 0.2.3+cu124torch2.5

triton: 3.1.0

transformers: 4.48.3

torchao: 0.9.0

numpy: 1.26.4

aiohttp: 3.11.13

fastapi: 0.115.11

hf_transfer: 0.1.9

huggingface_hub: 0.29.3

interegular: 0.3.3

modelscope: 1.23.2

orjson: 3.10.15

packaging: 24.2

psutil: 7.0.0

pydantic: 2.10.6

multipart: 0.0.20

zmq: 26.3.0

uvicorn: 0.34.0

uvloop: 0.21.0

vllm: 0.7.2

openai: 1.66.3

tiktoken: 0.9.0

anthropic: 0.49.0

decord: 0.6.0

NVIDIA Topology:

GPU0 GPU1 GPU2 GPU3 GPU4 GPU5 GPU6 GPU7 CPU Affinity NUMA Affinity GPU NUMA ID

GPU0 X NV4 PXB PXB SYS SYS SYS SYS 0-35,72-107 0 N/A

GPU1 NV4 X PXB PXB SYS SYS SYS SYS 0-35,72-107 0 N/A

GPU2 PXB PXB X NV4 SYS SYS SYS SYS 0-35,72-107 0 N/A

GPU3 PXB PXB NV4 X SYS SYS SYS SYS 0-35,72-107 0 N/A

GPU4 SYS SYS SYS SYS X NV4 PXB PXB 36-71,108-143 1 N/A

GPU5 SYS SYS SYS SYS NV4 X PXB PXB 36-71,108-143 1 N/A

GPU6 SYS SYS SYS SYS PXB PXB X NV4 36-71,108-143 1 N/A

GPU7 SYS SYS SYS SYS PXB PXB NV4 X 36-71,108-143 1 N/A

Legend:

X = Self

SYS = Connection traversing PCIe as well as the SMP interconnect between NUMA nodes (e.g., QPI/UPI)

NODE = Connection traversing PCIe as well as the interconnect between PCIe Host Bridges within a NUMA node

PHB = Connection traversing PCIe as well as a PCIe Host Bridge (typically the CPU)

PXB = Connection traversing multiple PCIe bridges (without traversing the PCIe Host Bridge)

PIX = Connection traversing at most a single PCIe bridge

NV# = Connection traversing a bonded set of # NVLinks

ulimit soft: 65535 | open | 2025-03-14T01:04:33Z | 2025-03-20T02:35:51Z | https://github.com/sgl-project/sglang/issues/4404 | [] | zzk2021 | 3 |

jpadilla/django-rest-framework-jwt | django | 292 | auth0 and rest framework jwt | Please restframwork-jwt assume that you have already registered user.

in my case, I want to authenticate user via auith0 and then create them in my django app.

how could i do this?

Thanks | closed | 2016-12-22T08:26:26Z | 2017-03-04T16:38:50Z | https://github.com/jpadilla/django-rest-framework-jwt/issues/292 | [] | saius | 1 |

dpgaspar/Flask-AppBuilder | rest-api | 2,195 | Support Personal Access Tokens in addition to AUTH_TYPE | Hi, is it possible to support access token in addition to oauth/oidc authentication.

so that other applications can communicate with the API of the FAB application.

I am dealing this issue when I want to use datahub to ingest metadata from Superset that is built with FAB and the only way for now is when superset is configured with DB_AUTH or LDAP_AUTH but not OAUTH/OIDC.

If FAB will have access token in regardless of the authentication type this will open new ways to communicate with FAB applications API.

what do you think? | open | 2024-02-10T19:44:17Z | 2024-02-20T09:58:38Z | https://github.com/dpgaspar/Flask-AppBuilder/issues/2195 | [] | shohamyamin | 1 |

recommenders-team/recommenders | deep-learning | 1,980 | [BUG] Set scipy version back to use the latest one | ### Description

This issue is a backlog to revert the temporary workaround #1971 | closed | 2023-08-29T03:39:45Z | 2024-04-30T04:58:10Z | https://github.com/recommenders-team/recommenders/issues/1980 | [

"bug"

] | loomlike | 2 |

sdl60660/letterboxd_recommendations | web-scraping | 18 | Failed on "Getting User's Movie" stage: redis_get_user_data_job_status failed | Can't seem to scrape my profile's movie on any device or network. Seems to be logging "redis_get_user_data_job_status" as failed. | closed | 2023-11-30T05:11:41Z | 2024-02-02T22:37:54Z | https://github.com/sdl60660/letterboxd_recommendations/issues/18 | [] | TomasCarlson | 2 |

iMerica/dj-rest-auth | rest-api | 319 | Sending verification email to false email produces 500 Internal server error | Currently when a user registers im sending a verification email using a Custom Account Adapter:

```

class CustomAccountAdapter(DefaultAccountAdapter):

def get_email_confirmation_url(self, request, confirmation):

return f"{secret.FRONT_URL}/verify-email?key={confirmation.key}"

def send_mail(self, template_prefix, email, context):

# Send email

subject = 'Welcome to Website.com, please verify your email'

template_name = 'verification_email.html'

body = render_to_string(template_name, context).strip()

msg = EmailMessage(subject, body, self.get_from_email(), [email])

msg.content_subtype = 'html'

msg.send()

```

The issue is that when I try to signup with a fake email for example sdavc@idhoihfhssdadiohdfij.com (which many people would probably try to do), the Django server has an internal error 500.

`**smtplib.SMTPRecipientsRefused: {'sdavc@idhoihfhssdadiohdfij.com': (450, b'4.1.2 <sdavc@idhoihfhssdadiohdfij.com>: Recipient address rejected: Domain not found')}**`

How can I return some sort of errors similar to when a user tries to register with an email that already exists so I can display this error in my frontend?

| open | 2021-10-19T01:40:38Z | 2021-10-19T01:40:38Z | https://github.com/iMerica/dj-rest-auth/issues/319 | [] | adrenaline681 | 0 |

OWASP/Nettacker | automation | 250 | CSV result export feature | Currently Nettacker is only capable of producing results in JSON,TXT and HTML format A new feature to produce results in CSV format is needed

Command line option -o :

`-o results.csv

` | closed | 2020-04-26T23:38:24Z | 2020-05-16T23:53:06Z | https://github.com/OWASP/Nettacker/issues/250 | [

"ask for feature"

] | securestep9 | 1 |

kizniche/Mycodo | automation | 1,058 | Generic Analog pH/EC Actions broken on AJAX-enabled interface | ### Describe the problem/bug

AJAX-enabled interface doesn't allow Actions to be executed. Clicking on the "Calibrate Slot" buttons don't appear to do anything.

### Versions:

- Mycodo Version: 8.11.0 + master branch commit [8d46745](https://github.com/kizniche/Mycodo/commit/8d46745b8439abad903f49eb9da0e27756e8fdca)

- Raspberry Pi Version: 3B

- Raspbian OS Version: Linux raspberrypi 5.10.17-v7+ #1403 SMP Mon Feb 22 11:29:51 GMT 2021 armv7l GNU/Linux

### Reproducibility

Please list specific setup details that are involved and the steps to reproduce the behavior:

1. Upgrade to master branch commit [8d46745](https://github.com/kizniche/Mycodo/commit/8d46745b8439abad903f49eb9da0e27756e8fdca)

2. Browse to Generic Analog pH/EC input.