repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

mage-ai/mage-ai | data-science | 5,221 | [BUG] Setting cache_block_output_in_memory breaking pipeline on K8s (EKS) | ### Mage version

0.9.72

### Describe the bug

All pipelines that we configure as explained in [the docs](https://docs.mage.ai/design/data-pipeline-management#cache-block-output-in-memory) to not write to the EFS filesystem but store the data in memory, are passing an empty dataframe between blocks.

We set both required settings:

```yaml

cache_block_output_in_memory: true

run_pipeline_in_one_process: true

```

Thus, the pipeline is run in one single k8s job.

However, only the first block finishes successfully.

The direct downstream block does not receive any data.

When I set `cache_block_output_in_memory: false`, everything works as expected.

### To reproduce

1. Set `cache_block_output_in_memory: true` and `run_pipeline_in_one_process: true`

2. Create a pipeline run using the "Run@once" trigger

3. Check the pipeline run's logs

### Expected behavior

I expect data to be passed between blocks even if data is kept fully in memory and not spilled out to disk.

### Screenshots

_No response_

### Operating system

- AWS EKS (K8s)

- EFS

### Additional context

_No response_ | open | 2024-06-23T16:20:34Z | 2024-06-23T16:20:34Z | https://github.com/mage-ai/mage-ai/issues/5221 | [

"bug"

] | MartinLoeper | 0 |

iperov/DeepFaceLab | machine-learning | 675 | Newest update not being able to use Full Face images... | Giving me this error when i use the aligned src that i always use, full face. This is the latest dfl with xseg and whole face. Previous versions works fine.

| closed | 2020-03-25T00:54:31Z | 2020-03-25T22:37:17Z | https://github.com/iperov/DeepFaceLab/issues/675 | [] | mpmo10 | 2 |

deeppavlov/DeepPavlov | nlp | 853 | ODQA inference speed very very slow | Running the default configuration and model on a EC2 p2.xlarge instance (60~GB Ram and Nvidia K80 GPU) and inference for simple questions take 40 seconds to 5 minutes.

Sometimes, no result even after 10 minutes.

<img width="1093" alt="MobaXterm_2019-05-27_16-36-13" src="https://user-images.githubusercontent.com/3790163/58415912-98020200-809d-11e9-936e-022089c5aba3.png">

| closed | 2019-05-27T11:06:48Z | 2020-05-21T10:05:58Z | https://github.com/deeppavlov/DeepPavlov/issues/853 | [] | shubhank008 | 12 |

PaddlePaddle/PaddleHub | nlp | 2,320 | yolov3_darknet53_pedestrian 每次加载都需要下载很长时间,且推理错误 | 欢迎您反馈PaddleHub使用问题,非常感谢您对PaddleHub的贡献!

在留下您的问题时,辛苦您同步提供如下信息:

- 版本、环境信息

1)PaddleHub和PaddlePaddle版本:请提供您的PaddleHub和PaddlePaddle版本号,例如PaddleHub1.4.1,PaddlePaddle1.6.2

2)系统环境:请您描述系统类型,例如Linux/Windows/MacOS/,python版本

- 复现信息:如为报错,请给出复现环境、复现步骤

-

○ →

`import paddlehub as hub

import cv2

pedestrian_detector = hub.Module(name="yolov3_darknet53_pedestrian")

result = pedestrian_detector.object_detection(images=[cv2.imread('/home/ai02/test/people/8.jpg')])

print(result)`

/bin/python3 /home/ai02/test/people/test.py

Download https://bj.bcebos.com/paddlehub/paddlehub_dev/yolov3_darknet53_pedestrian_1_1_0.zip

[##################################################] 100.00%

Decompress /home/ai02/.paddlehub/tmp/tmp7dw1rgc9/yolov3_darknet53_pedestrian_1_1_0.zip

Traceback (most recent call last):

File "/home/ai02/test/people/test.py", line 4, in <module>

pedestrian_detector = hub.Module(name="yolov3_darknet53_pedestrian")

File "/home/ai02/.local/lib/python3.8/site-packages/paddlehub/module/module.py", line 388, in __new__

module = cls.init_with_name(

File "/home/ai02/.local/lib/python3.8/site-packages/paddlehub/module/module.py", line 487, in init_with_name

user_module_cls = manager.install(

File "/home/ai02/.local/lib/python3.8/site-packages/paddlehub/module/manager.py", line 190, in install

return self._install_from_name(name, version, ignore_env_mismatch)

File "/home/ai02/.local/lib/python3.8/site-packages/paddlehub/module/manager.py", line 265, in _install_from_name

return self._install_from_url(item['url'])

File "/home/ai02/.local/lib/python3.8/site-packages/paddlehub/module/manager.py", line 258, in _install_from_url

return self._install_from_archive(file)

File "/home/ai02/.local/lib/python3.8/site-packages/paddlehub/module/manager.py", line 374, in _install_from_archive

for path, ds, ts in xarfile.unarchive_with_progress(archive, _tdir):

File "/home/ai02/.local/lib/python3.8/site-packages/paddlehub/utils/xarfile.py", line 225, in unarchive_with_progress

with open(name, mode='r') as file:

File "/home/ai02/.local/lib/python3.8/site-packages/paddlehub/utils/xarfile.py", line 162, in open

return XarFile(name, mode, **kwargs)

File "/home/ai02/.local/lib/python3.8/site-packages/paddlehub/utils/xarfile.py", line 91, in __init__

if self.arctype in ['tar.gz', 'tar.bz2', 'tar.xz', 'tar', 'tgz', 'txz']:

AttributeError: 'XarFile' object has no attribute 'arctype'

Exception ignored in: <function XarFile.__del__ at 0x7fd670f1fb80>

Traceback (most recent call last):

File "/home/ai02/.local/lib/python3.8/site-packages/paddlehub/utils/xarfile.py", line 101, in __del__

self._archive_fp.close()

AttributeError: 'XarFile' object has no attribute '_archive_fp' | closed | 2024-02-27T02:21:25Z | 2024-03-17T05:39:36Z | https://github.com/PaddlePaddle/PaddleHub/issues/2320 | [] | sun-rabbit | 4 |

microsoft/qlib | machine-learning | 1,193 | How to adapt LSTM to DDG-DA | I want to adapt LSTM to DDG-DA, how can I do that?

What I have tried:

1. modify rolling_benchmark.py to fit with LSTM parameters

2. modify the bug caused by changing the dataset object to TSDatasetH.

change the file in qlib > contrib > meta > data_selection > model.py:

```

def reweight(self, data: Union[pd.DataFrame, pd.Series]):

# TODO: handling TSDataSampler

if isinstance(data, pd.DataFrame):

idx = data.index

else:

idx = data.get_index()

w_s = pd.Series(1.0, index=idx)

for k, w in self.time_weight.items():

w_s.loc[slice(*k)] = w

logger.info(f"Reweighting result: {w_s}")

return w_s

```

However, the valid loss remains the same in different epoch and I don't know why.

| closed | 2022-07-12T11:53:33Z | 2022-10-21T15:05:41Z | https://github.com/microsoft/qlib/issues/1193 | [

"question",

"stale"

] | Xxiaoting | 2 |

taverntesting/tavern | pytest | 787 | how can i get coverage after running pytest | how can i get coverage after running

`

pytest -v test_01_init_gets.tavern.yaml --html=all.html

` | closed | 2022-06-09T10:17:46Z | 2022-06-15T09:37:31Z | https://github.com/taverntesting/tavern/issues/787 | [] | iakirago | 2 |

sqlalchemy/alembic | sqlalchemy | 1,246 | Minor typing issue for alembic.context.configure in 1.11.0 | **Describe the bug**

Signature of the `alembic.context.configure` function has changed in 1.11.0, where `compare_server_default` argument uses `Column` classes, which should be generics. This kind of definition produces type checkers warnings.

**Expected behavior**

No warnings reported by static type checkers

**To Reproduce**

E.g. using VSCode with Pyright in "strict" mode (usually it is a part of `env.py`):

```py

from alembic.context import configure

# Throws: Type of "configure" is partially unknown

```

**Versions.**

- OS: MacOS

- Python: 3.11.3

- Alembic: 1.11.0

- SQLAlchemy: 2.0.13

- Database: Postgres 15

- DBAPI: asyncpg

**Additional context**

**Have a nice day!**

| closed | 2023-05-16T18:00:39Z | 2023-05-17T15:15:23Z | https://github.com/sqlalchemy/alembic/issues/1246 | [

"bug",

"pep 484"

] | AlexanderPodorov | 2 |

graphql-python/graphene-django | django | 840 | Validate Meta.fields and Meta.exclude on DjangoObjectType | tl;dr: DjangoObjectType ignores all unknown values in `Meta.fields`. It should compare the fields list with the available Model's fields instead.

---

I'm in the process of rewriting DRF-based backend to graphene-django, and I was surprised when my graphene-django generated schema was silently missing the fields I specified in `fields`.

(I'm copy-pasting `fields` from DRF serializers to DjangoObjectType's Meta class).

Turns out some of these fields were implemented as properties or methods on models, and I'm ok with writing custom resolvers for those (otherwise there's no way to detect types, at least in the absence of type hints), but I didn't expect DjangoObjectType to quietly accept unknown values.

I believe the reason for this is that `graphene_django.types.construct_fields` iterates over model's fields, but it could/should iterate over `only_fields` too.

Implementing the same check for `exclude` also seems like a good idea to me (otherwise you could make a typo in `exclude`, but never notice it until it's too late). | closed | 2019-12-29T11:45:02Z | 2019-12-31T13:55:46Z | https://github.com/graphql-python/graphene-django/issues/840 | [] | berekuk | 1 |

NullArray/AutoSploit | automation | 798 | Divided by zero exception68 | Error: Attempted to divide by zero.68 | closed | 2019-04-19T16:00:55Z | 2019-04-19T16:37:44Z | https://github.com/NullArray/AutoSploit/issues/798 | [] | AutosploitReporter | 0 |

seleniumbase/SeleniumBase | web-scraping | 2,216 | Setting a `user_data_dir` while using Chrome extensions | First time,I use SeleniumBase open chrome without add extensions,like editcookies, and I really add "user-data-dir",generate special folder,at the end I use driver.quit() ,second time,i just use SeleniumBase open by "user-data-dir=special folder path", I can't find the installed extensions in chrome.Did I do something wrong? or How can I see the extensions installed for the first time after the second startup?

wait u r,thx | closed | 2023-10-28T11:04:25Z | 2023-10-29T06:36:57Z | https://github.com/seleniumbase/SeleniumBase/issues/2216 | [

"question"

] | SiTu-JIanying | 1 |

scikit-learn/scikit-learn | python | 30,753 | ⚠️ CI failed on Linux_Runs.pylatest_conda_forge_mkl (last failure: Feb 03, 2025) ⚠️ | **CI failed on [Linux_Runs.pylatest_conda_forge_mkl](https://dev.azure.com/scikit-learn/scikit-learn/_build/results?buildId=73883&view=logs&j=dde5042c-7464-5d47-9507-31bdd2ee0a3a)** (Feb 03, 2025)

- Test Collection Failure | closed | 2025-02-03T02:34:16Z | 2025-02-03T16:44:29Z | https://github.com/scikit-learn/scikit-learn/issues/30753 | [] | scikit-learn-bot | 1 |

globaleaks/globaleaks-whistleblowing-software | sqlalchemy | 3,256 | Export failure when users have configured a language that has been disabled | **Describe the bug**

'Save/Export' submission function returns error

**To Reproduce**

Steps to reproduce the behavior:

1. Recipient login

2. Go to 'Submissions'

3. Click on 'save/export' in the list of submissions

4. Error showned in a new page:` {"error_message": "InternalServerError [Unexpected]", "error_code": 1, "arguments": ["Unexpected"]}`

5. Enter the specific Submission

7. Click on 'save/export' on the top of the page report

8. Error showned in a new page:` {"error_message": "InternalServerError [Unexpected]", "error_code": 1, "arguments": ["Unexpected"]}`

**Expected behavior**

Zip file to be dowloaded.

**Desktop:**

- OS: windows 10

- Browser: Edge

- Version [103.0.1264.77]

**Additional context**

Email sent to admin with this content:

Version: 4.9.9

KeyError Mapping key not found.

Traceback (most recent call last):

File "/usr/lib/python3/dist-packages/twisted/internet/defer.py", line 1416, in _inlineCallbacks

result = result.throwExceptionIntoGenerator(g)

File "/usr/lib/python3/dist-packages/twisted/python/failure.py", line 512, in throwExceptionIntoGenerator

return g.throw(self.type, self.value, self.tb)

File "/usr/lib/python3/dist-packages/globaleaks/handlers/export.py", line 130, in get

files = yield prepare_tip_export(self.session.cc, tip_export)

File "/usr/lib/python3/dist-packages/twisted/internet/defer.py", line 1418, in _inlineCallbacks

result = g.send(result)

File "/usr/lib/python3/dist-packages/globaleaks/handlers/export.py", line 109, in prepare_tip_export

export_template = Templating().format_template(tip_export['notification']['export_template'], tip_export).encode()

KeyError: 'export_template'

| open | 2022-08-04T14:12:42Z | 2022-08-05T10:18:36Z | https://github.com/globaleaks/globaleaks-whistleblowing-software/issues/3256 | [

"T: Bug",

"C: Backend"

] | zangels | 8 |

collerek/ormar | sqlalchemy | 980 | pytest DatabaseBackend is not running | **Describe the bug**

When trying to run tests with `pytest`, I get an exception `DatabaseBackend is not running`.

I think that `pytest` is using `BaseMeta`'s database, which is not the test database.

This is the setup code:

```python

TEST_DATABASE_URL_WITH_DB = f"postgresql://....."

# tried with postgresql+asyncpg as well

database = databases.Database(TEST_DATABASE_URL_WITH_DB)

@pytest.fixture()

def engine():

return sqlalchemy.create_engine(DATABASE_URL_WITH_DB)

# yield engine

# engine.sync_engine.dispose()

@pytest.fixture(autouse=True)

def create_test_database(engine):

metadata = BaseMeta.metadata

metadata.drop_all(engine)

metadata.create_all(engine)

# await database.connect()

yield

# await database.disconnect()

metadata.drop_all(engine)

@pytest.mark.asyncio

async def test_actual_logic():

await database.connect()

async with database:

org = await Org.objects.create(name="test-org", auth0_id="test-org-auth0-id")

```

The models:

```python

database = databases.Database(PROD_DATABASE_URL)

class BaseMeta(ormar.ModelMeta):

metadata = metadata

database = database

class Org(ormar.Model):

id = ormar.Integer(primary_key=True)

public_id: str = ormar_postgres_extensions.UUID(

index=True, unique=True, nullable=False, default=uuid.uuid4()

)

name: str = ormar.Text(max_length=320, index=True)

auth0_id: str = ormar.Text(max_length=320, index=True, nullable=True)

class Meta(BaseMeta):

tablename = "orgs"

```

Stack trace:

```bash

@pytest.mark.asyncio

async def test_actual_logic():

await database.connect()

> org = await Org.objects.create(name="test-org", auth0_id="test-org-auth0-id")

tests/efforts/test_service.py:57:

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

../../../../.venvs/core/lib/python3.11/site-packages/ormar/queryset/queryset.py:1121: in create

instance = await instance.save()

../../../../.venvs/core/lib/python3.11/site-packages/ormar/models/model.py:94: in save

pk = await self.Meta.database.execute(expr)

../../../../.venvs/core/lib/python3.11/site-packages/databases/core.py:164: in execute

async with self.connection() as connection:

../../../../.venvs/core/lib/python3.11/site-packages/databases/core.py:235: in __aenter__

raise e

../../../../.venvs/core/lib/python3.11/site-packages/databases/core.py:232: in __aenter__

await self._connection.acquire()

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

self = <databases.backends.postgres.PostgresConnection object at 0x106afa7d0>

async def acquire(self) -> None:

print("acquire")

print(self._database._pool)

assert self._connection is None, "Connection is already acquired"

> assert self._database._pool is not None, "DatabaseBackend is not running"

E AssertionError: DatabaseBackend is not running

../../../../.venvs/core/lib/python3.11/site-packages/databases/backends/postgres.py:180: AssertionError

```

**Versions (please complete the following information):**

- Database backend used (mysql/sqlite/postgress): **postgres 14.1**

- Python version: 3.11

- `ormar` version: 0.12.0

- if applicable `fastapi` version 0.88 | closed | 2023-01-08T13:21:39Z | 2023-01-09T18:22:27Z | https://github.com/collerek/ormar/issues/980 | [

"bug"

] | AdamGold | 2 |

Gozargah/Marzban | api | 1,543 | Node Data Limit | I believe it would be useful to able to specify Node Data Limit as some servers don't have traffic limit, therefore we must be careful that the server's traffic usage doesn't exceed the limit. | closed | 2024-12-27T23:18:05Z | 2024-12-28T14:42:00Z | https://github.com/Gozargah/Marzban/issues/1543 | [] | iamtheted | 0 |

modin-project/modin | data-science | 7,465 | BUG: Series.rename_axis raises AttributeError | ### Modin version checks

- [x] I have checked that this issue has not already been reported.

- [x] I have confirmed this bug exists on the latest released version of Modin.

- [x] I have confirmed this bug exists on the main branch of Modin. (In order to do this you can follow [this guide](https://modin.readthedocs.io/en/stable/getting_started/installation.html#installing-from-the-github-main-branch).)

### Reproducible Example

```python

import modin.pandas as pd

s = pd.Series(["dog", "cat", "monkey"])

s.rename_axis("animal")

```

### Issue Description

`Series.rename_axis` should rename the index of the series, but currently raises due to a missing method.

Found in Snowpark pandas: https://github.com/snowflakedb/snowpark-python/pull/3040

### Expected Behavior

Does not raise and renames the index.

### Error Logs

<details>

```python-traceback

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/Users/joshi/code/modin/modin/logging/logger_decorator.py", line 149, in run_and_log

result = obj(*args, **kwargs)

File "/Users/joshi/code/modin/modin/pandas/series.py", line 1701, in rename_axis

return super().rename_axis(

File "/Users/joshi/code/modin/modin/logging/logger_decorator.py", line 149, in run_and_log

result = obj(*args, **kwargs)

File "/Users/joshi/code/modin/modin/pandas/base.py", line 2565, in rename_axis

return self._set_axis_name(mapper, axis=axis, inplace=inplace)

File "/Users/joshi/code/modin/modin/pandas/series.py", line 358, in __getattr__

raise err

File "/Users/joshi/code/modin/modin/pandas/series.py", line 354, in __getattr__

return _SERIES_EXTENSIONS_.get(key, object.__getattribute__(self, key))

AttributeError: 'Series' object has no attribute '_set_axis_name'. Did you mean: '_get_axis_number'?

```

</details>

### Installed Versions

<details>

INSTALLED VERSIONS

------------------

commit : c114e7b0a38ff025c5f69ff752510a62ede6506f

python : 3.10.13.final.0

python-bits : 64

OS : Darwin

OS-release : 24.3.0

Version : Darwin Kernel Version 24.3.0: Thu Jan 2 20:24:23 PST 2025; root:xnu-11215.81.4~3/RELEASE_ARM64_T6020

machine : arm64

processor : arm

byteorder : little

LC_ALL : None

LANG : en_US.UTF-8

LOCALE : en_US.UTF-8

Modin dependencies

------------------

modin : 0.32.0+19.gc114e7b0.dirty

ray : 2.34.0

dask : 2024.8.1

distributed : 2024.8.1

pandas dependencies

-------------------

pandas : 2.2.2

numpy : 1.26.4

pytz : 2023.3.post1

dateutil : 2.8.2

setuptools : 68.0.0

pip : 23.3

Cython : None

pytest : 8.3.2

hypothesis : None

sphinx : 5.3.0

blosc : None

feather : None

xlsxwriter : None

lxml.etree : 5.3.0

html5lib : None

pymysql : None

psycopg2 : 2.9.9

jinja2 : 3.1.4

IPython : 8.17.2

pandas_datareader : None

adbc-driver-postgresql: None

adbc-driver-sqlite : None

bs4 : 4.12.2

bottleneck : None

dataframe-api-compat : None

fastparquet : 2024.5.0

fsspec : 2024.6.1

gcsfs : None

matplotlib : 3.9.2

numba : None

numexpr : 2.10.1

odfpy : None

openpyxl : 3.1.5

pandas_gbq : 0.23.1

pyarrow : 17.0.0

pyreadstat : None

python-calamine : None

pyxlsb : None

s3fs : 2024.6.1

scipy : 1.14.1

sqlalchemy : 2.0.32

tables : 3.10.1

tabulate : None

xarray : 2024.7.0

xlrd : 2.0.1

zstandard : None

tzdata : 2023.3

qtpy : None

pyqt5 : None

</details>

| closed | 2025-03-11T21:12:35Z | 2025-03-20T20:56:20Z | https://github.com/modin-project/modin/issues/7465 | [

"bug 🦗",

"P3"

] | sfc-gh-joshi | 0 |

microsoft/nni | data-science | 5,038 | can i use netadapt with yolov5? | **Describe the issue**:

**Environment**:

- NNI version:

- Training service (local|remote|pai|aml|etc):

- Client OS:

- Server OS (for remote mode only):

- Python version:

- PyTorch/TensorFlow version:

- Is conda/virtualenv/venv used?:

- Is running in Docker?:

**Configuration**:

- Experiment config (remember to remove secrets!):

- Search space:

**Log message**:

- nnimanager.log:

- dispatcher.log:

- nnictl stdout and stderr:

<!--

Where can you find the log files:

LOG: https://github.com/microsoft/nni/blob/master/docs/en_US/Tutorial/HowToDebug.md#experiment-root-director

STDOUT/STDERR: https://nni.readthedocs.io/en/stable/reference/nnictl.html#nnictl-log-stdout

-->

**How to reproduce it?**: | open | 2022-08-01T10:26:41Z | 2022-08-04T01:49:59Z | https://github.com/microsoft/nni/issues/5038 | [] | mumu1431 | 1 |

samuelcolvin/watchfiles | asyncio | 330 | Expose `follow_links` | ### Description

Notifications for linked files seem to be deduplicated at the `notify` level, which leads to issues like https://github.com/Aider-AI/aider/issues/3315.

I believe this could be solved by exposing `notify`s `follow_links` and then setting it to `False` in the client program.

### Example Code

```Python

```

### Watchfiles Output

```Text

```

### Operating System & Architecture

Linux-6.13.2-zen1-1-zen-x86_64-with-glibc2.41

#1 ZEN SMP PREEMPT_DYNAMIC Sat, 08 Feb 2025 18:54:38 +0000

### Environment

_No response_

### Python & Watchfiles Version

python: 3.12.8 (main, Jan 3 2025, 17:16:36) [GCC 14.2.1 20240910], watchfiles: 1.0.4

### Rust & Cargo Version

_No response_ | open | 2025-02-27T09:06:11Z | 2025-02-27T09:06:11Z | https://github.com/samuelcolvin/watchfiles/issues/330 | [

"bug"

] | bard | 0 |

sgl-project/sglang | pytorch | 4,410 | [Bug] support gemma3 | ### Describe the bug

get this error

```

ValueError: The checkpoint you are trying to load has model type `gemma3` but Transformers does not recognize this architecture. This could be because of an issue with the checkpoint, or because your version of Transformers is out of date.

```

update Transformers to `transformers-4.49.0`

got

```

File "/opt/my-venv/lib/python3.12/site-packages/transformers/models/auto/auto_factory.py", line 833, in register

raise ValueError(f"'{key}' is already used by a Transformers model.")

ValueError: '<class 'sglang.srt.configs.qwen2_5_vl_config.Qwen2_5_VLConfig'>' is already used by a Transformers model.

```

### Reproduction

```

python -m sglang.launch_server --model-path /opt/model/models--google--gemma-3-27b-it/snapshots/dfb98f29ff907e391ceed2be3834ca071ea260f1 --served-model-name gemma-3-27b-it --mem-fraction-static 0.7 --tp 2 --host 0.0.0.0 --port 8000

```

### Environment

ubuntu, have 2 `rtx a6000` with `nvlink` bridage support

```

sglang[all]>=0.4.4.post1

Driver Version: 570.124.04 CUDA Version: 12.8

``` | closed | 2025-03-14T04:46:15Z | 2025-03-18T19:01:16Z | https://github.com/sgl-project/sglang/issues/4410 | [] | Liusuqing | 4 |

OFA-Sys/Chinese-CLIP | nlp | 20 | import cn_clip出错UnicodeDecodeError: 'gbk' codec can't decode byte 0x81 in position 1564: illegal multibyte sequence | import cn_clip.clip as clip

发生异常: UnicodeDecodeError

Traceback (most recent call last):

File "D:\develop\anaconda3\lib\runpy.py", line 193, in _run_module_as_main

"__main__", mod_spec)

File "D:\develop\anaconda3\lib\runpy.py", line 85, in _run_code

exec(code, run_globals)

File "c:\Users\saizong\.vscode\extensions\ms-python.python-2022.4.1\pythonFiles\lib\python\debugpy\__main__.py", line 45, in <module>

cli.main()

File "c:\Users\saizong\.vscode\extensions\ms-python.python-2022.4.1\pythonFiles\lib\python\debugpy/..\debugpy\server\cli.py", line 444, in main

run()

File "c:\Users\saizong\.vscode\extensions\ms-python.python-2022.4.1\pythonFiles\lib\python\debugpy/..\debugpy\server\cli.py", line 285, in run_file

runpy.run_path(target_as_str, run_name=compat.force_str("__main__"))

File "D:\develop\anaconda3\lib\runpy.py", line 263, in run_path

pkg_name=pkg_name, script_name=fname)

File "D:\develop\anaconda3\lib\runpy.py", line 96, in _run_module_code

mod_name, mod_spec, pkg_name, script_name)

File "D:\develop\anaconda3\lib\runpy.py", line 85, in _run_code

exec(code, run_globals)

File "d:\develop\workspace\today_video\clipcn.py", line 5, in <module>

import cn_clip.clip as clip

File "D:\develop\anaconda3\Lib\site-packages\cn_clip\clip\__init__.py", line 3, in <module>

_tokenizer = FullTokenizer()

File "D:\develop\anaconda3\Lib\site-packages\cn_clip\clip\bert_tokenizer.py", line 170, in __init__

self.vocab = load_vocab(vocab_file)

File "D:\develop\anaconda3\Lib\site-packages\cn_clip\clip\bert_tokenizer.py", line 132, in load_vocab

token = convert_to_unicode(reader.readline())

UnicodeDecodeError: 'gbk' codec can't decode byte 0x81 in position 1564: illegal multibyte sequence

请问如何处理?谢谢! | closed | 2022-11-28T08:07:28Z | 2022-12-13T11:37:28Z | https://github.com/OFA-Sys/Chinese-CLIP/issues/20 | [] | bigmarten | 13 |

huggingface/datasets | machine-learning | 6,935 | Support for pathlib.Path in datasets 2.19.0 | ### Describe the bug

After the recent update of `datasets`, Dataset.save_to_disk does not accept a pathlib.Path anymore. It was supported in 2.18.0 and previous versions. Is this intentional? Was it supported before only because of a Python dusk-typing miracle?

### Steps to reproduce the bug

```

from datasets import Dataset

import pathlib

path = pathlib.Path("./my_out_path")

Dataset.from_dict(

{"text": ["hello world"], "label": [777], "split": ["train"]}

.save_to_disk(path)

```

This results in an error when using datasets 2.19:

```

Traceback (most recent call last):

File "<stdin>", line 3, in <module>

File "/Users/jb/scratch/venv/lib/python3.11/site-packages/datasets/arrow_dataset.py", line 1515, in save_to_disk

fs, _ = url_to_fs(dataset_path, **(storage_options or {}))

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/jb/scratch/venv/lib/python3.11/site-packages/fsspec/core.py", line 383, in url_to_fs

chain = _un_chain(url, kwargs)

^^^^^^^^^^^^^^^^^^^^^^

File "/Users/jb/scratch/venv/lib/python3.11/site-packages/fsspec/core.py", line 323, in _un_chain

if "::" in path

^^^^^^^^^^^^

TypeError: argument of type 'PosixPath' is not iterable

```

Converting to str works, however.

```

Dataset.from_dict(

{"text": ["hello world"], "label": [777], "split": ["train"]}

).save_to_disk(str(path))

```

### Expected behavior

My dataset gets saved to disk without an error.

### Environment info

aiohttp==3.9.5

aiosignal==1.3.1

attrs==23.2.0

certifi==2024.2.2

charset-normalizer==3.3.2

datasets==2.19.0

dill==0.3.8

filelock==3.14.0

frozenlist==1.4.1

fsspec==2024.3.1

huggingface-hub==0.23.2

idna==3.7

multidict==6.0.5

multiprocess==0.70.16

numpy==1.26.4

packaging==24.0

pandas==2.2.2

pyarrow==16.1.0

pyarrow-hotfix==0.6

python-dateutil==2.9.0.post0

pytz==2024.1

PyYAML==6.0.1

requests==2.32.3

six==1.16.0

tqdm==4.66.4

typing_extensions==4.12.0

tzdata==2024.1

urllib3==2.2.1

xxhash==3.4.1

yarl==1.9.4 | open | 2024-05-30T12:53:36Z | 2025-01-14T11:50:22Z | https://github.com/huggingface/datasets/issues/6935 | [] | lamyiowce | 2 |

ultralytics/ultralytics | pytorch | 19,425 | KeyError: 'ratio_pad' | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and [discussions](https://github.com/orgs/ultralytics/discussions) and found no similar questions.

### Question

When I started training on my self-built dataset, the following error was reported during the first verification. Please help me solve this problem. I can't figure out where the specific problem is. Thank you.

> Traceback (most recent call last):

File "D:\XXX\XXX\ultralytics-8.3.78\train.py", line 9, in <module>

results = model.train(data=r"./data.yaml",

File "D:\XXX\XXX\ultralytics-8.3.78\ultralytics\engine\model.py", line 810, in train

self.trainer.train()

File "D:\XXX\XXX\ultralytics-8.3.78\ultralytics\engine\trainer.py", line 208, in train

self._do_train(world_size)

File "D:\XXX\XXX\ultralytics-8.3.78\ultralytics\engine\trainer.py", line 433, in _do_train

self.metrics, self.fitness = self.validate()

File "D:\XXX\XXX\ultralytics-8.3.78\ultralytics\engine\trainer.py", line 607, in validate

metrics = self.validator(self)

File "D:\XXX\XXX\venv\lib\site-packages\torch\utils\_contextlib.py", line 116, in decorate_context

return func(*args, **kwargs)

File "D:\XXX\XXX\ultralytics-8.3.78\ultralytics\engine\validator.py", line 193, in __call__

self.update_metrics(preds, batch)

File "D:\XXX\XXX\ultralytics-8.3.78\ultralytics\models\yolo\detect\val.py", line 139, in update_metrics

pbatch = self._prepare_batch(si, batch)

File "D:\XXX\XXX\ultralytics-8.3.78\ultralytics\models\yolo\detect\val.py", line 115, in _prepare_batch

ratio_pad = batch["ratio_pad"][si]

KeyError: 'ratio_pad'

### Additional

_No response_ | closed | 2025-02-25T16:42:35Z | 2025-02-27T06:48:48Z | https://github.com/ultralytics/ultralytics/issues/19425 | [

"question",

"detect"

] | ywWang-coder | 6 |

mlfoundations/open_clip | computer-vision | 827 | how to get hidden_state from every layers of ViT of openclip vision encoder? | if you could solve my problem, thanks a lot ! | open | 2024-02-24T07:03:10Z | 2024-04-12T19:50:25Z | https://github.com/mlfoundations/open_clip/issues/827 | [] | jzssz | 2 |

jupyter/docker-stacks | jupyter | 1,969 | [ENH] - /home/jovyan/work is confusing (documentation) | ### What docker image(s) is this feature applicable to?

scipy-notebook

### What change(s) are you proposing?

User Guide documentation suggests mounting a local directory (`$PWD`, etc.) to `/home/jovyan/work` to persist notebooks. An excellent suggestion, let's keep our data around.

But at no point in _Quick Start_, _Selecting an Image_, _Running a Container_, or _Common Features_ does the documentation instruct you that by default, the notebook will save to `/home/jovyan`.

### How does this affect the user?

The user will discover that their data didn't persist only upon running a new container.

Further, there's no immediately available troubleshooting topic or search query that will illuminate that you didn't click "work" in the left sidebar of jupyter-server. The natural inclination is to click the large, friendly Python logo to get a Python notebook, since after all, that's why your here.

But that notebook ends up in `/home/jovyan`.

### Anything else?

I was running:

```

docker run --rm --name=jupyter \

-p 8888:8888 -v $(pwd):/home/jovyan/work \

-e RESTARTABLE=yes \

jupyter/scipy-notebook:python-3.11.4 "$@"

```

I'm using jupyter for instructional purposes. My resolution is to mount `$PWD` to `/home/jovyan` (without the `work` folder) and accept that I end up with extra files on the host from jovyan's `$HOME` (which I presume could be an issue when selecting another image at a later date). Not a problem, since I'm primarily concerned with making sure I don't lose any .ipynb files. | closed | 2023-08-17T13:55:59Z | 2023-08-18T17:16:50Z | https://github.com/jupyter/docker-stacks/issues/1969 | [

"type:Enhancement"

] | 4kbyte | 2 |

simple-login/app | flask | 2,188 | Wrong unsubscribe link format? | To me it looks like that the way the original unsubscribe links are encoded does not match the way simple-login would handle them.

In `app/handler/unsubscribe_encoder.py`, line 100:

`return f"{config.URL}/dashboard/unsubscribe/encoded?data={encoded}"`

In `app/dashboard/views/unsubscribe.py`, line 76:

`@dashboard_bp.route("/unsubscribe/encoded/<encoded_request>", methods=["GET"])`

I.e. the links did not work for me, unless I changed the URL format from `/dashboard/unsubscribe/encoded?data=DATA` to `/dashboard/unsubscribe/encoded/DATA`. | open | 2024-08-19T06:02:53Z | 2024-12-21T18:55:35Z | https://github.com/simple-login/app/issues/2188 | [] | a-bali | 1 |

iMerica/dj-rest-auth | rest-api | 333 | RegisterView complete_signup receives HttpReuest instead of a Request | I needed to access `Request.data` inside the `AccountAdapter` and it worked until I tested it with `raw` JSON body.

By examing `perform_create()` at [RegisterView](https://github.com/iMerica/dj-rest-auth/blob/b72a55f86b2667e0fa10070485967f5e42588e3b/dj_rest_auth/registration/views.py#L76)

```

def perform_create(self, serializer):

user = serializer.save(self.request)

if allauth_settings.EMAIL_VERIFICATION != \

allauth_settings.EmailVerificationMethod.MANDATORY:

if getattr(settings, 'REST_USE_JWT', False):

self.access_token, self.refresh_token = jwt_encode(user)

else:

create_token(self.token_model, user, serializer)

complete_signup(

self.request._request, user,

allauth_settings.EMAIL_VERIFICATION,

None,

)

return user

```

I noticed that `complete_signup` receives `self.requests._request` which is django `HttpRequest`.

My code accessed request data as follows:

```

def send_confirmation_mail(self, request, email_confirmation, signup): # noqa: D102

request.POST['value_passed_to_email_template']

```

Everything was fine even tests using `rest_framework.tests.ApiClient` passed fine.

Until I tried to POST raw json body.

`http POST localhost:8000/auth/registration/ value_passed_to_email_template=a_value`

This caused `KeyError` because `request.POST` was empty.

It took me some time to notice that the `request` inside `send_confirmation_mail` is `WSGIRequest (django HttpRequest)` and not `rest_framework.request.Request`.

Then I did some tests.

1. POST body as `x-www-form-urlencoded` -> PASS

2. POST body as `form-data` -> PASS

3. POST body as `raw` -> FAIL | open | 2021-11-25T10:58:16Z | 2021-11-25T10:58:16Z | https://github.com/iMerica/dj-rest-auth/issues/333 | [] | 1oglop1 | 0 |

exaloop/codon | numpy | 195 | compile on mac failed when link libcodonc.dylib | Mac OS: Catalina

version: 10.15

llvm: [clang+llvm-15.0.7-x86_64-apple-darwin21.0.tar.xz](https://github.com/llvm/llvm-project/releases/download/llvmorg-15.0.7/clang+llvm-15.0.7-x86_64-apple-darwin21.0.tar.xz)

cmake version 3.24.3

codon: v0.15.5

```

[build] [ 95%] Linking CXX shared library libcodonc.dylib

[build] Undefined symbols for architecture x86_64:

[build] "typeinfo for llvm::ErrorInfoBase", referenced from:

[build] typeinfo for llvm::ErrorInfo<codon::error::ParserErrorInfo, llvm::ErrorInfoBase> in compiler.cpp.o

[build] typeinfo for llvm::ErrorInfo<llvm::ErrorList, llvm::ErrorInfoBase> in jit.cpp.o

[build] typeinfo for llvm::ErrorInfo<codon::error::ParserErrorInfo, llvm::ErrorInfoBase> in jit.cpp.o

[build] typeinfo for llvm::ErrorInfo<codon::error::RuntimeErrorInfo, llvm::ErrorInfoBase> in jit.cpp.o

[build] typeinfo for llvm::ErrorInfo<llvm::ErrorList, llvm::ErrorInfoBase> in memory_manager.cpp.o

[build] typeinfo for llvm::ErrorInfo<llvm::jitlink::JITLinkError, llvm::ErrorInfoBase> in memory_manager.cpp.o

[build] typeinfo for llvm::ErrorInfo<codon::error::PluginErrorInfo, llvm::ErrorInfoBase> in plugins.cpp.o

[build] ...

[build] "typeinfo for llvm::JITEventListener", referenced from:

[build] typeinfo for codon::DebugListener in debug_listener.cpp.o

[build] "typeinfo for llvm::SectionMemoryManager", referenced from:

[build] typeinfo for codon::BoehmGCMemoryManager in memory_manager.cpp.o

[build] "typeinfo for llvm::cl::GenericOptionValue", referenced from:

[build] typeinfo for llvm::cl::OptionValueCopy<std::__1::basic_string<char, std::__1::char_traits<char>, std::__1::allocator<char> > > in gpu.cpp.o

[build] "typeinfo for llvm::orc::ObjectLinkingLayer::Plugin", referenced from:

[build] typeinfo for codon::DebugPlugin in debug_listener.cpp.o

[build] "typeinfo for llvm::detail::format_adapter", referenced from:

[build] typeinfo for llvm::detail::provider_format_adapter<unsigned long long> in memory_manager.cpp.o

[build] "typeinfo for llvm::jitlink::JITLinkMemoryManager::InFlightAlloc", referenced from:

[build] typeinfo for codon::BoehmGCJITLinkMemoryManager::IPInFlightAlloc in memory_manager.cpp.o

[build] "typeinfo for llvm::jitlink::JITLinkMemoryManager", referenced from:

[build] typeinfo for codon::BoehmGCJITLinkMemoryManager in memory_manager.cpp.o

[build] ld: symbol(s) not found for architecture x86_64

[build] clang-15: error: linker command failed with exit code 1 (use -v to see invocation)

[build] make[2]: *** [libcodonc.dylib] Error 1

[build] make[1]: *** [CMakeFiles/codonc.dir/all] Error 2

[build] make: *** [all] Error 2

```

```

libcodonc.dylib depend content in file build/CMakefiles/codonc.dir/build.make:

libcodonc.dylib: CMakeFiles/codonc.dir/codon/compiler/compiler.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/compiler/debug_listener.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/compiler/engine.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/compiler/error.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/compiler/jit.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/compiler/memory_manager.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/dsl/plugins.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/ast/expr.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/ast/stmt.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/ast/types/type.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/ast/types/link.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/ast/types/class.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/ast/types/function.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/ast/types/union.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/ast/types/static.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/ast/types/traits.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/cache.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/common.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/peg/peg.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/doc/doc.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/format/format.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/simplify/simplify.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/simplify/ctx.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/simplify/assign.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/simplify/basic.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/simplify/call.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/simplify/class.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/simplify/collections.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/simplify/cond.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/simplify/function.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/simplify/access.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/simplify/import.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/simplify/loops.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/simplify/op.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/simplify/error.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/translate/translate.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/translate/translate_ctx.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/typecheck/typecheck.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/typecheck/infer.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/typecheck/ctx.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/typecheck/assign.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/typecheck/basic.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/typecheck/call.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/typecheck/class.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/typecheck/collections.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/typecheck/cond.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/typecheck/function.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/typecheck/access.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/typecheck/loops.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/typecheck/op.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/typecheck/error.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/parser/visitors/visitor.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/attribute.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/analyze/analysis.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/analyze/dataflow/capture.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/analyze/dataflow/cfg.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/analyze/dataflow/dominator.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/analyze/dataflow/reaching.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/analyze/module/global_vars.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/analyze/module/side_effect.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/base.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/const.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/dsl/nodes.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/flow.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/func.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/instr.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/llvm/gpu.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/llvm/llvisitor.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/llvm/optimize.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/module.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/transform/cleanup/canonical.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/transform/cleanup/dead_code.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/transform/cleanup/global_demote.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/transform/cleanup/replacer.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/transform/folding/const_fold.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/transform/folding/const_prop.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/transform/folding/folding.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/transform/lowering/imperative.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/transform/lowering/pipeline.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/transform/manager.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/transform/parallel/openmp.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/transform/parallel/schedule.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/transform/pass.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/transform/pythonic/dict.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/transform/pythonic/generator.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/transform/pythonic/io.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/transform/pythonic/list.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/transform/pythonic/str.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/types/types.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/util/cloning.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/util/format.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/util/inlining.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/util/irtools.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/util/matching.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/util/outlining.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/util/side_effect.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/util/visitor.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/value.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/cir/var.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon/util/common.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/extra/jupyter/jupyter.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/codon_rules.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/omp_rules.cpp.o

libcodonc.dylib: CMakeFiles/codonc.dir/build.make

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMAArch64AsmParser.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMAMDGPUAsmParser.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMARMAsmParser.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMAVRAsmParser.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMBPFAsmParser.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMHexagonAsmParser.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMLanaiAsmParser.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMMipsAsmParser.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMMSP430AsmParser.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMPowerPCAsmParser.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMRISCVAsmParser.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMSparcAsmParser.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMSystemZAsmParser.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMVEAsmParser.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMWebAssemblyAsmParser.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMX86AsmParser.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMAArch64CodeGen.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMAMDGPUCodeGen.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMARMCodeGen.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMAVRCodeGen.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMBPFCodeGen.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMHexagonCodeGen.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMLanaiCodeGen.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMMipsCodeGen.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMMSP430CodeGen.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMNVPTXCodeGen.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMPowerPCCodeGen.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMRISCVCodeGen.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMSparcCodeGen.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMSystemZCodeGen.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMVECodeGen.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMWebAssemblyCodeGen.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMX86CodeGen.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMXCoreCodeGen.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMAArch64Desc.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMAMDGPUDesc.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMARMDesc.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMAVRDesc.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMBPFDesc.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMHexagonDesc.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMLanaiDesc.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMMipsDesc.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMMSP430Desc.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMNVPTXDesc.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMPowerPCDesc.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMRISCVDesc.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMSparcDesc.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMSystemZDesc.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMVEDesc.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMWebAssemblyDesc.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMX86Desc.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMXCoreDesc.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMAArch64Info.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMAMDGPUInfo.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMARMInfo.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMAVRInfo.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMBPFInfo.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMHexagonInfo.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMLanaiInfo.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMMipsInfo.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMMSP430Info.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMNVPTXInfo.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMPowerPCInfo.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMRISCVInfo.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMSparcInfo.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMSystemZInfo.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMVEInfo.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMWebAssemblyInfo.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMX86Info.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMXCoreInfo.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMAggressiveInstCombine.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMAnalysis.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMAsmParser.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMBitWriter.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMCodeGen.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMCore.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMExtensions.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMipo.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMIRReader.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMInstCombine.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMInstrumentation.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMMC.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMMCJIT.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMObjCARCOpts.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMOrcJIT.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMRemarks.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMScalarOpts.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMSupport.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMSymbolize.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMTarget.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMTransformUtils.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMVectorize.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMPasses.a

libcodonc.dylib: _deps/fmt-build/libfmtd.a

libcodonc.dylib: libcodonrt.dylib

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMAArch64Utils.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMAMDGPUUtils.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMMIRParser.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMARMUtils.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMHexagonAsmParser.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMHexagonDesc.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMHexagonInfo.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMLanaiAsmParser.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMLanaiDesc.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMLanaiInfo.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMWebAssemblyUtils.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMGlobalISel.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMCFGuard.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMAsmPrinter.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMSelectionDAG.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMCodeGen.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libPolly.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libPollyISL.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMPasses.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMObjCARCOpts.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMCoroutines.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMipo.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMBitWriter.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMIRReader.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMAsmParser.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMInstrumentation.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMVectorize.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMFrontendOpenMP.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMLinker.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMScalarOpts.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMAggressiveInstCombine.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMInstCombine.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMTransformUtils.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMMCDisassembler.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMExecutionEngine.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMTarget.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMAnalysis.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMProfileData.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMSymbolize.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMDebugInfoPDB.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMDebugInfoMSF.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMDebugInfoDWARF.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMRuntimeDyld.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMJITLink.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMObject.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMMCParser.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMMC.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMDebugInfoCodeView.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMBitReader.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMCore.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMRemarks.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMBitstreamReader.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMTextAPI.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMBinaryFormat.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMOrcTargetProcess.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMOrcShared.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMSupport.a

libcodonc.dylib: /Users/robot/Projects/llvm/lib/libLLVMDemangle.a

libcodonc.dylib: /Applications/Xcode.app/Contents/Developer/Platforms/MacOSX.platform/Developer/SDKs/MacOSX10.15.sdk/usr/lib/libz.tbd

libcodonc.dylib: /Applications/Xcode.app/Contents/Developer/Platforms/MacOSX.platform/Developer/SDKs/MacOSX10.15.sdk/usr/lib/libcurses.tbd

libcodonc.dylib: CMakeFiles/codonc.dir/link.txt

@$(CMAKE_COMMAND) -E cmake_echo_color --switch=$(COLOR) --green --bold --progress-dir=/Users/robot/GitHub/codon/build/CMakeFiles --progress-num=$(CMAKE_PROGRESS_106) "Linking CXX shared library libcodonc.dylib"

$(CMAKE_COMMAND) -E cmake_link_script CMakeFiles/codonc.dir/link.txt --verbose=$(VERBOSE)

``` | closed | 2023-02-12T05:17:33Z | 2024-11-08T18:43:29Z | https://github.com/exaloop/codon/issues/195 | [] | dipadipa | 2 |

plotly/dash | data-visualization | 2,691 | [Feature Request] Validate Arguments to components | If I break my Dash app by supplying, for example, the wrong type for `marks` when instantiating a `dcc.Slider`, the error message is not useful: "Error loading layout" is displayed in the browser, and nothing at all is logged on the back end.

What I'd hope for, and to some extent expect, is a helpful error message pointing to the issue, something like:

```python

Slider(marks={year: year for year in df["Year"].unique()}, ...)

is an invalid type, as what's required is dict[str, str | dict].

```

Or I guess the actual values which were fed into `Slider`, I think you get what I mean.

**Describe alternatives you've considered**

The only alternative is to revert (hopefully) your most recent changes which broke the app; otherwise, to go bug-hunting.

**Additional context**

Possibly something like `pydantic` could be useful here, and if type hints were added to the components, either directly or via Pydantic models, there might not be a lot of additional work to implement such a validation feature. I haven't dug too deeply into the React-side props validation and how you've implemented "React Component" -> "Python Class", but maybe it would make sense to tackle it from that end and generate the Python component classes from that.

I'm happy to implement this, by the way!

Cheers,

Zev | closed | 2023-11-13T12:08:23Z | 2023-12-16T12:23:50Z | https://github.com/plotly/dash/issues/2691 | [] | zevaverbach | 7 |

scikit-learn-contrib/metric-learn | scikit-learn | 185 | [DOC] Calibration example | It would be nice to have an example in the doc which demonstrates how to calibrate the pairwise metric learners with respect to several scores as introduced in #168, as well as the use of CalibratedClassifierCV (once this is properly tested, see #173) | open | 2019-03-14T16:11:44Z | 2021-04-22T21:25:32Z | https://github.com/scikit-learn-contrib/metric-learn/issues/185 | [

"documentation"

] | bellet | 0 |

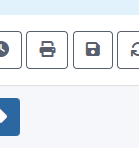

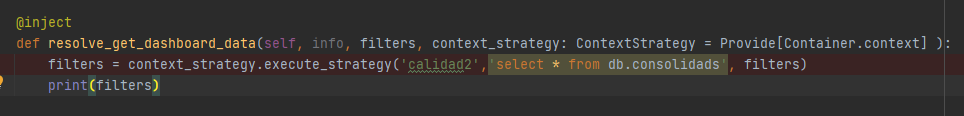

ets-labs/python-dependency-injector | flask | 335 | Unable to inject dependencies in Django Graphene project | Hi, I have tried to setup dependecy-injector in order to use in a project with Django and Graphql using [Graphene](https://graphene-python.org/). but I am get `Provide' object has no attribute 'execute_strategy`, I follow these steps [https://python-dependency-injector.ets-labs.org/examples/django.html](url) for Django setup, however the dependency doesn't work... I have somenthing like:

Where `resolve_get_dashboard_data` is a tipycal Graphql resolver | closed | 2020-12-14T15:04:10Z | 2020-12-14T16:48:33Z | https://github.com/ets-labs/python-dependency-injector/issues/335 | [

"question"

] | juanmarin96 | 2 |

InstaPy/InstaPy | automation | 6,530 | like_by_tags not working! pls suggest if any xpath is changed | Traceback (most recent call last):

File "C:/Scarper/insta2.py", line 62, in <module>

session.like_by_tags(smart_hashtags,amount=random.randint(5, 6))

File "C:\Users\CJ\miniconda3\envs\Scarper\lib\site-packages\instapy-0.6.16-py3.7.egg\instapy\instapy.py", line 1995, in like_by_tags

self.browser, self.max_likes, self.min_likes, self.logger

File "C:\Users\CJ\miniconda3\envs\Scarper\lib\site-packages\instapy-0.6.16-py3.7.egg\instapy\like_util.py", line 933, in verify_liking

OOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOO

ooooooooooooooooooooooooooooooooooooooooooooooooooooooooooo

likes_count = post_page["items"][0]["like_count"]

KeyError: 'items'

---------------------------------------------------------

**like_util.py**

line 933:

def verify_liking(browser, maximum, minimum, logger):

"""Get the amount of existing existing likes and compare it against maximum

& minimum values defined by user"""

post_page = get_additional_data(browser)

#DEF: 22jan

print(post_page)

likes_count = post_page["items"][0]["like_count"]

if not likes_count:

likes_count = 0

| open | 2022-03-02T08:57:24Z | 2022-03-02T08:58:05Z | https://github.com/InstaPy/InstaPy/issues/6530 | [] | charan89 | 0 |

s3rius/FastAPI-template | graphql | 4 | Change aioschedule to aiosheduler | Currently, in schedule.py, I use the Aioschedule lib, but there is another high-performant lib called Aioscheduler.

We need to change aioschedule to the new [scheduler lib](https://pypi.org/project/aioscheduler/). | closed | 2020-11-15T12:51:38Z | 2021-08-30T01:25:07Z | https://github.com/s3rius/FastAPI-template/issues/4 | [] | s3rius | 1 |

Yorko/mlcourse.ai | seaborn | 758 | Proofread topic 7 | - Fix issues

- Fix typos

- Correct the translation where needed

- Add images where necessary | closed | 2023-10-24T07:41:55Z | 2024-08-25T08:10:28Z | https://github.com/Yorko/mlcourse.ai/issues/758 | [

"enhancement",

"articles"

] | Yorko | 2 |

pennersr/django-allauth | django | 4,070 | ModuleNotFoundError: No module named 'allauth.socialaccount.providers.linkedin' | Seems like allauth.socialaccount.providers.linkedin is not yet implemented; only linkedin_oauth2 is implemented, even though the documentation says "linkedin_oauth2" is now deprecated.

Current latest version of django-allauth: **64.1.0**

| closed | 2024-08-24T16:32:00Z | 2024-08-24T18:50:27Z | https://github.com/pennersr/django-allauth/issues/4070 | [] | takuonline | 1 |

tableau/server-client-python | rest-api | 1,520 | Retry for request in use_server_version | **Describe the bug**

We are indexing data from multiple Tableau instances as a service provider integrating with Tableau.

We observed flaky requests on some instances:

```

2024-11-01T08:42:57.565777616Z stderr F INFO 2024-11-01 08:42:57,565 server 14 140490986871680 Could not get version info from server: <class 'tableauserverclient.server.endpoint.exceptions.InternalServerError'>

2024-11-01T08:42:57.565846529Z stderr F

2024-11-01T08:42:57.565851784Z stderr F Internal error 504 at https://XXXX/selectstar/api/2.4/serverInfo

2024-11-01T08:42:57.565860665Z stderr F b'<html>\r\n<head><title>504 Gateway Time-out</title></head>\r\n<body>\r\n<center><h1>504 Gateway Time-out</h1></center>\r\n<hr><center>nginx/1.25.3</center>\r\n</body>\r\n</html>\r\n'

2024-11-01T08:42:57.566048361Z stderr F INFO 2024-11-01 08:42:57,565 server 14 140490986871680 versions: None, 2.4

```

We observed that the request in `use_server_version` does not apply retry with exponential backoff, which is a good practice in such scenarios. There is no easy way to implement it, as this is implicit call in `__init__`.

**Versions**

Details of your environment, including:

- Tableau Server version (or note if using Tableau Online)

- Python version

- TSC library version

**To Reproduce**

Steps to reproduce the behavior. Please include a code snippet where possible.

That issue is transistent.

1/ Initalize SDK:

```

self._server = TSC.Server(

base_url,

use_server_version=True,

http_options={"timeout": self.REQUEST_TIMEOUT},

)

```

2/ Ensure that network connectivity to Tableau is unreliable and may drop connection.

**Results**

What are the results or error messages received?

See exception above. | open | 2024-11-01T19:57:41Z | 2025-01-03T23:54:02Z | https://github.com/tableau/server-client-python/issues/1520 | [

"Design Proposal",

"docs"

] | ad-m-ss | 2 |

donnemartin/data-science-ipython-notebooks | numpy | 33 | "Error 503 No healthy backends" | Hello,

When I try to open the hyperlinks which should direct me to the correct ipython notebook, it returns me "Error 503 No healthy backends"

"No healthy backends

Guru Mediation:

Details: cache-fra1236-FRA 1462794681 3780339426

Varnish cache server"

<img width="833" alt="capture" src="https://cloud.githubusercontent.com/assets/14320144/15112809/3e3a020c-15f9-11e6-9440-bfed7debac08.PNG">

<img width="350" alt="capture2" src="https://cloud.githubusercontent.com/assets/14320144/15112808/3e391b62-15f9-11e6-86b0-cf57a5d2e16e.PNG">

Thanks

Jiahong Wang

| closed | 2016-05-09T12:19:03Z | 2016-05-10T09:55:50Z | https://github.com/donnemartin/data-science-ipython-notebooks/issues/33 | [

"question"

] | wangjiahong | 1 |

iterative/dvc | machine-learning | 10,234 | gc: keep last `n` versions of data files, while ignoring commits with only code changes | Suppose I have the following commits in my project (from newest to oldest):

```

sha | changes

------------------------------

a01 | only dvc files changed

a02 | only code files changed

a03 | only dvc files changed

a04 | both dvc and code files changed

```

Now, suppose I'd like to keep the last 2 versions of dvc tracked files. Using this command:

```

dvc gc -w --cloud -r my-remote --num 2 --rev a01

```

it would only consider commits `a01` and `a02` and therefore **only the last version of files are kept** (whereas I need to keep the files in the `a03` commit as well). This is especially important if we would like to do this in an automated script on a regular interval, say every week (and hence we don't know about the history of commits to tune the command arguments). | closed | 2024-01-12T11:10:04Z | 2024-03-05T01:58:07Z | https://github.com/iterative/dvc/issues/10234 | [

"p3-nice-to-have",

"A: gc"

] | mkaze | 5 |

gradio-app/gradio | deep-learning | 10,813 | ERROR: Exception in ASGI application after downgrading pydantic to 2.10.6 | ### Describe the bug

There were reports of the same error in https://github.com/gradio-app/gradio/issues/10662, and the suggestion is to downgrade pydantic, but even after I downgraded pydantic, I am still seeing the same error.

I am running my code on Kaggle

and the error

```

ERROR: Exception in ASGI application

Traceback (most recent call last):

File "/usr/local/lib/python3.10/dist-packages/uvicorn/protocols/http/h11_impl.py", line 403, in run_asgi

result = await app( # type: ignore[func-returns-value]

File "/usr/local/lib/python3.10/dist-packages/uvicorn/middleware/proxy_headers.py", line 60, in __call__

return await self.app(scope, receive, send)

File "/usr/local/lib/python3.10/dist-packages/fastapi/applications.py", line 1054, in __call__

await super().__call__(scope, receive, send)

File "/usr/local/lib/python3.10/dist-packages/starlette/applications.py", line 112, in __call__

await self.middleware_stack(scope, receive, send)

File "/usr/local/lib/python3.10/dist-packages/starlette/middleware/errors.py", line 187, in __call__

raise exc

File "/usr/local/lib/python3.10/dist-packages/starlette/middleware/errors.py", line 165, in __call__

await self.app(scope, receive, _send)

File "/usr/local/lib/python3.10/dist-packages/gradio/route_utils.py", line 789, in __call__

await self.app(scope, receive, send)

File "/usr/local/lib/python3.10/dist-packages/starlette/middleware/exceptions.py", line 62, in __call__

await wrap_app_handling_exceptions(self.app, conn)(scope, receive, send)

File "/usr/local/lib/python3.10/dist-packages/starlette/_exception_handler.py", line 53, in wrapped_app

raise exc

File "/usr/local/lib/python3.10/dist-packages/starlette/_exception_handler.py", line 42, in wrapped_app

await app(scope, receive, sender)

File "/usr/local/lib/python3.10/dist-packages/starlette/routing.py", line 714, in __call__

await self.middleware_stack(scope, receive, send)

File "/usr/local/lib/python3.10/dist-packages/starlette/routing.py", line 734, in app

await route.handle(scope, receive, send)

File "/usr/local/lib/python3.10/dist-packages/starlette/routing.py", line 288, in handle

await self.app(scope, receive, send)

File "/usr/local/lib/python3.10/dist-packages/starlette/routing.py", line 76, in app

await wrap_app_handling_exceptions(app, request)(scope, receive, send)

File "/usr/local/lib/python3.10/dist-packages/starlette/_exception_handler.py", line 53, in wrapped_app

raise exc

File "/usr/local/lib/python3.10/dist-packages/starlette/_exception_handler.py", line 42, in wrapped_app

await app(scope, receive, sender)

File "/usr/local/lib/python3.10/dist-packages/starlette/routing.py", line 73, in app

response = await f(request)

File "/usr/local/lib/python3.10/dist-packages/fastapi/routing.py", line 301, in app

raw_response = await run_endpoint_function(

File "/usr/local/lib/python3.10/dist-packages/fastapi/routing.py", line 214, in run_endpoint_function

return await run_in_threadpool(dependant.call, **values)

File "/usr/local/lib/python3.10/dist-packages/starlette/concurrency.py", line 37, in run_in_threadpool

return await anyio.to_thread.run_sync(func)

File "/usr/local/lib/python3.10/dist-packages/anyio/to_thread.py", line 33, in run_sync

return await get_asynclib().run_sync_in_worker_thread(

File "/usr/local/lib/python3.10/dist-packages/anyio/_backends/_asyncio.py", line 877, in run_sync_in_worker_thread

return await future

File "/usr/local/lib/python3.10/dist-packages/anyio/_backends/_asyncio.py", line 807, in run

result = context.run(func, *args)

File "/usr/local/lib/python3.10/dist-packages/gradio/routes.py", line 584, in main

gradio_api_info = api_info(request)

File "/usr/local/lib/python3.10/dist-packages/gradio/routes.py", line 615, in api_info

api_info = utils.safe_deepcopy(app.get_blocks().get_api_info())

File "/usr/local/lib/python3.10/dist-packages/gradio/blocks.py", line 3019, in get_api_info

python_type = client_utils.json_schema_to_python_type(info)

File "/usr/local/lib/python3.10/dist-packages/gradio_client/utils.py", line 931, in json_schema_to_python_type

type_ = _json_schema_to_python_type(schema, schema.get("$defs"))

File "/usr/local/lib/python3.10/dist-packages/gradio_client/utils.py", line 985, in _json_schema_to_python_type

des = [

File "/usr/local/lib/python3.10/dist-packages/gradio_client/utils.py", line 986, in <listcomp>

f"{n}: {_json_schema_to_python_type(v, defs)}{get_desc(v)}"

File "/usr/local/lib/python3.10/dist-packages/gradio_client/utils.py", line 993, in _json_schema_to_python_type

f"str, {_json_schema_to_python_type(schema['additionalProperties'], defs)}"

File "/usr/local/lib/python3.10/dist-packages/gradio_client/utils.py", line 939, in _json_schema_to_python_type

type_ = get_type(schema)

File "/usr/local/lib/python3.10/dist-packages/gradio_client/utils.py", line 898, in get_type

if "const" in schema:

TypeError: argument of type 'bool' is not iterable

```

### Have you searched existing issues? 🔎

- [x] I have searched and found no existing issues

### Reproduction

```

!pip install -Uqq fastai

!pip uninstall gradio -y

!pip uninstall pydantic -y

!pip cache purge

!pip install pydantic==2.10.6

!pip install gradio

import gradio as gr

from fastai.learner import load_learner

learn = load_learner('export.pkl')

labels = learn.dls.vocab

def predict(img):

img = PILImage.create(img)

pred,pred_idx,probs = learn.predict(img)

result {labels[i]: float(probs[i].item()) for i in range(len(labels))}

gr.Interface(

fn=predict,

inputs=gr.Image(),

outputs=gr.Label()

).launch(share=True)

```

### Screenshot

_No response_

### Logs

```shell

```

### System Info

```shell

Gradio Environment Information:

------------------------------

Operating System: Linux

gradio version: 5.21.0

gradio_client version: 1.7.2

------------------------------------------------

gradio dependencies in your environment:

aiofiles: 22.1.0

anyio: 3.7.1

audioop-lts is not installed.

fastapi: 0.115.11

ffmpy: 0.5.0

gradio-client==1.7.2 is not installed.

groovy: 0.1.2

httpx: 0.28.1

huggingface-hub: 0.29.0

jinja2: 3.1.4

markupsafe: 2.1.5

numpy: 1.26.4

orjson: 3.10.12

packaging: 24.2

pandas: 2.2.3

pillow: 11.0.0

pydantic: 2.10.6

pydub: 0.25.1

python-multipart: 0.0.20

pyyaml: 6.0.2

ruff: 0.11.0

safehttpx: 0.1.6

semantic-version: 2.10.0

starlette: 0.46.1

tomlkit: 0.13.2

typer: 0.15.1

typing-extensions: 4.12.2

urllib3: 2.3.0

uvicorn: 0.34.0

authlib; extra == 'oauth' is not installed.

itsdangerous; extra == 'oauth' is not installed.

gradio_client dependencies in your environment:

fsspec: 2024.12.0

httpx: 0.28.1

huggingface-hub: 0.29.0

packaging: 24.2

typing-extensions: 4.12.2

websockets: 14.1

```

### Severity

Blocking usage of gradio | open | 2025-03-15T15:27:56Z | 2025-03-17T18:26:54Z | https://github.com/gradio-app/gradio/issues/10813 | [

"bug"

] | yumengzhao92 | 1 |

nltk/nltk | nlp | 2,818 | WordNetLemmatizer in nltk.stem module | What's the parameter of WordNetLemmatizer.lemmatize() in nltk.stem module?

Turn to the document, what are the candidate value of the parameter **'pos'**?

The default value is 'Noun'. But use the function pos_tag() to get the pos of the word, the value appears to come from several options. | closed | 2021-09-26T02:44:43Z | 2021-09-27T08:20:53Z | https://github.com/nltk/nltk/issues/2818 | [

"documentation"

] | Beliefuture | 3 |

clovaai/donut | computer-vision | 188 | key information extraction with DonUT on hand-written documents? | Hi everyone,

Has anyone tried fine-tuning DonUT for key information extraction on a corpus with documents half-digital and half-handwritten? Specifically, I am wondering if anyone has any evidence on how it performs on handwritten text, given that all the suggestions on generating a synthetic dataset with SynthDoG for pre-training point to selecting appropriate fonts of the digital text.