repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

miguelgrinberg/Flask-Migrate | flask | 242 | Tutorial sections 4.5+ - No Changes in Schema detected (Solution?) | Hi

For the command line examples in Section 4.5 - 4.9 to work. Namely the "flask db migrate -m "users table" and subsequent upgrades.

You need the import statements seen in the Shell Context example for Ex 4.10.

As stated in other issues / posts the Model and the DB aren't imported to be seen by the Flask db modules, once you add this, problem solved.

Maybe the tutorial needs an amendment?

Ex:

Compared my 4.5 code base vs the Microblog "source" repo seen here.

``` shell

(microblog_venv) ╭─jrock@ritchie ~/git

╰─$ diff --ignore-all-space ./microblog_source/microblog.py ./jrock/microblog/microblog.py 1 ↵

1,7c1,2

< from app import app, db

< from app.models import User, Post

<

<

< @app.shell_context_processor

< def make_shell_context():

< return {'db': db, 'User': User, 'Post': Post}

---

> # From our module import that app 'file'

> from app import app

\ No newline at end of file

(microblog_venv) ╭─jrock@ritchie ~/git

╰─$

``` | closed | 2018-12-24T19:30:01Z | 2019-04-07T09:55:51Z | https://github.com/miguelgrinberg/Flask-Migrate/issues/242 | [

"question"

] | JeanNiBee | 2 |

jina-ai/serve | machine-learning | 5,272 | Align on OpenTelemetry service names and cloud semantic attributes. | The current tracers are using the default runtime name or module name to create spans. These are currently very generic and don't differentiate between different flows. Without unique service names and other cloud deployment attributes ([k8s](https://opentelemetry.io/docs/reference/specification/resource/semantic_conventions/k8s/), [cloud provider attributes](https://opentelemetry.io/docs/reference/specification/resource/semantic_conventions/#cloud-provider-specific-attributes)), viewing traces will become un-filterable and very messy.

# Proposal

- Allow users to provide/override the deployment name using environment variables which is then set in the instrumentation library.

- Jina AI Cloud specific attributes need to be manually supported and documented.

- Self hosted cloud specific attributes need to be set by the user using environment variables as defined in the OpenTelemetry [documentation](https://opentelemetry.io/docs/reference/specification/resource/semantic_conventions/#cloud-provider-specific-attributes). | closed | 2022-10-11T15:07:15Z | 2022-11-21T12:38:55Z | https://github.com/jina-ai/serve/issues/5272 | [] | girishc13 | 1 |

holoviz/panel | matplotlib | 6,927 | panel.serve() implicitly and unconditionally captures SIGINT under the hood | ### ALL software version info

panel 1.4.4 (currently latest). Others are irrelevant, I believe, but still:

- python 3.9

- bokeh 3.4.1

- OS Windows 11

- browser FireFox (definitely irrelevant)

### Description of expected behavior and the observed behavior

#### Observed:

`panel.serve(..., threaded=False)` delegates to `panel.io.server.get_server()`.

This in turn attempts (with silent failure!?) to install a `SIGINT` handler that eventually calls `server.io_loop.stop()`.

This behavior stops the asyncio loop in its tracks without allowing any opportunity for code that shares the same event loop to perform its own orderly cleanup.

Note also that the signal handler is installed unconditionally; no arguments can be passed in to prevent it. In particular, this is done even when `start=False` is passed.

#### Expected:

IMHO, being intended for programmatic server operation from user code, it is none of `panel.serve()`'s business to do ANY kind of OS signal handling. Also, it is not within its charter to stop the event loop it is running on.

All of this should be handled by higher level code that has better awareness of the environment it is being run in, and what else may be running in it.

I expect `panel.serve()`, especially if called with `start=False`, to just create a server. That's it. Not `start()` and definitely not `stop()` it for me.

Even if this overreach is somehow deemed to be within the charter of `panel.serve()` in some cases, there should be a mechanism in place to allow the caller to prevent this when undesired.

### Complete, minimal, self-contained example code that reproduces the issue

In the following program, if SIGINT is received while in the `try` block, things will explode.

This is because the event loop gets stopped by `panel.serve` while the coroutine is inside `asyncio.wait(tasks_running, ...)`, and the `finally` clause never gets to run.

```

fueling_dashboard: panel.viewable.Viewable

class SpaceShip:

async def monitor_fuel_tanks(self, how: str):

while True:

fueling_dashboard.update_fuel_display(self.poll_fuel_sensors(how))

await asyncio.sleep(0.5)

async def make_launch_preparations(self, ...):

from asyncio import create_task, ALL_COMPLETED

fueling_dashboard_server = panel.serve(fueling_dashboard, start=False, threaded=False, ...)

panel.state.execute(self.monitor_fuel_tanks('carefully')) # schedule background update task before starting server

fueling_dashboard_server.start()

tasks_running = {create_task(self.pump_in_oxygen(fill_level=0.95)), create_task(self.pump_in_hydrogen(fill_level=0.95))}

try:

_, tasks_running = await asyncio.wait(tasks_running, return_when=ALL_COMPLETED)

finally:

was_successful = not tasks_running

if not was_successful: # something went wrong; e.g. KeyboardInterrupt or asyncio.CancelledError because SIGINT was received

fueling_dashboard.big_red_flashing_lamp.turn_on()

for task in tasks_running: # stop pumping in

task.cancel()

# empty tanks to prevent explosion

await asyncio.wait({self.pump_out_oxygen(), self.pump_out_hydrogen()}, return_when=ALL_COMPLETED)

fueling_dashboard_server.stop() # no longer needed

return was_successful

```

### Stack traceback and/or browser JavaScript console output

not applicable

### Screenshots or screencasts of the bug in action

not applicable

- [x] I may be interested in making a pull request to address this (but I'm not sure I know enough about what else could break)

| open | 2024-06-17T13:26:21Z | 2024-06-17T13:45:18Z | https://github.com/holoviz/panel/issues/6927 | [] | mcskatkat | 0 |

inducer/pudb | pytest | 257 | Failure on Windows 10 | `pip install` reports success:

```

C:\Users\bruce\Documents\Git\on-java>pip install pudb

Collecting pudb

Downloading pudb-2017.1.2.tar.gz (53kB)

100% |████████████████████████████████| 61kB 72kB/s

Collecting urwid>=1.1.1 (from pudb)

Downloading urwid-1.3.1.tar.gz (588kB)

100% |████████████████████████████████| 593kB 262kB/s

Collecting pygments>=1.0 (from pudb)

Using cached Pygments-2.2.0-py2.py3-none-any.whl

Installing collected packages: urwid, pygments, pudb

Running setup.py install for urwid ... done

Running setup.py install for pudb ... done

Successfully installed pudb-2017.1.2 pygments-2.2.0 urwid-1.3.1

```

But when I try to run the first example:

`python -m pudb.run binsearch.py`

I see:

```

Traceback (most recent call last):

File "C:\Python\Python36\lib\runpy.py", line 193, in _run_module_as_main

"__main__", mod_spec)

File "C:\Python\Python36\lib\runpy.py", line 85, in _run_code

exec(code, run_globals)

File "C:\Python\Python36\lib\site-packages\pudb\run.py", line 38, in <module>

main()

File "C:\Python\Python36\lib\site-packages\pudb\run.py", line 34, in main

steal_output=options.steal_output)

File "C:\Python\Python36\lib\site-packages\pudb\__init__.py", line 64, in runscript

dbg = _get_debugger(steal_output=steal_output)

File "C:\Python\Python36\lib\site-packages\pudb\__init__.py", line 50, in _get_debugger

dbg = Debugger(**kwargs)

File "C:\Python\Python36\lib\site-packages\pudb\debugger.py", line 152, in __init__

self.ui = DebuggerUI(self, stdin=stdin, stdout=stdout, term_size=term_size)

File "C:\Python\Python36\lib\site-packages\pudb\debugger.py", line 1905, in __init__

self.screen = ThreadsafeRawScreen()

File "C:\Python\Python36\lib\site-packages\urwid\raw_display.py", line 89, in __init__

fcntl.fcntl(self._resize_pipe_rd, fcntl.F_SETFL, os.O_NONBLOCK)

NameError: name 'fcntl' is not defined

``` | closed | 2017-06-06T16:44:20Z | 2017-06-06T21:20:14Z | https://github.com/inducer/pudb/issues/257 | [] | BruceEckel | 10 |

alteryx/featuretools | data-science | 2,287 | Move all Natural Language primitives that don't require an external library into core Featuretools | - As a user of Featuretools core, I want to apply NL primitives without install nlp-primitives.

- We need to move all the primitives that don't require an external library into Featuretools core:

- https://github.com/alteryx/nlp_primitives | closed | 2022-09-12T18:00:12Z | 2022-10-20T16:25:16Z | https://github.com/alteryx/featuretools/issues/2287 | [] | gsheni | 0 |

PokeAPI/pokeapi | graphql | 373 | Availability of named resources (for current hosting) | This issue is to consolidate updates for the current outage of named resources (eg. /pokemon/bulbasaur) into one place.

See also #374 for work on getting named resources working when we move to Netlify. | closed | 2018-09-22T22:10:54Z | 2018-09-22T23:55:51Z | https://github.com/PokeAPI/pokeapi/issues/373 | [] | tdmalone | 14 |

slackapi/python-slack-sdk | asyncio | 959 | RTMClient v2 - OSError: [Errno 9] Bad file descriptor | RTMClientv2 regularly disconnects. (26 times in a 24 hour period).

### Reproducible in:

#### The Slack SDK version

slack-sdk==3.3.1

#### Python runtime version

Python 3.8.7

#### OS info

Linux 5.4.97-gentoo #1 SMP

#### Steps to reproduce:

Run the RTMClientv2 for extended periods of time.

### Expected result:

The connection should remain established and only disconnect when the Slack server sends a `goodbye` event (after approximately 8 hours) or when there is a network disruption.

### Actual result:

There doesn't appear to be any `goodbye` event sent to trigger a disconnect. The disconnection issue only affects RTMClientv2 but not Events Socket Mode client. The bot is idle when the error occurs.

```

2021-02-15 16:51:17,915 DEBUG slack_sdk.rtm.v2 Message processing completed (type: hello)

2021-02-15 17:28:09,571 INFO slack_sdk.rtm.v2 The connection seems to be stale. Disconnecting... (session id: ae73a7cb-f2f0-41cd-ad7d-ced66fde6717)

2021-02-15 17:28:09,636 ERROR slack_sdk.rtm.v2 on_error invoked (session id: ae73a7cb-f2f0-41cd-ad7d-ced66fde6717, error: OSError, message: [Errno 9] Bad file de

scriptor)

Traceback (most recent call last):

File "/opt/errbot/lib/python3.8/site-packages/slack_sdk/socket_mode/builtin/connection.py", line 255, in run_until_completion

] = _receive_messages(

File "/opt/errbot/lib/python3.8/site-packages/slack_sdk/socket_mode/builtin/internals.py", line 131, in _receive_messages

return _fetch_messages(

File "/opt/errbot/lib/python3.8/site-packages/slack_sdk/socket_mode/builtin/internals.py", line 154, in _fetch_messages

remaining_bytes = receive()

File "/opt/errbot/lib/python3.8/site-packages/slack_sdk/socket_mode/builtin/internals.py", line 125, in receive

received_bytes = sock.recv(size)

File "/usr/lib/python3.8/ssl.py", line 1228, in recv

return super().recv(buflen, flags)

OSError: [Errno 9] Bad file descriptor

2021-02-15 17:28:09,636 INFO slack_sdk.rtm.v2 The connection has been closed (session id: ae73a7cb-f2f0-41cd-ad7d-ced66fde6717)

2021-02-15 17:28:09,637 INFO slack_sdk.rtm.v2 Stopped receiving messages from a connection (session id: ae73a7cb-f2f0-41cd-ad7d-ced66fde6717)

2021-02-15 17:28:09,637 INFO slack_sdk.rtm.v2 The session seems to be already closed. Going to reconnect... (session id: ae73a7cb-f2f0-41cd-ad7d-ced66fde6717)

2021-02-15 17:28:09,637 INFO slack_sdk.rtm.v2 Connecting to a new endpoint...

2021-02-15 17:28:10,215 INFO slack_sdk.rtm.v2 The connection has been closed (session id: ae73a7cb-f2f0-41cd-ad7d-ced66fde6717)

2021-02-15 17:28:10,215 INFO slack_sdk.rtm.v2 A new session has been established (session id: 55954e5b-0b93-4c11-b515-362c90bf59f5)

2021-02-15 17:28:10,215 INFO slack_sdk.rtm.v2 Connected to a new endpoint...

2021-02-15 17:28:10,638 INFO slack_sdk.rtm.v2 Starting to receive messages from a new connection (session id: 55954e5b-0b93-4c11-b515-362c90bf59f5)

2021-02-15 17:28:10,639 DEBUG slack_sdk.rtm.v2 on_message invoked: (message: {"type": "hello", "region":"eu-central-1", "start": true, "host_id":"gs-fra-angr"})

2021-02-15 17:28:10,639 DEBUG slack_sdk.rtm.v2 A new message enqueued (current queue size: 1)

2021-02-15 17:28:10,639 DEBUG slack_sdk.rtm.v2 A message dequeued (current queue size: 0)

2021-02-15 17:28:10,639 DEBUG slack_sdk.rtm.v2 Message processing started (type: hello)

2021-02-15 17:28:10,644 DEBUG slack_sdk.rtm.v2 Message processing completed (type: hello)

2021-02-15 18:34:23,979 INFO slack_sdk.rtm.v2 The connection seems to be stale. Disconnecting... (session id: 55954e5b-0b93-4c11-b515-362c90bf59f5)

2021-02-15 18:34:24,127 ERROR slack_sdk.rtm.v2 on_error invoked (session id: 55954e5b-0b93-4c11-b515-362c90bf59f5, error: OSError, message: [Errno 9] Bad file descriptor)

Traceback (most recent call last):

File "/opt/errbot/lib/python3.8/site-packages/slack_sdk/socket_mode/builtin/connection.py", line 255, in run_until_completion

] = _receive_messages(

File "/opt/errbot/lib/python3.8/site-packages/slack_sdk/socket_mode/builtin/internals.py", line 131, in _receive_messages

return _fetch_messages(

File "/opt/errbot/lib/python3.8/site-packages/slack_sdk/socket_mode/builtin/internals.py", line 154, in _fetch_messages

remaining_bytes = receive()

File "/opt/errbot/lib/python3.8/site-packages/slack_sdk/socket_mode/builtin/internals.py", line 125, in receive

received_bytes = sock.recv(size)

File "/usr/lib/python3.8/ssl.py", line 1228, in recv

return super().recv(buflen, flags)

OSError: [Errno 9] Bad file descriptor

2021-02-15 18:34:24,127 INFO slack_sdk.rtm.v2 The connection has been closed (session id: 55954e5b-0b93-4c11-b515-362c90bf59f5)

2021-02-15 18:34:24,131 INFO slack_sdk.rtm.v2 Stopped receiving messages from a connection (session id: 55954e5b-0b93-4c11-b515-362c90bf59f5)

2021-02-15 18:34:24,131 INFO slack_sdk.rtm.v2 The session seems to be already closed. Going to reconnect... (session id: 55954e5b-0b93-4c11-b515-362c90bf59f5)

2021-02-15 18:34:24,132 INFO slack_sdk.rtm.v2 Connecting to a new endpoint...

```

| closed | 2021-02-17T00:46:52Z | 2021-02-19T08:42:31Z | https://github.com/slackapi/python-slack-sdk/issues/959 | [

"rtm-client",

"Version: 3x"

] | nzlosh | 4 |

PaddlePaddle/models | computer-vision | 4,833 | 已解决 | closed | 2020-09-03T03:12:17Z | 2020-09-03T07:32:16Z | https://github.com/PaddlePaddle/models/issues/4833 | [] | kyuer | 0 | |

d2l-ai/d2l-en | deep-learning | 2,097 | d2l makes os.environ["CUDA_VISIBLE_DEVICES"] = "1" invalid | when i try to import d2l into my project, I found if os.environ["CUDA_VISIBLE_DEVICES"] is set after the d2l, then it will be valid.

<img width="443" alt="image" src="https://user-images.githubusercontent.com/38461329/162663129-7294395b-f58a-4de4-8eed-4dde85bd2229.png">

before run this program

when i run this program

Even though set before the d2l have been imported will work, I still hope this bug can be solved, since it will be more eleglent and convinent.

| closed | 2022-04-11T04:05:52Z | 2022-04-11T09:05:07Z | https://github.com/d2l-ai/d2l-en/issues/2097 | [] | FelliYang | 1 |

Gozargah/Marzban | api | 1,059 | Returning Null for admin_id When a User Is Created in Telegram Bot | ادمین سودو در `.env` آیدی عدی تلگرام را وارد کرده: `TELEGRAM_ADMIN_ID`

علاوه بر آن هنگام ایجاد ادمین هم آیدی تلگرام را وارد نموده. (در جدول `admins` برای یوزر سودو در `telegram_id` آیدی عددی تلگرام وجود داره)

حالا وقتی ادمین سودو از طریق ربات اقدام به سایت یوزر میکنه؛ `admin_id` در جدول `users` مقدارش null هست:

در `app/db/crud.py` فانکشن `get_admin_by_telegram_id` وجود داره ولی در` app/telegram/handlers/admin.py` در فانکشن `confirm_user_command` استفاده نشده:

| closed | 2024-06-23T21:17:27Z | 2024-07-03T06:34:55Z | https://github.com/Gozargah/Marzban/issues/1059 | [

"Bug"

] | amotlagh | 1 |

blb-ventures/strawberry-django-plus | graphql | 249 | hard dependency on django.contrib.auth | The line in utils/typing.py:

UserType: TypeAlias = Union[AbstractUser, AnonymousUser]

requires the inclusion of "django.contrib.auth" in INSTALLED_APPS

it would be better to use the

AbstractBaseUser of django/contrib/auth/base_user.py as it doesn't require the inclusion and is even the base of AbstractUser, AnonymousUser and an extra UserType is not neccessary.

This also improves the compatibility with 3party user apps

| closed | 2023-06-19T09:05:20Z | 2023-06-24T12:25:58Z | https://github.com/blb-ventures/strawberry-django-plus/issues/249 | [] | devkral | 1 |

KrishnaswamyLab/PHATE | data-visualization | 124 | Install issue, probably sklearn -> scikit-learn versions | We are having errors installing phate, and I think it tracks back to here, and to updates in scikit-learn. I posted details in the graphtools repo: https://github.com/KrishnaswamyLab/graphtools/issues/64.

I believe scikit-learn 1.2.0 breaks graphtools /PHATE. Have you seen this issue? Thanks in advance. | closed | 2022-12-27T15:29:49Z | 2023-01-03T07:14:53Z | https://github.com/KrishnaswamyLab/PHATE/issues/124 | [

"bug"

] | bbimber | 4 |

onnx/onnx | pytorch | 6,572 | It should be dim instead of a | https://github.com/onnx/onnx/blob/96a0ca4374d2198944ff882bd273e64222b59cb9/onnx/reference/ops/op_center_crop_pad.py#L24 | open | 2024-12-04T08:03:06Z | 2024-12-04T08:03:06Z | https://github.com/onnx/onnx/issues/6572 | [] | aksenventwo | 0 |

postmanlabs/httpbin | api | 661 | URL prefix? | Hey there,

My company has been using httpbin copiously and while we think it's fantastic, one consistent problem we've been having is trying to set httpbin behind a url path prefix (e.g. no httpbin.mydomain.com but mydomain.com/httpbin). We've scoured the documentation and it does not seem like this is possible although a flask app is supposed to be able to support this. Is there any documentation I'm missing, or could it be possible to add support for this feature? | open | 2021-11-30T18:24:13Z | 2023-08-29T00:05:59Z | https://github.com/postmanlabs/httpbin/issues/661 | [] | juandiegopalomino | 1 |

mckinsey/vizro | pydantic | 798 | Add default styling for waterfall chart to chart template | Context: https://github.com/mckinsey/vizro/pull/786 | open | 2024-10-08T09:50:55Z | 2024-10-08T13:35:27Z | https://github.com/mckinsey/vizro/issues/798 | [] | huong-li-nguyen | 3 |

ansible/ansible | python | 84,743 | PowerShell module doesn't accept type list in spec | ### Summary

When I try to run a custom module whose spec has a list type, it errors with

`"Exception calling "Create" with "2" argument(s): Unable to cast object of type 'System.String' to type 'System.Collections.IList'.`

# min viable:

```powershell

#AnsibleRequires -CSharpUtil Ansible.Basic

$spec = @{

options = @{

list_option = @{

type="list"

}

}

}

[Ansible.Basic.AnsibleModule]::Create($args, $spec)

```

### Issue Type

Bug Report

### Component Name

module_util Ansible.Basic

### Ansible Version

```console

ansible [core 2.18.2]

config file = None

configured module search path = [ '/home/vscode/.ansible/plugins/modules', '/usr/share/ansible/plugins/modules' ]

ansible python module location = /mnt/poetry-persistent-cache/virtua1envs/j1abaca-8Y3pZR--lS-py3.12/1ib/python3.12/site-packages/ansib1e

ansible collection location = /hane/vscode/. ansible/collections:/usr/share/ansible/collections

executable location = /mnt/poetry-persistent-cache/virtua1envs/<env name>/bin/ansib1e

python version = 3.12.8 (main, Dec 4 2024, 20:37:48) [GCC 10.2.1 20210110] (/mnt/poetry-persistent-cache/virtua1envs/<env name>/bin/python)

jinja version = 3.1.5

libyaml = True

```

### Configuration

```console

CONFIG_FILE() = None

GALAXY SERVERS:

```

### OS / Environment

VSCode devcontainers:

mcr.microsoft.com/devcontainers/python:3.12-bullseye

### Steps to Reproduce

<!--- Paste example playbooks or commands between quotes below -->

```yaml (paste below)

ansible-playbook <playbook.yaml> -i <inventory file.yml>

```

### Expected Results

I expect it to provide the valid ansible module

### Actual Results

```console

The full traceback is:

Exception calling "Create" with "2" argument(s): "Unable to cast object of type 'System.String' to type 'System.Collections.IList'."

At line:49 char:1

+ New-AnsibleModule -Arguments $args -Spec $spec

+ ~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

+ CategoryInfo : NotSpecified: (:) [New-AnsibleModule], MethodInvocationException

+ FullyQualifiedErrorId : InvalidCastException,New-AnsibleModule

ScriptStackTrace:

at New-AnsibleModule, <No file>: line 66

at <ScriptBlock>, <No file>: line 49

System.Management.Automation.MethodInvocationException: Exception calling "Create" with "2" argument(s): "Unable to cast object of type 'System.String' to type 'System.Collections.IList'." ---> System.InvalidCastException: Unable to cast object of type 'System.String' to type 'System.Collections.IList'.

at Ansible.Basic.AnsibleModule.CheckRequiredIf(IDictionary param, IList requiredIf) in c:\Users\mcanady\AppData\Local\Temp\1dfcd3e2-e15a-4631-b743-71d8667ecbbd\vltxogcu.0.cs:line 1198

at Ansible.Basic.AnsibleModule.CheckArguments(IDictionary spec, IDictionary param, List`1 legalInputs) in c:\Users\mcanady\AppData\Local\Temp\1dfcd3e2-e15a-4631-b743-71d8667ecbbd\vltxogcu.0.cs:line 1004

at Ansible.Basic.AnsibleModule..ctor(String[] args, IDictionary argumentSpec, IDictionary[] fragments) in c:\Users\mcanady\AppData\Local\Temp\1dfcd3e2-e15a-4631-b743-71d8667ecbbd\vltxogcu.0.cs:line 276

at CallSite.Target(Closure , CallSite , Type , String[] , Object )

--- End of inner exception stack trace ---

at System.Management.Automation.ExceptionHandlingOps.CheckActionPreference(FunctionContext funcContext, Exception exception)

at System.Management.Automation.Interpreter.ActionCallInstruction`2.Run(InterpretedFrame frame)

at System.Management.Automation.Interpreter.EnterTryCatchFinallyInstruction.Run(InterpretedFrame frame)

at System.Management.Automation.Interpreter.EnterTryCatchFinallyInstruction.Run(InterpretedFrame frame)

at System.Management.Automation.Interpreter.Interpreter.Run(InterpretedFrame frame)

at System.Management.Automation.Interpreter.LightLambda.RunVoid1[T0](T0 arg0)

at System.Management.Automation.PSScriptCmdlet.RunClause(Action`1 clause, Object dollarUnderbar, Object inputToProcess)

at System.Management.Automation.PSScriptCmdlet.DoEndProcessing()

at System.Management.Automation.CommandProcessorBase.Complete()

failed: [129.57.30.48] (item={'identity': 'JLAB\\CNILDS2$', 'rights': ['Enroll'], 'control_type': 'Deny'}) => {

"ansible_loop_var": "item",

"changed": false,

"item": {

"control_type": "Deny",

"identity": "JLAB\\CNILDS2$",

"rights": [

"Enroll"

]

},

"msg": "Unhandled exception while executing module: Exception calling \"Create\" with \"2\" argument(s): \"Unable to cast object of type 'System.String' to type 'System.Collections.IList'.\""

}

```

### Code of Conduct

- [x] I agree to follow the Ansible Code of Conduct | closed | 2025-02-24T16:12:51Z | 2025-03-10T13:00:03Z | https://github.com/ansible/ansible/issues/84743 | [

"bug",

"affects_2.18"

] | michaeldcanady | 5 |

python-gino/gino | sqlalchemy | 692 | Continually Getting TypeError: 'GinoExecutor' object is not callable | * GINO version: 1.0.0

* Python version: 3.7

* asyncpg version: 0.20.1

* aiocontextvars version: Not installed

* PostgreSQL version: 12.1

### Description

Attempting to make a simple query but am unable to.

### What I Did

While running:

```

domain = await Domain.get(Domain.domain == 'http://domain.com').gino().first()

```

I get:

```

TypeError: 'GinoExecutor' object is not callable

```

In context, here's some more code:

```python

./db/__init__.py

from gino import Gino

from scraper import config

print(config.DB_DSN)

import asyncio

gino_db = Gino()

async def main():

await gino_db.set_bind(config.DB_DSN)

asyncio.get_event_loop().run_until_complete(main())

# Import your models here so Alembic will pick them up

from db.models import *

```

My main.py:

```python

...

loop.run_until_complete(init_db())

...

```

And finally the init_db() function

```python

async def init_db():

engine = await gino_db.set_bind(config.DB_DSN, echo=True)

domain = await Domain.query.where(Domain.domain == 'http://equipomedia.com').gino().first()

```

What's going wrong? In the same code I'm able to call ```domains = await Domain.query.gino.all()``` and get a valid response.

[Github Repo](https://github.com/austincollinpena/spell-check-scraper) | closed | 2020-06-03T08:46:19Z | 2020-06-03T15:06:26Z | https://github.com/python-gino/gino/issues/692 | [] | austincollinpena | 4 |

ultralytics/ultralytics | pytorch | 19,708 | Assertion Error | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and [discussions](https://github.com/orgs/ultralytics/discussions) and found no similar questions.

### Question

Hellooo,

I have done a fine-tunning like this with my images with a different size than usual:

from ultralytics import YOLO

model = YOLO(‘yolo11m-seg.pt’)

results = model.train(

data=‘data.yaml’,

epochs=100,

imgsz=2560,

batch=1,

device=0,

lr0=0.01,

weight_decay=0.0005,

workers=8

)

With my .pt model I compiled it to .engine like this:

model.export(format="engine")

with input shape (1, 3, 2560, 2560) BCHW and output shape(s) ((1, 37, 134400), (1, 32, 640, 640)) (129.3 MB)

TensorRT: input "images" with shape(1, 3, 2560, 2560) DataType.FLOAT

TensorRT: output "output0" with shape(1, 37, 134400) DataType.FLOAT

TensorRT: output "output1" with shape(1, 32, 640, 640) DataType.FLOAT

[03/14/2025-18:32:49] [TRT] [I] Local timing cache in use. Profiling results in this builder pass will not be stored.

[03/14/2025-18:34:20] [TRT] [I] Compiler backend is used during engine build.

[03/14/2025-18:36:05] [TRT] [E] Error Code: 9: Skipping tactic 0x001526e231ae2e51 due to exception Cask convolution execution

[03/14/2025-18:37:08] [TRT] [I] [GraphReduction] The approximate region cut reduction algorithm is called.

[03/14/2025-18:37:08] [TRT] [I] Detected 1 inputs and 5 output network tensors.

[03/14/2025-18:37:09] [TRT] [I] Total Host Persistent Memory: 731472 bytes

[03/14/2025-18:37:09] [TRT] [I] Total Device Persistent Memory: 1952256 bytes

[03/14/2025-18:37:09] [TRT] [I] Max Scratch Memory: 1320550400 bytes

[03/14/2025-18:37:09] [TRT] [I] [BlockAssignment] Started assigning block shifts. This will take 363 steps to complete.

[03/14/2025-18:37:09] [TRT] [I] [BlockAssignment] Algorithm ShiftNTopDown took 27.7015ms to assign 13 blocks to 363 nodes requiring 2123367424 bytes.

[03/14/2025-18:37:09] [TRT] [I] Total Activation Memory: 2123366400 bytes

[03/14/2025-18:37:09] [TRT] [I] Total Weights Memory: 105195780 bytes

[03/14/2025-18:37:09] [TRT] [I] Compiler backend is used during engine execution.

[03/14/2025-18:37:09] [TRT] [I] Engine generation completed in 260.223 seconds.

[03/14/2025-18:37:09] [TRT] [I] [MemUsageStats] Peak memory usage of TRT CPU/GPU memory allocators: CPU 9 MiB, GPU 3202 MiB

Everything seems to end up correctly but when I try to run inference, I always get the same error:

assert im.shape == s, f ‘input size {im.shape} {’>' if self.dynamic else “not equal to”} max model size {s}’

AssertionError: input size torch.Size([1, 3, 640, 640]) not equal to max model size (1, 3, 2560, 2560)

### Additional

_No response_ | closed | 2025-03-14T23:15:49Z | 2025-03-15T22:13:59Z | https://github.com/ultralytics/ultralytics/issues/19708 | [

"question",

"segment",

"exports"

] | davidpacios | 5 |

vanna-ai/vanna | data-visualization | 408 | Add No-SQL Database Support | Description: This feature request proposes adding support for No-SQL databases to the vanna project. No-SQL databases are a type of database that is not based on the relational model. They are a good option for storing data that does not fit well into a relational schema, such as JSON data.

Benefits:

- Increased flexibility: No-SQL databases can store a wider variety of data types than relational databases.

- Improved scalability: No-SQL databases can scale more easily than relational databases.

- Reduced complexity: No-SQL databases can simplify the development process by eliminating the need to design and manage relational schemas.

Drawbacks:

- Potential for increased complexity: While No-SQL databases can simplify the development process in some cases, they can also introduce complexity if not used carefully.

- Limited querying capabilities: No-SQL databases may not offer the same level of querying capabilities as relational databases.

Proposed Implementation:

- The vanna project should be extended to support a popular No-SQL database, such as MongoDB or Firebase.

- The project should provide a way for users to connect to their No-SQL database instance.

- The project should provide a way for users to query and manipulate their data in the No-SQL database.

I hope this helps! | open | 2024-05-04T16:33:26Z | 2025-03-20T06:33:58Z | https://github.com/vanna-ai/vanna/issues/408 | [] | shrijayan | 4 |

deeppavlov/DeepPavlov | nlp | 1,239 | Provide an extensive example of API usage in go-bot | The go-bot is able ([example](https://github.com/deepmipt/DeepPavlov/blob/master/examples/gobot_extended_tutorial.ipynb)) to query an API to get the data. It allows to perform the dialog relying onto some explicit knowledge.

In the provided example the bot queries the database to perform some read operations. Operations of other classes (e.g. update) seem to be possible but we have no examples of such cases.

**The contribution could follow these steps**:

* discover the purpose of update- queries in goal-oriented datasets

* find the dataset with such api calls or generate an artificial one

* provide an example of the go-bot trained on this dataset and using this data | closed | 2020-06-04T13:50:55Z | 2022-04-06T10:17:34Z | https://github.com/deeppavlov/DeepPavlov/issues/1239 | [

"Documentation",

"code",

"easy"

] | oserikov | 1 |

mithi/hexapod-robot-simulator | plotly | 33 | ❗Some unstable poses are marked as stable | ❗ Some unstable poses are not marked as unstable

<img width="1253" alt="Screen Shot 2020-04-18 at 10 19 55 PM" src="https://user-images.githubusercontent.com/1670421/79640224-f9a1f200-81c2-11ea-9ee0-c18d8d655417.png">

| closed | 2020-04-11T10:26:25Z | 2020-04-23T15:22:07Z | https://github.com/mithi/hexapod-robot-simulator/issues/33 | [

"bug",

"help wanted",

"PRIORITY"

] | mithi | 1 |

Johnserf-Seed/TikTokDownload | api | 719 | [BUG] Error Downloading TikTok Posts Due to msToken API Error | ## 错误的详细描述

在使用 `f2 tk` 命令尝试下载抖音帖子时,我经常遇到错误。相同的设置过程用于下载抖音(Douyin)时正常工作。

## 系统平台

<details>

<summary>点击展开</summary>

- **操作系统**: Windows 11

- **Python 版本**: Python 3.11.1

- **F2 版本**: 0.0.1.5

- **浏览器**: Firefox

- **网络环境**: 美国,无代理

</details>

## 错误复现步骤

<details>

<summary>点击展开</summary>

1. 在 `douyin\Lib\site-packages\f2\conf\app.yaml` 文件中设置抖音配置。

2. 运行命令 `f2 tk --auto-cookie firefox`。

3. 运行命令 `f2 tk -u https://www.tiktok.com/@xxxx`。

**配置文件(app.yaml)**:

```yaml

tiktok:

cookie: ak_bmsc=xxxx; passport_csrf_token=xxxx; passport_csrf_token_default=xxxx; multi_sids=xxxx; cmpl_token=xxxx; passport_auth_status=xxxx; passport_auth_status_ss=xxxx; sid_guard=xxxx; uid_tt=xxxx; uid_tt_ss=xxxx; sid_tt=xxxx; sessionid=xxxx; sessionid_ss=xxxx; ssid_ucp_v1=xxxx; tt-target-idc-sign=xxxx; tt_chain_token=xxxx; bm_sv=xxxx; store-idc=xxxx; ttwid=xxxx; odin_tt=xxxx; msToken=xxxx; tiktok_webapp_theme=light

cover: false

desc: false

folderize: false

interval: all

languages: en_US

max_connections: 5

max_counts: 0

max_retries: 4

max_tasks: 6

mode: post

music: false

naming: '{create}_{aweme_id}_{desc}'

page_counts: 20

path: ./Download

timeout: 6

```

**错误信息**:

```plaintext

ERROR msToken API错误:msToken 内容不符合要求

INFO 生成虚假的msToken

INFO 生成虚假的msToken

ERROR 解析

https://www.tiktok.com/api/user/detail/?WebIdLastTime=1716643217&aid=1988&app_language=zh-Hans&app_name=tiktok_web&browser_language=zh-CN&browser_name=Mozilla&browser_online=true&browser_platform=Win32&browser_version=5.0%20(Windows%20NT%2010.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/119.0.0.0 Safari/537.36&channel=tiktok_web&cookie_enabled=true&device_id=7306060721837852167&device_platform=web_pc&focus_state=true&from_page=user&history_len=4&is_fullscreen=false&is_page_visible=true&language=zh-Hans&os=windows&priority_region=&referer=®ion=SG&root_referer=https://www.tiktok.com/&screen_height=1080&screen_width=1920&webcast_language=zh-Hans&tz_name=Asia/Hong_Kong&msToken=BsX4yCeHR4DH+4Z1kGkzncqJnTIraH3-ZL85kv0lC3us+O04tDhlndLqN7Ff8zZq134EIxtk06RDjnRFvhQRVgIHJFhJDRee+RcSZ8d6dWQ9k22tf5uVDevFoNkO3q43FlszpFZ1X2WzgFM9MP==&secUid=MS4wLjABAAAA1TQUIEMS7ThX22wMrfKDn1G_yIYHRQ4kCM3WxsgDUJbLYN5SExsIbJH_-L5YG-gY&uniqueId=&X-Bogus=DFS...

接口 JSON 失败: Expecting property name enclosed in double quotes: line 2 column 5 (char 6)

ERROR API内容请求失败,请更换新cookie后再试

```

**调试命令**:

请运行命令 `f2 -d DEBUG` 并附上日志目录中的日志文件,以提供日志信息进行诊断。

</details>

## 预期行为

我希望帖子能够成功下载,而不会遇到 msToken 错误。

## 截图

如果适用,请添加截图以帮助解释您的问题。

## 日志文件

请附上调试日志文件,以帮助诊断问题。

## 其他信息

任何有助于解决问题的额外信息。

- [x] 我已经查看了[文档](https://johnserf-seed.github.io/f2/quick-start.html)和[已关闭的问题](https://github.com/Johnserf-Seed/f2/issues?q=is%3Aissue+is%3Aclosed)以寻找可能的解决方案。

- [x] 我在[常见问题解答](https://johnserf-seed.github.io/f2/question-answer/qa.html)中没有找到我的问题。

- [x] 这个问题是公开的,并且我已删除所有敏感信息。

- [x] 我理解未按照模板提交的问题将不予优先处理。 | closed | 2024-05-25T13:39:14Z | 2024-07-03T00:11:59Z | https://github.com/Johnserf-Seed/TikTokDownload/issues/719 | [

"故障(bug)",

"已确认(confirmed)"

] | iDataist | 4 |

521xueweihan/HelloGitHub | python | 2,844 | 【开源自荐】ReactPress — 基于React的博客&CMS内容管理系统 | ## 推荐项目

- 项目地址:<https://github.com/fecommunity/reactpress>

<!--请从中选择(C、C#、C++、CSS、Go、Java、JS、Kotlin、Objective-C、PHP、Python、Ruby、Rust、Swift、其它、书籍、机器学习)-->

- 类别:JS

<!--请用 20 个左右的字描述它是做什么的,类似文章标题让人一目了然 -->

- 项目标题:基于React的博客&CMS内容管理系统

<!--这是个什么项目、能用来干什么、有什么特点或解决了什么痛点,适用于什么场景、能够让初学者学到什么。长度 32-256 字符-->

- 项目描述:`ReactPress` 是使用React开发的开源发布平台,用户可以在支持React和MySQL数据库的服务器上架设属于自己的博客、网站。也可以把 `ReactPress` 当作一个内容管理系统(CMS)来使用。

<!--令人眼前一亮的点是什么?类比同类型项目有什么特点!-->

- 亮点:

- 📦 技术栈:基于 `React` + `NextJS` + `MySQL 5.7` + `NestJS` 构建

- 🌈 组件化:基于 `antd 5.20` 最新版的交互语言和视觉风格

- 🌍 国际化:支持中英文切换,国际化配置管理能力

- 🌞 黑白主题:支持亮色和暗黑模式主题自由切换

- 🖌️ 创作管理:内置 `MarkDown` 编辑器,支持文章写文章、分类目录管理,标签管理

- 📃 页面管理:支持自定义新页面

- 💬 评论管理:支持内容评论管理

- 📷️ 媒体管理:支持文件本地上传和 `OSS` 文件上传

- 📱 移动端:完美适配移动端H5页面

- 示例代码:

```bash

$ git clone --depth=1 https://github.com/fecommnity/reactpress.git

$ cd reactpress

$ npm i -g pnpm

$ pnpm i

```

- 截图:

- 后续更新计划:

每周更新。

| open | 2024-11-11T14:40:52Z | 2024-11-11T14:40:52Z | https://github.com/521xueweihan/HelloGitHub/issues/2844 | [] | fecommunity | 0 |

odoo/odoo | python | 202,218 | [18.0] hr_holidays: on leave refusal, first_approver_id and second_approver_id are wrongly updated | ### Odoo Version

- [ ] 16.0

- [ ] 17.0

- [x] 18.0

- [ ] Other (specify)

### Steps to Reproduce

Given a leave having 'both' as validate_type (leave 'By Employee's Approver and Time Off Officer').

When the employee's first approver approves the leave, and the second approver refuses the leave

Then the leave first_approver_id and second_approver_id are wrong.

When the employee's first approver refuses the leave

Then the leave first_approver_id and second_approver_id are wrong.

```

def action_refuse(self):

...

validated_holidays = self.filtered(lambda hol: hol.state == 'validate1')

validated_holidays.write({'state': 'refuse', 'first_approver_id': current_employee.id})

(self - validated_holidays).write({'state': 'refuse', 'second_approver_id': current_employee.id})

....

```

### Log Output

```shell

```

### Support Ticket

_No response_ | open | 2025-03-18T05:20:46Z | 2025-03-18T05:20:46Z | https://github.com/odoo/odoo/issues/202218 | [] | dsauvage | 0 |

learning-at-home/hivemind | asyncio | 320 | [Minor] make "could not connect" errors in example more pronounced | In examples/albert, if a peer cannot connect to others, it will print something like:

```

[...][WARN][dht.node.create:234] DHTNode bootstrap failed: none of the initial_peers responded to a ping.

```

Which is nice, but no one will ever see this warning among 100+ lines of other logs (training config, module warnings, etc)

Let's make this warning into an error? | closed | 2021-07-15T13:10:20Z | 2021-08-20T15:08:42Z | https://github.com/learning-at-home/hivemind/issues/320 | [

"enhancement"

] | justheuristic | 0 |

jupyterlab/jupyter-ai | jupyter | 871 | Jupyter AI plugin schema is never loaded; contains unused cell toolbar and menus | I was trying to add a shortcut for https://github.com/jupyterlab/jupyter-ai/issues/799 and noticed that there is `schema/plugin.json` which contains a cell toolbar button and menu actions:

https://github.com/jupyterlab/jupyter-ai/blob/5183bc9281d81a953b0f360e76b03dc15f3d8987/packages/jupyter-ai/schema/plugin.json#L7-L43

However, these do not show up int the UI because the schema is not correctly hooked up, and these commands are not defined. Is it intended?

Also, I am quite confused on the design directions because the original version of the UI did contain a dedicated cell toolbar button but it seems this was changed early on but I cannot find an issue nor PR documenting rationale for this change. | closed | 2024-07-05T09:24:31Z | 2024-07-08T15:51:15Z | https://github.com/jupyterlab/jupyter-ai/issues/871 | [

"bug"

] | krassowski | 1 |

facebookresearch/fairseq | pytorch | 5,538 | Has anyone got the MMPT example to work? | ## 🐛 Bug

I am running into so many dependency errors when trying to use VideoCLIP model. If anyone has gotten it to work please share your details on which package versions you have installed, etc.

By the way, I'm trying to get the model to compare if a video and text match and get a score from that.

I am currently stuck with this error while running the example code:

```

---------------------------------------------------------------------------

ConfigAttributeError Traceback (most recent call last)

Cell In[1], line 6

1 import torch

3 from mmpt.models import MMPTModel

----> 6 model, tokenizer, aligner = MMPTModel.from_pretrained(

7 "projects/retri/videoclip/how2.yaml")

9 model.eval()

12 # B, T, FPS, H, W, C (VideoCLIP is trained on 30 fps of s3d)

File ~/PycharmProjects/fairseq/examples/MMPT/mmpt/models/mmfusion.py:39, in MMPTModel.from_pretrained(cls, config, checkpoint)

37 from ..utils import recursive_config

38 from ..tasks import Task

---> 39 config = recursive_config(config)

40 mmtask = Task.config_task(config)

41 checkpoint_path = os.path.join(config.eval.save_path, checkpoint)

File ~/PycharmProjects/fairseq/examples/MMPT/mmpt/utils/load_config.py:58, in recursive_config(config_path)

56 """allows for stacking of configs in any depth."""

57 config = OmegaConf.load(config_path)

---> 58 if config.includes is not None:

59 includes = config.includes

60 config.pop("includes")

File /opt/anaconda3/envs/test_env_3_9/lib/python3.9/site-packages/omegaconf/dictconfig.py:355, in DictConfig.__getattr__(self, key)

351 return self._get_impl(

352 key=key, default_value=_DEFAULT_MARKER_, validate_key=False

353 )

354 except ConfigKeyError as e:

--> 355 self._format_and_raise(

356 key=key, value=None, cause=e, type_override=ConfigAttributeError

357 )

358 except Exception as e:

359 self._format_and_raise(key=key, value=None, cause=e)

File /opt/anaconda3/envs/test_env_3_9/lib/python3.9/site-packages/omegaconf/base.py:231, in Node._format_and_raise(self, key, value, cause, msg, type_override)

223 def _format_and_raise(

224 self,

225 key: Any,

(...)

229 type_override: Any = None,

230 ) -> None:

--> 231 format_and_raise(

232 node=self,

233 key=key,

234 value=value,

235 msg=str(cause) if msg is None else msg,

236 cause=cause,

237 type_override=type_override,

238 )

239 assert False

File /opt/anaconda3/envs/test_env_3_9/lib/python3.9/site-packages/omegaconf/_utils.py:899, in format_and_raise(node, key, value, msg, cause, type_override)

896 ex.ref_type = ref_type

897 ex.ref_type_str = ref_type_str

--> 899 _raise(ex, cause)

File /opt/anaconda3/envs/test_env_3_9/lib/python3.9/site-packages/omegaconf/_utils.py:797, in _raise(ex, cause)

795 else:

796 ex.__cause__ = None

--> 797 raise ex.with_traceback(sys.exc_info()[2])

File /opt/anaconda3/envs/test_env_3_9/lib/python3.9/site-packages/omegaconf/dictconfig.py:351, in DictConfig.__getattr__(self, key)

348 raise AttributeError()

350 try:

--> 351 return self._get_impl(

352 key=key, default_value=_DEFAULT_MARKER_, validate_key=False

353 )

354 except ConfigKeyError as e:

355 self._format_and_raise(

356 key=key, value=None, cause=e, type_override=ConfigAttributeError

357 )

File /opt/anaconda3/envs/test_env_3_9/lib/python3.9/site-packages/omegaconf/dictconfig.py:442, in DictConfig._get_impl(self, key, default_value, validate_key)

438 def _get_impl(

439 self, key: DictKeyType, default_value: Any, validate_key: bool = True

440 ) -> Any:

441 try:

--> 442 node = self._get_child(

443 key=key, throw_on_missing_key=True, validate_key=validate_key

444 )

445 except (ConfigAttributeError, ConfigKeyError):

446 if default_value is not _DEFAULT_MARKER_:

File /opt/anaconda3/envs/test_env_3_9/lib/python3.9/site-packages/omegaconf/basecontainer.py:73, in BaseContainer._get_child(self, key, validate_access, validate_key, throw_on_missing_value, throw_on_missing_key)

64 def _get_child(

65 self,

66 key: Any,

(...)

70 throw_on_missing_key: bool = False,

71 ) -> Union[Optional[Node], List[Optional[Node]]]:

72 """Like _get_node, passing through to the nearest concrete Node."""

---> 73 child = self._get_node(

74 key=key,

75 validate_access=validate_access,

76 validate_key=validate_key,

77 throw_on_missing_value=throw_on_missing_value,

78 throw_on_missing_key=throw_on_missing_key,

79 )

80 if isinstance(child, UnionNode) and not _is_special(child):

81 value = child._value()

File /opt/anaconda3/envs/test_env_3_9/lib/python3.9/site-packages/omegaconf/dictconfig.py:480, in DictConfig._get_node(self, key, validate_access, validate_key, throw_on_missing_value, throw_on_missing_key)

478 if value is None:

479 if throw_on_missing_key:

--> 480 raise ConfigKeyError(f"Missing key {key!s}")

481 elif throw_on_missing_value and value._is_missing():

482 raise MissingMandatoryValue("Missing mandatory value: $KEY")

ConfigAttributeError: Missing key includes

full_key: includes

object_type=dict

``` | open | 2024-09-05T18:08:36Z | 2024-10-13T07:51:31Z | https://github.com/facebookresearch/fairseq/issues/5538 | [

"bug",

"needs triage"

] | qingy1337 | 1 |

jeffknupp/sandman2 | sqlalchemy | 112 | does sandman2 query data support “>” "<" | ### Environment

MySQL 8.0

sandman2 1.2.1

pymysql 0.9.3

Postman 7.2.2

Operating system: win7 x64

### Description of issue

**step1 create table and insert data**

create database paspce ,create table t_areainfo , insert data to table.

like this

"create database if not exists pspace;

create table if not exists pspace.t_areainfo(

id int primary key,

level int,

name varchar(255),

parentId int,

status int

);

insert into pspace.t_areainfo values(1, 0, 'aaa', 0, 0),(2, 0, 'bbb', 1, 0),(3, 0, 'ccc', 1, 0),(4, 0, 'ddd', 2, 0);"

**step2 start sandman2ctl then use postman query data**

start sandman2ctl in cmd like this "sandman2ctl mysql+pymysql://admin:juan@localhost/pspace".

then use postman query data by get at this url "127.0.0.1:5000/t_areainfo/", it works well.

**step3 query data by get and use "<"**

query data by get at url "127.0.0.1:5000/t_areainfo/?id<3" reveive this message

"{

"message": "Invalid field [id<3]"

}"

is bug or not support?tell me thanks。 | closed | 2019-07-11T06:35:48Z | 2019-07-22T07:33:35Z | https://github.com/jeffknupp/sandman2/issues/112 | [] | abcweizhuo | 1 |

graphdeco-inria/gaussian-splatting | computer-vision | 1,151 | Why not camera's intrinsic parameters(cx,cy) adjusted with image resolution ? | Why, when the image resolution is adjusted by factors of 2, 4, or 8 and the image size is reduced, are the camera's intrinsic parameters not adjusted, especially the values of the intrinsic parameters cx and cy?

| open | 2025-02-03T16:43:58Z | 2025-03-04T08:34:51Z | https://github.com/graphdeco-inria/gaussian-splatting/issues/1151 | [] | scott198510 | 1 |

strawberry-graphql/strawberry | fastapi | 3,349 | When i use run with python3, ImportError occured | <!-- Provide a general summary of the bug in the title above. -->

<!--- This template is entirely optional and can be removed, but is here to help both you and us. -->

<!--- Anything on lines wrapped in comments like these will not show up in the final text. -->

ImportError : cannot import name 'GraphQLError' from 'graphql'

## Describe the Bug

It works well when executed with poetry run app.main:main.

However, when executing with python3 app/main.py, the following Import Error occurs.

_**Error occured code line**_

<img width="849" alt="image" src="https://github.com/strawberry-graphql/strawberry/assets/10377550/713ab6b4-76c1-4b0d-84e1-80903f8855ea">

**_Traceback_**

```bash

Traceback (most recent call last):

File "<frozen importlib._bootstrap>", line 1176, in _find_and_load

File "<frozen importlib._bootstrap>", line 1147, in _find_and_load_unlocked

File "<frozen importlib._bootstrap>", line 690, in _load_unlocked

File "<frozen importlib._bootstrap_external>", line 940, in exec_module

File "<frozen importlib._bootstrap>", line 241, in _call_with_frames_removed

File "/Users/evanhwang/dev/ai-hub/hub-api/app/bootstrap/admin/bootstrapper.py", line 4, in <module>

from app.bootstrap.admin.router import AdminRouter

File "/Users/evanhwang/dev/ai-hub/hub-api/app/bootstrap/admin/router.py", line 3, in <module>

import strawberry

File "/Users/evanhwang/Library/Caches/pypoetry/virtualenvs/hub-api-UM7sgzi1-py3.11/lib/python3.11/site-packages/strawberry/__init__.py", line 1, in <module>

from . import experimental, federation, relay

File "/Users/evanhwang/Library/Caches/pypoetry/virtualenvs/hub-api-UM7sgzi1-py3.11/lib/python3.11/site-packages/strawberry/federation/__init__.py", line 1, in <module>

from .argument import argument

File "/Users/evanhwang/Library/Caches/pypoetry/virtualenvs/hub-api-UM7sgzi1-py3.11/lib/python3.11/site-packages/strawberry/federation/argument.py", line 3, in <module>

from strawberry.arguments import StrawberryArgumentAnnotation

File "/Users/evanhwang/Library/Caches/pypoetry/virtualenvs/hub-api-UM7sgzi1-py3.11/lib/python3.11/site-packages/strawberry/arguments.py", line 18, in <module>

from strawberry.annotation import StrawberryAnnotation

File "/Users/evanhwang/Library/Caches/pypoetry/virtualenvs/hub-api-UM7sgzi1-py3.11/lib/python3.11/site-packages/strawberry/annotation.py", line 23, in <module>

from strawberry.custom_scalar import ScalarDefinition

File "/Users/evanhwang/Library/Caches/pypoetry/virtualenvs/hub-api-UM7sgzi1-py3.11/lib/python3.11/site-packages/strawberry/custom_scalar.py", line 19, in <module>

from strawberry.exceptions import InvalidUnionTypeError

File "/Users/evanhwang/Library/Caches/pypoetry/virtualenvs/hub-api-UM7sgzi1-py3.11/lib/python3.11/site-packages/strawberry/exceptions/__init__.py", line 6, in <module>

from graphql import GraphQLError

ImportError: cannot import name 'GraphQLError' from 'graphql' (/Users/evanhwang/dev/ai-hub/hub-api/app/graphql/__init__.py)

```

## System Information

- Operating System: Mac Ventura 13.5.1(22G90)

- Strawberry Version (if applicable):

Entered in pyproject.toml as follows:

```bash

strawberry-graphql = {extras = ["debug-server", "fastapi"], version = "^0.217.1"}

```

**_pyproject.toml_**

```toml

##############################################################################

# poetry 종속성 설정

# - https://python-poetry.org/docs/managing-dependencies/#dependency-groups

# - 기본적으로 PyPI에서 종속성을 찾습니다.

##############################################################################

[tool.poetry.dependencies]

python = "3.11.*"

fastapi = "^0.103.2"

uvicorn = "^0.23.2"

poethepoet = "^0.24.0"

requests = "^2.31.0"

poetry = "^1.6.1"

sqlalchemy = "^2.0.22"

sentry-sdk = "^1.32.0"

pydantic-settings = "^2.0.3"

psycopg2-binary = "^2.9.9"

cryptography = "^41.0.4"

python-ulid = "^2.2.0"

ulid = "^1.1"

redis = "^5.0.1"

aiofiles = "^23.2.1"

pyyaml = "^6.0.1"

python-jose = "^3.3.0"

strawberry-graphql = {extras = ["debug-server", "fastapi"], version = "^0.217.1"}

[tool.poetry.group.dev.dependencies]

pytest = "^7.4.0"

pytest-mock = "^3.6.1"

httpx = "^0.24.1"

poetry = "^1.5.1"

sqlalchemy = "^2.0.22"

redis = "^5.0.1"

mypy = "^1.7.0"

types-aiofiles = "^23.2.0.0"

types-pyyaml = "^6.0.12.12"

commitizen = "^3.13.0"

black = "^23.3.0" # fortmatter

isort = "^5.12.0" # import 정렬

pycln = "^2.1.5" # unused import 정리

ruff = "^0.0.275" # linting

##############################################################################

# poethepoet

# - https://github.com/nat-n/poethepoet

# - poe를 통한 태스크 러너 설정

##############################################################################

types-requests = "^2.31.0.20240106"

pre-commit = "^3.6.0"

[tool.poe.tasks.format-check-only]

help = "Check without formatting with 'pycln', 'black', 'isort'."

sequence = [

{cmd = "pycln --check ."},

{cmd = "black --check ."},

{cmd = "isort --check-only ."}

]

[tool.poe.tasks.format]

help = "Run formatter with 'pycln', 'black', 'isort'."

sequence = [

{cmd = "pycln -a ."},

{cmd = "black ."},

{cmd = "isort ."}

]

[tool.poe.tasks.lint]

help = "Run linter with 'ruff'."

cmd = "ruff ."

[tool.poe.tasks.type-check]

help = "Run type checker with 'mypy'"

cmd = "mypy ."

[tool.poe.tasks.clean]

help = "Clean mypy_cache, pytest_cache, pycache..."

cmd = "rm -rf .coverage .mypy_cache .pytest_cache **/__pycache__"

##############################################################################

# isort

# - https://pycqa.github.io/isort/

# - python import 정렬 모듈 설정

##############################################################################

[tool.isort]

profile = "black"

##############################################################################

# ruff

# - https://github.com/astral-sh/ruff

# - Rust 기반 포맷터, 린터입니다.

##############################################################################

[tool.ruff]

select = [

"E", # pycodestyle errors

"W", # pycodestyle warnings

"F", # pyflakes

"C", # flake8-comprehensions

"B", # flake8-bugbear

# "T20", # flake8-print

]

ignore = [

"E501", # line too long, handled by black

"E402", # line too long, handled by black

"B008", # do not perform function calls in argument defaults

"C901", # too complex

]

[tool.commitizen]

##############################################################################

# mypy 설정

# - https://mypy.readthedocs.io/en/stable/

# - 정적 타입 체크를 수행합니다.

##############################################################################

[tool.mypy]

python_version = "3.11"

packages=["app"]

exclude=["tests"]

ignore_missing_imports = true

show_traceback = true

show_error_codes = true

disable_error_code="misc, attr-defined"

follow_imports="skip"

#strict = false

# 다음은 --strict에 포함된 여러 옵션들입니다.

warn_unused_configs = true # mypy 설정에서 사용되지 않은 [mypy-<pattern>] config 섹션에 대해 경고를 발생시킵니다. (증분 모드를 끄려면 --no-incremental 사용 필요)

disallow_any_generics = false # 명시적인 타입 매개변수를 지정하지 않은 제네릭 타입의 사용을 금지합니다. 예를 들어, 단순히 x: list와 같은 코드는 허용되지 않으며 항상 x: list[int]와 같이 명시적으로 작성해야 합니다.

disallow_subclassing_any = true # 클래스가 Any 타입을 상속할 때 오류를 보고합니다. 이는 기본 클래스가 존재하지 않는 모듈에서 가져올 때( --ignore-missing-imports 사용 시) 또는 가져오기 문에 # type: ignore 주석이 있는 경우에 발생할 수 있습니다.

disallow_untyped_calls = true # 타입 어노테이션이 없는 함수 정의에서 함수 호출시 오류를 보고합니다.

disallow_untyped_defs = false # 타입 어노테이션이 없거나 불완전한 타입 어노테이션이 있는 함수 정의를 보고합니다. (--disallow-incomplete-defs의 상위 집합)

disallow_incomplete_defs = false # 부분적으로 주석이 달린 함수 정의를 보고합니다. 그러나 완전히 주석이 달린 정의는 여전히 허용됩니다.

check_untyped_defs = true # 타입 어노테이션이 없는 함수의 본문을 항상 타입 체크합니다. (기본적으로 주석이 없는 함수의 본문은 타입 체크되지 않습니다.) 모든 매개변수를 Any로 간주하고 항상 Any를 반환값으로 추정합니다.

disallow_untyped_decorators = true # 타입 어노테이션이 없는 데코레이터를 사용할 때 오류를 보고합니다.

warn_redundant_casts = true # 코드가 불필요한 캐스트를 사용하는 경우 오류를 보고합니다. 캐스트가 안전하게 제거될 수 있는 경우 경고가 발생합니다.

warn_unused_ignores = false # 코드에 실제로 오류 메시지를 생성하지 않는 # type: ignore 주석이 있는 경우 경고를 발생시킵니다.

warn_return_any = false # Any 타입을 반환하는 함수에 대해 경고를 발생시킵니다.

no_implicit_reexport = true # 기본적으로 모듈에 가져온 값은 내보내진 것으로 간주되어 mypy는 다른 모듈에서 이를 가져오도록 허용합니다. 그러나 이 플래그를 사용하면 from-as를 사용하거나 __all__에 포함되지 않은 경우 내보내지 않도록 동작을 변경합니다.

strict_equality = true # mypy는 기본적으로 42 == 'no'와 같은 항상 거짓인 비교를 허용합니다. 이 플래그를 사용하면 이러한 비교를 금지하고 비슷한 식별 및 컨테이너 확인을 보고합니다. (예: from typing import Text)

extra_checks = true # 기술적으로는 올바르지만 실제 코드에서 불편할 수 있는 추가적인 검사를 활성화합니다. 특히 TypedDict 업데이트에서 부분 중첩을 금지하고 Concatenate를 통해 위치 전용 인자를 만듭니다.

# pydantic 플러그인 설정 하지 않으면 가짜 타입 오류 발생 여지 있음

# - https://www.twoistoomany.com/blog/2023/04/12/pydantic-mypy-plugin-in-pyproject/

plugins = ["pydantic.mypy", "strawberry.ext.mypy_plugin"]

##############################################################################

# 빌드 시스템 설정

##############################################################################

[build-system]

requires = ["poetry-core"]

build-backend = "poetry.core.masonry.api"

##############################################################################

# virtualenv 설정

# - 프로젝트에서 peotry 명령 호출 시 venv가 없다면 '.venv' 경로에 생성

##############################################################################

[virtualenvs]

create = true

in-project = true

path = ".venv"

```

## Additional Context

- I already run 'Invaidate Caches` in pycharm. | closed | 2024-01-19T02:17:47Z | 2025-03-20T15:56:34Z | https://github.com/strawberry-graphql/strawberry/issues/3349 | [] | evan-hwang | 6 |

mckinsey/vizro | data-visualization | 991 | Allow horizontally-aligned radio items | ### Which package?

vizro

### What's the problem this feature will solve?

[dbc.RadioItems](https://dash-bootstrap-components.opensource.faculty.ai/docs/components/input/) on which `vm.RadioItems` is based allows horizontally-aligned radio items through the `inline` option.

### Describe the solution you'd like

Allow `vm.RadioItems` to take an `inline` option and propagate it to `dbc.RadioItems`.

### Code of Conduct

- [x] I agree to follow the [Code of Conduct](https://github.com/mckinsey/vizro/blob/main/CODE_OF_CONDUCT.md). | closed | 2025-02-04T16:39:06Z | 2025-03-24T20:03:57Z | https://github.com/mckinsey/vizro/issues/991 | [

"Feature Request :nerd_face:"

] | gtauzin | 11 |

pydata/xarray | numpy | 9,877 | infer_freq() doesn't recognize monthly output if the time dimension is the middle of each month | ### What happened?

CESM used to write the time dimension of its output files at the end of the averaging period, so for monthly output the following would hold:

* January averages would have a time dimension of midnight on February 1

* February averages would have a time dimension of midnight on March 1

* etc

The version currently being developed uses the middle of the averaging period, so

* January averages now have a time dimension of noon on January 16 (15.5 days into a 31 day month)

* February averages now have a time dimension of midnight on February 15 (14 days into a 28 day month)

* etc

Some of our diagnostic packages (https://geocat-comp.readthedocs.io/en/latest/user_api/generated/geocat.comp.climatologies.climatology_average.html) require uniformly spaced data and rely on `xr.infer_freq()` to enforce that. `infer_freq()` recognizes Feb 1, March 1, April 1, ... as monthly but does not do the same for January 16 (12:00), Feb 15, March 16 (12:00), April 16, ...

### What did you expect to happen?

It would be great if `infer_freq()` could recognize a time dimension of monthly mid-points as having a monthly frequency

### Minimal Complete Verifiable Example

```Python

import numpy as np

import xarray as xr

month_bounds = np.array([0., 31., 59., 90., 120., 151., 181., 212., 243., 273., 304., 334., 365.])

mid_month = xr.decode_cf(xr.DataArray(0.5*(month_bounds[:-1] + month_bounds[1:]), attrs={'units': 'days since 0001-01-01 00:00:00', 'calendar': 'noleap'}).to_dataset(name='time'))['time']

end_month = xr.decode_cf(xr.DataArray(month_bounds[1:], attrs={'units': 'days since 0001-01-01 00:00:00', 'calendar': 'noleap'}).to_dataset(name='time'))['time']

print(f'infer_freq(mid_month) = {xr.infer_freq(mid_month)}') # None

print(f'infer_freq(end_month) = {xr.infer_freq(end_month)}') # 'MS'

```

### MVCE confirmation

- [X] Minimal example — the example is as focused as reasonably possible to demonstrate the underlying issue in xarray.

- [X] Complete example — the example is self-contained, including all data and the text of any traceback.

- [X] Verifiable example — the example copy & pastes into an IPython prompt or [Binder notebook](https://mybinder.org/v2/gh/pydata/xarray/main?urlpath=lab/tree/doc/examples/blank_template.ipynb), returning the result.

- [X] New issue — a search of GitHub Issues suggests this is not a duplicate.

- [X] Recent environment — the issue occurs with the latest version of xarray and its dependencies.

### Relevant log output

```Python

>>> print(f'infer_freq(mid_month) = {xr.infer_freq(mid_month)}') # None

infer_freq(mid_month) = None

>>> print(f'infer_freq(end_month) = {xr.infer_freq(end_month)}') # 'MS'

infer_freq(end_month) = MS

```

### Anything else we need to know?

I'm not familiar enough with `xarray` to be able to offer up a solution, but I figured logging the issue was a good first step. Sorry I can't do more!

### Environment

<details>

INSTALLED VERSIONS

------------------

commit: None

python: 3.12.8 | packaged by conda-forge | (main, Dec 5 2024, 14:24:40) [GCC 13.3.0]

python-bits: 64

OS: Linux

OS-release: 5.14.21-150400.24.18-default

machine: x86_64

processor: x86_64

byteorder: little

LC_ALL: en_US.UTF-8

LANG: en_US.UTF-8

LOCALE: ('en_US', 'UTF-8')

libhdf5: None

libnetcdf: None

xarray: 2024.11.0

pandas: 2.2.3

numpy: 2.2.0

scipy: None

netCDF4: None

pydap: None

h5netcdf: None

h5py: None

zarr: None

cftime: 1.6.4

nc_time_axis: None

iris: None

bottleneck: None

dask: None

distributed: None

matplotlib: None

cartopy: None

seaborn: None

numbagg: None

fsspec: None

cupy: None

pint: None

sparse: None

flox: None

numpy_groupies: None

setuptools: 75.6.0

pip: 24.3.1

conda: None

pytest: None

mypy: None

IPython: None

sphinx: None

</details>

| open | 2024-12-11T22:31:47Z | 2024-12-11T22:31:47Z | https://github.com/pydata/xarray/issues/9877 | [

"bug",

"needs triage"

] | mnlevy1981 | 0 |

joeyespo/grip | flask | 121 | Perhaps it would be nice to have WYSIWYG possibility | I'd like to see if it would be nice to edit in the localhost and then have it push back to the readme file once done.

Not sure if it is possible and not sure if it would be an improvement, but just thought to throw it out there.

| closed | 2015-05-23T08:50:57Z | 2015-05-23T16:30:46Z | https://github.com/joeyespo/grip/issues/121 | [

"out-of-scope"

] | kootenpv | 1 |

wkentaro/labelme | deep-learning | 1,495 | Raster and ghost appear when adjusting brightness and contrast labelme v5.5 | ### Provide environment information

python 3.8.19

### What OS are you using?

Windows 10

### Describe the Bug

When I adjust the brightness and contrast, the raster and ghost will appear. The contrast will change, but it will be obscured by the raster and overlap, and going back to the default values will not change the result. I will have to reopen the file to get it back to normal.But nothing happened on the console.

The images are a series of .png images, 1616x970 in size,32 bit.

labelme: 5.5.0

### Expected Behavior

_No response_

### To Reproduce

_No response_ | open | 2024-09-19T02:20:25Z | 2024-09-19T02:27:36Z | https://github.com/wkentaro/labelme/issues/1495 | [

"issue::bug"

] | Downsiren | 1 |

AirtestProject/Airtest | automation | 493 | 关于opencv-contrib-python版本问题,"There is no SIFT module in your OpenCV environment!" | (请尽量按照下面提示内容填写,有助于我们快速定位和解决问题,感谢配合。否则直接关闭。)

**(重要!问题分类)**

* 测试开发环境AirtestIDE使用问题 -> https://github.com/AirtestProject/AirtestIDE/issues

* 控件识别、树状结构、poco库报错 -> https://github.com/AirtestProject/Poco/issues

* 图像识别、设备控制相关问题 -> 按下面的步骤

**描述问题bug**

(简洁清晰得概括一下遇到的问题是什么。或者是报错的traceback信息。)

Linux执行airtest脚本报"There is no SIFT module in your OpenCV environment!"

根据https://github.com/AirtestProject/Airtest/issues/377,想安装3.2.0.7的opencv-contrib-python,有的Linux能安装上,有的却报"ERROR: airtest 1.0.24 has requirement opencv-contrib-python==3.4.2.17, but you'll have opencv-contrib-python 3.2.0.7 which is incompatible."

能安装上opencv-contrib-python==3.2.0.7的环境的确是不报"There is no SIFT module in your OpenCV environment!"了。

为什么有的环境安装opencv-contrib-python==3.2.0.7却报错?

(python2和python3都不行)

```

(在这里粘贴traceback或其他报错信息)

```

Collecting opencv-contrib-python==3.2.0.7

Downloading https://files.pythonhosted.org/packages/34/70/323020070a925c75d53042923265807c7915181921e0484911249f1d3336/opencv_contrib_python-3.2.0.7-cp27-cp27m-win_amd64.whl (28.4MB)

|████████████████████████████████| 28.4MB 7.3MB/s

Requirement already satisfied: numpy>=1.11.1 in c:\python27\lib\site-packages (from opencv-contrib-python==3.2.0.7) (1.16.4)

ERROR: airtest 1.0.24 has requirement opencv-contrib-python==3.4.2.17, but you'll have opencv-contrib-python 3.2.0.7 which is incompatible.

Installing collected packages: opencv-contrib-python

Successfully installed opencv-contrib-python-3.2.0.7

**相关截图**

(贴出遇到问题时的截图内容,如果有的话)

(在AirtestIDE里产生的图像和设备相关的问题,请贴一些AirtestIDE控制台黑窗口相关报错信息)

**复现步骤**

1. Go to '...'

2. Click on '....'

3. Scroll down to '....'

4. See error

**预期效果**

(预期想要得到什么、见到什么)

**python 版本:** `python2.7`

**airtest 版本:** `1.0.24`

> airtest版本通过`pip freeze`可以命令可以查到

**设备:**

- 型号: [e.g. google pixel 2]

- 系统: [e.g. Android 8.1]

- (别的信息)

**其他相关环境信息**

(其他运行环境,例如在linux ubuntu16.04上运行异常,在windows上正常。)

linux ubuntu16.04运行异常,windows使用opencv-contrib-python==3.4.2.17运行正常,Linux和windows安装opencv-contrib-python==3.2.0.7都有问题。

| open | 2019-08-12T13:41:51Z | 2019-08-13T01:43:04Z | https://github.com/AirtestProject/Airtest/issues/493 | [] | SHUJIAN01 | 1 |

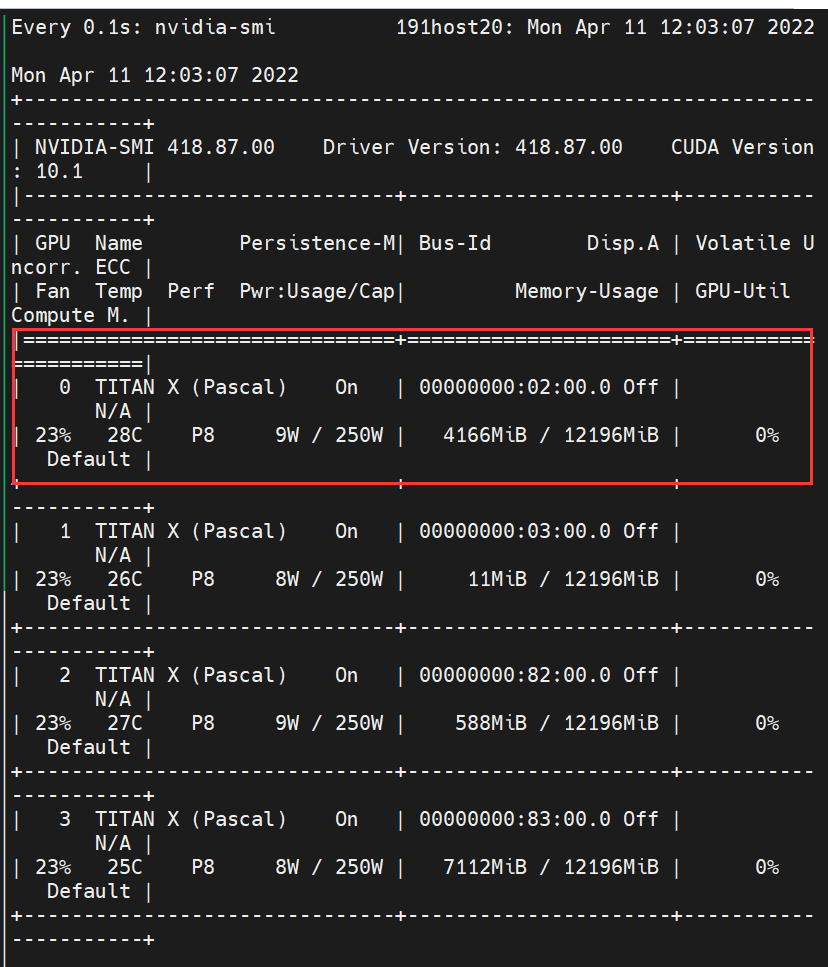

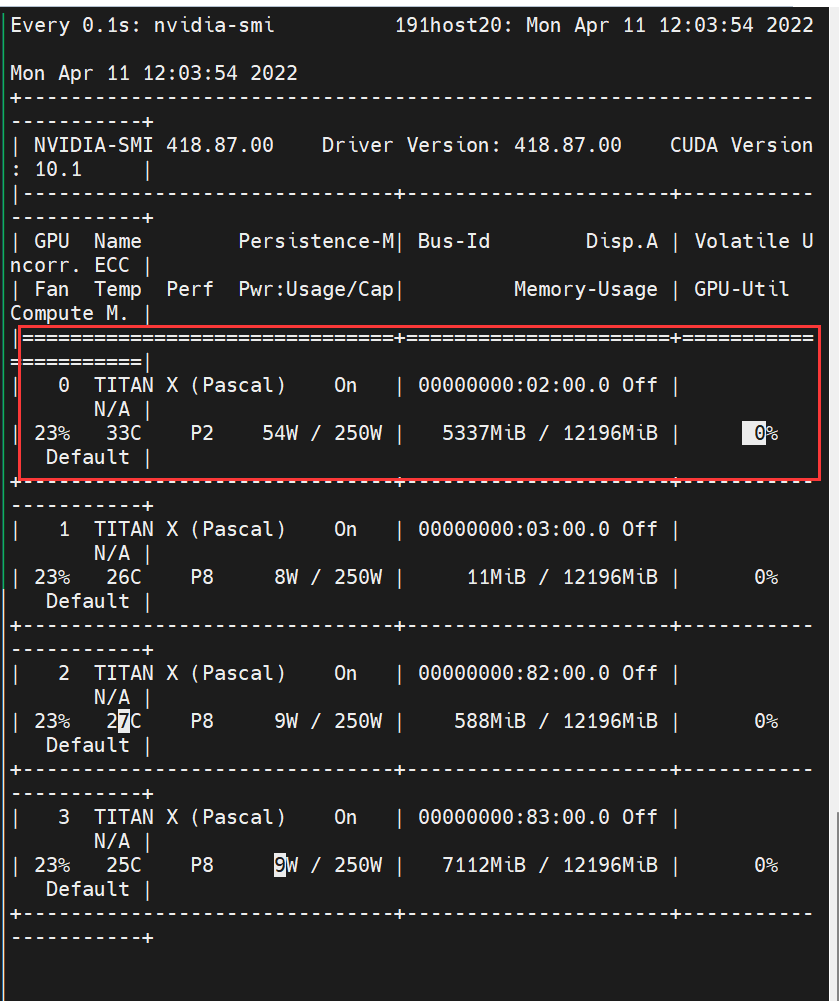

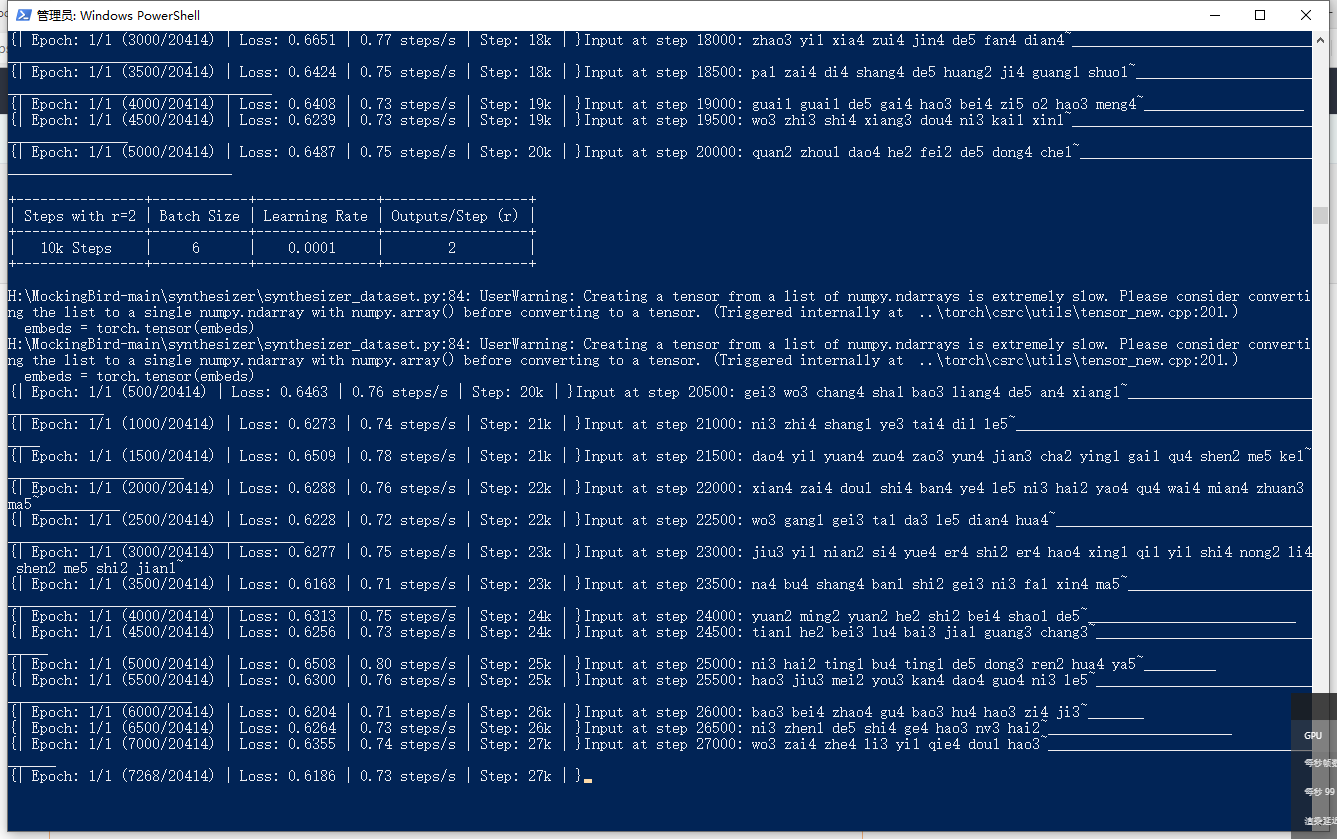

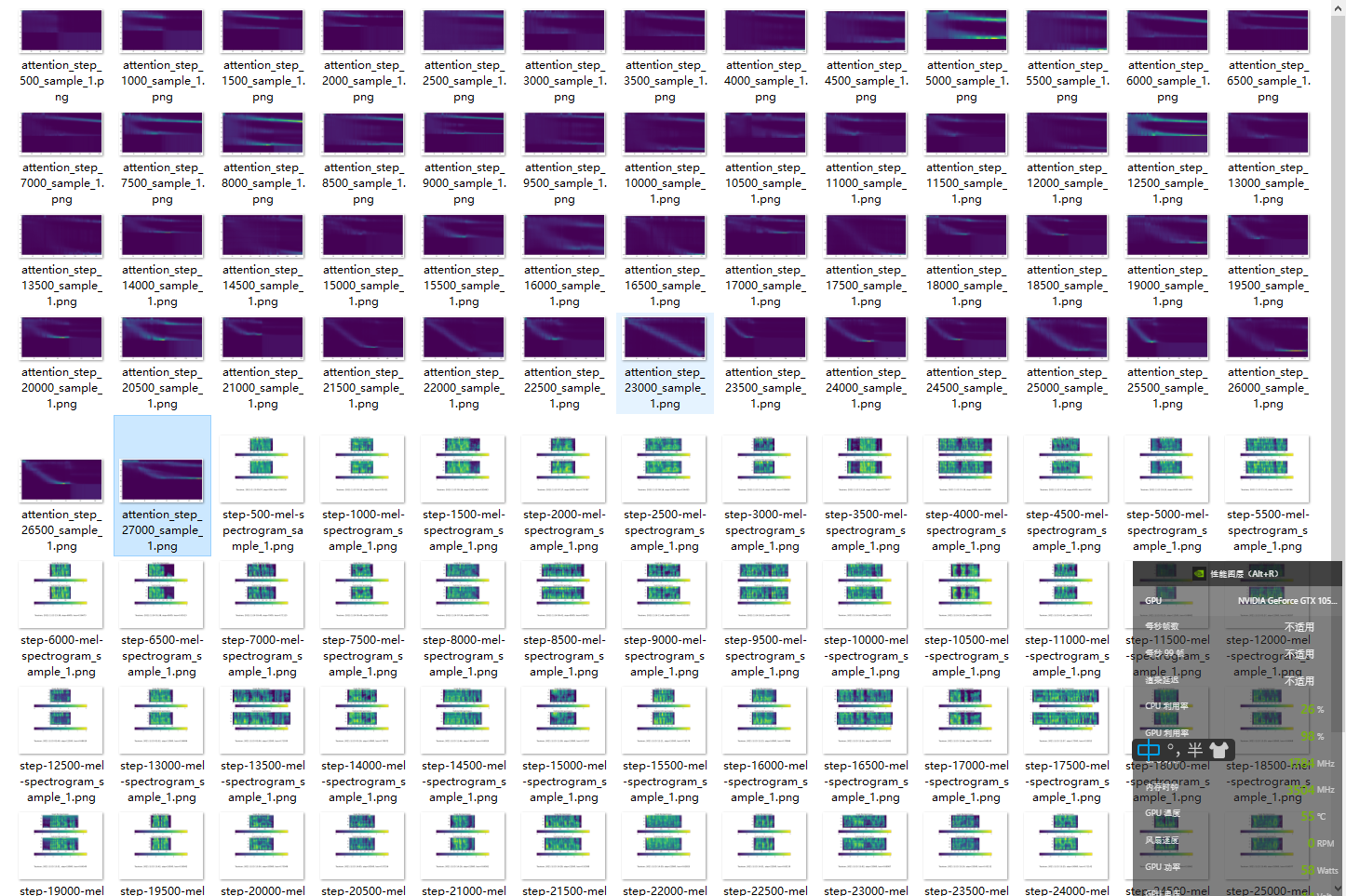

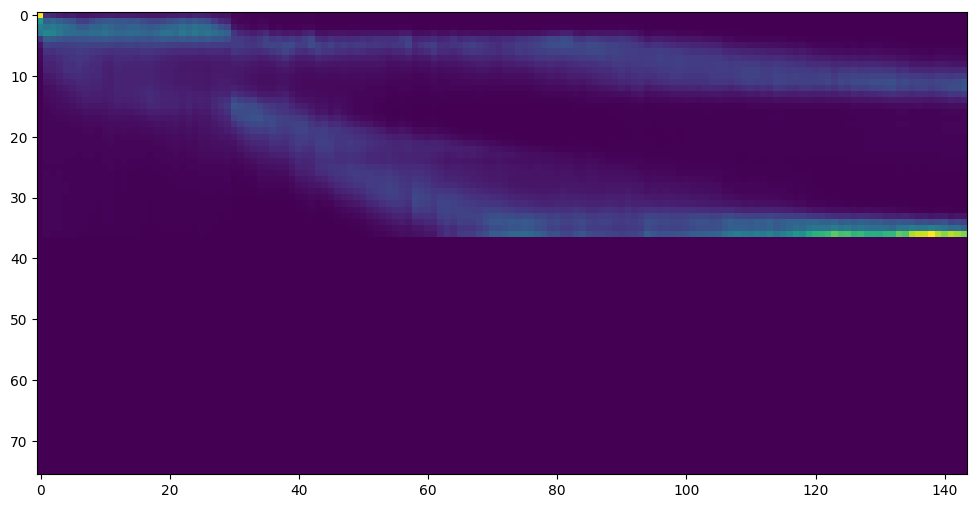

babysor/MockingBird | deep-learning | 234 | 请问训练到25k的时候注意力线还是没有出来,并且文件多出了一个出来这个是正常的吗? | 请问训练到25k的时候注意力线还是没有出来,并且文件多出了一个出来这个是正常的吗?

这样的情况是正确的还是我操作错了

cpu是i59400F,显卡是1505ti,配置GPU是的数值只能配置到6,GPU的占用率已经在80-100%跳动了

Tacotron Training

tts_schedule = [(2, 1e-3, 10_000, 6), # Progressive training schedule

(2, 5e-4, 15_000, 6), # (r, lr, step, batch_size)

(2, 2e-4, 20_000, 6), # (r, lr, step, batch_size)

(2, 1e-4, 30_000, 6), #

(2, 5e-5, 40_000, 6), #

(2, 1e-5, 60_000, 6), #

(2, 5e-6, 160_000, 6), # r = reduction factor (# of mel frames

(2, 3e-6, 320_000, 6), # synthesized for each decoder iteration)

(2, 1e-6, 640_000, 6)], # lr = learning rate

还请大佬给指点指点,谢谢! | open | 2021-11-25T08:53:44Z | 2022-05-27T13:22:16Z | https://github.com/babysor/MockingBird/issues/234 | [] | yemaohaker | 13 |

ultralytics/ultralytics | computer-vision | 18,920 | Validation of YOLO pretrained COCO on custom Dataset - Zero metrics | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and [discussions](https://github.com/orgs/ultralytics/discussions) and found no similar questions.

### Question

Hello, I want to test the Yolo model pre-trained on YOLO on my custom dataset.

My test dataset contains only 1 label class: the boat coco class.

My YAML file is following :

```

path: N:/IA/data_2024/split_coco_to_data

train:

val: images/test

test:

# Classes

names:

0: person

1: bicycle

2: car

3: motorcycle

4: airplane

5: bus

6: train

7: truck

8: boat

9: traffic light

10: fire hydrant

11: stop sign

12: parking meter

13: bench

14: bird

15: cat

16: dog

17: horse

18: sheep

19: cow

20: elephant

21: bear

22: zebra

23: giraffe

24: backpack

25: umbrella

26: handbag

27: tie

28: suitcase

29: frisbee

30: skis

31: snowboard

32: sports ball

33: kite

34: baseball bat

35: baseball glove

36: skateboard

37: surfboard

38: tennis racket

39: bottle

40: wine glass

41: cup

42: fork

43: knife

44: spoon

45: bowl

46: banana

47: apple

48: sandwich

49: orange

50: broccoli

51: carrot

52: hot dog

53: pizza

54: donut

55: cake

56: chair

57: couch

58: potted plant

59: bed

60: dining table

61: toilet

62: tv

63: laptop

64: mouse

65: remote

66: keyboard

67: cell phone

68: microwave

69: oven

70: toaster

71: sink

72: refrigerator

73: book

74: clock

75: vase

76: scissors

77: teddy bear

78: hair drier

79: toothbrush

```

**As I don't have the training data for the COCO, I'm leaving the train path in the YAML file blank.**

I get these results and error when I attempt to print the metrics :

```

[]

```

I get the test images with the predictions as well as the predictions.json file containing all the predictions.

However, I don't get any output metrics, which are set to 0 by default. I don't understand where the error comes from, given that my YAML file is well defined, as is my entire folder tree.

### Additional

_No response_ | open | 2025-01-27T15:58:13Z | 2025-01-31T14:07:29Z | https://github.com/ultralytics/ultralytics/issues/18920 | [

"question",

"detect"

] | adriengoleb | 37 |

streamlit/streamlit | python | 10,112 | pills and segmented_control with customized image display for options | ### Checklist

- [X] I have searched the [existing issues](https://github.com/streamlit/streamlit/issues) for similar feature requests.

- [X] I added a descriptive title and summary to this issue.

### Summary

The `pills` and `segmented_control` components are fantastic. However, I currently miss 1 functionality, that is allowing arbitrary images to be displayed on "buttons" or options display, which is supported in `st.button`.

### Why?

The reason is the same as allowing arbitrary images in `st.button`. In many use cases, images are clear and compact in displaying options.

### How?

Allow `format_func` to accept the base64 text or a path to a local image / icon file.

### Additional Context

https://github.com/streamlit/streamlit/issues/7300

https://github.com/streamlit/streamlit/pull/9670 | closed | 2025-01-04T16:09:34Z | 2025-01-10T15:26:58Z | https://github.com/streamlit/streamlit/issues/10112 | [

"type:enhancement",

"feature:st.segmented_control",

"feature:st.pills"

] | nycjersey | 2 |

streamlit/streamlit | streamlit | 10,115 | Input widget does not take input from password manager | ### Checklist

- [X] I have searched the [existing issues](https://github.com/streamlit/streamlit/issues) for similar issues.

- [X] I added a very descriptive title to this issue.

- [X] I have provided sufficient information below to help reproduce this issue.

### Summary

When my password manager (Dashlane version 6.2451.1 - latest) autofills an e-mail address into a text input widget, the on_change callback is not triggered and the session state is not updated.

Typing in the e-mail address in the input widget with the keyboard works as expected.

### Reproducible Code Example

```Python

import streamlit as st

st.text_input('Input e-mail', key='test_key')

if st.button('Submit'):

print("input: " + st.session_state['test_key'])

```

### Steps To Reproduce

1. Setup Dashlane password manager (add some credentials for autofill)

2. Open page with code example

3. Use Dashlane to autofill the text input widget

4. Click submit (does not work)

5. Adjust the text using keyboard input

6. Click submit again (works)

### Expected Behavior

I expect the session state to be updated (and on_change to be triggered) when an e-mail address is set in the input widget by the password manager.

### Current Behavior

https://github.com/user-attachments/assets/10fd4c53-cf87-46bb-9316-ef62f0a7b867

### Is this a regression?

- [ ] Yes, this used to work in a previous version.

### Debug info

- Streamlit version: 1.41.1

- Python version: 3.12.3

- Operating System: MacOs 12.6

- Browser: Chrome version 131.0.6778.205 (Official Build) (arm64)

### Additional Information

_No response_ | open | 2025-01-06T14:24:31Z | 2025-03-09T08:25:35Z | https://github.com/streamlit/streamlit/issues/10115 | [

"type:bug",

"status:confirmed",

"priority:P3",

"feature:st.text_input"

] | sandervdhimst | 4 |

TencentARC/GFPGAN | pytorch | 499 | gfpgan | billing problem

| open | 2024-01-29T06:48:54Z | 2024-02-29T04:42:55Z | https://github.com/TencentARC/GFPGAN/issues/499 | [] | assassin1382 | 6 |

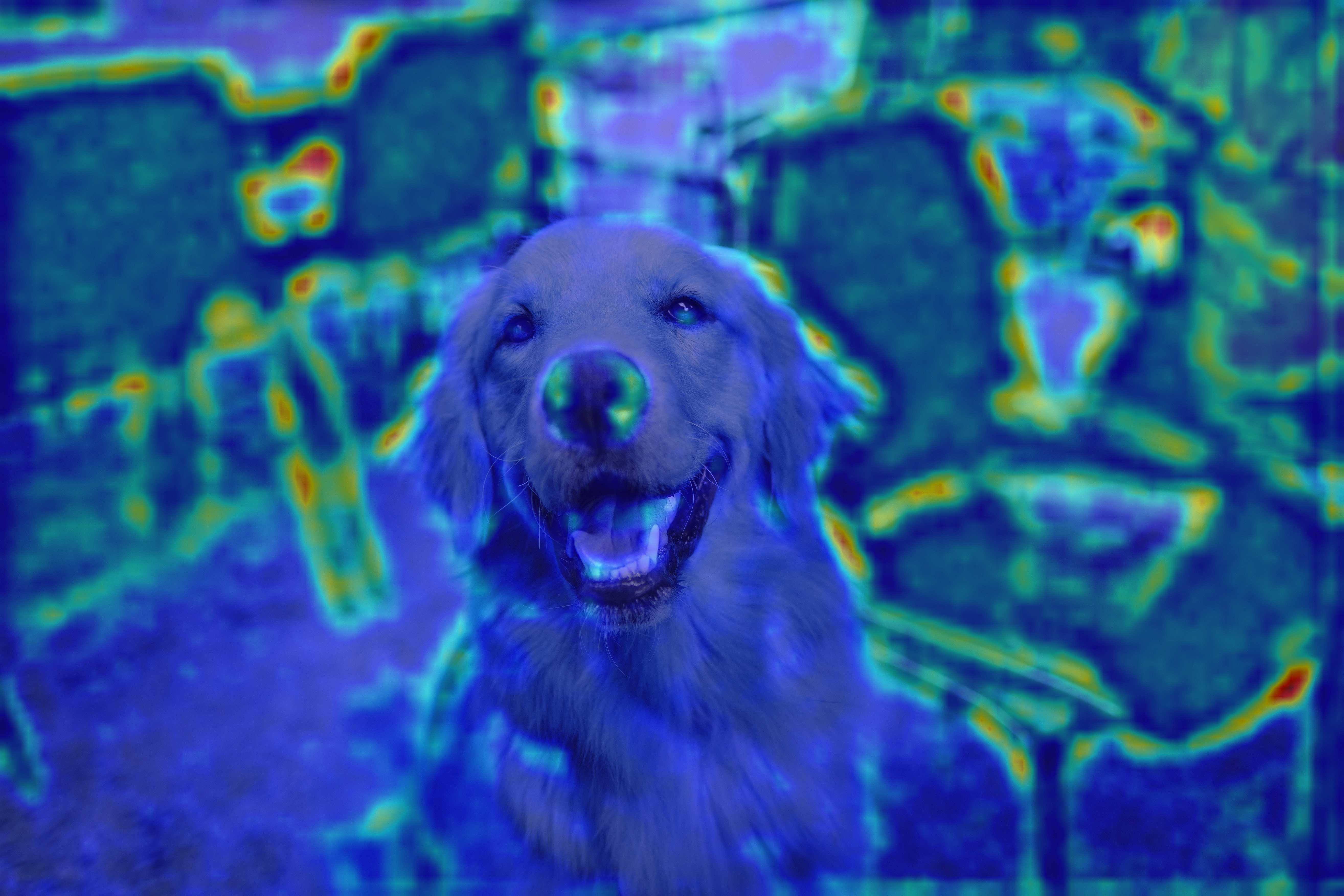

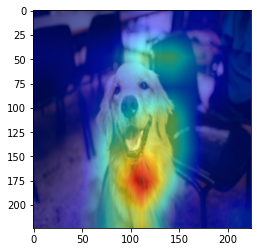

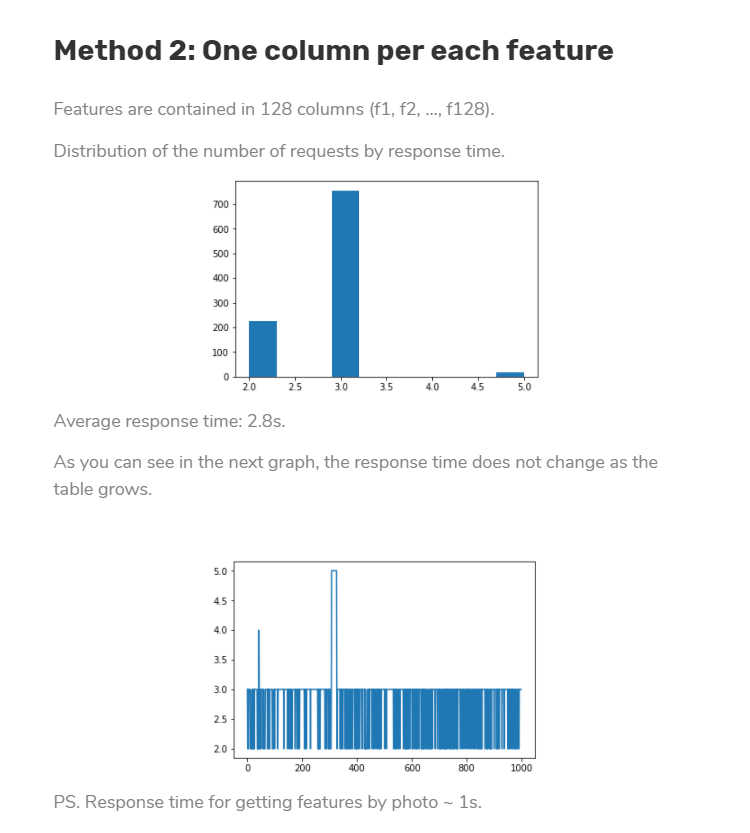

ultralytics/yolov5 | deep-learning | 13,042 | how to find why mAP suddenly increased | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) and found no similar questions.

### Question

I trained YOLOv5s for 500 epochs, and around the 385th to 387th epochs, there was a sudden increase in mAP, resulting in the best result at about 80%. After this peak, the mAP gradually decreased.

I've repeated this training several times to see if this sudden increase would appear again, but it didn't. The best results after these subsequent trainings, without the sudden increase, decreased from 80% to 70%.

My questions are:

How can this phenomenon be explained?

How can I identify the specific reason for this sudden increase in mAP?

I suspect that an inappropriate learning rate might have caused this issue. Should I adjust the learning rate or other hyperparameters?

Attached are images showing the mAP increase during the initial training (around the 385th to 387th epochs) and subsequent trainings where the sudden increase did not appear.

👆 Sudden increase at about the 385th to 387th epochs in the initial training

👆 No sudden increase in subsequent trainings with the same dataset and parameters

👆batch size and epoch was change from 16 to 96 , 500 to 1000 respectively, but the same

### Additional

_No response_ | closed | 2024-05-28T02:09:34Z | 2024-10-20T19:46:43Z | https://github.com/ultralytics/yolov5/issues/13042 | [

"question"

] | MiNaMisan | 6 |

aimhubio/aim | tensorflow | 2,645 | Remove soft lock from UI | ## 🚀 Feature

When running in aim remote server mode it's difficult for users to clear failed runs / soft locks.

From the UI they see a generic error. Remote server side in logs shows:

```

Error while trying to delete run '1901419848fb433ab111647c'. Cannot delete Run '1901419848fb433ab111647c'. Run is locked..

```

This can be resolved by an admin deleting the lock manually from the filesystem but this is an operationally expensive exercise.

Would be great if a user could solve this from the UI.

### Motivation

- Minimise operational overheads.

### Alternatives

- Operational time manually deleting locks for failed runs.

| open | 2023-04-11T12:45:24Z | 2023-12-29T14:28:13Z | https://github.com/aimhubio/aim/issues/2645 | [

"type / enhancement",

"area / Web-UI"

] | dcarrion87 | 5 |

dolevf/graphql-cop | graphql | 27 | How can I use graphql-cop as package in the test suite | Hi,

I would like to know if graphql-cop can be imported as a package

Aswathy | closed | 2023-08-30T09:00:15Z | 2023-10-31T05:12:31Z | https://github.com/dolevf/graphql-cop/issues/27 | [

"question"

] | abnair24 | 2 |

mljar/mljar-supervised | scikit-learn | 100 | Add `explain` and `performance` modes | There should be `explain` mode in the AutoML which will produce explanations.

There should be `performance` mode for max accuracy of models from AutoML. | closed | 2020-06-02T10:57:12Z | 2020-07-08T09:38:35Z | https://github.com/mljar/mljar-supervised/issues/100 | [

"enhancement"

] | pplonski | 1 |

allenai/allennlp | pytorch | 5,033 | Publish info about each model implementation in models repo | From our discussion on Slack.

This could be markdown docs, ideally automatically generated. Could also publish to our API docs.

Kind of related to #4720 | open | 2021-03-02T18:12:27Z | 2021-03-02T18:12:27Z | https://github.com/allenai/allennlp/issues/5033 | [] | epwalsh | 0 |

Esri/arcgis-python-api | jupyter | 1,750 | Doc - remove reference to "PlacesAPI (beta)" | In the "Find Places" guide, we have a reference to the Places API beta. This feature is no longer in beta so we can remove reference to it in the guide. We can simply refer to it as "Places service".

https://developers.arcgis.com/python/guide/find-places/