repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

horovod/horovod | machine-learning | 2,961 | PyTorch sparse allreduce fails with torch nightly | Repro:

```

pip install --no-cache-dir --pre torch torchvision -f https://download.pytorch.org/whl/nightly/cpu/torch_nightly.html

# install horovod

horovodrun -np 2 pytest -vs "test/parallel/test_torch.py::TorchTests::test_async_sparse_allreduce"

```

The test hangs, which has been causing test failures.

cc @chongxiaoc @romerojosh

@chongxiaoc can you or @irasit take a look? | closed | 2021-06-08T19:31:19Z | 2021-06-10T03:11:05Z | https://github.com/horovod/horovod/issues/2961 | [

"bug"

] | tgaddair | 2 |

huggingface/datasets | numpy | 6,819 | Give more details in `DataFilesNotFoundError` when getting the config names | ### Feature request

After https://huggingface.co/datasets/cis-lmu/Glot500/commit/39060e01272ff228cc0ce1d31ae53789cacae8c3, the dataset viewer gives the following error:

```

{

"error": "Cannot get the config names for the dataset.",

"cause_exception": "DataFilesNotFoundError",

"cause_message": "No (supported) data files found in cis-lmu/Glot500",

"cause_traceback": [

"Traceback (most recent call last):\n",

" File \"/src/services/worker/src/worker/job_runners/dataset/config_names.py\", line 73, in compute_config_names_response\n config_names = get_dataset_config_names(\n",

" File \"/src/services/worker/.venv/lib/python3.9/site-packages/datasets/inspect.py\", line 347, in get_dataset_config_names\n dataset_module = dataset_module_factory(\n",

" File \"/src/services/worker/.venv/lib/python3.9/site-packages/datasets/load.py\", line 1873, in dataset_module_factory\n raise e1 from None\n",

" File \"/src/services/worker/.venv/lib/python3.9/site-packages/datasets/load.py\", line 1854, in dataset_module_factory\n return HubDatasetModuleFactoryWithoutScript(\n",

" File \"/src/services/worker/.venv/lib/python3.9/site-packages/datasets/load.py\", line 1245, in get_module\n module_name, default_builder_kwargs = infer_module_for_data_files(\n",

" File \"/src/services/worker/.venv/lib/python3.9/site-packages/datasets/load.py\", line 595, in infer_module_for_data_files\n raise DataFilesNotFoundError(\"No (supported) data files found\" + (f\" in {path}\" if path else \"\"))\n",

"datasets.exceptions.DataFilesNotFoundError: No (supported) data files found in cis-lmu/Glot500\n"

]

}

```

because the deleted files were still listed in the README, see https://huggingface.co/datasets/cis-lmu/Glot500/discussions/4

Ideally, the error message would include the name of the first configuration with missing files, to help the user understand how to fix it. Here, it would tell that configuration `aze_Ethi` has no supported data files, instead of telling that the `cis-lmu/Glot500` *dataset* has no supported data files (which is not true).

### Motivation

Giving more detail in the error would help the Datasets Hub users to debug why the dataset viewer does not work.

### Your contribution

Not sure how to best fix this, as there are a lot of loops on the dataset configs in the traceback methods. "maybe" it would be easier to handle if the code was completely isolating each config. | open | 2024-04-17T11:19:47Z | 2024-04-17T11:19:47Z | https://github.com/huggingface/datasets/issues/6819 | [

"enhancement"

] | severo | 0 |

CorentinJ/Real-Time-Voice-Cloning | python | 706 | Can't start demo_cli or demo_toolbox.py | C:\Users\n-har\Desktop\deepaudio>python demo_cli.py

> Traceback (most recent call last):

File "demo_cli.py", line 4, in <module>

from synthesizer.inference import Synthesizer

File "C:\Users\n-har\Desktop\deepaudio\synthesizer\inference.py", line 1, in <module>

import torch

File "C:\Users\n-har\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.7_qbz5n2kfra8p0\LocalCache\local-packages\Python37\site-packages\torch\__init__.py", line 81, in <module>

ctypes.CDLL(dll)

File "C:\Program Files\WindowsApps\PythonSoftwareFoundation.Python.3.7_3.7.2544.0_x64__qbz5n2kfra8p0\lib\ctypes\__init__.py", line 364, in __init__

self._handle = _dlopen(self._name, mode)

OSError: [WinError 126] Das angegebene Modul wurde nicht gefunden

C:\Users\n-har\Desktop\deepaudio>python` demo_toolbox.py

> Traceback (most recent call last):

File "demo_toolbox.py", line 2, in <module>

from toolbox import Toolbox

File "C:\Users\n-har\Desktop\deepaudio\toolbox\__init__.py", line 1, in <module>

from toolbox.ui import UI

File "C:\Users\n-har\Desktop\deepaudio\toolbox\ui.py", line 2, in <module>

from matplotlib.backends.backend_qt5agg import FigureCanvasQTAgg as FigureCanvas

File "C:\Users\n-har\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.7_qbz5n2kfra8p0\LocalCache\local-packages\Python37\site-packages\matplotlib\backends\backend_qt5agg.py", line 11, in <module>

from .backend_qt5 import (

File "C:\Users\n-har\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.7_qbz5n2kfra8p0\LocalCache\local-packages\Python37\site-packages\matplotlib\backends\backend_qt5.py", line 16, in <module>

import matplotlib.backends.qt_editor.figureoptions as figureoptions

File "C:\Users\n-har\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.7_qbz5n2kfra8p0\LocalCache\local-packages\Python37\site-packages\matplotlib\backends\qt_editor\figureoptions.py", line 11, in <module>

from matplotlib.backends.qt_compat import QtGui

File "C:\Users\n-har\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.7_qbz5n2kfra8p0\LocalCache\local-packages\Python37\site-packages\matplotlib\backends\qt_compat.py", line 177, in <module>

raise ImportError("Failed to import any qt binding")

ImportError: Failed to import any qt binding

PIP Freeze:

> appdirs==1.4.4

audioread==2.1.9

certifi==2020.12.5

cffi==1.14.5

chardet==4.0.0

cycler==0.10.0

decorator==4.4.2

dill==0.3.3

ffmpeg==1.4

future==0.18.2

idna==2.10

inflect==5.3.0

joblib==1.0.1

jsonpatch==1.32

jsonpointer==2.1

kiwisolver==1.3.1

librosa==0.8.0

llvmlite==0.36.0

matplotlib==3.3.4

multiprocess==0.70.11.1

numba==0.53.0

numpy==1.19.3

packaging==20.9

Pillow==8.1.2

pooch==1.3.0

pycparser==2.20

pynndescent==0.5.2

pyparsing==2.4.7

PyQt5==5.15.4

PyQt5-Qt5==5.15.2

PyQt5-sip==12.8.1

python-dateutil==2.8.1

pyzmq==22.0.3

requests==2.25.1

resampy==0.2.2

scikit-learn==0.24.1

scipy==1.6.1

six==1.15.0

sounddevice==0.4.1

SoundFile==0.10.3.post1

threadpoolctl==2.1.0

torch==1.5.1+cpu

torchfile==0.1.0

torchvision==0.6.1+cpu

tornado==6.1

tqdm==4.59.0

typing-extensions==3.7.4.3

umap-learn==0.5.1

Unidecode==1.2.0

urllib3==1.26.4

visdom==0.1.8.9

websocket-client==0.58.0

Using Windows 10 with Python 3.7.9 - Did i missed something? | closed | 2021-03-16T19:17:31Z | 2021-03-16T20:55:35Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/706 | [] | t0g3pii | 1 |

fastapi/fastapi | fastapi | 12,402 | [BUG] In version 0.115.0 of FastAPI, the pydantic model that has declared an alias cannot correctly receive query parameters | Thank you for all the work you have done. I have initiated the [discussion ](https://github.com/fastapi/fastapi/discussions/12401)as requested, but I think this issue is quite important. Initiating this issue is just to prevent the discussion from being drowned out, and I apologize for any offense.

### Example Code

```python

import uvicorn

from typing import Literal

from fastapi import FastAPI, Query

from pydantic import BaseModel, ConfigDict, Field

from pydantic.alias_generators import to_camel

app = FastAPI()

class FilterParams(BaseModel):

model_config = ConfigDict(alias_generator=to_camel)

limit: int = Field(100, gt=0, le=100)

offset: int = Field(0, ge=0)

order_by: Literal['created_at', 'updated_at'] = 'created_at'

tags: list[str] = []

@app.get('/items/')

async def read_items(filter_query: FilterParams = Query()):

return filter_query

if __name__ == '__main__':

uvicorn.run(app='app:app')

```

### Description

Running the code in the example above, I encountered an incorrect result when accessing http://127.0.0.1:8000/items/?offset=1&orderBy=updated_at in the browser, orderBy did not receive successfully.

```

{

"limit": 100,

"offset": 1,

"orderBy": "created_at",

"tags": []

}

```

The correct result should be as follows

```

{

"limit": 100,

"offset": 1,

"orderBy": "updated_at",

"tags": []

}

```

### Operating System

Windows

### Operating System Details

_No response_

### FastAPI Version

0.115.0

### Pydantic Version

2.9.2

### Python Version

3.9.19

### Additional Context

_No response_ | open | 2024-10-08T06:42:41Z | 2025-01-21T06:56:01Z | https://github.com/fastapi/fastapi/issues/12402 | [] | insistence | 10 |

miguelgrinberg/Flask-SocketIO | flask | 754 | Buffer/Queue fills up when client is not consuming socketio emit | I have a flask-socketio server which when the client is connected emits log messages. The intention is for real time log viewing. I noticed the application was leaking memory (occasionally) so after some investigation I found that on occasion the disconnect message from the client was getting lost and therefore the server was unaware that the client was no longer listening and this was causing memory to be eaten up.

After some more research I found a reference that said that the client MUST be consuming or the buffer/queue would fill up rather than the messages be silently dropped (like they are if no client is connected). So the memory is leaked in the gap between the client disconnecting and the ping timeout closing the connection on the server side.

While I understand I need to fix the lost disconnect message it is clear that it can be the case that the client silently disconnects and so I wonder if there is a way to reset/clear this buffer/queue it and get that memory back?

Sorry if the answer is out there but I did try my best.

Thanks

Max | closed | 2018-08-01T19:58:49Z | 2019-06-08T23:16:06Z | https://github.com/miguelgrinberg/Flask-SocketIO/issues/754 | [

"investigate"

] | maximillion90 | 3 |

JaidedAI/EasyOCR | deep-learning | 492 | When do you plan to release v1.4 to pypi | Hi! You have added dict output at v1.4. I look forward to trying this opportunity, but latest version at pypi is still 1.3.2. When do you plan to update it? | closed | 2021-07-19T15:16:32Z | 2021-07-20T08:57:28Z | https://github.com/JaidedAI/EasyOCR/issues/492 | [] | AndreyGurevich | 1 |

python-restx/flask-restx | flask | 420 | flask restx user case to adopt flask-oidc authentication | Hello Team,

Recently I am working on oidc auth for my flask restx app.

The most examples I see online about flask-oidc is just based on a barebone flask app.

That usually works.

But through googling, I do not see any user case where flask restx can adopt flask-oidc for authentication so we can enjoy the benefit of testing api through swagger UI.

?

any thought or quick example you have in mind?

| open | 2022-03-17T19:13:57Z | 2022-03-17T19:13:57Z | https://github.com/python-restx/flask-restx/issues/420 | [

"question"

] | zhoupoko2000 | 0 |

SYSTRAN/faster-whisper | deep-learning | 618 | faster_whisper batch encode time-consume issue | I have made a test, for batching in faster-whisper.

But faster_whisper batch encode consume multiple time as sample's amount, it seems encode in batch not work as expected by CTranslate?

By the way, the decode/generate have the same issue when in-Batch ops.

| open | 2023-12-14T06:45:25Z | 2023-12-14T09:30:22Z | https://github.com/SYSTRAN/faster-whisper/issues/618 | [] | dyyzhmm | 2 |

ContextLab/hypertools | data-visualization | 251 | tests for backend management | the matplotlib backend management code only works in ipython/jupyter notebook-based environments. we could use some of the tricks @paxtonfitzpatrick is using in the [davos](https://github.com/ContextLab/davos) package to run tests for that code. | open | 2021-08-07T15:16:47Z | 2021-08-07T15:16:47Z | https://github.com/ContextLab/hypertools/issues/251 | [

"enhancement",

"help wanted"

] | jeremymanning | 0 |

mljar/mercury | jupyter | 474 | Notebook hangs in "WorkerState.Busy" indefinitely | Hi, I haven't been able to deploy any notebooks in Mercury (unable than the demo). I've followed the documentation and set up the requirements.txt file, but the Worker never finishes. I've waited up to an hour. Any suggestions? | open | 2024-12-04T19:10:26Z | 2024-12-05T21:50:02Z | https://github.com/mljar/mercury/issues/474 | [] | Pancake205 | 3 |

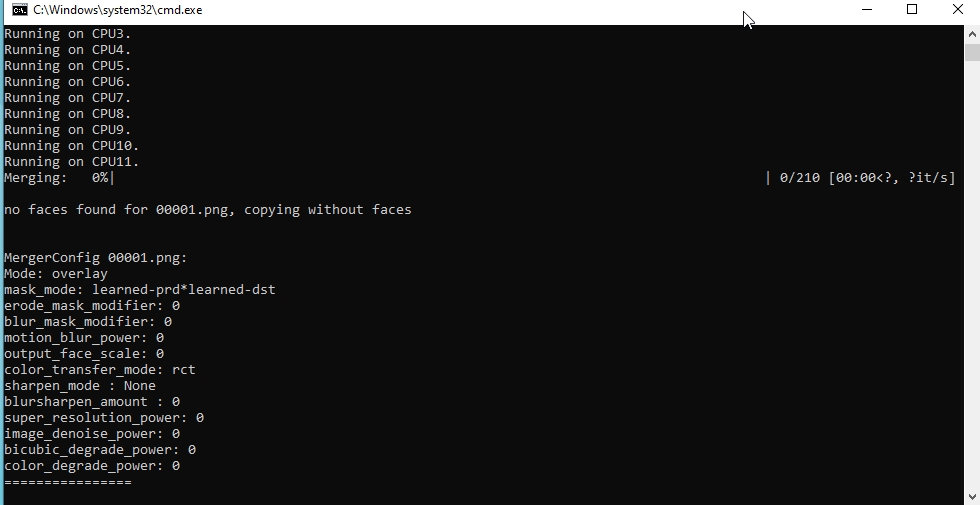

iperov/DeepFaceLab | machine-learning | 5,576 | Merge error | after learning through Quick96, I put on the merge. As a result, it writes "no faces found for 00001.png, copying without faces" and a window with hot keys appears. Inside the data_dst folder, a merged folder appeared, containing one frame that really does not contain the desired face, as well as a merged_masked folder, respectively containing a file with a black square. That's all. My dst video contains more than just the face I'm about to replace. How then to replace the face with a video in which there are other faces? (P.S. in the frame where there is a face that I am going to replace, while other faces do not fall. P.P.S. In the aligned folder, I deleted the frames without the face that I want to replace). Tell me what's wrong?

| open | 2022-10-30T16:54:12Z | 2023-09-21T04:21:43Z | https://github.com/iperov/DeepFaceLab/issues/5576 | [] | Margaret93 | 2 |

tfranzel/drf-spectacular | rest-api | 1,094 | How to only include `ApiKeyAuth` authentication/authorization strategy? | ### Describe the bug

We are only exposing endpoints that use `djangorestframework-api-key` in our generated schemas. We're following the blueprint instructions [here](https://github.com/tfranzel/drf-spectacular/blob/0.25.1/docs/blueprints.rst#djangorestframework-api-key) to include `ApiKeyAuth`.

However, we're also seeing `basicAuth` and `cookieAuth` in the generated docs, how do we suppress those?

Including an empty list for `AUTHENTICATION_WHITELIST` does not seem to affect it.

### To Reproduce

In `settings.py`:

```py

SPECTACULAR_SETTINGS = {

# ...

"AUTHENTICATION_WHITELIST": [],

"APPEND_COMPONENTS": {

"securitySchemes": {

"ApiKeyAuth": {

"type": "apiKey",

"in": "header",

"name": "Authorization",

}

}

},

"SECURITY": [{"ApiKeyAuth": []}],

}

```

### Expected behavior

We would like to only see the `ApiKeyAuth` strategy in generated docs

### Observed behavior

We also see `basicAuth` and `cookieAuth`

In Swagger "Authorize":

In Redoc "Authorizations":

In `.yaml` file "securitySchemes":

```yml

components:

securitySchemes:

ApiKeyAuth:

type: apiKey

in: header

name: Authorization

basicAuth:

type: http

scheme: basic

cookieAuth:

type: apiKey

in: cookie

name: sessionid

```

| closed | 2023-10-31T15:58:33Z | 2023-11-02T17:57:37Z | https://github.com/tfranzel/drf-spectacular/issues/1094 | [] | alexburner | 6 |

dynaconf/dynaconf | fastapi | 449 | [bug] LazyFormatted TypeError exception in Django TEMPLATES DIRS | **Describe the bug**

TypeError exception (expected str, bytes or os.PathLike object, not Lazy) if add templates dir path with `@format` or `@jinja` tokens.

**To Reproduce**

Steps to reproduce the behavior:

1. Having the following config files:

<!-- Please adjust if you are using different files and formats! -->

<details>

<summary> Config files </summary>

**.env**

```bash

DJANGO_ENV=development

DJANGO_SETTINGS_MODULE=project.settings

```

and

**settings.yaml**

```yaml

[default]

TEMPLATES:

- APP_DIRS: true

BACKEND: django.template.backends.django.DjangoTemplates

DIRS:

- "@format {this.BASE_DIR}/templates"

OPTIONS:

context_processors:

- django.template.context_processors.debug

- django.template.context_processors.request

- django.contrib.auth.context_processors.auth

- django.contrib.messages.context_processors.messages

```

</details>

2. Having the following app code:

<details>

<summary> Code </summary>

**settings.py**

```python

import os

import dynaconf

BASE_DIR = os.path.dirname(os.path.dirname(os.path.abspath(__file__)))

settings = dynaconf.DjangoDynaconf(__name__, BASE_DIR=BASE_DIR)

```

</details>

**Environment (please complete the following information):**

- OS: Windows

- Dynaconf version: 3.1.2

- Frameworks: Django 2.2.16 | closed | 2020-10-14T16:46:43Z | 2021-03-01T17:50:04Z | https://github.com/dynaconf/dynaconf/issues/449 | [

"bug"

] | dgavrilov | 0 |

NVlabs/neuralangelo | computer-vision | 214 | Error during "requirements.txt" installation | Hello,

I did everything like in this tutorial video "https://www.youtube.com/watch?v=NEF5bGyTqmk" but when I run

`pip install -r requirements.txt`

I got this error:

`Collecting git+https://github.com/NVlabs/tiny-cuda-nn/#subdirectory=bindings/torch (from -r requirements.txt (line 3))

Cloning https://github.com/NVlabs/tiny-cuda-nn/ to /tmp/pip-req-build-1bu7dhfy

Running command git clone --filter=blob:none --quiet https://github.com/NVlabs/tiny-cuda-nn/ /tmp/pip-req-build-1bu7dhfy

Resolved https://github.com/NVlabs/tiny-cuda-nn/ to commit c91138bcd4c6877c8d5e60e483c0581aafc70cce

Running command git submodule update --init --recursive -q

Preparing metadata (setup.py) ... done

Collecting addict (from -r requirements.txt (line 1))

Using cached addict-2.4.0-py3-none-any.whl.metadata (1.0 kB)

Requirement already satisfied: gdown in /home/stein/miniconda3/envs/neuralangelo/lib/python3.8/site-packages (from -r requirements.txt (line 2)) (5.2.0)

Requirement already satisfied: gpustat in /home/stein/miniconda3/envs/neuralangelo/lib/python3.8/site-packages (from -r requirements.txt (line 4)) (1.1.1)

Collecting icecream (from -r requirements.txt (line 5))

Using cached icecream-2.1.3-py2.py3-none-any.whl.metadata (1.4 kB)

Collecting imageio-ffmpeg (from -r requirements.txt (line 6))

Using cached imageio_ffmpeg-0.5.1-py3-none-manylinux2010_x86_64.whl.metadata (1.6 kB)

Collecting imutils (from -r requirements.txt (line 7))

Using cached imutils-0.5.4.tar.gz (17 kB)

Preparing metadata (setup.py) ... done

Collecting ipdb (from -r requirements.txt (line 8))

Using cached ipdb-0.13.13-py3-none-any.whl.metadata (14 kB)

Collecting k3d (from -r requirements.txt (line 9))

Using cached k3d-2.16.1-py3-none-any.whl.metadata (6.8 kB)

Collecting kornia (from -r requirements.txt (line 10))

Using cached kornia-0.7.3-py2.py3-none-any.whl.metadata (7.7 kB)

Collecting lpips (from -r requirements.txt (line 11))

Using cached lpips-0.1.4-py3-none-any.whl.metadata (10 kB)

Collecting matplotlib (from -r requirements.txt (line 12))

Using cached matplotlib-3.7.5-cp38-cp38-manylinux_2_12_x86_64.manylinux2010_x86_64.whl.metadata (5.7 kB)

Collecting mediapy (from -r requirements.txt (line 13))

Using cached mediapy-1.2.2-py3-none-any.whl.metadata (4.8 kB)

Collecting nvidia-ml-py3 (from -r requirements.txt (line 14))

Using cached nvidia-ml-py3-7.352.0.tar.gz (19 kB)

Preparing metadata (setup.py) ... done

Collecting open3d (from -r requirements.txt (line 15))

Using cached open3d-0.18.0-cp38-cp38-manylinux_2_27_x86_64.whl.metadata (4.2 kB)

Collecting opencv-python-headless (from -r requirements.txt (line 16))

Using cached opencv_python_headless-4.10.0.84-cp37-abi3-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.metadata (20 kB)

Collecting OpenEXR (from -r requirements.txt (line 17))

Using cached openexr-3.3.1-cp38-cp38-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.metadata (10 kB)

Collecting pathlib (from -r requirements.txt (line 18))

Using cached pathlib-1.0.1-py3-none-any.whl.metadata (5.1 kB)

Requirement already satisfied: pillow in /home/stein/miniconda3/envs/neuralangelo/lib/python3.8/site-packages (from -r requirements.txt (line 19)) (10.4.0)

Collecting plotly (from -r requirements.txt (line 20))

Using cached plotly-5.24.1-py3-none-any.whl.metadata (7.3 kB)

Collecting pyequilib (from -r requirements.txt (line 21))

Using cached pyequilib-0.5.8-py3-none-any.whl.metadata (8.4 kB)

Collecting pyexr (from -r requirements.txt (line 22))

Using cached pyexr-0.4.0-py3-none-any.whl.metadata (4.5 kB)

Collecting PyMCubes (from -r requirements.txt (line 23))

**Using cached pymcubes-0.1.6.tar.gz (109 kB)

Installing build dependencies ... error

error: subprocess-exited-with-error

× pip subprocess to install build dependencies did not run successfully.

│ exit code: 1

╰─> [9 lines of output]

Collecting setuptools

Using cached setuptools-75.2.0-py3-none-any.whl.metadata (6.9 kB)

Collecting wheel

Using cached wheel-0.44.0-py3-none-any.whl.metadata (2.3 kB)

Collecting Cython

Using cached Cython-3.0.11-cp38-cp38-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.metadata (3.2 kB)

ERROR: Ignored the following versions that require a different python version: 1.25.0 Requires-Python >=3.9; 1.25.1 Requires-Python >=3.9; 1.25.2 Requires-Python >=3.9; 1.26.0 Requires-Python <3.13,>=3.9; 1.26.1 Requires-Python <3.13,>=3.9; 1.26.2 Requires-Python >=3.9; 1.26.3 Requires-Python >=3.9; 1.26.4 Requires-Python >=3.9; 2.0.0 Requires-Python >=3.9; 2.0.1 Requires-Python >=3.9; 2.0.2 Requires-Python >=3.9; 2.1.0 Requires-Python >=3.10; 2.1.0rc1 Requires-Python >=3.10; 2.1.1 Requires-Python >=3.10; 2.1.2 Requires-Python >=3.10

ERROR: Could not find a version that satisfies the requirement numpy~=2.0 (from versions: 1.3.0, 1.4.1, 1.5.0, 1.5.1, 1.6.0, 1.6.1, 1.6.2, 1.7.0, 1.7.1, 1.7.2, 1.8.0, 1.8.1, 1.8.2, 1.9.0, 1.9.1, 1.9.2, 1.9.3, 1.10.0.post2, 1.10.1, 1.10.2, 1.10.4, 1.11.0, 1.11.1, 1.11.2, 1.11.3, 1.12.0, 1.12.1, 1.13.0, 1.13.1, 1.13.3, 1.14.0, 1.14.1, 1.14.2, 1.14.3, 1.14.4, 1.14.5, 1.14.6, 1.15.0, 1.15.1, 1.15.2, 1.15.3, 1.15.4, 1.16.0, 1.16.1, 1.16.2, 1.16.3, 1.16.4, 1.16.5, 1.16.6, 1.17.0, 1.17.1, 1.17.2, 1.17.3, 1.17.4, 1.17.5, 1.18.0, 1.18.1, 1.18.2, 1.18.3, 1.18.4, 1.18.5, 1.19.0, 1.19.1, 1.19.2, 1.19.3, 1.19.4, 1.19.5, 1.20.0, 1.20.1, 1.20.2, 1.20.3, 1.21.0, 1.21.1, 1.21.2, 1.21.3, 1.21.4, 1.21.5, 1.21.6, 1.22.0, 1.22.1, 1.22.2, 1.22.3, 1.22.4, 1.23.0, 1.23.1, 1.23.2, 1.23.3, 1.23.4, 1.23.5, 1.24.0, 1.24.1, 1.24.2, 1.24.3, 1.24.4)

ERROR: No matching distribution found for numpy~=2.0

[end of output]**

note: This error originates from a subprocess, and is likely not a problem with pip.

error: subprocess-exited-with-error

× pip subprocess to install build dependencies did not run successfully.

│ exit code: 1

╰─> See above for output.

note: This error originates from a subprocess, and is likely not a problem with pip.

`

I tried to install numpy 2.0 but this time i got this error

` pip install numpy==2.0.0

ERROR: Ignored the following versions that require a different python version: 1.25.0 Requires-Python >=3.9; 1.25.1 Requires-Python >=3.9; 1.25.2 Requires-Python >=3.9; 1.26.0 Requires-Python <3.13,>=3.9; 1.26.1 Requires-Python <3.13,>=3.9; 1.26.2 Requires-Python >=3.9; 1.26.3 Requires-Python >=3.9; 1.26.4 Requires-Python >=3.9; 2.0.0 Requires-Python >=3.9; 2.0.1 Requires-Python >=3.9; 2.0.2 Requires-Python >=3.9; 2.1.0 Requires-Python >=3.10; 2.1.0rc1 Requires-Python >=3.10; 2.1.1 Requires-Python >=3.10; 2.1.2 Requires-Python >=3.10

ERROR: Could not find a version that satisfies the requirement numpy==2.0.0 (from versions: 1.3.0, 1.4.1, 1.5.0, 1.5.1, 1.6.0, 1.6.1, 1.6.2, 1.7.0, 1.7.1, 1.7.2, 1.8.0, 1.8.1, 1.8.2, 1.9.0, 1.9.1, 1.9.2, 1.9.3, 1.10.0.post2, 1.10.1, 1.10.2, 1.10.4, 1.11.0, 1.11.1, 1.11.2, 1.11.3, 1.12.0, 1.12.1, 1.13.0, 1.13.1, 1.13.3, 1.14.0, 1.14.1, 1.14.2, 1.14.3, 1.14.4, 1.14.5, 1.14.6, 1.15.0, 1.15.1, 1.15.2, 1.15.3, 1.15.4, 1.16.0, 1.16.1, 1.16.2, 1.16.3, 1.16.4, 1.16.5, 1.16.6, 1.17.0, 1.17.1, 1.17.2, 1.17.3, 1.17.4, 1.17.5, 1.18.0, 1.18.1, 1.18.2, 1.18.3, 1.18.4, 1.18.5, 1.19.0, 1.19.1, 1.19.2, 1.19.3, 1.19.4, 1.19.5, 1.20.0, 1.20.1, 1.20.2, 1.20.3, 1.21.0, 1.21.1, 1.21.2, 1.21.3, 1.21.4, 1.21.5, 1.21.6, 1.22.0, 1.22.1, 1.22.2, 1.22.3, 1.22.4, 1.23.0, 1.23.1, 1.23.2, 1.23.3, 1.23.4, 1.23.5, 1.24.0, 1.24.1, 1.24.2, 1.24.3, 1.24.4)

ERROR: No matching distribution found for numpy==2.0.0

`

I also tried to update Python but failed. Any solutions or ideas?

| open | 2024-10-25T21:20:28Z | 2024-10-25T21:36:59Z | https://github.com/NVlabs/neuralangelo/issues/214 | [] | canonar | 1 |

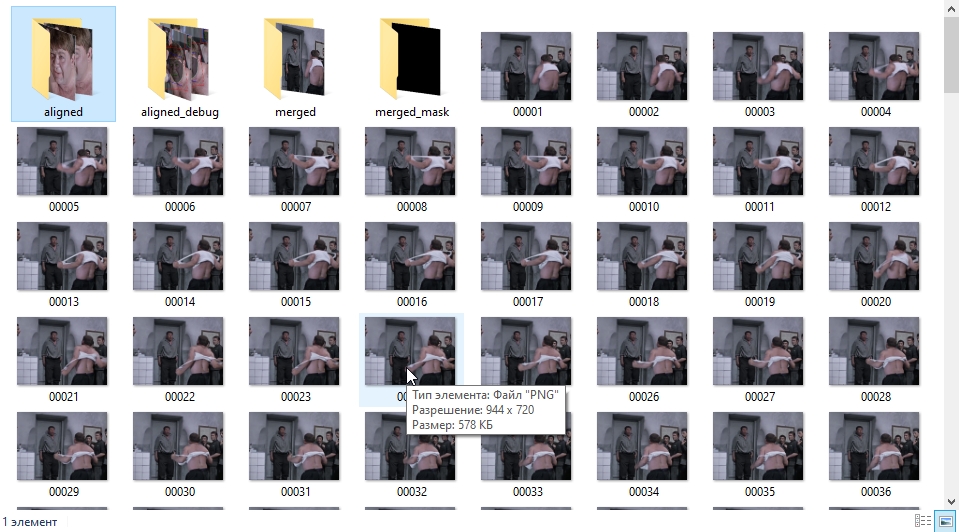

microsoft/nni | data-science | 5,110 | Using 2 GPUs training to train DARTS in parallel, but get 2 different search architecture? | **Describe the issue**:

I used 2 GPUs to train DARTS, but from the output, I find that I get 2 different results.

And I used 'export_onnx', but I didn't get the output model under the specified directory.

```python

if __name__ == "__main__":

parser = ArgumentParser("darts")

parser.add_argument("--layers", default=8, type=int)

parser.add_argument("--batch-size", default=64, type=int)

parser.add_argument("--log-frequency", default=10, type=int)

parser.add_argument("--epochs", default=1, type=int)

parser.add_argument("--channels", default=16, type=int)

parser.add_argument("--unrolled", default=False, action="store_true")

parser.add_argument("--visualization", default=False, action="store_true")

parser.add_argument("--v1", default=False, action="store_true")

parser.add_argument("--local_rank", default=0, type=int,help="node rank for distributed training")

args = parser.parse_args()

dataset_train, dataset_valid = datasets.get_dataset("cifar10")

length=len(dataset_train)

train_size,validate_size=int(0.5*length),int(0.5*length)

train_set,validate_set=torch.utils.data.random_split(dataset_train,[train_size,validate_size])

model = CNN(32, 3, args.channels, 10, args.layers)

evaluator = pl.Classification(

train_dataloaders=pl.DataLoader(train_set, batch_size=45,pin_memory=True,num_workers=4),

val_dataloaders=pl.DataLoader(validate_set, batch_size=45,pin_memory=True,num_workers=4),

max_epochs=1,

accelerator="gpu",

devices=2,

strategy='ddp',

log_every_n_steps=10,

export_onnx=Path("searched_models/"),

)

exploration_strategy = strategy.DARTS()

exp = RetiariiExperiment(model, evaluator=evaluator, strategy=exploration_strategy)

exp_config = RetiariiExeConfig('local')

exp_config.experiment_name = 'cifa10'

exp_config.max_trial_number = 1

exp_config.trial_concurrency = 1

exp_config.trial_gpu_number = 2

exp_config.training_service.use_active_gpu = True

exp_config.execution_engine = 'oneshot'

exp.run(exp_config, 8081)

exported_arch=exp.export_top_models()[0]

print(exported_arch)

```

output model:

It seems two processes on 2 GPUs print different results. Should I just use the model trained by rank 0 or is there anything wrong with my use?

```shell

{'normal_n2_p0': 'maxpool', 'normal_n2_p1': 'maxpool', 'normal_n2_switch': [0, 1], 'normal_n3_p0': 'maxpool', 'normal_n3_p1': 'maxpool', 'normal_n3_p2': 'maxpool', 'normal_n3_switch': [0, 1], 'normal_n4_p0': 'maxpool', 'normal_n4_p1': 'maxpool', 'normal_n4_p2': 'maxpool', 'normal_n4_p3': 'maxpool', 'normal_n4_switch': [0, 2], 'normal_n5_p0': 'maxpool', 'normal_n5_p1': 'maxpool', 'normal_n5_p2': 'maxpool', 'normal_n5_p3': 'maxpool', 'normal_n5_p4': 'dilconv5x5', 'normal_n5_switch': [0, 3], 'reduce_n2_p0': 'maxpool', 'reduce_n2_p1': 'maxpool', 'reduce_n2_switch': [0, 1], 'reduce_n3_p0': 'maxpool', 'reduce_n3_p1': 'maxpool', 'reduce_n3_p2': 'maxpool', 'reduce_n3_switch': [0, 2], 'reduce_n4_p0': 'maxpool', 'reduce_n4_p1': 'maxpool', 'reduce_n4_p2': 'maxpool', 'reduce_n4_p3': 'maxpool', 'reduce_n4_switch': [0, 2], 'reduce_n5_p0': 'maxpool', 'reduce_n5_p1': 'sepconv5x5', 'reduce_n5_p2': 'maxpool', 'reduce_n5_p3': 'dilconv5x5', 'reduce_n5_p4': 'dilconv3x3', 'reduce_n5_switch': [0, 3]}

{'normal_n2_p0': 'maxpool', 'normal_n2_p1': 'maxpool', 'normal_n2_switch': [0, 1], 'normal_n3_p0': 'maxpool', 'normal_n3_p1': 'maxpool', 'normal_n3_p2': 'maxpool', 'normal_n3_switch': [0, 1], 'normal_n4_p0': 'maxpool', 'normal_n4_p1': 'maxpool', 'normal_n4_p2': 'sepconv5x5', 'normal_n4_p3': 'dilconv5x5', 'normal_n4_switch': [0, 3], 'normal_n5_p0': 'maxpool', 'normal_n5_p1': 'maxpool', 'normal_n5_p2': 'maxpool', 'normal_n5_p3': 'maxpool', 'normal_n5_p4': 'maxpool', 'normal_n5_switch': [0, 3], 'reduce_n2_p0': 'maxpool', 'reduce_n2_p1': 'maxpool', 'reduce_n2_switch': [0, 1], 'reduce_n3_p0': 'maxpool', 'reduce_n3_p1': 'maxpool', 'reduce_n3_p2': 'maxpool', 'reduce_n3_switch': [0, 2], 'reduce_n4_p0': 'maxpool', 'reduce_n4_p1': 'maxpool', 'reduce_n4_p2': 'maxpool', 'reduce_n4_p3': 'maxpool', 'reduce_n4_switch': [0, 2], 'reduce_n5_p0': 'maxpool', 'reduce_n5_p1': 'dilconv5x5', 'reduce_n5_p2': 'dilconv5x5', 'reduce_n5_p3': 'dilconv5x5', 'reduce_n5_p4': 'sepconv5x5', 'reduce_n5_switch': [0, 3]}

```

| open | 2022-09-05T06:59:23Z | 2023-10-12T02:12:07Z | https://github.com/microsoft/nni/issues/5110 | [

"NAS 2.0"

] | toufunao | 2 |

huggingface/peft | pytorch | 1,363 | Error while fetching adapter layer from huggingface library | ### System Info

```

pa_extractor = LlamaForCausalLM.from_pretrained(LLAMA_MODEL_NAME,

token=HF_ACCESS_TOKEN,

max_length=LLAMA2_MAX_LENGTH,

pad_token_id=cls.tokenizer.eos_token_id,

device_map="auto",

quantization_config=bnb_config)

pa_extractor.load_adapter(PEFT_MODEL_NAME, token=HF_ACCESS_TOKEN, device_map="auto")

```

# getting the below error while executing :

401 client error, Repository Not Found for url: https://huggingface.co/muskan/llama2/resolve/main/adapter_model.safetensors.

Please make sure you specified the correct `repo_id` and `repo_type`.

If you are trying to access a private or gated repo, make sure you are authenticated. Invalid username or password

This error occurs while calling pa_extractor.load_adapter

### Who can help?

@pacman100 @younesbelkada @sayakpaul

### Information

- [X] The official example scripts

- [ ] My own modified scripts

### Tasks

- [ ] An officially supported task in the `examples` folder

- [X] My own task or dataset (give details below)

### Reproduction

The model is present in my private repo, should be replicable if you will try to use load_adapter to fetch any adapter layer from hf directly.

### Expected behavior

Should be able to download the peft adapter layer successfully | closed | 2024-01-16T11:39:08Z | 2024-03-10T15:03:37Z | https://github.com/huggingface/peft/issues/1363 | [] | Muskanb | 2 |

comfyanonymous/ComfyUI | pytorch | 7,000 | Pinning | ### Feature Idea

To pin means to fix something in place.

If I move a group with pinned nodes, I expect, that the nodes are pinned to the group, but it isn't. The nodes are teared off the group instead. Looks strange.

I would expect, that a pinned node in a group can't be moved inside the group, but moved with the group. It should not be possible to change the groups border, so that the pinned node comes lying outside of the group.

A group should also have the option to be pinned.

Maybe also a possibility to collapse / expand, to hide it's content.

Because Group nodes can be set to be disabled or bypassed, it makes no sense, if a pinned node will be left outside after a movement of the group and needs to be reset separately outside of the group.

### Existing Solutions

None

### Other

None | open | 2025-02-27T16:36:36Z | 2025-02-27T23:43:13Z | https://github.com/comfyanonymous/ComfyUI/issues/7000 | [

"Feature",

"Frontend"

] | schoenid | 0 |

huggingface/datasets | machine-learning | 6,695 | Support JSON file with an array of strings | Support loading a dataset from a JSON file with an array of strings.

See: https://huggingface.co/datasets/CausalLM/Refined-Anime-Text/discussions/1 | closed | 2024-02-26T12:35:11Z | 2024-03-08T14:16:25Z | https://github.com/huggingface/datasets/issues/6695 | [

"enhancement"

] | albertvillanova | 1 |

huggingface/datasets | deep-learning | 7,194 | datasets.exceptions.DatasetNotFoundError for private dataset | ### Describe the bug

The following Python code tries to download a private dataset and fails with the error `datasets.exceptions.DatasetNotFoundError: Dataset 'ClimatePolicyRadar/all-document-text-data-weekly' doesn't exist on the Hub or cannot be accessed.`. Downloading a public dataset doesn't work.

``` py

from datasets import load_dataset

_ = load_dataset("ClimatePolicyRadar/all-document-text-data-weekly")

```

This seems to be just an issue with my machine config as the code above works with a colleague's machine. So far I have tried:

- logging back out and in from the Huggingface CLI using `huggingface-cli logout`

- manually removing the token cache at `/Users/kalyan/.cache/huggingface/token` (found using `huggingface-cli env`)

- manually passing a token in `load_dataset`

My output of `huggingface-cli whoami`:

```

kdutia

orgs: ClimatePolicyRadar

```

### Steps to reproduce the bug

```

python

Python 3.12.2 (main, Feb 6 2024, 20:19:44) [Clang 15.0.0 (clang-1500.1.0.2.5)] on darwin

Type "help", "copyright", "credits" or "license" for more information.

>>> from datasets import load_dataset

>>> _ = load_dataset("ClimatePolicyRadar/all-document-text-data-weekly")

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/Users/kalyan/Library/Caches/pypoetry/virtualenvs/open-data-cnKQNmjn-py3.12/lib/python3.12/site-packages/datasets/load.py", line 2074, in load_dataset

builder_instance = load_dataset_builder(

^^^^^^^^^^^^^^^^^^^^^

File "/Users/kalyan/Library/Caches/pypoetry/virtualenvs/open-data-cnKQNmjn-py3.12/lib/python3.12/site-packages/datasets/load.py", line 1795, in load_dataset_builder

dataset_module = dataset_module_factory(

^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/kalyan/Library/Caches/pypoetry/virtualenvs/open-data-cnKQNmjn-py3.12/lib/python3.12/site-packages/datasets/load.py", line 1659, in dataset_module_factory

raise e1 from None

File "/Users/kalyan/Library/Caches/pypoetry/virtualenvs/open-data-cnKQNmjn-py3.12/lib/python3.12/site-packages/datasets/load.py", line 1597, in dataset_module_factory

raise DatasetNotFoundError(f"Dataset '{path}' doesn't exist on the Hub or cannot be accessed.") from e

datasets.exceptions.DatasetNotFoundError: Dataset 'ClimatePolicyRadar/all-document-text-data-weekly' doesn't exist on the Hub or cannot be accessed.

>>>

```

### Expected behavior

The dataset downloads successfully.

### Environment info

From `huggingface-cli env`:

```

- huggingface_hub version: 0.25.1

- Platform: macOS-14.2.1-arm64-arm-64bit

- Python version: 3.12.2

- Running in iPython ?: No

- Running in notebook ?: No

- Running in Google Colab ?: No

- Running in Google Colab Enterprise ?: No

- Token path ?: /Users/kalyan/.cache/huggingface/token

- Has saved token ?: True

- Who am I ?: kdutia

- Configured git credential helpers: osxkeychain

- FastAI: N/A

- Tensorflow: N/A

- Torch: N/A

- Jinja2: 3.1.4

- Graphviz: N/A

- keras: N/A

- Pydot: N/A

- Pillow: N/A

- hf_transfer: N/A

- gradio: N/A

- tensorboard: N/A

- numpy: 2.1.1

- pydantic: N/A

- aiohttp: 3.10.8

- ENDPOINT: https://huggingface.co

- HF_HUB_CACHE: /Users/kalyan/.cache/huggingface/hub

- HF_ASSETS_CACHE: /Users/kalyan/.cache/huggingface/assets

- HF_TOKEN_PATH: /Users/kalyan/.cache/huggingface/token

- HF_HUB_OFFLINE: False

- HF_HUB_DISABLE_TELEMETRY: False

- HF_HUB_DISABLE_PROGRESS_BARS: None

- HF_HUB_DISABLE_SYMLINKS_WARNING: False

- HF_HUB_DISABLE_EXPERIMENTAL_WARNING: False

- HF_HUB_DISABLE_IMPLICIT_TOKEN: False

- HF_HUB_ENABLE_HF_TRANSFER: False

- HF_HUB_ETAG_TIMEOUT: 10

- HF_HUB_DOWNLOAD_TIMEOUT: 10

```

from `datasets-cli env`:

```

- `datasets` version: 3.0.1

- Platform: macOS-14.2.1-arm64-arm-64bit

- Python version: 3.12.2

- `huggingface_hub` version: 0.25.1

- PyArrow version: 17.0.0

- Pandas version: 2.2.3

- `fsspec` version: 2024.6.1

``` | closed | 2024-10-03T07:49:36Z | 2024-10-03T10:09:28Z | https://github.com/huggingface/datasets/issues/7194 | [] | kdutia | 2 |

jacobgil/pytorch-grad-cam | computer-vision | 110 | About target_category | Would you mind explaining the function of this parameter in detail? | closed | 2021-07-07T02:14:08Z | 2021-07-10T14:08:18Z | https://github.com/jacobgil/pytorch-grad-cam/issues/110 | [] | m250317460 | 4 |

aio-libs-abandoned/aioredis-py | asyncio | 567 | xread with non-integer timeout argument hangs indefinitely | The `timeout` argument [is passed verbatim to redis](https://github.com/aio-libs/aioredis/blob/master/aioredis/commands/streams.py#L252). When that argument is not an integer, the XREAD will hang.

Example:

`await r.xread(["system_event_stream"], timeout=0.1, latest_ids=[0])` shows up in `redis monitor` as `1554191321.856104 [3 172.18.0.16:58130] "XREAD" "BLOCK" "0.1" "STREAMS" "system_event_stream" "0"` and hangs forever.

It's probably best to assert the argument is an integer. Other python functions often accept seconds for timeouts, so a warning/error would be good. | closed | 2019-04-02T07:55:47Z | 2019-07-09T14:17:28Z | https://github.com/aio-libs-abandoned/aioredis-py/issues/567 | [] | tino | 1 |

holoviz/panel | matplotlib | 7,190 | test | Thanks for contacting us! Please read and follow these instructions carefully, then delete this introductory text to keep your issue easy to read. Note that the issue tracker is NOT the place for usage questions and technical assistance; post those at [Discourse](https://discourse.holoviz.org) instead. Issues without the required information below may be closed immediately.

#### ALL software version info

(this library, plus any other relevant software, e.g. bokeh, python, notebook, OS, browser, etc)

#### Description of expected behavior and the observed behavior

#### Complete, minimal, self-contained example code that reproduces the issue

```

# code goes here between backticks

```

#### Stack traceback and/or browser JavaScript console output

#### Screenshots or screencasts of the bug in action

- [ ] I may be interested in making a pull request to address this

| closed | 2024-08-27T08:48:25Z | 2024-08-27T08:49:23Z | https://github.com/holoviz/panel/issues/7190 | [

"TRIAGE"

] | hoxbro | 0 |

microsoft/unilm | nlp | 1,583 | Kosmo2.5 Chinese performance very bad | Why not consider add Chinese support? | open | 2024-06-23T03:30:22Z | 2024-07-14T14:56:19Z | https://github.com/microsoft/unilm/issues/1583 | [] | luohao123 | 2 |

Miserlou/Zappa | django | 1,509 | Can't parse ".serverless/requirements/xlrd/biffh.py" unless encoding is latin | <!--- Provide a general summary of the issue in the Title above -->

## Context

when detect_flask is called during zappa init, it fails on an encoding issue because of the commented out block at the head of xlrd/biffh.py

<!--- Provide a more detailed introduction to the issue itself, and why you consider it to be a bug -->

<!--- Also, please make sure that you are running Zappa _from a virtual environment_ and are using Python 2.7/3.6 -->

## Expected Behavior

<!--- Tell us what should happen -->

It should not error out.

## Actual Behavior

<!--- Tell us what happens instead -->

It fails with an encoding exception at f.readlines()

## Possible Fix

<!--- Not obligatory, but suggest a fix or reason for the bug -->

just add encoding='latin' to the open call

## Steps to Reproduce

<!--- Provide a link to a live example, or an unambiguous set of steps to -->

<!--- reproduce this bug include code to reproduce, if relevant -->

1. have xlrd as a dependency

2. call zappa init

3.

## Your Environment

<!--- Include as many relevant details about the environment you experienced the bug in -->

* Zappa version used:

* Operating System and Python version: OSX, python 3

* The output of `pip freeze`:

* Link to your project (optional):

* Your `zappa_settings.py`:

| open | 2018-05-14T18:00:14Z | 2018-05-14T18:00:14Z | https://github.com/Miserlou/Zappa/issues/1509 | [] | joshmalina | 0 |

httpie/http-prompt | api | 193 | Preview request? | While the `env` command is somewhat useful it would be nice to preview a request before sending it… perhaps a `show` or `dump` or `req` command would be helpful? | open | 2021-04-24T02:03:26Z | 2021-05-07T06:40:29Z | https://github.com/httpie/http-prompt/issues/193 | [] | jenstroeger | 2 |

newpanjing/simpleui | django | 401 | simpleui 的 layer对话框 失效。 | **bug描述**

* *Bug description * *

layer对话框,点击确定按钮,ajax 请求地址错误。导致失效,代码相关问题如下。

simpletags.py 文件 get_model_ajax_url 方法。

key 值编写错误,应将: {}:{}_{}_changelist 改为 {}:{}_{}_ajax | closed | 2021-10-15T02:12:01Z | 2021-10-15T06:27:45Z | https://github.com/newpanjing/simpleui/issues/401 | [

"bug"

] | cqjinxiaotao | 0 |

JohnSnowLabs/nlu | streamlit | 246 | Model Loading | I am loading model like this

```

import sparknlp

import nlu

spark = sparknlp.start()

df = spark.read.csv("nlp_data.csv")

res = nlu.load("pos").predict(df[["text"]].rdd.flatMap(lambda x: x).collect())

print(res)

spark.stop()

```

Each time I get the following messages in my console:

```

com.johnsnowlabs.nlp#spark-nlp_2.12 added as a dependency

:: resolving dependencies :: org.apache.spark#spark-submit-parent-3f17e4b8-0bdf-40c5-9879-d62f9c2dc974;1.0

confs: [default]

found com.johnsnowlabs.nlp#spark-nlp_2.12;5.2.3 in central

found com.typesafe#config;1.4.2 in central

found org.rocksdb#rocksdbjni;6.29.5 in central

found com.amazonaws#aws-java-sdk-s3;1.12.500 in central

found com.amazonaws#aws-java-sdk-kms;1.12.500 in central

found com.amazonaws#aws-java-sdk-core;1.12.500 in central

found commons-logging#commons-logging;1.1.3 in central

found commons-codec#commons-codec;1.15 in central

found org.apache.httpcomponents#httpclient;4.5.13 in central

found org.apache.httpcomponents#httpcore;4.4.13 in central

found software.amazon.ion#ion-java;1.0.2 in central

found com.fasterxml.jackson.dataformat#jackson-dataformat-cbor;2.12.6 in central

found joda-time#joda-time;2.8.1 in central

found com.amazonaws#jmespath-java;1.12.500 in central

found com.github.universal-automata#liblevenshtein;3.0.0 in central

found com.google.protobuf#protobuf-java-util;3.0.0-beta-3 in central

found com.google.protobuf#protobuf-java;3.0.0-beta-3 in central

found com.google.code.gson#gson;2.3 in central

found it.unimi.dsi#fastutil;7.0.12 in central

found org.projectlombok#lombok;1.16.8 in central

found com.google.cloud#google-cloud-storage;2.20.1 in central

found com.google.guava#guava;31.1-jre in central

found com.google.guava#failureaccess;1.0.1 in central

found com.google.guava#listenablefuture;9999.0-empty-to-avoid-conflict-with-guava in central

found com.google.errorprone#error_prone_annotations;2.18.0 in central

found com.google.j2objc#j2objc-annotations;1.3 in central

found com.google.http-client#google-http-client;1.43.0 in central

found io.opencensus#opencensus-contrib-http-util;0.31.1 in central

found com.google.http-client#google-http-client-jackson2;1.43.0 in central

found com.google.http-client#google-http-client-gson;1.43.0 in central

found com.google.api-client#google-api-client;2.2.0 in central

found com.google.oauth-client#google-oauth-client;1.34.1 in central

found com.google.http-client#google-http-client-apache-v2;1.43.0 in central

found com.google.apis#google-api-services-storage;v1-rev20220705-2.0.0 in central

found com.google.code.gson#gson;2.10.1 in central

found com.google.cloud#google-cloud-core;2.12.0 in central

found io.grpc#grpc-context;1.53.0 in central

found com.google.auto.value#auto-value-annotations;1.10.1 in central

found com.google.auto.value#auto-value;1.10.1 in central

found javax.annotation#javax.annotation-api;1.3.2 in central

found com.google.cloud#google-cloud-core-http;2.12.0 in central

found com.google.http-client#google-http-client-appengine;1.43.0 in central

found com.google.api#gax-httpjson;0.108.2 in central

found com.google.cloud#google-cloud-core-grpc;2.12.0 in central

found io.grpc#grpc-alts;1.53.0 in central

found io.grpc#grpc-grpclb;1.53.0 in central

found org.conscrypt#conscrypt-openjdk-uber;2.5.2 in central

found io.grpc#grpc-auth;1.53.0 in central

found io.grpc#grpc-protobuf;1.53.0 in central

found io.grpc#grpc-protobuf-lite;1.53.0 in central

found io.grpc#grpc-core;1.53.0 in central

found com.google.api#gax;2.23.2 in central

found com.google.api#gax-grpc;2.23.2 in central

found com.google.auth#google-auth-library-credentials;1.16.0 in central

found com.google.auth#google-auth-library-oauth2-http;1.16.0 in central

found com.google.api#api-common;2.6.2 in central

found io.opencensus#opencensus-api;0.31.1 in central

found com.google.api.grpc#proto-google-iam-v1;1.9.2 in central

found com.google.protobuf#protobuf-java;3.21.12 in central

found com.google.protobuf#protobuf-java-util;3.21.12 in central

found com.google.api.grpc#proto-google-common-protos;2.14.2 in central

found org.threeten#threetenbp;1.6.5 in central

found com.google.api.grpc#proto-google-cloud-storage-v2;2.20.1-alpha in central

found com.google.api.grpc#grpc-google-cloud-storage-v2;2.20.1-alpha in central

found com.google.api.grpc#gapic-google-cloud-storage-v2;2.20.1-alpha in central

found com.fasterxml.jackson.core#jackson-core;2.14.2 in central

found com.google.code.findbugs#jsr305;3.0.2 in central

found io.grpc#grpc-api;1.53.0 in central

found io.grpc#grpc-stub;1.53.0 in central

found org.checkerframework#checker-qual;3.31.0 in central

found io.perfmark#perfmark-api;0.26.0 in central

found com.google.android#annotations;4.1.1.4 in central

found org.codehaus.mojo#animal-sniffer-annotations;1.22 in central

found io.opencensus#opencensus-proto;0.2.0 in central

found io.grpc#grpc-services;1.53.0 in central

found com.google.re2j#re2j;1.6 in central

found io.grpc#grpc-netty-shaded;1.53.0 in central

found io.grpc#grpc-googleapis;1.53.0 in central

found io.grpc#grpc-xds;1.53.0 in central

found com.navigamez#greex;1.0 in central

found dk.brics.automaton#automaton;1.11-8 in central

found com.johnsnowlabs.nlp#tensorflow-cpu_2.12;0.4.4 in central

found com.microsoft.onnxruntime#onnxruntime;1.16.3 in central

:: resolution report :: resolve 1966ms :: artifacts dl 54ms

:: modules in use:

com.amazonaws#aws-java-sdk-core;1.12.500 from central in [default]

com.amazonaws#aws-java-sdk-kms;1.12.500 from central in [default]

com.amazonaws#aws-java-sdk-s3;1.12.500 from central in [default]

com.amazonaws#jmespath-java;1.12.500 from central in [default]

com.fasterxml.jackson.core#jackson-core;2.14.2 from central in [default]

com.fasterxml.jackson.dataformat#jackson-dataformat-cbor;2.12.6 from central in [default]

com.github.universal-automata#liblevenshtein;3.0.0 from central in [default]

com.google.android#annotations;4.1.1.4 from central in [default]

com.google.api#api-common;2.6.2 from central in [default]

com.google.api#gax;2.23.2 from central in [default]

com.google.api#gax-grpc;2.23.2 from central in [default]

com.google.api#gax-httpjson;0.108.2 from central in [default]

com.google.api-client#google-api-client;2.2.0 from central in [default]

com.google.api.grpc#gapic-google-cloud-storage-v2;2.20.1-alpha from central in [default]

com.google.api.grpc#grpc-google-cloud-storage-v2;2.20.1-alpha from central in [default]

com.google.api.grpc#proto-google-cloud-storage-v2;2.20.1-alpha from central in [default]

com.google.api.grpc#proto-google-common-protos;2.14.2 from central in [default]

com.google.api.grpc#proto-google-iam-v1;1.9.2 from central in [default]

com.google.apis#google-api-services-storage;v1-rev20220705-2.0.0 from central in [default]

com.google.auth#google-auth-library-credentials;1.16.0 from central in [default]

com.google.auth#google-auth-library-oauth2-http;1.16.0 from central in [default]

com.google.auto.value#auto-value;1.10.1 from central in [default]

com.google.auto.value#auto-value-annotations;1.10.1 from central in [default]

com.google.cloud#google-cloud-core;2.12.0 from central in [default]

com.google.cloud#google-cloud-core-grpc;2.12.0 from central in [default]

com.google.cloud#google-cloud-core-http;2.12.0 from central in [default]

com.google.cloud#google-cloud-storage;2.20.1 from central in [default]

com.google.code.findbugs#jsr305;3.0.2 from central in [default]

com.google.code.gson#gson;2.10.1 from central in [default]

com.google.errorprone#error_prone_annotations;2.18.0 from central in [default]

com.google.guava#failureaccess;1.0.1 from central in [default]

com.google.guava#guava;31.1-jre from central in [default]

com.google.guava#listenablefuture;9999.0-empty-to-avoid-conflict-with-guava from central in [default]

com.google.http-client#google-http-client;1.43.0 from central in [default]

com.google.http-client#google-http-client-apache-v2;1.43.0 from central in [default]

com.google.http-client#google-http-client-appengine;1.43.0 from central in [default]

com.google.http-client#google-http-client-gson;1.43.0 from central in [default]

com.google.http-client#google-http-client-jackson2;1.43.0 from central in [default]

com.google.j2objc#j2objc-annotations;1.3 from central in [default]

com.google.oauth-client#google-oauth-client;1.34.1 from central in [default]

com.google.protobuf#protobuf-java;3.21.12 from central in [default]

com.google.protobuf#protobuf-java-util;3.21.12 from central in [default]

com.google.re2j#re2j;1.6 from central in [default]

com.johnsnowlabs.nlp#spark-nlp_2.12;5.2.3 from central in [default]

com.johnsnowlabs.nlp#tensorflow-cpu_2.12;0.4.4 from central in [default]

com.microsoft.onnxruntime#onnxruntime;1.16.3 from central in [default]

com.navigamez#greex;1.0 from central in [default]

com.typesafe#config;1.4.2 from central in [default]

commons-codec#commons-codec;1.15 from central in [default]

commons-logging#commons-logging;1.1.3 from central in [default]

dk.brics.automaton#automaton;1.11-8 from central in [default]

io.grpc#grpc-alts;1.53.0 from central in [default]

io.grpc#grpc-api;1.53.0 from central in [default]

io.grpc#grpc-auth;1.53.0 from central in [default]

io.grpc#grpc-context;1.53.0 from central in [default]

io.grpc#grpc-core;1.53.0 from central in [default]

io.grpc#grpc-googleapis;1.53.0 from central in [default]

io.grpc#grpc-grpclb;1.53.0 from central in [default]

io.grpc#grpc-netty-shaded;1.53.0 from central in [default]

io.grpc#grpc-protobuf;1.53.0 from central in [default]

io.grpc#grpc-protobuf-lite;1.53.0 from central in [default]

io.grpc#grpc-services;1.53.0 from central in [default]

io.grpc#grpc-stub;1.53.0 from central in [default]

io.grpc#grpc-xds;1.53.0 from central in [default]

io.opencensus#opencensus-api;0.31.1 from central in [default]

io.opencensus#opencensus-contrib-http-util;0.31.1 from central in [default]

io.opencensus#opencensus-proto;0.2.0 from central in [default]

io.perfmark#perfmark-api;0.26.0 from central in [default]

it.unimi.dsi#fastutil;7.0.12 from central in [default]

javax.annotation#javax.annotation-api;1.3.2 from central in [default]

joda-time#joda-time;2.8.1 from central in [default]

org.apache.httpcomponents#httpclient;4.5.13 from central in [default]

org.apache.httpcomponents#httpcore;4.4.13 from central in [default]

org.checkerframework#checker-qual;3.31.0 from central in [default]

org.codehaus.mojo#animal-sniffer-annotations;1.22 from central in [default]

org.conscrypt#conscrypt-openjdk-uber;2.5.2 from central in [default]

org.projectlombok#lombok;1.16.8 from central in [default]

org.rocksdb#rocksdbjni;6.29.5 from central in [default]

org.threeten#threetenbp;1.6.5 from central in [default]

software.amazon.ion#ion-java;1.0.2 from central in [default]

:: evicted modules:

commons-logging#commons-logging;1.2 by [commons-logging#commons-logging;1.1.3] in [default]

commons-codec#commons-codec;1.11 by [commons-codec#commons-codec;1.15] in [default]

com.google.protobuf#protobuf-java-util;3.0.0-beta-3 by [com.google.protobuf#protobuf-java-util;3.21.12] in [default]

com.google.protobuf#protobuf-java;3.0.0-beta-3 by [com.google.protobuf#protobuf-java;3.21.12] in [default]

com.google.code.gson#gson;2.3 by [com.google.code.gson#gson;2.10.1] in [default]

---------------------------------------------------------------------

| | modules || artifacts |

| conf | number| search|dwnlded|evicted|| number|dwnlded|

---------------------------------------------------------------------

| default | 85 | 0 | 0 | 5 || 80 | 0 |

---------------------------------------------------------------------

:: retrieving :: org.apache.spark#spark-submit-parent-3f17e4b8-0bdf-40c5-9879-d62f9c2dc974

confs: [default]

0 artifacts copied, 80 already retrieved (0kB/27ms)

pos_anc download started this may take some time.

Approximate size to download 3.9 MB

[ / ]pos_anc download started this may take some time.

Approximate size to download 3.9 MB

[ — ]Download done! Loading the resource.

[OK!]

sentence_detector_dl download started this may take some time.

Approximate size to download 354.6 KB

[ | ]sentence_detector_dl download started this may take some time.

Approximate size to download 354.6 KB

[ / ]Download done! Loading the resource.

[ — ]2024-02-06 14:43:45.340048: I external/org_tensorflow/tensorflow/core/platform/cpu_feature_guard.cc:151] This TensorFlow binary is optimized with oneAPI Deep Neural Network Library (oneDNN) to use the following CPU instructions in performance-critical operations: AVX2 FMA

To enable them in other operations, rebuild TensorFlow with the appropriate compiler flags.

[OK!]

```

Is it indicating that I am downloading the model(s) from the internet agin and again, or am I downloading it from the jar files?

I assume that the jar files are now on my local system since it took some time when I first installed spark-nlp, and now it just prints the jars information almost immediately when I run the code | open | 2024-02-06T09:22:44Z | 2024-02-06T09:22:44Z | https://github.com/JohnSnowLabs/nlu/issues/246 | [] | ArijitSinghEDA | 0 |

python-visualization/folium | data-visualization | 1,596 | Formatted popups or tooltips with variable strings? | I'm using Python 3.10.4 and the most recent version of folium. My needs are simple: to create popups or tooltips on a map showing the name of the location and some information. I am fetching the results from a pandas dataframe, over which I'm iterating with iterrows. The salient code currently is this:

tooltip = row["place"] + ", Population: "+str(row["population"])

which produces a single line, like

Crumbletown, Population: 4.5

But what I want is for the text to be formatted, so it looks for example like this:

**Crumbletown**

Population: 4.5

maybe with a larger font for the heading. I know formatting can be done with html (as per the popup.ipynb example notebook). But what I don't know is how to include a string variable in the html code, and whether this issue is a Python issue, an html issue, or something else. Note that I am a folium beginner, so forgive me if this is a trivial question. Many thanks! | closed | 2022-05-23T05:29:25Z | 2022-11-17T15:29:11Z | https://github.com/python-visualization/folium/issues/1596 | [] | amca01 | 1 |

microsoft/unilm | nlp | 1,275 | [BEIT3] How to apply GradCam on the beit3 models? | Hi. I want to see the gradcam image of the beit3 model. I used the grad-cam library [https://github.com/jacobgil/pytorch-grad-cam], but I got this error. 'RuntimeError: element 0 of tensors does not require grad and does not have a grad_fn'.

Please help..

Thank you! | closed | 2023-08-30T08:29:48Z | 2023-08-30T10:12:15Z | https://github.com/microsoft/unilm/issues/1275 | [] | TheNha | 6 |

scrapy/scrapy | web-scraping | 5,850 | Invalid Copyright Notice | I am not a lawyer but I believe your copyright notice at https://github.com/scrapy/scrapy/blob/master/LICENSE

is invalid in the USA (and probably elsewhere) because it lacks a date.

Copyright (c) Scrapy developers.

Should be:

Copyright (c) 2011 Scrapy developers.

Or

Copyright (c) 2011-2023 Scrapy developers.

Or a variation of the above.

From https://www.copyright.gov/circs/circ01.pdf#page=7

"""

Copyright Notice

A copyright notice is a statement placed on copies or phonorecords of a work to inform the public that a copyright owner is claiming ownership of the work. A copyright notice consists of three elements:

• The copyright symbol © or (p) for phonorecords, the word “Copyright,” or the abbreviation “Copr.”;

• The year of first publication of the work (or of creation if the work is unpublished); and

• The name of the copyright owner, an abbreviation by which the name can be recognized, or a generally known alternative designation.

"""

| closed | 2023-03-15T16:38:43Z | 2024-01-03T18:17:56Z | https://github.com/scrapy/scrapy/issues/5850 | [] | RogerHaase | 1 |

QuivrHQ/quivr | api | 2,960 | TOtot | closed | 2024-08-07T10:59:51Z | 2024-08-07T11:00:57Z | https://github.com/QuivrHQ/quivr/issues/2960 | [] | StanGirard | 1 | |

python-gitlab/python-gitlab | api | 2,575 | How to use python-gitlab library to search a string in every commits? | I noticed `commits = project.commits.list(all=True)` can list every commits, but I don't know how to perform a search against each commits, can it be done? :) | closed | 2023-05-25T02:39:41Z | 2024-05-27T01:20:03Z | https://github.com/python-gitlab/python-gitlab/issues/2575 | [] | umeharasang | 1 |

modelscope/data-juicer | data-visualization | 98 | [Bug]: alphanumeric_filter, char.isalnum() | ### Before Reporting 报告之前

- [X] I have pulled the latest code of main branch to run again and the bug still existed. 我已经拉取了主分支上最新的代码,重新运行之后,问题仍不能解决。

- [X] I have read the [README](https://github.com/alibaba/data-juicer/blob/main/README.md) carefully and no error occurred during the installation process. (Otherwise, we recommend that you can ask a question using the Question template) 我已经仔细阅读了 [README](https://github.com/alibaba/data-juicer/blob/main/README_ZH.md) 上的操作指引,并且在安装过程中没有错误发生。(否则,我们建议您使用Question模板向我们进行提问)

### Search before reporting 先搜索,再报告

- [X] I have searched the Data-Juicer [issues](https://github.com/alibaba/data-juicer/issues) and found no similar bugs. 我已经在 [issue列表](https://github.com/alibaba/data-juicer/issues) 中搜索但是没有发现类似的bug报告。

### OS 系统

ubuntu

### Installation Method 安装方式

pip

### Data-Juicer Version Data-Juicer版本

v0.1.2

### Python Version Python版本

3.8

### Describe the bug 描述这个bug

https://github.com/alibaba/data-juicer/blob/main/data_juicer/ops/filter/alphanumeric_filter.py#L75

``````

alnum_count = sum(

map(lambda char: 1

if char.isalnum() else 0, sample[self.text_key]))

``````

Python3默认使用Unicode编码,所以`'汉字'.isalnum()`会返回True;encode()默认编码是UTF-8,编码成utf8之后,汉字就不会返回True了

``````

alnum_count = sum(

map(lambda char: 1

if char.encode().isalnum() else 0, sample[self.text_key]))

``````

### To Reproduce 如何复现

python tools/analyze_data.py --config configs/demo/analyser.yaml

### Configs 配置信息

``````

project_name: 'demo-analyser'

dataset_path: 'demos/data/demo-dataset.jsonl' # path to your dataset directory or file

np: 4 # number of subprocess to process your dataset

text_keys: 'text'

export_path: './outputs/demo-analyser/demo-analyser-result.jsonl'

# process schedule

# a list of several process operators with their arguments

process:

- alphanumeric_filter:

``````

### Logs 报错日志

_No response_

### Screenshots 截图

第五行全中文,alnum_ratio字母数字比例,正确应该是0

### Additional 额外信息

_No response_ | closed | 2023-11-24T01:52:00Z | 2023-12-22T09:32:20Z | https://github.com/modelscope/data-juicer/issues/98 | [

"bug",

"stale-issue"

] | simplew2011 | 3 |

ageitgey/face_recognition | python | 1,355 | [Question] : Is any way to recognise masked face img | Hello guys ,

Face_recognition is failing while detecting faces with mask and person name.

How we can tackle this issue ?

Any suggestions

Thanks | open | 2021-08-11T11:34:08Z | 2021-08-24T00:39:04Z | https://github.com/ageitgey/face_recognition/issues/1355 | [] | VinayChaudhari1996 | 1 |

StructuredLabs/preswald | data-visualization | 231 | [FEATURE] Use API endpoints as a source | **Is your feature request related to a problem? Please describe.**

Today, users can add in CSV, Postgres, and Clickhouse as sources. S3 is coming soon too. We want to support APIs as sources.

**Describe the solution you'd like**

An API source type which pulls from the API (w/ necessary keys/auth) upon running a query.

**Describe alternatives you've considered**

Separately dumping an API output JSON to a file, and then importing that via pandas.

**Additional context**

Take a look at issue 153, as well as the current implementations of sources (the 3 above) in `preswald/engine/managers/data.py` and `preswald/interfaces/data.py` | open | 2025-03-13T00:27:50Z | 2025-03-15T14:46:04Z | https://github.com/StructuredLabs/preswald/issues/231 | [

"enhancement"

] | shivam-singhal | 1 |

ultralytics/yolov5 | deep-learning | 13,216 | gpu memory usage is low but out of memory | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) and found no similar questions.

### Question

When training yolov5, I found that the GPU memory was not used, GPU memory usage is versy low, and the memory increased very quickly, resulting in out of memory. I would like to know how to use the GPU for training?

command is `python .\train.py --device 0 --epochs 1 --batch-size 16`

### Additional

_No response_ | open | 2024-07-24T15:52:07Z | 2024-10-27T13:30:50Z | https://github.com/ultralytics/yolov5/issues/13216 | [

"question"

] | leooobreak | 2 |

AutoGPTQ/AutoGPTQ | nlp | 611 | [QUESTION] How to unload AutoGPTQForCausalLM.from_quantized model from GPU to CPU in order to free up GPU memory | Hi

I have loaded a model with the follow code:

```

DEVICE = "cuda:0" if torch.cuda.is_available() else "cpu"

print(DEVICE)

embeddings = HuggingFaceInstructEmbeddings(

model_name="hkunlp/instructor-xl",model_kwargs={"device":DEVICE}

)

model_name_or_path = "./models/Llama-2-13B-chat-GPTQ"

model_basename = "model"

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, use_fast=True)

model = AutoGPTQForCausalLM.from_quantized(

model_name_or_path,

revision= "gptq-4bit-128g-actorder_True", #"gptq-8bit-128g-actorder_False", #revision="gptq-4bit-128g-actorder_True",

model_basename=model_basename,

use_safetensors=True,

trust_remote_code=True,

inject_fused_attention=False,

device=DEVICE,

quantize_config=None,

)

```

And if I print out this model, it has the following structure:

```

LlamaGPTQForCausalLM(

(model): LlamaForCausalLM(

(model): LlamaModel(

(embed_tokens): Embedding(32000, 5120, padding_idx=0)

(layers): ModuleList(

(0-39): 40 x LlamaDecoderLayer(

(self_attn): LlamaAttention(

(rotary_emb): LlamaRotaryEmbedding()

(k_proj): QuantLinear()

(o_proj): QuantLinear()

(q_proj): QuantLinear()

(v_proj): QuantLinear()

)

(mlp): LlamaMLP(

(act_fn): SiLUActivation()

(down_proj): QuantLinear()

(gate_proj): QuantLinear()

(up_proj): QuantLinear()

)

(input_layernorm): LlamaRMSNorm()

(post_attention_layernorm): LlamaRMSNorm()

)

)

(norm): LlamaRMSNorm()

)

(lm_head): Linear(in_features=5120, out_features=32000, bias=False)

)

)

```

Is there a way that I can unload this model from the GPU to free up GPU memory?

I tried ```model.to(torch.device("cpu")) ```

and it did not work.

Thanks | open | 2024-03-26T10:58:28Z | 2024-03-26T10:58:28Z | https://github.com/AutoGPTQ/AutoGPTQ/issues/611 | [] | tommycmy | 0 |

CorentinJ/Real-Time-Voice-Cloning | tensorflow | 787 | LibriTTS & older models | Hey - I've been playing with the Tensorflow version of this repo with a LibriTTS model for some time now & have had some good results from it. The model i've been using was from here & had a partial train of a LibriTTS dataset which was really good for punctuation etc.

Just upgraded my GPU to a 3080ti (rare I know!) & tried to get the repo working with this GPU.. the cuda sdk doesn't appear to work unfortunately (10.1) & every time I try to load an audio file to clone the toolkit just hangs..

So i've ended up pulling the latest repo & pretrained models, but noticed there's no punctuation - which is a real shame...

Would appreciate any thoughts on either getting the older repo working or how to get LibriTTS into the mix?

()Also if needed I can provide a 5950x and GPU to train models if it would help restore some of the functionality around this) | closed | 2021-07-02T20:46:51Z | 2021-09-14T17:35:29Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/787 | [] | ThePowerOfMonkeys | 1 |

inducer/pudb | pytest | 375 | source not displayed (linecache filename mismatch) | I'm debugging a Python 3 script, which I typically invoke from the directory it lives in, as:

> ./fooBar.py parm1

If I instrument my script with a `set_trace()` call and start the script as above, it hits the breakpoint and stops. However, no source code is displayed. If, on the other hand, I enter the debugger from the command line, as:

> python3 -m pudb fooBar.py parm1

and _then_ hit continue, the source is displayed when I reach the `set_trace()` call.

Things to note:

* The filename is mixed case, as in my examples above.

* I am running this on Linux.

* The file is on a network storage device (netApp), not local to my Linux server.

* The linecache key for the filename in the non-working case is `./fooBar.py` as would be expected. Apparently, the filename used when looking up the file upon encountering the breakpoint is not exactly the same.

* If, instead of using the relative path to invoke the script from the command line (e.g., `./fooBar.py`), I use the absolute path (e.g., `$PWD/fooBar.py`), it hits the breakpoint and displays the source as it should.

| open | 2020-01-23T16:00:46Z | 2020-01-27T22:40:09Z | https://github.com/inducer/pudb/issues/375 | [] | dccarson | 1 |

whitphx/streamlit-webrtc | streamlit | 1,119 | Camera doesn't start when offline state | I'm creating a camera app that runs locally.

Also, I want to run it even when the PC is not connected to network, but if I press the start button when the PC is not connected to network, the camera does not start and nothing is output to the log.

<img width="617" alt="camera_image" src="https://user-images.githubusercontent.com/13214003/199183279-cd5779d3-2fe4-4aee-b281-23f431da2e13.png">

Of course, the camera is displayed correctly when connected to the Internet.

Currently, when using streamlit-webrtc, we only do simple processing like the code below.

```

webrtc_streamer(

key="image-filter",

mode=WebRtcMode.SENDRECV,

video_frame_callback=callback_func,

media_stream_constraints={"video": True},

)

```

What do I need to do to make streamlit-webrtc work offline? | closed | 2022-11-01T07:47:25Z | 2022-12-06T04:26:35Z | https://github.com/whitphx/streamlit-webrtc/issues/1119 | [] | RoloAfrole | 2 |

ultralytics/ultralytics | deep-learning | 19,320 | About YOLOv12 support | ### Search before asking

- [x] I have searched the Ultralytics [issues](https://github.com/ultralytics/ultralytics/issues) and found no similar feature requests.

### Description

Since YOLOv12 is released at https://github.com/sunsmarterjie/yolov12, will the models of YOLOv12 be supported? Thank you.

### Use case

_No response_

### Additional

_No response_

### Are you willing to submit a PR?

- [ ] Yes I'd like to help by submitting a PR! | closed | 2025-02-20T01:09:22Z | 2025-02-21T08:41:36Z | https://github.com/ultralytics/ultralytics/issues/19320 | [

"enhancement",

"question",

"fixed"

] | curtis18 | 5 |

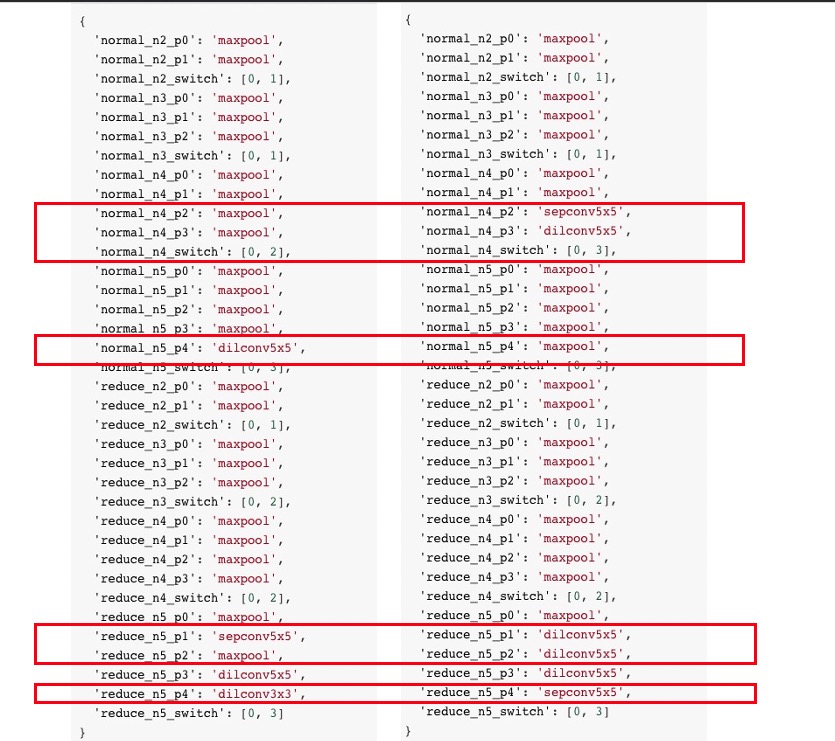

HIT-SCIR/ltp | nlp | 268 | srl_srl_train项目编译错误 |

| closed | 2017-12-09T06:51:22Z | 2017-12-10T03:54:40Z | https://github.com/HIT-SCIR/ltp/issues/268 | [] | JaneWangle | 1 |

SciTools/cartopy | matplotlib | 2,228 | Source code no longer included on PyPI | On conda-forge, we're trying to build 0.22.0:

https://github.com/conda-forge/cartopy-feedstock/pull/156

However, we're running into issues because a `.tar.gz` file wasn't included in the PyPI release. First, our bot was not able to create a PR for the release (see https://github.com/conda-forge/cartopy-feedstock/issues/155). Then, when I tried to use the source from GitHub instead, `setuptools-scm` complains:

```

LookupError: setuptools-scm was unable to detect version for /home/conda/feedstock_root/build_artifacts/cartopy_1691225778495/work.

Make sure you're either building from a fully intact git repository or PyPI tarballs. Most other sources (such as GitHub's tarballs, a git checkout without the .git folder) don't contain the necessary metadata and will not work.

For example, if you're using pip, instead of https://github.com/user/proj/archive/master.zip use git+https://github.com/user/proj.git#egg=proj

[end of output]

```

While there are workarounds that I've used in the past (I'm a little rusty so I'll have to dig them up), it seems like the cleanest solution would be to include the source on PyPI agian. | closed | 2023-08-05T09:04:07Z | 2023-08-07T22:04:16Z | https://github.com/SciTools/cartopy/issues/2228 | [] | xylar | 4 |

modin-project/modin | pandas | 7,043 | `BaseQueryCompiler.repartition()` works slow | [`BaseQueryCompiler.repartition()`](https://github.com/modin-project/modin/blob/14452a8414bdec10e3b5cfa05e98bd26c6e1bafc/modin/core/storage_formats/base/query_compiler.py#L6711) works slower than the same logic implemented on partition's level (see perf measurements in [this PR](https://github.com/modin-project/modin/pull/7016)) | open | 2024-03-08T13:16:37Z | 2024-03-08T13:16:38Z | https://github.com/modin-project/modin/issues/7043 | [

"Performance 🚀",

"P2"

] | dchigarev | 0 |

ultralytics/yolov5 | machine-learning | 12,730 | export int8 tflite with customdataset | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) and found no similar questions.

### Question

Hi, there

I trained custom dataset and got a .pt file and I tried to convert .pt to .tflite.

Shell script is running well, and I tried to infer a image to predict.

But class name of detection result is not correct.

**ex) custom dataset class label are A, B, C and predicted class has to be 'C' which is class_index=2,

but the class of inferenced image is 'car' which is class_index=2 of coco128.yaml**

below is my export.py shell script:

**python export.py --data custom_dataset.yaml --weight /home/aaa/yolov5/runs/train/moel_res/weights/best.pt --int8 --include tflite**

I tried to find if --data parameter is correct but I can't find yet.

Thanks.

### Additional

_No response_ | closed | 2024-02-13T05:34:06Z | 2024-02-13T11:45:58Z | https://github.com/ultralytics/yolov5/issues/12730 | [

"question"

] | timingisnow | 2 |

2noise/ChatTTS | python | 744 | decoder error on all_codes.masked_fill & what's the correct vesion of vector_quantize_pytorch | An error occurred as follows during the process of changing the default decoder to DVAE (inferring with `use_decoder=False`). Could it be attributed to an incompatible version of `vector_quantize_pytorch==1.17.3`? However, I have attempted `vector-quantize-pytorch==1.16.1`, `vector-quantize-pytorch==1.15.5`, and `vector-quantize-pytorch==1.14.24`.

```code

File "/workspace/ChatTTS/ChatTTS/model/dvae.py", line 95, in _embed

feat = self.quantizer.get_output_from_indices(x)

File "/usr/local/lib/python3.10/dist-packages/vector_quantize_pytorch/residual_fsq.py", line 248, in get_output_from_indices

outputs = tuple(rvq.get_output_from_indices(chunk_indices) for rvq, chunk_indices in zip(self.rvqs, indices))

File "/usr/local/lib/python3.10/dist-packages/vector_quantize_pytorch/residual_fsq.py", line 248, in <genexpr>

outputs = tuple(rvq.get_output_from_indices(chunk_indices) for rvq, chunk_indices in zip(self.rvqs, indices))

File "/usr/local/lib/python3.10/dist-packages/vector_quantize_pytorch/residual_fsq.py", line 134, in get_output_from_indices

codes = self.get_codes_from_indices(indices)

File "/usr/local/lib/python3.10/dist-packages/vector_quantize_pytorch/residual_fsq.py", line 120, in get_codes_from_indices

all_codes = all_codes.masked_fill(rearrange(mask, 'b n q -> q b n 1'), 0.)

RuntimeError: expected self and mask to be on the same device, but got mask on cpu and self on cuda:0

../aten/src/ATen/native/cuda/IndexKernel.cu:92: operator(): block: [2,0,0], thread: [36,0,0] Assertion `-sizes[i] <= index && index < sizes[i] && "index out of bounds"` failed.

```

environment:

```

av 13.0.0

chattts 0.0.0 /workspace/ChatTTS

gradio 4.42.0

gradio_client 1.3.0

nemo_text_processing 1.0.2

numba 0.60.0

numpy 1.26.4

pybase16384 0.3.7

pydub 0.25.1

pynini 2.1.5

torch 2.1.2

torchaudio 2.1.2

tqdm 4.66.5

transformers 4.44.2

transformers-stream-generator 0.0.5

vector-quantize-pytorch 1.16.1

vocos 0.1.0

WeTextProcessing 1.0.3

```

Would you be able to offer me some suggestions, please? | closed | 2024-09-05T08:09:57Z | 2024-10-23T04:01:31Z | https://github.com/2noise/ChatTTS/issues/744 | [

"question",

"stale"

] | unbelievable3513 | 2 |

netbox-community/netbox | django | 17,688 | GraphQL filters (AND, OR and NOT) don't work for custom filterset fields | ### Deployment Type

Self-hosted

### NetBox Version

v4.1.3

### Python Version

3.10

### Steps to Reproduce

Using a GraphQL filter with AND, OR, NOT for a field that has custom implementation in the filterset (or only appears in the filterset) for example asn_id on Site. Doesn't work

1. Create 4 sites with ID's 1 to 4

2. Create 4 ASNs and assign each to a single Site (1-4)

3. Use the following GraphQL query

```

{

site_list(filters: {asn_id: "1", OR: {asn_id: "4"}}) {

name

asns {

id

}

}

}

```

### Expected Behavior

Will get a list of 2 sites.

### Observed Behavior

Get an empty list. | closed | 2024-10-07T17:00:19Z | 2025-03-10T19:19:02Z | https://github.com/netbox-community/netbox/issues/17688 | [

"type: bug",

"status: accepted",

"topic: GraphQL",

"severity: medium",

"netbox"

] | arthanson | 6 |

sgl-project/sglang | pytorch | 3,880 | [Feature] Run DeepSeek V3 W4-only | ### Checklist