repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

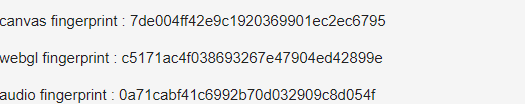

pyppeteer/pyppeteer | automation | 151 | How to disguise browser fingerprint? | I didn't find any documentation about injecting JavaScript before the page loads.

How to modify the fingerprint information of these browsers in the picture

| closed | 2020-07-12T01:14:20Z | 2020-07-12T04:10:39Z | https://github.com/pyppeteer/pyppeteer/issues/151 | [

"invalid"

] | xiaohuimc | 1 |

allenai/allennlp | nlp | 5,260 | I think the implementation of bimpm_mathcing is wrong | <!--

Please fill this template entirely and do not erase any of it.

We reserve the right to close without a response bug reports which are incomplete.

If you have a question rather than a bug, please ask on [Stack Overflow](https://stackoverflow.com/questions/tagged/allennlp) rather than posting an issue here.

-->

## Checklist

<!-- To check an item on the list replace [ ] with [x]. -->

- [ ] I have verified that the issue exists against the `main` branch of AllenNLP.

- [x] I have read the relevant section in the [contribution guide](https://github.com/allenai/allennlp/blob/main/CONTRIBUTING.md#bug-fixes-and-new-features) on reporting bugs.

- [ ] I have checked the [issues list](https://github.com/allenai/allennlp/issues) for similar or identical bug reports.

- [ ] I have checked the [pull requests list](https://github.com/allenai/allennlp/pulls) for existing proposed fixes.

- [ ] I have checked the [CHANGELOG](https://github.com/allenai/allennlp/blob/main/CHANGELOG.md) and the [commit log](https://github.com/allenai/allennlp/commits/main) to find out if the bug was already fixed in the main branch.

- [ ] I have included in the "Description" section below a traceback from any exceptions related to this bug.

- [ ] I have included in the "Related issues or possible duplicates" section beloew all related issues and possible duplicate issues (If there are none, check this box anyway).

- [ ] I have included in the "Environment" section below the name of the operating system and Python version that I was using when I discovered this bug.

- [ ] I have included in the "Environment" section below the output of `pip freeze`.

- [ ] I have included in the "Steps to reproduce" section below a minimally reproducible example.

## Description

I read the code source code of Bi-MPM model(https://github.com/allenai/allennlp/blob/main/allennlp/modules/bimpm_matching.py) and I found the Attentive-Matching in the code is very different from what is described in the original paper.

For example, the 350, 351 lines, softmax is used to the hidden_state dimension(the last dimension). However, to be the same as what is in the paper, I think we just need to devide the weighted sum with the sum of weights.

<!-- Please provide a clear and concise description of what the bug is here. -->

<details>

<summary><b>Python traceback:</b></summary>

<p>

<!-- Paste the traceback from any exception (if there was one) in between the next two lines below -->

```

```

</p>

</details>

## Related issues or possible duplicates

- None

## Environment

<!-- Provide the name of operating system below (e.g. OS X, Linux) -->

OS:

<!-- Provide the Python version you were using (e.g. 3.7.1) -->

Python version:

<details>

<summary><b>Output of <code>pip freeze</code>:</b></summary>

<p>

<!-- Paste the output of `pip freeze` in between the next two lines below -->

```

```

</p>

</details>

## Steps to reproduce

<details>

<summary><b>Example source:</b></summary>

<p>

<!-- Add a fully runnable example in between the next two lines below that will reproduce the bug -->

```

```

</p>

</details>

| closed | 2021-06-15T02:41:30Z | 2021-07-28T16:13:55Z | https://github.com/allenai/allennlp/issues/5260 | [

"bug",

"stale"

] | zhaowei-wang-nlp | 6 |

healthchecks/healthchecks | django | 1,006 | Discord Webhook integration | Hello,

thanks for healthchecks !

Would it be possible to get [Discord Webhook integration](https://support.discord.com/hc/en-us/articles/228383668-Intro-to-Webhooks) ?

There are simpler to set-up than the Discord App integration.

| open | 2024-05-25T16:12:58Z | 2024-08-28T16:45:04Z | https://github.com/healthchecks/healthchecks/issues/1006 | [

"good-first-issue"

] | r3mi | 9 |

MaartenGr/BERTopic | nlp | 1,109 | `_preprocess_text` does not remove stop words | I tried to aggregate documents by topics using the following code:

```

# Aggregate documents by topics

documents = pd.DataFrame({"Document": docs, "ID": range(len(docs)), "Topic": topics})

documents_per_topic = documents.groupby(['Topic'], as_index=False).agg({'Document': ' '.join})

cleaned_docs = topic_model._preprocess_text(documents_per_topic.Document.values)

```

However, I found the stop words seem not yet removed as seen in the word cloud (there are many `s`, `ing`, etc.):

BTW, I have explicitly set up stop words in the config of bertopic model:

```

import os

import pandas as pd

path_dataset = 'Dataset'

df_all = pd.read_json(os.path.join(path_dataset, 'all_filtered.json'))

docs = df_all['Challenge_original_content_gpt_summary'].tolist()

# visualize the best challenge topic model

from sklearn.feature_extraction.text import TfidfVectorizer

from bertopic.vectorizers import ClassTfidfTransformer

from sentence_transformers import SentenceTransformer

from bertopic.representation import KeyBERTInspired

from bertopic import BERTopic

from hdbscan import HDBSCAN

from umap import UMAP

# Step 1 - Extract embeddings

embedding_model = SentenceTransformer("all-mpnet-base-v2")

# Step 2 - Reduce dimensionality

umap_model = UMAP(n_components=5, metric='manhattan',

random_state=42, low_memory=False)

# Step 3 - Cluster reduced embeddings

min_samples = int(35 * 0.5)

hdbscan_model = HDBSCAN(min_cluster_size=35,

min_samples=min_samples, prediction_data=True)

# Step 4 - Tokenize topics

vectorizer_model = TfidfVectorizer(stop_words="english", ngram_range=(1, 2))

# Step 5 - Create topic representation

ctfidf_model = ClassTfidfTransformer(reduce_frequent_words=True)

# Step 6 - (Optional) Fine-tune topic representation

representation_model = KeyBERTInspired()

# All steps together

topic_model = BERTopic(

embedding_model=embedding_model,

umap_model=umap_model,

hdbscan_model=hdbscan_model,

vectorizer_model=vectorizer_model,

ctfidf_model=ctfidf_model,

representation_model=representation_model,

calculate_probabilities=True

)

topics, probs = topic_model.fit_transform(docs)

``` | closed | 2023-03-21T06:33:50Z | 2023-03-21T10:06:31Z | https://github.com/MaartenGr/BERTopic/issues/1109 | [] | zhimin-z | 1 |

dmlc/gluon-cv | computer-vision | 1,514 | temporal segment network load increases on inference | I tried inferencing on a pretrained TSN model for Action recognition from Gluon zoo. On inferencing the first few frames the CPU consumption was lower, but it gradually increased on inferencing on later frames | closed | 2020-11-11T05:31:07Z | 2021-05-22T06:40:20Z | https://github.com/dmlc/gluon-cv/issues/1514 | [

"Stale"

] | athulvingt | 1 |

AntonOsika/gpt-engineer | python | 896 | pip metadata problem in 0.2.0? (downgrades install to 0.1.0) |

## Expected Behavior

Using `python -m pip install gpt-engineer` should install version 0.2.0 by now.

## Current Behavior

pip seems to reject 0.2.0 (as the package metadata is "0.0.0"???) and installs 0.1.0 instead.

## Failure Information

Running the below pip command shows a message:

```

Discarding https://files.pythonhosted.org/packages/17/d3/adbca4a7f982636fc8a57f41bd174105f5b78b557749fc1e5d19d6f89dea/gpt_engineer-0.2.0.tar.gz (from https://pypi.org/simple/gpt-engineer/) (requires-python:>=3.8.1,<3.12): Requested gpt-engineer from https://files.pythonhosted.org/packages/17/d3/adbca4a7f982636fc8a57f41bd174105f5b78b557749fc1e5d19d6f89dea/gpt_engineer-0.2.0.tar.gz has inconsistent version: expected '0.2.0', but metadata has '0.0.0'

Downloading gpt_engineer-0.1.0-py3-none-any.whl.metadata (7.5 kB)

```

### Steps to Reproduce

Using an anaconda installation on MacOS

conda create -n gpt-engineer python=3.11.5

conda activate gpt-engineer

python -m pip install gpt-engineer

| closed | 2023-12-11T15:57:10Z | 2023-12-14T17:53:00Z | https://github.com/AntonOsika/gpt-engineer/issues/896 | [

"bug",

"triage"

] | IanRogers | 2 |

kornia/kornia | computer-vision | 2,173 | Using images from tutorials is breaking in some places | ## 📚 Documentation

Using images from tutorials is crashing in some places

- Face detection - https://kornia.readthedocs.io/en/latest/applications/face_detection.html

similar to https://github.com/kornia/kornia/pull/2167/commits/b0f2e61c95b1a1ad8290bb589ffeeb864839fc6d | open | 2023-01-23T21:52:30Z | 2023-01-24T16:08:43Z | https://github.com/kornia/kornia/issues/2173 | [

"bug :bug:",

"docs :books:"

] | johnnv1 | 3 |

pennersr/django-allauth | django | 3,161 | Changing primary key for user model causes No Reverse Match | Reverse for 'account_reset_password_from_key' with keyword arguments '{'uidb36': 'mgodhrawala402@gmail.com', 'key': 'bbz25w-9c6941d5cb69a49883f15bc8e076f504'}' not found. 1 pattern(s) tried: ['accounts/password/reset/key/(?P<uidb36>[0-9A-Za-z]+)-(?P<key>.+)/$']

| closed | 2022-09-19T00:18:17Z | 2022-12-10T21:55:04Z | https://github.com/pennersr/django-allauth/issues/3161 | [] | mustansirgodhrawala | 1 |

plotly/dash | data-visualization | 2,449 | dcc.Upload doesn't support rendering of tif image files with html.Img | **Describe your context**

Please provide us your environment, so we can easily reproduce the issue.

- replace the result of `pip list | grep dash` below

```

dash 2.8.1

dash-canvas 0.1.0

dash-core-components 2.0.0

dash-html-components 2.0.0

dash-table 5.0.0

dash-uploader 0.6.0

```

- if frontend related, tell us your Browser, Version and OS

- OS: Ubuntu

- Browser Chrome

- Version Version 110.0.5481.177 (Official Build) (64-bit)

**Describe the bug**

I am trying to render .tif image files from `dcc.Upload` using `html.Img` components. The tifs render perfectly fine using a direct path read:

```

html.Img(src=Image.open(os.path.join(os.path.dirname(os.path.abspath(__file__)),

"sample.tif")))

```

but when using dcc.Upload and the base64 string, nothing appears.

**Expected behavior**

i would expect that plotly dash components would be able to render .tif files using the upload feature as the HTML Img components are able to. Is there some sort of additional conversion required, is this a bug, or is support not being offered for this feature?

This lack of image rendering can also be seen here: https://dash.plotly.com/dash-core-components/upload when attempting to upload .tifs, nothing will appear.

| open | 2023-03-10T15:31:05Z | 2024-08-13T14:29:22Z | https://github.com/plotly/dash/issues/2449 | [

"bug",

"P3"

] | matt-sd-watson | 2 |

RobertCraigie/prisma-client-py | asyncio | 351 | Add foreign key constraint failed error | closed | 2022-04-01T17:51:39Z | 2022-04-30T04:07:06Z | https://github.com/RobertCraigie/prisma-client-py/issues/351 | [

"kind/subtask"

] | RobertCraigie | 0 | |

google-deepmind/graph_nets | tensorflow | 31 | Pretrained Networks | closed | 2018-12-01T06:38:10Z | 2019-03-27T23:03:00Z | https://github.com/google-deepmind/graph_nets/issues/31 | [] | ferreirafabio | 0 | |

jina-ai/serve | machine-learning | 5,401 | Wrong host ip it served really | **Describe the bug**

I start a service

```python

f = Flow(port=22456, host_in='127.0.0.1', host='127.0.0.1').add(uses=xxxx)

with f:

f.block()

```

And i use `neetstat -ant`

It seems that it binds to 0.0.0.0 not 127.0.0.1.Why?

**Environment**

jina 3.10.1

docarray 0.17.0

jcloud 0.0.35

jina-hubble-sdk 0.19.1

jina-proto 0.1.13

protobuf 3.20.0

proto-backend cpp

grpcio 1.48.0

pyyaml 5.3.1

python 3.7.0

platform Linux

platform-release 3.10.0-862.14.1.5.h442.eulerosv2r7.x86_64

platform-version https://github.com/jina-ai/jina/pull/1 SMP Fri May 15 22:01:58 UTC 2020

architecture x86_64

processor x86_64

uid 171880166524263

session-id 22b0ff44-65b2-11ed-8def-9c52f8450567

uptime 2022-11-16T21:25:27.563941

ci-vendor (unset)

internal False

JINA_DEFAULT_HOST (unset)

JINA_DEFAULT_TIMEOUT_CTRL (unset)

JINA_DEPLOYMENT_NAME (unset)

JINA_DISABLE_UVLOOP (unset)

JINA_EARLY_STOP (unset)

JINA_FULL_CLI (unset)

JINA_GATEWAY_IMAGE (unset)

JINA_GRPC_RECV_BYTES (unset)

JINA_GRPC_SEND_BYTES (unset)

JINA_HUB_NO_IMAGE_REBUILD (unset)

JINA_LOG_CONFIG (unset)

JINA_LOG_LEVEL (unset)

JINA_LOG_NO_COLOR (unset)

JINA_MP_START_METHOD (unset)

JINA_OPTOUT_TELEMETRY (unset)

JINA_RANDOM_PORT_MAX (unset)

JINA_RANDOM_PORT_MIN (unset)

| closed | 2022-11-17T02:56:57Z | 2022-11-17T09:00:07Z | https://github.com/jina-ai/serve/issues/5401 | [] | wqh17101 | 1 |

pytest-dev/pytest-mock | pytest | 312 | #note-about-usage-as-context-manager leads to no message | https://github.com/pytest-dev/pytest-mock/blob/35e2dca0ab5e0a0e1580359f7effd6ef99a7c8e6/src/pytest_mock/plugin.py#L212-L220

Leads to the now not-existing section of the README.rst:

https://github.com/pytest-dev/pytest-mock/blob/4c3caaf2260f77ed10e855a20207023dded12c07/README.rst#L277-L315 | closed | 2022-09-09T10:25:48Z | 2022-09-09T11:58:12Z | https://github.com/pytest-dev/pytest-mock/issues/312 | [] | stdedos | 1 |

ydataai/ydata-profiling | pandas | 1,426 | to_html ignores sensitive parameter and exposes data | ### Current Behaviour

In ydata-profile v4.5.0, `ProfileReport.to_html()` ignores the sensitive parameter and exposes data, similar to the bug reported in #1300.

### Expected Behaviour

No sensitive data shown.

### Data Description

A list of integers from 0 - 9, inclusive.

### Code that reproduces the bug

```Python

import pandas as pd

from ydata_profiling import ProfileReport

data = [[i] for i in range(10)]

df = pd.DataFrame(data, columns=['sensitive_column'])

displayHTML(ProfileReport(df, sensitive=True).to_html())

```

### pandas-profiling version

v4.5.0

### Dependencies

```Text

pandas==1.1.5

```

### OS

_No response_

### Checklist

- [X] There is not yet another bug report for this issue in the [issue tracker](https://github.com/ydataai/pandas-profiling/issues)

- [X] The problem is reproducible from this bug report. [This guide](http://matthewrocklin.com/blog/work/2018/02/28/minimal-bug-reports) can help to craft a minimal bug report.

- [X] The issue has not been resolved by the entries listed under [Common Issues](https://pandas-profiling.ydata.ai/docs/master/pages/support_contrib/common_issues.html). | open | 2023-08-11T18:25:03Z | 2023-08-24T15:38:41Z | https://github.com/ydataai/ydata-profiling/issues/1426 | [

"information requested ❔"

] | ch-nickgustafson | 1 |

piskvorky/gensim | nlp | 3,184 | Reduce duplication in word2vec.pyx source code | OK, we can deal with this separately.

_Originally posted by @mpenkov in https://github.com/RaRe-Technologies/gensim/pull/3169#discussion_r660297089_ | open | 2021-06-29T05:44:26Z | 2021-06-29T05:44:42Z | https://github.com/piskvorky/gensim/issues/3184 | [

"housekeeping"

] | mpenkov | 0 |

HIT-SCIR/ltp | nlp | 655 | 有必要再加上ltp.to("cuda")吗 | 加载模型的时候,看到初始化里面包含了判断是否有GPU

在文档里面也看到有类似的判断,那这个是有必要的吗

| closed | 2023-06-30T02:35:46Z | 2023-07-04T07:56:42Z | https://github.com/HIT-SCIR/ltp/issues/655 | [] | liyanfu520 | 1 |

pydata/pandas-datareader | pandas | 125 | Treasury returns | To my knowledge, pandas-datareader does not support loading of US treasury returns from the federal reserve. These are implemented in zipline: https://github.com/quantopian/zipline/blob/master/zipline/data/treasuries.py

Is there interest in adding these to `pandas-datareader`? What's the preferred style?

| closed | 2015-11-23T11:28:54Z | 2018-01-18T17:27:50Z | https://github.com/pydata/pandas-datareader/issues/125 | [] | twiecki | 2 |

coqui-ai/TTS | python | 2,884 | [Bug] Sound too quick when synthesize one-word-speech like "hello"。 | ### Describe the bug

hello,I want to synthesize some speech only have one word, like "Hello".

I try the model named "tts_models/en/ek1/tacotron2". I got the wav file but it sound so quickly that can not hear the word clearly. Any method can solve it?

### To Reproduce

tts --text "hello" --model_name tts_models/en/ek1/tacotron2 --vocoder_name vocoder_models/en/ek1/wavegrad --out_path ./hello.wav

### Expected behavior

_No response_

### Logs

_No response_

### Environment

```shell

-TTS 1.3.

```

### Additional context

_No response_ | closed | 2023-08-23T10:42:18Z | 2023-08-26T20:29:32Z | https://github.com/coqui-ai/TTS/issues/2884 | [

"bug"

] | travisCxy | 2 |

PokeAPI/pokeapi | api | 441 | Egg Group Missing: Field | As described here:

https://bulbapedia.bulbagarden.net/wiki/Egg_Group

https://bulbapedia.bulbagarden.net/wiki/Field_(Egg_Group)

The field group is missing (or does not seem to work.)

Its other group name would be **ground** but that does not seem to work either.

Ex.

I've tried both **egg-group/field** and **egg-group/ground**.

Neither of them yields a result. | closed | 2019-08-05T07:05:51Z | 2019-08-06T15:26:02Z | https://github.com/PokeAPI/pokeapi/issues/441 | [] | bausshf | 3 |

Kitware/trame | data-visualization | 623 | Bug with exporting the plotter to an HTML file | I found a bug with exporting the plotter to an HTML file ```self.plotter.export_html()```.

When I open it in a browser, it works normally.

However, when I open trame in desktop mode (using this line ```sys.argv.append('--app')```),

not only does the HTML file fail to export, but a strange dialog box also pops up.

I look forward to a resolution to this issue.

Thanks.

Windows 11

Python 3.11

Trame 3.6.5

Pyvista 0.44.0

``` python

import sys

import pyvista as pv

from pyvista.trame import PyVistaLocalView

from trame.decorators import TrameApp

from trame_vtk.modules.vtk.serializers import encode_lut

from trame.app import get_server

from trame.widgets import vuetify3 as vuetify

from trame.ui.vuetify3 import SinglePageLayout

encode_lut(True)

@TrameApp()

class KlGModelApp:

def __init__(self):

self.view = None

self.server = get_server(client_type="vue3")

self.plotter = pv.Plotter(off_screen=True)

sphere = pv.Sphere(center=(0, 0, 0))

self.plotter.add_mesh(sphere)

self.build_ui()

@property

def state(self):

return self.server.state

@property

def ctrl(self):

return self.server.controller

def export_to_html(self):

self.plotter.export_html("pv.html")

def build_ui(self):

with SinglePageLayout(_server=self.server) as layout:

layout.title.set_text("(ο´・д・)??")

with layout.toolbar:

vuetify.VDivider(vertical=True, classes="mx-2")

vuetify.VBtn(children="Export HTML", click=self.export_to_html)

with layout.content:

with vuetify.VContainer(fluid=True, classes="pa-0 fill-height"):

self.view = PyVistaLocalView(self.plotter)

# -----------------------------------------------------------------------------

# Main

# -----------------------------------------------------------------------------

if __name__ == "__main__":

app = KlGModelApp()

sys.argv.append('--app')

app.server.start(width=1800, height=900, port=8081)

```

| closed | 2024-10-28T10:02:28Z | 2024-11-04T14:54:55Z | https://github.com/Kitware/trame/issues/623 | [] | Brandon-Xu | 2 |

jupyterhub/zero-to-jupyterhub-k8s | jupyter | 2,995 | Customizing jupyter docker image not working | <!-- Thank you for contributing. These HTML comments will not render in the issue, but you can delete them once you've read them if you prefer! -->

### Bug description

I tried creating a customized notebook using this link https://z2jh.jupyter.org/en/latest/jupyterhub/customizing/user-environment.html#customize-an-existing-docker-image but when built and push into dockerhub, chaning the config.yaml file it is not working. the image puller is on crashloopbackoff state

#### Expected behaviour

continuous image puller is working

#### Actual behaviour

continuous image puller is in backoff state Init:CrashLoopBackOff

### How to reproduce

<!-- Use this section to describe the steps that a user would take to experience this bug. -->

1. Go to '...'

2. Click on '....'

3. Scroll down to '....'

4. See error

### Your personal set up

<!--

Tell us a little about the system you're using.

Please include information about how you installed,

e.g. are you using a distribution such as zero-to-jupyterhub or the-littlest-jupyterhub.

-->

- OS:

<!-- [e.g. ubuntu 20.04, macOS 11.0] -->

- Version(s):

<!-- e.g. jupyterhub --version, python --version --->

<details><summary>Full environment</summary>

<!-- For reproduction, it's useful to have the full environment. For example, the output of `pip freeze` or `conda list` --->

```

# paste output of `pip freeze` or `conda list` here

```

</details>

<details><summary>Configuration</summary>

<!--

For JupyterHub, especially include information such as what Spawner and Authenticator are being used.

Be careful not to share any sensitive information.

You can paste jupyterhub_config.py below.

To exclude lots of comments and empty lines from auto-generated jupyterhub_config.py, you can do:

grep -v '\(^#\|^[[:space:]]*$\)' jupyterhub_config.py

-->

```python

# jupyterhub_config.py

```

</details>

<details><summary>Logs</summary>

<!--

Errors are often logged by jupytehub. How you get logs depends on your deployment.

With kubernetes it might be:

kubectl get pod # hub pod name starts with hub...

kubectl logs hub-...

# or for a single-user server

kubectl logs jupyter-username

Or the-littlest-jupyterhub:

journalctl -u jupyterhub

# or for a single-user server

journalctl -u jupyter-username

-->

```

# paste relevant logs here, if any

```

</details>

| closed | 2023-01-10T09:22:32Z | 2023-01-10T09:27:52Z | https://github.com/jupyterhub/zero-to-jupyterhub-k8s/issues/2995 | [

"support"

] | rafmacalaba | 2 |

tpvasconcelos/ridgeplot | plotly | 171 | [DISCUSSION] Using `ridgeplot` for `sktime` and `skpro` distributional predictions? | For a while I have now been thinking about what a good plotting modality would be for fully distributional predictions, i.e., the output of `predict_proba` in `sktime` or `skpro`.

The challnge is that you have a (marginal) distribution for each entry in a `pandas`-like table, which seems hard to visualize. I've experimented with panels (`matplotlib.subplots`) but I wasn't quit happy with the result.

Now, by accident (just curious clicking), I've discovered `ridgeplot`.

What would you think of using the look & feel of `ridgeplot` as a plotting function in `BaseDistribution`? Where rows are the rows of the data-frame like stucture, and mayb there are also columns (but I am happy with the single-variable case too)

The main difference is that the distribution does not need to be estimated via KDE, you already have it in a form where you can access `pdf`, `cdf`, etc, completely, and you have the quantile function too which helps with selecting x-axis range.

Plotting `cdf` and other distribution defining functions would also be neat, of course `pdf` (if exists), or `cdf` (for survival) are already great.

Imagined usage, sth like

```python

fcst = BuildSth(Complex(params), more_params)

fcst.fit(y, fh=range(1, 10)

y_dist = fcst.predict_proba()

y_dist.plot() # default is pdf for continuous distirbutions

y_dist.plot("cdf")

```

Dependencies-wise, one could imagine `ridgeplot` as a plotting softdep like `matplotlib` or `seaborn`, of `skpro` and therefore indirectly of `sktime`.

What do you think? | closed | 2024-01-31T02:36:32Z | 2024-02-01T11:48:15Z | https://github.com/tpvasconcelos/ridgeplot/issues/171 | [] | fkiraly | 2 |

TencentARC/GFPGAN | pytorch | 519 | Image blending problem while caching the gfpgan model | I have created an API for Real-ESRGAN using FastAPI, and it is working properly for multiple user requests. However, when I am initially loading the models (Real-ESRGAN and GFPGAN) using lru_cache (functools) to decrease the inference time, I am encountering following two errors during execution.

**1. Sometimes I have getting faces of one user request mixed up with another user request.**

**2. In some requests, I have getting following error.**

```

Traceback (most recent call last):

File "D:/Image Super Resolution/Models/Real-ESRGAN/env/lib/site-packages/starlette/middleware/errors.py", line 164, in _call_

await self.app(scope, receive, _send)

File "D:/Image Super Resolution/Models/Real-ESRGAN/env/lib/site-packages/starlette/middleware/exceptions.py", line 62, in _call_

await wrap_app_handling_exceptions(self.app, conn)(scope, receive, send)

File "D:/Image Super Resolution/Models/Real-ESRGAN/env/lib/site-packages/starlette/_exception_handler.py", line 64, in wrapped_app

raise exc

File "D:/Image Super Resolution/Models/Real-ESRGAN/env/lib/site-packages/starlette/_exception_handler.py", line 53, in wrapped_app

await app(scope, receive, sender)

File "D:/Image Super Resolution/Models/Real-ESRGAN/env/lib/site-packages/starlette/routing.py", line 758, in _call_

await self.middleware_stack(scope, receive, send)

File "D:/Image Super Resolution/Models/Real-ESRGAN/env/lib/site-packages/starlette/routing.py", line 778, in app

await route.handle(scope, receive, send)

File "D:/Image Super Resolution/Models/Real-ESRGAN/env/lib/site-packages/starlette/routing.py", line 299, in handle

await self.app(scope, receive, send)

File "D:/Image Super Resolution/Models/Real-ESRGAN/env/lib/site-packages/starlette/routing.py", line 79, in app

await wrap_app_handling_exceptions(app, request)(scope, receive, send)

File "D:/Image Super Resolution/Models/Real-ESRGAN/env/lib/site-packages/starlette/_exception_handler.py", line 64, in wrapped_app

raise exc

File "D:/Image Super Resolution/Models/Real-ESRGAN/env/lib/site-packages/starlette/_exception_handler.py", line 53, in wrapped_app

await app(scope, receive, sender)

File "D:/Image Super Resolution/Models/Real-ESRGAN/env/lib/site-packages/starlette/routing.py", line 74, in app

response = await func(request)

File "D:/Image Super Resolution/Models/Real-ESRGAN/env/lib/site-packages/fastapi/routing.py", line 299, in app

raise e

File "D:/Image Super Resolution/Models/Real-ESRGAN/env/lib/site-packages/fastapi/routing.py", line 294, in app

raw_response = await run_endpoint_function(

File "D:/Image Super Resolution/Models/Real-ESRGAN/env/lib/site-packages/fastapi/routing.py", line 193, in run_endpoint_function

return await run_in_threadpool(dependant.call, **values)

File "D:/Image Super Resolution/Models/Real-ESRGAN/env/lib/site-packages/starlette/concurrency.py", line 42, in run_in_threadpool

return await anyio.to_thread.run_sync(func, *args)

File "D:/Image Super Resolution/Models/Real-ESRGAN/env/lib/site-packages/anyio/to_thread.py", line 56, in run_sync

return await get_async_backend().run_sync_in_worker_thread(

File "D:/Image Super Resolution/Models/Real-ESRGAN/env/lib/site-packages/anyio/_backends/_asyncio.py", line 2134, in run_sync_in_worker_thread

return await future

File "D:/Image Super Resolution/Models/Real-ESRGAN/env/lib/site-packages/anyio/_backends/_asyncio.py", line 851, in run

result = context.run(func, *args)

File "D:/Image Super Resolution/Models/Real-ESRGAN/api.py", line 102, in process_image

intermediate_image = hd_process(img_array)

File "D:/Image Super Resolution/Models/Real-ESRGAN/api.py", line 58, in hd_process

, , output = face_enhancer.enhance(img_array, has_aligned=False, only_center_face=False, paste_back=True)

File "D:/Image Super Resolution/Models/Real-ESRGAN/env/lib/site-packages/torch/utils/_contextlib.py", line 115, in decorate_context

return func(*args, **kwargs)

File "D:/Image Super Resolution/Models/Real-ESRGAN/env/lib/site-packages/gfpgan/utils.py", line 144, in enhance

restored_img = self.face_helper.paste_faces_to_input_image(upsample_img=bg_img)

File "D:/Image Super Resolution/Models/Real-ESRGAN/env/lib/site-packages/facexlib/utils/face_restoration_helper.py", line 291, in paste_faces_to_input_image

assert len(self.restored_faces) == len(self.inverse_affine_matrices), ('length of restored_faces and affine_matrices are different.')

AssertionError: length of restored_faces and affine_matrices are different.

```

This is the small code snippet from my api:

```

@lru_cache()

def loading_model():

real_esrgan_model_path = "D:/Image Super Resolution/Models/Real-ESRGAN/weights/RealESRGAN_x4plus.pth"

gfpgan_model_path = "D:/Image Super Resolution/Models/Real-ESRGAN/env/Lib/site-packages/gfpgan/weights/GFPGANv1.3.pth"

model = RRDBNet(num_in_ch=3, num_out_ch=3, num_feat=64, num_block=23, num_grow_ch=32, scale=4)

netscale = 4

upsampler = RealESRGANer(scale=netscale,model_path=real_esrgan_model_path,dni_weight=0.5,model=model,tile=0,tile_pad=10,pre_pad=0,half=False)

face_enhancer = GFPGANer(model_path=gfpgan_model_path,upscale=4,arch='clean',channel_multiplier=2,bg_upsampler=upsampler)

return face_enhancer

def hd_process(file):

filename = file.filename.split('.')[0]

save_path = os.path.join("temp_images", f"{filename}.jpg")

content = file.file.read()

with open(save_path, 'wb') as image_file:

image_file.write(content)

img_array = cv2.imread(save_path, cv2.IMREAD_UNCHANGED)

face_enhancer = loading_model()

with torch.no_grad():

_, _, output = face_enhancer.enhance(img_array, has_aligned=False, only_center_face=False, paste_back=True)

output_rgb = cv2.cvtColor(output, cv2.COLOR_BGR2RGB)

del face_enhancer

torch.cuda.empty_cache()

return output_rgb

```

So, when I went through the code of GFPGAN, I found that GFPGANer contains an "enhance" function which calls the "facexlib" library for face enhancement and face-related operations. The "enhance" function clears all list variables of "facexlib" after every execution by reinitializing them. This type of behavior is only observed when I load the model into the cache; otherwise, it works properly. Is there any way to cache the model and also resolve this error? | open | 2024-02-21T10:25:05Z | 2024-03-15T08:04:52Z | https://github.com/TencentARC/GFPGAN/issues/519 | [] | dummyuser-123 | 8 |

jeffknupp/sandman2 | sqlalchemy | 235 | Is it possible to serialize the models/code that sandman2 generates? | My understanding is that `sandmanctl` generates SQLAlchemy models and Flask routes for my DB on the fly.

Is it in any way possible to store the generated code so it can reviewed, put under version control etc? | open | 2021-09-09T13:48:40Z | 2021-09-09T13:48:40Z | https://github.com/jeffknupp/sandman2/issues/235 | [] | arne-cl | 0 |

PaddlePaddle/PaddleHub | nlp | 2,164 | 向容器中的服务发送请求,报错:(External) CUDA error(3), initialization error. | 报错信息:

{"msg":"(External) CUDA error(3), initialization error.

[Hint: 'cudaErrorInitializationError'. The API call failed because the CUDA driver and runtime could not be initialized. ] (at /paddle/paddle/phi/backends/gpu/cuda/cuda_info.cc:172)

","results":"","status":"101"}

欢迎您反馈PaddleHub使用问题,非常感谢您对PaddleHub的贡献!

在留下您的问题时,辛苦您同步提供如下信息:

1)版本、环境信息

容器(ce)版本:docker 20.10.21, build baeda1f

初始镜像:paddlepaddle/paddle 2.3.2-gpu-cuda11.2-cudnn8

生成容器中的paddle相关信息:

paddle-bfloat 0.1.7

paddle2onnx 1.0.1

paddlefsl 1.1.0

paddlehub 2.3.0

paddlenlp 2.4.2

paddlepaddle-gpu 2.3.2.post112

python版本:3.7.13

我准备利用Knover中的PLATO-2训练一个chitchat服务,我使用的是24层结构的模型,在粗略地完成了模型训练的阶段后,我使用paddlehub部署服务。在此之前,我已经在容器中使用脚本进行了测试,虽然话说的有些奇怪,但功能执行无误,后来使用hub serving start部署服务,使用jmeter测试服务的时候,报出上述错误。

我的模型名称重新起名为“plato2_cn24”

我的项目中的module.py参考了一位开发者的开源项目,内容如下:

# coding:utf-8

# Copyright (c) 2020 PaddlePaddle Authors. All Rights Reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License"

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

import ast

import os

import json

import sys

import argparse

import contextlib

from collections import namedtuple

import paddle.fluid as fluid

import paddlehub as hub

from paddlehub.module.module import runnable

from paddlehub.module.nlp_module import DataFormatError

from paddlehub.common.logger import logger

from paddlehub.module.module import moduleinfo, serving

import plato2_cn_small.models as plato_models

from plato2_cn_small.tasks.dialog_generation import DialogGeneration

from plato2_cn_small.utils import check_cuda, Timer

from plato2_cn_small.utils.args import parse_args

import translate as trans

import jieba

from collections import namedtuple

@moduleinfo(

name="plato2_cn_small",

version="1.0.0",

summary=

"A novel pre-training model for dialogue generation, incorporated with latent discrete variables for one-to-many relationship modeling. "

"This model is a minor revision from plato2_en_base, making it be able to do conversation in Chinese and English (translated)",

author="baidu-nlp, Dongyang Yan",

author_email="dongyangyan@bjtu.edu.cn",

type="nlp/text_generation",

)

class Plato(hub.NLPPredictionModule):

def _initialize(self):

"""

initialize with the necessary elements

"""

if "CUDA_VISIBLE_DEVICES" not in os.environ:

raise RuntimeError(

"The module only support GPU. Please set the environment variable CUDA_VISIBLE_DEVICES."

)

args = self.setup_args()

self.task = DialogGeneration(args)

self.model = plato_models.create_model(args, fluid.CUDAPlace(0))

self.Example = namedtuple("Example", ["src", "data_id"])

self._interactive_mode = False

self._from_lang = "cn"

self._to_lang = "cn"

self._trans_en2cn = trans.Translator("zh-cn", 'en')

self._trans_cn2en = trans.Translator('en', 'zh-cn')

def setup_args(self, tokenized=False):

"""

Setup arguments.

"""

assets_path = os.path.join(self.directory, "assets")

vocab_path = os.path.join(assets_path, "vocab.txt")

init_pretraining_params = os.path.join(assets_path, "12L", "Plato")

spm_model_file = os.path.join(assets_path, "spm.model")

nsp_inference_model_path = os.path.join(assets_path, "12L", "NSP")

config_path = os.path.join(assets_path, "12L.json")

# ArgumentParser.parse_args use argv[1:], it will drop the first one arg, so the first one in sys.argv should be ""

if not tokenized:

sys.argv = [

"", "--model", "Plato", "--vocab_path",

"%s" % vocab_path, "--do_lower_case", "False",

"--init_pretraining_params",

"%s" % init_pretraining_params, "--spm_model_file",

"%s" % spm_model_file, "--nsp_inference_model_path",

"%s" % nsp_inference_model_path, "--ranking_score", "nsp_score",

"--do_generation", "True", "--batch_size", "1", "--config_path",

"%s" % config_path

]

else:

sys.argv = [

"", "--model", "Plato", "--data_format", "tokenized", "--vocab_path",

"%s" % vocab_path, "--do_lower_case", "False",

"--init_pretraining_params",

"%s" % init_pretraining_params, "--spm_model_file",

"%s" % spm_model_file, "--nsp_inference_model_path",

"%s" % nsp_inference_model_path, "--ranking_score", "nsp_score",

"--do_generation", "True", "--batch_size", "1", "--config_path",

"%s" % config_path

]

parser = argparse.ArgumentParser()

plato_models.add_cmdline_args(parser)

DialogGeneration.add_cmdline_args(parser)

args = parse_args(parser)

args.load(args.config_path, "Model")

args.run_infer = True # only build infer program

return args

@serving

def generate(self, texts):

"""

Get the robot responses of the input texts.

Args:

texts(list or str): If not in the interactive mode, texts should be a list in which every element is the chat context separated with '\t'.

Otherwise, texts shoule be one sentence. The module can get the context automatically.

Returns:

results(list): the robot responses.

"""

if not texts:

return []

if self._from_lang == 'cn':

if not self._interactive_mode:

texts = [' '.join(list(jieba.cut(text))) for text in texts]

else:

texts = ' '.join(list(jieba.cut(texts)))

if self._interactive_mode:

if isinstance(texts, str):

if self._from_lang == 'en':

texts = self._trans_en2cn.translate(texts)

self.context.append(texts.strip())

texts = [" [SEP] ".join(self.context[-self.max_turn:])]

else:

raise ValueError(

"In the interactive mode, the input data should be a string."

)

elif not isinstance(texts, list):

raise ValueError(

"If not in the interactive mode, the input data should be a list."

)

else:

if self._from_lang == 'en':

texts = [self._trans_en2cn.translate(text) for text in texts]

bot_responses = []

for i, text in enumerate(texts):

example = self.Example(src=text.replace("\t", " [SEP] "), data_id=i)

record = self.task.reader._convert_example_to_record(

example, is_infer=True)

data = self.task.reader._pad_batch_records([record], is_infer=True)

pred = self.task.infer_step(self.model, data)[0] # batch_size is 1

bot_response = pred["response"] # ignore data_id and score

bot_responses.append(bot_response)

if self._interactive_mode:

self.context.append(bot_responses[0].strip())

if self._to_lang == 'en':

bot_responses = [self._trans_cn2en.translate(resp) for resp in bot_responses]

if self._to_lang == 'cn':

bot_responses = [''.join(resp.split()) for resp in bot_responses]

return bot_responses

@serving

def generate_for_test(self, records):

"""

Get the robot responses of the input texts.

Args:

list of dicts: numerical data, [field_values, ...]

field_values = {

"token_ids": src_token_ids,

"type_ids": src_type_ids,

"pos_ids": src_pos_ids,

"tgt_start_idx": tgt_start_idx

}

Returns:

results(list): the robot responses.

"""

if not records:

return []

if self._interactive_mode:

print("Warning: This function is not suitable for interactive mode.")

elif not isinstance(records, list):

raise ValueError(

"If not in the interactive mode, the input data should be a list.")

fields = ["token_ids", "type_ids", "pos_ids", "tgt_start_idx", "data_id"]

Record = namedtuple("Record", fields, defaults=(None,) * len(fields))

record_all = []

for i, record in enumerate(records):

record["data_id"] = i

record = Record(**record)

record_all.append(record)

data = self.task.reader._pad_batch_records(record_all, is_infer=True)

pred = self.task.infer_step(self.model, data)

bot_responses = [p["response"] for p in pred]

return bot_responses

def set_dialog_mode(self, from_lang='cn', to_lang='cn'):

"""

To set the mode of dialog, from_lang is the language type of input, and

to_lang is the language type from the robot. "cn": Chinese; "en": English.

Default: from_lang is "cn", to_lang is "cn".

"""

self._from_lang = from_lang

self._to_lang = to_lang

@contextlib.contextmanager

def interactive_mode(self, max_turn=6):

"""

Enter the interactive mode.

Args:

max_turn(int): the max dialogue turns. max_turn = 1 means the robot can only remember the last one utterance you have said.

"""

self._interactive_mode = True

self.max_turn = max_turn

self.context = []

yield

self.context = []

self._interactive_mode = False

@runnable

def run_cmd(self, argvs):

"""

Run as a command

"""

self.parser = argparse.ArgumentParser(

description='Run the %s module.' % self.name,

prog='hub run %s' % self.name,

usage='%(prog)s',

add_help=True)

self.arg_input_group = self.parser.add_argument_group(

title="Input options", description="Input data. Required")

self.arg_config_group = self.parser.add_argument_group(

title="Config options",

description="Run configuration for controlling module behavior, optional.")

self.add_module_input_arg()

args = self.parser.parse_args(argvs)

try:

input_data = self.check_input_data(args)

except DataFormatError and RuntimeError:

self.parser.print_help()

return None

results = self.generate(texts=input_data)

return results

if __name__ == "__main__":

module = Plato()

for result in module.generate([

"你是机器人吗?",

"如果你不是机器人,那你得皮肤是什么颜色的呢?"

]):

print(result)

"""

import paddlehub as hub

import os

os.environ["CUDA_VISIBLE_DEVICES"] = "0"

module = hub.Module("plato2_en&cn_base")

# change the dialog language.

module.set_dialog_mode(from_lang='en', to_lang='cn')

"""

with module.interactive_mode(max_turn=3):

while True:

human_utterance = input()

robot_utterance = module.generate(human_utterance)

print("Robot: %s" % robot_utterance[0])

| open | 2022-12-05T09:46:47Z | 2022-12-05T10:58:10Z | https://github.com/PaddlePaddle/PaddleHub/issues/2164 | [] | what-is-perfect | 2 |

qubvel-org/segmentation_models.pytorch | computer-vision | 334 | Is it possible to increase the U-Net context (input size different than the output size)? | Nowadays, most implementations have inputs of the same size as the outputs. However, the original U-Net has a 572x572 image as input and a 388x388 mask as output. I think this extra context is useful in many applications. Would it be possible to add this additional context in the segmentation_models.pytorch?

Thanks in advance! | closed | 2021-01-23T21:52:25Z | 2022-02-28T01:54:55Z | https://github.com/qubvel-org/segmentation_models.pytorch/issues/334 | [

"Stale"

] | bpmsilva | 2 |

PokeAPI/pokeapi | graphql | 324 | Asynchronous Python Wrapper? | I was deep into my project when I embarrassingly noticed that the pokeapi wrapper I used ([PokeBase](https://github.com/GregHilmes/pokebase) by Greg Hilmes) is not asynchronous.

Now I have the issue that I am not particularly experienced in this field and the wrapper is already fairly deeply integrated into the project.

So does anybody already have some sort of asynchronous python wrapper or would be willing to create one?

Any help would be greatly appreciated as I don't think that I could create something efficient in a timely manner! | closed | 2018-03-01T21:06:31Z | 2018-03-02T13:20:56Z | https://github.com/PokeAPI/pokeapi/issues/324 | [] | AtomToast | 1 |

Yorko/mlcourse.ai | seaborn | 773 | Proofread topic 9 | - Fix issues

- Fix typos

- Correct the translation where needed

- Add images where necessary | open | 2024-08-25T07:53:55Z | 2024-08-25T08:11:36Z | https://github.com/Yorko/mlcourse.ai/issues/773 | [

"enhancement",

"articles"

] | Yorko | 0 |

matterport/Mask_RCNN | tensorflow | 2,713 | ValueError: operands could not be broadcast together with shapes (571,800,3) (300,506,3) | I was trying to remove the background and only include the the segmented object in my image but however only 1 image worked but the rest showing me this error. Any one has faced this issue?

| closed | 2021-10-26T09:25:58Z | 2021-11-17T17:30:51Z | https://github.com/matterport/Mask_RCNN/issues/2713 | [] | hcyeow | 1 |

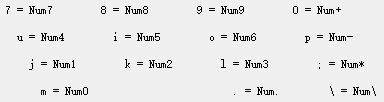

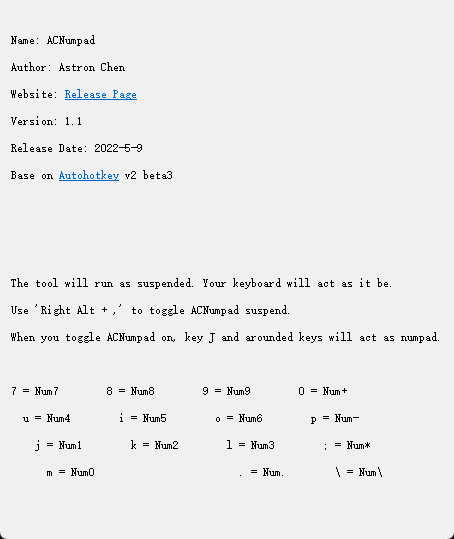

521xueweihan/HelloGitHub | python | 2,200 | 【自荐】ACNumpad,为主键盘区添加数字小键盘 | ## 项目推荐

- 项目地址:[ACNumpad](https://github.com/AstronChen/ACNumpad)

- 类别:AutoHotkey

- 平台

Windows

- 项目后续更新计划:

- 增加自定义键位映射功能。

- 保持软件简洁。

- 项目描述:

- 运行于后台,在主键盘区增加可随时开关的数字小键盘。

- 绿色,免费,简单,高效。

- 相对于其他同类软件,切换方式更顺手,快捷键冲突少。

- 解决以下问题:

①快速输入数字。

②使用小键盘快捷键可大幅提升效率的应用。如几个著名的音视频制作软件。

## 1. 安装

a、 解压缩到任何地方。运行。

b、 (可选)向Startup文件夹发送快捷方式。

为当前用户安装:

```

%USERPROFILE%\AppData\Roaming\Microsoft\Windows\Start Menu\Programs\Startup

```

为所有本地用户安装:

```

%APPDATA%\Microsoft\Windows\Start Menu\Programs\Startup

```

c、 (可选)进入设置-个性化设置-任务栏-其他系统托盘图标。切换以持续显示ACNumpad。

## 2. 使用

ACNumpad静默运行于后台。会显示一个托盘图标,帮助您确定是否开启了数字小键盘。

ACNumpad刚启动时,默认挂起。托盘图标为红色,不会对键盘进行任何更改。

当你按下右侧Alt+,(逗号)或点击任务栏图标右键菜单中的“Toggle Suspend”时,Numpad激活。托盘图标变为绿色。

按键变更如下:

## 3. 卸载

退出ACNumpad并删除目录文件。

# 截图

# 相关工具

AutoHotkey: https://www.autohotkey.com/

Ahk2Exe: https://github.com/AutoHotkey/Ahk2Exe

| closed | 2022-05-12T12:28:50Z | 2022-05-24T03:23:56Z | https://github.com/521xueweihan/HelloGitHub/issues/2200 | [] | AstronChen | 1 |

ageitgey/face_recognition | machine-learning | 1,447 | What is the image size sweet-spot? | I have been crawling the discussions here hoping to find any recommended sizes for training images.

We are building an app that will capture multiple images of each person so we can control the input.

The app will capture a full face and neck. So the face will occupy most of the image's available space.

Can anyone recommend the smallest sized file we should make to keep processing times to a minimum?

For instance is 400px x 500px suitable at 72dpi?

What would be suitable jpeg quality settings as a percentage?

Thanks in advance.

| closed | 2022-09-18T14:51:05Z | 2023-01-18T00:44:05Z | https://github.com/ageitgey/face_recognition/issues/1447 | [] | julianadormon | 5 |

zappa/Zappa | flask | 1,179 | How to add regions to existing deployment? | I'd like to either add select regions to an existing deployment or switch to deploying globally. Ideally the former but I don't know if it's possible. I've seen many articles/docs referencing the option in the `init` function for deploying globally, but I haven't seen anywhere what the zappa settings file should look like or how to update an existing application to this. Can anyone point me in the right direction? | closed | 2022-09-29T13:59:03Z | 2024-04-13T20:13:02Z | https://github.com/zappa/Zappa/issues/1179 | [

"documentation",

"no-activity",

"auto-closed"

] | davidgolden | 6 |

adamerose/PandasGUI | pandas | 213 | Installing Pandasgui breaks opencv/matplotlib compatibility | Ubuntu 20.04.5 LTS

To reproduce:

Install matplotlib, and opencv, then install pandasgui. Note that you can no longer plot anything with matplotlib due to the following error:

```

QObject::moveToThread: Current thread (0x2c2de30) is not the object's thread (0x36f3050).

Cannot move to target thread (0x2c2de30)

qt.qpa.plugin: Could not load the Qt platform plugin "xcb" in "/.../venv/lib/python3.8/site-packages/cv2/qt/plugins" even though it was found.

This application failed to start because no Qt platform plugin could be initialized. Reinstalling the application may fix this problem.

Available platform plugins are: xcb, eglfs, linuxfb, minimal, minimalegl, offscreen, vnc, wayland-egl, wayland, wayland-xcomposite-egl, wayland-xcomposite-glx, webgl.

Process finished with exit code 134 (interrupted by signal 6: SIGABRT)

```

I suspect this is because pandasgui installs PyQT5 as a dependency, breaking opencv/matplotlibs dependence on QT installed on the system. Pandasgui should handle this gracefully and make use of existing backends rather than force-installing PyQT5.

| open | 2022-09-29T14:46:47Z | 2022-09-29T14:46:47Z | https://github.com/adamerose/PandasGUI/issues/213 | [

"bug"

] | ckyleda | 0 |

widgetti/solara | fastapi | 145 | TypeError: set_parent() takes 3 positional arguments but 4 were given | When trying the First script example on the Quickstart of the docs, it works correctly when executed on Jupyter notebook, but it won't work as a script directly executed via solara executable.

When doing:

**solara run .\first_script.py**

the server starts but then it keeps logging the following error:

ERROR: Exception in ASGI application

Traceback (most recent call last):

File "c:\users\jicas\anaconda3\envs\ml\lib\site-packages\uvicorn\protocols\websockets\websockets_impl.py", line 254, in run_asgi

result = await self.app(self.scope, self.asgi_receive, self.asgi_send)

File "c:\users\jicas\anaconda3\envs\ml\lib\site-packages\uvicorn\middleware\proxy_headers.py", line 78, in __call__

return await self.app(scope, receive, send)

File "c:\users\jicas\anaconda3\envs\ml\lib\site-packages\starlette\applications.py", line 122, in __call__

await self.middleware_stack(scope, receive, send)

File "c:\users\jicas\anaconda3\envs\ml\lib\site-packages\starlette\middleware\errors.py", line 149, in __call__

await self.app(scope, receive, send)

File "c:\users\jicas\anaconda3\envs\ml\lib\site-packages\starlette\middleware\gzip.py", line 26, in __call__

await self.app(scope, receive, send)

File "c:\users\jicas\anaconda3\envs\ml\lib\site-packages\starlette\middleware\exceptions.py", line 79, in __call__

raise exc

File "c:\users\jicas\anaconda3\envs\ml\lib\site-packages\starlette\middleware\exceptions.py", line 68, in __call__

await self.app(scope, receive, sender)

File "c:\users\jicas\anaconda3\envs\ml\lib\site-packages\starlette\routing.py", line 718, in __call__

await route.handle(scope, receive, send)

File "c:\users\jicas\anaconda3\envs\ml\lib\site-packages\starlette\routing.py", line 341, in handle

await self.app(scope, receive, send)

File "c:\users\jicas\anaconda3\envs\ml\lib\site-packages\starlette\routing.py", line 82, in app

await func(session)

File "c:\users\jicas\anaconda3\envs\ml\lib\site-packages\solara\server\starlette.py", line 197, in kernel_connection

await thread_return

File "c:\users\jicas\anaconda3\envs\ml\lib\site-packages\anyio\to_thread.py", line 34, in run_sync

func, *args, cancellable=cancellable, limiter=limiter

File "c:\users\jicas\anaconda3\envs\ml\lib\site-packages\anyio\_backends\_asyncio.py", line 877, in run_sync_in_worker_thread

return await future

File "c:\users\jicas\anaconda3\envs\ml\lib\site-packages\anyio\_backends\_asyncio.py", line 807, in run

result = context.run(func, *args)

File "c:\users\jicas\anaconda3\envs\ml\lib\site-packages\solara\server\starlette.py", line 190, in websocket_thread_runner

anyio.run(run)

File "c:\users\jicas\anaconda3\envs\ml\lib\site-packages\anyio\_core\_eventloop.py", line 68, in run

return asynclib.run(func, *args, **backend_options)

File "c:\users\jicas\anaconda3\envs\ml\lib\site-packages\anyio\_backends\_asyncio.py", line 204, in run

return native_run(wrapper(), debug=debug)

File "c:\users\jicas\anaconda3\envs\ml\lib\asyncio\runners.py", line 43, in run

return loop.run_until_complete(main)

File "c:\users\jicas\anaconda3\envs\ml\lib\asyncio\base_events.py", line 587, in run_until_complete

return future.result()

File "c:\users\jicas\anaconda3\envs\ml\lib\site-packages\anyio\_backends\_asyncio.py", line 199, in wrapper

return await func(*args)

File "c:\users\jicas\anaconda3\envs\ml\lib\site-packages\solara\server\starlette.py", line 182, in run

await server.app_loop(ws_wrapper, session_id, connection_id, user)

File "c:\users\jicas\anaconda3\envs\ml\lib\site-packages\solara\server\server.py", line 148, in app_loop

process_kernel_messages(kernel, msg)

File "c:\users\jicas\anaconda3\envs\ml\lib\site-packages\solara\server\server.py", line 179, in process_kernel_messages

kernel.set_parent(None, msg)

File "c:\users\jicas\anaconda3\envs\ml\lib\site-packages\solara\server\kernel.py", line 294, in set_parent

super().set_parent(ident, parent, channel)

TypeError: set_parent() takes 3 positional arguments but 4 were given

Is there anything I can do to avoid this error?

Thanks in advance. | closed | 2023-06-06T10:05:14Z | 2023-07-28T09:55:25Z | https://github.com/widgetti/solara/issues/145 | [

"bug"

] | jicastillow | 5 |

tflearn/tflearn | data-science | 338 | Is it possible to change the tensor shape in the model define process | Hello, there,

I want to define the data as

```

Input:

Images:(NxM) x height x width x channel,

label: N x L

```

Is that possible to change the shape of the tensor in the network definition such as

```

net = input_data(inputs, [-1, NxM, height, width, channel]) # inputs

net = conv_2d(net, 32, 3) # convolutional neural network

net = do some things here # change the shape of tensor, maybe tf.reshape(net, (N, -1))?

net = fully_connected(net, L)

```

| closed | 2016-09-12T16:29:58Z | 2016-09-15T16:00:46Z | https://github.com/tflearn/tflearn/issues/338 | [] | ShownX | 3 |

man-group/arctic | pandas | 628 | enum34 should be used via enum-compat for python 3.6+ compatibility | **enum34** (one of arctic's dependencies in [setup.py](https://github.com/manahl/arctic/blob/5ef7f322481fcee7a275e3b3708c6c3ecdab6304/setup.py#L83)) should be used via [enum-compat](https://pypi.org/project/enum-compat/0.0.2/) for **python 3.6+ compatibility**

Please see: https://stackoverflow.com/questions/43124775/why-python-3-6-1-throws-attributeerror-module-enum-has-no-attribute-intflag

enum34 should not be installed anymore starting from python 3.6

| closed | 2018-09-20T14:53:30Z | 2018-11-13T14:11:19Z | https://github.com/man-group/arctic/issues/628 | [] | fersarr | 1 |

graphql-python/graphene-sqlalchemy | graphql | 337 | Sorting not working | ```

def int_timestamp():

return int(time.time())

class UserActivity(TimestampedModel, Base, DictModel):

__tablename__ = 'user_activities'

id = Column(Integer, primary_key=True)

user_id = Column(Integer, ForeignKey("user.id", ondelete='SET NULL'))

username = Column(String())

timestamp = Column(Integer, default=int_timestamp)

class UserActivityModel(SQLAlchemyObjectType):

class Meta:

model = UserActivity

only_fields = ()

exclude_fields = ('user_id',)

interfaces = (relay.Node,)

class Query(ObjectType):

list_user_activities = SQLAlchemyConnectionField(

type=UserActivityModel,

sort=UserActivityModel.sort_argument()

)

def resolve_list_user_activities(self, info: ResolveInfo, sort=None, first=None, after=None, **kwargs):

# Build the query

query = UserActivityModel.get_query(info)

query = query.filter_by(**kwargs)

return query.all()

graphql_app = GraphQLApp(schema=Schema(query=Query))

```

My query in GQL:

```

query {

listUserActivities(first: 3, sort: TIMESTAMP_DESC) {

edges {

node {

username

timestamp

}

}

}

}

```

The result:

```

{

"data": {

"listUserActivities": {

"edges": [

{

"node": {

"username": "adis@ulap.co",

"timestamp": 1644321703

}

},

{

"node": {

"username": "adis@ulap.co",

"timestamp": 1644334763

}

},

{

"node": {

"username": "adis@ulap.co",

"timestamp": 1644344156

}

}

]

}

}

}

```

What's really strange is the `first` argument appears to apply a limit to the result, but the sort argument appears to just be swallowed and unused. Switching to `TIMESTAMP_ASC` produces the same result. I'm trying to find examples online to help with this. What am I doing wrong or what can i try here?

I'm using `2.3.0` | closed | 2022-04-27T16:53:47Z | 2023-02-25T00:48:48Z | https://github.com/graphql-python/graphene-sqlalchemy/issues/337 | [

"question"

] | kastolars | 11 |

thunlp/OpenPrompt | nlp | 282 | 请问反向传播训练PromptModel的原理是什么? | 在README的示例中,prompt model是(template, plm, verbalizer)三元组,而template和verbalizer是Manual给定的,不会发生变化,那么Step 7: Train and inference 是怎么可能做到训练prompt model的呢?是会修改plm吗? | open | 2023-06-18T16:47:35Z | 2023-06-18T16:48:36Z | https://github.com/thunlp/OpenPrompt/issues/282 | [] | 2catycm | 1 |

man-group/arctic | pandas | 425 | Metadata for Tickstore | Hi, I see from the documents that metadata can be saved in a VersionStore. However, I'm using TickStore and now I can only save metadata of each symbol in a seperate mongo library, which is very inconvenient.

Is there a way to save metadata in a TickStore? | closed | 2017-09-26T08:47:32Z | 2017-12-03T23:25:14Z | https://github.com/man-group/arctic/issues/425 | [] | SnowWalkerJ | 3 |

PokemonGoF/PokemonGo-Bot | automation | 5,716 | UBUNTU 16 | Hi,

Have installed the bot on ubuntu 16.04

But i cant get the bot working. If i start the bot on the defualt config it just go to sleep in 2 seconds, when the sleep is done it just sleeps again.

if i go with optimizer it stops at:

2016-09-27 20:59:14,676 [pokemongo_bot.health_record.bot_event] [INFO] Health check is enabled. For more information:

2016-09-27 20:59:14,676 [pokemongo_bot.health_record.bot_event] [INFO] https://github.com/PokemonGoF/PokemonGo-Bot/tree/dev#analytics

2016-09-27 20:59:14,677 [PokemonGoBot] [INFO] Starting bot...

2016-09-27 20:59:14,694 [PokemonOptimizer] [INFO] Buddy Dragonite walking: 0.00 / 5.00 km

2016-09-27 20:59:14,694 [PokemonOptimizer] [INFO] Pokemon Bag: 209 / 250

been trying to figure this out all day but now i have given up. is there something wrong with the bot at the moment?

| closed | 2016-09-27T18:59:59Z | 2016-09-27T19:51:26Z | https://github.com/PokemonGoF/PokemonGo-Bot/issues/5716 | [] | perpysling2 | 3 |

tqdm/tqdm | pandas | 1,380 | Unnecessary | - [ ] I have marked all applicable categories:

+ [ ] exception-raising bug

+ [ ] visual output bug

- [ ] I have visited the [source website], and in particular

read the [known issues]

- [ ] I have searched through the [issue tracker] for duplicates

- [ ] I have mentioned version numbers, operating system and

environment, where applicable:

```python

import tqdm, sys

print(tqdm.__version__, sys.version, sys.platform)

```

[source website]: https://github.com/tqdm/tqdm/

[known issues]: https://github.com/tqdm/tqdm/#faq-and-known-issues

[issue tracker]: https://github.com/tqdm/tqdm/issues?q=

| closed | 2022-10-07T03:33:55Z | 2022-10-13T21:13:23Z | https://github.com/tqdm/tqdm/issues/1380 | [

"invalid ⛔"

] | soheil | 0 |

littlecodersh/ItChat | api | 217 | 自动回复消息没有反应 | 我的版本是python3.5

这是chengxu程序进程

源码在这里

```

import itchat

@itchat.msg_register(itchat.content.TEXT)

def text_reply(msg):

return msg['Text']

itchat.auto_login()

itchat.run()

``` | closed | 2017-01-27T07:43:53Z | 2017-02-02T14:51:14Z | https://github.com/littlecodersh/ItChat/issues/217 | [

"question"

] | Ericxiaoshuang | 1 |

aiortc/aiortc | asyncio | 381 | Ice connection state stuck in checking | Hi!

I have been trying to work on handling frames from a wowza streaming server using python,

i'm working since a while on this code and i cannot understand why my ice candidate still on checking state and i'm not receiving any frames or any feedback from server

I'm look for a lot of examples on this repository but i cannot reproduce the behavior on my code.

Here it's my code.

```python

import asyncio

import cv2

from aiortc import (

RTCIceCandidate,

MediaStreamTrack,

RTCPeerConnection,

RTCSessionDescription,

RTCConfiguration,

)

pc = RTCPeerConnection(RTCConfiguration(iceServers=[]))

@pc.on("iceconnectionstatechange")

async def on_iceconnectionstatechange():

print(f"ICE connection state is {pc.iceConnectionState}")

if pc.iceConnectionState == "failed":

await pc.close()

if pc.iceConnectionState == "checking":

candidates = pc.localDescription.sdp.split("\r\n")

for candidate in candidates:

if "a=candidate:" in candidate:

print("added ice candidate")

candidate = candidate.replace("a=candidate:", "")

splitted_data = candidate.split(" ")

remote_ice_candidate = RTCIceCandidate(

foundation=splitted_data[0],

component=splitted_data[1],

protocol=splitted_data[2],

priority=int(splitted_data[3]),

ip=splitted_data[4],

port=int(splitted_data[5]),

type=splitted_data[7],

sdpMid=0,

sdpMLineIndex=0,

)

pc.addIceCandidate(remote_ice_candidate)

@pc.on("track")

async def on_track(track):

print(f"Track {track.kind} received")

if track.kind == "video":

local_video = VideoTransformTrack(track)

pc.addTrack(local_video)

await local_video.recv()

@track.on("ended")

def on_ended():

print(f"Track {track.kind} ended")

@pc.on("datachannel")

async def on_datachannel(channel):

print(f"changed datachannel to {channel}")

@pc.on("signalingstatechange")

async def on_signalingstatechange():

print(f"changed signalingstatechange {pc.signalingState}")

@pc.on("icegatheringstatechange")

async def on_icegatheringstatechange():

print(f"changed icegatheringstatechange {pc.iceGatheringState}")

class VideoTransformTrack(MediaStreamTrack):

"""

A video stream track that transforms frames from an another track.

"""

kind = "video"

def __init__(self, track):

super().__init__() # don't forget this!

self.track = track

async def recv(self):

print("trying to retrieve frame...")

frame = await self.track.recv()

print("framed retrieved.")

return frame

async def offer(sdp, sdp_type):

offer = RTCSessionDescription(sdp=sdp, type=sdp_type)

# handle offer

await pc.setRemoteDescription(offer)

# send answer

answer = await pc.createAnswer()

await pc.setLocalDescription(answer)

print("finished offer.")

if __name__ == "__main__":

sdp_type = "offer"

sdp = "v=0\r\no=WowzaStreamingEngine-next 948965951 2 IN IP4 127.0.0.1\r\ns=-\r\nt=0 0\r\na=fingerprint:sha-256 53:21:2B:54:27:E2:4F:16:7F:A9:70:0D:21:D0:0A:DF:9D:8A:E1:A7:6B:7B:A9:2B:57:D9:42:EB:C9:7A:76:8C\r\na=group:BUNDLE video\r\na=ice-options:trickle\r\na=msid-semantic:WMS *\r\nm=video 9 RTP/SAVPF 97\r\na=rtpmap:97 H264/90000\r\na=fmtp:97 packetization-mode=1;profile-level-id=42001f;sprop-parameter-sets=Z00AH52oFAFum4CAgKAAAAMAIAAAAwMQgA==,aO48gA==\r\na=cliprect:0,0,720,1280\r\na=framesize:97 1280-720\r\na=framerate:12.0\r\na=control:trackID=1\r\nc=IN IP4 0.0.0.0\r\na=sendrecv\r\na=ice-pwd:30f8917b33a334eb74fb468068b9b492\r\na=ice-ufrag:206f59a4\r\na=mid:video\r\na=msid:{af74b9ec-8e25-42a6-829c-ef935ac422c2} {4b9b5630-3b6b-41c9-9ca9-50bf00d7be78}\r\na=rtcp-fb:97 nack\r\na=rtcp-fb:97 nack pli\r\na=rtcp-fb:97 ccm fir\r\na=rtcp-mux\r\na=setup:actpass\r\na=ssrc:1715144048 cname:{a4361dd7-133c-4777-90dc-671394ecafe9}\r\n"

loop = asyncio.get_event_loop()

loop.run_until_complete(offer(sdp, sdp_type))

loop.run_forever()

```

This is the output from the code.

```txt

Track video received

trying to retrieve frame...

changed signalingstatechange stable

changed signalingstatechange stable

changed icegatheringstatechange gathering

changed icegatheringstatechange complete

finished offer.

ICE connection state is checking

added ice candidate

added ice candidate

added ice candidate

added ice candidate

added ice candidate

added ice candidate

```

In this case the frame retrieved it's never printed and the ICE connection state keeps in checking

Any feedback would be appreciated!

Cheers

Jcanabarro | closed | 2020-06-17T18:58:55Z | 2021-08-05T12:38:58Z | https://github.com/aiortc/aiortc/issues/381 | [

"invalid"

] | jcanabarro | 8 |

plotly/dash | data-visualization | 2,608 | [BUG] adding restyleData to input causing legend selection to clear automatically | Thank you so much for helping improve the quality of Dash!

We do our best to catch bugs during the release process, but we rely on your help to find the ones that slip through.

**Describe your context**

Please provide us your environment, so we can easily reproduce the issue.

- replace the result of `pip list | grep dash` below

```

dash 2.11.1

dash-core-components 2.0.0

dash-html-components 2.0.0

dash-table 5.0.0

```

- if frontend related, tell us your Browser, Version and OS

- OS: [MacOS Ventura 13.5, Apple M1]

- Browser [chrome]

- Version [chrome Version 115.0.5790.114 (Official Build) (arm64)]

**Describe the bug**

After adding `restyleData` in `Input` in `@app.callback` like below:

@app.callback(

Output("graph", "figure"),

Input("input_1", "value"),

Input('upload-data', 'contents'),

Input("graph", "restyleData"),

)

When I click or double click on a legend, initially the graph is updated correctly (e.g. removing the data series if single clicked, or only showing the data series is double clicked). But then the graph is reverted back to its initial state automatically (i.e. all data series are shown) after a certain period of time (depending on the size of the df). If I click or double click the legend the second time, the graph does not revert back automatically. If I click or double click the third time, the graph reverts back again automatically...and so on.

To isolate the issue, the `restyleData` input is not used anywhere in the function (e.g. `def update_line_chart(input_1, contents, restyleData)`) but in a `print` statement. But the `restyleData` content prints out correctly it seems.

**Expected behavior**

When I click or double click on a legend, the update to the graph retains.

**Screenshots**

If applicable, add screenshots or screen recording to help explain your problem.

https://github.com/plotly/dash/assets/5752865/79f3cdfd-2c13-46c7-8097-98e9fa046217

| closed | 2023-08-01T04:39:47Z | 2024-07-25T13:39:35Z | https://github.com/plotly/dash/issues/2608 | [] | crossingchen | 3 |

httpie/cli | python | 1,388 | No such file or directory: '~/.config/httpie/version_info.json' | ## Checklist

- [x] I've searched for similar issues.

- [x] I'm using the latest version of HTTPie.

---

## Minimal reproduction code and steps

1. do use `http` command, e.g. `http GET http://localhost:8004/consumers/test`

## Current result

```bash

❯ http GET http://localhost:8004/consumers/test

HTTP/1.1 200

Connection: keep-alive

Content-Length: 0

Date: Fri, 06 May 2022 06:28:00 GMT

Keep-Alive: timeout=60

X-B3-TraceId: baf0d94787afeb82

~ on ☁️ (ap-southeast-1)

❯ Traceback (most recent call last):

File "/opt/homebrew/Cellar/httpie/3.2.0/libexec/lib/python3.10/site-packages/httpie/__main__.py", line 19, in <module>

sys.exit(main())

File "/opt/homebrew/Cellar/httpie/3.2.0/libexec/lib/python3.10/site-packages/httpie/__main__.py", line 9, in main

exit_status = main()

File "/opt/homebrew/Cellar/httpie/3.2.0/libexec/lib/python3.10/site-packages/httpie/core.py", line 162, in main

return raw_main(

File "/opt/homebrew/Cellar/httpie/3.2.0/libexec/lib/python3.10/site-packages/httpie/core.py", line 44, in raw_main

return run_daemon_task(env, args)

File "/opt/homebrew/Cellar/httpie/3.2.0/libexec/lib/python3.10/site-packages/httpie/internal/daemon_runner.py", line 47, in run_daemon_task

DAEMONIZED_TASKS[options.task_id](env)

File "/opt/homebrew/Cellar/httpie/3.2.0/libexec/lib/python3.10/site-packages/httpie/internal/update_warnings.py", line 51, in _fetch_updates

with open_with_lockfile(file, 'w') as stream:

File "/opt/homebrew/Cellar/python@3.10/3.10.4/Frameworks/Python.framework/Versions/3.10/lib/python3.10/contextlib.py", line 135, in __enter__

return next(self.gen)

File "/opt/homebrew/Cellar/httpie/3.2.0/libexec/lib/python3.10/site-packages/httpie/utils.py", line 287, in open_with_lockfile

with open(file, *args, **kwargs) as stream:

FileNotFoundError: [Errno 2] No such file or directory: '/Users/na/.config/httpie/version_info.json'

```

## Expected result

```bash

❯ http GET http://localhost:8004/consumers/test

HTTP/1.1 200

Connection: keep-alive

Content-Length: 0

Date: Fri, 06 May 2022 06:28:00 GMT

Keep-Alive: timeout=60

X-B3-TraceId: baf0d94787afeb82

```

(without the `FileNotFoundError`)

---

## Debug output

Please re-run the command with `--debug`, then copy the entire command & output and paste both below:

```bash

❯ http --debug GET http://localhost:8004/consumers/test

HTTPie 3.2.0

Requests 2.27.1

Pygments 2.12.0

Python 3.10.4 (main, Apr 26 2022, 19:36:29) [Clang 13.1.6 (clang-1316.0.21.2)]

/opt/homebrew/Cellar/httpie/3.2.0/libexec/bin/python3.10

Darwin 21.4.0

<Environment {'apply_warnings_filter': <function Environment.apply_warnings_filter at 0x104179d80>,

'args': Namespace(),

'as_silent': <function Environment.as_silent at 0x104179c60>,

'colors': 256,

'config': {'default_options': []},

'config_dir': PosixPath('/Users/nico.arianto/.config/httpie'),

'devnull': <property object at 0x104153b00>,

'is_windows': False,

'log_error': <function Environment.log_error at 0x104179cf0>,

'program_name': 'http',

'quiet': 0,

'rich_console': <functools.cached_property object at 0x104169570>,

'rich_error_console': <functools.cached_property object at 0x10416b0a0>,

'show_displays': True,

'stderr': <_io.TextIOWrapper name='<stderr>' mode='w' encoding='utf-8'>,

'stderr_isatty': True,

'stdin': <_io.TextIOWrapper name='<stdin>' mode='r' encoding='utf-8'>,

'stdin_encoding': 'utf-8',

'stdin_isatty': True,

'stdout': <_io.TextIOWrapper name='<stdout>' mode='w' encoding='utf-8'>,

'stdout_encoding': 'utf-8',

'stdout_isatty': True}>

<PluginManager {'adapters': [],

'auth': [<class 'httpie.plugins.builtin.BasicAuthPlugin'>,

<class 'httpie.plugins.builtin.DigestAuthPlugin'>,

<class 'httpie.plugins.builtin.BearerAuthPlugin'>],

'converters': [],

'formatters': [<class 'httpie.output.formatters.headers.HeadersFormatter'>,

<class 'httpie.output.formatters.json.JSONFormatter'>,

<class 'httpie.output.formatters.xml.XMLFormatter'>,

<class 'httpie.output.formatters.colors.ColorFormatter'>]}>

>>> requests.request(**{'auth': None,

'data': RequestJSONDataDict(),

'headers': <HTTPHeadersDict('User-Agent': b'HTTPie/3.2.0')>,

'method': 'get',

'params': <generator object MultiValueOrderedDict.items at 0x104483220>,

'url': 'http://localhost:8004/consumers/test'})

HTTP/1.1 200

Connection: keep-alive

Content-Length: 0

Date: Fri, 06 May 2022 06:37:17 GMT

Keep-Alive: timeout=60

X-B3-TraceId: 5c2a368fd5b3f98a

~ on ☁️ (ap-southeast-1)

❯ Traceback (most recent call last):

File "/opt/homebrew/Cellar/httpie/3.2.0/libexec/lib/python3.10/site-packages/httpie/__main__.py", line 19, in <module>

sys.exit(main())

File "/opt/homebrew/Cellar/httpie/3.2.0/libexec/lib/python3.10/site-packages/httpie/__main__.py", line 9, in main

exit_status = main()

File "/opt/homebrew/Cellar/httpie/3.2.0/libexec/lib/python3.10/site-packages/httpie/core.py", line 162, in main

return raw_main(

File "/opt/homebrew/Cellar/httpie/3.2.0/libexec/lib/python3.10/site-packages/httpie/core.py", line 44, in raw_main

return run_daemon_task(env, args)

File "/opt/homebrew/Cellar/httpie/3.2.0/libexec/lib/python3.10/site-packages/httpie/internal/daemon_runner.py", line 47, in run_daemon_task

DAEMONIZED_TASKS[options.task_id](env)

File "/opt/homebrew/Cellar/httpie/3.2.0/libexec/lib/python3.10/site-packages/httpie/internal/update_warnings.py", line 51, in _fetch_updates

with open_with_lockfile(file, 'w') as stream:

File "/opt/homebrew/Cellar/python@3.10/3.10.4/Frameworks/Python.framework/Versions/3.10/lib/python3.10/contextlib.py", line 135, in __enter__

return next(self.gen)

File "/opt/homebrew/Cellar/httpie/3.2.0/libexec/lib/python3.10/site-packages/httpie/utils.py", line 287, in open_with_lockfile

with open(file, *args, **kwargs) as stream:

FileNotFoundError: [Errno 2] No such file or directory: '/Users/nico.arianto/.config/httpie/version_info.json'

```

## Additional information, screenshots, or code examples

Installation via `homebrew`

| closed | 2022-05-06T06:38:24Z | 2022-05-07T00:43:46Z | https://github.com/httpie/cli/issues/1388 | [

"bug",

"new"

] | nico-arianto | 3 |

microsoft/unilm | nlp | 1,501 | Image decoder download for beitv3 | **Describe**

For my personal research, I would like to have the pre-training parameters for the BEiT3-base-indomain version of the decoder.

Is there any place where I can download it?

| open | 2024-04-07T11:50:28Z | 2024-04-07T11:50:28Z | https://github.com/microsoft/unilm/issues/1501 | [] | YangSun22 | 0 |

feder-cr/Jobs_Applier_AI_Agent_AIHawk | automation | 322 | Linked in | closed | 2024-09-08T17:25:06Z | 2024-09-08T22:31:12Z | https://github.com/feder-cr/Jobs_Applier_AI_Agent_AIHawk/issues/322 | [] | TheGeoHaze | 1 | |

ultralytics/ultralytics | machine-learning | 19,669 | minor bug critical bug in /examples/YOLOv8-ONNXRuntime /main.py | ### Search before asking

- [ ] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and found no similar bug report.

### Ultralytics YOLO Component

Other

### Bug

in line 184

`gain = min(self.input_height / self.img_height, self.input_width / self.img_height)`

it should be

`gain = min(self.input_height / self.img_height, self.input_width / self.img_width)`

### Environment

Ultralytics 8.3.80 🚀 Python-3.11.11 torch-2.6.0 CPU (Apple M1 Max)

Setup complete ✅ (10 CPUs, 32.0 GB RAM, 846.0/926.4 GB disk)

OS macOS-15.3.1-arm64-arm-64bit

Environment Darwin

Python 3.11.11

Install pip

RAM 32.00 GB

Disk 846.0/926.4 GB

CPU Apple M1 Max

CPU count 10

GPU None

GPU count None

CUDA None

numpy ✅ 2.1.1<=2.1.1,>=1.23.0

matplotlib ✅ 3.10.0>=3.3.0

opencv-python ✅ 4.11.0.86>=4.6.0

pillow ✅ 11.1.0>=7.1.2

pyyaml ✅ 6.0.2>=5.3.1

requests ✅ 2.32.3>=2.23.0

scipy ✅ 1.15.2>=1.4.1

torch ✅ 2.6.0>=1.8.0

torch ✅ 2.6.0!=2.4.0,>=1.8.0; sys_platform == "win32"

torchvision ✅ 0.21.0>=0.9.0

tqdm ✅ 4.67.1>=4.64.0

psutil ✅ 5.9.0

py-cpuinfo ✅ 9.0.0

pandas ✅ 2.2.3>=1.1.4

seaborn ✅ 0.13.2>=0.11.0

ultralytics-thop ✅ 2.0.14>=2.0.0

### Minimal Reproducible Example

just run the current code and you will get wrong bbox.

### Additional

_No response_

### Are you willing to submit a PR?

- [ ] Yes I'd like to help by submitting a PR! | open | 2025-03-13T01:15:49Z | 2025-03-14T01:29:03Z | https://github.com/ultralytics/ultralytics/issues/19669 | [

"bug",

"exports"

] | FitzWang | 2 |