repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

keras-team/keras | python | 20,790 | Conv2D with torch backend causes errors (RuntimeError: view size is not compatible with input tensor's size and stride) | Running on Apple M1. This code works for `tensorflow` and `jax` backends, but fails when the backend is `torch`.

The reported error is:

`RuntimeError: view size is not compatible with input tensor's size and stride (at least one dimension spans across two contiguous subspaces). Use .reshape(...) instead.`

Python 3.12, miniforge3, keras 3.8.0, torch 2.5.1

The error seems to relate to the Conv2D layer. If I remove Conv2D, the error disappears.

```python

import os

os.environ["KERAS_BACKEND"] = "torch"

import keras, numpy as np

model = keras.models.Sequential([

keras.layers.Input(shape=(32, 32, 3)),

keras.layers.Conv2D(filters=10, kernel_size=(3, 3), strides=(1,1), activation='relu'),

keras.layers.Flatten(),

keras.layers.Dense(10, activation='softmax')

])

model.compile(optimizer='adam', loss='mse')

model.summary()

x_train = np.zeros((64, 32, 32, 3), dtype=np.float32)

y_train = np.zeros((64, 10), dtype=np.float32)

model.fit(x=x_train, y=y_train, batch_size=64, epochs=1)

``` | closed | 2025-01-21T12:29:26Z | 2025-01-21T13:54:58Z | https://github.com/keras-team/keras/issues/20790 | [

"backend:torch"

] | gyohng | 5 |

datadvance/DjangoChannelsGraphqlWs | graphql | 46 | WebSocket connection to 'wss://api-such.andsuch.xyz/graphql/' failed: Error during WebSocket handshake: Unexpected response code: 400 | Hello @prokher, thanks for this great library. I recently deployed my application which uses [`django-graphql-playground`](https://github.com/jaydenwindle/django-graphql-playground) to test subscriptions. It works great locally. However in production, I get the following error in my browser console:

```

WebSocket connection to 'wss://api-such.andsuch.xyz/graphql/' failed: Error during WebSocket handshake: Unexpected response code: 400

```

...and in `graphql-playground`, I get:

```

{

"error": "Could not connect to websocket endpoint wss://api-such.andsuch.xyz/graphql/. Please check if the endpoint url is correct."

}

```

I checked Network tab in Chrome and discovered that websocket connections are closing immediately. What could be causing this?

Here's what my `docker-compose.yml` looks like:

```

version: '3.7'

services:

nginx:

container_name: nginx

image: nginx

restart: always

depends_on:

- web

volumes:

- ./web/dev.nginx.template:/etc/nginx/conf.d/dev.nginx.template

- ./static:/static

- ./media:/media

ports:

- "8080:80"

networks:

- SOME_NETWORK

command: /bin/bash -c "envsubst \"`env | awk -F = '{printf \" $$%s\", $$1}'`\" < /etc/nginx/conf.d/dev.nginx.template > /etc/nginx/conf.d/default.conf && exec nginx -g 'daemon off;'"

web:

container_name: web

restart: always

build: ./web

networks:

- SOME_NETWORK

depends_on:

- postgres

- redis

volumes:

- ./web:/usr/src/app/

environment:

- REDIS_HOST=redis

- REDIS_PORT=6379

- GRAPHQL_ENDPOINT=https://api-such.andsuch.xyz/graphql/

command: bash -c /start.sh

postgres:

container_name: postgres

restart: always

image: postgres:latest

networks:

- SOME_NETWORK

volumes:

- pgdata:/var/lib/postgresql/data/

redis:

container_name: redis

restart: always

image: redis:latest

networks:

- SOME_NETWORK

ports:

- "6379:6379"

volumes:

- redisdata:/data

volumes:

pgdata:

redisdata:

networks:

SOME_NETWORK:

name: SOME_NETWORK

driver: bridge

```

`settings.py`

```python

...

...

CHANNEL_LAYERS = {

'default': {

'BACKEND': 'channels_redis.core.RedisChannelLayer',

'CONFIG': {

'hosts': [(os.getenv('REDIS_HOST', 'redis'), os.getenv('REDIS_PORT', 6379))],

}

}

}

...

...

```

`urls.py`

```python

...

...

from graphql_playground.views import GraphQLPlaygroundView

urlpatterns = [

path('admin/', admin.site.urls),

path('playground/', GraphQLPlaygroundView.as_view(

endpoint=os.getenv('GRAPHQL_ENDPOINT'))),

]

...

```

`nginx`

```

server {

client_max_body_size 10M;

listen 443 ssl;

listen [::]:443 ssl;

server_name api-such.andsuch.xyz;

ssl_certificate /etc/ssl/certs/andsuch.xyz.pem;

ssl_certificate_key /etc/ssl/certs/andsuch.xyz.key;

location = /favicon.ico { access_log off; log_not_found off; }

location / {

proxy_set_header Host $http_host;

proxy_http_version 1.1;

proxy_set_header Upgrade $http_upgrade;

proxy_set_header Connection ‘upgrade’;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

proxy_pass http://0.0.0.0:8080;

}

}

```

What could be wrong? I'm out of ideas | closed | 2020-09-01T05:13:07Z | 2020-10-17T04:09:10Z | https://github.com/datadvance/DjangoChannelsGraphqlWs/issues/46 | [] | Cimmanuel | 3 |

voxel51/fiftyone | data-science | 5,060 | [FR] Way to Pre-render thumbnails of the patches you see in patch view |

### Proposal Summary

maybe this already exists, but I want to be able to pre-render out the crops of the views you see in patch view, and have those load at run time

### Motivation

I have a dataset that i have been having major performance issues with. The full size images at 10,000 pixels wide and i have about 500 of them. Each image can have like 1-30 detections that are generally like 200 pixels and smaller. When i load them up in the fiftyone app, stuff gets real slow (to the point of unusability) and often crashes. I have been battling with this for a couple months now, and one solution i had was to generate thumbnails for all the samples with some code like this:

```

samples_to_process = []

for sample in dataset:

filename = os.path.basename(sample.filepath)

thumbnail_path = f"{output_dir}/{filename}"

sample["thumbnail_path"]=thumbnail_path

sample.save()

if not os.path.exists(thumbnail_path):

samples_to_process.append(sample)

else:

sample["thumbnail_path"]=thumbnail_path

if samples_to_process:

# Create a new dataset with the samples to process

dataset_to_process = fo.Dataset()

dataset_to_process.add_samples(samples_to_process)

print("making extra thumbnails")

print(samples_to_process)

foui.transform_images(

dataset_to_process,

size=target_size,

output_field="thumbnail_path",

output_dir=output_dir,

)

dataset.save()

```

now i get two options when using the app! I have speed, but pixelation, or full res but un-usable.

patches taken from shrunken samples (runs fast! can't ID them too pixely)

patches from full size images (goes super slow and often crashes everything)

Instead it would make more sense to be able to have the patch view load pre-rendered thumbnails of each patch (not a thumbnail of the FULL sample, but rather the patches)

Since the patches are generally like 200 pixels and smaller the performance would be great, and this performance wouldn't lower the cropped image quality.

maybe this is something one can already do, but i don't really know how?

This "tips and tricks" thing suggests something related, but i don't really understand it.

https://voxel51.com/blog/fiftyone-computer-vision-tips-and-tricks-may-19-2023/

### What areas of FiftyOne does this feature affect?

- [X ] App: FiftyOne application

- [ ] Core: Core `fiftyone` Python library

- [ ] Server: FiftyOne server

### Details

### Willingness to contribute

The FiftyOne Community welcomes contributions! Would you or another member of your organization be willing to contribute an implementation of this feature?

- [ ] Yes. I can contribute this feature independently

- [ ] Yes. I would be willing to contribute this feature with guidance from the FiftyOne community

- [x ] No. I cannot contribute this feature at this time, i dont know how to code a fiftyone

| open | 2024-11-07T01:53:43Z | 2024-11-20T15:40:34Z | https://github.com/voxel51/fiftyone/issues/5060 | [

"feature"

] | quitmeyer | 3 |

clovaai/donut | computer-vision | 245 | Query Regarding Tree-Based Accuracy Calculation in Donut Model | Hello,

I am currently working on understanding the code within the donut model repository, specifically focusing on the tree-based accuracy calculation. While examining the codebase, I came across the utilization of the zss.distance function for accuracy calculation.

My inquiry pertains to the distance function, particularly concerning the concept of "keyroots of tree." I am seeking clarification on the definition and significance of these "keyroots of tree" within the context of the accuracy calculation process. Could someone kindly provide an explanation or insight into this matter?

Thank you. | open | 2023-08-31T09:38:02Z | 2023-08-31T09:38:02Z | https://github.com/clovaai/donut/issues/245 | [] | Mann1904 | 0 |

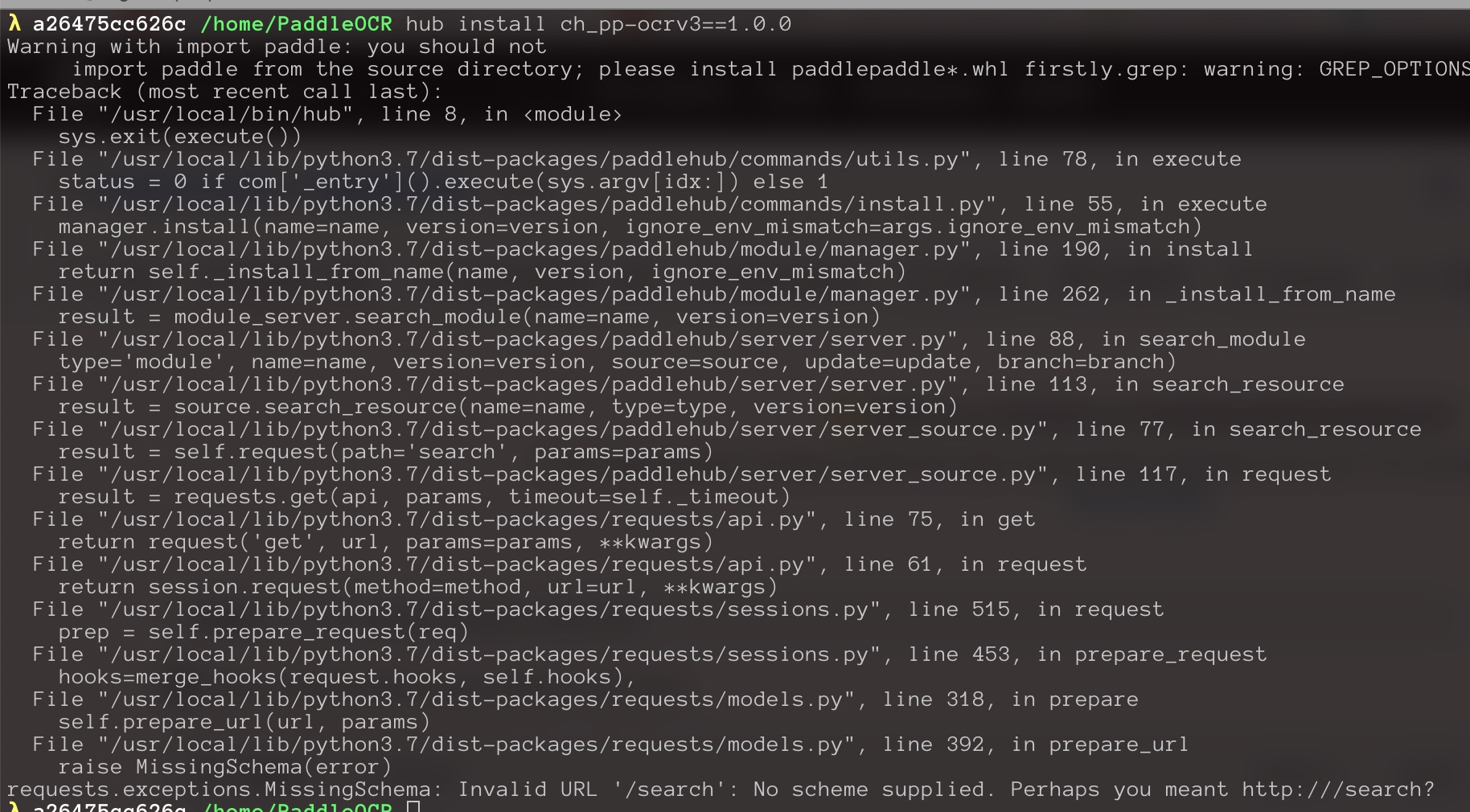

PaddlePaddle/PaddleHub | nlp | 2,072 | 安装模型报错 |

想试用模型,可是却报错了

paddlepaddle 2.3.0

paddle hub 2.3.0 | closed | 2022-10-14T03:11:37Z | 2022-10-14T06:52:15Z | https://github.com/PaddlePaddle/PaddleHub/issues/2072 | [] | hbo-lambda | 3 |

JoeanAmier/TikTokDownloader | api | 416 | 如何正确获取自己的账号主页链接? | 开始获取收藏数据

获取账号简略失败

self 获取账号信息失败

程序运行耗时 0 分钟 3 秒

已退出批量下载收藏作品(抖音)模式

请选择采集功能: | closed | 2025-03-04T01:26:14Z | 2025-03-04T10:50:35Z | https://github.com/JoeanAmier/TikTokDownloader/issues/416 | [

"文档补充(docs)",

"适合新手(good first issue)"

] | lenmao | 3 |

iperov/DeepFaceLab | machine-learning | 5,737 | not working on AMD GPU | After following the instructions and selecting a video file, the application stops working. It responds to inputs, but does not detect faces, swap them, or play the video.

OS: Windows 11

GPU: RX 7900 XTX 24Gb (latest drivers) | open | 2023-10-16T20:54:21Z | 2023-10-16T20:54:21Z | https://github.com/iperov/DeepFaceLab/issues/5737 | [] | f1am3d | 0 |

CorentinJ/Real-Time-Voice-Cloning | pytorch | 592 | Training on custom data | is there a guide for training this using custom voice data we collect on our own?

| closed | 2020-11-09T14:23:34Z | 2021-04-28T19:41:00Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/592 | [] | michaellin99999 | 1 |

facebookresearch/fairseq | pytorch | 5,195 | Dialogue Speech-to-Unit Encoder for dGSLM - checkpoint issues | ## 🐛 Bug

I tried to load the checkpoints (Fisher HuBERT model and k-means model) from

[Dialogue Speech-to-Unit Encoder for dGSLM: The Fisher HuBERT model](https://github.com/facebookresearch/fairseq/tree/main/examples/textless_nlp/dgslm/hubert_fisher)

and got the following error:

`No such file or directory: '/checkpoint/ntuanh/experiments/dialogue-LM/hubert/fisher-vad-10s-separated/kmeans/hubert_iter2/km500/dict.km.txt'`

After trying to fix it with an external k-means dictionary file, I got dimension mismatch in the weights.

### To Reproduce

1. Download the checkpoint files under [Fisher HuBERT model](https://dl.fbaipublicfiles.com/textless_nlp/dgslm/checkpoints/hubert/hubert_fisher.pt) and [k-means model](https://dl.fbaipublicfiles.com/textless_nlp/dgslm/checkpoints/hubert/hubert_fisher_km_500.bin) from [Dialogue Speech-to-Unit Encoder for dGSLM: The Fisher HuBERT model](https://github.com/facebookresearch/fairseq/tree/main/examples/textless_nlp/dgslm/hubert_fisher)

3. Create a Python script using the code snippet shown at the bottom of the readme file

4. Refer to these checkpoint files when passing the paths to HubertTokenizer

5. Include the path to a (dummy) audio file

6. Run Python script

7. See error

Note: I tried the other method as well (by using quantize_with_kmeans.py) but got the same error.

Trace:

```

Traceback (most recent call last):

File "/home/user/fairseq/examples/textless_nlp/gslm/speech2unit/clustering/quantize_with_kmeans.py", line 144, in <module>

main(args, logger)

File "/home/user/fairseq/examples/textless_nlp/gslm/speech2unit/clustering/quantize_with_kmeans.py", line 101, in main

features_batch = get_features(

File "/home/user/fairseq/examples/textless_nlp/gslm/speech2unit/pretrained/utils.py", line 73, in get_features

generator, num_files = get_feature_iterator(

File "/home/user/fairseq/examples/textless_nlp/gslm/speech2unit/pretrained/utils.py", line 58, in get_feature_iterator

reader = feature_reader_cls(

File "/home/user/fairseq/examples/textless_nlp/gslm/speech2unit/pretrained/hubert_feature_reader.py", line 23, in __init__

) = fairseq.checkpoint_utils.load_model_ensemble_and_task(

File "/home/user/fairseq/fairseq/checkpoint_utils.py", line 484, in load_model_ensemble_and_task

model = task.build_model(cfg.model, from_checkpoint=True)

File "/home/user/fairseq/fairseq/tasks/fairseq_task.py", line 355, in build_model

model = models.build_model(cfg, self, from_checkpoint)

File "/home/user/fairseq/fairseq/models/__init__.py", line 106, in build_model

return model.build_model(cfg, task)

File "/home/user/fairseq/fairseq/models/hubert/hubert.py", line 335, in build_model

model = HubertModel(cfg, task.cfg, task.dictionaries)

File "/home/user/fairseq/fairseq/tasks/hubert_pretraining.py", line 139, in dictionaries

return self.state.dictionaries

File "/home/user/fairseq/fairseq/tasks/fairseq_task.py", line 42, in __getattr__

self._state[name] = self._factories[name]()

File "/home/user/fairseq/fairseq/tasks/hubert_pretraining.py", line 149, in load_dictionaries

dictionaries = [

File "/home/user/fairseq/fairseq/tasks/hubert_pretraining.py", line 150, in <listcomp>

Dictionary.load(f"{label_dir}/dict.{label}.txt")

File "/home/user/fairseq/fairseq/data/dictionary.py", line 228, in load

d.add_from_file(f)

File "/home/user/fairseq/fairseq/data/dictionary.py", line 241, in add_from_file

raise fnfe

File "/home/user/fairseq/fairseq/data/dictionary.py", line 238, in add_from_file

with open(PathManager.get_local_path(f), "r", encoding="utf-8") as fd:

FileNotFoundError: [Errno 2] No such file or directory: '/checkpoint/ntuanh/experiments/dialogue-LM/hubert/fisher-vad-10s-separated/kmeans/hubert_iter2/km500/dict.km.txt'

```

#### Code sample

```

# Load the Hubert tokenizer

from examples.textless_nlp.dgslm.dgslm_utils import HubertTokenizer

encoder = HubertTokenizer(

hubert_path = "/path/to/hubert_ckpt.pt",

hubert_layer = 12,

km_path = "path/to/km.bin"

)

# Encode the audio to units

path = "/path/to/stereo/audio.wav"

codes = encoder.wav2codes(path)

```

### Expected behavior

I assume that the path to the k-means dictionary file should be a relative path and that the dictionary file should be included in the HuBERT model checkpoint itself.

Note: As a temporary measure, I tried to overwrite the path that causes the error with [dictionary1](https://dl.fbaipublicfiles.com/textless_nlp/dgslm/checkpoints/speech_dlm/dict.unitA.txt) in [Generative Spoken Dialogue Language Modeling](https://github.com/facebookresearch/fairseq/tree/main/examples/textless_nlp/dgslm) which has exactly 500 elements. After this, I got another error:

```

Traceback (most recent call last):

File "/home/user/hubert.py", line 6, in <module>

encoder = HubertTokenizer(

File "/home/user/fairseq/examples/textless_nlp/dgslm/dgslm_utils.py", line 27, in __init__

self.feature_extractor = HubertFeatureReader(hubert_path, hubert_layer, use_cuda=use_cuda)

File "/home/user/fairseq/examples/textless_nlp/gslm/speech2unit/pretrained/hubert_feature_reader.py", line 23, in __init__

) = fairseq.checkpoint_utils.load_model_ensemble_and_task(

File "/home/user/fairseq/fairseq/checkpoint_utils.py", line 493, in load_model_ensemble_and_task

model.load_state_dict(

File "/home/user/fairseq/fairseq/models/fairseq_model.py", line 128, in load_state_dict

return super().load_state_dict(new_state_dict, strict)

File "/home/liviaq/miniconda3/envs/asr/lib/python3.9/site-packages/torch/nn/modules/module.py", line 2041, in load_state_dict

raise RuntimeError('Error(s) in loading state_dict for {}:\n\t{}'.format(

RuntimeError: Error(s) in loading state_dict for HubertModel:

size mismatch for label_embs_concat: copying a param with shape torch.Size([504, 256]) from checkpoint, the shape in current model is torch.Size([1008, 256]).

size mismatch for final_proj.weight: copying a param with shape torch.Size([256, 768]) from checkpoint, the shape in current model is torch.Size([512, 768]).

size mismatch for final_proj.bias: copying a param with shape torch.Size([256]) from checkpoint, the shape in current model is torch.Size([512]).

```

Based on this, I believe that there is also some dimension mismatch between the pre-trained weights and the actual model weights.

### Environment

- fairseq Version: main

- PyTorch Version: 2.0.1

- OS (e.g., Linux): Linux

- How you installed fairseq (`pip`, source): source

- Build command you used (if compiling from source): `pip install --editable ./`

- Python version: 3.9.12

- CUDA/cuDNN version: 11.8

- GPU models and configuration: 1 GPU (24 GB), Driver Version: 520.61.05

| closed | 2023-06-06T10:55:16Z | 2023-06-06T15:26:00Z | https://github.com/facebookresearch/fairseq/issues/5195 | [

"bug",

"needs triage"

] | qianlivia | 2 |

JaidedAI/EasyOCR | machine-learning | 1,342 | Fine-Tuning EasyOCR on Urdu Textbooks | I'm fine-tuning the EasyOCR model (arabic.pth) for Urdu TextBooks text recognition. Currently, I'm passing the image of an entire page as input and providing the corresponding text for the entire page. However, the performance is almost zero.

My question is: Is this approach correct, or should I split the page (image) into smaller regions for better performance? | open | 2024-12-04T11:58:03Z | 2024-12-04T11:58:03Z | https://github.com/JaidedAI/EasyOCR/issues/1342 | [] | mujeeb-merwat | 0 |

man-group/notebooker | jupyter | 166 | pdf report generation broken in python 3.8 | In python 3.8 pdf reports are not showing correct charts but instead their string versions | closed | 2024-01-09T16:45:05Z | 2024-02-29T08:28:50Z | https://github.com/man-group/notebooker/issues/166 | [] | marcinapostoluk | 2 |

slackapi/python-slack-sdk | asyncio | 1,651 | How to share files in a ephermal way to a user in a channel or DM without making the files publicly available on the internet? | This is the upload method I'm using, to upload and post PDF files to Slack. The files are generated via a script on on google cloud run and uploaded directly to slack, they're not hosted on Google cloud run, it's serverless.

```

def upload_file(

self,

channel_id: str,

user_id: str,

file_bytes: bytes,

filename: str,

title: str,

initial_comment: str = "",

) -> dict:

"""

Uploads a file to Slack using the external file upload workflow and posts

an ephemeral message (visible only to the specified user) with an attachment

referencing the uploaded file.

"""

# Step 1: Get the upload URL from Slack.

file_length = len(file_bytes)

upload_url_resp = self.client.files_getUploadURLExternal(

filename=filename, length=file_length

)

if not upload_url_resp.get("ok"):

raise ValueError(f"Failed to get upload URL: {upload_url_resp}")

upload_url = upload_url_resp["upload_url"]

file_id = upload_url_resp["file_id"]

# Step 2: Upload the file bytes using an HTTP POST.

payload = {

"filename": filename,

"token": self.token,

}

post_response = requests.post(upload_url, params=payload, data=file_bytes)

if post_response.status_code != 200:

raise ValueError(

f"File upload failed (status {post_response.status_code}): {post_response.text}"

)

# Step 3: Complete the external upload so the file appears in Slack.

complete_resp = self.client.files_completeUploadExternal(

files=[{"id": file_id, "title": title}],

channel_id=channel_id,

initial_comment=initial_comment,

thread_ts=None,

)

if not complete_resp.get("ok"):

raise ValueError(f"Failed to complete file upload: {complete_resp}")

# Step 4: Post an ephemeral message with an attachment referencing the file.

attachment = {

"title": title,

"image_url": upload_url,

}

post_msg_resp = self.client.chat_postEphemeral(

channel=channel_id,

user=user_id,

text=initial_comment or f"File uploaded: {title}",

attachments=[attachment],

)

return post_msg_resp

```

I want to share files in a ephermal way, this method shares files publicly only.

```

post_msg_resp = self.client.chat_postEphemeral(

channel=channel_id,

user=user_id,

text=initial_comment or f"File uploaded: {title}",

attachments=[attachment],

)

```

so I must remove the `channel_id` and then grab the link and use `chat_postEphemeral` to send the URL to the user. Therefore `files_completeUploadExternal` can no longer post files directly to Slack.

- I'm unable to show the PDF as an embeddable attachment, the way that `files_completeUploadExternal` shows the file

- I'm unable to use `files_completeUploadExternal` to post to a channel privately (ephermal) or to send the PDF via DM

- I don't want to make the permalinks shareable publicly, and then send the links via `chat_postEphemeral` because the data is sensitive and belongs to my company.

If I make the data public, I'd have to create another service that deletes the data every few days and use database to keep track of the visible files. It's a whole other project.

| closed | 2025-02-04T18:29:50Z | 2025-02-19T16:18:47Z | https://github.com/slackapi/python-slack-sdk/issues/1651 | [

"question",

"web-client",

"Version: 3x"

] | elieobeid7 | 4 |

huggingface/datasets | pytorch | 7,418 | pyarrow.lib.arrowinvalid: cannot mix list and non-list, non-null values with map function | ### Describe the bug

Encounter pyarrow.lib.arrowinvalid error with map function in some example when loading the dataset

### Steps to reproduce the bug

```

from datasets import load_dataset

from PIL import Image, PngImagePlugin

dataset = load_dataset("leonardPKU/GEOQA_R1V_Train_8K")

system_prompt="You are a helpful AI Assistant"

def make_conversation(example):

prompt = []

prompt.append({"role": "system", "content": system_prompt})

prompt.append(

{

"role": "user",

"content": [

{"type": "image"},

{"type": "text", "text": example["problem"]},

]

}

)

return {"prompt": prompt}

def check_data_types(example):

for key, value in example.items():

if key == 'image':

if not isinstance(value, PngImagePlugin.PngImageFile):

print(value)

if key == "problem" or key == "solution":

if not isinstance(value, str):

print(value)

return example

dataset = dataset.map(check_data_types)

dataset = dataset.map(make_conversation)

```

### Expected behavior

Successfully process the dataset with map

### Environment info

datasets==3.3.1 | open | 2025-02-21T10:58:06Z | 2025-02-25T15:26:46Z | https://github.com/huggingface/datasets/issues/7418 | [] | alexxchen | 4 |

huggingface/datasets | machine-learning | 6,451 | Unable to read "marsyas/gtzan" data | Hi, this is my code and the error:

```

from datasets import load_dataset

gtzan = load_dataset("marsyas/gtzan", "all")

```

[error_trace.txt](https://github.com/huggingface/datasets/files/13464397/error_trace.txt)

[audio_yml.txt](https://github.com/huggingface/datasets/files/13464410/audio_yml.txt)

Python 3.11.5

Jupyter Notebook 6.5.4

Windows 10

I'm able to download and work with other datasets, but not this one. For example, both these below work fine:

```

from datasets import load_dataset

dataset = load_dataset("facebook/voxpopuli", "pl", split="train", streaming=True)

minds = load_dataset("PolyAI/minds14", name="en-US", split="train")

```

Thanks for your help

https://huggingface.co/datasets/marsyas/gtzan/tree/main | closed | 2023-11-25T15:13:17Z | 2023-12-01T12:53:46Z | https://github.com/huggingface/datasets/issues/6451 | [] | gerald-wrona | 3 |

matplotlib/mplfinance | matplotlib | 450 | Where can I find help documentation of mplfinance parameters? | Hi, I saw other people mentioning "mpf.plot(df, type='pnf', pnf_params=dict(box_size=7))" parameters in other issue, but I did not find any documents containing these parameters on the mplfinance webpage(including github and pypi.org). Where did these parameters come from? Are there any more optional parameters can use of mplfinance?

Thank you ! | closed | 2021-10-02T23:59:37Z | 2021-10-03T01:23:13Z | https://github.com/matplotlib/mplfinance/issues/450 | [

"question"

] | sunjar2020 | 1 |

zappa/Zappa | django | 558 | [Migrated] import routes causing 404 error | Originally from: https://github.com/Miserlou/Zappa/issues/1475 by [johnedstone](https://github.com/johnedstone)

What is it about the import statement `from app import routes` in this [example](https://blog.miguelgrinberg.com/post/the-flask-mega-tutorial-part-i-hello-world) that causes the app to fail (returns 404) when deployed as a lambda package with Zappa?

Later: I just realized that if I changed the `app_function` value in `zappa_settings.json` generated by `zappa init` to `microblog.app` (or `app.app` ) that it would work. The default: `"app_function": "microblog.__init__.app"`, though it works with `flask run`, doesn't work when deployed with zappa. You can close this issue. Thanks again for this awesome project! | closed | 2021-02-20T12:22:44Z | 2022-07-16T07:07:35Z | https://github.com/zappa/Zappa/issues/558 | [] | jneves | 1 |

axnsan12/drf-yasg | django | 422 | AttributeError: module 'ruamel.yaml' has no attribute 'SafeDumper' | Hello! After docker image build, trying to run containers via docker-compose up, and facing error:

Traceback (most recent call last):

django_1 | File "manage.py", line 21, in <module>

django_1 | main()

django_1 | File "manage.py", line 17, in main

django_1 | execute_from_command_line(sys.argv)

django_1 | File "/usr/local/lib/python3.6/site-packages/django/core/management/__init__.py", line 381, in execute_from_command_line

django_1 | utility.execute()

django_1 | File "/usr/local/lib/python3.6/site-packages/django/core/management/__init__.py", line 375, in execute

django_1 | self.fetch_command(subcommand).run_from_argv(self.argv)

django_1 | File "/usr/local/lib/python3.6/site-packages/django/core/management/base.py", line 323, in run_from_argv

django_1 | self.execute(*args, **cmd_options)

django_1 | File "/usr/local/lib/python3.6/site-packages/django/core/management/base.py", line 361, in execute

django_1 | self.check()

django_1 | File "/usr/local/lib/python3.6/site-packages/django/core/management/base.py", line 390, in check

django_1 | include_deployment_checks=include_deployment_checks,

django_1 | File "/usr/local/lib/python3.6/site-packages/django/core/management/commands/migrate.py", line 65, in _run_checks

django_1 | issues.extend(super()._run_checks(**kwargs))

django_1 | File "/usr/local/lib/python3.6/site-packages/django/core/management/base.py", line 377, in _run_checks

django_1 | return checks.run_checks(**kwargs)

django_1 | File "/usr/local/lib/python3.6/site-packages/django/core/checks/registry.py", line 72, in run_checks

django_1 | new_errors = check(app_configs=app_configs)

django_1 | File "/usr/local/lib/python3.6/site-packages/django/core/checks/urls.py", line 13, in check_url_config

django_1 | return check_resolver(resolver)

django_1 | File "/usr/local/lib/python3.6/site-packages/django/core/checks/urls.py", line 23, in check_resolver

django_1 | return check_method()

django_1 | File "/usr/local/lib/python3.6/site-packages/django/urls/resolvers.py", line 398, in check

django_1 | for pattern in self.url_patterns:

django_1 | File "/usr/local/lib/python3.6/site-packages/django/utils/functional.py", line 80, in __get__

django_1 | res = instance.__dict__[self.name] = self.func(instance)

django_1 | File "/usr/local/lib/python3.6/site-packages/django/urls/resolvers.py", line 579, in url_patterns

django_1 | patterns = getattr(self.urlconf_module, "urlpatterns", self.urlconf_module)

django_1 | File "/usr/local/lib/python3.6/site-packages/django/utils/functional.py", line 80, in __get__

django_1 | res = instance.__dict__[self.name] = self.func(instance)

django_1 | File "/usr/local/lib/python3.6/site-packages/django/urls/resolvers.py", line 572, in urlconf_module

django_1 | return import_module(self.urlconf_name)

django_1 | File "/usr/local/lib/python3.6/importlib/__init__.py", line 126, in import_module

django_1 | return _bootstrap._gcd_import(name[level:], package, level)

django_1 | File "<frozen importlib._bootstrap>", line 994, in _gcd_import

django_1 | File "<frozen importlib._bootstrap>", line 971, in _find_and_load

django_1 | File "<frozen importlib._bootstrap>", line 955, in _find_and_load_unlocked

django_1 | File "<frozen importlib._bootstrap>", line 665, in _load_unlocked

django_1 | File "<frozen importlib._bootstrap_external>", line 678, in exec_module

django_1 | File "<frozen importlib._bootstrap>", line 219, in _call_with_frames_removed

django_1 | File "/app/config/urls.py", line 20, in <module>

django_1 | from drf_yasg.views import get_schema_view

django_1 | File "/usr/local/lib/python3.6/site-packages/drf_yasg/views.py", line 14, in <module>

django_1 | from .renderers import (

django_1 | File "/usr/local/lib/python3.6/site-packages/drf_yasg/renderers.py", line 11, in <module>

django_1 | from .codecs import VALIDATORS, OpenAPICodecJson, OpenAPICodecYaml

django_1 | File "/usr/local/lib/python3.6/site-packages/drf_yasg/codecs.py", line 133, in <module>

django_1 | class SaneYamlDumper(yaml.SafeDumper):

django_1 | AttributeError: module 'ruamel.yaml' has no attribute 'SafeDumper' | closed | 2019-07-26T10:52:12Z | 2019-09-29T15:41:58Z | https://github.com/axnsan12/drf-yasg/issues/422 | [] | Almaz97 | 2 |

sqlalchemy/alembic | sqlalchemy | 548 | create_module_proxy can be fooled when it wraps methods that have explciit arguments | There's an import artifact of some kind that is changing the behavior of `@contextlib.contextmanager` in one environment, such that `inspect.getargspec(fn)` of the function is returning it's full set of keyword defaults, rather than a generic `*arg, **kw`. We can simulate this by removing it from the method in question:

```diff

diff --git a/alembic/operations/base.py b/alembic/operations/base.py

index 90b3500..a5b0706 100644

--- a/alembic/operations/base.py

+++ b/alembic/operations/base.py

@@ -171,7 +171,7 @@ class Operations(util.ModuleClsProxy):

yield op

op._remove_proxy()

- @contextmanager

+ #@contextmanager

def batch_alter_table(

self,

table_name,

```

then we get a simple import error:

```

$ PYTHONPATH=~/dev/sqlalchemy/lib/ python -m alembic

Traceback (most recent call last):

File "/opt/python-3.7.0/lib/python3.7/runpy.py", line 183, in _run_module_as_main

mod_name, mod_spec, code = _get_module_details(mod_name, _Error)

File "/opt/python-3.7.0/lib/python3.7/runpy.py", line 142, in _get_module_details

return _get_module_details(pkg_main_name, error)

File "/opt/python-3.7.0/lib/python3.7/runpy.py", line 109, in _get_module_details

__import__(pkg_name)

File "/home/classic/dev/alembic/alembic/__init__.py", line 5, in <module>

from . import op # noqa

File "/home/classic/dev/alembic/alembic/op.py", line 5, in <module>

Operations.create_module_class_proxy(globals(), locals())

File "/home/classic/dev/alembic/alembic/util/langhelpers.py", line 56, in create_module_class_proxy

cls._setup_proxy(globals_, locals_, attr_names)

File "/home/classic/dev/alembic/alembic/util/langhelpers.py", line 61, in _setup_proxy

cls._add_proxied_attribute(methname, globals_, locals_, attr_names)

File "/home/classic/dev/alembic/alembic/util/langhelpers.py", line 69, in _add_proxied_attribute

methname, globals_, locals_

File "/home/classic/dev/alembic/alembic/util/langhelpers.py", line 176, in _create_method_proxy

exec_(func_text, globals_, lcl)

File "<string>", line 1, in <module>

NameError: name 'immutabledict' is not defined

```

because it doesn't have the "util" prefix that the actual code does:

```

def batch_alter_table(

self,

table_name,

schema=None,

recreate="auto",

copy_from=None,

table_args=(),

table_kwargs=util.immutabledict(),

reflect_args=(),

reflect_kwargs=util.immutabledict(),

naming_convention=None,

):

``` | closed | 2019-03-29T17:18:23Z | 2019-03-30T03:28:15Z | https://github.com/sqlalchemy/alembic/issues/548 | [] | zzzeek | 1 |

xuebinqin/U-2-Net | computer-vision | 160 | ModuleNotFoundError: No module named 'PIL' | Having problems with

from PIL import Image

though I have the following installed in PyCharm venv on Windows 10:

pillow

When trying to install PIL it tells me:

`pip install PIL

ERROR: Could not find a version that satisfies the requirement PIL

ERROR: No matching distribution found for PIL

` | open | 2021-01-31T13:45:58Z | 2021-01-31T13:45:58Z | https://github.com/xuebinqin/U-2-Net/issues/160 | [] | coveritytest | 0 |

strawberry-graphql/strawberry | django | 3,503 | Support explicit setting of federation version | ## Feature Request Type

- [ ] Core functionality

- [x] Alteration (enhancement/optimization) of existing feature(s)

- [ ] New behavior

## Description

The latest version of strawberry supports federation v2.7 and hardcodes the directive urls as such: https://github.com/strawberry-graphql/strawberry/pull/3420

The apollo federation version at my company is v2.5 so I've updated our schema generator to manually replace `v2.7` with `v2.5`

It would be nice to be able to set this at the schema level, e.g.

`federation.Schema(query=Query, federation_version="v2.5")`

At first glance it seems relatively straightforward:

1. Add `federation_version` argument to the schema, default to `"v2.7"` if `enable_federation_2=True`

2. Set `enable_federation_2=True` if federation_version >= 2

3. Update schema urls with the version so the schema generator writes that version

But I imagine that the versioning actually changes underlying support, so then there are outstanding questions:

* Would we support old federation versions and new ones?

* Would we have to validate the version number against what features are supported?

* Would we just trust the user that they know which features are available if they're setting the version explicity?

* Would it be preferable to overwrite the `print_schema` function with version instead?

Not sure what the approach should be, but I don't think this library should dictate the federation version, and more explicitly, I think that users of this library should be able to get library updates unrelated to federation version, rather than having to pin to the version before some federation version was explicitly supported / mandated. | open | 2024-05-16T16:46:49Z | 2025-03-20T15:56:44Z | https://github.com/strawberry-graphql/strawberry/issues/3503 | [

"enhancement",

"discussion",

"federation"

] | bradleyoesch | 5 |

Yorko/mlcourse.ai | seaborn | 678 | time series part 1: weighted_average, weights order | In the `weighted_average` function [here](https://mlcourse.ai/articles/topic9-part1-time-series/), larger weights need to be assigned to more recent observations, however, that's not the case for the implementation. | closed | 2021-01-03T23:52:28Z | 2021-01-04T00:15:14Z | https://github.com/Yorko/mlcourse.ai/issues/678 | [

"minor_fix"

] | Yorko | 1 |

suitenumerique/docs | django | 245 | Can't copy paste content from the doc itself | ## Bug Report

**Problematic behavior**

I can't copy paste content from the doc I'm working on.

When I copy paste from outside the docs it works

**Steps to Reproduce**

1. Select content on the doc

2. Ctrl + C

3. Ctrl + V

4. Nothing happens

**Environment**

docs.numerique.gouv.fr on firefox

- Impress version:

- Platform:

**Possible Solution**

<!--- Only if you have suggestions on a fix for the bug -->

**Additional context/Screenshots**

Add any other context about the problem here. If applicable, add screenshots to help explain.

| closed | 2024-09-11T13:39:16Z | 2024-10-17T15:15:23Z | https://github.com/suitenumerique/docs/issues/245 | [

"bug",

"good first issue",

"frontend",

"editor",

"Firefox"

] | virgile-dev | 3 |

piskvorky/gensim | nlp | 2,760 | Proper tokenizers for pretrained word embeddings models? | #### Problem description

I wan't to tokenize the text the same way it was tokenized when the model was trained. For example, google-news word2vec has separate vectors for common phrases, like San Francisco. It is also not clear which tokenizer to use for other models, like, how to handle apostrophes in words like "shouldn't".

Is there any general way to do so? | closed | 2020-02-24T09:29:42Z | 2020-02-24T10:18:02Z | https://github.com/piskvorky/gensim/issues/2760 | [] | IvanLazarevsky | 1 |

jupyter/docker-stacks | jupyter | 1,908 | Support installing RStudio Server on jupyter/datascience-notebook | ### What docker image(s) is this feature applicable to?

datascience-notebook

### What changes are you proposing?

I'm trying to install Rstudio-Server on `jupyter/datascience-notebook:hub-4.0.0`. However, facing error `libc6-i386 : Depends: libc6 (= 2.31-0ubuntu9.9) but 2.35-0ubuntu3.1 is to be installed`. Thanks.

```

FROM jupyter/datascience-notebook:hub-4.0.0

USER root

WORKDIR /tmp

# https://posit.co/download/rstudio-server/

RUN apt-get update && \

apt-get install -y --no-install-recommends \

file \

git \

libapparmor1 \

libclang-dev \

libcurl4-openssl-dev \

libedit2 \

libobjc4 \

libssl-dev \

libpq5 \

lsb-release \

psmisc \

procps \

python-setuptools \

pwgen \

sudo \

wget

RUN wget https://download2.rstudio.org/server/jammy/amd64/rstudio-server-2023.03.1-446-amd64.deb -O rstudio-server.deb && \

dpkg -i rstudio-server.deb && \

rm rstudio-server.deb

```

### How does this affect the user?

New feature for Rstudio users.

### Anything else?

_No response_ | closed | 2023-05-28T12:10:01Z | 2023-06-02T12:48:29Z | https://github.com/jupyter/docker-stacks/issues/1908 | [

"type:Enhancement",

"status:Need Info"

] | ShichenXie | 3 |

chezou/tabula-py | pandas | 217 | ImportError: No module named 'pandas.errors' while importing tabula | <!--- Provide a general summary of your changes in the Title above -->

# Summary of your issue

<!-- Write the summary of your issue here -->

`import tabula`

```

ImportError Traceback (most recent call last)

<ipython-input-36-21d3fd5ede8c> in <module>

----> 1 import tabula

/Library/Frameworks/Python.framework/Versions/3.5/lib/python3.5/site-packages/tabula/__init__.py in <module>

1 from pkg_resources import DistributionNotFound, get_distribution

2

----> 3 from .io import convert_into, convert_into_by_batch, read_pdf, read_pdf_with_template

4 from .util import environment_info

5

/Library/Frameworks/Python.framework/Versions/3.5/lib/python3.5/site-packages/tabula/io.py in <module>

31 import pandas as pd

32

---> 33 from .errors import CSVParseError, JavaNotFoundError

34 from .file_util import localize_file

35 from .template import load_template

/Library/Frameworks/Python.framework/Versions/3.5/lib/python3.5/site-packages/tabula/errors/__init__.py in <module>

----> 1 from pandas.errors import ParserError

2

3

4 class CSVParseError(ParserError):

5 """Error represents CSV parse error, which mainly caused by pandas.

ImportError: No module named 'pandas.errors'

```

## environment_info

Running ipython notebook in python3 environment

`>>ipython3 notebook`

`>> python3 --version`

`Python 3.5.0`

`>>java -version`

`java version "1.8.0_60"

Java(TM) SE Runtime Environment (build 1.8.0_60-b27)

Java HotSpot(TM) 64-Bit Server VM (build 25.60-b23, mixed mode)`

`MacOS High Seria`

```

Package Version

---------------------------

ipykernel 5.1.4

ipython 7.9.0

notebook 6.0.3

numpy 1.11.2

pandas 0.19.0

pip 20.0.2

six 1.14.0

tabula-py 2.0.4

wheel 0.31.0

```

| closed | 2020-02-13T02:40:01Z | 2020-02-13T03:08:32Z | https://github.com/chezou/tabula-py/issues/217 | [] | zkid18 | 2 |

thtrieu/darkflow | tensorflow | 421 | AssertionError with four byte size difference when converting | I'm getting a rather weird error trying to convert a `darknet` trained Tiny YOLO (adjusted model, transfer learned using a custom dataset) using `flow --savepb`, which complains about finding an unexpected file size. The size difference appears to be exactly four bytes though:

```python

Traceback (most recent call last):

File "./flow", line 6, in <module>

cliHandler(sys.argv)

File "/home/mmayer/dev/ml/darkflow/darkflow/cli.py", line 22, in cliHandler

tfnet = TFNet(FLAGS)

File "/home/mmayer/dev/ml/darkflow/darkflow/net/build.py", line 58, in __init__

darknet = Darknet(FLAGS)

File "/home/mmayer/dev/ml/darkflow/darkflow/dark/darknet.py", line 27, in __init__

self.load_weights()

File "/home/mmayer/dev/ml/darkflow/darkflow/dark/darknet.py", line 82, in load_weights

wgts_loader = loader.create_loader(*args)

File "/home/mmayer/dev/ml/darkflow/darkflow/utils/loader.py", line 105, in create_loader

return load_type(path, cfg)

File "/home/mmayer/dev/ml/darkflow/darkflow/utils/loader.py", line 19, in __init__

self.load(*args)

File "/home/mmayer/dev/ml/darkflow/darkflow/utils/loader.py", line 77, in load

walker.offset, walker.size)

AssertionError: expect 63184556 bytes, found 63184560

```

I was trying it with different versions of TensorFlow, specifically 1.0.1 and 1.3.1, but that didn't change anything.

Does anyone have an idea what could trigger this issue?

| open | 2017-11-03T12:53:45Z | 2019-09-23T08:16:19Z | https://github.com/thtrieu/darkflow/issues/421 | [] | sunsided | 17 |

kiwicom/pytest-recording | pytest | 78 | [FEATURE] Parameterize vcr_config | **Is your feature request related to a problem? Please describe.**

I'm working on a library that supports multiple web services behind a single interface.

Now I've written some tests for that interface and would like to run them for each of the web services I support. This is where pytest's fixture parameterization shines and it works well without VCR in the picture, the implementations simply make real requests.

Obviously that's not ideal, so I wanna use VCR. I need different configurations for each web service though. Unfortunately I can't find a way to parameterize vcr_config.

**Describe the solution you'd like**

No idea, sorry.

**Describe alternatives you've considered**

I guess I could use the vcr fixture somehow?

| open | 2021-10-10T21:19:04Z | 2021-10-10T21:19:04Z | https://github.com/kiwicom/pytest-recording/issues/78 | [

"Status: Review Needed",

"Type: Feature"

] | dAnjou | 0 |

vimalloc/flask-jwt-extended | flask | 145 | Why do we need a separate fresh login endpoint? | Hi,

It's more of a best practice question. I've been working on this extension recently. And I noticed that in the documentation, it is recommended to create three endpoints `/login`, `/refresh` and `/fresh-login`.

I can understand that we don't want the user to login via credentials every time thus we need the `/refresh` endpoint. But I'm not sure about the `/fresh-login` endpoint, since that the `/login` endpoint gives us a fresh access token already.

I understand the concern that we don't want to generate refresh tokens over and over again. But my argument would be that, in practice, we only need the user authenticates via credentials once in a long period of time, and meanwhile, he only needs to use the refresh token to re-authenticate. I feel like using the `/login` endpoint would be sufficient as opposed to introducing another `/fresh-login` endpoint, and there wouldn't be an abuse of generating refresh tokens.

Please let me know if I erred. Thank you.

| closed | 2018-05-03T19:46:38Z | 2018-05-03T22:31:09Z | https://github.com/vimalloc/flask-jwt-extended/issues/145 | [] | CristianoYL | 4 |

3b1b/manim | python | 1,294 | Subsequent fade on submobject of TexMobject not behaving as epected | I would like to fade out and fade in a submobject of a TexMobject. In my example below, I would like to see the "B" disappearing and appearing again. However, on my system the fade in does not work. The B remains faded out.

Is my assumption on the expected behavior correct? Or am I using the wrong approach for the second call to the fade method? I would appreciate any tips or hints!

I am on manimlib 0.1.11 in a linux environment.

Thanks for the great library!

```

class FadeInFadeOutSubMobject(Scene):

def construct(self):

text = TexMobject("A","B","C")

self.play(FadeIn(text))

self.wait(1)

self.play(FadeOut(text))

text[1].fade(1)

self.wait(1)

self.play(FadeIn(text))

self.wait(1)

self.play(FadeOut(text))

text[1].fade(0)

self.play(FadeIn(text))

self.wait(1)

```

| closed | 2020-12-19T18:15:04Z | 2020-12-20T08:24:05Z | https://github.com/3b1b/manim/issues/1294 | [] | msmart | 2 |

proplot-dev/proplot | matplotlib | 359 | ax.step where parameter | ### Description

In `ax.step`, the 'where' parameter doesn't do anything in proplot.

### Steps to reproduce

```python

import numpy as np

import proplot as pplt

x = np.linspace(-5.0, 5.0, 11)

y = np.exp(-0.5 * x**2)

fig, axes = pplt.subplots(nrows=3, figsize=(4.0, 3.0), spany=False)

for ax, where in zip(axes, ['pre', 'post', 'mid']):

ax.step(x, y, where=where, color='black', alpha=0.2)

ax.scatter(x, y, color='black', marker='.')

ax.format(ylabel=where)

```

**Expected behavior**: The `where` parameter should shift the locations of the steps.

**Actual behavior**: The `where` parameter does not shift the locations of the steps.

### Equivalent steps in matplotlib

```python

import numpy as np

from matplotlib import pyplot as plt

x = np.linspace(-5.0, 5.0, 11)

y = np.exp(-0.5 * x**2)

fig, axes = plt.subplots(nrows=3, figsize=(4.0, 3.0), sharex=True)

for ax, where in zip(axes, ['pre', 'post', 'mid']):

ax.step(x, y, where=where, color='black', alpha=0.2)

ax.scatter(x, y, color='black', marker='.')

ax.set_ylabel(where)

```

### Proplot version

matplotlib=3.4.3

proplot=0.9.5 | closed | 2022-05-15T18:27:42Z | 2023-03-29T22:11:56Z | https://github.com/proplot-dev/proplot/issues/359 | [

"bug"

] | austin-hoover | 3 |

plotly/dash-table | dash | 450 | Conditional formatting in for loop broken since upgrade to 0.3.7 | The conditional formatting stopped working since upgrading to 0.3.7 when used in a for loop.

Below code used to work fine in previous versions:

```

[{

'if': {'column_id': str(x), 'filter': '{} < num(0.9)'.format(x)},

'background-color': '#9cd5ac'

} for x in ['column1','column2']

]

```

I found out that the numbers expression needs to be changed from num(0.9) to just 0.9, otherwise it will throw an error: "unable to evaluate target: syntax tree is invalid"

When I remove the num(), the error is gone but the conditional formatting still does not work.

How do I have to adapt my code? | closed | 2019-05-30T14:11:16Z | 2019-05-30T19:40:20Z | https://github.com/plotly/dash-table/issues/450 | [] | philspbr | 3 |

flairNLP/flair | nlp | 2,991 | Building token embeddings from subtokens (subtoken_pooling) | I have a question regarding how the (FLAIR) token embeddings are generated from the subtoken embeddings, which are the outputs of the TransformerWordEmbeddings.

In the [docs ](https://github.com/flairNLP/flair/blob/master/resources/docs/TUTORIAL_7_TRAINING_A_MODEL.md#training-a-named-entity-recognition-ner-model-with-transformers) and [paper](https://arxiv.org/pdf/2011.06993.pdf) you recommend using the `subtoken_pooling="first"`.

However, I have found the following paper **[Subword Pooling Makes a Difference](https://aclanthology.org/2021.eacl-main.194.pdf)** that actually advises the opposite, that is using the "last" subtoken. This is proven by using an attention mechanism on the token level.

My question is, have you experimented with other subtokien_pooling policies? | closed | 2022-11-16T10:08:57Z | 2023-06-11T11:25:47Z | https://github.com/flairNLP/flair/issues/2991 | [

"question",

"wontfix"

] | kobiche | 3 |

pallets/flask | flask | 4,656 | Two Flask server spawned using multiprocessing not working as expected. | **Issue Outline**

I tried to spawn two Flask processes using `multiprocessing` library as mentioned below.

The response from the server is fluctuating/crossing between the two server's '/' route.

More information on: https://stackoverflow.com/questions/72758633/two-flask-processes-spawned-using-multiprocessing-are-not-working-properly

**Reproducer Code:**

```

# ======================= server 1 ======================

from flask import Flask

app1 = Flask("App1")

@app1.route('/')

def index1():

return "Hello This is app 1"

class FlaskApp1:

def start(self):

global app1

app1.run(host="0.0.0.0", port=6000, debug=True)

# ======================= server 2 ======================

app2 = Flask("App2")

@app2.route('/')

def index2():

return "Hello This is app 2"

class FlaskApp2:

def start(self):

global app2

app2.run(host="0.0.0.0", port=7000, debug=True)

# ======================= MAIN ======================

from multiprocessing import Process

if __name__ == "__main__":

p1 = Process(target=FlaskApp1().start, args=())

p2 = Process(target=FlaskApp2().start, args=())

p1.start()

p2.start()

p1.join()

p2.join()

```

**Output:**

The response is fluctuating between the '/' route of two servers

```

======== From Server 1 ==========

# curl localhost:6000

Hello This is app 1

# curl localhost:6000

Hello This is app 2

# curl localhost:6000

Hello This is app 1

# curl localhost:6000

Hello This is app 2

# curl localhost:6000

Hello This is app 1

# curl localhost:6000

Hello This is app 2

# curl localhost:6000

Hello This is app 1

# curl localhost:6000

Hello This is app 2

========= From server 2 ==========

# curl localhost:7000

Hello This is app 1

# curl localhost:7000

Hello This is app 2

# curl localhost:7000

Hello This is app 1

# curl localhost:7000

Hello This is app 2

# curl localhost:7000

Hello This is app 1

# curl localhost:7000

Hello This is app 1

# curl localhost:7000

Hello This is app 2

```

**Expected Behavior:**

The request on port 6000 should consistently return `Hello This is app 1` and server for port 7000 should consistently return `Hello This is app 2` on multiple request request.

**Environment:**

python3 = 3.7.10

Flask = 1.1.2, 2.1.2

| closed | 2022-06-28T15:32:51Z | 2022-07-02T21:56:23Z | https://github.com/pallets/flask/issues/4656 | [] | sssyam | 3 |

ckan/ckan | api | 7,576 | Cannot update language translations | CKAN version 2.9.8

Trying to update language translation in English to change default CKAN labels following: https://docs.ckan.org/en/2.9/contributing/i18n.html

No error messages when compiling po file to generate mo file. However, interface labels are not being updated. Tested this across different languages with the same outcome. This was previously working. Any suggestions?

| open | 2023-05-04T21:15:35Z | 2023-06-03T10:23:45Z | https://github.com/ckan/ckan/issues/7576 | [] | isomemo | 4 |

ray-project/ray | machine-learning | 51,634 | [core/scheduler] Split giant ray core C++ target into small ones | Subissue of #50586 . | open | 2025-03-24T06:35:47Z | 2025-03-24T06:37:37Z | https://github.com/ray-project/ray/issues/51634 | [

"enhancement",

"core"

] | Ziy1-Tan | 1 |

marcomusy/vedo | numpy | 939 | Allow for cloning a mesh-inherited class to retain its class type | Currently if you clone a vedo.Plane, it converts it into a vedo.Mesh (the parent class) and loses attributes such as "normal". It would be useful for the copy to remain as a vedo.Plane. | closed | 2023-10-03T22:44:49Z | 2023-11-16T10:53:10Z | https://github.com/marcomusy/vedo/issues/939 | [

"enhancement",

"fixed"

] | JeffreyWardman | 1 |

paperless-ngx/paperless-ngx | machine-learning | 8,418 | 2006, 'Server has gone away' | I see some issues and at least one discussion, regarding topic "2006, 'Server has gone away'". All of them are closed, some are marked as being not a bug. However, I am facing this issue as well when using paperless-ngx via docker compose, but using an external MariaDB instance.

It happens predictable, when processing a PDF takes some time. In my case when analysing a PDF attachment from an E-Mail being retrieved by a workflow.

| closed | 2024-12-03T14:52:47Z | 2024-12-03T15:00:41Z | https://github.com/paperless-ngx/paperless-ngx/issues/8418 | [

"not a bug"

] | m0wlheld | 2 |

python-gino/gino | asyncio | 632 | [question] how manage alter with Gino | * GINO version: 0.8.5

* Python version: 3.8.1

* asyncpg version: 0.20.1

* aiocontextvars version: 0.2.2

* PostgreSQL version: 11

### Description

I created a table `test` to see if alter are manager by Gino,

```

class Test(db.Model):

__tablename__ = "test"

id = db.Column(db.Integer(), primary_key=True)

foo = db.Column(db.Integer())

async def main():

await db.set_bind("postgresql://test:test@localhost/test")

await db.gino.create_all()

loop = asyncio.get_event_loop()

loop.run_until_complete(main())

```

the table is created successfully but I'm thinking about if it's possible to avoid "migration/upgrade script" or at least avoid an alter part, like

```

class Test(db.Model):

__tablename__ = "test"

id = db.Column(db.Integer(), primary_key=True)

foo = db.Column(db.Integer())

bar = db.Column(db.Integer())

```

will automatically altert the table (btw in this case it should raise exception because there is no default value or nullable=True) | closed | 2020-02-17T10:56:03Z | 2020-04-20T23:04:32Z | https://github.com/python-gino/gino/issues/632 | [

"question"

] | flapili | 4 |

google-research/bert | nlp | 885 | How many "num_tpu_cores" be set ? | I try to pretraining with **run_pretraining.py** using **tpu-v2-32**

How many "num_tpu_cores" be set ?

When tested with tpu-v2-8 worked fine(num_tpu_cores=8).

python3 run_pretraining.py \

--input_file=gs://... \

--output_dir=gs://... \

--do_train=True \

--do_eval=True \

--bert_config_file=/data/workspace/bert/bert_config.json \

--train_batch_size=64 \

--max_seq_length=128 \

--max_predictions_per_seq=19 \

--num_train_steps=100 \

--num_warmup_steps=70 \

--learning_rate=1e-4 \

--use_tpu=True \

--num_tpu_cores=32 \

--tpu_name=grpc://ip:8470 \

--tpu_zone=us-central1-a \

--gcp_project=myproject

**This are parameters to run. Is that correct? When i do this, i got an error like this :**

ValueError: TPUConfig.num_shards is not set correctly. According to TPU system metadata for Tensorflow master (grpc://...:8470): num_replicas should be (8), got (32). For non-model-parallelism, num_replicas should be the total num of TPU cores in the system. For model-parallelism, the total number of TPU cores should be num_cores_per_replica * num_replicas. Please set it accordingly or leave it as `None`

**When i set "num_tpu_cores=8", I got the following error :**

I1025 05:22:42.688320 140065835681600 tpu_estimator.py:557] Init TPU system

ERROR:tensorflow:Error recorded from evaluation_loop: From /job:worker/replica:0/task:0:

Cloud TPU: Invalid TPU configuration, ensure ClusterResolver is passed to tpu.RunConfig

[[{{node configure_distributed_tpu/_0}}]]

Am I missing something else? Or which one should I set?

| closed | 2019-10-25T05:27:02Z | 2020-08-08T12:39:57Z | https://github.com/google-research/bert/issues/885 | [] | jwkim912 | 2 |

microsoft/qlib | deep-learning | 1,791 | Client Error when downloading data | Dear authors:

I've just faced the client error when executing the codes illustrated in your official documentation:

> # download 1d

> python scripts/get_data.py qlib_data --target_dir ~/.qlib/qlib_data/cn_data --region cn

>

> # download 1min

> python scripts/get_data.py qlib_data --target_dir ~/.qlib/qlib_data/qlib_cn_1min --region cn --interval 1min

>

>

or

> from qlib.tests.data import GetData

>

> GetData().qlib_data(exists_skip=True)

> requests.exceptions.HTTPError: 403 Client Error: Server failed to authenticate the request. Make sure the value of Authorization header is formed correctly including the signature. for url: https://qlibpublic.blob.core.windows.net/data/default/stock_data/v2/qlib_data_cn_1d_0.9.zip?sv=2020-10-02&st=2023-06-21T00%3A58%3A06Z&se=2043-06-22T00%3A58%3A00Z&sr=c&sp=rl&sig=ykiWECM7%2BsPLXE32ZM6p2Tc1mMDeUaCmo8LD%2BOKiAo4%3D

May I wonder is there any solution?

Envs:

Mac ( Intel )

python3.8

the latest version of qlib | closed | 2024-05-22T07:57:25Z | 2024-05-24T05:44:23Z | https://github.com/microsoft/qlib/issues/1791 | [

"question"

] | Yottaxx | 1 |

scrapy/scrapy | web-scraping | 6,553 | Recommendation for docstring for functions and classes in the file exporters.py | <!--

Thanks for taking an interest in Scrapy!

If you have a question that starts with "How to...", please see the Scrapy Community page: https://scrapy.org/community/.

The GitHub issue tracker's purpose is to deal with bug reports and feature requests for the project itself.

Keep in mind that by filing an issue, you are expected to comply with Scrapy's Code of Conduct, including treating everyone with respect: https://github.com/scrapy/scrapy/blob/master/CODE_OF_CONDUCT.md

The following is a suggested template to structure your issue, you can find more guidelines at https://doc.scrapy.org/en/latest/contributing.html#reporting-bugs

-->

### Description

The exporters.py file lacks consistent docstrings for its functions and classes. While some docstrings are present, many are either missing or incomplete. This inconsistency can make it challenging for developers, especially newcomers, to understand the purpose and usage of these components without delving deep into the implementation. This affects the maintainability and readability of the code.

### Steps to Reproduce

- Open the exporters.py file in the Scrapy codebase.

- Inspect the classes and functions, noting missing or incomplete docstrings.

- Compare this with the expectations outlined in Python's PEP 257 guidelines for docstrings.

**Expected behavior:**

Each class should have a clear and concise docstring describing:

- The purpose of the class.

- An overview of its main methods and attributes.

Each function should have a docstring explaining:

- Its purpose.

- Input parameters, their types, and expected values.

- Return values and their types.

- Any side effects, exceptions raised, or special behaviors.

**Actual behavior:**

Many classes and functions in exporters.py lack docstrings. Existing docstrings, where present, are inconsistent in detail and format.

**Reproduces how often:**

100% of the time when inspecting exporters.py.

### Versions

Please paste here the output of executing `scrapy version --verbose` in the command line.

### Additional context

Any additional information, configuration, data or output from commands that might be necessary to reproduce or understand the issue. Please try not to include screenshots of code or the command line, paste the contents as text instead. You can use [GitHub Flavored Markdown](https://help.github.com/en/articles/creating-and-highlighting-code-blocks) to make the text look better.

| closed | 2024-11-20T04:20:53Z | 2024-11-20T19:13:56Z | https://github.com/scrapy/scrapy/issues/6553 | [] | dami1025 | 2 |

horovod/horovod | tensorflow | 3,268 | Unable to load most recent checkpoint for Pytorch and Pytorch lightning Estimator | **Environment:**

1. Framework: PyTorch

2. Framework version: 1.8.1

3. Horovod version: 0.23.0

4. MPI version:

5. CUDA version:

6. NCCL version:

7. Python version: 3.8

8. Spark / PySpark version: 3.1.2

9. Ray version:

10. OS and version:

11. GCC version:

12. CMake version:

**Bug report:**

In case of pytorch lightning estimator, the _read_checkpoint() API does not return the latest checkpoint stored in the run path.

Reason: Pytorch lightning estimator calls store.get_checkpoints() which looks for a folder named 'checkpoint' in run path while there is no folder named checkpoint, instead there is a temp folder generated via tempfile.TemporaryDirectory()

In case of pytorch estimator, the checkpoint stored in run path is not overwritten if multiple iterations are done using the same run path, which leads to _load_checkpoint() API returning the stale checkpoint.

| closed | 2021-11-10T11:34:06Z | 2021-11-23T07:12:35Z | https://github.com/horovod/horovod/issues/3268 | [

"bug"

] | kamalsharma2 | 3 |

learning-at-home/hivemind | asyncio | 581 | forking before initialization of the MPFuture handler - server runtime not initialized in WSL --new_hive | Apparently there is an issue of forking before initialization of the MPFuture handler. It happens when running private hive under WSL. No such problem happens when runnging pure linux or WSL with real hive.

What likely happens:

--> The ConnectionHandlers were created - as a process - while we were still initializing the background thread - the MPFuture handler - and they forked the state of the main process, in which the MPFuture handler was not fully initialized - and was broken.

--> the `ConnectionHandlers` could not write self.ready.set_result - this method is serviced by their (broken) MPFuture handler.

--> the ModuleContainer got stuck in run -> handler.run_in_background -> handler.wait_until_ready().

--> the ModuleContainer never reached Runtime.run() - it was called after handler.run_in_background().

--> the Runtime never started processing batches.

--> the connection handlers, which received a request from the client, gave the task to the Runtime, but did not receive a response - the Runtime was never launched.

--> the client did not receive a response from the server.

How to reproduce:

run in WSL: `HIVEMIND_LOGLEVEL=DEBUG python -m petals.cli.run_server bigscience/bloom-560m --new_swarm --identity tests/test.id --host_maddrs /ip4/127.0.0.1/tcp/32337 --throughput 1 --torch_dtype float32 --compression NONE --attn_cache_tokens 2048 --max_chunk_size_bytes 1024`

Problem symptoms:

Server runtime seems inactive.

- no message `Started`

- not responding to client calls

**Environment**

Please list:

* python version (e.g. 3.8.1); 3.10

* hivemind.__version__; 1.1.9

* Please copy and paste the output from pytorch [environment collection script](https://raw.githubusercontent.com/pytorch/pytorch/master/torch/utils/collect_env.py)

If the script doesn't work, please report pytorch and numpy versions manually. We also encourage you to include any additional information that you believe can help us solve the issue.

PyTorch version: 2.0.1

Is debug build: False

CUDA used to build PyTorch: 11.7

ROCM used to build PyTorch: N/A

OS: Ubuntu 20.04.5 LTS (x86_64)

GCC version: (Ubuntu 9.4.0-1ubuntu1~20.04.1) 9.4.0

Clang version: Could not collect

CMake version: version 3.16.3

Libc version: glibc-2.31

Python version: 3.10.12 | packaged by conda-forge | (main, Jun 23 2023, 22:40:32) [GCC 12.3.0] (64-bit runtime)

Python platform: Linux-5.15.90.1-microsoft-standard-WSL2-x86_64-with-glibc2.31

Is CUDA available: True

CUDA runtime version: Could not collect

CUDA_MODULE_LOADING set to: LAZY

GPU models and configuration: GPU 0: NVIDIA GeForce RTX 3090

Nvidia driver version: 531.79

cuDNN version: Could not collect

HIP runtime version: N/A

MIOpen runtime version: N/A

Is XNNPACK available: True

CPU:

Architecture: x86_64

CPU op-mode(s): 32-bit, 64-bit

Byte Order: Little Endian

Address sizes: 48 bits physical, 48 bits virtual

CPU(s): 16

On-line CPU(s) list: 0-15

Thread(s) per core: 2

Core(s) per socket: 8

Socket(s): 1

Vendor ID: AuthenticAMD

CPU family: 23

Model: 8

Model name: AMD Ryzen 7 2700X Eight-Core Processor

Stepping: 2

CPU MHz: 3700.062

BogoMIPS: 7400.12

Hypervisor vendor: Microsoft

Virtualization type: full

L1d cache: 256 KiB

L1i cache: 512 KiB

L2 cache: 4 MiB

L3 cache: 16 MiB

Vulnerability Itlb multihit: Not affected

Vulnerability L1tf: Not affected

Vulnerability Mds: Not affected

Vulnerability Meltdown: Not affected

Vulnerability Mmio stale data: Not affected

Vulnerability Retbleed: Mitigation; untrained return thunk; SMT vulnerable

Vulnerability Spec store bypass: Mitigation; Speculative Store Bypass disabled via prctl and seccomp

Vulnerability Spectre v1: Mitigation; usercopy/swapgs barriers and __user pointer sanitization

Vulnerability Spectre v2: Mitigation; Retpolines, IBPB conditional, STIBP disabled, RSB filling, PBRSB-eIBRS Not affected

Vulnerability Srbds: Not affected

Vulnerability Tsx async abort: Not affected

Flags: fpu vme de pse tsc msr pae mce cx8 apic sep mtrr pge mca cmov pat pse36 clflush mmx fxsr sse sse2 ht syscall nx mmxext fxsr_opt pdpe1gb rdtscp lm constant_tsc rep_good nopl tsc_reliable nonstop_tsc cpuid extd_apicid pni pclmulqdq ssse3 fma cx16 sse4_1 sse4_2 movbe popcnt aes xsave avx f16c rdrand hypervisor lahf_lm cmp_legacy cr8_legacy abm sse4a misalignsse 3dnowprefetch osvw topoext ssbd ibpb vmmcall fsgsbase bmi1 avx2 smep bmi2 rdseed adx smap clflushopt sha_ni xsaveopt xsavec xgetbv1 xsaves clzero xsaveerptr virt_ssbd arat

Versions of relevant libraries:

[pip3] mypy==0.991

[pip3] mypy-extensions==0.4.3

[pip3] numpy==1.22.4

[pip3] torch==2.0.1

[pip3] triton==2.0.0

[conda] blas 2.16 mkl conda-forge

[conda] libblas 3.8.0 16_mkl conda-forge

[conda] libcblas 3.8.0 16_mkl conda-forge

[conda] liblapack 3.8.0 16_mkl conda-forge

[conda] liblapacke 3.8.0 16_mkl conda-forge

[conda] mkl 2020.2 256

[conda] numpy 1.25.1 pypi_0 pypi

[conda] pytorch 2.0.1 py3.10_cuda11.7_cudnn8.5.0_0 pytorch

[conda] pytorch-cuda 11.7 h778d358_5 pytorch

[conda] pytorch-mutex 1.0 cuda pytorch

[conda] torchtriton 2.0.0 py310 pytorch | open | 2023-08-01T22:31:50Z | 2023-08-03T20:48:38Z | https://github.com/learning-at-home/hivemind/issues/581 | [

"bug"

] | poedator | 1 |

lanpa/tensorboardX | numpy | 158 | the problem about add_image | Hello,I want to draw a attention picture,but when I use the function of add_image,I find have no image to writer.However, the function of add_scaler to be used will generate some files.

PyTorch 0.4 / torchvision 0.2 / tensorboard 1.7.0 | closed | 2018-06-04T08:40:52Z | 2018-06-11T02:09:35Z | https://github.com/lanpa/tensorboardX/issues/158 | [] | travel-go | 3 |

AutoViML/AutoViz | scikit-learn | 115 | Issue installing AutoViz on Kaggle Notebook | Hi!

I have encountered the below error when trying to install AutoViz on Kaggle Notebook. Is there any solutions? thanks.

| closed | 2024-11-11T14:32:59Z | 2025-01-28T21:37:08Z | https://github.com/AutoViML/AutoViz/issues/115 | [] | vincentfeng9109 | 1 |

biolab/orange3 | data-visualization | 6,065 | Group By: Add quartile outputs | <!--

Thanks for taking the time to submit a feature request!

For the best chance at our team considering your request, please answer the following questions to the best of your ability.

-->

**What's your use case?**

I would like to summarize large datasets through the 5-number set (minimum, Q1, median, Q3, maximum) + mean, but quartiles are not available in the Group By GUI.

**What's your proposed solution?**

<!-- Be specific, clear, and concise. -->

Integrate quartile computations and 1 or 2 check boxes ("Quartiles" or "First quartile" / "Third quartile") in the GUI of the Group By widget.

**Are there any alternative solutions?**

These can be computed with a custom python script using pandas in the meantime.

| closed | 2022-07-18T13:27:47Z | 2023-01-27T13:55:22Z | https://github.com/biolab/orange3/issues/6065 | [

"wish",

"snack"

] | chourroutm | 4 |

onnx/onnx | machine-learning | 5,989 | SegFault during shape inference if the schema not be set inference function | # Bug Report

### Describe the bug

SegFault during shape inference if the schema not be set inference function

### Reproduction instructions

cpp (Optional):

```

ONNX_OPERATOR_SCHEMA(CustomOp);

```

python:

```

import onnx

from onnx import defs

input = """

<

ir_version: 7,

opset_import: [

"" : 1

]

>

agraph (float[N, 128] X, int32 Y) => (float[N] Z)

{

Z = CustomOp(X, Y)

}

"""

model = onnx.parser.parse_model(input)

op_schema = defs.OpSchema(

'CustomOp',

'',

1,

inputs=[

],

outputs=[

],

)

# Uncomment if not register in cpp

# onnx.defs.register_schema(op_schema)

onnx.shape_inference.infer_shapes(model, True)

```

### Expected behavior

Raise a python exception rather than crash.

### Notes

The root case is the input or output of schema is empty and the corresponding field in graph is no-empty. So the `input.back` from schema will invalid.

| closed | 2024-03-02T15:23:13Z | 2024-03-04T22:07:42Z | https://github.com/onnx/onnx/issues/5989 | [

"bug"

] | OYCN | 0 |

adbar/trafilatura | web-scraping | 196 | updated benchmarks | there have been several significant releases since the last benchmark 10 months ago, I'm curious if there have been any notable changes there.

https://trafilatura.readthedocs.io/en/latest/evaluation.html#results-2021-06-07 | closed | 2022-04-13T21:57:58Z | 2022-05-18T20:41:00Z | https://github.com/adbar/trafilatura/issues/196 | [

"question"

] | derekperkins | 3 |

1313e/CMasher | matplotlib | 32 | Colormap summary table | You mention in a documentation note that colormaps are sorted according to minimum and maximum lightness values, Starting (sequential) or central (diverging and cyclic) lightness value, Difference between the minimum and maximum lightness values and RMSE (root mean square error) of the derivative of the lightness profile. Could these quantities be centralized in a table?

| closed | 2021-04-06T10:02:44Z | 2021-05-11T06:48:32Z | https://github.com/1313e/CMasher/issues/32 | [

"feature request",

"awaiting response"

] | ycopin | 22 |

flairNLP/flair | nlp | 3,395 | Assertion error while reading training data | i am building it for my organisation, for indian addresses, i can import the libraries, make the labelled dataset

but while training the model there is a connection error, as my organisation doesn't allow pull requests from GitHub i guess

any workaround? | open | 2024-01-16T05:04:17Z | 2024-01-17T05:54:17Z | https://github.com/flairNLP/flair/issues/3395 | [

"question"

] | shubh-singhal | 0 |

pyqtgraph/pyqtgraph | numpy | 2,685 | This is not working in case parameterTree example | https://github.com/pyqtgraph/pyqtgraph/blob/7642e18e1d1ff569b1ef6db844c09280fc5fcddf/pyqtgraph/examples/_buildParamTypes.py#L1 | closed | 2023-04-11T11:54:56Z | 2023-05-24T00:09:33Z | https://github.com/pyqtgraph/pyqtgraph/issues/2685 | [

"cannot reproduce"

] | yashwanth-eng | 3 |

A3M4/YouTube-Report | matplotlib | 13 | Step 5 console error | I get this error when I try to do step 5. I tried to upgrade pip using 'python -m pip installs --upgrade pip' but still doesn't works.

| closed | 2019-12-15T12:23:24Z | 2019-12-15T22:28:31Z | https://github.com/A3M4/YouTube-Report/issues/13 | [] | SpicySnow | 2 |

s3rius/FastAPI-template | fastapi | 40 | Database is not initialized without migrations | If you choose to skip adding migrations, you'll face this issue.

We must add a function in application's startup that initializes database using metadata. | closed | 2021-10-10T05:57:07Z | 2021-10-13T10:03:45Z | https://github.com/s3rius/FastAPI-template/issues/40 | [

"bug"

] | s3rius | 2 |

pytest-dev/pytest-qt | pytest | 437 | BUG: Multiple `PyQt`/`PySide` Versions Not Supported | ### Bug

I ran into this issue when trying to fix a bug with [pyvistaqt](https://qtdocs.pyvista.org/). It appears, for some reason, when all of

- `PyQt5`

- `PySide2`

- `PyQt6`

- `PySide6`

are installed, `pytest-qt` fails to create a `QApplication` instance, causing tests to silently fail.

**NOTE**: `QT_API` is not set to anything on my system when this occurs.

### System Information

OS: Windows 10, x64-bit

Python: 3.8.10 x64-bit (CPython)

pytest-qt: 4.1.0

### Steps to Reproduce

1. Clone the `pyvistaqt` repo and cd into the folder

```powershell

git clone https://github.com/pyvista/pyvistaqt.git

cd pyvistaqt

```

2. Create and activate a virtual environment

```powershell

py -m venv .venv && .venv\Scripts\Activate.ps1

```

3. Install dependencies

```powershell

py -m pip install --upgrade pip

py -m pip install -r requirements_test.txt

py -m pip install -r requirements_docs.txt

```

5. First, install only `PySide6`

```powershell

py -m pip install pyside6

```

6. Sanity Check: Run tests to verify everything is working correctly (All Pass)

```powershell

pytest

```

7. Now install `PyQt5`, `PySide2`, and `PyQt6`

```powershell

py -m pip install pyqt5 pyside2 pyqt6

```

8. Rerun `pytest` and tests will fail silently

```powershell

PS> pytest

=================================== test session starts =======================================

platform win32 -- Python 3.8.10, pytest-7.1.2, pluggy-1.0.0

PySide6 6.3.1 -- Qt runtime 6.3.1 -- Qt compiled 6.3.1

rootdir: %USERPROFILE%\Code\external\pyvistaqt-demo\pyvistaqt, configfile: pytest.ini

plugins: cov-3.0.0, memprof-0.2.0, mypy-plugins-1.9.3, mypy-testing-0.0.11, qt-4.1.0, sphinx-0.4.0

collected 2 items

tests\test_plotting.py

```

9. Re-run `pytest` in verbose mode while printing output to stdout/stderr

```powershell

PS> pytest -v -s

==================================== test session starts ======================================