repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

Gozargah/Marzban | api | 1,364 | Users migration strategy when node gets blocked by censor. | First of all, I would like to thank you for the work you’ve done on this project. It’s truly an important and valuable contribution to the fight against censorship.

Is there any recommended strategy for quickly and seamlessly migrating users if the node they are on gets blocked by a censor based on its IP address?

If there is no automated solution for this task at the moment, have you considered adding such functionality?

| closed | 2024-10-14T10:42:12Z | 2024-10-14T14:39:30Z | https://github.com/Gozargah/Marzban/issues/1364 | [] | lk-geimfari | 1 |

mars-project/mars | scikit-learn | 3,268 | [BUG] Ray executor raises ValueError: WRITEBACKIFCOPY base is read-only | <!--

Thank you for your contribution!

Please review https://github.com/mars-project/mars/blob/master/CONTRIBUTING.rst before opening an issue.

-->

**Describe the bug**

A clear and concise description of what the bug is.

```python

_____________________ test_predict_sparse_callable_kernel ______________________

setup = <mars.deploy.oscar.session.SyncSession object at 0x33564eee0>

def test_predict_sparse_callable_kernel(setup):

# This is a non-regression test for #15866

# Custom sparse kernel (top-K RBF)

def topk_rbf(X, Y=None, n_neighbors=10, gamma=1e-5):

nn = NearestNeighbors(n_neighbors=10, metric="euclidean", n_jobs=-1)

nn.fit(X)

W = -1 * mt.power(nn.kneighbors_graph(Y, mode="distance"), 2) * gamma

W = mt.exp(W)

assert W.issparse()

return W.T

n_classes = 4

n_samples = 500

n_test = 10

X, y = make_classification(

n_classes=n_classes,

n_samples=n_samples,

n_features=20,

n_informative=20,

n_redundant=0,

n_repeated=0,

random_state=0,

)

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=n_test, random_state=0

)

model = LabelPropagation(kernel=topk_rbf)

> model.fit(X_train, y_train)

mars/learn/semi_supervised/tests/test_label_propagation.py:143:

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

mars/learn/semi_supervised/_label_propagation.py:369: in fit

return super().fit(X, y, session=session, run_kwargs=run_kwargs)

mars/learn/semi_supervised/_label_propagation.py:231: in fit

ExecutableTuple(to_run).execute(session=session, **(run_kwargs or dict()))

mars/core/entity/executable.py:267: in execute

ret = execute(*self, session=session, **kw)

mars/deploy/oscar/session.py:1888: in execute

return session.execute(

mars/deploy/oscar/session.py:1682: in execute

execution_info: ExecutionInfo = fut.result(

../../.pyenv/versions/3.8.13/lib/python3.8/concurrent/futures/_base.py:444: in result

return self.__get_result()

../../.pyenv/versions/3.8.13/lib/python3.8/concurrent/futures/_base.py:389: in __get_result

raise self._exception

mars/deploy/oscar/session.py:1868: in _execute

await execution_info

../../.pyenv/versions/3.8.13/lib/python3.8/asyncio/tasks.py:695: in _wrap_awaitable

return (yield from awaitable.__await__())

mars/deploy/oscar/session.py:105: in wait

return await self._aio_task

mars/deploy/oscar/session.py:953: in _run_in_background

raise task_result.error.with_traceback(task_result.traceback)

mars/services/task/supervisor/processor.py:372: in run

await self._process_stage_chunk_graph(*stage_args)

mars/services/task/supervisor/processor.py:250: in _process_stage_chunk_graph

chunk_to_result = await self._executor.execute_subtask_graph(

mars/services/task/execution/ray/executor.py:551: in execute_subtask_graph

meta_list = await asyncio.gather(*output_meta_object_refs)

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

awaitable = ObjectRef(c3f6db450a565c05ffffffffffffffffffffffff0100000001000000)

@types.coroutine

def _wrap_awaitable(awaitable):

"""Helper for asyncio.ensure_future().

Wraps awaitable (an object with __await__) into a coroutine

that will later be wrapped in a Task by ensure_future().

"""

> return (yield from awaitable.__await__())

E ray.exceptions.RayTaskError(ValueError): ray::execute_subtask() (pid=15135, ip=127.0.0.1)

E At least one of the input arguments for this task could not be computed:

E ray.exceptions.RayTaskError: ray::execute_subtask() (pid=15135, ip=127.0.0.1)

E At least one of the input arguments for this task could not be computed:

E ray.exceptions.RayTaskError: ray::execute_subtask() (pid=15135, ip=127.0.0.1)

E File "/home/admin/mars/mars/services/task/execution/ray/executor.py", line 185, in execute_subtask

E execute(context, chunk.op)

E File "/home/admin/mars/mars/core/operand/core.py", line 491, in execute

E result = executor(results, op)

E File "/home/admin/mars/mars/tensor/arithmetic/core.py", line 165, in execute

E ret = cls._execute_cpu(op, xp, lhs, rhs, **kw)

E File "/home/admin/mars/mars/tensor/arithmetic/core.py", line 142, in _execute_cpu

E return cls._get_func(xp)(lhs, rhs, **kw)

E File "/home/admin/mars/mars/lib/sparse/__init__.py", line 93, in power

E return a**b

E File "/home/admin/mars/mars/lib/sparse/array.py", line 503, in __pow__

E x = self.spmatrix.power(naked_other)

E File "/home/admin/.pyenv/versions/3.8.13/lib/python3.8/site-packages/scipy/sparse/_data.py", line 114, in power

E data = self._deduped_data()

E File "/home/admin/.pyenv/versions/3.8.13/lib/python3.8/site-packages/scipy/sparse/_data.py", line 32, in _deduped_data

E self.sum_duplicates()

E File "/home/admin/.pyenv/versions/3.8.13/lib/python3.8/site-packages/scipy/sparse/_compressed.py", line 1118, in sum_duplicates

E self.sort_indices()

E File "/home/admin/.pyenv/versions/3.8.13/lib/python3.8/site-packages/scipy/sparse/_compressed.py", line 1164, in sort_indices

E _sparsetools.csr_sort_indices(len(self.indptr) - 1, self.indptr,

E ValueError: WRITEBACKIFCOPY base is read-only

../../.pyenv/versions/3.8.13/lib/python3.8/asyncio/tasks.py:695: RayTaskError(ValueError)

```

```python

________________________ test_label_binarize_multilabel ________________________

setup = <mars.deploy.oscar.session.SyncSession object at 0x332666190>

def test_label_binarize_multilabel(setup):

y_ind = np.array([[0, 1, 0], [1, 1, 1], [0, 0, 0]])

classes = [0, 1, 2]

pos_label = 2

neg_label = 0

expected = pos_label * y_ind

y_sparse = [sp.csr_matrix(y_ind)]

for y in [y_ind] + y_sparse:

> check_binarized_results(y, classes, pos_label, neg_label, expected)

mars/learn/preprocessing/tests/test_label.py:250:

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

mars/learn/preprocessing/tests/test_label.py:186: in check_binarized_results

inversed = _inverse_binarize_thresholding(

../../.pyenv/versions/3.8.13/lib/python3.8/site-packages/sklearn/preprocessing/_label.py:649: in _inverse_binarize_thresholding

y.eliminate_zeros()

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

self = <3x3 sparse matrix of type '<class 'numpy.int64'>'

with 4 stored elements in Compressed Sparse Row format>

def eliminate_zeros(self):

"""Remove zero entries from the matrix

This is an *in place* operation.

"""

M, N = self._swap(self.shape)

> _sparsetools.csr_eliminate_zeros(M, N, self.indptr, self.indices,

self.data)

E ValueError: WRITEBACKIFCOPY base is read-only

../../.pyenv/versions/3.8.13/lib/python3.8/site-packages/scipy/sparse/_compressed.py:1077: ValueError

```

related issue: https://github.com/scipy/scipy/issues/8678

**To Reproduce**

To help us reproducing this bug, please provide information below:

1. Your Python version

2. The version of Mars you use

3. Versions of crucial packages, such as numpy, scipy and pandas

4. Full stack of the error.

5. Minimized code to reproduce the error.

**Expected behavior**

A clear and concise description of what you expected to happen.

**Additional context**

Add any other context about the problem here.

| closed | 2022-09-21T09:56:43Z | 2022-10-13T03:43:28Z | https://github.com/mars-project/mars/issues/3268 | [

"type: bug",

"mod: learn"

] | fyrestone | 0 |

supabase/supabase-py | fastapi | 516 | Cannot set options when instantiating Supabase client | **Describe the bug**

Cannot set options (such as schema, timeout etc.) for Supabase client in terminal or Jupyter notebook.

**To Reproduce**

Steps to reproduce the behavior:

1. Create a Supabase client in a new .py file using your database URL and service role key, and attempt to set an option:

```python

supabase: Client = create_client(url, key, {"schema": "some_other_schema"})

```

``

2. Attempt to run your .py file in the terminal, VSCode debug mode or a Jupyter notebook.

3. It will throw the error: `AttributeError: 'dict' object has no attribute 'headers'`

**Expected behavior**

Successful declaration of a new Supabase client, allowing the user to fetch or insert new data into, for example, a table on a different schema to 'public'.

**Screenshots**

<img width="916" alt="image" src="https://github.com/supabase-community/supabase-py/assets/101295184/7fbbce52-4b21-4190-b270-92d763535f65">

**Desktop (please complete the following information):**

- OS: macOS

| closed | 2023-08-08T04:41:12Z | 2023-08-08T05:31:18Z | https://github.com/supabase/supabase-py/issues/516 | [] | d-c-turner | 1 |

scikit-image/scikit-image | computer-vision | 6,906 | regionprops and regionprops_table crash when spacing != 1 | ### Description:

The `skimage.measure.regionprops` and `skimage.measure.regionprops_table` will crash when particular properties are passed and the `spacing` parameter is not 1 (or unspecified).

I think the `spacing` parameter is a new feature in v0.20.0 so this is probably a new bug.

I've got the code below to reproduce it.

When I pass the properties `label`, `area`, and `equivalent_diameter_area` then everything works fine with a custom `spacing`. Everything else seems to be trying to index a `float` value.

```props_dict_passing 1 ... PASSED

props_dict_passing 2 ... PASSED

Output exceeds the [size limit](command:workbench.action.openSettings?%5B%22notebook.output.textLineLimit%22%5D). Open the full output data [in a text editor](command:workbench.action.openLargeOutput?e9d046cb-8f53-4a89-b9dd-bc7dd3482e9b)---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

Cell In[14], line 42

39 print("props_dict_passing 2 ... PASSED")

41 # Fails now that spacing != 1 and eccentricity is passed

---> 42 props_dict_failing = regionprops_table(

43 label_image=label_image,

44 intensity_image=test_img,

45 spacing=0.5,

46 properties=bad_properties,

47 )

48 print("props_dict_failing ... PASSED")

File [~/mambaforge/envs/test-robusta-package/lib/python3.9/site-packages/skimage/measure/_regionprops.py:1038](https://vscode-remote+ssh-002dremote-002bbasic-002dst-002dm6ixlarge-002d1.vscode-resource.vscode-cdn.net/users/tony_reina/resilience_projects/starbux-image-viewer/notebooks/~/mambaforge/envs/test-robusta-package/lib/python3.9/site-packages/skimage/measure/_regionprops.py:1038), in regionprops_table(label_image, intensity_image, properties, cache, separator, extra_properties, spacing)

1031 intensity_image = np.zeros(

1032 label_image.shape + intensity_image.shape[ndim:],

1033 dtype=intensity_image.dtype

1034 )

1035 regions = regionprops(label_image, intensity_image=intensity_image,

1036 cache=cache, extra_properties=extra_properties, spacing=spacing)

-> 1038 out_d = _props_to_dict(regions, properties=properties,

1039 separator=separator)

1040 return {k: v[:0] for k, v in out_d.items()}

1042 return _props_to_dict(

1043 regions, properties=properties, separator=separator

...

264 delta[:, np.newaxis] ** np.arange(order + 1, dtype=float_dtype)

265 )

266 calc = np.rollaxis(calc, dim, image.ndim)

TypeError: 'float' object is not subscriptable```

### Way to reproduce:

```python

from skimage.measure import regionprops_table

from skimage.filters import threshold_otsu

from skimage.segmentation import clear_border

from skimage.measure import label, regionprops_table

from skimage.morphology import closing, square

import numpy as np

# Create random image 640x480

test_img = np.random.randint(0, 255, (640, 480))

# Detect objects

thresh = threshold_otsu(test_img)

bw = closing(test_img > thresh, square(3))

cleared = clear_border(bw)

label_image = label(cleared)

# Bugs start

good_properties = [

"label",

"equivalent_diameter_area",

"area",

]

# Works

props_dict_passing = regionprops_table(

label_image=label_image,

intensity_image=test_img,

spacing=0.5,

properties=good_properties,

)

print("props_dict_passing 1 ... PASSED")

# Add eccentricity (or major/minor axis and other float properties)

bad_properties = good_properties + ["eccentricity"]

# Works because spacing == 1

props_dict_passing2 = regionprops_table(

label_image=label_image,

intensity_image=test_img,

spacing=1,

properties=good_properties,

)

print("props_dict_passing 2 ... PASSED")

# Fails now that spacing != 1 and eccentricity is passed

props_dict_failing = regionprops_table(

label_image=label_image,

intensity_image=test_img,

spacing=0.5,

properties=bad_properties,

)

print("props_dict_failing ... PASSED")

```

### Version information:

```Shell

3.9.16 | packaged by conda-forge | (main, Feb 1 2023, 21:39:03)

[GCC 11.3.0]

Linux-5.10.147-133.644.amzn2.x86_64-x86_64-with-glibc2.26

scikit-image version: 0.21.0rc0

numpy version: 1.24.2

```

| open | 2023-04-21T18:31:04Z | 2023-09-16T14:09:05Z | https://github.com/scikit-image/scikit-image/issues/6906 | [

":bug: Bug"

] | tony-res | 6 |

onnx/onnx | machine-learning | 6,011 | [Feature request] Shape Inference for Einsum instead of Rank Inference | ### System information

v1.15.0

### What is the problem that this feature solves?

In the development of ONNX Runtime, we need know the output shape of each Op node for static graph compilation. However, we found that we could use onnx shape inference to achieve almost all output shapes except the output shape of Einsum. In `onnx/defs/math/defs.cc`, we found that there was only Rank Inference function for Einsum instead of Shape Inference. In a nutshell, shape inference for Einsum will be helpful for static graph compilations.

### Alternatives considered

_No response_

### Describe the feature

Just like the shape inference for all other ops, shape inference for Einsum should infer the output shape instead of rank according to the input shapes and the equation attribute.

We have developed a prototype version, which can be found in PR https://github.com/onnx/onnx/pull/6010. We would be delighted if this feature request is accepted. Alternatively, we are more than willing to provide assistance in incorporating this feature.

### Will this influence the current api (Y/N)?

No

### Feature Area

shape_inference

### Are you willing to contribute it (Y/N)

Yes

### Notes

_No response_ | closed | 2024-03-11T06:06:49Z | 2024-03-26T23:52:17Z | https://github.com/onnx/onnx/issues/6011 | [

"topic: enhancement",

"module: shape inference"

] | peishenyan | 1 |

huggingface/diffusers | deep-learning | 10,987 | Spatio-temporal diffusion models | **Is your feature request related to a problem? Please describe.**

Including https://github.com/yyysjz1997/Awesome-TimeSeries-SpatioTemporal-Diffusion-Model/blob/main/README.md models

| open | 2025-03-06T14:39:11Z | 2025-03-06T14:39:11Z | https://github.com/huggingface/diffusers/issues/10987 | [] | moghadas76 | 0 |

JaidedAI/EasyOCR | pytorch | 681 | Accelerate reader.readtext() with OpenMP | Hello all, this is more a question than an issue. I know `reader.readtext()` can be accelerated if I have a GPU with CUDA available; I was wondering if there was a flag to accelerate it with multi-threading (OpenMP).

Regards,

Victor | open | 2022-03-14T01:31:44Z | 2022-03-14T01:31:44Z | https://github.com/JaidedAI/EasyOCR/issues/681 | [] | vkrGitHub | 0 |

open-mmlab/mmdetection | pytorch | 11,753 | RuntimeError: handle_0 INTERNAL ASSERT FAILED at "../c10/cuda/driver_api.cpp":15, please report a bug to PyTorch. ```none | Thanks for your error report and we appreciate it a lot.

**Checklist**

1. I have searched related issues but cannot get the expected help.

2. I have read the [FAQ documentation](https://mmdetection.readthedocs.io/en/latest/faq.html) but cannot get the expected help.

3. The bug has not been fixed in the latest version.

**Describe the bug**

When I used the mask2former for instance segmentation, an error came out.

mask_pred = mask_pred[is_thing]

RuntimeError: handle_0 INTERNAL ASSERT FAILED at "../c10/cuda/driver_api.cpp":15, please report a bug to PyTorch.

**Reproduction**

1. What command or script did you run?

```none

A placeholder for the command.

```

2. Did you make any modifications on the code or config? Did you understand what you have modified?

3. What dataset did you use?

A segmentation dataset

**Environment**

1. Please run `python mmdet/utils/collect_env.py` to collect necessary environment information and paste it here.

2. You may add addition that may be helpful for locating the problem, such as

- How you installed PyTorch \[e.g., pip, conda, source\]

- Other environment variables that may be related (such as `$PATH`, `$LD_LIBRARY_PATH`, `$PYTHONPATH`, etc.)

**Error traceback**

If applicable, paste the error trackback here.

return _VF.meshgrid(tensors, **kwargs) # type: ignore[attr-defined]

05/29 16:12:42 - mmengine - INFO - Saving checkpoint at 44 iterations

Traceback (most recent call last):

File "tools/train.py", line 121, in <module>

main()

File "tools/train.py", line 117, in main

runner.train()

File "/home/xuym/miniconda3/envs/openmmlab/lib/python3.8/site-packages/mmengine/runner/runner.py", line 1777, in train

model = self.train_loop.run() # type: ignore

File "/home/xuym/miniconda3/envs/openmmlab/lib/python3.8/site-packages/mmengine/runner/loops.py", line 294, in run

self.runner.val_loop.run()

File "/home/xuym/miniconda3/envs/openmmlab/lib/python3.8/site-packages/mmengine/runner/loops.py", line 373, in run

self.run_iter(idx, data_batch)

File "/home/xuym/miniconda3/envs/openmmlab/lib/python3.8/site-packages/torch/utils/_contextlib.py", line 115, in decorate_context

return func(*args, **kwargs)

File "/home/xuym/miniconda3/envs/openmmlab/lib/python3.8/site-packages/mmengine/runner/loops.py", line 393, in run_iter

outputs = self.runner.model.val_step(data_batch)

File "/home/xuym/miniconda3/envs/openmmlab/lib/python3.8/site-packages/mmengine/model/base_model/base_model.py", line 133, in val_step

return self._run_forward(data, mode='predict') # type: ignore

File "/home/xuym/miniconda3/envs/openmmlab/lib/python3.8/site-packages/mmengine/model/base_model/base_model.py", line 361, in _run_forward

results = self(**data, mode=mode)

File "/home/xuym/miniconda3/envs/openmmlab/lib/python3.8/site-packages/torch/nn/modules/module.py", line 1518, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

File "/home/xuym/miniconda3/envs/openmmlab/lib/python3.8/site-packages/torch/nn/modules/module.py", line 1527, in _call_impl

return forward_call(*args, **kwargs)

File "/home/xuym/miniconda3/envs/openmmlab/lib/python3.8/site-packages/mmdet/models/detectors/base.py", line 94, in forward

return self.predict(inputs, data_samples)

File "/home/xuym/miniconda3/envs/openmmlab/lib/python3.8/site-packages/mmdet/models/detectors/maskformer.py", line 103, in predict

results_list = self.panoptic_fusion_head.predict(

File "/home/xuym/miniconda3/envs/openmmlab/lib/python3.8/site-packages/mmdet/models/seg_heads/panoptic_fusion_heads/maskformer_fusion_head.py", line 255, in predict

ins_results = self.instance_postprocess(

File "/home/xuym/miniconda3/envs/openmmlab/lib/python3.8/site-packages/mmdet/models/seg_heads/panoptic_fusion_heads/maskformer_fusion_head.py", line 167, in instance_postprocess

mask_pred = mask_pred[is_thing]

RuntimeError: handle_0 INTERNAL ASSERT FAILED at "../c10/cuda/driver_api.cpp":15, please report a bug to PyTorch.

```none

A placeholder for trackback.

```

**Bug fix**

If you have already identified the reason, you can provide the information here. If you are willing to create a PR to fix it, please also leave a comment here and that would be much appreciated!

| open | 2024-05-29T08:23:07Z | 2024-05-29T08:23:22Z | https://github.com/open-mmlab/mmdetection/issues/11753 | [] | AIzealotwu | 0 |

cookiecutter/cookiecutter-django | django | 4,872 | You probably don't need `get_user_model` | ## Description

Import `User` directly, rather than using `get_user_model`.

## Rationale

`get_user_model` is meant for *reusable* apps, while it is my understanding this project is targeted more towards creating websites than packages. Especially within the `users` app it doesn't make any sense to use it (are we expecting users to create a new user model in a custom app but then keep the `users` app in their project?) , and switching can prevent new users from assuming they have to use it in all their custom apps (which may or may not be what happened to me). For a more in-depth explanation of why it's an anti-pattern, read this [blog post](https://adamj.eu/tech/2022/03/27/you-probably-dont-need-djangos-get-user-model/). | closed | 2024-02-18T02:15:15Z | 2024-02-21T10:01:58Z | https://github.com/cookiecutter/cookiecutter-django/issues/4872 | [

"enhancement"

] | mfosterw | 1 |

apache/airflow | data-science | 47,630 | AIP-38 Turn dag run breadcrumb into a dropdown | ### Body

Make it easier to switch between dag runs in the graph view by using the breadcrumb as a dropdown like we had in the designs when the graph view was in its own modal.

### Committer

- [x] I acknowledge that I am a maintainer/committer of the Apache Airflow project. | closed | 2025-03-11T15:37:11Z | 2025-03-17T14:06:34Z | https://github.com/apache/airflow/issues/47630 | [

"kind:feature",

"area:UI",

"AIP-38"

] | bbovenzi | 2 |

Miserlou/Zappa | django | 1,525 | Support for generating slimmer packages | Currently zappa packaging will include all pip packages installed in the virtualenv. Installing zappa in the venv brings in a ton of dependencies. Depending on the app's actual needs, most/all of these don't actually need to be packaged and shipped to lambda. This unnecessarily increases the size of the package which makes zappa deploy/update much slower than it would otherwise.

As an example, for a simple hello world app, the package is over 8MB. The vast majority of this data is unneeded.

A possible approach here is to have an option to:

- don't package up anything from venv

- use requirements.txt in a way that doesn't slow deploy down

I see #525 and #542 but they don't seem to be resolved yet. Let me know if I'm missing anything! | open | 2018-06-08T20:15:28Z | 2019-04-04T14:08:19Z | https://github.com/Miserlou/Zappa/issues/1525 | [

"feature-request"

] | figelwump | 15 |

ultralytics/yolov5 | machine-learning | 12,514 | a questions when improve YOLOv5 | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and found no similar bug report.

### YOLOv5 Component

Training, Detection

### Bug

I want to improve ECA-attention, but there have same bug, which i cant not solve, i want your help@glenn-jocher . When i run yolo.py it work, but run train.py, there have been some issues.

`class EfficientChannelAttention(nn.Module): # Efficient Channel Attention module

def __init__(self, c, b=1, gamma=2):

super(EfficientChannelAttention, self).__init__()

t = int(abs((math.log(c, 2) + b) / gamma))

k = t if t % 2 else t + 1

self.avg_pool = nn.AdaptiveAvgPool2d(1)

self.conv1 = nn.Conv1d(1, 1, kernel_size=k, padding=int(k/2), bias=False)

self.sigmoid = nn.Sigmoid()

def forward(self, x):

# print('x是:{}'.format(x.size))

out = self.avg_pool(x)

# print('out是:{}'.format(out))

out_flat = out.view(-1)

orig_shape = out.size()

print('out_flat:{}'.format(out_flat))

sorted_indices = torch.argsort(out_flat,descending=True)

print('sorted_indices为:{}'.format(sorted_indices))

reshape_indices = sorted_indices.view(*orig_shape)

# print('reshape_indices:{}'.format(reshape_indices.shape))

soted_out = out.flatten()[sorted_indices].reshape(*orig_shape)

# print('soted_out为:{}'.format(soted_out))

# sorted_x = x.view(x.size()[0],-1,x.size()[-2],x.size()[-1])[reshape_indices]

sorted_x = torch.index_select(x, dim = 1, index =sorted_indices)

# print('sorted_x的形状:{}'.format(sorted_x.shape))

# print('排序后的x:{}'.format(sorted_x))

out2 = self.avg_pool(sorted_x)

# print('avgpool验证排序:{}'.format(out2))

soted_out = self.conv1(soted_out.squeeze(-1).transpose(-1, -2)).transpose(-1, -2).unsqueeze(-1)

soted_out = self.sigmoid(soted_out)

# print('out的形状:{}'.format(out.shape))

# print(out * sorted_x)

return soted_out * sorted_x`

`# parameters

nc: 80 # number of classes

depth_multiple: 0.33 # model depth multiple

width_multiple: 0.50 # layer channel multiple

# anchors

anchors:

- [10,13, 16,30, 33,23] # P3/8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

# YOLOv5 backbone

backbone:

# [from, number, module, args]

[[-1, 1, Focus, [64, 3]], # 0-P1/2

[-1, 1, Conv, [128, 3, 2]], # 1-P2/4

[-1, 3, C3, [128]],

[-1, 1, Conv, [256, 3, 2]], # 3-P3/8

[-1, 9, C3, [256]],

[-1, 1, Conv, [512, 3, 2]], # 5-P4/16

[-1, 9, C3, [512]],

[-1, 1, Conv, [1024, 3, 2]], # 7-P5/32

[-1, 1, SPP, [1024, [5, 9, 13]]],

[-1, 3, C3, [1024, False]], # 9

]

# YOLOv5 head

head:

[[-1, 1, Conv, [512, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 6], 1, Concat, [1]], # cat backbone P4

[-1, 3, C3, [512, False]], # 13

[-1, 1, Conv, [256, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 4], 1, Concat, [1]], # cat backbone P3

[-1, 3, C3, [256, False]], # 17 (P3/8-small)

[-1, 1, Conv, [256, 3, 2]],

[[-1, 14], 1, Concat, [1]], # cat head P4

[-1, 3, C3, [512, False]], # 20 (P4/16-medium)

[-1, 1, Conv, [512, 3, 2]],

[-1, 1, EfficientChannelAttention, [512]],

[[-1, 10], 1, Concat, [1]], # cat head P5

[-1, 3, C3, [1024, False]], # 23 (P5/32-large)

[[17, 20, 24], 1, Detect, [nc, anchors]], # Detect(P3, P4, P5)

]`

When i use cpu the follow problem appear:

` Epoch gpu_mem box obj cls total labels img_size

0%| | 0/11049 [00:00<?, ?it/s]

out_flat:tensor([ 0.13989, 0.01097, 0.67497, ..., 0.14956, 0.13888, -0.00238], grad_fn=<ViewBackward0>)

sorted_indices为:tensor([ 27, 84, 107, ..., 539, 596, 706])

Traceback (most recent call last):

File "/home/wjh/learning/1/yolov5-5.0/train.py", line 543, in <module>

train(hyp, opt, device, tb_writer)

File "/home/wjh/learning/1/yolov5-5.0/train.py", line 303, in train

pred = model(imgs) # forward

File "/home/wjh/.conda/envs/Yolov5/lib/python3.9/site-packages/torch/nn/modules/module.py", line 1194, in _call_impl

return forward_call(*input, **kwargs)

File "/home/wjh/learning/1/yolov5-5.0/models/yolo.py", line 123, in forward

return self.forward_once(x, profile) # single-scale inference, train

File "/home/wjh/learning/1/yolov5-5.0/models/yolo.py", line 139, in forward_once

x = m(x) # run

File "/home/wjh/.conda/envs/Yolov5/lib/python3.9/site-packages/torch/nn/modules/module.py", line 1194, in _call_impl

return forward_call(*input, **kwargs)

File "/home/wjh/learning/1/yolov5-5.0/models/common.py", line 411, in forward

sorted_x = torch.index_select(x, dim = 1, index =sorted_indices)

RuntimeError: INDICES element is out of DATA bounds, id=918 axis_dim=256

进程已结束,退出代码`

The display exceeds the index, but I have checked the index during yolo. py runtime and everything is fine,

### Environment

_No response_

### Minimal Reproducible Example

_No response_

### Additional

_No response_

### Are you willing to submit a PR?

- [ ] Yes I'd like to help by submitting a PR! | closed | 2023-12-16T12:44:17Z | 2024-10-20T19:34:34Z | https://github.com/ultralytics/yolov5/issues/12514 | [

"bug",

"Stale"

] | haoaZ | 5 |

praw-dev/praw | api | 1,404 | Smarter MoreComments Algorithim | If we basically wait on a bunch of MoreComments, why could we not add up all of the MoreComments into one big MoreComment and then replace as needed? It would make the replace_more algorithm much faster.

PR #1403 implements a queue, so we could theoretically combine and get a lot at once. If it's a matter of linking the new comments to match with their parent comments, we could use a dict of some sort, and have the new objects go to the Comment key. | closed | 2020-04-22T03:59:07Z | 2021-05-20T17:46:48Z | https://github.com/praw-dev/praw/issues/1404 | [

"Feature",

"Discussion"

] | PythonCoderAS | 3 |

BeastByteAI/scikit-llm | scikit-learn | 86 | can you share link to Agent Dingo | can you share link to Agent Dingo | closed | 2024-03-03T19:33:56Z | 2024-03-04T21:57:06Z | https://github.com/BeastByteAI/scikit-llm/issues/86 | [] | Sandy4321 | 1 |

AutoGPTQ/AutoGPTQ | nlp | 363 | Why inference gets slower by going down to lower bits?(in comparison with ggml) | Hi Team,

Thanks for the great work.

I had a few doubts about quantized inference. I was doing the benchmark test and found that inference gets slower by going down to lower bits(4->3->2). Below are the inference details on the A6000 GPU:

4 bit(3.7G): 48 tokens/s

3 bit(2.9G): 38 tokens/s

2 bit(2.2G): 39 tokens/s

What is the reason behind the inference getting slower by going to lower bits? but this is not the case for ggml, where the inference speed gets better.

Thanks | closed | 2023-10-06T21:26:56Z | 2023-10-25T12:54:20Z | https://github.com/AutoGPTQ/AutoGPTQ/issues/363 | [

"bug"

] | Darshvino | 1 |

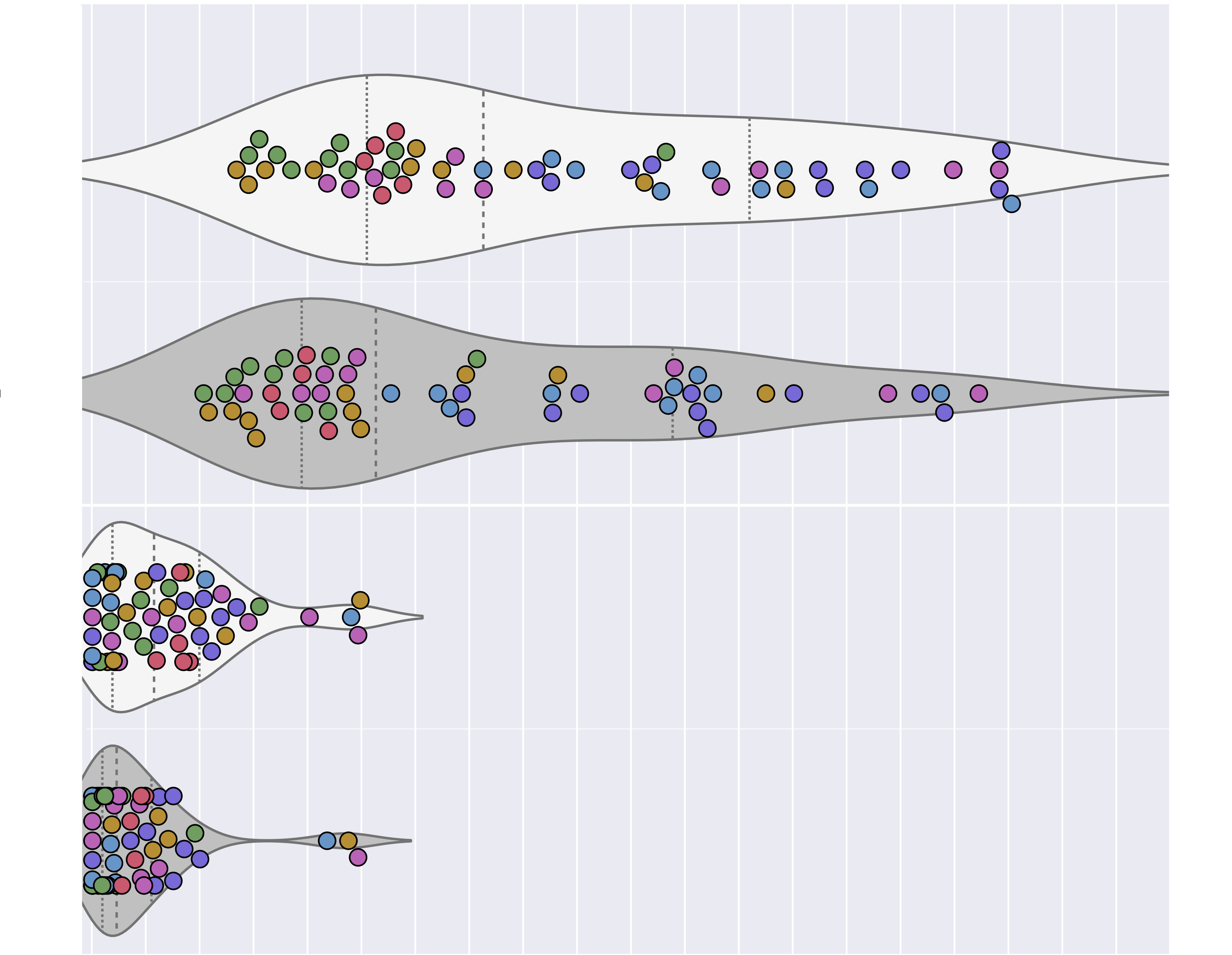

mwaskom/seaborn | data-science | 2,986 | swarmplot change point maximum displacement from center | Hi,

I am trying to plot a `violinplot` + `swarmplot` combination for with multiple hues and many points and am struggling to get the optimal clarity with as few points as possible overlapping. I tried both `swarmplot` and `stripplot`, with and without `dodge`.

Since i have multiple categories on the y-axis , I have also played around with the figure size, setting it to large height values. It helps to improve the clarity of the violin plots but the swarm/strip plots remain unchanged and crowded with massive overlap. I know that there will always be overlap with many points sharing the same/similar x-values, but i would like to maximize the use of space available between y-values for the swarms. Is there a way i can increase the maximum displacement from center for the swarm plots? With the `stripplot` `jitter` i can disperese the point, but they tend to overlap randomly quite a bit still and also start to move over into other violin plots.

Tried with Seaborn versions: `0.11.2` and `0.12.0rc0 `

I attached a partial plot, as the original is quite large:

```

...

sns.set_theme()

sns.set(rc={"figure.figsize": (6, 18)})

...

PROPS = {'boxprops': {'edgecolor': 'black'},

'medianprops': {'color': 'black'},

'whiskerprops': {'color': 'black'},

'capprops': {'color': 'black'}}

ax = sns.violinplot(x=stat2show, y=y_cat, data=data_df, width=1.7, fliersize=0,

linewidth=0.75, order=y_order, palette=qual_colors,

scale="count", inner="quartile", **PROPS)

sns.swarmplot(x=stat2show, y=y_cat, data=data_df, size=5.2, color='white',

linewidth=0.5, hue="Data Set", edgecolor='black',

palette=data_set_palette, order=y_order, dodge=False,

hue_order=data_set_hue_order)

...

```

Thanks for any help! | closed | 2022-08-30T10:05:12Z | 2022-08-30T11:44:51Z | https://github.com/mwaskom/seaborn/issues/2986 | [] | ohickl | 4 |

plotly/dash | dash | 2,302 | [BUG] Error when passing list of components in dash component properties other than children. | ```

dash 2.7.0

dash-core-components 2.0.0

dash-html-components 2.0.0

dash-table 5.0.0

dash-mantine-components 0.11.0a0

```

**Describe the bug**

When passing components in dash component properties other than `children`, an error is thrown if a list of components is passed.

```python

from dash import Dash

from dash_iconify import DashIconify

import dash_mantine_components as dmc

app = Dash(__name__)

app.layout = dmc.Divider(label=["GitHub", DashIconify(icon="fa:github")])

if __name__ == "__main__":

app.run_server(debug=True)

```

Error:

<img width="1614" alt="Screenshot 2022-11-06 at 12 10 52 AM" src="https://user-images.githubusercontent.com/91216500/200135808-1b1ea37d-4b7b-4871-9b02-e02412340600.png">

This behaviour is observed even if a single component is passed in the list:

```python

app.layout = dmc.Divider(label=[DashIconify(icon="fa:github")])

```

**Expected behavior**

No error should be displayed even when multiple components are passed.

**Screenshots**

NA | closed | 2022-11-05T18:48:04Z | 2022-12-05T16:24:34Z | https://github.com/plotly/dash/issues/2302 | [] | snehilvj | 0 |

junyanz/pytorch-CycleGAN-and-pix2pix | deep-learning | 730 | Looking for performance metric for cyclegan | Hi, we often apply cycleGAN for unpaired data. So, some of the performance metric will be not applied

- SSIM

- PSNR

For my dataset, I would like to use cyclegan to mapping an image from winter session to spring session and they have no pair data for each image. Could you tell me how can I evaluate the cyclegan performance (i.e how to know the output is close to a realistic image...)

| closed | 2019-08-14T21:55:25Z | 2020-04-25T18:18:55Z | https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/730 | [] | John1231983 | 6 |

Evil0ctal/Douyin_TikTok_Download_API | api | 439 | 抖音视频可以解析,但无法下载 | ***发生错误的平台?***

抖音

***发生错误的端点?***

Web APP

***提交的输入值?***

如:短视频链接

***是否有再次尝试?***

如:是,发生错误后X时间后错误依旧存在。

***你有查看本项目的自述文件或接口文档吗?***

如:有,并且很确定该问题是程序导致的。

{

"code": 400,

"message": "Client error '403 Forbidden' for url 'http://v3-web.douyinvod.com/045365e0ddece3cd7bb6ee83a1f2207c/6687bd6a/video/tos/cn/tos-cn-ve-15c001-alinc2/oEheBigbIOzQoZA3EBy2VNiBQOkaRAfR30AEpT/?a=6383&ch=26&cr=3&dr=0&lr=all&cd=0%7C0%7C0%7C3&cv=1&br=1508&bt=1508&cs=0&ds=4&ft=pEaFx4hZffPdhb~NI1VNvAq-antLjrKaM9V.RkaFmfTeejVhWL6&mime_type=video_mp4&qs=0&rc=MztoNTVkODxoZGc8ZDg7M0BpM29oN3E5cjw2czMzNGkzM0A0NTZeLjYwNmMxNi42YGAyYSNmZDA0MmRzNm1gLS1kLWFzcw%3D%3D&btag=c0000e00008000&cquery=100B_100x_100z_100o_100w&dy_q=1720164672&feature_id=46a7bb47b4fd1280f3d3825bf2b29388&l=20240705153112480A6FC15A49E08AB062'\nFor more information check: https://developer.mozilla.org/en-US/docs/Web/HTTP/Status/403",

"support": "Please contact us on Github: https://github.com/Evil0ctal/Douyin_TikTok_Download_API",

"time": "2024-07-05 07:28:20",

"router": "/api/download",

"params": {

"url": "https://v.douyin.com/i6CgdQHY/",

"prefix": "true",

"with_watermark": "false"

}

}

| closed | 2024-07-05T07:34:35Z | 2024-07-10T02:50:07Z | https://github.com/Evil0ctal/Douyin_TikTok_Download_API/issues/439 | [

"BUG"

] | zttlovedouzi | 3 |

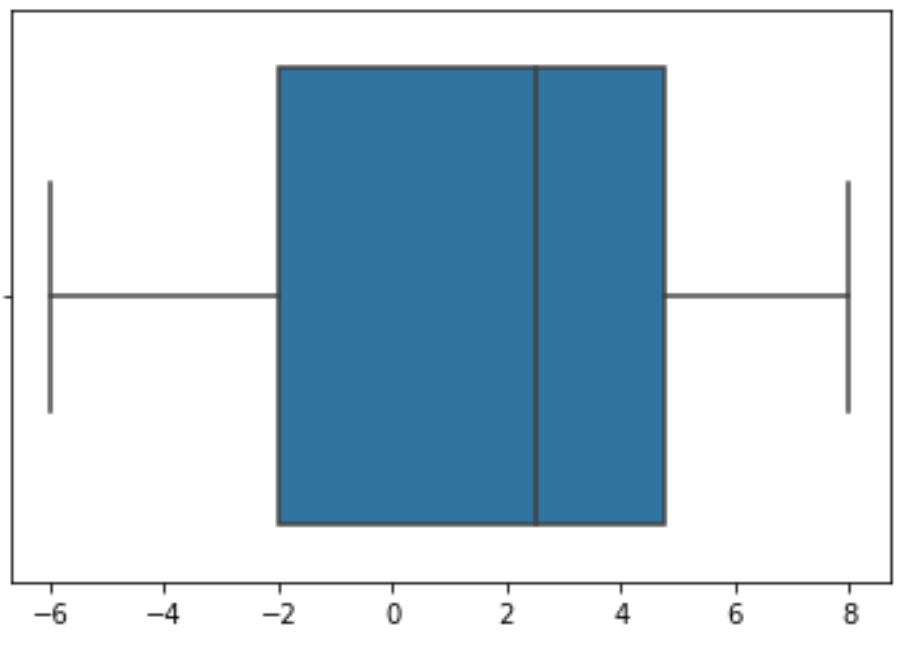

mwaskom/seaborn | pandas | 3,025 | Boxplot Bug | Hi

The issue happens when use sns.boxplot for quartiles. In terms of the quartile Wikipedia with links (https://en.wikipedia.org/wiki/Quartile), Q1, Q2(median), and Q3 are all mean but in the different subsets. If that is the case, in the list containing elements - -6,-3,1,4,5,8, Q1 is -3, median for 2.5, and Q3 for 5. However, when running the method, sns.boxplot, I find Q1 shows at the edge of the blue box is -2. Hence, I think this is bug.

In order to facilitate your inquiry, I paste both source code and plot, which are shown below.

| closed | 2022-09-14T18:06:19Z | 2022-09-15T00:58:12Z | https://github.com/mwaskom/seaborn/issues/3025 | [] | tac628 | 1 |

davidteather/TikTok-Api | api | 855 | time out error and new connection error | when i run the code it shows time out error and new connection error,but i can get access to https://www.tiktok.com/@laurenalaina

the code is :

```

from TikTokApi import TikTokApi

verify_fp = " "

api = TikTokApi(custom_verify_fp=verify_fp)

user = api.user(username="laurenalaina")

for video in user.videos():

print(video.id)

```

it shows

```

TimeoutError Traceback (most recent call last)

~\anaconda3\lib\site-packages\urllib3\connection.py in _new_conn(self)

158 try:

--> 159 conn = connection.create_connection(

160 (self._dns_host, self.port), self.timeout, **extra_kw

~\anaconda3\lib\site-packages\urllib3\util\connection.py in create_connection(address, timeout, source_address, socket_options)

83 if err is not None:

---> 84 raise err

85

~\anaconda3\lib\site-packages\urllib3\util\connection.py in create_connection(address, timeout, source_address, socket_options)

73 sock.bind(source_address)

---> 74 sock.connect(sa)

75 return sock

TimeoutError: [WinError 10060] 由于连接方在一段时间后没有正确答复或连接的主机没有反应,连接尝试失败。

During handling of the above exception, another exception occurred:

NewConnectionError Traceback (most recent call last)

~\anaconda3\lib\site-packages\urllib3\connectionpool.py in urlopen(self, method, url, body, headers, retries, redirect, assert_same_host, timeout, pool_timeout, release_conn, chunked, body_pos, **response_kw)

669 # Make the request on the httplib connection object.

--> 670 httplib_response = self._make_request(

671 conn,

~\anaconda3\lib\site-packages\urllib3\connectionpool.py in _make_request(self, conn, method, url, timeout, chunked, **httplib_request_kw)

380 try:

--> 381 self._validate_conn(conn)

382 except (SocketTimeout, BaseSSLError) as e:

~\anaconda3\lib\site-packages\urllib3\connectionpool.py in _validate_conn(self, conn)

975 if not getattr(conn, "sock", None): # AppEngine might not have `.sock`

--> 976 conn.connect()

977

~\anaconda3\lib\site-packages\urllib3\connection.py in connect(self)

307 # Add certificate verification

--> 308 conn = self._new_conn()

309 hostname = self.host

~\anaconda3\lib\site-packages\urllib3\connection.py in _new_conn(self)

170 except SocketError as e:

--> 171 raise NewConnectionError(

172 self, "Failed to establish a new connection: %s" % e

NewConnectionError: <urllib3.connection.HTTPSConnection object at 0x00000251A9F6D670>: Failed to establish a new connection: [WinError 10060] 由于连接方在一段时间后没有正确答复或连接的主机没有反应,连接尝试失败。

During handling of the above exception, another exception occurred:

MaxRetryError Traceback (most recent call last)

~\anaconda3\lib\site-packages\requests\adapters.py in send(self, request, stream, timeout, verify, cert, proxies)

438 if not chunked:

--> 439 resp = conn.urlopen(

440 method=request.method,

~\anaconda3\lib\site-packages\urllib3\connectionpool.py in urlopen(self, method, url, body, headers, retries, redirect, assert_same_host, timeout, pool_timeout, release_conn, chunked, body_pos, **response_kw)

723

--> 724 retries = retries.increment(

725 method, url, error=e, _pool=self, _stacktrace=sys.exc_info()[2]

~\anaconda3\lib\site-packages\urllib3\util\retry.py in increment(self, method, url, response, error, _pool, _stacktrace)

438 if new_retry.is_exhausted():

--> 439 raise MaxRetryError(_pool, url, error or ResponseError(cause))

440

MaxRetryError: HTTPSConnectionPool(host='www.tiktok.com', port=443): Max retries exceeded with url: / (Caused by NewConnectionError('<urllib3.connection.HTTPSConnection object at 0x00000251A9F6D670>: Failed to establish a new connection: [WinError 10060] 由于连接方在一段时间后没有正确答复或连接的主机没有反应,连接尝试失败。'))

During handling of the above exception, another exception occurred:

ConnectionError Traceback (most recent call last)

<ipython-input-10-c2db16034fb7> in <module>

6 user = api.user(username="laurenalaina")

7

----> 8 for video in user.videos():

9 print(video.id)

~\anaconda3\lib\site-packages\TikTokApi\api\user.py in videos(self, count, cursor, **kwargs)

131

132 if not self.user_id and not self.sec_uid:

--> 133 self.__find_attributes()

134

135 first = True

~\anaconda3\lib\site-packages\TikTokApi\api\user.py in __find_attributes(self)

261 # It is more efficient to check search first, since self.user_object() makes HTML request.

262 found = False

--> 263 for u in self.parent.search.users(self.username):

264 if u.username == self.username:

265 found = True

~\anaconda3\lib\site-packages\TikTokApi\api\search.py in search_type(search_term, obj_type, count, offset, **kwargs)

78 cursor = offset

79

---> 80 spawn = requests.head(

81 "https://www.tiktok.com",

82 proxies=Search.parent._format_proxy(processed.proxy),

~\anaconda3\lib\site-packages\requests\api.py in head(url, **kwargs)

102

103 kwargs.setdefault('allow_redirects', False)

--> 104 return request('head', url, **kwargs)

105

106

~\anaconda3\lib\site-packages\requests\api.py in request(method, url, **kwargs)

59 # cases, and look like a memory leak in others.

60 with sessions.Session() as session:

---> 61 return session.request(method=method, url=url, **kwargs)

62

63

~\anaconda3\lib\site-packages\requests\sessions.py in request(self, method, url, params, data, headers, cookies, files, auth, timeout, allow_redirects, proxies, hooks, stream, verify, cert, json)

528 }

529 send_kwargs.update(settings)

--> 530 resp = self.send(prep, **send_kwargs)

531

532 return resp

~\anaconda3\lib\site-packages\requests\sessions.py in send(self, request, **kwargs)

641

642 # Send the request

--> 643 r = adapter.send(request, **kwargs)

644

645 # Total elapsed time of the request (approximately)

~\anaconda3\lib\site-packages\requests\adapters.py in send(self, request, stream, timeout, verify, cert, proxies)

514 raise SSLError(e, request=request)

515

--> 516 raise ConnectionError(e, request=request)

517

518 except ClosedPoolError as e:

ConnectionError: HTTPSConnectionPool(host='www.tiktok.com', port=443): Max retries exceeded with url: / (Caused by NewConnectionError('<urllib3.connection.HTTPSConnection object at 0x00000251A9F6D670>: Failed to establish a new connection: [WinError 10060] 由于连接方在一段时间后没有正确答复或连接的主机没有反应,连接尝试失败。'))

```

**Desktop (please complete the following information):**

- OS: [Windows 10][jupyter notebook]

- TikTokApi Version [e.g. 5.0.0] - if out of date upgrade before posting an issue

**Additional context**

Add any other context about the problem here.

| closed | 2022-03-13T04:00:57Z | 2023-08-08T22:18:06Z | https://github.com/davidteather/TikTok-Api/issues/855 | [

"bug"

] | sxy-dawnwind | 2 |

litestar-org/litestar | pydantic | 3,814 | Enhancement: consider adding mypy plugin for type checking `data.create_instance(id=1, address__id=2)` | ### Summary

Right now [`create_instance`](https://docs.litestar.dev/latest/reference/dto/data_structures.html#litestar.dto.data_structures.DTOData.create_instance) can take any `**kwargs`.

But, mypy has no way of actually checking that `id=1, address__id=2` are valid keywords for this call.

It can be caught when executed, sure. But typechecking is much faster than writing code + writing tests + running them.

In Django we have a similar pattern of passing keywords like this to filters. Like: `User.objects.filter(id=1, settings__profile="public")`. For this we use a custom mypy plugin: https://github.com/typeddjango/django-stubs/blob/c9c729073417d0936cb944ab8585ad236ab30321/mypy_django_plugin/transformers/orm_lookups.py#L10

What it does?

- It checks that simple keyword arguments are indeed the correct ones

- It checks that nested `__` ones also exist on the nested model

- It still allows `**custom_data` unpacking

- It generates an error that can be silenced with `type: ignore[custom-code]`

- All checks like this can be turned off when `custom-code` is disabled in mypy checks

- It does not affect anything else

- It slows down type-checking a bit for users who added this plugin

- For users without a plugin - nothing happens

- Pyright and PyCharm are unaffected

- It is better to bundle this plugin, but it can be a 3rd party (really hard to maintain)

Plus, in the future more goodies can be added, included DI proper checking, URL params, etc.

It will require its own set of tests via `typing.assert_type` and maintaince time. Mypy sometimes break plugin-facing APIs.

I can write this plugin if others are interested :)

### Basic Example

_No response_

### Drawbacks and Impact

_No response_

### Unresolved questions

_No response_ | open | 2024-10-16T09:31:03Z | 2025-03-20T15:55:00Z | https://github.com/litestar-org/litestar/issues/3814 | [

"Enhancement",

"Typing",

"DTOs"

] | sobolevn | 0 |

graphdeco-inria/gaussian-splatting | computer-vision | 720 | how to construct our own dataset as input for 3d-GS from images taken by a phone | Hi,

Thanks for your great work. I'd like to try your pipeline on my own dataset. I took a few images and wanted to use colmap to obtain the necessary files as in your dataset. When I run your python file "convert.py", the output files were totally different from yours. The images taken are stored in this format: ./360_v2/bottles/input . Is there anything wrong with this data format?

thx for your time

best,

| open | 2024-03-21T10:02:58Z | 2024-03-21T10:02:58Z | https://github.com/graphdeco-inria/gaussian-splatting/issues/720 | [] | Ericgone | 0 |

django-cms/django-cms | django | 7,482 | [BUG] Wizard create page doesnt work | ## Description

When i start new. I get the wizard with 'new page'. I get the message in red "Please choose an option from below to proceed to the next step.".

## Steps to reproduce

I used this docs: https://django-cms-docs.readthedocs.io/en/latest/how_to/01-install.html

To setup django-cms v4.

## Expected behaviour

That when you choose a option in the wizard the form comes in front.

## Actual behaviour

When choosing an option i get in red "Please choose an option from below to proceed to the next step."

## Screenshots

<img width="1375" alt="Schermafbeelding 2023-01-22 om 12 01 49" src="https://user-images.githubusercontent.com/34129243/213912639-352a1761-08a6-4c2a-92cb-31aad98bd552.png">

## Additional information (CMS/Python/Django versions)

Python 3.9

Django 4.1.5

Django CMS 4.1.0rc1

## Do you want to help fix this issue?

* [ + ] Yes, I want to help fix this issue and I will join #workgroup-pr-review on [Slack](https://www.django-cms.org/slack) to confirm with the community that a PR is welcome.

* [ ] No, I only want to report the issue.

| closed | 2023-01-22T11:09:12Z | 2023-01-28T13:36:56Z | https://github.com/django-cms/django-cms/issues/7482 | [] | svandeneertwegh | 1 |

microsoft/MMdnn | tensorflow | 608 | pytorch to IR error?? | Platform (like ubuntu 16.04/win10):

Ubuntu 16.04.6 LTS

Python version:

2.7

Source framework with version (like Tensorflow 1.4.1 with GPU):

1.13.1with GPU

Destination framework with version (like CNTK 2.3 with GPU):

pytorch verson: 1.0.1.post2

Pre-trained model path (webpath or webdisk path):

mmdownload -f pytorch -n resnet101 -o ./

Running scripts:

mmtoir -f pytorch -d resnet101 --inputShape 3,224,224 -n imagenet_resnet101.pth

mmdnn setup: pip install -U git+https://github.com/Microsoft/MMdnn.git@master

mmtoir -f pytorch -d resnet101 --inputShape 3,224,224 -n imagenet_resnet101.pth

Traceback (most recent call last):

File "/home/luna/.local/bin/mmtoir", line 10, in <module>

sys.exit(_main())

File "/home/luna/.local/lib/python2.7/site-packages/mmdnn/conversion/_script/convertToIR.py", line 192, in _main

ret = _convert(args)

File "/home/luna/.local/lib/python2.7/site-packages/mmdnn/conversion/_script/convertToIR.py", line 92, in _convert

parser = PytorchParser(model, inputshape[0])

File "/home/luna/.local/lib/python2.7/site-packages/mmdnn/conversion/pytorch/pytorch_parser.py", line 85, in __init__

self.pytorch_graph.build(self.input_shape)

File "/home/luna/.local/lib/python2.7/site-packages/mmdnn/conversion/pytorch/pytorch_graph.py", line 124, in build

trace.set_graph(PytorchGraph._optimize_graph(trace.graph(), False))

File "/home/luna/.local/lib/python2.7/site-packages/mmdnn/conversion/pytorch/pytorch_graph.py", line 74, in _optimize_graph

graph = torch._C._jit_pass_onnx(graph, aten)

TypeError: _jit_pass_onnx(): incompatible function arguments. The following argument types are supported:

1. (arg0: torch::jit::Graph, arg1: torch._C._onnx.OperatorExportTypes) -> torch::jit::Graph

| open | 2019-03-07T06:31:41Z | 2019-06-26T15:19:47Z | https://github.com/microsoft/MMdnn/issues/608 | [] | lunalulu | 16 |

flasgger/flasgger | api | 517 | swag_from did not update components.schemas - OpenAPI 3.0 | Hi

I am converting a project OpenAPI from v2.0 to v3.0

the problem is : `swag_from` will not update `components.schemas` when I load an additional yaml file (previously with v2.0, where we had `definitions`, everything worked fine and `definitions` will updated by `swag_from` from extra yml file)

here is a simple code to reproduce this issue

`swagger_all.yml`

```yml

openapi: 3.0.1

info:

title: Test

description: Testing

version: 1.0.0

paths: {}

# paths:

# /get_cost:

# post:

# summary: a test with cascading $refs

# requestBody:

# description: request

# content:

# application/json:

# schema:

# $ref: '#/components/schemas/GetCostRequest'

# required: true

# responses:

# 200:

# description: OK

# content:

# application/json:

# schema:

# type: array

# items:

# $ref: '#/components/schemas/GetCostResponse'

# 201:

# description: Created

# content: {}

# 401:

# description: Unauthorized

# content: {}

components:

schemas:

Cost:

title: Cost

type: object

properties:

currency:

type: string

description: cost currency (3-letters code)

value:

type: number

description: cost value

GeoPosition:

title: GeoPosition

type: object

properties:

latitude:

type: number

description: latitude in float

format: double

longitude:

type: number

description: longitude in float

format: double

# GetCostRequest:

# title: GetCost Request

# type: object

# properties:

# level:

# type: integer

# location:

# $ref: '#/components/schemas/Location'

# GetCostResponse:

# title: GetCost response

# type: object

# properties:

# cost:

# $ref: '#/components/schemas/Cost'

# description:

# type: string

Location:

title: Location

type: object

properties:

name:

type: string

description: name of the location

position:

$ref: '#/components/schemas/GeoPosition'

```

and `extra.yml`

```yml

summary: a test

requestBody:

description: request

content:

application/vnd.api+json:

schema:

$ref: '#/components/schemas/GetCostRequest'

required: true

responses:

200:

description: OK

content:

application/json:

schema:

type: array

items:

$ref: '#/components/schemas/GetCostResponse'

201:

description: Created

content: {}

401:

description: Unauthorized

content: {}

components:

schemas:

GetCostRequest:

title: GetCost Request

type: object

properties:

level:

type: integer

location:

$ref: '#/components/schemas/Location'

GetCostResponse:

title: GetCost response

type: object

properties:

cost:

$ref: '#/components/schemas/Cost'

description:

type: string

```

and the python code :

```py

from flask import Flask, jsonify, Blueprint

from flasgger import swag_from, Swagger

try:

import simplejson as json

except ImportError:

import json

app = Flask(__name__)

app.config['SWAGGER'] = {'openapi': '3.0.1','uiversion': 3}

swagger = Swagger(app, template_file='swagger_all.yml')

api = Blueprint('api', __name__, url_prefix='/api')

@api.route('/get_cost', methods=['POST'])

@swag_from(specs='extra.yml',validation=True)

def get_cost():

result = dict(description='The best place',

cost=dict(currency='EUR', value=123456))

return jsonify([result])

app.register_blueprint(api)

if __name__ == '__main__':

app.run(debug=True)

```

when I look at to the generated `apispec_1.json` file, the input entry `components.schemas` did not updated by `swag_from` (only `paths` updated), and contains only inputs from file passed by `template_file`

here is the error I am getting

```bash

Errors

Resolver error at paths./api/get_cost.post.requestBody.content.application/vnd.api+json.schema.$ref

Could not resolve reference: Could not resolve pointer: /components/schemas/GetCostRequest does not exist in document

Resolver error at paths./api/get_cost.post.responses.200.content.application/json.schema.items.$ref

Could not resolve reference: Could not resolve pointer: /components/schemas/GetCostResponse does not exist in document

```

any suggestion ?

| open | 2022-01-14T14:58:46Z | 2022-03-10T05:43:20Z | https://github.com/flasgger/flasgger/issues/517 | [] | arabnejad | 2 |

pyjanitor-devs/pyjanitor | pandas | 959 | Extend select_columns to groupby objects | # Brief Description

Allow column selection on pandas dataframe groupby objects with `select_columns`

# Example API

``mtcars.groupby('cyl').select_columns('*p')``

| closed | 2021-11-27T03:12:29Z | 2021-12-11T10:27:08Z | https://github.com/pyjanitor-devs/pyjanitor/issues/959 | [] | samukweku | 2 |

MilesCranmer/PySR | scikit-learn | 424 | [BUG]: PySR runs well once and then stops after error | ### What happened?

Hello,

I was trying to use PySR and I ran into a problem: I ran it once and the model was able to identify the equation correctly. However, after trying to run my code on other data, nothing happens but the code stops at the following error (see below)

I am not sure if I am causing this problem or what the problem could be. I am running the code in Python 3.11.0 and Julia 1.8.5. If there is already an issue that would help, then sorry for posting the same question twice. I hope that you can help me in resolving this problem.

Best wishes,

Bartosz

### Version

0.16.3

### Operating System

Windows

### Package Manager

pip

### Interface

Jupyter Notebook

### Relevant log output

```shell

UserWarning Traceback (most recent call last)

Cell In[45], line 19

1 from pysr import PySRRegressor

3 model = PySRRegressor(

4 niterations=40, # < Increase me for better results

5 binary_operators=["+", "*", "-"],

(...)

17 progress=False

18 )

---> 19 model.fit(x_train_ic,x_dot)

File ~\Anaconda3\envs\tristan\Lib\site-packages\pysr\sr.py:1904, in PySRRegressor.fit(self, X, y, Xresampled, weights, variable_names, X_units, y_units)

1900 seed = random_state.get_state()[1][0] # For julia random

1902 self._setup_equation_file()

-> 1904 mutated_params = self._validate_and_set_init_params()

1906 (

1907 X,

1908 y,

(...)

1915 X, y, Xresampled, weights, variable_names, X_units, y_units

1916 )

1918 if X.shape[0] > 10000 and not self.batching:

File ~\Anaconda3\envs\tristan\Lib\site-packages\pysr\sr.py:1346, in PySRRegressor._validate_and_set_init_params(self)

1344 parameter_value = 1

1345 elif parameter == "progress" and not buffer_available:

-> 1346 warnings.warn(

1347 "Note: it looks like you are running in Jupyter. "

1348 "The progress bar will be turned off."

1349 )

1350 parameter_value = False

1351 packed_modified_params[parameter] = parameter_value

UserWarning: Note: it looks like you are running in Jupyter. The progress bar will be turned off.

```

### Extra Info

This the minimal example, the x_train_ic is just a time series and x_dot the derivatives of it.

```

from pysr import PySRRegressor

model = PySRRegressor(

niterations=40, # < Increase me for better results

binary_operators=["+", "*", "-"],

#unary_operators=[

# "cos",

# "exp",

# "sin",

# "inv(x) = 1/x",

# ^ Custom operator (julia syntax)

#],

#extra_sympy_mappings={"inv": lambda x: 1 / x},

# ^ Define operator for SymPy as well

loss="loss(prediction, target) = (prediction - target)^2",

# ^ Custom loss function (julia syntax)

progress=False

)

model.fit(x_train_ic,x_dot)

``` | open | 2023-09-13T13:51:57Z | 2023-09-13T18:34:50Z | https://github.com/MilesCranmer/PySR/issues/424 | [

"bug"

] | BMP-TUD | 1 |

PaddlePaddle/PaddleNLP | nlp | 9,482 | [Docs]:预测demo中加载了两次模型参数,不符合逻辑 | ### 软件环境

```Markdown

- paddlepaddle:

- paddlepaddle-gpu:

- paddlenlp:

```

### 详细描述

```Markdown

这个文档里,predict时加载了两次模型参数,第一次是原始模型,第二次是训练后的参数,按理说,只需要加载训练后的参数即可,是不是可以再完善一下

```

| closed | 2024-11-22T09:03:43Z | 2025-02-05T00:20:47Z | https://github.com/PaddlePaddle/PaddleNLP/issues/9482 | [

"documentation",

"stale"

] | williamPENG1 | 6 |

jupyter/nbviewer | jupyter | 703 | Markdown rendering issue for ipython notebooks in Github but not nbviewer | (I wasn't certain where to raise this issue but the nbviewer blog recommended this repo.).

**Issue**: For ipython notebooks viewed in Github (but not nbviewer), if there is any Markdown-formatted text nested between inline LaTeX _within the same paragraph block_, the Markdown formatting does not render correctly.

For example, take a look at this [ipython notebook](https://github.com/redwanhuq/machine-learning/blob/master/sms_spam_filter.ipynb). If you search for the word "subsets", you'll notice that the 1st example displays as "\<em>subsets\</em>", instead of italicized Markdown rendering. (FYI in the ipython notebook editor, I'm using the proper Markdown syntax, i.e., \*subsets*) Whereas, the same notebook in nbviewer [doesn't exhibit this issue](http://nbviewer.jupyter.org/github/redwanhuq/machine-learning/blob/master/sms_spam_filter.ipynb).

Oddly, if the Markdown-formatted text is flanked by inline LaTeX either before or after (but not both) within the same paragraph block, then the issue doesn't appear at all in Github viewer. | closed | 2017-06-15T14:12:38Z | 2017-06-23T23:39:00Z | https://github.com/jupyter/nbviewer/issues/703 | [] | redwanhuq | 3 |

sigmavirus24/github3.py | rest-api | 403 | Bug in Feeds API | In line 361-362 of `github.py`

```

for d in links.values():

d['href'] = URITemplate(d['href'])

```

there is a bug. When user have no `current_user_organization_url` or `current_user_organization_urls` or something like this ,the d will be blank array `[]`. So this would throw an error of `TypeError: list indices must be integers, not unicode`

So I add a line between them like this `if d:` . It will work well.

| closed | 2015-06-30T13:57:50Z | 2015-11-08T00:25:37Z | https://github.com/sigmavirus24/github3.py/issues/403 | [] | jzau | 1 |

sammchardy/python-binance | api | 773 | Failed to parse error [SOLVED] | My code works fine If I'm running it using VScode with the Python virtualenv

Python version: [3.8.5] 64-bit

however if I run the same code in the terminal using Python [3.8.5] 64-bit

I get this error here

nick-pc@nickpc-HP-xw4300-Workstation:~/Documents/pythonprojects/DCAbot$ /usr/bin/python3 /home/nick-pc/Documents/pythonprojects/DCAbot/DCAETHbot.py

Traceback (most recent call last):

File "/home/nick-pc/.local/lib/python3.8/site-packages/requests/models.py", line 382, in prepare_url

scheme, auth, host, port, path, query, fragment = parse_url(url)

File "/usr/lib/python3/dist-packages/urllib3/util/url.py", line 392, in parse_url

return six.raise_from(LocationParseError(source_url), None)

File "<string>", line 3, in raise_from

urllib3.exceptions.LocationParseError: Failed to parse: https://api.binance.com/api/v3/ping

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/home/nick-pc/Documents/pythonprojects/DCAbot/DCAETHbot.py", line 8, in <module>

client = Client(keys.api_key, keys.api_secret)

File "/home/nick-pc/.local/lib/python3.8/site-packages/binance/client.py", line 105, in __init__

self.ping()

File "/home/nick-pc/.local/lib/python3.8/site-packages/binance/client.py", line 392, in ping

return self._get('ping', version=self.PRIVATE_API_VERSION)

File "/home/nick-pc/.local/lib/python3.8/site-packages/binance/client.py", line 237, in _get

return self._request_api('get', path, signed, version, **kwargs)

File "/home/nick-pc/.local/lib/python3.8/site-packages/binance/client.py", line 202, in _request_api

return self._request(method, uri, signed, **kwargs)

File "/home/nick-pc/.local/lib/python3.8/site-packages/binance/client.py", line 196, in _request

self.response = getattr(self.session, method)(uri, **kwargs)

File "/home/nick-pc/.local/lib/python3.8/site-packages/requests/sessions.py", line 555, in get

return self.request('GET', url, **kwargs)

File "/home/nick-pc/.local/lib/python3.8/site-packages/requests/sessions.py", line 528, in request

prep = self.prepare_request(req)

File "/home/nick-pc/.local/lib/python3.8/site-packages/requests/sessions.py", line 456, in prepare_request

p.prepare(

File "/home/nick-pc/.local/lib/python3.8/site-packages/requests/models.py", line 316, in prepare

self.prepare_url(url, params)

File "/home/nick-pc/.local/lib/python3.8/site-packages/requests/models.py", line 384, in prepare_url

raise InvalidURL(*e.args)

requests.exceptions.InvalidURL: Failed to parse: https://api.binance.com/api/v3/ping

- Python version: [3.8.5] 64-bit

- Virtual Env: [virtualenv]

- OS: [Ubuntu]

- python-binance version [0.7.9]

| closed | 2021-04-18T04:27:02Z | 2021-05-11T12:43:30Z | https://github.com/sammchardy/python-binance/issues/773 | [] | fuzzybannana | 1 |

mars-project/mars | pandas | 2,584 | [BUG] mars.dataframe.DataFrame.loc[i:j] semantics is different with pandas | # Reporting a bug

```

import pandas as pd

import mars

import numpy as np

df = pd.DataFrame(np.random.rand(5,3))

sliced_df = df.loc[0:1]

# Out[6]: sliced_df

# 0 1 2

# 0 0.362741 0.466188 0.750695

# 1 0.775940 0.544655 0.711621

mars.new_session()

md = mars.dataframe.DataFrame(np.random.rand(5, 3))

sliced_md = md.loc[0:1]

sliced_md.execute()

# Out[14]: sliced_md

# 0 1 2

# 0 0.851917 0.508231 0.908007

```

As you can see, mars choose `right open`, while pandas choose `right closed`. They contain different behaviours.

Python 3.7.9 & Pandas 1.2.0 & mars 0.7.5 | closed | 2021-11-24T06:33:29Z | 2022-09-05T03:26:57Z | https://github.com/mars-project/mars/issues/2584 | [

"type: bug",

"mod: dataframe"

] | dlee992 | 0 |

coqui-ai/TTS | pytorch | 4,043 | [Feature request] Support for Quantized ONNX Model Conversion for Stream Inference | <!-- Welcome to the 🐸TTS project!

We are excited to see your interest, and appreciate your support! --->

**🚀 Feature Description**

Is there support in Coqui TTS for converting models to a quantized ONNX format for stream inference? This feature would enhance model performance and reduce inference time for real-time applications.

**Solution**

Implement a workflow or tool within Coqui TTS for easy conversion of TTS models to quantized ONNX format.

**Alternative Solutions**

Currently, external tools like ONNX Runtime or TensorRT can be used for post-conversion quantization, but having this feature natively would streamline the process.

**Additional context**

Any existing documentation or insights on this topic would be appreciated. Thank you!

| closed | 2024-11-02T04:01:41Z | 2024-12-28T11:58:22Z | https://github.com/coqui-ai/TTS/issues/4043 | [

"wontfix",

"feature request"

] | TranDacKhoa | 1 |

modin-project/modin | data-science | 7,405 | BUG: incorrect iloc behavior in modin when assigning index values based on row indices | ### Modin version checks

- [X] I have checked that this issue has not already been reported.

- [X] I have confirmed this bug exists on the latest released version of Modin.

- [ ] I have confirmed this bug exists on the main branch of Modin. (In order to do this you can follow [this guide](https://modin.readthedocs.io/en/stable/getting_started/installation.html#installing-from-the-github-main-branch).)

### Reproducible Example

```python

import pandas as pd

import modin.pandas as mpd

dict1 = {

'index_test': [-1, -1, -1]

}

df1 = pd.DataFrame(dict1)

mdf1 = mpd.DataFrame(dict1)

row_indices = [2, 0]

df1.iloc[row_indices, 0] = df1.iloc[row_indices].index

mdf1.iloc[row_indices, 0] = mdf1.iloc[row_indices].index

print(df1) # as expected: 0, -1, 2

print('-------------')

print(mdf1) # NOT as expected: 2, -1, 0

# index_test

# 0 0

# 1 -1

# 2 2

# -------------

# index_test

# 0 2

# 1 -1

# 2 0

```

### Issue Description

When assigning values using iloc in modin, the behavior deviates from the expected behavior seen with pandas. Specifically, assigning index values to a subset of rows works correctly in pandas, but modin assigns values in wrong order.

### Expected Behavior

This issue occurs consistently when trying to assign values based on row indices using iloc in modin. The expected behavior is for modin to mirror pandas behavior, but instead, the values are assigned in a different order.

expected output produced with pandas:

```

index_test

0 0

1 -1

2 2

```

actual output produced with modin:

```

index_test

0 2

1 -1

2 0

```

### Error Logs

_No response_

### Installed Versions

<details>

PyDev console: using IPython 8.23.0

INSTALLED VERSIONS

------------------

commit : 3e951a63084a9cbfd5e73f6f36653ee12d2a2bfa

python : 3.11.8

python-bits : 64

OS : Windows

OS-release : 10

Version : 10.0.22631

machine : AMD64

processor : Intel64 Family 6 Model 186 Stepping 2, GenuineIntel

byteorder : little

LC_ALL : None

LANG : None

LOCALE : English_Austria.1252

Modin dependencies

------------------

modin : 0.32.0

ray : 2.20.0

dask : 2024.5.2

distributed : 2024.5.2

pandas dependencies

-------------------

pandas : 2.2.3

numpy : 1.26.4

pytz : 2024.1

dateutil : 2.9.0.post0

pip : 24.2

Cython : 3.0.10

sphinx : None

IPython : 8.23.0

adbc-driver-postgresql: None

adbc-driver-sqlite : None

bs4 : 4.12.3

blosc : None

bottleneck : 1.3.7

dataframe-api-compat : None

fastparquet : None

fsspec : 2023.10.0

html5lib : None

hypothesis : None

gcsfs : None

jinja2 : 3.1.3

lxml.etree : None

matplotlib : 3.8.2

numba : None

numexpr : 2.8.7

odfpy : None

openpyxl : None

pandas_gbq : None

psycopg2 : None

pymysql : None

pyarrow : 14.0.2

pyreadstat : None

pytest : 8.1.1

python-calamine : None

pyxlsb : None

s3fs : None

scipy : 1.14.0

sqlalchemy : 2.0.25

tables : 3.9.2

tabulate : 0.9.0

xarray : None

xlrd : None

xlsxwriter : None

zstandard : 0.23.0

tzdata : 2024.1

qtpy : 2.4.1

pyqt5 : None

</details>

| closed | 2024-10-14T07:13:20Z | 2025-02-27T19:59:55Z | https://github.com/modin-project/modin/issues/7405 | [

"bug 🦗",

"P1"

] | SchwurbeI | 3 |

fastapi/sqlmodel | pydantic | 75 | Add sessionmaker | ### First Check

- [X] I added a very descriptive title to this issue.

- [X] I used the GitHub search to find a similar issue and didn't find it.

- [X] I searched the SQLModel documentation, with the integrated search.

- [X] I already searched in Google "How to X in SQLModel" and didn't find any information.

- [X] I already read and followed all the tutorial in the docs and didn't find an answer.

- [X] I already checked if it is not related to SQLModel but to [Pydantic](https://github.com/samuelcolvin/pydantic).

- [X] I already checked if it is not related to SQLModel but to [SQLAlchemy](https://github.com/sqlalchemy/sqlalchemy).

### Commit to Help

- [X] I commit to help with one of those options 👆

### Example Code

```python

Session = sessionmaker(engine)

```

### Description

Add an sqlalchemy compatible sessionmaker that generates SqlModel sessions

### Wanted Solution

I would like to have a working sessionmaker

### Wanted Code

```python

from sqlmodel import sessionmaker

```

### Alternatives

_No response_

### Operating System

macOS

### Operating System Details

_No response_

### SQLModel Version

0.0.4

### Python Version

3.9.6

### Additional Context

_No response_ | open | 2021-09-02T21:00:03Z | 2024-05-14T11:03:00Z | https://github.com/fastapi/sqlmodel/issues/75 | [

"feature"

] | hitman-gdg | 6 |

junyanz/pytorch-CycleGAN-and-pix2pix | deep-learning | 1,119 | Always out of memory when testing | Hi,

My testing set has about 7,000 images in all. Some images in the testing set are very large, like 2,000*3,000 pixels. The memory is always overflow. The testing program can only run on one gpu instead of multi-gpu. How can I fix this problem? Many thanks! | closed | 2020-08-06T13:47:36Z | 2020-08-07T04:12:09Z | https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/1119 | [] | GuoLanqing | 2 |

gradio-app/gradio | python | 10,564 | Misplaced Chat Avatar While Thinking | ### Describe the bug

When the chatbot is thinking, the Avatar icon is misplaced. When it is actually inferencing or done inferencing, the avatar is fine.

Similar to https://github.com/gradio-app/gradio/issues/9655 I believe, but a special edge case. Also, I mostly notice the issue with rectangular images.

### Have you searched existing issues? 🔎

- [x] I have searched and found no existing issues

### Reproduction

```python

import gradio as gr

from time import sleep

AVATAR = "./car.png"

# Define a simple chatbot function

def chatbot_response(message, hist):

sleep(10)

return f"Gradio is pretty cool!"

# Create a chat interface using gr.ChatInterface

chatbot = gr.ChatInterface(fn=chatbot_response,

chatbot=gr.Chatbot(

label="LLM",

elem_id="chatbot",

avatar_images=(

None,

AVATAR

),

)

)

# Launch the chatbot

chatbot.launch()

```

### Screenshot

### Logs

```shell

```

### System Info

```shell

(base) carter.yancey@Yancy-XPS:~$ gradio environment

Gradio Environment Information:

------------------------------

Operating System: Linux

gradio version: 5.13.1

gradio_client version: 1.6.0

------------------------------------------------

gradio dependencies in your environment:

aiofiles: 23.2.1

anyio: 3.7.1

audioop-lts is not installed.

fastapi: 0.115.7

ffmpy: 0.3.2

gradio-client==1.6.0 is not installed.

httpx: 0.25.1

huggingface-hub: 0.27.1

jinja2: 3.1.2

markupsafe: 2.1.3

numpy: 1.26.2

orjson: 3.9.10

packaging: 23.2

pandas: 1.5.3

pillow: 10.0.0

pydantic: 2.5.1

pydub: 0.25.1

python-multipart: 0.0.20

pyyaml: 6.0.1

ruff: 0.2.2

safehttpx: 0.1.6

semantic-version: 2.10.0

starlette: 0.45.3

tomlkit: 0.12.0

typer: 0.15.1

typing-extensions: 4.8.0

urllib3: 2.3.0

uvicorn: 0.24.0.post1

authlib; extra == 'oauth' is not installed.

itsdangerous; extra == 'oauth' is not installed.

gradio_client dependencies in your environment:

fsspec: 2023.10.0

httpx: 0.25.1

huggingface-hub: 0.27.1

packaging: 23.2

typing-extensions: 4.8.0

websockets: 11.0.3

```

### Severity

I can work around it | closed | 2025-02-11T18:31:28Z | 2025-03-04T21:23:07Z | https://github.com/gradio-app/gradio/issues/10564 | [

"bug",

"💬 Chatbot"

] | CarterYancey | 0 |

ultralytics/yolov5 | machine-learning | 13,447 | training stuck | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) and found no similar questions.

### Question

I used your framework to modify yolo and define a model by myself, but when I was training, why did I get stuck at the beginning, in the position shown below

### Additional

_No response_ | closed | 2024-12-06T06:35:00Z | 2024-12-06T10:43:26Z | https://github.com/ultralytics/yolov5/issues/13447 | [

"question"

] | passingdragon | 3 |

Kanaries/pygwalker | matplotlib | 670 | [BUG] Installation from conda-forge yields No module named 'lib2to3' | **Describe the bug**

When installing pygwalker via conda, the actual installation works, but subsequent import yields:

```sh

----> 1 import pygwalker as pyg

File ~/miniforge3/envs/scratchpad/lib/python3.13/site-packages/pygwalker/__init__.py:16

13 __version__ = "0.3.17"

14 __hash__ = __rand_str()

---> 16 from pygwalker.api.walker import walk

17 from pygwalker.api.gwalker import GWalker

18 from pygwalker.api.html import to_html

File ~/miniforge3/envs/scratchpad/lib/python3.13/site-packages/pygwalker/api/walker.py:10

8 from pygwalker.data_parsers.database_parser import Connector

9 from pygwalker._typing import DataFrame

---> 10 from pygwalker.services.format_invoke_walk_code import get_formated_spec_params_code_from_frame

11 from pygwalker.services.kaggle import auto_set_kanaries_api_key_on_kaggle, adjust_kaggle_default_font_size

12 from pygwalker.utils.execute_env_check import check_convert, get_kaggle_run_type, check_kaggle

File ~/miniforge3/envs/scratchpad/lib/python3.13/site-packages/pygwalker/services/format_invoke_walk_code.py:3

1 from typing import Optional, List, Any

2 from types import FrameType