id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

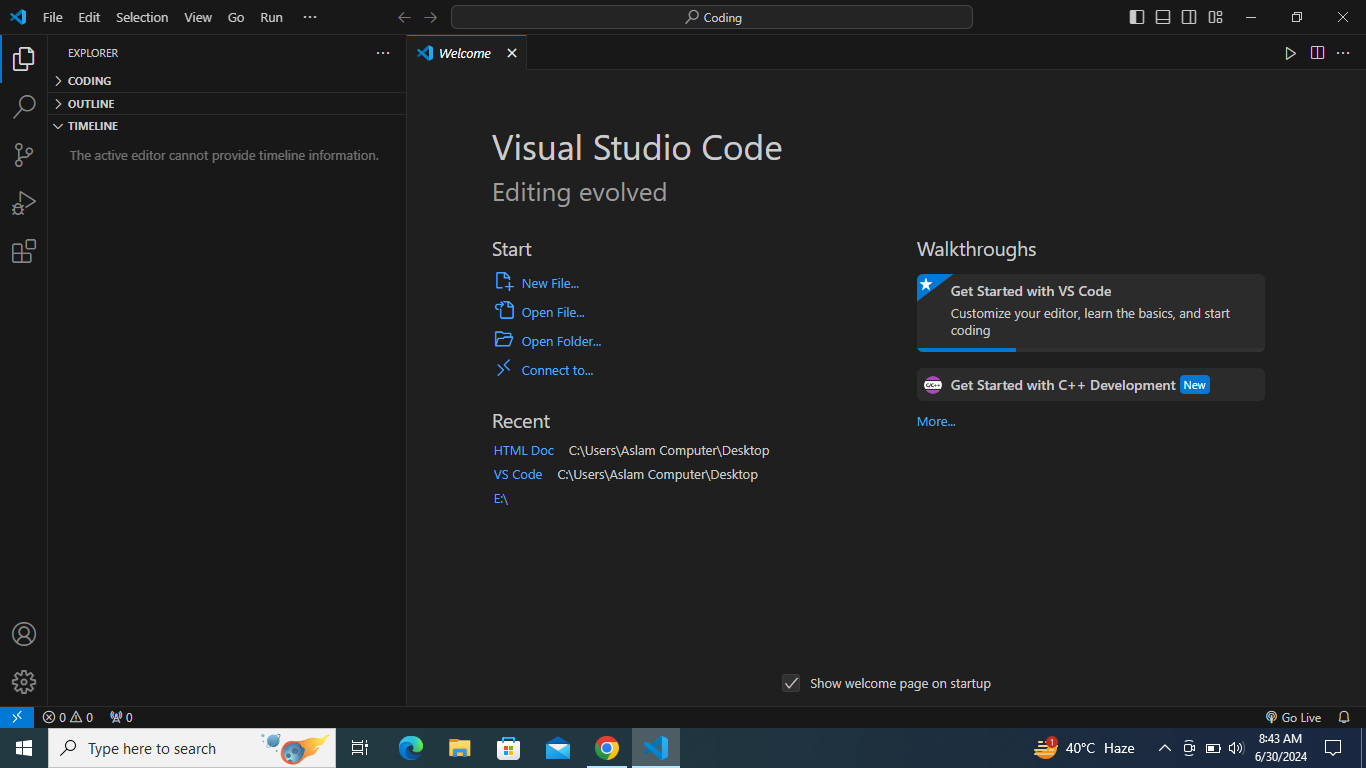

1,906,747 | How to create or write HTML code as example in Visual Studio Code(VS Code) | Method of writing HTML code in VS Code Writing HTML code in Visual Studio Code (VS Code)... | 0 | 2024-06-30T15:43:02 | https://dev.to/wasifali/how-to-create-or-write-html-code-as-example-in-visual-studio-codevs-code-2hpb | webdev, html, coding, learning | ## **Method of writing HTML code in VS Code**

Writing HTML code in Visual Studio Code (VS Code) is straightforward and follows these steps:

**Open VS Code**: Launch the Visual Studio Code editor on your computer.

## ... | wasifali |

1,906,746 | Step-by-Step Guide to Using the Hashnode API for Developers | Welcome to this comprehensive guide on using the Hashnode API! Whether you're a seasoned developer or... | 0 | 2024-06-30T15:41:23 | https://raajaryan.tech/step-by-step-guide-to-using-the-hashnode-api-for-developers | api, beginners, tutorial, react |

Welcome to this comprehensive guide on using the Hashnode API! Whether you're a seasoned developer or just starting out, this post will equip you with everything you need to know about interacting with Hashnode's powerful API. By the end of this guide, you'll be able to fetch articles, publish content, and integrate... | raajaryan |

1,906,744 | Python Development Environment on WSL2 | This Post guides you through how to setup a python development environment on Windows Sub-System for... | 0 | 2024-06-30T15:31:48 | https://dev.to/iamgauravpande/python-development-environment-on-wsl2-2o7m | python, development, microsoft, wsl2 | This Post guides you through how to setup a python development environment on Windows Sub-System for Linux(wsl2).

**What is WSL2?**

wsl2 lets a windows user use a virtual linux environment on Windows Machine itself. This is pretty handy feature for quick development needs on your local machine.

Below Steps would l... | iamgauravpande |

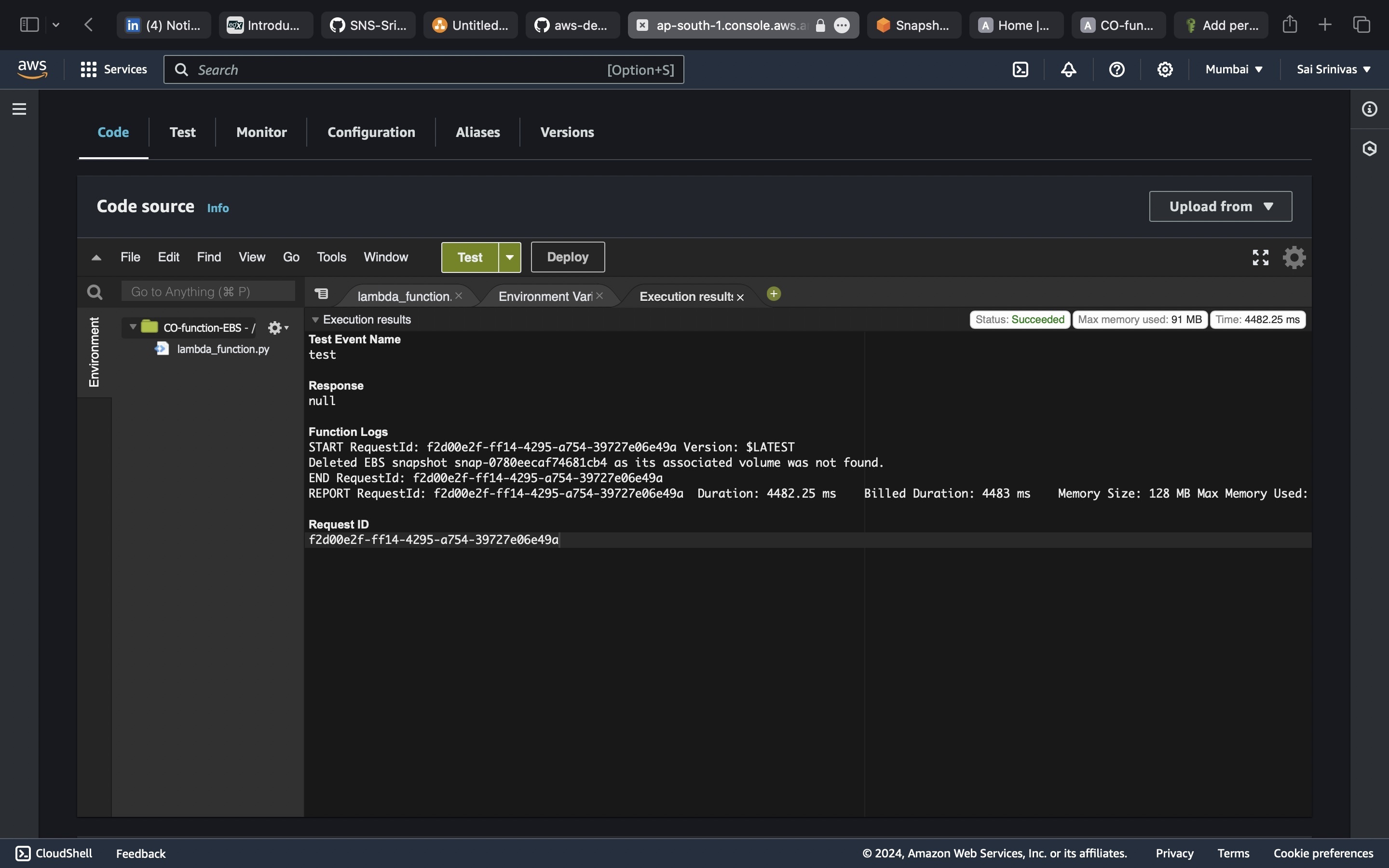

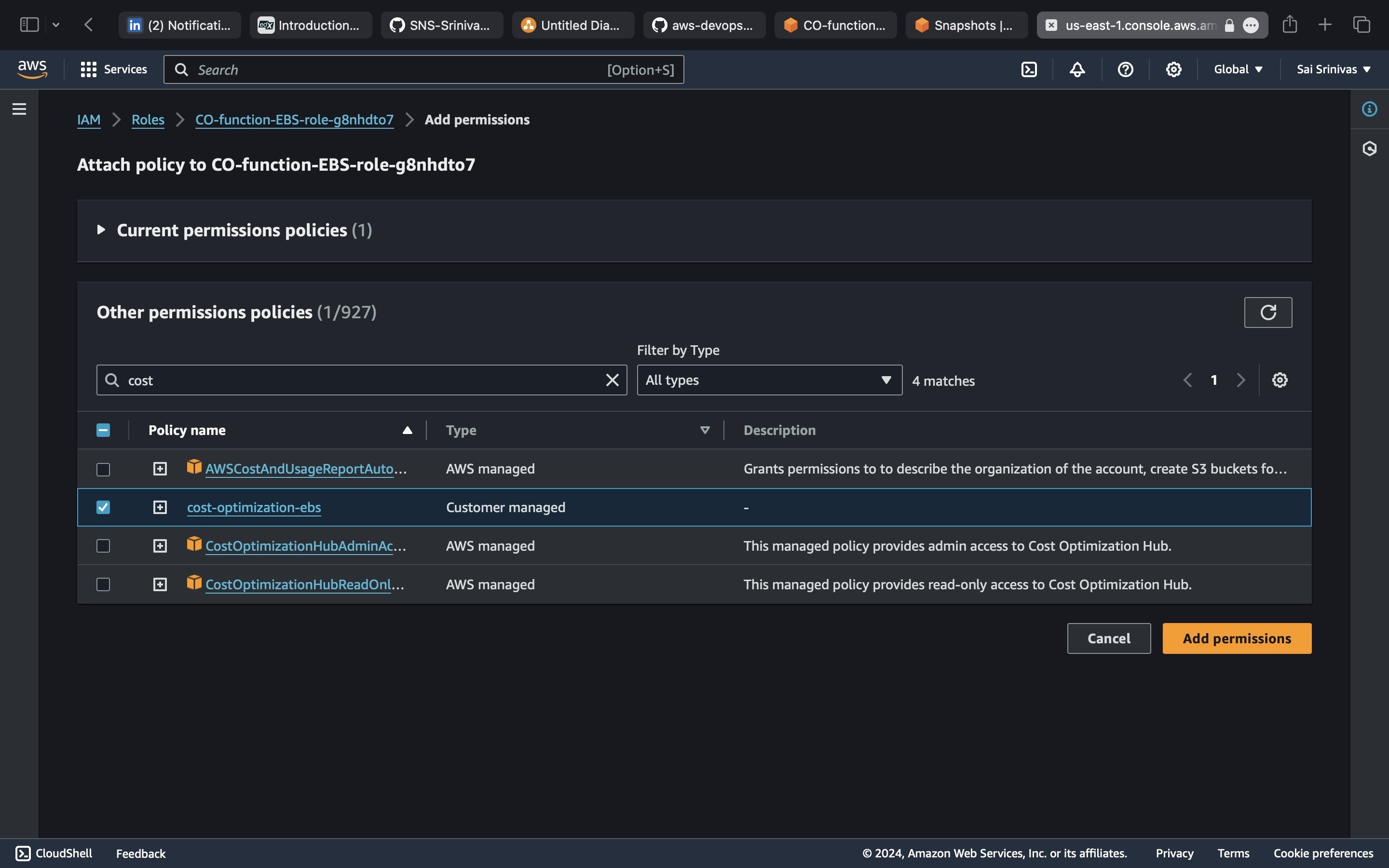

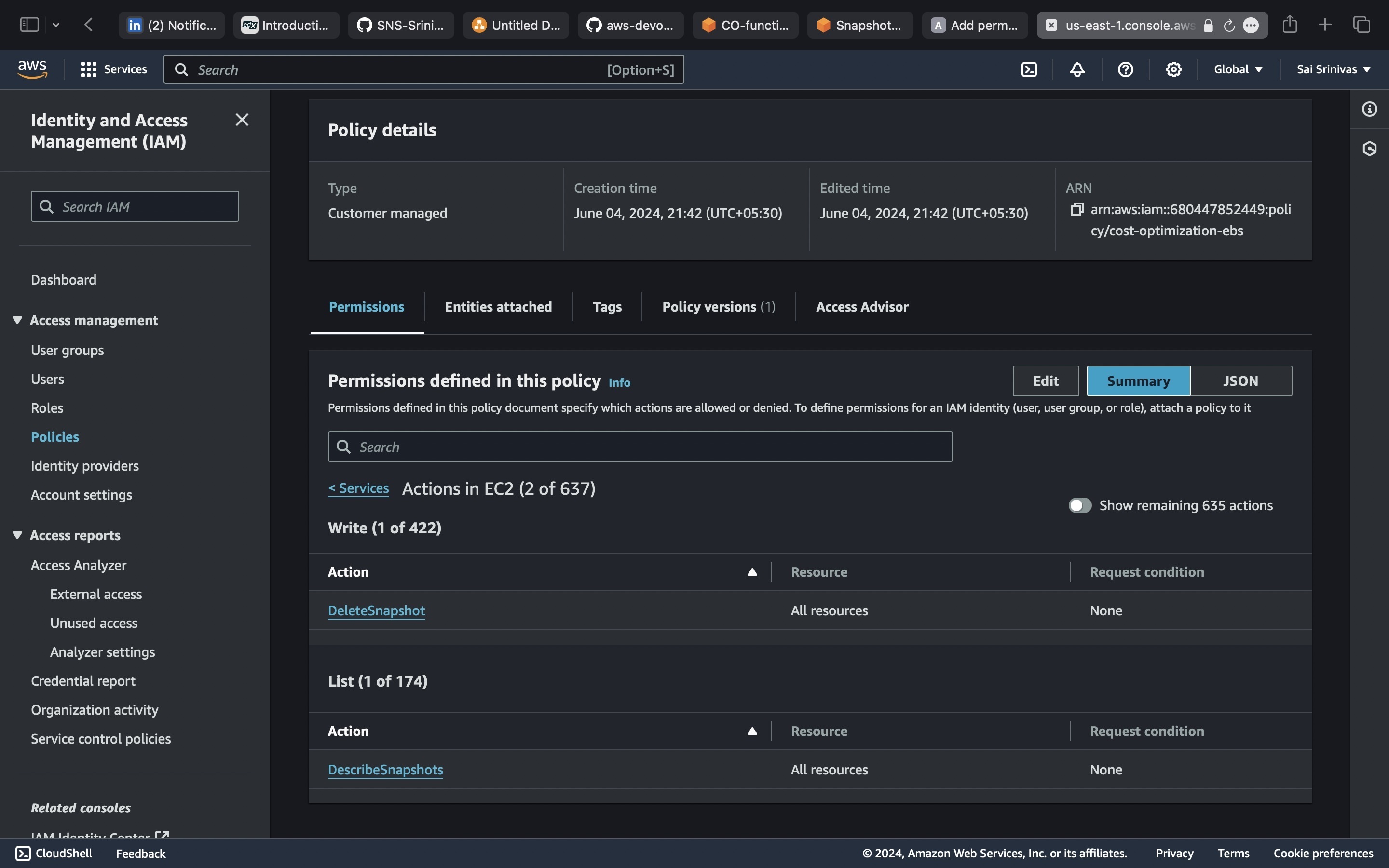

1,906,743 | AWS Cloud Cost Optimization - Identifying Stale Resources | In the fast-paced world of cloud computing, managing costs efficiently is crucial for any... | 0 | 2024-06-30T15:28:14 | https://dev.to/sukuru_naga_sai_srinivasu/aws-cloud-cost-optimization-identifying-stale-resources-52ao | cloud, costoptimization, aws, lambda |

In the fast-pac... | sukuru_naga_sai_srinivasu |

1,906,686 | Performance: mistakes to avoid | It might seem obvious, but performance is a concern, and it's easy to make mistakes in that... | 16,464 | 2024-06-30T15:20:51 | https://dev.to/spo0q/performance-mistakes-to-avoid-4pca | programming, performance, bookmarks, development | It might seem obvious, but performance is a concern, and it's easy to make mistakes in that particular matter.

This topic can be quite sensitive in dev teams because performance failures are usually impactful.

## 🦄 "Too unlimited"?

Indeed, resources like memory are limited. Even if you can enable horizontal scaling... | spo0q |

1,906,687 | Frontend Face-Off: React vs. Vue.js - An HNG Intern's Perspective | Ever wondered which tool to use to build awesome websites? Today, we compare two frontend titans:... | 0 | 2024-06-30T15:18:55 | https://dev.to/barshow/frontend-face-off-react-vs-vuejs-an-hng-interns-perspective-5466 | hng | Ever wondered which tool to use to build awesome websites? Today, we compare two frontend titans: ReactJS (the one we use at HNG [link to HNG Internship website, https://hng.tech/internship]) and Vue.js! Both are JavaScript libraries that help you create dynamic and interactive web experiences, but they have their own ... | barshow |

1,906,685 | Dive into the Captivating World of Modern 3D Graphics Programming! 🎮 | Comprehensive guide to modern 3D graphics programming, covering the latest techniques and technologies. Suitable for beginners and experienced developers. | 27,801 | 2024-06-30T15:14:29 | https://getvm.io/tutorials/learning-modern-3d-graphics-programming | getvm, programming, freetutorial, technicaltutorials |

As a passionate programmer and graphics enthusiast, I recently discovered an incredible resource that has completely transformed my understanding of modern 3D graphics programming. Allow me to introduce you to "Learning Modern 3D Graphics Programming" by Jason L. McKesson – a comprehensive guide that will take you on... | getvm |

1,906,684 | .present? VS .any? VS .exists? | Something I have a difficult time wrapping my head around as a newbie is how actually super... | 0 | 2024-06-30T15:13:49 | https://dev.to/sakuramilktea/present-vs-any-vs-exists-34pp | rails, database, queries, sql |

Something I have a difficult time wrapping my head around as a newbie is how actually super important it is to care about query speeds.

Different methods will have _vastly_ different query speeds, and it's therefor... | sakuramilktea |

1,906,682 | The WebAssembly Magic 🪄 | Hello amazing people 👋, Have you ever thought about running some other programming language on the... | 0 | 2024-06-30T15:08:51 | https://dev.to/prathamjagga/the-wasm-magic-3p2n | webdev, programming, devops, performance | Hello amazing people 👋,

Have you ever thought about running some other programming language on the browser, other than Javascript 🤔. But then thought it is not possible since Browsers natively support only Javscript right?

Now what if I tell you that WebAssembly is the solution here, it enables us to run any compil... | prathamjagga |

1,906,455 | Optimizing Your React App: A Guide to Production-Ready Setup with Webpack, TypeScript, ESLint, and Prettier - 2024 | In this blog post, we'll cover everything you need to know to set up a React app that's ready for... | 0 | 2024-06-30T15:08:11 | https://dev.to/shivampawar/optimizing-your-react-app-a-guide-to-production-ready-setup-with-webpack-typescript-eslint-and-prettier-2024-4lcl | react, learning, webpack, typescript | In this blog post, we'll cover everything you need to know to set up a React app that's ready for deployment.

GitHub Repo: https://github.com/shivam-pawar/sample-react-app

## Prerequisites

Before we begin, make sure you have Node.js and npm (or yarn) installed on your machine.

## Initialize a new project

Use your C... | shivampawar |

1,906,681 | Keeping Your Data Close: Cross-Region Replication with AWS DMS | Keeping Your Data Close: Cross-Region Replication with AWS DMS In today's digital... | 0 | 2024-06-30T15:05:35 | https://dev.to/virajlakshitha/keeping-your-data-close-cross-region-replication-with-aws-dms-14bo |

# Keeping Your Data Close: Cross-Region Replication with AWS DMS

In today's digital landscape, businesses require resilient and scalable solutions to ensure data availability and disaster recovery. Geographic redundancy, achieved b... | virajlakshitha | |

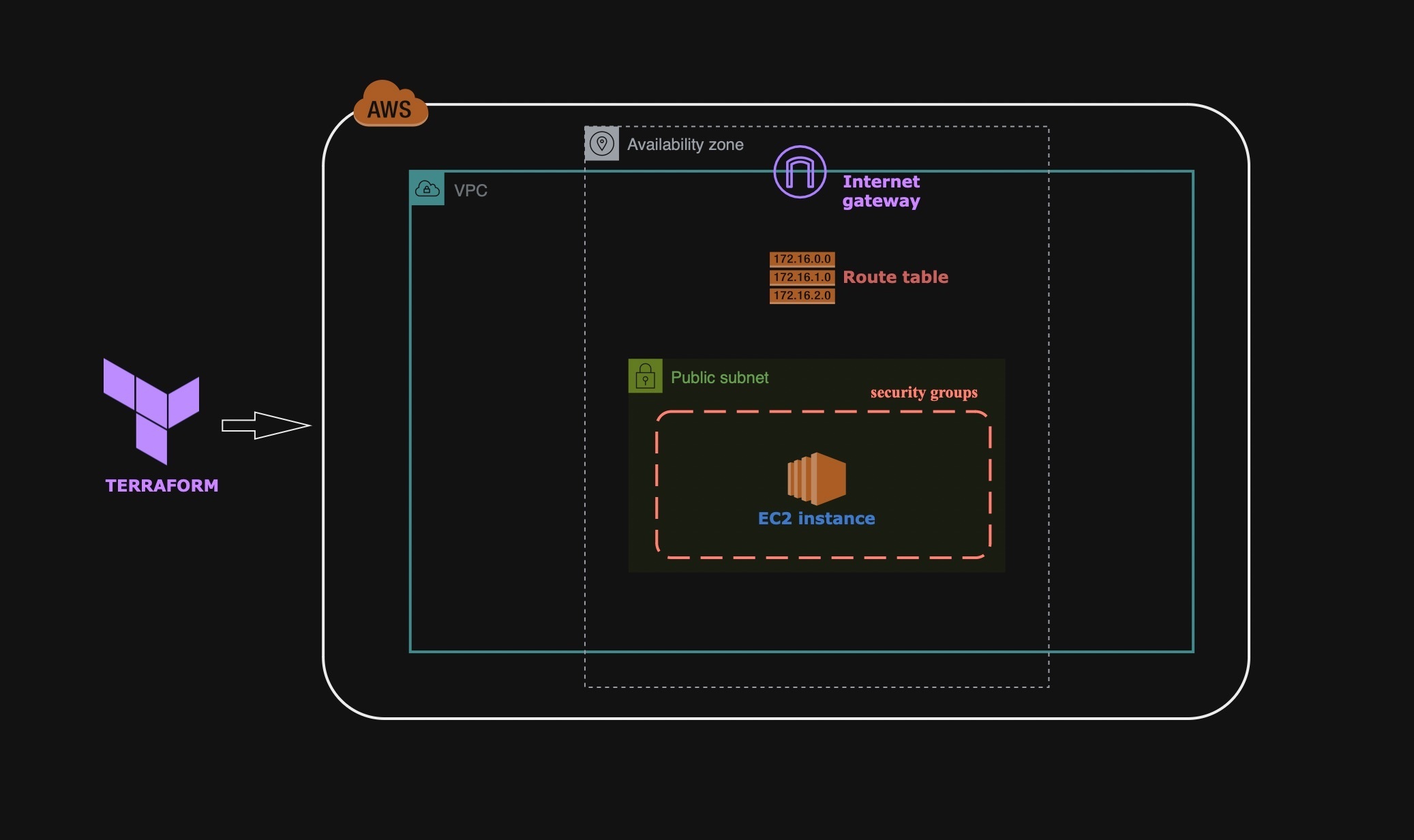

1,906,680 | Networking-Architecture-using-Terraform | 🖥 Excited to Share My Latest Project with AWS and Terraform! I am thrilled to announce that I... | 0 | 2024-06-30T15:03:29 | https://dev.to/sukuru_naga_sai_srinivasu/networking-architecture-using-terraform-4flp | aws, terraform |

🖥 Excited to Share My Latest Project with AWS and Terraform!

I am thrilled to announce that I have recently completed a small but impactful project leveraging AWS and Terraform. This hand... | sukuru_naga_sai_srinivasu |

1,906,678 | Automating Email Notifications for S3 Object Uploads using SNS | Introduction: In today's cloud-centric world, automation is key to managing and scaling... | 0 | 2024-06-30T14:59:13 | https://dev.to/mohanapriya_s_1808/automating-email-notifications-for-s3-object-uploads-using-sns-5efb | **Introduction:**

In today's cloud-centric world, automation is key to managing and scaling infrastructure efficiently. Amazon Web Services (AWS) offers a robust suite of services that allow developers to build complex systems with relative ease. One such powerful combination is Amazon Simple Storage Service (S3) and ... | mohanapriya_s_1808 | |

1,906,657 | Mastering Caching in Distributed Systems: Strategies for Consistency and Scalability | Handling Caching in a Distributed System is difficult but not impossible. This is going to be long... | 0 | 2024-06-30T14:57:57 | https://dev.to/nayanraj-adhikary/deep-dive-caching-in-distributed-systems-at-scale-3h1g | webdev, javascript, development, beginners | Handling Caching in a Distributed System is difficult but not impossible.

This is going to be long but, informative

I would be referring to Distributed System --> DS

For a Basic Understanding of Caching refer to my previous blogs

1. [Deep Dive into Caching: Techniques for High-Performance Web Apps](https://dev.t... | nayanraj-adhikary |

1,906,676 | Issue 005 - The Bottleneck of Continuous Writing | I went to the sea again this weekend. Every time I connect with nature and feel the sea breeze, it... | 0 | 2024-06-30T14:56:27 | https://dev.to/justin3go/issue-005-the-bottleneck-of-continuous-writing-313b |

I went to the sea again this weekend. Every time I connect with nature and feel the sea breeze, it seems like all the fatigue of the week just vanishes.

> website: [fav0.com](https://fav0.com/en/)

## \>\> Topics to Discuss

**The Bottleneck of Continuous Writing**

... | justin3go | |

1,906,675 | About using Microsoft office 365 (offline version) for free | As a budding Data Analyst, I have encountered challenges with utilizing Microsoft 365 due to... | 0 | 2024-06-30T14:52:16 | https://dev.to/dipalee_gaware_b4630cc678/about-using-microsoft-office-365-offline-version-for-free-42im | As a budding Data Analyst, I have encountered challenges with utilizing Microsoft 365 due to financial constraints. I am seeking guidance on how to access or download Microsoft Office 365 for free in its offline version. This is important as certain functionalities I require are not available in the online version. You... | dipalee_gaware_b4630cc678 | |

1,906,674 | What Is BC-404: A Comprehensive Guide to the Latest Deflationary NFT Standard | Introduction: Recently, a new NFT standard called BC-404 has emerged following the advent of the... | 0 | 2024-06-30T14:51:03 | https://dev.to/nft_research/what-is-bc-404-a-comprehensive-guide-to-the-latest-deflationary-nft-standard-99j | nft, web3 | Introduction:

Recently, a new NFT standard called BC-404 has emerged following the advent of the ERC-404 standard, bringing fresh possibilities to the NFT market.

BC-404, which stands for Bonding Curve 404, is the first deflationary NFT contract in the cryptocurrency space, building upon and improving the ERC-404 stan... | nft_research |

1,906,672 | React vs. Vue: Frontend Duel. | Hey folks, I’ve been diving into two awesome frontend technologies: React and Vue. Both are super... | 0 | 2024-06-30T14:42:00 | https://dev.to/mashobtechie/react-vs-vue-frontend-duel-m97 | webdev, javascript, frontend, programming | Hey folks,

I’ve been diving into two awesome frontend technologies: React and Vue. Both are super powerful but have their own vibes. Since I use ReactJS a lot, I thought it’d be cool to explore Vue too, which i did but for a short period of time. So, here’s what I found:

Let's start with react;

React is a library for b... | mashobtechie |

1,906,671 | 7 Books That Make You A Great Tech Lead | This article was originally posted in my blog:... | 0 | 2024-06-30T14:39:40 | https://dev.to/codebymedu/7-books-that-make-you-a-great-tech-lead-16gf | leadership, frontend, beginners | This article was originally posted in my blog: [https://www.codebymedu.com/blog/7-books-for-tech-lead](https://www.codebymedu.com/blog/7-books-for-tech-lead)

If you're already a tech lead or are planning to become a tech lead in the future, I've gathered 7 of the books I've read that helped me become a successful tech... | codebymedu |

1,906,669 | React vs Flutter | Getting into the field of frontend web development can be exciting and at the same confusing. Here... | 0 | 2024-06-30T14:36:59 | https://dev.to/cebuka/react-vs-flutter-1mac | webdev, flutter, react, beginners | Getting into the field of frontend web development can be exciting and at the same confusing. Here you'll discover that there are myriads of tools to get the job done. The thought of what tool to use can become a hindrance to productivity.In this article, we compare and contrast two well-known web technology tools; Rea... | cebuka |

1,906,668 | How I built a Billion Dollar Company - Alone! | 14 months ago I had a falling out with my VC guy. We went different ways. My VC guy got to keep my... | 0 | 2024-06-30T14:33:42 | https://ainiro.io/blog/how-i-built-a-billion-dollar-company-alone | startup | 14 months ago I had a falling out with my VC guy. We went different ways. My VC guy got to keep my previous company entirely, and I got to keep my IP and he released me from all non-competes and existing agreements we had.

Yesterday people started whispering that AINIRO might be worth one billion dollars, so I've ther... | polterguy |

1,906,666 | Page navigation with react-router-dom | To navigate to pages in a React project, we use react-router-dom, which offers many features such as... | 0 | 2024-06-30T14:31:00 | https://dev.to/thinhkhang97/page-navigation-with-react-router-dom-a38 | webdev, react, navigation | To navigate to pages in a React project, we use react-router-dom, which offers many features such as routing and creating layouts with <Outlet/>, etc. Today, I'd like to show you how to use react-router-dom in a React project with Vite.

I recommend you read my article on setting up a React project [here](https://dev.t... | thinhkhang97 |

1,906,665 | MyFirstApp - React Native with Expo (P10) - Create a Layout Profile | MyFirstApp - React Native with Expo (P10) - Create a Layout Profile | 27,894 | 2024-06-30T14:30:00 | https://dev.to/skipperhoa/myfirstapp-react-native-with-expo-p10-create-a-layout-profile-29cc | react, reactnative, webdev, tutorial | MyFirstApp - React Native with Expo (P10) - Create a Layout Profile

{% youtube NTiZwSiEq9M %} | skipperhoa |

1,906,663 | My Journey of Taming Transitive Dependencies in Spring Boot | The beauty of being a Software Developer lies in the endless journey of learning and overcoming... | 0 | 2024-06-30T14:29:27 | https://dev.to/the_zen/my-journey-of-taming-transitive-dependencies-in-spring-boot-3n2c | java, springboot, developers, softwareengineering | The beauty of being a Software Developer lies in the endless journey of learning and overcoming challenges. Among the myriads of obstacles I’ve faced, one stands out as particularly overwhelming: **_Managing Transitive Dependencies in my Spring Boot application using Maven_**.

This is the tale of my struggle, frustra... | the_zen |

1,906,664 | Choosing Your Frontend Champion: React vs Vue Explained for Beginners | Earlier this year, I set out on a roadmap to master full-stack web development, starting with... | 0 | 2024-06-30T14:29:08 | https://dev.to/sochuks/choosing-your-frontend-champion-react-vs-vue-explained-for-beginners-2ojp | frontend, react, vue | Earlier this year, I set out on a roadmap to master full-stack web development, starting with frontend technologies. A quick internet search provided results to many exciting frameworks and their capabilities but soon enough I was able to narrow my decision to two of the most popular frameworks React and Vue. Both offe... | sochuks |

1,906,661 | MyFirstApp - React Native with Expo (P9) - Custom Bottom Tab | MyFirstApp - React Native with Expo (P9) - Custom Bottom Tab | 27,894 | 2024-06-30T14:28:31 | https://dev.to/skipperhoa/myfirstapp-react-native-with-expo-p9-custom-bottom-tab-3eb6 | react, reactnative, webdev, tutorial | MyFirstApp - React Native with Expo (P9) - Custom Bottom Tab

{% youtube 0scLTwrfoZg %} | skipperhoa |

1,906,660 | MyFirstApp - React Native with Expo (P8) - Add Bottom Sheet | MyFirstApp - React Native with Expo (P8) - Add Bottom Sheet | 27,894 | 2024-06-30T14:27:20 | https://dev.to/skipperhoa/myfirstapp-react-native-with-expo-p8-add-bottom-sheet-5abh | react, reactnative, webdev, tutorial | MyFirstApp - React Native with Expo (P8) - Add Bottom Sheet

{% youtube 9w8hnLNB5DY %} | skipperhoa |

1,906,659 | Understanding Closures in JavaScript: A Powerful Mechanism for Variable Scope | Demystifying the Concept of Closures Closures are a fundamental concept in JavaScript that... | 0 | 2024-06-30T14:23:59 | https://dev.to/sahilatahar/understanding-closures-in-javascript-a-powerful-mechanism-for-variable-scope-2mfg | ## Demystifying the Concept of Closures

Closures are a fundamental concept in JavaScript that can be challenging to grasp at first, but once understood, they become a powerful tool in your programming arsenal. In this blog post, we'll dive deep into the world of closures, exploring what they are, how they work, and wh... | sahilatahar | |

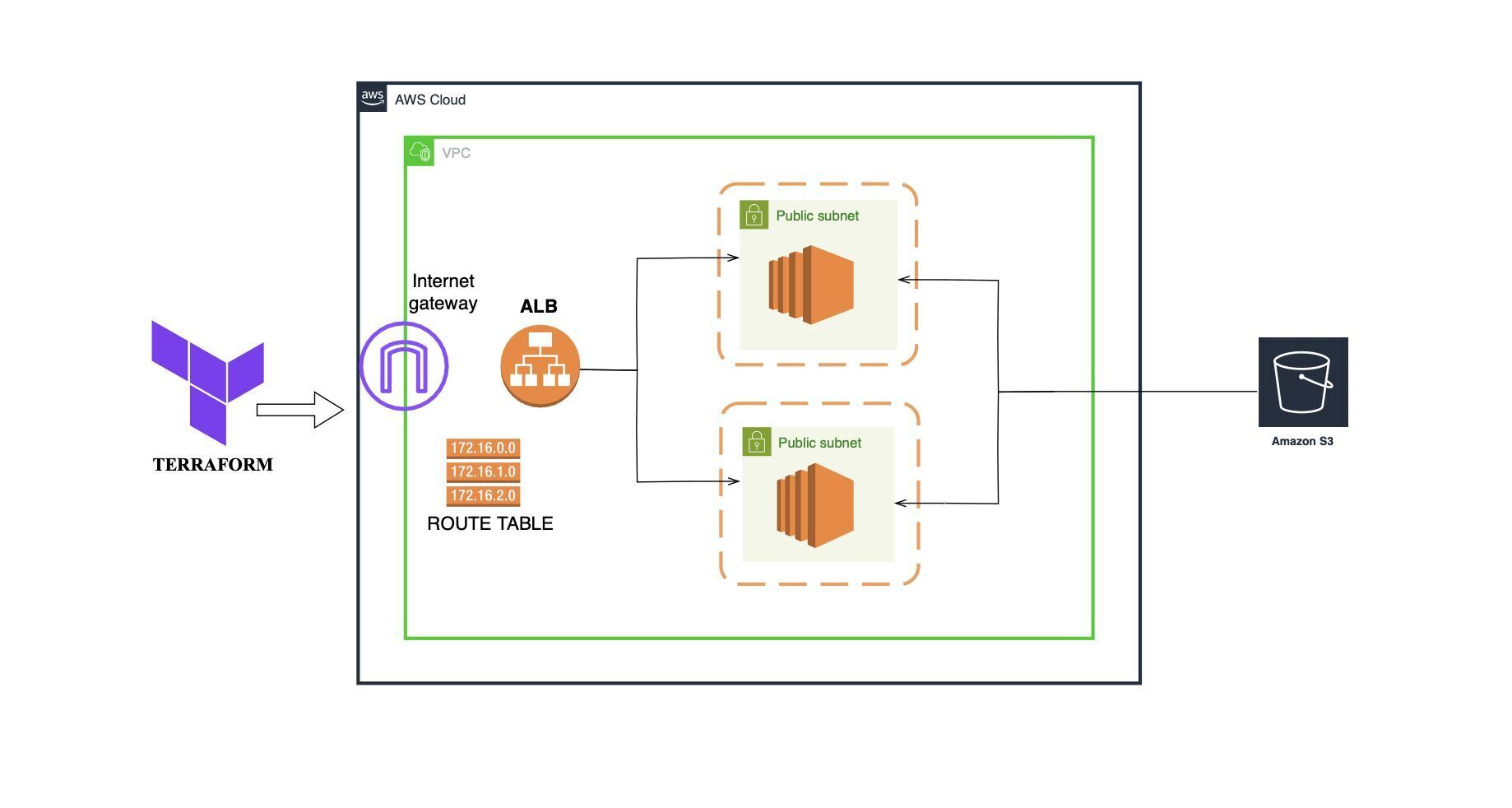

1,906,658 | AWS-infrastructure-using-Terraform | 🚀 Excited to share my latest project where I set up a highly available and scalable infrastructure... | 0 | 2024-06-30T14:22:41 | https://dev.to/sukuru_naga_sai_srinivasu/aws-infrastructure-using-terraform-3p6j | aws, terraform |

🚀 Excited to share my latest project where I set up a highly available and scalable infrastructure on AWS using Terraform! 🌐

🔧 Project Overview:

1) Provider Configuration: Utilized AWS as the cloud provide... | sukuru_naga_sai_srinivasu |

1,906,656 | Day20- 90DaysOfDevOps | Hey Learners! Welcome back. We learned about Docker, Docker-Compose, Docker-Volumes and Docker... | 0 | 2024-06-30T14:20:58 | https://dev.to/oncloud7/day20-90daysofdevops-156b | devops, docker, awschallenge, 90daysofdevop | Hey Learners! Welcome back. We learned about Docker, Docker-Compose, Docker-Volumes and Docker Networking hands-on. Now it's time to create a comprehensive cheat sheet of all the commands we've learned so far. Let's get started.

_**Docker Commands:-**_

**docker login**

**docker pull <image-name>**

**docker push <repo... | oncloud7 |

1,906,633 | A Comprehensive Guide to Functional Testing with Selenium | Functional testing is a crucial aspect of software development, ensuring that applications perform as... | 0 | 2024-06-30T14:14:53 | https://dev.to/iaadidev/a-comprehensive-guide-to-functional-testing-with-selenium-1mek | selenium, testing, devops, linux |

Functional testing is a crucial aspect of software development, ensuring that applications perform as expected under various conditions. Among the numerous tools available for functional testing, Selenium stands out due to its flexibility and extensive support for web applications. In this blog, we will explore Selen... | iaadidev |

1,906,632 | SvelteKit vs React: A Technical Comparison | Web development frameworks are constantly evolving, and two popular options for building modern web... | 0 | 2024-06-30T14:10:39 | https://dev.to/chiater_dev/sveltekit-vs-react-a-technical-comparison-297p | webdev, javascript, programming, beginners |

Web development frameworks are constantly evolving, and two popular options for building modern web applications are SvelteKit and React. This article will compare these technologies across several key dimensions to help developers make informed choices for their projects.

## Overview

### SvelteKit

SvelteKit is a f... | chiater_dev |

1,906,631 | Our Approach to AWS Well-Architected Best Practices | What is Well-Architected? The Well-Architected framework, a comprehensive compilation of... | 0 | 2024-06-30T14:08:37 | https://dev.to/shu85t/our-approach-to-aws-well-architected-best-practices-3edg | aws, wellarchitectedframework | ## What is Well-Architected?

The Well-Architected framework, a comprehensive compilation of 'best practices' for cloud architecture, is created by AWS.

https://aws.amazon.com/architecture/well-architected

Based on this review, you can identify risks that are not in line with best practices.

## Impressions from Conduc... | shu85t |

1,906,628 | Developing Custom Plugins for CoreDNS | Develop and Run Custom Plugins for CoreDNS | 0 | 2024-06-30T14:05:27 | https://dev.to/satrobit/developing-custom-plugins-for-coredns-4jnj | tutorial, go, linux, programming | ---

title: Developing Custom Plugins for CoreDNS

published: true

description: Develop and Run Custom Plugins for CoreDNS

tags: tutorial,go,linux,programming

---

## Introduction To CoreDNS

CoreDNS is a powerful, flexible DNS server written in Go. One of its key features is its plugin-based architecture, which allows u... | satrobit |

1,906,629 | Comparing Frontend Technologies: ReactJS vs VueJS | Introduction As a newbie to front-end development, navigating the landscape of... | 0 | 2024-06-30T14:04:04 | https://dev.to/xyzeez/comparing-frontend-technologies-reactjs-vs-vuejs-210j | react, veu, webdev, frontend | ##Introduction

As a newbie to front-end development, navigating the landscape of technologies can be overwhelming. Among the many frameworks and libraries available, ReactJS and VueJS have emerged as two of the most popular and widely adopted tools for building modern web applications. Both offer powerful features, a ... | xyzeez |

1,906,627 | A Frontend Technology Comparison of Svelte vs Alpine.js | Introduction In this article, we will learn about Svelte & Alpine.js, along with discussing the... | 0 | 2024-06-30T14:00:57 | https://dev.to/audu_sunday_c2624dc16e5b3/a-frontend-technology-comparison-of-svelte-vs-alpinejs-1eka | beginners, webdev, hng | **Introduction**

In this article, we will learn about Svelte & Alpine.js, along with discussing the significant distinction that differentiates Svelte from Alpine.js.

In the dynamic world of frontend development, finding the right tool can significantly impact the efficiency and performance of your projects. While popu... | audu_sunday_c2624dc16e5b3 |

1,906,625 | A Backend Story | This story is quite intriguing as I would not call myself a Backend Engineer or Programmer yet. I am... | 0 | 2024-06-30T13:57:00 | https://dev.to/veescript/a-backend-story-2cp5 | backenddevelopment, php, python, beginners | This story is quite intriguing as I would not call myself a Backend Engineer or Programmer yet.

I am a person with no background at all in backend programming except the basic CSC 201 class that taught us little to nothing about Python programming. All I knew was, there was something called languages, environments and ... | veescript |

1,906,626 | TypeScript vs React; which should I learn as a frontend developer. | React is a JavaScript library use to build reusable UI components for single page applications. It... | 0 | 2024-06-30T13:56:40 | https://dev.to/victor_88/typescript-vs-react-which-should-i-learn-as-a-frontend-developer-38gh | React is a JavaScript library use to build reusable UI components for single page applications. It uses a jsx(JavaScript XML) syntax that allows you to write html-like code. Although jsx is not compulsory, it makes building applications with react easier.

Typescript is a superset of JavaScript, which means that typescr... | victor_88 | |

1,906,623 | Moonlight Architecture - The Old-New | Moonlight Architecture is an architecture that has existed for a while but remained nameless.... | 0 | 2024-06-30T13:54:52 | https://dev.to/jet_ezra/moonlight-architecture-the-old-new-4ph5 | php, pionia, webdev, restapi | [Moonlight Architecture](https://pionia.netlify.app/moonlight/moonlight-architecture/) is an architecture that has existed for a while but remained nameless. Therefore, it is not right to say it is a new architecture yet most developers and companies have been using it. It borrows its features from commonly used archit... | jet_ezra |

1,906,622 | Paper detailing BitPower Loop’s security | Security Research of BitPower Loop BitPower Loop is a decentralized lending platform based on... | 0 | 2024-06-30T13:53:40 | https://dev.to/wgac_0f8ada999859bdd2c0e5/paper-detailing-bitpower-loops-security-i6d | Security Research of BitPower Loop

BitPower Loop is a decentralized lending platform based on blockchain technology, dedicated to providing users with safe, transparent and efficient financial services. Its core security comes from multi-level technical measures and mechanism design, which ensures the robust operation ... | wgac_0f8ada999859bdd2c0e5 | |

1,896,879 | functions and their inverses: 2 insightful examples. | Math is often considered hard. But, that is not true. Math is about logic. Following what you know to... | 27,818 | 2024-06-30T13:53:18 | https://dev.to/0xc0der/functions-and-their-inverses-2-insightful-examples-3iha | math, tutorial, learning, mathpills | Math is often considered hard. But, that is not true. Math is about logic. Following what you know to reach an understanding of what you don't. That we can do naturally. Our brains are wired to think.

The diagnosis **in my opinion** to most of the problems with math is.

- lack of **foundation**.

- unwillingness to g... | 0xc0der |

1,906,621 | REACTJS | Hey!! I started my frontend journey with Html, css and JavaScript which took me about one year to... | 0 | 2024-06-30T13:52:34 | https://dev.to/anthonynelson/reactjs-5401 | Hey!! I started my frontend journey with Html, css and JavaScript which took me about one year to master almost enough to start building a static website, then moved to learning a framework like ReactJS, but I had a lot of frame work to choose from in the likes of Angular, Vue and React but I ended up with going for Re... | anthonynelson | |

1,906,101 | Google I/O Extended 2024 Cape Town | Google I/O Extended events are organized by local Google Developer Groups worldwide as an extended... | 0 | 2024-06-30T13:51:59 | https://dev.to/muhammedsalie/google-io-extended-2024-cape-town-4bai | ioextended, ioextended2024, gdgcapetown, machinelearning | Google I/O Extended events are organized by local Google Developer Groups worldwide as an extended version of the main I/O conference held in California.

The Google Developer Group in Cape Town, in partnership with... | muhammedsalie |

1,906,620 | TIL you can create keyboard shortcuts to switch between a specific desktop | Normally on a mac, you can use the trackpad to swipe left or right to switch between a desktop. But... | 0 | 2024-06-30T13:51:32 | https://dev.to/benji011/til-you-can-create-keyboard-shortcuts-to-switch-between-a-specific-desktop-1ic6 | todayilearned, mac, osx | Normally on a mac, you can use the trackpad to swipe left or right to switch between a desktop. But you can add a shortcut to switch between desktop x instead of swiping multiple times, or swiping up to select one.

## How to do this

> System preferences > Keyboard > Keyboard shortcuts > Mission Control > Tick the c... | benji011 |

1,906,618 | Shaping the Future: A Deep Dive into Top Universities in Uttarakhand | Introduction Uttarakhand, nestled in the lap of the Himalayas, not only boasts breathtaking natural... | 0 | 2024-06-30T13:49:45 | https://dev.to/nisha_rawat_b538a76f5cc46/shaping-the-future-a-deep-dive-into-top-universities-in-uttarakhand-15d2 | **Introduction**

Uttarakhand, nestled in the lap of the Himalayas, not only boasts breathtaking natural beauty but also houses several esteemed universities and colleges that contribute significantly to the region's educational prowess. This article aims to provide an in-depth exploration of some of the top educational... | nisha_rawat_b538a76f5cc46 | |

1,906,617 | Introduction to Apache Hadoop & MapReduce | The History of Hadoop There are mainly two problems with the big data. Storage for a... | 0 | 2024-06-30T13:48:35 | https://dev.to/shvshydv/introduction-to-apache-hadoop-30ka | hadoop, dataengineering, bigdata, datascience | ## The History of Hadoop

There are mainly two problems with the big data.

- Storage for a huge amount of data.

- Processing of that stored data.

In 2003, Google published about Google's distributed file system, called **GFS (Google File System)** which can be used for storing large data sets.

Similarly in 2004, Go... | shvshydv |

1,906,616 | Revolutionizing Visual Content: The Power of Undress AI | Overview of Undress AI Discover how Undress AI reshapes photo editing with its advanced capabilities... | 0 | 2024-06-30T13:45:36 | https://dev.to/gogato2980/revolutionizing-visual-content-the-power-of-undress-ai-4ig6 | **Overview of Undress AI**

Discover how Undress AI reshapes photo editing with its advanced capabilities in realistic clothing alterations. This platform caters to professionals across industries, providing intuitive tools for enhancing visual storytelling.

**Key Features of Undress AI**

Advanced AI Technology

Undress... | gogato2980 | |

1,906,615 | Comparing Frontend Technologies: ReactJS vs. Svelte | When choosing a frontend framework, it's important to know what each one offers. Let's compare... | 0 | 2024-06-30T13:42:56 | https://dev.to/badmuseniola/comparing-frontend-technologies-reactjs-vs-svelte-5d8m | When choosing a frontend framework, it's important to know what each one offers. Let's compare ReactJS and Svelte to see which might be best for your next project.

**ReactJS**

ReactJS, developed by Facebook, is known for building dynamic user interfaces with a component-based architecture.

**Key Features:**

- Compon... | badmuseniola | |

1,906,614 | Array search methods in JavaScript.! | Javascriptda arraylarning izlash metodlari.! Array indexOf() Array lastIndexOf() Array... | 0 | 2024-06-30T13:37:52 | https://dev.to/samandarhodiev/array-search-methods-in-javascript-406p | **Javascriptda arraylarning izlash metodlari.!**

`Array indexOf()

Array lastIndexOf()

Array includes()

Array find()

Array findIndex()

Array findLast()

Array findLastIndex()`

<u>1. `indexOf()`</u>

Ushbu metod massiv elementi joylashgan indeks raqamni qaytaradi.

<u>**sintaksis:** `arrayName.indexOf(item,start);`</u>

... | samandarhodiev | |

1,906,613 | Paper detailing BitPower Loop’s security | Security Research of BitPower Loop BitPower Loop is a decentralized lending platform based on... | 0 | 2024-06-30T13:35:06 | https://dev.to/weq_24a494dd3a467ace6aca5/paper-detailing-bitpower-loops-security-31jj | Security Research of BitPower Loop

BitPower Loop is a decentralized lending platform based on blockchain technology, dedicated to providing users with safe, transparent and efficient financial services. Its core security comes from multi-level technical measures and mechanism design, which ensures the robust operation ... | weq_24a494dd3a467ace6aca5 | |

1,906,611 | Understanding Security Context in Kubernetes | Introduction Kubernetes, a leader in container orchestration, ensures that applications... | 0 | 2024-06-30T13:31:01 | https://dev.to/piyushbagani15/understanding-security-context-in-kubernetes-1gkn | kubernetes, security | ## Introduction

Kubernetes, a leader in container orchestration, ensures that applications run efficiently and securely across a cluster of machines. An essential component of Kubernetes' security mechanism is the security context, which configures permissions and access controls for Pods and containers. This blog del... | piyushbagani15 |

1,906,609 | Ego, Not Pride, Comes Before a Fall | Let me tell you a bit about myself and why I am qualified to have an opinion on pride vs ego in... | 0 | 2024-06-30T13:29:23 | https://dev.to/thesimpledev/ego-not-pride-comes-before-a-fall-3bcl | programming, career | Let me tell you a bit about myself and why I am qualified to have an opinion on pride vs ego in Software Engineering. I have been working as a software engineer for over 17 years, prior to that I spent 15 years programming as an amateur. At 41, I have over 30 years of experience programming for fun and profit. During t... | thesimpledev |

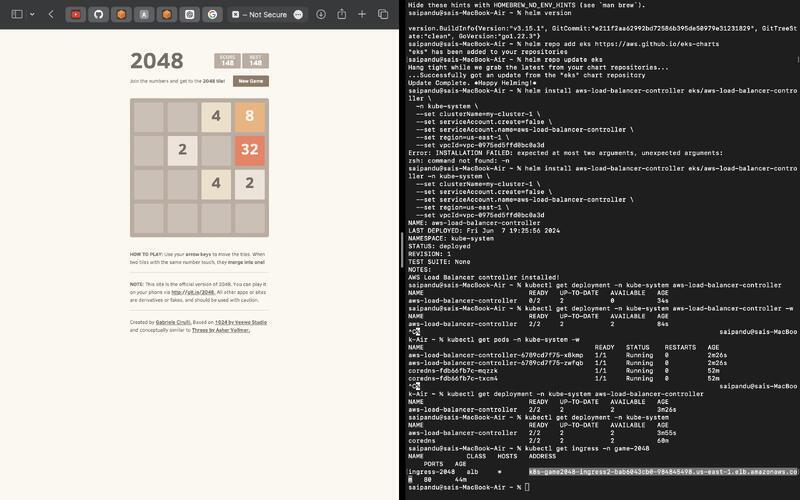

1,906,608 | Kubernetes-project-using-an-EKS-cluster | 🚀Excited to share my recent Kubernetes project where I deployed the 2048 Game Application using an... | 0 | 2024-06-30T13:27:33 | https://dev.to/sukuru_naga_sai_srinivasu/kubernetes-project-using-an-eks-cluster-42kg | kubernetes, aws, cloudcomputing |

🚀Excited to share my recent Kubernetes project where I deployed the 2048 Game Application using an EKS cluster! This project was a great learning experience, leveraging EKS, Fargate, and the Application Load Bal... | sukuru_naga_sai_srinivasu |

1,906,607 | Effective Debugging Techniques for React JS: Debugging Doesn’t Have to Be a Drag! | Hey there, fellow React JS developer! 🧑💻 We’ve all been there: you’re cruising along, building your... | 0 | 2024-06-30T13:24:18 | https://dev.to/a_shokn/effective-debugging-techniques-for-react-js-debugging-doesnt-have-to-be-a-drag-2iea | webdev, javascript, beginners, tutorial | Hey there, fellow React JS developer! 🧑💻

We’ve all been there: you’re cruising along, building your latest and greatest app, when suddenly… BAM! An error message pops up out of nowhere. Panic sets in. But fear not! Debugging doesn’t have to be a drag. In fact, with the right techniques, it can be as smooth as butte... | a_shokn |

1,906,606 | Swapping in elasticsearch to the proto-OLIVER | In the last video of the llm-zoomcamp, which I didn't post about, I reformatted the code to make it... | 0 | 2024-06-30T13:24:03 | https://dev.to/cmcrawford2/swapping-in-elasticsearch-to-the-proto-oliver-2ml1 | llm, rag | In the last video of the [llm-zoomcamp](https://github.com/datatalksclub/llm-zoomcamp), which I didn't post about, I reformatted the code to make it modular, so I could swap in a different search engine or a different LLM. In this video, the last video of module 1, I learned how to exchange elasticsearch with the in-me... | cmcrawford2 |

1,906,605 | Use XDebug for PHP Project Debugging | XDebug is an indispensable debugging tool in PHP development, offering powerful features for... | 0 | 2024-06-30T13:22:46 | https://dev.to/servbay/use-xdebug-for-php-project-debugging-2i4o | php, webdev, beginners, programming | [XDebug](https://xdebug.org/) is an indispensable debugging tool in PHP development, offering powerful features for breakpoint debugging, performance analysis, and code coverage. With XDebug, developers can set breakpoints in the code, inspect variable values, trace function call stacks, analyze performance bottlenecks... | servbay |

1,906,603 | A Technical Report on My Observations on https://www.kaggle.com/datasets/kyanyoga/sample-sales-data | A Technical Report on My Observations on https://www.kaggle.com/datasets/kyanyoga/sample-sales-data... | 27,915 | 2024-06-30T13:20:00 | https://dev.to/adewalebab/a-technical-report-on-my-observations-on-httpswwwkagglecomdatasetskyanyogasample-sales-data-3e17 | A Technical Report on My Observations on https://www.kaggle.com/datasets/kyanyoga/sample-sales-data

My First Glance Observation of Sample Sales Data

available on Kaggle provides a collection of records related to sales transactions. It consists of a single CSV file containing various attributes that describe each tr... | adewalebab | |

1,906,604 | #2 Open Close Principle ['O' in SOLID] | OCP - Open/Close Principle The Open/Close Principle is the second principle in the Solid Design... | 0 | 2024-06-30T13:19:55 | https://dev.to/vinaykumar0339/2-open-close-principle-o-in-solid-2jj6 | openclosedprinciple, solidprinciples, designprinciples | **OCP - Open/Close Principle**

The Open/Close Principle is the second principle in the Solid Design Principle.

1. Software entities should be open for extension but closed for modification.

**Today Will discuss about discount calculator system.**

**Violating OCP:**

```swift

enum DiscountType {

case seasonal

... | vinaykumar0339 |

1,906,602 | A Technical Report on My Observations on https://www.kaggle.com/datasets/kyanyoga/sample-sales-data | A Technical Report on My Observations on https://www.kaggle.com/datasets/kyanyoga/sample-sales-data... | 0 | 2024-06-30T13:18:12 | https://dev.to/adewalebab/a-technical-report-on-my-observations-on-httpswwwkagglecomdatasetskyanyogasample-sales-data-4j0m | beginners | A Technical Report on My Observations on https://www.kaggle.com/datasets/kyanyoga/sample-sales-data

My First Glance Observation of Sample Sales Data

available on Kaggle provides a collection of records related to sales transactions. It consists of a single CSV file containing various attributes that describe each tr... | adewalebab |

1,906,601 | BitPower: Security Analysis of Decentralized Lending Platform | Introduction The rapid development of decentralized finance (DeFi) has made security an important... | 0 | 2024-06-30T13:18:08 | https://dev.to/aimm_y/bitpower-security-analysis-of-decentralized-lending-platform-2nf0 | Introduction

The rapid development of decentralized finance (DeFi) has made security an important consideration for users to choose a platform. As a leading decentralized lending platform, BitPower provides users with highly secure lending services through smart contracts and blockchain technology. This article briefly... | aimm_y | |

1,894,521 | ✨ Do this first if you are using an Auth Provider 🧙♂️ 🫵 | In this article, I will show you how you can safely backup your users data in your own database when... | 0 | 2024-06-30T13:16:24 | https://dev.to/shricodev/do-this-first-if-you-are-using-an-auth-provider-1ndo | webdev, javascript, opensource, programming | In this article, I will show you how you can safely backup your users data in your own database when using an Authentication Provider.

#... | shricodev |

1,906,600 | A First Glance Review on Retail Sales Data | Introduction The dataset under review is a retail sales data sample, containing information on sales... | 0 | 2024-06-30T13:15:38 | https://dev.to/fadeelah/a-first-glance-review-on-retail-sales-data-2hi3 | data, analytics, datascience, powerplatform | **Introduction**

The dataset under review is a retail sales data sample, containing information on sales transactions, including variables such as product codes, customer information, order quantities, sales, and dates. As a data analysis intern, I'm always on the lookout for fresh insights and trends, and this dataset... | fadeelah |

1,906,599 | Frontend Technologies | A journey of a thousand miles begins with one step they say, I would say my journey into the tech... | 0 | 2024-06-30T13:12:46 | https://dev.to/veescript/frontend-technologies-3a85 | webdev, html, css, beginners | A journey of a thousand miles begins with one step they say, I would say my journey into the tech world begins with this post.

I'm a brilliant analytical individual who discovered I have an affinity for numbers and lines of code. I mean I became fascinated with how coding works and while I don't know most functions, I... | veescript |

1,906,597 | Tech notes 02 - Most Important Command Line Notes | I finished the Command Line course from Elzero Web School on YouTube and the link to the notes... | 0 | 2024-06-30T13:10:20 | https://dev.to/omar99/most-important-command-line-notes-345g | cmd, tutorial | **I finished the Command Line course from Elzero Web School on YouTube and the link to the notes document.**

[cmd course - elzero - notes commands](https://docs.google.com/document/d/1V-uM4pyXYzr3J2jLjtPLjoE-Hckh57c7o67Ql6vbo5M/edit?usp=sharing)

**Note: The course is in Arabic but the notes are in English.** | omar99 |

1,906,595 | Paper detailing BitPower Loop’s security | Security Research of BitPower Loop BitPower Loop is a decentralized lending platform based on... | 0 | 2024-06-30T13:08:40 | https://dev.to/sang_ce3ded81da27406cb32c/paper-detailing-bitpower-loops-security-da3 | Security Research of BitPower Loop

BitPower Loop is a decentralized lending platform based on blockchain technology, dedicated to providing users with safe, transparent and efficient financial services. Its core security comes from multi-level technical measures and mechanism design, which ensures the robust operation ... | sang_ce3ded81da27406cb32c | |

1,906,594 | How i solved a backend issue relating to Super apps(Miniapps) and prisma | When you journey into software development and decide to move into the backend aspect if the web,... | 0 | 2024-06-30T13:06:30 | https://dev.to/ejiroosiephri/how-i-solved-a-backend-issue-relating-to-super-appsminiapps-and-prisma-k2i | **When you journey into software development and decide to move into the backend aspect if the web, your debugging, understanding and problem solving skills must be off the charts.**

In this short article/blog i would be discussing how i solved one of the pressing issues i faced while developing a super app with minia... | ejiroosiephri | |

1,906,591 | BitPower: Security Analysis of Decentralized Lending Platform | Introduction The rapid development of decentralized finance (DeFi) has made security an important... | 0 | 2024-06-30T13:00:11 | https://dev.to/aimm_x_54a3484700fbe0d3be/bitpower-security-analysis-of-decentralized-lending-platform-28pb | Introduction

The rapid development of decentralized finance (DeFi) has made security an important consideration for users to choose a platform. As a leading decentralized lending platform, BitPower provides users with highly secure lending services through smart contracts and blockchain technology. This article briefly... | aimm_x_54a3484700fbe0d3be | |

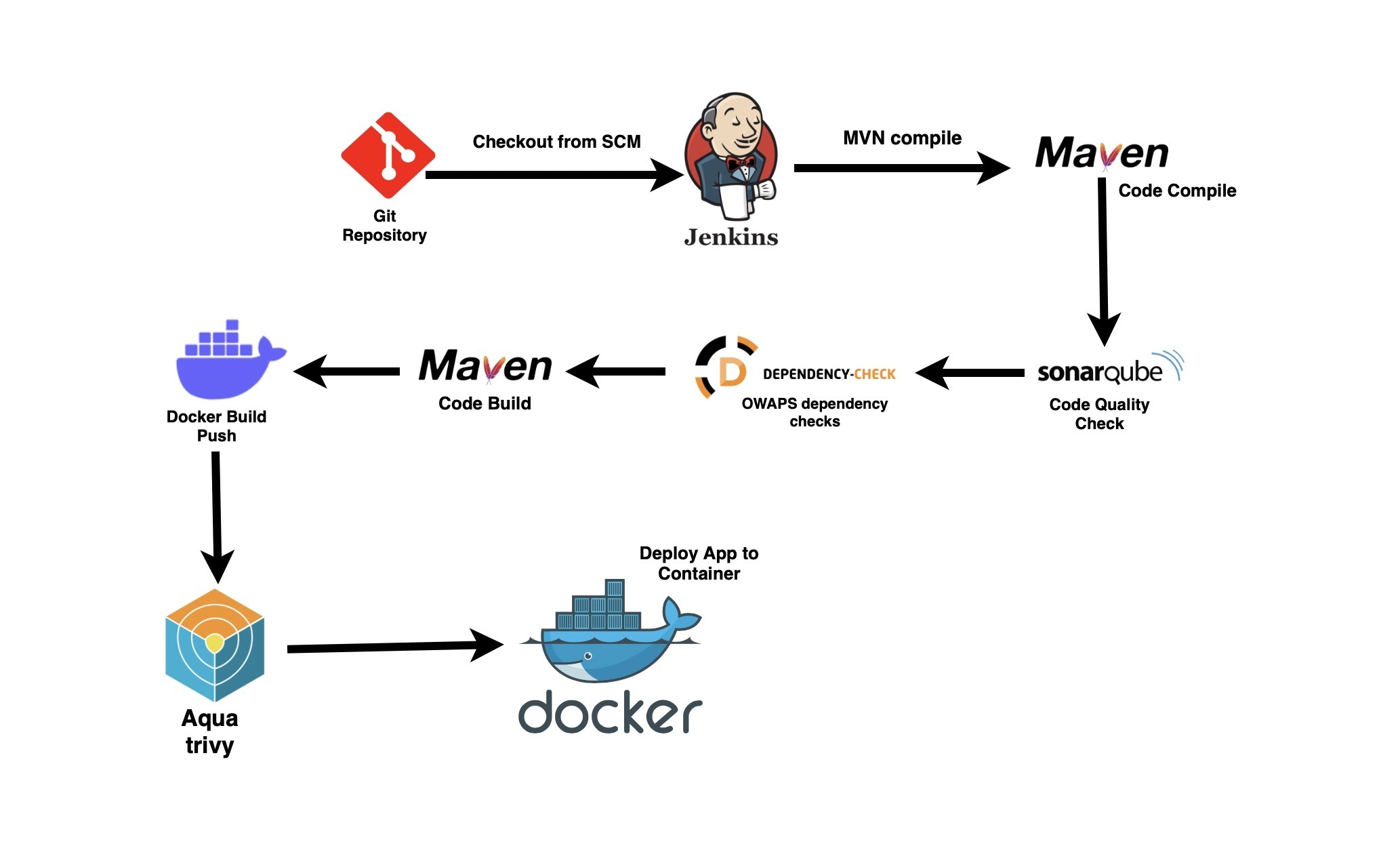

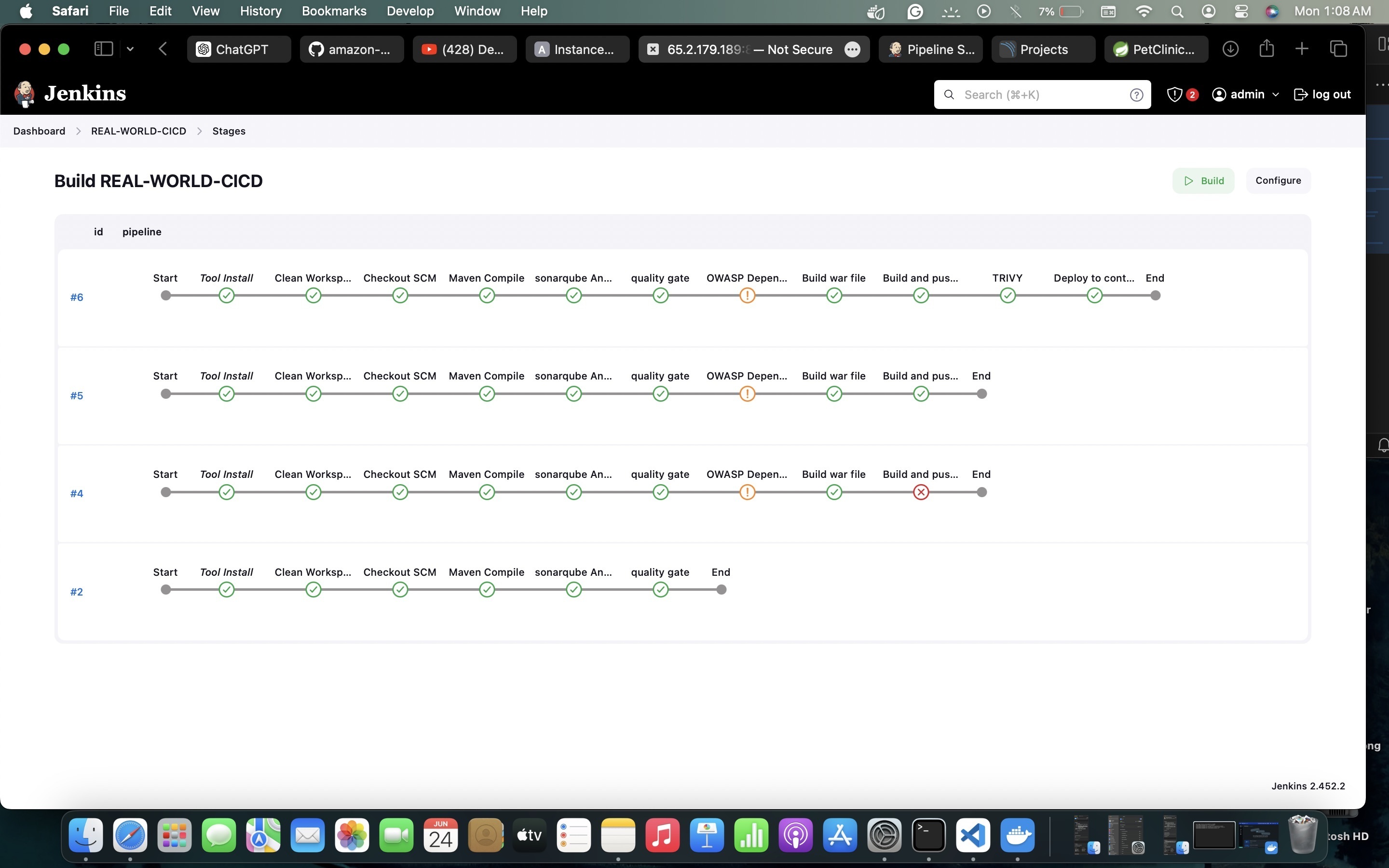

1,906,589 | CICD-PIPELINE (SIMPLE PET CLINIC WEB-APP) | 🚀I'm excited to share my First CI/CD Project,I have deployed a Java-based Pet Clinic application... | 0 | 2024-06-30T12:56:19 | https://dev.to/sukuru_naga_sai_srinivasu/cicd-pipeline-simple-pet-clinic-web-app-3p2i | jenkins, cicd, docker, aws |

🚀I'm excited to share my First CI/CD Project,I have deployed a Java-based Pet Clinic application using Jenki... | sukuru_naga_sai_srinivasu |

1,906,588 | The operating mechanism of BitPower Loop | Introduction With the development of blockchain technology, decentralized finance (DeFi) has become a... | 0 | 2024-06-30T12:52:40 | https://dev.to/woy_ca2a85cabb11e9fa2bd0d/the-operating-mechanism-of-bitpower-loop-5c06 | btc | Introduction

With the development of blockchain technology, decentralized finance (DeFi) has become a hot topic in the field of financial technology. As a decentralized lending protocol based on smart contracts, BitPower Loop is committed to providing secure, transparent and efficient financial services. This article w... | woy_ca2a85cabb11e9fa2bd0d |

1,906,586 | Git revert commit – Come rimuovere l'ultimo commit fatto | Fonte: Git Revert Commit – How to Undo the Last Commit Voglio riportare due metodi per poter... | 0 | 2024-06-30T12:51:31 | https://dev.to/mcale/git-revert-commit-come-rimuovere-lultimo-commit-fatto-1nb3 | git, revert, italian |

Fonte: [Git Revert Commit – How to Undo the Last Commit](https://www.freecodecamp.org/news/git-revert-commit-how-to-undo-the-last-commit/)

Voglio riportare due metodi per poter ripristinare il proprio codice quando per sbaglio del codice errato è stato commitatto o se si ha sbagliato branch di destinazione.

## Il co... | mcale |

1,906,587 | The operating mechanism of BitPower Loop | Introduction With the development of blockchain technology, decentralized finance (DeFi) has become a... | 0 | 2024-06-30T12:51:28 | https://dev.to/woy_621fc0f3ac62fff68606e/the-operating-mechanism-of-bitpower-loop-2085 | btc | Introduction

With the development of blockchain technology, decentralized finance (DeFi) has become a hot topic in the field of financial technology. As a decentralized lending protocol based on smart contracts, BitPower Loop is committed to providing secure, transparent and efficient financial services. This article w... | woy_621fc0f3ac62fff68606e |

1,906,585 | The operating mechanism of BitPower Loop | Introduction With the development of blockchain technology, decentralized finance (DeFi) has become a... | 0 | 2024-06-30T12:49:41 | https://dev.to/wot_ee4275f6aa8eafb35b941/the-operating-mechanism-of-bitpower-loop-3l0 | btc | Introduction

With the development of blockchain technology, decentralized finance (DeFi) has become a hot topic in the field of financial technology. As a decentralized lending protocol based on smart contracts, BitPower Loop is committed to providing secure, transparent and efficient financial services. This article w... | wot_ee4275f6aa8eafb35b941 |

1,906,584 | How to Cancel Fetch Requests in JavaScript | Ever wanted to cancel a fetch request in JavaScript? Discover how the **AbortController **makes it... | 0 | 2024-06-30T12:47:49 | https://dev.to/adriangube/how-to-cancel-fetch-requests-in-javascript-4ggd | javascript, webdev, beginners, tutorial | Ever wanted to **cancel a fetch request in JavaScript?** Discover how the **AbortController **makes it easy to manage and stop async operations. Check out this quick guide to master it in just a few steps!

First, you might wonder when you would need to cancel a fetch request. Here are an examples:

When **filtering a ... | adriangube |

1,906,582 | The operating mechanism of BitPower Loop | Introduction With the development of blockchain technology, decentralized finance (DeFi) has become a... | 0 | 2024-06-30T12:44:46 | https://dev.to/wot_dcc94536fa18f2b101e3c/the-operating-mechanism-of-bitpower-loop-56ip | btc | Introduction

With the development of blockchain technology, decentralized finance (DeFi) has become a hot topic in the field of financial technology. As a decentralized lending protocol based on smart contracts, BitPower Loop is committed to providing secure, transparent and efficient financial services. This article w... | wot_dcc94536fa18f2b101e3c |

1,906,581 | Security: BitPower's impeccable security | Security: BitPower's impeccable security BitPower, a decentralized platform built on the blockchain,... | 0 | 2024-06-30T12:43:58 | https://dev.to/pingz_iman_38e5b3b23e011f/security-bitpowers-impeccable-security-5a76 |

Security: BitPower's impeccable security

BitPower, a decentralized platform built on the blockchain, knows the importance of security. In this rapidly changing digital world, security is the top priority for every u... | pingz_iman_38e5b3b23e011f | |

1,906,579 | An Exposition into Vue and React | Introduction As part of the tasks on HNG Internship 11, I'm supposed to talk about two... | 0 | 2024-06-30T12:41:49 | https://dev.to/ikuewumi/an-exposition-into-vue-and-react-m22 | webdev, javascript, vue, react | ## Introduction

As part of the tasks on HNG Internship 11, I'm supposed to talk about two frontend technologies, So let's get into it! ReactJS and Vue are the most popular JavaScript frameworks used to create front-end applications. In this article, we'll be diving into the similarities, differences, and various pros a... | ikuewumi |

1,906,580 | BitPower: Security Analysis of Decentralized Lending Platform | Introduction The rapid development of decentralized finance (DeFi) has made security an important... | 0 | 2024-06-30T12:41:47 | https://dev.to/aimm_l_6b8a62242513520c18/bitpower-security-analysis-of-decentralized-lending-platform-40bj | Introduction

The rapid development of decentralized finance (DeFi) has made security an important consideration for users to choose a platform. As a leading decentralized lending platform, BitPower provides users with highly secure lending services through smart contracts and blockchain technology. This article briefly... | aimm_l_6b8a62242513520c18 | |

1,906,578 | Premier Badminton Streaming Platform | In today's digital era, access to live sports content has become increasingly important for fans... | 0 | 2024-06-30T12:40:31 | https://dev.to/rakettv/premier-badminton-streaming-platform-375p | In today's digital era, access to live sports content has become increasingly important for fans around the globe. Rakettv.net stands out as a premier channel for badminton enthusiasts, offering live streaming, highlights, and a variety of related content from world tours. As a free platform, Rakettv.net garners signif... | rakettv | |

1,906,462 | Buy Negative Google Reviews | https://dmhelpshop.com/product/buy-negative-google-reviews/ Buy Negative Google Reviews Negative... | 0 | 2024-06-30T10:01:42 | https://dev.to/carika4293/buy-negative-google-reviews-53do | node, aws, learning, career | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-negative-google-reviews/\n\n\n\n\nBuy Negative Google Reviews\nNegative reviews on Google are detrimental critiques that expose customers’ unfavorable experiences with a business. These reviews can significantly damage a company’s reputation, presenting challenges in both attracting new customers and retaining current ones. If you are considering purchasing negative Google reviews from dmhelpshop.com, we encourage you to reconsider and instead focus on providing exceptional products and services to ensure positive feedback and sustainable success.\n\nWhy Buy Negative Google Reviews from dmhelpshop\nWe take pride in our fully qualified, hardworking, and experienced team, who are committed to providing quality and safe services that meet all your needs. Our professional team ensures that you can trust us completely, knowing that your satisfaction is our top priority. With us, you can rest assured that you’re in good hands.\n\nIs Buy Negative Google Reviews safe?\nAt dmhelpshop, we understand the concern many business persons have about the safety of purchasing Buy negative Google reviews. We are here to guide you through a process that sheds light on the importance of these reviews and how we ensure they appear realistic and safe for your business. Our team of qualified and experienced computer experts has successfully handled similar cases before, and we are committed to providing a solution tailored to your specific needs. Contact us today to learn more about how we can help your business thrive.\n\nBuy Google 5 Star Reviews\nReviews represent the opinions of experienced customers who have utilized services or purchased products from various online or offline markets. These reviews convey customer demands and opinions, and ratings are assigned based on the quality of the products or services and the overall user experience. Google serves as an excellent platform for customers to leave reviews since the majority of users engage with it organically. When you purchase Buy Google 5 Star Reviews, you have the potential to influence a large number of people either positively or negatively. Positive reviews can attract customers to purchase your products, while negative reviews can deter potential customers.\n\nIf you choose to Buy Google 5 Star Reviews, people will be more inclined to consider your products. However, it is important to recognize that reviews can have both positive and negative impacts on your business. Therefore, take the time to determine which type of reviews you wish to acquire. Our experience indicates that purchasing Buy Google 5 Star Reviews can engage and connect you with a wide audience. By purchasing positive reviews, you can enhance your business profile and attract online traffic. Additionally, it is advisable to seek reviews from reputable platforms, including social media, to maintain a positive flow. We are an experienced and reliable service provider, highly knowledgeable about the impacts of reviews. Hence, we recommend purchasing verified Google reviews and ensuring their stability and non-gropability.\n\nLet us now briefly examine the direct and indirect benefits of reviews:\nReviews have the power to enhance your business profile, influencing users at an affordable cost.\nTo attract customers, consider purchasing only positive reviews, while negative reviews can be acquired to undermine your competitors. Collect negative reports on your opponents and present them as evidence.\nIf you receive negative reviews, view them as an opportunity to understand user reactions, make improvements to your products and services, and keep up with current trends.\nBy earning the trust and loyalty of customers, you can control the market value of your products. Therefore, it is essential to buy online reviews, including Buy Google 5 Star Reviews.\nReviews serve as the captivating fragrance that entices previous customers to return repeatedly.\nPositive customer opinions expressed through reviews can help you expand your business globally and achieve profitability and credibility.\nWhen you purchase positive Buy Google 5 Star Reviews, they effectively communicate the history of your company or the quality of your individual products.\nReviews act as a collective voice representing potential customers, boosting your business to amazing heights.\nNow, let’s delve into a comprehensive understanding of reviews and how they function:\nGoogle, with its significant organic user base, stands out as the premier platform for customers to leave reviews. When you purchase Buy Google 5 Star Reviews , you have the power to positively influence a vast number of individuals. Reviews are essentially written submissions by users that provide detailed insights into a company, its products, services, and other relevant aspects based on their personal experiences. In today’s business landscape, it is crucial for every business owner to consider buying verified Buy Google 5 Star Reviews, both positive and negative, in order to reap various benefits.\n\nWhy are Google reviews considered the best tool to attract customers?\nGoogle, being the leading search engine and the largest source of potential and organic customers, is highly valued by business owners. Many business owners choose to purchase Google reviews to enhance their business profiles and also sell them to third parties. Without reviews, it is challenging to reach a large customer base globally or locally. Therefore, it is crucial to consider buying positive Buy Google 5 Star Reviews from reliable sources. When you invest in Buy Google 5 Star Reviews for your business, you can expect a significant influx of potential customers, as these reviews act as a pheromone, attracting audiences towards your products and services. Every business owner aims to maximize sales and attract a substantial customer base, and purchasing Buy Google 5 Star Reviews is a strategic move.\n\nAccording to online business analysts and economists, trust and affection are the essential factors that determine whether people will work with you or do business with you. However, there are additional crucial factors to consider, such as establishing effective communication systems, providing 24/7 customer support, and maintaining product quality to engage online audiences. If any of these rules are broken, it can lead to a negative impact on your business. Therefore, obtaining positive reviews is vital for the success of an online business\n\nWhat are the benefits of purchasing reviews online?\nIn today’s fast-paced world, the impact of new technologies and IT sectors is remarkable. Compared to the past, conducting business has become significantly easier, but it is also highly competitive. To reach a global customer base, businesses must increase their presence on social media platforms as they provide the easiest way to generate organic traffic. Numerous surveys have shown that the majority of online buyers carefully read customer opinions and reviews before making purchase decisions. In fact, the percentage of customers who rely on these reviews is close to 97%. Considering these statistics, it becomes evident why we recommend buying reviews online. In an increasingly rule-based world, it is essential to take effective steps to ensure a smooth online business journey.\n\nBuy Google 5 Star Reviews\nMany people purchase reviews online from various sources and witness unique progress. Reviews serve as powerful tools to instill customer trust, influence their decision-making, and bring positive vibes to your business. Making a single mistake in this regard can lead to a significant collapse of your business. Therefore, it is crucial to focus on improving product quality, quantity, communication networks, facilities, and providing the utmost support to your customers.\n\nReviews reflect customer demands, opinions, and ratings based on their experiences with your products or services. If you purchase Buy Google 5-star reviews, it will undoubtedly attract more people to consider your offerings. Google is the ideal platform for customers to leave reviews due to its extensive organic user involvement. Therefore, investing in Buy Google 5 Star Reviews can significantly influence a large number of people in a positive way.\n\nHow to generate google reviews on my business profile?\nFocus on delivering high-quality customer service in every interaction with your customers. By creating positive experiences for them, you increase the likelihood of receiving reviews. These reviews will not only help to build loyalty among your customers but also encourage them to spread the word about your exceptional service. It is crucial to strive to meet customer needs and exceed their expectations in order to elicit positive feedback. If you are interested in purchasing affordable Google reviews, we offer that service.\n\n\n\n\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com" | carika4293 |

1,906,577 | Paper detailing BitPower Loop’s security | Security Research of BitPower Loop BitPower Loop is a decentralized lending platform based on... | 0 | 2024-06-30T12:36:59 | https://dev.to/kjask_jklshd_cecbd37d6d57/paper-detailing-bitpower-loops-security-5502 | Security Research of BitPower Loop

BitPower Loop is a decentralized lending platform based on blockchain technology, dedicated to providing users with safe, transparent and efficient financial services. Its core security comes from multi-level technical measures and mechanism design, which ensures the robust operation ... | kjask_jklshd_cecbd37d6d57 | |

1,906,575 | Security: BitPower's impeccable security | Security: BitPower's impeccable security BitPower, a decentralized platform built on the blockchain,... | 0 | 2024-06-30T12:32:30 | https://dev.to/pings_iman_934c7bc4590ba4/security-bitpowers-impeccable-security-10mk |

Security: BitPower's impeccable security

BitPower, a decentralized platform built on the blockchain, knows the importance of security. In this rapidly changing digital world, security is the top priority for every u... | pings_iman_934c7bc4590ba4 | |

1,906,574 | BotPower Introduction: | BotPower is a revolutionary AI tool designed to improve work efficiency and simplify daily tasks. Our... | 0 | 2024-06-30T12:30:13 | https://dev.to/xin_l_9aced9191ff93f0bf12/botpower-introduction-27b0 |

BotPower is a revolutionary AI tool designed to improve work efficiency and simplify daily tasks. Our powerful AI engine can automatically handle tedious tasks, from data analysis to customer service, and easily meet various challenges. Whether it is a small or medium-sized business or a large enterprise, BotPower can... | xin_l_9aced9191ff93f0bf12 | |

1,906,573 | BitPower: Security Analysis of Decentralized Lending Platform | Introduction The rapid development of decentralized finance (DeFi) has made security an important... | 0 | 2024-06-30T12:29:54 | https://dev.to/aimm/bitpower-security-analysis-of-decentralized-lending-platform-1fkd | Introduction

The rapid development of decentralized finance (DeFi) has made security an important consideration for users to choose a platform. As a leading decentralized lending platform, BitPower provides users with highly secure lending services through smart contracts and blockchain technology. This article briefly... | aimm | |

1,906,572 | Security: BitPower's impeccable security | Security: BitPower's impeccable security BitPower, a decentralized platform built on the blockchain,... | 0 | 2024-06-30T12:23:29 | https://dev.to/pingd_iman_9228b54c026437/security-bitpowers-impeccable-security-hk4 |

Security: BitPower's impeccable security

BitPower, a decentralized platform built on the blockchain, knows the importance of security. In this rapidly changing digital world, security is the top priority for every ... | pingd_iman_9228b54c026437 | |

1,906,571 | BotPower Introduction: | BotPower is a revolutionary AI tool designed to improve work efficiency and simplify daily tasks. Our... | 0 | 2024-06-30T12:20:44 | https://dev.to/xin_lin_fc39c6250ef2ab451/botpower-introduction-53e7 |

BotPower is a revolutionary AI tool designed to improve work efficiency and simplify daily tasks. Our powerful AI engine can automatically handle tedious tasks, from data analysis to customer service, and easily meet various challenges. Whether it is a small or medium-sized business or a large enterprise, BotPower can... | xin_lin_fc39c6250ef2ab451 | |

1,906,558 | Paper detailing BitPower Loop’s security | Security Research of BitPower Loop BitPower Loop is a decentralized lending platform based on... | 0 | 2024-06-30T12:19:20 | https://dev.to/asfg_f674197abb5d7428062d/paper-detailing-bitpower-loops-security-83f | Security Research of BitPower Loop

BitPower Loop is a decentralized lending platform based on blockchain technology, dedicated to providing users with safe, transparent and efficient financial services. Its core security comes from multi-level technical measures and mechanism design, which ensures the robust operation ... | asfg_f674197abb5d7428062d | |

1,906,557 | Transforming Cloud Infrastructure with Terraform: Build, Change, Deploy | In today's cloud-centric landscape, managing infrastructure efficiently is key to scalability and... | 0 | 2024-06-30T12:17:32 | https://dev.to/rashmitha_v_d0cfc20ba7152/transforming-cloud-infrastructure-with-terraform-build-change-deploy-4o4g | In today's cloud-centric landscape, managing infrastructure efficiently is key to scalability and reliability. Enter Terraform, an Infrastructure as Code (IaaC) tool that revolutionizes how we provision, manage, and evolve cloud resources across platforms like AWS, Azure, and Google Cloud. With Terraform, you define yo... | rashmitha_v_d0cfc20ba7152 | |

1,906,550 | Step-by-Step Guide to Setting Up Push Notifications in Node.js: Backend Configuration | Introduction to Push Notifications Push notifications are a powerful way to keep users... | 27,914 | 2024-06-30T12:15:52 | https://dev.to/sanjampreetsingh/step-by-step-guide-to-setting-up-push-notifications-in-nodejs-backend-configuration-53gn | webdev, javascript, programming, tutorial | ## Introduction to Push Notifications

Push notifications are a powerful way to keep users engaged by delivering timely and relevant information directly to their devices. Unlike traditional pull mechanisms where t... | sanjampreetsingh |

1,906,556 | BitPower: Security Analysis of Decentralized Lending Platform | Introduction The rapid development of decentralized finance (DeFi) has made security an important... | 0 | 2024-06-30T12:13:58 | https://dev.to/aimm_w_1761d19cef7fa886fd/bitpower-security-analysis-of-decentralized-lending-platform-cce | Introduction

The rapid development of decentralized finance (DeFi) has made security an important consideration for users to choose a platform. As a leading decentralized lending platform, BitPower provides users with highly secure lending services through smart contracts and blockchain technology. This article briefly... | aimm_w_1761d19cef7fa886fd | |

1,906,554 | Mastering Time: Using Fake Timers with Vitest | Level Up Your Timers Tests With Speed and Isolation | 0 | 2024-06-30T12:08:59 | https://brunosabot.dev/posts/2024/mastering-time-using-fake-timers-with-vitest/ | javascript, testing, vitest, codequality | ---

title: "Mastering Time: Using Fake Timers with Vitest"

published: true

date: 2024-06-30 12:08:59 UTC

description: Level Up Your Timers Tests With Speed and Isolation

tags: javascript, testing, vitest, quality

canonical_url: https://brunosabot.dev/posts/2024/mastering-time-using-fake-timers-with-vitest/

cover_image:... | brunosabot |

1,906,553 | Security: BitPower's impeccable security | Security: BitPower's impeccable security BitPower, a decentralized platform built on the blockchain,... | 0 | 2024-06-30T12:07:13 | https://dev.to/pingc_iman_034e9f20936ef4/security-bitpowers-impeccable-security-5gcl |

Security: BitPower's impeccable security

BitPower, a decentralized platform built on the blockchain, knows the importance of security. In this rapidly changing digital world, security is the top priority for every u... | pingc_iman_034e9f20936ef4 | |

1,906,552 | Ad-Free AI Chat | Normal Article... | 0 | 2024-06-30T12:00:12 | https://dev.to/haroonafgpt/ad-free-ai-chat-44bf | chatbot, ai, mobile | Normal Article Links:

https://ad-free-gpt-android-app-44571291.hubspotpagebuilder.com/

https://adfreeaichat.wordpress.com/2023/12/17/discover-the-future-of-fun-innovative-ad-free-ai-chat-android-app-without-interruptions/

https://haroonafgpt.wixsite.com/ad-free-ai-chat/post/experience-innovation-fun-discover-the-ad-fre... | haroonafgpt |

1,906,551 | BotPower Introduction: | BotPower is a revolutionary AI tool designed to improve work efficiency and simplify daily tasks. Our... | 0 | 2024-06-30T11:58:31 | https://dev.to/bao_xin_145cb69d4d8d82453/botpower-introduction-1mmp | BotPower is a revolutionary AI tool designed to improve work efficiency and simplify daily tasks. Our powerful AI engine can automatically handle tedious tasks, from data analysis to customer service, and easily meet various challenges. Whether it is a small or medium-sized business or a large enterprise, BotPower can ... | bao_xin_145cb69d4d8d82453 | |

1,906,549 | Creating a Synchronized Vertical and Horizontal Scrolling Component for Web Apps | Introduction Microsoft Teams' mobile agenda page offers a sleek and intuitive interface... | 0 | 2024-06-30T11:56:30 | https://dev.to/rahul_patwa_f99f19cd1519b/creating-a-synchronized-vertical-and-horizontal-scrolling-component-for-web-apps-1igc | #### Introduction

Microsoft Teams' mobile agenda page offers a sleek and intuitive interface with synchronized vertical and horizontal scrolling. This design allows users to scroll through dates horizontally and see the corresponding events in a vertical list. Inspired by this elegant solution, I decided to create a si... | rahul_patwa_f99f19cd1519b | |

1,906,546 | BotPower Introduction: | BotPower is a revolutionary AI tool designed to improve work efficiency and simplify daily tasks. Our... | 0 | 2024-06-30T11:46:03 | https://dev.to/xin_wang_e8a515f2373224df/botpower-introduction-21h0 | BotPower is a revolutionary AI tool designed to improve work efficiency and simplify daily tasks. Our powerful AI engine can automatically handle tedious tasks, from data analysis to customer service, and easily meet various challenges. Whether it is a small or medium-sized business or a large enterprise, BotPower can ... | xin_wang_e8a515f2373224df | |

1,906,544 | Saveinsta - Download Pictures from Instagram | Instaloader is a tool to download pictures (or videos) along with their captions and other metadata... | 0 | 2024-06-30T11:41:49 | https://dev.to/save_insta/saveinsta-download-pictures-from-instagram-406n | instagram, astro, github, python | Instaloader is a tool to download pictures (or videos) along with their captions and other metadata from Instagram.

With Python installed, do:

$ pip3 install instaloader

$ instaloader profile [profile ...]

See Install Instaloader for more options on how to install Instaloader.

Instaloader

downloads public and priv... | save_insta |

1,906,543 | Mastering Grids in UI Design | Day 11: Mastering Grids in UI Design 👋 Hello, LinkedIn Community! I'm Prince Chouhan, a B.Tech CSE... | 0 | 2024-06-30T11:35:30 | https://dev.to/prince_chouhan/mastering-grids-in-ui-design-3pbb | ui, uidesign, ux, uxdesign | Day 11: Mastering Grids in UI Design

👋 Hello, LinkedIn Community!

I'm Prince Chouhan, a B.Tech CSE student with a passion for UI/UX design. Today, I'm diving into the essential role of grids in UI design.

🗓️ Day 11 Topic: Grids in UI Design

Key Highlights:

1️⃣ Types of Grids:

- Column Grid:

- Vertical columns... | prince_chouhan |

1,906,542 | Free time | I was curious about online casinos and needed a reliable site that accepted Mastercard for deposits.... | 0 | 2024-06-30T11:33:18 | https://dev.to/alexseen18/free-time-3ajn | I was curious about online casinos and needed a reliable site that accepted Mastercard for deposits. I came across [https://casinoonlineca.ca/payment-options/mastercard/](https://casinoonlineca.ca/payment-options/mastercard/) and it turned out to be a fantastic resource. The site explains how to use Mastercard for onli... | alexseen18 | |

1,873,040 | USB HID Down the rabbit hole: Logitech G435 dongle | Last time, in the first post of this series, I explored the vendor interface of my Logitech Mouse,... | 0 | 2024-06-30T11:32:21 | https://dev.to/endes/usb-hid-down-the-rabbit-hole-logitech-g435-dongle-33if | usb, reverseengineering, hardware, reversing | Last time, in the first post of this series, I explored the vendor interface of my Logitech Mouse, now let's explore the dongle of my headphones. As I said last time, it caught my attention that it can send "phone" HID events. Also, Logitech doesn't provide any official software for configuring the headphones(like the ... | endes |

1,906,538 | Learning CRUD Operations with NodeJS | Task Backend task I want to share a recent experience I faced while learning crud with nodejs. I was... | 0 | 2024-06-30T11:25:57 | https://dev.to/ojerahi_daniel/learning-crud-operations-with-nodejs-11i1 | Task Backend task

I want to share a recent experience I faced while learning crud with nodejs.

I was working on a simple quote generator API, and I wanted to try CRUD operations on it like being able to add new quotes and view the existing quotes. I struggled to understand how to properly handle the requests. After so... | ojerahi_daniel |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.